Wearable Sensors vs. Drone-Based Monitoring: A 2025 Comparative Analysis for Precision Agriculture

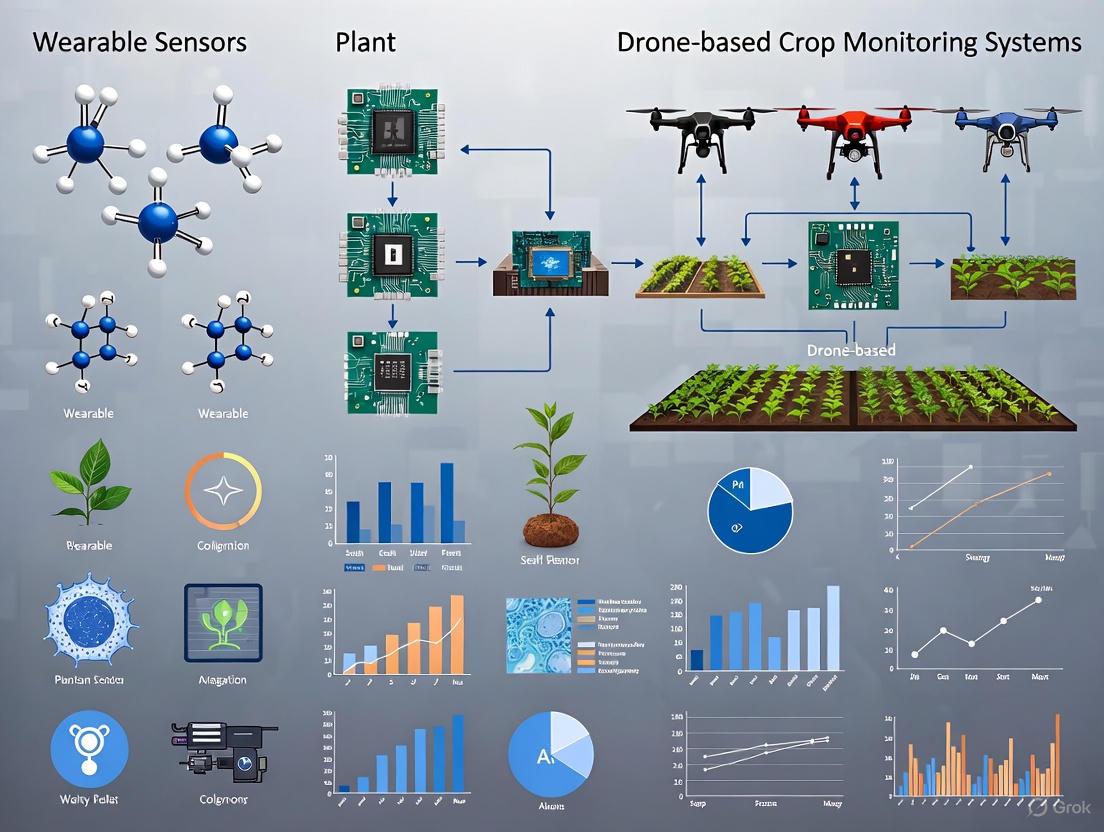

This article provides a comprehensive comparative analysis for researchers and agricultural scientists on two transformative crop monitoring technologies: wearable sensors and drone-based systems.

Wearable Sensors vs. Drone-Based Monitoring: A 2025 Comparative Analysis for Precision Agriculture

Abstract

This article provides a comprehensive comparative analysis for researchers and agricultural scientists on two transformative crop monitoring technologies: wearable sensors and drone-based systems. It explores the foundational principles of both approaches, detailing how flexible, biocompatible wearable devices enable direct, continuous measurement of plant physiology and chemistry, while aerial drones equipped with multispectral and AI-powered analytics facilitate large-scale field assessment. The analysis delves into specific methodological applications, from monitoring plant volatiles and stem diameter to generating NDVI maps and targeted spraying. It further addresses critical troubleshooting aspects, including sensor durability, data integration, and regulatory hurdles. A direct validation and comparison of spatial resolution, data types, cost-effectiveness, and suitability for different research and farming scales is presented, concluding with a synthesis of their complementary roles and future trajectories in smart, sustainable agriculture.

Understanding the Core Technologies: From Plant-Level Wearables to Field-Scale Drones

Wearable plant sensors represent a groundbreaking frontier in precision agriculture, enabling real-time, non-invasive monitoring of plant physiological status. Defined as flexible electronic devices that conform intimately to plant surfaces, these sensors leverage advanced materials and sensing mechanisms to continuously track vital signs, from water relations and growth to chemical biomarkers [1] [2]. This capability marks a paradigm shift from reactive to proactive crop management, allowing researchers and farmers to optimize plant health with unprecedented precision. The World Economic Forum has recognized this transformative potential, selecting wearable plant sensors as one of the Top 10 Emerging Technologies in 2023 [3].

This review provides a comparative analysis between wearable plant sensors and established drone-based monitoring systems, focusing on their underlying principles, operational capabilities, and experimental applications. While drone technology offers macro-scale field assessment through aerial imaging, wearable sensors provide direct, continuous physiological monitoring at the micro-scale [4] [5]. This distinction is fundamental to understanding their complementary roles in modern agricultural research and practice, particularly as global agricultural systems face increasing pressure from population growth and climate change [6] [2].

Fundamental Principles and Material Foundations

Core Operating Principles of Flexible Electronics

Wearable plant sensors operate on transduction principles that convert physiological parameters into quantifiable electrical signals. The fundamental mechanisms include:

- Piezoresistive Effect: Materials change electrical resistance in response to mechanical strain, enabling monitoring of plant growth and movement [5] [7]. This principle is particularly valuable for tracking stem diameter variations that indicate water status.

- Electrochemical Sensing: Selective detection of ions, nutrients, and biomarkers through redox reactions at electrode interfaces [3]. This enables real-time monitoring of soil nutrient levels, pesticide residues, and stress biomarkers.

- Capacitive Sensing: Measurement of dielectric property changes in response to humidity, vapor, or proximity [8]. This mechanism is widely employed in flexible humidity sensors for monitoring leaf surface microclimates.

- Potentiometric Sensing: Measurement of potential differences at electrode surfaces in response to specific ions or chemical species [9]. This allows for simultaneous measurement of multiple elements in nutrient solutions.

Biocompatible Materials for Plant Integration

The development of effective plant wearables requires materials that balance electronic performance with biocompatibility and environmental resilience:

Table 1: Key Material Classes for Wearable Plant Sensors

| Material Class | Specific Examples | Key Properties | Primary Applications |

|---|---|---|---|

| Carbon-Based Materials | Carbonized silk georgette, Graphene, CNTs [5] [8] | High conductivity, stretchability, biocompatibility | Strain sensing, electrophysiological monitoring |

| Polymeric Substrates | PDMS, Ecoflex, Polyimide (PI), PET [7] [8] | Flexibility, stretchability, environmental protection | Sensor encapsulation and structural support |

| Conductive Polymers | PEDOT:PSS, PANI [8] | Tunable conductivity, mechanical flexibility | Electrodes, chemical sensing |

| 2D Materials | MXenes [8] | High surface area, hydrophilic properties | Humidity sensing, gas detection |

| Metal-Based | Gold nanoparticles, Silver paste electrodes [7] [8] | High conductivity, electrochemical stability | Electrodes, electrochemical sensing |

These materials enable the creation of devices that can conform to complex plant morphologies without impeding growth or causing damage—a critical consideration for long-term monitoring applications. Material selection directly influences key performance parameters including sensitivity, detection range, and durability in harsh agricultural environments [6] [8].

Experimental Methodologies for Sensor Development and Deployment

Prototype Fabrication and Performance Characterization

Rigorous experimental protocols are essential for developing reliable wearable plant sensors. Standard methodologies include:

Sensor Fabrication: For resistive strain sensors, a common approach involves laser patterning of carbonized silk georgette on polyimide substrates, followed by encapsulation with biocompatible silicone elastomers [5]. Electrochemical sensors typically employ screen-printed electrodes fabricated with carbon or noble metal inks (e.g., silver paste) on flexible substrates [9] [8].

Performance Validation: Laboratory characterization includes mechanical cycling tests (e.g., ≥10,000 bending cycles) to verify durability, and environmental exposure tests to assess stability under varying temperature and humidity conditions [7]. Calibration against reference instruments establishes measurement accuracy, with statistical analysis of sensitivity, linearity, and detection limits [5] [8].

Plant Integration Studies: Controlled experiments monitor plant physiological responses post-sensor attachment, assessing potential impacts on growth, gas exchange, and development over full growth cycles [5] [2].

Field Deployment and Data Acquisition Protocols

Successful translation from laboratory to field settings requires standardized deployment methodologies:

Sensor Attachment: Gentle mounting using biocompatible adhesives or mechanical fixtures that minimize restriction of plant growth [5]. Orientation is optimized for target parameter measurement while minimizing interference with natural plant functions.

Data Acquisition Systems: Implementation of wireless nodes (e.g., Bluetooth, LoRaWAN) for continuous data logging with minimal power requirements [9] [6]. Timing protocols synchronize multi-sensor measurements across plant populations.

Environmental Correlation: Simultaneous monitoring of microclimatic conditions (temperature, humidity, light intensity) enables correlation between plant physiological responses and environmental drivers [5] [2].

The experimental workflow below illustrates the complete process from sensor development to data application:

Comparative Performance Analysis: Wearable Sensors vs. Drone-Based Monitoring

Technical Capabilities and Operational Parameters

Direct comparison of wearable plant sensors and drone-based systems reveals distinct advantages and limitations for each technology:

Table 2: Performance Comparison: Wearable Sensors vs. Drone-Based Monitoring

| Parameter | Wearable Plant Sensors | Drone-Based Monitoring |

|---|---|---|

| Spatial Resolution | Millimeter to centimeter scale [5] | Centimeter to meter scale [4] [10] |

| Temporal Resolution | Continuous, real-time (seconds to minutes) [1] [2] | Periodic (hours to days) [4] [10] |

| Measured Parameters | Direct physiological metrics: sap flow, stem diameter, nutrient uptake, VOC emissions [5] [3] | Indirect proxies: canopy vegetation indices, surface temperature, chlorophyll fluorescence [4] [10] |

| Detection Capability | Early stress detection (pre-visual) through physiological changes [5] [3] | Stress detection once visible symptoms manifest [4] |

| Plant Interaction | Direct physical contact with plant organs [1] [2] | Remote, non-contact sensing [4] [10] |

| Scalability | Limited by sensor cost and deployment labor [6] | Highly scalable for large acreages [4] [10] |

| Implementation Cost | High per-unit cost, potential for reuse [6] | High initial investment, lower marginal cost for additional acres [10] |

Complementary Applications in Precision Agriculture

The comparative analysis reveals how these technologies address different needs within agricultural research and management:

Wearable sensors excel in detailed physiological studies, such as investigating hydraulic mechanisms in fruit cracking [5] or quantifying stomatal sensitivity to soil drought [5]. Their continuous data streams enable discovery of novel plant behaviors and gene functions, as demonstrated in research linking circadian clock genes to stomatal regulation [5].

Drone systems provide unmatched efficiency for field-scale assessment, enabling rapid identification of spatial variability in crop health, soil conditions, and irrigation efficacy across hundreds of acres [4] [10]. Their macro-perspective is invaluable for whole-field management decisions and targeted scouting.

The integration framework below illustrates how these complementary technologies can be combined in agricultural research:

Essential Research Reagent Solutions and Materials

Successful implementation of wearable plant sensing requires specific materials and reagents optimized for plant biological interfaces:

Table 3: Essential Research Reagents and Materials for Wearable Plant Sensors

| Material/Reagent | Function | Application Example |

|---|---|---|

| Carbonized Silk Georgette | Strain-sensing material with high stretchability and durability [5] | PlantRing system for monitoring stem diameter variations [5] |

| Amine-terminated PAMAM Dendrimer-Gold Nanoparticles | Humidity-sensitive composite for impedance-based sensing [8] | Flexible humidity sensors on PET substrates [8] |

| Screen-printable Electrode Inks (Carbon, Silver) | Create conductive patterns on flexible substrates [9] [8] | Electrochemical sensors for nutrient detection [9] |

| Polydimethylsiloxane (PDMS) | Flexible, gas-permeable encapsulation material [7] [8] | Protective coating for field-deployable sensors [8] |

| Ion-Selective Membranes | Enable potentiometric detection of specific ions [9] | Multi-ion sensors for root zone monitoring [9] |

| Biocompatible Adhesives | Secure sensor attachment without plant damage [5] [2] | Mounting sensors to stems and leaves long-term [5] |

Wearable plant sensors and drone-based monitoring represent complementary rather than competing technologies in the precision agriculture ecosystem. Wearable sensors provide unprecedented access to plant physiological processes at high temporal resolution, enabling fundamental discoveries and plant-centered irrigation control [5]. Meanwhile, drone systems offer scalable solutions for field-level assessment and management [4] [10].

The future of agricultural monitoring lies in integrated systems that combine the micro-scale precision of wearable sensors with the macro-scale perspective of drone-based remote sensing. Such integration will require advances in data fusion algorithms, wireless communication networks, and multi-scale modeling approaches. As materials science continues to develop more robust, biocompatible, and cost-effective sensing platforms [6] [8], and as artificial intelligence enhances data interpretation capabilities [4], these technologies will collectively transform our approach to crop management, breeding programs, and sustainable agricultural intensification.

Wearable sensors and drone-based crop monitoring represent two advanced, yet functionally distinct, sensing paradigms. Wearable sensors are engineered for intimate, continuous contact with a biological host—either human or livestock—to monitor internal physiological and biochemical states in real-time [11] [12]. In contrast, agricultural drones operate as remote, macroscopic platforms, capturing spatial and spectral data across vast areas of crops and environment from above [13] [14]. This comparative analysis delineates their core functions, data types, and underlying technological principles, providing a framework for researchers and scientists to evaluate their applications in healthcare and precision agriculture.

Table 1: Fundamental Comparison of Sensing Paradigms

| Feature | Wearable Sensors | Drone-Based Crop Monitoring |

|---|---|---|

| Primary Domain | Healthcare, Livestock Management | Precision Agriculture |

| Sensing Distance | Intimate/Contact-based | Remote/Macroscopic |

| Temporal Resolution | Continuous, Real-time | Periodic, Snapshot |

| Spatial Resolution | Individual Organ/Body System | Field, Plant, or Leaf Level |

| Core Data Types | Physiological, Biochemical, Environmental | Spectral, Spatial, Topographic |

| Key Outputs | Heart rate, glucose, temperature | Vegetation indices, health maps, yield predictions |

Core Functions & Data Types of Wearable Sensors

Wearable sensors function as a non-invasive "eyesight" into the body, capturing a multifaceted stream of data directly from the host [11] [12]. Their functionality is categorized into three primary domains.

Monitoring Physiological Signals

These sensors capture physical and electrical signals generated by the body's functional activities, crucial for health management and preventive medicine [11].

- Electrophysiological Signals: These include electrocardiogram (ECG) for cardiac electrical activity, electromyogram (EMG) for skeletal muscle contraction, and electroencephalogram (EEG) for neural activity in the brain. They are vital for diagnosing cardiovascular pathologies, assessing neuromuscular health, and detecting neurological conditions like epilepsy [11].

- Biomechanical Signals: These are generated by musculoskeletal kinematics, such as motion, strain, and pressure, and are commonly measured by accelerometers and gyroscopes for activity and gait analysis [11] [15].

- Supplementary Biophysical Indicators: This category includes core metrics like body temperature and respiratory rate, which are fundamental indicators of metabolic state and overall health [11].

Sensing Biochemical Markers

Wearable biosensors incorporate biorecognition elements to selectively detect and quantify chemical biomarkers in bodily fluids, providing molecular-level health insights [12].

- Target Analytes: Key biomarkers include glucose (for diabetes management), lactate (for muscle fatigue), electrolytes, and pH levels [11] [12].

- Biofluid Sources: These sensors are designed to analyze sweat, saliva, tears, and interstitial fluid, enabling non-invasive monitoring [12]. Sweat, with its rich biochemical composition, is a particularly excellent medium for this purpose [12].

Tracking Environmental Parameters

Wearables also monitor the user's immediate ambient environment, contextualizing physiological and biochemical data.

- Common Metrics: Devices can track exposure to factors like ambient temperature, humidity, and airborne pollutants, providing a more complete picture of the factors influencing an individual's health [15].

Table 2: Core Data Types and Specifications of Wearable Sensors

| Data Category | Specific Signals/Markers | Example Sensing Modality | Typical Device/Platform |

|---|---|---|---|

| Physiological | ECG, EMG, EEG, EOG | Conductive electrodes (e.g., MXene, Hydrogel) | Smart patches, Chest straps |

| Heart Rate, Blood Pressure | Photoplethysmography (PPG) | Smartwatches, Fitness bands | |

| Motion, Strain, Pressure | Accelerometer, Gyroscope, Piezoresistive sensors | All-in-one wearables | |

| Skin Temperature | Thermistor | Smart patches, Rings | |

| Biochemical | Glucose, Lactate | Enzyme-based electrochemical sensors | Smart patches, Textile sensors |

| Electrolytes (e.g., Na+, K+) | Ion-selective electrodes (ISEs) | Textile sensors | |

| pH | Potentiometric sensors | Smart patches | |

| Environmental | Ambient Temperature, Humidity | Integrated environmental sensors | Smartwatches |

Core Functions & Data Types of Agricultural Drones

Agricultural drones perform two primary types of tasks: mechanical and informational [13]. This analysis focuses on their informational and sensing capabilities, which involve mapping, monitoring, and generating data to assess crop and field conditions.

Informational & Remote Sensing Functions

Drones serve as aerial platforms for a suite of remote sensing technologies.

- Field & Crop Mapping: Using high-resolution cameras, drones create detailed maps of field topography, boundaries, and plant populations [13].

- Crop Health Assessment: This is a primary function. Equipped with multispectral and hyperspectral sensors, drones capture data beyond visible light to compute vegetation indices like the Normalized Difference Vegetation Index (NDVI), which reveals plant vigor, chlorophyll levels, and photosynthetic activity [14] [16].

- Stress & Pathogen Detection: Advanced AI-powered diagnostics can spot diseases, nutrient deficiencies, and pest infestations by analyzing subtle changes in crop canopy reflectance [14] [17].

- Sustainability Monitoring: Emerging applications include tracking soil carbon levels and monitoring regenerative practices for carbon credit verification [14].

Key Data Outputs

The raw sensor data is processed into actionable insights for precision farming.

- Vegetation Indices: Quantified metrics of plant health (e.g., NDVI) [16].

- Health Zonation Maps: Georeferenced maps that highlight areas of stress, disease, or poor growth within a field, enabling targeted intervention [17].

- Yield Predictions: Data-driven forecasts of crop production [16].

- Treatment Prescription Files: Zoned maps that can be exported to guide variable-rate application of water, fertilizer, or pesticides by other farm machinery [17].

Table 3: Core Data Types and Specifications of Agri-Drone Monitoring

| Data Category | Specific Applications | Sensing Technology | Key Outputs |

|---|---|---|---|

| Spatial & Topographic | Field mapping, 3D modeling | RGB Cameras, LiDAR | Field maps, elevation models |

| Spectral & Health | Crop vigor, chlorophyll content | Multispectral, Hyperspectral sensors | NDVI, other vegetation indices |

| Disease, pest, nutrient deficiency | AI-powered analysis of spectral data | Health alerts, zonation maps | |

| Plant-level imaging | 4th-gen multispectral imaging | Targeted treatment maps | |

| Environmental | Soil moisture, carbon monitoring | Advanced specialized sensors | Sustainability insights, carbon data |

Experimental Protocols & Methodologies

The validation of performance for these technologies relies on distinct experimental protocols, tailored to their specific operational environments.

Experimental Protocol for Wearable Biosensor Development

The following methodology outlines the development and benchtop validation of a typical hydrogel-based electrochemical biosensor for sweat analysis [11] [12].

- Sensor Fabrication:

- Substrate Preparation: A flexible polymer (e.g., Polydimethylsiloxane (PDMS)) or textile is selected as the substrate.

- Electrode Patterning: Conductive inks (e.g., Carbon, Silver/Silver Chloride) are screen-printed or deposited via spray-coating to form working, reference, and counter electrodes.

- Functionalization: The working electrode is modified with a biorecognition element (e.g., Glucose Oxidase for glucose sensing). A hydrogel layer (e.g., Gelatin-based, Polyvinyl alcohol (PVA)) may be added to enhance biocompatibility and fluid wicking.

- In-Vitro Calibration:

- The sensor is exposed to a series of standard solutions with known concentrations of the target analyte (e.g., 0-10 mM glucose).

- The electrochemical response (e.g., amperometric current) is measured using a potentiostat.

- A calibration curve (Response vs. Concentration) is plotted to determine key performance metrics: sensitivity, linear detection range, and limit of detection (LOD).

- Mechanical Testing: The sensor's flexibility and durability are tested under repeated bending cycles (e.g., 1000 cycles at a 5mm bend radius) while monitoring for signal drift or physical degradation.

- Selectivity Assessment: The sensor's response is tested against common interfering agents (e.g., Ascorbic acid, Uric acid for glucose sensors) to confirm specificity.

Experimental Protocol for Drone-Based Crop Health Assessment

This protocol describes the workflow for generating an AI-powered crop health zonation map [14] [17].

- Mission Planning:

- A flight plan is programmed into the drone's ground control software, defining the area, flight altitude, and image overlap (e.g., 80% front and side overlap).

- The appropriate sensors (e.g., multispectral camera) are mounted and calibrated on the drone.

- Data Acquisition:

- The autonomous flight is executed, ensuring consistent lighting conditions (e.g., near solar noon).

- The drone captures geotagged imagery across multiple spectral bands (e.g., Red, Green, Red-Edge, Near-Infrared).

- Data Processing:

- Orthomosaic Generation: Captured images are stitched together using photogrammetry software to create a single, georeferenced map for each spectral band.

- Index Calculation: Vegetation indices (e.g., NDVI) are computed pixel-by-pixel using the formula: NDVI = (NIR - Red) / (NIR + Red).

- AI-Powered Analysis & Zonation:

- The computed index map is processed by a trained machine learning model. This model, often trained on a dataset of annotated crop images, classifies areas as "healthy," "stressed," or "diseased" [17].

- The output is a color-coded health zonation map, which can be converted into a prescription file for variable-rate application systems.

Signaling Pathways, Workflows & Logical Diagrams

The operational logic of both sensing systems can be visualized through their core workflows.

Wearable Biosensor Signaling Pathway

The following diagram illustrates the transduction pathway from a biological event to a measurable digital signal in a wearable biosensor.

Drone-Based Crop Monitoring Workflow

This workflow outlines the logical sequence from mission planning to actionable insight in precision agriculture.

The Scientist's Toolkit: Research Reagent Solutions

The development and operation of these technologies rely on specialized materials and software tools.

Table 4: Essential Research Tools for Sensor Development and Deployment

| Category | Item | Function & Application |

|---|---|---|

| Advanced Materials for Wearables | MXenes (e.g., Ti₃C₂Tₓ) | Provide ultrahigh electrical conductivity and specific surface area for sensitive electrophysiological and biochemical electrodes [11]. |

| Conductive Polymers (e.g., PEDOT:PSS) | Used as flexible, conductive coatings for electrodes and interconnects in flexible sensors [11]. | |

| Hydrogels (e.g., Gelatin, PVA) | Biocompatible, hydrating interfaces that mimic biological tissues, ideal for in vivo monitoring and enhancing contact with skin [11] [12]. | |

| Gold Nanowires | Create highly conductive and stretchable networks within flexible substrates for durable sensors [12]. | |

| Drone Sensing & Analysis | Multispectral/Hyperspectral Sensors | Capture light reflectance data at specific wavelengths (e.g., Red, NIR) essential for calculating vegetation indices like NDVI [14] [16]. |

| AI-Powered Analytics Platforms (e.g., DroneDeploy) | Process aerial imagery to identify patterns of disease, stress, and nutrient deficiency, providing real-time crop diagnostics [14]. | |

| Ground Control Points (GCPs) | Physical markers placed in the field to geometrically correct and improve the spatial accuracy of stitched drone imagery. | |

| General Research Equipment | Potentiostat/Galvanostat | An essential electronic instrument for performing electrochemical measurements (e.g., amperometry, impedance) in biosensor development and testing. |

| Phantom Limb/Skin Simulants | Synthetic platforms that mimic the mechanical and electrical properties of human tissue for controlled testing of wearable sensor performance. |

Agricultural drones, or Unmanned Aerial Vehicles (UAVs), are sophisticated technological platforms that integrate an airframe (platform) with data collection sensors and onboard intelligence to enable precision farming. Within the broader comparative analysis of plant monitoring technologies, they offer a distinct, aerial-based solution contrasted with ground-based or wearable sensor approaches [1]. Their core function is to provide high-resolution, spatially explicit data for informed crop management.

Drone Platforms: Structural and Functional Categories

The platform defines the drone's physical structure and flight capabilities, determining its suitability for different agricultural tasks and farm scales. The three primary categories are fixed-wing, multirotor, and Vertical Takeoff and Landing (VTOL), each with distinct advantages and limitations [18].

Table 1: Comparative Analysis of Agricultural Drone Platform Types.

| Platform Type | Key Advantages | Key Limitations | Ideal Use Cases |

|---|---|---|---|

| Fixed-Wing | Long flight times; Efficient coverage of large areas; Better performance in windy conditions [18]. | Cannot hover; Requires runway for takeoff/landing; Lower maneuverability [18]. | Large-scale mapping and surveying of extensive farmland [18]. |

| Multirotor | High maneuverability; Ability to hover and fly at low altitudes; Vertical Takeoff and Landing (VTOL) [18]. | Shorter flight times; Limited to smaller or medium-sized fields [18]. | Close-range crop inspection, precision spraying on complex plots [18]. |

| VTOL | Versatile VTOL capability; No need for a runway; Efficient long-distance coverage [18]. | More complex operation and maintenance; Higher cost due to hybrid design [18]. | Farms with varied topography and mixed requirements for close inspection and large-area coverage [18]. |

Sensor Technologies for Data Acquisition

Sensors are the primary data-gathering components of a drone system. The choice of sensor dictates the type of information that can be extracted about the crop and its environment [19]. These can be broadly categorized into four types.

Table 2: Overview of Primary Sensor Types Used in Agricultural Drones.

| Sensor Type | Spectral Bands | Measured Parameters / Applications | Relative Cost |

|---|---|---|---|

| Visual (RGB) | Red, Green, Blue [19] | True-color imagery for field observation, plant counting, and visual assessment [19]. | Low [19] |

| Multispectral | Typically includes B, G, R, Red Edge, Near-Infrared (NIR) [19] | Plant health assessment (e.g., NDVI), chlorophyll levels, nutrient deficiency, and biomass estimation [19] [20]. | Medium [19] |

| Thermal Infrared | Long-wave infrared [19] | Crop water stress, irrigation scheduling, and detection of waterlogging [19] [20]. | High [19] |

| LiDAR | Active laser pulses [21] | Creation of 3D point clouds for topographic mapping, canopy structure, and volume estimation [21]. | High |

Experimental Data: Sensor Performance in Estimating Grapevine Parameters

A 2024 study provides a direct, quantitative comparison of how different UAV sensors perform against a terrestrial benchmark (Terrestrial Laser Scanner - TLS) for estimating geometric parameters of grapevines, a key metric of plant vigor [21]. This experimental data is crucial for selecting the appropriate sensor for a specific research goal.

Experimental Protocol:

- Objective: To compare the accuracy of point cloud data from a TLS and various UAV sensors (RGB, LiDAR, multispectral, panchromatic, Thermal Infrared) in estimating grapevine height, projected area, and volume [21].

- Methodology: Data was collected from a 0.30-hectare experimental vineyard. The TLS and UAV systems were used to scan the grapevines, generating 3D point clouds. Maximum grapevine height was manually measured in the field for validation, and canopy projected area was measured in a GIS [21].

- Data Analysis: The accuracy of each sensor was evaluated using linear correlations (r and R²) and the Root Mean Square Error (RMSE) between the sensor-derived parameters and the reference measurements [21].

Table 3: Sensor Performance in Estimating Grapevine Geometric Parameters (Adapted from [21])

| Sensor Type | Max Height vs. Measured (r / R² / RMSE in m) | Projected Area in GIS (r / R² / RMSE in m²) | Performance Summary |

|---|---|---|---|

| TLS (Benchmark) | 0.95 / 0.90 / 0.027 [21] | N/A | Highest accuracy for height estimation [21]. |

| UAV Panchromatic | >0.83 / >0.70 / <0.084 [21] | >0.83 / >0.70 / <0.084 [21] | Performed well, closely matching TLS and measured values [21]. |

| UAV RGB | >0.83 / >0.70 / <0.084 [21] | >0.83 / >0.70 / <0.084 [21] | Performed well, closely matching TLS and measured values [21]. |

| UAV Multispectral | >0.83 / >0.70 / <0.084 [21] | >0.83 / >0.70 / <0.084 [21] | Performed well, closely matching TLS and measured values [21]. |

| UAV LiDAR | >0.83 / >0.70 / <0.084 [21] | >0.83 / >0.70 / <0.084 [21] | Performed well, closely matching TLS and measured values [21]. |

| UAV Thermal (TIR) | 0.76 / 0.58 / 0.147 [21] | 0.82 / 0.66 / 0.165 [21] | Poor performance in estimating geometric parameters [21]. |

Onboard Intelligence: AI and Autonomous Systems

Onboard intelligence transforms drones from simple data collectors to automated field analysis tools. This encompasses the computing hardware and algorithms that enable real-time data processing, autonomous flight, and targeted action [20] [14].

The core of this intelligence is Artificial Intelligence (AI), particularly machine learning models trained on thousands of plant images. These models can detect early signs of pests, diseases, nutrient deficiencies, and water stress during the flight itself, a process known as edge computing [20]. This allows for immediate diagnosis and shortens response time dramatically.

This AI-driven analysis enables fully automated and precise mechanical tasks. For example, spray-equipped drones can use AI-generated zonal maps to identify affected patches and autonomously adjust nozzle flow and spray volume based on real-time crop density, ensuring inputs are applied only where needed [20]. Advanced systems now feature AI-powered drone swarms, where fleets of drones coordinate to spray, monitor, or map massive areas simultaneously [14].

The Researcher's Toolkit for Drone-Based Crop Monitoring

Implementing a drone-based monitoring study requires a suite of hardware, software, and analytical tools. The following table details key components and their functions in a typical research workflow.

Table 4: Essential Research Toolkit for Drone-Based Crop Monitoring.

| Tool / Reagent | Category | Primary Function in Research |

|---|---|---|

| VTOL Drone Platform | Hardware | Provides the aerial vehicle for data collection; VTOL capability is versatile for complex terrain [18]. |

| Multispectral Sensor | Hardware | Captures data in non-visible wavelengths (e.g., NIR, Red Edge) for calculating vegetation indices like NDVI [19] [20]. |

| Terrestrial Laser Scanner | Hardware | Serves as a high-accuracy ground truthing instrument for validating drone-based geometric measurements [21]. |

| Ground Control Points | Equipment | Physical markers placed in the field to geometrically correct and improve the spatial accuracy of drone imagery. |

| Flight Planning Software | Software | Enables autonomous mission planning, defining flight paths, altitude, overlap, and sensor triggering [22]. |

| Photogrammetry Software | Software | Processes hundreds of overlapping drone images to generate orthomosaics, digital elevation models, and 3D point clouds [21]. |

| Normalized Difference Vegetation Index | Analytical | A key vegetation index calculated from multispectral data to assess plant health and density [19]. |

Comparative Positioning: Drones vs. Wearable Sensors

Positioning drone-based monitoring within the broader context of plant sensing reveals its complementary role alongside other technologies, such as wearable plant sensors. The following diagram illustrates this technological relationship.

As shown, drone-based systems are defined by their platform versatility, sophisticated multi-sensor payloads, and increasingly autonomous onboard intelligence. They provide a powerful, spatially explicit solution for crop monitoring that is highly complementary to the continuous, micro-scale data from wearable sensors, together enabling a multi-scale understanding of plant health.

Drone technology has become a pivotal tool in modern agricultural research, enabling high-throughput, non-destructive data collection and intervention. This guide provides a comparative analysis of its three core functions—large-scale mapping, precision spraying, and phenotypic analysis—contrasting their capabilities with ground-based alternatives like wearable plant sensors to highlight distinct applications and performance.

Large-Scale Mapping and Field Analysis

Large-scale mapping with drones provides researchers with high-resolution, georeferenced maps of experimental plots, enabling the detailed analysis of spatial variability in crop health, soil conditions, and resource distribution.

Key Applications and Technologies: Drones equipped with RGB, multispectral, and thermal sensors can rapidly survey hundreds of acres, capturing data that is processed into various analytical maps [22] [23]. Normalized Difference Vegetation Index (NDVI) maps, derived from multispectral imagery, are crucial for assessing crop vigor and health status [24] [23]. Furthermore, drones are employed for automated field mapping and soil analysis, measuring moisture and nutrient levels to optimize resource management [22].

Comparative Performance Data: The table below summarizes the performance and adoption of mapping technologies.

Table 1: Comparative Analysis of Field Mapping Technologies

| Technology | Key Applications | Spatial Resolution | Coverage Speed | Estimated Adoption in 2025 [22] | Cost per Acre (Mapping) [22] |

|---|---|---|---|---|---|

| Drone-based Mapping | NDVI mapping, soil analysis, growth tracking, irrigation planning [22] [23] | High (Centimeter-level) [14] | Hundreds of acres per flight [23] | 52% | $5 - $11 |

| Satellite Imaging | Regional crop health assessment, large-area monitoring [22] | Low (Meter-level) | Global coverage | N/A | Lower (often subscription-based) |

| Wearable Plant Sensors | In-situ monitoring of sap flow, leaf temperature, and micro-climate [25] | Single plant level | Manual deployment per plant | Emerging | N/A |

Experimental Protocol for Drone-Based Mapping: A typical research protocol for generating field maps involves [24]:

- Flight Planning: Define the area of interest and set a flight path with high image overlap (e.g., 80% forward and side overlap) to ensure complete coverage.

- Ground Control: Place ground control points (GCPs) with known GPS coordinates in the field to achieve high geolocation accuracy (within 2 cm).

- Data Acquisition: Execute the autonomous flight using a drone equipped with a multispectral camera. Flights are often conducted at altitudes of 50-120 meters under clear, cloudless skies for consistent illumination.

- Data Processing: Use photogrammetry software (e.g., Agisoft Metashape) to align images and generate geo-referenced orthomosaics and digital surface models (DSMs).

- Radiometric Calibration: Convert raw sensor data to reflectance values using a reference panel with known reflectance properties imaged during the flight [24].

- Analysis: The orthomosaic is processed with scripts (e.g., in R) to calculate vegetation indices like NDVI for every plot, segment plant pixels from soil, and extract plot-level mean reflectance values [24].

Diagram 1: Drone-based mapping and analysis workflow.

Precision Spraying and Targeted Application

Precision spraying with drones allows for the site-specific application of agrochemicals, revolutionizing pest control and nutrient management by targeting only areas requiring intervention.

Key Applications and Technologies: Spray drones use AI and sensor-driven tanks to apply pesticides, herbicides, and fertilizers with pinpoint accuracy [22]. A major application is drone-based weed mapping for targeted spraying [26]. Drones first map the field to identify weed patches, then generate a prescription map that is executed by a sprayer (either drone or ground-based), targeting only the infested zones.

Comparative Performance Data: The table below compares the efficacy of targeted spraying versus broadcast methods.

Table 2: Experimental Results from Targeted Spraying Trials

| Parameter | Broadcast Application (Control) | Targeted Spraying (Drone-Based) | Notes/Source |

|---|---|---|---|

| Herbicide Savings | 0% (Baseline) | ~50% | Iowa State University demonstration on soybeans [26]. |

| Cost Savings per Acre | N/A | $13.42 | From reduced chemical use [26]. |

| Weed Control Efficacy | Baseline | >99% herbicide injury; 94% weeds dead [26] | No significant difference in final yield compared to control [26]. |

| Weed Detection Accuracy | N/A | 94% | Sentera Aerial WeedScout program (2024 data) [26]. |

| Adoption Rate in 2025 | N/A | 55% | Projected for precision spraying applications [22]. |

Experimental Protocol for Targeted Weed Spraying: A demonstrated protocol for drone-based weed control is as follows [26]:

- High-Resolution Mapping: Fly a drone equipped with a high-precision camera over the field to capture detailed imagery of the weed pressure.

- Prescription Generation: Use automated analysis software (e.g., Sentera's Aerial WeedScout) to process the imagery, detect weeds as small as 1/4 inch, and automatically generate a targeted spray prescription map within 24 hours.

- Application: Upload the prescription file to a sprayer with nozzle control capabilities (e.g., a self-propelled sprayer or a spray drone). The sprayer then applies herbicide only to the predefined zones, optimizing tank mix and volume for the specific weed pressure.

- Validation: Conduct pre- and post-application weed counts in the treatment area to quantify control efficacy. Compare crop yield at harvest with a control area treated with broadcast application.

Phenotypic Analysis and Yield Prediction

Drones enable high-throughput field phenotyping (HTFP), using advanced sensors and artificial intelligence to quantitatively measure key plant traits and predict yield at scale.

Key Applications and Technologies: This function involves using drones to estimate agronomic traits like plant height, biomass, leaf area index (LAI), and, crucially, yield components [24] [27]. Advanced AI-powered systems, such as CropQuant-Air, combine deep learning models with multispectral and RGB imagery to detect and count wheat spikes—a key yield component—and perform yield classification [27]. This allows researchers to screen hundreds of varieties for stress tolerance and yield performance under complex field conditions.

Comparative Performance Data: The table below contrasts drone-based phenotyping with traditional manual methods.

Table 3: Comparison of Phenotypic Analysis Methods

| Trait / Metric | Traditional Manual Phenotyping | Drone-Based Phenotyping (AI-Powered) | Correlation with Manual Scoring |

|---|---|---|---|

| Spike Number per m² (SNpM2) | Laborious, prone to error [27] | Automated using optimized YOLOv7 model [27] | Significant positive correlation [27] |

| Plant Height & Biomass | Destructive sampling or manual measurements | Estimated via vegetation indices and DSM analysis [24] | Good correlation with LAI and biomass [24] |

| Throughput (plots per day) | Low (10s-100s) | High (1000s) | N/A |

| Scalability | Limited to small populations | Suitable for large-scale breeding trials [27] | N/A |

Experimental Protocol for AI-Powered Phenotypic Analysis (e.g., Wheat Spike Detection): The workflow for a system like CropQuant-Air involves [27]:

- Field Trial and Image Acquisition: Establish a field trial with hundreds of wheat varieties (e.g., 210 varieties with two replicates). Use a drone with an RGB camera to capture high-resolution canopy images during the reproductive growth stage.

- Plot Segmentation: Process the stitched orthomosaic of the entire field using a deep learning model (e.g., YOLACT-Plot) to automatically segment and delineate individual experimental plots.

- Spike Detection and Counting: Within each segmented plot, run an optimized object detection model (e.g., YOLOv7) that has been trained on a large dataset of labeled wheat spikes (including public datasets like the Global Wheat Head Detection dataset) to detect and count spikes.

- Trait Extraction and Yield Classification: Extract the spike density (SNpM2) and other canopy-level spectral and textural features. Use these computed traits as input to a machine learning classifier (e.g., XGBoost) to classify plots into different yield groups.

- Validation: Validate the system's accuracy by performing correlation analysis between the computationally derived traits (e.g., spike counts) and manually scored ground truth data.

Diagram 2: AI-powered phenotypic analysis workflow for yield prediction.

The Researcher's Toolkit: Essential Reagents and Solutions

For researchers designing experiments in drone-based agriculture and comparative monitoring, the following key resources and technologies are essential.

Table 4: Key Research Reagent Solutions for Drone-Based Agricultural Research

| Category / Solution | Specific Examples | Function in Research |

|---|---|---|

| Drone Platforms | DJI Agras T30 (spraying), Sentera (weed mapping), Parrot Bluegrass Fields (mapping) [23] | Physical vehicle for sensor and applicator deployment. |

| Sensor Packages | Multispectral (e.g., Airphen), Thermal (e.g., FLIR), RGB [24] | Captures raw data on crop reflectance, temperature, and morphology. |

| AI/Software Models | YOLOv7 (spike detection), Custom CNNs (disease detection), XGBoost (yield classification) [27] | Extracts meaningful phenotypic information from raw image data. |

| Data Processing Suites | Agisoft Metashape, Pix4D, FieldImageR package in R [24] [26] | Processes raw images into orthomosaics, DSMs, and extracts plot-level data. |

| Validation Benchmarks | Global Wheat Head Detection (GWHD) dataset [27] | Provides standardized data for training and benchmarking AI models. |

| Comparative Technology | Wearable Plant Sensors [25] | Provides in-situ, continuous data on plant physiology (e.g., sap flow, leaf temperature) for ground-truthing and complementary studies. |

Drone technology offers distinct capabilities in mapping, spraying, and phenotyping that are highly complementary to, rather than a direct replacement for, other monitoring technologies like wearable sensors. Wearable sensors excel at continuous, high-frequency monitoring of individual plant physiology [25], while drones provide a scalable, canopy-level overview. The integration of data from both platforms—detailed physiological data from wearables and scalable spatial data from drones—holds the promise of a more holistic understanding of plant-environment interactions, which is crucial for advancing breeding programs and developing sustainable agricultural practices.

Deployment in Action: Methodologies and Real-World Applications in Crop Monitoring

The pursuit of precision agriculture has given rise to two distinct technological paradigms for crop monitoring: wearable sensor technology and drone-based remote sensing. Wearable sensors, attached directly to plants, provide continuous, high-resolution physiological data at the individual plant level, enabling real-time detection of stresses before visible symptoms appear [28] [29]. In contrast, drone-based systems utilize aerial platforms equipped with advanced sensors to capture spatial and temporal data across entire fields, facilitating large-scale monitoring and management zones identification [4] [30]. This comparative analysis examines the technical capabilities, experimental methodologies, and research applications of these approaches within the specific context of measuring volatile organic compounds (VOCs), sap flow, stem microvariations, and microclimate parameters—critical indicators of plant health and stress response.

The integration of these technologies is driving a paradigm shift from reactive to proactive agriculture. Where traditional methods often rely on visual identification of stress symptoms, these advanced sensing platforms enable early intervention, potentially reducing crop losses and optimizing resource use [31] [29]. For researchers and agricultural professionals, understanding the comparative advantages, technical requirements, and data output of each approach is fundamental to designing effective monitoring strategies and advancing sustainable crop management practices.

Wearable Sensors for Plant Physiology Monitoring

Volatile Organic Compound (VOC) Sensing

2.1.1 Sensing Technologies and Materials

Wearable VOC sensors represent a cutting-edge application of materials science for plant health diagnostics. These sensors typically utilize chemiresistive or electrochemical sensing mechanisms, where exposure to target VOCs induces measurable changes in electrical properties [28]. Advanced sensing materials include metal oxide semiconductors (e.g., SnO₂, ZnO), conducting polymers (e.g., polyaniline, polypyrrole), and carbon nanomaterials (e.g., graphene, MXenes), which offer high sensitivity and low detection limits crucial for capturing subtle plant emissions [31] [28]. Recent innovations focus on developing flexible, biocompatible substrates that conform to plant surfaces without inhibiting growth or causing damage, with materials such as polydimethylsiloxane (PDMS) and biodegradable hydrogels gaining prominence for their mechanical properties and environmental sustainability [28] [29].

Plant-emitted VOCs serve as noninvasive biomarkers for tracking health and diagnosing diseases, with emission profiles changing significantly in response to both biotic and abiotic stresses [31]. For example, tomatoes infected with late blight release hexenal, while maize plants under insect attack emit increased methanol and terpenoids [31]. Monitoring these VOC signatures enables early detection of pathogens like Fusarium oxysporum and Ralstonia solanacearum, which can cause yield losses of up to 90% in tomato and potato crops [28].

Table 1: Key Plant VOC Biomarkers and Their Significance

| VOC Compound | Plant Source | Stimulant/Context | Significance |

|---|---|---|---|

| Methanol | Maize | Insect attack | General stress response indicator |

| Hexenal | Tomato | Late blight infection | Specific disease biomarker |

| Terpenoids | Corn seedlings | Insect herbivory | Direct and indirect defense response |

| Jasmonate | Corn | Mechanical damage | Defense hormone signaling |

| Monoterpene α-pinene | Pinus sylvestris | Mechanical damage, water stress | Abiotic stress indicator |

| (E)-β-caryophyllene | Maize (root) | Western corn rootworm infestation | Below-ground pest detection |

| Salicylic acid | Tobacco, soybean, potato, rice, cucumber | Biotic and abiotic stresses | Systemic acquired resistance |

2.1.2 Experimental Protocol for Wearable VOC Sensor Deployment

Objective: To continuously monitor stress-induced VOC emissions from tomato plants subjected to fungal pathogen (Fusarium oxysporum) inoculation.

Materials Required:

- Flexible chemiresistive VOC sensors (e.g., metal oxide semiconductor-based)

- Potentiostat for electrochemical measurements

- Data logging system with wireless transmission capability

- Reference analysis instrument (e.g., portable GC-MS for validation)

- Plant attachment materials (biocompatible adhesive, flexible straps)

- Pathogen culture and inoculation tools

- Environmental control chamber

Methodology:

- Sensor Calibration: Pre-deploy sensors in controlled atmosphere chambers with standard VOC solutions at known concentrations (0.1-100 ppm) to establish calibration curves for key biomarkers (e.g., hexenal, jasmonate) [28].

- Plant Preparation: Group tomato plants (n=20) into treatment (inoculated) and control groups. Maintain consistent growing conditions (25°C, 60% RH, 16/8h light/dark cycle).

- Sensor Attachment: Mount pre-calibrated sensors on fully expanded leaves using biocompatible attachment systems that minimize damage to plant tissues. Ensure proper sensor-leaf contact while allowing for natural growth.

- Pathogen Inoculation: Inoculate treatment plants with Fusarium oxysporum spore suspension (10⁶ spores/mL) applied to root zone. Control plants receive sterile water.

- Data Collection: Record continuous sensor measurements at 15-minute intervals for 14 days post-inoculation. Simultaneously collect microclimate data (temperature, humidity, light intensity).

- Validation Sampling: Conduct grab air sampling adjacent to sensor locations 2-3 times daily for GC-MS analysis to validate sensor accuracy.

- Data Analysis: Process time-series data to identify VOC emission patterns, correlating with disease progression visually assessed using standard disease rating scales.

This protocol enables real-time, non-invasive monitoring of plant stress responses, overcoming limitations of conventional VOC analysis techniques like GC-MS that lack continuous monitoring capability and require extensive sample preparation [31] [28].

Sap Flow, Stem Microvariations, and Microclimate Sensing

2.2.1 Sensing Approaches and Technical Specifications

Wearable sensors for monitoring plant hydrodynamics include dendrometers for stem microvariations and heat-based sensors for sap flow. These sensors provide critical insights into plant water relations, growth patterns, and responses to environmental stresses. Modern implementations utilize microelectromechanical systems (MEMS) technology with high-resolution strain gauges and temperature sensors that offer minimal intrusion while capturing diurnal variations in stem diameter and water flux [29]. Microclimate sensors concurrently monitor ambient conditions immediately surrounding the plant, including air temperature, relative humidity, light intensity, and leaf wetness, providing essential context for interpreting physiological data.

Table 2: Wearable Sensor Performance Specifications for Plant Physiology Monitoring

| Parameter | Sensor Technology | Accuracy/Resolution | Measurement Range | Key Applications |

|---|---|---|---|---|

| VOC Detection | Chemiresistive (Metal Oxide) | 0.1-5 ppm (detection limit) | 0.1-500 ppm | Early disease detection, stress response monitoring |

| VOC Detection | Electrochemical | 0.5-2 ppm (detection limit) | 0.5-1000 ppm | Specific biomarker detection (e.g., ethylene) |

| Stem Diameter | Resistive strain gauge | ±1µm resolution | 0-20 mm variation | Water status, growth patterns, drought stress |

| Sap Flow | Heat pulse/heat balance | ±5-10% accuracy | 0-300 g/h | Irrigation scheduling, transpiration studies |

| Temperature | Thermistor | ±0.1°C | -40°C to +85°C | Microclimate characterization |

| Relative Humidity | Capacitive sensor | ±2% RH | 0-100% RH | Microclimate characterization, disease risk assessment |

| Light Intensity | Photodiode | ±5% | 0-2000 µmol/m²/s | Photosynthetic active radiation monitoring |

2.2.2 Experimental Protocol for Hydrodynamic Monitoring

Objective: To simultaneously monitor stem microvariations, sap flow, and microclimate parameters on potato plants under progressive drought stress.

Materials Required:

- High-resolution dendrometer (e.g., resistive strain gauge type)

- Heat ratio method sap flow sensors

- Microclimate sensor array (temperature, RH, light, wind)

- Data logger with multi-channel capability

- Power supply (solar/battery hybrid system)

- Calibration instruments (digital calipers, reference thermometers)

Methodology:

- Sensor Installation: Install dendrometers on main stems of potato plants (n=15) at 15cm above soil level. Apply minimal contact pressure to avoid constricting growth. Install sap flow sensors on adjacent stems following manufacturer specifications for proper thermal isolation.

- Microclimate Setup: Position microclimate sensors at plant canopy height, ensuring representative exposure without shading from the sensors themselves.

- Baseline Recording: Collect data for 3-5 days under well-watered conditions to establish baseline variability and plant-specific patterns.

- Treatment Application: Withhold irrigation to initiate drought stress while continuing continuous monitoring at 10-minute intervals.

- Reference Measurements: Periodically collect destructive measurements (leaf water potential, stomatal conductance) for validation during the experimental period.

- Data Processing: Apply temperature compensation to dendrometer data. Calculate sap flow velocity using heat pulse timing algorithms. Correlate stem contractions/expansions with sap flow rates and microclimate conditions.

This integrated approach reveals the complex interplay between environmental conditions and plant water relations, providing insights into drought tolerance mechanisms and supporting irrigation optimization research.

Drone-Based Crop Monitoring Technologies

Sensor Platforms and Capabilities

Drone-based crop monitoring utilizes unmanned aerial vehicles (UAVs) equipped with multi-spectral, thermal, and hyperspectral sensors to assess crop health across large areas [4] [30]. These systems capture spatial and temporal data on plant physiology, water status, and pest/disease incidence, enabling researchers to identify variability that might not be visible to the naked eye. Advanced drone platforms are increasingly integrated with AI and IoT technologies, creating sophisticated data collection and analysis ecosystems for precision agriculture [30].

The sensor capabilities of agricultural drones have expanded significantly, with common payloads including:

- Multispectral Sensors: Capture data in specific wavelength bands (e.g., red edge, near-infrared) used to calculate vegetation indices like NDVI (Normalized Difference Vegetation Index), which correlate with crop health, biomass, and nutrient status [4] [28].

- Thermal Sensors: Measure canopy temperature as an indicator of plant water stress, enabling targeted irrigation management [30].

- Hyperspectral Sensors: Provide high spectral resolution data for detecting subtle physiological changes and specific stress responses [29].

- LiDAR: Creates detailed 3D models of crop canopies for growth monitoring and biomass estimation [32].

Recent trends include the development of sensor fusion technologies that combine data from multiple sensors to provide more comprehensive insights, and the integration of 5G and edge computing for real-time data processing and decision support [33].

Table 3: Drone-Based Sensors for Agricultural Monitoring Applications

| Sensor Type | Key Parameters Measured | Spatial Resolution | Application Examples | Limitations |

|---|---|---|---|---|

| Multispectral | Vegetation indices (NDVI, NDRE) | 1-20 cm/pixel | Crop health assessment, nutrient deficiency detection | Limited to surface-level phenomena |

| Thermal | Canopy temperature | 10-50 cm/pixel | Water stress identification, irrigation scheduling | Affected by ambient conditions |

| Hyperspectral | Narrowband spectral reflectance | 5-30 cm/pixel | Disease detection, pigment composition analysis | High cost, complex data processing |

| LiDAR | Canopy structure, height | 5-50 cm/pixel | Biomass estimation, growth monitoring | Limited penetration through dense canopies |

| RGB | Visual assessment, canopy cover | 1-10 cm/pixel | Growth stage assessment, stand count | Limited to visible spectrum |

Experimental Protocol for Drone-Based Field Monitoring

Objective: To map spatial variability of water stress and disease incidence in a maize field using a multi-sensor drone platform.

Materials Required:

- Multirotor or fixed-wing UAV platform

- Multi-sensor payload (multispectral, thermal, RGB cameras)

- Ground control targets for radiometric calibration

- GPS base station for precise geotagging

- Data processing software (e.g., Pix4D, Agisoft Metashape)

- Field validation equipment (SPAD meter, leaf water potential meter)

Methodology:

- Flight Planning: Design autonomous flight missions ensuring adequate forward and side overlap (80%/70% minimum), appropriate altitude for target resolution, and consistent sun geometry (within 2 hours of solar noon).

- Ground Control: Establish and survey ground control points with differential GPS for spatial accuracy and radiometric calibration targets for spectral consistency.

- Data Acquisition: Conduct repeated flights at critical crop growth stages (e.g., V6, VT, R3) maintaining consistent flight parameters. Capture simultaneous multispectral and thermal imagery.

- Field Validation: Collect coincident ground truth data including leaf water potential, chlorophyll content, and visual disease ratings at pre-determined sample locations across the field.

- Data Processing: Generate orthomosaics, vegetation index maps, and canopy temperature maps using photogrammetric software. Apply radiometric calibration to ensure data comparability across dates.

- Data Analysis: Conduct spatial analysis to identify patterns of variability. Correlate drone-derived indices with ground measurements. Develop prescription maps for targeted interventions.

This approach enables researchers to capture field-scale variability efficiently, identifying problem areas that might be missed with point-based sampling and enabling targeted collection of more detailed ground observations.

Comparative Analysis: Wearable Sensors vs. Drone-Based Monitoring

Technical and Operational Comparison

The selection between wearable sensors and drone-based monitoring approaches involves balancing multiple factors including spatial and temporal resolution, parameters measured, and operational constraints. Each approach offers distinct advantages that make them suitable for different research scenarios and questions.

Table 4: Comprehensive Comparison of Monitoring Approaches

| Characteristic | Wearable Sensors | Drone-Based Monitoring |

|---|---|---|

| Spatial Scale | Single plant or organ level | Field scale (hectares) |

| Temporal Resolution | Continuous (minutes) | Periodic (days/weeks) |

| Spatial Resolution | Point measurements | High-resolution maps (cm-pixel) |

| Primary Parameters | Direct physiological measures (VOCs, sap flow, stem growth) | Proxy indicators (vegetation indices, canopy temperature) |

| Data Output | High-resolution time series | Georeferenced imagery and maps |

| Early Detection Capability | High (pre-symptomatic detection) | Moderate (symptoms often visible at subcanopy level) |

| Labor Requirements | High initial installation, lower maintenance | Lower per data collection event |

| Cost Structure | Lower per unit, higher at scale | Higher platform investment, lower marginal cost |

| Integration with AI | Emerging for pattern recognition | Well-established for image analysis and automation |

| Limitations | Limited spatial coverage, potential plant interference | Weather-dependent, limited direct physiological measurement |

Integrated Research Framework

For comprehensive crop monitoring research, wearable sensors and drone-based approaches should be viewed as complementary rather than competing technologies. Wearable sensors provide the high-temporal-resolution physiological grounding for interpreting drone-derived spatial patterns, while drones identify spatial variability that guides strategic placement of wearable sensors.

The following workflow diagram illustrates how these technologies can be integrated in a research context:

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of wearable sensor and drone-based monitoring research requires specific materials and technologies. The following table details key solutions and their functions for researchers designing experiments in this field.

Table 5: Essential Research Reagents and Solutions for Advanced Crop Monitoring

| Category | Item/Technology | Specification/Function | Application Context |

|---|---|---|---|

| Wearable Sensor Materials | Chemiresistive sensing films | Metal oxide (SnO₂, WO₃) or polymer-based (PANI, PPy) sensitive layers | VOC detection and monitoring |

| Flexible substrates | PDMS, polyimide, or biodegradable hydrogel materials | Conformable plant attachment | |

| Stretchable conductors | Ag/AgCl ink, graphene, or liquid metal (Galinstan) circuits | Durable electrical connections on moving plant parts | |

| Biocompatible adhesives | Silicone-based, acrylic, or hydrogel formulations | Secure attachment minimizing plant damage | |

| Drone Sensor Technologies | Multispectral cameras | 5-12 band sensors capturing visible to near-infrared spectra | Vegetation health assessment via NDVI, NDRE |

| Thermal imaging cameras | Uncooled microbolometer with <50mk thermal sensitivity | Canopy temperature measurement for water stress | |

| LiDAR systems | Rotary or solid-state with specific point density capabilities | 3D canopy modeling and biomass estimation | |

| Hyperspectral imagers | 100+ contiguous bands with 3-10nm spectral resolution | Detailed pigment and biochemical analysis | |

| Data Acquisition & Processing | IoT sensor nodes | Low-power microcontrollers (nRF52840, ARM Cortex-M4) with BLE | Field data collection and wireless transmission |

| Edge computing devices | Onboard processing units for real-time data analysis | Immediate data processing and decision support | |

| Photogrammetry software | Pix4D, Agisoft Metashape, or OpenDroneMap | Orthomosaic and 3D model generation from drone imagery | |

| Calibration & Validation | Portable gas chromatographs | GC-MS systems for VOC identification and quantification | Wearable sensor validation |

| Spectroradiometers | Field portable instruments with 1-3nm resolution | Drone sensor radiometric calibration | |

| Plant physiology tools | Porometer, pressure chamber, fluorometer | Ground truth physiological measurements |

Wearable sensors and drone-based monitoring represent complementary paradigms in modern agricultural research, each with distinct advantages and optimal application domains. Wearable sensors excel in providing high-temporal-resolution physiological data at the individual plant level, enabling pre-symptomatic detection of stresses through direct measurement of VOCs, sap flow, and stem microvariations [31] [28]. These technologies are particularly valuable for detailed mechanism studies, genotype screening, and precise irrigation management. In contrast, drone-based systems offer unparalleled capabilities for spatial assessment at field scale, identifying variability patterns and hotspots that guide targeted management and ground-level investigations [4] [30].

The choice between these approaches—or their strategic integration—should be guided by specific research objectives, scale requirements, and resource constraints. As both technologies continue to advance, driven by innovations in materials science, AI integration, and sensor miniaturization, their combined application promises to accelerate our understanding of plant-environment interactions and support the development of more resilient and productive agricultural systems. For the research community, mastering both technological domains and their integrative potential will be essential for addressing the complex challenges of sustainable crop production in a changing climate.

The quantitative monitoring of crop health and growth is a cornerstone of precision agriculture and agricultural research [34]. Among the array of technologies available, drone-based methodologies have emerged as a powerful tool, offering a unique combination of high spatial resolution, extensive coverage, and operational flexibility. This guide provides a comparative analysis of drone-based sensing technologies—specifically multispectral/hyperspectral imaging, NDVI mapping, and 3D topography—framed within a broader thesis examining their role alongside emerging contact-based methods, such as wearable sensors. For researchers and scientists, understanding the capabilities, limitations, and appropriate application contexts of these aerial technologies is critical for designing robust experiments and monitoring systems. Drones, sometimes termed "flying tractors," have evolved from hobbyist gadgets to multifunctional agricultural tools capable of spraying, sowing, and, most critically for research, high-resolution sensing and mapping [35]. This analysis will dissect their performance through experimental data, detailed methodologies, and comparative benchmarks.

Drone-based remote sensing captures information about crops without direct contact, primarily through the detection of reflected electromagnetic radiation. This approach contrasts with wearable sensors, which are attached directly to plants to achieve high time and spatial resolution for monitoring physiological and ecological information [34] [36].

Multispectral Imaging captures data across a few discrete, predefined wavelength bands (e.g., blue, green, red, red-edge, near-infrared). It is the workhorse for calculating established Vegetation Indices (VIs) like NDVI.

Hyperspectral Imaging is a more advanced technology that captures data across hundreds of contiguous, narrow spectral bands, generating a continuous spectrum for each pixel [37]. This allows for detailed analysis of spectral signatures to detect subtle changes in plant health, moisture, and nutrients that are invisible to multispectral sensors.

NDVI Mapping is an application rather than a sensing technology itself. The Normalized Difference Vegetation Index (NDVI) is a specific, widely used vegetation index calculated from multispectral or hyperspectral data. It measures the difference between near-infrared (which vegetation strongly reflects) and red light (which vegetation absorbs) to assess relative biomass and health.

3D Topography involves creating digital elevation models of the land surface. This is often achieved using photogrammetric techniques with high-resolution RGB imagery or, more precisely, with LiDAR sensors, which use laser pulses to measure distance.

Table 1: Core Characteristics of Drone-Based Monitoring Technologies

| Technology | Primary Data | Spectral Resolution | Key Measurables | Best Suited For |

|---|---|---|---|---|

| Multispectral | Reflectance in 3-10 bands | Low (Broadbands) | Vegetation Indices (NDVI, EVI), general health, biomass estimation | High-level crop health assessment, yield prediction, routine monitoring |

| Hyperspectral | Reflectance in 100s of bands | High (Narrow, Contiguous Bands) | Biochemical composition (chlorophyll, water, nitrogen), early stress detection, species discrimination | In-depth phenotyping, pre-symptomatic disease detection, nutrient management, research on stress physiology |

| 3D Topography | 3D Point Clouds / Digital Surface Models | N/A (Spatial/Geometric) | Plant height, canopy structure, terrain models, erosion mapping | Growth monitoring, canopy structure analysis, field drainage planning |

Diagram 1: Workflow for drone-based crop monitoring and research applications.

Comparative Performance Analysis: Hyperspectral vs. Multispectral Indices

The choice between multispectral and hyperspectral data has direct implications for the accuracy and depth of agricultural insights. A benchmark study comparing multispectral Vegetation Indices (VIs) to hyperspectral mixture models provides critical experimental data for this comparison.

Experimental Protocol & Methodology

- Objective: To investigate the relationships between common multispectral VIs and hyperspectral mixture models for estimating photosynthetic vegetation fraction (Fv) in diverse croplands [38].

- Data Acquisition: The study leveraged 64 million high-resolution (3-5 m ground sampling distance) hyperspectral spectra collected by the Airborne Visible/Infrared Imaging Spectrometer-Next Generation (AVIRIS-ng) instrument over various agricultural landscapes in California. The AVIRIS-ng instrument measures radiance from 380 to 2510 nm at 5 nm intervals [38].

- Hyperspectral Analysis: Surface reflectance was derived using the Imaging Spectrometer Optimal Fitting algorithm (ISOFIT). The photosynthetic vegetation fraction (Fv) for each pixel was then estimated by inverting a three-endmember (photosynthetic vegetation, substrate, shadow) linear spectral mixture model [38].

- Multispectral Simulation: The AVIRIS-ng surface reflectance spectra were convolved with the spectral response of the Planet SuperDove multispectral sensor to simulate real-world multispectral data. Six popular VIs (NDVI, NIRv, EVI, EVI2, SR, DVI) were computed from these simulated spectra [38].

- Comparison Metrics: The relationships between each multispectral VI and the hyperspectral Fv were quantified using both parametric (Pearson correlation, ρ) and nonparametric (Mutual Information, MI) metrics [38].

Key Findings and Comparative Data

The study revealed significant differences in how well various VIs correlate with the hyperspectrally-derived vegetation fraction.

Table 2: Benchmarking Multispectral VIs against Hyperspectral Mixture Models [38]

| Vegetation Index (VI) | Pearson's ρ vs. Fv | Mutual Information (MI) vs. Fv | Linearity & Key Characteristics |

|---|---|---|---|

| NIRv | > 0.94 | > 1.2 | Strong linear relationship with Fv, but deviates from 1:1 correspondence. |

| DVI | > 0.94 | > 1.2 | Strong linear relationship with Fv, performs similarly to NIRv (ρ > 0.99). |

| EVI | > 0.94 | > 1.2 | Strong linear relationship and more closely approximates a 1:1 relationship with Fv. |

| EVI2 | > 0.94 | > 1.2 | Strongly interrelated with EVI (ρ > 0.99) and shows similar 1:1 correspondence with Fv. |

| NDVI | < 0.84 | 0.69 | Weaker, nonlinear, heteroskedastic relation. Severe sensitivity to background and saturation. |

| SR | < 0.84 | 0.69 | Exhibited a weaker, nonlinear relationship similar to NDVI. |

The data demonstrates that while EVI and EVI2 more accurately estimate true vegetation cover, the widely used NDVI shows significant limitations, including saturation in moderate-to-dense canopies and high sensitivity to bare soil background [38]. This is critical for researchers selecting indices for quantitative studies.

The Impact of Topography on Drone-Based Vegetation Indices

A crucial consideration for drone-based monitoring in non-flat terrain is the impact of topography. A comprehensive 2024 study quantified these effects, revealing that topographic variations can significantly compromise the reliability of vegetation indices.

Experimental Protocol for Topographic Effect Analysis

- Evaluation Strategies: The study employed three parallel strategies: 1) an analytic radiative transfer model, 2) a 3D ray-tracing radiative transfer model, and 3) analysis of real MODIS satellite products [39].

- Key Measured Variables: The research quantified the impact of topography, particularly shadow effects, on ten different vegetation indices across various spatial resolutions (from 30 m to 3 km) and temporal scales (daily to multi-year trends) [39].

- Trend Analysis: The study analyzed long-term VI data (2003-2020) from MODIS-Terra and MODIS-Aqua over the Tibetan Plateau to assess how topography influences interannual trend calculations [39].

Quantitative Findings on Topographic Impact

- Spatial Scale Impact: Topographic effects were significant across scales. The Mean Relative Error (MRE) for NDVI reached 28.5% at a 30 m resolution and remained substantial (11.1%) even at a 3 km resolution [39].

- Shadow vs. Non-Shadow Areas: Shadow effects dramatically increased errors. The MRE for NDVI was 14.7% in non-shadow areas compared to 26.1% in shadow areas [39].

- Impact on Long-Term Trends: Topography-induced variations can bias long-term vegetation studies. The study found that VI trend deviations between MODIS-Terra and MODIS-Aqua generally doubled as the slope steepened [39].

These findings underscore the necessity of accounting for topographic effects in any drone-based research conducted in undulating or mountainous terrain, as ignoring them can lead to incorrect conclusions about vegetation dynamics.

Comparative Framework: Drones vs. Wearable Sensors

To frame drone-based methodologies within the broader thesis of crop monitoring, a direct comparison with the emerging paradigm of wearable sensors is essential. These technologies represent two fundamentally different approaches: non-contact remote sensing versus direct, on-plant measurement.

Table 3: Drone-Based Monitoring vs. Wearable Crop Sensors

| Parameter | Drone-Based Sensing (Multispectral/Hyperspectral) | Wearable Crop Sensors |

|---|---|---|

| Spatial Coverage | Extensive (Entire fields) | Localized (Single plant or organ) |

| Spatial Resolution | Centimeter to Meter scale | Millimeter to Centimeter scale (on-plant) |

| Temporal Resolution | Minutes to Days (flight-dependent) | Continuous, Real-time |

| Measured Variables | Canopy-level spectral reflectance, vegetation indices, canopy structure | Direct biophysical (e.g., stem diameter, sap flow) and biochemical (e.g., xylem pH) parameters [34] [36] |

| Key Advantage | Scalability, ability to map spatial variability, non-invasive | High temporal resolution, direct measurement of physiological status, minimal latency [36] |

| Primary Limitation | Affected by atmosphere/topography, indirect inference of plant status, data processing demands | Limited spatial coverage, potential to damage plant tissues if not designed properly [34] |

| Ideal Research Use Case | Field-scale phenotyping, yield prediction, stress mapping, topographic studies | Deep-dive physiological studies, monitoring rapid plant responses, optimizing irrigation timing |

Diagram 2: Data flow and applications for wearable crop sensors.

The Scientist's Toolkit: Essential Reagents & Materials

For researchers designing experiments in drone-based crop monitoring, familiarity with the following key tools and platforms is essential.

Table 4: Key Research Reagent Solutions for Drone-Based Monitoring

| Item / Platform | Category | Primary Function in Research | Noteworthy Features |

|---|---|---|---|

| Planet SuperDove | Multispectral Satellite Data | Provides high-cadence (daily) baseline data for validating/calibrating drone-derived VIs. | 8 spectral bands, ~3m resolution, global coverage [38]. |

| AVIRIS-ng | Airborne Hyperspectral Sensor | Gold-standard hyperspectral data for method development and validation against drone sensors. | 5 nm spectral resolution, 380-2510 nm range, used for benchmarking [38]. |

| FlyPix AI | Geospatial Analysis Platform | AI-powered platform for processing drone and satellite imagery, including NDVI and custom analysis. | Supports multispectral, hyperspectral, LiDAR; no-code interface for AI model training [40]. |

| QGIS | Geographic Information System | Open-source software for spatial data analysis, map creation, and integrating drone data with other layers. | Free, extensible with plugins, supports numerous GIS file formats [40]. |

| ISOFIT | Algorithm / Software | Performs atmospheric correction of radiance data to convert it to surface reflectance—a critical preprocessing step. | State-of-the-art radiative transfer-based correction model [38]. |

| Flexible Sensor Materials | Sensor Fabrication | Enable creation of conformable, biocompatible wearable sensors for concurrent, direct plant monitoring. | Polymers, hydrogels; minimize plant damage during long-term monitoring [34] [36]. |

Drone-based methodologies offer an unparalleled capacity for scalable, high-resolution spatial monitoring of crop health, stress, and topography. The comparative data shows that while standard indices like NDVI have limitations, advanced indices like EVI and EVI2, as well as the rich data from hyperspectral imaging, provide powerful tools for agricultural research. However, these aerial methods are inherently susceptible to environmental confounders like topography, as quantified by recent studies [39].