Troubleshooting Replicability in Complex Plant Protocols: A Guide to Consistent Multi-Step Experiments

This article provides a comprehensive framework for researchers and scientists to achieve and troubleshoot replicability in complex, multi-step plant science protocols.

Troubleshooting Replicability in Complex Plant Protocols: A Guide to Consistent Multi-Step Experiments

Abstract

This article provides a comprehensive framework for researchers and scientists to achieve and troubleshoot replicability in complex, multi-step plant science protocols. Covering foundational concepts, methodological standardization, proactive troubleshooting, and robust validation techniques, it synthesizes best practices from recent large-scale reproducibility studies. The guide is tailored for professionals in plant research and related biomedical fields who rely on consistent, verifiable experimental outcomes to advance drug development and sustainable agriculture.

Defining the Replicability Challenge: Why Complex Plant Protocols Often Fail

For researchers working with complex multi-step plant protocols, a clear understanding of scientific reliability is crucial. The terms repeatability, reproducibility, and replicability represent hierarchical levels of verification that guard against experimental artifacts and build confidence in your findings. Confusion between these terms can lead to miscommunication and flawed validation attempts within your research team. This guide clarifies these concepts and provides a practical troubleshooting framework to address challenges when your results cannot be consistently replicated.

Defining the Core Concepts

The terms repeatability, reproducibility, and replicability describe different levels of scientific verification. The table below summarizes their key characteristics for easy reference [1] [2].

| Term | Core Question | Key Conditions | What is Reused? | What is New? |

|---|---|---|---|---|

| Repeatability | Can I get the same result again in my own lab? | Same location, operator, equipment, and methods [2]. | Data, methods, and analysis by the same team [3]. | Successive attempts or trials [3]. |

| Reproducibility | Can another team get our results using our data and methods? | Different team, same experimental setup and data [1] [2]. | Original data and research methods [1]. | Independent team reanalyzing the data [1]. |

| Replicability | Can another team get similar results by conducting a new experiment? | Different team, location, and experimental setup [1] [2]. | Research methods and the scientific hypothesis [1]. | Newly collected data and independent analysis [1]. |

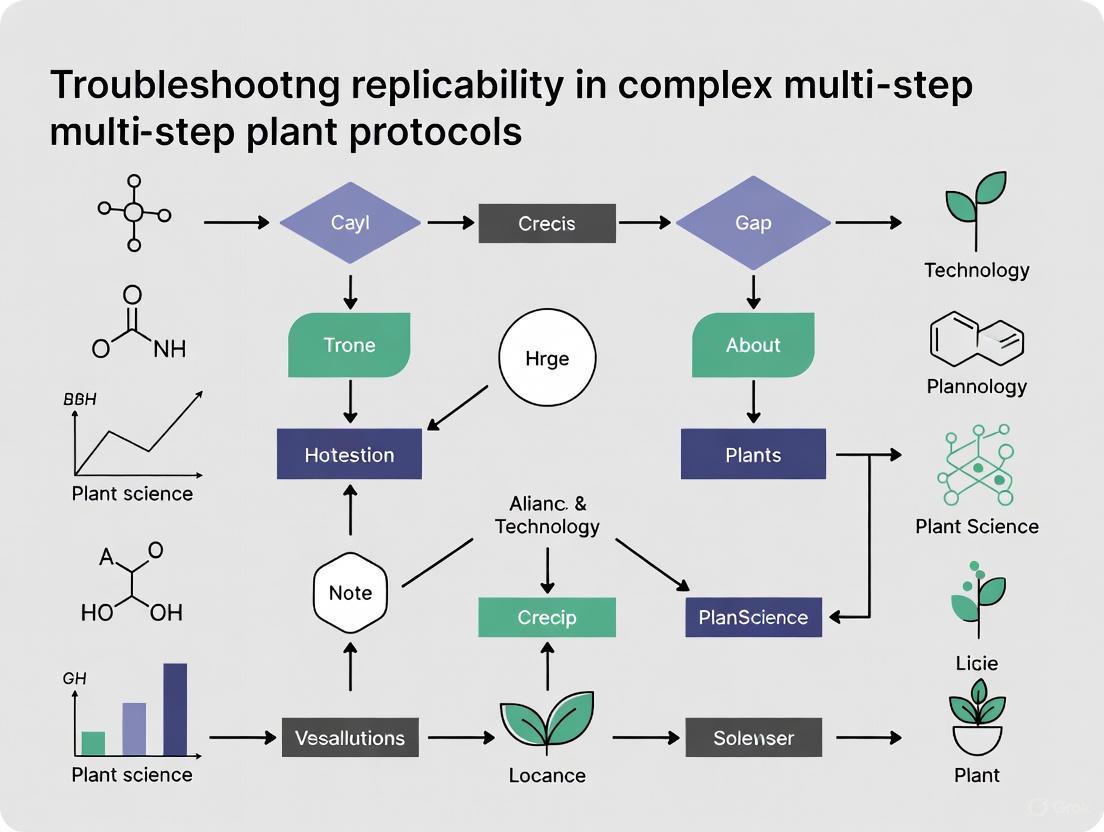

The following diagram illustrates the hierarchical relationship between these concepts and the key elements that change at each level.

The Replicability Crisis in Scientific Research

A significant challenge facing modern science is the replication crisis. Findings from many fields, including psychology, medicine, and economics, often prove impossible to replicate [1]. For instance, a large-scale effort to reproduce 100 psychology studies found that only 68% of the replications yielded statistically significant results that matched the original findings [2]. This means that when other research teams try to repeat a study with new data, they often get a different result, suggesting the initial findings may not be reliable [1].

Several factors contribute to this problem [1] [4]:

- Unclear definitions and methods: Poor description of research methods and a lack of transparency in the discussion section.

- Publication bias: Journals are more likely to accept original studies that report positive, statistically significant results, creating a disincentive to publish replication studies or negative results.

- Pressure to publish: Intense competition for funding and academic tenure can create incentives for researchers to overstate the importance of their results or engage in questionable research practices [4].

- Lack of raw data: Unclear presentation of raw data and poor description of the data analysis undertaken.

Troubleshooting Guide: Addressing Replicability Failures in Plant Protocols

When your multi-step plant experiments fail to yield replicable results, a systematic approach to troubleshooting is essential. The following workflow provides a structured method for diagnosing and resolving these issues.

Step 1: Define and Verify the Problem

- Identify the Problem: Clearly state the expected outcome versus the actual outcome you observed. Avoid defining the cause at this stage [5]. For example, "The gene expression level in the treated plant group was expected to increase 5-fold but showed no significant change."

- Verify and Replicate: Repeat the experiment to confirm the issue is consistent and not a one-time error [6]. Ask: "Can I consistently replicate this problem under the same conditions?"

Step 2: Review Methods and Challenge Assumptions

- Inspect Equipment and Reagents:

- Equipment: Ensure all instruments are properly calibrated and maintained [7]. For plant growth chambers, verify temperature, humidity, and light cycle settings.

- Reagents: Check the storage conditions and expiration dates of all reagents [8]. Biological reagents like enzymes or antibodies can be sensitive to improper storage. Visually inspect solutions for cloudiness or precipitation [8].

- Check Your Controls: Re-examine your positive and negative controls. A failed positive control indicates a problem with the protocol itself, while a valid positive control narrows the issue to your specific test samples [8].

- Challenge Your Hypothesis: Consider if your initial assumptions were correct. Could the unexpected result be a novel finding? Re-evaluate if your experimental design and hypothesis are robust [7].

Step 3: Isolate Variables Systematically

- Change One Variable at a Time: Generate a list of variables that could have caused the failure (e.g., incubation time, reagent concentration, sample age). Change only one variable per experimental iteration to clearly identify the cause [8].

- Prioritize Likely Causes: Start with the easiest variables to test (e.g., microscope settings) before moving to more time-consuming ones (e.g., antibody concentration). Use your knowledge and literature to guess which variable is most likely at fault [8].

Step 4: Test Hypotheses and Document Everything

- Design Diagnostic Experiments: Based on your hypotheses, design small, focused experiments to test the remaining possible causes [5]. For example, to test if a plant extraction reagent has degraded, run the extraction with a new batch of the same reagent.

- Document the Process: Keep a detailed and organized record of every troubleshooting step, including dates, methods, changes made, and results [8] [7]. This creates a valuable record for you and your colleagues.

Essential Research Reagent Solutions for Plant Protocols

The table below lists key reagents and materials used in complex plant research, along with common troubleshooting points.

| Reagent/Material | Function in Plant Protocols | Common Troubleshooting Checks |

|---|---|---|

| Enzymes (e.g., Taq Polymerase, Restriction Enzymes) | Catalyze specific biochemical reactions like PCR or DNA digestion. | Check expiration date and storage temperature (-20°C). Verify activity with a positive control reaction [5]. |

| Antibodies (Primary & Secondary) | Detect specific proteins of interest via techniques like immunohistochemistry or Western blot. | Confirm antibody specificity for your plant species. Check for compatibility between primary and secondary antibodies [8]. |

| Plant Growth Media & Supplements | Provide nutrients and hormones to support plant growth in vitro. | Verify pH and sterilization. Ensure supplements like auxins or cytokinins are fresh and added at the correct concentration. |

| DNA/RNA Extraction Kits | Isolate high-quality nucleic acids from complex plant tissues. | Ensure tissue was properly homogenized. Check for RNA degradation using an agarose gel [5]. |

| Competent Cells | Facilitate cloning by taking up plasmid DNA during transformation. | Test transformation efficiency with a known, intact control plasmid [5]. Ensure cells are not expired and were stored correctly. |

Frequently Asked Questions (FAQs)

Q1: Why should I care about these definitions? My results are correct.

These concepts are vital for building trustworthy and reliable science. They allow you and others to check the quality of work, which increases the chance that your results are valid and not suffering from research bias [1]. A replicable finding is a robust finding that forms a stronger foundation for future research and drug development.

Q2: What is the most common cause of non-replicable results in my own lab?

Often, the issue lies in uncontrolled variability in the protocol or reagents. Minor deviations in a multi-step plant protocol (e.g., slight changes in incubation times, reagent concentrations, or plant handling) can compound and lead to different outcomes. This is why meticulous documentation and systematic troubleshooting are critical.

Q3: I've checked everything, and I'm still stuck. What should I do?

- Seek Help: Consult your supervisor, mentor, or colleagues. A fresh perspective can offer new insights or suggestions you may not have considered [7].

- Literature Deep Dive: Conduct a thorough literature review to see if other researchers have encountered similar challenges and published solutions.

- Contact Vendors: For issues potentially linked to a specific reagent or kit, contact the vendor's technical support. They may be aware of batch-specific issues or have optimized protocols to share.

Q4: How can I design my experiments to be more replicable from the start?

- Transparent Methodology: Write a clear and detailed methodology section as if someone with no prior knowledge of your work could repeat it based solely on your description [1]. Include details on plant growth conditions, exact reagent concentrations, and equipment models.

- Use Clear Language: Avoid vague terms. For example, instead of "the plants were watered," write "the plants were watered with 50 mL of distilled water every 48 hours" [1].

- Automate and Standardize: Where possible, use automation to reduce human error. Employ tools for version control of data and code to maintain full provenance of your analysis [3].

What are the major biological factors that cause variation in plant experiments? Variation in plant experiments arises from a complex interplay of genetic, developmental, and tissue-specific factors. Key sources include:

- Genetic Variation: Closely related plant species can show significant, phylogenetically correlated differences in their chemical profiles, a phenomenon observed in studies of wild figs. This means that the genetic background of your plant material is a fundamental source of variation [9].

- Tissue-Specific Function (Convergent Evolution): The function of a specific plant organ can be a stronger driver of its chemical makeup than its species identity. For instance, the metabolome of fruits from different fig species can be more similar to each other than to the leaf metabolome from the same individual plant. This indicates that the experimental organ or tissue you sample is critical [9].

- Intraspecific Variation (ITV): Individuals within a single species show variability in physical and chemical traits due to local adaptations to their environment. This "hidden biodiversity" is a significant source of variation that is often overlooked when using only species-mean data [10].

- Developmental and Environmental Signals: Complex internal signaling pathways, such as the multi-step phosphorelay (MSP) system, regulate plant responses to stimuli. These pathways involve interactions between various proteins (e.g., histidine kinases, AHPs, and ARRs), and natural variation in these components can lead to different experimental outcomes [11].

How can environmental conditions impact the reproducibility of my plant growth studies? Environmental factors are a major contributor to the "reproducibility crisis" in science. Even when genetic material is consistent, environmental differences can alter results.

- Non-Genetic Differences: A study on Nicotiana attenuata found that the greatest variance in leaf reflectance spectra—a measure of plant physiology and chemistry—was explained by "between-experiment" and "non-genetic between-sample differences." This means that the conditions in which plants are grown (greenhouse vs. field) and measured can overshadow genetically-driven variation [12].

- Inconsistent Conditions: Factors like light exposure, water availability, and soil composition can vary between growth chambers or over time, introducing unintended variability in plant growth, chemical composition, and stress responses [13].

What methodological errors commonly lead to irreproducible results? Many issues with replicability stem from shortcomings in experimental practice and documentation.

- Insufficient Protocol Detail: A lack of transparency and rigorous reporting of methods makes it impossible for other researchers to replicate the exact conditions of an experiment. This is a failure of "replicability of the method" [14].

- Low Statistical Power: Studies that are underpowered, often due to small sample sizes, are less likely to detect true effects and are more likely to produce results that cannot be replicated [14].

- Uncontrolled Variability: Failing to account for and measure inherent biological variation (e.g., between individual plants) or environmental variation within a growth facility can lead to inaccurate conclusions [13].

- Fragmented Record-Keeping: Data and protocols recorded in scattered, handwritten notes are prone to omission and error, making it difficult to retroactively compile a complete experimental record [15].

What strategies can I use to control for variation and improve replicability? Proactive measures in experimental design and data management are key to enhancing replicability.

- Standardized Protocols and Training: Using standardized protocols and ensuring all team members are adequately trained in methodology and metrology minimizes variability in procedure administration [14].

- Adequate Sample Size and Nested Designs: Ensure your study has sufficient statistical power. Using nested study designs (e.g., measuring multiple leaves from multiple plants across multiple treatments) helps to quantify and account for different levels of variation [10].

- Rigorous Reporting and Data Sharing: Provide exhaustive detail on procedures, including genetic material, growth conditions, and sampling methods. Publicly share raw data, analysis scripts, and materials to allow for verification and repurposing [14].

- Pre-registration: Publishing your research hypotheses and analysis plan before conducting the study helps prevent selective reporting of results and confirms the integrity of the experimental design [14].

- Digitization and IoT: Utilizing connected laboratory tools can automatically and accurately record each step of an experiment, such as intricate pipetting sequences, creating a centralized, traceable, and reliable digital record [15].

Troubleshooting Guides

Problem: Inconsistent Results in Plant Growth Assays

| Observed Issue | Potential Cause | Recommended Action |

|---|---|---|

| High variation in growth metrics (e.g., plant height) within a single treatment group. | Natural biological variation between individual plants is not being accounted for in the experimental design or analysis. | Increase sample size. Use a nested design to measure and account for variation at different levels (within-plant, between plants). Employ statistical methods that model variability [10] [13]. |

| Inability to replicate the chemical profile (e.g., metabolome) of a specific plant organ. | Sampling may be inconsistent regarding tissue type, developmental stage, or diurnal timing. Phylogenetic differences between plant lines may be involved. | Strictly standardize the organ, developmental stage, and time of day for all sampling. Verify the genetic identity of plant material. Acknowledge that different organs (leaf vs. fruit) have fundamentally different chemical profiles, even within the same species [9]. |

| Gene expression or signaling pathway outcomes are not consistent. | Redundancy in signaling pathways (e.g., multiple AHPs interacting with multiple ARRs in MSP) allows for compensatory mechanisms. Environmental conditions may be altering pathway activity. | Conduct experiments in more controlled environmental conditions. Use genetic lines with multiple knockouts to overcome pathway redundancy. Perform biophysical assays (e.g., affinity studies) to characterize specific molecular interactions [11]. |

Problem: Failure to Replicate Published Research

| Step | Action |

|---|---|

| 1 | Verify Methodological Detail: Scrutinize the original publication and contact the authors to obtain any missing details on protocols, plant growth conditions, and data analysis procedures [14]. |

| 2 | Source identical materials: Obtain the exact same plant genotypes, seeds, or genetic constructs used in the original study, if possible from the same supplier or repository. |

| 3 | Replicate Environmental Conditions: Carefully match greenhouse or growth chamber conditions (light cycles, humidity, temperature, soil composition) as described in the original work [12]. |

| 4 | Control for Intra-specific Variation: Do not assume a different accession or ecotype of the same plant species will behave identically. Use the same genetically defined material [10]. |

| 5 | Implement Quality Controls: Establish systematic verification procedures within your lab to detect errors in data collection and analysis [14]. |

Data Presentation

| Source of Variation | Description | Impact on Replicability | Method for Control |

|---|---|---|---|

| Phylogenetic History | Chemical and trait diversity correlated with evolutionary relatedness [9]. | Can lead to systematic differences when different species or genotypes are used. | Use phylogenetically informed designs; verify and report species/genotype. |

| Organ-Specific Function | Different plant organs (leaf, fruit, root) have distinct metabolomes driven by function [9]. | Sampling different organs will yield fundamentally different results. | Standardize and meticulously report the specific organ and tissue sampled. |

| Genetic Redundancy | Multiple proteins (e.g., AHP1-5) can perform similar functions in signaling pathways [11]. | Can mask the effect of single-gene manipulations due to compensatory mechanisms. | Use multiple knock-out lines; conduct interaction affinity studies. |

| Intraspecific Variation (ITV) | Variability in functional traits among individuals of the same species [10]. | Using species-mean data can obscure individual-level effects and lead to erroneous conclusions. | Report individual or population-level data; use nested designs. |

| Non-Genetic (Environmental) | Variance explained by differences in growth and measurement environments [12]. | Can be the largest source of variation, overwhelming genetic signals. | Control and meticulously document all environmental conditions. |

Experimental Protocols

Protocol: Investigating Tissue-Specific Chemodiversity

Objective: To characterize and compare the chemical profiles (metabolomes) of different organs from multiple plant species while accounting for phylogenetic relatedness [9].

Key Materials:

- Plant material from multiple, phylogenetically defined species.

- Liquid Nitrogen or silica gel for rapid tissue preservation.

- Ultra-performance liquid chromatography–mass spectrometry (UPLC-MS) system.

- Materials for DNA extraction and sequencing for phylogenetic reconstruction.

Methodology:

- Sample Collection: Collect target organs (e.g., leaves and unripe fruits) from multiple individual plants per species. Immediately preserve tissues by freezing in liquid nitrogen or drying in silica gel to halt metabolic activity.

- Metabolite Extraction: Homogenize the plant tissue and use a standardized solvent system (e.g., methanol-water) to extract secondary metabolites.

- Metabolomic Profiling: Analyze the extracts using UPLC-MS in untargeted mode. Use consistent chromatography and mass spectrometry settings across all samples.

- Data Processing: Process raw data to align peaks, correct for retention time shifts, and create a data matrix of metabolite features (mass-to-charge ratio and retention time) with corresponding intensities.

- Phylogenetic Reconstruction: Isolate DNA from the same species. Sequence several genetic markers and use computational tools to reconstruct a phylogenetic tree.

- Statistical Integration: Use multivariate statistics (e.g., PERMANOVA, PCoA) to test if chemical profiles cluster more strongly by organ type or by species. Test for phylogenetic signal in the chemical data.

Protocol: Assessing Genetic vs. Environmental Influence on Leaf Traits

Objective: To dissect the genetic versus non-genetic contributions to variation in leaf spectral phenotypes [12].

Key Materials:

- A set of plant genotypes including wild accessions, recombinant inbred lines (RILs), and transgenic lines.

- A hand-held field spectroradiometer (400–2500 nm).

- Controlled environment (glasshouse) and field plot.

- A standard radiation source and background for measurement.

Methodology:

- Plant Cultivation: Grow the diverse set of genotypes in both highly controlled (glasshouse) and more variable (field) environments.

- Standardized Spectroscopy: Measure leaf reflectance using the spectroradiometer under standardized conditions (e.g., fixed distance from leaf, using an integrating sphere) to minimize measurement uncertainty. Measure leaves both on and off the plant.

- Data Collection: Collect the entire reflectance spectrum for each sample.

- Variance Partitioning: Use statistical models (e.g., ANOVA) to partition the total variance in the spectral data into components attributable to "Genotype," "Environment," "Experiment," and their interactions.

- Analysis: Identify which wavelengths or spectral regions show the highest heritability (strong genetic influence) and which are most affected by the environment.

Signaling Pathway and Experimental Workflow Diagrams

Cytokinin Multi-Step Phosphorelay

Plant Chemodiversity Study Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Silica Gel | Used for rapid drying and preservation of plant tissue (e.g., leaves, fruits) in the field to stabilize the metabolome until laboratory analysis [9]. |

| Recombinant Inbred Lines (RILs) | A population of plants that are genetically distinct but largely homozygous, allowing for the mapping of traits and the separation of genetic from environmental effects [12]. |

| Transgenic Lines (Knock-Down/Knock-Out) | Plants with targeted reductions or eliminations in the expression of specific genes (e.g., in biosynthetic or signaling pathways) to determine gene function and its contribution to phenotypic variation [12]. |

| Histidine-Containing Phosphotransfer Proteins (AHPs) | Key shuttle proteins in the multi-step phosphorelay system; studying their interactions with various Response Regulators (ARRs) helps unravel the complexity and potential redundancy in plant signaling pathways [11]. |

| Standardized Spectral Library | A reference database of leaf reflectance spectra from genetically defined plants grown under controlled conditions, used to calibrate and interpret spectral data from new experiments [12]. |

Frequently Asked Questions (FAQs)

Q1: What is the tangible impact of the reproducibility crisis on drug development? A1: The impact is severe and quantifiable. In oncology drug development, one attempt to confirm the preclinical findings of 53 "landmark" studies succeeded in only 6 cases [16]. Furthermore, a 90% failure rate exists for drugs progressing from phase 1 trials to final approval, a problem exacerbated by a lack of replicable preclinical evidence [17].

Q2: What are the most common causes of irreproducibility in preclinical research? A2: According to a survey of scientists, the top causes include selective reporting, pressure to publish, low statistical power or poor analysis, insufficient replication within the original laboratory, and insufficient oversight/mentoring [16]. Other factors are poor experimental design and lack of access to raw data or methods [16].

Q3: In plant single-cell research, what are the key considerations for choosing between protoplast and nucleus isolation? A3: The choice has significant implications for reproducibility. The table below summarizes the key differences:

| Characteristic | Protoplast (scRNA-seq) | Nucleus (snRNA-seq) |

|---|---|---|

| Transcripts Captured | Nuclear and cytoplasmic | Primarily nuclear [18] |

| Average Genes Detected | Higher [18] | Fewer [18] |

| Tissue Applicability | Limited to tissues susceptible to enzymatic digestion [18] | Suitable for tissues resistant to protoplast isolation [18] |

| Major Caveat | Can induce stress responses that alter the transcriptome (e.g., expression of WOX2) [18] | May capture more immature mRNA and miss cytoplasmic transcripts [18] |

Q4: What concrete steps can I take to improve the reproducibility of my data management? A4: Reproducible data management requires an auditable trail. Best practices include:

- Keep Original Data: Always preserve copies of the original, raw data file.

- Document Changes: Maintain data management programs that document all changes and the rationale for any data cleaning. Avoid "point, click, drag, and drop" methods in favor of programmable, auditable systems [16].

- Retain Analysis Programs: Keep the final version of all analysis programs used to generate the results reported in a manuscript [16].

Q5: How can I make the charts and graphs in my research more accessible? A5: To be accessible, charts and graphs must not use color as the only means of conveying information [19]. For a bar graph, this means using different patterns or textures in addition to colors, and directly labeling data series where possible [19]. All non-text elements require a minimum contrast ratio of 3:1 against adjacent colors [19].

Troubleshooting Guides

Issue: Inconsistent Results in Plant Single-Cell RNA Sequencing Workflows

Problem: Transcriptome data varies significantly between experiments, potentially due to the cell/nucleus isolation method.

Solution: Follow this structured guide to select and optimize your isolation protocol.

Step 1: Evaluate Your Research Goal and Tissue Type

- If your study requires full transcriptome data (including cytoplasmic RNAs) AND your plant tissue is easily digestible (e.g., Arabidopsis roots), then protoplast isolation may be suitable, but you must account for stress responses [18].

- If your study focuses on nuclear transcripts, involves snapshot profiling, OR uses tissues resistant to enzymatic digestion (e.g., woody tissues), then nucleus isolation (snRNA-seq) is the more reliable and representative method [18].

Step 2: Mitigate Stress-Induced Artifacts

- For protoplast isolation, the process itself can quickly induce stress genes (e.g., WOX2), altering the transcriptome [18]. To troubleshoot:

- Minimize Isolation Time: Reduce the time between tissue harvesting and protoplast fixation/lysis as much as possible.

- Use Controls: Include a positive control to quantify the stress response in your specific protocol.

- For nucleus isolation, a key advantage is the ability to immediately freeze tissue in liquid nitrogen, halting biological activity and limiting stress-related gene activation [18].

- For protoplast isolation, the process itself can quickly induce stress genes (e.g., WOX2), altering the transcriptome [18]. To troubleshoot:

Step 3: Validate Cell Type Representation

- A unique cell cluster found in nucleus profiling may not be detected in cell profiling, and vice-versa [18]. To ensure your data is representative:

- Cross-validate with Markers: Use known cell-type-specific marker genes to check if all expected cell types are present in your data.

- Consider Multi-modal Approach: For critical findings, consider using both methods on parallel samples to confirm results.

- A unique cell cluster found in nucleus profiling may not be detected in cell profiling, and vice-versa [18]. To ensure your data is representative:

Issue: Failure to Replicate a Published Experimental Protocol

Problem: You are unable to achieve the same results as a published study, even when following the described methods.

Solution: Systematically address common gaps in protocol reporting.

- Step 1: Scrutinize Reagent and Material Specifications

- Research Reagent Solutions: The table below lists common reagents and critical details often omitted, leading to irreproducibility.

| Reagent/Material | Critical Specification for Reproducibility | Function |

|---|---|---|

| Enzymes for Cell Wall Digestion | Exact brand, specific activity, and batch number [18] | Breaks down rigid plant cell wall to release protoplasts for single-cell analysis. |

| Antibodies | Clone ID, host species, and dilution buffer composition [16] | Binds to specific target proteins for detection or quantification. |

| Cell Culture Media | Serum batch and precise concentrations of all growth factors [16] | Provides nutrients and signaling molecules to support cell growth. |

| Biological Models (e.g., Seeding) | Passage number, exact growth conditions, and handling stress history [16] | The biological unit (e.g., cell line, plant variety) under study. |

Step 2: Improve Data Management and Analysis Transparency

- Pre-specify Analysis Plans: Define your data analysis plan, including how outliers will be handled, before conducting the experiment to decrease selective reporting [16].

- Maintain Raw Data and Code: Keep the original raw data files, the final analysis files, and all data management and analysis programs. This allows for an auditable trail from raw data to final result [16].

Step 3: Implement Active Laboratory Management

- Senior investigators should adopt practices like random audits of raw data, more hands-on oversight of experiments, and fostering a culture of healthy skepticism among all contributors [16].

Essential Workflows and Visualizations

Experimental Workflow for Single-Cell Plant Transcriptomics

The diagram below outlines the critical decision points in a plant single-cell transcriptomics protocol, highlighting steps that are key for reproducibility.

The Path from Preclinical Discovery to Clinical Approval

This diagram visualizes the drug development pipeline, highlighting the "valley of death" where reproducibility failures often occur.

This technical support guide is framed within a broader thesis on troubleshooting replicability in complex, multi-step plant research protocols. A significant challenge in environmental and biological research is that scientific findings are not always reproducible [20]. A 2016 survey, for instance, revealed that in biology alone, over 70% of researchers were unable to reproduce the findings of other scientists [20].

This case study analyzes a pioneering international ring trial—a powerful tool for proficiency testing [21]. The study involved five independent laboratories all performing the same experiment to investigate the assembly of a synthetic microbial community (SynCom) on the roots of the model grass Brachypodium distachyon within standardized fabricated ecosystems (EcoFAB 2.0 devices) [22] [21]. The following sections provide a detailed breakdown of the experimental parameters, the quantitative results, and a troubleshooting guide for researchers aiming to design replicable multi-laboratory studies.

The ring trial was designed to test the hypothesis that the inclusion of a specific bacterial strain, Paraburkholderia sp. OAS925, would consistently influence microbiome assembly, plant growth, and root exudate composition across all laboratories. The experiment consisted of four treatments with seven biological replicates each at every site [21].

Table 1: Consolidated Plant Phenotype Data Across Five Laboratories

| Treatment | Shoot Fresh Weight (mg) | Shoot Dry Weight (mg) | Root Development (after 14 DAI) |

|---|---|---|---|

| Axenic (Control) | Baseline | Baseline | Baseline |

| SynCom16 | Decreased | Decreased | Similar to Control |

| SynCom17 | Significantly Decreased | Significantly Decreased | Consistent Decrease |

Note: DAI = Days After Inoculation. SynCom16 = 16-member community without Paraburkholderia. SynCom17 = 17-member community with Paraburkholderia. [21]

Table 2: Final Root Microbiome Composition (22 DAI)

| Treatment | Dominant Strain(s) | Relative Abundance (Mean ± SD) |

|---|---|---|

| SynCom17 Inoculum | Paraburkholderia sp. OAS925 | 98% ± 0.03% |

| SynCom16 Inoculum | Rhodococcus sp. OAS809 | 68% ± 33% |

| Mycobacterium sp. OAE908 | 14% ± 27% | |

| Methylobacterium sp. OAE515 | 15% ± 20% |

The data from the SynCom16 treatment showed significantly higher variability across labs compared to the SynCom17 treatment, highlighting how the presence of a dominant competitor can reduce overall outcome variability [21].

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: Our lab is unable to maintain sterile conditions in our EcoFAB devices, leading to contamination. What critical steps might we be missing?

- A: In the ring trial, less than 1% of sterility tests (2 out of 210) showed contamination [21]. This high success rate was achieved by:

- Using Centralized Supplies: Critical components like the EcoFAB 2.0 devices themselves, filters, and seeds were provided from a single source to minimize variation [21].

- Following Detailed Protocols: The published protocol includes explicit instructions for device assembly and surface sterilization of seeds [21] [23]. Ensure you are meticulously following every step.

- Conducting Regular Sterility Checks: The protocol mandates testing sterility by incubating spent medium on LB agar plates at multiple time points. Consistently perform these checks to catch failures early [21].

Q2: We observe high variability in plant phenotype and microbiome assembly outcomes between our experimental replicates. How can we improve consistency?

- A: Variability can stem from differences in the starting inoculum, growth chambers, and sample collection.

- Standardize Inoculum Preparation: The ring trial used SynComs from a public biobank. Cells were counted using pre-calibrated OD600 to CFU (colony-forming unit) conversions and shipped as concentrated glycerol stocks on dry ice to ensure equal cell numbers for the final inoculum at every lab [21].

- Control Growth Chamber Conditions: The study identified variability in light quality (fluorescent vs. LED), light intensity, and temperature as a likely source of phenotypic variation between labs [21]. Use data loggers to continuously monitor and report these environmental parameters.

- Minimize Analytical Variation: For downstream 'omics' analyses, the ring trial sent all samples to a single organizing laboratory for sequencing and metabolomics [21]. If this isn't possible for your project, establish standardized protocols and cross-validate methods between labs.

Q3: Our research is affected by the "file drawer problem," where negative results go unpublished. How does this case study address that?

- A: The case study explicitly discusses that a competitive academic culture often undervalues negative results, which hinders reproducibility by preventing others from learning from unsuccessful experiments [20]. This guide and the underlying ring trial publication themselves help to counter this by:

- Publishing Detailed Challenges: The study documents the obstacles encountered, providing invaluable information for other researchers [22].

- Providing Benchmarking Data: The publicly shared dataset includes all raw data, which can be used by other labs to troubleshoot their own protocols and understand the range of expected outcomes, including negative or null results [22].

Experimental Protocol and Workflow

The detailed, step-by-step protocol used in the ring trial is available on protocols.io [23]. The general workflow is summarized in the diagram below.

Key Methodology Details:

- Fabricated Ecosystem: The experiment used the EcoFAB 2.0, a sterile, closed laboratory habitat that enables highly reproducible plant growth [21].

- Model Organism: The model grass Brachypodium distachyon was used for its standardized genetics and growth characteristics [21].

- Synthetic Community (SynCom): The study employed a defined community of 17 bacterial isolates from a grass rhizosphere, available through a public biobank (DSMZ) [21]. The community included representatives from key phyla: Actinomycetota, Bacillota, Pseudomonadota, and Bacteroidota [21].

- Critical Pre-inoculation Step: A sterility test was performed just before inoculation to confirm the integrity of the system [21].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Reproducible Plant-Microbiome Research

| Item | Function in the Experiment | Source/Specification |

|---|---|---|

| EcoFAB 2.0 Device | A sterile, fabricated ecosystem habitat that provides a controlled environment for plant growth and microbe interaction. | Standardized device distributed from a central lab [21]. |

| Brachypodium distachyon Seeds | A model plant organism with consistent genetics and growth patterns, ideal for standardized experiments. | Fresh seeds distributed from a central lab to ensure uniform genetic background [21]. |

| Synthetic Community (SynCom) | A defined mixture of bacterial strains that reduces complexity while retaining functional diversity, enabling mechanistic studies. | 17-member community available from public biobank (DSMZ) [21] [22]. |

| Standardized Growth Medium | A defined nutritional substrate (e.g., MS media) that supports consistent plant and microbial growth without introducing unknown variables. | Part of the detailed protocol [23]. |

| Cryopreserved Bacterial Stocks | Long-term storage of SynCom members in 20% glycerol at -80°C ensures a consistent and viable starting inoculum across experiments and replications. | Shipped as 100x concentrated stocks on dry ice [21]. |

Mechanism of Microbial Dominance

Follow-up experiments to the ring trial investigated why Paraburkholderia sp. OAS925 so effectively dominated the root microbiome. The findings revealed a pH-dependent mechanism, summarized in the diagram below.

Key mechanistic insights:

- Comparative Genomics: Genomic analysis of Paraburkholderia sp. OAS925 revealed a heightened capacity to utilize a wide array of compounds found in root exudates [21].

- Exometabolite Profiling: Analytical chemistry (LC-MS/MS) confirmed that this strain could efficiently consume root-derived metabolites, giving it a competitive nutrient advantage [21].

- Motility Assays: Follow-up lab experiments demonstrated that the strain's motility (its ability to move) was enhanced at the specific pH levels created by the plant root, allowing it to outcompete other strains in reaching and colonizing the root niche [21].

Blueprint for Standardization: Methodologies to Ensure Cross-Lab Consistency

Implementing Standardized Fabricated Ecosystems (EcoFABs) for Controlled Studies

Troubleshooting Guide for EcoFAB Experiments

Common Problem 1: Microbial Contamination

Problem Description: Unwanted bacterial, fungal, or yeast growth within EcoFABs, compromising experimental integrity and replicability. Contaminants can originate from external sources or exist as endophytes within plant tissues [24] [25].

Diagnosis and Analysis:

- Bacteria: Liquid media appears cloudy; slimy, glistening film forms on solid surfaces, often starting at the plant explant base [24].

- Fungi: Fuzzy, cottony growth with possible green, blue, or black spores on medium or plant surfaces [24].

- Yeast: Small, round, shiny colonies that grow rapidly without immediately altering medium appearance [24].

Solution Steps:

- Immediate Isolation: Remove and seal contaminated EcoFABs; autoclave before disposal to prevent spore spread [25].

- Sterilization Protocols: Implement multi-step explant sterilization: wash with detergent, rinse with 70-95% ethanol (30-60 seconds), disinfect with 10-20% bleach solution (10-20 minutes), then rinse with sterile distilled water [24].

- Chemical Additives: Incorporate broad-spectrum biocides like Plant Preservative Mixture (PPM) at 0.5-2.0 mL/L in culture medium [24].

- Antibiotic Application: Use targeted antibiotics (e.g., Carbenicillin, Cefotaxime) for bacterial contamination, noting potential phytotoxicity [24].

Prevention Strategies:

- Maintain strict aseptic technique in laminar flow hood [24] [25]

- Autoclave all media, vessels, and tools at 121°C [25]

- Regularly disinfect workspace with 70% ethanol [25]

- Use PPM as prophylactic in initial culture medium [24] [25]

Common Problem 2: Oxidative Browning

Problem Description: Tissue and media turn brown due to phenolic oxidation, particularly common in woody plant species, inhibiting cell division and reducing regeneration capacity [25].

Diagnosis and Analysis: Browning results from plant wound response where phenolic compounds mix with oxidative enzymes like polyphenol oxidase (PPO), producing toxic quinones that polymerize into brown pigments [24].

Solution Steps:

- Antioxidant Application: Add ascorbic acid (Vitamin C) to reduce toxic quinones to stable forms and citric acid to inhibit PPO enzyme activity [24].

- Adsorbent Utilization: Incorporate activated charcoal (0.1-0.5%) or polyvinylpyrrolidone (PVP) to physically remove phenolic compounds [24].

- Cultural Practices: Soak explants in antioxidant solution before culture; incubate in darkness for first week to reduce phenolic synthesis [24] [25].

- Frequent Subculturing: Transfer to fresh media regularly to prevent phenolic compound accumulation [25].

Prevention Strategies:

- Pre-treat donor plants to reduce endogenous phenolics [25]

- Work quickly to minimize time between explant excision and culture [25]

- Use vertical explant placement to reduce browning surface area [25]

Common Problem 3: Replicability Challenges

Problem Description: Inability to reproduce consistent results across EcoFAB studies targeting the same scientific question, stemming from both helpful and unhelpful sources of variation [26].

Diagnosis and Analysis:

- Helpful Non-Replicability: Arises from inherent uncertainties in biological systems, leading to discovery of new phenomena [26].

- Unhelpful Non-Replicability: Results from methodological shortcomings, poor study design, or insufficient transparency [26].

Solution Steps:

- Enhanced Documentation: Provide clear, specific descriptions of all methods, instruments, materials, procedures, measurements, and data analysis decisions [26].

- Computational Transparency: Share complete data, code, and computational workflows to enable reproducibility [27] [26].

- Evidence-Based Data Analysis: Combine massive-scale education with empirical studies to identify robust data analytic practices [27].

- Pilot Studies: Conduct small-scale tests to identify optimal conditions before full experimentation [25].

Prevention Strategies:

- Adhere to highest standards of research practice [26]

- Understand and express uncertainty inherent in scientific conclusions [26]

- Utilize tools for checking analysis and results [26]

Experimental Protocols for EcoFAB Studies

Standardized EcoFAB Assembly Protocol

Objective: Establish consistent fabricated ecosystem platforms for controlled plant studies.

Materials Required:

- EcoFAB chambers (standardized dimensions)

- Sterile growth medium (specific composition documented)

- Plant materials (standardized source and developmental stage)

- Inoculum (if studying plant-microbe interactions)

Procedure:

- Sterilization: Autoclave EcoFAB components at 121°C for 20 minutes [25].

- Medium Preparation: Prepare standardized growth medium with documented pH (typically 5.6-5.8), nutrient composition, and gelling agent concentration [25].

- Plant Establishment: Surface-sterilize plant materials using optimized sterilization protocol [24].

- Assembly: Transfer sterilized plants to EcoFABs under laminar flow conditions [25].

- Environmental Standardization: Maintain consistent temperature (22°C-25°C), light intensity, and photoperiod across replicates [25].

- Monitoring: Document all procedural details and environmental conditions [26].

Contamination Control Protocol

Objective: Prevent and manage microbial contamination in EcoFAB studies.

Materials Required:

- Laminar flow hood

- Sterilization solutions (ethanol, bleach)

- Antimicrobial agents (PPM, antibiotics, fungicides)

- Filter sterilization equipment (0.22 μm filters)

Procedure:

- Preventive Measures:

- Contamination Response:

Data Collection and Documentation Protocol

Objective: Ensure computational reproducibility and research transparency.

Materials Required:

- Electronic lab notebook

- Data management system

- Code repository (e.g., GitHub)

- Standardized data collection templates

Procedure:

- Comprehensive Documentation: Record all experimental details including methods, instruments, materials, procedures, measurements, and environmental conditions [26].

- Data Management: Implement consistent data organization with clear metadata [26].

- Code Sharing: Document computational steps, analysis code, and software versions [27] [26].

- Uncertainty Quantification: Document measurement errors, biological variability, and analysis limitations [26].

Quantitative Data Tables

Table 1: Contamination Control Agents and Applications

| Agent | Concentration Range | Target Contaminants | Phytotoxicity Risk | Application Notes |

|---|---|---|---|---|

| PPM | 0.5-2.0 mL/L | Bacteria, Fungi, Yeast | Low | Heat-stable; add before autoclaving [24] |

| Carbenicillin | 100-500 mg/L | Bacteria | Low to Moderate | Filter-sterilize; add to cooled media [24] |

| Cefotaxime | 100-500 mg/L | Bacteria | Low to Moderate | Filter-sterilize; effective against gram-positive and negative [24] |

| Benomyl | 10-100 mg/L | Fungi | Moderate | Systemic fungicide; test concentration for specific species [24] |

| Activated Charcoal | 0.1-0.5% | Phenolic compounds | None | Adsorbs inhibitory compounds; may also adsorb hormones [24] [25] |

Table 2: Antioxidant Treatments for Oxidative Browning

| Antioxidant | Concentration Range | Mode of Action | Application Method | Effectiveness |

|---|---|---|---|---|

| Ascorbic Acid | 50-200 mg/L | Reduces quinones to stable forms | Add to medium or pre-soak solution | High [24] |

| Citric Acid | 50-150 mg/L | Inhibits PPO enzyme; lowers pH | Add to medium or pre-soak solution | Medium-High [24] |

| Polyvinylpyrrolidone (PVP) | 0.1-1.0% | Binds phenolic compounds | Add to solid or liquid medium | Medium [24] |

| Activated Charcoal | 0.1-0.5% | Adsorbs phenolic compounds | Add to solid medium | High (but non-specific) [24] [25] |

Research Reagent Solutions

Essential Materials for EcoFAB Experiments

| Reagent | Function | Application Notes |

|---|---|---|

| Plant Preservative Mixture (PPM) | Broad-spectrum biocide against bacteria, fungi, and yeasts | Heat-stable; add to medium before autoclaving; use at 0.5-2.0 mL/L [24] |

| Activated Charcoal | Adsorbs phenolic compounds and inhibitory substances | May also adsorb hormones and nutrients; use at 0.1-0.5% in medium [24] [25] |

| Ascorbic Acid (Vitamin C) | Antioxidant that reduces toxic quinones | Use at 50-200 mg/L in medium or as pre-soak solution [24] |

| Citric Acid | Lowers pH and inhibits polyphenol oxidase enzyme | Synergistic with ascorbic acid; use at 50-150 mg/L [24] |

| MS Medium | Standard plant tissue culture nutrient base | Contains macro/micronutrients, vitamins; may require modification for specific species [25] |

| Agar/Gellan Gum | Gelling agents for solid media | Concentration affects water availability; adjust based on plant requirements [25] |

| Plant Growth Regulators | Control development and organogenesis | Cytokinins promote shoot growth; auxins promote root formation; balance is critical [25] |

Experimental Workflows and Signaling Pathways

EcoFAB Troubleshooting Workflow

Phenolic Oxidation Pathway in Plant Tissue

Frequently Asked Questions

Experimental Design Questions

Q: How can I improve the replicability of my EcoFAB experiments? A: Focus on three key areas: (1) Enhanced documentation of all methods, materials, and environmental conditions [26]; (2) Computational transparency by sharing data, code, and analysis workflows [27] [26]; and (3) Standardization of protocols across research groups. Implement systematic monitoring of environmental variables and conduct pilot studies to identify optimal conditions before full-scale experiments [25].

Q: What is the difference between reproducibility and replicability in EcoFAB research? A: Reproducibility refers to obtaining consistent computational results using the same input data, computational steps, methods, and code [26]. Replicability means obtaining consistent results across studies aimed at answering the same scientific question, each of which has obtained its own data [26]. In EcoFAB contexts, reproducibility ensures you can recompute results from existing data, while replicability ensures independent researchers can obtain consistent findings using the same methods but different plant materials or EcoFAB setups.

Technical Implementation Questions

Q: What concentration of PPM should I use for contamination prevention? A: Use 0.5-2.0 mL/L of PPM in your culture medium [24]. For initial experiments, start with 1.0 mL/L and adjust based on results. PPM is heat-stable and can be added to medium before autoclaving, simplifying preparation. Note that effectiveness varies by plant species, so conduct small-scale tests with your specific plant material before large-scale application [24].

Q: How do I troubleshoot persistent oxidative browning in sensitive plant species? A: Implement a multi-pronged approach: (1) Pre-soak explants in antioxidant solution (100 mg/L ascorbic acid + 50 mg/L citric acid) for 30-60 minutes before culture [24]; (2) Include both antioxidants and adsorbents in initial medium (150 mg/L ascorbic acid + 0.3% activated charcoal) [24] [25]; (3) Maintain cultures in darkness for the first 7-10 days [25]; (4) Transfer to fresh medium more frequently (every 7-10 days initially) to remove accumulated phenolics [25].

Data Management Questions

Q: What specific information should I document to ensure computational reproducibility? A: Beyond standard methods descriptions, include: (1) Complete computational workflow including software versions and parameters [27] [26]; (2) All data preprocessing steps and exclusion criteria [26]; (3) Raw data and metadata in accessible formats [26]; (4) Environmental conditions throughout the experiment (temperature, humidity, light cycles) [25]; (5) Any deviations from planned protocols with explanations [26].

Q: How should I handle failed replication attempts in my research? A: First, determine whether non-replicability stems from helpful or unhelpful sources [26]. Helpful sources include inherent biological variability that may lead to new discoveries, while unhelpful sources include methodological errors or insufficient documentation [26]. Systematically examine potential sources: check environmental consistency, reagent quality, technique variations, and data analysis methods. Document all findings thoroughly, as understanding why replication fails can be scientifically valuable [26].

Developing and Using Synthetic Microbial Communities (SynComs)

FAQs: Addressing Common Experimental Challenges

FAQ 1: Why does the actual composition of my SynCom drift significantly from the designed community after a few generations in experiments?

This is a common issue related to community stability. A SynCom's stability is influenced by ecological interactions, functional redundancy, and environmental conditions [28]. To troubleshoot:

- Check Interaction Balance: The community may be dominated by competitive or negative interactions. Re-design to include a balance of cooperative and competitive relationships to foster a dynamic equilibrium [28].

- Assess Functional Redundancy: A lack of functional redundancy can lead to the loss of key functions if one strain drops out. Incorporate multiple strains that can perform the same critical function to bolster resilience [29] [28].

- Validate Keystone Species: Ensure your design includes and properly establishes keystone species, which are critical for governing the structure and function of the entire community [28].

FAQ 2: Why does my SynCom perform well in controlled lab conditions but fails in more complex natural environments, such as soil?

This performance gap often stems from the inability to adapt to real-world complexity [30].

- Insufficient Environmental Adaptation: The lab-grown SynCom may not withstand biotic (e.g., native microbiota) and abiotic (e.g., soil pH, moisture fluctuations) stresses. Consider using "helper" bacteria already adapted to the target environment to assist the invasion and establishment of your SynCom [29] [28].

- Lack of Multikingdom Considerations: Many SynComs are bacteria-only, but fungal members can be crucial. For example, fungi can facilitate bacterial colonization in dry soil and improve soil structure, which enhances overall resilience [29] [28]. Incorporating multikingdom members can improve environmental fitness.

- Host-Specificity Mismatch: The SynCom might not be compatible with the specific plant host or its developmental stage. Re-assess the host's recruitment of microbes via root exudates and ensure your SynCom design aligns with the host's physiology [30].

FAQ 3: My SynCom amplicon sequencing results show many unexpected sequences. How can I accurately determine which of my designed strains are present and in what abundance?

Standard amplicon analysis tools can misclassify PCR/sequencing errors or paralogous gene copies as contaminants or new strains. For a defined SynCom, use a reference-based error correction tool like Rbec [31].

- Solution: Use the

Rbectool (available as an R package), which is specifically designed for SynComs where reference sequences for each strain are known. It accurately corrects PCR and sequencing errors, identifies true intra-strain polymorphism, and detects external contaminants, providing a more precise abundance estimation of your strains than standard methods [31].

FAQ 4: How can I efficiently test all possible combinations of a candidate strain library to find the optimal consortium without the process being prohibitively time-consuming or expensive?

A full factorial construction method using basic lab equipment can solve this.

- Protocol Logic: The method uses a multi-well plate and a multichannel pipette. Each consortium is represented by a unique binary number where each bit represents the presence or absence of a species. By strategically duplicating and adding new species to plate columns, you can rapidly assemble all possible combinations [32].

- Benefit: This low-cost, accessible protocol allows a single user to assemble all combinations of up to 10 species in under an hour, enabling the empirical mapping of community-function landscapes and the identification of optimal combinations [32].

Troubleshooting Guides

Guide 1: Troubleshooting SynCom Stability and Composition

| Problem | Potential Causes | Solutions & Diagnostic Steps |

|---|---|---|

| Rapid Drift in Community Composition | • Dominance of competitive/antagonistic interactions [28]• "Cheating" behavior where some strains exploit public goods without contributing [28]• Lack of functional redundancy [29] | • Pre-design screening: Use genome-scale metabolic models (GSMNs) to predict metabolic competition and cross-feeding potential [33] [28].• Engineer spatial structure: Use solid media or microenvironments to limit cheater dominance and stabilize interactions [28].• Increase diversity: Introduce metabolically interdependent strains to create division of labor [29] [28]. |

| Loss of Key Function (e.g., pathogen suppression) | • Drop-out of the one strain responsible for that function.• Environmental conditions suppress the expression of key genes. | • Build in redundancy: Include multiple strains with the same plant growth-promoting trait (PGPT) in the initial design [33].• Pre-validate in conditions: Test SynCom function in a medium that mimics the target environment's nutrient conditions [33]. |

Guide 2: Troubleshooting Functional Performance

| Problem | Potential Causes | Solutions & Diagnostic Steps |

|---|---|---|

| Poor Performance in Complex Environments (e.g., field soil) | • Failure to establish against native microbiota [30]• Abiotic stressors (e.g., drought, salinity) [29]• Incompatibility with plant host [30] | • Use native "helpers": Co-inoculate with strains already adapted to the target soil to aid SynCom establishment [29].• Include stress-tolerant strains: Design SynComs with halophiles or drought-tolerant bacteria/fungi that produce exopolysaccharides [29] [28].• Align with plant physiology: Use host-specific root exudate profiles in design; employ multi-omics to verify plant-SynCom interactions [30]. |

| Suboptimal Biodegradation/Production | • Inefficient division of labor.• Accumulation of toxic intermediates. | • Full factorial screening: Use the method above [32] to find the combination that maximizes function.• Design synergistic consortia: Assemble strains that sequentially degrade a compound, like a linuron-degrading community where different strains handle different breakdown intermediates [29]. |

Key Data and Experimental Protocols

Table 1: Quantitative Data on SynCom Applications from Research

This table summarizes measurable outcomes of SynCom applications in various areas as reported in the literature.

| Application Area | SynCom Composition / Type | Key Quantitative Results | Source |

|---|---|---|---|

| Composting & Lignocellulose Degradation | Synthetic community inoculated during thermophilic phase. | • Reduced lignin, cellulose, hemicellulose content.• Significantly increased activity of laccase, Mn peroxidase, cellulase, xylanase.• Enriched key fungal genera (Cephaliophora, Thermomyces). | [34] |

| Soil Fertility Restoration | Combination of N2-fixing, P-solubilizing, K-solubilizing, IAA-producing bacteria. | • Increased content of available N, P, and K in soil.• Effectively improved plant N/P/K uptake and growth. | [29] |

| Pollutant Bioremediation | Variovorax sp. WDL1 (degrades linuron) mixed with non-degrading helper strains. | • Dramatically increased linuron degradation rate compared to Variovorax alone. | [29] |

| Bioinformatics Analysis | Rbec tool vs. other error-correction methods (DADA2, Deblur). |

• Corrected 89.2% of erroneous reads on average.• Outperformed all other tested methods, especially for reads from polymorphic gene copies. | [31] |

Objective: To assemble all possible combinations of a library of m microbial strains to empirically identify the optimal consortium for a desired function.

Materials:

- Pure cultures of each of the

mstrains, grown to a standardized optical density. - 96-well deep-well plates (or similar).

- Multichannel pipette.

- Fresh growth medium.

Methodology:

- Binary Representation: Assign each strain a unique position in a binary number of length

m. For example, for 8 strains, the presence of strain 1 is00000001, strain 2 is00000010, and so on. Each unique consortium is a unique binary number. - Initial Plate Setup: For the first 3 strains, use one column of the 96-well plate (8 wells) to assemble all 2³=8 combinations, from the empty consortium (000) to the full consortium (111).

- Iterative Expansion:

- Duplicate the entire set of assemblages created so far into a new plate section.

- Using a multichannel pipette, add the next strain (e.g., strain 4, represented as 1000) to every well in the duplicated section. This binary addition creates all combinations that include the new strain.

- This process of duplication and addition is repeated for each remaining strain.

- Incubation and Measurement: Incubate the plate under desired conditions and measure the function of interest (e.g., biomass yield via absorbance, pollutant degradation, etc.) for every well.

Troubleshooting Note: Ensure all cultures are at the same physiological state and density before pooling to avoid biased initial inoculation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for SynCom Research

A list of key reagents, tools, and their primary functions in SynCom development and analysis.

| Item / Tool Name | Type | Primary Function in SynCom Research |

|---|---|---|

Rbec (R Package) |

Bioinformatics Tool | Reference-based error correction for amplicon sequencing data from defined SynComs; identifies contaminants and polymorphic variation [31]. |

| Genome-Scale Metabolic Models (GSMNs) | Computational Model | Predicts metabolic interactions, potential for division of labor, and helps in selecting non-redundant, complementary strains for a minimal community [33] [28]. |

| Multichannel Pipette & 96-Well Plates | Lab Equipment | Enables high-throughput, full factorial assembly of strain combinations for systematic functional screening [32]. |

| Root Exudate-Mimicking Growth Media | Growth Medium | Used as a nutritional constraint in metabolic models and experiments to pre-adapt SynComs to the rhizosphere environment [33]. |

| Keystone Species | Biological Concept | A strategically selected strain that disproportionately impacts community structure and function, enhancing stability and performance [28]. |

| Helper Bacteria | Biological Concept | Native strains co-inoculated to assist the establishment and function of the primary, introduced SynCom members in a complex environment [29]. |

Experimental and Ecological Workflows

The following diagram illustrates the integrated design-build-test-learn (DBTL) cycle, which is a foundational framework for the rational development of high-performance SynComs.

SynCom Rational Design Workflow

The diagram below outlines the logical process of moving from a natural environment to a designed, minimal synthetic community.

From Natural Microbiome to SynCom

Creating and Sharing Detailed Protocols with Annotated Videos

Frequently Asked Questions

Q1: Why should I use annotated videos for my plant research protocols instead of traditional written methods? Annotated videos transform complex, multi-step plant protocols into dynamic visual guides. They capture nuanced techniques, precise timing, and spatial relationships that are difficult to describe in text alone. This visual documentation is crucial for troubleshooting and ensuring that your research can be accurately replicated by your team and the broader scientific community, thereby enhancing the reliability of your findings.

Q2: What are the most common errors in video annotation projects, and how can I avoid them? The five most common errors are [35]:

- Misaligned Annotation Guidelines: Annotations do not match the model's or protocol's learning objectives.

- Over-Reliance on Generic Tooling: Using tools that lack features for complex video tasks like temporal tracking.

- Inadequate Quality Assurance (QA): Manual or inconsistent QA allows errors to propagate.

- Neglecting Edge Case Strategy: Failing to properly label rare but critical scenarios.

- Underestimating Annotation Workforce Training: Treating annotation as low-skill labor without proper training.

Q3: How do I choose the right video annotation method for my protocol? The choice of method depends on what you need to demonstrate in your protocol. The table below summarizes common techniques [36]:

| Annotation Method | Description | Best Use in Plant Protocols |

|---|---|---|

| Bounding Boxes | Drawing rectangles around objects. | Simple, well-defined objects like fruits or specific leaves; cost-effective and widely used. |

| Polygonal Annotation | Drawing precise, multi-sided shapes around objects. | Irregularly shaped plant structures or root systems that require precise outlines. |

| Key Point Annotation | Marking specific points or landmarks on an object. | Measuring distances between nodes or marking specific features on a plant's anatomy. |

| Object Tracking | Annotating an object across consecutive video frames. | Monitoring the growth or movement of a plant organ over time in a time-lapse video. |

| Semantic Segmentation | Labeling each pixel of an object to distinguish its components. | Detailed analysis of disease spots on leaves or differentiating between tissue types. |

Q4: What features are critical when selecting video annotation software for scientific use? Choose software with an intuitive user interface, support for multiple video file formats (e.g., MP4, AVI, MOV), and robust collaboration tools for team review. For scientific accuracy, advanced capabilities like polygonal annotations, keypoint marking, and object tracking are essential. Auto-annotation features can also significantly improve efficiency [36].

Q5: My annotated text in diagrams becomes unreadable in dark mode. How can I fix this?

This is a common contrast issue. To ensure clarity in all viewing environments, you must explicitly set the text color (fontcolor) to have high contrast against the node's background color (fillcolor). A good rule is to use light-colored text (e.g., #FFFFFF) on dark backgrounds and dark-colored text (e.g., #202124) on light backgrounds [37].

Troubleshooting Guides

Problem: Inconsistent Annotations Across a Video Sequence

- Symptoms: The same object or action is labeled differently in subsequent frames, leading to confusion and inaccurate protocol instructions.

- Causes: Annotator fatigue; lack of clear, continuous guidelines; using tools without object tracking features.

- Solutions: [35] [36]

- Use Specialized Tools: Employ annotation software that supports object tracking and temporal interpolation. These features help maintain consistent labels across frames by automatically propagating annotations.

- Implement a Robust QA Pipeline: Move beyond manual checks. Establish a multi-level QA process that includes automated checks for label consistency and dynamic sampling, where complex sequences are reviewed more thoroughly.

- Continuous Training: Regularly train and retrain your annotators using feedback from the QA process and updated guidelines to prevent "label drift" over time.

Problem: Failing to Account for Edge Cases in Plant Phenotypes

- Symptoms: The protocol works for standard plant specimens but fails when researchers try to replicate it with unusual variants, disease states, or under different environmental conditions.

- Causes: The annotation dataset lacks examples of rare but critical scenarios, causing the instructional model to be overfitted to "ideal" conditions.

- Solutions: [35]

- Proactive Identification: Work with domain experts to identify potential edge cases (e.g., nutrient deficiencies, rare mutations) before annotation begins.

- Active Learning: Use a strategy that prioritizes annotating video clips where the model or protocol is least confident, as these are often edge cases.

- Dedicated Workflows: Create specific annotation guidelines and, if necessary, use specialized tools to ensure these critical examples are labeled with high accuracy.

Problem: Poor Readability of Diagrams and On-Screen Text in Annotations

- Symptoms: Text in workflow diagrams or embedded in the video is blurry or hard to read, especially when viewed on different devices or in dark mode.

- Causes: Insufficient color contrast between the text and its background; default color settings that are not optimized for accessibility.

- Solutions: [37]

- Explicit Color Settings: Always explicitly define

fontcolorandfillcolorin your diagrams. Do not rely on default settings. - High Contrast Ratios: Follow accessibility guidelines (like WCAG) by ensuring a high contrast ratio between text and background. The "Contrasting Color" node in some tools can automatically select the color with the best visibility against a given background [38].

- Test in Multiple Environments: Always check how your annotated videos and diagrams appear in both light and dark modes before finalizing your protocol.

- Explicit Color Settings: Always explicitly define

Experimental Protocol: Creating an Annotated Video for a Plant Transformation Procedure

1. Objective To create a detailed, reproducible, and annotated video protocol for the Agrobacterium-mediated transformation of Arabidopsis thaliana.

2. Materials and Equipment

- Plant Materials: Sterilized A. thaliana seeds.

- Biological Reagents: Agrobacterium tumefaciens strain GV3101 harboring the plasmid of interest, MS media, selection antibiotics.

- Equipment: Laminar flow hood, growth chambers, camera with macro lens capable of recording high-resolution video, tripod.

- Software: Video annotation software (e.g., tools with bounding box and key point features).

3. Methodology

- Video Recording:

- Set up the camera on a stable tripod within the laminar flow hood to avoid shaking.

- Record the entire procedure from seed sterilization to plant transformation.

- Ensure consistent lighting and capture close-up shots of critical steps, such as floral dipping.

- Video Annotation:

- Critical Step Marking: Use bounding boxes to highlight key objects like the floral inflorescence and the Agrobacterium culture.

- Temporal Tracking: Apply object tracking to follow the same plant throughout the multi-day process.

- Action Labeling: Use key points or polygons to mark the exact site of inoculation and text annotations to describe the duration of each step.

- QA Review: Have a second researcher review the annotated video against the written protocol to flag any inconsistencies or missing information.

Research Reagent Solutions

The following table details essential materials used in plant transformation protocols, a common complex procedure benefiting from video annotation [36].

| Reagent/Material | Function in the Protocol |

|---|---|

| Agrobacterium tumefaciens | A biological vector used to transfer foreign DNA into the plant genome. |

| Selection Antibiotics | Allows for the growth of only successfully transformed plants or bacteria by eliminating non-modified ones. |

| MS (Murashige and Skoog) Media | A nutrient-rich, sterile gel or liquid that provides essential minerals and vitamins for plant tissue growth. |

| Plant Growth Regulators | Hormones that control plant cell processes, such as callus induction and shoot formation. |

Workflow Visualization

The following diagrams illustrate the experimental workflow and the strategic approach to video annotation.

FAQs & Troubleshooting Guides

What are the core concepts of analytical and biological variation, and how do they impact my results?

A single laboratory result does not represent one absolute value but rather one point within a range of possible values. This range is determined by two key sources of variation [39]:

- Biological Variation (BV): This refers to the innate, physiological fluctuation of a measurand (the quantity being measured) around an individual's homeostatic set point. It has two components:

- Analytical Variation (CVA): This is the variation introduced by the measurement system itself, including the equipment, reagents, and operator technique [39].

Understanding these components is crucial because they represent the "noise" that can obscure the "signal" of your experimental findings. High variability can lead to false negatives or overestimated effect sizes, undermining the replicability of your research [39].

Table 1: Components of Variation in Laboratory Measurements

| Component | Symbol | Definition | Source of Fluctuation |

|---|---|---|---|

| Within-Individual Biological Variation | CVI |

Variation in a measurand over time within a single subject. | Natural physiological rhythms and daily fluctuations [39]. |

| Between-Individual Biological Variation | CVG |

Variation due to differences in the homeostatic set points between different subjects. | Genetic differences, diet, long-term health status [39]. |

| Analytical Variation | CVA |

Variation introduced by the measurement process and equipment. | Instrument imprecision, reagent quality, operator technique [39] [40]. |

How can a structured troubleshooting process help resolve reproducibility issues in complex protocols?

When facing irreproducible results, a systematic approach is more effective than random checks. The following principles can help isolate and resolve problems efficiently [6]:

- Define the Problem: Clearly state the actual result versus the expected result. "The plant growth assay is broken" is insufficient. Instead, specify: "Expected: Plants in group A show a 20% height increase vs. controls. Actual: No significant difference was observed." [6]

- Verify and Replicate: Can you consistently reproduce the problem? Document the exact steps and conditions. Inconsistent replication may point to an uncontrolled variable [6].

- Research and Investigate: Look for changes in the environment (e.g., new reagent lot, minor protocol deviation). Check if others have encountered similar issues [6].

- Form a Hypothesis: Based on your research, make an educated guess about the root cause (e.g., "The pH of the new growth medium is the cause.") [6]

- Isolate the Problem: Systematically test your hypothesis by changing one variable at a time in a controlled test environment to pinpoint the exact source of error [6].

What is the difference between centralized and distributed analysis, and when should I use each strategy?

The choice between a centralized or distributed analytical strategy is a fundamental decision that impacts data consistency, resilience, and management complexity.

Table 2: Centralized vs. Distributed Analysis Comparison

| Basis of Comparison | Centralized Analysis | Distributed Analysis |

|---|---|---|

| Definition | All data storage, processing, and analysis occur at a single location or is managed by a single core team [41] [42]. | Data and analysis responsibilities are spread across multiple databases or teams in different locations [41] [42]. |

| Data Consistency & Governance | High data consistency and uniform governance, as all procedures are controlled from one location [41] [42]. | Lower inherent consistency due to potential replication issues; governance can be challenging to standardize [41]. |

| Failure Resilience | The central system is a single point of failure. If it goes down, all analysis halts [41]. | High resilience. Failure of one node does not prevent access to other databases or analytical streams [41]. |

| Cost & Maintenance | Generally less costly and easier to maintain due to its simplicity [41]. | More expensive and complex to maintain due to distributed infrastructure [41]. |

| Best For | Projects requiring strict governance, uniform procedures, and a single source of truth. Ideal for validating core protocols [41] [42]. | Large, complex projects where agility, local expertise, and fault tolerance are prioritized. Ideal for multi-site studies [41] [42]. |

What specific sample handling and preparation techniques can minimize analytical variability?

Sample preparation is often the largest source of variability in analysis. Controlling this step is critical for reproducibility [43].

- Create an Analytical Target Profile (ATP): Before starting, define the method's required performance criteria (e.g., accuracy, precision). This sets the goalposts for evaluating every step [43].

- Conduct a Risk Assessment: Evaluate each sample handling step (collection, storage, extraction, filtration) for potential risks to integrity. Key considerations include [43]:

- Representativeness: Is the sample truly representative of the whole?

- Integrity: Is the sample protected from light, temperature, and microbial contamination?

- Homogeneity: Is the solution well-mixed before sampling?

- Control Extraction and Filtration: The extraction of an analyte from its matrix is critical. Control the diluent, mixing type, duration, and speed. When filtering, discard the first few milliliters to avoid adsorptive losses [43].

- Implement an Analytical Control Strategy (ACS): Document all controlled parameters (reagents, consumables, equipment, procedures) in the method to ensure consistent application by all analysts [43].