Transfer Learning Performance Across Plant Species: A Comprehensive Review for Biomedical and Life Science Applications

This comprehensive review synthesizes current research on transfer learning (TL) applications for plant species classification and trait prediction, drawing critical parallels for biomedical research.

Transfer Learning Performance Across Plant Species: A Comprehensive Review for Biomedical and Life Science Applications

Abstract

This comprehensive review synthesizes current research on transfer learning (TL) applications for plant species classification and trait prediction, drawing critical parallels for biomedical research. We explore foundational TL concepts in plant phenotyping, evaluate diverse methodological approaches including CNN architectures and spectroscopic analysis, and address key troubleshooting challenges like dataset limitations and model generalization. Through systematic validation across spatial, temporal, and species boundaries, we demonstrate TL's robust performance in achieving high accuracy (up to 97.79% in disease detection and R² up to 0.79 in trait prediction) despite data scarcity. These findings offer valuable insights for researchers developing automated classification systems in resource-constrained environments, with significant implications for drug discovery from plant sources and clinical image analysis.

The Foundation of Transfer Learning in Plant Science: Concepts, Challenges, and Cross-Domain Potential

Core Transfer Learning Paradigms in Plant Classification

Transfer learning has emerged as a pivotal technique in computational biology, enabling researchers to develop accurate plant disease classification models while mitigating challenges associated with limited datasets and computational resources. The application of these paradigms in plant species research primarily revolves around several key methodologies that facilitate knowledge transfer from source to target domains.

Table 1: Fundamental Transfer Learning Approaches in Biological Classification

| Paradigm | Core Methodology | Primary Applications in Plant Research | Key Advantages |

|---|---|---|---|

| Feature-based Transfer | Extracts and reuses feature representations from pre-trained source models [1] | Plant disease recognition across species [2] [3] | Reduces need for large datasets; improves feature extraction |

| Fine-tuning | Adjusts pre-trained model parameters on target plant data [4] [5] | Adaptation of ImageNet models to plant classification tasks [4] [3] | Leverages previously learned features; enhances accuracy |

| Domain Adaptation | Aligns feature distributions between source (lab) and target (field) domains [6] | Cross-environment plant disease recognition [6] | Addresses domain shift; improves field applicability |

| Multi-representation Learning | Captures domain-invariant features through multiple representations [6] | Cross-species plant disease classification [6] | Handles large interdomain discrepancies; improves robustness |

The feature-based transfer learning approach has demonstrated remarkable success in plant disease classification, where convolutional neural networks (CNNs) pre-trained on large datasets like ImageNet are repurposed to extract meaningful features from plant images [4] [2]. This paradigm significantly reduces the requirement for extensive labeled plant disease datasets while maintaining high classification accuracy. Researchers have effectively employed this method to identify diseases across various plant species including tomatoes, rice, cassava, and apples [2] [3].

Domain adaptation techniques address a critical challenge in plant disease recognition: the performance degradation that occurs when models trained under controlled laboratory conditions are deployed in field environments with different imaging characteristics [6]. Advanced methods like the Multi-representation Subdomain Adaptation Network with Uncertainty Regularization (MSUN) have been developed specifically to handle the large interdomain discrepancies, intraclass variations, and fuzzy boundaries between disease categories that commonly occur in plant pathology [6].

Performance Comparison of Transfer Learning Architectures

Experimental evaluations across multiple plant disease datasets reveal significant performance variations among different deep learning architectures when applied through transfer learning paradigms. Comprehensive benchmarking studies provide crucial insights for researchers selecting appropriate models for specific plant classification tasks.

Table 2: Model Performance Comparison Across Plant Disease Datasets

| Model Architecture | Average Accuracy Range | Computational Efficiency | Best-performing Use Cases |

|---|---|---|---|

| Vision Transformer (ViT) | 86.29% (20-shot) [3] | Moderate | Limited data scenarios [3] |

| Ensemble Methods (PDDNet) | 96.74-97.79% [2] | Lower | Complex multi-disease classification [2] |

| YOLOv8 | 91.05% mAP [7] | Higher | Real-time disease detection [7] |

| EfficientNet | Varies by dataset [5] | Higher | Resource-constrained environments [5] |

| ResNet Variants | Varies by dataset [5] [2] | Moderate | General plant disease classification [2] |

The Vision Transformer (ViT) model, when pre-trained using a dual transfer learning strategy on the PlantCLEF2022 dataset (2,885,052 images across 80,000 classes), achieved a remarkable mean testing accuracy of 86.29% across 12 plant disease datasets in 20-shot learning scenarios [3]. This performance surpassed conventional approaches by 12.76%, demonstrating the efficacy of transformer architectures in plant pathology with limited data.

Ensemble methods such as the Plant Disease Detection Network (PDDNet) integrate multiple pre-trained CNNs including DenseNet201, ResNet101, ResNet50, GoogleNet, and AlexNet [2]. The PDDNet-LVE (Lead Voting Ensemble) variant achieved 97.79% accuracy on the PlantVillage dataset (54,305 images across 38 disease categories), outperforming individual CNN models and demonstrating the power of collective intelligence in plant disease classification [2].

For real-time detection applications, YOLO architectures have shown exceptional performance. YOLOv8 achieved a mean Average Precision (mAP) of 91.05% for detecting diseases including Powdery Mildew, Angular Leaf Spot, Early Blight, and Tomato Mosaic Virus, outperforming YOLOv7 while maintaining higher computational efficiency [7].

Experimental Protocols and Methodologies

Dual Transfer Learning with Vision Transformers

The dual transfer learning protocol for Vision Transformers represents a sophisticated methodology that efficiently addresses computational constraints while maximizing classification performance:

Pre-training Phase 1: ViT models initially undergo self-supervised pre-training on ImageNet to learn general visual representations without label dependency [3].

Pre-training Phase 2: Models are further pre-trained on PlantCLEF2022 using supervised learning, specializing in plant-specific features [3].

Fine-tuning Phase: The specialized model is finally fine-tuned on target plant disease datasets with limited samples [3].

This dual-phase pre-training approach significantly reduces computational requirements compared to training ViT models directly on PlantCLEF2022 from scratch, which would require approximately five months using four RTX 3090 GPUs [3].

Domain Adaptation Experimental Protocol

The Multi-representation Subdomain Adaptation Network with Uncertainty Regularization (MSUN) addresses critical challenges in cross-domain plant disease classification through a meticulously designed experimental protocol:

Multi-representation Module: Implements a hybrid neural structure to learn multiple domain-invariant representations, capturing both overall feature structures and fine details [6].

Subdomain Adaptation: Employs Local Maximum Mean Discrepancy (LMMD) to align feature distributions of relevant subdomains, addressing higher interclass similarity and lower intraclass variation [6].

Uncertainty Regularization: Introduces auxiliary regularization to suppress uncertainty from domain transfer and mitigate negative effects of potentially incorrect pseudo-labels for target domain data [6].

This non-adversarial approach has demonstrated superior performance on challenging datasets including PlantDoc, Plant-Pathology, Corn-Leaf-Diseases, and Tomato-Leaf-Diseases, with accuracy values of 56.06%, 72.31%, 96.78%, and 50.58% respectively [6].

Table 3: Key Research Reagents and Computational Resources

| Resource Type | Specific Examples | Research Application | Performance Considerations |

|---|---|---|---|

| Plant Datasets | PlantVillage [5] [2], PlantDoc [6] [7], PlantCLEF2022 [3] | Model training and validation | PlantVillage: Lab images; PlantDoc: Field conditions [7] |

| Pre-trained Models | ResNet50 [2], Vision Transformer [3], EfficientNet [5], YOLOv8 [7] | Feature extraction and transfer learning | ViT excels in limited data; YOLO for real-time detection [7] [3] |

| Computational Frameworks | TensorFlow, Keras [7], PyTorch | Model implementation and training | GPU acceleration essential for large datasets [7] |

| Domain Adaptation Tools | MSUN framework [6], Subdomain alignment modules | Cross-environment model transfer | Critical for field applications [6] |

The PlantVillage dataset represents one of the most comprehensive resources for plant disease classification, containing 54,305 images across 38 categories of diseased and healthy plant leaves [2]. However, researchers must consider that PlantVillage images were primarily captured under controlled laboratory conditions, which may limit model generalization to field environments [7].

PlantDoc addresses this limitation with real-world field images containing complex backgrounds, making it more representative of practical agricultural scenarios but also more challenging for classification algorithms [6] [7]. For large-scale pre-training, PlantCLEF2022 offers an extensive collection of 2,885,052 images across 80,000 plant classes, providing an exceptional resource for developing specialized plant recognition models through transfer learning [3].

The computational framework selection significantly impacts research workflow efficiency. Most contemporary studies utilize TensorFlow or PyTorch with GPU acceleration, with Google Colab providing accessible GPU resources (such as Tesla T4 with 12.68GB memory) for researchers without local computational infrastructure [7].

Performance Optimization Strategies

Computational Efficiency Improvements

Recent research has demonstrated that strategic feature management in transfer learning can significantly reduce computational requirements while maintaining classification accuracy:

Domain-Specific Feature Discarding: Selective removal of domain-specific features from pre-trained models can reduce training time by approximately 12%, processor utilization by 25%, and memory usage by 22% while potentially improving accuracy by 7% [1].

Feature Optimization Algorithms: Integration of optimization algorithms like the Gravitational Search Algorithm (GSA) with transfer learning enables feature reduction exceeding 50%, dramatically decreasing computational requirements without compromising diagnostic quality [8].

These approaches address a critical challenge in plant disease classification research: the trade-off between model complexity and practical deployability in resource-constrained agricultural environments.

Cross-Domain Generalization Techniques

The effectiveness of transfer learning paradigms in plant species research heavily depends on their ability to generalize across domains with different characteristics. The domain adaptation approach has proven particularly valuable for addressing the "domain shift" problem, where models trained on laboratory images fail to perform effectively on field-collected images [6].

Advanced methodologies like the MSUN framework specifically target three key challenges in cross-species plant disease classification: large interdomain discrepancies between laboratory and field environments, significant intraclass variations within species, and fuzzy boundaries between different disease categories that display similar visual manifestations [6]. By incorporating uncertainty regularization and multi-representation learning, these approaches achieve more robust performance across diverse agricultural environments.

Future Directions in Transfer Learning for Plant Science

The evolution of transfer learning paradigms in biological classification continues to address emerging challenges in plant species research. The integration of vision transformers with specialized plant datasets represents a promising direction, particularly for few-shot learning scenarios where limited labeled examples are available [3].

Similarly, the development of lightweight ensemble methods that maintain high accuracy while reducing computational demands addresses practical deployment constraints in agricultural settings [2]. As these paradigms mature, they offer increasingly sophisticated tools for researchers and agricultural professionals working to enhance global food security through improved plant disease management.

Automated plant species identification is crucial for biodiversity conservation, ecological monitoring, and medical research, yet it faces significant technical challenges. This domain grapples with the vast diversity of plant species, limited data availability for rare taxa, and subtle visual differences between closely related species. Transfer learning—where pre-trained deep learning models are adapted to botanical tasks—has emerged as a powerful strategy to address these challenges. This guide compares the performance of various transfer learning approaches, analyzing their effectiveness across different plant identification scenarios and providing experimental data to inform researcher selection.

The Computational Challenges in Plant Identification

Automated plant classification must overcome several interconnected obstacles rooted in biological reality. The extraordinary diversity of plant species presents a fundamental scalability challenge, with approximately 350,386 accepted vascular plant species documented globally [9]. This complexity is compounded by fine-grained visual characteristics, where many species exhibit high inter-class similarity and significant intra-class variability [10]. The blue dotted rectangle in Figure 1 shows an example of the possible similarity among different species, while the red line rectangle presents an example of the difference between samples of the same species caused by shape, color, and texture changes [10].

For rare and endangered species, data scarcity creates additional bottlenecks. Since medicinal plants with limited populations often have few available images, traditional deep learning approaches that require large datasets become impractical [11]. Furthermore, environmental variability introduces complexity, as factors like shooting angles, lighting conditions, seasonal variations, and complex natural backgrounds substantially impact model performance [11].

Comparative Analysis of Transfer Learning Architectures

Researchers have evaluated numerous convolutional neural network (CNN) architectures adapted through transfer learning for plant identification tasks. The table below summarizes experimental results from key studies:

Table 1: Performance Comparison of CNN Architectures for Plant Identification

| Model Architecture | Dataset | Accuracy | F1-Score | Key Strengths | Limitations |

|---|---|---|---|---|---|

| EfficientNetB0 [12] | Swedish Leaf (15 species) | 94.67% | 94.6% | High accuracy on venation patterns, robust feature extraction | - |

| MobileNetV2 [12] | Swedish Leaf (15 species) | 93.34% | 93.23% | Lightweight, suitable for real-time applications, better generalization | Lower peak accuracy than EfficientNet |

| ResNet50 [12] | Swedish Leaf (15 species) | 88.45% | 87.82% | Strong feature representation capabilities | Pronounced overfitting, reduced testing accuracy |

| Two-view S-CNN (Proposed) [10] | PlantCLEF 2015 | Superior to baseline CNNs | - | Handles fine-grained differences, hierarchical classification | Complex two-stage workflow |

| BDCC Framework [11] | FewMedical-XJAU (540 species) | Superior accuracy in few-shot settings | - | Multimodal fusion, handles data scarcity | Requires textual and image data |

EfficientNetB0 demonstrates particularly strong performance for standard identification tasks, achieving 94.67% testing accuracy on the Swedish Leaf Dataset while maintaining balanced precision, recall, and F1-scores exceeding 94.6% [12]. Its compound scaling method provides an optimal balance between network depth, width, and resolution. MobileNetV2 offers the advantage of computational efficiency with minimal accuracy sacrifice, making it suitable for field applications or resource-constrained environments [12].

ResNet50, while achieving high training accuracy (94.11%), exhibited noticeable overfitting with a significant gap between training and testing performance [12]. This suggests that its residual connections may memorize dataset specifics rather than learning generalizable features when applied to plant images without sufficient regularization.

Advanced Methodologies for Specific Challenges

Handling Data Scarcity with Few-Shot Learning

For rare medicinal plants where training data is limited, the BDCC (Bilinear Deep Cross-modal Composition) framework introduces innovative solutions. This approach integrates textual priors with visual features through a deep metric learning framework, significantly enhancing semantic discrimination [11]. The method employs a Class-Aware Structured Text Prompt Construction strategy, generating category descriptions from multiple perspectives including appearance and growth habits [11]. A dynamic fusion mechanism automatically allocates weights to visual and textual modalities based on their discriminative power for each specific task [11].

Addressing Fine-Grained Variation with Multi-View Approaches

The two-view similarity learning strategy tackles fine-grained classification through a hierarchical process [10]. In the first stage, a Siamese CNN performs coarse classification at the genus level using global features (shape, color) from entire leaf images [10]. The second stage then performs fine species classification using local features (texture, vein patterns) from cropped leaf centers [10]. This approach mimics botanical taxonomy relationships and reduces computational complexity by progressively narrowing candidate species [10].

Table 2: Specialized Methods for Specific Identification Challenges

| Challenge | Solution Approach | Key Components | Performance Advantage |

|---|---|---|---|

| Data Scarcity [11] | BDCC Framework | Cross-modal learning, structured text prompts, dynamic fusion | Superior accuracy for rare species with few samples |

| Fine-Grained Differences [10] | Two-View S-CNN | Global and local feature extraction, hierarchical classification | Effectively distinguishes visually similar species |

| Complex Environments [11] | FewMedical-XJAU Dataset | Multi-angle images, varied lighting, complex backgrounds | Improved generalization to real-world conditions |

| Multi-Species Communities [13] | PlantCLEF 2025 Approaches | Multi-label classification, self-supervised learning | Identifies all species in vegetation plot images |

Experimental Protocols and Methodologies

Standard Transfer Learning Protocol

Most studies follow a consistent transfer learning methodology: (1) Model Selection: Choosing a CNN architecture pre-trained on ImageNet; (2) Adaptation: Replacing the final classification layer with a new one matching the number of plant species; (3) Fine-Tuning: Training with plant image datasets, typically using strategies like progressive unfreezing or differential learning rates [12] [10]. For the Swedish Leaf Dataset experiments, models were trained using 1,125 images across 15 species (75 images per species) with standard data augmentation techniques [12].

Two-View Similarity Learning Protocol

The two-view approach employs a more specialized workflow: (1) Genus-Level Classification: Training a Siamese CNN on entire leaf images to compute similarity with genus reference images; (2) Species-Level Classification: Using a second Siamese CNN on center-cropped leaf images to compare with species references from candidate genera; (3) Result Combination: Merging similarity scores from both stages to produce final species rankings [10]. This method requires careful selection of reference images and similarity thresholds at each hierarchical level.

BDCC Framework Protocol

The BDCC framework implements cross-modal integration: (1) Structured Prompt Generation: Creating textual descriptions for each plant category using botanical characteristics; (2) Feature Alignment: Mapping both visual and textual features into a shared semantic space; (3) Dynamic Fusion: Automatically weighting modality contributions based on task-specific performance [11]. This approach requires both image datasets and botanical text descriptions for optimal performance.

Table 3: Key Research Reagents and Resources for Plant Identification Studies

| Resource | Type | Key Features | Application in Research |

|---|---|---|---|

| Swedish Leaf Dataset [12] | Image Dataset | 15 species, 1,125 total images, controlled conditions | Benchmarking model performance on leaf venation patterns |

| FewMedical-XJAU [11] | Specialized Dataset | 540 medicinal species, 4,992 images, complex backgrounds | Few-shot learning research, fine-grained classification |

| PlantCLEF Datasets [13] [10] | Large-Scale Benchmark | 7,800+ species, 1.4M+ images, multi-organ | Large-scale species identification, multi-label classification |

| Pl@ntNet Training Data [13] | Crowdsourced Dataset | Global coverage, multiple plant organs, expert-verified | Real-world application development, transfer learning |

| Xper3 [14] | Software Tool | Freely available, text and image-based key construction | Morphological identification, taxonomic key development |

| DeepBDC [11] | Algorithmic Framework | Deep metric learning, covariance modeling | Feature representation for fine-grained classification |

| Pre-trained CNN Models [12] [9] | Model Weights | ImageNet initialization, various architectures | Transfer learning baseline, feature extraction backbone |

Transfer learning has substantially advanced plant species identification, but model selection must align with specific research constraints and challenges. For standard identification tasks with sufficient data, EfficientNetB0 provides superior accuracy, while MobileNetV2 offers the best efficiency-accuracy balance for resource-limited applications. For rare species with limited samples, the BDCC framework demonstrates how multimodal approaches can overcome data scarcity. When dealing with taxonomically complex species, two-view hierarchical methods effectively address fine-grained visual differences.

Future research directions include developing more sophisticated cross-modal learning techniques, creating standardized benchmarks for long-tailed species distributions, and improving model interpretability for botanical experts. The integration of genomic data with visual recognition represents a promising frontier for resolving taxonomically challenging species complexes [15]. As these technologies evolve, they will increasingly support critical scientific workflows in biodiversity monitoring, ecological research, and medicinal plant conservation.

The accurate classification of plant species is a cornerstone of botanical research, with profound implications for biodiversity conservation, ecosystem monitoring, and pharmaceutical discovery. Historically, plant taxonomy has relied on morphological characteristics observed through detailed field study and herbarium collections. However, technological advancements have introduced powerful computational methods that enhance and, in some cases, transform traditional approaches. This guide objectively compares the performance of contemporary methodologies for plant species identification, with a specific focus on the analysis of leaves, venation patterns, and spectral signatures. These methodologies range from handcrafted feature extraction to deep learning and transfer learning approaches. The evaluation is framed within the context of a broader thesis on transfer learning performance, a technique that is increasingly vital in botanical research where large, annotated datasets are often scarce [16]. We present supporting experimental data from recent studies to provide researchers, scientists, and drug development professionals with a clear comparison of the capabilities and limitations of each technique.

Performance Benchmarking of Classification Approaches

Quantitative Comparison of Model Accuracy

The performance of different feature extraction and classification methodologies varies significantly across plant species and dataset types. The following table summarizes key performance metrics from recent experimental studies.

Table 1: Performance comparison of plant classification approaches

| Classification Approach | Key Features/Methods | Dataset(s) Used | Reported Accuracy/F1-Score | Reference |

|---|---|---|---|---|

| Handcrafted Feature Aggregation | Multiscale entropy of curvature, texture features | Plantscan, MED117, Flavia, Swedish | Exceeded 99.50% (F1 & Accuracy) | [17] |

| Deep Learning (CNN) Benchmark | 23 state-of-the-art CNN models with transfer learning | 18 public plant leaf disease datasets | Varied by model and dataset; comprehensive benchmarking provided | [5] |

| Hyperspectral ML Framework | ANN on hyperspectral reflectance data | Bean plants under thermal stress | 99.4% (Overall Accuracy) | [18] |

| Optimized Hyperspectral CNN | Machine Learning Vegetation Indices (MLVI, H_VSI) | UAV-acquired hyperspectral data | 83.40% (Classification Accuracy) | [19] |

| Sentinel-2 Time Series Classification | Random Forest on multi-temporal satellite data | German National Forest Inventory | F1 scores between 67% and 99% for frequent species | [20] |

Dataset Characteristics and Model Generalizability

The scale and diversity of training data significantly impact model performance and generalizability. The following table compares notable datasets used in plant species classification research.

Table 2: Comparison of plant species classification datasets

| Dataset Name | Number of Species/Classes | Data Modality | Key Characteristics | Reported Challenges |

|---|---|---|---|---|

| Swedish Leaf Dataset | Not specified in results | Leaf images | Historical benchmark for leaf classification | Controlled conditions, limited scope [9] |

| iNaturalist/PlantNet | Extensive (global scale) | Multi-organ plant images | Large-scale, diverse organs, global coverage | Inter-class similarity, intra-class variation [9] |

| German Tree Species Dataset | 48 species + 3 species groups | Sentinel-2 satellite time series | Temporal patterns, large area coverage | Pixel-level validation challenges [20] |

| Proximal Hyperspectral Dataset | 7 crop and weed species | Hyperspectral (400-1000 nm) | Detailed spectral profiles | Noise in specific wavelengths, occlusion [21] |

| Leaf Disease Datasets (18 sets) | Varies per dataset | RGB leaf images | Focus on pathological symptoms | Dataset quality variability, accessibility [5] |

Experimental Protocols and Methodologies

Handcrafted Morphological Feature Extraction

The protocol for handcrafted feature extraction emphasizes shape complexity and texture details from leaf images, achieving exceptional performance on several standard datasets [17].

Experimental Protocol:

- Image Acquisition: Collect leaf images against controlled backgrounds to ensure clear contour detection.

- Contour Processing: Extract the leaf shape contour and compute multiscale curvature representations.

- Entropy Calculation: Apply differential entropy to probability distributions of multiscale curvatures to create coarse-to-fine shape representations.

- Texture Analysis: Combine Local Binary Pattern (LBP) and Gray-Level Co-occurrence Matrix (GLCM) statistics to quantify surface texture.

- Feature Aggregation: Aggregate multiscale entropy of curvature, bending energy, and texture features into a comprehensive descriptor.

- Classification: Implement Random Forest classifier on the aggregated feature set, replacing fully connected layers in CNN comparisons.

Advantages: This approach provides interpretable features and has demonstrated superior performance compared to some CNN architectures, particularly with limited training data [17]. The method achieved better results with 40 features than LeNet achieved with 50 features, indicating high feature efficiency.

Deep Learning and Transfer Learning Framework

Transfer learning has emerged as a solution to limited annotated data in plant science, leveraging pre-trained CNN models adapted for botanical tasks [16].

Experimental Protocol:

- Model Selection: Choose pre-trained CNN architectures (commonly VGG, ResNet, AlexNet) trained on large generic image datasets.

- Dataset Preparation: Curate plant image datasets, applying data augmentation techniques to increase effective dataset size.

- Model Adaptation: Replace and retrain the final classification layer to match target plant species classes while retaining pre-trained weights in earlier layers.

- Fine-tuning: Optionally fine-tune weights in deeper layers to adapt features to botanical characteristics.

- Performance Validation: Evaluate on held-out test sets using accuracy, F1-score, and other relevant metrics.

Advantages: Transfer learning reduces training time and data requirements while achieving state-of-the-art performance on many plant classification tasks [16]. Comprehensive benchmarking has identified top-performing architectures across diverse plant disease datasets [5].

Hyperspectral Analysis for Stress Detection and Classification

Hyperspectral sensing captures detailed reflectance patterns that correlate with physiological and biochemical properties, enabling early stress detection and species discrimination [19].

Experimental Protocol:

- Spectral Data Acquisition: Collect hyperspectral data using field spectroradiometers or UAV-mounted sensors across visible, NIR, and SWIR regions.

- Data Preprocessing: Convert radiance to reflectance, remove noise, and apply spectral corrections.

- Feature Selection: Implement Recursive Feature Elimination (RFE) to identify optimal spectral bands for specific classification tasks.

- Index Development: Formulate novel vegetation indices (e.g., MLVI, H_VSI) based on selected bands.

- Model Training: Apply machine learning classifiers (ANN, Random Forest, SVM) or 1D CNN models to hyperspectral data or derived indices.

- Validation: Correlate spectral classifications with ground-truth measurements of pigment content, stress markers, or species identities.

Advantages: Hyperspectral approaches can detect physiological changes before visible symptoms appear, enabling early intervention [19]. Specific spectral regions (green: 530-570 nm; red-edge: 700-710 nm) have been identified as particularly sensitive to thermal stress [18].

Workflow Visualization

Handcrafted Feature Extraction Workflow

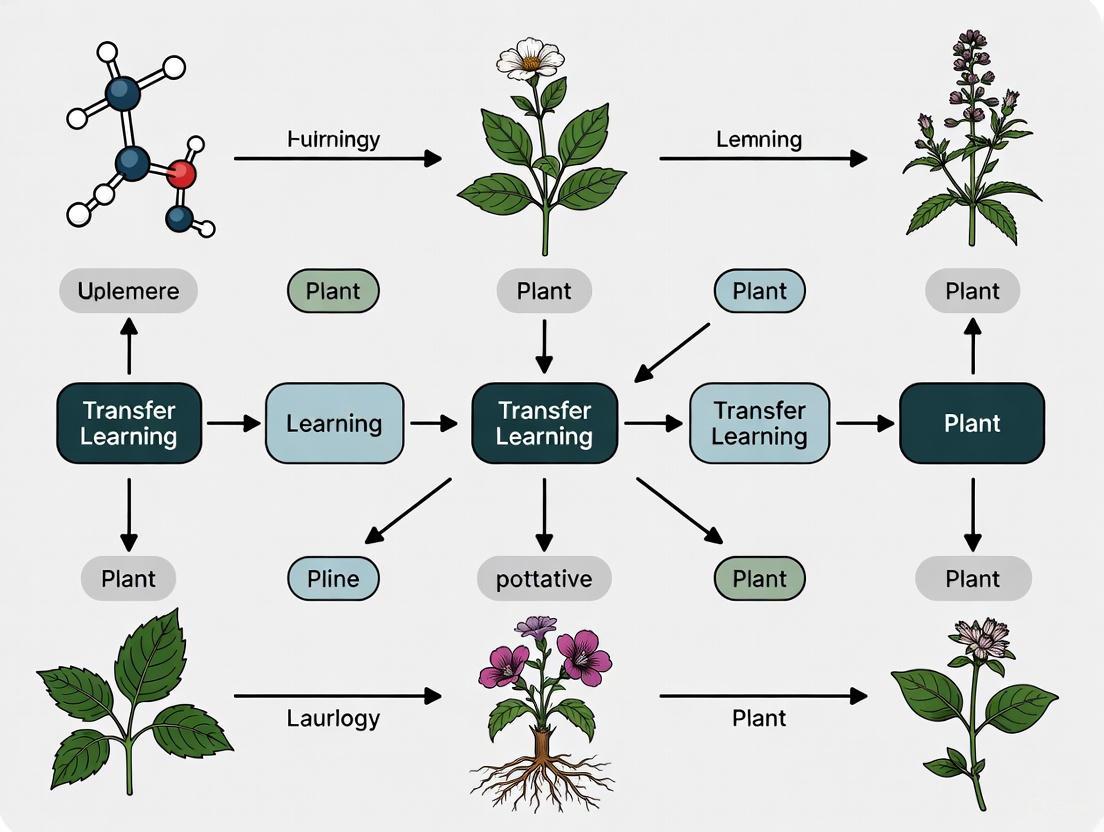

Transfer Learning Workflow for Plant Classification

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential research reagents and equipment for plant morphological and spectral analysis

| Tool/Reagent | Function/Application | Specification Considerations |

|---|---|---|

| Field Spectroradiometer | Measures spectral reflectance of plant tissues | Spectral range (350-2500 nm), resolution, field of view [22] |

| Hyperspectral Imaging Sensors | Captures spatial-spectral data cubes for analysis | Spectral bands, spatial resolution, platform compatibility [19] |

| Vegetation Indices (NDVI, PRI, MCARI) | Quantifies vegetation properties from spectral data | Sensitivity to target pigments, resistance to noise [23] |

| CNN Architectures (VGG, ResNet, EfficientNet) | Deep feature extraction for image classification | Model depth, parameter count, computational requirements [5] |

| Public Datasets (iNaturalist, PlantVillage, GBIF) | Training and benchmarking data for models | Species diversity, image quality, annotation reliability [9] |

| Random Forest Classifier | Machine learning for morphological and spectral data | Number of trees, feature sampling, interpretability [17] |

| Reference Pigment Sets | Calibration for spectral pigment assessment | Purity, stability, solvent compatibility [23] |

| Laboratory Spectrophotometry | Destructive pigment validation method | Accuracy, precision, detection limits [23] |

Discussion and Performance Analysis

The comparative analysis reveals that no single approach universally outperforms others across all scenarios. The choice of methodology depends on specific research objectives, data availability, and resource constraints.

Handcrafted feature extraction demonstrates remarkable efficiency, achieving over 99.5% accuracy on several benchmark datasets with relatively few features [17]. This approach provides interpretable features and strong performance, particularly when training data is limited. However, feature engineering requires domain expertise and may not generalize well across diverse plant organs and species.

Deep learning with transfer learning has become dominant for large-scale plant classification tasks, benefiting from extensive model architectures pre-trained on general image datasets [16]. Comprehensive benchmarking of 23 CNN models across 18 datasets provides guidance for architecture selection [5]. Transfer learning effectively addresses the data limitation problem common in botanical applications, though model interpretability remains challenging.

Hyperspectral analysis offers unique capabilities for early stress detection and subtle species discrimination by capturing biochemical and physiological properties beyond visible morphology [19]. The development of machine learning-optimized vegetation indices (MLVI, H_VSI) represents an advance over traditional indices like NDVI, enabling earlier stress detection (10-15 days) and stronger correlation with ground-truth markers [19]. However, hyperspectral data acquisition requires specialized equipment and processing expertise.

The integration of multi-modal data—combining morphological, spectral, and temporal information—shows particular promise for challenging classification tasks. Studies utilizing Sentinel-2 time series demonstrate the value of phenological patterns for tree species discrimination, achieving F1 scores between 67% and 99% for frequent species [20]. This approach captures seasonal variations that single-timepoint analysis misses.

For drug development professionals, these advanced classification techniques enable more efficient screening of medicinal plants like Ficus and Moringa species, where hyperspectral indices can indicate pharmacological potential through correlations with pigment density and physiological vigor [22]. The non-destructive nature of spectral methods allows for continuous monitoring of valuable specimens without harvest damage.

The taxonomic significance of plant morphological features remains undiminished, though modern analytical approaches have dramatically enhanced our ability to quantify and interpret these characteristics. Handcrafted feature extraction provides interpretability and efficiency for specific leaf-based classification tasks, while deep learning with transfer learning offers scalable solutions for diverse plant organs and large datasets. Hyperspectral analysis enables early stress detection and subtle species discrimination through biochemical sensing. The optimal approach frequently involves integrating multiple methodologies and data sources, leveraging the complementary strengths of each technique. As these technologies continue to mature, they will increasingly support critical applications in biodiversity conservation, agricultural management, and pharmaceutical discovery from plant resources.

The fields of plant science and biomedical research are increasingly converging on a common set of technological challenges in image-based classification. Despite vast differences in their biological subjects, both domains face remarkably similar obstacles in fine-grained visual categorization, limited data availability, and the need for robust model generalization. Advances in deep learning architectures and transfer learning methodologies are creating unexpected synergies between these seemingly disparate fields, enabling knowledge transfer that benefits both agricultural and medical applications.

This review examines the parallel challenges in plant species identification and biomedical image analysis, with particular focus on transfer learning performance across domains. By comparing experimental protocols, model architectures, and performance benchmarks, we identify cross-disciplinary insights that can accelerate research in both fields. The analysis reveals that solutions developed for plant biodiversity monitoring can directly inform biomedical diagnostic approaches, and vice versa, creating a virtuous cycle of methodological innovation.

Parallel Classification Challenges: Technical Landscape

Common Obstacles in Fine-Grained Visual Categorization

Plant species classification and biomedical image analysis share fundamental technical challenges that stem from the inherent complexity of their respective subjects:

Fine-grained visual classification: Both domains require distinguishing between categories with subtle visual differences. In plant science, this involves differentiating between closely related species based on minor variations in leaf morphology, texture, or coloration [9]. Similarly, biomedical imaging requires identifying subtle cellular abnormalities or pathological features that may indicate disease states [24].

High intra-class variation: Individual specimens within the same category can exhibit significant visual differences due to developmental stage, environmental factors, or genetic diversity. Plant images capture natural variation in appearance across growth stages and environmental conditions [25], while biomedical samples show variability due to patient-specific factors and tissue heterogeneity.

Limited annotated data: Both fields face constraints in obtaining large-scale, expertly annotated datasets. Rare species in botany and uncommon pathologies in medicine create natural imbalances in data availability, necessitating specialized approaches for low-data regimes [25].

Complex backgrounds and artifacts: Real-world plant images contain complex natural backgrounds that can interfere with classification [25], while biomedical images often include tissue artifacts, staining variations, and imaging noise that complicate analysis [24].

Domain-Specific Dataset Characteristics

The datasets used in plant science and biomedical research reflect both these common challenges and their specialized requirements:

Table 1: Representative Dataset Characteristics in Plant Science and Biomedical Research

| Dataset Name | Domain | Categories | Image Count | Key Characteristics | Annotation Type |

|---|---|---|---|---|---|

| PlantCLEF 2025 [26] | Plant Science | ~400 species | 1.4M training + 2,105 test | Multi-label quadrat images, high-resolution | Expert-annotated species presence |

| FewMedical-XJAU [25] | Plant Science | 540 medicinal species | 4,992 images | Complex backgrounds, multi-view, seasonal variation | Expert annotations with fine-grained labels |

| PlantVillage [5] | Plant Science | 15-38 disease classes | 54,305 images | Controlled backgrounds, focused on leaves | Single-label disease classification |

| Glioma-MDC 2025 [24] | Biomedical | Multiple | Annotated image patches | H&E-stained tissue, mitotic figures | Expert-annotated pathological features |

| Pap Smear Cell Classification [24] | Biomedical | Multiple cell types | Not specified | Cervical cell images, cancer screening | Abnormal cell identification |

Transfer Learning Performance: Comparative Analysis

Model Architecture Benchmarking

Recent comprehensive studies have evaluated diverse model architectures across both domains, providing critical insights into transfer learning effectiveness:

Table 2: Cross-Domain Model Performance Comparison on Benchmark Tasks

| Model Architecture | Plant Disease Classification Accuracy [5] | Key Characteristics | Computational Efficiency |

|---|---|---|---|

| EfficientNetB7 | High performance (ensemble) | Compound scaling, optimized architecture | Moderate parameters |

| DenseNet201 | High performance (ensemble) | Feature reuse, connectivity pattern | Higher memory usage |

| ResNet50 | Strong baseline | Residual connections, proven architecture | Balanced efficiency |

| ConvNeXt | Modernized CNN | Modernized ResNet, competitive with transformers | Variable sizes available |

| Vision Transformers | Emerging leader | Global attention, strong on large datasets | High computational demand |

The performance benchmarks reveal several cross-domain patterns. In plant disease classification, ensemble approaches integrating multiple architectures consistently achieve superior performance, with PDDNet-LVE and PDDNet-AE models reaching 97.79% and 96.74% accuracy respectively on the PlantVillage dataset [27]. Similarly, biomedical challenges increasingly leverage hybrid architectures that combine the strengths of convolutional networks and attention mechanisms [24].

Specialized Architectures for Domain-Specific Challenges

Both fields have developed specialized architectural innovations to address their unique classification challenges:

Attention mechanisms for plant disease detection: The CNN-SEEIB architecture incorporates Squeeze-and-Excitation attention within identity blocks, achieving 99.79% accuracy on PlantVillage by adaptively emphasizing informative features while suppressing less relevant ones [28]. This approach mirrors attention mechanisms used in biomedical imaging for highlighting pathological features.

Multimodal learning for fine-grained discrimination: The BDCC framework combines visual and textual features through bilinear deep cross-modal composition, using class-aware structured text prompts to enhance semantic discrimination for medicinal plant identification [25]. This approach demonstrates how auxiliary information can compensate for limited visual discriminability in fine-grained categories.

Transformer-CNN hybrids: Models like DaViT incorporate dual attention mechanisms (spatial and channel) that have proven effective in both domains, achieving 90.4% top-1 accuracy on ImageNet with the giant configuration [29]. The complementary attention patterns help capture both local discriminative features and global contextual relationships.

Experimental Protocols and Methodologies

Standardized Evaluation Frameworks

Both domains have established rigorous evaluation frameworks through organized challenges that enable meaningful performance comparisons:

The experimental workflow follows a consistent pattern across domains. Challenges begin with precisely defined tasks and carefully curated datasets, followed by a training phase where participants develop models, and conclude with an evaluation phase where models are assessed on withheld data to ensure fair benchmarking [30].

Transfer Learning Methodologies

Transfer learning has emerged as a foundational methodology in both domains, with several standardized approaches:

The transfer learning protocol typically begins with source domain pre-training on large-scale datasets like ImageNet, followed by model adaptation through various techniques, target domain fine-tuning on domain-specific data, and final evaluation on task-specific metrics [5] [27].

Data Augmentation and Preprocessing

Both domains employ sophisticated data augmentation strategies to address limited training data and improve model robustness:

Geometric transformations: Standard augmentations including rotation, flipping, scaling, and cropping are universally applied to increase data diversity and encourage translation invariance [28].

Photometric adjustments: Modifications to brightness, contrast, saturation, and hue help models generalize across varying imaging conditions and staining protocols [27].

Domain-specific simulations: Plant science incorporates synthetic leaf damage and environmental variations [25], while biomedical imaging may simulate tissue artifacts or staining variations [24].

The Scientist's Toolkit: Essential Research Reagents

Researchers in both domains rely on a common set of computational tools and frameworks:

Table 3: Essential Research Reagents for Image-Based Classification Research

| Tool/Category | Specific Examples | Function | Domain Applications |

|---|---|---|---|

| Deep Learning Frameworks | PyTorch, TensorFlow, Keras | Model development and training | Universal |

| Pre-trained Models | ImageNet weights, CLIP, CoCa | Transfer learning foundation | Universal |

| Model Architectures | CNN-based: ResNet, EfficientNet, ConvNeXt; Transformer-based: ViT, DaViT; Hybrid: CoCa | Feature extraction and classification | Universal, with domain-specific adaptations |

| Attention Mechanisms | Squeeze-and-Excitation, Self-Attention, Dual Attention | Feature emphasis, contextual understanding | Plant disease detection, biomedical image analysis |

| Evaluation Metrics | Accuracy, Precision, Recall, F1-Score, mAP, MA-MRR | Performance quantification | Universal, with domain-specific emphasis |

| Data Augmentation Tools | Albumentations, TensorFlow Augment, Custom transforms | Dataset expansion, robustness improvement | Universal |

| Ensemble Methods | Early Fusion, Lead Voting Ensemble, Average Fusion | Performance enhancement, overfitting reduction | Plant disease detection, biomedical challenges |

Emerging Trends and Future Directions

Cross-Domain Methodological Exchange

The parallel evolution of image classification in plant science and biomedical research has created opportunities for fruitful methodological exchange:

Few-shot and zero-shot learning: Both fields are increasingly focusing on learning from limited labeled examples. Plant science has developed specialized approaches like the BDCC framework for rare medicinal plants [25], while biomedical research faces similar challenges with rare diseases and conditions where annotated data is scarce [24].

Multi-modal learning: Integration of visual data with textual descriptions, taxonomic information, and genomic data is becoming increasingly common. The CLIP model and its derivatives demonstrate how cross-modal pretraining can enhance generalization in both domains [25].

Self-supervised learning: Methods that leverage unlabeled data through pretext tasks are gaining traction to reduce annotation burden. These approaches show particular promise for domains with abundant unlabeled images but limited expert annotations [9].

Technological Convergence

The technological stack for image-based classification is converging across domains, with several shared development trajectories:

Transformer architecture adoption: Originally developed for natural language processing, transformer architectures are being increasingly adapted for visual tasks in both plant science and biomedical research, often in hybrid configurations with convolutional networks [29].

Explainable AI integration: As models become more complex, both fields are placing greater emphasis on interpretability and explainability, developing visualization techniques that help experts understand model decisions and build trust in automated systems [28].

Edge deployment optimization: Both agricultural and clinical applications often require deployment in resource-constrained environments, driving development of optimized models that balance accuracy with computational efficiency [28].

The parallels between plant science and biomedical research in image-based classification challenges reveal fundamental similarities in computational approaches despite surface-level domain differences. Transfer learning has emerged as a cornerstone methodology in both fields, with consistent patterns in model adaptation strategies, performance benchmarks, and architectural innovations.

The cross-pollination of ideas between these domains accelerates progress for both. Biomedical research benefits from plant science's innovations in fine-grained categorization of visually similar specimens, while plant science adopts advanced architectures and learning paradigms developed for medical applications. This synergistic relationship demonstrates how methodological advances in one domain can transcend disciplinary boundaries to drive innovation across multiple fields.

Future progress will likely depend on continued methodological exchange, standardized benchmarking through organized challenges, and the development of specialized architectures that address the unique characteristics of biological imagery while leveraging general advances in computer vision and deep learning.

Transfer learning has become a cornerstone of modern artificial intelligence (AI), enabling the adaptation of pre-trained models to specialized tasks with remarkable efficiency. In scientific domains such as plant species research, where labeled data is often scarce and acquisition costly, these techniques dramatically reduce training time and data requirements while delivering substantial performance gains compared to training models from scratch [31]. This guide provides a systematic comparison of transfer learning approaches, with a specific focus on their application and performance in plant science research, encompassing areas from disease detection to species identification and leaf trait analysis.

The field encompasses a spectrum of strategies, from parameter-efficient fine-tuning methods to sophisticated domain adaptation techniques. For researchers in botany, agriculture, and drug development from plant sources, selecting the appropriate approach is crucial for developing accurate, robust, and scalable models. This article objectively compares the performance of these methods using empirical data from recent plant science studies, providing detailed experimental protocols and resource guidance to inform research design.

Conceptual Framework of Transfer Learning Approaches

Transfer learning techniques can be systematically categorized based on their methodology and implementation approach. Table 1 outlines the primary strategies relevant to plant science applications.

Table 1: Systematic Categorization of Key Transfer Learning Approaches

| Category | Key Techniques | Mechanism | Primary Use Cases in Plant Science |

|---|---|---|---|

| Supervised Fine-Tuning (SFT) | Full fine-tuning, Layer-wise tuning | Updates all or most model weights on labeled target data | Domain-specific adaptation with sufficient labeled data (e.g., plant disease classification) [31] [32] |

| Parameter-Efficient Fine-Tuning (PEFT) | LoRA (Low-Rank Adaptation), QLoRA | Adds and trains small adapter modules while freezing base model | Adapting large models with limited compute resources [31] |

| Domain Adaptation | Continued Pretraining (CPT), Unsupervised Domain Adaptation | Exposure to domain-specific corpora before task-specific tuning | Handling domain shift (e.g., lab to field conditions) [32] [33] |

| Model Merging | SLERP (Spherical Linear Interpolation) | Combines parameters from multiple specialized models | Integrating capabilities from different plant science domains [32] |

| Transfer Learning with CNNs | Feature extraction, Fine-tuning pre-trained vision models | Leverages features learned on large datasets like ImageNet | Plant disease detection, species identification [12] [34] [2] |

These approaches form a methodological continuum. Supervised Fine-Tuning represents the most straightforward approach, adapting all model parameters to a new task. Parameter-Efficient Fine-Tuning methods have gained prominence due to their reduced computational demands, with LoRA and QLoRA enabling adaptation of billion-parameter models on single GPUs by training only small, low-rank matrices injected into the model architecture [31]. Domain Adaptation techniques address the challenge of distribution shift between training and deployment environments, a common issue in plant pathology where models trained on lab images must perform in field conditions. Model Merging represents an advanced approach where multiple fine-tuned models are combined to create new capabilities beyond what any single model possesses [32].

Performance Comparison in Plant Science Applications

Computer Vision for Plant Disease Detection

Computer vision approaches, particularly Convolutional Neural Networks (CNNs), have demonstrated exceptional performance in plant disease detection and classification. Table 2 compares the performance of various transfer learning approaches across multiple studies and architectures.

Table 2: Performance Comparison of Transfer Learning Models in Plant Disease Detection

| Model Architecture | Dataset | Key Metrics | Performance | Experimental Notes |

|---|---|---|---|---|

| EfficientNetB0 [12] | Swedish Leaf (15 species, 1,125 images) | Testing Accuracy | 94.67% | Leaf venation pattern classification |

| MobileNetV2 [12] | Swedish Leaf Dataset | Testing Accuracy, F1-score | 93.34%, 93.23% | Better generalization capabilities |

| ResNet50 [12] | Swedish Leaf Dataset | Training/Testing Accuracy | 94.11%/88.45% | Exhibited overfitting |

| YOLOv8 [35] | Plant Disease Detection Dataset | mAP, F1-score, Precision, Recall | 91.05%, 89.40%, 91.22%, 87.66% | Detected multiple disease types |

| PDDNet-LVE Ensemble [2] | PlantVillage (54,305 images, 38 categories) | Accuracy | 97.79% | Combined multiple CNNs with logistic regression |

| PDDNet-AE Ensemble [2] | PlantVillage Dataset | Accuracy | 96.74% | Early fusion of multiple CNNs |

| Custom CNN [34] | Plant Village Dataset | Testing Accuracy | 97.6% | Several convolution and pooling layers |

The comparative data reveals several key insights for plant science researchers. Ensemble methods consistently achieve the highest accuracy, with the PDDNet-LVE model combining multiple CNNs reaching 97.79% accuracy on the challenging PlantVillage dataset comprising 54,305 images across 38 disease categories [2]. For single-model architectures, EfficientNetB0 demonstrates superior performance in species identification tasks, achieving 94.67% testing accuracy on leaf venation patterns while showing robust generalization [12]. Object detection models like YOLOv8 provide comprehensive performance across multiple metrics, achieving 91.05% mAP while detecting various disease types in realistic conditions [35], making them suitable for real-world agricultural applications.

Advanced Fine-Tuning Strategies for Domain Adaptation

Beyond standard transfer learning, advanced fine-tuning strategies offer sophisticated approaches to domain adaptation. Table 3 outlines the performance characteristics of these methods based on experimental studies.

Table 3: Advanced Fine-Tuning Strategies and Model Merging Approaches

| Technique | Mechanism | Performance Advantages | Implementation Considerations |

|---|---|---|---|

| Continued Pretraining (CPT) [32] | Further pre-training on domain-specific corpora | Enhances domain relevance and knowledge integration | Requires substantial domain-specific data |

| Direct Preference Optimization (DPO) [32] | Optimizes model based on human or AI preferences | Aligns model outputs with expert preferences | Requires carefully curated preference data |

| Model Merging via SLERP [32] | Spherical linear interpolation of model parameters | Enables capability emergence beyond parent models | Highly dependent on parent model diversity |

| Fine-tuning-based Transfer Learning [33] | Adjusts pre-trained models on limited target data | Improved accuracy and transferability across domains | Effective even with limited target data |

The experimental data indicates that model merging via Spherical Linear Interpolation (SLERP) can produce capabilities that surpass those of the individual parent models, creating emergent functionalities through highly nonlinear interactions between model parameters [32]. Continued Pretraining (CPT) has proven effective for building domain-specific knowledge, particularly when followed by Supervised Fine-Tuning (SFT) for task-specific optimization [32]. Preference optimization methods like DPO provide mechanisms for aligning model behavior with scientific requirements, while fine-tuning-based transfer learning has demonstrated improved accuracy and transferability across diverse spatial, species, and temporal domains in leaf trait prediction [33].

Experimental Protocols and Methodologies

Standardized Experimental Workflow

The following diagram illustrates a comprehensive transfer learning workflow integrating multiple adaptation strategies, from initial domain adaptation to specialized model development:

Key Experimental Protocols

Cross-Domain Validation Protocol

Robust evaluation of transfer learning models in plant science requires rigorous cross-domain validation. The protocol implemented by [33] for leaf trait prediction exemplifies best practices:

- Dataset Composition: Compile data across diverse geographic locations, plant functional types (PFTs), and seasons to test model transferability.

- Domain Shift Assessment: Explicitly measure performance degradation when applying models to new domains (unseen species, locations, or temporal periods).

- Fine-tuning Data Stratification: Systematically vary the quantity and diversity of fine-tuning data to quantify sample efficiency gains.

- Benchmarking: Compare against established baselines including PLSR (Partial Least Squares Regression), GPR (Gaussian Process Regression), and physical models.

This protocol revealed that fine-tuning-based transfer learning models significantly outperformed traditional methods, with improved accuracy and transferability across geographic locations, plant functional types, and seasons [33].

Comprehensive Model Benchmarking Framework

Large-scale benchmarking studies provide invaluable guidance for model selection. The approach described by [36] offers a systematic framework:

- Model Diversity: Evaluate numerous state-of-the-art architectures (23 models in their study) to identify optimal inductive biases for plant science tasks.

- Dataset Variety: Test across multiple openly available datasets (18 datasets in their study) to assess generalization beyond single data distributions.

- Training Strategies: Compare transfer learning with and without additional fine-tuning across 5 iterations each to ensure statistical significance.

- Comprehensive Metrics: Report multiple performance metrics including accuracy, precision, recall, and F1-score to capture different aspects of model capability.

This extensive benchmarking approach, encompassing 4,140 trained models in their implementation, provides robust guidance for selecting architectures that balance performance, efficiency, and generalization for specific plant science applications [36].

Implementing effective transfer learning solutions in plant science requires both computational resources and specialized datasets. Table 4 catalogs essential resources for developing and deploying plant science AI applications.

Table 4: Essential Research Reagents and Computational Resources

| Resource Category | Specific Tools & Datasets | Application in Plant Science Research | Access/Requirements |

|---|---|---|---|

| Public Datasets | PlantVillage Dataset [2], Swedish Leaf Dataset [12], Detecting Diseases Dataset [35] | Benchmarking, model training, transfer learning evaluation | Publicly available, contains thousands of annotated plant images |

| Deep Learning Frameworks | TensorFlow, Keras, PyTorch [35] | Model implementation, training, and fine-tuning | Open-source, GPU acceleration support |

| Transfer Learning Libraries | Hugging Face Transformers, PEFT [31] | Parameter-efficient fine-tuning, pre-trained model access | Python libraries, pre-trained models available |

| Computational Infrastructure | Google Colab (Tesla T4 GPU) [35], NVIDIA DGX Systems [31] | Model training and experimentation | Cloud and on-premises solutions, varying compute capabilities |

| Specialized Architectures | YOLOv7/v8 [35], EfficientNet [12], U-Net [37] | Object detection, classification, segmentation tasks | Open-source implementations available |

| Model Optimization Tools | LoRA, QLoRA [31] | Memory-efficient fine-tuning of large models | Integrated into major ML frameworks |

For plant scientists embarking on transfer learning projects, the PlantVillage dataset represents the most extensive publicly available resource for disease detection, containing 54,305 images across 38 categories [2]. Computational resources range from free cloud-based platforms like Google Colab with Tesla T4 GPUs [35] to enterprise-scale on-premises solutions like NVIDIA DGX systems for large-scale model training [31]. Parameter-efficient fine-tuning libraries like PEFT, implementing methods such as LoRA and QLoRA, enable adaptation of billion-parameter models on limited hardware resources [31], dramatically increasing accessibility for research institutions with limited compute budgets.

The systematic comparison of transfer learning approaches reveals a complex landscape of complementary techniques, each with distinct advantages for plant science applications. Ensemble methods and architectures like EfficientNet and YOLOv8 demonstrate superior performance for classification and detection tasks, while advanced strategies like model merging and preference optimization offer pathways to specialized capabilities. The experimental data consistently shows that transfer learning approaches significantly outperform traditional methods and training-from-scratch approaches, with performance advantages of 10-20% commonly reported across diverse plant science applications.

For researchers, selection criteria should include not only raw accuracy but also computational requirements, data efficiency, and domain transfer capabilities. Parameter-efficient fine-tuning methods like LoRA have dramatically reduced the computational barriers to adapting large models, while comprehensive benchmarking studies provide robust guidance for architecture selection. As transfer learning methodologies continue to evolve, their integration into plant science research workflows promises to accelerate discoveries in species identification, disease detection, and trait analysis, ultimately supporting enhanced agricultural productivity and biodiversity conservation.

Methodological Approaches and Real-World Applications: Architectures, Spectroscopic Integration, and Ensemble Strategies

The application of deep learning in plant science, particularly for species identification and disease diagnosis, has witnessed significant advancements through transfer learning. This approach allows models pre-trained on large-scale datasets like ImageNet to be adapted for specific agricultural tasks, often with limited data. Evaluating the performance of diverse neural architectures within this context is crucial for developing accurate, efficient, and deployable tools for researchers and agricultural professionals. This guide provides a comparative analysis of four prominent architectures—ResNet, EfficientNet, MobileNetV2, and Vision Transformers (ViT)—framed within the context of transfer learning performance across plant species research. The analysis focuses on their applicability in plant leaf classification and disease detection, synthesizing experimental data to offer an objective performance benchmark.

Key Architectures in Plant Science Research

- ResNet (Residual Network): Known for its residual connections that solve the vanishing gradient problem in very deep networks, enabling the training of architectures with dozens or even hundreds of layers. A variant like ResNet50 provides a strong balance between depth and computational feasibility for feature extraction [12] [38].

- EfficientNet: A family of models that use a compound scaling method to uniformly scale network depth, width, and resolution, achieving state-of-the-art efficiency and accuracy. EfficientNet-B0, the lightest variant, is particularly favored for its low computational demand and high performance in plant disease classification [39] [40].

- MobileNetV2: Designed for mobile and embedded vision applications, it uses inverted residual blocks and linear bottlenecks to reduce model size and latency while maintaining satisfactory accuracy. It demonstrates strong generalization capabilities [12].

- Vision Transformer (ViT): Applies the transformer architecture, based on a self-attention mechanism, directly to sequences of image patches. This allows it to model global dependencies across the entire image from the earliest layers, a departure from the inductive biases inherent in CNNs [41] [40].

Quantitative Performance Comparison

The following tables summarize the performance of these architectures across various plant science tasks, as reported in recent studies.

Table 1: Performance on Plant Species and Disease Classification

| Model | Task / Dataset | Accuracy (%) | Precision (%) | Recall (%) | F1-Score (%) | References |

|---|---|---|---|---|---|---|

| EfficientNet-B0 | Apple Leaf Diseases (APV Dataset) | 99.69 | - | - | - | [39] |

| EfficientNet-B0 | Apple Leaf Diseases (PV Dataset) | 99.78 | - | - | - | [39] |

| EfficientNet-B0 | Coffee Leaf Diseases | 88.37 | 87.9 | 88.02 | 87.96 | [40] |

| EfficientNet-B0 | Plant Species ID (Venation Patterns) | 94.67 | >94.6 | >94.6 | >94.6 | [12] |

| Vision Transformer (ViT) | Coffee Leaf Diseases | 85.12 | 84.76 | 84.93 | 84.85 | [40] |

| Vision Transformer (ViT) | Cross-domain (PlantVillage to PlantDoc) | 68.00 | - | - | - | [41] |

| MobileNetV2 | Plant Species ID (Venation Patterns) | 93.34 | - | - | 93.23 | [12] |

| ResNet50 | Plant Species ID (Venation Patterns) | 88.45 | - | - | 87.82 | [12] |

| ResNet50 | Chili Plant Disease Classification | 91.00 | 94.00 | 94.00 | 93.00 | [38] |

Table 2: Computational Efficiency and Robustness

| Model | Computational Efficiency | Data Efficiency & Robustness | References |

|---|---|---|---|

| EfficientNet-B0 | Low memory consumption and FLOPs; suitable for resource-constrained environments. | High accuracy with limited data; robust to field conditions in some studies. | [39] [40] |

| MobileNetV2 | Lightweight; designed for mobile and real-time applications. | Good generalization; suitable for lightweight, real-time applications. | [12] |

| Vision Transformer (ViT) | Higher computational cost than lightweight CNNs; requires more data. | Performance can drop with small datasets; shows promise in cross-domain generalization. | [41] [40] |

| ResNet50 | Moderate to high computational complexity. | Can suffer from overfitting on smaller datasets. | [12] [38] |

Experimental Protocols and Methodologies

The comparative data presented are derived from standardized experimental protocols in plant science research. The workflow below outlines the general transfer learning process used for adapting these models.

Detailed Methodological Breakdown

Data Acquisition and Curation: Studies utilize publicly available datasets such as PlantVillage, PlantDoc, and specialized collections for specific crops (e.g., coffee, apple, chili). A critical distinction is made between lab-condition images (e.g., original PlantVillage) and in-the-wild images (e.g., PlantDoc), which contain complex backgrounds and variable lighting [39] [41]. Performance can drop significantly (e.g., from >99% to <40% accuracy) when models trained on lab data are tested on in-the-wild images, highlighting the importance of dataset choice for real-world generalization [41].

Data Preprocessing and Augmentation: Standard practices include image resizing to match model input dimensions (e.g., 224x224 or 384x384), pixel normalization, and data augmentation techniques to improve model robustness and combat overfitting. Common augmentations include random rotation, flipping, zooming, and brightness adjustments [39] [38]. To address class imbalance, techniques like stratified data splitting and class weighting are employed [39].

Transfer Learning and Fine-Tuning: This is the core methodology. Models are initialized with weights pre-trained on ImageNet.

- CNN Models (ResNet, EfficientNet, MobileNetV2): Typically, the convolutional base is used for feature extraction, and the final classification layers are replaced and trained from scratch. Fine-tuning may involve unfreezing and training some of the higher-level layers of the base model to adapt generic features to the specific plant pathology domain [39].

- Vision Transformer (ViT): The standard ViT architecture is adapted, often by replacing the final classification head. Fine-tuning leverages pre-trained weights from large vision datasets to overcome the data hunger typically associated with transformers [41] [40].

Training Configuration: Models are typically trained using the Adam optimizer and categorical cross-entropy loss. A critical step is the use of callbacks for early stopping and learning rate reduction on plateau to prevent overfitting and ensure convergence [38] [40].

Performance Evaluation: Models are evaluated on a held-out test set using standard metrics: Accuracy, Precision, Recall, F1-Score, and Area Under the Curve (AUC). For object detection tasks, mean Average Precision (mAP) is used [35]. Cross-validation and ablation studies are sometimes conducted to ensure result robustness [38].

The Scientist's Toolkit: Essential Research Reagents

The following reagents, datasets, and computational tools are fundamental for conducting research in this field.

Table 3: Key Research Reagents and Resources

| Item Name | Function / Application in Research | Example Sources / Instances |

|---|---|---|

| Benchmark Datasets | Provides standardized data for training and evaluating models; crucial for reproducibility and comparison. | PlantVillage Dataset, PlantDoc Dataset, Swedish Leaf Dataset [12] [41] |

| Pre-trained Models | Enables transfer learning; provides powerful feature extractors to boost performance with limited data. | Keras Applications, PyTorch Hub, Hugging Face Transformers [39] [40] |

| Data Augmentation Tools | Increases effective dataset size and diversity; improves model generalization and robustness. | TensorFlow ImageDataGenerator, PyTorch Torchvision.Transforms, Albumentations [39] [38] |

| Explainability Tools | Provides visual explanations for model predictions; builds trust and aids in biological insight. | Grad-CAM++ [42] |

| Optimizers & Loss Functions | Algorithms to update model weights and define the objective for training. | Adam Optimizer, Categorical Cross-Entropy Loss [38] [40] |

| Computational Resources | Hardware accelerators essential for training deep learning models in a feasible time. | GPUs (e.g., NVIDIA Tesla T4) [35] |

This comparative analysis demonstrates that the choice of architecture involves a trade-off between accuracy, computational efficiency, and generalization capability. EfficientNet-B0 consistently emerges as a high-performing and efficient solution, ideal for resource-constrained environments. MobileNetV2 offers a compelling balance of speed and accuracy for real-time applications. While ResNet50 provides strong baseline performance, it can be computationally heavier and prone to overfitting on smaller datasets. Vision Transformers show immense potential, particularly in capturing global context, but their performance is often contingent on larger datasets or sophisticated regularization techniques to mitigate overfitting on smaller plant disease corpora. The integration of attention mechanisms with CNNs in hybrid models presents a promising research direction for enhancing both performance and interpretability in plant species research [38]. Ultimately, the selection of an optimal model depends on the specific requirements of the research task, including the available data, computational resources, and deployment constraints.

The integration of multi-modal data represents a transformative approach in plant science research, enabling unprecedented capabilities in species identification, disease detection, and physiological monitoring. This paradigm combines the accessibility of Red-Green-Blue (RGB) imagery with the rich spectral information from hyperspectral spectroscopy and the predictive power of physical models. While RGB cameras capture broad overlapping wavelength bands that appear realistic to the human eye, hyperspectral imaging (HSI) divides the spectrum into hundreds of contiguous narrow bands, revealing chemical composition details invisible to conventional cameras [43] [44]. This multi-modal integration is particularly valuable within transfer learning frameworks, where models pre-trained on one plant species or dataset can be adapted to others, addressing the critical challenge of limited annotated data in specialized agricultural domains.

The fundamental advantage of hyperspectral technology over RGB imaging lies in its ability to identify materials based on their chemical composition rather than merely their shape and visible color [44]. Each material exhibits a unique spectral signature or "fingerprint" when interacting with electromagnetic radiation, allowing hyperspectral cameras to detect subtle differences between biologically similar samples. For instance, hyperspectral imaging can distinguish almonds from their shells based on spectral features at 930 nm related to the nut's oil content—a differentiation completely impossible for RGB cameras limited to three color bands [44]. This capability makes hyperspectral technology indispensable for demanding applications requiring precise material identification and quantification.

Technical Comparison of Imaging Modalities

Fundamental Principles and Capabilities

RGB Imaging utilizes conventional cameras that capture light in three broad overlapping bands corresponding to red, green, and blue wavelengths. These systems are suitable for characterizing objects based on shape and color but provide minimal identification capability due to their limited spectral information [44]. The RGB color space effectively represents how human vision perceives scenes but contains only a fraction of the information available in the full electromagnetic spectrum.

Hyperspectral Imaging acquires data as a three-dimensional "hyperspectral cube" with two spatial dimensions and one spectral dimension, containing complete spectral information for each pixel in the image [43]. Unlike RGB cameras, hyperspectral systems capture hundreds of contiguous spectral bands, typically ranging from the visible spectrum into the near-infrared (NIR) and sometimes short-wave infrared (SWIR) regions [44]. This detailed spectral data enables the identification of materials based on their chemical composition through their unique spectral signatures.

Table 1: Technical Comparison of RGB and Hyperspectral Imaging Modalities

| Characteristic | RGB Imaging | Hyperspectral Imaging |

|---|---|---|

| Spectral Bands | 3 broad bands (Red, Green, Blue) [44] | Hundreds of contiguous narrow bands [44] |

| Spectral Range | Visible spectrum (approximately 400-700 nm) | Extended range (e.g., 400-1700 nm for Specim FX17) [44] |

| Information Content | Shape, texture, human-perceptible color [44] | Chemical composition, material properties [44] |

| Data Volume per Image | Low (3 values per pixel) | High (hundreds of values per pixel) [45] |

| Primary Applications | Basic color distinction, shape analysis [44] | Precise material identification, quantitative analysis [44] |

| Cost & Accessibility | Widely available, low cost | Specialized equipment, higher cost [45] |

Performance Comparison in Plant Science Applications

Experimental studies demonstrate the superior capability of hyperspectral imaging for precise plant phenotyping and disease detection. In agricultural sorting applications, RGB-based models struggle to distinguish biologically similar materials, while hyperspectral systems achieve high accuracy by leveraging distinctive spectral features outside the visible range.

Table 2: Experimental Performance Comparison in Plant Science Applications

| Application | RGB Performance | Hyperspectral Performance | Experimental Context |

|---|---|---|---|

| Nut/Shell Discrimination | Limited to color and shape differences [44] | High accuracy using 930 nm oil-related spectral feature [44] | Almond sorting with Specim FX10 [44] |

| Plant Disease Classification | 96.40% accuracy with EfficientNetB0 [46] | Not explicitly quantified but enables functional tissue analysis [45] | Tomato disease diagnosis [46] |

| Surgical Tissue Differentiation | Limited to visual appearance | Enables tissue oxygenation (StO2) mapping without contrast agents [45] | Surgical imaging using reconstructed HSI from RGB [45] |

| Multi-Species Plant Identification | 82.61% accuracy with multimodal deep learning [47] | Provides complementary chemical composition data | PlantCLEF2015 dataset with 979 classes [47] |