The AI Revolution in Sensor Technology: A 2025 Outlook for Biomedical Research and Drug Development

This article explores the transformative impact of Artificial Intelligence (AI) and Machine Learning (ML) on sensor technology, with a specific focus on implications for biomedical research and drug development.

The AI Revolution in Sensor Technology: A 2025 Outlook for Biomedical Research and Drug Development

Abstract

This article explores the transformative impact of Artificial Intelligence (AI) and Machine Learning (ML) on sensor technology, with a specific focus on implications for biomedical research and drug development. We examine the foundational technologies powering next-generation smart sensors, from IoT connectivity to advanced data analytics. The analysis covers methodological applications in predictive maintenance, real-time process optimization, and autonomous systems within research and production environments. The article also provides a critical comparative analysis of different AI approaches, addressing troubleshooting and validation strategies to ensure data integrity and system reliability. Finally, we synthesize key takeaways and future directions, outlining how these technological synergies are poised to accelerate drug discovery, enhance manufacturing quality, and advance clinical research.

The Building Blocks: Core Technologies Powering AI-Integrated Sensor Systems

The field of plant science is undergoing a profound transformation, moving from periodic, manual data collection to continuous, intelligent monitoring systems. Smart sensor technology, characterized by its integration with Artificial Intelligence (AI) and Internet of Things (IoT) platforms, is revolutionizing how researchers understand plant physiology, stress responses, and health. This evolution aligns with the emergence of Agriculture 5.0, which emphasizes a human-centric, sustainable, and resilient approach to agricultural innovation through collaborative efforts between human expertise and machine efficiency [1] [2]. For researchers and drug development professionals, this technological shift enables unprecedented precision in probing plant biological systems, opening new frontiers in phytochemical research, stress adaptation studies, and the development of plant-based therapeutics. The synergy between next-generation sensors and AI is not merely an incremental improvement but a fundamental redesign of the research toolkit, allowing for the decoding of complex plant signaling mechanisms in real-time [3].

The Architectural Evolution of Plant Sensors

From Simple Measurements to Complex Diagnostics

The journey of plant sensing began with simple environmental monitors that measured basic parameters like soil moisture and temperature. Today's sensors have evolved into sophisticated diagnostic tools capable of detecting molecular-level changes in plant systems.

First-generation sensors were primarily physical sensors focused on external environmental conditions: soil moisture, ambient temperature, humidity, and light levels. While valuable, these provided only indirect inferences about plant status [4].

Second-generation sensors introduced direct plant-based monitoring, measuring parameters like stem diameter, leaf thickness, and sap flow for direct plant stress measurement. This represented a significant advance by capturing the plant's physiological response to its environment [4].

Third-generation sensors now encompass chemical and electrophysiological sensing, capable of detecting volatile organic compounds (VOCs), reactive oxygen species (ROS), ions, pigments, and even action potentials in plants [5]. These sensors provide a window into the molecular signaling pathways that underlie plant stress responses and defense mechanisms—critical intelligence for pharmaceutical research involving plant-derived compounds.

The Convergence with AI and Machine Learning

The true intelligence of modern sensor systems emerges from their integration with AI algorithms. This convergence enables not just data collection but predictive analytics and prescriptive interventions. The AI models best suited for plant sensor data include:

- Convolutional Neural Networks (CNNs) such as VGG16, VGG19, and ResNet50, which consistently perform well across stress types for image-based classification [3]

- Detection-focused models like YOLO and lightweight architectures such as MobileNet that show greater variability, particularly in biotic stress identification tasks [3]

- Traditional machine learning methods including Support Vector Machines (SVM), Decision Trees, and k-Nearest Neighbors, which remain relevant for structured, low-resolution data, especially under constrained computational conditions [3] [2]

The optimization algorithms most commonly employed include Adam (predominantly for abiotic stress monitoring) and Stochastic Gradient Descent (more common for biotic stress) [3]. This algorithmic specialization allows for increasingly precise modeling of plant physiological responses.

Table 1: Evolution of Smart Plant Sensor Capabilities

| Generation | Primary Focus | Key Parameters Measured | Technological Enablers | Limitations |

|---|---|---|---|---|

| First Generation | Environmental conditions | Soil moisture, air temperature, humidity, light intensity | Basic analog sensors, manual data collection | Indirect plant assessment, delayed response |

| Second Generation | Plant physiology | Stem diameter, leaf thickness, sap flow, chlorophyll content | Digital sensors, wireless communication, basic data loggers | Limited molecular information, post-symptom detection |

| Third Generation | Molecular signaling & early stress detection | VOCs, ROS, ions, pigments, electrophysiological signals | AI/ML integration, IoT networks, flexible electronics, nanosen sors | Cost, technical complexity, data management challenges |

Next-Generation Sensor Technologies for Advanced Research

Wearable Plant Sensors

Wearable plant sensors represent a cutting-edge frontier in plant health monitoring. These devices offer non-invasive, high-sensitivity, and highly integrated capabilities for continuous, real-time monitoring [5]. They can be categorized into three primary types based on their sensing mechanisms:

- Physical Sensors: Designed to sense strain, temperature, humidity, and light directly from plant surfaces [5].

- Chemical Sensors: Capable of detecting volatile organic compounds, reactive oxygen species, ions, and pigments that signal specific stress responses or metabolic changes [5].

- Electrophysiological Sensors: Engineered to monitor action potentials and variation potentials in plants, analogous to neurological monitoring in medical research [5].

The development of these sensors faces significant challenges, particularly in ensuring long-term stability in harsh and unpredictable agricultural environments. Issues such as the melting of coating materials, changes in the internal stress of sensing layers, and the loosening of sensor adhesion to plants due to physiological effects or environmental changes need to be addressed for widespread adoption [6]. Current research focuses on creating flexible wearable sensors fabricated from biocompatible materials to ensure high-resolution data acquisition without impeding plant growth [6].

Hyperspectral Imaging and Electronic Noses

Advanced sensor technologies are moving beyond single-point measurements to comprehensive spatial and chemical profiling:

Hyperspectral imaging captures data across the electromagnetic spectrum, allowing researchers to identify subtle changes in plant physiology before they become visible to the naked eye. This technology enables the detection of nutrient deficiencies, water stress, and disease incidence at their earliest stages [3].

Electronic noses equipped with sensor arrays can detect and profile volatile organic compounds (VOCs) released by plants under different stress conditions. These VOC profiles serve as chemical fingerprints for specific biotic and abiotic stresses, with research demonstrating sensors with high accuracy in identifying plant stress [6] [3]. For pharmaceutical researchers, this technology offers potential for non-destructive quality assessment of medicinal plants and early detection of phytochemical changes.

IoT and Edge Computing for Distributed Intelligence

The integration of sensor networks with Internet of Things (IoT) platforms enables remote monitoring, data analysis via AI, and automated control systems [1]. The emergence of Edge AI represents a significant advancement, where data processing occurs on the device itself rather than being transmitted to the cloud, enabling immediate decisions [7] [2].

This distributed intelligence is particularly valuable in field research settings where connectivity may be limited. The implementation of 5G networks further enhances this capability by enabling faster, real-time connections between equipment and systems [7]. For multi-site clinical trials involving plant-based therapeutics, this ensures consistent, synchronized monitoring protocols across geographically dispersed locations.

Table 2: Performance Comparison of AI Algorithms in Plant Stress Monitoring

| Algorithm Type | Primary Applications | Reported Accuracy Ranges | Strengths | Limitations |

|---|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Image-based stress classification, disease identification | 85-96% [3] | High accuracy with complex image data, minimal feature engineering required | Computationally intensive, requires large datasets |

| YOLO Models | Real-time stress detection and localization | 78-92% [3] | Fast processing, suitable for video and continuous monitoring | Lower accuracy with small stress features, variable performance across stress types |

| Support Vector Machines (SVM) | Structured data analysis, nutrient deficiency identification | 82-90% [3] [2] | Effective with smaller datasets, robust against overfitting | Limited performance with unstructured data, requires careful feature selection |

| Random Forests | Multi-parameter sensor fusion, yield prediction | 80-88% [2] | Handles mixed data types, provides feature importance | Can overfit with noisy data, less interpretable than single decision trees |

| Lightweight Architectures (MobileNet) | Edge device deployment, mobile applications | 75-87% [3] | Low computational requirements, suitable for resource-constrained environments | Lower accuracy compared to more complex models |

Experimental Protocols for Intelligent Plant Sensing

Protocol 1: Multi-Modal Stress Response Profiling

Objective: To simultaneously monitor physical, chemical, and electrophysiological responses of plants to controlled stress stimuli.

Methodology:

- Sensor Deployment: Attach flexible physical sensors (strain, temperature) to plant stems and leaves. Install chemical sensors for VOC monitoring in the plant's immediate environment. Place microelectrode arrays for electrophysiological signal capture [5].

- Stress Induction: Apply controlled abiotic (drought, salinity) or biotic (pathogen inoculation) stress according to experimental requirements.

- Data Acquisition: Collect continuous data from all sensors at high frequency (minimum 1 Hz sampling rate) throughout the experimental period.

- Data Fusion: Employ sensor fusion algorithms to integrate multi-modal data streams and identify cross-correlated events.

- Model Training: Use the synchronized dataset to train ML models (CNN for spatial data, LSTM for temporal patterns) to predict stress states from early signals.

Validation: Compare sensor-derived stress classifications with conventional physiological assays (chlorophyll fluorescence, ion leakage, molecular markers) to establish correlation metrics [3].

Protocol 2: High-Throughput Phenotyping with AI-Assisted Imaging

Objective: To automate the detection and quantification of plant stress symptoms using integrated sensor platforms.

Methodology:

- Platform Setup: Deploy autonomous mobile robots or UAVs equipped with multi-spectral cameras, hyperspectral imagers, and LiDAR sensors [3].

- Data Collection: Program automated traversal paths for consistent, repeatable data collection across large plant populations.

- Image Processing: Apply pre-trained CNN architectures (VGG16, ResNet50) for feature extraction and symptom identification [3].

- Segmentation and Quantification: Use U-Net or similar architectures to segment affected plant areas and quantify stress severity.

- Temporal Tracking: Implement object detection models (YOLO) to track individual plants over time and monitor symptom progression.

Validation: Establish ground truth through manual annotation by plant pathologists and calculate precision/recall metrics for the AI system [3].

Research Reagent Solutions for Advanced Plant Sensing

Table 3: Essential Research Reagents and Materials for Plant Sensor Development

| Reagent/Material | Function | Application Examples | Technical Considerations |

|---|---|---|---|

| Biocompatible Polymers (e.g., PDMS) | Flexible sensor substrate | Wearable plant sensors that adhere to plant surfaces without impeding growth [5] | Must maintain adhesion during plant growth; should not inhibit gas exchange |

| Ion-Selective Membranes | Chemical sensing layer | Detection of specific ions (K+, Ca2+, NO3-) in plant sap or apoplast [5] | Requires calibration for different plant species; sensitivity to temperature variations |

| Carbon Nanotube/ Graphene Inks | Conductive sensing elements | Printed electrochemical sensors for metabolite detection [5] | Consistency in deposition crucial for reproducible results; potential toxicity concerns |

| VOC-Binding Ligands | Chemical recognition elements | Electronic noses for plant stress volatile detection [6] [3] | Selectivity against complex background odors; drift compensation needed |

| Fluorescent Nanoparticles | Optical sensing probes | Hyperspectral imaging of pH, ions, or reactive oxygen species [3] | Photostability under prolonged illumination; potential interference with plant physiology |

| Enzyme-Based Biosensors | Specific metabolite detection | Monitoring glucose, sucrose, or stress-related metabolites [8] | Enzyme stability under field conditions; calibration requirements |

Data Integration and Workflow Architecture

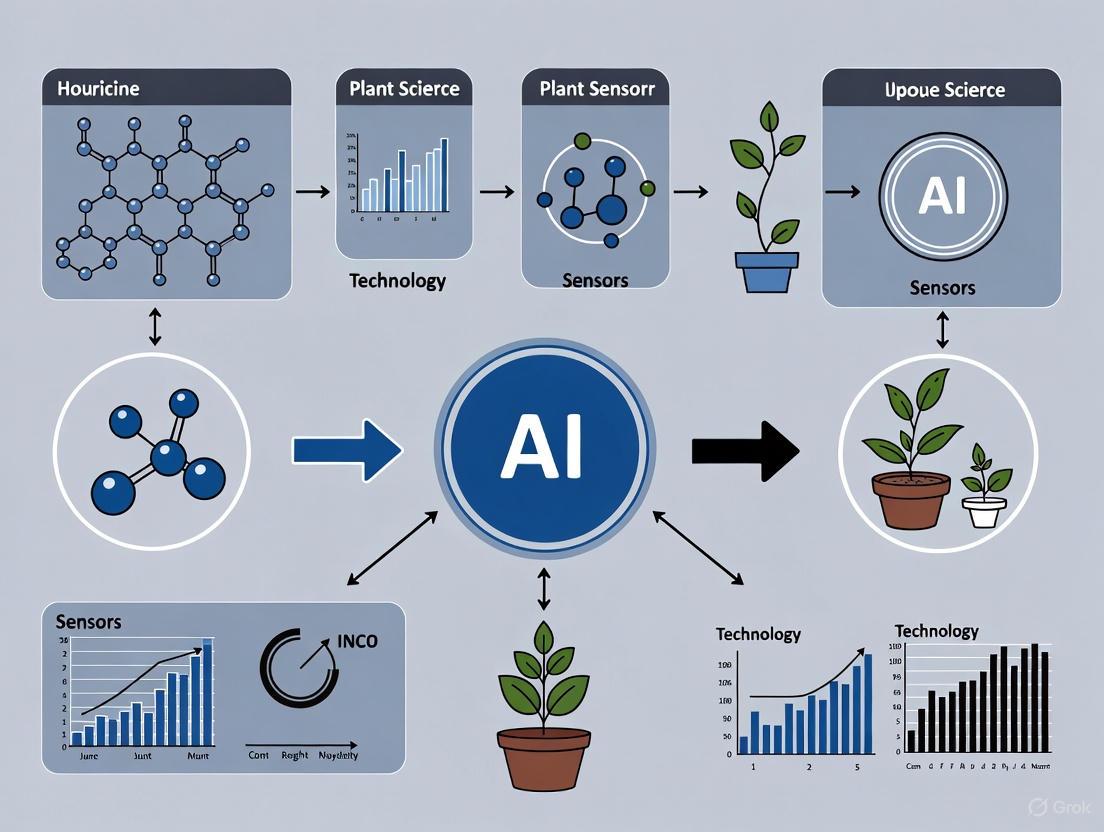

The intelligence derived from smart sensors depends critically on the architecture for data processing and analysis. The following workflow represents the standard pipeline for transforming raw sensor data into actionable research insights:

This workflow illustrates the transformation of multi-modal sensor data through edge processing and AI analytics into actionable research insights. The architecture emphasizes distributed computing, where initial data processing occurs at the edge to reduce bandwidth requirements, while more complex analytics leverage cloud or high-performance computing resources [7] [2].

Future Directions and Research Opportunities

The future of smart sensors in plant research will be shaped by several emerging technologies and paradigms:

Digital Twins for Plant Systems

Digital twin technology—virtual replicas of physical systems—enables researchers to create computational models of individual plants or entire ecosystems [7]. These twins can be used to simulate stress responses, test interventions, and optimize sensor placement without disturbing actual plants. For pharmaceutical researchers working with medicinal plants, digital twins offer the potential to model phytochemical production under various environmental conditions, accelerating the discovery of optimal cultivation protocols.

AI-Driven Predictive Phenotyping

The integration of predictive analytics with sensor data will enable researchers to forecast plant development, stress susceptibility, and chemical composition based on early growth patterns [3] [4]. This approach is particularly valuable for breeding programs targeting specific phytochemical profiles, where traditional analytical methods are time-consuming and destructive.

Sustainable and Biodegradable Sensors

Addressing the environmental impact of sensor deployment represents a critical research frontier. The development of biodegradable sensors using eco-friendly materials will be essential for large-scale deployment without ecological consequences [6]. Research in this area focuses on creating "set and forget" solutions that are biocompatible and biodegradable, addressing concerns about environmental impact and long-term usability [6].

Human-Machine Collaboration in Research

The Agriculture 5.0 paradigm emphasizes collaborative intelligence between human researchers and AI systems [1]. This approach leverages human expertise in hypothesis generation and experimental design while utilizing AI capabilities for pattern recognition in high-dimensional data. For drug development professionals, this collaboration enables more efficient identification of promising plant-derived compounds and their optimal production conditions.

The evolution of smart sensors from simple data collection devices to intelligent analytical platforms represents a paradigm shift in plant science research. This transformation, driven by advances in AI integration, sensor miniaturization, and IoT connectivity, enables researchers to decode complex plant signaling networks with unprecedented temporal and spatial resolution. For the pharmaceutical and drug development community, these technologies offer powerful new tools for understanding plant-derived compounds, optimizing their production, and discovering new therapeutic agents from plant sources.

The future trajectory points toward increasingly non-invasive, predictive, and context-aware sensing platforms that will further blur the boundaries between biological and digital research methodologies. As these technologies mature, they will undoubtedly accelerate the pace of discovery in plant-based pharmaceutical research while enabling more sustainable and precise cultivation of medicinal plants. The researchers who successfully integrate these intelligent sensor systems into their workflows will gain a significant competitive advantage in the race to develop new plant-based therapeutics and optimize their production.

The integration of artificial intelligence (AI) and advanced sensor technologies is fundamentally transforming how vital parameters are monitored in both research and production environments, particularly within the biomedical and agricultural sectors. This whitepaper delineates the core sensor types that form the backbone of this transformation. These technologies are pivotal components of a broader thesis on the future of AI and machine learning in research, enabling a shift from reactive to predictive and personalized approaches. The synergy between sophisticated sensors—capable of continuous, real-time data acquisition—and intelligent algorithms is accelerating drug discovery, optimizing production processes, and paving the way for precision medicine and smart agriculture [9] [10] [3]. This guide provides a technical examination of these key sensors, their operational methodologies, and their integrated applications within AI-driven frameworks.

Key Sensor Types and Technical Specifications

The evolution of sensor technology has been marked by advancements in miniaturization, flexibility, and multi-modality. The following sensor types are at the forefront of modern monitoring systems.

Table 1: Key Sensor Types for Vital Parameter Monitoring

| Sensor Type | Sensing Principle | Measured Parameters | Key Technologies & Materials | Performance Specifications |

|---|---|---|---|---|

| Wearable/Implantable Electrochemical (Bio)sensors [11] | Measurement of electrical signals (current, potential) from chemical reactions. | Agrochemicals, phytohormones (e.g., salicylic acid), stress biomarkers, H₂O₂, NH₄⁺ [9] [11]. | Nanomaterials, bioreceptors (enzymes, antibodies), flexible substrates. | High sensitivity & selectivity; real-time, in-situ monitoring; detection limit for NH₄⁺: ~3 ppm [9]. |

| Flexible Mechanical Sensors [12] | Measurement of physical deformation or force. | Plant growth (stem/fruit elongation), sap flow, transpiration rates [12]. | Conductive polymers (PEDOT:PSS), carbon nanotubes (CNTs), graphite-chitosan inks, Fiber Bragg Gratings (FBGs) in silicone [12]. | Gauge factor up to 352 [12]; measures micro-strain (e.g., 720µm elongation) [12]; stretchability up to 150%. |

| Optical & Spectroscopic Sensors [13] [3] | Measurement of light interaction with plant tissue (absorption, reflection). | Nitrogen levels, water content, plant secondary metabolites, chlorophyll content [13] [3]. | Hyperspectral imaging, near-infrared (NIR) & shortwave infrared (SWIR) spectroscopy, handheld spectrometers [13]. | Non-invasive; provides high spatial resolution; rapid analysis (seconds). |

| Electronic Noses (E-Noses) [3] | Detection of volatile organic compound (VOC) profiles via sensor arrays. | Early disease identification, plant stress response [3]. | Arrays of gas sensors with partial specificity, pattern recognition algorithms. | Enables early stress detection before visible symptoms appear. |

Experimental Protocols for Key Sensor Deployment

Objective: To fabricate a highly stretchable, direct-write strain sensor for in-situ monitoring of fruit or stem elongation.

Materials:

- Conductive ink: A composite of carbon nanotubes (CNTs) and graphite flakes.

- Substrate: Buna-N rubber or the direct surface of the plant.

- Instrumentation: Data acquisition system (e.g., source meter) with Bluetooth module for wireless transmission.

Methodology:

- Ink Preparation: A multi-matrix composite ink is prepared by dispersing CNTs and graphite flakes in a solvent to form a highly conductive and stretchable mixture.

- Sensor Fabrication: The conductive ink is applied directly onto the target plant surface (e.g., fruit skin or stem) using a direct writing technique, creating a specific serpentine or linear pattern.

- Curing: The applied ink is left to air-dry at room temperature for approximately 15 minutes to form the final sensor.

- Calibration: The sensor is calibrated by measuring the change in electrical resistance (∆R/R) against known mechanical strains. The Gauge Factor (GF = [∆R/R]/ε) is calculated.

- Deployment & Data Acquisition: The sensor is connected to a simple serial resistance circuit and a wireless data acquisition system. Changes in resistance, corresponding to plant growth (strain), are transmitted in real-time via Bluetooth to a computer or smartphone for analysis.

Objective: To non-invasively determine key nutrient levels in a plant leaf within seconds using a handheld spectrometer and a cloud-based machine learning model.

Materials:

- Hand-held spectrometer (covering visible to shortwave infrared: 400–2400 nm).

- Mobile application with cloud connectivity.

- Pre-trained machine learning model (e.g., convolutional neural network).

Methodology:

- Data Collection: A leaf is scanned using a hand-held spectrometer connected via Bluetooth to a mobile app. The device shines light onto the leaf and measures the absorbed and reflected wavelengths.

- Spectral Data Generation: The instrument produces a characteristic absorption spectrum based on the excitation of molecular bonds (C–H, N–H, O–H) at specific wavelengths.

- Cloud-Based Prediction: The spectral data is sent to a cloud service where a pre-trained machine learning model processes it. The model was trained on a large database matching leaf spectral data to nutrient values obtained through traditional laboratory analysis.

- Result Delivery: The predicted nutrient levels (e.g., nitrogen, water content) are returned to the mobile app and displayed to the user within seconds, enabling immediate decision-making.

The AI and Machine Learning Engine

The raw data from advanced sensors gains its transformative power through analysis by AI and machine learning models. These algorithms identify complex, non-linear patterns that are often imperceptible to human observation.

Table 2: Dominant AI/ML Algorithms in Sensor Data Analysis

| Algorithm | Primary Application | Key Advantage | Example Use-Case |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) [3] | Image and spectral data classification. | Excellent at feature extraction from spatial data. | Identifying disease patterns from hyperspectral leaf images [3]. |

| YOLO (You Only Look Once) [3] | Real-time object detection. | High speed and accuracy in locating and classifying objects. | Detecting and localizing pest infestations from drone-captured imagery [3]. |

| Random Forest (RF) [10] [3] | Structured data analysis, QSAR modeling. | Handles high-dimensional data well; reduces overfitting. | Predicting compound efficacy and toxicity in drug discovery [10]. |

| Support Vector Machines (SVM) [3] | Classification and regression tasks. | Effective in high-dimensional spaces with a clear margin of separation. | Classifying plant stress types from sensor-derived VOC profiles [3]. |

| Ensemble Learning [14] | Combining multiple models for improved prediction. | Increases predictive accuracy and robustness by leveraging multiple models. | Combining 10 ML models to assess rice yield performance under climate change [14]. |

The workflow from data acquisition to actionable insight is a continuous, iterative cycle. The diagram below illustrates this integrated pipeline.

AI-Sensor Integration Pipeline

The Scientist's Toolkit: Essential Research Reagents and Materials

The development and deployment of advanced monitoring systems rely on a suite of specialized reagents and materials.

Table 3: Key Research Reagent Solutions

| Item | Function | Application Example |

|---|---|---|

| Carbon Nanotubes (CNTs) & Graphite Flakes [12] | Form the conductive network in composite inks, providing stretchability and piezoresistivity. | Primary component in direct-write flexible strain sensors for plant growth monitoring [12]. |

| Single-Walled Carbon Nanotube (SWNT) Probes [9] | Act as a nanosensor for specific biomarkers; high surface area for sensitivity. | Real-time detection of hydrogen peroxide (H₂O₂) in plant tissues for wound response monitoring [9]. |

| Fiber Bragg Grating (FBG) [12] | Optical sensor whose reflected wavelength shifts with applied strain or temperature. | Embedded in silicone to create flexible sensors for stem elongation and fruit diameter monitoring [12]. |

| Bioreceptors (Enzymes/Antibodies) [11] | Provide high specificity for target analytes in biosensors. | Functionalization of electrochemical sensors for detecting specific phytohormones or pathogens [11]. |

| Chitosan [12] | Biocompatible polymer used as a binder in conductive inks; enables adhesion to plant surfaces. | Matrix material in graphite-based conductive inks for plant-wearable sensors [12]. |

| Hyperspectral Imaging Sensors [3] | Capture spectral data across many wavelengths, creating a detailed chemical fingerprint. | Non-invasive detection of nutrient deficiencies and early-stage biotic stress in crops [3]. |

The confluence of key sensor types—electrochemical, mechanical, optical, and volatile compound detectors—with sophisticated AI/ML algorithms is creating an unprecedented capability for monitoring vital parameters. This synergy is the cornerstone of the future of research and production, enabling a paradigm shift towards intelligent, data-driven decision-making. As these technologies continue to evolve, becoming more integrated, miniaturized, and powerful, they will further dissolve the boundaries between physical biological systems and digital intelligence, driving innovation across biomedical science and agricultural production.

The convergence of the Internet of Things (IoT), 5G connectivity, and edge computing is creating an unprecedented technological backbone for real-time data flow. This infrastructure is fundamentally reshaping research and application across numerous fields. Within the specific context of plant science, this connectivity triad serves as the central nervous system for a new era of intelligent monitoring. It enables the transition from traditional, manual data collection to a continuous, automated, and intelligent stream of phenotypic and physiological data [15] [9]. This real-time data flow is the critical enabler for advanced artificial intelligence (AI) and machine learning (ML) models, allowing researchers to move from retrospective analysis to proactive intervention and discovery. The future of AI in plant sensor research is inextricably linked to the evolution of this robust, low-latency connectivity layer, which empowers everything from single-sensor readings to complex, ecosystem-wide digital twins [16].

The Architectural Framework for Real-Time Data Intelligence

The seamless flow of data from physical sensors to actionable insights relies on a sophisticated, layered architecture. This framework efficiently distributes computational tasks across the network, optimizing for latency, bandwidth, and security.

The Hierarchical Data Flow Model

The logical progression of data in a modern plant sensing system can be visualized through the following architecture, which integrates edge, cloud, and business layers:

This architecture illustrates the stratified flow of information, which is critical for managing the scale and security of modern agricultural IoT systems [15] [17]. At Level 1, a network of sensors—including wearable plant sensors, spectral imagers, and soil monitors—collects raw data [9]. This data is transmitted via 5G for high-bandwidth applications like video phenotyping or Low-Power Wide-Area Networks (LPWAN) for intermittent, low-power soil moisture readings to the Level 2 edge layer [15]. Here, initial processing and real-time AI inference occur, minimizing latency for immediate responses [15]. Processed data is then securely passed through a Demilitarized Zone (DMZ) at Level 3.5 before reaching the cloud for intensive storage and model training [17]. Finally, insights are delivered to end-users at Level 4 through dashboards and mobile applications, with security maintained through mechanisms like data diodes that prevent reverse access [17].

The Role of 5G and Advanced Communication Protocols

The efficacy of the entire connectivity backbone hinges on the communication protocols that link the sensors to the edge and cloud. 5G technology is a cornerstone of this system, providing the ultra-low latency and high bandwidth essential for applications like autonomous vehicles and real-time high-resolution phenotyping [15]. For example, transmitting 3D plant imagery or data from high-throughput phenotyping platforms requires a robust and fast connection to be practical [16]. Alongside 5G, LPWAN technologies like LoRaWAN are vital for applications that prioritize long-range communication and minimal energy consumption, such as environmental monitoring across vast fields, where sensors can operate autonomously for years [15].

Table 1: Communication Technologies for Agricultural IoT

| Technology | Key Features | Best-Suited Applications in Plant Research | Limitations |

|---|---|---|---|

| 5G | High bandwidth (Gbps), Ultra-low latency (<1ms) [15] | Real-time video phenotyping, Autonomous scouting drones, High-resolution sensor networks | Higher power consumption, Limited rural infrastructure |

| LPWAN (e.g., LoRaWAN) | Long range (>10 km urban), Very low power consumption [15] | Soil moisture networks, Climate stations, Low-frequency plant wearables | Low data rate, Not suitable for image/video streaming |

| Wi-Fi 6 | High capacity, Low latency in local areas [18] | Greenhouse networks, Lab-based phenotyping systems | Limited range, Requires power infrastructure |

| Bluetooth Low Energy (BLE) | Short range, Low power, Low cost [13] | Hand-held sensor links (e.g., Leaf Monitor), Personal area networks | Very limited range (<100m) |

Core Technologies Powering the Backbone

Internet of Things and Smart Sensors

IoT sensor networks form the foundational layer for real-time data acquisition in modern plant science [15]. These are no longer simple, passive data collectors. A new generation of smart sensors features built-in processing capabilities, often directly incorporating AI or ML algorithms [15]. This allows for on-device analysis, which reduces the need for constant data transmission and conserves bandwidth. For instance, a smart sensor can locally analyze temperature variations to detect potential equipment issues without sending raw data to the cloud [15].

Driven by innovations in micro-nano technology and flexible electronics, sensors are becoming smaller, more intelligent, and multi-modal [9]. For example, wearable plant sensors with flexible adhesion can be installed directly on the irregular surfaces of crop tissues for in-situ, real-time monitoring of physiological parameters [9]. Similarly, nanosensors based on single-walled carbon nanotubes (SWNTs) have been developed for the real-time detection of specific compounds like hydrogen peroxide (H2O2) induced by plant wounds, offering high sensitivity and enabling real-time monitoring of plant stress in the field [9].

Edge Computing for Low-Latency Intelligence

Edge computing has emerged as a transformative paradigm to address the limitations of cloud-centric models, particularly concerning latency, bandwidth, and real-time decision-making [15]. By processing data closer to its source—at the "edge" of the network—this approach reduces dependence on centralized cloud systems. For latency-sensitive applications, such as real-time disease detection or automated irrigation control, edge computing is not merely an enhancement but a necessity [15].

The ability to perform AI inference directly on edge devices is a key advancement. Frameworks like TensorFlow Lite and PyTorch Mobile enable the deployment of lightweight, optimized AI models on resource-constrained devices [15]. This allows edge systems to analyze data locally. A prime example is found in high-throughput phenotyping, where edge devices equipped with AI can process images from drones or rovers in real-time to count organs, monitor growth, or detect stress, significantly improving response times while conserving network bandwidth [16]. This hybrid architecture, which dynamically allocates tasks between edge and cloud, optimizes resource use and ensures high performance [15].

Cloud-Based AI and Large-Scale Analytics

Cloud computing serves as the computational backbone of the IoT ecosystem, providing the scalable infrastructure required for storing, processing, and analyzing the vast amounts of data generated by edge devices and sensors [15]. The integration of AI into cloud platforms has redefined how raw data is transformed into actionable insights. Cloud services like AWS SageMaker, Google Vertex AI, and Microsoft Azure AI streamline the deployment and management of complex ML models [15]. These platforms are essential for resource-intensive tasks such as training deep learning models on large-scale datasets, which is impractical on limited edge hardware [15].

In plant research, the cloud is indispensable for consolidating data from multiple field sites to train robust models for predicting crop yield [19], identifying genetic markers [20], or simulating crop performance under future climate scenarios [20]. Furthermore, cloud platforms address critical concerns of data privacy and security through advanced encryption and compliance with international regulations, which is crucial when handling sensitive data [15] [21].

Practical Implementation and Experimental Protocols

Translating the theoretical connectivity backbone into a functional research tool requires a clear understanding of its implementation. The following workflow and accompanying toolkit detail the process of establishing a real-time plant monitoring system.

Experimental Workflow for a Real-Time Plant Monitoring System

The diagram below outlines a generalized protocol for deploying and operating an IoT-enabled system for real-time plant health monitoring, from sensor deployment to insight generation.

Phase 1: Sensor Deployment & Data Acquisition Researchers first deploy a suite of multimodal sensors tailored to the experimental variables of interest [9]. This may include flexible wearable sensors attached to plant leaves or stems to monitor physiological status, soil sensor arrays for moisture and nutrient levels, and drone- or rover-based spectral imaging systems for canopy-level phenotyping [16] [9]. A critical step is the calibration of these sensors against laboratory-grade equipment to ensure data fidelity. For example, a handheld spectrometer used for leaf nutrient analysis must be correlated with traditional chemical analysis results to build a reliable machine learning model [13].

Phase 2: Secure Data Transmission Collected data is transmitted wirelessly using the appropriate protocol from Table 1. Security measures are paramount. As demonstrated in a recent IoT monitoring system, implementing Two-Factor Authentication (2FA) and JSON Web Tokens (JWT) protects sensitive agricultural data from unauthorized access [21]. In industrial settings, data is routed through a DMZ, a security buffer that protects the internal process control network from the internet-connected business network [17].

Phase 3 & 4: Distributed Computing & Analytics Time-sensitive processing, such as real-time anomaly detection for disease, occurs at the edge [15] [16]. This involves lightweight ML models running on local devices. The processed data and non-urgent tasks are then sent to the cloud. In the cloud, data from multiple sources is aggregated, and more complex, resource-intensive AI models are trained and refined [15]. For instance, a cloud-based AI might integrate historical weather, soil data, and real-time sensor readings to predict future nutrient deficiencies [20] [19].

Phase 5: Insight Delivery & Visualization The final insights are delivered to researchers and farmers through user-friendly interfaces like mobile apps or web dashboards [13] [21]. A successful example is the Leaf Monitor tool, which allows a user to scan a leaf and receive key nutrient values within seconds, enabling immediate, data-driven decisions [13].

The Researcher's Toolkit: Key Technologies and Reagents

Implementing the connectivity backbone and associated analyses requires a suite of specialized tools and platforms. The following table catalogs essential components for building a real-time plant sensing system.

Table 2: Research Reagent Solutions for an IoT-Enabled Plant Lab

| Category | Item | Function & Application |

|---|---|---|

| Sensing & Imaging | Hand-held Spectrometer [13] | Captures leaf spectral data (400-2400 nm) for non-destructive estimation of nitrogen, water content, and secondary metabolites. |

| Wearable Plant Sensor [9] | Flexible, adhesive patches for in-situ, continuous monitoring of plant physiological status (e.g., sap flow, biomarkers). | |

| Drone-based Multispectral Camera [16] | Enables high-throughput field phenotyping by capturing canopy-level data for growth monitoring and stress detection. | |

| Edge Hardware | Single-Board Computer (e.g., Raspberry Pi) | A low-cost, versatile computing node for building custom edge devices to run lightweight AI models for real-time inference. |

| Micro-electromechanical Systems (MEMS) [9] | Miniaturized sensors and structures that enable the development of compact, low-power sensors for plant and environmental monitoring. | |

| Cloud & AI Platforms | AWS IoT Core / Google Cloud IoT | Managed cloud services to securely connect, manage, and ingest data from a global network of IoT devices [21]. |

| TensorFlow / PyTorch [15] | Open-source machine learning frameworks used to develop, train, and deploy models for tasks like image-based disease diagnosis [16] [22]. | |

| Security & Connectivity | Two-Factor Authentication (2FA) [21] | A security process that requires two forms of identification to access data, protecting sensitive plant and field data. |

| LoRaWAN Gateway [15] | A network gateway that enables long-range, low-power communication between sensors and the network server, ideal for large farms. |

Quantitative Performance and Future Outlook

Performance Metrics of the Connected Backbone

The real-world efficacy of this technological backbone is validated by concrete performance metrics. A 2024 IoT plant monitoring system demonstrated high sensor reliability, with determination coefficients (R²) of 0.979 for temperature and 0.750 for humidity when compared to reference data [21]. Furthermore, by implementing power management strategies at the edge, the system extended its battery life to 10 days on a single charge, a significant improvement over existing systems that required daily recharging [21]. From an AI perspective, studies reviewing crop disease detection have found that while Convolutional Neural Networks (CNNs) are the most widely used and cost-effective, emerging Vision Transformers (ViTs) can achieve superior accuracy, albeit at a higher computational cost [22]. The choice of architecture thus represents a trade-off between performance and resource constraints, a key consideration for practical deployment.

Envisioning the Future: AI-Driven Plant Research

The continuous maturation of this connectivity backbone paves the way for transformative advancements in AI-driven plant research. Key future directions include:

- Scalable and Robust AI Models: Future research must prioritize making high-performance AI models like Vision Transformers more computationally efficient and accessible [22]. This involves exploring techniques like neural architecture search and model quantization to reduce their footprint for edge deployment, making them viable for a broader range of researchers and applications.

- Multimodal Data Fusion and Digital Twins: The next frontier is the intelligent fusion of data from diverse sources—genomics, sensor readings, drone imagery, and weather forecasts—into a unified AI model [16]. This will enable the creation of "digital twins" of plants or entire fields, which are virtual models that can be used to simulate growth, predict the impact of stressors, and test intervention strategies in silico before applying them in the real world [16].

- Pervasive Environmental Intelligence: The ultimate goal is the development of fully integrated, sustainable agricultural systems [15]. In this future, the seamless convergence of IoT, edge, and cloud computing will create a pervasive network of intelligence that autonomously optimizes resource use, enhances crop resilience, and provides unprecedented insights into plant biology, contributing directly to global food security [15] [19].

The integration of advanced artificial intelligence (AI) paradigms with sophisticated sensor technologies is fundamentally transforming plant science research. This transition moves beyond traditional data collection towards creating intelligent, closed-loop systems capable of sensing, understanding, and autonomously acting upon complex plant physiochemical data. As global agricultural systems face escalating pressures from climate change and resource scarcity, the fusion of predictive AI, generative AI, and agentic AI with multimodal sensor networks offers a revolutionary pathway to enhance crop resilience, optimize resource efficiency, and secure sustainable food production. This technical guide explores the core principles, applications, and experimental implementations of these AI paradigms within plant sensor research, providing researchers with a framework for developing next-generation intelligent agricultural systems.

Core AI Paradigms: Definitions and Synergies

The power of modern AI in sensor data analysis stems from the complementary strengths of three distinct paradigms.

Predictive AI utilizes historical and real-time sensor data to forecast future events or outcomes. It applies statistical models and machine learning (ML) algorithms—including regression analysis, time-series forecasting, and classification techniques—to identify patterns in data, enabling the anticipation of plant stress, disease outbreaks, or optimal harvest times [23] [24] [25]. Its primary function is to answer "What is likely to happen?".

Generative AI differs by creating new data or content based on learned patterns from existing datasets. In plant science, it can generate synthetic spectral images, draft reports from complex sensor data, or create hypothetical growth models [23] [26]. It moves beyond forecasting to synthesize new information, answering "What are possible scenarios or solutions?".

Agentic AI represents a transformative leap by enabling AI systems to take autonomous actions based on predictions and generative insights. These "agents" can perceive their environment via sensor data, reason to make decisions, execute actions through connected systems (e.g., adjusting irrigation), and learn from the outcomes [23] [24] [27]. Agentic AI closes the loop between insight and action, creating autonomous systems for continuous plant health management.

Table 1: Comparative Analysis of Core AI Paradigms in Plant Sensor Research

| Aspect | Predictive AI | Generative AI | Agentic AI |

|---|---|---|---|

| Primary Goal | Forecast future outcomes or probabilities [24] | Create new content or data samples [23] [24] | Take autonomous action to achieve a goal [24] |

| Core Function | Uses historical data to forecast likelihood [24] | Learns patterns and generates original outputs [24] | Perceives, reasons, acts, and learns autonomously [24] |

| Key Technologies | Statistical modeling, regression, time-series forecasting [24] | Large Language Models (LLMs), diffusion models, transformers [24] | Multi-agent systems, reinforcement learning, contextual decision engines [24] |

| Example Application | Predicting plant stress from sensor data [28] | Generating daily shift reports from sensor data [23] | Automatically adjusting irrigation and nutrient delivery [24] [26] |

The paradigms are not mutually exclusive; their integration creates powerful synergies. A typical workflow may involve Predictive AI forecasting a water deficit, Generative AI creating multiple optimized irrigation strategies, and Agentic AI autonomously selecting and executing the most effective strategy while learning from its impact [24].

AI-Driven Sensor Data Analysis in Plant Research

Sensor Modalities and Data Acquisition

Modern plant research leverages a suite of advanced sensor technologies to capture a holistic view of plant physiology and its environment.

- Electrical Impedance Spectroscopy (EIS): A non-invasive method measuring the electrical impedance of plant tissues across various frequencies. It provides insights into cell membrane integrity, ion transport, and water content, serving as a sensitive indicator of physiological stress [28].

- Hyperspectral and Multispectral Imaging: These modalities capture reflectance data across hundreds of narrow, contiguous spectral bands. This allows for the detection of non-visible biochemical shifts, such as altered chlorophyll fluorescence or pigment concentrations, often before visual stress symptoms appear [29].

- Wearable/Attachable Plant Sensors: Flexible, micro-nano sensors mounted directly on plants enable real-time, in-situ monitoring of parameters like stem diameter, leaf moisture, sap flow, and micro-climate (temperature, humidity, light intensity) [30].

- Environmental Sensor Suites: These measure ambient conditions including temperature, relative humidity, vapor pressure deficit (VPD), and light intensity, which are critical for contextualizing plant physiological data [28] [30].

The integration of these diverse data streams through Multi-Mode Analytics (MMA) or sensor fusion techniques significantly enhances the accuracy and reliability of plant stress detection and diagnosis compared to single-mode approaches [29].

Predictive AI for Forecasting and Early Detection

Predictive models are primarily deployed for the early identification of abiotic and biotic stress. For instance, ensemble methods like AdapTree, which combines AdaBoost and decision trees, have demonstrated exceptional performance in predicting stress-related parameters from EIS, temperature, and humidity data, achieving R² scores as high as 0.999 for environmental variables [28]. Convolutional Neural Networks (CNNs), particularly YOLOv8, have shown over 90% accuracy in visual-based detection of conditions like bumblefoot in poultry, a testament to the architecture's potential for plant disease detection from spectral images [31].

Generative AI for Data Augmentation and System Design

Generative AI's role is expanding in plant science. It is used to create synthetic sensor data, which is invaluable for training robust ML models when real-world data is scarce or imbalanced. Furthermore, generative design systems are being applied to indoor agriculture, where AI algorithms process countless parameters (lighting layout, airflow, spatial configuration) to generate and simulate thousands of potential farm layouts, identifying those that maximize yield and resource efficiency [26]. These systems can also draft natural language summaries from complex sensor data, improving report generation and knowledge transfer [23].

Agentic AI for Autonomous Closed-Loop Systems

Agentic AI represents the frontier of autonomous plant management. These systems employ a Sense-Infer-Control (SIC) architecture [27]. They continuously sense the environment and plant status via sensor networks, infer the optimal action using predictive and generative models (e.g., diagnosing a nutrient deficiency and generating a corrective formulation), and control actuators to execute the action (e.g., adjusting nutrient dosing in an irrigation system) [24] [27]. This creates a closed-loop system that autonomously maintains optimal growing conditions, responding to stressors in real-time.

Experimental Protocols and Methodologies

Protocol: A Multimodal Framework for Plant Stress Assessment

The following protocol, inspired by the AdapTree study and multi-mode analytics reviews, provides a template for implementing AI-driven plant stress research [29] [28].

1. Experimental Setup and Sensor Integration:

- Plant Material: Select uniform plants (e.g., 3-4 month-old tobacco plants) and grow them in a controlled environment (e.g., a greenhouse) [28].

- Sensor Deployment:

- Install a Four-Point-Probe EIS System to collect impedance magnitude and phase data from the plant stem at frequencies from 50 Hz to 2 MHz at regular intervals (e.g., every 9 minutes) [28].

- Deploy Environmental Sensors to continuously log relative humidity (RH), temperature, and vapor pressure deficit (VPD) [28].

- Utilize a Gravimetric System for automated phenotyping, capturing data on plant weight and water use efficiency [28].

- Optionally, incorporate Hyperspectral Imaging for periodic capture of spectral reflectance data [29].

2. Data Acquisition and Stress Induction:

- Collect baseline data under optimal conditions.

- Induce controlled stress conditions, such as drought, salinity, or temperature extremes.

- Continue simultaneous data collection from all sensors throughout the stress period and recovery.

3. Data Preprocessing and Fusion:

- Clean and synchronize all time-series data streams.

- Extract features from raw data (e.g., specific impedance values at key frequencies, vegetation indices from hyperspectral data).

- Fuse the multi-modal data into a unified dataset for analysis, aligning data points by timestamp.

4. Model Development and Training:

- For Predictive Tasks: Implement a boosting-based ensemble model like AdapTree (or baseline models like Random Forest, SVM) to predict stress parameters (e.g., EIS values, RH, temperature) from the fused dataset [28].

- For Generative Tasks: Train a model (e.g., a Generative Adversarial Network) on hyperspectral images to generate synthetic data of stressed and healthy plants.

- For Agentic Tasks: Develop a reinforcement learning agent that learns optimal control policies (e.g., for irrigation) based on the sensor data stream and reward signals (e.g., plant weight gain).

5. Model Evaluation:

- Evaluate predictive models using regression metrics (R², Root Mean Squared Error, Mean Absolute Error) [28].

- Assess generative models using quality metrics for synthetic data (e.g., Fréchet Inception Distance).

- Test agentic systems in simulation or real-world environments, measuring key performance indicators (e.g., water saved, yield maintained).

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials and Technologies for AI-Driven Plant Sensor Research

| Item | Function/Description | Research Application |

|---|---|---|

| Electrical Impedance Spectroscopy (EIS) System [28] | Measures frequency-dependent electrical impedance of plant tissues. | Non-invasive monitoring of physiological status (cell membrane integrity, water content) for early stress detection. |

| Hyperspectral Imaging Camera [29] | Captures image data across hundreds of narrow spectral bands. | Detection of non-visible biochemical shifts (e.g., chlorophyll fluorescence, pigment changes) associated with stress. |

| Wearable Flexible Sensors [30] | Attachable micro-sensors for monitoring micro-climate (temp, humidity) and plant physiology (water potential). | Real-time, in-situ monitoring of plant and environmental parameters on living specimens. |

| Gravimetric Plant Monitoring System [28] | Automated system for measuring plant weight and water use. | High-precision phenotyping for quantifying plant growth and water use efficiency, often used as ground truth. |

| Multi-Agent AI Software Platform [24] | Enables the creation of multiple collaborative AI agents. | Orchestrating complex, autonomous systems where different agents manage irrigation, nutrition, and climate control. |

| Laser-Induced Graphene (LIG) Sensors [30] | Flexible, low-cost sensors fabricated via laser for humidity/gas sensing. | Creating low-power, wearable sensors for continuous plant health and environment monitoring. |

Visualizing the Integrated AI-Sensor Workflow

The entire process, from data collection to autonomous action, can be visualized as an integrated workflow that leverages all three AI paradigms, as shown in the diagram below.

The Future of AI and Machine Learning in Plant Sensors Research

The trajectory of AI in plant sensor research points towards increasingly intelligent, autonomous, and scalable systems. Key future directions include:

- Advanced Multi-Agent Systems: The coordination of specialized AI agents (for irrigation, nutrition, pest control) that collaborate to manage entire agricultural ecosystems, optimizing for competing objectives like yield, sustainability, and cost [24].

- Generalizable and Lightweight Models: A critical focus will be on developing models that are transferable across plant species, environments, and scales, while also being computationally efficient for deployment on edge devices in resource-limited settings [31] [30].

- Quantum-Enhanced AI: Early exploration into quantum computing for agentic AI suggests a future where complex biomanufacturing and plant system design problems can be solved with unprecedented speed [27].

- Human-AI Collaboration: The future will not be about replacing scientists but augmenting their expertise. AI will handle massive, repetitive data analysis and routine control tasks, freeing researchers to focus on high-level experimental design, hypothesis generation, and innovation [23] [25].

In conclusion, the synergistic application of predictive, generative, and agentic AI to data from advanced sensor networks is poised to create a new paradigm in plant science. This integration enables a shift from reactive observation to proactive and autonomous management of plant health, paving the way for highly resilient and efficient agricultural systems capable of meeting the demands of the future.

The convergence of artificial intelligence (AI) and advanced sensor technologies is fundamentally transforming agricultural research and practice. This whitepaper examines the market trajectory and adoption trends of these integrated systems, with a specific focus on plant sensors and precision agriculture. Driven by the need to meet a projected 70% increase in global agricultural demand by 2050, the sector is rapidly evolving from automated data collection to intelligent, predictive, and self-optimizing agricultural systems (Agriculture 5.0) [3]. We provide a quantitative analysis of market growth, detail the experimental protocols underpinning key AI-sensor integrations, and visualize the core workflows. The synthesis presented herein highlights a pivotal shift towards scalable, data-driven plant science that is poised to accelerate crop breeding, enhance stress resilience, and optimize resource management for researchers and industry professionals.

The integration of AI into sensor systems represents a transition from simple data logging to complex, intelligent interpretation of the plant environment. This shift is characterized by the move from Agriculture 4.0, which focused on automation, to Agriculture 5.0, which emphasizes a harmonious collaboration between human intelligence, smart machines, and computational power for sustainable food production [3]. Core to this paradigm is the development of systems capable of monitoring plant stresses—both biotic (e.g., pests, diseases causing up to 42% crop loss) and abiotic (e.g., drought, heat)—with a precision and scale previously unattainable [3].

This evolution is critical for overcoming the dual challenges of labor shortages in agriculture and the need for high-throughput phenotyping in crop breeding programs [32] [33]. The fusion of AI with a new generation of sensors, including hyperspectral imagers, electronic noses for volatile organic compound (VOC) detection, and miniaturized wearable plant sensors, creates a powerful toolkit for decoding plant physiology and its response to a dynamic environment [3] [34]. This whitepaper dissects the components of this toolkit, analyzes its market trajectory, and provides a technical guide to its implementation in a research context.

Market Analysis & Quantitative Data

The market for integrated AI-sensor systems in agriculture is experiencing robust, multi-faceted growth, reflecting broad investment and adoption across hardware, software, and platform solutions.

Global Market Size and Projections

The table below summarizes the projected growth for key market segments related to AI and sensors in agriculture, illustrating a significant financial commitment to these technologies.

Table 1: Global Market Size and Growth Projections for AI and Sensor Technologies in Agriculture

| Market Segment | 2023/2024 Base Value | 2029/2032/2035 Projected Value | CAGR | Key Drivers |

|---|---|---|---|---|

| Industrial Sensors Market [35] | USD 27.97 Billion (2024) | USD 42.1 Billion (2029) | 8.5% | Adoption of Industry 4.0/IIoT, smart manufacturing, predictive maintenance. |

| Plant Sensors Market [36] | ~USD 1.5 Billion (2023) | USD 3.2 Billion (2032) | ~8.5% | Smart agriculture practices, water scarcity, demand for food production efficiency. |

| Wearable Plant Sensors Market [37] | USD 153 Million (2025) | - (Growth to 2033) | 5.2% | Precision agriculture adoption, sensor miniaturization, data-driven insights. |

| Precision Planting Market [38] | USD 1.65 Billion (2025) | USD 3.50 Billion (2035) | 7.76% | Rising seed costs, need for yield maximization, sustainability targets. |

Sensor Type and Regional Adoption Trends

Growth is not uniform across all sensor types or geographies. Specific segments and regions are emerging as leaders due to technological advancements and local economic drivers.

Table 2: Market Characteristics by Sensor Type and Region

| Category | Leading Segments / Regions | Characteristics and Growth Catalysts |

|---|---|---|

| Sensor Type | Level Sensors [35] | Dominated the industrial sensor market in 2023; crucial for process control and safety in various industries, including environmental applications. |

| Sensor Type | Soil Moisture Sensors [36] | A crucial component of the plant sensors market; demand driven by water conservation priorities and integration with advanced irrigation systems. |

| Connectivity | Wireless Sensors [36] | Experiencing higher growth than wired variants due to flexibility, scalability, and advancements in low-power protocols (LoRaWAN, NB-IoT). |

| Region | Asia-Pacific [35] [36] | Expected to be the fastest-growing market (CAGR of 9.7% for industrial sensors), fueled by smart city projects, manufacturing growth, and government support for agritech in India and China. |

| Region | North America [35] [38] | Holds the largest market share (44% of industrial sensor growth); driven by a strong R&D ecosystem, advanced agricultural practices, and leading OEMs (John Deere, AGCO). |

Technical Framework: AI and Sensor Integration

The power of this technological shift lies in the seamless integration of physical sensing devices with sophisticated AI algorithms for data analysis and decision-making.

Core Architectural Workflow

The following diagram illustrates the standard workflow for an AI-driven sensor system in plant research, from data acquisition to actionable insight.

Diagram 1: AI-Sensor System Workflow. This illustrates the pipeline from multi-source data acquisition through AI analysis to precision intervention.

Key Algorithmic Approaches in AI-Driven Plant Sensing

The choice of AI model is critical and is dictated by the specific research objective, whether it is classification, detection, or analysis of complex traits.

Table 3: Dominant AI Algorithms and Their Applications in Plant Sensor Research

| Algorithm Type | Specific Models | Primary Research Application | Reported Performance / Characteristics |

|---|---|---|---|

| Deep Learning (Classification) | VGG16, VGG19, ResNet50 [3] | General plant stress classification (biotic & abiotic). | Consistent high performance across various stress types. |

| Deep Learning (Detection) | YOLO, MobileNet [3] | Real-time detection and localization of biotic stresses (pests, disease). | High variability; offers a balance of speed and accuracy. |

| Traditional Machine Learning | Support Vector Machine (SVM), Decision Trees, K-Nearest Neighbors (KNN) [3] | Structured, low-resolution data analysis; relevant under constrained computational resources. | Remains relevant for specific datasets; often used as a benchmark. |

| Generative Adversarial Network (GAN) | ESGAN (Efficiently Supervised GAN) [33] | Reducing the need for human-annotated training data in image-based phenotyping. | Reduces annotation requirements by "one-to-two orders of magnitude". |

| Optimization Algorithms | Adam, Stochastic Gradient Descent [3] | Training and fine-tuning deep learning models for stress monitoring. | Adam is prominent in abiotic stress; SGD in biotic stress tasks. |

Detailed Experimental Protocols

To ground this overview in practical science, we detail two critical experimental approaches that highlight the integration of AI and sensors.

Protocol 1: AI-Driven Stress Detection Using Multimodal Sensor Fusion

This protocol is designed for the early detection and identification of plant stresses in a field setting.

- Objective: To automatically detect and classify biotic and abiotic plant stresses by fusing data from multiple sensor modalities.

- Materials & Equipment:

- Sensor Platforms: Ground vehicle or UAV (drone) equipped with mounting points.

- Sensors: RGB camera, multispectral or hyperspectral camera, thermal imaging camera.

- Positioning System: High-precision GPS/GNSS receiver.

- Computing Unit: Onboard computer (e.g., NVIDIA Jetson) or system for data offloading.

- Software: Python environment with libraries (TensorFlow/PyTorch, OpenCV, Scikit-learn).

- Methodology:

- Data Collection:

- Conduct autonomous or manual transects of the research plot using the sensor platform.

- Simultaneously capture co-registered RGB, multispectral, and thermal images. Geotag all data.

- Collect ground-truthed data by manually tagging and identifying healthy and stressed plants across the plot.

- Data Preprocessing:

- Perform image orthorectification and radiometric calibration.

- Align and fuse image data from different sensors into a unified data structure.

- Extract patches of interest (individual plants or leaves) and augment the dataset (rotations, flips) to increase robustness.

- Model Training & Validation:

- Design a convolutional neural network (CNN) architecture, such as a modified ResNet50, with input streams for each sensor modality.

- Train the model using the prepared dataset, employing optimization algorithms like Adam.

- Validate model performance on a held-out test set, using metrics such as accuracy, precision, recall, and F1-score.

- Deployment & Inference:

- Deploy the trained model to the onboard computing unit or a cloud platform.

- Perform real-time or near-real-time inference on new sensor data to generate a stress map of the field.

- Data Collection:

Protocol 2: High-Throughput Phenotyping of Flowering Time Using ESGAN

This protocol leverages a novel AI approach to minimize the labor-intensive process of annotating data for plant phenotyping.

- Objective: To accurately determine flowering time in a crop breeding trial from aerial imagery while minimizing human annotation effort.

- Materials & Equipment:

- Imaging Platform: UAV (drone) with a high-resolution RGB camera.

- Plant Material: Thousands of varieties of a target crop (e.g., Miscanthus grasses) in a field trial [33].

- Computing Infrastructure: Server or workstation with a high-performance GPU.

- Methodology:

- Initial Data Acquisition:

- Capture high-resolution aerial imagery of the field trial at regular intervals throughout the growing season, focusing on the flowering period.

- Implementation of ESGAN:

- Utilize a Generative Adversarial Network (GAN) framework where two models compete [33].

- The generator model learns to create synthetic images of flowering and non-flowering plants that are indistinguishable from real images.

- The discriminator model learns to differentiate between real and synthetic images.

- Through this competition, both models become highly adept at understanding the visual features of flowering.

- Efficient Supervision & Model Training:

- Introduce a small amount of human-annotated data (e.g., 1-10% of what a traditional model would require) to guide the ESGAN.

- Fine-tune the pre-trained ESGAN model on this small, annotated dataset for the specific task of classifying images as "flowering" or "non-flowering."

- Phenotypic Data Extraction:

- Run the entire time-series of aerial images through the trained ESGAN model.

- Automatically generate a dataset recording the first and peak flowering times for each plant variety in the trial.

- Initial Data Acquisition:

The Scientist's Toolkit: Key Research Reagent Solutions

For researchers embarking on projects in this domain, the following table outlines essential "reagent solutions" – the key hardware, software, and data components required.

Table 4: Essential Research Toolkit for AI-Driven Plant Sensor Projects

| Category | Item | Function / Application in Research |

|---|---|---|

| Sensor Platforms | Unmanned Aerial Vehicle (UAV / Drone) | High-throughput aerial imaging for large field plots; enables temporal studies at high resolution [34]. |

| Sensor Platforms | Autonomous Ground Vehicle | Proximal sensing; carries heavier sensor payloads for root-level or under-canopy data collection [3]. |

| Physical Sensors | Hyperspectral Imaging Sensor | Captures spectral data across hundreds of narrow bands; used for detailed analysis of plant physiology, nutrient status, and early stress detection [3]. |

| Physical Sensors | Soil Sensor Network (Moisture, Temp, Nutrients) | Provides real-time, below-ground environmental data; critical for irrigation studies and understanding soil-plant interactions [32] [36]. |

| Physical Sensors | "Wearable" Plant Sensors (e.g., Leaf Wetness) | Monitors micro-climatic conditions directly at the plant surface; used for disease risk modeling (e.g., fungal outbreaks) [37]. |

| AI Software & Models | Pre-trained CNN Models (e.g., VGG16, ResNet50) | Serves as a starting point for transfer learning, significantly reducing the data and time required to develop custom plant stress models [3]. |

| AI Software & Models | Generative Adversarial Network (GAN) Framework | Used to create synthetic plant image data and to develop models, like ESGAN, that require minimal manual annotation [33]. |

| Data & Analytics | IoT Platform & Edge Computing Device | Handles data ingestion from multiple sensors, real-time processing, and model execution at the edge for low-latency decision-making [32] [39]. |

| Data & Analytics | Phenotypic Analysis Software (e.g., Leaf Doctor) | Quantifies disease severity or specific plant traits from imagery, providing standardized metrics for research analysis [40]. |

The integration of AI into sensor systems is not merely an incremental improvement but a foundational shift in agricultural research methodology. The quantitative market data confirms strong, sustained investment and growth across sensor hardware, AI software, and integrated platforms. The experimental protocols and toolkit detailed herein provide a roadmap for researchers to implement these technologies, which are critical for addressing the grand challenges of food security and sustainable intensification. The future of plant sensors research is inextricably linked to the advancement of AI, particularly in overcoming current limitations of scalability, context-dependency, and data annotation overhead. As these intelligent systems become more adaptable and accessible, they will unlock new frontiers in predictive phenotyping, accelerated breeding, and fully autonomous crop management systems.

From Data to Decisions: Methodological Applications of AI-Driven Sensors in Research and Manufacturing

Predictive maintenance represents a paradigm shift in how industries manage physical assets, moving from reactive repairs and rigid schedules to a proactive, data-driven approach. By utilizing advanced technologies such as machine learning (ML) and statistical models, predictive maintenance analyzes sensor and historical data to forecast when specific components will fail [41]. This methodology enables organizations to plan repairs with precision, avoid unnecessary part replacements, and minimize unexpected stoppages that disrupt operations [41]. Within industrial plants and research facilities—particularly those supporting drug development—this approach is increasingly critical for maintaining sensitive equipment where failures can compromise research integrity, result in substantial financial losses, or create safety hazards.

The future of AI and machine learning in plant sensors research points toward increasingly intelligent, interconnected systems. In sectors ranging from manufacturing to agriculture, the synergy between next-generation sensors and AI algorithms is creating systems capable of not just monitoring but truly understanding equipment behavior and plant physiology [3]. This technological evolution enables a shift from simple data collection to predictive analytics and prescriptive recommendations, transforming how researchers and scientists approach equipment maintenance and experimental continuity.

Fundamental Concepts: From Data to Predictions

Defining Predictive Maintenance in the AI Context

Predictive maintenance (PdM) is a data-driven approach to predicting machinery failure and making proactive repairs [42]. Unlike traditional methods, it services equipment not on fixed intervals or after breakdowns, but only when measurable indicators foresee degradation [41]. This approach combines continuous monitoring of operating conditions with the estimation of failure probability, allowing maintenance to be performed precisely when needed [41].

AI elevates this concept by using algorithms that not only follow predefined rules but learn from data as they go [42]. Instead of merely flagging current issues, AI-based analytics can identify even the faintest indication of performance deviation, sensing emerging problems before they cause disruptions [42]. For research and drug development professionals, this capability is particularly valuable for protecting sensitive experiments and expensive biological materials that require stable environmental conditions and equipment performance.

How AI and Machine Learning Enable Failure Forecasting

Machine learning, a branch of computer science that develops algorithms capable of identifying patterns and correlations in large datasets, serves as the analytical engine of modern predictive maintenance systems [41]. In predictive maintenance, ML transforms raw operational data into actionable insights, allowing maintenance teams to anticipate failures rather than react to breakdowns [41].

AI systems employ several learning approaches:

- Supervised learning: Models trained using labeled datasets to recognize patterns associated with known failure states [41]

- Unsupervised learning: Algorithms that identify anomalies and patterns without pre-labeled data, useful for detecting previously unknown failure modes [41]

- Reinforcement learning: Systems that improve their predictive capabilities through continuous feedback from maintenance outcomes [41]

These approaches enable AI systems to continuously refine their understanding of equipment behavior, becoming increasingly accurate at forecasting failures and recommending interventions.

Technical Framework: Implementing AI-Driven Predictive Maintenance

Core Workflow and Architecture

The implementation of AI-driven predictive maintenance follows a systematic workflow that transforms raw equipment data into actionable maintenance recommendations. This process involves multiple coordinated stages that ensure accurate predictions and timely interventions.

Figure 1: AI-Powered Predictive Maintenance Workflow

The workflow begins with data collection from multiple sources, including sensors that track vibration, temperature, pressure, and power consumption, as well as historical logs of repairs and operating conditions [41]. This data then undergoes processing and feature engineering to remove noise, handle missing values, and create meaningful indicators of equipment health [41]. The processed data fuels model training, where algorithms learn normal equipment behavior and failure patterns [41]. Once deployed, the system continuously monitors equipment, detects anomalies, predicts failures, and triggers maintenance scheduling [41] [42].

Key Algorithms and Their Applications

Different machine learning algorithms serve distinct purposes in predictive maintenance systems, each with particular strengths for specific types of analysis and prediction tasks.

Table 1: Machine Learning Algorithms in Predictive Maintenance

| Algorithm Category | Specific Algorithms | Application in Predictive Maintenance | Use Case Examples |

|---|---|---|---|

| Classification Models | Support Vector Machines (SVM), Decision Trees, Random Forest | Failure type classification, fault categorization | Identifying specific failure modes in robotic arms [41] |

| Regression Models | Linear Regression, Gradient Boosting | Remaining Useful Life (RUL) estimation | Predicting time until bearing failure in motors [41] |

| Anomaly Detection | Isolation Forest, Autoencoders | Detecting deviations from normal operation | Identifying unusual vibration patterns in compressors [41] |

| Deep Learning | CNN, LSTM, Neural Networks | Complex pattern recognition in multivariate data | Analyzing vibration spectra for early fatigue detection [41] [3] |

| Optimization Algorithms | Adam, Stochastic Gradient Descent | Model training and parameter optimization | Fine-tuning neural networks for temperature drift prediction [3] |

The selection of appropriate algorithms depends on multiple factors, including data characteristics, failure mode complexity, and computational constraints. In research environments, deep learning models such as Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks have shown strong performance for complex pattern recognition tasks, while traditional methods like Support Vector Machines and Decision Trees remain relevant for structured, lower-dimensional data [3].

Sensor Technologies and Data Infrastructure

Predictive maintenance relies on a sophisticated ecosystem of sensor technologies that capture equipment health indicators in real-time. These sensors form the fundamental data-gathering layer that enables all subsequent analysis.

Table 2: Essential Sensor Technologies for Predictive Maintenance

| Sensor Type | Parameters Measured | Research Application | Technical Specifications |

|---|---|---|---|

| Vibration Sensors | Frequency, amplitude, harmonics | Detecting imbalance, misalignment, bearing wear in centrifuges | High-frequency sampling (≥10kHz) for detection of micro-cracks [41] |

| Thermal Sensors | Temperature, heat distribution | Monitoring reactor vessels, HVAC systems in labs | Infrared imaging for thermal profiles [41] |