Telepresence Technologies for Remote BLSS Monitoring: A Comprehensive Guide for Biomedical Researchers

This article provides researchers, scientists, and drug development professionals with a comprehensive analysis of telepresence technologies for remote monitoring of Biological Life Support Systems (BLSS) and related biomedical applications.

Telepresence Technologies for Remote BLSS Monitoring: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive analysis of telepresence technologies for remote monitoring of Biological Life Support Systems (BLSS) and related biomedical applications. It explores the foundational principles of telepresence robotics, details methodological approaches for integration into research environments, offers practical troubleshooting and optimization strategies, and presents a comparative validation of current systems. By synthesizing the latest technological advancements with practical implementation frameworks, this guide aims to equip professionals with the knowledge needed to leverage telepresence for enhanced remote monitoring, data collection, and research continuity in biomedical settings.

Understanding Telepresence Robotics: Core Principles and Healthcare Transformation

Telepresence technology creates the sensation of being fully immersed in a remote location, constructing a virtual environment that mirrors genuine experiences for the operator [1]. This field has evolved from basic video conferencing to sophisticated immersive robotics, enabling spatial and social presence over distance where direct physical presence is impossible or undesired [1]. For remote Bioregenerative Life Support System (BLSS) monitoring research, telepresence provides critical capabilities for maintaining continuous observation and intervention in controlled environment agriculture and life support systems without physical intrusion that could compromise delicate ecological balances.

The fundamental distinction between simple video conferencing and advanced telepresence lies in mobility, spatial awareness, and environmental interaction. While video conferencing locks participants to a fixed screen perspective, telepresence robots allow remote operators to navigate environments freely, choose viewpoints, and focus attention on specific areas or components [1] [2]. This mobility enables researchers to conduct thorough remote inspections of BLSS components, from plant growth chambers to air revitalization systems, with the freedom to examine equipment from multiple angles as if physically present.

Telepresence Technology Spectrum and Quantitative Comparison

Technology Classification and Market Landscape

The telepresence ecosystem encompasses everything from stationary video systems to mobile robotic platforms with advanced sensor capabilities. The market landscape reflects this diversity, with key players including SMP Robotics, Anybots, Double Robotics, Mantaro, Revolve Robotics, OhmniLabs, and Inbot Technology [3]. These systems are categorized primarily as mobile or stationary robots serving education, healthcare, manufacturing, and other specialized applications [3].

Table 1: Global Virtual Telepresence Robot Market Forecast

| Metric | 2024 Value | Projected 2033 Value | CAGR (2026-2033) |

|---|---|---|---|

| Market Size | USD 150 Million | USD 931.79 Million | 22.5% |

Source: [3]

The 3D telepresence segment shows particularly promising growth, with an anticipated compound annual growth rate (CAGR) of approximately 15% from 2025-2033, driven by integration of artificial intelligence and virtual reality technologies [4]. This segment includes both software and hardware solutions that enable more immersive remote experiences through holographic projection and improved bandwidth efficiency [4].

Table 2: Telepresence Technology Comparison Matrix

| Feature | Basic Video Conferencing | Standard Telepresence Robots | Advanced 3D/Immersive Telepresence |

|---|---|---|---|

| Mobility | Fixed perspective | Mobile navigation | Mobile with environmental manipulation |

| Spatial Awareness | Limited 2D view | Basic 3D navigation | Enhanced 3D spatial understanding |

| Visualization | 2D camera feed | 2D/3D hybrid interfaces | Augmented Virtuality (AV), point clouds |

| Typical Applications | Meetings, consultations | Remote inspections, healthcare | Complex industrial tasks, precision monitoring |

| User Control | Camera angle adjustment | Full robotic navigation | Advanced interaction with environment |

Technical Specifications and Performance Metrics

Recent research has quantified the performance characteristics of various telepresence visualization modalities. A 2025 study systematically evaluated four interface types for industrial robot teleoperation: 2D camera feed, 3D point cloud, combined 2D3D, and Augmented Virtuality (AV) [5]. The findings revealed distinct trade-offs between cognitive load and operational precision that directly inform BLSS monitoring applications.

The 3D visualization modality imposed the highest cognitive load (as measured by NASA-TLX and pupillometry) but enabled the most precise navigation with low collision rates [5]. The combined 2D3D interface offered the lowest cognitive load and highest user comfort while maintaining reasonable distance accuracy. The AV approach suffered from significantly higher collision rates and usability issues, suggesting it requires further refinement for critical monitoring applications [5]. No significant differences were found for task completion time across modalities, indicating that interface choice should prioritize safety and accuracy over speed for BLSS monitoring tasks.

Application Notes for BLSS Monitoring Research

Healthcare-Derived Monitoring Protocols

BLSS monitoring can adapt telepresence applications validated in healthcare settings, where continuous patient observation shares similarities with ecological system monitoring. Research indicates that telepresence robots (TPRs) offer promising solutions for scenarios where physical presence is impossible or physical isolation is required to prevent contamination [1]. This directly translates to BLSS applications where researcher presence could introduce pathogens or disrupt delicate atmospheric balances.

Three key usage scenarios tested in simulated healthcare settings provide applicable protocols for BLSS research:

- Anamnesis (System Status Assessment): Continuous remote monitoring of BLSS parameters including atmospheric composition, nutrient solution metrics, and plant health indicators.

- Measurements (Data Collection): Strategic deployment of TPRs for physical sampling or sensor reading verification at multiple locations within the BLSS.

- Falls and Frailty (System Failure Detection): Early identification of component failures or suboptimal performance through regular robotic inspection routes.

These applications demonstrate particularly strong potential for addressing the challenges of providing continuous monitoring to complex biological systems, emphasizing the technology's ability to extend specialist reach while minimizing system disruptions [1].

Industrial Inspection Methodologies

Manufacturing applications provide equally relevant protocols for BLSS monitoring. Companies now utilize telepresence robots for "gemba walks" (going to the actual place where work is done), audits, inspections, and virtual visits [2]. This approach enables process improvement professionals to monitor facility health remotely and quickly identify solutions when problems arise [2].

For BLSS applications, this translates to:

- Regular System Audits: Scheduled telepresence reviews of all BLSS components without requiring physical researcher presence.

- Remote Expert Consultation: Enabling specialized researchers to visually inspect and guide troubleshooting without travel.

- Multi-operator Collaboration: Allowing several researchers to sequentially or simultaneously evaluate system status from distributed locations.

Industrial applications highlight the cost-saving potential of telepresence, with one case study noting the technology "replace[s] the need for you or your colleagues to fly out to a client location" while maintaining the effectiveness of in-person assessment [2].

Experimental Protocols for Telepresence Evaluation

Visualization Modality Assessment Protocol

Based on the experimental framework from the "Study of Visualization Modalities on Industrial Robot Teleoperation for Inspection in a Virtual Co-Existence Space" [5], the following protocol evaluates telepresence interfaces for BLSS monitoring:

Objective: To determine the optimal visualization modality for remote BLSS monitoring tasks balancing cognitive load, operational precision, and task efficiency.

Equipment:

- Telepresence robot platform with navigation capabilities

- VR headset with integrated eye-tracking

- Performance monitoring software

- NASA-TLX questionnaire for subjective workload assessment

- BLSS simulation environment or physical testbed

Procedure:

- Participant Briefing: Explain experimental objectives and obtain informed consent.

- System Orientation: Familiarize participants with each visualization interface (2D, 3D, 2D3D, AV).

- Task Assignment: Assign standardized monitoring tasks including:

- Component inspection

- Sensor reading verification

- Anomaly identification

- Navigation through constrained spaces

- Performance Metrics Collection:

- Task completion time

- Collision count

- Distance accuracy

- Identification accuracy

- Workload Assessment:

- Administer NASA-TLX questionnaire after each condition

- Collect pupillometry data throughout tasks

- Data Analysis:

- Employ repeated measures ANOVA to compare conditions

- Conduct post-hoc tests for significant main effects

Expected Outcomes: Based on prior research [5], the 2D3D combined interface is anticipated to offer the best balance of low cognitive load and acceptable accuracy for routine monitoring tasks, while 3D point cloud visualization may be preferable for precision tasks despite higher cognitive demands.

Experimental Protocol for Telepresence Evaluation

Healthcare Scenario Adaptation Protocol

Adapting the methodology from healthcare telepresence research [1], this protocol validates BLSS-specific applications:

Objective: To evaluate telepresence robot effectiveness for continuous BLSS monitoring and specialist intervention.

Equipment:

- Telepresence robot with camera, microphones, speakers, and sensors

- Secure communication platform

- BLSS simulation or operational environment

- Data collection instruments

Procedure: 1. Scenario Development: Create simulated BLSS monitoring scenarios: - Routine system assessment - Emergency response to component failure - Multi-expert collaborative diagnosis 2. Participant Selection: Engage BLSS researchers and technicians with varying telepresence experience 3. Implementation: - Deploy TPR in BLSS environment - Conduct remote monitoring sessions - Record interaction metrics 4. Data Collection: - System assessment accuracy - Response time to anomalies - User satisfaction measures - Technology acceptance metrics 5. Analysis: - Qualitative analysis of user feedback - Quantitative performance comparisons - Identification of implementation barriers

Expected Outcomes: This protocol is expected to validate telepresence as a viable method for reducing physical intrusions into sensitive BLSS environments while maintaining monitoring fidelity, particularly for scenarios where specialist expertise is required but physical presence is impractical.

The Researcher's Toolkit: Essential Materials and Solutions

Table 3: Research Reagent Solutions for Telepresence Experiments

| Item | Function | Application Notes |

|---|---|---|

| Telepresence Robot Platform | Mobile remote presence platform | Select models with appropriate sensor suites for BLSS monitoring; consider Ohmni, Double Robotics, or custom solutions |

| VR Headset with Eye-Tracking | Immersive visualization and cognitive load measurement | Essential for advanced visualization studies; provides objective workload data via pupillometry |

| NASA-TLX Questionnaire | Subjective workload assessment | Validated instrument for measuring perceived cognitive load across multiple dimensions |

| BLSS Simulation Environment | Controlled testbed for evaluation | Enables standardized testing of telepresence interfaces without risking operational BLSS |

| Data Logging Software | Performance metric collection | Captures task completion time, accuracy, collision data, and navigation efficiency |

| Network Infrastructure | Latency-controlled communication | Critical for maintaining responsive control; aim for <200ms latency for optimal performance |

| 3D Sensing Technology | Environmental mapping and point cloud generation | LiDAR or RGBD cameras for spatial awareness and 3D representation of BLSS components |

Operational Workflow for BLSS Telepresence Monitoring

BLSS Telepresence Monitoring Workflow

Implementation Considerations for BLSS Research

Successful integration of telepresence technology into BLSS monitoring requires addressing several critical implementation factors:

Technical Requirements: Telepresence systems demand robust network infrastructure with minimal latency. Research indicates that high-speed internet connections are essential for optimal performance, with bandwidth requirements varying by visualization modality [4]. 3D telepresence applications particularly benefit from advanced compression techniques that reduce bandwidth demands while maintaining immersive quality.

Human Factors: Interface design must balance information richness with cognitive load. The demonstrated trade-offs between visualization modalities indicate that BLSS monitoring applications should match interface complexity to task requirements [5]. Routine monitoring may benefit from lower-load 2D3D hybrid interfaces, while complex diagnostic tasks may warrant the higher cognitive demands of pure 3D visualization for enhanced spatial understanding.

Ethical and Security Considerations: As with healthcare applications where HIPAA compliance is crucial [2], BLSS research must ensure data security and integrity. This is particularly important for closed-loop life support systems where unauthorized access could compromise system stability. Additionally, researcher acceptance and patient-centered technology adoption approaches should be considered to overcome potential reluctance to replace human presence fully [1].

Future Development Trajectory: The telepresence field is evolving toward more immersive experiences through integration of artificial intelligence and virtual reality technologies [4]. For BLSS applications, this promises increasingly sophisticated remote monitoring capabilities, including predictive anomaly detection and automated response systems guided by remote human expertise.

Telepresence technology enables individuals to feel and interact as if they are present in a remote location, overcoming geographical and physical barriers through advanced communication systems. These systems have evolved beyond simple video conferencing to offer immersive and high-fidelity experiences that replicate in-person interactions, making them particularly valuable for specialized applications such as remote Bioregenerative Life Support System (BLSS) monitoring and research. The global telepresence market demonstrates robust growth, projected to reach approximately $5,800 million by 2025 with a compound annual growth rate (CAGR) of around 12.5% anticipated through 2033, reflecting increasing adoption across research and professional sectors [6].

Telepresence systems are characterized by their ability to create a sense of "being there" through various technological implementations. According to Minsky, who coined the term in 1980, telepresence refers to teleoperation systems for manipulating remote physical objects, creating a virtual or simulated environment that mirrors real experience [7]. This foundational concept has expanded to encompass multiple system categories, each with distinct capabilities suited to different research and monitoring applications. Modern systems integrate advanced audio-visual technologies, including high-definition video, spatial audio, and artificial intelligence features, to enhance the user experience and facilitate more effective remote collaboration and monitoring tasks [6].

The growing demand for remote collaboration solutions, accelerated by hybrid work models and the need for specialized remote monitoring capabilities, has driven significant innovation in telepresence technologies. These systems now offer increasingly sophisticated features, including seamless integration with existing IT ecosystems, cloud-based deployment options, and immersive interfaces that provide more natural and intuitive remote interaction capabilities [6]. For BLSS monitoring and similar research applications, where continuous observation and precise intervention are critical, the evolution of telepresence systems offers promising tools for enhancing research efficiency and enabling remote collaboration between geographically dispersed scientific teams.

Quantitative Analysis of Telepresence Systems

The telepresence market encompasses diverse system types with varying technological implementations, performance characteristics, and application suitability. The following tables provide a comprehensive quantitative comparison of current telepresence technologies based on market data and technical specifications, offering researchers a foundation for selecting appropriate systems for BLSS monitoring applications.

Table 1: Telepresence System Types and Market Characteristics

| System Type | Key Characteristics | Primary Applications | Projected Market Growth |

|---|---|---|---|

| Video Conferencing Systems | High-definition video/audio, multi-codec support, room-based or personal setups | Corporate meetings, remote consultations, team collaboration | Stable growth driven by hybrid work models [6] |

| Robotic Platforms (TPRs) | Mobile robotic base, cameras, microphones, screens, sensor-assisted motion control | Healthcare, education, remote facility monitoring | Expanding due to aging population and telehealth needs [7] [1] |

| Holographic Telepresence | 3D projection technology, immersive visual experience, specialized display systems | High-end presentations, medical visualization, design collaboration | Significant growth potential with AR/VR adoption [8] |

| VR Telepresence | Virtual reality headsets, fully immersive environments, spatial audio | Training simulations, virtual collaboration, remote operations | Rapid growth driven by metaverse technologies [6] |

Table 2: Technical Specifications and Implementation Requirements

| System Type | Key Technical Components | Bandwidth Requirements | Implementation Complexity |

|---|---|---|---|

| Room-based Video Systems | Multiple codecs, high-resolution cameras, array microphones, large displays | High (10-20 Mbps) | High (dedicated space, specialized equipment) [6] |

| Personal Telepresence | Single codec, integrated camera/mic, desktop monitor | Medium (5-10 Mbps) | Low (personal device integration) [6] |

| Telepresence Robots | Mobile platform, navigation sensors, bilateral communication, battery system | Medium (5-15 Mbps) | Medium (navigation mapping, charging infrastructure) [7] [1] |

| Holographic Systems | 3D capture technology, specialized displays, projection systems | Very High (20+ Mbps) | Very High (specialized hardware, calibrated environment) [8] |

Market analysis indicates that the telepresence equipment market is concentrated among major players including Cisco Systems, Polycom, and Avaya, who hold significant market shares due to extensive product portfolios and technological advancements [6] [8]. The continuous innovation cycle in this sector is characterized by heavy investment in research and development, particularly in enhancing video quality, audio fidelity, and user interface design. North America currently dominates the market, driven by early technology adoption and strong enterprise IT infrastructure, though the Asia-Pacific region is expected to witness the highest growth rate due to increasing digital transformation initiatives [6].

For BLSS monitoring applications, the selection of appropriate telepresence technology must consider both the quantitative metrics above and specific research requirements, including precision of observation, need for mobility within the monitoring environment, communication latency tolerance, and integration with existing sensor networks and data collection systems. Room-based systems with multi-codec capabilities may be suitable for centralized monitoring stations, while mobile robotic platforms offer advantages for physical inspection of multiple BLSS components, and emerging holographic technologies could provide enhanced 3D visualization of complex biological systems.

Application Notes for Research Environments

Video Conferencing Systems

Video conferencing systems represent the foundational technology for telepresence, providing real-time audio and visual communication between remote locations. These systems have evolved from basic video calling applications to sophisticated telepresence solutions that create the illusion of participants being in the same room through careful attention to sightlines, camera placement, and audio quality. For BLSS monitoring and research collaboration, these systems facilitate regular communication between distributed team members, enable expert consultation without travel requirements, and support routine observation of system status and experimental conditions [6].

Advanced video telepresence systems now incorporate specialized features to enhance the sense of spatial presence. The Portal Display system, for example, synchronizes the user's viewpoint with their head position and orientation to provide stereoscopic vision through a single monitor, creating a more convincing sense of depth and spatial awareness [9]. This technology uses a single depth camera to capture RGB-D data, making it both economically and spatially efficient compared to multi-camera arrays. Research indicates that point cloud streaming of remote users significantly improves social telepresence, usability, and concentration compared with graphical avatars, while the type of background representation has negligible impact on these metrics [9]. These findings suggest that for BLSS monitoring applications, research teams can prioritize high-quality user representation over background fidelity when bandwidth limitations require compromise.

Implementation of video telepresence for BLSS monitoring should consider both technical and human factors. On the technical side, systems must provide sufficient resolution to observe relevant visual details of plant growth, system components, and instrumentation readings. From a human factors perspective, attention to sigh tlines, eye contact, and audio clarity significantly impact communication effectiveness during collaborative problem-solving sessions. The integration of video telepresence with data visualization systems and shared digital workspaces can further enhance research collaboration by providing contextual information alongside video feeds [6].

Robotic Platforms (Telepresence Robots)

Telepresence robots (TPRs) represent a significant advancement beyond stationary video systems by providing mobility and physical presence in remote environments. These systems typically consist of a mobile robotic base equipped with cameras, microphones, speakers, and a display screen, allowing remote operators to navigate through environments and interact with people and objects as if physically present. For BLSS monitoring applications, TPRs offer the unique advantage of enabling researchers to visually inspect multiple system components, respond to alerts by navigating to specific locations, and maintain a physical presence in specialized laboratory environments that may have access restrictions or require containment [7] [1].

Research on TPR implementation in healthcare settings provides valuable insights for BLSS applications. Studies have demonstrated the effectiveness of TPRs for tasks including anamnesis (data collection), measurements, and monitoring of critical events – functions directly transferable to BLSS monitoring requirements [1]. In these applications, TPRs successfully facilitated remote interactions while maintaining a sense of social presence, with users reporting higher engagement compared to traditional video conferencing systems. The mobile nature of TPRs allows operators to change viewpoint and focus attention on specific system components, making them particularly valuable for monitoring distributed BLSS systems with multiple interconnected modules [1].

A critical consideration for BLSS implementation is interface design tailored to researcher requirements. Studies with older adults have demonstrated that customized user interfaces incorporating features such as obstacle detection, adjustable height, and room access restrictions significantly improved usability and addressed privacy concerns [10]. Similar principles apply to BLSS monitoring interfaces, where researchers may need to control navigation precision, manipulate robotic sensors, or restrict access to sensitive experimental areas. The implementation of TPRs in BLSS environments requires careful attention to navigation infrastructure, with methods such as laser pointers, auto-navigation, and mapping features enhancing operational efficiency in complex laboratory layouts [7].

Holographic and VR Telepresence

Holographic and virtual reality telepresence systems represent the cutting edge of immersive remote interaction technologies. Holographic telepresence creates 3D representations of remote participants or objects using technologies such as volumetric capture and specialized displays, enabling viewers to perceive depth and spatial relationships without requiring head-mounted equipment. These systems are particularly valuable for BLSS applications requiring detailed spatial understanding of system configurations, plant growth structures, or complex mechanical assemblies, as they provide more natural depth cues than conventional 2D displays [8].

Virtual reality telepresence takes immersion further by placing users in completely synthetic environments that may replicate physical spaces or provide abstracted visualizations of system data. VR systems typically require head-mounted displays and motion tracking technology to create a convincing sense of presence within the virtual environment. For BLSS monitoring, VR telepresence offers unique capabilities for data visualization, allowing researchers to interact with system parameters, biological models, or sensor data in three-dimensional space, potentially revealing patterns and relationships difficult to discern through traditional interfaces [6].

Current research in advanced telepresence interfaces explores hybrid approaches that combine elements of video, holographic, and VR technologies. The Portal Display system mentioned previously represents one such innovation, using head pose-responsive view transformation to create a sense of depth on conventional 2D displays [9]. These approaches offer increasingly sophisticated spatial communication capabilities while minimizing specialized hardware requirements. For BLSS applications with limited resources or specific technical constraints, such solutions may provide an optimal balance between immersion and practicality, particularly when integrated with existing monitoring infrastructure and data systems.

Experimental Protocols for Telepresence Evaluation

Protocol for Evaluating Social Presence in Telepresence Systems

Objective: To quantitatively assess the sense of social presence and usability of different telepresence systems for remote BLSS monitoring tasks.

Materials:

- Telepresence systems to be evaluated (e.g., video conferencing, robotic platform, VR system)

- Standardized rating scales (Social Presence Questionnaire, System Usability Scale)

- Task materials relevant to BLSS monitoring (system diagrams, data interpretation exercises)

- Recording equipment for session documentation (if required for analysis)

Procedure:

- Participant Recruitment: Recruit researchers and BLSS specialists with varying levels of technical proficiency (target N=25-30 for statistical significance) [10].

- System Orientation: Provide standardized training on each telepresence system interface, ensuring all participants achieve basic proficiency before evaluation.

- Task Implementation:

- Conduct collaborative problem-solving sessions using each telepresence system

- Utilize BLSS-specific scenarios including:

- Joint analysis of system performance data

- Simulation of emergency response procedures

- Equipment troubleshooting exercises

- Standardize task order across participants using counterbalancing techniques

- Data Collection:

- Administer standardized questionnaires after each task condition

- Measure task completion time and accuracy for objective performance metrics

- Conduct structured interviews to gather qualitative feedback on system strengths and limitations

Analysis:

- Employ quantitative analysis of rating scale data using appropriate statistical tests (e.g., repeated measures ANOVA)

- Perform thematic analysis of qualitative feedback to identify recurring usability themes

- Correlate system usability scores with task performance metrics to identify interface features that impact functional effectiveness

Table 3: Key Metrics for Telepresence System Evaluation

| Evaluation Dimension | Specific Metrics | Measurement Method |

|---|---|---|

| Social Presence | Co-presence, psychological involvement, behavioral engagement | Standardized questionnaires [9] |

| Usability | Efficiency, learnability, error rate, satisfaction | System Usability Scale, task performance measures [10] |

| Technical Performance | Video/audio quality, latency, navigation precision | Objective measures, expert ratings [9] |

| Task Effectiveness | Completion time, accuracy, solution quality | Performance metrics, expert evaluation [1] |

Protocol for Robotic Telepresence in Monitoring Scenarios

Objective: To evaluate the effectiveness of telepresence robots for remote BLSS monitoring and inspection tasks.

Materials:

- Telepresence robot with camera, microphone, and navigation capabilities

- Simulated or actual BLSS environment with multiple monitoring points

- Standardized checklist of system parameters for assessment

- Data collection forms for recording observation accuracy

Procedure:

- Environment Preparation:

- Establish a BLSS monitoring scenario with multiple inspection stations

- Introduce simulated anomalies or predetermined observation targets at specific locations

- Participant Training:

- Provide standardized robot operation training to all participants

- Allow practice time until proficiency demonstrated with basic navigation and inspection tasks

- Task Execution:

- Participants complete timed monitoring routines using the telepresence robot

- Tasks include:

- Navigation to specific monitoring stations

- Reading and reporting simulated instrument values

- Identifying and describing pre-placed "anomalies" in the system

- Conducting visual assessment of plant growth or system status

- Data Collection:

- Record navigation efficiency (time to reach stations, path efficiency)

- Measure observation accuracy (correct identification of anomalies, accurate reading of instruments)

- Administer workload assessment (NASA-TLX) and usability questionnaires

Analysis:

- Compare monitoring task performance between telepresence robot conditions and direct observation

- Analyze relationship between navigation proficiency and observation accuracy

- Evaluate variation in performance across different BLSS monitoring task types

- Identify common operational challenges and interface limitations

This protocol adapts methodologies successfully employed in healthcare telepresence research, where TPRs have been evaluated for tasks including patient assessment, environmental monitoring, and equipment operation [1]. The structured approach allows for systematic comparison between telepresence options and identification of optimal implementation strategies for specific BLSS monitoring requirements.

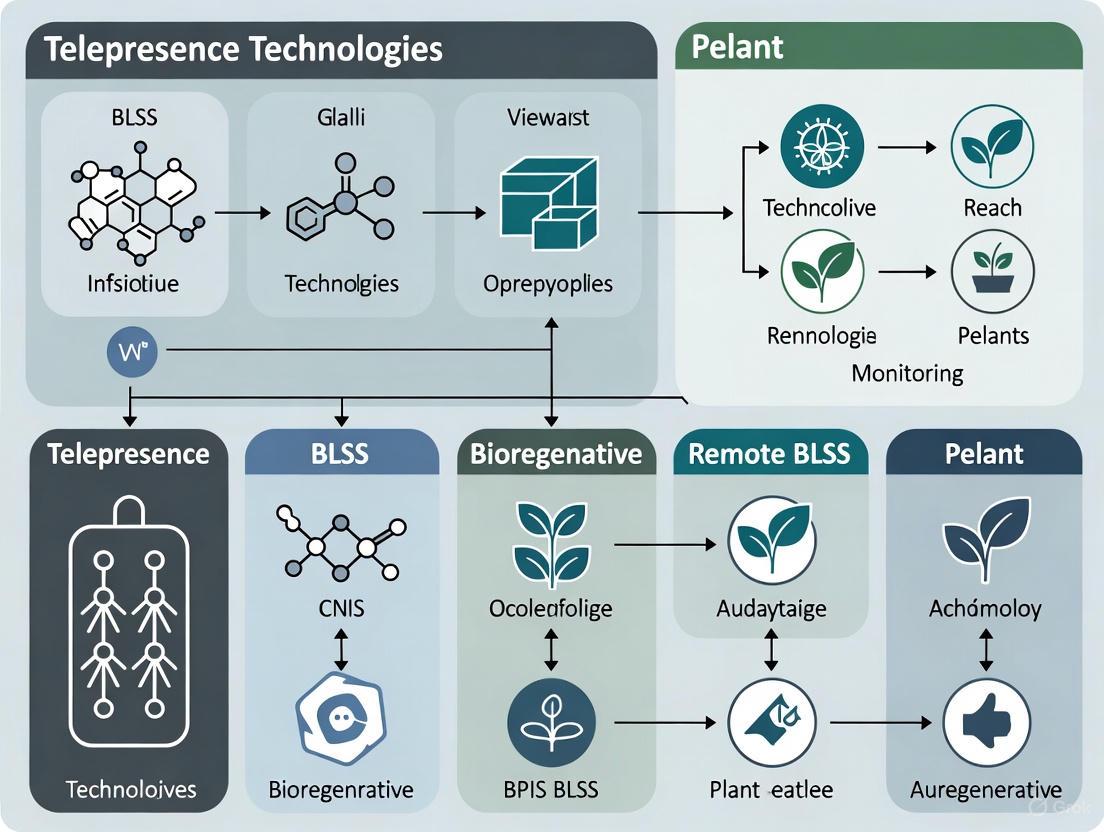

Visualization of Telepresence System Workflows

The following diagrams illustrate key workflows and system architectures for telepresence technologies relevant to BLSS monitoring applications.

Diagram 1: Telepresence System Selection Workflow

Diagram 2: Robotic Telepresence Monitoring Protocol

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Components for Telepresence Research Implementation

| Component Category | Specific Items | Research Function |

|---|---|---|

| Core Telepresence Systems | Room-based telepresence systems, Personal telepresence units, Telepresence robots (TPRs) | Provide foundational remote presence capabilities for different monitoring scenarios [6] [7] |

| Sensing and Perception | HD cameras with zoom capability, Depth-sensing cameras (e.g., Intel D435), Microphone arrays, Environmental sensors | Capture visual, auditory, and environmental data from remote locations [9] [1] |

| Interface and Control | Tablets/computers for robot control, VR headsets for immersive viewing, Customized user interface software | Enable researchers to operate remote systems and interpret collected data [10] |

| Network Infrastructure | High-speed internet connectivity, 5G network equipment, Quality of Service (QoS) enabled routers | Ensure reliable, low-latency communication for real-time interaction [6] |

| Evaluation Tools | Social Presence Questionnaires, System Usability Scales, Task performance metrics, User satisfaction surveys | Quantitatively assess system effectiveness and user experience [9] [10] |

The selection of appropriate components for BLSS telepresence research should consider both current monitoring requirements and future scalability needs. Room-based telepresence systems with multiple codecs offer the highest fidelity for centralized monitoring stations where multiple researchers may collaborate in observing BLSS operations [6]. These systems typically incorporate high-resolution cameras capable of capturing fine details of plant development and system components, along with advanced audio systems that support natural conversation between remote and local team members.

Telepresence robots provide mobility for distributed monitoring applications, with systems ranging from simpler tablet-based implementations to sophisticated platforms with autonomous navigation capabilities [7] [1]. For BLSS applications, TPRs with adjustable height capabilities offer advantages for inspecting systems at different vertical levels, while obstacle detection sensors prevent collisions with critical infrastructure. Research indicates that customized user interfaces specifically designed for researcher requirements significantly enhance operational efficiency and reduce cognitive load during extended monitoring sessions [10].

Specialized sensors integrated with telepresence systems expand monitoring capabilities beyond standard audio-visual communication. Depth-sensing cameras, such as the Intel D435 used in the Portal Display system, enable more accurate spatial understanding and can support 3D reconstruction of the remote environment [9]. Environmental sensors for parameters including temperature, humidity, CO2 levels, and light intensity can be streamed alongside video feeds, providing comprehensive situational awareness for BLSS management. The integration of these diverse data streams into coherent user interfaces represents an ongoing research challenge with significant implications for monitoring effectiveness.

Medical telepresence represents a revolutionary shift in healthcare delivery, enabling remote clinical consultation, monitoring, and intervention through robotic and virtual presence technologies. These systems integrate audio, video, and mobility capabilities to allow healthcare providers to interact with patients, colleagues, and medical environments across geographic barriers. The global COVID-19 pandemic dramatically accelerated adoption of telepresence solutions, establishing them as critical infrastructure for modern healthcare systems [1] [11]. For researchers investigating Bioregenerative Life Support Systems (BLSS), medical telepresence offers a compelling analog for remote monitoring and intervention in isolated, confined environments where direct human presence may be limited or impossible. The evolution of these technologies provides valuable insights into the technical and human-factors requirements for sustaining life in extreme environments through remote means.

This article analyzes the market growth and adoption trends of medical telepresence technologies, with particular emphasis on their application to remote BLSS monitoring research. We examine quantitative market data, present experimental protocols for technology validation, and explore the specialized requirements for monitoring closed-loop biological systems where continuous, non-invasive observation is essential for system stability and experimental integrity.

Market Landscape and Quantitative Analysis

The medical telepresence market encompasses diverse technologies including mobile telepresence robots, 3D telepresence systems, and integrated remote monitoring platforms. Current market data reveals robust growth across all segments, fueled by technological advancements and changing healthcare delivery models.

Global Market Size and Projections

Table 1: Medical Telepresence Market Size and Growth Projections

| Market Segment | 2023/2024 Value | 2030/2034 Projection | CAGR | Data Source |

|---|---|---|---|---|

| Medical Telepresence Robots | USD 70.5 million (2024) | USD 110.5 million (2034) | 4.4% | [12] |

| Telepresence Robots (Overall) | USD 368.33 million (2024) | USD 1,251.53 million (2032) | 16.5% | [13] |

| 3D Telepresence | USD 2.08 billion (2023) | USD 5.66 billion (2030) | 15.37% | [14] |

| Telehealth Services (Overall) | USD 57.6 billion (2024) | USD 505.3 billion (2034) | 24.3% | [15] |

The disparity between the specialized medical telepresence robot market and the broader telehealth services market indicates that while dedicated medical robots represent a smaller market segment, they operate within a rapidly expanding digital health ecosystem. The significant growth in 3D telepresence suggests a trend toward more immersive remote experiences, which holds particular relevance for BLSS monitoring where spatial perception and depth recognition may be critical for accurate system assessment [14].

Adoption Trends by Sector and Region

Table 2: Medical Telepresence Adoption Trends by Sector and Region

| Adoption Category | Leading Segment/Region | Market Share/Characteristics | Data Source |

|---|---|---|---|

| Robot Type | Mobile Telepresence Robots | 62.67% market share in 2025 | [13] |

| Application Sector | Enterprise/Corporate | 46.28% market share in 2025 | [13] |

| Regional Adoption | North America | Dominant market position | [13] |

| End-User | Healthcare Providers | Expanding through telemedicine | [15] |

The dominance of mobile platforms reflects the importance of navigational capability in medical environments, a requirement that translates directly to BLSS monitoring where fixed camera systems provide limited contextual awareness. Regional adoption patterns highlight the influence of technological infrastructure, with North America's leadership attributable to advanced connectivity ecosystems and earlier adoption of robotic solutions [13].

Experimental Protocols for Medical Telepresence Evaluation

Rigorous assessment protocols are essential for validating telepresence systems in clinical and research environments. The following experimental frameworks provide methodologies for evaluating system performance, user experience, and technical reliability.

Protocol 1: Simulated Clinical Scenario Testing

Objective: To evaluate telepresence robot functionality and user acceptance in controlled healthcare environments.

Methodology:

- Participant Recruitment: Employ non-random purposive sampling of healthcare professionals (n≥25) with varying technical proficiency [1].

- Scenario Development: Create simulated clinical scenarios including:

- Patient anamnesis (medical history taking)

- Vital signs measurement integration

- Emergency response simulation (falls, frailty assessment) [1]

- Environment Setup: Conduct testing in medical simulation laboratories replicating clinical settings with standardized lighting, acoustic properties, and network conditions.

- System Configuration: Implement mobile telepresence robots equipped with:

- High-definition cameras (minimum 1080p resolution)

- Dual-microphone arrays for noise suppression

- Automated navigation capabilities

- Secure data transmission protocols

- Data Collection:

- Quantitative: Task completion time, error rates, number of technical interventions

- Qualitative: Structured interviews using Likert scales for usability, presence, and communication quality [1]

Analysis: Employ mixed-methods approach combining descriptive statistics for quantitative measures and thematic analysis for qualitative feedback.

Protocol 2: BLSS Monitoring Integration Framework

Objective: To establish technical requirements and validation protocols for telepresence integration with BLSS monitoring.

Methodology:

- System Architecture Implementation:

- Deploy dew-roof-fog-cloud computing hierarchy for distributed data processing [16]

- Install sensor networks for continuous physiological and environmental monitoring

- Implement redundant communication pathways for fault tolerance

- Performance Metrics Establishment:

- Response time: Target <100ms for critical alerts

- Bandwidth utilization: Optimize for limited connectivity scenarios

- Energy consumption: Minimize for extended deployment

- Validation Testing:

- Conduct controlled disconnection tests to evaluate offline functionality

- Implement security stress testing for data protection verification

- Perform longitudinal reliability assessment over 30-day continuous operation

Analysis: Compare performance against traditional cloud-centric models for response time, energy efficiency, and bandwidth utilization.

Technical Architecture for Remote BLSS Monitoring

Advanced medical telepresence systems employ sophisticated computational architectures that enable reliable operation in challenging environments. The DeW-IoMT (Dew-Internet of Medical Things) framework provides a relevant model for BLSS monitoring applications where connectivity may be intermittent or limited.

This hierarchical architecture demonstrates a 74.61% reduction in response time, 38.78% decrease in energy consumption, and 33.56% reduction in data transmission compared to traditional cloud-centric models [16]. For BLSS applications, this efficiency translates to more sustainable operation in resource-constrained environments and greater resilience during communication disruptions.

Research Reagent Solutions for Telepresence Experiments

Table 3: Essential Research Materials for Telepresence Experimental Protocols

| Category | Specific Solution | Research Function | Application Context |

|---|---|---|---|

| Hardware Platforms | Mobile Telepresence Robots (e.g., Double Robotics, OhmniLabs) | Remote physical presence and navigation | Clinical simulations, BLSS facility inspection |

| Sensor Systems | Pulse sensors, environmental monitors | Physiological and environmental data acquisition | Patient vitals monitoring, BLSS parameter tracking |

| Computing Infrastructure | Arduino/Raspberry Pi devices | Dew computing layer implementation | Local data processing in connectivity-limited environments |

| Network Components | 5G compatible modems, redundant connectivity modules | High-speed, low-latency data transmission | Real-time video transmission for remote diagnosis |

| Software Platforms | HIPAA-compliant video conferencing, secure data storage | Protected health information management | Patient data security in clinical trials |

| Testing Tools | iFogSim simulation software | Fog layer performance analysis | Architecture optimization for specific use cases |

The research reagents outlined in Table 3 represent the core components required for experimental implementation of medical telepresence systems. For BLSS research applications, particular emphasis should be placed on environmental monitoring sensors and robust computing infrastructure capable of operating in potentially isolated environments with limited technical support [16] [12].

Emerging Trends and Research Directions

The medical telepresence landscape continues to evolve with several trends particularly relevant to BLSS monitoring applications:

Technology Integration Trends

Artificial Intelligence Implementation: AI algorithms are increasingly being embedded in telepresence platforms to enable predictive analytics, automated anomaly detection, and personalized interaction patterns. For BLSS research, these capabilities could enable early identification of system imbalances or biological stress indicators before they reach critical levels [17] [12].

5G and Advanced Connectivity: The rollout of 5G networks significantly enhances telepresence capabilities through reduced latency and increased bandwidth. This enables higher-quality video transmission and more responsive remote control, essential for detailed visual assessment of biological systems in BLSS environments [12].

Dew Computing Architectures: The development of more sophisticated edge computing capabilities supports continued operation during connectivity disruptions. This resilience is particularly valuable for BLSS applications in extreme environments where communication infrastructure may be unreliable [16].

Implementation Challenges

Despite promising advances, several challenges persist in medical telepresence implementation:

Technical Reliability: Operational failures due to technical complexities remain a significant concern, with connectivity issues, software glitches, and hardware malfunctions potentially disrupting critical monitoring functions [13].

User Acceptance: Resistance to technology adoption among healthcare professionals and patients continues to impede widespread implementation. This highlights the importance of intuitive design and comprehensive training protocols [1].

Regulatory Compliance: Evolving regulatory frameworks for telehealth and data privacy create compliance challenges, particularly for cross-border research collaborations relevant to international BLSS initiatives [17] [11].

Medical telepresence technologies have evolved from conceptual innovations to essential healthcare tools, with demonstrated efficacy in expanding care access and enabling remote specialist involvement. The market growth trajectory indicates accelerating adoption across healthcare sectors, supported by advancing technology and evolving reimbursement models. For BLSS research, these technologies offer a framework for remote monitoring of closed-loop biological systems, with particular value for applications in isolated or extreme environments. The experimental protocols and technical architectures presented provide a foundation for adapting medical telepresence solutions to BLSS monitoring requirements. As dew computing architectures advance and AI integration deepens, the capabilities of these systems will continue to expand, offering increasingly sophisticated tools for sustaining and monitoring biological life support systems in remote settings.

Telepresence robots are sophisticated cyber-physical systems that enable users to project a real-time, interactive presence into a remote environment. These robots function as the physical avatar for a remote operator, combining advanced sensing, communication, and mobility technologies to create an immersive experience for both the operator and individuals in the robot's environment. For researchers working on remote Bioregenerative Life Support System (BLSS) monitoring, these systems provide a critical capability for maintaining continuous oversight of complex biological systems without physical intrusion. The architecture of a modern telepresence robot rests on three fundamental technological pillars: cameras for visual perception, sensors for environmental awareness and navigation, and communication systems for real-time data transmission and control [18] [19].

The operational paradigm for BLSS monitoring applications requires particularly robust implementations of these core components. Unlike standard commercial applications, BLSS monitoring demands exceptional reliability, precise data collection capabilities, and seamless operation over potentially extended mission durations. The cameras must capture not only general scene information but also specific biological indicators; sensors must monitor both the robot's navigation and the BLSS's environmental parameters; and communication systems must maintain uninterrupted connectivity for continuous data streaming and command transmission [20] [1].

Core Component Analysis

Camera Systems

Visual perception systems in telepresence robots serve as the primary sensory interface for remote operators, delivering critical visual data that enables environmental assessment and decision-making. For BLSS research applications, these systems require capabilities beyond standard video conferencing, including the ability to monitor plant growth, assess organism health, and identify potential system anomalies.

Table: Camera System Specifications for Research-Grade Telepresence Robots

| Parameter | Standard Configuration | Research-Grade (BLSS) Configuration | Functional Impact |

|---|---|---|---|

| Resolution | 1080p (Full HD) [2] | 4K Ultra HD (8MP+) [21] | Enables detailed inspection of plant health, microbial cultures, and system components |

| Field of View | Standard wide-angle (~110°) [2] | Ultra-wide angle with digital pan/tilt/zoom [18] | Provides comprehensive environmental awareness and focused inspection capability |

| Frame Rate | 30 fps [18] | 60 fps or higher [20] | Ensures smooth video for navigating complex environments and observing dynamic processes |

| Low-Light Performance | Standard CMOS sensor [18] | Low-light optimized and IR-capable sensors [20] | Allows for monitoring during simulated night cycles without disrupting photoperiods |

| Specialized Imaging | RGB only [19] | Multi-spectral or hyperspectral capabilities [20] | Facilitates advanced plant health monitoring and physiological assessment beyond visible spectrum |

Camera systems in advanced telepresence robots employ sophisticated image processing algorithms to optimize video quality, reduce bandwidth requirements through efficient compression, and maintain low latency for real-time operator feedback. The Ohmni Robot, for instance, incorporates a 4K ultra-high-definition wide-angle camera combined with a highly responsive tilting mechanism, providing an immersive visual experience with preserved detail [21]. For BLSS applications, this granular visual detail is essential for detecting subtle changes in plant coloration, water surface characteristics, or condensation patterns that might indicate system imbalances.

Sensor Systems

Sensor suites form the autonomous intelligence foundation of telepresence robots, enabling both self-navigation and environmental data acquisition. These systems transform the robot from a simple remotely-controlled camera into an intelligent mobile sensing platform capable of operating semi-independently while collecting vital BLSS parameters.

Table: Sensor Configurations for Environmental Navigation and BLSS Monitoring

| Sensor Type | Primary Function | BLSS Research Application | Integration Method |

|---|---|---|---|

| LIDAR | Spatial mapping and obstacle avoidance [18] | 3D mapping of growth chamber layout and biomass structure | Robot-native integration for navigation |

| Ultrasonic/Infrared | Close-range obstacle detection [18] | Proximity detection for delicate experimental apparatus | Robot-native integration for safety |

| Inertial Measurement Unit (IMU) | Position tracking and orientation [18] | Localization within BLSS modules and motion stability | Robot-native integration for navigation |

| Environmental (Temp, Humidity, CO2) | Basic ambient monitoring [19] | Core BLSS parameter tracking and system health validation | Add-on modular sensor package |

| Gas Sensors (O2, VOCs, Ethylene) | Not typically included | Advanced atmospheric composition monitoring in closed-loop systems | Add-on research-grade sensor package |

| Hyperspectral/NDVI Sensors | Not typically included | Non-destructive plant health and stress assessment | Add-on specialized imaging system |

The sensor and control system constitutes a critical component of the robot's body, working in concert with processing algorithms to interpret sensor data and execute navigation commands [19]. Research by [1] demonstrates that effective sensor integration is crucial for operational reliability in healthcare settings, a finding directly transferable to the high-reliability demands of BLSS monitoring. Advanced platforms like the CPR-OS support the integration of additional sensor modalities including IR cameras and can mesh this sensor data with video streams, creating a comprehensive environmental dataset [20].

Communication Systems

Communication infrastructure serves as the critical link between the remote researcher and the telepresence robot operating within the BLSS environment. This bidirectional data pipeline must simultaneously handle high-bandwidth video/audio streams, sensor data transmission, and low-latency command signals with exceptional reliability.

Table: Communication Protocols and Performance Requirements

| Communication Technology | Data Rate Requirements | Latency Tolerance | BLSS Application Context |

|---|---|---|---|

| Wi-Fi 6/6E (802.11ax) | High (50+ Mbps for 4K video) [22] | Low (<100ms) [18] | Primary connectivity for indoor BLSS facilities with existing infrastructure |

| 5G Cellular | High (100+ Mbps) [18] | Very Low (<50ms) [23] | Mobile applications or facilities without dedicated Wi-Fi; future-proof for lunar/Martian networks |

| Ethernet (Wired) | Maximum reliability (1 Gbps+) | Lowest (<10ms) | Preferred for fixed monitoring stations where mobility is not critical |

| Bluetooth/LE | Low (1-2 Mbps for sensor data) | Moderate (<200ms) | Secondary connection for peripheral sensors and control devices |

Modern telepresence robots utilize advanced connectivity modules—typically Wi-Fi, 4G/5G, or Ethernet—to ensure seamless data transmission between the robot and the user's device [18]. Cloud-based platforms often host control interfaces, data storage, and analytics, providing a centralized hub for operations [18]. For BLSS applications requiring secure and reliable data transmission, implementations utilize encryption protocols like TLS and end-to-end encryption to protect sensitive data and operational commands [18]. The CPR-OS exemplifies modern communication architecture, supporting advanced video formats and real-time data streaming to multiple endpoints simultaneously, a capability valuable for collaborative BLSS research and monitoring [20].

Experimental Protocols for Component Validation

Protocol 1: Camera System Performance Assessment

Objective: To quantitatively evaluate the performance of telepresence robot camera systems for BLSS monitoring applications, focusing on resolution, color accuracy, and low-light performance.

Materials:

- Telepresence robot unit (e.g., Ohmni Robot, Double Robotics)

- Standardized test chart (ISO 12233 resolution chart)

- Color calibration chart (X-Rite ColorChecker Classic)

- Illuminance meter

- Adjustable lighting system (0-2000 lux)

- Data recording station with calibrated reference display

Methodology:

- Setup Phase: Position the robot 2 meters from the test charts in a controlled lighting environment. Establish a stable communication link between the robot and the monitoring station.

- Resolution Testing: Systematically vary illumination levels (50, 200, 1000 lux) to simulate different BLSS operating conditions. Capture images of the resolution chart at each level. Use Imatest or equivalent software to calculate MTF50 values for center and edge regions.

- Color Accuracy Assessment: At 1000 lux illumination, capture an image of the ColorChecker chart. Analyze 24 color patches using color difference analysis (ΔE*ab) compared to reference values.

- Low-Light Performance: Gradually reduce illumination to 10 lux while capturing video of a simulated BLSS scene containing plant specimens. Subjectively evaluate usable video quality and objectively measure signal-to-noise ratio.

- Latency Measurement: Using a high-speed camera (240 fps), record the time between a physical movement in the robot's environment and its appearance on the operator's display.

Data Analysis: Calculate minimum resolvable detail (in lp/PH) across illumination conditions, average color error (ΔE), and total system latency. Compare results against BLSS monitoring requirements, where ΔE < 5 and latency < 200ms are considered minimum performance thresholds [2] [21].

Protocol 2: Sensor Integration and Navigation Accuracy

Objective: To validate the performance of integrated sensor systems for autonomous navigation and environmental monitoring in a simulated BLSS environment.

Materials:

- Telepresence robot with LIDAR and environmental sensors

- Mock BLSS setup with plant growth racks, instrumentation, and obstacles

- Reference environmental sensors (research-grade CO2, O2, temperature, humidity)

- Motion capture system or reference positioning system

- Data logging equipment

Methodology:

- Environment Mapping: Deploy the robot in the mock BLSS environment and execute its autonomous mapping routine. Compare the generated map with ground truth blueprint measurements.

- Navigation Testing: Program a series of waypoints throughout the environment, including narrow passages between equipment. Execute 10 repeated autonomous navigation trials, recording success rate, deviation from planned path, and obstacle avoidance performance.

- Environmental Monitoring: Position the robot at predetermined monitoring stations within the mock BLSS. Collect environmental data from both the robot's sensors and reference instruments simultaneously over a 24-hour period.

- Sensor Fusion Assessment: Evaluate how effectively the robot integrates multiple sensor inputs (LIDAR, IMU, camera) for localization and navigation, particularly in areas with similar visual features.

Data Analysis: Calculate root mean square error for positional accuracy, compare environmental sensor readings against reference values using Bland-Altman analysis, and document any navigation failures or manual interventions required [20] [1].

Protocol 3: Communication System Reliability

Objective: To stress-test communication systems under conditions simulating BLSS operational environments, including network variability and interference.

Materials:

- Telepresence robot unit

- Network emulation hardware (e.g., Wi-Fi attenuator)

- Spectrum analyzer

- Packet capture software (e.g., Wireshark)

- Multiple access points for roaming tests

Methodology:

- Baseline Performance: Establish optimal connection conditions and measure baseline video quality (SSIM index), audio latency, and control responsiveness.

- Network Degradation Testing: Systematically introduce packet loss (0-10%), latency (0-500ms), and jitter (0-100ms) using network emulation tools. At each degradation level, assess operational performance and video quality.

- Roaming Tests: Evaluate seamless handoff between access points as the robot moves through different sections of a facility, measuring interruption duration during handoffs.

- Long-Duration Stability: Conduct a continuous 72-hour operational test with the robot performing predefined monitoring patterns, logging all communication failures and quality degradation events.

Data Analysis: Determine minimum network requirements for reliable BLSS operation, identify failure modes during network degradation, and quantify reliability metrics (uptime, mean time between failures) [18] [22].

System Integration and Workflow

The core components of a telepresence robot do not operate in isolation but function as an integrated system to enable remote presence capabilities. The synergy between cameras, sensors, and communication systems creates a technological ecosystem that is greater than the sum of its parts.

Diagram: Telepresence Robot System Architecture for BLSS Monitoring

This systems architecture illustrates how the core components interact to create a functional telepresence robot. The sensing subsystem continuously acquires environmental data, which is processed by the central computing unit. The communication subsystem transmits this data to the remote operator while simultaneously receiving control commands. Finally, the actuation subsystem executes navigation commands and facilitates social interaction through audio-visual components [18] [20].

For BLSS applications, this integrated workflow enables:

- Continuous Monitoring: Environmental sensors track BLSS parameters while navigation sensors enable autonomous patrols

- Remote Intervention: High-quality video and audio allow researchers to assess system status and guide maintenance procedures

- Data Correlation: Simultaneous collection of visual, environmental, and positional data creates comprehensive system understanding

- Adaptive Operation: Sensor fusion allows the robot to modify its behavior based on environmental conditions, such as focusing camera attention on areas with anomalous sensor readings

The Researcher's Toolkit

Table: Essential Research Reagents and Hardware Solutions for Telepresence Robotics

| Component Category | Specific Solution/Product | Research Application | Implementation Notes |

|---|---|---|---|

| Platform Architecture | CPR-OS (TRC Robotics) [20] | Development framework for custom BLSS applications | Provides secure authentication (CPR-ID chip) and supports hardware accessory integration |

| Camera System | Ohmni Robot 4K UHD Camera [21] | High-fidelity visual inspection of BLSS components | Offers wide-angle view with responsive tilting; suitable for detailed plant health monitoring |

| Navigation Sensor | LIDAR-based Mapping [18] | Autonomous navigation in structured BLSS environments | Enables creation of precise environment maps and obstacle avoidance during monitoring routes |

| Environmental Sensing | Modular Sensor Packages [20] | Customized BLSS parameter monitoring | Allows integration of research-specific sensors (gas, atmospheric, water quality) via API |

| Communication Security | End-to-End Encryption [18] | Protection of sensitive research data | Implements TLS and other protocols to secure video feeds and experimental data |

| Development Platform | CPR SDK & App Store [20] | Custom application development | Enables creation of BLSS-specific behaviors and monitoring protocols through 3rd party development |

The effective implementation of telepresence robotics for BLSS monitoring and research depends on the careful integration and optimization of camera systems, sensor suites, and communication architecture. As demonstrated through the technical specifications and validation protocols outlined in this document, research-grade applications demand performance standards exceeding those of commercial telepresence solutions. The ongoing advancement in these core technologies—particularly in imaging resolution, sensor fusion algorithms, and 5G connectivity—promises even greater capabilities for remote BLSS operation and monitoring in future missions [23] [22]. By leveraging the component analysis and experimental frameworks provided herein, researchers can systematically evaluate, select, and implement telepresence robotics solutions that meet the rigorous demands of life support system research and development.

The integration of telepresence technologies is revolutionizing biomedical research by overcoming traditional limitations of physical presence and manual processes. This application note details how telepresence robots and continuous monitoring systems provide remote, real-time access to laboratory environments, enable uninterrupted data collection in critical settings such as pharmaceutical manufacturing, and significantly reduce contamination risks in sensitive experiments. Framed within the context of remote monitoring for Biological Life Support Systems (BLSS) research, this document provides validated protocols and quantitative data to guide researchers and drug development professionals in adopting these transformative technologies.

Remote Access via Medical Telepresence Robots

Telepresence robots are mobile devices equipped with audiovisual communication systems that allow researchers to interact with laboratory environments and collaborate with colleagues in real-time from any location. These systems are pivotal for enabling expert oversight and maintaining research continuity outside traditional laboratory settings [24].

Key Technical Specifications: Modern medical telepresence robots are typically outfitted with high-definition cameras for detailed visual inspection, two-way microphones and speakers for seamless communication, and mobility controls that allow remote navigation through laboratory spaces [24]. Some advanced models can be integrated with specialized sensors or robotic arms for basic manipulation tasks, though this remains an emerging capability.

Quantitative Market Growth: The adoption of this technology is accelerating. The medical telepresence robots market, valued at approximately $75 million in 2024, is projected to reach $116.47 million by 2034, reflecting a compound annual growth rate (CAGR) of 4.5% [24]. This growth is driven by the increasing demand for remote collaboration and access to specialized expertise.

Table 1: Key Features of Medical Telepresence Robots for Biomedical Research

| Feature | Description | Research Application |

|---|---|---|

| HD Cameras & Zoom | Provides high-resolution, close-up visual inspection of samples, equipment readouts, and cell cultures. | Remote data collection, visual monitoring of experimental outcomes, and equipment status verification. |

| Two-Way Audio/Video | Enables real-time communication between on-site and remote researchers. | Facilitation of collaborative experiment planning, troubleshooting, and peer review of procedures. |

| Remote Mobility | Allows the operator to navigate the robot through the lab environment from a distance. | Remote lab tours, monitoring of multiple workstation setups, and inspection of BLSS components. |

| Secure Data Transmission | Ensures that research data and intellectual property are protected during transmission. | Maintenance of data integrity and confidentiality, which is crucial for proprietary drug development research. |

Continuous Monitoring in Controlled Environments

Continuous monitoring involves the uninterrupted, real-time collection of environmental and process data throughout a critical operation. In biomedical research, this is essential for maintaining the integrity of classified environments like cleanrooms, where factors such as non-viable and viable particle counts are critical quality attributes [25].

This approach is a cornerstone of quality by design (QbD). Regulatory guidelines, such as the revised Annex 1 from the European Commission, explicitly advocate for continuous monitoring as the best practice for aseptic processes, stating that it should be undertaken "for the full duration of critical processing" [25]. This shift in regulatory expectation emphasizes the importance of capturing all interventions and transient events that sporadic sampling might miss.

Key Monitoring Parameters:

- Non-viable Particles: Continuous monitoring of particulate matter (e.g., ≥0.5 and ≥5 µm) with a suitable sample flow rate (at least 28 liters per minute) [25].

- Viable Particles: Continuous air sampling or the use of settle plates to monitor for microbial contamination throughout critical processes [25].

- Environmental Conditions: Tracking of temperature, humidity, and pressure differentials in real-time.

Table 2: Quantitative Data on Remote and Continuous Monitoring Adoption

| Parameter | Metric | Significance for Research |

|---|---|---|

| U.S. RPM Market Value (2024) | ~$14-15 Billion [17] | Indicates massive and growing investment in remote data collection technologies. |

| Projected U.S. RPM Market (2030) | >$29 Billion [17] | Reflects a CAGR of ~12-13%, signaling long-term sustainability. |

| American RPM Users (2025 Projection) | 71 Million (26% of population) [17] | Demonstrates widespread acceptance and normalization of remote monitoring. |

| Provider RPM Adoption (2023) | 81% of Clinicians [17] | Shows rapid integration into professional practice, supporting its reliability. |

Experimental Protocol: Continuous Environmental Monitoring for Aseptic Experimentation

1. Objective: To ensure the continuous integrity of the experimental environment by monitoring non-viable and viable particle counts throughout the duration of a critical aseptic procedure.

2. Materials:

- Continuous laser particle counter (capable of monitoring ≥0.5 and ≥5 µm particles).

- Volumetric air sampler for viable particles.

- Data logging software with real-time alarm capabilities.

- 70% ethanol or 5-10% bleach disinfectants for surface decontamination [26].

3. Methodology:

- Risk Assessment & Sensor Placement: Conduct a risk assessment to identify critical control points for particle monitoring. Place sensors in locations representative of the air quality in the zone of operation [25].

- Calibration & Pre-check: Calibrate all monitoring equipment according to manufacturer specifications. Verify data logging and alarm functions.

- Baseline Recording: Initiate continuous monitoring at least 15 minutes before the experiment begins to establish an environmental baseline.

- In-Process Monitoring: Allow the system to monitor uninterrupted for the full duration of the critical process. The system should be configured to trigger an alert immediately upon exceeding predefined alert levels [25].

- Data Review & Response: Document all monitoring data. If an action limit is exceeded, follow a predetermined procedure for investigation and corrective action.

Reduction of Sample Contamination

Contamination during sample preparation is a major source of error, with studies indicating that up to 75% of laboratory errors occur in the pre-analytical phase due to improper handling or contamination [26]. Implementing remote technologies and optimized protocols can drastically mitigate these risks.

Strategies for Contamination Reduction:

- Remote Visual Assistance: Using telepresence robots for remote supervision allows experts to guide on-site technicians without physically entering the cleanroom, thereby reducing human-borne contamination [24].

- Use of Disposable Components: Employing single-use, sterile tools like disposable homogenizer probes (e.g., Omni Tips) or hybrid probes (e.g., Omni Tip Hybrid) virtually eliminates the risk of cross-contamination between samples [26].

- Rigorous Decontamination Protocols: For reusable tools, validate cleaning procedures. This includes running a blank solution after cleaning to check for residual analytes [26]. Use specific decontamination solutions (e.g., DNA Away for molecular biology workflows) on lab surfaces [26].

- Process Refinements: For plate-based assays, centrifuging sealed plates before slowly removing seals can reduce well-to-well contamination [26].

The Scientist's Toolkit: Key Reagent Solutions for Contamination Control

Table 3: Essential Materials for Reducing Contamination in Sensitive Assays

| Item | Function | Application Example |

|---|---|---|

| Disposable Homogenizer Probes | Single-use probes for sample homogenization that prevent cross-contamination between samples. | Processing multiple tissue samples for RNA/DNA extraction in a single session [26]. |

| Hybrid Homogenizer Probes | Probes with a stainless steel outer shaft and disposable plastic inner rotor, balancing durability and contamination control. | Homogenizing tough or fibrous samples where pure plastic probes may be insufficient [26]. |

| Decontamination Solutions (e.g., DNA Away) | Chemical solutions designed to degrade and remove specific contaminants like nucleic acids from lab surfaces and equipment. | Preparing a DNA-free workspace for PCR setup to prevent false positives [26]. |

| Surface Disinfectants (70% Ethanol, 10% Bleach) | Used in routine cleaning of lab surfaces (benches, pipettors) to reduce microbial and particulate load. | Daily and pre-experiment cleaning of laminar flow hoods and workstations [26]. |

| Validated Cleaning Protocols | Documented, step-by-step procedures for cleaning reusable labware to a defined standard. | Ensuring trace metal analyzers are free of contaminant residues from previous runs [26]. |

Integrated Workflow Diagram

The following diagram illustrates a integrated research protocol leveraging telepresence and continuous monitoring to minimize contamination in a BLSS or pharmaceutical research context.

Integrated Research Workflow for Remote-Enabled Biomedical Research

The synergistic application of telepresence robotics, continuous monitoring systems, and stringent contamination control protocols presents a paradigm shift for biomedical research. These technologies collectively enhance collaboration, ensure data integrity through real-time oversight, and uphold the sterility of critical experiments. For researchers focused on BLSS and drug development, adopting these practices is a strategic imperative for improving reproducibility, efficiency, and the overall reliability of scientific outcomes.

Implementing Telepresence Solutions: Methodologies for BLSS Monitoring and Research Applications

Bioartificial Liver Support Systems (BLSS) represent a promising therapeutic modality for patients with fulminant hepatic failure [27]. These complex biomedical systems require continuous monitoring and parameter adjustment to maintain optimal patient support. Telepresence robots offer researchers the capability to conduct remote monitoring of BLSS instrumentation and experimental protocols, enabling real-time observation without physical presence in laboratory environments. This application note establishes systematic criteria for selecting appropriate telepresence platforms that align with the specific technical and operational requirements of BLSS research, ensuring reliable data collection and system oversight while maintaining experimental integrity.

The fundamental value of telepresence technology in this context lies in its ability to provide remote visual and auditory access to laboratory spaces containing BLSS equipment [24] [28]. These robotic systems typically incorporate high-definition cameras, microphones, speakers, and mobility features that enable researchers to visually inspect equipment readings, observe experimental conditions, and communicate with on-site personnel [24]. For BLSS research, which may involve monitoring bioreactor parameters, blood circuit integrity, and patient physiological responses [27], this remote capability provides crucial oversight while potentially reducing contamination risks and enabling specialist consultation across geographical boundaries.

Key Selection Criteria for Research Applications

Quantitative Technical Specifications

Selecting an appropriate telepresence robot for BLSS monitoring requires careful evaluation of technical specifications against research-specific needs. The following parameters represent minimum requirements for effective remote monitoring in laboratory settings.

Table 1: Essential Technical Specifications for BLSS Research Telepresence

| Parameter | Minimum Specification | Recommended Specification | Research Application Rationale |

|---|---|---|---|

| Video Resolution | 1080p HD | 4K UHD | Clear reading of equipment displays and fine visual details |

| Audio System | Two-way microphone/speaker | Noise-canceling directional mics | Clear communication despite equipment background noise |

| Battery Life | 4 hours | 8+ hours | Sustained monitoring throughout extended experiments |

| Mobility | Two-wheel drive | Omnidirectional wheels | Navigation in narrow laboratory spaces between equipment |

| Height Adjustment | Fixed position | Adjustable range (1.1-1.6m) | Optimal viewing angles for different equipment configurations |