Synthetic Solutions: How Generative AI is Overcoming Data Scarcity in Plant Phenotyping

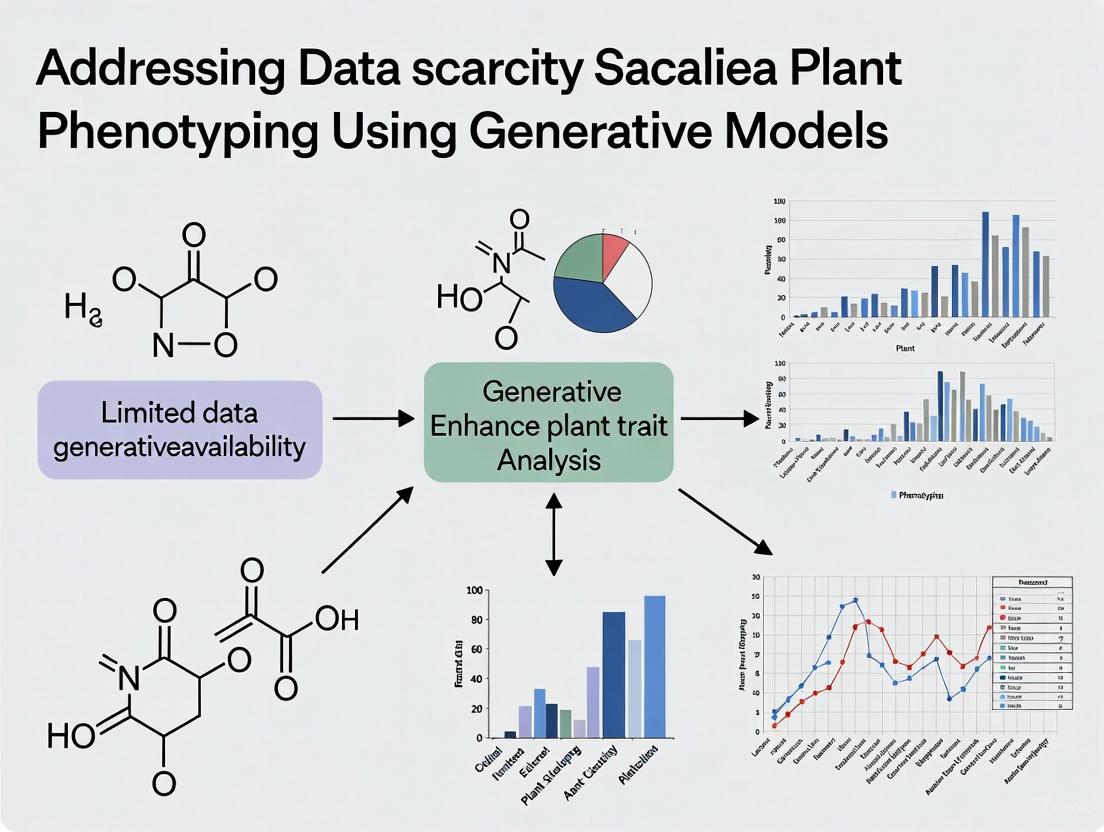

This article addresses the critical challenge of data scarcity in plant phenotyping, a major bottleneck for training robust deep learning models in agricultural and biomedical research.

Synthetic Solutions: How Generative AI is Overcoming Data Scarcity in Plant Phenotyping

Abstract

This article addresses the critical challenge of data scarcity in plant phenotyping, a major bottleneck for training robust deep learning models in agricultural and biomedical research. We explore how generative models, particularly Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs), are being deployed to create realistic, diverse, and annotated synthetic plant image data. The scope covers foundational concepts, practical methodologies for model implementation, strategies for troubleshooting and optimization, and rigorous validation frameworks. Designed for researchers, scientists, and drug development professionals, this guide provides a comprehensive roadmap for leveraging generative AI to enhance dataset quality, improve model generalizability, and accelerate innovation in plant science and related fields.

The Data Famine in Plant Science: Understanding the Scarcity Problem and the Generative AI Opportunity

Frequently Asked Questions (FAQs)

Q1: What are the primary technical challenges in annotating plant phenotyping data? Annotation is hindered by the inherent complexity of plant structures. Challenges include occlusion and overlap of plant organs (e.g., dense wheat heads), variability in appearance due to maturity, genotype, and environment, and the presence of visual noise like wind-blurred images [1]. These factors make it difficult for both human annotators and models to identify and delineate individual structures consistently, requiring extensive training and calibration for annotators [1].

Q2: How does environmental variability contribute to data scarcity? Phenotypic traits are highly dependent on genotype-by-environment interactions, meaning the same plant can look drastically different under varying conditions [2] [3]. To build a robust model, training data must encompass a wide range of environments, soils, and management practices. This necessity for massive environmental diversity makes collecting a comprehensively labeled dataset prohibitively expensive and time-consuming.

Q3: Why is a lack of data standardization a problem? Without standardized formats and descriptions, data from different experiments and platforms become interoperable silos [2] [3]. The community suffers from a "vast heterogeneity" in data, with different research groups using inconsistent nomenclatures and protocols [3]. This lack of harmonization makes it difficult to aggregate smaller datasets into a larger, more useful resource, effectively compounding the problem of data scarcity.

Q4: What are the key resource bottlenecks in creating high-quality datasets? The primary bottlenecks are expert labor, time, and cost. Manual phenotyping is "labor-intensive, time-consuming, and prone to human error" [4] [5]. High-quality annotation requires skilled personnel, and the process of managing annotators and ensuring quality control places a significant burden on researchers [1]. Furthermore, high-throughput phenotyping platforms themselves represent major financial investments [3].

Q5: How can generative AI models help address this scarcity? Generative models, such as Generative Adversarial Networks (GANs) and Diffusion Models, can create synthetic phenotypic data [4] [6]. This synthetic data can augment limited real-world datasets, helping to balance class distributions and simulate rare traits or environmental conditions. This approach reduces dependency on extensive and expensive field experiments [4] [6].

Troubleshooting Common Experimental Issues

Issue 1: Poor Model Generalization to New Environments

- Problem: A model trained in a controlled greenhouse fails when deployed in a field setting.

- Root Cause: Domain shift due to changes in lighting, background, plant density, and other environmental factors [3].

- Solution:

- Employ Domain Adaptation: Use techniques like style transfer [6] or environment-aware modules [4] to make models invariant to domain-specific features.

- Incorporate Diverse Data: Source training images from multiple locations and conditions. The Global Wheat Head Dataset, for example, includes images from continents worldwide to combat bias [1].

- Use Biologically-Constrained Optimization: Integrate prior biological knowledge into the model to ensure predictions are biologically realistic, improving generalizability [4] [5].

Issue 2: Inconsistent and Non-Reproducible Annotations

- Problem: High inter-annotator variability, leading to noisy labels and unreliable model training.

- Root Cause: Lack of a clear, detailed protocol for handling edge cases (e.g., overlapping leaves, blurred heads) [1].

- Solution:

- Develop a Detailed Annotation Guide: Create a visual guide with examples of correct/incorrect annotations and rules for edge cases.

- Implement Calibration Rounds: Before full-scale annotation, run small calibration batches to train annotators to the required standard and refine the protocol [1].

- Leverage FAIR Principles: Ensure data is Findable, Accessible, Interoperable, and Reusable by using standardized ontologies (like Crop Ontology and MIAPPE) and metadata [2].

Issue 3: Handling Multi-Scale and Multi-Modal Data

- Problem: Difficulty integrating data from different sources (e.g., drone images, soil sensors, genomic data) for a holistic analysis.

- Root Cause: Data stored in incompatible formats with different spatial and temporal scales.

- Solution:

- Adopt Interoperability Standards: Utilize frameworks like the Breeding API (BrAPI) to enable standard data exchange between systems [2].

- Use Interoperability Pivots: Identify and use common identifiers (e.g., specific plant IDs, GPS coordinates, trait ontologies) to link disparate datasets [2].

- Implement Multimodal Deep Learning Frameworks: Design models that can process and fuse data from images, sensors, and genomics simultaneously [4] [5].

Experimental Protocols & Workflows

Protocol 1: Creating a High-Quality Annotated Image Dataset

This protocol outlines the steps for generating a robust dataset for a task like wheat head detection and segmentation [1].

Workflow Diagram: Image Dataset Annotation Pipeline

Step-by-Step Methodology:

- Image Acquisition: Collect images from a diverse set of environments, genotypes, and growth stages. Ensure variety in planting densities, patterns, and field conditions to build a robust dataset [1].

- Data Curation and Selection: Select a representative subset of images that captures the full spectrum of expected variability.

- Develop a Detailed Annotation Guide: Create a comprehensive guide that defines the target object (e.g., "What constitutes a wheat head?"), includes visual examples, and specifies how to handle edge cases like occlusion, blur, and overlap [1].

- Annotator Calibration and Training: Conduct training sessions with annotators using the guide. Perform several calibration rounds where annotators label the same small set of images. Compare results with expert labels, provide feedback, and refine the guide until acceptable agreement is achieved [1].

- Initial Bounding Box Annotation: Begin with simpler bounding box annotation to familiarize the annotation team with the data and its specificities without the complexity of segmentation [1].

- Iterative Quality Checks and Feedback: Implement a quality assurance process where a subset of annotations is reviewed by experts. Provide continuous feedback to annotators to correct systematic mistakes and clarify ambiguities [1].

- Precise Polygon Annotation: Once the team is proficient, switch to polygon annotation for precise delineation of each object's boundaries. This step is more time-consuming but yields higher-quality data for segmentation tasks [1].

- Final Validation and Dataset Publication: Have experts perform a final validation on the annotated dataset. Publish the dataset following FAIR principles, ensuring it is citable and comes with clear documentation on the annotation protocol [2] [1].

Protocol 2: A Framework for Generative Model-Assisted Phenotyping

This protocol describes how to integrate generative AI to mitigate data scarcity, based on proposed frameworks in recent literature [4] [6] [5].

Workflow Diagram: Generative Model Training & Deployment

Step-by-Step Methodology:

- Base Data Collection: Assemble a limited set of high-quality, annotated real-world data. This dataset must be carefully curated to be as representative as possible.

- Synthetic Data Generation: Use generative models (e.g., GANs or Diffusion Models) to create synthetic plant images and corresponding annotations. This can be done by training the generative model on the available real data to learn the underlying data distribution [6].

- Domain Adaptation: Apply techniques like style transfer [6] or an environment-aware module [4] [5] to bridge the "reality gap" between synthetic and real images. This step enhances the utility of synthetic data for training models destined for real-world deployment.

- Hybrid Model Training: Train a hybrid deep learning model (e.g., combining transformers for context and CNNs for feature extraction) on the augmented dataset comprising both real and domain-adapted synthetic data [4] [5].

- Biologically-Constrained Optimization: During training, incorporate a biologically-constrained optimization strategy. This uses prior knowledge (e.g., physical constraints on plant architecture) to penalize biologically implausible model predictions, ensuring outputs are realistic and interpretable [4] [5].

- Model Deployment and Monitoring: Deploy the trained model in a real-world setting (e.g., a phenotyping platform or field). Continuously monitor its performance and use the newly collected data to further refine and retrain the model.

Table 1: Key Sources of Data Scarcity and Their Impact

| Bottleneck Category | Specific Challenge | Impact on Data Quality & Availability |

|---|---|---|

| Annotation Complexity | Occlusion and overlap of plant organs [1] | Increases annotation time and cost; introduces label noise and inconsistency. |

| Annotation Complexity | High phenotypic variability (maturity, genotype) [1] | Requires a larger number of annotated examples to capture full diversity. |

| Environmental Variability | Genotype-by-Environment (GxE) interactions [2] [3] | Necessitates data from countless environments for generalizability, which is infeasible to collect exhaustively. |

| Data Standardization | Heterogeneous formats & nomenclatures [3] | Prevents data pooling and integration, leading to ineffective, small, isolated datasets. |

| Resource Constraints | Labor-intensive manual processes [4] [5] | Limits the scale and speed at which new annotated datasets can be produced. |

Table 2: Key Research Reagent Solutions for Plant Phenotyping Data Generation

| Research Reagent / Resource | Function in Addressing Data Scarcity |

|---|---|

| Public Benchmark Datasets (e.g., Plant Phenotyping Datasets [7]) | Provide a common ground for developing and evaluating computer vision algorithms, reducing the initial overhead for researchers. |

| Standardized Ontologies (e.g., Crop Ontology, MIAPPE [2]) | Enable interoperability and reuse of data by providing a common language for describing traits, methods, and experimental conditions. |

| Data Repositories (e.g., GnpIS [2]) | Facilitate long-term access to Findable, Accessible, Interoperable, and Reusable (FAIR) phenotyping data, promoting collaboration and meta-analysis. |

| Generative AI Models (e.g., GANs, Diffusion Models [4] [6]) | Create synthetic data to augment real datasets, simulate rare scenarios, and reduce dependency on physical experiments. |

| Professional Annotation Services [1] | Provide scalable, high-quality human annotation, reducing the management burden on researchers and accelerating dataset creation. |

Frequently Asked Questions (FAQs)

FAQ 1: What exactly is "ground truth" data in the context of plant phenotyping research?

In plant phenotyping and machine learning, ground truth data is the accurately labeled, verified information that serves as the definitive reference against which AI models are trained and evaluated [8]. It is considered the "gold standard" and represents the most accurate result achievable for a given dataset [9]. For example, in a disease detection model, the ground truth would be plant images that have been definitively diagnosed and annotated by expert plant pathologists for specific diseases [10] [8].

FAQ 2: Why is the creation of ground truth data so labor-intensive and expensive?

The process is labor-intensive due to several factors:

- Expert Dependency: Accurate annotation requires the time of expert plant pathologists, who are a limited resource [10]. Their specialized knowledge is essential for correct classification, creating a bottleneck in dataset creation.

- Manual Effort: The preparation of hand-curated "gold standard" data is "extremely labor intensive" [9]. For image-based phenotyping, this often involves experts manually drawing bounding boxes or segmentation masks around plant organs or diseased areas in thousands of images [9] [8].

- Handling Subjectivity: To mitigate individual annotator bias and error, a consensus approach is often needed, where multiple, independent annotators label the same data, and a final label is determined by majority agreement. This process, while improving quality, further multiplies the required effort [11].

FAQ 3: What are the specific consequences of using poor-quality ground truth data?

The quality of your ground truth data sets the performance ceiling for your AI model [8]. Consequences of poor ground truth include:

- Catastrophic Model Failure: A model trained on flawed, inaccurate, or biased data will build a flawed understanding of the world, leading to unreliable and inaccurate predictions [8].

- Perpetuation of Bias: Any biases present in the labels will be learned and replicated by the model, potentially leading to unfair or dangerous outcomes in real-world applications [8].

- Wasted Resources: The financial and computational resources invested in training a model on poor-quality data are ultimately wasted, as the model will never be reliable [8].

FAQ 4: Our research involves rare plant diseases. How can we create ground truth with limited examples?

This challenge of class imbalance is common. Potential strategies include:

- Data Augmentation: Artificially increasing the number of examples for the rare class by applying transformations (e.g., rotation, scaling, color adjustment) to the existing images [4] [5].

- Weighted Loss Functions: Using technical solutions during model training that assign a higher penalty when the model misclassifies examples from the under-represented rare disease class, forcing the model to pay more attention to them [10].

- Generative Models: Exploring the use of Generative Adversarial Networks (GANs) to synthesize realistic and scientifically grounded phenotypic data for conditions where real data is scarce [4] [5].

FAQ 5: Are there any methods to reduce the manual labor involved in ground truth annotation?

While full automation is difficult, several techniques can improve efficiency:

- Semi-Supervised Learning: Leveraging a small amount of high-quality, expert-labeled data together with a larger set of unlabeled data [11].

- Active Learning: Implementing a process where the AI model itself flags the data points it is most uncertain about, allowing human experts to focus their annotation efforts where they are most needed [8] [11].

- Automation for Consistency Checks: Using specialized AI models to perform initial, tedious annotation tasks, which are then validated and corrected by human experts. This combines the speed of automation with the accuracy of human oversight [11].

Troubleshooting Guides

Problem: Your deep learning model for plant disease classification is performing poorly, and you suspect an issue with your ground truth data.

Diagnosis and Resolution Steps:

Audit Your Annotation Guidelines

- Symptom: High rates of disagreement between annotators (low inter-annotator agreement).

- Solution: Reconvene with your expert annotators and pathologists. Review and refine the annotation guidelines to ensure they are unambiguous. Create a clear, visual guide for classifying borderline cases. Re-annotate a sample of data to measure the improvement in agreement [11].

Check for Class Imbalance

- Symptom: The model performs excellently on common diseases but fails to detect rare ones.

- Solution: Analyze the distribution of labels in your dataset.

Validate Against a "Gold Standard" Subset

- Symptom: Uncertainty about the overall accuracy of your labels.

- Solution: Select a random subset of your data (e.g., 5-10%) and have it re-annotated by a senior, board-certified expert whose judgment you trust as the final authority. Use this "gold standard" subset to quantify the error rate in your main dataset and identify systematic labeling errors [9] [8].

Assess Temporal Drift

- Symptom: A model that was once accurate is now degrading in performance.

- Solution: Consider that the "truth" may have changed. New disease strains or environmental conditions can make old ground truth data obsolete.

- Action: Implement continuous data collection and periodic re-annotation cycles to keep your ground truth dataset current. This is managed through data versioning and continuous retraining [8].

Problem: Your high-throughput phenotyping system's proxy measurements (e.g., digital biomass) do not accurately reflect destructive measurements (e.g., dry weight).

Diagnosis and Resolution Steps:

Re-establish the Calibration Curve

- Symptom: A consistent, biased error between non-destructive measurements and destructive harvests.

- Solution: Do not assume a simple linear relationship.

- Action: Conduct a dedicated calibration experiment where you take non-destructive measurements immediately followed by destructive harvests across a wide range of plant sizes and treatments.

- Action: Fit an appropriate model (e.g., a curvilinear relationship) to this data. Neglecting this curvilinearity can result in linear calibration curves that have a high r² but still exhibit large relative errors [12].

Determine if Treatment-Specific Calibrations are Needed

- Symptom: The calibration is accurate for control plants but fails under different growing conditions (e.g., drought, high CO₂).

- Solution: Test whether your different experimental treatments affect the relationship between the proxy and the actual trait.

- Action: Generate calibration curves for each major treatment or genotype if you find significant differences. A single universal calibration may not be sufficient [12].

Control for Diurnal Variation

- Symptom: Plant size estimates from top-view cameras vary significantly throughout the day without actual growth.

- Solution: This is often due to diurnal changes in leaf angle (nyctinasty).

- Action: Standardize the timing of your image acquisitions to a specific time of day to minimize this effect. Research has shown that diurnal leaf movement can cause deviations of more than 20% in size estimates over the course of a day [12].

Quantitative Data on Plant Phenotyping and Model Performance

Table 1: Performance and Cost Comparison of Plant Phenotyping Technologies

| Technology | Reported Lab Accuracy | Reported Field Accuracy | Relative Cost | Key Challenges |

|---|---|---|---|---|

| RGB Imaging | 95–99% [10] | 70–85% [10] | $500–$2,000 [10] | Sensitivity to environmental variability (illumination, background) [10] |

| Hyperspectral Imaging | Information Missing | Information Missing | $20,000–$50,000 [10] | High cost, complex data analysis, annotation difficulty [10] |

| Transformer Models (e.g., SWIN) | Information Missing | 88% (on real-world datasets) [10] | High (Computational) | Significant computational resource requirements [4] [5] |

| Traditional CNNs (e.g., ResNet) | Information Missing | 53% (on real-world datasets) [10] | Moderate (Computational) | Struggles with generalization to new conditions [10] |

Table 2: Labor and Data Challenges in Ground Truth Creation

| Challenge Category | Specific Issue | Impact on Research |

|---|---|---|

| Data Annotation | Requires expert plant pathologists for labeling [10]. | Creates a significant bottleneck, slowing down dataset expansion and diversification. |

| Class Distribution | Natural imbalance in disease occurrence [10]. | Biases models toward common diseases, reducing accuracy for rare but devastating conditions. |

| Dataset Variability | Differences in illumination, background, plant growth stage [10]. | Models must be robust to these variations to ensure reliable field performance. |

| Ground Truth Evolution | New disease strains and changing environments [8]. | Models can become obsolete, requiring continuous data collection and re-annotation. |

Experimental Protocols for Key Cited Experiments

Protocol 1: Establishing a Curvilinear Calibration for Projected Leaf Area

This protocol addresses the pitfall of assuming a simple linear relationship between projected leaf area (PLA) from images and total leaf area (TLA) from destructive measurement [12].

- Plant Material and Growth: Select a rosette species (e.g., Plantago major). Grow plants under controlled conditions to generate a wide range of sizes. A large number of replicates per harvest (e.g., n=12) is recommended.

- Non-Destructive Imaging: At weekly intervals over the growth cycle, capture top-view images of each plant under standardized lighting to determine the Projected Leaf Area (PLA). Ensure the camera is fixed at a consistent height.

- Destructive Harvesting: Directly after imaging, destructively harvest the same plants. Separate all leaves.

- Total Leaf Area Measurement: Use a leaf area meter (e.g., LiCor 3100) equipped with a conveyor belt system to measure the Total Leaf Area (TLA) for each plant.

- Data Analysis: Fit different models to the PLA and TLA data:

- A simple linear model:

TLA ~ PLA - A model with a quadratic term:

TLA ~ PLA + PLA² - A log-transformed model:

ln(TLA) ~ ln(PLA) - Select the model that provides the best fit and lowest error, which is often curvilinear rather than linear [12].

- A simple linear model:

Protocol 2: Assessing the Impact of Diurnal Leaf Movement on Size Estimation

This protocol quantifies how diurnal changes in leaf angle can impact digital biomass estimates [12].

- Plant Material: Use Arabidopsis thaliana (Col-0) plants grown in a controlled growth room with a consistent light-dark cycle (e.g., 12-hour day).

- Imaging Schedule: On a selected day (e.g., 39 days after sowing), image the plants repeatedly at regular intervals (e.g., every 2 hours) throughout the day, including both light and dark periods.

- Image Analysis: Process all images using consistent software to calculate a size metric, such as projected leaf area or digital biomass.

- Statistical Analysis: Plot the calculated size metric against the time of day. The analysis will typically reveal significant oscillations, demonstrating deviations of more than 20% over the day, highlighting the need for standardized timing for image acquisition [12].

Workflow and System Diagrams

Ground Truth Creation and Use Workflow

Troubleshooting Ground Truth Data Issues

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Ground Truth Creation and Plant Phenotyping Experiments

| Item / Solution | Function in Research |

|---|---|

| High-Throughput Phenotyping Platform (e.g., PlantArray) | Automated system for non-destructive, frequent measurement of plant physiological traits like water use efficiency and daily biomass gain, providing high-resolution time-series data [13] [12]. |

| RGB Camera Systems | Captures high-resolution visible spectrum images for analysis of morphological traits (e.g., leaf area, color, disease spots). The most accessible and cost-effective imaging modality [10]. |

| Hyperspectral Imaging Sensors | Captures data across a wide spectral range (e.g., 250–1500 nm), enabling the identification of physiological changes associated with stress or disease before visible symptoms appear [10]. |

| Leaf Area Meter (e.g., LiCor 3100) | Provides accurate, destructive measurement of total leaf area, serving as the "gold standard" for calibrating non-destructive image-based projected leaf area measurements [12]. |

| Controlled Environment Growth Chambers | Provides standardized conditions for plant growth, minimizing environmental variability and enabling the generation of reproducible phenotypic data for model training and calibration [12]. |

| Data Annotation Software Platform | Software tools that facilitate the manual labeling of images by experts, often including features for managing annotator consensus and quality control [9] [11]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental relationship between data scarcity and model overfitting?

A1: Deep learning models possess millions of parameters, enabling them to learn highly complex, non-linear relationships. When training data is scarce, the model lacks sufficient examples to learn the true underlying data distribution. Instead, it begins to memorize the noise, outliers, and specific patterns present in the limited training set rather than learning generalizable features. This results in a model that performs exceptionally well on its training data but fails to make accurate predictions on new, unseen test data, a phenomenon known as overfitting [14]. In plant phenotyping, this could mean a model perfectly identifies diseases in the images it was trained on but fails when presented with a new plant variety or different lighting conditions.

Q2: Beyond overfitting, how does data scarcity lead to poor generalization in plant phenotyping tasks?

A2: Poor generalization manifests as a model's inability to perform well across different environmental conditions, plant species, or sensor types. Data scarcity exacerbates this because the limited dataset cannot possibly capture the full variability of real-world agricultural settings [15]. A model trained on a small, unrepresentative dataset will learn features that are specific to that narrow context. For instance, if a stress-detection model is trained only on images of maize under controlled greenhouse lighting, it will likely associate the lighting conditions with the plant's health, causing it to fail when deployed in a field with natural, variable light [16]. This is often described as the model learning "shortcuts" or spurious correlations instead of the true phenotypic traits.

Q3: What are the specific challenges of data scarcity in 3D plant phenotyping?

A3: The challenge is particularly acute for 3D phenotyping. Extensive 3D datasets remain scarce compared to 2D images, creating a significant bottleneck for developing robust deep learning models [17]. 3D data acquisition is often more time-consuming and expensive, requiring specialized sensors and reconstruction methods. Consequently, models trained on limited 3D point clouds or meshes struggle to learn the complex, organic geometry of plant structures. They may fail to accurately reconstruct occluded leaves or stems and will not generalize to plants with architectural variations not present in the small training set.

Q4: How can generative models help mitigate these problems of overfitting and poor generalization?

A4: Generative models, such as Generative Adversarial Networks (GANs) and Diffusion Models, act as a powerful data augmentation tool. They learn the underlying distribution of your existing limited dataset and can generate novel, synthetic samples that reflect that distribution [14] [16]. By augmenting a small real dataset with high-quality synthetic data, you effectively increase the size and diversity of your training set. This provides the model with more examples to learn from, discouraging memorization and forcing it to learn more robust, generalizable features. Furthermore, generative models can be used for domain adaptation, translating images from one domain (e.g., simulated plants) to another (e.g., real plants), to create more realistic training data [15].

Troubleshooting Guides

Guide 1: Diagnosing and Addressing Overfitting in Your Plant Phenotyping Model

Symptoms:

- Training accuracy is very high and continues to improve, while validation/test accuracy stagnates or decreases.

- The model performs poorly on new plant images, especially from different cultivars, growth stages, or environments.

Step-by-Step Solutions:

- Verify Data Splits: Ensure your training and validation sets are strictly separated. Check for data leakage, where information from the validation set may have inadvertently influenced the training process.

- Implement Robust Regularization:

- Dropout: Add dropout layers to your network to randomly disable neurons during training, preventing complex co-adaptations on training data.

- Weight Decay (L2 Regularization): Apply a penalty on large weights in the model to encourage a simpler, more generalized function.

- Early Stopping: Monitor the validation loss during training and halt the process as soon as it begins to increase consistently.

- Leverage Generative Data Augmentation:

- Train a Generative Model: Use a GAN or Diffusion Model trained on your limited plant image dataset.

- Generate Synthetic Data: Produce a large number of synthetic plant images with variations in color, texture, and minor occlusions.

- Augment Training Set: Combine your original real data with the newly generated synthetic data.

- Re-train Your Model: Train your phenotyping model on this augmented dataset. The increased diversity will help the model learn invariant features rather than memorizing the original small set.

Guide 2: Improving Model Generalization Across Different Plant Species and Environments

Symptoms:

- The model performs well only on data from the same source (e.g., a specific lab's imaging setup) but fails in cross-domain applications (e.g., field images from a partner institution).

Step-by-Step Solutions:

- Analyze Data Diversity: Audit your current training dataset. Is it representative of the target environments? You may need to intentionally collect or generate data for underrepresented conditions.

- Employ Transfer Learning:

- Source a Pre-trained Model: Start with a model pre-trained on a large, general-purpose dataset (e.g., ImageNet). This model has already learned useful low-level features like edges and textures [14] [16].

- Fine-tune on Your Data: Replace the final classification/regression layers and fine-tune the entire model, or just the later layers, on your specific plant phenotyping dataset. This transfers general feature knowledge to your domain with less data.

- Utilize Domain Adaptation with Generative Models:

- If you have a source domain with ample data (e.g., simulated plants from an L-System [17]) and a target domain with little data (e.g., real plant images), use a framework like a CycleGAN.

- Train the model to translate images from the source domain to the style of the target domain. This generates realistic, labeled synthetic data in the target domain, which can then be used to train a more robust phenotyping model that generalizes to real-world conditions [15].

Experimental Protocols & Data

Table 1: Quantitative Impact of Data Scarcity and Mitigation Strategies

This table summarizes key metrics from studies investigating data scarcity and the performance gains from using generative models.

| Model / Task | Training Data Size | Baseline Performance (Without Augmentation) | Performance with Generative Augmentation | Key Metric |

|---|---|---|---|---|

| Object Detection (Underwater) [18] | Few hundred real images | Low detection accuracy | Performance comparable to training on thousands of images | mAP (Mean Average Precision) |

| 3D Plant Generation (PlantDreamer) [17] | N/A (Synthetic generation) | PSNR (Masked): 11.01 dB (GaussianDreamer) | PSNR (Masked): 16.12 dB (PlantDreamer) | PSNR (Higher is better) |

| Drought Stress Prediction [16] | Multimodal dataset | SVM: ~82% Accuracy | LSTM: 97% Accuracy | Prediction Accuracy |

| Segmentation Model Adaptation [15] | Small new dataset | Original network failed on new data | Fine-tuning & synthetic data improved segmentation accuracy | Segmentation Accuracy |

Detailed Experimental Protocol: Leveraging a Generative Model for Data Augmentation

Objective: To improve the accuracy and generalization of a plant disease classifier suffering from a small, imbalanced training dataset.

Materials:

- Dataset: A limited set of RGB images of healthy and diseased leaves.

- Base Model: A standard CNN classifier (e.g., a lightweight ResNet).

- Generative Model: A Diffusion Model or GAN (e.g., Stable Diffusion or a StyleGAN variant).

Methodology:

Pre-processing:

- Resize all images to a uniform resolution (e.g., 256x256 pixels).

- Normalize pixel values.

- Separate data into training, validation, and (held-out) test sets.

Baseline Model Training:

- Train the CNN classifier solely on the original, limited training set.

- Evaluate its performance on the validation and test sets to establish a baseline accuracy. Expect signs of overfitting.

Synthetic Data Generation:

- Train the generative model on the entire available dataset of plant images to learn the data distribution.

- Use the trained generative model to produce a large number (e.g., 10,000) of novel synthetic images of healthy and diseased plants. Ensure the generated images cover a wide range of variations.

Augmented Model Training:

- Create an augmented training set by combining the original real images with the generated synthetic images.

- Re-train the CNN classifier from scratch on this new, larger augmented dataset.

Evaluation:

- Finally, evaluate the re-trained model on the held-out test set, which contains only real images not used in training or generation.

- Compare the final test accuracy against the baseline to quantify the improvement.

The Scientist's Toolkit: Research Reagent Solutions

Table detailing key computational tools and their functions for addressing data scarcity.

| Research Reagent | Function & Application |

|---|---|

| Generative Adversarial Networks (GANs) | Generate synthetic plant images to augment training datasets; can be used for style transfer to adapt images from one domain (e.g., simulation) to another (e.g., real field) [15] [16]. |

| Diffusion Models | High-fidelity image generation; can be guided by text prompts or depth maps (ControlNet) to create specific plant phenotypes or complex 3D structures [17] [18]. |

| 3D Gaussian Splatting (3DGS) | A 3D representation enabling efficient and high-quality rendering of novel views; used as a target output for 3D generative models like PlantDreamer [17]. |

| Low-Rank Adaptation (LoRA) | A parameter-efficient fine-tuning method; allows for rapid adaptation of large pre-trained models (e.g., diffusion models) to specific plant textures and domains without full retraining [17]. |

| L-Systems | A procedural modeling technique for generating complex plant and fractal-like structures; provides the initial geometric priors for 3D generative pipelines [17]. |

Workflow Visualization

Diagram 1: Generative Augmentation Workflow for Plant Phenotyping

Diagram 2: Technical Consequences of Data Scarcity

Frequently Asked Questions (FAQs)

Q1: What is the primary data-related challenge in plant phenotyping that generative models can solve? The core challenge is data scarcity, specifically the lack of large volumes of accurately labeled ground truth data needed to train deep learning models for tasks like image segmentation. Manually generating this data is labor-intensive and time-consuming, creating a major bottleneck in automated image analysis workflows for quantitative plant phenotyping [19].

Q2: How do Generative Adversarial Networks (GANs) differ from traditional data augmentation? Traditional data augmentation applies simple pixel-level transformations (like rotation, scaling, or flipping) to existing images. It rearranges existing pixels but cannot create genuinely new plant phenotypes or lighting conditions. In contrast, GANs learn the underlying probability distribution of plant appearances and morphological traits. This allows them to sample and generate entirely new, realistic images, introducing plant variations not present in the original dataset [19].

Q3: What are the key functional differences between GANs and Variational Autoencoders (VAEs) for generating plant images? While both are generative models, they have distinct strengths and weaknesses, as summarized in the table below.

Table 1: Comparison of GANs and VAEs for Plant Image Synthesis

| Feature | Generative Adversarial Networks (GANs) | Variational Autoencoders (VAEs) |

|---|---|---|

| Core Mechanism | Adversarial training between a generator and a discriminator [19] | Optimization of a reconstruction-based loss function [19] |

| Output Quality | Can produce visually sharper and structurally rich images [19] | Tend to produce over-smoothed outputs [19] |

| Best Suited For | Generating high-fidelity images where fine details (e.g., leaf boundaries) are crucial [19] | Applications where some loss of fine texture detail is acceptable |

Q4: In a two-stage GAN pipeline, what are the roles of FastGAN and Pix2Pix? In a typical pipeline for generating plant images and their segmentations:

- FastGAN is used in the first stage for data augmentation. It performs non-linear intensity and texture transformations to generate new, realistic RGB images of plants [19].

- Pix2Pix, a conditional GAN, is used in the second stage. It is trained on a limited set of real RGB images and their corresponding binary segmentation masks. After training, it can automatically generate accurate segmentation masks for the synthetic RGB images produced by FastGAN [19].

Troubleshooting Guides

Issue 1: Poor Quality or Unrealistic Generated Images

Problem: The generative model (e.g., GAN) produces plant images that look blurry, contain artifacts, or are biologically implausible.

Possible Causes and Solutions:

- Insufficient Training Data: The model may not have learned the true distribution of plant features.

- Solution: Incorporate a biologically-constrained optimization strategy. This involves integrating prior biological knowledge into the training process to ensure generated outputs are realistic and adhere to known plant structures [4].

- Mode Collapse in GANs: The generator produces a limited variety of samples.

- Solution: Experiment with different GAN architectures and loss functions. For instance, one study found that using Sigmoid Loss enabled more efficient model convergence and higher accuracy [19].

Issue 2: Inaccurate Segmentation Masks from Generated Data

Problem: When using a generated RGB image and its corresponding mask to train a segmentation model, the model's performance is poor because the masks are incorrect.

Solution:

- Implement a rigorous validation loop. Manually annotate a subset of the generated images and compute a similarity metric, like the Dice coefficient, between the manual annotations and the generated masks. This quantitatively assesses the accuracy of the synthetic ground truth data [19].

Table 2: Example Dice Coefficient Performance of a GAN-Generated Segmentation Model [19]

| Plant Species | Dice Coefficient | Key Experimental Note |

|---|---|---|

| Arabidopsis | 0.94 | Achieved using Sigmoid Loss function |

| Maize | 0.95 | Achieved using Sigmoid Loss function |

| Barley | 0.88 - 0.95 | Performance range reported for different setups |

Issue 3: Managing Large Volumes of Generated Phenotyping Data

Problem: The high-throughput generation of synthetic images and masks leads to challenges in data storage, management, and traceability.

Solution:

- Adopt standardized data management practices. Use ontologies for unique and repeatable annotation of data to ensure traceability and reuse [20].

- Implement the Minimal Information About a Plant Phenotyping Experiment (MIAPPE) standard to describe your experiments. This is crucial for integrating data from different sources and promoting synergism across studies [20].

Experimental Protocol: Two-Stage GAN for Plant Image and Segmentation Mask Generation

This protocol details the methodology for using GANs to generate synthetic plant images and their corresponding binary segmentation masks, based on a published feasibility study [19].

Image Acquisition and Data Preparation

- Imaging: Acquire high-resolution top-view or side-view RGB images of plants (e.g., Arabidopsis, maize, barley) using a phenotyping system (e.g., LemnaTec platform).

- Preprocessing: Resize all images to a standardized resolution (e.g., 1024 X 1024 pixels). Apply per-channel normalization to scale pixel values to a [0, 1] range. Minor cropping may be applied to remove peripheral non-plant regions.

- Dataset Splitting: Divide the original real images into training and test sets. For example:

- FastGAN Training: Use 300 images for a species with high variability (e.g., barley side-views) and 120 images for species with lower variability (e.g., Arabidopsis, maize).

- Pix2Pix Training: Use a smaller set of hand-annotated RGB-mask pairs (e.g., 100 for barley, 80 for Arabidopsis/maize). Hold out a separate test set (e.g., 25 images for barley, 20 for Arabidopsis/maize) with no overlap with the training data.

Stage One: RGB Image Generation with FastGAN

- Objective: Augment the dataset with novel, realistic RGB plant images.

- Model: Train a FastGAN model on the preprocessed RGB training images.

- Output: A set of synthetic RGB images that mimic the visual characteristics and morphological diversity of the real plant training data.

Stage Two: Segmentation Mask Generation with Pix2Pix

- Objective: Generate accurate binary segmentation masks for the synthetic RGB images from Stage One.

- Model: Train a Pix2Pix model, a conditional GAN, on the set of real RGB images and their corresponding manually created binary ground truth masks.

- Inference: Apply the trained Pix2Pix model to the synthetic RGB images generated by FastGAN. The model will output the predicted binary segmentation masks.

Validation and Performance Evaluation

- Manual Annotation: Manually annotate a random subset of the synthetic RGB images generated by FastGAN to create a ground truth benchmark.

- Quantitative Metric: Calculate the Dice coefficient to measure the similarity between the masks generated by Pix2Pix and the manual annotations. A Dice score above 0.90 is typically indicative of strong performance.

The following workflow diagram illustrates the complete two-stage experimental protocol:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Components for a Generative Models Pipeline in Plant Phenotyping

| Tool / Resource | Type | Function / Description | Example from Literature |

|---|---|---|---|

| High-Throughput Phenotyping System | Imaging Hardware | Automated system for acquiring large volumes of plant images under controlled conditions. | LemnaTec greenhouse phenotyping system [19] |

| FastGAN | Generative Model (GAN) | Used for data augmentation to generate new, realistic RGB images of plants through non-linear transformations [19]. | Generating synthetic RGB images of barley, Arabidopsis, and maize [19] |

| Pix2Pix | Conditional Generative Model (GAN) | Translates an input image from one domain to another; used to generate segmentation masks from RGB images [19]. | Creating binary masks from synthetic RGB images [19] |

| U-Net | Deep Learning Model | A convolutional neural network used for image segmentation; often serves as a performance benchmark [19]. | Supervised baseline model for segmentation [19] |

| Dice Coefficient | Evaluation Metric | A statistical measure of similarity between two samples; used to validate the accuracy of generated segmentation masks [19]. | Quantifying mask accuracy, with scores of 0.88-0.95 achieved [19] |

| Sigmoid Loss | Loss Function | A specific loss function used during model training to optimize performance. | Achieved highest Dice scores (0.94-0.95) for Arabidopsis and maize [19] |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between simple data augmentation and synthetic data generation? Simple data augmentation applies predefined transformations (e.g., rotation, flipping, brightness changes) to existing images. It rearranges existing pixels but cannot introduce genuinely novel plant phenotypes, lighting conditions, or morphological combinations. In contrast, synthetic data generation uses generative models like GANs or diffusion models to learn the underlying probability distribution of plant appearances. This allows it to sample entirely new images, introducing phenotypes, illumination conditions, or canopy architectures never originally captured by the camera [19].

FAQ 2: Why are traditional computer vision models insufficient for capturing complex phenotypic variations? Traditional models, including simple thresholding or classic machine learning (e.g., Random Forest, SVMs), often require manual feature extraction and preprocessing. This limits their scalability and ability to generalize across diverse plant varieties, complex backgrounds, and varying lighting conditions found in environments like vertical farms. They struggle to capture the non-linear and complex relationships between multiple physiological indicators that define emergent phenotypes [21] [22].

FAQ 3: How can synthetic data help in detecting outliers or abnormal phenotypes? Complex phenotypes often manifest as coordinated perturbations across multiple physiological indicators, even when individual measurements appear normal. Advanced methods like ODBAE (Outlier Detection using Balanced Autoencoders) use machine learning to uncover these subtle outliers by capturing latent relationships among multiple parameters. Synthetic data can be used to train such models on a wider range of potential abnormal scenarios, enhancing their ability to detect both subtle and extreme outliers that disrupt normal biological correlations [21].

FAQ 4: What are the primary risks associated with using synthetic data, and how can they be mitigated? Key risks include:

- Temporal Gap: The synthetic data becomes outdated compared to real-world data. Mitigation involves regularly regenerating datasets and incorporating up-to-date information [23].

- Data Bias: Biases in the original seed data can be amplified. Mitigation requires using fairness tools to test data and models and collaborating with domain experts [23].

- Data Privacy: There's a potential for re-identification. Mitigation includes robust anonymization of source data and avoiding generation of real personally identifiable information (PII) [23].

- Overfitting: The generative model may produce data too similar to the original. Mitigation involves ensuring sufficient variability and using holdout sets for validation [24].

FAQ 5: What metrics and methods are essential for validating synthetic phenotypic data? Robust validation should not rely on a single metric. It must include:

- Statistical Validation: Comparing distributions, correlations, and other statistical properties against real data.

- Task-Specific Utility: Using the synthetic data to train a model and evaluating its performance on a real-world test set.

- Visual Inspection: Leveraging diagrams and visualizations for domain experts to spot errors or unrealistic patterns [24].

- Quality Scores: Utilizing automated reports that provide scores for fidelity and usefulness, such as those offered by platforms like YData Fabric [24].

Troubleshooting Guide

Problem: Generative Model Produces Low-Fidelity or Blurry Synthetic Images

- Symptoms: Generated plant images lack sharp leaf boundaries or fine textural details; segmentation masks are imprecise.

- Possible Causes & Solutions:

- Cause 1: Inadequate Training Data. The initial seed dataset is too small or lacks diversity.

- Solution: Utilize a two-stage generation process. First, use a GAN like FastGAN to augment the original RGB images with non-linear intensity and texture transformations, expanding the feature space. Then, use a model like Pix2Pix trained on a limited set of real image-mask pairs to generate the corresponding segmentation masks for the synthetic RGB images [19].

- Cause 2: Suboptimal Loss Function. The model's loss function does not prioritize structural accuracy.

- Solution: Experiment with alternative loss functions. For instance, in plant segmentation tasks, Sigmoid Loss has been shown to enable more efficient model convergence and achieve higher Dice Coefficient scores (e.g., 0.94-0.95) compared to other functions [19].

- Cause 3: Model Architecture Limitations. The generator cannot capture the complexity of plant morphology.

- Solution: Consider more advanced architectures. For 3D plant phenotyping, explore volumetric regression networks that perform domain adaptation simultaneously with training. For 2D, transformer-based architectures can sometimes outperform traditional CNNs when fine-tuned with synthetic data [6].

- Cause 1: Inadequate Training Data. The initial seed dataset is too small or lacks diversity.

Problem: Synthetic Data Fails to Generalize to Real-World Experimental Data

- Symptoms: Models trained on synthetic data perform poorly when applied to real images from greenhouses or fields.

- Possible Causes & Solutions:

- Cause 1: Domain Gap. The synthetic data does not reflect the lighting, background, or sensor characteristics of the target environment.

- Solution: Implement domain adaptation techniques. This can include style transfer to adapt synthetic images to the target domain's visual characteristics or using unsupervised domain adaptation approaches that learn from unlabeled real data during training [6].

- Cause 2: Lack of Representativeness. The synthetic data misses rare edge cases or specific phenotypic variations.

- Solution: Collaborate with domain experts to identify underrepresented traits. Refine the generation process to explicitly include these variations and use clustering approaches during training to improve generalization on diverse target datasets [6]. Ensure your original seed data is as representative as possible.

- Cause 1: Domain Gap. The synthetic data does not reflect the lighting, background, or sensor characteristics of the target environment.

Problem: Difficulty in Segmenting Plants in Dense or Complex Environments

- Symptoms: Inability to accurately separate individual plants or leaves in images from vertical farms with high planting density and merged canopies.

- Possible Causes & Solutions:

- Cause: Weak Prompts for Foundation Models. Using generic prompts with models like the Segment Anything Model (SAM) in complex agricultural contexts.

- Solution: Enhance prompt engineering. For box prompts, integrate Vegetation Cover Aware Non-Maximum Suppression (VC-NMS) with indices like the Normalized Cover Green Index (NCGI) to refine object localization. For point prompts, use similarity maps with a max distance criterion to improve spatial coherence in sparse annotations. Combining Grounding DINO with SAM and these enhanced prompts has been shown to outperform SAM's default modes in zero-shot segmentation tasks [22].

- Cause: Weak Prompts for Foundation Models. Using generic prompts with models like the Segment Anything Model (SAM) in complex agricultural contexts.

Problem: Synthetic Genomic Data Does Not Capture Complex Genetic Relationships

- Symptoms: Models cannot accurately predict complex, non-linear genotype-phenotype relationships (e.g., epistasis).

- Possible Causes & Solutions:

- Cause: Limitations of Linear Models. Relying on traditional methods like Linear Mixed Models (LMM) that are designed for additive effects.

- Solution: Use AI tools specifically designed for complex genetic data. Software like AIGen implements Kernel Neural Networks (KNN) and Functional Neural Networks (FNN) which combine the strengths of deep learning with statistical frameworks to model non-linear and non-additive genetic effects while handling high-dimensional data efficiently [25].

- Cause: Limitations of Linear Models. Relying on traditional methods like Linear Mixed Models (LMM) that are designed for additive effects.

Experimental Protocols for Synthetic Data Generation

Protocol 1: Two-Stage GAN for Generating Plant Image and Segmentation Mask Pairs

This protocol details the methodology from [19] for generating synthetic plant images and their corresponding ground-truth segmentation masks.

- Objective: To synthesize pairs of realistic RGB images and binary segmentation masks of greenhouse-grown plant shoots to overcome the bottleneck of manual annotation.

- Research Reagents & Materials:

| Item | Function / Description |

|---|---|

| FastGAN | A Generative Adversarial Network used in Stage 1 to generate novel, high-resolution RGB images of plants through non-linear feature transformations. |

| Pix2Pix | A conditional GAN used in Stage 2. It is trained to translate a synthetic RGB image (from FastGAN) into a corresponding binary segmentation mask. |

| High-Throughput Phenotyping System (e.g., LemnaTec) | For acquiring high-resolution original RGB images of plants (e.g., Barley, Arabidopsis, Maize) under controlled greenhouse conditions. |

| Annotation Software (e.g., kmSeg, GIMP) | For creating a small set of manually annotated ground truth masks from original images to train the Pix2Pix model. |

- Methodology:

- Data Preparation:

- Acquire original RGB images (e.g., 2056x2454 px for Arabidopsis).

- Resize all images to a standard size (e.g., 1024x1024 px) and normalize pixel values to [0, 1].

- Manually annotate a subset of images to create a seed set of [RGB image : binary mask] pairs.

- Stage 1: RGB Image Generation with FastGAN:

- Train FastGAN on the original RGB images (e.g., 120 images each for Arabidopsis and maize).

- Use the trained FastGAN to generate a large set of novel synthetic RGB images.

- Stage 2: Mask Generation with Pix2Pix:

- Train the Pix2Pix model on the seed set of real [RGB image : binary mask] pairs.

- After training, apply the trained Pix2Pix model to the synthetic RGB images generated by FastGAN. The model will output the predicted segmentation masks.

- Validation:

- Manually annotate a subset of the FastGAN-generated images to create a gold-standard test set.

- Calculate the Dice Coefficient between the Pix2Pix-predicted masks and the manual annotations to evaluate accuracy. Reported results range from 0.88 to 0.95 [19].

- Data Preparation:

The workflow for this two-stage process is as follows:

Protocol 2: Zero-Shot Instance Segmentation for Plant Phenotyping

This protocol outlines the use of foundation models for segmenting plant images without target-specific training data, as described in [22].

- Objective: To perform accurate instance segmentation of diverse plant types in vertical farming environments without relying on manually annotated training data for each plant variety.

- Research Reagents & Materials:

| Item | Function / Description |

|---|---|

| Grounding DINO | A zero-shot object detector that generates bounding box prompts from text descriptions (e.g., "plant leaf"). |

| Segment Anything Model (SAM) | A foundation model for image segmentation that uses prompts (points, boxes) to generate masks. |

| Normalized Cover Green Index (NCGI) | A vegetation index used to calculate vegetation cover and refine object localization. |

- Methodology:

- Box Prompt Enhancement with VC-NMS:

- Use Grounding DINO with a text prompt to generate initial bounding box proposals for plants/leaves.

- Incorporate the Normalized Cover Green Index (NCGI) into the Non-Maximum Suppression (NMS) process to create Vegetation Cover Aware NMS (VC-NMS). This refines the bounding boxes by leveraging spectral vegetation features, improving localization accuracy.

- Point Prompt Enhancement:

- To guide SAM with points, generate similarity maps from the image features.

- Apply a max distance criterion to select the most salient points, which improves spatial coherence and addresses the ambiguity of generic point prompts in complex agricultural scenes.

- Segmentation:

- Feed the enhanced box and point prompts to SAM to generate the final instance segmentation masks.

- Validation:

- Evaluate segmentation performance on test datasets using metrics like mean Average Precision (mAP) and compare against supervised baselines (e.g., YOLOv11) and SAM's default "everything" mode.

- Box Prompt Enhancement with VC-NMS:

The logical flow for this zero-shot segmentation framework is visualized below:

Table 1: Performance of Two-Stage GAN Pipeline for Different Plant Species Data adapted from [19], showing the segmentation accuracy achieved when using a Pix2Pix model trained on synthetic data.

| Plant Species | View | Training Set Size (RGB-Mask Pairs) | Dice Coefficient (Average) |

|---|---|---|---|

| Arabidopsis | Top | 80 | 0.94 - 0.95 |

| Maize | Top | 80 | 0.94 - 0.95 |

| Barley | Side | 100 | 0.88 - 0.95 |

Table 2: Publicly Available Synthetic Datasets for Genomic and Phenotypic Research A selection of resources for researchers to obtain or generate synthetic data.

| Dataset / Tool | Description | Key Features | Reference / Access |

|---|---|---|---|

| HAPNEST | A program for simulating large-scale, diverse, and realistic genotypes and phenotypes. | 6.8M variants; 1,008,000 individuals; 6 genetic ancestry groups; 9 continuous traits. | BioStudies (S-BSST936) [26] |

| AIGen | A C++ software for complex genetic data analysis using Kernel and Functional Neural Networks. | Models non-linear genetic effects (e.g., interactions); robust for high-dimensional data. | GitHub [25] |

| GAN-Generated Plant Shoot Images | Two-stage GAN pipeline output for greenhouse-grown plants. | Pairs of synthetic RGB images and binary segmentation masks for Arabidopsis, maize, and barley. | Methodology in [19] |

Building the Digital Greenhouse: A Practical Guide to Implementing Generative Models for Phenotyping

Frequently Asked Questions

Q1: Which generative model is best for creating high-fidelity, diverse plant images for my phenotyping research?

The choice depends on your primary requirement: perceptual quality, diversity, or training stability. Diffusion Models currently dominate for tasks requiring high diversity and strong alignment with complex conditions, such as generating plant images across a spectrum of health states [27]. They excel in producing diverse outputs and are highly flexible for conditioning on various inputs like text or other images [28]. However, if your project demands the sharpest possible images and fast inference speed for real-time applications, GANs like StyleGAN can produce images with high perceptual quality and structural coherence [28] [27]. VAEs are less common for high-fidelity synthesis, as they can tend to produce blurrier images compared to the other two architectures [29].

Q2: I have limited data for a rare "slightly wilted" plant state. Can generative models help, and which one is most effective?

Yes, generative models are specifically suited to address this data scarcity. Recent research demonstrates that Diffusion Models, particularly Denoising Diffusion Probabilistic Models (DDPM), are highly effective for this task [30]. One successful methodology involves taking images of "Normal" and "Wilted" plants, transforming them into a latent space, and then interpolating between these states to generate realistic "Slightly Wilted" images [30]. In contrast to GANs, diffusion models provide a more stable training framework and are better at capturing fine-grained morphological details for intermediate plant states [30].

Q3: My GAN training is unstable and often collapses. What are the best practices to mitigate this?

Training instability and mode collapse are classic challenges with GANs [31] [27]. To address these, consider the following approaches:

- Architectural Improvements: Use modern, stabilized architectures like StyleGAN or incorporate self-attention layers [28] [31].

- Objective Functions: Replace the standard objective function with more stable alternatives, such as the Wasserstein loss used in WGANs [31].

- Combination with Other Models: Integrating GANs with autoencoders (e.g., in a VAE-GAN framework) or using ensemble techniques can also help improve training dynamics and output diversity [31].

Q4: How do I validate that my synthetic plant images are scientifically useful, not just visually plausible?

This is a critical step. Standard quantitative metrics like Fréchet Inception Distance (FID) or Structural Similarity Index (SSIM) can be used, but they have limitations in capturing scientific relevance [28]. It is essential to complement these metrics with expert-driven qualitative assessment [28]. Domain experts (e.g., plant biologists) should validate that the synthetic images preserve fundamental physical and biological principles and do not introduce hallucinations or misrepresentations [28]. Establishing robust verification protocols is mandatory for scientific image generation.

Troubleshooting Guides

Issue 1: Handling Mode Collapse in GANs

Problem: The generator produces limited varieties of plant images, ignoring some input modes.

Solution Steps:

- Switch to a Stabilized Architecture: Implement architectures like WGAN-GP or StyleGAN, which are designed to be more robust against mode collapse [31].

- Modify the Objective Function: Use losses like Wasserstein distance or Maximum Mean Discrepancy (MMD) that encourage broader distribution coverage [31].

- Incorporate Mini-batch Discrimination: This technique allows the discriminator to look at multiple data examples in combination, helping it detect a lack of diversity in the generator's output [31].

- Apply Experience Replay: Occasionally feed previous generator outputs back to the discriminator to prevent it from "forgetting" what real data looks like [31].

Issue 2: Addressing Blurry Outputs from VAEs

Problem: The synthetic plant images generated by the VAE lack sharpness and appear blurry.

Solution Steps:

- Check the Loss Function: The standard VAE loss includes a Kullback-Leibler (KL) divergence term that regularizes the latent space. A common cause of blurriness is an overly strong weight on this KL term relative to the reconstruction loss. Try adjusting the balance between these two terms [29].

- Use a More Powerful Decoder: Enhance the capacity of the decoder network to better reconstruct fine details from the latent representation.

- Explore Alternative Architectures: Consider more advanced variants like Vector Quantised-VAEs (VQ-VAEs) or NVAEs, which are designed to generate sharper images [29].

Issue 3: Managing Slow Inference Speed in Diffusion Models

Problem: Generating plant images with a diffusion model takes a very long time due to the iterative denoising process.

Solution Steps:

- Use a Latent Diffusion Model (LDM): LDMs, such as Stable Diffusion, perform the diffusion process in a lower-dimensional latent space instead of the pixel space, significantly reducing computational demands and speeding up generation [27] [30].

- Reduce Sampling Steps: Employ optimized samplers (e.g., DPM-Solver) that can generate high-quality images in far fewer steps (e.g., 20-50 steps instead of 1000) without a major loss in quality [27].

- Knowledge Distillation: Explore methods where a student model is trained to mimic the output of the full diffusion process in fewer steps [29].

Quantitative Model Comparison for Plant Phenotyping

The table below summarizes the key characteristics of the three main generative architectures to help you select the most appropriate one for your plant phenotyping task.

| Aspect | GANs (e.g., StyleGAN) | VAEs | Diffusion Models (e.g., DDPM) |

|---|---|---|---|

| Output Quality | High perceptual quality, sharp images [28] | Can produce blurry images; lower fidelity [29] | High diversity, strong prompt alignment [27] |

| Training Stability | Unstable; prone to mode collapse [31] [27] | Stable and predictable [29] | Stable and predictable [27] |

| Inference Speed | Very fast (single forward pass) [27] | Fast (single forward pass) | Slower (multiple iterative steps) [27] |

| Data Efficiency | Requires large, curated datasets [27] | Can work with smaller datasets | Requires large datasets but adaptable [27] |

| Primary Strength | High visual sharpness, fast generation | Stable training, meaningful latent space | High output diversity, training stability, flexibility [27] |

| Key Weakness | Training instability, mode collapse [31] | Blurry outputs [29] | Slow inference speed [27] |

| Best for Phenotyping | Generating high-fidelity images of specific plant structures [28] | Exploring continuous latent spaces of plant traits | Augmenting datasets with diverse, complex plant states [30] |

Experimental Protocol: DDPM for "Slightly Wilted" Plant Image Synthesis

This protocol details a methodology for generating synthetic images of intermediate plant health states using Denoising Diffusion Probabilistic Models (DDPM), as validated in recent research [30].

Objective: To augment a scarce dataset for the "Slightly Wilted" plant health category by interpolating between latent representations of "Normal" and "Wilted" plant images.

Materials & Dataset:

- Image Data: A dataset of high-resolution plant images (e.g., Golden Pothos, Parlour Palm) categorized into "Normal" and "Wilted" states.

- Pre-trained Latent Diffusion Model (LDM): A model, such as Stable Diffusion, to encode images into a latent space.

- Computational Resources: A GPU cluster with sufficient memory for training and running diffusion models.

Procedure:

- Data Preprocessing: Resize and normalize all "Normal" and "Wilted" plant images to be compatible with the chosen LDM's input dimensions.

- Latent Encoding: Use the encoder of the LDM to transform all "Normal" and "Wilted" images into their corresponding latent vectors.

- Latent Space Interpolation: For each pair of "Normal" and "Wilted" latent vectors, compute a new synthetic latent vector using linear interpolation: zsynthetic = znormal + λ * (zwilted - znormal), where

λis an interpolation ratio between 0 and 1 (e.g., 0.3, 0.5, 0.7) to simulate different degrees of wilting. - Image Decoding: Use the decoder of the LDM to transform the synthetic latent vectors (

z_synthetic) back into image space, generating the final "Slightly Wilted" synthetic images. - Validation: Have domain experts (botanists) qualitatively assess the generated images for biological plausibility. Quantitatively, use the augmented dataset (original data + synthetic data) to train a plant health classifier and measure the improvement in accuracy and F1-score for the "Slightly Wilted" class on a held-out test set of real images [30].

Research Reagent Solutions

The table below lists key computational tools and concepts essential for experiments in generative plant image synthesis.

| Item / Technique | Function in Experiment |

|---|---|

| Denoising Diffusion Probabilistic Models (DDPM) | A class of diffusion model that learns to generate data by iteratively denoising a random variable; used for high-quality synthetic image generation [30]. |

| Latent Diffusion Model (LDM) | A variant of diffusion models that operates in a compressed latent space, drastically reducing computational cost for training and inference [30]. |

| StyleGAN | A specific GAN architecture that allows for fine-grained control over image styles; capable of generating high-fidelity plant images [28]. |

| Structural Similarity Index (SSIM) | A metric for measuring the perceptual similarity between two images; used to evaluate the quality of reconstructed or synthetic images [28]. |

| Fréchet Inception Distance (FID) | A metric that calculates the distance between feature vectors of real and generated images; lower scores indicate that the two sets of images are more similar [28] [31]. |

| Latent Space Interpolation | The technique of generating new data points by moving between existing points in a model's latent space; key for creating intermediate states like "Slightly Wilted" [30]. |

Workflow Diagram for Synthetic Data Generation

Synthetic Plant Image Generation Flow

Frequently Asked Questions (FAQs)

FAQ 1: What are the most effective data augmentation techniques for improving genomic selection (GS) accuracy in plant breeding?

Data augmentation (DA) is a powerful technique for artificially expanding training datasets to improve the prediction performance of genomic selection models. In the context of plant breeding, where acquiring large genomic datasets is challenging, DA can significantly enhance accuracy. Research has shown that applying DA to genomic data can improve prediction accuracy for the top-performing lines in a testing set. On average, across 14 real plant breeding datasets, the DA approach improved prediction performance by 108.4% in terms of Normalized Root Mean Square Error (NRMSE) and 107.4% in terms of Mean Arctangent Absolute Percentage Error (MAAPE) for the top 20% of lines, compared to conventional methods without augmentation [32]. Techniques like mixup, which creates virtual training examples through linear interpolations of existing data points, are particularly effective [32].

FAQ 2: How can I address severe class imbalance when segmenting plant organs, such as wheat stems?

Class imbalance is a common issue in plant phenotyping, where certain organs (e.g., stems) occupy far fewer pixels in an image than others (e.g., leaves). To address this:

- Stem-Aware Sampling: Instead of using all available unlabeled data for semi-supervised learning, carefully curate a subset of images that are rich in the minority class. A model trained on initially labeled data can help filter and select these relevant images [33].

- Loss Functions and Metrics: Focus on class-specific metrics like Intersection over Union (IoU). For instance, while a model might achieve an IoU of 0.90 for leaves, the stem class might only report 0.69, clearly identifying the performance bottleneck. Tailoring your approach to improve this specific metric is key [33].

FAQ 3: Can foundation models like the Segment Anything Model (SAM) be used effectively for zero-shot plant phenotyping in complex environments?

Yes, but their performance can be limited without domain-specific enhancements. SAM, trained on a billion general-image masks, struggles with the low contrast and complex backgrounds typical of agricultural imagery [22]. To improve its zero-shot performance:

- Enhance Prompts with Domain Knowledge: Use models like Grounding DINO to generate better box prompts. Refine these prompts using vegetation indices like the Normalized Cover Green Index (NCGI) to improve object localization [22].

- Optimize Point Prompts: Employ criteria like the max distance criterion to select the most informative point prompts, which improves spatial coherence in sparse annotations compared to generic points [22]. This enhanced framework has been shown to outperform SAM's default "everything" mode and can achieve superior zero-shot generalization compared to supervised methods like YOLOv11 in some vertical farming scenarios [22].

FAQ 4: What are the key steps in building a modern data augmentation pipeline for a machine vision system in 2025?

Building an effective data augmentation pipeline involves a structured process [34]:

- Define Objectives: Set clear goals using formal metrics (e.g., Service Level Objectives) for data quality and model performance.

- Select Techniques: Choose augmentation methods based on your dataset and task. Geometric transformations (rotation, scaling) are crucial for spatial tasks, while color-based adjustments help with varying lighting.

- Implementation: Use libraries like PyTorch's

torchvisionor TensorFlow to apply transformations programmatically. - Integration: Seamlessly integrate the pipeline into your computer vision workflow, connecting it to data loaders for on-the-fly augmentation during model training.

- Evaluation and Optimization: Continuously measure the pipeline's impact on model accuracy, precision, and recall, and fine-tune the techniques and their parameters accordingly [34].

Troubleshooting Guides

Problem: Model Performance is Poor Due to Limited and Imbalanced Training Data

This is a fundamental challenge in plant phenotyping, where collecting large, balanced datasets is often expensive and time-consuming.

Solution Steps:

- Diagnose the Imbalance: Quantify the class distribution in your dataset. For image segmentation, calculate the percentage of pixels belonging to each class (e.g., background, leaf, stem, head). In one wheat segmentation challenge, the stem class comprised only 9% of pixels, creating a significant imbalance [33].

- Implement a Hybrid Data Augmentation Strategy:

- For Image Data: Apply a combination of geometric and photometric transformations. The table below summarizes techniques and their impacts [34].

- For Tabular/Genomic Data: Explore techniques like

mixupand other data augmentation routines that generate synthetic data from the vicinity distribution of the original training set [32].

- Leverage Generative Models: For severe data scarcity, use Generative Adversarial Networks (GANs) or Variational Autoencoders (VAEs) to synthesize realistic, high-dimensional plant images. These models can learn the underlying distribution of your limited data and generate new, diverse samples [16].

- Utilize Semi-Supervised Learning (SSL): Capitalize on unlabeled data, which is often more readily available. A guided distillation approach, where a "teacher" model generates pseudo-labels for unlabeled data to train a "student" model, can significantly improve generalization. Remember to use class-aware sampling to select unlabeled data that will benefit the minority classes most [33].

Table: Impact of Common Image Augmentation Techniques on Model Performance

| Data Augmentation Method | Impact on Model Performance | Recommended for Dataset Characteristics |

|---|---|---|

| Affine Transformation | Strong performance boost | Effective for diverse datasets; good for object detection [34] |

| Random Rotation | Performance varies significantly | Dependent on object sizes and shapes; test for your use case [34] |

| Image Transpose | Consistent performance improvement | Effective across various datasets [34] |

| Gaussian Noise | Enhances generalization capabilities | Effective for imbalanced datasets and varying lighting conditions [34] |

| Random Perspective | Shows versatility in performance | Adaptable to various dataset properties [34] |

| Color Jitter | Improves robustness to lighting changes | Essential for field conditions with variable illumination [16] |

| Salt & Pepper Noise | Limited impact on performance | Less effective for complex datasets [34] |

Problem: Automated Stomata Phenotyping Suffers from Inaccurate Orientation Measurement

Solution Steps:

- Move Beyond Bounding Boxes: Standard object detectors like YOLOv8 provide axis-aligned bounding boxes and segmentation masks but do not natively predict object orientation. Relying solely on these will yield inaccurate angle measurements for features like stomatal guard cells [35].

- Implement Ellipse Fitting on Segmentation Masks: Use a state-of-the-art instance segmentation model (e.g., YOLOv8) to generate precise pixel-level masks for each stomatal pore and guard cell. Then, apply a post-processing step to fit an ellipse to each segmented mask. The orientation of the major axis of the ellipse provides a robust measurement of the stomatal angle [35].

- Incorporate a Novel Opening Ratio Metric: From the segmented areas of the guard cells and the stomatal pore, calculate a new metric:

Opening Ratio = (Pore Area / Guard Cell Area). This provides a functional phenotyping descriptor beyond simple orientation [35].

Problem: Foundation Model (e.g., SAM) Fails to Segment Plants in Complex Vertical Farm Imagery

Solution Steps:

- Identify the Failure Mode: Determine if the issue is due to poor prompt quality, complex backgrounds, or domain shift. SAM often underperforms in agricultural images due to low target-background contrast and uneven lighting [22].

- Enhance Prompt Quality: