Stoichiometric Modeling of BLSS Mass Flows: From Closed-Loop Systems to Clinical Applications

Stoichiometric modeling is pivotal for designing Bioregenerative Life Support Systems (BLSS) that enable long-duration space missions by closing mass loops and providing essential resources.

Stoichiometric Modeling of BLSS Mass Flows: From Closed-Loop Systems to Clinical Applications

Abstract

Stoichiometric modeling is pivotal for designing Bioregenerative Life Support Systems (BLSS) that enable long-duration space missions by closing mass loops and providing essential resources. This article explores the foundational principles of element cycling in artificial ecosystems, detailing methodologies like Flux Balance Analysis for predicting intracellular fluxes. It addresses key challenges in model optimization and thermodynamic feasibility, and reviews advanced validation techniques to ensure predictive reliability. By synthesizing insights from space-life-support research and constraint-based metabolic modeling, we highlight the cross-disciplinary applications of these frameworks in biomedical research, including drug discovery and understanding metabolic diseases.

Principles of Mass Flow and Element Cycling in Closed Ecosystems

The Role of BLSS in Long-Duration Space Missions and Mass Closure

Bioregenerative Life Support Systems (BLSS) are advanced artificial ecosystems considered vital for future long-duration and remote space missions. These systems are designed to recycle human metabolic wastes into nutrients, carbon dioxide, and water for plants and other edible organisms, which in turn provide food, fresh water, and oxygen for astronauts. The central concept involves creating a materially closed loop to significantly reduce mission mass and volume by cutting down or even eliminating disposable waste and reliance on resupply missions from Earth [1]. For autonomous long-duration space missions without resupply possibility, a BLSS that generates all essential resources with minimal material loss is fundamental for mission sustainability [1] [2].

The core principle of a BLSS mimics natural ecological networks, comprising three main types of biological compartments: producers (e.g., plants, microalgae), consumers (i.e., crew), and degraders/recyclers (e.g., bacteria) [3]. The ultimate goal is to achieve a high degree of mass closure, where the majority of resources are regenerated within the system. Recent achievements, such as the Chinese "Lunar Palace 365" mission, have demonstrated an overall system closure degree of 98.2% over a 370-day experiment, providing strong evidence for the feasibility of this technology for future lunar bases [4].

Stoichiometric Modeling of BLSS Mass Flows

Fundamental Principles

Stoichiometric modeling provides the foundational framework for describing and quantifying the mass flows of elements within a BLSS. It involves establishing a compact set of chemical equations with fixed coefficients to describe the cycling of key elements—primarily Carbon (C), Hydrogen (H), Oxygen (O), and Nitrogen (N)—through all interconnected compartments of the system [1]. This approach allows researchers to simulate the flow of all relevant compounds and balance the dimensions of different compartments to maximize closure at steady state.

The stoichiometric relations govern the material flows in the ecosystem model, enabling the prediction of system dynamics, long-term reliability, and the impact of design changes or perturbations [1]. In a successfully balanced system, most compounds exhibit minimal or zero loss between process iterations. For instance, recent modeling efforts have demonstrated that 12 out of 14 compounds can achieve zero loss, with only oxygen and CO2 displaying minor, manageable losses [1].

MELiSSA as a Modeling Framework

The Micro-Ecological Life Support System Alternative (MELiSSA) project, developed by the European Space Agency with international partners, serves as a leading reference framework for BLSS stoichiometric modeling. The MELiSSA loop is structured as an artificial ecosystem consisting of five interconnected compartments inhabited by different organisms, each with specific metabolic functions [1]:

- C1: Thermophilic anaerobic compartment for initial waste breakdown

- C2: Photoheterotrophic compartment

- C3: Nitrifying compartment

- C4a & C4b: Photoautotrophic compartments (microalgae and higher plants)

- C5: Crew compartment (human inhabitants)

This compartmentalized approach enables specialized processing of waste streams and efficient regeneration of resources through controlled biochemical pathways, providing an ideal structure for stoichiometric analysis [1].

BLSS Compartment Protocols and Methodologies

Higher Plant Compartment (C4b) Protocol

The higher plant compartment serves as the primary producer of food, oxygen, and water transpiration while consuming CO2 and nutrients. The experimental protocol varies significantly based on mission duration and objectives.

- Application: Food production, oxygen generation, CO2 removal, water purification, psychological support [3]

- Species Selection Criteria:

- Short-duration missions (<6 months): Fast-growing species with high nutritive value and minimal volume requirements (leafy greens, microgreens, dwarf cultivars) [3]

- Long-duration missions (>6 months): Staple crops providing carbohydrates, proteins, and fats (wheat, potato, rice, soy), plus vegetables and fruits with longer growth cycles (~100 days) [3]

Experimental Workflow:

- Cultivation System Setup: Install controlled environment agriculture systems with precise monitoring of temperature, humidity, light intensity, photoperiod, and CO2 concentration [3].

- Nutrient Delivery: Implement hydroponic, aeroponic, or soil-like substrate systems using recycled nutrients from waste processing compartments [4].

- Growth Monitoring: Daily tracking of plant health, development stage, and environmental parameters.

- Harvest and Processing: Scheduled harvesting of edible biomass with waste biomass redirected to recycling compartments.

- Yield Analysis: Quantification of edible biomass production, nutritional content, and resource consumption rates.

Table 1: Plant Species Selection for Different Mission Scenarios

| Mission Type | Example Species | Growth Cycle | Primary Output | Resource Contribution |

|---|---|---|---|---|

| Short-duration | Lettuce, Kale, Microgreens | 20-30 days | Nutritional supplementation, antioxidants | Limited resource recycling |

| Long-duration | Wheat, Potato, Rice, Soy | 80-120 days | Caloric and protein provision | Significant O2 production & CO2 consumption |

| Supplemental | Tomato, Peppers, Beans, Berries | ~100 days | Dietary variety, phytonutrients | Moderate resource recycling |

Microbial Waste Processing Compartments (C1-C3) Protocol

The microbial compartments are responsible for the systematic breakdown of human waste and conversion into usable nutrients for plant compartments.

- Application: Waste mineralization, nutrient recovery, CO2 production [1]

- Organisms: Anaerobic bacteria (C1), photoheterotrophic bacteria (C2), nitrifying bacteria (C3) [1]

Experimental Workflow:

- Bioreactor Inoculation: Establish pure or controlled mixed cultures of specific bacterial strains in appropriate bioreactors [1].

- Waste Feed Introduction: Introduce standardized human waste simulants or actual metabolic wastes at controlled feed rates.

- Process Monitoring: Continuously monitor temperature, pH, dissolved oxygen, and metabolic intermediates (e.g., volatile fatty acids).

- Output Characterization: Analyze effluent for nutrient content (nitrates, phosphates), elemental composition, and potential contaminants.

- System Integration: Transfer processed waste streams to appropriate downstream compartments (C3 for nitrification, C4 for plant nutrition).

Gas Balance Management Protocol

Maintaining O2 and CO2 concentrations within appropriate ranges is a critical indicator of BLSS stability and requires active management [4].

- Application: Atmospheric homeostasis, crew health protection [4]

- Target Parameters: CO2 concentration 246-4131 ppm, O2 concentration at breathable levels [4]

Experimental Workflow:

- Continuous Monitoring: Implement real-time gas sensors for O2, CO2, and trace contaminants throughout the system.

- Metabolic Load Prediction: Calculate expected O2 consumption and CO2 production based on crew activity and plant respiration cycles.

- Intervention Implementation: Deploy strategic countermeasures during crew shift changes or system perturbations:

- Performance Validation: Verify system recovery to equilibrium gas concentrations following interventions.

Quantitative Analysis of BLSS Performance

Table 2: Mass Closure Performance in Recent BLSS Experiments

| System/Experiment | Duration | Crew Size | O2 Closure (%) | Water Closure (%) | Food Closure (%) | Overall Closure (%) |

|---|---|---|---|---|---|---|

| Lunar Palace 365 [4] | 370 days | 4 (rotating) | 100% | 100% | High (partial resupply) | 98.2% |

| MELiSSA Model [1] | Steady-state simulation | 6 | ~100% (minor losses) | Not specified | 100% | High (12/14 compounds zero loss) |

| Early CELSS [2] | 91 days | 4 | Significant contribution | Not specified | Partial supplementation | Not specified |

Table 3: Stoichiometric Element Tracking in BLSS Modeling

| Element | Input Sources | Output Sinks | Recycling Pathways | Measurement Techniques |

|---|---|---|---|---|

| Carbon (C) | Crew respiration (CO2), waste | Plant biomass, microbial biomass, | Photosynthesis, waste degradation | CO2 sensors, biomass composition analysis |

| Hydrogen (H) | Water, organic compounds | Water vapor, biomass, | Transpiration, condensation, | Mass balance, humidity sensors |

| Oxygen (O) | CO2, water, plant production | Crew consumption, oxidation processes | Photosynthesis, respiration | O2 sensors, gas chromatography |

| Nitrogen (N) | Crew waste (urea), food | Plant proteins, microbial biomass | Nitrification, assimilation | Elemental analysis, ion chromatography |

Research Reagent Solutions and Essential Materials

Table 4: Key Research Reagents and Materials for BLSS Experimentation

| Reagent/Material | Function/Application | Specification Requirements |

|---|---|---|

| Trimethylolpropane (TMP) [5] | Bio-lubricant synthesis for machinery maintenance | High purity, esterification grade |

| Hydroponic nutrient solutions [3] | Plant mineral nutrition | Balanced macro/micronutrients, pH buffered |

| Bacterial culture media [1] | Waste processor inoculation | Sterile, defined composition for target microbes |

| Gas standard mixtures [4] | Sensor calibration | Certified O2, CO2, trace contaminants in balance gas |

| Water quality test kits [6] | Monitoring recycled water safety | Tests for microbial contamination, organics, ions |

BLSS System Visualization and Workflows

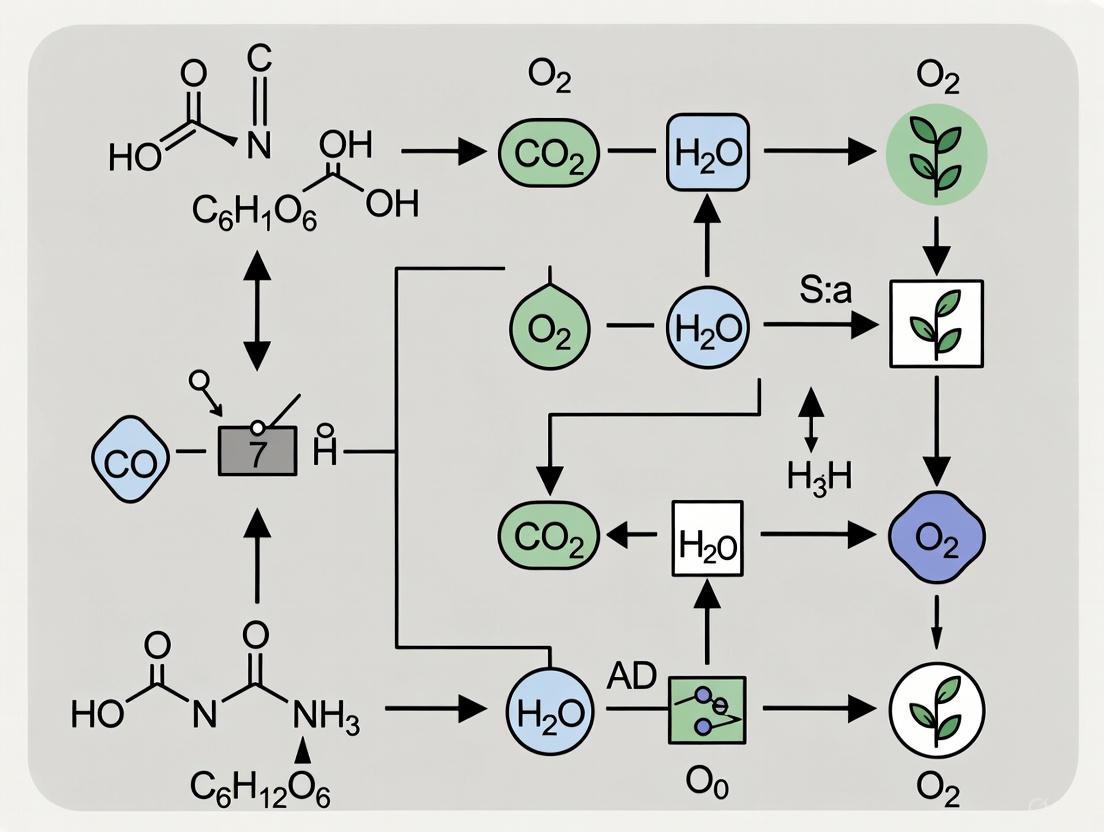

BLSS Compartment Mass Flow Diagram

BLSS Mass Flow Diagram

Stoichiometric Modeling Workflow

Stoichiometric Modeling Workflow

Bioregenerative Life Support Systems with sophisticated stoichiometric modeling represent the pinnacle of life support technology for long-duration space missions. The integration of biological components with engineering controls enables unprecedented levels of mass closure, reducing reliance on Earth resupply. Current research demonstrates that over 98% closure is achievable in ground-based demonstrations, with mathematical models supporting the feasibility of fully autonomous systems [4] [1].

Future development should focus on enhancing system resilience to perturbations, particularly during crew shift changes, improving the oxidation stability of biological components, and validating system performance under actual space conditions [2] [4]. As space agencies worldwide prepare for sustained lunar presence and eventual Mars exploration, BLSS technology with robust stoichiometric modeling will be fundamental to mission success and crew survival in the challenging environment of deep space.

The Micro-Ecological Life Support System Alternative (MELiSSA) is an artificial ecosystem conceived as a tool for understanding the behavior of closed-loop biological systems and developing technology for future biological life support systems (BLSS) in long-term space missions [7]. The primary objective of MELiSSA is the recovery of oxygen and edible biomass from waste materials, including faeces and urea [7]. Due to the intrinsic instability of such complex biological systems and the stringent safety requirements of manned space missions, a sophisticated hierarchical control strategy has been developed to pilot the system and optimize its recycling performance [7]. The framework is structured as an assembly of unit processes, or compartments, designed to simplify the behavior of the artificial ecosystem and enable a deterministic engineering approach [8]. This organization into specific compartments with assigned functions allows for detailed stoichiometric modeling of mass flows, which is fundamental to the research and development of BLSS.

The Five-Compartment Structure

The MELiSSA loop is engineered as a sequential process where the output of one compartment serves as the input for the next, ultimately supporting human life. The specific functions of each compartment are detailed in Table 1.

Table 1: The Five Compartments of the MELiSSA Loop

| Compartment | Key Microorganisms / Components | Primary Function | Key Inputs | Key Outputs |

|---|---|---|---|---|

| CI (Liquefying Compartment) | Thermophilic anoxygenic bacteria [8] | Organic waste degradation & solubilisation [8] | Organic wastes (e.g., non-edible plant parts, paper) [8] | CO₂, volatile fatty acids, ammonia [8] |

| CII (Photoheterotrophic Compartment) | Photoheterotrophic bacteria [8] | Removal of organic carbon compounds [8] | Volatile fatty acids, ammonia from CI [8] | Inorganic carbon source [8] |

| CIII (Nitrifying Compartment) | Nitrosomonas europaea, Nitrobacter winogradskyi (in a biofilm) [8] | Conversion of ammonia into nitrates [8] | Ammonia from preceding compartments [8] | Nitrates (suitable nitrogen source for plants) [8] |

| CIVa (Photoautotrophic Compartment - Bacteria) | Arthrospira platensis (cyanobacteria) [8] | Food and oxygen production [8] | CO₂ from CI and crew, nutrients [8] | Edible biomass, oxygen, water [8] |

| CIVb (Photoautotrophic Compartment - Higher Plants) | Higher plants (e.g., Lactuca sativa; 32 crops considered) [8] [9] | Food, oxygen, and water production [8] | CO₂ from CI and crew, nitrates from CIII [8] | Edible biomass, oxygen, water [8] |

| CV (Crew Compartment) | Human crew | Consumption of resources and production of waste | O₂, food, water from CIVa and CIVb [8] | CO₂, organic waste, urea [7] |

The logical flow and mass exchange between these compartments and the crew can be visualized as a circular ecosystem.

Stoichiometric Modeling and Control Strategies

The driving element of MELiSSA is the efficient recovery of mass and energy. A hierarchical control strategy is employed to ensure system stability and performance [7]. This strategy operates at two primary levels:

- Local Control: Each MELiSSA compartment has its own local control system [7].

- Global Control: An upper-level control system, taking into account the states of all compartments and a desired global functioning point, determines the optimal setpoints for each local controller [7].

This approach is fundamentally based on first principles models of each compartment, which incorporate physico-chemical equations, stoichiometries, and kinetic rates [7]. These models are used both for developing a global system simulator and for implementing a non-linear predictive model-based control strategy [7]. For higher plant chambers (Compartment IVb), modeling is particularly complex. A multilevel mechanistic modeling approach has been developed to integrate phenomena across different scales, from the canopy level down to the metabolic network [9]. This approach can include Flux Balance Analysis (FBA) to predict the distribution of metabolic fluxes, providing a deeper understanding of the plant's internal stoichiometry and its response to environmental conditions [9]. The integration of these detailed models allows for the development of advanced Model Predictive Control (MPC) architectures that can manage the chamber environment to optimize plant growth and system-level mass flows [9].

Experimental Protocols for System Validation

Protocol: Global Control Strategy Simulation and Validation

This protocol outlines the procedure for using first-principles models to simulate the global MELiSSA ecosystem and validate its control strategy [7].

1. Objective: To simulate the dynamic behavior of the interconnected MELiSSA loop and validate the hierarchical control strategy's ability to maintain system stability and performance at a defined global functioning point.

2. Research Reagent Solutions and Essential Materials: Table 2: Key Materials for MELiSSA Research

| Item / Organism | Function in the Ecosystem | Research Context |

|---|---|---|

| Thermophilic anoxygenic bacteria | Degrades solid organic waste into soluble compounds in CI [8]. | Used in bioreactor studies for waste liquefaction efficiency. |

| Photoheterotrophic bacteria | Removes organic carbon compounds from CI effluent in CII [8]. | Key for preventing feedback inhibition in CI. |

| Nitrifying bacteria consortium (Nitrosomonas europaea, Nitrobacter winogradskyi) | Converts toxic ammonia into nitrate, the preferred nitrogen source for plants, in CIII [8]. | Essential for nitrogen cycle closure. |

| Arthrospira platensis (Cyanobacteria) | Produces oxygen, edible biomass, and water through photosynthesis in CIVa [8]. | Studied for its high growth rate and nutritional value. |

| Lactuca sativa (Lettuce) and other higher plants | Produces a varied diet, oxygen, and water, and contributes to well-being in CIVb [9]. | Model organism for higher plant chamber research. |

| First-Principles Compartment Models | Mathematical models containing physico-chemical equations, stoichiometries, and kinetic rates [7]. | Core component of the global simulator and predictive controller. |

3. Methodology: 1. Model Integration: Develop or obtain the validated first-principles models for each of the five MELiSSA compartments (CI, CII, CIII, CIVa, CIVb) and the crew (CV). These models should encapsulate the core stoichiometries and kinetics of the biological processes [7]. 2. Simulator Configuration: Integrate the individual compartment models into a global simulator. The outputs of one compartment (e.g., CO₂ from CI and CV) must be correctly linked as inputs to the downstream compartments (e.g., CIVa and CIVb) [7] [8]. 3. Control System Implementation: - Implement the local controllers for each compartment, which regulate internal parameters based on local setpoints. - Implement the upper-level global controller, which uses the global simulator in a predictive manner. This controller monitors the state of all compartments and calculates new setpoints for the local controllers to drive the system towards an optimal, safe operating point [7]. 4. Simulation Execution: Run the coupled simulation and control system over a defined mission period. Introduce realistic perturbations, such as a variation in crew waste output or a change in light intensity for the photosynthetic compartments. 5. Data Collection and Analysis: Monitor key performance indicators (KPIs) including: - Oxygen and carbon dioxide levels. - Production rates of edible biomass. - Stability of each compartment's key process variables. - Overall mass flow closure.

4. Anticipated Outcomes: The simulation will demonstrate whether the hierarchical control strategy can successfully reject disturbances and maintain the entire MELiSSA loop at the desired recycling performance, thereby validating the control approach before implementation in a physical pilot plant.

Protocol: Multilevel Modeling and Control of a Higher Plant Chamber

This protocol details the methodology for developing and validating a multilevel model for higher plant growth (CIVb) and integrating it into a model-based predictive controller [9].

1. Objective: To create and validate a mechanistic multilevel model of Lactuca sativa (lettuce) growth and use it to design a predictive controller for optimizing environmental conditions in the plant chamber.

2. Methodology: 1. Model Development (Multilevel Approach): - Level 1 (Canopy/Chamber Scale): Develop sub-models for irradiance distribution within the canopy, energy balance (to determine leaf temperature), and gas exchange (CO₂, O₂, H₂O) between the plant and the chamber atmosphere [9]. - Level 2 (Biochemical Level): Implement enzyme-kinetic based models for fundamental processes like photosynthesis (e.g., the Farquhar model) and respiration [9]. - Level 3 (Metabolic Network Level): Reconstruct a genome-scale metabolic network for Lactuca sativa. Use Flux Balance Analysis (FBA) to predict intracellular flux distributions and growth rates under the constraints provided by Level 1 and 2 models [9]. 2. Model Validation: Grow Lactuca sativa in a controlled environment chamber. Collect experimental data on gas exchange rates (CO₂ uptake, O₂ production, water transpiration), biomass accumulation, and environmental conditions (light, temperature, humidity). Compare these measurements against the predictions of the multilevel model to validate its accuracy [9]. 3. Controller Design: Embed the validated multilevel model into a Model Predictive Control (MPC) framework. The MPC algorithm will use the model to predict future plant growth and gas exchange based on current states. It will then compute optimal adjustments to the chamber's control variables (e.g., light intensity, CO₂ concentration, irrigation) to maximize a predefined objective, such as biomass production rate or oxygen regeneration [9]. 4. Experimental Control Validation: Implement the MPC system on the actual plant growth chamber and run a controlled experiment. Compare the system's performance (e.g., growth rate, resource use efficiency) against traditional control strategies.

3. Anticipated Outcomes: This protocol enables a deeper, mechanistic understanding of plant growth in controlled environments. The resulting MPC strategy is anticipated to outperform traditional controllers, leading to more precise and efficient management of the photoautotrophic compartment (CIVb), which is critical for the overall success of a BLSS [9].

Bioregenerative Life Support Systems (BLSS) are artificial ecosystems critical for long-duration space missions, as they recycle human waste into oxygen, water, and food, thereby creating a materially closed loop and reducing mission mass and volume [1]. Stoichiometric modeling forms the foundational framework for understanding and predicting the mass flows of key elements—Carbon (C), Hydrogen (H), Oxygen (O), and Nitrogen (N)—through these systems. The accurate balancing of these elements is paramount for achieving a high degree of closure and ensuring the continuous, sustainable provision of vital resources for the crew without external resupply [1]. This document outlines the core stoichiometric equations and associated protocols for modeling the mass flows of C, H, O, and N within a BLSS, based on the established MELiSSA (Micro-Ecological Life Support System Alternative) concept.

The MELiSSA loop, developed by the European Space Agency, is a benchmark BLSS architecture composed of five distinct, interconnected compartments, each with a specific metabolic function [1]. The system's operation relies on the sequential processing of waste by various organisms to ultimately sustain human life. A conceptual model of the mass flows between these compartments is provided in the diagram below.

Core Stoichiometric Equations by Compartment

The following section details the fundamental stoichiometric equations for the cycling of C, H, O, and N in each compartment. These equations are based on a simplified, balanced model designed for a crew of six and assume steady-state operation [1].

Table 1: Core Stoichiometric Equations for the MELiSSA Loop [1]

| Compartment | Primary Function | Core Stoichiometric Equation |

|---|---|---|

| C1 | Thermophilic Anaerobic Digestion | Organic Waste (CxHyOz) + H₂O → Volatile Fatty Acids (VFAs) + CO₂ + CH₄ + NH₄⁺ + ... |

| C2 | Photoheterotrophic Oxidation | VFAs (e.g., C₂H₄O₂) + O₂ + NH₄⁺ → Bacterial Biomass (C₅H₇O₂N) + CO₂ + H₂O |

| C3 | Nitrification | NH₄⁺ + 1.5 O₂ → NO₂⁻ + 2H⁺ + H₂ONO₂⁻ + 0.5 O₂ → NO₃⁻ |

| C4a | Microalgae (e.g., Limnospira) | CO₂ + NO₃⁻ + H₂O + Light → Algal Biomass (C₆H₁₀O₅N) + O₂ |

| C4b | Higher Plants (e.g., Wheat) | CO₂ + NO₃⁻ + H₂O + Light → Plant Biomass (C₆H₁₂O₆) + O₂ |

| C5 | Human Crew | Food (C₆H₁₂O₆, C₆H₁₀O₅N, etc.) + O₂ → CO₂ + H₂O + Urea (CH₄N₂O) + Feces |

The logical sequence of these chemical transformations, which close the elemental loops, is visualized below.

Quantitative Data for a 6-Person Crew

For practical system design, the stoichiometric model must be quantified. The table below summarizes the key mass flows for a system supporting a crew of six, based on a balanced steady-state model [1].

Table 2: Key Mass Flow Rates for a 6-Person Crew in a Closed BLSS [1]

| Compound / Element | Mass Flow Rate (g/day) | Source Compartment | Sink Compartment | Notes |

|---|---|---|---|---|

| O₂ (Oxygen) | ~2000 | C4a, C4b | C5 | Primary product of photosynthesis; consumed by crew. |

| CO₂ (Carbon Dioxide) | ~2500 | C5, C1, C2 | C4a, C4b | Primary product of respiration and breakdown; consumed by plants/microalgae. |

| H₂O (Water) | Variable | All | All | Recycled and purified throughout the loop. |

| Edible Biomass | ~1500 (dry weight) | C4a, C4b | C5 | Provides 100% of crew's nutritional needs in a fully closed system. |

| Nitrate (NO₃⁻) | To be balanced | C3 | C4a, C4b | Key nitrogen source for photoautotrophs. |

| Ammonium (NH₄⁺) | To be balanced | C1, C5 | C2, C3 | Key intermediate in nitrogen cycle. |

Experimental Protocols for Stoichiometric Model Calibration

Protocol: Determination of Crew Metabolic Stoichiometry

Objective: To empirically determine the consumption and production rates of C, H, O, and N for a human in a controlled environment, providing the foundational input (C5) for the BLSS model.

Materials:

- Calorimetry chamber (whole-room or mask-based)

- Gas analyzers (for O₂ and CO₂)

- Automated urine and feces collection system

- Food and water with precisely known elemental composition

- Microbalance for accurate mass measurements

Methodology:

- Subject Preparation: Subjects reside in a sealed calorimetry chamber for a minimum of 72 hours.

- Controlled Diet: Provide subjects with a diet of known mass and stoichiometric composition (CxHyOzN). Record all food and water intake mass.

- Gas Exchange Monitoring: Continuously monitor the concentration and flow rate of inlet and outlet air to calculate real-time O₂ consumption and CO₂ production rates.

- Waste Collection: Collect all urine and feces excreted during the study period. Record mass.

- Sample Analysis:

- Elemental Analysis: Use CHNS elemental analysis on aliquots of homogenized food, feces, and urine to determine C, H, N content.

- Calorimetry: Use bomb calorimetry to cross-validate energy content, which correlates with carbon oxidation.

- Data Calculation:

- Calculate daily intake of each element (from food, water, O₂).

- Calculate daily output of each element (in CO₂, feces, urine, H₂O).

- Formulate an empirical chemical equation representing human metabolism, balancing input and output masses for C, H, O, and N.

Protocol: Characterization of Photobioreactor (C4a) Stoichiometry

Objective: To establish the growth stoichiometry of Limnospira indica microalgae under defined light and nutrient conditions, quantifying its O₂ production and nutrient uptake.

Materials:

- Photobioreactor (PBR) with controlled temperature, pH, and lighting

- Sterile culture medium with known NO₃⁻ concentration

- CO₂ supply system with mass flow controller

- In-situ optical density (OD) probe or cell counter

- Gas analyzer for outlet O₂ and CO₂

- Filtration setup for biomass harvesting

Methodology:

- Inoculation: Aseptically inoculate the PBR with an axenic culture of L. indica.

- Continuous Cultivation: Operate the PBR in continuous or semi-continuous mode to achieve steady-state growth.

- Process Monitoring: Continuously log light intensity, temperature, pH, and OD.

- Gas Analysis: Precisely measure the inflow rate of CO₂ and the outflow rates of O₂ and residual CO₂.

- Medium & Biomass Sampling:

- Take periodic samples from the culture medium. Use ion chromatography to measure NO₃⁻ depletion over time.

- Harvest a known volume of culture, filter, and wash the biomass.

- Biomass Analysis:

- Dry Weight: Determine the biomass dry weight.

- Elemental Analysis: Perform CHNS analysis on the dried biomass to determine its empirical formula (e.g., ~C₆H₁₀O₅N).

- Stoichiometry Calculation:

- Using the data on CO₂ consumed, O₂ produced, NO₃⁻ consumed, and biomass produced, derive the balanced stoichiometric equation for microalgae growth under the tested conditions.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for BLSS Stoichiometric Research

| Item Name | Function / Application | Critical Specifications |

|---|---|---|

| CHNS Elemental Analyzer | Precisely determines the mass fractions of Carbon, Hydrogen, Nitrogen, and Sulfur in solid and liquid samples (e.g., biomass, food, waste). | High accuracy (±0.3%), ability to handle small sample masses (1-3 mg). |

| Gas Chromatography (GC) System | Separates and quantifies gas mixtures; essential for monitoring CH₄, CO₂, and O₂ in the headspace of anaerobic (C1) and aerobic (C2, C4) reactors. | Equipped with TCD (Thermal Conductivity Detector) and FID (Flame Ionization Detector). |

| Ion Chromatography (IC) System | Measures concentrations of anions (NO₃⁻, NO₂⁻, PO₄³⁻) and cations (NH₄⁺, K⁺, Ca²⁺) in liquid culture media, tracking nutrient uptake and conversion. | High sensitivity in the parts-per-million (ppm) range. |

| Photobioreactor (PBR) | Provides a controlled environment (light, temperature, pH, gas mixing) for cultivating microalgae (C4a) and characterizing its growth stoichiometry. | Integrated sensors for pH, dO₂, temperature; adjustable light intensity. |

| Synthetic Waste Stream | A chemically defined simulant of human waste used for reproducible experimentation with C1 and C2 compartments, avoiding variability of real waste. | Precise composition of carbohydrates, proteins, lipids, and minerals based on human metabolic studies. |

| Limnospira indica Culture | A model cyanobacterium for the C4a compartment; efficiently produces O₂ and edible biomass from CO₂ and minerals. | Axenic (sterile, contaminant-free), high growth rate strain. |

Defining Empirical Formulas for Key Biomolecules and Biomass

In the context of stoichiometric modeling of Bioregenerative Life Support Systems (BLSS), the precise definition of empirical formulas for biomolecules and bulk biomass is not merely an analytical exercise but a foundational requirement for predicting mass flows and achieving system closure. A BLSS aims to recycle astronaut metabolic waste into food, oxygen, and clean water through a series of interconnected biological compartments [1]. Accurate stoichiometric models, which track the flow of elements like carbon, hydrogen, oxygen, and nitrogen (CHON), are essential for designing these systems to be sustainable for long-duration space missions without resupply [1]. The empirical formula, which expresses the simplest whole-number ratio of atoms of each element in a compound, provides the fundamental building block for these complex mass balance calculations [10]. This document outlines the theoretical principles and detailed experimental protocols for determining these critical values, enabling researchers to construct reliable models for BLSS research and development.

Fundamental Concepts: Empirical vs. Molecular Formulas

Understanding the distinction between empirical and molecular formulas is crucial for accurate stoichiometric accounting.

- Empirical Formula: Represents the simplest whole-number ratio of the atoms of each element present in a compound. It is a standardized descriptor of composition. For example, the empirical formula for glucose is CH₂O, indicating a 1:2:1 ratio of carbon, hydrogen, and oxygen atoms [10] [11].

- Molecular Formula: Indicates the actual number of atoms of each element in a single molecule of the compound. The molecular formula is always a whole-number multiple of the empirical formula. For glucose, the molecular formula is C₆H₁₂O₆, which is six times its empirical formula [10].

For stoichiometric modeling of processes like biomass combustion or microbial digestion, the empirical formula is often sufficient as it defines the fundamental elemental ratio being transformed [10].

Analytical Protocols for Compositional Analysis

Determining the empirical formula of a homogeneous biomolecule follows a well-established calculative procedure, while defining a representative formula for heterogeneous biomass requires extensive analytical characterization.

Protocol 1: Determining the Empirical Formula from Percentage Composition

This method is suitable for purified compounds [10] [11].

Workflow Overview:

Detailed Procedure:

- Obtain Percentage Composition: Acquire data on the mass percentage of each element (C, H, O, N, etc.) in the compound through elemental analysis. The sum should be 100%.

- Assume a 100g Sample: Simplify calculations by assuming a 100-gram sample. This converts percentage values directly into gram masses (e.g., 40% C becomes 40g C).

- Convert Mass to Moles: For each element, divide the mass by its atomic molar mass.

- Example: For 40g of Carbon: Moles of C = 40 g / 12.01 g/mol ≈ 3.33 mol [10].

- Determine the Simplest Ratio: Divide all mole values by the smallest number of moles calculated in the previous step.

- Achieve Whole Numbers: If the ratios are not whole numbers (e.g., 1.33), multiply all ratios by a small integer (e.g., 3) to obtain values very close to whole numbers (e.g., 1.33 × 3 ≈ 4). Round to the nearest whole number.

- Write the Empirical Formula: Use these whole numbers as subscripts to construct the formula.

Table 1: Example Calculation for a Hypothetical Biomolecule

| Element | Mass (%) | Mass in 100g (g) | Molar Mass (g/mol) | Moles | ÷ by Smallest (3.33) | Ratio | Whole Number Ratio |

|---|---|---|---|---|---|---|---|

| Carbon (C) | 40.0 | 40.0 | 12.01 | 3.33 | 1.00 | 1 | 1 |

| Hydrogen (H) | 6.7 | 6.7 | 1.01 | 6.63 | 1.99 | 2 | 2 |

| Oxygen (O) | 53.3 | 53.3 | 16.00 | 3.33 | 1.00 | 1 | 1 |

Resulting Empirical Formula: CH₂O [10].

Protocol 2: Deriving a Representative Empirical Formula for Biomass

Biomass is a complex, heterogeneous mixture of structural polymers (cellulose, hemicellulose, lignin), and other components. Its "empirical formula" is a weighted average representing the overall elemental ratio of the sample. The U.S. National Renewable Energy Laboratory (NREL) has established standardized Laboratory Analytical Procedures (LAPs) for this purpose [12].

Workflow Overview:

Detailed Procedure:

Sample Preparation:

Determination of Extractives:

- Perform a solvent extraction (e.g., with water and ethanol) to remove non-structural components like sugars, oils, and pigments [12].

- This step is critical for reporting the final composition on an "as-received" basis. The mass loss corresponds to the extractives fraction.

Determination of Structural Carbohydrates and Lignin (Core Protocol):

- This procedure quantifies the main polymeric components [12].

- Two-Stage Acid Hydrolysis:

- Primary Hydrolysis: React the extractives-free biomass with 72% sulfuric acid (H₂SO₄) at 30°C for 1 hour, with continuous stirring. This step solubilizes the polymeric carbohydrates.

- Secondary Hydrolysis: Dilute the acid to 4% and hydrolyze in an autoclave at elevated temperature (e.g., 121°C). This step completes the breakdown of oligomers into monomeric sugars.

- Analysis of Hydrolysate (Liquid Fraction):

- Use High-Performance Liquid Chromatography (HPLC) to quantify the monomeric sugars (glucose, xylose, arabinose, etc.) in the liquid. These values are converted back to their anhydrous polymer masses (e.g., glucan, xylan) using anhydro corrections [12].

- The hydrolysate is also analyzed for acid-soluble lignin (via UV-Vis spectroscopy) and carbohydrate degradation products like furfural.

- Analysis of Solid Residue (Solid Fraction):

Summative Mass Closure:

- The final composition is the sum of the masses of:

- Structural Carbohydrates (Glucan, Xylan, etc.)

- Acid-Insoluble Lignin

- Acid-Soluble Lignin

- Extractives

- Ash

- The total should approach 100% of the dry weight of the original sample, providing a mass closure that validates the analysis [12].

- From the detailed composition, a representative empirical formula (e.g., CH~1.4~O~0.6~N~0.05~) can be calculated by aggregating the elemental contributions from each quantified component.

- The final composition is the sum of the masses of:

Table 2: Key Research Reagent Solutions for Biomass Analysis

| Reagent/Item | Function in Protocol |

|---|---|

| Sulfuric Acid (H₂SO₄) | Primary catalyst for the two-stage hydrolysis process that breaks down structural polymers into quantifiable monomers [12]. |

| HPLC System with Refractive Index (RI) / UV Detector | Quantifies concentrations of monomeric sugars (glucose, xylose), sugar alcohols, and degradation products in the hydrolysate [12]. |

| De-ashing Cartridges | Used during HPLC sample preparation to remove salts that can interfere with the RI detector and produce false signals [12]. |

| Vacuum Filtration Apparatus | Used with a defined crucible to separate the solid residue (acid-insoluble lignin) from the liquid hydrolysate after the second-stage hydrolysis [12]. |

| Reference Biomass Materials (e.g., from NIST) | Homogeneous standard materials used to validate analytical methods and ensure accuracy and precision across measurements [12]. |

Application in BLSS Stoichiometric Modeling

In a BLSS, the mass flows of elements must be balanced to sustain the crew. A recent stoichiometric model of a fully closed MELiSSA (Micro-Ecological Life Support System Alternative) loop, designed for a crew of six, uses fixed chemical equations to describe the cycling of C, H, O, and N through its five compartments [1]. These equations rely on the empirical compositions of all inputs and outputs, from human waste to plant biomass.

For instance, the consumption of plant food by the crew (Compartment 5) and the subsequent processing of solid waste in bioreactors (Compartments 1-3) are modeled using stoichiometric equations. The model's high degree of closure—where most compounds exhibit zero loss between cycles—is predicated on accurate empirical formulas for these biomass streams [1]. Using an inaccurate empirical formula for, say, Limnospira (spirulina) biomass grown in Compartment 4a would lead to erroneous predictions of oxygen production or carbon dioxide consumption, ultimately jeopardizing the system's balance. Therefore, the rigorous analytical protocols described herein are not just best practices but necessities for viable BLSS design.

Defining the empirical formulas of biomolecules and biomass through standardized analytical protocols provides the non-negotiable data foundation for stoichiometric modeling of BLSS. The calculative method for pure compounds and the detailed, multi-step wet chemical procedures for heterogeneous biomass, as established by bodies like NREL, ensure the accuracy and reliability of this data. Integrating these precisely defined elemental ratios into mass flow models, such as those for the MELiSSA loop, is the key to achieving the high degree of material closure required for autonomous, long-duration human space exploration. This approach transforms the abstract concept of "biomass" into a quantifiable and manageable variable within a closed ecological system.

The Challenge of Achieving Full Closure in Bioregenerative Systems

For long-duration space missions beyond Earth's orbit, Bioregenerative Life Support Systems (BLSS) become essential for human survival by regenerating resources through biological processes. The central challenge lies in achieving full material closure—creating a system where waste products are entirely recycled into food, water, and oxygen without significant resupply from Earth [13] [3]. On missions exceeding three months, the equivalent system mass (ESM) trade-offs favor BLSS over purely physiochemical systems due to reduced resupply requirements [14]. Stoichiometric modeling provides the foundational framework for understanding and balancing the mass flows of carbon, hydrogen, oxygen, and nitrogen through all system compartments, enabling the design of a truly closed ecosystem [1]. This application note details the specific challenges and protocols for achieving this closure, with a focus on quantitative mass balance and experimental validation.

Key Obstacles to System Closure

Mass Flow Imbalances and Cycling Acceleration

In a materially closed BLSS, the small reservoir sizes of critical elements, compared to Earth's biosphere, lead to accelerated cycling rates and heightened sensitivity to imbalances [13]. A transient disruption in one process can rapidly propagate through the entire system, causing destabilizing fluctuations in oxygen, carbon dioxide, or nutrient levels. Accumulation of trace gases or recalcitrant materials not accounted for in the initial stoichiometric model can further jeopardize closure by tying up elements in unusable forms [13]. These challenges necessitate robust, resilient biological communities and precise control systems not required in terrestrial or resupply-dependent environments.

Integration and Operational Hurdles

- Nitrogen Recovery Complexity: Nitrogen is a critical element for protein synthesis in food. While urine is the primary source of recoverable nitrogen (85%), its efficient conversion into a plant-accessible form (e.g., nitrate) via processes like nitrification is a complex, multi-step biological challenge [15]. Inadequate nitrogen recovery directly impacts food production and system closure.

- Food Production Limitations: Higher plants are vital as food producers and for air and water regeneration. However, for full closure, staple crops (e.g., wheat, potato) with long growth cycles must be incorporated, requiring significant growing area and precise resource allocation [3]. The choice between fast-growing "salad machine" species and calorie-dense staple crops directly impacts the system's mass balance.

- Pathogen and Pest Management: The introduction of plants and their associated microbiomes creates the risk of phytopathogen outbreaks, as demonstrated by a Fusarium oxysporum outbreak in the International Space Station's Veggie module [14]. Effective Integrated Pest Management (IPM) protocols are therefore not optional but essential for maintaining the stability and productivity of the plant compartment [14].

Quantitative Analysis of Mass Flows

Elemental Mass Flow in a Conceptual BLSS

Stoichiometric modeling tracks the flow of elements through interconnected compartments. The following table summarizes the steady-state mass flow for a crew of six, based on a MELiSSA-inspired model aiming for high closure [1].

Table 1: Daily Elemental Mass Flow for a Crew of Six in a Closed BLSS (Data adapted from [1])

| Element | Human Consumption (g/day) | Plant & Algal Uptake (g/day) | Waste Processing Output (g/day) | Closure Efficiency |

|---|---|---|---|---|

| Carbon (C) | 811.2 | 810.5 | 810.5 | ~99.9% |

| Hydrogen (H) | 111.6 | 111.4 | 111.4 | ~99.8% |

| Oxygen (O) | 1062.4 | 1060.1 | 1060.1 | ~99.8% |

| Nitrogen (N) | 19.8 | 19.7 | 19.7 | ~99.5% |

System Performance Metrics

The performance of BLSS ground demonstrators can be evaluated against key closure metrics.

Table 2: Performance Metrics for BLSS Closure

| Performance Metric | Target for Full Closure | Current State-of-the-Art (Example) |

|---|---|---|

| Food Production Closure | 100% | Varies; often only a fraction is targeted in tests [1] |

| Oxygen Closure | 100% | High (>99%) achievable in models [1] |

| Water Recovery | >98% | ~96.5% on ISS (physicochemical); targets are higher for BLSS [15] |

| Nitrogen Recovery | >98% | A major focus of current R&D (e.g., MELiSSA C3) [15] |

| Mission Duration without Resupply | >3 years | 1-year demonstration in Lunar Palace 1 [2] |

Experimental Protocols for Closure Research

Protocol: Stoichiometric Model Development for BLSS

This protocol outlines the creation of a stoichiometric model to describe mass flows in a BLSS.

1. Research Question: How do the elements C, H, O, and N cycle through all compartments of a BLSS at steady state, and what are the system's closure points?

2. Experimental Workflow:

3. Procedure:

- Step 1: System Definition. Define the functional compartments of the BLSS loop (e.g., C1: Waste Bioreactor, C2: Photoheterotrophs, C3: Nitrifier, C4a: Algae, C4b: Higher Plants, C5: Crew) [1].

- Step 2: Stream Identification. List all material inputs and outputs for each compartment, including human waste, plant biomass, food, water, and gases [1] [16].

- Step 3: Formula Assignment. Assign empirical chemical formulas to all components. For example, human solid waste can be represented as

CH1.8O0.5N0.1, and algal biomass asCH1.8O0.4N0.2[1] [17]. - Step 4: Equation Balancing. Write balanced chemical equations for the key processes in each compartment (e.g., nitrification, photosynthesis, human consumption). Ensure mass balance for each element [1] [18].

- Step 5: Model Implementation. Implement the system of equations in a spreadsheet or computational solver. Use a crew of six as a baseline for scaling input and output fluxes [1].

- Step 6: Iteration and Validation. Run the model iteratively to balance all flows. Validate the model outputs against data from experimental ground-based facilities like the MELiSSA Pilot Plant or Lunar Palace [1] [2].

4. Interpretation: A successful model will show minimal losses for most compounds at steady state, with oxygen and CO2 exhibiting only minor losses between iterations [1]. Non-closing fluxes indicate where system inefficiencies lie or where additional processing compartments are required.

Protocol: Operation of a Nitrifying Bioreactor for Nitrogen Recovery

This protocol details the operation of the nitrifying compartment (e.g., MELiSSA C3), which is critical for converting ammonia from waste streams into nitrate fertilizer for plants [15].

1. Research Question: Can a nitrifying bioreactor stably convert the ammonium load from a crew's urine into nitrate with a conversion efficiency of >98%?

2. Experimental Workflow:

3. Procedure:

- Step 1: Feedstock Preparation. Collect and chemically stabilize pretreated urine to prevent scaling and urea hydrolysis. Acidification (e.g., with H₃PO₄) converts volatile ammonia to non-volatile ammonium and reduces scaling [15].

- Step 2: Inoculation and Operation. Inoculate the bioreactor with a defined nitrifying consortium (e.g., Nitrosomonas europaea, Nitrobacter winogradskyi). Operate the reactor in a continuous or fed-batch mode.

- Step 3: Process Monitoring. Continuously monitor key parameters: Ammonium (NH₄⁺) and Nitrite (NO₂⁻) concentrations (target: near zero), Nitrate (NO₃⁻) concentration (target: steady increase proportional to NH₄⁺ decrease), and pH (controlled around 7.5-8.0 for optimal nitrifier activity) [15].

- Step 4: Product Formulation. The effluent, rich in nitrate, is combined with other recovered nutrients (P, K) and trace elements to form a complete hydroponic nutrient solution.

- Step 5: Plant Growth Trial. Deliver the nutrient solution to a plant growth compartment (e.g., growing lettuce or wheat). Monitor plant biomass production and nitrogen uptake efficiency [3].

4. Interpretation: Successful nitrogen closure is demonstrated by a high conversion efficiency of urine nitrogen into plant biomass nitrogen, with minimal accumulation of intermediate nitrite or gaseous nitrogen losses.

The Scientist's Toolkit: Key Reagents and Materials

Table 3: Essential Research Reagents for BLSS Stoichiometry and Closure Experiments

| Reagent/Material | Function in BLSS Research |

|---|---|

| Defined Microbial Consortia (e.g., Nitrosomonas, Nitrobacter) | To conduct specific waste recycling processes like nitrification with predictable stoichiometry [15]. |

| Hydroponic Nutrient Solutions | To provide precise mineral nutrition for plant growth studies and validate nutrient uptake models [3]. |

| Chemical Tracers (e.g., ¹⁵N-labeled Urea) | To quantitatively track the fate of nitrogen atoms through different compartments (e.g., urine to plant biomass) [15]. |

| Standardized Synthetic Waste Feeds | To simulate human waste (feces, urine) with a consistent chemical composition for reproducible bioreactor experiments [1] [16]. |

| Gas Analysis Standards (e.g., CO2, O2, CH4) | To calibrate sensors for real-time monitoring of atmospheric gases, crucial for detecting leaks or imbalances [13]. |

Achieving full closure in Bioregenerative Life Support Systems remains a formidable challenge that hinges on precise stoichiometric balancing of mass flows and the robust integration of biological components. While current research, exemplified by projects like MELiSSA and the Lunar Palace, has demonstrated the feasibility of long-duration operation, gaps in nitrogen recovery, trace gas management, and system stability under space conditions persist. Future work must focus on closing these specific loops through advanced modeling, ground-based testing in integrated facilities, and the development of dynamic control strategies that can respond to the inherent variability of biological systems. Success will enable the sustainable human exploration of deep space.

Computational Frameworks: From FBA to Genome-Scale Models

Flux Balance Analysis (FBA) is a mathematical computational approach for analyzing the flow of metabolites through metabolic networks. It calculates the steady-state fluxes in a biochemical reaction network to predict outcomes like growth rate or metabolite production. This methodology is particularly valuable for simulating complex systems such as Bioregenerative Life Support Systems (BLSS), where understanding mass flows of elements like carbon, hydrogen, oxygen, and nitrogen is critical for sustainability [1].

FBA operates on constraint-based modeling, using the stoichiometric coefficients of every reaction in a genome-scale metabolic model (GEM) to form a numerical matrix [19]. A GEM contains all known metabolic reactions for an organism and the genes encoding each enzyme [20]. The core principle involves defining a solution space bounded by constraints and applying an optimization function to identify a flux distribution that maximizes a biological objective, such as biomass production [21] [19].

For BLSS research, which aims to create materially closed loops for long-duration space missions, FBA provides a powerful in-silico tool. It enables scientists to model and optimize the metabolic interactions between crew and organisms (e.g., plants, microalgae, bacteria) that recycle waste into oxygen, water, and food [1]. By predicting how these systems consume and regenerate resources, FBA can inform the design of more robust and efficient BLSS, helping to achieve the high degree of closure necessary for mission autonomy [1].

Core Principles and Key Assumptions

The application of FBA is founded on several key principles and assumptions:

- Steady-State Assumption: The model assumes the network is in a steady state, meaning that for each internal metabolite, the rate of production equals the rate of consumption, resulting in no net accumulation [19].

- Stoichiometric Constraints: The stoichiometric matrix (S), derived from the metabolic reconstruction, defines the mass balance constraints under which the system operates [19].

- Physiological Constraints: Additional constraints, based on experimental measurements, are applied to flux variables, such as substrate uptake rates or the maximum capacity of certain reactions [20].

- Objective Function: An objective function is chosen to represent a biological goal, which the model optimizes to find a particular flux distribution within the solution space. A common objective is the Biomass Objective Function (BOF), which simulates the production of all biomass precursors in the correct proportions to support cellular growth [20].

Protocol: A Step-by-Step Guide to Performing FBA

The following protocol outlines the standard workflow for conducting an FBA.

Step 1: Define the Metabolic Network and Stoichiometric Matrix

- Action: Compile all metabolic reactions relevant to the organism and system under study into a stoichiometric matrix. For genome-scale models, this can involve thousands of reactions and metabolites [20].

- BLSS Context: In a multi-compartment BLSS like MELiSSA, this may require integrating models for the different organisms inhabiting each compartment to describe the cycling of elements [1].

Step 2: Set Constraints on the Reaction Network

- Action: Apply constraints to the flux variables (v). These typically include:

- Reversibility Constraints: Define whether each reaction can proceed in both forward and reverse directions.

- Capacity Constraints: Set upper and lower bounds (vmax, vmin) for reaction fluxes based on physiological data [19].

- Example: In a study simulating E. coli growth, the uptake rate of glucose might be constrained to a measured value [19].

Step 3: Formulate the Biomass Objective Function (BOF)

- Action: Define the BOF as a pseudo-reaction that consumes all necessary biomass precursors (e.g., amino acids, nucleotides, lipids) in their known cellular proportions [20].

- Detail: The BOF can be formulated at different levels:

Step 4: Solve the Linear Programming Problem

- Action: Perform the optimization. The standard formulation is:

- Maximize Z = c^T v

- Subject to: S • v = 0

- and vmin ≤ v ≤ vmax

- where Z is the objective function (e.g., biomass growth rate), c is a vector of weights indicating how much each flux contributes to the objective, S is the stoichiometric matrix, and v is the flux vector [19] [20].

Step 5: Analyze and Validate the Results

- Action: Interpret the predicted flux distribution. Compare the in-silico predictions, such as growth rates or substrate uptake/secretion rates, with experimental data to validate the model [20].

Application Notes: FBA for BLSS Stoichiometric Modeling

Integrating FBA into a Multi-Compartment BLSS Model

In the MELiSSA BLSS concept, mass flows connect several compartments. FBA can model each biological compartment (e.g., nitrifying bacteria, photoheterotrophic bacteria, higher plants) as an individual metabolic network [1]. The challenge is to appropriately define the exchange fluxes between compartments, ensuring that the waste outputs from the crew (C5) become the inputs for waste-processing compartments (C1, C2, C3), and that the nutrients produced by these compartments support the growth of autotrophic organisms (C4) that, in turn, sustain the crew [1].

Refining Predictions with Enzyme Constraints

A known limitation of traditional FBA is that it can predict unrealistically high fluxes. This can be addressed by incorporating enzyme constraints using workflows like ECMpy [19]. This method caps the flux through a reaction based on enzyme availability and catalytic efficiency (Kcat), adding a layer of biophysical reality without altering the stoichiometric matrix. For a BLSS model, this increases the accuracy of predicting how genetic modifications in key organisms might affect overall system flux.

Lexicographic Optimization for Realistic Scenarios

Optimizing for a single objective, like L-cysteine export in an engineered organism, can result in solutions with zero biomass growth, which is biologically unrealistic [19]. Lexicographic optimization is a solution: the model is first optimized for biomass. It is then constrained to require a percentage of that maximum growth (e.g., 30-90%) while a second objective (e.g., product synthesis) is optimized [19]. This ensures the solution reflects a growing, metabolically active system, which is essential for a sustainable BLSS.

Data Presentation

Table 1: Example Modifications to a Base Metabolic Model for FBA

This table illustrates how a base model like E. coli's iML1515 can be modified to reflect genetic engineering and simulate altered metabolic behavior, a key process for optimizing BLSS organisms [19].

| Parameter | Gene/Enzyme/Reaction | Original Value | Modified Value | Justification |

|---|---|---|---|---|

| Kcat_forward | PGCD | 20 1/s | 2000 1/s | 100-fold increase in mutant enzyme activity [19] |

| Kcat_forward | SERAT | 38 1/s | 101.46 1/s | Removal of feedback inhibition [19] |

| Gene Abundance | SerA/b2913 | 626 ppm | 5,643,000 ppm | Reflects modified promoter and copy number [19] |

Table 2: Uptake Reaction Bounds for a Defined Growth Medium (SM1 + LB)

FBA simulations require defining the environmental conditions through constraints on uptake reactions. This table provides an example for a specific medium [19].

| Medium Component | Associated Uptake Reaction | Upper Bound (mmol/gDW/h) |

|---|---|---|

| Glucose | EXglcDe_reverse | 55.51 |

| Ammonium Ion | EXnh4e_reverse | 554.32 |

| Phosphate | EXpie_reverse | 157.94 |

| Sulfate | EXso4e_reverse | 5.75 |

| Thiosulfate | EXtsule_reverse | 44.60 |

The Scientist's Toolkit: Essential Reagents and Software

Research Reagent Solutions

- Genome-Scale Metabolic Model (GEM): A structured database of all known metabolic reactions for an organism (e.g., iML1515 for E. coli K-12). It serves as the fundamental scaffold for constructing an FBA model [19].

- Stoichiometric Matrix: A mathematical representation of the metabolic network derived from the GEM. It defines the mass balance constraints for the FBA problem [19].

- Biomass Objective Function (BOF): A pseudo-reaction that defines the drain of metabolic precursors required for cell growth. It is the most common objective function used to simulate growth [20].

- Enzyme Constraint Data: Data on enzyme kinetic parameters (Kcat values) and abundances, used to add capacity constraints on reactions and improve the predictive accuracy of FBA [19].

Computational Tools

- COBRApy: A popular Python package for performing constraint-based reconstruction and analysis, including FBA [19].

- ECMpy: A workflow for incorporating enzyme constraints into existing GEMs without altering the stoichiometric matrix [19].

Constructing Genome-Scale Metabolic Models

Genome-Scale Metabolic Models (GSSMs) are computational reconstructions of the metabolic network of an organism, based on its genomic annotation. They represent a comprehensive, stoichiometric accounting of the reactions and metabolites that constitute metabolism, enabling in silico simulation of metabolic fluxes. In the context of Bioregenerative Life Support Systems (BLSS), GSSMs are indispensable tools for modeling mass flows of carbon, hydrogen, oxygen, and other elements, allowing researchers to predict how these closed-loop systems will behave under various conditions. The construction of a high-quality GSSM involves a multi-step process of network reconstruction, curation, and mathematical formulation, culminating in a model that can be used for simulation and analysis via techniques such as Flux Balance Analysis (FBA) [22] [23].

GSSM Reconstruction Workflow and Protocol

The construction of a GSSM is a systematic process that transforms genomic information into a mathematical model capable of predicting phenotypic behavior. The following workflow outlines the key stages, from initial data collection to final model validation.

Figure 1: A high-level workflow for the systematic reconstruction of a Genome-Scale Metabolic Model.

Protocol: Draft Model Reconstruction and Curation

This protocol details the steps for generating a draft model from a genome annotation and refining it into a functional GSSM.

- Inputs:

- Annotated genome sequence (e.g., from RAST or Prokka).

- Biochemical reaction database (e.g., ModelSEED, KEGG).

- Procedure:

- Generate Draft Model: Use an automated reconstruction tool (e.g., the Build Metabolic Model app in KBase) to map annotated genes to reactions from a biochemical database. This process creates an initial draft network [23].

- Define the Biomass Objective Function (BOF): Assemble a reaction that represents the composition of a new cell, including all necessary metabolites in their correct proportions (e.g., amino acids, nucleotides, lipids, cofactors). The BOF is typically set as the objective function for simulating growth [22].

- Perform Gapfilling: The draft model will likely be unable to produce all biomass precursors due to missing annotations. The gapfilling process algorithmically adds a minimal set of reactions from a database to enable growth on a specified medium.

- Algorithm: The process uses Linear Programming (LP) to minimize the sum of flux through the added (gapfilled) reactions. Transporters and non-KEGG reactions are assigned higher penalties to favor biologically plausible solutions [23].

- Media Selection: It is recommended to perform initial gapfilling on a minimal medium. This ensures the algorithm adds the maximal set of reactions required for the biosynthesis of essential substrates. Gapfilling on "complete" media (where all transportable compounds are available) can be performed subsequently, but will result in a model that is more dependent on nutrient uptake [23].

- Validate the Model: Simulate growth under different environmental conditions (e.g., varying carbon sources) using Flux Balance Analysis (FBA). Compare the predicted growth outcomes, substrate uptake rates, and by-product secretion rates against experimental data from the literature to assess model accuracy [22].

Key Reagents and Computational Tools for GSSM Construction

The following table details essential data resources and tools required for the construction and analysis of GSSMs.

Table 1: Key Research Reagent Solutions for GSSM Construction

| Item Name | Function/Application | Critical Specifications |

|---|---|---|

| AGORA2 Resource | A database of curated, strain-level GEMs for 7,302 human gut microbes. Used as a source of pre-reconstructed models or as a reference for reaction and metabolite formatting [22]. | Includes models with mapped taxonomic and phenotypic data. |

| ModelSEED Biochemistry | A standardized biochemistry database that provides controlled vocabularies for roles, reactions, and compounds. Essential for ensuring consistency during draft model generation [23]. | Integrated into the KBase reconstruction pipeline. |

| KBase Gapfill App | A computational tool that identifies and adds a minimal set of reactions to a draft model to enable it to produce biomass on a specified growth medium [23]. | Uses Linear Programming (LP) with a cost function for reactions; allows user-defined media conditions. |

| Custom Media Formulation | A defined set of extracellular metabolites available to the model during simulation and gapfilling. Critical for contextualizing the model to a specific environment, such as a BLSS [23]. | Can be minimal or complex; must be defined in a compatible format (e.g., in KBase). |

| RAST Annotation Pipeline | A service for annotating genome sequences. Its functional roles are recommended for metabolic modeling in KBase due to their use as a controlled vocabulary for deriving reactions [23]. | Provides consistent gene-to-function assignments. |

Advanced Applications and Customization in a BLSS Context

Once a functional GSSM is constructed, it can be used for advanced in silico analyses to probe system capabilities and design strategies for optimizing BLSS mass flows.

Protocol: Simulating Interspecies Interactions in a BLSS

A primary application of GSSMs in a BLSS is modeling the metabolic exchange between organisms (e.g., plants, microbes, and humans) to stabilize the closed-loop system.

- Objective: To predict the outcome of introducing a microbial LBP (Live Biotherapeutic Product) or other biological component on the existing BLSS community.

- Procedure:

- Select Candidate Models: Obtain GEMs for the resident BLSS microbes and the candidate organism (e.g., from AGORA2 or by constructing them de novo) [22].

- Define the Community Medium: Formulate a custom media condition that represents the BLSS nutrient environment.

- Simulate Pairwise Interactions: For the candidate strain and each key resident microbe, perform a pairwise simulation.

- A. Maximize the growth of the candidate strain.

- B. Take the fermentative by-products secreted by the candidate and add them as nutritional inputs to the model of the resident microbe.

- C. Simulate the growth of the resident microbe with and without these candidate-derived metabolites.

- Analyze Results: Compare the growth rates from step 3C. An increase suggests a commensal or mutualistic relationship (e.g., cross-feeding), while a decrease suggests competition or inhibition. This helps screen for candidates that positively influence the BLSS community [22].

Customization for BLSS: Visualizing Regulatory Interactions

Understanding dynamic regulation is key for BLSS management. The concept of Regulatory Strength (RS) can be used to visualize how metabolite pools regulate reaction fluxes within a GSSM. The RS quantifies the strength of an effector (inhibitor or activator) on a reaction step at a given metabolic state, providing a percentage that indicates its contribution to the total regulation of that reaction [24]. The following diagram illustrates the logic for determining and interpreting these interactions.

Figure 2: A logic flow for calculating and interpreting Regulatory Strength (RS) to visualize metabolite-reaction interactions in a GSSM [24].

Integrating Omics Data into Stoichiometric Network Models

The integration of multi-omics data into stoichiometric network models represents a transformative approach in systems biology, enabling researchers to bridge the gap between genomic potential and observed phenotypic behavior. This integration is particularly critical for complex biological systems such as Bioregenerative Life Support Systems (BLSS), where understanding and predicting mass flows of elements like carbon, hydrogen, oxygen, and nitrogen is essential for system stability and closure [1]. Stoichiometric models, traditionally based on Genome-Scale Metabolic Models (GEMs), provide a structured framework for analyzing the organization and dynamics of cellular mechanisms but often lack the capacity to incorporate real-time molecular data [25] [26]. The advent of high-throughput omics technologies—including genomics, transcriptomics, proteomics, and metabolomics—has generated unprecedented amounts of data that, when effectively integrated, can constrain and refine these models, significantly enhancing their predictive accuracy [27] [28].

For BLSS research, which aims to create sustainable closed-loop environments for long-duration space missions, this integration is paramount. These systems rely on interconnected compartments of organisms to recycle waste into oxygen, water, and food [1]. The precise quantification of mass flows through metabolic networks is thus crucial for achieving a high coefficient of closure—the percentage of resources regenerated within the system [29]. This protocol details methodologies for embedding multi-omics data into stoichiometric models to achieve such precision, providing a structured guide for researchers and scientists engaged in predictive metabolic modeling.

Background and Principles

Stoichiometric Modeling Fundamentals

Stoichiometric models are built around the concept of mass balance and the stoichiometry of biochemical reactions within a metabolic network. The core mathematical framework is often based on Constraint-Based Reconstruction and Analysis (COBRA), which assumes steady-state metabolite concentrations. This is represented as:

S · v = 0

where S is the stoichiometric matrix (m × n), with m metabolites and n reactions, and v is the flux vector of reaction rates [26]. The solution space is constrained by enzyme capacity and nutrient availability, typically leading to a linear programming problem where an objective function (e.g., biomass production) is maximized or minimized.

The integration of omics data introduces additional constraints that refine this solution space. For instance, proteomic data can be used to constrain the maximum flux through a reaction based on the measured abundance of its catalyzing enzyme and its turnover number [26]. This moves the model from a genetically defined potential state to a context-specific state that reflects actual physiological conditions.

Omics Data Types and Their Roles

Different omics layers provide distinct and complementary information for constraining metabolic models:

- Genomics defines the network structure itself, providing the gene-protein-reaction (GPR) associations that form the foundation of GEMs [30].

- Transcriptomics indicates which genes are being actively transcribed, often used as a proxy for enzyme capacity though subject to post-transcriptional regulation [27].

- Proteomics directly quantifies enzyme abundance, offering a more reliable constraint for flux calculations [26].

- Metabolomics provides snapshots of metabolite pool sizes, which can inform on thermodynamic constraints and reaction directions [27].

In the context of BLSS, the primary goal is to model the cycling of key elements (C, H, O, N) through the system's compartments. A successfully integrated model can predict how perturbations in one compartment affect the entire system, which is vital for managing essential outputs like oxygen and food production [1].

Computational Approaches and Methodologies

Several computational approaches have been developed to integrate omics data into stoichiometric models, which can be broadly categorized into four main strategies [26]. Table 1 summarizes these approaches, their characteristics, and representative algorithms.

Table 1: Categories of Methods for Integrating Omics Data into Stoichiometric Models

| Category | Description | Key Methods | Data Requirements | Output |

|---|---|---|---|---|

| Proteomics-Driven Flux Constraints | Uses enzyme abundance data to directly constrain upper flux bounds. | FBAwMC [26], MOMENT [26] | Quantitative proteomics, enzyme turnover numbers | Context-specific flux distributions |

| Proteomics-Enriched Stoichiometric Matrix Expansion | Expands the stoichiometric matrix to include explicit reactions for protein synthesis and degradation. | GECKO [26] | Proteomics, enzyme kinetic parameters | Resource allocation-aware flux solutions |

| Proteomics-Driven Flux Estimation | Uses statistical methods to integrate expression data and map it onto the network. | IOMA [26], MADE [26] | Relative or absolute proteomics/transcriptomics | Condition-specific metabolic states |

| Fine-Grained Methods | Incorporates detailed transcriptional and translational processes. | ETFL [26] | Multi-omics data (mRNA, protein, flux) | Integrated predictions of mRNA, enzyme, and flux |

Hybrid Machine Learning Approaches

Recent advances have introduced hybrid frameworks that combine mechanistic stoichiometric models with data-driven machine learning (ML). The Metabolic-Informed Neural Network (MINN) is one such architecture that embeds GEMs within a neural network, allowing for the seamless integration of multi-omics data to predict metabolic fluxes [25]. This approach leverages the pattern recognition strength of ML while respecting the biochemical constraints enforced by the stoichiometric model. Similarly, NEXT-FBA represents another hybrid stoichiometric/data-driven approach designed to improve intracellular flux predictions [31].

These hybrid models are particularly valuable for addressing the "omics cascade"—the sequential flow of information from genes to transcripts, proteins, and metabolites—which is influenced by numerous regulatory mechanisms and environmental factors [27]. By learning complex, non-linear relationships from data while adhering to stoichiometric constraints, they can achieve higher predictive accuracy than purely mechanistic or purely data-driven approaches alone.

Application Notes and Protocols

Protocol: Integrating Proteomics Data into a BLSS Stoichiometric Model

This protocol details the steps for integrating quantitative proteomics data into a stoichiometric model of a BLSS compartment, using the GECKO (GEnome-scale model with Enzymatic Constraints using Kinetic and Omics data) method as a framework [26].

Experimental Design and Workflow

The entire process, from model preparation to simulation and validation, is outlined in Figure 1 below.

Figure 1: Workflow for integrating proteomics data into a stoichiometric model using a GECKO-like approach.

Step-by-Step Procedure

Step 1: Model and Data Preparation

- GEM Preparation: Obtain a high-quality Genome-Scale Metabolic Model (GEM) for the organism of interest (e.g., Limnospira indica or a higher plant from the BLSS C4 compartment) [1] [26].

- Proteomics Acquisition: Generate or acquire quantitative proteomics data for the target organism under the specific BLSS growth condition (e.g., using mass spectrometry). Data should be in units of mg protein per gDW (gram dry weight) of cells [26].

Step 2: Model Expansion with Enzymatic Constraints

- Expand Stoichiometric Matrix: The GEM is expanded to include pseudo-reactions that represent the investment of enzymes in catalytic steps. This links metabolic fluxes to enzyme usage.

- The enzyme allocation constraint is formulated as: ∑ (vi / kcati) ≤ Ptot where v_i is the flux of reaction i, k_cat_i is the turnover number of the enzyme catalyzing reaction i, and P_tot is the total enzyme pool capacity [26].

Step 3: Incorporation of Proteomics Data

- Apply Measured Enzyme Levels: Use the proteomics data to constrain the upper bound for each enzyme's usage. For an enzyme E_i measured at a concentration [Ei], the flux through its catalyzed reaction is constrained by: vi ≤ [Ei] × kcat_i

- For enzymes with missing measurements, apply a default constraint or use gap-filling algorithms [26].

Step 4: Simulation and Analysis

- Define Objective: Set an appropriate biological objective function. While biomass maximization is common, for BLSS, objectives like oxygen production (C4 compartment) or waste degradation (C1 compartment) may be more relevant [1].

- Perform Flux Analysis: Solve the constrained model using Parsimonious Flux Balance Analysis (pFBA) or similar techniques to obtain a flux distribution that satisfies the proteomic constraints and optimizes the objective [26] [31].

- Validate Predictions: Compare predicted fluxes (e.g., substrate uptake, production rates) with experimentally measured rates, if available. For BLSS, this could involve comparing predicted CO2 uptake and O2 production by algae or plants to measured gas exchange data [1].

Reagent and Computational Tools

Successful implementation of this protocol requires specific reagents and computational tools. Table 2 lists the essential components of the "Researcher's Toolkit" for this workflow.

Table 2: Research Reagent and Computational Solutions for Omics-Stoichiometric Integration

| Category | Item | Specifications / Function | Example Use in Protocol |

|---|---|---|---|

| Wet-Lab Reagents | Protein Lysis Buffer | For efficient cell disruption and protein extraction from BLSS organism samples (e.g., microalgae, higher plants). | Preparing samples for mass spectrometry-based proteomics. |

| Quantitative Proteomics Kit (e.g., TMT/iTRAQ) | For isobaric labeling of peptides to enable multiplexed, relative quantification of protein abundance across different BLSS conditions. | Comparing enzyme abundance between different BLSS operational stages. | |

| Internal Standard (e.g., SILAC) | Labeled amino acids for spike-in absolute protein quantification. | Determining absolute enzyme concentrations (mg/gDW). | |

| Software & Databases | COBRA Toolbox | A MATLAB toolbox for constraint-based modeling. The GECKO toolbox is built upon it. | Implementing the model expansion and simulation steps [26]. |

| R/Python Environment | For data pre-processing, statistical analysis, and visualization of omics data. | Normalizing proteomics data and generating correlation plots. | |

| Genome-Scale Model (GEM) Database (e.g., BiGG Models) | A repository of curated GEMs for various organisms. | Sourcing a starting GEM for a BLSS-relevant organism [26]. | |