Smart Vision for Wheat: Integrating RGB Imagery and Weather Data to Predict Flowering with AI

This article explores the cutting-edge integration of RGB imagery and in-situ meteorological data with multimodal machine learning to predict anthesis in individual wheat plants.

Smart Vision for Wheat: Integrating RGB Imagery and Weather Data to Predict Flowering with AI

Abstract

This article explores the cutting-edge integration of RGB imagery and in-situ meteorological data with multimodal machine learning to predict anthesis in individual wheat plants. Aimed at researchers and agricultural scientists, it details a foundational shift from field-scale estimates to individual plant-level forecasting, crucial for hybrid breeding and regulatory compliance. The content covers the methodological framework involving few-shot learning and advanced architectures like Swin V2, addresses troubleshooting through environmental adaptation and data limitation strategies, and validates the approach with robust performance metrics exceeding 0.8 F1 scores across diverse planting environments. The implications for enhancing breeding efficiency and ensuring biosafety in field trials are thoroughly discussed.

The Critical Need for Precision: Why Individual Wheat Flowering Prediction is a Game-Changer

The Limitations of Conventional Field-Scale Anthesis Prediction Models

Accurate prediction of wheat anthesis, the period during which a plant flowers, is critically important for optimizing breeding programs and ensuring regulatory compliance for field trials. Conventional anthesis prediction models have primarily operated at the field scale, providing estimates of average flowering dates for a crop stand. However, the inherent limitations of these approaches fail to address a fundamental need in modern wheat breeding: accurate prediction for individual plants rather than whole fields. This application note details the specific constraints of conventional models and outlines advanced, scalable protocols that address these gaps by integrating RGB imagery and meteorological data, directly supporting the broader research objective of developing robust, individual plant-level forecasting tools.

Critical Limitations of Conventional Models

Conventional field-scale models face several significant constraints that limit their practical utility for precision breeding and regulatory reporting.

Inability to Capture Individual Plant Variation

Field-scale models successfully estimate average flowering dates but cannot account for the substantial variations in anthesis timing among individual plants of the same cultivar within a single field [1]. These variations, driven by micro-environmental heterogeneity in factors such as soil moisture, nutrient distribution, and light exposure, are a major source of prediction inaccuracy at the individual plant level [1] [2]. Breeders require this granular data for critical tasks like planning hybridization, which must be finalized at least 10 days before flowering is due [1].

Regulatory and Operational Challenges

Biotechnology field trials in the United States and Australia operate under strict regulatory mandates that require reporting to regulators 7–14 days before the first plant flowers [1] [2]. Conventional models, which provide field-level averages, are ill-suited for predicting the flowering time of the very first plant, creating compliance challenges. Furthermore, the current alternative—manual monitoring of individual plants—is a labour-intensive, inefficient, and costly process prone to human error [1] [2].

Table 1: Key Deficiencies of Conventional Field-Scale Prediction Models

| Deficiency Category | Specific Limitation | Impact on Breeding and Research |

|---|---|---|

| Spatial Resolution | Provides only field-scale averages, cannot predict individual plant flowering [1] | Inadequate for planning pollination of specific plants in hybrid breeding programs |

| Temporal Precision | Lacks accuracy for predicting the "first flower" in a population [2] | Fails to meet regulatory reporting requirements for biotech trials [1] |

| Data Inputs | Often relies solely on genetic markers or macro-environmental variables (e.g., temperature, photoperiod) [2] | Cannot account for micro-environmental variations affecting individual plants [1] |

| Operational Efficiency | Manual ground-truthing is required for validation [3] | Labour-intensive, costly, and limits the scale of field trials [1] [3] |

Quantitative Performance Comparison of Modeling Approaches

Emerging methodologies that integrate multiple data modalities consistently outperform conventional approaches. The table below summarizes the performance of different modeling frameworks as reported in recent studies.

Table 2: Performance Comparison of Anthesis Prediction and Related Phenotyping Models

| Model Approach | Primary Data Modality | Reported Performance Metric | Application Context |

|---|---|---|---|

| Multimodal Few-Shot Learning | RGB Imagery & Meteorological Data [1] | F1 score > 0.8 across planting settings [1] [2] | Individual wheat plant anthesis prediction |

| Support Vector Machine (SVM) | Hyperspectral Imaging [3] | F1 score of 0.832 for pre-anthesis growth stage classification [3] | Classification of Zadoks stages Z37, Z39, Z41 |

| Vision Transformer (ViT) | RGB Images of Wheat Grains [4] | Precision: 99.03%, Recall: 99.00% [4] | Predicting Days After Anthesis (DAA) |

| Random Forest (RF) | RGB Images of Wheat Grains [4] | Precision: 88.71%, Recall: 87.93% [4] | Predicting Days After Anthesis (DAA) |

| Artificial Neural Network (ANN) | Meteorological Variables [5] | R² of 0.96 for disease severity prediction [5] | Forecasting yellow rust and powdery mildew severity |

Experimental Protocol for Multimodal Few-Shot Anthesis Prediction

This protocol details the methodology for developing a multimodal framework that integrates RGB imagery and meteorological data for individual wheat plant anthesis prediction, as validated in recent research [1] [2].

Phase 1: Data Acquisition and Preprocessing

Objective: To collect and standardize high-quality RGB and environmental data from individual wheat plants.

Materials & Equipment:

- RGB Imaging System: A standardized RGB camera (e.g., DSLR) mounted on a tripod or UAV for consistent top-down image capture [6] [4].

- Meteorological Station: An on-site weather station capable of logging temperature, humidity, solar radiation, and precipitation at regular intervals [1] [7].

- Growth Environment: Wheat plants grown in pots or field plots with unique identifiers for tracking individuals over time [3].

Procedure:

- Image Acquisition: Capture high-resolution RGB images of individual wheat plants at regular intervals (e.g., daily) from early development stages through anthesis. Maintain consistent camera settings, distance, and lighting conditions where possible [6].

- Weather Data Logging: Record concurrent in-situ meteorological data at a temporal resolution matching or exceeding the image capture frequency [1].

- Data Labeling: For each plant image, annotate the phenological stage based on the Zadoks scale, with particular focus on pre-anthesis stages (Z37, Z39, Z41) and anthesis itself (Z65) [3].

- Preprocessing: Resize all images to a uniform resolution (e.g., 512x512 pixels). Normalize pixel values to the [0,1] range. Synchronize image and weather data timestamps [6].

Phase 2: Model Development and Training with Few-Shot Learning

Objective: To train a robust classification model that can generalize well to new environments with limited data.

Materials & Equipment:

- Computational Hardware: A computing workstation with a high-performance GPU (e.g., NVIDIA Tesla series) for efficient deep learning model training.

- Software Framework: Python programming environment with deep learning libraries such as PyTorch or TensorFlow.

Procedure:

- Problem Formulation: Frame anthesis prediction as a classification task. For example, a binary classification (e.g., "flowering within 24 hours" vs. "not flowering") or a three-class problem (e.g., "flower before," "within," or "after" a critical date) [1].

- Model Architecture Selection: Implement advanced architectures such as Swin V2 or ConvNeXt as backbone networks for feature extraction from images [2].

- Multimodal Integration: Fuse the extracted image features with the processed meteorological data. This can be achieved using a Fully Connected (FC) comparator or a Transformer (TF) comparator to integrate the two data streams [2].

- Few-Shot Learning Training: Incorporate a metric-based few-shot learning approach (e.g., Prototypical Networks).

- The model is first trained on a "base" dataset with ample labeled examples.

- For adaptation to a new environment, the model is fine-tuned using only a very small number of labeled examples ("K-shots," e.g., 1 or 5 examples per class) from the new environment. This step allows the model to quickly adapt its understanding to novel conditions without extensive retraining [1] [2].

Phase 3: Model Evaluation and Validation

Objective: To rigorously assess model performance and generalization capability.

Procedure:

- Cross-Dataset Validation: Train the model on data from one set of growing environments and validate its performance on a completely independent dataset from different environments. Target F1 scores above 0.8 on independent data indicate strong generalization [2].

- Ablation Study: Systematically evaluate the contribution of each data modality by training models with (a) images only, (b) weather data only, and (c) combined multimodal data. Integration of weather data typically boosts accuracy, particularly 12–16 days before anthesis when visual cues are subtle [2].

- Anchor-Transfer Test: Validate the model's deployability by testing its performance at new field sites using environmental anchors derived from previous data, demonstrating that environmental alignment is more critical than dataset size [2].

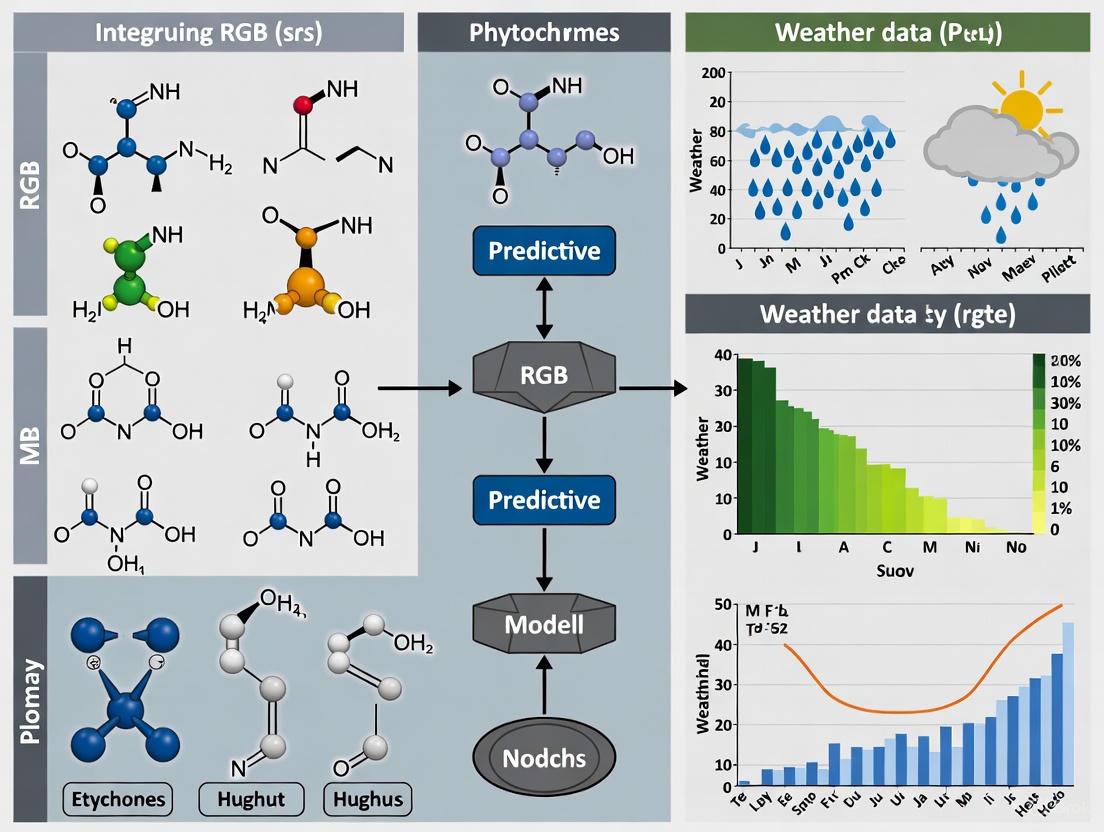

Workflow Diagram: Conventional vs. Multimodal Prediction

The following diagram illustrates the fundamental operational differences between the conventional field-scale approach and the advanced individual plant-focused multimodal protocol.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Multimodal Anthesis Prediction

| Item Name | Specification / Example | Primary Function in Protocol |

|---|---|---|

| High-Resolution RGB Camera | Canon EOS 1500D DSLR; 6000 x 4000 pixel resolution [6] | Captures detailed visual data on color, shape, and texture of individual wheat plants and grains. |

| On-Site Meteorological Station | Logging interval of 1 hour or less; measures temperature, humidity, solar radiation [1] [7] | Provides micro-environmental data correlated with plant development and anthesis timing. |

| Hyperspectral Imaging Sensor | Specim FX10 camera (400–1000 nm range) [3] | Enables detailed spectral analysis for fine-scale growth stage classification (e.g., Z37, Z39, Z41) [3]. |

| GPU Computing Workstation | NVIDIA Tesla or equivalent high-performance GPU | Accelerates training and inference of complex deep learning models (CNNs, Transformers). |

| Zadoks Growth Stage Scale | Standardized phenology scale (e.g., Z37, Z39, Z41, Z65) [3] | Provides the ground-truth labeling standard for model training and validation. |

| Few-Shot Learning Algorithm | Metric-based approaches (e.g., Prototypical Networks) | Enhances model adaptability to new environments with very limited labeled data [1] [2]. |

Accurately predicting the flowering time, or anthesis, of individual wheat plants is a critical challenge in both hybrid breeding and regulated biotechnology trials. For breeders, timely prediction—typically 8–10 days in advance—is essential for planning hybrid pollination strategies [2]. Meanwhile, regulatory agencies in the United States and Australia mandate that researchers accurately report anthesis 7–14 days before the first plant flowers in genetically modified (GM) crop field trials [1]. Currently, predicting anthesis of individual wheat plants is a labour-intensive, inefficient, and costly process, primarily reliant on manual visual inspections [1]. This document outlines automated, AI-driven protocols that integrate RGB imagery and meteorological data to meet these precise forecasting imperatives, transforming a traditionally subjective task into a smart, automated process [2].

Quantitative Performance Data

The following tables summarize the quantitative performance of the AI models described in the search results, providing key benchmarks for researchers.

Table 1: Model Performance Metrics for Flowering Prediction

| Model / Framework | Key Metric | Performance Value | Forecast Lead Time | Plant Scale |

|---|---|---|---|---|

| Multimodal Few-Shot Learning [2] | F1 Score | > 0.8 | Up to 16 days before anthesis | Individual plant |

| Multimodal Few-Shot Learning [2] | F1 Score (One-shot) | 0.984 | 8 days before anthesis | Individual plant |

| Multimodal Few-Shot Learning [2] | F1 Score (Five-shot) | 0.889 | 8 days before anthesis | Individual plant |

| Support Vector Machine (Hyperspectral) [3] | F1 Score | 0.832 | For growth stages Z37, Z39, Z41 | Individual plant |

Table 2: Impact of Integrated Data on Model Performance

| Integrated Data Type | Impact on Model Performance | Context / Condition |

|---|---|---|

| Meteorological Data [2] | Boosted accuracy by 0.06–0.13 F1 units | Particularly 12–16 days before anthesis |

| Few-Shot Learning [2] | Improved weaker results (e.g., 0.75 → 0.889 F1) | With five-shot training at 8 days pre-anthesis |

Experimental Protocols

Core Multimodal Framework for Anthesis Prediction

This protocol details the primary methodology for predicting wheat anthesis using a multimodal AI approach.

- Objective: To predict the anthesis of individual wheat plants as a binary (e.g., will flower within +/- 1 day of a critical date) or three-class classification task, 7-16 days in advance [2] [1].

- Key Equipment:

- Procedure:

- Data Acquisition:

- Data Labeling:

- Annotate each image data point with the corresponding ground-truth anthesis date or growth stage (e.g., Zadoks stages Z37, Z39, Z41) [3].

- Model Architecture & Training:

- Image Processing: Utilize advanced deep learning architectures like Swin V2 or ConvNeXt for feature extraction from RGB images [2].

- Data Fusion: Integrate the extracted image features with the meteorological data using a fully connected or transformer-based comparator [2].

- Few-Shot Learning: To enhance adaptability to new environments with limited data, employ few-shot learning techniques based on metric similarity. This involves training the model to generalize from a very small number of examples (e.g., one or five images) from the target environment [2] [1].

- Validation:

- Perform cross-dataset validation on independent datasets to assess model robustness and generalizability [2].

Hyperspectral Protocol for Pre-Anthesis Growth Staging

This protocol provides an alternative method using hyperspectral imaging for classifying earlier growth stages that precede anthesis.

- Objective: To automatically classify individual wheat plants into key pre-anthesis growth stages (Zadoks Z37, Z39, Z41) to support flowering forecasts [3].

- Key Equipment: Hyperspectral imaging sensor (e.g., Specim FX10) covering visible and near-infrared spectra (400–1000 nm) [3].

- Procedure:

- Image Acquisition: Capture hyperspectral images of plants under controlled lighting or in a semi-natural environment using a top-down view [3].

- Spectral Transformation: Apply transformations to the raw spectral data, such as Standard Normal Variate (SNV), Hyper-hue, or Principal Component Analysis (PCA), to enhance features and reduce noise [3].

- Feature Selection: Identify the most informative wavelengths to reduce data dimensionality. Studies show robust classification can be achieved with as few as five optimized wavelengths [3].

- Model Training & Classification: Train a Support Vector Machine (SVM) classifier on the transformed and selected spectral features to distinguish between the three distinct pre-anthesis growth stages [3].

Workflow and System Architecture Diagrams

The following diagrams illustrate the logical workflow of the core multimodal framework and the architecture of a modern agricultural weather AI system.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Models for AI-Driven Flowering Prediction

| Item Name | Type | Function / Application |

|---|---|---|

| Swin V2 & ConvNeXt [2] | Deep Learning Model | Advanced neural network architectures for extracting complex features from RGB imagery of plants. |

| Graph Neural Networks (GNNs) [8] | Deep Learning Model | Represents atmospheric states for efficient, high-quality weather forecasting in AI systems. |

| Support Vector Machine (SVM) [3] | Machine Learning Model | Effective classifier for growth stage classification using hyperspectral or processed data. |

| Few-Shot Learning (Metric-based) [2] [1] | Machine Learning Technique | Enables model adaptation to new growth environments with very limited new training data. |

| Standard Normal Variate (SNV) [3] | Spectral Transformation | Preprocessing method for hyperspectral data to reduce scattering effects and improve model robustness. |

| Google Earth Engine (GEE) [9] [10] | Computing Platform | Cloud-based platform for processing and integrating large-scale satellite, weather, and soil data. |

| WIWAM / LemnaTec Scanalyzer [3] | Hyperspectral Imaging System | Automated, high-throughput phenotyping system for capturing precise plant spectral data in controlled conditions. |

| FarmCast [11] | Forecasting Service | Provides year-ahead weather intelligence and crop milestone predictions to inform planting and management strategies. |

In wheat breeding and biotechnology trials, the precise prediction of anthesis (flowering) is critical for orchestrating successful hybridization and complying with biosecurity regulations. While field-scale prediction models have existed, their primary limitation lies in the inability to account for micro-environmental variations—highly localized differences in temperature, light, and other conditions within a single field. These variations can cause flowering timing to differ by 5 to 10 days even among individual plants of the same cultivar [12] [13]. Understanding and quantifying these micro-effects is essential for advancing precision agriculture. This Application Note frames the investigation of micro-environmental impacts within a broader research thesis on integrating RGB imagery and weather data, providing the experimental protocols and analytical tools necessary to dissect this complex relationship.

Quantitative Impact of Micro-Environment on Flowering

The following table synthesizes key quantitative evidence from recent studies, demonstrating how micro-environmental factors influence wheat flowering dynamics and the performance of models designed to predict it.

Table 1: Quantitative Evidence of Micro-Environmental Impacts on Wheat Flowering

| Observed Phenomenon / Model Feature | Quantitative Impact | Research Context & Citation |

|---|---|---|

| Intra-field Flowering Variation | 5 to 10 days difference between individual plants [12] [13] | Same cultivar, field conditions [12] [13] |

| Impact of Sowing Date (Macro to Micro) | Flowering duration: 18.4 days (Early sowing) vs. 11.6 days (Late sowing) [2] | Different sowing conditions, ANOVA confirmed significant differences (P ≤ 0.001) [2] |

| Value of Integrated Weather Data in AI Models | F1 score boost of 0.06 to 0.13, particularly 12-16 days pre-anthesis [2] [12] | Multimodal model (RGB + Weather) vs. image-only model [2] [12] |

| Few-Shot Learning Model Performance | F1 score of 0.984 at 8 days before anthesis with one-shot learning [2]; Five-shot training raised F1 from 0.75 to 0.889 [2] [12] | Model generalization to new environments with minimal data [2] [12] |

| Fine-Scale Growth Stage Classification | F1 score of 0.832 for classifying pre-anthesis stages (Z37, Z39, Z41) [13] | Hyperspectral imaging with Support Vector Machine [13] |

Experimental Protocols for Micro-Environmental Analysis

Protocol: Multimodal Data Acquisition for Individual Plant Phenotyping

This protocol outlines the procedure for collecting synchronized image and environmental data from individual wheat plants in a field setting.

I. Primary Objective To acquire high-quality, co-registered RGB image data and localized weather parameters from individual wheat plants to build a dataset for micro-environmentally aware flowering prediction models.

II. Research Reagent Solutions

Table 2: Essential Materials and Equipment

| Item Name | Specification / Example | Primary Function in Protocol |

|---|---|---|

| RGB Imaging System | Allied Vision Technologies GT3300C camera [13] or similar | Captures high-resolution (e.g., 2472x3296 pixels) visual data of plant morphology and color. |

| Meteorological Station | On-site weather logger measuring temperature, solar radiation, humidity, precipitation. | Records localized historical and forecast weather data (e.g., 90-day history + 6-day forecast) [12]. |

| Phenotyping Platform | Mobile field-based platform (e.g., as used in [14]) | Ensures consistent camera angle (e.g., side-view, 45°, 1m height) and positioning for repeatable image capture. |

| Data Processing Unit | Computer with GPU (e.g., NVIDIA GTX series) | Handles image preprocessing, storage, and subsequent model training tasks. |

III. Step-by-Step Procedure

Experimental Setup & Sowing:

Synchronized Data Acquisition:

- Imaging: Conduct imaging sessions daily from the flag leaf stage (Z37) until at least two days post-anthesis. Capture images consistently between 12:00 PM and 2:00 PM to minimize variation in natural lighting [13].

- Weather Data Logging: Ensure the meteorological station records parameters (temperature, radiation, precipitation, etc.) at hourly intervals. The data should be synchronized with image timestamps.

Data Preprocessing:

- Image Correction: Apply necessary corrections for lens distortion and perform white balancing.

- Plant Segmentation: Use an object detection model like YOLOv8 [12] to identify and crop individual wheat spikes or plants from the raw images.

- Weather Data Alignment: For each image, create an "Image-Weather Composite" (IWC) by aligning it with the relevant historical and short-term forecast weather data [12].

Data Storage:

- Store processed images and their aligned weather data in a structured database, ensuring each data point is linked to a unique plant ID and timestamp.

Protocol: Multi-Modal Few-Shot Learning for Anthesis Prediction

This protocol describes how to train and validate a model that can predict whether an individual wheat plant will flower within a specific time window, and can generalize to new environments with minimal data.

I. Primary Objective To develop and evaluate a machine learning framework that integrates RGB image features and weather data for robust, few-shot prediction of individual wheat plant anthesis.

II. Step-by-Step Procedure

Problem Formulation & Labeling:

Model Architecture Design:

- Implement a dual-branch neural network.

- Image Branch: Use a modern vision transformer (e.g., Swin V2) or CNN (e.g., ConvNeXt) as a backbone to extract visual features from the preprocessed RGB images [2] [12].

- Weather Branch: Use a Gated Recurrent Unit (GRU) or similar sequential model to process the time-series weather data embedded in the IWC [12].

- Fuse the outputs of both branches using a comparator module, such as a Fully Connected (FC) layer or a Transformer (TF) comparator [2].

- Implement a dual-branch neural network.

Model Training with Few-Shot Learning:

- Pre-train the model on a source dataset with abundant labeled examples.

- To adapt to a new target environment, employ a metric-based few-shot learning approach. The model learns a feature space where simple similarity metrics (e.g., cosine distance) can classify new examples based on a very small "support set" (e.g., 1 or 5 labeled examples per class from the new environment) [2] [12].

Model Evaluation:

- Perform cross-dataset validation to test generalizability.

- Use the F1 score as the primary metric for evaluating classification performance across different prediction timeframes (e.g., 8, 12, 16 days before anthesis) [2].

- Conduct ablation studies to quantify the specific contribution of weather data to the overall model accuracy.

Visualization of Workflows and Signaling

Multi-Modal Few-Shot Learning Workflow

This diagram illustrates the complete computational pipeline for predicting anthesis by fusing image and weather data, highlighting the few-shot learning adaptation process.

Gene-Environment Signaling Pathway

This diagram conceptualizes the simplified signaling pathway through which macro- and micro-environmental signals are integrated by the wheat plant to regulate the timing of flowering.

The High Cost and Inefficiency of Current Manual Monitoring Practices

In both agricultural breeding and regulatory field trials, the precise prediction of wheat flowering, or anthesis, is a critical determinant of success. For breeders, a lead time of 8–10 days is essential to plan hybridization and manage pollination windows effectively. Similarly, regulatory agencies in the United States and Australia mandate that genetically modified (GM) crop trials report anthesis 7–14 days before the first plant flowers [2] [1]. Currently, meeting these requirements relies on manual monitoring practices, which are inherently labor-intensive, inefficient, costly, and prone to human error [2]. This document details the limitations of these conventional methods and frames them within the urgent need for automated solutions that integrate RGB imagery and meteorological data.

Quantitative Analysis of Manual Monitoring Costs and Limitations

The inefficiency of manual phenotyping is not merely anecdotal; it is quantifiable and presents a significant bottleneck in agricultural research and development. The following table summarizes the core drawbacks and their operational impacts.

Table 1: Key Limitations and Associated Costs of Manual Wheat Anthesis Monitoring

| Limitation | Quantitative/Specific Impact | Consequence for Research & Compliance |

|---|---|---|

| High Labor Demand | Relies on frequent, skilled human labor for field scouting [2]. | Significantly increases operational costs and limits the scale of trials. |

| Subjectivity & Human Error | Prone to subjective bias and inaccuracies in stage identification [15]. | Reduces data quality and reliability, compromising experimental validity. |

| Insufficient Temporal Resolution | Provides only periodic "snapshots" of crop status [15]. | High risk of missing critical, rapid phenological events like the exact start of anthesis. |

| Inability to Predict Individual Plants | Cannot reliably forecast anthesis for individual plants 7-14 days in advance [1]. | Hinders hybrid breeding planning and risks non-compliance with regulatory mandates. |

The Automated Alternative: A Protocol for Multimodal Few-Shot Learning

The integration of RGB imagery and weather data presents a transformative solution. The following experimental protocol, derived from a peer-reviewed study, outlines a robust framework for automated anthesis prediction [2] [1].

Experimental Workflow for Automated Anthesis Prediction

The diagram below illustrates the end-to-end workflow for implementing this automated prediction system.

Detailed Experimental Protocols

Protocol 3.2.1: Multimodal Data Acquisition and Preprocessing

Objective: To systematically collect and fuse high-quality RGB image series and meteorological data for model development [2] [15].

Materials:

- RGB Imaging Sensor: A high-resolution RGB camera (e.g., 1920×1080 pixels or higher) mounted on a near-surface platform (3m height) or a UAV [15].

- Data Storage & Compute: Secure digital (SD) cards and cloud/on-premise servers for image storage.

- Weather Station: A station capable of logging in-situ temperature, precipitation, and solar radiation data [2].

Procedure:

- Image Capture: Position the camera at a vertical viewing angle of 40°–60° for optimal feature capture [15]. Capture images daily from 8:00 to 17:00 throughout the wheat growth cycle.

- Image Preprocessing: Manually annotate images with phenological stage labels. Construct standardized image series samples (e.g., 30 images per series) that represent the temporal progression towards anthesis. Apply data augmentation techniques, including random rotation, flipping, and brightness adjustment, to the training dataset [15].

- Weather Data Collection: Program the weather station to record meteorological parameters at hourly intervals. Ensure the weather station is located in close proximity to the experimental plots.

- Data Fusion: Align image series with corresponding meteorological data using timestamps to create a unified multimodal dataset for model input.

Protocol 3.2.2: Model Training and Few-Shot Learning Implementation

Objective: To train a deep learning model that can accurately predict anthesis and generalize to new environments with minimal data [2].

Materials:

- Software: Python programming environment with deep learning libraries (e.g., PyTorch, TensorFlow).

- Computing Hardware: A computer with a high-performance GPU (Graphics Processing Unit) for accelerated model training.

Procedure:

- Model Selection: Implement advanced neural network architectures such as Swin V2 or ConvNeXt as the core feature extractors for image data [2].

- Problem Formulation: Reformulate the flowering prediction into a classification task:

- Binary Classification: Predict if a plant will flower before or after a critical date.

- Three-Class Classification: Predict if a plant will flower before, after, or within one day of a critical date [1].

- Integrate Weather Data: Use a Fully Connected (FC) or Transformer (TF) comparator to integrate the extracted image features with the meteorological data [2].

- Apply Few-Shot Learning: To enhance model adaptability, employ a few-shot learning technique based on metric similarity. This allows the model, trained on a source dataset, to be rapidly fine-tuned for a new environment using only a handful (1-5) of labeled examples from the target environment [2] [1].

- Model Training: Train the model using the multimodal dataset. Utilize a multi-step evaluation process including cross-dataset validation and ablation studies (to test the contribution of weather data).

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Automated Anthesis Prediction

| Item/Category | Specification/Example | Primary Function in the Protocol |

|---|---|---|

| RGB Imaging System | High-resolution camera (e.g., Hikvision DS-2DE4223IW-D) [15] | Captures high-temporal-resolution image series of the crop canopy for visual phenotyping. |

| Near-Surface Platform | Fixed mount at 3m height with 40°–60° viewing angle [15] | Enables continuous, high-quality image acquisition under various weather conditions. |

| Meteorological Station | Station measuring temperature, rainfall, solar radiation [2] [16] | Provides in-situ environmental covariates that significantly influence flowering timing. |

| Deep Learning Models | Swin V2, ConvNeXt, LSTM, 3D-CNN [2] [15] | Advanced neural networks for spatiotemporal feature extraction and sequence modeling from image series. |

| Few-Shot Learning Algorithm | Metric-based similarity learning [2] [1] | Dramatically improves model adaptability to new sites and varieties with minimal new data. |

| Automated ML Library | PyCaret [17] | Streamlines and automates the process of model selection, training, and hyperparameter tuning. |

The high cost and inefficiency of manual monitoring are no longer tenable for modern, data-driven wheat research and regulatory compliance. The protocol detailed herein, centered on the integration of RGB imagery and weather data within a multimodal, few-shot learning framework, offers a scalable, accurate, and cost-effective alternative. By adopting these automated methods, researchers and institutions can overcome the critical bottlenecks of traditional practices, enhancing the precision and pace of wheat breeding and biotechnology development.

Building the Predictive System: A Technical Deep Dive into Multimodal AI Frameworks

The prediction of wheat anthesis, or flowering, is a critical agronomic process with direct implications for global food security. Timely prediction enables breeders to optimize hybridization plans and allows regulatory agencies to monitor genetically modified (GM) crop trials effectively. This document details the core architecture and experimental protocols for a multimodal framework that integrates RGB imagery with on-site meteorological data to predict the anthesis of individual wheat plants. The presented approach addresses the limitations of conventional methods by leveraging machine learning to account for micro-environmental variations, providing a cost-effective, scalable, and precise tool for wheat breeding and biotechnology trials [2] [1].

Core Architectural Framework

The foundational architecture reformulates the flowering prediction problem into a classification task. The system determines whether an individual wheat plant will flower before, after, or within one day of a critical date, aligning with the operational needs of breeders and regulators who require lead times of 7 to 14 days [2] [3].

The framework's robustness stems from its multimodal design and its incorporation of few-shot learning based on metric similarity. This allows models trained on one dataset to generalize effectively to new growth environments with minimal additional data, overcoming a significant challenge in agricultural AI applications [2] [1]. Advanced neural network architectures, specifically Swin V2 and ConvNeXt, form the visual backbone of the system. These are paired with comparators, either Fully Connected (FC) or Transformer (TF) layers, to process and fuse the features extracted from the different data streams [2].

Table 1: Core Components of the Architectural Framework

| Component | Description | Function in Prediction Model |

|---|---|---|

| RGB Imaging | Standard color images of individual wheat plants. | Captures visual phenotypic traits and morphological changes associated with pre-anthesis growth stages [3]. |

| Meteorological Data | On-site weather measurements (e.g., temperature). | Accounts for environmental drivers of development that are not visible in images [2]. |

| Few-Shot Learning | Machine learning technique for learning from limited data. | Enables model adaptation to new environments, cultivars, or planting conditions with minimal new data [2] [1]. |

| Swin V2 / ConvNeXt | Advanced deep learning architectures for image processing. | Acts as a feature extractor to identify relevant visual patterns from RGB imagery [2]. |

| Comparator (FC/TF) | A module (Fully Connected or Transformer) for data fusion. | Integrates the extracted visual features with the meteorological data for a unified prediction [2]. |

A multi-step evaluation process, including cross-dataset validation and ablation studies, has demonstrated the robustness of this architecture. The integration of weather data is particularly crucial in the early prediction window, enhancing model accuracy when visual cues from images are subtle or insufficient.

Table 2: Summary of Model Performance Metrics

| Evaluation Metric | Performance Outcome | Context and Significance |

|---|---|---|

| Overall F1 Score | > 0.8 | Achieved across all planting settings (early, mid, and late sowing), indicating high and consistent reliability [2] [1]. |

| Cross-Dataset F1 Score | ~0.80 | On independent datasets, demonstrating strong generalization and adaptability to new environments [2]. |

| Impact of Weather Data | +0.06 to +0.13 F1 | Increase in accuracy, particularly 12-16 days before anthesis, highlighting the value of multimodal integration [2]. |

| Few-Shot (One-Shot) | F1 = 0.984 | Achieved at 8 days before anthesis, showing the model's capability to adapt with very limited new data [2]. |

| Three-Class Prediction | F1 > 0.6 | Maintained robust performance on the more complex task of predicting "before", "within", or "after" a 1-day window [2]. |

Detailed Experimental Protocols

Protocol 1: Multimodal Data Acquisition and Preprocessing

This protocol covers the simultaneous collection of image and weather data from wheat plants in a controlled or semi-natural environment.

Key Materials:

- Plant Material: Wheat plants (e.g., cultivar 'Scepter') grown in pots or field plots with staggered sowing dates to introduce developmental variation [3].

- RGB Imaging System: A high-resolution RGB camera (e.g., Allied Vision Technologies GT330) mounted on a stable platform or automated scanalyzer system (e.g., LemnaTec 3D Scanalyzer) [3].

- Meteorological Station: An on-site weather station capable of logging data for parameters such as temperature, solar radiation, and humidity.

Methodology:

- Imaging Setup: Position the RGB camera top-down, approximately 1.4 meters above the plant canopy, to ensure a consistent field of view. For controlled environments, use halogen lighting in a closed cabinet to eliminate external light variation [3].

- Imaging Schedule: Capture images of individual plants daily, from growth stage Z37 (flag leaf just visible) until several days after Z41 (flag leaf sheath extending). Conduct imaging sessions during a fixed time window (e.g., 12:00 PM to 2:00 PM) to minimize diurnal effects [3].

- Weather Data Logging: Ensure the meteorological station records data at high temporal resolution (e.g., hourly) throughout the experiment. The data must be time-synchronized with the image capture events.

- Data Preprocessing:

- Images: Apply standard normalization and augmentation techniques. For few-shot learning, organize images into support and query sets based on the target task.

- Weather Data: Align weather parameters (e.g., average daily temperature, cumulative solar radiation) with the corresponding image data for each plant and day.

Protocol 2: Model Training and Few-Shot Inference

This protocol outlines the procedure for training the core model and adapting it to new environments using few-shot learning.

Key Materials:

- Computing Infrastructure: A high-performance computing workstation or server with one or more GPUs suitable for deep learning.

- Software Frameworks: Standard deep learning libraries such as PyTorch or TensorFlow.

Methodology:

- Base Model Training:

- Initialize a Swin V2 or ConvNeXt model as the image encoder.

- Train the model on a source dataset containing paired RGB images, weather data, and annotated anthesis dates. The training objective is the classification task (binary or three-class) defined in the architecture.

- Use a comparator module (FC or TF) to fuse the image-derived features with the vector of meteorological data.

- Few-Shot Adaptation:

- For a new target environment, select a very small number (e.g., 1 to 5) of labeled examples (the "support set") from the new environment.

- The model uses a metric-based learning approach to compare the new examples with its existing knowledge, adjusting its internal representations without full retraining. This allows it to generalize from the source domain to the target domain efficiently [2].

- Model Evaluation:

- Evaluate the adapted model on a separate "query set" from the target environment.

- Use F1 score as the primary metric to assess performance for each pre-anthesis day, validating the model's predictive capability 7-14 days in advance of flowering.

Workflow Visualization

The following diagram illustrates the complete integrated workflow for data acquisition, processing, model training, and prediction.

Workflow for Wheat Flowering Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Technologies for Implementation

| Item / Solution | Specification / Example | Primary Function in Protocol |

|---|---|---|

| High-Resolution RGB Camera | Allied Vision Technologies GT330; Specim FX10 (for hyperspectral) [3]. | Captures detailed top-view images of individual wheat plants for phenotypic analysis. |

| Automated Imaging System | LemnaTec 3D Scanalyzer; WIWAM hyperspectral system [3]. | Provides controlled, high-throughput image acquisition in controlled environments. |

| On-Site Weather Station | Standard meteorological sensors for temperature, humidity, solar radiation. | Logs micro-environmental data that drives plant development and is fused with image data. |

| Deep Learning Framework | PyTorch, TensorFlow. | Provides the software environment for implementing and training Swin V2, ConvNeXt, and comparator models. |

| Wheat Cultivar | 'Scepter' (mid-season maturing) [3]. | A consistent plant material for validating the model performance across experiments. |

| Few-Shot Learning Algorithm | Metric-based learning (e.g., prototypical networks). | Enables model adaptation to new growing conditions with minimal labeled data [2] [1]. |

Accurate prediction of wheat anthesis is critical for optimizing breeding programs and meeting regulatory requirements in genetically modified (GM) crop trials. This note details a practical framework for formulating anthesis prediction as either a binary or three-class classification problem. By integrating RGB imagery with in-situ meteorological data and employing few-shot learning techniques, this multimodal approach addresses the core challenge of predicting individual plant flowering times up to 16 days in advance, moving beyond field-scale averages to provide plant-level forecasts essential for modern precision agriculture [2] [1].

The system is designed to answer questions directly relevant to breeder workflows: Will a given plant flower within a critical one-day window? This formulation aligns with operational needs, such as finalizing hybridization plans 10 days before flowering or reporting to regulators 7–14 days before the first plant flowers, as mandated in the United States and Australia [1]. The model demonstrates robust performance, achieving F1 scores above 0.8 across diverse planting environments, with few-shot learning further enhancing adaptability to new conditions with minimal data [2].

Quantitative Performance Data

Table 1: Key Performance Metrics for Anthesis Prediction Models

| Prediction Task | Time Before Anthesis | Key Performance Metric | Impact of Weather Data Integration |

|---|---|---|---|

| Binary Classification | 8 days | F1 Score: 0.984 (with one-shot learning) [2] | --- |

| Binary Classification | 12-16 days | F1 Score: Improvement of 0.06–0.13 [2] | Significant boost when image cues are weak [2] |

| Binary Classification | Independent datasets | F1 Score: ~0.80 [2] [1] | --- |

| Three-Class Classification | Multiple time points | F1 Score: >0.60 [2] | --- |

| Growth Stage Classification (Z37, Z39, Z41) | Pre-anthesis | F1 Score: 0.832 (Hyperspectral & SVM) [3] | --- |

Table 2: Comparison of Classification Formulations for Wheat Phenology

| Aspect | Binary Classification | Three-Class Classification |

|---|---|---|

| Practical Question | Will the plant flower before, after, or within one day of a critical date? [2] [1] | Will the plant flower in a near, middle, or distant future window? [2] |

| Operational Use | Suited for precise scheduling of pollination or reporting [1]. | Provides a more nuanced forecast for planning. |

| Model Complexity | Lower complexity, higher accuracy (F1 > 0.8) [2]. | Higher complexity, reduced accuracy (F1 > 0.6) but still informative [2]. |

| Typical F1 Score | Above 0.8 [2] [1] | Above 0.6 [2] |

Experimental Protocols

Protocol 1: Multimodal Few-Shot Learning for Anthesis Prediction

Objective: To predict the anthesis of individual wheat plants by integrating RGB images and weather data into a robust, adaptable classification model [2] [1].

Figure 1: Multimodal few-shot learning workflow.

Procedure:

- Data Acquisition:

- Data Pre-processing and Problem Formulation:

- Model Architecture and Training:

- Few-Shot Inference for Model Adaptation:

- To adapt the pre-trained model to a new environment or cultivar with limited data, use a few-shot learning approach based on metric similarity [2] [1].

- Provide the model with a very small number of labeled examples (e.g., one or five plants, known as "one-shot" or "five-shot" learning) from the new environment to achieve high performance without extensive re-training [2].

- Validation:

Protocol 2: Hyperspectral-Based Growth Stage Classification

Objective: To automatically classify individual wheat plants into key pre-anthesis growth stages (Zadoks Z37, Z39, Z41) using hyperspectral imaging and machine learning [3].

Figure 2: Hyperspectral classification protocol.

Procedure:

- Image Acquisition:

- Use a hyperspectral imaging system (e.g., Specim FX10 camera) in a controlled environment with uniform halogen lighting to capture top-down images [3].

- Collect hyperspectral reflectance data in semi-natural field conditions to test real-world applicability [3].

- Capture black-and-white reference images for calibration and correction [3].

- Spectral Data Transformation:

- Apply spectral transformations to the raw data to enhance features and reduce noise. Standard techniques include:

- Standard Normal Variate (SNV)

- Hyper-hue

- Principal Component Analysis (PCA) [3].

- Apply spectral transformations to the raw data to enhance features and reduce noise. Standard techniques include:

- Feature Selection:

- Systematically compare the performance of different transformations.

- Identify the most informative wavelengths. Studies show that after feature selection, high classification accuracy (F1 score of 0.752) can be maintained with as few as five wavelengths, creating a low-cost approach [3].

- Model Training and Classification:

The Scientist's Toolkit

Table 3: Essential Research Reagents and Solutions

| Item | Function/Application | Specifications/Notes |

|---|---|---|

| RGB Camera | Captures high-resolution visual images of plant morphology and color [2] [3]. | Used for top-down plant imaging; can be mounted on UAVs or ground-based systems [3]. |

| Hyperspectral Imager (e.g., Specim FX10) | Captures spectral reflectance data across numerous wavelengths (e.g., 400-1000 nm) [3]. | Reveals biochemical and pigment-related changes preceding visual stage changes; 5.5 nm FWHM resolution [3]. |

| Meteorological Station | Provides in-situ weather data (e.g., temperature, humidity) for integration with image data [2]. | Critical for capturing micro-environmental variations affecting individual plants [2]. |

| Swin V2 / ConvNeXt | Advanced neural network architectures for extracting complex features from RGB images [2]. | Form the visual backbone of the multimodal prediction model [2]. |

| Support Vector Machine (SVM) | A conventional machine learning algorithm for classification tasks [3]. | Effective for hyperspectral data, achieving F1 scores of 0.832 for growth stage classification [3]. |

| Standard Normal Variate (SNV) | A spectral transformation technique that scales reflectance spectra to reduce noise [3]. | Demonstrates robust performance and strong generalizability under limited training conditions [3]. |

The accurate prediction of wheat flowering time, or anthesis, is a critical challenge in agricultural science with direct implications for crop yield, breeding programs, and climate adaptation strategies. Conventional models relying solely on genetic markers or environmental variables often fail to capture the micro-environmental variations affecting individual plants. Modern research now leverages advanced computer vision to extract phenotypic data from RGB imagery, integrating it with meteorological information for more precise, individualized plant forecasting.

Within this domain, two advanced neural network architectures have emerged as particularly powerful backbones: Swin Transformer V2 and ConvNeXt. These models represent the culmination of different evolutionary paths in computer vision—the transformer-based approach and the modernized convolutional network. This article provides a detailed comparison of these architectures, framed within the context of a multimodal system for wheat flowering prediction, and offers explicit application notes and experimental protocols for researchers in agricultural science and phenotyping.

Swin Transformer V2: Hierarchical Vision Transformer

Swin Transformer V2 is a hierarchical Vision Transformer designed to serve as a general-purpose backbone for computer vision. Its core innovation lies in its shifted windowing scheme, which enables efficient computation while maintaining a global receptive field.

Key Architectural Components:

- Patch Partition: The input image is divided into non-overlapping patches (typically 4x4), which are treated as tokens [18].

- Hierarchical Feature Maps: The model employs a pyramid structure with four stages, progressively reducing the number of tokens while increasing feature dimensions through patch merging layers [18] [19].

- Shifted Window Multi-Head Self-Attention (SW-MSA): Instead of computing global self-attention (which is computationally expensive), attention is calculated within non-overlapping local windows. Consecutive blocks use shifted window partitions, allowing cross-window connection and capturing long-range dependencies with linear computational complexity relative to image size [18] [20].

- Enhanced Scalability: V2 introduces improvements for extreme scalability, supporting training with up to 3 billion parameters and handling high-resolution images (up to 1,536×1,536 pixels) through improved normalization techniques and residual post-normation [19] [20].

ConvNeXt: Modernized Convolutional Network

ConvNeXt is a pure convolutional model that re-evaluates the ResNet architecture by incorporating modern training techniques and structural ideas from Vision Transformers. It demonstrates that carefully engineered CNNs can match or surpass Transformer performance while retaining the operational advantages of convolutions on current hardware [21] [22].

Key Architectural Components:

- Patchify Stem: Replaces the aggressive 7x7 convolution and max pool of ResNet with a non-overlapping 4×4 convolution with stride 4, similar to ViT's patch embedding [21] [22].

- Depthwise Separable Convolutions: Uses large-kernel (7x7) depthwise convolutions for spatial mixing, followed by pointwise 1×1 convolutions for channel mixing. This separates spatial and channel processing, mirroring the transformer approach [21].

- Inverted Bottleneck: Adopts an inverted bottleneck design that expands channel dimensions within each block (typically 4x), prioritizing parameter efficiency [22].

- Modernization Elements: Employs Layer Normalization instead of Batch Normalization, GELU activations instead of ReLU, and reduces the frequency of activation/normalization layers to streamline information flow [21] [22].

Quantitative Comparison of Model Characteristics

Table 1: Architectural and Performance Specifications of Swin V2 and ConvNeXt

| Feature | Swin Transformer V2 | ConvNeXt |

|---|---|---|

| Core Operator | Shifted Window Self-Attention | Depthwise Separable Convolutions |

| Primary Inductive Bias | Global context via attention | Locality & translation equivariance |

| Hierarchical Structure | Yes (4 stages) | Yes (4 stages) |

| Complexity Relative to Image Size | Linear | Linear |

| Typical Base Model Parameters | ~88M (SwinV2-B) [19] | ~89M (ConvNeXt-B) [21] |

| ImageNet-1K Top-1 Accuracy (Base Model) | 84.2% (SwinV2-B, 256x256) [19] | 84.2% (ConvNeXt-B, 224x224) [21] |

| Inference Throughput | Lower due to attention overhead [21] | Higher, maps well to optimized convolution kernels [21] |

| Memory Footprint | Higher for high-resolution inputs [21] | Lower, scales gently with image size [21] |

| Hardware Optimization | Specialized kernel support (e.g., FasterTransformer) [19] | Broad support (cuDNN, TensorRT, CoreML) [21] |

Performance in Agricultural Phenotyping

Table 2: Model Performance in Wheat Flowering Prediction (Based on Xie & Liu, 2025 [2])

| Metric | Swin V2 | ConvNeXt |

|---|---|---|

| Anthesis Prediction F1 Score (8 days in advance) | >0.8 [2] | >0.8 [2] |

| Few-Shot Learning Adaptability | High (with transformer comparator) [2] | High (with FC comparator) [2] |

| Cross-Dataset Generalization F1 | ~0.80 [2] | ~0.80 [2] |

| Benefit from Weather Data Integration | +0.06-0.13 F1 boost [2] | +0.06-0.13 F1 boost [2] |

| One-Shot Learning Performance (F1) | 0.984 at 8 days before anthesis [2] | 0.984 at 8 days before anthesis [2] |

Application to Wheat Flowering Prediction: A Multimodal Framework

System Architecture and Workflow

The integration of Swin V2 and ConvNeXt within a multimodal framework for wheat anthesis prediction involves a sophisticated pipeline that processes both visual (RGB) and meteorological data. The system reformulates flowering prediction as a classification problem, predicting whether a plant will flower before, after, or within one day of a critical date [2].

Figure 1: Multimodal workflow for wheat flowering prediction integrating RGB and weather data.

Logical Architecture of the Multimodal Comparator

The comparator mechanism forms the core of the multimodal fusion process, enabling effective integration of visual features extracted by Swin V2 or ConvNeXt with meteorological data for precise anthesis prediction.

Figure 2: Logical architecture of the multimodal comparator for feature fusion.

Experimental Protocols

Data Acquisition and Preprocessing Protocol

RGB Image Collection:

- Equipment: Use standardized RGB cameras (DSLR or high-resolution smartphone cameras) with consistent lighting conditions

- Timing: Capture daily images of individual wheat plants from emergence through flowering, preferably at consistent times of day (e.g., mid-morning)

- Framing: Maintain consistent distance and angle to ensure individual plants are clearly visible and occupy a substantial portion of the frame

- Resolution: Acquire images at minimum 224×224 resolution (higher resolutions preferred, e.g., 512×512 or 1024×1024 for larger models)

Meteorological Data Collection:

- Parameters: Record daily temperature (min, max, average), photoperiod, solar radiation, and precipitation at the field site

- Source: Use on-site weather stations or validated local meteorological station data

- Temporal Alignment: Precisely align weather data with image capture dates to ensure accurate temporal correspondence

Image Preprocessing Pipeline:

- Background Removal: Apply segmentation algorithms to isolate plant tissue from background soil and debris

- Standardization: Resize images to model-appropriate dimensions (e.g., 224×224, 256×256, or 384×384)

- Data Augmentation: Apply RandAugment, MixUp, and CutMix strategies to improve model robustness [21] [22]

- Normalization: Apply channel-wise normalization using ImageNet statistics (mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) or dataset-specific values

Meteorological Data Preprocessing:

- Normalization: Apply z-score normalization to continuous weather variables

- Encoding: Use sinusoidal encoding for cyclical variables (day of year)

- Temporal Alignment: Precisely align weather data with image capture dates

Model Training and Implementation Protocol

Backbone Configuration:

- Swin Transformer V2: Implement with window sizes 8×8 or 16×16, depending on image resolution and computational constraints [19]

- ConvNeXt: Utilize appropriate variant (Tiny, Small, Base, Large) based on dataset size and computational resources [21]

Training Recipe:

- Optimizer: AdamW with decoupled weight decay (0.05) [21] [22]

- Learning Rate: Cosine decay schedule with warmup (10% of total epochs)

- Batch Size: Maximize based on available GPU memory (typical range: 32-128)

- Epochs: 300+ epochs with early stopping based on validation performance

- Regularization: Stochastic depth (0.1-0.3), label smoothing (0.1), and weight decay (0.05) [21]

Few-Shot Learning Implementation (for rapid adaptation to new environments):

- Base Model: Pre-train on large, diverse wheat phenology dataset

- Feature Extraction: Use frozen backbone to extract features from target environment samples

- Similarity Learning: Apply metric-based learning (prototypical networks) with cosine similarity [2]

- Fine-Tuning: Optionally fine-tune final layers on limited target environment data (1-5 samples per class)

Multimodal Fusion Implementation:

- Architecture: Implement fully connected (FC) or transformer-based comparator heads

- Feature Integration: Concatenate visual features (from Swin V2/ConvNeXt) with meteorological features before the classification head

- Training: Joint optimization of visual and meteorological pathways

Evaluation and Validation Protocol

Performance Metrics:

- Primary: F1-score (accounts for class imbalance in phenological stages)

- Secondary: Accuracy, Precision, Recall, Mean Absolute Error (days from actual anthesis)

Validation Strategies:

- Cross-Validation: Implement k-fold cross-validation (k=5) with stratification by environment and genotype

- Temporal Validation: Train on earlier seasons, validate on subsequent seasons

- Geographical Validation: Train on one location, validate on distinct geographical locations

Statistical Analysis:

- Significance Testing: Apply ANOVA with post-hoc tests to compare model performance across environments and genotypes [2]

- Confidence Intervals: Report performance metrics with 95% confidence intervals based on multiple training runs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Tools for Implementing Wheat Flowering Prediction Systems

| Tool/Category | Specific Examples | Function/Application |

|---|---|---|

| Model Implementations | Swin Transformer V2 (official GitHub) [19], ConvNeXt (TIMM library) | Pre-trained model weights and reference implementations for transfer learning |

| Training Frameworks | PyTorch, PyTorch Lightning, Hugging Face Transformers | Flexible frameworks for implementing and training multimodal architectures |

| Data Augmentation | RandAugment, MixUp, CutMix, Albumentations | Increase dataset diversity and improve model generalization [21] [22] |

| Few-Shot Learning | Protonets, Matching Networks, Model-Agnostic Meta-Learning (MAML) | Adapt models to new environments with limited data [2] |

| Similarity Metrics | Cosine Similarity, Euclidean Distance, Contrastive Loss | Compare feature embeddings for few-shot learning and retrieval [2] |

| Evaluation Metrics | F1-Score, Accuracy, Mean Absolute Error, mIoU | Quantify model performance for classification and regression tasks |

| Visualization Tools | Grad-CAM, Attention Visualization, t-SNE plots | Interpret model decisions and understand feature representations |

Swin Transformer V2 and ConvNeXt represent two powerful but philosophically distinct approaches to visual feature extraction that demonstrate remarkable effectiveness in wheat flowering prediction when integrated with meteorological data. The experimental results from recent research [2] indicate that both architectures can achieve F1 scores exceeding 0.8 for anthesis prediction 8-10 days in advance, with significant improvements when incorporating weather data.

The choice between these architectures involves important trade-offs: Swin Transformer V2 offers potentially stronger global context modeling through its self-attention mechanism, while ConvNeXt provides computational efficiency and easier deployment on diverse hardware. Critically, both models benefit substantially from few-shot learning approaches, enabling adaptation to new environments with minimal data.

This multimodal framework, combining advanced visual feature extraction with meteorological data, represents a significant advancement over traditional phenological models. It offers wheat breeders and researchers a powerful tool for optimizing hybridization schedules and complying with regulatory reporting requirements, ultimately contributing to improved crop productivity and food security in the face of climate variability.

Enhancing Adaptability with Few-Shot Learning for New Environments

Accurately predicting key phenological stages like flowering (anthesis) is critical in wheat breeding and production, directly impacting hybridization planning and regulatory compliance. A significant challenge in deploying artificial intelligence (AI) for this task is the inability of models trained in one environment to generalize effectively to new locations with different climatic conditions, a problem exacerbated by the high cost and labor involved in collecting extensive new labeled datasets for every target environment [1] [23]. This application note addresses this challenge by detailing the integration of few-shot learning with multimodal data—specifically RGB imagery and meteorological information—to create adaptable and data-efficient predictive models for wheat flowering. Framed within broader research on RGB and weather data fusion, this document provides researchers and scientists with structured quantitative comparisons and detailed experimental protocols for implementing these advanced techniques.

Core Framework and Data Integration

The proposed framework reformulates the complex problem of predicting exact flowering dates into a more manageable classification task. The model is designed to predict whether an individual wheat plant will flower before, after, or within a single day of a critical target date, providing breeders with the 7–14 day advance notice required for pollination planning and regulatory reporting [1] [2].

Multimodal Data Fusion

The model's robustness stems from the synergistic use of multiple data types, which compensates for the limitations of any single data source, especially when visual cues are subtle.

- RGB Image Data: Serves as the primary input, capturing the visual phenological state of individual wheat plants. Advanced deep learning architectures are used to extract relevant features from this imagery [2].

- Meteorological Data: In-situ weather data, including temperature and solar radiation, is incorporated as a crucial supplementary feature set. Ablation studies have demonstrated that integrating this data boosts prediction accuracy by 0.06–0.13 F1 points, a particularly significant improvement during the critical 12–16 days before anthesis when visual changes in images are minimal [2].

- Phenological Information: Integrating known phenological stages or growing degree days (GDD) can further contextualize the model's predictions, aligning them with the plant's physiological development [24].

Few-Shot Learning Adaptation

To achieve adaptability with minimal data, a metric-based few-shot learning approach is employed. This method allows a model pre-trained on a source dataset to rapidly adapt to a new target environment using only a very small number of labeled examples (e.g., 1 to 5 images per class from the new environment) [1]. The core of this adaptation involves fine-tuning the model's comparative mechanism—often a fully connected or transformer-based comparator—to recognize similarities between new, unseen examples and the limited labeled set, thereby enabling accurate prediction in the novel context [2].

Table 1: Key Performance Metrics of the Multimodal Few-Shot Learning Framework

| Metric / Scenario | Performance | Context / Condition |

|---|---|---|

| Overall F1 Score | > 0.8 | Achieved across all tested planting environments [1]. |

| Cross-Dataset F1 | ~0.80 | On independent datasets, demonstrating strong generalization [2]. |

| One-Shot Learning F1 | 0.984 | Achieved 8 days before anthesis [2]. |

| Five-Shot Learning | 0.889 (from 0.75) | Demonstrating rapid performance improvement with minimal data [2]. |

| Three-Class Prediction | > 0.6 | A more complex task (before/within/after critical date) [2]. |

Experimental Protocols and Validation

This section outlines the key experiments required to develop, validate, and deploy the described few-shot learning framework.

Model Architecture and Training Protocol

Objective: To train a base model on a source dataset that is inherently adaptable to new environments. Materials: A curated dataset of RGB images of individual wheat plants paired with local meteorological data, annotated with days to anthesis. Methodology:

- Base Model Selection: Employ advanced vision architectures such as Swin V2 or ConvNeXt as the image feature backbone [2].

- Comparator Integration: Pair the image backbone with a comparator module (e.g., Fully Connected or Transformer layers) that learns to compute similarity scores between image features and class prototypes [2].

- Multimodal Fusion: Integrate the meteorological data by projecting it into a feature space that can be combined with the image-derived features, typically via concatenation or attention mechanisms.

- Pre-training: Train the entire model on the source dataset. The loss function should combine classification error (e.g., cross-entropy) and a metric-learning objective (e.g., prototypical loss).

Few-Shot Adaptation Protocol

Objective: To adapt the pre-trained model to a new target environment using only k labeled examples per class (the "k-shot" setting). Materials: A "support set" from the target environment containing k labeled examples per class. Methodology:

- Prototype Calculation: For each class in the target environment, compute a prototype vector by averaging the feature embeddings of its k support images. These features are extracted using the pre-trained model [25].

- Comparator Fine-tuning: While keeping the feature backbone frozen, fine-tune the comparator module using the new prototype vectors from the target environment. This allows the model to recalibrate its similarity assessment for the new context [2].

- Inference: For a new query image from the target environment, its feature embedding is extracted and compared to the fine-tuned class prototypes. The class with the highest similarity score is assigned.

Diagram 1: Few-shot adaptation workflow for a new environment.

Robustness Validation Protocol

Objective: To rigorously evaluate the model's performance and generalization capability. Experiments:

- Cross-Dataset Validation: Test the model on completely independent datasets from different geographic locations or seasons. Target: F1 score > 0.80 [2].

- Ablation on Weather Integration: Systematically remove the meteorological input to quantify its contribution to accuracy, especially in the early prediction phase [2].

- Anchor-Transfer Tests: Validate whether environmental alignment is more critical than dataset size by transferring model "anchors" (e.g., learned decision thresholds) from one environment to another and observing performance [2].

Table 2: Comparison of Model Architectures and Data Modalities

| Model Architecture | Comparator Type | Data Modalities | Reported F1 Score | Key Advantage |

|---|---|---|---|---|

| Swin V2 | Transformer (TF) | RGB + Weather | >0.8 [2] | Strong capture of global contextual features. |

| ConvNeXt | Fully Connected (FC) | RGB + Weather | >0.8 [2] | Modernized CNN with high efficiency. |

| Vision Transformer (ViT) | Self-attention | RGB (Grain) | 0.99 (Precision) [26] | High accuracy on grain image classification. |

| Random Forest | N/A | Spectral & Phenological | High (vs. traditional ML) [27] | Handles multi-modal feature data well. |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Resource | Function / Purpose | Example / Specification |

|---|---|---|

| RGB Imaging System | High-resolution capture of plant visual phenotypes. | UAV-mounted or field-based digital cameras [27]. |

| Meteorological Sensors | Recording in-situ temperature, solar radiation, humidity. | On-site weather stations providing hourly data [1]. |

| Swin V2 / ConvNeXt | Deep learning backbones for image feature extraction. | Pre-trained models fine-tuned on plant imagery [2]. |

| Few-Shot Learning Library | Implementing metric-based learning algorithms. | Frameworks supporting prototypical networks [25]. |

| Stacking Ensemble Algorithm | Integrating multiple models for robust biomass prediction. | Combines Random Forest, Lasso, K-NN [27]. |

| WheatGrain Dataset | Benchmarking grain filling stage prediction. | Contains images from 6 to 39 days after anthesis (DAA) [26]. |

The integration of few-shot learning with multimodal RGB and weather data presents a transformative approach for creating adaptable and data-efficient AI models in agricultural science. The protocols outlined herein provide a clear pathway for researchers to develop systems that can generalize across environments, reducing the dependency on large, costly labeled datasets. For successful implementation, it is crucial to prioritize environmental alignment between source and target domains; anchor-transfer experiments have shown this to be more critical for performance than the absolute size of the target dataset [2]. Future work should focus on standardizing data collection protocols to facilitate model sharing and further explore the fusion of additional data sources, such as hyperspectral imagery and detailed soil data, to push the boundaries of predictive accuracy and robustness in precision agriculture.

This document outlines an operational workflow for predicting wheat flowering time (anthesis) by integrating RGB imagery and meteorological data. The protocol addresses a critical bottleneck in wheat breeding and biotechnology trials, where regulators in countries like the United States and Australia require anthesis reporting 7–14 days in advance [2] [1]. Traditional methods are manual, costly, and inefficient, failing to account for micro-environmental variations affecting individual plants [2].

The herein detailed framework employs a multimodal few-shot learning approach, enabling accurate, automated, and non-destructive anthesis prediction for individual wheat plants. This application note provides a step-by-step protocol for implementing this system, designed to assist researchers in planning hybridization schedules and ensuring regulatory compliance for genetically modified (GM) crop trials [2] [1].

Accurate anthesis prediction is fundamental for successful wheat breeding and regulatory adherence. Hybrid breeders must finalize pollination plans at least 10 days before flowering, a challenging task given that individual plants of the same cultivar within the same field can exhibit substantial variations in anthesis timing due to micro-environmental differences [1]. Existing models predicting anthesis at the field scale are insufficient for these precision-demanding applications [2] [1].

The integration of proximal sensing (RGB cameras) and environmental monitoring (weather stations) with advanced machine learning presents a transformative solution. This workflow leverages this integration, reformulating the prediction problem into a classification task to determine if a plant will flower before, after, or within a critical one-day window [2] [1]. The incorporation of few-shot learning techniques allows the model to adapt to new growth environments with minimal additional training data, enhancing its practical utility and scalability across diverse breeding programs [2].

The following tables summarize the core quantitative findings from the validation of this anthesis prediction framework.

Table 1: Overall Model Performance Metrics for Anthesis Prediction

| Metric | Performance | Notes |

|---|---|---|

| F1 Score (Binary Classification) | > 0.8 [2] [1] | Achieved across different planting environments. |

| F1 Score (Cross-Dataset Validation) | ~0.80 [2] | Demonstrates strong generalization to independent datasets. |

| F1 Score (Three-Class Classification) | > 0.6 [2] | For predicting "before", "after", or "within one day" of a critical date. |

| Impact of Weather Data Integration | +0.06 to +0.13 F1 points [2] | Most significant 12-16 days pre-anthesis when visual cues are weak. |

Table 2: Performance of Few-Shot Learning for Model Adaptation

| Few-Shot Scenario | Performance (F1 Score) | Context |

|---|---|---|

| One-Shot Learning | 0.984 [2] | Achieved at 8 days before anthesis. |

| Five-Shot Learning | Improved from 0.75 to 0.889 [2] | Example of performance boost with minimal data. |

Detailed Experimental Protocols

Protocol 1: Multimodal Data Acquisition and Preprocessing

This protocol covers the collection and preparation of image and weather data.

I. Materials and Equipment

- RGB imaging system (e.g., ground-based camera, UAV-mounted sensor)

- On-site weather station

- Data storage and processing unit (e.g., computer with adequate GPU)

II. Step-by-Step Procedure

- Image Acquisition: Capture high-resolution RGB images of individual wheat plants at regular intervals (e.g., daily) from early development stages until post-anthesis. Ensure consistent lighting conditions and camera angle where possible [2] [3].

- Weather Data Acquisition: Concurrently, collect local meteorological data, including temperature, solar radiation, and precipitation [2] [24]. The data logger should be located as close to the plant phenotyping site as feasible.

- Data Labeling: For each plant image, record the ground-truth anthesis date. This labeled dataset is essential for supervised model training.

- Data Preprocessing:

- Images: Resize images to a uniform resolution (e.g., 640x640). Apply data augmentation techniques like rotation and flipping to increase dataset diversity and model robustness [28].

- Weather Data: Clean the data and normalize the meteorological parameters to a common scale for model input.

Protocol 2: Model Training and Few-Shot Adaptation

This protocol details the training of the core prediction model and its adaptation to new environments.

I. Materials and Equipment

- Preprocessed and labeled dataset from Protocol 1.

- Computing environment with deep learning frameworks (e.g., PyTorch, TensorFlow).

II. Step-by-Step Procedure

- Model Architecture Selection: Employ advanced vision architectures such as Swin V2 or ConvNeXt as feature extractors for the RGB images [2].

- Feature Fusion: Integrate the extracted image features with the processed weather data. This can be achieved using a Fully Connected (FC) or Transformer (TF) comparator to create a unified multimodal representation [2].

- Model Training (Initial): Train the model on a source dataset. Frame the task as a binary (e.g., will flower within X days: yes/no) or three-class classification problem [2] [1].

- Model Adaptation (Few-Shot Learning): To deploy the model in a new environment with limited data:

- Anchor Selection: Use a small set of labeled examples (1-5 images per class, known as "anchors") from the new environment [2].

- Metric-based Learning: Fine-tune the model by comparing new input images to these anchors based on feature similarity, allowing it to quickly adapt to new conditions without full retraining [2].

Protocol 3: Model Inference and Decision Support

This protocol describes the operational use of the trained model for flowering prediction.

I. Materials and Equipment

- Trained and adapted model from Protocol 2.

- New, unlabeled RGB images and concurrent weather data from the target field.

II. Step-by-Step Procedure

- Data Input: Feed new data (RGB images + weather) into the prediction model.

- Inference: The model will output a classification prediction (e.g., "plant will flower in 8-10 days").

- Decision Support:

Workflow and Signaling Pathways

The following diagram illustrates the complete operational workflow from data acquisition to decision-making.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Implementation

| Item Name | Function / Purpose | Specifications / Examples |

|---|---|---|

| High-Resolution RGB Camera | Captures visual phenotypic data of individual wheat plants for image analysis. | Ground-based or UAV-mounted sensors; used for top-view image capture [2] [3]. |

| On-Site Weather Station | Logs micro-environmental variables that significantly influence flowering time. | Measures temperature, solar radiation, precipitation [2] [24]. |