Smart Planting Sensors 2025: A Researcher's Guide to Next-Gen Agritech and Biomedical Applications

This article provides a comprehensive overview of the current state and future trajectory of smart planting sensors, tailored for researchers and scientists.

Smart Planting Sensors 2025: A Researcher's Guide to Next-Gen Agritech and Biomedical Applications

Abstract

This article provides a comprehensive overview of the current state and future trajectory of smart planting sensors, tailored for researchers and scientists. It explores the foundational principles of sensor technologies, from established soil moisture probes to emerging wearable plant sensors and AI-integrated systems. The scope includes methodological guides for deployment, critical troubleshooting for data integrity, and rigorous validation frameworks for sensor performance. By synthesizing insights from precision agriculture, the article highlights the significant cross-disciplinary potential of these technologies for inspiring novel approaches in biomedical monitoring, clinical research, and environmental diagnostics.

The Foundations of Smart Sensing: From Soil Probes to Plant Wearables

Smart agriculture represents a fundamental transformation from blanket-method farming to a precise, data-driven paradigm. Smart agriculture leverages a suite of advanced technologies—including the Internet of Things (IoT), artificial intelligence (AI), robotics, and big data analytics—to observe, measure, and respond to inter- and intra-field variability in crops [1] [2]. This transition is critical; traditional farming methods, riddled with inefficiencies, waste approximately 60% of irrigation water and 30% of agricultural inputs, directly leaking profits and harming the environment [1]. In contrast, smart farming optimizes every aspect of production, aiming for maximum efficiency, sustainability, and profitability.

The core of this revolution is data, acquired through a network of smart sensors that act as the "senses" of the modern farm [3]. These sensors provide the foundational data for intelligent decision-making, enabling real-time monitoring of crop growth conditions, internal plant physiology, and external environmental factors [3]. The global market growth reflects this shift, with the smart agriculture market projected to reach USD 55 billion by 2032, building on a strong compound annual growth rate (CAGR) of 13.7% [1]. This technical guide examines the core components, sensor technologies, and experimental frameworks that define smart agriculture, providing researchers and scientists with a comprehensive overview of its technical underpinnings.

Core Architectural Framework of Smart Agriculture

The technological architecture of a smart farming system rests on three interconnected, cyber-physical pillars that form a closed-loop system: Sensing, Insight, and Action.

The Sensing Layer: Data Acquisition

This layer comprises the physical sensors deployed throughout the agricultural environment. It is responsible for the continuous and real-time acquisition of raw data on crop, soil, and atmospheric conditions [1] [2]. These sensors form the backbone of the system, converting analog physical and chemical interactions in the environment into digital data streams. Key measured parameters include soil moisture, nutrient levels, temperature, humidity, and crop vitality indicators [1] [4].

The Insight Layer: Data Analysis and Intelligence

Raw data from the sensing layer is transmitted to this cloud-based or edge-based layer for processing, management, and analysis [1]. Here, data management platforms and AI-powered analytics transform raw data into actionable intelligence [1] [2] [5]. Machine learning models identify patterns, predict outcomes like yield or disease outbreaks, and generate precision prescriptions for farm management [6]. This is where data becomes insight, enabling proactive and predictive decision-making.

The Action Layer: Automated Execution

The intelligence generated is fed to automation systems that execute field operations with precision. This includes smart irrigation controllers, autonomous tractors and drones for targeted spraying, and robotic systems for weeding and harvesting [1] [2]. This layer physically intervenes in the farm environment based on data-driven insights, closing the loop and optimizing resource allocation in real-time.

The seamless integration of these three layers creates a robust, data-driven farming ecosystem capable of granular process control, minimized production risks, and enhanced operational efficiency [1].

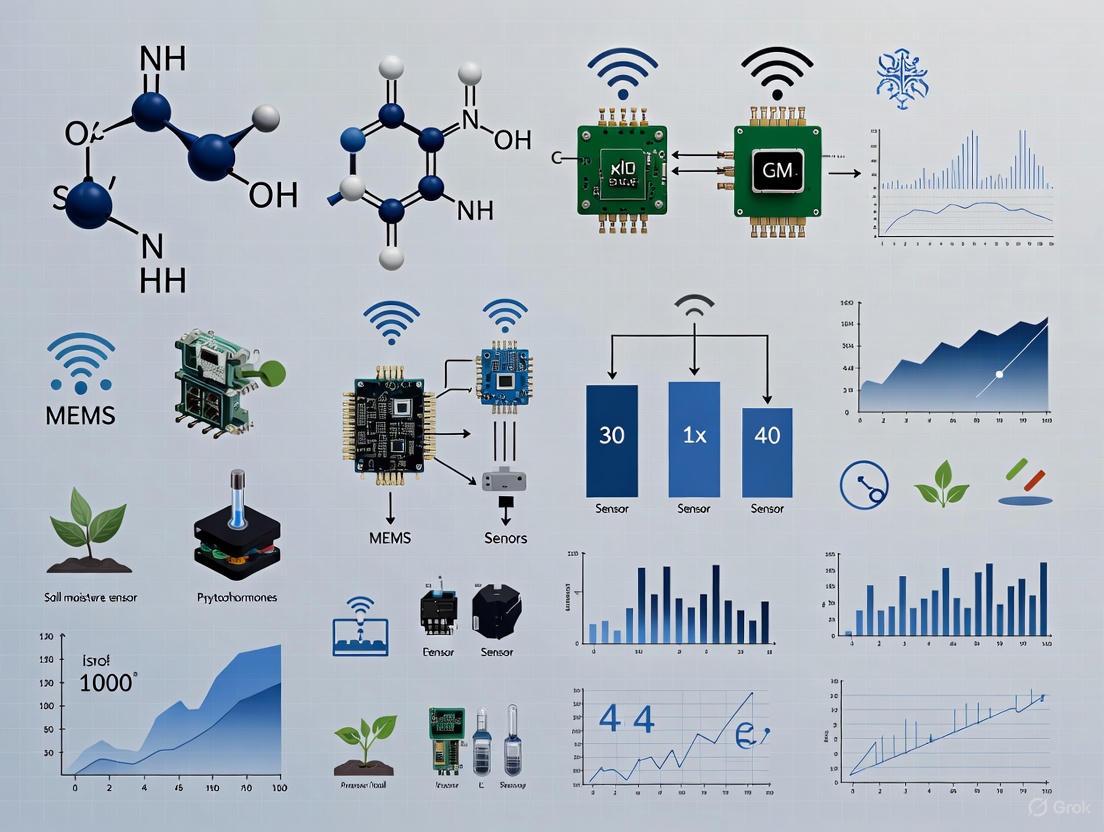

Figure 1: The core architecture of a smart agriculture system, showing the flow from data sensing to automated action.

Advanced Smart Sensor Technologies

Smart sensors are the fundamental data acquisition units enabling this transition. They are evolving towards miniaturization, intelligence, and multi-modality, driven by advancements in micro-nano technology, flexible electronics, and MEMS (Micro-Electro-Mechanical Systems) [3].

In-Situ Soil and Plant Sensors

Soil Moisture and Nutrient Sensors: These probes are embedded in the soil root zone to provide continuous data on water content and key nutritional elements like Nitrogen (N), Phosphorus (P), and Potassium (K). They are critical for triggering precision irrigation and variable-rate fertilization, preventing over- and under-application [7] [5]. For instance, deployment of these sensors can lead to a 20-60% reduction in water use compared to traditional flood irrigation [1].

Plant Wearable Sensors: A groundbreaking advancement, these sensors leverage flexible electronics and micro-nano technology to adhere to the irregular surfaces of plant tissues (e.g., leaves, stems) for in-situ, real-time, and continuous monitoring of physiological signals [3]. They can detect internal plant signaling molecules, such as hydrogen peroxide (H₂O₂) emitted due to wounding stress, allowing for ultra-early intervention [3].

Microclimate and Air Quality Sensors: These sensors monitor the immediate environmental conditions surrounding the crop, including temperature, humidity, light intensity (PAR), and CO₂ concentration [7]. In greenhouse operations, this data is fed directly to automated climate control systems to maintain optimal growing conditions, maximizing photosynthetic efficiency [1].

Proximal and Remote Sensing Platforms

Drone-Based Remote Sensing: Equipped with multispectral, hyperspectral, and thermal cameras, drones provide high-resolution, high-frequency aerial scouting of large fields [2] [5]. They enable the creation of detailed spatial maps (e.g., NDVI for vegetation health) that reveal variability and stress hotspots long before they are visible to the naked eye, facilitating targeted interventions.

Satellite-Sensor Synergy: Macro-level satellite imagery is increasingly integrated with micro-level terrestrial sensor data to provide a comprehensive view of farm intelligence, from regional weather patterns to individual plant health [8] [6]. This synergy is also pivotal for blockchain-based traceability, where satellite data verifies crop origin and practices [2].

Table 1: Taxonomy of Advanced Smart Sensors in Agriculture

| Sensor Category | Measured Parameters | Technology Principles | Key Applications |

|---|---|---|---|

| Soil Moisture | Volumetric Water Content | Capacitance, Time-Domain Reflectometry (TDR) | Precision Irrigation Management [7] |

| Soil Nutrient & pH | NPK levels, Soil Acidity | Ion-Selective Electrodes, Optical Spectroscopy | Variable-Rate Fertilization [7] |

| Plant Wearable | H₂O₂, Sap Flow, Biomarkers | Nanosensors (SWNTs), Flexible Electronics [3] | Real-time Plant Health & Stress Monitoring [3] |

| Drone-Based | NDVI, Canopy Temperature | Multispectral/Hyperspectral Imaging | Crop Health Mapping, Pest/Disease Early Detection [2] |

| Environmental | Temp, Humidity, PAR, CO₂ | MEMS, Electrochemical Sensors | Greenhouse Climate Control, Microclimate Optimization [7] |

Quantitative Impact and Performance Metrics

The adoption of smart agriculture technologies yields measurable, quantifiable returns across key performance indicators. The following table synthesizes performance data from field deployments as reported across multiple sources.

Table 2: Measured Impact of Core Smart Agriculture Technologies

| Technology / Practice | Impact on Yield | Resource Use Efficiency | Economic & Operational Impact |

|---|---|---|---|

| Smart Irrigation Systems | --- | 20-60% water use reduction [1] | --- |

| Precision Fertilization | --- | 15% reduction in fertilizer use [1] | --- |

| IoT & Precision Farming | 10-15% increase [1] | 20-30% reduction in input costs [1] | Labor savings of $15-20/acre [1] |

| Advanced Farming Methods | Up to 70% increase [1] | --- | --- |

| Autonomous Drones & Robotics | 15-22% increase [2] [6] | 30-40% reduction in chemical usage [2] [6] | Up to 40% labor cost reduction [6] |

Experimental Protocol: Deploying an Integrated Sensor Network for Crop Monitoring

This protocol details a methodology for establishing an in-field sensor network to monitor soil and plant parameters for research purposes, integrating proximal sensing with aerial imagery for validation.

Research Objective

To establish a correlated understanding of root-zone soil conditions and aerial crop health phenotypes for precision resource management.

Materials and Equipment (The Researcher's Toolkit)

Table 3: Essential Research Reagents and Materials for Sensor Deployment

| Item / Solution | Function / Specification | Research Application |

|---|---|---|

| IoT Soil Sensor Nodes | Measure soil moisture, temperature, salinity. Connectivity: LPWAN (LoRaWAN) or cellular. | In-situ, continuous root zone data logging. |

| Plant Wearable Sensor | Single-walled carbon nanotube (SWNT)-based sensor for H₂O₂ [3]. | Real-time detection of plant stress signaling molecules. |

| Agricultural Drone | UAV equipped with multispectral (RGB, NIR, RedEdge) camera. | High-resolution aerial mapping; calculation of NDVI/other indices. |

| Calibration Standards | Standard solutions for pH and NPK sensor calibration. | Ensuring accuracy and reliability of electrochemical sensor readings. |

| Edge Computing Gateway | Device for local data aggregation and preliminary analysis. | Reduces data latency; enables edge AI for immediate insights. |

| Cloud Analytics Platform | Software for data fusion, visualization, and machine learning. | Correlates soil sensor data with aerial imagery; generates insights. |

Methodology

Experimental Design and Sensor Placement:

- Delineate the study area and establish a georeferenced grid.

- Identify monitoring points that represent the field's variability (e.g., different soil types, slopes).

- Install soil sensors at each point according to manufacturer specifications, typically at multiple depths (e.g., 10cm, 20cm, 30cm) to profile root zone conditions.

- Attach plant wearable sensors to a representative sample of plants at each monitoring point, ensuring good contact with the tissue (e.g., leaf surface) without causing damage.

Data Acquisition and Workflow:

- Configure all sensors to log data at a fixed interval (e.g., every 15 minutes).

- Establish a communication link (e.g., LPWAN gateway) to transmit data to the edge device and cloud platform.

- Conduct bi-weekly drone flights at a consistent time of day (e.g., solar noon) under clear sky conditions to capture multispectral imagery.

- Perform ground-truthing during each drone flight to validate sensor and imagery data.

Data Integration and Analysis:

- In the cloud platform, synchronize the time-series data from soil and plant sensors with the spatially explicit drone imagery.

- Use statistical analysis and machine learning (e.g., regression models) to identify correlations between root-zone sensor data (e.g., low soil moisture) and aerial phenotyping data (e.g., decreasing NDVI).

- Develop a predictive model for crop stress, using the early physiological signals from plant wearables as a precursor to the spectral changes detected by drones.

Figure 2: Experimental workflow for deploying and validating an integrated smart sensor network.

Challenges and Future Research Directions

Despite its promise, the widespread adoption of smart agriculture faces significant barriers. High implementation costs remain prohibitive for smallholders, and a technical skills gap can prevent farmers from effectively utilizing the technology [8] [9]. Data security and privacy are major concerns as farms become increasingly connected, and connectivity issues in rural areas can hamper system reliability [9]. Furthermore, fragmented platforms and a lack of interoperability standards create integration headaches [8].

Future research and development are poised to address these challenges and push the boundaries further:

Multimodal and AI-Integrated Sensors: The next generation of sensors will move beyond single-parameter measurement. Research focuses on developing multimodal sensors that can simultaneously capture multiple data streams (e.g., a single device measuring soil moisture, NPK, and temperature) [3]. The integration of AI directly at the sensor level (AI-enabled sensors) will allow for on-device data processing and immediate, localized decision-making without constant cloud dependency [3] [5].

Advanced Nanobiotechnology: The use of nanomaterials like single-walled carbon nanotubes (SWNTs) is enabling the development of highly sensitive, miniaturized, and low-cost sensors for specific plant biomarkers and soil contaminants [4] [3]. This will allow researchers to understand plant physiology at an unprecedented molecular level.

Sustainable Power and Connectivity Solutions: Research into energy-harvesting techniques (e.g., solar, kinetic) for sensors and the expansion of low-power, wide-area network (LPWAN) technologies like LoRaWAN and NB-IoT are critical for making large-scale, long-term sensor deployments viable and maintenance-free [9].

Smart agriculture, centered on the deployment of advanced smart sensors and data analytics, is fundamentally redefining traditional farming. The transition to a data-driven framework—built on the core architecture of Sensing, Insight, and Action—enables unprecedented precision in resource management, leading to quantifiable gains in productivity, profitability, and environmental sustainability. For the research community, the path forward involves overcoming adoption barriers and pioneering developments in nanobiotechnology, AI, and multimodal sensing systems. By continuing to advance these key technologies, researchers and scientists will play a pivotal role in shaping a resilient and efficient global food system for the future.

Within the paradigm of smart planting, sensors function as the foundational sensory apparatus, enabling the transition from traditional practices to data-driven, precision agriculture [3]. This technological taxonomy delineates the core sensor types and their operating principles, providing a framework for researchers and scientists engaged in the development and application of advanced agricultural monitoring systems. These sensors facilitate the real-time acquisition of critical data pertaining to both plant biophysiology and the surrounding edaphic factors, forming the essential data pipeline for intelligent decision-support systems in crop management and drug development from plant-based compounds [3].

Sensor Taxonomy by Operating Principle and Measurand

The classification of sensors for smart planting can be primarily organized by their fundamental operating principles and the specific physical or chemical quantities they measure (measurands). The following table provides a comparative overview of core sensor technologies, their operating principles, key performance parameters, and primary applications in a research context.

Table 1: Technological Taxonomy of Core Smart Planting Sensors

| Sensor Category | Operating Principle | Key Measurands | Typical Accuracy/Performance | Primary Research Applications |

|---|---|---|---|---|

| Capacitive Moisture | Measures the dielectric permittivity of the soil, which changes with water content [10]. | Volumetric Water Content (VWC) | ≈ ±2% VWC [10] | Precision irrigation scheduling, water use efficiency studies [10]. |

| Time-Domain Reflectometry (TDR) | Measures the time for an electrical pulse to travel along a waveguide and reflect back; travel time is proportional to soil moisture [10]. | Volumetric Water Content (VWC) | ≈ ±1% VWC [10] | Soil science research, calibration standard for other sensors, high-precision irrigation studies [10]. |

| Digital Thermistor | Measures temperature-dependent electrical resistance [10]. | Soil Temperature, Air Temperature | ≈ ±0.5°C [10] | Seed germination studies, phenological modeling, frost protection systems [10]. |

| Micro-Nano Chemical Sensors | Employ nanomaterials (e.g., SWNTs) functionalized with specific recognition elements; transduction via optical or electrical signal changes upon target binding [3]. | NH4+, H2O2, Salicylic Acid, Ethylene [3] | Detection limits in ppm range (e.g., ~3 ppm for NH4+); high sensitivity (e.g., ≈8 nm/ppm for H2O2) [3]. | Real-time monitoring of plant stress signaling, soil nutrient dynamics, and hormone pathways [3]. |

| Flexible/Wearable Plant Sensors | Utilize flexible electronics and conductive inks/hydrogels to adhere to plant surfaces, measuring mechanical or biophysical properties [3]. | Leaf Turgor Pressure, Sap Flow, Growth Deformation [3] | Varies by design; enables in-situ, continuous monitoring [3]. | Plant hydration status, growth rate analysis, and disease progression studies [3]. |

Figure 1: A taxonomy of core sensor types for smart planting, categorized by their operating principle and primary measurands.

Detailed Operating Principles and Methodologies

Soil Moisture Sensors

Soil moisture sensors predominantly operate on electromagnetic principles, assessing the soil's dielectric properties. The soil-water-air matrix has a characteristic dielectric permittivity, with water exhibiting a value of approximately 80, significantly higher than that of soil minerals (3-5) and air (1). Capacitive sensors measure this property by assessing the charge-storage capacity between electrodes embedded in the soil, which correlates directly to volumetric water content [10]. Time-Domain Reflectometry (TDR) sensors represent a more advanced methodology, whereby a high-frequency electromagnetic pulse is propagated along a metal waveguide (probe) inserted into the soil. The velocity of this pulse is governed by the soil's dielectric permittivity. The system measures the time taken for the pulse to reflect back to its source, which is then algorithmically converted to soil moisture content with high accuracy [10].

Advanced Micro-Nano and Wearable Plant Sensors

Recent breakthroughs are anchored in micro-nano technology and flexible electronics. Micro-nano sensors often employ nanomaterials like single-walled carbon nanotubes (SWNTs) or specific nanoparticles. These nanomaterials are functionalized with bio-recognition elements (e.g., peptides, DNA oligos) that selectively bind to target analytes like hydrogen peroxide (H2O2) or ammonium ions (NH4+) [3]. The binding event induces a measurable change in the nanomaterial's properties, such as its fluorescence wavelength or electrical conductivity, enabling real-time, sensitive detection of plant stress signals or soil nutrients [3].

Flexible or wearable plant sensors are fabricated using flexible substrates (e.g., polymers) and conductive, stretchable materials (e.g., hydrogels, liquid metal inks). They are designed to conform to the irregular and dynamic surfaces of plant tissues, such as leaves or stems. These sensors can transcribe biophysical phenomena—like dimensional changes from growth or sap flow, or variations in mechanical pressure from turgor—into quantifiable electrical signals (e.g., resistance, capacitance), allowing for continuous, in-situ monitoring of plant physiological status [3].

Figure 2: Workflow for detecting plant stress signals using micro-nano sensors.

The Researcher's Toolkit: Essential Reagents and Materials

The development and deployment of advanced plant sensors, particularly in experimental settings, require a suite of specialized reagents and materials.

Table 2: Key Research Reagent Solutions for Sensor Development and Application

| Reagent/Material | Function/Application | Research Context |

|---|---|---|

| Functionalized Nanomaterials (e.g., SWNTs, Graphene) | Serve as the core transduction element in micro-nano sensors; functionalization provides specificity to target analytes [3]. | Fabrication of novel sensors for plant metabolites, hormones, and stress markers. |

| Flexible Polymer Substrates (e.g., PDMS, Polyimide) | Provide a flexible, stretchable, and often biocompatible base for mounting conductive elements in wearable sensors [3]. | Development of non-invasive sensors that adhere to plant surfaces for long-term monitoring. |

| Conductive Inks/Hydrogels | Form the stretchable conductive traces and sensing elements in flexible sensors [3]. | Creating electrodes and circuits that maintain conductivity under mechanical deformation on growing plants. |

| Recognition Elements (e.g., DNA oligos, Peptides) | Engineered to bind specifically to a target molecule, conferring high selectivity to the sensor [3]. | Designing the molecular recognition interface for detecting specific biochemical signals in planta. |

| Calibration Standards (e.g., Specific conductivity solutions, Known VWC soils) | Used to establish a quantitative relationship between the sensor's signal output and the actual measurand concentration or value. | Critical for validating sensor accuracy and ensuring reliable data in both lab and field experiments. |

The technological taxonomy of smart planting sensors reveals a trajectory from bulk environmental monitoring towards miniaturized, intelligent, and multi-modal sensing systems. Core operating principles—ranging from electromagnetic and resistive to nanomaterial-based optical/electronic transduction—underpin the accurate measurement of critical agronomic parameters. The ongoing integration of advanced manufacturing techniques like micro-nano technology and flexible electronics is pushing the boundaries of sensor capabilities, enabling unprecedented access to real-time plant physiological data. For the research community, this expanding toolkit promises to deepen the fundamental understanding of plant-environment interactions and accelerate the development of precision frameworks for both crop cultivation and plant-based pharmaceutical development.

Soil-based sensors represent a cornerstone of precision agriculture, enabling the data-driven transformation of traditional farming into a sustainable, efficient, and highly productive endeavor. This whitepaper provides an in-depth technical examination of sensor technologies for monitoring three fundamental soil parameters: moisture, key nutrients (Nitrogen, Phosphorus, Potassium), and pH. Within the broader context of smart planting sensor research, we detail the operating principles of prominent sensor types, from capacitance-based moisture probes to electrochemical nutrient sensors, and present standardized methodologies for their field calibration and validation. Supported by quantitative data comparisons and visual workflows, this guide serves as a reference for researchers and agricultural scientists developing next-generation monitoring systems to optimize crop management and enhance resource use efficiency.

The evolution from Agriculture 3.0 to Agriculture 4.0 and 5.0 is characterized by the integration of Internet of Things (IoT) devices, artificial intelligence (AI), and robust sensor networks that enable real-time, data-driven decision-making [11]. At the core of this transformation are soil-based sensors, which provide the critical data required for precision agriculture—a management strategy that optimizes resource use, enhances productivity, and minimizes environmental impact [11] [12]. These sensors deliver real-time insights into the soil's dynamic conditions, allowing for targeted interventions in irrigation, fertilization, and soil amendment practices.

Monitoring soil moisture, nutrients, and pH is paramount for sustainable crop production. Soil nutrient monitoring is a cornerstone of sustainable agriculture, as it enables the assessment of soil health for long-term productivity and environmental protection by minimizing agricultural non-point source pollution [12]. imbalances in key nutrients like nitrogen (N), phosphorus (P), and potassium (K) can lead to weakened plant development, soil erosion, nutrient leaching, and groundwater contamination [12]. Similarly, precise soil moisture management through sensors has been demonstrated to enable water savings of 35-65% compared to flood irrigation systems, a critical adaptation in the face of global water scarcity [13]. This technical guide delves into the operating principles, performance metrics, and application protocols of the sensors that make such precision possible.

Sensor Operating Principles and Technical Specifications

Soil Moisture Sensors

Soil moisture sensors measure the Volumetric Water Content (VWC) of the soil, which is essential for optimizing irrigation schedules and preventing water stress in crops.

Capacitance Sensors: These sensors operate by measuring the dielectric permittivity of the soil. The sensor's electrodes form a capacitor whose capacitance changes with the soil's water content, as water has a high dielectric constant (~80) compared to dry soil (2-6) and air (1) [13] [10]. The output is typically a voltage that is inversely proportional to moisture content—high voltage (e.g., ~3V) indicates dry soil, while low voltage (e.g., ~1.5V) indicates wet soil [13]. Capacitance sensors are popular due to their cost-effectiveness, low power consumption, and reasonable robustness [13] [10].

Time-Domain Reflectometry (TDR) Sensors: TDR sensors determine soil moisture by analyzing the propagation speed of an electromagnetic pulse along a waveguide embedded in the soil. The travel time is related to the soil's dielectric permittivity, which is dominated by the water content [10]. TDR sensors are known for their high accuracy (±1% VWC) but come at a higher cost, making them suitable for research and large commercial operations [10].

Resistance Sensors: These sensors, including gypsum blocks, measure the electrical resistance between electrodes, which varies with soil moisture and salinity. They are simple and affordable but can be less accurate, particularly in saline soils, and require good soil contact to function correctly [10].

Soil Nutrient and pH Sensors

The real-time monitoring of soil macronutrients (NPK) and pH is vital for precision fertilization and maintaining optimal soil health.

Electrochemical Sensors for pH: These sensors measure the activity of hydrogen ions in the soil solution using ion-selective electrodes. The potential difference between a reference electrode and the ion-selective electrode is converted to a pH value [14] [12].

Optical and Electrochemical Sensors for NPK: Optical methods often leverage the interaction between light and soil properties. For instance, the reflectance or absorbance of light at specific wavelengths can be correlated with nutrient concentrations [12]. Electrochemical sensors, on the other hand, use ion-selective membranes to detect specific ions like nitrate (NO₃⁻), potassium (K⁺), and phosphate (PO₄³⁻) in the soil solution, generating a voltage proportional to the ion's activity [14] [12]. Recent advances include the use of nanomaterials like graphene to enhance the sensitivity and binding capabilities of these sensors [12].

Table 1: Comparative Analysis of Primary Soil Moisture Sensor Technologies

| Sensor Type | Principle of Operation | Estimated Accuracy | Typical Cost per Unit | Power Consumption | Key Applications |

|---|---|---|---|---|---|

| Capacitance | Measures dielectric permittivity of soil | ±2% VWC [10] | $50-$100 [10] | Low [13] [10] | Irrigation scheduling, water use efficiency [10] |

| TDR | Time for electric pulse to return reflects VWC | ±1% VWC [10] | $200-$500 [10] | Medium [10] | Research, precision irrigation, soil health [10] |

| Resistance | Electrical resistance between electrodes | ±4% VWC [10] | $15-$30 [10] | Low [10] | Basic irrigation management [10] |

Table 2: Monitoring Technologies for Soil Nutrients and pH

| Technology / Sensor Type | Target Parameters | Key Strengths | Notable Limitations |

|---|---|---|---|

| Ion-Selective Electrodes (ISEs) | NPK, pH [12] | Real-time analysis, potential for in-situ use [12] | Sensitivity to soil conditions, cross-interference [12] |

| Optical Sensors | N, P, K [12] | Non-destructive measurement [12] | Affected by soil moisture, surface roughness [12] |

| Remote Sensing (RS) | Nitrogen (N) [12] | Large-scale coverage, historical data available [12] | Indirect measurement, requires ground-truthing [12] |

| AI/ML-based Models | NPK, pH [15] [12] | High predictive accuracy from fused data sets [15] [12] | Dependent on quality and quantity of training data [12] |

Experimental Protocols and Methodologies

Field Calibration of Low-Cost Capacitive Soil Moisture Sensors

Objective: To develop a regression model for predicting soil moisture from the output voltage of a low-cost capacitive sensor (e.g., SEN0193) and validate its accuracy against a commercial-grade sensor (e.g., SM150T) [13].

Materials and Reagents:

- Low-cost capacitive soil moisture sensor (e.g., SEN0193)

- Microcontroller unit (e.g., ESP8266) with Analog-to-Digital Converter (ADC)

- Commercial reference sensor (e.g., SM150T)

- Soil sampling tools (auger, cores)

- Drying oven and balance for gravimetric analysis

Procedure:

- Sensor Deployment: Install the low-cost sensor and the commercial reference sensor at the same depth and proximity within the crop's root zone, ensuring good soil-sensor contact [13].

- Data Collection:

- Record the output voltage (V) from the low-cost sensor as captured by the microcontroller's ADC across a range of soil moisture conditions from wet to dry [13].

- Simultaneously, record the Volumetric Water Content (VWC) readings from the commercial SM150T sensor [13].

- For a subset of data points, collect undisturbed soil samples using cores adjacent to the sensors for standard gravimetric analysis to determine actual VWC [13].

- Model Development:

- Perform regression analysis between the low-cost sensor's output voltage (independent variable) and the VWC from the gravimetric method or the commercial sensor (dependent variable) [13].

- Establish a calibration curve (e.g., linear or polynomial) to convert sensor voltage to VWC.

- Validation:

- Evaluate the performance of the calibrated low-cost sensor using metrics such as Mean Absolute Error (MAE), Root Mean Square Error (RMSE), and correlation coefficients (e.g., Spearman rank correlation) by comparing its predictions with the commercial sensor's readings over an extended validation period [13]. A Spearman correlation coefficient exceeding 0.98 indicates strong agreement [13].

Integration and Data Workflow for an IoT-Based Soil Monitoring System

Objective: To deploy a multi-parameter soil sensing system that provides real-time data for an AI-driven decision support platform [15].

Materials and Reagents:

- Multi-parameter sensor node (capable of measuring temperature, moisture, salinity, EC, pH, N, P, K) [15]

- Microcontroller/Gateway device (e.g., Arduino)

- Wireless communication modules (LoRaWAN, Wi-Fi, or NB-IoT) [13] [10]

- Cloud computing platform

- Power supply (solar panel, battery)

Procedure:

- Sensor Node Configuration: Deploy multi-parameter sensor nodes at representative locations in the field, configured to log data at pre-defined intervals (e.g., every 15 minutes) [15].

- Data Transmission: The microcontroller unit collects readings from the sensors and transmits them wirelessly via a long-range, low-power protocol like LoRaWAN to a cloud gateway [13] [10].

- Cloud Data Processing: In the cloud, data is stored, cleaned, and processed. Predictive algorithms and AI models are employed for tasks such as soil moisture forecasting or nutrient recommendation [15] [16].

- Insight Delivery: Processed data and actionable recommendations (e.g., irrigation schedules, fertilizer applications) are delivered to the end-user via a web dashboard or mobile application [15].

The following diagram illustrates this integrated workflow.

The Scientist's Toolkit: Research Reagent Solutions

For researchers embarking on field experiments or the development of soil-based sensor systems, the following table details essential materials and their functions.

Table 3: Essential Research Tools for Soil Sensor Deployment

| Item / Solution | Function in Research Context |

|---|---|

| Arduino Microcontroller | An open-source electronics platform used to read analog signals from sensors, process data, and manage communication modules. Ideal for prototyping low-cost sensor systems [17]. |

| SKU: CE09640 / SEN0193 Sensor | Low-cost, capacitive soil moisture sensors. They require field-specific calibration but offer a viable and accessible solution for irrigation management studies, especially in resource-limited contexts [17] [13]. |

| Gravimetric Analysis Kit | The standard method for soil moisture measurement. It involves weighing soil samples before and after oven-drying. It is used as ground truth to calibrate and validate all other soil moisture sensors [13] [17]. |

| LoRaWAN Communication Module | A long-range, low-power wireless communication protocol. It is essential for transmitting sensor data from remote agricultural fields to a central gateway with minimal energy consumption [13] [10]. |

| Commercial Reference Sensor (e.g., SM150T) | A high-accuracy, commercially available sensor used as a benchmark to validate the performance and accuracy of newly developed or low-cost sensor systems in field conditions [13]. |

Soil-based sensors for moisture, nutrients, and pH are pivotal technologies driving the advancement of precision agriculture and sustainable resource management. This technical guide has outlined the fundamental principles, performance characteristics, and standardized experimental protocols for these sensors, providing a foundation for researchers and developers. The integration of these sensing technologies with IoT platforms and AI-driven analytics, as visualized in the system architecture, marks a significant leap toward fully automated, data-informed farming systems. Future progress will hinge on overcoming challenges related to sensor interoperability, cost reduction for wider adoption, and the development of more robust models that fuse multi-sensor data for predictive decision-making. Continued research and development in this field are essential to address global challenges of food security, water scarcity, and environmental sustainability.

Wearable plant sensors represent a transformative frontier in precision agriculture, enabling real-time, in-situ monitoring of plant physiological status. These flexible, non-invasive devices adhere directly to plant surfaces, facilitating continuous data acquisition on physical, chemical, and electrophysiological signals. This whitepaper provides a comprehensive technical examination of wearable plant sensor technologies, detailing their operational mechanisms, implementation methodologies, and application frameworks within smart planting systems. By synthesizing current research and experimental approaches, this guide serves as a foundational resource for researchers and professionals advancing plant science and agricultural technology.

The transition from traditional to smart farming necessitates advanced technologies for precise plant health monitoring [3]. Wearable plant sensors function as the "senses" of smart agriculture, providing critical data for intelligent decision-making [3]. These devices stand out for their non-invasive nature, high sensitivity, and integration capability, allowing them to provide continuous, real-time monitoring without significantly disrupting normal plant growth or function [18]. Unlike remote sensing technologies that capture canopy-level information, wearable sensors attach directly to stems, leaves, or fruits, enabling detection of micro-scale physiological changes and early stress indicators before visible symptoms appear [3]. This capability for early intervention is crucial for addressing biotic and abiotic stresses, optimizing resource inputs, and ultimately enhancing crop productivity and sustainability [19].

The fundamental operational premise of these sensors lies in detecting plant-emitted signals that reflect health status under stress conditions [20]. By closely attaching to irregular plant surfaces, these devices monitor critical parameters including growth rate, leaf surface temperature and humidity, organic volatiles, and electrophysiological signals in real-time [20]. The development of these advanced sensors draws upon multiple disciplines including crop physiology, electronics, materials science, and computer science, exhibiting distinct multidisciplinary integration characteristics essential for their continued evolution [3].

Classification and Operating Principles

Wearable plant sensors are systematically categorized based on the type of signals they detect and the physiological phenomena they monitor. The classification framework encompasses three primary sensor types: physical, chemical, and electrophysiological, each with distinct sensing mechanisms and target parameters.

Table 1: Fundamental Classification of Wearable Plant Sensors

| Sensor Category | Detected Signals/Parameters | Sensing Mechanism | Key Applications |

|---|---|---|---|

| Physical Sensors | Growth deformation, strain, temperature, humidity, light | Response to physical property changes; measures morphological and environmental variations [18] [20] | Monitoring plant growth rates, environmental stress, water status |

| Chemical Sensors | Volatile organic compounds (VOCs), reactive oxygen species (ROS), ions, pigments, pesticide residues | Detection of specific chemical molecules or ions; often uses functionalized nanomaterials [18] [20] | Early disease detection, nutrient deficiency identification, pollution monitoring |

| Electrophysiological Sensors | Action potentials, variation potentials | Measurement of electrical potential differences and signal propagation in plant tissues [18] [20] | Monitoring plant responses to stimuli, stress signaling pathways |

Physical Sensors

Physical sensors monitor morphological and environmental parameters including growth deformation, strain, temperature, humidity, and light intensity [18] [20]. These sensors typically operate on mechanisms that translate physical property changes into measurable electrical signals. For instance, flexible strain sensors can detect micron-scale growth variations through changes in electrical resistance or capacitance when the sensor substrate deforms with plant tissue expansion or contraction. Temperature sensors often utilize the predictable relationship between temperature and electrical resistance in specific materials, while humidity sensors commonly measure dielectric constant changes in hygroscopic materials as water vapor absorption varies.

Chemical Sensors

Chemical sensors detect specific molecules or ions associated with plant physiological status. These include sensors for volatile organic compounds (VOCs) released during stress responses, reactive oxygen species (ROS) generated under abiotic stress, ion fluctuations indicative of nutrient status, and pigment changes signaling health degradation [18]. Advanced chemical sensors often employ functionalized nanomaterials like single-walled carbon nanotubes (SWNTs) that exhibit changes in fluorescence or electrical properties when binding target analytes [3]. For example, Lew et al. (2020) developed a nanosensor using SWNTs for real-time detection of hydrogen peroxide (H2O2) induced by plant wounds, demonstrating high sensitivity (approximately 8 nm ppm⁻¹) and compatibility with portable electronic devices for field monitoring [3].

Electrophysiological Sensors

Electrophysiological sensors measure electrical potential variations in plant tissues, including action potentials and variation potentials that propagate through plant vascular systems in response to stimuli [18]. These sensors typically consist of flexible electrodes that maintain intimate contact with plant surfaces to detect minute electrical signals. Unlike physical and chemical sensors that monitor specific parameters, electrophysiological sensors capture integrated systemic responses to environmental stresses, mechanical damage, or other perturbations, providing insights into plant signaling networks and systemic communication.

Key Enabling Technologies and Fabrication

The development of advanced wearable plant sensors relies on several cutting-edge technologies that enable miniaturization, flexibility, and enhanced functionality.

Micro-Nano Sensing Technology

Micro-nano sensing technology integrates nanomaterials and nanoprocesses with traditional sensing technologies to achieve high-precision recognition and monitoring of small signals [3]. This approach is particularly valuable for capturing critical information about plant responses to environmental stresses and changes in internal physiological signals at the micro-nano scale, which traditional sensing technologies cannot detect [3]. Nanotechnology enhances sensor performance by improving detection range, sensitivity, selectivity, and response speed, thereby aiding intuitive understanding of plants' physiological states and their dynamic responses to environmental changes [3]. Fabrication processes for micro-nano sensors include:

- Modification and assembly of nanoparticle probes [3]

- Printable electronics and transfer printing techniques [3]

- Nanomaterials-DNA composite assembly [3]

- Film coating of interdigitated electrodes [3]

Flexible Electronics Technology

Flexible electronics technology enables the development of sensors that can conform to irregular plant surfaces, maintaining reliable contact during plant growth and movement [3]. This technology employs flexible substrates, stretchable conductive materials, and novel device architectures to create sensors with mechanical properties compatible with biological tissues. These advancements promote the development of wearable crop information sensors that possess flexible adhesion and can be installed on irregular crop tissue surfaces for in-situ, real-time, continuous precise monitoring [3].

Micro-Electro-Mechanical Systems (MEMS) Technology

MEMS technology integrates mechanical elements, sensors, actuators, and electronics on a common silicon substrate through microfabrication technology. This approach enables batch fabrication of miniaturized, low-power sensors with integrated sensing and signal processing capabilities. MEMS-based sensors are particularly valuable for plant wearables due to their small size, low power consumption, and potential for multi-parameter sensing on a single chip.

Experimental Methodologies and Implementation

Sensor Deployment and Integration

Successful implementation of wearable plant sensors requires careful consideration of deployment methodologies to ensure reliable data acquisition while minimizing plant impact.

Table 2: Experimental Protocols for Sensor Deployment

| Experimental Phase | Key Procedures | Technical Considerations |

|---|---|---|

| Pre-deployment Preparation | Sensor calibration, power system verification, communication testing | Calibrate sensors for specific plant species and environmental conditions; verify low-power operation modes |

| Field Installation | Surface cleaning, sensor attachment, connection establishment | Ensure intimate contact without restricting growth; minimize surface preparation that damages cuticle |

| Data Acquisition | Signal sampling, data logging, wireless transmission | Optimize sampling intervals to balance data resolution and battery life; implement error checking |

| Maintenance | Periodic inspection, power management, performance validation | Monitor for physical damage, attachment integrity, and signal drift over extended deployments |

Representative Experimental Protocol: Hydrogen Peroxide Detection

A detailed experimental methodology for detecting hydrogen peroxide (H2O2) using nanosensors exemplifies the precision required for chemical sensing applications [3]:

Sensor Fabrication:

- Prepare single-walled carbon nanotubes (SWNTs) through catalytic chemical vapor deposition

- Functionalize SWNTs with specific polymers or DNA sequences to create recognition sites for H2O2

- Deposit functionalized SWNTs onto flexible substrate using transfer printing techniques

- Pattern electrodes using microfabrication techniques to create complete sensor devices

Calibration Procedure:

- Expose sensors to standard H2O2 solutions of known concentrations (0-100 ppm)

- Measure optical or electrical response (fluorescence shift or resistance change)

- Generate calibration curve correlating sensor response to H2O2 concentration

- Determine detection limit (reported as ≈ 8 nm ppm⁻¹ fluorescence shift) and sensitivity [3]

Plant Integration:

- Select appropriate plant organs (leaves, stems) based on experimental objectives

- Gently clean surface without damaging cuticle or epidermal cells

- Apply sensor using biocompatible adhesive ensuring full contact

- Shield sensor from direct sunlight if measuring optical signals

Data Collection and Analysis:

- Monitor sensor response continuously following mechanical wounding or stress application

- Record signal changes with time resolution appropriate for H2O2 dynamics (seconds to minutes)

- Correlate H2O2 fluctuations with stress responses or physiological events

Research Reagent Solutions and Materials

The development and implementation of wearable plant sensors requires specialized materials and reagents that enable specific sensing functionalities while maintaining biocompatibility.

Table 3: Essential Research Reagents and Materials for Wearable Plant Sensors

| Material/Reagent | Function/Application | Technical Specifications |

|---|---|---|

| Single-Walled Carbon Nanotubes (SWNTs) | Transducer element for chemical sensing; provides high surface area and sensitive electronic properties | Functionalized with specific polymers or DNA for target recognition; diameter 0.8-2 nm, length 100-1000 nm [3] |

| Flexible Polymer Substrates | Base material for sensor fabrication; provides mechanical flexibility and conformal contact | Polyimide, PDMS, or PET films; thickness 10-100 μm; Young's modulus matching plant tissues (0.1-2 GPa) |

| Stretchable Conductive Inks | Forming electrodes and interconnects; maintain conductivity under mechanical deformation | Silver nanowires, graphene, or PEDOT:PSS composites; sheet resistance <100 Ω/sq; stretchability >20% strain |

| Biocompatible Adhesives | Sensor attachment to plant surfaces; secure bonding without phytotoxicity | Silicone-based or hydrogel adhesives; water vapor permeable; minimal interference with gas exchange [20] |

| Ion-Selective Membranes | Enable specific ion detection in chemical sensors; provide selectivity against interfering ions | PVC or polyurethane matrices with ionophores; specific for K⁺, Na⁺, Ca²⁺, NO₃⁻, or NH₄⁺ ions |

| Fluorescent Probe Molecules | Optical detection of specific analytes; provide signal transduction through emission changes | ROS-sensitive dyes (e.g., H2O2-sensitive boronate probes); compatible with incorporation into nanomaterial systems |

Performance Metrics and Comparative Analysis

Evaluating wearable plant sensors requires standardized performance metrics to enable comparison across different sensing modalities and technological approaches.

Table 4: Performance Metrics for Wearable Plant Sensors

| Performance Parameter | Target Range/Values | Measurement Methodology |

|---|---|---|

| Sensitivity | Chemical: ≈8 nm ppm⁻¹ for H2O2 [3]; Physical: strain gauge factor >2 | Measured as output signal change per unit input change (slope of calibration curve) |

| Accuracy | VMC: 0.5-3% for soil moisture (reference for plant sensors) [21] | Comparison against standardized reference methods under controlled conditions |

| Measurement Range | Physical: 0-100% strain; Temperature: -20 to 50°C [21] | Documented operating range where specified performance is maintained |

| Response Time | 10-100 ms for electronic readout [21] | Time to reach 90% of final output after stimulus application |

| Operating Lifetime | Battery life: 3-15 years for comparable systems [21] | Continuous operation time until power depletion or performance degradation >10% |

| Sampling Interval | Programmable: 10-60 minutes [21] | Minimum time between consecutive measurements in continuous monitoring |

Implementation Challenges and Future Perspectives

Despite significant advancements, wearable plant sensors face several technical and practical challenges that require further research and development.

Current Challenges

Sensor Attachment and Interface: Maintaining reliable contact during plant growth while minimizing interference with natural plant functions like gas exchange and photosynthesis remains challenging [20]. The complex, dynamic surfaces of plants require innovative attachment strategies that accommodate growth without sensor detachment or tissue damage.

Environmental Resilience: Field-deployed sensors must withstand varying environmental conditions including rain, UV exposure, temperature fluctuations, and mechanical disturbances without performance degradation. Packaging and encapsulation strategies must protect sensitive electronic components while maintaining sensing functionality.

Power Management: Continuous monitoring requires efficient power solutions balancing operational lifetime with sensor performance. While battery lives of 3-15 years are reported for some systems [21], energy harvesting approaches or ultra-low-power designs remain active research areas.

Multi-parameter Integration: Most current sensors focus on single parameters, but understanding complex plant physiology requires simultaneous monitoring of multiple signals. Developing integrated, multimodal sensing platforms presents significant design and fabrication challenges.

Future Outlook

The future development of wearable plant sensors is likely to focus on several key directions:

Multimodal Sensing Platforms: Combining physical, chemical, and electrophysiological sensing capabilities on integrated platforms will provide comprehensive understanding of plant status and responses [3]. These systems will capture correlated parameters to elucidate complex physiological processes.

Artificial Intelligence Integration: Incorporating AI and machine learning for data analysis will enable pattern recognition, anomaly detection, and predictive modeling based on sensor data [3]. On-device intelligence could facilitate real-time decision making for precision agriculture applications.

Biodegradable and Sustainable Materials: Developing sensors from biodegradable or environmentally benign materials will address end-of-life disposal concerns and reduce electronic waste in agricultural environments.

Energy Harvesting Solutions: Integrating plant-based energy harvesting mechanisms (such as biochemical fuel cells or biomechanical energy harvesters) could enable self-powered sensor systems for long-term deployment without battery replacement.

Wearable plant sensors represent a rapidly advancing technology with transformative potential for precision agriculture, plant science research, and environmental monitoring. By enabling real-time, in-situ monitoring of plant physiological status, these devices provide unprecedented insights into plant health, stress responses, and growth dynamics. Current research has demonstrated sophisticated sensing capabilities for physical, chemical, and electrophysiological parameters, with continued advancements in sensitivity, specificity, and reliability.

The successful implementation of these technologies requires interdisciplinary collaboration across materials science, electrical engineering, plant physiology, and data science. As these fields continue to converge, wearable plant sensors will become increasingly sophisticated, robust, and accessible, supporting the transition toward more intelligent, data-driven agricultural systems that optimize resource use while enhancing crop productivity and sustainability.

The advent of precision agriculture is fundamentally changing how we approach crop management and environmental monitoring. This management strategy gathers, processes, and analyzes temporal, spatial, and individual data to support management decisions according to estimated variability for improved resource use efficiency, productivity, quality, profitability, and sustainability [22]. Sensor technologies provide the foundational data for these decisions, enabling a shift from uniform field treatment to site-specific management that accounts for in-field variability.

Sensors deployed in agricultural environments are broadly categorized into two types: proximal sensors, which are placed close to or in contact with plants or soil, and remote sensors, which operate at a distance, typically aboard satellites or aircraft [22]. Proximal sensing includes technologies that directly measure soil electrical conductivity, canopy characteristics, and microclimate conditions, while remote sensing utilizes satellite or aerial imagery to capture spectral information across large areas. The integration of these complementary data streams creates a powerful monitoring system that captures both granular detail and broad spatial patterns—a capability essential for tracking microclimate and canopy health in complex agricultural landscapes [23].

This technical guide explores the principles, applications, and implementation methodologies for proximal and remote environmental sensing systems, with particular focus on their role in monitoring the critical agricultural parameters of microclimate and canopy health within the broader context of smart planting research.

Fundamental Principles of Environmental Sensing

Proximal Sensing Technologies

Proximal sensing encompasses technologies that measure environmental parameters directly from near the plant or soil environment. These sensors provide high-resolution, localized data that is crucial for understanding micro-scale variations in environmental conditions. Key proximal sensing applications in agriculture include:

Soil Sensing: Electromagnetic sensors like the EM38-MK2 measure apparent electrical conductivity (aEC) of soil, which correlates with properties including clay content, soil moisture, and salinity [24] [25]. These sensors can be mounted on vehicles and deployed across fields to generate high-density soil maps.

Canopy Sensing: Active sensors such as the Crop Circle ACS-430 measure Normalized Difference Vegetation Index (NDVI) and other vegetation indices by emitting their own light source and measuring the reflectance from plant canopies [25]. This enables assessment of canopy architecture, biomass, and chlorophyll content.

Microclimate Monitoring: Wireless sensor networks deployed across landscapes capture fine-scale variations in air temperature, relative humidity, solar radiation, and leaf wetness [26]. These parameters are crucial for understanding plant-environment interactions and disease risk.

Ultrasonic Sensing: Instruments like the PaddockTrac system utilize ultrasonic sensors at 10 kHz to measure vegetation height above the ground with sub-centimeter precision based on echo-ranging principles [23]. These systems also capture parameters including canopy density and vertical leaf distribution.

Remote Sensing Technologies

Remote sensing utilizes platforms at varying altitudes to capture spectral information about agricultural systems:

Satellite-Based Systems: Platforms including Landsat 7, Landsat 8, and Sentinel-2 provide systematic global coverage with specific spectral bands optimized for vegetation monitoring [23] [24]. Landsat 7 features the Enhanced Thematic Mapper Plus (ETM+) sensor with a 16-day revisit cycle, while Sentinel-2's MultiSpectral Instrument (MSI) includes red-edge bands specifically designed for vegetation monitoring with a 5-day revisit cycle [23].

Aerial and UAV-Based Systems: Manned aircraft and unmanned aerial vehicles (UAVs) capture higher spatial resolution imagery than satellites, providing detailed information about canopy structure and health.

Vegetation Indices: Mathematical combinations of different spectral bands that highlight specific vegetation properties:

- NDVI (Normalized Difference Vegetation Index): Quantifies vegetation greenness using near-infrared and red reflectance.

- MSAVI2 (Modified Soil Adjusted Vegetation Index): Minimizes soil background influence on vegetation signals.

- EVI (Enhanced Vegetation Index): Improves sensitivity in high-biomass regions.

Table 1: Comparison of Remote Sensing Platforms for Agricultural Monitoring

| Platform | Spatial Resolution | Revisit Time | Key Bands | Primary Applications |

|---|---|---|---|---|

| Landsat 7 | 30m (multispectral) | 16 days | Blue, Green, Red, NIR, SWIR, Thermal | Vegetation monitoring, land cover classification, biomass estimation |

| Sentinel-2 | 10m, 20m, 60m (depending on band) | 5 days | Blue, Green, Red, Red-edge, NIR, SWIR | Vegetation health assessment, chlorophyll content, water stress detection |

| UAV-based | 1-10cm (customizable) | On-demand | Customizable multispectral and thermal | High-resolution canopy monitoring, disease detection, precision management |

Sensor Integration and Data Fusion Methodologies

Data Fusion Approaches

The integration of proximal and remote sensing data creates a powerful synergy that leverages the strengths of both approaches. Data fusion methodologies enable researchers to combine high-resolution proximal measurements with extensive spatial coverage from remote sensing:

Kriging with External Drift (KED): A geostatistical technique that integrates sparse proximal sensor data (e.g., soil aEC) with densely-packed remote sensing covariates (e.g., satellite imagery, terrain indices) to create accurate prediction maps. Research demonstrates KED achieving R² values of 0.78 for soil property mapping even with sparse proximal data inputs [24].

Geographically Weighted Regression (GWR): A spatial regression technique that models relationships between variables that change across geographic space, allowing for the integration of proximal and remote sensing data while accounting for spatial non-stationarity.

Machine Learning Integration: Algorithms such as Random Forest and XGBoost effectively handle complex, multi-source data to identify nonlinear relationships between sensor inputs and agricultural parameters. Studies report XGBoost achieving R² values of 0.86 for biomass estimation by integrating ultrasonic pasture height measurements with satellite vegetation indices [23].

Sensor Deployment Frameworks

Effective sensor deployment requires systematic approaches to capture environmental variability:

Stratified Random Sampling: Dividing the study area into homogeneous strata before randomly placing sensors within each stratum ensures coverage of different environmental conditions [25].

Iterative Workflow for Microclimate Sensors: A comprehensive step-by-step guide integrating Geographic Information Systems (GIS) tools, local knowledge, and statistical methods for optimal sensor placement. This adaptive approach involves preliminary remote sensing analysis, stakeholder consultation, statistical optimization, and field validation [26].

The following workflow diagram illustrates the systematic process for deploying environmental sensor networks:

Technical Protocols for Microclimate and Canopy Monitoring

Microclimate Sensor Deployment Protocol

Objective: Establish a wireless sensor network to capture spatial and temporal variability in microclimate parameters across an agricultural landscape.

Materials:

- Wireless microclimate sensors (temperature, relative humidity, solar radiation)

- GNSS receiver for geolocation

- Data logging platform

- Sensor mounting equipment

Methodology:

- Site Selection: Utilize an iterative workflow integrating GIS analysis of remote sensing data (e.g., topography, vegetation cover) with statistical optimization to identify locations representing environmental variability [26].

- Sensor Configuration: Calibrate all sensors according to manufacturer specifications. Set logging intervals appropriate for phenomenon of interest (typically 5-60 minutes).

- Field Deployment: Install sensors at standardized heights (typically 1-2m above ground for air measurements) using radiation shields for temperature sensors. Ensure secure mounting to minimize disturbance.

- Georeferencing: Record precise coordinates of each sensor using GNSS with differential correction for accurate spatial referencing.

- Data Collection: Implement automated data retrieval systems with regular quality checks. Include protocols for handling sensor failures or data gaps.

Data Analysis:

- Calculate derived climate parameters (e.g., growing degree days, vapor pressure deficit)

- Conduct spatial interpolation (kriging) to create continuous microclimate maps

- Analyze temporal patterns in relation to plant development stages

Canopy Health Assessment Protocol

Objective: Quantify spatial and temporal variability in canopy health using proximal and remote sensing technologies.

Materials:

- Active canopy sensor (e.g., Crop Circle ACS-430 for NDVI)

- EM38-MK2 for soil electrical conductivity

- GNSS receiver for geolocation

- Satellite imagery (e.g., Sentinel-2 with red-edge bands)

Methodology:

- Proximal Sensor Data Collection:

- Utilize active canopy sensors to measure NDVI across the study area

- Conduct measurements with the EM38-MK2 in vertical dipole mode to assess soil EC to 1.5m depth [25]

- Georeference all measurements with high-precision GNSS

- Perform temporal measurements throughout growing season to capture dynamics

Remote Sensing Data Acquisition:

- Acquire satellite imagery coincident with proximal sensing dates

- Preprocess imagery for atmospheric correction and cloud masking

- Calculate vegetation indices (NDVI, MSAVI2) from processed imagery

Ground Truthing:

- Collect complementary plant physiological measurements (e.g., stem water potential, leaf photosynthesis)

- Sample berry chemistry for quality parameters (e.g., anthocyanins, flavonoids) [25]

- Measure yield components in relation to sensor data

Data Integration:

- Apply data fusion techniques (KED or GWR) to combine proximal and remote sensing datasets

- Develop predictive models using machine learning algorithms

- Establish management zones based on multivariate analysis of sensor data

Table 2: Key Research Reagent Solutions for Sensor-Based Agricultural Research

| Reagent/Equipment | Technical Specification | Primary Function | Application Context |

|---|---|---|---|

| EM38-MK2 | Electromagnetic induction sensor, 1.5m depth | Measures apparent soil electrical conductivity (aEC) | Spatial assessment of soil moisture, clay content, salinity [24] [25] |

| Crop Circle ACS-430 | Active canopy sensor, own light source | Measures NDVI independent of ambient light | Canopy health assessment, biomass estimation, vegetation monitoring [25] |

| PaddockTrac | Ultrasonic sensor, 10 kHz, 2mm accuracy | Measures vegetation height with sub-centimeter precision | Pasture biomass estimation, canopy structure analysis [23] |

| GeoSCOUT X Datalogger | GNSS-integrated data logging | Georeferences and records sensor measurements | Spatial data collection for precision agriculture applications [25] |

Case Studies in Precision Agriculture

Precision Viticulture Applications

Vineyards represent an ideal application for integrated sensing approaches due to their high economic value and sensitivity to microclimate variations. Research demonstrates that proximal sensing of soil EC and canopy NDVI can explain spatial variability in plant physiology and berry chemistry [25].

In a commercial vineyard study in Napa Valley, California, researchers continuously monitored spatial and temporal patterns in soil EC and NDVI across three grape varieties. Soil EC assessments conducted with an EM38-MK2 sensor revealed significant relationships with stem water potential integrals and total skin anthocyanins. NDVI values showed correlation with yield components, though cultivar effects sometimes weakened these relationships, highlighting the importance of variety-specific calibration [25].

The integrated analysis demonstrated that temporal proximal sensing methods could effectively monitor plant water status, primary metabolism, yield, and berry secondary metabolism, enabling spatially-variable management of both plant physiology and berry chemistry.

Biomass Estimation in Pasture Systems

Research integrating proximal ultrasonic sensing with satellite remote sensing demonstrates the power of data fusion for agricultural monitoring. The PaddockTrac system, utilizing ultrasonic sensors to measure vegetation height, was combined with Landsat 7 and Sentinel-2 derived vegetation indices to develop machine learning models for pasture biomass estimation [23].

The XGBoost algorithm consistently performed best, achieving an R² of 0.86, MAE of 414 kg ha⁻¹, and RMSE of 538 kg ha⁻¹ using Landsat 7 data across multiple years. This integrated approach proved more accurate than using either sensing method alone, demonstrating that detailed ground-based measurements complement the spatial coverage of satellite imagery [23].

Irrigation Management Zoning

A study in Brazil explored the fusion of remote and proximal sensing for defining irrigation management zones (MZs). Researchers collected exhaustive apparent electrical conductivity (aEC) data using an EM38-MK2 sensor, then simulated sparse sampling scenarios to evaluate the potential of combining limited proximal data with remote sensing covariates [24].

The Kriging with External Drift (KED) method, integrating sparse aEC data with satellite imagery and terrain covariates, showed relatively good fit (R² = 0.78) and effectively delineated MZs that closely matched those derived from exhaustive sampling. This approach demonstrates how strategic integration of limited proximal measurements with readily available remote sensing data can create accurate management zones while reducing data collection costs [24].

The following diagram illustrates the data fusion process for creating irrigation management zones:

Emerging Technologies and Future Directions

The field of environmental sensing for agriculture is rapidly evolving with several emerging technologies poised to transform monitoring capabilities:

Nanotechnology-Enhanced Sensors: Development of miniaturized sensors with improved sensitivity and selectivity through micro-nano technology, flexible electronics, and micro-electromechanical systems (MEMS) [27]. These advancements enable new sensing modalities for plant metabolites, pathogens, and stress biomarkers.

Wearable Plant Sensors: Flexible, non-invasive sensors that can be directly attached to plants for continuous monitoring of physiological parameters including sap flow, stem diameter variations, and fruit development [27].

Explainable Machine Learning: Advanced ML approaches that not only predict agricultural parameters but provide interpretable insights into the relationships between sensor data and plant physiology. Research demonstrates the ability to quantify the specific value added by proximal sensing variables in predicting latent energy flux, with models capturing 77-88% of variability using just 2-4 predictors [28].

Digital Twins: Virtual representations of agricultural systems that continuously update based on sensor inputs, enabling simulation of different management scenarios and prediction of system responses [24].

Multimodal Sensor Fusion: Integration of diverse sensing modalities (spectral, thermal, structural, meteorological) through advanced algorithms that extract synergistic information beyond what any single sensor type can provide.

These technological advances, coupled with improved data analytics and decision support systems, are creating unprecedented opportunities for understanding and managing the complex interactions between plants and their environment. As sensor technologies continue to evolve toward greater miniaturization, intelligence, and multi-modality, they will fundamentally transform our approach to crop management and environmental monitoring [27].

The Role of IoT and Wireless Networks in Creating Connected Farm Ecosystems

The foundation of modern agriculture is undergoing a fundamental transformation, shifting from reliance on tradition and instinct to data-driven decision-making. This digital revolution is powered by connected farm ecosystems—sophisticated networks of Internet of Things (IoT) devices, sensors, and automated machinery integrated through robust wireless networks [29]. These ecosystems enable precision agriculture, an approach that manages fields not as uniform blocks, but on a per-square-meter or even per-plant basis, delivering precisely the right inputs at the right time [30]. This technical paradigm is critical for addressing unprecedented challenges in global food security, including the need to feed a projected population of 10 billion by 2050 amid climate change and resource depletion [30].

The operational backbone of these ecosystems is a continuous stream of real-time data collected from every corner of a farming operation. This data flow, which requires new skills in data science and IT management, enables predictive rather than reactive farm management [30]. By converting everyday farm data into actionable intelligence, these systems allow farmers to optimize irrigation, reduce waste, protect animal welfare, and make faster, better decisions [29]. The architectural framework rests on three interconnected pillars: sensor networks for data acquisition, wireless connectivity for data transmission, and data management platforms for analysis and automation [1].

Core IoT Technologies in Agriculture

Sensor Networks and Data Acquisition

IoT sensor networks form the digital nervous system of the connected farm, harvesting raw information from the physical environment. These networks consist of flexible arrays of small, rugged, and increasingly autonomous devices that act as the digital eyes and ears of agricultural operations [30]. The sensors are specialized for specific agricultural monitoring tasks, each measuring critical environmental or biological parameters.

Table: Primary IoT Sensor Types in Agriculture

| Sensor Type | Measured Parameters | Primary Applications | Data Output |

|---|---|---|---|

| Soil Sensors | Moisture levels, nutrient content (Nitrogen, Phosphorus, Potassium), pH balance, electrical conductivity, temperature [30] [31] | Precision fertilization, irrigation scheduling, soil health management | Quantitative values (%, mg/kg, pH, dS/m, °C) |

| Climatic Sensors | Air temperature, humidity, wind speed, precipitation, atmospheric pressure, Photosynthetically Active Radiation (PAR) [30] | Microclimate monitoring, frost prevention, disease prediction | Quantitative values (°C, %, km/h, mm, kPa, μmol/m²/s) |

| Plant Health Sensors | Leaf wetness, chlorophyll content, spectral reflectance patterns, canopy temperature [32] | Early disease detection, pest infestation alerts, nutrient deficiency identification | Quantitative values & spectral signatures |

| Livestock Biometric Sensors | Location, body temperature, heart rate, feeding behavior, movement patterns, digestive activity [30] | Health monitoring, estrus detection, pasture optimization, theft prevention | Location coordinates, physiological parameters |

Advanced sensing platforms are evolving toward greater affordability and sophistication. Research initiatives are creating innovative, low-cost sensors that can detect changes in soil and water, helping farmers fine-tune fertilizer use [33]. These developments are crucial for democratizing precision agriculture, making it accessible to small- and medium-sized farms that contribute significantly to global food production [30].

Wireless Connectivity Infrastructure

The agricultural environment presents unique connectivity challenges that require a hybrid approach to network design. Most farms operate in remote or rural areas where traditional networks are unreliable or unavailable [29]. Effective connected ecosystems therefore demand software-defined, hybrid connectivity that combines multiple technologies to ensure seamless, secure communication across devices and regions [29].

Table: Wireless Communication Technologies for Agriculture

| Technology | Range | Power Consumption | Data Rate | Best-Suited Applications | Cost Factor |

|---|---|---|---|---|---|

| LPWAN (LoRaWAN, NB-IoT) | Very Long (up to 20km) [30] | Very Low [30] | Low | Soil moisture sensors, environmental monitoring, livestock tracking [30] | Low [30] |

| Cellular (4G/LTE) | Medium | Medium [30] | Medium to High | Mobile machinery, livestock tags, video monitoring [30] | Medium [30] |

| 5G | Medium-High | Low [30] | Very High | Real-time drone control, autonomous machinery, HD video analytics [30] | High [30] |

| Satellite | Global | Medium to High | Low to Medium | Remote area connectivity, backup for critical communications [29] | High |

| Wi-Fi | Short (≤100m) | High [30] | High | Greenhouse automation, farm office applications, fixed infrastructure [30] | Low [30] |

| Zigbee | Short (<100m) | Very Low [32] | Low | Device-to-device communication in confined areas like greenhouses [32] | Low [32] |

The emergence of eSIM technology represents a significant advancement for agricultural IoT, solving the problem of patchy rural coverage by enabling devices to switch between network operators seamlessly [30]. Global multi-network SIMs spanning 550+ networks across more than 180 countries provide uninterrupted coverage through a single platform, bringing cloud connectivity to every corner of the farm [29].

Data Architecture and Processing Frameworks

Cloud and Edge Computing Integration

The intelligence of connected farm ecosystems emerges from a sophisticated data processing architecture that combines cloud platforms with edge computing. This hybrid approach creates the control layer of the modern farm, handling everything from immediate on-site automation to long-term strategic pattern recognition [30].

Edge computing processes data as close to the source as possible—at the 'edge' of the network—using local devices such as gateways installed in farm buildings, tractors' onboard computers, or the sensors themselves [30]. This architecture provides three critical advantages for agricultural applications:

- Low-latency response: Essential for automated applications like driverless tractors, crop-dusting drones, and GPS-guided harvesters where safety commands must execute in fractions of a second [30].

- Operational autonomy: Enables core automated systems (irrigation, climate control, livestock feeding) to function intelligently even when internet connectivity is interrupted, addressing the historical challenge of unreliable rural internet [30].

- Data filtration: Edge devices can be programmed to analyze data streams locally and transmit only significant events or summaries to the cloud, dramatically reducing network traffic volume and connectivity costs [30].

Cloud computing complements edge processing by providing massive data storage, powerful analytics, and sophisticated data visualization capabilities [30]. Cloud platforms enable the application of AI and machine learning algorithms to both archived and live data streams, comparing real-time information with years of historical data and cross-referenced information from other farms or government resources [30].

Data Management and Analytics

The data architecture for smart farming must support scalable growth while maintaining robust security measures and data accessibility [1]. Effective systems employ standardized APIs for cross-system compatibility, creating automated data pipelines that streamline operational workflows [1].

The integration of edge and cloud creates a self-improving feedback loop that enhances network intelligence over time. Edge devices in the field collect and pre-process vast amounts of data, which is curated and sent to the cloud for aggregation with results from diverse sources [30]. The cloud's AI platform analyzes this massive dataset to refine its predictive models, with benefits cascading to every participant in the network [30]. This form of collective intelligence, where each farm benefits from the experiences of others, represents the digital evolution of traditional farming knowledge-sharing [30].

Implementation Framework and Experimental Protocols

Methodology for Sensor Network Deployment