Robust Multimodal Learning: Advanced Strategies for Handling Missing Data in AI Systems

This comprehensive review explores cutting-edge methodologies and frameworks designed to enhance the robustness of multimodal learning systems when faced with missing data.

Robust Multimodal Learning: Advanced Strategies for Handling Missing Data in AI Systems

Abstract

This comprehensive review explores cutting-edge methodologies and frameworks designed to enhance the robustness of multimodal learning systems when faced with missing data. As multimodal AI increasingly transforms fields from healthcare to autonomous systems, the critical challenge of performance degradation under incomplete modality scenarios demands innovative solutions. We examine foundational concepts, methodological advances including dynamic fusion strategies and cross-modal representation learning, optimization techniques for real-world applications, and rigorous validation approaches. By synthesizing the latest research breakthroughs and empirical findings, this article provides researchers and drug development professionals with actionable insights for developing resilient multimodal systems capable of maintaining accuracy and reliability despite missing or incomplete data inputs.

Understanding the Missing Data Challenge in Multimodal AI Systems

Frequently Asked Questions (FAQs)

1. Why does model performance deteriorate when a modality is missing? Multimodal models are often designed with a multi-branch architecture, where each branch processes a specific modality. During training, these models develop a dependency on having a complete set of modalities to make predictions. When one modality is absent during inference, the architecture lacks the expected input, leading to significant performance drops because the model cannot properly execute its fused decision-making process [1].

2. What are the main real-world causes of missing modalities? In clinical and real-world settings, modalities can be missing due to several factors: sensor malfunctions or hardware limitations, privacy concerns that restrict data access, cost constraints in data collection, environmental interference during acquisition, and data transmission or storage issues. In healthcare, for example, it is common that not every patient has all types of tests (like genomic data or specific images) available [2] [3].

3. Is it a good solution to simply discard samples with missing modalities? While discarding samples with missing modalities is a common pre-processing step, it is generally not the optimal solution. This approach wastes the valuable information contained in the partially available data and reduces the effective training dataset size, which can increase the risk of model overfitting. Furthermore, a model trained only on complete data will not be equipped to handle missing modalities during testing [2].

4. What is the core idea behind making models robust to missing modalities? The overarching goal is to design models that can dynamically and robustly handle information from any number of available modalities during both training and testing. The aim is to maintain performance comparable to what is achieved with full-modality samples, without requiring retraining or significant architectural changes for every possible missing-modality scenario [2].

5. Can a model be robust to missing modalities even if it's trained only on complete data? Yes, with the right architectural choices, this is possible. Frameworks like Chameleon are designed to be trained using a complete set of modalities but remain resilient when modalities are missing during testing. This is achieved by unifying all input modalities into a common representation space (e.g., encoding everything into a visual format), which eliminates the dependency on modality-specific branches [1].

Troubleshooting Guides

Issue 1: Performance Drop with a Specific Missing Modality

Problem: Your multimodal model's accuracy falls significantly when one particular modality (e.g., text) is unavailable at test time.

Solutions:

- Implement Feature Modulation: Adapt a pre-trained multimodal network using a parameter-efficient method. This involves adding a small number of trainable parameters (e.g., fewer than 1% of the total model parameters) that modulate intermediate features to compensate for the missing modality. This approach has been shown to partially bridge the performance gap [4] [5].

- Use a Unified Representation Framework: Adopt a framework like Chameleon, which encodes all non-visual modalities (like text or audio) into a visual format. This allows a single visual network (like a CNN or Vision Transformer) to process any combination of available modalities, making the model inherently robust to missing inputs [1].

- Apply a Model Combination Strategy: Train an ensemble of networks, where each network is an expert for a specific combination of available modalities. During inference, you can select the network that corresponds to the available modalities [2].

Issue 2: Missing Modalities with Limited Labeled Data

Problem: You are working on a task that suffers from both missing modalities and a very small number of annotated training samples (the "low-data regime").

Solutions:

- Leverage In-Context Learning (ICL): Employ a retrieval-augmented in-context learning framework. This method retrieves relevant, full-modality samples from a support set and uses them as context for a transformer model to make predictions on a target sample that may have missing modalities. This data-dependent approach is highly sample-efficient [6].

- Explore Data Imputation Techniques: Generate or impute the missing modality data from the available modalities. This can be done at the raw data level (modality generation) or at the feature representation level (representation generation). Once the modality is imputed, downstream tasks can proceed as if all modalities were available [2].

Issue 3: Handling Arbitrary and Dynamic Modality Combinations

Problem: You need a single model that can handle unpredictable and constantly changing patterns of missing modalities across different clients or data samples, such as in a federated learning setting.

Solutions:

- Implement Reconfigurable Representations: As proposed for multimodal federated learning, use learnable client-side embedding controls. These embeddings act as reconfiguration signals that encode each client's specific data-missing pattern. They align a globally aggregated representation with the local client's context, allowing for dynamic adaptation [7].

- Utilize Coordinated Representation Learning: Train your model with specific constraints that align the representations of different modalities in a shared semantic space. This way, even if one modality is missing, its correlated representation can be inferred or approximated by the others, maintaining performance [2].

Experimental Protocols & Data

The following table summarizes the performance improvements achieved by various robust learning methods on different datasets.

Table 1: Performance Improvements of Robust Multimodal Methods

| Method / Approach | Key Metric | Dataset(s) | Performance Result |

|---|---|---|---|

| ICL-CA (In-Context Learning) [6] | Accuracy gain over best baseline with only 1% training data | Four multimodal datasets | 5.9% to 10.8% improvement across various missing states |

| Chameleon Framework [1] | Robustness to missing modalities | Six benchmark datasets (e.g., Hateful Memes, VoxCeleb) | Outperforms standard multimodal methods and shows superior resilience without data-centric optimization |

| Parameter-Efficient Adaptation [4] | Number of new parameters required | Five tasks across seven datasets | Achieves robustness with <1% of total model parameters |

| Multimodal Federated Learning [7] | Performance improvement under severe data incompleteness | Multiple federated benchmarks | Up to 36.45% performance improvement |

Detailed Experimental Protocol: Parameter-Efficient Adaptation

This protocol is based on the method described in "Robust Multimodal Learning With Missing Modalities via Parameter-Efficient Adaptation" [4] [5].

1. Objective: To bridge the performance gap caused by missing modalities during inference by adapting a pre-trained multimodal network with minimal trainable parameters.

2. Methodology:

- Pre-trained Model: Start with a multimodal network that has been pre-trained on a dataset with all modalities present.

- Adaptation Mechanism: Introduce lightweight adaptation modules (e.g., feature modulation layers) into the pre-trained network. These modules are designed to adjust the intermediate features of the available modalities to compensate for the missing one(s).

- Training Procedure:

- Artificially create training scenarios with missing modalities by masking out one or more modalities from the input data.

- Keep the vast majority of the pre-trained network's weights frozen.

- Only update the parameters of the newly introduced adaptation modules.

- Use the original task's loss function (e.g., cross-entropy for classification) to train the model.

3. Evaluation:

- Test the adapted model on a held-out test set where modalities are intentionally missing.

- Compare its performance against (a) the original pre-trained model (which suffers a large drop) and (b) independently trained networks dedicated to specific modality combinations.

Detailed Experimental Protocol: In-Context Learning for Data Scarcity

This protocol is based on the method described in "Borrowing treasures from neighbors: In-context learning for multimodal learning with missing modalities and data scarcity" [6].

1. Objective: To address the dual challenge of missing modalities and limited annotated data by leveraging the in-concontext learning ability of transformer models.

2. Methodology:

- Model Architecture: Utilize a transformer-based model capable of in-context learning.

- Retrieval-Augmented Setup:

- Maintain a support set of full-modality data samples.

- For a given target sample with missing modalities, retrieve the most relevant full-modality samples from the support set.

- Input Formulation: Construct the input to the transformer by concatenating the retrieved full-modality samples (context) with the target sample that has missing modalities.

- Training/Learning:

- The model learns to use the context from the retrieved full-modality samples to understand the task and fill in the informational gaps for the target sample.

- This approach is highly sample-efficient as it relies on a data-dependent paradigm rather than intensive parameter updates.

3. Evaluation:

- Evaluate the model in a low-data regime where only a small fraction (e.g., 1%) of the training data is available.

- Measure classification accuracy on both full-modality and missing-modality test data.

- Assess the reduction in the performance gap between full-modality and missing-modality data compared to baseline methods.

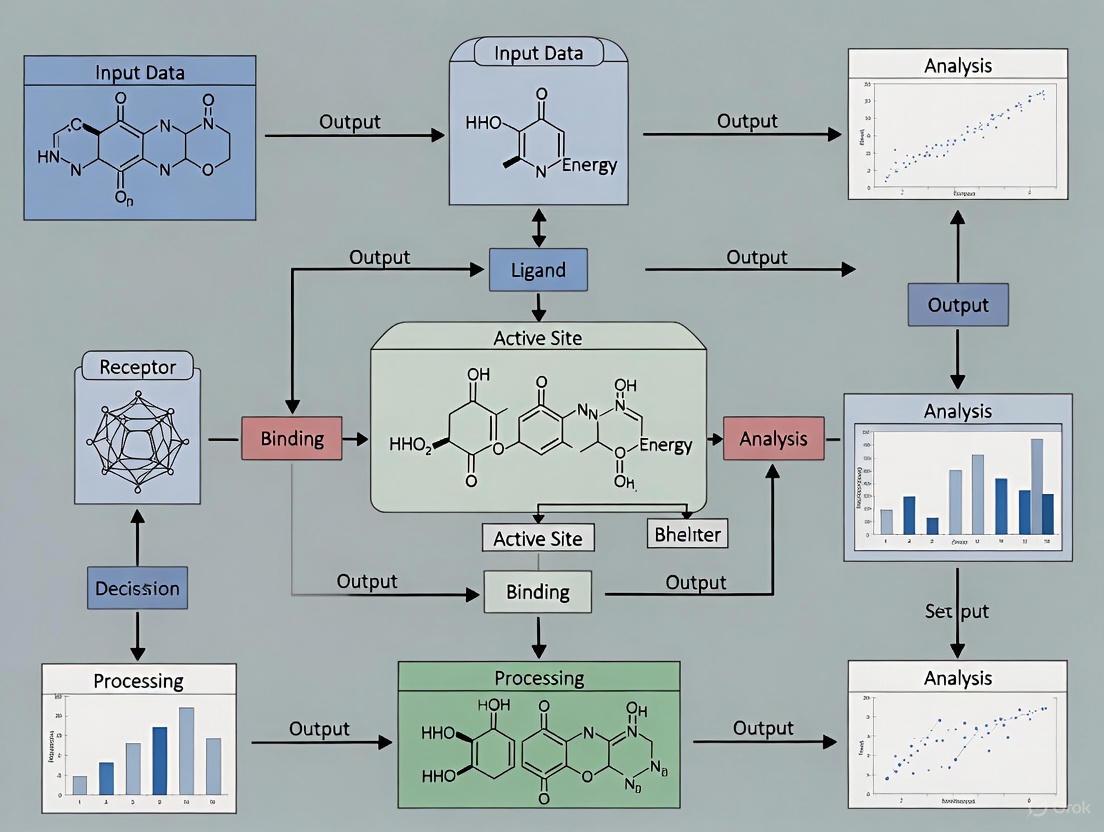

Workflow Diagrams

Diagram 1: Chameleon Framework Workflow

Chameleon Framework Flow

Diagram 2: In-Context Learning for Missing Modalities

In-Context Learning Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Methods for Robust Multimodal Learning

| Item / Reagent | Function / Purpose | Example Use Case |

|---|---|---|

| Parameter-Efficient Adaptation Modules | Lightweight neural network components added to a pre-trained model to adjust features and compensate for missing inputs with minimal new parameters. | Fine-tuning large pre-trained multimodal models (e.g., ViLT) to be robust to missing modalities without full retraining [4] [5]. |

| Modality Encoding Scheme | An algorithm that transforms non-visual data (text, audio) into a visual format (e.g., a 2D feature map), enabling a unified visual processing pipeline. | The core of the Chameleon framework, allowing a single visual network to process any combination of text, audio, and images [1]. |

| In-Context Learning (ICL) with Retrieval | A data-dependent framework that uses a support set of full-modality examples to provide context for a transformer model making predictions on incomplete samples. | Tackling multimodal tasks in data-scarce regimes where collecting large annotated datasets is expensive or impractical [6]. |

| Multimodal Datasets with Natural Missingness | Real-world datasets where a significant portion of samples have one or more modalities missing, essential for training and evaluating model robustness. | TCGA cancer datasets (genomic & image data) [3], social media datasets (text & image) [1], and audio-visual datasets [1]. |

| Reconfigurable Representation Framework | A set of learnable embeddings that encode a client's specific data-missing pattern, allowing a global model to adapt to local data heterogeneity. | Multimodal federated learning scenarios where different clients possess different and incomplete subsets of modalities [7]. |

Frequently Asked Questions (FAQs)

1. What are the most common causes of missing data in real-world multimodal experiments? Missing data in multimodal experiments frequently arises from sensor malfunctions (e.g., device failure, battery drain), costly or invasive data collection procedures (e.g., skipping expensive PET scans in Alzheimer's studies), privacy concerns, data loss during transmission, and human error (e.g., patients forgetting to fill out surveys) [8] [2]. In pharmaceutical manufacturing, equipment malfunctions and unplanned downtime are significant contributors [9].

2. Why is simply removing samples with missing data a problematic strategy? Deleting records with missing data, known as listwise deletion, is a common but flawed approach. It wastes valuable information present in the available modalities and can introduce significant bias if the missingness is not random, thereby reducing the reliability and generalizability of the resulting model [8] [2]. It also fails to prepare the model for real-world scenarios where missing data occurs at test time.

3. What is the fundamental difference between 'random missing' data and a 'missing modality'?

- Random Missing: Refers to sporadic, isolated missing values within a single modality (e.g., a few time-series sensor readings are lost) [8].

- Missing Modality: Describes the scenario where an entire modality is absent for a data sample (e.g., a patient completely lacks MRI data) [8] [2]. This presents a more significant architectural challenge for multimodal systems.

4. How can I make my multimodal model robust to a modality being entirely absent during testing? Several advanced methodological families are designed for this purpose, moving beyond simple imputation. Key strategies include:

- Modality Imputation: Generating plausible data for the missing modality based on the available ones [2].

- Representation-Focused Models: Learning a shared semantic space where representations from different modalities are aligned, or generating the missing modality's representation directly [2].

- Architecture-Focused Models: Designing flexible networks (e.g., using parameter-efficient adaptation) that can dynamically adjust to whichever modalities are present [4] [2].

- Unification Frameworks: Encoding all modalities into a common format (e.g., transforming text and audio into a visual representation) so the model always processes a single, consistent input type, making it inherently robust to absence [1].

5. What is a 'data gap' and how does it differ from typical missing data? A data gap does not refer to a few missing values in an otherwise populated dataset. Instead, it describes a situation where an entire data series was never collected or is not available at a useful granularity, for a price, or with acceptable timeliness [10]. For example, a complete lack of data on the nutritional content of school meals in a region is a data gap, which fundamentally limits the analysis that can be performed.

Troubleshooting Guides

Issue 1: Handling Missing Modalities During Model Inference

Problem: Your trained multimodal model experiences a severe performance drop when one or more modalities are missing during deployment, which was not accounted for during training.

Diagnosis: This is a classic symptom of a model that has developed a dependency on a complete set of modalities due to its multi-branch design and training procedure [1].

Solution Strategies:

| Solution Category | Description | Key Techniques | Consideration |

|---|---|---|---|

| Parameter-Efficient Adaptation [4] | Fine-tunes a small subset of parameters (e.g., <1% of total) in a pre-trained model to compensate for missing inputs. | Feature modulation, adapter layers. | Highly parameter-efficient; applicable to a wide range of modality combinations. |

| Unification via Visual Encoding [1] | Encodes all non-visual modalities (text, audio) into a visual format (e.g., via embeddings reshaped into 2D), enabling a single visual network. | Embedding extraction, 2D reshaping. | Simplifies architecture; inherently robust; may require modality-specific encoders. |

| Fusion-Based Imputation [8] | Uses information from available modalities to impute the missing one before fusion. | Early, Intermediate, or Late Fusion strategies. | Can be computationally expensive; risk of introducing noise if imputation is poor. |

Experimental Protocol for Robustness Validation:

- Dataset Preparation: Use a multimodal dataset (e.g., from healthcare [8] or affective computing [2]).

- Create Test Splits: Generate multiple test sets where modalities are systematically ablated (e.g., text-only, image-only, audio-only).

- Model Training: Train your proposed robust model (e.g., using the Chameleon framework [1] or parameter-efficient adaptation [4]).

- Benchmarking: Compare your model's performance against a baseline model (trained on complete data only) across all test splits.

- Metrics: Report key metrics (e.g., Accuracy, F1-Score) for each test condition to demonstrate robustness. The goal is to minimize the performance gap between complete and missing-modality scenarios.

The logical flow for diagnosing and addressing missing modality robustness is outlined below:

Issue 2: Addressing Fundamental Data Gaps in a Research Domain

Problem: You identify a critical lack of data necessary to investigate your research question (e.g., no data on childhood obesity drivers in a specific region) [10].

Diagnosis: This is a data gap, not a simple missing data problem. The required information was never systematically collected.

Solution Strategy: A Five-Step Data Gap Mapping Process [10]

This methodology provides a structured way to identify and prioritize missing data at a macro level.

- Map Routes to Impact: Define the key areas of focus for your research mission (e.g., reducing obesity by improving school meals, reducing unhealthy advertising).

- Find the Purpose of Data: For each focus area, identify the specific data needed to track progress towards your intermediate goals (e.g., data to track the healthiness of school meals over time).

- Build a Vision for Ideal Data: Imagine the perfect dataset that would fulfill your data needs, without considering current limitations (e.g., a national database of all school meal menus with nutritional information).

- Uncover Existing Data: Critically assess available data against your "ideal" vision. Ask: Is it available at the right granularity? Is it accurate? Is it timely? This exposes partial or complete gaps.

- Describe and Prioritize Gaps: For each identified gap, assign a priority (Low/Medium/High) based on:

- Time Sensitivity: Is it needed for a current policy decision?

- Potential Impact: How much could this data advance the field?

- Effort Required: How difficult is it to collect?

The following chart visualizes this iterative process:

The Scientist's Toolkit: Research Reagent Solutions

This table details key methodological "reagents" for building robust multimodal systems.

| Research Reagent | Function in Experiment | Key Characteristics |

|---|---|---|

| Fusion Strategies [8] | Defines how and when information from different modalities is combined, which is crucial for imputation. | Early Fusion: Combine raw data. Intermediate Fusion: Merge features in hidden layers. Late Fusion: Fuse model outputs/predictions. |

| Modality Imputation Methods [2] | Generates plausible data for a missing modality, allowing standard full-modality models to be used. | Modality Composition: Combines available modalities. Modality Generation: Uses generative models (e.g., VAEs, GANs). |

| Shared Representation Learning [2] [1] | Aligns features from different modalities into a common semantic space, enabling cross-modal understanding. | Uses constraints (e.g., contrastive loss) to ensure representations of the same concept are close, regardless of modality. |

| Parameter-Efficient Adaptation [4] | Fine-tunes a minimal number of parameters in a pre-trained network to adapt it to missing modality scenarios. | Methods include feature modulation or adapter layers. Requires <1% of total parameters, making it highly efficient. |

| Unification Encoding [1] | Transforms all input modalities into a single, consistent format (e.g., images) for processing by a single model. | Encodes non-visual data (text, audio) as 2D representations. Makes the model inherently robust to modality absence. |

Troubleshooting Guide: Diagnosing Multimodal Learning Issues

This guide helps you diagnose and address two common challenges in multimodal learning research: modality missingness (the absence of entire data modalities) and modality imbalance (where one modality dominates the learning process).

Issue: Poor Model Performance with Incomplete Data

- Problem Description: Your model's performance degrades significantly when one or more data modalities (e.g., audio from a video file, a tabular clinical feature set) are missing during inference, even if it performs well on complete data.

- Diagnosis Check: This is a classic symptom of Modality Missingness. It indicates that your model has not learned to make robust predictions unless all data streams are present and has failed to leverage the available information effectively [11] [12].

- Recommended Solution: Implement a modality-resilient framework. The DREAM framework uses a sample-level dynamic modality assessment to direct the selective reconstruction of missing modalities and a soft masking fusion strategy for adaptive integration [11]. Alternatively, employ improved modality dropout during training. Using learnable modality tokens instead of fixed zero placeholders enhances the model's awareness and handling of missing inputs [12].

Issue: Model Over-Reliance on a Single Modality

- Problem Description: Your model appears to "ignore" one or more modalities, basing its decisions primarily on a single, dominant data type (e.g., relying on audio over visual cues in emotion recognition). Performance may not surpass, and could even be worse than, a unimodal model using only the dominant modality [13] [14].

- Diagnosis Check: This indicates Modality Imbalance. The model's optimization process has been skewed, allowing a modality that is easier to learn from to overshadow others. Recent research shows this imbalance manifests not just during training but also systematically at the decision layer due to intrinsic disparities in feature-space and decision-weight distributions [13].

- Recommended Solution: Move beyond simply equalizing modality contributions. Pursue a dataset-aware Utopia Contribution Distribution (UCD), which estimates the optimal contribution ratio for each modality based on the specific dataset, rather than forcing an equal balance. Align the model's Factual Contribution Distribution (FCD) with this UCD during training [14]. For Large Multimodal Models (LMMs), techniques like Modality-Balancing Preference Optimization (MBPO) can be used, which generates hard negatives to counteract the model's inherent linguistic bias [15].

Issue: Handling Data that is Both Missing and Imbalanced

- Problem Description: You are working with a real-world dataset where some samples have missing modalities, and the available modalities have inherently different levels of predictive power.

- Diagnosis Check: You are facing the combined challenge of missingness and imbalance. This is a common scenario in clinical and real-world data [16] [12].

- Recommended Solution: Adopt a unified framework that addresses both issues simultaneously. The DREAM framework is explicitly designed for this, as it dynamically recognizes missing or underperforming modalities and adaptively fuses the available ones based on their estimated contributions [11]. Another approach is to combine simultaneous modality dropout for robustness with contrastive multimodal fusion that binds unimodal and fused representations to improve learning from all modalities [12].

Experimental Protocols for Robust Multimodal Research

Integrating the following methodologies into your experimental pipeline can systematically enhance model robustness.

Protocol 1: Improved Modality Dropout with Contrastive Learning

This protocol enhances robustness to missingness and improves unimodal representations [12].

- Model Setup: Use a standard multimodal architecture with pretrained, frozen unimodal encoders, a lightweight fusion MLP, and a task head.

- Simultaneous Dropout Training: Instead of randomly sampling modality subsets, explicitly supervise all combinations. The loss function is:

ℒ_smd = -log p(yᵢ | x_cᵢ, x_tᵢ, θ) - λ ∑_{j∈M} log p(yᵢ | x_jᵢ, θ)whereMis the set of modalities andλis a balancing hyperparameter. - Integrate Learnable Tokens: Replace the traditional fixed zero matrices (

0_c,0_t) used for missing modalities with learnable modality tokens (E_c,E_t). This helps the model generalize better to missingness. - Apply Contrastive Learning: Use a supervised contrastive loss that includes not only unimodal representations (

z_c,z_t) but also the fused multimodal representation (z_f). This encourages better alignment and binding of concepts across representations.

Protocol 2: Estimating and Aligning with Utopia Contribution

This protocol addresses imbalance by finding the optimal contribution target for a dataset [14].

- Estimate Utopia Contribution Distribution (UCD):

- For each modality

m, train a model with and without that modality. - The UCD for modality

m,π_m*, is proportional to the performance change (e.g., increase in accuracy or decrease in loss) when the modality is included. Formally, it's derived from the modality's impact on population risk. - Normalize the contributions so that

∑ π_m* = 1.

- For each modality

- Estimate Factual Contribution Distribution (FCD):

- For a given model, estimate the factual contribution of each modality to a prediction using a model-agnostic method like mutual information.

- The FCD for modality

mis the proportion of information its representation contributes to the final fused representation.

- Training Objective: Add a Kullback–Leibler (KL) divergence loss term to the main task loss to align the FCD with the pre-defined UCD:

ℒ_total = ℒ_task + ℒ_KL(FCD || UCD).

Protocol 3: Agentic Framework for Missing Modality Generation (AFM2)

This protocol uses foundation models to reconstruct missing modalities in a training-free manner [17].

- Agent Design: Deploy three collaborative agents:

- Miner: A foundation model (e.g., GPT-4o) that adaptively extracts fine-grained semantic cues from the available modalities.

- Generator: A generative model (e.g., Stable Diffusion) that synthesizes the missing modality using the miner's guidance.

- Verifier: A model (e.g., ImageBind) that evaluates the generated candidates for semantic alignment with the input.

- Self-Refinement Loop:

- The miner produces a detailed description from the available context.

- The generator produces multiple candidate outputs.

- The verifier selects the best candidate. If no candidate meets a quality threshold, the guidance is refined and the process repeats.

The tables below summarize key experimental findings from recent studies on modality imbalance and missingness.

Table 1: Decision-Layer Imbalance Measurements on Audio-Visual Datasets (CREMAD & Kinetic-Sounds). This data quantifies the inherent disparity in decision weights and output logits between audio and video modalities, even after sufficient pre-training, demonstrating that imbalance is a fundamental property beyond optimization dynamics [13].

| Dataset | Modality | Avg. Weight (×10⁻²) | Avg. Logits (×10⁻²) |

|---|---|---|---|

| CREMAD | Audio | 3.56 | 2.14 |

| Video | 1.81 | 1.48 | |

| Kinetic-Sounds | Audio | 3.63 | 2.47 |

| Video | 2.73 | 2.02 |

Table 2: Performance of the DREAM Framework on Benchmark Datasets. The results demonstrate the framework's effectiveness in handling both modality missingness and imbalance, showing superior performance compared to other models, especially under the challenging condition of a single available modality [11].

| Dataset | Model | Full Modality Accuracy | Single Modality Accuracy |

|---|---|---|---|

| IEMOCAP | DREAM | 68.9 | 63.5 |

| MISA | 65.1 | 58.3 | |

| MulT | 66.7 | 59.8 | |

| CMU-MOSEI | DREAM | 83.4 | 79.2 |

| MISA | 80.5 | 74.1 | |

| MulT | 81.6 | 75.0 |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and methods for building robust multimodal models.

| Research Reagent | Function & Explanation |

|---|---|

| Learnable Modality Tokens [12] | A replacement for fixed zero-placeholders in modality dropout. These learnable parameters improve the model's "awareness" of which modality is missing, leading to more robust representations when data is incomplete. |

| Utopia Contribution Distribution (UCD) [14] | A dataset-aware optimization target that defines the ideal contribution proportion for each modality. It prevents the suboptimal performance that can result from blindly forcing all modalities to contribute equally. |

| Adversarial Negative Mining [15] | A data curation method for preference optimization. It generates "hard negative" responses that are misled by a dominant modality's bias (e.g., language), teaching the model to rely more on the neglected modality (e.g., vision). |

| Agentic Framework (AFM2) [17] | A training-free, planner-based system that uses foundation models as "agents" to mine cross-modal cues, generate missing data, and verify output quality. It is particularly useful for reconstructing raw missing modalities. |

| Simultaneous Modality Dropout [12] | A training strategy that explicitly calculates loss for every possible combination of available modalities in a single iteration. This ensures the model is directly optimized for all missing-data scenarios, leading to more stable training. |

Visual Workflows for Multimodal Learning

The following diagrams illustrate key workflows and relationships for tackling modality imbalance and missingness.

Diagram 1: Analyzing Decision-Layer Imbalance

This workflow outlines the experimental procedure for identifying and diagnosing modality imbalance at the decision layer of a model [13].

Diagram 2: Handling Missing Modalities with AFM2

This diagram shows the iterative, self-refining process of the Agentic Framework for generating missing modalities [17].

Diagram 3: Utopia vs. Factual Contribution Alignment

This chart illustrates the core principle of aligning a model's actual modality use with the ideal target for a given dataset [14].

Frequently Asked Questions (FAQs)

Q1: Is it always bad if one modality contributes more than others?

A: No. Forcing equal contribution can be counterproductive [14]. The goal is a relative balance aligned with the "Utopia Contribution" for your dataset. A modality with inherently higher predictive power should often have a larger weight. The problem is a systematic bias that prevents weaker modalities from contributing effectively, even in contexts where they are informative [13].

Q2: Can't I just discard samples with missing data?

A: "Complete-case analysis" (dropping samples with any missing data) is rarely appropriate [18]. It assumes the remaining data is representative, which is often false, and can introduce severe bias, reduce statistical power, and exclude marginalized populations whose data is more likely to be missing [18]. Using robust methods like modality dropout or imputation is statistically and ethically preferable.

Q3: Are modality imbalance and missingness independent problems?

A: No, they are deeply interconnected. Missingness can exacerbate imbalance (e.g., if the dominant modality is frequently missing), and solutions must often address both [11] [12]. Frameworks like DREAM are explicitly designed to handle this combined challenge through dynamic assessment and fusion.

Q4: What is the biggest limitation in using foundation models to generate missing modalities?

A: Current foundation models often struggle with fine-grained semantic extraction and lack robust verification mechanisms, which can lead to semantically misaligned or low-quality generated content [17]. The proposed agentic framework (AFM2) with its miner and verifier agents is a step toward mitigating these issues.

Frequently Asked Questions (FAQs)

1. Why does model performance deteriorate when a modality is missing? Multimodal models typically rely on a multi-branch architecture, where each branch processes a specific modality. During training, these models develop a dependency on having a complete set of modalities to form accurate joint representations. When one branch receives no input due to a missing modality, the model cannot function as designed, leading to significant performance drops [1]. Furthermore, models may learn shortcuts from spurious correlations present only in the complete training data, failing to generalize to incomplete data scenarios [11].

2. What are the common types of modality missingness encountered in real-world data? Missing modalities can occur in various patterns:

- Missing Completely at Random (MCAR): The absence of a modality is independent of any observed or unobserved data. This is often the simplest pattern to handle.

- Missing at Random (MAR): The probability of a modality being missing depends on other observed variables in the data.

- Missing Not at Random (MNAR): The missingness is related to the unobserved values of the missing modality itself. This is the most challenging scenario, common in clinical settings where a specific test is not performed because a patient's symptoms suggest it is unnecessary [19] [3].

3. Can't I just discard samples with missing modalities during training? While common, this practice is suboptimal. Discarding samples wastes valuable data and can drastically reduce your training dataset size, increasing the risk of overfitting. In clinical studies, this can also introduce selection bias, as the "complete" dataset may no longer be representative of the real patient population [3]. Modern methods aim to utilize all available data.

4. How does modality imbalance differ from modality missingness? Modality missingness refers to the complete absence of one or more modalities for a given data sample. Modality imbalance, however, occurs when all modalities are present but contribute unequally to the final prediction. A dominant modality can cause the model to overlook subtle but important signals from weaker modalities, also leading to suboptimal performance [11].

5. What is a common baseline approach to handle a missing modality during inference? A simple baseline is zero-imputation, where the missing modality is replaced with a zero vector. However, this can create a distribution shift between training and inference, as the model encounters an input it was not trained on. More advanced methods dynamically adjust the fusion strategy or reconstruct a placeholder for the missing modality [11].

Troubleshooting Guides

Issue: Performance Drop with Modally-Incomplete Test Data

Problem: Your model, which was trained on a complete multimodal dataset, suffers a significant drop in accuracy when one or more modalities are missing during testing.

Solution: Implement a robust multimodal learning framework designed to handle missingness. Below is a comparison of strategies documented in recent literature.

- Table 1: Comparison of Robust Multimodal Learning Frameworks

| Framework / Method | Core Principle | Handling Missing Modalities During... | Key Advantage(s) |

|---|---|---|---|

| DREAM [11] | Dynamic modality assessment & selective reconstruction; soft masking fusion. | Training & Inference | Sample-level dynamic adaptation; no need for explicit missing-modality annotations. |

| Chameleon [1] | Unifies all modalities into a visual common space via encoding. | Training & Inference | Single-branch network eliminates dependency on modality-specific branches. |

| CPM-Nets Fusion [3] | Learns a complete, structured joint representation via reconstruction and classification loss. | Training & Inference | Can handle arbitrary missing patterns; uses available modalities to reconstruct the hidden representation. |

| Ma et al. Strategy [1] | Multi-task optimization to improve Transformer robustness. | Training & Inference | Reduces dependency on complete modality set without complex fusion schemes. |

Experimental Protocol for Robust Training: A common protocol to evaluate these methods involves artificially creating missing data in a complete dataset.

- Dataset: Start with a benchmark dataset where all samples have all modalities (e.g., a multimodal medical dataset [3] or a text-visual dataset [1]).

- Data Splitting: Split the data into training, validation, and test sets.

- Simulate Missingness: For the training set, randomly discard one modality (e.g., the text or image) from a certain percentage (e.g., n% where n=25, 50, 75) of the samples. Keep the validation and test sets complete for initial evaluation.

- Model Training: Train the robust model (e.g., Chameleon, DREAM) using all available data, including the samples with missing modalities.

- Evaluation:

- Test the model on a complete-modality test set.

- Test the model on a missing-modality test set, where modalities are systematically dropped to simulate real-world inference scenarios.

- Metrics: Compare accuracy, F1-score, etc., against a baseline model trained only on complete data.

The following diagram illustrates the core architectural difference between a standard multimodal model and a robust framework like Chameleon.

Robust vs. Standard Multimodal Architecture

Issue: Modality Imbalance and Dominance

Problem: Even when all modalities are present, one modality (e.g., image) dominates the prediction, causing the model to underutilize other important modalities (e.g., genomic data).

Solution: Implement a dynamic fusion strategy that adaptively weights the contribution of each modality based on the input sample.

- Table 2: Quantitative Results on Benchmark Datasets (Classification Accuracy)

| Dataset | Task | Complete Modality | Missing Modality (Text) | Missing Modality (Image) |

|---|---|---|---|---|

| Hateful Memes [1] | Binary Classification | 76.5 (Chameleon) | 73.1 (Chameleon) | 70.2 (Chameleon) |

| UPMC Food-101 [1] | Food Classification | 91.2 (Chameleon) | 89.8 (Chameleon) | 90.5 (Chameleon) |

| TCGA Glioma [3] | Grade Classification (3-way) | 84.4 (Pathomic Fusion w/ CPM) | 80.1 (Pathomic Fusion w/ CPM) | 82.9 (Pathomic Fusion w/ CPM) |

Experimental Protocol for Dynamic Fusion (DREAM framework):

- Modality Assessment: For each input sample, a lightweight network estimates the quality or "contribution score" of each available modality.

- Selective Reconstruction: If a modality is identified as missing or of very low quality, the model can trigger a generator to reconstruct a plausible version of it, guided by the available modalities.

- Soft Masking Fusion: Instead of a fixed fusion rule (e.g., concatenation), a soft mask is generated based on the contribution scores. This mask adaptively modulates the features from each modality before they are fused, allowing the model to focus on more reliable modalities.

- Joint Training: The assessment, reconstruction, and fusion modules are trained end-to-end with the main task objective.

The workflow for a dynamic fusion framework like DREAM is illustrated below.

Dynamic Fusion Workflow in DREAM

The Scientist's Toolkit

- Table 3: Research Reagent Solutions for Multimodal Experiments

| Reagent / Material | Function in Experiment |

|---|---|

| Convolutional Neural Network (CNN) [3] | Extracts localized, hierarchical features from image-based data (e.g., histological slides, MRI scans). |

| Graph Convolutional Network (GCN) [3] | Models relational and structural information within data, such as cell-to-cell interactions in tissue graphs or social networks. |

| Self-Normalizing Network (SNN) [3] | A type of feedforward network that is robust to overfitting and is effective for processing tabular data, such as genomic features. |

| Kronecker Product [3] | A mathematical operation used for multimodal fusion that captures all pairwise interactions between feature vectors of different modalities. |

| Canonical Correlation Analysis (CCA) Loss [3] | A supervision signal that encourages the model to learn maximally correlated representations across different modalities. |

| Reconstruction Network (in CPM-Nets) [3] | A module that learns to reconstruct all modalities from a common hidden representation, enforcing the representation to be complete and informative. |

| Modality Encoder (in Chameleon) [1] | Transforms non-visual modalities (text, audio) into a visual format (e.g., 2D feature maps), enabling processing by a single visual network. |

Frequently Asked Questions (FAQs)

Q1: What are the fundamental types of missing data patterns I might encounter?

The foundational taxonomy of missing data mechanisms, as defined by Rubin, is crucial for diagnosing and treating incomplete data. While recent research suggests moving beyond these for complex, multivariable missingness, they remain essential knowledge [20]. The table below summarizes the three core types.

Table 1: Fundamental Missing Data Mechanisms

| Mechanism | Acronym | Formal Definition | Simple Explanation | Common Example |

|---|---|---|---|---|

| Missing Completely at Random | MCAR | Missingness is independent of both observed and unobserved data. | The fact that a value is missing is a purely random event. | A lab sample is dropped, or a survey form is lost in the mail [21]. |

| Missing at Random | MAR | Missingness depends on observed data but not on unobserved data. | Missingness can be explained by other complete variables in your dataset. | In a health study, older patients are more likely to have missing blood pressure readings; age is fully observed [22] [20]. |

| Missing Not at Random | MNAR | Missingness depends on the unobserved value itself. | The reason for the missing value is directly linked to what that value would have been. | Individuals with very high income are less likely to report it in a survey [20]. |

Q2: My data has multiple missing variables. What patterns should I look for beyond MCAR, MAR, and MNAR?

With multiple incomplete variables, the overall pattern of missingness becomes critical. These patterns describe which variables are missing together and influence which imputation methods are most effective [23].

Table 2: Common Missing Data Patterns in Multivariable Datasets

| Pattern | Description | Implication for Analysis |

|---|---|---|

| Univariate | Only a single variable has missing data. | A simpler special case of monotone missingness [23]. |

| Monotone | Variables can be ordered so that if Y~j~ is missing, all subsequent variables Y~k~ (k>j) are also missing. | Common in longitudinal studies with patient drop-out. Allows for computational savings in imputation [23]. |

| Non-Monotone (General) | Missing data occurs in an arbitrary, non-systematic way across variables. | The most common and complex pattern. Requires general imputation methods like Multiple Imputation by Chained Equations (MICE) [23]. |

The following diagram illustrates the logical relationship between these patterns and their characteristics.

Q3: How can I visually diagnose the missingness pattern in my dataset?

Visual diagnostics are a powerful first step in understanding the structure and scale of your missing data problem [21]. They help answer how much data is missing, where it is missing, and whether the gaps are isolated or systematic.

Table 3: Essential Visual Diagnostics for Missing Data

| Visualization Technique | What It Shows | How It Helps |

|---|---|---|

| Missingness Bar Chart | The amount of missing data (count or percentage) for each variable. | Provides immediate triage, showing which columns dominate the missing-data problem [21]. |

| Missingness Matrix | A pixel-based view where each row is a record and each column is a variable; white pixels indicate missing values. | Reveals if missingness is clustered in specific records (horizontal bands) or variables (vertical bands), hinting at systematic issues [21]. |

| Heatmap of Missingness Correlation | Pair-wise correlations between the "is missing" indicators of different variables. | Identifies groups of variables that tend to be missing together (e.g., all basement-related features in a housing dataset) [21]. |

| UpSet Plot | The frequency of specific combinations of missing columns. | Goes beyond pairs to show exact sets of variables that are missing together in the same rows, confirming blocks of missingness [21]. |

Q4: What quantitative metrics can I use to assess how connected my missing data is?

Beyond visuals, statistics like influx and outflux coefficients provide quantitative measures of how each variable is connected to the observed and missing data, informing predictor selection for imputation [23].

- Influx Coefficient (I~j~): Measures how well the missing entries in a variable are connected to the observed data in other variables. A higher influx means the variable is better connected to the observed data and might be easier to impute accurately [23].

- Outflux Coefficient (O~j~): Measures the potential usefulness of a variable for imputing other variables. A higher outflux indicates that the variable is observed in many records where other variables are missing, making it a good candidate predictor [23].

Troubleshooting Guides

Problem: My multimodal model's performance drops severely when one or more modalities (e.g., text, audio) are missing at test time.

Background

Multimodal learning methods often use a multi-branch design that becomes reliant on having a complete set of modalities, leading to significant performance deterioration during inference if a modality is missing [11] [1].

Diagnosis

This is a classic symptom of a model architecture that is not robust to missing modalities. The model's design assumes concurrent presence of all modalities for training and has not learned to adapt when this assumption is violated [1].

Solution Protocols

Several modern frameworks have been proposed to create models that are inherently more robust to missing modalities.

Apply a Unification and Alignment Framework (e.g., Chameleon)

- Core Idea: Encode all input modalities (both visual and non-visual) into a common visual representation space. This allows a single visual network to process any combination of available modalities [1].

- Methodology:

- Encoding: Transform non-visual modalities (e.g., text, audio) into a visual format. This is often done by extracting modality-specific embeddings and reshaping them into a 2D image-like structure [1].

- Training: Train a standard visual network (e.g., CNN, Vision Transformer) on these unified representations.

- Inference: The model can now accept any subset of modalities, as each is processed through the same common interface.

Implement a Dynamic Recognition and Enhancement Framework (e.g., DREAM)

- Core Idea: Use a sample-level dynamic modality assessment mechanism to identify missing or underperforming modalities and direct their selective reconstruction. Then, use a soft masking fusion strategy to adaptively integrate modalities based on their estimated contributions [11].

- Methodology:

- Assessment: A lightweight network component evaluates the presence and quality of each input modality for every sample.

- Reconstruction: Missing or low-quality modalities are reconstructed based on the available, reliable modalities.

- Fusion: A fusion network incorporates the original and reconstructed modalities using soft masks that weight each modality's contribution, leading to more robust predictions [11].

Utilize Learnable Client-Side Embeddings (e.g., for Federated Learning)

- Core Idea: In federated learning where data missingness patterns can vary greatly across clients, use learnable client-side embedding controls to reconfigure a global model to align with each client's local data context [7].

- Methodology:

- Each client learns a set of embeddings that encodes its unique data-missing pattern.

- These embeddings act as signals to adjust the globally aggregated model, aligning it with the client's specific available modalities.

- Embeddings from clients with similar missingness patterns can be aggregated to create more robust reconfiguration signals [7].

The workflow below illustrates how these solutions integrate into a robust multimodal learning pipeline.

Problem: I need to handle missing confounder data in a real-world evidence study (e.g., using EHR data), and I'm unsure how to choose an analysis method.

Background

Real-world data, like Electronic Health Records (EHR), frequently contain missing confounding variables (e.g., lab values, BMI). Simply using Complete Case Analysis is common but often inappropriate, as it assumes MCAR and can lead to biased results [22].

Diagnosis

The choice of analysis method should be informed by a systematic investigation of the missing data pattern and its likely mechanism, rather than defaulting to the simplest approach [22].

Solution Protocol: Apply a Structured Missing Data Investigation (SMDI) Toolkit

This protocol is based on a real-world pharmacoepidemiology study that used the SMDI R package to handle missing HbA1c and BMI data in an EHR-Medicare linked dataset [22].

Table 4: Protocol for Handling Missing Confounders using the SMDI Toolkit

| Step | Action | Details from Case Study [22] |

|---|---|---|

| 1. Characterize | Use descriptive functions to visualize missingness proportions and patterns. | The study noted high missingness for key confounders: HbA1c (63.6%) and BMI (16.5%). |

| 2. Diagnose | Run diagnostic tests to understand the missingness mechanism. | Tests compared patient characteristics and outcomes between those with and without observed values. They assessed if missingness could be predicted from observed data and if it was differential with respect to the outcome. |

| 3. Decide | Based on diagnostics, select a missingness mitigation approach. | The study found evidence that missingness could be described using observed data (suggestive of MAR). This justified the use of Multiple Imputation by Chained Equations (MICE) using random forests. |

| 4. Implement & Validate | Execute the chosen method and check its impact. | The use of multiple imputation resulted in effect estimates that showed improved alignment with previous clinical studies, validating the approach. |

Problem: The traditional MCAR/MAR/MNAR classification feels insufficient for guiding my analysis of a complex dataset with multiple missing variables.

Background

You are correct. With multiple incomplete variables, the plausibility of the MAR assumption is difficult to assess and is more stringent than often appreciated. Furthermore, this classification does not provide a direct guide to the best analytical method, as MAR/MCAR are not always necessary conditions for consistent estimation with methods like Complete Records Analysis [20].

Diagnosis

You are dealing with multivariable missingness, and a more nuanced approach is needed to determine if your target estimand (the parameter you want to estimate) can be reliably recovered from the incomplete data.

Solution Protocol: A Recoverability-Focused Approach using m-DAGs

This modern approach uses causal diagrams to map assumptions and determine if your target estimand is "recoverable" [24] [20].

- Define the Estimand: Precisely specify the population parameter you wish to estimate and how you would estimate it with complete data [20].

- Draw a Missingness DAG (m-DAG): Create a Directed Acyclic Graph that includes:

- All analysis variables (exposure, outcome, confounders).

- Missingness indicators (e.g., R~Y~, R~X~) for each incomplete variable.

- Arrows showing assumed causal relationships, including common causes of variables and their missingness [20].

- Determine Recoverability: Use graphical rules and theoretical results to determine if the estimand can be consistently estimated from the observed data patterns without external information. If it is recoverable, a method like Multiple Imputation or a carefully conducted Complete Records Analysis may be valid. If not, a sensitivity analysis is required [24] [20].

The Scientist's Toolkit

Table 5: Key Research Reagents and Solutions for Missing Data Research

| Tool / Reagent | Type | Primary Function | Example Use Case |

|---|---|---|---|

| SMDI Toolkit | R Package | Provides an integrated interface to characterize missing data patterns and conduct diagnostic tests for identifying missingness mechanisms [22]. | Informing the choice between complete-case analysis or multiple imputation in observational studies [22]. |

mice R Package |

R Package | A comprehensive library for performing Multiple Imputation by Chained Equations (MICE), a robust method for handling missing data under the MAR assumption [23]. | Imputing missing confounders like HbA1c and BMI in clinical datasets to reduce bias in treatment effect estimates [22] [23]. |

missingno Python Library |

Python Library | Provides a suite of visualizations (matrix, heatmap, dendrogram) to quickly diagnose and explore the patterns of missingness in a dataset [21]. | Initial exploratory data analysis to identify blocks of variables that are missing together (e.g., all basement-related features in a housing dataset) [21]. |

| Chameleon Framework | Deep Learning Framework | A multimodal learning framework that unifies different modalities into a common visual representation, making the model robust to missing modalities during inference [1]. | Building a classifier for hateful memes that still works if the text or image component is unavailable at test time [1]. |

| DREAM Framework | Deep Learning Framework | Employs dynamic modality assessment and selective reconstruction to handle both missing and imbalanced modalities in multimodal learning [11]. | Creating a robust multimodal sentiment analysis model that can function even when audio data is corrupted or missing from input samples [11]. |

Frequently Asked Questions

Q1: Why does my multimodal model's performance degrade significantly with missing modalities? Multimodal models often rely on a complete set of modalities to make accurate predictions. This dependency arises from the fundamental multi-branch design used in many architectures, where each modality is processed by a dedicated branch. When one branch receives no input, the entire model's performance deteriorates because it was trained expecting complementary information from all modalities. Studies have shown that baseline models can experience significant performance drops; for instance, the ViLT transformer demonstrated notable degradation when the textual modality was missing during testing [1].

Q2: What is the difference between "block-wise" and "random-wise" missing data, and why does it matter? The pattern of missing data significantly impacts the effectiveness of mitigation strategies. Block-wise missingness occurs when an entire modality (and all its associated features) is absent for a given sample, which is common in clinical datasets where a patient might miss an entire MRI scan. In contrast, random-wise missingness refers to the absence of random, individual features across different modalities. Research indicates that sophisticated imputation techniques, which may work well with random-wise missing data, often show shortcomings when confronted with the more challenging block-wise missing pattern commonly found in real-world multimodal datasets [25].

Q3: How can I improve my model's robustness to missing modalities during training? A highly effective strategy is to explicitly train your model with incomplete data. This can be achieved by:

- Artificial Masking: Intentionally dropping one or more modalities during training, forcing the model to learn from whatever data is available [26].

- Multi-task Optimization: Framing the problem to jointly learn from both complete and incomplete data samples [1].

- Specialized Architectures: Using frameworks like Chameleon, which unifies all modalities into a common visual representation. This allows a single model to process any combination of inputs, making it inherently robust to missing modalities without requiring modality-specific branches [1].

Q4: My dataset has very few full-modality samples. Are there solutions for this "low-data regime"? Yes, this is a common and practical challenge. Recent research has explored using retrieval-augmented in-context learning (ICL) to address this. This method leverages a small set of available full-modality data points as reference "context." When making a prediction for a new sample with missing data, the model retrieves the most relevant full-modality examples from this set and uses them to inform its decision. This data-dependent approach has been shown to enhance performance in low-data regimes, outperforming baselines by up to 10.8% when only 1% of the training data was available [6].

Q5: Are some machine learning algorithms inherently better at handling missing data? Yes. Tree-based ensemble methods, particularly Gradient Boosting (GB), have a built-in capability to handle missing values without requiring a separate imputation step. Empirical evaluations on clinical datasets have shown that GB performance is highly resilient to missing values compared to algorithms like Support Vector Machines (SVM) or Random Forests (RF), which require the data to be complete or pre-processed with imputation [25].

Quantitative Data on Performance Impact

The tables below summarize documented performance drops and recoveries across various applications and methods, providing a concrete basis for impact assessment.

Table 1: Performance Degradation with Missing Modalities

| Application Domain | Model / Framework | Test Condition | Performance Metric | Result | Citation |

|---|---|---|---|---|---|

| General Multimodal Classification | ViLT (Baseline) | Text Modality Missing | Accuracy | Significant performance drop | [1] |

| Alzheimer's Disease (AD) Classification | Standard Classifiers (SVM, RF) | High % of missing data points | Classification Accuracy | Reduced accuracy, requires imputation | [25] |

Table 2: Performance Recovery with Robust Methods

| Application Domain | Robust Method / Framework | Key Technique | Performance Gain | Citation |

|---|---|---|---|---|

| Alzheimer's Disease (AD) Classification | Full Information LICA (FI-LICA) | Leverages all available data to recover missing latent info | Showcased better classification of MCI-to-AD transition | [27] |

| Low-Data Regime Multimodal Tasks | In-Context Learning with Cross-Attention (ICL-CA) | Retrieval-augmented in-context learning | Outperformed best baseline by up to 10.8% with only 1% training data | [6] |

| Benchmark Multimodal Datasets | DREAM Framework | Dynamic modality assessment & soft masking fusion | Outperformed state-of-the-art models on three benchmarks | [11] |

| Textual-Visual & Audio-Visual Tasks | Chameleon Framework | Unifies modalities into a common visual space | Outperformed SOTA on complete data & superior robustness | [1] |

Detailed Experimental Protocols

To ensure reproducible results in robustness research, follow these structured protocols for key experiments.

Protocol 1: Evaluating Robustness to Artificially Induced Missingness

This protocol tests a model's resilience when modalities are systematically dropped during testing.

- Dataset Preparation: Select a benchmark dataset with complete modalities (e.g., Hateful Memes for text-image, avMNIST for audio-image).

- Baseline Training: Train your multimodal model on the complete training set.

- Test Set Creation: From the complete test set, create multiple test subsets:

- Subset 1: All modalities present.

- Subset 2: Modality A missing (e.g., all text set to zero or a placeholder).

- Subset 3: Modality B missing (e.g., all images set to black).

- Evaluation: Run inference on all subsets and record performance metrics (e.g., accuracy, F1-score) for each.

- Analysis: Quantify the performance drop for each missing-modality scenario compared to the full-modality test subset.

Protocol 2: Training with the DREAM Framework

This protocol outlines how to implement the DREAM framework, which dynamically handles missing and imbalanced modalities [11].

- Dynamic Modality Assessment:

- For each input sample, pass the available modalities through their respective encoders.

- Use a lightweight, sample-level assessment network to analyze the encoded representations. This network outputs a contribution score for each modality, estimating its reliability and importance for the current task and sample.

- Selective Modality Reconstruction:

- If a modality is entirely missing or its contribution score is below a threshold, trigger a reconstruction module.

- The reconstruction module uses available modalities (via cross-modal attention or generative models) to synthesize a plausible representation for the missing modality.

- Soft Masking Fusion:

- Instead of simply concatenating all modality representations, use the contribution scores from Step 1 to create a soft mask.

- This mask adaptively weights each modality's feature vector before fusion, allowing the model to rely more on present or reliable modalities and less on missing or noisy ones.

- Joint Training: Train the encoders, assessment network, reconstruction module, and classifier end-to-end using a combined loss function (e.g., task loss like cross-entropy plus possible auxiliary losses for reconstruction).

Protocol 3: Implementing the Chameleon Framework

This protocol describes how to use the Chameleon framework, which converts all modalities into a unified visual format for inherent robustness [1].

- Modality Unification via Encoding:

- Visual Modality: Use the image as is.

- Non-Visual Modality (Text/Audio): First, extract feature embeddings from the raw data (e.g., using BERT for text, a pre-trained audio network for audio). Then, reshape the resulting 1D embedding vector

T ∈ R^dinto a 2D gridÎ ∈ R^(h×w)that resembles an image, whereh * w ≈ d.

- Model Architecture:

- Use a single visual backbone network (e.g., a Vision Transformer or CNN) as the core processor.

- Feed both the native visual data and the encoded non-visual "images" through this same network. This eliminates the need for modality-specific branches.

- Training:

- Train the model on a mix of data samples: some with both modalities, some with only the original image, and some with only the encoded non-visual "image."

- Inference:

- During testing, the model can accept any combination of modalities. If a modality is missing, its encoded version is simply omitted, and the single backbone processes whatever is available.

Visual Workflows for Robust Multimodal Learning

The following diagrams illustrate the logical flow and architecture of key robustness-enhancing methods.

Diagram: DREAM Framework Workflow

Title: Dynamic Modality Assessment and Fusion in DREAM

Diagram: Chameleon's Unification Approach

Title: Modality Unification in Chameleon Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Materials for Robust Multimodal Research

| Research Reagent | Function & Explanation | Example Use Case |

|---|---|---|

| Gradient Boosting (GB) Models | A tree-based ensemble algorithm with inherent missing data handling. It learns to split on available data points, avoiding the need for explicit imputation during model training. | Direct classification on multimodal clinical datasets (e.g., ADNI) with missing block-wise features [25]. |

| Multiple Imputation by Chained Equations (MICE) | A statistical technique that creates multiple plausible versions of the complete dataset by imputing missing values based on the distributions of observed data. Reduces bias compared to single imputation. | Preparing incomplete clinical datasets for use with classifiers that require complete data, such as SVM or RF [28]. |

| Linked Independent Component Analysis (LICA) | A multimodal fusion technique that identifies hidden, independent components shared across different data types. | Integrating MRI, PET, and cognitive scores to identify latent factors associated with Alzheimer's disease progression [27]. |

| Modality-Specific Encoders | Separate neural network branches, each designed to process one specific type of data (e.g., CNN for images, Transformer for text). This modularity allows the system to function even if one encoder's input is missing. | Building a flexible multimodal architecture where the image encoder can still process inputs if the text stream is unavailable [26]. |

| Cross-Attention Mechanisms | Allows representations from one modality to directly attend to, and influence, representations of another. This enables the model to use information from an available modality to "explain" or "compensate for" a missing one. | Within the DREAM framework, used for reconstructing features of a missing modality based on available ones [11]. |

| Soft Masking / Gating | A fusion technique that dynamically weights the contribution of each modality's feature vector before combining them. Weights can be based on the estimated reliability or presence of the modality. | Adaptively reducing the influence of a noisy or missing modality and increasing the reliance on a clean, available one during prediction [11]. |

Foundational Concepts in Multimodal Robustness

Q: What does "robustness" mean in the context of multimodal learning? A: Robustness refers to a model's ability to maintain high performance even when input data is imperfect. A key challenge is handling missing modalities, where one or more data types (e.g., text, audio) are absent during training or testing. Traditional multi-branch networks often fail in this scenario, but newer approaches aim to create architectures that are resilient to such incomplete data [1].

Q: Why is handling missing data so critical for real-world applications? A: In real-world scenarios, data acquisition pipelines can fail, or certain data types may not always be available. For example, a social media post might contain only an image without descriptive text. If a model is only trained on complete data (image + text), its performance will significantly deteriorate when faced with this missing modality, limiting its practical utility [29] [1].

Troubleshooting Common Experimental Challenges

Q: My model's performance drops drastically when a modality is missing at test time. What is the root cause? A: This is a classic symptom of a model architecture that has developed a dependency on a complete set of modalities. This is often attributed to the commonly used multi-branch design with modality-specific components. During training, the model relies on all branches being active, so it fails to make reliable predictions when one branch is unavailable [1].

Q: What are some strategic solutions to improve robustness against missing modalities? A: Research points to several promising architectural strategies:

- Unified Representation Learning: Encode all modalities into a common feature space. The Chameleon framework, for instance, transforms non-visual modalities like text and audio into a visual format, allowing a single visual network (e.g., a CNN or Vision Transformer) to process any combination of inputs, making it inherently robust to missing modalities [1].

- Cross-Modal Transfer Learning: Train your model using techniques that allow knowledge from one modality to compensate for gaps in another. This can involve designing loss functions that encourage the model to learn shared representations across modalities [29].

- Data-Centric Fusion Strategies: Incorporate modal-incomplete data directly into your training procedure. This explicitly teaches the model how to handle various missing data scenarios, though it can be cumbersome to optimize [1].

Q: How can I effectively fuse information from different modalities? A: Multimodal fusion is challenging due to the heterogeneous nature of the data. Key considerations include [30]:

- Fusion Type: Choose between joint representation (projecting all modalities into one common space, best when all modalities are usually present) and coordinated representation (projecting each modality into its own space but enforcing similarity constraints, better for when modalities are often missing).

- Handling Heterogeneity: Different modalities have different noise levels, temporal alignment issues, and generalization rates. Your fusion strategy, such as using specific neural network architectures, must account for this.

Q: My model trains well but does not generalize. What might be happening? A: Multimodal models are particularly prone to overfitting. This can occur because different modalities learn at different rates, so a joint training strategy may not be optimal for all. Furthermore, if the training data does not adequately represent the noise and variability (like missing modalities) present in real-world data, the model will not generalize well [30].

Experimental Protocols for Robust Multimodal Learning

The following table summarizes a key robust learning methodology, the Chameleon framework, as presented in a 2025 study [1].

| Protocol Component | Description |

|---|---|

| Core Idea | A framework that adapts a common-space visual learning network to align all input modalities, making it robust to missing modalities. |

| Key Innovation | Unification of input modalities into a single visual format by encoding non-visual modalities (text, audio) into visual representations. |

| Encoding Scheme | 1. Extract modality-specific embeddings (e.g., using a pre-trained model for text or audio). 2. Reshape the embedding vector into a 2D image-like format (e.g., a square matrix). 3. Feed this generated "image" into a visual network. |

| Proposed Architecture | A single visual network (e.g., Convolutional Neural Network or Vision Transformer) that processes both genuine images and encoded non-visual "images," using shared weights. |

| Evaluation Datasets | Textual-Visual: Hateful Memes, UPMC Food-101, MM-IMDb, Ferramenta. Audio-Visual: avMNIST, VoxCeleb. |

| Reported Outcome | Achieved superior performance with complete modalities and demonstrated notable resilience when modalities were missing during testing, outperforming baseline methods like ViLT. |

The workflow for this methodology can be visualized as follows:

Chameleon Framework Workflow: Transforming non-visual modalities into a common visual space for processing by a single, robust visual network.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists essential computational "reagents" and their functions for building robust multimodal models.

| Research Reagent | Function / Explanation |

|---|---|

| Modality Embedding Models | Pre-trained models (e.g., BERT for text, VGGish for audio) that convert raw modality data into a dense vector representation (embeddings), which is essential for creating a common input format [1]. |

| Vision Transformer (ViT) | A visual network architecture that leverages self-attention mechanisms. It is highly effective as a backbone for processing both images and encoded non-visual modalities in a unified framework [1]. |

| Convolutional Neural Network (CNN) | A standard neural network for visual processing. Can serve as a robust and efficient visual network backbone in multimodal frameworks, especially when computational resources are a constraint [1]. |

| Cross-Modal Loss Functions | Objective functions (e.g., contrastive loss) designed to minimize the distance between representations of the same concept from different modalities in a shared space, strengthening cross-modal connections [30]. |

| Benchmark Datasets with Missing-Modality Splits | Datasets like Hateful Memes or avMNIST that are specifically curated or split to evaluate model performance in the presence of missing modalities, providing a standard for benchmarking robustness [1]. |

Advanced Technical Frameworks for Missing Modality Robustness

The DREAM (Dynamic modality Recognition and Enhancement for Adaptive Multimodal fusion) framework is a novel approach designed to tackle two critical challenges in multimodal machine learning: modality missingness and modality imbalance [11]. These issues often significantly degrade the performance of multimodal models in real-world scenarios where complete data is rarely available. DREAM introduces a dynamic, sample-level adaptation mechanism that selectively reconstructs missing or underperforming modalities and employs a soft masking strategy to fuse modalities according to their estimated contributions, leading to more robust and accurate predictions [11].

This technical support guide provides researchers and drug development professionals with essential troubleshooting and methodological support for implementing DREAM within their experimental pipelines, particularly in contexts focused on improving robustness in multimodal learning with missing data.

Frequently Asked Questions & Troubleshooting

Q1: The performance of my multimodal model drops significantly when one sensor modality is missing during testing. How does DREAM address this?

A1: DREAM employs a dynamic modality assessment and reconstruction mechanism to handle missing modalities. Unlike traditional models that require full-modality data or explicit missing-modality annotations, DREAM uses a sample-level assessment to identify missing or underperforming modalities and triggers a selective reconstruction process [11]. Furthermore, its soft masking fusion strategy adaptively integrates the available modalities based on their estimated contribution to the task, which compensates for the missing information and maintains robust performance [11].

Q2: In my heterogeneous patient data, modalities are often imbalanced, where one data type (e.g., lab results) is much more predictive than others (e.g., patient images). How can I prevent the model from ignoring weaker modalities?

A2: This is a classic issue of modality imbalance. The DREAM framework's fusion strategy is specifically designed to counter this. Instead of using static fusion rules, it applies dynamic, adaptive weighting. The soft masking fusion strategy assigns importance weights to each modality in a sample-specific manner, ensuring that even "weaker" modalities contribute meaningfully to the final prediction when they contain relevant information [11].

Q3: When implementing the training workflow, what is a common pitfall that leads to unstable learning?

A3: A common pitfall is improper handling of the dynamic assessment mechanism. Ensure that the process for identifying missing or underperforming modalities is performed at the sample level, not the dataset level. The reconstruction and fusion steps must be conditioned on the output of this assessment for each individual data sample. Incorrect, batch-level application will fail to provide the necessary granularity for the framework to adapt effectively.

Q4: Are there any specific constraints on the type or number of modalities DREAM can support?

A4: The core innovation of DREAM is its flexibility. The framework is not limited to specific modalities. Its architecture relies on a dynamic assessment and a parameter-efficient adaptation that can be applied to a wide range of modality combinations and tasks [11] [5]. This makes it suitable for diverse applications, from integrating imaging, genomic, and clinical data in drug development to processing data from various IoT health sensors.

Experimental Protocols & Benchmarking

Core DREAM Workflow Protocol

The following diagram illustrates the primary data flow and adaptive integration process of the DREAM framework.

Implementation Steps: