Powering Discovery: Statistical Strategies for Rare Variant Analysis in Newborn Screening and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on managing statistical power in the analysis of rare genetic variants, with a specific focus on applications in...

Powering Discovery: Statistical Strategies for Rare Variant Analysis in Newborn Screening and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing statistical power in the analysis of rare genetic variants, with a specific focus on applications in newborn screening (NBS) and therapeutic development. It covers foundational concepts of rare variant association studies, explores advanced methodological frameworks including burden tests and variable selection, and offers practical strategies for optimizing power through study design, weighting schemes, and meta-analysis. The content also addresses critical validation techniques and comparative performance of statistical methods, synthesizing key takeaways to enhance the detection and interpretation of rare variant signals in biomedical research.

The Rare Variant Challenge: Foundational Concepts and Power Limitations in Genetic Analysis

Understanding the 'Missing Heritability' Problem and the Role of Rare Variants

Frequently Asked Questions (FAQs)

What is the "Missing Heritability" problem?

Missing heritability refers to the gap between the heritability of a trait or disease estimated from family-based studies (pedigree-based heritability, ( {h}{PED}^{2} )) and the heritability explained by common genetic variants identified through Genome-Wide Association Studies (GWAS) ( {h}{SNP}^{2} ) [1] [2]. While GWAS has successfully identified thousands of common variant associations, these often explain only a fraction of the total genetic influence. For instance, early GWAS for type 2 diabetes and Crohn's disease explained only ~11% and ~23% of heritability, respectively [3]. This gap suggests that other genetic factors, including rare variants, play a crucial role.

Why are rare variants believed to account for a portion of this missing heritability?

Rare variants (typically defined as those with a Minor Allele Frequency - MAF - of less than 1%) are strong candidates for explaining missing heritability for two main reasons:

- Evolutionary Pressure: Evolutionary theory predicts that deleterious alleles which negatively impact health or fitness are kept at low frequencies in the population through purifying selection [3] [4].

- Empirical Evidence: Recent large-scale sequencing studies have directly quantified this contribution. An analysis of whole-genome sequencing (WGS) data from 347,630 individuals in the UK Biobank found that rare variants (MAF < 1%) accounted for an average of 20% of the heritability for 34 complex traits, while common variants accounted for 68%. On average, WGS data captured 88% of the pedigree-based heritability [1].

What is the fundamental difference between analyzing common variants and rare variants?

The analysis strategies differ significantly due to allele frequencies and the number of variants involved.

Table: Comparison of Common Variant vs. Rare Variant Association Analysis

| Feature | Common Variant Analysis (CVAS) | Rare Variant Analysis (RVAS) |

|---|---|---|

| Target Variants | Common (MAF ≥ 1-5%) | Rare (MAF < 1-5%) |

| Primary Method | Single-variant tests | Aggregation (set-based) tests |

| Typical Array Design | GWAS chips | Exome chips, custom arrays |

| Ideal Technology | Genotyping arrays | Sequencing (WES, WGS) |

| Key Challenge | Multiple testing burden for millions of variants | Low statistical power for individual variants |

| Typical Output | Individual SNP associations | Gene- or region-based associations |

Because individual rare variants are too uncommon to test one-by-one with sufficient power, researchers aggregate them into sets, typically within a gene or functional region, and test for a collective association with the trait [3] [4] [2].

My rare variant association study is underpowered. What are the primary factors affecting power?

Statistical power in RVAS is the probability of detecting a true association. The key factors are interconnected [5]:

- Sample Size (N): Larger sample sizes are crucial for detecting rare variant effects. Well-powered RVAS for common diseases may require 25,000 cases or more [4].

- Effect Size: The strength of the association between the variant and the trait. Rare variants linked to Mendelian diseases often have large effects, whereas their contributions to complex traits are often more modest [6].

- Minor Allele Frequency (MAF): The rarer the variant, the more difficult it is to detect.

- Number and Proportion of Causal Variants: Within a tested gene, power is higher when a larger proportion of the aggregated variants are truly causal and have effects in the same direction [7].

- Significance Threshold (α): The stringent p-value threshold required to claim statistical significance after correcting for multiple tests across the genome.

Troubleshooting Guides

Problem: Inflated Type I Error (False Positives) in Case-Control Studies

- Issue: Your analysis produces statistically significant associations that are false, often due to unbalanced case-control ratios or population stratification.

- Solution: Employ methods that specifically account for case-control imbalance and relatedness. The Meta-SAIGE method uses a two-level saddlepoint approximation (SPA) to accurately estimate the null distribution and effectively control Type I error rates, even for low-prevalence binary traits [8].

Problem: Low Statistical Power in RVAS

- Issue: Failure to detect a true genetic association.

- Solutions:

- Increase Sample Size: Collaborate and perform meta-analyses. Combining summary statistics from multiple cohorts (e.g., using Meta-SAIGE) can boost power substantially, identifying associations not seen in individual studies [8].

- Optimize Study Design: Use extreme phenotype sampling. Selecting individuals from the very high and low ends of a trait distribution can enrich for rare, causal variants and increase power [6] [4].

- Refine Variant Filtering and Weighting:

- Use functional annotations (e.g., prioritize protein-truncating or predicted deleterious missense variants) to focus on variants more likely to be causal [4] [7].

- Apply variable selection methods (e.g., Lasso, Elastic Net) or "statistical annotation" to assign optimal weights to variants in the absence of high-quality functional data [9].

- Choose the Right Statistical Test: Select an aggregation test that matches the underlying genetic architecture.

- Use burden tests (e.g., CAST, weighted-sum) when you expect most rare variants in a gene to influence the trait in the same direction.

- Use variance-component tests (e.g., SKAT) when you expect a mixture of risk and protective variants, or only a small proportion of variants are causal.

- Use an omnibus test (e.g., SKAT-O) that combines burden and variance-component approaches for robustness [3] [2] [7].

Problem: Choosing Between a Single-Variant Test and an Aggregation Test

- Issue: Uncertainty about which testing strategy will yield more discoveries.

- Solution: The optimal choice depends on the genetic model. Aggregation tests are more powerful than single-variant tests when a moderate-to-high proportion (e.g., >20-30%) of the rare variants in a gene are causal and have similar effect directions. If only one or a very few variants in a region are causal, single-variant tests may be more powerful, especially with very large sample sizes [7]. When in doubt, run both and use methods that combine their evidence.

Experimental Protocols & Data

Protocol: Gene-Based Rare Variant Burden Test using Whole-Genome Sequencing Data

This protocol outlines a standard burden test workflow for a quantitative or binary trait.

- Step 1: Data Quality Control (QC) & Phasing

- Perform standard QC on WGS data: filter by call rate, Hardy-Weinberg equilibrium, etc.

- Phase haplotypes using tools like SHAPEIT or Eagle.

- Step 2: Variant Filtering and Set Definition

- Restrict analysis to autosomal variants.

- Define "rare" by a MAF threshold (e.g., MAF < 0.01 or 0.001).

- Group variants by gene boundaries based on a standard annotation (e.g., GENCODE).

- Step 3: Calculate Genetic Burden

- For each individual (i) and gene, calculate a burden score (Bi). A common approach is the weighted sum: (Bi = \sum{j=1}^{M} wj \cdot G{ij}) where (G{ij}) is the allele count (0,1,2) for individual (i) and variant (j), and (w_j) is a weight for variant (j) (e.g., based on MAF or functional prediction) [2] [9].

- Step 4: Association Testing

- Fit a regression model: (Yi = \alpha + \beta Bi + \gamma Xi + \epsiloni)

- (Yi) is the trait value.

- (Bi) is the burden score.

- (X_i) is a vector of covariates (e.g., age, sex, principal components).

- Test the null hypothesis that (\beta = 0).

- Step 5: Significance Testing and Multiple Test Correction

- Apply a genome-wide significance threshold (e.g., (p < 2.5 \times 10^{-6}) for ~20,000 genes) to account for multiple testing.

Quantitative Data on Rare Variant Heritability

Table: WGS-Based Heritability Partitioning for 34 Complex Traits (UK Biobank, N=347,630) [1]

| Variant Category | Sub-Category | Average Contribution to Heritability | Notes |

|---|---|---|---|

| All WGS Variants (MAF > 0.01%) | - | ~88% of ( {h}_{PED}^{2} ) | Gap with pedigree heritability nearly closed for 15 traits. |

| Common Variants (MAF ≥ 1%) | - | 68% of ( {h}_{WGS}^{2} ) | - |

| Rare Variants (MAF < 1%) | - | 20% of ( {h}_{WGS}^{2} ) | - |

| Rare Variants (MAF < 1%) | Coding Variants | 21% of rare-variant ( {h}_{WGS}^{2} ) | Confirms importance of non-coding genome. |

| Rare Variants (MAF < 1%) | Non-coding Variants | 79% of rare-variant ( {h}_{WGS}^{2} ) | Highlights need for WGS over WES. |

Visualizations

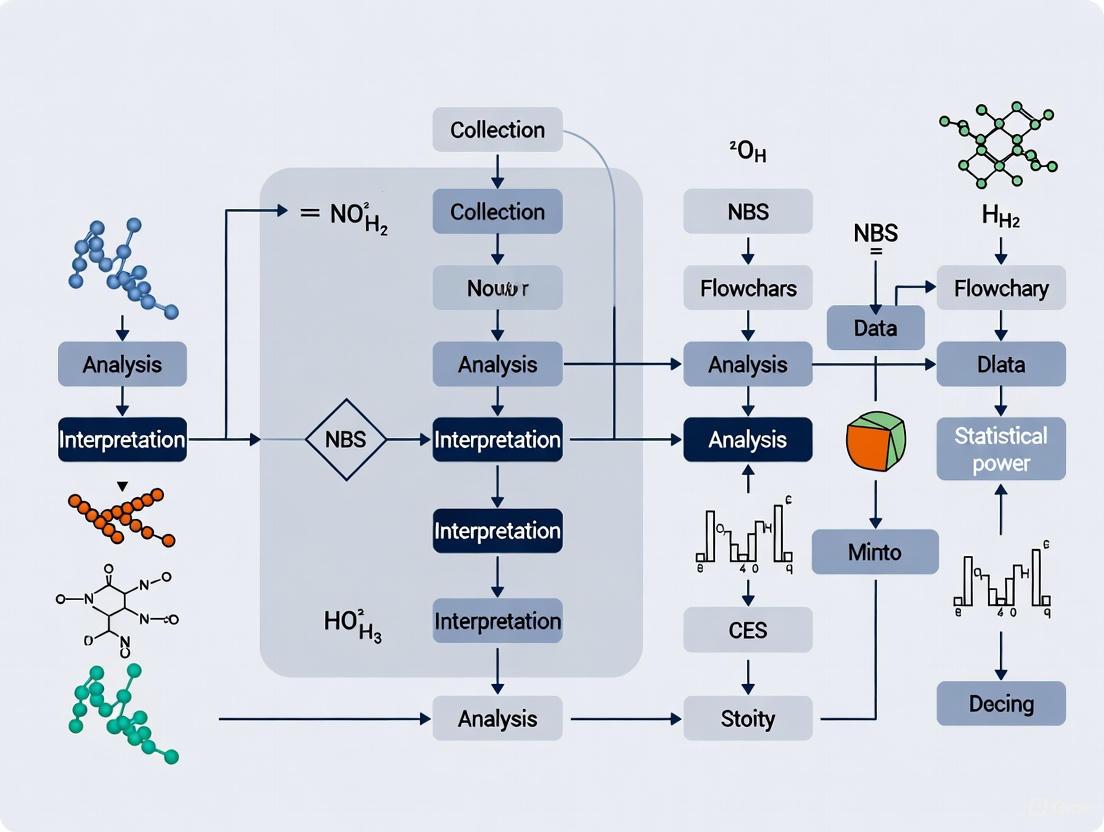

Rare Variant Association Study (RVAS) Workflow

Partitioning the Heritability Gap

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Rare Variant Research

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| Whole-Genome Sequencing (WGS) | Comprehensively identifies genetic variation across the entire genome, including non-coding regions. | Capturing the 79% of rare-variant heritability attributed to non-coding variants [1]. |

| Whole-Exome Sequencing (WES) | Identifies variants within the protein-coding regions (exons) of the genome. Cost-effective for focused analysis. | Initial studies of rare coding variation; accounts for ~21% of rare-variant heritability [1] [6]. |

| Exome Chip Array | Genotyping array designed to assay hundreds of thousands of known coding variants. Very low cost per sample. | Rapidly and cost-effectively genotyping previously identified coding variants in very large cohorts [6]. |

| SAIGE / SAIGE-GENE+ | Software for set-based rare variant association tests. Accounts for sample relatedness and case-control imbalance. | Conducting gene-based tests in biobank data with unbalanced case-control ratios [8]. |

| Meta-SAIGE | Scalable method for meta-analyzing rare variant association results from multiple cohorts. | Combining summary statistics from different biobanks to increase power and discover new associations [8]. |

| Functional Annotation Tools (e.g., SIFT, PolyPhen-2) | Bioinformatics tools that predict the functional impact of genetic variants (e.g., benign vs. deleterious). | Prioritizing damaging missense variants for inclusion in a burden test [3] [9]. |

Frequently Asked Questions

Q1: Why can't I trust low-frequency variants called by standard NGS methods? Standard Next-Generation Sequencing (NGS) technologies, like Illumina, have a background error rate of approximately 0.5% per nucleotide (VAF ~5 × 10⁻³) [10] [11]. This error rate is 50 to 500 times higher than the expected frequency of true, biologically relevant low-frequency mutations, which can range from an average of 10⁻⁷ to 10⁻⁵ per nt for a gene region, up to 10⁻⁶ to 10⁻⁴ per nt for mutation hotspots [10]. This means that without specialized methods, mutations reported with a Variant Allele Frequency (VAF) of 0.5% to 1% are often spurious sequencing artifacts [10].

Q2: What is the difference between Mutation Frequency (MF) and Variant Allele Frequency (VAF), and why does it matter? Precise terminology is critical for interpreting rare variant studies [10].

- Variant Allele Frequency (VAF) is the proportion of sequencing reads at a specific genomic position that contain a particular variant [10]. It is a direct measurement from the sequencing data but conflates independent mutation events with clonal expansions.

- Mutation Frequency (MF) should be used to describe the rate at which new, independent mutations occur. It is essential to distinguish between:

- MFminI: The minimum independent-mutation frequency, which counts each unique mutation only once.

- MFmaxI: The maximum independent-mutation frequency, which counts all observed mutations, including recurrences that may represent a single event that has clonally expanded [10]. Sequencing alone cannot distinguish between a site with a high rate of independent mutation and a site where a single mutation has expanded clonally [10].

Q3: My analysis involves testing millions of rare variants. How do I avoid being overwhelmed by false positives? When performing millions of statistical tests (e.g., across the genome), the probability of obtaining false positives (Type I errors) increases dramatically [12]. Standard significance thresholds (like p < 0.05) are no longer appropriate. You must use multiple testing corrections:

- Family-Wise Error Rate (FWER) controls the probability of at least one false positive. The Bonferroni correction is a common FWER method where the significance threshold α is divided by the number of tests performed (α/m). This is very stringent [13] [12].

- False Discovery Rate (FDR) controls the expected proportion of false discoveries among all significant tests. The Benjamini-Hochberg procedure is a popular method to estimate FDRs. This is less stringent than FWER and is often preferred for large-scale exploratory studies, as it helps identify a set of "candidate positives" for follow-up [13] [12].

Q4: What are the trade-offs between different rare-variant association study designs? Choosing a study design involves balancing cost, coverage, and accuracy [3].

| Design | Advantages | Disadvantages |

|---|---|---|

| High-depth WGS | Identifies nearly all variants with high confidence [3]. | Very expensive [3]. |

| Low-depth WGS | Cost-effective for large sample sizes [3]. | Higher genotyping error rates for rare variants; requires imputation; less power [3]. |

| Whole-Exome Sequencing | Less expensive than WGS; focuses on protein-coding regions [3]. | Limited to the exome [3]. |

| Exome Chip | Very cheap for genotyping known variants [3]. | Poor coverage for very rare or novel variants and in non-European populations [3]. |

Q5: Which statistical tests are robust for rare-variant association with binary traits in related samples? Analyzing binary traits with related samples is complex. Simulations have shown that [14]:

- Logistic regression with a Likelihood Ratio Test (LRT) applied to related samples was the only method evaluated that did not show inflated Type I error rates in both single-variant and gene-based tests.

- Firth logistic regression also performed well, with only minor inflation in certain gene-based tests under low prevalence conditions.

- Methods like SAIGE can be inflated for single-variant tests at lower prevalence unless a minor allele count filter (e.g., ≥5) is applied [14]. There is no single most powerful method across all scenarios; the choice depends on the specific test and data structure [14].

Experimental Protocols & Methodologies

Protocol for Ultrasensitive Mutation Detection Using Duplex Sequencing

Objective: To detect mutations with a frequency as low as 10⁻⁷ to 10⁻⁹ per base pair, far below the error rate of standard NGS [10].

Principle: This method sequences both strands of the original DNA duplex independently. A true mutation is only called when it is found in both strands, originating from the same original DNA molecule, thereby filtering out errors from PCR amplification or DNA damage on a single strand [10].

Workflow:

Key Reagent Solutions:

| Research Reagent | Function |

|---|---|

| Duplex Sequencing Adapters | Uniquely tags and barcodes each individual double-stranded DNA molecule before PCR amplification [10]. |

| High-Fidelity DNA Polymerase | Minimizes errors introduced during the PCR amplification step [10]. |

| Bioinformatic Pipeline (e.g., DuplexSeq) | Software to group sequenced reads by their original molecule, build single-strand consensus sequences (SSCS), and then compare SSCS to form a duplex consensus sequence (DCS) for variant calling [10]. |

Protocol for Gene-Based Rare-Variant Association Testing

Objective: To increase statistical power for association studies by aggregating the effects of multiple rare variants within a functional unit (e.g., a gene).

Principle: Instead of testing each variant individually (which requires severe multiple testing corrections and has low power), groups of rare variants are tested collectively for an association with a phenotype [3] [14].

Workflow:

Key Reagent Solutions:

| Research Reagent | Function |

|---|---|

| Variant Annotation Databases (e.g., dbSNP, ClinVar) | Provides information on known variants and their population frequency [3]. |

| Functional Prediction Tools (e.g., SIFT, PolyPhen-2) | Bioinformatic tools to predict the potential deleteriousness of coding variants (e.g., missense, nonsense) [3]. |

| Association Software (e.g., SAIGE, RVFam, seqMeta) | Statistical packages that implement burden tests, variance-component tests (like SKAT), and omnibus tests for rare-variant association in both unrelated and related samples [14]. |

Protocol for Multiple Testing Correction in Genome-Wide Analyses

Objective: To control the rate of false positive findings when testing hundreds of thousands to millions of genetic variants.

Principle: Adjust the significance threshold to account for the number of hypotheses tested. The choice of method depends on the goal of the study: strict control of any false positives (FWER) or a more exploratory approach that tolerates some false positives but controls their proportion (FDR) [13] [12].

Decision Workflow:

Key Reagent Solutions:

| Research Reagent | Function |

|---|---|

| Statistical Software (e.g., R, Python) | Provides built-in functions and packages (e.g., p.adjust in R) to perform Bonferroni, Benjamini-Hochberg, and other multiple testing corrections [13]. |

| Genomic Relationship Matrix (GRM) | A matrix used in mixed models to account for population stratification and relatedness among samples, which is a source of confounding that can exacerbate multiple testing problems [14]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why is statistical power especially challenging in rare variant research? Statistical power is the probability that a test will detect a true effect, and in rare variant studies, it is challenging due to the very low frequency of the variants of interest [15]. Standard statistical methods have low power for low minor allele frequency (MAF) SNPs unless the effect size is very large [15] [16]. Because individual rare variants are so uncommon, it is statistically difficult to identify the effect of a single variant, which necessitates specialized methods like collapsing or burden tests that group multiple variants together to boost power [15] [16] [17].

FAQ 2: What are the key factors I need to consider for a sample size calculation? Calculating an appropriate sample size requires balancing several factors to achieve both scientific validity and practical feasibility. The key parameters are:

- Statistical Power (1-β): The probability of rejecting a false null hypothesis. A power of 80% or 90% is commonly targeted [18] [19].

- Significance Level (α): The probability of a Type I error (false positive). This is typically set at 0.05 or lower [19].

- Effect Size: The magnitude of the difference or relationship you expect to find. A smaller, more subtle effect requires a larger sample size to detect [20] [19].

- Baseline Rate or Variance: For binary outcomes, the underlying rate in the control group influences sample size, with rates near 50% often requiring fewer subjects. For continuous outcomes, the variance of the measurement is a key factor [20] [19].

FAQ 3: My sample size is limited. How can I increase my study's power? When sample size is constrained, you can increase statistical power by modifying your experimental protocol and analysis strategy.

- Optimize the Experimental Design: You can reduce the probability of succeeding by chance (the chance level) or increase the number of trials or measurements per subject [21].

- Adjust Statistical Parameters: Consider raising your Minimum Detectable Effect (MDE) to target larger, more easily detectable effects. In some circumstances, using statistical analyses suited for discrete values can also help [21] [20].

- Utilize Family-Based Designs: Studying large families can be a powerful alternative. Rare variants can be enriched in extended pedigrees, and the segregation of a variant with a phenotype within a family provides strong evidence [15] [22].

FAQ 4: What are collapsing methods for rare variant analysis? Collapsing methods, also known as burden tests, are statistical approaches that overcome the power problem by pooling or grouping multiple rare variants within a defined genetic region (like a gene or pathway) for analysis [15] [16]. The two fundamental coding approaches are:

- Indicator Coding: Creates a dichotomous variable indicating the presence or absence of any rare variant within the region for a subject [15].

- Proportion Coding: Counts the number of rare variants a subject carries across all sites in the region [15].

These methods often incorporate weighting schemes where variants are up- or down-weighted based on their frequency, with the idea that rarer variants might have larger effects [15] [17].

FAQ 5: What is the "winner's curse" and how does it affect rare variant research? The winner's curse refers to the phenomenon where the estimated effect size of a genetic variant is biased upward (overestimated) when it is discovered in a study with limited statistical power or sample size [17]. This occurs because hypothesis testing and effect estimation are performed on the same data, and a variant is more likely to pass the significance threshold if its effect is overestimated in that particular sample [17]. In rare variant analyses that pool multiple variants, this upward bias can compete with a downward bias caused by including non-causal variants or variants with opposing effect directions in the same test [17].

Troubleshooting Guides

Problem: Low Statistical Power for Detecting Rare Variant Associations

Potential Causes and Solutions:

- Cause: Inadequate sample size.

- Cause: Using a standard single-variant analysis method.

- Cause: High variant heterogeneity or effects in opposite directions.

- Solution: If variants within a gene have both risk and protective effects, a pure burden test may lose power. Consider using a quadratic test like SKAT or a hybrid test like SKAT-O, which are more robust to bidirectional effects [17].

Problem: Inflated False-Positive Findings in Family-Based Studies

Potential Causes and Solutions:

- Cause: Founders in extended pedigrees are not genotyped.

- Solution: Standard methods can have inflated false-positive rates when founders are missing. Use methods specifically designed to handle this, such as the RareIBD approach, which accounts for missing founder genotypes and maintains controlled type I error [22].

- Cause: Population stratification.

- Solution: Rare variants can be unique to specific geoethnic groups. Genotype individuals on a sufficient number of additional markers to assess and control for population structure using standard stratification correction techniques [16].

Data Presentation

Table 1: Comparison of Rare Variant Collapsing and Weighting Strategies

| Method | Description | Key Feature | Best Suited For |

|---|---|---|---|

| Indicator Coding [15] | Creates a binary variable (carrier/non-carrier) for any rare variant in a region. | Simplicity; does not consider number of variants. | Initial screening where any rare variant is hypothesized to increase risk. |

| Proportion Coding [15] | Counts the total number of rare variants a subject has in a region. | Additive model; assumes each variant contributes equally. | Scenarios where a "dosage" effect of multiple variants is expected. |

| Frequency-Based Weighting (e.g., WSS) [15] | Weights each variant inversely proportional to its estimated frequency. | Up-weights rarer variants, which may have larger effects. | General use when rarer variants are presumed to have larger effect sizes. |

| Burden Test (Linear) [17] | Aggregates variants into a single score, often with weights. | High power when most variants are causal and have effects in the same direction. | Genes where all rare variants are predicted to be deleterious. |

| Variance Component Test (Quadratic) [17] | Assesses the distribution of variant-specific test statistics. | Robust to the presence of both risk and protective variants. | Genomic regions with suspected bidirectional effects. |

Table 2: Strategies to Enhance Power Under Sample Size Constraints

| Strategy | Mechanism | Example in Rare Variant Research |

|---|---|---|

| Increase Measurements per Subject [21] | Reduces outcome variance by averaging over repeated trials. | Using the average success rate from multiple behavioral trials in a mouse model rather than a single yes/no outcome. |

| Study Extreme Phenotypes [16] | Enriches the sample for genetic factors of large effect. | Sequencing individuals from the extreme ends of a biochemical trait distribution (e.g., very high vs. very low levels). |

| Utilize Family-Based Designs [15] [22] | Enriches rare variants and leverages segregation with phenotype. | Studying large extended pedigrees where a rare variant is segregating with a severe, early-onset disease. |

| Employ Advanced Sequencing [23] | Identifies variant types missed by standard approaches. | Using whole-genome or long-read sequencing to detect structural variants or repeat expansions after negative exome sequencing. |

Experimental Protocols & Workflows

Workflow 1: Statistical Analysis of Rare Variants via Collapsing Methods

Workflow 2: Leveraging Family-Based Designs for Rare Variant Discovery

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Research |

|---|---|

| High-Throughput Sequencer | Enables whole-exome or whole-genome sequencing to identify rare variants not captured by genotyping arrays [15] [23]. |

| Trios (Proband & Parents) | Allows segregation analysis to eliminate hundreds of non-causative variants, dramatically reducing the search space for causative mutations [23]. |

| Bioinformatics Pipelines (e.g., GATK) | Tools for processing raw sequencing data, including variant calling, genotyping, and quality control, which are essential for accurate rare variant identification [16]. |

| Variant Annotation Databases (e.g., PolyPhen-2, SIFT) | Used to predict the functional impact of missense variants (e.g., benign, deleterious), helping to prioritize variants for further analysis [15]. |

| Structural Variant Callers (e.g., GangSTR, Manta) | Specialized software to detect structural variants and short tandem repeats from sequencing data, which are often missed by exome sequencing [23]. |

| Gene-Based Test Software (e.g., SKAT, RareIBD) | Implements statistical methods for collapsing and testing groups of rare variants for association with phenotypes [22] [17]. |

Frequently Asked Questions (FAQs)

Q1: What are region-based and gene-based aggregation tests, and why are they crucial for rare variant research? Region-based and gene-based aggregation tests are statistical methods that analyze the collective effect of multiple genetic variants within a predefined genomic region (like a gene or pathway) rather than testing each variant individually [24] [3]. They are crucial for rare variant research because the power of single-variant tests is often limited for rare variants due to their low frequency [25] [3]. By aggregating signals from multiple rare variants, these tests can significantly increase the statistical power to detect associations with complex diseases [22] [7].

Q2: When should I use a burden test versus a variance-component test like SKAT? The choice depends on the underlying genetic architecture you expect, such as the proportion of causal variants and the direction of their effects.

- Burden Tests are more powerful when a large proportion of the rare variants in a region are causal and their effects on the trait are in the same direction (all deleterious or all protective) [26]. They work by collapsing variants into a single aggregate score.

- Variance-Component Tests (e.g., SKAT) are more robust and powerful when a small proportion of variants are causal or when the variants have effects in opposite directions (a mix of risk and protective alleles) [3] [26]. SKAT tests for the presence of any effect within the region without assuming a uniform direction.

Q3: What is an omnibus test, and when should I use it? Omnibus tests, such as SKAT-O, combine the advantages of burden and variance-component tests [26]. Since the true genetic model is usually unknown beforehand, SKAT-O provides a powerful and robust approach by adaptively weighting the burden and SKAT statistics, often achieving high power across a wide range of scenarios [27] [26].

Q4: How do I define a "region" for my analysis? A "region" can be defined in several ways, often based on biological or statistical considerations [24]:

- Gene-based: The most common approach, using gene boundaries from databases like NCBI or UCSC. Upstream and downstream regions can be included to cover regulatory elements.

- Pathway-based: Grouping genes by shared biological function or pathway using databases like KEGG or GO.

- Variant Function: Grouping variants with similar predicted functional impact (e.g., all protein-truncating variants).

- Statistically-defined: Using segments of constant copy number or clusters of significant SNPs identified by scan statistics.

Q5: My study has a small sample size. Are aggregation tests still useful? Yes, but the choice of test is critical. In small studies, methods like RareIBD, which leverage familial relationships, can be particularly powerful as they exploit the enrichment of rare variants within families [22]. For unrelated individuals, the power of aggregation tests is inherently limited by sample size, but combined tests like SKAT-O or newer ensemble methods like Excalibur are designed to be more robust across different sample sizes and genetic models [26].

Troubleshooting Guides

Issue 1: Low Statistical Power in Rare Variant Analysis

Problem: Your analysis fails to identify significant associations, potentially due to insufficient power.

Solutions:

- Consider Study Design: Family-based designs can be more powerful for rare variants as they are often enriched in extended pedigrees. The RareIBD method is designed for such scenarios [22].

- Optimize Variant Filtering and Weighting: Use functional annotations to prioritize likely causal variants. Apply appropriate weights (e.g., based on allele frequency or predicted functionality) to increase the signal from causal variants [27] [3].

- Choose the Right Statistical Test: If you suspect a mix of protective and risk variants, avoid pure burden tests. Use variance-component (SKAT) or omnibus tests (SKAT-O) [26]. For the highest robustness, consider ensemble methods that combine multiple tests [26].

- Increase Sample Size: If possible, consider meta-analysis by pooling data from multiple studies or using extreme-phenotype sampling to enrich for rare variants [3].

Issue 2: Handling Population Stratification and Relatedness

Problem: Population structure or relatedness among samples can lead to spurious associations.

Solutions:

- For Population Stratification: Use principal components (PCs) of genetic variation as covariates in your regression model. Software like Eigenstrat is designed for this purpose [24].

- For Related Samples: Use methods specifically designed for familial data. The famFLM package implements a functional linear model that incorporates a random polygenic effect to account for relatedness [28]. RareIBD also correctly handles extended pedigrees, even when founders are missing [22].

Issue 3: Managing Data Quality and Missing Genotypes

Problem: Missing genotypes or low-quality variant calls can introduce bias and reduce power.

Solutions:

- Pre-processing and Quality Control: Perform stringent QC. Standard filters include removing SNPs with low call rates (e.g., <97%), those deviating from Hardy-Weinberg equilibrium (HWE), and those with very low minor allele frequency (MAF) [24].

- Imputation: Use imputation tools like MACH or FastPhase to infer missing genotypes. This is also crucial for combining data from different genotyping platforms [24]. For rare variants, ensure your imputation approach is accurate for low-frequency sites.

Issue 4: Interpreting Significant Gene-Based Findings

Problem: You have a significant gene-based result, but you are unsure of the biological implication.

Solutions:

- Incorporate Functional Data: Integrate your findings with expression Quantitative Trait Locus (eQTL) data to see if the variants are associated with gene expression. Tools like AeQTL or methods like PrediXcan and TWAS can facilitate this [29] [27].

- Conduct Pathway Enrichment Analysis: Test if the significant genes are enriched in known biological pathways (e.g., using GO or KEGG). This can provide a higher-level biological context [24].

- Replicate Findings: Plan a replication study in an independent cohort to confirm the association. For rare variants, this often requires large sample sizes or collaborative efforts [3].

Experimental Protocols & Workflows

Protocol: A Standard Workflow for Gene-Based Aggregation Analysis

Variant Calling and Quality Control:

- Perform sequencing and initial variant calling.

- Apply quality filters: Remove variants with call rate < 97%, HWE p-value < 5.7x10^-7 in controls, and MAF < 0.01 (thresholds can be adjusted) [24].

- Check for sample contamination and relatedness.

Define Analysis Units:

- Map SNPs to genes using a database like NCBI or UCSC. A common approach is to include the gene body plus a defined flanking region (e.g., 5-20 kb upstream/downstream) to cover regulatory elements [24].

Bioinformatic Annotation:

- Annotate variants for functional impact (e.g., synonymous, missense, loss-of-function) using tools like ANNOVAR or SnpEff. This helps in creating functionally informed variant masks [3].

Imputation (if necessary):

- Use software like MACH or FastPhase to impute missing genotypes, especially when merging data from different studies or platforms [24].

Association Testing:

- Choose and run one or more aggregation tests (see Table 1). For an initial analysis, SKAT-O is a robust default choice. Covariates like age, sex, and principal components should be included to control for confounding.

Interpretation and Validation:

- Correct for multiple testing (e.g., using Bonferroni or False Discovery Rate).

- Interpret significant genes in the context of biological pathways and prior literature.

- Seek replication in an independent dataset.

Diagram Title: Gene-Based Aggregation Analysis Workflow

Data Presentation: Statistical Test Comparison

Table 1: Comparison of Key Aggregation Tests and Their Properties

| Test Name | Test Category | Key Assumption | Best Use Case Scenario | Software/Tool |

|---|---|---|---|---|

| Burden Test [3] | Burden | All variants are causal with effects in the same direction. | When you expect a high proportion of causal variants with uniform effect direction. | PLINK, SKAT R package |

| SKAT [26] | Variance-Component | Only a small proportion of variants are causal; effects can be bi-directional. | When you expect a mix of risk and protective variants, or a small fraction of causal variants. | SKAT R package |

| SKAT-O [26] | Omnibus | Adapts to the underlying genetic model, whether it favors burden or SKAT. | The recommended default when the true genetic model is unknown. | SKAT R package |

| RareIBD [22] | Family-Based | Only one founder carries the rare variant in a family. | Family-based studies with related individuals, especially with missing founder genotypes. | RareIBD |

| famFLM [28] | Functional Data Analysis | Genotypes in a region can be treated as a continuous stochastic function. | Family-based samples; powerful for quantitative traits in related individuals. | famFLM R function |

| Overall [27] | Summary Statistics | Combines information from multiple tests and eQTL weights using GWAS summary data. | When only GWAS summary statistics are available and you want to incorporate eQTL data. | Overall method (R) |

| Excalibur [26] | Ensemble Method | Combines 36 different aggregation tests to overcome individual test limitations. | For maximum robustness and the best average power across diverse genetic models. | Excalibur |

Table 2: Power Scenarios: Aggregation Tests vs. Single-Variant Tests Table based on simulations from [7] and [26]

| Scenario | Recommended Test Type | Rationale |

|---|---|---|

| High proportion of causal variants (>30%) with uniform effects | Burden Test | Aggregation is highly favorable; burden tests pool effects efficiently [7]. |

| Low proportion of causal variants (<20%) or mixed effect directions | SKAT or SKAT-O | Single-variant tests may outperform burden tests; variance-component tests are robust to these models [7] [26]. |

| Very large sample sizes (e.g., >50,000) | Both single-variant and aggregation tests | Single-variant tests can detect strong individual signals, while aggregation tests find genes with polygenic rare-variant contributions [7]. |

| Small sample sizes and unknown genetic model | SKAT-O or Excalibur | Omnibus and ensemble tests are designed to maintain robust performance across models without prior knowledge [26]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Data Resources for Aggregation Analysis

| Tool / Resource Name | Type | Primary Function | Reference |

|---|---|---|---|

| PLINK | Software Tool | Whole-genome association analysis; can perform basic burden tests and data management. | [24] |

| SKAT R Package | Software Tool | A comprehensive suite for running SKAT, SKAT-O, and various burden tests. | [27] [26] |

| MACH / Minimac | Software Tool | Software for genotype imputation to infer missing genotypes or combine datasets. | [24] |

| Eigenstrat | Software Tool | Detects and corrects for population stratification in genetic association studies. | [24] |

| AeQTL | Software Tool | Performs eQTL analysis on aggregated variants in user-specified regions. | [29] |

| sumFREGAT | Software Tool | R package for gene-based association tests using GWAS summary statistics. | [27] |

| GTEx Portal | Data Resource | Provides reference data on tissue-specific gene expression and eQTLs for functional interpretation. | [27] |

| ANNOVAR | Software Tool | Functional annotation of genetic variants from sequencing data. | [3] |

Diagram Title: Aggregation Test Selection Logic

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: How can we improve the statistical power of a study investigating rare genetic variants in newborn screening?

Statistical power in rare variant research is limited by low allele frequencies and small expected case numbers. To address this, consider the following strategies:

- Utilize Large, Diverse Cohorts: Collaborate with consortia or biobanks to access large sample sizes. A 2025 study screened 33,894 newborns to establish reliable carrier rates and disease prevalence, providing a model for achieving meaningful results [30].

- Employ Case-Enrichment Designs: Instead of population-wide screening initially, focus on "screen-positive" infants identified by traditional methods. A 2025 analysis used this approach, performing genome sequencing on 119 screen-positive cases to efficiently identify true positives and false positives [31].

- Implement Advanced Analytical Techniques: Integrate artificial intelligence and machine learning (AI/ML) with multi-omics data. One study demonstrated that a Random Forest classifier trained on metabolomic data achieved 100% sensitivity in identifying true positive cases, which can help validate genomic findings [31].

- Leverage Public Data and Cross-Study Harmonization: Consult resources like the International Consortium on Newborn Sequencing (ICoNS), which compares gene lists and inclusion criteria from 27 global research programs to inform your own gene selection and variant interpretation [32].

FAQ 2: What are the primary causes of false positive results in genomic newborn screening, and how can they be mitigated?

False positives in genomic newborn screening (gNBS) arise from several sources, and require a multi-faceted mitigation strategy.

- Carrier Status for Recessive Disorders: A significant source of false positives in biochemical assays is the infant's status as a heterozygous carrier for a condition. A 2025 study found that for VLCADD, half of the false-positive cases were, in fact, carriers of a single ACADVL variant, which caused elevated biomarker levels [31].

- Variants of Uncertain Significance (VUS) and Off-Target Findings: Interpreting variants with unknown clinical significance is a major challenge. Furthermore, gNBS can reveal "off-target" findings. For example, the Early Check program identified a pathogenic variant in the MITF gene associated with melanoma risk, which was not the primary target of the screening panel [33].

- Incomplete Penetrance: Many genetically identified infants do not show symptoms in infancy. The Early Check program found that most infants with screen-positive results were asymptomatic, making it difficult to confirm the clinical diagnosis early on [33].

Mitigation Strategies:

- Integrate Orthogonal Testing: Combine genome sequencing with expanded metabolite profiling or other functional assays to validate findings [31].

- Implement Robust Bioinformatic Filtering and AI: Use AI/ML models to differentiate true positives from false positives based on multi-parametric data [31].

- Develop Standardized Terminology and Actionable Panels: Focus screening on genes with high actionability and established treatments. The Early Check program used a panel of 169 high-actionability genes to maximize clinical utility [33]. Adopt standardized nomenclature to accurately characterize gNBS outcomes [33].

FAQ 3: Our NGS library yields are consistently low. What are the most common culprits and solutions?

Low library yield is a common technical hurdle that can derail sequencing projects. The root causes and solutions are summarized in the table below.

| Common Cause | Mechanism of Yield Loss | Corrective Action |

|---|---|---|

| Poor Input Quality | Enzyme inhibition from contaminants (salts, phenol, EDTA) or degraded nucleic acids. | Re-purify input sample; use fluorometric quantification (e.g., Qubit); check purity ratios (260/230 > 1.8) [34]. |

| Fragmentation Issues | Over- or under-fragmentation produces fragments outside the optimal size range for adapter ligation. | Optimize fragmentation parameters (time, energy); verify fragment size distribution pre- and post-fragmentation [34]. |

| Suboptimal Adapter Ligation | Poor ligase performance, incorrect adapter-to-insert molar ratio, or suboptimal reaction conditions. | Titrate adapter:insert ratios; ensure fresh ligase and buffer; maintain correct incubation temperature [34]. |

| Overly Aggressive Cleanup | Desired library fragments are accidentally removed during bead-based purification or size selection. | Optimize bead-to-sample ratios; avoid over-drying beads; use techniques like "waste plates" to prevent accidental discarding of samples [34]. |

Troubleshooting Guides

Guide 1: Troubleshooting Variant Interpretation in a Population Screening Context

Problem: A high number of Variants of Uncertain Significance (VUS) and findings in genes with incomplete penetrance are complicating the return of results and overwhelming genetic counseling resources.

Background: In the context of population screening of healthy newborns, variant interpretation must be more stringent than in a diagnostic setting for a sick child. The high frequency of VUS and the discovery of variants in individuals who may never develop symptoms (incomplete penetrance) are significant challenges [33].

Step-by-Step Solution:

- Filter Against Population Databases: Filter variants against large population frequency databases (e.g., gnomAD). Variants with a population frequency significantly higher than the expected disease prevalence are unlikely to be pathogenic [31].

- Apply Strict, Actionability-Focused Gene Panels: Do not screen for genes associated with adult-onset conditions or those without clear, actionable outcomes in childhood. Use a data-driven approach to select genes. A 2025 analysis of 27 NBSeq programs used a machine learning model to prioritize genes based on characteristics like actionability and availability of treatments, creating a ranked list for inclusion in panels [32].

- Require Strong Evidence for Reporting: In a screening context, consider reporting only pathogenic and likely pathogenic variants, and refrain from reporting VUS. The Early Check program established specific criteria for their gene-condition pairs to maximize clinical utility and minimize uncertainty [33].

- Implement a Multidisciplinary Review Board: Establish a dedicated team including clinical geneticists, genetic counselors, molecular pathologists, and bioinformaticians to review all positive findings before they are returned to families. This ensures consistent application of reporting rules [35].

Workflow for Interpreting gNBS Variants

Guide 2: Implementing an Integrated Sequencing and Metabolomics Workflow

Problem: Our newborn screening program faces high false-positive rates with traditional MS/MS, leading to parental anxiety and inefficient use of follow-up resources.

Background: Tandem mass spectrometry (MS/MS) is a powerful tool but can lack specificity. Second-tier testing can significantly improve accuracy. A 2025 study validated a workflow combining genome sequencing (GS) and targeted metabolomics with AI/ML to resolve screen-positive cases more effectively [31].

Step-by-Step Protocol:

A. Genome Sequencing Protocol: 1. DNA Extraction: Extract genomic DNA from a single 3-mm DBS punch using a magnetic bead-based system (e.g., KingFisher Apex with MagMax DNA Multi-Sample Ultra 2.0 kit) [31]. 2. Library Preparation: Shear 50 ng of DNA to ~300 bp fragments. Prepare sequencing libraries using a kit designed for low-input or cfDNA/FFPE-derived DNA (e.g., xGen cfDNA and FFPE DNA Library Prep Kit). Perform PCR amplification with custom dual-indexed primers [31]. 3. Sequencing: Sequence on a platform such as Illumina NovaSeq X Plus to achieve a minimum of 160 Gbp of data per sample with 151 bp paired-end reads [31]. 4. Bioinformatic Analysis: Align to a reference genome (GRCh37). Use GATK for variant calling. Annotate variants with ANNOVAR/Ensembl VEP. Filter to a pre-defined list of condition-related genes and apply frequency and pathogenicity filters per ACMG guidelines [31].

B. Targeted Metabolomics & AI/ML Protocol: 1. Metabolite Profiling: Perform targeted LC-MS/MS analysis on the DBS samples to quantify an expanded panel of metabolic analytes beyond the primary MS/MS panel [31]. 2. Classifier Training: Use a machine learning framework (e.g., Random Forest in R or Python) to train a classifier. Use the quantified metabolite levels as features and the confirmed clinical diagnosis (True Positive/False Positive) as the label [31]. 3. Integration and Resolution: Integrate the genomic and metabolomic results. * A case with two reportable variants in trans and a positive AI/ML metabolomic classification is a confirmed true positive. * A case with no variants and a negative AI/ML classification is a confirmed false positive. * A case with a single variant (carrier) and an intermediate metabolite level can be classified as a carrier, explaining the initial false positive [31].

Integrated Workflow for Resolving Screen-Positive NBS Cases

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and resources essential for conducting robust genomic newborn screening research.

| Item | Function in Research | Example/Specification |

|---|---|---|

| Dried Blood Spots (DBS) | The primary source material for DNA extraction in public health NBS programs. Using residual DBS allows for integration with existing infrastructure [31] [33]. | Residual punches from state NBS cards, collected on standardized filter paper. |

| Magnetic Bead DNA Extraction Kit | High-throughput, automated nucleic acid extraction from DBS punches, maximizing DNA yield and purity from a limited sample [31]. | KingFisher Apex system with MagMax DNA Multi-Sample Ultra 2.0 kit. |

| Low-Input DNA Library Prep Kit | Preparation of sequencing libraries from the low quantities of fragmented DNA typically obtained from DBS. Kits for cfDNA/FFPE DNA are often optimized for this [31]. | xGen cfDNA and FFPE DNA Library Prep Kit (IDT). |

| Custom Targeted Sequencing Panel | A curated set of genes associated with early-onset, actionable disorders, allowing for focused analysis and reduced incidental findings [30] [32]. | Panel of 465 genes for early-onset monogenic disorders [30]. |

| Bioinformatic Pipelines & Guidelines | Standardized workflows for variant calling, annotation, and interpretation ensure consistency and reproducibility across studies and clinical programs [31] [35]. | GATK for variant calling; ANNOVAR for annotation; ACMG/AMP guidelines for variant classification [31]. |

| Reference Materials | Essential for analytical validation of the NGS test, ensuring variant calling accuracy and assay performance [35]. | Commercially available genomic DNA controls with known variants in relevant genes. |

Methodological Arsenal: Statistical Frameworks for Rare Variant Association Testing

Frequently Asked Questions

What is the fundamental principle behind phenotype-independent weighting? Phenotype-independent weighting assigns weights to genetic variants based solely on their frequency and/or predicted functional impact, without using any information from the trait or phenotype being studied. The core principle is that variants are up-weighted or down-weighted based on the assumption that rarer, more deleterious variants are more likely to have a larger biological effect [15].

When should I choose the Madsen-Browning WSS over a simple count method? The Madsen-Browning Weighted Sum Statistic (WSS) is often preferable when you have a cohort with a well-defined set of controls (unaffected individuals). Because it calculates variant frequencies exclusively from controls, it can provide a more robust estimate of the population allele frequency, which is less likely to be biased by the presence of disease cases. A simple count method, which weights all variants equally, may be sufficient when such a control group is not available or when all variants in a region are assumed to have similar effect sizes regardless of frequency [15].

My gene-based test failed to converge or produced an error. What are the most common causes? Non-convergence in statistical tests for rare variants is often caused by separation or sparsity, where a particular rare variant is found only in cases or only in controls. This creates a scenario where the model cannot find a maximum likelihood estimate. This is a known challenge for methods like Firth logistic regression and generalized linear mixed models (GLMM) when analyzing very rare variants [14].

| Problem | Potential Causes | Suggested Solutions |

|---|---|---|

| Model non-convergence | Low minor allele count (MAC), complete or quasi-complete separation in data [14]. | Apply a MAC filter (e.g., MAC ≥5), combine variants from the same functional class, use Firth regression [14]. |

| Inflated type I error | Extremely rare variants, highly unbalanced case-control ratios, inadequate population stratification control [14]. | Use saddle point approximation (e.g., SAIGE), apply stricter MAC filters, include genetic principal components as covariates [14]. |

| Low statistical power | Small sample size, too few variants in the unit, heterogeneous variant effects [3] [15]. | Consider variable threshold tests, increase sample size via collaboration/metadata-analysis, use variance-component tests like SKAT [3]. |

How do I determine the optimal minor allele frequency (MAF) threshold for collapsing? There is no universal optimal threshold. Standard choices in the literature are a MAF of 0.01 (1%) or 0.05 (5%) [15]. The choice depends on the specific disease hypothesis and study design. It is considered good practice to perform analyses using multiple thresholds to assess the robustness of the findings. Some methods also employ a variable threshold approach that data-adaptively selects the frequency cut-off [15].

Can these methods be applied to family-based studies? While methods like the count, CMC, and Madsen-Browning were initially designed for unrelated individuals, the core principle of collapsing variants remains valid for family data. However, the association tests themselves must account for relatedness to avoid inflated false-positive rates. This is typically done using mixed models that incorporate a genetic relationship matrix (GRM) or pedigree structure [14].

Experimental Protocols & Workflows

Protocol: Implementing a Basic Collapsing Analysis

- Define the Region of Interest (ROI): Typically, this is a gene, but it can also be a gene cluster, a pathway, or a defined genomic interval [15].

- Variant Quality Control (QC): Filter variants based on standard QC metrics (call rate, Hardy-Weinberg equilibrium p-value, etc.).

- Select and Group Variants: Within the ROI, select variants based on your chosen MAF threshold (e.g., MAF < 1%). You may further refine this by functional annotation (e.g., include only non-synonymous or loss-of-function variants) [15].

- Calculate the Collapsed Variable:

- Count Method: For each subject ( j ), calculate ( xj' = \frac{1}{2K} \sum{i=1}^{K} x{ij}' ), where ( K ) is the number of variant sites and ( x{ij}' ) is the number of minor alleles (0, 1, 2) [15].

- Indicator Coding: For each subject ( j ), create a binary variable ( xj = 1 ) if they carry any rare variant in the ROI, and 0 otherwise [15].

- Madsen-Browning WSS: Calculate weights ( \hat{w}i ) for each variant ( i ) in the controls: ( \hat{w}i = 1/\sqrt{ni \hat{p}{iu}'(1 - \hat{p}{iu}')} ), where ( \hat{p}{iu}' ) is the estimated MAF in unaffected subjects. The burden score for subject ( j ) is then ( \sum{i=1}^{K} \hat{w}i x{ij}' ) [15].

- Association Testing: Use the collapsed variable as the predictor in a regression model (e.g., logistic for case-control) to test for association with the phenotype.

Comparative Analysis of Weighting Schemes

The table below summarizes the key characteristics of three phenotype-independent weighting schemes.

| Feature | Count / CMC | Madsen-Browning WSS | Variable-Threshold (VT) |

|---|---|---|---|

| Core Principle | Collapses variants into a single burden score; all variants weighted equally [15]. | Weights each variant inversely proportional to its standard deviation in controls [15]. | Data-adaptively selects the MAF threshold that maximizes evidence for association. |

| Variant Weight | 1 (equal weight for all variants) | ( \hat{w}i = 1/\sqrt{\hat{p}i(1-\hat{p}i)} ) where ( \hat{p}i ) is MAF in controls [15]. | Not applicable (uses a frequency threshold). |

| Advantages | Simple and intuitive; does not require a separate control group. | Up-weights rarer variants, which may have larger effects; can be more powerful when this assumption holds. | Avoids the need for a pre-specified, fixed MAF threshold. |

| Disadvantages | May lose power if both protective and risk variants are collapsed, or if effect sizes correlate with frequency. | Relies on accurate allele frequency estimation from a control set; performance can suffer if controls are not representative. | More computationally intensive due to testing multiple thresholds; requires multiple-testing correction. |

| Ideal Use Case | Initial scan where variant effect sizes are assumed to be independent of frequency. | Case-control studies with a high-quality control group, seeking rarer, higher-penetrance variants. | Exploring data with unknown allelic architecture, where the causal MAF spectrum is not known a priori. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Function / Application |

|---|---|

| Rare-Variant Association Software (e.g., RVFam, SAIGE, seqMeta) | Provides implementations of various collapsing and weighting methods, often with options to account for relatedness and population structure [14]. |

| Variant Functional Annotation Tools (e.g., PolyPhen-2, SIFT) | Used to prioritize and group variants (e.g., synonymous vs. nonsynonymous, benign vs. deleterious) before collapsing, refining the Region of Interest [15]. |

| Genetic Relationship Matrix (GRM) / Pedigree Information | Essential for accounting for relatedness among samples in family-based or population-based studies to control for inflated type I error [14]. |

| Large, Public Reference Panels (e.g., gnomAD) | Provides population-level allele frequency data, which can be used for quality control and, in some cases, for estimating weights externally. |

| High-Performance Computing (HPC) Cluster | Necessary for running computationally intensive rare-variant association analyses, especially for whole-genome data or large sample sizes. |

Troubleshooting Guides and FAQs

FAQ: Core Concepts and Workflow

Q1: What is the fundamental difference between marginal and multiple regression weights in the context of rare variant analysis? Marginal regression weights assess the effect of each genetic variant individually on the phenotype. In contrast, multiple regression weights evaluate the effect of a variant while simultaneously accounting for the effects of other variants, typically within a gene or pathway. The latter is central to "collapsing" or "burden" tests, where multiple rare variants are aggregated into a single genetic score for association testing. These aggregated approaches are often more powerful than single-variant tests for detecting effects when individual causal variants are very rare within a population [36].

Q2: Why is statistical power a major concern in rare variant studies, and what are the primary strategies to improve it? Power is a key issue because the low frequency of rare variants means very few individuals in a study carry them. Large samples are required to detect their typically small effect sizes [36]. Primary strategies to boost power include:

- Increasing sample size, for instance, through meta-analysis [8].

- Employing powerful study designs, such as extreme phenotype sampling [36].

- Refining phenotypic definitions, for example, by disaggregating controls into subthreshold and asymptomatic groups to create a more informative ordinal trait [37].

- Using gene- or region-based tests (e.g., burden tests) that aggregate variants [36] [8].

Q3: How does population structure impact weighted burden analysis, and how can this be corrected? In ethnically heterogeneous populations, structure can cause spurious associations if unaccounted for. A method using multiple linear regression within a ridge regression framework can correct for this by including principal components of the genetic data as covariates. This approach has been shown to effectively control for confounding due to population structure in burden analyses [38].

Troubleshooting Guide: Common Analysis Problems

Q4: My rare variant analysis appears to have inflated test statistics. What could be the cause? Inflation can arise from several sources. In binary traits, especially those with low prevalence (imbalanced case-control ratios), type I error inflation is a known issue for some meta-analysis methods [8]. In multi-ethnic cohorts, a primary cause is population stratification. This can be "almost completely corrected" by including principal components from the genetic data as covariates in the regression model [38]. For meta-analyses, ensure the method uses robust error-control techniques like saddlepoint approximation [8].

Q5: What should I do if my model selection strategy lacks power to detect interactive effects? The power of model selection strategies (marginal, exhaustive, forward search) depends heavily on the underlying genetic model. If you suspect strong interaction effects (epistasis) with weak marginal effects, a marginal search will be underpowered [39]. In such cases, an exhaustive search, while computationally intensive, is the only way to find influential genes. For a model with purely additive effects, marginal or forward search will be more effective and efficient [39]. Systematically evaluate strategies across a range of genetic models to select an optimal one.

Q6: My dataset has a limited number of diagnosed patients per rare disease. How can I perform a meaningful analysis? For very small sample sizes (a "few-shot" learning problem), consider knowledge-guided deep learning approaches like SHEPHERD. This method is trained primarily on simulated rare disease patients and incorporates existing medical knowledge (phenotype-gene-disease associations) via a graph neural network. It can perform causal gene discovery even with few or zero real labeled examples of a specific disease [40].

Summarized Data and Protocols

Table 1: Statistical Power of Alternative Phenotype Definitions

This table summarizes findings on how redefining case-control outcomes into an ordinal variable impacts statistical power in genetic association studies [37].

| Analysis Model | Relative Statistical Power | Key Application Context | Important Considerations |

|---|---|---|---|

| Standard Case-Control | Baseline (Least Power) | Standard GWAS design; easy to implement and meta-analyze. | Unbiased in large samples but underpowered for rare variants. |

| Ordinal (Case-Subthreshold-Asymptomatic) | Greatest Power (≈10% effective sample size increase) | When data allows subdivision of controls based on symptom severity. | Maintains clinical validity of cases; interprets associations with underlying genetic liability. |

| Case-Asymptomatic Control | Variable (Can match ordinal or case-control power) | When seeking to maximize effect size difference by excluding subthreshold individuals. | Can inflate effect size estimates; power depends on population prevalence and subthreshold group size. |

Table 2: Comparison of Rare Variant Meta-Analysis Methods

This table compares features of meta-analysis methods for rare variant association tests, critical for boosting power by combining cohorts [8].

| Method Feature | Meta-SAIGE | MetaSTAAR | Weighted Fisher's Method |

|---|---|---|---|

| Type I Error Control | Controlled via two-level saddlepoint approximation (SPA) | Can be inflated for low-prevalence binary traits | Varies by implementation |

| Computational Efficiency | High (Reuses LD matrix across phenotypes) | Lower (Requires phenotype-specific LD matrices) | High |

| Statistical Power | Comparable to joint analysis of individual-level data | High when error is controlled | Significantly lower |

| Key Innovation | SPA adjustment for combined score statistics in meta-analysis | Incorporates variant functional annotations | Combines p-values from cohort-level gene tests |

Experimental Protocol: Weighted Burden Analysis in Heterogeneous Populations

Objective: To perform a weighted burden analysis of rare coding variants for a quantitative phenotype in an ethnically heterogeneous cohort while controlling for population structure.

Methodology:

- Variant Annotation and Filtering: Focus on rare and very rare coding variants within a gene or pathway. Weights can be assigned based on allele frequency and predicted functional impact [38].

- Burden Score Calculation: Derive a weighted burden score for each subject. This score is an aggregate of the genotypes for the variants in the gene set, with each variant's contribution modified by its assigned weight [38].

- Regression Model: Use a multiple linear regression framework. Include the weighted burden score as the primary variable of interest.

- Covariate Adjustment: To correct for population stratification, calculate genetic principal components (PCs) from the genome-wide data. Include the top PCs (e.g., 20) as covariates in the regression model [38].

- Significance Testing: Assess the association between the burden score and the phenotype, conditional on the covariates.

Workflow and Pathway Diagrams

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Rare Variant Analysis

Essential materials, software, and data resources for implementing the described methodologies.

| Research Reagent | Category | Primary Function in Analysis |

|---|---|---|

| SAIGE / SAIGE-GENE+ [8] | Software Tool | Performs efficient rare variant association tests for large-scale biobank data, controlling for case-control imbalance and sample relatedness. |

| Meta-SAIGE [8] | Software Tool | Extends SAIGE-GENE+ for scalable rare variant meta-analysis across multiple cohorts with accurate type I error control. |

| SHEPHERD [40] | Software / AI Model | A few-shot learning approach for rare disease diagnosis; uses knowledge graphs and simulated patient data for causal gene discovery. |

| 1000 Genomes Project Data [36] | Reference Data | Provides a public reference of human genetic variation and haplotype information; often used for quality control and imputation. |

| Human Phenotype Ontology (HPO) [40] | Vocabulary / Tool | A standardized vocabulary of phenotypic abnormalities; essential for describing and computing patient phenotypes in rare disease studies. |

| Genetic Principal Components [38] | Derived Variable | Numerical summaries of population genetic structure; used as covariates in regression models to correct for population stratification. |

| Exomiser [40] | Software Tool | A variant prioritization tool that filters and ranks candidate genes based on genotype and phenotype data. |

Troubleshooting Common Implementation Issues

My Lasso model is unstable, selecting different features each time I run it, especially with correlated predictors. What is wrong?

This is a known limitation of Lasso in the presence of correlated predictor variables. When irrelevant variables are highly correlated with relevant ones, Lasso may struggle to distinguish between them, leading to unstable selection [41].

Solutions:

- Stable Lasso: Consider using an enhanced method that integrates a weighting scheme into the Lasso penalty. The weights are defined as an increasing function of a correlation-adjusted ranking that reflects the predictive power of predictors, which helps improve selection stability without significantly increasing computational cost [41].

- Elastic Net: Switch to Elastic Net regression, which is specifically designed to handle groups of correlated features. It mixes Lasso's feature selection with Ridge regression's ability to handle related features, tending to keep or remove correlated variables as a group instead of picking one randomly [42].

- Stability Selection: Employ the Stability Selection framework, which uses resampling to assess the selection frequency of variables, helping to identify more stable features. However, note that it may not fully resolve Lasso's instability with correlated predictors [41].

Should I scale my data before using Lasso or Elastic Net?

Yes, feature scaling is essential before applying any penalized regression model [43]. These methods add a penalty to the size of the coefficients. If features are on different scales, a feature with a larger scale will disproportionately influence the model and be unfairly penalized. Scaling ensures all features contribute equally.

Protocol:

- Standardize Numerical Features: Rescale each numerical feature to have a mean of zero and a standard deviation of one. This is typically done using a

StandardScaler[42] [43]. - Encode Categorical Features: Convert categorical variables into a numerical format using one-hot encoding, as the models' penalties are sensitive to feature scales [42].

- Use a Pipeline: Implement scaling and encoding within a pipeline to ensure the same transformation is applied to both training and test data, preventing data leakage [43].

How do I choose the right tuning parameters (alpha, lambda, l1_ratio) for my model?

Selecting tuning parameters is crucial for model performance. The most common method is cross-validation (CV).

Experimental Protocol for Hyperparameter Tuning with GridSearchCV:

- For Lasso: Tune the

alpha(orlambda) parameter, which controls the strength of the L1 penalty. A higher alpha increases regularization, forcing more coefficients to zero [43]. - For Elastic Net: You need to tune two parameters:

alpha(overall penalty strength) andl1_ratio(the mix between L1 and L2 penalty). Anl1_ratioof 1 is equivalent to Lasso, while 0 is equivalent to Ridge [42].

In the context of rare genetic variants, when should I use a single-variant test versus an aggregation test?

The choice depends on the underlying genetic model and the set of rare variants being aggregated [44].

Decision Guide:

- Use Aggregation Tests (like Burden or SKAT) when a substantial proportion of the rare variants in your gene or region are causal and have effects in the same direction. They are more powerful in this scenario as they pool signals from multiple variants [44] [17].

- Use Single-Variant Tests when only a small fraction of the aggregated variants are causal, or when the causal variants have effects in opposite directions (bidirectional effects). Aggregation tests can lose power in these situations [44].

Table: Comparison of Test Types for Rare Variants

| Feature | Aggregation Tests (e.g., Burden, SKAT) | Single-Variant Tests |

|---|---|---|

| Best Use Case | Many causal variants with similar effect directions [17] | Few causal variants or variants with opposing effects [44] |

| Power | Higher when a large proportion of variants are causal [44] | Higher when a small proportion of variants are causal [44] |

| Key Consideration | Sensitive to the proportion of causal variants and effect direction heterogeneity [44] [17] | Less powerful for individual rare variants due to low minor allele frequency [8] |

Experimental Protocols for Rare Variant Research

Gene-Based Rare Variant Association Analysis Workflow

This protocol outlines a meta-analysis approach for identifying gene-trait associations using rare variants, based on methods like Meta-SAIGE [8].

1. Preparation of Summary Statistics per Cohort:

- Input: Individual-level genetic and phenotypic data from each cohort (e.g., UK Biobank, All of Us).

- Method: Use software (e.g., SAIGE) to perform single-variant score tests for each variant. This generates:

- Per-variant score statistics (S).

- Their variances and p-values.

- A sparse linkage disequilibrium (LD) matrix (Ω) for genetic variants in the region [8].

2. Combining Summary Statistics:

- Input: Summary statistics and LD matrices from all participating cohorts.

- Method: Combine the score statistics from different studies into a single superset. To control for type I error inflation, especially for binary traits with case-control imbalance, apply statistical adjustments like the genotype-count-based saddlepoint approximation (SPA) [8].

3. Gene-Based Association Testing:

- Input: The combined summary statistics and covariance matrix.

- Method: Conduct set-based rare variant tests (e.g., Burden, SKAT, SKAT-O) on genes or genomic regions. Variants can be grouped and weighted using various functional annotations and minor allele frequency (MAF) cutoffs. The final gene-based p-values are then calculated [8].

The following workflow diagram illustrates the key steps of this protocol:

Workflow for Identifying Rare Variant Subgroups using the Causal Pivot Method

This protocol uses the Causal Pivot method to subgroup patients by the true biological causes of their illnesses, differentiating between polygenic and monogenic drivers [45].

1. Calculate Polygenic Risk Score (PRS):

- Compute a PRS for each patient, which summarizes the combined effect of many common genetic variants [45].

2. Test for Rare Variant Carriers:

- Among patients with the disease, compare the PRS of those who carry a specific rare, harmful variant (or a burden of variants in a pathway) against those who do not.

- Expected Signal: If the rare variant is a true driver, carriers will, on average, have a lower PRS than non-carriers, because the rare variant itself provides a strong push into the disease state [45].

3. Formal Statistical Test:

- The Causal Pivot formalizes this comparison into a rigorous statistical test to identify rare variant-driven subgroups and estimate their effect size. This method can work with cases-only data, which is advantageous when control samples are unavailable [45].

The logical relationship and expected signal in this analysis are shown below:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials and Tools for Advanced Genetic Association Studies

| Item / Method | Function / Purpose | Relevance to Rare Variant Analysis |

|---|---|---|

| SAIGE / SAIGE-GENE+ | Software for accurate single-variant and gene-based tests. | Controls for sample relatedness and case-control imbalance in large biobanks [8]. |

| Meta-SAIGE | Scalable rare variant meta-analysis method. | Combines summary statistics from cohorts; boosts power for discovery [8]. |

| Causal Pivot | Statistical method for subgrouping patients. | Detects hidden genetic drivers by pivoting rare variants against polygenic risk scores (PRS) [45]. |

| Stable Lasso | Enhanced variable selection method. | Improves feature selection stability in the presence of correlated predictors [41]. |

| Polygenic Risk Score (PRS) | Summary of common variant effects. | Serves as a pivot in the Causal Pivot method to identify rare variant subgroups [45]. |

| Elastic Net | Penalized regression model. | Handles correlated features effectively, useful for clinical or transcriptomic predictors [42]. |

| SKAT / Burden Tests | Gene-based rare variant association tests. | Aggregates signals from multiple rare variants to increase statistical power [8] [17]. |

| Linkage Disequilibrium (LD) Matrix | Describes correlation between genetic variants. | Critical for accurate meta-analysis and controlling type I error [8]. |

Frequently Asked Questions (FAQs)

Q1: What is the key difference between a burden test and a variance component test like SKAT?

Burden tests (e.g., CAST, weighted sum test) collapse genetic information from multiple variants in a region into a single score per individual and test for association with this combined score. A core assumption is that all rare variants influence the trait in the same direction and with similar effect sizes [46]. In contrast, variance component tests like SKAT model the effect of each variant as random, drawn from a distribution with a mean of zero and a variance that is tested. This allows variants to have effects in different directions and magnitudes, making SKAT more robust and powerful when both risk and protective variants exist in the same gene or region [46] [7].

Q2: When should I use SKAT-O instead of SKAT or a burden test?

SKAT-O is an adaptive test that optimally combines the burden test and SKAT. You should use SKAT-O when you are uncertain about the underlying genetic architecture of the trait [8]. If you suspect a mix of scenarios—where some genes have mostly causal variants acting in the same direction (favoring the burden test) and others have a mix of causal and neutral or opposing variants (favoring SKAT)—then SKAT-O is the recommended choice as it will automatically adapt to the scenario without a priori knowledge, often at a minimal cost to power [46] [7].

Q3: My SKAT analysis for a low-prevalence binary trait shows inflated type I error. What could be the cause and solution?