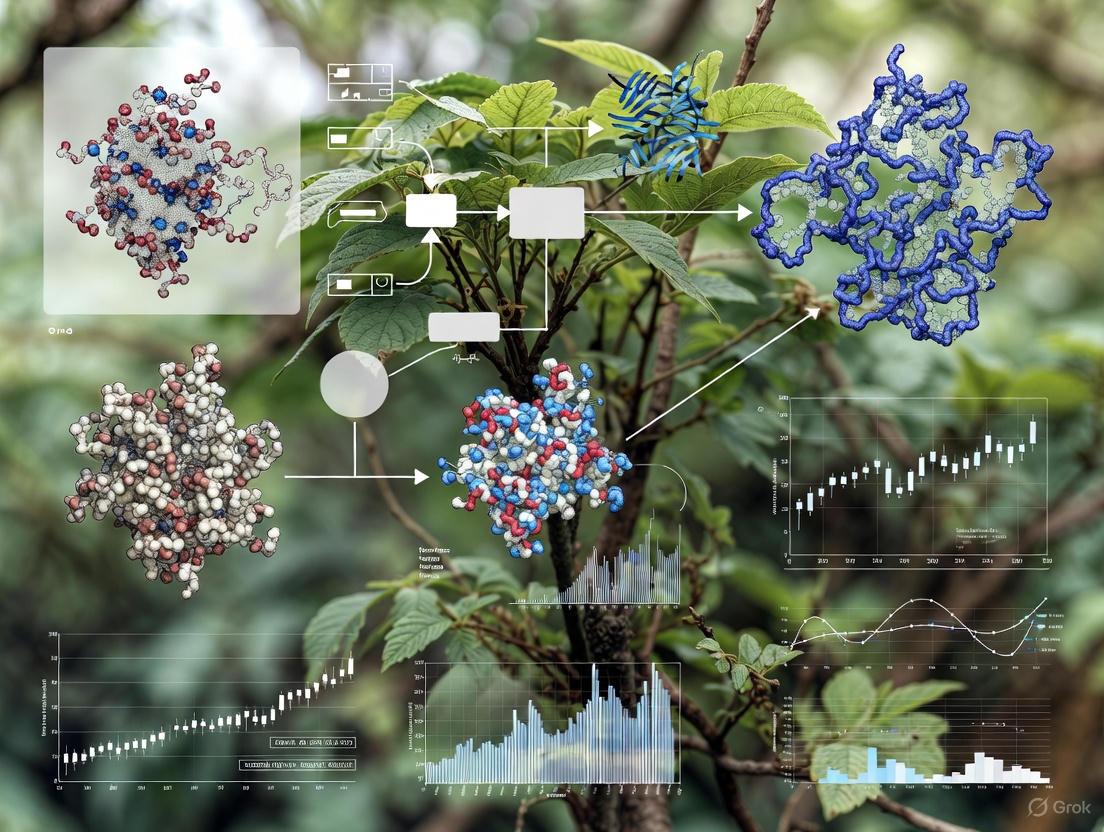

Overcoming the Green Veil: A Comprehensive Guide to Automatic Occlusion Detection in Plant Canopy Imaging

This article provides a systematic review of automatic occlusion detection technologies for plant canopy imaging, a critical challenge in high-throughput plant phenotyping and precision agriculture.

Overcoming the Green Veil: A Comprehensive Guide to Automatic Occlusion Detection in Plant Canopy Imaging

Abstract

This article provides a systematic review of automatic occlusion detection technologies for plant canopy imaging, a critical challenge in high-throughput plant phenotyping and precision agriculture. We explore the fundamental principles behind occlusion in complex plant structures and detail state-of-the-art solutions, including advanced deep learning models, 3D reconstruction techniques, and novel sensor fusion approaches. The content covers practical implementation methodologies, performance benchmarking across different agricultural environments, and optimization strategies for overcoming common deployment constraints. Aimed at researchers, scientists, and agricultural technology developers, this guide synthesizes current research trends and validation frameworks to enable more accurate yield prediction, growth monitoring, and disease detection by effectively addressing the persistent problem of occluded plant organs in imaging systems.

The Invisible Challenge: Understanding Occlusion in Plant Canopies

FAQs: Understanding Occlusion in Agricultural Imaging

Q1: What is occlusion in the context of plant canopy imaging? Occlusion occurs when plant organs, such as leaves, stems, or fruits, overlap and obscure each other, or when environmental structures shade the target plant, preventing a clear, complete view for imaging systems. In major soybean-growing regions that use vertical planting systems, for example, canopy shading from taller crops severely restricts the acquisition of phenotypic information from the lower-growing soybeans [1]. This is a fundamental challenge for automatic canopy imaging research.

Q2: What are the primary types of occlusion encountered in field conditions? Occlusion can be categorized based on its cause. The table below summarizes the common types and their impacts.

Table 1: Types of Occlusion in Agricultural Imaging

| Occlusion Type | Description | Common Impact on Imaging |

|---|---|---|

| Inter-Plant Occlusion | Leaves or fruits from one plant obscure those of a neighboring plant [2]. | Prevents accurate individual plant counting and phenotypic trait measurement. |

| Intra-Plant (Self-Occlusion) | Different parts of the same plant (e.g., leaves hiding stems or fruits) obscure each other [3]. | Hampers complete 3D reconstruction and organ-level phenotypic analysis. |

| Environmental Occlusion | Shading from taller crops in intercropping systems or from infrastructure [1]. | Alters light conditions, causing data deviation and masking true plant coloration. |

| Background Occlusion | Complex backgrounds like soil, mulch, or neighboring plants complicate target isolation [4]. | Reduces object detection confidence and model accuracy. |

Q3: How does occlusion impact high-throughput plant phenotyping? Occlusion directly constrains the accuracy and throughput of phenotypic data collection. It leads to the loss of critical morphological information, which can cause significant errors in measuring key traits. For instance, in 3D plant reconstruction, mutual occlusions between plant organs make obtaining a complete 3D point cloud from a single viewpoint scan challenging [3]. In fruit harvesting robots, occlusion can result in a fruit detection failure rate of up to 30% [5].

Q4: What are the main technical strategies to mitigate occlusion? Researchers employ several strategies to tackle occlusion, often in combination:

- Multi-View Imaging: Capturing images from multiple viewpoints around the plant and fusing the data to create a complete model [3].

- Active Sensing: The system actively moves the camera to a new position to gather more information from a different angle when occlusion is detected [6] [5].

- Advanced Deep Learning Models: Using specialized neural network architectures that are more robust to occlusions, often incorporating context and edge information to "imagine" obscured parts [6] [7].

- Multi-Modal Data Fusion: Combining data from different sensors (e.g., RGB, LiDAR, hyperspectral) to gain complementary information that can penetrate or see through certain types of occlusion [8].

Troubleshooting Guides

Guide 1: Diagnosing Occlusion-Related Inaccuracies in Plant Counts

Problem: A model trained for plant counting on early-growth-stage UAV imagery shows a significant drop in accuracy during later growth stages with high canopy coverage.

Symptoms:

- Decreasing precision and recall metrics as plant canopy density increases.

- Missed detections of smaller or completely obscured plants.

- Low confidence scores for detected plants.

Solution: Integrate plant location information from multiple growth stages. This method uses the known plant positions from earlier, less-occluded stages to guide the detection model in the high-coverage stage.

Table 2: Workflow for Improving Plant Counts Under High Coverage

| Step | Action | Protocol Details |

|---|---|---|

| 1. Data Acquisition | Capture co-registered UAV RGB imagery. | Use a UAV with RTK module for precise geotagging. Fly at 30m altitude with 80% forward and 70% side overlap [2]. |

| 2. Early-Stage Mapping | Generate an orthomosaic and detect plants. | Use software like Agisoft Metashape to create an orthomosaic. Train a YOLOv5 model to detect and log the geographic positions of plants at the early-growth stage [2]. |

| 3. Later-Stage Analysis | Use early-stage positions to inform later-stage counting. | When analyzing high-coverage imagery, use the pre-mapped plant locations as regions of interest to focus the detection model, significantly improving counting accuracy [2]. |

Workflow for troubleshooting plant counting inaccuracies under high-coverage occlusion.

Guide 2: Addressing Occlusion for Robotic Fruit Harvesting

Problem: A robotic harvester fails to detect fruits that are severely obscured by leaves, branches, or other fruits.

Symptoms:

- The robot's vision system cannot locate the fruit or its stem (peduncle).

- The detection confidence for occluded fruits is very low.

- The robotic arm aborts the picking sequence or moves to an incorrect position.

Solution: Implement an active sensing paradigm where the robot actively changes its viewpoint to find an unobstructed perspective of the target.

Detailed Protocol:

- Initial Detection & Occlusion Assessment:

- Viewpoint Re-planning:

- If the occlusion ratio (

Ro) exceeds a set threshold, trigger the viewpoint planner. - An imitation learning-based planner (e.g., using the Action Chunking with Transformer (ACT) algorithm) can be employed. This system learns from human expert demonstrations how to move the camera (mounted on a 6-DoF robotic arm) to a better viewpoint [5].

- If the occlusion ratio (

- Re-sampling and Re-detection:

- Move the camera to the new viewpoint and capture a new image.

- Perform the detection and occlusion assessment again with the new image.

- Repeat this process until the target is sufficiently visible or a maximum number of attempts is reached [6].

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Occlusion Research

| Tool / Technology | Primary Function | Role in Mitigating Occlusion |

|---|---|---|

| Binocular Stereo Vision Camera | Captures synchronized images from two viewpoints to compute depth and generate 3D point clouds. | Serves as the core sensor for 3D reconstruction. Multi-viewpoint clouds are fused to overcome self-occlusion [3]. |

| LiDAR (Light Detection and Ranging) | An active remote sensor that uses laser pulses to generate high-precision 3D point cloud data of the canopy structure. | Penetrates light foliage to provide structural data independent of ambient light, reducing the impact of shading and some occlusion [8]. |

| Multi-View Reconstruction Workflow | A processing pipeline involving Structure from Motion (SfM) and Multi-View Stereo (MVS) algorithms. | Reconstructs high-fidelity 3D plant models from images taken around the plant, explicitly designed to overcome occlusion from any single view [3]. |

| Occlusion-Robust DL Models (e.g., Chicken-YOLO) | Deep learning models with specialized modules for feature extraction in occluded scenes. | Enhances perception of occluded areas by strengthening local-global information coordination and edge feature extraction [7]. |

| Imitation Learning Viewpoint Planner | A policy that controls a robotic arm to move a camera to the "next-best-view" to see an occluded target. | Actively reduces occlusion by mimicking human expert behavior to find a viewpoint that reveals the hidden target [5]. |

Experimental Protocols for Key Cited Studies

Protocol 1: Multi-View 3D Plant Reconstruction for Fine-Grained Phenotyping

This protocol is based on the work by Frontiers in Plant Science [3], which aims to create accurate 3D models to overcome self-occlusion.

1. Image Acquisition:

- System: Use a system with a binocular camera (e.g., ZED 2) mounted on a programmable, rotating U-shaped arm.

- Process: Capture high-resolution RGB images from at least six viewpoints around the plant. At each viewpoint, capture multiple images.

2. Single-View Point Cloud Generation (Phase 1):

- Bypass built-in depth estimation. Instead, apply Structure from Motion (SfM) to the captured high-resolution images to estimate camera positions.

- Apply Multi-View Stereo (MVS) algorithms to the aligned images to generate a high-fidelity, distortion-free point cloud for each viewpoint.

3. Multi-View Point Cloud Registration (Phase 2):

- Coarse Alignment: Use a marker-based Self-Registration (SR) method. Place a calibration object (e.g., a sphere) in the scene to quickly align the six single-view point clouds into a common coordinate system.

- Fine Alignment: Apply the Iterative Closest Point (ICP) algorithm to the coarsely aligned point clouds to create a unified, complete, and accurate 3D plant model.

4. Phenotypic Trait Extraction:

- Automatically extract key parameters like plant height, crown width, leaf length, and leaf width from the complete 3D model. Validation with manual measurements showed R² values exceeding 0.92 for plant height and crown width [3].

Workflow for multi-view 3D plant reconstruction to overcome self-occlusion.

Protocol 2: Active Sensing for Fruit Peduncle Localization Under Occlusion

This protocol, derived from research on truss tomatoes [6], uses active camera movement to handle severe occlusion.

1. Initial Recognition and Occlusion Calculation:

- Capture an initial image of the target (e.g., a tomato cluster).

- Use a deep neural network to identify the regions of the fruit and the stem (peduncle).

- Based on the visible portion of the fruit, calculate its occlusion ratio (

Ro).

2. Active Viewpoint Adjustment:

- If the occlusion ratio is too high, plan a new camera viewpoint. The new viewpoint can be determined by calculating a vector based on the relative positions of the target fruit and the occluding object [6].

- Alternatively, an imitation learning policy can be used to output a continuous 6-DoF movement command for the robotic arm holding the camera [5].

3. Iterative Recognition:

- Move the camera to the new viewpoint and capture a new image.

- Perform the recognition and occlusion calculation again with this new image.

- Iterate until the peduncle is successfully located and its inclination angle can be estimated, or until a time limit is reached.

Validation: This method showed a 33% increase in precision and a 43% increase in efficiency compared to non-active methods, with an overall picking success rate of 90% in real-world tests [6].

Economic and Research Consequences of Unseen Canopy Elements

Frequently Asked Questions (FAQs)

Q1: My canopy images appear consistently darker than expected. What are the primary causes and solutions?

A1: Dark images typically result from incorrect exposure settings or limitations of the imaging environment.

- Cause 1: Automatic Exposure Setting. Using automatic exposure in variable field conditions leads to inconsistent results, often darkening images in denser canopies [9].

- Solution: Use manual exposure. Determine the correct setting by first taking a reference photo in an open sky area, then overexposing by 1-3 shutter speed stops when imaging under the canopy [9].

- Cause 2: Insufficient Indoor Lighting. For standardized imaging chambers, indoor lights are often not strong enough to provide adequate illumination, resulting in dark or black images [10].

- Solution: Ensure the imaging system is equipped with controlled, adjustable light sources to provide consistent and sufficient brightness for capture [11].

Q2: How can I ensure color accuracy in my plant images when light conditions change throughout the day?

A2: Achieving color constancy across different illumination conditions requires a hardware-assisted software correction.

- Solution: Integrate a standard color checker chart (e.g., X-rite Colour Checker) into every image [12]. Use a post-processing algorithm to fit a transformation model that aligns the observed color values of the chart tiles with their known true values. A quadratic model has been shown to be more effective than a linear one for field conditions, significantly reducing the standard deviation of mean canopy color across multiple imaging sessions [12].

Q3: What methods can improve the detection and counting of plants during high-coverage growth stages when occlusion is severe?

A3: Relying on imagery from a single growth stage is often insufficient. A multi-temporal approach significantly improves accuracy.

- Solution: Integrate plant location information obtained from early-growth-stage imagery with images from the high-coverage stage [2]. A deep learning model (e.g., YOLOv5) can be applied to the early-stage imagery to establish a baseline plant count and position map. This positional data then serves as a guide to enhance the recognition and counting of plants in the later, more occluded stage, saving annotation effort and improving precision [2].

Q4: My hemispherical photography analysis seems inaccurate. What are the critical camera settings to check?

A4: Accurate digital hemispherical photography depends on specific technical configurations.

- Exposure: Always use manual exposure, as automatic settings will incorrectly estimate the gap fraction [9].

- Gamma Function: Digital cameras apply a gamma correction (typically 2.0-2.5) that lightens midtones. For scientific analysis, it is recommended to correct images back to a gamma value of 1.0 to accurately represent light intensity [9].

- Channel Selection: For pixel classification (separating sky from canopy), using the blue channel of the RGB image often provides better results because foliage has low reflectivity in the blue spectrum, improving contrast [9].

Q5: How can I adapt a phenotyping platform for use in complex planting systems like vertical (3D) or intercropping systems?

A5: Traditional platforms struggle with the occlusion and access challenges in these environments. A dedicated system design is required.

- Solution: Implement a rail-based transportation system that automatically moves potted plants from the field to a centralized, standardized imaging chamber [11]. This design avoids operating bulky equipment between narrow rows and eliminates shading from taller companion crops during image capture. The system should support an automated rotating stage to acquire images from multiple angles, capturing the full 3D plant architecture [11].

Troubleshooting Guides

Table 1: Common Canopy Imaging Issues and Resolutions

| Problem Symptom | Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| Dark/Black Images | Incorrect exposure; Low light in imaging chamber [10] [9] | Check camera settings; Verify illuminance in chamber. | Use manual exposure, overexposing relative to open sky [9]; Install adjustable light sources [11]. |

| Inconsistent Color Values | Varying illumination conditions (sunny vs. overcast) [12] | Compare color values of a neutral object across images. | Include a color checker chart in every image and apply a quadratic color correction model [12]. |

| Low Plant Detection Accuracy in Dense Canopies | Severe leaf occlusion and canopy overlap [2] | Check detection performance against manual counts. | Integrate early-growth-stage plant location data with later-stage imagery for analysis [2]. |

| Inaccurate Gap Fraction Analysis | Automatic camera settings; Uncorrected gamma [9] | Review image acquisition protocol and software settings. | Use manual exposure and correct gamma function to 1.0 during image processing [9]. |

| Blurry Images | Camera out of focus; Plant or platform movement | Inspect image sharpness; check platform stability. | Use manual focus set to the plant distance; ensure imaging stage is stable during capture [11]. |

Table 2: Quantitative Performance of Phenotyping Solutions

| Technology / Method | Key Performance Metric | Result / Accuracy | Reference Application |

|---|---|---|---|

| Rail-based Field Phenotyping Platform | Plant Height Extraction (R²) | 0.99 | Soybean in vertical planting system [11] |

| Rail-based Field Phenotyping Platform | Canopy Fresh Weight Prediction (R²) | 0.965 (Vegetative stage) | Soybean in vertical planting system [11] |

| Integrated UAV & Deep Learning (YOLOv5) | Konjac Plant Counting (F1-score) | 92.3% | High-coverage crop stage [2] |

| Color Correction with Quadratic Model | Standard Deviation of Mean Canopy Color | Significant reduction | Consistent canopy characterization under inconsistent field illumination [12] |

Experimental Protocols

Protocol 1: Color Correction for Consistent Canopy Characterization

Objective: To standardize color values in plant images captured under inconsistent field illumination conditions.

Materials:

- Imaging system (e.g., digital camera)

- Standard color checker chart (e.g., X-rite Colour Checker)

- Image processing software (e.g., MATLAB, Python with OpenCV)

Methodology:

- Setup: Fixedly mount the color checker chart within the field of view of the camera so that it appears in every image captured [12].

- Image Acquisition: Capture images of plant canopies according to your standard protocol, ensuring the color chart is visible in each frame.

- Pre-processing: For each image, automatically detect and extract the region of interest (ROI) containing the plants and the color chart [12].

- Model Fitting: For each image, use a least-squares approach to fit a quadratic transformation model. This model maps the observed RGB values of the color chart tiles to their known reference values [12].

- Color Correction: Apply the derived transformation model to all pixels within the image, including the plant canopy ROI. This corrects the color values to align with the ground truth provided by the chart [12].

- Validation: The success of the method can be confirmed by the reduced error between observed and reference chart values and a significant decrease in the standard deviation of mean canopy color across multiple days [12].

Protocol 2: Enhanced Plant Counting in High-Coverage Stages

Objective: To accurately detect and count crop plants during later growth stages with high canopy coverage and occlusion.

Materials:

- Unmanned Aerial Vehicle (UAV) with RGB camera

- GNSS/RTK module for precise geolocation

- Software for orthomosaic generation (e.g., Agisoft Metashape)

- Deep learning framework (e.g., PyTorch, TensorFlow) with YOLOv5 implementation

Methodology:

- Early-Stage Imaging: Conduct a UAV flight over the field during an early growth stage (e.g., leaf-spreading phase). Use an RTK module to geotag images for high spatial accuracy [2].

- Early-Stage Processing: Generate an orthomosaic from the captured images. Train a YOLOv5 model to detect and count individual plants, generating a map of plant locations with geographic coordinates [2].

- Late-Stage Imaging: Conduct a second UAV flight during the high-coverage stage (e.g., production of new corms), following the same georeferencing procedure [2].

- Data Integration: Use the precise plant location map from the early stage to inform and constrain the detection process in the late-stage imagery. This helps distinguish individual plants within the overlapping canopy [2].

- Validation: Compare the final plant counts against manual ground-truth counts. Metrics such as Precision, Recall, and F1-score should be used for evaluation [2].

Workflow Visualization

Diagram 1: Canopy Imaging Issue Resolution

Diagram 2: Multi-temporal Plant Counting

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Canopy Imaging Research

| Item | Function / Application | Key Considerations |

|---|---|---|

| Standard Color Checker Chart | Provides a ground truth for color calibration and correction in image analysis under varying light [12]. | Essential for achieving color constancy across different times of day and weather conditions. |

| Hemispherical (Fish-eye) Lens | Enables canopy imaging over a 150°+ angle to calculate gap fraction, LAI, and light regimes [10] [9]. | Requires careful control of exposure and gamma settings for accurate results [9]. |

| Rail-Based Transport System | Automates the movement of plants from field growth plots to a centralized imaging chamber, minimizing human error [11]. | Particularly useful in complex planting systems (e.g., intercropping) where access is limited [11]. |

| Standardized Imaging Chamber | Provides a controlled environment with stable lighting and background for consistent, high-quality image acquisition [11]. | Balances the benefits of field growth with the precision of lab-based phenotyping [11]. |

| UAV with RTK Module | Captures high-resolution, georeferenced aerial imagery for plant counting and monitoring over large areas [2]. | RTK (Real-Time Kinematic) provides centimeter-level positioning accuracy crucial for tracking individual plants over time [2]. |

This technical support center provides troubleshooting guides and FAQs for researchers working on automatic occlusion detection in plant canopy imaging. The content is designed to help you overcome common experimental challenges and implement state-of-the-art methodologies.

Frequently Asked Questions (FAQs)

Q1: What are the primary technical challenges in segmenting plant organs from canopy images? The main challenges include severe leaf occlusion and overlap, the irregular and complex morphology of plant structures like rapeseed inflorescences, and blurred organ boundaries [13]. Additionally, substantial variation in organ size, condition, and color across different growth stages complicates feature extraction. In aerial imagery, targets are often very small, providing limited visual features for detection algorithms to learn [14].

Q2: How can I generate accurate ground truth data without exhaustive manual annotation? Generative Adversarial Networks (GANs) offer a viable solution. A two-stage GAN-based approach can be employed: first, use FastGAN to augment original RGB images using intensity and texture transformations. Then, use a Pix2Pix model, trained on a limited set of RGB images and their corresponding segmentations, to generate binary segmentation masks for the synthetic images [15]. This method has achieved Dice coefficients between 0.88 and 0.95 for greenhouse-grown plants.

Q3: What imaging setup is recommended for robust 3D canopy reconstruction in field conditions? For stereo vision in field conditions, a system with two nadir cameras is effective. One study used two GO-5000C-USB cameras (2560 × 2048 CMOS sensors) with 16 mm focal length objectives, a baseline of 50 mm, and parallel optical axes, capturing images from about 1 meter above the canopy [16]. Binning images from 2560 × 2048 to 1280 × 1024 pixels can improve matching and leaf area computation.

Q4: Which deep learning architectures are most effective for handling occlusion in plant images? Semi-supervised frameworks like DM_CorrMatch, which combine strongly and weakly augmented data views, show superior performance [13]. Architectures like Mamba-Deeplabv3+ integrate the global feature extraction of Mamba with the local feature extraction of CNNs. For a more accessible approach, combining YOLOv11 for detection with the Segment Anything Model (SAM) for zero-shot segmentation is highly effective, achieving IoU scores over 0.92 [17].

Q5: How does environmental variability impact model performance, and how can this be mitigated? Models trained in controlled laboratory conditions often show a significant performance drop (e.g., from 95-99% to 70-85% accuracy) when deployed in the field [18]. Environmental factors like wind can induce motion, affecting the variability of leaf area measurement by 3% or more [16]. Mitigation strategies include using semi-supervised learning that leverages unlabeled field data [13] and designing models with realistic data augmentation that accounts for factors like variable lighting and complex backgrounds [18].

Troubleshooting Guides

Issue 1: Poor Segmentation Accuracy in Dense Canopies

Problem: Your model fails to accurately segment individual leaves or flowers in a dense, occluded canopy. Solution:

- Step 1: Implement a semi-supervised learning framework like DM_CorrMatch. This uses a teacher-student model where the teacher generates pseudo-labels for unlabeled data, and the student is trained on both labeled and pseudo-labeled data [13].

- Step 2: Employ an automatic update strategy for the labeled dataset to gradually reduce the proportion of erroneous labels from initial manual segmentation [13].

- Step 3: Utilize a network architecture capable of capturing both global and local context. The Mamba-Deeplabv3+ architecture is designed for this purpose [13].

- Verification: Expect a significant improvement in metrics. The referenced study achieved an Intersection over Union (IoU) of 0.886, Precision of 0.942, and Recall of 0.940 on a challenging rapeseed flower dataset [13].

Issue 2: Lack of Sufficient Annotated Data for Training

Problem: You cannot train a supervised model effectively due to a small set of manually segmented images. Solution:

- Step 1: Use a Generative Adversarial Network (GAN) to create synthetic data. Begin by training FastGAN on your limited set of original RGB plant images to generate new, realistic synthetic plant images [15].

- Step 2: Train a Pix2Pix model (a conditional GAN) on your small set of paired RGB and ground truth segmentation images.

- Step 3: Use the trained Pix2Pix model to predict segmentation masks for the synthetic RGB images generated by FastGAN. This creates a large dataset of synthetic image-mask pairs [15].

- Step 4: Fine-tune your segmentation models using this augmented dataset.

- Verification: Manually annotate a subset of the synthetic images and calculate the Dice coefficient between the manual and GAN-predicted masks. A score above 0.90 indicates high-quality synthetic data [15].

Issue 3: Inaccurate Canopy Size and Volume Estimation

Problem: Your 2D segmentation does not translate into an accurate 3D understanding of canopy structure and volume. Solution:

- Step 1: Accurately detect and segment the plant in 2D. An integrated YOLOv11 and SAM pipeline is recommended. YOLOv11 provides precise bounding box detections, which are then used as prompts for SAM to perform zero-shot segmentation [17].

- Step 2: Implement a prompt selection algorithm to guide SAM. The "refined" approach uses a hollow concentric structure to select background points from regions overlapping fruit detections, improving segmentation reliability [17].

- Step 3: Transition from 2D to 3D by integrating a depth estimation model like Depth Anything v2 (DAv2). DAv2 generates a depth map, which can be combined with the 2D segmentation mask to calculate canopy volume [17].

- Verification: This workflow has achieved IoU scores of 0.924 for 2D segmentation, providing a solid foundation for robust 3D volume estimation [17].

Experimental Protocols & Performance Data

Table 1: Performance Metrics of Advanced Segmentation Models

| Model / Method | Dataset / Application | Key Metric | Reported Score | Challenge Addressed |

|---|---|---|---|---|

| DM_CorrMatch [13] | Rapeseed Flower (RFSD) | IoU | 0.886 | Occlusion, Complex Morphology |

| Precision | 0.942 | |||

| Recall | 0.940 | |||

| YOLOv11 + SAM (Refined) [17] | Strawberry Canopy | IoU | 0.924 | Occlusion, Size Estimation |

| Plant-MAE [19] | Plant Organ Point Clouds | Average IoU | 0.840 | 3D Organ Segmentation |

| Pix2Pix (Sigmoid Loss) [15] | Arabidopsis (Synthetic Masks) | Dice Coefficient | 0.95 | Lack of Annotated Data |

| Stereo Vision (Calibrated) [16] | Winter Wheat (Leaf Area) | RMSE | 0.37 | 3D Field Measurement |

Table 2: Essential Research Reagent Solutions

| Item | Specification / Example | Primary Function in Experiment |

|---|---|---|

| Imaging Sensor | RGB CMOS (e.g., 2560 × 2048) [16] | Captures high-resolution 2D color images for segmentation and 3D reconstruction. |

| Stereo Vision System | Two nadir cameras, 50 mm baseline [16] | Enables 3D point cloud reconstruction via triangulation for measuring plant architecture. |

| Depth Estimation Model | Depth Anything v2 (DAv2) [17] | Converts 2D segmentations into 3D depth maps for canopy volume estimation. |

| Zero-Shot Segmenter | Segment Anything Model (SAM) [17] | Performs image segmentation without task-specific training, reducing annotation needs. |

| Object Detector | YOLOv11 [17] | Provides precise bounding box detections to guide and prompt the segmentation model. |

| Self-Supervised Framework | Plant-MAE [19] | Segments plant organs from 3D point clouds with reduced reliance on annotated data. |

Workflow Visualization

Diagram 1: Semi-Supervised Plant Segmentation Workflow

Diagram 2: Synthetic Ground Truth Generation with GANs

Sensor Limitations and Environmental Factors Affecting Visibility

Troubleshooting Guides & FAQs

This technical support resource addresses common challenges in automatic occlusion detection for plant canopy imaging research. The guidance is based on current methodologies and experimental findings.

FAQ: Environmental & Technical Interference

Q1: How does changing sunlight throughout the day affect my canopy reflectance measurements, and how can I correct for it?

Solar altitude changes cause significant diurnal variation in nadir reflectance, typically following a U-shaped pattern with the smallest values observed at solar noon [20]. This occurs because the sun's position affects the angle of sunlight and the amount of specular reflection from the canopy [20].

- Solution: For the most stable readings, collect data around midday (e.g., 10:00-14:00) when solar altitude changes are minimal [20]. For all-day monitoring, use a sensor with a built-in solar altitude correction model. One study developed a vegetation canopy reflectance (VCR) sensor that reduced the intra-day coefficient of variation (CV) at 710 nm from 10.86% before correction to 2.93% after correction [20].

- Experimental Protocol: To validate your sensor's performance, conduct a diurnal experiment. Measure a standard reflectance gray scale board and a consistent vegetation target (e.g., Bermuda grass) hourly from sunrise to sunset. The root mean square error (RMSE) for a calibrated VCR sensor was 1.07% at 710 nm and 0.94% at 870 nm [20].

Q2: My research involves soybean plants shaded by taller crops. How can I phenotype these occluded plants effectively?

Vertical (three-dimensional) planting systems create classic occlusion where taller crops (e.g., maize) shade lower crops (e.g., soybean), limiting equipment access and imaging quality [11]. Standard platforms like UAVs or gantries struggle with this due to fixed viewing angles, insufficient resolution, or inability to penetrate the upper canopy [11].

- Solution: Implement an integrated system that combines field growth with standardized indoor imaging. A rail-based transport system can automatically move potted plants from the field to a controlled imaging chamber [11].

- Experimental Protocol:

- Platform Setup: Establish a fixed imaging chamber with a high-precision imaging system (e.g., RGB camera, infrared camera, LiDAR) and an automated rail transport system for potted plants [11].

- Data Acquisition: Use the rail system to transport plants from the intercropping field to the chamber for imaging. This avoids shading and environmental interference during data capture [11].

- Validation: Correlate digitally extracted traits with manual measurements. One platform achieved an R² of 0.99 for plant height and 0.95 for plant width against manual measurements [11].

Q3: Can I detect plant stress before visible symptoms like discoloration occur?

Yes. Non-visible cellular and subcellular changes precede visible symptoms. Advanced spectroscopic and imaging techniques can detect these early stress responses [21] [22].

- Solution: Use hyperspectral imaging to detect subtle shifts in leaf absorbance spectra. Under drought stress, specific red-shifted and broadened absorbance features appear in the red-edge region (~695 nm), indicating conformational changes in the photosynthetic antenna as the plant dissipates excess energy as heat [21]. Alternatively, Optical Coherence Tomography (OCT) can non-destructively quantify internal leaf structural changes caused by stressors like ozone, which first damages the palisade tissue [22].

- Experimental Protocol for Hyperspectral Detection [21]:

- Grow plants (e.g., tomato) under controlled conditions.

- Subject them to incremental light levels (e.g., PAR from 100 to 1500 µmol m⁻² s⁻¹) and drought stress.

- Use a top-view VNIR hyperspectral camera in an automated screening system to capture canopy reflectance daily.

- Analyze the derivative of the reflectance spectrum in the 520 nm (green) and 680-750 nm (red-edge) regions to identify stress-linked absorbance features.

Troubleshooting Guide: Sensor Limitations

Problem: 2D imaging provides inaccurate morphological data for complex, occluded canopies.

- Issue: 2D imaging loses 3D information, requires specific shooting angles, and results are greatly deviated if shots are taken at random angles [23]. It cannot resolve overlapping leaves in a dense canopy.

- Solution: Adopt 3D sensing technology. A hand-held 3D laser scanner can reconstruct a high-precision 3D mesh model of the plant in real-time, enabling the extraction of individual leaves from a occluded canopy [23].

- Performance Data: For plants with heavy canopy occlusion, this method automatically extracted 87.61% of typical leaf samples, with estimated morphological traits highly correlated to manual measurements (modeling efficiency above 0.8919 for scale-related traits) [23].

Problem: My sensor data is contaminated by cloud cover, creating gaps in evapotranspiration (ET) time series.

- Issue: Thermal infrared sensors used to derive ET apply cloud masking, which removes affected pixels and creates data gaps. The impact varies by cloud type [24].

- Solution: Incorporate cloud-type classification into data analysis. Research shows that cloud presence generally reduces instantaneous ET, but the effect is not uniform. For example, ET under dense Cumulonimbus clouds did not differ significantly from clear-sky conditions, unlike other cloud types [24].

- Protocol: Use Cloud Optical Depth (COD) and Cloud Top Pressure (CTP) data from sources like GOES-18 to classify clouds. Integrate this classification into data gap-filling models to improve ET estimation accuracy during cloudy periods [24].

The following tables summarize key performance metrics from cited experiments.

Table 1: Platform & Sensor Performance Metrics

| Platform / Sensor | Key Performance Metric | Reported Value | Application Context |

|---|---|---|---|

| Vegetation Canopy Reflectance (VCR) Sensor [20] | RMSE at 710 nm / 870 nm | 1.07% / 0.94% | Diurnal reflectance monitoring |

| CV after solar correction (710 nm) | 2.93% (from 10.86%) | Diurnal reflectance monitoring | |

| Field Soybean Phenotyping Platform [11] | R² vs. manual (Plant Height/Width) | 0.99 / 0.95 | Vertical planting system |

| R² for Canopy Fresh Weight Prediction | 0.965 | Vegetative stage | |

| Hand-held 3D Laser Scanner [23] | Typical Leaf Sample Extraction Rate | 87.61% | Heavy canopy occlusion |

| Avg. Time per Plant Measurement | 196.37 seconds | High-throughput phenotyping |

Table 2: Early Stress Detection Signatures

| Stress Type | Detection Technique | Spectral / Structural Signature | Biological Meaning |

|---|---|---|---|

| Drought & Excessive Light [21] | Hyperspectral Imaging (Canopy) | Red-shifted & broadened absorbance at ~695 nm | Stepwise tuning of regulated energy dissipation (heat) in the photosynthetic antenna. |

| Ozone Stress [22] | Optical Coherence Tomography (Leaf) | Decreased signal intensity, increased thickness, and increased "Energy" texture in palisade tissue. | Structural damage to the palisade tissue from ozone entering stomata. |

Experimental Workflow Diagrams

Diagram 1: Integrated Phenotyping for Occluded Plants

Diagram 2: OCT-Based Environmental Stress Diagnosis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Advanced Plant Stress Sensing

| Item / Reagent | Function / Explanation | Example Application |

|---|---|---|

| Hyperspectral Imaging Camera (VNIR) | Captures spectral data across many contiguous bands, enabling detection of subtle biochemical and physiological changes based on reflectance. | Detecting red-shifted absorbance features associated with drought stress in tomato canopies [21]. |

| Portable Optical Coherence Tomography (OCT) | A non-destructive, non-contact technique that uses low-coherence light to generate cross-sectional images of internal tissue structure. | Quantifying ozone-induced damage to the palisade tissue in white clover leaves [22]. |

| 3D Laser Scanner (Hand-held) | Reconstructs high-precision 3D mesh models of plants in real-time, allowing for the segmentation and measurement of individual organs in occluded canopies. | Automatically measuring morphological traits of typical leaf samples from heavily occluded plants [23]. |

| Fluorescence Proteins (e.g., RFP) | Used to genetically engineer pathogens, allowing for real-time, in vivo tracking of infection progression and host-pathogen interactions. | Monitoring growth of Phytophthora capsici in cucumber and pepper plants to evaluate host resistance [25] [26]. |

| Enzyme-Linked Immunosorbent Assay (ELISA) Kits | Immunoassays that use antigen-antibody interactions to detect and quantify specific pathogens or stress-related host proteins (e.g., heat shock proteins). | Detecting plant viral infections or quantifying stress-related hormonal responses [25] [26]. |

The Fundamental Role of Occlusion Detection in Precision Agriculture

Troubleshooting Common Occlusion Detection Issues

FAQ: My deep learning model performs well in the lab but poorly in the field. What is wrong?

This is a common problem resulting from the sim-to-real gap. Laboratory conditions are controlled, while field environments introduce significant variability in lighting, plant orientation, and background elements [4].

- Solution: Implement domain adaptation strategies and augment your training data with field-condition images. Research shows transformer-based architectures like SWIN demonstrate superior robustness in field conditions, achieving 88% accuracy compared to 53% for traditional CNNs on real-world datasets [4].

- Prevention: During model development, train with datasets containing realistic field variations, including multiple lighting conditions (sunny, overcast), growth stages, and complex backgrounds including soil and other plant species.

FAQ: How can I accurately count plants during high-coverage growth stages when leaves severely occlude target plants?

High canopy coverage presents a significant challenge, as traditional detection methods experience substantial accuracy decreases during later growth stages [2].

- Solution: Integrate multi-temporal positional information. For Konjac plants, researchers achieved 98.7% precision by combining a YOLOv5 model with plant location data from both early-stage and high-coverage stage UAV imagery. This approach leverages the consistent positional information of plants despite changing canopy morphology [2].

- Alternative Method: The Count Crops tool in ENVI software provides a non-deep learning alternative that requires no annotation and has demonstrated promising recognition precision for high-coverage scenarios [2].

FAQ: My 3D reconstruction of plant architecture is missing occluded leaves and structures. Which sensor should I use?

This limitation often stems from using 2.5D depth sensors that capture only a single surface layer. True 3D reconstruction requires multiple viewing angles [27].

- Solution: Implement multi-angle scanning using laser scanners or structured light systems. Research indicates that using multiple scanners mounted at different angles (e.g., on top of the plant with an angle) can capture even overlapping leaves, creating a complete 3D point cloud rather than a partial 2.5D depth map [27].

- Sensor Selection: Consider laser line scanners which offer high precision (up to 0.2mm) in all dimensions and are robust for field use, though they require movement over plants [27].

FAQ: How can I estimate yield in grapevines when leaves occlude a significant portion of fruit bunches?

Vineyard yield estimation faces the challenge of vine-occlusions, particularly leaf-occlusions in dense canopies [28].

- Solution: Develop a multiple regression model using canopy features as proxies. One study achieved R² = 0.80 for estimating visible bunch percentage using canopy porosity and visible bunch area as predictors, providing a non-invasive yield estimation approach without requiring defoliation [28].

- Implementation: Capture 2D images of 1m vine segments and extract canopy porosity (proportion of gaps with no plant material) and visible bunch area using image analysis software. The regression model then estimates total bunch area and ultimately yield [28].

Experimental Protocols for Occlusion Research

Protocol: Instance Segmentation and Leaf Completion for Occluded Canopies

This protocol, validated on butterhead lettuce, provides a robust pipeline for extracting leaf morphological traits under occlusion [29].

Workflow Overview:

Step-by-Step Methodology:

Data Acquisition and Preprocessing

- Capture high-resolution RGB images of occluded canopies in controlled lighting conditions.

- Create paired datasets by carefully extracting leaves and imaging them individually against a neutral background to establish in vivo–ex vivo correspondences.

Instance Segmentation for Leaf Extraction

- Implement YOLOv8s-Seg as the optimal model for leaf instance segmentation.

- Train the model on annotated canopy images with bounding boxes and segmentation masks for individual leaves.

- Use segmented leaf instances as input for the completion network.

Supervised Conditional GAN for Leaf Completion

- Employ pix2pix as the conditional GAN architecture for leaf completion.

- Train the network using paired data where inputs are occluded leaf segments and targets are corresponding complete leaves.

- Configure training parameters: batch size of 16, Adam optimizer with learning rate of 0.0002.

Performance Validation

- Validate completion accuracy using R² and RMSE for leaf area estimation (target: R² > 0.94, RMSE < 2.851 cm²).

- Assess morphological reconstruction accuracy using SAMScore for semantic similarity (target: >0.97).

- Note that optimal performance occurs at approximately 60% leaf completeness [29].

Protocol: Hemispherical Imaging for Canopy Light Interception Assessment

This cost-effective method provides an alternative to ceptometers for precision irrigation in orchards and vineyards [30].

Workflow Overview:

Step-by-Step Methodology:

Image Acquisition

- Use action cameras with hemispherical lenses mounted beneath the canopy.

- Capture images throughout the day to assess diurnal patterns of light interception, particularly in orchards where single midday measurements are insufficient.

- Maintain consistent camera positioning and settings across measurements.

Image Processing

- Process images automatically to analyze canopy occlusion along the sun's trajectory.

- Calculate the fraction of Intercepted Photosynthetically Active Radiation (fIPAR) by determining the proportion of occluded versus open sky in the hemisphere.

Validation and Application

- Validate against ceptometer measurements (target R² between 0.88–0.92).

- Use the daily fIPAR pattern to inform precision irrigation scheduling, adapting to diverse canopy structures and training systems [30].

Technical Specifications for Occlusion Detection Systems

Table 1: Performance Comparison of 3D Sensing Technologies for Plant Phenotyping

| Technology | Spatial Resolution | Key Advantages | Limitations for Occlusion Handling | Representative Accuracy |

|---|---|---|---|---|

| LIDAR | 1-10 cm [27] | Light independent; Long scanning range (2-100m) [27] | Poor edge detection; Single viewpoint creates occlusion [27] | Plant height: R² = 0.99 [1] |

| Laser Line Scanner | Up to 0.2 mm [27] | High precision in all dimensions; Robust with no moving parts [27] | Requires movement; Limited to calibrated range (0.2-3m) [27] | Leaf area: RMSE = 2.851 cm² [29] |

| Structured Light (Kinect) | ~0.2% of object size [31] | Inexpensive; No movement required; Color and depth [27] | Sensitive to sunlight; Limited outdoor use [27] | Suitable for coarse plant structure [31] |

| Stereo Vision | Varies with distance | Lower cost than LiDAR; Simultaneous color and geometry [32] | Sensitive to lighting and texture; Calibration intensive [32] | Dependent on matching algorithm quality [32] |

| Multi-view RGB Reconstruction | Sub-millimeter potential | Low-cost hardware; Rich texture information [33] | Computationally intensive; Requires significant post-processing [31] | Leaf area: R² = 0.972 [1] |

Table 2: Deep Learning Architectures for Occlusion Scenarios

| Model Architecture | Application Context | Performance Metrics | Strengths for Occlusion | Limitations |

|---|---|---|---|---|

| SWIN Transformer | General plant disease detection [4] | 88% accuracy (real-world datasets) [4] | Superior robustness to environmental variability [4] | Computational complexity [4] |

| YOLOv8s-Seg | Instance segmentation in occluded lettuce canopies [29] | Optimal balance of speed and accuracy [29] | Effective leaf extraction despite occlusion [29] | Requires extensive annotation [29] |

| pix2pix (CGAN) | Leaf completion from occluded contours [29] | R² = 0.948 leaf area; SAMScore = 0.974 [29] | Reconstructs full leaf morphology from partial data [29] | Requires paired training data [29] |

| Faster R-CNN | Multi-temporal plant detection [2] | High detection accuracy for visible objects [2] | Reliable for early growth stages [2] | Performance decreases with high coverage [2] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Occlusion Detection Experiments

| Research Reagent | Function in Occlusion Research | Example Implementation | Technical Considerations |

|---|---|---|---|

| Programmable Rail System | Automated plant transport for multi-angle imaging [1] | X-Y dual-directional tracks moving plants to imaging chamber [1] | Enables standardized imaging of field-grown plants; Modular design [1] |

| Multi-sensor Imaging Chamber | Standardized data acquisition under controlled conditions [1] | Fixed chamber with adjustable sensors, lighting, rotating stage [1] | Balances field growth requirements with imaging stability [1] |

| UAV with RGB Camera | High-throughput field image acquisition [2] | DJI Phantom 4 RTK at 30m altitude, 80% forward overlap [2] | Provides georeferenced imagery for multi-temporal analysis [2] |

| Hemispherical Action Camera | Canopy light interception assessment [30] | Mounted beneath canopy to capture occlusion patterns [30] | Cost-effective alternative to ceptometers; Processes automatically [30] |

| Paired Plant Dataset | Training supervised CGANs for leaf completion [29] | In vivo–ex vivo leaf correspondences for butterhead lettuce [29] | Enables accurate reconstruction of occluded morphology [29] |

| Canopy Porosity Metric | Proxy for fruit exposure in occluded canopies [28] | Proportion of gaps with no plant material in fruit zone [28] | Correlates with visible bunch area (R² = 0.80) [28] |

Beyond the Visible: Technological Solutions for Occlusion Detection

Frequently Asked Questions

Q1: My model performs well in the lab but fails in real-world field conditions. What could be wrong? This is a common issue often caused by the domain gap between controlled lab images and variable field environments. Performance drops are typical, with accuracy often falling from 95-99% in the lab to 70-85% in the field [34]. To improve robustness:

- Utilize Data Augmentation: Introduce variations in lighting, background, and occlusion during training to simulate field conditions [34].

- Leverage Transformer Architectures: Consider models like SWIN Transformers, which have demonstrated superior robustness, achieving 88% accuracy on real-world datasets compared to 53% for traditional CNNs [34].

- Implement Image Quality Filtering: Use an automated pre-processing step, like an XGBoost classifier, to filter out poor-quality images (e.g., with motion blur or bad exposure) before they are processed by your deep learning model [35].

Q2: Should I use a CNN or a Transformer for my plant canopy imaging project? The choice depends on your specific needs for accuracy, computational resources, and robustness. The table below summarizes a systematic comparison from a phenological classification study [35].

| Model Type | Example Architectures | Key Strengths | Considerations |

|---|---|---|---|

| Classical CNNs | ResNet50, VGG16, ConvNeXt Tiny | High robustness, excellent performance with lower computational cost [35]. | May struggle with long-range dependencies in complex canopy structures [34]. |

| Transformers | ViT, Swin Transformer | Superior at capturing global context and relationships; state-of-the-art on many benchmarks [34] [36]. | Higher computational demand; can be sensitive to small datasets without proper pre-training [34]. |

Note: In a direct benchmark, classical CNNs like ResNet50 and ConvNeXt Tiny achieved top performance (F1-score: ~0.988) with 2-3x less computation than transformer models [35].

Q3: How can I detect diseases or occlusions before they are visibly apparent? For pre-symptomatic detection, the imaging modality is crucial.

- RGB Imaging is effective for identifying visible symptoms but is limited to after-damage detection [34].

- Hyperspectral Imaging (HSI) can identify physiological changes before symptoms become visible to the human eye by capturing data across a broad spectral range [34]. The main constraint is cost, with systems ranging from $20,000 to $50,000 [34].

Q4: My model is biased toward a common disease or plant species. How can I improve its performance on rare classes? This is caused by imbalanced class distribution in your dataset. To address it:

- Apply Technical Mitigations: Use weighted loss functions, data augmentation specifically for rare classes, or specialized sampling methods to balance the influence of each class during training [34].

- Re-evaluate Your Data: Ensure your dataset has adequate representation for all species or conditions you want the model to recognize, as models struggle to generalize to species not seen during training [34].

Q5: How can I understand why my model is making a specific prediction? Use eXplainable AI (XAI) techniques to interpret model decisions.

- Grad-CAM: Generates heatmaps highlighting the image regions most influential to the prediction, allowing you to verify if the model is focusing on biologically relevant features like leaves or stems [36].

- SHAP & LIME: Help explain the contribution of different features to the final output [36].

The Scientist's Toolkit

This section details key software models, hardware, and analytical tools used in modern plant canopy research.

Software & Deep Learning Models

| Name / Category | Specific Examples | Function & Application |

|---|---|---|

| Convolutional Neural Networks (CNNs) | VGG16, ResNet50, EfficientNetB3, MobileNetV3, ConvNeXt [35] | Foundation models for image feature extraction; effective for hierarchical local pattern recognition. Proven robust for phenological phase classification [37] [35]. |

| Transformer Architectures | Vision Transformer (ViT), Swin Transformer, DeiT, PVTv2 [36] [35] | Use self-attention mechanisms to model global dependencies in an image. Excellent for capturing complex spatial relationships in canopy structures [34] [36]. |

| Ensemble & Hybrid Models | ViT + MLP-based local feature extractor [36] | Combines global context modeling (from ViT) with fine-grained local texture analysis (from MLP/CNN). Achieved 97.29% accuracy in landscape classification [36]. |

| Image Analysis Software | LemnaGrid [38] | A programmable image processing toolbox for analyzing plant phenotyping data, enabling the creation of custom analytical workflows. |

| Explainable AI (XAI) Tools | Grad-CAM, SHAP, LIME [36] | Provides visual and quantitative explanations for model predictions, crucial for validating and debugging models in a scientific context. |

Imaging Hardware for Data Acquisition

| Imaging Modality | Example Device | Key Specifications | Primary Research Application |

|---|---|---|---|

| Multi-Sensor Canopy System | LemnaTec CanopyAIxpert [38] | Gantry system with interchangeable sensors (RGB, IR, Hyperspectral, 3D laser). | Automated high-throughput plant phenotyping in glasshouses and growth rooms. |

| Portable Canopy Imager | CID Bio-Science CI-110 Plant Canopy Imager [10] [39] | Self-leveling 8MP hemispherical lens, 150° view, integrated PAR sensor. | Instantaneous in-field calculation of Leaf Area Index (LAI) and light analysis for crop and forest studies. |

Experimental Protocols & Data

Table 1: Benchmarking Model Performance on a Phenological Task

A comparative evaluation of models classifying the flowering phase of Tilia cordata from real-world images. Data curated from a rigorous cross-validation study [35].

| Model Architecture | F1-Score (Mean ± Std) | Balanced Accuracy (Mean ± Std) |

|---|---|---|

| ResNet50 (CNN) | 0.9879 ± 0.0077 | 0.9922 ± 0.0054 |

| ConvNeXt Tiny (CNN) | 0.9860 ± 0.0073 | 0.9927 ± 0.0042 |

| VGG16 (CNN) | 0.9852 ± 0.0076 | 0.9912 ± 0.0055 |

| EfficientNetB3 (CNN) | 0.9841 ± 0.0081 | 0.9906 ± 0.0059 |

| Swin Transformer Tiny | 0.9824 ± 0.0083 | 0.9896 ± 0.0062 |

| Vision Transformer (ViT-B/16) | 0.9811 ± 0.0085 | 0.9891 ± 0.0064 |

| MobileNetV3 Large | 0.9803 ± 0.0087 | 0.9885 ± 0.0066 |

Table 2: Performance Gap Between Laboratory and Field Conditions

Summary of findings from a systematic review on plant disease detection, highlighting the challenge of deploying models in practice [34].

| Environment | Typical Reported Accuracy | Key Challenges |

|---|---|---|

| Laboratory Conditions | 95% - 99% | Controlled lighting, uniform background, minimal occlusion. |

| Field Deployment | 70% - 85% | Environmental variability (light, weather), complex backgrounds, occlusion by other plant parts. |

Protocol 1: Methodology for Benchmarking Deep Learning Models This protocol is based on the comparative study presented in [35].

Data Curation & Annotation:

- Collect a time-series of images under natural field conditions.

- Annotate images into relevant classes (e.g., "Flowering" vs. "Non-flowering") using standardized scales like the BBCH scale.

Automated Image Quality Filtering:

- Extract features related to exposure and sharpness from each image.

- Train a classifier (e.g., XGBoost) to automatically filter out low-quality images to create a robust dataset for training.

Model Training & Evaluation:

- Select a set of state-of-the-art CNN and Transformer models.

- Fine-tune all models under an identical training protocol (optimizer, learning rate, epochs).

- Evaluate performance using a rigorous cross-validation scheme and report metrics like F1-score and Balanced Accuracy.

Protocol 2: Building an Ensemble Model for Improved Accuracy This protocol follows the approach used to achieve 97.29% classification accuracy on landscape images [36].

Architectural Design:

- Global Feature Encoder: Employ a Vision Transformer (ViT) to process the entire image and capture long-range, global contextual relationships.

- Local Feature Extractor: Use an MLP-based network to extract fine-grained, local textural details from image patches.

- Adaptive Gating Mechanism: Fuse the global and local features using a gating mechanism that dynamically weights their contributions.

Training & Interpretation:

- Train the entire ensemble end-to-end.

- Apply eXplainable AI (XAI) techniques like Grad-CAM and SHAP to visualize and validate the model's decision-making process.

Workflow Diagrams

Diagram Title: Deep Learning Pipeline for Automatic Occlusion Detection

Diagram Title: Experimental Framework for Model Benchmarking

Troubleshooting Common 3D Reconstruction Issues in Plant Canopy Research

Q: What can I do when my 3D reconstruction of dense plant canopies has significant missing data due to leaf occlusion?

A: For severe occlusion in dense canopies, consider integrating multi-view stereo vision with active structured light. The multi-view approach captures the plant from numerous angles to minimize blind spots, while structured light projects known patterns onto the foliage to help reconstruct surfaces lacking natural texture. Research shows that combining binocular structured light with gray code patterns can achieve robust 3D measurements even in complex scenes with varying surface reflectivity. Implement an error point filtering strategy to retain pixels with decoding errors of less than two bits for improved robustness [40].

Q: Why does my binocular vision system fail to reconstruct accurate 3D models of plant canopies with minimal texture?

A: Binocular stereo vision relies on matching corresponding points between images, which becomes challenging with minimally textured surfaces like uniform green leaves. This limitation can be addressed by:

- Using active structured light systems that project patterns onto the canopy to create artificial texture

- Implementing multi-view stereo with more than two cameras to increase matching opportunities

- Applying deep learning models trained on plant datasets to infer 3D structure from limited texture cues [41] [42]

One study achieved higher matching precision by using absolute phase information from left and right cameras instead of relying on surface color and texture [40].

Q: How can I improve the accuracy of plant height measurements from 3D reconstructions?

A: For accurate height measurements:

- Ensure proper camera calibration using standardized targets

- Implement subpixel matching algorithms to refine disparity values

- Use controlled lighting conditions to minimize shadows

- Validate against manual measurements regularly

Recent research on soybean phenotyping demonstrated extremely high agreement between extracted plant height from 3D reconstructions and manual measurements (R² = 0.99) through careful system design and validation [1].

Q: What approaches help with 3D reconstruction of plants in outdoor field conditions with varying lighting?

A: Field conditions present challenges like changing sunlight and wind movement:

- Use fast acquisition systems (structured light with high frame rates)

- Implement HDR imaging to handle high contrast between sunlit and shaded areas

- Employ active illumination that overpowers ambient light

- Consider robotic transport systems that move plants to controlled imaging environments [1]

Advanced systems address field challenges by combining natural field growth conditions with standardized indoor imaging chambers [1].

Experimental Protocols for Canopy Reconstruction

Multi-View Stereo Reconstruction for Plant Phenotyping

Objective: Generate detailed 3D models of plant shoots from multiple color images for quantitative trait analysis.

Materials:

- DSLR or high-resolution industrial cameras (2+ units)

- Turntable or camera positioning system

- Calibration chessboard pattern

- Diffuse lighting setup

- Computer with 3D reconstruction software (e.g., Canopy Reconstruction tool [43])

Procedure:

- System Calibration: Capture 15-20 images of calibration pattern from different orientations. Use calibration algorithm to determine intrinsic and extrinsic camera parameters.

- Image Acquisition: Place plant specimen on turntable. Capture images at 10° intervals (36 images total) ensuring 60-80% overlap between consecutive images.

- Feature Detection & Matching: Detect SIFT/SURF features across image set. Establish feature correspondences between overlapping images.

- Sparse Reconstruction: Apply structure from motion (SfM) to generate initial point cloud and camera pose estimation.

- Dense Reconstruction: Perform multi-view stereo matching to generate dense point cloud (500,000+ points).

- Surface Reconstruction: Apply Poisson surface reconstruction or marching cubes algorithm to convert point cloud to mesh.

- Model Refinement: Use level set method to optimize surface boundaries based on image information and neighboring surfaces [43].

Validation: Compare extracted morphological parameters (leaf area, plant height) with manual measurements.

Binocular Structured Light for High-Precision Canopy Measurement

Objective: Achieve high-precision 3D measurement of plant structures in complex growth environments.

Materials:

- Two synchronized industrial cameras

- Digital light projector (DLP)

- Computer with custom reconstruction software

- Tripods and mounting equipment

Procedure:

- System Setup: Arrange cameras in stereo configuration with 20-40cm baseline. Position projector to illuminate target area.

- Camera-Projector Calibration: Determine projective relationships between cameras and projector using calibration patterns.

- Pattern Projection: Project gray code and phase-shifted sinusoidal patterns onto plant canopy. For 5-step phase shifting, project 5 sinusoidal patterns plus n gray code patterns (for 2^n strips).

- Image Capture: Synchronously capture images from both cameras for each projected pattern.

- Phase Computation: Calculate wrapped phase using phase-shifting algorithm. Unwrap phase using gray code to obtain absolute phase.

- Stereo Matching: Match pixels between left and right images using absolute phase information.

- 3D Reconstruction: Triangulate 3D coordinates using camera parameters and matched points [40].

- Error Filtering: Apply error point filtering strategy to retain pixels with decoding errors of less than two bits.

Troubleshooting: If reconstruction fails on shiny leaves, implement adaptive stripe projection that dynamically adjusts brightness based on surface reflectivity [40].

Performance Comparison of 3D Reconstruction Techniques

Table 1: Quantitative Performance of 3D Reconstruction Methods in Agricultural Research

| Technique | Accuracy | Resolution | Speed | Occlusion Handling | Best For |

|---|---|---|---|---|---|

| Binocular Structured Light [40] | Sub-millimeter | High (0.1mm) | Medium (seconds) | Good with patterns | Individual leaves, controlled environments |

| Multi-View Stereo [43] | 1-5mm | Medium-High | Slow (minutes-hours) | Excellent with sufficient views | Whole plant architecture, complex canopies |

| UAV RGB + Deep Learning [2] | Plant-level | Low-Medium | Fast (real-time processing) | Poor under high coverage | Field-scale plant counting, early growth stages |

| Plant Canopy Imager [10] | Canopy-level | Low | Fast (<1 second) | N/A (2.5D) | Gap fraction, LAI estimation |

Table 2: Validation Metrics for Plant Phenotyping Reconstruction Methods

| Application | Method | Validation Metric | Reported Performance | Reference |

|---|---|---|---|---|

| Soybean phenotyping | Transport + imaging chamber | Plant height correlation | R² = 0.99 | [1] |

| Soybean phenotyping | Transport + imaging chamber | Canopy width correlation | R² = 0.95 | [1] |

| Konjac counting | UAV RGB + YOLOv5 | Precision | 98.7% | [2] |

| Konjac counting | UAV RGB + YOLOv5 | Recall | 86.7% | [2] |

| Canopy fresh weight prediction | Imaging chamber | Predictive accuracy (R²) | 0.965 | [1] |

| Leaf area prediction | Imaging chamber | Predictive accuracy (R²) | 0.972 | [1] |

The Researcher's Toolkit: Essential Materials for Plant 3D Reconstruction

Table 3: Key Research Equipment for Plant Canopy 3D Reconstruction

| Equipment | Specifications | Function | Example Use Cases |

|---|---|---|---|

| Industrial Cameras [1] | Resolution: 8+ MP; Interface: USB3.0/GigE | High-resolution image capture for detailed reconstruction | Multi-view stereo, binocular vision systems |

| Structured Light Projector [40] | Pattern rate: 60+ Hz; Resolution: 1024×768 | Project known patterns for surface reconstruction | Active 3D scanning of leaves and stems |

| UAV with RGB Camera [2] | Resolution: 20MP; GPS: RTK | Large-scale field data collection | Field phenotyping, plant counting |

| Plant Canopy Imager [10] | Fish-eye lens: 150°; PAR sensors | Hemispherical photography for canopy metrics | Gap fraction analysis, LAI estimation |

| Robotic Transport System [1] | X-Y dual-directional tracks; Programmable carts | Automated plant positioning for consistent imaging | High-throughput phenotyping of potted plants |

| Calibration Target | Chessboard pattern; Known dimensions | Camera calibration for accurate measurements | All 3D reconstruction systems |

Workflow Diagrams for 3D Reconstruction Techniques

3D Canopy Reconstruction Workflow

Structured Light 3D Measurement Process

Advanced Methodologies for Complex Canopy Environments

Integrated UAV and Deep Learning Approach for High-Coverage Periods

Recent research demonstrates that integrating deep learning models with plant location information from multiple growth stages significantly improves detection and counting accuracy during high-coverage periods when occlusion is most severe. One study achieved 98.7% precision and 86.7% recall for Konjac plants during high-coverage stages by combining YOLOv5 detection with positional data from early growth stages [2]. This approach saves substantial time in annotating and training deep learning samples for later growth stages while improving accuracy.

Automated In-Field Transport Systems for Controlled Imaging

For precise phenotyping of plants grown in vertical planting systems where shading causes significant occlusion, automated transport systems can move potted plants from field growing areas to controlled imaging chambers. This approach effectively integrates natural field growth conditions with the stability requirements of indoor imaging, eliminating data deviations caused by environmental factors like wind, rain, and mutual plant shading [1]. These systems typically include X and Y dual-directional tracks with programmable rail carts for fully automated plant movement.

Multi-Temporal Analysis for Occlusion Reduction

Leveraging the fact that plant positions remain consistent across growth stages enables researchers to use early-stage positional information to improve later-stage analysis when canopy coverage increases. This multi-temporal approach provides comprehensive information that outperforms single-temporal imagery for classification and detection tasks [2]. By combining detection results from early growth stages with plant positional information from multiple stages, researchers can significantly improve detection and counting accuracy while reducing annotation workload.

FAQs: Core Concepts and Technology

1. What is the primary advantage of using multi-modal sensor fusion for canopy imaging? Multi-modal sensor fusion overcomes the fundamental limitations of individual sensing technologies. It combines data from different modalities to provide a more comprehensive picture, enhancing detection robustness. For instance, while RGB cameras offer high-resolution color information, they fail to detect components occluded by leaves. Ultrasound can penetrate foliage to identify these hidden structures, and spectral imaging can reveal plant health information not visible to the human eye. This synergy allows for more accurate and complete canopy characterization, especially in complex, real-world field conditions. [44] [45]

2. My RGB images of the canopy appear too dark or have inconsistent color. How can I correct for this? Inconsistent illumination and dark images are common challenges in field-based phenotyping. Solutions include:

- Color Calibration: Use an industry-standard color checker (e.g., X-Rite Color Checker) placed within every image. A quadratic model can then be applied to transform the RGB values in the image so that the known color values of the chart are accurately reproduced, effectively correcting for varying light conditions. [12]

- Optimal Capture Conditions: For upward-looking canopy images, avoid direct sunlight in the frame. Capture images during uniformly overcast conditions, early in the morning, or late in the day to minimize glare and high contrast. [46]

- Camera Settings: Ensure proper exposure settings. If the image is consistently too dark, the camera's exposure may need manual adjustment to allow more light, as auto-exposure modes can be unreliable in the variable light of a canopy. [10]

3. Can ultrasonic sensors reliably detect objects hidden within a plant canopy? Yes, research demonstrates that low-frequency, highly directional ultrasonic arrays can be used to image through leaves and identify occluded grape clusters. Techniques such as using chirp excitation waveforms and near-field focusing of the array improve resolution and detail. A fan can be employed to help differentiate between stationary grape clusters and moving leaves based on their ultrasonic reflections, enhancing detection accuracy. [45]

4. What is the role of spectral imaging in this multi-modal context? Spectral imaging, often deployed via vegetation indices, provides critical information on plant physiology and health that is not available from RGB or ultrasound. It measures the reflectance of light at specific wavelengths. Healthy vegetation has a distinct spectral signature, with low reflectance in the visible spectrum and high reflectance in the near-infrared. These indices act as proxies for key traits like chlorophyll content, plant nutrition, and water stress, offering a top-down view of canopy function. [39] [47]

5. How do I handle data from sensors that are not perfectly aligned? Spatial misalignment between different sensors (e.g., RGB and thermal) is a common practical challenge due to different fields of view and resolutions. Instead of manual alignment, you can use fusion algorithms designed for unaligned data. One approach is a Multi-modal Dynamic Local Fusion Network (MDLNet), which uses a set of dynamic boxes to selectively fuse local features from one modality (e.g., high-resolution RGB) with the corresponding information from another (e.g., thermal), without requiring global pixel-level alignment. [48]

Troubleshooting Guides

Table 1: Common Data Collection Issues and Solutions

| Problem | Possible Cause | Solution |

|---|---|---|

| Dark RGB Images | Low light under canopy; incorrect camera exposure. | Use color checker for post-processing correction [12]; manually adjust camera exposure settings [10]. |

| Inconsistent Color Between Images | Changing illumination (sunny vs. overcast). | Place a color checker in every image for consistent post-hoc color correction across all data. [12] |

| Sun Flare/Glare in Images | Direct sun is visible in the image or filtering through canopy. | Retake images when the sun is not in the frame; capture during overcast conditions or at dawn/dusk. [46] |

| Ultrasound Fails to Discern Targets | Inability to separate clutter from leaves and target objects. | Introduce a fan to create leaf movement; use advanced signal processing like chirp waveforms to improve resolution. [45] |

| Poor GPS Lock | GPS requires a clear view of the sky and time to connect to satellites. | Ensure use outdoors; allow up to 15 minutes for initial satellite acquisition. [10] |

| High Occlusion Error in Yield Estimation | Reliance on counting yield components (e.g., bunches) visible only in RGB. | Shift from counting to measuring bunch projected area in RGB, which remains highly correlated with yield even under occlusion. [49] |

Table 2: Multi-Modal Fusion and Analysis Challenges

| Problem | Possible Cause | Solution |

|---|---|---|

| Model Fails on Occluded Objects | RGB-based model cannot see through foliage. | Fuse with ultrasound data to detect occluded grape clusters [45] or use deep learning (e.g., Faster RCNN) trained to identify specific stress patterns on visible canopy parts. [50] |

| Low Spatial/Temporal Resolution | Limitations of individual modalities (e.g., ultrasound, thermal). | Leverage fusion to achieve higher effective resolution by combining high-spatial-resolution RGB with functional data from other sensors. [44] |

| Fusion Algorithm Performs Poorly | Sensors are not spatially aligned at the pixel level. | Employ fusion methods like MDLNet that are specifically designed for unaligned multi-modal image pairs. [48] |

| Inaccurate Leaf Area Index (LAI) | User subjectivity in thresholding hemispherical photos. | Use alternative instruments like a ceptometer, which estimates LAI based on light transmittance (PAR inversion technique) according to Beer's law. [47] |

Experimental Protocols for Key Tasks

Protocol 1: Field-Based Canopy Image Acquisition and Color Standardization

This protocol ensures consistent and comparable RGB image data across multiple time points and lighting conditions. [12]

Key Materials:

- Digital RGB camera (e.g., Canon EOS 60D).

- Industry-standard color checker (e.g., X-rite Color Checker).

- Fixed platform or vehicle for consistent camera positioning.

Methodology:

- Setup: Mount the camera on a fixed platform at a predetermined height and angle. Securely attach the color checker so it is visible within the frame of every image.

- Camera Settings: Use manual focus and a fixed aperture (e.g., f/9.0) to maintain consistency. A fast shutter speed (e.g., 1/500 s) is recommended to minimize motion blur.

- Image Capture: Capture images of your canopy plots, ensuring the color checker is fully visible in each shot.

- Pre-processing: In software (e.g., MATLAB), detect the region of interest (ROI - e.g., the plot area) and extract the color checker from each image.

- Color Correction:

- For each image, record the observed RGB values of the color checker tiles.

- Using a least-squares approach, fit a quadratic model that transforms the observed values to match the chart's known reference values.

- Apply this transformation to all pixels in the image.

Protocol 2: Ultrasonic Detection of Occluded Canopy Components

This protocol outlines a method for detecting grape clusters hidden by foliage using airborne ultrasound. [45]

Key Materials:

- Ultrasonic phased array composed of air-coupled transducers and microphones.

- Signal generator and data acquisition system.

- Fan (to induce leaf movement).

Methodology:

- System Configuration: Set up a highly directional, low-frequency ultrasonic array. Configure the array for near-field focusing to improve resolution at close ranges.

- Signal Excitation: Use chirp excitation waveforms instead of single pulses. This technique, combined with pulse-compression processing, improves signal-to-noise ratio and resolution.

- Data Acquisition: Position the array to scan the target canopy area. Acquire reflection data.

- Movement Discrimination: Activate a fan to create air movement. This causes leaves to move while grape clusters remain relatively stationary. Subsequent data processing can help differentiate between the static targets (grapes) and moving clutter (leaves).