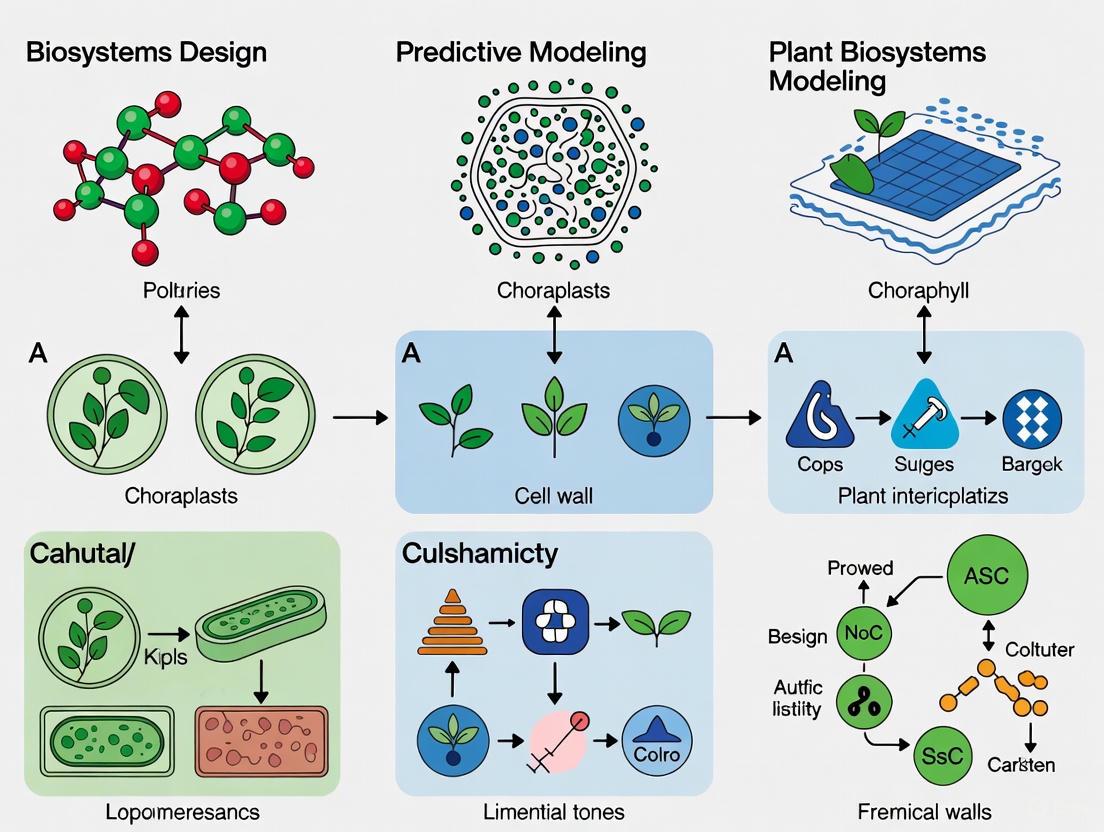

Overcoming Predictive Modeling Challenges in Plant Biosystems Design: From AI Foundations to Biomedical Applications

Predictive modeling is revolutionizing plant biosystems design, yet researchers and drug development professionals face significant challenges in model accuracy, biological relevance, and clinical translation.

Overcoming Predictive Modeling Challenges in Plant Biosystems Design: From AI Foundations to Biomedical Applications

Abstract

Predictive modeling is revolutionizing plant biosystems design, yet researchers and drug development professionals face significant challenges in model accuracy, biological relevance, and clinical translation. This article provides a comprehensive analysis of current methodologies, from foundational graph theory and mechanistic models to cutting-edge foundation models and machine learning applications. We explore troubleshooting strategies for data scarcity and model generalizability, alongside rigorous validation frameworks essential for credible biomedical application. By synthesizing advances across computational biology, systems pharmacology, and plant science, this work offers a strategic roadmap for enhancing predictive capabilities in plant-based drug discovery and biosystems engineering.

Theoretical Foundations and Emerging Paradigms in Plant Biosystems Modeling

Troubleshooting Guides

Common Computational Challenges in Plant Network Analysis

Table 1: Troubleshooting Common Network Analysis Issues

| Problem Category | Specific Symptoms | Possible Causes | Recommended Solutions | Verification Methods |

|---|---|---|---|---|

| Network Construction | Incomplete network with missing interactions; Low connectivity | Sparse biological data; Incorrect correlation thresholds; Missing node types | Use multiple data sources (multi-omics integration); Adjust statistical cutoffs carefully; Validate with literature mining [1] [2] | Check scale-free property (power-law degree distribution); Compare network density to known benchmarks |

| Model Accuracy | Predictions don't match experimental validation; Poor phenotypic prediction | Incorrect edge weighting; Missing underground metabolism; Compartmentalization errors | Incorporate enzyme promiscuity data; Use cell-type specific data; Apply constraint-based modeling (FBA) [2] | Perform cross-validation; Compare flux predictions with 13C-labeling experiments |

| Tool Implementation | Long computation times for large networks; Memory overflow errors | Inefficient data structures; O(V²) memory complexity for dense matrices | Use adjacency lists for sparse networks (O(V+E) memory); Apply community detection before full analysis [3] | Profile code performance; Test on network subsets first |

| Visualization | Cluttered, unreadable diagrams; Important nodes not highlighted | Too many nodes displayed; Poor layout algorithm choice; Insufficient visual encoding | Use hierarchical layouts (dot) for directed graphs; Apply centrality-based filtering; Use color schemes strategically [4] | Conduct readability tests with domain experts |

| Data Integration | Inconsistent results across omics layers; Network motifs not detected | Batch effects between datasets; Different temporal/spatial scales | Apply network alignment algorithms; Use multi-layer network approaches; Normalize data properly [5] | Validate with known pathway conservation |

Experimental Protocol: Constructing a Gene-Metabolite Network from Multi-omics Data

Purpose: To create an integrated network representing molecular relationships in plant systems for identifying key regulatory elements.

Materials and Reagents:

- Plant tissue samples at multiple developmental stages

- RNA extraction kit (e.g., TRIzol-based methods)

- LC-MS/MS system for metabolomics

- Computational resources with minimum 16GB RAM

- Network analysis software (Cytoscape, Graphviz, or custom Python/R scripts)

Procedure:

- Data Collection:

- Extract RNA and sequence for transcriptome data

- Perform metabolite profiling using LC-MS/MS

- Record environmental conditions and developmental stages

Network Initialization:

- Create node lists: genes (from transcriptomics) and metabolites (from metabolomics)

- Calculate correlation matrices (e.g., Pearson correlation between gene expression and metabolite abundance)

- Apply significance thresholds (p < 0.05 with multiple testing correction)

Edge Definition:

- Establish promotional relationships (positive correlations)

- Establish inhibitory relationships (negative correlations)

- Assign edge weights based on correlation strength

Network Analysis:

- Calculate degree distribution to identify hubs

- Perform community detection to find functional modules

- Compute centrality measures (betweenness, eigenvector) to find key nodes

Validation:

- Compare identified hubs with known essential genes

- Test network robustness with permutation tests

- Validate predictions with mutant phenotype data

Troubleshooting Notes:

- If network is too dense, increase correlation thresholds gradually

- If biological interpretation is difficult, incorporate prior knowledge from databases

- For large networks, use sampling approaches or divide into subnetworks

Frequently Asked Questions (FAQs)

Q1: What are the main types of biological networks used in plant biosystems design, and when should I use each type?

Table 2: Network Types and Their Applications in Plant Research

| Network Type | Structural Features | Plant Science Applications | Tools & Algorithms | Example Use Cases |

|---|---|---|---|---|

| Protein-Protein Interaction (PPI) | Undirected graph; Nodes: proteins; Edges: physical interactions [5] | Identify protein complexes; Map signaling pathways | Markov Clustering (MCL); Affinity Propagation | Stress response pathways; Growth regulator complexes |

| Gene Regulatory | Directed graph; Nodes: genes/TFs; Edges: regulatory relationships [2] | Understand developmental programs; Map transcriptional cascades | Path finding (Dijkstra's); Motif detection | Flowering time control; Root development networks |

| Metabolic | Directed/Bipartite graph; Nodes: metabolites/reactions [2] [5] | Engineer metabolic pathways; Predict flux distributions | Flux Balance Analysis (FBA); Elementary Mode Analysis | Biofortification strategies; Secondary metabolite production |

| Co-expression | Undirected, weighted graph; Nodes: genes; Edges: expression similarity [3] | Identify functionally related genes; Find novel pathway components | Weighted Correlation Network Analysis | Abiotic stress responses; Tissue-specific expression programs |

| Signal Transduction | Directed graph; Nodes: signaling molecules; Edges: signal transmission [5] | Map information flow; Identify signaling hubs | Network alignment; Perturbation analysis | Hormone signaling networks; Defense response pathways |

Q2: How can I identify essential genes or proteins in my plant network using graph theory concepts?

Essential elements can be identified through several graph theoretical measures [5] [3]:

- Degree Centrality: Nodes with unusually high number of connections (hubs) often indicate essential elements. In plant PPI networks, these may be key signaling proteins.

- Betweenness Centrality: Nodes that appear on many shortest paths (bottlenecks) control information flow. In metabolic networks, these often correspond to key regulatory metabolites.

- Eigenvector Centrality: Nodes connected to other well-connected nodes have high influence. In gene regulatory networks, these may be master transcription factors.

- Experimental Validation: Always combine computational predictions with experimental validation using mutant analysis or knockdown experiments.

Q3: What are the most common pitfalls when applying graph theory to plant systems, and how can I avoid them?

Common pitfalls include:

- Oversimplification: Plant networks are multi-scale (molecular to organismal). Solution: Use multi-layer network approaches [2].

- Temporal Dynamics: Plant responses unfold over time. Solution: Incorporate time-series data and dynamic network models.

- Compartmentalization: Plant cells have unique organelles. Solution: Include subcellular localization data [2].

- Species-Specificity: Network properties may vary between species. Solution: Use comparative network analysis across species.

- Data Quality: Incomplete interactions lead to fragmented networks. Solution: Integrate multiple data types and use quality controls.

Q4: How do I choose the right layout algorithm for visualizing my plant biological network?

Table 3: Graph Layout Algorithms for Biological Networks

| Layout Algorithm | Best For Network Types | Key Strengths | Plant-Specific Applications | Graphviz Command |

|---|---|---|---|---|

| dot | Hierarchical, directed graphs [4] | Clear flow visualization; Efficient for large graphs | Gene regulatory hierarchies; Signaling cascades | dot -Tpng input.dot -o output.png |

| neato | Undirected graphs; Small to medium networks [4] | Natural node distribution; Force-directed placement | Protein interaction networks; Co-expression networks | neato -Tpng input.dot -o output.png |

| fdp | Large undirected graphs [4] | Scalable force-directed; Minimal edge crossings | Metabolic networks; Large-scale PPI networks | fdp -Tpng input.dot -o output.png |

| circo | Cyclic structures; Circular relationships [4] | Highlights cycles and loops | Feedback loops in signaling; Cyclic metabolic pathways | circo -Tpng input.dot -o output.png |

| sfdp | Very large graphs (1000+ nodes) [4] | Scalability; Memory efficiency | Genome-scale networks; Multi-omics integration | sfdp -Tpng input.dot -o output.png |

Q5: What experimental techniques can validate computational predictions from plant network analysis?

Validation strategies include:

- Mutant Analysis: Knock out predicted essential genes and observe phenotypes

- Protein-DNA Interaction: Use ChIP-seq to validate transcription factor targets

- Metabolic Flux Analysis: Employ 13C-labeling to test predicted flux distributions

- Protein Complex Validation: Use co-immunoprecipitation for predicted interactions

- Spatial Validation: Apply in situ hybridization or GFP fusions for spatial predictions

Diagram: Plant Gene Regulatory Network with Feedback Loops

Diagram: Multi-omics Data Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Resources for Plant Network Biology Research

| Category | Specific Reagent/Tool | Function/Application | Key Features | Plant-Specific Considerations |

|---|---|---|---|---|

| Data Generation | RNA-seq kits (e.g., Illumina) | Transcriptome profiling for gene nodes | High sensitivity; Quantitative | Optimize for plant secondary metabolites |

| LC-MS/MS systems | Metabolite detection and quantification | Broad metabolite coverage | Requires plant-specific spectral libraries | |

| Yeast two-hybrid systems | Protein-protein interaction detection [5] | High-throughput capability | May miss plant-specific post-translational modifications | |

| Computational Tools | Graphviz software [4] | Network visualization and layout | Multiple layout algorithms | Essential for large plant genomes |

| Cytoscape with plugins | Network analysis and integration | Extensible architecture | Plant-specific databases available | |

| R/Bioconductor packages | Statistical network analysis | Reproducible workflows | Packages for plant omics data | |

| Database Resources | Plant-specific databases (e.g., PlantCyc) | Metabolic pathway information | Curated plant content | Species-specific data critical |

| AraNet (Arabidopsis) | Reference interaction networks | Validated interactions | Model system for translation | |

| Validation Reagents | CRISPR-Cas9 systems | Gene knockout for hub validation | Precise genome editing | Efficient transformation protocols needed |

| Antibody libraries | Protein detection and localization | Target specificity | Limited availability for plant proteins | |

| Stable isotope labels (13C) | Metabolic flux analysis [2] | Quantitative flux measurements | Plant-specific labeling strategies |

Foundational Principles & Frequently Asked Questions

What is the core difference between mechanistic and empirical modeling?

Answer: Mechanistic models are theory-based, built upon established scientific principles and physical laws to describe the underlying causal relationships in a system. In contrast, empirical (or data-driven) models are primarily constructed to find statistical relationships within a specific dataset without attempting to describe the underlying mechanisms [6].

| Feature | Mechanistic Models | Empirical Models |

|---|---|---|

| Basis | Theory, first principles, biological/physical laws [6] [7] | System data, statistical correlations [6] |

| Predictive Scope | Can extrapolate beyond the original data to predict system behavior under new, untested conditions [8] [7] | Limited to interpolation within the scope and range of the data used for training [8] |

| Interpretability | High; model components (parameters, equations) have biological meaning [6] | Low; often function as "black boxes" with limited insight into causal mechanisms [8] |

| Primary Challenge | Requires expert knowledge; parameter estimation can be complex and computationally intensive [6] [9] | Susceptible to variance unless large datasets are available; may not reveal underlying biology [8] [6] |

When should I use an ODE-based model versus a Genome-Scale Model (GEM)?

Answer: The choice depends on the biological scale of your research question and the required level of detail.

- Use ODE-based Kinetic Models when you need a dynamic, detailed view of a specific pathway or network. They are ideal for studying the temporal behavior of a well-defined system, such as a signaling cascade or a metabolic pathway with known regulatory mechanisms [9]. The key challenge is parameter identifiability—ensuring the available experimental data is sufficient to reliably estimate the model's parameters [9].

- Use Genome-Scale Models (GEMs) when you require a system-wide, comprehensive overview of an organism's metabolic capabilities. GEMs are particularly powerful for exploring metabolic fluxes at a steady state and understanding the interactions between different tissues in a multicellular organism [8] [10]. They are less suited for modeling the transient, second-by-second dynamics of a specific pathway.

Troubleshooting Common Modeling Challenges

My model parameters are unidentifiable. What should I do?

Answer: Parameter unidentifiability means the available data cannot uniquely determine the values of some parameters, often due to lack of influence on outputs or parameter interdependence [9]. The following workflow outlines a systematic approach to diagnose and address this issue.

Detailed Methodologies:

- Diagnosis with VisId Toolbox: Use the VisId MATLAB toolbox to calculate a collinearity index for groups of parameters. This index quantifies the degree of correlation between parameters, helping to identify the largest groups of uncorrelated (identifiable) parameters and smaller groups of highly correlated (non-identifiable) ones [9].

- Parameter Estimation with Regularization: Combine global optimization metaheuristics (e.g., enhanced Scatter Search, eSS) with efficient local search methods (e.g., NL2SOL) and regularization techniques. Regularization adds a penalty term to the objective function (e.g., weighted sum-of-squares), which helps to avoid over-fitting and can improve parameter estimation, especially in large models [9].

How can I integrate models across different biological scales?

Answer: Multiscale modeling links processes across levels of biological organization (e.g., gene → protein → metabolism → whole-plant physiology) to predict emergent properties [8]. A common challenge is managing complexity.

Experimental Protocol: Constructing a Multi-Tissue Metabolic Framework

This protocol is based on the extension of the AraGEM model for Arabidopsis thaliana to a multi-tissue context [10].

- Define Tissue Compartments: Create distinct tissue compartments (e.g., leaf, stem, root), each with its own instance of the metabolic model, reflecting tissue-specific metabolic capabilities [10].

- Establish Common Pools (CP): Define shared metabolite pools that allow for translocation between tissues. A common pool has no storage capacity; transport into the pool from one tissue must be matched by transport out to another tissue [10].

- Incorporate Storage Pools (SP): Introduce storage pools to manage temporal dynamics (e.g., diurnal cycle). A key assumption is no net accumulation across all periods; compounds stored in one period (e.g., starch during the day) must be retrieved in another (e.g., night) [10].

- Build the Stoichiometric Matrix: Assemble an integrated stoichiometric matrix that includes the internal reactions for each tissue and the transport reactions to/from the common and storage pools [10].

- Apply Constraints and Solve: Apply tissue-specific constraints (e.g., biomass composition, energy demands) and use a constraint-based optimization approach, such as Flux Balance Analysis (FBA), with an appropriate objective function (e.g., minimization of total photon usage for plant growth) [10].

How do I incorporate omics data into a mechanistic model?

Answer: Integration can be achieved through several strategies, from constraining existing models to building new hybrid models.

| Integration Strategy | Methodology | Application Example |

|---|---|---|

| Constraining GEMs | Use condition-specific transcriptomic or proteomic data to activate/deactivate reactions in a genome-scale metabolic model [8]. | Study metabolic shifts in Arabidopsis under low and high CO₂ conditions by integrating transcriptome data with a GEM [8]. |

| Multi-Omics Data Fusion | Combine genomic, transcriptomic, proteomic, and metabolomic datasets to inform a unified model, often leveraging AI/ML to handle data complexity [11]. | Develop predictive models for complex plant traits by using ML to find patterns across multiple omics layers [11]. |

| Scientific Machine Learning (SciML) | Embed mechanistic structures (e.g., ODEs) directly into machine learning models, or use ML to learn unknown terms or parameters within a mechanistic framework [12]. | Use a biologically-constrained neural network, where network connections represent known gene-protein interactions, to predict signaling outcomes [12]. |

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Mechanistic Modeling |

|---|---|

| VisId (MATLAB Toolbox) | A computational tool for practical identifiability analysis, helping to detect and visualize correlated parameters in large-scale kinetic models [9]. |

| AraGEM (Genome-Scale Model) | A genome-scale metabolic reconstruction of Arabidopsis thaliana; serves as a base for building tissue-specific and multi-tissue plant models [10]. |

| Systems Biology Markup Language (SBML) | A standard format for representing computational models in systems biology; enables model exchange and reuse between different software tools [13]. |

| GNU MCSim | Software for performing Monte Carlo simulations for statistical inference; useful for model calibration and uncertainty analysis [13]. |

| Stable Isotope Labeling (e.g., ¹³C) | An experimental method for measuring intracellular metabolic fluxes, providing critical data for validating and refining constraint-based metabolic models [2]. |

| Biologically-Constrained Neural Networks | A type of SciML model where the architecture of a neural network is sparsified based on prior biological knowledge (e.g., known gene interactions), enhancing interpretability and preventing overfitting [12]. |

Advanced Applications & Emerging Paradigms

What is Scientific Machine Learning (SciML) and how is it applied?

Answer: Scientific Machine Learning (SciML) is an emerging field that synergistically combines the pattern-finding strengths of Machine Learning (ML) with the interpretability and causal reasoning of mechanistic modeling [12]. It is particularly useful when systems are partially understood or when simulating a full mechanistic model is computationally prohibitive.

Key Integration Approaches:

- ML Informing Mechanics: Using machine learning to learn unknown terms or parameters within mechanistic models. For example, a neural network can be trained to learn a missing rate law within a system of ODEs from experimental data [12].

- Mechanics Informing ML: Constraining the structure of machine learning models with mechanistic knowledge. This can be done by sparsifying the connections in a neural network to only include biologically plausible interactions, which improves generalizability and interpretability [12].

- Hybrid Modeling: Creating models where some components are represented by ODEs and others by ML, allowing for the integration of well-characterized subsystems with less-understood ones [12].

How can mechanistic modeling guide plant engineering?

Answer: Multiscale mechanistic models serve as in silico testbeds for evaluating genetic engineering strategies before conducting costly and time-consuming wet-lab experiments [8] [2].

- Predicting Outcomes: Models can predict the phenotypic consequences of genetic perturbations, such as gene knockouts or overexpression. For example, a multiscale model of lignin biosynthesis in poplar was used to explore gene knockdown strategies for improving bioenergy traits while mitigating negative impacts on growth [8].

- Identifying Key Regulators: Integrated models can identify critical control points in regulatory networks. A model coupling gene regulatory networks with photosynthesis models helped identify key regulatory controls for improving photosynthetic efficiency in soybean under elevated CO₂ [8].

Theoretical Foundation FAQ

Q1: What is Evolutionary Dynamics Theory in the context of plant biosystems design? Evolutionary Dynamics Theory provides a framework for predicting the genetic stability and evolvability of genetically modified or de novo synthesized plant systems. It helps researchers understand how designed biological systems will behave over multiple generations, assessing whether introduced traits will persist or degrade. This is crucial for ensuring the long-term viability and safety of engineered plants [2].

Q2: Why is predicting genetic stability a major challenge in plant biosystems design? A primary challenge is the inherent conflict between design objectives and natural evolutionary pressures. A designed trait that is beneficial in a controlled lab environment might impose a fitness cost in a natural ecosystem, creating selective pressure for the plant to mutate or inactivate the engineered genetic circuit. Furthermore, a full understanding of the principles that govern genetic stability across different spatial and temporal scales in complex, multicellular plants is still developing [2].

Q3: How can concepts like selective pressure be measured in engineered plants?

Selective pressure can be quantified by analyzing the rates of non-synonymous (Ka) and synonymous (Ks) nucleotide substitutions. The Ka/Ks ratio is a key metric:

Ka/Ks > 1: Indicates positive selection, where genetic changes are advantageous.Ka/Ks ≈ 1: Suggests neutral evolution.Ka/Ks < 1: Indicates purifying selection, which removes deleterious mutations [14]. For example, in a study of tea plants, genes likeCsJAZ1,CsJAZ8, andCsJAZ9showed signs of positive selection (Ka/Ks > 1), indicating their adaptive roles [14].

Troubleshooting Guide: Common Experimental Challenges

Table 1: Troubleshooting Genetic Instability in Designed Plant Systems

| Problem | Potential Cause | Recommended Solution |

|---|---|---|

| Rapid Loss of Engineered Trait | The trait imposes a high fitness cost (e.g., metabolic burden) [2]. | Refactor the genetic circuit to minimize energy consumption; use endogenous promoters with appropriate strength instead of strong constitutive ones. |

| Unstable Gene Expression Across Generations | Epigenetic silencing or positional effects due to random DNA insertion [2]. | Use genome editing to insert constructs into genomic "safe harbors"; include genetic insulators in the design. |

| Variable Performance in Different Environments | Conditional neutrality, where the trait is only advantageous in specific conditions [15]. | Conduct multi-environment trials; design systems that are only activated under specific, target environmental cues. |

| Emergence of Inactive Rearranged Sequences | Presence of repetitive DNA sequences leading to homologous recombination [2]. | Avoid repeats in the original design; use bioinformatics tools to scan for and eliminate such sequence elements. |

Experimental Protocols for Stability Assessment

Protocol 1: Quantifying Selection Pressure on Engineered Genes

Objective: To determine if an introduced gene is under positive, neutral, or purifying selection.

Methodology:

- Sequence Alignment: For the gene of interest, obtain coding sequences (CDS) from multiple related cultivars or from the engineered plant line over several generations. For pan-genomic studies, use high-quality genome assemblies from multiple individuals [14].

- Calculation of Substitution Rates: Use bioinformatics software (e.g.,

wgdtoolkit) to calculate the number of non-synonymous substitutions per non-synonymous site (Ka) and synonymous substitutions per synonymous site (Ks) [14]. - Statistical Analysis: Compute the

Ka/Ksratio.- A

Ka/Kssignificantly greater than 1 suggests the gene is undergoing positive selection, which may be desirable for adaptive traits. - A

Ka/Ksnot significantly different from 1 suggests neutral evolution. - A

Ka/Kssignificantly less than 1 suggests purifying selection, indicating that most mutations are harmful and are being removed [14].

- A

Protocol 2: Pan-Genomic Analysis of Gene Presence-Absence Variation (PAV)

Objective: To understand the core and dispensable genome and assess how PAV affects the stability of engineered pathways.

Methodology:

- Genome Assembly & Annotation: Assemble and annotate high-quality genomes for a population of individuals (e.g., 22 tea plant genomes in the JAZ gene study) [14].

- Gene Family Identification: Identify all genes belonging to the target family (e.g., JAZ genes) across all genomes.

- Categorize Genes:

- Core Genes: Present in all (or nearly all) genomes.

- Dispensable Genes: Present in a subset of genomes.

- Private Genes: Unique to a single genome [14].

- Correlate with Phenotype: Correlate the presence or absence of specific genes with phenotypic outcomes, such as stress resistance or metabolite production, to identify critical, stable components for biosystems design.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Evolutionary Dynamics Studies

| Reagent / Material | Function / Application |

|---|---|

| Pan-Genome Dataset | A collection of genome sequences from multiple individuals of a species; serves as the foundational data for analyzing gene presence-absence variation (PAV) and structural variants [14]. |

Software for Ka/Ks Calculation (e.g., wgd) |

Bioinformatics toolkits used to perform whole-genome duplication analysis and calculate non-synonymous (Ka) and synonymous (Ks) substitution rates to infer selection pressure [14]. |

| Multiple Sequence Alignment Tools (e.g., MAFFT) | Software used to align three or more biological sequences (DNA, RNA, protein) to identify regions of similarity, which is a prerequisite for phylogenetic analysis and calculating substitution rates [14]. |

| Phylogenetic Analysis Software (e.g., RAxML) | Tools used to infer evolutionary relationships among genes or species, helping to trace the origin and diversification of engineered genetic modules [14]. |

Key Conceptual Diagrams

Diagram 1: Evolutionary Forces on a Designed Genetic Module

Diagram 2: Experimental Workflow for Stability Analysis

Technical Support Center

Troubleshooting Guide: Common Modeling Challenges

This guide addresses specific issues you might encounter when developing and using pattern and mechanistic mathematical models in plant biology research.

Table 1: Troubleshooting Common Model Implementation Issues

| Problem Scenario | Underlying Issue | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| Pattern models (e.g., from RNA-seq data) show high false-positive correlations. | Overfitting due to high-dimensional data (many genes, few samples) or unaccounted for batch effects. | 1. Check sample size to variable ratio. [16]2. Perform principal component analysis (PCA) to identify hidden batch effects.3. Validate on a held-out test dataset. | 1. Apply regularization techniques (e.g., Lasso, Ridge regression). [16]2. Use a tool like DESeq2 that employs a negative binomial distribution to model over-dispersed count data. [16]3. Increase biological replicates. |

| Mechanistic model simulations do not converge or produce unrealistic results. | Model stiffness, incorrect parameter scaling, or violation of mass/energy conservation laws. | 1. Check units and scaling of all parameters. [2]2. Perform a local stability analysis around steady states.3. Verify mass balance in metabolic models. [2] | 1. Use a solver designed for stiff systems of ODEs.2. Re-estimate parameters using Bayesian inference or profile likelihood. [17]3. Simplify the model to a core, well-understood module first. |

| Inability to select an appropriate model type for a new research question. | Unclear research objective: is the goal hypothesis generation (pattern) or hypothesis testing (mechanistic)? | 1. Define the primary goal: finding associations or understanding causality. [16] [18]2. Audit available data (type, quantity, quality).3. Evaluate the need for temporal dynamics prediction. | Use the Model Selection Workflow diagrammed below. For spatial patterns, leverage machine learning for model selection from images. [17] |

| Mechanistic model parameters cannot be estimated from available data. | Lack of identifiability: different parameter sets yield equally good fits to the data. | 1. Conduct a structural (theoretical) identifiability analysis.2. Perform a practical identifiability analysis (e.g., profile likelihood). | 1. Redesign experiments to capture informative dynamics. [16]2. Use approximate Bayesian inference methods that work with steady-state data, such as Simulation-Decoupled Neural Posterior Estimation. [17] |

| Model predictions fail under novel conditions (e.g., new environment). | Pattern Model: Learned correlations are not transferable. [19]Mechanistic Model: Missing a key biological process. | 1. Test the model on a new, independent dataset from the novel conditions.2. For mechanistic models, perform a global sensitivity analysis. | Pattern Model: Retrain with data from the new conditions.Mechanistic Model: Refactor the model to include the missing environmental response mechanism, as done in plant biosystems design. [2] [19] |

Experimental Protocols for Model Development and Validation

Protocol 1: Constructing a Gene Co-expression Network (Pattern Model)

Objective: To infer a functional gene regulatory network (GRN) from RNA-seq data to identify candidate genes for further study. [16]

Materials:

- RNA-seq data (count matrix) from multiple samples.

- Computational tools: R/Bioconductor with packages such as DESeq2 for normalization and WGCNA for network construction. [16]

Methodology:

- Data Preprocessing: Normalize raw read counts using a method like DESeq2's median-of-ratios to correct for library size and RNA composition. [16]

- Filtering: Filter out lowly expressed genes to reduce noise.

- Network Construction: Use the Weighted Gene Co-expression Network Analysis (WGCNA) package. [16]

- Construct a correlation matrix of all gene pairs across all samples.

- Transform the correlation matrix into an adjacency matrix using a soft power threshold to emphasize strong correlations.

- Convert the adjacency matrix into a Topological Overlap Matrix (TOM) to measure network interconnectedness.

- Identify modules of highly co-expressed genes using hierarchical clustering on the TOM-based dissimilarity.

- Validation: Relate modules to external traits (e.g., physiological measurements) to identify biologically significant modules. Perform functional enrichment analysis (e.g., GO, KEGG) on module genes.

Protocol 2: Building and Analyzing a Genome-Scale Metabolic Model (Mechanistic Model)

Objective: To create a constraint-based mechanistic model of plant cell metabolism to predict metabolic fluxes and phenotypic outcomes. [2]

Materials:

- Annotated plant genome sequence.

- Biochemical, genomic, and literature-derived data for metabolic reactions.

- Software: A constraint-based modeling platform like COBRApy.

Methodology:

- Network Reconstruction: [2]

- Assemble a draft network from genome annotation and databases.

- Define the network's biochemical reactions and their stoichiometry.

- Assign reactions to specific cellular compartments (e.g., cytosol, chloroplast).

- Define a biomass reaction that represents the composition of the plant cell.

- Constraint-Based Analysis: [2]

- Formulate the model as

S • v = 0, whereSis the stoichiometric matrix andvis the flux vector. - Apply constraints on reaction fluxes (upper and lower bounds) based on enzyme capacity and nutrient uptake rates.

- Formulate the model as

- Phenotype Prediction: Use Flux Balance Analysis (FBA) to predict optimal growth or metabolite production by solving for the flux distribution that maximizes a defined objective function (e.g., biomass yield). [2]

- Model Validation: Compare model predictions (e.g., growth rates, essential genes, byproduct secretion) with experimental data from literature or new experiments.

Frequently Asked Questions (FAQs)

Q1: When should I use a pattern model versus a mechanistic mathematical model in my research?

A: The choice is dictated by your research goal and available data. Use pattern models when your goal is hypothesis generation, you have large, high-dimensional datasets (e.g., transcriptomics, phenomics), and you want to identify correlations and potential relationships without specifying underlying processes. [16] [18] Use mechanistic mathematical models when your goal is hypothesis testing, you have prior knowledge about the system's biology and kinetics, and you want to understand causality, make quantitative predictions, or explore emergent properties under novel conditions. [16] [2] [19]

Q2: How can I overcome the mathematical barrier to entering mechanistic modeling?

A: This is a common challenge. Several pathways exist: [16]

- Use Easy-to-Use Tools: Start with high-level software and modeling environments that provide graphical user interfaces or scripting in accessible languages (e.g., Python libraries, COPASI).

- Interdisciplinary Collaboration: Actively collaborate with mathematicians, physicists, or computational biologists. Frame your biological question clearly for them. [16]

- Targeted Training: Engage with workshops and online courses focused on mathematical biology.

Q3: Our inferred Gene Regulatory Network (GRN) is static. How can we make it dynamic and more predictive?

A: A static network is a valuable first step. To add dynamics:

- Use the static network as a topological scaffold to define potential interactions. [16] [18]

- Translate this topology into a dynamic system, typically using Ordinary Differential Equations (ODEs), where the rate of change of component (e.g., mRNA) is a function of its regulators. [16] [18]

- Parameterize the ODEs using kinetic data from literature or parameter estimation techniques applied to time-series data. [17] This creates a mechanistic model that can simulate temporal responses.

Q4: Why would I choose a complex mechanistic model over a simpler empirical/pattern model for applied problems like disease forecasting?

A: While simpler initially, empirical models (like the "3-10 rule" for grape downy mildew) often lack accuracy and robustness, especially under changing conditions like new climates. They require recalibration for new environments. [19] While more complex to build, mechanistic models, which encode the underlying biology (e.g., pathogen life cycle, host plant response, environment), are more accurate and robust. Their complexity is in the construction, not necessarily the output, which can be designed to be simple and easy-to-use for growers within a Decision Support System. [19]

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Computational Modeling in Plant Biology

| Item | Function/Application | Example Use Case |

|---|---|---|

| DESeq2 / EdgeR | Statistical software for differential expression analysis from RNA-seq data. [16] | Identifying genes whose expression is significantly changed in response to a stress treatment (Pattern Modeling). |

| WGCNA | R package for constructing weighted gene co-expression networks. [16] | Finding clusters (modules) of highly correlated genes to link to a phenotype of interest (Pattern Modeling). |

| COBRA Toolbox | A MATLAB/Python suite for constraint-based reconstruction and analysis of metabolic networks. [2] | Building a genome-scale metabolic model (GEM) of a plant cell to predict growth requirements or metabolic engineering targets (Mechanistic Modeling). |

| COPASI | Software application for simulating and analyzing biochemical networks and their dynamics. [16] | Simulating a small, well-defined gene regulatory circuit using ODEs to study its dynamic behavior (Mechanistic Modeling). |

| CLIP-based Model Selector | A machine learning tool using Contrastive Language-Image Pre-training to select appropriate mathematical models from spatial pattern images. [17] | Automatically suggesting that a leaf patterning phenotype may be explained by a Turing model based on an image alone (Model Selection). |

| NGBoost for Parameter Estimation | A method using Natural Gradient Boosting for approximate Bayesian inference of model parameters. [17] | Estimating the parameters of a pattern formation model from a small number of steady-state images without time-series data (Parameter Estimation). |

Workflow Visualization Diagrams

Diagram 1: A workflow for selecting between pattern and mechanistic modeling approaches based on research goals and data availability. [16] [18] [19]

Diagram 2: A logical flowchart for diagnosing and correcting a model that produces failed or unrealistic predictions. [2] [17]

This technical support center is designed to assist researchers and scientists in navigating the transition from traditional plant genetic modification to advanced predictive biosystems design. Plant biosystems design represents a fundamental shift from trial-and-error approaches to innovative strategies based on predictive models of biological systems [2]. This emerging interdisciplinary field seeks to accelerate plant genetic improvement using genome editing and genetic circuit engineering, or create novel plant systems through de novo synthesis of plant genomes [20]. As you engage in this complex research, you will inevitably encounter challenges related to computational modeling, experimental automation, and data integration. The following troubleshooting guides and FAQs address specific, common issues in plant biosystems design predictive modeling research, providing practical solutions and detailed methodologies to advance your work.

Troubleshooting Guides for Predictive Modeling Research

Troubleshooting Genome-Scale Model (GEM) Construction

Problem: Incomplete Metabolic Network Reconstruction

- Symptoms: Missing reactions in key pathways, inability to model metabolic fluxes accurately, and failure to predict phenotypic outcomes.

- Root Causes: Lack of comprehensive knowledge of gene functions, undefined underground metabolism due to enzyme promiscuity, and insufficient data on metabolites in different cellular compartments [2].

- Solutions & Protocols:

- Utilize Advanced Computational Tools: Employ tools like MAGI (Metabolite Annotation and Gene Integration) to facilitate the integration of metabolic and genetic networks by reconciling metabolomic and genomic data [2].

- Implement Single-Cell Omics: Address compartmentalization challenges by applying single-cell/single-cell-type omics technologies to decipher metabolites, reactions, and pathways specific to different cell types [2].

- Leverage CoralME Platform: For microbial plant symbionts or algal systems, use the coralME tool to automatically reconstruct nearly finished ME-models (Metabolism and Expression models) from existing genome-scale metabolic models (M-models). This can reduce reconstruction time from months to minutes [21].

Table 1: Solutions for Incomplete GEM Construction

| Solution | Primary Use Case | Technical Approach | Key Outcome |

|---|---|---|---|

| MAGI Tool | Integrating genetic and metabolic networks | Algorithmic reconciliation of metabolomic and genomic datasets | Improved network curation and gap filling |

| Single-Cell Omics | Cell-type specific metabolism | High-resolution separation and analysis of distinct cell types | Compartmentalized reaction and metabolite data |

| CoralME Platform | Rapid ME-model generation | Automated draft reconstruction from M-models | Accelerated modeling of metabolism and gene expression |

Troubleshooting the Design-Build-Test-Learn (DBTL) Cycle

Problem: Low Efficiency in Optimizing Biological Systems

- Symptoms: Requiring an excessive number of experimental rounds to achieve desired traits (e.g., high metabolite production), inconsistent results between experimental batches, and failure to identify optimal genetic constructs.

- Root Causes: High-dimensional optimization spaces, experimental noise and variability, and traditional one-factor-at-a-time approaches that miss synergistic effects [22].

- Solutions & Protocols:

- Implement Bayesian Optimization: Integrate a fully automated algorithm-driven platform like BioAutomata to close the DBTL cycle. This approach is ideal for expensive, noisy experiments with black-box optimization problems [22].

- Experimental Protocol for BioAutomata:

- Step 1: Initial Setup: Define the biological system's inputs (e.g., gene expression levels) and the objective output (e.g., lycopene titer).

- Step 2: Model Selection: Choose a probabilistic model; a Gaussian Process (GP) is recommended for its flexibility in assigning expected value and confidence levels to unevaluated points.

- Step 3: Acquisition Policy: Employ the Expected Improvement (EI) function to guide the algorithm toward experiments that balance exploration of new regions and exploitation of promising ones.

- Step 4: Automated Execution: The robotic foundry (e.g., iBioFAB) performs the batch of experiments selected by the algorithm.

- Step 5: Iterative Learning: The model updates its predictions based on new data, and the cycle repeats, requiring minimal human intervention [22].

- Utilize Flux Analysis Tools: Apply tools like FreeFlux, an open-source Python package for efficient 13C-Metabolic Flux Analysis (MFA), to obtain reliable intracellular flux data for validating and informing models [21].

The following workflow diagram illustrates the fully automated, algorithm-driven DBTL cycle:

Diagram 1: BioAutomata DBTL Workflow

Frequently Asked Questions (FAQs)

FAQ 1: What theoretical frameworks are most critical for transitioning from simple genetic modification to predictive plant biosystems design?

Three core theoretical approaches are fundamental for this transition [2]:

- Graph Theory: This approach uses networks (graphs) to represent complex plant systems. Nodes represent biological components (genes, proteins, metabolites), and edges represent interactions between them. This provides a holistic, systems-level view crucial for understanding and engineering biological complexity.

- Mechanistic Modeling: Based on the law of mass conservation, this theory uses ordinary differential equations (ODEs) and constraint-based analyses like Flux Balance Analysis (FBA) to link genes to phenotypic traits. It allows for quantitative prediction of cellular phenotypes in response to genetic perturbations.

- Evolutionary Dynamics Theory: This framework helps predict the genetic stability and evolvability of genetically modified plants or de novo plant systems, ensuring the long-term viability and safety of designed biosystems.

FAQ 2: How can I improve the predictive accuracy of my models when experimental data is limited and costly to obtain?

The most effective strategy is to employ a Bayesian optimization framework within an automated DBTL platform [22]. This machine learning method is specifically designed for scenarios where data acquisition is expensive and noisy. It uses a probabilistic model (like a Gaussian Process) to make intelligent predictions about the entire experimental landscape. Instead of testing all possible variants, the algorithm actively selects the next most informative experiments to run, dramatically reducing the number of trials needed. For example, in optimizing a lycopene biosynthetic pathway, this approach evaluated less than 1% of all possible variants while outperforming random screening by 77% [22].

FAQ 3: We have successfully edited a key transcription factor (e.g., a R2R3-MYB gene), but the resulting metabolite profiles (e.g., glucosinolates, flavonoids) are not as predicted. What are the potential causes?

Unexpected metabolic outcomes, such as a decrease in target glucosinolates (GSLs) and an unexpected increase in flavonoids, have been observed in studies on Isatis indigotica [23]. Potential causes and investigation paths include:

- Cross-Pathway Regulation: The transcription factor may have unanticipated roles in multiple metabolic pathways. For instance, IiMYB34 was found to regulate both aliphatic and indolic GSL biosynthesis, and its overexpression also impacted flavonoid and anthocyanin content [23].

- Feedback Loops and Network Motifs: Examine your system for inherent regulatory network motifs, such as feed-forward or feed-back loops, which can create non-intuitive, emergent behaviors that disrupt simple predictions [2].

- Investigation Protocol:

- Expand your transcriptomic analysis (e.g., RNA-Seq) to profile a broader set of genes beyond the immediate target pathway.

- Use Elementary Mode Analysis (EMA) or similar tools on your GEM to identify all possible metabolic phenotypes and check if the observed outcome is an alternative steady state [2].

- Validate protein-DNA interactions for the edited transcription factor (e.g., using ChIP-Seq) to confirm its binding targets in vivo.

The diagram below maps the complex regulatory network that can lead to such unexpected outcomes:

Diagram 2: MYB Regulatory Network Complexity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Platforms for Plant Biosystems Design

| Item Name | Type/Category | Key Function in Research | Example Application |

|---|---|---|---|

| CoralME | Computational Software Platform | Automates reconstruction of Metabolism and Expression models (ME-models) from genome-scale metabolic models (M-models). | Rapidly generated highly curated ME-models for Synechocystis sp. and Pseudomonas putida [21]. |

| FreeFlux | Computational Package (Python) | Performs comprehensive and time-efficient 13C-Metabolic Flux Analysis (MFA). | Provides reliable intracellular flux estimates to validate model predictions and understand metabolic pathway activity [21]. |

| EMUlator2ML | Machine Learning Framework | Accelerates metabolic flux estimation by "learning" relationships between metabolite labeling patterns and flux. | Enables large-scale strain screening and fluxomic phenotyping from metabolomic data [21]. |

| 6-Benzylaminopurine (BAP) with Cefotaxime | Plant Tissue Culture Reagents | BAP is a cytokinin for shoot regeneration; cefotaxime is an antibiotic that also stimulates regeneration and reduces genetic instability. | Efficient in vitro shoot regeneration in Cucumis melo with reduced tetraploidy [23]. |

| Maxent Software | Ecological Modeling Tool | Uses environmental variables to predict species habitat distribution via Species Distribution Models (SDMs). | Identified potential conservation areas for the near-threatened Silene marizii [23]. |

This technical support center is designed to assist researchers in overcoming common challenges in predictive modeling for plant biosystems design. The field aims to accelerate plant genetic improvement and create novel systems by moving from trial-and-error approaches to strategies based on predictive models of biological systems [2] [24]. A core challenge in this endeavor is understanding and modeling emergent properties—the novel functions that arise from the multi-scale interactions of individual biological components, where the whole becomes greater than the sum of its parts [25]. The following guides and FAQs address specific experimental and computational issues encountered in this interdisciplinary research.

Troubleshooting Guides

Model-Experiment Discrepancies in Predictive Modeling

Problem: In silico model predictions consistently diverge from observed experimental results for plant phenotypes.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incomplete Network Annotation | Compare model's metabolic/genetic network scope with recent literature and omics data. | Curate and update the model using genome-scale metabolic network (GEM) tools and single-cell omics data [2]. |

| Inadequate Error Control | Audit experimental design for sources of lack of uniformity (e.g., environmental gradients). | Implement controlled environments and use clones or inbred lines to reduce genetic variation [26]. |

| Hidden "Underground" Metabolism | Conduct enzyme promiscuity assays and analyze metabolomic profiles for unexpected products. | Incorporate enzyme promiscuity data and use computational tools like MAGI to integrate metabolic and genetic networks [2]. |

Experimental Protocol: Constraint-Based Metabolic Flux Analysis

- Objective: Predict cellular phenotypes under steady-state conditions.

- Procedure:

- Reconstruct Network: Build a genome-scale metabolic network from the plant genome sequence and omics datasets, defining metabolites and reactions as nodes and edges [2].

- Formulate Model: Express mass conservation for each metabolite as a system of linear equations:

S · v = 0, whereSis the stoichiometric matrix andvis the flux vector [2]. - Apply Constraints: Incorporate physiological constraints, such as substrate uptake rates or ATP maintenance requirements.

- Solve with FBA: Use Flux Balance Analysis (FBA) to predict flux distributions by optimizing an objective function (e.g., maximization of biomass production) [2].

- Troubleshooting: If the model is underdetermined, perform stable isotope-labeling experiments (e.g., with 13C-labeled CO2) to measure fluxes and constrain the system [2].

Challenges in Multi-Scale Integration

Problem: Inability to effectively integrate data and models across molecular, cellular, and organ scales to predict emergent organ-level functions.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Data Scale Mismatch | Audit the spatial (cell, tissue) and temporal (seconds, days) resolution of all input data. | Employ multi-scale computational models that explicitly link scales and use data from histology, tissue clearing, and light sheet microscopy [27]. |

| Neglect of Spatial Compartmentalization | Check if the model accounts for different cell types and intracellular compartments. | Utilize single-cell/single-cell-type omics data to decipher metabolites, reactions, and pathways in specific compartments [2]. |

| Overlooking Physical Forces | Review if model includes biomechanical cues (e.g., pressure, shear stress). | Integrate biomechanical models with molecular networks; use techniques like AFM to measure physical properties [27]. |

Frequently Asked Questions (FAQs)

General Concepts

Q1: What are emergent properties in the context of plant biosystems design? A1: Emergent properties are novel functions that arise from the interaction of individual cellular components in a multicellular plant [25]. In plant biosystems design, this means that complex traits like drought tolerance or yield emerge from the synergistic interactions of genes, proteins, metabolites, and cells across different spatial and temporal scales, and cannot be predicted by studying individual parts in isolation.

Q2: Why is a multi-scale understanding critical for predictive modeling in plant biosystems? A2: Biophysical processes at different scales are deeply interconnected [27]. Molecular-level interactions (e.g., protein-DNA binding) trigger cascades that affect cellular, tissue, and organ function. Conversely, organ-level physical forces (e.g., blood flow shear stress) influence cellular behavior and gene expression [27]. Accurate prediction requires models that integrate these cross-scale interactions.

Technical & Computational Challenges

Q3: My mechanistic model of a genetic circuit fails when transferred from a model plant to a crop species. What could be wrong? A3: This is often due to undefined species-specific interactions. The "graph theory approach" in plant biosystems design suggests that a biological system is a dynamic network of thousands of interconnected nodes (genes, metabolites) [2]. The network topology, including key regulatory motifs like feed-forward or feedback loops, likely differs between species. You should map the target crop's relevant subnetwork and compare its structure and parameters to your original model.

Q4: How can I handle the inherent stochasticity (noise) in gene expression when designing a predictable genetic circuit? A4: Stochasticity is a key source of experimental error and can be a design feature [26]. At the molecular level, techniques like single-molecule microscopy and optical tweezers can quantify this noise [27]. To counter it, design circuits with built-in robustness, such as incorporating negative feedback loops, which are a common regulatory network motif that can stabilize system output [2].

Experimental & Practical Issues

Q5: How do I distinguish between a biotic (living) and an abiotic (non-living) stress factor when my engineered plants show poor growth? A5: This is a classic diagnostic problem.

- Biotic factors (pests, diseases) often show a progression over time, specific damage to one plant species/cultivar, and a gradual transition between healthy and damaged areas [28].

- Abiotic factors (drought, nutrient deficiency) often cause damage that appears suddenly, affects multiple plant species, and has sharp margins between affected and unaffected tissue [28]. Remember, biotic factors often attack plants already stressed by abiotic factors [28].

Q6: What are the key considerations for designing a valid experiment to test a new plant genetic construct? A6:

- Define Variables: Clearly specify your independent variable (e.g., genetic construct presence/absence) and dependent variables (e.g., plant growth, metabolite levels) [26].

- Include Controls: Use both negative controls (null treatment, e.g., wild-type plants) and positive controls (a construct with a known effect) to provide a baseline and validate your assay [26].

- Replication and Randomization: Include sufficient biological replicates to compute experimental error and randomize treatments to ensure a valid measure of that error [26]. Control for natural variation by using inbred lines or clones where possible [26].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Plant Biosystems Design |

|---|---|

| Genome-Scale Metabolic Models (GEMs) | Mathematical frameworks that allow constraint-based analysis (e.g., FBA) to predict plant cellular phenotypes from metabolic networks [2]. |

| Stable Isotope Labeling (e.g., 13C-CO2) | Enables experimental measurement of metabolic fluxes within the plant, which is critical for constraining and validating metabolic models [2]. |

| Single-Cell Omics Technologies | Provides high-resolution data on gene expression and metabolism from specific cell types, addressing challenges of cellular compartmentalization in models [2]. |

| CRISPR/Cas9 Genome Editing | Allows precise modification of plant genomes to test predictions from biosystems design models and implement new genetic circuits [2] [24]. |

| Constraint-Based Reconstruction and Analysis (COBRA) | A suite of computational methods used to simulate and analyze genome-scale metabolic networks [2]. |

Essential Experimental Protocols

Protocol 1: Establishing a Multi-Scale Observation Framework

Objective: To collect coordinated data from molecular to organ scales for model building. Workflow:

- Molecular Scale: Use NMR spectroscopy or Cryo-EM to determine the 3D structure of key protein targets [27].

- Cellular Scale: Employ confocal or super-resolution fluorescence microscopy to visualize the spatial localization and dynamics of these proteins within living plant cells [27].

- Tissue Scale: Apply tissue clearing methods (e.g., CLARITY) followed by light-sheet microscopy to map the 3D architecture and cellular interactions within the tissue of interest [27].

- Data Integration: Correlate the multi-scale data temporally and spatially using computational modeling to identify cross-scale interaction rules.

Protocol 2: De Novo Synthesis of a Synthetic Gene Circuit

Objective: To implement and test a small, predictive genetic circuit in a plant model system. Workflow:

- In Silico Design: Model the circuit (e.g., a feed-forward loop) using graph theory and ODEs to predict its dynamic behavior [2].

- Part Assembly: Synthesize or select well-characterized genetic parts (promoters, coding sequences, terminators) and assemble the circuit using Golden Gate or similar methods.

- Plant Transformation: Introduce the construct into the plant via Agrobacterium-mediated transformation or biolistics.

- Phenotypic Validation: Quantify circuit performance using reporters (e.g., fluorescence) and assess its impact on the host system via transcriptomics and metabolomics.

- Model Refinement: Compare empirical data with predictions to refine the initial model and improve its predictive power for future designs.

Visualizations

Diagram 1: Multi-Scale Hierarchy in Plants

Diagram 2: Gene-Metabolite Network Motifs

Diagram 3: Model-Driven Design Workflow

Advanced Computational Methods and Cross-Disciplinary Applications

Foundation Models (FMs), large machine learning models pre-trained on vast datasets, are revolutionizing predictive modeling in plant biology. These models, including Large Language Models (LLMs) adapted for biological sequences, learn fundamental patterns from data, allowing them to be fine-tuned for specific tasks with exceptional accuracy. In plant biosystems design—an interdisciplinary field aiming to accelerate genetic improvement and create novel plant systems through predictive design—FMs offer a transformative approach [2] [20]. They address core challenges in linking complex plant genotypes to observable phenotypes by deciphering the "language" of DNA, RNA, and proteins, thereby enabling more accurate predictions of gene regulation, protein function, and cellular behavior across different biological scales [29] [30]. This technical support guide addresses frequent experimental challenges and provides actionable protocols for researchers integrating these powerful tools into their plant biology workflows.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Our research involves predicting the impact of non-coding genetic variants in cassava. Traditional bioinformatics tools have been inconclusive. What FM approach can provide deeper insights?

A1: Leveraging a domain-specific LLM like the Agronomic Nucleotide Transformer (AgroNT) is recommended for this task. AgroNT, pre-trained on the genomes of 48 crop species and over 10 million cassava mutations, has demonstrated a unique capability to uncover non-obvious regulatory patterns in promoter regions and predict the functional impacts of non-coding variants with high accuracy [31].

- Troubleshooting Guide:

- Problem: Inability to identify causal variants in non-coding regions.

- Solution: Utilize AgroNT to score how sequence variants affect the model's inferred regulatory grammar, prioritizing variants that most significantly alter the predicted binding affinity for transcription factors.

- Problem: Lack of species-specific model.

- Solution: Fine-tune a pre-trained, general DNA FM (e.g., DNABERT-2) on your target plant species' genomic data if a dedicated model like AgroNT is unavailable. This transfers learned sequence knowledge to a new organism [30].

Q2: We need to predict gene expression levels from DNA sequence in tomato under various stress conditions. Which FM methodology is most suitable?

A2: Deep learning models based on convolutional neural networks (CNNs) have shown high efficacy in predicting gene expression from sequence. The ExPecto model architecture, for instance, uses a CNN to analyze DNA sequence features and predict expression levels across different tissues and conditions [32]. By training on RNA-seq data from tomato under stress, the model can learn the regulatory code and identify key sequence motifs associated with stress-responsive expression.

- Experimental Protocol:

- Data Preparation: Compile a dataset of paired genomic sequences (e.g., promoter regions) and corresponding gene expression values (from RNA-seq) for tomato across your stress conditions of interest.

- Model Adaptation: Adapt an existing ExPecto-style model architecture for plant genomes.

- Training & Validation: Train the model on your dataset, holding out a subset for validation. Use cross-validation to ensure robustness.

- Interpretation: Analyze the model's learned features to identify predictive sequence motifs and potential new cis-regulatory elements involved in the stress response [32].

Q3: For a high-throughput phenotyping project, we are struggling with accurately segmenting and classifying diseased leaf areas from images. How can FMs help?

A3: While not LLMs, Convolutional Neural Networks (CNNs) are a class of deep learning foundation models for image analysis. State-of-the-art CNN models for tasks like classification, object detection, and semantic segmentation have achieved >95% accuracy in identifying and segmenting plant diseases from leaf images [33] [34]. These models automatically learn hierarchical features, eliminating the need for manual feature engineering.

- Troubleshooting Guide:

- Problem: Low accuracy due to small or imbalanced dataset.

- Solution: Employ data augmentation techniques (random rotation, flipping, contrast adjustment) to multiply your dataset size and improve model generalization [31]. Use transfer learning by starting with a model pre-trained on a large dataset like Plant Village [31].

- Problem: Model fails to generalize to images taken in field conditions.

- Solution: Incorporate preprocessing steps like color normalization and background suppression to reduce external interference. Ensure your training dataset includes images with complex backgrounds and varied lighting [31].

Q4: We aim to integrate multi-omics data (transcriptomics, proteomics, metabolomics) to model a plant's stress response. What FM architectures can handle such complex, heterogeneous data?

A4: Graph Neural Networks (GNNs) and Variational Autoencoders (VAEs) are powerful for multi-omics integration. GNN-based models can explicitly model interactions between biological entities (genes, proteins, metabolites), while DeepOmix (a VAE) can integrate multiple data types to analyze regulatory relationships and predict phenotypic outcomes [32].

- Experimental Protocol:

- Network Construction: For a GNN, construct a biological network where nodes represent molecules and edges represent known interactions (e.g., from protein-protein interaction databases).

- Feature Attribution: Attach omics data (e.g., expression levels) as features to the nodes.

- Model Training: Train the GNN or VAE to learn a compressed, integrative representation of the multi-omics data that predicts a stress phenotype.

- Analysis: The model can identify key hub genes or metabolites in the stress response network that might be missed when analyzing single-omics datasets in isolation [32].

Experimental Protocols & Data Presentation

Protocol: Using DNA Foundation Models for Regulatory Element Discovery

Objective: Identify novel cis-regulatory elements in a plant genome (e.g., Arabidopsis) using a pre-trained DNA FM.

Materials:

- Genomic sequences of interest (e.g., promoter regions upstream of co-expressed genes).

- A pre-trained DNA FM like DNABERT or a plant-specific variant [30].

- Computational resources (GPU recommended).

Methodology:

- Sequence Preprocessing: Extract and format your DNA sequences into the input format required by the FM (e.g., k-mers).

- Model Inference: Pass the sequences through the FM to obtain sequence embeddings—numerical representations that capture functional and evolutionary patterns [29] [30].

- Motif Discovery: Apply clustering algorithms (e.g., k-means) to the embeddings of sequences that drive similar expression patterns. Sequences clustering together are likely to share functional motifs.

- Sequence Analysis: Use in-silico mutagenesis within the model to pinpoint nucleotides critical for the predicted regulatory function. Validate top candidates with wet-lab experiments like EMSA or reporter assays.

Protocol: Implementing a CNN Foundation Model for Plant Disease Detection

Objective: Fine-tune a pre-trained CNN to accurately detect and segment disease lesions in wheat leaf images.

Materials:

- A dataset of annotated wheat leaf images (e.g., from the Plant Village dataset or custom-collected) [31].

- A pre-trained CNN model (e.g., VGGNet, InceptionNet) [34].

- Deep learning framework (e.g., TensorFlow, PyTorch).

Methodology:

- Data Preparation: Split your image dataset into training, validation, and test sets. Apply preprocessing (resizing, normalization) and augmentation (rotation, flipping) [31].

- Model Fine-tuning: Load the pre-trained CNN, replace its final classification layer with a new one matching your number of disease classes, and train the network on your data. Earlier layers can be frozen to leverage general feature detectors.

- Model Evaluation: Evaluate the model on the held-out test set using metrics like accuracy, precision, recall, and F1-score. For segmentation, use Intersection over Union (IoU).

- Deployment: The trained model can be deployed in mobile apps or integrated with IoT systems for real-time field diagnostics [33].

Performance Data of Foundation Models in Plant Biology

Table 1: Summary of quantitative performance for various foundation models and deep learning applications in plant biology.

| Model / Application | Model Type | Task | Reported Performance | Key Features |

|---|---|---|---|---|

| AgroNT [31] | LLM (Transformer) | Predict TF binding & variant effect in crops | Unprecedented accuracy across species; discovered novel gene-stress associations. | Pre-trained on 48 crop species and 10M+ cassava mutations. |

| CNN-based Models [33] [34] | CNN | Plant disease classification | >95% accuracy; >90% precision for detection/segmentation. | Hierarchical feature learning; outperforms traditional feature engineering. |

| DeepPheno [32] | CNN | High-throughput plant phenotyping | >95% accuracy in trait measurement (leaf size, stem height). | Tracks plant development from standard color images. |

| 3D CNN [32] | 3D-CNN | Early plant stress detection | 95% accuracy in detecting charcoal rot in soybeans 2 days before visual symptoms. | Analyzes hyperspectral image data. |

| ExPecto (adapted) [32] | CNN | Predict gene expression from sequence | Successfully predicted tissue-specific expression in maize. | Identifies key regulatory sequence motifs. |

Table 2: Essential research reagents and resources for working with biological foundation models.

| Resource Type | Name / Example | Function / Application | Reference / Source |

|---|---|---|---|

| Pre-trained Model | DNABERT-2, HyenaDNA | General-purpose DNA sequence analysis and understanding. | [35] [30] |

| Pre-trained Model | AgroNT, FloraBERT | Domain-specific analysis for agronomic plants and crops. | [31] [30] |

| Software/Repository | Awesome-Bio-Foundation-Models | A curated collection of papers and models for DNA, RNA, protein, and single-cell FMs. | [35] |

| Dataset | Plant Village Dataset | Large-scale, public dataset of plant images for disease diagnosis model training. | [31] |

| Dataset | >788 Sequenced Plant Genomes | Foundational data for pre-training or fine-tuning genomic FMs. | [30] |

Visualizations: Workflows and Logical Structures

Foundation Model Analysis

Multi-scale Plant Data

The field of plant biosystems design seeks to address global challenges in food security, sustainable biomaterials, and environmental health by moving beyond traditional plant breeding toward predictive design of plant systems [2] [24]. This represents a fundamental shift from trial-and-error approaches to innovative strategies based on predictive models of biological systems. Within this broader context, machine learning (ML) has emerged as a transformative technology for predictive biocatalysis, enabling researchers to understand and optimize enzyme function and metabolic pathways with unprecedented speed and accuracy.

Predictive biocatalysis focuses on using computational models to forecast enzyme behavior, reaction outcomes, and pathway performance before experimental validation. For plant biosystems design, this capability is crucial for engineering plants with enhanced traits such as improved nutrient utilization, stress resistance, or production of valuable compounds [2]. The integration of ML methods addresses key limitations in traditional biocatalysis research, including the vastness of protein sequence space, the complexity of metabolic networks, and the difficulty in predicting how genetic modifications will affect overall system behavior.

This technical support center provides practical guidance for researchers applying ML-enabled biocatalysis within plant biosystems design projects. The following sections offer troubleshooting advice, experimental protocols, and resource recommendations to address common challenges encountered when implementing these advanced methodologies.

Core Concepts and Importance

Frequently Asked Questions

Q: How can machine learning specifically advance enzyme engineering for plant biosystems design?

A: ML accelerates multiple aspects of enzyme engineering: (1) Functional annotation of the vast number of uncharacterized protein sequences in databases, helping identify enzymes with useful activities [36]; (2) Fitness landscape navigation by predicting the effects of multiple mutations, including non-additive (epistatic) effects that are difficult to identify through traditional directed evolution [36] [37]; and (3) De novo enzyme design by generating completely novel protein sequences with desired functions [36]. For plant biosystems design, this enables creation of specialized enzymes that can introduce novel metabolic pathways or enhance existing ones in plants.

Q: What types of machine learning models are most effective for predicting enzyme kinetics?

A: Current research indicates that gradient-boosted decision tree frameworks like RealKcat can achieve >85% test accuracy for predicting catalytic turnover (kcat) and >89% for substrate affinity (KM) when trained on rigorously curated datasets [38]. These models are particularly valuable because they can capture mutation effects on catalytically essential residues, including complete loss of function when catalytic residues are altered – a capability where previous models struggled [38]. Other effective approaches include convolutional neural networks (CNNs) and graph neural networks (GNNs) for predicting enzyme turnover across diverse enzyme-substrate pairs [38].

Q: What are the main data-related challenges in applying ML to biocatalysis?

A: The primary challenges include: (1) Data scarcity – experimental datasets are typically small and resource-intensive to generate [36]; (2) Data quality and consistency – inconsistencies in kinetic parameters, enzyme sequences, and substrate identity require rigorous curation [38]; and (3) Data complexity – enzyme function depends on multiple factors beyond sequence, including stability, solubility, and environmental conditions [36]. For plant research specifically, additional challenges include the complexity of plant metabolic networks and compartmentalization of metabolites in different cellular compartments [2].

Q: How can researchers overcome the limitation of small datasets in specialized enzyme families?

A: Several strategies can address data scarcity: (1) Transfer learning – pre-training models on large general protein datasets then fine-tuning on smaller, task-specific datasets [36]; (2) Data augmentation – generating synthetic data points, such as creating inactive variants by mutating catalytic residues to alanine [38]; and (3) Zero-shot predictors – using general knowledge from large datasets to make predictions about novel variants without task-specific training data [36]. For example, RealKcat improved its sensitivity to catalytic residues by adding ~17,000 synthetic negative examples to its training set [38].

Technical Troubleshooting Guide

Problem: Poor model generalization to unseen enzyme variants

Symptoms: High training accuracy but low test accuracy; inaccurate predictions for mutations distant from training set sequences.

Solutions:

- Implement K-fold cross-validation during training to detect overfitting and ensure robust performance [39].

- Balance sequence diversity in training sets to prevent overrepresentation of specific enzyme families [39].

- Use sequence similarity partitioning to ensure training and test sets have controlled similarity levels [39].

- Incorporate evolutionary context using protein language model embeddings (e.g., ESM-2) to improve generalization [38].

Problem: Inaccurate prediction of mutation effects on catalytic residues

Symptoms: Failure to predict complete loss of function when catalytic residues are mutated; similar predictions for active site and non-active site mutations.

Solutions:

- Include negative training data by incorporating catalytically inactive variants (e.g., catalytic residue alanine mutants) [38].

- Use structure-aware features that incorporate spatial relationships and residue conservation patterns [38].

- Frame kinetics prediction as classification by clustering kcat and KM values into orders of magnitude rather than predicting exact values [38].

Problem: Difficulty in predicting pathway-level effects of enzyme modifications

Symptoms: Accurate enzyme-level predictions that fail to translate to expected metabolic flux changes in vivo.

Solutions:

- Integrate constraint-based metabolic models like Flux Balance Analysis (FBA) with enzyme kinetics predictions [2] [38].

- Incorporate multi-scale modeling that links molecular-level enzyme properties to tissue-scale and whole-plant metabolic networks [2].

- Use tools like FreeFlux for metabolic flux analysis that can validate predicted pathway performance [21].

Experimental Protocols & Methodologies

ML-Guided Enzyme Engineering Workflow

The following diagram illustrates a comprehensive machine learning-guided workflow for enzyme engineering, integrating computational and experimental approaches:

Title: ML-Guided Enzyme Engineering Workflow

Detailed Protocol:

Reaction Identification and Substrate Scope Evaluation

- Identify target chemical transformation based on plant metabolic pathway requirements [37].

- Evaluate native enzyme substrate promiscuity using diverse substrate arrays (e.g., 1100+ unique reactions) to identify potential starting points [37].

- Reaction conditions: Use low enzyme concentration (~1 µM) and high substrate concentration (25 mM) to mimic industrially relevant conditions [37].

Hot Spot Screen Implementation

- Select residues completely enclosing the active site and substrate tunnels (within 10Å of docked native substrates) [37].

- Perform site-saturation mutagenesis on selected positions (e.g., 64 residues × 19 amino acids = 1216 variants) [37].

- Use structure-guided selection to prioritize regions with potential functional impact.

High-Throughput Screening with Cell-Free Expression

- Implement cell-free DNA assembly and gene expression to rapidly generate sequence-defined protein libraries [37].