Overcoming Parallax: Advanced 3D Registration Techniques for Multimodal Plant Phenotyping

This article addresses the critical challenge of parallax effects in close-range multimodal plant imaging, a significant obstacle for researchers and scientists in high-throughput phenotyping and drug development from natural products.

Overcoming Parallax: Advanced 3D Registration Techniques for Multimodal Plant Phenotyping

Abstract

This article addresses the critical challenge of parallax effects in close-range multimodal plant imaging, a significant obstacle for researchers and scientists in high-throughput phenotyping and drug development from natural products. We explore the foundational principles of parallax and its impact on data alignment across different camera modalities. The scope encompasses a detailed examination of 3D registration methodologies that leverage depth information, practical troubleshooting for common imaging artifacts, and a comparative validation of state-of-the-art techniques. By synthesizing current research, this guide provides a comprehensive framework for achieving pixel-precise alignment in complex plant canopies, enabling more reliable extraction of physiological and morphological traits for biomedical and agricultural research.

Understanding Parallax: The Core Challenge in Multimodal Plant Imaging

A technical support resource for plant phenotyping researchers

Understanding the Core Problem

What is parallax and why does it cause misalignment in my multimodal plant images?

Parallax is a displacement or difference in the apparent position of an object viewed along two different lines of sight, measured by the angle or half-angle of inclination between those two lines [1]. In practical terms for plant phenotyping, this means that when you use multiple cameras from different positions to capture the same plant, objects appear to shift position relative to the background depending on the camera's viewpoint [1] [2].

This occurs due to foreshortening, where nearby objects show a larger parallax than farther objects [1]. In a complex plant canopy with leaves at varying distances from your cameras, this effect creates significant misalignment when you try to combine images from different sensors. The effective utilization of cross-modal patterns in plant phenotyping depends on image registration to achieve pixel-precise alignment - a challenge often complicated by parallax and occlusion effects inherent in plant canopy imaging [3] [4].

How does parallax specifically affect plant phenotyping research?

In multimodal plant imaging, parallax introduces several specific problems:

- Pixel Misalignment: Different cameras capture different spatial information for the same plant features, preventing accurate data fusion [5]

- Occlusion Variations: Leaves and stems that are visible to one camera may be hidden from another camera's viewpoint [3]

- Measurement Errors: Quantitative traits like leaf area, growth measurements, and spectral analysis become inaccurate when images aren't properly aligned [5]

- Algorithm Failure: Computer vision algorithms for trait extraction assume consistent spatial relationships across modalities [3]

The problem is particularly acute in close-range imaging systems where the distance between cameras and plants is relatively small compared to the baseline distance between different cameras in your array [5].

Troubleshooting Guide

How can I determine if parallax is causing issues in my experimental setup?

Use this diagnostic checklist to identify parallax-related problems:

| Symptom | Parallax Likely Cause | Quick Verification Test |

|---|---|---|

| Misalignment increases toward image edges | High | Image stationary objects at different depths |

| Different cameras show different leaf arrangements | Moderate to High | Check occlusion patterns in canopy |

| Registration works only in image center | High | Use gridded target at angles |

| Thermal/RGB alignment varies by distance | High | Image heated target at known distances |

| Feature matching fails despite good calibration | Moderate | Test with planar calibration target |

What are the most effective strategies to minimize parallax errors?

Based on current research, implement these proven approaches:

Hardware Solutions:

- Increase subject-to-camera distance where possible [6]

- Use single-optics multi-spectral cameras instead of separate cameras [6]

- Implement nodal slide systems to rotate cameras around their optical center [2]

- Utilize 3D depth sensors (Time-of-Flight cameras) to capture spatial information [3]

Software Solutions:

- Implement local transformation algorithms instead of global transformations [5]

- Use depth-aware registration methods that incorporate 3D information [3]

- Apply adaptive local registration that accounts for subject movement [6]

- Employ ray casting techniques that model the actual camera geometry [3]

Experimental Protocols

Depth-Enhanced Multimodal Registration Protocol

This methodology leverages 3D information to address parallax in plant phenotyping, adapted from recent research [3] [4]:

Step-by-Step Implementation:

Equipment Setup

- Position Time-of-Flight (ToF) camera with multimodal cameras (RGB, thermal, spectral)

- Ensure overlapping fields of view with known baseline distances

- Calibrate all cameras using standard procedures

Data Acquisition

- Capture synchronized depth data and multimodal images

- Maintain consistent lighting conditions

- Include reference targets for validation

Ray Casting Registration

- Project 2D image pixels into 3D space using depth information

- Account for each camera's specific position and orientation

- Transform 3D points back to 2D coordinates of target camera

Occlusion Handling

- Automatically identify regions hidden from certain viewpoints

- Flag these areas in final registered images

- Implement interpolation only where scientifically valid

Validation

- Measure registration accuracy using known ground control points

- Quantify alignment errors across different plant structures

- Verify biological measurements against manual assessments

Local Transformation Registration Protocol

For setups without depth cameras, this method provides improved parallax handling compared to global approaches [5]:

Transformation Comparison:

| Transformation Type | Parameters | Parallax Handling | Best Use Case |

|---|---|---|---|

| Translation | 2 (x,y shift) | Poor | Strictly 2D scenes |

| Euclidean | 3 (x,y,rotation) | Poor | Single-plane objects |

| Affine | 6 (linear transform) | Moderate | Distant subjects |

| Homography | 8 (planar projection) | Good | Flat canopies |

| Local/Elastic | Variable (per-patch) | Excellent | Complex 3D canopies |

Implementation Steps:

Feature Detection

- Identify distinctive keypoints across multimodal images

- Use modality-invariant features where possible

- Account for different texture representations

Patch-Based Alignment

- Divide images into smaller patches

- Compute local displacements for each patch

- Use optical flow or similar techniques

Non-Linear Warping

- Apply smooth deformation field

- Maintain biological structural integrity

- Preserve metric measurements

Performance Data

Quantitative Registration Accuracy

Recent studies provide these performance benchmarks for parallax correction methods [5]:

| Registration Method | Average Error (mm) | Applicable to Thermal | Handles Local Distortion |

|---|---|---|---|

| REAL-TIME (camera position) | >10 | Limited | No |

| FAST strategy | ~5 | Yes | No |

| ACCURATE strategy | ~3 | Limited | Partial |

| HIGHLY ACCURATE (local transform) | ~2 | Limited | Yes |

| 3D Depth-Based Method [3] | <2 | Yes | Yes |

Parallax Error Magnitude in Practical Scenarios

Experimental data reveals how different factors affect parallax-induced errors [6]:

| Scenario | Baseline Distance | Subject Distance | Error Without Correction | Error With Local Registration |

|---|---|---|---|---|

| Laboratory close-range | 15 cm | 50 cm | 12.4% | 5.1% |

| Field phenotyping | 20 cm | 1 m | 8.7% | 3.2% |

| Greenhouse setup | 25 cm | 1.5 m | 6.2% | 2.1% |

| Controlled conditions | 10 cm | 2 m | 3.5% | 1.3% |

The Scientist's Toolkit

Essential Research Reagents and Equipment

| Item | Function in Parallax Mitigation | Technical Specifications |

|---|---|---|

| Time-of-Flight (ToF) Camera | Captures 3D depth information for geometry-aware registration | Resolution: VGA to 1MP, Range: 0.1-5m, Accuracy: ~1cm [3] |

| Nodal Slide Assembly | Enables rotation around lens nodal point to eliminate parallax in panoramas | Precision: <0.1mm, Load capacity: 3-5kg, Compatibility: Standard tripods [2] |

| Multi-Spectral Camera Array | Simultaneous capture at different wavelengths from same optical center | 6 monochrome cameras with different filters, synchronized acquisition [5] |

| Optical Flow Software | Estimates per-pixel displacement between different viewpoints | Algorithms: Lucas-Kanade, Farneback, Horn-Schunck, or deep learning variants [6] |

| Calibration Target | Provides known geometry for quantifying and correcting parallax errors | Chessboard pattern with precise dimensions, multi-spectral visibility [5] |

Frequently Asked Questions

Can I use software alone to fix parallax problems, or do I need hardware changes?

While software approaches can significantly reduce parallax errors, complete elimination often requires both hardware and software strategies. Local transformation algorithms can achieve approximately 2mm alignment accuracy in complex wheat canopies [5], but 3D depth-based methods using Time-of-Flight cameras generally provide superior results by addressing the fundamental geometric issue [3]. For new experimental setups, invest in proper camera geometry during design; for existing setups, focus on advanced registration algorithms.

How much does subject distance affect parallax in plant imaging?

Subject distance has an inverse relationship with parallax errors. As documented in remote monitoring research, increasing subject-to-camera distance significantly reduces parallax effects [6]. However, this comes at the cost of spatial resolution and signal strength. The optimal balance depends on your specific trait measurement requirements and the size of target plant features.

Are some plant species more susceptible to parallax problems than others?

Yes, canopy structure complexity directly influences parallax severity. Species with:

- High leaf area density (e.g., lettuce, spinach) exhibit more occlusion variations

- Complex 3D architecture (e.g., tomato, wheat with multiple tillers) show greater misalignment

- Fine structural details (e.g., fern fronds, compound leaves) demonstrate more registration challenges

Recent studies validated methods on six species with varying leaf geometries, finding robust performance across types [3].

What's the practical accuracy limit for parallax correction in plant phenotyping?

Current state-of-the-art methods achieve approximately 2mm alignment accuracy in field conditions [5]. With 3D depth-based approaches, accuracy can potentially reach <1mm under controlled conditions [3]. However, the biological relevance of higher precision depends on your specific application - for whole-canopy measurements, 2mm may suffice, while for individual leaf trait analysis, sub-millimeter accuracy might be necessary.

How do I validate parallax correction effectiveness in my specific setup?

Implement a multi-level validation protocol:

- Geometric validation: Use reference objects with known dimensions at different depths

- Biological validation: Compare trait measurements before/after correction using manual measurements as ground truth

- Algorithm validation: Test whether downstream analysis algorithms perform better with corrected data

- Inter-modal consistency: Verify that features appear in consistent positions across different modalities

This comprehensive approach ensures both mathematical and biological relevance of your parallax correction method.

Frequently Asked Questions (FAQs)

FAQ 1: What is parallax in the context of multimodal plant imaging? Parallax is the apparent displacement of an object's position when viewed from two different lines of sight. In plant phenotyping, it occurs when cameras of different modalities (e.g., RGB, spectral, depth sensors) capture images of a complex plant canopy from slightly different positions. This misalignment makes it difficult to correlate data patterns across modalities, obscuring crucial cross-modal relationships for a comprehensive phenotype assessment [3].

FAQ 2: Why is parallax particularly problematic for plant canopy imaging? Plant canopies have complex, multi-layered structures with significant self-occlusion. Parallax effects are amplified in these non-solid, detailed architectures, causing severe misalignment between images from different sensors. This hinders the accurate fusion of structural and functional data, which is essential for advanced phenotyping tasks [3] [7].

FAQ 3: What are the main technical solutions for mitigating parallax errors? The primary solutions involve using 3D information to correct for the differing camera viewpoints. This includes:

- 3D Registration Algorithms: Using depth data (e.g., from Time-of-Flight cameras) to achieve pixel-precise alignment of 2D images from different modalities [3].

- Structure from Motion (SfM): Reconstructing a 3D model from multiple 2D images taken from different angles, which can then be used to map functional data onto the 3D structure [8].

- Multi-view Point Cloud Registration: Combining multiple 3D scans (point clouds) from different viewpoints to create a complete, occlusion-free model, often using coarse alignment followed by fine-tuning with algorithms like Iterative Closest Point (ICP) [9].

FAQ 4: My multimodal setup uses a low-cost stereo camera, but I get distorted point clouds. How can I improve accuracy? A common issue with binocular stereo cameras on low-texture plant surfaces is point cloud distortion and drift [9]. An effective workflow to overcome this is:

- Bypass On-Board Depth Estimation: Do not rely on the camera's integrated depth calculation.

- Acquire High-Resolution RGB Images: Use the camera to capture high-resolution images from multiple viewpoints.

- Apply SfM-MVS Processing: Use computational photogrammetry pipelines (Structure from Motion with Multi-View Stereo) on these high-res images to generate high-fidelity, single-view point clouds, effectively avoiding the distortion caused by the camera's hardware-based disparity calculation [9].

FAQ 5: How can I validate that my parallax correction method is working effectively? Validation should involve both quantitative and qualitative assessments:

- Quantitative: Extract key phenotypic parameters (e.g., plant height, crown width, leaf length) from your registered 3D models and compare them with manual measurements. A strong correlation (e.g., R² > 0.9 for plant height and crown width) indicates high accuracy [9].

- Qualitative: Visually inspect the alignment of fine-scale features, such as leaf edges and veins, across the registered multimodal data (e.g., structural vs. fluorescence imagery) to ensure there is no ghosting or misalignment [8].

Troubleshooting Guides

Issue 1: Misalignment of Multimodal Images After Basic Registration

Problem: Images from different cameras (e.g., RGB and thermal) are not pixel-precise after using a standard 2D feature-based registration tool, making cross-modal analysis unreliable.

Solution: Implement a 3D-based multimodal registration algorithm.

- Integrate a Depth Sensor: Use a Time-of-Flight (ToF) camera or other depth sensor in your setup to capture 3D information [3].

- Leverage Ray Casting: Utilize the 3D geometry and ray casting techniques to project images from all modalities into a common coordinate system. This mitigates parallax by accounting for the different physical positions of the cameras [3].

- Automated Occlusion Handling: Employ an integrated method to automatically detect and filter out pixels that are occluded in one camera's view but visible in another's [3].

Required Materials:

- Multimodal camera setup

- Time-of-Flight (ToF) camera or other depth sensor [3]

- Computing hardware capable of 3D processing

Issue 2: Incomplete 3D Reconstruction Due to Occlusion

Problem: The 3D model of the plant has missing parts because leaves and stems hide each other from a single viewpoint.

Solution: Perform multi-view acquisition and point cloud registration.

- Multi-view Acquisition: Capture images or point clouds from multiple viewpoints around the plant (e.g., every 60 degrees for a full rotation) [9].

- Coarse Alignment: Use a marker-based method for initial, rapid alignment. Place spherical markers with known properties around the plant and use them to roughly align the different point clouds [9].

- Fine Registration: Apply a precise registration algorithm like the Iterative Closest Point (ICP) to refine the alignment and merge the point clouds into a complete, occlusion-free 3D model [9].

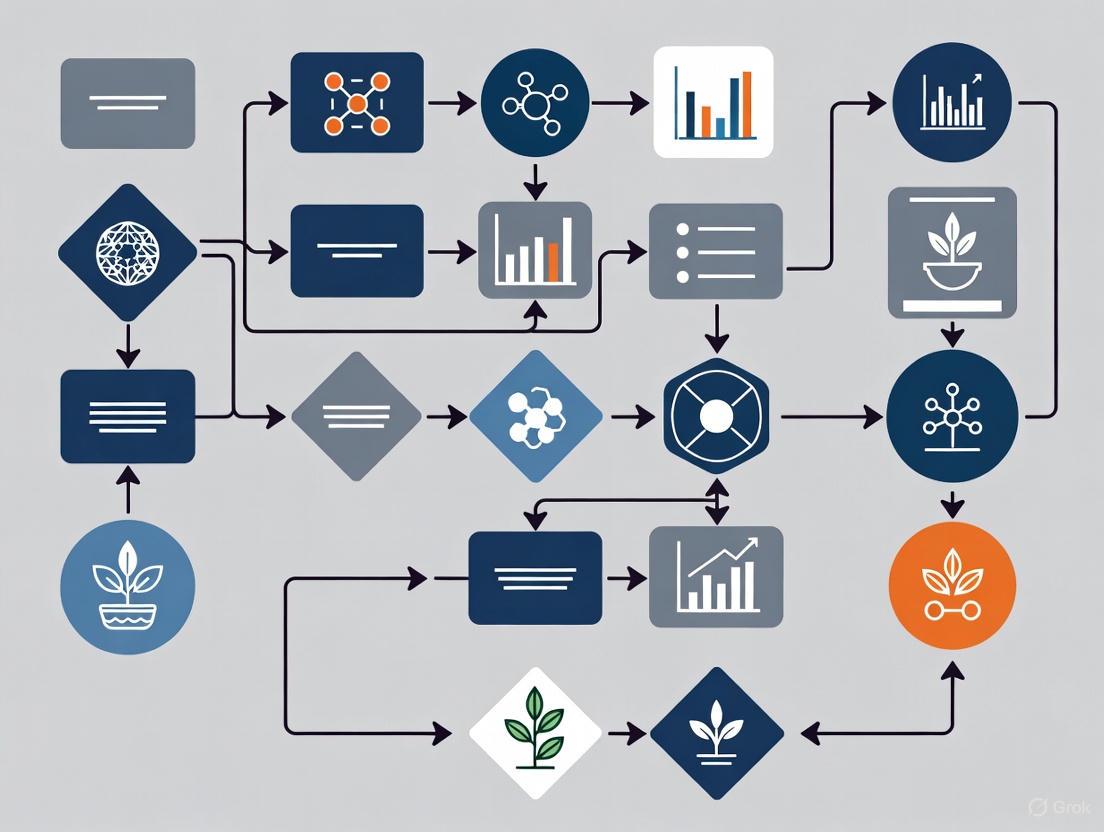

Workflow Diagram: Multi-View 3D Plant Reconstruction

Issue 3: Low Accuracy in Reconstructed Plant Morphology

Problem: The extracted phenotypic traits (e.g., leaf area, plant height) from your 3D model do not match manual measurements.

Solution: Optimize the image-based 3D reconstruction pipeline for complex plant structures.

- Image Pre-processing:

- Feature Detection & Matching:

Protocol 1: Multimodal 3D Image Registration for Parallax Mitigation

This protocol is based on a novel algorithm designed to achieve pixel-precise alignment across camera modalities using depth information [3].

1. Experimental Setup:

- Equipment: Arrange an arbitrary multimodal camera setup (e.g., combining RGB, hyperspectral, and fluorescence cameras) with a Time-of-Flight (ToF) depth camera. The setup is applicable for any plant species [3].

- Data Acquisition: Simultaneously capture images from all modalities along with the depth map from the ToF camera.

2. Image Processing Workflow:

- Input: Multimodal 2D images and a co-acquired 3D point cloud from the ToF camera.

- Core Algorithm: Utilize ray casting to project pixels from all 2D images onto the 3D structure, effectively aligning them into a common 3D space and mitigating parallax effects [3].

- Occlusion Handling: Automatically detect and filter out pixels that are occluded in the view of a specific camera using the integrated 3D information [3].

- Output: Registered multimodal images and a consolidated 3D point cloud of the plant.

Multimodal Registration Workflow

Protocol 2: Combined Structural and Functional 3D Imaging via SfM

This protocol details a cost-effective method using a single monocular camera to reconstruct both 3D structure and functional information, mapping fluorescence onto the 3D model [8].

1. Materials and Setup:

- Imaging System: A monochrome camera mounted with an objective lens (e.g., 8mm focal length) and a filter wheel holding red, green, and blue spectral filters.

- Lighting: A white-light source for structural imaging and a UV light source for inducing blue-green fluorescence as a functional biomarker for infection.

- Sample Mount: A rotation stage to hold the plant pot and change the perspective for multi-view imaging.

2. Image Acquisition:

- Structural Imaging: Under white light, sequentially capture images of the plant through the red, green, and blue filters.

- Functional Imaging: Under UV light, capture fluorescent images through the same set of filters.

- Multi-view Capture: Rotate the plant stage to a new angle and repeat the structural and functional imaging sequence. Continue until the plant has been fully captured from all sides (e.g., 360° rotation).

3. Data Processing:

- Camera Calibration: Use a checkerboard pattern to calibrate the camera and correct for lens distortion.

- 3D Reconstruction with SfM: a. Convert RGB images to ExG for better feature contrast. b. Upsample images and detect key points using the SIFT algorithm. c. Match key points across image pairs using a FLANN-based matcher. d. Reconstruct the 3D coordinates of the key points using perspective transformation, creating the structural model.

- Functional Data Mapping: Map the fluorescence image data (a functional biomarker) onto the corresponding locations of the reconstructed 3D structural model [8].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and equipment used in advanced 3D plant phenotyping experiments as cited in the research.

| Item | Function / Application | Key Specification / Note |

|---|---|---|

| Time-of-Flight (ToF) Camera | An active 3D imaging sensor that provides depth information by measuring the round-trip time of a light pulse. Used to mitigate parallax in multimodal registration [3]. | Integrated into multimodal setups for direct depth data [3]. |

| Binocular Stereo Camera | A passive depth sensor that uses two lenses to calculate 3D structure from pixel disparities. Can be used for 3D reconstruction [9]. | Prone to distortion on low-texture surfaces; often used with SfM-MVS post-processing for higher accuracy [9]. |

| Monochrome Camera with Filter Wheel | A cost-effective system for capturing both structural (RGB) and functional (e.g., fluorescence) information in multiple spectral bands using a single sensor [8]. | Allows sequential image capture with different filters; acquisition speed is often limited by the filter wheel rotation [8]. |

| Structure from Motion (SfM) Software | A computational photogrammetry technique that reconstructs 3D models from multiple 2D images. Core to many 3D plant phenotyping pipelines [8] [9]. | Outputs a 3D point cloud; performance depends on the number and quality of key points detected [8]. |

| Iterative Closest Point (ICP) Algorithm | A standard algorithm for fine alignment and registration of multiple 3D point clouds into a single, complete model [9]. | Used after coarse alignment to minimize the distance between points in overlapping clouds [9]. |

| Extra Green (ExG) Index | A image processing formula used to enhance the contrast between green plant material and the background, improving feature detection for 3D reconstruction [8]. | Calculated as 2*Green - Red - Blue from RGB images [8]. |

| Spherical Markers | Passive calibration objects placed around the plant to provide known reference points for the coarse alignment of multi-view point clouds [9]. | Should have a known diameter and matte, non-reflective surfaces to facilitate detection [9]. |

Table 1: Performance of 3D Reconstruction Workflow on Ilex Species This data validates a two-phase reconstruction workflow (SfM-MVS + point cloud registration) by comparing traits extracted from the 3D model against manual measurements [9].

| Phenotypic Trait | Coefficient of Determination (R²) |

|---|---|

| Plant Height | > 0.92 |

| Crown Width | > 0.92 |

| Leaf Length | 0.72 - 0.89 |

| Leaf Width | 0.72 - 0.89 |

Table 2: Comparison of 3D Imaging Techniques for Plant Phenotyping A summary of common methods for acquiring 3D plant data, highlighting their advantages and limitations [7].

| Method | Type | Key Advantages | Key Disadvantages / Challenges |

|---|---|---|---|

| Time of Flight (ToF) | Active | Easy setup; high-speed; wide measurement range; insensitive to ambient light [7]. | Lower resolution can miss fine details; high cost [7]. |

| Binocular Stereo Vision | Passive | Can directly capture depth images (point clouds); lower cost than ToF [9]. | Prone to point cloud distortion and drift on low-texture or smooth surfaces [9]. |

| Structure from Motion (SfM) | Passive | Produces detailed point clouds with low-cost equipment (standard cameras) [9]. | Time-consuming and computationally intensive; not ideal for high-throughput [9]. |

| LiDAR | Active | High-precision; suitable for high-volume scanning; relatively insensitive to lighting [7]. | High cost; requires multi-site scanning and fusion for complete models [9]. |

FAQs: Understanding Core Concepts

What is the fundamental difference between affine transformations and homography?

Affine transformations are a specific class of geometric transformation that preserve lines and parallelism but do not necessarily maintain Euclidean distances or angles [10]. They include operations like scaling, rotation, shearing, and translation. In contrast, a homography (or projective transformation) is a more general model that describes the projection of points from one plane to another, capable of handling perspective changes [11]. The homography matrix is a 3x3 matrix with eight degrees of freedom, encapsulating affine, translation, and perspective transformations [11].

Why do these traditional transformations fail with parallax in plant imaging?

Traditional transformations like affine and single homography models operate under the assumption of a planar scene or purely rotational camera motion [12]. In close-range plant phenotyping, the scene (e.g., a plant canopy) has non-negligible relief and a complex 3D structure [3] [13]. This depth variation causes parallax effects, where the relative position of objects appears to shift when viewed from different angles. A single global transformation cannot model these displacement variations across different parts of the image, leading to misalignment and ghosting artifacts in tasks like image stitching [12].

What does "multimodal" mean in the context of plant imaging?

In plant phenotyping, multimodal imaging involves using multiple camera technologies or sensors to capture different aspects of the plant phenotype [3]. For example, a system might combine a standard RGB camera with a depth camera (time-of-flight), multispectral sensors, or other specialized cameras [3] [13]. Each modality captures distinct cross-modal patterns, providing a more comprehensive assessment of plant health and structure.

Troubleshooting Guides

Problem: Severe Ghosting or Misalignment in Stitched Plant Images

Description: After applying a traditional homography to stitch images of a plant canopy, the resulting panorama shows severe ghosting or double edges, particularly around leaves or stems.

Diagnosis: This is a classic symptom of parallax error caused by the 3D structure of the plant canopy. A single homography cannot account for the different depths of foreground leaves and background stems [12].

Solution: Implement a multi-homography warping approach guided by image segmentation [12].

- Segment the Target Image: Use a powerful segmentation model like the Segment Anything Model (SAM) to partition the target image into numerous distinct contents (e.g., individual leaves, stems) [12].

- Multi-Homography Fitting: Instead of a single homography, compute multiple homographies from the feature matches using a robust, energy-based fitting algorithm that is more stable than iterative RANSAC [12].

- Assign Homographies to Segments: For each segmented content in the overlapping region, select the homography that yields the lowest photometric error. For non-overlapping regions, calculate a weighted combination of homographies [12].

- Warp and Blend: Warp the target image using the selected homographies for each segment and blend it with the reference image.

Experimental Workflow: The following diagram illustrates the workflow for a parallax-tolerant stitching method.

Problem: Failed Multimodal Registration Due to Intensity Differences

Description: When trying to align images from different sensors (e.g., RGB and multispectral), the registration algorithm fails to find correspondences due to vastly different intensity profiles and textures.

Diagnosis: Traditional intensity-based similarity metrics (like Mean Squared Error) fail because they assume a linear relationship of intensities across modalities, which does not exist in multimodal plant imaging [14].

Solution: Use a semantic similarity metric that leverages deep features instead of raw pixel intensities [14].

- Feature Extraction: Pass both the fixed and moving images through a large-scale pre-trained model (e.g., Segment Anything Model (SAM) or TotalSegmentator) to extract deep feature maps [14]. These features capture high-level semantic information.

- Similarity Calculation: Define a new similarity measure (e.g., IMPACT metric) that compares these deep feature maps instead of the original image intensities [14].

- Optimization: Integrate this semantic similarity measure into your registration framework (algorithmic like Elastix or learning-based like VoxelMorph) and optimize the spatial transformation to maximize the feature similarity [14].

Solution Workflow: The diagram below outlines the process of using a semantic similarity metric for robust multimodal registration.

Quantitative Comparison of Registration Techniques

The table below summarizes the performance of different image registration techniques as evaluated in a medical imaging context, providing a proxy for their potential performance in complex plant imaging scenarios with multimodal data [10].

Table 1: Performance Comparison of Registration Techniques (Optimized for PET/CT Alignment)

| Registration Technique | Key Principle | Reported Optimal RMSE | Best Use Case |

|---|---|---|---|

| MATLAB Intensity-Based (Affine) | Intensity-based affine transformation with contrast enhancement [10]. | 0.1317 | Flexible processing for large 2D datasets with minimal initial deformation [10]. |

| Demons Algorithm | Non-rigid, fluid-like model based on optical flow [10]. | 0.1529 | Time-sensitive tasks requiring computational efficiency [10]. |

| Free-Form Deformation (MIRT) | B-spline-based deformation for highly flexible, smooth transformations [10]. | 0.1725 | Precision-driven applications with complex anatomical (or plant structure) deformations [10]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Components for a Multimodal Plant Phenotyping Setup

| Item | Function / Explanation |

|---|---|

| Time-of-Flight (ToF) / Depth Camera | Integrates 3D information into the registration process, mitigating parallax effects by providing depth data for each pixel [3]. |

| Multispectral Camera (e.g., Airphen) | Captures spectral images at different wavelengths via multiple lenses, allowing for the assessment of plant health beyond visible light [13]. |

| Segment Anything Model (SAM) | A foundation model for computer vision used to generate accurate segmentation masks of plant contents, which can guide multi-homography warping or feature extraction [12] [14]. |

| TotalSegmentator | A large-scale pre-trained model for segmenting multiple anatomical structures; can be repurposed as a powerful feature extractor for defining semantic similarity in registration tasks [14]. |

| Robust Feature Descriptors (e.g., MIND, SSC) | Handcrafted local descriptors that capture stable spatial patterns across different imaging modalities, providing an alternative to deep features for similarity measurement [14]. |

FAQ and Troubleshooting Guide

Q1: In my multimodal imaging setup, I am encountering parallax errors that prevent precise pixel alignment between my RGB and hyperspectral cameras. How can I resolve this?

Parallax errors occur because cameras placed at different physical locations capture the plant from slightly different viewpoints, causing misalignment. This is a common challenge in multimodal registration [3].

- Troubleshooting Steps:

- Integrate a 3D Depth Sensor: Incorporate a Time-of-Flight (ToF) camera or other depth-sensing technology into your setup. The 3D information can be used to model and correct for parallax effects, leading to significantly more accurate pixel alignment across different camera modalities [3].

- Employ a 3D Registration Algorithm: Utilize a registration algorithm specifically designed to leverage depth data. Such methods can mitigate parallax by understanding the 3D structure of the plant canopy, thereby improving the fusion of data from RGB, hyperspectral, and fluorescence imagers [3].

- Verify Camera Calibration: Ensure all cameras are intrinsically and extrinsically calibrated. A well-calibrated system is the foundation for any successful image registration pipeline [15].

Q2: My 3D plant point clouds have significant gaps and missing data, likely due to leaf occlusion. How can I complete these models for accurate phenotypic parameter extraction?

Occlusion is a major bottleneck in 3D plant phenotyping, as leaves often hide other plant organs from the sensor's view, resulting in incomplete data [16] [9].

- Troubleshooting Steps:

- Implement Multi-View Scanning: Capture data from multiple viewpoints around the plant (e.g., 0°, 60°, 120°, 180°, 240°, 300°) to minimize blind spots [9].

- Fuse Point Clouds: Use a point cloud registration algorithm, such as a marker-based Self-Registration (SR) for coarse alignment followed by the Iterative Closest Point (ICP) algorithm for fine alignment, to merge the point clouds from different angles into a single, complete 3D model [9].

- Apply Deep Learning for Completion: For persistent, localized gaps, consider using a point cloud completion network like Point Fractal Network (PF-Net). These networks are trained on datasets of plant leaves and can intelligently predict and fill in missing geometry, which has been shown to significantly improve the accuracy of leaf area estimation [16].

Q3: I am using a binocular stereo camera, but my 3D reconstructions of plants suffer from distortion and drift, especially on leaf edges. What is the cause and solution?

This issue is often due to the inherent limitations of stereo camera hardware and its texture-based matching. Low-texture or smooth surfaces on leaves, combined with complex geometries and occlusions, challenge the feature matching process, leading to errors [9].

- Troubleshooting Steps:

- Bypass Integrated Depth Estimation: Instead of using the camera's onboard depth calculation, capture high-resolution images and process them offline using Structure from Motion (SfM) and Multi-View Stereo (MVS) techniques. This method avoids the distortion and drift common in single-shot stereo imaging and produces higher-fidelity point clouds [9].

- Ensure Adequate Texture and Lighting: Improve the imaging conditions to aid feature matching. However, be cautious of introducing shadows or reflections that could complicate the process.

- Validate with Known Objects: Test your reconstruction pipeline on objects with known geometry (e.g., calibration spheres) to quantify the distortion and calibrate your system accordingly [9].

Q4: My automated leaf detection and positioning system performs poorly in dense foliage. What computer vision techniques are suitable for such intricate structures?

Common depth mapping techniques like standard block-matching or IR-based sensors (e.g., Kinect) struggle with dense vegetation due to their wide field of view and sensitivity to ambient light or lack of distinctive features [17].

- Troubleshooting Steps:

- Leverage a Lightweight Neural Network: Use a computationally efficient convolutional neural network (CNN) to reliably detect and classify individual leaves in 2D RGB images, even in complex canopies [17].

- Apply the Principle of Parallax for Depth: After leaf detection in 2D, calculate the 3D position of each leaf by capturing images from multiple known camera positions along a linear axis. The apparent movement (parallax) of the leaf between images can be used to triangulate its precise depth [17].

- Simplify the Problem: Focus the depth calculation only on the detected leaf positions rather than trying to generate a full depth map for the entire scene, which reduces computational complexity [17].

Experimental Protocols for Key Challenges

Protocol 1: Multi-View 3D Plant Reconstruction for Occlusion Mitigation

This protocol outlines a method to create a complete 3D model of a plant by fusing data from multiple viewpoints, effectively overcoming occlusion [9].

- Workflow Overview:

- Materials and Setup:

- A binocular stereo camera (e.g., ZED 2).

- A robotic or gantry system with a rotating arm capable of positioning the camera at six viewpoints around the plant (e.g., 0°, 60°, 120°, 180°, 240°, 300°).

- Six passive spherical markers (calibration spheres) placed around the plant.

- A computer with an NVIDIA GPU for processing.

- Methodology:

- Image Acquisition: At each of the six viewpoints, capture high-resolution RGB images. The camera's vertical position can also be adjusted to capture the plant from different heights.

- Single-View Point Cloud Generation: For each viewpoint, process the captured images using Structure from Motion (SfM) and Multi-View Stereo (MVS) algorithms instead of the camera's native depth output. This generates a high-quality, but partial, 3D point cloud from that perspective.

- Point Cloud Registration:

- Coarse Alignment: Use a Self-Registration (SR) method that automatically detects the spherical markers in each point cloud. The known position and geometry of these markers allow for an initial, rough alignment of all six point clouds into a common coordinate system.

- Fine Alignment: Apply the Iterative Closest Point (ICP) algorithm to the coarsely aligned model. ICP iteratively refines the alignment by minimizing the distances between points in the different clouds, resulting in a precise and complete 3D model.

- Phenotyping: Extract key phenotypic parameters such as plant height, crown width, leaf length, and leaf width from the complete 3D model [9].

Protocol 2: Multi-Modal Image Registration for Parallax Correction

This protocol describes how to achieve pixel-precise alignment between images from different sensor modalities (e.g., RGB, Hyperspectral, Chlorophyll Fluorescence) to facilitate data fusion and analysis [15].

- Workflow Overview:

- Materials and Setup:

- A multi-modal imaging system (e.g., integrating an RGB camera, a hyperspectral imager (HSI), and a chlorophyll fluorescence (ChlF) imager).

- A controlled platform to position plants (e.g., Multi-well plates).

- Calibration targets for all cameras.

- Methodology:

- Data Acquisition: Capture image data of the same plant sample using all sensor modalities. While the plant position should be kept constant, the different imagers may be oriented slightly differently.

- Pre-processing and Camera Calibration: Correct for lens distortion, geometric misalignment, and other non-linear effects in each camera system using calibration data. This step is crucial for accurate registration [15].

- Reference Image Selection: Choose one modality (e.g., the high-contrast ChlF image) as the fixed target. The other images will be transformed to align with this reference.

- Global Affine Registration: Perform an initial, image-wide affine transformation to align the "moving" images to the "target" reference image. This transformation can be calculated using methods like Phase-Only Correlation (POC) or feature-based algorithms (e.g., ORB) with RANSAC.

- Fine-Scale Object Registration: Since a single global transformation may not perfectly align all plant parts, perform an additional fine registration on individually segmented objects (e.g., single leaves or rosettes) within the image. This step ensures a high overlap ratio across all regions of interest [15].

Technical Specifications of 3D Imaging Techniques

The table below summarizes the key characteristics of different 3D imaging methods used in plant phenotyping, highlighting their suitability for various challenges [18] [7].

Table 1: Comparison of 3D Imaging Technologies for Plant Phenotyping

| Method | Principle | Advantages | Disadvantages | Best for Overcoming |

|---|---|---|---|---|

| Laser Triangulation (LT) [18] | Pairs a laser line with a camera; uses triangulation for distance. | High accuracy & resolution at close range; insensitive to ambient light [18]. | Small measurement volume; trade-off between resolution and volume [18]. | Complex Geometry (high-resolution organ-level detail) |

| Structure from Motion (SfM) [18] [9] | Reconstructs 3D from multiple 2D images with overlapping viewpoints. | Low cost (uses RGB cameras); provides color information; high detail [18] [7]. | Computationally intensive; slower; sensitive to lighting and wind [18] [7]. | Occlusion (via multi-view capture) |

| Time of Flight (ToF) [18] [7] | Measures round-trip time of a projected light pulse. | Fast acquisition; small sensor size; less sensitive to ambient light [18] [7]. | Lower resolution; can miss fine details; difficulties with shiny surfaces [9] [7]. | Leaf Movement (fast capture) & Parallax (in multimodal setups [3]) |

| Structured Light (SL) [18] | Projects known light patterns and measures their deformation. | High accuracy and speed [18]. | Vulnerable to ambient light; accuracy decreases with distance [18]. | Complex Geometry in controlled environments |

| Terrestrial Laser Scanning (TLS) [18] | A ground-based LiDAR system using time-of-flight or phase-shift. | High accuracy over large volumes; measures dense canopies [18]. | High cost; complex scanning and data processing [18]. | Complex Geometry of large plants/canopies |

Research Reagent Solutions: Essential Materials for 3D Plant Phenotyping

This table lists key materials and equipment essential for experiments in 3D plant phenotyping, as featured in the cited research.

Table 2: Essential Research Materials and Equipment

| Item | Function / Application | Example Use-Case |

|---|---|---|

| Binocular Stereo Camera | Captures synchronized image pairs for 3D reconstruction via stereo vision. | Used as the primary image acquisition device in multi-view plant reconstruction protocols [9]. |

| Time-of-Flight (ToF) Camera | Provides depth information by measuring the time for light to return from an object. | Integrated into multimodal setups to provide 3D data for parallax correction during image registration [3]. |

| Spherical Markers (Calibration Spheres) | Serve as known geometric references in a 3D scene. | Placed around a plant to enable coarse automatic registration (alignment) of point clouds from different viewpoints [9]. |

| Robotic Linear Gantry / Rotating Arm | Provides precise, automated positioning of sensors around a plant. | Enables repeatable image acquisition from multiple, predefined angles for occlusion-free 3D modeling [17] [9]. |

| Point Cloud Completion Software (e.g., PF-Net) | Uses deep learning to predict and fill in missing 3D data in incomplete point clouds. | Applied to recover the geometry of leaves that were partially occluded during scanning, improving phenotypic trait accuracy [16]. |

| Multi-Modal Registration Algorithm | Computes the transformation needed to align images from different sensors at the pixel level. | Crucial for fusing RGB, hyperspectral, and fluorescence images into a coherent dataset for analysis [3] [15]. |

3D Registration Methodologies: From Depth Cameras to Ray Casting

This technical support center is designed for researchers working with active 3D sensing technologies in multimodal plant phenotyping. A primary challenge in such setups is achieving pixel-accurate alignment between different camera modalities (e.g., RGB, thermal, hyperspectral) due to parallax effects caused by differing camera viewpoints. This guide provides targeted troubleshooting and methodologies to leverage Time-of-Flight (ToF) and Structured Light cameras to overcome these challenges, ensuring precise and reliable data for your research.

Troubleshooting Guides & FAQs

General Hardware & Data Integrity

Q: My 3D scan data shows significant noise or wrong depth values. What could be the cause?

A: This is a common issue often linked to the scanning environment, object properties, or hardware setup.

- Actionable Checklist:

- Control Ambient Light: For both ToF and Structured Light systems, strong ambient light, especially sunlight, can interfere with the projected light patterns or emitted signals, degrading point cloud quality. Perform scans in a controlled, diffusely lit environment [19].

- Prepare Object Surfaces: Surfaces that are highly reflective (e.g., glossy leaves) or absorbent (very dark soils) can cause data gaps or noise. For reflective or dark objects, applying a temporary matte spray can create a scan-friendly surface [19].

- Verify Hardware Calibration: An uncalibrated scanner can produce precise but systematically inaccurate data, known as "measurement bias." Ensure your scanner is regularly calibrated according to the manufacturer's protocols [19].

- Check for Physical Damage: Ensure all flex cables are correctly seated and locked in place. Gently handle circuit boards by the edges to avoid electrostatic discharge [20].

Q: What is the optimal workflow for setting up a multimodal imaging experiment?

A: A systematic setup is crucial for success, particularly when integrating a depth camera to mitigate parallax.

*1. System Calibration: Precisely calibrate all cameras (ToF/Structured Light, RGB, thermal, etc.) together. This involves capturing multiple images of a calibration pattern (like a checkerboard) from different distances and angles to determine the intrinsic and extrinsic parameters of each camera [21]. *2. Synchronized Data Capture: Acquire images from all modalities simultaneously or under tightly controlled conditions to minimize temporal discrepancies. *3. 3D Data Processing: Use the depth data to generate a 3D mesh or point cloud of the plant canopy [21] [22]. *4. Multimodal Registration: Employ a ray-casting algorithm that projects pixels from the other cameras onto the 3D mesh. This effectively maps information from all modalities into a common 3D space, directly addressing parallax [21]. *5. Occlusion Handling: Automatically identify and mask areas where plant parts occlude each other from different camera views to minimize registration errors [21].

The following workflow diagram illustrates this process for integrating a ToF camera:

Time-of-Flight (ToF) Specific Issues

Q: My ToF camera exhibits abnormal performance like interlacing, point cloud failures, or consistently wrong depth data.

A: This is frequently a software, not hardware, issue.

- Solution: Update the camera's Software Development Kit (SDK) to the latest version. For instance, one documented issue with Arducam ToF cameras was resolved by updating the SDK to version

0.0.7using the commands: Always check the manufacturer's documentation for the latest firmware and SDK updates [20].

Q: How can I change the measurement mode (e.g., from 2m to 4m range) on my ToF camera?

A: The measurement range is typically controlled via the API. The general code logic involves setting the control parameter for the range, which often also defines the MAX_DISTANCE variable used in processing.

Always consult your specific SDK's API documentation for the exact function calls [20].

Structured Light Specific Issues

Q: My Structured Light scanner performs poorly on dark or shiny plant leaves.

A: This is an expected challenge, as these surfaces interfere with the projected light pattern.

- Solutions:

- Use Matte Spray: As with ToF, a temporary matte coating can neutralize challenging surfaces [19].

- Leverage Advanced Hardware: Some modern structured light scanners use specific technologies, like blue light or infrared VCSELs, which are more robust against ambient light and can perform better on dark surfaces than standard white light projectors [19].

- Software Correction: Use post-processing software to fill small data gaps and clean the point cloud [19].

The Scientist's Toolkit: Essential Research Reagent Solutions

The table below details key hardware and software components for building a robust multimodal 3D phenotyping system.

Table 1: Essential Materials for Multimodal 3D Plant Phenotyping

| Item Name | Type | Primary Function | Key Considerations |

|---|---|---|---|

| Time-of-Flight (ToF) Camera [21] [22] | Hardware | Measures distance for each pixel by calculating light roundtrip time. Provides the 3D data to resolve parallax. | Optimal working distance, resolution (point density), frame rate, resistance to ambient light. |

| Structured Light Camera [19] [22] | Hardware | Projects a light pattern and calculates 3D shape via triangulation. Provides high-resolution 3D data. | Works best in controlled light; performance can vary with surface texture and color. |

| Calibration Target (Checkerboard) [21] | Hardware | Enables geometric calibration of all cameras in the setup for precise spatial alignment. | High-contrast, precise printing, size appropriate for the camera's field of view. |

| Matte Aerosol Spray [19] | Lab Consumable | Temporarily creates a scan-friendly surface on reflective or dark leaves by reducing specular reflections. | Must be non-toxic to plants and easily removable if long-term plant health is a concern. |

| Ray-Casting Registration Software [21] | Software/Algorithm | Core algorithm for parallax correction. Projects pixels from various cameras onto the 3D mesh to achieve pixel-precise alignment. | Requires a calibrated system and a generated 3D mesh. Custom development is often needed. |

| 3D Scanning & Processing Suite (e.g., EINSTAR) [19] | Software | Provides a unified platform for point cloud cleaning, editing, alignment, and mesh generation from raw scan data. | Look for features like automatic alignment, hole filling, mesh simplification, and color adjustment. |

Experimental Protocols for Multimodal Registration

This protocol details the method for using a ToF camera to enable parallax-free multimodal image registration, as validated on six distinct plant species [21].

Detailed Methodology

System Setup and Calibration:

- Rigidly mount all cameras (Hyperspectral, Thermal, RGB, ToF) in a fixed arrangement overlooking the plant scene.

- Calibration: Use a checkerboard pattern. Capture at least 15-20 images of the pattern from different orientations and distances with each camera. Use these images to compute:

- Intrinsic Parameters: Focal length, optical center, and lens distortion for each camera.

- Extrinsic Parameters: The relative rotation and translation between every camera and the ToF camera.

Data Acquisition:

- Trigger all cameras simultaneously to capture a synchronized dataset of the plant.

- From the ToF camera, you will obtain a depth map (a 2D image where each pixel value represents distance) and often a corresponding 3D point cloud.

3D Mesh Generation:

- Process the raw ToF point cloud to create a 3D mesh (a surface model) of the plant canopy. This may involve:

- Removing statistical outliers to filter noise.

- Applying surface reconstruction algorithms (e.g., Poisson surface reconstruction) to create a continuous mesh from the discrete points [22].

- Process the raw ToF point cloud to create a 3D mesh (a surface model) of the plant canopy. This may involve:

Ray-Casting-Based Registration (Core Parallax Handling):

- For every pixel in the non-3D cameras (e.g., thermal camera), cast a ray from the camera's focal point through the pixel into the 3D scene.

- Calculate the intersection point of this ray with the 3D mesh generated from the ToF data.

- This 3D intersection point is the true spatial location of that pixel's information. It can now be accurately projected onto any other camera's view, effectively aligning the pixels from different modalities in 3D space and eliminating parallax errors [21].

Occlusion Detection and Masking:

- During ray-casting, automatically detect occlusions. If a ray from a secondary camera does not intersect the mesh, or intersects it at a point that is not visible from the primary (ToF) camera's perspective, label that pixel as "occluded" [21].

- These occluded regions can be masked in the final registered images to prevent erroneous data fusion.

Performance & Specification Comparison

When selecting a 3D sensor, understanding the key specifications and their practical implications is critical. The table below compares active 3D sensing technologies based on common performance metrics.

Table 2: Performance Comparison of Active 3D Sensing Technologies

| Specification | Time-of-Flight (ToF) | Structured Light | Considerations for Plant Phenotyping |

|---|---|---|---|

| Working Principle | Measures light pulse roundtrip time [22]. | Triangulation of a deformed projected pattern [22]. | ToF is less sensitive to baseline distance than Structured Light. |

| Resolution | Typically medium (e.g., VGA) [22]. | Can be high (e.g., 1080p and above) [19]. | Structured light may capture finer leaf venation. |

| Scan Speed | Very high (frame rates suitable for real-time) [22]. | Varies; can be fast, but high-res scans take longer. | ToF is advantageous for tracking dynamic plant movement. |

| Ambient Light Sensitivity | Sensitive to strong infrared light (e.g., sunlight) [19]. | Sensitive to broad-spectrum ambient light which can wash out the pattern [19]. | Both require controlled lighting; Structured Light is often more vulnerable. |

| Performance on Challenging Surfaces | Can struggle with very dark, absorbent surfaces [19]. | Struggles with reflective, shiny, or transparent surfaces [19]. | Plant leaves often present both challenges (glossy and dark). Preparation with matte spray may be needed. |

| Primary Parallax Role | Provides the 3D geometry for ray-casting registration [21]. | Provides high-resolution 3D geometry for ray-casting registration [22]. | Both are excellent for generating the required 3D mesh. |

Troubleshooting Guide: Common Issues in the 3D Plant Phenotyping Pipeline

Problem 1: Parallax-Induced Misalignment in Multimodal Images Issue: Pixel-level misalignment occurs when fusing data from multiple cameras (e.g., RGB, thermal, hyperspectral) due to parallax error, where the same plant feature appears at different positions from various viewpoints [4]. Solution:

- Integrate Depth Information: Utilize a time-of-flight camera to capture depth data. This allows the registration algorithm to account for and mitigate parallax effects by understanding the 3D structure of the plant canopy [4].

- Automated Occlusion Handling: Implement algorithms that automatically identify and differentiate between different types of occlusions (e.g., leaf-over-leaf), preventing the introduction of errors during the registration process [4].

Problem 2: Inaccurate Mesh Reconstruction from Multi-View Images Issue: The reconstructed 3D plant mesh is noisy, contains holes, or inaccurately represents fine structures like thin stems, leading to poor ray-casting results. Solution:

- Optimize Image Acquisition: Ensure high-resolution images (e.g., 10 Megapixels) are taken from a sufficient number of viewing angles (e.g., 64 images per plant) [23]. A controlled, automated rotation platform is ideal for consistency.

- Validate Reconstruction Accuracy: Cross-validate the automated mesh by comparing it against a small set of manual measurements. The mean absolute error for key parameters like leaf length and width should ideally be below 10% [23].

Problem 3: Ray Casting Yields No Intersections (t_hit = inf)

Issue: When casting rays into a RaycastingScene, the result shows t_hit as inf (infinity) and geometry_ids as INVALID_ID, indicating the rays are missing the mesh [24].

Solution:

- Verify Ray Origin and Direction: Ensure the ray's origin is placed appropriately relative to the mesh. For a pinhole camera model, the

eye(camera position) should be placed so that the mesh falls within the camera's field of view [24]. - Check Mesh Preprocessing: Confirm the mesh has been correctly loaded into the

RaycastingSceneand that theadd_triangles()method was successful. The mesh should be watertight and located at the expected 3D coordinates [24].

Problem 4: Incorrect Organ Segmentation on the 3D Mesh Issue: The mesh segmentation algorithm fails to correctly identify and label different plant organs (stem, leaves), preventing accurate trait measurement. Solution:

- Employ Advanced Morphological Segmentation: Use a mesh segmentation algorithm designed for plant phenotyping that can partition the mesh into morphological regions based on the structure and connectivity of the mesh vertices [23].

- Implement Temporal Tracking: For time-series data, develop an organ-tracking feature that follows individual leaves across growth time-points, which can achieve accuracy rates of 95% or higher [23].

Frequently Asked Questions (FAQs)

Q1: Why is precise multimodal image registration so critical for my plant phenotyping research? Precise registration is the foundation for any cross-modal analysis. It enables the accurate fusion of data—for instance, aligning a thermal signature directly with a specific leaf region on an RGB model. Without pixel-accurate alignment, any subsequent analysis correlating data from different sensors will be fundamentally flawed. A novel 3D registration method that uses depth information has been shown to achieve robust alignment across six distinct plant species with varying leaf geometries [4].

Q2: What are the key advantages of a 3D mesh-based analysis over traditional 2D image processing? 2D techniques suffer from a loss of crucial spatial and volumetric information. A 3D mesh-based approach allows for accurate, non-destructive measurement of specific morphological features, including:

- Spatial Traits: True leaf orientation, inclination, and stem curvature.

- Volumetric Traits: Biomass estimation and leaf thickness.

- Occlusion Handling: The ability to reason about and model hidden structures [23]. This leads to more accurate and exhaustive phenotypic data, with 3D methods demonstrating high correlation (up to 0.96) with manual measurements [23].

Q3: How do I create a virtual point cloud from my plant's 3D mesh using ray casting?

You can simulate a virtual laser scan using a RaycastingScene [24]. The process is:

- Generate a set of rays, often from a pinhole camera model, covering the desired viewpoint.

- Cast these rays against the scene containing your plant mesh.

- For each ray that hits (

t_hitis a finite number), calculate the 3D intersection point using the formula:point = ray_origin + t_hit * ray_direction. - Collect all these 3D points to form a virtual point cloud. This is useful for simulating sensor data or for further analysis [24].

Q4: What is the typical accuracy and throughput I can expect from an automated 3D mesh phenotyping pipeline? Validation studies on cotton plants report the following performance metrics for a mesh-processing pipeline [23]:

- Accuracy: Mean absolute errors of 5.75% for leaf width and 8.78% for leaf length when compared to manual measurements.

- Speed: An average execution time of 4.9 minutes to analyze a single plant across four time-points, including segmentation and trait extraction.

- Reliability: Correlation coefficients with manual measurements can reach 0.96 for leaf dimensions and 0.88 for stem height [23].

Experimental Protocol: Multimodal 3D Plant Phenotyping with Parallax Mitigation

This protocol details the steps for acquiring and processing multimodal plant images to create an accurately aligned 3D model, specifically addressing parallax challenges.

1. Plant Material and Growth Conditions

- Grow plants (e.g., Gossypium hirsutum for initial testing) in a thoroughly controlled environment [23].

- Subject plants to the desired environmental stresses (e.g., drought, salt) for the study duration [23].

2. Multi-Technology Image Acquisition

- Setup: Arrange multiple cameras (RGB, time-of-flight for depth, etc.) in a fixed rig, ensuring their fields of view overlap the plant canopy [4].

- Procedure: For each time-point, simultaneously or near-simultaneously capture images from all sensors. If using a single camera, place the plant on a rotating tray and capture multiple views (e.g., one image every few degrees over a 360° rotation) [23].

- Key Parameter: Use high-resolution cameras (e.g., 3872x2592 pixels) to capture sufficient detail for reconstruction [23].

3. 3D Mesh Reconstruction

- Use 3D reconstruction software (e.g., 3DSOM or a custom algorithm) to generate a triangle mesh from the multi-view images [23].

- Expect meshes to consist of 120,000 to 270,000 polygons for a detailed plant model [23].

4. Multimodal Image Registration

- Inputs: The reconstructed 3D mesh and the set of 2D images from all modalities.

- Process: Execute a registration algorithm that integrates the depth information from the time-of-flight camera. This step projects pixels from the 2D images onto the 3D mesh surface, explicitly correcting for parallax effects [4].

- Output: A pixel-accurate, multimodal 3D model where data from all sensors is aligned to the mesh geometry [4].

5. Ray Casting for Phenotypic Trait Extraction

- Initialize Scene: Create a

RaycastingSceneand add the plant mesh to it [24]. - Cast Rays: Generate rays to analyze the model. This can be for creating a depth map from a virtual camera view or for systematically probing the mesh to measure distances [24].

- Extract Data: Use the intersection results (

t_hit,geometry_ids,primitive_normals) to calculate phenotypic parameters such as leaf area, stem height, and leaf angles [24].

Table 1: Quantitative Validation of 3D Mesh-Based Phenotyping vs. Manual Measurement

| Phenotypic Trait | Mean Absolute Error | Correlation Coefficient (r) |

|---|---|---|

| Main Stem Height | 9.34% | 0.88 |

| Leaf Width | 5.75% | 0.96 |

| Leaf Length | 8.78% | 0.95 |

Data validated on cotton plants (Gossypium hirsutum) over four time-points [23].

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Software and Hardware for 3D Plant Phenotyping

| Item Name | Function / Purpose |

|---|---|

| Open3D Library | An open-source library that provides the RaycastingScene class and related functions for 3D data processing, ray intersection tests, and virtual point cloud generation [24]. |

| Time-of-Flight (ToF) Camera | A depth-sensing camera that is integrated into the multimodal registration process to mitigate parallax effects and achieve pixel-accurate alignment of images from different modalities [4]. |

| High-Resolution SLR Camera | Used for capturing high-quality multi-view images (e.g., 10 Megapixels) necessary for detailed and accurate 3D mesh reconstruction of plant structures [23]. |

| 3DSOM Software | A commercial 3D digitisation software package used to reconstruct a 3D triangle mesh from a series of high-resolution images taken from multiple viewing angles around the plant [23]. |

| Morphological Mesh Segmentation Algorithm | A custom algorithm that partitions the reconstructed plant mesh into its constituent organs (stem, leaves), which is a critical step before quantitative trait extraction can be performed [23]. |

Workflow and Signaling Diagrams

Diagram Title: 3D Plant Phenotyping and Ray Casting Workflow

Diagram Title: Troubleshooting Guide for Common Pipeline Failures

This technical support center provides targeted guidance for researchers implementing camera-agnostic systems in multimodal plant imaging. A camera-agnostic approach utilizes hardware and software that can interface with various camera types and brands without custom engineering for each device. This is particularly valuable in plant phenotyping and drug development research, where combining data from multiple imaging sensors (RGB, hyperspectral, thermal, fluorescence) is essential for non-destructive growth analysis and physiological trait monitoring [25]. The protocols and FAQs below are framed within the specific challenge of managing parallax effects when fusing data from these different modalities.

Troubleshooting Guides

Parallax Misalignment in Multimodal Registration

Problem: Images captured from different cameras (e.g., RGB and thermal) cannot be accurately overlaid or registered due to parallax error. This occurs because each camera samples the scene from a slightly different physical position.

Diagnosis Checklist:

- Confirm that the plant target is positioned at the designed working distance from the camera array.

- Verify that the mechanical mounting of all cameras is secure and within the tolerances specified in the system's calibration procedure.

- Check the calibration files to ensure they are current and were generated for the exact lens and focal length currently in use.

Resolution Protocol:

- Mechanical Alignment: Physically adjust the cameras to ensure their optical axes are as parallel as possible. Use precision spirit levels and alignment jigs. The goal is to minimize the baseline distance between different sensors [25].

- Software Correction: Re-run the system's geometric calibration routine using a multi-modal calibration target (e.g., a checkerboard visible in all target wavelengths). This will generate a transformation matrix to align the images.

- Reference Plane Selection: Define a fixed reference plane (e.g., the top of the plant canopy) in your processing software. All image fusion and analysis should be performed relative to this plane to minimize parallax artifacts.

Inconsistent Lighting Across Camera Modalities

Problem: A lighting source optimal for one camera (e.g., a flash for RGB) creates glare, is invisible, or interferes with another camera (e.g., a thermal camera).

Diagnosis Checklist:

- Identify the active wavelengths of all lighting sources (e.g., LED grow lights, flash units, laser guides).

- Check for temporal synchronization conflicts between camera exposure and lighting triggers.

- Assess the impact of ambient light conditions, which can vary throughout the day.

Resolution Protocol:

- Spectral Separation: Use lighting with narrow, non-overlapping spectral bands for different cameras where possible. For instance, use near-infrared (NIR) lights for an NIR camera and turn them off when capturing with an RGB sensor.

- Temporal Separation: Sequentially trigger cameras and their dedicated light sources. This requires precise electronic control to ensure one camera's light does not pollute another's image [25].

- Active vs. Passive Sensing: For critical 3D data, use active sensing modalities like LiDAR or structured light, which are less susceptible to ambient light variations and can provide parallax-free depth maps.

Low Color Contrast for Automated Plant Analysis

Problem: Automated image analysis algorithms (e.g., for leaf area estimation or disease spotting) perform poorly due to insufficient contrast between the plant and its background or within the plant itself.

Diagnosis Checklist:

- Use a color contrast analyzer tool to check the contrast ratio between key regions (e.g., leaf and soil) [26] [27].

- Simulate the image using a color blindness simulator to see if the color differentiations are perceptible under different vision deficiencies [28] [29].

- Check if the issue persists in grayscale versions of the image, which helps isolate luminance-based contrast problems.

Resolution Protocol:

- Use Color Blind-Friendly Palettes: For false-color visualizations, use palettes designed for accessibility. Avoid red-green combinations. Instead, use a blue-red palette or sequential palettes with a single hue [28] [29].

- Employ Non-Color Cues: Enhance algorithms to rely not just on color but also on texture, shape, and morphological features. In visuals, use direct labels, patterns, or shapes in addition to color coding [28] [29].

- Optimize Backgrounds: In controlled environments, use a neutral, high-contrast backdrop (e.g., a blue screen) to simplify plant segmentation. Ensure the backdrop's reflectance properties are consistent across the wavelengths used by all cameras.

Frequently Asked Questions (FAQs)

Q1: What does "camera-agnostic" mean in practice for our imaging rig? A1: It means your software control, data acquisition, and calibration pipelines are designed to work with a wide range of cameras from different manufacturers (e.g., Emergent, FLIR, Basler) and across different modalities (RGB, hyperspectral, thermal) without requiring fundamental changes to the codebase. The system abstractly handles camera communication via standards like GigE Vision or GenICam [30].

Q2: Why is parallax a more significant problem in plant imaging compared to industrial inspection? A2: Plant structures are complex, three-dimensional, and change over time. A slight parallax error can cause a leaf tip in one image to be misregistered as a separate leaf in another modality, leading to incorrect data fusion and flawed analysis of plant architecture or health [25].

Q3: How can we ensure our visualized data (e.g., heat maps of plant stress) are accessible to all team members, including those with color vision deficiency? A3:

- For Heatmaps: Use a single-hue sequential palette (e.g., light blue to dark blue) or a grayscale palette instead of a red-green diverging palette [29].

- For Line Charts: Use dashed lines, different line weights, and direct data labels instead of relying solely on color to distinguish lines [28].

- Validation: Always test your visualizations with a color blindness simulator tool (e.g., Color Oracle) and check contrast ratios meet WCAG guidelines (at least 4.5:1 for normal text) [28] [27] [31].

Q4: We are building a low-cost, linear robotic camera system for automated plant photography. What is the most critical factor for success? A4: The most critical factor is mechanical precision and repeatability. The system must move the camera to the "exact same spot" for each capture to ensure consistent viewpoint, distance, and shooting angle over the plant's lifecycle. This consistency is paramount for reliable time-series analysis and minimizing alignment problems in post-processing [25].

The following tables summarize key quantitative metrics relevant to designing and troubleshooting camera-agnostic imaging systems.

Table 1: WCAG Color Contrast Requirements for Scientific Imagery

Adhering to these standards ensures your data visualizations and software interfaces are accessible to a wider audience, including those with visual impairments [26] [27].

| Text/Element Type | Minimum Ratio (Level AA) | Enhanced Ratio (Level AAA) | Example Use Case in Research |

|---|---|---|---|

| Normal Text | 4.5:1 | 7:1 | Labels, axis values, and legends on graphs |

| Large Text (18pt+ or 14pt+ Bold) | 3:1 | 4.5:1 | Graph titles, section headers in dashboards |

| User Interface Components | 3:1 | - | Buttons, slider tracks, form input borders |

| Graphical Objects | 3:1 | - | Data points, lines in a chart, icons |

Table 2: Color Palette Guidelines for Accessible Data Visualization

Choosing the right type of color palette for your data is crucial for clear and accurate communication [28].

| Data Type | Recommended Palette Type | Color Blind-Safe Recommendation | Maximum Recommended Colors |

|---|---|---|---|

| Qualitative (Distinct Categories) | Categorical | Blue/Red/Orange palette; use patterns/shapes | 4-5 [28] |

| Sequential (Low to High Values) | Single-Hue Sequential | Light to dark blue; grayscale | 9 [28] |

| Diverging (Values relative to a midpoint) | Two-Hue Diverging | Blue (low) to white to red (high) | 11 [28] |

Experimental Protocols

Protocol: System Calibration for Parallax Correction

Objective: To generate a set of transformation matrices that allow for accurate spatial alignment of images captured from multiple cameras in an agnostic array.

Materials:

- Multi-camera imaging rig with fixed relative positions.

- Multi-modal calibration target (e.g., a checkerboard with materials visible in RGB, thermal, and NIR spectra).

- Calibration software (e.g., OpenCV, MATLAB Camera Calibrator, or custom scripts).

Methodology:

- Positioning: Place the calibration target within the system's working volume, ensuring it is visible to all cameras. For 3D correction, capture images of the target at different angles and depths.

- Image Acquisition: Trigger all cameras simultaneously to capture a set of images of the calibration target. Repeat this process for at least 10-15 different target poses.

- Feature Detection: For each camera, the software automatically detects key points (e.g., checkerboard corners) in all captured images.

- Parameter Calculation: The software computes the intrinsic parameters (focal length, optical center, lens distortion) for each camera individually.

- Extrinsic Calculation: Using the known 3D position of the target points and their 2D locations in each camera's image, the software calculates the extrinsic parameters (rotation and translation) that define the position and orientation of each camera relative to a global coordinate system and to each other.

- Validation: Capture a new set of images of the target in new poses. Apply the calculated transformations and measure the re-projection error to validate the calibration accuracy.

Protocol: Automated Plant Health Monitoring via Robotic Camera

Objective: To non-destructively monitor plant growth and health by repeatedly capturing top-view images of plants at predefined locations over time [25].

Materials:

- 1-DOF (Degree of Freedom) linear robotic actuator.

- Standard RGB or multispectral camera mounted on the actuator.

- Laboratory or greenhouse setup with potted plants (e.g., lettuce).

- Image processing software (e.g., Fiji/ImageJ, Python with OpenCV).

Methodology:

- System Setup: Program the linear robot to move to predefined stop points corresponding to each plant's location. Ensure consistent lighting for each capture session [25].

- Image Acquisition: The system automatically moves to each stop point and triggers the camera to capture a top-view image. This process is repeated according to a set schedule (e.g., daily).

- Image Processing:

- Color Segmentation: Isolate the plant from the background (soil, pot) using color thresholding in a suitable color space (e.g., HSV).

- Contour Analysis: Detect the contour of the plant. From this, calculate metrics like total projected leaf area and perimeter [25].

- Feature Extraction: Compute other relevant features, such as color histograms or texture metrics, which can be correlated with health indicators.

- Data Logging: Store the calculated metrics for each plant and each time point to generate growth curves and monitor health trends over time.

System Diagrams

Multimodal Imaging Workflow

Camera Agnostic Software Architecture

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Plant Imaging Systems

| Item | Function in Experimental Setup | Application Note |

|---|---|---|

| Linear Robotic Actuator | Provides precise 1-DOF movement for a camera to sequentially image multiple plants in a row from a consistent viewpoint and distance [25]. | Critical for longitudinal studies to ensure data consistency and eliminate variability introduced by manual positioning. |

| GigE Vision Cameras | Standardized interface cameras (e.g., Emergent Eros series) that ensure interoperability in an agnostic system. They offer high-speed data transfer and are often compact and low-power [30]. | The "agnostic" part of the system relies on such standards to abstract away manufacturer-specific details. |

| Color Calibration Target | A physical card with known color patches (e.g., X-Rite ColorChecker) used to calibrate cameras for accurate color reproduction across different sessions and lighting. | Essential for quantitative color analysis, such as tracking chlorophyll levels or identifying nutrient deficiencies. |

| Multi-Modal Calibration Target | A calibration target designed to be visible in multiple wavelengths (e.g., a checkerboard with heated elements for thermal, reflective material for RGB/NIR). | The cornerstone for performing parallax correction and spatial alignment between different camera modalities. |

| Accessible Color Palettes | Pre-defined sets of colors (e.g., from Paul Tol or ColorBrewer) that are perceptible to individuals with color vision deficiencies [28] [29]. | Must be used for all scientific figures, heatmaps, and software UI elements to ensure accessibility and clear communication of data. |

Troubleshooting Guides

Common Registration Errors and Solutions

Problem: Persistent misalignment and blurring in specific plant regions despite successful global affine transformation.

| Symptom | Likely Cause | Recommended Solution |

|---|---|---|

| Local misalignment, "ghosting" | Parallax effects from complex plant canopy geometry [21] | Transition from 2D affine to a 3D registration framework using depth data [21]. |