Optimizing Voxel Classification for 3D Plant Imaging: Advanced Methods for Biomedical and Phenotyping Research

This article provides a comprehensive guide to optimizing voxel classification for 3D plant imaging, tailored for researchers, scientists, and drug development professionals.

Optimizing Voxel Classification for 3D Plant Imaging: Advanced Methods for Biomedical and Phenotyping Research

Abstract

This article provides a comprehensive guide to optimizing voxel classification for 3D plant imaging, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of voxel-based 3D reconstruction and its critical importance in plant phenotyping. The content delves into advanced methodological approaches, including deep learning and multi-view imaging, for precise plant structure analysis. It addresses common computational and data challenges, offering practical optimization strategies. Finally, the article covers rigorous validation techniques and comparative analyses of different voxel classification methods, highlighting their applications and performance in biomedical and agricultural research.

Voxel-Based 3D Reconstruction: Core Principles and Significance in Plant Phenotyping

Frequently Asked Questions (FAQs)

Q1: What is a voxel grid and how is it used in 3D plant phenotyping? A voxel grid is a three-dimensional matrix of values, analogous to a 2D pixel image, that digitally represents an object's geometry in 3D space. In plant phenotyping, voxel grids are created from multiple 2D images or laser scans to reconstruct a 3D model of a plant. This model enables the accurate computation of phenotypic traits, such as canopy volume, leaf area, and plant architecture, which are difficult to measure precisely from 2D images due to plant self-occlusions and leaf crossover [1].

Q2: What are the main technical challenges when creating voxel grids for plant analysis? A primary challenge is setting the appropriate voxel size. A size that is too large fails to capture fine plant structures, leading to inaccurate volume calculations, while a very small size significantly increases computational load without substantial gain in precision [2]. Another common issue is the occurrence of "holes" or gaps in the reconstructed voxel grid, which can be caused by exceeding the effective boundaries of the scanning system or by insufficient data points from certain viewing angles [3].

Q3: What is the difference between active and passive 3D imaging methods? Active methods, such as LiDAR and structured light scanners, project their own light source (e.g., a laser or pattern) onto the plant and measure the reflection to directly capture 3D point clouds. Passive methods, like Structure from Motion (SfM), rely on ambient light and use multiple 2D images from different angles to reconstruct the 3D model computationally [4] [5]. Active methods often provide higher accuracy but can be more expensive, whereas passive methods are generally more cost-effective but may require more computational processing [4].

Troubleshooting Guides

Issue 1: Inaccurate Canopy Volume Estimation

- Problem: The calculated canopy volume is significantly larger than the expected physical volume.

- Diagnosis: This is a frequent problem where the voxel grid or reconstruction algorithm accounts for the entire spatial envelope of the canopy, including empty spaces (porosity) between leaves and branches [2].

- Solution:

- Employ an improved alpha-shape algorithm: Instead of basic methods like Convex Hull (CH) or standard Voxel-Based (VB), use an algorithm that can better fit the concave surfaces of the canopy. An improved alpha-shape algorithm can model the canopy more accurately and allows for the calculation of an "effective volume" that discounts internal porosity [2].

- Optimize voxel and partition size: For the best performance, set the voxel size to the average nearest neighbor distance of your point cloud. The partition size should be approximately five times the voxel size. This configuration has been shown to achieve high predictive accuracy (R² of 0.9720) [2].

- Validate with simulated data: Before applying the method to real-world experiments, test your pipeline on simulated tree models with known volumes to calibrate parameters [2].

Issue 2: Holes or Gaps in the Voxel Grid Reconstruction

- Problem: The reconstructed 3D plant model has erroneous holes, making it incomplete.

- Diagnosis: This can be caused by two main factors:

- Solution:

- Verify system boundaries: Empirically determine the valid spatial dimensions for your specific scanning platform. For instance, one study found that a cube of 250x250x250 units worked without errors, while a 300x300x300 cube introduced holes [3].

- Increase data coverage: Ensure comprehensive scanning or image capture from multiple, overlapping viewpoints. For multiview image reconstruction, using a "camera to plant" video acquisition system can provide a dense set of keyframes for a more complete 3D model [5].

- Use space carving with consistency checks: Implement advanced space carving techniques supplemented with voxel overlapping consistency checks to solidify the reconstruction and fill in spurious gaps [1].

Issue 3: Slow Processing Speed for Large or Complex Plants

- Problem: The voxel grid reconstruction process is computationally intensive and time-consuming, especially for large plants at advanced vegetative stages.

- Diagnosis: High-resolution voxel grids of complex plant architectures create a massive computational load for standard CPUs [1] [5].

- Solution:

- Leverage modern neural rendering: For image-based reconstruction, consider using deep learning methods like Neural Radiance Fields (NeRF). Improved versions such as Object-Based NeRF (OB-NeRF) can reduce reconstruction time from over 10 hours to just 250 seconds while maintaining high quality [5].

- Implement efficient data structures: Use an octree-based volume carving method, which is a tree data structure that efficiently represents 3D space and can be processed faster than a uniform grid [1].

- Apply voxel downsampling: Use voxel grid filtering to rearrange points uniformly in space, which reduces the total number of points to process without critically compromising the model's integrity [6].

Experimental Protocols for Voxel-Based Plant Analysis

Protocol 1: 3D Plant Reconstruction via Multiview Images and Voxel-Grid

This protocol details the creation of a 3D voxel grid from multiple 2D images for computing plant phenotypes [1].

- Image Acquisition: Capture multiple visible light images of the target plant from different viewpoints. In high-throughput platforms, this is often done automatically as the plant rotates on a turntable or as a camera moves around it.

- Camera Calibration: Use a method like Zhang Zhengyou's calibration to estimate the intrinsic camera parameters (focal length, optical center) and lens distortion coefficients [5].

- Voxel-Grid Reconstruction via Space Carving:

- Define a bounding volume around the plant and subdivide it into a grid of small cubic voxels.

- For each camera viewpoint, project the voxels into the 2D image plane.

- Carve away (remove) any voxel that projects outside the plant's silhouette in that image.

- Repeat this process for all images. The remaining set of voxels after multi-view consistency checking forms the 3D reconstruction of the plant.

- Organ Segmentation:

- Apply a voxel overlapping consistency check to group voxels into cohesive clusters.

- Use point cloud clustering techniques (e.g., Euclidean clustering) on the voxel data to detect and isolate individual leaves and the stem.

- Phenotype Extraction: Compute holistic phenotypes (e.g., total plant volume, height) from the entire voxel grid. Calculate component phenotypes (e.g., individual leaf area, stem angle) from the segmented organs.

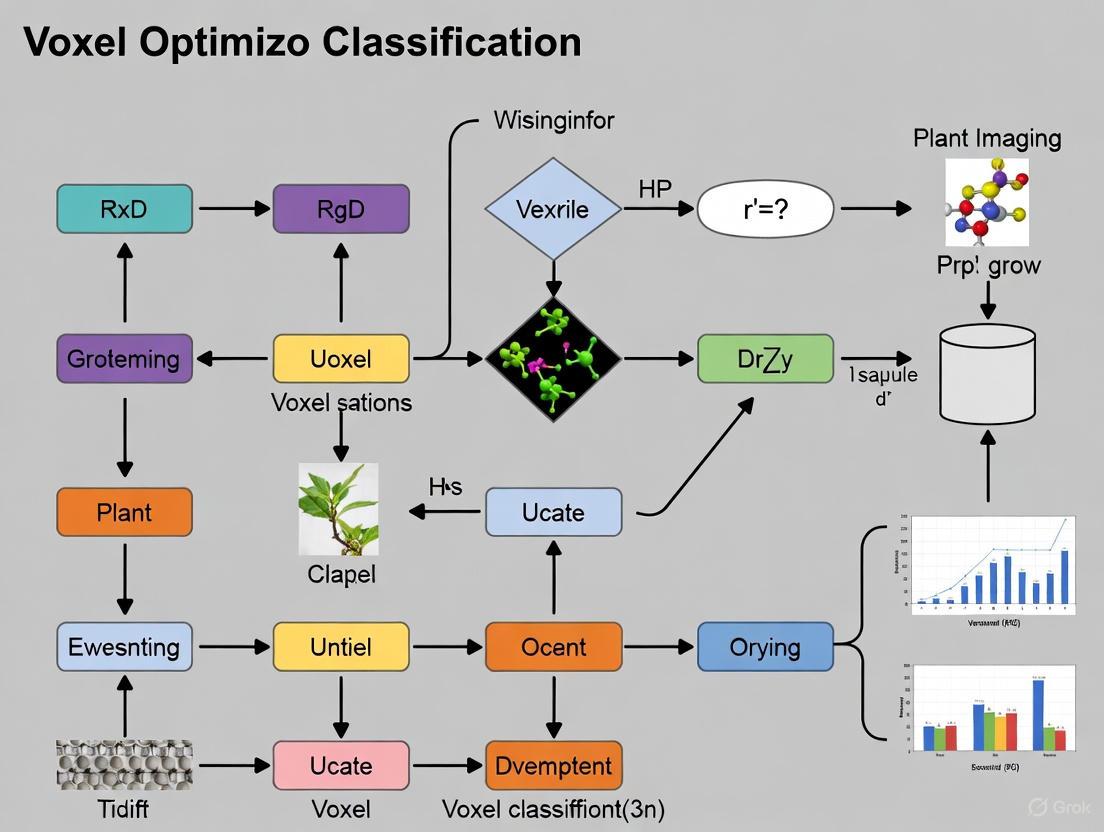

The following workflow illustrates the core steps of this voxel-grid reconstruction process:

Protocol 2: Calculating Canopy Effective Volume from LiDAR Point Clouds

This protocol describes a method to calculate the effective volume of a fruit tree canopy from LiDAR data, specifically addressing the overestimation caused by internal porosity [2].

- Data Acquisition: Collect a dense 3D point cloud of the target tree using a Terrestrial Laser Scanner (TLS) or Mobile Laser Scanner (MLS). Ensure scans are taken from multiple positions around the tree for full coverage.

- Preprocessing: Register and merge individual scans into a single, aligned point cloud. Apply noise filtering to remove outliers.

- Canopy Model Reconstruction:

- Use an improved alpha-shape algorithm to reconstruct a surface model of the canopy from the point cloud. The alpha parameter controls the tightness of the fit; a smaller alpha value better captures concavities.

- Calculate the total enclosed volume (

V_alpha) of this model.

- Calculate Canopy Effective Volume Coefficient (

C_ev):- Partition the point cloud space into a 3D grid of larger partitions. The optimal partition size is about five times the voxel size (where voxel size is set to the point cloud's average nearest neighbor distance).

- Within each partition, create a finer voxel grid and calculate the ratio of voxels containing points to the total number of voxels in that partition. This ratio is the local density (

ρ_i). - The overall effective volume coefficient is the average of these local densities:

C_ev = (1/n) * Σρ_i.

- Compute Canopy Effective Volume (EV): Multiply the total enclosed volume by the effective volume coefficient:

EV = V_alpha * C_ev. This product represents the canopy volume after accounting for internal porosity.

This table compares the performance of different voxel-based volume calculation methods against a proposed Effective Volume (EV) method.

| Method | R² Value | RMSE (m³) | Volume Reduction Rate vs. Method |

|---|---|---|---|

| Effective Volume (EV) (Proposed) | 0.9720 | 0.0203 | - |

| Alpha-Shape by Slices (ASBS) | - * | - | 0.5101 |

| Convex Hull by Slices (CHBS) | - * | - | 0.6953 |

| Voxel-Based (VB) | - * | - | 0.6213 |

*The source study primarily used the Volume Reduction Rate to demonstrate the EV method's improvement over existing methods, highlighting its success in removing porosity-related overestimation [2].

This table compares the performance of different 3D reconstruction algorithms in reconstructing a high-quality model of a plant.

| Algorithm | Reconstruction Time | PSNR (Quality) | Key Advantage |

|---|---|---|---|

| OB-NeRF | 250 seconds | High | Fast, automated, high geometric & textural fidelity |

| Traditional NeRF | > 10 hours | High | High-fidelity implicit representation |

| SfM-MVS (e.g., COLMAP) | High | Medium | Cost-effective, widely used |

| Kinect-based | Low | Low | Low-cost, real-time active sensing |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Equipment for 3D Plant Imaging and Voxel Analysis

| Item & Example | Function in Voxel-Based Plant Research |

|---|---|

| Terrestrial Laser Scanner (TLS)(e.g., RIEGL VZ-400i) | Captures high-precision, dense 3D point clouds of plants and canopies from the ground level [7] [8]. |

| Multispectral 3D Scanner(e.g., PlantEye F600) | A phenotyping-specific sensor that captures synchronized 3D geometry and multispectral (RGB, NIR) data for each point [6]. |

| Depth Camera(e.g., Microsoft Kinect) | A low-cost active sensor that provides real-time depth images, which can be converted into a 3D point cloud [4]. |

| Unmanned Aerial Vehicle (UAV) | Platforms for mounting cameras or lightweight scanners to capture top-down and oblique views of canopies [8]. |

| High-Throughput Phenotyping Platform(e.g., LeasyScan) | An automated system that integrates sensors and conveyors for imaging large numbers of plants with minimal human intervention [6]. |

Technical Workflow for Voxel Classification Optimization

For a thesis focused on optimizing voxel classification, the following detailed workflow integrates advanced deep learning techniques to improve accuracy and efficiency. This workflow addresses key challenges like the need for extensive annotated data and the computational complexity of 3D models.

Workflow Stages:

- Input and Preprocessing: Begin with a raw 3D point cloud acquired from TLS, a depth camera, or derived from multiview images [4] [6]. Apply voxelization and downsampling to structure the data and reduce computational load.

- Self-Supervised Learning: This is the core optimization step. Instead of relying solely on manually annotated data, use a framework like Plant-MAE, which employs a masked autoencoder. It learns robust feature representations from unlabeled plant point cloud data by learning to reconstruct masked parts of the input. This approach alleviates the data annotation bottleneck and helps the model learn general plant structures [9].

- Fine-Tuning for Specific Tasks: The pre-trained model from the previous step is then fine-tuned on a smaller set of labeled data for a specific task, such as semantic segmentation of plant organs (leaves, stem, etc.) [9].

- Organ-Level Segmentation and Phenotype Extraction: The fine-tuned model performs accurate segmentation of the voxel/point cloud into individual plant organs. This enables the precise computation of advanced phenotypic traits, such as individual leaf area and stem angle [1].

- Output: The result is an optimized, accurate, and efficient voxel classification model that is foundational for high-throughput 3D plant phenotyping.

The Role of 3D Phenotyping in Precision Agriculture and Drug Discovery

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between a 2.5D depth map and a true 3D point cloud for plant phenotyping, and why does it matter for voxel classification?

A1: A 2.5D depth image provides a single distance value for each x-y location, meaning it cannot detect overlapping leaves or structures behind the projected surface. In contrast, a true 3D point cloud consists of x-y-z coordinates that can represent the entire plant structure from multiple angles, including occluded parts. For voxel classification, this distinction is critical: 2.5D data provides insufficient information for accurate segmentation of complex plant architectures, while 3D point clouds enable robust voxel-based analysis of overlapping structures, leading to more accurate morphological trait extraction [10].

Q2: Our LIDAR system produces blurry edges on plant organs. Is this a calibration issue or a fundamental technology limitation?

A2: This is primarily a fundamental limitation of LIDAR technology. The blurry edges occur because the laser dot projected on leaf edges is partly reflected from the border and partly from the background, creating an averaged height signal. While calibration can improve overall data quality, this specific issue is inherent to the technology. For applications requiring precise leaf boundary detection, consider supplementing with a laser light section system, which offers higher precision in the X-Y plane (up to 0.2mm) and better edge detection capabilities [10].

Q3: How can we generate high-quality 3D plant data for training voxel classification algorithms when labeled real-world data is scarce?

A3: Implement a generative AI approach that creates synthetic yet biologically accurate 3D leaf point clouds. One validated methodology involves:

- Extract skeleton representations (petiole and vein axes) from existing real leaf data

- Train a 3D convolutional neural network (U-Net architecture) to expand these skeletons into dense point clouds using Gaussian mixture models

- Use combination loss functions to ensure generated leaves match geometric and statistical properties of real data This approach has demonstrated high similarity to real leaves (validated by Fréchet Inception Distance) and significantly improves trait estimation accuracy when used to fine-tune existing algorithms [11].

Q4: What are the practical considerations for implementing a low-cost 3D phenotyping system suitable for field use?

A4: Structure-from-motion (SfM) techniques using standard RGB cameras offer the most cost-effective solution. Key considerations include:

- Equipment: Use consumer-grade cameras capable of capturing high-resolution video from multiple angles

- Processing: Apply SfM algorithms to reconstruct 3D geometry from video sequences

- Analysis: Train machine learning models to extract features from the resulting 3D point clouds This approach has successfully estimated total leaf area in tomato plants with high accuracy, outperforming traditional 2D methods even with leaf overlap and plant movement challenges. The open-source nature of many SfM implementations further enhances accessibility [12].

Q5: What specific advantages do 3D spheroid models offer over 2D cultures in drug discovery phenotyping?

A5: 3D spheroid models provide superior physiological relevance through:

- Architecture: Better mimic in vivo tissue microenvironment and cellular interactions

- Functionality: Exhibit nutrient and oxygen gradients similar to real tissues

- Drug Response: Generate more clinically predictive compound profiling data Live-cell analysis of 3D spheroids captures temporal changes in both size and viability, providing kinetic information that endpoint 2D assays miss. This enables identification of compound mechanisms that might be overlooked in traditional screening [13].

Troubleshooting Guide

Issue 1: Poor Voxel Classification Accuracy on Complex Plant Structures

Symptoms: Inconsistent segmentation of overlapping leaves, failure to distinguish adjacent organs, high error rates in morphological trait extraction.

Solution: Implement a multi-view acquisition system with skeleton-based processing.

| Step | Procedure | Technical Specification |

|---|---|---|

| 1. Data Acquisition | Capture multiple overlapping viewpoints using laser scanners mounted at angles | Minimum 2 scanners at 30-45° angles; scan rate ≥25Hz [10] |

| 2. Pre-processing | Apply branch junction detection algorithm to point cloud data | Use subgraph matching for correspondence estimation [14] |

| 3. Feature Enhancement | Train 3D U-Net with combined reconstruction and distribution losses | Input: Leaf skeletons; Output: Dense point clouds [11] |

| 4. Validation | Compare with synthetic datasets using FID and CMMD metrics | Target FID score: <20.0 for high similarity [11] |

Issue 2: Sensor Selection for Specific Plant Phenotyping Applications

Problem: Choosing between active 3D imaging technologies for optimal voxel data quality.

Solution: Select sensors based on resolution requirements, environmental conditions, and target species.

Table: Comparative Analysis of Active 3D Imaging Technologies for Plant Phenotyping

| Technology | Spatial Resolution | Optimal Range | Key Advantages | Major Limitations | Voxel Classification Suitability |

|---|---|---|---|---|---|

| LIDAR | 10-100mm | 2-100m | Fast acquisition; Light independent; Long range | Poor X-Y resolution; Blurry edges; Requires warm-up | Low - insufficient detail for fine structures [10] |

| Laser Light Section | 0.2-1.0mm | 0.2-3m | High precision; Robust hardware; Light independent | Requires movement; Defined range only | High - excellent for detailed organ classification [10] |

| Structured Light | 0.5-5.0mm | 0.5-5m | No movement required; Low cost; Color capability | Sensitive to sunlight; Limited outdoor use | Medium - good for controlled environments [10] [4] |

| Time of Flight (ToF) | 1-10mm | 0.5-10m | Real-time reconstruction; Cost-effective | Lower resolution; Ambient light sensitivity | Medium - balance of speed and detail [4] |

Issue 3: Real-time Plant Growth Monitoring for Dynamic Voxel Analysis

Symptoms: Inability to capture diurnal growth patterns, motion artifacts in time-series data, insufficient temporal resolution.

Solution: Deploy an automated gantry system with near-infrared laser scanners.

Protocol:

- System Setup: Install 7-degree-of-freedom gantry robot with roof mounting in growth chamber [14]

- Sensor Configuration: Program robot trajectory for multiple overlapping viewpoints around plant

- Data Acquisition: Capture dense depth maps (raw 3D coordinates in mm) at regular intervals (e.g., every 6 hours)

- Processing: Align sequence of overlapping images into full 3D point cloud, then triangulate to mesh representation

- Analysis: Compute growth metrics from temporal changes in surface area and volume of plant meshes

This system has proven capable of capturing diurnal growth patterns across multiple plant species, providing essential data for optimizing voxel classification across growth stages [14].

Experimental Protocols

Protocol 1: 3D Spheroid Drug Screening with Live-Cell Analysis

Application: Phenotypic screening in drug discovery for evaluating compound efficacy.

Table: Research Reagent Solutions for 3D Spheroid Assays

| Reagent/Equipment | Function in Protocol | Specification |

|---|---|---|

| PrimeSurface ULA Plates | Enable spheroid formation through ultra-low attachment surface | 96-well or 384-well U-bottom format [13] |

| Incucyte Nuclight Red Lentivirus | Labels nuclei for viability tracking | EF1α promoter, Puromycin resistance [13] |

| Incucyte Live-Cell Analysis System | Enables kinetic imaging without disrupting environment | 4X magnification, brightfield and fluorescence [13] |

| Camptothecin & Cycloheximide | Positive controls for cytotoxic and cytostatic effects | 10 µM final concentration [13] |

Methodology:

- Cell Preparation: Harvest and seed A549-NR cells (5,000 cells/well in 100µL) into 96-well ULA plates [13]

- Spheroid Formation: Centrifuge plates (125 × g, 10 minutes, RT), monitor formation until 200-500µm diameter (3 days)

- Compound Treatment: Add library compounds (100µL/well at 2X final concentration), include DMSO vehicle control

- Live-Cell Imaging: Acquire brightfield and fluorescence images every 6 hours using Incucyte system

- Analysis: Quantify spheroid area and nuclear fluorescence using spheroid analysis software module

Key Metrics:

- Largest Brightfield Object Area (µm²) for growth/shrinkage

- Largest Brightfield Object Red Integrated Intensity (RCU × µm²) for viability

- Kinetic response profiles over full assay duration

Protocol 2: AI-Enhanced 3D Leaf Reconstruction for Trait Estimation

Application: Generating synthetic training data to improve voxel classification algorithms.

Methodology:

- Data Collection: Acquire real 3D leaf data from multiple species (sugar beet, maize, tomato)

- Skeleton Extraction: Extract petiole, main axis, and lateral veins from point clouds

- Network Training: Train 3D U-Net to predict per-point offsets from skeletons to complete leaf shapes

- Synthetic Generation: Apply Gaussian mixture models to generate dense leaf point clouds

- Validation: Compare with real data using Fréchet Inception Distance (FID) and CLIP Maximum Mean Discrepancy (CMMD)

- Algorithm Enhancement: Fine-tune existing trait estimation models with synthetic dataset

Performance Metrics: This approach has demonstrated significant improvement in leaf length and width estimation accuracy with lower error variance when tested on BonnBeetClouds3D and Pheno4D datasets [11].

Comparing Active vs. Passive 3D Imaging Techniques for Voxel Acquisition

Technical Comparison: Active vs. Passive 3D Imaging

The choice between active and passive 3D imaging techniques is fundamental to voxel acquisition quality and subsequent classification. The table below summarizes their core characteristics for plant phenotyping applications.

| Feature | Active 3D Imaging | Passive 3D Imaging |

|---|---|---|

| Basic Principle | Uses a controlled emission source (laser, structured light); based on triangulation or Time-of-Flight (ToF) [4]. | Relies on ambient or controlled external lighting; analyzes images from multiple viewpoints [4] [15]. |

| Primary Technologies | LiDAR (3D Laser Scanners, Terrestrial Laser Scanners), Time-of-Flight (ToF) Cameras, Structured Light systems [4] [16]. | Structure from Motion (SfM) with Multi-View Stereo (MVS), Binocular Stereo Cameras [16] [17] [15]. |

| Typical Data Output | Directly generates 3D point clouds representing object surface coordinates [4]. | Produces 3D point clouds via computational processing of 2D image features [16] [17]. |

| Key Advantages | Higher accuracy; less affected by ambient lighting or low surface texture; can penetrate vegetation to some extent (e.g., waveform LiDAR) [4] [18]. | Lower equipment cost; preserves spectral (RGB) information; capable of producing highly detailed textured models [4] [17] [15]. |

| Key Limitations | Higher equipment cost; specialized hardware; laser scanners can be slow; may miss fine details at high speed [4] [16]. | Sensitive to lighting variations and low-texture surfaces; computationally intensive processing; struggles with occlusions and reflective surfaces [4] [16] [15]. |

| Best Suited For | High-precision structural mapping, complex canopies, large-scale field applications, and when ambient light control is difficult [4] [18] [19]. | Cost-sensitive projects, detailed morphological studies on smaller plants, and when color/texture information is critical for classification [16] [17] [15]. |

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Our SfM-MVS reconstruction of a plant has large, missing areas and a sparse point cloud. What could be the issue?

- A: This is a common problem often related to insufficient features for the software to match. Please check the following:

- Plant Texture: Plants often have low-texture, repetitive surfaces (e.g., many similar green leaves). Solution: Increase image contrast by using an "extra-green" (ExG) channel during image processing, which can help isolate plant features from the background and improve keypoint detection [17].

- Number and Overlap of Images: The coverage is inadequate. Solution: Ensure a sufficient number of images are captured from multiple viewpoints with ample overlap (typically >60-80%). One optimized protocol uses 120 images from three height levels [16] [15].

- Lighting Conditions: Inconsistent shadows or highlights confuse the feature matching algorithm. Solution: Perform imaging in a controlled, diffuse lighting environment to minimize shadows and reflections [15].

Q2: Our LiDAR-derived voxel grid seems to miss fine structural details like thin stems or petioles. How can we improve this?

- A: This is typically a limitation of the sensor's resolution and the chosen voxel size.

- Voxel Size Sensitivity: The voxel size is too large. Solution: Perform a voxel size sensitivity analysis. Note that while smaller voxels (e.g., 0.25 m) capture more detail, they also introduce higher variability and computational cost. There is a trade-off between resolution and the stability of voxel content estimation [18].

- Sensor Capability: The scanner's intrinsic resolution is too low for the target details. Solution: Consider using a higher-resolution laser scanner or complementing the data with a close-range photogrammetry setup for the specific regions of interest [4] [15].

Q3: When combining multiple 3D point clouds from different viewpoints, the registration is inaccurate, leading to a "blurred" or duplicated plant model.

- A: Accurate registration is critical for a unified 3D model.

- Coarse Alignment: Relying solely on fine-alignment algorithms like Iterative Closest Point (ICP) without a good initial position. Solution: Implement a two-phase registration workflow. First, use a marker-based (e.g., calibration spheres) or feature-based method for rapid coarse alignment. Then, apply the ICP algorithm for fine alignment [16].

- Targetless Registration: For environments where placing markers is difficult, ensure sufficient overlap between point clouds and use feature descriptors that are robust to the complex geometry of plants.

Q4: How do we choose the optimal voxel size for our plant phenotyping study?

- A: The optimal voxel size is application-dependent and involves a trade-off.

- Rule of Thumb: Larger voxel sizes (e.g., 2 meters) reduce errors and computational load but lose fine-scale structural information. Smaller voxel sizes (e.g., 0.25-0.5 meters) capture more detail but result in higher estimation errors, particularly within dense canopies, and require more processing power [18].

- Recommendation: Conduct a sensitivity analysis on a subset of your data. Test a range of voxel sizes and evaluate the performance based on your downstream task, such as the accuracy of voxel content classification or correlation with manually measured phenotypic traits [18].

Experimental Protocols for Voxel Acquisition

Protocol 1: High-Fidelity Plant Reconstruction Using SfM-MVS

This passive method is ideal for creating detailed 3D models for fine-grained morphological trait extraction [16] [15].

Image Acquisition:

- Setup: Mount a high-resolution RGB camera on a robotic arm or a gantry system for flexible viewpoint control. Use a uniform, non-reflective background.

- Parameters: Use diffuse lighting to eliminate sharp shadows. An optimized configuration includes an exposure time of 50 milliseconds and a camera-to-object distance of 16 cm [15].

- Procedure: Capture images from multiple heights and angles around the plant. An effective strategy is to use 3 height levels, capturing 40 images per level, for a total of 120 images per plant [15].

3D Reconstruction Processing:

- Software: Use photogrammetric software (e.g., Metashape, RealityCapture) or open-source SfM-MVS pipelines.

- Keypoint Detection: Convert RGB images to grayscale or ExG for enhanced feature detection. Digitally upsample images using cubic interpolation to increase the number of keypoints [17].

- Dense Cloud Generation: After SfM computes camera positions, run the MVS algorithm to generate a dense point cloud. A key optimization is to adjust the "parameter tweak" (e.g., to a value of 0.9) to improve the reconstruction of thin plant parts like petioles [15].

Voxelization:

- Import the dense point cloud into a computational environment (e.g., Python, CloudCompare).

- Define a 3D grid over the point cloud. The grid cell size defines the voxel resolution.

- Assign points to their corresponding voxels. Each voxel can be assigned properties based on the points it contains, such as average color or density.

Protocol 2: Structural and Functional Mapping with Multimodal Imaging

This advanced protocol combines multiple imaging modalities to enhance voxel classification by providing complementary structural and functional data [17] [19].

Multimodal Data Acquisition:

- Structural Data: Acquire a 3D point cloud using either an active (LiDAR, ToF) or passive (SfM-MVS) method as described above.

- Functional Data: Using the same or a co-registered setup, capture functional images. For example:

- Fluorescence Imaging: Illuminate the plant with UV light and capture images through blue and green spectral filters. The blue-green fluorescence intensity can serve as a biomarker for infection or physiological status [17].

Data Fusion and Voxel Classification:

- Registration: Use an automatic 3D registration pipeline to align the structural point cloud with the functional image data into a unified multimodal 4D image [19].

- Feature Extraction: For each voxel in the structural grid, extract features from the co-registered functional data (e.g., mean fluorescence intensity).

- Machine Learning Classification: Train a model (e.g., a random forest or deep learning model) to classify voxels into categories such as "intact," "degraded," and "white rot" based on the combined structural and functional features. This approach has achieved mean global accuracy of over 91% in discriminating grapevine trunk tissues [19].

Workflow Visualization

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table lists key hardware and software solutions essential for implementing the described 3D imaging protocols.

| Item | Function / Application | Examples / Specifications |

|---|---|---|

| Binocular Stereo Camera | Captures synchronized image pairs for depth perception and 3D reconstruction in passive imaging. | ZED 2, ZED mini [16]. |

| Robotic Arm & Turntable | Provides precise, automated control of camera viewpoint or plant rotation for comprehensive multi-view image acquisition. | UR5 robot arm, high-precision turntable [20] [15]. |

| LiDAR Sensor | An active sensor that measures distance by illuminating the target with laser light, ideal for high-precision structural mapping. | Terrestrial Laser Scanners (TLS), low-cost options like Microsoft Kinect [4] [1]. |

| Monochrome Camera & Filter Wheel | Used for high-quality functional imaging (e.g., fluorescence) by capturing light in specific spectral bands. | Basler acA1440 with BP525/BP470 filters [17]. |

| SfM-MVS Software | Processes multiple overlapping 2D images to reconstruct a 3D point cloud model. | Metashape, RealityCapture, Pix4Dmapper [15]. |

| Calibration Targets | Essential for determining the intrinsic (lens distortion) and extrinsic (position) parameters of the camera(s). | Checkerboard pattern with known square dimensions [20] [17]. |

Frequently Asked Questions (FAQs) & Troubleshooting Guides

This technical support center addresses common challenges in 3D plant imaging experiments, with a specific focus on optimizing voxel classification for accurate biomass estimation and morphological analysis.

Data Acquisition & Imaging

Q1: My 3D point cloud has poor resolution for small plant organs like thin stems or ears. What are my options?

A: Poor resolution for fine structures is often a sensor limitation.

- Root Cause: The laser footprint or sensor resolution is too large to capture delicate plant organs effectively [10].

- Solutions:

- Consider Laser Line Scanners: Technologies like laser light section scanners can offer higher precision (up to 0.2 mm) in all dimensions compared to LIDAR, making them more suitable for small plant structures [10].

- Evaluate Scanner Specifications: When choosing a 3D scanner, pay close attention to the point accuracy and the scanner's ability to handle deep holes and slender structures. Scanners with higher point accuracy (e.g., 0.02 mm) and better hole depth ratios will capture more complete data for thin stems [21].

- Explore Photogrammetry: For root systems or other complex structures, photogrammetry-based 3D reconstruction from overlapping 2D images can be a cost-effective alternative that captures fine details, though it may require significant computational processing [22] [4].

Q2: How can I mitigate the impact of plant movement (e.g., from wind) during 3D scanning?

A: Movement introduces blur and errors into the point cloud.

- Root Cause: Scanner-based systems (LIDAR, laser line) require constant movement over the plant. If the plant moves during this process, the data quality is reduced [10].

- Solutions:

- Use a Camera-Based System: Structured light cameras, like the Microsoft Kinect, are insensitive to movement as they capture data in a single shot, making them suitable for environments where plant movement is a concern [10].

- Control the Environment: Whenever possible, perform scanning in controlled indoor environments without direct sunlight and where airflow can be minimized to reduce leaf movement [21].

- Ensure Fast Acquisition: Select sensors with high scan rates to minimize the time window during which movement can occur [10].

Point Cloud Processing & Segmentation

Q3: What is the most significant bottleneck in achieving organ-level 3D segmentation of plants?

A: The primary challenge is bridging the data–algorithm–computing gap [23].

- The Problem:

- Data Scarcity: A major limitation is the lack of large-scale, annotated 3D plant datasets required for training robust deep learning models [23] [24].

- Technical Adaptation: Adapting advanced deep neural networks designed for general point clouds (like those from autonomous driving) to the complex, non-solid architecture of plants is technically difficult [23].

- Standardization: The field lacks standardized benchmarks and evaluation protocols tailored to plant phenotyping [23].

- Solutions & Future Directions:

- Leverage Synthetic Data: Use sim-to-real learning strategies, where models are pre-trained on realistic synthetic plant data and then fine-tuned on smaller real-world datasets. This reduces the annotation burden [23].

- Utilize Open-Source Frameworks: Employ frameworks like Plant Segmentation Studio (PSS) to streamline benchmarking and ensure reproducible comparisons of different segmentation algorithms [23].

- Adopt Advanced Networks: Sparse convolutional backbones and transformer-based instance segmentation networks have shown high efficacy in plant point cloud tasks [23].

Q4: My voxel classification model struggles to distinguish between different internal wood degradation stages. How can I improve accuracy?

A: This is a complex classification problem that can be addressed with a multimodal imaging approach.

- Root Cause: A single imaging modality may not provide enough contrasting information to differentiate between tissue types that have similar structural or density properties [19].

- Solutions:

- Implement Multimodal Imaging: Combine complementary imaging techniques. For example, fuse X-ray CT data, which excels at discriminating advanced degradation stages based on tissue density loss, with MRI protocols (T1-, T2-, PD-weighted), which are better at assessing tissue functionality and early-stage physiological changes [19].

- Employ Machine Learning: Train a model, such as a random forest or deep learning classifier, on the combined multimodal data. Research has shown that this can discriminate between intact, degraded, and white rot tissues with over 91% global accuracy [19].

- Ensure Proper Data Registration: Use an automatic 3D registration pipeline to precisely align the 3D data from each imaging modality into a unified 4D-multimodal image for joint voxel-wise analysis [19].

Data Analysis & Quantification

Q5: How can I non-destructively estimate plant biomass from 3D images?

A: Digital biomass can be modeled as a function of plant volume derived from images.

- Methodology: A generalized linear model can estimate biomass more accurately than a simple projection of plant area. The model incorporates:

- Projected Shoot Area (A): The sum of plant pixel areas from top and side views.

- Plant Compactness: The square of plant border length divided by the projected area, which provides information on plant architecture and density.

- Plant Age: The growth stage of the plant [25].

- Experimental Protocol:

- Image Acquisition: Capture top-view and multiple side-view images of plants daily using a high-throughput phenotyping system [25].

- Feature Extraction: Use image analysis software (e.g., IAP) to segment the plant from the background and extract the projected shoot area from each view [25].

- Calculate Digital Biomass: Compute an initial digital biomass value as:

average_pixel_side_area² × top_area[25]. - Build Model: Fit a linear model where Digital Biomass =

a₀ + a₁ × A + a₂ × Compactness + a₃ × (Area × Days) + e. This model explains most of the observed variance and shows a small difference between actual and estimated digital biomass [25].

Experimental Protocols & Workflows

This protocol details the workflow for non-destructive diagnosis of inner tissues in living plants, such as grapevine trunks, using multimodal imaging and machine learning.

- Application: In-vivo phenotyping of internal woody tissues condition, specifically for diagnosing wood degradation and trunk diseases.

- Key Materials:

- Living plants (e.g., grapevines with and without foliar symptoms).

- Equipment:

- X-ray Computed Tomography (CT) scanner.

- Magnetic Resonance Imaging (MRI) scanner capable of T1-, T2-, and PD-weighted protocols.

- Equipment for molding plant samples and slicing them into thin cross-sections.

- High-resolution digital camera for photographing cross-sections.

- Step-by-Step Methodology:

- Sample Collection & Preparation: Collect plants based on external symptom history. Prepare them for imaging in the clinical facility.

- Multimodal Image Acquisition: For each plant, acquire 3D images using:

- X-ray CT.

- Multiple MRI protocols (T1-w, T2-w, PD-w).

- Expert Annotation & Ground Truthing: Post-imaging, mold and physically slice the plant trunk. Photograph both sides of each cross-section. Have experts manually annotate the photographs into tissue classes (e.g., healthy, necrosis, white rot) based on visual inspection.

- 3D Data Registration: Use an automatic 3D registration pipeline to align all MRI, CT, and photograph data into a single, coherent 4D-multimodal image dataset.

- Signature Identification: Jointly explore the multimodal signals in the registered data to identify quantitative structural and physiological markers (signatures) for each tissue class.

- Model Training & Voxel Classification: Train a machine learning model (e.g., random forest) using the expert annotations and the identified multimodal signatures. The model will learn to classify each 3D voxel in the plant trunk into the defined tissue classes.

- Quantification & Diagnosis: Use the model's output to automatically quantify the volume of intact, degraded, and white rot tissues within the entire trunk. Correlate these internal measurements with the plant's external symptom history to build a diagnostic model.

The following diagram illustrates the core workflow of this multimodal imaging and analysis pipeline.

This protocol describes an end-to-end workflow for detecting Sweetpotato Virus Disease (SPVD) at the plant level using a 3D-CNN on UAV-acquired hyperspectral data.

- Application: Early, accurate, and non-destructive detection of plant virus diseases at field scale.

- Key Materials:

- Sweetpotato plants in a field setting.

- Equipment:

- Unmanned Aerial Vehicle (UAV).

- Hyperspectral camera mounted on the UAV.

- Step-by-Step Methodology:

- Data Acquisition: Fly the UAV over the field to capture high-resolution hyperspectral imagery of the sweetpotato canopy during early growth stages.

- Feature Selection: Process the hyperspectral data to select the most informative features.

- Use algorithms like Random Forest (RF) to identify optimal spectral bands.

- Calculate relevant Vegetation Indices (VIs).

- Perform Variance Inflation Factor (VIF) analysis on the combined bands and VIs to remove multicollinearity and create an optimized, non-redundant feature set.

- Deep Feature Extraction: Input the selected feature bands into a 3D Convolutional Neural Network (3D-CNN). The 3D-CNN will automatically extract deep spectral-spatial features for classification.

- Plant-Level Classification: Implement a two-stage post-processing pipeline to convert pixel-level predictions into coherent plant-level labels.

- Perform Connected-Component Analysis to identify individual plants in the geospatial data.

- Apply Majority Voting within each connected component to assign a single, consistent label to the entire plant.

- Validation: Compare the framework's classification results against ground-truthed plant health data to evaluate performance.

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key technologies and their functions in 3D plant imaging research, as discussed in the cited experiments.

| Technology / Material | Primary Function in 3D Plant Imaging | Key Considerations & Applications |

|---|---|---|

| Laser Line Scanner [10] [21] | Projects a laser line onto the plant; measures the line's distortion to calculate distance and create a high-precision 3D profile. | High accuracy (up to 0.2 mm), robust with no moving parts. Ideal for detailed morphological analysis of shoots and leaves. Sensitive to ambient sunlight. |

| LIDAR [10] [4] | Measures the round-trip time of a laser dot to calculate distance, creating a 3D point cloud by scanning the dot across the scene. | Fast acquisition, long-range, light-independent. Lower X-Y resolution makes it less suitable for fine plant structures. Used for large canopies and field phenotyping. |

| Structured Light Camera (e.g., Kinect) [10] [4] | Projects a light pattern onto the plant; calculates depth from the pattern's deformation in a single shot. | Inexpensive, insensitive to movement, provides color information. A good option for cost-effective 3D reconstruction in controlled environments. |

| X-ray CT [19] | Uses X-rays to create cross-sectional images, revealing the internal structure and density of tissues non-destructively. | Excellent for visualizing internal wood degradation, graft unions, and occluded vessels. Reveals structural markers of disease. |

| MRI [19] | Uses magnetic fields and radio waves to image internal structures based on water content and tissue physiology. | Excellent for functional assessment. Can detect early-stage wood degradation (reaction zones) before structural collapse is visible in CT. |

| Hyperspectral Camera [26] | Captures hundreds of narrow, contiguous spectral bands, revealing subtle changes in plant physiology and biochemistry. | Mounted on UAVs for field-scale disease detection. Sensitive to changes in chlorophyll, water content, and cellular structure caused by stress or disease. |

| 3D-CNN Model [26] | A deep learning architecture that can process 3D data (e.g., hyperspectral cubes) to extract complex spectral-spatial features. | Used for voxel classification and plant-level disease identification from hyperspectral imagery, outperforming traditional classifiers. |

Comparative Data Tables

This table compares the pros and cons of different active 3D imaging methods to help select the appropriate technology.

| Technology | Key Advantages | Key Disadvantages / Challenges | Best Suited For |

|---|---|---|---|

| LIDAR | Fast acquisition; works in ambient light; long range. | Poor X-Y resolution; blurry edges; requires warm-up and calibration. | Field phenotyping; canopy-level measurements; large-scale architecture. |

| Laser Line Scanning | High precision in all dimensions; robust with no moving parts. | Requires movement of sensor/plant; defined, limited working range. | High-resolution shoot architecture; detailed leaf morphology in controlled settings. |

| Structured Light | Single-shot capture (insensitive to movement); low-cost systems available. | Sensitive to ambient light (especially sunlight). | Indoor plant phenotyping; real-time growth monitoring of single plants. |

| Photogrammetry | Cost-effective (uses standard cameras); good for complex structures like roots. | High computational demand; significant processing time. | Root system architecture; creating detailed 3D models where cost is a constraint [22] [4]. |

This table summarizes the typical signal responses for different tissue types in X-ray CT and MRI, which serve as the basis for training a voxel classification model.

| Tissue Class | X-ray CT Absorbance | T1-weighted MRI | T2-weighted MRI | PD-weighted MRI |

|---|---|---|---|---|

| Intact / Functional | High | High | High | High |

| Necrotic / Degraded | Medium (approx. -30%) | Medium to Low | Very Low (close to zero) | Very Low (close to zero) |

| White Rot (Decay) | Very Low (approx. -70%) | Very Low | Extremely Low | Extremely Low |

| Reaction Zones | Similar to healthy | Similar to healthy | Strong Hypersignal | Similar to healthy |

| Dry Tissues | Medium | Very Low | Very Low | Very Low |

Technical Support & Troubleshooting Hub

Frequently Asked Questions (FAQs)

FAQ 1: How can I improve the trajectory efficiency of my robotic system for 3D plant data collection? Challenge: Inefficient view planning leads to long trajectory paths and redundant data, increasing processing time and cost. Solution: Implement a self-supervised local view planning method like SSL-Local-NBV. This approach selects camera views within a local neighborhood rather than the entire global space, which has been shown to reduce trajectory distance per reconstruction cycle by 56%–70% and improve overall trajectory efficiency by 267%–300% compared to global next-best-view (NBV) methods [27]. Incorporating a View Trajectory Network (VTN) helps prevent redundant visits to the same locations [27].

FAQ 2: My voxel-based LAD (Leaf Area Density) predictions are inaccurate, especially in dense canopy centers. How can I fix this? Challenge: Major deviations in LAD prediction occur in the crown center where branches are dense but leaves are few, leading to overestimation [28]. Solution:

- Algorithm Selection: Employ a Hist Gradient Boosting Regressor (HGBR) model, which has demonstrated a mean absolute error of 16.33% for LAD prediction in such complex scenarios [28].

- Input Data: Use Quantitative Structure Models (QSM) derived from Terrestrial Laser Scanning (TLS) data. Convert these QSMs into novel QSM indexes that describe branch distribution within each voxel as input for the regression model [28].

FAQ 3: What is the optimal voxel size to balance accuracy and computational cost in forest studies? Challenge: The choice of voxel size involves a trade-off; smaller voxels capture more detail but are computationally expensive and can show higher error rates, especially within the canopy [18]. Solution: The optimal voxel size is application-dependent. A sensitivity analysis reveals that:

- For lower errors and reduced computational cost, especially in large-scale studies, larger voxel sizes (e.g., 2 meters) are effective [18].

- To capture fine-scale structural information, smaller voxel sizes (e.g., 0.25 or 0.5 meters) are necessary, but you must account for higher error variability within the canopy [18]. Testing a range of voxel sizes on a representative sample of your data is recommended.

FAQ 4: How can I generate high-quality 3D leaf data without costly and time-consuming manual labeling? Challenge: Acquiring accurate, labeled 3D data for leaf trait estimation is a major bottleneck due to the need for manual work by experts [11]. Solution: Use a generative AI model to create synthetic, lifelike 3D leaf point clouds. Train a 3D convolutional neural network (e.g., a 3D U-Net) to expand leaf skeletons into dense point clouds. This method has been validated on sugar beet, maize, and tomato plants and can improve the accuracy of leaf trait estimation algorithms like polynomial fitting [11].

Troubleshooting Guides

Problem: Poor Correlation Between Simulated and Measured Light Extinction Application Context: Validating radiative transfer models (RTM) using voxel-based reconstructions against in-situ PAR measurements [29].

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Occlusion from TLS system | Check for data gaps or inconsistent point density in the original scan data, particularly in the inner canopy. | Use a terrestrial laser scanner with high penetration capability (e.g., RIEGL VZ-400i) and scan from multiple positions (e.g., 8) around the subject to mitigate occlusion [29]. |

| Imprecise leaf-wood separation | Visually inspect the classified point cloud to see if wooden structures are misclassified as leaves or vice versa. | Implement a direct reconstruction method that extracts the geometry of woody features and foliage as explicit polygons (e.g., leaf and wood polygons) from TLS data, rather than relying solely on a turbid voxel approach [29]. |

| Overly simplified voxel representation | Compare the spatial resolution of your voxels (e.g., 1m) to the size of the leaves and branches. | Use a finer voxel size or shift to a polygon-based reconstruction method. One study achieved a correlation coefficient (r) of 0.92 with in-situ PAR measurements using polygons, outperforming a 1m voxel-based approach (r = 0.73) [29]. |

Problem: High Estimation Error for Voxel Content in Dense Canopies Application Context: Using deep learning for multi-target regression to estimate the percentage occupancy of bark, leaf, and soil within each voxel [18].

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Inherent class imbalance | Analyze the distribution of target values (e.g., percentage occupancy) in your training dataset. You will likely find a high imbalance, with many "empty" or low-occupancy voxels. | Apply cost-sensitive learning techniques to handle the imbalanced regression problem. Use an instance weighting technique like Density-Based Relevance (DBR) and a loss function that combines Weighted MSE and Focal Regression (FocalR) to focus on harder-to-learn samples [18]. |

| Insufficient model capacity | Benchmark your model against a state-of-the-art architecture like Kernel Point Convolution (KPConv), adapted for multi-target regression. | Utilize a dedicated deep learning architecture like KPConv, which is designed for 3D point cloud and voxel data, to better capture the complex structural nuances of a forest canopy [18]. |

| Inappropriate voxel size | Perform a sensitivity analysis on your model's performance with different voxel sizes (e.g., 0.25m, 0.5m, 1m, 2m). | Choose a voxel size suited to your application. Acknowledge that smaller voxels within the canopy will have higher error; if overall plot-level accuracy is the goal, a larger voxel size may be more effective and computationally efficient [18]. |

Experimental Protocols & Methodologies

Protocol: Self-Supervised Local View Planning for 3D Reconstruction

This protocol outlines the method for efficient robotic 3D plant reconstruction, which directly addresses the challenge of occlusion by actively planning views to maximize information gain [27].

Workflow Diagram: SSL-Local-NBV for Plant Reconstruction

Key Materials & Equipment:

- Robotic Platform: A mobile robotic arm or gantry system capable of precise camera positioning.

- Depth Sensor: An RGB-D camera (e.g., a structured light or Time-of-Flight camera like Microsoft Kinect) or a laser scanner for capturing 3D data [4].

- Computing Unit: A GPU-equipped computer for running the self-supervised deep learning model in near real-time.

- View Trajectory Network (VTN): A software module to memorize the history of visited views and prevent revisiting [27].

Protocol: Voxel-Based Leaf Area Density (LAD) Estimation from TLS

This protocol describes an indirect method for estimating LAD using tree QSMs, which is useful when direct leaf scanning is impractical [28].

Workflow Diagram: LAD Estimation from Winter Scans

Key Materials & Equipment:

- Terrestrial Laser Scanner (TLS): A high-precision TLS system (e.g., RIEGL VZ-400i) [29].

- QSM Extraction Software: Software for reconstructing quantitative structure models from point clouds (e.g., SimpleTree, TreeQSM).

- Hemispherical Camera: For measuring Leaf Area Index (LAI) to validate the predicted LAD values [28].

Table 1: Performance Comparison of 3D Reconstruction & Voxel Classification Methods

| Method / Approach | Key Performance Metric | Reported Performance | Primary Application Context |

|---|---|---|---|

| SSL-Local-NBV (Robotic View Planning) [27] | Trajectory Efficiency (vs. Global NBV) | 267% - 300% higher efficiency | Efficient 3D reconstruction of plants of varying sizes |

| SSL-Local-NBV (Robotic View Planning) [27] | Trajectory Distance Reduction per cycle | 56% - 70% reduction | Efficient 3D reconstruction of plants of varying sizes |

| HGBR Model for LAD Estimation [28] | Mean Absolute Error (MAE) | 16.33% | Predicting voxel-based Leaf Area Density (LAD) in plane trees |

| HGBR Model for LAD Estimation [28] | R-squared Score | 0.56 | Predicting voxel-based Leaf Area Density (LAD) in plane trees |

| Polygon vs. Voxel Reconstruction [29] | Correlation (r) with in-situ PAR measurements | Polygon: 0.92, Voxel (1m): 0.73 | Radiative transfer modeling for light extinction |

| AI-Generated 3D Leaf Models [11] | Coefficient of Determination (R²) for leaf area | 0.96 (on tomato plants) | Estimating total leaf area from 3D point clouds |

| Voxel Size | Relative Error Trend | Key Observation / Rationale |

|---|---|---|

| 0.25 / 0.5 meter | Significantly Higher | Higher errors, particularly within the canopy where structural variability is greatest. Fine details increase model complexity. |

| 2 meters | Significantly Lower | Reduced variability within each voxel leads to lower errors, but at the cost of losing fine-scale structural information. |

| General Rule | Application-Dependent | The choice represents a trade-off between predictive accuracy and computational complexity. Larger voxels are more efficient but less detailed. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Materials and Solutions for Plant Voxel Classification Research

| Item Name | Function / Purpose | Specific Example / Note |

|---|---|---|

| Terrestrial Laser Scanner (TLS) | Captures high-density 3D point clouds of plant and canopy structure. | RIEGL VZ-400i; used for direct reconstruction and deriving QSMs [28] [29]. |

| RGB-D Camera | Provides cost-effective 3D data capture for robotic view planning and smaller-scale phenotyping. | Microsoft Kinect; a Time-of-Flight (ToF) camera used in controlled environments [4]. |

| Hist Gradient Boosting Regressor (HGBR) | A machine learning model for predicting continuous variables like Leaf Area Density (LAD) from voxel features. | Demonstrates best performance for LAD prediction from QSM indexes [28]. |

| Kernel Point Convolution (KPConv) | A deep learning architecture for processing 3D point clouds and voxelized data. | Can be adapted for multi-target regression to estimate voxel content (bark, leaf, soil %) [18]. |

| View Trajectory Network (VTN) | A software component that memorizes the history of camera views visited by a robot. | Prevents redundant data collection, crucial for improving trajectory efficiency [27]. |

| DIRSIG Software | Physics-based simulation software for generating synthetic, radiometrically accurate LiDAR data. | Creates digital forest twins with precise ground truth for voxel content, overcoming the lack of real-world labeled data [18]. |

Advanced Methodologies for High-Fidelity Voxel Classification and Analysis

Multi-View Image Capture and Voxel-Grid Reconstruction Techniques

Troubleshooting Guides

Common Issues and Solutions in Multi-View Plant Phenotyping

Problem 1: Incomplete 3D Reconstruction with Missing Plant Parts

- Symptoms: Holes or missing sections in the reconstructed voxel-grid, particularly in areas with dense foliage or self-occluding leaves.

- Root Causes:

- Insufficient Viewpoints: Too few images fail to capture all angles of complex plant architectures [1].

- Inadequate Image Overlap: Adjacent images share less than the recommended 60% overlap, causing failures in feature matching [30].

- Poor Feature Matching: Lack of distinctive textures on leaves or inconsistent lighting can prevent successful feature matching in SfM pipelines [31].

- Solutions:

- Increase the number of viewpoints. For a rotating plant setup, decrease the rotation interval between shots (e.g., from 10° to 5°) [32].

- Ensure >60% overlap between consecutive images [30].

- Improve imaging conditions using controlled, diffuse LED lighting to create consistent textures and minimize shadows [30].

Problem 2: Poor Alignment of Multimodal Data (e.g., RGB with Depth/3D)

- Symptoms: Misalignment between different data modalities, such as spectral data not correctly mapped onto the corresponding parts of the 3D model.

- Root Cause: Parallax errors and occlusion effects complicate pixel-precise registration between different sensors [31].

- Solutions:

- Integrate depth information from a Time-of-Flight (ToF) camera into the registration process to mitigate parallax [31].

- Use an automated algorithm to identify and filter out various types of occlusions before final registration [31].

- Employ ray-casting techniques that leverage 3D information for more robust multimodal registration, making the process less reliant on plant-specific image features [31].

Problem 3: Voxel-Grid Reconstruction is Noisy or Over-Carved

- Symptoms: The reconstructed 3D model appears fragmented, with thin structures like stems disappearing, or contains significant noise.

- Root Cause: Traditional binary voxel carving is sensitive to small vibrations of leaves and imperfections in the image segmentation process. Even minor errors can cause large regions to be incorrectly carved away [33].

- Solutions:

- Implement a probabilistic voxel carving algorithm. Instead of binary decisions, this method assigns a probability to each voxel representing its likelihood of being part of the plant. A user-defined probability threshold is then used to determine the final geometry, making the process robust to noise [33].

- Apply morphological operations (e.g., dilation) to the binary plant silhouette masks before carving to reduce the chance of over-carving thin structures [33].

- Leverage GPU computing to allow for high-resolution voxel grids (e.g., 1024³), which can better capture fine details without being prohibitively slow [33].

Problem 4: Inaccurate Scale and Dimensional Measurements

- Symptoms: The 3D model is geometrically distorted, and extracted phenotypic traits (e.g., plant height, leaf area) do not match physical measurements.

- Root Cause: The reconstruction process does not have an accurate real-world scale reference.

- Solutions:

- Include a calibration object of known dimensions, like a measurement bar with coded targets, in the scene during image capture. The known distance between targets provides a scale for the entire model [32].

- Perform rigorous internal and external camera calibration to correct for lens distortion and determine precise camera positions and orientations [32].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between "plant to camera" and "camera to plant" imaging modes, and which should I choose?

A1: The choice involves a trade-off between accuracy and practicality.

- Plant to Camera: The plant is placed on a rotating turntable with fixed cameras. This is simpler and requires less space but can cause plant vibration, leading to motion blur and poorer reconstruction quality for tall or flexible plants [30].

- Camera to Plant: The plant remains static while one or more cameras move around it. This mode is generally more accurate and robust because it eliminates plant movement, supporting in-situ and non-destructive measurement. However, it requires a more complex setup, such as a robotic arm, and must address potential cable management issues [30].

- Recommendation: For small, sturdy plants like seedlings, a turntable ("plant to camera") is sufficient. For larger plants or those with complex, flexible architectures like wheat or mature maize, the "camera to plant" mode is superior [30].

Q2: How many images are typically required for a high-quality 3D reconstruction of a plant?

A2: The required number of images depends on plant architectural complexity rather than a fixed count.

- Basic Guideline: Capture images with at least 60% overlap on the same level and 50% overlap between different levels [30].

- Example Setup: One study achieved successful reconstruction of maize plants by rotating the plant 360 degrees in 5-degree intervals, resulting in 72 images per camera [32]. Another study using a robotic system generated camera poses on a sphere around the object, ensuring comprehensive coverage [34]. The key is to ensure all plant organs, especially those prone to self-occlusion, are visible from multiple points of view [1].

Q3: My voxel-grid reconstruction is computationally expensive and slow. How can I improve efficiency?

A3: Consider the following optimizations:

- Algorithm Choice: Use a probabilistic voxel carving approach, which can be more efficient and robust than traditional methods [33].

- Hardware Acceleration: Leverage GPU computing to parallelize the voxel carving process. For very high-resolution grids, implement space partitioning to process the data in manageable batches [33].

- Data Structures: Employ efficient data structures like octrees to speed up computations by orders of magnitude, as demonstrated in 3D reconstruction of plant shoots [33].

Q4: What are the key considerations for choosing a voxel size?

A4: Voxel size represents a trade-off between detail and computational load.

- Small Voxels (e.g., 0.25-0.5 m): Capture more structural detail and nuance but result in higher computational cost, storage requirements, and greater estimation error, particularly within dense canopies where variability is high [18].

- Large Voxels (e.g., 2 m): Lead to lower errors due to averaged values and reduced variability, but this comes at the cost of losing fine-scale structural information [18].

- Recommendation: The choice is application-dependent. If the goal is to analyze overall canopy structure, larger voxels may suffice. If analyzing fine-grained organ-level architecture, smaller voxels are necessary, acknowledging the associated increase in computational complexity and potential error [18].

Experimental Protocols & Methodologies

This protocol is designed for efficient and robust 3D model reconstruction of plants from multi-view images, specifically addressing noise and scalability issues.

Step 1: Multi-view Image Acquisition

- Mount the plant on a rotating platform.

- Use a fixed, calibrated camera to capture a video of the plant completing a full rotation.

- Extract frames from the video at regular intervals to obtain an arbitrary number of views.

Step 2: Image Pre-processing

- For each extracted image, generate a binary silhouette mask of the plant against a contrasting background.

- Apply morphological dilation to the masks to reduce the risk of over-carving thin structures like leaves.

Step 3: Probabilistic Voxel Carving

- Define a 3D voxel grid encompassing the entire plant volume.

- For each voxel, compute the probability of it belonging to the plant. This is based on the number of camera views from which the voxel projects onto a plant pixel in the silhouette mask.

- Apply a user-defined probability threshold to the grid to obtain the final, carved voxelized plant model.

Step 4: Trait Extraction

- Use the resulting voxel-grid to compute morphological traits such as the number of leaves, leaf angles, plant height, and biomass.

This protocol outlines a method for generating high-quality, concentric multi-view datasets ideal for 3D reconstruction models like NeRF and 3D Gaussian Splatting, using robotic arms for precise camera positioning.

Step 1: Scene Configuration

- Define the object's size and its position in the robot's World Coordinate System (WCS).

- Set the capture radius, determining the distance of the camera from the object's center.

Step 2: Camera Pose Generation

- Execute a sphere generation algorithm. This creates a set of target camera poses on a sphere around the object, using "rings" (horizontal levels) and "sectors" (vertical slices). All poses are oriented towards the object's center.

Step 3: Robot Traversal and Image Capture

- The system assigns each camera pose to the most suitable robot based on reachability.

- Robots traverse to their assigned poses in parallel or sequence.

- At each target pose, the system captures an RGB image and, if supported, a depth map.

Step 4: Camera Pose Refinement with COLMAP

- Feature Extraction & Matching: Use COLMAP to extract features from all images and match them based on pre-defined image pairs (adjacent poses).

- Point Cloud Triangulation: Triangulate matched features to generate a sparse 3D point cloud.

- Bundle Adjustment: Refine the recorded camera poses through bundle adjustment based on the point cloud. This step is iterative, with each iteration using the refined poses for better triangulation.

- The final output is a set of images and highly accurate, refined camera poses.

Workflow Visualization

Multi-View 3D Plant Phenotyping Workflow

Research Reagent Solutions: Essential Materials for 3D Plant Imaging

The following table details key hardware and software components used in advanced multi-view plant phenotyping setups.

| Item Name | Type | Function/Application | Key Specifications |

|---|---|---|---|

| PlantEye F500/F600 [35] | Integrated 3D Scanner | Automated, non-destructive plant phenotyping; combines 3D laser scanning with multispectral imaging. | 3D + 4 spectral bands (RGB & NIR), IP65 rating, operational in direct sunlight. |

| Multi-View Robotic Imaging Setup [34] | Hardware & Software Platform | Captures concentric multi-view images for high-quality 3D reconstruction (NeRF, 3DGS). | ROS/MoveIt control, support for multiple robots/turntables, integrated COLMAP refinement. |

| MVS-Pheno V2 Platform [30] | Phenotyping Platform | High-throughput phenotyping for low plants using "camera-to-plant" mode. | Controlled imaging box, wireless communication, automated data processing pipeline. |

| All-Around 3D Modeling Studios [32] | Custom Imaging System | Non-contact 3D modeling of plants from a few mm to 2.4 m height using SfM-MVS. | Scalable design (2-8 cameras), integrated measurement bar for scale/calibration. |

| COLMAP [34] | Software | A state-of-the-art Structure-from-Motion (SfM) and Multi-View Stereo (MVS) pipeline. | Used for feature matching, sparse/dense reconstruction, and camera pose refinement. |

| 3D Gaussian Splatting (3DGS) [36] | Software / Algorithm | A state-of-the-art method for high-fidelity 3D reconstruction from multi-view images. | Real-time rendering, explicit scene representation, superior to NeRF in speed/quality. |

| Probabilistic Voxel Carving Pipeline [33] | Software / Algorithm | Robust 3D voxel-grid reconstruction from multi-view images, resistant to noise. | GPU-accelerated, handles arbitrary number of views, open-source. |

Troubleshooting Guide: FAQs for 3D Plant Imaging

This section addresses common technical challenges researchers face when implementing 3D-CNNs and Neural Architecture Search (NAS) for voxel classification in plant phenotyping.

Q1: My 3D-CNN model for plant organ segmentation is overfitting, despite using data augmentation and dropout. What else can I do?

Overfitting in 3D-CNNs is a common issue, often due to the high model complexity relative to the available 3D plant data [37]. Beyond the steps you've taken, consider these strategies:

- Incorporate Stronger Regularization: In addition to dropout, apply L1 or L2 weight regularization (e.g., weight decay) in your convolutional and fully connected layers to penalize overly complex models [38].

- Use Architectural Best Practices: Integrate batch normalization layers after convolutions and before activation functions. This stabilizes training and acts as a regularizer, often improving generalization [37].

- Simplify the Model: Manually reduce the number of parameters by decreasing the number of layers or filters in your 3D-CNN. A simpler model is less prone to overfitting, especially with limited datasets [37].

- Leverage Synthetic Data: Augment your training set with AI-generated 3D leaf models. Generative models can create lifelike 3D leaf point clouds with known geometric traits, providing diverse and unlimited training data that closely mimics real-world variability [11].

Q2: I have limited computational resources. How can I implement Neural Architecture Search (NAS) for my plant phenotyping project?

Traditional NAS can be computationally expensive, but several strategies make it feasible with limited resources:

- Adopt a Weight-Sharing Supernetwork: Instead of training each candidate architecture from scratch, use a framework where all architectures share weights within a single, over-parameterized "supernetwork." This reduces the search cost dramatically [39].

- Apply Evolutionary Search with Constraints: Use evolutionary algorithms to search for optimal architectures while incorporating direct constraints on the memory footprint and latency (inference time). This allows you to find a model that balances performance with your specific resource limits [39].

- Explore Bi-Level Optimization: Consider an Evolutionary Bi-Level NAS framework. This approach simultaneously optimizes the network's architecture (upper level) and its weights (lower level), efficiently discovering compact and effective models that can achieve up to a 99.66% reduction in model size while maintaining competitive performance [38].

Q3: My 3D plant segmentation model performs well on synthetic data but poorly on real-world point clouds. How can I improve sim-to-real generalization?

This "sim-to-real" gap is a significant challenge in 3D plant phenotyping [23]. To bridge it:

- Use High-Fidelity Synthetic Data: Ensure your synthetic data is generated with high biological accuracy. Methods that use real plant skeletons and statistical models of leaf shapes (like Gaussian mixture models) create more realistic point clouds than purely rule-based generators [11].

- Incorporate Realistic Noise and Occlusion: Your synthetic data generation pipeline should model the specific noise patterns, occlusions, and point density variations found in your real-world sensor data (e.g., from LiDAR or multi-view stereo) [16].

- Employ Sim-to-Real Learning Strategies: Combine modeling-based and augmentation-based synthetic data generation. Fine-tune a model pre-trained on high-quality synthetic data using a smaller set of real, annotated plant point clouds. This leverages the abundance of synthetic data while adapting to real-world distributions [23].

Experimental Protocols for Key Tasks

Protocol 1: Neural Architecture Search for Plant Part Segmentation

This protocol outlines the method to automatically design a 3D neural network for segmenting plant parts from point cloud data [39].

- Objective: To automatically find an optimal 3D deep learning architecture for segmenting individual plant parts (e.g., leaves, stems) from LiDAR or other 3D point cloud data.

- Materials: 3D point cloud dataset of plants (e.g., cotton plants) with annotated plant parts.

Methodology:

- Define Search Space: Use Point Voxel Convolution (PVConv) as the fundamental building block for the network. The search space consists of various stacks and configurations of these blocks.

- Construct Supernetwork: Build a single, weight-sharing supernetwork that encompasses all possible architectures within the defined search space.

- Perform Evolutionary Search: Run an evolutionary algorithm to explore candidate architectures. Use surrogate models to predict each candidate's performance (e.g., mean Intersection-over-Union - IoU), latency, and memory usage.

- Select and Evaluate: Select the best-performing architecture that meets any predefined resource constraints. Finally, train the selected architecture from scratch on the target dataset for evaluation.

Expected Outcome: A tailored neural network that outperforms manually designed models, achieving high accuracy (>94%) and mean IoU (>90%) for plant part segmentation while respecting hardware limitations [39].

Protocol 2: Generating Synthetic 3D Leaf Point Clouds for Trait Estimation

This protocol describes a generative approach to create synthetic 3D leaf data to overcome the bottleneck of manual annotation [11].

- Objective: To generate realistic, labeled 3D leaf point clouds for training and improving leaf trait estimation algorithms.

- Materials: A dataset of real plant leaves (e.g., sugar beet, maize, tomato) with extracted leaf skeletons (petiole and main/lateral axes).

Methodology:

- Skeleton Extraction: Process real leaf point clouds to extract their central skeletons, which define the underlying shape and structure.

- Model Training: Train a 3D U-Net convolutional neural network to learn the mapping from a leaf skeleton to a complete, dense leaf point cloud. The model predicts per-point offsets to expand the skeleton.

- Synthetic Data Generation: Use the trained model to generate diverse, lifelike leaf point clouds. The generation can be conditioned on user-defined traits (e.g., length, width) to create a controlled dataset.

- Validation: Validate the synthetic data by comparing it to real leaf data using metrics like Fréchet Inception Distance (FID). Use the synthetic data to fine-tune existing trait estimation models and measure the improvement in accuracy on real-world test data.

Expected Outcome: A scalable source of high-quality 3D leaf data that improves the accuracy and precision of algorithms for estimating traits like leaf length and width when real annotated data is scarce [11].

Table 1: Performance of Neural Architecture Search (NAS) in 3D Plant and Medical Imaging Applications