Navigating the Data Deluge: Overcoming Key Data Handling Challenges in High-Throughput Plant Phenotyping

High-throughput phenotyping (HTP) generates massive, complex datasets, creating significant bottlenecks in data management, standardization, and analysis that can hinder crop improvement and breeding programs.

Navigating the Data Deluge: Overcoming Key Data Handling Challenges in High-Throughput Plant Phenotyping

Abstract

High-throughput phenotyping (HTP) generates massive, complex datasets, creating significant bottlenecks in data management, standardization, and analysis that can hinder crop improvement and breeding programs. This article provides a comprehensive analysis for researchers and scientists of the core data challenges, from foundational volume and variety issues to methodological applications of AI and cloud platforms. It offers practical troubleshooting strategies for data complexity and cost barriers, and explores validation frameworks and comparative technology assessments to guide investment and implementation, ultimately aiming to unlock the full potential of HTP for developing climate-resilient crops.

The Scale of the Challenge: Understanding the Data Bottleneck in Modern Phenotyping

Modern plant phenotyping, which involves the comprehensive assessment of complex plant traits such as development, growth, architecture, and yield, is generating unprecedented amounts of data [1]. The shift from traditional, manual phenotyping to automated high-throughput phenotyping (HTP) systems has ushered in a "big data" era characterized by three fundamental challenges: Volume, Variety, and Velocity [2]. These "Three Vs" present both tremendous opportunities and significant hurdles for researchers aiming to enhance crop improvement and ensure global food security [3] [2]. Effectively managing these dimensions is crucial for unlocking the potential of predictive breeding and precision agriculture [3].

The following table summarizes the key quantitative aspects of the plant phenotyping landscape, illustrating the scale and growth of this field:

Table 1: The Scale of High-Throughput Plant Phenotyping

| Aspect | Quantitative Metric | Context & Significance |

|---|---|---|

| Market Value | Projected to reach USD 161.6 million by 2025 with a CAGR of 6.3% (2019-2033) [4] | Indicates substantial and growing investment in phenotyping technologies and research. |

| Global Phenotyping Platforms | Nearly 200 large-scale facilities worldwide (as of 2016 statistics) [5] | Includes both indoor (≈82) and European field (≈81) mechanized platforms, forming the physical infrastructure for data generation. |

| Research Focus | Only 23% of phenotyping research publications focus on dicotyledonous crops [5] | Highlights a significant research gap despite dicots comprising 4/5 of angiosperm species and including major crops like soybean and cotton. |

| Data Generation Context | A single drone flight over a crop can generate gigabytes of data [2]. Genomic data involves thousands or millions of DNA SNPs [2]. | Provides concrete examples of the massive Volume of data generated by modern phenotyping and genomic tools. |

FAQ: Core Concepts and Troubleshooting

FAQ 1: What exactly do the "Three Vs" mean in the context of my phenotyping research?

- Volume refers to the immense quantity of data generated. For example, imaging from a drone flying over a crop weekly or genomic information based on millions of DNA markers creates datasets that are challenging to store, process, and analyze with traditional methods and infrastructure [2].

- Variety describes the diversity of data types. A single research project might combine genomic sequences, hyperspectral images, geospatial coordinates, farm management metadata, and climate data. This complexity requires different tools and procedures for each data type and poses challenges for integration [2] [6].

- Velocity has two key aspects: the speed at which new data is generated and must be analyzed, and the need for rapid computational processing to deliver timely insights for breeding decisions. Slow analysis can bottleneck the entire research pipeline [2].

FAQ 2: I am overwhelmed by the Volume of my image data. What are the first steps to manage this?

- Infrastructure Planning: Anticipate data growth and invest in adequate storage and computing resources. The solution is not to collect less data but to improve infrastructure to handle it [2].

- Data Sampling: Employ intelligent data sampling strategies during initial analysis to make large datasets more manageable without losing critical information [2].

- Automated Processing: Utilize automated image analysis pipelines and machine learning models to extract phenotypic traits from thousands of images non-destructively, replacing manual, labor-intensive methods [1].

FAQ 3: How can I handle the Variety of data from different sensors and platforms?

- Adopt FAIR Principles: Make your data Findable, Accessible, Interoperable, and Reusable. This is especially important for phenotypic data, which often lacks global standards [6].

- Use Integrated Tools: Invest in software and platforms that help consolidate multiple data sources into a unified dataset for analysis [2].

- Leverage Machine Learning: Machine and deep learning approaches are adept at handling large, heterogeneous datasets and can automatically extract features and identify patterns across different data types [1].

FAQ 4: The Velocity of my data analysis is too slow, hindering breeding decisions. What can I do?

- Optimize Computational Speed: Ensure your statistical software and algorithms are efficient and capable of handling large arrays of data. This might require upgrading hardware or using cloud-based computing resources [2].

- Implement Real-time Analytics: For precision breeding applications, explore streaming data platforms and models that can analyze data as it is collected, enabling immediate intervention [2].

- Focus on Robust Data Collection: Build comprehensive and well-documented datasets. This allows for faster reaction to new challenges, as the necessary information is already available to explore and develop solutions rapidly [2].

Experimental Protocols for Managing the Three Vs

Protocol 1: An Integrated Workflow for Field-Based High-Throughput Phenotyping

This protocol outlines a methodology for collecting and managing multi-source data in a field environment, directly addressing the Three Vs.

1. Experimental Design and Platform Selection:

- Select a phenotyping platform appropriate for the scale and traits of interest. Options include ground-based vehicles (for high-resolution data), UAVs (drones) (for rapid coverage of large fields), and sensor networks (for continuous monitoring) [5] [1].

- Establish a rigorous schedule for data capture across key plant developmental stages to ensure temporal consistency.

2. Multi-Sensor Data Acquisition:

- Equip the chosen platform with a suite of sensors to capture data Variety:

- RGB Cameras: For capturing morphological traits and plant architecture [5] [1].

- Hyperspectral Sensors: For assessing physiological and biochemical indicators such as chlorophyll and water content [1].

- Thermal Cameras: For monitoring plant temperature as an indicator of water stress [1].

- LiDAR: For creating 3D models of plant canopy structure [5].

- Geotag all data captures using onboard GPS to add spatial context.

3. Data Management and Pre-processing:

- Establish a centralized data repository to handle the data Volume. Use automated pipelines for basic pre-processing:

- Image stitching and orthomosaicking for UAV imagery.

- Sensor calibration and geo-referencing.

- Data cleaning to handle errors and inconsistencies inherent in complex datasets [2].

4. Trait Extraction and Data Analysis:

- Use machine learning (e.g., Random Forests for tabular data) and deep learning models (e.g., Convolutional Neural Networks for image data) to automatically extract phenotypic traits from the raw sensor data [1].

- Integrate the extracted phenotypic data with genomic and environmental data to build models for genetic gain [3].

Protocol 2: Implementing a Machine Learning Pipeline for Phenotypic Trait Extraction

This protocol provides a detailed methodology for using AI to manage data Volume and Velocity in image-based phenotyping.

1. Data Preparation and Annotation:

- Image Collection: Compile a large dataset of images from your phenotyping platform (Volume).

- Annotation: Manually label a subset of images to create ground truth data for training. For example, annotate individual leaves, fruits, or diseased areas. This is a labor-intensive but critical step.

2. Model Selection and Training:

- Model Choice: For image-based tasks, select a Deep Convolutional Neural Network (CNN) architecture, which is the state-of-the-art for image classification and segmentation [1].

- Training: Train the CNN on the annotated dataset. This process allows the model to learn hierarchical features directly from the data, bypassing the need for manual feature engineering [1].

- Validation: Use a held-out portion of the data to validate the model's accuracy and avoid overfitting.

3. Deployment and High-Throughput Analysis:

- Deployment: Run the trained model on the entire, non-annotated image dataset. This enables the rapid, automated analysis of thousands of images, dramatically increasing analysis Velocity [1].

- Result Export: The model outputs quantitative traits (e.g., leaf count, disease severity percentage) into a structured format for further statistical analysis.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Technologies and Platforms for High-Throughput Phenotyping

| Tool Category | Specific Examples | Primary Function |

|---|---|---|

| Phenotyping Platforms | LemnaTec 3D Scanalyzer, PHENOPSIS, PHENOVISION, "Plant Accelerator" [5] [1] | Automated, non-invasive systems for imaging and monitoring plants in controlled environments or fields. They form the core infrastructure for HTP. |

| Sensor Technologies | RGB, Hyperspectral, Thermal, and LiDAR sensors [5] [1] | Capture a wide Variety of morphological, physiological, and structural data from plants. |

| Software & Analytical Tools | AI/ML models (CNNs, Random Forests), Cloud-based analytics platforms [1] [4] | Process the high Volume of data, extract traits, and accelerate analysis Velocity. |

| Data Management Solutions | Platforms adhering to FAIR principles, Geospatial data infrastructure (e.g., for precision ag) [6] | Store, manage, and standardize heterogeneous data, enabling sharing and reuse. |

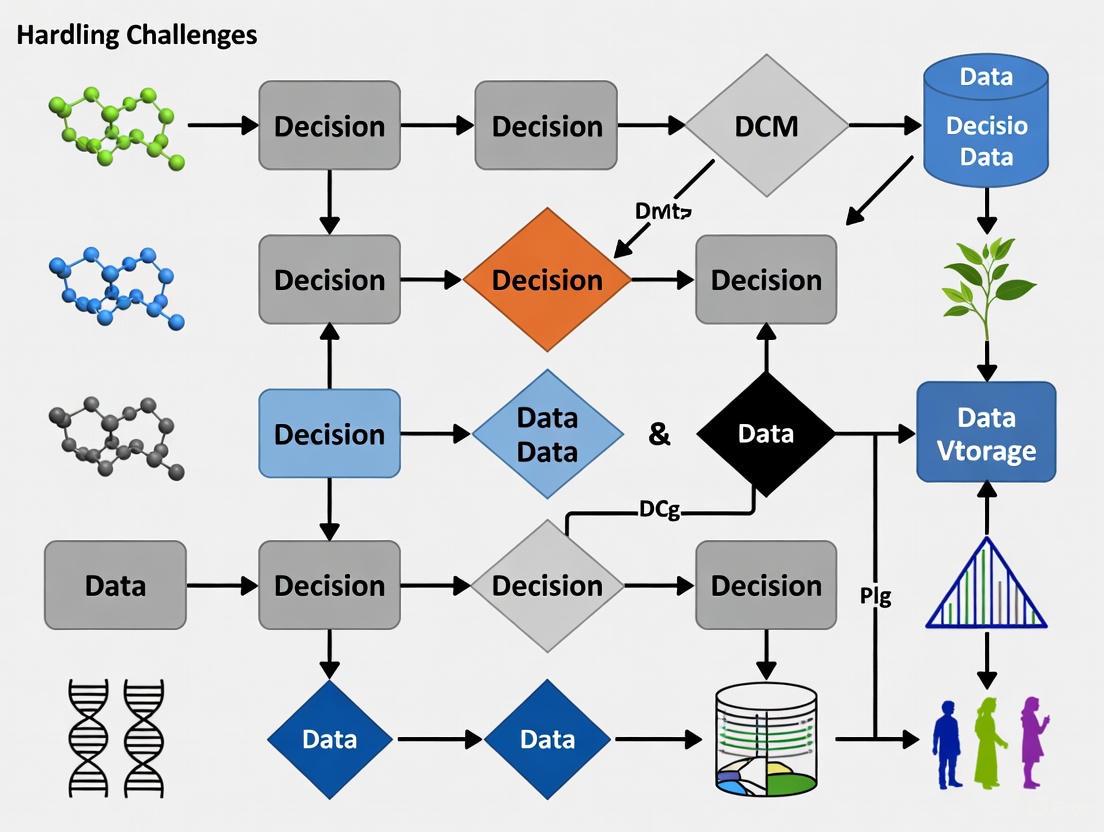

Visualizing the Big Data Challenges in Plant Phenotyping

The following diagram illustrates the logical relationships between the Three Vs, their drivers, and the solutions required to manage them in a plant phenotyping workflow.

Research Reagent Solutions: Essential Materials for HTPP

The following table details key hardware and software solutions essential for conducting high-throughput plant phenotyping (HTPP) research.

| Item Category | Specific Examples | Primary Function | Key Applications in HTPP |

|---|---|---|---|

| Platforms | Unmanned Aerial Vehicles (UAVs), Ground-Based Robotic Platforms (e.g., Scanalyzer [7]), Stationary Field Systems [8] | Automated, mobile, or fixed-position carriers for sensor deployment, enabling high-frequency, non-destructive data acquisition. [9] [10] | Large-scale field monitoring; precise, controlled-environment phenotyping. [8] [7] |

| Sensors | RGB, Multispectral (e.g., SMICGS [9]), Hyperspectral, Thermal, LiDAR, RGB-D Cameras [11] [10] | Capture various physical and chemical properties of plants across visible, non-visible, and 3D spatial domains. [9] [11] | Estimating biomass, chlorophyll content, water stress, canopy structure, and plant architecture. [9] [11] [7] |

| Computational Algorithms | Neural Radiance Fields (NeRF), SegVoteNet [12], Random Forest, other Machine/Deep Learning models (e.g., DarkNet53 [7]) | Process raw sensor data to reconstruct 3D models, segment plant organs, detect objects, and predict traits. [12] [9] | 3D canopy reconstruction, panicle detection, growth indicator modeling, and stress classification. [12] [9] [7] |

| Data Fusion & Registration Tools | Novel multimodal 3D registration algorithms [13], Multi-source sensor data fusion systems [10] | Align and integrate data from multiple sensors to create unified, information-rich datasets and correct for parallax. [13] [10] | Generating 3D multispectral point clouds, achieving pixel-precise alignment across camera modalities. [13] [10] |

Experimental Protocols for High-Throughput Phenotyping

Protocol 1: UAV-Based 3D Canopy Reconstruction and Panicle Phenotyping

This protocol outlines the methodology for efficient 3D reconstruction of sorghum canopies and phenotyping of panicle morphology using UAVs and advanced computer vision. [12]

- Data Acquisition: Employ a low-altitude UAV to capture videos (not just still images) of the crop canopy. This ensures efficient coverage and collects a continuous stream of data from multiple viewpoints. [12]

- 3D Model Generation: Process the video data using a Neural Radiance Fields (NeRF) algorithm. This deep learning technique generates high-quality, detailed 3D point clouds of the sorghum canopies from the 2D video frames. [12]

- Model Training & Semantic Segmentation:

- Create a synthetic, annotated dataset of 3D sorghum canopies to train deep learning models where real-world annotated data is scarce. [12]

- Employ the SegVoteNet model, a multi-task deep learning architecture that integrates VoteNet and PointNet++. This model is designed for semantic segmentation and 3D object detection directly on point cloud data. [12]

- The model's voting and sampling module leverages segmentation results to refine the generation of object proposals, improving the accuracy of detecting individual panicles. [12]

- Validation: Validate the model's performance using metrics like Mean Average Precision (mAP) at a 0.5 Intersection over Union (IoU) threshold. The referenced study achieved 0.850 mAP on real point cloud datasets. [12]

Protocol 2: Multimodal Image Registration for Plant Phenotyping

This protocol describes a method for accurately aligning images from different camera technologies, which is crucial for leveraging complementary data from multimodal systems. [13]

- Data Collection: Set up a system with multiple cameras (e.g., RGB, hyperspectral) along with a depth camera (e.g., Time-of-Flight camera) that provides 3D information for the scene. [13]

- Leverage Depth Information: Integrate the depth data into the registration process. This additional spatial information is key to mitigating parallax effects, a major challenge when aligning images taken from different viewpoints. [13]

- Occlusion Handling: Apply an integrated automated mechanism to identify and filter out various types of occlusions. This step minimizes registration errors caused by leaves or other plant parts hiding from the view of one camera but visible to another. [13]

- Registration Execution: Use a ray-casting-based algorithm to achieve pixel-precise alignment of the images from the different modalities. This method is not reliant on detecting plant-specific image features, making it robust across different plant species. [13]

Sensor Performance and Calibration Data

Calibration and validation are critical steps to ensure data quality. The following table summarizes key performance metrics from a novel sensor system.

| Calibration Parameter | Metric | Value/Outcome |

|---|---|---|

| Spectral Accuracy [9] | Max. deviation between preset and measured wavelengths | 0.43 nm |

| Crosstalk Correction [9] | Reflectance error (before vs. after correction) | Reduced from 26.49% to 6.47% |

| System Robustness [9] | Signal-to-Noise Ratio (SNR) | > 100 dB |

| Prediction Accuracy (Rice) [9] | R² for Above-Ground Biomass (AGB) | 0.93 |

| Prediction Accuracy (Rice) [9] | R² for Leaf Area Index (LAI) | 0.89 |

Troubleshooting Guides and FAQs

Data Acquisition & Sensor Issues

Q: My multispectral sensor data shows inconsistent reflectance values, even from the same plot. What could be wrong? A: This is often caused by spectral crosstalk and a lack of proper calibration.

- Solution: Implement a spectral crosstalk correction method. One study reduced reflectance errors from 26.49% to 6.47% by applying such a correction to a snapshot multispectral sensor. [9]

- Prevention: Regularly perform radiometric calibration using a standardized reference panel, especially when using snapshot sensors with mosaic filters. Ensure consistent sunlight conditions during data capture or use an integrated calibrated light source. [9]

Q: I am using multiple sensors, but the data does not align spatially, leading to flawed analysis. A: This is a classic multimodal registration problem, exacerbated by parallax in complex plant canopies.

- Solution: Integrate a 3D depth camera (e.g., Time-of-Flight) into your setup. Use a registration algorithm that leverages this 3D information and ray casting to achieve pixel-precise alignment across different camera modalities. This approach automatically detects and filters out occlusion effects. [13]

- Alternative Solution: For a robotic or UGV platform, develop a unified data fusion system that integrates RGB-D, multispectral, thermal, and LiDAR sensors with pre-calibrated extrinsic parameters to ensure synchronized and aligned data acquisition. [10]

Q: My UAV-based imagery is not producing high-quality 3D models for trait extraction. A: The issue may lie in the data capture method and processing algorithm.

- Solution: Instead of capturing still images, try collecting video data. Process this video with advanced reconstruction techniques like Neural Radiance Fields (NeRF), which are capable of generating high-fidelity 3D point clouds suitable for detailed phenotyping. [12]

Data Processing & Analysis Issues

Q: How can I accurately detect and count specific organs, like sorghum panicles, from 3D point cloud data? A: Traditional image processing methods may fail due to occlusion and complexity.

- Solution: Train a dedicated deep learning model like SegVoteNet on your 3D point clouds. This model performs semantic segmentation and 3D detection simultaneously. Its architecture uses a shared backbone and a voting mechanism that refines object detection based on segmentation results, achieving high precision (e.g., 0.850 mAP). [12]

- Pre-processing: If real annotated data is limited, consider building a 3D model to simulate your crop and generate a large, synthetic, annotated dataset for initial model training. [12]

Q: My AI model for stress detection is not generalizing well to new field data. A: This is typically due to insufficient or non-representative training data.

- Solution: Ensure you have a sufficiently large and varied dataset. A common guideline is to have at least 100 images per object class or genotype. [14] Employ data augmentation techniques or use a patch-based classification approach, which divides high-resolution images into smaller sub-regions to artificially increase the number of training samples. [14]

- Solution: Integrate multiple data sources. Fusing data from RGB, thermal, and multispectral sensors can create more robust models that capture a wider range of physiological responses, improving generalizability. [10] [7]

HTPP Experimental Workflow

The following diagram visualizes the core workflow and common troubleshooting points in a high-throughput plant phenotyping experiment.

High-throughput plant phenotyping (HTP) has emerged as a transformative tool in agricultural research, enabling the non-destructive, rapid assessment of plant traits across large populations using advanced imaging, sensors, and automated platforms [15] [8]. However, the immense data volumes generated by these technologies—from hyperspectral imagery, unmanned aerial vehicles, and IoT sensors—present significant bottlenecks in data storage, transfer, and management [16] [17]. These challenges complicate efforts to bridge the genotype-to-phenotype gap and develop climate-resilient crops [8]. Adhering to the FAIR principles—making data Findable, Accessible, Interoperable, and Reusable—is no longer optional but a scientific imperative to maximize research reproducibility, collaboration, and insight [18] [19]. This guide addresses common data management issues in HTP research, providing troubleshooting and protocols to overcome these hurdles.

Core Data Bottlenecks in HTP Research

The transition from data collection to actionable insight in HTP is fraught with technical challenges. The table below summarizes the primary bottlenecks and their practical implications for researchers.

Table 1: Key Data Management Bottlenecks in High-Throughput Plant Phenotyping

| Bottleneck Category | Specific Challenges | Impact on Research |

|---|---|---|

| Data Storage & Volume | Massive data flows from imaging sensors (RGB, hyperspectral, thermal); complex data types (3D point clouds, time-series) [16] [8]. | Overwhelmed storage infrastructure; difficulty in data centralization and backup; high costs [17]. |

| Data Transfer & Access | Moving large datasets from field to lab or between collaborators; data siloed in incompatible formats or systems [20]. | Delays in analysis; impeded collaboration and data sharing; failure to leverage collected data [19]. |

| Data Findability | Poor metadata practices; datasets not indexed in searchable resources; lack of persistent identifiers [19] [21]. | Inability for researchers (and machines) to discover existing datasets, leading to duplication of effort [18]. |

| Data Interoperability | Use of inconsistent data formats, vocabularies, and ontologies across labs and platforms [22] [20]. | Inability to integrate datasets for meta-analysis; errors in automated data processing [23]. |

| Data Reusability | Inadequate documentation about protocols, provenance, and data licensing [21] [20]. | Prevents validation of results and reuse of data in new studies, reducing the long-term value of research [18]. |

FAQs and Troubleshooting Guides

Data Storage and Handling

Q: Our phenotyping platform generates terabytes of image data. How can we manage storage costs without losing data?

A: Implementing a tiered storage strategy is key to balancing cost and accessibility.

- Issue: Raw data from hyperspectral and 3D sensors can quickly exhaust local server capacity [16].

- Solution:

- Immediate Tier (Hot Storage): Retain raw and actively processed data from current experiments on high-performance network-attached storage (NAS) or institutional servers.

- Intermediate Tier (Warm Storage): After primary analysis, move processed data (e.g., extracted trait measurements, analyzed images) to larger, more cost-effective storage systems.

- Archive Tier (Cold Storage): Use tape drives or cloud archive services (e.g., Amazon Glacier, Google Coldline) for raw data that must be kept for long-term preservation but is rarely accessed. Always keep derived, analysis-ready data more accessible than raw data volumes.

- Prevention: Plan storage needs and costs as part of your experimental design and grant proposals. Budget for data management as a necessary research cost [19].

Q: How can we efficiently centralize data from multiple sources (drones, field sensors, lab instruments)?

A: Dedicated agricultural trial management software is designed for this specific task.

- Issue: Manually merging data from spreadsheets, various image analysis outputs, and sensor feeds is error-prone and time-consuming [17].

- Solution: Utilize platforms like Bloomeo or GnpIS that support automated data integration via API feeds, flat file imports, and mobile app data entry [22] [17]. These systems structure data upon entry, linking it to the correct trial subplot and timestamp, which minimizes manual errors and speeds up the validation process.

- Prevention: Establish a data management plan before the experiment starts. Ensure all instruments and software can export data in a format compatible with your central system.

FAIR Data Implementation

Q: What are the most critical first steps to make our phenotyping data FAIR?

A: Focus on findability and reusability through rich metadata and persistent identifiers.

- Issue: Datasets with minimal description are effectively lost, even if stored, because they cannot be found or understood by others (or yourself in the future) [19].

- Solution:

- Create Rich Metadata: At a minimum, describe your dataset using the MIAPPE (Minimal Information About a Plant Phenotyping Experiment) standard, which covers experimental design, growth conditions, plant material, and observed variables [22].

- Use a Public Repository: Deposit your dataset in a repository such as FigShare, Dataverse, or a disciplinary repository like GnpIS that assigns a Digital Object Identifier (DOI). This makes your data findable and citable [22] [21].

- Apply a Clear License: Attach a license (e.g., Creative Commons) to your data so others know the terms of reuse [21].

Q: How can we ensure our data is interoperable with other studies?

A: Standardize your data using community-agreed vocabularies and formats.

- Issue: The same trait (e.g., "plant height") might be labeled and measured differently across studies, preventing integration [20].

- Solution:

- Use Ontologies: Describe traits and methodologies using terms from controlled vocabularies like the Crop Ontology (CO) or Plant Ontology (PO). For instance, instead of "plant height," use the formal ontology term (e.g.,

CO_323:0000010) with its precise definition [22]. - Adopt Standardized Protocols: Follow established phenotyping protocols where available. Document any custom protocols in detail.

- Use Open, Machine-Readable Formats: Store data in standardized, non-proprietary formats like CSV for tables or HDF5 for complex, hierarchical data, rather than in proprietary Excel formats [21].

- Use Ontologies: Describe traits and methodologies using terms from controlled vocabularies like the Crop Ontology (CO) or Plant Ontology (PO). For instance, instead of "plant height," use the formal ontology term (e.g.,

Data Transfer and Collaboration

Q: We need to share large HTP datasets with an international collaborator. What is the most effective method?

A: For large volumes, cloud-based repositories or high-speed transfer protocols are preferable to email or standard cloud drives.

- Issue: Files are too large for email attachments, and consumer cloud storage can be slow and unreliable for sync.

- Solution:

- Data Repository: The best practice is to use the same repository where your data is published (e.g., FigShare, Dataverse). This ensures version control and permanence.

- High-Speed Transfer Services: For unpublished data, use services like Globus, Aspera, or SCP which are designed for secure, high-speed movement of large scientific datasets.

- Prevention: Include data sharing plans and associated costs in your collaborative grant proposals.

Essential Research Reagent Solutions

The following tools and resources are critical for implementing effective and FAIR data management in HTP research.

Table 2: Key Reagents and Tools for FAIR Plant Phenotyping Data Management

| Tool / Resource | Function | Relevance to HTP Data Challenges |

|---|---|---|

| MIAPPE Standard | A metadata standard defining the minimal information required to describe a plant phenotyping experiment [22]. | Ensures Reusability by providing essential context about the experiment, plant material, and environment. |

| Crop Ontology (CO) | A set of controlled, standardized vocabularies for describing plant traits and measurement methods [22]. | Ensures Interoperability by allowing different systems and studies to unambiguously understand the meaning of traits. |

| Breeding API (BrAPI) | A standardized RESTful API specification for plant breeding data [22]. | Enables Accessibility and Interoperability by allowing different software tools and databases to communicate and exchange data seamlessly. |

| GnpIS / PHIS | Plant phenomics-specific data repositories [22]. | Provides a structured environment for data Storage, making data Findable (via indexing) and Accessible, while supporting FAIR principles. |

| Persistent Identifier (DOI) | A permanent unique identifier for a digital object, such as a dataset. | Makes data Findable and citable, ensuring it can always be located and credited, even if the underlying URL changes. |

| Dedicated Agronomy Software (e.g., Bloomeo) | Centralized platforms for managing agricultural trial data [17]. | Addresses data Storage and Transfer bottlenecks by providing a structured hub for data from multiple sources, streamlining validation and analysis. |

Experimental Workflow for FAIR HTP Data Management

The diagram below outlines a recommended experimental workflow, from data acquisition to publication, incorporating FAIR principles at every stage to mitigate bottlenecks.

The Impact of Unmanaged Data on Research Reproducibility and Breeding Cycles

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common data-related causes of irreproducible results in high-throughput plant phenotyping (HTP) experiments? Irreproducible results often stem from inadequate metadata collection, improper data annotation, and a lack of standardized experimental protocols [24]. Without detailed metadata on environmental conditions and imaging sensors, it is impossible to recreate the experiment accurately. Furthermore, batch-to-batch phenotypic variation, even in highly standardized environments, is a significant but often unrecorded factor [25].

FAQ 2: How can we manage the massive volume of image data generated by HTP platforms without compromising data integrity? The key is implementing dedicated data management frameworks and standardized ontologies. Platforms like the Plant Genomics and Phenomics (PGP) repository and PIPPA (PSB Interface for Plant Phenotype Analysis) are designed to handle HTP data from the moment it is generated [24]. They facilitate proper data annotation, storage, and traceability, which are crucial for long-term data usability and sharing.

FAQ 3: Our multi-laboratory study produced conflicting results. How can we improve consistency in the future? Counterintuitively, embracing variability through systematic heterogenization in your experimental design can improve reproducibility. Studies show that implementing a multi-laboratory approach with as few as two sites significantly increases the reproducibility of findings without increasing the total sample size [25]. This approach tests the robustness of your results across diverse genetic and environmental backgrounds.

FAQ 4: What are the biggest pitfalls in analyzing HTP data, and how can we avoid them? A major pitfall is the high dimensionality of data, which can lead to spurious correlations and noise accumulation [26]. This occurs when unrelated covariates incidentally correlate with the outcome, leading to false discoveries. Using robust statistical methods designed for high-dimensional data, such as regularization and feature selection, is essential to mitigate this risk [26].

Troubleshooting Guides

Problem: Inconsistent phenotypic measurements across replicated experiments.

- Potential Cause: Unrecorded micro-environmental fluctuations or subtle differences in imaging sensor settings [25] [24].

- Solution:

- Systematize Metadata: Implement the Minimal Information About a Plant Phenotyping Experiment (MIAPPE) standard to ensure all environmental conditions, camera properties, and sample details are meticulously recorded [24].

- Introduce Controlled Variation: Design experiments across multiple independent batches or slightly varied conditions to ensure your findings are robust and not idiosyncratic to a single, highly specific environment [25].

Problem: Inability to integrate or compare your phenotyping data with public datasets.

- Potential Cause: Use of custom, non-standardized data formats and a lack of common ontologies for data annotation [24].

- Solution:

- Adopt Common Ontologies: Use established plant ontologies for trait naming and description.

- Utilize Public Repositories: Deposit and share data through structured repositories like AraPheno or the PGP repository, which encourage the use of standardized formats and vocabularies, enabling data fusion and meta-analysis [24].

Problem: High-dimensional phenotyping data leads to false positive associations.

- Potential Cause: Spurious correlations and incidental endogeneity, which are common in Big Data analysis [26].

- Solution:

- Apply Robust Statistics: Utilize statistical methods designed for high-dimensional data, such as penalized regression (e.g., Lasso) or sure independence screening, to reduce noise accumulation and improve variable selection [26].

- Independent Validation: Always validate identified features or markers using a hold-out validation dataset or through an independent replication study [26].

Data and Experimental Protocols

Table 1: Common High-Throughput Plant Phenotyping Platforms and Applications This table summarizes key HTP platforms, the traits they record, and their application in stress phenotyping, aiding researchers in selecting appropriate technology [1].

| Platform Name | Primary Traits Recorded | Crop Example(s) | Application in Stress Research |

|---|---|---|---|

| PHENOPSIS | Plant responses to soil water deficit | Arabidopsis thaliana | Drought stress analysis [1] |

| LemnaTec 3D Scanalyzer | Non-invasive trait screening | Rice (Oryza sativa) | Salinity tolerance traits [1] |

| GROWSCREEN FLUORO | Leaf growth, Chlorophyll fluorescence | Arabidopsis thaliana | Detection of multiple abiotic stress tolerances [1] |

| HyperART | Leaf chlorophyll content, Disease severity | Barley, Maize, Tomato, Rapeseed | Quantification of disease severity and leaf health [1] |

| PHENOVISION | Drought response traits | Maize (Zea mays) | Detection of drought stress and recovery [1] |

Table 2: Key Challenges of Big Data in Plant Phenotyping and Their Impacts on Breeding This table outlines core data challenges and how they directly impact the efficiency and success of breeding programs [26].

| Data Challenge | Impact on Research Reproducibility | Impact on Breeding Cycles |

|---|---|---|

| High Dimensionality & Noise Accumulation | Reduces statistical power; true signals are obscured by noise, leading to false negatives. | Slows down identification of reliable marker-trait associations, delaying selection. |

| Spurious Correlation | Generates false positive associations between traits and genetic markers. | Leads to breeding for incorrect traits, wasting time and resources on dead-end crosses. |

| Data Heterogeneity | Makes it difficult to combine datasets from multiple trials or locations, reducing statistical power. | Prevents effective genomic selection across environments, limiting genetic gain. |

| Heavy Computational Cost | Makes complex, robust analyses inaccessible, forcing researchers to use less rigorous methods. | Slows down the data analysis pipeline, preventing rapid, data-driven decisions in the field. |

Experimental Protocol: Implementing a Multi-Laboratory Phenotyping Study This protocol is designed to enhance reproducibility by systematically incorporating variation, based on the findings of Voelkl et al. as cited in [25].

- Objective: To validate the effect of a specific treatment (e.g., a new fertilizer or drought regimen) on plant growth.

- Design:

- Collaboration: Conduct the experiment simultaneously in at least two independent laboratories.

- Harmonization: Agree on a core set of protocols (e.g., treatment definition, primary outcome measure).

- Heterogenization: Allow for "real-world" variation in other factors (e.g., plant growth chamber models, minor variations in watering schedules, technicians).

- Data Collection:

- Standardized Metadata: All labs must adhere to the MIAPPE standard, rigorously recording all environmental and procedural metadata [24].

- Imaging: Use HTP platforms (e.g., from Table 1) to collect non-destructive image data over time.

- Data Analysis:

- Data Integration: Combine data from all laboratories, using the laboratory identifier as a blocking factor in the statistical model.

- Statistical Testing: Analyze the data using a mixed model that accounts for the variation introduced by the different laboratory environments. A treatment effect that remains significant across labs is considered robust and reproducible [25].

Workflow and Data Relationship Visualizations

HTP Data Impact on Breeding

Troubleshooting Data Issues

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Managing HTP Data

| Category | Item / Solution | Function |

|---|---|---|

| Data Management | MIAPPE Standards | Provides a checklist to ensure all critical experimental metadata is captured, enabling replication and data sharing [24]. |

| Data Repositories | AraPheno, PGP Repository | Centralized, structured databases for publishing and accessing plant phenotyping data, facilitating meta-analysis [24]. |

| Analysis Platforms | PlantCV, IAP | Open-source image analysis software that allows for customizable pipelines to extract phenotypic traits from HTP image data [24]. |

| Statistical Methods | Regularization (Lasso) | A class of regression analysis methods that reduces model complexity and mitigates false positives in high-dimensional data [26]. |

| Experimental Design | Multi-laboratory Trials | A study design that introduces systematic variation to test the robustness of findings, thereby enhancing reproducibility and external validity [25]. |

From Raw Data to Actionable Insights: Methodologies for Effective Data Management

Leveraging AI and Machine Learning for Automated Image Analysis and Trait Extraction

Technical Support Center

Troubleshooting Guides

Guide 1: Resolving Common Image Analysis Errors in GRABSEEDS

Problem: High rate of false positives in object (seed) identification due to noisy background. Solution: Adjust the sigma values for Gaussian de-noising within the Canny edge detector.

- Procedure:

- Access the command-line interface for GRABSEEDS.

- Increase the

sigmavalue (default is 1) to better handle background noise from materials like cloth. - If the issue persists, use the feature to change the background to a color that contrasts with the seed color [27].

Problem: Blurred edges in low-light conditions prevent proper object enclosure. Solution: Adjust the closing morphology kernel size to mend gaps in object outlines.

- Procedure:

- In the GRABSEEDS parameters, locate the closing morphology setting.

- Increase the

kernel size(default is 2 pixels) to close larger 'cracks' in the edges. - Be aware that increasing the kernel size also raises the risk of falsely connecting objects that are close together [27].

Problem: Failure to separate touching or overlapping seeds. Solution: Utilize the watershed segmentation feature or a deep learning model.

- Procedure:

- For moderately overlapping seeds, activate the

watershed segmentationfunction. This uses the furthest points from detected edges as markers to separate objects [27]. - For more complex cases, employ the integrated Segment Anything Model (SAM) by specifying the appropriate command-line flag. Note that this requires higher computational resources [27].

- For moderately overlapping seeds, activate the

Problem: Inaccurate text label recognition during batch processing. Solution: Leverage the consistent location of labels in batch images.

- Procedure:

- Use the image cropping function to define a specific area of the image that contains the text label.

- GRABSEEDS will then focus the Google tesseract-OCR engine on this pre-defined area, significantly speeding up and improving the accuracy of label extraction [27].

Table 1: GRABSEEDS Parameter Adjustments for Common Issues

| Problem | Key Parameter to Adjust | Default Value | Adjusted Value | Trade-off Consideration |

|---|---|---|---|---|

| Noisy background | Sigma (σ) in Canny edge detector | 1 | Increase (e.g., to 2 or 3) | May overlook smaller seeds due to increased smoothing [27]. |

| Blurred edges | Closing morphology kernel size | 2 pixels | Increase (e.g., to 3-5 pixels) | Risk of falsely connecting closely spaced objects [27]. |

| Incorrect object size | Minimum/Maximum size threshold | Not specified | Set based on known object size | Effectively filters out background noise mistakenly identified as targets [27]. |

Guide 2: Addressing Data Management and Model Performance Challenges

Problem: Inefficient or unsuccessful integration of multi-dimensional datasets from different sources (a core data challenge in plant phenotyping) [28]. Solution: Implement standardized data management and annotation practices.

- Procedure:

- Standardize Metadata: Create and use a common set of metadata descriptors for all images (e.g., growth stage, imaging conditions, stress treatment) [28].

- Centralize Data Storage: Utilize centralized data repositories that support rich metadata and are accessible to all collaborators [28].

- Systematic Annotation: For ground truth data, establish a clear protocol for manual annotation to ensure consistency across different annotators [29].

Problem: Limited availability of high-quality ground truth data for training deep learning models. Solution: Use Generative Adversarial Networks (GANs) to synthesize realistic training data.

- Procedure:

- Stage 1 - Image Augmentation: Use a model like FastGAN on your original RGB images to perform non-linear intensity and texture transformations, creating a larger set of augmented images [29].

- Stage 2 - Mask Generation: Train a conditional GAN (e.g., Pix2Pix) on a limited set of original RGB images and their corresponding manually created binary segmentation masks. Then, apply this trained model to the augmented RGB images from Stage 1 to automatically generate their segmentation masks [29].

- Validation: Manually annotate a subset of the generated images to calculate the Dice coefficient and validate the accuracy of the synthetic masks, which has been shown to range between 0.88 and 0.95 [29].

Problem: Poor performance of a YOLO-based model in detecting small plant structures (e.g., petioles) under varying stress conditions. Solution: Enhance the model architecture with modules that improve small-object detection.

- Procedure:

- Integrate AKConv: Incorporate Adaptive Kernel Convolution (AKConv) into the backbone's C3 module (C3k2) to enhance the model's ability to capture features from small and irregularly shaped objects [30].

- Redesign Feature Pyramid: Implement a recalibration feature pyramid detection head based on the P2 layer, which helps preserve fine-grained details from earlier feature maps that are crucial for detecting small structures [30].

- A study using this approach reported performance increases of 4.1% in recall, 2.7% in mAP50, and 5.4% in mAP50-95 for tomato phenotype recognition [30].

Frequently Asked Questions (FAQs)

FAQ 1: What are the most critical data management challenges when applying AI in plant phenotyping research?

Eight key data management challenges have been identified:

- Data Quality: Ensuring consistency and reliability of data from diverse sources and platforms [28].

- Data Integration: Combining multi-dimensional datasets (genomic, phenotypic, environmental) from different scientific approaches [28].

- Data Sharing: Facilitating the exchange of data across institutions and disciplines while respecting ethical and legal constraints [28].

- Metadata Standards: Developing and implementing validated, meaningful, and usable metadata descriptors [28].

- Data Curation: The labor-intensive process of annotating and maintaining large datasets, especially for ground truth generation [28] [29].

- Computational Infrastructure: Access to sufficient processing power and storage for large-scale AI model training and data analysis [28].

- Reproducibility: Ensuring that AI applications and analyses can be reliably reproduced, which depends on well-managed data and code [28].

- Workflow Management: Handling the complexity of integrated data analysis pipelines that span from data generation to model interpretation [28].

FAQ 2: My model performs well on validation data but poorly on new field images. What could be the cause?

This is a common issue often stemming from the domain shift between controlled validation environments and complex field conditions. Key factors include:

- Variable Lighting and Backgrounds: Field images have unpredictable illumination and cluttered backgrounds, unlike uniform lab settings [27] [30].

- Overfitting to Training Data: The model may have learned features specific to your training set (e.g., a particular background texture) that are not relevant in the field [28].

- Lack of Real-World Variability: Your training dataset may not encompass the full morphological and phenotypic diversity present in field conditions [30].

Mitigation Strategies:

- Use data augmentation techniques that specifically simulate field variations (e.g., random shadows, background swaps, noise injection) [30].

- Incorporate a diverse set of field images into your training and validation cycles.

- Consider using GANs to generate synthetic field images with accurate ground truth to expand your training dataset's domain coverage [29].

FAQ 3: How can I efficiently validate the accuracy of traits extracted by an automated image analysis tool like GRABSEEDS?

A multi-faceted validation approach is recommended:

- Visual Debugging: Use GRABSEEDS' built-in visual debugging tool, which generates a PDF document overlaying object contours, detected edges, and a list of identified objects on the original image for manual inspection [27].

- Comparison with Manual Measurements: Conduct a small-scale study where traits (e.g., seed count, leaf area) are measured manually and compared to the automated outputs using statistical metrics like correlation coefficients or mean absolute error [30].

- Geometric Analysis Validation: For traits like plant height calculated from bounding boxes, calculate the average relative error. For example, one deep learning-based study reported an average relative error of 6.9% for plant height and 10.12% for petiole count, which was deemed acceptable for non-destructive analysis [30].

Experimental Protocols & Workflows

Protocol 1: High-Throughput Phenotyping Pipeline for Stress Response Analysis

This protocol outlines a methodology for using automated image analysis to quantify plant phenotypic responses to abiotic stress (e.g., water stress).

1. Image Acquisition:

- Platform: Utilize high-throughput phenotyping platforms (e.g., LemnaTec systems) or standardized handheld imaging setups [31] [29].

- Settings: Maintain consistent lighting, camera angle, and resolution throughout the experiment. For time-series studies, images should be captured at regular intervals [30].

- Replication: Image multiple plants per treatment group to ensure statistical robustness.

2. Image Preprocessing:

- Format Standardization: Convert all images to a consistent format (e.g., PNG).

- Resizing: Resize images to a uniform dimension required by the analysis model (e.g., 1024x1024 pixels) [29].

- Normalization: Perform per-channel normalization of pixel values to a standard range (e.g., [0, 1]) [29].

3. Automated Trait Extraction with an Improved YOLO Model:

- Model Selection: Start with a base object detection model like YOLOv11n [30].

- Architectural Improvements:

- Trait Calculation: Use the bounding box information output by the model to calculate key phenotypic parameters through geometric analysis (e.g., plant height from the vertical bounding box axis) [30].

4. Data Integration and Statistical Analysis:

- Data Aggregation: Compile extracted traits into a structured data table.

- Stress Classification: Use the extracted traits as input features for machine learning classifiers (e.g., Random Forest, Support Vector Machine) to differentiate between stress conditions. Random Forest has been shown to achieve up to 98% accuracy in classifying water stress in tomatoes [30].

Workflow for Stress Response Phenotyping

Protocol 2: Generative Adversarial Training for Ground Truth Data Expansion

This protocol describes a two-stage GAN-based approach to generate synthetic plant images and their corresponding segmentation masks, addressing the data bottleneck.

1. Data Preparation:

- Seed Collection: Gather a limited set of original RGB plant images and their corresponding manually created binary segmentation masks (ground truth). Example sizes: 80-100 image-mask pairs [29].

- Preprocessing: Resize all images to a uniform size (e.g., 1024x1024 pixels) and normalize pixel values [29].

2. Stage 1: RGB Image Augmentation with FastGAN:

- Model: Train a FastGAN model on the original RGB images.

- Output: Generate a larger set of novel, realistic RGB plant images through non-linear intensity and texture transformations [29].

3. Stage 2: Segmentation Mask Generation with Pix2Pix:

- Model Training: Train a Pix2Pix conditional GAN on the paired original RGB images and their manual segmentation masks.

- Mask Synthesis: Apply the trained Pix2Pix model to the synthetic RGB images from Stage 1 to automatically generate their corresponding binary segmentation masks [29].

- Loss Function: Use Sigmoid Loss for efficient model convergence, which has been shown to achieve high Dice coefficients (0.94-0.95) [29].

4. Validation:

- Manual Annotation: Manually annotate a subset of the FastGAN-generated images to create a validation set.

- Accuracy Calculation: Compute the Dice coefficient between the Pix2Pix-predicted masks and the manual annotations for the validation set. Target accuracy: >0.88 Dice coefficient [29].

Two-Stage GAN Data Generation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Software and Analytical Tools for AI-Based Plant Phenotyping

| Tool Name | Type/Function | Key Features | Application in Research |

|---|---|---|---|

| GRABSEEDS [27] | Image Analysis Software | Command-line tool for batch processing; extracts dimension, shape, and color traits; robust to variable lighting and overlapping objects. | Phenotyping of seeds, leaves, and flowers; QTL mapping and GWAS studies [27]. |

| PlantCV [27] | Image Analysis Toolkit | Comprehensive, flexible open-source toolkit for complex plant image analysis. | General-purpose plant phenotyping across laboratory and field conditions [27]. |

| YOLO Models (e.g., YOLOv11) [30] | Deep Learning Object Detection | Real-time performance; high accuracy for detecting small objects and complex plant structures; enables automated bounding-box-level trait extraction. | Automatic identification and counting of plant organs (leaves, petioles, fruits); structural phenotyping under stress [30]. |

| Pix2Pix & FastGAN [29] | Generative Adversarial Networks | FastGAN generates realistic RGB images. Pix2Pix generates segmentation masks from RGB images in a paired manner. | Automated generation of synthetic ground truth data to overcome the limited annotated data bottleneck [29]. |

| DIRT/3D [27] | Root Phenotyping Platform | Image-based 3D technology for phenotyping root architecture. | Non-destructive analysis of root system traits and their responses to environmental cues [27]. |

The Role of Cloud-Based Platforms and Data Hubs for Centralized Analysis

Technical Support Center: FAQs & Troubleshooting Guides

Frequently Asked Questions (FAQs)

Q1: How can we structure our research organization to best accelerate innovation using cloud platforms? Research leaders indicate that organizational change is often more complex than technological change. A successful strategy involves adopting agile operating models that differentiate research IT from central IT. Some institutions create dedicated hubs, such as the RMIT AWS Cloud Supercomputing Hub (RACE), to provide scalable High Performance Computing (HPC) services, freeing research IT staff from manual tasks to focus on enabling researchers [32].

Q2: How can we maintain open, collaborative research networks while meeting security and compliance requirements? The increase in cyber-attacks and the use of sensitive data in research necessitates robust governance. Data spaces are one solution, built with interoperability, data governance, and security in mind to facilitate organizing, accessing, and sharing data across different organizations and systems in a compliant manner [32].

Q3: What is the best way to create a consistent and seamless experience for researchers who are not cloud experts? There is a tension between making tools easy to use and training researchers to be cloud engineers. A solution is to use platforms like the Research and Engineering Studio on AWS (RES), which provides a web-based portal for administrators to create and manage secure cloud-based research environments. This allows scientists to visualize data and run interactive applications without needing deep cloud expertise [32].

Q4: How can we ensure our cloud adoption strategy is financially sustainable? Research institutions struggle to democratize cloud access in a financially sustainable way. Key practices include:

- Implementing FinOps: Using mechanisms to track project costs for both charge-back and show-back to ensure financial transparency [32].

- Utilizing Cost Tools: Leveraging frameworks like the Cloud Value Framework and Cost Optimization Flywheel for cost control and forecasting [32].

- Exploring Waivers: Investigating programs like the Global Data Egress Waiver to help predictably budget monthly cloud spend [32].

Q5: Our dataset was generated in a controlled environment. Will it work for field conditions? Models trained solely on controlled-environment data (e.g., greenhouses) may not perform accurately in the field. A dataset from a cloud-based automatic data acquisition system (CADAS) specifically notes this limitation. It is recommended to combine your controlled-environment dataset with field data to enhance model robustness and reduce performance gaps [33].

Troubleshooting Common Experimental Issues

Issue 1: Data Integration Errors from Multiple Sensors

- Problem: Inability to fuse data in real-time from multiple imaging sensors (e.g., RGB, spectral) on a phenotyping platform, leading to misaligned or unusable data.

- Solution: Implement a data registration and fusion method using established algorithms. One proven methodology involves using Zhang's calibration and a feature point extraction algorithm to calculate a homography matrix, which aligns the images. Experimental validation of this method shows a registration RMSE that does not exceed 3 pixels [34].

- Prevention: Ensure consistent sensor positioning and regular calibration checks as part of the experimental protocol.

Issue 2: "Camera Busy" Errors in Automated Image Acquisition Systems

- Problem: During automated, multi-camera image acquisition, the system throws a "camera busy" error, halting data collection.

- Solution: This is a known issue in systems like those using the gPhoto2 library. The solution implemented in the Cloud-based Automatic Data Acquisition System (CADAS) is to terminate the process identification number (PID) that is preventing the camera from operating within each capture loop. This reliably resolves the conflict [33].

- Prevention: Use a robust bash script that includes this PID termination step as a standard part of the image capture cycle.

Issue 3: Managing and Analyzing Extremely Large Phenotyping Datasets

- Problem: High-throughput phenotyping (HTP) platforms generate massive amounts of temporal and spatial data, impeding analysis and storage [1].

- Solution: Deploy a big data pipeline based on a robust architecture like the Lambda architecture. This is specifically designed for HTP data and can handle both real-time and batch processing for comprehensive analysis [35]. Furthermore, leverage machine learning (ML) and deep learning (DL) approaches to automatically extract useful information and phenotypes from these large datasets [1].

Experimental Protocols & Methodologies

Protocol 1: Cloud-Based Automatic Data Acquisition for Weed and Crop Imaging

This protocol details the methodology for setting up an automated system to capture plant images for deep learning-based weed detection [33].

- 1. Objective: To automate the acquisition of crop and weed images at fixed time intervals, accounting for different plant growth stages, thereby overcoming the labor-intensive nature of manual data collection.

- 2. Materials:

- Cameras: Twelve Canon EOS T7 and three EOS 90D visible spectrum digital cameras.

- Computer: A desktop computer (e.g., Dell with Intel Core i7 processor) running a Linux operating system, to act as the central control unit.

- Software: gPhoto2 image acquisition software (IAS) and a custom bash script.

- Storage: A 4TB external hard drive and an Amazon Web Services (AWS) S3 bucket for cloud storage.

- Connectivity: USB extension cables, a USB hub, and a Verizon wireless device for internet.

- Power: Power adapters for all cameras to enable continuous operation.

- 3. Method:

- System Setup: Mount the 15 cameras over the plant benches. Connect all cameras to the desktop computer via the USB hub and extension cables. Connect the external hard drive and ensure internet connectivity.

- Script Configuration: Develop and deploy a custom bash script on the control computer. The script should:

- Scan all cameras connected to the USB hub.

- Create a dedicated directory for each camera.

- In a loop for all 15 cameras, execute a command to capture an image.

- Terminate any process causing a "camera busy" error.

- Download the image to the local system.

- Move the image to the external hard drive and copy it to the AWS S3 bucket.

- Wait for a set time interval (e.g., 30 minutes) before repeating the loop.

- Data Post-Processing:

- Data Cleaning: Manually review the acquired images and remove poor-quality images from the dataset.

- Data Labeling: Use labeling software (e.g., Labelmg) on a single image from each day to designate each crop and weed plant as a distinct object, generating bounding box information.

- Automated Labeling: Use a Python script to automatically apply the labels from the reference image to all other images from that day, leveraging the fixed camera position.

- Image Cropping: Use a Python cropping script with the labeled text files to create individual, class-specific images of crops and weeds.

Protocol 2: High-Throughput Field Phenotyping using a Gantry-Style Robot

This protocol describes the use of an adjustable phenotyping robot for high-throughput data collection in field conditions [34].

- 1. Objective: To perform non-destructive, high-throughput phenotyping in both dry and paddy fields, adapting to different row spacing and carrying a high-payload sensor gimbal.

- 2. Materials:

- Phenotyping Robot: A gantry-style chassis with an adjustable wheel track (1400–1600 mm).

- Sensor Gimbal: A six-degree-of-freedom gimbal with high payload capacity, allowing precise height (1016–2096 mm) and angle adjustments.

- Imaging Sensors: Multiple integrated imaging sensors (e.g., RGB, hyperspectral).

- 3. Method:

- Robot Calibration: Adjust the robot's wheel track to match the row spacing of the experimental field to minimize crop damage.

- Sensor Registration and Fusion:

- Use Zhang's calibration method to calibrate each imaging sensor.

- Employ a feature point extraction algorithm to identify common points across the different sensor images.

- Calculate a homography matrix to enable the registration and fusion of data from the multiple sensors.

- Data Acquisition: Navigate the robot to fixed positions within the field. At each position, adjust the gimbal's height and angle as required, and trigger the multi-sensor data acquisition system.

- Validation: Validate the data quality by comparing the robot's sensor readings with handheld instruments. A strong correlation (e.g., r² > 0.90) confirms practicality and reliability.

Data Presentation

Quantitative Market Data for Plant Phenotyping

Table 1: Global Plant Phenotyping Market Forecast [36]

| Metric | Value (2025) | Value (2035) | Compound Annual Growth Rate (CAGR) |

|---|---|---|---|

| Market Size | USD 216.7 Million | USD 601.7 Million | 11.0% |

Table 2: Plant Phenotyping Market CAGR by Segment (2025-2035) [36]

| Segment | Example Technology | Projected CAGR |

|---|---|---|

| Sensors | Hyperspectral & Multispectral Sensors | 12.8% |

| Software | Data Management & Integration Software | 12.5% |

| Equipment | Growth Chambers / Phytotrons | 11.8% |

Table 3: Key Regional Focus Areas in Plant Phenotyping [36]

| Region | Primary Investment Focus | Key Driver |

|---|---|---|

| USA | AI-driven automation and high-throughput imaging. | Speed and precision for crop breeding. |

| Western Europe | Multi-sensor fusion and carbon-neutral technologies. | EU Green Deal and sustainability policies. |

| Japan / South Korea | Compact, cost-effective, lab-scale systems. | Space efficiency and affordability. |

Workflow Visualization

Data Flow in a Cloud-Based Phenotyping System

Cloud phenotyping data workflow.

High-Throughput Phenotyping Protocol

Automated image acquisition protocol.

The Scientist's Toolkit

Essential Research Reagents & Platforms

Table 4: Key Platforms and Software for Plant Phenotyping

| Item Name | Category | Function / Description |

|---|---|---|

| LemnaTec Scanalyzer System | High-Throughput Platform | An automated platform used for non-invasive, high-throughput phenotyping of various stresses in controlled environments [1]. |

| gPhoto2 Library | Software Library | A set of software applications and libraries for controlling digital cameras on Unix-like systems, enabling automated image capture [33]. |

| Labelmg | Software Tool | Used for the manual labeling and annotation of images to generate bounding box information for object detection models [33]. |

| Research & Engineering Studio (RES) on AWS | Cloud Platform | An open-source, web-based portal that allows administrators to create and manage secure cloud-based research environments without requiring deep cloud expertise from scientists [32]. |

| Hyperspectral Sensors | Sensor | Advanced sensors that capture data across many wavelengths, used for detecting plant health, chlorophyll content, and disease stress non-invasively [36]. |

FAQs and Troubleshooting for Plant Phenotyping Data Management

This technical support center addresses common challenges researchers face when implementing data standards in high-throughput plant phenotyping. These questions and solutions are framed within the broader context of overcoming data handling challenges to ensure findable, accessible, interoperable, and reusable (FAIR) data.

FAQ 1: What is the first step to make my phenotyping data MIAPPE-compliant?

Answer: The foundational step is to collect the minimum required metadata about your study. MIAPPE v1.2 provides a clear checklist for this purpose [37]. The core information you must provide includes:

- Study Description: A clear title, unique identifier, and description of the study [38].

- Investigation Details: The associated investigation's unique ID, title, and contact information [38].

- Experimental Context: The study's start and end date, geographic location (country, site, latitude, longitude), and a description of the growth facility [38].

- Biological Material: Unambiguous information about the germplasm (e.g., genotype, species) used in the experiment [39].

- Data File Links: A clear link and description for the data file generated by the study [38].

FAQ 2: My data is spread across multiple files and formats. How can PHIS help integrate it?

Answer: The Phenotyping Hybrid Information System (PHIS) is specifically designed to integrate multi-source and multi-scale data through its ontology-driven architecture [39]. Its key features address integration challenges:

- Unambiguous Identification: PHIS assigns unique identities to all objects in an experiment (e.g., plants, sensors, plots) and establishes their relationships using ontologies and semantics [39].

- Event Association: It links events, such as plant positions or annotations, to the relevant objects, making them easily traceable [39].

- Web Service Interoperability: PHIS can interoperate with external resources via web services, allowing data to be integrated into modeling platforms or other databases [39].

Troubleshooting Guide: If you encounter issues while importing data into PHIS, use the provided OpenSILEX Python tool, which offers programmable methods for creating experiments and importing data, ensuring consistency and saving time [40].

FAQ 3: I am using ISA-Tab. How do I correctly represent my experimental design for a field trial?

Answer: In the ISA-Tab format, the experimental design is primarily described in the Investigation file's "Study Design Descriptors" section [38].

- Step 1: In the

Study Design Typefield, provide a term from a controlled ontology. For a field trial, you would use a class from the Crop Research Ontology (CO), such asCO_715:0000145for a "complete block design" [38]. - Step 2: Use the

Comment[Study Design Description]field to provide a detailed, human-readable description of the design (e.g., "Lines were repeated twice at each location using a complete block design...") [38]. - Step 3: Define your

Observation Unit Level Hierarchy(e.g., field > block > plot > plant) and describe theObservation Unitin the respective comment fields [38].

FAQ 4: What are the most common data quality issues in phenotyping, and how can I fix them?

Answer: High-throughput phenotyping generates vast amounts of data that are prone to specific quality issues. The table below summarizes common problems and their solutions.

| Data Quality Issue | Description | Recommended Solution |

|---|---|---|

| Duplicate Data | Redundant records from multiple sources or system silos that skew analytics [41]. | Implement rule-based data quality management and de-duplication tools to detect and merge records [42]. |

| Non-Standardized Data | Inconsistent formats, units, or terminologies across data sources hamper analysis [42]. | Enforce standardization at the point of collection. Specify required formats and naming conventions [42]. |

| Missing Values | Gaps in the data that can severely impact analyses and lead to misleading insights [42]. | Employ data imputation techniques to estimate missing values or flag gaps for future collection [42]. |

| Outdated Information | Data that decays over time and misguides strategic decisions [41]. | Establish a regular data update schedule and use automated systems to flag old data for review [42]. |

| Inaccurate Data | Typos, misinformation, or incorrect entries that lead to flawed insights [42]. | Implement validation rules and data verification processes during data entry [42]. |

FAQ 5: How do PHIS, MIAPPE, and ISA-Tab work together?

Answer: These standards and tools form a complementary ecosystem for managing phenotyping data.

- MIAPPE is the content standard. It defines what metadata and data need to be reported to adequately describe a phenotyping experiment [37].

- ISA-Tab is a data exchange format. It is one of the implementations that can be used to structure and format your data and metadata according to the MIAPPE specification in a plain-text, tab-delimited format [38] [43].

- PHIS is an active information system. It is an ontology-driven platform that you can use to manage your experiments, integrating both the MIAPPE-standardized metadata and the actual phenotypic data, and making them accessible via a web interface and APIs [39] [40].

The following workflow diagram illustrates how these components interact in a typical data management pipeline.

Standardized Experimental Protocols for Data Collection

Adopting standardized protocols is critical for ensuring the consistency, reproducibility, and reusability of phenotyping data. Below are detailed methodologies for key experiments cited in the field.

Protocol 1: Canopy Height Estimation using UAS and SfM-MVS

This protocol details the high-throughput estimation of canopy height, a key architectural trait [44].

- 1. Experimental Setup: Establish ground control points (GCPs) throughout the field for georeferencing and model accuracy validation.

- 2. Image Acquisition: Use a UAS (drone) equipped with a high-resolution RGB camera. Fly the UAS over the field plot at a consistent altitude to capture overlapping images (≥80% front and side overlap) throughout the growing season. Conduct flights at consistent times of day to minimize shadow effects.

- 3. 3D Reconstruction: Upload the images to a photogrammetric software suite (e.g., Agisoft Metashape). Use the Structure from Motion and Multi-View Stereo (SfM-MVS) algorithm to generate a dense 3D point cloud of the canopy and a digital elevation model (DEM) of the ground surface.

- 4. Height Calculation: Generate a digital surface model (DSM) from the canopy point cloud. Subtract the DEM from the DSM to create a crop surface model (CSM), where each point represents the height of the canopy above the ground [44].

- 5. Data Output: The CSM provides a raster map of canopy height. Average values can be extracted for individual plots for statistical analysis and genotype comparison.

Protocol 2: Canopy Coverage Analysis using EasyPCC

This protocol measures canopy coverage, an indicator of crop growth and ground cover, using a robust segmentation method [44].

- 1. Image Collection: Capture high-resolution RGB images of the plots using a UAS or a ground-based vehicle. Ensure images are taken under uniform lighting conditions where possible.

- 2. Segmentation Model Application: Process the images using the EasyPCC application, which is based on the Decision Tree Segmentation Model (DTSM). This machine learning-based method is robust to varying illumination and shadows [44].

- 3. Pixel Classification: The DTSM classifies each pixel in the image as either "plant" or "background" (soil, etc.).

- 4. Coverage Calculation: The software calculates the percentage of "plant" pixels relative to the total number of pixels in the region of interest (e.g., a single plot).

- 5. Data Output: The primary output is a quantitative canopy coverage percentage for each plot. By conducting sequential imaging, a growth curve can be constructed to track development over time [44].

The following table details key resources and tools essential for implementing data standards in plant phenotyping research.

| Resource/Tool | Function |

|---|---|

| MIAPPE Checklist | The core specification document that provides a list of mandatory and recommended metadata to describe a phenotyping experiment [37]. |

| ISA-Tab Templates | Pre-formatted text file templates (Investigation, Study, Assay) that guide the structured reporting of MIAPPE-compliant metadata and data [38]. |

| PHIS (Phenotyping Hybrid Information System) | An open-source, ontology-driven information system for integrating, managing, and sharing multi-source phenotyping data from field and controlled conditions [39]. |

| Breeding API (BrAPI) | A standardized web service API that facilitates interoperability between different phenotyping databases and tools, and implements MIAPPE standards [37] [43]. |

| OpenSILEX Python Tool | A programmable tool for interacting with the PHIS system, allowing researchers to create experiments and import data via scripts for automation [40]. |

High-throughput phenotyping (HTP) using unmanned aerial vehicles (UAVs) has emerged as a transformative technology for plant research and breeding, capable of generating massive volumes of spectral and imagery data across large experimental areas [45] [46]. While this approach enables rapid, non-destructive measurements of plant health, architecture, and physiology, it simultaneously creates significant data handling challenges that can bottleneck research progress [47] [14]. The integration of robust data analytics pipelines with UAV-based data collection is therefore not merely advantageous but essential for translating raw sensor data into biologically meaningful insights.

This case study examines the successful implementation of an end-to-end phenotyping pipeline within a wheat breeding program, focusing specifically on the data management架构 and troubleshooting strategies employed to overcome common integration challenges. The methodologies and solutions presented serve as a replicable model for researchers facing similar hurdles in managing the complex data lifecycle from acquisition to analysis in high-throughput plant phenotyping research.

Experimental Framework & Design

Plant Materials and Growth Conditions

The case study involved a wheat mapping population consisting of 180 recombinant inbred lines (RILs) developed from a cross between the heat-tolerant 'Halberd' and moderately heat-susceptible 'Len' cultivars [46]. These were planted in an alpha lattice design with two replications, creating 364 individual plots. The experiment was conducted under both well-watered (WW) and drought (DR) conditions to evaluate drought resistance traits, with soil moisture content monitored regularly throughout the reproductive growth stages (jointing, heading, flowering, and grain filling) [45].

UAV Platform and Sensor Configuration

The data acquisition platform utilized a UAV equipped with multiple sensors to capture different aspects of plant physiology and structure:

- Multispectral sensors for capturing vegetation indices related to canopy structure, chlorophyll content, and water status

- RGB sensors for high-resolution color imagery and morphological assessment

- LiDAR for creating detailed 3D structural models of plant architecture [46]

Flights were conducted regularly throughout the growing season with careful attention to flight altitude, image overlap, and sensor calibration to ensure consistent, high-quality data collection [48].

Data Analytics Pipeline Architecture

The integrated analytics pipeline transformed raw UAV data into actionable insights through a multi-stage process:

Key Research Reagents and Computational Tools

Table 1: Essential research reagents and computational tools for UAV-based phenotyping pipelines

| Category | Specific Tool/Platform | Function in Pipeline | Application Example |

|---|---|---|---|

| UAV Platforms | DJI Enterprise Drones | Reliable flight platform for sensor deployment | Consistent data acquisition across growing season [48] |

| Sensor Technologies | Multispectral, RGB, LiDAR | Capture canopy structure, color, and reflectance | Measuring vegetation indices (NDVI, EVI, NDRE) [45] [46] |

| Data Management | Laboratory Information Management Systems (LIMS) | Centralized data repository and version control | Creating single source of truth for experimental data [47] |

| Analytical Software | R, Python with scikit-learn | Statistical analysis and machine learning implementation | Yield prediction models from spectral features [45] [49] |

| Cloud Platforms | Hiphen Cloverfield, Custom solutions | Data processing, storage, and collaboration | Automated extraction of agronomic traits from UAV imagery [48] |

Implementation Challenges and Troubleshooting Guide

Frequently Asked Questions (FAQs)

Table 2: Common technical challenges and their solutions in UAV phenotyping workflows

| Challenge Category | Specific Issue | Root Cause | Solution | Preventive Measures |

|---|---|---|---|---|

| Data Acquisition | Insufficient image resolution for analysis | Incorrect flight altitude or sensor choice | Reflight with optimized parameters | Calculate ground sampling distance pre-flight; match sensor to trait [48] |

| Data Quality | Inaccurate georeferencing between timepoints | Lack of permanent Ground Control Points (GCPs) | Implement stable, surveyed GCPs | Place and maintain GCPs before first flight; use RTK/PPK GPS [48] |

| Data Processing | Gaps in field maps (orthomosaics) | Inadequate front/side overlap (e.g., <70%) | Reacquire data with proper overlap (80/70% recommended) | Validate flight parameters using mission planning software [48] |

| Sensor Configuration | Inconsistent vegetation indices across dates | Varying weather conditions and sun angles | Use radiometric calibration panels | Include calibration targets in every flight; standardize timing [48] |