Navigating Protocol Variation in Quantitative Plant Experiments: A Guide to Robust and Reproducible Research

This article addresses the critical challenge of protocol variation in quantitative plant experiments, a key factor affecting the reproducibility and robustness of research findings in plant biology and related fields.

Navigating Protocol Variation in Quantitative Plant Experiments: A Guide to Robust and Reproducible Research

Abstract

This article addresses the critical challenge of protocol variation in quantitative plant experiments, a key factor affecting the reproducibility and robustness of research findings in plant biology and related fields. It explores the foundational principles of quantitative plant biology, from historical precedents to modern computational modeling. The content provides methodological guidance for high-throughput phenotyping and standardized procedures, offers troubleshooting strategies for common experimental variations, and discusses validation frameworks for comparing outcomes across studies. Aimed at researchers, scientists, and development professionals, this comprehensive resource synthesizes current best practices to enhance experimental reliability and facilitate knowledge transfer from basic plant research to applied biomedical contexts.

The Foundations of Quantitative Plant Biology: From Mendel to Modern Modeling

Frequently Asked Questions (FAQs)

Q1: What are the common reasons for not observing Mendel's expected 3:1 ratio in F2 offspring? Deviations from the expected 3:1 ratio can occur due to insufficient sample size, as Mendel himself used thousands of plants to establish this average [1]. Other factors include reduced viability or germination failure of certain genotypes, or the presence of non-Mendelian inheritance patterns like epistasis, which Mendel himself inferred in later bean experiments [2]. Ensuring pure-breeding parental lines (homozygous) and controlling cross-pollination are critical.

Q2: How can environmental variation be minimized in quantitative plant experiments? Environmental variation can be minimized by using controlled growth conditions, standardized protocols for growth substrate and watering, and employing experimental designs that account for spatial inhomogeneities [3]. This includes using randomized complete block designs (RCBD) or augmented designs, and monitoring microclimatic conditions with sensor networks to account for fluctuations [3] [4].

Q3: What strategies can be used to evaluate a large number of genotypes with limited seeds? Augmented experimental designs are highly efficient for this purpose. These designs involve replicating a limited number of check or control genotypes throughout the experiment, while a large number of new test genotypes are included only once. This allows for control of environmental variability across the field while maximizing the number of genotypes that can be evaluated with limited seed [4].

Q4: How is Mendel's work relevant to modern crop improvement? Mendel's principles form the basis for understanding the inheritance of quantitative traits. Modern techniques like QTL mapping and genome editing (e.g., CRISPR/Cas9) rely on the fundamental concepts of segregation and independent assortment to identify and engineer genes controlling complex traits such as yield and plant architecture [2] [5]. This allows for the precise manipulation of allelic variation to enhance crop performance [6].

Troubleshooting Guides

Issue: Unexpected Phenotypic Ratios in Progeny

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient sample size | Calculate if the deviation from the expected ratio is statistically significant using a chi-square test. | Increase the number of plants in the crossing experiment to reduce sampling error [1]. |

| Impure parental lines (not true-breeding) | Self-cross parental plants for another generation; if traits are not uniform, the line is not homozygous. | Generate new, genetically pure parental lines through repeated self-fertilization and selection [1] [7]. |

| Accidental cross-pollination | Review physical isolation procedures during cultivation and crossing. | In plants like peas, ensure flowers are properly emasculated and bagged to prevent unwanted pollen transfer [7]. |

| Biological interactions (e.g., epistasis) | Perform test crosses to isolate the trait of interest. | Consult literature for known gene interactions; treat the interacting gene complex as a single locus in analysis [2]. |

Issue: High Phenotypic Variability Obscuring Genotypic Effects

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Environmental micro-variation | Monitor and record environmental parameters (light, temperature, humidity) across the growth area. | Use a randomized block or augmented row-column experimental design to account for spatial trends [3] [4]. |

| Variation in seed quality or size | Measure seed size/weight and test germination rates before planting. | Use seeds from the same propagation batch; consider seed size as a covariate in data analysis [3]. |

| Unaccounted genotype-by-environment interaction (GEI) | Grow the same genotypes in multiple, distinct environments (e.g., different chambers or fields). | Characterize the stability of genotypes across environments; select for stable genotypes if the goal is broad adaptation [4]. |

Quantitative Data from Mendel's Experiments

| Characteristic | Dominant Phenotype (Count) | Recessive Phenotype (Count) | Ratio (Dominant:Recessive) |

|---|---|---|---|

| Seed Color | Yellow (6,022) | Green (2,001) | 3.01:1 |

| Seed Shape | Round (5,474) | Wrinkled (1,850) | 2.96:1 |

| Pod Color | Green (428) | Yellow (152) | 2.82:1 |

| Pod Shape | Inflated (882) | Constricted (299) | 2.95:1 |

| Flower Color | Violet (705) | White (224) | 3.15:1 |

| Flower Position | Axial (651) | Terminal (207) | 3.14:1 |

| Plant Height | Tall (787) | Dwarf (277) | 2.84:1 |

Table 2: Expected Genotypic and Phenotypic Ratios in Mendelian Crosses

| Cross Type | Parental Genotypes | F1 Genotype | F2 Genotypic Ratio | F2 Phenotypic Ratio |

|---|---|---|---|---|

| Monohybrid | AA x aa | Aa | 1 AA : 2 Aa : 1 aa | 3 Dominant : 1 Recessive [1] |

| Dihybrid | AABB x aabb | AaBb | 1 AABB : 2 AABb : 1 AAbb :2 AaBB : 4 AaBb : 2 Aabb :1 aaBB : 2 aaBb : 1 aabb | 9 AB : 3 Abb : 3 aaB : 1 aabb [1] |

Experimental Protocols

- Plant Material: Use true-breeding (homozygous) parental lines with contrasting traits.

- Selection: Identify young flower buds on the female parent plant where the petals are still closed.

- Emasculation: Gently open the petal sheath and carefully remove all anthers using fine forceps. This prevents self-pollination.

- Bagging: Cover the emasculated flower with a small bag to prevent contamination from foreign pollen.

- Pollination: After 24-48 hours, transfer pollen from the mature anthers of the male parent flower to the stigma of the emasculated female flower.

- Re-bagging: Label the cross and re-bag the flower to protect the developing pod.

- Seed Collection: Allow the pod to mature fully on the plant before harvesting the F1 seeds.

Protocol 2: Generating an F2 Population and Scoring Traits

- Plant F1 Seeds: Sow the F1 seeds obtained from the initial cross. All plants in this generation should display the dominant phenotype and are heterozygous (Aa) [7].

- Self-pollination: Allow the F1 plants to self-fertilize naturally or through controlled methods to produce the F2 generation.

- Plant F2 Population: Sow a sufficiently large population of F2 seeds (e.g., >200 plants) to ensure accurate ratio estimation [1].

- Data Collection: As the plants develop, carefully score each individual for the trait(s) of interest (e.g., seed shape, flower color). Classify them into clear, discrete categories.

- Statistical Analysis: Compare the observed counts of each phenotype to the expected Mendelian ratio using a statistical test like chi-square (χ²).

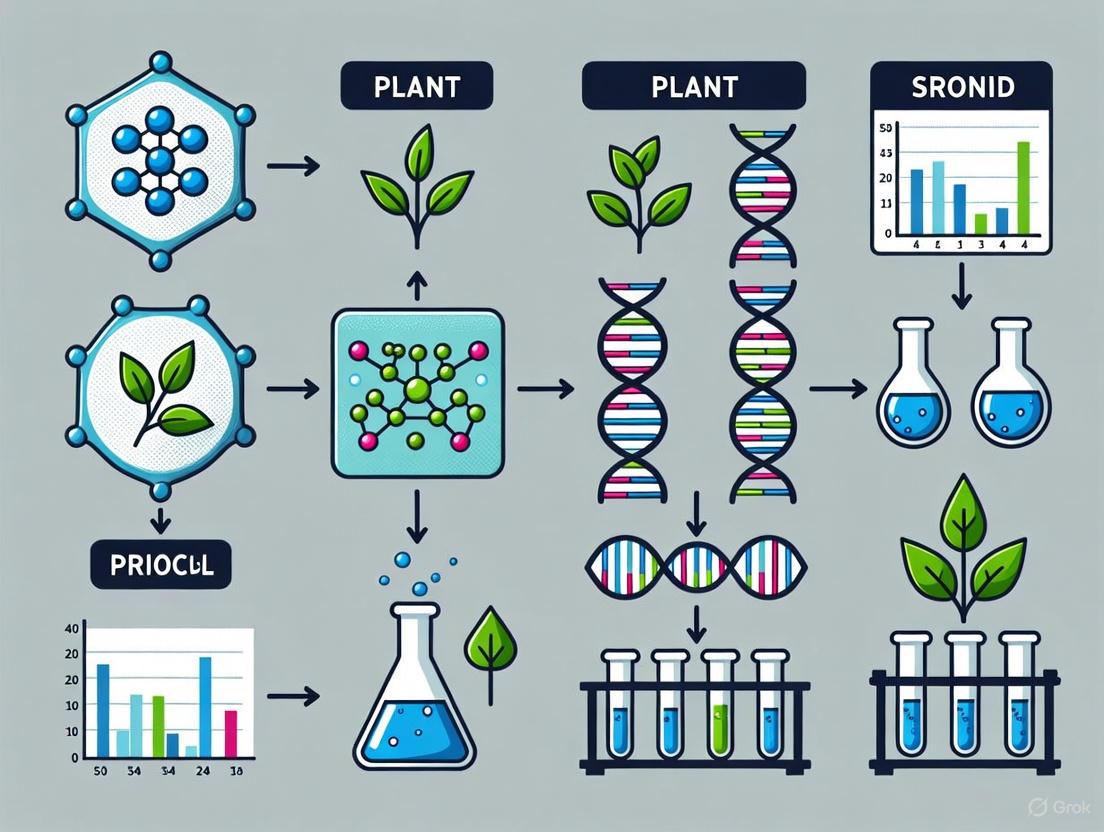

Visualized Workflows and Relationships

Mendel's Monohybrid Cross Workflow

From Genotype to Phenotype

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Mendelian Genetics Experiments

| Item | Function in Experiment |

|---|---|

| True-breeding Plant Lines | Serve as homozygous parental generations (P0) with consistent, predictable inheritance of traits [1] [7]. |

| Growth Chambers/Greenhouses | Provide controlled environmental conditions to minimize unwanted environmental variance (GxE) [3]. |

| Fine Forceps & Dissecting Scopes | Essential tools for precise emasculation and cross-pollination of plant flowers [7]. |

| Pollen Transfer Brushes | Used for applying pollen from the male parent to the stigma of the female parent during controlled crosses [1]. |

| Isolation Bags | Prevent accidental cross-pollination by wind or insects, ensuring the purity of the generated crosses [7]. |

| Molecular Markers | Modern tool for genotyping plants, allowing direct confirmation of homozygosity/heterozygosity without phenotyping [8] [6]. |

In quantitative plant experiments, protocol variation refers to any non-compliance or divergence from the approved study design and procedures. This variation can be unintentional or planned and is defined as "any change, divergence, or departure from the study design or procedures defined in the protocol" [9]. Understanding, managing, and minimizing these variations is crucial because they can significantly affect the completeness, accuracy, and reliability of study data [9] [10]. For plant researchers, controlling technical variance is paramount, as the biological variance in protein expression can only be accurately accessed if the technical variance of the quantification method is low in comparison [11] [12]. This guide provides troubleshooting and FAQs to help you identify, manage, and reduce protocol variation in your work.

FAQs: Common Questions on Protocol Variation

What is the difference between a protocol deviation and a protocol violation?

A protocol deviation is a broad term for any non-compliance with the approved protocol. Deviations may or may not affect a participant's eligibility or the data's integrity [10].

A significant or serious protocol deviation is a specific subset that increases the potential risk to participants or affects the integrity of the study data. The significance can increase with numerous deviations of the same nature [10]. The term "violation" is often used interchangeably with "significant deviation."

Why is protocol harmonization important in multi-laboratory plant studies?

Protocol harmonization—aligning experimental procedures across different laboratories—is critical for ensuring the replicability of results. A major multi-lab study found that harmonizing protocols across laboratories substantially reduced between-lab variability compared to each lab using its own local protocol [13]. This reduction in technical variance is essential for detecting true biological signals in collaborative plant science research.

How can I distinguish between biological and technical replicates to avoid pseudo-replication?

This is a common source of error in statistical analysis [14].

- Biological replicates are independent samples from different biological sources (e.g., different plants grown separately). They capture biological variation.

- Technical replicates are repeated measurements of the same sample (e.g., loading the same plant extract multiple times on a gel). They assess the measurement precision of your protocol.

Troubleshooting Tip: Using technical replicates as if they were biological replicates is called pseudo-replication and artificially inflates your sample size, leading to spurious statistical significance [14]. Always base your primary statistical tests on biological replicates.

What are the reporting requirements for a protocol deviation?

Requirements vary, but generally, the site investigator or designee must report deviations promptly. For example, one guideline requires reporting within ten working days of the site becoming aware of the issue [10]. The specific workflow for reporting and reviewing a deviation can be mapped as follows:

Troubleshooting Guide: Identifying and Resolving Common Issues

Problem: High Technical Variance Obscuring Biological Results

- Symptoms: Inconsistent quantitative results (e.g., from mass spectrometry or phenotyping), inability to replicate findings, high variability between experimental replicates.

- Possible Cause & Solution:

- Cause: Samples are being combined too late in the experimental workflow, leading to differential losses and handling errors.

- Solution: Implement a metabolic labelling technique (e.g., ¹⁵N labelling) that allows labelled and unlabelled plant tissues to be combined immediately at harvest. One study demonstrated that this early combination minimizes technical variance more effectively than methods where samples are processed separately and combined later [11] [12]. The core principle of this method is to introduce an internal standard at the very beginning of the workflow:

Problem: Low Reproducibility Between Labs

- Symptoms: The same experiment, when run in different laboratories or by different researchers, produces conflicting results.

- Possible Cause & Solution:

- Cause: Subtle differences in lab-specific protocols (e.g., watering regimes, light intensity, handling) introduce uncontrolled variation [13] [3].

- Solution: Harmonize the protocol across all participating labs. The multi-lab study on preclinical research showed that moving from local protocols to a fully harmonized protocol drastically reduced between-lab variability [13]. Furthermore, ensure detailed, step-by-step protocols are documented and shared.

Problem: Unaccounted Environmental Variation in High-Throughput Phenotyping

- Symptoms: Unexplained growth variability among plant replicates in a controlled environment, trends related to plant position in the growth chamber.

- Possible Cause & Solution:

- Cause: Microclimatic fluctuations (light, temperature, humidity) within growth chambers and greenhouses, even in controlled settings [3].

- Solution: Use a wireless sensor network (WSN) to continuously monitor environmental conditions at the level of individual plants. Incorporate this data into your experimental design with sufficient randomization and replication to account for spatial inhomogeneities [3].

Quantitative Data on Protocol Deviations and Variance

Understanding the scale and impact of protocol deviations is easier with benchmarking data. The following table summarizes findings from a large analysis of clinical trials, which illustrates the pervasive nature of protocol deviations [15].

Table 1: Benchmarking Protocol Deviation Incidence

| Protocol Phase | Mean Number of Deviations per Protocol | Percentage of Patients Affected |

|---|---|---|

| Phase II | 75 | ~33% |

| Phase III | 119 | ~33% |

| Disease Area | ||

| Oncology | Highest relative number | >40% |

Furthermore, a systematic study dissecting the sources of technical variance in quantitative proteomics provides a blueprint for evaluating your own workflows. The key finding was that the lowest technical variance was achieved when samples were combined at the tissue stage [12]. The variance components from a multi-lab animal study further highlight the impact of harmonization, as shown in the table below.

Table 2: Impact of Protocol Harmonization on Between-Lab Variance [13]

| Experimental Protocol | Variance Due to Between-Lab Differences | Variance Due to Drug-Treatment-by-Lab Interaction |

|---|---|---|

| Local Protocol (Non-Harmonized) | 33.19% | 25.23% |

| Harmonized Protocol (Standardized) | 18.67% | 7.57% |

The Scientist's Toolkit: Key Research Reagent Solutions

Selecting the right reagents and tools is fundamental to controlling protocol variation.

Table 3: Essential Research Reagents and Materials for Managing Protocol Variation

| Reagent / Material | Function in Managing Variation | Application Example |

|---|---|---|

| ¹⁵N Isotope Labeled Salts | Enables metabolic labelling for creating an internal standard. Allows samples to be combined at the start of the workflow, minimizing technical variance from sample processing. | Quantitative plant proteomics using ¹⁵N-enriched potassium nitrate as the sole nitrogen source in growth media [11] [12]. |

| Standardized Growth Media | Provides a uniform and controlled nutritional environment, reducing variability in plant growth and development between experiments and labs. | Using precisely defined media, such as Gamborg B5 or Murashige and Skoog, for plant callus cultures [12]. |

| Wireless Sensor Networks (WSN) | Monitors microclimatic conditions (light, temperature, humidity) in real-time, allowing researchers to account for environmental inhomogeneities in their experimental design and data analysis. | High-throughput phenotyping systems in greenhouses or phytochambers [3]. |

| Automated Image Analysis Software | Provides objective, high-throughput quantification of phenotypic traits from images, reducing observer bias and increasing reproducibility. | Software like IAP or PhenoPhyte for analyzing plant growth in HT phenotyping systems [3]. |

FAQs and Troubleshooting Guides

This section addresses common challenges researchers face when selecting and implementing computational models in quantitative plant experiments.

FAQ 1: How do I choose between a mechanistic model and a pattern/statistical model for my plant biology study?

Your choice should be guided by your research goal, the availability of prior knowledge on the system's mechanisms, and the amount of data you have.

- Solution: Use the following decision table to guide your experimental design.

| Criterion | Mechanistic Model | Pattern Model (e.g., Machine Learning) |

|---|---|---|

| Primary Goal | To understand underlying causal mechanisms and generate hypotheses [16]. | To predict outcomes based on patterns in data, without needing causal insight [16]. |

| Data Requirements | Can be calibrated and validated with relatively small datasets [16]. | Requires large amounts of data to train and validate [16]. |

| Handling Complexity | Difficult to accurately incorporate information from multiple space and time scales [16]. | Can tackle problems with multiple space and time scales effectively [16]. |

| Predictive Capability | Once validated, can predict system behavior under new, untested conditions (deductive capability) [16]. | Predictions are limited to patterns within the scope of the supplied data; cannot extrapolate to entirely new conditions (inductive capability) [16]. |

| Ideal Application | Modeling specific physiological processes like nutrient uptake or hormone signaling [16]. | High-throughput phenotyping analysis, image-based classification of plant health, and genomic selection [16] [17]. |

FAQ 2: My high-throughput phenotyping experiment is producing noisy data with high variability. How can I ensure my model is reliable?

Variation in automated plant cultivation and imaging systems can be introduced by environmental inhomogeneities [17].

- Troubleshooting Steps:

- Optimize Growth Protocols: Standardize and precisely document growth substrate, soil coverage, and watering regimes to minimize non-biological variation [17].

- Experimental Design: Use experimental designs that account for environmental gradients within growth chambers (e.g., randomized complete block designs) [17]. The use of an F-protected Least Significant Difference (LSD) test, where mean comparison tests are only conducted after a significant F-test in the ANOVA, is a more conservative and valid approach to identify true treatment effects [18].

- Validation: Correlate the variation observed in the high-throughput system with field data to ensure the results are biologically relevant [17].

FAQ 3: When comparing multiple treatment means, what is the most appropriate statistical method to avoid false positives?

Using pairwise comparison procedures indiscriminately to locate any chance difference greatly increases the probability of a Type I error (falsely declaring a significant difference) [18].

- Solution: Select a mean comparison procedure based on your experimental design.

- Pre-planned Comparisons (Contrasts): If your treatment structure suggests specific, meaningful comparisons (e.g., Treatment A vs. Control, Group X vs. Group Y), use planned t-tests or F-tests (contrasts). This approach does not require a significant overall F-test and provides more sensitive tests [18].

- Multiple Comparison Procedures: If you are exploring a large number of qualitative treatments (e.g., many different cultivars) with no pre-specified hypotheses, use a multiple comparison test like Tukey's Honestly Significant Difference (HSD), which is more conservative. The F-protected LSD is also appropriate here but should be used primarily to compare adjacent means in an ordered array [18].

- Trend Analysis: For quantitative treatments like fertilizer rates, trend analysis or regression techniques are more appropriate than multiple comparisons for examining functional relationships [18].

Experimental Protocols for Key Modeling Approaches

Protocol 1: Developing a Mechanistic Model (e.g., for Nutrient Uptake)

Objective: To create a mathematical model that represents the causal relationship between soil nutrient concentration and plant uptake based on known physio-chemical principles.

Methodology:

- Hypothesis Formulation: Define the causal mechanisms to be tested. For example, "Nutrient uptake is driven by a combination of diffusion and active transport across the root membrane."

- Model Construction: Translate the biological hypotheses into a system of mathematical equations (e.g., differential equations) that represent the rates of change for key variables [16].

- Parameter Calibration: Use a subset of experimental data to estimate the model's parameters (e.g., transport rates, binding constants).

- Model Validation: Test the model's predictions against a separate, independent dataset not used in calibration. The model is validated if its predictions are consistent with experimental observations [16].

Protocol 2: Implementing a Pattern Recognition Model (e.g., for Disease Prediction from Leaf Images)

Objective: To train a machine learning model to accurately classify plant health status from leaf images without specifying the underlying biological mechanisms.

Methodology:

- Data Acquisition & Curation: Collect a large, labeled dataset of leaf images (e.g., healthy, nutrient-deficient, diseased). Ensure consistent imaging protocols to minimize technical noise [17].

- Feature Extraction: Identify and quantify relevant features from the images. This can be manual (e.g., color histograms, texture) or automated (e.g., using deep learning convolutional layers).

- Model Training: Select a machine learning algorithm (e.g., Random Forest, Support Vector Machine, Neural Network) and "train" it on a portion of your data to learn the patterns that map image features to health status [16].

- Model Testing: Evaluate the trained model's performance on a held-out test set of data that it has never seen before, reporting metrics like accuracy, precision, and recall.

The Scientist's Toolkit: Research Reagent Solutions

| Essential Material / Resource | Function in Computational Modeling |

|---|---|

| R with Bioconductor [19] | An open-source software environment for the statistical analysis and comprehension of high-throughput genomic data. Essential for processing omics data for both mechanistic and pattern models. |

| High-Throughput Phenotyping System [17] | Automated plant cultivation and imaging systems that generate the large-scale, quantitative data on plant growth and performance required for training robust pattern recognition models. |

| Gene Ontology (GO) Resource [19] | A knowledgebase used to inform mechanistic models by providing structured, computable information on the functions of genes, such as those identified as important in a machine learning analysis. |

| The Arabidopsis Information Resource (TAIR) [19] | A curated database of genetic and molecular biology data for the model plant Arabidopsis thaliana. Serves as a key source of information for building and parameterizing mechanistic models. |

| Experimental Design & Data Analysis for Biologists [19] | Reference texts that provide the foundational statistical principles for designing valid experiments and analyzing the resulting data, which is critical for generating high-quality data for any model. |

A Synergistic Workflow: Integrating Both Paradigms

The most powerful approach often combines the strengths of both modeling paradigms. A common synergistic workflow is outlined below.

FAQs: Core Concepts and Definitions

What is the difference between 'repeatability,' 'replicability,' and 'reproducibility'? In agricultural and plant research, these terms describe different levels of research confirmation. The definitions below are synthesized from common usage in the field, which can sometimes differ from other scientific disciplines [20].

- Repeatability: The ability of a single research group to obtain consistent results when an experiment or analysis is repeated under the same conditions, using the same methods and equipment. This is often assessed within a single study [20].

- Replicability: The ability of the same research team to obtain consistent results across multiple studies directed at the same question, often conducted in different environments (e.g., multiple seasons or locations). This involves collecting new data using the same methods [20].

- Reproducibility: The ability of an independent research team to obtain consistent results using its own methods and data. This can involve a new field experiment with different conditions or using different models to confirm prior findings. It is the strongest form of external validation [20].

Why is there a "reproducibility crisis" in science, and how does it affect plant research? The term "replication crisis" originated in psychology in the early 2010s and has since been recognized in fields like biology, medicine, and economics [21]. It refers to widespread difficulties in independently replicating or reproducing published scientific findings. In plant science, this is driven by several factors:

- Pressure to Publish: "Publish or perish" culture can lead to cutting corners and reduced internal confirmation before publication [20].

- Questionable Research Practices: This includes flexible data analysis ("p-hacking"), formulating hypotheses after results are known ("HARKing"), and selective publication of only positive results ("publication bias") [20].

- Inadequate Experimental Design: Low statistical power, insufficient replication, and incomplete documentation of protocols and environmental conditions [3] [20].

- Complexity of Systems: Plant research involves complex interactions between genetics, environment, and management, making it inherently variable and difficult to reproduce perfectly [3].

How can I determine if my experimental results are robust and reproducible? Robustness is increased by integrating key principles into your experimental design from the start [22].

- Replication: Repeating treatments on multiple experimental units (e.g., plots) to increase the accuracy of your results and measure consistency [22].

- Randomization: Randomly assigning treatments to experimental units to avoid unintentional bias. For example, randomizing which hybrid is planted in which field ensures that soil effects are left to chance [22].

- Design Control: Using techniques like "blocking" to group experimental units into homogenous sets (blocks). This helps account for underlying heterogeneity (e.g., a fertility gradient across a field) and reduces unwanted error variation [22].

Troubleshooting Guide: Common Experimental Pitfalls and Solutions

Problem: Inconsistent Results Between Field Trials

Symptoms: A treatment shows a significant effect in one growing season or location but fails to do so in another.

Potential Causes and Solutions:

Cause 1: Unaccounted Environmental Variation Plant phenotype (Pt) is a function of initial field conditions (Ft=0), genetics (G), environment (Et), and management (Mt) [20]. Natural variation in Et (weather, soil micro-variability) is often the largest source of inconsistency.

- Solution:

- Monitor Microclimate: Use wireless sensor networks to continuously track temperature, humidity, light intensity, and soil conditions within your experiment area [3].

- Characterize Soils: Document soil properties (texture, pH, organic matter) at the beginning of the experiment (Ft=0) [20].

- Report Fully: Use standardized vocabularies like the ICASA standards to thoroughly document Et and Mt in your methods section [20].

- Solution:

Cause 2: Inadequate Replication and Randomization

- Solution:

- Increase Replication: More replicates increase precision and allow for a better measure of repeatability. Ensure your replication is sufficient to detect the effect size you expect [22].

- Randomize Rigorously: Use a randomized complete block design (RCBD) or similar to control for spatial variation. Blocking treatments within homogenous areas of the field accounts for soil heterogeneity [22].

- Solution:

Problem: Inability to Reproduce a Published Study

Symptoms: You cannot achieve results comparable to a previously published study, even when following the described methods.

Potential Causes and Solutions:

Cause 1: Incomplete Methodological Documentation Published methods often lack critical details on plant cultivation, measurement protocols, or data analysis [3] [21].

- Solution:

- Contact the Authors: Request detailed protocols directly.

- Use Protocol Repositories: For your own work, publish detailed protocols on platforms like

protocols.io, which can be assigned a DOI for permanent, citable access [20]. - Share Code and Data: Ensure computational reproducibility by publishing analysis scripts and raw data where possible.

- Solution:

Cause 2: Uncontrolled Parental and Seed History The phenotype is influenced by the genotype (G), environment (E), and the phenotype (vitality) of its parents (GxExP). Seed size, quality, and the environmental conditions of the parental generation can add variability [3].

- Solution:

- Use Simultaneously Propagated Seed: For a given experiment series, use seed material that was produced in the same parental generation under uniform conditions [3].

- Measure and Account for Seed Size: Record seed size and consider it as a covariate in your statistical analysis to adjust for its effect on early growth [3].

- Solution:

Quantitative Data on Reproducibility

The table below summarizes quantitative findings related to reproducibility and replication efforts in scientific research.

| Metric | Field / Context | Value | Source / Context |

|---|---|---|---|

| Replication Rate | Psychology | 58% of registered reports are replication studies [21] | |

| Publication Rate of Replications | Psychology | Only ~3% of published papers are replications [21] | |

| Publication Rate of Replications | Education | Less than 1% of published papers are replications [21] | |

| Publication Rate of Replications | Marketing | 1.2% of published papers are replications [21] |

Experimental Protocols for Enhancing Reproducibility

Protocol 1: Standardized Documentation for Field Experiments

Adopting a standardized framework is crucial for ensuring that your experiments can be understood, replicated, and reproduced by others. The following workflow outlines the key information to document at each stage of a plant science experiment [3] [20].

Detailed Procedures:

Document Initial Field Conditions (Ft=0) [20]:

- Soil Analysis: Test for pH, texture, organic matter, and key nutrient levels at the start of the experiment.

- Site History: Record previous crops, amendments, and any treatments applied to the area.

Standardize Plant Genetics (G) and History [3]:

- Use seeds from a single, well-documented propagation cycle.

- Record seed size and quality metrics. If possible, use seeds that have been quality-tested (e.g., for germination rate).

Precisely Define Management Practices (Mt) [20]:

- Document all activities: sowing date and depth, fertilization (product, rate, date), irrigation (volumes, timing, method), and pest control.

- Adhere to the ICASA data standards for a consistent vocabulary when reporting these practices.

Monitor Environment (Et) Continuously [3] [20]:

- Deploy sensors for light intensity, air temperature, humidity, and soil moisture.

- Note that environmental gradients exist even in controlled growth chambers; sensor networks help quantify this variation.

Implement Robust Phenotyping (Pt) Protocols [3] [20]:

- Use automated, high-throughput phenotyping systems where available to reduce human error and increase throughput.

- Provide detailed measurement protocols. For yield, this includes the harvested plot area, handling of border rows, threshing methods, and moisture content determination.

Protocol 2: Utilizing Registered Reports for Replication Studies

A Registered Report is a publication format where the study plan is peer-reviewed and accepted before data is collected. This format is ideal for replication studies, as it removes the bias against publishing null or non-significant results [21].

Workflow:

Phase 1: Protocol Development

- Develop a detailed study plan including introduction, hypotheses, methods, and analysis plan.

- Submit the plan to a journal offering Registered Reports.

Phase 1: Peer Review

- Reviewers assess the importance of the research question and the rigor of the proposed methodology.

- If the plan is approved, the journal commits to publishing the final paper regardless of the outcome.

Phase 2: Data Collection & Analysis

- Conduct the experiment and analyze the data exactly as described in the approved plan.

- Any deviations from the pre-registered plan must be reported and justified.

Phase 2: Manuscript Completion

- Write the full manuscript, including results and discussion.

- The final manuscript is reviewed again to ensure it adheres to the pre-registered plan.

The Disease Triangle: A Framework for Diagnosing Plant Problems

A fundamental concept in plant pathology is the "Disease Triangle," which states that for an infectious disease to occur, three factors must be present simultaneously: a susceptible host, a virulent pathogen, and a favorable environment. You can use this model to diagnose issues and break the triangle to protect your plants [23].

Strategies for Intervention:

- Break the "Susceptible Host" Side: Choose disease-resistant plant varieties when available [23].

- Break the "Virulent Pathogen" Side: Practice good sanitation by removing diseased plant material and disinfecting tools to reduce pathogen levels [23].

- Break the "Favorable Environment" Side: Modify the environment to make it less hospitable to the pathogen. This can include improving air circulation via plant spacing, avoiding overhead watering, and ensuring proper soil drainage [23].

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key materials and reagents used in modern quantitative plant experiments, particularly those focused on phenotyping and genetic analysis.

| Item Name | Function / Application | Key Considerations |

|---|---|---|

| High-Throughput Phenotyping Systems (e.g., LemnaTec Scanalyzer) [3] | Automated, non-invasive monitoring of plant growth and performance over time. Captures morphological and physiological data from large populations. | Systems can be "sensor-to-plant" or "plant-to-sensor." Critical to standardize growth protocols to maximize data reproducibility. |

| CRISPR/Cas9 Genome Editing Tools [5] | Used to create precise mutations in plant genomes, allowing researchers to engineer quantitative trait variation (e.g., in promoters to fine-tune gene expression). | Enables the generation of novel genetic diversity for crop improvement in a targeted manner, beyond relying on natural variation. |

| Wireless Sensor Networks (WSN) [3] | Continuous, spatially dense monitoring of environmental conditions (light, temperature, humidity, soil moisture) within experiments. | Essential for quantifying microclimatic fluctuations that contribute to phenotypic variation and are a major source of non-reproducibility. |

| ICASA/AgMIP Data Standards [20] | A standardized vocabulary and data architecture for documenting field experiments, including management practices, environmental data, and measurements. | Promotes data interoperability and ensures that experiments are described with sufficient detail for reproduction. |

| Automated Image Analysis Software (e.g., IAP, Rosette Tracker) [3] | Software pipelines that extract quantitative phenotypic traits (e.g., leaf area, plant height) from images captured by phenotyping systems. | Replaces subjective, manual scoring. The choice of software and its settings must be documented for analysis reproducibility. |

Foundational Concepts: Defining the Triad of Research Reliability

This section defines the core concepts of robustness, replicability, and reproducibility, which are fundamental to ensuring the reliability of scientific research in experimental biology. Precise terminology is critical, as these terms are often used inconsistently across disciplines [24].

What is the difference between replicability and reproducibility?

The terms "replicability" and "reproducibility" are frequently conflated, but making a distinction is crucial for diagnosing where issues in an experiment may lie [24]. The definitions below synthesize usage from computational, biological, and agricultural sciences to provide a clear framework.

- Replicability refers to the ability of a researcher to obtain consistent results when repeating a study using the original data, code, and computational methods [24]. It focuses on verifying the computational analysis of the same dataset. In some fields, this is termed "repeatability" or "computational reproducibility" [20].

- Reproducibility refers to the ability of an independent research team to obtain consistent results by conducting a new, independent study directed at the same scientific question [24] [20]. This involves collecting new data, often under different but related conditions (e.g., a new season, a new location, or with a slightly different model organism). A reproducible finding holds true beyond the narrow context of the original experiment.

What is robustness, and how does it differ from reproducibility?

Robustness is a related but distinct concept that describes how broadly a scientific conclusion holds true.

- Narrow Robustness: A result is narrowly robust if it can be confirmed by precisely replicating the original experiment. The effect is only observed when the experimental conditions are duplicated exactly [25].

- Broad Robustness: A result is broadly robust if it can be confirmed by performing different experiments that test the same underlying hypothesis under a range of different circumstances, with varying covariates and sources of noise [25]. Findings with broad robustness have greater explanatory power and are more likely to be fundamental.

Why are these concepts especially critical in quantitative plant experiments?

Research in plant science is particularly vulnerable to challenges in reproducibility and replicability due to the complex interaction of genotype (G), environment (E), and management (M), which collectively determine a plant's phenotype (P~t~). This can be expressed as: P~t~ = f(F~t=0~, G, E~t~, M~t~) + ε~t~ [20]

Where F~t=0~ represents initial field conditions and ε~t~ represents random error. The inherent variability in E~t~ (environment) across seasons and locations, combined with often incomplete reporting of M~t~ (management practices) and F~t=0~, makes independent confirmation of results a significant challenge [20].

Troubleshooting Guide: Ensuring Reliability in Your Experiments

Pre-Experiment Checklist

What are the most common sources of irreproducibility in plant biology?

| Category | Specific Issue | Preventive Action |

|---|---|---|

| Experimental Design | Inadequate sample size or replication [20] | Perform a priori power analysis; consult a statistician. |

| Unaccounted for environmental gradients [3] | Use randomized block designs; map and measure environmental inhomogeneities. | |

| Protocol Documentation | Vague or incomplete methods [20] | Use detailed, standardized protocols (e.g., on protocols.io); specify all reagents and equipment. |

| Uncontrolled parental plant and seed history [3] | Standardize seed propagation; record and account for seed size and quality. | |

| Data & Analysis | Flexibility in data analysis ("p-hacking") [20] | Pre-register analysis plans; blind researchers to treatment groups during data collection and initial analysis. |

| Selective reporting of results [24] | Report all experimental outcomes, including non-significant results. |

Frequently Asked Questions (FAQ)

Q: My lab cannot replicate a published study's findings. Where should I start troubleshooting? A: Begin by systematically checking for protocol variation. First, contact the corresponding author to request the original protocol, and ask specific questions about details often omitted from publications, such as the exact brand of growth substrate, the specific watering regime, and the precise settings for environmental chambers [3]. Second, scrutinize your own seed source and quality, as the physiological status of the parental plants can significantly affect offspring phenotype [3].

Q: We followed the protocol exactly, but our results are still inconsistent. What could be wrong? A: "Hidden variables" in your experimental environment are a likely culprit. Even in controlled growth chambers, microclimatic fluctuations occur. Implement a wireless sensor network (WSN) to continuously monitor light intensity, spectrum, temperature, humidity, and CO~2~ levels at the level of individual plants or plots [3]. This data can reveal environmental inhomogeneities that introduce variability and can be used as covariates in your statistical analysis to increase detection power.

Q: How can I design my experiment to maximize its broader robustness from the start? A: To ensure your findings are broadly robust, deliberately introduce controlled variation at the experimental design stage. This could include:

- Using multiple, genetically distinct lines of your model plant or crop.

- Repeating the experiment across more than one growing season or in multiple controlled-environment chambers with slightly different settings.

- Testing your hypothesis under a range of relevant abiotic stresses (e.g., mild drought, nutrient limitation) rather than only in optimal conditions [25]. A finding that holds across this variation is more likely to be fundamental.

Q: What is the minimum level of methodological detail required for my paper to be reproducible? A: A reproducible methods section must allow an independent researcher to recreate your study system precisely. For plant research, this requires detailed reporting on:

- Plant Material: Genus, species, cultivar/accession name, seed source, and propagation history [3].

- Growth Conditions: A full description of the growth environment, including media/substrate, pot size, temperature, photoperiod, light intensity and quality, watering regime, and fertilization schedule [3] [20].

- Experimental Setup: A detailed layout, including randomization and replication schemes.

- Data Collection: Precise descriptions of how and when each phenotypic trait was measured, including instrument make and model, software, and version.

Standardized Experimental Protocols for Reproducible Research

This section provides a detailed methodology, adapted from a multi-laboratory ring trial, for conducting reproducible plant-microbiome experiments [26] [27]. The use of such standardized protocols is critical for minimizing inter-laboratory variation.

Protocol: Reproducible Plant-Microbiome Interactions in EcoFAB 2.0

Background: This protocol uses a fabricated ecosystem (EcoFAB 2.0) and a defined synthetic microbial community (SynCom) to create a highly controlled and reproducible system for studying plant-microbiome interactions [26] [27].

Key Research Reagent Solutions

| Item | Function in the Experiment | Specific Example / Notes |

|---|---|---|

| EcoFAB 2.0 Device | A sterile, transparent growth chamber that allows for root imaging and controlled nutrient delivery [26] [27]. | Provides a standardized habitat. |

| Synthetic Community (SynCom) | A defined mixture of bacterial strains that reduces the complexity of natural microbiomes for mechanistic studies [26]. | Example: A 17-member SynCom for the grass Brachypodium distachyon available from a public biobank (DSMZ). |

| Model Plant | A well-characterized plant species with established genetic tools. | Brachypodium distachyon (model grass) or Arabidopsis thaliana. |

| Growth Chamber | Provides controlled environmental conditions (light, temperature, humidity). | Data loggers are essential to continuously monitor and record actual conditions [3]. |

Step-by-Step Workflow:

- Device Assembly & Plant Preparation: Assemble the sterile EcoFAB 2.0 devices. Dehusk and surface-sterilize Brachypodium distachyon seeds, then stratify them at 4°C for 3 days [26] [27].

- Germination: Germinate the sterilized seeds on agar plates for 3 days [26].

- Transfer to EcoFAB: Aseptically transfer the seedlings to the EcoFAB 2.0 device containing a defined growth medium. Allow plants to grow for an additional 4 days [26] [27].

- Sterility Check & Inoculation: Test the sterility of the system by incubating spent medium on LB agar plates. Inoculate the plants with the prepared SynCom (e.g., 1 x 10^5^ bacterial cells per plant). Maintain a set of axenic (non-inoculated) control plants [26].

- Growth & Maintenance: Grow plants under controlled conditions, refilling water as needed. Conduct root imaging at predetermined timepoints [26] [27].

- Harvest & Sampling: At the end of the experiment (e.g., 22 days after inoculation), harvest plant shoots and roots for biomass measurement. Collect root and media samples for downstream analyses such as 16S rRNA amplicon sequencing and metabolomics via LC-MS/MS [26].

Visual Guide to Experimental Workflow

Troubleshooting Common Issues:

- Problem: Microbial Contamination.

- Solution: Strictly adhere to surface sterilization protocols. Always include axenic controls and test for sterility by plating spent medium on nutrient-rich agar at multiple time points [26].

- Problem: High variability in plant growth phenotypes between replicates.

- Solution: Standardize seed size and quality, as these are major sources of variation. Control for the life cycle history of the parental generation. Use environmental sensor data to account for microclimatic fluctuations [3].

- Problem: Inconsistent microbiome assembly, especially in the absence of a dominant bacterial strain.

- Solution: As demonstrated in the ring trial, the final community structure can be highly variable without a strong dominant colonizer. Including a positive control community with a known dominant strain (e.g., SynCom17 with Paraburkholderia sp.) can help benchmark system performance [26].

Quantitative Data & Benchmarking

Table: Representative Data from a Multi-Laboratory Reproducibility Study [26]

This table summarizes key results from a ring trial conducted across five independent laboratories (A-E) using the standardized protocol above. It demonstrates the level of consistency that can be achieved for various data types.

| Data Type / Metric | Axenic Control (Mean ± SD) | SynCom16 Inoculated (Mean ± SD) | SynCom17 Inoculated (Mean ± SD) | Consistency Across Labs? |

|---|---|---|---|---|

| Shoot Fresh Weight (mg) | 25.5 ± 4.2 | 22.1 ± 3.8 | 18.3 ± 3.5 | Yes (Significant decrease with SynCom17) |

| Root Biomass (mg) | 12.8 ± 2.5 | 11.5 ± 2.1 | 9.1 ± 1.9 | Yes (Significant decrease with SynCom17) |

| Dominant Root Colonizer | N/A | Rhodococcus sp. (68% ± 33%) | Paraburkholderia sp. (98% ± 0.03%) | Yes (Highly consistent for SynCom17) |

| Sterility Test Failure Rate | <1% of all control tests | - | - | Yes (High sterility achieved) |

Implementing Standardized Protocols for High-Throughput Plant Phenotyping

Optimizing Experimental Design for High-Throughput Phenotyping Systems

Frequently Asked Questions (FAQs) and Troubleshooting Guides

Section 1: Experimental Design and Statistical Analysis

FAQ 1.1: What are the fundamental principles of experimental design I must follow in a high-throughput phenotyping (HTPP) experiment?

The fundamental principles of replication, randomization, and blocking are non-negotiable for generating reliable and reproducible data [17] [28].

- Replication: A replicate is a copy of a treatment applied to a different experimental unit. It helps estimate the inherent variability in your experiment, allowing you to determine if observed differences between treatments are genuine. The number of replicates needed depends on the expected variance and the size of the effect you wish to detect [28].

- Randomization: This is the process of randomly allocating treatments to experimental units (e.g., pots, field plots). It minimizes bias by ensuring that every treatment has an equal chance of being assigned to any unit. This accounts for unanticipated environmental gradients, such as subtle differences in light or temperature in a growth chamber [28].

- Blocking: This technique controls for known or anticipated variability. You group experimental units into blocks that are internally homogeneous. For example, in a greenhouse, a bench might be one block, and the treatments are randomized within it. This accounts for local variation, reducing the residual error and increasing the precision of your treatment comparisons [28].

FAQ 1.2: My phenotyping system produces massive amounts of image data. How do I ensure my data remains usable and valuable long-term?

Proper data management is critical to avoid "drowning" in the data generated by automated systems [29]. Adherence to the FAIR principles—Findable, Accessible, Interoperable, and Reusable—is recommended [30].

- Troubleshooting Guide:

- Problem: Data is stored in an ad-hoc manner on personal hard drives without consistent annotation.

- Solution: Implement a robust data management plan from the start. Use dedicated platforms (e.g., GnpIS, PIPPA) that support the MIAPPE (Minimal Information About a Plant Phenotyping Experiment) standard for describing your experiments [29] [30]. This ensures that all necessary metadata about the genotype, environment, and experimental design is captured, enabling data sharing and meta-analyses.

FAQ 1.3: After my ANOVA shows a significant treatment effect, how should I compare individual treatment means?

Using a protected Fisher's Least Significant Difference (LSD) test is a common approach. This means you only proceed with pairwise mean comparisons if the initial ANOVA F-test is significant [18].

- Troubleshooting Guide:

- Problem: Indiscriminately comparing all possible pairs of means without a significant F-test dramatically increases the probability of a Type I error (falsely declaring a significant difference).

- Solution: Use the F-protected LSD. The formula for the LSD is:

LSD = t * √(2 * Error Mean Square / r)where 't' is the critical t-value for your chosen significance level, 'Error Mean Square' comes from your ANOVA table, and 'r' is the number of replications [18]. For more complex treatment structures, consider using planned contrasts or a more conservative test like Tukey's HSD [18].

The table below summarizes key statistical tests for mean comparisons.

Table 1: Statistical Methods for Comparing Treatment Means in Phenotyping Experiments

| Method | Best Use Case | Key Consideration |

|---|---|---|

| F-protected LSD [18] | Planned comparisons of adjacent means or comparisons against a control after a significant ANOVA F-test. | Less conservative; using it for unplanned, multiple comparisons increases Type I error risk. |

| Tukey's HSD [18] | Unplanned, all-pairwise comparisons of several means. | More conservative than LSD, better controlling the family-wise error rate across all comparisons. |

| Planned Contrasts [18] | Testing specific, pre-defined hypotheses (e.g., "urea vs. nitrate sources"). | Does not require a significant overall F-test and provides more sensitive tests for specific questions. |

| Trend Analysis [18] | Analyzing the response to quantitative treatment levels (e.g., fertilizer rates, time series). | Fits a functional relationship (linear, quadratic) to describe the response curve. |

Section 2: Technical and Practical Challenges

FAQ 2.1: How reliable are the proxy traits (like "digital biomass" from images) that my HTPP system provides?

Proxy traits are useful for high-throughput screening but require rigorous calibration against ground-truth data [31].

- Troubleshooting Guide:

- Problem: Assuming a simple linear relationship between projected leaf area (from top-view images) and total plant biomass.

- Solution: Establish calibration curves by destructively harvesting a representative subset of plants throughout the experiment and across the full range of observed sizes. Research shows that the relationship between projected leaf area and total leaf area can be curvilinear, and neglecting this can lead to significant errors, even if the R² of a linear model appears high [31]. Furthermore, check if different genotypes or treatments require separate calibration curves.

FAQ 2.2: My plant size estimates from top-view images seem to fluctuate drastically throughout the day. Why?

This is a common issue caused by diurnal changes in plant physiology, specifically leaf movements like paraheliotropism [31].

- Troubleshooting Guide:

- Problem: Plant size estimates from top-view RGB images can deviate by more than 20% over a single day due to changes in leaf angle [31].

- Solution: Standardize the timing of your image acquisitions. Always capture images at the same time of day, preferably when leaf angles are most stable and consistent, to ensure data comparability across time points and treatments.

FAQ 2.3: What are the key factors to consider before investing in or using an HTPP system?

Acquiring and operating an HTPP system requires significant investment and expertise [31].

- Troubleshooting Guide:

- Problem: Underestimating the total cost of ownership and operational complexity.

- Solution: Carefully consider the following before proceeding:

- Financial and Time Investment: Account for costs beyond the initial hardware, including maintenance, software, data storage, and the specialized personnel required for operation and data analysis [31].

- Research Need: The system must be tailored to your specific research questions. A platform designed for a compromise between multiple research groups may not satisfy any single one effectively [31].

- Growth Conditions: Automated systems can impose constraints on plant handling and pot size, which may influence plant growth and the phenotype being measured [31].

The following diagram outlines the key decision points and workflow for optimizing an HTPP experiment.

Section 3: Data Management and Integration

FAQ 3.1: What is MIAPPE and why is it important for my research?

MIAPPE (Minimal Information About a Plant Phenotyping Experiment) is an emerging community standard for describing plant phenotyping experiments [29].

- Troubleshooting Guide:

- Problem: Inability to compare or integrate your phenotypic data with datasets from other research groups or public repositories.

- Solution: Adopt the MIAPPE standard to structure your metadata. This ensures that all critical information about the biological source, experimental design, and environmental conditions is captured in a consistent way, which is fundamental for data interoperability, sharing, and re-use [29] [30].

FAQ 3.2: How can I handle the integration of phenotypic data with other data types, like genomic information?

This requires a structured, ontology-driven approach to data annotation [29] [30].

- Troubleshooting Guide:

- Problem: Phenotypic data exists in isolated spreadsheets with inconsistent naming, making integration with genomic databases for genome-wide association studies (GWAS) difficult.

- Solution: Use controlled vocabularies and ontologies (e.g., Crop Ontology) to annotate your data uniquely and unambiguously. Data repositories like GnpIS are built on such models, enabling the integration and interoperability of phenotyping datasets with genotyping data, which is essential for bridging the genotype-to-phenotype gap [29] [30].

Table 2: Key Resources for High-Throughput Plant Phenotyping Experiments

| Resource Category | Specific Tool / Standard | Function and Explanation |

|---|---|---|

| Data Standards | MIAPPE [29] [30] | Provides a checklist of minimal metadata required to properly describe a phenotyping experiment, ensuring data is interpretable and reusable. |

| Ontologies | Crop Ontology [30] | Provides standardized, controlled terms for describing phenotypic traits and experimental conditions, enabling data integration across studies. |

| Data Repositories | GnpIS [29] [30] | An integrative information system for storing, sharing, and publishing plant phenotypic and genomic data in a FAIR manner. |

| Phenotyping Platforms | PlantCV [29], IAP [29] | Open-source image analysis software tools that allow users to extract phenotypic traits from image data. |

| Sensor Technologies | RGB Imaging [31] [32] | Used for measuring morphological traits like projected leaf area, plant architecture, and color. |

| Thermal Infrared Imaging [29] [32] | Measures canopy temperature as a proxy for stomatal conductance and plant water status. | |

| Chlorophyll Fluorescence Imaging [32] | Assesses the photosynthetic performance and efficiency of photosystem II. | |

| Hyperspectral Imaging [32] | Captects spectral reflectance across many wavelengths, providing information on plant biochemical composition. | |

| Statistical Methods | Protected LSD Test [18] | A statistical method for comparing treatment means after a significant result is found in the ANOVA. |

| Random Forests / LASSO [32] | Machine learning techniques used for classifying treatments (e.g., drought-stressed vs. control) and predicting complex harvest-related traits from high-dimensional phenotypic data. |

Troubleshooting Guide: Resolving Environmental Swings

Step 1: Validate Your Sensor Readings

- Action: Compare your primary sensor data with a certified, third-party handheld sensor.

- Purpose: Confirms the accuracy of your installed sensors and helps identify if a micro-climate around the sensor is causing erratic readings [33].

Step 2: Identify Patterns in Historical Data

- Action: Analyze historical data logs to correlate environmental swings with specific events.

- Common Patterns:

- Coupled decreases in humidity and temperature during intense HVAC cooling cycles.

- Swings occurring only during day-cycles due to higher heat and humidity loads from lighting.

- Increasing variation as a plant growth cycle progresses and the biological load increases [33].

Step 3: Audit Controlled Device Activity

- Action: Review activity logs for all devices controlling the environmental parameter in question.

- Devices to Investigate:

- For Temperature Swings: HVAC cooling and heating stages.

- For Humidity Swings: Dehumidifiers, humidifiers, and HVAC systems (as cooling also removes moisture) [33].

Step 4: Review and Optimize Control Logic

- Action: Examine the rule logic and setpoints for your control systems.

- Solution: Look for overlapping activation of opposing devices (e.g., a humidifier and dehumidifier running simultaneously). To resolve this, increase the deadband—the margin between the on/off setpoints—to prevent devices from working against each other and creating oscillations [33].

Step 5: Isolate Impactful Devices

- Action: Systematically remove or stage devices from the control sequence to determine their individual impact.

- Examples:

- Prevent secondary HVAC cooling stages from activating to see if rapid cooling causes swings.

- Stage dehumidifiers to run a few at a time instead of all at once to prevent over-dehumidification and overshooting the target range [33].

Frequently Asked Questions (FAQs)

Q1: My temperature and humidity readings are erratic. What is the first thing I should check? The first and most critical step is to validate your sensor readings with a certified reference sensor. This confirms whether the swings are real or a result of sensor drift or miscalibration [33] [34].

Q2: How can I prevent my humidifier and dehumidifier from fighting each other? This is typically caused by control logic that is too tight. Review your sequence of operations and implement a larger deadband between their activation setpoints. This creates a buffer zone that prevents both devices from being active in the same humidity range [33].

Q3: Why is it crucial to report detailed environmental conditions in my research? Careful measurement and reporting of environmental variables like light, temperature, and humidity are fundamental to the replicability and interpretability of plant science experiments. Inconsistent reporting hinders cross-disciplinary progress and can invalidate comparative analyses [35].

Q4: What are the most common causes of failure in an environmental chamber? Common failures include worn-out door seals, compromised insulation, failing sensors, and miscalibrated control systems. A structured maintenance plan is essential to prevent unreliable test results and unplanned downtime [36].

Q5: How often should I calibrate the humidity sensors in my growth chambers? While a common baseline is annual calibration, the ideal frequency depends on a risk assessment. Consider the sensor's historical stability, the criticality of your measurements, the operating environment's harshness, and any specific regulatory requirements (e.g., GMP, ISO) [34].

Maintenance and Calibration Schedules

Table 1: Quarterly Maintenance Tasks for Environmental Chambers

| Task | Purpose | Procedure |

|---|---|---|

| Compressor & Condenser Check | Maintains cooling efficiency and prevents overheating. | Measure refrigeration system pressures; clean condenser coils of dust and debris [36]. |

| Humidity System Inspection | Prevents blockages, corrosion, and microbial growth. | Check water filters; clear out drains and water trays [36]. |

| Electrical Systems Test | Ensures safe and reliable operation. | Test switches and verify amp draws on electrical components [36]. |

| Seal and Gasket Cleaning | Maintains chamber integrity and prevents leaks. | Clean door seals, gaskets, hinges, and air registers [36]. |

Table 2: Annual Maintenance and Calibration Tasks

| Task | Purpose | Standard/Procedure |

|---|---|---|

| Sensor Calibration | Ensures measurement accuracy and data integrity. | Calibrate all temperature and humidity sensors against NIST-traceable standards [36] [34]. |

| Performance Verification | Confirms the chamber meets its specified uniformity and ramp-rate specifications. | Assess performance across multiple setpoints and check ramp-rate capabilities [36]. |

| Mechanical Wear Assessment | Identifies and addresses wear before it causes failure. | Inspect lubrication points on bearings and other mechanical systems [36]. |

| Control System Update | Ensures operational stability and access to latest features. | Review and install firmware/software updates for digital control systems [36]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Environmental Management

| Item | Function | Application Notes |

|---|---|---|

| High-Accuracy Reference Hygrometer | Provides a traceable standard for calibrating in-situ humidity sensors. | Essential for validating primary sensor readings; should be calibrated to ISO/IEC 17025 standards [34]. |

| Chilled Mirror Dew Point Sensor | A highly accurate method for measuring absolute humidity (dew point). | Often used as a primary reference in professional calibration setups due to its fundamental measurement principle [34]. |

| IoT Environmental Sensors | Enable real-time, remote monitoring of conditions like temperature, humidity, and light. | Facilitates proactive management and data logging; integrates with control systems for automated responses [37]. |

| Saturated Salt Solutions | Create known, stable relative humidity levels in a sealed container. | Useful for basic verification of sensor function, though with higher uncertainty than professional calibration methods [34]. |

Experimental Protocol: Systematic Isolation of Environmental Drivers

Objective: To identify which controlled devices are the primary drivers of observed environmental variation.

Methodology:

- Baseline Establishment: With all control systems active, log environmental data (e.g., temperature, RH) for a full 24-hour cycle to establish the swing pattern [33].

- Device Isolation: One at a time, remove individual devices from the control sequence. For example:

- Disable stage 2 of HVAC cooling.

- Set dehumidifiers to run in a staggered sequence rather than simultaneously [33].

- Data Collection: For each isolated configuration, log environmental data for another full cycle, ensuring plant load and other conditions are comparable.

- Comparative Analysis: Overlay the data logs to visualize how the removal of each device alters the amplitude and frequency of the environmental swings. The device whose isolation most smoothes the oscillations is a primary driver.

Workflow Diagram: Troubleshooting Environmental Variation

Troubleshooting Common Experimental Protocols

FAQ: Seed Selection and Sourcing

Q: What strategies can I employ during seed selection to minimize experimental variability in my plant trials?

A: Minimizing variability begins with a strategic approach to seed selection. Key strategies include:

- Genetic Diversity: Do not rely on a single hybrid or variety. Planting a range of genotypes with diverse characteristics, such as different silking or pollination dates, spreads risk and ensures that a single stressor does not compromise your entire experiment [38].

- Data-Driven Selection: Base selections on replicated, unbiased performance data from university or third-party trials rather than marketing claims. Yield potential between hybrids of the same maturity can vary by 50–70 bushels per acre [39].

- Trait-Specific Selection: Match genetic traits to your specific experimental conditions. For drought-prone environments, select drought-tolerant hybrids, which have been shown to out-yield conventional hybrids by an average of 6 bushels per acre under water stress [39].

- Early Sourcing: Secure your seed early to ensure access to the most consistent and high-quality genetic material, as the best-performing varieties often become limited [40].

Q: How do I choose the right seed treatment for my controlled environment study?

A: The choice of seed treatment should be dictated by your experimental objectives and known biotic pressures.

- Robust Protection: Avoid bare-bones treatment packages. For studies involving early planting or in soils with known pathogen loads, a robust seed treatment with multiple modes of action is crucial to ward off subterranean insects and pathogens [38].

- Targeted Application: Upgrade from standard treatments when investigating specific diseases. For example, if your experimental system involves sudden death syndrome (SDS) in soybeans, a seed treatment with documented efficacy against SDS should be selected [38] [39].

- Control Considerations: Remember that treatments are an experimental variable. Ensure your design includes appropriate untreated controls to isolate the effect of the treatment from the intrinsic performance of the seed.

FAQ: Growth Substrate Formulation and Optimization

Q: What is a systematic, data-driven method for optimizing soilless substrate compositions?

A: Moving beyond empirical, trial-and-error methods is key to reproducibility. A practical framework is the Design–Build–Test–Learn (DBTL) cycle [41]:

- Design: Generate a wide range of substrate formulations by randomly varying the volume ratios of components (e.g., peat, vermiculite, perlite) within defined constraints.

- Build: Prepare the substrates and characterize their physical (e.g., porosity, water-holding capacity) and chemical properties.

- Test: Cultivate your model plant (e.g., garden lettuce, Arabidopsis) in the substrates under controlled conditions and collect phenotypic data.

- Learn: Use regression and machine learning models (e.g., random forest) to identify which substrate properties are key predictors of plant performance. This model then informs the next, refined Design phase for further optimization. This approach has been shown to significantly increase biomass and chlorophyll content in lettuce [41].

Q: Which non-destructive phenotyping techniques are most useful for monitoring plant responses to different substrates?

A: Imaging-based technologies are ideal for longitudinal studies as they allow repeated measurements on the same plant.

- Hyperspectral Imaging (HSI): This technique captures detailed spectral data across hundreds of wavelengths. Machine learning analysis can identify specific vegetative indices (e.g., NDVI705, mNDVI705, mSR705) that are highly responsive to changes in substrate formulation and serve as proxies for plant health and biomass [41].

- RGB Imaging: Standard color imaging can be used with automated phenotyping platforms to track growth dynamics, leaf area, and color changes non-destructively over time [41] [17].

Q: During substrate optimization, how should I handle watering and fertilization to isolate the substrate effect?

A: To accurately test the intrinsic properties of your substrates, the protocol must control for other variables.

- Fertilization: To isolate the nutrient contribution of the substrate components themselves, you may choose to irrigate with tap water only, applying no additional fertilizer during the trial period [41].

- Watering: The watering regime should be standardized. A common approach is to water daily to maintain substrate moisture near field capacity, using water with a known and consistent electrical conductivity (EC) and pH [41]. This ensures differences in plant growth are due to the substrate's water-holding and nutrient-providing capacity, not variation in water availability.

Experimental Protocols & Data Presentation

Protocol 1: Data-Driven Optimization of Soilless Substrates

This protocol outlines a reproducible method for formulating and testing growth substrates, adapted from a study on garden lettuce (Lactuca sativa L.) [41].

1. Experimental Design and Substrate Formulation

- Objective: To identify the optimal volume-based mixture of peat, vermiculite, and perlite for maximizing lettuce biomass.

- Formulation Space: Generate 100-200 substrate formulations using a randomized design where the volume percentage of each component is allowed to vary (e.g., 0-100% for each, within the constraint that the sum is 100%).

- Preparation: Calculate the mass of each component required to fill your standard pot volume. Mix components thoroughly to ensure homogeneity.

2. Growth Conditions and Plant Material

- Plant Model: Garden lettuce (e.g., Romaine-type 'Speedy Crisp No.1').

- Environment: Controlled climate chamber with set photoperiod (e.g., 16h light/8h dark), light intensity (~220 µmol·m⁻²·s⁻¹), temperature (e.g., 25/20°C day/night), and relative humidity (e.g., 85%).

- Cultivation: Sow seeds in plug trays and transplant seedlings at the two true-leaf stage into the experimental pots.

3. Data Collection and Analysis

- Destructive Measurements: After a set growth period (e.g., 4 weeks), harvest plants to measure shoot fresh weight, shoot dry weight, root dry weight, and chlorophyll content.

- Non-Destructive Phenotyping: Use hyperspectral (HSI) and RGB imaging weekly to track growth.

- Statistical Learning: Employ linear regression to identify relationships between component volume and physical properties (e.g., peat content reduces porosity). Use random forest or other machine learning models to identify key spectral indices predictive of biomass.

The workflow for this cyclic optimization process is detailed in the diagram below.

Protocol 2: Standardized Cultivation for High-Throughput Phenotyping

This protocol provides guidelines for establishing consistent plant growth conditions essential for generating reliable quantitative data in automated systems [17].

1. Pre-Experimental Setup

- Growth Substrate: Select a standardized, well-defined substrate (e.g., a specific soil-sand mixture or a commercial soilless mix). Ensure uniform filling of pots and consistent soil coverage.

- Experimental Design: Account for environmental inhomogeneities within growth chambers (e.g., light gradients, temperature fluctuations) by using randomized block designs and regularly rotating plant positions.

2. Cultivation and Monitoring

- Watering Regime: Implement a standardized, weight-based watering protocol to maintain consistent soil moisture levels across all plants and avoid drought or waterlogging stress.

- Validation: Confirm that the growth and physiological status of plants grown under these controlled conditions correspond to those observed in natural environments. Metabolite profiling can be used to verify that the plants' physiological status is not adversely affected by handling or movement within the system [17].

Table 1: Impact of Sequential Substrate Optimization on Lettuce Growth

Data generated from two rounds of a randomized substrate experiment, showing significant improvement in key growth metrics after data-driven optimization [41].

| Growth Metric | Initial Trial Performance | Optimized Trial Performance | Percent Increase | P-value |

|---|---|---|---|---|

| Shoot Biomass | Baseline | +57.5% | 57.5% | ( 9.2 \times 10^{-8} ) |

| Root Biomass | Baseline | +89.8% | 89.8% | ( 8.24 \times 10^{-10} ) |

| Chlorophyll Content | Baseline | +43.3% | 43.3% | ( < 2.0 \times 10^{-16} ) |

Table 2: Research Reagent Solutions for Substrate and Phenotyping Experiments

Essential materials and their functions for establishing reproducible cultivation and phenotyping assays [41] [17] [39].

| Reagent/Material | Specification/Function in Experiment |

|---|---|

| Peat Moss | Primary organic component of many substrates; influences water-holding capacity, porosity, and provides some nutrients. |

| Perlite & Vermiculite | Inorganic components used to adjust physical properties: aeration, drainage (perlite) and water retention (vermiculite). |

| Hyperspectral Imaging (HSI) System | Non-destructive tool for capturing detailed spectral data; used to calculate vegetation indices (e.g., NDVI705) as proxies for biomass and plant health. |

| Controlled Environment Chamber | Provides standardized, reproducible conditions for light, temperature, and humidity, critical for eliminating environmental noise. |

| Model Plant Seeds (Arabidopsis thaliana, Lactuca sativa L.) | Fast-growing species with short life cycles, ideal for high-throughput phenotypic screening of substrates or treatments. |

Diagnostic Guide: Systemic Workflow for Problem-Solving

The following diagnostic tree provides a logical pathway for investigating sub-optimal plant growth in standardized experiments, integrating principles from plant pathology and agronomy [42] [43].

High-Throughput Plant Phenotyping (HTPP) has emerged as a vital technological bridge, connecting plant genomics with agricultural performance by enabling the quantitative assessment of complex traits. As defined in recent research, plant phenotyping refers to "the determination of quantitative or qualitative values for morphological, physiological, biochemical, and performance-related properties, which act as observable proxies between gene(s) expression and environment" [44]. With the rapid growth of global population and increasing challenges in sustainable agriculture, image-based phenotyping has become indispensable for advancing crop breeding and precision agriculture [45].