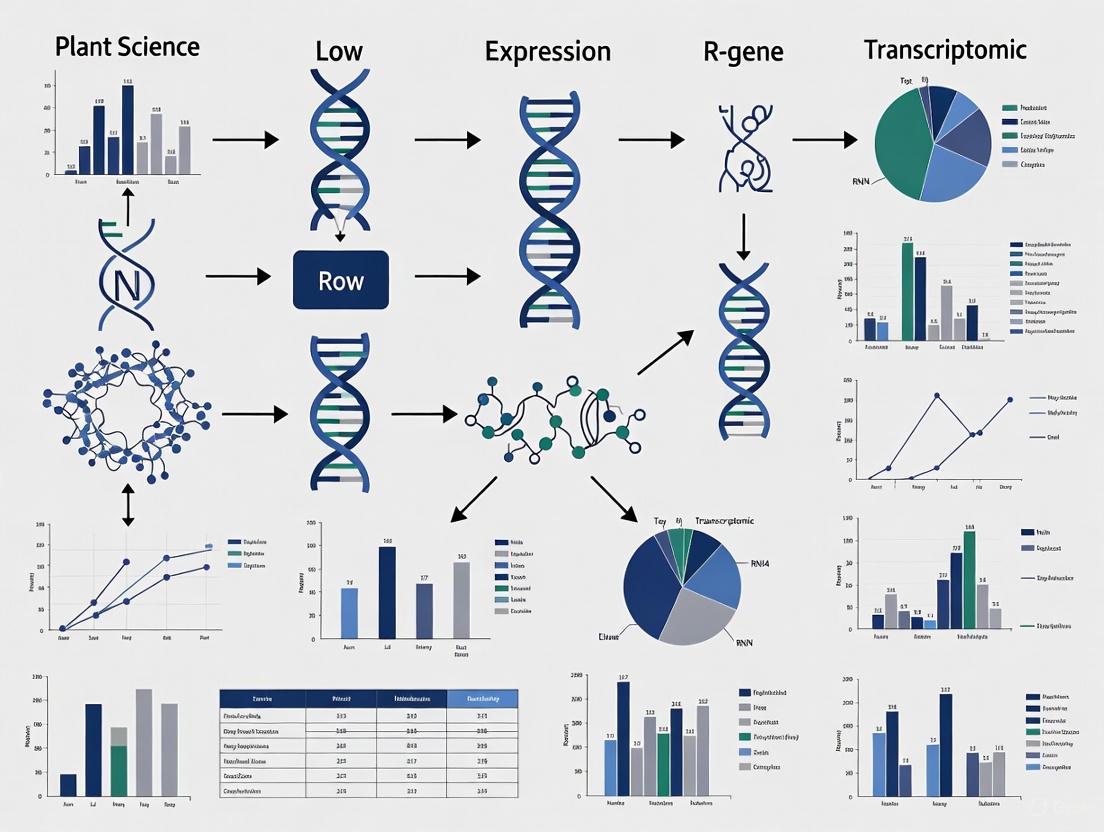

Navigating Low Expression in RNA-seq: An R/Bioconductor Guide for Robust Gene Detection and Analysis

This article provides a comprehensive guide for researchers and drug development professionals tackling the challenge of lowly expressed genes in transcriptomic studies.

Navigating Low Expression in RNA-seq: An R/Bioconductor Guide for Robust Gene Detection and Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals tackling the challenge of lowly expressed genes in transcriptomic studies. Covering the full analytical workflow, we detail foundational concepts on why low expression matters, methodological pipelines using established R/Bioconductor tools like DESeq2 and edgeR, strategies for troubleshooting and optimizing power, and finally, methods for validating findings and comparing tool performance. The content synthesizes current best practices to enhance the reliability and biological relevance of differential expression analysis, with a focus on applications in biomarker discovery and precision medicine.

Understanding the Impact and Detection of Low Expression Genes in Transcriptomic Data

Biological Significance: FAQs

FAQ: Why are low-expression genes biologically important if they are present in such small quantities?

Despite low abundance, low-expression genes are critical for controlling fundamental biological processes. Their importance is demonstrated through several key roles:

- Regulation of Critical Functions: They often encode for signaling molecules, such as those in the Wnt signaling pathway, which function through the precise expression of minute amounts of specific products to control cell fate and development [1].

- Dosage Sensitivity: Many are essential genes or genes that encode subunits of protein complexes. In these cases, even small fluctuations in expression can disrupt cellular function and reduce organism fitness, which is why they are under strong selective pressure for low expression noise [1].

- Noise Control: To maintain this precise expression, dosage-sensitive genes are frequently marked with specific epigenetic modifications, such as gene-body-localized histone marks (e.g., H3K79 methylation), which are associated with suppressing expression noise by regulating transcriptional burst frequency [1].

FAQ: How does gene expression noise differ between high- and low-expression genes?

There is a fundamental, inverse relationship between expression level and noise, but this relationship is strategically modulated based on gene function.

- The General Rule: A strong negative correlation exists between expression level and noise (cell-to-cell expression variability) across organisms. This means low-expression genes generally have higher noise [1].

- Strategic Exceptions: Biological systems overcome this constraint for critical genes. Essential genes and protein complex subunits consistently exhibit low noise despite potentially having low expression levels, ensuring their protein products are consistently available [1]. Conversely, some high-expression genes involved in processes like energy generation and autophagy can exhibit high noise, potentially benefiting the organism by enabling rapid responses to environmental changes [1].

FAQ: What is the relationship between expression noise and a gene's response to environmental changes (plasticity)?

The relationship between stochastic noise (variability under the same conditions) and plasticity (variability in response to environmental changes) depends on how essential a gene is to survival.

- In Nonessential Genes: A positive correlation exists between noise and plasticity. Genes sensitive to one type of perturbation tend to be sensitive to others [2].

- In Essential Genes: This coupling is broken. Essential genes exhibit both lower noise and lower plasticity, ensuring their stable function despite internal fluctuations or external challenges [2].

Troubleshooting Guide: Technical Noise in Low Expression Gene Analysis

Problem: I cannot detect or accurately quantify low-abundance transcripts in my RNA-seq experiment.

This is a common challenge often stemming from issues with experimental design, sample quality, or data analysis. The following workflow outlines a systematic approach to diagnosing and resolving this problem.

Diagnosis and Solutions

1. Check RNA Quality and Input

- Potential Cause: Degraded RNA or insufficient input material leads to poor library complexity and loss of low-abundance transcripts [3].

- Solutions:

- Quality Control: Use an Agilent TapeStation or Bioanalyzer to ensure a high RNA Integrity Number (RIN > 7) [4].

- Purity Check: Verify sample purity with NanoDrop. Aim for 260/280 ratio ~2.0 and 260/230 ratio between 2.0-2.2 [4].

- Input Material: Use the maximum recommended input RNA for your library prep kit. For very low-input samples (e.g., single-cells), use specialized kits like the SMARTer Stranded Total RNA-Seq Kit v2 - Pico Input Mammalian [4].

2. Verify Library Preparation and Sequencing Depth

- Potential Cause: Inefficient library preparation or insufficient sequencing reads result in inadequate coverage of rare transcripts [5] [6].

- Solutions:

- rRNA Depletion: Use ribosomal RNA depletion kits (e.g., QIAseq FastSelect) instead of poly(A) selection alone, especially if RNA is partially degraded [5] [4].

- Sequencing Depth: Increase sequencing depth. While 5-25 million reads per sample may suffice for highly expressed genes, detecting low-abundance transcripts often requires 30-200 million reads or more, depending on the complexity of the transcriptome [6].

- Read Length: For transcriptome analysis, use paired-end reads (e.g., 2x75 bp or 2x100 bp) to improve mappability and identification of splice variants [5] [6].

3. Inspect Bioinformatics Analysis Pipeline

- Potential Cause: Suboptimal read trimming, alignment, or quantification methods increase technical noise and mask true low-level expression [5] [4].

- Solutions:

- Quality Trimming: Use tools like Trimmomatic or cutadapt to remove low-quality bases and adapter sequences from raw reads [4].

- Alignment: Choose a splice-aware aligner like STAR or HISAT2 for accurate mapping across exon junctions [4].

- Quantification: For most accurate quantification of lowly expressed genes, use pseudo-alignment tools like Salmon or Kallisto. These are fast, avoid alignment biases, and perform well for isoform-level quantification [4].

Recommended Sequencing Depth for Different Study Goals

Table 1: Guidance on sequencing read depth for RNA-seq experiments, based on project aims [6].

| Study Goal | Recommended Reads Per Sample | Key Considerations |

|---|---|---|

| Gene Expression Profiling (snapshot of highly expressed genes) | 5 - 25 million | Suitable for high multiplexing of samples. Inadequate for comprehensive low-expression gene analysis. |

| Standard Differential Expression & Splicing | 30 - 60 million | A standard for most published mRNA-seq studies. Provides a more global view of gene expression. |

| In-depth Transcriptome Analysis (novel transcript assembly, low-expression detection) | 100 - 200 million+ | Required for comprehensive coverage and reliable detection of rare transcripts. |

Research Reagent Solutions

Table 2: Essential reagents and tools for studying low-expression genes, from sample prep to data analysis.

| Item | Function | Example Products/Tools |

|---|---|---|

| RNA Quality Control Kits | Assess RNA integrity (RIN) and purity for reliable library prep. | Agilent TapeStation, Bioanalyzer [4] |

| Low-Input Library Prep Kits | Generate sequencing libraries from minimal RNA input. | SMART-Seq v4 Ultra Low Input RNA kit, SMARTer Stranded Total RNA-Seq Kit v2 - Pico Input Mammalian [4] |

| rRNA Depletion Kits | Remove abundant ribosomal RNA to increase coverage of mRNA, including low-expression transcripts. | QIAseq FastSelect [4] |

| Splice-Aware Aligners | Accurately map RNA-seq reads that span exon-intron boundaries. | STAR, HISAT2 [4] |

| Pseudo-alignment Quantification Tools | Rapid and accurate quantification of transcript abundance from raw reads. | Salmon, Kallisto [4] |

Essential QC and Exploratory Data Analysis (EDA) for Low Expression

Troubleshooting Guide: FAQs on Low Expression Data

Q1: Why is my dataset dominated by low-count transcripts, and how should I handle this?

It is common for RNA-seq datasets to contain a substantial proportion of low-count transcripts. In one study on prairie grasses, approximately 60% of the 25,582 detected transcripts were classified as low-count [7]. Rather than applying arbitrary expression thresholds for filtering, modern statistical methods like DESeq2 and edgeR robust are recommended. These methods are designed to handle the inherent noisiness of low-count data by using information sharing across transcripts (shrinkage in DESeq2) or robust regression techniques (edgeR robust) [7]. This approach helps avoid discarding biologically relevant low-expression genes, such as transcription factors.

Q2: How do I differentiate true low-expression signals from technical artifacts during quality control?

Effective Quality Control (QC) for identifying low-quality libraries involves calculating specific per-cell metrics [8]. The key is to identify outliers relative to your entire dataset, as absolute thresholds vary between experiments.

The table below summarizes the essential QC metrics and their interpretation for identifying problematic cells or samples.

Table 1: Key QC Metrics for Identifying Low-Quality Libraries

| QC Metric | Description | Indication of Low Quality |

|---|---|---|

| Library Size | Total sum of counts across all endogenous genes per cell/sample. | Abnormally low values suggest RNA loss during library prep (e.g., cell lysis, inefficient cDNA capture) [8]. |

| Number of Expressed Features | Number of endogenous genes with non-zero counts per cell/sample. | Very few expressed genes indicate a failure to capture the diverse transcript population [8]. |

| Mitochondrial Read Proportion | Percentage of reads mapped to genes in the mitochondrial genome. | High proportions (outliers) suggest cell damage, as mitochondrial RNA can be enriched in perforated cells [8] [9]. |

| Spike-in Read Proportion | Percentage of reads mapped to spike-in transcripts. | High proportions relative to other samples indicate loss of endogenous RNA [8]. |

Q3: What is the best normalization method for datasets with many low-expression genes?

The choice of normalization method can significantly impact the interpretation of low-expression genes. For bulk RNA-seq data, the variance stabilizing transformation (VST) available in tools like DESeq2 is particularly useful when library sizes vary greatly, as it produces data that is roughly homoscedastic [10]. For single-cell RNA-seq data, which is characterized by an abundance of zeros, a regularized log transformation (rlog) can be beneficial, as it minimizes the differences between samples with small counts [10]. The selection should be guided by the characteristics of your dataset.

Q4: How can I visually explore my data to assess the impact of low-expression genes?

Exploratory Data Analysis (EDA) is an iterative process that uses visualization and transformation to understand data [11]. For low-expression data, the following visualizations are crucial:

- Histograms or Frequency Polygons of gene expression (e.g., log counts) across samples can reveal the overall distribution and the proportion of genes in low-expression bins [11].

- Principal Component Analysis (PCA) plots help visualize global sample relationships. If the first principal components are driven by QC metrics (like mitochondrial read proportion) rather than biological conditions, it indicates that low-quality cells are dominating the variation and should be removed [12] [8].

- Heatmaps of sample-to-sample distances or correlation matrices can help identify outliers and assess technical versus biological variability [10].

Experimental Protocol: Differential Expression Analysis for Low-Count Transcripts

This protocol is adapted from established RNA-seq workflows [13] [5] and specific considerations for low-count data [7].

1. Data Import and Preparation

- Import quantification data (e.g., from Salmon) into R using the

tximetaortximportpackages. These tools correctly handle transcript-level estimates and summarize to the gene level, also providing average transcript lengths for use as offsets [13]. - Create a metadata object (

colData) that includes sample names, experimental conditions, and paths to quantification files [13].

2. Rigorous Quality Control

- Calculate per-cell QC metrics (library size, number of features, mitochondrial proportion) using functions like

perCellQCMetrics()from thescaterpackage or similar utilities for bulk data [8] [9]. - Identify and remove outlier cells or samples using adaptive thresholds (e.g., 3 median absolute deviations (MADs) from the median) rather than fixed cut-offs. The

perCellQCFilters()function can automate this [8]. - Visualize QC metrics with histograms and PCA plots to confirm the removal of technical outliers.

3. Pre-processing and Filtering

- Filter genes to remove those with very low counts across the majority of samples. However, avoid overly stringent thresholds. A sensible approach is to filter out genes that do not have a minimum number of counts in a minimum number of samples, where these parameters are determined by the median library size and the experimental design [12] [7].

4. Normalization and Exploratory Data Analysis

- Perform normalization using methods suited for low-count data, such as the methods in DESeq2 or edgeR, which account for library size and composition biases [10] [5].

- Conduct EDA using PCA and clustering after normalization and filtering. This step verifies that the largest sources of variation in the data are biological and that sample groups replicate well [12] [10].

5. Differential Expression Analysis

- Use statistical methods that are robust for low-count transcripts. DESeq2 and edgeR robust have shown promising performance for this task [7].

- Specify the experimental design correctly in the model (e.g., ~ cell_line + treatment for a paired design) [13].

- Be cautious when specifying the degrees of freedom parameter in

edgeR robust, as it can non-trivially impact inference [7].

The following workflow diagram summarizes the key steps in this protocol.

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details essential materials and computational tools for conducting robust QC and EDA on studies involving low-expression transcripts.

Table 2: Essential Research Reagents and Computational Tools for RNA-seq Analysis

| Item/Tool Name | Function/Brief Explanation |

|---|---|

| Salmon | Alignment-free tool for fast and accurate transcript-level quantification from raw RNA-seq reads [13]. |

| tximeta / tximport | R/Bioconductor packages to import transcript-level quantifications into R and summarize to the gene level, correctly handling inferential replicates and transcript length [13]. |

| scater / SingleCellExperiment | R/Bioconductor packages providing a framework and functions for comprehensive calculation and visualization of QC metrics for single-cell and bulk RNA-seq data [8] [9]. |

| DESeq2 | An R/Bioconductor package for differential expression analysis that uses shrinkage estimators for dispersion and fold change, making it suitable for low-count transcripts [13] [10] [7]. |

| edgeR / edgeR robust | An R/Bioconductor package for differential expression. The "robust" option uses robust regression to dampen the effect of outliers, improving performance for low-counts [12] [10] [7]. |

| ERCC Spike-in RNA | A set of synthetic, known-length RNAs added to samples before library prep to monitor technical variability and assist in normalization, especially useful for low-input protocols [8] [9]. |

| FASTQC | A popular tool for quality control of raw sequencing reads, assessing sequence quality, GC content, adaptor contamination, and overrepresented sequences [5]. |

| IRIS-EDA | A user-friendly, interactive web platform that integrates multiple discovery-driven and differential expression analysis tools, facilitating EDA for users with limited computational experience [10]. |

Workflow for Informed Analysis Decisions

The diagram below outlines the logical decision process for handling low-expression data, from QC to analysis.

A technical support guide for researchers navigating RNA-Seq data analysis

Frequently Asked Questions

1. What is compositional bias in RNA-Seq data, and why is it a problem?

Compositional bias occurs when the apparent abundance of genes in one sample is skewed by the presence of a few extremely highly expressed genes in another sample. Imagine two samples where most genes are expressed at the same level, but one sample has a single gene that is massively over-expressed. This highly expressed gene "soaks up" a large proportion of the sequencing reads, making it appear as if all the other genes in that sample are under-expressed by comparison. Normalization methods that do not account for this, such as simple total count normalization, will incorrectly identify these other genes as differentially expressed [14].

2. When should I use TMM normalization over the DESeq2 method, and vice versa?

The choice can depend on your data and experimental design. For a simple two-condition experiment without replicates, the choice of normalization method (TMM, RLE, or MRN) may have minimal impact on your results [15]. However, for more complex designs, consider the following:

- TMM is considered robust against high-count genes and differences in RNA composition, making it suitable for datasets where a few genes are very highly expressed or when comparing different tissues or conditions [16].

- DESeq2's Median of Ratios (RLE) is robust to outliers and can handle large variations in expression levels. It is ideal for datasets with substantial differences in library sizes or when some genes are only expressed in a subset of samples [16]. Some large-scale comparisons have found that normalizing with the DESeq method, in combination with a negative binomial generalized linear model, provided a superior analysis approach [17].

3. My data has many lowly expressed R-genes. How does this affect normalization?

Most normalization methods, including TMM and DESeq2, operate under the key assumption that the majority of genes are not differentially expressed [14] [16]. If you are studying a specific set of genes, like R-genes, that are known to be lowly expressed and potentially differentially regulated under your experimental conditions, this assumption could theoretically be challenged. However, because these methods use data from all genes in the genome to calculate a single scaling factor per sample, the impact of a small set of low-expression genes is typically minimal. The greater risk with low-expression genes is not their effect on normalization, but the statistical challenge they pose in reliably calling them differentially expressed due to higher relative variability [17].

4. I've heard TPM and RPKM should not be used for differential expression. Why is this?

TPM and RPKM are problematic for between-sample comparisons in differential expression analysis because they do not account for RNA composition bias [18] [19]. Furthermore, these normalized counts "flatten out" the raw read data, normalizing away the sampling variance information that is critical for statistical tests in tools like DESeq2 and edgeR. A difference of a few TPM units could represent a change of 2 reads or 20 reads; you cannot tell from the normalized values alone, which severely impacts the statistical power of differential expression testing [19].

Comparison of Normalization Methods

Table 1: Key characteristics and recommendations for common RNA-Seq normalization methods. Methods like TMM and DESeq2 are designed to handle compositional bias, while TPM/RPKM are not recommended for differential expression analysis.

| Method | Implemented In | Accounts for Sequencing Depth? | Accounts for RNA Composition? | Recommended Use |

|---|---|---|---|---|

| TMM (Trimmed Mean of M-values) | edgeR [20] | Yes [16] | Yes [16] | Differential expression between samples; robust to a few highly expressed genes [16]. |

| RLE / Median of Ratios | DESeq2 [18] | Yes [16] | Yes [16] | Differential expression between samples; robust to large variations in expression [16]. |

| TPM | General use | Yes [16] | No [19] | Not for DE; comparing expression of different genes within a sample [19] [16]. |

| RPKM/FPKM | General use | Yes [16] | No [19] | Not for DE; legacy method, superseded by TPM [16]. |

| CPM | General use | Yes [16] | No | Not for DE; simple comparisons when gene length is not a factor [16]. |

Table 2: A quantitative example of how different normalization methods calculate scaling factors on the same dataset. Note how TMM factors are less correlated with library size than RLE/MRN factors [21].

| Sample | Library Size | TMM Factor [21] | RLE (DESeq2) Factor [21] | MRN Factor [21] |

|---|---|---|---|---|

| Bud 1 | ~3.2 million | 0.980 | 1.017 | 0.871 |

| Bud 2 | ~3.0 million | 0.922 | 0.809 | 0.754 |

| Bud 3 | ~2.3 million | 0.720 | 0.727 | 0.914 |

| Ant 1 | ~3.6 million | 1.058 | 0.866 | 0.793 |

| Ant 2 | ~3.4 million | 0.981 | 1.236 | 1.201 |

| Pos 1 | ~4.0 million | 1.130 | 1.282 | 1.340 |

| Pos 2 | ~4.2 million | 1.194 | 1.272 | 1.253 |

| Pos 3 | ~4.4 million | 1.241 | 1.373 | 1.293 |

Experimental Protocols

Protocol 1: Performing TMM Normalization with edgeR

This protocol outlines the steps to calculate normalization factors using the Trimmed Mean of M-values (TMM) method as implemented in the edgeR package [20].

- Load Data into DGEList: Create a DGEList object from your matrix of raw counts, specifying the experimental groups.

- Calculate Normalization Factors: Use the

calcNormFactorsfunction. This function calculates a scaling factor for each library. - Access Results: The normalization factors are stored in

dge_object$samples$norm.factors. The effective library size used in downstream modeling is the product of the original library size and this factor.

Protocol 2: Performing Median of Ratios Normalization with DESeq2

This protocol outlines the steps for the Relative Log Expression (RLE) normalization, also known as the median of ratios method, used by DESeq2 [18].

- Create DESeqDataSet: Generate a DESeqDataSet object from your count matrix and colData (sample information).

- Estimate Size Factors: The

estimateSizeFactorsfunction automatically performs the median of ratios normalization. - Access Results: The estimated size factors can be accessed using

sizeFactors(dds). DESeq2 automatically uses these factors in all subsequent steps, such asDESeq()andresults().

Workflow Visualization

The following diagram illustrates the logical steps and key differences between the TMM and DESeq2 normalization workflows.

The Scientist's Toolkit

Table 3: Essential research reagents and software solutions for implementing normalization strategies in R-gene transcriptomic studies.

| Item / Resource | Function / Description | Example / Source |

|---|---|---|

| edgeR | Bioconductor package for differential expression analysis; implements the TMM normalization method [20]. | https://bioconductor.org/packages/edgeR |

| DESeq2 | Bioconductor package for differential expression analysis; implements the median of ratios (RLE) normalization [18]. | https://bioconductor.org/packages/DESeq2 |

| R/Bioconductor | Open-source software environment for statistical computing and graphics; essential platform for running edgeR and DESeq2 [21]. | https://www.r-project.org/ |

| Tomato Fruit Set Dataset | A real RNA-Seq dataset used in methodological comparisons; consists of 34,675 genes across 9 samples from 3 stages [21]. | Maza et al. (2016) [21] |

| ERCC Spike-In Controls | Synthetic RNA molecules added to samples to help evaluate technical variation and normalization performance [17]. | Thermo Fisher Scientific |

| DGEList Object | The S4 object class used by edgeR to store read counts, sample information, and normalization factors [20]. | DGEList() function in edgeR |

| DESeqDataSet Object | The S4 object class used by DESeq2 to store read counts, sample information, and intermediate statistics like size factors [18]. | DESeqDataSetFromMatrix() function in DESeq2 |

Diagnostic Plots for Library Size, Zeros, and Dispersion

Why is there a high percentage of zero counts in my single-cell RNA-seq data, and how can I diagnose the causes?

A high proportion of zero counts, or dropout, is a well-known characteristic of single-cell RNA-seq (scRNA-seq) data, which can be substantially higher than in bulk RNA-seq data [22]. To diagnose the causes, you should investigate both technical and biological factors.

Key Factors Influencing Dropout Rates:

- Expression Level: Lowly expressed genes are more susceptible to dropout due to lower sequencing depth per cell [22].

- Gene Features: Technical factors such as gene length, GC content, and the length of the 3' UTR (UTR3) can influence whether a transcript is successfully captured and sequenced [22].

- Biological Properties: The number of transcripts per gene and RNA integrity are biological factors that also contribute to the occurrence of zeros [22].

- Library Size: A low total number of reads per cell increases the chance that a transcript is not detected [22].

Diagnostic Steps:

- Create a Pseudo-bulk Profile: Aggregate counts from all cells in a sample to create a pseudo-bulk sample. Compare the zero rates between the true single-cell data and the pseudo-bulk data [22].

- Investigate Gene-Level Factors: Use machine learning models (e.g., random forest) to evaluate the influence of factors like gene length and GC content on the dropout rate for your specific dataset [22].

- Visualize Data Sparsity: Use quality control tools like

Loupe Browserto visualize the distribution of UMI counts and the number of features (genes) per cell barcode. Filter out low-quality cells that are likely empty droplets or multiplets [23].

My log-ratio analysis (e.g., PCA) is dominated by library size differences. How can I identify and correct for this?

Log-ratio analysis, such as principal component analysis (PCA) on centred log-ratio (clr) transformed data, can become dependent on library size when the data contains many zero values and substantial variability in library size between samples [24]. This occurs because adding a pseudo-count (like 1) to handle zeros destroys the proportionality to the library size for low counts [24].

Diagnostics for Library Size Dependence:

You can use the following diagnostics to check if your analysis is affected by library size [24]:

Diagnostic 1: Correlation Plot

- Calculate the correlation between each taxon's (or gene's) clr-transformed values and the row means (r).

- Plot this correlation against the log of the mean abundance per taxon/gene.

- Interpretation: If low-abundance features show a strong negative correlation with

r, it indicates that the analysis is being unduly influenced by library size effects.

Diagnostic 2: Contribution Plot

- For a specific PCA axis, plot the log contribution of each feature against the log of its mean abundance.

- Interpretation: If all low-abundance features have a relatively high and roughly equal contribution to the axis, it suggests the axis primarily captures the library size effect. This often creates a V-shaped pattern in the plot.

Solutions and Best Practices:

- Consider Alternative Normalization: Methods like rarefying (subsampling to an even depth) can mitigate library size differences, though they come with the drawback of discarding valid data [25].

- Account for Sampling Fractions: Recognize that observed counts are compositional. Use methods that explicitly account for sample-specific sampling fractions to make data comparable across samples [25].

- Leverage Statistical Models: For differential expression analysis, use methods that incorporate library size normalization directly into their model, such as the median-of-ratios method used in DESeq2 [26].

How can I obtain stable dispersion estimates for differential expression analysis with low replicate numbers?

With a small number of biological replicates (e.g., 2-3 per condition), gene-wise dispersion estimates from RNA-seq count data are highly variable. This can compromise the power and accuracy of differential expression tests [26]. The standard solution is to "share information" across genes to stabilize dispersion estimates.

Methodology: Shrinkage Estimation

DESeq2 employs an empirical Bayes approach for shrinkage estimation, which is a common and effective strategy [26]:

- Gene-wise Estimation: A dispersion estimate is calculated for each gene independently using maximum likelihood (black dots in the plot below).

- Modeling the Trend: A smooth curve is fitted to the gene-wise dispersions as a function of the mean normalized count (red line). This represents the expected dispersion value for a gene with a given expression strength.

- Shrinkage: The gene-wise estimates are shrunk towards the curve-fitted values (blue arrows) to obtain the final dispersion estimates. The strength of shrinkage depends on:

- The spread of the gene-wise estimates around the curve.

- The number of replicates (shrinkage is stronger with fewer replicates).

Diagram: Dispersion Shrinkage in DESeq2

Assessing the Dispersion Fit:

The level of residual variation around the dispersion trend is crucial. A simple statistic, sigma (σ), can quantify this unexplained variation [27]. A larger σ indicates that the fitted trend does not capture all the dispersion variation, which can affect the power of differential expression tests.

Recommendations:

- Use Established Tools: Employ robust differential expression tools like DESeq2 [26] or edgeR [27] that automatically perform dispersion shrinkage.

- Review the Dispersion Plot: Always check the plot of final dispersions versus the mean (e.g.,

plotDispEstsin DESeq2) to ensure the trend fits the data reasonably well. - Consider High-Dispersion Genes: Be aware that some genes may have biological or technical reasons for being high-dispersion "outliers." Some methods, like DESeq2, will use the gene-wise estimate instead of the shrunken one for such genes to avoid false positives [26].

Research Reagent Solutions

Table: Key Computational Tools for Transcriptomic Diagnostics

| Tool / Resource Name | Primary Function | Key Application in Diagnostics |

|---|---|---|

| DESeq2 [26] | Differential expression analysis | Provides shrinkage estimators for dispersion and fold change to improve stability and interpretability. Essential for analyzing data with low replicate numbers. |

| edgeR [12] | Differential expression analysis | Uses a weighted conditional likelihood approach to moderate dispersion estimates across genes, powerful for count data. |

| Cell Ranger [23] | Primary analysis of 10x Genomics data | Performs initial read alignment, filtering, and UMI counting. Generates crucial QC metrics (e.g., median genes per cell) in its web_summary.html. |

| Loupe Browser [23] | Interactive data visualization | Allows for manual filtering of cell barcodes based on QC metrics like UMI counts, genes detected, and mitochondrial read percentage. |

| BatchI R package [22] | Batch effect quantification | Calculates a p-value to quantify the batch effect between samples using guided PCA. |

Experimental Protocol: Investigating Zero-Count Factors

This protocol outlines a methodology for systematically investigating the sources of zero counts in single-cell RNA-seq data, as derived from the research of [22].

1. Data Collection and Pre-processing:

- Obtain publicly available paired scRNA-seq and bulk RNA-seq datasets from the same biological source. Examples include datasets from human cell lines (e.g., MDAMB-468, Rh41) or primary tissues (e.g., bone marrow, prostate) [22].

- Map all data to the same transcriptome annotation (e.g., ENSEMBL) to ensure gene-level comparability.

- Create pseudo-bulk samples from the scRNA-seq data by summing the counts for each gene across all cells within a sample [22].

- Normalize all data (bulk, single-cell, and pseudo-bulk) using a consistent method, such as Counts Per Million (CPM) with log2 transformation [22].

2. Factor Analysis using Machine Learning:

- Calculate the dropout rate for each gene in the scRNA-seq data as the proportion of cells with zero counts [22].

- Compile a set of technical and biological factors for each gene, such as:

- GC content

- Gene length

- Number of transcripts per gene

- UTR3 length [22]

- Train two separate machine learning models (e.g., random forest) to identify which factors most strongly influence the occurrence of zero counts [22].

3. Downstream Analysis and Validation:

- Identify genes that are consistently discordant between the bulk and single-cell platforms across multiple datasets.

- Perform pathway enrichment analysis on these discordant genes to determine if any biological pathways are systematically affected by the platform difference [22].

Diagram: Workflow for Zero-Count Investigation

Building Robust Analysis Pipelines for Low Expression with R/Bioconductor

Frequently Asked Questions (FAQs)

What is the primary advantage of using Salmon and tximport for RNA-seq quantification?

The Salmon/tximport pipeline provides several key advantages for accurate transcript quantification, especially important for studies focusing on lowly expressed transcripts like R-genes. This alignment-free or "quasi-mapping" approach is significantly faster and requires less memory and disk space compared to traditional alignment-based methods [28]. More importantly, it corrects for potential changes in gene length across samples (e.g., from differential isoform usage) and can avoid discarding fragments that align to multiple genes with homologous sequence, thereby capturing more biological information [28]. For low-expression studies, this means retaining more data from fragile expression signals.

Why might my gene-level counts from tximport not match expectations for low-abundance genes?

Discrepancies, particularly for low-abundance genes or small RNAs, can arise from several sources. First, alignment-free tools like Salmon can systematically show poorer performance for quantifying lowly-abundant and small RNAs compared to alignment-based methods [29]. Second, if you are quantifying transcript types (like lncRNA) separately instead of using a comprehensive transcriptome, you may get less accurate correlations for those genes [30]. Finally, ensure you are using the correct tx2gene file during the tximport step; a mismatch between transcript identifiers in your quantification files and this mapping file will cause errors and missing counts [31].

What is the correct way to use Salmon output with DESeq2 for differential expression?

There are two recommended methods, and both require using the tximport package to prepare the data for DESeq2 [32]. You should not manually sum the transcript-level counts into a gene-level matrix and provide it directly to DESeq2.

- Method 1 (Recommended): Use

tximportwith the defaultcountsFromAbundance="no". Then, pass the resultingtxiobject directly toDESeqDataSetFromTximport. This method allows DESeq2 to internally create a normalization offset to account for length biases and differential isoform usage [32]. - Method 2: Use

tximportwithcountsFromAbundance="lengthScaledTPM"and then use the gene-level count matrixtxi$countswithDESeqDataSetFromMatrixas you would a regular count matrix [32].

The first method is often preferred as it explicitly models the uncertainty in the counts.

How can I resolve "None of the transcripts in the quantification files are present in the first column of tx2gene" error?

This common error in tximport occurs when the transcript identifiers in your Salmon output (e.g., quant.sf files) do not match the identifiers in the first column of your tx2gene mapping file [31]. To fix this:

- Check Identifier Consistency: Compare the transcript IDs from an example

quant.sffile (e.g.,ENSG00000210194) with those in yourtx2genefile (e.g.,ENST00000456328). Ensure you are using the same annotation source (e.g., GENCODE vs. Ensembl) and version. - Use

tximportArguments: Utilize theignoreTxVersionand/orignoreAfterBararguments in thetximport()function. These will strip version numbers (e.g.,.1) or suffixes after a delimiter (e.g.,|) to find matches [31].

Troubleshooting Guide

Issue: Poor Correlation for Specific Gene Types

- Symptoms: Good correlation between Salmon and other methods (e.g., featureCounts) for protein-coding genes, but extremely low correlation for other types like lncRNA genes [30].

- Cause: Quantifying different transcript types (e.g., lncRNA and protein-coding) with separate, incomplete transcriptome FASTA files, rather than a single, comprehensive transcriptome [30].

- Solution:

- Action: Always build the Salmon index using a complete reference transcriptome that includes all known transcripts [30].

- Verification: Re-run quantification and check that the correlation for the problematic gene types has improved.

Issue: Transcript ID Mismatch in tximport

- Symptoms:

tximportfails with the error: "None of the transcripts in the quantification files are present in the first column of tx2gene" [31]. - Cause: Inconsistent transcript identifiers between the Salmon output and the provided

tx2genemapping file. - Solution:

- Action 1: Visually inspect the IDs in both files to identify systematic differences (e.g., version numbers, prefixes).

- Action 2: Re-run

tximportwith theignoreTxVersion = TRUEargument. This is a common fix [31]. - Prevention: Generate your

tx2genefile from the same FASTA file or GTF annotation that was used to build the Salmon index.

Issue: Choosing the Right tximport Method for Downstream Analysis

- Symptoms: Confusion about whether to use

DESeqDataSetFromTximportorDESeqDataSetFromMatrixafter runningtximport. - Cause: The

tximportvignette describes two valid methods, leading to uncertainty about which is correct [32]. - Solution: The following table outlines the two primary workflows. For most users, Workflow A is the standard and recommended approach.

| Workflow | tximport countsFromAbundance Argument |

DESeq2 Data Import Function | Key Consideration |

|---|---|---|---|

| A: Original Counts + Offset | "no" (Default) |

DESeqDataSetFromTximport(txi, ...) |

DESeq2 uses an internal offset for gene-level bias correction. Recommended method [32]. |

| B: Bias-Corrected Counts | "lengthScaledTPM" |

DESeqDataSetFromMatrix(countData = txi$counts, ...) |

Provides pre-corrected counts; use if explicitly required by your analysis strategy [32]. |

Workflow Visualization

The following diagram illustrates the complete, recommended pathway from raw sequencing data to a gene-count matrix ready for differential expression analysis, incorporating best practices for accuracy.

Research Reagent Solutions

The table below lists the essential materials and computational tools required to implement this workflow successfully.

| Item Name | Function/Brief Explanation |

|---|---|

| Reference Transcriptome (FASTA) | A comprehensive file of all known transcript sequences for the organism. Used by salmon index to create a quantification reference. Do not use a genome sequence [33]. |

| Transcript-to-Gene Map (tx2gene) | A two-column tab-separated file mapping transcript IDs to gene IDs. Crucial for tximport to correctly summarize transcript-level abundances to the gene level [28]. |

| Salmon | A fast and accurate alignment-free tool for quantifying transcript abundances from RNA-seq reads [33] [28]. |

| tximport (R Package) | An R package that imports transcript-level abundance estimates from tools like Salmon and summarizes them to the gene-level, while also incorporating normalization offsets for accurate downstream analysis [32] [28]. |

| DESeq2 (R Package) | A widely-used R package for differential gene expression analysis based on a negative binomial model. It works directly with the output from tximport [32] [28]. |

Within the context of R-gene transcriptomic studies, a primary challenge researchers face is the robust identification of differentially expressed genes amidst high levels of biological noise and low-count features. Resistance genes (R-genes) are often expressed at low basal levels, making their accurate quantification and statistical validation particularly sensitive to the choice of analysis parameters. Tools like DESeq2 and edgeR, which model count data using a negative binomial distribution, provide powerful frameworks for this task, but their effectiveness hinges on appropriate parameter configuration to control false discovery rates and enhance sensitivity for biologically relevant transcripts. This guide addresses specific parameter-tuning scenarios to optimize your differential expression analysis for R-gene studies.

Key Parameter Reference Tables

Table 1: Core Parameter Comparison for DESeq2 and edgeR

| Function/Aspect | DESeq2 | edgeR |

|---|---|---|

| Default Normalization | Relative Log Expression (RLE) [15] | Trimmed Mean of M-values (TMM) [15] |

| Default Test | Wald test or LRT [34] | GLM Quasi-Likelihood F-test (glmQLFTest) or Exact test [34] [35] |

| Dispersion Estimation | Empirical Bayes shrinkage [35] | Common, trended, or tagwise dispersion [35] |

| Fit Type | parametric, local, or mean [34] |

Not Applicable |

| Handling of Low Counts | Independent filtering via results() function |

Filtering by prior count or specified rowsum.filter [34] |

Table 2: Impact of Experimental Design on Parameter Selection

| Experimental Factor | Recommendation | Rationale |

|---|---|---|

| Low Replicate Number (n<5) | Prioritize edgeR's robust=TRUE or DESeq2's fitType="local" |

Enhanced dispersion estimation stability with limited degrees of freedom [36]. |

| Presence of Batch Effects | Include as a blocking factor in the design matrix [37] | Corrects for technical variance without absorbing biological signal of interest. |

| Very Low Expressing R-genes | Apply minimal pre-filtering (e.g., rowsum.filter=1) |

Retains critical low-count genes for the model, allowing statistical filtering post-test [34]. |

| High Global Dispersion | In edgeR, use trended or tagwise dispersion; in DESeq2, use fitType="mean" |

Accommodates gene-specific variability while maintaining stable mean-dispersion trends [35]. |

Frequently Asked Questions & Troubleshooting

Q1: In my R-gene study, many candidate genes have low counts. What is the most appropriate way to filter the data before running DESeq2 or edgeR to avoid losing these signals?

A: Avoid aggressive pre-filtering based on counts-per-million. Both DESeq2 and edgeR incorporate internal filtering that is more statistically sound. For DESeq2, rely on the independent filtering automatically performed by the results() function, which removes genes with low counts that have little chance of showing significant evidence. In edgeR, use the filterByExpr() function which creates a filter based on the experimental design, keeping genes that have a minimum number of counts in a minimum number of samples. This is preferable to an arbitrary count threshold and is more likely to retain meaningful, lowly expressed R-genes [35] [36].

Q2: The diagnostic plots for my dataset indicate a problem with dispersion estimates. When should I change the default fitType in DESeq2?

A: You should change fitType from the default "parametric" to "local" or "mean" when the dispersion trend does not follow the parametric model. This is often evident in the plotDispEsts() plot, where the fitted dispersion curve poorly matches the gene-wise estimates. The "local" fit type uses a local regression to fit the dispersion trend, which can be more flexible for datasets where the mean-variance relationship is irregular—a situation not uncommon in stressed or treated plant samples in R-gene studies. The "mean" fit type uses the mean of gene-wise estimates, which serves as a conservative alternative when the parametric fit fails [34].

Q3: How do I choose between the Wald test and the Likelihood Ratio Test (LRT) in DESeq2 for my experimental design?

A: Use the Wald test for standard pairwise comparisons or when testing individual coefficients in a model. It is computationally efficient and is the default in results(). Choose the Likelihood Ratio Test (LRT) for more complex comparisons, such as testing the significance of a multi-level factor, evaluating nested models, or for time-series analyses. The LRT is more powerful for testing multiple parameters at once and can be specified by using the DESeq() function with a reduced model formula [34]. For most R-gene studies involving simple condition contrasts, the Wald test is sufficient.

Q4: What is the practical impact of choosing between TMM (edgeR) and RLE (DESeq2) normalization for my R-gene transcriptome?

A: While both TMM and RLE methods are highly effective and produce similar results by assuming most genes are not differentially expressed [15], their impact can be noticeable in R-gene studies. If your treatment condition (e.g., pathogen challenge) triggers a massive transcriptional reprogramming where a large fraction of genes are truly differentially expressed, these assumptions can be violated. In such cases, the TMM method in edgeR may be slightly more robust due to its trimming of extreme log-fold changes. If you suspect this, you can compare the results from both pipelines using a tool like SARTools [37], which provides diagnostic plots to assess normalization quality.

Detailed Experimental Protocols

Protocol 1: Tuning DESeq2 for Studies with Low-Expressing R-genes

This protocol is optimized for maximizing sensitivity without inflating false positives when analyzing low-count R-genes.

Data Import and Design:

- Read the raw count matrix into R. Ensure the data are un-normalized counts, as DESeq2 models raw counts and performs its own normalization [38].

- Construct the

DESeqDataSetwith a correct design formula (e.g.,~ condition).

Parameter Tuning for Pre-filtering:

- Apply a minimal pre-filter to remove genes with zero counts across all samples. This reduces computational load without sacrificing sensitivity.

Dispersion Estimation and Fit Type:

- Execute the core analysis with a specified

fitType: - Inspect the mean-dispersion relationship with

plotDispEsts(dds). If the red fitted line fails to follow the cloud of black gene-wise estimates, re-run withfitType="mean".

- Execute the core analysis with a specified

Results Extraction with Independent Filtering:

- Extract results using the

results()function. The independent filtering is applied by default (parameteralpha=0.1) to automatically filter out genes with low mean counts, optimizing the number of genes passing multiple testing correction. - For low-count genes, you can adjust the

alphathreshold, though this is generally not recommended.

- Extract results using the

Protocol 2: Configuring edgeR's GLM for Complex R-gene Study Designs

This protocol leverages edgeR's flexibility for experiments with multiple factors or batches.

Data Import and Normalization:

- Create a

DGEListobject from the raw count matrix and sample information. - Apply TMM normalization using

calcNormFactors().

- Create a

Experimental Design and Filtering:

- Create a design matrix using

model.matrix(), including all relevant factors (e.g., condition, batch). - Filter out lowly expressed genes using

filterByExpr(), which uses the design matrix to determine a sensible threshold.

- Create a design matrix using

Dispersion Estimation and Modeling:

- Estimate dispersions. For complex designs with many factors, the

robust=TRUEoption can prevent the dispersion estimates from being dominated by a few outliers. - Examine the dispersion estimates with

plotBCV(y).

- Estimate dispersions. For complex designs with many factors, the

Differential Expression Testing:

- For most R-gene studies, the quasi-likelihood F-test (

glmQLFTest) is recommended as it accounts for the uncertainty in dispersion estimation and provides stricter error control.

- For most R-gene studies, the quasi-likelihood F-test (

Workflow Visualization with Graphviz

Diagram 1: DESeq2 Parameter Tuning Workflow

Diagram 2: edgeR Parameter Tuning Workflow

Table 3: Key Research Reagent Solutions for RNA-seq in R-gene Studies

| Reagent / Resource | Function / Purpose | Notes for R-gene Studies |

|---|---|---|

| HTSeq-count / featureCounts | Generates the raw count matrix from aligned sequencing reads (BAM files) by assigning fragments to genomic features [37]. | Essential for producing the un-normalized count matrix required as input for both DESeq2 and edgeR. |

| SARTools R Package | A comprehensive pipeline that wraps DESeq2 and edgeR, providing systematic quality control (QC) and a HTML report for tracking the analysis process [37]. | Highly recommended for beginners and for ensuring reproducible, well-documented analyses. |

| DEGreport R Package | Assists in creating QC figures and reports from DESeq2/edgeR results, including checks for covariate effects and p-value distribution [39]. | Useful for diagnosing potential issues with model fits or uncovering hidden batch effects. |

| AnnotationHub / GenomicFeatures | Bioconductor packages to load and manage gene annotations (e.g., creating TxDb objects from GTF files) [40]. | Critical for accurately defining R-gene loci and other genomic features during count generation and results annotation. |

| Galaxy Wrapper for SARTools | Provides a user-friendly, web-based interface to run the SARTools pipeline without requiring extensive R knowledge [37]. | Lowers the barrier to entry for performing standardized, robust differential expression analysis. |

Leveraging edgeRun's Unconditional Exact Test for Increased Power in Low-Count Genes

In transcriptomic studies, a significant challenge is the reliable detection of differentially expressed genes (DEGs) with low expression levels. These genes often escape detection using standard statistical approaches despite their potential biological importance in regulatory networks and disease mechanisms. The unconditional exact test implemented in the edgeRun R package addresses this limitation by providing increased statistical power for genes with low total expression counts, especially in experiments with limited replication [41]. This technical support guide provides comprehensive troubleshooting and methodological support for researchers implementing this approach in their gene expression studies.

edgeRun Fundamentals: Q&A

What is the fundamental difference between edgeRun's test and traditional exact tests?

EdgeRun implements an unconditional exact test that eliminates the nuisance mean parameter by maximizing the exact p-value over all possible values for the mean, without conditioning on the total count [41]. This contrasts with the conditional exact test in edgeR, which conditions on a sufficient statistic for the mean. While the unconditional approach requires more computation, it provides significantly enhanced power for detecting differential expression in low-count genes and in experiments with as few as two replicates per condition [41].

In what experimental scenarios does edgeRun provide the greatest advantage?

EdgeRun demonstrates particular strength in these specific scenarios:

- Low-expression genes with limited read counts

- Experiments with minimal biological replication (as few as 2 replicates)

- Studies where genes exhibit large biological coefficients of variation

- Research focusing on functionally relevant pathways where comprehensive gene detection is critical [41]

The increased power is especially pronounced for genes with low total expression and with large biological coefficient of variation [41].

Troubleshooting Common Implementation Issues

Performance and Computational Optimization

Issue: Long computation times with large datasets.

Solution: The UCexactTest function includes an upper parameter that controls the number of iterations. For production analyses, use at least 50,000 iterations, though the analysis will take longer to run [42]. For initial testing, you may use 10,000 iterations to verify the pipeline functionality.

Issue: Memory limitations with whole-transcriptome data. Solution: Process data in chunks by chromosome or functional gene sets. Ensure your system has adequate RAM for the intended analysis scale.

Interpretation and Validation Challenges

Issue: Concern about potential false positives with increased detection sensitivity. Solution: Implement stringent false discovery rate (FDR) correction and validate findings using functional relevance metrics. Research has demonstrated that edgeRun consistently captures functionally similar DEGs, with 33% of genes unique to edgeRun showing co-expression with consensus networks compared to only 17% for other methods [41].

Issue: Determining appropriate expression thresholds for low-count genes. Solution: While edgeRun improves power for low-expression genes, apply minimum expression filters appropriate to your sequencing depth. The package interfaces directly with edgeR objects, allowing use of edgeR's filtering functions [41].

Performance Comparison: Statistical Methods for Low-Count RNA-Seq Data

Table 1: Comparative performance of statistical tests for differential expression detection in low-count genes

| Statistical Method | Power for Low Counts | Small Sample Performance | Computational Efficiency | Best Application Context |

|---|---|---|---|---|

| edgeRun (Unconditional) | High [41] | Excellent (2+ replicates) [41] | Moderate [41] | Low-expression genes, small n |

| Conditional Exact Test | Low [43] | Poor with small samples [43] | High | Large sample sizes |

| Wald-Log Test | Moderate-High [43] | Moderate | High | General use with adequate counts |

| Fisher Exact Test | Low [43] | Poor with small samples [43] | High | Large sample sizes, high counts |

| Likelihood Ratio Test | Moderate [43] | Moderate | High | Balanced designs |

Table 2: Empirical performance comparison based on simulation studies

| Condition (Mean λ) | edgeRun Power | Conditional Exact Power | Fisher Exact Power | Wald-Log Power |

|---|---|---|---|---|

| λ1=5, λ2=9 | 0.0522 | 0.0066 | 0.0066 | 0.0199 |

| λ1=10, λ2=15 | 0.0256 | 0.0082 | 0.0082 | 0.0114 |

| λ1=15, λ2=25 | 0.0689 | 0.0314 | 0.0314 | 0.0456 |

Note: Simulation conditions based on Poisson distributions with nominal significance level 0.001, demonstrating edgeRun's enhanced power for low expression values [43].

Experimental Protocol for edgeRun Implementation

Sample Size Planning and Experimental Design

For reliable detection of differential expression:

- Include at least 3 biological replicates per condition whenever possible

- Ensure adequate sequencing depth (20-30 million reads per sample)

- Balance experimental groups to avoid confounding technical and biological effects [44]

While edgeRun provides advantages with minimal replication, power increases substantially with additional replicates. With only two replicates, DGE analysis is technically possible, but the ability to estimate variability and control false discovery rates is greatly reduced [44].

Complete edgeRun Analysis Workflow

edgeRun Analysis Workflow

Code Implementation Example

Validation and Functional Relevance Assessment

To assess the biological relevance of additional genes detected by edgeRun:

- Perform Gene Ontology enrichment analysis on the full result set

- Use GRAIL with co-expression networks (COXPRESdb) to evaluate functional connections [41]

- Compare the proportion of genes co-expressed with established consensus signatures

Research has demonstrated that genes uniquely identified by edgeRun show significantly higher functional relevance, with 33% of edgeRun-unique genes showing co-expression with consensus networks compared to 17% for other methods (p < 0.001) [41].

Essential Research Reagent Solutions

Table 3: Key computational tools and packages for edgeRun implementation

| Tool/Package | Function | Application Context |

|---|---|---|

| edgeRun R Package | Implements unconditional exact test | Primary differential expression analysis [41] |

| edgeR | Data preprocessing and normalization | Creation of DGEList objects and data conditioning [41] |

| GRAIL + COXPRESdb | Functional relevance assessment | Validation of biological significance of findings [41] |

| ClusterProfiler | Gene Ontology enrichment analysis | Functional interpretation of results [45] |

| DESeq2 | Alternative differential expression method | Comparative analysis and validation [45] |

Advanced Technical Considerations

Statistical Foundation of Unconditional Testing

Statistical Testing Approaches

The unconditional approach was initially proposed by Barnard (1945) for binomial distributions and has been adapted for RNA-seq data [41]. This method eliminates the nuisance mean parameter via maximizing the exact p-value over all possible values for the mean without conditioning, addressing a key limitation of Fisher's exact test which tends to be conservative and underpowered outside specific settings [46].

Integration with Transcriptomic Analysis Pipelines

EdgeRun is designed to interface seamlessly with existing edgeR objects, accepting inputs and generating output in the same format [41]. This facilitates incorporation into comprehensive RNA-seq analysis workflows including quality control, normalization, and functional enrichment. For educational applications or users requiring graphical interfaces, tools like ERSAtool provide Shiny-based implementations while maintaining analytical rigor [45].

EdgeRun's unconditional exact test represents a statistically rigorous approach for enhancing detection power in transcriptomic studies, particularly for the challenging but biologically important category of low-expression genes. By implementing the protocols and troubleshooting guides presented here, researchers can significantly improve their ability to detect functionally relevant differential expression while maintaining appropriate false discovery control.

Integrating Functional Relevance Assessment with Co-Expression Networks

Transcriptomic studies of resistance genes (R-genes) present a unique analytical challenge. Research on tomato and potato has revealed that the majority of R-genes are expressed at low levels, with only approximately 10% being differentially expressed during pathogen infection [47]. This low-expression profile can significantly impact the construction and interpretation of co-expression networks, potentially obscuring biologically relevant relationships. This guide addresses the specific methodological issues that arise when integrating functional relevance assessment with co-expression networks in the context of R-gene research, providing troubleshooting and experimental solutions for researchers and drug development professionals.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Why are many of my identified R-genes excluded or poorly connected in my co-expression network?

A: This is a common issue stemming from the biological nature of R-genes rather than a technical failure.

- Primary Cause: A core set of R-genes, including well-known genes like

EDS1,Pto, andNRC2/3/4, are constitutively expressed, while most others show consistently low expression levels [47]. Most network inference methods rely on expression variance; genes with near-constant, low counts contribute little to correlation metrics and are often filtered out or appear as peripheral nodes. - Solution: Before network construction, conduct an exploratory analysis of your expression matrix. For R-genes that are critical to your study but show low expression, consider validating their presence via alternative methods like qPCR. In your network analysis, focus on the core set of consistently expressed R-genes as anchor points.

Q2: How can I determine if a co-expression module containing R-genes is functionally relevant?

A: Functional relevance is established through a multi-step validation process.

- Enrichment Analysis: Perform Gene Ontology (GO) and pathway enrichment analysis on genes within the module. While not all co-expressed genes are functionally related, significant enrichment for immune-related terms (e.g., "inflammatory response," "defense response") strengthens biological plausibility [48] [49].

- Cross-Validation with External Data: Integrate your findings with existing protein-protein interaction (PPI) data or regulatory element databases. If the genes in your module are known to physically interact or share regulatory elements (e.g., enhancers), this provides strong evidence of functional coherence [50] [48].

- Prioritize Hub Genes: Within a module, genes with the highest connectivity (hub genes) are often critical for the module's function. Their experimental perturbation is most likely to disrupt the network and the associated biological process [51].

Q3: My co-expression network is too dense and uninterpretable. What filtering strategies are recommended?

A: Dense networks are a frequent challenge. Moving from an unweighted to a weighted network representation is a key strategy.

- Use Weighted Networks: Instead of using a hard correlation cutoff to create a binary (connected/not connected) network, use a weighted correlation network like WGCNA. This preserves the continuous nature of co-expression relationships and leads to more robust and interpretable modules [50] [52] [51].

- Apply Soft Thresholding: WGCNA uses a soft-power threshold to emphasize strong correlations and penalize weak ones, resulting in a network that follows a scale-free topology, which is more biologically realistic [52].

- Filter by Significance: Use permutation testing or false discovery rate (FDR) controls to retain only statistically significant co-expression links, rather than relying on an arbitrary correlation cutoff [48].

Q4: What is the advantage of using RNA-seq over microarray data for R-gene co-expression studies?

A: RNA-seq offers several critical advantages for this specific application:

- Discovery of Non-Coding Regulators: RNA-seq can quantify over 70,000 non-coding RNAs, including miRNAs (like the miR482/2118 superfamily) that are known to post-transcriptionally regulate R-genes. These are typically not measured by microarrays [50] [47].

- Resolution of Splice Variants: Many R-genes undergo alternative splicing to produce distinct protein isoforms with different functions. RNA-seq can distinguish between these splice variants, allowing for isoform-specific co-expression analysis that would be aggregated in microarray data [50] [47].

- Improved Accuracy: RNA-seq has higher accuracy for low-abundance transcripts and better distinguishes between closely related paralogues [50].

Troubleshooting Common Experimental Issues

Table 1: Troubleshooting Common Problems in Co-expression Network Analysis of R-genes

| Problem | Potential Cause | Solution |

|---|---|---|

| No coherent modules are formed. | Incorrect soft-thresholding power; high heterogeneity in samples. | Use the pickSoftThreshold function in WGCNA to choose the appropriate power. Consider if the dataset contains multiple cell types or tissues and use a differential co-expression approach [50] [52]. |

| Key R-genes appear in unrelated modules. | Spurious correlation due to batch effects or indirect interactions. | Check for and correct for batch effects before network construction. Use algorithms like ARACNE that can prune indirect connections [51]. |

| Network fails to validate with known pathways. | The co-expression does not reflect a functional relationship. | Integrate other data types (e.g., shared regulatory elements from ATAC-seq, protein interactions) to confirm functional links [50] [48]. |

| Low statistical power to detect co-expression. | Too few samples (low n) for the number of genes (high p). | Increase sample size or leverage large public repositories. For R-genes, ensure samples include relevant tissues and infection time points [47] [49]. |

Essential Experimental Protocols

Protocol: Weighted Gene Co-expression Network Analysis (WGCNA) for R-gene Studies

This protocol is adapted for handling the specific challenges of R-gene transcriptomics [47] [52].

1. Data Preparation and Input

- Input Format: Use properly normalized count data, such as log2-transformed values (e.g., log2(FPKM+1) or log2FC from a differential expression analysis). This minimizes background noise.

- Data Cleaning: Filter genes with excessive missing values. For R-genes, avoid filtering too stringently on overall variance to retain critical low-expression genes.

2. Network Construction and Module Detection

- Soft-Thresholding: Choose a soft-thresholding power (β) that achieves a scale-free topology fit (R²) > 0.8-0.9.

- Module Identification: Construct a topological overlap matrix (TOM) and use hierarchical clustering with dynamic tree cutting to identify modules of highly co-expressed genes.

3. Integration with Functional Traits

- Relate Modules to Traits: Correlate module eigengenes (the first principal component of a module) with external traits (e.g., infection status, pathogen load, tissue type).

- Functional Enrichment: Perform GO enrichment analysis on genes within significant modules to assess biological relevance.

Protocol: Differential Co-expression Analysis for Condition-Specific Responses

This method identifies genes whose co-expression partners change between conditions (e.g., healthy vs. infected), which is powerful for finding regulatory genes [50].

1. Data Stratification: Construct separate co-expression networks for each condition of interest (e.g., mock-treated vs. pathogen-infected samples).

2. Network Comparison: Compare the network topology or connection strengths between conditions. Identify genes that are hub genes in one condition but not the other, or genes that switch modules.

3. Regulatory Inference: Genes with significant changes in their co-expression patterns are likely to be underlying regulators of the phenotypic difference. These candidates can be prioritized for further validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Co-expression Network Analysis

| Item / Resource | Function / Application | Example / Note |

|---|---|---|

| WGCNA R Package | A comprehensive collection of R functions for performing weighted correlation network analysis. | Primary tool for network construction, module detection, and functional integration [52]. |

| Cytoscape | An open-source platform for complex network visualization and integration with other data types. | Used for visualizing co-expression networks and hub genes; has >200 apps for extended analysis [52] [53]. |

| hdWGCNA Package | An R package for performing WGCNA on single-cell or high-dimensional transcriptomics data. | Useful for analyzing R-gene expression in heterogeneous tissues at single-cell resolution [53]. |

| Public Data Repositories | Sources of additional transcriptomic data for validation and meta-analysis. | NCBI GEO, Sequence Read Archive (SRA). Integrating multiple datasets increases statistical power [47] [49]. |

| Functional Annotation Databases | Resources for interpreting the biological meaning of co-expression modules. | Gene Ontology (GO), KEGG Pathways. Critical for functional relevance assessment [49] [51]. |

Visualizing Workflows and Signaling Pathways

Co-expression Network Analysis Workflow

Title: Co-expression Network Construction and Analysis Workflow

R-gene Co-expression and Regulation Network

Title: R-gene Co-expression and Regulatory Mechanisms

Solving Common Pitfalls and Enhancing Power for Low Expression Signals

Frequently Asked Questions (FAQs)

Q1: Why do my transcriptomic analyses keep identifying the same generic Gene Ontology (GO) categories (e.g., "chemical synaptic transmission") as significant, even for very different neural phenotypes?

A1: This is a classic symptom of false-positive bias in Gene-Category Enrichment Analysis (GCEA). When applied to spatially embedded transcriptomic data (e.g., from brain atlases), standard GCEA methods are affected by two key factors that inflate false positives:

- Within-category gene-gene coexpression: Genes within the same functional category are often co-expressed, violating the assumption of independence in many statistical tests [54].

- Spatial autocorrelation: Both gene expression maps and many neural phenotypes are spatially autocorrelated, meaning nearby regions have more similar values. Two spatially autocorrelated maps have a higher chance of appearing correlated by chance alone [54]. These biases can lead to an over 500-fold average inflation of false-positive associations with random neural phenotypes. The solution is to use ensemble-based null models that account for these spatial dependencies, rather than standard "random-gene" null models [54].

Q2: What is the fundamental difference between "offline" and "online" FDR control, and when should I use the latter for my RNA-seq studies?

A2:

- Offline FDR Control: This is the classical paradigm. An FDR correction method (like Benjamini-Hochberg) is applied once to a single gene-p-value matrix from one experiment or a single pooled family of experiments [55].

- Online FDR Control: This is used when analyzing multiple families of RNA-seq experiments conducted over time (e.g., testing different drug compounds across a research program). The online approach controls the global FDR across all past, present, and future experiments without changing decisions made on historical data [55].

You should use online FDR control when your research program involves multiple related but distinct RNA-seq experiment families over a calendar time, and you require that FDR-controlled decisions made early in the program remain valid as new data arrives [55].

Q3: How can I increase the power of my differential expression analysis without inflating the false discovery rate?

A3: You can use modern FDR-controlling methods that leverage an "informative covariate." These methods use additional information about each hypothesis test (e.g., a gene's average expression level or its variance) to prioritize tests that are more likely to be true positives.

- These methods are modestly more powerful than classic approaches (BH, Storey's q-value) when the covariate is informative.

- Crucially, they do not underperform classic approaches even when the covariate is completely uninformative [56]. Examples of such methods include Independent Hypothesis Weighting (IHW) and AdaPT, which can use a gene's mean expression as a covariate to improve power in RNA-seq analyses [56].

Troubleshooting Guides

Issue 1: High False Positives in Gene-Category Enrichment with Spatial Data

Problem: Gene-category enrichment analysis (GCEA) of spatial transcriptomic brain data yields many significant GO categories, but they are often biologically implausible or overly generic and are replicated even with random simulated phenotypes.

Diagnosis: This indicates a severe false-positive bias driven by the spatial structure of the data, specifically spatial autocorrelation and gene-gene coexpression within categories [54].

Solution: Implement an Ensemble-Based Null Model.

- Step 1: Instead of using a "random-gene" null model, generate an ensemble of randomized surrogate phenotypes that preserve the spatial autocorrelation structure of your original phenotype [54].

- Step 2: For each surrogate phenotype, perform the same GCEA and calculate the enrichment score for each GO category.

- Step 3: For each GO category in your real analysis, compute an empirical p-value by comparing its enrichment score to the null distribution of scores generated from the surrogate phenotypes [54].

- Step 4: Apply FDR correction to these empirical p-values.

Required Tools/Scripts: The authors of the Nature Communications study provide a software toolbox to implement this [54]. Alternatively, spatial permutation methods like "spin tests" can be adapted to create the surrogate maps [54].

Issue 2: Global FDR Inflation Across Multiple RNA-seq Experiments

Problem: Your research program involves multiple RNA-seq studies (e.g., testing different drug compounds). While each study's FDR is controlled individually, you suspect the overall proportion of false discoveries across all studies is higher than desired.

Diagnosis: Repeated application of "offline" FDR correction to each experiment family separately inflates the global FDR across the entire research program [55].

Solution: Apply Online Multiple Hypothesis Testing.

- Step 1: Formulate the differential expression results from each family of RNA-seq experiments as a sequence of hypothesis tests arriving over time.

- Step 2: Choose an online FDR algorithm, such as onlineBH or onlineStoreyBH [55].

- Step 3: As each new family of experiments is analyzed, apply the chosen online algorithm to the new p-values. The algorithm uses information from previous hypothesis tests to determine an appropriate significance threshold for the new batch, ensuring global FDR control [55].

- Step 4: Proceed with downstream validation and research decisions based on the rejections provided by the online procedure.

Required Tools/Scripts: The onlineFDR R package available on Bioconductor implements these algorithms [55].

Issue 3: Poor Normalization Leading to Inaccurate Differential Expression

Problem: Differential expression analysis yields a high number of significant results that may be driven by technical artifacts rather than true biological change, often due to improper normalization.

Diagnosis: Standard normalization methods (e.g., based on pre-defined housekeeping genes) can perform poorly if the reference genes are not stable under your specific experimental conditions [57].

Solution: Utilize Custom-Selected Reference Genes.

- Step 1: Normalize your raw count data to TPM (Transcripts per Million) to account for sequencing depth and gene length [57].

- Step 2: Filter out weakly expressed genes. The DAFS script can be used to calculate a robust cut-off [57].

- Step 3: From the remaining genes, select the 0.5% of genes with the lowest coefficient of variation (CV) in TPM across your samples as your custom reference set [57].

- Step 4: Use these stable, custom-selected genes for normalization in downstream differential expression analysis with tools like DESeq2 or edgeR.

Required Tools/Scripts: An R package called "CustomSelection" was developed for this purpose [57]. The tximeta and tximport packages can also be used to import and summarize transcript-level quantifications for robust gene-level analysis [13] [58].

Key Methodologies and Data

Table 1: Comparison of FDR Control Paradigms

| Paradigm | Description | Best Use Case | Key Advantage |

|---|---|---|---|

| Classic Offline (BH, q-value) [56] | Applies FDR correction to a single batch of p-values. | A single, self-contained RNA-seq differential expression analysis. | Simplicity; widely understood and implemented. |

| Modern Covariate-Assisted [56] | Uses an informative covariate (e.g., mean expression) to weight hypotheses in a single batch. | Any analysis where a prior covariate is informative of power or null probability (e.g., RNA-seq, GWAS). | Increased power without FDR inflation. |

| Online FDR Control [55] | Controls FDR across a stream of hypothesis tests (multiple experiments) over time. | A research program with multiple sequential RNA-seq experiment families. | Guarantees global FDR control; decisions are immutable to future data. |

| Ensemble-Based Null [54] | Uses spatial permutation of phenotypes to generate a valid null for spatial transcriptomics. | Gene-category enrichment analysis with spatially-resolved data. | Directly accounts for spatial autocorrelation, drastically reducing false positives. |

Table 2: Research Reagent Solutions for FDR Control

| Reagent / Resource | Type | Function in Addressing False Positives |

|---|---|---|

| Negative Control Probes (NCPs) [59] | Wet-lab reagent | Target non-biological sequences to empirically measure and calculate the background false discovery rate in single-cell spatial imaging experiments. |