Multimodal Imaging in Plant Phenomics: A Comprehensive Guide to Technologies, Applications, and Data Integration

This article provides a comprehensive overview of multimodal imaging in plant phenomics, an interdisciplinary field that integrates multiple imaging technologies to achieve a holistic understanding of plant structure and function.

Multimodal Imaging in Plant Phenomics: A Comprehensive Guide to Technologies, Applications, and Data Integration

Abstract

This article provides a comprehensive overview of multimodal imaging in plant phenomics, an interdisciplinary field that integrates multiple imaging technologies to achieve a holistic understanding of plant structure and function. Aimed at researchers and scientists, we explore the foundational principles of combining diverse imaging modalities—from RGB and hyperspectral to MRI and CT—to overcome the limitations of single-technique approaches. The scope spans from core concepts and sensor technologies to methodological workflows for data registration and fusion, alongside practical troubleshooting for common technical challenges. Furthermore, we examine validation frameworks and comparative analyses that demonstrate the transformative potential of multimodal imaging for quantifying complex traits, assessing plant health, and accelerating crop improvement, with cross-cutting implications for biomedical research.

Defining Multimodal Imaging: Core Concepts and Technological Pillars in Plant Phenomics

Multimodal imaging is defined as the integration of multiple imaging techniques to examine the same biological subject, with the resulting images registered in both space and time [1]. In the context of plant phenomics, this approach leverages the complementary strengths of different imaging modalities to provide a more comprehensive and accurate visualization of plant systems than any single modality can achieve alone. The fundamental principle is to overcome individual limitations of standalone techniques by combining structural, functional, and physiological information into a unified data product [1].

This methodology has transformed how researchers visualize and understand biological processes in plants, from molecular interactions to whole-organism systems. By bridging structural and functional assessment, multimodal imaging enables more precise phenotypic characterization and deeper insights into plant-environment interactions [2]. The effective utilization of cross-modal patterns depends on precise image registration to achieve pixel-accurate alignment, a challenge often complicated by parallax and occlusion effects inherent in plant canopy imaging [3] [4].

Technical Foundations: Imaging Modalities and Their Synergies

Core Imaging Technologies in Plant Phenomics

Table 1: Primary Imaging Modalities Used in Multimodal Plant Phenotyping

| Modality Type | Physical Principle | Key Applications in Plant Science | Spatial Resolution | Penetration Depth |

|---|---|---|---|---|

| X-ray CT | X-ray attenuation | Internal structure, vascular system, wood degradation | Micrometers to millimeters | Centimeters to meters |

| MRI | Nuclear magnetic resonance | Physiological status, water distribution, functional imaging | Tens of micrometers | Centimeters |

| Optical Imaging | Light reflectance/absorption | Canopy structure, chlorophyll content, leaf area | Millimeters to centimeters | Surface to thin tissues |

| Thermal Imaging | Infrared radiation | Canopy temperature, stomatal conductance, stress response | Millimeters | Surface only |

| Hyperspectral/Multispectral | Spectral reflectance | Biochemical composition, pigment content, stress indicators | Millimeters to centimeters | Surface to shallow penetration |

The Integration Workflow: From Data Acquisition to Registration

The process of multimodal imaging involves a sophisticated workflow that transforms raw data from multiple sources into integrated, actionable information.

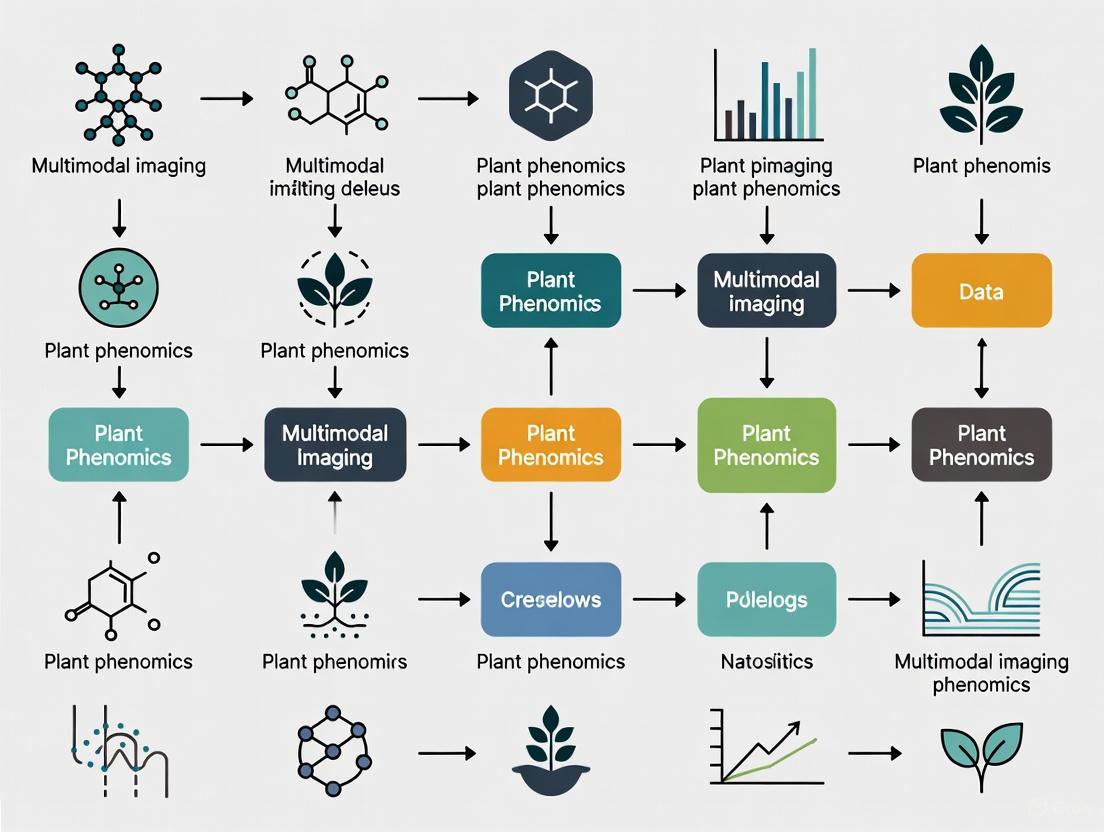

Figure 1: The Multimodal Imaging Workflow for Plant Phenotyping

A key technical challenge in this workflow is image registration, particularly for complex plant structures. Recent advances have introduced 3D multimodal image registration algorithms that integrate depth information from time-of-flight cameras to mitigate parallax effects [3] [4]. These methods utilize ray casting for registration and include integrated mechanisms to automatically detect and filter out occlusion effects, facilitating more accurate pixel alignment across camera modalities [4].

The registration approach can scale to arbitrary numbers of cameras with varying resolutions and wavelengths, making it suitable for a wide range of applications in plant sciences [3]. This scalability is particularly valuable for cross-scale studies that aim to connect phenomena from microscopic to macroscopic levels [2].

Experimental Protocols: Implementing Multimodal Imaging

Case Study: Non-Destructive Diagnosis of Grapevine Trunk Diseases

Table 2: Quantitative Tissue Classification Accuracy Using Multimodal Imaging

| Tissue Type | MRI Alone Accuracy | X-ray CT Alone Accuracy | Multimodal Combination Accuracy | Key Discriminating Features |

|---|---|---|---|---|

| Intact Tissue | 85% | 78% | 94% | High X-ray absorbance, high MRI values |

| Degraded Tissue | 72% | 81% | 89% | Medium X-ray absorbance, low MRI values |

| White Rot | 88% | 95% | 98% | Low X-ray absorbance (-70%), very low MRI values |

| Reaction Zones | 65% | 42% | 87% | T2-w hypersignal near necrosis boundaries |

A comprehensive experimental protocol for multimodal imaging of plant diseases was demonstrated in grapevine trunk disease assessment [5]. The methodology proceeded through these critical stages:

Sample Preparation and Imaging: Twelve vines (both symptomatic and asymptomatic) were collected from a vineyard and imaged using four different modalities: X-ray CT and three MRI protocols (T1-, T2-, and PD-weighted). Following non-destructive imaging, vines were destructively sampled for ground truth validation.

Multimodal Data Registration: 3D data from each imaging modality were aligned into 4D-multimodal images using an automatic 3D registration pipeline. This enabled voxel-wise joint exploration of modality information and comparison with empirical annotations.

Expert Annotation and Signature Identification: Experts manually annotated eighty-four random cross-sections based on visual inspection of tissue appearance, defining six distinct classes from healthy tissue to various degradation stages. This preliminary analysis identified general signal trends distinguishing tissue types.

Machine Learning Classification: A segmentation model was trained to detect degradation levels voxel-wise using the non-destructive imaging data. The model achieved a mean global accuracy of over 91% in discriminating intact, degraded, and white rot tissues [5].

Multimodal Registration for Plant Canopies

For above-ground plant phenotyping, a specialized protocol has been developed utilizing 3D information from a depth camera and ray casting for registration [3]. This method:

- Automates Occlusion Handling: Integrates an automated mechanism to identify and differentiate various types of occlusions, thereby minimizing registration errors in dense canopies.

- Species-Independent Analysis: Does not rely on detecting plant-specific image features, making it suitable for a wide range of plant species with varying leaf geometries.

- Validates Across Diverse Species: Testing on six distinct plant species with varying leaf geometries demonstrated robustness across different plant types and camera compositions [3] [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Multimodal Plant Imaging

| Reagent/Equipment Category | Specific Examples | Function in Multimodal Imaging | Application Notes |

|---|---|---|---|

| Multimodal Contrast Agents | MRI-CT dual contrast agents | Enhance visibility across multiple modalities | Limited use in plants; under development |

| Depth Sensing Cameras | Time-of-flight cameras | Provide 3D information for registration | Mitigates parallax in canopy imaging [3] |

| Annotation Software | Custom manual annotation tools | Generate ground truth for training | Requires domain expertise [5] |

| Image Registration Algorithms | 3D registration with ray casting | Align images from different modalities | Handles parallax and occlusion [4] |

| Machine Learning Frameworks | Voxel classification models | Automatic tissue segmentation | Achieves >91% accuracy in tissue classification [5] |

| Multimodal Imaging Platforms | MVS-Pheno V2, Scanalyzer | Integrated data acquisition | Optimized for specific plant types [6] [7] |

Data Integration and Analysis: From Images to Biological Insights

Computational Approaches for Multimodal Data Fusion

The integration of multimodal imaging data requires sophisticated computational approaches to extract meaningful biological insights:

Figure 2: Computational Pathways for Multimodal Data Analysis

Cross-Scale Integration: From Microscopic to Macroscopic

A particularly powerful application of multimodal imaging lies in its ability to integrate information across biological scales. As noted in a recent review, "A complete plant body consists of elements on different scales, including microscopic molecules, mesoscopic multicellular structures, and macroscopic tissues and organs, which are interconnected to form complex biological networks" [2].

Multimodal cross-scale imaging technologies enable researchers to study these connections from microscopic, mesoscopic, and macroscopic levels, which is crucial for understanding the complex internal connections behind biological functions [2]. This approach provides the foundation for creating comprehensive 'digital twin' models of plants, representing a significant advancement in computational plant science [5].

Future Directions and Implementation Challenges

While multimodal imaging offers transformative potential for plant phenomics, several challenges remain for widespread implementation:

Technical Integration Complexity: Co-location of instruments for direct correlative imaging is rarely feasible, creating registration challenges [1]. Different imaging modalities often have conflicting requirements for sample preparation and imaging conditions.

Data Management and Computation: Multimodal imaging generates massive datasets that require sophisticated computational resources for co-registration, fusion, and analysis [5] [8]. Development of efficient algorithms for handling these large datasets remains an active research area.

Cost and Accessibility: Advanced multimodal imaging systems are expensive to acquire and maintain, limiting their availability, particularly in resource-constrained settings [1]. This has spurred development of more accessible alternatives, including smartphone-based sensing platforms [8].

Expertise Requirements: Operating and interpreting multimodal imaging requires specialized expertise across multiple imaging domains, creating training and staffing challenges [1]. The field needs more interdisciplinary researchers comfortable with both biological questions and technical methodologies.

Future developments will likely focus on enhanced integration across imaging domains, improved data analysis through machine learning, development of more sophisticated hybrid imaging systems, and the creation of multimodal contrast agents that can be detected by multiple imaging modalities [1]. As these technological advances progress, multimodal imaging will play an increasingly important role in bridging structure and function in plant systems, ultimately enabling more precise and comprehensive phenotyping capabilities.

Plant phenomics is an emerging research field that focuses on the quantitative description of the physiological and biochemical properties of plants, addressing the critical challenge of linking plant genotypes to their observable traits, or phenotypes [9] [10]. Traditionally, plant phenotyping relied heavily on visual scoring by experts, a method that is laborious, time-consuming, and susceptible to bias [9]. Modern high-throughput plant phenotyping aims to sense and quantify plant traits rapidly, non-destructively, and regularly with sufficient precision [9]. The effective utilization of cross-modal patterns in plant phenotyping depends on image registration to achieve pixel-precise alignment, a challenge often complicated by parallax and occlusion effects inherent in plant canopy imaging [3]. This technical guide explores the core imaging modalities driving innovation in plant phenomics research, with particular emphasis on integrated multimodal approaches that provide more comprehensive phenotypic assessment than any single technology can deliver alone.

Core Imaging Modalities in Plant Phenotyping

Visible Light (RGB) Imaging

Visible light imaging, also referred to as RGB imaging, forms the foundation of most plant phenotyping systems. This modality utilizes cameras sensitive to the visible spectral range (approximately 400-700 nm) to capture digital representations of plant scenes [10]. The electronic devices most commonly used for image capture are charge-coupled device (CCD) and complementary metal oxide semiconductor (CMOS) sensors [11]. While CCD sensors generally produce less noise and higher-quality images, particularly under suboptimal lighting conditions, CMOS sensors offer faster image processing, lower power consumption, and lower cost [11].

In plant phenotyping applications, RGB imaging is primarily employed to measure architectural traits such as projected shoot area, growth dynamics, shoot biomass, yield traits, panicle characteristics, root architecture, and germination rates [10]. The advantages of RGB systems include excellent spatial and temporal resolution, portability, low cost, and numerous available software tools for image processing [11]. Limitations primarily involve organ overlap during growth phases and sensitivity to illumination variations, particularly in outdoor environments [11].

Imaging Spectroscopy: Multispectral and Hyperspectral Imaging

Imaging spectroscopy encompasses both multispectral and hyperspectral imaging technologies, with the key distinction being spectral resolution. Multispectral cameras capture images at a number of discrete spectral bands (typically 3-25 bands), while hyperspectral cameras capture contiguous spectral bands across a specific range, generating a full spectrum for each pixel [11]. This detailed spectral information provides insight into the biochemical composition of plant tissues.

Hyperspectral imaging enables quantification of vegetation indices, water content, composition parameters of seeds, and pigment composition [10]. The technology has proven valuable for assessing leaf and canopy water status, health status, panicle health, leaf growth, and coverage density [10]. The main advantage of hyperspectral imaging is the rich spectral data that can be correlated with specific plant physiological and biochemical parameters. Challenges include large data volumes, computational complexity, and the need for specialized calibration and processing techniques [12] [11].

Thermal Infrared Imaging

Thermal imaging captures the infrared radiation emitted by plants to create pixel-based maps of surface temperature [10]. This modality characterizes plant temperature to detect differences in stomatal conductance as a measure of plant response to water status and transpiration rate, particularly for abiotic stress adaptation [10]. Thermal imaging has been applied to studies of barley, wheat, maize, grapevine, and rice for detecting water stress and insect infestation [10]. The primary strength of thermal imaging is its ability to detect pre-visual stress responses related to plant water relations, though it provides limited structural information.

3D Imaging Technologies

3D imaging technologies capture the three-dimensional structure of plants through various approaches, including stereo vision systems, time-of-flight (TOF) cameras, and light detection and ranging (LIDAR) [9] [10]. These systems generate depth maps that enable quantification of shoot structure, leaf angle distributions, canopy architecture, root architecture, and plant height [10].

Stereo vision systems emulate human binocular vision using two mono vision systems to compute distances, creating what are known as depth maps [11]. This approach has evolved into multi-view stereo (MSV) and has found significant application in plant phenotyping [11]. Time-of-flight techniques measure the time taken for a light signal to travel to an object and back to the sensor, calculating distance from this measurement [9]. The main advantages of 3D imaging include accurate volumetric assessments and architectural measurements, while challenges can include computational demands and limited resolution for complex plant structures.

Table 1: Comparison of Core Imaging Modalities in Plant Phenotyping

| Imaging Technique | Primary Sensor Types | Measured Parameters | Example Applications | Key Advantages | Main Limitations |

|---|---|---|---|---|---|

| Visible (RGB) | CCD, CMOS cameras | Projected area, growth dynamics, shoot biomass, yield traits, root architecture | Rosette geometry time courses, seed morphology, germination rates | High spatial/temporal resolution, low cost, numerous software tools | Organ overlap, illumination sensitivity |

| Hyperspectral | Imaging spectrometers, pushbroom scanners | Vegetation indices, water content, pigment composition, panicle health status | Drought stress detection, chlorophyll content, nutrient status | Rich spectral data, biochemical specificity | Large data volumes, computational complexity |

| Thermal | Near-infrared cameras | Canopy/leaf temperature, stomatal conductance | Water stress detection, insect infestation | Pre-visual stress detection, water relation assessment | Limited structural information |

| 3D | Stereo cameras, TOF, LIDAR | Shoot structure, leaf angles, canopy architecture, height | Plant architecture analysis, biomass estimation, growth monitoring | Volumetric assessment, structural detail | Computational demands, potential resolution limits |

Fluorescence Imaging

Chlorophyll fluorescence imaging captures the light re-emitted by chlorophyll molecules during photosynthesis, providing functional information on photosynthetic efficiency [10] [13]. This modality produces pixel-based maps of emitted fluorescence in the red and far-red region, enabling quantification of photosynthetic status, quantum yield, non-photochemical quenching, and leaf health status [10]. Fluorescence imaging has been applied to studies of wheat, Arabidopsis, barley, bean, sugar beet, tomato, and chicory plants [10]. The technology is particularly valuable for early stress detection and photosynthetic performance assessment, though it requires specific excitation light sources and specialized cameras.

Multimodal Image Registration: Methodologies and Challenges

Registration Algorithms and Performance

The fusion of data from multiple imaging modalities requires precise image registration to achieve pixel-level alignment across different sensor outputs [13]. This process involves geometric transformation of images from different modalities so that their pixels correspond to the same physical points in the scene. Recent research has investigated various automated image registration algorithms, including:

- Phase-only correlation (POC): A frequency-based method that transforms images into the Fourier domain and estimates transformation parameters using phase information, providing robustness to intensity differences and noise [13].

- Feature-based methods: These identify key points such as edges, corners, or gradients in pixel neighborhoods, then calculate transformation matrices through feature matching and filtering algorithms like RANSAC (Random Sample Consensus) [13].

- Enhanced correlation coefficient (ECC): A similarity metric that extends normalized cross-correlation (NCC), measuring correlation between zero-mean and variance-normalized image values [13].

In experimental evaluations using Arabidopsis thaliana and Rosa × hybrida test sets, researchers have achieved high overlap ratios of 98.0 ± 2.3% for RGB-to-chlorophyll fluorescence registration and 96.6 ± 4.2% for HSI-to-chlorophyll fluorescence registration through affine transformation approaches [13].

3D Multimodal Registration with Depth Information

Advanced registration approaches incorporate 3D information from depth cameras to address challenges of parallax and occlusion effects in plant canopy imaging [3]. One novel method utilizes a ray casting technique that integrates depth information from a time-of-flight camera directly into the registration process [3]. This approach:

- Mitigates parallax effects by leveraging 3D structural information

- Automatically detects and filters out various types of occlusions

- Is applicable for arbitrary multimodal camera setups and diverse plant species

- Can compute both registered images and point clouds of plants [3]

This method demonstrates particular robustness across different plant types and camera compositions, as validated through experiments on six distinct plant species with varying leaf geometries [3].

Table 2: Multimodal Image Registration Techniques in Plant Phenotyping

| Registration Approach | Core Methodology | Transformation Type | Reported Performance | Advantages |

|---|---|---|---|---|

| Affine Transformation | Global transformation matrix accounting for translation, rotation, scaling, shearing | Linear | 98.0% overlap (RGB-ChlF), 96.6% overlap (HSI-ChlF) | Computational efficiency, reversibility, minimal data alteration |

| 3D Ray Casting | Integration of depth information from TOF camera, ray casting for projection | Projective | Robust across 6 plant species | Handles parallax and occlusion, suitable for complex canopies |

| Feature-Based (ORB) | Detection of keypoints (edges, corners), feature matching with RANSAC | Variable | Dependent on feature similarity | Handles complex transformations, robust to illumination changes |

| Phase-Only Correlation | Fourier domain transformation, phase information utilization | Linear | Robust to intensity differences | Effective for multimodal data with different representations |

Experimental Workflows and Visualization

Workflow for Multimodal Data Acquisition and Registration

The integration of multiple imaging modalities requires carefully designed experimental workflows to ensure accurate spatial and temporal correlation of data. The following diagram illustrates a generalized workflow for multimodal image acquisition and registration in plant phenotyping:

Diagram 1: Workflow for multimodal image acquisition and registration in plant phenotyping

Sensor Integration Platform Architecture

Multimodal imaging platforms require careful engineering to coordinate multiple sensors with different operational characteristics. The following diagram illustrates the architecture of a coordinated hyperspectral and RGB imaging system:

Diagram 2: Architecture of a coordinated hyperspectral and RGB imaging platform

Research Reagent Solutions: Essential Materials for Multimodal Plant Phenotyping

Table 3: Essential Research Reagents and Materials for Multimodal Plant Phenotyping Experiments

| Category | Specific Item | Technical Function | Application Example |

|---|---|---|---|

| Imaging Sensors | CCD/CMOS RGB cameras | Capture high-spatial-resolution visible spectrum images | Plant architecture analysis, growth monitoring [11] |

| Hyperspectral line-scanning cameras | Acquire full spectral information for each pixel (e.g., 400-1000 nm) | Biochemical composition analysis, stress detection [13] | |

| Thermal infrared cameras | Measure canopy temperature variations | Stomatal conductance assessment, water stress monitoring [10] | |

| Time-of-flight (TOF) 3D cameras | Capture depth information through light pulse time measurement | 3D plant structure reconstruction, occlusion handling [3] | |

| Calibration Tools | Spectraflect/Spectralon panels | Provide known reflectance reference (5%, 50%, 99%) | Radiometric calibration of hyperspectral/thermal sensors [12] |

| Chessboard calibration targets | Enable geometric correction for lens distortion | Image registration accuracy improvement [13] | |

| Software Libraries | OpenCV, Scikit-image | Computer vision and image processing algorithms | Feature detection, image transformation [11] |

| PlantCV | Plant-specific image analysis pipeline | High-throughput phenotypic trait extraction [11] | |

| Platform Components | Motorized gantry systems | Provide precise camera positioning and movement | Automated multi-view image acquisition [11] |

| Controlled illumination systems | Ensure consistent lighting conditions | Standardized image acquisition across time points [11] | |

| GPS synchronization units | Coordinate temporal alignment of multi-sensor data | Fusion of hyperspectral and RGB video streams [12] |

Multimodal imaging represents a paradigm shift in plant phenomics, enabling comprehensive assessment of plant traits through the integration of complementary sensing technologies. The core imaging modalities—RGB, stereo vision, hyperspectral, thermal, and 3D systems—each contribute unique information about plant structure, function, and composition. The true power of these technologies emerges when they are strategically combined through robust image registration techniques, creating datasets richer than the sum of their parts.

Future developments in plant phenotyping will likely focus on enhancing computational frameworks for managing and extracting knowledge from large multimodal datasets, developing more sophisticated registration algorithms that handle complex plant architectures, and creating standardized protocols for sensor calibration and data validation. The fusion of 3D geometric information with spectral data holds particular promise for advanced analysis such as organ segmentation and disease detection [9]. As these technologies mature and become more accessible, they will play an increasingly vital role in accelerating crop improvement and addressing challenges in sustainable agriculture under changing environmental conditions.

Plant phenomics represents a paradigm shift in plant sciences, enabling the high-throughput, non-invasive measurement of plant traits across their entire life cycle [14]. At the heart of this revolution lies multimodal imaging—the integration of diverse sensor technologies and imaging techniques to capture comprehensive phenotypic information across multiple spatial and temporal scales. This integrated approach is essential because plants possess an inherently multiscale organization, with complex 3D structures spanning from molecular components within cells to entire canopies in field conditions [14]. The central challenge in modern plant phenomics is bridging these scales through computational and sensor fusion techniques that can connect cellular processes to whole-plant physiology and performance.

Multimodal imaging addresses fundamental limitations of single-scale approaches by combining anatomical and functional information from complementary techniques. For instance, a modality with high spatial resolution (e.g., providing anatomical information) can be registered with another modality offering functional data (e.g., metabolic activity), enabling researchers to analyze specific anatomical compartments with precise functional correlations [14]. This integrative capability is particularly valuable for understanding complex plant responses to environmental stresses such as drought and heat, which involve coordinated mechanisms across biological scales from gene expression to canopy-level physiology [15]. As climate change intensifies abiotic stresses on global crop production, multimodal phenomics approaches become increasingly critical for developing climate-resilient crop varieties through advanced breeding strategies.

Multiscale Imaging Technologies: From Cells to Canopies

Imaging Modalities Across Biological Scales

Table 1: Imaging techniques spanning biological scales in plant phenomics

| Biological Scale | Imaging Technique | Spatial Resolution | Key Applications in Plant Sciences |

|---|---|---|---|

| Molecular to Cellular | PALM/STORM | ~20-30 nm | Single-molecule imaging, protein localization [14] |

| STED | ~30-80 nm | Subcellular structure visualization [14] | |

| 3D-SIM | ~100 nm | 3D cellular architecture [14] | |

| TIRF | ~100 nm | Surface-associated processes [14] | |

| Tissue to Organ | OCT | ~1-10 μm | Seedling elongation, cell discrimination [14] |

| LSFM | ~1-5 μm | Entire seedling growth cell-by-cell [14] | |

| X-ray PCT | ~1-10 μm | Seed microstructure analysis [14] | |

| OPT | ~5-20 μm | Entire leaf imaging with cell resolution [14] | |

| Root System | μX-ray CT | ~10-50 μm | 3D root architecture in soil [14] |

| Rhizotron | ~50-100 μm | 2D root growth dynamics [14] | |

| Whole Shoot | 3D Photogrammetry | ~0.1-1 mm | Shoot architecture, biomass estimation [14] |

| Multiview Stereo | ~0.1-0.5 mm | 3D plant morphology [14] | |

| Canopy to Field | UAV/Satellite | ~1 cm - 10 m | Canopy temperature, vegetation indices [14] [15] |

| Thermal Imaging | ~0.5-5 cm | Canopy temperature depression [15] | |

| Hyperspectral | ~1-10 cm | Chlorophyll content, stress detection [15] |

Experimental Protocols for Multimodal Imaging

The effective implementation of multiscale imaging requires standardized protocols to ensure data quality and cross-comparability. For microscopy techniques at cellular scales, sample preparation must minimize physiological disruption while maintaining structural integrity. For super-resolution techniques like PALM/STORM, protocols typically involve chemical fixation, permeabilization, and specific fluorescent labeling, with particular attention to preserving plant cell wall architecture [14]. For live-cell imaging, environmental control maintaining appropriate temperature, humidity, and minimal phototoxic exposure is crucial, especially given that plants are sensitive to light quality and duration during development [14].

At the whole-plant level, multimodal imaging protocols often combine 3D imaging systems with controlled growth environments. For example, optical coherence tomography (OCT) of Arabidopsis thaliana seedlings can be performed using systems integrated with microstage translation systems, enabling 3D capture of hundreds of entire seedlings at cellular resolution in a single run [14]. A critical consideration is the non-invasiveness of imaging, particularly for long-term time-lapsed acquisitions capturing developmental processes like seed imbibition (hours) or seedling elongation (days) [14].

For field-based phenotyping, standardized protocols must account for environmental variability. Unmanned aerial vehicle (UAV) imaging should be conducted under consistent illumination conditions (e.g., solar noon ±2 hours) with calibrated sensors and precise geo-referencing [15]. Multimodal field imaging typically combines RGB, thermal, hyperspectral, and LiDAR sensors, requiring rigorous cross-calibration and synchronized data acquisition [15]. The integration of ground-based control plots with known phenotypes provides essential reference data for validating aerial measurements and translating between scales.

Data Processing and Visualization Challenges

Multimodal Image Registration

The integration of images from different modalities and scales necessitates sophisticated registration approaches to achieve pixel-precise alignment—a challenge often complicated by parallax and occlusion effects in complex plant structures [3]. Recent advances address this through 3D registration methods that integrate depth information to mitigate parallax effects [3]. One novel algorithm utilizes 3D information from depth cameras and employs ray casting for registration, with integrated methods to automatically detect and filter out occlusion effects [3]. This approach is particularly valuable as it is not reliant on detecting plant-specific image features, making it suitable for diverse species and camera configurations [3].

Registration workflows typically involve both rigid and non-rigid transformations computed on regions of interest containing landmarks, which can be selected manually or detected automatically with scale-invariant feature transforms (SIFT) or variants implemented in tools like the ImageJ Plugin TrakEM2 [14]. For large datasets, computational efficiency is achieved by calculating transformation matrices on landmark-rich regions rather than entire images, then applying these transformations to full datasets [14]. This approach enables handling of the substantial memory requirements associated with high-resolution multiscale images, which can reach gigabytes for a single 3D scan of hundreds of seedlings at cellular resolution [14].

Visualization Frameworks for Multimodal Data

The high dimensionality of multimodal phenomics data presents significant visualization challenges. Interactive frameworks like Vitessce have been developed specifically for exploring multimodal and spatially resolved data, enabling simultaneous visualization of millions of data points across coordinated views [16]. These tools support diverse data types including cell-type annotations, gene expression quantities, spatially resolved transcripts, and cell segmentations, bridging traditional gaps between image viewers and genome browsers [16].

Effective visualization of multiscale plant data requires principles that maximize the "data-ink ratio"—ensuring most pixels display actual data rather than decorative elements [17]. Strategic color usage is particularly important, with sequential palettes for continuous data (e.g., light to dark blue for intensity gradients), diverging palettes for data with meaningful midpoints (e.g., red-white-blue for temperature variations), and categorical palettes with distinct hues for discrete groups [17]. Accessibility considerations mandate avoiding problematic color combinations like red-green and using simulation tools to verify interpretations for viewers with color vision deficiencies [17].

Table 2: Essential tools for multiscale plant image analysis

| Tool Category | Specific Tools | Primary Function | Applicable Scale |

|---|---|---|---|

| Image Processing | ImageJ with TurboReg | Image registration using landmark-based transformation [14] | Cellular to Whole-Plant |

| TrakEM2 | Automatic landmark detection with SIFT [14] | Cellular to Tissue | |

| Visualization | Vitessce | Integrative visualization of multimodal data [16] | Molecular to Organ |

| Cellxgene | Interactive exploration of large cell datasets [16] | Cellular | |

| TissUUmaps | Spatial data visualization [16] | Tissue to Organ | |

| Data Integration | SpatialData | Standardized spatial data handling [16] | All Scales |

| OME-TIFF/OME-Zarr | Standardized file formats for imaging data [16] | All Scales |

Signaling Pathways in Abiotic Stress Response

Plant responses to environmental stresses involve complex signaling networks that operate across biological scales. Under combined drought and heat stress—a growing concern in climate change scenarios—several core pathways mediate plant adaptation. The abscisic acid (ABA) signaling pathway is central to drought tolerance: under water deficit, ABA accumulates and initiates a cascade via PYR/PYL receptors, PP2C inactivation, and SnRK2 kinase activation, leading to stomatal closure and expression of drought-responsive genes [15]. Concurrently, the heat shock factor–heat shock protein (HSF-HSP) network responds to elevated temperatures through activation of molecular chaperones that prevent protein unfolding and aggregation [15]. These pathways interact through cross-talk mechanisms, where ABA-responsive elements can regulate heat resistance genes, and heat stress can elevate ABA levels that modulate stress-responsive genes [15]. Both stresses converge on reactive oxygen species (ROS) signaling, inducing accumulation of molecules like hydrogen peroxide that serve as secondary messengers at moderate levels but cause oxidative damage at high concentrations if not scavenged by antioxidant enzymes [15].

Abiotic Stress Signaling Network

Integrated Experimental Workflow for Multiscale Phenomics

A comprehensive multiscale phenomics workflow integrates data acquisition across platforms, multimodal registration, and data analysis to connect phenotypic observations with underlying biological mechanisms. The workflow begins with experimental design that considers the appropriate imaging modalities for target biological questions, ensuring coverage of relevant spatial and temporal scales. For investigating drought-heat stress interactions, this typically combines remote sensing for canopy-level responses with microscopy for cellular reactions, linked through molecular analyses [15].

Multiscale Phenomics Workflow

Research Reagent Solutions for Plant Phenomics

Table 3: Essential research reagents and materials for multimodal plant imaging

| Reagent/Material Category | Specific Examples | Function in Multimodal Imaging |

|---|---|---|

| Fluorescent Labels & Probes | GFP variants, Synthetic dyes | Labeling specific cellular structures for super-resolution microscopy [14] |

| Immunofluorescence markers | Antibody-based protein localization in fixed tissues [14] | |

| Molecular Biology Reagents | RNA sequencing kits | Transcriptomic profiling correlated with phenotypic traits [15] |

| Metabolite extraction kits | Analysis of stress-responsive compounds [15] | |

| Fixation & Preservation | Chemical fixatives (formaldehyde, glutaraldehyde) | Tissue preservation for structural imaging [14] |

| Cryopreservation solutions | Maintaining native state for in situ molecular analysis [14] | |

| Growth Media & Substrates | Agar compositions, Soil substitutes | Standardized growth conditions for reproducible phenotyping [18] |

| Hydroponic nutrients | Controlled nutrient delivery for stress studies [15] | |

| Sensor Calibration Standards | Reflectance standards, Thermal references | Cross-platform calibration for quantitative imaging [15] |

| Color calibration charts | Standardized color reproduction across imaging systems [17] |

Multimodal imaging in plant phenomics represents a transformative approach for bridging biological scales from cellular processes to canopy-level performance. The integration of diverse imaging technologies—from super-resolution microscopy to satellite remote sensing—enables comprehensive characterization of plant responses to environmental challenges [14] [15]. However, the full potential of these approaches requires addressing significant computational challenges in data management, multimodal registration, and visualization [14] [3]. Future advances will depend on developing scalable computational frameworks that can handle the enormous data volumes generated by multiscale imaging while providing intuitive interfaces for biological discovery [16].

The emerging "pixels-to-proteins" paradigm exemplifies the power of integrated multiscale approaches, connecting field-level phenotypes with molecular responses through advanced analytics and machine learning [15]. This integration is particularly crucial for addressing pressing agricultural challenges, such as developing crop varieties with enhanced resilience to compound drought-heat stress events that are increasingly common under climate change [15]. As multimodal phenomics continues to evolve, cross-disciplinary collaboration among plant scientists, computer vision specialists, and data scientists will be essential for realizing the promise of climate-smart agriculture through digital innovation [18].

In the field of plant phenomics, the pursuit of a comprehensive understanding of plant growth, structure, and function has led to a fundamental challenge: no single imaging technology can capture the full complexity of a plant's phenotype. Multimodal imaging addresses this by integrating complementary data from multiple sensors to create a holistic view that is greater than the sum of its parts. This approach is essential for bridging the gap between plant genotype and its expressed phenotype under varying environmental conditions [19]. The core objective is to synergistically combine anatomical, structural, and functional data to uncover relationships that remain invisible to single-mode sensors, thereby accelerating crop improvement and biological discovery.

The Fundamental Principles of Multimodal Imaging

Multimodal phenomics is driven by the inherent limitations of individual imaging technologies. Each modality possesses unique strengths and weaknesses in terms of spatial resolution, sensitivity, and the specific plant traits it can measure.

The Complementarity of Sensor Data

No single sensor can provide a complete picture of plant health and architecture. For instance, while RGB cameras offer excellent spatial detail for morphological assessment, they provide limited information on physiological status. The integration of multiple sensors allows researchers to overcome the constraints of any single system.

- Spatial and Spectral Synergy: A standard RGB (red, green, blue) camera captures high-resolution morphological data, such as plant size, shape, and color [11]. When combined with a hyperspectral camera, which captures data across hundreds of narrow spectral bands, researchers can derive detailed information on plant physiology, including water content, chlorophyll levels, and other biochemical constituents [11]. This synergy links what a plant looks like with how it is functioning.

- 2D and 3D Fusion: Two-dimensional imaging often struggles with complex plant canopies due to occlusion and overlap of leaves. Stereo vision systems or depth cameras generate 3D models and depth maps, allowing for accurate calculation of plant volume, leaf area index, and canopy structure [3] [11]. This 3D structural information is crucial for accurately interpreting 2D data from other sensors, as it provides spatial context and mitigates parallax errors [3].

- Structural and Physiological Alignment: Thermal imaging cameras measure leaf temperature, which is a proxy for stomatal conductance and water stress [11]. When these data are precisely aligned with 3D structural models, researchers can determine how different layers of the canopy contribute to overall plant transpiration and water use efficiency [20].

Overcoming the Parallax and Occlusion Challenge

A significant technical hurdle in multimodal imaging is the precise alignment of images from different sensors, especially given the complex and often self-occluding nature of plant canopies. Advanced registration algorithms are required to achieve pixel-precise alignment. Novel methods now use 3D information from a depth camera and ray-casting techniques to mitigate parallax effects and automatically detect and filter out occluded areas, ensuring accurate data fusion from multiple viewpoints and camera technologies [3].

Experimental Evidence: Quantifying Multimodal Advantages

The theoretical benefits of multimodal imaging are best demonstrated through concrete experimental applications. The following case studies and data syntheses illustrate its power to provide insights unattainable through single-modality approaches.

Case Study: Decoding Light-Use Efficiency in Lettuce

A key study on lettuce employed multimodal phenotyping to unravel the complex relationships between canopy structure and photosynthetic efficiency [20]. Researchers combined 3D imaging to capture structural traits with chlorophyll fluorescence imaging and spectral analysis to assess physiological status.

Key Findings:

- Structural-Physiological Coordination: The study revealed that specific canopy architectural traits, such as compactness and voxel volume (a 3D pixel measurement), were directly coordinated with physiological traits like the maximum net photosynthetic rate.

- Predictive Modeling: Machine learning models, including partial least squares regression and random forest, were trained on the multimodal dataset. These models successfully predicted light-use efficiency from the integrated phenotypic data, demonstrating that the combination of structural and physiological data provides a reliable basis for forecasting plant performance [20].

Case Study: Robust Root Phenotyping

Research on root systems highlights the critical importance of selecting appropriate imaging and metrics. A comparative analysis showed that 2D projection methods can introduce significant measurement errors for critical traits like root growth angle [21].

Key Findings:

- 3D vs. 2D Imaging: Metrics that are aggregates of multiple underlying "phenes" (elementary phenotypic components), such as total root length or bushiness index, can be misleading. Different root architectures can produce similar aggregate scores, obscuring important biological variation.

- Superiority of Elementary Phenes: The study concluded that direct measurements of elementary phenes—such as root number, root diameter, and lateral root branching density—are more stable and reliable because they are not affected by the imaging method and provide unambiguous information about the underlying plant architecture [21]. This underscores the need for imaging modalities that can resolve fine, three-dimensional structures rather than relying on 2D approximations.

Comparative Table: Unlocking Trait Visibility through Multimodal Integration

The table below summarizes how combining different imaging modalities makes visible a wider range of plant traits than any single modality could achieve.

Table: Complementary Trait Acquisition Through Different Imaging Modalities

| Imaging Modality | Primary Data Output | Key Measurable Traits | Inferred Plant Properties |

|---|---|---|---|

| RGB / Stereo Vision [11] | 2D color images, 3D point clouds | Projected leaf area, plant height, compactness, color patterns | Biomass accumulation, canopy architecture, developmental stage |

| Hyperspectral Imaging [11] | Spectral reflectance across numerous bands | Vegetation indices (e.g., NDVI), chlorophyll, water content | Photosynthetic capacity, nutrient status, drought stress |

| Thermal Imaging [11] | Canopy temperature map | Leaf surface temperature | Stomatal conductance, water use efficiency, drought stress response |

| 3D Depth Sensing [3] [11] | Depth maps, 3D voxel models | Canopy volume, leaf angle distribution, 3D biomass | Light interception efficiency, structural adaptation to environment |

| X-ray CT / MRI [19] | Cross-sectional images of internal structures | Root architecture, seed morphology, vascular tissue | Resource uptake efficiency, seed quality, hydraulic properties |

Experimental Protocol for a Multimodal Study

The following workflow outlines a generalized protocol for conducting a multimodal phenotyping experiment, synthesizing methodologies from the cited research.

System Setup and Calibration:

- Arrange multiple sensors (e.g., RGB, hyperspectral, thermal, depth camera) in a controlled or field-based platform.

- Ensure precise geometric and radiometric calibration across all sensors. For 3D registration, this involves calculating the relative position and orientation of each camera to a common coordinate system [3].

Synchronized Data Acquisition:

- Capture images of the plant subjects from all sensors simultaneously or in rapid sequence to minimize temporal discrepancies, especially for dynamic physiological traits.

Multimodal Image Registration:

Trait Extraction and Data Fusion:

- Apply modality-specific algorithms to extract traits: segmentation and mesh reconstruction from 3D data [11], vegetation indices from hyperspectral data [11], and temperature statistics from thermal data.

- Fuse the extracted traits into a unified data matrix where each plant has associated structural, physiological, and spectral descriptors.

Integrated Data Analysis:

- Use multivariate statistical analysis or machine learning models (e.g., Partial Least Squares Regression, Random Forest, or Artificial Neural Networks) to discover relationships between structural and physiological traits, as demonstrated in the lettuce study [20].

- Build phenotypic networks to visualize and quantify the coordination between different trait modules.

Implementation and Workflow

Successfully deploying a multimodal imaging system requires careful planning of the technical workflow and an understanding of the logical relationships between different data streams.

The Multimodal Imaging and Analysis Workflow

The diagram below illustrates the sequential process of a multimodal phenotyping experiment, from data acquisition to biological insight.

Diagram 1: Multimodal phenotyping workflow, from data acquisition to biological insight.

The Conceptual Framework of Multimodal Integration

The following diagram maps the logical relationship between the core challenges in phenomics, the imaging solutions, and the ultimate holistic view.

Diagram 2: Conceptual framework linking phenomics challenges to multimodal solutions.

The Scientist's Toolkit: Essential Research Solutions

Implementing a successful multimodal phenotyping strategy requires a suite of technological and analytical tools. The following table details key components of a modern multimodal phenomics pipeline.

Table: Essential Research Reagents and Solutions for Multimodal Phenotyping

| Category | Item / Technology | Specific Function in Multimodal Research |

|---|---|---|

| Imaging Hardware | RGB & Stereo Vision Cameras [11] | Captures high-resolution 2D color images and enables 3D reconstruction via depth maps for morphological analysis. |

| Hyperspectral Imaging Sensors [11] | Measures spectral reflectance across hundreds of narrow bands to quantify biochemical and physiological plant properties. | |

| 3D Time-of-Flight (ToF) Depth Camera [3] | Provides real-time 3D point cloud data of the plant canopy, used for registration and structural trait extraction. | |

| Thermal Imaging Camera [11] | Maps canopy temperature as a proxy for stomatal conductance and transpirational water loss. | |

| Analytical Software & Algorithms | 3D Multimodal Registration Algorithm [3] | Aligns images from different sensors pixel-precisely using depth data and ray casting, while filtering occlusions. |

| Machine Learning Models (PLSR, RF, ANN) [20] | Discovers complex, non-linear relationships between fused multimodal traits (e.g., structure and physiology). | |

| PlantCV / OpenCV [11] | Open-source software libraries for image analysis and trait extraction from plant images. | |

| Experimental Materials | Controlled Environment Growth Chambers | Standardizes environmental conditions to minimize noise and isolate genetic effects on phenotype. |

| Robotic or Gantry-Based Platforms [19] | Automates the movement of sensors or plants for high-throughput, consistent data acquisition over time. | |

| Calibration Targets (e.g., Color, Spectral, Geometric) | Ensures data consistency and accuracy across imaging sessions and between different sensors. |

Combining imaging modalities is not merely a technical exercise; it is a fundamental requirement for achieving a holistic and mechanistic understanding of plant phenotype. By fusing complementary data streams—morphological with physiological, and structural with functional—researchers can overcome the limitations of single-sensor systems. This integrated approach, powered by advanced registration techniques and machine learning, is transforming plant phenomics from a descriptive science to a predictive one. It enables the deconvolution of complex traits, reveals the hidden coordination between plant architecture and performance, and ultimately provides the robust data needed to link genotype to phenotype for the improvement of future crops.

Methodologies and Real-World Applications: From Data Acquisition to Phenotypic Insight

Multimodal imaging represents a paradigm shift in plant phenomics, enabling a comprehensive assessment of plant phenotypes by synergistically combining data from multiple camera technologies. This approach allows researchers to capture cross-modal patterns that provide deeper insights into plant growth, physiology, and responses to environmental stresses than single-modality systems. However, the effective utilization of these cross-modal patterns hinges on robust image registration techniques capable of achieving pixel-accurate alignment across different imaging modalities—a significant challenge complicated by parallax and occlusion effects inherent in plant canopy imaging. This technical guide outlines a systematic workflow for multimodal image acquisition and analysis, with particular emphasis on emerging 3D registration methodologies that leverage depth information to overcome traditional limitations. By providing detailed protocols and technical specifications, this work aims to standardize practices in a rapidly evolving field and facilitate more accurate, high-throughput plant phenotyping.

Plant phenomics has emerged as a crucial discipline bridging the genotype-phenotype gap, essential for addressing global food security challenges in the face of climate change and population growth. The development of high-throughput phenotyping platforms has become increasingly important as traditional visual assessment methods prove inadequate for large-scale genetic studies and breeding programs. Multimodal imaging refers to the integrated use of multiple imaging technologies—including visible, fluorescence, thermal, hyperspectral, and 3D imaging—to capture complementary aspects of plant phenotype that cannot be observed with any single modality alone [10].

The fundamental advantage of multimodal systems lies in their ability to simultaneously monitor diverse plant characteristics across different spectral ranges and spatial resolutions. For instance, while visible imaging can quantify morphological parameters like leaf area and plant architecture, thermal imaging reveals stomatal conductance and water status, and fluorescence imaging provides insights into photosynthetic efficiency [10]. When these datasets are precisely aligned, researchers can identify novel correlations between structural, physiological, and functional traits, enabling a more holistic understanding of plant performance under varying environmental conditions.

Recent advances in imaging sensors and computational methods have made multimodal approaches increasingly accessible, though significant technical challenges remain. The effective integration of multimodal data requires solving complex image registration problems, managing large datasets, and developing analytical frameworks that can extract biologically meaningful information from multiple image streams. This guide addresses these challenges by presenting a standardized workflow for multimodal image acquisition and analysis, with particular focus on a novel 3D registration method that substantially improves alignment accuracy across modalities.

Core Principles of Multimodal Image Registration

The Parallax and Occlusion Challenges

Plant canopy imaging presents unique challenges for image registration due to its complex three-dimensional structure. Traditional 2D registration methods based on affine transformations or homography estimation fail to account for parallax effects—the apparent displacement of objects when viewed from different positions—leading to misalignment in multimodal image stacks [22]. This problem is particularly pronounced in close-range imaging scenarios where leaf arrangement creates significant depth variation. Additionally, occlusion effects, where plant organs hide each other from certain viewing angles, create regions that cannot be properly aligned using 2D methods [3].

The limitations of 2D approaches become especially evident when integrating modalities with fundamentally different characteristics, such as RGB and thermal cameras. Without accounting for the 3D structure of the plant, precise alignment of features like leaf veins, margins, or disease patterns becomes impossible, thereby limiting the potential for correlating information across modalities [22]. These challenges necessitate a paradigm shift toward 3D-aware registration methods that explicitly model plant geometry to achieve accurate pixel-level correspondence.

The 3D Registration Paradigm

A groundbreaking approach to multimodal plant image registration leverages 3D information obtained from depth cameras to overcome the limitations of 2D methods [3] [22]. This methodology utilizes a time-of-flight camera to capture depth information, which is then used to generate a mesh representation of the plant canopy. Through ray casting techniques, this 3D representation enables precise pixel mapping between different cameras regardless of their positions, orientations, or spectral characteristics [22].

The principal advantage of this approach is its independence from plant-specific image features, making it applicable across diverse species with varying leaf geometries and architectural patterns [3]. Furthermore, the method incorporates an automated mechanism to identify and classify different types of occlusions, allowing researchers to mask regions where reliable registration cannot be achieved [4]. This transparency about limitations is crucial for ensuring the biological validity of subsequent analyses.

Workflow for Multimodal Image Acquisition and Analysis

System Setup and Calibration

The initial phase involves configuring a multimodal imaging system typically comprising multiple cameras with complementary capabilities. A recommended setup includes a hyperspectral camera, a thermal camera, and a combined RGB + infrared + depth camera (such as the Intel RealSense D435) [23]. The system should be designed to minimize parallax errors through careful spatial arrangement of components, though the subsequent registration process will address residual misalignments.

Calibration is a critical step that establishes the geometric relationship between all cameras in the system. This process involves recording multiple images of a checkerboard pattern from different distances and orientations [22]. These calibration images enable computation of intrinsic parameters (focal length, principal point, lens distortion) and extrinsic parameters (rotation and translation) for each camera, creating a unified coordinate system that forms the foundation for subsequent registration steps. Regular recalibration is recommended to maintain system accuracy, particularly when cameras are subject to mechanical stress or environmental fluctuations.

Image Acquisition Protocol

Standardized acquisition protocols are essential for generating consistent, comparable multimodal datasets. The following procedure ensures optimal data quality:

- Environmental Control: Conduct imaging under consistent lighting conditions where applicable. For modalities sensitive to ambient conditions (e.g., thermal imaging), stabilize environmental factors such as air temperature and humidity [10].

- Synchronization: Trigger all cameras simultaneously or implement precise timestamping to minimize temporal discrepancies between modalities, particularly important for capturing dynamic plant processes.

- Parameter Optimization: Adjust camera-specific settings (exposure, gain, etc.) for each modality to ensure optimal signal-to-noise ratio without sensor saturation.

- Reference Standards: Include color and spatial reference targets in the scene where possible to facilitate post-processing validation and radiometric calibration.

- Data Management: Implement a systematic naming convention and metadata structure to track experimental conditions, plant identifiers, and acquisition parameters across modalities.

Following this protocol ensures that subsequent registration and analysis steps begin with high-quality input data, maximizing the reliability of final results.

3D Reconstruction and Registration

The core registration process transforms acquired images into aligned multimodal datasets using the following steps:

- Depth Data Processing: Process raw data from the time-of-flight camera to generate a dense depth map of the plant canopy [22].

- Mesh Generation: Convert the depth map into a 3D mesh representation that captures the plant's geometric structure.

- Ray Casting: For each pixel in every camera, cast a ray through the 3D mesh to establish correspondence between image coordinates and 3D points [22].

- Occlusion Detection: Automatically identify and classify occlusion types (self-occlusion, inter-occlusion) to flag regions where accurate registration is not possible [4].

- Multimodal Projection: Project image data from all modalities onto the 3D model or transfer to a common image plane using the established ray-mesh intersections.

This process outputs both registered 2D images with precise pixel-level alignment and registered 3D point clouds that integrate geometric and multispectral measurements [22]. The approach scales to arbitrary numbers of cameras with different resolutions and wavelengths, making it adaptable to diverse experimental requirements.

Data Analysis and Phenotype Extraction

Once images are registered, researchers can extract quantitative phenotypic traits that integrate information across modalities:

- Feature Extraction: Apply computer vision algorithms to measure morphological (leaf area, plant height), physiological (chlorophyll content, water status), and health-related (disease severity, stress response) parameters [10].

- Cross-Modal Correlation: Identify relationships between features extracted from different modalities, such as correlating thermal patterns with hyperspectral indices.

- Temporal Analysis: Track trait evolution over time by aligning data from consecutive imaging sessions, enabling growth rate calculation and dynamic response quantification.

- Statistical Modeling: Integrate multimodal phenotypic data with genomic and environmental information to develop predictive models of plant performance.

The resulting datasets provide unprecedented insights into plant structure-function relationships and their responses to genetic and environmental factors.

Visual Documentation of Workflow

The following diagram illustrates the complete multimodal image registration pipeline, from image acquisition to the generation of registered outputs:

Multimodal Image Registration Workflow

Technical Specifications of Imaging Modalities

Table 1: Imaging Modalities in Plant Phenotyping

| Imaging Technique | Sensor Type | Spectral Range | Primary Applications | Phenotypic Parameters |

|---|---|---|---|---|

| Visible Imaging | RGB cameras | 400-700 nm | Morphological analysis, growth monitoring | Projected leaf area, plant architecture, color analysis [10] |

| Fluorescence Imaging | Fluorescence cameras | 400-800 nm | Photosynthetic efficiency, stress detection | Quantum yield, non-photochemical quenching [10] |

| Thermal Imaging | Thermal infrared cameras | 7-14 μm | Stomatal conductance, water status | Canopy temperature, transpiration rate [10] |

| Hyperspectral Imaging | Imaging spectrometers | 400-2500 nm | Biochemical composition, disease detection | Vegetation indices, pigment composition, water content [10] |

| 3D Imaging | Time-of-flight, stereo cameras | N/A (depth) | Plant architecture, biomass estimation | Leaf angle distribution, canopy structure, biomass [10] |

| Multimodal 3D Registration | Combined RGB-D + other sensors | Multiple ranges | Comprehensive phenotype assessment | Integrated structural, physiological and health parameters [3] |

Research Reagent Solutions and Essential Materials

Table 2: Essential Research Materials for Multimodal Plant Phenotyping

| Item | Specifications | Function in Workflow |

|---|---|---|

| Multimodal Imaging System | RGB, thermal, hyperspectral, and depth cameras (e.g., Intel RealSense D435) [23] | Simultaneous acquisition of complementary plant data across multiple spectra |

| Calibration Target | Standardized checkerboard pattern with precise dimensions [22] | Geometric calibration and alignment of multiple cameras in the system |

| Depth Sensing Camera | Time-of-flight camera with sufficient resolution for plant structures [3] | Capture of 3D information essential for parallax correction and occlusion handling |

| Controlled Environment Chamber | Adjustable lighting, temperature, and humidity control [10] | Standardization of imaging conditions to minimize environmental variability |

| Data Processing Unit | High-performance computing system with adequate GPU resources [22] | Execution of computationally intensive 3D reconstruction and registration algorithms |

| Reference Standards | Color charts and spatial reference objects [10] | Radiometric calibration and spatial validation across imaging modalities |

| Plant Handling System | Automated conveyor or positioning system [11] | High-throughput processing of multiple plants with consistent positioning |

Experimental Protocols and Methodologies

Protocol for 3D Multimodal Registration

Based on the method described by Stumpe et al. [22], the following protocol enables robust multimodal image registration:

- System Configuration: Mount all cameras in fixed positions relative to the imaging area. Ensure overlapping fields of view and minimize lens distortion through appropriate focal length selection.

- Checkerboard Calibration: Acquire at least 20 images of a checkerboard pattern from different orientations and distances with each camera. Use these to compute intrinsic and extrinsic camera parameters.

- Multimodal Image Acquisition: Simultaneously capture images of plant subjects with all cameras using the synchronization method appropriate for your setup.

- Depth Map Generation: Process raw data from the time-of-flight camera to generate a high-quality depth map. Apply noise reduction filters while preserving edge details.

- Mesh Reconstruction: Convert the depth map into a 3D mesh using surface reconstruction algorithms. Optimize mesh complexity to balance detail and computational efficiency.

- Ray Casting Registration: For each camera, cast rays through the 3D mesh to establish correspondence between image pixels and 3D coordinates.

- Occlusion Handling: Identify occluded regions by detecting rays that intersect with multiple surfaces or fail to intersect with the mesh. Classify occlusion types and generate corresponding mask layers.

- Validation: Assess registration accuracy using ground control points or by visually inspecting alignment of distinctive features across modalities.

This protocol has been validated on six distinct plant species with varying leaf geometries, demonstrating its robustness across different plant architectures [3].

Application to Plant Disease Assessment

Multimodal imaging enables sophisticated plant disease assessment through the correlation of symptoms across modalities. The following protocol, adapted from Fernandez et al. [24], outlines a multimodal approach for non-destructive disease diagnosis:

- Multimodal Symptom Detection: Capture registered images across visible, thermal, and hyperspectral modalities to detect complementary disease symptoms including color changes, temperature variations, and biochemical alterations.

- Feature Fusion: Extract features from each modality that indicate disease presence or severity, such as lesion area from visible images, canopy temperature anomalies from thermal images, and specific spectral indices from hyperspectral data.

- Machine Learning Classification: Train a classifier (e.g., random forest, support vector machine) on the multimodal feature set to distinguish between healthy and diseased tissue, or to classify different disease stages.

- Quantitative Assessment: Calculate disease severity metrics based on the classified regions, providing objective measures for resistance screening.

This approach has been successfully applied to grapevine trunk diseases, achieving over 91% accuracy in discriminating intact, degraded, and white rot tissues [24].

Implementation Considerations

Technical Requirements and Limitations

Implementing multimodal imaging systems requires careful consideration of several technical factors. Depth cameras have specific operating ranges and may perform differently across plant species with varying canopy densities [23]. Computational requirements for 3D reconstruction and ray casting can be significant, particularly when processing large datasets or operating at high spatial and temporal resolutions [22]. Researchers should also consider the trade-offs between system complexity and biological insights, as overly complex setups may introduce technical artifacts without corresponding scientific benefits.

The 3D registration method described requires at least one depth camera in the setup, which may represent an additional hardware investment. However, this approach eliminates the need for specialized feature detection algorithms tailored to specific plant species or camera types, potentially simplifying the implementation for diverse research applications [3].

Future Directions and Emerging Technologies

The field of multimodal plant phenotyping is rapidly evolving, with several promising research directions emerging. Deep learning approaches are being increasingly applied to 3D plant phenomics, offering potential improvements in feature extraction, classification, and segmentation tasks [25]. Integration of multimodal imaging with other sensing technologies, such as molecular markers or environmental sensors, could provide even more comprehensive insights into plant function. Additionally, the development of lightweight models and edge computing approaches aims to make sophisticated analysis more accessible and deployable in field conditions [23].

Future advancements will likely focus on improving the scalability of multimodal systems, enhancing automated analysis pipelines, and developing standardized data formats to facilitate collaboration and data sharing across research institutions. As these technologies mature, multimodal imaging is poised to become an increasingly central tool in plant phenomics and precision agriculture.

Multimodal imaging in plant phenomics research represents a paradigm shift from single-source data analysis to an integrated approach that combines diverse sensing technologies. This methodology, often termed multi-mode analytics (MMA) or sensor fusion, involves the synergistic use of multiple imaging and sensing modalities to capture comprehensive information on plant structure, physiology, and function [26]. By integrating data from various sources, researchers can overcome the limitations inherent in any single technology, enabling a more holistic understanding of plant growth, stress responses, and health status.

The foundational principle of multimodal phenomics lies in the complementary nature of different sensing technologies. RGB imaging captures visible morphological characteristics, hyperspectral imaging reveals physiological status through spectral signatures, thermal imaging provides data on plant water status and transpiration, and 3D imaging and LiDAR quantify structural attributes [27] [19]. When fused, these data streams create a multidimensional representation of plant phenotypes that more accurately reflects the complex interplay between genetics, environment, and management practices. This integrated approach is particularly valuable for deciphering quantitative traits governed by multiple genes and strongly influenced by environmental factors [19].

Sensor fusion operates at multiple technical levels—from early data layer fusion to feature-level integration and decision-level combinations—each offering distinct advantages for specific applications [28]. The implementation of these fusion strategies has become increasingly critical as plant phenomics addresses global challenges in food security, climate change adaptation, and sustainable agricultural intensification. This technical guide examines current applications, methodologies, and implementations of sensor fusion across three critical domains: plant stress response, disease detection, and growth modeling.

Sensor Fusion for Plant Stress Response Analysis

Technical Approaches and Fusion Methodologies

The application of sensor fusion for plant stress response monitoring typically employs multiple data processing methods, each with distinct advantages for specific applications. Research on poplar trees under gradient drought stress has demonstrated that feature layer fusion—where features are extracted from each modality before integration—delivers superior performance for monitoring drought severity and duration, achieving average accuracy, precision, recall, and F1 scores of 0.85 [28]. This approach outperforms data decomposition, data layer fusion, and decision layer fusion methods by more effectively leveraging complementary information from visible and thermal infrared imagery.

Table 1: Performance Comparison of Data Fusion Methods in Poplar Drought Monitoring

| Fusion Method | Average Accuracy | Average Precision | Average Recall | Average F1 Score |

|---|---|---|---|---|

| Feature Layer Fusion | 0.85 | 0.86 | 0.85 | 0.85 |

| Data Decomposition | 0.54 | 0.54 | 0.54 | 0.54 |

| Data Layer Fusion | Varies by algorithm | Varies by algorithm | Varies by algorithm | Varies by algorithm |

| Decision Layer Fusion | Lower than feature layer | Lower than feature layer | Lower than feature layer | Lower than feature layer |

Multi-mode analytics integrates data from multiple detection modes and spectral bands to accurately model plant stress responses by capturing real-time data that distinguishes transient from prolonged stress while detecting early biochemical shifts in photosynthesis before visible symptoms appear [26]. This capability for early stress detection is crucial for implementing timely interventions that can prevent significant yield losses. Furthermore, MMA systems can track recurrent stress patterns, distinguishing adaptive responses from new stressors and identifying concurrent deficiencies such as combined nutrient and water stress [26].

Experimental Protocol: Poplar Drought Stress Monitoring

Objective: Monitor drought severity and duration in poplar trees using multimodal data fusion with visible and thermal infrared imaging.

Materials and Equipment:

- High-resolution visible light camera

- Thermal infrared imaging sensor

- Controlled environment growth facilities

- Four poplar species with varying drought tolerance

- Computing hardware for data processing and machine learning

Methodology:

- Experimental Setup: Apply gradient drought stress treatments to multiple poplar species in controlled environments.

- Data Acquisition: Collect synchronized visible and thermal infrared images throughout the stress progression period.

- Feature Extraction: For feature layer fusion, extract texture features and grayscale channel values from both imaging modalities.

- Feature Selection: Apply Recursive Feature Elimination with Cross-Validation (RFE-CV) to identify optimal feature combinations.

- Model Training: Implement multiple machine learning algorithms (Random Forest, XGBoost, GBDT, Decision Tree, CatBoost) with Bayesian hyperparameter optimization.

- Model Evaluation: Validate model performance using five-fold cross-validation with accuracy, precision, recall, and F1 score metrics.

Key Findings: Texture features from thermal infrared image decomposition demonstrated greater sensitivity to poplar drought stress compared to visible light image features, with 15 of the 24 optimal features identified coming from thermal imagery [28].

Figure 1: Workflow for multimodal poplar drought stress monitoring

Multimodal Imaging for Plant Disease Detection

Comparative Analysis of Imaging Modalities

Plant disease detection has evolved significantly with advances in imaging technologies and artificial intelligence. Systematic comparisons between RGB (visible) imaging and hyperspectral imaging (HSI) reveal distinct advantages and limitations for each modality, creating opportunities for synergistic fusion approaches. RGB imaging offers accessibility and cost-effectiveness (500-2,000 USD for systems) and enables detection of visible disease symptoms using conventional deep learning architectures [27]. However, its performance significantly declines in field conditions (70-85% accuracy) compared to controlled laboratory settings (95-99% accuracy), primarily due to environmental variability and illumination effects.

Hyperspectral imaging systems, though more expensive (20,000-50,000 USD), enable pre-symptomatic disease detection by capturing physiological changes before visible symptoms manifest, operating across a broad spectral range of 250 to 15,000 nanometers [27]. This capability for early detection provides a critical window for intervention before disease establishment and spread. Transformer-based architectures like SWIN have demonstrated superior robustness on real-world datasets, achieving 88% accuracy compared to 53% for traditional CNNs [27].

Table 2: Performance Comparison of RGB vs. Hyperspectral Imaging for Disease Detection

| Imaging Modality | Laboratory Accuracy | Field Accuracy | Early Detection Capability | Cost Range (USD) |

|---|---|---|---|---|