Multimodal Imaging in Genotype-Phenotype Association Studies: Advanced Methods, Applications, and Future Directions

This comprehensive review explores the transformative role of multimodal imaging in genotype-phenotype association studies, a rapidly evolving field bridging computational biology, medical imaging, and genetics.

Multimodal Imaging in Genotype-Phenotype Association Studies: Advanced Methods, Applications, and Future Directions

Abstract

This comprehensive review explores the transformative role of multimodal imaging in genotype-phenotype association studies, a rapidly evolving field bridging computational biology, medical imaging, and genetics. We examine foundational principles of integrating diverse data modalities—from neuroimaging and retinal scans to single-cell RNA sequencing—to uncover complex genetic architectures underlying human diseases. The article details cutting-edge methodological frameworks including adversarial mutual learning, dirty multi-task sparse canonical correlation analysis (SCCA), and multimodal foundation models that address critical challenges like missing data and high-dimensional integration. Through applications across neurological disorders, inherited retinal diseases, and cancer research, we demonstrate how these approaches enhance diagnostic precision, enable early intervention, and accelerate therapeutic development. The synthesis of validation strategies, comparative analyses, and future directions provides researchers and drug development professionals with essential insights for implementing these advanced methodologies in both research and clinical settings.

The Genotype-Phenotype Landscape: Foundations of Multimodal Data Integration

Core Conceptual Framework

Multimodal imaging genetics is an advanced research framework that investigates the genetic underpinnings of brain structure, function, and disease by integrating heterogeneous data types. This approach simultaneously analyzes high-dimensional datasets from neuroimaging and genomics to uncover how genetic variations influence biological systems observable through imaging technologies [1] [2].

The foundational premise is that imaging-derived phenotypes serve as crucial intermediate traits (endophenotypes) that bridge the gap between genetic variation and clinical disease expression [2] [3]. Unlike traditional genetics studies that focus directly on disease diagnosis, imaging genetics examines how genetic variants influence quantitative biological traits measurable through various imaging modalities [2]. This provides a more powerful approach for understanding the biological pathways from genotype to phenotype to clinical symptom manifestation [2].

Multimodal AI has emerged as a transformative force in this domain, with systems capable of jointly learning from diverse data streams to create richer representations and significantly boost the discovery of genetic links to disease [4] [5]. By combining complementary and overlapping information from different modalities, these approaches enhance biological signals, reduce noise, and enable more powerful genetic discoveries than unimodal methods [5].

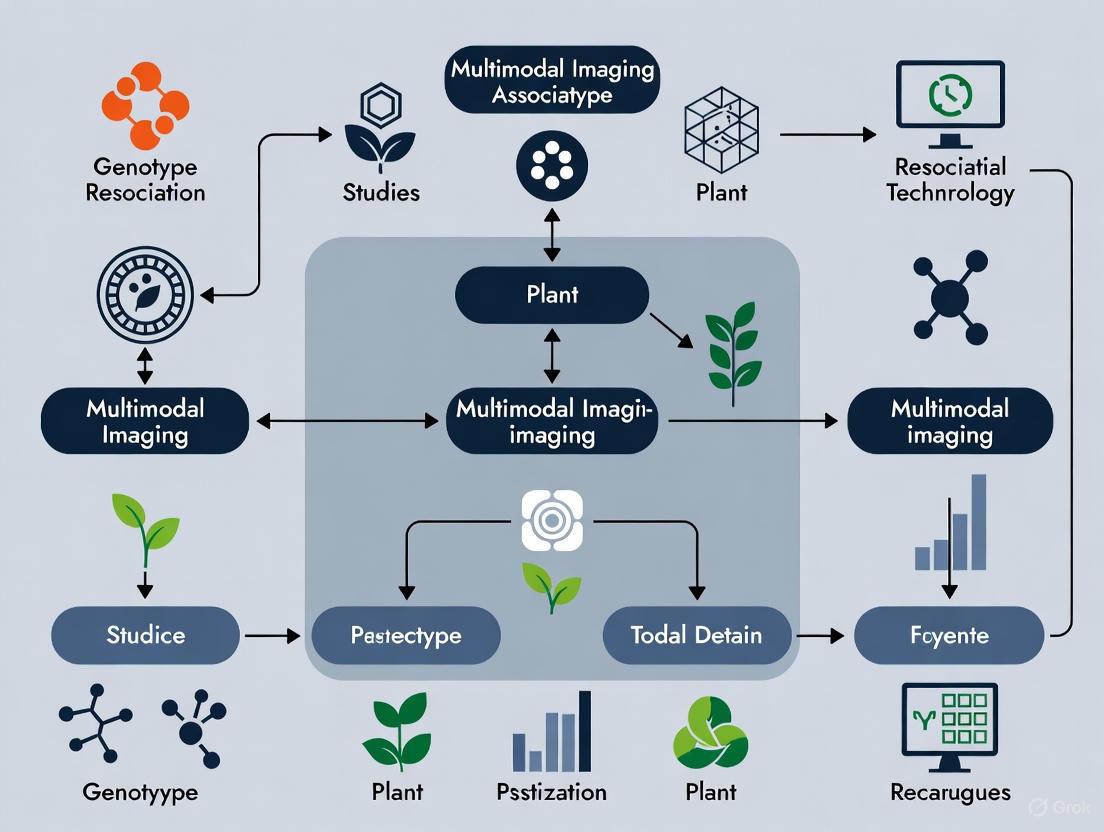

Figure 1: Core Conceptual Framework of Multimodal Imaging Genetics. This diagram illustrates how imaging phenotypes serve as intermediate traits bridging genetic variation and clinical disease manifestation.

Key Biological Significance and Applications

Enhancing Discovery of Disease Mechanisms

Multimodal imaging genetics has proven particularly valuable for unraveling the complex biological mechanisms underlying neurological and psychiatric disorders. In Alzheimer's disease research, this approach has successfully identified robust and consistent regions of interest across multiple imaging modalities associated with known genetic risk factors like the APOE rs429358 SNP [2]. Studies have discovered cerebellar-mediated mechanisms common to multiple neuropsychiatric disorders and identified genes involved in iron transport, extracellular matrix formation, and midline axon development that influence brain structure and susceptibility to disease [3].

Advancing Drug Discovery and Development

The pharmaceutical and biotechnology industries are increasingly leveraging multimodal imaging genetics to accelerate therapeutic development. This approach helps identify novel biological targets, predict treatment response, and increase clinical trial success rates by identifying patient subpopulations most likely to respond to treatments [4]. AI-driven predictive analytics can identify potential side effects and toxicity issues before clinical testing, ensuring higher safety profiles for drug candidates [4].

Powering Personalized Medicine

By integrating multi-omics data with imaging and clinical information, multimodal approaches facilitate comprehensive understanding of disease mechanisms at the individual level [4]. The development of improved polygenic risk scores using genetic variants identified through multimodal analysis has demonstrated significantly better prediction of cardiac diseases like atrial fibrillation, enabling better identification of at-risk individuals [5].

Genomic Data Types

Table 1: Genomic Data Types in Multimodal Imaging Genetics

| Data Type | Description | Research Applications |

|---|---|---|

| Single Nucleotide Polymorphisms (SNPs) | Common genetic variations occurring throughout the genome | Genome-wide association studies (GWAS) to identify genetic loci associated with imaging phenotypes [2] [3] |

| APOE Variants | Specific genetic polymorphisms in the apolipoprotein E gene | Investigation of Alzheimer's disease risk and brain changes [2] |

| DNA Methylation Patterns | Epigenetic modifications that regulate gene expression | Studying environmental influences on gene expression and brain structure [6] |

| Expression Quantitative Trait Loci (eQTLs) | Genomic loci that regulate expression levels of mRNAs | Linking genetic variants to gene expression changes in specific tissues [7] |

Imaging Modalities and Derived Phenotypes

Table 2: Common Imaging Modalities and Derived Phenotypes

| Imaging Modality | Biological Information | Representative Derived Phenotypes |

|---|---|---|

| Structural MRI | Brain anatomy and morphology | Regional grey matter volume, cortical thickness, surface area [3] |

| Functional MRI (fMRI) | Brain activity and connectivity | Functional connectivity between brain regions, network properties [3] |

| Diffusion MRI | White matter microstructure | Fractional anisotropy, mean diffusivity, tract integrity [3] |

| FDG-PET | Cerebral glucose metabolism | Metabolic rates in specific brain regions [2] |

| Amyloid PET (AV45) | Amyloid plaque deposition | Amyloid burden in Alzheimer's disease vulnerable regions [2] |

| Susceptibility-weighted MRI | Iron deposition and venous vasculature | Iron content in subcortical structures, microbleeds [3] |

Methodological Approaches and Experimental Protocols

Multimodal Data Integration Frameworks

Hypergraph-Based Multi-modal Data Fusion (HMF) represents an advanced approach that captures high-order relationships among subjects beyond simple pairwise interactions [6]. This method generates a hypergraph similarity matrix to represent complex relationships and enforces regularization based on both inter- and intra-modality relationships [6]. The mathematical formulation extends standard joint learning models by incorporating hypergraph-based manifold regularization, which helps circumvent overfitting problems common in high-dimension, low-sample-size data [6].

Diagnosis-Guided Multi-Modality (DGMM) frameworks incorporate subjects' clinical diagnosis information to discover disease-specific imaging genetic associations [2]. This approach ensures that identified quantitative traits are associated with both genetic markers and disease status, providing more biologically relevant findings for understanding pathways from genetic data to brain changes to clinical symptoms [2].

AI and Machine Learning Approaches

Multimodal REpresentation learning for Genetic discovery on Low-dimensional Embeddings (M-REGLE) employs a convolutional variational autoencoder (CVAE) to learn compressed, combined "signatures" from multiple data streams [5]. The methodology involves combining modalities, using CVAE to learn latent factors, applying principal component analysis to ensure independence, and conducting genome-wide association studies on these factors [5]. This approach has demonstrated 19.3% more genetic locus discoveries for 12-lead ECG data compared to unimodal methods [5].

Transformer-based models and graph neural networks (GNNs) represent cutting-edge approaches for handling complex multimodal data [8]. Transformers utilize self-attention mechanisms to assign weighted importance to different parts of input data, while GNNs model data in graph-structured formats that can naturally represent relationships between different data types without forcing them into grid-like structures [8].

Figure 2: Multimodal Imaging Genetics Experimental Workflow. This diagram outlines the key stages in a comprehensive multimodal imaging genetics study, highlighting the integration of diverse data sources.

Image-Mediated Association Study (IMAS) Protocol

The Image-Mediated Association Study (IMAS) protocol provides an innovative methodology for leveraging borrowed imaging/genomics data to conduct association mapping in legacy GWAS cohorts [7]. This approach is particularly valuable when imaging data is unavailable for large GWAS datasets due to cost constraints. The protocol utilizes an integrated feature selection/aggregation model to discover genetic bases underlying neuropsychiatric disorders by leveraging image-derived phenotypes from resources like the UK Biobank [7]. Simulations demonstrate that IMAS can be more powerful than hypothetical protocols with complete imaging data, offering significant cost savings for integrated analysis of genetics and imaging [7].

Key Experimental Steps:

- Data Acquisition and Quality Control: Obtain genomic data and multimodal imaging from coordinated initiatives like UK Biobank or ADNI [3] [2]

- Image-Derived Phenotype Extraction: Process raw images to generate quantitative traits using standardized pipelines [3]

- Genotype Processing and Imputation: Perform quality control, phasing, and imputation of genetic data [3]

- Multimodal Data Integration: Apply HMF, DGMM, or M-REGLE approaches to integrate data types [6] [2] [5]

- Association Mapping: Conduct genome-wide analyses to identify genetic variants associated with multimodal phenotypes [3]

- Replication and Validation: Verify findings in independent datasets and through biological pathway analyses [3]

Major Cohort Studies and Datasets

Table 3: Essential Research Resources in Multimodal Imaging Genetics

| Resource | Type | Key Features | Applications |

|---|---|---|---|

| UK Biobank | Large-scale cohort | 500,000 participants; multimodal imaging; genome-wide genetics; extensive phenotyping [3] | Genome-wide association studies of 3,144 brain imaging phenotypes [3] |

| Alzheimer's Disease Neuroimaging Initiative (ADNI) | Longitudinal cohort | Multimodal imaging (MRI, FDG-PET, AV45); genetic data; cognitive assessment [2] | Studying genetic associations with brain changes in Alzheimer's disease [2] |

| MIND Clinical Imaging Consortium (MCIC) | Clinical cohort | Structural and functional MRI; genetic data; schizophrenia and healthy controls [6] | Schizophrenia classification and biomarker detection [6] |

Computational Tools and Methods

The Scientist's Toolkit for multimodal imaging genetics requires specialized computational resources:

- Hypergraph-Based Algorithms: For capturing high-order relationships among subjects in multimodal data [6]

- Convolutional Variational Autoencoders (CVAE): For learning compressed representations from multiple data streams [5]

- Transformer Architectures: For handling sequential data and assigning importance weights to different input features [8]

- Graph Neural Networks (GNNs): For modeling non-Euclidean relationships in multimodal data [8]

- Image Processing Pipelines: Standardized tools for deriving quantitative phenotypes from raw images [3]

- GWAS Software: Specialized tools for genome-wide association testing with appropriate population structure controls [3]

Key Findings and Quantitative Results

Multimodal imaging genetics has yielded substantial insights into the genetic architecture of brain structure and function. Large-scale studies have identified hundreds of significant associations between genetic variants and imaging phenotypes, with many findings replicating across independent datasets [3].

Table 4: Quantitative Findings from Major Multimodal Imaging Genetics Studies

| Study/Approach | Sample Size | Key Quantitative Results | Significance |

|---|---|---|---|

| UK Biobank Imaging Genetics [3] | 8,428 participants | 1,262 significant SNP-imaging phenotype associations; 844 replicated; 38 genomic regions with strong associations | Demonstrates extensive genetic influence on brain structure and function |

| M-REGLE for Cardiovascular Traits [5] | UK Biobank participants | 19.3% more loci identified for 12-lead ECGs; 72.5% reduction in reconstruction error; improved AFib prediction | Validates superiority of multimodal over unimodal approaches |

| DGMM for Alzheimer's Disease [2] | 913 ADNI participants | Identified consistent ROIs across MRI, FDG-PET, and AV45-PET associated with APOE risk | Discovers robust cross-modal biomarkers for genetic risk |

These findings collectively demonstrate that multimodal approaches substantially enhance discovery power compared to single-modality studies. The identification of consistent regional patterns across multiple imaging modalities provides stronger evidence for biological mechanisms linking genetic variants to brain structure and function [2]. Furthermore, the improved genetic risk prediction achieved through multimodal integration has important implications for personalized medicine approaches in neurological and psychiatric disorders [5].

The integration of neuroimaging, genomics, and clinical phenotypes represents a transformative approach in biomedical research, enabling the deconstruction of disease heterogeneity and illuminating the pathways linking genetic predisposition to clinical manifestation. Genotype-phenotype association studies aim to connect an organism's genetic makeup with its observable characteristics, a task of immense complexity for neurological and psychiatric disorders. These studies are increasingly relying on multimodal data integration to bridge the gap between identified genetic risk loci and their functional, systems-level consequences in the brain. This paradigm leverages high-dimensional datasets to identify dimensional intermediate phenotypes that provide a more direct and mechanistically informative link to genetic architecture than broad clinical diagnoses alone.

Central to this approach is the concept of the endophenotype, a heritable, quantitative trait that lies on the causal pathway between genes and complex clinical syndromes [9]. In brain disorders, neuroimaging-derived phenotypes serve as powerful endophenotypes, capturing the expression of genetic risk in brain structure and function before it manifests as a full-blown clinical entity. Simultaneously, advances in sequencing technologies have generated a tsunami of genomic data, necessitating sophisticated bioinformatic annotation to distinguish causal variants from a sea of correlative findings [10] [11]. When these annotated genomic profiles are combined with deeply phenotyped clinical cohorts using machine learning frameworks, researchers can identify reproducible disease subtypes with distinct genetic drivers and clinical trajectories, paving the way for precision medicine in neurology and psychiatry [12] [9].

Technical Foundations of Core Data Modalities

Neuroimaging Modalities

Neuroimaging provides non-invasive windows into brain structure, function, and connectivity, generating rich quantitative phenotypes essential for genotype-phenotype mapping.

- Structural Magnetic Resonance Imaging (sMRI) measures brain macrostructure, including cortical thickness, surface area, and subcortical volume. These measures serve as stable, heritable markers of neurodevelopmental and neurodegenerative processes.

- Functional MRI (fMRI) captures brain activity and functional connectivity by measuring blood-oxygen-level-dependent (BOLD) signals, revealing networks implicated in cognitive processes and their disruption in disease.

- Diffusion Tensor Imaging (DTI) maps white matter tract microstructure by measuring the directionality of water diffusion, providing indices of structural connectivity between brain regions.

- Advanced Neuroimaging Analytics: Modern frameworks like Surreal-GAN and HYDRA use artificial intelligence to identify complex dimensional neuroimaging endophenotypes (DNEs) from high-dimensional imaging data [9]. These DNEs capture distinct, co-varying neuroanatomical patterns that cut across traditional diagnostic boundaries, offering a more nuanced representation of disease liability in the general population.

Genomic Modalities

Genomic technologies identify DNA sequence variations and facilitate the interpretation of their functional impact on molecular and systems-level biology.

- Whole Genome Sequencing (WGS) interrogates the complete DNA sequence of an organism, capturing variation across both coding and non-coding regions [10] [11]. This is critical for comprehensive variant discovery.

- Whole Exome Sequencing (WES) targets protein-coding exons, which constitute approximately 1-2% of the genome but harbor the majority of known pathogenic variants for Mendelian disorders [10].

- Genome-Wide Association Studies (GWAS) test hundreds of thousands to millions of single nucleotide polymorphisms (SNPs) across many individuals to identify genetic variants associated with specific traits or diseases [11]. A key challenge is that the majority of associated variants lie in non-coding regions, implicating regulatory elements in disease pathogenesis.

- Functional Genomic Annotation: This critical step translates raw variant calls into biological insights using tools like Ensembl's Variant Effect Predictor (VEP) and ANNOVAR [11]. The process involves determining a variant's genomic context (e.g., coding, intronic, intergenic), predicting its functional impact on genes and proteins, and overlaying regulatory information from epigenomic maps to prioritize putative causal mechanisms.

Table 1: Key Genomic Technologies for Association Studies

| Technology | Genomic Coverage | Primary Application | Key Limitations |

|---|---|---|---|

| Whole Genome Sequencing (WGS) | Complete genome (~99%) | Discovery of coding and non-coding variants; structural variation | Higher cost; substantial data storage; complex interpretation of non-coding variants |

| Whole Exome Sequencing (WES) | Protein-coding exons (~1-2%) | Identifying causal variants for Mendelian diseases | Misses regulatory and deep intronic variants |

| Genome-Wide Association Study (GWAS) | Common variants across the genome | Identifying genetic loci associated with complex traits | Identifies association signals, not causal variants; most hits are in non-coding regions |

Clinical Phenotyping Modalities

Clinical phenotyping involves the systematic characterization of a patient's disease status, symptoms, and trajectory, moving beyond simple diagnostic labels to capture multidimensional heterogeneity.

- Electronic Health Records (EHR) provide large-scale, real-world data on diagnoses, medications, laboratory values, and procedures. Phenotype algorithms are used to define patient cohorts from EHRs with high precision and recall [13].

- Standardized Clinical Assessments include structured interviews, cognitive batteries, and behavioral rating scales that provide quantitative, domain-specific measures of symptom severity and function.

- Biomarker Panels integrate laboratory measures across pathophysiological domains (e.g., inflammation, metabolism). Machine learning can then be applied to these panels to identify distinct clinical phenotypes. For example, a study of 1,207 hemodialysis patients used K-means clustering on 22 clinical indicators to reveal three metabolic phenotypes: "high retention-inflammatory," "optimal clearance," and "intermediate-stable" [12]. This data-driven subtyping provides a more granular view of clinical heterogeneity.

- Composite Clinical Indicators: These mathematically integrate related pathophysiological measures to enhance discriminatory power. For instance, the Middle-Small Molecule Clearance Index (β2-microglobulin reduction ratio × Kt/V) provides a more comprehensive assessment of dialysis adequacy than traditional metrics alone [12]. Similarly, the Inflammation–nutrition ratio (CRP/albumin) quantitatively captures the malnutrition–inflammation complex syndrome.

Methodologies for Multimodal Data Integration

Experimental and Analytical Workflows

A robust genotype-phenotype association study follows a multi-stage workflow, from data generation to integrated analysis, with each step requiring rigorous quality control.

Statistical and Machine Learning Approaches

The integration of multimodal data requires sophisticated analytical frameworks designed to handle high dimensionality and uncover complex relationships.

- Unsupervised Clustering (K-means): This approach identifies naturally occurring subgroups within a dataset without pre-defined labels. As applied in clinical phenotyping, it groups patients based on similarity across multiple biomarkers, revealing distinct subtypes such as the "high retention-inflammatory" phenotype in hemodialysis patients [12]. The stability of these clusters is then validated using metrics like the Adjusted Rand Index.

- Polygenic Risk Scoring (PRS): PRS aggregate the effects of many genetic variants (often from GWAS) into a single individual-level score that quantifies genetic liability for a trait. These scores can be tested for association with neuroimaging endophenotypes to establish a genetic-neurobiological link [9].

- Mendelian Randomization (MR): This method uses genetic variants as instrumental variables to test for causal relationships between a modifiable risk factor (e.g., an imaging phenotype) and a clinical outcome, helping to mitigate confounding in observational data [14].

- Multimodal Machine Learning: AI models, including semi-supervised representation learning frameworks like Surreal-GAN and HYDRA, are used to derive dimensional neuroimaging endophenotypes (DNEs) from complex data [9]. These DNEs can subsequently be integrated with genomic data in phenome-wide association studies to elucidate their genetic architecture and relationship to systemic health.

Table 2: Core Analytical Methods for Multimodal Integration

| Method | Primary Function | Application in Genotype-Phenotype Research |

|---|---|---|

| K-means Clustering | Unsupervised discovery of data-driven subgroups | Identifying distinct clinical or neuroanatomical subtypes within a heterogeneous patient population [12] |

| Polygenic Risk Score (PRS) | Aggregation of genetic liability | Testing if genetic risk for a disorder is associated with alterations in specific brain-based endophenotypes [9] |

| Mendelian Randomization | Causal inference using genetic instruments | Testing causal hypotheses about whether an endophenotype mediates the path from a gene to a disease [14] |

| Multimodal AI (e.g., HYDRA) | Semi-supervised pattern discovery | Identifying dimensional neuroimaging endophenotypes (DNEs) that capture disease-related brain patterns [9] |

Validation and Causal Inference Frameworks

Robust validation is paramount to ensure that findings from integrative analyses are reproducible and biologically meaningful.

- Cross-Validation and Replication: Machine learning models must be rigorously validated in independent cohorts to ensure generalizability. This involves partitioning data into training, validation, and test sets, or using k-fold cross-validation.

- Genetic Validation: The genetic architecture of identified endophenotypes can be characterized through genome-wide association studies of the endophenotypes themselves. Identifying associated genomic loci provides orthogonal biological validation [9].

- Phenome-Wide Association Study (PheWAS): This approach tests the association of a specific variable (e.g., a polygenic risk score for a DNE) with a wide array of clinical phenotypes and health outcomes available in biobanks like the UK Biobank, establishing the broader health relevance of the discovered endophenotype [9].

- Prospective Clinical Validation: The ultimate test for a genotype-phenotype model is its ability to predict future disease onset or clinical progression in longitudinal studies, a necessary step for translating research findings into clinical practice.

Essential Research Reagents and Computational Tools

Successful execution of multimodal integration studies requires a comprehensive suite of computational tools and resources for data processing, analysis, and management.

Table 3: The Scientist's Toolkit for Multimodal Studies

| Tool/Resource | Category | Primary Function | Application Context |

|---|---|---|---|

| Ensembl VEP [11] | Genomic Annotation | Predicts functional consequences of genetic variants on genes, transcripts, and protein sequence | Critical first step in prioritizing deleterious variants from WGS/WES data |

| ANNOVAR [11] | Genomic Annotation | Functionally annotates genetic variants from sequencing data | Used similarly to VEP for annotating SNPs and indels in large-scale studies |

| UK Biobank [9] | Data Resource | Large-scale biomedical database containing deep genetic, imaging, and clinical data from half a million participants | Provides the population-scale data essential for discovering and validating genotype-phenotype associations |

| OHDSI/OMOP [13] | Phenotyping Platform | Open-source community and data model for standardizing analysis of observational health data | Enables large-scale, reproducible phenotype algorithm development and validation across international datasets |

| HYDRA [9] | Neuroimaging AI | Machine learning tool for semi-supervised clustering of heterogeneous brain disorders | Used to derive dimensional neuroimaging endophenotypes (DNEs) from structural MRI data |

| Surreal-GAN [9] | Neuroimaging AI | Semi-supervised representation learning via Generative Adversarial Networks | Discovers heterogeneous disease-related imaging patterns without the need for extensive labeled data |

| Databricks [15] | Data Management | Unified data analytics platform for massive-scale data processing | Provides a compliant cloud-based "Data LakeHouse" for managing and analyzing multimodal clinical and genomic data |

| Apache Atlas [16] | Data Governance | Provides data lineage and governance capabilities within a unified data platform | Ensures data integrity, traceability, and auditability for GxP-compliant research environments |

The synergistic integration of neuroimaging, genomics, and deep clinical phenotyping is fundamentally advancing our understanding of the biological pathways that connect genetic predisposition to complex clinical disorders. The methodologies outlined in this guide—from AI-driven derivation of dimensional neuroimaging endophenotypes and robust functional annotation of genetic variants to machine learning-based clinical subtyping—provide a powerful framework for deconstructing disease heterogeneity. The key to unlocking the full potential of this multimodal paradigm lies in the continued development of scalable computational infrastructures, standardized phenotyping platforms like OHDSI [13], and robust analytical frameworks that can handle the immense scale and complexity of the data. As these tools and resources mature, they will accelerate the translation of genetic discoveries into a mechanistic understanding of disease pathophysiology, ultimately paving the way for personalized diagnostic and therapeutic strategies in neurology and psychiatry.

Genome-wide association studies (GWAS) represent a foundational methodology in human genetics, first emerging as a powerful tool for identifying genetic variants associated with complex traits and diseases. A landmark 2005 study on age-related macular degeneration catalyzed the field, leading to thousands of published GWAS and the identification of tens of thousands of genomic loci associated with human traits ranging from established biological parameters to complex behavioral phenotypes [17]. The conventional GWAS approach examines associations between single-nucleotide polymorphisms (SNPs) and phenotypes one marker at a time, operating under a linear, additive model of genetic effects [18]. While this paradigm has produced valuable discoveries, including novel drug targets such as IL6R for inflammatory conditions and CYP2C19 for pharmacogenomics, several fundamental limitations persist [17].

The March 2025 bankruptcy of 23andMe serves as a stark reminder of the limited translational value of traditional GWAS findings for the general public [17]. This reality check highlights four persistent obstacles that continue to hinder GWAS progress: technological inertia in genomic reference standards, the linkage disequilibrium (LD) bottleneck complicating causal inference, a research focus that prioritizes heritability over clinical actionability, and inadequate sample diversity that limits equity and generalizability [17]. These challenges have stimulated the development of more integrated analytical frameworks that combine multiple data modalities to bridge the gap between genetic association and biological mechanism.

Theoretical Foundations: From Single Modality to Multi-Modal Integration

Limitations of Traditional GWAS Frameworks

Traditional GWAS face several theoretical and methodological constraints that limit their explanatory power. The approach primarily identifies statistical associations rather than causal mechanisms, providing limited insight into the biological pathways linking genetic variants to phenotypic outcomes [17]. This problem is compounded by the issue of horizontal pleiotropy, where genetic variants influence multiple traits through different pathways, creating challenges for inferring direct biological relationships [19] [20].

The "omnigenic" model of complex traits suggests that most heritability is explained by genes with indirect effects on phenotypes, necessitating analytical frameworks that can account for these complex network relationships [17]. Furthermore, the predominant focus on European ancestry populations (over 80% of GWAS participants) creates major limitations for generalizability and equity, potentially overlooking population-specific genetic architectures and gene-environment interactions [17].

The Rise of Integrated Imaging Genomics

Imaging genomics has emerged as a powerful integrative framework that combines imaging-derived phenotypes (IDPs) with genetic data to bridge the gap between genotype and phenotype [21]. This approach leverages the ability of multi-modal imaging to provide non-invasive physiological and functional phenotypes that serve as intermediate markers between genetic variation and clinical disease states [21].

The theoretical advancement of this field has progressed through several stages:

Initial Correlation Studies (circa 2007): Early imaging genomics focused primarily on identifying genetic variants associated with quantitative imaging features, treating IDPs as endophenotypes closer to biological mechanisms than clinical diagnoses [21].

Causal Inference Frameworks: Methodological advances incorporated Mendelian randomization (MR) and instrumental variable (IV) approaches to test causal relationships between imaging phenotypes and disease outcomes [19] [21].

Multi-Modal Integration: Contemporary frameworks simultaneously incorporate multiple imaging modalities (structural, functional, and diffusion MRI) to account for pleiotropic effects across different aspects of brain structure and function [19] [20].

This evolution reflects a fundamental theoretical shift from analyzing isolated associations to modeling complex networks of biological influence.

Methodological Frameworks for Integrated Analysis

Extensions of GWAS for Intermediate Phenotypes

Several methodological frameworks have extended traditional GWAS to incorporate intermediate phenotypes and enable causal inference:

Transcriptome-Wide Association Studies (TWAS) integrate gene expression data with GWAS through a two-stage approach. First, SNPs within a gene are used to predict gene expression levels via machine learning methods. Second, the genetically regulated component of gene expression is associated with the outcome trait [19]. This approach can be statistically interpreted through the lens of causal inference using instrumental variable analysis [19].

Imaging-Wide Association Studies (IWAS) extend the TWAS framework by substituting neuroimaging features for gene expression as intermediate phenotypes [19]. Univariate IWAS (UV-IWAS) tests individual IDPs, while Multivariable IWAS (MV-IWAS) accounts for horizontal pleiotropy by modeling multiple IDPs simultaneously [19]. The mathematical foundation of IWAS can be represented as:

Stage 1 (Prediction Model): E[m] = Σgjαj Stage 2 (Outcome Model): h(E[y]) = m̂β

Where gj represents SNPs, αj are weights from penalized regression, m̂ is the genetically imputed IDP, and y is the outcome trait [19].

Methodological Comparisons

Table 1: Comparison of Genotype-Phenotype Mapping Frameworks

| Framework | Primary Inputs | Analytical Approach | Key Outputs | Limitations |

|---|---|---|---|---|

| Traditional GWAS | Genotypes, Clinical Phenotypes | Single-marker association testing | SNP-trait associations | Limited biological insight; Susceptible to confounding |

| TWAS | Genotypes, Gene Expression, Clinical Phenotypes | Two-stage instrumental variable | Gene-trait associations mediated by expression | Dependent on expression reference panels |

| UV-IWAS | Genotypes, Imaging Phenotypes, Clinical Phenotypes | Two-stage instrumental variable | IDP-trait associations | Vulnerable to horizontal pleiotropy |

| MV-IWAS | Genotypes, Multi-modal Imaging, Clinical Phenotypes | Multivariable MR controlling for pleiotropy | Modality-level causal pathways | Computational complexity; Requires large sample sizes |

Advanced Computational Frameworks

G-P Atlas represents a novel neural network framework that transforms genetic analysis by simultaneously modeling multiple phenotypes and capturing complex nonlinear relationships between genes [18]. This approach uses a two-tiered denoising autoencoder architecture that first learns a low-dimensional representation of phenotypes and then maps genetic data to these representations [18]. Unlike traditional linear models, G-P Atlas can identify causal genes acting through non-additive interactions that conventional approaches miss [18].

BrainXcan adopts a polygenic scoring approach to implement instrumental variable analysis for imaging genetics, using the whole genome as potential instruments to identify IDPs leading psychiatric traits under MR assumptions [19]. This method addresses the high dimensionality of imaging features but may lose gene-level resolution [19].

Experimental Protocols and Workflows

Multimodal Neuroimaging Causal Inference Pipeline

Table 2: Research Reagent Solutions for Integrated Genotype-Imaging Studies

| Research Reagent | Function/Application | Specification Considerations |

|---|---|---|

| UK Biobank IDPs | Standardized imaging-derived phenotypes | Structural, functional, and diffusion MRI metrics; Quality control protocols essential |

| GWAS Summary Statistics | Pre-computed genetic associations for method validation | Must include effect sizes, standard errors, and p-values; LD reference panels needed |

| LD Reference Panels | Account for correlation between genetic variants | 1000 Genomes Project or population-specific references; Impact portability of results |

| TWAS/IWAS Software | Implement instrumental variable methods | Summary statistics compatibility; Pleiotropy robustness features; GitHub availability |

A representative experimental protocol for modality-level causal testing in Alzheimer's disease integrates the following components [19] [20]:

Data Acquisition and Preprocessing:

- Genotype Data: Obtain GWAS summary statistics from consortium studies (e.g., International Genomics of Alzheimer's Project) and process using standard quality control pipelines, including imputation to a unified reference panel.

- Imaging Data: Acquire multi-modal neuroimaging (structural MRI, functional MRI, diffusion MRI) from cohorts such as UK Biobank, extracting IDPs through established processing pipelines (e.g., FSL, FreeSurfer).

- Phenotype Data: Collect clinical diagnostic information for Alzheimer's disease using standardized criteria (e.g., NINCDS-ADRDA).

Analytical Workflow:

- Genetic Instrument Construction: For each gene, select SNPs meeting genome-wide significance thresholds or using clumping procedures to ensure independence.

- Modality-Level Testing: Implement multivariable MR to test causal effects of each brain modality (structural, functional, diffusion) while controlling for pleiotropic effects of IDPs from other modalities.

- Sensitivity Analyses: Conduct robustness checks using different MR assumptions (e.g., MR-Egger, weighted median) to assess consistency of causal estimates.

The following diagram illustrates the core analytical workflow for multimodal causal inference:

Workflow for Multimodal Causal Inference

Neural Network Framework Implementation

The G-P Atlas framework implements a sophisticated neural network architecture with the following experimental protocol [18]:

Phase 1: Phenotype Autoencoder Training

- Data Corruption: Introduce Gaussian-distributed noise and missing values to phenotypic data.

- Encoder Training: Train a three-layer encoder network with leaky ReLU activation and batch normalization to create a low-dimensional latent representation.

- Decoder Training: Train a symmetrical decoder network to reconstruct uncorrupted phenotypic data from the latent representation.

- Hyperparameter Tuning: Optimize latent space size, hidden layer dimensions, and noise levels using grid search with 80/20 train-test splits.

Phase 2: Genotype-to-Phenotype Mapping

- Network Architecture: Fix the trained phenotypic decoder weights and create a new network mapping genotypic data to the phenotype latent space.

- Regularization: Apply combined L1 (weight=0.8) and L2 (weight=0.01) norm regularization to prevent overfitting.

- Training Protocol: Use Adam optimizer with learning rate=0.001, β₁=0.5, β₂=0.999 for 250 epochs with batch size=16.

- Variable Importance: Calculate permutation-based feature importance using Captum library to identify causal genetic loci.

The architecture and information flow of this framework is visualized below:

G-P Atlas Two-Tiered Architecture

Applications in Disease Research

Alzheimer's Disease Case Study

The integration of multimodal neuroimaging and genetics has proven particularly valuable in Alzheimer's disease research, where a 2025 study demonstrated the application of modality-level causal testing [20]. Using GWAS data from UK Biobank and the International Genomics of Alzheimer's Project, researchers implemented a multivariable IWAS framework to disentangle the causal contributions of different brain imaging modalities [20].

This analysis revealed distinct genetic pathways influencing Alzheimer's risk through specific neuroimaging modalities, with structural MRI features (particularly hippocampal volume) showing the strongest causal relationship with disease progression, followed by diffusion tensor imaging metrics of white matter integrity [20]. The methodological innovation allowed researchers to control for horizontal pleiotropy - where genetic variants influence multiple imaging modalities simultaneously - providing more specific insights into the neurobiological pathways of Alzheimer's disease [19].

Multi-Omics Integration in Epilepsy Research

Beyond neuroimaging, integrated frameworks are expanding to incorporate multiple omics technologies in complex neurological disorders. In epilepsy research, multi-omics approaches enable comprehensive characterization of molecular dysregulation networks underlying different epilepsy phenotypes [22]. The integration of genomics, transcriptomics, proteomics, and metabolomics has catalyzed a paradigm shift from hypothesis-driven to data-driven research architectures [22].

Spatial transcriptomics technologies, recognized as "Method of the Year" by Nature Methods in 2020, have been particularly transformative by enabling visualization and quantitative analysis of the full transcriptome with spatial distribution in tissue sections [22]. This advancement addresses a critical limitation of conventional transcriptomics, which sacrifices crucial spatial information during tissue homogenization.

Future Directions and Conceptual Innovations

Emerging Theoretical Concepts

The field continues to evolve with several emerging conceptual frameworks that address persistent challenges:

The "trait efficiency locus (TEL)" has been proposed as a complement to the quantitative trait locus framework, providing a new lens for evaluating genetic discoveries that emphasizes efficiency rather than mere association [17]. This concept reframes genetic effects in terms of their functional impact on biological systems.

Pangenomic references represent another conceptual shift from single reference genomes to collections that capture all DNA sequence information in a species [17]. This approach enables presence/absence variation-based GWAS (PAV-GWAS), vital for assessing population structure, analyzing diversity, and identifying important functional genes across diverse human populations [22].

Methodological Frontiers

Future methodological development will likely focus on several key frontiers:

Deep Learning for LD Modeling: As sequencing resolution improves, compulsory reliance on massive LD matrices is becoming computationally burdensome. Future approaches may adopt deep learning models that learn LD patterns without explicit enumeration [17].

Enhanced Causal Inference: Methods that strengthen causal claims while requiring fewer statistical assumptions will be particularly valuable, especially those that integrate multiple lines of evidence from different experimental paradigms.

Scalable Multi-Modal Fusion: The development of computationally efficient algorithms for fusing high-dimensional data from genomics, imaging, and other omics technologies will enable more comprehensive biological models.

The trajectory from traditional GWAS to integrated analysis represents a fundamental maturation of genetic epidemiology, moving from cataloguing associations to understanding biological mechanisms through sophisticated multi-modal integration. This evolution promises to enhance both the scientific insights and clinical translation of genetic studies in complex human diseases.

Challenges in High-Dimensional Data Integration and Interpretation

In the field of genotype-phenotype association studies, the integration of high-dimensional data from multimodal sources, such as genomics and neuroimaging, presents a formidable frontier. Modern research increasingly relies on combining diverse data types—including genome-wide association studies (GWAS), structural and functional magnetic resonance imaging (sMRI/fMRI), and electronic health records (EHR)—to build a comprehensive understanding of complex disease mechanisms [23] [24] [25]. However, this multimodal approach introduces significant challenges in data integration, interpretation, and analysis. This technical guide examines the core challenges and outlines sophisticated computational strategies developed to address them, providing researchers with actionable methodologies for advancing precision medicine.

Core Technical Challenges

The path to effectively merging and interpreting high-dimensional biological data is fraught with technical hurdles. The table below summarizes the primary challenges and their impacts on research outcomes.

Table 1: Key Challenges in High-Dimensional Data Integration

| Challenge | Description | Impact on Research |

|---|---|---|

| Dimensionality Imbalance [26] | Marked differences in feature dimensions across modalities (e.g., millions of SNPs vs. thousands of imaging voxels). | Complicates model training, risks having one modality dominate the analysis, and can obscure subtle but biologically significant signals. |

| Multimodal Fusion [23] [26] | The technical difficulty of combining disparate data types (e.g., image, genotype, clinical text) into a coherent model. | Suboptimal fusion leads to significant loss of complementary information, reducing the power to detect genuine associations. |

| Missing Modalities [26] | The frequent absence of one or more data types for certain subjects in a cohort. | Introduces bias, reduces effective sample size, and complicates the use of standardized analytical pipelines. |

| Interpretability [23] [26] | The "black-box" nature of complex AI/ML models used for integration, making it hard to understand how predictions are made. | Hinders clinical translation, as biological insight and trust in model predictions are compromised. |

| Data Alignment & Noise [27] | The problem of ensuring data from different sources are synchronized and comparable, while mitigating inherent noise. | Misaligned or noisy data produces unreliable results and can lead to the detection of spurious associations. |

Methodologies for Data Integration and Analysis

Multimodal Fusion Strategies

Choosing the right fusion strategy is critical and depends on the research question and data structure.

Table 2: Comparison of Data Fusion Techniques

| Fusion Type | Description | Best Used For | Advantages | Limitations |

|---|---|---|---|---|

| Early Fusion [26] | Raw data from different modalities are combined directly before feature extraction. | Highly correlated modalities with similar dimensionality and sampling rates. | Simple; can capture basic cross-modal relationships at the raw data level. | Struggles with heterogeneous data; sensitive to noise and missing data. |

| Intermediate Fusion [23] [26] | Modality-specific features are extracted first, then integrated in a shared model layer (e.g., using neural networks). | Integrating fundamentally different data types (e.g., images with genetic or clinical data). | Highly flexible; resilient to dimensionality imbalance and missing modalities. | Model architecture becomes more complex. |

| Late Fusion [26] | Separate models are trained for each modality, and their predictions are combined at the final stage. | Scenarios with weak correlations between modalities or when prioritizing specific information sources. | Robust to missing data and heterogeneous formats. | Fails to capture complex, high-level interactions between modalities during learning. |

| Hybrid Fusion [26] | Combines elements of early, intermediate, and late fusion at multiple processing stages. | Complex analyses requiring a nuanced approach, such as integrating closely related and distinct data types. | Highly adaptable to specific data and task requirements. | Highest architectural and computational complexity. |

Advanced Analytical Frameworks

Sparse Reduced-Rank Regression (sRRR)

For brain-wide, genome-wide association (BW-GWA) studies, the sparse Reduced-Rank Regression (sRRR) model offers a powerful alternative to the standard mass-univariate linear model (MULM) approach [28].

- Objective: To model high-dimensional imaging responses (e.g., voxels across the brain) from high-dimensional genetic covariates (e.g., SNPs) by enforcing sparsity in the regression coefficients.

- Protocol:

- Model Formulation: The standard regression model Y = XB + E is used, where Y is an n x q matrix of imaging phenotypes, X is an n x p matrix of genotypes, B is a p x q matrix of coefficients, and E is the error matrix.

- Rank Constraint: The coefficient matrix B is constrained to have a low rank, meaning it can be factorized into a product of two low-rank matrices. This effectively captures the underlying latent factors that drive the genotype-phenotype associations.

- Sparsity Penalty: An L1-norm (lasso) penalty is applied to the coefficients in B, driving many of them to exactly zero. This performs simultaneous variable selection on both the genotype and phenotype sides.

- Optimization: Specialized algorithms are used to solve the resulting optimization problem, which combines the least-squares loss with the rank constraint and sparsity penalty.

- Advantages: This method overcomes key limitations of MULM by (i) combining information across correlated phenotypes, (ii) identifying sets of genetic markers that jointly influence the brain, and (iii) providing a more computationally efficient framework for high-dimensional searches [28].

The TATES Method

The TATES (Trait-based Association Test that uses Extended Simes procedure) method provides a robust framework for multivariate genotype-phenotype analysis without requiring raw data integration [29].

- Objective: To gain power by combining univariate association p-values across multiple traits, while correcting for correlations among them.

- Protocol:

- Univariate GWAS: Perform a standard univariate GWAS for each of the m individual phenotype components.

- P-value Combination: For each SNP, combine the m resulting p-values into a single trait-based p-value using an Extended Simes procedure.

- Correction for Correlation: The method adjusts for the correlations between the phenotypic components, which is crucial for maintaining the correct false positive rate. The effective number of independent p-values (m*~eff~) is calculated based on the eigenvalue decomposition of the phenotypic correlation matrix.

- Significance Testing: The final TATES p-value is obtained and used to assess the genome-wide significance of the association between the SNP and the multivariate phenotype.

- Advantage: TATES has been shown to have a higher statistical power to detect causal variants than both univariate tests on composite scores and standard multivariate methods like MANOVA, especially when a genetic variant affects only a subset of the phenotypes [29].

Experimental Workflow for Multimodal Imaging Genetics

The following diagram outlines a standardized protocol for a multimodal imaging genetics study, from data collection to biological interpretation.

Diagram 1: Multimodal Imaging Genetics Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Analytical Tools and Resources

| Tool/Resource | Function | Application Context |

|---|---|---|

| Convolutional Neural Networks (CNN) [23] | Extracts spatial features from structural neuroimaging data (sMRI). | Quantifying cortical thickness, gray matter density, and other morphological biomarkers. |

| Gated Recurrent Units (GRU) [23] | Models temporal dynamics in functional neuroimaging data (fMRI). | Analyzing time-series data from functional connectivity networks. |

| Dynamic Cross-Modality Attention Module [23] | Weights the importance of features from different modalities, enhancing integration and interpretability. | Identifying which brain features and genetic variants are most salient for a model's prediction. |

| Polygenic Risk Score (PRS) [25] | Summarizes an individual's genetic liability for a trait/disease based on GWAS data. | Used as a genetic covariate in models integrating with clinical or imaging data for risk prediction. |

| Natural Language Processing (NLP) [25] | Generates latent phenotypes from unstructured clinical text in Electronic Health Records (EHR). | Creating rich, data-driven clinical risk scores (ClinRS) from diagnostic codes and clinical notes. |

| Canonical Correlation Analysis (CCA) [30] | Identifies linear relationships between two multivariate sets of variables. | Discovering maximal correlations between sets of genetic markers and neuroimaging phenotypes. |

| Sparse Reduced-Rank Regression (sRRR) [28] | Performs simultaneous variable selection and dimension reduction on both genotype and phenotype data. | Brain-wide, genome-wide association studies (BW-GWA) to find genetic variants influencing brain structure/function. |

The integration and interpretation of high-dimensional multimodal data remain a central challenge in advancing genotype-phenotype research. While significant hurdles related to dimensionality, fusion, and interpretability persist, the development of sophisticated analytical frameworks like sRRR and TATES, coupled with strategic fusion approaches and explainable AI components, provides a powerful path forward. The continued refinement of these methodologies, underscored by a commitment to transparency and biological plausibility, is essential for unlocking the full potential of multimodal data in precision medicine.

The study of complex biological systems, particularly in genotype-phenotype association research, has historically relied on single-modality approaches that provide limited perspectives. The emerging paradigm recognizes that biological entities are multidimensional, requiring integrative analysis of complementary data types to capture their full complexity. This whitepaper examines the transformative potential of multimodal methodologies in biomedical research, with specific focus on their application in enhancing genetic discovery, improving diagnostic precision, and advancing therapeutic development. Multimodal integration represents a fundamental shift from isolated data analysis to holistic computational frameworks that simultaneously process diverse data types including medical images, physiological waveforms, clinical notes, and genomic information. This approach mirrors the clinical reality where diagnosticians integrate information from various sources to form a comprehensive assessment [31] [32].

The limitations of single-modality approaches become particularly evident in studying complex diseases where subtle phenotypic variations correlate with specific genetic mutations. In inherited retinal diseases (IRDs), for example, more than 300 gene mutations contribute to an extreme diversity of clinical presentation and disease progression, with significant overlap between genetically distinct conditions [33]. This heterogeneity poses substantial diagnostic challenges that cannot be adequately addressed through unimodal analysis. Similarly, in cardiovascular genetics, individual physiological waveforms provide partial information, but their integration enables more powerful genetic association studies [34]. The multimodal paradigm addresses these limitations by combining complementary data sources to increase signal relative to noise, enabling researchers to capture both shared and unique biological signals across modalities.

Theoretical Foundations: Core Principles of Multimodal Integration

Complementary and Overlapping Information in Multimodal Data

Multimodal frameworks are predicated on the fundamental principle that different data modalities capture both complementary and overlapping information about biological systems. Complementary information refers to unique signals present in one modality but absent in another, while overlapping information represents shared signals across multiple modalities [34]. Effective multimodal integration leverages both types of information to construct more comprehensive representations of biological phenomena than can be derived from any single source.

In clinical settings, physicians naturally employ multimodal reasoning by combining imaging results, laboratory tests, patient history, and physical examination findings to form diagnostic conclusions [31]. Computational multimodal systems aim to replicate this integrative process at scale. When multiple clinical modalities pertain to a single organ system or disease process, they encode different perspectives on the same underlying biology. For instance, in cardiovascular research, electrocardiogram (ECG) and photoplethysmogram (PPG) waveforms capture complementary aspects of cardiac function that, when analyzed jointly, provide a more complete picture of cardiovascular health than either modality alone [34].

Multimodal Fusion Strategies

The architectural implementation of multimodal integration occurs primarily through three fusion strategies:

- Early Fusion: Combining raw or low-level features from different modalities before model processing. This approach enables rich cross-modal interactions but requires careful handling of heterogeneous data structures.

- Intermediate Fusion: Integrating modalities at intermediate processing stages through shared representations or attention mechanisms, balancing specificity and integration.

- Late Fusion: Processing each modality independently and combining outputs or decisions at the final stage, preserving modality-specific features while enabling cross-modal validation.

Research indicates that the choice of fusion strategy significantly impacts model performance. In radiology applications, for example, models using early or intermediate fusion have demonstrated substantial improvements in report generation compared to image-only approaches [32]. Similarly, in genetic studies of cardiovascular traits, joint representation learning (early fusion) has proven more effective than statistical combination of separate modality analyses (late fusion) [34].

Technical Implementation: Methodologies and Workflows

Multimodal Representation Learning for Genetic Discovery (M-REGLE)

The M-REGLE framework exemplifies the technical implementation of multimodal approaches for genotype-phenotype association studies. This method extends unimodal representation learning by jointly analyzing multiple complementary physiological waveforms to enhance genetic discovery [34].

Experimental Protocol: M-REGLE Workflow

- Data Acquisition and Preprocessing: Collect multimodal physiological data (e.g., 12-lead ECG, PPG) alongside genomic data. Preprocess signals to remove artifacts and normalize formats.

- Joint Representation Learning: Input concatenated multimodal data into a convolutional variational autoencoder (VAE) to learn a low-dimensional, largely uncorrelated joint representation. The VAE consists of an encoder that compresses multimodal inputs into latent embeddings and a decoder that reconstructs original data from these representations.

- Embedding Orthogonalization: Perform principal component analysis (PCA) on the VAE embeddings to ensure completely uncorrelated embeddings, addressing rotational indeterminacies and creating identifiable representations.

- Genetic Association Analysis: Use the orthogonalized embeddings as synthetic phenotypes for genome-wide association studies (GWAS). Perform GWAS on each latent factor, then combine results by summing chi-squared statistics across factors and deriving combined p-values.

- Polygenic Risk Scoring: Apply elastic net regression to identified hits to compute polygenic risk scores (PRS) for predicting clinical phenotypes across multiple biobanks.

Figure 1: M-REGLE Multimodal Genetic Analysis Workflow

Quantitative Performance Advantages of Multimodal Approaches

Multimodal approaches demonstrate significant quantitative improvements across multiple metrics compared to unimodal methods, as evidenced by rigorous validation studies.

Table 1: Performance Comparison of M-REGLE vs. Unimodal Methods in Genetic Discovery [34]

| Metric | Dataset | M-REGLE (Multimodal) | U-REGLE (Unimodal) | Improvement |

|---|---|---|---|---|

| Loci Identified | 12-lead ECG | 19.3% more loci | ||

| Loci Identified | ECG lead I + PPG | 13.0% more loci | ||

| Expected χ² Statistics | 12-lead ECG | 22.0% higher | ||

| Expected χ² Statistics | ECG lead I + PPG | 16.4% higher | ||

| Atrial Fibrillation Prediction | Multiple Biobanks | Significant outperformance | Baseline | Improved prediction accuracy |

Table 2: Multimodal Imaging Performance in Inherited Retinal Disease Diagnosis [33]

| Imaging Modality | Primary Function in IRD Diagnosis | Key Biomarkers | Clinical Utility |

|---|---|---|---|

| Fundus Autofluorescence (FAF) | Snapshots of disease activity | Hyperautofluorescence (cellular stress), Hypoautofluorescence (RPE atrophy) | Dynamic monitoring of disease progression, clinical trial endpoint |

| Optical Coherence Tomography (OCT) | 3D dissection of retinal layers | Ellipsoid zone disruption, RPE atrophy, outer retinal layer loss | Disease staging, monitoring progression, detecting complications |

| Ultra-Widefield Imaging | Incorporation of peripheral pathology | Extension of pathology into periphery | Redefining grading systems for Stargardt disease and RP |

| OCT Angiography (OCTA) | Visualization of retinal vasculature | Reduced perfusion, enlarged foveal avascular zone | Monitoring CNV, identifying reduced perfusion in RP |

Domain-Specific Applications

Multimodal Imaging in Inherited Retinal Diseases

Inherited retinal diseases represent a compelling application for multimodal imaging approaches, where the combination of complementary imaging techniques enables more precise genotype-phenotype correlations. With more than 300 gene mutations implicated in IRDs and extreme diversity in clinical presentation, single-modality imaging provides insufficient information for accurate diagnosis and monitoring [33].

Multimodal Imaging Protocol for IRD Characterization

- Initial Assessment: Begin with ultra-widefield fundus photography to establish visible retinal pathology and distinguish IRDs from more common conditions such as age-related macular degeneration. This provides staging information based on characteristic findings like retinal flecks in Stargardt disease or bony spicule pigment clumping in retinitis pigmentosa.

- Metabolic Activity Mapping: Apply fundus autofluorescence (FAF) to visualize perturbations in cellular homeostasis before overt damage appears on fundoscopy. Identify patterns such as the hyperautofluorescence ring in retinitis pigmentosa that constricts with disease progression, or the classic bull's eye appearance in Stargardt disease that progresses from central hypoautofluorescence outward.

- Cross-Sectional Analysis: Perform optical coherence tomography (OCT) to assess integrity of individual retinal layers. Evaluate ellipsoid zone disruption, RPE atrophy, and outer retinal layer loss as core components of IRD progression. Monitor complications including cystoid macular edema in retinitis pigmentosa.

- Vascular Assessment: Implement OCT angiography (OCTA) to visualize the superficial and deep retinal capillary plexus and choriocapillaris without dye injection. Identify reduced perfusion and enlarged foveal avascular zone in RP and degraded choriocapillaris in advanced Best disease and choroideremia.

This integrated protocol provides complementary information that enables more accurate disease staging, progression monitoring, and treatment response assessment than any single modality alone [33].

Integrative Multimodal Approaches in Radiology

Radiology represents a natural domain for multimodal integration, where the combination of imaging with non-imaging data significantly enhances diagnostic accuracy and clinical utility.

Experimental Protocol: Multimodal Chest X-Ray Report Generation [32]

- Data Collection: Gather chest x-ray images alongside structured patient data (vital signs, symptoms) and unstructured clinical notes. Ensure proper anonymization and ethical compliance.

- Feature Extraction: Utilize pre-trained convolutional neural networks (e.g., ResNet, VGG-19) to extract visual features from CXR images. Process textual data using natural language processing techniques to create embedded representations.

- Multimodal Fusion: Implement a conditioned cross-multi-head attention module to fuse heterogeneous data modalities, bridging the semantic gap between visual and textual data. This module enables the model to focus on relevant regions of both image and text data during report generation.

- Report Generation: Employ a transformer-based decoder to generate comprehensive radiology reports comprising both "Findings" and "Impressions" sections. Train the model using combined objective functions that optimize for both clinical accuracy and linguistic coherence.

- Validation: Conduct both automated evaluation (using metrics like ROUGE-L) and human evaluation by board-certified radiologists to assess clinical accuracy, completeness, and nuanced understanding.

This multimodal approach has demonstrated substantial improvements compared to image-only models, achieving the highest reported performance on the ROUGE-L metric while generating more clinically accurate and contextually appropriate reports [32].

Figure 2: Multimodal Radiology Report Generation Framework

Essential Research Reagents and Computational Tools

Successful implementation of multimodal approaches requires specialized computational tools and methodological resources. The following table summarizes key solutions for multimodal research.

Table 3: Essential Research Reagent Solutions for Multimodal Studies

| Research Reagent | Type | Primary Function | Application Examples |

|---|---|---|---|

| hMRI Toolbox | Software Library | Estimation of quantitative parameter maps from MRI data | Processing multiparametric maps (R1, R2*, MTSat, PD) for microstructural analysis [35] |

| Multi-Parametric Mapping (MPM) | MRI Protocol | Simultaneous acquisition of quantitative MRI metrics | Capturing R1, R2*, MTSat, and PD images in a single protocol [35] |

| Convolutional Variational Autoencoders | Deep Learning Architecture | Learning non-linear, low-dimensional representations from complex data | Joint representation learning from multimodal physiological waveforms [34] |

| Conditioned Cross-Multi-Head Attention | Algorithmic Module | Fusing heterogeneous data modalities | Bridging semantic gaps between visual and textual data in radiology report generation [32] |

| UK Biobank | Data Resource | Large-scale multimodal biomedical database | Accessing paired genomic, imaging, and clinical data for multimodal association studies [34] |

Challenges and Future Directions

Despite significant advances, multimodal approaches face several technical and methodological challenges that represent opportunities for future research.

Data Heterogeneity and Standardization: The integration of fundamentally different data types (images, waveforms, text, genomics) presents substantial challenges in data alignment, normalization, and standardization. Future work should focus on developing flexible data architectures that can accommodate diverse modalities while preserving their unique informational content.

Interpretability and Biological Validation: As multimodal models increase in complexity, interpreting their findings and validating biological significance becomes more challenging. Research priorities should include developing explainable AI techniques specifically designed for multimodal contexts and establishing robust validation frameworks grounded in biological plausibility.

Computational Resource Requirements: The joint processing of multiple high-dimensional data modalities demands substantial computational resources, potentially limiting accessibility. Future developments in efficient model architectures, compression techniques, and distributed computing approaches will be essential for broader adoption.

Multimodal Foundation Models: Recent evaluations of general-purpose multimodal foundation models (e.g., GPT-4o, Gemini 1.5 Pro) in specialized domains like neuroradiology reveal significant limitations in image interpretation and multimodal integration compared to human experts [36]. While these models outperform radiologists using clinical context alone (34.0% and 44.7% vs. 16.4% accuracy), they perform poorly with images alone (3.8% and 7.5% vs. 42.0% for radiologists) and fail to effectively integrate multimodal inputs [36]. This highlights the need for domain-specific multimodal architectures rather than relying on general-purpose solutions.

The trajectory of multimodal research points toward increasingly sophisticated integration frameworks that will enable more comprehensive genotype-phenotype association studies, ultimately accelerating therapeutic development and personalized medicine approaches.

Advanced Computational Frameworks and Real-World Applications

Adversarial Mutual Learning for Longitudinal Prediction with Missing Data

Longitudinal prediction in genotype-phenotype association studies faces significant challenges from pervasive missing data and the complex integration of multimodal imaging and genetic information. This technical guide explores Adversarial Mutual Learning (AML) as a sophisticated framework designed to address these dual challenges. AML integrates the robust feature capture of adversarial training with the collaborative refinement of mutual learning, enabling researchers to model complex biological pathways despite incomplete data records. We provide an in-depth examination of AML's architectural components, present detailed experimental protocols for implementation in neuroimaging genomics, and quantitatively benchmark its performance against traditional methods. Within multimodal imaging genomics, this approach offers a promising pathway for enhancing the reliability of longitudinal predictions of brain structure and function, ultimately supporting more precise investigation of genetic influences on brain health and disease.

In multimodal imaging genomics, researchers seek to uncover the complex relationships between genetic variation and quantitative imaging phenotypes (IDPs) to better understand brain structure, function, and the mechanisms of disease [37] [38]. A quintessential goal is to model how genetic markers influence trajectories of brain aging or disease progression through longitudinal analysis. However, this endeavor is consistently hampered by two major methodological challenges: the prevalence of missing data and the complex integration of heterogeneous data modalities.

Missing data is a pervasive issue in longitudinal studies, arising from participant dropout, technical failures in data acquisition, or inconsistent quality control [39]. The mechanism of data loss, particularly whether it is Missing at Random (MAR) or Missing Not at Random (MNAR), significantly impacts the validity of statistical inferences. Traditional techniques like Full Information Maximum Likelihood (FIML) excel with MNAR data but rely on normal distribution assumptions that are often violated by real-world, nonnormal neuroimaging phenotypes [39]. Meanwhile, machine learning imputation methods like missForest show promise but only under specific conditions with large sample sizes and low missingness rates [39].

Simultaneously, the field requires advanced models to fuse high-dimensional genomic data (e.g., Single Nucleotide Polymorphisms or SNPs) with multi-modal neuroimaging features (e.g., from structural, functional, and diffusion MRI) [40] [37]. Adversarial Mutual Learning emerges as a powerful framework to address these intertwined challenges. It combines the representative power of adversarial networks—which learn to distinguish real from imputed data—with the collaborative, performance-boosting dynamic of mutual learning, where multiple neural networks teach each other throughout the training process [41]. This guide details the architecture, implementation, and application of AML for robust longitudinal prediction within multimodal imaging-genomics studies.

Technical Foundations

Adversarial Mutual Learning: Core Components

The Adversarial Mutual Learning framework consists of two primary, interacting components: a mutual learning synthesis system and an adversarial discrimination mechanism.

Mutual Learning Synthesis: This component typically involves two or more denoising networks that learn collaboratively to generate or impute missing data. Each network is often designed with a distinct specialization. For instance, in the MU-Diff model for MRI synthesis, one network focuses on capturing comprehensive structural information to preserve anatomical consistency, while the other emphasizes fine-grained texture details crucial for accurate lesion depiction [41]. A shared critic network facilitates knowledge exchange between them, enabling collaborative refinement of their respective feature representations and preventing over-specialization.

Adversarial Discrimination: A discriminator network works adversarially against the generative/synthesis networks. Its goal is to distinguish real, observed data from imputed or synthesized data. This adversarial process forces the generator networks to produce increasingly realistic imputations, thereby improving the quality of the completed dataset used for downstream longitudinal prediction tasks [41] [42].

Comparison with Traditional Missing Data Techniques

Traditional and machine learning methods for handling missing data exhibit distinct strengths and weaknesses, making them suitable for different scenarios in imaging genomics.

Table 1: Comparison of Missing Data Analytical Techniques

| Technique | Mechanism | Strengths | Weaknesses | Optimal Use Case |

|---|---|---|---|---|

| FIML [39] | Uses all available data points under a specified likelihood model. | Most effective for MNAR data; does not require explicit imputation. | Relies on normal distribution assumptions; fails with nonnormal data. | MNAR mechanisms with approximately normal data. |

| TSRE [39] | Two-stage estimation robust to non-normality. | Excels with MAR data and nonnormal distributions. | Less effective for MNAR data; complex implementation. | MAR data with skewed distributions. |

| missForest [39] | Non-parametric imputation using random forests. | No distributional assumptions; handles complex interactions. | Advantageous only with very large samples (n ≥ 1,000) and low missing rates. | Large-sample studies with low missingness. |

| Generative Adversarial Imputation (GAIN/MGAIN) [42] | Adversarial training to generate plausible imputations. | No distributional assumptions; can capture complex data patterns. | Training instability; potential for mode collapse; architectural complexity. | High-dimensional data (e.g., imaging, sensors). |

| Adversarial Mutual Learning (AML) [41] | Mutual learning between networks guided by an adversarial critic. | Handles heterogeneous data; produces high-fidelity imputations/synthesis. | High computational demand; complex hyperparameter tuning. | Multimodal data fusion (e.g., imaging genomics). |

Experimental Design and Protocols

Workflow for Longitudinal Genotype-Phenotype Modeling

Implementing AML for longitudinal prediction involves a structured, multi-stage workflow that integrates data processing, imputation, and causal analysis.

Protocol 1: Adversarial Mutual Learning for Data Imputation and Fusion

This protocol is designed to handle missing data and fuse multimodal features using a modified MU-Diff architecture [41].

- Aim: To impute missing longitudinal imaging phenotypes and simultaneously fuse them with genetic variant data (e.g., SNPs) for subsequent prediction tasks.

- Materials:

- Procedure:

- Data Preprocessing: Process raw neuroimaging data to extract IDPs (e.g., regional gray matter volumes, white matter integrity measures, functional connectivity matrices). Standardize and normalize all phenotypic and genetic data.

- Architecture Configuration:

- Generator Networks (G1 & G2): Implement two distinct U-Net or ResNet-based generators. Configure G1 with a loss function (e.g., L1) that prioritizes overall structural similarity. Configure G2 with a loss function that emphasizes fine-grained texture details, using an adaptive feature selection mechanism.

- Discriminator Network (D): Implement a convolutional discriminator network (e.g., PatchGAN) to assess the realism of generated data.

- Shared Critic: Implement a shared critic network that evaluates features from both G1 and G2 to facilitate mutual learning through knowledge distillation [41].

- Model Training: Train the networks in an adversarial manner. The generators (G1, G2) try to minimize a combined loss (adversarial loss + structural loss + mutual learning loss), while the discriminator (D) is updated to maximize its ability to distinguish real from imputed data. Use techniques like gradient penalty to stabilize training.

- Imputation & Fusion: Use the trained generator ensemble to impute missing values in the longitudinal IDPs. The output is a complete, fused multimodal dataset ready for longitudinal modeling.

Protocol 2: Causal Analysis with Mendelian Randomization

After obtaining a complete dataset, this protocol assesses potential causal relationships between imaging phenotypes and disease outcomes.

- Aim: To test for causal effects of brain imaging modalities on disease traits (e.g., Alzheimer's disease) using genetic variants as instrumental variables [40].

- Materials:

- Procedure:

- Instrument Selection: For a given IDP, select SNPs that are strongly associated with it (p < 5 × 10⁻⁸) and are not associated with confounders.

- Two-Stage Regression: