Multimodal Dropout for Robust Plant Classification: Enhancing Agricultural AI with Missing Modality Resilience

This article explores the emerging technique of multimodal dropout and its pivotal role in developing robust deep learning models for plant classification.

Multimodal Dropout for Robust Plant Classification: Enhancing Agricultural AI with Missing Modality Resilience

Abstract

This article explores the emerging technique of multimodal dropout and its pivotal role in developing robust deep learning models for plant classification. As agricultural AI increasingly relies on integrating diverse data sources—from images of leaves, flowers, fruits, and stems to agrometeorological sensor data and textual descriptions—a significant challenge arises: real-world conditions often lead to incomplete or missing data modalities. This work synthesizes recent research demonstrating how multimodal dropout acts as a regularization strategy during training, explicitly preparing models for such scenarios. We detail the foundational principles of multimodal learning in agriculture, present methodological implementations of dropout techniques, address key optimization challenges, and provide a comparative analysis of model performance. The findings highlight that models incorporating multimodal dropout not only maintain high accuracy when modalities are missing but also significantly outperform traditional fusion methods, offering a path toward more reliable and deployable AI solutions for precision agriculture, species conservation, and ecological monitoring.

The What and Why: Foundations of Multimodal Learning and Dropout in Plant Science

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using a multimodal approach over a single-source model for plant classification?

Traditional deep learning models often rely on a single data source, such as leaf images. From a biological standpoint, a single organ is frequently insufficient for accurate classification, as the same species can have visual variations, and different species can appear similar [1]. Multimodal learning addresses this by integrating images from multiple plant organs—such as flowers, leaves, fruits, and stems—into a cohesive model, creating a more comprehensive representation of plant characteristics and significantly boosting classification accuracy [1] [2].

Q2: What is "multimodal dropout" and why is it critical for real-world applications?

Multimodal dropout is a training technique that makes a model robust to missing modalities [1]. In real-world scenarios, it might be impossible to obtain images of all plant organs (e.g., a plant may not be in fruit or flower at the time of observation). By randomly dropping modalities during training, the model learns to generate accurate classifications even with incomplete data, ensuring reliable performance in the field [1] [2].

Q3: How do I determine the optimal point to fuse data from different modalities?

Choosing where to fuse modalities (e.g., early, intermediate, or late fusion) is a classic challenge and is often determined subjectively by the model developer, which can introduce bias [1]. A pioneering solution is to use a Multimodal Fusion Architecture Search (MFAS) algorithm. This approach automates the search for the best fusion strategy by progressively merging pre-trained unimodal models at different layers, identifying the optimal fusion point without relying on manual design [1].

Q4: A key challenge in agricultural AI is standardizing multimodal datasets. What are the essential criteria for creating such a resource?

For a multimodal dataset to be standardized and useful for the research community, it should satisfy four key criteria [3]:

- Inclusion of Critical Domain-Specific Knowledge: The data must cover essential agricultural concepts and tasks.

- Expert Curation: The dataset must be validated and annotated by domain experts.

- Consistent Structure: The data should be organized in a uniform format to facilitate processing.

- Peer Acceptance: The dataset should be vetted and accepted by the broader research community.

Troubleshooting Common Experimental Issues

Problem: Model Performance is Poor When One Modality is Missing

- Issue: Your fused multimodal model was trained with all modalities present, but its accuracy drops significantly during testing if, for example, fruit images are unavailable.

- Solution: Implement multimodal dropout during the training phase [1] [2].

- Protocol: During each training iteration, randomly set the input from one or more modalities to zero. This forces the model to not become overly reliant on any single data source and learn to make accurate predictions from any subset of the available organs. The research by Lapkovskis et al. demonstrated that this approach provides strong robustness to missing modalities [1].

Problem: Uncertainty in Selecting a Fusion Strategy

- Issue: You are unsure whether to use early, intermediate, or late fusion for your specific dataset and model architecture.

- Solution: Employ an automated fusion search methodology [1].

- Protocol: Adapt the Multimodal Fusion Architecture Search (MFAS) algorithm. The process is as follows [1]:

- Train Unimodal Models: First, train individual models (e.g., based on MobileNetV3) for each modality (leaf, flower, etc.).

- Search for Fusion Architecture: Keep the pre-trained unimodal models static. Use the MFAS algorithm to iteratively search for the best joint architecture by testing fusion connections at different depths of the networks.

- Train Fusion Layers: Only train the newly discovered fusion layers, which saves substantial computational time compared to searching the entire architecture from scratch.

Problem: Lack of Standardized Data Hinders Benchmarking

- Issue: It is difficult to fairly evaluate your new multimodal model because existing datasets are limited in scope or not designed for multimodal tasks.

- Solution: Contribute to the community by creating or utilizing a standardized, multi-scene dataset [3] [4].

- Protocol: Follow the example of benchmarks like AgroMind [4]. The pipeline involves:

- Data Pre-processing: Integrate multiple public and/or private datasets. Perform format standardization and annotation refinement.

- Systematic Task Definition: Generate a diverse set of agriculturally relevant questions that cover multiple dimensions like spatial perception, object understanding, and scene reasoning.

- Evaluation: Use the curated set to perform a comprehensive evaluation of model capabilities, revealing strengths and limitations in agricultural remote sensing.

Experimental Protocols & Data

Performance Comparison of Fusion Strategies

The following table quantifies the performance gains achieved by automated multimodal fusion on the PlantCLEF2015 dataset.

Table 1: Quantitative results of automated fusion versus late fusion on plant classification. [1]

| Fusion Strategy | Number of Classes | Test Accuracy | Key Feature |

|---|---|---|---|

| Late Fusion (Averaging) | 979 | 72.28% | Simple to implement, but suboptimal [1] |

| Automatic Fusion (MFAS) | 979 | 82.61% | Discovers optimal fusion point; +10.33% improvement [1] |

| Automatic Fusion with Multimodal Dropout | 979 | ~82.61%* | Maintains high accuracy even with missing modalities [1] |

Note: The model trained with multimodal dropout maintains robust performance when tested on subsets of organs, though the exact accuracy on the full test set may vary slightly [1].

AgroMind Benchmarking Dimensions

The AgroMind benchmark provides a framework for evaluating multimodal models across a wide range of agricultural tasks. The table below summarizes its core dimensions [4].

Table 2: Core task dimensions of the AgroMind benchmark for evaluating LMMs in agriculture. [4]

| Task Dimension | Description | Example Task Types |

|---|---|---|

| Spatial Perception | Understanding the location and layout of elements within a scene. | Geolocation, size estimation [4] |

| Object Understanding | Identifying and classifying specific objects or entities. | Crop identification, pest detection [4] |

| Scene Understanding | Interpreting the overall context and state of the agricultural environment. | Land use classification, health monitoring [4] |

| Scene Reasoning | Drawing inferences and making decisions based on the visual and contextual data. | Yield forecasting, environmental analysis [4] |

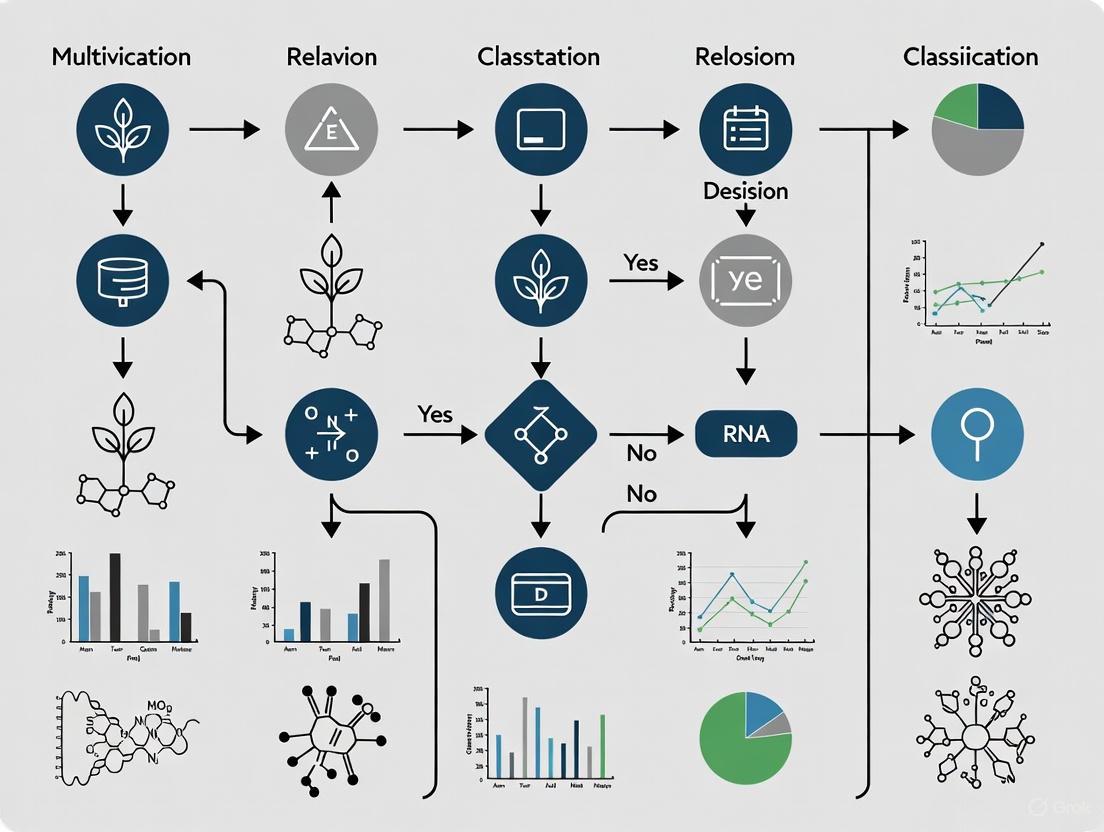

Research Workflow and System Diagrams

Multimodal Fusion with Dropout

AgroMind Evaluation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential components for building a multimodal plant classification system. [1] [5] [4]

| Research Reagent / Resource | Type | Function / Description |

|---|---|---|

| Multimodal-PlantCLEF | Dataset | A restructured version of PlantCLEF2015 tailored for multimodal tasks, containing images of flowers, leaves, fruits, and stems for 979 plant species [2]. |

| AgroMind Benchmark | Evaluation Suite | A comprehensive benchmark for agricultural remote sensing, covering 13 tasks across 4 dimensions (spatial, object, scene, reasoning) to evaluate model capabilities systematically [4]. |

| MFAS Algorithm | Software/Method | The Multimodal Fusion Architecture Search algorithm automates the discovery of the optimal fusion point between pre-trained unimodal networks, saving computational resources [1]. |

| Multimodal Dropout | Training Technique | A regularization method that randomly ignores entire modalities during training, forcing the model to be robust to missing data sources in real-world deployments [1] [2]. |

| Pre-trained CNNs (e.g., MobileNetV3) | Model | Convolutional Neural Networks pre-trained on large-scale image datasets (e.g., ImageNet) serve as effective unimodal feature extractors for images of different plant organs [1]. |

| ESA WorldCereal | Remote Sensing Data | Provides global-scale, high-resolution (10m) annual and seasonal crop maps, useful for incorporating large-scale remote sensing context [5]. |

The Critical Challenge of Missing Modalities in Real-World Field Conditions

Troubleshooting Guide: Missing Modalities

Q1: What is the "missing modality" problem in plant classification? A1: In real-world conditions, it is common for data from one or more sensors or sources (modalities) to be unavailable. For example, a plant classification model trained on images of flowers, leaves, fruits, and stems might be presented with a plant that has no visible flowers. This missing information can cause a severe performance drop in standard multimodal models that expect a complete set of data [6] [7].

Q2: What are the primary technical strategies to make models robust to missing modalities? A2: Research has identified several core strategies:

- Multimodal Dropout: This technique, inspired by standard dropout, randomly omits entire modalities during model training. This forces the network to learn robust features that do not rely on any single, always-available data source, effectively simulating missing data scenarios in the lab [6] [8].

- Knowledge Distillation: A large, powerful "teacher" model is first trained using all available modalities. A smaller, more efficient "student" model is then trained to mimic the teacher's output using only a subset of modalities (e.g., without the missing one) [9].

- Prompt Learning: In this method, the model is first trained on a complete dataset. It is then fine-tuned using specialized, learnable "prompts" that instruct the model on how to adapt its processing when a specific modality is missing [7].

Q3: How do I evaluate my model's robustness to missing modalities? A3: You should design an evaluation protocol that systematically withholds each modality during testing. The table below summarizes the performance of various methods under such conditions, providing a benchmark for comparison.

Table 1: Performance Comparison of Robust Multimodal Methods

| Model / Approach | Application Context | Performance with All Modalities | Performance with Missing Modalities |

|---|---|---|---|

| Automatic Fused Multimodal with Dropout [6] | Plant Identification (4 organs) | 82.61% accuracy | Demonstrates strong robustness (specific metrics not provided in search results) |

| MMC with Prompt Learning [7] | Chemical Process Fault Diagnosis | High diagnosis accuracy (specific metrics not provided) | Maintains improved performance and robustness |

| PlantIF [10] | Plant Disease Diagnosis | 96.95% accuracy | Robustness inferred from complex fusion method (not explicitly tested for missing data) |

Q4: Our model uses a complex fusion strategy. Is there a way to automate the fusion design to better handle missing data? A4: Yes. Instead of manually designing how modalities are combined (e.g., late or early fusion), you can use a Multimodal Fusion Architecture Search (MFAS). This approach automatically discovers the optimal way to combine features from different modalities, which can lead to more resilient architectures. This automated fusion has been shown to outperform common manual strategies like late fusion by a significant margin (10.33% in one study) [6] [11].

Q5: Where can I find a multimodal dataset for plant science to test these methods? A5: A commonly used and restructured dataset is Multimodal-PlantCLEF, which is derived from PlantCLEF2015. It provides images from multiple plant organs—flowers, leaves, fruits, and stems—formatted for fixed-input multimodal tasks [6] [8].

Experimental Protocol: Testing Multimodal Dropout Robustness

Objective: To quantitatively evaluate a multimodal deep learning model's classification accuracy and robustness when one or more input modalities are missing.

Materials:

- Dataset: Multimodal-PlantCLEF (979 plant classes) or an equivalent multimodal dataset [6].

- Model Architecture: A multimodal network (e.g., one found via MFAS) with a separate feature extractor for each modality [6].

- Software: Deep learning framework (e.g., PyTorch, TensorFlow).

Methodology:

- Baseline Training: Train the multimodal model on the complete dataset with all modalities present. Use a standard loss function like cross-entropy.

- Robustness Training (Multimodal Dropout): In a separate training run, incorporate multimodal dropout. For each training batch, randomly set the input of one or more modalities to zero with a predefined probability (e.g., 0.2 per modality) [6].

- Evaluation Protocol:

- Fully Available Test: Evaluate the model on a test set where all modalities are present.

- Systematically Missing Test: Create several corrupted versions of the test set, each with a different modality entirely missing.

- Comparison: Compare the classification accuracy of the baseline model against the model trained with multimodal dropout across all test scenarios. Use statistical tests like McNemar's test to confirm the significance of the results [6].

The workflow for this experiment is outlined below.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Robust Multimodal Classification Pipeline

| Research Reagent / Component | Function / Explanation |

|---|---|

| Multimodal-PlantCLEF Dataset | A benchmark dataset restructured for multimodal plant identification, providing images of four distinct plant organs as separate modalities [6]. |

| Multimodal Fusion Architecture Search (MFAS) | An algorithm that automates the discovery of the optimal neural network architecture for combining different data modalities, moving beyond simple late or early fusion [6] [11]. |

| Multimodal Dropout | A regularization technique applied at the modality level during training. It randomly "drops" or ignores entire modalities to force the model to not become dependent on any single data source, enhancing real-world robustness [6] [8]. |

| Pre-trained Feature Extractors (e.g., MobileNetV3) | Foundation models pre-trained on large-scale image datasets (e.g., ImageNet). They serve as efficient and powerful encoders for transforming raw input images (of leaves, flowers, etc.) into rich feature representations, speeding up convergence and improving performance [6]. |

| Knowledge Distillation Framework | A training paradigm where a compact "student" model is trained to replicate the behavior of a larger "teacher" model. This is particularly useful for creating models that perform well even when a modality is missing, by distilling knowledge from a teacher that had access to all data [9]. |

| Prompt Learning Library | Software tools that enable the implementation of trainable prompt vectors. These prompts can be used to adapt a pre-trained multimodal model to handle specific scenarios, such as the absence of a particular input modality, without retraining the entire network [7]. |

Model Architecture & Data Flow for Robust Multimodal Learning

The following diagram illustrates a high-level architecture that integrates several of the discussed robust learning techniques, including automated fusion and knowledge distillation for handling missing inputs.

Multimodal dropout is an advanced regularization technique in deep learning that stochastically removes entire modality representations during training. This approach simulates realistic scenarios where input data from one or more sensors or sources may be missing, corrupted, or noisy. By preventing over-reliance on any single modality, multimodal dropout promotes balanced learning across all data sources and enhances model robustness for real-world deployment. This technical guide explores the implementation, troubleshooting, and experimental protocols for multimodal dropout within the context of robust plant classification research.

Core Concepts: Frequently Asked Questions (FAQs)

What is multimodal dropout and how does it differ from traditional dropout?

Traditional dropout operates at the neuron level, randomly deactivating individual neurons within a layer to prevent overfitting. In contrast, multimodal dropout operates at the modality level, stochastically removing entire modality representations (e.g., all image data from flowers, leaves, fruits, or stems) during training. This prevents the model from becoming dependent on any single data source and ensures it can maintain performance even when complete multimodal data isn't available [12].

Why is multimodal dropout particularly important for plant classification research?

From a biological standpoint, a single plant organ is often insufficient for accurate classification, as appearance can vary within the same species, while different species may share similar features. Multimodal models that integrate multiple organs (flowers, leaves, fruits, stems) provide more comprehensive representations. Multimodal dropout ensures these models remain effective even when certain organ images are unavailable during real-world deployment, which is common in field conditions [6].

What are the main technical challenges when implementing multimodal dropout?

The primary challenges include:

- Performance Trade-offs: Finding a dropout rate that provides robustness without significantly degrading full-modality performance [12].

- Computational Complexity: Supervising all possible modality combinations becomes computationally intensive with many modalities [12].

- Fusion Strategy Integration: Effectively combining dropout with multimodal fusion architectures to maintain information integration [12].

Troubleshooting Guide: Common Experimental Issues

Problem: Model performance degrades when all modalities are present.

- Potential Cause: Excessively aggressive dropout rates are causing "modality bias" or preventing the model from learning effective fusion representations [12].

- Solution: Systematically reduce dropout probabilities and monitor performance on both complete and missing-modality validation sets. Consider implementing learnable or adaptive dropout that assesses modality relevance per sample [12].

Problem: Model collapses when a specific modality is missing at inference.

- Potential Cause: The model over-relied on the missing modality during training due to insufficient or improperly configured modality dropout [12].

- Solution: Re-train with more balanced modality dropout rates, ensuring each modality is dropped with sufficient frequency. Implement "Conditional Dropout" where distinct encoder branches are explicitly optimized for specific missing-modality scenarios [12].

Problem: Training becomes unstable or excessively slow with multimodal dropout.

- Potential Cause: High dropout rates or simultaneous dropping of multiple critical modalities [12] [13].

- Solution: Adjust the dropout distribution, potentially using a truncated geometric distribution to sample the number of dropped modalities. Consider gradually increasing dropout rates during training or using simultaneous supervision where all modality combinations are explicitly supervised each iteration [12].

Experimental Protocols & Methodologies

Standard Implementation Protocol

The following workflow details the standard methodology for implementing multimodal dropout in a plant classification system, based on successful applications documented in the literature [6] [12]:

Quantitative Performance Analysis

The table below summarizes key quantitative findings from multimodal dropout implementations across various domains, demonstrating its effectiveness for improving robustness:

Table 1: Quantitative Performance of Multimodal Dropout Across Applications

| Application Domain | Baseline Performance | With Multimodal Dropout | Key Improvement Metric |

|---|---|---|---|

| Plant Classification [6] | Late Fusion: ~72.28% accuracy | 82.61% accuracy | +10.33% accuracy, strong robustness to missing modalities |

| General Medical Image Segmentation [12] | U-Net Baseline | Superior Dice scores | Improved regularization even with full modalities |

| Action Recognition [12] | Various fusion methods | State-of-the-art on Kinetics400 | Outperformed gating & attention by several percentage points |

| Vision Tasks (RGB+D Dehazing) [12] | Standard processing | +3.6% PSNR improvement | Enhanced object detection mAP by ~19% at night |

| Emotion Recognition [12] | Standard multimodal | 90.15% test accuracy | Optimal with tuned dropout rate |

Advanced Implementation: Conditional Dropout Protocol

For challenging scenarios requiring maximum robustness, consider this advanced protocol based on recent research [12]:

- Duplicate encoder branches for each modality, creating dedicated pathways for full-modality and missing-modality scenarios.

- Freeze one branch on full-modality data while separately training another with specific modalities replaced by zeros.

- Combine branches using zero-initialized convolutions to preserve full-modality performance while adding missing-modality robustness.

- Implement simultaneous supervision where all modality combinations receive explicit gradient updates each iteration (computationally feasible only with few modalities).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Computational Tools for Multimodal Dropout Research

| Research Reagent / Tool | Function / Purpose | Example Implementation |

|---|---|---|

| Multimodal-PlantCLEF Dataset | Standardized dataset for multimodal plant classification research | Restructured version of PlantCLEF2015 with images from flowers, leaves, fruits, stems [6] |

| Modality Dropout Mask Generator | Stochastic system for removing modality representations during training | Generates mask vector r ~ Bernoulli(pₘ) for M modalities [12] |

| Multimodal Fusion Architecture Search (MFAS) | Automated system for discovering optimal fusion points | Modified MFAS algorithm to automatically fuse unimodal models [6] |

| Learnable Missing-Modality Tokens | Alternative to zero-replacement for dropped modalities | Learnable vectors that represent missing modalities, improving fusion [12] |

| Unified Representation Network (URN) | Maps variable modality combinations to consistent latent space | Fuses batch-normalized encoder outputs via f-mean with variance losses [12] |

Performance Optimization Guidelines

Dropout Rate Tuning Strategy

Systematically tune modality-specific dropout rates rather than using uniform values:

- Start with conservative rates (0.1-0.3) and incrementally increase based on validation performance with missing modalities.

- Monitor performance disparities between full-modality and missing-modality scenarios - aim for gaps smaller than 5%.

- Consider modality reliability - assign higher dropout rates to more reliable or information-rich modalities to prevent over-reliance.

- Implement dynamic scheduling where dropout rates gradually increase during training to initially learn strong representations before adding regularization.

Integration with Fusion Strategies

Effectively combine multimodal dropout with your fusion approach:

- For early fusion: Apply dropout before modality concatenation to simulate completely missing feature streams.

- For intermediate fusion: Drop modalities before the fusion layer, forcing the network to learn cross-modal relationships with incomplete information.

- For late fusion: Apply dropout to individual modality branches before decision averaging, ensuring the system can handle missing classifiers.

FAQs: Multimodal Data and Plant Classification

Why is using multiple plant organs better than a single organ for classification? From a biological standpoint, a single organ is insufficient for accurate classification. Variations in appearance can occur within the same species, while different species may exhibit similar features on a single organ. Using images from multiple plant organs—such as flowers, leaves, fruits, and stems—provides a comprehensive representation of the plant's biological diversity, leading to significantly higher classification accuracy [6] [8]. One study achieved 82.61% accuracy on 979 plant classes by using multiple organs, outperforming single-organ methods [6] [11].

What is multimodal dropout and how does it improve model robustness? Multimodal dropout is a technique that makes a deep learning model resilient to missing data. During training, the model randomly "drops" or ignores data from one or more plant organs. This forces the model to learn robust features that do not depend on any single organ type, ensuring reliable performance even when images of certain organs (e.g., fruits out of season) are unavailable for real-world identification [6] [8].

How do I create a multimodal dataset from existing plant image collections? You can transform a unimodal dataset into a multimodal one through a data preprocessing pipeline. The process involves:

- Organ Identification: Sorting existing images based on the plant organ depicted (flower, leaf, fruit, stem).

- Sample Combination: For each plant specimen, creating data entries that combine images of its different available organs.

- Dataset Structuring: Formatting this data to support models with a fixed number of inputs, each corresponding to a specific organ. This approach was used to create the Multimodal-PlantCLEF dataset from PlantCLEF2015 [6].

What is the difference between 'late fusion' and 'automatic fusion'?

- Late Fusion is a simple strategy where separate models analyze each organ and make individual classifications. These decisions are combined at the final stage, typically by averaging the scores [6] [8].

- Automatic Fusion uses a Neural Architecture Search to find the optimal way to combine information from different organs throughout the analysis process. This automated approach has been shown to outperform late fusion by over 10% in accuracy [6] [11].

Troubleshooting Guides

Problem: Model Performance is Poor When a Plant Organ is Missing

Possible Cause and Solution: The model was likely trained only on complete sets of organ images and cannot handle incomplete data.

- Solution: Implement multimodal dropout during training.

- Protocol:

- Model Setup: Use a multimodal deep learning model, such as one built with a MobileNetV3 backbone for each organ stream [6].

- Training with Dropout: During each training iteration, randomly set the data from one or more organ modalities to zero with a predefined probability.

- Validation: Test the trained model on a validation set with missing modalities to confirm improved robustness. The model should maintain high accuracy even when, for example, fruit or flower images are absent [8].

Problem: Inconsistent Metabolite Profiling Results Across Tissue Samples

Possible Cause and Solution: The biosynthetic profiles of many bioactive compounds are highly organ-specific.

- Solution: Conduct tissue-specific metabolomic and transcriptomic analysis.

- Protocol:

- Sample Collection: Separately harvest different organs (e.g., roots, stems, leaves, flowers) from healthy plants and immediately freeze them in liquid nitrogen to preserve metabolic states [14].

- Metabolite Extraction and Analysis: Homogenize the tissues and extract metabolites using 70% methanol with internal standards. Analyze the extracts using UPLC-MS/MS to identify and quantify metabolites [14].

- RNA Sequencing: In parallel, extract total RNA from each organ and perform RNA-seq to profile gene expression [14].

- Data Integration: Correlate the accumulation of specific metabolites (e.g., flavonoids in flowers, terpenoids in roots) with the expression of key biosynthetic genes in those organs. This identifies the optimal organ for harvesting your target compound [14].

Data Presentation

Table 1: Performance Comparison of Plant Classification Fusion Strategies

| Fusion Strategy | Key Description | Advantages | Reported Accuracy on Multimodal-PlantCLEF |

|---|---|---|---|

| Late Fusion | Combines model decisions at the final prediction level (e.g., by averaging) [6]. | Simple to implement, modular | ~72.28% [6] |

| Automatic Fusion (MFAS) | Uses architecture search to find the optimal point to fuse data from different organs [6]. | Higher accuracy, discovers more efficient architectures | 82.61% [6] [11] |

Table 2: Organ-Specific Biosynthesis of Bioactive Compounds inBidens alba

| Plant Organ | Key Flavonoids Enriched | Key Terpenoids Enriched | Biosynthetic Genes Upregulated |

|---|---|---|---|

| Flowers | Quercetin, Kaempferol, Okanin glycosides [14] | Sesquiterpenes (regulated by BpTPS2/3) [14] | CHS, FLS, BpMYB2, BpbHLH1 [14] |

| Leaves | Apigenin, Isorhamnetin [14] | - | F3H, BpMYB1 [14] |

| Roots | - | Sesquiterpenes, Triterpenes [14] | HMGR, FPPS [14] |

| Stems | - | - | GGPPS [14] |

Experimental Protocols

Protocol 1: Automatically Fused Multimodal Deep Learning for Plant Identification

Objective: To build a high-accuracy plant classification model that automatically learns how to best combine information from images of flowers, leaves, fruits, and stems [6].

Methodology:

- Dataset Curation: Apply a preprocessing pipeline to create a multimodal dataset (e.g., Multimodal-PlantCLEF) where each data point consists of a set of images from the four plant organs for a single specimen [6].

- Unimodal Model Training: Train a separate convolutional neural network (CNN), such as MobileNetV3Small, for each organ modality using its corresponding images [6].

- Multimodal Fusion: Apply a Multimodal Fusion Architecture Search (MFAS) algorithm. This algorithm automatically explores different ways to fuse the feature maps from the unimodal networks, seeking the architecture that yields the highest validation accuracy [6].

- Robustness Training: Incorporate multimodal dropout during the training of the fused model to ensure it remains accurate even when some organ images are missing [6] [8].

- Model Evaluation: Evaluate the final model on a held-out test set using metrics like accuracy and compare it against baseline models (e.g., Late Fusion) using statistical tests like McNemar's test [6].

Protocol 2: Multi-Omics Analysis for Organ-Specific Metabolic Pathways

Objective: To identify which plant organ is most actively producing a target secondary metabolite and to uncover the genetic regulators of its biosynthesis [14].

Methodology:

- Tissue Sampling: Collect flowers, leaves, stems, and roots from multiple individual plants. Immediately freeze samples in liquid nitrogen to halt metabolic activity and preserve RNA integrity [14].

- Widely Targeted Metabolomics:

- Grind tissues to a fine powder under liquid nitrogen.

- Extract metabolites with 70% methanol containing internal standards.

- Analyze using UPLC-MS/MS.

- Identify and quantify metabolites by matching spectra to a self-built database or public libraries [14].

- Transcriptome Sequencing (RNA-seq):

- Extract total RNA from each organ sample.

- Prepare RNA-seq libraries and sequence on a platform like DNBSEQ-T7 or Illumina.

- Map reads to a reference genome and quantify gene expression levels (e.g., FPKM) [14].

- Data Integration and Analysis:

- Perform differential analysis to find metabolites and genes that are significantly enriched in one organ compared to others.

- Conduct correlation analysis to link the expression of key biosynthetic genes (e.g., CHS, F3H for flavonoids; HMGR, FPPS for terpenoids) with the accumulation of target metabolites.

- Identify candidate transcription factors (e.g., MYB, bHLH) that co-express with these pathways and may act as regulators [14].

Experimental Workflow Visualization

Multimodal Plant Classification with Dropout

Multi-Omics for Organ-Specific Metabolism

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Multimodal-PlantCLEF Dataset | A restructured dataset for multimodal plant identification tasks, providing aligned images of flowers, leaves, fruits, and stems for model training and evaluation [6]. |

| MobileNetV3 | A pre-trained, efficient convolutional neural network architecture often used as a backbone for feature extraction from images of individual plant organs [6]. |

| Multimodal Fusion Architecture Search (MFAS) | An algorithm that automates the discovery of the optimal neural network architecture for fusing data from different modalities (plant organs), replacing manual design [6]. |

| UPLC-MS/MS System | Ultra-Performance Liquid Chromatography coupled with Tandem Mass Spectrometry for high-sensitivity identification and quantification of hundreds to thousands of metabolites in plant tissue extracts [14]. |

| RNA-seq Library Prep Kit | Kits (e.g., VAHTS Universal V6) for converting extracted total RNA into sequencing-ready libraries, enabling transcriptome-wide gene expression profiling [14]. |

| DNBSEQ-T7 / Illumina Platforms | High-throughput sequencing platforms used for generating the massive amounts of sequence data required for transcriptomic studies [14]. |

FAQs & Troubleshooting Guides

How do I handle missing plant organ images during training and inference?

Issue: A common problem in real-world experiments is the lack of images for one or more plant organs (e.g., missing fruits or stems), which can cause standard multimodal models to fail.

Solution: Implement Multimodal Dropout during training. This technique, inspired by the automatic fused multimodal approach, artificially drops modalities during training to force the model to learn robust representations even when some data is missing [6]. For inference, ensure your model's architecture can handle variable inputs.

Experimental Protocol:

- Dataset: Use a structured multimodal dataset like Multimodal-PlantCLEF, which contains images of flowers, leaves, fruits, and stems for 979 plant species [6].

- Training Procedure:

- Train your base unimodal models (e.g., pre-trained CNNs like MobileNetV3) on each organ separately.

- During multimodal fusion training, randomly set the feature vector of one or more modalities to zero for each training batch, simulating missing data.

- This practice enhances the model's resilience, allowing it to perform robust classification even when only a subset of organs is available for a given sample [6].

What is the most effective strategy for fusing data from different plant organs?

Issue: Researchers often struggle to choose between early, intermediate, or late fusion strategies for combining features from images of leaves, flowers, stems, and fruits.

Solution: Leverage an automatic fusion strategy instead of relying on a fixed, pre-defined method. Manual fusion strategies like late fusion (averaging predictions from unimodal models) can be suboptimal, trailing automatic fusion by over 10% in accuracy [6].

Experimental Protocol:

- Baseline Comparison: Compare your proposed method against a standard late fusion baseline, which achieves approximately 72.28% accuracy on 979-class Multimodal-PlantCLEF [6].

- Automated Fusion: Employ a Multimodal Fusion Architecture Search (MFAS). This algorithm automatically discovers the optimal fusion points and methods between unimodal neural networks, leading to a more effective and compact model [6].

- Validation: Use McNemar's statistical test to validate the superiority of the automatically fused model over the baseline [6].

How can I create a multimodal dataset from existing unimodal plant image collections?

Issue: A significant bottleneck is the lack of dedicated multimodal datasets, as most existing resources are designed for unimodal classification.

Solution: Implement a data preprocessing pipeline to restructure a unimodal dataset. The creation of the Multimodal-PlantCLEF dataset from PlantCLEF2015 demonstrates a viable methodology [6].

Experimental Protocol:

- Source Data: Select a large-scale unimodal dataset (e.g., PlantCLEF2015).

- Organ Annotation: Identify and label images based on the specific plant organ depicted (flower, leaf, fruit, stem).

- Sample Alignment: For each plant specimen, group together images of its different organs from the dataset. This creates a multi-organ representation for each plant instance.

- Data Curation: Ensure that the final dataset contains a fixed number of inputs, with each input corresponding to a specific plant organ, ready for multimodal model training [6].

Performance Comparison of State-of-the-Art Models

The following table summarizes the performance of recent multimodal models on plant classification and diagnosis tasks.

Table 1: Performance of Multimodal Models in Plant Science

| Model Name | Modalities Used | Key Task | Reported Accuracy | Key Advantage |

|---|---|---|---|---|

| Automatic Fused Multimodal DL [6] | Images of 4 organs (flower, leaf, fruit, stem) | Plant species classification | 82.61% (on 979 classes) | Automatic fusion search & robustness to missing modalities |

| PlantIF [10] | Image, Text | Plant disease diagnosis | 96.95% | Semantic interactive fusion via graph learning |

| Interpretable Multimodal Model [15] | Image, Environmental data | Tomato disease diagnosis & severity estimation | 96.40% (classification), 99.20% (severity) | Explains decisions with LIME & SHAP |

| Hybrid ConvNet-ViT [16] | Leaf Images (single) | Multiclass leaf disease classification | 99.29% | Combines local (ConvNet) and global (ViT) features |

| TaxaBind [17] | 6 modalities (image, location, satellite, text, audio, environment) | Species classification & distribution | High zero-shot performance | General-purpose ecological foundational model |

Experimental Workflow for Multimodal Plant Classification

The diagram below illustrates a generalized experimental workflow for developing a robust multimodal plant classification system, incorporating automatic fusion and multimodal dropout.

Research Reagent Solutions

Table 2: Essential Resources for Multimodal Plant Classification Experiments

| Item | Function in Research | Example / Specification |

|---|---|---|

| Multimodal-PlantCLEF | Benchmark dataset for evaluating multimodal plant ID models; contains 4 organ types [6]. | Restructured from PlantCLEF2015; 979 species [6]. |

| Pre-trained CNN Models | Feature extraction backbones for processing images of individual plant organs. | MobileNetV3, EfficientNetB0, ResNet50 [6] [15]. |

| Multimodal Fusion Architecture Search (MFAS) | Algorithm to automatically find the optimal fusion strategy between modalities [6]. | Modified from Perez-Rua et al., 2019 [6]. |

| Explainable AI (XAI) Tools | Provides interpretability for model decisions, crucial for scientific validation and diagnostics [15]. | LIME (for images), SHAP (for tabular/weather data) [15]. |

| TaxaBind Framework | Foundational model for ecological tasks; supports fusion of 6 modalities for zero-shot learning [17]. | Unifies image, location, text, audio, satellite, and environmental data [17]. |

Implementation in Practice: Architectures and Fusion Strategies for Multimodal Dropout

Frequently Asked Questions (FAQs)

Q1: My multimodal model for plant classification performs well on training data but generalizes poorly to new species. What architectural components should I investigate?

A1: Poor generalization often stems from inadequate fusion strategies or overfitting on individual modalities. We recommend the following troubleshooting steps:

- Revisit Fusion Strategy: The choice between early, late, or intermediate fusion significantly impacts generalization. An automatically searched fusion strategy, like Multimodal Fusion Architecture Search (MFAS), has been shown to outperform simple late fusion by over 10% in accuracy on plant classification tasks [6] [8].

- Incorporate Multimodal Dropout: Systematically drop one or more modalities during training (e.g., images of flowers or leaves) to force the model to build robust cross-modal representations and not rely on a single dominant modality. This technique enhances resilience to missing data in real-world scenarios [6] [8].

- Analyze Modality-Specific Features: Ensure your unimodal encoders (e.g., a CNN for leaf images) are extracting meaningful, complementary features. From a botanical standpoint, a single plant organ is often insufficient for accurate classification [6].

Q2: During training, my model's loss becomes unstable and outputs NaNs. This seems to happen when fusing features from my image and text encoders. How can I resolve this?

A2: Instability and NaNs during fusion are frequently caused by mismatched feature scales or excessively large gradients.

- Normalize Inputs and Features: Confirm that input data for all modalities is properly normalized. For continuous values, a typical range is -1 to 1 or 0 to 1. Ensure the same normalization is applied to both training and testing data [18].

- Check Feature Dimensions: When concatenating features from different encoders (e.g., a 512-dim image vector and a 768-dim text vector), the resulting high-dimensional input can make training unstable. Consider projecting modalities into a shared, lower-dimensional latent space before fusion [19] [20].

- Apply Gradient Normalization: Use gradient normalization techniques, such as gradient clipping, to prevent the exploding gradient problem, which is common in networks that process sequential or multimodal data [18].

Q3: In practical field deployment, I cannot guarantee all plant organ images will be available for every sample. How can I design an architecture that is robust to these missing modalities?

A3: Robustness to missing modalities is a core challenge addressed by multimodal dropout and specific architectural designs.

- Leverage Multimodal Dropout: Explicitly train your model with randomly missing modalities. This teaches the network to rely on any available combination of inputs, maintaining performance even when data is incomplete [6] [8].

- Implement Uncertainty Quantification: Incorporate a module that estimates the uncertainty of each modality's features. During fusion, these uncertainty estimates can be used to dynamically weight the contribution of each modality. A modality with high uncertainty (e.g., due to noise or absence) can be assigned a lower weight, as demonstrated in driver fatigue detection research using EEG and EOG signals [21].

- Utilize Knowledge Distillation: Train a robust student model that can handle missing modalities by distilling knowledge from a larger teacher model that was trained with all modalities present. This approach has shown success in maintaining performance even when one or two modalities are missing in medical data applications [22].

Performance Comparison of Multimodal Fusion Strategies

The following table summarizes quantitative results from recent research, highlighting the effectiveness of different architectural choices.

| Model / Strategy | Application Domain | Key Architectural Components | Performance |

|---|---|---|---|

| PlantIF [10] | Plant Disease Diagnosis | Graph learning; Self-attention graph convolution; Semantic space encoders | 96.95% accuracy on a dataset of 205,007 images and 410,014 texts. |

| Automatic Fusion (MFAS) [6] [8] | Plant Identification | Multimodal Fusion Architecture Search; Multimodal dropout; MobileNetV3Small encoders | 82.61% accuracy on 979 plant classes, outperforming late fusion by 10.33%. |

| Uncertainty-Weighted Fusion (TMU-Net) [21] | Driver Fatigue Detection | Cross-modal attention; Uncertainty-weighted gating; Transformer encoders | Achieved high robustness in cross-subject testing, leveraging complementary EEG and EOG signals. |

| Late Fusion (Baseline) [6] [8] | Plant Identification | Averaging predictions from unimodal models | 72.28% accuracy, demonstrating the limitation of non-joint decision-making. |

Experimental Protocol: Implementing Multimodal Dropout for Robust Plant Classification

This protocol provides a detailed methodology for training a robust multimodal plant classification model, as referenced in the FAQs.

1. Objective: To train a multimodal deep learning model that maintains high classification accuracy even when images of certain plant organs are missing at test time.

2. Dataset Preparation:

- Data Source: Utilize a multimodal plant dataset such as Multimodal-PlantCLEF, which contains images of flowers, leaves, fruits, and stems across 979 plant classes [6] [8].

- Data Preprocessing: For each modality (plant organ), use a pre-trained model like MobileNetV3Small as a feature extractor. Normalize the output feature vectors to have a consistent scale across modalities [18].

3. Model Architecture Setup:

- Unimodal Encoders: Use separate, pre-trained CNNs (e.g., MobileNetV3Small) for each plant organ modality (flower, leaf, fruit, stem). Do not include their final classification layers [6] [8].

- Fusion Module: The features from all available encoders are fused. An effective approach is to use an automatically discovered fusion strategy via Multimodal Fusion Architecture Search (MFAS) [6] [8].

- Classification Head: A fully connected layer takes the fused feature vector and outputs a probability distribution over the target plant classes.

4. Training with Multimodal Dropout:

- Procedure: For each batch of training data, randomly and independently set the input of one or more modalities to a zero vector with a predefined probability (e.g., 0.2 per modality). This simulates the absence of that organ's image [6] [8].

- Optimization: Use an optimizer like SGD or Adam. The learning rate should be tuned; a good starting point is between 1e-3 and 1e-1 [18]. The loss function is typically cross-entropy loss.

5. Evaluation:

- Assess the final model on a held-out test set under two conditions:

- Complete Data: All four plant organ modalities are available.

- Missing Modalities: One or more modalities are systematically omitted to evaluate robustness.

Architectural and Workflow Visualizations

Multimodal Classification with Dropout

Robust Fusion with Uncertainty

The Scientist's Toolkit: Research Reagents & Essential Materials

The following table details key computational "reagents" and resources for building multimodal plant classification systems.

| Research Reagent / Material | Function / Explanation |

|---|---|

| Multimodal-PlantCLEF Dataset [6] [8] | A restructured version of PlantCLEF2015, providing aligned images of multiple plant organs (flowers, leaves, fruits, stems). It serves as the essential benchmark dataset for training and evaluating multimodal plant identification models. |

| Pre-trained Unimodal Encoders (e.g., MobileNetV3Small, ResNet) [6] [8] | These networks, pre-trained on large-scale image datasets like ImageNet, are used as feature extractors for each plant organ modality. They provide a strong foundation of visual knowledge, reducing the need for training from scratch. |

| Multimodal Fusion Architecture Search (MFAS) [6] [8] | An algorithmic tool that automates the discovery of the optimal fusion strategy for combining features from different modalities, leading to more accurate and efficient models than manually designed fusion. |

| Multimodal Dropout [6] [8] | A regularization technique applied during training that randomly "drops" or ignores entire modalities. This is crucial for forcing the model to learn cross-modal dependencies and build robustness against missing data in real-world deployments. |

| Uncertainty Quantification Module [21] | A component that estimates the reliability of the features from each modality. These uncertainty scores are used to dynamically weight the contribution of each modality during fusion, enhancing the model's resilience to noisy or incomplete inputs. |

FAQs on MFAS and Multimodal Dropout

Q1: What is Multimodal Fusion Architecture Search (MFAS) and why is it important for plant classification? Multimodal Fusion Architecture Search (MFAS) is an automated approach that leverages neural architecture search (NAS) to find the optimal way to combine data from different sources, or modalities [23]. In plant classification, where modalities can be images of different plant organs like leaves, flowers, fruits, and stems [1], finding the right fusion strategy is critical. Different layers of a deep learning model capture different levels of features, and the highest levels are not necessarily the best for fusion [1]. MFAS efficiently explores a vast space of possible fusion architectures to discover how and when to fuse information from these distinct plant organs for a more accurate and robust model, outperforming manually-designed fusion strategies like simple late fusion [8] [6].

Q2: How does MFAS integrate with a research pipeline focused on multimodal dropout for robustness? MFAS and multimodal dropout are complementary technologies that enhance model robustness. In a typical research pipeline:

- Unimodal Backbone Training: First, a separate model (e.g., MobileNetV3Small) is pre-trained for each modality (e.g., leaf, flower) [8] [6].

- Architecture Search: The MFAS algorithm then searches for the optimal fusion points between these pre-trained unimodal networks, creating a unified, high-performance architecture [23] [1].

- Robustness Training with Multimodal Dropout: Finally, the discovered architecture is trained with multimodal dropout. During this phase, random modalities are "dropped" or set to zero, forcing the model to learn from any available organ and not become dependent on a single one [8]. This results in a model that maintains high accuracy even when images of certain plant organs are missing at test time [1] [6].

Q3: During the MFAS process, the search is slow and computationally expensive. How can this be mitigated? A primary strategy to enhance the efficiency of MFAS is to use pre-trained models for each modality and keep their weights static during the architecture search [1]. The search process then focuses only on optimizing the fusion layers and connections between these fixed networks. This approach dramatically reduces the search space and computational cost compared to searching the entire multimodal architecture from scratch [1].

Q4: After implementing MFAS, the final fused model is overfitting to the training data. What steps can be taken? Overfitting in a fused model can be addressed by:

- Incorporating Multimodal Dropout: Explicitly training the final model with multimodal dropout prevents over-reliance on any single modality and improves generalization [8] [6].

- Data Augmentation: Apply standard image augmentation techniques (e.g., rotation, flipping, color jitter) to each organ modality during training.

- Regularization: Use standard regularization techniques like L2 regularization or standard dropout within the fusion layers.

- Reviewing the Search Space: The MFAS search space itself might be too complex. Constraining it to prevent overly intricate fusion pathways could yield a simpler, more generalizable model.

Troubleshooting Guide for MFAS Experiments

| Problem | Possible Cause | Solution |

|---|---|---|

| Poor Search Performance | Search space is too large or poorly defined. | Redefine the search space to focus on biologically plausible fusion points (e.g., later layers for high-level features). Use a sequential model-based optimization (SMBO) approach for efficient exploration [23] [24]. |

| Model Performs Poorly with Missing Data | Model is dependent on a full set of modalities. | Integrate multimodal dropout during the training of the final MFAS-derived model. This mimics missing data and forces robustness [8] [6]. |

| High Computational Demand | Searching architectures for all modalities and their fusion is complex. | Leverage pre-trained models for each modality and freeze their weights during the search. The MFAS algorithm then only searches for the fusion architecture, significantly reducing compute time [1]. |

| Suboptimal Fusion Architecture | The chosen NAS algorithm is not effective for multimodal tasks. | Ensure the NAS method is specifically designed for multimodal fusion, like MFAS, which understands the heterogeneity of multimodal data, unlike generic NAS [1] [6]. |

Experimental Protocols and Performance Data

Protocol: Applying MFAS and Multimodal Dropout for Plant Identification

- Dataset Preparation: Use a multimodal plant dataset. For example, the Multimodal-PlantCLEF dataset was created from PlantCLEF2015, containing images of flowers, leaves, fruits, and stems for 979 plant species [8] [6].

- Unimodal Backbone Training: Train or fine-tune a separate convolutional neural network (e.g., MobileNetV3Small) on each individual plant organ modality [8] [6].

- MFAS Execution: Run the MFAS algorithm on the pre-trained unimodal backbones. The search will explore different fusion points and operations to find the architecture that maximizes validation accuracy [23] [1].

- Final Model Training with Dropout: Train the MFAS-discovered architecture from scratch on the full multimodal training set. During this phase, apply multimodal dropout, where each modality has a probability of being omitted in each training iteration [8].

- Evaluation: Evaluate the final model on a held-out test set. Critically, test its robustness by evaluating on subsets with missing modalities (e.g., leaves and flowers only) to validate the effect of multimodal dropout [6].

The workflow for this protocol is summarized in the following diagram:

Quantitative Results from Plant Classification Study

The effectiveness of an automated MFAS approach is demonstrated by the following results from a plant identification study:

Table 1: Performance Comparison of Fusion Strategies on PlantCLEF2015 (979 classes) [8] [6]

| Fusion Strategy | Test Accuracy | Key Characteristic |

|---|---|---|

| Late Fusion (Averaging) | ~72.28% | Simple but often suboptimal; combines decisions. |

| MFAS (Automated Fusion) | 82.61% | Searches for and discovers an optimal fusion architecture. |

| MFAS with Multimodal Dropout | Robust to missing modalities | Maintains high accuracy even when organs are missing. |

Table 2: Impact of Missing Modalities on Model Performance [6]

| Modalities Presented | Model Performance (Accuracy %) |

|---|---|

| All Four Organs | Highest |

| Three Organs | Maintains High Performance |

| Two Organs | Good Performance Sustained |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Components for an MFAS and Multimodal Dropout Experiment

| Item | Function in the Experiment |

|---|---|

| Multimodal Plant Dataset (e.g., Multimodal-PlantCLEF) | Provides the core biological data; contains images of different plant organs (flowers, leaves, etc.) aligned by species [8] [6]. |

| Pre-trained CNN Models (e.g., MobileNetV3, ResNet) | Serve as feature extractors for each modality. Using models pre-trained on large datasets (e.g., ImageNet) saves time and computational resources [8] [6]. |

| MFAS Algorithm | The core "reagent" for automation. It searches for the optimal fusion architecture between the unimodal models, replacing manual design [23] [1]. |

| Multimodal Dropout | A regularization technique applied during training to make the final model robust to incomplete data, simulating real-world scenarios where not all plant organs are visible [8]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What is multimodal dropout, and how does it differ from standard dropout? Standard dropout randomly deactivates neurons within a single neural network to prevent overfitting [25] [13]. Multimodal dropout is a more advanced technique that randomly drops entire modalities (e.g., a whole image channel for "leaves" or "flowers") during training. This forces the model to not become reliant on any single data type and to learn robust, complementary features from all available inputs, making it highly effective for tasks like plant classification where some plant organs might be missing in real-world scenarios [6] [26].

Q2: Why is my multimodal model's performance poor even when using dropout? This often stems from an incorrect fusion strategy. If modalities are fused suboptimally, the model cannot learn effective joint representations. A solution is to automate the fusion process using a Multimodal Fusion Architecture Search (MFAS), which has been shown to outperform manual designs like simple late fusion by over 10% in accuracy [6] [8]. Furthermore, ensure that multimodal dropout is applied after the modality-specific feature extraction but before the fusion point to effectively simulate missing data.

Q3: How can I ensure my model works when one or more modalities are missing at inference? This is the primary purpose of multimodal dropout. By randomly omitting different combinations of modalities during training, the model adapts to make accurate predictions with any available subset. For instance, a plant identification model trained with multimodal dropout can still perform well even if only leaf and stem images are provided, without the flower or fruit [6] [26].

Q4: What is the difference between early, late, and intermediate fusion?

- Early Fusion: Combines raw input data (e.g., concatenating pixel values) before feature extraction. It is simple but may not capture complex interactions [26] [20].

- Late Fusion: Processes each modality through separate models and combines their final predictions (e.g., averaging scores). It is simple and robust but misses early cross-modal interactions [6] [26].

- Intermediate Fusion: Merges modalities after they have been transformed into feature embeddings (latent representations). This is often the most powerful approach as it allows the model to learn rich, complex relationships between modalities [26] [20]. Automated fusion methods often search for the best intermediate fusion strategy [6].

Troubleshooting Common Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| Model fails to converge | Improperly scaled features from different modalities | Normalize the feature embeddings from each modality to a common scale before fusion. |

| Overfitting on training data | Dropout rate is too low; model is too complex | Increase the multimodal dropout rate; use weight constraints as recommended in the original dropout paper [25]. |

| Poor performance with missing modalities | Multimodal dropout was not used during training | Implement and rigorously apply multimodal dropout throughout the training process, randomly excluding each modality [6] [26]. |

| Model relies on only one modality | Fusion method does not encourage complementarity | Use an automated fusion search (MFAS) to find an architecture that balances modality use, and apply dropout to the dominant modality more frequently [6]. |

Experimental Protocols and Data

Key Experiment: Robust Plant Classification with Automatic Fusion and Multimodal Dropout

This protocol is based on a seminal study that introduced an automated multimodal deep learning approach for plant identification, achieving state-of-the-art results [6] [8] [11].

1. Objective: To develop a robust plant classification model that effectively integrates images from four plant organs (flowers, leaves, fruits, stems) and maintains high accuracy even when some organs are missing.

2. Dataset: Multimodal-PlantCLEF

- A restructured version of the PlantCLEF2015 dataset, tailored for multimodal tasks [6].

- Comprises 979 plant classes [6] [11].

- Contains images corresponding to the four specified plant organs.

3. Methodology:

- Step 1 - Unimodal Model Training: Independently train a feature extractor for each modality (flower, leaf, fruit, stem) using a pre-trained model like MobileNetV3Small [6] [8].

- Step 2 - Automated Fusion: Employ a Multimodal Fusion Architecture Search (MFAS) algorithm to automatically find the optimal way to combine the features from the four unimodal networks. This replaces manual, suboptimal fusion strategies [6].

- Step 3 - Multimodal Dropout: During the training of the fused model, randomly drop feature maps from entire modalities. This technique, called multimodal dropout, forces the network to learn with varying combinations of inputs, building robustness against missing data [6].

4. Quantitative Results: The following table summarizes the key performance metrics from the study, highlighting the effectiveness of the proposed method.

| Model / Fusion Strategy | Test Accuracy (%) | Notes |

|---|---|---|

| Late Fusion (Averaging) | ~72.28 | Common baseline; combines model decisions at the end [6]. |

| Proposed (Auto-Fusion + Multimodal Dropout) | 82.61 | Outperforms late fusion by 10.33% [6] [11]. |

| Proposed Model with Missing Modalities | High Robustness | Maintains strong performance even when one or more plant organs are not available during testing [6]. |

Experimental Workflow Diagram

The Scientist's Toolkit: Research Reagent Solutions

This table details the essential computational "reagents" and tools required to implement the described multimodal dropout pipeline for plant classification.

| Research Reagent / Tool | Function in the Experiment | Specification / Notes |

|---|---|---|

| Multimodal-PlantCLEF Dataset | Provides the standardized, multi-organ image data required for training and evaluation. | Restructured from PlantCLEF2015; contains 979 plant classes with images for flowers, leaves, fruits, and stems [6]. |

| Pre-trained CNN Model (e.g., MobileNetV3) | Serves as the foundational feature extractor for each plant organ modality. | Using pre-trained models on ImageNet provides a strong starting point and accelerates convergence [6] [8]. |

| Multimodal Fusion Architecture Search (MFAS) | Automatically discovers the optimal neural network architecture for combining features from different modalities. | Critical for surpassing the performance of manual fusion strategies like late fusion [6]. |

| Multimodal Dropout Layer | A regularization layer that randomly drops entire modalities during training. | Promotes robustness by preventing the model from over-relying on any single data source (e.g., only flowers) [6] [26]. |

| SHAP (SHapley Additive exPlanations) | Provides post-hoc interpretability, explaining the contribution of each modality to the final prediction. | Helps in validating the model's logic and ensuring it uses a balanced set of features [27]. |

Multimodal Fusion and Dropout Logic Diagram

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the automatic fused multimodal learning approach? The core innovation is the use of a Multimodal Fusion Architecture Search (MFAS) to automatically find the optimal way to combine features from images of different plant organs (flowers, leaves, fruits, stems). This automation outperforms commonly used but simplistic fusion strategies like late fusion, leading to a more effective and compact model [6] [8].

Q2: Why is the Multimodal-PlantCLEF dataset necessary? Existing plant classification datasets are predominantly designed for unimodal tasks (e.g., a single image of a leaf). The Multimodal-PlantCLEF dataset is a restructured version of PlantCLEF2015 that provides organized image sets of multiple plant organs per species, which is essential for training and evaluating multimodal approaches [6] [11].

Q3: How does multimodal dropout enhance the model's robustness? Multimodal dropout is a technique applied during training where one or more input modalities (e.g., fruit or stem images) are randomly omitted. This forces the model to learn robust representations that do not over-rely on any single organ type, making it perform reliably even when some plant organ images are missing during real-world use [6] [2].

Q4: What quantitative performance gain does this method offer? As shown in Table 1, the automated fusion method achieved a classification accuracy of 82.61% on 979 plant classes in the Multimodal-PlantCLEF dataset. This represents a 10.33% absolute improvement over the common late fusion baseline [6] [8] [2].

Q5: What is the practical advantage of having a smaller model? The automatically searched model architecture has a significantly smaller parameter count. This facilitates deployment on resource-limited devices like smartphones, enabling fast and accurate plant identification directly in the field for farmers, ecologists, and citizen scientists [6] [8].

Troubleshooting Guides

Issue 1: Poor Performance with Missing Plant Organ Images

Problem: Your model's accuracy drops significantly when images of certain plant organs (e.g., fruits or stems) are not available during testing. Solution: This indicates the model is overly dependent on specific modalities.

- During Training: Ensure that multimodal dropout is correctly implemented and activated in your training pipeline. Regularly discard random modalities during each training step to force the network to learn from all combinations.

- During Evaluation: Verify that your evaluation protocol mirrors real-world conditions. If certain organs are seasonally unavailable, test your model's performance on relevant subsets using only the available organs.

Issue 2: Inability to Replicate Reported Baseline Results

Problem: You cannot reproduce the 82.61% accuracy or the 10.33% improvement over the late fusion baseline as reported in the study. Solution:

- Data Verification: Confirm you are using the correct version of the Multimodal-PlantCLEF dataset. Ensure the data preprocessing pipeline for creating organ-specific image sets matches the one described in the original research [6].

- Baseline Implementation: Double-check your late fusion baseline. A common pitfall is a naive averaging of scores. Ensure the unimodal models (e.g., pre-trained MobileNetV3Small for each organ) are individually trained to convergence before fusion [6] [8].

- Hyperparameters: Review the training hyperparameters (learning rate, optimizer, batch size) used for both the unimodal models and the MFAS algorithm, as these are critical for performance.

Issue 3: Suboptimal Fusion Architecture Search

Problem: The MFAS algorithm is not converging or is producing a fusion architecture that performs worse than a simple late fusion. Solution:

- Search Space Design: Verify that the defined search space for potential fusion operations (e.g., concatenation, element-wise addition) is sufficiently expressive. An overly constrained search space can limit the discovery of optimal models.

- Computational Budget: Ensure the architecture search process is allocated enough time and computational resources (e.g., a sufficient number of epochs and network evaluations) to effectively explore the search space.

Experimental Protocols & Data

Table 1: Key Performance Metrics on Multimodal-PlantCLEF

| Model / Approach | Fusion Strategy | Top-1 Accuracy (%) | Number of Parameters | Robustness to Missing Modalities |

|---|---|---|---|---|

| Proposed Model | Automatic (MFAS) | 82.61 | Low (Compact) | High (with Multimodal Dropout) |

| Baseline 1 | Late Fusion (Averaging) | 72.28 | Moderate | Low |

| Baseline 2 | Single Modality (Leaf-only) | ~65.00* | Low | Not Applicable |

Note: The exact performance for a single leaf modality was not explicitly provided in the search results but is inferred from context as being lower than multimodal baselines [6] [8] [2].

Detailed Methodology: Automatic Fusion Workflow

The following workflow was used to achieve the reported results [6] [8]:

- Unimodal Model Pre-training:

- Independently train a separate MobileNetV3Small model (pre-trained on ImageNet) for each of the four modalities: flower, leaf, fruit, and stem.

- Use the standard cross-entropy loss for each classifier.

- Multimodal Fusion Architecture Search (MFAS):

- Input: The pre-trained unimodal models serve as the foundation.

- Process: A modified MFAS algorithm searches for the optimal combination of fusion operations (e.g., where and how to merge feature maps from different organ streams).

- Output: A single, compact, and optimally fused multimodal model.

- Robustness Training with Multimodal Dropout:

- During the training of the fused model, randomly drop (set to zero) entire feature maps from one or more modalities in each training batch.

- This technique encourages the model to develop redundant and complementary features, enhancing its ability to handle incomplete data.

- Evaluation:

- Evaluate the final model on the test set of Multimodal-PlantCLEF.

- Use standard metrics (Accuracy) and statistical tests (McNemar's test) to validate superiority over baselines.

Automatic Fusion and Robustness Training Workflow

Diagram: Multimodal Dropout Logic for Robustness

Multimodal Dropout Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Reproducing the Experiment

| Item | Function / Role in the Experiment |

|---|---|

| Multimodal-PlantCLEF Dataset | The foundational dataset for training and evaluation, providing organized images of flowers, leaves, fruits, and stems for 979 plant species [6]. |

| PlantCLEF2015 Dataset | The original unimodal dataset that was restructured to create the Multimodal-PlantCLEF dataset, serving as the source of images and labels [6] [28]. |

| Pre-trained MobileNetV3Small | Serves as the backbone feature extractor for each plant organ modality (flower, leaf, fruit, stem), leveraging transfer learning to boost performance and efficiency [6] [8]. |

| Multimodal Fusion Architecture Search (MFAS) Algorithm | The core "reagent" that automates the discovery of the optimal neural network architecture for fusing information from the four different plant organ modalities [6] [11]. |

| Multimodal Dropout | A regularization technique used during training to improve model robustness by randomly ignoring one or more input modalities, simulating scenarios with missing data [6] [2]. |

Frequently Asked Questions

Q1: My multimodal model is overfitting to the image data and ignoring other modalities like weather or genomic data. What steps can I take?

This is a classic sign of model overfitting and imbalance in feature learning. To address it:

- Implement Modality-Specific Dropout: Apply dropout layers to the deepest, most representative features of each modality before the fusion layer. This prevents any single modality, especially dominant ones like images, from overwhelming the learning process and forces the model to rely on a robust combination of all inputs. [6]

- Verify Dataset Diversity: Ensure your training dataset is diverse across all modalities. For instance, your image data should represent various geographic regions, plant varieties, and environmental conditions, while your agrometeorological data should cover different seasons and climate patterns. A model trained on a limited dataset will fail to generalize. [29]

- Analyze Feature Contributions: Use model interpretation techniques to quantify the contribution of each modality to the final prediction. This can help you confirm if other data streams are being underutilized.

Q2: I am missing genomic data for some plant samples in my dataset. Does this mean I have to discard them?

Not necessarily. Your model can be designed to be robust to missing modalities.

- Use Multimodal Dropout: During training, you can randomly "drop" or mask entire modalities. This technique, sometimes called multimodal dropout, trains the network to make accurate predictions even when some data types are unavailable, making it highly resilient to real-world incomplete data. [6] [11]

- Consider Hybrid Fusion: A flexible fusion strategy that can handle missing data is often more effective than a rigid one. For example, an intermediate fusion approach that allows for dynamic integration of available modalities would be suitable here.

Q3: What is the optimal point to fuse different data types (image, text, sensor data) in a neural network?

The choice of fusion strategy is a critical challenge and depends on the complexity and relationship between your data types. [6]

- Early Fusion: Combine raw or low-level features from different modalities into a single input vector. This can allow the model to learn complex, cross-modal interactions from the start.

- Intermediate Fusion: This is often the most powerful approach. You extract high-level features from each modality using separate sub-networks and then fuse these features in a shared fusion layer. This balances the need for modality-specific learning with cross-modal interaction.

- Late Fusion: Train separate models for each modality and combine their final predictions. This is simple and robust to missing data but cannot capture fine-grained interactions between modalities.

- Automated Fusion Search: For optimal performance, you can employ a Neural Architecture Search (NAS) to automatically discover the best fusion strategy for your specific dataset and task, rather than relying on manual design. [6] [11]

Q4: My model performs well in validation but fails on new field data from a different region. How can I improve its generalization?

Poor generalization is often tied to a lack of diversity in the training set. [29]

- Audit Your Training Data: Compare the environmental conditions, soil types, and plant species in your training data against those in the new field. The discrepancy is likely the source of the problem.

- Prioritize Diverse Data Collection: Actively source data from a wide range of geographic locations, agricultural practices, and genetic lineages. As shown in the table below, a highly diverse dataset is crucial for a model to perform reliably in new, unseen conditions. [29]

- Apply Data Augmentation: For image data, use techniques like rotation, color jittering, and noise addition. For agrometeorological data, consider adding slight variations to simulate different environmental conditions.

Experimental Results on Dataset Diversity

The following table summarizes quantitative evidence from a study on rice blast disease identification, demonstrating the critical impact of dataset diversity on model generalization and performance. [29]

| Model Type | Training Data Diversity | Training Accuracy | Validation Accuracy | Generalization Assessment |

|---|---|---|---|---|

| High-Diverse Model | Images from different geographic regions, rice species, environmental conditions, growth stages, and disease severity levels. [29] | 95.26% | 94.43% | Excellent generalization with minimal overfitting. |

| Low-Diverse Model | Limited variability in geographic, species, and environmental factors. [29] | 98.37% | 35.38% | Severe overfitting; model failed to generalize. |

Detailed Experimental Protocol: Multimodal Fusion with Dropout

This protocol outlines the methodology for training a robust plant classification model using images, agrometeorological, and genomic data.

1. Data Preparation and Preprocessing

- Image Data: Collect high-resolution images of plant organs (leaves, stems, fruits). Resize images to a uniform dimension and apply augmentation (random flipping, rotation, brightness/contrast adjustments).

- Agrometeorological Data: Compile time-series data for temperature, humidity, rainfall, and soil moisture. Normalize each feature to a common scale.

- Genomic Data: Use DNA sequence or SNP (Single Nucleotide Polymorphism) data. Encode sequences numerically and apply feature scaling.