Multimodal Data Fusion in Plant Science: Strategies, Applications, and Optimization for Enhanced Analysis

This article provides a comprehensive analysis of fusion strategies for multimodal plant data, catering to researchers and scientists in plant biology and agricultural technology.

Multimodal Data Fusion in Plant Science: Strategies, Applications, and Optimization for Enhanced Analysis

Abstract

This article provides a comprehensive analysis of fusion strategies for multimodal plant data, catering to researchers and scientists in plant biology and agricultural technology. It explores the foundational principles of multimodal learning, detailing various data fusion methodologies from early to late fusion and their specific applications in tasks such as species identification and health monitoring. The content further addresses critical troubleshooting aspects, including data alignment and model robustness, and offers a comparative validation of different fusion techniques against established benchmarks. By synthesizing current research and emerging trends, this review serves as a strategic guide for selecting and optimizing fusion strategies to improve accuracy and efficiency in plant science research and its biomedical implications.

The Fundamentals of Multimodal Plant Data: From Unimodal Limits to Fusion Principles

Defining Multimodality in Plant Science

In plant science, multimodal data refers to information that is captured across multiple, distinct types or formats—known as modalities—to provide a comprehensive representation of plant biology. Unlike traditional unimodal approaches that rely on a single data source, multimodal integration leverages the complementary strengths of diverse data types. This paradigm is crucial because a single data source, such as an image of a leaf, is often biologically insufficient for accurate classification or analysis, as variations can occur within the same species and different species can share similar visual features [1] [2].

The core value of multimodal data lies in three key characteristics [3]:

- Complementarity: Each modality captures unique and complementary aspects of a plant's phenotype, genotype, or environment. For instance, images of different organs provide visual cues, while genomic data reveals hereditary information.

- Redundancy: Multiple data types can provide corroborating evidence for a finding, enhancing the reliability of predictions even if one data source is missing or compromised.

- Enhanced Model Performance: Integrative models consistently outperform unimodal alternatives by capturing a more holistic view of complex plant traits and responses.

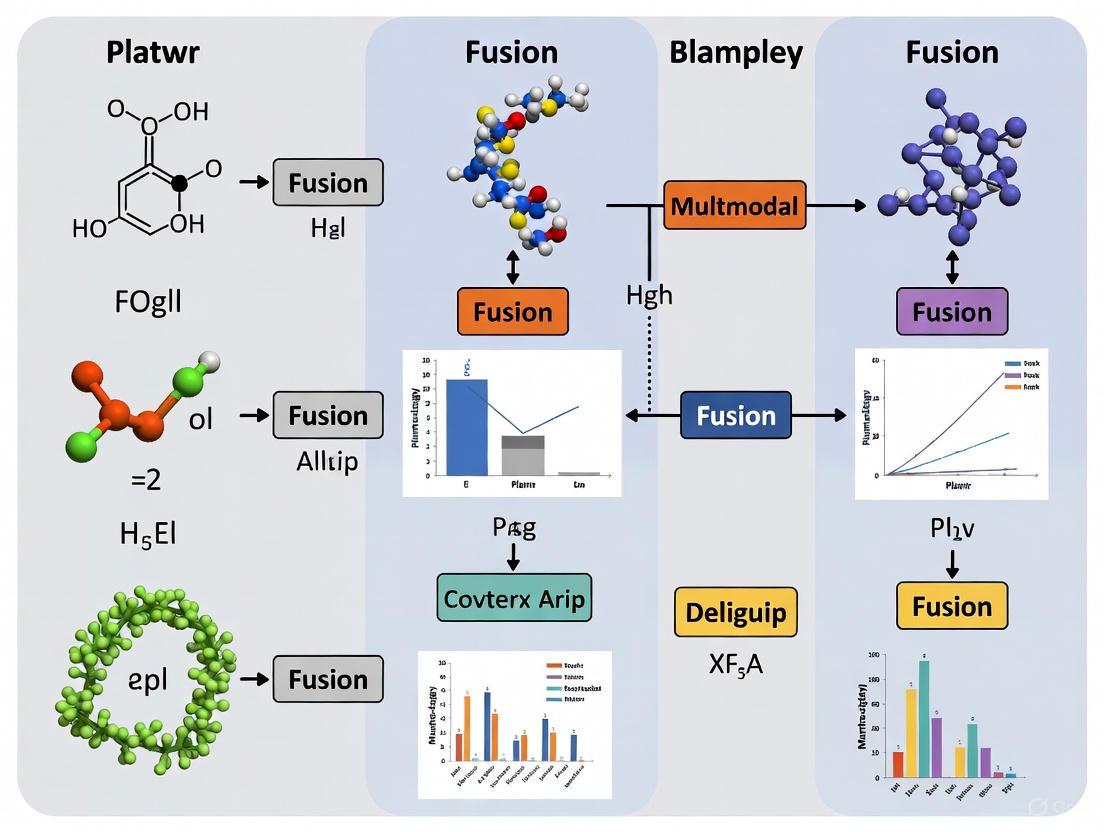

The following diagram illustrates the core logical relationship between the fundamental concepts in multimodal plant science, from raw data types to final application outcomes.

Core Concepts of Multimodal Data in Plant Science

Key Data Modalities and Fusion Strategies

Primary Modalities in Plant Research

The tables below categorize the primary data modalities utilized in modern plant science research.

Table 1: Core Data Modalities in Plant Science

| Modality Category | Specific Data Types | Description & Role | Example Applications |

|---|---|---|---|

| Visual Phenomics | Images of leaves, flowers, fruits, stems [1] [2] | Provides information on plant morphology, health, and organ-specific characteristics. | Plant species identification [1], disease diagnosis from leaf spots [4]. |

| Environmental & Climate | Temperature, humidity, rainfall, soil data [4] | Captures the abiotic conditions influencing plant growth, health, and disease spread. | Predicting disease severity [4], modeling trait distributions [5]. |

| Genomic & Multi-Omics | Genotypic (SNP), transcriptomic, epigenomic data [6] | Reveals the genetic blueprint and functional molecular activity within the plant. | Genomic selection for breeding [6], predicting complex traits [6]. |

| Text & Semantics | Scientific literature, curated database entries [7] [8] | Encodes structured and unstructured knowledge from domain experts and publications. | Enhancing knowledge bases (e.g., P3DB) [8], interpreting model results. |

| Geospatial Context | Satellite imagery, GPS coordinates, climate priors [5] | Provides location-based context, enabling scaling from individual plants to ecosystems. | Global-scale mapping of plant traits [5]. |

Comparing Multimodal Fusion Strategies

A central challenge in multimodal learning is data fusion—the method of integrating information from different modalities. The choice of strategy significantly impacts model performance and interpretability [1] [2].

Table 2: Comparison of Multimodal Fusion Strategies

| Fusion Strategy | Description | Technical Advantages | Limitations & Challenges |

|---|---|---|---|

| Early Fusion | Integration of raw data from different modalities into a single input tensor before feature extraction [1]. | Allows for modeling low-level interactions between modalities immediately. | Highly susceptible to noise and requires strict alignment between modalities [1]. |

| Intermediate Fusion | Features are extracted from each modality separately and then merged in intermediate layers of a model [1]. | Offers a balanced approach, enabling the model to learn complex cross-modal interactions [1]. | Designing the optimal architecture and fusion points is complex [1]. |

| Late Fusion | Combines modalities at the decision level, typically by averaging the predictions of separate models [1] [2]. | Simple to implement, robust to missing data, and allows for asynchronous training of unimodal models [1] [2]. | Cannot capture fine-grained, cross-modal correlations, potentially limiting performance gains [1]. |

| Hybrid/Automatic Fusion | Leverages Neural Architecture Search (NAS) to automatically discover the optimal fusion architecture [1] [2]. | Can outperform manually designed models by finding more efficient and effective fusion pathways [1] [2]. | Computationally intensive during the search phase [2]. |

Performance Comparison of Fusion Methods

Experimental data from recent studies provides a quantitative basis for comparing the performance of different fusion strategies in specific plant science tasks.

Table 3: Experimental Performance of Fusion Strategies on Benchmark Tasks

| Study & Task | Dataset | Fusion Method | Key Performance Metric | Result | Comparative Advantage |

|---|---|---|---|---|---|

| Plant Identification [1] [2] | Multimodal-PlantCLEF (979 classes) | Automatic Fusion (MFAS) | Accuracy | 82.61% | +10.33% over Late Fusion |

| Late Fusion (Averaging) | Accuracy | 72.28% | Baseline | ||

| Tomato Disease Diagnosis [4] | PlantVillage & Environmental Data | Late Fusion (EfficientNetB0 + RNN) | Disease Classification Accuracy | 96.40% | Integrates image and climate data |

| Unimodal (Image-only) | Disease Classification Accuracy | ~90% (est. from context) | Outperforms unimodal approaches | ||

| Tomato Disease Severity [4] | PlantVillage & Environmental Data | Late Fusion (EfficientNetB0 + RNN) | Severity Prediction Accuracy | 99.20% | High-precision severity estimation |

| Plant Disease Diagnosis [7] | 205,007 images & 410,014 texts | Intermediate Fusion (PlantIF) | Accuracy | 96.95% | +1.49% over established models |

Detailed Experimental Protocols

The high-performing results in Table 3 were achieved through carefully designed methodologies. Below are the detailed experimental protocols for the two key studies.

Protocol 1: Automatic Fusion for Plant Identification [1] [2]

- Dataset Preparation: The PlantCLEF2015 dataset was restructured into "Multimodal-PlantCLEF," comprising images of four distinct plant organs (flowers, leaves, fruits, stems) treated as separate modalities.

- Unimodal Model Training: A separate pre-trained MobileNetV3Small model was first trained on each individual organ modality.

- Fusion Architecture Search: The Multimodal Fusion Architecture Search (MFAS) algorithm was applied. This algorithm automatically searches for the optimal layers at which to merge the pre-trained unimodal networks, creating a single, cohesive architecture.

- Robustness Training: The model was trained with multimodal dropout, a technique that randomly drops subsets of modalities during training, forcing the model to become robust to missing data at test time.

- Evaluation: The final fused model was evaluated against a late fusion baseline using classification accuracy and McNemar's statistical test.

Protocol 2: Late Fusion for Tomato Disease Diagnosis and Severity [4]

- Data Collection: The study utilized two parallel data streams:

- Visual Modality: Leaf images from the PlantVillage dataset.

- Environmental Modality: Time-series weather data (e.g., temperature, humidity, rainfall).

- Unimodal Model Training:

- An EfficientNetB0 model was trained for image-based disease classification.

- A Recurrent Neural Network (RNN) was trained to predict disease severity from the environmental data sequences.

- Late Fusion: Predictions from the two independent models (EfficientNetB0 and RNN) were combined at the decision level to produce a final, integrated output.

- Interpretability Analysis: The model was made interpretable using LIME (for the image modality) and SHAP (for the weather modality) to explain the classification and severity predictions.

- Evaluation: The fused model was evaluated on separate test sets for classification accuracy and severity prediction accuracy.

The workflow for this late fusion protocol is detailed in the following diagram.

Tomato Disease Diagnosis via Late Fusion

The Scientist's Toolkit: Essential Research Reagents

Building and experimenting with multimodal plant data requires a suite of computational tools and data resources. The following table catalogs key "research reagent solutions" cited in the discussed studies.

Table 4: Essential Research Reagents for Multimodal Plant Science

| Reagent Category | Specific Tool / Resource | Function in Research | Example Use Case |

|---|---|---|---|

| Computational Frameworks | Multimodal Fusion Architecture Search (MFAS) [2] | Automates the discovery of optimal neural network architectures for fusing multiple data modalities. | Achieving state-of-the-art plant identification accuracy [1] [2]. |

| MUFASA [2] | A more comprehensive NAS that searches for both unimodal and fusion architectures. | Potentially higher performance at the cost of greater computational resources [2]. | |

| Pre-trained Models & Encoders | MobileNetV3Small [1] [2] | A lightweight, efficient convolutional neural network used as a feature extractor for plant organ images. | Serving as the base unimodal model in automatic fusion pipelines [1] [2]. |

| EfficientNetB0 [4] | A CNN that provides high accuracy and efficiency scaling, used for image-based classification tasks. | Serving as the visual backbone for tomato disease diagnosis [4]. | |

| Geospatial Foundation Models (e.g., SatCLIP, Climplicit) [5] | Encoders that provide rich, pre-trained representations of climate and satellite data. | Integrating geospatial context into global-scale plant trait prediction models [5]. | |

| Key Datasets | Multimodal-PlantCLEF [1] | A restructured version of PlantCLEF2015 containing images from four plant organs for multimodal classification. | Benchmarking plant identification models and fusion strategies [1]. |

| PlantVillage [4] | A large, public dataset of plant leaf images annotated with disease labels. | Training and evaluating disease classification models [4]. | |

| TRY Plant Trait Database [5] | A global database of plant traits, containing species-level trait measurements. | Providing weak labels for training trait prediction models from citizen science images [5]. | |

| P3DB (Plant Protein Phosphorylation Database) [8] | A curated knowledgebase of plant phosphorylation events. | Integrating structured biological knowledge with LLMs for enhanced querying [8]. | |

| Interpretability Tools | LIME (Local Interpretable Model-agnostic Explanations) [4] | Explains the predictions of any classifier by perturbing the input and analyzing changes in the output. | Interpreting which parts of a leaf image contributed to a disease classification [4]. |

| SHAP (SHapley Additive exPlanations) [4] | Determines the contribution of each input feature to a model's prediction based on game theory. | Explaining which weather variables were most important for disease severity prediction [4]. |

Plant classification and analysis are fundamental to agricultural productivity, ecological conservation, and understanding plant growth dynamics [1]. Traditional approaches to plant analysis have predominantly relied on single-source data, such as images of a single plant organ—typically leaves [1] [9]. From a biological standpoint, however, a single organ provides insufficient information for accurate classification and comprehensive analysis [1]. This limitation stems from the fact that variations in appearance can occur within the same species due to various environmental factors, while different species may exhibit remarkably similar features in a single organ type [1] [9].

The limitations of single-source data extend beyond morphological classification to physiological analysis. Traditional plant physiological measurements, such as detailed leaf gas exchange systems used to quantify photosynthetic performance, are often constrained to instantaneous point measurements that provide only a 'snap shot' of leaf photosynthetic status at a single point in time over a comparatively small area [10]. These methods introduce substantial measurement variability, with differences between lowest and highest rates often amounting to one or even two orders of magnitude [11], highlighting the critical need for more comprehensive analytical approaches that integrate multiple data sources.

Comparative Analysis: Single-Modality vs. Multimodal Fusion

Performance Comparison of Classification Approaches

Table 1: Comparison of plant classification approaches using Multimodal-PlantCLEF dataset

| Approach | Data Sources | Fusion Strategy | Accuracy | Key Limitations |

|---|---|---|---|---|

| Single-Source (Leaf-only) | Leaf images | Not applicable | ~60-65% (estimated) | Limited view of plant biology; struggles with species having similar leaves [1] [9] |

| Late Fusion | Flowers, leaves, fruits, stems | Decision-level averaging | 72.28% | Suboptimal architecture; relies on developer discretion [1] [9] |

| Automatic Fused Multimodal DL | Flowers, leaves, fruits, stems | Multimodal fusion architecture search | 82.61% | Requires multimodal dataset creation [1] [9] |

Comparison of Plant Analysis Methods

Table 2: Broader comparison of plant analysis methodologies

| Method Category | Primary Data Sources | Key Applications | Limitations |

|---|---|---|---|

| Traditional Physiological Measurements | Leaf gas exchange, chlorophyll fluorescence | Photosynthetic performance, biochemical efficiency | Time-consuming; low-throughput; specialized equipment required [10] |

| Optical Sensing & Remote Sensing | Multi/hyperspectral reflectance, infrared thermography, LiDAR | High-throughput phenotyping, stress detection | Requires calibration with direct empirical measurements [10] |

| Unimodal Deep Learning | Single organ images (typically leaves) | Automated plant identification | Fails to capture full biological diversity [1] [9] |

| Multimodal Deep Learning | Multiple plant organs (flowers, leaves, fruits, stems) | Comprehensive species identification, growth analysis | Dataset availability; fusion strategy optimization [1] [9] |

Experimental Evidence: Quantifying the Fusion Advantage

Multimodal Plant Identification Protocol

Experimental Objective: To develop and evaluate an automated fused multimodal deep learning approach for plant classification that integrates images from multiple plant organs and compares performance against single-modality and late fusion baselines [1] [9].

Dataset Preparation:

- Created Multimodal-PlantCLEF, a restructured version of PlantCLEF2015 tailored for multimodal tasks

- Comprised images of four distinct plant organs: flowers, leaves, fruits, and stems

- Contained 979 plant classes for classification [1] [9]

Methodology:

- Trained individual unimodal models for each plant organ using MobileNetV3Small pre-trained model

- Applied a modified Multimodal Fusion Architecture Search (MFAS) to automatically fuse unimodal models

- Incorporated multimodal dropout to enhance robustness to missing modalities

- Compared against late fusion baseline using averaging strategy [1] [9]

Evaluation Metrics:

Cancer Survival Prediction Protocol (Cross-Domain Validation)

Experimental Objective: To evaluate the performance of multimodal data fusion versus single-modality approaches for predicting overall survival in cancer patients, providing cross-domain validation of fusion benefits [12].

Dataset:

- Utilized The Cancer Genome Atlas (TCGA) data

- Incorporated multiple modalities: transcripts, proteins, metabolites, and clinical factors [12]

Methodology:

- Addressed challenges of high dimensionality, small sample sizes, and data heterogeneity

- Applied various feature extraction and fusion strategies

- Specifically compared late fusion models against single-modality approaches across lung, breast, and pan-cancer datasets [12]

Evaluation Metrics:

- Concordance index (C-index) for survival prediction performance

- Robustness analysis through multiple training-test splits [12]

The Fusion Workflow: From Single-Source to Integrated Analysis

The following diagram illustrates the fundamental shift from traditional single-source analysis to automated multimodal fusion:

Technical Implementation: Fusion Architecture Strategies

Multimodal Fusion Architecture Comparison

Table 3: Technical comparison of multimodal fusion strategies

| Fusion Strategy | Integration Point | Key Advantages | Key Limitations | Representative Applications |

|---|---|---|---|---|

| Early Fusion | Data-level, before feature extraction | Simple implementation; preserves raw data correlations | Susceptible to overfitting with high-dimensional data; ignores data heterogeneity [13] [12] | Simple image-text concatenation [13] |

| Late Fusion | Decision-level, after individual processing | Resistant to overfitting; handles data heterogeneity naturally | May miss important cross-modal interactions; suboptimal for capturing complex relationships [1] [12] | Averaging predictions from separate organ classifiers [1] |

| Intermediate Fusion | Feature-level, after separate feature extraction | Balances flexibility and integration; enables cross-modal feature enrichment | Requires careful architecture design; can be computationally complex [13] [14] | Attention mechanisms between modality features [13] [14] |

| Hybrid Fusion | Multiple integration points | Maximizes benefits of different strategies; highly flexible | Complex to implement and optimize; risk of over-engineering [1] [13] | Combined feature and decision fusion [1] |

| Automated Fusion Search | Learned through architecture search | Discovers optimal fusion strategy automatically; adapts to specific data characteristics | Computationally intensive search process; requires specialized expertise [1] [9] | Multimodal Fusion Architecture Search (MFAS) [1] |

Table 4: Key research reagents and computational resources for multimodal plant analysis

| Resource Category | Specific Examples | Function/Application | Key Characteristics |

|---|---|---|---|

| Multimodal Datasets | Multimodal-PlantCLEF, PlantCLEF2015 | Training and evaluation of multimodal plant classification models | Contains images of multiple plant organs; 979 plant classes [1] [9] |

| Deep Learning Frameworks | TensorFlow, PyTorch | Implementation of neural network architectures | Support for convolutional networks; pre-trained model availability [1] |

| Pre-trained Models | MobileNetV3Small, VGGNet, ResNET | Feature extraction; transfer learning | Pre-trained on large datasets; enables efficient knowledge transfer [1] [13] |

| Neural Architecture Search Tools | MFAS implementations | Automated discovery of optimal fusion architectures | Reduces manual design bias; finds more efficient models [1] [9] |

| Physiological Measurement Systems | Photosynthetic gas exchange systems, chlorophyll fluorometers | Direct empirical measurement of plant physiological status | Quantifies photosynthetic CO2 assimilation, stomatal conductance [10] |

| Optical Sensors | Multi/hyperspectral reflectance sensors, infrared thermography, LiDAR | High-throughput phenotyping; indirect physiological assessment | Enables rapid screening over wide spatial scales [10] |

Integration Pathways: From Data to Decisions

The following diagram illustrates the complete multimodal fusion pipeline for plant analysis, highlighting the integration of complementary data sources:

The experimental evidence across domains consistently demonstrates that single-source data approaches introduce significant limitations in plant analysis, from classification inaccuracies to incomplete physiological characterization. The 10.33% performance gap between automated multimodal fusion and conventional late fusion strategies underscores the critical importance of not just adding more data sources, but of implementing optimized fusion methodologies [1] [9]. The cross-domain validation from cancer research further strengthens this conclusion, with late fusion models consistently outperforming single-modality approaches despite the challenges of high dimensionality and data heterogeneity [12].

For researchers in plant science and agricultural technology, the path forward requires a fundamental shift from single-source to deliberately designed multimodal approaches. This transition encompasses both technical implementation—adopting advanced fusion strategies like automated architecture search—and philosophical orientation toward holistic plant characterization that respects the biological complexity of the subjects under study. As the field progresses, the development of standardized multimodal datasets, reusable processing pipelines, and validated fusion protocols will be essential to realizing the full potential of multimodal integration for addressing pressing challenges in food security, climate resilience, and sustainable agriculture.

In the field of artificial intelligence, multimodal data fusion is the process of integrating information from diverse data types—such as images, text, audio, and sensor data—to create richer, more comprehensive computational models [15]. For plant data research, this often involves combining visual data from different plant organs (e.g., leaves, flowers, stems, fruits) with textual descriptions, thermal imagery, or other sensor data to achieve more accurate classification, diagnosis, and phenotyping than would be possible with any single data source [1] [7]. The core challenge lies in determining the optimal strategy and timing for integrating these heterogeneous data streams to maximize performance while managing computational complexity [1].

The selection of fusion strategy significantly impacts model effectiveness, as each approach offers distinct trade-offs in how it handles inter-modal interactions, data synchronization, and robustness to missing information [15]. This guide provides a structured comparison of early, intermediate, late, and hybrid fusion strategies, with specific applications to multimodal plant data research, experimental protocols, and practical implementation guidelines for scientific teams.

Core Fusion Strategies

Early Fusion (Feature-Level Fusion)

Mechanism Overview: Early fusion, also known as feature-level fusion, integrates raw data or preliminary features from multiple modalities before they are fed into the main machine learning model [16] [15]. This approach combines data sources at the input level, typically through concatenation or similar methods, creating a unified feature representation that captures low-level interactions between modalities [17].

Technical Implementation: In practice, early fusion involves extracting basic features from each modality—such as pixel values from images or fundamental acoustic features from audio—then merging these features into a single composite vector before model training [16]. For plant research, this might involve combining raw pixel data from images of different plant organs into a single input tensor [1].

Table: Early Fusion Characteristics

| Aspect | Description |

|---|---|

| Integration Point | Input/feature level, before main model processing |

| Data Requirements | Precisely aligned and synchronized modalities |

| Computational Profile | Single training process, but potentially high-dimensional feature spaces |

| Key Advantage | Enables learning of complex cross-modal interactions at granular level |

| Primary Limitation | Susceptible to curse of dimensionality; requires strict data alignment |

Intermediate Fusion (Joint Representation Learning)

Mechanism Overview: Intermediate fusion represents a balanced approach where modalities are processed separately in initial stages, then integrated at intermediate model layers after each has been transformed into latent representations [15]. This strategy has gained significant traction as it balances modality-specific processing with joint representation learning [15].

Technical Implementation: In intermediate fusion, each modality passes through dedicated processing streams (often using specialized neural network architectures) to extract high-level features. These feature representations are then merged through concatenation, element-wise operations, or attention mechanisms before final prediction layers [15]. The PlantIF model for plant disease diagnosis exemplifies this approach, employing semantic space encoders to map visual and textual features into shared and modality-specific spaces before fusion through graph learning techniques [7].

Table: Intermediate Fusion Characteristics

| Aspect | Description |

|---|---|

| Integration Point | Intermediate model layers, after modality-specific processing |

| Data Requirements | Modalities need semantic alignment but not precise low-level synchronization |

| Computational Profile | Balanced complexity; enables rich cross-modal interactions |

| Key Advantage | Captures complex modal interactions while allowing modality-specific processing |

| Primary Limitation | Increased architectural complexity and training requirements |

Late Fusion (Decision-Level Fusion)

Mechanism Overview: Late fusion, also called decision-level fusion, processes each modality independently through separate models and combines their predictions at the final decision stage [16] [17]. This approach resembles ensemble methods, where each modality-specific model contributes its specialized knowledge to a collective decision [15].

Technical Implementation: In late fusion systems, dedicated models are trained for each data modality—for example, one model for leaf images, another for flower images, and a third for textual descriptions [16]. The predictions from these specialized models are aggregated using techniques such as voting, averaging, or weighted summation based on confidence scores [16] [17]. This method's modularity allows researchers to incorporate new data sources without retraining existing components [16].

Table: Late Fusion Characteristics

| Aspect | Description |

|---|---|

| Integration Point | Decision/output level, after independent model processing |

| Data Requirements | Tolerant to asynchronous and heterogeneous data formats |

| Computational Profile | Multiple training processes but reduced dimensionality concerns |

| Key Advantage | High flexibility and robustness to missing modalities |

| Primary Limitation | Limited ability to capture complex cross-modal relationships |

Hybrid Fusion

Mechanism Overview: Hybrid fusion strategically combines elements from early, intermediate, and late fusion approaches to leverage their respective strengths while mitigating their limitations [1]. This adaptive framework enables researchers to customize integration strategies based on specific data characteristics and task requirements.

Technical Implementation: Hybrid approaches might employ early fusion for closely related modalities (e.g., different image types), intermediate fusion for semantically aligned representations, and late fusion for incorporating diverse information sources [1]. The automatic fusion approach described in multimodal plant classification research exemplifies this strategy, using neural architecture search to optimize fusion points throughout the model [1].

Comparative Analysis of Fusion Strategies

Performance Comparison in Plant Research

Experimental studies in plant data research provide quantitative insights into how different fusion strategies perform on practical classification tasks. Research on multimodal plant identification using images from multiple plant organs (flowers, leaves, fruits, and stems) demonstrated significant performance variations between fusion approaches [1].

Table: Experimental Performance Comparison in Plant Classification

| Fusion Strategy | Reported Accuracy | Key Advantages | Limitations |

|---|---|---|---|

| Late Fusion | 72.28% | Simple implementation; robust to missing modalities | Fails to capture cross-modal interactions |

| Automatic Hybrid Fusion | 82.61% | Automatically discovers optimal architecture | Complex implementation; computationally intensive search process |

| Early Fusion | Not specifically reported | Learns rich joint representations | Requires precisely aligned data; high-dimensional issues |

The automatic fusion approach, which employed multimodal fusion architecture search (MFAS), outperformed late fusion by 10.33% accuracy on the Multimodal-PlantCLEF dataset comprising 979 plant classes [1]. This performance advantage stems from the method's ability to automatically discover optimal fusion points throughout the network architecture rather than relying on predetermined integration strategies.

Strategic Trade-Off Analysis

Each fusion strategy presents distinct trade-offs that researchers must consider when designing multimodal plant data systems:

Early Fusion excels when modalities are closely related and precisely synchronized, but struggles with high-dimensional feature spaces and data alignment requirements [16]. The approach is particularly suitable when raw data from multiple sources need to be analyzed together, such as in audio-visual recognition systems [16].

Intermediate Fusion offers a balanced solution that captures rich cross-modal interactions while allowing for modality-specific processing [15]. This comes at the cost of increased architectural complexity and training requirements. Intermediate fusion has proven effective in plant disease diagnosis, where models like PlantIF use graph learning to capture spatial dependencies between plant phenotype and text semantics [7].

Late Fusion provides maximum flexibility and robustness to missing data, making it ideal for scenarios where modalities are asynchronous or have different sampling rates [16] [15]. However, this approach may miss important cross-modal interactions that could enhance model performance [16]. Its modular nature facilitates incorporation of new data sources without retraining existing models [16].

Hybrid Fusion strategies aim to combine the strengths of multiple approaches, as demonstrated by the automatic fusion method that achieved state-of-the-art performance in plant classification [1]. The trade-off involves increased implementation complexity and computational demands for architecture search or custom design.

Experimental Protocols and Methodologies

Multimodal Plant Classification Protocol

Dataset Preparation: The Multimodal-PlantCLEF dataset provides a benchmark for evaluating fusion strategies in plant research [1]. This dataset was created by restructuring the PlantCLEF2015 dataset into a multimodal format containing images of four distinct plant organs: flowers, leaves, fruits, and stems [1]. Each plant specimen is represented by multiple images capturing different biological features, enabling comprehensive multimodal learning.

Experimental Setup: In the referenced study, researchers first trained unimodal models for each plant organ using MobileNetV3Small pretrained weights [1]. They then applied a modified Multimodal Fusion Architecture Search (MFAS) algorithm to automatically discover optimal fusion points throughout the network [1]. The baseline comparison implemented late fusion with averaging strategy, a common approach in multimodal plant classification [1].

Evaluation Metrics: Performance was assessed using standard classification metrics including accuracy, with statistical significance verified through McNemar's test [1]. Robustness to missing modalities was evaluated using multimodal dropout techniques during training [1].

Implementation Workflow

The following workflow diagram illustrates the experimental protocol for multimodal plant classification with automatic fusion:

Advanced Fusion Technique: Modality Dropout

Concept and Implementation: Modality dropout is a training technique that randomly drops or obscures specific modalities during each training iteration, forcing the model to adapt to varying combinations of available data [17]. This approach enhances robustness in real-world scenarios where certain data sources may be missing or corrupted at inference time [17].

Application Protocol: In plant research, modality dropout can be implemented by randomly omitting images of specific plant organs during training batches. For example, a model might receive only flower and leaf images in one iteration, then fruit and stem images in another, learning to generate accurate predictions from incomplete multimodal data [1]. Studies have demonstrated that models trained with modality dropout maintain reasonable performance even when only one modality is available, a common occurrence in field applications [1].

The Scientist's Toolkit: Research Reagent Solutions

Implementing effective multimodal fusion requires both computational resources and specialized datasets. The following table outlines essential components for plant data fusion research:

Table: Essential Research Resources for Multimodal Plant Data Fusion

| Resource Category | Specific Tools & Datasets | Research Function | Implementation Notes |

|---|---|---|---|

| Benchmark Datasets | Multimodal-PlantCLEF [1] | Standardized evaluation of fusion strategies | Restructured from PlantCLEF2015; contains 4 plant organs |

| Architecture Search | Multimodal Fusion Architecture Search (MFAS) [1] | Automatically discovers optimal fusion points | Modified from Perez-Rua et al. (2019); enables hybrid fusion |

| Pretrained Models | MobileNetV3Small [1] | Feature extraction for image-based modalities | Provides strong baseline; transfer learning from ImageNet |

| Robustness Techniques | Modality Dropout [1] [17] | Enhances model resilience to missing data | Randomly omits modalities during training |

| Fusion Frameworks | PlantIF [7] | Graph-based fusion of image and text data | Uses semantic space encoders and self-attention graph convolution |

| Evaluation Metrics | McNemar's Test [1] | Statistical significance testing | Complementary to standard accuracy metrics |

Technical Implementation Guide

Data Preprocessing Pipeline

Effective multimodal fusion requires meticulous data preprocessing to ensure compatibility between modalities. For plant data research, this typically involves:

Image Normalization: Standardizing size, orientation, and color properties across all plant organ images to create consistent input representations [15]. This may include resizing to uniform dimensions, color normalization, and augmentation techniques to increase dataset diversity.

Feature Alignment: Creating semantic correspondence between different data types, such as aligning images of specific plant organs with relevant textual descriptions or thermal measurements [15]. In the PlantIF model, this involved mapping visual and textual features into shared semantic spaces to enable effective fusion [7].

Handling Missing Data: Developing strategies for incomplete multimodal samples, whether through interpolation, imputation, or robust fusion techniques that can accommodate partial inputs [15]. Modality dropout during training prepares models for such scenarios [1] [17].

Computational Considerations

Implementing fusion strategies requires careful attention to computational requirements and efficiency:

Resource Allocation: Early fusion often creates high-dimensional input spaces that increase computational demands [16]. Late fusion requires maintaining multiple models but with lower individual complexity [16]. Intermediate and hybrid approaches balance these factors but introduce architectural complexity [15].

Deployment Constraints: For field applications in agricultural research, model size and inference speed become critical factors. The automatically discovered fusion architecture in plant classification research achieved strong performance with compact parameter counts, facilitating deployment on resource-constrained devices [1].

The selection of fusion strategy represents a fundamental design decision in multimodal plant data research, with significant implications for model performance, robustness, and practical applicability. Experimental evidence demonstrates that automatically discovered hybrid fusion strategies can outperform conventional approaches, achieving state-of-the-art results in plant classification tasks [1].

Future research directions include developing more efficient neural architecture search methods for fusion optimization, creating standardized multimodal benchmarks for plant phenotyping, and advancing techniques for handling extreme data heterogeneity. As multimodal learning continues to evolve, plant data research stands to benefit substantially from these advancements, enabling more accurate species identification, disease diagnosis, and growth monitoring to support agricultural productivity and ecological conservation.

In modern research, particularly in fields like precision agriculture and environmental monitoring, relying on a single data source often proves insufficient for comprehensive analysis. The integration of multiple data types—a practice known as multimodal fusion—has emerged as a critical methodology for enhancing the accuracy and robustness of scientific observations [1]. This approach leverages the complementary strengths of different sensing technologies to overcome the inherent limitations of any single modality. For plant data research specifically, multimodal learning addresses a fundamental biological reality: a single plant organ is often insufficient for accurate classification, as variations can occur within the same species while different species may exhibit similar features in one organ type [1].

This guide provides a systematic comparison of four foundational sensor technologies—RGB, Hyperspectral, LiDAR, and Environmental Sensors—within the context of multimodal plant data research. By objectively analyzing the performance specifications, applications, and integration methodologies of these technologies, we aim to equip researchers and drug development professionals with the knowledge needed to design effective sensor fusion strategies. The subsequent sections will detail each sensor type's capabilities, present experimental data on their performance, and illustrate workflows for their synergistic application in research settings.

Sensor Fundamentals and Comparative Analysis

Core Sensor Types and Characteristics

RGB Sensors: These are conventional digital cameras capturing images in three broad spectral bands (Red, Green, Blue). They provide high-resolution spatial information but limited spectral data, making them susceptible to the metamerism effect where visually similar materials appear identical despite different compositions [18]. Recent advancements have focused on leveraging deep learning to extract more value from RGB data, such as reconstructing spectral information from standard images [19].

Hyperspectral Imaging (HSI) Sensors: HSI systems capture electromagnetic intensities across hundreds of narrow, contiguous spectral bands, typically from visible (VIS: 0.4-0.7μm) to near-infrared (NIR: 0.7-1μm) or shortwave infrared (SWIR: 1-2.5μm) regions [18]. This enables detailed material identification through unique spectral signatures, overcoming limitations of RGB imaging but generating high-dimensional data that poses computational challenges for real-time processing [18] [20].

LiDAR (Light Detection and Ranging) Sensors: These active sensors use laser pulses to measure distances and create detailed three-dimensional point clouds of surfaces and structures. Modern systems, such as the RIEGL VQ-1560 III-S, can achieve measurement rates up to 4.4 MHz and are often integrated with RGB or NIR cameras for complementary data collection [21]. LiDAR excels at capturing spatial geometry and surface topography but lacks biochemical information.

Environmental Sensors: This category encompasses sensors that monitor atmospheric and ambient conditions, including particulate matter (PM2.5), nitrogen dioxide (NO2), temperature, and humidity [22]. They provide crucial contextual data for interpreting other sensor readings and are increasingly deployed in networked systems for epidemiological and environmental studies.

Technical Specification Comparison

Table 1: Comparative technical specifications of key sensor types

| Sensor Type | Spatial Resolution | Spectral Resolution | Data Output | Key Measurables | Cost Level |

|---|---|---|---|---|---|

| RGB | High (e.g., 266 MP for FARO Focus Premium Max) [21] | 3 broad bands (R, G, B) | 2D raster images | Visual appearance, texture, morphology | Low |

| Hyperspectral | Medium (trade-off with spectral resolution) [18] | Hundreds of narrow bands (e.g., 128+ channels) [18] | 3D hypercube (x,y,λ) | Material composition, chemical properties | High |

| LiDAR | 3D point density (e.g., ~70 points/m² from 1500 ft AGL) [23] | N/A | 3D point cloud | Surface geometry, topography, structure | Medium-High |

| Environmental | Point measurements | N/A (gas/particle specific) | Time-series data | PM2.5, NO2, temperature, humidity [22] | Low |

Table 2: Performance characteristics and limitations across sensor types

| Sensor Type | Strengths | Limitations | Primary Applications |

|---|---|---|---|

| RGB | Low cost, high resolution, strong anti-interference ability, ease of integration [19] | Limited to visual spectrum, cannot distinguish metameric colors [18] | Plant morphology, visual documentation, object detection |

| Hyperspectral | Material-level discrimination, detects invisible features, measures chemical properties [18] [20] | High cost, large data volumes, computationally intensive, sensitivity to environmental conditions [18] | Plant stress detection, nutrient status assessment, disease identification |

| LiDAR | Accurate 3D mapping, works in darkness, penetrates vegetation to some degree [19] | High cost, limited by weather conditions, no chemical information | Plant height measurement, canopy structure, biomass estimation |

| Environmental | Continuous monitoring, provides contextual data, increasingly compact designs | Calibration drift, cross-sensitivities to environmental factors [22] | Microclimate monitoring, pollution exposure studies |

Experimental Protocols and Performance Validation

Multimodal Plant Classification Using Automated Fusion

Objective: To develop an automated multimodal deep learning approach for plant classification by integrating images from multiple plant organs [1].

Methodology: Researchers created a multimodal dataset (Multimodal-PlantCLEF) by restructuring the unimodal PlantCLEF2015 dataset to include images of four specific plant organs: flowers, leaves, fruits, and stems. They trained unimodal models for each organ type using the MobileNetV3Small pretrained model. A modified Multimodal Fusion Architecture Search (MFAS) algorithm was then employed to automatically determine the optimal fusion strategy rather than relying on manual design decisions. The approach incorporated multimodal dropout to enhance robustness to missing modalities [1].

Performance Metrics: The automated fusion model achieved 82.61% accuracy across 979 plant classes in the Multimodal-PlantCLEF dataset, outperforming traditional late fusion by 10.33%. The model maintained strong performance even with missing modalities, demonstrating the effectiveness of both multimodality and optimized fusion strategy [1].

UAV-Based Multisensor Fusion for Crop Parameter Estimation

Objective: To simultaneously estimate multiple crop growth parameters (plant height, leaf area index, and chlorophyll content) through UAV-borne sensor fusion [19].

Methodology: Researchers developed an integrated system comprising a LiDAR module and an RGB camera mounted on a UAV platform. The hardware system was controlled through ROS (Robot Operating System) to collaboratively generate color point clouds. A pixel-level co-registration algorithm aligned LiDAR and camera data without requiring special registration objects. An improved MST++ deep learning network reconstructed 31 spectral channels in the 400-700nm range from RGB images, creating simulated 3D hyperspectral data [19].

Performance Metrics: The system demonstrated high accuracy in estimating all three growth parameters with R² values of 0.95 for plant height, 0.91 for leaf area index, and 0.89 for chlorophyll content. The fusion approach significantly outperformed single-sensor methods, particularly for chlorophyll content estimation where RGB-alone methods typically fail [19].

Hyperspectral Imaging for Air Pollution Classification

Objective: To classify air pollution severity using hyperspectral imaging converted from standard RGB images [24].

Methodology: Researchers developed a novel conversion algorithm (cHSI) to transform RGB images into hyperspectral images, extracting spectral information beyond standard three-band imagery. A dataset of 15,137 images was compiled across four regions (trees, roofs, roads, and other surfaces), captured by a drone at 100 meters altitude. The images were classified into "Good," "Normal," or "Severe" categories according to the Air Quality Index (AQI). Two separate 3D convolutional neural network (3DCNN) models were trained using traditional RGB images and the converted HSI images respectively [24].

Performance Metrics: Replacement of the RGB-3DCNN model with the cHSI-3DCNN model improved classification accuracy by up to 9% across all regions, demonstrating the value of enhanced spectral information for environmental monitoring applications [24].

Integration Workflows and Fusion Strategies

Multimodal Data Fusion Framework

Hardware Integration Architecture

Research Reagent Solutions and Essential Materials

Table 3: Essential research materials and their functions in sensor-based studies

| Item | Function/Application | Example Use Case |

|---|---|---|

| Molecularly Imprinted Polymers (MIPs) | Selective targeting of small molecules for detection [25] | Colorimetric detection of specific compounds in aqueous solutions [25] |

| Standard 24-Color Checker | Reference target for camera calibration and color correction [24] | Establishing relationship matrix between camera and spectrometer [24] |

| 3D-Printed Opaque Enclosure | Housing for sensitive optical components to prevent light interference [25] | Creating controlled measurement environment for RGB sensor systems [25] |

| Reference-Equivalent Instruments (RIs) | Gold-standard measurement devices for sensor calibration [22] | Co-location studies to enhance low-cost sensor accuracy [22] |

| Alphasense OPC-N3 Particle Sensor | Low-cost particulate matter monitoring with high time resolution [22] | Indoor air quality studies in epidemiological research [22] |

The comparative analysis presented in this guide demonstrates that each sensor technology offers distinct advantages and suffers from specific limitations that can be effectively mitigated through strategic multimodal fusion. RGB sensors provide cost-effective high-resolution imaging but lack spectral discrimination capabilities. Hyperspectral imaging enables material-level analysis but at higher cost and computational complexity. LiDAR delivers precise structural information without biochemical context, while environmental sensors supply crucial ancillary data for interpreting primary measurements.

For researchers designing multimodal plant studies, the experimental protocols and fusion workflows outlined herein provide validated frameworks for implementation. The demonstrated performance improvements—from the 10.33% accuracy gain in automated plant classification to the high R² values (0.89-0.95) in crop parameter estimation—substantiate the value of integrated sensing approaches. Future advancements will likely focus on standardizing calibration methodologies, developing more efficient fusion algorithms, and creating increasingly compact and cost-effective multisensor platforms to further accelerate adoption across research domains.

The Role of Data Alignment and Preprocessing in Effective Multimodal Integration

In plant science research, accurately identifying complex traits—such as disease resistance or water stress response—requires integrating diverse data types, or modalities. This process of multimodal integration allows models to capture complementary information that a single data source might miss [15]. However, two technical challenges are central to its success: data alignment, which establishes semantic relationships across different modalities, and data preprocessing, which prepares raw data for integration [26]. Within the specific domain of multimodal plant data, the choice of fusion strategy—dictated by how well data is aligned and processed—directly impacts the performance, robustness, and interpretability of the resulting models. This guide objectively compares the performance of different fusion approaches, providing experimental data and methodologies to inform research decisions.

Core Concepts: Alignment and Preprocessing

Understanding Multimodal Alignment

Multimodal alignment focuses on establishing coherent semantic links between distinct data types, such as images, text, and sensor readings [26]. It can be broadly categorized into two approaches:

- Explicit Alignment directly measures inter-modal relationships, often using similarity matrices or graph structures to link specific elements (e.g., aligning a textual description of a leaf spot with its corresponding location in a leaf image) [26] [27].

- Implicit Alignment serves as an intermediate, latent step in a larger model. Techniques like crossmodal attention mechanisms allow models to automatically learn the relationships between modalities without requiring pre-aligned data [26] [28].

A critical consideration is that the utility of forced alignment is not universal. Recent research indicates that the optimal level of alignment depends on the inherent redundancy between the modalities; forcing alignment between modalities with little shared information can even hinder performance [29].

The Preprocessing Pipeline

Effective fusion is built on a foundation of meticulous data preprocessing, which ensures that different modalities can be accurately integrated [15]. This stage involves modality-specific transformations.

Table: Essential Preprocessing Techniques by Modality

| Modality | Preprocessing Techniques | Key Functions |

|---|---|---|

| Image (RGB/Thermal) | Resizing, Normalization, Augmentation [15] | Standardizes dimensions, enhances contrast, increases data diversity |

| Text | Tokenization, Stopword Removal, Embedding Conversion (e.g., BERT) [15] | Breaks text into units, removes noise, converts to numerical vectors |

| Environmental Sensor Data | Handling missing values, Temporal Alignment [30] [15] | Ensures data continuity, synchronizes with other temporal streams |

Beyond these techniques, temporal and spatial alignment is often crucial. For instance, in plant stress monitoring, a thermal image of a canopy must be accurately matched with the corresponding sensor readings for soil moisture and air temperature from the same point in time [30] [15].

Comparative Analysis of Fusion Strategies

The stage at which modalities are combined—known as the fusion strategy—is a primary differentiator among multimodal models. The following table summarizes the core characteristics of the three main strategies.

Table: Comparison of Multimodal Fusion Strategies

| Fusion Strategy | Description | Best-Use Context | Advantages | Limitations |

|---|---|---|---|---|

| Early Fusion | Combines raw or low-level features from multiple modalities before model input [15]. | Modalities are naturally synchronized and share a low-level semantic space [15]. | Allows model to learn dense, joint representations from the onset [15]. | Highly sensitive to noise and misalignment; requires precise data synchronization [15]. |

| Intermediate Fusion | Processes each modality separately initially, then combines features at an intermediate model layer [15]. | A balance is needed between modality-specific processing and joint learning [7] [31]. | Balances specificity and interaction; highly flexible with architectures like transformers [7] [28]. | Increased model complexity; requires careful design of fusion modules [7]. |

| Late Fusion | Processes each modality independently, combining their final predictions or decisions [15] [4]. | Modalities are asynchronous, or when some modalities may be missing at inference time [15] [31]. | Robust to missing data and easy to implement; leverages state-of-the-art unimodal models [15] [31]. | May miss crucial, fine-grained cross-modal interactions [15]. |

Performance Comparison in Plant Science Tasks

Quantitative results from recent studies demonstrate how the choice of fusion strategy and alignment technique directly impacts model performance on specific plant science tasks.

Table: Experimental Performance of Multimodal Models in Plant Research

| Model / Study | Task | Modalities Used | Fusion & Alignment Approach | Reported Accuracy |

|---|---|---|---|---|

| PlantIF [7] | Plant Disease Diagnosis | Image, Text | Intermediate fusion using a graph learning module for semantic alignment [7]. | 96.95% |

| Sweet Potato Water Stress [30] | Water Stress Classification | RGB-Thermal Imagery, Growth Indicators | Late fusion of Vision Transformer-CNN model with growth indicator analysis [30]. | High (Exact metric N/A, model simplified classification) |

| Automatic Fusion [31] | Plant Identification | Images of multiple organs (flowers, leaves, etc.) | Neural Architecture Search for optimal intermediate fusion [31]. | 82.61% |

| Tomato Disease Diagnosis [4] | Disease Classification & Severity Estimation | Image, Environmental Data | Late fusion of EfficientNetB0 (image) and RNN (environmental data) predictions [4]. | 96.40% (Classification), 99.20% (Severity) |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear blueprint for research, this section details the methodologies from two key studies cited in the performance comparison.

Protocol 1: Multimodal Plant Disease Diagnosis with PlantIF

This protocol outlines the methodology for the PlantIF model, which uses graph networks for semantic alignment [7].

Data Acquisition and Preparation:

- Collect a dataset of 205,007 plant images alongside 410,014 textual descriptions.

- Employ pre-trained image and text feature extractors (e.g., ResNet, BERT) to obtain initial visual and textual feature vectors enriched with prior knowledge.

Semantic Space Encoding:

- Map the extracted features into both a shared semantic space (to capture common patterns) and modality-specific spaces (to preserve unique information).

Multimodal Feature Fusion and Alignment:

- The core of the model is a graph-based fusion module.

- Model the relationships between plant phenotypes and text semantics as a graph.

- Use a Self-Attention Graph Convolutional Network (SA-GCN) to process this graph. The self-attention mechanism dynamically weights the importance of different connections, extracting spatial dependencies and achieving deep semantic alignment between the visual and textual data.

Model Training and Output:

- Train the entire network end-to-end for the task of disease diagnosis.

- The final output is a diagnostic classification (e.g., healthy, specific disease).

Protocol 2: Classifying Water Stress in Sweet Potatoes

This protocol details the experiment that fused low-altitude imagery with environmental data [30].

Field Setup and Data Collection:

- Cultivar and Plot: Use the 'Jinyulmi' sweet potato cultivar, established in experimental field plots.

- Sensor and Imagery Data:

- Capture RGB and thermal imagery from low-altitude platforms.

- Collect various growth indicators from the plants.

- Record environmental variables such as temperature and humidity.

Data Preprocessing and Index Calculation:

- Preprocess the RGB and thermal images (e.g., resizing, registration).

- Calculate a redefined Crop Water Stress Index (CWSI) using the newly defined environmental variables to make it more applicable to open-field conditions.

Model Development and Fusion:

- Machine Learning Pathway: Input the leaf temperature and growth indicators into traditional ML models (e.g., K-Nearest Neighbors).

- Deep Learning Pathway: Develop a Vision Transformer-Convolutional Neural Network (ViT-CNN) model to process the RGB-thermal imagery.

- Fusion Strategy: A late-fusion approach is effectively used. The ML models classify stress based on tabular data, while the DL model classifies based on imagery. Their outputs can be combined or used separately, simplifying the original complex five-level stress classification into a more practical three-level system.

Interpretation and Application:

- Employ Explainable AI (XAI) techniques like Grad-CAM to interpret model predictions.

- Integrate the best-performing models into a graphical user interface (GUI) for actionable decision-making.

Workflow Visualization: Multimodal Plant Data Analysis

The following diagram synthesizes the common stages and decision points in a multimodal plant data analysis pipeline, as exemplified by the detailed experimental protocols.

Diagram Title: Multimodal Plant Data Analysis Workflow

The Scientist's Toolkit: Key Reagents and Algorithms

This section catalogs essential computational "reagents" and techniques that form the foundation of modern multimodal fusion pipelines in plant research.

Table: Essential Research Reagents and Algorithms for Multimodal Fusion

| Category | Item/Algorithm | Function in the Experimental Pipeline |

|---|---|---|

| Core Algorithms | Graph Convolutional Network (GCN) / Self-Attention GCN (SA-GCN) [7] [27] | Models relationships and dependencies between entities (e.g., plant phenotypes and text semantics) for advanced alignment. |

| Capsule Networks [27] | Enhances feature extraction from images by preserving hierarchical spatial relationships, improving robustness. | |

| Contrastive Learning [29] | A training objective that forces the model to learn a shared representation space by pulling related data pairs closer and pushing unrelated pairs apart. | |

| Architectures & Models | Transformer / Crossmodal Attention [28] | Dynamically weighs the relevance of features across different, potentially unaligned, modalities (e.g., vision → language). |

| Multimodal Fusion Architecture Search [31] | Automates the process of finding the optimal neural network architecture and fusion point for a given multimodal task and dataset. | |

| Data & Explainability | Explainable AI (XAI) Tools (LIME, SHAP, Grad-CAM) [30] [4] | Provides post-hoc interpretations of model predictions, crucial for building trust and validating models in a biological context. |

| Pre-trained Feature Extractors (EfficientNet, BERT) [4] [7] | Provides strong, generic feature representations from raw data, serving as a powerful starting point for task-specific models. |

The experimental data and protocols presented in this guide consistently demonstrate that there is no single "best" fusion strategy for all scenarios in plant science. The performance of a multimodal model is intrinsically linked to how data alignment and preprocessing challenges are addressed. Late fusion offers a robust and practical starting point, especially when data is noisy or asynchronous. In contrast, intermediate fusion with sophisticated alignment mechanisms, such as graph networks or attention, can achieve superior performance when data relationships are complex and precise semantic integration is required. As the field advances, the automated discovery of fusion strategies and the principled application of alignment based on data redundancy will become increasingly critical for developing accurate, robust, and interpretable models that address the complex challenges of modern plant science.

Advanced Fusion Techniques and Their Practical Applications in Plant Research

The integration of diverse data types, or modalities, is revolutionizing plant phenotyping and disease diagnosis. Multimodal Fusion addresses the critical limitation of single-source data by combining multiple inputs, such as images of different plant organs or environmental sensor data, to create a more comprehensive biological representation [1]. However, a central challenge lies in determining the optimal strategy for fusing these modalities. Manual design of fusion architectures is complex and often leads to suboptimal performance. Multimodal Fusion Architecture Search (MFAS) has emerged as a solution, automating the discovery of effective fusion strategies and significantly enhancing model accuracy and efficiency [1]. This guide provides a comparative analysis of MFAS against other prominent fusion strategies, detailing their experimental protocols, performance, and practical applications for agricultural research.

Comparative Analysis of Fusion Strategies

The following table summarizes the core characteristics and performance outcomes of the primary fusion strategies employed in multimodal deep learning for plant science.

Table 1: Comparison of Multimodal Fusion Strategies in Plant Science Research

| Fusion Strategy | Core Methodology | Reported Performance | Key Advantages | Key Limitations |

|---|---|---|---|---|

| MFAS (Automated Intermediate) | Uses neural architecture search to automatically find the optimal fusion points and operations between encoder backbones [1]. | 82.61% accuracy on Multimodal-PlantCLEF (979 classes), outperforming late fusion by 10.33% [1]. | Optimized for specific task/dataset; achieves high performance with compact models (e.g., 3.51M parameters) [32] [1]. | Computationally intensive search phase; requires technical expertise for implementation. |

| Late Fusion | Combines modalities at the decision level by averaging or concatenating predictions from separate models [1] [4]. | Serves as a common baseline; MFAS showed significant improvement over this method [1]. | Simple to implement; robust to missing modalities; allows for training of separate models [1]. | Fails to model rich, intermediate feature interactions between modalities, limiting performance. |

| Manual Intermediate Fusion | Manually designed network architecture integrates features from different modalities before the final classification layer [33] [4]. | An optimized multi-path CNN achieved noise robustness of 0.931 on a medical dataset [33]. | More flexible than late fusion; allows for custom, interpretable design of fusion layers [33]. | Architecture design is labor-intensive, requires expert knowledge, and may not be optimal. |

| Multimodal with XAI | Integrates explainable AI (XAI) techniques like LIME and SHAP with a fusion model to interpret predictions [4]. | Achieved 96.40% disease classification and 99.20% severity prediction accuracy for tomatoes [4]. | High transparency and trust; provides insights into model decisions for both image and environmental data [4]. | Adds computational overhead; explanations are post-hoc and may not reflect the true model reasoning. |

Detailed Experimental Protocols

To ensure reproducibility and provide a deeper understanding of the comparative data, this section outlines the experimental methodologies employed in the cited studies.

The MFAS approach demonstrated state-of-the-art performance on a complex plant identification task. The key steps of its protocol are as follows:

- Dataset and Preprocessing: The study used the PlantCLEF2015 dataset, restructured into "Multimodal-PlantCLEF". This new dataset comprises images of four distinct plant organs—flowers, leaves, fruits, and stems—treated as separate modalities. The input images were preprocessed and normalized.

- Model Training and Search:

- Unimodal Model Pre-training: Individual pre-trained MobileNetV3Small models were first fine-tuned as encoders for each specific organ modality (flower, leaf, fruit, stem).

- Fusion Architecture Search: A modified Multimodal Fusion Architecture Search (MFAS) algorithm was applied. This algorithm automatically explores different ways to combine the feature maps from the four unimodal encoders, searching for the most effective fusion points and operations to build a unified, high-performance model.

- Evaluation: The final fused model was evaluated on the test set of Multimodal-PlantCLEF and compared against a strong late-fusion baseline using averaging. The evaluation metric was classification accuracy across 979 plant classes.

Table 2: Key Experimental Conditions for MFAS Study [1]

| Parameter | Specification |

|---|---|

| Dataset | Multimodal-PlantCLEF (restructured from PlantCLEF2015) |

| Modalities | 4 (Flower, Leaf, Fruit, Stem images) |

| Number of Classes | 979 |

| Unimodal Backbone | MobileNetV3Small (pre-trained) |

| Fusion Method | Automated MFAS |

| Key Metric | Classification Accuracy |

This experiment focused on tomato disease diagnosis and severity estimation, emphasizing model interpretability.

- Dataset: The study utilized the widely adopted PlantVillage dataset, containing images of diseased and healthy tomato leaves.

- Multimodal Model Design:

- Image Modality: An EfficientNetB0 model was used to classify diseases from leaf images. LIME (Local Interpretable Model-agnostic Explanations) was applied to this branch to generate visual explanations, highlighting the image regions most influential to the classification decision.

- Weather Modality: A Recurrent Neural Network (RNN) was designed to predict disease severity based on a sequence of environmental data (e.g., humidity, temperature, rainfall). SHAP (SHapley Additive exPlanations) was used to interpret this branch, quantifying the contribution of each weather feature to the severity prediction.

- Fusion Strategy: A late-fusion approach was employed, combining the independent predictions from the image and weather models into a final decision.

- Evaluation: The model was evaluated on its accuracy for both disease classification and severity prediction tasks. The primary value of the study was the demonstration of a functional, interpretable multimodal framework.

Workflow and Architecture Diagrams

The following diagrams illustrate the logical structure and data flow of the core fusion architectures discussed.

MFAS Workflow for Multi-Organ Plant Identification

Interpretable Multimodal Network for Disease Diagnosis

The Scientist's Toolkit: Essential Research Reagents & Materials

For researchers aiming to implement similar multimodal fusion experiments, the following table details key computational "reagents" and their functions.

Table 3: Essential Resources for Multimodal Plant Data Research

| Resource Name | Type | Primary Function in Research | Example in Context |

|---|---|---|---|

| PlantVillage Dataset | Image Dataset | Provides a large, labeled benchmark of plant disease images for training and evaluating models [32] [4]. | Served as the primary data source for the tomato disease diagnosis model [4]. |

| MobileNetV3 | Pre-trained CNN Architecture | Serves as a lightweight, efficient feature extractor for images, ideal for mobile deployment and as a backbone for encoder networks [32] [1]. | Used as the unimodal encoder for each plant organ (flower, leaf, etc.) in the MFAS experiment [1]. |

| EfficientNetB0 | Pre-trained CNN Architecture | Provides a strong balance between accuracy and computational efficiency for image-based classification tasks [4]. | Formed the core of the image classification branch in the interpretable tomato disease model [4]. |

| LIME (XAI Tool) | Explainable AI Library | Generates post-hoc, human-interpretable visual explanations for predictions made by any classifier [32] [4]. | Used to highlight which parts of a leaf image were most important for the disease classification [4]. |

| SHAP (XAI Tool) | Explainable AI Library | Explains the output of any machine learning model by computing the marginal contribution of each feature to the prediction [4]. | Used to quantify the impact of weather features like humidity and temperature on disease severity prediction [4]. |

| Grad-CAM/Grad-CAM++ | Explainable AI Technique | Produces visual explanations from CNNs without requiring architectural changes, highlighting important regions in the image [32]. | Integrated into the Mob-Res model to provide visual insights into the neural regions influencing disease predictions [32]. |

In the rapidly evolving field of agricultural technology, multimodal data fusion has emerged as a transformative methodology for extracting meaningful insights from diverse sensor inputs. This approach systematically combines information from multiple sources—including RGB imagery, thermal imaging, spectral data, and environmental sensors—to create comprehensive digital representations of crop health, stress status, and phenotypic traits. For researchers and drug development professionals working with plant-based systems, understanding the nuanced relationship between fusion strategies and specific application goals is paramount for designing effective experimental protocols and analytical frameworks.

The fundamental premise of sensor-to-application mapping recognizes that no single fusion methodology delivers optimal performance across all research contexts. Rather, the efficacy of any fusion strategy is inherently dependent on the specific analytical goals, sensor characteristics, and environmental constraints of the application domain. This comparative guide examines the performance characteristics of predominant fusion strategies through the lens of agricultural research, with particular emphasis on experimental frameworks for assessing abiotic stress in crop species—a domain where multimodal approaches have demonstrated significant utility for both basic research and applied pharmaceutical development.

Comparative Analysis of Fusion Methodologies

Multi-sensor fusion strategies are systematically categorized into three distinct architectural paradigms based on the stage at which data integration occurs: data-level, feature-level, and decision-level fusion [34]. Each approach offers characteristic advantages and limitations that must be carefully evaluated against specific research requirements, including computational efficiency, robustness to sensor noise, and interpretability of results.

Table 1: Comparative Analysis of Data Fusion Strategies in Agricultural Research

| Fusion Strategy | Technical Approach | Performance Advantages | Application Context | Key Limitations |

|---|---|---|---|---|

| Data-Level Fusion | Raw data aggregation from multiple sensors into unified dataset [34] | Increased signal-to-noise ratio; Enhanced data precision [34] | Low-altitude RGB-thermal imaging for water stress classification [30] | High computational load; Sensitivity to sensor misalignment [34] |

| Feature-Level Fusion | Feature extraction followed by concatenation into high-dimensional vectors [34] | Eliminates redundancy; Increases calculation efficiency [35] | Tea grade discrimination combining NIR spectra and GC-MS features [35] | Potential information loss during feature selection [35] |

| Decision-Level Fusion | Combination of outputs from multiple classifiers or decision processes [34] | Robust to sensor failure; Compatible with heterogeneous sensor types [34] | Voting, Multi-view stacking, and AdaBoost methods [34] | Dependent on individual classifier performance [34] |

The strategic selection among these fusion methodologies represents a critical determinant of experimental success in plant research applications. Data-level fusion excels in contexts requiring maximal information preservation from raw sensor inputs, particularly when deploying complementary sensing modalities such as RGB-thermal imaging systems for water stress assessment [30]. Feature-level fusion offers superior computational efficiency for high-dimensional datasets, as demonstrated in tea quality evaluation platforms combining near-infrared spectroscopy with gas chromatography-mass spectrometry data [35]. Decision-level fusion provides exceptional robustness in heterogeneous sensor networks, making it particularly valuable for field-based agricultural monitoring systems where sensor reliability may vary considerably [34].

Experimental Performance Comparison

To quantitatively evaluate the practical performance of different fusion strategies in plant science applications, we examined two representative experimental frameworks from recent literature. These case studies illustrate how fusion methodology selection directly impacts classification accuracy, model robustness, and operational efficiency in real-world research scenarios.

Table 2: Experimental Performance Metrics Across Fusion Strategies

| Experimental Context | Fusion Method | Classification Accuracy | Key Performance Metrics | Implementation Considerations |

|---|---|---|---|---|

| Sweet Potato Water Stress Classification [30] | K-Nearest Neighbors (KNN) with feature-level fusion | Outperformed other ML models at all growth stages | Simplified 5-level to 3-level stress classification for extreme conditions | Low-altitude platform with RGB-thermal imagery and growth indicators |

| Vision Transformer-CNN (ViT-CNN) [30] | Deep Learning with data-level fusion | High sensitivity to extreme stress conditions | Enhanced applicability to practical agricultural management | Integrated Grad-CAM and XAI for interpretability |

| Vine Tea Grade Discrimination [35] | Random Forest (RF) with mid-level fusion | Excellent classification results with ensemble decisions | Specificity: 0.974; Sensitivity: 0.965 | Resistance to overfitting; Simplicity of implementation |

| Vine Tea Grade Discrimination [35] | Partial Least Squares DA (PLS-DA) with low-level fusion | Appropriate for linear classifier issues | Effectively handled fused NIR and GC-MS data | Concatenated original data from different technologies |

The experimental results demonstrate consistent performance patterns across diverse application domains. In sweet potato water stress monitoring, the K-Nearest Neighbors algorithm implementing feature-level fusion achieved superior classification performance across all growth stages, while the Vision Transformer-CNN architecture utilizing data-level fusion provided enhanced sensitivity to extreme stress conditions [30]. For vine tea grade discrimination, both Random Forest and Partial Least Squares Discriminant Analysis models delivered high classification accuracy, with the ensemble-based Random Forest approach demonstrating particular robustness to overfitting—a critical consideration for research applications with limited sample sizes [35].

These performance comparisons highlight the context-dependent nature of fusion strategy efficacy. The integration of explainable AI (XAI) components, such as gradient-weighted class activation mapping (Grad-CAM) in the sweet potato study, further enhanced the practical utility of these systems by providing researchers with interpretable diagnostic visualizations to support scientific decision-making [30].

Detailed Experimental Protocols

Sweet Potato Water Stress Classification Protocol

The experimental framework for sweet potato water stress assessment exemplifies a sophisticated multimodal fusion approach combining proximal sensing technologies with machine learning classification. The methodology encompassed several distinct phases, from sensor data acquisition through model development and validation [30].