Molecular Mechanisms of Phenotypic Robustness: From Genetic Networks to Therapeutic Applications

This article provides a comprehensive analysis of the molecular mechanisms that confer phenotypic robustness—the ability of biological systems to maintain stable outcomes despite genetic and environmental perturbations.

Molecular Mechanisms of Phenotypic Robustness: From Genetic Networks to Therapeutic Applications

Abstract

This article provides a comprehensive analysis of the molecular mechanisms that confer phenotypic robustness—the ability of biological systems to maintain stable outcomes despite genetic and environmental perturbations. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of genetic buffering and canalization, examine cutting-edge methodologies for quantifying robustness in experimental and clinical settings, address challenges in overcoming and exploiting this robustness for therapeutic gain, and review comparative frameworks for validating robustness across biological systems. By synthesizing insights from developmental biology, genetics, and computational modeling, this review aims to bridge fundamental knowledge with practical applications in drug target identification and validation, ultimately informing strategies for more resilient therapeutic interventions.

The Core Principles of Biological Robustness: Buffering, Canalization, and Cryptic Variation

Defining Phenotypic Robustness and Canalization in Biological Systems

Phenotypic robustness, often termed canalization, is a fundamental property of biological systems whereby developmental processes produce consistent outcomes despite genetic or environmental perturbations [1] [2]. This phenomenon ensures phenotypic stability in the face of mutations, stochastic events, and environmental fluctuations. First conceptualized by Waddington, canalization represents the evolutionary tuning of developmental pathways to suppress phenotypic variation [3]. Robustness enables species to maintain fitness across generations while preserving genetic diversity that may become advantageous under changing conditions. Understanding the mechanisms governing phenotypic robustness provides crucial insights for evolutionary biology, systems biology, and therapeutic development [1] [4].

Theoretical Foundations and Definitions

Conceptual Framework

Robustness describes the insensitivity of phenotypic traits to various perturbations, while plasticity represents the ability to adjust phenotypes predictably in response to specific environmental stimuli [1]. These concepts exist on a spectrum, with traits displaying varying degrees of stability versus responsiveness throughout an organism's life history.

- Genetic Robustness: Insensitivity of a trait to variation in the genome, measured as stability against mutational effects or natural genetic polymorphisms [5] [2].

- Environmental Robustness: Insensitivity of a trait to variation in environmental conditions, ranging from subtle microenvironmental fluctuations to major environmental shifts [5] [2].

- Developmental Canalization: The suppression of phenotypic variation through stabilized developmental pathways, resulting in consistent trait outcomes despite perturbations [3].

Quantitative Genetic Perspectives

Quantitative genetics provides frameworks for measuring and distinguishing different forms of robustness. The following table summarizes key concepts in quantitative robustness assessment:

Table 1: Quantitative Framework for Assessing Phenotypic Robustness

| Concept | Definition | Measurement Approach | Biological Interpretation |

|---|---|---|---|

| Genetic Robustness (GR) | Insensitivity to genetic variation | Between-strain variation in genetically identical environments | High GR indicates suppression of genetic variation effects |

| Environmental Robustness (ER) | Insensitivity to environmental variation | Within-strain variation across environmental gradients | High ER indicates stability across environmental conditions |

| Canalization | Suppression of phenotypic variation | Combined assessment of GR and ER | Overall developmental stability |

| Reaction Norm | Pattern of phenotypic expression across environments | Slope of phenotype-environment relationship | Flat reaction norms indicate high robustness |

Polymorphic robustness, wherein robustness levels themselves vary between individuals, can be mapped to quantitative trait loci (QTL), revealing that polymorphisms buffering genetic variation are often distinct from those buffering environmental variation [5]. This distinction suggests these two robustness forms may have different mechanistic bases and evolutionary trajectories.

Molecular Mechanisms of Phenotypic Robustness

Systems-Level Features: Redundancy and Network Topology

Biological systems employ multiple architectural strategies to achieve robustness. At the systems level, redundancy provides a fundamental robustness mechanism through duplicate components that can compensate for each other's failure [1].

Morphological redundancy in plants demonstrates how repeating structural units (leaves, branches, roots) create robustness through compensatory growth and continuous development. For example, the reticulated network of leaf venation provides multiple alternative pathways for solute transport if damage occurs, ensuring continued function despite physical injury [1].

Genetic redundancy through whole-genome duplication, tandem gene duplication, and hybridization provides robustness through backup genetic elements. Plants particularly utilize this strategy, tolerating extensive genetic redundancy through mechanisms like RNA-directed DNA methylation that manages increased gene dosage complications [1].

Specific Molecular Mechanisms

Several specific molecular mechanisms have been identified as key contributors to phenotypic robustness:

- Hsp90 Chaperone System: The heat-shock protein Hsp90 promotes maturation and stability of key regulatory proteins, maintaining them above functional threshold levels despite stochastic fluctuations. Hsp90 capacity can be overwhelmed by stress, potentially serving as an environmental sensor that modulates phenotypic diversity in response to conditions [2].

- Transcriptional and Translational Control: Gene-specific noise control occurs through promoter architecture tuning, with frequent promoter activation reducing cell-to-cell variation even at equivalent average expression levels. Similarly, increased mRNA abundance coupled with decreased translation rates can reduce protein-expression noise without altering average abundance [2].

- Chromatin Modifiers: Chromatin-modifying enzymes contribute to robustness through epigenetic regulation of gene expression, though detailed mechanisms remain under investigation [1].

- rDNA Copy Number Variation: Ribosomal DNA copy number may influence phenotypic robustness, though the specific pathways require further elucidation [1].

Nonlinear Developmental Processes

Robustness emerges from inherent nonlinearities in developmental systems rather than exclusively through dedicated buffering mechanisms. Research manipulating Fgf8 gene dosage in mouse craniofacial development demonstrated that variation in Fgf8 expression has a nonlinear relationship to phenotypic variation [3]. The genotype-phenotype map follows a sigmoidal curve where Fgf8 expression above approximately 40% of wild-type levels produces minimal phenotypic effects, while below this threshold, variation causes increasingly severe morphological consequences. This nonlinear relationship directly predicts robustness differences among genotypes without requiring changes in gene expression variance [3].

Table 2: Experimental Evidence for Mechanisms of Phenotypic Robustness

| Mechanism | Experimental System | Key Findings | Reference |

|---|---|---|---|

| Nonlinear G-P Map | Fgf8 allelic series in mice | Phenotypic variance increases below Fgf8 expression threshold (~40% wild-type) | [3] |

| Hsp90 Chaperone | Arabidopsis, yeast, Drosophila | Decreased Hsp90 activity increases within-strain phenotypic variation | [2] |

| Genotype Networks | Synthetic GRNs in E. coli | Multiple GRN architectures produce identical phenotypes, enabling mutational robustness | [6] |

| Expression Noise Control | Yeast gene expression | Essential genes show lower cell-to-cell variability than non-essential genes | [2] |

Experimental Approaches and Methodologies

Quantitative Genetic Mapping of Robustness

Identifying robustness modifiers requires specialized genetic approaches that differ from standard quantitative trait locus (QTL) mapping:

- Environmental Robustness QTL Mapping: Within-strain trait variances substitute for typically used trait means in QTL analysis. Significantly different within-strain variances between genotype groups indicate polymorphic environmental robustness [5].

- Genetic Robustness QTL Mapping: Between-strain variation comparisons across genotype groups reveal genetic robustness modifiers. Differences in dispersion of strain medians between genotype classes indicate polymorphic buffering of genetic effects [5].

These approaches applied to genome-wide gene expression data enable systematic characterization of robustness architecture across thousands of molecular traits simultaneously [5].

High-Throughput Phenotypic Screening

Standardized methodologies enable robust quantification of phenotypic variation across large sample collections. For microbial systems, key steps include:

- Inoculum Standardization: Grow isolates to mid-log phase and prepare identical aliquots in multi-well plates with 50% glycerol at predetermined optical densities [7].

- Controlled Pattern Arrangement: Arrange isolates in set patterns across multiple plates with control strains on each plate to control for intra-assay variability [7].

- Phenotypic Assessment: Implement quantitative phenotypic assays (e.g., biofilm formation via crystal violet staining) across the standardized inoculum array [7].

- Data Integration: Couple phenotypic data with genomic analyses to identify genetic determinants of phenotypic variability [7].

This approach generates realistic standard deviation estimates for multiple isolates, enabling power calculations for genotyping studies [7].

Synthetic Biology Approaches

Synthetic gene regulatory networks (GRNs) provide powerful experimental systems for directly testing robustness principles. Construction of genotype networks using CRISPR interference (CRISPRi) in Escherichia coli enables precise manipulation of network properties:

- Network Construction: Implement three-node GRNs with CRISPRi-based repression using modular cloning strategies [6].

- Perturbation Introduction: Apply qualitative changes (gaining/losing interactions) and quantitative changes (modifying interaction strengths) through promoter substitutions and sgRNA variants [6].

- Phenotypic Characterization: Measure expression patterns across inducer concentration gradients using fluorescent reporters [6].

- Network Mapping: Identify interconnected GRNs producing identical phenotypes despite architectural differences, revealing genotype network organization [6].

This approach experimentally confirms that extensive genotype networks exist for GRNs, providing mutational robustness while enabling evolutionary innovation through access to novel phenotypic neighborhoods [6].

Research Reagent Solutions

Table 3: Essential Research Tools for Phenotypic Robustness Investigation

| Reagent/Tool | Function | Application Examples |

|---|---|---|

| Allelic Series | Generate gradations in gene dosage | Fgf8neo and Fgf8;Crect series in mice [3] |

| CRISPRi GRN Platform | Programmable gene repression | Synthetic genotype network construction in E. coli [6] |

| Geometric Morphometrics | Quantitative shape analysis | 3D landmark-based craniofacial phenotyping [3] |

| Multi-Assay Stock Plates | Standardized phenotypic screening | High-throughput microbial phenotyping [7] |

| Hsp90 Inhibitors | Perturb chaperone function | Test capacitance effects on phenotypic variance [2] |

| sgRNA Variants | Tune repression strength | Parameter modification in synthetic GRNs [6] |

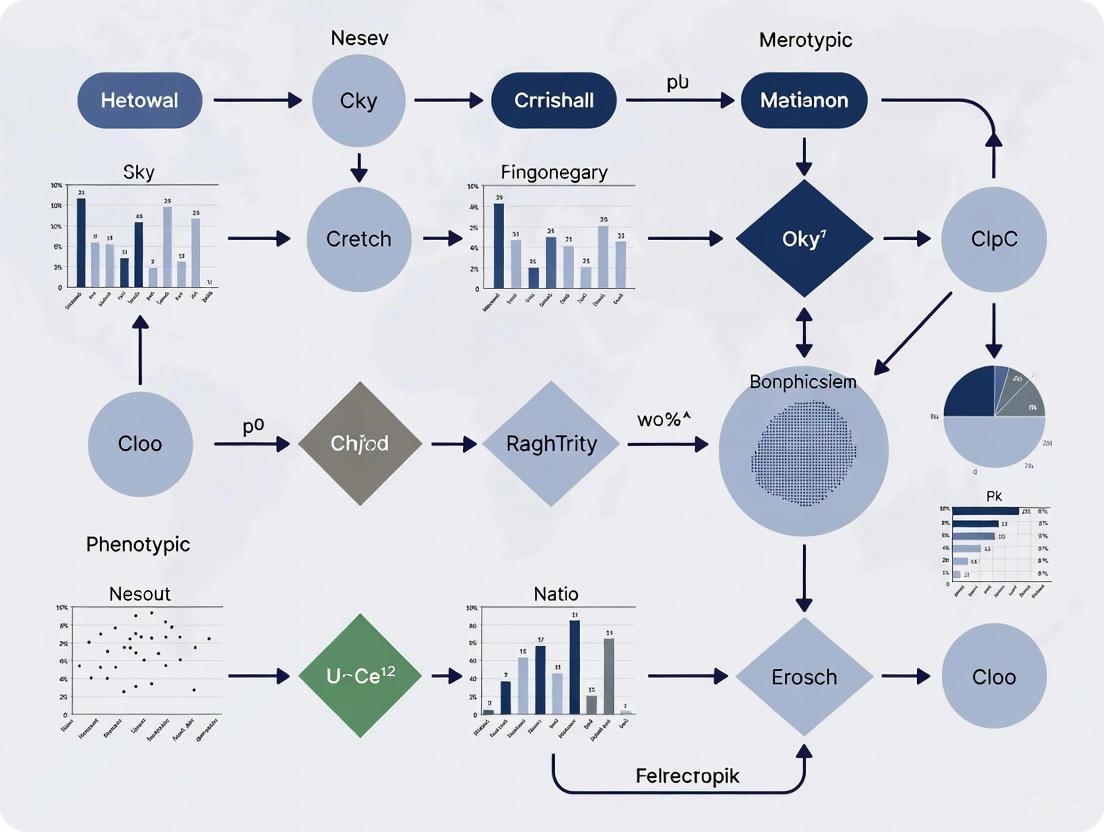

Signaling Pathway and Experimental Workflow Diagrams

Fgf8-Mediated Craniofacial Development Pathway

Diagram Title: Fgf8 Robustness Mechanism

High-Throughput Phenotypic Screening Workflow

Diagram Title: Phenotypic Screening Workflow

Synthetic Genotype Network Construction

Diagram Title: Genotype Network Construction

Implications for Evolutionary Biology and Drug Discovery

Evolutionary Implications

Phenotypic robustness facilitates evolutionary innovation through genotype networks—connected sets of genotypes producing identical phenotypes despite architectural differences [6]. These networks enable extensive exploration of genotypic space while maintaining phenotypic function, allowing populations to accumulate cryptic genetic variation that may prove advantageous under changing conditions [2] [6].

The capacity for phenogenetic drift—where genotypes evolve while phenotypes remain constant—underscores how robustness paradoxically enhances evolutionary adaptability. Theoretical and empirical evidence confirms that genotype networks provide access to distinct phenotypic neighborhoods, facilitating evolutionary transitions when environmental conditions change [6].

Therapeutic Development Applications

Phenotypic robustness concepts are revolutionizing drug discovery approaches. Phenotypic drug discovery (PDD) identifies therapeutic compounds based on functional effects in disease-relevant models rather than predetermined molecular targets [4] [8]. This approach has yielded first-in-class medicines for diverse conditions including cystic fibrosis, spinal muscular atrophy, and hepatitis C [4].

Modern PDD leverages advanced tools including high-content screening, CRISPR-based functional genomics, and artificial intelligence platforms like PhenoModel, which connects molecular structures with phenotypic information through multimodal learning [9]. These approaches identify compounds with novel mechanisms of action that modulate complex biological systems rather than single targets, potentially addressing polygenic diseases more effectively than reductionist strategies [4] [9].

Understanding robustness mechanisms also informs therapeutic resistance management, as robust biological systems tend to resist targeted interventions. Combinatorial therapies that simultaneously engage multiple targets may overcome robustness barriers in cancer and infectious diseases [4] [8].

Genetic Redundancy and Gene Duplication as Foundational Buffering Mechanisms

Phenotypic robustness, the insensitivity of biological systems to genetic and environmental perturbations, is a fundamental property of complex life [2] [3]. This robustness ensures consistent phenotypic outcomes despite stochastic fluctuations in internal microenvironments, genetic variation, or external environmental challenges [2]. Within the molecular mechanisms underlying phenotypic robustness, genetic redundancy—particularly that arising from gene duplication—serves as a foundational buffering mechanism. While originally considered primarily through an evolutionary genetics lens, contemporary research has illuminated how these mechanisms operate at the molecular, network, and systems levels to stabilize phenotypic outputs [10] [11]. For researchers and drug development professionals, understanding these mechanisms provides crucial insights into disease etiology, evolutionary constraints, and potential therapeutic strategies that leverage or disrupt these buffering systems.

The conceptual framework for robustness dates to Waddington's "canalization" hypothesis, which proposed that selection stabilizes development along particular paths [3]. Contemporary definitions characterize robustness as a genotype's ability to endure random mutations with minimal phenotypic effects [11]. The ongoing scientific discourse surrounds whether robustness to mutations is itself a selected trait or emerges as a byproduct of selection for robustness to environmental perturbations [2]. This whitepaper synthesizes current understanding of how genetic redundancy and gene duplication contribute to phenotypic robustness, examining molecular mechanisms, experimental evidence, and implications for biomedical research.

Molecular Mechanisms of Genetic Redundancy

Origins and Evolutionary Maintenance

Genetic redundancy arises when two or more genes can perform overlapping functions, creating a backup system that buffers against functional loss [10]. The simplest origin of redundancy is gene duplication, which immediately creates identical gene copies [12] [10]. Contrary to early expectations that redundancy would be evolutionarily transient, phylogenetic analyses reveal that genetic redundancy can be remarkably stable, with many redundant gene pairs maintaining overlapping functions for over 100 million years in model organisms like Saccharomyces cerevisiae and Caenorhabditis elegans [10].

Several evolutionary models explain the maintenance of genetic redundancy:

- Piggyback mechanism: Overlapping redundant functions are co-selected with non-redundant ones

- Dosage maintenance: Both copies are required to maintain optimal expression levels

- Subfunctionalization: Complementary degeneration of regulatory elements preserves essential functions across duplicates

- Stable heterosis: Heterozygote advantage maintains duplicate loci in populations

The stability of genetic redundancy contrasts with the more rapid evolution of genetic interactions between unrelated genes and demonstrates that redundancy is not merely a transient state but can be an evolutionarily selected feature of genomes [10].

Gene Duplication Mechanisms and Their Functional Consequences

Gene duplication occurs through several distinct mechanisms, each with different implications for genomic architecture and functional outcomes:

Table 1: Mechanisms of Gene Duplication

| Mechanism | Process | Genomic Scale | Functional Consequences |

|---|---|---|---|

| Whole Genome Duplication (WGD) | Duplication of complete chromosomes; includes autopolyploidization and allopolyploidization | Entire genome | Creates ohnologs; high initial redundancy followed by extensive gene loss (fractionation) and diploidization [12] |

| Tandem Duplication | Unequal crossing over between homologous chromosomes or sister chromatids | Single genes to small genomic segments | Produces tandemly arrayed genes (TAGs); common for rapidly evolving gene families (e.g., pathogen resistance) [12] [13] |

| Segmental Duplication | Non-allelic homologous recombination, replication slippage, or transposable element activity | 1-200 kb segments | Often associated with duplication-inducing elements (e.g., long tandem repeats); generates copy number variations [13] |

| Transposition-Mediated | Transposable element activity moving gene copies to new locations | Single genes | Creates dispersed duplicates; may place genes under different regulatory control [13] |

The mechanism of duplication significantly influences the fate of gene duplicates. WGD events, particularly prevalent in plants, often lead to massive but temporary redundancy with subsequent gene loss (fractionation) and genomic reorganization (diploidization) [12]. In contrast, small-scale duplications like tandem and segmental duplications frequently expand gene families involved in adaptive processes, such as pathogen defense in plants [13].

Robustness Through Gene Duplication: Experimental Evidence

Direct Evidence from Gene Dosage Studies

Experimental manipulation of gene dosage provides direct evidence for how duplication affects phenotypic robustness. A landmark study on Fgf8 gene dosage in mice demonstrated that nonlinear relationships between gene expression and phenotypic outcomes directly modulate robustness [3]. Researchers created an allelic series with nine Fgf8 dosage genotypes ranging from 14% to 110% of wild-type expression levels. The resulting genotype-phenotype map revealed a nonlinear relationship where phenotypic variance depended on position along the expression curve:

Diagram Title: Nonlinear Genotype-Phenotype Map in Fgf8 Study

This research demonstrated that genotypes with Fgf8 expression above 40% of wild-type showed minimal phenotypic variance, while those below this threshold exhibited dramatically increased variance. Crucially, these differences in robustness emerged directly from the nonlinearity of the genotype-phenotype curve rather than from changes in gene expression variance or dysregulation [3].

Network-Level Buffering in Gene Regulatory Networks

Computational models of gene regulatory networks (GRNs) provide system-level insights into how duplication affects robustness. Research using Boolean network models has shown that gene duplication generally enhances mutational robustness—the ability to maintain phenotypic stability despite mutations [11]. Key findings include:

- Networks better at maintaining original phenotypes after duplication are also better at buffering single interaction mutations

- Duplication further enhances this buffering capacity beyond mere increases in gene number

- The effect of mutations after duplication depends on both mutation type and the specific genes involved

- Phenotypes accessible through mutation before duplication remain more accessible after duplication

Table 2: Network-Level Effects of Gene Duplication on Robustness and Evolvability

| Property | Effect of Duplication | Research Evidence |

|---|---|---|

| Mutational Robustness | Generally enhanced | Networks show reduced sensitivity to interaction mutations after duplication [11] |

| Phenotypic Stability | Often maintained | Many networks endure duplication without phenotypic effects, especially when few or nearly all genes duplicate [11] |

| Phenotypic Accessibility | Preserved or enhanced | Previously accessible phenotypes remain accessible through mutation after duplication [11] |

| Environmental Robustness | Positively correlated | Species with more duplicates tolerate wider environmental ranges [11] |

This research indicates that gene duplication contributes to distributed robustness, where robustness emerges from system properties rather than simple one-to-one backup relationships [10]. The network architecture determines how duplication affects phenotypic stability, with some network positions more tolerant of duplication than others.

Cryptic Genetic Variation and Evolutionary Contingency

Recent research reveals that while paralogs may maintain functional redundancy, they accumulate cryptic genetic variation—sequence differences that don't affect current function but alter future evolutionary potential [14]. A sophisticated study of redundant myosin genes (MYO3 and MYO5) in yeast demonstrated how cryptic divergence shapes evolutionary trajectories:

Diagram Title: Cryptic Variation in Paralogs Creates Evolutionary Contingency

Using saturation mutagenesis and CRISPR-Cas9, researchers introduced all possible single-amino acid substitutions in the SH3 domains of both paralogs and quantified effects on protein-protein interactions [14]. They found that ~15% of mutations had significantly different effects between paralogs due to cryptic sequence divergence, and ~9% of mutations would allow only one paralog to subfunctionalize. The higher-expressing paralog also buffered mutations that impaired function in the lower-expressing duplicate, demonstrating how expression differences interact with coding sequence changes to shape evolutionary outcomes [14].

Experimental Approaches and Research Tools

Key Methodologies for Studying Genetic Redundancy

Cutting-edge research on genetic redundancy employs sophisticated molecular biology techniques combined with high-throughput functional assays:

Saturation Mutagenesis and CRISPR-Cas9 Screening

- Approach: Create comprehensive mutant libraries using CRISPR-Cas9-mediated homology-directed repair to introduce all possible single-amino acid substitutions [14]

- Application: Systematically quantify functional effects of mutations in redundant paralogs

- Measurement: Bulk competition assays with deep sequencing readout

Protein-Protein Interaction Mapping

- Technique: Dihydrofolate reductase protein-fragment complementation assay (DHFR-PCA) in bulk competition format [14]

- Output: Quantitative measurement of protein complex formation through growth rates in selective media

- Advantage: Enables high-throughput quantification of binding affinity changes across mutant libraries

Gene Dosage Manipulation Series

- Method: Create allelic series with graded expression levels (e.g., through hypomorphic alleles and tissue-specific deletion) [3]

- Phenotyping: Geometric morphometrics for quantitative shape analysis

- Analysis: Genotype-phenotype mapping using quantitative models (e.g., Morrissey model)

Computational Modeling of Gene Regulatory Networks

- Framework: Boolean network models simulating gene activity patterns [11]

- Perturbations: Simulate gene duplications and mutation effects

- Output: Quantify robustness through phenotypic stability across perturbations

Essential Research Reagents and Tools

Table 3: Key Research Reagents for Studying Genetic Redundancy

| Reagent/Tool | Function/Application | Example Use |

|---|---|---|

| CRISPR-Cas9 Mutagenesis System | Precise genome editing for creating mutant libraries | Saturation mutagenesis of SH3 domains in myosin paralogs [14] |

| DHFR Protein-Fragment Complementation Assay | Quantitative measurement of protein-protein interactions | Bulk competition assays to measure binding effects of mutations [14] |

| Allelic Series (Hypomorphic alleles) | Graded reduction in gene function | Fgf8 neo insertion and conditional deletion series [3] |

| Geometric Morphometrics | Quantitative shape analysis | 3D landmark-based analysis of craniofacial phenotypes [3] |

| Boolean Network Models | Computational simulation of gene regulatory dynamics | Studying robustness in gene regulatory networks after duplication [11] |

| Phylogenetic Dating Tools | Evolutionary analysis of duplication events | Determining age of redundant gene pairs across eukaryotes [10] |

Implications for Biomedical Research and Therapeutic Development

The principles of genetic redundancy and duplication-induced buffering have significant implications for understanding disease mechanisms and developing therapeutic interventions:

Disease Resistance and Drug Target Identification

Genetic redundancy presents both challenges and opportunities for therapeutic development. The buffering provided by redundant genes can confer resistance to targeted therapies when parallel pathways compensate for inhibited targets [10]. However, understanding these redundant networks also reveals new therapeutic opportunities:

- Synthetic lethal approaches: Identify redundant pairs where simultaneous inhibition of both paralogs is lethal while single inhibition is tolerated [10]

- Network-based target identification: Map redundant modules to predict resistance mechanisms and design combination therapies

- Expression-based targeting: Leverage differential expression of paralogs to selectively target specific tissues or cell states

Harnessing Duplication for Crop Improvement and Pathogen Resistance

In agricultural biotechnology, understanding how duplication drives the expansion of pathogen resistance genes enables more targeted crop improvement strategies [13]. Research in barley has demonstrated that pathogen defense genes are statistically associated with duplication-prone genomic regions, particularly those rich in kilobase-scale tandem repeats [13]. This non-random association suggests evolutionary selection for lineages where arms-race genes are physically linked to duplication-inducing elements, creating a natural diversity-generating system that can be harnessed for crop improvement.

Genetic redundancy arising from gene duplication serves as a foundational buffering mechanism that enhances phenotypic robustness at multiple biological levels. Rather than being merely a transient evolutionary state, stable redundancy emerges from complex interactions between gene dosage effects, network topology, and evolutionary constraints. The nonlinear nature of developmental systems amplifies the robustness benefits of duplication, particularly through distributed robustness mechanisms that extend beyond simple gene-for-gene backup.

Future research directions should focus on:

- Quantitative mapping of genotype-phenotype relationships across diverse biological systems

- Single-cell resolution studies of how redundancy buffers stochastic variation in gene expression

- Engineering redundant systems to test theoretical predictions about robustness and evolvability

- Clinical translation of redundancy principles for overcoming therapeutic resistance

For researchers and drug development professionals, recognizing the pervasive role of genetic redundancy provides powerful explanatory frameworks for understanding disease penetrance, therapeutic resistance, and evolutionary constraints. By incorporating these principles into experimental design and therapeutic development, the scientific community can better navigate the complexity of biological systems and develop more effective interventions that work with, rather than against, evolved buffering mechanisms.

The Role of Protein Interaction Networks and Topology in System Stability

Protein-protein interaction (PPI) networks are fundamental to cellular processes, and their topological structure is a critical determinant of system stability and phenotypic robustness. The annotation of protein functions constitutes a key connection between genetic sequences, molecular conformations, and biochemical roles, driving progress in biomedical studies [15]. Biological networks display high robustness against random failures but are vulnerable to targeted attacks on central nodes, making network topology analysis a powerful tool for investigating network susceptibility [16]. The robustness of these networks—their ability to maintain functionality despite perturbations—is intricately linked to their topological properties [17]. Understanding this relationship provides crucial insights for deciphering disease mechanisms, identifying therapeutic targets, and explaining the molecular mechanisms underlying phenotypic robustness [15] [18] [16].

Analytical Frameworks for Network Topology

Key Topological Metrics

The stability and function of PPI networks can be quantified through specific topological metrics that capture different aspects of network organization. Node degree represents the number of direct links a node has, while betweenness centrality is the fraction of shortest paths between all pairs of nodes passing through a specific node [16]. Highly connected nodes (hubs) and those with high betweenness often play essential roles in maintaining network integrity [16] [19]. Research has demonstrated that the correlation between gene essentiality and gene centrality increases by roughly 50% when using network features derived from local module topology rather than global network topology [17].

Table 1: Key Topological Metrics for PPI Network Analysis

| Metric | Definition | Biological Interpretation | Correlation with Essentiality |

|---|---|---|---|

| Degree | Number of direct connections | Highly connected hubs; essential for network integrity | 0.497 (module), 0.352 (global) [17] |

| Betweenness Centrality | Fraction of shortest paths passing through a node | Bottleneck proteins controlling information flow | 0.385 (module), 0.314 (global) [17] |

| Product of Degree and Betweenness (PDB) | Combined local and global centrality | Nodes with both high connectivity and strategic positioning | Most effective for network fragmentation [16] |

| Algebraic Connectivity | Second smallest eigenvalue of Laplacian matrix | Overall connectedness and resilience to perturbations [19] | Predictive of network robustness [19] |

Advanced Topological Analysis Methods

Persistent homology captures multi-scale topological features of data, identifying robust topological features including connected components, loops, and voids characterized by their "birth" and "death" across varying scales [19]. This approach provides a quantitative description of the underlying data's shape and stability, offering a deeper understanding of structural organization beyond conventional graph-theoretic approaches [19].

Contrast subgraphs identify the most important structural differences between two networks while preserving node identity awareness [20]. This method extracts gene/protein modules whose connectivity is most altered between two conditions or experimental techniques, providing new insights in functional genomics by identifying differentially connected modules that represent separate biological processes [20].

Experimental and Computational Methodologies

Topology-Aware Functional Similarity (TAFS) Framework

The TAFS framework addresses limitations in traditional functional similarity algorithms by integrating both local neighborhood information and global topological information [15]. This method introduces a distance-dependent functional attenuation factor to dynamically adjust the weights of distant nodes and constructs a bidirectional joint co-function probability model [15].

Protocol: TAFS Calculation

- Input: PPI network G = (V, E), proteins u and v, decay factor β = 0.5

- Calculate co-functional probability from u to v: p(u,v) = Σ_{i∈N(u)} β^{d(i,v)} / |N(u)| where d(i,v) is shortest path length

- Calculate bidirectional probability: p(v,u) similarly from v's perspective

- Compute final TAFS metric: TAFS(u,v) = √[p(u,v) × p(v,u)]

- Output: Functional similarity score between u and v [15]

Experimental Validation: TAFS was systematically evaluated on PPI networks from four model organisms (Saccharomyces cerevisiae, Arabidopsis thaliana, Drosophila melanogaster, and Caenorhabditis elegans) using data from STRING database (v12.0) and protein function annotations from Gene Ontology Consortium [15]. The framework outperformed traditional baseline methods in both single-species and cross-species evaluations [15].

Topological Robustness Analysis via Targeted Attack

This methodology assesses network vulnerability through intentional removal of central nodes, revealing structural weaknesses and essential components [16].

Protocol: Centrality-Based Attack Strategy

- Network Construction: Build PPI network using databases (GeneMania, STRING, BioGRID)

- Centrality Calculation: Compute degree, betweenness, and PDB for all nodes

- Attack Simulation:

- Degree-based: Remove nodes with highest degree first

- Betweenness-based: Remove nodes with highest betweenness first

- PDB-based: Remove nodes with highest degree×betweenness product first

- Robustness Assessment: After each removal, calculate:

- Network diameter (d) and average shortest path length (a)

- Size of largest connected component (S)

- Number of edges (e) and clustering coefficient (cc)

- Validation: Compare with gene essentiality data and functional enrichment [16]

Application in Glioma Research: In temozolomide-resistant glioma networks, PDB-based attack strategy was most effective, with networks almost totally disconnected after removing 20% of most central nodes [16]. This approach identified known and novel targets for overcoming chemotherapy resistance, with central nodes participating in PI3K-Akt-mTOR and Ras-Raf-Erk pathways [16].

Table 2: Network Robustness Parameters for Attack Assessment

| Parameter | Definition | Interpretation in Attack Simulation |

|---|---|---|

| Diameter (d) | Longest shortest path between any two nodes | Increases as network fragments, then decreases |

| Average Shortest Path Length (a) | Mean distance between all node pairs | Measures network efficiency; increases during attack |

| Size of Largest Component (S) | Number of nodes in largest connected subgraph | Decreases as network disintegrates |

| Number of Edges (e) | Total remaining connections | Decreases monotonically during node removal |

| Clustering Coefficient (cc) | Measure of local connectivity | Indicates preservation of modular structure |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for PPI Network and Topology Research

| Resource | Type | Function | Application Example |

|---|---|---|---|

| STRING | Database | Comprehensive PPI datasets with confidence scores | Source for physical interactions (confidence score ≥0.7) [15] |

| Cytoscape | Software Platform | Network visualization and analysis | Layout optimization, cluster identification, centrality calculation [16] [21] |

| GeneMANIA | Database/Cytoscape Plugin | Protein interactions from multiple sources | Building condition-specific PPI networks [16] |

| clusterMaker2 | Algorithm | Identifies densely interconnected node groups | Detecting functional modules in resistance networks [16] |

| BioGRID | Database | Biological interaction repository | Curated PPI data for network construction [15] |

| Gene Ontology Consortium | Database | Standardized functional annotations | Functional enrichment of network modules [15] |

The topological analysis of protein interaction networks provides fundamental insights into system stability and phenotypic robustness. The framework connecting network topology to stability has significant implications for understanding disease mechanisms and developing therapeutic strategies, particularly in complex diseases like cancer where network robustness contributes to therapy resistance [16]. The integration of topological analysis with artificial intelligence approaches represents the future frontier for understanding PPIs at unprecedented resolution [22].

{: .no_toc}

Table of contents

{: .text-delta }- TOC {:toc}

This whitepaper examines the role of the heat shock protein 90 (Hsp90) molecular chaperone as a capacitor of phenotypic variation, a key mechanism underlying phenotypic robustness in evolving biological systems. We synthesize evidence from diverse eukaryotic models—including Drosophila, Arabidopsis, Tribolium castaneum, and yeast—demonstrating that Hsp90 buffers cryptic genetic variation and modulates developmental trajectories in response to environmental stress. By epistatically interacting with numerous client proteins, Hsp90 stabilizes the genotype-phenotype map under normal conditions while permitting phenotypic diversification under stress. This review details the molecular mechanisms of Hsp90 function, presents quantitative analyses of its effects, and explores implications for evolutionary biology, disease pathogenesis, and therapeutic development. Technical protocols for experimental manipulation of Hsp90 are provided to facilitate continued investigation into this global modifier of biological variation.

Biological systems exhibit remarkable stability in phenotypic output despite genetic and environmental fluctuations, a phenomenon termed canalization. First conceptualized by Conrad Hal Waddington, canalization represents the buffering of developmental pathways to produce consistent phenotypes [23]. Waddington observed that environmental stress (heat shock) applied to Drosophila pupae could induce crossveinless wing phenotypes. Through selective breeding, this initially rare, stress-induced trait was assimilated into populations even without the original environmental trigger [23]. This foundational work demonstrated that developmental systems possess inherent robustness while retaining the capacity for evolutionary change when buffering mechanisms are compromised.

The contemporary molecular understanding of canalization identifies Hsp90 as a central mechanism underlying this phenomenon. Hsp90 functions as a potent buffer of phenotypic variation due to several distinctive characteristics:

- Abundant expression: Hsp90 constitutes 1-2% of cellular protein under normal conditions, rising to 4-6% during stress [24].

- Client specificity: Hsp90 interacts with ~10% of the eukaryotic proteome [25] [24], preferentially binding "hub" proteins in signaling networks including kinases, transcription factors, and E3 ubiquitin ligases [23] [26].

- Stress sensitivity: Hsp90 expression and function are modulated by environmental fluctuations, creating a molecular link between stress and phenotypic plasticity [23] [24].

As a "global modifier" of the genotype-phenotype-fitness map [26], Hsp90 determines the phenotypic visibility of genetic variation, thereby influencing evolutionary trajectories and disease manifestations.

Hsp90 Structure and Molecular Mechanism

Structural Domains and Functional Motifs

Hsp90 functions as a homodimer with each monomer comprising three structurally and functionally distinct domains:

- N-terminal domain: Contains an ATP-binding pocket and a "lid" segment that undergoes conformational changes during the chaperone cycle. This domain is connected to the middle domain by a flexible charged linker [24].

- Middle domain: Contributes to client protein binding and contains a catalytic loop with a conserved arginine residue (Arg380 in yeast) essential for ATP hydrolysis [24].

- C-terminal domain: Mediates the inherent dimerization of Hsp90 and contains a second nucleotide-binding site with regulatory functions [23] [24].

The following diagram illustrates the structural organization and conformational cycle of Hsp90:

Figure 1: Hsp90 chaperone cycle and conformational states. ATP binding and hydrolysis drive conformational changes that enable client protein folding and maturation.

The Hsp90 Chaperone Cycle

Hsp90 undergoes a tightly regulated ATP-dependent cycle to facilitate client protein maturation:

- Client recognition: Partially folded client proteins are transferred to Hsp90 from Hsp70/Hsp40 complexes, often facilitated by co-chaperones like Hop [23].

- ATP binding and N-terminal dimerization: ATP binding induces transient dimerization of the N-terminal domains and lid closure over the nucleotide-binding pocket [24].

- Middle domain engagement and ATP hydrolysis: The catalytic loop of the middle domain repositions to interact with the ATP γ-phosphate, dramatically stimulating Hsp90's intrinsic ATPase activity, often enhanced by the co-chaperone Aha1 [24].

- Client protein release: ATP hydrolysis and subsequent ADP release reset Hsp90 to its open conformation, releasing the properly folded client protein [23] [24].

This chaperone cycle enables Hsp90 to stabilize metastable client proteins, particularly those involved in signal transduction and developmental regulation.

Hsp90 as an Evolutionary Capacitor: Empirical Evidence

The capacitor hypothesis posits that Hsp90 buffers cryptic genetic variation under normal conditions while releasing this variation as phenotypic diversity under environmental stress. The following table summarizes key experimental evidence across diverse eukaryotic systems:

Table 1: Empirical evidence for Hsp90's capacitor function across model organisms

| Organism | Experimental Manipulation | Phenotypic Effects | Heritability | Citation |

|---|---|---|---|---|

| Drosophila melanogaster | Hsp83 heterozygosity or pharmacological inhibition | Crossveinless wings, eye deformities, leg malformations | Selectable and assimilable over generations | [23] [27] |

| Arabidopsis thaliana | Pharmacological inhibition with geldanamycin | Diverse morphological abnormalities in leaves, flowers, roots | Dependent on underlying genetic variation | [27] |

| Tribolium castaneum | RNAi knock-down or 17-DMAG inhibition | Reduced-eye phenotype (44% size reduction, ~75% fewer ommatidia) | Fixed as monomorphic line after selection | [25] |

| Saccharomyces cerevisiae | Genetic reduction or chemical inhibition | Morphological heterogeneity, filamentous growth | Environmentally contingent, not genetically fixed | [28] |

| Astyanax mexicanus (cavefish) | Pharmacological inhibition | Eye size variation | Suggested role in adaptive evolution | [25] |

Case Study: Adaptive Eye Reduction inTribolium castaneum

A recent investigation in the red flour beetle provides compelling evidence for Hsp90's role in adaptive evolution. The experimental workflow and key findings are summarized below:

Figure 2: Experimental workflow for Hsp90 capacitor study in Tribolium castaneum demonstrating the release, inheritance, and assimilation of a reduced-eye phenotype.

This study demonstrated that Hsp90 inhibition released a previously cryptic reduced-eye phenotype, which persisted in subsequent generations without continued Hsp90 disruption [25]. Under constant light conditions, reduced-eye beetles exhibited higher reproductive success than normal-eyed siblings, providing direct evidence of context-dependent fitness benefits [25]. Whole-genome sequencing identified the transcription factor atonal as the underlying gene, offering the first direct genetic link between an Hsp90-buffered trait and adaptive evolution in animals [25].

Methodologies for Experimental Manipulation of Hsp90

Research Reagent Solutions

Table 2: Essential reagents for Hsp90 functional studies

| Reagent | Type | Mechanism of Action | Application Examples | Considerations |

|---|---|---|---|---|

| 17-DMAG | Chemical inhibitor | Binds ATP pocket, blocks chaperone activity | Tribolium: 10-100 µg/mL induces reduced-eye phenotype [25] | Water-soluble analog of geldanamycin; suitable for in vivo studies |

| Geldanamycin | Chemical inhibitor | Competitive inhibition of ATP binding | Arabidopsis: induces morphological variation [27] [29] | Limited solubility; toxic at high concentrations |

| Radicicol | Chemical inhibitor | Binds ATP pocket with different structural motif | Yeast: induces morphological heterogeneity [28] | Natural product; useful for verifying Hsp90-specific effects |

| Hsp83-targeting dsRNA | RNA interference | Sequence-specific mRNA degradation | Tribolium: paternal RNAi induces heritable phenotypes [25] | Species-specific design required; efficiency varies by tissue |

| Hsp90 mutant alleles | Genetic manipulation | Reduced function or expression | Drosophila: Hsp83 heterozygotes show developmental defects [23] [27] | Essential gene; null alleles often lethal |

Quantitative Assessment of Hsp90 Inhibition Effects

Table 3: Quantitative metrics for Hsp90 perturbation across studies

| Parameter | Drosophila | Arabidopsis | Tribolium | Yeast |

|---|---|---|---|---|

| Phenotype incidence | Varies by genetic background | Widespread across accessions | 4.2% (RNAi), 5.1% (17-DMAG) | Heterogeneous in population |

| Developmental timing | Pupal stages sensitive | Throughout development | Larval treatment, adult phenotype | Continuous during growth |

| Heritability rate | High after selection | Dependent on genetic background | Fixed after 5 generations | Not genetically fixed |

| Environmental interaction | Heat stress mimics effect | Morphological plasticity | Enhanced under constant light | Various stresses induce |

Protocol: Hsp90-Dependent Phenotypic Screening in Model Organisms

Objective: To identify and characterize Hsp90-buffered phenotypic variation in a model organism population.

Materials:

- Wild-type population with genetic diversity (e.g., Tribolium Cro1 strain)

- Hsp90 inhibitor (e.g., 17-DMAG stock solution at 1mg/mL in DMSO)

- RNAi reagents for Hsp83 gene targeting

- Appropriate environmental chambers for controlled maintenance

Procedure:

Experimental Group Setup:

- Chemical inhibition group: Treat larvae with 17-DMAG (10-100 µg/mL) in culture medium for full developmental period.

- Genetic inhibition group: Perform paternal RNAi injection targeting Hsp83 transcripts in adults.

- Control groups: Include vehicle-only (DMSO) and scramble RNAi controls.

Phenotypic Screening:

- Monitor F1 generation for developmental abnormalities and survival rates.

- In F2 generation (from crosses between F1 individuals), conduct systematic screening for novel phenotypes.

- For Tribolium eye reduction: Quantify eye surface area and ommatidia count in adults.

Heritability Assessment:

- Cross individuals exhibiting novel phenotypes with naive wild-types.

- Track phenotype incidence over multiple generations without continued Hsp90 disruption.

- For stable phenotypes, perform selection to establish fixed lines.

Fitness Analysis:

- Under different environmental conditions, compare reproductive success between phenotypic variants.

- In Tribolium: Assess fecundity and offspring survival under constant light vs. dark conditions.

Genetic Mapping:

- For assimilated traits, employ whole-genome sequencing of fixed lines vs. original population.

- Conduct linkage analysis to identify loci underlying the Hsp90-buffered variation.

- Validate candidate genes via functional complementation or CRISPR manipulation.

Troubleshooting:

- Low phenotype incidence may require larger population sizes or optimized inhibitor concentrations.

- Failure of phenotypic assimilation suggests insufficient genetic basis or strong counterselection.

- Variable expressivity indicates modifier loci or environmental interactions requiring controlled conditions.

The Network Basis of Hsp90 Capacitor Function

Hsp90's global modifier capability stems from its privileged position in cellular networks. Systematic interaction mapping reveals Hsp90 as a "hub of hubs" with several distinctive network properties [26]:

- High connectivity: Hsp90 ranks as the fourth most interconnected protein in yeast protein-protein interaction networks, with 1,251 combined physical and genetic interactions [26].

- Client preference: Hsp90 clients are themselves highly connected proteins, disproportionately representing kinases, transcription factors, and other regulatory molecules [23] [26].

- Pleiotropic buffering: Through its effects on these key regulators, Hsp90 can simultaneously modulate the phenotypic expression of numerous genetic variants across the genome [26].

This network positioning explains Hsp90's disproportionate influence on the genotype-phenotype map and its capacity to shape evolutionary trajectories through environment-dependent alteration of selective landscapes.

Implications for Disease and Therapeutic Development

The Hsp90 capacitor mechanism has significant implications for human disease and pharmaceutical intervention:

- Disease pathogenesis: Hsp90 buffering may maintain asymptomatic states in individuals carrying deleterious variants until environmental stress or secondary hits overwhelm protective capacity [29]. This model explains incomplete penetrance and variable expressivity in many genetic disorders.

- Cancer evolution: Many oncoproteins are Hsp90 clients, and cancer cells often exhibit increased Hsp90 dependence [26]. Hsp90 inhibition can simultaneously compromise multiple cancer pathways while revealing cryptic genetic variation that may influence therapeutic resistance [26].

- Neuropsychiatric disorders: Emerging evidence implicates Hsp90 in major depressive disorder through regulation of the HPA axis stress response and neuroinflammatory pathways [30].

- Antifungal therapy: Hsp90 enables the evolution of drug resistance in fungal pathogens by buffering resistance mutations until drug exposure [26].

The following diagram illustrates Hsp90's role in disease manifestation and therapeutic response:

Figure 3: Hsp90-mediated transition from asymptomatic to disease state through buffer failure. Environmental stress or therapeutic inhibition compromises Hsp90 function, revealing previously cryptic variation as pathological phenotypes or drug resistance.

Hsp90 represents a paradigm-shifting concept in evolutionary and developmental biology: a specific molecular mechanism that governs the visibility of genetic variation to natural selection. As a stress-sensitive capacitor, Hsp90 provides a dynamic interface between environment and genome, enabling phenotypic robustness in stable conditions while facilitating rapid adaptation when circumstances change. The mechanistic dissection of Hsp90 function—from atomic-level chaperone cycle to network-level influences on the genotype-phenotype map—reveals fundamental principles of biological system regulation.

Future research directions should prioritize:

- Systematic identification of Hsp90-dependent genetic variants in natural populations

- Elucidation of tissue-specific and developmental stage-specific capacitor functions

- Development of therapeutic strategies that modulate Hsp90 buffering capacity for selective phenotype control

- Exploration of potential capacitors beyond Hsp90 that might buffer different forms of biological variation

The study of Hsp90 continues to illuminate the intricate molecular negotiations between genetic inheritance, developmental processes, and environmental factors that collectively shape biological diversity and evolutionary trajectories.

Cryptic genetic variation (CGV) represents a reservoir of genetic polymorphisms that remain phenotypically silent under normal conditions but can generate heritable phenotypic variation when revealed by genetic or environmental perturbations [31]. This phenomenon operates through two primary conditional mechanisms: gene-by-gene interactions (epistasis), where an allele's effect depends on the genetic background, and gene-by-environment interactions, where an allele's effect depends on environmental conditions [31]. From a molecular perspective, CGV accumulates within buffered biological systems—including gene regulatory networks, protein interaction networks, and metabolic pathways—that stabilize phenotypes against genetic and environmental fluctuations [32]. This phenotypic robustness, maintained through homeostatic and canalization mechanisms, enables populations to accumulate genetic diversity without compromising fitness, thereby creating a hidden substrate for evolutionary adaptation and disease pathogenesis.

The Waddingtonian concept of canalization provides a foundational framework for understanding CGV. C.H. Waddington proposed that evolved buffering mechanisms dampen phenotypic variation under normal conditions, with this variation becoming exposed only when these mechanisms are overwhelmed [32] [31]. Modern research has identified specific molecular implementations of this buffering capacity, including regulatory network redundancy, protein-folding chaperones, and allosteric feedback in metabolic pathways [32]. The clinical and evolutionary significance of CGV stems from its dual potential: it can serve as a cache of adaptive potential for rapid evolution when environments change, or as a pool of deleterious alleles that may contribute to disease under specific genetic or environmental contexts [31].

Molecular Mechanisms and Buffering Systems

Architectural Buffering in Gene Regulatory Networks

Gene regulatory networks with inherent redundancy provide robust buffering capacity for CGV accumulation. Research in tomato inflorescence development demonstrates how paralogous gene pairs maintain phenotypic stability while accumulating cryptic sequence variation. The JOINTLESS2 (J2) and ENHANCER OF JOINTLESS2 (EJ2) MADS-box transcription factors function redundantly in inflorescence development [33]. Individual loss-of-function mutations in either gene remain phenotypically cryptic, while simultaneous disruption reveals their redundant functions through dramatic increases in branching complexity [33].

This buffering capacity extends to cis-regulatory elements, where natural and engineered promoter variants in EJ2 exhibit branching phenotypes only in specific genetic backgrounds. CRISPR-Cas9-mediated dissection of the EJ2 promoter identified transcription factor binding sites (TFBSs) for DOF and PLETHORA (PLT) families that modulate expression [33]. These cis-regulatory variants accumulated cryptically in wild tomato species (S. habrochaites and S. pennellii) and were only phenotypically revealed when combined with the j2 mutant background [33]. The hierarchical epistasis observed within this network demonstrates how buffering architecture enables CGV accumulation: dose-dependent synergistic interactions within paralogue pairs are counterbalanced by antagonistic interactions between paralogue pairs, creating a complex genotype-phenotype map with multiple thresholds for phenotypic revelation [33].

Protein-Level Buffering and Chaperone Systems

Cellular protein homeostasis mechanisms represent another crucial layer for CGV accumulation. The heat shock protein Hsp90 exemplifies a generic buffering mechanism that suppresses the phenotypic effects of protein-folding variants by assisting in the folding of metastable proteins [31]. When Hsp90 function is compromised by environmental stress or pharmacological inhibition, pre-existing genetic variation affecting protein stability becomes phenotypically expressed [31]. This chaperone system functions as an evolutionary capacitor, storing and releasing CGV in response to proteomic stress.

Beyond Hsp90, metabolic pathway architecture provides targeted buffering through allosteric regulation. The one-carbon metabolism network demonstrates how feedback and feedforward loops stabilize flux against enzymatic variation [32]. In this network, metabolites allosterically regulate enzyme activity to maintain stable reaction rates (e.g., thymidylate synthesis) despite variation in enzyme concentrations or activities [32]. Elimination of these regulatory interactions through mutation exposes previously buffered genetic variation, dramatically increasing phenotypic variance in metabolic output [32].

Table 1: Molecular Buffering Mechanisms for Cryptic Genetic Variation

| Buffering Mechanism | Molecular Implementation | Phenotypic Revelation Trigger |

|---|---|---|

| Gene Regulatory Redundancy | Paralogous transcription factors (J2/EJ2) with overlapping functions [33] | Simultaneous disruption of multiple network components [33] |

| Protein Chaperone Systems | Hsp90-assisted folding of metastable protein variants [31] | Environmental stress or pharmacological inhibition of chaperone function [31] |

| Metabolic Homeostasis | Allosteric feedback regulation in one-carbon metabolism [32] | Disruption of regulatory interactions while maintaining catalytic function [32] |

| Cis-Regulatory Compensation | Shadow enhancers and redundant transcription factor binding sites [31] | Specific promoter mutations or transcription factor depletion [33] |

Experimental Dissection and Revelation of CGV

Systematic Mutagenesis in Protein Interaction Networks

Recent research on yeast type-I myosins (Myo3 and Myo5) demonstrates how cryptic sequence divergence shapes evolutionary potential in redundant paralogs. Despite maintaining identical binding preferences for 100 million years, these paralogs accumulated cryptic amino acid substitutions (6/59 residues in Myo3 and 5/59 in Myo5) [14]. Saturation mutagenesis of their SH3 domains, coupled with CRISPR-Cas9-mediated homology repair, enabled systematic quantification of how all possible single-amino acid substitutions affect binding to eight cognate partners [14].

The experimental workflow involved:

- Library Construction: Creating complete single-amino acid mutant libraries for both SH3 domains

- Genomic Integration: Inserting variant libraries at native genomic loci using CRISPR-Cas9

- Binding Quantification: Measuring protein-protein interactions via dihydrofolate reductase protein-fragment complementation assay (DHFR-PCA)

- Functional Effect Calculation: Scaling log₂ fold changes to derive mutation effects (ΔF), where 1 represents silent mutation effects and 0 represents termination codon effects [14]

This approach revealed that ~15% of mutations had significantly different effects between paralogs due to epistatic interactions with cryptic divergent sites [14]. Furthermore, expression-level differences created additional contingency, with the higher-expressed paralog buffering mutations that impaired binding in the lower-expressed duplicate [14]. This demonstrates how cryptic variation at both sequence and expression levels creates evolutionary potential for subfunctionalization and neofunctionalization.

Figure 1: Experimental workflow for systematic dissection of cryptic genetic variation effects on protein-protein interactions

Environmental Induction in Natural Populations

Spadefoot toad tadpole cannibalism provides a compelling natural example of CGV revelation. While cannibalism is a constitutive behavior in Spea bombifrons, its sister genus Scaphiopus holbrookii exhibits this behavior only under specific environmental conditions [34]. High conspecific density induces cannibalistic behavior in S. holbrookii, revealing cryptic genetic variation in brain gene expression patterns [34].

The experimental protocol for quantifying this CGV involved:

- Environmental Manipulation: Raising tadpoles under factorial combinations of density levels (low/high) and diet types (detritus/shrimp)

- Behavioral Quantification: Measuring cannibalism rates through survival assays and direct observation

- Brain Region Dissection: Microdissecting telencephalon and diencephalon regions for RNA sequencing

- Variance Partitioning: Quantifying contributions of genetic background, environment, and gene-by-environment interactions to transcriptional variance

- Heritability Estimation: Calculating broad-sense heritability of gene expression profiles under different conditions [34]

This approach demonstrated that novel environments (high density, shrimp diet) increase heritable variance in brain gene expression, with gene-by-environment interactions accounting for approximately 20% of transcriptional variance in both brain regions [34]. This provided the raw material for genetic accommodation of cannibalistic behavior in derived lineages.

Table 2: Quantitative Analysis of Cryptic Genetic Variation Across Experimental Systems

| Experimental System | Phenotype Assayed | Measurement Approach | Key Quantitative Finding |

|---|---|---|---|

| Tomato Inflorescence [33] | Branching complexity | Quantification of >35,000 inflorescences across 216 genotypes | Hierarchical epistasis with dose-dependent interactions within paralogue pairs |

| Yeast Myosin SH3 Domains [14] | Protein-protein binding | DHFR-PCA bulk competition with deep sequencing | 15% of mutations showed paralog-specific effects due to epistasis with cryptic sites |

| Spadefoot Toad Tadpoles [34] | Brain gene expression | RNA-seq of diencephalon and telencephalon regions | GxE interactions accounted for 20.5% of transcriptional variance in telencephalons |

| One-Carbon Metabolism [32] | Metabolic flux stability | Computational modeling of reaction kinetics | Enzymatic activities could vary 0.2-1.5x wild-type with minimal effect on flux |

Evolutionary Trajectories and Disease Implications

From Cryptic Variation to Evolutionary Innovation

The evolutionary significance of CGV lies in its capacity to facilitate rapid adaptation through genetic assimilation—the process by which environmentally induced phenotypes become genetically fixed without the original inducing stimulus [32] [31]. Waddington's original experiments demonstrated this principle by selecting for cross-veinless wings in Drosophila after heat shock treatment, eventually establishing stable lines that expressed the trait without environmental induction [31]. The molecular era has identified specific instances where CGV provides the substrate for evolutionary innovation.

In the tomato inflorescence system, hierarchical epistasis within the J2-EJ2-PLT regulatory network structures the available phenotypic space, creating both strongly buffered phenotypic regions and thresholds permitting sudden bursts of phenotypic change [33]. Similarly, in yeast myosin evolution, cryptic divergence in both protein sequence and expression levels creates evolutionary contingency, where the same mutation would nonfunctionalize one paralog while having minimal impact on the other [14]. This contingency biases evolutionary trajectories, facilitating subfunctionalization and neofunctionalization outcomes.

The genetic accommodation of spadefoot toad tadpole cannibalism demonstrates how CGV enables behavioral evolution. Ancestral Scaphiopus populations exhibited conditional cannibalism with heritable variation in plasticity, while derived Spea lineages evolved constitutive expression through changes in environmental responsiveness [34]. This progression from cryptic genetic variation to evolutionary innovation illustrates how CGV facilitates the origins of novel traits.

Figure 2: Evolutionary progression from cryptic genetic variation to novel trait stabilization

Clinical Implications for Complex Disease

Cryptic genetic variation has profound implications for human disease susceptibility and manifestation. As environments change—through dietary shifts, toxin exposures, or lifestyle alterations—previously cryptic genetic variants may become phenotypically expressed, potentially increasing disease incidence [31]. This model provides a plausible explanation for the increasing prevalence of complex diseases in modern human populations.

From a therapeutic perspective, understanding CGV dynamics offers opportunities for intervention. Potential strategies include:

- Network Stabilization: Reinforcing buffering mechanisms to suppress deleterious variant expression

- Precision Environmental Matching: Identifying and avoiding individual-specific triggers for variant revelation

- Epistatic Interaction Mapping: Accounting for genetic background effects in therapeutic target identification

The protein interaction network findings from yeast myosins have direct translational relevance [14]. As human genomes contain numerous redundant paralogs, understanding how cryptic sequence variation affects mutation impact could improve variant interpretation in clinical genetics. Similarly, the metabolic buffering principles identified in one-carbon metabolism [32] inform how nutritional interventions might stabilize metabolic flux in inborn errors of metabolism.

Research Toolkit: Experimental Approaches and Reagents

Table 3: Essential Research Reagents and Methodologies for CGV Investigation

| Research Tool | Specific Application | Experimental Function | Exemplary Use |

|---|---|---|---|

| CRISPR-Cas9 Genome Editing | Saturation mutagenesis; promoter dissection; paralog manipulation [33] [14] | Precise genomic modifications to test specific hypotheses about variant effects | Engineering EJ2 promoter alleles to test cis-regulatory cryptic variation [33] |

| DHFR Protein-Fragment Complementation Assay | Quantitative protein-protein interaction measurement [14] | High-throughput quantification of binding affinity changes across mutant libraries | Measuring SH3 domain mutation effects on eight cognate partners [14] |

| RNA Sequencing | Transcriptional variance partitioning; brain gene expression analysis [34] | Genome-wide expression quantification under different environmental conditions | Identifying cryptic genetic variation in spadefoot toad brain responses [34] |

| Pan-Genome Analysis | Natural variation mining in non-model systems [33] | Identification of structural and regulatory variants across populations | Discovering natural EJ2 promoter variants in wild tomato species [33] |

| Computational Modeling | Metabolic flux analysis; epistasis mapping [32] | Predicting system behavior from component interactions and identifying buffering mechanisms | Modeling one-carbon metabolism stability and vulnerability [32] |

Quantifying Robustness: Experimental and Computational Approaches for Research and Drug Discovery

Robust Parameter Design (RPD) and Statistical Modeling for Protocol Optimization

Robust Parameter Design (RPD) represents a critical statistical engineering methodology for optimizing processes and protocols to minimize performance variation while maintaining target outcomes. This whitepaper examines RPD's fundamental principles, methodological frameworks, and emerging applications within biological research, with particular emphasis on molecular mechanisms underlying phenotypic robustness. By integrating traditional RPD with modern risk optimization strategies, researchers can develop experimental protocols that remain stable against both genetic and environmental perturbations, advancing drug development and fundamental biological discovery.

Robust Parameter Design (RPD) is an experimental methodology focused on exploiting interactions between control and uncontrollable noise variables to find control factor settings that minimize response variation from uncontrollable factors [35]. Introduced by Genichi Taguchi, RPD distinguishes between control factors (variables researchers can set and maintain) and noise factors (variables difficult or impossible to control during actual process implementation) [35] [36].

In biological contexts, RPD enables scientists to develop protocols that function reliably despite experimental variations. For instance, a polymerase chain reaction (PCR) protocol optimized through RPD would maintain high performance despite fluctuations in reagent quality, operator technique, or equipment calibration [36]. This approach contrasts with traditional one-factor-at-a-time optimization, which fails to account for factor interactions and often produces protocols sensitive to minor variations [36].

The fundamental principle of RPD aligns with the biological concept of phenotypic robustness—the insensitivity of physiological and developmental processes to genetic and environmental perturbations [2]. Both systems aim to achieve consistent outcomes despite underlying variability, whether in engineered processes or evolved biological systems.

Theoretical Foundations and Statistical Framework

Core Mathematical Formulations

RPD operates through response function modeling, where a response is quantitatively modeled as a function of control and noise factors [36]. The general model form can be represented as:

[ g(x,z,w,e) = f(x,z,\beta) + w^Tu + e ]

Where:

- (x) represents control factors

- (z) and (w) represent different classes of noise factors

- (\beta) represents fixed effects

- (u) and (e) represent random effects [36]

This model structure allows researchers to distinguish between different sources of variation and identify control factor settings that make the system minimally sensitive to noise factors.

Key Design Criteria and Aliasing Considerations

RPD builds upon fractional factorial designs (FFDs) but introduces modified priority schemes to account for control-by-noise (CN) interactions [35]. The extended priority scale in RPD increases the importance of effects involving noise factors:

Table: Effect Priority in RPD vs. Traditional Fractional Factorial Designs

| Effect Type | Traditional FFD Priority | RPD Priority | Rationale |

|---|---|---|---|

| Main Effects (Control) | 1 | 1 | Primary interest remains |

| Main Effects (Noise) | 1 | 1 | Direct noise impact |

| Control-Control (CC) 2FI | 2 | 2 | Standard interaction |

| Control-Noise (CN) 2FI | 2 | 2.5 | Critical for robustness |

| Control-Control-Noise (CCN) 3FI | 3 | 2.5 | Includes noise component |

This reprioritization reflects RPD's focus on identifying control factor settings that mitigate the impact of noise factors through significant CN interactions [35].

Advanced Optimization Formulations

Modern RPD implementations employ robust optimization with risk-averse criteria such as conditional value-at-risk (CVaR) [36]. The optimization problem can be formulated as:

[ \begin{align} &\text{minimize } g_0(x) \ &\text{subject to } g(x,z,w,e) \geq t \ &\quad x \in \mathcal{S} \end{align} ]

Where (g_0(x) = c^Tx) represents the protocol cost, and the constraint ensures performance exceeds threshold (t) despite randomness in (z), (w), and (e) [36]. This formulation explicitly balances cost efficiency with robustness, particularly valuable in resource-constrained research environments [37].

RPD in Biological Context: Connecting to Phenotypic Robustness

Conceptual Alignment with Biological Robustness Mechanisms

RPD's engineering framework mirrors evolved robustness mechanisms in biological systems. Biological robustness—the insensitivity of phenotypes to genetic or environmental perturbations—shares fundamental principles with RPD's goals [2]. Both systems employ specific strategies to buffer against variability:

- Redundancy: Biological systems use gene duplication; RPD uses backup factors

- Feedback control: Biological systems use regulatory networks; RPD uses control loops

- Nonlinear responses: Both exploit threshold effects and saturation kinetics [2] [3]

Molecular chaperones like Hsp90 represent biological analogs to RPD, buffering against phenotypic effects of genetic variation and environmental stress [2]. Hsp90 maintains the stability of key regulatory proteins, ensuring consistent phenotypic outcomes despite stochastic fluctuations in cellular environments [2].

Nonlinearity in Genotype-Phenotype Maps

A critical connection between RPD and phenotypic robustness emerges in nonlinear genotype-phenotype (G-P) relationships. Research on Fgf8 signaling demonstrates how nonlinearities in developmental systems dictate robustness levels [3]. When Fgf8 expression exceeds a threshold (~40% of wild-type), variation has minimal phenotypic impact, whereas below this threshold, the same variation produces dramatically different phenotypes [3].

Table: Nonlinear Response Characteristics in Biological Systems

| System | Nonlinear Relationship | Robustness Mechanism | Experimental Evidence |

|---|---|---|---|

| Fgf8 Signaling | Sigmoidal (threshold) | Canalization above threshold | Mouse allelic series showing increased shape variance below 40% Fgf8 [3] |

| Hsp90 Chaperone | Capacity saturation | Molecular buffering | Yeast morphology variation when Hsp90 overwhelmed [2] |

| Transcriptional Regulation | Hill function | Noise suppression | Promoter architecture optimization for reduced variability [2] |

This nonlinear behavior directly informs RPD strategy: identifying control factor settings in flatter regions of response surfaces where noise factors have minimal impact, analogous to biological systems operating above sensitivity thresholds.

Methodological Implementation

Experimental Design Workflow

Implementing RPD follows a structured, often iterative workflow:

The process begins with screening designs to eliminate unimportant factors, followed by fractional factorial designs to explore response spaces [36]. Center points assess curvature, with potential augmentation using center-face composite designs to estimate quadratic effects [36]. Model adequacy requires the estimated response variance to be at least three-fold greater than residual error variance before proceeding to optimization [36].

Three-Stage RPD Implementation for Biological Protocols

A comprehensive RPD application involves three integrated stages:

Stage 1: Factor Classification and Experimental Design

- Classify factors as control (x), experimentally controllable noise (z), or uncontrollable noise (w) [36]

- Design experiments using fractional factorial structures with distinct control and noise arrays

- Incorporate sufficient replication to estimate variance components

Stage 2: Mixed Effects Modeling

- Fit combined models with fixed effects for control factors and random effects for noise

- Use Bayesian Information Criterion (BIC) for parsimonious model selection

- Validate models through leave-one-out cross-validation

Stage 3: Risk-Averse Robust Optimization

- Apply conditional value-at-risk (CVaR) criteria for optimization

- Minimize cost function subject to probabilistic performance constraints

- Verify optimal settings through independent validation experiments [36]

Case Study: PCR Protocol Optimization

Experimental Implementation

A demonstrated application of RPD optimized a polymerase chain reaction (PCR) protocol for cost-effectiveness and robustness [36]. The implementation included:

Table: Research Reagent Solutions for RPD Experimental Implementation

| Reagent/Resource | Function in RPD Context | Experimental Role |

|---|---|---|

| Hadamard Matrices | Design construction | Orthogonal array basis for factor combinations [35] |

| Mixed Effects Models | Variance component analysis | Separating control, noise, and random error effects [36] |

| Conditional Value-at-Risk (CVaR) | Risk-averse optimization | Protecting against worst-case performance scenarios [36] |

| Fractional Factorial Designs | Efficient screening | Identifying significant factors with minimal runs [35] [36] |

| Center-Face Composite Designs | Curvature detection | Modeling nonlinear response surfaces [36] |

Control factors included reagent concentrations, cycling conditions, and enzyme sources, while noise factors included template quality, technician experience, and equipment calibration [36]. The experimental design systematically varied these factors according to a fractional factorial structure.