Genomic vs. Transcriptomic Prediction Models: A Comprehensive Performance Comparison for Biomedical Research

This article provides a comprehensive performance comparison of genomic and transcriptomic prediction models, tailored for researchers, scientists, and drug development professionals.

Genomic vs. Transcriptomic Prediction Models: A Comprehensive Performance Comparison for Biomedical Research

Abstract

This article provides a comprehensive performance comparison of genomic and transcriptomic prediction models, tailored for researchers, scientists, and drug development professionals. It explores the foundational principles of both genomic selection and transcriptomic data, detailing how they capture different layers of biological information. The content covers a wide array of methodological approaches, from traditional BLUP models to advanced multi-omics integration and machine learning techniques. It addresses key challenges in model implementation, including data redundancy and technical complexity, while offering optimization strategies. Through rigorous validation and comparative analysis across diverse applications—from agriculture to drug response prediction and personalized medicine—this article synthesizes evidence on the complementary strengths of each approach and provides actionable insights for selecting and refining predictive models in research and development.

Core Concepts: Understanding Genomic and Transcriptomic Data for Predictive Modeling

Genomic Selection (GS) has revolutionized animal and plant breeding by enabling the prediction of an individual's genetic merit based on genome-wide molecular markers. First proposed by Meuwissen et al. in 2001, GS bypasses the need for direct phenotypic selection, allowing for earlier and more efficient selection decisions that shorten breeding cycles and enhance genetic gain [1] [2]. This methodology represents a fundamental shift from phenotype-based to genotype-driven decision-making in breeding programs. The core principle involves developing a prediction model using genotypic and phenotypic data from a training population, which is then applied to estimate Genomic Estimated Breeding Values (GEBVs) for individuals in a breeding population based solely on their genomic profiles [2].

In recent years, attention has turned toward other omics layers seen as promising for improving prediction accuracy. Transcriptomic data, which provides insights into gene expression patterns shaped by both genetic and environmental factors, offers a more comprehensive understanding of phenotype expression [3]. This article provides a comprehensive comparison between traditional genomic prediction and emerging transcriptomic prediction approaches, examining their relative performances across species, traits, and experimental conditions to guide researchers in selecting appropriate strategies for genetic improvement.

Performance Comparison: Genomic vs. Transcriptomic Prediction

Direct comparisons between genomic and transcriptomic prediction models across multiple studies reveal a complex performance landscape influenced by species, trait characteristics, and environmental conditions. The table below summarizes key findings from recent research:

Table 1: Comparison of Genomic and Transcriptomic Prediction Accuracies Across Studies

| Species | Traits Assessed | Genomic Prediction Accuracy | Transcriptomic Prediction Accuracy | Combined Model Accuracy | Reference |

|---|---|---|---|---|---|

| Japanese Quail | Efficiency-related traits (P utilization, body weight) | Moderate | Higher than genomic | Highest | [3] |

| Wheat | Flowering time, height | Moderate | Superior in controlled environments | Best performing | [4] |

| Barley | Agricultural traits | 0.73-0.78 (50K SNP array) | Comparable to genomic | 0.73-0.78 (consensus SNP) | [5] |

| Dairy Cattle | Lactation traits | Baseline | Functional variants from RNA-seq improved accuracy | Varied by trait | [6] |

| Maize & Rice | Complex agronomic traits | Variable | Complementary to genomic | Consistently improved | [1] |

Transcriptomic data generally explains a larger portion of phenotypic variance than host genetics for many traits. In Japanese quail, transcript abundances from intestinal tissue explained more phenotypic variance of efficiency-related traits than genetic markers [3]. Similarly, in wheat grown under controlled environments, transcriptome abundance outperformed genomic data when considered independently for predicting flowering time and height [4].

However, the superior predictive ability of transcriptomic data is context-dependent. In field conditions with greater environmental variability, the relative advantage of transcriptomic data diminishes while models combining genomic and environmental data often provide comparable gains at lower cost [4]. For some traits, particularly those with well-characterized genetic architecture, genomic data may remain superior, as seen with yield traits in maize where genomic data outperformed transcriptomic and metabolomic layers [4].

Methodological Approaches and Experimental Protocols

Statistical Models for Prediction

Various statistical approaches have been employed for genomic and transcriptomic prediction:

- GBLUP (Genomic Best Linear Unbiased Prediction): Uses a genomic relationship matrix derived from SNP markers to capture additive genetic effects [3] [4]

- TBLUP (Transcriptomic BLUP): Applies the BLUP framework to transcript abundance data to predict phenotypes [3]

- GTBLUP: Incorporates both genomic and transcriptomic data as independent random effects [3]

- GTCBLUP/GTCBLUPi: Advanced models that account for redundant information between genomic and transcriptomic data by conditioning transcriptomic effects on genetics [3]

- RKHS (Reproducing Kernel Hilbert Spaces): A semi-parametric method using Gaussian kernel functions to capture non-linear relationships [7] [4]

- Bayesian Models (e.g., BayesA, BayesB, BayesCπ): Allow for different prior distributions of marker effects [7] [6]

- Machine Learning Approaches: Including random forests, gradient boosting, and deep learning architectures [1] [7]

Experimental Workflows

Standardized experimental protocols have emerged for comparative studies:

Table 2: Key Methodological Components in Prediction Studies

| Component | Genomic Prediction Approach | Transcriptomic Prediction Approach |

|---|---|---|

| Data Generation | SNP arrays, GBS, WGS | RNA-Seq, microarrays, Fluidigm BioMark |

| Data Processing | Quality control, imputation, MAF filtering | Normalization, quality control, transformation |

| Model Training | Training population with genotypes and phenotypes | Training population with transcriptomes and phenotypes |

| Validation | Cross-validation, independent validation sets | Cross-validation, independent validation sets |

| Assessment | Correlation between predicted and observed phenotypes | Correlation between predicted and observed phenotypes |

The typical workflow begins with careful experimental design. For transcriptomic studies, this includes standardized cultivation conditions, precise timing of tissue collection, and high-throughput RNA extraction methods [5]. In barley research, researchers cultivated all recombinant inbred lines under controlled conditions in vertically stacked square Petri dishes for seven days in reach-in growth chambers with fixed temperature, humidity, and light intensity [5]. RNA extraction typically uses TRIzol reagent with adaptations for 96-well formats to enable high-throughput processing [5].

Library preparation for RNA-Seq has been miniaturized to reduce costs, with studies successfully reducing reagent volumes to 25% of original amounts without compromising data quality [5]. For genomic studies, DNA extraction followed by genotyping using platforms such as Illumina SNP chips or genotyping-by-sequencing represents the standard approach.

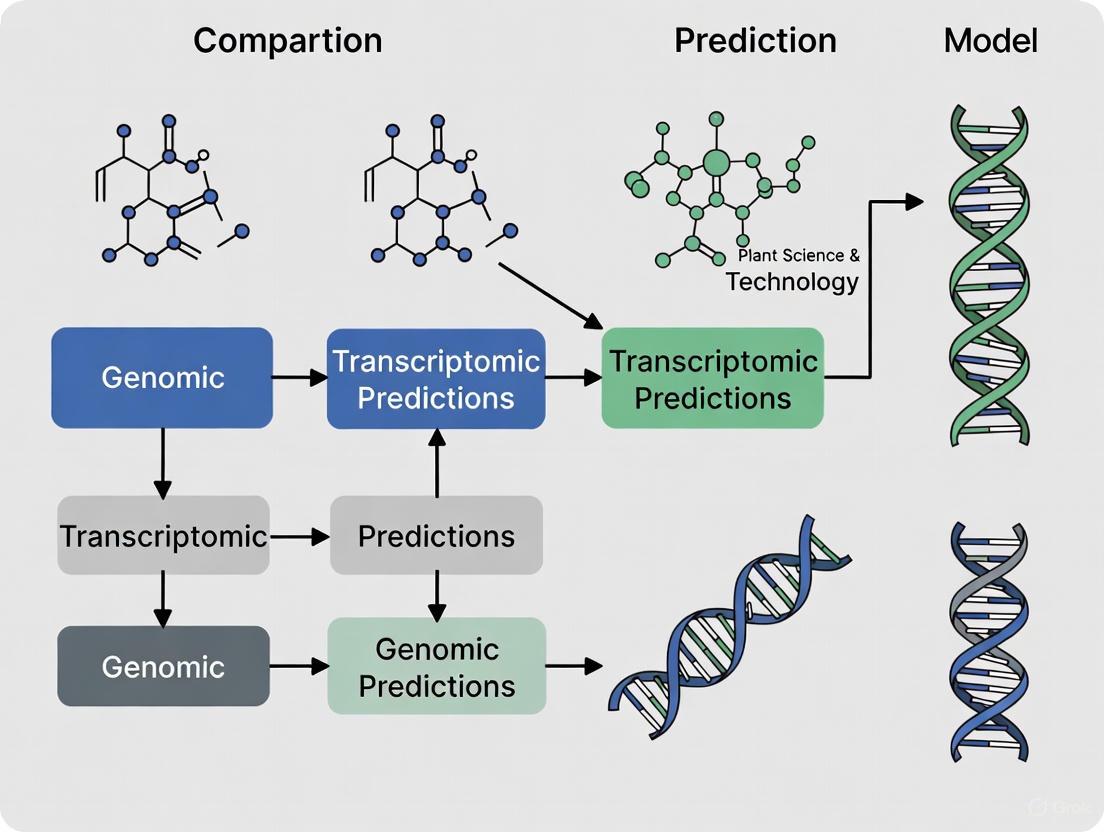

Figure 1: Experimental workflow for genomic and transcriptomic prediction studies

The Scientist's Toolkit: Essential Research Reagents and Platforms

Successful implementation of genomic and transcriptomic prediction requires specific research reagents and platforms:

Table 3: Essential Research Reagents and Platforms for Prediction Studies

| Category | Specific Tools/Reagents | Function | Example Applications |

|---|---|---|---|

| Genotyping Platforms | Illumina SNP chips, Genotyping-by-Sequencing | Genome-wide marker identification | Genetic relationship matrix construction [5] [2] |

| Transcriptomics Technologies | RNA-Seq, Fluidigm BioMark HD system | Gene expression quantification | Transcript abundance measurement [3] [5] |

| Library Preparation Kits | VAHTS Universal V6 RNA-seq Library Prep Kit | cDNA library construction | Preparation for sequencing [5] |

| RNA Extraction Reagents | TRIzol reagent | High-quality RNA isolation | Tissue RNA extraction [5] |

| Sequencing Platforms | Illumina systems | High-throughput sequencing | Genotype and expression data generation [5] |

| Analysis Software | ASReml R, JWAS, EasyGeSe | Statistical modeling and prediction | Implementation of BLUP and Bayesian models [3] [7] [6] |

The Fluidigm BioMark HD system has been particularly valuable for high-throughput transcriptomic studies, enabling efficient quantification of candidate transcripts across hundreds of individuals [3]. For RNA extraction, TRIzol reagent with adaptations for 96-well formats allows processing of large sample sizes essential for robust prediction modeling [5].

Recent advances in benchmarking tools such as EasyGeSe provide standardized datasets and evaluation procedures for comparing prediction methods across diverse species [7]. This resource encompasses data from multiple species including barley, maize, rice, and wheat, enabling more reproducible comparisons of genomic prediction methods [7].

Integration Strategies and Multi-Omics Approaches

The integration of genomic and transcriptomic data often outperforms models using either data type alone. Several integration strategies have been developed:

- Early Fusion (Data Concatenation): Combining genomic and transcriptomic data before model construction [1]

- Model-Based Integration: Advanced frameworks that capture non-additive, nonlinear, and hierarchical interactions across omics layers [1]

- Conditioned Models (GTCBLUPi): Models that explicitly account for redundancy between genomic and transcriptomic information [3]

Research comparing 24 integration strategies combining genomics, transcriptomics, and metabolomics found that model-based fusion methods consistently improved predictive accuracy over genomic-only models, particularly for complex traits [1]. In contrast, several commonly used concatenation approaches did not yield consistent benefits and sometimes underperformed [1].

The GTCBLUPi model, which addresses redundant information between genomic and transcriptomic data, has proven to be a suitable framework for integration [3]. This approach conditions transcriptomic effects on genetic effects, ensuring that the transcriptomic components captured are purely non-genetic, thereby avoiding collinearity problems [3].

Figure 2: Multi-omics integration strategies for enhanced prediction accuracy

Genomic selection using genome-wide markers has established itself as a powerful tool for predicting complex traits in plant and animal breeding. The integration of transcriptomic data provides complementary information that often enhances prediction accuracy, particularly for traits influenced by gene regulation and environmental responses. While transcriptomic data alone frequently explains more phenotypic variance than genomic data, the most effective strategies combine both data types using advanced integration methods that account for their redundant information.

The choice between genomic and transcriptomic approaches depends on multiple factors including trait architecture, environmental influence, resource availability, and breeding objectives. For commercial breeding programs, the higher costs and complexity of generating transcriptomic data may currently limit its feasibility, though combining genomic data with well-characterized environmental covariates provides a practical alternative with similar gains. As sequencing costs continue to decline and multi-omics integration methods improve, the combined use of genomic and transcriptomic information holds significant promise for accelerating genetic improvement across agricultural species.

The transition from static genetic blueprints to dynamic, observable traits represents one of the most significant challenges in modern biology. While genomic data provides a comprehensive catalog of inherited variants, it offers limited insight into how these variants dynamically orchestrate molecular processes that ultimately manifest as phenotypes. Transcriptomic data, which captures the full complement of RNA molecules in a cell, serves as a crucial functional intermediary that bridges this fundamental gap between genotype and phenotype [8]. This comparative analysis examines the relative performance of genomic and transcriptomic prediction models across multiple biological contexts, demonstrating how transcriptomics provides a more direct, functional readout of cellular states that enhances phenotypic prediction accuracy.

The limitations of single-omics approaches have become increasingly apparent in complex trait prediction. Genomics alone cannot quantify the spatiotemporal specificity of gene expression or its regulatory mechanisms [8]. Furthermore, genetic variants often exert their effects through subtle changes in gene regulation rather than through direct protein-coding changes. Transcriptomics addresses these limitations by capturing the integrated effects of genetic variation, environmental influences, and regulatory mechanisms, providing a more comprehensive understanding of the molecular networks underlying phenotypic diversity [8] [9].

Theoretical Foundations: Molecular Hierarchies from Genome to Phenome

The Central Dogma and Transcriptomic Positioning

The flow of genetic information follows a fundamental pathway from DNA to RNA to protein, with transcriptomics occupying the critical intermediate position in this biological cascade. While DNA represents the static code, the transcriptome reflects dynamically regulated processes including transcription, RNA processing, and degradation that collectively determine functional outputs [8]. This positioning enables transcriptomic data to capture both genetic influences and environmental perturbations that collectively shape phenotypic outcomes.

Transcriptomic profiling moves beyond mere sequence information to reveal how genes are quantitatively regulated across different conditions, tissues, and timepoints. This regulatory dimension provides critical functional context that static DNA sequences lack. As noted in epilepsy research, "genomics identify candidate disease-causing genes for epilepsy, but it cannot quantify their expression levels" [8]. The integration of transcriptomics elucidates the spatiotemporal specificity of gene expression and its regulatory mechanisms, providing a more complete picture of molecular networks underlying different epilepsy phenotypes.

Transcriptional Regulation of Complex Traits

The relationship between transcript abundance and phenotypic outcomes is governed by complex regulatory networks involving transcription factors, non-coding RNAs, and epigenetic modifications. Studies in mango fruit development demonstrated how transcription factors MibZIP66 and MibHLH45 activate MiPSY1 transcription by directly binding to the CACGTG motif of the MiPSY1 promoter, thereby regulating β-carotene biosynthesis and affecting fruit flesh color [10]. Such mechanistic insights are only possible through integrated analysis that includes transcriptomic data.

The transcriptome's responsiveness to both internal genetic programs and external environmental cues makes it particularly valuable for predicting dynamic traits. In quail research, transcript abundances from the ileum explained a larger portion of the phenotypic variance for efficiency-related traits than host genetics alone [3]. This demonstrates how transcriptomics captures the functional integration of multiple influences that collectively determine phenotypic outcomes.

Experimental Comparison: Genomic vs. Transcriptomic Prediction Models

Direct Performance Comparison in Avian Models

A comprehensive study in Japanese quail (Coturnix japonica) provides compelling direct evidence comparing genomic and transcriptomic prediction models for efficiency-related traits [3]. Researchers utilized various statistical methods including GBLUP (genomic best linear unbiased prediction), TBLUP (transcriptomic BLUP), and integrated models to predict phenotypes including phosphorus utilization (PU), body weight gain (BWG), feed intake (FI), feed conversion ratio (FCR), tibia ash amount (TA), and calcium utilization (CaU).

Table 1: Prediction Accuracy Comparison of Genomic and Transcriptomic Models for Quail Efficiency Traits

| Trait | GBLUP (Genomic Only) | TBLUP (Transcriptomic Only) | GTBLUP (Combined) |

|---|---|---|---|

| Phosphorus Utilization (PU) | Lower accuracy | Higher accuracy | Highest accuracy |

| Body Weight Gain (BWG) | Lower accuracy | Higher accuracy | Highest accuracy |

| Feed Intake (FI) | Lower accuracy | Higher accuracy | Highest accuracy |

| Feed Conversion Ratio (FCR) | Lower accuracy | Higher accuracy | Highest accuracy |

| Tibia Ash (TA) | Lower accuracy | Higher accuracy | Highest accuracy |

| Calcium Utilization (CaU) | Lower accuracy | Higher accuracy | Highest accuracy |

The study demonstrated that "transcript abundances from the ileum explain a larger portion of the phenotypic variance of the traits than host genetics" across all measured efficiency traits [3]. Importantly, models incorporating both genetic and transcriptomic information (GTBLUP) consistently outperformed models using either data type alone, confirming that transcriptomic information complements genetic data effectively rather than simply replicating it.

Methodological Framework for Model Comparison

The experimental protocol for direct model comparison followed rigorous statistical standards [3]:

Population Design: 480 F2 cross Japanese quail selected from an initial total of 920 animals, raised under controlled conditions with standardized diet during the strong growing phase between days 10-15 of life.

Phenotyping: Comprehensive efficiency measurements including PU based on total P intake and P excretion, BWG between days 10-15, FI during the 5-day period, FCR as FI divided by BWG, TA in mg, and CaU based on total Ca intake and Ca excretion.

Genotyping: 4k SNPs after filtering using a 6k Illumina iSelect chip with established genetic linkage map.

Transcriptomic Profiling: Ileal miRNA and mRNA sequencing followed by candidate assessment with 96.96 dynamic arrays on a Fluidigm BioMark HD system.

Statistical Analysis: Box-Cox transformation of phenotypic data with trait-specific lambda parameters followed by BLUP model comparisons including:

- GBLUP: y = Xb + Zgg + e

- TBLUP: y = Xb + Ztt + e

- GTBLUP: y = Xb + Zgg + Ztt + e

- GTCBLUP: Integrated model addressing redundancy between genomic and transcriptomic information

The mathematical framework for the integrated GTCBLUP model was specifically derived to handle the overlapping nature of genomic and transcriptomic data layers, preventing collinearity problems that would arise from treating them as independent random effects [3].

Diagram 1: Transcriptomic data bridges DNA and phenotype, capturing dynamic functional information that static genetic data misses. The bold pathway highlights transcriptomics' direct predictive power for phenotypic outcomes.

Case Studies Across Biological Systems

Neurological Disorders: Epilepsy Research Applications

In epilepsy research, multi-omics approaches have revealed the complex molecular dysregulation networks underlying different epilepsy phenotypes [8]. The transition from traditional hypothesis-driven research to data-driven architectures has been catalyzed by multi-omics methods, with transcriptomics playing a crucial role in understanding the functional consequences of genetic variants associated with epilepsy susceptibility.

Despite the availability of over 20 anti-seizure medications, about one-third of epilepsy patients develop drug-resistant epilepsy [8]. Transcriptomic profiling has helped identify molecular subtypes that may explain this treatment resistance, moving beyond the limitations of purely genetic classification. The integrated analysis of transcriptomic data with genomic findings has provided insights into the spatiotemporal specificity of gene expression and its regulatory mechanisms in neurological tissues.

Agricultural Genomics: Crop Improvement Applications

In mango fruit research, chromosome-scale genome assembly combined with comparative transcriptomic analysis identified transcriptional regulators of β-carotene biosynthesis [10]. Researchers compared β-carotene content in two different cultivars ("Irwin" and "Baixiangya") across growth periods, finding that variation in β-carotene content mainly affected fruit flesh color.

Transcriptome analysis identified MiPSY1 as a key gene regulating β-carotene biosynthesis, with subsequent functional validation confirming that transcription factors MibZIP66 and MibHLH44 activate MiPSY1 transcription by directly binding to the CACGTG motif of the MiPSY1 promoter [10]. This mechanistic understanding of fruit quality traits demonstrates how transcriptomics bridges the gap between genomic sequences and commercially relevant phenotypic traits.

Parasitology: Helminth Genome Biology

In Haemonchus contortus research, genomic and transcriptomic variation analysis defined the chromosome-scale assembly of this model gastrointestinal worm [11]. The integration of transcriptomic data allowed researchers to define coordinated transcriptional regulation throughout the parasite's life cycle and refine understanding of cis- and trans-splicing.

The remarkable pattern of chromosome content conservation with Caenorhabditis elegans, despite almost no conservation of gene order, highlights the importance of transcriptomic data for understanding functional genomics in parasitic species [11]. This comparative approach provides insights into evolutionarily conserved operons and regulatory mechanisms that would be inaccessible through genomic analysis alone.

Technical Considerations in Transcriptomic Experimentation

Methodological Best Practices and Pitfalls

Transcriptomic experimentation requires careful consideration of multiple technical factors to ensure data quality and biological relevance [12]:

Experimental Design: Statistical countermeasures must be implemented throughout experimentation, including proper randomization, sufficient replicates, and appropriate statistical methods such as false discovery rate correction. Inadequate implementation due to budget constraints or lack of statistical expertise frequently undermines experimental outcomes.

Sample Pooling Decisions: While pooling samples intuitively seems to average out differences between individuals, it actually eliminates the variation needed for statistical power and inference. Pooling substantially different cells creates artificial in-between cell types that can hamper biological interpretation.

Perturbation Severity: Severe perturbations often trigger generic stress responses that obscure specific reactions to the perturbation of interest. Range-finding experiments help determine optimal experimental settings that elicit specific responses without overwhelming generic stress pathways.

Technical vs Biological Replication: Biological variation heavily outweighs technological variation in transcriptomics, making biological replicates generally more valuable than technical replicates despite lingering preferences from early microarray technology.

Analytical Frameworks and Visualization Approaches

Effective visualization of transcriptomic data is essential for exploring large datasets and uncovering hidden patterns [13]. Different visualization approaches serve distinct analytical purposes:

Table 2: Transcriptomic Data Visualization Methods and Applications

| Visualization Method | Data Type | Primary Application | Strengths |

|---|---|---|---|

| Volcano Plot | Differential expression | Significance vs magnitude of change | Identifies statistically significant large-effect changes |

| Heatmap | Gene expression matrix | Multi-sample expression patterns | Visualizes expression patterns across many samples/genes |

| Violin Plot | Single-cell expression | Distribution of expression values | Shows full distribution rather than summary statistics |

| Network Visualization | Gene interactions | Regulatory relationships | Maps complex interaction networks between genes |

| Pathway Diagrams | Enrichment results | Biological process visualization | Contextualizes results within known biological pathways |

Space-filling layouts such as Hilbert curves preserve the sequential nature of genomic features while allowing visual integration of multiple datasets [13]. Circular layouts like Circos plots efficiently display sequences and interactions in a space-saving manner, enabling simultaneous visualization of multiple data types including mutations, copy number changes, and translocations.

Research Reagent Solutions for Transcriptomic Studies

Table 3: Essential Research Reagents for Transcriptomic Experiments

| Reagent/Category | Specific Examples | Function | Application Context |

|---|---|---|---|

| Sequencing Platforms | Illumina NovaSeq X, Oxford Nanopore | High-throughput sequencing | Whole transcriptome sequencing, targeted RNA-seq |

| Single-cell RNA-seq | 10X Genomics, Fluidigm | Cellular resolution transcriptomics | Tumor heterogeneity, developmental biology |

| Spatial Transcriptomics | 10X Genomics Visium | Tissue context preservation | Brain mapping, tumor microenvironment |

| qPCR Validation | Fluidigm BioMark HD | Targeted expression validation | Candidate gene verification, biomarker confirmation |

| Library Preparation | Illumina, Takara Bio | RNA library construction | Strand-specific RNA-seq, small RNA sequencing |

The research utilized specific reagent systems including 96.96 dynamic arrays on a Fluidigm BioMark HD system for assessing miRNA and mRNA candidates [3]. For single-cell and spatial transcriptomics, the 10X Genomics Visium platform has been commercialized and widely adopted for preserving spatial context in transcriptomic measurements [8].

Integrated Multi-Omics Prediction Frameworks

Statistical Models for Data Integration

The redundancy between different molecular data layers presents statistical challenges for integrated models. The GTCBLUP model addresses this by conditioning transcriptomic effects on genetic effects to remove shared variation [3]. This approach models genotype data and omics data conditioned on the genotypes simultaneously in a one-step approach, ensuring that the modeled omics effects are purely non-genetic.

Alternative approaches include the two-step procedure proposed by Christensen et al. that first estimates the total effect of omics data on phenotypes and then explicitly models the genetic portion of these omics effects in a second step [3]. The optimal approach depends on the specific research question and the nature of the genetic control over transcriptomic features.

Functional Genomics in Psychiatric Research

Novel approaches in psychiatric research have begun incorporating functional molecular phenotypes that are closer to genetic variation and less penalized by multiple testing burdens [9]. Moving from genotype-disease to genotype–gene regulation frameworks, these approaches incorporate prior knowledge regarding biological processes involved in disease and aggregate estimates for the association of genotypes and phenotypes using multi-omics data modalities.

This shift from traditional polygenic risk scores to functionally informed risk assessment demonstrates how transcriptomic data provides biological context for genetic signals, helping generate biologically driven hypotheses that can ultimately serve as potential biomarkers of disease susceptibility [9].

Diagram 2: Multi-omics integration framework with transcriptomic data as a central component. Statistical models like GTCBLUP handle redundancy between data layers to enhance phenotypic prediction accuracy.

The comparative analysis of genomic and transcriptomic prediction models demonstrates the superior performance of transcriptomic data for predicting complex phenotypes across multiple biological systems. Transcriptomics serves as a functional intermediary that captures the dynamic integration of genetic predispositions, environmental influences, and regulatory mechanisms that collectively determine phenotypic outcomes.

While transcriptomic data alone explains a larger portion of phenotypic variance than genetic data alone, the most accurate predictions come from integrated models that leverage both data types [3]. The complementary nature of genomic and transcriptomic information reflects the biological reality that DNA sequence provides the template, while RNA expression reflects the functional implementation of that template in specific contexts.

Future directions in transcriptomic prediction will likely include greater incorporation of single-cell and spatial resolution data, longitudinal profiling to capture dynamic processes, and integration with emerging omics layers including proteomics and metabolomics [8] [14]. As analytical methods continue to evolve, transcriptomic data will remain a cornerstone of predictive biology, providing the critical functional link between genetic inheritance and observable traits across basic research, clinical applications, and agricultural improvement.

In the field of modern genetics, two powerful paradigms offer distinct yet complementary insights into the complex journey from genotype to phenotype. The static genetic blueprint, explored through genomics, provides a comprehensive map of an organism's entire DNA sequence, including its genes and regulatory regions. This blueprint is largely fixed throughout an organism's lifetime. In contrast, dynamic expression profiles, studied via transcriptomics, capture the ever-changing set of RNA transcripts present in a cell at a specific moment, reflecting real-time gene activity in response to developmental cues, environmental stimuli, and disease states [15].

The primary distinction lies in their fundamental nature: genomics offers a static inventory of potential, while transcriptomics reveals the dynamic execution of that potential [15]. For researchers and drug development professionals, understanding the comparative strengths of these approaches is crucial for selecting the appropriate methodology for specific applications, from predicting complex traits in breeding programs to unraveling disease mechanisms for therapeutic discovery. This guide objectively compares their performance, supported by experimental data and detailed methodologies.

Fundamental Characteristics and Comparative Strengths

The table below summarizes the core characteristics that differentiate genomics and transcriptomics.

Table 1: Fundamental Characteristics of Genetic Blueprints and Expression Profiles

| Feature | Static Genetic Blueprint (Genomics) | Dynamic Expression Profiles (Transcriptomics) |

|---|---|---|

| Definition | Study of the complete set of DNA (genome) in an organism [15] | Study of the complete set of RNA transcripts (transcriptome) in a cell at a given time [15] |

| Primary Focus | Genetic structure, sequence, variation, and coding potential [15] | Gene expression levels, activity, and regulation [15] |

| Temporal Nature | Largely static and constant throughout life [15] | Highly dynamic, changing rapidly in response to conditions [15] |

| Key Data Type | DNA sequence, single nucleotide polymorphisms (SNPs), structural variants | RNA sequence counts (mRNA, non-coding RNA), expression levels |

| Information Provided | Genetic blueprint and predisposition | Functional, real-time view of cellular state and response |

Performance Comparison in Predictive Modeling

Genomic Selection (GS), which uses genome-wide markers to predict breeding values, has revolutionized plant and animal breeding [16] [17]. However, its accuracy can be limited for complex traits influenced by regulation and environment. Integrating transcriptomic data aims to capture this missing information, bridging the gap between DNA and phenotype [16] [17].

Recent studies have directly compared the predictive power of genomic and transcriptomic data. The following table summarizes key findings from experiments in animal and plant models.

Table 2: Experimental Comparison of Prediction Accuracy for Complex Traits

| Study Model | Trait Category | Prediction Model | Key Finding on Predictive Power |

|---|---|---|---|

| Japanese Quail [16] | Efficiency traits (e.g., Phosphorus Utilization, Feed Conversion Ratio) | GBLUP (Genomic) | Genomic data explained a portion of the phenotypic variance. |

| TBLUP (Transcriptomic) | Transcript abundances explained a larger portion of the phenotypic variance than host genetics. | ||

| GTCBLUPi (Combined) | Models combining both data types outperformed those using only one type of information. | ||

| Barley RIL Population [5] | Agronomic traits (8 traits across up to 7 environments) | SNP Array (Genomic) | Served as a benchmark for prediction ability. |

| RNA-Seq SNP Data (Transcriptomic) | Achieved prediction ability comparable to or better than the traditional SNP array. | ||

| Consensus SNP (RNA-Seq + WGS) | Performed best, with significant improvements for 5 out of 8 traits and in inter-population predictions. |

Interpretation of Experimental Data

The data consistently demonstrates that transcriptomic information accounts for a significant and often greater portion of phenotypic variance compared to static genomic markers alone [16] [5]. This is because gene expression is shaped by both genetic makeup and environmental factors, providing a more comprehensive view of the biological processes leading to the final phenotype [16].

Furthermore, the most accurate predictions are consistently achieved by models that integrate both genomic and transcriptomic data [16] [17]. This synergy occurs because the two data layers capture complementary information: the static blueprint provides the underlying genetic potential, while the dynamic profile reveals how that potential is being executed in a specific context.

Detailed Experimental Protocols

To ensure reproducibility and a deep understanding of the compared data, here are the detailed methodologies from the key studies cited.

Protocol: Genomic and Transcriptomic Prediction in Japanese Quail

This protocol is adapted from the study that developed the GTCBLUPi model [16].

- Experimental Population and Phenotyping: Create an F2 cross population (e.g., 480 Japanese quails). Raise animals under controlled conditions. Record efficiency-related phenotypes such as Phosphorus Utilization (PU), Body Weight Gain (BWG), and Feed Conversion Ratio (FCR) during a strong growing phase [16].

- Genotyping: Collect blood samples. Genotype all animals using a platform like a 6k Illumina iSelect chip. Filter SNPs for quality, resulting in a final set of several thousand markers [16].

- Transcriptome Sampling: On a predetermined day (e.g., day 15), sacrifice animals and collect tissue samples of interest (e.g., ileum mucosa). Immediately preserve samples for RNA extraction [16].

- RNA Sequencing and Candidate Selection: Extract total RNA. Perform miRNA and mRNA sequencing. Identify differentially expressed transcripts between animals with high and low phenotypes to create a set of candidate miRNAs and mRNAs [16].

- High-Throughput Transcript Quantification: Quantify the selected candidate transcripts across all individuals in the study population using a high-throughput system like a Fluidigm BioMark HD with dynamic arrays [16].

- Data Transformation: Apply a Box-Cox transformation to phenotypic data to normalize distributions and stabilize variance [16].

- Statistical Modeling and Prediction: Construct and compare multiple prediction models using mixed linear models in a software environment like ASReml-R:

- GBLUP: Uses the genomic relationship matrix (G) derived from SNP data.

- TBLUP: Uses a transcriptomic relationship matrix derived from miRNA or mRNA abundance data.

- GTCBLUPi: An integrated model that uses both genomic and transcriptomic matrices, explicitly accounting for the redundancy between them to isolate the non-genetic transcriptomic effects [16].

- Model Validation: Compare models based on the proportion of phenotypic variance explained and the accuracy of predicting phenotypes in validation sets [16].

Protocol: Transcriptome-Based Prediction in Barley

This protocol is adapted from the study on a barley multi-parent RIL population [5].

- Plant Cultivation: Cultivate Recombinant Inbred Lines (RILs) in a randomized, controlled environment. Use standardized conditions for light, temperature, and humidity. Harvest whole seedlings at a specific developmental stage (e.g., 7 days) as a bulk sample for each line [5].

- High-Throughput RNA Extraction: Freeze and grind plant material. Perform total RNA extraction using a miniaturized, high-throughput protocol (e.g., a 96-well format with reduced reagent volumes like a TRIzol-based method) [5].

- Library Preparation and Sequencing: Construct mRNA sequencing libraries using a poly-A tail capture method and a kit like the VAHTS Universal V6 RNA-seq Library Prep Kit. Miniaturize the library preparation process to reduce costs. Sequence the libraries on an Illumina platform [5].

- Data Processing and Genotype Calling:

- Gene Expression Matrix: Map RNA-Seq reads to a reference genome and quantify read counts per gene to create a gene expression matrix.

- RNA-Seq SNP Dataset: Call sequence variants (SNPs) from the RNA-Seq data itself.

- Consensus SNP Dataset: Integrate the RNA-Seq SNPs with a high-quality Whole-Genome Sequencing (WGS) dataset from the parents to create a refined, consensus SNP set [5].

- Phenotypic Data Collection: Measure agronomic traits (e.g., yield components, disease resistance) across multiple field environments to obtain robust phenotypic values [5].

- Genomic Prediction Modeling: Use the different data types (Gene Expression, RNA-Seq SNPs, Consensus SNPs) to build genomic prediction models. A standard benchmark is comparison against predictions from a traditional SNP array [5].

- Model Evaluation: Use cross-validation (e.g., fivefold) to evaluate prediction ability (correlation between predicted and observed phenotypes). Assess both within-population and inter-population prediction scenarios [5].

Visualizing the Workflows

The following diagram illustrates the core workflows for generating and using static genetic blueprints and dynamic expression profiles in predictive modeling, highlighting their convergence in multi-omics integration.

The Scientist's Toolkit: Essential Research Reagents and Materials

The table below lists key reagents and materials essential for conducting experiments in genomics and transcriptomics, as derived from the cited protocols.

Table 3: Essential Research Reagents and Solutions for Genomic and Transcriptomic Studies

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| Illumina iSelect Chip | A genotyping array for high-throughput genome-wide SNP profiling. [16] | Genotyping Japanese quail for genomic prediction models (GBLUP). [16] |

| TRIzol Reagent | A ready-to-use monophasic solution for the isolation of high-quality total RNA from cells and tissues. [5] | High-throughput RNA extraction from barley seedling tissue. [5] |

| Fluidigm BioMark HD System | A high-throughput microfluidic platform for targeted gene expression analysis using nano-scale quantitative PCR. [16] | Quantifying candidate mRNA and miRNA transcripts across hundreds of quail samples. [16] |

| VAHTS Universal V6 RNA-seq Library Prep Kit | A kit for preparing sequencing-ready mRNA libraries from total RNA for Illumina platforms. [5] | Constructing miniaturized, cost-effective RNA-Seq libraries for barley RILs. [5] |

| Poly-A Tail Magnetic Beads | Beads that bind the poly-adenylated tail of mRNA to selectively isolate mRNA from total RNA. [5] | mRNA selection during library preparation for transcriptome sequencing. [5] |

Both static genetic blueprints and dynamic expression profiles are powerful tools in modern biological research and product development. The static genetic blueprint is foundational for understanding inherited variation and predisposition. However, as the experimental data shows, dynamic expression profiles often provide superior predictive power for complex traits because they capture the functional, real-time activity of genes as influenced by both genetics and environment.

The most robust and accurate predictions are achieved not by choosing one over the other, but by strategically integrating both data types using sophisticated models like GTCBLUPi [16] or consensus SNP approaches [5]. This multi-omics paradigm leverages the complementary strengths of both worlds—the constant potential of the genome and the context-specific execution of the transcriptome—offering researchers and drug developers a more complete framework for accelerating genetic gain and unraveling complex disease mechanisms.

In the evolving landscape of predictive biology, a key performance comparison between genomic and transcriptomic models reveals a fundamental distinction: while genomic prediction relies on static DNA sequences, transcriptomics captures the dynamic interplay of environmental and regulatory influences that directly shape phenotypic outcomes. Transcriptomics, the study of the complete set of RNA transcripts in a cell or tissue, provides a crucial functional readout of cellular activity by quantifying gene expression levels. This molecular layer reflects both the genetic blueprint and the organism's real-time response to its environment, offering a more comprehensive understanding of phenotypic expression. For researchers and drug development professionals, this translational capability positions transcriptomic data as a powerful predictor for complex traits, often outperforming traditional genomic approaches by accounting for the regulatory mechanisms and biological processes that intervene between genes and final phenotypes [3] [16].

The fundamental advantage of transcriptomics lies in its ability to measure active biological processes rather than just genetic potential. Where genomic selection uses genome-wide single nucleotide polymorphisms (SNPs) to predict breeding values for phenotypic traits, transcriptomic data provides insights into gene expression patterns that are shaped by both genetic and environmental factors [3]. This captures a more direct reflection of the biological state, including responses to environmental stressors, disease conditions, or developmental stages that pure DNA sequence analysis cannot detect. Evidence across multiple species—from Japanese quail to barley and poplar—consistently demonstrates that models incorporating transcriptomic information achieve superior prediction accuracy for efficiency, performance, and complex disease-related traits compared to those relying solely on genetic markers [3] [5] [18].

How Transcriptomics Captures Environmental Cues

Transcriptomic profiling functions as a highly sensitive recorder of environmental influence by detecting expressional changes in response to external conditions. When an organism encounters environmental stressors, these stimuli trigger signal transduction pathways that ultimately activate specific transcription factors, leading to measurable changes in mRNA expression levels. This molecular responsiveness enables transcriptomics to reveal how environmental factors shape biological outcomes.

A compelling example comes from research on Davidia involucrata Baill., a rare and endangered plant species sensitive to environmental stressors. Under high-light stress conditions, transcriptome analysis revealed that the plant significantly activated pathways related to reactive oxygen species and heat stress responses. Notably, the specific response pathways differed depending on soil moisture conditions: under moist soil conditions, the plant primarily utilized reactive oxygen species-related pathways, while under dry soil conditions, it predominantly relied on heat stress response pathways [19]. This demonstrates how transcriptomics can capture not just the response to a single environmental factor, but the nuanced interplay between multiple environmental variables.

Further evidence comes from studies showing that under non-humidified air conditions, Davidia involucrata Baill. responded to high-light stress by activating the MAPK signaling pathway and processes related to indole-containing compound biosynthesis [19]. These molecular responses would remain invisible to purely genomic analysis but are readily detectable through transcriptomic profiling. The study also found that when high-light stress and drought stress occurred simultaneously, the plant prioritized mitigating damage from high-light stress, a strategic response clearly reflected in its transcriptomic signature [19].

Table 1: Environmental Factors and Their Transcriptomic Signatures

| Environmental Factor | Transcriptomic Response | Biological Consequence |

|---|---|---|

| High-light stress | Activation of ROS and heat stress response pathways | Protection from photodamage |

| Dry soil conditions | Shift to heat stress response pathways | Enhanced stress tolerance |

| Non-humidified air | Activation of MAPK signaling pathway | Cellular stress response |

| Combined light/drought stress | Prioritization of light-stress response genes | Strategic resource allocation |

Transcriptomics in Gene Regulation Networks

Beyond environmental responsiveness, transcriptomics provides a window into the complex regulatory networks that control gene expression, including transcription factors, non-coding RNAs, and epigenetic regulators. These regulatory mechanisms fine-tune phenotypic expression without altering the underlying DNA sequence, explaining why models that incorporate both genomic and transcriptomic data often achieve superior predictive performance.

Research on genomic prediction in Japanese quail demonstrated that transcript abundances from intestinal tissue explained a larger portion of the phenotypic variance for efficiency-related traits than host genetics alone [3] [16]. This finding indicates that transcriptomic data captures crucial regulatory information that mediates the relationship between genotype and phenotype. The study employed specialized statistical models (GTCBLUP and GTCBLUPi) that specifically addressed the redundant information between genomic and transcriptomic data, allowing for more accurate estimation of their respective contributions to phenotypic variation [3].

The regulatory capacity captured by transcriptomics extends to non-coding RNA species, including microRNAs (miRNAs), which play important roles in post-transcriptional gene regulation. Studies in Japanese quail identified specific miRNAs and mRNAs that were differentially expressed in relation to phosphorus utilization efficiency [3]. Similarly, research on sex differentiation in gastropods revealed critical regulatory genes, including DMRT1, FOXL2, and various SOX genes, that showed sexually dimorphic expression patterns during gonadal development [20]. These regulatory factors would not be fully captured by genomic analysis alone but are readily detected through transcriptomic profiling.

Table 2: Key Regulatory Genes Identified Through Transcriptomics

| Regulatory Gene | Function in Gene Regulation | Biological Role |

|---|---|---|

| DMRT1 | Key transcription factor in sex determination | Testis development and differentiation |

| FOXL2 | Forkhead transcription factor | Ovarian function and maintenance |

| SOX genes | HMG-box transcription factors | Multiple roles in sex determination |

| β-catenin | Signaling molecule in Wnt pathway | Ovarian differentiation and oogenesis |

| VASA | RNA helicase | Germ cell development and differentiation |

Comparative Performance: Genomic vs. Transcriptomic Prediction

Direct comparisons between genomic and transcriptomic prediction models provide compelling evidence for the superior performance of transcriptomic approaches across multiple species and trait types. These comparative analyses reveal that transcriptomic data often explains more phenotypic variance than genomic data alone, and integrated models that combine both data types typically achieve the highest prediction accuracy.

A comprehensive study on Japanese quail evaluated different prediction models for efficiency-related traits including phosphorus utilization, body weight gain, and feed conversion ratio. The research demonstrated that models incorporating both genetic and transcriptomic information (GTBLUP and GTCBLUPi) consistently outperformed those using only one type of information [3]. The derived GTCBLUPi model, which specifically addresses redundancy between genomic and transcriptomic information, proved to be a suitable framework for integration, resulting in higher trait prediction accuracies [16].

Similarly, research in barley demonstrated that RNA sequencing (RNA-Seq) data for recombinant inbred lines (RILs) achieved genomic prediction performance comparable to or better than traditional SNP array datasets [5]. This study utilized cost-efficient RNA-Seq data generation through small-footprint plant cultivation and miniaturized library preparation. Notably, the consensus SNP dataset derived from combining RNA-Seq with parental whole-genome sequencing data performed best, with five out of eight traits showing significantly better prediction compared to a 50K SNP array benchmark [5].

In poplar trees, a study using 241 genotypes with xylem and cambium RNA sequencing compared prediction models based on genomic data (G), transcriptomic data (T), and integrated data (G+T). The multi-omic model displayed performance advantages for specific functional types of traits, particularly those related to growth, pathogen tolerance, and phenology [18]. This research provided important insights into the factors affecting prediction accuracy during integration, highlighting how beneficial integration occurs when redundancy of predictors is decreased, allowing complementary predictors to contribute to model performance [18].

Table 3: Performance Comparison of Prediction Models Across Species

| Species | Genomic Model Accuracy | Transcriptomic Model Accuracy | Integrated Model Accuracy |

|---|---|---|---|

| Japanese quail (Efficiency traits) | Moderate | Higher than genomic | Highest |

| Barley (Agronomic traits) | 50K SNP array benchmark | Comparable or better | Best with consensus SNPs |

| Poplar (Growth traits) | Variable by trait | Variable by trait | Superior for specific trait types |

Experimental Approaches in Transcriptomics

Transcriptomics Technologies and Workflows

Modern transcriptomics relies primarily on two complementary technologies: microarrays and RNA sequencing (RNA-Seq). Microarrays quantify a predefined set of transcripts through hybridization to complementary probes, while RNA-Seq uses high-throughput sequencing to capture sequences across the entire transcriptome without prior knowledge of gene sequences [21]. The comprehensive nature of RNA-Seq has made it the preferred method for most transcriptomic studies, as it can detect novel transcripts, alternative splicing events, and sequence variants in addition to quantifying gene expression levels [5].

A typical RNA-Seq workflow begins with RNA extraction from tissues or cells of interest, followed by enrichment for messenger RNA using poly-A affinity methods or ribosomal RNA depletion [21]. The isolated RNA is then converted to cDNA through reverse transcription, and sequencing libraries are prepared with platform-specific adapters. After high-throughput sequencing, the resulting reads are processed through a bioinformatics pipeline that includes quality control, alignment to a reference genome or transcriptome, and quantification of transcript abundances [22].

Recent methodological advances have focused on increasing throughput and reducing costs. For example, studies in barley have implemented miniaturized library preparation protocols that reduce reagent volumes to 25% of original amounts while maintaining data quality [5]. Such innovations make transcriptomic profiling feasible for larger sample sizes required in genomic prediction applications.

Key Experimental Considerations

Robust transcriptomics experimentation requires careful planning at each step to ensure biologically meaningful results:

Experimental Design: Proper statistical design is crucial, including sufficient biological replicates, randomization, and appropriate controls. Pooling samples should be a conscious choice as it can create artificial in-between cell types and hamper biological interpretation [12].

Sample Quality: RNA integrity significantly impacts downstream results. Snap-freezing of tissues prior to RNA isolation is standard practice, and care must be taken to minimize RNase activity during extraction [21]. For gene expression studies, mRNA enrichment from degraded samples will result in depletion of 5' mRNA ends and uneven transcript coverage.

Technology Selection: The choice between 3' mRNA-Seq and whole transcriptome methods depends on research goals. 3' mRNA-Seq is cost-effective for gene expression profiling but cannot detect alternative splicing, while whole transcriptome methods provide comprehensive coverage but at higher cost and complexity [22].

Pilot Experiments: Before large-scale studies, conducting pilot experiments with representative samples helps validate chosen parameters and allows for workflow optimization [22].

Diagram 1: RNA-Seq Experimental Workflow

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful transcriptomics research requires specialized reagents and platforms tailored to specific experimental goals. The selection of appropriate tools impacts data quality, reproducibility, and the biological insights that can be derived.

Table 4: Essential Research Reagents and Solutions for Transcriptomics

| Reagent/Solution | Function | Application Notes |

|---|---|---|

| TRIzol Reagent | RNA isolation from cells and tissues | Effective for simultaneous isolation of RNA, DNA, and proteins; adapted for 96-well formats [5] |

| Poly-A Selection Beads | mRNA enrichment from total RNA | Captures polyadenylated transcripts; not suitable for non-polyA RNA or degraded samples [22] |

| Ribosomal RNA Depletion Probes | Removal of abundant rRNA | Alternative to poly-A selection; preserves non-coding RNAs and degraded samples [22] |

| DNase Treatment | DNA removal from RNA preparations | Prevents genomic DNA contamination in downstream applications [21] |

| Reverse Transcriptase | cDNA synthesis from RNA templates | Creates stable cDNA for library preparation [21] |

| VAHTS Universal V6 RNA-seq Library Prep Kit | Library preparation for Illumina | Compatible with miniaturization to 25% reagent volumes for cost savings [5] |

| Fluidigm BioMark HD System | High-throughput transcript quantification | Enables targeted analysis of candidate genes across many samples [3] |

| Illumina Stranded mRNA Prep | Library preparation for expression analysis | Streamlined solution for comprehensive transcriptome analysis [23] |

| Illumina Total RNA Prep with Ribo-Zero Plus | Analysis of coding and noncoding RNA | Provides exceptional performance for multiple RNA forms [23] |

The integration of transcriptomic data into predictive models represents a significant advancement beyond traditional genomic approaches. By capturing both environmental influences and regulatory mechanisms, transcriptomics provides a dynamic view of biological systems that more accurately reflects phenotypic outcomes. Evidence across multiple species consistently demonstrates that transcriptomic data often explains a larger portion of phenotypic variance than genetic markers alone, and integrated models that combine both data types achieve the highest prediction accuracy for complex traits [3] [5] [18].

For researchers and drug development professionals, transcriptomic profiling offers tangible benefits for understanding disease mechanisms, identifying therapeutic targets, and predicting treatment responses. The ability to detect expressional changes in response to environmental stimuli, developmental stages, or pathological conditions provides insights that remain inaccessible to purely genomic approaches. As transcriptomic technologies continue to evolve with decreasing costs and improved throughput, their integration into standard research and development pipelines promises to enhance our understanding of complex biological systems and improve predictive modeling across diverse applications.

The future of transcriptomics will likely see increased integration with other omics technologies, refined single-cell approaches, and more sophisticated computational methods for data analysis. These advances will further solidify the position of transcriptomic profiling as an essential tool for capturing the complex interplay between genes, environment, and regulatory networks that ultimately determines phenotypic outcomes.

The Heritability of Gene Transcripts and Its Implications for Model Accuracy

The field of genetic prediction has been revolutionized by genomic selection, which uses genome-wide markers to predict breeding values and accelerate genetic gain [3]. However, attention is now turning to other biological data layers, particularly transcriptomics, which captures dynamic gene expression patterns shaped by both genetics and environment [3] [17]. This guide provides an objective comparison of genomic and transcriptomic prediction models, examining their respective capabilities, optimal applications, and performance across diverse biological contexts.

Understanding the heritability of gene transcripts—the proportion of expression variation attributable to genetic factors—is fundamental to appreciating why transcriptomic data can enhance prediction models. While genomic data provide a static blueprint of an organism's DNA sequence, transcriptomic data offer a dynamic snapshot of active biological processes, capturing regulatory mechanisms and environmental responses that ultimately shape phenotypic outcomes [17]. This biological distinction has profound implications for prediction accuracy across different trait types and species.

Quantitative Performance Comparison: Genomics vs. Transcriptomics

Prediction Accuracy Across Models and Species

Table 1: Comparative performance of genomic and transcriptomic prediction models across multiple studies

| Species | Trait Category | GBLUP Accuracy | TBLUP Accuracy | Combined Model Accuracy | Key Findings | Citation |

|---|---|---|---|---|---|---|

| Japanese quail | Efficiency traits (PU, BWG, FCR) | 0.21-0.61 | 0.32-0.69 | 0.41-0.74 (GTBLUP) | Transcripts explained larger variance portion than genetics | [3] [16] |

| Barley | Agronomic traits | 0.73-0.78 (SNP array) | 0.73-0.78 (RNA-Seq) | 0.73-0.78 (consensus SNP) | RNA-Seq matched/exceeded SNP array performance | [5] |

| Maize & Rice | Complex agronomic traits | Varies by dataset | Varies by dataset | +5-15% with multi-omics | Model-based fusion outperformed simple concatenation | [17] |

Variance Components Explained by Different Data Types

Table 2: Proportion of phenotypic variance explained by genomic and transcriptomic data

| Variance Component | Genomic Data (SNPs) Only | Transcriptomic Data Only | Combined Models | Notes |

|---|---|---|---|---|

| Additive Genetic | 20-40% (varies by trait) | 15-30% (heritable transcripts) | 25-45% | Portion of transcriptome has high heritability |

| Transcriptomic | Not captured | 40-65% | 35-55% (conditioned) | Captures regulatory and environmental influences |

| Residual | 60-80% | 35-60% | 20-40% | Combined models reduce unexplained variance |

| Key Insight | Captures stable inheritance | Captures functional activity | Maximizes explained variance | Complementary information |

The data consistently demonstrate that transcript abundances often explain a larger portion of phenotypic variance than host genetics alone. In Japanese quail studies, models incorporating both genetic and transcriptomic information (GTBLUP) consistently outperformed single-data models, with transcriptomic data particularly valuable for efficiency-related traits like phosphorus utilization and feed conversion ratio [3] [16]. Similarly, in barley, RNA-Seq data achieved prediction accuracies comparable to or better than traditional SNP arrays, with the consensus SNP dataset (integrating RNA-Seq and parental whole-genome sequencing) showing particular advantage for inter-population predictions [5].

Key Experimental Protocols and Methodologies

Model Formulations and Statistical Approaches

GBLUP (Genomic Best Linear Unbiased Prediction)

The standard GBLUP model follows the formulation:

Where y is the vector of phenotypes, X is the incidence matrix for fixed effects (e.g., test day), b is the vector of fixed effects, Z is the incidence matrix for random genetic effects, g is the vector of random additive genetic effects ~N(0,Gσ²g), and e is the vector of residuals ~N(0,Iσ²e). The genomic relationship matrix G is calculated following VanRaden's first method as G = ZZ'/∑2pj(1-pj), where Z contains centered genotype codes and pj is the allele frequency at SNP j [3] [16].

TBLUP (Transcriptomic BLUP)

The TBLUP model replaces genomic relationships with transcriptomic similarities:

Where t is the vector of random transcriptomic effects ~N(0,Tσ²t), with T representing the transcriptomic relationship matrix derived from transcript abundance data [3]. This model can be constructed using different transcript types (e.g., miRNA or mRNA data).

Integrated Models (GTBLUP and GTCBLUPi)

The combined model incorporates both information sources:

Advanced formulations like GTCBLUPi address redundancy between data layers by conditioning transcriptomic effects on genetics:

Where tc represents transcriptomic effects conditioned on genetic effects to remove shared variation, thereby capturing purely non-genetic transcriptomic influences [3] [16]. This approach prevents collinearity issues when both SNP genotypes and omics data are used as independent random effects.

Experimental Workflows in Model Organisms

Experimental Workflow for Genomic-Transcriptomic Prediction Studies

Japanese Quail Efficiency Traits Protocol

The seminal study comparing genomic and transcriptomic prediction models utilized 480 F2 cross Japanese quail raised under controlled conditions. Birds were allocated to metabolism units during peak growth (days 10-15) and fed a corn-soybean meal-based diet with marginal phosphorus to maximize expression of genetic potential for phosphorus utilization [3] [16].

Key phenotypic traits measured included:

- Phosphorus utilization (PU): Based on total P intake and excretion (%)

- Body weight gain (BWG): Measured between days 10-15 (g)

- Feed intake (FI): During the 5-day period (g)

- Feed conversion ratio (FCR): FI divided by BWG (g/g)

- Tibia ash (TA): Total amount (mg)

- Calcium utilization (CaU): Based on total Ca intake and excretion (%)

Molecular data collection:

- Genotyping: 6k Illumina iSelect chip, filtered to 4k high-quality SNPs

- Transcriptomics: Ileum mucosa sampling with miRNA and mRNA sequencing, focused on top differentially expressed transcripts (77 miRNAs and 80 mRNAs) related to PU

- Tissue-specific focus: Intestinal tissue selected for relevance to nutrient utilization traits

All phenotypes underwent Box-Cox transformation with trait-specific λ parameters (ranging from -3.147 to 5.015) to address distributional skewness before model fitting [3].

Barley Multi-Parent RIL Population Protocol

The barley study employed a different approach using 237 recombinant inbred lines (RILs) from three connected spring barley populations (HvDRR13, HvDRR27, HvDRR28) derived from pairwise crosses of diverse parental inbreds [5].

Innovative cost-saving measures included:

- Low-cost RNA-Seq: Small-footprint plant cultivation, high-throughput RNA extraction, and library preparation miniaturization

- Reduced sequencing depth: Testing depth reduction as cost-saving strategy while maintaining prediction accuracy

- Multiple data types: Comparison of gene expression datasets, RNA-Seq SNP datasets, and consensus SNP datasets integrating RNA-Seq with parental whole-genome sequencing

Evaluation framework:

- Fivefold cross-validation: Within and across populations

- Benchmarking: Against traditional 50K SNP array

- Trait measurement: Eight agronomic traits across up to seven environments

Biological Mechanisms and Relationship Visualization

Transcripts as Phenotypic Intermediates

The diagram illustrates how transcriptomic data captures both heritable regulatory mechanisms (red arrow) and environmental influences (dashed lines), serving as functional intermediates between genotype and phenotype. This dual capture explains why transcriptomic data often accounts for larger portions of phenotypic variance than genomic data alone, particularly for traits influenced by environmental conditions or complex regulatory networks [3] [17].

The high heritability of many gene transcripts enables TBLUP models to effectively capture polygenic backgrounds underlying complex traits. Transcriptomic correlations between traits often reveal shared biological pathways, providing both predictive advantages and biological insights beyond what pure genomic models can offer [3].

Essential Research Tools and Reagents

Table 3: Key research reagent solutions for genomic-transcriptomic prediction studies

| Category | Specific Tools/Platforms | Application in Prediction Studies | Performance Considerations |

|---|---|---|---|

| Genotyping Platforms | Illumina iSelect chip, Genotyping-by-sequencing | SNP discovery, genomic relationship matrix | Density, missing data rates, MAF spectrum |

| Transcriptomics | RNA-Seq (Illumina), Fluidigm BioMark HD | Gene expression quantification, transcriptome profiling | Tissue specificity, normalization, batch effects |

| Library Preparation | VAHTS Universal V6 RNA-seq Kit, Poly-A selection | cDNA library construction for sequencing | Cost, throughput, reproducibility |

| Sequencing Platforms | Illumina NovaSeq X, Oxford Nanopore | High-throughput data generation | Read length, accuracy, coverage depth |

| Statistical Software | ASReml-R, sommer, custom R/Python scripts | Model fitting, variance component estimation | Computational efficiency, scalability |

| Data Integration Tools | EasyGeSe, BreedBase, GPCP tool | Benchmarking, cross-prediction, multi-omics fusion | Standardization, interoperability |

The selection of appropriate research tools depends on species-specific considerations, trait complexity, and resource constraints. For plants, low-cost RNA-Seq methods with miniaturized library preparation have proven effective without sacrificing prediction accuracy [5]. In animal studies, tissue-specific sampling (e.g., intestinal mucosa for efficiency traits) is critical for biological relevance [3].

The comparative analysis reveals that neither genomic nor transcriptomic data universally outperforms the other across all contexts. Instead, the optimal approach depends on trait architecture, biological context, and research objectives.

Guidelines for Model Selection

- Choose genomic models for traits with strong additive genetic architecture and when prediction stability across environments is prioritized

- Select transcriptomic models for traits influenced by environmental factors, regulatory mechanisms, or when seeking biological interpretation of predictive features

- Implement combined models when maximal prediction accuracy is essential and computational resources allow, using conditioning approaches (GTCBLUPi) to address collinearity

- Consider cost-effectiveness where transcriptomic data may provide dual benefits (variant discovery + expression quantification), particularly in species with limited genomic resources

Future Directions

Emerging methodologies like deep learning integration of multi-omics data and temporal transcriptomic profiling show promise for further enhancing prediction accuracy [17]. However, challenges remain in standardizing data integration protocols and developing computationally efficient implementations accessible to breeding programs with limited resources.

The heritability of gene transcripts provides a biological foundation for their predictive utility, but their greatest value emerges when combined with genomic data in models that respect their complementary nature and overlapping information content.

Model Architectures and Real-World Applications Across Industries

In the field of modern genetics and drug development, statistical models for predicting complex traits have evolved significantly. Traditional approaches primarily utilize genomic data through models like Genomic Best Linear Unbiased Prediction (GBLUP). However, as understanding of biological systems has deepened, researchers have recognized that transcriptomic data—reflecting actual gene expression—can capture influences from both genetic and environmental factors, potentially offering a more direct link to phenotypic outcomes. This recognition led to the development of Transcriptomic BLUP (TBLUP). The most recent advancements involve integrated frameworks such as GTCBLUPi, which systematically combine both genomic and transcriptomic information while addressing the redundancy between these data layers. These models represent a progression from single-omics to multi-omics approaches, aiming to enhance prediction accuracy for complex traits in fields ranging from animal and plant breeding to human disease research and pharmacogenomics.

Model Frameworks and Methodologies

GBLUP (Genomic Best Linear Unbiased Prediction)

Mathematical Foundation and Workflow: GBLUP is a cornerstone method in genomic selection that uses genome-wide markers to predict breeding values [24] [25]. The core model is represented as:

y = 1μ + Zg + e

Where y is the vector of phenotypic values, 1 is a vector of ones, μ is the overall mean, Z is an incidence matrix linking observations to genetic values, g is the vector of random additive genetic effects assumed to follow a normal distribution ( g \sim N(0, G\sigmag^2) ), and e is the vector of random residuals ( e \sim N(0, I\sigmae^2) ) [24]. The G matrix is the genomic relationship matrix, calculated from marker data following methods described by VanRaden [16]. This matrix quantifies the genetic similarity between individuals based on their SNP profiles, replacing the pedigree-based relationship matrix used in traditional BLUP.

Key Characteristics:

- Computational Efficiency: GBLUP is generally preferred for routine genomic evaluations because of its relatively low computational demand compared to Bayesian variable selection models [26].

- Implementation Simplicity: The model assumes all marker effects follow a normal distribution with equal variance, simplifying implementation [25].

- Data Requirements: Requires genotype data typically from SNP chips, with standard quality control procedures including filters for call rate, minor allele frequency, and Hardy-Weinberg equilibrium [24] [27].

TBLUP (Transcriptomic Best Linear Unbiased Prediction)

Mathematical Foundation and Workflow: TBLUP adapts the BLUP framework to utilize transcriptomic data instead of genomic markers [16]. The model structure is analogous to GBLUP:

y = Xb + Zt + e

Where the components are similar to the GBLUP model, except that t represents the vector of random transcriptomic effects assumed to follow ( t \sim N(0, T\sigma_t^2) ), where T is the transcriptomic relationship matrix. This matrix is constructed from transcript abundance data (e.g., mRNA or miRNA expression levels) rather than SNP genotypes, capturing similarities based on gene expression profiles.

Key Characteristics:

- Biological Insight: Transcriptomic data provide insights into gene expression patterns shaped by both genetic and environmental factors, offering a more comprehensive understanding of phenotypic expression [28] [16].

- Tissue Specificity: Transcriptomic profiles are often tissue-specific, requiring collection from relevant tissues for the trait of interest (e.g., intestinal tissue for efficiency traits) [16].

- Data Processing: Requires normalization and transformation of expression data, often involving log-transformation and scaling to ensure comparability across samples [29].

GTCBLUPi (Integrated Genomic-Transcriptomic BLUP)

Mathematical Foundation and Workflow: GTCBLUPi is an advanced framework that integrates both genomic and transcriptomic information while explicitly addressing their redundancy [16]. The model can be represented as:

y = Xb + Zg + Zt + e

The key innovation in GTCBLUPi lies in how the random effects are structured to avoid double-counting the genetic component already captured by the genomic data. The transcriptomic effects (t) are modeled as being conditioned on the genotypes, ensuring they represent predominantly non-genetic influences. This addresses the collinearity problems that arise when both SNP genotypes and other omics data are used as independent random effects in a mixed linear model.

Key Characteristics:

- Redundancy Management: The model specifically accounts for the overlapping information between genomic and transcriptomic data layers, as transcripts often have high heritability [16].

- Variance Component Partitioning: Allows estimation of the proportion of phenotypic variance explained by genomics versus transcriptomics, providing biological insights into trait architecture [16].

- One-Step Integration: Unlike two-step procedures that first estimate total omics effects and then model their genetic components, GTCBLUPi implements a simultaneous analysis in a single step [16].

Performance Comparison Across Models

Prediction Accuracy for Various Traits

Table 1: Comparison of Prediction Accuracy Across Models and Traits

| Species | Trait Category | GBLUP Accuracy | TBLUP Accuracy | GTCBLUPi Accuracy | Notes | Citation |

|---|---|---|---|---|---|---|

| Beijing-You Chicken | Immune Traits (SRBC, H/L) | 0.281 (heritability) | - | - | Small reference population | [24] |

| Japanese Quail | Efficiency Traits (Phosphorus Utilization) | Moderate | Higher than GBLUP | Highest | Transcriptomics explained larger variance than genomics | [16] |

| Nordic Holstein | Milk Production Traits | 0.3% lower than GBLUP+polygenic | - | - | Comparison with polygenic effect model | [25] |

| Maize & Rice | Complex Agronomic Traits | Baseline | Variable | Consistently improved over GBLUP | Multi-omics integration beneficial for complex traits | [1] |

Variance Components Explained

Table 2: Variance Components Explained by Different Omics Layers

| Model | Genomic Variance (%) | Transcriptomic Variance (%) | Residual Variance (%) | Trait Context | Citation |

|---|---|---|---|---|---|

| GBLUP | 0-28% (immune traits) | - | 72-100% | Poultry immune traits | [24] |

| TBLUP | - | Up to 47.2% | Varies | Efficiency traits in quail | [16] |

| GTCBLUPi | 12.5% (avg) | 35.3% (avg) | 52.2% (avg) | Combined explanation for efficiency traits | [16] |

Experimental Protocols and Methodologies

Standard GBLUP Implementation Protocol

Data Preparation and Quality Control:

- Genotyping: Utilize SNP chips (e.g., Illumina 60K SNP chips for chickens) to genotype all individuals in the reference population [24].

- Quality Control: Apply filters using software like PLINK to remove markers with call rates <90-95%, minor allele frequency <1-5%, and significant deviation from Hardy-Weinberg equilibrium (p < 0.00001) [24] [27].

- Relationship Matrix Construction: Compute the genomic relationship matrix G following VanRaden's first method: ( G = \frac{ZZ^T}{\sumj 2pj(1-pj)} ), where Z is a matrix of centered genotype codes and ( pj ) is the frequency of the reference allele at SNP j [16].

Model Fitting and Validation:

- Cross-Validation: Implement k-fold cross-validation (e.g., 50 times 5-fold CV) to assess prediction accuracy [24].

- Variance Component Estimation: Use restricted maximum likelihood (REML) approaches to estimate genetic and residual variances.

- Breeding Value Prediction: Solve the mixed model equations to obtain genomic estimated breeding values (GEBVs).

TBLUP Implementation Protocol

Transcriptomic Data Collection:

- Tissue Sampling: Collect relevant tissue samples (e.g., ileum mucosa for efficiency traits) under standardized conditions [16].

- RNA Sequencing: Extract and sequence RNA using platforms such as Illumina Ref-8 BeadChip or Fluidigm BioMark HD system [27] [16].