Evaluating Deep Learning Architectures for 3D Plant Phenotyping: A Comprehensive Review from Data Acquisition to Clinical Translation

This article provides a systematic evaluation of deep learning (DL) architectures for 3D plant phenotyping, a field crucial for advancing plant science and precision agriculture.

Evaluating Deep Learning Architectures for 3D Plant Phenotyping: A Comprehensive Review from Data Acquisition to Clinical Translation

Abstract

This article provides a systematic evaluation of deep learning (DL) architectures for 3D plant phenotyping, a field crucial for advancing plant science and precision agriculture. It explores the foundational shift from 2D to 3D phenotyping, detailing the capabilities of various DL models in processing complex 3D plant data like point clouds. The review covers methodological applications for trait extraction, discusses significant challenges such as data redundancy and model interpretability, and presents optimization strategies. Furthermore, it offers a comparative analysis of model performance and validation techniques. Aimed at researchers and scientists, this synthesis serves as a guide for selecting, optimizing, and validating DL architectures to accelerate phenotyping research and its downstream applications, including in biomedical contexts such as plant-based drug development.

From 2D to 3D: Foundations of Deep Learning in Plant Phenotyping

Plant phenotyping, the quantitative assessment of plant structural and physiological characteristics, has traditionally relied on 2D imaging approaches. However, these methods project the complex three-dimensional architecture of plants onto a two-dimensional plane, resulting in significant information loss. 2D image-based analysis methods inherently suffer from occlusion, perspective distortion, and the loss of depth information, failing to accurately capture the plant's true morphological features [1]. These limitations become particularly problematic when analyzing complex plant architectures with overlapping leaves, stems, and other organs, where crucial phenotypic data remains hidden or distorted.

The emergence of 3D plant phenotyping addresses these fundamental limitations by capturing the complete spatial geometry and topological structure of plants [2] [3]. This paradigm shift enables researchers to move beyond proxy measurements to direct assessment of complex traits. In some cases, 3D sensing methods that incorporate data from multiple viewing angles provide insights that are hard or impossible to get from a 2D model alone, such as resolving occlusions and accurately characterizing plant architecture [1] [2]. This capability is revolutionizing plant research, breeding programs, and precision agriculture by providing a more comprehensive understanding of the relationship between plant structure and function.

Comparative Analysis: Quantitative Evidence of 3D Superiority

Direct experimental comparisons between 2D and 3D phenotyping methodologies consistently demonstrate the superior accuracy and information content of 3D approaches across multiple plant species and phenotypic traits.

Table 1: Performance Comparison of 2D vs. 3D Phenotyping Methods

| Phenotypic Trait | Plant Species | 2D Method Performance | 3D Method Performance | Reference |

|---|---|---|---|---|

| Leaf Parameters | Ilex species | N/A | R² = 0.72-0.89 vs. manual measurements | [1] |

| Plant Height/Crown | Ilex species | N/A | R² > 0.92 vs. manual measurements | [1] |

| Tissue Segmentation | Apple Fruit | Benchmark AJI: 0.715 | 3D Model AJI: 0.889 | [4] |

| Tissue Segmentation | Pear Fruit | Benchmark AJI: 0.631 | 3D Model AJI: 0.773 | [4] |

| New Organ Detection | Tobacco, Tomato, Sorghum | Limited by occlusion | Mean F1-score: 88.13% | [5] |

| Plant Segmentation | Tomato | N/A | Similar accuracy with 5x less training data | [6] |

The performance advantages extend beyond simple morphological measurements. For instance, in a study focused on fruit tissue microstructure, a 3D deep learning model achieved an Aggregated Jaccard Index (AJI) of 0.889 for apple and 0.773 for pear, significantly outperforming previous 2D approaches and traditional algorithms [4]. The model successfully segmented pore spaces, cell matrices, and identified vasculature with Dice Similarity Coefficients reaching 0.789 in pear, demonstrating exceptional precision at the microscopic level.

Furthermore, the data efficiency of 3D approaches presents a significant practical advantage. Research on tomato plant segmentation revealed that a 2D-to-3D reprojection method achieved similar performance to training state-of-the-art 3D segmentation algorithms like Swin3D-s with only five annotated plants compared to twenty-five plants required for the 3D approach [6]. This five-fold reduction in data requirement dramatically decreases annotation costs and accelerates research cycles.

Deep Learning Architectures for 3D Plant Phenotyping

The advancement of 3D phenotyping is inextricably linked to sophisticated deep learning architectures capable of processing complex spatial data. Unlike 2D computer vision that utilizes Convolutional Neural Networks (CNNs) applied to images, 3D phenotyping requires specialized networks designed for point clouds, voxels, and multi-view representations.

Core Architectural Approaches

Point-based Networks (e.g., PointNet++, Point Transformer v3, DGCNN) directly process unstructured 3D point clouds, making them ideal for data acquired from LiDAR or stereo cameras [6] [5]. These networks learn features from the spatial arrangement of points, enabling tasks like organ segmentation and growth tracking. For example, the 3D-NOD framework for detecting new plant organs utilizes DGCNN as its backbone to achieve an F1-score of 88.13% across multiple crop species [5].

Voxel-based Networks (e.g., MinkUNet34C, Swin3D-s) convert point clouds into a 3D grid of voxels, allowing the application of 3D convolutions [6]. While effective, these methods can be computationally intensive due to the sparsity of plant point clouds.

Projection-based Methods leverage well-developed 2D networks by projecting 3D data into 2D spaces. A developed 2D-to-3D reprojection method segments images using Mask2Former and then reprojects predictions to the point cloud, achieving accuracy comparable to state-of-the-art 3D algorithms but with higher training efficiency [6].

Table 2: Deep Learning Architectures for 3D Plant Phenotyping

| Architecture Type | Representative Models | Input Data Format | Key Applications | Advantages |

|---|---|---|---|---|

| Point-based | PointNet++, Point Transformer v3, DGCNN | Point Cloud | Organ segmentation, new organ detection | Direct processing, preserves geometry |

| Voxel-based | Swin3D-s, MinkUNet34C | Voxel Grid | Semantic segmentation, trait extraction | Structured data format, uses 3D convolutions |

| Projection-based | 2D-to-3D (Mask2Former) | Multiple 2D Images | Plant segmentation, trait extraction | Leverages pre-trained 2D models, data efficient |

| Hybrid | 3D Residual U-Net, 3D Cellpose | Voxel/Point Cloud | Tissue segmentation, microscopic analysis | High accuracy for complex structures |

Specialized Frameworks for Growth Monitoring

The 3D-NOD framework exemplifies architecture specifically designed for temporal 3D phenotyping. It incorporates novel Backward & Forward Labeling (BFL) and Humanoid Data Augmentation (HDA) strategies to boost sensitivity in detecting tiny new organs [5]. This framework enables real-time growth monitoring by accurately detecting budding events in tobacco, tomato, and sorghum with a mean Intersection over Union (IoU) of 80.68%, demonstrating remarkable precision for developmental studies.

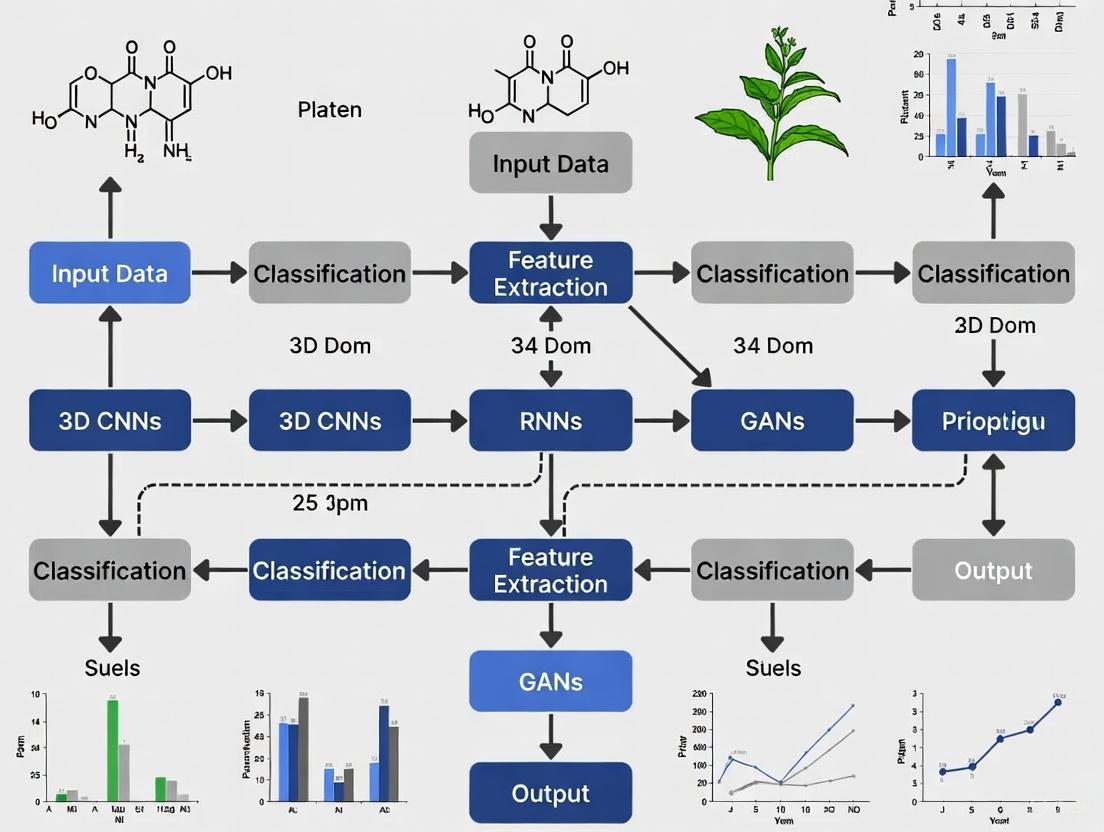

Figure 1: Deep Learning Workflow for 3D Plant Phenotyping

Experimental Protocols and Methodologies

Implementing robust 3D phenotyping requires carefully designed experimental protocols spanning data acquisition, processing, and analysis. Below are detailed methodologies for key experiments cited in this review.

High-Fidelity Plant Reconstruction Protocol

Research by Nanjing Forestry University established an integrated, two-phase workflow for accurate 3D reconstruction of plants [1]:

Phase 1: Single-View Point Cloud Generation

- Image Acquisition: Capture high-resolution RGB images using binocular cameras (e.g., ZED 2) from multiple viewpoints. The recommended setup uses six viewpoints around the plant with camera resolution of 2208×1242.

- 3D Reconstruction: Bypass integrated depth estimation and instead apply Structure from Motion (SfM) and Multi-View Stereo (MVS) techniques to the captured images.

- Output: Production of high-fidelity, single-view point clouds that effectively avoid distortion and drift common in direct depth estimation.

Phase 2: Multi-View Point Cloud Registration

- Coarse Alignment: Rapid initial alignment using a marker-based Self-Registration (SR) method with calibration spheres.

- Fine Alignment: Apply the Iterative Closest Point (ICP) algorithm for precise registration of point clouds from multiple viewpoints.

- Result: Unified and complete 3D plant model enabling extraction of key phenotypic parameters.

This protocol was validated on Ilex species, showing strong correlation with manual measurements (R² > 0.92 for plant height and crown width) [1].

2D-to-3D Reprojection Segmentation Method

A groundbreaking approach that leverages 2D segmentation power for 3D analysis was developed as follows [6]:

- Multi-view Image Capture: Acquire images from multiple virtual cameras surrounding the plant.

- 2D Segmentation: Process each image using advanced 2D segmentation networks (e.g., Mask2Former).

- Reprojection to 3D: Reproject the 2D segmentation predictions to the 3D point cloud using camera transformation parameters.

- Majority Vote Fusion: Apply a majority vote algorithm to merge multiple predictions from different viewpoints for each point in the 3D cloud.

This method demonstrated no significant performance difference compared to state-of-the-art 3D segmentation algorithms like Swin3D-s and Point Transformer v3, while achieving significantly higher training efficiency [6]. The approach achieved similar performance with only five annotated plants compared to twenty-five plants required for training Swin3D-s, highlighting its data efficiency.

Microscopic Tissue Segmentation Protocol

For microscopic analysis of fruit tissues, researchers employed a distinct protocol [4]:

- Image Acquisition: Use X-ray micro-CT for non-destructive 3D imaging of plant samples without extensive sample preparation.

- 3D Panoptic Segmentation Framework: Implement a dual-path approach:

- Instance Segmentation: Predict intermediate gradient fields in X, Y, and Z directions using a 3D extension of Cellpose to separate individual parenchyma cells.

- Semantic Segmentation: Employ a 3D Residual U-Net to classify voxels into cell matrix, pore space, vasculature, or stone cell clusters.

- Data Augmentation: Apply synthetic data augmentation involving morphological dilation and erosion, grey-value assignment, and Gaussian noise addition to enhance model robustness.

- Performance Validation: Evaluate using Aggregated Jaccard Index (AJI) and Dice Similarity Coefficient (DSC), achieving AJIs of 0.889 for apple and 0.773 for pear.

Figure 2: 3D Plant Reconstruction Experimental Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of 3D plant phenotyping requires specific hardware, software, and computational resources. The table below details key solutions used in the featured research.

Table 3: Essential Research Reagents and Solutions for 3D Plant Phenotyping

| Category | Specific Tool/Solution | Function/Application | Key Features | |

|---|---|---|---|---|

| Imaging Hardware | ZED 2 Binocular Camera | Stereo image acquisition for 3D reconstruction | 2208×1242 resolution, built-in depth sensing | [1] |

| Imaging Hardware | Terrestrial Laser Scanner (TLS) | High-precision point cloud acquisition | Millimetric accuracy, large scanning volume | [2] |

| Imaging Hardware | Microsoft Kinect | Low-cost depth sensing using structured light | RGB-D data, accessible SDK | [2] |

| Imaging Hardware | X-ray Micro-CT | Non-destructive 3D imaging of tissue microstructure | High-resolution internal structure visualization | [4] |

| Software & ML Models | Mask2Former | 2D image segmentation for projection-based methods | State-of-the-art segmentation performance | [6] |

| Software & ML Models | DGCNN (Dynamic Graph CNN) | Point cloud processing for organ detection | Edge convolution, dynamic graph updating | [5] |

| Software & ML Models | 3D Residual U-Net | Volumetric segmentation of microscopic tissues | Skip connections, high precision for bio-images | [4] |

| Algorithms | Structure from Motion (SfM) | 3D reconstruction from multiple 2D images | Feature point matching, camera pose estimation | [1] |

| Algorithms | Iterative Closest Point (ICP) | Precise point cloud registration | Fine alignment, iterative error minimization | [1] |

| Platforms | Plant Phenomics Platforms | Integrated systems for high-throughput phenotyping | Automated imaging, data management | [5] [3] |

The paradigm shift from 2D to 3D plant phenotyping represents a fundamental transformation in how researchers quantify and analyze plant architecture. The evidence consistently demonstrates that 3D approaches provide superior accuracy, enable measurement of previously inaccessible traits, and offer greater data efficiency compared to traditional 2D methods. The ability to accurately resolve occlusions, capture spatial relationships, and track temporal changes in three dimensions has opened new frontiers in plant science, from gene function analysis to precision breeding.

Future advancements in 3D phenotyping will likely focus on several key areas. First, the development of more efficient deep learning architectures that can process 3D data with reduced computational requirements will make these technologies more accessible. Second, the integration of multi-modal data (e.g., combining 3D structural information with hyperspectral and thermal data) will provide unprecedented insights into plant structure-function relationships [3]. Finally, the move toward real-time, field-based 3D phenotyping using unmanned aerial vehicles and portable systems will bridge the gap between controlled environment research and agricultural production settings [1] [3].

As these technologies continue to mature, 3D plant phenotyping is poised to become the standard approach for understanding and leveraging the relationship between plant architecture and function, ultimately accelerating crop improvement and sustainable agricultural production.

The adoption of three-dimensional data is revolutionizing plant phenotyping by enabling researchers to capture intricate structural traits of plants non-destructively and with high precision. Moving beyond the limitations of two-dimensional imaging, 3D data provides comprehensive spatial information that is crucial for analyzing complex plant architectures, tracking growth over time, and understanding genotype-to-phenotype relationships. Among the various 3D representation formats, three core data types have emerged as fundamental for deep learning applications in plant phenotyping: point clouds, voxels, and multi-view representations. Each of these data types possesses distinct characteristics, advantages, and limitations that make them suitable for different experimental setups and research objectives in plant sciences. This guide provides a comprehensive comparison of these core 3D data types, with a specific focus on their application in evaluating deep learning architectures for 3D plant phenotyping research, offering researchers evidence-based guidance for selecting appropriate methodologies for their specific needs.

Core 3D Data Types: Technical Foundations

Point Clouds

Point clouds are collections of data points in a three-dimensional coordinate system, directly representing the external surface of an object or environment. Each point in the cloud has its own set of X, Y, and Z coordinates, and may optionally include additional information such as color intensity or reflectance value. In plant phenotyping, point clouds are typically acquired through active sensing techniques like LiDAR (Light Detection and Ranging) or passive methods such as Structure from Motion (SfM) from multiple 2D images [7]. The primary advantage of point clouds lies in their ability to preserve the exact geometric information of plant structures without any discretization or conversion, making them highly suitable for capturing intricate details of leaves, stems, and other plant organs.

From a deep learning perspective, point clouds present unique challenges due to their unstructured and unordered nature. Unlike pixel arrays in images, point clouds lack a regular grid structure, making them incompatible with conventional convolutional neural networks (CNNs). Pioneering architectures like PointNet and PointNet++ address this challenge by using shared multi-layer perceptrons (MLPs) and symmetric functions to maintain permutation invariance [8]. More recent advancements include dynamic graph CNNs (DGCNN), point transformers, and stratified transformers that better capture local geometric features and long-range dependencies in plant structures [7]. These approaches have demonstrated remarkable success in organ-level segmentation tasks, enabling precise identification and measurement of individual plant components in complex canopy environments.

Voxels

Voxels (volumetric pixels) represent three-dimensional space as a regular grid of discrete cells, analogous to how pixels represent 2D images. Each voxel contains information about whether it is occupied by the object or empty, and may include additional properties about the contained region. This structured representation bridges the gap between unstructured point cloud data and the requirement of deep learning architectures for regular input formats. Voxel-based methods convert raw point clouds into a 3D grid through a process called voxelization, where the spatial resolution is determined by the size of the voxels [8].

The primary advantage of voxel representations is their compatibility with well-established 3D convolutional neural networks (3D CNNs), which can systematically extract hierarchical features from the structured grid. This enables researchers to leverage extensively studied CNN architectures and optimization techniques originally developed for 2D image analysis. However, voxel representations face significant challenges in plant phenotyping applications due to the trade-off between resolution and computational efficiency. High-resolution voxel grids necessary for capturing fine plant structures like thin leaves or stems result in exponential increases in memory requirements and computational cost, much of which is wasted on empty space in typically sparse plant point clouds [7]. Techniques like sparse convolutions and octrees have been developed to mitigate these issues, but they add implementation complexity.

Multi-View Representations

Multi-view representations bridge 2D and 3D analysis by rendering a 3D object or scene from multiple viewpoints and applying well-established 2D deep learning techniques to the resulting images. This approach typically involves projecting 3D point clouds onto 2D planes from various perspectives (often six orthogonal views or a spherical arrangement of viewpoints) to create depth images or silhouettes, which are then processed using standard 2D CNNs [9]. The features extracted from individual views are subsequently aggregated using view-pooling operations or more sophisticated fusion mechanisms to form a comprehensive 3D representation.

The significant advantage of multi-view representations lies in their ability to leverage the maturity, efficiency, and powerful feature extraction capabilities of 2D CNNs pre-trained on massive image datasets like ImageNet. This is particularly valuable in plant phenotyping, where annotated 3D datasets are scarce and computationally expensive to process. Research has demonstrated that multi-view methods "exhibit superior noise robustness and require lower resolution compared to direct 3D point-cloud processing" [10]. However, this approach faces challenges in preserving complete 3D spatial information and handling self-occlusions, where parts of the plant hide other parts from certain viewpoints, potentially leading to information loss.

Table 1: Technical Characteristics of Core 3D Data Types

| Characteristic | Point Clouds | Voxels | Multi-View Representations |

|---|---|---|---|

| Data Structure | Unstructured set of 3D points | Regular 3D grid | Multiple 2D projections |

| Information Preservation | High (raw 3D geometry) | Medium (discretized) | Variable (view-dependent) |

| Memory Efficiency | High for sparse structures | Low (memory grows cubically with resolution) | Medium (depends on number of views) |

| Compatibility with DL Architectures | Requires specialized networks (PointNet++, DGCNN, Point Transformer) | Compatible with 3D CNNs | Compatible with standard 2D CNNs (ResNet, VGG) |

| Handling Occlusions | Good (direct 3D structure) | Good (volumetric representation) | Poor (view-dependent occlusions) |

| Implementation Complexity | High (custom architectures needed) | Medium (standard 3D CNNs, but optimized versions are complex) | Low (leverages mature 2D DL frameworks) |

Comparative Performance Analysis in Plant Phenotyping

Performance Metrics Across Data Types

Evaluating the performance of 3D data types requires multiple metrics that capture different aspects of model effectiveness. In plant phenotyping applications, the most relevant metrics include accuracy (correctness of predictions), computational efficiency (inference time and memory usage), robustness to noise and occlusions, and data efficiency (performance with limited training data). Experimental comparisons across these metrics reveal distinct trade-offs that inform method selection for specific phenotyping tasks.

Recent comprehensive evaluations of deep learning models on plant point clouds provide valuable insights into these trade-offs. A 2024 study comparing nine classical point cloud segmentation models on plants collected under different scenarios revealed that the Stratified Transformer (ST) "achieved optimal performance across almost all environments and sensors, albeit at a significant computational cost" [7]. The transformer architecture for points demonstrated considerable advantages over traditional feature extractors by accommodating features over longer ranges, which is particularly beneficial for capturing extended plant structures like stems and branches. Additionally, PAConv, which constructs weight matrices in a data-driven manner, enabled better adaptation to various scales of plant organs [7].

For multi-view representations, research has demonstrated exceptional performance in classification tasks while maintaining computational efficiency. The SimpleView approach, which projects a point cloud onto just six orthogonal planes and processes these projections through ResNet, has shown particularly strong performance [9]. In domain generalization settings where models trained on synthetic data must perform well on real-world data, multi-view approaches have outperformed point-based methods, demonstrating better robustness to the geometric variations commonly encountered in plant phenotyping applications [9].

Table 2: Performance Comparison of 3D Data Types on Plant Phenotyping Tasks

| Performance Metric | Point Clouds | Voxels | Multi-View Representations |

|---|---|---|---|

| Classification Accuracy | High (Point Transformer: ~93% on ModelNet) | Medium (VoxNet: ~85% on ModelNet) | High (MVCNN: ~90% on ModelNet) |

| Segmentation Accuracy (mIoU) | High (Stratified Transformer: 78.4% on plant datasets) | Medium (VCNN: ~70% on maize datasets) | Low-Medium (projection-based: ~65%) |

| Inference Speed (frames/second) | Medium (15-25 FPS on complex models) | Low (5-15 FPS for high-resolution grids) | High (30+ FPS with 2D CNNs) |

| Memory Consumption | Low-Medium (depends on number of points) | High (especially for high-resolution grids) | Medium (depends on number and resolution of views) |

| Robustness to Noise | Medium (varies with architecture) | High (voxelization averages noise) | High (2D CNNs are naturally robust) |

| Data Efficiency | Low (requires more training data) | Medium | High (benefits from 2D pre-training) |

Domain Generalization Capabilities

Domain generalization—the ability of models trained on one dataset to perform well on data from different distributions—is particularly important in plant phenotyping due to the significant differences between controlled laboratory environments and field conditions. A critical challenge arises from the domain shift between synthetic point clouds from CAD models (which are easy to annotate) and real-world point clouds captured by sensors, with the latter often suffering from occlusion, missing points, and noise [9].

Research has revealed that point-based methods exhibit limitations in domain generalization due to their reliance on max-pooling operations that discard many point features. Studies show that "a large number of point features are discarded by point-based methods through the max-pooling operation," which represents a significant waste of information, particularly problematic for domain generalization where data is already challenging [9]. This is especially critical for plant phenotyping applications where fine structural details may be essential for distinguishing phenotypes.

In contrast, multi-view representations have demonstrated superior domain generalization capabilities. The DG-MVP framework, which uses multiple 2D projections of point clouds, has outperformed point-based methods on standard domain generalization benchmarks like PointDA-10 and Sim-to-Real [9]. The approach remains robust because certain projections maintain consistency even when point clouds have missing regions or deformations, making it particularly valuable for plant phenotyping applications where complete 3D data is difficult to acquire.

Experimental Protocols and Methodologies

Standardized Evaluation Protocols

To ensure fair comparisons across different 3D data types and deep learning architectures, researchers have established standardized evaluation protocols using benchmark datasets. For plant phenotyping applications, these protocols typically involve:

Data Preparation: Experiments should use publicly available plant datasets such as the Arabidopsis thaliana dataset from CVPPP (Computer Vision Problems in Plant Phenotyping) or maize datasets that include 3D point clouds with organ-level annotations [7]. Data should be split into training, validation, and test sets using standard ratios (typically 70:15:15) with stratified sampling to maintain class distribution.

Preprocessing: For point-based methods, input is typically normalized by centering and scaling. For voxel-based methods, point clouds are voxelized at multiple resolutions (e.g., 32³, 64³) to evaluate resolution impact. For multi-view methods, standard protocols involve rendering 6 or 12 views using orthogonal projection [9].

Data Augmentation: Standard augmentation techniques include random rotation, scaling, jittering (adding noise to point coordinates), and simulated occlusion. For domain generalization experiments, additional augmentations simulate missing points and variations in scanning density to better represent real-world conditions [9].

Evaluation Metrics: Primary metrics include overall accuracy for classification tasks, mean Intersection over Union (mIoU) for segmentation tasks, inference time (FPS), and memory consumption. For plant-specific applications, additional metrics like leaf counting accuracy and projected leaf area (PLA) estimation error are recommended [11].

Implementation Details for Comparative Studies

Hardware Configuration: Most studies utilize high-performance GPUs (NVIDIA RTX 3080/3090 or Tesla V100) with 11-32GB memory. CPU and system RAM specifications should be reported as they significantly impact voxel-based methods.

Software Framework: Standard implementations use PyTorch or TensorFlow with dedicated 3D deep learning libraries like Open3D, Pytorch3D, or TorchPoints3D.

Training Protocols: Models should be trained with consistent epochs (typically 200-300) with batch sizes adjusted according to memory constraints. Standard optimization uses Adam or SGD with momentum, with learning rate scheduling and early stopping based on validation performance.

Model Selection: For fair comparisons, studies should include representative models for each data type: PointNet++, DGCNN, and Point Transformer for point clouds; VoxNet and 3D-CNN for voxels; MVCNN and SimpleView for multi-view representations.

Diagram 1: Experimental protocol for evaluating 3D data types

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of 3D plant phenotyping requires both computational resources and specialized experimental equipment. The following table details essential "research reagent solutions" for establishing a comprehensive 3D plant phenotyping pipeline.

Table 3: Essential Research Reagents and Tools for 3D Plant Phenotyping

| Tool/Resource | Function | Application Examples | Considerations |

|---|---|---|---|

| LiDAR Sensors | Active 3D data acquisition using laser scanning | Terrestrial laser scanning for field phenotyping; portable scanners for laboratory use | Varying resolution and accuracy; eye-safety requirements for certain classes |

| Photometric Stereo Systems | 3D reconstruction from 2D images under different lighting conditions | PS-Plant system for tracking Arabidopsis thaliana growth with high temporal resolution | Requires controlled lighting conditions; excellent for fine details [12] |

| Multi-View Camera Rigs | Simultaneous image capture from multiple angles for 3D reconstruction | Structure from Motion (SfM) for plant architecture analysis | Camera synchronization critical; calibration required for accurate reconstruction [7] |

| Deep Learning Frameworks | Software infrastructure for developing 3D analysis models | PyTorch3D, TensorFlow 3D, Open3D-ML | Varying levels of 3D data type support; community support important |

| Annotation Tools | Manual or semi-automatic labeling of 3D plant data | Custom tools for organ-level segmentation; CloudCompare with plugins | Time-consuming process; inter-annotator agreement important for reliability |

| Benchmark Datasets | Standardized data for method comparison | CVPPP Plant Segmentation Dataset; RoPlant for robotics | Essential for reproducible research; domain gaps between datasets |

Integration Strategies and Future Directions

Hybrid Approaches

Rather than relying exclusively on a single data type, emerging research demonstrates the advantages of hybrid approaches that combine multiple representations to leverage their complementary strengths. Point-voxel frameworks represent a promising direction, such as PV-MM3D, which uses "point-based and voxel-based methods in parallel to aggregate features from virtual and LiDAR point clouds, respectively" [13]. This design preserves the high accuracy and flexibility of point-based methods for feature aggregation, while simultaneously leveraging the benefits of voxel-based methods in data compression and computational efficiency.

For plant phenotyping applications, where both structural precision and computational tractability are important, such hybrid frameworks offer significant potential. The integration can be implemented through various fusion strategies, including early fusion (combining raw data), intermediate fusion (merging extracted features), or late fusion (combining predictions). The Dual-Attention Region Adaptive Fusion Module (DARAFM) represents an advanced implementation that "integrates a self-attention mechanism and a cross-attention mechanism to capture intra-feature correlations and inter-feature complementarities, respectively" [13].

Multitask Learning Frameworks

Multitask learning (MTL) has emerged as a powerful paradigm for plant phenotyping, enabling simultaneous prediction of multiple plant traits from a shared representation. Research has demonstrated that MTL frameworks can predict "three traits simultaneously: (i) leaf count, (ii) projected leaf area (PLA), and (iii) genotype classification" with improved performance compared to single-task models [11]. Importantly, MTL allows leveraging more easily obtainable annotations (like PLA and genotype) to improve performance on harder-to-predict tasks like leaf counting, addressing annotation scarcity challenges in plant phenotyping.

The information sharing inherent in MTL increases generalization capability and reduces overfitting, particularly valuable when working with limited plant datasets. Implementation-wise, MTL enables a unified model instead of separate models for each task, reducing "storage space, decreasing training times and making deployment and maintenance easier" [11].

Future Research Directions

The field of 3D plant phenotyping continues to evolve rapidly, with several promising research directions emerging. Self-supervised learning approaches that learn representations from unlabeled data show particular promise for addressing the data annotation bottleneck. Lightweight models optimized for deployment on resource-constrained devices will be essential for field applications. Multimodal data fusion that integrates 3D structural data with spectral, thermal, and genetic information will enable more comprehensive phenotype characterization. Domain adaptation techniques that explicitly address the gap between controlled environment and field data will be crucial for real-world applications. Finally, interpretable deep learning approaches that provide biological insights alongside predictions will increase adoption by plant scientists.

Each of these directions presents unique opportunities for leveraging the complementary strengths of point clouds, voxels, and multi-view representations to advance plant phenotyping research and applications.

The ability to perceive and interpret the three-dimensional world is fundamental to advancing fields ranging from autonomous systems to biomedical science. For plant phenotyping research, which seeks to quantitatively understand the relationship between a plant's genotype and its observable characteristics, mastering 3D vision is particularly transformative. Traditional phenotyping methods are often labor-intensive, subjective, and limited in throughput [14]. Deep learning technologies now enable researchers to automatically extract precise morphological and structural traits from complex plant architectures, uncovering insights previously inaccessible through manual observation [14] [15]. This guide provides a comprehensive comparison of deep learning capabilities across four fundamental 3D vision tasks—classification, detection, segmentation, and generation—with specific evaluation of their performance, experimental protocols, and applications within plant phenotyping research.

Core 3D Data Representations and Their Characteristics

Before examining specific tasks, it is essential to understand the various ways 3D data can be represented in computational systems, as this choice fundamentally influences algorithm selection and performance.

Table 1: Comparison of Primary 3D Data Representations

| Representation | Description | Advantages | Disadvantages | Common Applications in Phenotyping |

|---|---|---|---|---|

| Point Clouds | Sets of 3D points (X,Y,Z coordinates) potentially with additional features (RGB, intensity) [16]. | Direct sensor output; preserves original precision; efficient storage for sparse data [17]. | Irregular structure; requires specialized networks; no explicit topology [17]. | Plant organ segmentation [14]; canopy structure analysis [14]. |

| Voxels | 3D volumetric pixels representing space on a regular grid [16]. | Regular structure compatible with 3D CNNs; explicit occupancy/geometry [17]. | Computational/memory cost increases cubically with resolution; discrete quantization artifacts [17]. | Root system architecture analysis [14]. |

| Meshes | Networks of vertices, edges, and faces (typically triangles) defining object surfaces [16]. | Efficient surface representation; well-established graphics pipeline. | Complex learning operations; topology changes are challenging. | Leaf surface modeling [14]; fruit morphology. |

| Multi-view Images | Multiple 2D renderings of a 3D object from different viewpoints [17]. | Leverages mature 2D CNNs; memory efficient. | Dependent on view selection; may lose 3D spatial relationships. | Plant shape classification [17]. |

| Neural Fields | Neural networks (e.g., NeRFs, SDFs) that continuously represent shape/appearance [18]. | High-resolution; continuous representation; memory efficient. | Computationally intensive training; slow inference. | High-fidelity plant reconstruction [18]. |

Deep Learning Architectures for 3D Vision Tasks

3D Classification

3D classification involves categorizing entire 3D objects or scenes into predefined classes, such as identifying plant species or stress types from whole-plant 3D scans.

Architectural Approaches:

- Volumetric CNNs: Apply 3D convolutional kernels to voxel grids. Early architectures like 3D ShapeNets demonstrated feasibility but face computational limitations at high resolutions [17].

- Multi-view CNNs: Render multiple 2D images of 3D objects from different viewpoints and aggregate features using view-pooling layers [17]. This approach leverages well-established 2D CNNs and has achieved state-of-the-art results on benchmark datasets.

- Point-based Networks: Directly process point clouds using architectures like PointNet++ that hierarchically extract features while respecting permutation invariance [17].

- Transformer-based Models: Apply self-attention mechanisms to point clouds or patches for global context modeling [19].

Table 2: Performance Comparison of 3D Classification Methods on Benchmark Datasets

| Method | Representation | ModelNet10 Accuracy (%) | ScanObjectNN Accuracy (%) | Computational Efficiency | Remarks |

|---|---|---|---|---|---|

| Volumetric CNN [17] | Voxel (32³) | 89.5 | 80.2 | Low | Pioneering but struggles with resolution vs. memory trade-off |

| PointNet++ [17] | Point Cloud | 90.7 | 82.3 | Medium | Robust to input perturbations; hierarchical feature learning |

| Multi-view CNN [17] | 80 Views | 92.8 | 85.1 | Medium | Leverages pre-trained 2D CNNs; view selection crucial |

| Vision Transformer [19] | Point Cloud | 91.5 | 84.7 | Low | Requires extensive data; strong global context modeling |

Experimental Protocol for Plant Classification:

- Data Acquisition: Capture 3D point clouds of various plant species using terrestrial LiDAR or photogrammetry.

- Data Preprocessing: Normalize point clouds to a consistent number of points (e.g., 1024 points via farthest point sampling) and scale to unit sphere.

- Model Training: Train PointNet++ architecture with multi-scale grouping for 100 epochs using Adam optimizer with initial learning rate of 0.001 and step decay.

- Evaluation: Use 5-fold cross-validation with stratified sampling to ensure balanced class representation across splits. Report overall accuracy and per-class F1 scores.

3D Object Detection

3D object detection involves identifying and localizing objects in 3D space, typically with oriented 3D bounding boxes. In plant phenotyping, this could mean detecting individual fruits or leaves within a canopy.

Architectural Approaches:

- Voxel-based Methods: Convert point clouds to voxel grids and apply 3D CNNs with region proposal networks (RPNs). SECOND algorithm improves efficiency with sparse convolutions [20].

- Point-based Methods: Process raw point clouds directly. PointRCNN generates 3D proposals from point clouds and refines them in a second stage [20].

- Multi-view Methods: Project 3D data to 2D and leverage 2D detection frameworks, then lift predictions back to 3D.

- Fusion-based Methods: Combine multiple data modalities (e.g., camera images with LiDAR point clouds) [20]. VirConv uses virtual sparse convolution for multimodal 3D object detection [20].

Table 3: Performance Comparison of 3D Object Detection Methods on KITTI Dataset (Car Class, Moderate Difficulty) [20]

| Method | Representation | Moderate AP (%) | Easy AP (%) | Hard AP (%) | Runtime (s) | Data Modalities |

|---|---|---|---|---|---|---|

| VirConv-S [20] | Point Cloud + Virtual Features | 87.20 | 92.48 | 82.45 | 0.09 | LiDAR + Image |

| UDeerPEP [20] | Point Cloud | 86.72 | 91.77 | 82.57 | 0.10 | LiDAR |

| PointPillars [20] | Pseudo-image | 82.58 | 88.35 | 77.10 | 0.016 | LiDAR |

| MV3D [20] | Multi-view | 74.97 | 86.62 | 68.78 | 0.36 | LiDAR + Image |

Experimental Protocol for Fruit Detection in Canopy:

- Data Collection: Acquire 3D point clouds of orchard trees using mobile LiDAR systems or depth cameras, with annotated 3D bounding boxes around fruits.

- Data Preparation: Split point clouds into overlapping blocks of 20×20×5 meters with 5-meter stride to manage density variations.

- Model Training: Implement a voxel-based detection pipeline with 0.1m voxel size, sparse 3D CNN backbone, and RPN head. Train with Adam optimizer for 50 epochs.

- Evaluation Metrics: Calculate Average Precision (AP) with 3D IoU threshold of 0.5, with separate analysis for different occlusion levels and ranges.

3D Segmentation

3D segmentation partitions 3D data into semantically meaningful regions and can be categorized into semantic segmentation (labeling each point with a class), instance segmentation (identifying distinct object instances), and part segmentation (labeling components of instances).

Architectural Approaches:

- Point-based Methods: PointNet and PointNet++ architectures process raw point clouds while respecting permutation invariance [16]. More recent methods like PointTransformer use self-attention for improved context modeling [16].

- Sparse Convolutional Methods: Process voxelized point clouds efficiently using submanodule sparse convolutions that ignore empty space, as in MinkowskiEngine [16].

- Hybrid Methods: Combine multiple representations, such as using point features within a voxel framework (e.g., PVCNN) [16].

- Projection-based Methods: Project 3D data to 2D (e.g., range views) and apply 2D CNNs, then project predictions back to 3D [16].

Table 4: Performance Comparison of 3D Segmentation Methods on Benchmark Datasets

| Method | Representation | S3DIS (mIoU %) | ScanNet (mIoU %) | Instance Segmentation (mAP) | Remarks |

|---|---|---|---|---|---|

| PointNet++ [16] | Point Cloud | 54.5 | 63.4 | 35.8 (AP₅₀) | Pioneering point-based method; limited context |

| SparseConvNet [16] | Voxels | 65.4 | 72.1 | 48.6 (AP₅₀) | High accuracy; memory intensive at high resolutions |

| PointTransformer [16] | Point Cloud | 70.4 | 76.5 | 52.7 (AP₅₀) | State-of-the-art; global context modeling |

| Mask R-CNN (3D) [21] | Voxels/Points | - | - | 55.9 (AP₅₀) | Adapts 2D instance segmentation paradigm to 3D |

Experimental Protocol for Plant Organ Segmentation:

- Data Annotation: Collect 3D point clouds of plants and manually annotate points with semantic labels (stem, leaf, fruit, background) and instance IDs for individual organs.

- Data Augmentation: Apply random rotation, scaling, jittering, and elastic deformation to improve model robustness.

- Model Training: Implement a U-Net style architecture with sparse 3D convolutions, training with combined cross-entropy and dice loss for 200 epochs.

- Evaluation: Calculate mean Intersection-over-Union (mIoU) for semantic segmentation and mean Average Precision (mAP) at IoU threshold 0.5 for instance segmentation.

3D Generation

3D generation involves creating novel 3D shapes or scenes, with applications in synthetic data generation for training models or simulating plant growth under various conditions.

Architectural Approaches:

- Autoregressive Models: Treat 3D shapes as sequences of tokens (e.g., in octrees) and predict tokens sequentially [18].

- Generative Adversarial Networks (GANs): Train generator and discriminator networks in adversarial framework to produce realistic 3D shapes [18].

- Diffusion Models: Progressively denoise random initial distributions to generate coherent 3D structures [18]. The Unifi3D framework provides a unified benchmark for various 3D representations in generation tasks [18].

- Hybrid Methods: Combine neural fields with diffusion processes (e.g., DiffRF) for high-quality generation [18].

Table 5: Performance Comparison of 3D Generation Methods on ShapeNet Dataset

| Method | Representation | MMD (×10⁻³) ↓ | COV (%) ↑ | JSD ↓ | Jaccard ↑ | Remarks |

|---|---|---|---|---|---|---|

| 3D-GAN [18] | Voxels (64³) | 5.82 | 42.5 | 0.185 | 0.675 | Pioneering but limited resolution |

| ShapeGF [18] | Point Cloud (2048 points) | 4.15 | 48.3 | 0.162 | 0.712 | Continuous flow-based generation |

| Diffusion Fields [18] | Neural SDF | 3.28 | 53.7 | 0.138 | 0.748 | High-quality surfaces; slow sampling |

| Unifi3D (SDF) [18] | SDF | 2.95 | 56.2 | 0.126 | 0.769 | Unified framework; balanced performance |

Experimental Protocol for Synthetic Plant Generation:

- Data Preparation: Preprocess 3D plant meshes to ensure watertightness and consistent topology where needed.

- Representation Conversion: Convert meshes to signed distance functions (SDFs) with 128³ resolution for balanced detail and computational efficiency.

- Model Training: Train latent diffusion model with VQ-VAE compression, using classifier-free guidance to condition on plant species or trait parameters.

- Evaluation: Use Minimum Matching Distance (MMD) to assess quality, Coverage (COV) to measure diversity, and 1-Nearest Neighbor Accuracy (1-NNA) to evaluate distribution matching.

Table 6: Essential Research Reagents and Computational Resources for 3D Plant Phenotyping

| Resource Category | Specific Tools/Solutions | Function/Purpose | Application Examples in Phenotyping |

|---|---|---|---|

| Data Acquisition Hardware | Terrestrial LiDAR (e.g., FARO Focus), Depth Cameras (e.g., Intel RealSense), RGB-D sensors (e.g., Microsoft Kinect) [14] | Capture 3D point clouds of plant structures and canopies | High-resolution scanning of root systems [14]; canopy architecture measurement [14] |

| Annotation Software | CloudCompare, MeshLab, custom web-based tools with 3D canvas | Manual and semi-automated labeling of 3D data for ground truth generation | Segmenting individual leaves [14]; marking disease regions on 3D plant models [14] |

| Deep Learning Frameworks | PyTorch3D, TensorFlow Graphics, MinkowskiEngine (sparse tensors), Open3D-ML | Specialized libraries for 3D deep learning with optimized operations | Implementing sparse convolutional networks for large-scale plant point clouds [14] |

| Benchmark Datasets | Plant phenotyping-specific datasets (e.g., RoPlant, Lemnatec), general 3D datasets (ShapeNet, ScanNet, S3DIS, KITTI) [16] | Model training, benchmarking, and comparative evaluation | Transfer learning from general objects to plant structures [14] |

| Computational Infrastructure | High-end GPUs (e.g., NVIDIA A100/V100 with 32GB+ VRAM), distributed training frameworks, high-speed storage | Handle memory-intensive 3D data and model training | Training transformer models on high-resolution 3D plant voxel grids [19] |

Workflow and Architectural Visualizations

Generalized 3D Deep Learning Pipeline for Plant Phenotyping

3D Representation Conversion and Generation Pipeline

Deep learning for 3D vision has matured significantly, offering robust solutions for classification, detection, segmentation, and generation tasks relevant to plant phenotyping research. Point-based methods generally excel for fine-grained structural analysis of plant organs, while voxel-based approaches provide strong performance for more volumetric analyses. Multi-view methods offer a practical compromise when computational resources are limited. For 3D generation, diffusion models combined with neural field representations are emerging as the most promising approach for high-fidelity synthetic plant generation.

Key challenges remain, including the need for large annotated 3D plant datasets, development of more efficient architectures to handle the complexity of plant structures, and improved generalization across growth stages and environmental conditions [14] [17]. Future research should focus on self-supervised learning to reduce annotation burden, multimodal fusion combining 3D structure with spectral and temporal information, and development of more interpretable models that can link 3D architectural traits to biological function [14]. As these technologies continue to evolve, they will increasingly enable high-throughput, precise, and automated phenotyping solutions that accelerate plant breeding and sustainable agricultural innovation.

Plant phenotyping, the comprehensive assessment of plant traits, is crucial for understanding the intricate relationships between genotypes and environmental conditions [14]. Traditional phenotyping has relied on manual measurements, which are labor-intensive, destructive, and prone to subjective bias. Two-dimensional imaging offered initial digital advancements but fundamentally fails to capture the complete complexity of plant morphology, as projecting 3D structures onto a 2D plane results in the loss of critical information such as leaf curvature, surface area, and plant volume [22] [23]. The advent of three-dimensional phenotyping technologies has revolutionized this field by enabling non-destructive, precise, and automated measurements of plant architecture [24]. This overview explores the complete 3D phenotyping pipeline, from image acquisition to trait analysis, providing a comparative evaluation of the underlying deep learning architectures and reconstruction techniques that power modern plant science.

Core Components of a 3D Phenotyping Pipeline

A complete 3D phenotyping pipeline integrates several sequential technological components, each with distinct methodological choices that influence the final output quality and applicability.

Image Acquisition Systems

The initial phase involves capturing digital representations of plants using various sensor technologies, each with distinct advantages and limitations:

Multi-view RGB Systems: Utilize multiple standard RGB cameras or a single camera moved around the plant to capture images from different viewpoints. The MVS-Pheno V2 platform, for instance, employs two Raspberry Pi cameras (8 megapixels) with a motorized turntable to automate image capture [25]. These systems benefit from low sensor costs and high image quality but may struggle with heavily occluded regions.

Active Sensing Systems: Technologies like LiDAR (Light Detection and Ranging) and depth cameras (Time-of-Flight or stereo vision) directly capture 3D spatial information. LiDAR offers high precision but at a higher cost [1], while consumer-grade depth cameras can be affected by environmental conditions like scattered sunlight in greenhouses [22].

Robotic Acquisition Platforms: Advanced systems incorporate mobility for field operation. One greenhouse implementation uses an unmanned robot platform with a 6-degrees-of-freedom (6-DoF) robotic arm equipped with a machine-vision camera (4200 × 3120 pixel resolution) to capture images from 64 different poses arranged on a virtual sphere around the target plant [22] [26].

3D Reconstruction Techniques

The captured 2D images or depth readings are processed to reconstruct 3D models of plants, primarily through these computational approaches:

Structure from Motion with Multi-View Stereo (SfM-MVS): This classical computer vision approach reconstructs 3D point clouds by identifying and matching feature points across multiple 2D images taken from different viewpoints [1]. While cost-effective, it can be computationally intensive and may produce incomplete models for plants with severe self-occlusion [23].

Neural Radiance Fields (NeRF): An emerging deep learning technique that uses a fully connected neural network to model volumetric scene features. NeRF synthesizes photorealistic images from novel viewpoints and can generate dense point clouds, demonstrating robustness even with limited and sparsely distributed input images [22] [24]. Advancements like Instant-NGP with hash-encoding have reduced training times from hours to minutes [22].

3D Gaussian Splatting (3DGS): A novel paradigm that represents scene geometry through Gaussian primitives, offering potential benefits in reconstruction efficiency and scalability [24].

Organ Segmentation and Tracking

Once 3D models are reconstructed, identifying and labeling individual plant organs is essential for detailed trait extraction:

Semantic and Instance Segmentation: Deep learning models, particularly those with Transformer-based architectures, have shown remarkable success in segmenting complex plant structures. For peanut plants, which feature multiple branches and dense foliage, such models enable the identification of individual leaves, stems, and petioles [25].

Temporal Organ Tracking: For growth analysis, methods like PhenoTrack3D employ multiple sequence alignment algorithms to track individual maize leaves over time, associating successive segmentations of the same leaf despite significant morphological changes and occlusions [27].

Trait Extraction and Analysis

The final component involves quantifying specific phenotypic parameters from the segmented 3D models:

- Plant-scale Traits: Including plant height, crown width, and overall biomass or volume.

- Organ-level Traits: Encompassing leaf length, width, area, and angle; stem diameter; internode length; and fruit volume, often calculated through geometric fitting approaches like ellipsoid approximation for fruits [22].

Table 1: Quantitative Performance of Different 3D Phenotyping Pipelines

| Crop Species | Reconstruction Method | Trait Category | R² Value | MAPE | Reference |

|---|---|---|---|---|---|

| Tomato | NeRF | Internode length | 0.973 | 0.089 | [22] |

| Tomato | NeRF | Leaf area | 0.953 | 0.090 | [22] |

| Tomato | NeRF | Fruit volume | 0.96 | 0.135 | [22] |

| Ilex species | SfM-MVS + Multi-view registration | Plant height/Crown width | >0.92 | - | [1] |

| Ilex species | SfM-MVS + Multi-view registration | Leaf parameters | 0.72-0.89 | - | [1] |

| Potted plants | SFM-NeRF | Various organ parameters | 0.89-0.98 | - | [23] |

Comparative Analysis of Reconstruction Methodologies

Classical vs. Emerging Reconstruction Approaches

Each 3D reconstruction technique offers distinct advantages and limitations for plant phenotyping applications:

Structure from Motion with Multi-View Stereo (SfM-MVS) has been widely adopted due to its relatively simple implementation and flexibility in representing plant structures. However, it typically requires numerous input images (50-100 depending on plant complexity) and suffers from challenges with data density, noise, and computational scalability, particularly for plants with severe occlusion [24] [1]. The method's performance is also dependent on feature matching, which can be problematic for plants with repetitive structures or low-texture surfaces.

Neural Radiance Fields (NeRF) represents a significant advancement through its use of deep learning to interpolate and extrapolate novel views from sparse input data. A key advantage is its ability to generate high-quality reconstructions from limited viewpoints, which aligns well with the practical constraints of greenhouse environments where full 360-degree access may be impossible [22]. The technology has demonstrated impressive quantitative performance, with R² values exceeding 0.95 for various tomato plant traits [22]. However, its computational requirements, though improving, and applicability in complex outdoor environments remain active research areas [24].

3D Gaussian Splatting (3DGS) has emerged as a promising alternative that represents geometry through Gaussian primitives, potentially offering benefits in both efficiency and scalability compared to previous approaches [24]. While comprehensive validation on diverse plant types is still ongoing, initial results suggest strong potential for high-throughput phenotyping applications.

Deep Learning Architectures for Segmentation

The segmentation of plant point clouds into individual organs has evolved from traditional unsupervised methods to sophisticated deep learning approaches:

Traditional unsupervised segmentation methods required laborious parameter tuning and exhibited relatively low accuracy, especially for plants with complex morphological structures [25]. These methods often necessitated manual intervention, reducing processing efficiency for large datasets.

Modern deep learning models have dramatically improved segmentation automation and accuracy. Voxel-based approaches using 3D sparse convolutions have demonstrated good performance in semantic and instance segmentation tasks, though their limited kernel size can constrain further model improvement [25].

Transformer-based architectures have recently shown exceptional performance across various segmentation tasks by leveraging robust attention mechanisms and global feature processing capabilities [25]. For peanut plants with dense foliage, such models have enabled effective identification and separation of individual leaves and other organs, facilitating organ-level phenotypic measurements previously challenging with traditional methods [25].

Table 2: Deep Learning Approaches for 3D Plant Point Cloud Analysis

| Model Architecture | Application Example | Advantages | Limitations |

|---|---|---|---|

| Voxel-based 3D Sparse Convolutions | Semantic/instance segmentation of peanut plants [25] | Good performance on structured data | Limited scalability with kernel size |

| Transformer-based Architectures | Leaf instance segmentation in dense peanut canopies [25] | Powerful attention mechanisms, global feature processing | Computational complexity for large point clouds |

| Multi-Layer Perceptron (MLP) Networks | NeRF-based 3D reconstruction [22] | Interpolation from sparse views, high-quality novel view synthesis | Significant training computational requirements |

Experimental Protocols and Methodologies

Protocol 1: NeRF-Based Reconstruction for Greenhouse Crops

The NeRF-based pipeline for tomato crops exemplifies a modern approach to 3D phenotyping in controlled environments [22]:

Image Acquisition: A robotic system captures images from 64 predetermined poses arranged on a virtual dome surrounding the target plant, with an average distance of 60cm between camera and plant.

Camera Pose Estimation: Structure from Motion software (COLMAP) processes the acquired images to estimate precise camera pose information (position and orientation), which is essential for NeRF training.

NeRF Training: The images and corresponding camera poses are used to train a NeRF model, which learns the volumetric scene representation using a fully connected neural network. With modern implementations like Instant-NGP, this process requires only minutes rather than hours.

Point Cloud Extraction: The trained NeRF model generates dense point clouds through depth rendering from multiple viewpoints.

Organ Segmentation: Point clouds are segmented using clustering algorithms and geometric feature analysis to identify stems, leaves, and fruits.

Trait Extraction:

- Length Parameters: Skeletonization algorithms identify connections between plant organs to measure internode lengths.

- Leaf Area: Surface reconstruction on segmented leaf point clouds enables accurate area calculation.

- Fruit Volume: Ellipsoid fitting to segmented fruit point clouds allows volume estimation through geometric calculation.

Protocol 2: Multi-View Registration for Fine-Scale Traits

A two-phase workflow developed for Ilex species addresses the challenge of obtaining complete 3D models for detailed organ-level phenotyping [1]:

High-Fidelity Single-View Reconstruction:

- Bypass the built-in depth estimation of stereo cameras

- Apply SfM and MVS algorithms to high-resolution RGB images captured by stereo cameras (ZED 2 and ZED Mini)

- Generate distortion-free point clouds for individual viewpoints

Multi-View Point Cloud Registration:

- Capture point clouds from six different viewpoints around the plant

- Perform rapid coarse alignment using a marker-based Self-Registration (SR) method with calibration spheres

- Execute fine alignment with the Iterative Closest Point (ICP) algorithm

- Merge registered point clouds into a complete 3D plant model

Phenotypic Parameter Extraction:

- Automatically compute plant height, crown width, leaf length, and leaf width from the unified model

- Validate measurements through correlation analysis with manual measurements

This approach demonstrates that multi-view fusion can achieve accuracy comparable to image-based methods while enabling the extraction of fine-scale phenotypic traits rarely addressed in prior registration-based studies [1].

Visualization of Pipeline Architectures

Generalized 3D Phenotyping Workflow

Generalized 3D Phenotyping Workflow

NeRF-Specific Implementation Architecture

NeRF-Specific Implementation Architecture

Table 3: Essential Resources for 3D Plant Phenotyping Research

| Resource Category | Specific Examples | Function/Application | Reference |

|---|---|---|---|

| Image Acquisition Systems | MVS-Pheno V2 platform (Raspberry Pi cameras, turntable) | Automated multi-view image capture for potted plants | [25] |

| Robotic platform with 6-DoF arm (UR-5e), IDS U3-36L0XC camera | Flexible image acquisition in greenhouse environments | [22] | |

| PlantEye F600 multispectral 3D scanner | Combines 3D scanning with multispectral imaging | [28] | |

| Reconstruction Software | COLMAP | Structure from Motion camera pose estimation | [22] |

| Nerfstudio | User-friendly framework for NeRF application and training | [22] | |

| Instant-NGP | Hash-encoding accelerated NeRF training | [22] | |

| Annotation Platforms | Segments.ai | Online platform for point cloud annotation | [28] |

| Datasets | Annotated 3D point cloud dataset of broad-leaf legumes | Training and validation for segmentation models | [28] |

| Potted peanut point cloud dataset (188 samples) | Model training and phenotypic accuracy assessment | [25] | |

| Segmentation Algorithms | Transformer-based architectures | Semantic and instance segmentation of complex plant structures | [25] |

| Density-based spatial clustering (DBSCAN) | Leaf instance separation in occlusion conditions | [23] |

The field of 3D plant phenotyping has evolved from basic volumetric assessments to sophisticated organ-level trait extraction capable of capturing dynamic growth processes. The comparative analysis presented in this overview demonstrates that no single pipeline architecture universally outperforms others across all applications. Rather, the optimal selection depends on specific research constraints including target crop species, required throughput, environmental conditions, and measurement precision requirements.

Future advancements in 3D phenotyping will likely focus on several key areas: (1) construction of comprehensive benchmark datasets through synthetic data generation and generative artificial intelligence to address the current scarcity of annotated plant point clouds [14]; (2) development of more accurate and efficient 3D point cloud analysis methods leveraging multitask learning, lightweight models, and self-supervised learning [14]; and (3) enhanced interpretation of deep learning models for improved extensibility and multimodal data utilization [14]. The integration of these technologies will continue to transform plant phenotyping from a descriptive practice to a predictive science, ultimately accelerating crop improvement and sustainable agricultural production.

Architectures in Action: A Deep Dive into 3D Deep Learning Models and Their Phenotyping Applications

The accurate extraction of plant phenotypic traits is crucial for modern agriculture, enabling growth monitoring, cultivar selection, and scientific management practices [29]. Traditional manual measurement methods are time-consuming, labor-intensive, and unsuitable for large-scale, high-throughput field phenotyping [29]. While 3D imaging technologies can overcome these limitations by capturing complete plant geometry, processing the resulting point cloud data presents significant computational challenges [30].

PointNet and PointNet++ represent pioneering deep learning architectures that process raw 3D point clouds directly, avoiding the information loss associated with voxel-based or projection-based methods [29]. This capability is particularly valuable for plant phenotyping applications where preserving fine-grained local geometric features of stems and leaves is essential for accurate organ segmentation [29]. This guide provides a comprehensive comparison of these architectures within the context of 3D plant phenotyping research, evaluating their performance against contemporary alternatives across multiple crop species.

Core Innovation and Technical Approach

PointNet introduced a foundational approach to direct point cloud processing using shared multi-layer perceptrons (MLPs) and symmetric aggregation functions [31]. The architecture learns spatial encodings of individual points, which are then aggregated into a global signature using a symmetric function (typically max pooling) [31]. This design enables the network to handle unordered point sets while being invariant to geometric transformations.

PointNet++ addresses PointNet's limitation in capturing local structures by introducing a hierarchical neural network that applies PointNet recursively to partitioned point sets [29]. This multi-layer feature extraction strategy enables the model to learn local features with increasing contextual scales, making it particularly suitable for complex plant structures [29].

Enhancements for Plant Phenotyping

Recent research has introduced specialized modules to enhance PointNet++ for plant-specific applications. The Local Spatial Encoding (LSE) module captures intricate local spatial relationships within plant structures, while the Density-Aware Pooling (DAP) module adaptively selects pooling strategies based on neighborhood point cloud density [29]. These improvements address challenges posed by non-uniform density and complex organ morphology in plant point clouds.

Performance Comparison in Plant Phenotyping

Quantitative Benchmarking

Table 1: Semantic Segmentation Performance Comparison Across Architectures

| Architecture | Overall Accuracy (%) | Mean IoU (%) | Crop Species | Key Limitations |

|---|---|---|---|---|

| Original PointNet | 90.13 [29] | 88.42 [29] | Tobacco [29] | Limited local feature capture [29] |

| Original PointNet++ | 92.47 [29] | 91.65 [29] | Tobacco [29] | Sensitive to point density variations [29] |

| Improved PointNet++ (with LSE & DAP) | 95.25 [29] | 93.97 [29] | Tobacco [29] | Higher computational complexity [29] |

| PVSegNet (Point-Voxel Fusion) | 96.38 [31] | 92.10 [31] | Soybean [31] | Balance of performance and computational cost [31] |

| Dual-Task Segmentation Network (DSN) | 99.16 [32] | 93.64 [32] | Caladium bicolor [32] | Complex multi-head attention design [32] |

| SCNet (Dual-Representation) | >10% improvement over SOTA [33] | Not Reported | 20 plant species [33] | Cylindrical and sequential slice processing [33] |

Table 2: Phenotypic Trait Extraction Accuracy Using PointNet++

| Phenotypic Trait | Coefficient of Determination (R²) | Root Mean Square Error (RMSE) | Segmentation Architecture |

|---|---|---|---|

| Plant Height | 0.95 [29] | 0.31 cm [29] | Improved PointNet++ [29] |

| Leaf Length | 0.86 [29] | 2.27 cm [29] | Improved PointNet++ [29] |

| Leaf Width | 0.91 [29] | 1.84 cm [29] | Improved PointNet++ [29] |

| Internode Length | 0.89 [29] | 1.12 cm [29] | Improved PointNet++ [29] |

| Pod Length | 0.918 [31] | Not Reported | PVSegNet [31] |

| Pod Width | 0.949 [31] | Not Reported | PVSegNet [31] |

Algorithm Comparison and Suitability Analysis

Table 3: Architecture Selection Guide for Plant Phenotyping Tasks

| Research Requirement | Recommended Architecture | Rationale | Experimental Evidence |

|---|---|---|---|

| High-throughput stem-leaf segmentation | Improved PointNet++ (with LSE & DAP) | Superior accuracy for complex plant structures [29] | 95.25% OA, 93.97% mIoU for tobacco [29] |

| Fine-grained organ segmentation | PVSegNet or DSN | Enhanced feature capture through point-voxel fusion or multi-head attention [31] [32] | 96.38% precision for soybean pods [31] |

| Multi-species applications | SCNet or Plant-MAE | Dual-representation learning or self-supervised adaptability [33] [34] | >10% accuracy improvement across 20 species [33] |

| Limited annotated data | Plant-MAE with self-supervised learning | Reduces dependency on exhaustive annotations [34] | State-of-the-art performance across multiple crops [34] |

| Instance-level leaf segmentation | DSN with MV-CRF | Joint optimization for instance and semantic segmentation [32] | 87.94% average precision for leaf instances [32] |

Experimental Protocols and Methodologies

Standardized Workflow for 3D Plant Phenotyping

Implementation Details for PointNet++ Evaluation

Dataset Construction: Multi-view images of tobacco plants were captured using a DJI Inspire 2 UAV equipped with a Zenmuse X5s camera (20.8 effective megapixels) flying at 5 meters height with 30°, 60°, and 90° angles [29]. The resulting 2,220 images were processed using Structure from Motion (SfM) and Multi-View Stereo (MVS) algorithms to generate high-fidelity 3D point clouds [29].

Network Training: The improved PointNet++ model was trained with a local spatial encoding module to capture spatial relationships and a density-aware pooling module to handle non-uniform point density [29]. Data augmentation techniques including cropping, jittering, scaling, and rotation were applied to enhance model robustness [34].

Performance Metrics: Segmentation accuracy was evaluated using overall accuracy (OA) and mean intersection over union (mIoU), while phenotypic extraction performance was quantified through coefficients of determination (R²) and root mean square errors (RMSE) compared to manual measurements [29].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Materials for 3D Plant Phenotyping Experiments

| Item Category | Specific Examples | Research Function | Application Context |

|---|---|---|---|

| Imaging Systems | DJI Inspire 2 UAV with Zenmuse X5s [29], ZED 2 stereo camera [1], Custom MVS platforms [31] | 3D data acquisition via multi-view image capture | Field-based (UAV) and controlled environment (stationary systems) phenotyping |

| Reconstruction Software | Structure from Motion (SfM), Multi-View Stereo (MVS) [1] | 3D point cloud generation from 2D images | Converting multi-view images to precise plant models |

| Annotation Tools | Semantic Segmentation Editor [5], Manual labeling protocols | Ground truth generation for training and evaluation | Precise labeling of stems, leaves, and other organs |

| Computational Framework | PointNet++ implementation with LSE & DAP modules [29], PVSegNet [31] | Deep learning-based organ segmentation | Segmenting plant point clouds into constituent organs |

| Validation Metrics | OA, mIoU, R², RMSE [29] | Performance quantification | Objective evaluation of segmentation and trait extraction accuracy |

| Plant Materials | Tobacco, soybean, tomato, caladium varieties [29] [31] [32] | Experimental subjects | Species-specific phenotypic analysis |

PointNet and PointNet++ have established themselves as foundational architectures for direct point cloud processing in plant phenotyping research. While the original PointNet++ demonstrates significant advantages over its predecessor in capturing local features, enhanced versions incorporating spatial encoding and density-aware modules achieve state-of-the-art performance for tobacco phenotyping, with overall accuracy of 95.25% and mIoU of 93.97% [29].

The evolving landscape of plant phenotyping architectures shows that point-based methods like PointNet++ maintain distinct advantages for preserving fine geometric details compared to voxel-based approaches [29]. However, emerging frameworks combining point and voxel representations (PVSegNet) or incorporating dual-representation learning (SCNet) demonstrate promising results for specific applications such as soybean pod segmentation or multi-species panoptic recognition [31] [33].

For researchers selecting architectures, the choice depends critically on specific research goals: improved PointNet++ variants for high-throughput stem-leaf segmentation, specialized networks like PVSegNet for fine-grained organ analysis, or self-supervised approaches like Plant-MAE when annotated data is limited [29] [31] [34]. As the field advances, reducing annotation dependency through self-supervised learning and improving model interpretability will be crucial for broadening adoption across plant science research communities.

The accurate analysis of three-dimensional (3D) plant structures is crucial for modern agricultural research, enabling scientists to non-destructively monitor growth, identify diseases, and predict yield. In this domain, Dynamic Graph Convolutional Neural Networks (DGCNN) have emerged as a powerful framework for processing 3D plant point cloud data. Unlike traditional convolutional neural networks designed for structured grid data, DGCNN excels at capturing local point-point relationships in unstructured 3D space by dynamically constructing graphs in each feature space [35]. This capability is particularly valuable for plant phenotyping applications, where accurately segmenting individual organs like leaves, stems, and panicles from complex, overlapping plant architectures remains a fundamental challenge.

DGCNN belongs to a broader family of graph-based deep learning models that have demonstrated significant potential across various plant science applications. These range from genomic prediction using graph pangenomes [36] [37] to 3D organ segmentation [38] [35]. The core strength of DGCNN lies in its ability to model the inherent geometric relationships between spatially proximate points in a 3D scan, effectively learning both local patterns and global context from plant point clouds. This article provides a comprehensive performance comparison between DGCNN and alternative approaches for 3D plant phenotyping tasks, supported by experimental data and implementation protocols.

Core Architectural Principles of DGCNN

Dynamic Graph Construction

The foundational innovation of DGCNN is its dynamic graph learning approach. While traditional graph convolutional networks operate on a fixed graph structure, DGCNN constructs a new graph in the feature space at each layer of the network. This dynamic construction allows the model to adaptively learn semantic relationships between points that may not be immediately adjacent in Euclidean space but share similar features [35]. For plant point clouds, this means the network can identify organ-level structures based on both spatial arrangement and feature similarity, enabling more accurate segmentation of complex plant architectures.

Local Feature Aggregation

DGCNN employs an edge convolution (EdgeConv) operation that generates features for each point by applying channel-wise symmetric aggregation on its nearest neighbors in the feature space [38] [35]. This operation captures local geometric structures while maintaining permutation invariance, a critical requirement for processing unordered point sets. The EdgeConv operation can be formally represented as:

[ xi' = \square{j:(i,j)\in\mathcal{E}} h\Theta(xi, x_j) ]

where (\square) represents a channel-wise symmetric aggregation function (typically max pooling), (h_\Theta) denotes a nonlinear function with learnable parameters (\Theta), and (\mathcal{E}) represents the dynamically constructed graph edges. For plant phenotyping, this enables the network to learn distinctive features for different plant organs based on their local geometric properties, such as the curvature of leaf surfaces or the cylindrical structure of stems.

Performance Comparison with Alternative Architectures

Segmentation Accuracy Across Plant Species

DGCNN has been evaluated against multiple alternative architectures across various plant species and dataset modalities. The following table summarizes the performance of DGCNN compared to other prominent models on 3D plant point cloud segmentation tasks:

Table 1: Performance comparison of DGCNN against alternative architectures on plant organ segmentation

| Model | Dataset | Plant Species | Accuracy (%) | mIoU (%) | Inference Time |

|---|---|---|---|---|---|

| DGCNN | Plant3D | Multiple species | 95.46 [35] | 90.41 [35] | Competitive [35] |

| PointNet | Plant3D | Multiple species | Lower than DGCNN [35] | Lower than DGCNN [35] | Faster than DGCNN [35] |