Ensuring Robustness in High-Throughput Screening: A Comprehensive Guide for Reliable Phenotyping and Drug Discovery

This article provides a comprehensive framework for establishing robust high-throughput screening (HTS) protocols in biomedical research and drug development.

Ensuring Robustness in High-Throughput Screening: A Comprehensive Guide for Reliable Phenotyping and Drug Discovery

Abstract

This article provides a comprehensive framework for establishing robust high-throughput screening (HTS) protocols in biomedical research and drug development. It explores the foundational principles of HTS, detailing key methodological approaches including biochemical, cell-based, and phenotypic assays. The content delivers practical strategies for troubleshooting common pitfalls, optimizing assay performance through metrics like Z'-factor, and implementing rigorous validation and comparative statistical analyses. Aimed at researchers and drug development professionals, this guide synthesizes current best practices to enhance the reliability, efficiency, and predictive power of high-throughput phenotyping campaigns, ultimately accelerating the journey from hit identification to viable lead compounds.

The Pillars of Robust High-Throughput Screening: Core Principles and Evolving Landscape

In the field of modern drug discovery and phenotyping research, screening methodologies form the cornerstone of initial candidate identification. High-Throughput Screening (HTS) is an automated, robotic assay process that rapidly conducts hundreds to thousands of biochemical or cell-based tests to identify active compounds, or "hits," from extensive chemical libraries [1]. It serves as a critical jump-start to the discovery pipeline, allowing researchers to quickly narrow thousands of possibilities down to a manageable number of promising leads for further investigation [1]. The evolution of this technology has given rise to Ultra-High-Throughput Screening (uHTS), which pushes the boundaries of scale and speed, enabling the screening of millions of compounds to exhaustively explore chemical space and significantly increase the probability of discovering novel therapeutic candidates [2] [3].

The drive toward uHTS is fueled by the increasing complexity of drug targets and the desire to screen ever-larger libraries. This is particularly relevant in high-throughput phenotyping robustness screening, where the goal is not only to identify hits but also to ensure that the screening process itself is reproducible, reliable, and generates physiologically relevant data. The core distinction lies in the throughput capacity, level of miniaturization, and the sophistication of the integrated automation and data analysis systems required.

Comparative Analysis: HTS vs. uHTS

The quantitative and qualitative differences between HTS and uHTS can be summarized in terms of throughput, technological requirements, and data output. The table below provides a structured comparison of their key attributes.

Table 1: Key Characteristics of HTS and uHTS

| Attribute | High-Throughput Screening (HTS) | Ultra-High-Throughput Screening (uHTS) |

|---|---|---|

| Throughput Range | Up to 100,000 compounds per day [4] | Over 300,000, potentially millions, of compounds per day [2] [4] |

| Typical Assay Format | 96-well, 384-well, and 1536-well microplates [5] [4] | 1536-well plates and higher-density formats; microfluidic droplets and chips [5] [6] |

| Screening Volume | Low microliter (µL) range | Nanoliter (nL) to low microliter (µL) range [4] |

| Primary Goal | Rapid identification of active compounds ("hits") from large libraries [1] | Comprehensive exploration of massive chemical libraries; genome-wide studies [2] [7] |

| Automation Level | Robotic automation for liquid handling and detection [1] | Highly sophisticated, integrated automated systems with minimal manual intervention [3] |

| Cost & Infrastructure | High initial investment in equipment and maintenance [2] | Significantly greater complexity and cost, requiring specialized infrastructure [4] |

The progression from HTS to uHTS is not merely a linear increase in speed but a fundamental shift in approach, facilitated by advances in miniaturization and fluid handling. While HTS is a powerful tool for many discovery campaigns, uHTS becomes essential when the chemical or genetic library is so vast that a lower-throughput method would be impractical or too time-consuming.

Quantitative Market and Technology Outlook

The adoption and development of screening technologies are reflected in the market's growth and segment analysis. The global HTS market, valued at an estimated $26.12 billion in 2025, is projected to grow at a compound annual growth rate (CAGR) of 10.7%, reaching $53.21 billion by 2032 [7]. Another analysis estimates the market will grow from $32.0 billion in 2025 to $82.9 billion by 2035 [2]. This robust growth is underpinned by continuous technological innovation and rising R&D investments in the pharmaceutical and biotechnology sectors [2] [8].

Within this expanding market, specific segments demonstrate particularly strong growth potential, indicating future trends. The following table summarizes the forecasted growth for key technologies and applications.

Table 2: High-Growth Segments in the Screening Market (2025-2035 Forecast)

| Segment | Category | Projected CAGR | Key Driver |

|---|---|---|---|

| Technology | Ultra-High-Throughput Screening | 12% [2] | Unprecedented ability to screen millions of compounds quickly for comprehensive exploration [2]. |

| Technology | Cell-Based Assays | Leading segment with 39.4% share in 2025 [2] | Demand for physiologically relevant data that more accurately replicates complex biological systems [2] [7]. |

| Application | Target Identification | 12% [2] | Capacity to rapidly assess large chemical libraries against diverse biological targets, accelerating early discovery [2]. |

| Application | Primary Screening | Leading segment with 42.7% share in 2025 [2] | Essential role in identifying active compounds from large libraries in the initial drug discovery phase [2]. |

Experimental Protocol: A Typical uHTS Workflow for Drug Discovery

The following protocol details a standard uHTS workflow for primary screening in drug discovery, which can be adapted for various phenotyping applications.

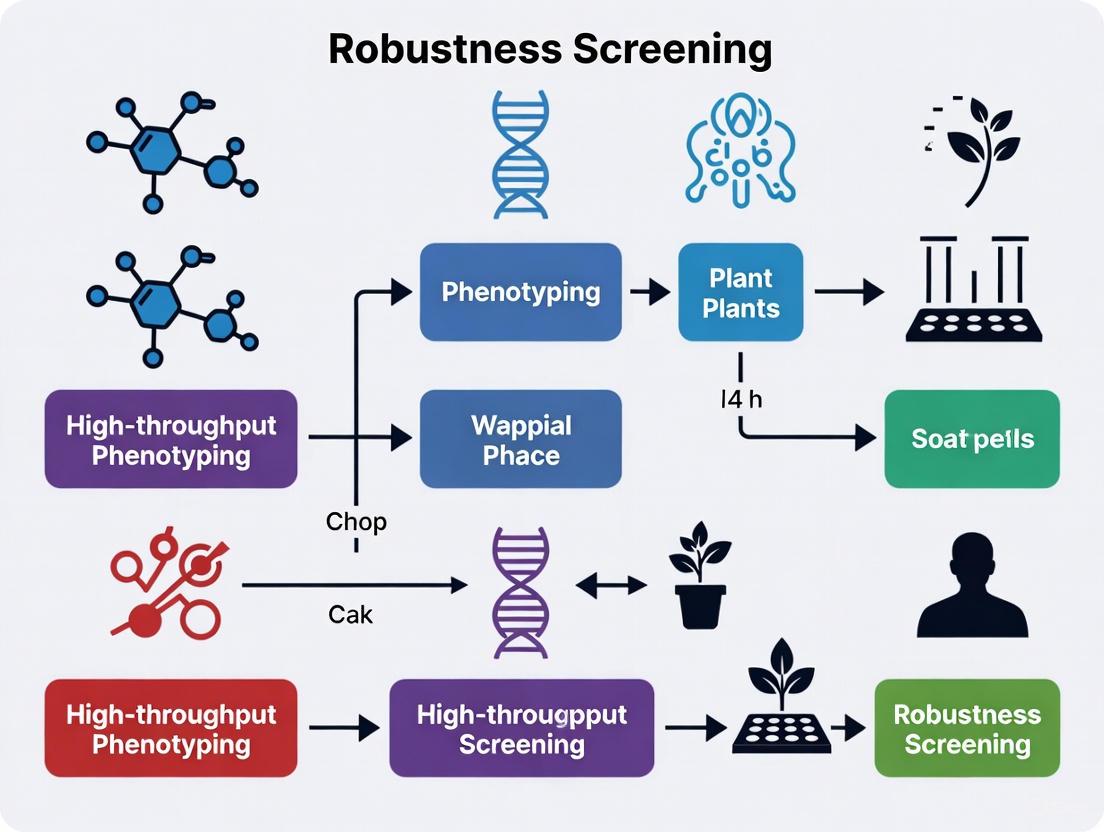

The diagram below illustrates the key stages of a uHTS campaign, from library preparation to hit confirmation.

Detailed Protocol Steps

Step 1: Library and Assay Preparation

- Compound Library Management: uHTS begins with the preparation of a diverse chemical library stored in high-density microplates (e.g., 384 or 1536-well format). Compound management systems use highly automated procedures for retrieval, nanoliter liquid dispensing, and quality control [4] [9]. Libraries can contain hundreds of thousands to millions of compounds.

- Assay Design and Miniaturization: The biological assay must be robust, reproducible, and miniaturized for 1536-well plates or microfluidic volumes (1-2 µL) to conserve reagents [4] [5]. Assays are fully validated for their pharmacological relevance and statistical robustness (e.g., calculating Z'-factor > 0.5) before the primary screen [4].

- Reagent Dispensing: Using non-contact acoustic or solenoid-based liquid handlers, nanoliter volumes of compounds are transferred to assay plates. Subsequently, assay reagents, buffers, and cells (for cell-based assays) are dispensed automatically [4].

Step 2: Primary Ultra-High-Throughput Screening

- Automated Incubation and Processing: The assay plates are incubated under controlled conditions (e.g., temperature, CO₂) using robotic arms that shuttle plates between incubators and detectors. The entire process is managed by scheduling software to maintain assay timing consistency [3].

- Signal Detection and Readout: After incubation, plates are moved to high-speed detectors. Common detection methods include:

- Fluorescence/Luminescence Intensity: Highly sensitive and adaptable for enzymatic and cell-based assays [4].

- High-Content Imaging: Provides multiparametric data on cellular morphology and subcellular localization [8].

- Label-Free Technologies: Such as mass spectrometry, which is increasingly used for unlabeled biomolecules [4].

Step 3: Hit Identification and Data Analysis

- Raw Data Processing: Automated data analysis pipelines process the raw signal data, normalizing it against positive and negative controls on each plate to account for inter-plate variability.

- Hit Selection: "Hits" are identified based on a pre-defined activity threshold, commonly set using statistical measures like a percentage of control activity or a certain number of standard deviations from the mean of negative controls [4].

- Data Triage and cheminformatics: Advanced analysis is critical due to the high potential for false positives from assay interference (e.g., chemical reactivity, autofluorescence, colloidal aggregation) [4]. Machine learning models and expert-rule-based filters are applied to triage the output, ranking compounds by their probability of being true positives [4].

Step 4: Hit Confirmation

- Compound Re-testing: The primary "hit" compounds are re-tested, often in dose-response curves, to confirm their activity from the original screening samples.

- Counter-Screening: Confirmed hits are tested against unrelated targets or assays to evaluate their specificity and rule out promiscuous or non-selective compounds [3] [4]. This step is crucial for ensuring the robustness of the screening outcomes before committing resources to secondary screening.

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful execution of an HTS/uHTS campaign relies on a suite of specialized reagents, instruments, and consumables.

Table 3: Essential Research Reagent Solutions for HTS/uHTS

| Item | Function/Description | Application Note |

|---|---|---|

| Liquid Handling Systems | Automated robots for precise, non-contact dispensing of nanoliter to microliter volumes of samples and reagents [7] [4]. | Essential for assay miniaturization and reproducibility. Systems like the firefly platform use positive displacement for high-density pipetting [7]. |

| HTS-Optimized Assay Kits | Ready-to-use, validated reagent kits (e.g., reporter assays, viability assays) that simplify setup and ensure consistency [2]. | The reagents and kits segment holds a leading 36.5% market share due to demand for reliability and reproducibility [2]. |

| High-Density Microplates | Microplates with 384, 1536, or even 3456 wells, designed for low-volume assays and compatible with automation [5] [1]. | The foundation of assay miniaturization; 1536-well plates are standard for uHTS to enable screening of >300,000 compounds per day [4]. |

| Cell Lines for Phenotypic Screening | Engineered or disease-relevant cell lines (e.g., iPSCs) used in cell-based assays to provide physiologically relevant data [5] [7]. | Cell-based assays are the leading technology segment; 3D cell cultures and organoids are gaining traction for better mimicking in vivo conditions [8] [5]. |

| Detection Reagents & Probes | Fluorescent, luminescent, or label-free probes that generate a measurable signal upon biological activity (e.g., substrate cleavage, ion flux) [4]. | Fluorescence-based methods are most common due to sensitivity and ease of use. Mass spectrometry is an emerging label-free alternative [4]. |

Advanced Applications and Future Perspectives

The application of HTS and uHTS has expanded far beyond conventional small-molecule drug discovery, becoming pivotal in advanced research domains. In functional genomics, HTS is combined with CRISPR-Cas9 or RNAi technologies for genome-wide screens to study gene function and identify novel therapeutic targets [10] [7]. In toxicology, initiatives like the Tox21 program use HTS with in vitro assays in a high-throughput, concentration-responsive manner to predict chemical toxicity and reduce reliance on animal studies [4]. Furthermore, HTS is instrumental in protein engineering, facilitating the directed evolution of enzymes with enhanced properties or novel functions through ultrahigh-throughput screening toolkits like fluorescence-activated cell sorting (FACS) and microfluidics-based droplet sorting [6].

The future of screening is being shaped by several key technological integrations. The use of 3D cell models, such as organoids and spheroids, in HTS platforms is rapidly advancing to provide more physiologically relevant data that can bridge the gap between in vitro models and in vivo situations [5] [8] [1]. The integration of Artificial Intelligence (AI) and Machine Learning is reshaping the market by enhancing data analysis, predicting molecular interactions, optimizing compound libraries, and streamlining assay design, thereby reducing time and costs [2] [7]. Finally, microfluidic and droplet-based technologies are enabling unprecedented miniaturization and speed, allowing for single-cell analysis and the screening of vast libraries with minimal reagent consumption [5] [6]. These innovations collectively ensure that HTS and uHTS will remain at the forefront of accelerating scientific discovery and therapeutic development.

The Strategic Shift from Manual to Automated, Miniaturized Systems

The field of biological screening is undergoing a profound transformation, marked by a strategic shift from manual, low-throughput methods to automated, miniaturized, and data-rich systems. This evolution is particularly critical in high-throughput phenotyping robustness screening, where the ability to maintain stable performance across diverse conditions is essential for advancing drug discovery and basic research. High-throughput phenotyping enables the comprehensive assessment of complex traits—including development, growth, resistance, and physiology—across vast numbers of biological samples [11]. The move toward automation addresses a significant bottleneck in research, as traditional phenotyping is often costly, laborious, and destructive, limiting the scale and precision of experimental outcomes [11].

Robustness, defined as a system's ability to maintain stable performance despite internal or external challenges, has emerged as a crucial phenotypic property [12]. In the context of high-throughput screening, robustness ensures that results are reproducible and reliable across different perturbation spaces—sets of relevant environmental or experimental conditions [12]. Quantifying robustness provides researchers with valuable insights into the genetic and metabolic mechanisms underlying stable performance, enabling the development of more predictable and effective therapeutic interventions [12].

The Evolution of Screening Methodologies

Quantitative Comparison of Screening Approaches

Table 1: Evolution from Manual to Automated, Miniaturized Screening Systems

| Screening Characteristic | Traditional Manual Methods | Modern Automated Systems |

|---|---|---|

| Throughput Capacity | Low (3-8 plants per treatment, full day for harvest) [13] | High (hundreds of genotypes, thousands of plants) [13] |

| Data Points per Experiment | Limited by human capacity | Extensive, automated data collection |

| Measurement Standardization | Prone to operator variability | Highly standardized through automation |

| Temporal Resolution | Limited by labor constraints | Frequent, non-destructive monitoring [13] |

| Financial Investment | Lower initial cost | High initial investment ($250M market in 2025) [14] |

| Operational Costs | High labor requirements | Reduced labor, higher maintenance |

| Data Complexity | Simple, manageable datasets | Complex, requires advanced analytics [14] |

Key Drivers for the Strategic Shift

The transition to automated systems is propelled by multiple converging factors. The phenotyping bottleneck has become particularly pronounced in plant sciences and drug discovery, where molecular tools have advanced rapidly while phenotypic characterization lagged behind [13]. Technological advancements in sensor technology, robotics, and data analytics have created enabling infrastructures for automation [13]. Additionally, the growing demand for improved crop yields and climate-resilient crops in agriculture, alongside the need for accelerated drug development timelines in pharmaceuticals, has increased the value proposition of high-throughput approaches [14] [15].

The integration of artificial intelligence and machine learning has been particularly transformative, allowing researchers to extract meaningful patterns from massive datasets generated by automated systems [11]. These technologies enable automated image analysis, predictive modeling, and the identification of subtle phenotypic patterns that would be imperceptible through manual observation [11].

Core Technologies Enabling the Transition

Automated Phenotyping Platforms

Various automated platforms have been developed to address specific research needs across biological domains. In plant phenotyping, systems like "PHENOPSIS" automate the assessment of plant responses to soil water stress, while "LemnaTec 3D Scanalyzer" systems enable non-invasive screening of salinity tolerance traits in rice [11]. For microbial studies, automated cultivation systems facilitate the high-throughput quantification of robustness across different perturbation spaces [12].

These platforms typically incorporate multiple sensor technologies, including digital RGB cameras, hyperspectral imagers, thermal cameras, and fluorescence imaging systems [13] [11]. The combination of diverse sensing modalities provides comprehensive phenotypic profiles that capture various aspects of biological response to environmental challenges.

Miniaturization and Experimental Standardization

Miniaturization represents a critical component of the strategic shift, enabling researchers to increase throughput while reducing resource consumption. The development of methods for creating multi-assay stock plates containing standardized inocula of multiple isolates has demonstrated how miniaturization can be achieved without sacrificing data quality [16]. This approach utilizes cultures grown to mid-log phase, aliquoted in set patterns into multi-well plates containing cryoprotectants like 50% glycerol, and stored at -80°C for future use [16].

Standardization protocols are essential for ensuring the robustness of miniaturized systems. Including control strains on each plate controls for intra-assay variability, while standardizing optical densities and growth conditions ensures reproducible starting points across experiments [16]. These methodological advances enable researchers to predict realistic standard deviations for multiple isolates in phenotypic assays and generate data for performance of power calculations for genotyping [16].

Application Notes: Experimental Protocols for Robustness Screening

Protocol 1: High-Throughput Phenotypic Screening for Microbial Robustness

Objective: To quantify robustness of microbial strains across multiple perturbation spaces.

Materials and Reagents:

- Microbial strains (wild-type and mutants)

- Multi-well culture plates (96 or 384-well)

- Liquid growth media appropriate for strains

- Chemical perturbagens or stress conditions

- Plate reader with temperature and shaking control

- Automated liquid handling system

Procedure:

- Strain Preparation: Grow overnight cultures of all strains to stationary phase in appropriate media.

- Inoculum Standardization: Dilute cultures to standardized optical density (OD600 = 0.1) using automated liquid handling.

- Plate Setup: Dispense 100 μL of standardized inoculum into each well of multi-well plates.

- Perturbation Application: Add chemical perturbagens or apply environmental stresses (temperature, pH, osmotic) using concentration gradients.

- Cultivation and Monitoring: Incubate plates with continuous shaking and monitor growth kinetics through OD measurements every 15-60 minutes.

- Data Collection: Record growth curves for 24-48 hours or until stationary phase is reached.

- Robustness Calculation: Quantify robustness using the Fano factor method, which is dimensionless, frequency-independent, and free from arbitrary controls [12].

Data Analysis:

- Calculate maximum growth rate and yield for each strain under each condition.

- Compute robustness as R = σ²/μ, where σ² is the variance of the phenotype across perturbations and μ is the mean phenotype across the same perturbations [12].

- Compare robustness scores across strains to identify genetic determinants of stable performance.

Protocol 2: Automated Plant Phenotyping for Stress Response

Objective: To non-invasively phenotype plant growth and stress responses under controlled conditions.

Materials and Reagents:

- Plant genotypes (wild-type and variants)

- Soil or hydroponic growth systems

- Automated phenotyping platform (e.g., LemnaTec, WIWAM, PhenoBox)

- RGB, fluorescence, and hyperspectral imaging sensors

- Automated watering and weighing systems

- Environmental control chambers

Procedure:

- Experimental Design: Randomize plant positions to account for micro-environmental variation.

- Plant Growth: Grow plants under controlled conditions with standardized light, temperature, and humidity.

- Automated Imaging: Program regular intervals for automated imaging using multiple sensor types.

- Stress Application: Implement controlled drought, salinity, or nutrient stress through automated environmental control.

- Data Acquisition: Collect images and sensor data automatically throughout the experiment.

- Image Analysis: Extract phenotypic traits using machine learning algorithms for image segmentation and analysis.

- Calibration: Establish calibration curves between digital biomass estimates and destructive measurements [13].

Data Analysis:

- Extract time-series data for growth-related traits (projected leaf area, digital biomass).

- Calculate stress response indices based on temporal changes in physiological traits.

- Account for diurnal variations in leaf angle and other periodic phenomena [13].

- Develop genotype-phenotype associations through genome-wide association studies.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Research Reagents and Materials for High-Throughput Phenotyping

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Multi-well Plates (96/384-well) | Miniaturized cultivation platform | Enable high-throughput screening; black walls with clear bottoms ideal for imaging [16] |

| Standardized Glycerol Stocks (50%) | Cryopreservation of standardized inocula | Ensure reproducible starting material across experiments [16] |

| Crystal Violet Stain | Biofilm quantification | Used in high-throughput biofilm assays; requires washing steps [16] |

| Fluorescent Dyes (e.g., FRET probes) | Molecular interaction reporting | Enable target-based screening; require specific filter sets [17] |

| Chemical Perturbagen Libraries | Induction of phenotypic diversity | Used to create perturbation spaces for robustness screening [12] |

| Sensor Solutions (e.g., pH, O₂ probes) | Environmental monitoring | Integrated into automated systems for real-time condition assessment |

| Cell Culture Media Formulations | Support of diverse microbial growth | Must be optimized for specific strains and conditions [12] |

Quantifying and Analyzing Robustness

Robustness Quantification Methodologies

The Fano factor-based robustness metric (R = σ²/μ) provides a powerful approach for quantifying phenotypic stability [12]. This method offers several advantages over traditional approaches: it is dimensionless, allowing comparison across different phenotypic measures; frequency-independent, making it suitable for diverse experimental designs; and free from arbitrary controls, as it doesn't require designation of a reference condition [12].

Application of this method to a dataset of Saccharomyces cerevisiae mutants identified 67 strains with maximal robustness (R = 0), including seven with mutations in temperature-sensitive alleles and 60 with non-essential gene deletions [12]. Further analysis revealed that genes associated with increased robustness were primarily linked to "cell polarity," "mitosis," or "unknown" functional regions, providing insights into the genetic architecture of robust performance [12].

Data Analysis and Integration Challenges

The shift to automated, high-throughput systems generates massive datasets that present significant analytical challenges. Machine learning and deep learning approaches have become essential tools for extracting meaningful biological insights from these complex data streams [11]. Convolutional neural networks (CNNs) now achieve state-of-the-art performance for image classification, object recognition, and image segmentation tasks in phenotypic analysis [11].

A critical consideration in data analysis is the need for appropriate calibration curves between proxy measurements and actual biological traits. For example, studies have demonstrated curvilinear relationships between projected leaf area and total leaf area in plants, where neglecting this non-linearity can result in significant errors despite high coefficients of determination [13]. Similarly, diurnal changes in leaf angle can cause deviations of more than 20% in plant size estimates from top-view cameras over the course of a day [13].

Visualization of High-Throughput Robustness Screening Workflows

Workflow for Microbial Robustness Screening

Diagram 1: Microbial robustness screening workflow for identifying genetic determinants of stable performance across perturbation spaces.

Automated Plant Phenotyping Data Pipeline

Diagram 2: Automated plant phenotyping data pipeline from image acquisition to genetic association studies.

The strategic shift from manual to automated, miniaturized systems represents a fundamental transformation in biological research methodology. This transition enables unprecedented scale in phenotypic characterization while introducing new capabilities for quantifying robustness—a critical phenotypic property that had been challenging to assess systematically. The integration of advanced sensor technologies, robotics, and computational analytics has created a new paradigm where researchers can simultaneously screen thousands of biological entities across multiple environmental conditions, generating rich datasets that reveal both performance and stability characteristics.

Future advancements in this field will likely focus on several key areas. The integration of artificial intelligence will continue to evolve, with deep learning approaches becoming increasingly sophisticated in their ability to extract subtle phenotypic patterns from complex data streams [11]. The development of more accessible systems will address current barriers to adoption, particularly for smaller research institutions and agricultural operations in developing economies [14] [18]. Additionally, the standardization of robustness metrics across different biological domains will facilitate comparative analyses and meta-studies, potentially revealing universal principles of biological stability [12].

As these technologies mature, they will progressively transform from specialized tools used in advanced research facilities to mainstream methodologies employed across diverse biological disciplines. This democratization of high-throughput phenotyping capabilities will accelerate discoveries in basic research while enabling practical applications in drug development, agricultural improvement, and environmental sustainability. The ongoing strategic shift toward automated, miniaturized systems thus represents not merely a methodological improvement, but a fundamental reimagining of how biological research is conducted in the pursuit of understanding and harnessing living systems.

High-Throughput Screening (HTS) is a foundational methodology in modern scientific research and drug discovery, enabling the rapid and efficient testing of thousands of chemical compounds or genetic constructs. By automating experimental processes, HTS accelerates the identification of "hits" – compounds or conditions that produce a desired biological effect. In the context of robustness screening for high-throughput phenotyping, these systems provide the scale and precision necessary to dissect complex biological mechanisms and identify potential therapeutic interventions [19]. The core value of HTS lies in its ability to transform a needle-in-a-haystack search into a manageable, data-driven discovery process, thereby bridging critical gaps in genomics and functional analysis [11].

A robust HTS framework integrates three synergistic components: automation for standardized sample handling, robotics for precise physical manipulation, and advanced detection systems for quantitative phenotypic measurement. This integration allows researchers to conduct large-scale screens with minimal human intervention, reducing labor-intensive processes and increasing experimental reproducibility. For phenotyping applications, this means tracking subtle morphological and physiological changes in response to genetic or chemical perturbations, providing insights into gene function, metabolic pathways, and disease mechanisms [20] [11].

Core Component 1: Automation Systems and Workflow Integration

Automation forms the backbone of any HTS operation, encompassing the hardware and software that standardize and expedite experimental procedures. The primary objective of automation is to enable the parallel processing of vast numbers of samples while minimizing variability and human error.

Liquid Handling and Microplate Platforms

Modern HTS relies on miniaturized assay formats to maximize throughput and minimize reagent consumption. Automated liquid handling systems manage the transfer of nanoliter to microliter volumes of samples and reagents across standardized microplate formats [19]. These systems are programmable for diverse protocols, including serial dilutions, reagent additions, and compound library management.

Table 1: Standard Microplate Formats in HTS

| Well Format | Typical Working Volume | Primary Applications | Throughput Consideration |

|---|---|---|---|

| 96-well | 50-200 µL | Assay development, secondary screening | Moderate |

| 384-well | 10-50 µL | Primary compound screening | High |

| 1536-well | 5-10 µL | Large-library primary screening | Very High |

| 3456-well | < 5 µL | Ultra-High-Throughput Screening (uHTS) | Ultra High |

Integrated Workflow Automation

Beyond liquid handling, a fully automated HTS platform may include plate hotels for sample storage, robotic arms for plate transfer between stations, incubators for controlling environmental conditions, and plate readers for endpoint detection. This integration creates a continuous workflow from sample preparation to data acquisition. Software orchestration is critical, linking the various hardware components and scheduling their operations to maximize efficiency and prevent bottlenecks. This level of automation is essential for phenotypic screens that require temporal monitoring of plant or cell growth over days or weeks [20] [21].

Core Component 2: Robotic Systems for Phenotyping and Manipulation

Robotics provide the physical interface between the automated system and the biological samples, enabling precise manipulation and non-destructive phenotyping over time. In contrast to simple automation, robotic systems incorporate sensing and decision-making capabilities for adaptive interactions.

Mobile Robotic Platforms for Phenotyping

Autonomous ground vehicles are increasingly deployed for high-throughput phenotyping, particularly in agricultural and plant biology research. These mobile robots are equipped with multi-sensor suites to navigate field or greenhouse environments and collect phenotypic data from large plant populations non-invasively [22] [23]. Key advantages include the ability to monitor dynamic changes in plant growth and architecture throughout the development cycle, providing richer datasets than single time-point measurements [23] [11].

Table 2: Robotic Platforms for High-Throughput Phenotyping

| Platform Type | Key Features | Advantages | Common Applications |

|---|---|---|---|

| Autonomous Ground Robots | Self-navigating, multi-sensor suites (cameras, LiDAR, hyperspectral imagers) | High-resolution data, capable of operating in dense canopies | Field-based plant phenotyping, morphological trait measurement [22] [23] |

| Gantry Systems | Fixed infrastructure, sensor arrays mounted on moving gantries | Highly precise positional control, repeatable measurements | Controlled environment phenotyping, high-resolution imaging of small plots [22] |

| Collaborative Robots (Cobots) | Power and force limiting, hand-guiding, safety-rated monitored stop | Safe human-robot interaction, flexible deployment | Laboratory automation, sample processing, intricate manipulations [24] [25] |

Robotic Manipulators and End-Effectors

For more intricate operations, robotic manipulators (arms) with specialized end-effectors are used. These systems can perform tasks such as seed planting, leaf sampling, or tissue harvesting with sub-millimeter precision. In drug discovery, robotic arms are integral to compound management systems, where they retrieve and store chemical library plates from high-density archives. The emergence of collaborative robots (cobots) that can work safely alongside human technicians has further expanded applications, particularly in sample preparation and quality control workflows [25]. Modern robotic safety standards, such as ANSI/A3 R15.06-2025, now emphasize the safety of the collaborative application rather than categorizing the robot itself as collaborative, reflecting a more nuanced approach to human-robot interaction [24].

Core Component 3: Detection and Imaging Systems

Detection systems are the sensory organs of HTS platforms, converting biological responses into quantifiable data. The selection of appropriate detection technologies is critical for capturing relevant phenotypic information with sufficient sensitivity and specificity.

Sensor and Imaging Modalities

A diverse array of sensors is available for phenotyping applications, each capturing different aspects of biological samples:

- 2D and 3D Vision Systems: High-resolution cameras capture morphological information. 3D vision systems have become particularly valuable, offering up to 25% greater accuracy in tasks like part-picking and inspection compared to traditional 2D systems [26].

- Hyperspectral and Multispectral Imaging: These sensors capture data across numerous wavelength bands, providing information on plant health, pigment composition, and water status that is not visible to the naked eye [11].

- Fluorescence Imaging: Used to monitor physiological processes such as photosynthetic efficiency, gene expression, or protein localization [11].

- Thermal Imaging: Measures canopy temperature as an indicator of stomatal conductance and water stress [11].

Performance Metrics for Detection Systems

The quality of an HTS detection system is quantified using several key performance metrics that ensure data reliability and robustness:

- Z'-factor: A statistical measure of assay quality that accounts for both the dynamic range of the signal and the data variation. A Z'-factor between 0.5 and 1.0 indicates an excellent assay robust enough for HTS [21] [19].

- Signal-to-Noise Ratio (S/N): Measures the strength of the desired signal relative to background noise.

- Signal Window: The separation between positive and negative controls, with values >2 generally considered robust [21].

- Coefficient of Variation (CV): Measures well-to-well and plate-to-plate reproducibility, with lower values indicating higher consistency.

Machine vision systems have demonstrated remarkable efficiency improvements, reducing inspection errors by over 90% and lowering defect rates by up to 80% compared to manual inspection [26].

Integrated Experimental Protocol: A Differential Growth Chemical Screen

The following detailed protocol exemplifies the integration of automation, robotics, and detection systems in a high-throughput differential chemical genetic screen, as adapted from a study identifying genotype-specific chemical regulators of plant growth [20].

Experimental Workflow

Materials and Reagents

Table 3: Essential Research Reagent Solutions

| Reagent/Item | Function/Application | Specifications |

|---|---|---|

| Prestwick Chemical Library | FDA-approved drug library for repurposing screens | 2320 compounds, known safety profiles [20] |

| Arabidopsis Seeds | Plant model for differential screening | Wild-type (WT) and mus81 DNA repair mutant [20] |

| Microtiter Plates | Sample housing and processing | 24-well format optimal for seedling growth and imaging [20] |

| Liquid Growth Medium | Support plant growth under controlled conditions | Optimized for robust phenotypic expression [20] |

| Mitomycin C (MMC) | Genotoxic agent for positive control | Induces altered growth in mus81 mutant [20] |

| DMSO | Solvent control for normal growth phenotype | Standardized concentration [20] |

Step-by-Step Procedure

Assay Development and Optimization (2-3 weeks)

- Systematically test growth condition parameters including medium composition (solid vs. liquid), seed density, and plate format (96-well vs. 24-well).

- Select 24-well plates with liquid medium based on superior growth (3.6 true leaves/plant vs. 2.2 in 96-well) and facilitated imaging.

- Establish optimal positive (MMC) and negative (DMSO) control conditions that robustly differentiate mutant and wild-type phenotypes.

Plant Material Preparation and Compound Dispensing (1 day)

- Surface-sterilize Arabidopsis seeds (wild-type and mus81 mutant).

- Using automated liquid handlers, dispense compounds from the Prestwick library into 24-well microtiter plates, including appropriate controls.

- Add optimized liquid growth medium to all wells.

Seed Sowing and Growth Conditions (10 days)

- Sow three seeds per well to ensure biological replicates while accounting for low germination rates.

- Seal plates and transfer to controlled environment growth chambers with standardized light, temperature, and humidity conditions.

- Grow for 10 days, allowing development beyond cotyledon stage to true leaf formation.

Automated Imaging and Data Acquisition (1 day)

- Using robotic systems, transport plates to imaging station with light macroscope.

- Capture high-resolution images of each well at consistent focal points to ensure root and leaf visibility.

- Automate image naming and storage with metadata linking to plate coordinates and treatment conditions.

Machine Learning-Based Image Analysis (1-2 days)

- Process images using two complementary convolutional neural network (CNN) approaches:

- Image Classification: Employ a Residual Neural Network (ResNet) architecture trained to classify seedling images as "normal" or "altered" growth with probability scoring.

- Image Segmentation: Segment images into background, leaf, and root pixels for quantitative measurement of tissue-specific growth parameters.

- Train models using datasets of 240 images (equally distributed between DMSO and MMC treatments) split into training (80%), validation (10%), and testing (10%) sets.

- Validate model robustness across different plate arrangements to ensure predictions are not based on positional artifacts.

- Process images using two complementary convolutional neural network (CNN) approaches:

Hit Identification and Data Analysis (2-3 days)

- Compare growth patterns between wild-type and mus81 mutant for each compound.

- Identify "hits" as compounds that selectively affect mus81 growth while showing minimal effect on wild-type.

- Apply statistical thresholds (e.g., ≥3-fold growth effect in mutant specifically) to prioritize candidates.

- In the referenced study, approximately 10% of molecules caused altered growth in both genotypes (general toxicity), while three compounds specifically affected mus81.

Hit Validation (1-2 weeks)

- Conduct confirmation assays with hit compounds using dose-response experiments.

- Validate dose-dependent effects, with promising compounds (e.g., "Compound 21" in original study) showing doubled activity at 100-250μM compared to untreated controls.

- Perform secondary assays to elucidate mechanism of action, potentially including molecular docking studies to predict compound binding to target proteins.

Critical Performance Parameters

- Assay Robustness: Achieve Z'-factor >0.4 and signal window >2 during validation [21].

- Model Accuracy: CNN models should achieve >95% accuracy in classifying normal vs. altered growth phenotypes [20].

- Throughput: The protocol enables screening of 2320 compounds with dual genotypes in a time-efficient manner.

- Data Quality: Segmentation algorithms must accurately delineate roots and aerial structures for precise quantification.

Advanced Applications and Future Directions

The integration of automation, robotics, and detection systems continues to evolve, enabling increasingly sophisticated phenotyping applications. Emerging trends include the incorporation of artificial intelligence for real-time decision making, the development of more complex 3D tissue and organoid models, and the implementation of edge computing to process data closer to the source [27]. In plant phenotyping, autonomous robotic systems are poised to make significant advances, moving from mere data collection platforms to intelligent systems that can interpret and respond to phenotypic information in real-time [23].

The future of HTS lies in the seamless integration of these core components with advanced data analytics, particularly deep learning approaches that can extract subtle phenotypic patterns from complex image data [11]. As these technologies mature, they will further accelerate the pace of discovery in both basic research and drug development, enabling more comprehensive robustness screening across diverse biological systems.

The Critical Link between HTS Robustness and Accelerated Drug Discovery Timelines

High-Throughput Screening (HTS) has established itself as a cornerstone of modern drug discovery, enabling the rapid evaluation of thousands to millions of chemical or biological compounds for therapeutic potential [28]. The primary value proposition of HTS lies in its ability to accelerate the early stages of drug discovery by providing massive amounts of screening data in a condensed timeframe. However, the mere speed of screening provides little advantage if the generated data lacks reliability or biological relevance. Robustness—defined as the assay's consistency, reproducibility, and reliability under normal operating conditions—serves as the critical bridge between rapid screening and truly accelerated drug discovery timelines. A robust HTS campaign minimizes false positives and negatives, enhances reproducibility between batches and sites, and provides high-quality data that enables confident decision-making, thereby reducing costly cycles of re-testing and validation [28]. The industry is now shifting from a focus on sheer throughput to a more practical emphasis on data quality, integration, and biological relevance, recognizing that robust assays are fundamental to shortening the overall path from target identification to clinical candidate [29].

Quantifying Robustness: Key Metrics and Their Impact

The robustness of a cell-based HTS assay is not a qualitative trait but can be quantitatively measured using specific statistical parameters. These metrics allow researchers to objectively evaluate assay performance before committing to a full-scale screen, thereby de-risking the campaign.

Essential Statistical Parameters for Assay Quality

The following table summarizes the key metrics used to quantify and assure HTS assay robustness:

Table 1: Key Metrics for Quantifying HTS Assay Robustness

| Metric | Calculation/Definition | Optimal Value | Interpretation and Impact on Drug Discovery | ||

|---|---|---|---|---|---|

| Z'-Factor | ( Z' = 1 - \frac{3(\sigma{p} + \sigma{n})}{ | \mu{p} - \mu{n} | } )σ = standard deviation; μ = mean; p = positive control; n = negative control. | ( Z' \geq 0.5 ) | A Z'-Factor ≥ 0.5 indicates an excellent assay with a large signal window. This robust separation between positive and negative controls minimizes misclassification of compounds, directly reducing the number of false leads that require downstream follow-up, thus saving time and resources [28]. |

| Signal-to-Noise Ratio (S/N) | ( S/N = \frac{ | \mu{p} - \mu{n} | }{\sqrt{\sigma{p}^2 + \sigma{n}^2}} ) | ( > 10 ) | A high S/N ratio indicates that the measured signal (the biological response) is strong relative to the background noise of the assay system. This ensures that observed compound effects are real and not artifacts, increasing confidence in hit selection [28]. |

| Signal Window (SW) | ( SW = \frac{ | \mu{p} - \mu{n} | }{3\sqrt{\sigma{p}^2 + \sigma{n}^2}} ) | ( > 2 ) | Similar to Z'-Factor, this provides a measure of the assay's dynamic range. A larger window allows for better discrimination between active and inactive compounds. |

| Coefficient of Variation (CV) | ( CV = \frac{\sigma}{\mu} \times 100\% ) | ( < 10\% ) | The CV measures the precision of the assay signal across replicates (e.g., across a plate or between plates). A low CV indicates high reproducibility, which is essential for comparing data from screens run over multiple days or by different operators [28]. |

The application of robust statistical analysis is critical for interpreting HTS data. Methods that account for heteroscedasticity (variance that changes with dose) and outliers, such as M-estimation procedures, have been shown to provide better control of false discovery rates (FDR) while maintaining statistical power, which is paramount when making decisions on thousands of compounds simultaneously [30].

A Practical Protocol for Developing a Robust Cell Viability HTS Assay

This protocol outlines the development of a robust, ATP-based cell viability assay suitable for HTS, detailing the steps to ensure reproducibility and physiological relevance.

Protocol: Development of a Robust, ATP-based Cell Viability Assay for HTS

Principle: This assay quantifies cellular ATP levels, a direct indicator of metabolically active cells, using luciferase-based detection. The generation of light is proportional to the ATP concentration, providing a sensitive, homogeneous (no-wash) readout amenable to automation [28].

Key Reagent Solutions:

- Cell Titer-Glo Reagent: Luminescent substrate for ATP quantification.

- Cell Line: Choose a disease-relevant cell line (e.g., HepG2 for liver toxicity).

- Cell Culture Media: Standard media (e.g., DMEM) supplemented with FBS and antibiotics.

- Compound Library: e.g., Prestwick library of off-patent drugs [31].

- Controls: Positive Control (e.g., 10µM Staurosporine for cytotoxicity), Negative Control (e.g., 0.1% DMSO vehicle).

- Automation Equipment: Automated liquid handlers, multichannel pipettors, robotic dispensers, and a luminescence microplate reader [29] [28].

Procedure:

- Plate Selection: Use tissue culture-treated, multi-well plates (384-well or 1536-well) compatible with your automation systems and plate readers.

- Cell Seeding Optimization:

- Harvest cells in the logarithmic growth phase and resuspend in complete medium.

- Critical Step: Titrate the cell seeding density to determine the optimal density that provides a linear ATP signal response after the desired incubation period (e.g., 48-72 hours) without causing over-confluence. A common starting range is 1,000-5,000 cells per well for a 384-well plate.

- Use an automated liquid handler or a multichannel pipettor to dispense the cell suspension uniformly across the assay plate.

- Incubate plates at 37°C, 5% CO₂ for 24 hours to allow cell adhesion and recovery.

- Compound Addition:

- Prepare compound library plates via serial dilution in DMSO, then further dilute in medium immediately before use to ensure the final DMSO concentration is non-cytotoxic (e.g., ≤0.5%).

- Using a robotic liquid handler or acoustic dispenser, transfer a precise volume of each compound from the library source plate to the assay plates. Include positive and negative control wells on every plate.

- Incubation: Incubate the compound-treated plates for the predetermined optimal duration (e.g., 48 hours) at 37°C, 5% CO₂.

- Viability Measurement:

- Equilibrate Cell Titer-Glo Reagent to room temperature.

- Add an equal volume of reagent to each well of the assay plate using an automated dispenser.

- Shake the plate on an orbital shaker for 2 minutes to induce cell lysis.

- Incubate the plate at room temperature for 10 minutes to stabilize the luminescent signal.

- Detection:

- Read luminescence using a microplate reader integrated with robotic plate handling for high-throughput processing.

- Data Analysis:

- Normalize raw data: % Viability = (Compound Signal - Positive Control Signal) / (Negative Control Signal - Positive Control Signal) * 100.

- Calculate assay robustness metrics (Z'-factor, CV) for each plate. Plates failing quality control (e.g., Z' < 0.5) should be flagged or repeated.

- Apply robust statistical methods, such as preliminary test estimation (PTE) or M-estimation, to fit dose-response curves (e.g., Hill model) and classify compounds as active, inactive, or inconclusive, controlling for false discovery rates [30].

Troubleshooting Notes:

- Poor Z'-Factor: Re-optimize cell seeding density or positive control concentration. Check liquid handler precision for consistent dispensing.

- High CV Across Replicates: Confirm cell suspension is homogeneous during dispensing. Check for contamination or edge effects in plates; consider using edge-well reservoirs.

- Weak Signal: Ensure reagent is fresh and has been properly equilibrated. Confirm cell viability is high at time of seeding.

The workflow for this protocol, from cell preparation to hit identification, is visualized below.

Diagram 1: HTS viability assay workflow with quality control.

Advanced Applications: Enhancing Robustness through Integration and AI

Beyond foundational wet-lab techniques, robustness is increasingly achieved through the integration of advanced technologies that reduce human variability and enhance data quality.

Automation and Human-Relevant Biology

Modern automation extends beyond mere speed. Ergonomic and accessible automation systems, such as flexible liquid handlers, empower scientists to use automation confidently, saving time for analysis rather than manual pipetting [29]. This "automation of process" enhances reproducibility. Furthermore, robustness is bolstered by using more physiologically relevant models. Automated platforms like the MO:BOT standardize 3D cell culture (organoids), producing consistent, human-derived tissue models that provide more predictive safety and efficacy data, thereby building regulatory confidence and shortening development timelines [29].

The Role of AI and Data Integration

Artificial Intelligence (AI) is transforming HTS by improving the robustness of data analysis and experimental design. A major challenge in HTS is fragmented, siloed data with inconsistent metadata, which prevents automation and AI from delivering full value [29]. Solving this data foundation is a prerequisite for robust AI application. When built on quality data, AI can:

- Improve Hit Identification: Apply robust statistical models to analyze dose-response curves, effectively controlling false discovery rates (FDR) while maintaining power to identify true actives [30].

- Enable Phenotypic Screening: Use convolutional neural networks (CNN) for high-throughput image analysis of plant or cell phenotypes, allowing for accurate quantification of complex traits like growth in a genotype-specific manner [31].

- Generate Predictive Insights: Foundation models can extract features from complex imaging data (e.g., histopathology slides) to identify new biomarkers and link them to clinical outcomes, making AI-driven decisions more explainable and reproducible [29].

The synergy between robust data generation, structured data management, and intelligent analysis creates a virtuous cycle that accelerates discovery.

The critical link between HTS robustness and accelerated drug discovery timelines is undeniable. Robustness, quantified by rigorous metrics and achieved through careful assay optimization, advanced automation, and human-relevant models, is the key to generating high-quality, reliable data. This reliability, in turn, enables confident decision-making, reduces the costly repetition of experiments, and increases the translational potential of early-stage hits. As the industry moves towards a more integrated and AI-driven future, the focus on building robustness into every step of the HTS process—from assay design to data analysis—will remain the most critical factor in shortening the path from the lab to life-saving medicines.

The convergence of artificial intelligence (AI), three-dimensional (3D) cell cultures, and organoid models is fundamentally transforming high-throughput phenotyping in biomedical research. This integration addresses critical limitations of traditional two-dimensional (2D) cultures and animal models, which often fail to accurately predict human physiological responses [32] [33]. High-throughput screening (HTS) has evolved from simple compound screening to sophisticated systems capable of evaluating complex phenotypes at single-cell resolution within physiologically relevant environments [32] [34]. The emerging paradigm leverages AI-driven image analysis to interpret massive, multi-parametric datasets generated from 3D models, enabling unprecedented accuracy in predicting drug efficacy, toxicity, and patient-specific treatment responses [35] [36]. This technological synergy is particularly crucial for robustness screening, where reproducibility and physiological relevance are paramount for successful translation of preclinical findings. These advanced platforms now provide the necessary tools to incorporate human biological diversity into the earliest stages of drug development, potentially reducing the high attrition rates that have long plagued the pharmaceutical industry [37] [38].

Quantitative Comparison of Advanced Screening Platforms

The table below summarizes key performance metrics for cutting-edge screening platforms that integrate AI, 3D cultures, and organoid technologies.

Table 1: Performance Metrics of Advanced High-Throughput Screening Platforms

| Platform Name | Core Technology | Throughput Capacity | Key Applications | Spatial Resolution | Notable Advantages |

|---|---|---|---|---|---|

| HCS-3DX [36] | AI-driven micromanipulator, LSFM, FEP foil multiwell plates | 384-well format | Tumor biology, drug discovery, personalized medicine | Single-cell level within 3D structures | Automated selection of morphologically homogeneous 3D-oids; Reduced operator-induced variability |

| Digital Colony Picker (DCP) [34] | Microfluidic chip (16,000 chambers), AI-image analysis, Laser-induced bubble export | 16,000 picoliter-scale microchambers | Microbial cell factory development, functional gene discovery | Single-cell resolution | Contact-free clone export; Dynamic medium exchange capability; Spatiotemporal monitoring |

| Automated 3D HCS Platform [39] | Robotic liquid handling, confocal imaging | 384-well format | Organoid phenotyping, drug response assessment | Organoid-level (phenotypic changes) | More consistent than manual pipetting; Non-destructive imaging; Compatible with downstream analysis |

Table 2: Analysis of 3D Model Variability and AI Classification Performance

| Parameter | Monoculture Spheroids (Expert 1 vs. 2 vs. 3) | Co-culture Spheroids (Expert 1 vs. 2 vs. 3) | AI Classification Accuracy (ResNet) |

|---|---|---|---|

| Size/Area | Significant differences (Expert 1 generated larger spheroids) | Increased variability compared to monocultures | 100% (n=24) for both WT and mus81 genotypes [20] |

| Shape/Circularity | No significant differences between experts and batches | Twice as many seeding cells, more compact spheroids | Robust to different plate arrangements (100% correct classification) [20] |

| Correlation (Circularity vs. Diameter) | -0.69 (60.5% most similar samples) | -0.54 (55.1% most similar samples) | Training set: 80% of images; Validation: 10%; Testing: 10% [20] |

Application Notes: Integrated AI-3D Screening Systems

HCS-3DX: A Next-Generation AI-Driven Screening System

The HCS-3DX platform represents a comprehensive solution for single-cell phenotyping within 3D microenvironments, addressing critical bottlenecks in standardization and analysis [36]. The system integrates three innovative components: (1) an AI-driven SpheroidPicker for automated selection and transfer of morphologically homogeneous 3D-oids, which substantially reduces operator-induced variability; (2) custom Fluorinated Ethylene Propylene (FEP) foil multiwell plates optimized for high-resolution light-sheet fluorescence microscopy (LSFM), enabling exceptional imaging penetration with minimal phototoxicity; and (3) a dedicated AI-based image analysis workflow implemented in Biology Image Analysis Software (BIAS) for quantitative single-cell data extraction from complex 3D structures [36]. This integrated approach demonstrates particular utility in cancer research, where it enables precise quantification of tissue composition in both monoculture and co-culture tumor models, providing unprecedented resolution for drug screening applications.

Digital Colony Picker: AI-Powered Microbial Phenotyping

The Digital Colony Picker (DCP) platform revolutionizes phenotype-based screening of microbial cell factories through an addressed static droplet system that eliminates the need for agar or physical contact [34]. Its core innovation lies in a microfluidic chip containing 16,000 addressable picoliter-scale microchambers that enable single-cell compartmentalization, dynamic monitoring, and AI-guided export via laser-induced bubble technique. When applied to Zymomonas mobilis for lactate tolerance screening, the DCP platform identified a mutant with 19.7% increased lactate production and 77.0% enhanced growth under lactate stress [34]. This system enables multi-modal phenotyping with spatiotemporal precision, offering a generalizable strategy for accelerated strain engineering and functional gene discovery in synthetic biology applications.

Automated Organoid Screening Platforms

Fully automated screening platforms specifically designed for organoid models are bridging the gap between physiological relevance and screening robustness. These systems incorporate robotic liquid handling that demonstrates superior consistency compared to manual pipetting, with automated randomization capabilities that enhance experimental reproducibility [39]. Integrated confocal imaging systems provide greater sensitivity for detecting phenotypic changes within organoid cultures compared to traditional biochemical viability assays, enabling more nuanced assessment of drug responses [39]. Furthermore, these platforms facilitate single-well co-cultures of organoids derived from primary human biopsies and patient-derived xenograft (PDX) models, allowing for complex disease modeling while maintaining compatibility with high-content screening workflows [39].

Experimental Protocols

Protocol: AI-Driven 3D Spheroid Screening Using HCS-3DX

Objective: To perform high-content drug screening on 3D tumor spheroids with single-cell resolution using the HCS-3DX system.

Materials:

- HCS-3DX system (SpheroidPicker, LSFM, FEP foil multiwell plates)

- BIAS image analysis software

- HeLa Kyoto human cervical cancer cells

- MRC-5 human fibroblast cells (for co-cultures)

- 384-well U-bottom cell-repellent plates

- Test compounds

Procedure:

- Spheroid Generation: Seed 100 HeLa Kyoto cells per well in 384-well U-bottom cell-repellent plate for monocultures. For co-cultures, seed 40 HeLa Kyoto cells first, then add 160 MRC-5 human fibroblast cells after 24 hours.

- Incubation: Incubate plates for 48 hours (monocultures) or 24+24 hours (co-cultures) to allow spheroid formation.

- Spheroid Selection: Use the AI-driven SpheroidPicker to automatically select and transfer morphologically homogeneous spheroids to FEP foil multiwell plates based on predefined size and circularity parameters.

- Compound Treatment: Apply test compounds using robotic liquid handling systems to ensure precision and reproducibility.

- High-Content Imaging: Acquire images using LSFM with 20x objective for optimal resolution (adjust to 5x or 10x for faster screening where appropriate).

- AI-Based Analysis: Process images through the BIAS software for single-cell segmentation, classification, and feature extraction.

- Data Quantification: Extract 2D and 3D morphological features (Diameter, Perimeter, Area, Circularity, Sphericity, Convexity) for statistical analysis and hit identification.

Validation: The platform achieves single-cell resolution within 3D structures and reliably quantifies tissue composition in mono- and co-culture tumor models [36].

Protocol: Single-Cell Microbial Phenotyping Using Digital Colony Picker

Objective: To identify microbial mutants with enhanced stress tolerance and metabolite production using the AI-powered Digital Colony Picker.

Materials:

- DCP platform (microfluidic chip, optical module, droplet location module, export/collection module)

- Zymomonas mobilis cell suspension (1 × 10⁶ cells/mL)

- PDMS mold layer microfluidic chips with ITO film

- Culture media with stressor (e.g., 30 g/L lactate)

- 96-well collection plates

Procedure:

- Chip Preparation: Pre-vacuum the microfluidic chip to remove air from microchambers.

- Single-Cell Loading: Introduce cell suspension (1 × 10⁶ cells/mL) into microchannels allowing passive loading into 16,000 microchambers via vacuum assistance.

- Incubation: Place chip in water-filled centrifuge tube (10% filled) to maintain humidified environment and incubate at controlled temperature.

- Dynamic Monitoring: Use AI-driven image analysis to track single-cell morphology, proliferation, and metabolic activities over time.

- Liquid Replacement (Optional): Exchange culture media through chip inlet as needed using gas gaps between microchambers.

- Target Identification: Apply AI algorithms to identify microchambers containing clones with desired phenotypic signatures.

- Clone Export: Generate microbubbles via laser-induced bubble technique to propel selected single-clone droplets toward outlet.

- Collection: Transfer exported clones to 96-well collection plates using cross-surface microfluidic printing method.

- Validation: Culture selected clones for further phenotypic validation (e.g., lactate production assay).

Validation: The protocol successfully identified a Zymomonas mobilis mutant with 19.7% increased lactate production and 77.0% enhanced growth under lactate stress [34].

Workflow Visualization

Diagram 1: HCS-3DX Screening Workflow

Diagram 2: Digital Colony Picker Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for AI-Integrated 3D Screening

| Item | Function/Application | Specific Examples/Notes |

|---|---|---|

| FEP Foil Multiwell Plates [36] | Optimized for high-resolution LSFM imaging of 3D models | Provides exceptional optical clarity for deep tissue imaging; Minimal autofluorescence |

| Microfluidic Chips with ITO Film [34] | Picoliter-scale single-cell cultivation and manipulation | 16,000 addressable microchambers; Enables laser-induced bubble export |

| Cell-Repellent U-Bottom Plates [36] | Standardized spheroid formation | Promotes consistent 3D aggregation; 384-well format for HTS |

| BIAS Analysis Software [36] | AI-powered 3D image analysis | Enables single-cell segmentation and feature extraction in complex 3D structures |

| SpheroidPicker [36] | Automated selection of homogeneous 3D-oids | AI-driven micromanipulator; Reduces operator-induced variability |

| Patient-Derived Organoids [33] | Personalized disease modeling and drug testing | Retains original tumor genetics and heterogeneity; Predictive of clinical response |

HTS Assay Platforms in Action: From Design to Hit Identification

High-throughput screening (HTS) serves as a foundational pillar in modern drug discovery and biological research, enabling the rapid evaluation of thousands to millions of chemical compounds or genetic modifiers. The selection of an appropriate screening strategy is paramount to the success of any campaign aimed at identifying novel therapeutic agents or probing biological mechanisms. This choice fundamentally hinges on the research question: whether to target a specific, isolated protein; to observe effects within a living cellular environment; or to discover new biology through observable changes in cell or organism phenotype. Within the context of high-throughput phenotyping and robustness screening, each paradigm—biochemical, cell-based, and phenotypic—offers distinct advantages, limitations, and technical considerations. This application note delineates these three primary screening approaches, providing structured comparisons, detailed protocols, and strategic guidance to empower researchers in selecting and implementing the optimal assay for their specific investigative goals.

The following table summarizes the core characteristics, advantages, and challenges of the three primary screening platforms.

Table 1: Comparison of High-Throughput Screening Platforms

| Feature | Biochemical Screening | Cell-Based Screening (Target-Based) | Phenotypic Screening |

|---|---|---|---|

| System Complexity | Simplified, purified components (e.g., enzymes, substrates) [40] | Living cells, often engineered with specific reporters or targets [41] [42] | Living cells or organisms, un-engineered or disease-model contexts [43] [44] |

| Primary Readout | Molecular interaction or enzymatic activity (e.g., binding, inhibition) [40] [42] | Pathway activity, reporter signal, or cellular response [45] [41] | Macroscopic or morphological phenotype (e.g., growth, shape, differentiation) [20] [43] |

| Key Advantage | High target specificity; controlled conditions; high throughput [42] | Cellular context; assesses membrane permeability; measures pathway modulation [45] [40] | Biologically unbiased; discovers novel mechanisms of action (nMoA); clinically relevant models [43] [45] |

| Main Challenge | Lack of biological context; may identify non-cell-permeable compounds [45] [46] | Can be complex to develop and interpret; false positives from cytotoxicity [45] | Target identification (deconvolution) can be challenging and time-consuming [43] |

| Throughput | Very High [42] | High [45] | Moderate to High [20] |

| Hit-to-Lead Focus | Potency, selectivity, structure-activity relationships (SAR) [40] | Cellular efficacy, permeability, cytotoxicity [40] | Efficacy in disease-relevant models, novel biology [43] |

The decision-making workflow for selecting an appropriate screening method based on the research objective can be visualized as follows:

Biochemical Screening

Biochemical screening utilizes purified target proteins in a well-defined in vitro system to directly measure compound binding or inhibition of enzymatic activity [40] [42]. This approach is ideal when the molecular target is known and can be isolated.

Key Experimental Protocol: Fluorescence Polarization (FP) Assay for Kinase Inhibition

This protocol is adapted for a 384-well plate format to screen for inhibitors of a recombinant kinase enzyme [40] [42].

Workflow:

Reagent Preparation:

- Prepare assay buffer (e.g., 50 mM HEPES pH 7.5, 10 mM MgCl₂, 1 mM DTT, 0.01% Tween-20).

- Dilute the recombinant kinase to a working concentration in assay buffer.

- Prepare a fluorescently-labeled peptide substrate.

- Prepare ATP solution at a concentration near its Km value.

- Dilute compound library in DMSO, then further in assay buffer (typical final DMSO concentration 1%).

Assay Assembly (384-well plate):

- Step 1: Transfer 5 µL of compound solution (or buffer/DMSO for controls) to each well.

- Step 2: Add 10 µL of kinase/substrate mixture to all wells.

- Step 3: Initiate the reaction by adding 5 µL of ATP solution.

- Controls: Include positive controls (no inhibitor) and negative controls (no enzyme or with a known potent inhibitor).

Incubation and Reading:

- Incubate the plate at room temperature for 60 minutes.

- Stop the reaction if necessary (e.g., with EDTA).

- Read fluorescence polarization (in mP units) using a plate reader equipped with appropriate FP optics.

Data Analysis:

- Calculate % inhibition for each compound:

(1 - (mP_compound - mP_negative_control) / (mP_positive_control - mP_negative_control)) * 100. - Fit dose-response curves for hit compounds to determine IC₅₀ values.

- Calculate % inhibition for each compound:

Research Reagent Solutions for Biochemical Screening

Table 2: Essential Reagents for Biochemical Assays

| Item | Function | Example Application |

|---|---|---|

| Purified Target Protein | The isolated protein of interest (e.g., kinase, protease, receptor). | Source of enzymatic activity or binding for the assay [42]. |

| Fluorescent Tracer | A fluorescently-labeled ligand or substrate whose properties change upon binding or conversion. | FP and TR-FRET assays; displacement indicates compound binding [40]. |

| Co-factors / Substrates | Essential molecules for the target's function (e.g., ATP for kinases). | Provides necessary components for a functional enzymatic reaction [46]. |

| HTS-Optimized Buffer | A chemically defined buffer to maintain protein stability and function. | Creates a consistent and reproducible in vitro environment [42]. |

| Microtiter Plates (384-well) | Miniaturized assay vessels for high-throughput processing. | Standard format for HTS, balancing volume, throughput, and cost [42]. |

Cell-Based Screening

Cell-based assays use live cells to measure a compound's effect in a more physiologically relevant context than biochemical assays [45] [41]. They can be target-based, focusing on a specific pathway, or phenotypic.

Key Experimental Protocol: Reporter Gene Assay in a 96-Well Format

This protocol is used to identify compounds that modulate the activity of a specific signaling pathway or transcription factor [42].

Workflow:

Cell Culture and Plating:

- Culture reporter cells (e.g., HEK-293T engineered with a luciferase gene under a response element of interest).

- Harvest cells during logarithmic growth and seed in a 96-well tissue culture-treated plate at a density of 10,000-20,000 cells per well in 100 µL of complete growth medium.

- Incubate plates for 18-24 hours at 37°C, 5% CO₂ to allow cells to adhere and stabilize.

Compound Treatment:

- Prepare serial dilutions of test compounds in culture medium.

- Remove the old medium from the cell plate and add 100 µL of compound-containing medium to each well. Include vehicle (DMSO) and controls for maximal and minimal pathway activation.

Incubation and Assay:

- Incubate the plate for a predetermined time (e.g., 6-24 hours) at 37°C, 5% CO₂.

- Equilibrate the plate and a luciferase assay reagent to room temperature.

- Add the luciferase reagent to each well according to the manufacturer's instructions (e.g., 50 µL per well).

- Measure luminescence signal immediately using a plate reader.

Data Analysis:

- Normalize raw luminescence values to vehicle and control wells.

- Calculate % activation or inhibition and generate dose-response curves to determine EC₅₀ or IC₅₀ values.

The interconnected workflow for cell-based and phenotypic screening, from cell culture to data analysis, is outlined below:

Phenotypic Screening

Phenotypic screening involves testing compounds for their ability to produce a desired change in cell or organism phenotype without a preconceived molecular target [43]. This approach is powerful for discovering novel mechanisms of action (nMoA).

Key Experimental Protocol: Image-Based Screening for Altered Plant Seedling Growth

This protocol, based on a high-throughput screen in Arabidopsis thaliana, uses convolutional neural networks (CNNs) to quantify genotype-specific chemical effects [20].

Workflow:

Biological System Setup:

- Plant Materials: Use wild-type Arabidopsis and a DNA repair mutant (mus81).

- Growth Conditions: Surface-sterilize seeds and sow them separately in liquid medium within 24-well microtiter plates (3 seedlings per well). Incorporate positive (e.g., Mitomycin C) and negative (DMSO) controls.

Chemical Treatment and Growth:

- Add small molecules from the screening library (e.g., Prestwick library) to the respective wells.

- Incubate the plates under controlled light and temperature conditions for 10 days.

Image Acquisition:

- Capture high-resolution images of seedlings in each well using a light macroscope. Ensure consistent lighting and focal plane across all wells.

Machine Learning-Based Image Analysis:

- Training: Train a Residual Neural Network (ResNet) CNN model using images of control seedlings (DMSO for normal growth, MMC for altered growth). Use 80% of images for training, 10% for validation, and 10% for testing [20].

- Classification: Use the trained model to classify seedling images from the screen as "normal growth" or "altered growth," outputting a probability score.

- Segmentation (Complementary): Use a second CNN to segment images into background, leaves, and roots. Quantify the size of each tissue for detailed phenotypic profiling [20].

Hit Identification:

- Identify "hits" as molecules that induce a significant alteration in the mutant (mus81) growth phenotype while showing minimal effect on the wild-type.

Research Reagent Solutions for Phenotypic Screening

Table 3: Essential Reagents for Phenotypic Screens

| Item | Function | Example Application |

|---|---|---|

| Disease-Relevant Cell Model | Primary cells, stem cell-derived lineages, or engineered organoids that mimic human disease. | Provides a physiologically relevant context for observing phenotypic changes [43] [41]. |

| Viability/Cytotoxicity Dyes | Fluorescent probes that distinguish live/dead cells (e.g., propidium iodide, calcein-AM). | A basic phenotypic readout for compound toxicity or selective cell death [41]. |

| High-Content Imaging Reagents | Fluorescent dyes or antibodies for labeling cellular structures (nuclei, cytoskeleton, organelles). | Enables multiparametric analysis of complex phenotypes like morphology and protein localization [42]. |

| 3D Culture Matrices | Hydrogels (e.g., Matrigel, synthetic alternatives) to support three-dimensional cell growth. | Creates in vivo-like architecture and cell-cell interactions for more predictive screening [41]. |

| Label-Free Detection Kits | Reagents for measuring metabolic activity or confluence without fluorescent labels. | Reduces assay artifacts and simplifies workflow for certain phenotypic endpoints [45]. |

Mechanism of Action Deconvolution

A significant challenge following a phenotypic screen is identifying the molecular target of hit compounds—a process known as target deconvolution or mechanism of action (MoA) studies [43]. The following diagram illustrates the primary methodologies employed.

The main strategies include:

- Affinity Chromatography: The hit compound is immobilized on a solid support and used as bait to pull down direct binding proteins from cell lysates, which are then identified by mass spectrometry [43] [45].

- Genetic Modifier Screening: Genome-wide CRISPR knockout or RNAi screens are used to identify genes whose loss-of-function either enhances or suppresses the activity of the hit compound [43] [42].

- Gene Expression Profiling: Comparing the global gene expression signature (e.g., by RNA-Seq) induced by the hit compound to databases of signatures from compounds with known MoA can infer the pathway or target involved [43].

- Resistance Mutation Selection: Treating cells with low doses of the compound to select for resistant clones, followed by sequencing to identify the acquired mutations that confer resistance, can pinpoint the drug target [43].