Dynamic Climate Control Strategies: Cutting Energy Load in Biomedical and Research Facilities

This article explores the transformative potential of dynamic climate control systems in reducing the substantial energy load of research greenhouses and laboratory environments.

Dynamic Climate Control Strategies: Cutting Energy Load in Biomedical and Research Facilities

Abstract

This article explores the transformative potential of dynamic climate control systems in reducing the substantial energy load of research greenhouses and laboratory environments. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis from foundational principles to advanced applications. The content covers the core mechanisms of real-time sensor-driven HVAC optimization, details methodological implementations for precision environmental control, addresses common operational challenges with targeted solutions, and presents validation data from comparative case studies. The synthesis of these areas offers a roadmap for achieving stringent climate stability for sensitive research while simultaneously advancing sustainability goals and reducing operational costs.

The Science of Dynamic Control: Foundations for Reducing Research Facility Energy Load

Dynamic Climate Control (DCC) represents a paradigm shift in environmental management for research facilities, moving beyond the static setpoints of traditional Heating, Ventilation, and Air Conditioning (HVAC) systems. DCC employs adaptive, data-driven strategies to achieve precise thermal regulation while significantly reducing energy consumption. Within greenhouse and controlled environment research, DCC frameworks are particularly valuable for minimizing energy load without compromising the stable conditions required for scientific experimentation, including drug development and plant science research. This document outlines the core principles, quantitative benefits, and practical implementation protocols for DCC systems, providing researchers with the tools to integrate these strategies into energy-efficient research operations.

Quantitative Data Comparison of Climate Control Strategies

The table below summarizes performance data from various climate control studies, highlighting the potential energy savings of advanced strategies.

Table 1: Quantitative comparison of energy performance for different climate control strategies.

| Control Strategy | Application Context | Key Performance Metric | Reported Energy Saving/Effect | Source |

|---|---|---|---|---|

| Dynamic Heating Control | Apartment Building, District Heating | Heating Power Reduction | 13.7% total heating power reduction | [1] |

| Rooftop Greenhouse (RTG) Integration | Building with RTG (Forced Ventilation) | Annual Energy Savings for Top Floor | 44.9% energy savings | [2] |

| Rooftop Greenhouse (RTG) Integration | Building with RTG (Cultivation Suspension) | Annual Energy Savings for Top Floor | 60.2% energy savings | [2] |

| Top-Down Energy Disaggregation | Residential AC Prediction | Prediction Accuracy | ~2.89 times overestimation of measured consumption | [3] |

| Bottom-Up Energy Simulation | Residential AC Prediction | Prediction Accuracy | ~2.76 times overestimation of measured consumption | [3] |

| VO₂-based Thermal Regulation | Passive Solid-State Device (Space Conditions) | Temperature Fluctuation Reduction | Halved thermal fluctuations vs. constant-emissivity sample | [4] |

Experimental Protocols for Key Dynamic Control Strategies

Protocol: Dynamic Heating Control in a District-Heated Building

This protocol is adapted from a study demonstrating power reduction via dynamic supply temperature control [1].

1. Objective: To reduce peak heating power demand in a multi-apartment building with district heating by dynamically adjusting the heating curve, without compromising indoor thermal comfort.

2. Materials and Equipment:

- Building with district heating and a central heating controller.

- Array of indoor air temperature sensors deployed in representative apartments.

- Outdoor air temperature sensor.

- Data acquisition system for collecting temperature and power data.

- Controller capable of implementing a dynamic heating curve algorithm.

3. Methodology:

- Baseline Measurement: Over a significant period (e.g., one heating season), operate the building with a conventional, static heating curve. Collect data on space heating power, total heating power, and indoor temperatures.

- Algorithm Development: Develop a dynamic heating curve control algorithm incorporating:

- DHW Compensation: A reduction in the space heating supply temperature is applied during peaks in Domestic Hot Water (DHW) draw to reduce total simultaneous power demand.

- Thermal Comfort Safeguard: A feedback mechanism that triggers a supply temperature uplift if any apartment's indoor temperature drops below a defined lower limit (e.g., 21 °C).

- Intervention Measurement: Over a subsequent, comparable period, operate the building with the new dynamic control algorithm. Collect the same dataset as during the baseline measurement.

- Data Analysis: Compare the total heating power and space heating power between the two periods. Analyze indoor temperature data to confirm that thermal comfort was maintained.

Protocol: Evaluating Passive Thermal Regulation with VO₂ Thin Films

This protocol is based on an experimental demonstration of dynamic thermal regulation using phase-change materials [4].

1. Objective: To experimentally characterize the temperature regulation performance of a vanadium dioxide (VO₂) thin-film device under a time-varying heat load.

2. Materials and Equipment:

- Fabricated VO₂/Si/Au device (VO₂ thickness ~62-75 nm).

- Vacuum chamber to minimize convective heat loss.

- Ceramic heater with an embedded thermocouple.

- Ice bath to maintain a stable, low ambient temperature.

- Power supply for the heater.

- Data logger for temperature and power.

3. Methodology:

- Setup Calibration:

- Mount the sample on the heater and suspend the assembly in the vacuum chamber.

- Submerge the chamber in the ice bath (e.g., 0.5 °C).

- Calibrate parasitic heat losses by conducting a steady-state experiment with a low-emissivity sample (e.g., gold mirror). Measure the temperature at incremental, steady-state heat loads.

- Device Testing:

- Replace the calibration sample with the VO₂ device.

- Repeat the steady-state experiment through a complete heating and cooling cycle, allowing 45 minutes at each step to reach equilibrium.

- Record the heater temperature at each applied heat load.

- Data Analysis:

- Plot the temperature as a function of applied heat load for both heating and cooling cycles. A hysteresis window around the VO₂ phase transition temperature (~68°C) will be evident.

- Calculate the radiative heat flux from the sample using the calibrated data.

- The regulation capability is demonstrated by a significant difference in temperature for the same heat load between the heating (high-emissivity) and cooling (low-emissivity) paths.

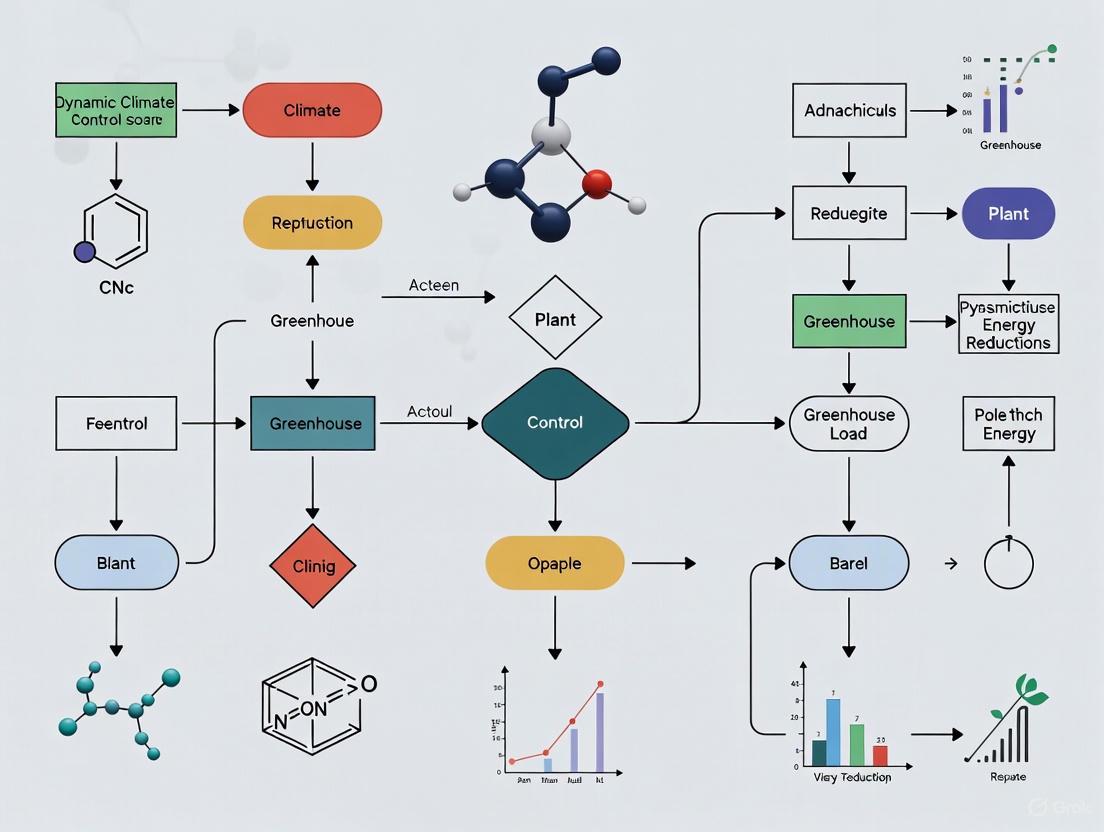

Visualization of a Dynamic Climate Control Framework

The diagram below illustrates the core logical workflow and feedback mechanisms of a DCC system.

Diagram 1: Dynamic climate control system logic and feedback.

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details essential materials and their functions for experiments in dynamic thermal regulation and energy control.

Table 2: Essential research reagents and materials for dynamic climate control studies.

| Item Name | Function / Application | Specific Example / Note |

|---|---|---|

| Vanadium Dioxide (VO₂) Thin Films | Passive thermal regulation; acts as a phase-change material that switches thermal emissivity with temperature, providing automatic cooling at high temps. | Fabricated via Atomic Layer Deposition (ALD) on Si substrate with Au back reflector for optimal performance [4]. |

| Wireless Sensor Network | Onsite measurement of temperature, humidity, and energy consumption in field studies; enables high-resolution data collection. | Used for longitudinal measurement in residences to collect AC energy consumption data [3]. |

| Data Logging Thermocouples | Precise temperature measurement inside test assemblies or buildings for calibration and performance validation. | Embedded in a ceramic heater to measure core temperature in a vacuum chamber experiment [4]. |

| Building Energy Simulation (BES) Tool | Bottom-up modeling of energy consumption in buildings; used for predicting performance and optimizing control strategies. | Used to create models of typical reference buildings for annual AC energy prediction [3]. |

| Dynamic Heating Controller | The central unit that implements advanced control algorithms (e.g., dynamic heating curves) for a building's heating system. | Programmed with a DHW compensation differential and a thermal comfort feedback loop [1]. |

| Rooftop Greenhouse (RTG) Model | A validated simulation model to analyze the energy-saving effects of integrating a greenhouse with a building. | Used to simulate scenarios like forced ventilation or cultivation suspension for maximum energy savings [2]. |

The Energy Burden of Precision Climate in Bio-labs and Greenhouses

Precision climate control is a foundational element in both modern agricultural greenhouses and pharmaceutical bio-labs, essential for ensuring crop yield and research integrity. However, the energy required to maintain these precise environments constitutes a significant operational cost and environmental burden. This document details the energy consumption profiles of these facilities and provides validated, dynamic control strategies that can reduce energy load without compromising environmental setpoints. The protocols herein are framed within broader thesis research demonstrating that advanced, algorithm-driven climate management can significantly lower energy use. Implementing the described hierarchical control frameworks, model-predictive strategies, and equipment-level optimizations can lead to energy savings of over 25% in greenhouses and major reductions in the HVAC-dominated energy load of bio-labs, contributing to more sustainable operations in both fields [5] [6] [7].

Quantitative Energy Burden Analysis

The tables below summarize the primary energy consumers and documented savings from optimized control strategies in greenhouse and bio-lab environments.

Table 1: Energy Consumption Profile of Precision Climate-Controlled Facilities

| Facility Type | Primary Energy-Consuming System | Typical Energy Cost Contribution | Key Energy Burden Factors |

|---|---|---|---|

| Agricultural Greenhouse | Heating, Ventilation, & Cooling (HVAC) | 30-50% of total production cost [5] | Climate extremes, 24/7 operation, high-volume space conditioning |

| Agricultural Greenhouse | Lighting & CO₂ Enrichment | Significant portion of operational energy [8] | High-intensity electric lighting, CO₂ generation/compression |

| Pharmaceutical Bio-Lab | Cleanroom HVAC Systems | 60-75% of total facility energy [7] | Continuous 24/7 operation, high air-exchange rates, strict humidity/temperature control |

| Pharmaceutical Bio-Lab | Process Cooling & Refrigeration | Notable secondary load [9] | Laboratory equipment, sample and reagent storage |

Table 2: Documented Energy Savings from Advanced Climate Control Strategies

| Strategy | Application Context | Documented Energy Saving | Key Metric |

|---|---|---|---|

| Global Setpoint Optimization [5] | Greenhouse Climate Control | Up to 27% | Reduction in total energy consumption for climate regulation |

| Hierarchical MPC & DRL Control [6] | Semi-Closed Greenhouse | Significant energy-efficient operation | Maintained performance with actuator faults and weather uncertainty |

| Targeted Rootzone Heating [10] | Greenhouse Production | Over 30% | Reduction in fuel bills for heating |

| Demand-Controlled Filtration & VAV [7] | Pharmaceutical Cleanroom HVAC | Over 90% in exemplary cases | Reduction in HVAC energy usage |

Dynamic Climate Control Protocols for Energy Reduction

Protocol: Hierarchical Control for Greenhouse Climate and Energy Management

This protocol describes a dual-loop control system that separates slow economic optimization from fast, robust climate tracking, maximizing energy efficiency and adaptability.

I. Experimental Principle and Objective A hierarchical controller combines the long-term planning of Model Predictive Control (MPC) with the real-time resilience of Deep Reinforcement Learning (DRL). The objective is to minimize the total energy cost of greenhouse or growth chamber operation while maintaining climate conditions within a predefined optimal range for the specimen, even under equipment malfunction or variable external weather [6].

II. Research Reagent Solutions

- Greenhouse/Bio-Lab Digital Twin: A validated mathematical model simulating the internal climate dynamics (energy, moisture, CO₂ balances) in response to weather, actuator states, and crop/lab processes. Function: Serves as a virtual environment for training the DRL agent and testing MPC without risking real specimens [6] [11].

- Historical Weather Data Dataset: High-resolution (e.g., hourly) local data for temperature, solar radiation, humidity, and wind speed. Function: Provides the external disturbance input for both the MPC's forecasts and the DRL's training environment [6].

- Particle Swarm Optimization (PSO) Algorithm: An optimization tool. Function: Used to fine-tune the parameters of the inner-loop controller or to solve the MPC's optimization problem to find the most economical setpoints [5] [11].

III. Step-by-Step Workflow

System Identification & Modeling: Develop a dynamic model of the facility's microclimate. For a greenhouse, this includes heat transfer, vapor balance, and CO₂ flux. For a bio-lab, this focuses on HVAC dynamics and internal heat loads [11].

Upper-Level Controller (Economic Optimization): a. Input: Forecasted weather, dynamic energy pricing, and crop growth stage or lab protocol requirements. b. Process: The MPC uses the dynamic model to predict the facility's behavior over a 24-48 hour horizon. It calculates the sequence of climate setpoints (e.g., temperature, humidity) that minimizes energy cost while satisfying the specimen's constraints. c. Output: A trajectory of optimal daily setpoints [6].

Lower-Level Controller (Robust Tracking): a. Input: The desired setpoint trajectory from the upper level and real-time sensor data from the facility. b. Process: A DRL-based controller, pre-trained in the digital twin environment, translates the setpoints into real-time commands for actuators (heaters, chillers, vents, lights). The DRL agent is trained to be robust to disturbances like sudden cloud cover or an actuator failure. c. Output: Precise, real-time control signals to the physical hardware [6].

Validation and Deployment: a. Validate the entire control hierarchy in simulation under various failure and extreme weather scenarios. b. Deploy in the physical facility with a phased approach, starting with monitoring mode to compare proposed actions with existing control, before full handover.

Diagram 1: Hierarchical Control Framework. The MPC (Upper Level) performs economic optimization, generating a setpoint trajectory for the robust DRL controller (Lower Level) to track.

Protocol: Precision Zone and HVAC Control for Bio-Lab Energy Load Reduction

This protocol focuses on reducing the dominant HVAC energy burden in pharmaceutical cleanrooms and laboratories through dynamic airflow control and system-level optimization.

I. Experimental Principle and Objective The protocol aims to replace constant-volume HVAC operation with demand-based control. By dynamically adjusting air change rates and conditioning setpoints based on real-time occupancy and process load, significant energy can be saved without compromising the sterile or controlled environment [7].

II. Research Reagent Solutions

- Variable Air Volume (VAV) Terminal Units: HVAC components that can modulate airflow to a specific zone. Function: Reduce airflow to individual lab spaces or cleanrooms when they are unoccupied or have lower contamination risk [7].

- Occupancy Sensors and Particle Counters: Real-time monitoring devices. Function: Provide the data input for demand-controlled systems, signaling when a space is occupied or if air quality degrades, triggering increased ventilation [7].

- High-Efficiency Particulate Air (HEPA) Filters with Demand-Controlled Filtration (DCF): A system that modulates fan speed based on real-time air purity readings. Function: Reduces the fan energy required to push air through the HEPA filter system when full filtration is not required [7].

III. Step-by-Step Workflow

Facility Zoning and Sensor Deployment: a. Divide the lab facility into discrete climate control zones based on function and occupancy patterns. b. Install networked sensors in each zone for temperature, relative humidity, differential pressure, CO₂, and particulate matter.

Baseline Profiling: a. Monitor and log the environmental data and HVAC energy consumption for a minimum of two weeks under standard operating procedures to establish a baseline.

Control Logic Implementation: a. Integrate VAV terminals with the Building Management System (BMS). b. Program the BMS with the following dynamic control rules: - For occupied modes: Maintain standard design setpoints for temperature, humidity, and air changes per hour (ACH). - For unoccupied modes: Implement setback strategies, such as relaxing temperature and humidity bounds and reducing ACH to a pre-validated safe minimum. - Continuous demand control: Use particle counters to modulate ACH in real-time, increasing ventilation only when needed to maintain cleanliness class.

Validation and Commissioning: a. Execute a performance qualification (PQ) protocol to demonstrate that all zones maintain compliance with their environmental specifications (e.g., ISO 14644) under the new dynamic control scheme. b. Continuously meter HVAC energy consumption and compare it to the baseline to calculate and report energy savings.

Integrated Climate Control Strategy Diagram

The following diagram synthesizes the key strategies from greenhouse and bio-lab contexts into a unified workflow for reducing energy burden.

Diagram 2: Integrated Energy Reduction Strategy. A multi-pronged approach combining strategic planning, intelligent control, and efficient hardware.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Solutions for Climate-Energy Research

| Item Name | Function/Application in Research | Specification Notes |

|---|---|---|

| Data-Driven Greenhouse Model | Serves as a virtual testbed for simulating and optimizing control algorithms before real-world deployment. | Must include validated sub-models for energy, water vapor, CO₂, and crop growth [5] [11]. |

| Digital Twin for HVAC Systems | Allows for simulation and optimization of cleanroom HVAC control strategies like VAV and DCF. | Should be calibrated against a physical lab facility's performance data [9] [7]. |

| Particle Swarm Optimization (PSO) | A computational method for finding optimal parameters in complex, non-linear models, such as greenhouse setpoints. | Used to solve the high-dimensional optimization problems in MPC and global setpoint planning [5] [11]. |

| Deep Reinforcement Learning (DRL) Algorithm | Provides a framework for developing control policies that can adapt to uncertainties and faults. | Requires a carefully designed reward function that balances energy use against climate tracking error [6]. |

| IoT Sensor Network | Enables high-resolution, real-time data acquisition for model validation and direct feedback control. | Sensors for Temperature, Relative Humidity, CO₂, PAR Light, and Soil/Substrate Moisture are critical [8] [11]. |

Application Notes: System Integration for Dynamic Climate Control

Integrating smart thermostats, IoT sensors, and motorized dampers creates a dynamic control system capable of significantly reducing the energy load in greenhouse environments. This synergy allows for real-time, zone-specific climate adjustments that optimize growing conditions while minimizing energy consumption from Heating, Ventilation, and Air Conditioning (HVAC) systems.

The core principle involves using IoT sensors to collect real-time data on environmental parameters such as temperature, humidity, and light intensity. This data is processed by a central controller, which then issues commands to the smart thermostat to adjust HVAC operation and to motorized dampers to modulate airflow into specific zones. Research indicates that such smart control systems can achieve substantial HVAC operational energy and greenhouse gas (GHG) emissions savings, which offset the initial embodied energy of deploying the additional technology [12]. The U.S. indoor air quality market is projected to grow from $9.8 billion in 2022 to $11.9 billion by 2027, underscoring the importance of advanced, efficient climate control systems [13].

Quantitative Performance Data

The following table summarizes key performance metrics and characteristics of the core components as established in current research and market analyses.

Table 1: Performance Metrics and Characteristics of Dynamic Climate Control Components

| Component | Key Function | Performance/Characteristic | Impact on Energy Load |

|---|---|---|---|

| Smart Thermostat | Learns schedules, adjusts setpoints remotely, uses occupancy and weather data for predictive control [13]. | Market valued at $1.2B (2022), projected $3.8B by 2029 [13]. | Saves energy by adjusting temperatures based on occupancy and preferences [13]. |

| IoT Sensor Network | Measures real-time temperature, humidity, CO₂, and light levels across zones. | Enables data-driven control logic for HVAC and damper systems [12]. | Provides critical data to prevent over-conditioning unoccupied or self-regulating zones. |

| Motorized Damper | Modulates airflow to specific zones (VAV systems) based on sensor input. | Integral to Zone Control systems, allowing independent area conditioning [13]. | Reduces energy waste by directing conditioned air only where needed. |

| Variable-Speed Compressor | Adjusts motor speed to meet precise thermal demand [13]. | Often paired with smart control systems for maximum efficiency. | Provides energy savings and quieter operation compared to single-stage units [13]. |

| Complete Smart HVAC Control System | Integrates all components for optimized, holistic climate management. | Life cycle assessment shows net operational energy and GHG savings offset embodied impacts [12]. | Quantified net reduction in total life cycle energy and GHG emissions [12]. |

Experimental Protocols

Protocol: Life Cycle Energy and GHG Emission Analysis of Smart vs. Traditional HVAC Control

This methodology quantifies the environmental impact of deploying a smart climate control system versus a traditional system, providing a holistic view of its efficacy in reducing greenhouse energy loads [12].

2.1.1. Objective: To perform a comparative life cycle assessment (LCA) quantifying the embodied and operational energy needs and greenhouse gas (GHG) emissions of a traditional HVAC control system versus a smart HVAC control system with thermostats, IoT sensors, and motorized dampers in a research greenhouse.

2.1.2. Materials and Reagents:

- Control System Configurations: One traditional thermostat-based control system; one smart control system with a programmable central controller, IoT sensor network, and motorized dampers.

- Software: Life cycle inventory (LCI) database (e.g., Ecoinvent), building energy simulation software (e.g., EnergyPlus).

2.1.3. Procedure:

- System Scoping: Define the boundaries of the LCA to include the embodied impacts of all components (production, manufacturing) and the operational energy use over a defined lifespan (e.g., 15 years).

- Component Inventory: Create a detailed bill of materials for both the traditional and smart control systems, specifying all electronic components, sensors, dampers, and housing materials.

- Embodied Impact Calculation: Use a hybrid life cycle inventory approach to calculate the total embodied energy and GHG emissions for each system based on the component inventory [12].

- Operational Energy Simulation: a. Develop a calibrated energy model of the target greenhouse. b. Model the HVAC operational energy for the traditional system using its standard control logic. c. Model the HVAC operational energy for the smart system, implementing its dynamic control logics (e.g., zone-based setpoints, occupancy-driven setbacks, predictive adjustments based on sensor data).

- Data Synthesis: Calculate the net life cycle energy and GHG emissions by summing the embodied and operational impacts for each system. Compare the results to determine the payback period and net benefit of the smart system.

Protocol: Real-World Efficacy of Zone Control via IoT Sensors and Motorized Dampers

This experiment measures the direct energy savings and climate stability achieved by implementing a dynamic zoning strategy.

2.2.1. Objective: To evaluate the energy consumption and temperature/humidity uniformity achieved by a zoned HVAC system using IoT sensors and motorized dampers compared to a single-zone system in a heterogeneous greenhouse environment.

2.2.2. Materials and Reagents:

- Test Setup: A greenhouse compartment with varying solar exposure and plant canopy densities.

- IoT Sensors: A network of at least three temperature/RH sensors per zone, calibrated and logging at 5-minute intervals.

- Actuators: Motorized dampers installed in the air distribution ducts serving each defined zone.

- Control System: A central controller (e.g., a smart thermostat with expansion capability) programmed with zone-specific setpoints.

- Data Logger: A system to record total HVAC system energy consumption.

2.2.3. Procedure:

- Baseline Phase: Operate the HVAC system in a single-zone mode for one week. Record total energy consumption and spatial distribution of temperature and humidity.

- Intervention Phase: Activate the zoned control system for one week. Program the controller to adjust damper positions and HVAC setpoints based on real-time readings from the IoT sensors in each zone.

- Data Analysis: Calculate the total energy consumption during both phases. Perform a statistical analysis (e.g., standard deviation) of temperature and humidity readings across the greenhouse to compare climate uniformity between the two phases.

System Architecture and Experimental Visualization

Dynamic Climate Control Data Flow

Life Cycle Assessment Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Dynamic Climate Control Research

| Item Name | Function/Application in Research |

|---|---|

| Calibrated IoT Sensor Array | Provides high-fidelity, research-grade data on environmental parameters (T, RH, CO₂, PAR) essential for validating control algorithms and model calibration. |

| Programmable Central Controller | The computational core for implementing and testing custom dynamic control logics, such as model predictive control or zone-based optimization rules. |

| Life Cycle Inventory (LCI) Database | A database containing embodied energy and emission factors for materials and electronic components, required for conducting a comprehensive Life Cycle Assessment (LCA) [12]. |

| Building Energy Modeling (BEM) Software | Software platform used to create a virtual model of the greenhouse to simulate HVAC energy use under different control scenarios before physical implementation [12]. |

| Data Acquisition System (DAQ) | Hardware and software for aggregating, timestamping, and storing time-series data from all sensors and the energy meter for subsequent analysis. |

| Variable Refrigerant Flow (VRF) System | An advanced, electrically-driven HVAC system capable of simultaneous heating and cooling in different zones, ideal for testing high-efficiency control strategies. |

| Protocol for Hybrid LCI | A standardized methodology for combining process-based and input-output data to comprehensively quantify the embodied energy of complex electronic systems [12]. |

Principles of Real-Time Data Utilization for Load Balancing and Adaptation

In modern greenhouse agriculture, dynamic climate control is paramount for optimizing plant growth while minimizing energy consumption. The core challenge lies in managing the significant energy load required for heating, cooling, and humidity control—a major contributor to operational costs and environmental footprint. This document outlines the principles of using real-time data for dynamic load balancing and system adaptation, framing them within the context of reducing greenhouse energy load. By treating a greenhouse as a complex system of interconnected zones, principles from computer science such as adaptive load balancing and resource scheduling can be applied to achieve substantial energy savings and improve climate stability [14] [15]. These strategies enable a responsive and efficient climate management system that dynamically allocates resources—such as heated air, coolant, or shade—based on real-time sensor readings and predictive models.

Core Principles of Real-Time Data Utilization

The effective use of real-time data for adaptive control in greenhouses is governed by several key principles:

Real-Time Monitoring and Data Acquisition: The foundation of any adaptive system is a robust sensor network. This involves the continuous collection of high-fidelity data on climatic parameters including air temperature, relative humidity, photosynthetically active radiation (PAR), soil moisture, and carbon dioxide concentration [14] [15]. For energy load monitoring, sensors must also track the status and power consumption of actuators like HVAC systems, lights, and pumps. The data acquisition must be designed for low latency to enable timely system responses.

Dynamic Resource Allocation as Load Balancing: The greenhouse environment can be conceptualized as a distributed computing system where environmental control resources (e.g., heat, cool air, dehumidification) are the "services," and the individual zones or plant trays are the "clients" making requests. An adaptive load balancing algorithm, similar to those used in web server clusters, can be employed to distribute these resources efficiently [16] [15]. Instead of a static schedule, the system dynamically routes resources to zones with the highest demand, thereby preventing some areas from being over-conditioned while others are under-served. This maximizes the efficiency of each unit of energy consumed.

Predictive Scaling Based on Forecasts: Proactive adaptation is superior to purely reactive responses. By integrating external weather forecasts and historical climate patterns, the system can anticipate future energy demands [14]. For instance, if a sudden drop in external temperature is predicted, the heating system can be pre-emptively engaged in a gradual manner, avoiding a high-power surge later. This smooths the energy load profile and reduces peak demand charges.

System Stability and Fault Tolerance: An adaptive system must be resilient to sensor failures, communication dropouts, or actuator malfunctions. Principles from fault-tolerant distributed systems should be incorporated [14] [17]. This includes implementing heartbeat mechanisms to monitor device health, maintaining system stability by avoiding control oscillations (similar to cache thrashing [14]), and having fallback strategies to default, safe operating modes when critical failures are detected.

Application Notes and Protocols

Protocol 1: Deployment of a Real-Time Sensor Network

Objective: To establish a reliable sensor network for continuous monitoring of greenhouse microclimates and energy consumption.

Materials:

- Environmental sensors (temperature, humidity, PAR, CO2)

- Power meters (for HVAC, lighting, irrigation pumps)

- Microcontrollers (e.g., Arduino, Raspberry Pi) or programmable logic controllers (PLCs)

- Central data aggregation server (local or cloud-based)

- Secure communication infrastructure (e.g., wired Ethernet, Wi-Fi, LoRaWAN)

Methodology:

- Sensor Placement Strategy: Deploy sensors in a grid formation across the greenhouse, ensuring coverage for all distinct zones and vertical strata (canopy level, root zone). Place sensors away from direct HVAC airflow or sunlight to prevent biased readings.

- Data Acquisition and Transmission: Configure microcontrollers/PLCs to sample sensor data at a high frequency (e.g., every 10 seconds). Implement data smoothing (e.g., moving average filters) at the edge to reduce network traffic and filter out noise.

- Data Aggregation and Ingestion: Transmit the processed data packets to a central server using a lightweight protocol such as MQTT. The central server should timestamp and ingest each data point into a time-series database (e.g., InfluxDB).

- Data Validation: Implement a data validation routine on the server to identify and flag outliers or physically impossible values (e.g., a relative humidity of 110%). Flagged data can be excluded from control decisions and trigger maintenance alerts.

Protocol 2: Adaptive Load Balancing for Zone-Based Climate Control

Objective: To dynamically allocate thermal energy and ventilation resources across greenhouse zones based on real-time load.

Materials:

- Central climate control computer running the load balancing algorithm.

- Actuators: Modulating valves for heating/cooling, variable-speed fans, and adjustable vents.

- Real-time data feed from the sensor network (Protocol 1).

Methodology:

- Quantify Zone "Load": For each zone

i, calculate a dynamic load metricL_i. This metric can be a weighted function of the deviation from the setpoint:L_i = w_t * |T_setpoint - T_actual| + w_h * |H_setpoint - H_actual|wherew_tandw_hare weights for temperature and humidity importance, respectively [15]. - Collect System-Wide Metrics: The central controller periodically (e.g., every 1-5 minutes) polls the load

L_iand current actuator state for all zones. - Execute Load Balancing Algorithm: Employ a variant of the Power of Two Choices (P2C) adaptive load balancing algorithm [16].

- a. Selection: Randomly select two zones.

- b. Evaluation: Query the real-time load metric for the two selected zones.

- c. Decision: Direct the next available resource (e.g., a burst of warm air) to the zone with the higher load metric

L_i.

- Integrate Predictive Scaling: Adjust the overall capacity of the system (e.g., boiler output, chiller setpoint) based on forecasts. If a cold night is predicted, the boiler's base temperature can be raised proactively to meet the anticipated increase in demand across all zones.

Data Presentation and Analysis

Table 1: Performance Comparison of Load Balancing Strategies in a Simulated Greenhouse Cluster

| Strategy | Average Temperature Deviation (°C) | Energy Consumption (kWh/day) | CPU Load (Central Controller) |

|---|---|---|---|

| Static Round-Robin | 1.2 | 105 | Low |

| Adaptive Load Balancing (P2C) | 0.3 | 89 | Moderate |

| Predictive + Adaptive | 0.2 | 78 | High |

Table 2: Key Real-Time Data Sources for Greenhouse Load Balancing

| Data Source | Measurement Frequency | Primary Function in Load Calculation | Latency Requirement |

|---|---|---|---|

| Zone Air Temperature | Every 10 seconds | Core component of load metric L_i |

Low (< 5s) |

| Zone Relative Humidity | Every 10 seconds | Core component of load metric L_i |

Low (< 5s) |

| HVAC Power Draw | Every 1 second | System-wide capacity monitoring & energy reporting | Medium (< 30s) |

| External Weather Forecast | Every 1 hour | Predictive scaling of system capacity | Very Low (1h) |

| Soil Moisture Tension | Every 5 minutes | Influencing humidity load weights | Medium (< 1m) |

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Climate Control Research

| Item | Function/Description | Application in Protocol |

|---|---|---|

| Programmable Logic Controller (PLC) | An industrial computer adapted for robust control of machinery and processes. | Serves as the ruggedized hardware for executing the adaptive load balancing algorithm (Protocol 2) in a greenhouse environment. |

| MQTT Broker | A server that implements the MQTT protocol, a lightweight publish-subscribe network for messaging. | Acts as the central nervous system for real-time data exchange between sensors, actuators, and the control logic (Protocols 1 & 2). |

| Time-Series Database (e.g., InfluxDB) | A database optimized for storing and querying data points associated with timestamps. | Used for ingesting and storing all historical sensor and control data for analysis, model training, and system performance validation. |

| Euler Video Magnification (EVM) Algorithm | A computational technique to amplify subtle, color-based changes in video sequences that are invisible to the naked eye [18]. | Can be repurposed to non-invasively monitor plant physiological stress (e.g., water stress via subtle leaf movements) as an early indicator for pre-emptive climate adjustment. |

System Architecture and Workflow Visualization

Figure 1: High-Level Architecture for Adaptive Climate Control

Figure 2: Adaptive Load Balancing (P2C) Workflow

The integration of photovoltaic (PV) solar and geothermal energy systems represents a transformative approach for achieving ultra-high efficiency in dynamic climate control. This synergy leverages the complementary operational profiles of both technologies: PV systems provide peak electrical generation during daylight hours, while geothermal heat pumps offer consistent, high-efficiency thermal energy exchange 24/7, independent of weather conditions [19] [20]. For research focused on reducing greenhouse energy loads, this combination is particularly potent. A geothermal system drastically reduces the electrical demand for heating and cooling, as it can be 50% more efficient than conventional HVAC systems [21]. This reduced and predictable energy load can then be more effectively served by a appropriately sized on-site PV array, creating a closed-loop, resilient, and low-carbon energy system for maintaining precise indoor environmental conditions [19].

Quantitative System Comparison and Performance Characteristics

A critical step in designing an integrated system is understanding the distinct yet complementary technical profiles of PV and geothermal technologies. The following tables summarize their key characteristics and roles within a synergistic system.

Table 1: Performance and Economic Comparison of Solar PV and Geothermal Systems

| Factor | Solar Photovoltaic (PV) Systems | Geothermal Systems |

|---|---|---|

| Primary Energy Source | Sunlight [22] | Subsurface thermal energy [22] |

| Energy Output | Electricity [22] | Thermal energy for heating/cooling [21] |

| Capacity Factor | 20-30% (weather and daylight dependent) [20] | >90% (consistent, 24/7 operation) [20] |

| Typical Efficiency | Up to ~22.8% for commercial panels; up to 47.6% for lab-scale multi-junction cells [23] | Up to 50% less energy used than conventional HVAC [21] |

| Key Advantage | Zero fuel cost, scalable, reduces grid electricity purchases [22] [20] | Highly efficient, stable baseload thermal power, low operating costs [22] [20] |

| Key Limitation | Intermittent generation [22] [24] | High initial installation cost [22] |

| Land Use | Significant surface area required for panels [22] | Smaller surface footprint; subsurface land use for ground loops [20] |

Table 2: Complementary Roles in an Integrated Climate Control System

| Characteristic | Solar PV Contribution | Geothermal Contribution |

|---|---|---|

| Generation Profile | Intermittent, peak output during daytime [20] | Consistent, baseload operation 24/7 [20] |

| Grid Interaction | Can feed excess electricity to the grid [19] | Reduces overall building grid electricity demand [21] |

| Role in Energy Load Reduction | Offsets electricity consumption from the grid, including for auxiliary systems [19] | Directly reduces the largest component of a building's thermal energy load [21] [19] |

| Synergistic Benefit | Provides carbon-free electricity to run the geothermal heat pump's compressor and circulation pumps [19] | Lowers the total electrical load, making it easier for a PV system to meet a significant portion of a building's net energy needs [19] |

Experimental Protocols for System Integration and Performance Validation

Protocol 1: Pre-Installation Site Assessment and System Sizing

Objective: To quantitatively assess the site-specific conditions and accurately size the integrated PV-geothermal system components to meet the target energy load reduction.

Materials: Pyranometer, ground temperature sensors, thermal response test (TRT) rig, data logger, building energy modeling software (e.g., EnergyPlus), geological survey reports.

Methodology:

- Solar Resource Assessment:

- Install a pyranometer on-site for a minimum of one month to measure solar irradiance (W/m²).

- Calculate the average daily and seasonal solar energy potential (kWh/m²/day) [25].

- Analyze historical weather data to account for seasonal variations and cloud cover.

- Geological and Thermal Properties Assessment:

- Conduct borehole drilling to the planned depth of the ground loops (e.g., 200-400 feet for vertical systems) [21].

- Perform a Thermal Response Test (TRT) by circulating a fluid through a test borehole while applying a constant heat load. Monitor the fluid's inlet and outlet temperatures to determine the ground's thermal conductivity (W/m·K) and thermal resistance.

- Based on TRT results and the building's calculated heating/cooling loads, design the ground loop configuration (vertical vs. horizontal, required loop length) [21].

- Building Energy Load Profiling:

- Using building plans and HVAC specifications, model the building's hourly heating and cooling loads (in kWh) across all four seasons.

- Isolate the load that will be serviced by the geothermal heat pump.

- Integrated System Sizing:

- Size the geothermal heat pump capacity (in tons) to meet the peak thermal load.

- Size the PV array (in kW DC) to generate at least the annual electricity consumption of the geothermal heat pump, plus a target percentage of the building's remaining electrical load. Utilize the formula:

PV System Size (kW) = (Annual Geothermal kWh + Target % of Other Load) / (Local Annual Peak Sun Hours × 365 × System Efficiency).

Protocol 2: Integrated System Operation and Data Acquisition Workflow

Objective: To establish a controlled operational workflow for the integrated system and implement a robust data acquisition protocol to measure key performance indicators (KPIs).

Materials: Integrated system controller (e.g., Building Management System with custom algorithms), power meters (for PV production and building consumption), flow meters and thermocouples for the geothermal ground loop, data acquisition system (DAQ), indoor environmental quality (IEQ) sensors (temperature, humidity).

Methodology:

- System Control Logic:

- Program the system controller to prioritize the use of self-generated PV electricity for the geothermal heat pump's operation.

- Implement setpoints for indoor temperature and humidity that can be dynamically adjusted based on forecasted PV generation (e.g., pre-cooling the building during periods of high solar generation) [26].

- Data Acquisition Setup:

- Install and calibrate all sensors. Key measurement points include:

- PV System: DC and AC power output (kW), energy yield (kWh).

- Geothermal System: Fluid flow rate (L/s), inlet and outlet temperatures (°C) to the heat pump, electricity consumption (kW).

- Building: Total electricity import/export (kW), indoor temperature and humidity in controlled zones.

- Configure the DAQ to log all data at 5-minute intervals.

- Install and calibrate all sensors. Key measurement points include:

- Performance Monitoring Period:

- Run the integrated system for a full calendar year to capture seasonal variations.

- Continuously log all operational data as per the setup above.

Protocol 3: Data Analysis and Performance Validation

Objective: To analyze the acquired data and validate the performance of the integrated system against predefined KPIs, including energy efficiency, GHG reduction, and load flexibility.

Materials: Collected annual dataset, statistical analysis software (e.g., Python, R), baseline energy consumption data from a pre-installation period or a reference building.

Methodology:

- Calculate Key Performance Indicators (KPIs):

- On-Site Energy Fraction (OEF):

(Total PV Generation / Total Building Electricity Consumption) × 100% - Geothermal System Coefficient of Performance (COP):

(Thermal Energy Provided / Electrical Energy Consumed by Heat Pump) - GHG Emission Reduction: Compare grid electricity consumption before and after installation, using local grid emission factors.

- Peak Load Reduction: Analyze the reduction in peak power demand from the grid during extreme weather events.

- On-Site Energy Fraction (OEF):

- Statistical Analysis:

- Perform regression analysis to correlate PV generation with geothermal system operation and building load.

- Conduct a paired t-test to determine if the reduction in grid energy consumption is statistically significant compared to the baseline.

- Model Validation:

- Compare the actual measured performance data with the predictions from the pre-installation energy model. Calibrate the model for future accuracy.

Diagram 1: Integrated System Experimental Workflow. This diagram outlines the sequential and parallel processes for assessing, operating, and validating a synergistic PV-Geothermal system.

The Researcher's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagents and Materials for PV-Geothermal Integration Studies

| Item | Function/Application |

|---|---|

| Pyranometer | Measures solar irradiance (W/m²) for accurate assessment of on-site PV potential and real-time generation validation [25]. |

| Thermal Response Test (TRT) Rig | A mobile unit used to inject a known heat load into a test borehole and measure the thermal response of the ground, critical for determining ground loop design parameters [21]. |

| Building Energy Modeling Software (e.g., EnergyPlus) | Platforms used to create a digital twin of the building for simulating energy loads and predicting the performance of integrated systems under various scenarios [26]. |

| Integrated System Controller | A programmable logic controller or Building Management System (BMS) that executes dynamic control algorithms to optimize the synergy between PV generation and geothermal load [26]. |

| Power Analyzers/Meters | High-precision instruments installed at critical points (PV inverter output, geothermal heat pump supply) to measure real and reactive power, energy consumption, and power quality [25]. |

| Data Acquisition System (DAQ) | Hardware and software system that aggregates, logs, and time-synchronizes data from all sensors (temperature, flow, power) at high frequency for post-processing and analysis. |

The integration of photovoltaic and geothermal systems presents a robust, data-driven pathway for achieving deep energy load reductions in climate-controlled environments. The protocols outlined herein provide a framework for researchers to quantitatively assess, implement, and validate this synergy. By leveraging the continuous baseload capacity of geothermal systems and the peak daytime generation of PV, this integrated approach can significantly decouple building operation from the carbon-intensive grid, contributing directly to the decarbonization goals of modern energy and agricultural research. Future work should focus on the optimization of AI-driven predictive controllers that can further enhance this synergy by forecasting energy generation and load profiles [26].

Implementing Dynamic Systems: Methodologies for Precision and Efficiency

Dynamic climate control is a cornerstone of modern research facility management, directly supporting scientific integrity while presenting a significant opportunity for reducing greenhouse energy loads. In multi-purpose research spaces, maintaining distinct environmental conditions simultaneously is not merely a convenience but a critical requirement for experimental validity and parallel processing. Traditional, building-wide HVAC systems operate on static setpoints and uniform control strategies, leading to substantial energy waste by conditioning entire volumes to suit the most demanding local process. This application note details a system architecture that moves beyond this paradigm. By implementing a data-driven, dynamically zoned control system, research facilities can achieve precise environmental management tailored to specific experimental protocols. This approach directly addresses the core thesis that dynamic strategies can significantly reduce energy consumption. The proposed framework leverages digital twin technology and machine learning optimization to create a responsive, efficient, and robust system capable of meeting the stringent demands of modern scientific research [27] [28].

Core System Architecture

The proposed architecture is a closed-loop system that integrates physical sensing, computational intelligence, and actuation. It is designed to be adaptive, scalable, and capable of self-optimization based on real-time data and predictive models.

The following diagram illustrates the high-level logical flow of data and control decisions within the zoned system.

Quantitative Performance Metrics

Implementing a dynamic zoned control system yields measurable improvements in both energy and operational performance. The following table summarizes potential quantitative gains, as evidenced by pilot applications in complex environments.

Table 1: Quantitative Benefits of Dynamic Zoned Control Systems

| Performance Metric | Reported Improvement | Source Context |

|---|---|---|

| Comprehensive Energy Efficiency | Increase of 19.7% | E-commerce supply chain optimization [27] |

| Carbon Emission Intensity | Reduction of 14.3% | E-commerce supply chain optimization [27] |

| Peak Electricity Load (Warehousing) | Reduction of 23% | E-commerce supply chain optimization [27] |

| Zoning Consistency Score | Over 91% | Data-driven building thermal zoning [28] |

| Inventory Turnover Efficiency | Increase of 12% | E-commerce supply chain optimization [27] |

Experimental Protocols for System Validation

Rigorous experimental protocols are essential to validate the performance of the zoned control architecture against the thesis objective of reducing energy load. The following procedures outline key methodologies for quantifying system efficacy.

Protocol 1: Data-Driven Thermal Zoning and Model Calibration

This protocol details the process of defining the thermal zones, which forms the foundational step for the entire control strategy.

- Objective: To identify spatially contiguous rooms with similar thermal dynamics using statistical clustering of sensor data, thereby creating a high-fidelity digital twin for control.

- Background: Static or rule-based zoning often fails to account for dynamic conditions, leading to inefficient control. A data-driven approach balances model accuracy with implementation cost [28].

- Materials:

- IoT sensor network (e.g., temperature, relative humidity, CO₂ sensors).

- Data historian or building management system (BMS) with time-series database.

- Computational environment (e.g., Python with scikit-learn).

- Procedure:

- Data Collection: Collect time-series data from all sensors (e.g., 168 rooms with 262 sensors) at a 15-minute interval for a minimum of one month, covering diverse operational conditions [28].

- Feature Engineering: For each room, calculate key features from the data, including:

- Average, maximum, and minimum daily temperature.

- Correlation of room temperature with ambient outdoor temperature.

- Thermal response time constant (estimated from step-response data).

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to the feature set to reduce dimensionality while retaining the most significant variance in the data.

- Cluster Analysis: Apply the k-means clustering algorithm on the principal components to group rooms with similar thermal characteristics.

- Zone Validation: Validate the derived zones against qualitative criteria from literature and standards, such as physical inspection of building layout, orientation, and HVAC system topology [28].

- Digital Twin Visualization: Visualize the finalized, color-coded thermal zones in a 3D digital twin environment alongside real-time sensor data for operational monitoring [28].

Protocol 2: Dynamic Control Strategy Performance Benchmarking

This protocol tests the core hypothesis by comparing the dynamic controller against traditional baselines.

- Objective: To quantify the energy savings and thermal comfort improvements achieved by a Deep Reinforcement Learning (DRL) controller compared to a conventional Proportional-Integral-Derivative (PID) controller.

- Background: Advanced control strategies like DRL can adapt to dynamic complexities that challenge traditional methods, leading to significant energy efficiency improvements [27].

- Materials:

- A calibrated digital twin of the testbed research facility.

- A simulated or physical test environment with the zoned architecture implemented.

- Energy metering for relevant HVAC subsystems.

- Procedure:

- Baseline Establishment: Operate the system using a traditional PID control strategy for a two-week period. Log total HVAC energy consumption, and measure thermal comfort using metrics like Predicted Mean Vote (PMV) or temperature setpoint deviation.

- DRL Controller Deployment: Implement an improved Deep Deterministic Policy Gradient (DDPG) algorithm. The agent's objective is to minimize energy cost while maintaining environmental setpoints [27].

- Training Phase: Allow the DRL agent to interact with the digital twin (or the real system in a safe, supervised manner) to learn optimal control policies. The state space should include zone temperatures, humidity, schedules, and weather forecasts. The action space comprises setpoints for VAV boxes and AHUs.

- Testing Phase: Run the trained DRL controller for a two-week period under similar external weather conditions as the baseline test. Log the same performance metrics.

- Data Analysis: Perform a comparative statistical analysis (e.g., t-test) on the energy consumption and comfort metrics from the baseline and DRL test periods to determine significant differences.

Protocol 3: Quantifying Physiological and Psychological Responses

This protocol ensures that the environmental conditions created by the dynamic system are conducive to occupant health and cognitive performance, a critical factor in research environments.

- Objective: To quantitatively analyze the effects of different thermal environments on physiological and psychological responses of occupants during activities simulating research work.

- Background: The indoor environment significantly affects human health and comfort. Quantitative relationships between temperature and subjective responses provide effective indicators for refining comfort models [29].

- Materials:

- Climate chamber capable of precise temperature control.

- Physiological monitors (heart rate (HR) sensors, skin temperature (mTsk) probes).

- Subjective questionnaire forms (Thermal Sensation Vote (TSV), Fatigue Level Vote (FLV)).

- Procedure:

- Subject Recruitment: Recruit a cohort of subjects (e.g., n=32), ensuring a balance of genders, as significant differences in physiological and psychological responses have been observed [29].

- Experimental Conditions: Expose subjects to a sequence of temperature conditions (e.g., 22°C, 24°C, 26°C, 28°C) in a randomized order to control for learning effects.

- Activity and Measurement: Subjects perform standardized, light cognitive tasks. Continuously monitor and record HR and mTsk. At regular intervals, subjects complete TSV and FLV questionnaires.

- Data Analysis:

- Calculate peak HR and mean mTsk for each subject at each temperature.

- Analyze TSV and FLV scores.

- Perform correlation analysis (e.g., Pearson correlation) to establish significant relationships between temperature, HR, mTsk, and subjective votes (p < 0.05) [29].

- Model Integration: Use the results to define optimal temperature setpoint ranges that minimize fatigue and maximize comfort within specific zones, integrating these human-factors data into the dynamic control logic.

Data Analysis and Workflow

The analysis of data from both the system operation and human-subject experiments follows a structured workflow to ensure robust conclusions.

Data Analysis Workflow

The following diagram outlines the key stages of data analysis for the system.

Key Research Reagent Solutions

The "reagents" for this systems engineering research consist of the essential computational tools and datasets required to build and validate the architecture.

Table 2: Essential Research Reagents and Materials

| Item | Function / Explanation |

|---|---|

| IoT Sensor Network | A suite of connected sensors (temperature, humidity, CO₂, occupancy) deployed throughout the facility to provide the real-time data stream essential for the digital twin and closed-loop control. |

| Digital Twin Platform | A virtual mirror of the physical research space (e.g., built on platforms like Siemens Xcelerator or IBM Maximo) that hosts the 3D geometry, dynamic thermal models, and real-time data integration for simulation and analysis [27] [28]. |

| Long Short-Term Memory (LSTM) Network | A type of Recurrent Neural Network (RNN) used to process time-series data; its function is to accurately predict short-term dynamic thermal loads and occupant behavior based on historical data [27]. |

| Improved Deep Deterministic Policy Gradient (DDPG) Algorithm | A deep reinforcement learning algorithm suited for continuous action spaces; its function is to learn the optimal control policy for the HVAC system, balancing energy use against environmental precision [27]. |

| Principal Component Analysis (PCA) & k-means Algorithms | Standard unsupervised machine learning algorithms; their function is to reduce the dimensionality of sensor data and identify natural clusters of rooms with similar thermal behavior, forming the basis for the zoning map [28]. |

The precision control of indoor climates is a cornerstone of modern agricultural and pharmaceutical research, directly impacting the viability of biological specimens, the reproducibility of experimental conditions, and the energy footprint of research facilities. This document outlines detailed application notes and protocols for the strategic deployment of sensor networks to monitor the critical triumvirate of temperature, humidity, and occupancy. Framed within broader research on dynamic climate control strategies for reducing greenhouse energy loads, these guidelines are designed to equip researchers and scientists with the methodologies to collect high-fidelity environmental data. Such data is indispensable for developing AI-driven control models, validating energy-saving protocols, and ensuring the integrity of long-term studies in controlled environment agriculture and drug development.

Quantitative Foundations: Sensor Performance and System Costs

A strategic deployment begins with a quantitative understanding of sensor capabilities and market trajectories. The following tables summarize key performance metrics from recent studies and the evolving market for enabling technologies.

Table 1: Performance Metrics from Recent Sensor Deployment and Occupancy Forecasting Studies

| Study Focus / Metric | Reported Performance Value | Context and Methodology |

|---|---|---|

| Indoor Temperature Estimation (Steady-State) [30] | Average RMSE: 0.199 °C | User-centric deployment using IMOPSO and WiFi occupancy data. |

| Indoor Temperature Estimation (Dynamic-State, Heating) [30] | Average RMSE: 0.298 °C | User-centric deployment using IMOPSO and WiFi occupancy data. |

| Optimal Placement Error vs. Volume-Averaged Temperature [31] | Maximum RMSE: 0.35 °C | CFD-based OSP in radiant floor heating; most values < 0.3 °C. |

| Occupancy Prediction (LSTM Model) [32] | R²: 0.982, RMSE: 2.724 occupants | Privacy-friendly approach using CO₂, temperature, and humidity data. |

| Appropriate Sensor Density for Large Spaces [33] | 20-30 sensors (1 per 300-450 m²) | GA-optimized deployment for air temperature monitoring in an airport terminal. |

| Energy Reduction from Predictive ML Control [34] | 15.8% reduction vs. traditional thermostat | AI-powered blockchain framework for smart home temperature control. |

| Radiator Heat-On Event Detection [34] | 28.5% accuracy | Dynamic event detection in an AI-blockchain smart home system. |

Table 2: Wireless Sensor Market Overview and Communication Protocols

| Aspect | Detail | Relevance to Research Deployment |

|---|---|---|

| Global Market Size (2024) [35] | USD 4.56 Billion | Indicates technology maturity and availability. |

| Projected Market Size (2034) [35] | USD 11.13 Billion (CAGR 9.33%) | Highlights long-term viability and continued innovation. |

| Dominating Sensor Type [35] | Semiconductor (IC) Temperature Sensors (35% share) | Low-cost, small form factor, ideal for dense deployments. |

| Fastest Growing Sensor Type [35] | RTD (Resistance Temperature Detector) Wireless Sensors | High accuracy for critical applications in labs and storage. |

| Dominating Communication Protocol [35] | Short-range Wi-Fi (2.4/5 GHz) (30% share) | Ease of integration with existing IT infrastructure. |

| Fastest Growing Communication Protocol [35] | LPWAN (LoRaWAN, Sigfox) | Long-range, low-power, ideal for large greenhouses and campuses. |

Experimental Protocols for Strategic Sensor Deployment

This section provides a detailed, step-by-step methodology for planning and executing a sensor network deployment aimed at optimizing dynamic climate control and reducing energy load.

Protocol: Multi-Objective Optimization for User-Centric Sensor Placement

This protocol leverages occupancy information to place sensors that maximize both thermal accuracy and user satisfaction, reducing the need for dense, costly deployments [30].

- Objective: To determine the optimal number and location of temperature sensors that minimize estimation error while maximizing coverage of occupied zones and overall user satisfaction.

- Experimental Workflow:

- Step-by-Step Procedure:

- Data Collection:

- WiFi Connection Logs: Collect timestamped data from WiFi access points to map spatial and temporal occupancy patterns [30].

- Building Floor Plan: Obtain a digital plan of the facility (e.g., greenhouse, research lab). Discretize the space into a grid of candidate sensor locations.

- Historical User Satisfaction: If available, gather data relating environmental conditions to occupant feedback to calibrate the satisfaction model.

- Model Establishment:

- Coverage Model: Develop a model that quantifies how well a set of sensor locations "covers" the occupied zones identified by WiFi data [30].

- Satisfaction Model: Establish a metric that predicts user satisfaction based on the accuracy of temperature estimation in occupied areas [30].

- Multi-Objective Formulation: Formally define the optimization problem with objectives to 1) Maximize Coverage, 2) Maximize Satisfaction, and 3) Minimize the number of sensors (cost).

- Optimization Execution:

- Algorithm Selection: Implement a multi-objective optimization algorithm such as the Improved Multi-Objective Particle Swarm Optimization (IMOPSO) or NSGA-II [30].

- Parameter Tuning: Set algorithm parameters (e.g., population size, iteration count). Use Tent mapping for population initialization in IMOPSO to improve uniformity [30].

- Pareto Frontier: Run the optimization until a set of non-dominated solutions (Pareto frontier) is identified, representing the trade-offs between the objectives.

- Solution Validation:

- Final Selection: From the Pareto-optimal solutions, select the one that best aligns with the project's budget and performance priorities.

- Field Deployment & RMSE Validation: Physically deploy sensors at the chosen locations. Measure the Root Mean Square Error (RMSE) between the sensor readings and ground-truth measurements (e.g., from a temporary, high-density sensor array) to validate performance against targets like 0.2-0.3 °C RMSE [30] [31].

- Data Collection:

Protocol: CFD-Assisted Optimal Sensor Placement for Complex Environments

This protocol uses computational modeling to determine sensor placements that best represent the volume-averaged operating temperature in spaces with complex airflow and radiant effects, such as those with radiant floor heating [31].

- Objective: To identify sensor locations that minimize the error between the point sensor reading and the volume-averaged operative temperature in a zone, accounting for factors like solar radiation and floor heating.

- Experimental Workflow:

- Step-by-Step Procedure:

- Define Scenarios:

- Identify representative operational scenarios for the space. For a greenhouse or lab, this includes different times of day, seasons (e.g., typical winter day), and varying external conditions (solar load, outdoor temperature) [31].

- CFD Simulation:

- Model Development: Create a detailed 3D CFD model of the space, including geometry, material properties, heat sources (radiant floors, lights), and boundary conditions (solar load, outdoor temperature, HVAC inlets/outlets) [31].

- Simulation Execution: Run steady-state or transient CFD simulations for each defined scenario to generate a high-resolution spatial data set of air temperature and mean radiant temperature.

- Post-Processing:

- Operative Temperature Calculation: For each zone or occupied sub-space, calculate the volume-averaged operative temperature, which combines air and mean radiant temperature, for each simulation scenario [31].

- Difference Calculation: For every candidate sensor location in the CFD grid, calculate the absolute temperature difference

ΔT_j(x,y,h,τ) = |T_sensor,j(τ) - T_operative,zone(τ)|for each time scenarioτ[31]. - Index Computation: Compute a comprehensive evaluation index

R_ΔT_avg,j(x,y,h)that considers both the mean and maximumΔTover all scenarios to find locations that are robust across varying conditions [31].

- Identify Optimal Zones:

- Apply a threshold (e.g.,

ΔT < 0.25 °C) to filter candidate points [31]. - The location with the smallest comprehensive evaluation index

G_ΔT_avgrepresents the point where the sensor reading most accurately reflects the true zone operative temperature across all scenarios. Final placement should be in the 1.0m to 1.7m height range (occupied zone) [31].

- Apply a threshold (e.g.,

- Define Scenarios:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Reagents for Sensor Network Deployment

| Item / Solution | Function / Application | Specification / Notes |

|---|---|---|

| Integrated IoT Sensor Node [36] | Measures multiple parameters (Temp, Humidity, CO₂, Light, Noise). | Essential for dense deployments; should support roles as sensor, router, and hub [36]. |

| Wireless Temperature Sensors (RTD) [35] | High-accuracy temperature measurement for critical areas. | Preferred over semiconductors for applications requiring high precision and stability [35]. |

| CO₂ Sensor (NDIR type) [32] | Serves as a proxy for human occupancy and indicates ventilation status. | Key for privacy-friendly occupancy forecasting models; ceiling-mounted for stable readings [32]. |

| Computational Fluid Dynamics (CFD) Software [31] | Models complex indoor climate dynamics for virtual OSP. | Used to generate high-resolution temperature field data without physical intrusion [31]. |

| Multi-Objective Optimization Algorithm (IMOPSO/NSGA-II) [30] | Solves the sensor placement problem balancing cost, coverage, and satisfaction. | IMOPSO combines PSO's efficiency with genetic algorithm variation for better convergence [30]. |

| LPWAN Communication Module (LoRaWAN) [35] | Long-range, low-power connectivity for large-scale deployments. | Ideal for greenhouses and large facilities where Wi-Fi coverage is impractical [35]. |

| Data Logging & Cloud Analytics Platform [35] [34] | Aggregates sensor data, runs AI models, and enables predictive control. | Integrated cloud-based SaaS platforms are a dominant market solution [35]. |

Application Notes

Algorithmic control represents a paradigm shift in greenhouse climate management, moving from static setpoints to dynamic, intelligent systems that optimize environmental parameters in real-time. These frameworks are central to a thesis on dynamic climate control strategies, as they directly contribute to reducing greenhouse energy loads while maintaining optimal crop growth conditions. The integration of predictive models and adaptive control mechanisms allows for precise management of complex, spatially distributed environmental factors, leading to significant energy savings and improved crop yields. [37]

The core principle involves using data-driven models to forecast future climate conditions and dynamically adjust control actuators. This proactive approach contrasts with traditional reactive systems, enabling preemptive adjustments that smooth energy consumption peaks and minimize waste. For greenhouse operations, this translates to a substantial reduction in the energy required for heating, cooling, and ventilation, which constitutes a major portion of the operational energy load. [26] [37]

Core Framework Components

The predictive and adaptive control framework is built upon three interconnected pillars:

- Forecasting and Modeling: Utilizing historical and real-time data to predict future states of the greenhouse environment and crop growth. Artificial Intelligence (AI) techniques demonstrate superior forecasting accuracy and adaptive control capabilities, enabling more responsive and efficient management. [26]

- Optimization and Decision-Making: Employing algorithms to determine the optimal control actions that balance competing objectives, such as maximizing crop production versus minimizing energy consumption. [37]

- Adaptive Execution and Learning: Implementing control actions and using sensor feedback to continuously refine and improve the models and strategies, creating a closed-loop system that adapts to changing conditions and uncertainties. [37]

Experimental Protocols

Protocol: Rolling-Horizon Optimal Control for Spatial Climate Management

This protocol details a method for achieving high spatiotemporal accuracy in greenhouse climate control, which is critical for reducing energy load without compromising crop health. [37]

1. Objective: To dynamically control greenhouse actuators (e.g., shading, fans) to maximize crop production and energy efficiency, accounting for the spatial distribution of environmental parameters.

2. Prerequisites:

- A computational model of the greenhouse structure.

- Sensor network measuring temperature, humidity, CO₂, and light levels at multiple locations.

- Actuators for ventilation, shading, and heating/cooling.

- Access to a local weather forecast service.

3. Procedure:

Step 1: Offline Reduced-Order Model Development

- 1.1. Develop a high-fidelity Computational Fluid Dynamics (CFD) model of the greenhouse that simulates the climate (temperature, humidity, airflow) under various external weather and control actuator settings. [37]

- 1.2. Use the Proper Orthogonal Decomposition (POD) method to extract dominant features from a wide range of CFD simulations ("snapshots"). This process projects the high-dimensional model onto a low-dimensional, orthogonal basis. [37]

- 1.3. Obtain a low-dimensional feature subspace by energy truncation, creating a fast and computationally inexpensive surrogate model that can reconstruct the dynamic climate variation with high spatial resolution. [37]

Step 2: Online Rolling-Horizon Control

- 2.1. Initialize the control cycle. At the start of each finite time horizon (e.g., 1-3 hours), update the system with the latest external meteorological forecast. [37]

- 2.2. Using the reduced-order POD model, quickly calculate the predicted response of the greenhouse environment (temperature, humidity) across the entire crop area for the upcoming horizon. [37]

- 2.3. Define a performance criterion

Jthat balances crop growth rate against energy consumption. [37] - 2.4. Employ an optimization algorithm (e.g., Particle Swarm Optimization) to find the optimal settings for control variables (e.g., shading rate, fan speed) that maximize

Jover the forecast horizon. [37] - 2.5. Implement the optimized control sequence for the first step of the horizon.

- 2.6. Roll the horizon forward by one step, receive new sensor measurements and updated weather data, and repeat the process from Step 2.1. This continuous re-calculation corrects for external disturbances and model inaccuracies. [37]

4. Data Analysis:

- Compare the spatial uniformity of temperature and humidity against a control period using traditional thermostatic control.

- Calculate total energy consumption (kWh) for HVAC and actuator operations.

- Measure crop yield and quality metrics at harvest.

Protocol: AI-Driven Predictive Maintenance for HVAC Systems

This protocol aims to minimize energy waste and downtime by transitioning from reactive to proactive maintenance of greenhouse HVAC equipment. [38]

1. Objective: To predict potential failures in HVAC systems before they occur, reducing downtime and maintenance costs.

2. Prerequisites:

- IoT-enabled HVAC system with sensors for vibration, temperature, electrical consumption, and pressure.

- Data acquisition and storage system.

3. Procedure:

- Step 1: Continuously monitor and log time-series data from all critical HVAC components (compressors, fans, pumps). [38]

- Step 2: Train machine learning algorithms (e.g., regression models, neural networks) on the collected operational data to establish a baseline "healthy" performance profile. [38]

- Step 3: Use the trained models to analyze real-time data streams. The algorithms will identify anomalies and deviations from the normal performance baseline that indicate potential impending failures. [38]

- Step 4: Generate automatic alerts for technicians when the system predicts a failure with a high degree of confidence, allowing for scheduled, proactive maintenance. [38]

Table 1: Performance Outcomes of Algorithmic Control Strategies in Agricultural and Building Contexts

| Strategy / Metric | Reported Performance Improvement | Context / Conditions | Source |

|---|---|---|---|

| Rolling-Horizon Optimal Control (POD-based) | Improved spatiotemporal accuracy of climate management; Lower computational cost vs. full CFD. | Greenhouse case study; controlled shading and ventilation. | [37] |

| AI Predictive Maintenance | 92% accuracy in predicting system failures; 35% reduction in downtime; 28% decrease in maintenance costs. | General HVAC systems analysis. | [38] |

| Variable Refrigerant Flow (VRF) Systems | Achieved 15% to 42% energy savings. | Analysis across various climate zones. | [38] |

| Smart Thermostats with AI | Reduced energy consumption by up to 47%. | Residential HVAC using predictive learning. | [38] |

| Portfolio-wide Net-zero Strategies | On-site carbon-free energy reduced 51% of emissions; Efficiency measures reduced 19% of emissions. | Analysis of 16 diverse federal sites. | [39] |

Table 2: APCA Readability Contrast Criteria for Interface Design (Reference)

| Lightness Contrast (Lc) Value | Recommended Use Case | Minimum Font Example |

|---|---|---|

| Lc 90 | Preferred for fluent body text. | 14px / 400 weight |

| Lc 75 | Minimum for body text (readability important). | 18px / 400 weight |

| Lc 60 | Minimum for non-body content text. | 24px / 400 or 16px / 700 |

| Lc 45 | Larger, heavier text (e.g., headlines), fine-detail pictograms. | 36px / 400 or 24px / 700 |

| Lc 30 | Absolute minimum for any other text. | Not specified |

Visualization Diagrams

Greenhouse Algorithmic Control Workflow

Predictive Maintenance Data Pipeline

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions and Computational Tools

| Item / Tool | Function / Application in Research |

|---|---|