Domain-Based Methods vs. Machine Learning: The New Frontier in Protein Function Prediction

Accurately predicting protein function is a central challenge in biology, with direct implications for understanding disease mechanisms and drug discovery.

Domain-Based Methods vs. Machine Learning: The New Frontier in Protein Function Prediction

Abstract

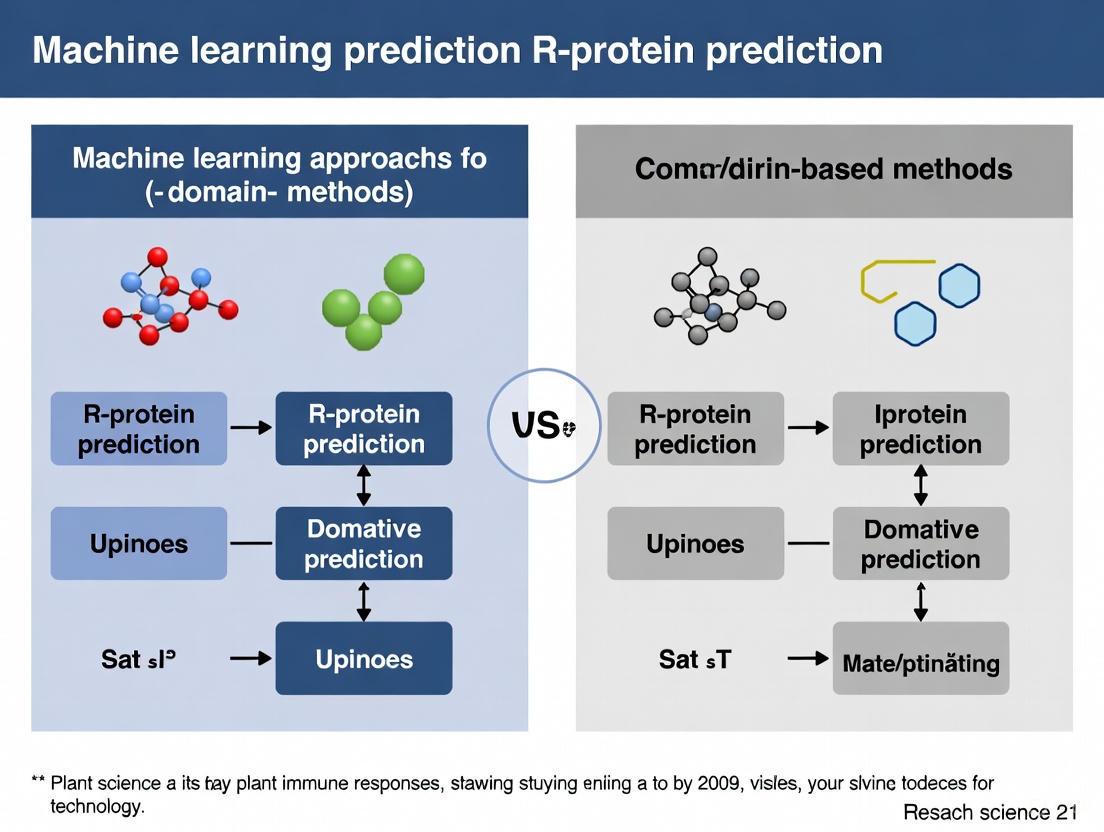

Accurately predicting protein function is a central challenge in biology, with direct implications for understanding disease mechanisms and drug discovery. This article provides a comprehensive analysis for researchers and drug development professionals, comparing traditional domain-based methods with emerging machine learning (ML) and deep learning (DL) approaches. We explore the foundational principles of both paradigms, detail the core architectures of modern ML models like Graph Neural Networks (GNNs) and protein language models, and address critical challenges such as data scarcity and model interpretability. Through a rigorous validation of performance metrics and real-world applications, we demonstrate that the most powerful solutions often emerge from the integration of domain-guided insights with the predictive power of deep learning, paving the way for more accurate and interpretable functional annotation at a proteome-wide scale.

From Sequence to Function: Core Principles of Domain Analysis and Machine Learning

Protein domains are widely recognized as the fundamental structural, functional, and evolutionary units of proteins [1]. These conserved segments of polypeptide chains fold into distinct three-dimensional structures independently and serve as the essential building blocks that combine to form multidomain proteins [1]. Through evolutionary processes, domain families have expanded into multiple members that appear in diverse configurations with other domains, continually evolving new specificities for interacting partners [2]. This combinatorial expansion explains much of the functional diversity observed in modern proteomes, with domains acting as evolutionary modular units that can be reused, repurposed, and recombined through genetic mechanisms [1].

The Domain Hypothesis provides a powerful framework for understanding protein evolution, suggesting that the staggering diversity of protein functions arises not predominantly from the de novo creation of entirely new sequences, but from the strategic recombination of a finite set of stable, folded domains [1]. This modular paradigm has transformed computational biology, enabling researchers to predict protein function through domain architecture analysis and develop machine learning approaches that leverage domain properties for protein classification [3] [4]. Nowhere is this more evident than in the study of plant resistance (R) proteins, where domain combinations directly determine pathogen recognition capabilities and immune signaling functions [5].

Domain Architecture of Plant Resistance Proteins

Major R Protein Classes and Their Domain Composition

Plant resistance proteins constitute a critical component of the plant immune system, recognizing pathogen effector molecules and initiating defense responses [5]. These proteins typically contain characteristic domain arrangements that define their mechanistic class and function. The major classes include:

- TNL proteins (TIR-NBS-LRR): Contain Toll/Interleukin-1 Receptor (TIR), Nucleotide-Binding Site (NBS), and Leucine-Rich Repeat (LRR) domains

- CNL proteins (CC-NBS-LRR): Feature Coiled-Coil (CC), NBS, and LRR domains

- RLK proteins (Receptor-Like Kinases): Comprise kinase (KIN) domains with extracellular LRR regions

- RLP proteins (Receptor-Like Proteins): Contain LRR domains but lack intracellular kinase domains

- Kinase proteins: Consist primarily of kinase domains, like the tomato PTO gene product [5]

Beyond these well-characterized classes, genomic analyses have revealed numerous atypical domain associations that may represent evolutionary innovations in plant immunity [5]. The distribution of these domain arrangements follows a distinct pattern where architectural complexity is inversely correlated with frequency—simpler domain associations are more common than complex multidomain arrangements [5].

Table 1: Major Plant R Protein Classes and Their Domain Architectures

| R Protein Class | Domain Architecture | Localization | Recognition Mechanism |

|---|---|---|---|

| TNL (TIR-NBS-LRR) | TIR-NBS-LRR | Cytoplasmic | Intracellular pathogen effector recognition |

| CNL (CC-NBS-LRR) | CC-NBS-LRR | Cytoplasmic | Intracellular pathogen effector recognition |

| RLK (Receptor-like kinase) | eLRR-TM-KIN | Membrane-bound | Surface recognition of PAMPs/MAMPs |

| RLP (Receptor-like protein) | eLRR-TM | Membrane-bound | Surface recognition without signaling domain |

| Kinase (e.g., PTO) | KIN | Cytoplasmic | Kinase-mediated signaling cascades |

Evolutionary Patterns in R Protein Domain Organization

The evolutionary history of R protein domains reveals fascinating patterns of combinatorial explosion. Genomic analyses of 33 plant species identified 4,409 putative R-proteins that could be classified into 22 distinct subfamilies based on domain composition [5]. Remarkably, approximately 40% of these proteins consisted of single domains, while associations comprising two to five domains displayed decreasing frequency with increasing complexity [5]. This distribution strongly supports the domain hypothesis, demonstrating that nature favors certain domain combinations while avoiding others—only 22 out of 31 theoretically possible domain combinations were actually observed [5].

The NBS domain emerged as the most versatile, appearing in 13 different domain classes, followed by LRR (12 classes), KIN (9 classes), and TIR (8 classes) [5]. Certain domain pairs showed preferential associations, particularly LRR-NBS and LRR-KIN, which appeared in 8 and 6 domain classes respectively [5]. These combinatorial preferences reflect functional constraints and evolutionary trajectories that have shaped the plant immune repertoire.

Computational Approaches for R Protein Prediction

Domain-Centric Methodologies

Traditional computational approaches for identifying R proteins have relied heavily on domain-centric methodologies that leverage sequence alignment and domain fingerprinting. These methods include:

- NLR-parser: Utilizes motif alignment and search tool (MAST) to identify NLR-like sequences based on conserved domain motifs [3] [4]

- RGAugury: Integrates multiple computational tools including BLAST, HMMER3, Phobius, and TMHMM to predict various R protein subclasses [3] [4]

- Restrepo-Montoya pipeline: Classifies RLK and RLP proteins using SignalP, TMHMM, and PfamScan for domain identification [3]

While these methods provide valuable insights, they suffer from inherent limitations. Sequence alignment-based approaches typically exhibit low sensitivity and are computationally intensive, making them poorly suited for identifying divergent R proteins with low sequence similarity to known counterparts [3] [4]. Furthermore, these methods fundamentally rely on pre-existing knowledge of domain signatures, potentially missing novel or highly divergent resistance protein families.

Machine Learning Paradigms

To overcome the limitations of domain-centric methods, researchers have developed sophisticated machine learning approaches that can identify R proteins based on sequence features beyond known domain signatures:

- DRPPP: A support vector machine (SVM)-based tool that achieved 91.11% prediction accuracy by integrating 10,270 features extracted using 16 different methods [6]

- prPred: Utilizes k-spaced amino acid pairs (CKSAAPs) and k-spaced amino acid group pairs (CKSAAGPs) with SVM classification, achieving 93.5% accuracy [4]

- prPred-DRLF: Employs bi-directional long short-term memory (BiLSTM) and unified representation (UniRep) embedding with light gradient boosting machine (LGBM) classification, reaching 95.6% accuracy [3]

- StackRPred: A recently developed method that uses residue energy content matrices (RECM) with a stacking ensemble framework, outperforming previous state-of-the-art methods [3]

Table 2: Performance Comparison of Machine Learning Methods for R Protein Prediction

| Method | Algorithm | Key Features | Accuracy | Strengths |

|---|---|---|---|---|

| DRPPP | Support Vector Machine | 10,270 features from 16 methods | 91.11% | Comprehensive feature coverage |

| prPred | Support Vector Machine | CKSAAP, CKSAAGP | 93.5% | Two-step feature selection |

| prPred-DRLF | BiLSTM + LightGBM | UniRep embeddings | 95.6% | Handles long-range dependencies |

| StackRPred | Stacking Ensemble | RECM, PsePSSM, DWT | Highest | Captures structural energy properties |

| NBSPred | Support Vector Machine | Electronic annotation | Not reported | Early machine learning approach |

These machine learning methods demonstrate a significant performance advantage over traditional domain-based approaches, particularly for identifying R proteins with low sequence similarity to known families. However, they face their own challenges, including the black box problem of interpretability and substantial computational resource requirements for training [3].

Integrated Domain-ML Approaches and Protocols

The CoDIAC Framework for Domain Interface Analysis

Recent advances have begun to bridge the gap between domain-centric and machine learning approaches. The CoDIAC (Comprehensive Domain Interface Analysis of Contacts) framework represents a novel structure-based interface analysis method that maps domain interfaces from experimental and predicted structures [2]. This Python-based tool performs contact mapping of domains to yield insights into domain selectivity, conservation of domain-domain interfaces across proteins, and conserved posttranslational modifications relative to interaction interfaces [2].

When applied to the human Src homology 2 (SH2) domains, CoDIAC revealed coordinated regulation of SH2 domain binding interfaces by tyrosine and serine/threonine phosphorylation and acetylation, suggesting that multiple signaling systems can regulate protein activity and domain interactions in a coordinated manner [2]. This approach demonstrates how machine learning can enhance our understanding of domain function beyond what traditional domain analysis provides.

Experimental Protocol: Predicting R Proteins Using StackRPred

Protocol Objective: Identify plant resistance proteins from protein sequences using the StackRPred framework [3]

Workflow Overview:

Step-by-Step Procedure:

Data Preparation

- Obtain protein sequences in FASTA format

- Curate training data with known R proteins and non-R proteins at appropriate ratio (typically 1:2 positive:negative)

- For the standard StackRPred dataset: 152 R proteins and 304 non-R proteins [3]

Feature Extraction

- Calculate Residue Energy Content Matrices (RECM) based on physicochemical properties

- RECM captures energy information between residue pairs that ensures protein structural stability [3]

- Extract Pseudo Position-Specific Score Matrix (PsePSSM) features from RECM

- Apply Discrete Wavelet Transform (DWT) to extract multi-resolution features

Feature Selection

- Implement two-step feature selection using SVM-Recursive Feature Elimination (SVM-RFE)

- Apply Correlation-Based feature selection (CBR) to remove redundant features

- Generate optimized feature set for model training

Model Training - Base Layer

- Train multiple base classifiers including:

- eXtreme Gradient Boosting (XGBoost)

- Support Vector Machine (SVM)

- K-Nearest Neighbors (KNN)

- Gradient Boosting Decision Tree (GBDT)

- Light Gradient Boosting Machine (LightGBM)

- Random Forest (RF)

- Use 5-fold cross-validation for parameter optimization

- Train multiple base classifiers including:

Model Training - Meta Layer

- Use predictions from base classifiers as input features

- Train SVM classifier as meta-learner to make final predictions

- Validate model using independent test set (typically 20% of data)

Prediction and Validation

- Apply trained StackRPred model to unknown protein sequences

- Output probability scores for R protein classification

- Validate predictions with domain analysis (Pfam, InterProScan) for biological interpretation

Technical Notes:

- StackRPred specifically leverages pairwise energy content information that captures structural stability constraints [3]

- The stacking ensemble framework helps mitigate overfitting and improves generalization

- For optimal performance, ensure training data covers diverse R protein classes and plant species

Experimental Protocol: Domain-Centric R Protein Identification

Protocol Objective: Identify resistance proteins through systematic domain architecture analysis [5]

Workflow Overview:

Step-by-Step Procedure:

Comprehensive Domain Scanning

- Perform batch domain analysis using PfamScan against Pfam domain database

- Identify key R protein domains: NBS, LRR, TIR, CC, KIN, TM domains

- Use HMMER3 for sensitive domain detection based on hidden Markov models

- Run InterProScan for integrated domain signature analysis

Architecture Classification

- Classify sequences into major R protein classes based on domain combinations:

- TNL: TIR-NBS-LRR

- CNL: CC-NBS-LRR

- RLK: eLRR-TM-KIN

- RLP: eLRR-TM (no intracellular kinase)

- Kinase: Primary KIN domains

- Identify atypical domain associations beyond these major classes

- Classify sequences into major R protein classes based on domain combinations:

NBS-LRR Subtyping

- Apply coiled-coil prediction (nCoil) to distinguish CNL from NL proteins

- Use TIR domain signatures to identify TNL proteins

- Differentiate between CC-NBS-LRR and NBS-LRR architectures

Receptor Protein Differentiation

- Implement transmembrane domain prediction using TMHMM or Phobius

- Apply Fritz-Laylin method to distinguish RLK from RLP proteins

- Filter RLP proteins based on homology to known resistance-related RLPs

Atypical Association Analysis

- Identify unusual domain combinations not fitting major classes

- Assess conservation of atypical architectures across plant species

- Evaluate potential functional innovations in atypical associations

Validation and Interpretation:

- Cross-reference predictions with PRGdb (Plant Resistance Gene Database)

- Perform phylogenetic analysis of domain architectures

- Evaluate genomic context and syntenic relationships for novel candidates

Table 3: Key Research Reagents and Computational Resources for Domain-Centric Protein Analysis

| Resource | Type | Function | Application in R Protein Research |

|---|---|---|---|

| PRGdb | Database | Curated repository of known and putative R genes | Reference data for validation and comparison [5] [4] |

| Pfam | Domain Database | Collection of protein domain families | Domain fingerprinting and architecture analysis [4] |

| CDD | Domain Database | Conserved Domain Database for sequence classification | Identification of R protein-specific domain variants [1] |

| HMMER | Software Tool | Profile hidden Markov model implementation | Sensitive domain detection and family classification [4] |

| InterProScan | Software Tool | Integrated domain and signature recognition | Comprehensive domain architecture analysis [4] |

| TMHMM | Software Tool | Transmembrane helix prediction | Membrane protein classification (RLK vs RLP) [4] |

| nCoil | Software Tool | Coiled-coil domain prediction | CC domain identification in CNL proteins [4] |

| Phobius | Software Tool | Transmembrane topology and signal peptide prediction | Subcellular localization prediction [4] |

| CATH | Database | Hierarchical classification of protein structures | Structural domain evolutionary analysis [7] |

| SCOPe | Database | Structural Classification of Proteins extended | Fold-based domain classification and analysis [1] |

The Domain Hypothesis continues to provide a powerful conceptual framework for understanding protein evolution and function. By viewing proteins as modular assemblies of domain building blocks, researchers can decipher evolutionary histories and predict functional capabilities. This perspective is particularly valuable in the study of plant resistance proteins, where domain combinations directly determine pathogen recognition specificities and signaling capabilities.

The integration of machine learning with traditional domain analysis represents the future frontier in protein bioinformatics. Methods like StackRPred that incorporate residue energy information and structural constraints demonstrate how ML can enhance our ability to identify proteins based on fundamental principles beyond sequence similarity [3]. Meanwhile, approaches like CoDIAC show how computational methods can reveal new insights into domain function and regulation [2].

As structural prediction methods like AlphaFold continue to advance [8], the research community will increasingly leverage high-accuracy protein models to inform domain analyses and identify novel functional relationships. The emerging paradigm combines evolutionary principles embodied in the Domain Hypothesis with the pattern recognition power of machine learning, creating synergistic approaches that advance both basic knowledge and practical applications in crop improvement and disease resistance breeding programs.

The field of protein structure and function prediction has undergone a revolutionary shift, moving from reliance on manual feature engineering and domain-based knowledge to the adoption of deep learning systems capable of automated pattern discovery. This transition is central to modern computational biology, particularly in the critical area of R-protein prediction, where accurately modeling resistance protein structures is essential for understanding plant immunity and developing sustainable crop protection strategies. Whereas traditional methods depended on expert-defined features and homology-based modeling, contemporary artificial intelligence (AI) pipelines now integrate multisource deep learning potentials and iterative physical simulations to achieve unprecedented accuracy in predicting protein tertiary structures and their functional interactions [9] [10]. This paradigm shift not only enhances our predictive capabilities but also fundamentally changes the workflow from a heavily human-dependent process to an automated, data-driven discovery engine. These advances are pushing the boundaries of drug discovery, protein engineering, and functional annotation, establishing a new foundation for precision medicine and therapeutic development [11].

The Evolution of Methodologies in Protein Prediction

From Manual Feature Extraction to Deep Learning

The initial approach to protein prediction relied heavily on manual feature extraction, where scientists identified and quantified specific protein characteristics based on domain knowledge.

Table: Traditional Manual Feature Extraction Techniques in Protein Science

| Feature Category | Specific Examples | Application in Protein Prediction |

|---|---|---|

| Sequence-based Features | Amino acid composition, physiochemical properties (e.g., hydrophobicity, charge), sequence motifs | Primary structure analysis, homology detection |

| Structural Features | Secondary structure propensities, solvent accessibility, contact maps | Template-based modeling, fold recognition |

| Evolutionary Features | Position-Specific Scoring Matrix (PSSM), co-evolutionary signals | Threading, identifying remote homologs |

These manually curated features were then used as input for conventional machine learning models, such as support vector machines or hidden Markov models. The limitations were evident: the process was labor-intensive, required deep expertise, and could easily miss complex, non-linear relationships within the data [12] [13].

The advent of deep learning marked a decisive turn towards automated pattern discovery. Modern architectures, including deep residual convolutional networks and self-attention transformers, now directly ingest raw or minimally pre-processed data—such as amino acid sequences and multiple sequence alignments (MSAs)—to autonomously learn hierarchical feature representations. This capability is exemplified by models like ProtT5, which generates context-aware embeddings for each amino acid in a sequence, capturing complex biochemical properties without human guidance [7]. This shift has enabled the development of end-to-end prediction systems that seamlessly map sequence to structure, moving beyond the constraints of manual feature design [9] [10].

Comparative Analysis: Domain-Based vs. Machine Learning Approaches

The distinction between domain-based methods and modern machine learning is not merely technical but philosophical, reflecting a fundamental shift in how biological knowledge is encoded and applied.

Domain-based methods, such as template-based modeling (TBM), operate on the principle of homology. Tools like MODELLER and SwissPDBViewer rely on identifying known protein structures (templates) with significant sequence similarity to the target. The process involves sequence alignment, model building by transferring coordinates from the template, and subsequent refinement. While effective for targets with clear homologs, TBM fails for proteins with novel folds or minimal sequence similarity to any known structure [9].

In contrast, machine learning approaches, particularly template-free modeling (TFM) and ab initio methods, learn the underlying principles of protein folding from vast datasets. AlphaFold2 demonstrated the power of this paradigm by using an end-to-end deep learning model to achieve atomic accuracy. Subsequent innovations, such as D-I-TASSER, have further integrated these learned potentials with physics-based simulations, creating hybrid models that outperform purely AI-based or physical approaches [10]. These methods excel where domain-based methods struggle, particularly on "hard" targets with no evolutionary trace in databases.

Application Notes: Machine Learning in Action

High-Accuracy Protein Structure Prediction with D-I-TASSER

The D-I-TASSER (deep-learning-based iterative threading assembly refinement) pipeline represents a state-of-the-art hybrid approach that synergizes deep learning with physics-based simulations. Its performance on a benchmark of 500 non-redundant "Hard" protein domains underscores the success of this integrated paradigm. D-I-TASSER achieved an average TM-score of 0.870, significantly outperforming AlphaFold2 (TM-score = 0.829) and AlphaFold3 (TM-score = 0.849) [10]. The advantage was most pronounced on difficult targets, where D-I-TASSER's ability to leverage iterative physical simulations provided a critical edge over purely deep learning-based end-to-end systems.

Table: Performance Benchmark of Protein Structure Prediction Methods [10]

| Method | Average TM-score (500 Hard Targets) | Correct Folds (TM-score > 0.5) | Key Innovation |

|---|---|---|---|

| I-TASSER (Physics-based) | 0.419 | 145 | Template threading & physical force fields |

| C-I-TASSER (Hybrid) | 0.569 | 329 | Integration of deep-learning-predicted contacts |

| AlphaFold2.3 | 0.829 | N/A | End-to-end deep learning with MSAs |

| AlphaFold3 | 0.849 | N/A | Diffusion model & multimodality |

| D-I-TASSER (Hybrid-AI) | 0.870 | 480 | Multisource deep learning potentials + iterative physics simulations |

A critical innovation in D-I-TASSER is its specialized protocol for multidomain protein structure prediction. Unlike many earlier models focused on single domains, D-I-TASSER incorporates a domain partition and assembly module. It iteratively identifies domain boundaries, generates domain-level MSAs and spatial restraints, and then reassembles the full-chain model using hybrid domain-level and interdomain restraints. This capability is vital for accurately modeling the complex architectures of R-proteins and other eukaryotic proteins, over 80% of which contain multiple domains [10].

Predicting Functional Relationships from Sequence and Structure

Beyond tertiary structure, machine learning is revolutionizing the prediction of protein function and interactions. A key application is predicting de novo protein-protein interactions (PPIs)—interactions with no natural precedent. Traditional methods, including AlphaFold2, excel at predicting endogenous interactions but see a performance drop on de novo PPIs [14]. Novel algorithms are now tackling this challenge using graph-based atomistic models and methods that learn from molecular surface features, opening new avenues for drug discovery, such as designing molecular glues that rewire cellular functions [14].

Furthermore, integrating sequence and structural features significantly enhances protein function prediction. A LightGBM-based machine learning model demonstrated that combining features like full-length sequence identity, domain structural similarity, and pocket similarity outperforms models based on sequence alone. Feature importance analysis revealed that domain sequence identity, calculated through structural alignment, was the most influential predictor, highlighting the critical role of structural information in determining functional identity [15].

Experimental Protocols

Protocol 1: Predicting Protein Structural Similarity with Rprot-Vec

Purpose: To rapidly predict the structural similarity (TM-score) between two proteins using only their primary sequences, bypassing the need for resource-intensive 3D structure prediction or alignment.

Principle: The Rprot-Vec model is a deep learning framework that employs a ProtT5 encoder for context-aware sequence embedding, followed by Bidirectional Gated Recurrent Units (Bi-GRU) and multi-scale Convolutional Neural Networks (CNN) to extract global and local features. The final protein representations are used to compute a TM-score via cosine similarity [7].

Workflow:

Steps:

- Input Preparation: Prepare the two protein sequences of interest in standard FASTA format.

- Sequence Encoding: Pass each sequence through the ProtT5-XL-U50 model. This generates a 1024-dimensional vector representation for each amino acid, capturing its context within the entire sequence.

- Feature Extraction:

- The sequence of embeddings is processed by a Bidirectional GRU layer to capture long-range, bidirectional dependencies in the sequence.

- An attention layer is applied to weight the importance of different amino acid positions.

- Multi-scale CNN blocks, using convolution kernels of sizes 3 and 7, are applied in parallel to capture local sequence motifs of varying lengths.

- Vector Generation: The outputs are pooled and passed through a fully connected layer to produce a single, fixed-dimensional vector representation for each protein.

- Similarity Calculation: Compute the cosine similarity between the two protein vectors. The resulting value (ranging from 0 to 1) is treated as the predicted TM-score, where 1 indicates highly similar structures and 0 suggests orthogonality.

Applications: This protocol is ideal for large-scale protein homology detection, function inference for unannotated proteins, and pre-screening candidate proteins before detailed structural analysis [7].

Protocol 2: Hybrid Structure Prediction for Multidomain Proteins using D-I-TASSER

Purpose: To construct atomic-level structural models for complex multidomain proteins by integrating deep learning predictions with physics-based folding simulations.

Principle: D-I-TASSER combines multisource spatial restraints (from DeepPotential, AttentionPotential, and AlphaFold2) with replica-exchange Monte Carlo (REMC) simulations for structure assembly. Its specialized domain-splitting protocol handles the inherent complexity of multidomain proteins [10].

Workflow:

Steps:

- Deep MSA Construction: Iteratively search genomic and metagenomic databases (e.g., UniRef90) to construct deep multiple sequence alignments for the target protein.

- Domain Partition: The input sequence is analyzed to predict potential domain boundaries.

- Domain-Level Processing: For each identified domain, the pipeline generates domain-specific MSAs, threading alignments (using LOMETS3), and spatial restraints independently.

- Spatial Restraint Generation: Multisource deep learning potentials are used to predict inter-residue distance maps, contact maps, and hydrogen-bonding networks for both intra-domain and inter-domain regions.

- Iterative Threading Assembly Simulation:

- Template fragments from threading alignments are assembled using Replica-Exchange Monte Carlo (REMC) simulations.

- The simulation is guided by a hybrid force field that combines the deep learning restraints with a knowledge-based physical force field.

- For multidomain proteins, the assembly process uses the hybrid domain-level and inter-domain restraints to correctly orient and pack the domains.

- Model Selection and Refinement: The lowest energy models from the simulation are selected and refined at the atomic level to produce the final 3D structure.

Applications: This protocol is particularly suited for predicting the structures of large, multidomain proteins, such as many R-proteins, where accurate domain orientation is critical for understanding function and mechanism. Benchmark tests confirm its superior performance over other leading methods on such targets [10].

Table: Key Computational Tools and Databases for AI-Driven Protein Prediction

| Resource Name | Type | Primary Function | Relevance to R-protein Prediction |

|---|---|---|---|

| AlphaSync Database [16] | Database | Provides continuously updated, pre-computed protein structures from AlphaFold2. | Ensures researchers work with the most current structural models, including for plant proteomes, and provides data in a 2D tabular format ideal for machine learning. |

| D-I-TASSER [10] | Software Suite | Hybrid deep learning and physics-based protein structure prediction server. | Accurately models single-domain and multidomain R-protein structures, often outperforming end-to-end deep learning methods on difficult targets. |

| Rprot-Vec [7] | Software/Model | A deep learning model for fast protein structural similarity calculation from sequence. | Enables rapid homology detection and functional inference for novel R-protein sequences without requiring 3D structure prediction. |

| UniProt Knowledgebase | Database | Central repository of protein sequence and functional information. | The primary source for obtaining canonical and isoform sequences for R-proteins and related proteins for analysis. |

| CATH Database [7] | Database | Hierarchical classification of protein domain structures. | Used for training and benchmarking structure prediction models; provides evolutionary and functional insights into R-protein domains. |

The expansion of biological data has created a critical need for robust computational methods to predict protein function. This endeavor is central to understanding biological mechanisms and developing treatments for complex diseases. While traditional experimental methods for determining protein function are reliable, they are often time-consuming and costly, leaving the vast majority of protein sequences functionally uncharacterized [17]. This challenge is framed within a broader research thesis comparing machine learning (ML) approaches for whole-protein (R-protein) prediction against more traditional domain-based methods. Domain-based strategies are gaining traction as proteins are comprised of specific, functional domains that are closely related to their structures and functions [17]. The selection of appropriate training data is paramount for developing accurate and generalizable predictive models, guiding researchers toward the most insightful computational tools.

A suite of public databases provides the foundational data for training protein function prediction models. These resources can be broadly categorized into those providing protein-protein interaction (PPI) networks, experimentally determined structures, and computationally predicted structures. The table below summarizes the core data sources relevant to this field.

Table 1: Key Data Sources for Predictive Model Training

| Resource Name | Primary Content | Data Type | Scale (as of latest update) | Key Application in Model Training |

|---|---|---|---|---|

| STRING [18] [19] | Functional protein association networks | Predicted & curated interactions (physical, functional, pathway) | >20 billion interactions across 59.3 million proteins from 12,535 organisms [18] | Feature engineering for network-based and context-aware models |

| BioGRID [20] [21] | Physical and genetic interactions, PTMs, chemical associations | Manually curated interactions from literature | ~2.25 million non-redundant interactions from over 87,000 publications [20] | Gold-standard training sets and validation for high-confidence interaction prediction |

| RCSB PDB [22] | Experimentally determined 3D structures of proteins and nucleic acids | Curated atomic coordinates from X-ray, Cryo-EM, NMR | ~200,000 structures (implied) [23] | Source of ground-truth structural data for structure-based function prediction |

| AlphaFold DB [24] [23] | AI-predicted protein structures | Computationally predicted 3D models | Over 200 million entries, covering nearly the entire UniProt proteome [24] | Large-scale input features for structure-based models where experimental structures are absent |

| ModelArchive [22] | Repository of theoretical macromolecular structure models | Computationally predicted 3D models | Variable (community-contributed) | Supplementary source of structural models for training and analysis |

Experimental Protocols for Data Utilization

Protocol 1: Building a Domain-Guided Function Prediction Model with DPFunc

Application Note: This protocol details the use of protein structure and domain information to train DPFunc, a deep learning model that exemplifies the advantage of integrating domain guidance over whole-protein (R-protein) approaches for predicting Gene Ontology (GO) terms [17].

Workflow Diagram: DPFunc Model Architecture

Materials & Reagents:

- Input Data: Protein amino acid sequences and their corresponding 3D structures (can be experimentally derived from PDB or computationally predicted by AlphaFold2).

- Software Tools: InterProScan for domain detection, ESM-1b pre-trained protein language model, PyTorch/TensorFlow deep learning framework.

- Training Labels: Gene Ontology (GO) annotations for proteins, typically sourced from UniProt-GOA.

Methodology:

- Residue-Level Feature Learning: For a target protein sequence, initial residue-level features are generated using the pre-trained ESM-1b protein language model. Simultaneously, a contact map is constructed from the protein's 3D structure. Both are fed into Graph Convolutional Network (GCN) layers to update and learn the final residue-level features that incorporate structural context [17].

- Domain-Guided Feature Extraction: The target protein sequence is scanned with InterProScan to detect functional domains. These domain entries are converted into dense numerical representations via an embedding layer [17].

- Domain-Guided Attention: An attention mechanism, inspired by transformer architecture, integrates the protein-level domain features with the residue-level features. This step identifies and weights the importance of different residues in the structure with respect to the detected domains [17].

- Function Prediction & Post-Processing: The weighted residue features are aggregated into a protein-level feature vector and passed through fully connected layers to predict GO terms. A final post-processing step ensures the predictions are consistent with the hierarchical structure of the GO graph [17].

Expected Outcomes: DPFunc has been shown to outperform state-of-the-art sequence-based and structure-based methods. On a benchmark dataset, it achieved significant improvements in Fmax scores (e.g., 16% in Molecular Function, 27% in Cellular Component) over the next best structure-based method, GAT-GO [17].

Protocol 2: Constructing a Protein-Protein Interaction Network for Functional Inference

Application Note: This protocol describes the use of STRING and BioGRID to build and analyze a PPI network, which can serve as input features for network-based ML models or for direct biological interpretation.

Workflow Diagram: PPI Network Construction & Analysis

Materials & Reagents:

- Input Data: A list of seed protein identifiers (e.g., UniProt IDs, gene names) for the organism of interest.

- Software/Tools: STRING web interface or API, BioGRID web interface or downloadable files, network analysis tools (e.g., Cytoscape, NetworkX in Python).

Methodology:

- Data Retrieval: Query the STRING database using seed protein names and the target organism. Separately, query the BioGRID database for the same proteins to obtain high-confidence, manually curated physical and genetic interactions [18] [20] [21].

- Data Filtering and Merging: In STRING, set a minimum interaction score threshold (e.g., high confidence > 0.7) to reduce false positives [19]. In BioGRID, all interactions are experimentally supported. Merge the interaction lists from both sources, removing duplicate entries.

- Network Construction and Analysis: Build a network graph where nodes are proteins and edges are interactions. Use the merged data. Analyze the network's topology (e.g., identifying highly connected proteins) and perform functional enrichment analysis to find GO terms or pathways that are statistically over-represented in the network [19].

Expected Outcomes: This process generates a high-confidence PPI network that can reveal functional modules. The network itself, along with node centrality measures and enrichment results, can be used as features for machine learning models to predict protein function or to prioritize new candidate proteins for further study.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table catalogs key computational and data "reagents" essential for research in protein function and structure prediction.

Table 2: Key Research Reagent Solutions for Predictive Modeling

| Item Name | Function / Application | Relevant Database/Tool |

|---|---|---|

| Pre-trained Protein Language Model (ESM-1b) | Generates evolutionarily informed, residue-level feature embeddings from amino acid sequences. | DPFunc [17], ESMFold [22] |

| InterProScan | Scans protein sequences against signatures from multiple databases to detect functional domains and sites. | DPFunc protocol [17] |

| AlphaFold2 Predicted Structure | Provides atomic-level 3D protein models for sequences lacking experimental structures; input for structure-based models. | AlphaFold DB [24] [23] |

| CRISPR Phenotype Data (ORCS) | Provides curated gene-phenotype relationships from genome-wide CRISPR screens for functional validation. | BioGRID ORCS [20] [21] |

| Gene Ontology (GO) Annotations | Provides standardized functional terms (Molecular Function, Biological Process, Cellular Component) for model training and evaluation. | Model benchmarking [17] |

| Graph Neural Network (GNN) | Deep learning architecture for learning from graph-structured data like PPI networks or protein contact maps. | DPFunc, DeepFRI [17] |

Discussion and Future Perspectives

The integration of data from STRING, BioGRID, PDB, and AlphaFold DB provides a multi-faceted evidence stream that is crucial for advancing protein function prediction. The comparative analysis between R-protein and domain-based methods, as exemplified by DPFunc, strongly indicates that guiding models with domain information unlocks greater accuracy and interpretability by pinpointing key functional residues within the structure [17].

Future developments will likely focus on the seamless integration of these massive databases into end-to-end prediction pipelines. Furthermore, the accurate prediction of multi-domain protein structures remains a challenge, with new hybrid approaches like D-I-TASSER that integrate deep learning with physical simulations showing promise in surpassing the performance of end-to-end ML systems like AlphaFold2 for these complex targets [10]. As the volume of curated interaction data in resources like BioGRID continues to grow monthly, and as structure prediction databases expand, the potential for training even more powerful and generalizable models will become a reality, profoundly impacting biological discovery and drug development.

The rapid accumulation of protein sequences from genome sequencing projects has dramatically outpaced experimental function annotation, leaving over 30% of protein-coding genes with unknown functions and creating a vast "Unknome" [25] [26]. This annotation gap represents a critical challenge and opportunity in biological research, as many valuable proteins potentially catalyzing novel enzymatic reactions remain undiscovered among the vast number of function-unknown proteins [15]. Traditional computational methods that rely solely on sequence homology often fail to accurately predict functions for proteins with no evolutionary precedence, particularly for those with small sequence variations that correspond to different functions or for pseudoenzymes that lack key catalytic residues [25].

This application note examines advanced machine learning strategies to address this challenge, focusing particularly on the emerging paradigm of integrating domain-guided and structure-based information to improve functional inference. We frame these methodologies within the context of a broader thesis contrasting general R-protein prediction (methods relying on sequence-level representations from protein language models) with domain-based methods (approaches that explicitly incorporate structural domain information) [26] [15]. The following sections provide detailed protocols for implementing these approaches, along with performance benchmarks and practical reagent solutions for researchers tackling the Unknome.

Core Methodologies and Experimental Protocols

Domain-Guided Deep Learning with DPFunc

Principle: DPFunc addresses the Unknome challenge by leveraging domain information within protein sequences to guide the model toward learning the functional relevance of amino acids in their corresponding structures, highlighting structure regions closely associated with functions [26]. This approach is particularly valuable for detecting key residues or regions in protein structures that exhibit strong functional correlations, even when overall sequence similarity to training data is low.

Experimental Protocol:

Input Data Preparation:

Domain Information Extraction:

- Process target protein sequences with InterProScan to identify functional domains by comparing them to background databases [26].

- Convert identified domains into dense vector representations using embedding layers that capture their unique characteristics.

Feature Learning and Integration:

- Extract initial residue-level features using pre-trained protein language models (ESM-1b, ProtT5) [26] [27].

- Construct protein contact maps based on 3D coordinates from protein structures.

- Process contact maps and residue-level features through Graph Convolutional Network (GCN) layers to update and learn final residue-level features, implementing a residual learning framework to maintain gradient flow [26].

- Implement attention mechanisms to interweave protein-level domain features and residue-level features, assessing the importance of each residue for functional prediction.

Function Prediction and Validation:

- Generate protein-level features through weighted summation of residue-level features and their importance scores.

- Annotate functions through fully connected layers using Gene Ontology (GO) terms across molecular function (MF), cellular component (CC), and biological process (BP) ontologies [26].

- Apply post-processing to ensure consistency with the hierarchical structure of GO terms.

- Validate predictions against held-out test sets with experimental annotations, using standard metrics from Critical Assessment of Functional Annotation (CAFA) challenges [26].

Sequence-Structure Integration for Functional Identity Prediction

Principle: This method predicts whether two proteins catalyze the same enzymatic reaction by integrating multiple similarity metrics derived from both sequence and structural features [15]. The approach utilizes predicted structural models from AlphaFold2, performing pocket detection and domain decomposition to extract features that are more conserved than full-sequence similarity.

Experimental Protocol:

Structure Prediction and Processing:

- Generate 3D structural models for all query sequences using AlphaFold2 [15].

- Perform pocket detection on structural models using tools like Fpocket or DeepPocket to identify potential binding sites [27] [15].

- Conduct domain decomposition for each structural model to identify functionally distinct regions.

Multi-Feature Similarity Calculation:

- Calculate full-length sequence identity using standard alignment tools (BLAST, DIAMOND) [26] [15].

- Compute domain sequence identity through structural alignment of decomposed domains.

- Determine pocket similarity by comparing geometric and chemical properties of detected binding pockets.

- Calculate overall structural similarity using TM-align or US-align to generate TM-scores [15] [7].

Model Training and Prediction:

- Compile feature set including full-length sequence identity, domain structural similarity, and pocket similarity for protein pairs [15].

- Train LightGBM classifier on known enzyme pairs with verified functional identity.

- Perform feature importance analysis to identify most predictive features (domain sequence identity typically shows highest importance) [15].

- Predict functional identity for novel protein pairs by feeding extracted features into trained model.

Workflow Visualization

Domain-Guided vs. R-Protein Prediction Workflow - This diagram contrasts the two primary approaches for Unknome protein function prediction, showing their shared feature extraction layers and divergent integration strategies.

Performance Benchmarks

Function Prediction Accuracy

Table 1: Performance comparison of protein function prediction methods on PDB dataset (Fmax scores)

| Method | Molecular Function (MF) | Cellular Component (CC) | Biological Process (BP) |

|---|---|---|---|

| Blast | 0.432 | 0.381 | 0.321 |

| DeepGO | 0.541 | 0.502 | 0.453 |

| DeepFRI | 0.612 | 0.558 | 0.507 |

| GAT-GO | 0.635 | 0.584 | 0.532 |

| DPFunc (w/o post) | 0.686 | 0.613 | 0.574 |

| DPFunc (with post) | 0.737 | 0.741 | 0.654 |

DPFunc demonstrates significant performance improvements over existing state-of-the-art methods, with particularly notable gains in cellular component prediction after implementing post-processing procedures to ensure consistency with GO term hierarchies [26]. The method outperforms both sequence-based approaches (Blast, DeepGO) and structure-based methods (DeepFRI, GAT-GO), highlighting the value of domain guidance in structure-based function prediction.

Structural Modeling Performance

Table 2: Protein structure prediction accuracy on "Hard" targets (TM-score)

| Method | Average TM-score | Correct Folds (TM-score > 0.5) | Parameters |

|---|---|---|---|

| I-TASSER | 0.419 | 145/500 (29%) | - |

| C-I-TASSER | 0.569 | 329/500 (66%) | - |

| AlphaFold2.3 | 0.829 | 452/500 (90%) | ~93 million |

| AlphaFold3 | 0.849 | 465/500 (93%) | - |

| D-I-TASSER | 0.870 | 480/500 (96%) | - |

| Rprot-Vec | - | 65.3% (TM-score > 0.8) | 41% of TM-vec |

Advanced hybrid approaches like D-I-TASSER, which integrate deep learning with physics-based folding simulations, demonstrate superior performance on challenging protein targets, particularly for non-homologous and multidomain proteins [10]. For large-scale applications, sequence-based structural similarity predictors like Rprot-Vec offer efficient alternatives, achieving 65.3% accuracy in identifying homologous proteins (TM-score > 0.8) using only sequence information [7].

Research Reagent Solutions

Table 3: Essential tools and databases for Unknome protein function prediction

| Resource | Type | Function | Access |

|---|---|---|---|

| AlphaFold2/3 | Structure Prediction | Predicts 3D protein structures from sequence | https://alphafold.ebi.ac.uk/ |

| ESMFold | Structure Prediction | High-speed structure prediction for large datasets | https://esmatlas.com/ |

| InterProScan | Domain Analysis | Identifies functional domains in protein sequences | https://www.ebi.ac.uk/interpro/ |

| DPFunc | Function Prediction | Domain-guided deep learning for function annotation | https://github.com/ [26] |

| Rprot-Vec | Similarity Prediction | Sequence-based structural similarity calculation | https://github.com/ [7] |

| UniProt | Database | Comprehensive protein sequence and functional data | https://www.uniprot.org/ |

| CATH | Database | Protein structure classification for benchmarking | http://www.cathdb.info/ [7] |

| STRING | Database | Known and predicted protein-protein interactions | https://string-db.org/ [28] |

| ProtT5 | Feature Extraction | Protein language model for sequence representations | https://github.com/ [27] [7] |

| TM-align | Structure Alignment | Protein structural similarity calculation | https://zhanggroup.org/TM-align/ [7] |

The challenge of the Unknome requires moving beyond traditional homology-based approaches toward integrated methodologies that leverage both sequence and structural information. Domain-guided methods like DPFunc demonstrate that explicitly modeling functional units within proteins significantly enhances prediction accuracy for poorly characterized proteins [26]. Meanwhile, hybrid structure prediction approaches like D-I-TASSER show that combining deep learning with physics-based simulations improves modeling of complex multidomain proteins [10].

For researchers investigating the Unknome, the experimental protocols outlined here provide practical pathways for implementing these advanced methods. The continuing development of protein language models, geometric deep learning, and multi-scale modeling promises to further accelerate our ability to illuminate the functional dark matter of the proteome, with profound implications for drug discovery and protein engineering [29] [14].

Architectures in Action: Deep Learning Models and Domain-Integrated Pipelines

Graph Neural Networks (GNNs) for Modeling Protein Structures and Interaction Networks

Graph Neural Networks (GNNs) have emerged as transformative tools in computational biology, providing a natural framework for modeling the inherent graph structures of biological systems. For protein-related tasks, GNNs excel at representing proteins as residue contact networks or atoms as nodes with edges representing spatial relationships, enabling the capture of complex structural patterns and interaction dynamics [28] [30]. This approach has demonstrated remarkable success across diverse applications including protein-protein interaction prediction, protein function analysis, and molecular property prediction for drug discovery [28] [31] [32].

The integration of GNNs into structural bioinformatics represents a significant advancement over traditional domain-based prediction methods and sequence-only machine learning approaches. While methods like I-TASSER series pipelines have successfully integrated deep learning with physics-based simulations for high-accuracy protein structure prediction [10], GNNs offer unique advantages for modeling interaction networks and structural relationships that are challenging for conventional approaches.

Performance Benchmarking of Computational Methods

Quantitative Comparison of Protein Structure Prediction Methods

Table 1: Benchmark performance of protein structure prediction methods on 500 non-redundant "Hard" domains from SCOPe, PDB, and CASP 8-14 experiments

| Method | Average TM-Score | Correctly Folded Targets (TM-score > 0.5) | Key Characteristics |

|---|---|---|---|

| D-I-TASSER | 0.870 | 480 | Hybrid approach integrating multisource deep learning potentials with iterative threading assembly simulations [10] |

| AlphaFold2.3 | 0.829 | N/R | End-to-end deep learning architecture [10] |

| AlphaFold3 | 0.849 | N/R | Enhanced with diffusion samples [10] |

| C-I-TASSER | 0.569 | 329 | Uses deep-learning-predicted contact restraints [10] |

| I-TASSER | 0.419 | 145 | Traditional template-based folding simulations [10] |

Performance of Specialized Protein Prediction Tools

Table 2: Performance metrics of specialized deep learning tools for protein prediction tasks

| Tool | Application Domain | Accuracy | Dataset | Key Innovation |

|---|---|---|---|---|

| PRGminer | Plant resistance gene prediction | 98.75% (training), 95.72% (independent testing) [33] | Plant R-genes from Phytozome, Ensemble Plants, NCBI [33] | Deep learning with dipeptide composition features [33] |

| Plant RBP Predictor | RNA-binding protein prediction in plants | 97.20% (5-fold CV), 99.72% (independent set) [34] | 4,992 balanced sequences [34] | Ensemble learning integrating shallow and deep learning with KPC encoding [34] |

| Domain-Disease Association | Protein domain-disease association | AUC: 0.94 [35] | Heterogeneous network of domains, proteins, diseases [35] | XGBOOST classifier with meta-path topological features [35] |

GNN Architectures for Protein Analysis

Core GNN Variants and Their Protein Applications

The field has developed several specialized GNN architectures tailored to protein data:

Graph Convolutional Networks (GCNs) apply convolutional operations to aggregate information from neighboring nodes, effectively capturing local structural patterns in protein residue networks [28] [30].

Graph Attention Networks (GATs) incorporate attention mechanisms to adaptively weight the importance of neighboring nodes, particularly useful for identifying critical interaction sites in protein complexes [28] [30].

Graph Autoencoders (GAE) utilize encoder-decoder frameworks to generate compact, low-dimensional node embeddings for tasks like protein function prediction and interaction characterization [28].

Kolmogorov-Arnold GNNs (KA-GNNs) represent a recent innovation integrating Fourier-based KAN modules into GNN components, enhancing expressivity and interpretability for molecular property prediction [32].

Experimental Protocol: Protein-Protein Interaction Prediction Using GNNs

Objective: Predict binary protein-protein interactions from structural information and sequence features [30].

Workflow:

Graph Construction:

- Input: Protein Data Bank (PDB) files containing 3D atomic coordinates

- Node definition: Represent each amino acid residue as a node

- Edge definition: Connect two nodes if they contain atoms within a threshold distance (typically 4-8Å), creating a residue contact network

- Graph representation: Undirected graph G = (V, E) where V = {v₁, v₂, ..., vₙ} represents residues and E = {e₁, e₂, ..., eₘ} represents spatial proximity [30]

Feature Extraction:

- Utilize protein language models (SeqVec or ProtBert) to generate feature vectors for each residue directly from protein sequences

- Alternative: Physicochemical properties or one-hot encoding of amino acids

- Output: Feature matrix X ∈ R^(n×d) where n is number of residues and d is feature dimension [30]

Model Architecture:

- Implement either GCN or GAT framework

- GCN layer operation: H⁽ˡ⁺¹⁾ = σ(ÃH⁽ˡ⁾W⁽ˡ⁾) where à is normalized adjacency matrix, H⁽ˡ⁾ is node features at layer l, W⁽ˡ⁾ is trainable weights [30]

- GAT layer: Compute attention coefficients αᵢⱼ = softmaxₑ(LeakyReLU(aᵀ[Whᵢ∥Whⱼ])) to weight neighbor contributions [30]

Classification:

- Concatenate feature vectors of protein pairs

- Feed to classifier with two hidden layers and output layer with sigmoid activation

- Use binary cross-entropy loss for training [30]

Datasets: Human PPI dataset (36,545 interacting pairs from HPRD) and S. cerevisiae dataset (22,975 interacting pairs from DIP) [30].

Advanced GNN Frameworks

Kolmogorov-Arnold GNNs (KA-GNNs) for Molecular Property Prediction

Recent advances have integrated Kolmogorov-Arnold Networks (KANs) with GNNs to create more expressive and efficient architectures. KA-GNNs replace standard multilayer perceptrons (MLPs) in GNN components with KAN modules based on learnable univariate functions [32].

Architecture Variants:

- KA-GCN: Integrates Fourier-based KAN modules into Graph Convolutional Networks

- KA-GAT: Enhances Graph Attention Networks with KAN-based transformations

Key Innovations:

- Fourier-series-based univariate functions to capture both low-frequency and high-frequency structural patterns

- Integration into all three GNN components: node embedding, message passing, and readout

- Theoretical guarantees of strong approximation capabilities based on Carleson's convergence theorem [32]

Experimental Results: KA-GNNs consistently outperform conventional GNNs across seven molecular benchmarks in both prediction accuracy and computational efficiency [32].

Visualization: GNN Workflow for Protein-Protein Interaction Prediction

Graph Workflow for PPI Prediction: From structural data to interaction prediction

Research Reagent Solutions

Table 3: Essential research reagents and computational tools for GNN-based protein analysis

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Protein Databases | PDB, UniProtKB, Phytozome [34] [33] | Source of protein structures, sequences, and annotations | Data acquisition for model training and validation |

| PPI Databases | STRING, BioGRID, IntAct, DIP, HPRD [28] [30] | Repository of known and predicted protein-protein interactions | Ground truth data for PPI prediction models |

| Domain Databases | Pfam, InterPro [33] | Protein domain families and functional domains | Feature extraction and functional annotation |

| Language Models | SeqVec, ProtBert, ESM [30] | Generate residue-level feature vectors from sequences | Node feature initialization for GNNs |

| GNN Frameworks | PyTorch Geometric, DGL, TensorFlow GNN | Implement GCN, GAT, and other GNN architectures | Model development and training |

| Specialized Tools | D-I-TASSER, PRGminer, RBPLight [10] [34] [33] | Domain-specific prediction pipelines | Benchmarking and comparative analysis |

Integration with Domain-Based Methods

The relationship between emerging GNN approaches and traditional domain-based methods represents a critical research frontier. Domain-based protein prediction methods, which identify structural and functional units through techniques like I-TASSER, have demonstrated remarkable success, with D-I-TASSER achieving 81% coverage of protein domains in the human proteome [10]. However, GNNs offer complementary strengths, particularly for modeling higher-order interactions and complex relationships in protein networks.

Recent research indicates that hybrid approaches integrating domain knowledge with graph-based learning show particular promise. For instance, heterogeneous network methods that incorporate domain information have achieved AUC scores of 0.94 for predicting domain-disease associations [35]. Similarly, the AG-GATCN framework integrates GAT and temporal convolutional networks to provide robust solutions against noise interference in protein-protein interaction analysis [28].

Visualization: Domain-Based vs. GNN Approaches

Comparative Methodologies: Domain-based and GNN approaches to protein analysis

GNNs have established themselves as powerful frameworks for modeling protein structures and interaction networks, offering distinct advantages for capturing complex relational patterns in biological data. While domain-based methods continue to excel at fundamental structure prediction tasks, GNNs provide complementary capabilities for understanding higher-order interactions and network-level properties. The integration of these approaches, along with emerging innovations such as KA-GNNs and multimodal learning frameworks, represents the most promising direction for future research. As these methodologies continue to evolve, they will increasingly enable researchers to unravel the complex relationship between protein structure, interaction networks, and biological function, with significant implications for drug discovery and therapeutic development.

Protein Language Models (ESM, ProtBERT) for Sequence-Based Functional Inference

Protein Language Models (pLMs), such as ESM (Evolutionary Scale Modeling) and ProtBERT, represent a transformative advance in computational biology, leveraging architectures from natural language processing to infer protein function directly from amino acid sequences. These models are trained on millions of protein sequences through self-supervised learning, learning the underlying "grammar" and "syntax" of proteins, which allows them to capture complex biological properties including evolutionary relationships, structural constraints, and functional motifs [36] [37]. This capability is challenging the long-standing dominance of methods that rely on evolutionary information derived from multiple sequence alignments (MSAs) [37] [38]. For researchers focused on R-proteins or any protein class, pLMs offer a powerful, fast, and MSA-free alternative for functional annotation, often achieving state-of-the-art performance, particularly for proteins with few known homologs [38] [39]. This application note details the use of ESM and ProtBERT for sequence-based functional inference, providing structured experimental protocols, performance comparisons, and practical toolkits for scientists and drug development professionals.

Prominent Protein Language Models

- ESM Model Family: A series of transformer-based models developed by Meta. The ESM-2 series, which succeeded ESM-1b, scales from 8 million to 15 billion parameters [40] [39]. These models are pre-trained on millions of protein sequences from the UniProt database using a masked language modeling objective, where the model learns to predict randomly masked amino acids in a sequence [39]. The recently introduced ESM3 is a generative model with 98 billion parameters [40].

- ProtBERT Model Family: Another prominent class of transformer-based pLMs, pre-trained on large datasets including UniProtKB and the BFD (Big Fantastic Database) [38]. ProtBERT models are also widely used as feature extractors for downstream prediction tasks.

Primary Applications in Functional Inference

pLMs excel in several key areas of protein functional inference, which are critical for research and drug development:

- Enzyme Commission (EC) Number Prediction: Accurately classifying enzymes into the hierarchical EC number system which defines the chemical reactions they catalyze [38].

- Gene Ontology (GO) Term Prediction: Predicting molecular functions, biological processes, and cellular components associated with a protein sequence [36] [41].

- Protein-Protein Interaction (PPI) Prediction: Identifying whether two proteins physically interact, a crucial aspect of understanding cellular signaling and disease mechanisms [42].

- Binding Site Prediction: Locating specific residues involved in binding small molecules, DNA, or other proteins, which is fundamental for drug design [39] [43].

- Variant Effect Prediction: Assessing the functional impact of missense mutations on protein function and interactions [42].

Performance Benchmarking and Quantitative Comparison

Performance on Enzyme Commission (EC) Prediction

Table 1: Comparative performance of pLMs and traditional methods on EC number prediction.

| Method | Input Type | Key Performance Insight | Relative Performance |

|---|---|---|---|

| BLASTp | Sequence & Homology | Slightly better overall performance; relies on sequence homologs in database [38]. | Benchmark |

| ESM2 (with DNN) | Sequence Embedding | Excels on difficult annotations and enzymes without close homologs (identity <25%) [38]. | Complementary to BLASTp |

| ProtBERT (with DNN) | Sequence Embedding | Surpasses one-hot encoding models; performance is strong but can be lower than ESM2 [38]. | Lower than ESM2 |

| One-hot Encoding DL Models | Raw Sequence | Suboptimal performance compared to pLM-based models [38]. | Lower than pLMs |

Performance on Protein-Protein Interaction (PPI) Prediction

Table 2: Performance of PLM-interact, a fine-tuned ESM-2 model, on cross-species PPI prediction (trained on human data).

| Test Species | PLM-interact (AUPR) | TUnA (AUPR) | TT3D (AUPR) |

|---|---|---|---|

| Mouse | 0.852 | 0.835 | 0.734 |

| Fly | 0.783 | 0.725 | 0.647 |

| Worm | 0.772 | 0.728 | 0.642 |

| Yeast | 0.706 | 0.641 | 0.553 |

| E. coli | 0.722 | 0.675 | 0.605 |

AUPR: Area Under the Precision-Recall Curve. A higher value indicates better performance. Results show PLM-interact achieves state-of-the-art cross-species generalization [42].

Impact of Model Size and Embedding Compression

Table 3: Practical considerations for selecting and using pLMs in research.

| Factor | Impact and Recommendation |

|---|---|

| Model Size | Larger models (e.g., ESM-2 15B) capture more complex patterns but are computationally expensive. Medium-sized models (eSM-2 650M, ESM C 600M) offer an optimal balance, performing nearly as well as larger models, especially when data is limited [40]. |

| Embedding Compression | For transfer learning, the mean pooling method (averaging embeddings across all sequence residues) consistently outperforms other compression methods (e.g., max pooling, PCA) across diverse tasks [40]. |

Experimental Protocols

Protocol 1: Gene Ontology (GO) Term Prediction Using Transfer Learning

This protocol describes how to use pLM embeddings as input features to a classifier to predict GO terms for a protein sequence.

1. Feature Extraction (Embedding Generation)

* Input: Protein amino acid sequence in FASTA format.

* Model Selection: Choose a pre-trained pLM, such as esm2_t33_650M_UR50D (ESM-2 with 650 million parameters).

* Software: Use the esm Python library or the transformers library for ProtBERT.

* Procedure:

* Load the pre-trained model and its corresponding tokenizer.

* Tokenize the input protein sequence.

* Pass the tokens through the model to extract the hidden representations (embeddings).

* Compression: Apply mean pooling along the sequence dimension to convert the per-residue embeddings (L x 1280) into a single, global protein embedding vector (1 x 1280), where L is the sequence length [40].

2. Classifier Training and Prediction * Input Features: The pooled protein embedding vector. * Model Architecture: Use a lightweight classifier, such as a fully connected Deep Neural Network (DNN) or a Multi-Layer Perceptron (MLP). For sequences, a BiLSTM network can also be effective [39]. * Training: * Use a dataset of protein sequences with known GO term annotations (e.g., from UniProt). * Frame the task as a multi-label classification problem, as a protein can have multiple GO terms. * Train the classifier using the pLM embeddings as input to predict the binary labels for each GO term. * Output: A list of predicted GO terms along with their association probabilities for the query sequence.

Protocol 2: Fine-tuning for Protein-Protein Interaction (PPI) Prediction

This protocol involves adapting a pre-trained pLM to the specific task of predicting interactions between two proteins, as exemplified by PLM-interact [42].

1. Model Architecture and Input Preparation * Base Model: Start with a pre-trained ESM-2 model (e.g., the 650M parameter version). * Input Format: Concatenate the amino acid sequences of the two candidate interacting proteins (Protein A and Protein B) into a single sequence string, separated by a special separator token. * Architecture Modification: The model must be configured to accept this longer, paired-sequence input.

2. Fine-tuning Procedure * Task Formulation: Treat PPI prediction as a binary classification task (interacting vs. non-interacting). * Training Objective: Use a combined loss function: * Next Sentence Prediction (NSP) Loss: A classification loss that teaches the model to predict the binary interaction label. * Masked Language Modeling (MLM) Loss: The original pre-training objective, which helps maintain the model's understanding of protein sequence semantics. * Balanced Loss: A weighting of 1:10 between the NSP classification loss and the MLM loss has been shown to be effective [42]. * Data: Fine-tune the model on a dataset of known interacting and non-interacting protein pairs (e.g., from human data in the Multi-Species PPI dataset).

3. Inference * Input the paired sequence of a novel protein pair into the fine-tuned PLM-interact model. * The model outputs a probability score indicating the likelihood of interaction.

Table 4: Key resources for implementing pLM-based functional inference.

| Resource Name | Type | Function and Application |

|---|---|---|

| ESM-2 / ProtBERT Pre-trained Models | Software Model | Foundational pLMs for generating protein sequence embeddings. Available via Hugging Face transformers or dedicated esm Python packages [40] [39]. |

| UniRef50 Database | Dataset | A non-redundant protein sequence cluster database used for pre-training pLMs and as a source of evolutionary information [41]. |

| UniProtKB | Dataset | A comprehensive repository of protein sequence and functional information, used for training and benchmarking prediction models [36] [39]. |

| PLM-interact | Software Model | A specialized, fine-tuned model for predicting protein-protein interactions, built upon ESM-2 [42]. |

| ESM-DBP | Software Model | A domain-adapted pLM, fine-tuned on DNA-binding proteins, which improves performance on DBP-related prediction tasks [39]. |

| BridgeNet | Software Model | A pre-trained framework that integrates sequence and structural information during training but only requires sequence for inference, enhancing property prediction [41]. |

Workflow and Conceptual Diagrams

Workflow for pLM-Based Functional Annotation

Diagram Title: pLM Functional Annotation Workflow

pLM Fine-tuning for PPI Prediction

Diagram Title: Fine-tuning pLM for PPI Prediction

The field of computational protein structure prediction has been transformed by the advent of advanced deep learning techniques. For over 50 years, predicting the three-dimensional structure that a protein will adopt based solely on its amino acid sequence represented one of the most important open challenges in biology [8]. Traditional experimental methods for determining protein structures, such as X-ray crystallography, nuclear magnetic resonance (NMR), and cryo-electron microscopy, are often costly, inefficient, and time-consuming [9]. The gap between known protein sequences and experimentally determined structures has created an urgent need for accurate computational approaches. This application note examines two revolutionary approaches—AlphaFold2 and D-I-TASSER—that address this challenge through fundamentally different methodologies, providing researchers with powerful tools for structure-based prediction in drug discovery and basic research.

Methodological Foundations

AlphaFold2: End-to-End Deep Learning Architecture

AlphaFold2 represents a purely deep learning-based approach to protein structure prediction. The system employs an entirely redesigned neural network-based model that incorporates physical and biological knowledge about protein structure into its deep learning algorithm [8]. The network directly predicts the 3D coordinates of all heavy atoms for a given protein using the primary amino acid sequence and aligned sequences of homologues as inputs.

The AlphaFold2 architecture comprises two main stages. First, the trunk of the network processes inputs through repeated layers of a novel neural network block termed "Evoformer," which produces representations for both multiple sequence alignments (MSAs) and residue pairs [8]. The Evoformer blocks enable continuous communication between the evolving MSA representation and the pair representation through attention-based mechanisms and triangular multiplicative updates that enforce geometric constraints consistent with 3D structures.

The second stage consists of the structure module, which introduces an explicit 3D structure in the form of a rotation and translation for each residue of the protein. These representations rapidly develop and refine a highly accurate protein structure with precise atomic details. A key innovation is the integration of "recycling," where outputs are recursively fed back into the same modules, enabling iterative refinement that significantly enhances accuracy [8].

D-I-TASSER: Hybrid Deep Learning and Physics-Based Approach

D-I-TASSER (Deep learning-based Iterative Threading ASSEmbly Refinement) employs a hybrid methodology that integrates multisource deep learning potentials with iterative threading fragment assembly simulations [10]. Unlike the end-to-end learning approach of AlphaFold2, D-I-TASSER combines deep learning predictions with classical physics-based folding simulations.

The D-I-TASSER pipeline begins by constructing deep multiple sequence alignments through iterative searches of genomic and metagenomic sequence databases [10]. Spatial structural restraints are then created by multiple deep learning systems, including DeepPotential, AttentionPotential, and AlphaFold2, which utilize deep residual convolutional, self-attention transformer, and end-to-end neural networks, respectively.

Full-length models are constructed by assembling template fragments from multiple threading alignments through replica-exchange Monte Carlo simulations, guided by an optimized deep learning and knowledge-based force field [10]. A critical innovation in D-I-TASSER is its domain partition and assembly module, which iteratively creates domain boundary splits, domain-level MSAs, threading alignments, and spatial restraints, enabling effective modeling of large multidomain protein structures.

Table 1: Core Methodological Comparison between AlphaFold2 and D-I-TASSER

| Feature | AlphaFold2 | D-I-TASSER |

|---|---|---|

| Core Approach | End-to-end deep learning | Hybrid deep learning and physics-based simulation |

| Architecture | Evoformer blocks with structure module | Monte Carlo assembly with deep learning restraints |

| Multiple Sequence Alignment | Integrated into initial processing | DeepMSA2 with iterative database search |

| Template Use | Direct incorporation of templates as inputs | LOMETS3 meta-threading for template identification |

| Domain Handling | Single end-to-end processing | Explicit domain splitting and reassembly module |

| Refinement Mechanism | Internal recycling of representations | Replica-exchange Monte Carlo simulations |

| Force Field | Implicit through training | Explicit physics-based force field |

Performance Benchmarking

Accuracy Metrics and Comparative Performance

Extensive benchmarking experiments demonstrate the competitive performance landscape between AlphaFold2 and D-I-TASSER. In the challenging 14th Critical Assessment of protein Structure Prediction, AlphaFold2 demonstrated remarkable accuracy, achieving a median backbone accuracy of 0.96 Å RMSD₉₅ (Cα root-mean-square deviation at 95% residue coverage), which was approximately three times more accurate than the next best method and comparable to experimental methods [8]. The all-atom accuracy of AlphaFold2 was 1.5 Å RMSD₉₅ compared to the 3.5 Å RMSD₉₅ of the best alternative method at the time.

Recent evaluations indicate that D-I-TASSER has demonstrated competitive or superior performance in certain contexts. On a benchmark set of 500 nonredundant "Hard" domains with no significant templates detectable, D-I-TASSER achieved an average TM-score of 0.870, which was 5.0% higher than AlphaFold2's TM-score of 0.829 [10]. This difference was particularly pronounced for difficult targets; for the 148 more challenging domains where at least one method performed poorly, D-I-TASSER achieved a TM-score of 0.707 compared to AlphaFold2's 0.598.

For multidomain proteins, D-I-TASSER shows particular advantages. On a dataset of 230 multidomain proteins, D-I-TASSER generated full-chain models with an average TM-score 12.9% higher than AlphaFold2 [10]. In the community-wide CASP15 experiment, D-I-TASSER achieved the highest modeling accuracy in both single-domain and multidomain structure prediction categories, with average TM-scores 18.6% and 29.2% higher than AlphaFold2, respectively [44].

Table 2: Performance Comparison on Benchmark Datasets

| Benchmark Dataset | AlphaFold2 Performance | D-I-TASSER Performance | Performance Delta |

|---|---|---|---|

| CASP14 Domains | 0.96 Å RMSD₉₅ (backbone) | Not available | Benchmark reference |

| 500 Hard Domains | TM-score = 0.829 | TM-score = 0.870 | +5.0% |

| 148 Difficult Domains | TM-score = 0.598 | TM-score = 0.707 | +18.2% |