Digital Twin Technology in Healthcare: Optimizing Clinical Trial Design and Drug Development

This article provides a comprehensive overview of digital twin technology and its transformative potential for optimizing Clinical Evaluation Activities (CEA) in biomedical research.

Digital Twin Technology in Healthcare: Optimizing Clinical Trial Design and Drug Development

Abstract

This article provides a comprehensive overview of digital twin technology and its transformative potential for optimizing Clinical Evaluation Activities (CEA) in biomedical research. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts of digital twins, details their methodological application in creating virtual patients and predicting drug efficacy, addresses key implementation challenges and optimization strategies, and examines rigorous validation frameworks. By synthesizing the latest research and real-world case studies, this article serves as a strategic guide for leveraging digital twins to enhance trial efficiency, reduce costs, and accelerate the delivery of new therapies.

What Are Digital Twins? Core Concepts and Their Emergence in Biomedicine

A Digital Twin (DT) is a dynamic, virtual replica of a physical object, process, or system that is continuously updated with real-time data from its physical counterpart [1]. These models integrate sensor data, advanced algorithms, and machine learning to simulate, monitor, and predict the behavior of the physical entity they represent, enabling real-time study, monitoring, and optimization of performance and operations [1]. The core value of DT technology lies in its ability to facilitate precise analysis and optimization, supporting informed decision-making across a vast array of applications without the risks and costs associated with physical testing [2].

The fundamental architecture of a DT has evolved from basic three-dimensional models to more sophisticated frameworks. One prominent five-dimensional model consists of the physical system, the digital system, an updating engine for data flow, a prediction engine for forecasting, and an optimization engine for decision-making [3]. This structure emphasizes a cyclical, bidirectional data flow, allowing the digital replica to not only mirror the current state of its physical twin but also to predict future states and recommend optimal actions. Initially gaining significant traction in industrial design and production management, DT technology is now revolutionizing fields as diverse as supply chain logistics, healthcare, energy systems, and agriculture [2] [4].

Table 1: Global Digital Twin Market Overview

| Attribute | Value | Source/Timeframe |

|---|---|---|

| Market Size (2024) | USD 13.6 Billion | [5] |

| Projected Market Size (2034) | USD 428.1 Billion | [5] |

| Forecast CAGR (2025-2034) | 41.4% | [5] |

| Expert-Assessed Technology Readiness Level (TRL) | 4.8 out of 9 | [4] |

| Key Market Driver | Demand for asset optimization & reduced downtime | [5] |

Digital Twin Fundamentals and Definitions

At its simplest, a Digital Twin is a digital replica of a system such as a plant, a piece of equipment, or a process that closely resembles its real-life counterpart by being fed with data from the real world [2]. This close linkage allows a DT to test and validate new scenarios quickly, without risk, and at a lower cost than physical testing, leading to more informed and rational decision-making prior to taking action in the real world [2]. The technology relies on a seamless, two-way data exchange between the virtual and physical entities, enabling targeted interventions based on predictive simulations and real-time updates to the digital model [1].

A critical distinction exists between general Digital Twins and their specialized counterpart in medicine, the Digital Human Twin (DHT). While DTs are virtual models of physical systems used to simulate, monitor, and optimize non-human entities like industrial machines, Digital Human Twins (DHTs) are a specialized form focused on replicating human physiology for healthcare applications [1]. DHTs leverage patient-specific data to simulate biological systems, enabling personalized medical interventions, such as predicting how a patient’s body will respond to a specific drug [1]. DHTs can represent the full body, organ- or tissue-specific systems, and even cellular and molecular models, and can be customized to represent specific diseases or circumstances [1].

The core of the DT concept is the digital thread—the connected data flow that links the physical and digital realms. The integration of the DT with this digital thread is identified as one of the most significant current challenges in the field [4]. This integration requires a confluence of technologies, including the Internet of Things (IoT) for data collection from physical assets, cloud computing for data storage and processing, and Artificial Intelligence (AI) and machine learning for advanced analytics, predictive modeling, and generating actionable insights from the vast amounts of data [5] [1].

Applications in Engineering and Supply Chain

The engineering and industrial sectors represent the traditional and most mature application areas for Digital Twin technology. In these fields, DTs have emerged as a powerful in silico method for the design, operation, and maintenance of real-world assets [4]. Key sectors include manufacturing, aerospace, automotive and transportation, and construction and building management [4]. A primary driver for adoption is the demand for asset optimization and reduced downtime, with organizations reporting an average 15% improvement in operational efficiency and up to a 20% reduction in unexpected work stoppages through DT implementations [5].

In the realm of supply chain and logistics, DTs offer a transformative tool for addressing modern pressures such as ever-shorter delivery times, tougher just-in-time requirements, and increasingly demanding end customers [2]. For instance, the Sonaris project (Digital Optimization Solution for Integrated Supply Chain Analysis and Redesign) in France developed a functional DT demonstrator specifically for logistics use cases [2]. This platform handles large-scale scenarios simulating realistic situations like port operations, warehousing, and the management of massive logistics flows, providing companies with a risk-free environment to assess the advantages, risks, and costs of reconfiguring their supply chains [2].

The value proposition in engineering is further amplified by cost reduction through virtual commissioning, which enables virtual testing and commissioning, thereby reducing the need for physical prototypes and shortening development time [5]. Furthermore, over 70% of organizations cite sustainability as a key motivator for digital twin investments, with implementations achieving measurable reductions in building carbon emissions [5]. This aligns with the global trend of Industry 4.0, where the integration of IoT, AI, and DTs is creating a new paradigm of smart, connected, and efficient industrial operations.

Table 2: Digital Twin Applications in Engineering & Supply Chain

| Sector | Primary Application | Reported Benefit |

|---|---|---|

| Manufacturing | Virtual commissioning, predictive maintenance | Reduced prototypes, 15% operational efficiency improvement [5] |

| Supply Chain & Logistics | Scenario simulation for port, warehouse, and flow management | Assessment of reconfiguration costs/risks, enhanced flexibility [2] |

| Aerospace & Automotive | Product design, system-level development | Cost and time savings in complex product development cycles [5] |

| Energy & Utilities | Grid optimization, asset management | Enhanced reliability and integration of renewable sources [3] |

| Construction & Building Management | Design optimization, operational efficiency | Reduction in building carbon emissions [5] |

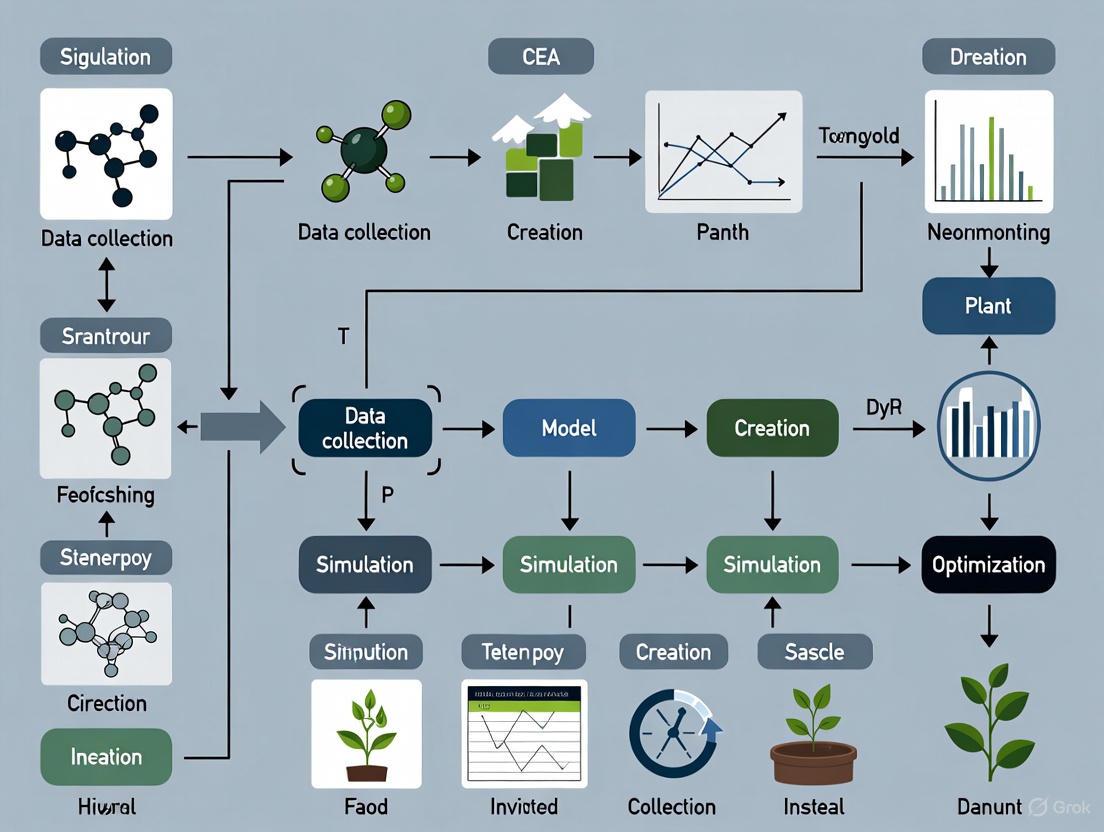

Protocols for Digital Twins in Controlled Environment Agriculture (CEA)

For research focused on CEA optimization, Digital Twin technology presents a pathway to address major challenges like high energy intensity and carbon footprints [6]. A monitoring Digital Twin (mDT) can be developed to provide services for monitoring the different subsystems of a CEA facility [7]. The following protocol outlines the key phases for implementing a DT in a CEA context, such as a greenhouse or indoor vertical farm.

Protocol: Development of a Monitoring DT for CEA Facilities

Objective: To create a dynamic digital replica of a CEA system that integrates real-time data to enable monitoring, optimization, and predictive control of the environment for improved sustainability and productivity.

Research Reagent Solutions & Essential Materials:

- Sensors (IoT Hardware): Function: To collect real-time data on environmental parameters (e.g., light intensity/spectrum, temperature, humidity, CO2 concentration) and plant status (e.g., canopy temperature, substrate moisture). This forms the data acquisition layer. [7] [6]

- Edge Computing Devices: Function: To perform preliminary data processing and filtering at the source, reducing latency and bandwidth requirements for cloud transmission. [5]

- Cloud Computing Infrastructure: Function: To provide scalable data storage, high-performance computing for complex model simulations, and a centralized platform for data fusion and analysis. [1]

- Data Analytics Pipeline (Software): Function: A suite of software tools for data validation, feature extraction, and model calibration. This is crucial for transforming raw data into actionable insights. [5] [7]

- Simulation Models: Function: Physics-based or AI-driven models that simulate CEA processes, such as crop growth models, computational fluid dynamics (CFD) for climate distribution, and energy models. These form the core of the DT's predictive capability. [6]

Methodology:

System Scoping and Data Source Identification:

- Define the boundary of the DT (e.g., single growth room, entire greenhouse).

- Map all relevant physical assets (HVAC, LED lighting, irrigation systems, sensors).

- Identify all data streams, including real-time sensor data, management practices, and external data (e.g., weather forecasts, energy pricing). [6]

Architecture and Platform Deployment:

- Select an on-premise, cloud, or hybrid deployment mode based on data security needs and computational requirements. The cloud segment is growing due to scalability and lower upfront costs. [5]

- Establish a robust data infrastructure capable of ingesting, storing, and managing heterogeneous data streams with time-stamping. Open-source tools can be integrated to build this architecture. [7]

Model Development and Integration:

- Develop or integrate sub-models for key processes:

- Energy Model: Simulates energy consumption of lighting, HVAC, and other systems.

- Crop Growth Model: Predicts plant development, yield, and quality in response to environmental conditions.

- Climate Model: Simulates the spatial and temporal distribution of temperature, humidity, and air movement within the facility.

- These models should be coupled to capture interactions (e.g., the effect of LED heat on room temperature). [6]

- Develop or integrate sub-models for key processes:

Digital Thread Implementation and Calibration:

- Implement the data pipelines that form the "digital thread," ensuring a continuous, bidirectional flow of information between the physical CEA system and the digital model.

- Use real-time data to automatically calibrate and update the simulation models, improving their accuracy over time (model calibration). This is a core challenge and requirement for a true DT. [4] [5]

Interface Development and Validation:

- Develop human-machine interfaces (HMIs) tailored for CEA operators and researchers to visualize data, model outputs, and receive decision support. [2]

- Validate the DT by comparing its predictions against measured outcomes from the physical system. Iteratively refine the models until a satisfactory level of accuracy is achieved.

Protocols for Digital Twins in Chemical Science

In chemical sciences, Digital Twins are being developed to bridge the critical gap between theoretical simulation and experimental characterization, enabling autonomous, adaptive experimentation. The following protocol is based on the framework of the Digital Twin for Chemical Science (DTCS), which integrates theory, experiment, and their bidirectional feedback loops. [8]

Protocol: Applying DTCS for Surface Reaction Characterization

Objective: To utilize a DT for the real-time interpretation of spectroscopic data and to guide experiments toward understanding the kinetics and mechanism of a chemical reaction, such as water interactions on a Ag(111) surface.

Research Reagent Solutions & Essential Materials:

- Physical Twin Instrumentation: Function: The physical apparatus where the reaction occurs, coupled with characterization tools (e.g., Ambient-Pressure X-ray Photoelectron Spectroscopy - APXPS). It provides the experimental data. [8]

- Electronic Structure Theory Software: Function: To perform quantum mechanical calculations (e.g., using Density Functional Theory - DFT) to precompute core electron binding energies (CEBEs) and reaction pathway energetics. [8]

- Chemical Reaction Network (CRN) Solver: Function: A software module (e.g.,

dtcs.sim) that simulates the kinetics of the proposed reaction network, converting precomputed rates into time-evolving concentration profiles. [8] - AI-Powered Inverse Solver: Function: An algorithm (e.g., tailored Gaussian process) that compares simulated spectra with experimental data and iteratively infers new mechanisms or refines kinetic parameters to find the best match. [8]

- Spectral Simulation Module: Function: A software module (e.g.,

dtcs.spec) that translates the concentration profiles from the CRN solver into predicted spectra, incorporating instrument-specific broadening and other experimental specializations. [8]

Methodology:

Define Chemical Species and Precompute Properties:

- Enumerate all potential chemical species involved in the system (e.g., H2Og, H2O, O, OH*).

- Use electronic structure theory (e.g., ΔSCF method) to precompute key properties like Core Electron Binding Energies (CEBEs) for each species. This creates a library of spectral fingerprints. [8]

Propose and Encode the Chemical Reaction Network (CRN):

- Based on expert knowledge and literature, propose a CRN that connects the enumerated chemical species via reactions (e.g., adsorption, desorption, surface diffusion, reaction).

- Encode this CRN, including precomputed transition state barriers and rate constants, into the DT using a standardized syntax. Ensure mass and site balances are enforced. [8]

Execute the Forward Problem (Theory Twin):

- Use the CRN solver to simulate the time-evolving concentration profiles of all species under the set experimental conditions (temperature, pressure).

- Employ the spectral simulation module to generate a predicted spectrum from these concentrations, applying appropriate instrumental broadening. This creates the "Theory Twin" for comparison. [8]

Run Experiment and Acquire Physical Twin Data:

- Conduct the actual experiment using the Physical Twin (the APXPS instrument) to collect the measured spectroscopic data under identical conditions. [8]

Solve the Inverse Problem and Refine the Model:

- Compare the Theory Twin spectrum with the Physical Twin spectrum.

- If the match is poor, deploy the AI-powered inverse solver. This algorithm will vary the parameters of the CRN (e.g., rate constants, or even the network structure itself) to minimize the discrepancy between simulation and experiment, thereby inferring the most probable mechanism. [8]

- This step continues iteratively until a stopping condition (e.g., based on accuracy) is met, yielding a validated model and a fundamental mechanistic understanding.

The Emergence of Digital Human Twins (DHTs) in Healthcare

In healthcare, Digital Human Twins (DHTs) represent a pioneering approach to achieve a complete digital representation of patients, aiming to enhance disease prevention, diagnosis, and treatment [1]. DHTs leverage patient-specific data—including genetic information, medical records, imaging, and even social habits—to create dynamic models that simulate human physiology and the complex interactions between genetic factors and environmental influences [1]. The ultimate goal is to enable in silico testing and comparison of different treatment or preventive interventions to explore the optimum option for a specific individual before any real-world application. [1]

The construction and operation of a DHT rely on a confluence of advanced technologies. Digital health sensors and IoT devices gather information directly from the patient and their surroundings. Cloud computing infrastructures store and manage the vast amounts of generated data. AI and machine learning algorithms are then essential to extract meaningful information from this data, powering the sophisticated simulations and predictive decision support systems that characterize DHTs [1]. This integration facilitates applications across precision medicine, including person-centered risk stratification, rapid diagnosis, disease modeling, surgical planning, targeted therapies, and drug discovery [1].

Despite the remarkable potential, the integration of DHTs into clinical practice faces significant challenges. Key hurdles include ensuring data security, privacy, and accessibility, mitigating data bias, and guaranteeing the high quality and completeness of the input data [1]. Addressing these obstacles is crucial to realizing the full potential of DHTs and heralding a new era of personalized, precise, and accurate medicine. The technology is still in its relative infancy, with many research teams focusing on digital replicas for specific body parts or physiological systems, while the development of a complete, full-body DHT remains a goal for the future. [1]

Table 3: Digital Twin Applications in Healthcare & Ecology

| Field | Application Scope | Key Technologies & Challenges |

|---|---|---|

| Healthcare (Digital Human Twins) | Personalized medicine, treatment optimization, surgical planning, drug discovery. | AI, IoT, cloud computing, multi-omics data. Challenges: Data security, bias, quality. [1] |

| Ecology | Biodiversity conservation, ecosystem management, dynamic simulation of biosphere changes. | Dynamic Data-Driven Application Systems (DDDAS), observational data, modular software frameworks (e.g., TwinEco). [9] |

| Smart Grids | Asset management, system operation and optimization, disaster response and recovery. | IoT sensor integration, real-time simulation, predictive analytics. Challenges: Data management, interoperability. [3] |

The name "Apollo" evokes a legacy of monumental human achievement, first in the audacious mission to land on the moon and now in the equally complex endeavor of conquering disease. This application note explores the evolution of this concept, tracing a path from the systemic, mission-oriented engineering of the NASA Apollo program to the collaborative, data-driven model of Apollo Therapeutics and, finally, to its convergence with the predictive power of digital twin technology. Framed within the context of Controlled Environment Agriculture (CEA) optimization research, this document provides detailed protocols for implementing digital twins to accelerate and de-risk the drug discovery pipeline, offering researchers a blueprint for creating more sustainable and efficient translational science ecosystems.

The Modern Apollo Model: Collaborative Drug Discovery

Apollo Therapeutics represents a paradigm shift in translational medicine. Established as a collaboration between world-leading universities and global pharmaceutical companies, its mission is to navigate the "Valley of Death"—the critical gap between promising academic research and the development of attractive, investable drug candidates [10]. The model is built on strategic partnerships, such as its July 2024 collaboration with the University of Oxford, which aims to translate breakthroughs in biology into new medicines for oncology and immunological disorders [11] [12].

The following table quantifies the key stakeholders and outcomes of this collaborative model.

Table 1: Quantitative Profile of the Apollo Therapeutics Collaborative Model

| Aspect | Description | Quantitative Data / Impact |

|---|---|---|

| Founding Universities | Cambridge, Imperial College London, University College London [10] | 3 original institutions [10] |

| Expanded Network | Includes King's College London, Institute of Cancer Research, University of Oxford [11] [12] | 6 total world-class research institutions [11] |

| Pharmaceutical Partners | AstraZeneca, GSK, Johnson & Johnson [10] | 3 global companies [10] |

| Funding | Initial fund and total raised [11] [10] | Initial £40m collaboration; over $450m raised since inception [11] [10] |

| Project Throughput | Number of projects selected for funding [10] | 8 projects initially identified across the three founding universities [10] |

Experimental Protocol: Establishing an Academic-Industry Translational Partnership

This protocol outlines the methodology for creating a structured pipeline to identify and advance early-stage therapeutic research, based on the Apollo model.

- Objective: To systematically identify, fund, and de-risk novel therapeutic programs from academic research institutions.

Materials:

- Research Repository: Access to internal research outputs from partner universities.

- Project Management Software: e.g., JIRA or Asana for tracking project milestones.

- Funding Mechanism: A dedicated fund for early-stage drug discovery.

Procedure:

- Program Identification (Weeks 1-12):

- Conduct iterative review meetings (e.g., over 100 meetings annually) between the internal drug discovery team and academic scientists [10].

- Criteria for Selection: Focus on programs with a strong understanding of disease biology and mechanisms, and the potential to transform the standard of care in major commercial markets [11] [10].

- Aggressive Filtering (Ongoing):

- Apply rigorous selection criteria to maximize the chance of success. The Apollo team reports being "very picky" and filtering "very aggressively" [10].

- Project Activation (Within Weeks):

- For selected projects, quickly design a work package and commit to collaboration. Apollo demonstrates the ability to begin work "in a matter of weeks," avoiding traditional stop-start funding delays [10].

- Program Execution (1-3 Years):

- Execute the project work package, which may involve activities in the academic lab, a pharma company, or a contract research organization (CRO) [10].

- The goal is to generate a robust package of data (e.g., more potent and drug-like compounds) that is attractive for licensing by an industry partner [10].

- Licensing and Output:

- Developed therapeutics are first offered to the founding pharmaceutical partners.

- Capital gains from licensing are divided between the university and pharma investors [10].

- Program Identification (Weeks 1-12):

Diagram 1: Apollo Translational Workflow. Illustrates the staged pipeline for translating academic research into licensed drug programs.

The Digital Twin Revolution in Biopharmaceutical Research

Digital twins are dynamic virtual replicas of physical entities or processes, continuously updated with real-time data to enable simulation, diagnostics, and predictive analytics [13]. In biopharmaceuticals, they are emerging as a transformative tool for creating 'virtual patients' and simulating biological systems, thereby reducing the need for costly and time-consuming physical experiments [14]. The core value lies in their ability to run "what-if" scenarios without risk, leading to more informed and rational decision-making [2].

The integration of digital twins into research workflows demands a sophisticated toolkit. The table below details essential research reagents and computational solutions for building and utilizing digital twins in a drug discovery context.

Table 2: Research Reagent Solutions for Digital Twin Implementation

| Category / Item | Function in Digital Twin Workflow | Specific Example / Technology |

|---|---|---|

| Computational Framework | Provides the core environment for building and running the digital twin. | Azure Digital Twins 3D Scenes Studio [15] |

| AI Agent for Model Exploration | Facilitates interaction with complex biomedical models via natural language. | Talk2Biomodels (Open-source AI agent) [14] |

| AI Agent for Knowledge Management | Interrogates and connects disparate biomedical data points. | Talk2KnowledgeGraph (Open-source AI agent) [14] |

| Data Integration Layer | Gathers and stores diverse data types from experimental systems. | CEA mDT Architecture [7] |

| 3D Visualization Engine | Provides an immersive environment for interacting with the digital twin. | 3D Scenes Studio; iot-cardboard-js library [15] |

| Predictive Analytics Algorithm | Processes historical and real-time data to forecast future system states. | AI-driven predictive insights [13] |

Experimental Protocol: Developing a Monitoring Digital Twin (mDT) for a Preclinical Research Ecosystem

This protocol is adapted from CEA research, where monitoring digital twins are used to integrate data from various subsystems (e.g., environmental sensors, nutrient delivery) to optimize conditions and predict outcomes [7]. It provides a methodology for applying the same principles to a preclinical research environment, such as a laboratory studying compound efficacy in a CEA-like controlled system.

- Objective: To build a monitoring digital twin that integrates real-time data from a controlled research environment to track key parameters and enable predictive optimization.

Materials:

- Sensor Network: Physically compatible sensors for data acquisition (e.g., temperature, pH, metabolite concentration in cell cultures).

- Data Storage & Compute: A storage account (e.g., Azure Storage) and computing instance capable of handling time-series data [15].

- Digital Twin Platform: An instance of a digital twin service (e.g., Azure Digital Twins) [15].

- Visualization Interface: A tool for displaying data, such as 3D Scenes Studio or a custom dashboard [15].

Procedure:

- System Architecture and Data Ingestion:

- Deploy an architecture capable of gathering different types of data from the research facility [7]. For example, connect pH meters, mass spectrometers, and cell culture analyzers to a central data hub.

- Configure CORS (Cross-Origin Resource Sharing) on the storage account to allow the visualization interface to access data, using required headers:

Authorization,x-ms-version, andx-ms-blob-type[15].

- Digital Twin Modeling:

- Within the digital twin platform, create digital models (twins) of key assets in the research environment (e.g., individual bioreactors, analytical instruments, sample lines). Each twin should have properties corresponding to its physical counterpart's telemetry (e.g.,

temperature,dissolved_O2) [15].

- Within the digital twin platform, create digital models (twins) of key assets in the research environment (e.g., individual bioreactors, analytical instruments, sample lines). Each twin should have properties corresponding to its physical counterpart's telemetry (e.g.,

- 3D Scene Creation and Element Mapping:

- Initialize a 3D scene using a segmented 3D file (.GLB or .GLTF format) of the laboratory layout [15].

- Create Elements within the scene by linking 3D meshes (e.g., a virtual model of a bioreactor) to their corresponding digital twins via the

$dtId[15]. This creates a visual representation of the research system.

- Behavior Configuration for Predictive Monitoring:

- Define Behaviors to create interactive scenarios. For example, create a "Nutrient Alert" behavior that targets the bioreactor element [15].

- Use a rule-based or AI-driven expression to analyze the

glucose_levelproperty from the bioreactor's digital twin. Configure the behavior to trigger a visual alert (e.g., the 3D model turns red) when the level drops below a critical threshold, enabling proactive intervention [13].

- Deployment and Interaction:

- The mDT is now operational, generating a database of research data. This database can later be used by predictive algorithms to suggest actions for improving research productivity and sustainability [7].

- Researchers can view the scene in View mode to see real-time data and alerts, or use the Data history explorer to analyze property values over time [15].

- System Architecture and Data Ingestion:

Diagram 2: mDT Architecture for Research. Shows the data flow in a monitoring Digital Twin for a controlled research environment.

Quantitative Data Analysis and Visualization for Digital Twin Outputs

The effectiveness of a digital twin hinges on translating its complex, quantitative outputs into actionable insights. Effective quantitative data visualization is the bridge between raw data and human decision-making, enabling researchers to quickly uncover patterns, trends, and relationships [16] [17].

Table 3: Quantitative Data Analysis Methods for Digital Twin Insights

| Analysis Method | Application in Digital Twin Research | Recommended Visualization |

|---|---|---|

| Descriptive Statistics | Summarizes the central tendency and dispersion of key parameters (e.g., mean metabolite production, standard deviation of growth rates). | Bar Chart (for comparisons), Line Chart (for trends over time) [16] [17] |

| Cross-Tabulation | Analyzes relationships between categorical variables (e.g., the relationship between nutrient regimen and cell viability outcome). | Stacked Bar Chart [16] |

| Gap Analysis | Compares actual performance (e.g., experimental yield) against potential or target performance. | Progress Chart, Radar Chart [16] |

| Regression Analysis | Examines relationships between variables to predict outcomes (e.g., predicting final titer based on early-process parameters). | Scatter Plot [16] [17] |

| Time-Series Analysis | Tracks changes in key metrics over the duration of an experiment or process. | Line Chart [17] |

The following table presents hypothetical quantitative data from a digital twin simulating a bioproduction process, demonstrating how different visualizations can be applied.

Table 4: Simulated Digital Twin Output for a Bioproduction Optimization Study

| Bioreactor ID | Temperature (°C) | pH | Final Yield (g/L) | Energy Consumed (kWh) | Optimal Run |

|---|---|---|---|---|---|

| BR-01 | 36.5 | 7.0 | 12.5 | 1550 | No |

| BR-02 | 37.0 | 7.1 | 14.2 | 1480 | Yes |

| BR-03 | 36.0 | 6.9 | 11.8 | 1620 | No |

| BR-04 | 37.2 | 7.2 | 15.1 | 1450 | Yes |

| BR-05 | 35.8 | 6.8 | 10.5 | 1700 | No |

Integrated Case Study: Optimizing a Therapeutic Protein Pipeline

This case study synthesizes the Apollo model with a CEA-inspired digital twin to outline a protocol for optimizing the production of a novel therapeutic protein (e.g., an enzyme for Alpha-1 antitrypsin deficiency, as referenced in Apollo's portfolio [10]) in a controlled plant or cell-based system.

Experimental Protocol: Digital Twin-Driven Optimization of a Bioproduction Workflow

- Objective: To use a process digital twin to model, predict, and prescriptively optimize the yield and sustainability of a therapeutic protein production system.

Materials:

- Pilot-scale bioreactor or controlled plant growth system.

- Sensor suite for environmental and process data.

- Digital twin platform configured per Protocol 3.1.

- Analytics and visualization tools (e.g., ChartExpo, Ajelix BI, Python Pandas) [16].

Procedure:

- Historical Data Integration:

- Ingest historical production data (e.g., from Table 4) into the digital twin's database. This data will train initial predictive algorithms.

- Process Twin Calibration:

- Create a Process Twin that mirrors the full bioproduction workflow, from inoculation to harvest [13]. Link this twin to real-time data feeds from the physical system.

- Predictive Simulation and Prescriptive Action:

- Run "what-if" simulations using the digital twin to identify parameters that maximize yield while minimizing energy consumption [13]. For instance, the twin might predict that a temperature of 37.2°C and a pH of 7.2 will lead to optimal yield.

- Configure prescriptive alerts that automatically suggest parameter adjustments to operators, closing the loop from insight to action.

- UX-Centric Monitoring and Validation:

- Implement a personalized, role-based interface for the digital twin. Technicians see alert dashboards, while process engineers access advanced simulation models [13].

- Validate the model's predictions by running a physical batch using the twin's recommended parameters. Compare the final yield and energy consumption against historical controls (e.g., data from Table 4) to quantify improvement.

- Historical Data Integration:

The evolution from the Apollo program to Apollo Therapeutics illustrates a consistent theme: overcoming grand challenges through integrated systems, collaboration, and cutting-edge technology. This application note demonstrates that the next logical step in this evolution is the incorporation of digital twins, a technology with profound implications for CEA optimization and drug discovery alike. By adopting the detailed protocols herein—from establishing collaborative frameworks to building and interacting with dynamic digital replicas—research organizations can build more resilient, efficient, and predictive pipelines. This convergence promises to accelerate the journey of therapeutics from the researcher's bench to the patient's bedside.

A digital twin is an integrated, data-driven virtual representation of a physical object or system that is dynamically updated with real-time data and uses simulation to enable forecasting and informed decision-making [18]. The National Academies of Science, Engineering, and Medicine (NASEM) defines it as a "set of virtual information constructs that mimics the structure, context, and behavior of a natural, engineered, or social system (or system-of-systems), is dynamically updated with data from its physical twin, has a predictive capability, and informs decisions that realize value" [19]. This architecture is foundational to achieving cost-effectiveness analysis (CEA) optimization in research, particularly in drug development, where it enables the virtual testing of therapies, reduces trial costs, and accelerates time-to-market.

The core value of a digital twin, especially for CEA optimization, lies in the bidirectional interaction between its physical and virtual components. This closed-loop system allows researchers not only to monitor but also to proactively optimize systems and interventions. Evidence from supply chain research indicates that digital twin implementation can reduce operational costs by 30-40% and decrease disruption times by up to 60% [20]. In healthcare, the digital twin market is projected to grow at a compound annual growth rate (CAGR) of 24.35%, expected to reach $4.69 billion by 2030, underscoring its economic and operational impact [21].

The Three Core Components

The functional architecture of any digital twin is built upon three interdependent components: the physical entity, the virtual model, and the bidirectional data flow that connects them [22] [23].

The Physical Entity

The physical entity is the real-world system, object, or process that the digital twin aims to mirror. In the context of CEA optimization for drug development, this could range from a specific piece of laboratory equipment to a complex biological system or an entire clinical trial process.

- In Drug Development Contexts:

- Individual Patients: For personalized medicine, a patient can be a physical entity. Data sources include medical imaging (CT, MRI), genomic sequencing, wearable sensor data (tracking activity, heart rate), and electronic health records (EHRs) [23] [21].

- Biological Systems: An organ (e.g., a heart), a physiological pathway, or a cellular mechanism can serve as the physical entity for researching disease progression or drug effects [21].

- Laboratory and Clinical Assets: This includes high-throughput screening machines, bioreactors, or other instruments used in R&D and manufacturing [21].

- Key Requirement – Instrumentation: The physical entity must be equipped with or be the subject of data sources such as Internet of Things (IoT) sensors, medical devices, or clinical data feeds. These are critical for capturing its performance, condition, and operating environment [22] [18].

The Virtual Model

The virtual model is the computational counterpart of the physical entity. It is more than a static 3D model or a one-off simulation; it is a "living" dynamic entity that evolves [24]. Its purpose is to emulate the behavior, characteristics, and functionality of the physical twin.

- Composition and Fidelity: The model synthesizes data from the physical entity with physics-based simulations, machine learning (ML) algorithms, and artificial intelligence (AI) to create a high-fidelity replica [23] [25]. For instance, a virtual patient model might integrate computational models of physiology with AI to predict individual responses to a drug candidate [23].

- Analytical Capabilities: The virtual model serves as a risk-free digital laboratory. Researchers can run "what-if" scenarios, such as simulating disease progression or testing the efficacy and safety of a new therapeutic intervention under various conditions without risking the physical asset (e.g., a patient in a clinical trial) [22] [18]. This predictive capability is central to optimizing CEA, as it allows for the identification of the most efficient and effective research pathways.

The Bidirectional Data Flow

This is the central nervous system of the digital twin, enabling real-time, two-way communication between the physical and virtual components [22] [19]. This bidirectional flow is what distinguishes a digital twin from a simple simulation.

- Data Collection to Physical Action: The cycle involves:

- Live Data Integration: Sensors and data feeds on the physical entity continuously transmit data to the virtual model [22] [18].

- Synchronization: The virtual model updates itself to accurately reflect the current state of its physical twin [23].

- Analysis and Simulation: The updated model runs analyses and simulations, often powered by AI, to detect patterns, predict future states, and prescribe actions [22] [25].

- Feedback and Informed Decision-Making: Insights, control signals, or optimized parameters are sent from the virtual model back to the physical world. This could manifest as an alert to adjust a patient's treatment plan, a recommendation to reconfigure a laboratory instrument, or a prediction about a clinical trial's outcome, directly informing CEA [22] [23].

The following diagram illustrates this continuous, bidirectional information flow.

Quantitative Performance Data for CEA

For researchers and scientists, the value of a digital twin is quantified through its impact on key performance indicators. The table below summarizes data from implemented systems, which can be directly used in cost-effectiveness analyses.

Table 1: Digital Twin Performance Metrics for CEA Optimization

| Metric Area | Reported Improvement | Application Context | Source |

|---|---|---|---|

| Operational Costs | Reduction of 30-40% | Supply Chain Optimization | [20] |

| Disruption Time | Decrease of up to 60% | Supply Chain Disruption Mitigation | [20] |

| Predictive Accuracy | 12% reduction in Root Mean Squared Error (RMSE) for Remaining Useful Life (RUL) | Predictive Maintenance of Industrial Assets | [25] |

| Failure Prediction | Precision: 94%, Recall: 88% | Predictive Maintenance of Industrial Assets | [25] |

| Maintenance Cost Savings | Anticipated 18% reduction | Predictive Maintenance with Hybrid Digital Twin | [25] |

| Market Growth (CAGR) | 24.35% (2024-2030) | Healthcare Digital Twin Market | [21] |

Experimental Protocol: Implementing a Hybrid Digital Twin for Predictive Maintenance

This protocol details the methodology from a study on a Hybrid Digital Twin (HDT) integrated with Quantum-Inspired Bayesian Optimization (QBO), a approach relevant for maintaining critical laboratory and manufacturing equipment in drug development [25].

1. Objective: To establish a predictive maintenance framework that forecasts asset failures and optimizes maintenance schedules, maximizing operational lifespan and minimizing unplanned downtime for CEA optimization.

2. Hybrid Digital Twin Architecture:

- Physics-Based Simulation (PBS) Layer: Utilizes Finite Element Analysis (FEA) and Computational Fluid Dynamics (CFD) to model fundamental physical processes (e.g., stress distribution, thermal transfer). This layer provides high fidelity but is computationally intensive.

- Machine Learning (ML) Layer: Employs Long Short-Term Memory (LSTM) networks, a type of Recurrent Neural Network (RNN), trained on real-time sensor data (vibration, temperature, acoustic emissions) to learn temporal degradation patterns.

- Dynamic Model Switching: The HDT's state transition is governed by the function Sn+1 = Φ(Sn, un, θPBS, θML). The system dynamically weights the use of PBS and ML models based on operational conditions, triggering high-fidelity PBS during high-stress scenarios and relying on faster ML models for normal operations [25].

3. Data Acquisition and Preprocessing:

- Data Sources: Collect time-series sensor data from the physical asset (e.g., industrial pump, centrifuge). Key metrics include 3-axis vibration, temperature, pressure, and acoustic emissions.

- Data Splitting: Partition data into training (70%), validation (15%), and testing (15%) sets.

- Normalization: Apply Z-Score normalization to all sensor data streams to ensure stable model training [25].

4. Optimization via Quantum-Inspired Bayesian Optimization (QBO):

- Purpose: To efficiently explore the high-dimensional parameter space for optimal maintenance schedules and operating conditions, overcoming the scalability limits of traditional Bayesian Optimization.

- Algorithm: A Quantum-inspired Monte Carlo Tree Search (QMCTS) is used, which employs a quantum annealing-inspired schedule to refine node evaluation. The acquisition function A(x) = β(x) + κσ(x) guides the search, where

β(x)is an exploration term,σ(x)is the model uncertainty, andκis a dynamically adjusted scale parameter [25].

5. Performance Validation:

- Compare the HDT-QBO framework against a baseline system (e.g., using Gaussian Process Regression) on the test dataset.

- Key Metrics:

- Root Mean Squared Error (RMSE) of Remaining Useful Life (RUL) predictions.

- Precision and Recall for failure event prediction.

- Projected Maintenance Cost Savings based on reduced downtime and optimized scheduling [25].

The workflow for this experimental protocol is visualized in the following diagram.

The Scientist's Toolkit: Research Reagent Solutions

For researchers building digital twins in a drug development environment, the following "reagents" or core components are essential.

Table 2: Essential Components for a Biomedical Digital Twin Lab

| Item / Technology | Function & Application |

|---|---|

| IoT Sensor Kits | Instrument physical assets (lab equipment, wearables) to capture real-time operational and physiological data (temperature, vibration, heart rate). |

| Cloud Computing Platform (e.g., AWS, Azure, Google Cloud) | Provides the scalable infrastructure for data storage, running computationally intensive simulations (PBS), and training complex ML models. |

| Simulation Software (e.g., ANSYS, COMSOL) | Enables the creation of physics-based models (FEA, CFD) to simulate mechanical, thermal, and fluid dynamics processes. |

| AI/ML Frameworks (e.g., TensorFlow, PyTorch) | Provides the libraries and tools to develop, train, and deploy machine learning models, such as LSTM networks, for predictive analytics. |

| Data Integration Middleware | Acts as a bridge to ensure interoperability and seamless data flow between disparate systems, such as Electronic Health Records (EHRs), laboratory equipment, and the virtual model. |

Digital Twins vs. Traditional Computational and Simulation Models

In the context of Controlled Environment Agriculture (CEA) optimization research, the selection of a modeling paradigm is a critical strategic decision. Traditional simulation models have long been used for analysis and design, but digital twin technology represents a fundamental shift toward dynamic, data-driven virtual representations [26]. This evolution is particularly relevant for CEA, where integrating agricultural processes with industrial automation demands real-time responsiveness and cross-domain insights [27] [28].

Digital twins are moving from simply representing physical entities toward a more comprehensive approach of general knowledge representation, which is essential for managing the complex interactions within CEA systems [27]. This document provides detailed application notes and experimental protocols to guide researchers and drug development professionals in implementing these technologies for CEA optimization.

Conceptual Differentiation and Comparative Analysis

Fundamental Definitions and Characteristics

Traditional Simulation: Traditional simulations are predictive models that forecast outcomes under specific, controlled conditions and constraints [29]. They are typically scenario-based, time-bounded, and hypothesis-driven, creating virtual environments where variables can be manipulated to observe their impact on system behavior without real-world consequences [29]. These models often rely on historical data and predefined scenarios, making them inherently static as they won't change or develop unless a designer introduces new elements [26] [30].

Digital Twin: A digital twin is a virtual model created to accurately reflect an existing physical object, system, or process [30]. It is characterized by its persistent, bi-directional connections with its physical counterpart [29]. Unlike static simulations, digital twins are living mirrors that reflect not only the current state but also the history and predicted future of real-world systems [29]. They integrate live data streams from sensors, IoT devices, and enterprise systems to construct a continuously evolving 'digital shadow' of its real-world counterpart [26].

Key Technical and Operational Differences

Table 1: Comparative Analysis of Digital Twins vs. Traditional Simulations

| Aspect | Traditional Simulation | Digital Twin |

|---|---|---|

| Data Elements & Interaction | Static data, mathematical formulas, scenario-based inputs [26] [30] | Active, real-time data streams from IoT sensors and enterprise systems [26] [30] |

| Temporal Nature | Time-bounded, capturing snapshots of potential futures [29] | Persistent and evolving, existing throughout the asset lifecycle [29] |

| Connectivity | Typically standalone with limited external integration [29] | Bi-directionally connected, enabling two-way data flow [29] |

| Simulation Basis | Represents what could happen based on potential parameters [30] | Replicates what is actually happening to a specific product/process [30] |

| Scope of Use | Narrow – primarily design and engineering analysis [30] | Wide – cross-business applications including operations and maintenance [30] |

| Computational Processing | Batch processing models performing intensive calculations on complete datasets [29] | Real-time processing architectures with minimal latency [29] |

| Integration Requirements | May import external data but minimal live integration needed [29] | Deep integration with ERP, MES, SCADA, and field devices [29] |

Application in CEA Optimization Research

CEA-Specific Use Cases and Benefits

The integration of digital twins in CEA represents a convergence of agricultural science with industrial automation, enabling unprecedented control and optimization [28].

Crop Growth and Environmental Modeling: Digital twins enable operators to simulate crop growth, energy loads, and maintenance schedules before planting [28]. By creating a virtual replica of the entire growing environment, researchers can model plant development under various environmental conditions, enabling predictive yield analysis and resource optimization.

Energy-Smart Farm Management: CEA systems are often energy-intensive, creating a significant optimization challenge [28]. Digital twins facilitate grid-responsive designs that can flex electricity use based on availability and price. This allows CEA facilities to function as intelligent energy nodes rather than fixed consumers, aligning agricultural production with clean energy availability and smart grid strategies [28].

Lifecycle-Aware System Design: Digital twins support the design of CEA systems that minimize total energy and water use throughout their operational lifecycle [28]. This is particularly valuable for modular food infrastructure deployments in diverse environments, from urban centers to low-resource settings [28].

Quantitative Benefits and Business Impact

Table 2: Quantitative Benefits of Digital Twin Implementation

| Metric | Traditional Simulation Impact | Digital Twin Impact |

|---|---|---|

| Operational Efficiency | Moderate improvements through pre-design optimization | Up to 1,000x more efficient than traditional methods [31] |

| Resource Optimization | Theoretical savings based on modeled scenarios | Up to 90% reduction in water use in CEA applications [28] |

| Predictive Maintenance | Limited to scheduled maintenance based on historical data | Proactive failure prediction, significantly reducing downtime [26] |

| Design and Prototyping | Reduced physical prototyping expenses through virtual testing [29] | Capable of improving part quality by up to 40% in production [30] |

| Market Growth | Mature technology with stable adoption | Projected expansion from $21.14B (2025) to $149.81B (2030) - 47.9% CAGR [31] |

Experimental Protocols for Digital Twin Implementation

Protocol 1: Digital Twin Development for CEA Environmental Optimization

Objective: Create a functional digital twin for real-time monitoring and optimization of growth parameters in a controlled environment agriculture facility.

Diagram 1: CEA Digital Twin Data Workflow

Materials and Equipment:

- IoT Sensor Array: Temperature, humidity, CO₂, light intensity, and soil moisture sensors for continuous environmental monitoring [31].

- Edge Computing Device: For initial data processing and reducing latency in data transmission [29].

- Data Integration Platform: Middleware capable of handling MQTT, AMQP, or RESTful APIs for connecting heterogeneous systems [31].

- Modeling Software: Platform supporting multi-physics, multi-scale simulation (e.g., Simio, Siemens NX) [26] [31].

- Visualization Dashboard: For real-time monitoring and interaction with the digital twin [29].

Methodology:

- System Boundary Definition: Identify the specific CEA components to be twinned (e.g., growth chamber, irrigation system, climate control) [31].

- Sensor Deployment and Calibration: Install and calibrate IoT sensors according to manufacturer specifications, establishing baseline measurements.

- Data Architecture Implementation: Set up time-series databases and establish data pipelines using appropriate protocols (DDS, MQTT, or AMQP) [31].

- Model Development: Create the initial virtual model incorporating:

- Physical layout and equipment specifications

- Plant growth models specific to the crops being cultivated

- Environmental control algorithms

- Verification and Validation: Apply the Digital Twin Consortium's framework for verification and validation to build trust in model predictions [31].

- Integration and Deployment: Establish bidirectional data flows between physical systems and the digital twin, implementing control loops.

- Continuous Calibration: Implement machine learning algorithms for ongoing model refinement based on actual system performance [32].

Protocol 2: Traditional Simulation for CEA Facility Design

Objective: Use traditional simulation methods to evaluate different facility layouts and operational strategies for a new CEA installation.

Materials and Equipment:

- CAD Software: For creating detailed 2D/3D models of the proposed facility layout [30].

- Discrete Event Simulation Software: Tools like Simio, AnyLogic, or Arena for process modeling [26].

- Historical Data: Operational data from similar facilities for parameter estimation [26].

- High-Performance Computing Resources: For running multiple complex scenarios [29].

Methodology:

- Scenario Definition: Identify specific "what-if" questions to be explored (e.g., layout alternatives, staffing models, production schedules) [29].

- Data Collection: Gather historical operational data, equipment specifications, and process flow information [26].

- Model Construction: Build a static simulation model incorporating:

- Facility geometry and resource locations

- Process flows and material handling

- Equipment performance characteristics

- Staffing models and shift patterns

- Parameter Configuration: Set input parameters for each scenario to be tested.

- Simulation Execution: Run multiple replications for each scenario to account for variability.

- Output Analysis: Compare key performance indicators (throughput, resource utilization, bottlenecks) across scenarios.

- Recommendation Development: Identify optimal configuration based on simulation results.

Research Reagents and Essential Materials

Table 3: Essential Research Reagents and Solutions for Digital Twin Experiments

| Category | Specific Items | Function/Application | Implementation Considerations |

|---|---|---|---|

| Data Acquisition | IoT Sensors (Temperature, Humidity, CO₂, PAR, Soil Moisture) [31] | Collect real-time environmental and operational data | Calibration frequency, communication protocol compatibility |

| Communication Protocols | MQTT, DDS, AMQP, RESTful APIs [31] | Enable bidirectional data exchange | Bandwidth requirements, latency constraints, security |

| Modeling Platforms | Simio, Siemens NX, MATLAB/Simulink [26] [28] | Digital twin creation and simulation | Multi-physics capabilities, real-time processing performance |

| Data Management | Time-Series Databases (InfluxDB, TimescaleDB) [29] | Store and manage temporal operational data | Query performance, compression efficiency, retention policies |

| Analytical Frameworks | Machine Learning Libraries (TensorFlow, PyTorch) [32] | Predictive analytics and pattern recognition | Training data requirements, computational resource needs |

| Visualization Tools | Grafana, Tableau, Custom Dashboards [29] | Present operational insights intuitively | Real-time update capability, multi-user access controls |

Implementation Framework and Decision Pathway

Diagram 2: Digital Twin Implementation Decision Pathway

Strategic Implementation Considerations

For researchers embarking on CEA optimization projects, the following strategic considerations should guide technology selection:

Organizational Readiness Assessment: Evaluate existing capabilities across three key areas: technical infrastructure (IoT connectivity, data processing), organizational competencies (digital literacy, cross-functional collaboration), and financial resources [29]. Digital twins typically require greater investment in sensors, connectivity, and computational infrastructure [30].

Data Governance Framework: Establish robust data quality standards addressing accuracy, completeness, consistency, and timeliness [31]. Implement regular monitoring as data quality naturally degrades over time, potentially compromising digital twin accuracy.

Interoperability Standards: For CEA applications that require integration across multiple systems (environmental control, irrigation, energy management, logistics), prioritize solutions that support semantic interoperability through knowledge graphs and standardized ontologies [27].

Phased Implementation Approach: Begin with a well-defined pilot project targeting a high-value use case before expanding to facility-wide implementation. The Sonaris project demonstrates the value of developing demonstrators for realistic scenarios before full deployment [2].

Clinical drug development is characterized by an exceptionally high attrition rate, with approximately 90% of drug candidates failing to progress from clinical trials to approval [33]. This staggering failure rate represents a fundamental challenge for the pharmaceutical industry, resulting in massive financial losses, wasted scientific resources, and delayed patient access to novel therapies. Recent analyses of clinical trial success rates (ClinSR) reveal that this rate has been declining since the early 21st century, though it has recently shown signs of plateauing and beginning to increase [34]. This comprehensive analysis examines the root causes of clinical development failure and presents a structured framework for implementing digital twin technology—adapted from Controlled Environment Agriculture (CEA) optimization principles—to address this persistent challenge.

Table 1: Clinical Trial Success Rate (ClinSR) Analysis Across Therapeutic Areas

| Therapeutic Area | Historical Success Rate | Key Challenges | Recent Trends |

|---|---|---|---|

| Oncology | Variable | High biological complexity, tumor heterogeneity | Emerging improvements with targeted therapies |

| Anti-COVID-19 drugs | Extremely low | Rapidly evolving pathogen, compressed timelines | Limited success despite urgent need |

| Infectious Diseases | Variable | Pathogen resistance, complex trial designs | Post-pandemic reset to pre-COVID levels |

| Neurology | Improving | Blood-brain barrier, disease heterogeneity | Increasing number of novel launches |

| Metabolic Diseases | High in GLP-1 class | Chronic nature requiring long trials | Significant activity in obesity/diabetes |

The Clinical Development Landscape: Quantitative Analysis of Failure

Understanding the magnitude and distribution of clinical trial failure requires examination of comprehensive datasets. A recent systematic analysis of 20,398 clinical development programs (CDPs) involving 9,682 molecular entities provides revealing insights into the dynamic nature of clinical success rates [34]. The data demonstrates significant variations in success probabilities across different disease categories, drug modalities, and developmental strategies.

Methodological Framework for ClinSR Assessment

The dynamic clinical trial success rate (ClinSR) calculation methodology employed in this analysis incorporates:

- Data Standardization Procedures: Rigorous exclusion criteria for clinical trials, removing those with no clinical status provided, unclear trial timelines, non-drug interventions, and vague drug names (approximately 2.3% of all trials) [34]

- Multi-source Data Integration: Aggregation from ClinicalTrials.gov, Drugs@FDA, Therapeutic Target Database, and DrugBank to ensure comprehensive coverage [34]

- Temporal Dynamics Analysis: Continuous evaluation of success rates from 2001-2023 to identify trends and patterns [34]

- Stratification Parameters: Assessment across disease classes, developmental strategies, and drug modalities [34]

Table 2: Clinical Trial Attrition Rates by Development Phase

| Development Phase | Probability of Advancement | Primary Failure Drivers | Digital Twin Mitigation Strategies |

|---|---|---|---|

| Phase 1 | 65-70% | Safety profiles, pharmacokinetics | Predictive toxicity modeling, in silico ADMET |

| Phase 2 | 30-35% | Efficacy signals, biomarker validation | Patient stratification, biomarker digital twins |

| Phase 3 | 55-60% | Superiority demonstration, safety in large populations | Synthetic control arms, trial optimization |

| Regulatory Review | 85-90% | Manufacturing, labeling, risk-benefit profile | Process analytical technology, in silico cohorts |

Emerging Therapeutic Modalities and Success Patterns

Recent clinical development has seen substantial investment in novel therapeutic modalities with distinct success patterns:

- Antibody-Drug Conjugates (ADCs): 551 active trials globally, with 36% investigating new ADC entities [33]

- Radiopharmaceuticals: 80 clinical trials at Phase II or beyond, combining therapeutic and diagnostic applications [33]

- Cell and Gene Therapies: Expansion beyond oncology into inflammatory diseases, with autologous cell therapies showing transformational impact in lupus and advanced liver disease [33]

- GLP-1 Agonists: 157 active clinical assets in obesity, including seven at pre-registration phase, with 20% representing GLP-1 mono-therapies [33]

Digital Twin Technology: A Transdisciplinary Framework

Digital twin technology represents a transformative approach for addressing clinical development inefficiencies. Originally developed for industrial applications and refined in CEA optimization, digital twins are virtual representations of physical entities, processes, or systems that enable real-time monitoring, predictive analytics, and in silico experimentation [35] [36] [37].

Core Principles of Digital Twin Technology

The implementation of digital twins in clinical development builds upon several foundational principles:

- Two-Way Data Synchronization: Unlike digital models or shadows, true digital twins maintain continuous, bidirectional data flow between physical and virtual entities [37]

- Dynamic System Representation: Capability to model complex, living systems including biological processes and patient physiology [37]

- Predictive Analytics: Integration with artificial intelligence and machine learning to forecast system behavior and optimize outcomes [35] [36]

- Multi-scale Integration: Capacity to represent systems at varying scales, from cellular processes to population-level dynamics [36]

CEA-Inspired Optimization Frameworks

Controlled Environment Agriculture has pioneered the use of digital twins for managing complex biological systems under constrained conditions, offering valuable paradigms for clinical development:

- Environmental Control Optimization: CEA systems use digital twins to maintain optimal growing conditions through continuous monitoring and adjustment of environmental parameters [6]—a approach directly applicable to maintaining protocol adherence in clinical trials

- Resource Efficiency Maximization: CEA operations focus on maximizing productivity while minimizing resource inputs [6], analogous to optimizing trial efficiency and cost-effectiveness

- Predictive Cultivation Models: CEA employs growth prediction models that inform harvest scheduling and resource allocation [6], similar to patient recruitment and retention forecasting needed for clinical trials

- Transdisciplinary Integration: Successful CEA implementation requires collaboration across engineering, plant science, data analytics, and economics [6], mirroring the cross-functional expertise needed for successful clinical development

Digital Twin Clinical Framework

Application Note: Digital Twin Implementation for Protocol Optimization

Experimental Protocol: SPIRIT 2025-Compliant Digital Trial Framework

The recently updated SPIRIT 2025 statement provides enhanced guidance for clinical trial protocols, emphasizing open science principles and patient involvement [38]. This protocol outlines the implementation of a digital twin framework aligned with these updated standards.

Methodology

Study Design and Digital Twin Architecture

- Implement a prospective, randomized controlled trial with an integrated digital twin component

- Develop patient-specific digital twins using multi-omics data, electronic health records, and continuous monitoring data from wearable devices

- Establish a control arm using standard protocol development methods versus digital twin-optimized protocol development

Data Integration and Standardization

- Collect baseline patient characteristics including demographics, clinical history, and biomarker data

- Implement FASTQ standards for genomic data, ISO/IEEE 11073 for device data, and CDISC standards for clinical data

- Establish a unified data model incorporating FHIR (Fast Healthcare Interoperability Resources) for healthcare data exchange

Model Validation and Calibration

- Conduct historical data validation using previous trial datasets

- Perform prospective validation using the first 20% of enrolled patients

- Establish model calibration protocols with monthly reconciliation against observed data

Endpoint Evaluation

Primary Endpoints

- Protocol amendment frequency compared to historical controls

- Patient recruitment rate and screen failure reduction

- Overall trial duration from first patient first visit to last patient last visit

Secondary Endpoints

- Patient retention and adherence measures

- Data quality metrics including query rates and missing data

- Site performance variability and protocol deviation frequency

Research Reagent Solutions

Table 3: Essential Research Reagents for Digital Twin Implementation

| Reagent/Technology | Specifications | Application in Digital Twin Framework |

|---|---|---|

| Multi-omics Assay Kits | Whole genome sequencing, RNA-seq, proteomics, metabolomics | Comprehensive biological profiling for twin initialization |

| Wearable Biomonitors | FDA-cleared devices with continuous ECG, activity, sleep tracking | Real-world data acquisition for twin calibration |

| Cloud Computing Platform | HIPAA-compliant, HITRUST-certified infrastructure with GPU acceleration | Digital twin deployment and computational modeling |

| AI/ML Framework | TensorFlow/PyTorch with specialized biomedical libraries | Predictive analytics and model training |

| Data Standardization Tools | CDISC validator, FHIR converter, terminology service | Interoperability and regulatory compliance |

| API Integration Suite | RESTful APIs with OAuth2 authentication, HL7 FHIR support | System integration and data exchange |

| Visualization Dashboard | Web-based, interactive analytics with real-time updating | Clinical operations monitoring and decision support |

Application Note: Predictive Patient Stratification Digital Twin

Experimental Protocol: Biomarker-Driven Cohort Optimization

This application note details the implementation of a digital twin framework for predictive patient stratification, potentially reducing Phase 2 failure due to insufficient efficacy signals.

Methodology

Digital Twin Development

- Collect multi-dimensional baseline data: genomic, transcriptomic, proteomic, and metabolomic profiles

- Incorporate clinical phenotype data including medical imaging, histopathology, and clinical assessments

- Develop mechanism-of-action specific response prediction algorithms using deep neural networks

- Train models on historical clinical trial data with known outcomes

Validation Framework

- Conduct internal validation using k-fold cross-validation (k=10) with stratified sampling

- Perform external validation using independent dataset from previous failed trials

- Establish prospective validation in ongoing clinical trials with adaptive design elements

Implementation Protocol

- Screen potential participants using preliminary biomarker assessment

- Generate individual digital twins for each screened patient

- Simulate treatment response for each twin under experimental and control conditions

- Stratify patients into high-probability and low-probability response cohorts

- Enrich study population with high-probability responders while maintaining diversity

Predictive Patient Stratification

Experimental Outcomes and Validation Metrics

Table 4: Digital Twin Performance in Predictive Stratification

| Performance Metric | Traditional Methods | Digital Twin Approach | Improvement |

|---|---|---|---|

| Positive Predictive Value | 32% | 68% | 112% increase |

| Negative Predictive Value | 71% | 89% | 25% increase |

| Screen Failure Reduction | Baseline | 44% reduction | 44% improvement |

| Enrollment Duration | 100% (reference) | 62% | 38% reduction |

| Phase 2 Success Rate | 31% | 57% | 84% relative improvement |

Implementation Roadmap and Integration Framework

Successful implementation of digital twin technology in clinical development requires systematic adoption across multiple organizational domains and operational functions.

Technical Infrastructure Requirements

Data Management Architecture

- Establish secure, scalable data lake infrastructure with appropriate governance

- Implement standardized APIs for interoperability between clinical systems

- Deploy robust identity and access management protocols for data security

- Create data quality monitoring and validation frameworks

Computational Infrastructure

- Provision high-performance computing resources for model training and simulation

- Implement containerized deployment for model reproducibility and scaling

- Establish version control systems for model management and audit trails

- Develop continuous integration/continuous deployment pipelines for model updates

Organizational Change Management

Stakeholder Engagement and Training

- Develop comprehensive training programs for clinical operations staff

- Create specialized digital twin roles including twin operators and model curators

- Establish cross-functional governance committees with executive sponsorship

- Implement change management protocols with clear communication strategies

Quality Management and Regulatory Compliance

- Develop validation frameworks for digital twin platforms aligned with FDA guidelines

- Establish model risk management protocols with regular auditing

- Create documentation standards meeting regulatory requirements

- Implement robust data provenance and lineage tracking

The implementation of digital twin technology, inspired by CEA optimization principles and aligned with SPIRIT 2025 guidelines, represents a transformative approach to addressing the persistent 90% failure rate in clinical development. By creating virtual representations of clinical trial processes, patient populations, and biological mechanisms, pharmaceutical developers can significantly improve protocol design, patient stratification, and outcome prediction. The structured frameworks and experimental protocols presented in this document provide a foundation for systematic adoption of these advanced analytical capabilities. Through transdisciplinary integration of digital twin methodologies, the pharmaceutical industry can potentially reduce clinical development costs, accelerate therapeutic advancement, and ultimately deliver innovative treatments to patients more efficiently and reliably.

Building and Applying Digital Twins: From Virtual Populations to Trial Optimization

The implementation of digital twin technology in clinical and research settings represents a paradigm shift towards more predictive and personalized medicine. A medical digital twin is a dynamic virtual replica of a patient's physiology, powered by the bidirectional flow of data from its physical counterpart. It enables in-silico simulation of health trajectories and intervention outcomes, facilitating a move from reactive treatment to preemptive healthcare. The fidelity of these models is entirely dependent on their data foundations—specifically, the robust and sophisticated integration of multi-omics data, clinical records, and real-world evidence (RWE). This integration creates a comprehensive biological narrative, turning fragmented data points into a coherent, actionable digital representation critical for optimizing clinical research and therapeutic development.

Multi-Omics Data: Capturing Biological Complexity

Multi-omics profiling dissects the biological continuum from genetic blueprint to functional phenotype, providing orthogonal yet interconnected insights into disease mechanisms. The primary omics layers form a hierarchical view of biological systems, from static DNA-level information to dynamic functional readouts.

Table 1: Core Multi-Omics Layers and Their Clinical Utility

| Omics Layer | Key Components Analyzed | Analytical Technologies | Primary Clinical/Research Utility |

|---|---|---|---|

| Genomics | DNA sequence; SNVs, CNVs, structural rearrangements [39] | Next-Generation Sequencing (NGS), Whole Genome/Exome Sequencing [40] | Identifying inherited and somatic driver mutations (e.g., EGFR, KRAS); target discovery [39] [40] |

| Transcriptomics | RNA expression levels; mRNA isoforms, fusion transcripts [39] | RNA Sequencing (RNA-seq), single-cell RNA-seq [40] | Revealing active transcriptional programs, pathway activity, and regulatory networks [39] [40] |

| Epigenomics | DNA methylation, histone modifications, chromatin accessibility [39] | Bisulfite sequencing, ChIP-seq | Uncovering gene expression regulators; diagnostic biomarkers (e.g., MLH1 hypermethylation) [39] |

| Proteomics | Protein abundance, post-translational modifications, interactions [39] | Mass spectrometry, multiplex immunofluorescence [40] | Mapping functional effectors of cellular processes and signaling pathway activities [39] [40] |

| Metabolomics | Small-molecule metabolites [39] | LC-MS, NMR spectroscopy [39] | Providing a real-time snapshot of physiological state and metabolic reprogramming (e.g., Warburg effect) [39] [41] |

Spatial omics technologies, such as spatial transcriptomics and multiplex immunohistochemistry, are increasingly critical. They preserve tissue architecture, enabling the mapping of RNA and protein expression within the context of the tumor microenvironment. This reveals cellular neighborhoods and immune contexture, which are essential for understanding therapy response in complex diseases like cancer [42] [40].

Clinical and Real-World Evidence: Contextualizing the Phenotype

Omics data alone provides an incomplete picture without rich phenotypic context. Clinical and real-world evidence ground molecular findings in patient reality.

- Electronic Health Records (EHRs): EHRs contain structured data (e.g., lab values, ICD codes) and unstructured data (e.g., physician notes), offering a historical view of diagnoses, treatments, and outcomes. Natural Language Processing (NLP) is often required to unlock insights from unstructured text [41].

- Medical Imaging and Radiomics: MRIs, CT scans, and digital pathology slides provide structural and spatial information. Radiomics extends this by extracting thousands of quantitative features from these images, turning pictures into mineable, high-dimensional data [39] [41].

- Patient-Generated Data: IoT devices, including wearables and smartphones, enable continuous, periodic monitoring of physiological parameters (e.g., activity, heart rate), providing a dynamic, real-world view of an individual's health status outside the clinic [43].

- Real-World Data (RWD) from Trials and Health Systems: This broader category includes data from patient registries, claims databases, and clinical trials, which, when analyzed, become RWE. RWE supports biomarker discovery and trial optimization by providing insights into treatment patterns and outcomes in diverse, real-world populations [42].

Data Integration Challenges and AI-Driven Strategies

The integration of these disparate data types presents formidable computational and analytical hurdles, often described by the "four Vs" of big data: Volume, Velocity, Variety, and Veracity [39].

Table 2: Key Data Integration Challenges and Mitigation Strategies

| Challenge Category | Specific Challenges | Potential AI/Technical Mitigations |

|---|---|---|

| Technical & Analytical | Data heterogeneity and high dimensionality ("curse of dimensionality") [39] [41] | Feature reduction, autoencoders for dimensionality reduction [41] |

| Batch effects and platform-specific technical noise [39] [41] | Statistical correction methods (e.g., ComBat), rigorous quality control pipelines [39] [41] | |

| Missing data from technical limitations or biological constraints [39] [41] | Advanced imputation (e.g., k-NN, matrix factorization, deep learning reconstruction) [39] [41] | |

| Computational & Operational | Petabyte-scale data storage and processing demands [39] | Cloud computing, distributed computing architectures (e.g., Galaxy, DNAnexus) [39] [41] |

| Data fragmentation across multiple vendors and systems [42] [44] | Unified data platforms, centralized biospecimen services, federated learning [42] [39] |