Deep Learning vs. Traditional Methods for R-Gene Prediction: A Comprehensive Benchmark and Future Outlook

This article provides a systematic evaluation of deep learning (DL) approaches against traditional methods for predicting plant resistance (R) genes, a critical task for advancing disease-resistant crop breeding.

Deep Learning vs. Traditional Methods for R-Gene Prediction: A Comprehensive Benchmark and Future Outlook

Abstract

This article provides a systematic evaluation of deep learning (DL) approaches against traditional methods for predicting plant resistance (R) genes, a critical task for advancing disease-resistant crop breeding. We explore the foundational principles of R-gene architecture and the limitations of alignment-based techniques, followed by an in-depth analysis of state-of-the-art DL tools like PRGminer and their performance advantages. The content addresses key challenges such as data scarcity and model interpretability, offering practical optimization strategies. Finally, we present a rigorous comparative framework for model validation, synthesizing evidence from cross-validation and independent benchmarks to guide researchers and biotech professionals in selecting the most effective strategies for precision breeding and agricultural biotechnology.

The Genetic Basis of Plant Immunity: Understanding R-Genes and Traditional Prediction Pitfalls

Plant innate immunity is a sophisticated, multi-layered system that enables plants to defend themselves against a vast array of pathogens, including bacteria, fungi, viruses, and nematodes. This immune system is built upon two primary tiers of pathogen recognition: PAMP-Triggered Immunity (PTI) and Effector-Triggered Immunity (ETI) [1] [2]. PTI constitutes the first line of defense, where cell-surface pattern recognition receptors (PRRs) identify conserved pathogen-associated molecular patterns (PAMPs) [3]. The second line, ETI, involves intracellular resistance (R) proteins that detect specific pathogen effector proteins, leading to a robust immune response [1] [2]. Plant R-genes are the cornerstone of ETI, and their identification and characterization are critical for understanding plant immunity and breeding disease-resistant crops. This guide compares the performance of traditional bioinformatics methods with modern deep learning (DL) approaches in predicting and classifying these crucial R-genes.

The following table details key reagents, databases, and computational tools essential for research in R-gene prediction and plant immunity.

Table 1: Key Research Reagent Solutions for R-gene Studies

| Item Name | Type/Category | Primary Function in Research |

|---|---|---|

| PRGdb | Curated Database | A specialized repository for plant resistance genes that supports annotation and comparative genomic studies [2]. |

| InterProScan | Bioinformatics Software | A tool for scanning protein sequences against multiple databases to identify functional domains and motifs [2]. |

| HMMER3 | Bioinformatics Software | Uses profile hidden Markov models for sensitive protein domain detection and sequence homology searches [2]. |

| PRGminer | Deep Learning Tool | A high-throughput tool for predicting and classifying plant resistance genes from protein sequences [1]. |

| NLR-Annotator | Bioinformatics Pipeline | A computational pipeline designed for the genome-wide identification and annotation of NLR-type resistance genes [2]. |

Core Signaling Pathways in Plant Innate Immunity

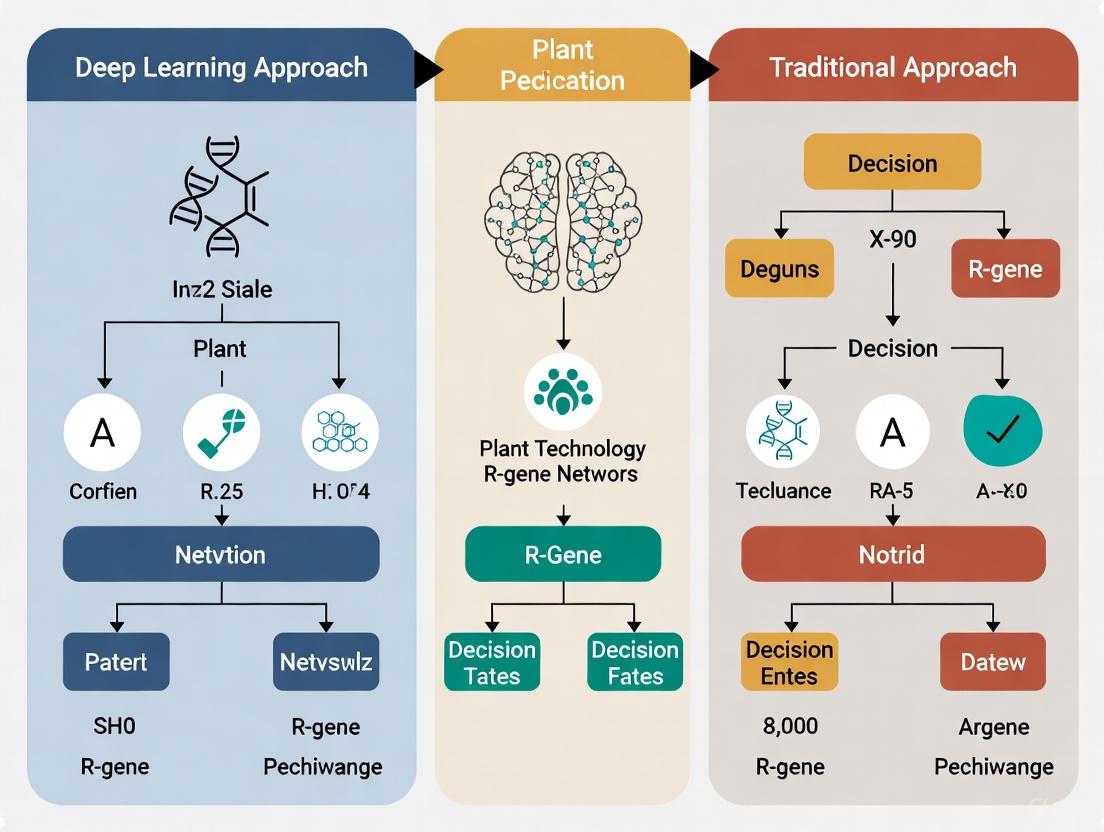

The plant immune system operates through a structured surveillance mechanism. The diagram below illustrates the logical sequence of pathogen recognition and immune activation, from initial detection at the cell surface to the induction of defense responses.

Methodologies for R-gene Identification: A Comparative Analysis

The prediction of resistance genes in plants has evolved from traditional, alignment-based methods to modern, artificial intelligence-driven approaches. The following sections detail the experimental protocols for these two primary methodologies.

Experimental Protocol 1: Traditional Domain-Based Bioinformatics

This approach relies on the identification of conserved structural domains characteristic of known R-proteins [2].

- Data Input: Provide the tool with a protein sequence or a whole proteome/genome.

- Domain Scanning: The sequence is scanned against profile hidden Markov models (HMMs) of known R-gene domains (e.g., NBS, LRR, TIR, CC) using tools like HMMER3 or InterProScan [2].

- Architecture Analysis: The tool checks for the presence and order of specific domain combinations that define R-gene classes (e.g., CNL, TNL, RLK).

- Classification & Output: Based on the domain architecture, the sequence is classified into an R-gene class or rejected as a non-R-gene.

Experimental Protocol 2: Modern Deep Learning-Based Prediction

Deep learning models like PRGminer bypass sequence alignment and instead learn to identify complex, hierarchical patterns directly from raw sequence data [1].

- Data Preprocessing: Protein sequences are converted into numerical feature vectors. Common encodings include dipeptide composition, which captures the frequency of adjacent amino acid pairs [1].

- Model Architecture: The encoded sequences are fed into a deep learning network, typically a Convolutional Neural Network (CNN) or a Multi-Layer Perceptron (MLP). These networks automatically extract relevant features for classification [1] [2].

- Two-Phase Prediction:

- Output: The tool provides the prediction result and the classification category.

The workflow below contrasts the logical steps of these two primary methodologies.

Performance Comparison: Deep Learning vs. Traditional Methods

Quantitative benchmarks are essential for evaluating the efficacy of computational tools. The table below summarizes the performance of deep learning models against traditional methods and baselines in various prediction tasks.

Table 2: Performance Benchmarking of R-gene Prediction and Related Tasks

| Method Category | Representative Tool / Model | Key Performance Metric | Reported Result | Experimental Context |

|---|---|---|---|---|

| Deep Learning | PRGminer (Phase I) [1] | Prediction Accuracy | 98.75% | k-fold training/test on R-genes |

| Deep Learning | PRGminer (Phase I) [1] | Independent Test Accuracy | 95.72% | Validation on a separate dataset |

| Deep Learning | PRGminer (Phase II) [1] | Overall Classification Accuracy | 97.55% | k-fold training/test for 8 R-gene classes |

| Deep Learning | Hybrid ML/DL Models [4] | GRN Prediction Accuracy | >95% | Holdout test on Arabidopsis, poplar, maize |

| Traditional Baseline | Simple Additive Model [5] | Perturbation Effect Prediction | Outperformed DL models | Benchmark on transcriptome change prediction |

| Traditional Baseline | 'No Change' Model [5] | Perturbation Effect Prediction | Outperformed or matched DL models | Benchmark on transcriptome change prediction |

The data presented allows for a direct comparison between traditional and deep learning-based approaches for R-gene discovery. Deep learning tools like PRGminer demonstrate exceptional accuracy, exceeding 95% in both identifying and classifying R-genes [1]. Their ability to learn complex sequence patterns without relying solely on pre-defined domain rules makes them particularly powerful for discovering novel R-genes that may have low sequence homology to known genes [1] [2]. Furthermore, hybrid models that combine deep learning with machine learning have shown over 95% accuracy in constructing gene regulatory networks, which are vital for understanding the immune signaling cascades initiated by R-proteins [4].

However, the performance of deep learning is not universal. In the challenging task of predicting gene expression changes from genetic perturbations, simple linear baselines and even a "no change" model have been shown to match or outperform sophisticated deep learning foundation models [5]. This highlights that the superiority of a method is highly task-dependent. Deep learning models typically require large, high-quality datasets and significant computational resources, and they can sometimes struggle with interpretability compared to more straightforward domain-based analysis [2].

In conclusion, deep learning represents a transformative advance for high-throughput R-gene identification and classification, offering high accuracy and the potential for novel discovery. Traditional methods and simple baselines, however, remain relevant for specific tasks and provide a valuable benchmark. The optimal research strategy often involves a synergistic approach, leveraging the strengths of both methodologies to accelerate the discovery of R-proteins and deepen our understanding of plant immunity, ultimately contributing to the development of disease-resistant crops [2].

Plant resistance (R) genes encode proteins that are crucial components of the plant immune system, providing defense against a diverse array of pathogens including bacteria, fungi, viruses, and nematodes [6] [2]. These genes enable plants to recognize specific pathogen-derived molecules and initiate robust defense responses, such as the hypersensitive response and systemic acquired resistance [6]. Among the various classes of R genes, the most predominant are the nucleotide-binding site leucine-rich repeat (NBS-LRR) proteins, which constitute approximately 80% of all known R genes [6] [2]. The identification and characterization of these genes have been transformed by computational approaches, creating a fundamental divide between traditional domain-based methods and emerging deep learning techniques.

The computational prediction of R genes represents a critical frontier in plant genomics and disease resistance breeding. As pathogens continuously evolve to overcome plant defenses, the rapid identification of novel R genes has become increasingly important for developing durable disease-resistant crop varieties [2]. This article provides a comprehensive comparison of traditional and deep learning-based methods for R-gene prediction, focusing on their approaches to deciphering the complex architecture of key domains including NBS, LRR, TIR, and CC. We evaluate these methodologies through the lens of performance metrics, experimental protocols, and practical applicability for researchers and breeders.

Fundamental Domains of R-Gene Architecture

Core Structural Domains and Their Functions

R proteins, particularly the NBS-LRR class, contain specific domain architectures that define their functional mechanisms in pathogen recognition and signal transduction. The central nucleotide-binding site (NBS) domain is a highly conserved region of approximately 300 amino acids that plays a critical role in signal transduction ATPase activity [6] [2]. This domain contains several conserved motifs (P-loop, RNBS-A, kinase-2, RNBS-B, RNBS-C, and GLPL) essential for ATP/GTP binding and hydrolysis, which regulate the activation of defense signaling [7]. The C-terminal leucine-rich repeat (LRR) domain typically consists of 10-40 repeating units that provide pathogen recognition specificity through protein-protein interactions [2] [7]. The remarkable variability of the LRR domain enables plants to recognize a vast repertoire of evolving pathogen effectors.

The N-terminal domains define the major subclasses of NBS-LRR proteins. The Toll/interleukin-1 receptor (TIR) domain characterizes the TNL subclass and is involved in signal recognition and transduction [8] [7]. In contrast, the coiled-coil (CC) domain defines the CNL subclass and facilitates protein-protein interactions [8] [7]. A less common RPW8 domain appears in some NBS-LRR proteins and is associated with broad-spectrum resistance [6] [7]. Additionally, truncated forms lacking complete domains exist, such as TN (TIR-NBS), CN (CC-NBS), and N (NBS-only) proteins, which may function as adaptors or regulators for typical NBS-LRR proteins [8].

Genomic Distribution and Structural Diversity

NBS-LRR genes demonstrate distinctive genomic organization patterns across plant species. They are frequently distributed unevenly across chromosomes, often forming physical clusters driven by tandem duplications and genomic rearrangements [7]. Research on pepper (Capsicum annuum) revealed that 54% of NBS-LRR genes form 47 gene clusters, with the largest cluster containing eight genes on chromosome 3 [7]. Similarly, studies in Perilla citriodora identified 535 NBS-LRR genes with notable clusters on chromosomes 2, 4, and 10 [6]. This clustering pattern facilitates the rapid evolution of novel recognition specificities through gene duplication and sequence exchange.

The relative abundance of NBS-LRR subclasses varies significantly across plant lineages. Angiosperms generally show a predominance of nTNL (non-TIR-NBS-LRR) genes over TNL genes, with complete loss of TNL genes observed in the Poaceae family of monocots and occasionally in some dicots like Mimulus guttatus [9]. In Nicotiana benthamiana, from 156 identified NBS-LRR homologs, researchers classified 5 as TNL-type, 25 as CNL-type, 23 as NL-type, 2 as TN-type, 41 as CN-type, and 60 as N-type proteins [8]. This structural diversity reflects lineage-specific adaptations and evolutionary pressures from pathogen communities.

Traditional Computational Methods for R-Gene Identification

Domain-Based Bioinformatics Pipelines

Traditional methods for R-gene identification rely primarily on sequence similarity and domain architecture analysis using established bioinformatics tools. These approaches utilize Hidden Markov Models (HMMs) to scan protein sequences for characteristic R-gene domains [6] [8] [2]. The standard workflow involves searching for the conserved NBS domain (NB-ARC: PF00931 in the Pfam database) using tools like HMMER, followed by identification of associated domains (TIR, CC, LRR) using complementary approaches [6] [8]. The CC domain is often identified using motif-based tools like NLR-Annotator or COILS, while TIR domains are detected through HMM profiles [6].

These domain-based pipelines have been successfully applied across numerous plant species. For example, in a study of Nicotiana benthamiana, researchers used HMMsearch with an expectation value cutoff of E-values < 1*10^-20 to identify 156 NBS-LRR homologs, which were subsequently validated using SMART, CDD, and Pfam domain analysis [8]. Similarly, the PRGA database system employs a sophisticated prediction pipeline that applies different statistical thresholds for various domains: "1e-20" for NBS, "1e-10" for TIR/LZ, "1e-5" for STK, and "1e-1" for LRR domains [9].

Several specialized databases support traditional R-gene identification and comparative analysis. These include PRGdb, the NBS-LRR Receptor database, SolRgene, RiceMetaSysB, LDRGDb, PlantNLRatlas, and RefPlantNLR [2]. These resources compile experimentally validated R-genes and predicted R-genes from public databases, enabling researchers to perform cross-species comparisons and evolutionary analyses. The PRGA database further provides RGA annotations, prediction tools, and domain profile analysis for 22 sequenced plant species, offering insights into R-gene evolution across the plant kingdom [9].

Table 1: Traditional Domain-Based Tools for R-Gene Prediction

| Tool/Database | Methodology | Application | Reference |

|---|---|---|---|

| HMMER | Hidden Markov Models | Domain identification (NBS, TIR, LRR) | [6] [8] |

| PfamScan | Domain database search | Conserved domain identification | [8] [1] |

| COILS | Coiled-coil prediction | CC domain identification | [7] [9] |

| MEME | Motif discovery | Conserved motif analysis | [6] [8] |

| PRGdb | Curated database | Experimentally validated R-genes | [2] |

| NLR-Annotator | Motif-based approach | CC domain and NLR identification | [6] |

Figure 1: Traditional Domain-Based R-Gene Prediction Workflow

Deep Learning Approaches for R-Gene Prediction

Neural Network Architectures for Sequence Analysis

Deep learning approaches represent a paradigm shift in R-gene prediction, moving from similarity-based methods to classification-based frameworks that learn complex patterns directly from sequence data. Convolutional Neural Networks (CNNs) have demonstrated particular effectiveness for this task, excelling at capturing local motif-level features in protein sequences [1] [10]. These architectures process encoded protein sequences through multiple layers to extract hierarchical features, with early layers capturing basic sequence patterns and deeper layers integrating these into higher-order representations relevant to R-gene function [10].

More recently, Transformer-based architectures have been applied to genomic sequences, offering enhanced capacity to capture long-range dependencies in DNA and protein sequences [10]. Models such as DNABERT and Nucleotide Transformer employ self-supervised pre-training on large-scale genomic sequences before fine-tuning for specific prediction tasks [10]. However, comparative analyses suggest that CNN models currently outperform Transformer-based architectures for variant effect prediction in enhancer regions, though fine-tuning significantly narrows this performance gap [10].

Implementation Frameworks and Performance Metrics

The PRGminer tool exemplifies the deep learning approach to R-gene prediction, implementing a two-phase classification framework [1]. In Phase I, the model distinguishes R-genes from non-R-genes using dipeptide composition features, achieving 98.75% accuracy in k-fold testing and 95.72% on independent validation with a Matthews correlation coefficient of 0.91 [1]. Phase II further classifies predicted R-genes into eight specific classes (CNL, TNL, Kinase, RLP, LECRK, RLK, LYK, TIR) with 97.21% accuracy on independent testing [1].

Hybrid models that combine convolutional neural networks with traditional machine learning have also demonstrated superior performance. In gene regulatory network prediction, hybrid CNN-ML models consistently outperformed traditional methods, achieving over 95% accuracy on holdout test datasets and more effectively ranking key regulatory transcription factors [4]. These approaches benefit from the feature learning capabilities of deep learning combined with the classification strength and interpretability of machine learning.

Table 2: Deep Learning Tools for R-Gene Prediction

| Tool/Model | Architecture | Performance Metrics | Application Scope |

|---|---|---|---|

| PRGminer | Deep Learning (Two-phase) | 98.75% accuracy (k-fold), 95.72% (independent) | Plant R-gene identification and classification |

| Hybrid CNN-ML | Convolutional Neural Network + Machine Learning | >95% accuracy | Gene regulatory network prediction |

| DeepSEA | CNN | Variant effect prediction | Enhancer activity and regulatory variants |

| DNABERT | Transformer | Cell-type-specific regulatory effects | Noncoding variant interpretation |

| TREDNet | CNN | Regulatory impact prediction | Enhancer variant effects |

Comparative Analysis: Performance Evaluation

Accuracy and Efficiency Metrics

Direct comparisons between traditional and deep learning approaches reveal significant differences in prediction accuracy and efficiency. While traditional domain-based methods typically achieve 70-85% accuracy for NBS-LRR gene identification, deep learning models like PRGminer demonstrate substantially higher performance, achieving 95-98% accuracy in controlled evaluations [1]. This performance advantage is particularly evident for sequences with low homology to known R-genes, where similarity-based methods often fail [1].

The performance differential varies according to the specific prediction task. For enhancer variant prediction, CNN models such as TREDNet and SEI consistently outperform other architectures, while hybrid CNN-Transformer models excel at causal variant prioritization within linkage disequilibrium blocks [10]. However, a comprehensive evaluation of polygenic scores found that neural network models provided only minimal improvements over linear regression models, suggesting that the advantage of deep learning may be task-dependent [11].

Handling of Low-Homology Sequences and Novel Discoveries

A critical limitation of traditional methods is their reliance on sequence similarity, which impedes the identification of novel R-gene classes with divergent sequences [1]. Deep learning approaches overcome this constraint by learning fundamental characteristics of R-genes directly from sequence data, enabling the discovery of previously unrecognized R-gene families [1]. This capability is particularly valuable for wild plant species and crop relatives, where limited prior annotation exists.

Transfer learning strategies further enhance the applicability of deep learning models to non-model species. By leveraging knowledge from data-rich species like Arabidopsis thaliana, models can be effectively applied to species with limited training data [4]. This cross-species learning approach demonstrates the potential for deep learning to accelerate R-gene discovery in less-characterized plant genomes.

Table 3: Performance Comparison of R-Gene Prediction Methods

| Method Category | Representative Tools | Accuracy Range | Strengths | Limitations |

|---|---|---|---|---|

| Traditional Domain-Based | HMMER, PfamScan, COILS | 70-85% | Interpretable, well-established | Limited to known domains, lower accuracy |

| Machine Learning | SVM, Random Forests | 80-90% | Handles complex features | Limited nonlinear capture |

| Deep Learning | PRGminer, CNN models | 95-98% | High accuracy, discovers novel genes | Data hungry, computationally intensive |

| Hybrid Models | CNN-ML combinations | >95% | Balances performance and interpretability | Implementation complexity |

Figure 2: Deep Learning-Based R-Gene Prediction Workflow

Experimental Protocols and Validation Frameworks

Standardized Benchmarking Approaches

Rigorous evaluation of R-gene prediction methods requires standardized benchmarking frameworks that control for dataset composition and evaluation metrics. Comparative analyses should employ consistent training and testing datasets, such as the compendium datasets described for Arabidopsis thaliana (22,093 genes across 1,253 samples), poplar (34,699 genes across 743 samples), and maize (39,756 genes across 1,626 samples) [4]. Performance metrics should include accuracy, precision, recall, F1-score, and Matthews correlation coefficient to provide a comprehensive assessment of prediction quality [1].

For variant effect prediction, benchmarking should utilize diverse experimental datasets including MPRA (Massively Parallel Reporter Assays), raQTL (reporter assay quantitative trait loci), and eQTL (expression quantitative trait loci) data, which collectively profile thousands of single-nucleotide polymorphisms across multiple cell lines [10]. These datasets enable evaluation of model performance for distinct but related tasks: predicting the direction and magnitude of regulatory impact, and identifying causal variants within linkage disequilibrium blocks [10].

Experimental Validation Strategies

Computational predictions require experimental validation to confirm biological functionality. Yeast one-hybrid (Y1H) assays, DNA electrophoretic mobility shift assays (EMSA), chromatin immunoprecipitation and sequencing (ChIP-seq), and DNA affinity purification and sequencing (DAP-seq) provide experimental confirmation of transcription factor-target gene relationships [4]. However, these approaches are labor-intensive and low-throughput, limiting their application to prioritized candidate genes.

Functional validation through transgenic expression or gene silencing remains the gold standard for confirming R-gene activity. The successful transfer of R genes between species, such as the introduction of Rpi-blb2 from Solanum bulbocastanum into cultivated potato, which provides broad-spectrum protection against Phytophthora infestans, demonstrates the practical application of R-gene discovery [2]. Such validation is essential for translating computational predictions into breeding applications.

Research Reagent Solutions for R-Gene Studies

Table 4: Essential Research Reagents and Resources for R-Gene Analysis

| Reagent/Resource | Category | Function/Application | Examples/Sources |

|---|---|---|---|

| Pfam Database | Bioinformatics Database | Domain identification and annotation | NB-ARC (PF00931) domain profiles |

| HMMER Suite | Bioinformatics Tool | Hidden Markov Model searches | Domain identification, RGA prediction |

| MEME Suite | Bioinformatics Tool | Motif discovery and analysis | Conserved motif identification in NBS domains |

| PRGminer | Deep Learning Tool | R-gene prediction and classification | Webserver and standalone tool |

| PRGdb | Specialized Database | Curated R-gene information | Experimentally validated R-genes |

| PlantNLRatlas | Specialized Database | NLR gene resource | Comparative analysis of NLR genes |

| Phytozome | Genomic Database | Plant genomic sequences | Multi-species gene data |

| NCBI SRA | Data Repository | RNA-seq and genomic data | Training data for machine learning models |

| Trimmomatic | Bioinformatics Tool | Read preprocessing | Adapter removal, quality control |

| STAR | Bioinformatics Tool | RNA-seq alignment | Reference-based read mapping |

The computational prediction of R genes has evolved significantly from traditional domain-based methods to sophisticated deep learning approaches. While traditional methods provide interpretable results based on biologically meaningful domains, deep learning models offer superior accuracy, particularly for sequences with low homology to known R-genes. The integration of these approaches through hybrid models represents a promising direction, combining the strengths of both methodologies.

Future advances in R-gene prediction will likely focus on several key areas: improved model interpretability to extract biological insights from deep learning predictions, expansion of curated training datasets encompassing diverse plant species, development of specialized architectures adapted to genomic sequence analysis, and implementation of transfer learning frameworks to enable knowledge transfer between well-characterized and non-model species [4] [2] [12]. As these computational methods continue to mature, they will play an increasingly vital role in accelerating the development of disease-resistant crops, supporting sustainable agriculture, and enhancing global food security.

A Comparative Analysis for Genomic Prediction

In the field of genomics and protein function prediction, researchers are equipped with a diverse toolkit. Traditional alignment-based methods like BLAST and HMMER have long been the standard for sequence analysis and homology detection. Alongside them, machine learning approaches, particularly Support Vector Machines (SVM), have emerged as powerful tools for classification and prediction tasks. This guide provides an objective comparison of their performance, supported by experimental data, to inform method selection for research and development.

Performance Comparison at a Glance

The table below summarizes the performance of these methods as reported in various genomic studies.

Table 1: Comparative performance of BLAST, HMMER, and SVM across different biological applications.

| Method | Reported Accuracy/Performance | Application Context | Key Strengths |

|---|---|---|---|

| BLASTp | Consistently high performance for GO term prediction [13] | Protein Gene Ontology (GO) term prediction [13] | High sensitivity, reliable homology detection [13] |

| HMMER (phmmer) | Lower performance compared to BLASTp and MMseqs2 in some assessments [13] | Protein Gene Ontology (GO) term prediction [13] | Powerful for detecting remote homology [14] |

| SVM | F1 score = 0.934, Accuracy = 0.939 [15] | Flowering-time gene prediction in plants [15] | High accuracy for complex classification, handles non-linear relationships [15] [16] |

| SVM | ~89% accuracy (binary), >97% accuracy (multi-class) [17] | Herbicide-resistant gene prediction [17] | Effective with k-mer features for nucleotide sequences [17] |

| SVM | Competitive with GBLUP and BayesR, best in 2 of 8 datasets [16] | Genomic prediction in pig and maize populations [16] | Flexible with different kernels, robust performance [16] |

Detailed Experimental Protocols

Understanding the methodology behind performance benchmarks is crucial for interpretation and replication.

Protocol: Homology-Based Function Prediction with BLAST & HMMER

This protocol outlines the standard workflow for transferring Gene Ontology (GO) terms to a query protein via sequence homology [13].

Sequence Search:

- Tool Selection: Choose a sequence search tool (e.g., BLASTp, DIAMOND, MMseqs2, phmmer/HMMER).

- Database: Search the query protein sequence against a database of annotated template proteins.

- Parameter Settings: Note that default parameters may not be optimal. Performance can be significantly improved with correct parameter settings [13].

- Output: Generate a list of homologous hits with alignment scores and E-values.

Scoring and Function Transfer:

- Scoring Function: Derive a prediction score for each GO term based on the homologous hits. A scoring function that aggregates information from multiple hits (e.g., the S1 function used in tools like GOLabeler and DeepGOPlus) often outperforms relying solely on the top hit [13].

- Assignment: Assign GO terms to the query protein that exceed a predetermined score threshold.

Protocol: Flowering-Time Gene Prediction with SVM

This protocol details the specific workflow used to develop the FTGD (Flowering-Time Gene) prediction tool [15].

Data Preparation:

- Positive Set: Retrieve 628 known flowering-time associated protein sequences from the FLOR-ID database for Arabidopsis thaliana.

- Negative Set: Define non-flowering-time genes.

- Feature Extraction: Encode protein sequences using a combination of K-mer composition and Pseudo Amino Acid Composition (PseAAC) to generate numeric feature vectors [15].

Model Training and Validation:

- Algorithm: Implement a Support Vector Machine (SVM) model. The specific model reported as best-performing was the SVM-Kmer-PC-PseAAC.

- Hyperparameter Tuning: Optimize model parameters (e.g., kernel type, regularization) for best performance.

- Validation: Evaluate the model using standard metrics, achieving an F1 score of 0.934 and accuracy of 0.939 [15].

Prediction and Deployment:

- Tool Creation: Package the trained model into a prediction tool called

FTAGs_Find. - Database Construction: Use the tool to predict FTAGs across 81 plant species and create a public database (FTAGdb).

- Tool Creation: Package the trained model into a prediction tool called

Workflow Visualization

The following diagram illustrates the typical workflows for alignment-based methods and SVM, highlighting their distinct approaches.

The Scientist's Toolkit: Key Research Reagents & Materials

Successful implementation of these computational methods relies on several key resources.

Table 2: Essential research reagents and resources for genomic prediction studies.

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| FLOR-ID Database [15] | Biological Database | Provides curated data on flowering-time genes for training and validating prediction models. |

| Annotated Protein Sequence Database (e.g., UniProt, NCBI) | Biological Database | Serves as the reference for homology-based function transfer using BLAST/HMMER. |

| Pfam Database [15] | Protein Family Database | Contains hidden Markov models (HMMs) for identifying protein domains and families. |

| CD-HIT Suite [15] | Computational Tool | Reduces sequence dataset redundancy to minimize bias in model training and evaluation. |

| Pse-in-One [15] | Computational Tool | Generates various modes of pseudo components for representing DNA, RNA, and protein sequences as feature vectors. |

| SVM Library (e.g., LibSVM) | Software Library | Provides the core algorithms and functions for implementing Support Vector Machine models. |

When evaluating the presented data, consider the following to guide your method selection:

- For Well-Defined Homology: BLAST remains a highly robust and sensitive choice for tasks where strong sequence similarity exists, and its performance can be optimized with proper parameters [13].

- For Complex Classification: SVM excels in tasks where the relationship between sequence and function is complex and not easily captured by direct alignment, often achieving high accuracy (e.g., >90%) [15] [17].

- Consider the Trade-Offs: BLAST and HMMER are conceptually straightforward and provide interpretable results based on homologous matches. SVM models can capture non-linear relationships but may require more effort in feature engineering and model tuning [16].

- No Universal Winner: The performance can be dataset-dependent. As one study on genomic prediction concluded, "there is no universal prediction model" [16].

In the context of R-gene prediction, these traditional methods form a solid baseline. The choice between them hinges on the specific biological question, the nature of the available data, and the desired balance between interpretability and predictive power.

The accurate prediction of resistance genes (R-genes) is crucial for understanding plant defense mechanisms and advancing disease resistance breeding. For years, traditional bioinformatics approaches have served as the backbone for genome annotation and R-gene identification. These methods primarily rely on sequence homology and protein domain analysis, employing tools such as BLAST, InterProScan, and HMMER to identify characteristic domain architectures in nucleotide-binding leucine-rich repeat (NB-LRR) genes [18] [1]. While these conventional methods have contributed significantly to our understanding of plant genomes, they face two fundamental challenges that limit their effectiveness: low homology in rapidly evolving R-gene families and systematic issues with fragmented annotations caused by complex genomic architectures [19]. These limitations become particularly problematic when studying non-model organisms or recently sequenced species where reference data is sparse. This review objectively examines these critical limitations through comparative experimental data and highlights how emerging deep learning approaches address these specific challenges.

Experimental Comparison: Traditional vs. Modern Methods for R-gene Prediction

Quantitative Performance Assessment

Table 1: Comparative performance of traditional and homology-based methods for NB-LRR gene prediction in the tomato genome

| Method | Total Full-length NB-LRR Genes Identified | CC-NB-LRR (CNL) Genes | TIR-NB-LRR (TNL) Genes | Key Limitations |

|---|---|---|---|---|

| Protein Domain Search (PDS) | ~170 | 151 | 19 | High false negatives due to repeat masking; fragmented predictions |

| Manual RenSeq Annotation | 221 | 193 | 26 | Labor-intensive; requires specialized expertise |

| Homology-based R-gene Prediction (HRP) | 231 | 198 | 31 | Limited by quality of initial gene set |

| Deep Learning (PRGminer) | N/A | N/A | N/A | 95.72% independent test accuracy; 0.91 MCC [1] |

Table 2: Method capability comparison for addressing key challenges

| Method Type | Handles Low Homology | Avoids Fragmentation | Automation Level | Computational Efficiency |

|---|---|---|---|---|

| Traditional PDS | Limited | Poor | Medium | High |

| HRP Method | Moderate | Good | Medium | Medium |

| Deep Learning | Excellent | Excellent | High | Variable |

Experimental Protocols for Key Studies

Homology-Based R-gene Prediction (HRP) Protocol

The HRP method employs a two-level homology search strategy to overcome limitations of traditional approaches [19]. The experimental workflow consists of:

Initial Domain Search: An initial set of R-genes is identified within the automated gene prediction set using protein domain-based search (PDS) with standard domain databases.

Full-length Homology Search: These identified R-genes serve as queries for comprehensive homology searches against the entire genome assembly using tools such as BLAST.

Gene Model Reconstruction: The genomic regions identified through homology searches are subjected to specialized gene prediction algorithms to reconstruct complete gene models, bypassing the limitations of automated annotation pipelines.

Validation: Performance is assessed through comparison with manually curated gold-standard datasets such as the tomato RenSeq annotation, measuring recovery of known genes and identification of novel candidates.

This protocol was validated on multiple plant genomes including tomato (Solanum lycopersicum), three Beta species, and five Cucurbita species, demonstrating consistent improvements over conventional PDS approaches [19].

Deep Learning (PRGminer) Experimental Protocol

PRGminer implements a two-phase deep learning framework for R-gene identification and classification [1]:

Data Collection and Preparation: R-gene and non-R-gene protein sequences are collected from public databases including Phytozome, Ensemble Plants, and NCBI.

Sequence Representation: Protein sequences are encoded using dipeptide composition and other feature representation methods optimized for deep learning architectures.

Model Architecture: A deep neural network is implemented with:

- Phase I: Binary classification of input protein sequences as R-genes or non-R-genes

- Phase II: Multi-class classification of predicted R-genes into eight specific classes (CNL, TNL, RNL, KIN, RLP, LECRK, RLK, LYK)

Training and Validation: The model is trained using k-fold cross-validation and evaluated on independent test sets using accuracy, Matthews correlation coefficient (MCC), and other statistical measures.

The protocol achieves 98.75% accuracy in k-fold testing and 95.72% on independent testing for Phase I, with MCC values of 0.98 and 0.91 respectively [1].

Critical Analysis of Traditional Approach Limitations

The Low Homology Challenge

Traditional homology-based methods face fundamental limitations when analyzing R-genes due to their exceptional evolutionary dynamics:

Rapid Sequence Diversification: R-genes evolve rapidly to counter adapting pathogens, resulting in sequences with low conservation across species [19]. Standard similarity thresholds used in BLAST and other alignment tools often fail to detect these distantly related homologs.

Species-Specific Diversification: NB-LRR genes have diversified in a species-specific manner, preventing the establishment of universal detection standards that work effectively across diverse plant taxa [19].

Limited Representation in Databases: Conventional methods depend on reference databases that underrepresent R-gene diversity, particularly for non-model organisms or recently sequenced species.

Experimental evidence demonstrates that traditional domain search methods identify significantly fewer full-length NB-LRR genes compared to more sophisticated approaches. In tomato, conventional PDS methods identified only 170 full-length NB-LRR genes compared to 231 found by the HRP method [19].

The Fragmented Annotation Problem

The genomic architecture of R-gene clusters creates systematic issues in automated annotation pipelines:

Complex Gene Organization: R-genes are typically organized in clusters of tandemly duplicated genes, which can cause assembly collapse and fragmentation during genome assembly processes [19] [1].

Repeat Masking Interference: Standard annotation pipelines employ repeat masking using transposable element databases, which often mistakenly mask R-gene loci due to their repetitive nature [19].

Low Expression Levels: Many R-genes exhibit low or condition-specific expression, providing insufficient evidence for expression-based gene prediction algorithms that rely on RNA-Seq data [19].

Multi-Domain Architecture Complexity: The complex exon-intron structure of multi-domain R-genes challenges ab initio gene predictors, which frequently produce incomplete or fragmented models [19].

These limitations collectively result in annotation sets that miss substantial portions of the R-gene repertoire or contain fragmented gene models that obscure functional analysis.

Diagram 1: Fragmentation challenges and solutions in R-gene annotation. Traditional approaches struggle with repeat-induced fragmentation, while deep learning methods can recognize patterns despite masking and assembly artifacts.

Table 3: Key experimental resources for R-gene identification and validation

| Resource Type | Specific Tools/Databases | Primary Function | Key Applications |

|---|---|---|---|

| Genome Annotation Tools | Maker, Blast2GO, InterProScan, GeneMark | Automated gene prediction and functional annotation | Initial genome annotation; functional inference |

| Specialized R-gene Databases | OMA database, Phytozome, Ensemble Plants | Reference data for homologous gene families | Comparative genomics; evolutionary analysis |

| Deep Learning Frameworks | PRGminer, custom TensorFlow/PyTorch implementations | R-gene prediction using neural networks | Novel R-gene discovery; classification |

| Quality Assessment Tools | OMArk, BUSCO | Proteome quality assessment and completeness evaluation | Annotation validation; error detection |

| Experimental Validation Resources | RenSeq, AgrenSeq | Targeted sequencing for resistance gene enrichment | Experimental confirmation; allele mining |

The critical limitations of traditional bioinformatics approaches—particularly their vulnerability to low homology and fragmented annotations—represent significant barriers to comprehensive R-gene discovery. Experimental evidence demonstrates that homology-based methods like HRP can identify up to 45% more full-length NB-LRR genes compared to conventional domain search approaches [19]. Meanwhile, deep learning frameworks such as PRGminer achieve prediction accuracy exceeding 95% on independent test sets [1], largely by overcoming the dependency on sequence similarity that plagues traditional methods. As the field progresses, integration of these advanced computational approaches with experimental validation will be essential for unlocking the complete R-gene repertoire in diverse plant species, ultimately accelerating disease resistance breeding and sustainable crop protection strategies.

Harnessing Deep Learning Architectures for High-Throughput R-Gene Discovery

The field of genomics is undergoing a profound transformation driven by the integration of deep learning methodologies. As high-throughput sequencing technologies continue to generate vast amounts of complex biological data, researchers are increasingly turning to sophisticated computational approaches to decipher the intricate language of DNA, RNA, and proteins. Among these approaches, Convolutional Neural Networks (CNNs) and Transformer-based architectures have emerged as particularly powerful tools for tackling diverse genomic challenges. These deep learning models have demonstrated remarkable capabilities in identifying subtle patterns in nucleotide sequences, predicting regulatory elements, annotating gene functions, and elucidating protein structures.

The shift from traditional bioinformatics methods to deep learning represents a fundamental change in how we extract meaning from biological sequences. While conventional approaches often rely on manually curated features and predefined rules, deep learning models can automatically discover relevant features directly from raw genomic data, capturing complex, non-linear relationships that might escape human experts or traditional algorithms. This paradigm shift is particularly evident in plant genomics and resistance gene (R-gene) prediction, where the exceptional diversity of gene families and the challenge of limited annotated data have motivated the development of specialized architectures.

This guide provides a comprehensive comparison of CNN and Transformer architectures applied to genomic tasks, with particular emphasis on their utility for R-gene prediction research. We examine their performance across standardized benchmarks, detail their experimental protocols, and provide practical guidance for researchers seeking to leverage these powerful tools in their genomic investigations.

Architectural Foundations: CNNs, Transformers, and Emerging Alternatives

Convolutional Neural Networks (CNNs) in Genomics

CNNs employ a hierarchical structure of convolutional layers that systematically scan input sequences to detect increasingly complex features. In genomic applications, their local connectivity and translation invariance make them exceptionally well-suited for identifying conserved motifs and regulatory elements regardless of their position in a sequence. Lower layers typically recognize basic nucleotide patterns, while deeper layers integrate these into more complex representations of functional elements. Architectures such as DeepSEA, DeepBind, and TREDNet exemplify the CNN approach in genomics, demonstrating particular strength in tasks involving localized sequence features including transcription factor binding sites and chromatin accessibility profiles [20] [12].

Transformer Architectures and Genomic Language Models

Transformers utilize a self-attention mechanism to weigh the importance of different sequence elements when making predictions. This architecture enables the model to capture long-range dependencies throughout genomic sequences, effectively considering interactions between distant nucleotides that may collaboratively influence function. Models like DNABERT, Nucleotide Transformer, and Enformer represent nucleotides or k-mers as tokens, applying transformer blocks to build contextualized representations [21]. The pre-training phase often employs masked language modeling, where the model learns to predict hidden portions of sequences based on surrounding context, enabling the acquisition of fundamental biological principles from unlabeled data [21].

Beyond CNNs and Transformers: Selective State Space Models

Recent architectural innovations have introduced potential alternatives to CNN and Transformer dominance. Selective State Space Models (SSSMs), such as Mamba, have shown promising results in genomic applications. In benchmark evaluations, models combining convolutional layers with bidirectional Mamba achieved 3-4% improvements in Pearson R correlation for predicting RNA-seq read coverage compared to attention-based models [22]. These architectures demonstrate particular efficiency in handling long sequences while effectively capturing complex genomic dependencies, suggesting they may offer advantages for specific genomic prediction tasks.

Table 1: Core Architectural Components in Genomic Deep Learning

| Component | CNN-Based Models | Transformer Models | Hybrid Models |

|---|---|---|---|

| Primary Strength | Local pattern recognition | Long-range dependency modeling | Combines local and global context |

| Typical Applications | Motif discovery, enhancer prediction, variant effect prediction | Regulatory element identification, gene expression prediction | Causal variant prioritization, multi-task genomic learning |

| Sequence Processing | Sliding convolutional filters | Self-attention across entire sequence | Convolutional feature extraction + attention |

| Example Models | DeepSEA, TREDNet, SEI, ChromBPNet | DNABERT, Nucleotide Transformer, Enformer, Geneformer | Borzoi, StripedMamba |

Performance Benchmarking: Quantitative Comparisons Across Genomic Tasks

Regulatory Variant Prediction

Standardized benchmarking studies have revealed distinct performance patterns across architectures for predicting the effects of non-coding variants. When evaluating models on datasets derived from MPRA, raQTL, and eQTL experiments encompassing 54,859 enhancer SNPs across four human cell lines, CNN models like TREDNet and SEI demonstrated superior performance for predicting the direction and magnitude of regulatory impact in enhancers [20]. In contrast, hybrid CNN-Transformer models (e.g., Borzoi) excelled at causal variant prioritization within linkage disequilibrium blocks, suggesting architectural strengths for distinct but related tasks [20].

Gene Expression Prediction

The evaluation of architectural performance extends to predicting gene expression from histology images, where comprehensive benchmarking of eleven methods revealed nuanced strengths. For spatial gene expression prediction from H&E-stained tissue images, EGNv2 achieved the highest overall performance (PCC = 0.28; SSIM of 0.22; AUC of 0.65) on ST datasets, while DeepPT performed best on higher-resolution Visium data [23]. These results highlight how optimal architecture selection may depend on data resolution and specific experimental contexts.

Plant Resistance Gene Prediction

For R-gene prediction in plants, the PRGminer tool demonstrates how deep learning approaches can achieve remarkable accuracy. Using dipeptide composition representations with deep learning architectures, PRGminer attained 98.75% accuracy in k-fold validation and 95.72% on independent testing for Phase I classification (R-gene vs. non-R-gene), with an MCC of 0.91 on independent tests [1]. For Phase II classification into eight R-gene classes, the tool maintained 97.55% accuracy in k-fold validation and 97.21% on independent testing [1]. These results significantly outperform traditional alignment-based methods, especially for sequences with low homology.

Table 2: Performance Benchmarks Across Genomic Tasks

| Task | Best Performing Architecture | Key Metric | Performance | Reference Dataset |

|---|---|---|---|---|

| Enhancer Variant Effect Prediction | CNN (TREDNet, SEI) | Direction/Magnitude Accuracy | Superior to Transformers | 54,859 enhancer SNPs from MPRA, raQTL, eQTL [20] |

| Causal SNP Prioritization in LD Blocks | Hybrid CNN-Transformer (Borzoi) | Prioritization Accuracy | Superior to pure CNNs/Transformers | LD blocks from GWAS loci [20] |

| R-gene Identification | Deep Learning (PRGminer) | Accuracy | 95.72% (independent test) | Plant genomes from Phytozome, Ensemble Plants, NCBI [1] |

| R-gene Classification | Deep Learning (PRGminer) | Accuracy | 97.21% (independent test) | 8 R-gene classes [1] |

| Spatial Gene Expression Prediction | EGNv2 (ST data), DeepPT (Visium) | Pearson Correlation | 0.28 (ST), superior on Visium | HER2+ breast cancer and cutaneous squamous cell carcinoma [23] |

| RNA-seq Read Coverage | Convolutional + Bidirectional Mamba | Pearson R | 3-4% improvement over attention models | GTEx eQTL dataset [22] |

Experimental Protocols and Methodologies

Standardized Model Evaluation Framework

Robust evaluation of deep learning models in genomics requires standardized benchmarks and consistent training conditions. The benchmarking approach used for regulatory variant prediction exemplifies this principle, where models were evaluated under identical training and evaluation conditions on nine integrated datasets derived from MPRA, raQTL, and eQTL experiments [20]. This methodology enabled direct comparison of architectural performance while controlling for confounding factors. The evaluation addressed three distinct tasks: (1) predicting fold-changes in enhancer activity, (2) classifying SNPs by regulatory impact, and (3) identifying causal SNPs within LD blocks [20]. Performance was assessed using metrics including Pearson Correlation Coefficient, Mutual Information, Structural Similarity Index, and Area Under the Curve, providing a multidimensional view of model capabilities.

Data Preprocessing and Integration

The construction of effective deep learning models for genomics requires meticulous data curation and preprocessing. For gene regulatory network prediction, researchers retrieved raw sequencing data from the Sequence Read Archive (SRA) database, then performed quality control including adapter sequence removal, low-quality base trimming, and alignment to reference genomes using STAR [4]. Normalization employed the weighted trimmed mean of M-values (TMM) method from edgeR to account for compositional differences between samples [4]. For plant R-gene prediction, datasets were compiled from multiple public databases including Phytozome, Ensemble Plants, and NCBI, with careful attention to domain architecture annotations [1].

Transfer Learning for Cross-Species Generalization

A significant challenge in plant genomics is the limited availability of annotated training data for non-model species. Transfer learning strategies have proven effective in addressing this limitation by leveraging knowledge from data-rich species. In gene regulatory network construction, models trained on Arabidopsis thaliana were successfully applied to poplar and maize, with hybrid CNN-machine learning approaches achieving over 95% accuracy on holdout test datasets [4]. This approach identified more known transcription factors regulating biosynthetic pathways and demonstrated higher precision in ranking key master regulators compared to traditional methods [4].

Diagram 1: Genomic Deep Learning Experimental Workflow

Application Focus: Deep Learning for Plant Resistance Gene Prediction

The R-gene Prediction Challenge

Plant resistance genes encode proteins that recognize specific pathogen effectors and initiate powerful immune responses through effector-triggered immunity (ETI) and pathogen-associated molecular pattern (PAMP)-triggered immunity (PTI) [1]. Accurate identification and classification of these genes is crucial for understanding plant immunity and developing disease-resistant crops. However, conventional identification methods face significant challenges due to the exceptional diversity of R-genes, their organization in clusters of closely duplicated genes, difficulties in genome assembly and annotation caused by numerous similar sequences, low expression levels complicating RNA-seq-based prediction, and potential misclassification as repetitive elements [1].

Deep Learning Solutions for R-gene Prediction

The PRGminer framework exemplifies how deep learning approaches address these challenges through a two-phase prediction system. In Phase I, input protein sequences are classified as R-genes or non-R-genes using dipeptide composition features processed through deep learning architectures [1]. Sequences identified as R-genes proceed to Phase II, where they are classified into eight structural categories: CNL (Coiled-coil, Nucleotide-binding site, Leucine-rich repeat), KIN (Kinase domain), RLP (Receptor-like protein), LECRK (Lectin Receptor-like Kinase), RLK (Receptor-like Kinase), LYK (LysM Receptor-like Kinase), TIR (Toll/Interleukin-1 Receptor domain), and TNL (TIR-NBS-LRR) [1]. This structured approach demonstrates how domain-aware architectural design can effectively capture the complex features defining resistance gene families.

Diagram 2: PRGminer Two-Phase R-gene Prediction

Comparative Performance Against Traditional Methods

Deep learning approaches significantly outperform traditional methods for R-gene prediction, particularly for sequences with low homology where alignment-based methods struggle. While conventional tools rely on BLAST, InterProScan, HMMER3, and PfamScan for domain prediction, these methods frequently miss novel or divergent resistance genes [1]. Deep learning models excel at capturing complex, non-linear relationships in protein sequences without requiring explicit domain annotation, enabling identification of structural features that may evade traditional motif-based searches. This capability is particularly valuable for predicting resistance genes in wild species and crop relatives where limited prior annotation exists.

Practical Implementation: The Researcher's Toolkit

Essential Research Reagent Solutions

Table 3: Key Research Reagents and Computational Tools

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Genomic Databases | Phytozome, Ensemble Plants, NCBI SRA | Source of annotated genomic sequences and expression data | Training data for model development; performance benchmarking [1] [4] |

| Sequence Processing | Trimmomatic, FastQC, STAR | Quality control, adapter trimming, sequence alignment | Data preprocessing pipeline; feature extraction [4] |

| Domain Annotation | InterProScan, HMMER, Pfam | Protein domain identification and annotation | Traditional baseline method; feature engineering for model input [1] |

| Deep Learning Frameworks | Python, Jax/Flax, TensorFlow | Model implementation and training | Architecture development; performance optimization [22] |

| Specialized Models | DNABERT, Nucleotide Transformer, PRGminer | Task-specific genomic predictions | Benchmark comparisons; specialized applications [1] [21] |

| Evaluation Benchmarks | CAGI5, GenBench, NT-Bench | Standardized performance assessment | Model validation; comparative analysis [21] |

Implementation Considerations

Successful implementation of deep learning approaches for genomic research requires careful consideration of several practical factors. Computational resources must be adequate for model training, with transformer architectures typically demanding more memory and processing power than CNNs. Data quality and curation profoundly impact model performance, with consistent preprocessing pipelines being essential for reproducible results. Model interpretability remains challenging, though attention mechanisms in transformers can provide insights into important sequence regions. For plant R-gene prediction specifically, evolutionary relationships between source and target species should be considered to enhance transfer learning effectiveness [4].

The comparative analysis of deep learning architectures for genomic applications reveals a complex landscape where no single architecture universally dominates. Instead, optimal model selection depends heavily on the specific biological question, data characteristics, and performance requirements. CNN-based architectures demonstrate particular strength for tasks requiring local pattern recognition, such as motif discovery and enhancer variant effect prediction [20]. Transformer models excel at capturing long-range dependencies and contextual sequence information, making them valuable for gene expression prediction and regulatory element identification [21] [24]. Hybrid approaches that combine convolutional and attention mechanisms frequently achieve state-of-the-art performance by leveraging the complementary strengths of both architectures [20] [4].

For plant resistance gene prediction, deep learning methods have demonstrated substantial advantages over traditional alignment-based approaches, particularly through tools like PRGminer that achieve exceptional accuracy in both identification and classification tasks [1]. The integration of transfer learning strategies further enhances their utility for non-model species with limited annotated data [4]. As the field advances, emerging architectures including selective state space models show promise for improved efficiency and performance on certain genomic tasks [22].

Future progress in genomic deep learning will likely be driven by several key developments: more sophisticated model architectures specifically designed for genomic data characteristics, improved strategies for leveraging unlabeled data through self-supervised learning, enhanced interpretability methods to extract biological insights from complex models, and standardized benchmarking frameworks that enable robust comparison across studies. For plant R-gene prediction specifically, the integration of multi-omics data and expansion to diverse crop species will further enhance the utility of these powerful computational approaches for agricultural biotechnology and crop improvement programs.

Plant Resistance genes (R-genes) form the cornerstone of a plant's innate immune system, enabling recognition of pathogens and activation of defense mechanisms. Accurate identification and classification of these genes are crucial for developing disease-resistant crops and ensuring global food security. This case study examines PRGminer, a deep learning-based tool for high-throughput R-gene prediction, and evaluates its performance against traditional identification methods. We analyze quantitative performance metrics, detail experimental protocols, and contextualize PRGminer within the broader landscape of computational biology tools for plant immunity research. The analysis demonstrates that PRGminer achieves exceptional accuracy rates exceeding 95% in both identification and classification tasks, significantly outperforming traditional alignment-based approaches, particularly for sequences with low homology.

Plant resistance genes (R-genes) encode proteins that specifically recognize pathogen-derived molecular patterns and initiate robust immune responses [1] [25]. When activated, these genes trigger a cascade of molecular processes culminating in defensive responses including synthesis of antimicrobial compounds, cell wall reinforcement, and programmed cell death in infected cells [26]. The plant immune system operates through two primary layers: PAMP-triggered immunity (PTI) involving membrane-bound pattern recognition receptors (PRRs), and effector-triggered immunity (ETI) mediated primarily by intracellular resistance receptors such as NLR proteins [2].

The identification of novel R-genes represents a critical component of disease resistance breeding programs [1]. However, traditional methods for R-gene discovery face significant challenges due to their complex genomic architecture, low expression levels, and presence in repetitive regions that complicate genome assembly and annotation [25]. These difficulties are particularly pronounced when working with wild species and near relatives of cultivated plants, where rapid identification could provide valuable genetic resources for breeding programs [26].

Computational Landscape: Traditional vs. Modern R-Gene Prediction Methods

Traditional Methods and Their Limitations

Traditional computational approaches for R-gene identification have primarily relied on alignment-based methods and domain search algorithms [2]. These methods utilize tools such as BLAST, InterProScan, HMMER3, and PfamScan to identify conserved domains and motifs characteristic of R-proteins [25]. The typical workflow involves scanning protein sequences for known R-gene domains such as nucleotide-binding sites (NBS), leucine-rich repeats (LRRs), coiled-coil (CC) domains, and toll/interleukin-1 receptor (TIR) domains [2].

While these methods have successfully identified numerous R-genes, they possess inherent limitations. Similarity-based methods frequently fail when sequence homology is low, a particular challenge when annotating newly sequenced plant genomes [25]. Additionally, traditional automated gene annotation pipelines often produce incomplete and fragmented annotations of R-gene loci due to their unique genomic organization into clusters of closely duplicated genes [25]. The dependence on predefined domain libraries further limits the discovery of novel or highly divergent R-gene classes.

The Emergence of Deep Learning in Genomics

Deep learning approaches represent a paradigm shift in genomic analysis, employing multiple nonlinear processing layers to automatically learn hierarchical feature representations from raw biological sequences [18]. Unlike traditional methods that require explicit domain knowledge and manual feature engineering, deep learning models can capture complex patterns and relationships directly from sequence data [18]. This capability is particularly valuable for R-gene prediction, where the relevant features may be distributed across multiple sequence regions or involve complex contextual relationships.

The application of deep learning to genome annotation has accelerated recently, with models such as convolutional neural networks (CNNs) and recurrent neural networks (RNNs) demonstrating remarkable success in identifying various genomic elements including promoters, enhancers, and coding regions [18]. PRGminer builds upon these advances by implementing a specialized deep learning framework specifically optimized for plant R-gene identification and classification [1].

Table 1: Comparison of R-Gene Prediction Methodologies

| Method Type | Examples | Key Features | Advantages | Limitations |

|---|---|---|---|---|

| Alignment-Based | BLAST, InterProScan, HMMER3 | Domain search, motif identification | Well-established, interpretable | Fails with low homology, limited to known domains |

| Traditional Machine Learning | SVM, Random Forest | Feature extraction, statistical learning | Better than alignment for some cases | Limited feature learning capability |

| Deep Learning | PRGminer, CNNs, RNNs | Automated feature learning, hierarchical representation | High accuracy, handles complex patterns | Computational intensity, data requirements |

PRGminer: Architecture and Implementation

PRGminer implements a sophisticated two-phase analytical workflow designed to first identify potential R-genes from protein sequences, then classify them into specific functional categories [1] [27]. This structured approach enables comprehensive characterization of plant resistance genes with high precision and accuracy.

Phase I: R-gene Identification

The initial phase functions as a binary classification system that distinguishes R-genes from non-R-genes using dipeptide composition features extracted from protein sequences [1]. This feature representation captures local sequence patterns that are discriminative for resistance proteins. The model employs a deep learning architecture, likely incorporating convolutional layers for local feature detection and fully connected layers for classification, though the exact architecture details are not fully specified in the available literature [25].

Phase II: R-gene Classification

Following successful identification, Phase II categorizes confirmed R-genes into eight distinct classes based on their domain architecture and functional characteristics [27]. These classes represent the major known categories of plant resistance genes:

- Coiled-coil-NBS-LRR (CNL): Characterized by a coiled-coil domain at the N-terminal region, a central nucleotide-binding site (NBS) domain, and a C-terminal leucine-rich repeat (LRR) domain [27].

- TIR-NBS-LRR (TNL): Features a Toll/interleukin-1 receptor (TIR) domain at the N-terminus instead of the coiled-coil domain found in CNL proteins [27].

- Receptor-like kinase (RLK): Contains an extracellular leucine-rich repeat region and an intracellular kinase domain [27].

- Receptor-like protein (RLP): Consists of a leucine-rich receptor-like repeat and a transmembrane region but lacks the intracellular kinase domain present in RLKs [27].

- Kinase (KIN): Contains a kinase domain involved in resistance processes [27].

- Lectin receptor-like kinase (LECRK): Features lectin, kinase, and potentially transmembrane domains [27].

- Lysin motif receptor kinase (LYK): Contains lysin motif (LysM), kinase, and potentially transmembrane domains [27].

- Toll-interleukin receptor domain (TIR): Contains only the TIR domain without LRR or NBS domains [27].

Experimental Analysis and Performance Benchmarking

Dataset Composition and Preprocessing

The development and validation of PRGminer utilized comprehensive datasets derived from multiple public databases including Phytozome, Ensemble Plants, and NCBI [1] [25]. The initial dataset underwent rigorous preprocessing to ensure data quality and minimize bias:

- Redundancy Reduction: CD-HIT was employed to eliminate redundant sequences at appropriate identity thresholds [25].

- Domain-Based Filtering: Sequences were filtered based on known R-gene domain information (NB-ARC, TIR, CC, kinase, LRR, etc.) from Ensemble BioMart and Phytozome Biomart [25].

- Dataset Partitioning: For Phase I, the dataset containing 18,952 R-genes and 19,212 non-Rgenes was divided into training and independent testing sets in a 9:1 ratio [25]. This partitioning strategy ensured robust model training while maintaining a substantial independent set for unbiased performance evaluation.

For Phase II classification, the R-genes dataset was systematically divided into the eight target classes, with CNL containing 1,883 sequences and Kinase class containing 8,591 sequences, indicating significant class imbalance that required appropriate handling during model development [25].

Performance Metrics and Comparative Analysis

PRGminer demonstrates exceptional performance across standard evaluation metrics, substantially outperforming traditional methods particularly for sequences with low homology [1].

Table 2: Quantitative Performance Metrics of PRGminer

| Evaluation Metric | Phase I (Identification) | Phase I (Independent Testing) | Phase II (Classification) | Phase II (Independent Testing) |

|---|---|---|---|---|

| Accuracy | 98.75% | 95.72% | 97.55% | 97.21% |

| Matthew's Correlation Coefficient | 0.98 | 0.91 | 0.93 | 0.92 |

The dipeptide composition representation yielded the best prediction performance across all tested feature representations [1]. The consistently high Matthew's Correlation Coefficient values across both phases indicate robust performance even when accounting for class imbalance, a common challenge in biological sequence classification.

When contextualized within the broader field, recent benchmarks of deep learning models in genomics have shown mixed results. A 2025 study in Nature Methods found that for predicting transcriptome changes after genetic perturbations, deep learning foundation models did not outperform simple linear baselines [5]. This contrast highlights that PRGminer's success may stem from its specialized architecture optimized specifically for R-gene prediction rather than a general-purpose genomic framework.

Comparison with Alternative Tools

Several alternative computational tools exist for R-gene prediction, employing diverse methodologies from alignment-based approaches to traditional machine learning:

- Domain-Based Pipelines: Tools such as DRAGO2/3, RGAugury, and NLR-Annotator utilize InterProScan, HMMER, and other domain prediction algorithms to identify R-genes based on conserved domains [2].

- Traditional Machine Learning: Methods like RFPDR (Random Forest), DualF-PBR (feature-based), and StackRPred (ensemble) employ conventional machine learning with manually engineered features [28].

- Hybrid Approaches: Some recent tools combine multiple approaches, such as prPred-DRLF which uses deep representation learning features with LightGBM classification [28].

PRGminer distinguishes itself through its comprehensive two-phase deep learning architecture and demonstrated superior accuracy metrics compared to these alternatives [1]. The tool's specific advantage appears most pronounced for identifying divergent R-genes that lack strong homology to previously characterized sequences.

Implementation and Practical Application

Implementing PRGminer in research environments requires specific computational resources and data preparation tools:

Table 3: Essential Research Reagents and Computational Tools for R-Gene Analysis

| Tool/Resource | Type | Primary Function | Application in R-gene Research |

|---|---|---|---|

| PRGminer Web Server | Deep Learning Tool | R-gene identification & classification | Primary analysis tool accessible without local installation |

| PRGminer Standalone | Downloadable Software | Local R-gene prediction | Large-scale analyses and proprietary data processing |

| Phytozome | Database | Plant genomic data | Source of reference sequences and annotation data |

| Ensemble Plants | Database | Plant genomic information | Supplementary data for training and validation |

| NCBI Databases | Data Repository | Public sequence data | Access to experimentally validated R-gene sequences |

| CD-HIT | Bioinformatics Tool | Sequence redundancy reduction | Preprocessing of training and query datasets |

Accessibility and Implementation Options

PRGminer is publicly accessible through multiple modalities to accommodate diverse research needs and computational environments [1] [26]:

- Web Server: Freely available at https://kaabil.net/prgminer/, requiring no local installation or computational expertise [27].

- Standalone Tool: Downloadable from https://github.com/usubioinfo/PRGminer for local installation and batch processing of large datasets [1].

The web server typically processes input sequences within approximately two minutes, enabling rapid analysis of candidate genes [27]. This accessibility lowers the barrier to entry for plant researchers without specialized bioinformatics training, while the downloadable version supports large-scale genome-wide analyses.

PRGminer represents a significant advancement in computational methods for plant R-gene discovery, demonstrating how specialized deep learning architectures can overcome limitations of traditional homology-based approaches. Its two-phase classification system provides both high-level identification and detailed functional categorization, offering plant researchers a comprehensive tool for accelerating resistance gene characterization.

The integration of deep learning in plant genomics continues to evolve, with emerging trends including hybrid models that combine convolutional neural networks with traditional machine learning, which have shown promise in gene regulatory network prediction [4]. Future developments in R-gene prediction will likely focus on improving model interpretability, expanding taxonomic coverage, and integrating multimodal data including expression profiles and epigenetic information [2].

As the field progresses, critical benchmarking against appropriate baselines remains essential, as evidenced by recent findings that simple linear models can sometimes outperform complex deep learning frameworks in genomic prediction tasks [5]. PRGminer's validated performance against independent test sets suggests it has avoided this pitfall through its specialized design and rigorous evaluation, positioning it as a valuable resource for the plant research community.

In the field of computational genomics, the accurate prediction of resistance genes (R-genes) is crucial for understanding plant defense mechanisms and advancing agricultural biotechnology. While deep learning models frequently demonstrate exceptional performance on internal validation sets, their true practical value is determined by their performance on independent test sets—data completely separate from and unseen during the training process. Independent testing provides an unbiased assessment of a model's generalizability and predictive power when faced with novel data, simulating real-world application scenarios. Metrics such as accuracy and the Matthews Correlation Coefficient (MCC) are particularly informative; accuracy offers an intuitive measure of overall correctness, while the MCC provides a more robust evaluation that accounts for all four categories of a confusion matrix, especially valuable when dealing with imbalanced datasets. This guide objectively compares the performance of contemporary deep learning tools against traditional methods for R-gene prediction, with a specific focus on benchmarking results from independent testing to provide researchers with a clear framework for methodological selection.

Performance Comparison: Deep Learning vs. Traditional Methods

The following tables summarize the benchmarking performance of various tools, with a emphasis on their results during independent testing phases.

Table 1: Benchmarking Performance of R-gene Prediction Tools

| Tool / Method | Methodology | Independent Test Accuracy | Independent Test MCC | Key Strengths |

|---|---|---|---|---|

| PRGminer | Deep Learning (CNN) | 95.72% (Phase I), 97.21% (Phase II) | 0.91 (Phase I), 0.92 (Phase II) | High accuracy & MCC, 2-phase classification [1] [25] |

| Alignment-Based Tools | BLAST, HMMER, InterProScan | Varies; generally lower on novel sequences | Not Reported | Effective for high-homology sequences [1] [25] |

| Traditional ML (SVM) | Support Vector Machines | Varies | Not Reported | Improved over alignment-based methods [1] [25] |

Table 2: Benchmarking Insights from Other Genomic Domains

| Domain / Tool | Finding | Implication for R-gene Research |

|---|---|---|

| Foundation Cell Models (scGPT, scFoundation) | Simple mean baseline or Random Forests with GO features could outperform complex foundation models in predicting post-perturbation RNA-seq [29]. | Highlights the need for rigorous baselines and the potential of biologically-informed features. |

| Single-Cell Integration (16 deep-learning methods) | No single loss function excelled in all aspects; performance depended on the specific balance between batch-effect removal and biological conservation [30]. | Model performance is multi-faceted; benchmarking must align with the specific biological question. |

| DNALONGBENCH Suite | Highly parameterized expert models, specially designed for a specific task, consistently outperformed more general DNA foundation models across five long-range prediction tasks [31]. | For focused tasks like R-gene prediction, a specialized model may be superior to a general-purpose one. |

Experimental Protocols for Rigorous Benchmarking

PRGminer's Two-Phase Deep Learning Framework

The high performance of PRGminer is underpinned by a meticulously designed experimental protocol [1] [25].