Deep Learning for 3D Plant Phenomics: A Comprehensive Review of Technologies, Applications, and Challenges

This article provides a comprehensive overview of the transformative role of deep learning in three-dimensional (3D) plant phenomics.

Deep Learning for 3D Plant Phenomics: A Comprehensive Review of Technologies, Applications, and Challenges

Abstract

This article provides a comprehensive overview of the transformative role of deep learning in three-dimensional (3D) plant phenomics. It explores the foundational concepts of 3D imaging and data acquisition, detailing the shift from traditional 2D methods to more accurate 3D representations. The review systematically covers the capabilities of deep learning for various 3D computer vision tasks, including segmentation, classification, and trait extraction, highlighting state-of-the-art methodologies and their practical applications in plant science. It further addresses critical challenges such as data scarcity, model optimization, and troubleshooting, while presenting validation frameworks and performance comparisons. Finally, the article synthesizes future directions, including the use of synthetic data and multimodal learning, offering researchers and scientists a roadmap for implementing robust deep learning solutions in phenotyping pipelines.

From 2D to 3D: Foundations of Plant Phenomics and Deep Learning

Plant phenomics, the quantitative measurement of plant traits, has emerged as a critical discipline bridging the gap between genetics and observable characteristics. For years, traditional phenotyping relying on manual, destructive measurements created a significant bottleneck in plant breeding and crop science. The advent of image-based techniques promised to alleviate this constraint, yet initial reliance on two-dimensional imaging introduced new limitations. Two-dimensional approaches, while valuable for estimating basic features like shoot area, struggle with the inherent complexity of plant architecture, often failing to accurately capture critical morphological traits such as leaf angle, stem height, and three-dimensional canopy structure due to issues with occlusion, perspective, and lack of volumetric data [1] [2].

The transition to three-dimensional plant phenomics represents a paradigm shift, enabling researchers to move beyond simple projections to detailed volumetric and architectural analysis. Compared to two-dimensional methods, 3D reconstruction models are more data-intensive but give rise to more accurate results, allowing for the precise geometry of the plant to be reconstructed [2]. This capability is fundamental for morphological classification, tracking plant movement and growth over time, and estimating yield—tasks that are challenging with 2D approaches alone [2]. By incorporating data from multiple viewing angles, 3D methods resolve occlusions and crossings of plant structures, reconstructing distance, orientation, and illumination in a way that provides insights impossible to achieve from a single 2D image [2]. This in-depth technical guide explores the core technologies, computational methodologies, and practical applications defining the rise of 3D plant phenomics, framed within the broader context of deep learning's transformative role in this field.

Core 3D Imaging Technologies in Plant Phenomics

Three-dimensional imaging techniques for plant phenotyping can be broadly classified into active and passive approaches, each with distinct operational principles, advantages, and limitations. The choice between these technologies depends on the specific application requirements, including desired accuracy, portability, cost, and environmental conditions [2] [3].

Active 3D Imaging Approaches

Active approaches utilize a controlled source of structured energy emissions, such as lasers or projected light patterns, to directly capture 3D point clouds representing object surface coordinates [2]. These methods generally provide high accuracy and are less susceptible to ambient light variations.

Laser Scanning (LiDAR): This high-precision method measures the time or phase shift of a reflected laser beam to calculate distance. Terrestrial Laser Scanners (TLS) measure large plant volumes with high accuracy but involve time-consuming data processing due to large volumes [2]. Low-cost alternatives like the Microsoft Kinect sensor provide lower resolutions suitable for less demanding applications and have been widely used for plant characterization in controlled conditions [2]. For example, Chebrolu et al. used a laser scanner to record time-series data of tomato and maize plants over two weeks, enabling detailed growth tracking [2].

Structured Light: This method projects a known light pattern (e.g., grids or stripes) onto the plant surface and calculates depth information by analyzing the pattern deformation using optical triangulation [3]. Its advantages include high precision in large fields of view, resistance to ambient light interference, and good real-time performance suitable for dynamic scenes [3]. A notable application demonstrated that the relative measurement error of fruit dimensions through structured light 3D reconstruction was within 3.32%, with deformation index of apples achieving an R² of 0.97 [3].

Time-of-Flight (ToF): ToF cameras measure the round-trip time of a light pulse between emission and reflection to determine distance for thousands of points, building a 3D image [2]. This technology enables high-precision measurements under various lighting conditions and is particularly effective for large-scale scenes. Manuel Vázquez-Arellano et al. developed a 3D reconstruction method for maize plants using ToF cameras, combining Iterative Closest Point (ICP) algorithm for point cloud registration and Random Sample Consensus (RANSAC) for soil point removal, achieving an average deviation of 3.4 cm from ground-truth measurements [2].

Passive 3D Imaging Approaches

Passive techniques rely on ambient light and typically use commodity hardware to capture multiple 2D images from different viewpoints, which are then processed to reconstruct 3D models [2]. These methods are generally more cost-effective but may require significant computational processing.

Structure from Motion (SfM): This photogrammetric technique reconstructs 3D structures from multiple overlapping 2D images taken from different viewpoints. It establishes correspondences between features in multiple images to estimate both camera positions and 3D structure simultaneously [4]. This approach has been successfully applied in phenotyping for extracting difficult-to-measure traits like phyllotaxy in sorghum. A voxel-carving-based SfM approach generated 3D reconstructions from calibrated 2D images of 366 sorghum plants representing 236 genotypes, enabling automated phyllotaxy measurements with a repeatability of R² = 0.41 across imaging timepoints separated by two days [4].

Stereo Vision: This method mimics human binocular vision using two or more cameras to capture simultaneous images from slightly different viewpoints. By matching corresponding points between images and calculating disparities, depth information can be derived through triangulation [3]. While effective for many applications, it may struggle with textureless plant surfaces where finding correspondences is challenging.

Table 1: Comparison of Primary 3D Imaging Technologies for Plant Phenotyping

| Technology | Operating Principle | Accuracy/Resolution | Advantages | Limitations |

|---|---|---|---|---|

| Laser Scanning (LiDAR) | Measures time/phase of reflected laser beam | High precision (sub-mm to cm) | High accuracy; works in various light conditions; captures detailed structure | Expensive equipment; slow scanning; complex data processing |

| Structured Light | Analyzes deformation of projected light patterns | High precision (relative error <3.32%) [3] | Works under natural light; good real-time performance; high accuracy | Requires precise calibration; limited outdoor use |

| Time-of-Flight (ToF) | Measures round-trip time of light pulses | Medium accuracy (e.g., ~3.4 cm deviation) [2] | Fast response; low cost; effective for large scenes | Affected by highly reflective/dark surfaces |

| Structure from Motion (SfM) | Reconstructs 3D from multiple 2D images | Varies with camera quality and algorithm | Cost-effective (standard cameras); flexible setup | Computationally intensive; requires feature matching |

| Stereo Vision | Triangulation from multiple camera viewpoints | Medium to high (depends on baseline) | Real-time capability; mimics human vision | Struggles with textureless surfaces |

Multi-Source Data Fusion

A growing trend in 3D plant phenomics involves multi-source fusion, which combines data from various sensors and integrates 3D models with plant growth physical models to enhance accuracy and completeness [3]. This approach addresses challenges such as occlusion, wind-induced disturbances, and growth variability. For instance, combining depth sensors with optical sensors, and integrating these with physiological data, yields more detailed and reliable plant models. The application of high-speed imaging systems and event cameras further advances real-time capabilities for reconstructing dynamic plant scenes [3].

Deep Learning for 3D Plant Data Analysis

The complexity of 3D plant data has rendered traditional image processing pipelines inadequate for advanced phenotyping tasks. Deep learning approaches have emerged as powerful solutions for extracting meaningful information from 3D point clouds and reconstructions, enabling automated, high-throughput analysis of complex plant structures.

Fundamental Architectures and Approaches

Deep convolutional neural networks (CNNs) represent a class of deep learning methods particularly suited to computer vision problems. In contrast to classical approaches that first measure statistical image properties as features, CNNs actively learn filter parameters during model training, typically using raw images directly as input without hand-tuned pre-processing steps [1]. A typical CNN architecture comprises convolutional layers (applying filters to input volumes), pooling layers (spatial downsampling), and fully connected layers (for final classification or regression) [1].

For 3D plant data, specialized architectures have been developed to handle point clouds and 3D representations:

PointNet++ Architecture: This hierarchical neural network directly processes point clouds, capturing local structures at multiple scales. It has been successfully adapted for plant organ segmentation. An optimized implementation named PSCSO incorporated an SCConv module to reduce feature redundancy and used the Sophia optimizer to improve convergence efficiency, achieving segmentation accuracies of 0.926 on the training set and 0.861 on the testing set, with a MIoU of 0.843, while significantly reducing training time [5].

Two-Stage Deep Learning Frameworks: Advanced approaches combine semantic segmentation with instance segmentation for precise organ discrimination. A two-stage method utilizing the PointNeXt deep learning framework first performs stem-leaf semantic segmentation, then employs the Quickshift++ clustering algorithm for leaf instance segmentation [6]. This approach achieved high accuracy across multiple crops, with mIoU values of 89.21%, 89.19%, and 83.05% for sugarcane, maize, and tomato, respectively, and mean overall accuracies above 94% [6].

Implementation Protocols for 3D Plant Analysis

Successful implementation of deep learning for 3D plant phenotyping requires careful attention to computational environment, data preparation, and training strategies:

Computational Environment Setup: Research implementations typically use Linux environments with powerful GPU acceleration. For example, one reported setup used PyTorch 1.11 on Ubuntu 18.04, supported by an Intel i9-10900X CPU, 120 GB of memory, and an NVIDIA RTX3090 GPU [6].

Data Preparation and Labeling: Models require precisely labeled 3D data with classes defined according to target organs (e.g., stems and leaves). The dataset size and diversity significantly impact model generalizability, with larger training sets (e.g., for sugarcane) yielding better performance (mIoU 89.21%) compared to more challenging species with smaller datasets [6].

Training Configuration and Optimization: Optimal performance requires careful hyperparameter tuning. Researchers have found that cross-entropy loss with label smoothing and the AdamW optimizer with an initial learning rate of 0.001 and cosine decay works effectively for plant point clouds [6]. Experimentation with multilayer perceptron channel sizes has shown 64 channels provides the best balance between accuracy and efficiency for plant organ segmentation [6].

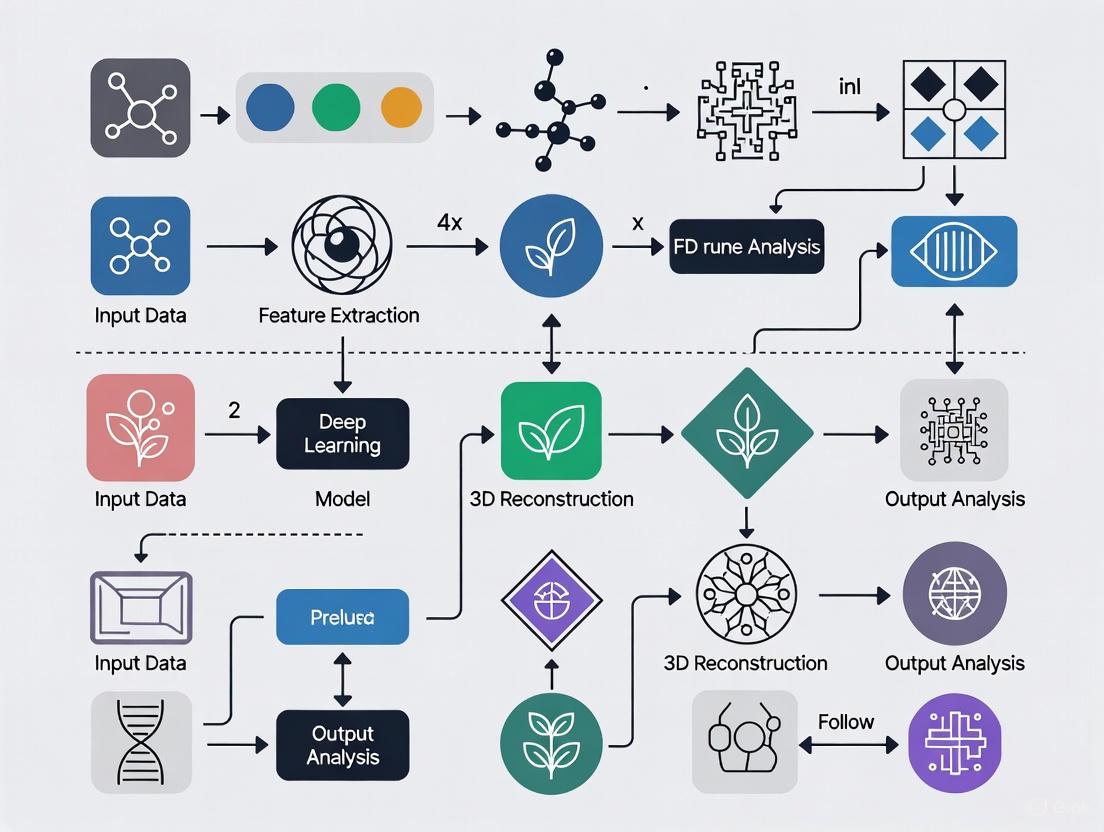

The following workflow diagram illustrates the complete pipeline from 3D data acquisition to phenotypic trait extraction:

Experimental Applications and Validation Protocols

Automated Phyllotaxy Measurement in Sorghum

Phyllotaxy (leaf arrangement) represents one of the most challenging architectural traits to measure accurately across large plant populations. Traditional approaches often approximate this trait rather than measuring it directly. A voxel-carving-based 3D reconstruction approach from multiple calibrated 2D images has enabled high-throughput phenotyping of this complex trait in sorghum [4].

Experimental Protocol:

- Image Acquisition: Capture multiple calibrated 2D images of sorghum plants (366 plants representing 236 genotypes) from various angles under controlled lighting conditions.

- 3D Reconstruction: Generate 3D reconstructions using voxel carving to create detailed plant architecture models.

- Automated Phyllotaxy Extraction: Implement algorithms to extract phyllotactic parameters directly from 3D reconstructions.

- Validation: Compare automated measurements with manual measurements collected by multiple human raters to establish accuracy and reliability.

Results and Validation: The correlation between automated and manual phyllotaxy measurements was only modestly lower than the correlation between manual measurements generated by two different individuals. The automated method exhibited a repeatability of R² = 0.41 across imaging timepoints separated by two days, demonstrating reasonable consistency for genetic studies [4]. This approach enabled a resampling-based genome-wide association study (GWAS) that identified several putative genetic associations with lower-canopy phyllotaxy in sorghum.

Multi-Species Organ Segmentation and Phenotypic Trait Extraction

Accurate plant organ segmentation remains a fundamental challenge in plant phenomics, particularly across diverse species with varying architectures. A comprehensive study evaluated a two-stage deep learning approach across sugarcane, maize, and tomato plants at different growth stages [6].

Experimental Protocol:

- Data Collection: Acquire 3D point clouds for 35 sugarcane, 14 maize, and 22 tomato plants across developmental stages.

- Data Labeling: Manually annotate point clouds with stem and leaf labels to create training and validation datasets.

- Model Training: Implement the PointNeXt framework with optimized hyperparameters (64 MLP channels, B=(1,1,2,1) InvResMLP block configuration) using cross-entropy loss with label smoothing and AdamW optimizer.

- Instance Segmentation: Apply Quickshift++ clustering algorithm to distinguish individual leaves and stems.

- Performance Evaluation: Assess using overall accuracy, mean Intersection over Union (mIoU), precision, recall, and F1 scores across species.

- Trait Extraction: Calculate phenotypic parameters from segmented organs using point cloud algorithms including linear regression, PCA, and Delaunay triangulation.

Results and Validation: The optimized model achieved high accuracy across all crops, with mIoU values of 89.21%, 89.19%, and 83.05% for sugarcane, maize, and tomato, respectively [6]. Sugarcane performed slightly better due to a larger training set, while tomato proved more challenging because of its dense and irregular leaf structure. Quantitative scores exceeded 90% precision and recall for sugarcane and maize, though tomato lagged due to overlapping leaflets. Comparative tests against four state-of-the-art networks confirmed the two-stage method consistently outperformed existing models [6].

Table 2: Performance Metrics of Two-Stage Deep Learning for 3D Plant Organ Segmentation

| Crop Species | Number of Plants | Overall Accuracy | mIoU | F1 Score | Precision | Recall |

|---|---|---|---|---|---|---|

| Sugarcane | 35 | >94% | 89.21% | 93.98% | >90% | >90% |

| Maize | 14 | >94% | 89.19% | N/A | >90% | >90% |

| Tomato | 22 | >94% | 83.05% | N/A | <90% | <90% |

High-Throughput Phenotyping System for Greenhouse Applications

The MARVIN (Multi-Angle Robotic Vision and Inspection Node) Gen2 system developed by Wageningen University & Research represents an integrated approach to high-throughput 3D plant phenotyping in controlled environments [7].

System Configuration:

- Hardware Setup: Multiple Photoneo MotionCam-3D Color cameras attached to a robotic arm capable of 360-degree rotation around plants with vertical movement capability.

- Image Acquisition: Cameras slowly rotate around plants at constant speed, capturing high-resolution 3D data with color information for both small plants (young cabbage) and taller plants (flowering orchids).

- 3D Reconstruction: Photoneo 3D Instant Meshing software creates detailed 3D models from continuous streams of 3D scans, integrating color and texture information.

- Trait Extraction: Algorithms analyze 3D models to determine size, shape of leaves and stems, and overall plant architecture in milliseconds.

Technical Advantages: This system overcomes limitations of previous solutions that could only scan certain plant types. The flexibility of camera positioning allows optimization for different species with varying architectural complexity. The continuous scanning approach saves time compared to systems that require stopping to capture individual scans [7]. The resulting high-quality 3D models enable tracking of plant growth over time with minimal human intervention.

Essential Research Tools and Reagents

Implementing 3D plant phenomics requires specialized hardware and software tools. The following table summarizes key components of a comprehensive 3D phenotyping workflow:

Table 3: Research Reagent Solutions for 3D Plant Phenomics

| Category | Specific Tool/Technology | Function/Application | Example Use Cases |

|---|---|---|---|

| 3D Scanning Hardware | Photoneo MotionCam-3D Color | High-resolution 3D scanning with color information | MARVIN system for plant architecture analysis [7] |

| LiDAR Sensors | Terrestrial Laser Scanners (TLS) | High-precision point cloud acquisition for large volumes | Canopy parameter measurement in field conditions [2] |

| Low-Cost 3D Sensors | Microsoft Kinect, HP 3D Scan | Cost-effective 3D reconstruction for controlled environments | Plant characterization in laboratory settings [2] |

| Software Platforms | Photoneo 3D Instant Meshing | Fast 3D model creation from continuous scan streams | High-throughput greenhouse phenotyping [7] |

| Deep Learning Frameworks | PointNeXt, PointNet++ | 3D point cloud processing and organ segmentation | Stem-leaf segmentation across multiple crops [6] |

| Clustering Algorithms | Quickshift++ | Instance segmentation of plant organs | Distinguishing individual leaves in dense canopies [6] |

| Optimization Algorithms | Sophia Optimizer | Improved convergence efficiency in deep learning | Training acceleration for point cloud segmentation [5] |

| Point Cloud Processing | Iterative Closest Point (ICP) | Point cloud registration from multiple views | 3D reconstruction of maize plants from ToF data [2] |

The rise of 3D plant phenomics represents a fundamental transformation in how researchers quantify and analyze plant traits, effectively overcoming the limitations inherent in 2D approaches. By providing precise volumetric data and resolving occlusions through multi-angle reconstruction, 3D phenotyping enables accurate measurement of complex architectural traits such as phyllotaxy, leaf angle, and biomass distribution—features that were previously challenging or impossible to quantify at scale [2] [4].

The integration of deep learning with 3D data acquisition has been particularly transformative, creating automated pipelines that can segment plant organs, quantify phenotypic traits, and track growth dynamics with minimal human intervention [6] [5]. These advances are closing the genotype-to-phenotype knowledge gap that has long constrained plant breeding and crop science [1]. The ability to perform non-destructive, high-throughput phenotyping supports sustainable research practices while enabling longitudinal studies of plant development [6].

Future developments in 3D plant phenomics will likely focus on several key areas: enhanced multi-sensor fusion for improved reconstruction completeness [3], more efficient deep learning architectures requiring less annotated training data [5], and increased integration with genetic analysis platforms to accelerate trait discovery and breeding programs [4]. As these technologies continue to mature and become more accessible, 3D phenomics will play an increasingly central role in addressing fundamental challenges in plant biology, crop improvement, and agricultural sustainability.

In the field of plant phenomics, which aims to quantitatively measure plant traits and their interactions with the environment, three-dimensional (3D) reconstruction technologies have emerged as powerful tools for capturing detailed plant morphology and structure [8]. The transition from traditional two-dimensional (2D) image analysis to 3D methods represents a significant advancement, enabling researchers to overcome limitations associated with 2D approaches, such as information loss from projecting 3D structures onto a 2D plane and difficulties in resolving occlusions between plant organs [2] [9]. Understanding the core methodologies for acquiring 3D data—categorized broadly as active and passive sensing—is fundamental for advancing plant phenomics research, particularly as it integrates with deep learning to create high-throughput, automated phenotyping systems [10] [11].

Active and passive sensing techniques differ primarily in their use of an external energy source. Active methods utilize controlled, emitted signals (e.g., laser or patterned light) to directly measure distance and form 3D point clouds, while passive methods rely on ambient light to capture multiple 2D images from which 3D structure is computationally inferred [2] [3]. The choice between these approaches involves critical trade-offs concerning cost, accuracy, resolution, and applicability to controlled versus field environments [12]. This guide provides a technical examination of these core methods, their operational principles, and their integration within modern deep learning-driven plant phenomics research.

Active 3D Sensing Techniques

Active 3D sensing techniques involve the use of a controlled source of structured energy emissions, such as a scanning laser or a projected pattern of light, to directly capture 3D information of an object's surface [2] [3]. These methods are known for their high precision and effectiveness in various lighting conditions.

Core Principles and Methodologies

- Structured Light: This method projects a known pattern, such as a grid or series of lines, onto the object of interest. One or more cameras then observe the deformation of this pattern when it falls on the object's surface. Using optical triangulation, the 3D coordinates of the surface are calculated based on the distortion of the pattern [3]. A well-known example is the Microsoft Kinect sensor, which projects an infrared pattern [12].

- Laser Scanning (LiDAR): LiDAR (Light Detection and Ranging) measures the distance to a target by illuminating it with a laser beam and analyzing the reflected light. The two primary approaches are:

- Time of Flight (ToF): This approach calculates distance by measuring the round-trip time of a laser pulse between the sensor and the object [2] [3]. The distance (d) is calculated using the formula (d = \frac{c \cdot t}{2}), where (c) is the speed of light and (t) is the measured time [3].

- Triangulation: In this approach, a laser dot or line is projected onto the object. A camera, positioned at a known distance and angle from the laser source, detects the location of the laser point. The displacement of the laser point in the camera's field of view is used to calculate the depth via triangulation [2].

- Laser Light Section: This method is a specific form of laser triangulation that projects a thin laser line, rather than a single point, onto the object. A camera captures the profile of this line as it appears on the contoured surface, and the resulting shift is used to generate a depth profile of the entire cross-section in a single capture, making it faster than point-by-point scanning [12].

Experimental Protocols and Workflows

A typical workflow for 3D reconstruction of plants using an active Time-of-Flight (ToF) camera, as detailed in a study on maize plants, involves several key stages [3]:

- Data Acquisition: Capture high-resolution 3D images (point clouds) of the plant from multiple viewpoints to overcome the issue of self-occlusion.

- Point Cloud Registration: Use algorithms like the Iterative Closest Point (ICP) to align the individual point clouds from different viewpoints into a unified coordinate system.

- Noise Removal: Apply filtering algorithms, such as Random Sample Consensus (RANSAC), to remove noise and non-plant points (e.g., soil).

- Phenotypic Trait Extraction: Analyze the clean, registered point cloud to extract quantitative morphological traits, such as plant height and leaf area.

Advantages and Limitations in Plant Phenotyping

Table 1: Comparison of Active 3D Sensing Technologies for Plant Phenotyping

| Method | Key Principle | Typical Accuracy/Resolution | Primary Advantages | Primary Limitations |

|---|---|---|---|---|

| LiDAR (ToF) | Laser pulse runtime measurement [3] | Varies with range; cm-level accuracy possible [12] | Works in various lighting conditions; suitable for long ranges (2m-100m) [12] | Lower X-Y resolution; blurry edges on leaves; may require warm-up [12] |

| Laser Triangulation | Optical triangulation of a laser point/line [2] | High precision (up to sub-mm) [12] | High accuracy in all dimensions; robust with no moving parts [12] | Requires movement for scanning; sensitive to plant movement (e.g., wind) [12] |

| Structured Light | Triangulation of a deformed projected pattern [3] | Sub-mm to mm level (e.g., <3.32% error reported) [3] | Single-shot capture; insensitive to plant movement; cost-effective (e.g., Kinect) [12] | Performance degrades in strong sunlight; limited outdoor use [12] |

Passive 3D Sensing Techniques

Passive 3D sensing techniques rely on ambient light to form images and do not emit any energy themselves. They use computational methods to reconstruct 3D geometry from multiple 2D images [2] [13].

Core Principles and Methodologies

- Stereo Vision: This technique mimics human binocular vision by using two or more cameras to capture the same scene from slightly different viewpoints. The 3D structure is recovered by identifying corresponding pixels in the different images and calculating their disparities, which are inversely proportional to the depth [13] [9]. The result is often a depth map [12].

- Multi-View Stereo (MVS) and Structure from Motion (SfM): This is a more advanced and widely used approach in plant phenomics.

- Structure from Motion (SfM): The process begins by taking dozens to hundreds of overlapping 2D images of a plant from different angles [9]. SfM algorithms automatically detect distinctive features across these images and simultaneously compute the 3D positions of these features (the "structure") and the camera poses for each image (the "motion") [9].

- Multi-View Stereo (MVS): Following SfM, MVS techniques are applied to densify the sparse 3D point cloud generated by SfM. MVS uses the known camera parameters to match pixels across all images, resulting in a dense, detailed 3D point cloud of the plant [9].

Experimental Protocols and Workflows

A validated integrated workflow for high-fidelity plant reconstruction using passive sensing involves a two-phase approach [9]:

- Multi-View Image Acquisition: A custom system (e.g., a U-shaped rotating arm with binocular cameras) captures high-resolution RGB images of the plant from multiple fixed viewpoints (e.g., 0°, 60°, 120°, 180°, 240°, 300°). Dozens of images are taken per viewpoint.

- High-Fidelity SfM-MVS Reconstruction: The captured images are processed using SfM and MVS algorithms to produce a high-quality, dense point cloud for each viewpoint, avoiding the distortion common in direct stereo vision.

- Multi-View Point Cloud Registration: The individual point clouds are aligned into a complete 3D model. This involves:

- Coarse Alignment: Using a marker-based Self-Registration (SR) method with calibration spheres.

- Fine Alignment: Applying the Iterative Closest Point (ICP) algorithm for precise registration.

- Phenotypic Trait Extraction: Key parameters like plant height, crown width, leaf length, and leaf width are automatically extracted from the unified 3D model.

Advantages and Limitations in Plant Phenotyping

Table 2: Comparison of Passive 3D Sensing Technologies for Plant Phenotyping

| Method | Key Principle | Data/Image Requirements | Primary Advantages | Primary Limitations |

|---|---|---|---|---|

| Stereo Vision | Depth from pixel disparity between two images [13] [9] | Two calibrated images from known positions | Simplicity of setup; real-time potential; lower cost than active sensors [13] | Sensitive to lighting; poor depth resolution; requires sufficient texture [13] [9] |

| SfM-MVS | 3D structure from feature tracking across many images [9] | Dozens to hundreds of overlapping images (e.g., 50-100 for a plant) [9] | Produces highly detailed models; uses low-cost RGB cameras; creates photorealistic textures [9] | Computationally intensive and time-consuming; not suitable for real-time applications [9] |

The Scientist's Toolkit: Essential Research Reagents & Materials

The following table details key equipment and computational tools essential for conducting 3D plant phenotyping experiments.

Table 3: Essential Research Toolkit for 3D Plant Phenotyping

| Item Name | Type | Critical Function in Experimentation |

|---|---|---|

| Binocular/Stereo Camera (e.g., ZED 2) [9] | Hardware - Sensor | Captures synchronized image pairs for stereo vision or provides raw images for high-quality SfM-MVS reconstruction [9]. |

| Time-of-Flight (ToF) Camera (e.g., Microsoft Kinect v2) [2] [3] | Hardware - Sensor | Directly captures depth maps by measuring the round-trip time of a modulated light signal, useful for real-time applications [2]. |

| LiDAR Sensor (e.g., Terrestrial Laser Scanner) [2] | Hardware - Sensor | Captures high-precision, long-range 3D point clouds, suitable for large canopies and field-scale phenotyping [2] [14]. |

| Calibration Spheres/Markers [9] | Hardware - Accessory | Serve as known geometric references in a scene, enabling coarse alignment and registration of point clouds from multiple viewpoints [9]. |

| Automated Gantry/Turntable System [9] [14] | Hardware - Platform | Enables automated, precise positioning of sensors or plants for multi-view data acquisition, which is crucial for high-throughput phenotyping [14]. |

| Structure from Motion (SfM) Software (e.g., from COLMAP, OpenMVG) | Software - Algorithm | The computational core for reconstructing 3D geometry from unordered 2D images, generating sparse and dense point clouds [9]. |

| Iterative Closest Point (ICP) Algorithm [9] [3] | Software - Algorithm | A standard method for the fine alignment (registration) of multiple 3D point clouds into a single, coherent model [9]. |

Integration with Deep Learning for 3D Plant Phenomics

The field of 3D plant phenomics is increasingly leveraging deep learning to overcome the bottlenecks of traditional 3D data processing and analysis. Deep learning models excel at automating complex tasks such as semantic segmentation of plant organs (leaves, stems), tracking growth over time, and directly estimating phenotypic traits from raw or pre-processed 3D data [10] [11].

Data Preprocessing for Deep Learning

Before 3D data can be fed into deep learning models, several preprocessing steps are critical:

- Point Cloud Annotation: Manual or semi-automated tools are used to label points or segments in 3D data for supervised learning tasks like segmentation [10].

- Downsampling: Raw 3D point clouds can be massive. Downsampling strategies reduce data density while preserving structural information, making model training computationally feasible [10].

- Dataset Organization: Curating large-scale, benchmark datasets is a fundamental challenge. Techniques like generative AI and unsupervised learning are being explored to create synthetic 3D plant data to supplement limited real-world datasets [10].

Advanced Deep Learning Architectures for 3D Data

- Convolutional Neural Networks (CNNs): While traditionally used for 2D images, CNNs have been adapted for 3D data. 3D-CNNs can process volumetric data (voxels) or be applied to multi-view 2D renderings of a 3D model to extract features for classification or regression tasks [11].

- Emerging Neural Representations:

- Neural Radiance Fields (NeRF): This novel method represents a 3D scene as a continuous volumetric function, parameterized by a neural network. It can generate highly photorealistic novel views of a plant from a sparse set of input images, offering a powerful alternative to traditional SfM [8] [3].

- 3D Gaussian Splatting (3DGS): A more recent technique that represents scene geometry explicitly with a set of 3D Gaussians. It offers extremely high-quality reconstructions and real-time rendering speeds, showing great promise for efficient and scalable plant phenotyping [8] [3].

- Transformers and Self-Supervised Learning: The Transformer architecture, with its self-attention mechanism, is being applied to point clouds and sequences of plant growth images to model long-range dependencies [11]. Self-supervised learning methods are also being developed to learn meaningful 3D representations from unlabeled data, reducing the dependency on large annotated datasets [10].

The adoption of three-dimensional (3D) plant phenotyping represents a significant advancement over traditional two-dimensional methods, enabling researchers to capture complex plant morphology, resolve occlusions, and accurately track growth and movement over time [2]. Plant phenomics, the comprehensive study of plant phenotypes, has gained prominence as a vital tool for understanding the intricate relationships between genotypes and the environment [10]. As the field marks a decade since the first applications of deep learning began to appear in the literature, a new research community has established connections between computer vision and biology [15].

This technical guide provides an in-depth examination of the three primary 3D representation techniques—point clouds, Gaussian splats, and meshes—within the context of modern plant phenomics research. We explore the fundamental principles, comparative strengths, and practical applications of each method, with a particular focus on their integration with deep learning frameworks that are revolutionizing the extraction of phenotypic traits from 3D data [10].

3D Representation Techniques: Core Principles and Methodologies

Point Clouds

Fundamental Principles: Point clouds represent one of the most fundamental forms of 3D representation in plant science, where an object's surface is encoded as a set of discrete points with 3D positional coordinates (x, y, z) and optionally additional attributes such as RGB color values [16]. This data structure directly maps the surfaces of real-world objects or environments, typically captured by 3D scanners, LiDAR, or photogrammetric techniques [17].

Methodological Approaches: Point cloud acquisition can be broadly classified into active and passive approaches [2]. Active methods use controlled emission sources like scanning lasers (LiDAR) or structured light patterns to directly measure surface distances through triangulation or time-of-flight (ToF) principles. Terrestrial Laser Scanners (TLS) allow for large volumes of plants to be measured with relatively high accuracy, while lower-cost devices such as the Microsoft Kinect sensor have been widely adopted for plant characterization in agricultural research [2]. Passive methods, such as Structure from Motion (SfM), generate point clouds through software-based triangulation of features across multiple 2D images, requiring only ambient light and conventional cameras [2] [16].

Gaussian Splatting

Fundamental Principles: Gaussian splatting (3D Gaussian Splatting - 3DGS) introduces a novel paradigm for creating and rendering 3D scenes by representing geometry through thousands of overlapping 3D Gaussian primitives—essentially, blobs of data placed in space with different orientations, densities, colors, and transparencies to match the appearance of real objects [17] [8]. Unlike point clouds composed of discrete points, Gaussian splats produce a smooth, continuous scene that can be rendered directly with photorealistic quality and realistic lighting effects [17].

Methodological Approaches: The 3DGS technique utilizes a collection of 3D Gaussians that are optimized through gradient descent to fit captured images, with each Gaussian defined by its position, color, transparency, and shape [17] [18]. This approach employs neural rendering principles to achieve lifelike results without heavy processing requirements. The emerging application of Gaussian splatting to plant science is exemplified by frameworks like GrowSplat, which combines 3DGS with a robust sample alignment pipeline to build temporal digital twins of plants through a two-stage registration approach: coarse alignment through feature-based matching and Fast Global Registration, followed by fine alignment with Iterative Closest Point (ICP) [18].

3D Meshes

Fundamental Principles: 3D meshes are composed of vertices, edges, and faces that form a structured surface representation of objects [19]. This polygonal modeling approach provides explicit geometric definitions that support precise spatial operations and topological manipulations. The clear surface representation enables accurate calculations of geometric properties, spatial relationships, and physical simulations [19].

Methodological Approaches: Mesh generation typically begins with point cloud data acquired through LiDAR, photogrammetry, or other 3D scanning techniques, which then undergoes surface reconstruction algorithms to create a continuous mesh surface [19]. Common reconstruction methods include Poisson surface reconstruction, Delaunay triangulation, and ball-pivoting algorithms. The resulting meshes can be optimized using Level of Detail (LOD) techniques to reduce computational load in less important areas while retaining detail in critical regions, making them suitable for large-scale applications [19].

Comparative Analysis of 3D Representation Techniques

Table 1: Technical Comparison of 3D Representation Methods for Plant Phenotyping

| Feature | Point Clouds | Gaussian Splatting | 3D Meshes |

|---|---|---|---|

| Data Structure | Individual data points in 3D space [17] | Overlapping 3D Gaussians ('splats') [17] | Vertices, edges, and faces forming structured surfaces [19] |

| Visual Quality | Can appear sparse or 'dotty'; limited realism [17] | Smooth, continuous, photo-realistic with realistic lighting [17] | Varies with polygon count; can achieve high realism with textures [20] |

| Measurement Accuracy | High precision for mapping and measurement [17] | Limited measurement accuracy; optimized for appearance [17] [19] | High precision for spatial analysis and geometric operations [19] |

| Processing Time | Slower; often requires further processing [17] | Faster; renders directly from images/video [17] | Moderate to high; requires surface reconstruction from raw data [19] |

| Editing & Manipulation | Limited editing capabilities | Primarily a rendering technique; difficult to edit [19] | Highly editable using standard 3D modeling software [19] |

| Spatial Analysis Capability | Suitable for basic measurements | Challenging for traditional GIS algorithms [19] | Excellent for spatial analysis, intersections, buffering [19] |

| Interoperability | Widely supported in professional software | Limited support in industry-standard GIS/BIM platforms [19] | Excellent interoperability with industry software [19] |

| Best Applications | Measurement, mapping, engineering surveys [17] | Visual inspections, virtual tours, VFX, growth visualization [17] [18] | GIS analysis, BIM, architectural design, simulations [19] |

Table 2: Performance Metrics in Plant Phenotyping Applications (Based on Experimental Data)

| Metric | Point Clouds (SfM) | Point Clouds (LiDAR) | Gaussian Splatting | NeRF | 3D Meshes |

|---|---|---|---|---|---|

| Reconstruction Accuracy (mm error) | 7.23 mm [16] | ~2.32 mm (MVS) [16] | 0.74 mm [16] | 1.43 mm [16] | Varies with reconstruction method |

| Data Collection Requirements | Multiple 2D images from different angles [16] | Direct 3D scanning [2] | Sparse multi-view images (15+ views) [18] [16] | Sparse multi-view images [16] | Derived from point clouds or direct scanning |

| Computational Requirements | Moderate | Low to moderate | High GPU power for training, efficient rendering [19] | Very high computational cost [8] | Moderate to high, depending on complexity |

| Real-time Rendering | Limited | Limited | Excellent [17] | Limited | Good with LOD optimization [19] |

| Handling of Plant Complexity | Struggles with fine details and occlusions [16] | Good for gross structure, may miss fine details | Excellent for complex geometries and fine details [18] | Good for complex geometries [8] | Good with sufficient resolution |

Experimental Protocols and Methodologies

3D Gaussian Splatting for Temporal Plant Reconstruction

The GrowSplat framework demonstrates a cutting-edge methodology for constructing temporal digital twins of plants using Gaussian splatting [18]. The experimental workflow involves:

Data Acquisition: Plants are imaged using multi-view camera systems such as the Maxi-Marvin setup at the Netherlands Plant Eco-phenotyping Centre (NPEC), which consists of 15 static cameras arranged in three layers of five cameras each [18]. The system captures synchronized images from multiple viewpoints as plants are moved through the imaging system on a conveyor belt.

Camera Calibration and Pose Estimation: For each camera, 3D pose parameters (rotation angles and translation vector), camera intrinsics, and internal camera parameters (focal length, radial distortion coefficient, image dimensions, image center coordinates, and scale factors) are determined through calibration procedures [18].

Data Preprocessing for NeRFStudio: The captured data is prepared for Gaussian splatting reconstruction through distortion parameter conversion, transforming the single radial distortion coefficient (κ) used in the division model into the six-parameter polynomial model required by modern reconstruction pipelines (K1 = -κ/√(w²+h²), K2 = (-κ/√(w²+h²))², P1 = 0.0, P2 = 0.0, with K3 and K4 set to 0.0 by default) [18].

3D Gaussian Optimization: The Gaussian splatting process optimizes the positions, shapes, colors, and transparencies of thousands of 3D Gaussian primitives through gradient descent to minimize the difference between rendered views and captured images [18].

Temporal Registration: A two-stage registration approach aligns sequential plant models: (1) coarse alignment through feature-based matching and Fast Global Registration, followed by (2) fine alignment with Iterative Closest Point (ICP) algorithms to create consistent 4D models of plant development [18].

High-Fidelity Plant Reconstruction Using Robotic Imaging Systems

Advanced imaging systems have been developed specifically for 3D plant reconstruction, such as the dual-robot setup described by Lewis-Stuart et al. [16]:

Robotic Imaging Configuration: Two robotic arms are combined with a turntable, controlled by a flexible image capture framework compatible with the Robot Operating System (ROS). This configuration enables the capture of a wide range of views with logged camera positions in metric units, ensuring measurements from reconstructed models correspond to real-world dimensions [16].

Multiview Data Collection: Each plant is captured from numerous viewpoints to ensure complete coverage. For wheat plants, this involves capturing 20 individual plants across 6 different time frames over a 15-week growth period, resulting in 112 plant instances and over 35,000 RGB-D images [16].

Model Training and Validation: Both 3D Gaussian Splatting (3DGS) and Neural Radiance Fields (NeRF) models are trained on the captured data. Reconstruction accuracy is validated by comparing against ground-truth scans from a handheld structured light scanner (Einstar), with point cloud comparisons measuring average distance between model and ground-truth points [16].

Trait Extraction: The reconstructed 3D models enable extraction of key phenotypic traits such as plant height, projected leaf area, convex hull volume, leaf orientation, and biomass estimates through computational analysis of the 3D representation [16].

Workflow Visualization

3D Plant Phenotyping Workflow This diagram illustrates the comprehensive pipeline for creating digital plant models, from multi-view image acquisition through 3D reconstruction to phenotypic trait extraction.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Equipment and Software for 3D Plant Phenotyping

| Tool Category | Specific Examples | Function and Application | Key Considerations |

|---|---|---|---|

| Imaging Hardware | Maxi-Marvin multi-camera array [18] | High-throughput plant imaging with 15 synchronized cameras | Enables efficient data collection for multiple plant specimens |

| XGRIDS Lixel K1/L2 Pro handheld scanners [17] | Capture data for both point clouds and Gaussian splats | Portable solution for field and greenhouse applications | |

| DJI Matrice 350/400 with Zenmuse L2/P1 [17] | Aerial data collection for large-scale phenotyping | Provides complementary aerial perspective for complete 3D models | |

| Structured light scanners (Einstar) [16] | High-accuracy ground truth data for validation | Essential for quantifying reconstruction accuracy | |

| Robotic Systems | Dual-robot imaging setup [16] | Automated multi-view image capture with precise camera control | Ensures metric accuracy and reproducible imaging conditions |

| Turntable systems [16] | Controlled rotation of plant specimens for comprehensive coverage | Enables full 360-degree plant reconstruction | |

| Software Platforms | NerfStudio [18] | Pipeline for Gaussian splatting and NeRF reconstruction | Requires conversion of distortion parameters for specific cameras |

| XGRIDS Lixel Cyber Color Studio [17] | Processing and export of Gaussian splat and mesh models | Enables sharing of lightweight, viewable models without specialist software | |

| DJI Terra [17] | Photogrammetric processing and Gaussian splat generation from drone data | Supports generation of photorealistic, high-precision 3DGS models | |

| Analysis Frameworks | GrowSplat [18] | Temporal reconstruction and growth tracking | Implements two-stage registration for 4D plant modeling |

| Plant-specific trait extraction algorithms [16] | Automated measurement of morphological traits | Enables high-throughput phenotypic screening |

Integration with Deep Learning in Plant Phenomics

The revolution in deep learning has profoundly impacted 3D plant phenotyping, addressing previous challenges in feature extraction from high-dimensional 3D data [10]. Deep learning techniques have enabled remarkable progress in 3D computer vision tasks including classification, detection, tracking, semantic segmentation, instance segmentation, and generation of plant models [10].

The integration of deep learning with 3D representations involves several critical approaches:

Point Cloud Processing Networks: Architectures such as PointNet++ and dynamic graph CNNs enable direct processing of point cloud data for tasks including plant organ segmentation, species classification, and growth stage prediction [10]. These networks can handle the irregular, unordered nature of point clouds while being invariant to geometric transformations.

Differentiable Rendering for Gaussian Splats: The advent of 3D Gaussian Splatting incorporates differentiable rendering pipelines that enable end-to-end training of reconstruction models from 2D images [8] [18]. This approach allows for optimization of 3D representations using only 2D supervision, making it particularly valuable for plant phenotyping where 3D ground truth data is difficult to obtain.

Multi-task Learning Frameworks: Advanced deep learning frameworks simultaneously address multiple phenotyping tasks such as plant segmentation, leaf counting, and biomass estimation from 3D representations [10]. These approaches leverage shared feature representations across related tasks, improving data efficiency and model robustness.

Self-supervised and Weakly Supervised Learning: To address the scarcity of annotated 3D plant data, self-supervised methods leverage unlabeled data by constructing pretext tasks, while weakly supervised approaches utilize partial annotations or image-level labels to reduce annotation burden [10].

Future Perspectives and Challenges

The field of 3D plant phenomics faces several important challenges and opportunities for advancement:

Benchmark Dataset Construction: A critical need exists for comprehensive benchmark datasets that enable fair comparison across methods and facilitate development of more robust algorithms [10]. Future efforts should focus on creating datasets using synthetic data generation, generative AI, and unsupervised or weakly supervised learning approaches to overcome annotation bottlenecks [10].

Model Efficiency and Accuracy: While current 3D representation methods offer impressive capabilities, opportunities remain for developing more accurate and efficient analysis techniques through multitask learning, lightweight models, and self-supervised learning [10]. This is particularly important for deployment in resource-constrained environments such as field applications.

Interpretability and Extensibility: As deep learning models become more complex, enhancing their interpretability will be crucial for gaining trust from plant scientists and breeders [10]. Additionally, improving model extensibility across plant species, growth stages, and environmental conditions will broaden the impact of 3D phenotyping technologies.

Multimodal Data Integration: Future frameworks should leverage complementary information from multiple data sources including RGB, hyperspectral, thermal, and fluorescence imaging to provide more comprehensive phenotypic profiles [10]. Such multimodal approaches will enable deeper insights into plant structure-function relationships.

The exploration of deep learning in 3D plant phenomics, particularly through emerging techniques like Gaussian splatting, is poised to spur breakthroughs in a new dimension of plant science, ultimately accelerating crop improvement and sustainable agricultural production [10] [8].

Plant phenomics, the comprehensive study of plant growth, performance, and composition, has emerged as a vital discipline for understanding the intricate relationships between genotypes and the environment [10]. While image-based plant phenotyping has progressed rapidly, traditional two-dimensional approaches often fail to fully capture the complex three-dimensional architecture of plants, limiting their accuracy in measuring traits like biomass, leaf area, and canopy structure [2]. The advent of 3D phenotyping represents a valuable extension beyond 2D methods, enabling researchers to overcome fundamental challenges such as occlusion, leaf overlap, and the inability to accurately capture depth and volume [10] [2].

Deep learning has recently revolutionized 3D plant phenotyping by providing powerful tools for extracting meaningful information from complex 3D data [10]. This technical guide explores the fundamental principles, methods, and applications of deep learning for 3D vision tasks within the specific context of plant phenomics research. We examine how various 3D representations—from point clouds to volumetric grids—can be processed using specialized neural network architectures to solve critical phenotyping challenges including organ segmentation, growth tracking, and morphological analysis. By providing a comprehensive overview of this rapidly evolving field, this article aims to equip researchers with the foundational knowledge needed to leverage 3D deep learning in their plant science investigations.

Fundamental 3D Data Representations for Plant Phenotyping

The choice of 3D representation is fundamental to any computer vision pipeline, as each format possesses distinct characteristics that influence computational requirements, processing algorithms, and applicability to specific phenotyping tasks [21] [22]. Unlike 2D images that have a dominant representation as pixel arrays, 3D data exhibits multiple popular representations, each with unique properties that pose both challenges and opportunities for deep architecture design [21].

Table 1: Comparison of Primary 3D Data Representations in Plant Phenotyping

| Representation | Data Structure | Advantages | Limitations | Common Applications in Phenotyping |

|---|---|---|---|---|

| Point Cloud | Unordered set of 3D coordinates (x,y,z) | Simple structure; preserves exact geometry; direct sensor output | Irregular format; no connectivity information | Leaf segmentation [23]; organ detection [23]; plant architecture analysis [2] |

| Voxel | Regular 3D grid of volumetric pixels | Compatible with 3D CNNs; structured format | Computational/memory intensive at high resolutions; discretization artifacts | Biomass estimation; volumetric growth measurement [2] |

| Mesh | Vertices, edges, and faces defining surface | Efficient representation; precise surface modeling | Complex processing; requires reconstruction | Detailed morphological analysis; synthetic plant models [2] |

| Multi-view Images | Multiple 2D images from different viewpoints | Leverages pre-trained 2D CNNs; simple acquisition | Requires view pooling; potential information loss between views | Plant classification; trait estimation from camera arrays [21] |

| Depth Images (RGB-D) | Pixels with color and depth information | Combines appearance and geometry; real-time acquisition | Limited field of view; depth sensor constraints | Real-time growth monitoring [2]; robotic harvesting guidance [2] |

In plant phenomics, each representation offers distinct advantages depending on the specific application requirements, available hardware, and processing constraints [2]. Point clouds have gained particular prominence in plant phenotyping due to their direct acquisition from popular 3D sensors like LiDAR and structured light systems, while multi-view images provide a practical alternative that leverages the maturity of 2D deep learning approaches [21] [2].

Deep Learning Architectures for 3D Vision Tasks

The development of specialized deep learning architectures has been crucial for processing the various 3D representations outlined in the previous section. These architectures can be broadly categorized according to the data representation they are designed to handle.

Point Cloud-Based Networks

Point clouds represent one of the most common 3D data formats in plant phenotyping, directly obtained from 3D scanners such as LiDAR [2]. Several pioneering architectures have been developed specifically for processing this irregular data format:

- PointNet: A groundbreaking architecture that directly processes unordered point sets using shared multi-layer perceptrons (MLPs) and a symmetric aggregation function (max pooling) to maintain permutation invariance [22]. While innovative, its layer-wise processing of individual points limits its ability to capture local structures.

- PointNet++: An extension that addresses PointNet's limitations by applying the network hierarchically to progressively enlarged local regions, enabling the learning of features at multiple scales [22]. This significantly improves the capture of fine-grained geometric patterns.

- Dynamic Graph CNN (DGCNN): Constructs local graph structures based on point neighborhoods in the feature space and applies graph convolutional networks to extract features, allowing dynamic updating of the graph throughout network layers [22]. This approach has demonstrated superior performance for complex plant structures with intricate branching patterns [23].

Volumetric and Voxel-Based Networks

Voxel-based representations organize 3D space into a regular grid, enabling the application of 3D convolutional neural networks (3D CNNs) that extend the concepts of their 2D counterparts:

- 3D Convolutional Neural Networks: Utilize 3D kernels that convolve across spatial dimensions to learn hierarchical feature representations [21]. While conceptually straightforward, they suffer from substantial computational and memory demands that limit resolution.

- Sparse Convolutional Networks: Employ specialized convolutions that operate only on non-empty voxels, dramatically reducing computational requirements for sparse scenes like plant architectures [22]. This approach has enabled higher-resolution processing of complex plant structures.

Transformer-Based Architectures

Inspired by their success in natural language processing, transformers have recently been adapted for 3D vision:

- Vision Transformers for 3D: Process 3D data by dividing it into patches, embedding these patches into tokens, and processing them through multi-head self-attention mechanisms [24]. This global receptive field enables capturing long-range dependencies in complex plant canopies.

- Point Cloud Transformers: Adapt transformer architectures to operate directly on point clouds by treating points as tokens and computing attention based on their spatial relationships [22]. These have shown promising results for plant organ segmentation tasks.

Core 3D Vision Tasks in Plant Phenomics

Deep learning approaches enable several fundamental 3D vision tasks that are critical for comprehensive plant phenotyping. These tasks form the building blocks for extracting biologically meaningful information from 3D plant data.

3D Semantic Segmentation

3D semantic segmentation involves assigning a categorical label (e.g., stem, leaf, fruit) to each point or voxel in a 3D representation [22]. This represents one of the most valuable yet challenging tasks in plant phenomics, given the complex morphology and self-occluding nature of plant structures.

Multiple methodological approaches have been developed for 3D semantic segmentation, categorized by their underlying data representation [22]:

- RGB-D based methods leverage both color and depth information from depth sensors

- Projected image-based methods project 3D data onto 2D planes and apply 2D CNNs

- Voxel-based methods employ 3D convolutional networks on volumetric grids

- Point-based methods operate directly on point clouds using architectures like PointNet++ and DGCNN

- Hybrid methods combine multiple representations to leverage their complementary strengths

In plant phenomics, point-based methods have shown particular promise due to their ability to preserve the precise geometry of plant organs while handling the irregular sampling typical of botanical specimens [23].

3D Instance Segmentation

Going beyond semantic segmentation, 3D instance segmentation distinguishes between different instances of the same class (e.g., individual leaves, separate fruits) [22]. This represents a significantly more challenging task that is essential for quantifying traits such as leaf count, fruit yield, and branching patterns.

The two primary paradigms for 3D instance segmentation are [22]:

- Proposal-based methods: Generate region proposals followed by classification and refinement

- Proposal-free methods: Typically employ a semantic segmentation followed by clustering or embedding-based approaches to group points into instances

For plant phenotyping, proposal-free methods have demonstrated advantages in handling the complex topology and touching structures common in plant architectures [23].

3D Object Detection and Classification

3D object detection involves identifying and localizing plant organs or entire plants in 3D space, typically with bounding boxes or other spatial encodings [10]. Classification assigns categorical labels to entire 3D models or scenes, such as species identification or stress classification [10].

Common approaches include:

- Voting-based methods that generate object proposals through point grouping and sampling

- Region proposal networks that extend 2D detection frameworks to 3D

- End-to-end architectures that directly regress detection outputs from input data

3D Reconstruction

3D reconstruction from 2D images represents a crucial capability for plant phenotyping, as it enables the creation of detailed 3D models from conventional camera systems [25]. Recent advances in feed-forward 3D modeling have emerged as promising approaches for rapid and high-quality 3D reconstruction [25].

Notably, iterative Large 3D Reconstruction Models (iLRM) have demonstrated significant progress by generating 3D Gaussian representations through an iterative refinement mechanism [25]. These models address scalability issues in traditional transformer-based approaches by decoupling scene representation from input-view images and decomposing fully-attentional multi-view interactions into a two-stage attention scheme [25]. This approach has shown particular promise for reconstructing complex plant structures with higher fidelity and reduced computational requirements.

Experimental Protocols and Methodologies

Implementing robust experimental protocols is essential for successful application of deep learning to 3D plant phenotyping. This section outlines key methodological considerations and presents specific experimental frameworks from recent literature.

3D-NOD Framework for New Organ Detection

The 3D-NOD framework provides a comprehensive pipeline for detecting new plant organs from time-series 3D data, addressing the critical challenge of spatiotemporal phenotyping [23]. The methodology consists of several key components:

Data Acquisition and Annotation:

- Acquire time-series 3D point clouds using high-precision 3D scanners at regular intervals

- Annotate point clouds using the Semantic Segmentation Editor under Ubuntu

- Implement Backward & Forward Labeling strategy to annotate points into "old organ" and "new organ" classes

- Divide data into training (25 sequences) and test sets (12 sequences)

Data Preprocessing and Augmentation:

- Apply Registration & Mix-up to align consecutive point clouds

- Implement Humanoid Data Augmentation to generate ten variants for each mixed point cloud

- Use DGCNN as backbone network architecture

Training Protocol:

- Train model on augmented dataset with standard cross-entropy loss

- Optimize using Adam optimizer with initial learning rate of 0.001

- Implement learning rate scheduling with step-wise decay

- Train for 200 epochs with batch size of 24

Evaluation Metrics:

- Assess performance using Precision, Recall, F1-score, and Intersection over Union

- Report class-specific metrics, particularly for "new organ" class

- Conduct ablation studies to validate component contributions

In experimental evaluations, this framework achieved an impressive mean F1-score of 88.13% and IoU of 80.68% across multiple crop species including tobacco, tomato, and sorghum [23]. The detection performance was highest in sorghum, likely due to its faster bud growth characteristics [23].

Iterative Large 3D Reconstruction Model Protocol

The iLRM framework introduces an iterative approach for feed-forward 3D reconstruction that addresses scalability limitations in previous methods [25]:

Model Architecture Design:

- Implement iterative refinement mechanism where each layer updates scene representation

- Decouple scene representation from input-view images to enable compact 3D representations

- Decompose multi-view interactions into two-stage attention scheme:

- Cross-attention between viewpoint embeddings and corresponding images

- Self-attention across all viewpoint embeddings

- Inject high-resolution information at every layer for high-fidelity reconstruction

Training Methodology:

- Train on large-scale datasets (RealEstate10K and DL3DV)

- Use combination of photometric and perceptual losses

- Employ progressive training strategy

- Optimize using AdamW optimizer with weight decay

Evaluation Framework:

- Assess reconstruction quality using PSNR, SSIM, and LPIPS metrics

- Compare rendering speed and computational efficiency

- Evaluate scalability with varying numbers of input views

- Test generalization across diverse scenes

Experimental results demonstrated that iLRM outperformed existing methods in both reconstruction quality and speed, achieving approximately 3 dB PSNR improvement on RealEstate10K dataset with less than half the computation time of comparable methods [25].

Diagram 1: 3D Deep Learning Pipeline for Plant Phenomics

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of 3D deep learning for plant phenotyping requires both computational resources and specialized hardware for data acquisition. The following table catalogs essential components of the research toolkit.

Table 2: Essential Research Reagents and Materials for 3D Plant Phenotyping

| Category | Item | Specifications | Function/Purpose |

|---|---|---|---|

| 3D Sensing Hardware | LiDAR Scanner | High-precision; time-of-flight or phase-shift | Direct 3D point cloud acquisition of plant structure [2] |

| Structured Light System | Pattern projection with stereo cameras | High-resolution 3D reconstruction of plant surfaces [2] | |

| Time-of-Flight (ToF) Camera | e.g., Microsoft Kinect; real-time capability | Cost-effective 3D data acquisition for real-time monitoring [2] | |

| Multi-view Camera Array | Synchronized RGB cameras with calibration | 3D reconstruction via photogrammetry [21] | |

| Computational Resources | DGCNN Backbone | Dynamic Graph CNN architecture | Point cloud segmentation for plant organ detection [23] |

| 3D-NOD Framework | With BFL and HDA components | New organ detection in time-series 3D data [23] | |

| iLRM Model | Iterative Large Reconstruction Model | Feed-forward 3D reconstruction from multi-view images [25] | |

| Vision Transformers | Pre-trained on large datasets (e.g., DINO) | Image classification and segmentation with transfer learning [24] | |

| Datasets & Annotation | RealEstate10K | Large-scale video dataset | Training data for 3D reconstruction models [25] |

| DL3DV Dataset | Diverse 3D vision dataset | Benchmark for 3D reconstruction quality [25] | |

| Semantic Segmentation Editor | Ubuntu-compatible | Annotation of 3D point clouds for training [23] | |

| Backward & Forward Labeling | Strategy for temporal data | Annotation of growth sequences for new organ detection [23] |

Diagram 2: iLRM Iterative 3D Reconstruction Workflow

Future Perspectives and Challenges

Despite significant advances in deep learning for 3D plant phenomics, several challenges remain that present opportunities for future research and development.

Data-Related Challenges

- Benchmark Dataset Construction: Developing comprehensive 3D plant phenotyping datasets remains challenging due to the extensive annotation requirements [10]. Future directions include using synthetic datasets generated through generative AI and methods leveraging unsupervised or weakly supervised learning to reduce annotation burdens [10].

- Multimodal Data Integration: Effectively combining 3D structural data with other modalities such as hyperspectral imagery, thermal data, and genetic information represents a promising frontier for obtaining more comprehensive phenotypic profiles [10].

Technical and Methodological Challenges

- Computational Efficiency: Many state-of-the-art 3D deep learning models suffer from severe scalability issues due to prohibitive computational costs as the number of views or image resolution increases [25]. Future research should focus on developing more efficient architectures through approaches like multitask learning, lightweight models, and self-supervised learning [10].

- Interpretability and Explainability: As deep learning models grow in complexity, understanding their decision-making processes becomes increasingly important for building trust and extracting biological insights [10]. Developing interpretable AI systems for plant phenomics represents a critical research direction.

Application-Oriented Challenges

- Field-Based Phenotyping: Most current 3D deep learning approaches have been developed and validated in controlled environments [2]. Adapting these methods for robust field application under varying lighting, weather, and occlusion conditions remains a significant challenge.

- Generalization Across Species and Growth Stages: Developing models that generalize across diverse plant architectures, species, and developmental stages is essential for broad applicability but remains challenging due to the tremendous variability in plant morphology [2] [23].

The exploration of deep learning in 3D plant phenomics is poised to spur breakthroughs in a new dimension of plant science, enabling unprecedented insights into plant growth, development, and response to environmental factors [10]. By addressing these challenges, the research community can unlock the full potential of 3D vision technologies for advancing both fundamental plant biology and agricultural innovation.

Deep Learning in Action: Architectures and Applications for 3D Plant Analysis

The field of plant phenomics, which aims to comprehensively study plant phenotypes, has gained prominence as a vital tool for understanding the intricate relationships between genotypes and the environment [10]. In the past decade, image-based plant phenotyping has progressed rapidly, with three-dimensional (3D) phenotyping emerging as a valuable extension of traditional two-dimensional (2D) approaches that can more accurately capture plant architecture and spatial relationships [10] [15]. However, this increased data dimensionality poses significant challenges for feature extraction and phenotyping analysis, creating a pressing need for advanced computational solutions [10].

Deep learning has led to remarkable progress in revolutionizing 3D phenotyping by automatically learning hierarchical features from complex plant data [10] [1]. These techniques are particularly crucial for bridging the genotype-to-phenotype gap - one of the most important problems in modern plant breeding [1]. While genomics research has yielded extensive information about plant genetic structures, sequencing techniques and the data they generate have far outstripped traditional phenotyping capacity, creating a significant "phenotyping bottleneck" that limits comprehensive analysis of traits within single plants and across cultivars [1].

This technical guide provides an in-depth examination of deep learning capabilities for 3D plant data analysis, focusing specifically on the core tasks of classification, detection, and segmentation. By synthesizing recent advances and practical methodologies, we aim to equip researchers and scientists with the knowledge needed to implement these technologies in plant phenomics research and drug development applications.

Foundations of 3D Plant Data Analysis

Data Acquisition Modalities

The foundation of any successful 3D plant phenotyping pipeline lies in appropriate data acquisition. Multiple technologies enable the capture of 3D plant structural information, each with distinct advantages and limitations:

- LiDAR (Light Detection and Ranging): Utilizes laser scanning to capture detailed spatial and structural data of plants, particularly effective for outdoor applications and investigating plant morphology and growth patterns [26].

- Structured Light Scanning: Employs projected light patterns to capture plant spatial structure, allowing creation of detailed 3D models of plant morphology, typically in indoor controlled environments [26].

- 3D Reconstruction from Multi-view Images: Generates 3D models through photogrammetric techniques using multiple 2D images captured from different angles, offering a more accessible alternative to specialized hardware [1].

- Spectral Imaging Technology: Captures plant images in specific wavelengths to gather critical information about plant health status, enabling analysis of photosynthetic efficiency, water content, and nutritional status [26].

Table 1: Comparison of 3D Plant Data Acquisition Technologies

| Technology | Spatial Resolution | Cost Range | Primary Applications | Key Advantages |

|---|---|---|---|---|

| LiDAR | Medium-High | $20,000-$50,000+ | Field-based plant architecture, canopy volume | Works well in outdoor conditions, captures large areas |

| Structured Light Scanning | High | $5,000-$20,000 | Detailed organ-level morphology, indoor phenotyping | High precision, controlled environment accuracy |

| Multi-view Reconstruction | Medium | $500-$2,000 (RGB cameras) | Greenhouse phenotyping, growth monitoring | Lower cost, uses accessible hardware |

| Spectral Imaging | Variable (spectral>spatial) | $20,000-$100,000+ | Pre-symptomatic stress detection, physiological traits | Early stress detection, functional trait analysis |

3D Data Representations

Each acquisition modality produces data in different formats, requiring specialized deep learning approaches:

- Point Clouds: Unstructured sets of 3D points representing the plant surface, typically generated by LiDAR and structured light scanners [10] [27]. This representation preserves the original measurement data but requires specialized neural network architectures that can handle permutation invariance and irregular sampling.

- Voxels: Regular 3D grids representing space with volumetric elements, analogous to pixels in 2D images. While compatible with standard 3D convolutional neural networks, this representation can be computationally intensive for high-resolution data due to cubic memory growth [10].

- Mesh Models: Interconnected polygons (typically triangles) forming a continuous surface, often derived from point clouds through reconstruction algorithms. These provide efficient representation but may lose some geometric details during the conversion process [10].

- Multi-view Images: Collections of 2D images captured from different viewpoints that implicitly contain 3D information, enabling the use of well-established 2D deep learning architectures with specialized fusion mechanisms for 3D reasoning [1].

Deep Learning Architectures for 3D Plant Data

Point Cloud Processing Networks

Plant organs naturally exhibit irregular structures that are well-represented by point clouds, making specialized architectures essential for effective analysis:

- PointNet++: Builds upon the foundational PointNet architecture by incorporating hierarchical feature learning that captures local structures at multiple scales, enabling better handling of non-uniform point densities common in plant scans [6] [23].

- Dynamic Graph CNN (DGCNN): Utilizes dynamic graph updates to capture local geometric structures while maintaining permutation invariance, achieving superior sensitivity in new organ detection tasks with an F1-score of 88.13% in recent implementations [23].

- PointNeXt: A refinement of the PointNet++ framework that enhances feature propagation through improved multilayer perceptron designs and InvResMLP blocks, demonstrating high accuracy across multiple crops with mIoU values of 89.21%, 89.19%, and 83.05% for sugarcane, maize, and tomato respectively [6].

3D Convolutional Neural Networks

For voxel-based representations, 3D CNNs extend the successful principles of 2D CNNs to volumetric data:

- Sparse Convolutional Networks: Address the computational inefficiency of standard 3D CNNs by operating only on non-empty voxels, significantly reducing memory consumption while maintaining representational power - particularly valuable for the sparse nature of plant point clouds [27].

- U-Net 3D Variants: Adapt the successful encoder-decoder architecture with skip connections for volumetric segmentation, enabling precise voxel-wise labeling of plant organs while requiring substantial computational resources for high-resolution data [28].

Transformer-Based Architectures

Recent advances have incorporated transformer architectures with self-attention mechanisms for 3D plant data: