Deep Learning and Computer Vision in Plant Phenotyping: Methods, Applications, and Future Directions

This article provides a comprehensive review of modern plant phenotyping methods leveraging deep learning and computer vision.

Deep Learning and Computer Vision in Plant Phenotyping: Methods, Applications, and Future Directions

Abstract

This article provides a comprehensive review of modern plant phenotyping methods leveraging deep learning and computer vision. It explores the foundational principles driving the shift from manual to automated, high-throughput systems and details the application of specific neural network architectures like CNNs, RNNs, and Transformers for tasks ranging from disease detection to morphological analysis. The content addresses critical challenges such as data scarcity, model generalization, and interpretability, offering troubleshooting and optimization strategies. Finally, it presents a comparative analysis of model performance across different conditions and datasets, benchmarking state-of-the-art approaches to guide researchers and professionals in selecting and validating methods for robust, real-world deployment.

From Manual Measurements to AI-Driven Insights: The Foundations of Modern Plant Phenotyping

Defining Plant Phenotyping and Its Critical Role in Food Security and Crop Improvement

Plant phenotyping is the scientific discipline concerned with the quantitative assessment of plant traits across different hierarchical scales, from the cellular level to the whole canopy [1] [2]. It encompasses the measurement and analysis of a plant's anatomical, ontogenetical, physiological, and biochemical properties to understand how its genetic makeup (genotype) interacts with environmental conditions and management practices to determine its observable characteristics and performance [1] [2]. The core objective is to establish a reliable link between the genotype and the resulting phenotype, which is crucial for selecting superior genotypes that will become future cultivars well-adapted to different environments [3].

Historically, phenotyping relied on labour-intensive manual methods where experts visually scored plant samples and recorded characteristics, often requiring destructive harvesting for laboratory tests [3]. This approach was limited by its throughput, which impacted data accuracy and the number of traits that could be practically characterized [3]. The contemporary revolution in phenotyping lies in the adoption of high-throughput, non-destructive methods that utilize automated sensors, robotics, and data analytics to characterize plants rapidly and objectively [3] [1]. These modern platforms can now accomplish in hours what previously took field experts months to collect, allowing researchers to focus more on data analysis and decision-making [3].

The Imperative for Advanced Phenotyping in Global Agriculture

The global plant phenotyping market, valued at approximately USD 242.9 million in 2023, is projected to grow steadily, reflecting its increasing importance in addressing core agricultural challenges [4]. This growth is fundamentally driven by the escalating global demand for food, with a population projected to exceed 9.7 billion by 2050, which necessitates a substantial increase in agricultural output without a proportional expansion of arable land or water resources [4]. Furthermore, there is an urgent need for climate-resilient crops capable of withstanding extreme weather patterns, including prolonged droughts, heatwaves, and emerging disease outbreaks [4] [5]. Phenotyping technologies are indispensable for rapidly identifying plant traits that confer resistance and tolerance to these abiotic and biotic stresses, thereby accelerating the development and deployment of robust crop varieties [4] [6].

Table 1: Primary Drivers of the Plant Phenotyping Market

| Driver | Impact |

|---|---|

| Food Demand | Necessary to increase agricultural output for a growing global population [4]. |

| Climate Change | Requires development of crops resilient to drought, heat, and new diseases [4] [5]. |

| Technology Integration | AI, ML, and robotics enable automated, high-throughput systems that replace manual measurements [4]. |

A significant bottleneck in crop improvement has been the disparity between the rapid advancements in genotyping technologies and our ability to collect high-quality phenotypic data at a similar scale and speed [6] [7]. Effective phenotyping is the essential bridge that connects genomic information to real-world plant performance, making it a cornerstone for modern genetic crop improvement, molecular breeding, and transgenic studies [6] [7]. By providing precise measurements of complex traits related to growth, yield, and stress adaptation, phenotyping empowers breeders and researchers to make data-driven selections, ultimately shortening the breeding cycle and enhancing crop productivity [6].

High-Throughput Phenotyping Technologies and Platforms

High-throughput phenotyping (HTP) leverages a suite of non-destructive imaging techniques and automated platforms to characterize plant traits rapidly and accurately. These technologies operate on the principle of measuring the interaction of electromagnetic radiation with plant tissues, which varies depending on the plant's physiological status [6] [7]. The data acquired from these sensors provide digital insights into plant health, structure, and function.

Table 2: Core Imaging Techniques in Modern Plant Phenotyping

| Imaging Technique | Measured Parameters | Key Applications |

|---|---|---|

| Visible Light Imaging | Plant biomass, architecture, height, color, growth dynamics [6] [7]. | Morphological analysis, growth monitoring, yield trait estimation [7]. |

| Thermal Imaging | Canopy/leaf temperature, stomatal conductance [6] [7]. | Assessment of plant water status and transpiration for drought stress detection [7]. |

| Fluorescence Imaging | Photosynthetic efficiency, quantum yield, leaf health status [6] [7]. | Detection of biotic and abiotic stresses before visual symptoms appear [6]. |

| Hyperspectral Imaging | Leaf/canopy water content, pigment composition, phytochemical levels [6] [7]. | Detailed health status assessment, nutrient content analysis, specific disease identification [6]. |

| 3D Imaging | Canopy and shoot structure, root architecture, leaf angle distribution [6] [7]. | Detailed architectural analysis for light interception and plant development studies [7]. |

These imaging techniques are deployed across various platforms, ranging from controlled environments (growth chambers, greenhouses) to field conditions [6]. In controlled settings, sophisticated robotics and conveyor systems enable the automated phenotyping of hundreds of plants per day under defined conditions [2]. For field-based phenotyping, which is critical for validating traits in real-world agricultural scenarios, platforms include Unmanned Aerial Vehicles (UAVs or drones), Unmanned Ground Vehicles (UGVs), and tractor-mounted systems [3] [4]. These field platforms, equipped with various sensors, capture canopy-level data over large acreages, directly contributing to precision agriculture models [4].

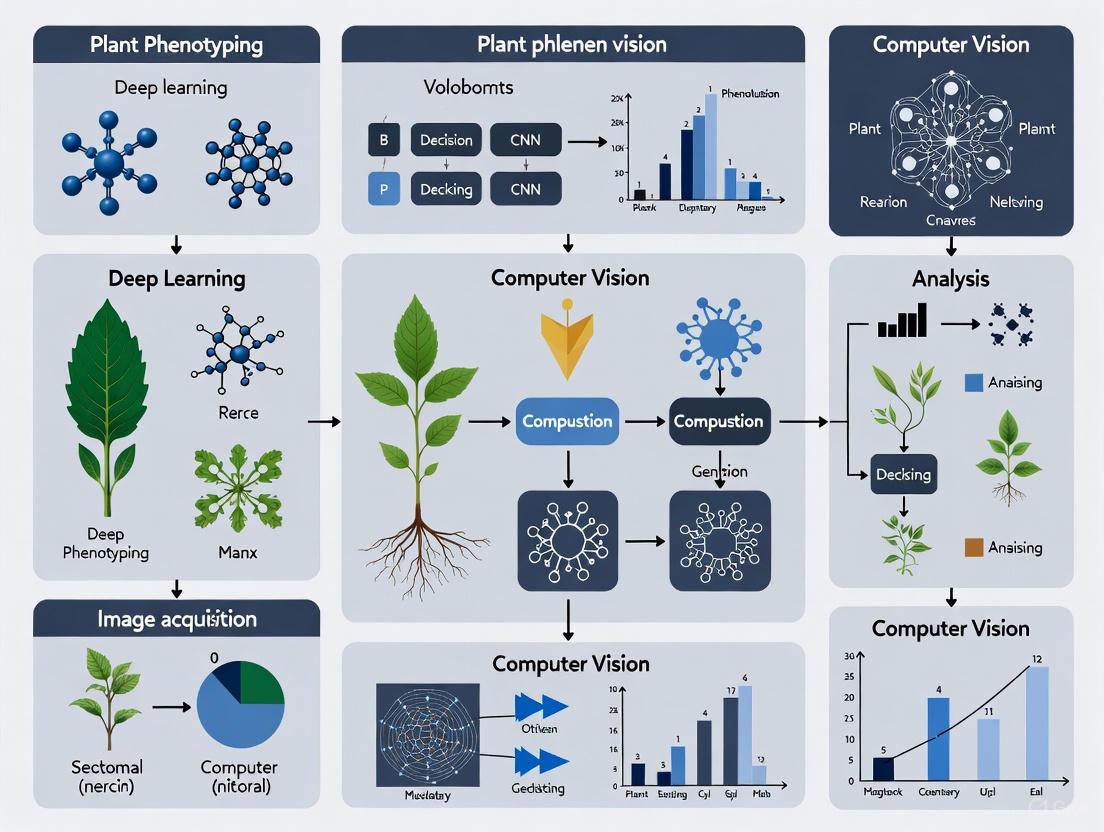

Figure 1: Workflow of a High-Throughput Phenotyping System. The process begins with the selection of an environment, which determines the appropriate platform. These platforms are equipped with various imaging sensors that collect raw data, which is subsequently analyzed to extract meaningful plant traits.

Application Note: Protocol for Multi-Spectral Phenotyping of Drought Stress Response

This protocol outlines a standardized procedure for using multi-spectral imaging to quantify the physiological response of cereal crops to progressive drought stress. The method is designed for high-throughput applications in a controlled greenhouse environment.

Research Reagent and Material Solutions

Table 3: Essential Materials for Drought Stress Phenotyping

| Item | Specification/Function |

|---|---|

| Plant Material | 20 genotypes of wheat (Triticum aestivum), with 10 plants per genotype [6]. |

| Growth System | Pot-based with standardized potting mix; automated irrigation system for initial well-watered phase [6]. |

| Multi-Spectral Camera | Sensor sensitive in visible (RGB) and near-infrared (NIR) bands, mounted on a movable gantry or UGV [6] [7]. |

| Thermal Camera | For simultaneous capture of canopy temperature, a proxy for stomatal conductance and water status [6] [7]. |

| Environmental Sensors | To continuously monitor and record light, air temperature, and relative humidity [6]. |

| Data Storage & Compute | Robust system for handling large image datasets; software for calculating vegetation indices (e.g., NDVI) [6] [7]. |

Experimental Procedure

Plant Growth and Experimental Design:

- Sow seeds in a randomized complete block design to account for microenvironmental variation within the greenhouse.

- Grow all plants under well-watered conditions (maintaining soil moisture at field capacity) until the tillering stage (Zadoks growth stage 25-29).

- Implement the drought stress treatment by withholding water from the designated stress group. The control group continues to receive regular irrigation.

Image Acquisition Protocol:

- Frequency: Acquire images every day at the same time (e.g., mid-morning, 10:00 AM) to minimize diurnal variation effects.

- Settings: Use fixed camera settings (aperture, ISO, shutter speed) and consistent lighting (or use camera flash) for the entire experiment to ensure data comparability.

- Capture: For each plant, capture co-registered multi-spectral (RGB and NIR) and thermal images from a nadir (top-down) view. Ensure the entire plant is within the frame.

Data Processing and Trait Extraction:

- Upload images to a data management platform (e.g., Hiphen's Cloverfield) for automated processing [3].

- Calculate Vegetation Indices algorithmically from the images. Key indices include:

- Extract mean canopy temperature from the thermal images.

Data Analysis:

- Plot the temporal trajectory of NDVI, PSA, and canopy temperature for each genotype under both control and stress conditions.

- Genotypes that maintain higher NDVI and PSA values and lower canopy temperatures under drought conditions are identified as possessing superior drought tolerance.

The Integration of Deep Learning and Computer Vision

The massive volume of image data generated by high-throughput phenotyping platforms presents a significant challenge in data analysis, creating a new bottleneck [8] [9]. Deep Learning (DL), a subset of artificial intelligence, has emerged as a transformative technology to address this challenge by automating the extraction of meaningful information from plant images [8] [9].

Deep learning, particularly Convolutional Neural Networks (CNNs), reduces the need for manual feature engineering by learning hierarchical representations directly from raw pixel data [8]. These algorithms are now crucial for a wide range of phenotyping tasks, including:

- Image Segmentation: Automatically distinguishing plant pixels from background soil or other objects [8].

- Classification and Counting: Identifying and counting specific organs, such as leaves, flowers, or kernels [8].

- Disease and Stress Detection: Identifying subtle patterns indicative of biotic or abiotic stress long before they are visible to the human eye [4] [8].

- Predictive Modeling: Unraveling complex genotype-phenotype-environment relationships to predict plant performance [8] [9].

The integration of DL into phenotyping pipelines is a key trend that significantly boosts both the scale and precision of plant research, enabling more powerful and predictive analyses for crop improvement [4] [9].

Figure 2: Role of Deep Learning in Image Analysis. Raw plant images are processed by deep learning models, which automate the extraction of complex phenotypic traits, enabling tasks such as organ counting, stress detection, and yield prediction.

Challenges and Future Perspectives

Despite its promising potential, the widespread adoption of advanced plant phenotyping faces several hurdles. A significant challenge is the high initial capital investment required for advanced phenotyping infrastructure, which can be a barrier for smaller institutions and developing economies [4]. Furthermore, the complexity of data management and analysis remains a major constraint; phenotyping generates petabytes of multi-dimensional data, and extracting actionable insights demands advanced computational resources and a highly skilled workforce [4]. The lack of standardized protocols across different platforms and institutions also hinders data comparability and collaborative progress [4].

The future of plant phenotyping will be shaped by the continued pervasive integration of Artificial Intelligence (AI) and Machine Learning (ML) to enhance data analysis and predictive power [4] [9]. There is also a strong trend toward scaling up field-based phenotyping to validate traits in real-world conditions using UAVs and UGVs [4]. Another critical frontier is the move towards multi-modal data fusion, combining imaging data with other 'omics' data (genomics, metabolomics) and environmental records to build a more holistic understanding of plant function and resilience [10] [5]. Overcoming current challenges and leveraging these future trends will be paramount to unlocking the full potential of plant phenotyping in securing global food security and accelerating crop improvement for a sustainable future.

Plant phenotyping, the science of measuring plant structural and physiological characteristics, is fundamental to crop improvement and agricultural research [11] [12]. Traditional methods for obtaining these measurements have historically relied on manual visual assessments and tools like rulers and calipers [11] [12]. While these approaches have provided valuable data, they introduce significant bottlenecks that impair the scalability, accuracy, and efficiency of modern breeding programs and physiological studies. This application note details the core limitations of traditional phenotyping—manual labor intensiveness, destructive sampling, and inherent subjectivity—and frames them within the context of a shifting research paradigm that leverages deep learning and computer vision to overcome these constraints. The transition to high-throughput, non-destructive, and automated phenotyping is crucial for accelerating the development of crops resilient to climate change and for supporting global food security [11] [12].

Core Limitations of Traditional Phenotyping

The table below summarizes the three primary limitations of traditional phenotyping methods and their impacts on research and breeding programs.

Table 1: Core Limitations of Traditional Plant Phenotyping Methods

| Limitation | Description | Impact on Research |

|---|---|---|

| Manual Labor | Relies on human effort for visual observations and physical measurements using tools like rulers and calipers [11]. | Time-consuming and labor-intensive, making it unsuitable for large-scale field operations [11]. Creates a bottleneck in data acquisition, limiting the number of individuals and traits that can be assessed [12]. |

| Destructive Sampling | Often requires plants to be damaged or uprooted to study internal properties, such as root architecture or biomass [11]. | Makes it impossible to monitor the same plant throughout its life cycle, capturing only a single moment in time [11]. Prevents longitudinal studies on the same individual, which is critical for understanding growth dynamics [13]. |

| Subjectivity | Measurements and scoring are influenced by the individual researcher's perception and interpretation [11] [12]. | Introduces inconsistency and error, as different people may observe and interpret the same plant traits differently [11]. Data accuracy and reliability cannot be guaranteed, compromising the validity of downstream analyses [12]. |

Transition to Modern High-Throughput Phenotyping

The limitations of traditional methods are being addressed by high-throughput plant phenotyping (HTP), which leverages a suite of non-destructive imaging technologies and automated analysis. The following workflow illustrates how modern phenotyping integrates these technologies to create an efficient, data-driven pipeline.

Experimental Protocol: A Case Study in Non-Destructive Vigor Assessment

This protocol details a specific experiment that demonstrates the transition from a destructive traditional method to a non-destructive, image-based technique for assessing early seedling vigor in rice—a critical trait for direct-seeded cultivation systems [13].

Application Note: Early Seedling Vigor Phenotyping in Direct-Seeded Rice

1. Background and Objective: Early seedling vigor helps young plants compete with weeds and establish successfully. Traditional screening relies on destructive harvests to measure biomass, preventing the tracking of individual plants over time and making the selection of superior genotypes in breeding programs slow and inefficient [13]. This protocol establishes a non-destructive, image-based method to quantify seedling vigor using whole-plant area (WPA) as a key proxy metric.

2. Experimental Setup and Workflow: The following diagram contrasts the traditional destructive method with the modern image-based protocol.

3. Key Findings and Validation:

- Strong Correlation: The whole-plant area estimated from images (WPAi) showed a strong positive correlation with the whole-plant area measured by a destructive flatbed scanner (WPAs), with regression analysis showing WPAs explained 83.11% and 87.33% of the variation in WPAi at 14 and 28 days after sowing (DAS), respectively [13].

- Growth Rate Validation: The crop growth rate calculated from WPAi (CGR-WPAi) was strongly correlated with the CGR of shoot dry weight with tillers (R² = 74.26%) and root dry weight (R² = 45.20%) from destructive sampling [13].

- Novel Geometric Traits: The study identified new non-destructive metrics like convex hull and top view area, which effectively differentiated vigorous genotypes and reduced labor time by 80% while halving labor costs [13].

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines key technologies and materials that form the foundation of a modern, computer vision-based phenotyping setup.

Table 2: Essential Tools for Modern High-Throughput Plant Phenotyping

| Category | Tool / Technology | Function in Phenotyping |

|---|---|---|

| Imaging Sensors | RGB Camera | Captures standard color images for morphological analysis, leaf counting, and flower detection [11] [14]. |

| Hyperspectral Imager | Captures a wide range of spectral bands to infer chemical composition, chlorophyll levels, water content, and nutrient deficiencies [11] [15]. | |

| LiDAR / 3D Scanner | Laser-based scanning to create detailed 3D models of plants for analyzing complex structures, biomass, and canopy architecture [11] [15]. | |

| Thermal Camera | Measures infrared radiation to assess plant surface temperature, useful for monitoring water stress and health [11] [16]. | |

| Data Acquisition Platforms | Unmanned Aerial Vehicle (UAV) / Drone | Enables high-throughput, aerial-based phenotyping of large field populations, often carrying multiple sensors [11] [15] [14]. |

| Ground Robot (e.g., BoniRob) | Provides ground-level, automated phenotyping screening for detailed organ-level data [16]. | |

| Software & Algorithms | Deep Learning Models (YOLO11, CNN, ViT) | Performs automated image analysis for tasks like object detection, classification, and segmentation to extract phenotypic information [11] [17] [14]. |

| Image Analysis Software (PlantCV, ImageJ) | Provides user-friendly platforms for applying image processing techniques and quantifying traits without extensive computational expertise [14]. |

The limitations of traditional phenotyping—its reliance on manual labor, its destructive nature, and its inherent subjectivity—have long been a bottleneck in plant science and breeding. The integration of high-throughput phenotyping techniques, powered by computer vision and deep learning, presents a transformative solution. As demonstrated by the rice seedling vigor protocol, modern methods can provide non-destructive, objective, and highly scalable alternatives that yield data with strong correlations to traditional metrics while enabling dynamic trait analysis. Adopting these tools and protocols allows researchers to overcome historical constraints, accelerate the breeding cycle, and contribute more effectively to global food security efforts.

High-throughput phenotyping (HTP) represents a paradigm shift in agricultural and biological research, addressing a major bottleneck in crop improvement pipelines: the ability to phenotype crops quickly and efficiently [9]. This shift is characterized by the integration of automation, non-destructive imaging, and advanced computational analysis to quantitatively measure plant structural and functional characteristics [18] [19]. Plant phenotyping, defined as the assessment of complex plant traits such as growth, development, stress tolerance, architecture, physiology, and yield, plays a crucial role in informing both crop breeding and crop management decisions [18]. The move from labor-intensive, destructive, and low-throughput manual methods to automated, scalable solutions enables researchers to analyze plant traits under diverse environmental conditions with minimal manual input, thereby accelerating strain screening and optimization for applications in biofuels, bioremediation, and nutraceuticals [20].

Core Imaging Technologies for Non-Destructive Analysis

Non-destructive imaging forms the foundation of high-throughput phenotyping, allowing repeated measurements of the same plants throughout their lifecycle. The primary imaging modalities each provide unique insights into plant health and performance.

Table 1: Core Imaging Modalities in High-Throughput Plant Phenotyping

| Imaging Modality | Measured Parameters | Applications in Phenotyping | Technical Considerations |

|---|---|---|---|

| RGB Imaging | Projected leaf area, shoot biomass, plant architecture, colour analysis [19] | Growth rate analysis, morphology assessment, phenology tracking [19] [21] | Multiple views (top, side) improve accuracy; affected by leaf overlapping and circadian movements [19] |

| Chlorophyll Fluorescence Imaging (CFIM) | Quantum yields of photochemistry, non-photochemical energy dissipation [19] | Photosynthetic efficiency, early stress detection, photosynthetic function analysis [19] | Requires dark adaptation; kinetic CFIM provides most comprehensive data [19] |

| Thermal Imaging | Leaf surface temperature [19] | Water stress detection, stomatal conductance assessment [19] | Requires careful environmental control; temperature differences indicate transpiration rates [19] |

| Hyperspectral Imaging | Reflectance across numerous spectral bands [19] | Chlorophyll content, nutrient status, pigment composition [19] | Provides chemical composition data through spectral signatures [19] |

Protocol: Multi-Modal Imaging for Stress Response Analysis

Purpose: To non-destructively monitor plant responses to abiotic stress using integrated imaging sensors.

Materials:

- Plant samples subjected to stress treatments and controls

- Automated phenotyping platform with integrated RGB, chlorophyll fluorescence, and thermal cameras

- Image analysis software (commercial or open-source)

- Data processing workstation

Procedure:

- Plant Preparation: Establish a minimum of 10 biological replicates per genotype and treatment. For controlled environments, use randomized complete block designs.

- Imaging Schedule: Capture images at consistent intervals (e.g., daily or every other day) at the same time of day to minimize diurnal variation effects.

- RGB Imaging: Acquire images from multiple angles (top and at least two side views) to accurately estimate biomass and projected leaf area [19].

- Chlorophyll Fluorescence: Dark-adapt plants for 20 minutes prior to measurement. Capture both minimal (F₀) and maximal (Fₘ) fluorescence levels to calculate Fᵥ/Fₘ = (Fₘ - F₀)/Fₘ, which estimates the maximum quantum yield of PSII photochemistry [19].

- Thermal Imaging: Ensure consistent environmental conditions during capture. Use reference surfaces of known temperature for calibration.

- Data Extraction: Use automated image analysis to extract phenotypic traits from all imaging modalities.

- Data Integration: Correlate data across imaging platforms to build comprehensive phenotypic profiles.

Automated and Scalable Phenotyping Platforms

Recent advances in phenotyping platforms focus on integrating robotics with multiple sensing technologies to achieve unprecedented throughput and data integration. The PhenoSelect system exemplifies this approach, combining robotics, spectroscopy, fluorometry, flow cytometry, and data analytics for high-throughput, multi-trait phenotyping [20]. Such systems can profile multiple algal species across 96 different environmental and chemical conditions simultaneously, quantitatively measuring parameters such as photosynthetic efficiency, growth rate, and cell size with minimal manual intervention [20].

A key innovation in automated phenotyping is the quantification of phenotypic plasticity through computational approaches like convex hull volume calculation, which helps characterize how species respond to varying environmental conditions [20]. For example, automated systems have revealed that Haematococcus pluvialis exhibits the largest phenome size (indicating broad plasticity), while Nannochloropsis australis shows the smallest among studied species [20]. Visualization tools such as Ranked Spider Plots and heatmaps enable researchers to identify patterns across multiple traits and conditions [20].

Protocol: Automated System Operation for High-Throughput Screening

Purpose: To operate an automated phenotyping platform for scalable screening of plant populations.

Materials:

- Automated phenotyping platform with robotic handling system

- Multi-sensor array (e.g., RGB, fluorescence, spectral sensors)

- Environmental control system

- Data management and analysis infrastructure

Procedure:

- System Calibration: Perform daily calibration of all sensors using standardized reference materials. Verify robotic positioning accuracy.

- Experimental Setup: Program the experimental layout into the system software, assigning specific positions to different genotypes and treatments.

- Automated Scheduling: Configure the imaging schedule to maximize throughput while avoiding measurement interference (e.g., sufficient dark adaptation for fluorescence measurements).

- Quality Control Checks: Implement automated quality checks for focus, exposure, and sensor performance during data collection.

- Data Management: Use automated pipelines to transfer, store, and pre-process acquired data. Implement backup protocols to prevent data loss.

- Trait Extraction: Apply computer vision algorithms to extract quantitative traits from images. Use batch processing for large datasets.

- Data Validation: Periodically validate automated measurements with manual assessments to ensure data quality and reliability.

Deep Learning and Computer Vision in Phenotyping Analysis

Deep learning has emerged as a transformative technology for analyzing the large image datasets generated by high-throughput phenotyping systems [9]. Convolutional Neural Networks (CNNs) have demonstrated remarkable success in extracting phenotypic traits from imaging data, including leaf count, shape, size, and disease severity [22]. These approaches have evolved from traditional machine learning methods that struggled with generalization to new conditions or crop types [22].

More recently, hybrid architectures that combine transformer-based models with lightweight convolutional modules have shown improved performance for phenotyping tasks [22]. These frameworks incorporate three key elements: (1) a hybrid generative model to capture complex spatial and temporal phenotypic patterns; (2) a biologically-constrained optimization strategy to improve prediction accuracy and interpretability; and (3) an environment-aware module to address environmental variability [22].

Protocol: Deep Learning Implementation for Image-Based Phenotyping

Purpose: To implement a deep learning pipeline for automated trait extraction from plant images.

Materials:

- High-performance computing workstation with GPU acceleration

- Curated dataset of plant images with corresponding manual annotations

- Deep learning frameworks (e.g., TensorFlow, PyTorch)

- Data augmentation utilities

Procedure:

- Data Preparation: Collect and annotate a minimum of 100 images per object class or genotype to ensure robust model training [21]. For limited data scenarios, employ patch-based classification to increase effective dataset size [21].

- Data Augmentation: Apply transformations including rotation, scaling, colour adjustment, and flipping to increase dataset diversity and improve model generalization.

- Model Selection: Choose appropriate network architectures based on the phenotyping task:

- Biologically-Constrained Optimization: Incorporate domain knowledge as constraints during training to ensure biologically plausible predictions [22].

- Model Training: Implement transfer learning when possible by fine-tuning pre-trained models on plant-specific datasets to reduce training time and data requirements [22].

- Validation: Use k-fold cross-validation with independent test sets to evaluate model performance. Employ metrics such as accuracy, F1-score, and mean average precision appropriate to the task.

- Deployment: Integrate the trained model into the phenotyping pipeline for automated trait extraction.

Explainable AI and Interpretability in Phenotyping

As deep learning models become more complex, their "black box" nature presents challenges for plant scientists who need to understand the relationship between model predictions and plant physiology [18]. Explainable AI (XAI) addresses this issue by providing tools and techniques that help researchers interpret, understand, and trust AI model decisions [18] [24]. The adoption of XAI in plant phenotyping is still in its early stages but growing in importance [18].

XAI methods can be categorized as either model-specific (applicable to specific model architectures) or model-agnostic (applicable to any model) [18]. Popular techniques include saliency maps that highlight image regions most influential in model decisions, feature visualization that reveals what patterns models have learned to detect, and surrogate models that approximate complex models with simpler, interpretable ones [18].

Protocol: Implementing Explainable AI for Phenotyping Models

Purpose: To apply XAI techniques for interpreting deep learning models in plant phenotyping.

Materials:

- Trained deep learning models for phenotyping tasks

- XAI libraries (e.g., SHAP, LIME, Captum)

- Visualization tools

- Domain knowledge of plant biology

Procedure:

- Model Selection: Choose appropriate XAI techniques based on model architecture and interpretation goals.

- Saliency Map Generation: Apply gradient-based methods to identify image regions most influential for model predictions.

- Feature Importance Analysis: Use permutation-based methods to quantify the importance of different input features.

- Biological Validation: Correlate model explanations with known biological knowledge to validate that models are learning meaningful features.

- Comparative Analysis: Compare explanations across different genotypes, treatments, or growth stages to identify patterns.

- Model Refinement: Use insights from XAI to identify potential model biases or errors and refine training data or architecture accordingly.

- Visualization: Create clear visualizations that communicate model decisions to domain experts without technical backgrounds.

Emerging Technologies and Future Directions

The field of high-throughput phenotyping continues to evolve with several emerging technologies promising to further transform plant phenotyping. Large Language Models (LLMs) and multi-modal approaches are showing potential for simplifying interaction with complex vision models [25]. Systems like PhenoGPT leverage LLMs to invoke the most appropriate pre-trained vision models to address plant tasks specified by free text, lowering the barrier for plant scientists without extensive computational background [25].

Another significant trend is the move toward field-based high-throughput phenotyping to capture trait expression under real-world conditions [21]. For perennial crops like grapevines, field phenotyping is particularly important for evaluating the full phenotypic variability of traits like yield or plant vigour throughout the season [21].

Table 2: Application of High-Throughput Phenotyping Across Scales and Environments

| Phenotyping Scale | Technological Requirements | Measurable Traits | Applications |

|---|---|---|---|

| Laboratory/ Controlled Environment | Automated imaging systems, environmental control, robotic handling [19] [21] | Detailed morphological traits, precise physiological responses [21] | Fundamental research, gene function analysis, early screening [21] |

| Greenhouse | Semi-controlled environments, mobile gantries or conveyor systems [19] | Disease progression, growth patterns under semi-controlled conditions [21] | Pre-breeding screening, preliminary yield assessment [21] |

| Field | UAVs, ground vehicles, weather-proof sensors, GPS [21] | Yield components, canopy architecture, stress responses under natural conditions [21] | Breeding selection, agronomic management, genotype × environment interaction studies [21] |

Protocol: Field-Based High-Throughput Phenotyping

Purpose: To implement high-throughput phenotyping under field conditions for perennial crops.

Materials:

- UAVs with multi-spectral or hyperspectral cameras

- Ground vehicles with sensors

- GPS and geotagging capability

- Weather monitoring stations

- Data processing pipeline for large datasets

Procedure:

- Experimental Design: Establish field trials with appropriate replication and randomization. Include reference genotypes with known characteristics.

- Sensor Selection: Choose sensors appropriate for target traits (e.g., multispectral for vegetation indices, thermal for water stress).

- Flight Planning: For UAV-based phenotyping, program automated flight paths with consistent altitude, speed, and overlap.

- Temporal Scheduling: Plan capture times to coincide with key growth stages and optimal environmental conditions (e.g., midday for water stress assessment).

- Data Management: Implement robust data management systems for large volumes of field data, including metadata on environmental conditions.

- Spatial Analysis: Apply geospatial analysis to account for field heterogeneity and positional effects.

- Data Integration: Combine field phenotyping data with environmental and genomic data for comprehensive analysis.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagent Solutions for High-Throughput Phenotyping

| Tool/Category | Specific Examples | Function/Application |

|---|---|---|

| Imaging Sensors | RGB cameras, Chlorophyll fluorescence imagers, Thermal cameras, Hyperspectral sensors [19] | Non-destructive measurement of plant morphology, physiological status, and chemical composition [19] |

| Automation Systems | Robotic handlers, Conveyor systems, Automated liquid handlers [20] | Enable high-throughput, reproducible sample processing and measurement with minimal manual intervention [20] |

| AI Models | CNN architectures (U-Net, ResNet), Transformer models, Hybrid architectures [22] [23] | Automated trait extraction, pattern recognition, and prediction from image data [22] |

| Data Analysis Platforms | PhenoSelect [20], Deep learning frameworks (TensorFlow, PyTorch) [22] | Data integration, visualization (Ranked Spider Plots, heatmaps), and trait quantification [20] |

| Reference Materials | Colour standards, Thermal references, Fluorescence standards [19] | Sensor calibration and data normalization across measurement sessions [19] |

Plant phenotyping is the comprehensive assessment of complex plant traits such as growth, development, tolerance, resistance, architecture, physiology, ecology, and yield [7]. The advancement of high-throughput phenotyping platforms using non-destructive imaging techniques has revolutionized plant biology research and breeding programs by enabling automated, quantitative measurement of plant traits [26]. These technologies are particularly valuable for dissecting the genetics of quantitative traits and studying plant responses to biotic and abiotic stresses [7] [19].

Imaging plants extends beyond simply "taking pictures" to quantitatively measure phenotypes through the interaction between light and plant tissues—including reflected, absorbed, or transmitted photons [7]. Each plant component has wavelength-specific properties; for instance, chlorophyll absorbs photons primarily in blue and red spectral regions, while water has specific absorption features in near and short wavelengths [7]. This review provides a comprehensive technical analysis of four core imaging technologies—RGB, hyperspectral, thermal, and 3D imaging—within the context of modern plant phenotyping pipelines that integrate deep learning and computer vision.

Core Imaging Modalities

RGB Imaging utilizes cameras sensitive to the visible spectral range (400-700 nm) to capture red, green, and blue channel data [7] [26]. It serves as a fundamental tool for quantifying morphological and architectural traits, providing high-contrast images that align with human visual perception [27] [19].

Hyperspectral Imaging (HSI) captures both spectral (λ) and spatial (x, y) information, merging these into a 3D data matrix termed a "hyperspectral data cube" or "hypercube" [28]. This technology collects hundreds of contiguous narrow spectral bands across ultraviolet (UV), visible (VIS), near-infrared (NIR), and short-wave infrared (SWIR) regions (250-2500 nm), enabling detailed biochemical characterization [28].

Thermal Imaging employs infrared cameras to detect electromagnetic radiation in the thermal infrared range (3-5 μm or 7-14 μm), producing pixel-based maps of surface temperature [7] [26]. This modality provides insights into plant physiological status by measuring canopy or leaf temperature variations [26].

3D Imaging utilizes technologies such as stereo camera systems, time-of-flight cameras, laser scanning, and photogrammetry to capture spatial depth information and reconstruct three-dimensional plant architecture [7] [29]. These systems generate detailed depth maps for analyzing complex structural traits [7].

Technical Specifications and Applications

Table 1: Comparative Analysis of Core Imaging Technologies for Plant Phenotyping

| Imaging Technique | Spectral Range | Spatial Resolution | Primary Measurable Parameters | Plant Phenotyping Applications | Key Limitations |

|---|---|---|---|---|---|

| RGB Imaging | 400-700 nm (visible light) | Whole organs or organ parts, time series | Projected area, growth dynamics, shoot biomass, color, texture, architecture | Biomass estimation [26] [19], growth rate analysis [26] [30], disease quantification [26], yield traits [7] | Limited to structural assessment; affected by lighting conditions [26] |

| Hyperspectral Imaging | 250-2500 nm (UV-VIS-NIR-SWIR) | Crop vegetation cycles, indoor time series | Continuous spectra per pixel, vegetation indices, pigment composition, water content | Early disease detection [28], pigment composition analysis [7] [28], water status monitoring [28], nutrient assessment | High instrument cost [28]; complex data processing [28]; large data volumes [28] |

| Thermal Imaging | 3-5 μm or 7-14 μm (thermal infrared) | Whole shoot or leaf tissue, time series | Canopy/leaf temperature, stomatal conductance, transpiration rate | Water stress detection [26] [19], stomatal conductance monitoring [26], irrigation management | Affected by ambient conditions; requires reference measurements for calibration |

| 3D Imaging | N/A (geometry-focused) | Whole-shoot time series at various resolutions | Depth maps, plant height, leaf angle distributions, canopy structure | Shoot architecture analysis [7], root system modeling [29], biomass estimation, growth modeling in 3D space | Computational intensity; occlusion challenges [29] |

Experimental Protocols

Multi-Modal Image Registration Protocol

Objective: To achieve pixel-perfect registration of multi-modal plant imaging data (RGB, hyperspectral, and chlorophyll fluorescence) for enhanced feature extraction in machine learning applications [27].

Materials and Equipment:

- Sensor system (e.g., HAIP BlackBox V2) with HSI push broom line scanner (500-1000 nm)

- RGB camera (slightly tilted mounting position)

- Chlorophyll fluorescence imager (e.g., PhenoVation Plant Explorer XS)

- Multi-well plates or rhizoboxes for plant cultivation

- Calibration targets for geometric and radiometric correction

Procedure:

- Camera Calibration: Perform geometric calibration for each imaging modality using calibration targets. Calculate mean reprojection errors for accuracy assessment (target: subpixel range) [27].

- Data Acquisition: Acquire images from all sensor systems while maintaining consistent plant positioning. For HSI systems, account for push broom scanner characteristics and potential geometric distortions [27].

- Transformation Restriction: Restrict image registration to affine transformation to balance computational efficiency and robustness while minimizing original data alteration [27].

- Reference Image Selection: Systematically evaluate which sensor system provides optimal registration performance as a reference/target image [27].

- Algorithm Application: Test multiple automated image registration algorithms:

- Feature-based ORB (Oriented FAST and Rotated BRIEF)

- Phase-only correlation (POC) of Fourier transform

- Normalized cross-correlation (NCC)-based approach

- Enhanced correlation coefficient (ECC) maximization [27]

- Performance Evaluation: Calculate overlap ratios (ORConvex) to quantify registration accuracy. Target performance: >95% overlap for RGB-to-ChlF and HSI-to-ChlF registrations [27].

- Fine Registration: Implement additional fine registration on object-separated image data to address heterogeneity across different image regions that may not be fully corrected by a single global transformation matrix [27].

Validation: Assess registration quality through overlap metrics and subsequent analysis performance in machine learning applications for stress detection and trait quantification [27].

Hyperspectral Imaging and Analysis Protocol

Objective: To acquire and analyze hyperspectral data for detecting plant physiological status, stress responses, and biochemical composition [28].

Materials and Equipment:

- Hyperspectral imaging system (push broom or snapshot type)

- Controlled illumination system (consistent lighting conditions)

- Calibration standards (white reference and dark current)

- Computer with hyperspectral data processing capabilities

- Plant samples in controlled growth environment

Procedure:

- System Setup: Configure HSI system appropriate for experimental scale (lab, greenhouse, or field). For field applications, portable HSI devices are recommended [28].

- Illumination Control: Implement standardized lighting conditions. For indoor systems, supplemental blue LED lighting arrays can improve signal quality [28].

- Data Acquisition: Capture hyperspectral data cubes across the 250-2500 nm range. Maintain consistent distance and angle between sensor and plant samples [28].

- Data Calibration: Convert raw data to reflectance using white and dark reference measurements to account for sensor characteristics and illumination conditions [28].

- Hypercube Processing: Organize data into spatial (x, y) and spectral (λ) dimensions for subsequent analysis [28].

- Feature Extraction: Apply appropriate algorithms for:

- Vegetation indices calculation (e.g., NDVI, PRI)

- Spectral signature analysis for specific biochemical compounds

- Spatial pattern recognition for stress detection

- Dimension reduction techniques for large datasets [28]

- Model Development: Implement machine learning approaches (traditional or deep learning) to correlate spectral features with phenotypic traits of interest [28].

Validation: Compare HSI-derived parameters with ground truth measurements from laboratory analyses (e.g., chlorophyll content, water potential, nutrient levels) [28].

Diagram 1: Multi-modal plant phenotyping workflow integrating RGB, hyperspectral, thermal, and 3D imaging technologies.

The Scientist's Toolkit

Research Reagent Solutions

Table 2: Essential Materials and Software for Imaging-Based Plant Phenotyping

| Category | Item | Specifications | Application in Phenotyping |

|---|---|---|---|

| Imaging Hardware | RGB Camera | Visible spectrum (400-700 nm), high spatial resolution | Basic morphological assessment, growth tracking, architecture analysis [7] [26] |

| Hyperspectral Imaging System | Spectral range: 250-2500 nm, spatial resolution: sensor-dependent | Biochemical composition analysis, early stress detection, pigment quantification [28] | |

| Thermal Infrared Camera | Thermal range: 3-5 μm or 7-14 μm, temperature sensitivity: <0.1°C | Stomatal conductance monitoring, water stress detection, transpiration measurement [7] [26] | |

| 3D Imaging System | Stereo cameras, time-of-flight, or laser scanning | Plant architecture modeling, biomass estimation, root system analysis [7] [29] | |

| Experimental Systems | Rhizoboxes | Transparent growth containers (e.g., 300 mm × 1000 mm) with mineral glass front | Root system imaging in soil environment, non-destructive root growth monitoring [31] |

| Multi-well Plates (PhenoWell) | Space-efficient culture system with multiple wells | High-throughput screening of various abiotic stress factors on small plants [27] | |

| Software & Algorithms | Image Registration Tools | Python packages (OpenCV, scikit-image), affine transformation methods | Multi-modal image fusion, coordinate system alignment [27] |

| Root Image Analysis | Rhizobox image processing pipelines, segmentation algorithms | Root architecture quantification, root-soil interaction studies [31] | |

| Deep Learning Frameworks | TensorFlow, PyTorch with custom plant imaging modules | Automated trait extraction, disease identification, growth prediction [32] [28] |

Diagram 2: Information flow in multi-modal plant phenotyping, showing how different imaging technologies contribute to comprehensive trait assessment through data fusion and deep learning analysis.

Applications in Plant Stress Response and Breeding

Biotic and Abiotic Stress Detection

The integration of multi-modal imaging technologies has significantly advanced the detection and quantification of plant stress responses [27] [19]. Hyperspectral imaging enables early detection of fungal pathogens such as Zymoseptoria tritici in wheat before visible symptoms manifest, allowing for timely intervention strategies [28]. By analyzing specific spectral signatures in the 500-900 nm range, HSI can distinguish between healthy and infected tissues with high accuracy [28]. Thermal imaging provides sensitive measurement of stomatal closure in response to drought stress through increased leaf temperature detection, often revealing water deficit conditions before visible wilting occurs [26] [19]. RGB imaging combined with advanced computer vision algorithms enables quantitative assessment of disease severity through lesion counting and discoloration area measurement, replacing subjective visual scoring systems [26] [33].

High-Throughput Trait Quantification

Modern imaging platforms enable automated quantification of complex phenotypic traits essential for breeding programs [30] [19]. Root system architecture analysis using rhizobox-based RGB and hyperspectral imaging provides non-destructive assessment of root growth dynamics and spatial distribution in soil environments [31]. The combination of RGB time-series imaging with chemometric information from hyperspectral scans offers comprehensive insights into root-soil interactions and functional root responses to environmental conditions [31]. Canopy structure and growth dynamics are quantified through 3D imaging and photogrammetry approaches, enabling precise measurement of leaf area index, plant height, and biomass accumulation over time [30] [29]. These automated trait extraction pipelines significantly accelerate the phenotyping of large breeding populations, overcoming previous bottlenecks in genotype-to-phenotype studies [30].

Future Perspectives and Challenges

The field of imaging-based plant phenotyping faces several important challenges and opportunities for advancement. Data management and processing remains a significant hurdle, particularly for hyperspectral and 3D imaging technologies that generate massive datasets requiring specialized computational resources and analysis expertise [28]. Future developments in automated preprocessing pipelines, cloud computing integration, and machine learning-based feature extraction will be essential for broader adoption [32] [28]. Multi-modal data fusion represents another critical frontier, with current research demonstrating improved stress detection accuracy through integrated analysis of complementary imaging modalities [27]. The development of standardized registration protocols and fusion algorithms will enhance the synergistic potential of combined imaging technologies [27].

Instrument accessibility and cost continue to limit widespread implementation, particularly for advanced technologies like hyperspectral and high-resolution 3D imaging [28]. Future directions should focus on developing lower-cost systems, portable devices for field applications, and user-friendly software interfaces to make these technologies accessible to a broader range of researchers and breeding programs [28]. The integration of artificial intelligence and deep learning will further transform plant phenotyping by enabling automated trait identification, predictive modeling of growth patterns, and discovery of novel phenotypic indicators from complex multi-modal datasets [32] [28]. As these technologies mature, they will increasingly support the development of climate-resilient crops and sustainable agricultural systems through accelerated identification of optimal genotypes for challenging environments.

The Phenotyping Bottleneck and the Promise of Deep Learning

Plant phenotyping, the quantitative assessment of plant traits, is recognized as a major bottleneck in improving the efficiency of breeding programs, understanding plant-environment interactions, and managing agricultural systems [34] [35]. Traditional methods, which rely heavily on manual observation and data collection, are labor-intensive, time-consuming, and prone to human error, hindering the understanding of correlations between genetic factors, environmental conditions, and expressed phenotypes [36] [32]. This creates a significant impediment to addressing global challenges such as food security, climate change, and resource constraints [32] [34].

Deep learning (DL), a subset of machine learning characterized by its ability to learn hierarchical data representations automatically, is revolutionizing image-based plant phenotyping [34] [9] [35]. Unlike conventional machine learning that requires manual feature design, DL models, particularly Convolutional Neural Networks (CNNs), can learn relevant features directly from raw image data, breaking down analytical barriers and enabling the development of intelligent solutions for high-throughput phenotyping [34]. This capability is transforming phenotyping from a slow, subjective exercise into a rapid, data-driven process, empowering researchers and breeders with objective insights [37]. This article details the specific CNN architectures overcoming these challenges and provides application-focused protocols for their implementation.

Core Deep Learning Architectures in Plant Phenotyping

Different computer vision tasks in phenotyping require specialized CNN architectures. The table below summarizes the primary architectures and their applications.

Table 1: Core CNN Architectures and Their Applications in Plant Phenotyping

| CNN Architecture | Primary Computer Vision Task | Key Innovation Concept | Exemplar Phenotyping Application |

|---|---|---|---|

| AlexNet/ZFNet [34] | Image Classification | Early deep CNNs demonstrating breakthrough performance on large datasets. | Plant stress classification; developmental stage identification. |

| VGGNet [34] | Image Classification | Use of small (3x3) convolutional filters to increase network depth (up to 19 layers). | Detailed feature extraction for trait analysis. |

| U-Net [36] [32] | Image Segmentation | Encoder-decoder architecture with skip connections for precise pixel-wise segmentation. | Leaf and plant organ segmentation from complex backgrounds. |

| SegNet [36] | Image Segmentation | Encoder-decoder network using pooling indices for upsampling. | Leaf segmentation for accurate counting and morphological analysis. |

| DeepLab V3+ [36] | Image Segmentation | Uses atrous convolution to capture multi-scale contextual information. | Fine-grained segmentation of plant structures. |

| Transformer-based Models [32] | Text Generation / Multi-task Learning | Self-attention mechanisms for contextual understanding and sequence generation. | Generating natural language descriptions of phenotyping data. |

| LC-Net [36] | Leaf Counting (Custom Pipeline) | Integrates segmented leaf images with original RGB images to enhance counting accuracy. | Accurate leaf counting in rosette plants, even with overlapping leaves. |

Specialized Architectures and Emerging Trends

Beyond standard architectures, the field is advancing through specialized designs and hybrid models:

LC-Net for Leaf Counting: LC-Net represents a tailored pipeline rather than a single architecture. It leverages a SegNet model for initial leaf segmentation. The key innovation is the use of both the original RGB image and the segmented leaf image as a combined input to a subsequent counting model, which employs convolution blocks and max-pooling layers. This dual-input approach significantly enhances accuracy by providing the model with both raw pixel data and pre-processed structural information [36].

Hybrid and Multimodal Frameworks: Emerging frameworks combine different deep learning models to handle diverse data sources. For instance, a hybrid generative model can capture complex spatial and temporal phenotypic patterns, while an environment-aware module dynamically adapts to varying environmental factors, ensuring reliable predictions across different agricultural settings [32].

Text Generation for Phenotyping: Transformer-based models like GPT are being fine-tuned on agricultural datasets to automate the generation of textual reports, summarize experimental findings, and provide actionable insights in natural language, thereby improving communication between researchers and practitioners [32].

Experimental Protocols for Key Phenotyping Tasks

This section provides detailed methodologies for implementing deep learning for two critical phenotyping tasks: leaf counting and disease severity assessment.

Protocol 1: Leaf Counting in Rosette Plants Using LC-Net

This protocol is adapted from the LC-Net model, which demonstrated superior performance on datasets like CVPPP and KOMATSUNA [36].

Workflow Overview:

Diagram 1: LC-Net leaf counting workflow.

Step-by-Step Procedure:

Data Acquisition and Preparation:

- Imaging: Capture top-view RGB images of rosette plants (e.g., Arabidopsis, cabbage) against a consistent background.

- Dataset: Utilize public benchmarks like the Plant Phenotyping Datasets [38] (e.g., CVPPP, KOMATSUNA) or collect your own.

- Preprocessing: Resize all images to a uniform size (e.g., 256x256 pixels). Apply data augmentation techniques including rotation, flipping, and brightness adjustment to improve model robustness.

Leaf Segmentation Model Training:

- Model Selection: Implement a SegNet architecture, which was chosen for its superior performance in the original study [36].

- Ground Truth: Prepare pixel-wise annotated masks where each leaf is distinctly labeled.

- Training: Train the SegNet model using the original RGB images as input and the annotated masks as the target. Use a loss function like categorical cross-entropy.

- Validation: Evaluate segmentation quality using metrics such as Intersection over Union (IoU) and Dice Score [36].

LC-Net Counting Model Training:

- Input Preparation: For each training image, generate the corresponding segmented image using the trained SegNet model. The input to the counting model is the concatenation of the original RGB image and the segmented image.

- Architecture: The LC-Net counting model consists of convolution blocks (CB). Each CB contains convolution layers, batch normalization, and an activation function (e.g., ReLU), followed by max-pooling layers [36].

- Training: Train the model using the actual leaf count as the regression target. Use Mean Squared Error (MSE) as the loss function.

Model Deployment and Inference:

- Validation: Test the entire pipeline on a held-out test set.

- Evaluation Metrics: Report Mean Square Error (MSE), absolute difference count (DiC), and percentage agreement between predicted and actual leaf counts [36].

Protocol 2: In-Field Phenotyping for Disease Severity Assessment

This protocol is inspired by large-scale, mobile-based initiatives like CIMMYT's ImageSafari project [37].

Workflow Overview:

Diagram 2: In-field phenotyping pipeline.

Step-by-Step Procedure:

Standardized Image Collection:

- Equipment: Use smartphones or tablets equipped with standardized imaging protocols. The ImageSafari project uses QED.ai tools for this purpose [37].

- Protocol: Capture geo-referenced images at multiple growth stages and from multiple angles (e.g., top-down, side-view). Ensure consistent lighting and distance where possible. Use barcode-based workflows to link images to specific plots and genetic metadata from breeding systems like CIMMYT's Enterprise Breeding System (EBS) [37].

Data Curation and Annotation:

- Curation: Build a high-quality dataset by removing blurry or otherwise unusable images.

- Annotation: Expert annotators label images with traits of interest, such as disease severity scores (e.g., on a 0-5 scale) or percent leaf area affected. This creates the ground-truth dataset for supervised learning.

AI Model Development and Validation:

- Model Selection: Employ a CNN architecture suitable for image classification (e.g., VGGNet, ResNet) or segmentation (U-Net), depending on whether the output is a severity class or a segmented diseased area.

- Training: Train the model on the annotated dataset. Incorporate biologically-constrained optimization to ensure predictions are biologically realistic [32].

- Validation: Perform rigorous validation across different environments, seasons, and genetic backgrounds to ensure accuracy, consistency, and fairness. This step is critical for model generalizability [37].

Deployment and Scaling:

- Integration: Deploy the best-performing model via user-friendly mobile apps or cloud-based APIs.

- Use Case: Breeders and technicians in the field can use the app to take a new picture and receive an instant, in-field prediction of disease severity, enabling rapid, data-driven decisions [37].

Successful implementation of deep learning phenotyping requires a suite of computational and data resources.

Table 2: Essential Research Reagents and Resources for Deep Learning Phenotyping

| Resource Category | Specific Examples | Function and Utility |

|---|---|---|

| Public Benchmark Datasets | CVPPP Dataset; KOMATSUNA Dataset [36] [38] | Provide annotated imaging data for developing, training, and benchmarking algorithms for tasks like leaf segmentation and counting. |

| Software Libraries & Frameworks | TensorFlow; PyTorch; Scikit-learn [36] | Open-source libraries used to build, train, and evaluate deep learning models (e.g., implementing CNN architectures). |

| Pre-trained Models | Models from ImageNet; SegNet; U-Net [36] [34] | Models pre-trained on large datasets enable transfer learning, reducing the computational cost and labeled data requirements for new tasks. |

| Hardware for Model Training | NVIDIA GeForce GPUs (e.g., GTX 1650) [36] | Graphics Processing Units (GPUs) are essential for accelerating the computationally intensive process of training deep neural networks. |

| Field Imaging & Data Collection Tools | Smartphones with QED.ai apps; Standardized Imaging Protocols [37] | Enable systematic, geo-referenced, high-volume image collection in the field, which is the foundational step for any data-driven pipeline. |

Performance Benchmarks and Quantitative Outcomes

The effectiveness of deep learning models is validated through quantitative benchmarks on standard datasets.

Table 3: Performance Benchmarks of Deep Learning Models in Phenotyping

| Model / Architecture | Task | Dataset | Key Performance Metrics |

|---|---|---|---|

| LC-Net [36] | Leaf Counting | CVPPP & KOMATSUNA (merged) | Demonstrated superior performance in accurate leaf counting, outperforming existing state-of-the-art techniques, with robust performance on overlapping leaves. |

| SegNet (within LC-Net) [36] | Leaf Segmentation | CVPPP & KOMATSUNA (merged) | Achieved superior segmentation results visually and numerically, as measured by Accuracy, IoU, and Dice Score. |

| SHEPHERD [39] | Rare Disease Diagnosis (Medical) | Undiagnosed Diseases Network (UDN) | Identified correct causal gene in 40% of patients across 299 diseases, demonstrating high performance in a low-data regime. |

| AI-Powered Phenotyping (CIMMYT Pipeline) [37] | In-Field Trait Prediction | >1 Million images (sorghum, millet, etc.) | Enabled rapid, scalable, and objective trait prediction, transforming a slow, subjective process into a data-driven one. |

Deep learning, particularly CNNs and emerging transformer-based architectures, is decisively overcoming the plant phenotyping bottleneck. By automating the extraction of meaningful information from large quantities of image data, these technologies enable high-throughput, accurate, and objective measurement of plant traits, from leaf counting in controlled environments to disease assessment in the field [36] [37] [9].

Future research will likely focus on several key areas: improving model performance on noisy images and in complex field conditions, exploring 3D convolution models for richer structural analysis, and developing optimizations using diverse algorithms [36]. Furthermore, the integration of multimodal data (e.g., combining imagery with genomic and environmental data) and the use of knowledge-grounded learning to incorporate existing biological knowledge will be crucial for enhancing predictive accuracy and biological interpretability [32] [39]. As these tools become more accessible through mobile platforms, they promise to democratize advanced phenotyping, accelerating crop improvement and sustainable agricultural production on a global scale.

Architectures in Action: A Deep Dive into Deep Learning Models for Phenotyping Tasks

Plant phenotyping, the quantitative assessment of plant traits, is crucial for understanding plant behavior, improving crop yields, and advancing precision agriculture [22]. This field has been revolutionized by the adoption of deep learning, particularly Convolutional Neural Networks (CNNs), which enable the automated, high-throughput analysis of plant images [40] [24]. CNNs have become the dominant approach for tackling key phenotyping tasks such as leaf counting and disease identification, offering superior performance over traditional image processing and machine learning methods [41] [42]. These applications are vital for addressing global challenges in food security by helping to breed more resilient crops and enabling more effective disease management [24]. This article provides detailed application notes and experimental protocols for implementing CNN-based solutions in leaf counting and plant disease detection, framed within the broader context of a thesis on deep learning and computer vision for plant phenotyping.

Application Note 1: CNN-Based Leaf Counting

Background and Significance

Accurate leaf counting is a fundamental component of plant phenotyping, as it provides direct insights into plant growth and development [43]. Manual counting is labor-intensive, time-consuming, and subject to human error and bias [44]. Automated leaf counting using CNNs offers a rapid, reliable, and scalable alternative, allowing researchers to monitor plant health and growth stages efficiently [43] [44].

Key Models and Performance

Recent research has produced several specialized CNN architectures for leaf counting. The following table summarizes the performance of key models on standard datasets.

Table 1: Performance of CNN-Based Leaf Counting Models

| Model Name | Dataset | Key Metric | Performance | Reference |

|---|---|---|---|---|

| LC-Net | Combined CVPPP & KOMATSUNA | Subjective & Numerical Evaluation | Outperformed other recent CNN-based models | [43] |

| Eff-U-Net++ | CVPPP | Absolute Difference in Count (AbsDiC) | 0.21 | [43] |

| Eff-U-Net++ | MSU-PID | Absolute Difference in Count (AbsDiC) | 0.38 | [43] |

| Eff-U-Net++ | KOMATSUNA | Absolute Difference in Count (AbsDiC) | 1.27 | [43] |

| Regression Model (AlexNet) | LCC/LSC (Ara2012, Ara2013-Canon) | Pearson Correlation (r) | 0.76 (with augmented data) | [44] |

| YOLO V3-based | CVPPP | Absolute Difference in Count (AbsDiC) | 0.48 | [43] |

Experimental Protocol: LC-Net for Rosette Plant Leaf Counting

Principle: The LC-Net model leverages a convolutional neural network that takes both the original plant image and a pre-segmented image of the leaves as dual inputs. This provides the model with additional spatial information, improving its counting accuracy [43].

Workflow:

Materials and Reagents:

- Dataset: The combined dataset from the Leaf Segmentation Challenge (LSC) and Leaf Counting Challenge (LCC), specifically the 'Ara2012' and 'Ara2013-Canon' sets, which contain top-down images of Arabidopsis plants [44].

- Segmentation Model: A pre-trained SegNet model for generating the segmented leaf input, which has been shown to outperform other models like DeepLab V3+, U-Net, and RefineNet for this task [43].

- Software: Python 3.6+, PyTorch or TensorFlow deep learning frameworks.

Procedure:

- Data Preparation:

- Obtain the LSC and LCC datasets.

- Use the SegNet model to generate segmented binary images from the original plant images. These highlight the leaf regions.

- Data Pre-processing:

- Resize all original and segmented images to a uniform size compatible with the LC-Net input layer (e.g., 128x128 or 256x256 pixels).

- Normalize pixel values to a [0, 1] range.

- Model Training:

- Construct the LC-Net architecture, which is designed to process the two input streams.

- Define a regression loss function, such as Mean Squared Error (MSE).

- Use an optimizer like Adam with an initial learning rate of 1e-4.

- Train the model on the training set, using the ground truth leaf counts as labels.

- Validation and Testing:

- Evaluate the model's performance on the validation and test sets using metrics such as Absolute Difference in Count (AbsDiC) and Mean Squared Error (MSE).

- Compare the performance against other state-of-the-art models to benchmark results.

Application Note 2: CNN-Based Plant Disease Identification

Background and Significance

Plant diseases cause significant economic losses and threaten global food security [45]. Early and accurate detection is critical for effective management. CNN-based disease identification systems provide a rapid, scalable, and accessible tool for farmers and researchers, potentially surpassing the accuracy of manual diagnosis by experts [46] [41]. These models can be deployed via mobile applications or integrated into autonomous agricultural vehicles for continuous field monitoring [46].

Key Models and Performance

Disease identification models typically focus on classification or detection. The following table summarizes the performance of representative models.

Table 2: Performance of CNN-Based Plant Disease Identification Models

| Model / Approach | Plant/Disease | Key Metric | Performance | Reference |

|---|---|---|---|---|

| Stepwise Detection Model | Bell pepper, Potato, Tomato | Overall Accuracy | 97.09% | [45] |

| Stepwise (Crop Classification) | Bell pepper, Potato, Tomato | Accuracy | 99.33% (EfficientNet) | [45] |

| Stepwise (Disease Detection) | Bell pepper | Accuracy | 100.00% (GoogLeNet) | [45] |

| Stepwise (Disease Detection) | Potato | Accuracy | 100.00% (VGG19) | [45] |

| Stepwise (Disease Detection) | Tomato | Accuracy | 99.75% (ResNet50) | [45] |

| PiTLiD (Transfer Learning) | Multiple (Small Datasets) | Comparative Accuracy | Superior performance on small-scale datasets | [47] |

| Faster R-CNN, YOLOv3 | Apple Leaf Disease | Mean Average Precision (mAP) | Feasible for real-field detection | [42] |

Experimental Protocol: Stepwise Disease Detection and Classification

Principle: This protocol uses a three-step CNN-based model to first identify the plant species, then detect the presence of disease, and finally classify the specific disease type. This stepwise approach improves accuracy and modularity [45].

Workflow:

Materials and Reagents:

- Dataset: A curated dataset of diseased and healthy leaf images. Public datasets like PlantVillage are commonly used. For real-field applications, custom datasets similar to the apple leaf disease dataset mentioned in [42] are necessary.

- CNN Models: Pre-trained models such as EfficientNet, GoogLeNet, VGG19, and ResNet50, which can be fine-tuned for specific tasks [45].

- Software: Python with deep learning libraries (PyTorch, TensorFlow), and image processing tools (OpenCV).

Procedure:

- Data Curation:

- Assemble a dataset with images labeled by crop species and disease state (healthy/diseased). For diseased samples, include the specific disease name.

- Split the dataset into training, validation, and test sets (e.g., 70%/15%/15%).

- Step 1 - Crop Classification Model:

- Training: Fine-tune a pre-trained EfficientNet model using the training images, with the crop species (e.g., bell pepper, potato, tomato) as the label.

- Validation: Validate the model on the validation set and select the model with the highest accuracy.

- Step 2 - Disease Detection Model:

- Training: For each crop species, train a dedicated binary classification model (e.g., GoogLeNet for bell pepper, VGG19 for potato, ResNet50 for tomato) to distinguish between healthy and diseased leaves.

- Validation: Validate each crop-specific model to ensure high detection accuracy.

- Step 3 - Disease Classification Model:

- Training: For each crop, train a multi-class classification model (e.g., EfficientNet for tomato diseases, VGG19 for potato diseases) on the diseased subset of the data to identify the specific disease type.

- Validation: Validate the model's ability to correctly classify different diseases.

- Integrated System Testing:

- Test the entire pipeline on the held-out test set, feeding an input image through all three steps to obtain a final diagnosis.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Computational Tools for CNN-based Plant Phenotyping

| Item Name | Function/Application | Specification Notes |

|---|---|---|

| PlantVillage Dataset | A large, public benchmark dataset for training and validating disease identification models. | Contains over 87,000 images across 25 plant species and 58 disease classes [46] [41]. |

| LSC/LCC Dataset | Standard dataset for leaf segmentation and counting challenges. | Comprises top-down images of Arabidopsis thaliana (e.g., Ara2012, Ara2013-Canon) with ground-truth annotations [44]. |

| Pre-trained CNN Models (ResNet, VGG, EfficientNet) | Base architectures for transfer learning, reducing data and computational requirements. | Pre-trained on ImageNet; can be fine-tuned for specific phenotyping tasks [47] [45]. |

| SegNet | Deep convolutional encoder-decoder architecture for robust pixel-wise leaf segmentation. | Used to generate segmented leaf images as input for advanced models like LC-Net [43]. |

| Data Augmentation Pipeline | Artificially expands training datasets to improve model generalization and prevent overfitting. | Techniques include random cropping, rotation, flipping, and color jittering [44]. |

| Explainable AI (XAI) Tools | Provides insights into model decision-making, increasing trust and aiding biological discovery. | Techniques like Grad-CAM can highlight image regions most influential to a model's prediction [24]. |

Recurrent Neural Networks (RNNs), particularly Long Short-Term Memory (LSTM) architectures, have emerged as transformative computational tools for analyzing temporal patterns in plant phenotyping. This framework enables unprecedented capability to model dynamic growth processes and developmental stage transitions by learning long-range dependencies in time-series data. By processing sequential input from high-throughput phenotyping platforms, these models capture complex temporal dependencies in plant development, overcoming limitations of static image analysis. This protocol details comprehensive methodologies for implementing LSTM networks to quantify phenological stage transitions and growth dynamics, providing researchers with practical tools for enhancing precision in agricultural research and crop management.

Plant phenotyping—the quantitative assessment of plant traits—faces significant challenges in capturing temporal dynamics of growth and development. Traditional methods relying on manual observations or static image analysis fail to adequately model the sequential nature of plant development, where current states are intrinsically linked to previous physiological conditions [48]. The emergence of automated phenotyping platforms has generated vast time-series datasets, creating an urgent need for analytical frameworks capable of modeling these temporal sequences.

Recurrent Neural Networks (RNNs) represent a class of neural networks specifically designed for sequential data, making them ideally suited for temporal phenotyping applications. Unlike feedforward networks, RNNs maintain an internal state that serves as a memory of previous inputs, allowing them to model time-dependent processes [48]. However, standard RNNs suffer from vanishing gradient problems that limit their ability to capture long-range dependencies. Long Short-Term Memory (LSTM) networks address this limitation through specialized gating mechanisms that regulate information flow, enabling learning of long-term dependencies in phenotypic time-series data spanning weeks or months [48] [49].

Within plant phenotyping, LSTM applications include classification of plant genotypes based on growth patterns, prediction of biomass accumulation, and identification of phenological stage transitions through analysis of time-lapse imagery and sensor data [48] [49]. This protocol provides comprehensive methodologies for implementing these approaches in plant research.

Core Concepts: Temporal Modeling in Plant Phenology

Phenological Stages as Sequential Processes

Plant development occurs through an ordered sequence of phenological stages, each characterized by distinct morphological and physiological changes. These stages include dormancy, bud break, leaf development, stem elongation, flowering, fruiting, and senescence [50]. The timing and duration of these stages are influenced by complex interactions between genetic factors and environmental conditions, particularly temperature and photoperiod [51].

The sequential nature of these developmental transitions makes them particularly amenable to temporal modeling approaches. Each stage both influences and constrains subsequent developmental possibilities, creating dependencies that span the entire growth cycle [50]. For example, the timing of bud break affects subsequent leaf development, which in turn influences the plant's capacity for photosynthesis and biomass accumulation.

LSTM Architecture for Temporal Phenotyping

LSTM networks address the vanishing gradient problem through a sophisticated gating mechanism that regulates information flow. The key components of an LSTM unit include:

- Forget Gate: Determines which information from the previous cell state should be discarded

- Input Gate: Controls which new information should be stored in the current cell state