Decoding Plant Genomes: How AI and Machine Learning Sequence Models Are Predicting Variant Effects for Precision Breeding and Drug Discovery

This article explores the transformative role of machine learning sequence models in predicting the effects of genetic variants in plants.

Decoding Plant Genomes: How AI and Machine Learning Sequence Models Are Predicting Variant Effects for Precision Breeding and Drug Discovery

Abstract

This article explores the transformative role of machine learning sequence models in predicting the effects of genetic variants in plants. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning from the foundational concepts of in silico variant effect prediction to its methodological applications in both coding and non-coding genomic regions. The review contrasts emerging AI approaches with traditional genomic techniques, addresses key challenges in model training and validation specific to plant genomes, and evaluates the practical integration of these tools for precision plant breeding and the sustainable sourcing of plant-derived therapeutics. By synthesizing the latest research, this article serves as a critical resource for understanding how these computational tools are shaping the future of agricultural and medicinal plant science.

From Phenotype to Sequence: The Foundational Shift to In Silico Variant Effect Prediction in Plants

Traditional plant breeding, driven by phenotypic selection and mutagenesis screens, has been the cornerstone of crop improvement for centuries. However, these approaches are hampered by significant limitations, including high costs, time-intensive cycles, and the complexity of accurately linking genotypic variations to phenotypic outcomes. This article details these bottlenecks through structured data and protocols, and frames them within the emerging context of machine learning sequence models, which promise to revolutionize variant effect prediction and pave the way for precision plant breeding.

Quantitative Limitations of Traditional Approaches

The reliance on observable traits (phenotypes) and random mutagenesis in traditional breeding presents substantial bottlenecks. The tables below summarize the core quantitative constraints of these methods.

Table 1: Key Bottlenecks in Phenotype-Driven Breeding

| Bottleneck | Quantitative/Limiting Factor | Impact on Breeding |

|---|---|---|

| Time-Consuming Cycles | Relies on multi-year, multi-location field trials for phenotypic evaluation [1]. | Dramatically extends the time from cross to cultivar release. |

| Complex Trait Heritability | Low heritability traits are strongly influenced by environmental factors (GxE interaction), masking true genetic value [1]. | Lowers selection accuracy, leading to slow genetic gain for critical yield and resilience traits. |

| Genetic Diversity Erosion | Intensive phenotypic selection inevitably reduces genetic variance in the breeding population [1]. | Diminishes long-term potential for genetic gain and resilience to new stresses. |

| Phenotyping Costs | Requires extensive field trials, sophisticated phenotyping equipment, and labor [2]. | Consumes a significant portion of program resources, limiting scale and scope. |

Table 2: Limitations of Mutagenesis Screens

| Limitation | Description | Consequence |

|---|---|---|

| Random Mutation Generation | Mutations are untargeted, creating a vast number of random genetic changes [2]. | Requires screening immense populations to identify rare, desirable mutations; high signal-to-noise ratio. |

| Experimental Burden | The process of creating and phenotyping mutant populations is costly and time-consuming [2]. | Not scalable for rapid improvement of multiple traits or in multiple genetic backgrounds. |

| Pleiotropic Effects | Uncontrolled mutations can disrupt essential genes or have negative effects on other traits [2]. | Can render an otherwise beneficial mutation agronomically useless. |

| Limited Resolution | Traditionally used to identify large-effect genes; struggles to resolve the impact of specific single-nucleotide variants (SNVs) [2]. | Offers limited insights for precise fine-tuning of gene function or regulatory elements. |

Experimental Protocols for Traditional and Emerging Methods

Protocol: Traditional Phenotypic Selection for Quantitative Traits

This protocol outlines the standard, phenotype-driven breeding cycle for complex traits like yield.

- Objective: To select superior genotypes based on multi-environment field performance.

- Materials: Diverse germplasm, target field locations, standard agronomic equipment, data collection tools.

- Procedure:

- Crossing and Generation Advancement: Create genetic variation by crossing parental lines with complementary traits. Advance generations through self-pollination to achieve genetic fixation (e.g., to F5-F7), a process that can take several years [1].

- Multi-Environment Trial (MET) Establishment: Plant offspring lines (e.g., 200-500 genotypes) in replicated field trials across multiple locations and over 2-3 years to account for Genotype-by-Environment (GxE) interactions [1].

- Phenotypic Data Collection: Measure target agronomic traits (e.g., grain yield, plant height, disease resistance) at appropriate developmental stages. This process is labor-intensive and can be influenced by subjective scoring [1] [3].

- Statistical Analysis and Selection: Analyze data using mixed models to separate genetic effects from environmental noise. Select the top 5-10% of performing lines based on estimated breeding values [1].

- Recycling and Re-evaluation: Use selected lines as parents for the next breeding cycle or advance them for further testing, repeating the MET process [1].

Limitations Illustrated: This protocol is inherently slow (one cycle can take 5-7 years) and has low accuracy for traits with low heritability, as the phenotype is a poor predictor of the underlying genetic value [1].

Protocol: Forward Genetic Mutagenesis Screen

This protocol describes a classical forward genetics approach to identify genes underlying a specific phenotype.

- Objective: To discover genes responsible for a particular trait by screening a population with random mutations.

- Materials: Mutagen (e.g., ethyl methanesulfonate - EMS), target plant seeds, facilities for mutant generation and cultivation, phenotyping platforms.

- Procedure:

- Population Mutagenesis: Treat a large population of seeds (e.g., 10,000-50,000) with a chemical mutagen to induce random point mutations. Grow treated seeds (M0 generation) to produce the next generation (M1) [2].

- Mutant Population Development: Self-pollinate M1 plants and harvest M2 seeds individually. The M2 generation is the first where recessive mutations are segregating and can be observed [2].

- Phenotypic Screening: Grow the M2 population and systematically screen for individuals exhibiting alterations in the target trait (e.g., altered flowering time, disease susceptibility, or morphology).

- Genetic Validation and Mapping: Cross confirmed mutants to the original wild-type line. Study the inheritance of the trait and use genetic mapping (e.g., using molecular markers) to identify the genomic region and ultimately the causal gene responsible for the mutant phenotype.

Limitations Illustrated: This is a "needle-in-a-haystack" approach. The high cost and time required to generate, grow, and meticulously phenotype thousands of plants is prohibitive. Furthermore, identifying the single causal nucleotide change among thousands of background mutations is a complex and tedious process [2].

Visualization of Bottlenecks and Emerging Solutions

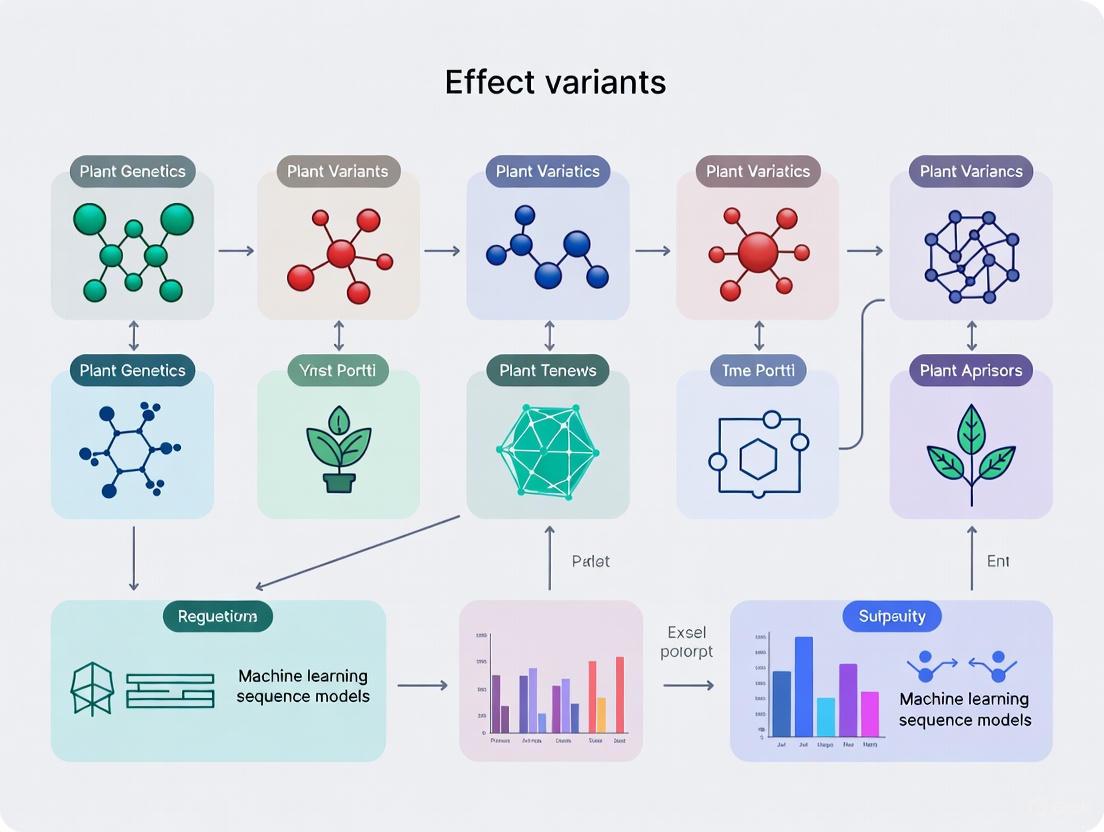

The following diagrams, generated using DOT language, illustrate the comparative workflows of traditional methods versus the emerging machine learning paradigm.

Diagram: Traditional vs. ML-Accelerated Breeding

Diagram: High-Resolution Variant Effect Mapping

Emerging technologies now enable the direct, high-throughput measurement of variant effects, generating the gold-standard data needed to train machine learning models and overcome traditional bottlenecks.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents and Technologies for Variant Effect Research

| Reagent/Technology | Function in Research | Context in ML-Driven Breeding |

|---|---|---|

| NanoSeq | An ultra-low error duplex sequencing method for accurately detecting somatic mutations in any tissue, even at single-molecule resolution [4]. | Provides high-fidelity data on mutation rates and selection landscapes, serving as a rich data source for training and validating predictive models of variant impact in plants. |

| Variant-EFFECTS | A high-throughput method combining pooled prime editing with FACS to quantitatively measure the effects of hundreds of designed DNA edits on endogenous gene expression [5]. | Generates gold-standard, context-aware datasets on regulatory DNA function, which are critical for overcoming the limitations of traditional association studies and training accurate sequence-to-function models. |

| Prime Editing Guide RNA (pegRNA) Libraries | Designed oligonucleotide libraries that encode specific sequence edits for the prime editor to introduce into the genome [5]. | Enable systematic perturbation of the genome at scale, from single-nucleotide changes to motif insertions, for functional characterization of coding and non-coding regions. |

| Crop Growth Models (CGM) | Mathematical models that simulate crop growth and yield based on genotype, environment, and management interactions [3]. | Can be integrated with G2P models to form hybrid CGM-G2P frameworks, allowing prediction of how sequence variants influence complex, yield-defining traits through intermediate physiological processes. |

| Ensemble G2P Models | A framework that combines diverse genome-to-phenome prediction models to improve accuracy and robustness [3]. | Leverages the "Diversity Prediction Theorem" to capture different dimensions of trait genetic architecture, mitigating the risk of model failure and providing more reliable predictions for breeding selection. |

Precision breeding represents a paradigm shift in modern plant improvement, moving from traditional phenotype-based selection to the direct targeting of specific causal genetic variants. This approach leverages advanced genomic technologies to make precise, targeted changes to a plant's DNA with the goal of introducing desirable traits. A core principle, as defined by UK legislation, is that these genetic changes must be of a type that "could have occurred naturally or through conventional breeding" [6]. This differentiates precision-bred organisms (PBOs) from traditional genetically modified organisms (GMOs), which may contain transgenes from unrelated species [6].

The targeting of causal variants—the specific DNA sequences responsible for phenotypic traits—is fundamental to this process. Unlike traditional breeding or marker-assisted selection, which often rely on linking traits to broader genomic segments, precision breeding aims to directly introduce or modify the precise nucleotides controlling agronomically important traits. This strategy requires a deep understanding of genotype-phenotype relationships and is increasingly supported by machine learning models that can predict the effects of genetic variants, thereby enabling more informed breeding decisions [2].

Machine Learning Sequence Models for Variant Effect Prediction

The accurate prediction of variant effects is critical for successful precision breeding. Machine learning sequence models have emerged as powerful tools for this purpose, offering a unified framework to understand how genetic changes influence plant form and function.

Contrasting Traditional and Modern Approaches

Traditional methods for identifying causal variants have primarily relied on association mapping, such as quantitative trait loci (QTL) mapping and genome-wide association studies (GWAS). These approaches fit separate statistical models for each genomic locus to estimate genotype-phenotype correlations [2]. While useful, they suffer from limitations including moderate to low resolution due to linkage disequilibrium, low power for detecting rare variants, and an inability to predict the effects of unobserved variants [2].

In contrast, modern sequence models fit a single, unified model across the entire genome to predict variant effects based on their genomic context [2]. These models fall into two main categories:

- Supervised learning in functional genomics: These models are trained on experimentally labeled sequences, often derived from functional genomics data, to predict molecular traits (e.g., gene expression) or complex phenotypes [2].

- Unsupervised learning in comparative genomics: These models leverage evolutionary sequence conservation across species or populations to identify functionally important regions and predict deleterious variants, often without requiring experimental labels [2].

Model Architectures and Applications in Plants

Deep learning architectures have shown particular promise for plant genomics applications. The general workflow involves processing DNA, RNA, or protein sequences through neural networks—including convolutional neural networks (CNNs), recurrent neural networks (RNNs), and more recently, transformer-based large language models (LLMs)—to extract meaningful biological patterns [7]. Plant-specific models such as PDLLMs and AgroNT are being developed to address the unique challenges of plant genomes, which often feature large repetitive sequences, rapid functional turnover, and comparatively scarce experimental data relative to mammalian systems [2] [7].

These sequence models extend traditional methods by generalizing across genomic contexts, enabling them to address inherent limitations of quantitative and evolutionary comparative genetics techniques [2]. Their primary application in precision breeding includes identifying candidate causal variants for precise gene editing and purging deleterious alleles that may have accumulated during domestication and intensive breeding [2].

Table 1: Comparison of Approaches for Variant Effect Prediction

| Feature | Traditional Association Mapping | Modern Sequence Models |

|---|---|---|

| Core Approach | Fits separate model for each locus [2] | Fits unified model across genomic contexts [2] |

| Resolution | Moderate to low (confounded by linkage disequilibrium) [2] | High (can achieve base-level resolution) [2] |

| Key Limitation | Cannot predict effects of unobserved variants [2] | Accuracy depends heavily on training data [2] |

| Primary Application | Discovery of genomic segments associated with traits [2] | Prediction of effects for specific nucleotide changes [2] |

Experimental Validation and Current Limitations

Despite their promise, sequence models for variant effect prediction are "not yet mature for in silico-driven precision breeding" and require rigorous experimental validation [2]. Validation procedures range from computational cross-validation and functional enrichment analyses to direct laboratory experiments that confirm predicted phenotypic effects [2]. Key challenges include the limited availability of well-annotated genomic data for many plant species, computational resource requirements, model interpretability, and difficulties in modeling regulatory regions where most causal variants are located [2] [7].

Machine Learning-Guided Precision Breeding Workflow

Regulatory Framework and Detection Methodologies

The implementation of precision breeding operates within evolving regulatory landscapes that directly influence methodological and analytical approaches.

Regulatory Framework for Precision-Bred Organisms

The United Kingdom has established a distinct regulatory pathway for precision-bred organisms through the Genetic Technology (Precision Breeding) Act 2023 and the implementing Genetic Technology (Precision Breeding) Regulations 2025 [6]. This framework creates a streamlined approval process for PBOs in England, marking a significant departure from the prior EU-derived GMO regime [6]. The regulatory process involves three key stages:

- Release Notice: Prior to environmental release, applicants must submit a notice to the Department for Environment, Food and Rural Affairs (Defra) containing contact information and a description of the PBO, including the species, intended alterations, genetic modifications introduced, and the technique used [6].

- Marketing Notice: Before commercial sale, a marketing notice must be submitted to Defra with detailed information about genetic changes, any unintended changes, analytical methods used for detection, and techniques employed. An advisory committee then issues a statement confirming the organism as precision-bred [6].

- Food and Feed Authorization: To market food or feed products from PBOs, applicants must obtain authorization from the Food Standards Agency, requiring disclosure of how genetic changes affect edible parts and demonstration that changes do not adversely alter nutritional quality, toxicity, or allergenicity [6].

Detection and Identification of Precision-Bred Products

Detection of precision-bred products presents distinct analytical challenges since the genetic alterations mimic changes that can occur naturally. Generalizations across all PBO products are inappropriate, and each edited product may need assessment on a case-by-case basis [8].

Current scientific opinion indicates that modern molecular biology techniques—including quantitative real-time PCR (qPCR), digital PCR (dPCR), and Next Generation Sequencing (NGS)—can detect small genomic alterations when specific prerequisites are met, particularly when a priori information exists about the DNA sequence of interest and its flanking regions [8]. However, these techniques alone may be insufficient to unequivocally determine whether a variation resulted from precision breeding or traditional processes unless additional information confirms the sequence is unique to a specific genome-edited line [8].

A "weight of evidence" approach is recommended, incorporating multiple indicators beyond the single mutation of interest. This may include analysis of the genetic background, flanking regions, off-target mutations, potential CRISPR/Cas activity, epigenetic changes, and supplementary documentation from suppliers [8]. The development of comprehensive pan-genomic databases is also recommended as an invaluable resource for confirming mutations resulting from genome editing and designing reliable detection methods [8].

Table 2: Key Research Reagent Solutions for Precision Breeding

| Reagent/Category | Function/Application | Examples/Specifications |

|---|---|---|

| Genome Editing Tools | Introduction of targeted genetic changes [9] | CRISPR-Cas9, CRISPR-Cas12 (Cpf1), TALENs, ZFNs [9] |

| Precision Editing Systems | Fine-tuning genetic changes without double-strand breaks [9] | Base editing, Prime editing [9] |

| Detection & Validation | Confirmation of intended edits and off-target effects [8] | qPCR, dPCR, NGS with appropriate bioinformatics pipelines [8] |

| Speed Breeding Systems | Acceleration of plant generation cycles [10] | Controlled environment growth chambers with extended photoperiod (22h light/2h dark) [10] |

Detailed Experimental Protocols

Protocol: In Silico Prediction of Variant Effects Using Sequence Models

Objective: To predict the functional impact of genetic variants in plants using machine learning sequence models.

Materials:

- Genomic sequence data for target species and related taxa

- High-performance computing infrastructure with GPU acceleration

- Plant-specific pre-trained models (e.g., PDLLMs, AgroNT) or resources to train custom models

- Validation dataset with known variant effects

Methodology:

- Data Preparation and Preprocessing:

- Collect and curate whole-genome sequencing data for the target population or diversity panel.

- Perform quality control, sequence alignment, and variant calling to identify single nucleotide polymorphisms (SNPs) and insertions/deletions (indels).

- Annotate variants using existing genome annotations to identify coding, regulatory, and intergenic regions.

Model Selection and Training:

- For supervised learning: Utilize labeled functional genomics data (e.g., expression QTL, chromatin accessibility QTL) to train models predicting molecular phenotypes from sequence [2].

- For unsupervised learning: Apply models trained on comparative genomics to infer evolutionary conservation and predict deleterious variants [2].

- Fine-tune plant-specific models on target species data when available.

Variant Effect Prediction:

- Input target DNA sequences with introduced variants into the trained model.

- Generate effect scores predicting impact on molecular or organismal phenotypes.

- Rank variants based on predicted effect sizes for downstream experimental prioritization.

Validation and Interpretation:

- Validate predictions through cross-validation against held-out experimental data.

- For novel predictions, design functional experiments to confirm phenotypic effects.

- Perform interpretability analyses (e.g., attention mapping) to identify sequence features driving predictions.

Protocol: CRISPR-Cas9 Mediated Precision Breeding in Plants

Objective: To introduce precise genetic modifications in plants using CRISPR-Cas9 genome editing.

Materials:

- Plant material: Sterilized seeds or tissue explants

- CRISPR-Cas9 constructs: Vectors containing Cas9 nuclease and guide RNA(s)

- Transformation reagents: Agrobacterium strains or biolistic delivery system

- Tissue culture media and plant growth facilities

- Molecular biology reagents for genotyping

Methodology:

- Target Selection and gRNA Design:

- Identify causal variant or genomic region for modification based on prior knowledge or in silico predictions.

- Design and validate 2-3 guide RNAs with high on-target efficiency and minimal off-target potential using specialized software.

- Clone validated gRNA sequences into appropriate plant transformation vectors.

Plant Transformation:

- For Agrobacterium-mediated transformation: Introduce CRISPR construct into disarmed Agrobacterium tumefaciens strain and inoculate plant explants [9].

- For biolistic transformation: Coat gold or tungsten microparticles with DNA constructs and bombard plant tissues using a gene gun [9].

- Transfer treated explants to selection media containing appropriate antibiotics to regenerate transformed shoots.

Molecular Characterization:

- Extract genomic DNA from regenerated plantlets (T0 generation).

- Perform PCR amplification of target regions and sequence to confirm precise edits.

- Use NGS for comprehensive analysis of potential off-target effects in closely related genomic sequences.

Phenotypic Validation and Breeding:

- Grow edited plants to maturity and evaluate for expected phenotypic changes.

- Self-pollinate primary transformants to segregate out the Cas9 transgene while maintaining the desired edit in subsequent generations (T1+).

- Backcross edited lines into elite breeding material if necessary to improve agronomic performance.

Precision Breeding Experimental Pipeline

Emerging Technologies and Future Directions

Precision breeding continues to evolve beyond foundational CRISPR-Cas9 technology through several emerging techniques that offer enhanced precision and expanded capabilities:

- CRISPR-Cas12 (Cpf1): This system offers advantages including smaller size for easier delivery and staggered DNA cuts that may reduce off-target effects compared to Cas9 [9].

- Base Editing: Enables precise single-base changes without creating double-strand breaks by fusing catalytically impaired Cas9 with a deaminase enzyme, reducing unintended alterations [9].

- Prime Editing: Allows for precise genetic changes by directly writing new genetic information into the genome using a fusion of impaired Cas9 and reverse transcriptase, offering high accuracy for correcting mutations [9].

- Epigenome Editing: Targets the regulation of gene expression without altering the underlying DNA sequence by modifying epigenetic marks such as DNA methylation and histone modifications, enabling temporary or reversible trait modifications [9].

The integration of speed breeding protocols—which use extended photoperiods (22 hours light/2 hours dark) and controlled environments to accelerate plant generation cycles—with precision breeding techniques creates a powerful synergy for rapid crop improvement [10]. This combination allows researchers to not only introduce precise genetic changes but also to rapidly advance these edits through multiple generations, significantly compressing the breeding timeline [10].

Future advancements in precision breeding will require continued interdisciplinary collaboration to develop more sophisticated deep learning applications, improve model interpretability, expand the range of editable crops, and address regulatory and societal considerations. As these technologies mature, they hold immense potential for addressing global food security challenges through the development of crops with improved yield, nutrition, and resilience to environmental stresses.

The integration of artificial intelligence into genomics represents a paradigm shift in how researchers decipher the functional elements of genomes and the effects of genetic variation. In the specific context of plant variant effects research, two complementary machine learning approaches have emerged as fundamental: supervised learning in functional genomics and unsupervised learning in comparative genomics. These computational frameworks enable scientists to move beyond traditional association studies toward predictive models that can generalize across genomic contexts [2]. Supervised methods rely on labeled training data, typically from experimental measurements, to build predictive models that directly link genotype to phenotype. In contrast, unsupervised approaches discover inherent patterns and structures within unlabeled genomic sequences, often leveraging evolutionary conservation principles to infer functional constraints [2] [11]. The distinction between these paradigms is not merely technical but reflects different philosophical approaches to extracting meaning from biological sequences—one guided by known experimental outcomes, the other by the intrinsic statistical properties of genomes themselves.

Conceptual Foundations

Supervised Learning in Functional Genomics

Supervised learning operates on the principle of learning a mapping function from input variables (genomic sequences) to output variables (functional measurements) based on labeled training data [11]. In functional genomics, this typically involves training models on experimentally determined phenotypes, molecular traits, or functional annotations. The algorithm learns patterns from these examples, then generalizes to make predictions on unseen data. Common supervised algorithms include regularized regression methods (Ridge, LASSO), support vector machines, random forests, and deep neural networks [12] [13] [11]. These models are particularly valuable for predicting variant effects on specific molecular traits like gene expression, chromatin accessibility, or protein function, where direct experimental measurements are available for training [2] [14].

The strength of supervised approaches lies in their ability to make precise, quantitative predictions about variant effects when sufficient high-quality labeled data exists. However, they face limitations in scenarios where labeled data is scarce, expensive to generate, or incomplete—common challenges in plant genomics research where functional annotations lag behind mammalian systems [2]. Additionally, supervised models may struggle to generalize beyond the specific conditions and variants represented in their training data, potentially limiting their predictive power for novel genomic contexts or species.

Unsupervised Learning in Comparative Genomics

Unsupervised learning algorithms identify inherent patterns, structures, and relationships within unlabeled genomic data without pre-specified output variables [11]. In comparative genomics, these methods excel at discovering evolutionary constraints, identifying functional elements through conservation patterns, and clustering sequences based on intrinsic properties [2]. Common unsupervised approaches include clustering algorithms (K-means, hierarchical clustering), dimensionality reduction techniques (PCA, t-SNE), and generative models that learn the underlying distribution of genomic sequences [11].

The power of unsupervised methods lies in their ability to leverage the vast amount of unlabeled genomic sequence data available across species and populations. By learning the statistical regularities and evolutionary constraints embedded in genomic sequences, these models can identify functionally important elements and predict deleterious variants without requiring explicit functional annotations [2]. Modern genomic language models like Evo represent a cutting-edge application of unsupervised learning, where models trained on billions of nucleotides can learn the "grammar" of genomes and generate functional sequences through approaches like semantic design [15].

Table 1: Core Characteristics of Supervised vs. Unsupervised Learning in Genomics

| Characteristic | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Data Requirements | Labeled data (e.g., phenotypes, expression values) | Unlabeled sequence data |

| Primary Objectives | Prediction, classification, regression | Pattern discovery, clustering, density estimation |

| Common Algorithms | Linear regression, random forests, neural networks, SVM | K-means, PCA, autoencoders, genomic language models |

| Key Applications in Genomics | Variant effect prediction on molecular traits, genomic selection | Conservation analysis, functional element discovery, sequence generation |

| Validation Approach | Performance on held-out test sets with known labels | Coherence, biological relevance of discovered patterns |

| Main Strengths | Direct phenotype prediction, interpretable models for some algorithms | No need for labeled data, discovery of novel patterns |

| Main Limitations | Dependency on quality/quantity of labeled data | Results can be harder to interpret biologically |

Quantitative Comparison of Method Performance

The practical utility of different AI paradigms must be evaluated through rigorous empirical testing across diverse genomic prediction tasks. Studies comparing machine learning methods for genomic prediction provide valuable insights into the relative performance of these approaches.

Table 2: Performance Comparison of Machine Learning Methods for Genomic Prediction

| Method Category | Specific Methods | Prediction Accuracy (Range) | Computational Efficiency | Best-Suited Scenarios |

|---|---|---|---|---|

| Linear Models | gBLUP, RR-BLUP | Moderate to High (0.4-0.7 correlation) | High | Additive genetic architectures, large sample sizes |

| Regularized Regression | LASSO, Ridge, Elastic Net | Moderate to High (0.45-0.75 correlation) | Moderate to High | Polygenic traits, feature selection needed |

| Ensemble Methods | Random Forests, XGBoost | Variable (0.35-0.65 correlation) | Moderate | Non-linear relationships, interaction effects |

| Neural Networks | CNNs, MLPs | High for some traits (0.5-0.8 correlation) | Low to Moderate | Complex architectures, large datasets |

| Genomic Language Models | Evo, DNABERT | Emerging evidence promising | Very Low | Sequence design, function prediction |

Research on Arabidopsis thaliana has demonstrated that the optimal model choice depends on both the genetic architecture of the target trait and the availability of training data. For traits with high heritability, neural network approaches have shown superior performance, achieving correlation coefficients exceeding 0.7 between predicted and measured values for flowering time traits [13]. However, linear models like gBLUP remain competitive, particularly for traits with predominantly additive genetic architectures, while offering greater computational efficiency and interpretability [12] [13].

The performance of unsupervised approaches is more difficult to quantify quantitatively, as evaluation metrics often focus on the biological relevance of discovered patterns rather than prediction accuracy. For genomic language models like Evo, performance can be measured through sequence recovery rates (e.g., 85% amino acid sequence recovery with just 30% input prompt) and experimental validation of generated functional elements [15].

Application Notes for Plant Variant Effects Research

Supervised Learning Protocols for Gene Expression Prediction

Objective: Predict variant effects on gene expression levels using supervised learning on functional genomics data.

Workflow Overview:

- Data Collection: Acquire genotype data (SNP arrays, WGS) and transcriptome data (RNA-seq) from a population of interest. For plant studies, ensure samples represent diverse genetic backgrounds and relevant growth conditions [2].

- Feature Engineering: Convert genomic sequences into predictive features. For cis-regulatory models, focus on regions proximal to transcription start sites (e.g., ±5kb); include both sequence-based features and epigenetic markers if available [14].

- Model Training: Implement supervised algorithms using nested cross-validation to prevent overfitting. For expression QTL mapping, consider ensemble methods that capture non-linear relationships between regulatory variants and expression levels [13].

- Validation: Evaluate model performance on independent validation sets using correlation between predicted and observed expression values. Perform experimental validation through reporter assays for top predictions [2].

Key Considerations:

- Population structure can confound expression predictions; include principal components or kinship matrices as covariates [2].

- For non-model plant species, transfer learning from well-annotated species may improve performance when training data is limited.

- Model interpretation tools like SHAP can identify predictive sequence features and potential causal variants [14].

Unsupervised Learning Protocols for Conservation-Based Variant Effect Prediction

Objective: Identify functional elements and deleterious variants using unsupervised learning on multi-species sequence alignments.

Workflow Overview:

- Data Compilation: Collect whole-genome sequences from multiple related species with varying evolutionary distances. For crops, include both wild relatives and domesticated varieties [2].

- Sequence Embedding: Utilize unsupervised models like genomic language models to learn sequence representations without functional labels. Models like Evo process sequences at single-nucleotide resolution to capture evolutionary constraints [15].

- Constraint Identification: Apply clustering and anomaly detection algorithms to identify conserved regions and deviations from expected evolutionary patterns.

- Variant Prioritization: Flag variants falling in constrained elements as potentially functional or deleterious, with priority increasing with conservation level [2].

Key Considerations:

- Evolutionary rate variation across lineages can affect conservation metrics; adjust for phylogenetic relationships.

- For recently domesticated species, contrast wild and cultivated genomes to identify selection signatures rather than deep conservation.

- Integration with functional genomics data from supervised approaches can validate predictions and improve precision [2].

Experimental Protocols

Protocol 1: Supervised Learning for Expression QTL Mapping

Goal: Identify variants affecting gene expression levels using supervised machine learning.

Materials and Reagents:

- Plant materials with diverse genotypes

- RNA extraction kits (e.g., TRIzol)

- Sequencing library preparation kits

- SNP genotyping arrays or whole-genome sequencing services

Procedure:

- Sample Preparation: Grow plant cohorts under controlled conditions. Harvest tissues at consistent developmental stages for RNA extraction. Preserve samples immediately in liquid nitrogen [2].

- Genotype and Transcriptome Profiling: Extract DNA for genotyping and RNA for transcriptome sequencing. Perform quality control on sequencing data [16].

- Data Preprocessing: Process RNA-seq data to obtain normalized expression values (e.g., TPM, FPKM). Impute missing genotypes if using SNP arrays. Perform quality control to remove low-quality samples and markers [2].

- Feature Selection: For each gene, extract variants in cis-regulatory regions. Include epigenetic features if available (e.g., chromatin accessibility, histone modifications) [14].

- Model Training: Implement supervised learners (gradient boosting machines, neural networks) using expression values as targets and genotypes as features. Use k-fold cross-validation to assess performance [13].

- Variant Effect Estimation: Extract feature importance scores to identify variants with largest impact on expression. Calculate predicted effect sizes for significant variants [2].

- Experimental Validation: Select top variants for functional validation using dual-luciferase reporter assays in plant protoplasts [2].

Protocol 2: Unsupervised Semantic Design for Plant Regulatory Elements

Goal: Generate novel functional regulatory sequences using unsupervised genomic language models.

Materials and Reagents:

- Genomic language model (e.g., Evo) [15]

- Plant genome sequences

- Cloning reagents for synthetic DNA

- Plant transformation materials

- Reporter constructs (e.g., GFP, luciferase)

Procedure:

- Model Selection: Access pretrained genomic language model with demonstrated capability on prokaryotic or eukaryotic sequences. For plant-specific applications, consider fine-tuning on plant genomes [15].

- Prompt Engineering: Curate sequence prompts based on known functional elements. For enhancer design, use sequences flanking strong enhancers or binding sites of key transcription factors [15] [14].

- Sequence Generation: Perform conditional sampling from the model using temperature scaling to control diversity. Generate thousands of candidate sequences [15].

- In Silico Filtering: Filter generated sequences for specific characteristics (e.g., motif presence, length, complexity). Use predictive models to prioritize candidates with high predicted activity [15] [14].

- Synthesis and Cloning: Synthesize top candidate sequences and clone into reporter vectors. Include positive and negative controls in experimental design [15].

- Functional Testing: Transform constructs into plant systems (e.g., Arabidopsis protoplasts, stable transformants). Quantify reporter gene expression relative to controls [15] [14].

- Model Refinement: Incorporate experimental results to fine-tune generation process, increasing success rate in subsequent iterations [15].

Visualization of Methodologies

Supervised Learning Workflow for Genomic Prediction

Supervised Learning Genomic Prediction Workflow

Unsupervised Genomic Sequence Design

Unsupervised Genomic Sequence Design Workflow

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Genomic AI Studies

| Reagent/Tool | Category | Function | Example Applications |

|---|---|---|---|

| Evo Genomic Language Model | Computational Model | Generative AI for DNA sequence design | Semantic design of functional elements [15] |

| BRAIN-MAGNET | Specialized AI Tool | CNN for non-coding variant interpretation | Prioritize functional non-coding variants [14] |

| ChIP-STARR-seq | Experimental Assay | High-throughput enhancer validation | Functional annotation of regulatory elements [14] |

| gBLUP | Statistical Model | Genomic best linear unbiased prediction | Genomic selection, breeding value prediction [12] [13] |

| Nested Cross-Validation | Validation Framework | Robust model performance estimation | Prevent overfitting in genomic prediction [13] |

| SynGenome Database | AI-Generated Resource | Database of AI-designed sequences | Access to functional sequence designs [15] |

| CRISPR-Cas9 | Genome Editing | Functional validation of variants | Experimental testing of AI predictions [16] |

| RNA-seq | Transcriptomics | Genome-wide expression profiling | Training data for expression prediction models [2] [16] |

The integration of supervised and unsupervised machine learning paradigms represents a transformative development in plant genomics and variant effects research. While supervised approaches excel at leveraging labeled functional genomics data to make precise predictions about variant effects, unsupervised methods unlock the potential of vast unlabeled sequence data to discover novel functional elements and generate synthetic biological components. The most powerful research programs will strategically combine both approaches, using unsupervised learning to explore sequence space and generate hypotheses, then applying supervised methods for precise functional predictions. As these technologies mature, they promise to accelerate precision plant breeding by enabling in silico prediction of variant effects before costly field trials, ultimately compressing breeding cycles and enhancing crop improvement efforts. However, rigorous validation through experimental studies remains essential to translate computational predictions into practical breeding applications [2] [17].

Plant genomics is pivotal for advancements in drug development, yet researchers face significant challenges due to the structural complexity of plant genomes and limitations in available data. High levels of heterozygosity, polyploidy, and abundant repetitive sequences complicate the assembly of high-quality reference genomes [18]. These obstacles directly impact the identification of genes responsible for synthesizing valuable secondary metabolites, which are the foundation for many therapeutic compounds [18]. Meanwhile, the scarcity of well-annotated genomic resources constrains the application of powerful machine learning models for predicting variant effects and gene function [17] [7]. These challenges are particularly acute in medicinal plants, where understanding the genetic basis of specialized metabolite biosynthesis is a primary research goal [19]. This Application Note details current methodologies and protocols to help researchers navigate these complexities, enabling more effective genomic analysis and accelerating the discovery of plant-derived bioactive compounds.

Current Landscape and Quantitative Data

The field of medicinal plant genomics has seen rapid expansion, yet significant gaps in quality and representation remain. As of February 2025, genomes for 431 medicinal plants across 203 species have been sequenced, with nearly half (47.56%) of these assemblies released in just the past three years, demonstrating accelerated progress [18]. However, the quality of these genomes varies considerably, and taxonomic coverage is uneven across different plant orders.

Table 1: Current Status of Medicinal Plant Genomes (as of February 2025)

| Metric | Value | Significance |

|---|---|---|

| Total Sequenced Medicinal Plants | 431 across 203 species | Foundation for genomic studies of medicinal species [18] |

| Recent Growth (Past 3 Years) | 205 assemblies (47.56%) | Rapid acceleration in sequencing efforts [18] |

| Telomere-to-Telomere (T2T) Assemblies | 11 genomes | Gold standard for completeness; represents only a small fraction [18] |

| Chromosome-Level Assemblies | 267 of 304 TGS genomes | Most modern assemblies achieve high contiguity [18] |

| BUSCO Completeness Range | 60% to 99% | Wide variation in assessed genome completeness [18] |

| Leading Contributor to Assemblies | China (69.9%) | Geographic imbalance in genomic resource generation [18] |

Table 2: Sequencing Technology Adoption and Outcomes in Medicinal Plants

| Technology | Usage Dominance | Key Contribution |

|---|---|---|

| Third-Generation Sequencing (TGS) | 98.04% in past 3 years | Long reads span complex/repetitive regions [18] |

| Hi-C Chromosome Conformation Capture | 89.3% adoption | Enables chromosome-length scaffolding [18] |

| PacBio HiFi Sequencing | Transformative impact | Concurrently provides sequence and epigenetic data; high accuracy in variant calling and complex regions [20] |

| Hybrid Approaches (Illumina + TGS) | Prevalent strategy | Combines short-read accuracy with long-range spanning [18] |

Experimental Protocols for Genome Sequencing and Analysis

Protocol: Chromatin-Assisted Genome Assembly with CiFi

Principle: The CiFi method (Hi-C with HiFi) combines chromosome conformation capture with HiFi sequencing to generate haplotype-resolved, chromosome-scale assemblies from a single technology, even from low-input samples [20].

Reagents and Equipment:

- Fresh plant tissue

- Formaldehyde for crosslinking

- Restriction enzymes (e.g., DpnII, HindIII)

- Biotin-labeled nucleotides

- PacBio Revio or Vega system with AmpliFi workflow

- LACHESIS or 3D-DNA scaffolding software

Procedure:

- Crosslinking: Fix 1-2 g of fresh plant tissue in formaldehyde to preserve chromatin structure.

- Digestion: Lyse cells and digest DNA with a restriction enzyme.

- Proximity Ligation: Dilute and ligate crosslinked DNA fragments, creating chimeric molecules representing spatial proximity.

- Biotin Capture: Purify biotin-labeled ligation products using streptavidin beads.

- Library Preparation and Sequencing: Construct HiFi libraries using the PacBio low-input AmpliFi workflow. Sequence on a Revio or Vega system to generate >500-fold improved efficiency compared to previous approaches [20].

- Data Integration: Assemble the genome using HiFi reads with Hifiasm or Canu, then scaffold using CiFi data with LACHESIS to achieve haplotype-resolved connectivity across scales exceeding 100 Mb [18] [20].

Protocol: Functional Gene Cluster Identification Using Multi-Omics

Principle: Integrated analysis of genomic, transcriptomic, and metabolomic data enables the discovery of Biosynthetic Gene Clusters (BGCs) responsible for producing valuable secondary metabolites [19] [21].

Reagents and Equipment:

- High-quality plant genomic DNA and RNA

- LC-MS/MS system for metabolomics

- PacBio Iso-Seq or RNA-Seq kits for full-length transcriptome

- AntiSmash, plantiSMASH software

Procedure:

- Genome Annotation: Annotate the assembled genome using BRAKER or MAKER pipelines, incorporating protein homology and transcriptomic evidence.

- Metabolite Profiling: Extract metabolites from different plant tissues and developmental stages. Analyze using LC-MS/MS to identify specialized metabolites of interest.

- Transcriptome Sequencing: Perform RNA-Seq or Iso-Seq across the same tissues to quantify gene expression.

- BGC Prediction: Use antiSMASH or plantiSMASH to scan the genome for colocalized biosynthetic genes.

- Correlation Analysis: Integrate expression data with metabolite abundance to identify co-expression patterns, prioritizing candidate BGCs for functional validation [21].

Computational Analysis and Machine Learning Approaches

Protocol: Applying Foundation Models for Variant Effect Prediction in Plants

Principle: Foundation models (FMs) pre-trained on large-scale biological sequences can predict the functional impact of genetic variants in plants, overcoming limitations of traditional association studies [17] [22].

Software and Resources:

- Plant-specific FMs (AgroNT, GPN-MSA, PlantCaduceus)

- VCF files from population sequencing

- Computing resources with GPU acceleration

Procedure:

- Model Selection: Choose a plant-appropriate FM based on target variants:

- AgroNT: For non-coding regulatory elements in crop plants

- GPN-MSA: For functional variant prediction using multi-species alignment data [22]

- PlantCaduceus: For general-purpose plant genomic analysis

Variant Encoding: Convert VCF files to sequence windows, incorporating variants in their genomic context.

Effect Prediction:

- For coding variants, use the model to calculate evolutionary probabilities and predict deleteriousness.

- For non-coding variants, predict changes in regulatory scores (e.g., promoter activity, transcription factor binding).

Validation: Correlate high-impact predictions with phenotypic data from mutant lines or GWAS cohorts to refine model accuracy [17].

Table 3: Research Reagent Solutions for Plant Genomic Studies

| Reagent/Resource | Function | Application Example |

|---|---|---|

| PacBio HiFi Reads | Generate long, accurate reads | Resolve complex regions, detect base modifications concurrently [20] |

| Hi-C Kit | Capture 3D chromatin architecture | Scaffold genomes to chromosome-scale [18] |

| AntiSmash/plantiSMASH | Identify biosynthetic gene clusters | Discover secondary metabolite pathways [19] |

| DNABERT, AgroNT | DNA foundation models | Predict regulatory elements and variant effects [22] |

| ESM3, SaProt | Protein foundation models | Predict protein structure and function [22] |

| BUSCO | Assess genome completeness | Benchmark assembly quality using universal single-copy orthologs [18] |

Navigating the challenges of large, repetitive plant genomes requires an integrated approach combining advanced sequencing technologies, multi-omics integration, and cutting-edge computational methods. While significant progress has been made in medicinal plant genomics, with over 400 species sequenced to date, the road ahead demands a concerted focus on achieving more complete, Telomere-to-Telomere assemblies and leveraging machine learning models specifically adapted to plant genomic peculiarities [18]. The protocols and methodologies detailed in this Application Note provide a framework for researchers to overcome these challenges, ultimately accelerating the discovery and characterization of valuable plant-derived compounds for drug development. As foundation models continue to evolve and incorporate more plant-specific data, their predictive power for variant effects will become an increasingly indispensable tool in the plant genomics toolkit [17] [7] [22].

The AI Toolbox: Methodologies and Real-World Applications of Sequence Models in Plant Genomics

The application of large language models (LLMs) to biological sequences represents a paradigm shift in computational genomics, enabling unprecedented capability in predicting variant effects. These foundation models (FMs), trained on massive-scale genomic data using self-supervised learning, have demonstrated remarkable performance in understanding the complex language of DNA [22]. Unlike traditional machine learning approaches that required task-specific feature engineering, genomic FMs learn contextual representations directly from sequence data, allowing them to capture complex biological patterns including evolutionary constraints, regulatory syntax, and structure-function relationships [23] [7]. For plant genomics specifically, models such as GPN-MSA and AgroNT address unique challenges including polyploidy, high repetitive sequence content, and environment-responsive regulatory elements that complicate analysis of plant genomes [22]. This architectural deep dive examines the transformer-based foundations, model-specific innovations, and practical applications of these cutting-edge models in plant variant effect research.

Architectural Foundations: From Transformers to Genomic Sequences

Core Transformer Architecture Adapted for Genomics

The transformer architecture, originally developed for natural language processing (NLP), provides the fundamental building blocks for modern genomic foundation models through its self-attention mechanism [22]. This mechanism allows the model to weigh the importance of different nucleotide positions when processing a genomic sequence, enabling it to capture long-range dependencies and contextual relationships that are crucial for understanding regulatory elements and their interactions [23]. Unlike convolutional neural networks that process sequences with localized filters, self-attention creates direct connections between all positions in the sequence, allowing it to learn complex grammatical rules in the "language of DNA" [23].

Genomic adaptions of transformers require specialized tokenization strategies to convert DNA sequences into model-readable inputs. While early models like DNABERT used k-mer tokenization (segmenting sequences into overlapping subsequences of length k), newer approaches including DNABERT-2 and Nucleotide Transformer have adopted Byte Pair Encoding (BPE) for more efficient processing [22]. The context window—the length of sequence a model can process at once—has substantially increased from early models supporting 1-6 kb to recent architectures like HyenaDNA that can handle sequences spanning millions of base pairs [22].

Key Architectural Variations in Genomic Foundation Models

Table: Architectural Comparison of Major Genomic Foundation Models

| Model | Base Architecture | Key Innovation | Context Window | Training Data | Primary Application |

|---|---|---|---|---|---|

| GPN-MSA | Transformer + CNN | MSA integration | Variable | Vertebrate genome alignments [24] | Genome-wide variant effect prediction [24] |

| AgroNT | Transformer | Plant-specific pre-training | 6-12 kb | Plant genomes [22] [7] | Plant variant effect prediction [22] |

| Nucleotide Transformer | Transformer | Cross-species training | 6-12 kb [22] | Human, model organisms [22] | General genomic tasks |

| HyenaDNA | Hyena operator | Long-range dependencies | 1 Mb+ [22] | Reference genomes | Long-range regulatory analysis |

| DNABERT-2 | BERT + BPE | Efficient tokenization | 1-3 kb | Reference genomes | Regulatory element identification [22] |

Model-Specific Architectural Innovations

GPN-MSA: Multiple Sequence Alignment Integration

GPN-MSA introduces a biologically-motivated framework that integrates multiple sequence alignment (MSA) information using a flexible Transformer architecture [24]. Unlike standard DNA language models trained on single reference genomes, GPN-MSA processes whole-genome MSAs across diverse species, allowing it to learn nucleotide probability distributions conditioned on both surrounding sequence context and evolutionary information from related species [24]. This approach draws inspiration from the MSA Transformer protein model but addresses the substantial complexities of whole-genome DNA alignments, which comprise small, fragmented synteny blocks with highly variable conservation levels [24].

The model architecture extends the original Genomic Pre-trained Network (GPN) by incorporating aligned sequences from related species that provide critical information about evolutionary constraints and adaptation [24]. Essential differences from the protein MSA Transformer include adaptations to handle the more complex genomic alignments and specialized training procedures optimized for DNA sequences. GPN-MSA demonstrated state-of-the-art performance across multiple benchmarks including clinical databases (ClinVar, COSMIC, OMIM), experimental functional assays, and population genomic data (gnomAD), achieving outstanding performance on deleteriousness prediction for both coding and non-coding variants [24].

AgroNT: Plant-Specialized Architecture

AgroNT represents a specialized foundation model trained specifically on plant genomic sequences to address challenges pervasive in plant genomes [22]. Plant genomes often exhibit characteristics that complicate analysis, including polyploidy (e.g., hexaploid wheat), extensive structural variation, and high proportions of repetitive sequences and transposable elements (over 80% in maize) [22]. AgroNT's architecture incorporates adaptations to handle these plant-specific genomic characteristics while effectively capturing environment-responsive regulatory elements that are crucial for understanding plant adaptation and trait variation [22].

The model demonstrates excellent performance in plant variant effect prediction, building on the initial success of GPN in Arabidopsis thaliana [23]. Unlike general-purpose genomic language models trained primarily on human or animal data, AgroNT captures plant-specific regulatory patterns and evolutionary constraints, making it particularly valuable for crop improvement applications [22] [7].

Experimental Protocols for Model Evaluation

Protocol 1: Benchmarking Variant Effect Prediction

Purpose: To evaluate model performance on classifying pathogenic versus benign variants across different genomic contexts.

Materials:

- Variant Datasets: Curated sets from ClinVar [24], COSMIC [24], gnomAD [24], and plant-specific databases

- Computational Resources: 4 NVIDIA A100 GPUs (or equivalent) [24]

- Software Framework: Python with PyTorch or TensorFlow, model-specific inference code

Procedure:

- Data Preparation:

- Obtain benchmark datasets of labeled pathogenic and benign variants

- For plant models, compile species-specific variant sets with phenotypic annotations

- Split data into training (if fine-tuning) and test sets, ensuring no overlap

Model Inference:

- Generate model scores for each variant using log-likelihood ratios (LLRs)

- For MSA-based models (GPN-MSA), provide appropriate alignment data

- Compute scores for both reference and alternative alleles

Performance Evaluation:

- Calculate Area Under the Receiver Operating Characteristic curve (AUROC)

- Compute Area Under the Precision-Recall Curve (AUPRC), especially for imbalanced datasets

- Assess performance separately for coding and non-coding variants

Comparative Analysis:

- Compare against baseline methods (CADD, phyloP, ESM-1b) [24]

- Evaluate statistical significance of performance differences

- Assess computational efficiency and inference speed

Expected Outcomes: GPN-MSA substantially outperforms other DNA language models including Nucleotide Transformer and HyenaDNA, as well as established predictors like CADD and phyloP on human clinical benchmarks [24]. Similar performance advantages are observed for plant-specific models on agricultural trait-associated variants.

Protocol 2: In Silico Saturation Mutagenesis for Regulatory Element Characterization

Purpose: To identify functional regulatory elements and quantify the functional impact of all possible mutations within a region of interest.

Materials:

- Genomic Regions: Candidate regulatory sequences (promoters, enhancers, UTRs)

- Reference Genome: Species-appropriate reference sequence

- Analysis Pipeline: Custom scripts for variant simulation and score aggregation

Procedure:

- Region Selection:

- Identify genomic regions of interest (e.g., promoters, enhancers) based on chromatin accessibility or evolutionary conservation

- For plants, consider tissue-specific regulatory contexts

Variant Simulation:

- Generate all possible single-nucleotide variants within the target region

- Include insertion-deletion variants if supported by the model architecture

Effect Prediction:

- Compute deleteriousness scores for each simulated variant using the model's LLR

- For sequence-to-activity models (DeepWheat), predict effects on gene expression or epigenomic features [25]

Functional Mapping:

- Aggregate scores to create a functional profile of the regulatory element

- Identify specific positions and motifs with high constraint scores

- Compare profiles across tissue types or developmental stages

Experimental Validation:

- Select high-impact variants for functional assays (e.g., reporter gene assays, DMS)

- Correlate computational predictions with experimental measurements

Expected Outcomes: The protocol identifies nucleotides under functional constraint within regulatory elements and predicts the directional effect of mutations on regulatory activity. In wheat, models like DeepWheat can predict tissue-specific expression changes resulting from regulatory variants with Pearson correlation coefficients of 0.82-0.88 [25].

Diagram: Architectural workflow of genomic foundation models showing both single-sequence and MSA-based approaches.

Performance Benchmarks and Comparative Analysis

Quantitative Performance Across Benchmark Tasks

Table: Performance Comparison of Genomic Foundation Models on Key Tasks

| Model | ClinVar Pathogenic vs.\ngnomAD Common (AUROC) | COSMIC vs. gnomAD\n(AUPRC) | Regulatory Variant\nPrediction (AUROC) | Training Time | Inference Speed |

|---|---|---|---|---|---|

| GPN-MSA | 0.95 [24] | 0.89 [24] | 0.91 (OMIM) [24] | 3.5 hours [24] | Moderate |

| Nucleotide Transformer | 0.82 [24] | 0.71 [24] | 0.76 (OMIM) [24] | 28 days [24] | Fast |

| CADD | 0.93 [24] | 0.75 [24] | 0.84 (OMIM) [24] | N/A | Fast |

| PhyloP | 0.89 [24] | 0.69 [24] | 0.79 (OMIM) [24] | N/A | Fast |

| AgroNT | Plant-specific benchmarks | Plant-specific benchmarks | Plant-specific benchmarks | Plant-specific | Plant-specific |

Plant-Specific Model Performance

For agricultural applications, models like DeepWheat demonstrate the capability to predict gene expression from sequence and epigenomic features with remarkable accuracy (Pearson correlation coefficients of 0.82-0.88 across wheat tissues) [25]. This performance substantially exceeds sequence-only models, particularly for tissue-specific genes where sequence-only approaches show notable performance drops [25]. The integration of epigenomic data enables these models to capture dynamic regulatory states rather than just static sequence features, making them particularly valuable for predicting context-dependent variant effects in crops [25].

Essential Research Reagents and Computational Solutions

Table: Key Research Reagents and Resources for Genomic Foundation Model Applications

| Resource Type | Specific Examples | Function/Application | Availability |

|---|---|---|---|

| Pre-trained Models | GPN-MSA, AgroNT, Nucleotide Transformer, PlantCaduceus | Zero-shot variant effect prediction without task-specific fine-tuning | GitHub repositories, model hubs [26] |

| Benchmark Datasets | ClinVar, gnomAD, COSMIC, plant-specific variation databases | Model evaluation and comparative performance assessment | Public data portals, specialized archives |

| MSA Resources | Zoonomia alignment, vertebrate genome alignments, plant pan-genomes | Providing evolutionary context for MSA-based models | UCSC Genome Browser, specialized databases [24] |

| Variant Annotation Suites | Ensembl VEP, SnpEff, plant-specific annotation tools | Functional annotation of predicted deleterious variants | Bioinformatics toolkits, public servers |

| Expression Prediction Models | DeepEXP (from DeepWheat), Basenji2, Xpresso | Predicting tissue-specific expression changes from sequence | GitHub repositories, custom implementations [25] |

| Epigenomic Prediction | DeepEPI (from DeepWheat), Enformer, Basenji2 | Predicting chromatin features from DNA sequence | Specialized implementations [25] |

Diagram: Research ecosystem for genomic foundation models showing data inputs, model types, applications, and research outputs.

Implementation Considerations and Future Directions

Practical Implementation Guidelines

Successful implementation of genomic foundation models requires careful consideration of several practical factors. Computational resources vary significantly between models, with GPN-MSA requiring just 3.5 hours on 4 NVIDIA A100 GPUs compared to 28 days on 128 GPUs for some large nucleotide transformers [24]. This substantial difference in training requirements makes certain models more accessible for research groups with limited computational infrastructure.

For plant genomics applications, species-specific adaptation is crucial. Plant genomes often contain unique characteristics including polyploidy, high repetitive content, and environment-responsive regulatory elements that require specialized models [22]. While universal models like GENERator and Evo 2 leverage extensive cross-species training data, plant-specific models like AgroNT and PlantCaduceus typically outperform them on agricultural tasks [22].

Data integration approaches represent another key consideration. Models that incorporate multiple data modalities—such as DeepWheat's integration of sequence with epigenomic features—consistently outperform sequence-only approaches, particularly for tissue-specific prediction tasks [25]. This advantage comes with increased data requirements, as high-quality epigenomic data remains expensive and challenging to obtain, especially in plants [25].

Emerging Research Directions

Future developments in genomic foundation models will likely focus on several key areas. Cross-species generalization capabilities are being enhanced through more diverse training datasets and architectural improvements that better capture evolutionary relationships [22]. Multi-modal integration is another active research frontier, with models increasingly incorporating diverse data types including epigenomic profiles, chromatin conformation, and protein interaction data to create more comprehensive functional representations [22].

For agricultural applications, a critical challenge remains the scarcity and limited diversity of plant datasets compared to mammalian systems [22]. Future research should prioritize the development of more comprehensive plant genomic resources to support model training and validation. Additionally, computational efficiency improvements will be essential to make these powerful models more accessible to the plant research community with limited computational resources [22].

As these models mature, they are poised to become integral components of the plant breeder's toolbox, enabling more precise identification of functional variants and accelerating the development of improved crop varieties through in silico prediction of variant effects [17] [27]. While not yet mature for fully in silico-driven precision breeding, current models already show strong potential to enhance traditional approaches and reduce dependence on costly phenotypic screening [27].

The shift toward precision plant breeding necessitates a move from traditional, phenotype-driven selection to approaches that directly target causal genetic variants. A significant challenge in this field is the development of models that can accurately predict the effects of these variants across all functional parts of the genome—not just within protein-coding sequences but also throughout the vast and complex non-coding regulatory landscape [17]. Modern machine learning (ML) and deep learning models are emerging as powerful tools to meet this challenge. These in silico methods serve as efficient alternatives or complements to costly mutagenesis screens, offering the potential to generalize predictions across diverse genomic contexts by fitting a unified model to all loci, rather than requiring a separate model for each one [17]. This application note details the protocols for applying these models, with a specific focus on plant systems, and provides a framework for their validation in a breeding context. The integration of these models holds strong potential to become an integral part of the modern breeder's toolbox, accelerating the development of improved crop varieties [17].

Quantitative Comparison of Genomic Constraint and Model Performance

Selecting the right metric and model is crucial for prioritizing functional elements. The tables below summarize key quantitative descriptors for genomic constraint and model performance.

Table 1: Comparison of Genomic Constraint Metrics for Variant Prioritization

| Metric Name | Genomic Scope | Core Principle | Key Application |

|---|---|---|---|

| gwRVIS [28] | Genome-wide (sliding window) | Intolerance to variation within the human lineage, agnostic to conservation. | Identifies regions depleted of variation due to purifying selection in humans. |

| ncRVIS [28] | Proximal non-coding (promoters, UTRs) | Constraint in specific regulatory regions near genes. | Prioritizes potentially pathogenic variants in well-defined non-coding elements. |

| JARVIS [28] | Non-coding regions | Deep learning model integrating gwRVIS, functional annotations, and primary sequence. | Comprehensive pathogenicity prediction for non-coding single-nucleotide and structural variants. |

Table 2: Performance Characteristics of Genomic Models and Elements

| Model / Genomic Class | Key Performance Differentiator | Pathogenic Variant Classification (AUC or similar) | Notable Strength |

|---|---|---|---|

| JARVIS Model [28] | Integrates multiple data types; human-lineage specific. | Comparable or superior to conservation-based scores. | Captures previously inaccessible human-lineage constraint information. |

| Ultraconserved Noncoding Elements (UCNEs) [28] | Most intolerant non-coding class per gwRVIS. | N/A | Highest median intolerance (gwRVIS: -0.99), despite no conservation data in gwRVIS calculation. |

| CCDS (Protein-Coding) [28] | Benchmark for disease-gene intolerance. | N/A | High intolerance (median gwRVIS: -0.55), but less than UCNEs. |

| VISTA Enhancers [28] | Developmental enhancers. | N/A | High intolerance (median gwRVIS: -0.77). |

Protocol for Genome-Wide Constraint Analysis and Variant Effect Prediction

This protocol outlines the steps for generating a genome-wide constraint profile and using it to train a deep learning model for variant effect prediction, adapted for plant genomes.

Stage 1: Calculation of Genome-Wide Residual Variation Intolerance Score (gwRVIS)

Objective: To identify genomic regions intolerant to variation using a population-scale dataset. Inputs: Whole genome sequencing (WGS) data from a large population (e.g., >60,000 individuals) [28]. Outputs: A single-nucleotide resolution gwRVIS score for the entire genome.

Variant Calling and Quality Control (QC): Perform variant calling on the WGS dataset. Apply stringent QC filters, including:

- Coverage depth to exclude poorly sequenced regions.

- Variant-calling confidence metrics.

- Masking of simple repeat regions to avoid artifacts [28].

Sliding-Window Analysis: Scan the entire genome using a sliding window approach.

- Window Size: Use a tuned window length (e.g., 3 kb as used in human studies [28]). The optimal size may vary for plant genomes based on diversity and data availability.

- Step Size: Use a 1-nucleotide step to achieve single-nucleotide resolution.

- Variant Tally: For each window, record:

- Total Variants: The count of all observed variants.

- Common Variants: The count of variants with a minor allele frequency (MAF) above a set threshold (e.g., 0.1% [28]).

Regression Modeling: Fit an ordinary linear regression model to predict the number of common variants in a window based on the total number of variants in that same window.

gwRVIS Calculation: For each window, calculate the gwRVIS as the studentized residual from the regression model [28].

- Interpretation: Lower (negative) gwRVIS values indicate greater intolerance to variation (purifying selection), while higher (positive) values indicate tolerance or potential positive selection.

Stage 2: Training a Deep Learning Model for Non-Coding Variant Effect Prediction (e.g., JARVIS)

Objective: To build a comprehensive model that predicts the pathogenicity of non-coding variants. Inputs: Primary genomic sequence, functional genomic annotations (e.g., chromatin accessibility, transcription factor binding sites), and the gwRVIS score [28]. Outputs: A pathogenicity score for non-coding variants.

Data Integration and Preprocessing:

- Compile a training set of known pathogenic and benign non-coding variants.

- Integrate multiple data types for each variant's genomic context, including the calculated gwRVIS score and other functional annotations. Intentionally exclude evolutionary conservation information to capture human-lineage-specific signals [28].

Model Architecture and Training:

- Employ a deep learning framework (e.g., a convolutional neural network) capable of processing sequential genomic data and integrating diverse feature sets.

- Train the model to distinguish between pathogenic and benign variants.

Model Validation:

- Validate the model's performance using held-out test sets and independent benchmarks.

- Assess its ability to classify pathogenic single-nucleotide and structural variants and compare its performance against conservation-based metrics [28].

Stage 3: Experimental Validation of Predicted Variant Effects in Plants

Objective: To functionally validate the impact of high-priority variants identified by in silico models. Inputs: Plant lines (e.g., mutant lines created via CRISPR-Cas9 with introduced variants). Outputs: Quantitative data on phenotypic and molecular changes.

Phenotypic Characterization:

- Imaging: Acquire high-resolution 2D or 3D images of key morphological traits (e.g., leaf shape, root architecture) in control and mutant plants [29].

- Morphometric Analysis: Extract quantitative descriptors of plant morphology, such as:

- Adhere to Minimum Information About a Plant Phenotyping Experiment (MIAPPE) standards for data reporting to ensure reproducibility [29].

Molecular Phenotyping:

- Gene Expression Analysis: Use RNA-seq or qPCR to measure expression changes in the gene putatively regulated by the non-coding element harboring the variant.

- Epigenomic Profiling: Perform assays such as ATAC-seq or ChIP-seq to confirm changes in chromatin accessibility or transcription factor binding resulting from the variant.

The following workflow diagram illustrates the integrated protocol from genomic data to functional validation:

Table 3: Essential Research Reagents and Computational Tools

| Tool / Resource | Category | Function in Workflow |

|---|---|---|

| PacBio HiFi / Oxford Nanopore [30] | Sequencing Technology | Generate long-read sequencing data to resolve complex genomic regions and structural variations. |

| TOPMed-like Dataset [28] | Genomic Data | Provides a large-scale, population-level WGS dataset for calculating genomic constraint metrics. |

| Plant Image Analysis Repository [29] | Software/Toolkit | A curated resource of tools for quantifying plant morphology from images. |

| MIAPPE Standards [29] | Reporting Guideline | Ensures reproducibility and minimum reporting standards for plant phenotyping experiments. |

| CRISPR-Cas9 | Genome Editing | Enables the introduction of specific variants into plant lines for functional validation. |

| ATAC-seq / ChIP-seq | Functional Assay | Measures chromatin accessibility or transcription factor binding to assess the molecular impact of non-coding variants. |

Concluding Remarks

The integration of genome-wide constraint metrics like gwRVIS with deep learning models such as JARVIS represents a significant advance in our ability to interpret the function of the non-coding genome. While these approaches have proven powerful in human genomics, their application to plant research is still maturing [17]. Success in plant variant effect research will depend on the availability of large-scale plant WGS data, the development of plant-specific functional annotations, and rigorous validation through experiments like those outlined in this protocol. By adopting this integrated in silico and empirical framework, researchers can systematically bridge the gap between genomic variation and observable traits, ultimately accelerating precision plant breeding.

In plant breeding, the pursuit of higher yields and improved fitness is persistently challenged by the accumulation of deleterious variants throughout the genome. These mutations, which negatively impact plant growth, development, and ultimately crop productivity, are often inadvertently fixed in populations during intense phenotypic selection [27]. Traditional methods for identifying these detrimental variants have relied on comparative genomics techniques that analyze conservation across sequence alignments from multiple related species [27]. However, these alignment-based methods face significant limitations, including the scarce availability of closely related plant genomes and difficulties in generating accurate homologous alignments [27].