Co-optimization of Environmental Variables: A Systems Approach to Maximizing Resource Use Efficiency

This article explores the transformative potential of co-optimization frameworks for simultaneously managing multiple environmental variables to enhance resource use efficiency.

Co-optimization of Environmental Variables: A Systems Approach to Maximizing Resource Use Efficiency

Abstract

This article explores the transformative potential of co-optimization frameworks for simultaneously managing multiple environmental variables to enhance resource use efficiency. Aimed at researchers and scientists, it moves beyond single-variable optimization to address the complex, interdependent nature of modern systems—from controlled environment agriculture to energy grids and industrial processes. We provide a foundational understanding of co-optimization principles, detail cutting-edge methodological approaches, analyze real-world applications and troubleshooting strategies, and present rigorous validation techniques. By synthesizing insights across sectors, this review serves as a critical resource for developing integrated, sustainable, and high-performance systems in research and development.

Defining Co-optimization: The Principles and Imperatives of Multi-Variable Systems

What is Co-optimization? Contrasting with Traditional Single-Objective Optimization

Technical FAQ: Understanding Co-optimization

Q1: What is co-optimization and how does it differ from traditional single-objective optimization?

Co-optimization is an advanced decision-support approach that simultaneously identifies the best solutions for two or more different yet related systems or objectives within a single planning or operational framework [1]. Unlike traditional single-objective optimization that seeks the best outcome for one isolated objective, co-optimization considers the interconnectedness and synergies between multiple systems, leading to more holistic and efficient solutions [1] [2].

In practical terms, while traditional optimization might separately optimize generation planning and then transmission planning in the energy sector, a co-optimization model assesses both simultaneously to identify integrated solutions that yield lower overall costs and improved resource usage [1]. This approach has proven particularly valuable in complex, interconnected systems where decisions in one domain significantly impact others.

Q2: What are the primary computational challenges when implementing co-optimization?

The main computational challenge lies in the dramatic increase in decision variables, which can lead to complexity that becomes intractable on networks of realistic scale [2]. As one research panel highlighted, "we are not yet capable of detailed and dynamic system-wide co-optimization" despite recognizing it as a "potentially game-changing objective" [2].

Specific technical hurdles include:

- Problem complexity grows exponentially with added systems

- Limited efficacy of parallelization on truly co-optimized problems

- Need to maintain the physical reality of all interconnected systems

- Requirements for massive stores of data often unavailable to researchers [2]

Q3: What algorithmic approaches help overcome co-optimization challenges?

Researchers have developed several technical approaches to manage co-optimization complexity:

Table: Algorithmic Solutions for Co-optimization Challenges

| Challenge | Algorithmic Solution | Technical Approach |

|---|---|---|

| Computational complexity | Simulation-based optimization | Embeds system physics into simulation within heuristic-based optimization框架 |

| System interdependence | Decomposition with iterative trading | Solves systems separately with iterative feedback exchange |

| Model fidelity vs. scale | Hybrid algorithms | Combines physical network reality with structural flexibility of heuristic and AI methods [2] |

| Nonlinear complexities | Relaxation and linear approaches | Reduces inherent nonlinear model complexities through mathematical transformations [1] |

Q4: What real-world applications demonstrate co-optimization benefits?

Successful co-optimization implementations span multiple sectors:

- Energy and Natural Gas: Co-optimized planning of electricity and natural gas infrastructure helps maximize efficiency, reliability, and cost-effectiveness, given natural gas's critical role in power generation [3].

- Fuels and Engines: The U.S. Department of Energy's Co-Optima initiative simultaneously developed advanced engine technologies and fuel components, identifying blendstocks with potential to improve passenger vehicle fuel economy by 10% [4] [5].

- Transmission and Distribution: Bi-level optimization coordinates transmission system operations with distribution system capabilities, enabling effective integration of distributed energy resources [2].

Troubleshooting Guide: Common Co-optimization Implementation Issues

Table: Co-optimization Implementation Issues and Solutions

| Observed Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| Suboptimal solutions that neglect key constraints | Over-simplified system representations; inadequate fidelity in modelling | Increase spatial granularity; enhance modelling fidelity while balancing computational demands [1] |

| Inability to handle uncertainty in dynamic systems | Failure to account for weather-dependent resources and flexible loads | Implement robust optimization techniques; incorporate uncertainty treatment methods [1] [2] |

| Computational intractability with realistic-scale networks | Excessive decision variables; inadequate algorithmic efficiency | Apply decomposition techniques; utilize simulation-based optimization; employ hybrid algorithms [2] |

| Limited practical adoption despite technical feasibility | Regulatory and policy limitations; data sharing barriers between organizations | Address regulatory separation of systems; develop cooperative decision-making frameworks; establish data sharing protocols [2] |

| Inadequate coordination across voltage levels | Traditional siloed operational models | Implement bi-level optimization with iterative feedback; develop coordinated market participation mechanisms [2] |

Experimental Protocol: Implementing a Co-optimization Framework

For researchers designing co-optimization experiments for environmental variables and resource use efficiency, follow this methodological workflow:

Phase 1: Conceptualization

- Clearly define the interconnected systems and their relationships

- Identify shared constraints and objective functions

- Establish evaluation metrics for success

Phase 2: Data and Modeling

- Collect coordinated data from multiple systems [1]

- Formulate integrated mathematical model representing all systems

- Identify key variables and constraints across systems

Phase 3: Computational Implementation

- Select appropriate algorithm based on problem structure

- Implement decomposition strategies if needed

- Validate model against known test cases

Phase 4: Evaluation and Refinement

- Analyze solutions for cross-system impacts

- Refine model based on sensitivity analysis

- Document trade-offs and synergies identified

The Researcher's Toolkit: Co-optimization Methods and Applications

Table: Essential Co-optimization Research Tools and Applications

| Method Category | Specific Techniques | Primary Applications | Resource Efficiency Benefits |

|---|---|---|---|

| Mathematical Formulations | Mixed-integer programming; Stochastic optimization; Decomposition methods | Generation and transmission planning; Multi-energy system coordination | Identifies synergies that yield 10%+ efficiency improvements in tested systems [5] |

| Computational Frameworks | Simulation-based optimization; Bi-level optimization; Hybrid algorithms | Transmission-distribution coordination; Power-gas network optimization | Enables leveraging demand-side flexibility, reducing supply-side investment needs [1] [2] |

| Domain Integration Methods | Co-planning; Joint optimization; Simultaneous optimization | Fuels and engines design; Water-energy nexus; Infrastructure planning | Improves overall resource usage compared to traditional decoupled approaches [1] |

| Uncertainty Management | Robust optimization; Stochastic programming; Chance constraints | Systems with high renewable energy shares; Climate-impacted resource planning | Mitigates variability from weather-dependent resources through coordinated flexibility [1] [2] |

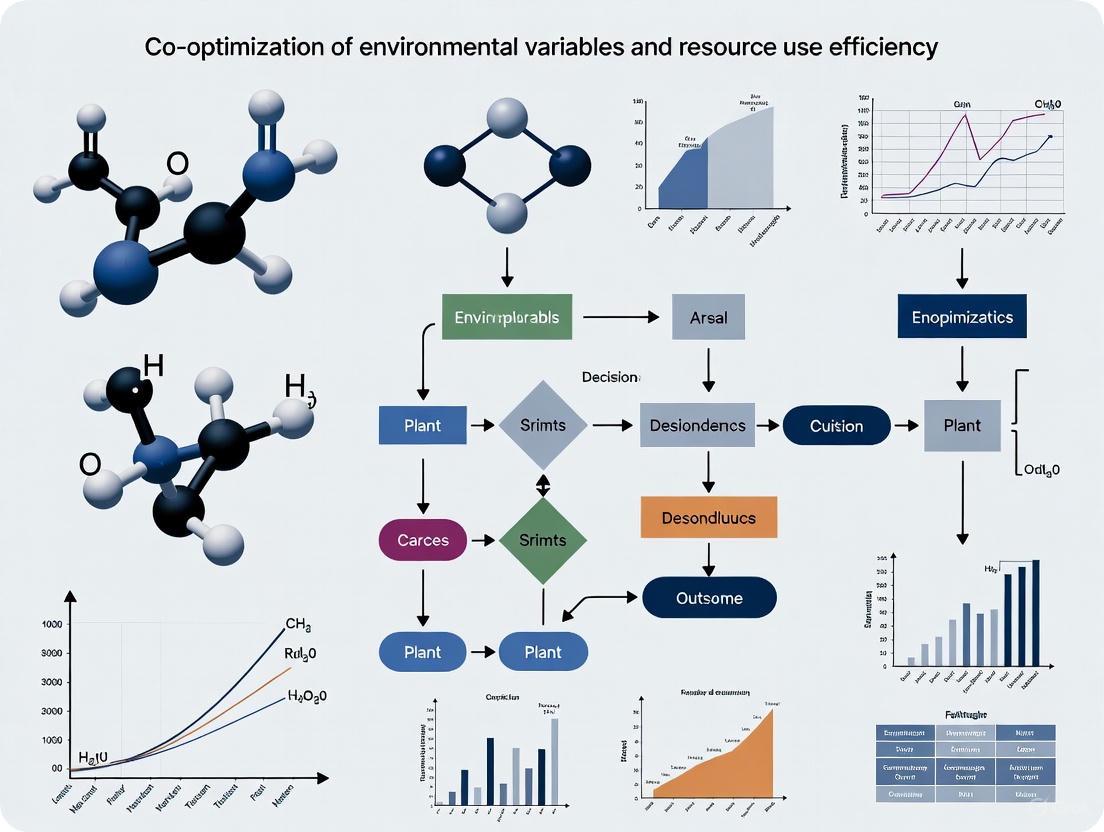

System Architecture for Co-optimization Implementation

This architecture illustrates how co-optimization frameworks integrate data, models, and algorithms across multiple resource systems and objectives to achieve superior outcomes compared to traditional siloed approaches. The framework emphasizes the simultaneous consideration of all interconnected systems, enabling identification of synergies and trade-offs that would be missed in sequential or isolated optimization processes [1] [2]. For researchers in environmental variables and resource efficiency, this approach provides a structured methodology for addressing complex, multi-system challenges in a holistic manner.

In controlled environment agriculture (CEA) research, achieving optimal resource use efficiency requires navigating the complex interdependencies between environmental variables. The core challenge lies in managing the inherent trade-offs between system stability, resource consumption, and productivity, while leveraging potential synergies between environmental factors and crop responses [6] [7]. This technical support guide provides frameworks and methodologies for troubleshooting common experimental challenges in this domain.

Frequently Asked Questions (FAQs)

FAQ 1: Our experimental data shows a persistent trade-off between yield and energy efficiency in our climate-controlled growth chambers. Is this unavoidable?

Recent research suggests this trade-off is fundamental but manageable. A 2024 study on complex systems revealed that systems evolved for high synergy (representing maximum information integration and potential yield) tend to be unstable and chaotic, whereas redundant systems are stable but lack integration capacity [6]. The solution lies in targeting a balanced "complex" state, akin to Tononi-Sporns-Edelman complexity, which offers greater stability than chaotic systems while maintaining a better capacity to integrate information than purely redundant systems [6].

- Troubleshooting Steps:

- Diagnose System State: Analyze your control system's response to minor perturbations. Over-damped, slow responses indicate excessive redundancy; oscillatory or unpredictable responses suggest chaotic instability.

- Adjust Control Parameters: Implement adaptive control strategies that move your system toward the complex regime, balancing sensitivity and robustness.

- Monitor Multiple Outcomes: Simultaneously track yield, energy input, and quality markers to identify the Pareto front—the set of solutions where one metric cannot be improved without worsening another.

FAQ 2: We observe conflicting plant responses when co-optimizing light and nutrient solutions. How can we deconvolve these interdependent effects?

This is a classic manifestation of interdependence. Plant responses are emergent properties of multiple interacting variables, not simply the sum of individual factors [8].

- Troubleshooting Steps:

- Implement a Factorial Experimental Design: Systematically vary light intensity, spectrum, and nutrient concentration (e.g., electrical conductivity - EC) in a crossed design. This allows you to isolate main effects and interaction effects.

- Construct a Response Surface: Model the yield or quality response across the multi-dimensional space of your input variables (e.g., Light x Nutrients). This visualization will reveal regions of positive synergy (where the combined effect is greater than the sum of parts) and trade-offs.

- Refer to the table below for a summary of common interactions.

FAQ 3: Our IoT-based sensor system collects vast amounts of data, but we struggle to translate it into actionable co-optimization strategies. What analytical approaches are recommended?

The field of complex systems science offers tools specifically designed for this purpose. The key is to move from simple correlation to understanding the network of causal relationships [8].

- Troubleshooting Steps:

- Develop a Causal Model: Use tools like structural equation modeling or Bayesian networks to map hypothesized causal pathways between your environmental inputs and plant performance outputs.

- Quantify Information Transfer: Employ information-theoretic measures (e.g., transfer entropy) to detect directed influence from one variable (e.g., root-zone temperature) to another (e.g., transpiration rate), even in non-linear systems.

- Validate with Intervention: Use your model to predict the outcome of a specific change (e.g., "If we increase VPD by 0.2 kPa while decreasing EC by 0.5 mS/cm, yield should remain stable but energy use should drop by 10%"). Run a targeted experiment to test this prediction.

Experimental Protocols for Co-optimization Research

Protocol 1: Quantifying Light-Nutrient Synergies in Leafy Greens

Objective: To map the interaction between photosynthetic photon flux density (PPFD) and nutrient solution electrical conductivity (EC) on the growth of lettuce (Lactuca sativa).

Methodology:

- Experimental Design: A full two-factor randomized complete block design.

- Factor A - PPFD: Four levels: 150, 250, 350, and 450 μmol·m⁻²·s⁻¹ (18-hour photoperiod).

- Factor B - EC: Four levels: 1.0, 1.8, 2.6, and 3.4 mS/cm.

- Replication: Five replications per treatment combination (Total of 80 experimental units).

- Culture: Deep-water culture hydroponic systems in identical, controlled environment chambers.

- Data Collection: Record fresh and dry mass (shoot and root), leaf area, chlorophyll content (SPAD), and tissue mineral analysis at harvest (28 days after transplanting).

Workflow Visualization:

Protocol 2: Evaluating the Energy-Yield Trade-off with IoT-based Dynamic Control

Objective: To compare the resource use efficiency and productivity of a conventional static-control greenhouse versus an IoT-equipped greenhouse with dynamic management of irrigation and fertilization [9].

Methodology:

- Setup: Two identical, adjacent greenhouse compartments cultivating zucchini, eggplant, and strawberry.

- Control Group (Conventional): Managed with timer-based irrigation and fixed-interval fertilization.

- Treatment Group (IoT): Equipped with soil moisture, temperature/humidity, and light sensors. Data feeds a control algorithm that triggers irrigation and injects fertilizer based on real-time substrate moisture depletion and predicted solar radiation.

- Key Metrics:

- Inputs: Total water (L), fertilizer (g), energy (kWh for climate control and lighting).

- Outputs: Total marketable fruit yield (kg/m²).

- Efficiency Indicators: Water use efficiency (kg yield/L H₂O), GHG emissions (kg CO₂-eq/kg yield) [9].

Workflow Visualization:

Data Presentation

| Metric | Conventional System | IoT-based System | Percent Change |

|---|---|---|---|

| Water Use (L/kg yield) | 45.2 | 26.7 | -41% |

| Fertilizer Input (g/kg yield) | 28.5 | 2.6 | -91% |

| Crop Yield (kg/m²) | 8.1 | 15.3 | +89% |

| GHG Emissions (kg CO₂-eq/kg yield) | 2.1 | 1.3 | -38% |

Table 2: Interaction Matrix for Common Environmental Variables in CEA

| Variable Pair | Type of Interaction | Observed Effect on Crops | Context Notes |

|---|---|---|---|

| Light & CO₂ | Strong Synergy | Increasing both simultaneously dramatically boosts photosynthesis beyond their additive effects. | Saturation points exist; benefits are non-linear [7]. |

| Air Temperature & Root-zone Temperature | Interdependence | Suboptimal root-zone temp can negate benefits of optimal air temp, and vice-versa [7]. | Critical for cool-season crops in warm climates and heating strategies. |

| Light Intensity & Nutrient Concentration (EC) | Trade-off/Synergy | High light requires high EC for maximum growth, but at low light, high EC can cause toxicity. | The optimal EC is light-dependent [7]. |

| Vapor Pressure Deficit (VPD) & Irrigation | Strong Interdependence | High VPD increases transpirational demand, requiring more frequent irrigation to avoid water stress. | IoT systems can dynamically link climate and irrigation control [9]. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Co-optimization Research |

|---|---|

| IoT Sensor Suite (Soil moisture, PAR, T/RH, CO₂) | Enables real-time, non-destructive monitoring of environmental variables for dynamic control and data-driven model building [9]. |

| Programmable LED Lighting Systems | Allows precise manipulation of light intensity and spectrum (quantity and quality) to dissect its interaction with other abiotic factors [7]. |

| Organic Biostimulants (e.g., PGPR, Seaweed Extract) | Used to investigate the potential synergy between root-zone biology and abiotic resource use efficiency (water, nutrients) [7]. |

| Hydroponic Nutrient Solutions (Inorganic & Organic) | The primary tool for manipulating root-zone chemistry (EC, pH) to study plant nutrient uptake and its interdependence with the aerial environment [7]. |

| Data Integration & AI Analytics Platform | Critical for analyzing high-dimensional datasets from co-optimization experiments, identifying patterns, and building predictive models [7]. |

FREQUENTLY ASKED QUESTIONS (FAQs)

Q1: What is the difference between total carbon emissions and carbon emissions intensity, and why is intensity a more relevant metric for growing research facilities?

A1: Total carbon emissions represent the entire volume of your greenhouse gas emissions, while carbon emissions intensity measures emissions relative to a specific unit of activity or output, such as emissions per kilogram of cell culture produced or per square foot of laboratory space [10]. For a growing research facility, total emissions will likely increase as operations scale up. Tracking emissions intensity is more informative because it reveals the efficiency of your processes. A decreasing intensity shows you are decoupling economic growth from environmental impact, which is a core goal of sustainable science [10].

Q2: Our laboratory's energy consumption is high due to constant environmental control (temperature, humidity). What are the most effective first steps to reduce energy intensity?

A2: The most effective strategy is the co-optimization of environmental variables [11]. Instead of controlling parameters like temperature, CO₂, and humidity in isolation, an integrated system adjusts them in concert to maintain optimal conditions with minimal energy expenditure. Research in controlled environment agriculture has demonstrated that real-time sensing and control strategies designed for environmental uniformity can significantly enhance resource use efficiency [11]. Begin with an audit to identify zones of environmental variability (e.g., hot/cold spots) and consider implementing more granular sensor networks and automated controls.

Q3: How can we quantitatively track our progress in reducing the carbon footprint of our research and development activities?

A3: You should track both absolute emissions and emissions intensity [10]. Develop a baseline by calculating your total Scope 1 (direct) and Scope 2 (indirect from purchased energy) emissions. Then, select a relevant intensity metric, such as kg CO₂e per research unit (e.g., per assay run, per liter of media prepared, or FTE scientist). The table below summarizes key metrics and reduction strategies.

Table: Key Carbon Emission Metrics and Strategies

| Metric | Definition | Application in Research | Primary Reduction Strategy |

|---|---|---|---|

| Total Emissions | Aggregate quantity of GHG emissions (Scope 1, 2, & 3) [10]. | Understanding the full environmental impact of the entire organization. | Transition to renewable energy; enhance supply chain sustainability [10]. |

| Carbon Emissions Intensity | Emissions per unit of economic output or activity [10]. | kg CO₂e per research unit (e.g., per assay, per kg of output). | Optimize processes for efficiency; adopt less carbon-intensive methods [10]. |

Q4: Are there documented cases where optimizing for sustainability also improved economic viability?

A4: Yes. Studies outside of traditional labs provide compelling evidence. For instance, in greenhouse agriculture, the integration of IoT systems for dynamic management of irrigation and fertilization led to a reduction in resource use (-41% water, -91% fertilizer) while simultaneously increasing crop yields (+89%) [9]. This demonstrates that precision management of environmental variables and resources can drastically cut costs and boost output, directly enhancing economic viability. These principles of sensor-based, data-driven optimization are transferable to controlled research environments.

TROUBLESHOOTING GUIDES

Problem: High and Unpredictable Energy Intensity in Environmental Control Systems

Symptoms:

- Spiking energy bills without a corresponding increase in research output.

- Inconsistent experimental results potentially linked to environmental fluctuations.

- HVAC systems constantly running and struggling to maintain setpoints.

Investigation and Resolution Protocol:

Baseline Energy Intensity Calculation:

- Action: Calculate your current energy intensity using the formula: Energy Consumption (kWh) / Research Activity Unit.

- Example: If your lab consumed 50,000 kWh in a month and processed 10,000 assay plates, your energy intensity is 5 kWh/plate.

- Note: This baseline is essential for measuring the impact of any interventions [10].

Sensor and Data Audit:

- Action: Verify the calibration and placement of sensors for temperature, humidity, and airflow. Identify zones with poor uniformity.

- Procedure: Use portable data loggers to map environmental conditions across the lab space over a 48-hour period. Compare this data to the readings from your central control system.

Implement Co-optimization Controls:

- Action: Move from independent setpoints to an integrated control strategy.

- Methodology: Based on sensor data, program your building management system (BMS) to use dynamic relationships between variables. For example, allow a slightly wider humidity range when temperature is precisely at its target, reducing the energy load from dehumidification [11]. The workflow for this approach is outlined in the diagram below.

Problem: Elevated Carbon Footprint from Laboratory Operations

Symptoms:

- Corporate sustainability targets are being missed.

- A high proportion of emissions come from purchased electricity (Scope 2).

- Lack of granular data on emission sources.

Investigation and Resolution Protocol:

Emissions Inventory & Segmentation:

- Action: Conduct a detailed inventory to categorize emissions into Scope 1 (e.g., natural gas for sterilization), Scope 2 (electricity), and Scope 3 (supply chain, waste disposal) [10].

- Tool: Utilize the GHG Protocol Corporate Standard for guidance. This will reveal your largest emission "hotspots."

Target High-Intensity Processes:

- Action: Focus reduction efforts on processes with the highest carbon emissions intensity.

- Procedure: For example, if ultra-low temperature (ULT) freezers are a major energy consumer, implement a program to raise setpoints from -80°C to -70°C where scientifically valid, and ensure regular maintenance. The table below quantifies potential savings from similar interventions, inspired by agricultural precision studies.

Table: Quantitative Impact of Precision Resource Management

| Parameter | Conventional System | Optimized/IoT System | Percentage Change | Source |

|---|---|---|---|---|

| Water Use | Baseline | -41% | -41% | [9] |

| Fertilizer Inputs | Baseline | -91% | -91% | [9] |

| Crop Yields | Baseline | +89% | +89% | [9] |

| GHG Emissions | Baseline | -38% | -38% | [9] |

- Transition to Renewable Energy and Optimize:

- Action: For Scope 2 emissions, source renewable energy through on-site generation, power purchase agreements (PPAs), or utility green tariffs.

- Parallel Action: Implement the process optimization strategies from the table above, such as streamlining operations to minimize waste, which directly reduces emissions per unit of output [10].

THE SCIENTIST'S TOOLKIT: RESEARCH REAGENT & SOLUTIONS FOR SUSTAINABILITY

Table: Essential Resources for Eco-Efficiency Research

| Item / Solution | Function & Relevance to Co-optimization |

|---|---|

| IoT Sensor Network | A system of connected sensors (temperature, humidity, CO₂, light) to provide real-time, granular data on environmental variables. This is the foundational hardware for data-driven resource optimization [9] [11]. |

| Data Integration Platform | Software that aggregates data from sensors, equipment, and utility meters. Enables the analysis of correlations between environmental conditions, resource consumption, and experimental outcomes. |

| Life Cycle Assessment (LCA) Software | A tool to quantify the environmental impacts (including carbon footprint) of a process or product throughout its life cycle, helping to identify key areas for improvement [9]. |

| Building Management System (BMS) | An automated control system for a building's equipment (HVAC, lighting). Can be programmed with advanced algorithms for the co-optimization of environmental parameters to achieve uniformity and efficiency [11]. |

| Energy Intensity Metric | A defined and tracked Key Performance Indicator (KPI), such as kWh per unit of output. It is a crucial analytical "reagent" for diagnosing inefficiency and proving the efficacy of new protocols [10]. |

Technical Support Center: FAQs for CEA Research Challenges

This section addresses frequently asked questions and provides targeted troubleshooting guidance for researchers working on the co-optimization of environmental variables to enhance resource use efficiency in Controlled Environment Agriculture (CEA).

FAQ 1: How can I diagnose and correct uneven crop growth in my vertical farming research setup?

Issue: Inconsistent plant size, color, or development across the growth area.

Troubleshooting Guide:

- Step 1: Assess Ambient Airflow: Check for inadequate or non-uniform air circulation, which can cause microclimates with varying temperature, humidity, and CO₂ levels. Verify that systems create gentle, consistent air movement to minimize stagnant zones [12].

- Step 2: Verify Multi-Level Airflow: In multi-tiered systems, ensure each layer has dedicated airflow. Microclimates can differ per tier, requiring targeted air supply to achieve environmental uniformity [12] [11].

- Step 3: Inspect for Condensation: Examine ceilings, joints, and gutters for condensation drip points. Condensate can carry debris and microorganisms, contaminating plants and causing localized disease or growth inhibition. Implement and maintain condensate deflection systems [13].

- Step 4: Check Floor Conditions: Ensure floors are dry and clean. Wet floors can lead to pathogen splash-up onto lower-growing plants, especially in densely optimized spaces. Require dedicated clean footwear for personnel [13].

FAQ 2: My resource use efficiency (water/fertilizer) is lower than expected in a recirculating hydroponic system. What are the likely causes?

Issue: High consumption of water and fertilizers without corresponding gains in biomass or yield.

Troubleshooting Guide:

- Step 1: Interrogate IoT Sensor Data: If using a sensor-based IoT system, verify sensor calibration and functionality. Dynamic management based on faulty data can lead to significant inefficiency [9].

- Step 2: Check for System Leaks: Physically inspect the entire water delivery system, including reservoirs, pipes, and connectors, for leaks in a closed-loop system [13].

- Step 3: Assess Root Zone Management: Evaluate root zone temperature, dissolved oxygen, and pH. Suboptimal root zone temperatures can impact nutrient uptake and plant development, while low dissolved oxygen can occur with organic nutrient sources [7].

- Step 4: Test Nutrient Solution for Contamination: In a closed-loop system, a single contamination point (e.g., from a dirty reservoir) can affect the entire crop and disrupt the nutrient balance. Test water for pathogens and chemical contaminants prior to harvest cycles [13].

FAQ 3: What strategies can I employ to reduce the energy footprint of my artificial lighting in plant growth experiments?

Issue: High energy consumption from lighting systems, leading to increased carbon emissions and operational costs.

Troubleshooting Guide:

- Step 1: Develop Crop-Specific Light Recipes: Move beyond fixed lighting. Research and implement precise light intensities and spectra (light recipes) tailored to your specific crop and growth stage to avoid wasteful energy use [7].

- Step 2: Investigate Conversion Efficiency: Evaluate the photon efficiency of your electric light sources (e.g., LEDs). Newer technologies may offer higher conversion efficiencies, delivering more photosynthetically active radiation per unit of energy consumed [7].

- Step 3: Implement Co-optimization Strategies: Do not control light in isolation. Use an AI framework to co-optimize lighting with other environmental variables like CO₂, temperature, and humidity. This can achieve the same or better growth outcomes with lower overall energy inputs [7] [11].

- Step 4: Consider Wavelength-Selective Coverings: In greenhouse experiments, investigate coverings that manipulate sunlight spectrum to reduce the need for supplemental lighting for specific growth phases [7].

Quantitative Performance Data

The following table summarizes key experimental outcomes from a study on IoT-based irrigation and fertilization management, demonstrating the potential for significant resource efficiency gains through environmental variable co-optimization [9].

Table 1: Environmental and Agronomic Impacts of IoT-Based Management in Greenhouse Agriculture

| Performance Metric | Conventional Management | IoT-Based Management | Change |

|---|---|---|---|

| Greenhouse Gas Emissions | Baseline | — | Reduction up to -38% |

| Water Use | Baseline | — | Reduction of -41% |

| Crop Yields (Average) | Baseline | — | Increase of +89% |

| Fertilizer Inputs (Average) | Baseline | — | Reduction of -91% |

Detailed Experimental Protocol: Sensor-Based Co-optimization for Resource Efficiency

Objective: To implement and validate a dynamic management system for co-optimizing environmental variables to maximize resource use efficiency in CEA.

Methodology:

This protocol is adapted from a comparative analysis of conventional versus IoT-equipped greenhouses [9] and principles from the NE2335 research project [7].

Materials:

- Plant Material: Select a model crop (e.g., zucchini, eggplant, melon, or strawberry).

- Experimental Setup: Two identical growth chambers or greenhouse bays.

- Sensor Suite: Install networked sensors for real-time monitoring of:

- Aerial Environment: Light (PPFD), CO₂ concentration, air temperature, relative humidity.

- Root Zone: Nutrient solution temperature, pH, electrical conductivity (EC), dissolved oxygen (DO).

- Actuators: Computer-controllable systems for LED lighting, nutrient dosing, irrigation valves, HVAC, and CO₂ injection.

- Data Platform: A central computer or cloud platform running control and data logging software, ideally incorporating artificial intelligence for decision-making.

Procedure:

- System Calibration: Calibrate all sensors and actuators prior to experiment initiation.

- Baseline Phase (Control): Manage one bay using conventional, fixed-setpoint strategies for irrigation and fertilization based on a predetermined schedule.

- Experimental Phase (IoT): Manage the second bay using the sensor-based IoT system. Program the system to dynamically adjust irrigation and fertilization based on real-time root zone and aerial sensor data. Implement AI techniques to co-optimize variables (e.g., increase CO₂ when light levels are high to enhance photosynthetic efficiency).

- Data Collection: Monitor and log the following data continuously for both systems throughout the crop cycle:

- Inputs: Total energy (kWh), water volume (L), fertilizer mass (g), CO₂ volume.

- Environmental Data: Time-series data from all sensors.

- Plant Response: Biomass (fresh and dry weight), yield, growth rate.

- Post-Harvest Analysis: Calculate resource use efficiency metrics for both systems, including:

- Water Use Efficiency (WUE) = Yield (kg) / Water Used (L)

- Fertilizer Use Efficiency (FUE) = Yield (kg) / Fertilizer Applied (g)

- Energy Efficiency = Yield (kg) / Energy Input (kWh)

Workflow and System Relationship Diagrams

Co-optimization Framework

Experimental Troubleshooting Workflow

Research Reagent and Essential Materials Toolkit

Table 2: Key Research Reagents and Materials for CEA Co-optimization Experiments

| Item | Function/Application | Technical Notes |

|---|---|---|

| IoT Sensor Suite | Real-time monitoring of aerial and root zone environmental variables. | Includes sensors for PPFD, CO₂, air temp, RH, solution temp, pH, EC, and DO. Critical for data-driven control [9]. |

| Programmable LED Lighting | Providing precise light spectra and intensities for crop-specific "light recipes." | Enables research on photon efficiency and spectral effects on plant growth and resource use [7]. |

| Data Integration & AI Platform | Central system for data logging, analysis, and implementing control algorithms. | Allows for co-optimization of environmental variables and the development of predictive growth models [7] [11]. |

| Hydroponic System Components | Soilless cultivation infrastructure for precise root zone management. | Includes reservoirs, pumps, and dosing systems. Essential for studying water and nutrient use efficiency [7] [14]. |

| Water Testing Kit | Detecting chemical and biological contaminants in nutrient solutions. | Crucial for maintaining solution quality and diagnosing pathogen-related issues in recirculating systems [13]. |

| Organic Fertilizers & Biostimulants | Researching sustainable nutrient sources and plant growth promoters. | Used to investigate the efficacy of beneficial microorganisms (e.g., PGPR, AMF) in organic hydroponic production [7]. |

Core Concepts and Definitions

What is the fundamental definition of "Co-optimization" in a research context? Co-optimization refers to the simultaneous or joint clearing of multiple variables or objectives to produce a solution with optimal outcomes, often characterized by the least operational cost or highest efficiency [15]. In environmental research, this involves the integrated management of several interacting factors, rather than optimizing them sequentially.

How does "Resource Use Efficiency" relate to co-optimization? Resource Use Efficiency is a primary goal of co-optimization. It measures the output obtained per unit of resource input. Co-optimization strategies aim to maximize this efficiency by ensuring that multiple environmental variables are tuned to work together synergistically, thereby reducing waste and improving overall system performance [9] [16].

What does "Environmental Sustainability" mean in the context of controlled environment agriculture (CEA)? Environmental Sustainability in CEA involves adopting practices and technologies that significantly reduce the environmental footprint of agricultural production. This includes lowering greenhouse gas emissions, minimizing water and fertilizer use, and enhancing resource use efficiency, all of which can be achieved through the co-optimization of environmental variables [9].

Troubleshooting Guides & FAQs

FAQ: Our experimental co-optimization model is not converging on an efficient solution. What are potential causes?

- Problem: Incomplete Variable Set.

- Solution: Ensure your model includes the key interacting environmental variables. In CEA, these typically include Light (quantity and quality), Air Temperature, Carbon Dioxide (CO₂) concentration, Humidity, and Root-zone conditions (e.g., nutrient composition, temperature, pH) [16]. Omitting one can prevent finding a true co-optimal solution.

- Problem: Inadequate Real-time Data.

- Problem: Conflicting Objectives.

- Solution: Explicitly define and weight your objectives (e.g., maximizing yield vs. minimizing water and energy use). Use a framework that can handle multi-objective optimization, as improving one metric (e.g., light intensity) might negatively impact another (e.g., energy use) if not balanced correctly [16].

FAQ: We are seeing high resource consumption despite our co-optimization efforts. Where should we look?

- Investigation 1: Audit System-Level Efficiency.

- Co-optimization should extend to the equipment level. For example, investigate the conversion efficiency of your electric light sources (e.g., LEDs) and the performance of your environmental control systems (e.g., dehumidifiers, HVAC). Inefficient hardware can undermine the best control algorithms [16].

- Investigation 2: Check for Sub-optimal Setpoints.

- The optimal setpoint for one variable depends on the levels of others. For instance, the ideal light intensity and spectrum are influenced by the prevailing CO₂ concentration and air temperature. Ensure your protocol uses crop-specific guidelines that account for these interactions [16].

- Investigation 3: Evaluate Nutrient Use Efficiency.

- A core area for improvement is the root zone. Monitor the efficacy of your fertilizer, whether organic or inorganic. Imbalanced nutrient content or poor dissolved oxygen levels in hydroponic systems can lead to high fertilizer inputs and low uptake, defeating co-optimization goals [16].

Quantitative Foundations of Co-optimization

The following table summarizes key quantitative findings from research implementing co-optimization strategies in controlled environments, providing a benchmark for experimental outcomes.

Table 1: Quantitative Impacts of IoT-Based Co-optimization in Greenhouse Agriculture

| Performance Metric | Conventional Practice | Co-optimized IoT System | Change | Research Context |

|---|---|---|---|---|

| Greenhouse Gas Emissions | Baseline | Reduced | -38% | Greenhouse cultivation of zucchini, eggplant, melon, strawberry [9] |

| Water Use | Baseline | Reduced | -41% | Same as above [9] |

| Crop Yields | Baseline | Increased | Average +89% | Same as above [9] |

| Fertilizer Inputs | Baseline | Reduced | Average -91% | Same as above [9] |

Experimental Protocols for Co-optimization Research

Protocol: Co-optimization of Aerial and Root-Zone Environmental Variables

1. Objective: To develop and validate a co-optimization protocol that simultaneously manages light, CO₂, air temperature, and nutrient solution temperature to enhance resource use efficiency and crop yield [16].

2. Materials and Reagent Solutions: Table 2: Essential Research Reagents and Materials

| Item | Function / Explanation |

|---|---|

| IoT Sensor Network | A system of interconnected sensors for dynamic, real-time monitoring of environmental variables (e.g., soil moisture, ambient light, CO₂, nutrient pH/EC) [9]. |

| Inorganic Fertilizer | A standard nutrient solution with known and readily available nutrient concentrations, used as a control or baseline treatment [16]. |

| Organic Fertilizer | A nutrient source derived from organic materials; requires assessment of its efficacy and potential need for beneficial microorganisms to aid mineralization in hydroponics [16]. |

| Plant Biostimulants (PBs) | Products (e.g., humic substances, seaweed extract, beneficial bacteria/fungi) used to boost plant growth and stress tolerance, potentially improving nutrient use efficiency under co-optimized conditions [16]. |

| Data Logging & Control System | Hardware and software for collecting sensor data, running AI/optimization algorithms, and automatically adjusting environmental control actuators [9] [16]. |

3. Methodology:

- System Setup: Establish two identical growth chambers or greenhouse compartments. One serves as the control (conventional management), the other as the treatment (co-optimized system) [9].

- Sensor Integration: Equip the treatment system with a suite of sensors to continuously monitor: light intensity (PPFD) and spectrum, air temperature, relative humidity, CO₂ concentration, root-zone temperature, and nutrient solution pH/EC [16] [11].

- AI Controller Implementation: Develop or implement an artificial intelligence (AI) framework. This framework should:

- Model the complex plant interactions with the growing environment.

- Control the environmental actuators (LEDs, HVAC, CO₂ injectors, root-zone heaters/chillers) based on sensor feedback and predefined optimization goals (e.g., maximize yield per unit of energy/water) [16].

- Evaluation: Over multiple growth cycles, measure and compare the outcomes listed in Table 1 (emissions, water use, yield, fertilizer inputs) between the control and treatment systems to quantify the benefit of co-optimization.

Visualization of Co-optimization Workflows

The following diagram illustrates the core feedback loop of an AI-driven co-optimization system for controlled environments.

This second diagram maps the logical relationships between key environmental variables that must be co-optimized in a controlled agriculture system.

Methodologies in Action: Frameworks and Algorithms for Real-World Co-optimization

Frequently Asked Questions (FAQs)

1. What are the main classes of Mathematical Programming (MP)-based heuristics and when should I use them? MP-based heuristics are broadly categorized into several classes. Decomposition approaches break down a complex problem into a sequence of subproblems, each modeled and solved optimally as a mathematical program [17]. Improvement heuristics, also known as Large-Scale Neighborhood Search, start with a feasible solution and solve a mathematical program to generate an improved solution [17]. Another class involves using exact MP algorithms, like branch-and-bound, in a modified way to generate approximate solutions, which is useful when nearing optimality takes prohibitively long [17]. Furthermore, relaxation-based approaches solve a relaxation of the original problem (e.g., Linear Programming relaxation of an Integer Program) and then use that solution to generate a good feasible solution, for instance, via rounding [17].

2. How can AI, specifically Large Language Models (LLMs), be integrated into optimization frameworks? LLMs can be integrated to create more adaptive and explainable optimization systems. A novel framework like REMoH (Reflective Evolution of Multi-objective Heuristics) integrates LLMs with evolutionary algorithms like NSGA-II [18]. In this setup, the LLM generates domain-agnostic, human-readable heuristic operators. A key innovation is a reflection mechanism that uses clustering and search-space analysis to guide the creation of diverse and high-quality heuristics, improving convergence and diversity [18]. LLMs can also function as intrinsic optimizers, for example, through techniques like Optimization by PROmpting (OPRO), where the problem is formulated in natural language and the LLM iteratively proposes solutions [18].

3. My model has non-linear constraints that are difficult for traditional MILP solvers. What are my options? Frameworks that leverage AI, such as REMoH, show significant promise for handling complex, non-linear constraints [18]. Unlike traditional mathematical approaches that often require extensive reformulation, these AI-integrated frameworks can incorporate complex and context-sensitive constraints with relatively little reformulation effort, offering greater modeling flexibility and robustness [18].

4. What is a "matheuristic" and how does it differ from a metaheuristic? Matheuristics are problem-independent frameworks that use mathematical programming tools to find high-quality heuristic solutions [19]. While compatible with the broader definition of metaheuristics, matheuristics emphasize the foundation on a mathematical model of the problem. They are structurally general enough to be applied to different problems with little adaptation, and can be seen as hybrid metaheuristics based on components derived from the problem's mathematical model [19].

Troubleshooting Guides

Problem 1: Algorithm Converging to a Poor Local Solution

Symptoms: Your optimization algorithm converges quickly, but the solution quality is unsatisfactory. You observe a lack of diversity in the solution pool.

Resolution:

- Implement a Reflection Mechanism: For population-based algorithms, incorporate a reflection step that analyzes the current population. Use clustering to identify groups of similar solutions or heuristics. Then, guide the search to explore underrepresented regions of the search space. This approach has been shown to improve both convergence and solution diversity in multi-objective problems [18].

- Utilize Very Large-Scale Neighborhood Search (VLNS): Instead of simple local moves, define a large neighborhood around your current solution and model the search for an improved solution within this neighborhood as a mathematical program (e.g., a MIP). Solving this model can lead to significantly better solutions [17] [19].

- Apply a Corridor Method: This method combines MP with heuristic search. It solves the original MP model but adds constraints that confine the search to a "corridor" around a reference solution (e.g., the incumbent). This limits the search space, allowing the solver to explore large, promising neighborhoods effectively [19].

Problem 2: High Computational Time for Large-Scale Problems

Symptoms: The model takes too long to solve, making it impractical for real-world application or rapid experimentation.

Resolution:

- Employ Decomposition Techniques: Break the large problem into smaller, more manageable subproblems. These subproblems are solved sequentially or in parallel, and their solutions are combined to form a solution to the original problem. This is a classic and powerful MP-based heuristic approach [17].

- Use a Kernel Search or Incremental Core: These are matheuristic frameworks designed for complex problems like Mixed-Integer Linear Programming (MILP). They work by iteratively solving a sequence of restricted MILP problems. The restriction is applied to a subset of decision variables (the "kernel" or "core"), which is updated at each iteration based on the solution of the previous restricted problem, focusing computational effort on the most promising variables [19].

- Leverage Hybrid AI Methods: Frameworks like REMoH that integrate LLMs can reduce the modeling and computational effort required to achieve competitive results, offering a different pathway to efficiency [18].

Problem 3: Translating a Real-World Problem into an Effective Mathematical Model

Symptoms: Difficulty in formulating the problem's objectives and constraints in a way that is both accurate and computationally tractable.

Resolution:

- Follow a Structured Modeling Process:

- Define Decision Variables: Clearly identify the questions you need to answer (e.g., "how much?", "should I?").

- Formulate the Objective Function: Mathematically express the goal (e.g., minimize cost, maximize efficiency).

- Specify Constraints: List all limitations and requirements as mathematical inequalities or equations [20].

- Use Knowledge-Based Systems: For ill-structured problems, leverage AI-based tools. These systems can help encode domain knowledge and modeling strategies, guiding the analyst in selecting appropriate model parameters, analysis strategies, and program options, which is particularly helpful for novice users [21].

- Consider an AI Co-Designer: Emerging tools like OptiMUS use LLMs to interpret natural language descriptions of a problem and automatically generate structured MILP formulations, which can then be debugged and solved [18].

Protocol 1: Benchmarking a Novel Multi-Objective Heuristic

Objective: To evaluate the performance of a new multi-objective optimization algorithm against state-of-the-art methods.

Methodology:

- Dataset Selection: Use standardized public datasets. For example, in Flexible Job Shop Scheduling (FJSSP), the Brandimarte, Barnes, and Dauzere-Peres instance suites are widely used [18].

- Baseline Comparison: Compare your algorithm against:

- Mathematical Models: Mixed-Integer Linear Programming (MILP) and Constraint Programming solved with exact solvers.

- Learning-Based Methods: Such as Reinforcement Learning.

- Established Metaheuristics: Such as standard NSGA-II.

- Performance Metrics: Calculate established multi-objective metrics:

- Hypervolume (HV): Measures the volume of the objective space dominated by the solution set (higher is better).

- Inverted Generational Distance (IGD): Measures the average distance from the true Pareto front to the solution set (lower is better) [18].

- Ablation Study: If your method has a key component (e.g., a reflection mechanism), perform an ablation study by running the algorithm with and without that component to quantify its contribution to performance [18].

Protocol 2: Analyzing Resource Use Efficiency (RUE) in Agricultural Production

Objective: To quantify and optimize the efficiency of various inputs (e.g., labor, fertilizer, water, energy) in a controlled agricultural system.

Methodology:

- Data Collection: Gather data on input quantities and output yield. This can be primary experimental data or secondary data from public agricultural databases [22] [23].

- Efficiency Analysis:

- Use a Cobb-Douglas production function to model the relationship between inputs and output.

- Calculate Resource Use Efficiency (RUE) by comparing the Marginal Value Product (MVP) of each input to its Marginal Factor Cost (MFC). If MVP > MFC, the input is underutilized; if MVP < MFC, it is overutilized [22].

- Optimization: Apply an optimization algorithm (e.g., the Imperialist Competitive Algorithm) to determine the input levels that maximize output or energy use efficiency while minimizing environmental impact [23].

- Impact Assessment: Evaluate environmental performance using metrics like emissions of nitrogen oxides, ammonia, heavy metals, CO₂, and Disability-Adjusted Life Years (DALY) [23].

The table below summarizes key metrics from resource optimization studies in agriculture.

Table 1: Comparative Energy and Resource Use in Crop Production

| Metric | Cotton [23] | Canola [23] | Notes |

|---|---|---|---|

| Total Labor (h/ha) | 120 | 79 | Indicates higher labor intensity for cotton |

| Machine Energy (MJ/ha) | 6,270 | 2,821.5 | Higher mechanization for cotton |

| Diesel Fuel (MJ/ha) | 5,631 | 6,757.21 | Canola is more diesel-dependent |

| Nitrogen Energy (MJ/ha) | 7,810 | 10,153 | Higher nitrogen volume for canola |

| Total Energy Input (MJ/ha) | 26,083.80 | 25,747.04 | Comparable total energy |

| Output Yield (kg/ha) | 2,900 | 2,300 | Cotton has higher yield |

| Energy Use Efficiency | 1.31 | 2.23 | Canola converts energy to output more efficiently |

| Net Energy Gain (MJ/ha) | 8,136.20 | 31,752.96 | Canola has a significantly higher net gain |

| Resource Intensity (USD/ha) | 115.36 | 187.56 | Cotton has lower financial cost per unit resource |

Framework Visualization

Matheuristic Algorithm Selection Workflow

AI-Enhanced Optimization Framework (REMoH)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Optimization Research

| Tool / Framework | Type | Primary Function | Relevance to Co-Optimization Research |

|---|---|---|---|

| MILP Solver (e.g., Gurobi) [20] | Software | Solves Mixed-Integer Linear Programming models to optimality or heuristically. | Core engine for many matheuristics; used in decomposition, VLNS, and corridor methods. |

| Wolfram Language [24] | Programming Language | A knowledge-based language for expressing computational thinking and complex models. | Useful for rapid prototyping of models and heuristics, and for integrating real-world data. |

| LLM (e.g., GPT-4) [18] | AI Model | Generates and evolves heuristic operators, interprets problems, and assists in model formulation. | Enhances adaptability and explainability; helps handle non-linear structures and reduce modeling effort. |

| Cobb-Douglas Function [22] | Economic Model | A production function modeling output as a function of multiple inputs (e.g., labor, capital). | Foundational for quantifying Resource Use Efficiency (RUE) in agricultural and environmental studies. |

| Imperialist Competitive Algorithm (ICA) [23] | Metaheuristic | A socio-politically inspired algorithm for global optimization. | Applied to optimize energy inputs and environmental outputs in crop production systems. |

| Knowledge-Based System [21] | AI System | Encodes domain expertise and modeling strategies to guide users. | Assists in model generation, parameter selection, and interpretation of results for complex systems. |

Troubleshooting Guides and FAQs

FAQ 1: What are the most significant computational challenges in multi-parameter building optimization, and how can they be overcome?

Computational expense is a primary bottleneck, as conventional simulation methods can be prohibitively expensive for complex forms [25]. You can adopt hybrid workflows that integrate approximate evolutionary searches (like NSGA-II or NSGA-III) with local optimization techniques (such as Tabu search). One study demonstrated that coupling parametric modeling, evolutionary algorithms, and k-means clustering substantially reduced computational time and cost while achieving optimal results for façade patterns [25]. For operational optimization of energy systems, a Diagram-Driven Method (DDM) can reduce operational optimization time by more than 99.99% compared to Mixed Integer Linear Programming (MILP), with comparable accuracy [26].

FAQ 2: How can I improve the convergence speed and stability of multi-objective optimization algorithms?

A highly effective method is to replace full-scale simulations with surrogate models developed using machine learning. Research on optimizing high-rise residential buildings used Support Vector Machines (SVM) to create a surrogate model from EnergyPlus simulation data, which greatly improved the computation efficiency of the NSGA-II algorithm [27]. This multi-stage approach separates the process into surrogate model training and optimization execution, preventing the algorithm from getting stuck in local minima and speeding up convergence.

FAQ 3: My optimization results show a conflict between visual comfort and energy performance. How should this trade-off be managed?

This is a common co-optimization challenge. Your parameter sensitivity analysis should guide you. In façade pattern optimization, studies found that while factors like pattern count, dispersion, and distance from windows significantly affected energy use (EUI), the material selection for these patterns primarily influenced visual comfort metrics [25]. You should first identify which parameters most strongly impact each objective. Then, use a Pareto-based multi-objective algorithm (like NSGA-III) to explore non-dominated solutions, allowing you to present a range of optimal trade-offs rather than a single solution.

FAQ 4: What is the practical difference between multi-layer and multi-stage optimization frameworks?

A multi-stage framework typically breaks a single optimization process into sequential phases to improve efficiency. For example, a two-stage approach might first use a surrogate model for a global search before switching to precise simulations for local refinement [27]. A multi-layer framework, often called co-optimization, simultaneously handles different system levels. A three-layer co-optimization for Distributed Energy Systems (DES) simultaneously explores system design, component configuration, and operational decisions, which is superior to conventional two-layer frameworks that treat design as fixed [26].

Table 1: Common Optimization Workflow Failures and Solutions

| Problem | Root Cause | Solution |

|---|---|---|

| Prohibitively long computation time | High-fidelity simulation models are too costly for thousands of iterations [25]. | Implement surrogate modeling (e.g., SVM, MLR) or a hybrid approximate-accurate workflow [27]. |

| Algorithm fails to find good solutions | Isolated information between parameters or paths; inefficient feature fusion [28]. | Introduce path cooperation mechanisms and dynamic structure adjustments [28]. |

| Results are not applicable in real-world operations | Framework does not integrate all decision layers (design, configuration, operation) [26]. | Adopt a three-layer co-optimization framework that allows simultaneous exploration of diverse system designs [26]. |

| Model performs poorly with new, unseen data | Inadequate robustness to noise, occlusion, or data scale variations [28]. | Incorporate a dynamic path cooperation mechanism and leverage multi-path architecture for better feature representation [28]. |

FAQ 5: How can I validate that my multi-parameter optimization model is robust and generalizable?

Robustness should be tested against specific metrics. Use dedicated datasets to evaluate key performance indicators. For instance, after optimizing a model, you can test its noise robustness, occlusion sensitivity, and resistance to sample attacks on a custom dataset. One study reported achieved scores of 0.931, 0.950, and 0.709 respectively on a Medical Images dataset for these metrics [28]. Furthermore, evaluate data scalability efficiency and resource scalability requirement on varied data types (e.g., E-commerce Data) to ensure the model adapts efficiently without excessive computational demands [28].

Experimental Protocols and Workflows

Protocol 1: Hybrid Multi-Stage Optimization for Architectural Forms

This protocol is designed for optimizing intricate façade designs regarding visual comfort and energy performance [25].

- Parameterization and Initial Sampling: Define the parametric model of the façade, identifying all variable parameters (e.g., pattern geometry, density, rotation). Use a space-filling algorithm like Latin Hypercube Sampling to generate an initial set of design alternatives.

- Surrogate Model Development: Run accurate simulations (e.g., for spatial daylight autonomy-sDA and Energy Use Intensity-EUI) on the initial sample. Use this data to train an approximate meta-model (like a Multiple Linear Regression model) that can predict performance without costly simulation.

- Evolutionary Approximate Search: Execute a multi-objective evolutionary algorithm (e.g., NSGA-III) using the surrogate model for fast fitness evaluation. This step identifies a promising region in the design space.

- Clustering and Local Refinement: Cluster the results from the previous step using the k-means algorithm to select representative candidates. Perform accurate simulations on these candidates. Then, initiate a local search (e.g., Tabu search) starting from the best-performing candidates to fine-tune the solutions.

Protocol 2: Three-Layer Co-optimization for Distributed Energy Systems (DES)

This protocol optimizes DES across design, configuration, and operation layers for superior energy, economic, and environmental performance [26].

- System Design Layer: Define multiple high-level system designs by selecting core equipment types (e.g., double-effect vs. single-effect absorption chillers, inclusion of heat pumps).

- Configuration Optimization Layer (Outer Loop): For each system design, use a multi-objective algorithm (e.g., NSGA-II) to determine the optimal capacities and sizes of key equipment (e.g., PGU, PV units, storage tanks).

- Operational Optimization Layer (Inner Loop): For each candidate configuration, determine the optimal hourly operational schedule. To avoid the computational cost of MILP, employ the Diagram-Driven Method (DDM), which uses targeted load-following strategies to make near-instantaneous operational decisions.

- Performance Evaluation and Selection: The operational results (Annual Total Cost - ATC and Carbon Dioxide Emissions - CDE) are fed back to the outer layer. The process repeats until Pareto-optimal fronts are identified for each system design, allowing for a final comparison.

Table 2: Key Performance Indicators (KPIs) for DES Co-optimization

| Metric | Formula/Description | Target Outcome |

|---|---|---|

| Annual Total Cost (ATC) | Sum of operational and capital costs [26]. | Minimize |

| Carbon Dioxide Emissions (CDE) | Total annual CO₂ emissions in kg [26]. | Minimize |

| Relative Energy Efficiency | Comparison with a conventional system baseline [26]. | Maximize (e.g., 31.69% gain) |

| Primary Energy Consumption | Total primary energy used by the system [26]. | Minimize |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Modeling Tools for Optimization Research

| Tool / Solution | Function in Experimentation |

|---|---|

| NSGA-II / NSGA-III | Multi-objective evolutionary algorithms used to find a Pareto front of non-dominated solutions, balancing competing objectives like energy use and visual comfort [25] [27]. |

| Surrogate Models (SVM, MLR, ANN) | Machine-learning models trained on simulation data to create fast, approximate predictions of building performance, drastically reducing computational cost in optimization loops [27]. |

| Diagram-Driven Method (DDM) | A novel operational decision-making method for energy systems that replaces MILP with ultra-fast, rule-based strategies, enabling complex multi-layer co-optimization [26]. |

| K-means Clustering | An unsupervised learning algorithm used to group a large set of candidate solutions into representative clusters, reducing the number of designs that require costly accurate simulation [25]. |

| Tabu Search | A local search optimization technique that explores neighboring solutions while using a "tabu list" to avoid revisiting areas, helping to escape local optima and fine-tune results [25]. |

| EnergyPlus | A whole-building energy simulation program used to calculate energy consumption, lighting, and HVAC performance, often generating the data for training surrogate models [27]. |

The table below summarizes performance metrics and experimental configurations from recent studies on transmission-distribution coordination.

| Study Focus / Configuration | Key Performance Metrics | Reported Improvement/Outcome |

|---|---|---|

| Bi-level Stochastic Model (T&D Coordination) [29] | Solution time, solution optimality | 40% faster than decomposition methods; 20% faster than evolutionary methods; results ~7% more optimal [29]. |

| Reserve-Optimized T&D Coordination [30] | Total system operating costs, wind/solar curtailment | Reduced total operating costs and curtailment rates by exploiting regulation resources on both transmission and distribution sides [30]. |

| Integrated Energy Management with ESS [31] | Distribution network costs, transmission network costs | 13% cost reduction with ESS in distribution grid; 83% cost reduction with large batteries in transmission grid [31]. |

| Electricity-Hydrogen-Carbon IES [32] | Carbon emissions, total profit of IES operator, total cost of load aggregator | Carbon emissions reduced by ~40.12 tons/year (1.1%); operator profit enhanced by 14.07%; aggregator cost reduced by 10.06% [32]. |

| Two-Step Decoupling for IES [33] | CO2 emissions, NOX emissions, primary energy consumption | CO2 reduction: 153.8%; NOX reduction: 314.5%; primary energy consumption reduced by 82.67% compared to traditional system [33]. |

Detailed Experimental Protocols and Methodologies

Protocol 1: Bi-level Stochastic Optimization for T&D Coordination

This protocol is designed to coordinate unit commitment in the transmission network with the optimal operation of distribution networks featuring distributed resources [29] [31].

1. Problem Formulation:

- Upper-Level (Transmission System Operator - TSO): Formulate a Security-Constrained Unit Commitment (SCUC) problem. The objective is to minimize total operating costs, including generation costs, start-up/shutdown costs, no-load costs, and load shedding costs. This is typically modeled as a Mixed-Integer Linear Programming (MILP) problem [29] [31].

- Lower-Level (Distribution System Operator - DSO): Formulate an Optimal Power Flow (OPF) problem for each distribution network. The objective is to minimize the cost of purchasing power from the transmission network, maximize renewable energy use, and manage Electric Vehicle Charging Stations (EVCS). This can be modeled as a Linear Programming (LP) or Second-Order Cone Programming (SOCP) problem [29].

2. Model Solving with KKT Conditions:

- To solve the bi-level problem efficiently, rewrite the lower-level optimization problem by replacing it with its necessary and sufficient Karush-Kuhn-Tucker (KKT) optimality conditions [29] [32].

- This transformation converts the bi-level problem into a single-level Mathematical Program with Equilibrium Constraints (MPEC).

- For lower-level problems with integer variables, use a reformulation and decomposition technique to ensure globally optimal solutions [31].

3. Experimental Setup & Validation:

- Test Networks: Use standard test cases like the IEEE 30-bus system as the transmission network and multiple IEEE 33-bus systems connected to various transmission buses to represent distribution networks [29] [30].

- Coupling: Achieve power dynamic mutual support across voltage levels through tie transformers [30].

- Scenarios for Comparison: Validate the model by comparing against benchmarks such as:

Protocol 2: Co-Optimization of Energy Storage Systems (ESS) in T&D Networks

This protocol provides a holistic framework for integrating ESS across both network levels to enhance flexibility and reduce costs [31].

1. Bi-level Stochastic Model Formulation:

- Upper-Level (TSO): Minimize total expected cost of the transmission network, including generation, reserve, and load curtailment, subject to SCUC constraints. The model considers scenarios

swith probabilitiesσ_sto handle uncertainty [31]. - Lower-Level (DSO): For each scenario

s, minimize distribution network operation costs, including energy purchasing, cost of non-participation of renewable resources, and network power losses. The model incorporates Demand Side Management (DSM) and the operation of distributed ESS [31].

2. Integration of Energy Storage:

- Model the ESS in both networks using constraints for charging power (

p_{n,t}^{ch}), discharging power (p_{n,t}^{dis}), and state of energy (e_{n,t}^{ess}). - Include charging/discharging efficiency (

η_n^{ess}) and a binary variable (ζ_{n,t}^{ess}) to prevent simultaneous charging and discharging [31].

3. Solution Technique:

- Employ a reformulation and decomposition algorithm to handle the binary variables and the stochastic, bi-level structure effectively [31].

Protocol 3: Electricity-Hydrogen-Carbon IES with Uncertainty and Demand Response

This protocol addresses supply-demand imbalance and carbon emissions by synergizing supply-side and demand-side optimization [32].

1. Upper-Level Model (Supply-Side Optimization):

- Uncertainty Modeling: Use robust optimization theory to model short-term (influenced by weather) and long-term (influenced by equipment performance) output errors of photovoltaic (PV) and wind turbine (WT) generation.

- Carbon Emission Control: Implement an improved stepwise carbon trading model that dynamically adjusts carbon prices based on actual emissions, providing more accurate incentives for reduction.

- Objective: Construct an electricity-hydrogen-carbon cooperative scheduling optimization model to minimize total cost, including wind curtailment and carbon emissions [32].

2. Lower-Level Model (Demand-Side Optimization):

- Objective: Minimize the annual consumption cost of the load aggregator.

- Mechanism: Implement an Integrated Demand Response (IDR) program, incentivizing users to adjust energy consumption patterns through dynamic energy pricing [32].

3. Solution Methodology:

- Solve the bi-level model by transforming the lower-level problem using KKT conditions and the Big-M method to handle complementarity constraints [32].

Diagram Title: Bi-level Optimization Hierarchical Structure

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: When solving the bi-level model using KKT conditions, my solver struggles with numerical instability or fails to converge. What could be the issue?

A: This is a common challenge. Please check the following:

- Constraint Qualification: Ensure that the Lower-Level Problem (LLP) satisfies a constraint qualification (like Mangasarian-Fromovitz) for all feasible upper-level decisions. If not, the KKT conditions may not be necessary or sufficient.

- Non-Linearities: If the LLP is non-linear, its KKT conditions introduce complementarity constraints, leading to a non-convex problem. Use specialized solvers or reformulate using the Big-M method with carefully chosen

Mvalues to avoid numerical issues [32]. - Binary Variables: The presence of integer/binary variables in the LLP makes the problem extremely hard. Standard KKT conditions are not applicable. Consider reformulation and decomposition techniques specifically designed for such problems [31].

Q2: The proposed stochastic models consider uncertainty from renewables, but the computational cost is too high for my large-scale test system. Are there simpler alternatives?

A: Yes, you can consider the following alternatives, trading off some detail for computational tractability:

- Robust Optimization (RO): Instead of modeling many scenarios, RO optimizes against the worst-case realization within an uncertainty set. This can be less computationally intensive than large-scale stochastic programming [32].

- Typical Day Selection: Reduce the number of scenarios by using clustering algorithms (e.g., k-means) to select a few "typical days" that represent the annual load and renewable generation profile.

- Deterministic Equivalent: Use a deterministic model with fixed reserve requirements, but base these requirements on a probabilistic analysis of historical forecast errors (e.g.,

x% of peak load ory% of renewable capacity).

Q3: How can I effectively model and integrate Demand Side Management (DSM) and Energy Storage Systems (ESS) in the distribution network level?

A: Integration is key for flexibility.

- For DSM: Model it as a virtual power resource. In the DSO's objective function, include a cost term for load adjustments (

d~_{n,t}^p, d~_{n,t}^q). In the constraints, limit the total adjusted load to a percentage (ε) of the original demand (d_{n,t}^p, d_{n,t}^q) to maintain user comfort [31]. - For ESS: Model the charging (

p_{n,t}^{ch}) and discharging (p_{n,t}^{dis}) power, and the energy state (e_{n,t}^{ess}). Include constraints for capacity, efficiency (η), and a limit on daily discharge cycles (A). Use a binary variable to prevent simultaneous charge/discharge [31]. - Coordination: The TSO's commitment and dispatch signals (via the coupling variable) will influence the DSO's optimal scheduling of both DSM and ESS to minimize local costs.

Q4: My bi-level optimization model for T&D coordination does not lead to significant cost savings compared to separate operation. What might be wrong?

A: The benefits of coordination are most pronounced when certain conditions are met. Please verify:

- Adequate Distributed Resources: Ensure your distribution network model includes a sufficient penetration of flexible resources (e.g., dispatchable DG, ESS, responsive loads). Without these, the DSO has little flexibility to respond to TSO signals.

- Binding Coupling Constraints: Check if the constraints at the transmission-distribution interface (e.g., substation transformer capacity) are binding. If these constraints are never active, the systems can operate independently without issue.

- Proper Price Signals: Ensure the coordination mechanism (e.g., locational marginal prices or other dual variables from the TSO problem) accurately reflects congestion and marginal costs in the transmission network, providing the correct economic signals for the DSO.

The Scientist's Toolkit: Key Research Reagents & Solutions

This table catalogs the essential computational models, algorithms, and data required for experimental research in T&D co-optimization.

| Tool Category | Specific Tool / Technique | Primary Function in Research |

|---|---|---|

| Optimization Models | Mixed-Integer Linear Programming (MILP) [29] | Models upper-level Unit Commitment problems with discrete on/off decisions. |

| Second-Order Cone Programming (SOCP) [29] | Relaxes and solves the non-convex DistFlow equations in distribution networks. | |

| Stochastic Programming [31] | Handles uncertainties in renewable generation and load via scenario-based analysis. | |

| Robust Optimization [32] | Optimizes system performance against the worst-case realization of uncertainty. | |

| Solution Algorithms | Karush-Kuhn-Tucker (KKT) Conditions [29] [32] | Transforms a bi-level problem into a single-level Mathematical Program with Equilibrium Constraints (MPEC). |

| Reformulation and Decomposition [31] | Breaks down large, complex problems with integer variables into manageable sub-problems. | |

| Big-M Method [32] | Linearizes complementarity constraints from KKT conditions for solver compatibility. | |

| Test System Data | IEEE 30-Bus / 118-Bus Systems [30] | Standardized transmission network models for benchmarking and validation. |

| IEEE 33-Bus / 69-Bus Radial Systems [30] | Standardized distribution network models for benchmarking and validation. | |

| Typical Meteorological Year (TMY) Data | Provides synthetic year of hourly solar irradiance and temperature for PV/wind generation modeling. |

This guide provides technical support for researchers applying Genetic Algorithms (GAs) to multi-objective optimization problems in environmental and agricultural research. It is framed within a broader thesis on co-optimizing environmental variables and resource use efficiency, using a recent case study on agricultural manure management in China as a central example [34]. The following sections offer detailed experimental protocols, troubleshooting for common GA challenges, and a toolkit of essential resources.

Experimental Protocol: Multi-Objective Manure Management Optimization

This protocol is based on a published study that employed GAs to determine the optimal manure substitution rate for major crops in China, balancing crop yield, nitrogen emissions, and climate impact [34].

Data Collection and Preprocessing

- Data Sources: The study synthesized data from 650 peer-reviewed studies, extracting 6,740 data pairs on agronomic and environmental responses to manure application [34]. This was combined with national census data from over 300,000 farm households and statistical sources to assess cropland manure capacity and spatial livestock production surplus.

- Key Variables: The meta-analysis focused on responses to manure application for nine major crops. The variables collected for each crop included:

- Agronomic Metrics: Crop yield, soil organic matter, soil pH.

- Environmental Emissions: Nitrous oxide (N₂O), ammonia (NH₃), nitrogen leaching, and nitrogen runoff.

- Objective Formulation: The multi-objective problem was defined to simultaneously optimize for:

- Maximizing crop yield.

- Maximizing economic benefits.

- Minimizing greenhouse gas emissions (GHGs).

- Minimizing water pollution (N leaching and runoff).

- Maximizing soil health (organic matter, pH).

- Minimizing ammonia (NH₃) emissions [34].

Optimization Algorithm Configuration

- Algorithm Selection: A multi-objective GA was employed, using a genetic algorithm to obtain the optimal substitution rate (OPSR) [34].

- Fitness Function: The core of the GA was a fitness function designed to balance the six conflicting objectives listed above, with the principle that no single benefit should be diminished [34].

- Key Parameters: While the exact parameters from the case study are not fully detailed, the following table summarizes general best practices for GA parameter tuning, which can serve as a starting point for similar environmental optimization problems [35].

Table 1: Genetic Algorithm Parameter Tuning Guide

| Parameter | Recommended Range / Value | Function and Tuning Consideration |

|---|---|---|

| Population Size | 100 - 1000 [35] | Determines genetic diversity. Use larger populations for complex problems (e.g., national-scale optimization with multiple crops) [34] [35]. |