Building Robust Multimodal Plant Datasets: A Comprehensive Guide to Data Preprocessing Pipelines for Agricultural AI and Drug Discovery

This article provides a comprehensive guide for researchers and scientists on constructing effective data preprocessing pipelines for multimodal plant datasets.

Building Robust Multimodal Plant Datasets: A Comprehensive Guide to Data Preprocessing Pipelines for Agricultural AI and Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and scientists on constructing effective data preprocessing pipelines for multimodal plant datasets. It explores the foundational principles of plant multimodality, detailing methodological steps for integrating diverse data types such as images of different plant organs, textual descriptions, and environmental data. The content addresses critical challenges including data heterogeneity, missing modalities, and label noise, offering practical troubleshooting and optimization strategies. Furthermore, it outlines robust validation and comparative analysis frameworks to benchmark pipeline performance, emphasizing the pipeline's pivotal role in enhancing the accuracy and reliability of downstream applications in plant phenotyping, disease diagnosis, and drug discovery.

The Why and What: Understanding the Imperative for Multimodal Plant Data

Frequently Asked Questions (FAQs)

Q1: Why is analyzing multiple plant organs (multimodal) better than just using leaves for classification? Relying on a single organ, like a leaf, is often biologically insufficient for accurate classification. The same plant species can show different appearances, while different species can share similar features in a single organ. Using images from multiple organs—such as flowers, leaves, fruits, and stems—provides complementary biological data, leading to a more comprehensive and accurate representation of the plant species [1].

Q2: What is a key technical challenge when working with multimodal plant data, and how can it be addressed? A primary challenge is determining the optimal strategy for fusing data from different modalities (organs). Simple methods like late fusion (averaging predictions from single-organ models) can be suboptimal. An automated fusion approach using a Multimodal Fusion Architecture Search (MFAS) can discover more effective fusion strategies, leading to significantly higher accuracy compared to manual methods [1].

Q3: How can I make my multimodal model robust to missing data, for example, if fruit images are not available for a particular sample? You can incorporate multimodal dropout techniques during training. This approach teaches the model to perform classification effectively even when one or more input modalities (e.g., fruits or stems) are missing, making it more practical for real-world applications where data for all plant organs may not be available [1].

Q4: My dataset was designed for single-organ analysis. How can I adapt it for multimodal research? You can create a multimodal dataset through a dedicated preprocessing pipeline. This involves restructuring an existing unimodal dataset. For instance, the Multimodal-PlantCLEF dataset was created from PlantCLEF2015 by grouping images of different organs (flowers, leaves, fruits, stems) from the same plant species into a single, multi-input sample [1].

Q5: What does single-cell analysis reveal that bulk tissue analysis cannot? Single-cell multi-omics can uncover that the biosynthesis of complex plant compounds (like the anti-cancer alkaloids vinblastine and vincristine) is organized across distinct, rare cell types. Bulk analysis dilutes these specific signals. Single-cell analysis allows researchers to discover new biosynthetic genes and understand that pathway intermediates accumulate at very high concentrations in specific, specialized cells [2].

Troubleshooting Guides

Issue 1: Poor Model Performance on Multimodal Plant Data

Problem: Your model's classification accuracy is low, potentially underperforming simpler, single-organ models.

Diagnosis & Solutions:

| Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Suboptimal Fusion Strategy | Check if you are using a simplistic fusion method (e.g., late fusion). | Implement an automated fusion search (e.g., Multimodal Fusion Architecture Search) to find a more effective integration method [1]. |

| Misalignment of Features | Examine feature vectors from different organ models for scale and semantic misalignment. | Introduce a feature alignment layer in your pipeline before fusion to project features into a common space [3]. |

| Missing Modalities | Evaluate if your model fails when an organ image is missing. | Use multimodal dropout during training to improve model robustness to incomplete data [1]. |

Issue 2: Challenges in Preprocessing and Pipelines for Heterogeneous Data

Problem: Managing and processing different data types (e.g., 3D organ images, text annotations, single-cell data) is complex and inefficient.

Diagnosis & Solutions:

| Possible Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Non-Standardized Workflow | Check if processing steps for each data type are manual and disjointed. | Implement a graph-based, modular preprocessing pipeline (e.g., using a framework like Pliers) to standardize and chain operations [3]. |

| Difficulty in Cell-Type Identification | For single-cell analysis, check if you rely on manual annotation or transgenic markers. | Use a computational tool like 3DCellAtlas, which leverages the intrinsic geometric properties of cells for accurate, automated identification without needing reference atlases [4]. |

Experimental Protocols

Protocol 1: Constructing a Multimodal Plant Classification Model with Automated Fusion

Objective: To build a high-accuracy plant classification model that automatically fuses data from images of four plant organs (flower, leaf, fruit, stem).

Methodology:

- Dataset Preparation: Use a multimodal dataset like Multimodal-PlantCLEF. Ensure each data sample consists of image sets (flower, leaf, fruit, stem) for a single plant species [1].

- Unimodal Model Training: Train a separate Convolutional Neural Network (CNN), such as a pre-trained MobileNetV3Small, on each individual organ modality [1].

- Automated Fusion: Apply a Multimodal Fusion Architecture Search (MFAS) algorithm. This algorithm will automatically discover the optimal points and methods to combine the features extracted from the four unimodal models [1].

- Robustness Training: Incorporate multimodal dropout during the training of the fused model to enhance its performance when some organ images are missing [1].

Expected Outcome: A multimodal model that achieves higher classification accuracy (e.g., 82.61% on 979 classes) compared to a late-fusion baseline, with robustness to missing data [1].

Protocol 2: Single-Cell Multi-Omics Analysis for Plant Metabolic Pathway Elucidation

Objective: To map the cell-type-specific biosynthesis of a target plant natural product (e.g., vinblastine in Catharanthus roseus).

Methodology:

- Sample Preparation & Imaging: Prepare thin sections of the plant organ of interest (e.g., leaf). Use confocal microscopy to acquire 3D image z-stacks of the tissue [4].

- 3D Cell Segmentation & Identification: Process the 3D images with software like MorphoGraphX. Use the 3DCellAtlas pipeline to segment individual cells and identify distinct cell types (e.g., epidermis, idioblasts) based on their intrinsic 3D geometry [4].

- Single-Cell Omics: Isolate or profile the contents of the identified individual cell types. Perform single-cell RNA sequencing (scRNA-seq) to measure gene expression and metabolomics to measure metabolite levels in each cell type [2].

- Data Integration: Correlate the expression of biosynthetic genes with the accumulation of pathway intermediates across the different cell types. This integration reveals the complete, spatially organized metabolic pathway [2].

Expected Outcome: Identification of which specific cell types express different steps of a biosynthetic pathway and where key intermediates accumulate, leading to the discovery of new pathway genes [2].

Table 1: Performance Comparison of Plant Classification Models

| Model Type | Fusion Strategy | Number of Classes | Top-1 Accuracy | Key Advantage |

|---|---|---|---|---|

| Multimodal (4 organs) | Automated Fusion Search | 979 | 82.61% [1] | Optimal architecture discovery |

| Multimodal (4 organs) | Late Fusion (Averaging) | 979 | 72.28% [1] | Simplicity |

| Unimodal (Single organ) | N/A | 979 | (Lower than multimodal) | Reduces need for multiple images |

Table 2: Cell-Type-Specific Accumulation in Catharanthus roseus Alkaloid Pathway

| Cell Type | Role in Vinblastine Biosynthesis | Key Observation |

|---|---|---|

| IPAP cells (Specialized vascular) | Express the first stage of the pathway [2] | Confines initial steps to specific cells. |

| Epidermis | Express the second stage of the pathway [2] | Middle steps occur in a separate tissue layer. |

| Idioblasts (Rare leaf cells) | Express the final stages; site of precursor accumulation (catharanthine & vindoline) [2] | Precursors concentrated 1000x higher than in whole-leaf extract [2]. |

Research Reagent Solutions

Table 3: Essential Tools and Reagents for Advanced Plant Analysis

| Item | Function in Research | Application Context |

|---|---|---|

| 3DCellAtlas | A computational pipeline for semiautomated identification of cell types and quantification of 3D cellular anisotropy from 3D image data [4]. | Single-cell analysis of radially symmetric plant organs (roots, hypocotyls). |

| Multimodal Preprocessing Pipeline (e.g., Pliers) | A structured workflow to extract, transform, and align features from heterogeneous data (video, audio, images, text) into a standardized format [3]. | Building unified datasets from multiple sources for multimodal machine learning. |

| Confocal Microscopy Z-Stacks | High-resolution 3D imaging of plant tissues and cellular structures [4]. | Essential for accurate 3D segmentation and analysis of cell shape and size. |

| Single-cell RNA Sequencing (scRNA-seq) | Profiling the complete set of RNA transcripts in individual cells [2]. | Identifying gene expression patterns specific to rare or specialized cell types. |

Workflow and Pathway Visualizations

Multimodal Plant Analysis Pipeline

Cell-Type-Specific Alkaloid Biosynthesis

Frequently Asked Questions

Q1: What exactly is considered a "modality" in plant science research? A modality refers to a distinct type or source of data that provides unique information about a plant. In multimodal learning, these diverse data sources are integrated to provide a comprehensive representation, leveraging their complementary nature [1]. Common modalities in plant science include:

- Images: RGB images of plant organs (leaves, flowers, fruits, stems) [1], as well as data from multispectral, hyperspectral, and thermal sensors [5] [6].

- Climate & Weather Data: Historical and temporal data on temperature, precipitation, humidity, and solar radiation [5].

- Phenotypic & Tabular Data: Manually or automatically measured traits, such as plant density, height, date to anthesis, and parental line information [5].

- Molecular Data: Genomic sequences and genetic data, which can be analyzed with advanced tools like large language models (e.g., Agronomic Nucleotide Transformer or AgroNT) to uncover regulatory patterns [6].

- Text: Scientific literature, taxonomic descriptions, and curated knowledge from public databases [7].

Q2: Why should I use a multimodal approach instead of relying on a single data type? A single data source, such as an image of a leaf, is often insufficient for accurate classification or prediction as it cannot capture the full biological diversity of a plant species [1]. Multimodal deep learning models integrate complementary information from different sources, leading to significantly improved predictive power and explanatory capabilities compared to single-modality models [5]. For example, fusing images with weather data allows a model to understand the impact of meteorological events on maize growth, which images alone cannot capture [5].

Q3: What are the main strategies for fusing different data modalities? The main fusion strategies are early, intermediate (or feature-level), late (decision-level), and hybrid fusion [1]. The choice of fusion strategy is a critical challenge, and the optimal point for modality fusion can even be discovered automatically using algorithms like the multimodal fusion architecture search (MFAS) [1].

Q4: I have a unimodal dataset. Can I adapt it for multimodal research? Yes. One pioneering approach involves creating a data preprocessing pipeline to transform an existing unimodal dataset into a multimodal one. For instance, the PlantCLEF2015 dataset was restructured into the "Multimodal-PlantCLEF" dataset by grouping images of multiple plant organs (flowers, leaves, fruits, stems) for the same species [1].

Q5: What is a common pitfall when building a multimodal data pipeline, and how can it be avoided? A common pitfall is creating an inefficient pipeline that results in idle GPUs due to excessive data padding, especially with text sequences [8]. A solution is to implement smarter batching strategies, such as "knapsack packing," which groups samples of similar lengths together to minimize padding and maximize GPU utilization [8].

Troubleshooting Guides

Problem: My Multimodal Model is Overfitting

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Apply Data Augmentation | Increased effective dataset size and improved model generalization. |

| 2 | Incorporate Multimodal Dropout | A more robust model that maintains performance even if a modality is missing at test time [1]. |

| 3 | Leverage Transfer Learning | Faster training and better performance, especially when labeled multimodal data is limited [6]. |

| 4 | Validate on Diverse Environments | A model that generalizes better across different growing conditions and is less biased [5]. |

Problem: My Data Modalities Are Misaligned or Incompatible

| Step | Action | Expected Outcome |

|---|---|---|

| 1 | Implement a Standardized Preprocessing Pipeline | Clean, uniformly structured data for each modality, ready for integration. |

| 2 | Adopt a Modular, Graph-Based Pipeline | Simplified management of heterogeneous data and seamless inter-modal conversion (e.g., extracting text from audio) [3]. |

| 3 | Use a Unified Output Format | Simplified merging and joint analysis of features from different modalities [3]. |

| 4 | Address Spatial/Temporal Biases | A more reliable model with predictions that are not skewed by data collection biases [7]. |

Experimental Protocols for Multimodal Integration

Protocol 1: Creating a Multimodal Dataset from Unimodal Sources This protocol is based on the methodology used to create the Multimodal-PlantCLEF dataset [1].

- Dataset Selection: Identify a public unimodal dataset with a wide array of plant species and images, such as PlantCLEF2015.

- Organ-Based Grouping: Restructure the dataset by grouping images of different organs (flower, leaf, fruit, stem) belonging to the same plant species and individual.

- Data Cleaning: Filter out species that do not have a sufficient number of images for each of the required organ types to ensure data completeness.

- Standardization: Resize and preprocess all images to a consistent resolution and format to facilitate model training.

Protocol 2: An Intermediate Fusion Workflow for Image and Weather Data This protocol summarizes the approach used for early prediction of maize yield [5].

- Data Collection:

- Imagery: Capture RGB images of crops at regular intervals using UAVs or ground platforms.

- Weather Data: Obtain historical weather data (temperature, humidity, solar radiation) for the field location from sources like NASA Power [5].

- Phenotypic Data: Record tabular data such as plant density, hybrid parental line, and date to anthesis.

- Feature Extraction:

- Images: Use a pre-trained Convolutional Neural Network (CNN) to extract deep feature representations from the images.

- Tabular Data: Normalize phenotypic and weather data.

- Temporal Alignment: Align the image-derived features and weather data temporally with key growth stages (e.g., anthesis, silking).

- Feature Fusion: Concatenate the extracted image features with the normalized tabular data vectors at an intermediate layer of a deep neural network.

- Model Training & Interpretation: Train a multimodal DNN for regression (yield prediction) and use explainability tools like SHAP to identify the most influential features from each modality.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| Public Data Platforms (e.g., G2F, Plant Village) | Provide large-scale, annotated plant image and phenotypic datasets for training and benchmarking models [5] [6]. |

| Darwin Core Standards | A standardized framework for sharing biodiversity data, crucial for achieving interoperability across different datasets and platforms [7]. |

| Pre-trained Models (e.g., MobileNetV3) | Provide a robust foundation for feature extraction, especially for image-based modalities, reducing the need for large, private datasets [1] [6]. |

| Neural Architecture Search (NAS) | Automates the design of optimal neural network architectures, which can be applied to find the best fusion strategy for a given multimodal problem [1]. |

| Modular Preprocessing Frameworks (e.g., Pliers) | Support the construction of structured workflows to extract, transform, and align features from heterogeneous data sources (video, audio, images, text) [3]. |

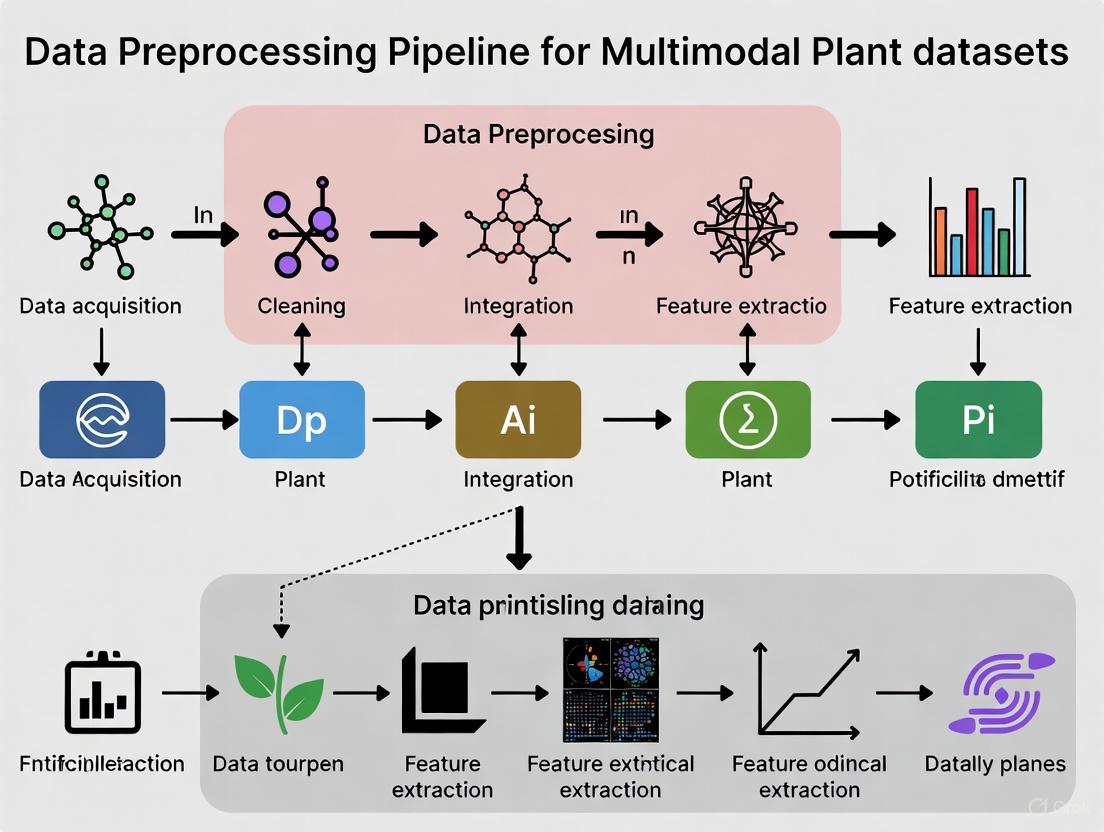

Multimodal Data Preprocessing Pipeline Workflow

The following diagram illustrates a generalized, modular workflow for preprocessing multimodal plant data, from raw data ingestion to model-ready features.

Generalized Multimodal Preprocessing Pipeline

Key Quantitative Guidelines for Dataset Curation

The table below summarizes recommended dataset sizes for different machine learning tasks in plant image analysis, which is a critical component of multimodal studies.

| Task Complexity | Recommended Minimum Dataset Size | Key Considerations |

|---|---|---|

| Binary Classification | 1,000 - 2,000 images per class [6] | A balanced dataset with roughly equal samples for each class is ideal. |

| Multi-class Classification | 500 - 1,000 images per class [6] | Requirements increase with the number of classes. Data augmentation is highly recommended. |

| Object Detection | Up to 5,000 images per object [6] | Requires bounding box annotations, which are labor-intensive to create. |

| Deep Learning Models (CNNs) | 10,000 - 50,000+ images total [6] | Larger models require more data. Transfer learning can reduce this requirement significantly. |

| Using Transfer Learning | As few as 100 - 200 images per class [6] | Effective for small datasets by leveraging features from a model pre-trained on a large, general dataset. |

Overcoming the Limitations of Unimodal Datasets in Complex Real-World Conditions

Multimodal Data Fusion Troubleshooting FAQs

FAQ 1: What are the most significant performance gaps between laboratory and real-world conditions for plant disease detection, and how can multimodal data help?

Laboratory conditions often achieve 95-99% accuracy in controlled settings, while real-world field deployment typically yields only 70-85% accuracy [9]. This significant performance gap stems from environmental variability, lighting changes, background complexity, and diverse growth stages that unimodal systems struggle to handle.

Multimodal data directly addresses these limitations by combining complementary information sources. For instance, integrating RGB imaging (for visible symptoms) with hyperspectral data (for pre-symptomatic physiological changes) provides more robust detection capabilities [9]. Research demonstrates that transformer-based architectures like SWIN achieve 88% accuracy on real-world datasets compared to just 53% for traditional CNNs, highlighting the importance of advanced fusion techniques [9].

Table: Performance Comparison Across Imaging Modalities and Environments

| Modality | Laboratory Accuracy | Field Accuracy | Key Strengths | Deployment Cost |

|---|---|---|---|---|

| RGB Imaging | 95-99% | 70-85% | Visible symptom detection, accessibility | $500-$2,000 USD |

| Hyperspectral Imaging | N/A reported | N/A reported | Pre-symptomatic detection, physiological analysis | $20,000-$50,000 USD |

| Multimodal Fusion (RGB+HSI) | N/A reported | 88% (SWIN transformers) | Combined strengths, robust to environmental variability | Cost-prohibitive for widespread use |

FAQ 2: How can I resolve synchronization issues between multiple data streams in field deployment?

Synchronization problems represent one of the most common technical challenges in multimodal research. These issues typically manifest as temporal misalignment between data streams, leading to inaccurate correlations and analysis errors [10].

Step-by-Step Resolution Protocol:

- Implement Hardware Synchronization: Use a master clock source with Precision Time Protocol (PTP) to maintain temporal alignment across all sensors [10]

- Address Clock Drift: Establish periodic re-synchronization routines, as internal device clocks can diverge by parts per million, causing significant misalignment over long recordings [10]

- Utilize Shared Event Markers: Incorporate visual or auditory markers (flashes, beeps) simultaneously recorded by all sensors for post-hoc alignment verification [10]

- Apply Post-Processing Correction: Deploy drift correction algorithms that estimate and compensate for temporal discrepancies based on timing signals [10]

FAQ 3: What data preprocessing pipeline effectively handles multimodal dataset inconsistencies?

Effective preprocessing must address the "heterogeneous hardware landscape" where sensors from various manufacturers use proprietary formats and protocols [10]. A robust pipeline should systematically resolve format inconsistencies, sampling rate mismatches, and data quality issues.

Comprehensive Preprocessing Protocol:

Data Cleaning Phase:

Format Standardization:

Temporal Alignment:

Quality Validation:

Table: Multimodal Preprocessing Solutions for Common Data Issues

| Data Issue | Detection Method | Resolution Techniques | Quality Metrics |

|---|---|---|---|

| Missing Values | Descriptive statistics, data profiling | Imputation (mean/median/mode), deletion, indicator variables | Percentage of completeness, pattern analysis |

| Noisy Data | Range validation, domain rules | Filtering, smoothing algorithms, format standardization | Signal-to-noise ratio, validation against constraints |

| Format Inconsistency | Data type checking, pattern matching | Type conversion, standardization protocols | Format compliance rate, parsing success rate |

| Sampling Rate Mismatch | Temporal analysis, frequency detection | Resampling, interpolation, alignment algorithms | Temporal alignment precision, data point correlation |

| Outliers | Statistical methods (IQR, Z-score), visualization | Winsorizing, transformation, domain-expert validation | Distribution analysis, impact assessment on models |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table: Critical Resources for Multimodal Plant Data Research

| Resource Category | Specific Tool/Solution | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Imaging Hardware | RGB Cameras | Capture visible spectrum symptoms | Cost-effective ($500-$2,000); suitable for initial deployment [9] |

| Imaging Hardware | Hyperspectral Sensors | Detect pre-symptomatic physiological changes | High cost ($20,000-$50,000); requires specialized expertise [9] |

| Synchronization | Lab Streaming Layer (LSL) | Resolve hardware compatibility and synchronization | Abstracts hardware-specific details; enables cross-platform data collection [10] |

| Data Management | TileDB Carrara | Multimodal data organization and governance | Manages data lake to warehouse transition; addresses governance challenges [14] |

| Fusion Algorithms | PlantIF Framework | Graph-based multimodal feature fusion | Achieves 96.95% accuracy on plant disease datasets; handles phenotype-text heterogeneity [15] |

| Annotation Software | Mangold INTERACT | Behavioral timeline creation and event annotation | Enables qualitative observation structuring; supports inter-rater reliability assessment [10] |

FAQ 4: How can I address the challenge of limited annotated datasets for multimodal plant pathology research?

The development of accurate plant disease detection models relies heavily on well-annotated datasets, which remain difficult to obtain at scale due to the need for expert plant pathologists to verify classifications [9]. This expert dependency creates bottlenecks in dataset expansion and diversification.

Experimental Protocol for Data Scarcity Mitigation:

Leverage Transfer Learning:

- Utilize pre-trained models on large-scale datasets (e.g., ImageNet) as feature extractors [9]

- Fine-tune final layers on limited domain-specific data

- Implement progressive unfreezing of layers during training

Apply Data Augmentation:

- Generate synthetic data using Generative Adversarial Networks (GANs) [9]

- Employ traditional augmentation techniques (rotation, flipping, color adjustment)

- Ensure augmentation reflects real-world environmental variations

Implement Few-Shot Learning:

- Utilize models like PlantIF that demonstrate strong performance with limited examples [15]

- Apply metric learning approaches to learn robust feature representations

- Use prototypical networks for classification with limited samples

Cross-Geographic Generalization:

- Incorporate data from multiple geographic regions during training [9]

- Apply domain adaptation techniques to address regional biases

- Implement test-time adaptation for new environments

FAQ 5: What fusion strategies work best for integrating heterogeneous data modalities in agricultural applications?

Effective multimodal fusion must address the fundamental challenge of heterogeneity between plant phenotypes and other modalities, such as textual descriptions or spectral data [15]. The optimal approach depends on the specific modalities involved and the agricultural application context.

Multimodal Fusion Experimental Protocol:

Early Fusion Strategy:

- Combine raw data from multiple modalities before feature extraction

- Suitable for highly correlated modalities with similar sampling rates

- Implement using concatenation or cross-modal attention mechanisms

Intermediate Fusion Approach:

Late Fusion Methodology:

- Process each modality through separate models to generate predictions

- Combine predictions using weighted averaging or meta-learning

- Effective for modalities with significant heterogeneity

Graph-Based Fusion Implementation:

The PlantIF framework demonstrates the effectiveness of graph-based fusion, achieving 96.95% accuracy on multimodal plant disease diagnosis—1.49% higher than existing models—by processing and fusing different modal semantic information through specialized attention mechanisms [15].

Troubleshooting Guides and FAQs

Data Complementarity

Q1: My multimodal plant dataset has missing organ images for many samples. How can I maintain data complementarity? A: Implement a robustness strategy directly within your deep learning model. Research on automatic fused multimodal deep learning for plant identification successfully addresses this by using multimodal dropout during training. This technique artificially drops certain modalities (e.g., flower or leaf images), forcing the model to learn robust representations and maintain performance even when some plant organ data is missing [1].

Q2: Are there specific plant organs that provide more complementary information than others? A: From a biological standpoint, a single organ is insufficient for accurate classification [1]. The most significant complementarity often comes from organs with distinct biological functions. For instance, integrating images of flowers, leaves, fruits, and stems provides a comprehensive representation of plant characteristics, as each organ encapsulates a unique set of biological features [1]. The optimal combination can be dataset-specific.

Data Alignment

Q3: What is the most common cause of temporal misalignment in continuously captured multimodal data, and how can it be corrected? A: The most pervasive cause is clock drift, where the internal clocks of different data collection devices gradually diverge over time [10]. This drift can accumulate over long recording sessions. Correction requires periodic re-synchronization using a master clock (e.g., via the Precision Time Protocol) or the use of post-hoc algorithms that estimate and correct for drift based on shared timing signals [10].

Q4: My data streams have different sampling rates (e.g., high-frequency sensors and lower-frequency images). How should I align them? A: This is a classic sampling rate mismatch challenge [10]. You have two main strategies:

- Downsampling: Reduce the higher-frequency data to match the lower frequency.

- Upsampling: Increase the lower-frequency data using interpolation techniques. The choice depends on your analysis goals. Downsampling is computationally simpler but may lose temporal precision, whereas upsampling can introduce artifacts if not carefully validated [10].

Data Heterogeneity

Q5: I need to integrate data from different sensor manufacturers, each with a proprietary output format. What is the best approach? A: This issue of data format inconsistency is common [10]. The recommended strategy is to use a middleware solution or custom data conversion scripts to transform all data into a standardized, common format (e.g., HDF5) before integration [10]. Careful selection of components with open standards or well-documented APIs during the experimental design phase can significantly reduce this problem.

Q6: How can I monitor data quality and heterogeneity in a multimodal pipeline that includes both structured metadata and unstructured image data? A: Adopt a split and monitor strategy. Independently monitor different data types and combine the results on a unified dashboard [16]:

- Structured Metadata: Use descriptive statistics, check for missing values, and monitor distribution drift.

- Unstructured Images: Use embedding monitoring or generate structured descriptors from the images (e.g., average brightness, texture metrics) and analyze them alongside your other structured data [16].

The table below summarizes key quantitative metrics and thresholds related to the core principles, derived from experimental protocols and system specifications.

Table 1: Quantitative Metrics for Multimodal Data Principles

| Principle | Metric | Reported Value / Threshold | Context / Rationale |

|---|---|---|---|

| Complementarity | Classification Accuracy | 82.61% | Achieved on 979 plant classes using a fused multimodal (flower, leaf, fruit, stem) model [1]. |

| Complementarity | Performance Gain over Unimodal | +10.33% | Accuracy increase over a late fusion baseline, highlighting the value of complementary data [1]. |

| Alignment | Synchronization Tolerance | <1 ms (typical target) | Required precision for temporal alignment to avoid erroneous conclusions in behavioral or physiological analysis [10]. |

| Alignment | Common Sampling Rates | EEG: 1000 Hz, Eye-tracker: 240 Hz, Video: 60 fps | Example rates leading to sampling rate mismatch [10]. |

| Heterogeneity | Data Format Variety | CSV, EDF, HDF5, proprietary binary | Common formats causing data format inconsistency [10]. |

| Heterogeneity | Network Bandwidth Requirement | Gigabit/10-Gigabit Ethernet | Recommended infrastructure to prevent data loss from bandwidth limitations during collection [10]. |

Experimental Protocols

Detailed Methodology: Automatic Fused Multimodal Deep Learning for Plant Identification

This protocol outlines the process for building a plant classification model using images from multiple plant organs [1].

1. Dataset Preprocessing and Curation:

- Input: A unimodal dataset (e.g., PlantCLEF2015) containing images labeled by plant species and organ type.

- Transformation: Restructure the dataset into a multimodal format, Multimodal-PlantCLEF, where each data sample consists of a set of images corresponding to different organs (flowers, leaves, fruits, stems) from the same plant species [1].

- Handling Missing Modalities: During training, apply multimodal dropout to simulate missing organ images and ensure model robustness [1].

2. Unimodal Model Training:

- For each modality (plant organ), train a separate deep learning model (e.g., MobileNetV3Small) using transfer learning from a pre-trained model [1].

3. Automated Multimodal Fusion:

- Fusion Algorithm: Apply a Multimodal Fusion Architecture Search (MFAS). This algorithm automatically discovers the optimal way to combine the features extracted from the unimodal models, rather than relying on a fixed, human-defined fusion strategy like late fusion [1].

- Output: A single, compact multimodal model that effectively integrates information from all available plant organs.

4. Model Evaluation:

- Metrics: Use standard performance metrics such as classification accuracy.

- Baseline Comparison: Validate the model against established benchmarks (e.g., late fusion with averaging) and use statistical tests like McNemar's test to confirm superiority [1].

Diagram: Multimodal Plant Data Preprocessing Workflow

Multimodal Dataset Creation and Model Training Pipeline

Diagram: Data Synchronization and Alignment Challenge

Common Challenges in Multimodal Data Alignment

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Multimodal Plant Research

| Item / Solution | Function / Application |

|---|---|

| Standardized Datasets (e.g., Multimodal-PlantCLEF) | Provides a curated, preprocessed benchmark for developing and evaluating multimodal plant classification models, ensuring reproducibility [1]. |

| Middleware (e.g., Lab Streaming Layer - LSL) | A software solution that abstracts away hardware-specific details, enabling the synchronization of data streams from different sensors and resolving compatibility issues [10]. |

| Multimodal Fusion Architecture Search (MFAS) | An algorithmic tool that automates the discovery of optimal neural network architectures for combining data from different modalities, outperforming manual fusion strategies [1]. |

| High-Performance Network Switch (Gigabit/10-Gigabit) | Critical hardware infrastructure to handle the enormous data volumes generated during multimodal collection, preventing data loss from bandwidth limitations [10]. |

| Graph Neural Networks (GNNs) | A class of deep learning models particularly effective for integrating and analyzing heterogeneous, network-structured data, such as biological interaction networks in drug discovery [17] [18]. |

Frequently Asked Questions (FAQs)

Q1: What are the key differences between the Multimodal-PlantCLEF and Augmented PlantVillage benchmarks?

Table 1: Core Characteristics of Featured Multimodal Benchmarks

| Feature | Multimodal-PlantCLEF | Augmented PlantVillage |

|---|---|---|

| Primary Task | Plant species identification [1] | Crop disease detection and diagnosis [19] [20] |

| Core Modalities | Images of multiple plant organs (flowers, leaves, fruits, stems) [1] | Plant disease images + Textual symptom descriptions & metadata [19] |

| Data Source | Restructured from PlantCLEF2015 [1] | Augmented from the original PlantVillage collection [19] |

| Key Innovation | Automatic modality fusion strategy for robust classification [1] | Expert-curated text prompts for vision-language model training [19] |

| Typical Model | Multimodal Deep Learning with fusion architecture search [1] | Vision-Language Models (e.g., CLIP, BLIP), Multimodal LLMs [19] [20] |

Q2: Why is a data preprocessing pipeline critical for building a multimodal plant dataset from unimodal sources? A robust preprocessing pipeline is essential to address the modality gap—the inherent differences in data structure and representation between various data types. Without careful processing, models cannot effectively learn the complementary relationships between modalities, such as how the visual features of a leaf correspond to its textual disease description [1] [19]. The creation of Multimodal-PlantCLEF from PlantCLEF2015 demonstrates a pipeline that reorganizes single-organ images into a structured, multi-organ (multimodal) dataset where each sample combines specific views of the same species [1].

Q3: What is modality dropout and how is it used to improve model robustness? Modality dropout is a training technique where one or more input modalities (e.g., a fruit image) are randomly omitted during training. This forces the model to learn to make accurate predictions even with incomplete data, mimicking real-world scenarios where certain data might be missing. Research on Multimodal-PlantCLEF has shown that this technique significantly enhances model robustness [1].

Q4: My multimodal model performs well on lab data but fails in the field. What could be wrong? This is a common challenge due to the simplicity gap between controlled lab images and complex field conditions [9]. Field images contain variable lighting, complex backgrounds, and different plant growth stages. To mitigate this:

- Utilize Augmented PlantVillage's contextual metadata (e.g., soil conditions, climate data) to make your model aware of environmental factors [19].

- Incorporate data augmentation during training to simulate field conditions.

- Fine-tune models on real-field datasets if available, as models trained solely on lab images like the original PlantVillage see significant accuracy drops (from 95-99% to 70-85%) when deployed in real environments [9].

Troubleshooting Guides

Issue: Poor Model Performance Due to Missing Modalities

Problem: Your trained multimodal model encounters samples during testing where one or more modalities (e.g., stem image) are missing, leading to unreliable or failed predictions.

Solution: Implement robustness strategies during training and inference.

- Step 1: Apply Modality Dropout. During the training phase, intentionally and randomly drop modalities. This teaches the model not to become over-reliant on any single data source and to leverage cross-modal relationships [1].

- Step 2: Design a Robust Inference Pipeline. Structure your inference code to handle missing inputs gracefully. The model, trained with modality dropout, will be capable of producing a prediction even with an incomplete sample [1].

- Step 3 (Advanced): Explore Generative Imputation. For critical applications, investigate using generative models to create plausible synthetic data for the missing modality based on the available ones, though this adds complexity.

Issue: Data Scarcity and Class Imbalance for Rare Species/Diseases

Problem: Certain plant species or diseases have very few examples in your dataset, leading to a model that is biased toward common classes.

Solution: Leverage Few-Shot Learning (FSL) techniques and data augmentation strategies.

- Step 1: Employ Contrastive Pre-training. Use a contrastive learning objective (e.g., using a Siamese network) on all available data. This helps the model learn a powerful and generalized feature representation that is effective even with few examples per class [21].

- Step 2: Adopt a Few-Shot Learning Framework. Utilize prototype-based networks, which compute a prototypical representation for each class from its few support examples. Query samples are classified based on their distance to these prototypes [21].

- Step 3: Augment with Synthetic Data. Use Large Language Models (LLMs) to generate additional textual descriptions for rare diseases, as demonstrated in multimodal few-shot learning research [21]. For images, consider Generative Adversarial Networks (GANs) to create synthetic samples.

Issue: Ineffective Fusion of Multimodal Features

Problem: Simply combining image and text features (e.g., by concatenation) does not lead to performance improvement, indicating poor fusion strategy.

Solution: Systematically explore and search for an optimal fusion architecture rather than relying on a fixed, manual design.

- Step 1: Benchmark Standard Fusion Techniques. Start by implementing and comparing early (feature-level), late (decision-level), and hybrid fusion to establish a baseline [1].

- Step 2: Implement Automated Fusion Search. Move beyond manual design by using a Multimodal Fusion Architecture Search (MFAS). This algorithm automatically discovers the most effective way to combine features from different encoders, which has been shown to significantly outperform simple late fusion (e.g., by over 10% accuracy) [1].

- Step 3: Analyze Cross-Modal Attention. If using transformer-based models, analyze the attention maps between image patches and text tokens. This can reveal if the model is truly learning meaningful cross-modal interactions or ignoring one modality.

Table 2: Comparison of Multimodal Fusion Strategies

| Fusion Strategy | Description | Advantages | Disadvantages |

|---|---|---|---|

| Early Fusion | Raw data from modalities is combined before feature extraction. | Allows modeling of low-level interactions. | Highly susceptible to noise and misalignment; requires synchronized data [1]. |

| Late Fusion | Decisions from unimodal models are combined (e.g., by averaging). | Simple, flexible, and modalities can be processed independently [1]. | Cannot capture complex cross-modal relationships at the feature level [1]. |

| Intermediate (Hybrid) Fusion | Features from unimodal encoders are merged within the model. | Balances flexibility with the capacity for rich interaction. | The fusion point and method are critical and non-trivial to design manually [1]. |

| Automated Fusion (MFAS) | Uses neural architecture search to find the optimal fusion structure. | Data-driven, can discover highly effective and non-intuitive architectures [1]. | Computationally more expensive during the search phase. |

Experimental Protocols & Methodologies

Protocol: Replicating the Multimodal-PlantCLEF Preprocessing Pipeline

This protocol outlines the steps to create a multimodal dataset from a unimodal source, based on the methodology used to create Multimodal-PlantCLEF from PlantCLEF2015 [1].

Objective: To transform a collection of single-organ plant images into a structured multimodal dataset where each data point consists of multiple organ views for a single plant species.

Materials: Source dataset (e.g., PlantCLEF2015), computing environment with storage.

Procedure:

- Data Annotation Audit: Review the source dataset's annotations to identify labels specifying the plant organ depicted in each image (e.g., "leaf," "flower," "fruit," "stem").

- Species-Organ Grouping: Group all images by their species label. Within each species group, further subgroup the images by their organ type.

- Multimodal Sample Construction:

- For each species, create a new data sample.

- For each required modality (e.g., leaf, flower), select one image at random from the corresponding organ subgroup.

- If an organ type is not available for a species, mark that modality as

nullor missing. This prepares the dataset for robustness techniques like modality dropout.

- Dataset Splitting: Perform a stratified split on the newly constructed multimodal samples (not the original images) to create training, validation, and test sets, ensuring all species are represented in each split.

Protocol: Fine-tuning a Vision-Language Model on Augmented PlantVillage

This protocol describes how to adapt a general-purpose Vision-Language Model (VLM) for the specialized task of plant disease diagnosis using a dataset like the Augmented PlantVillage [19] [20] [22].

Objective: To specialize a pre-trained VLM (e.g., CLIP, LLaVA, Qwen-VL) to accurately diagnose plant diseases from images and textual prompts.

Materials: Augmented PlantVillage dataset (images and text), access to a GPU cluster, pre-trained VLM weights.

Procedure:

- Data Preparation: Load the dataset, which includes image files and paired JSON annotation files containing text prompts, disease classes, and contextual metadata [19].

- Model Selection: Choose a base VLM. Models like Qwen-VL have shown success in this domain after fine-tuning [22].

- Fine-tuning Strategy:

- Full Fine-tuning: For maximum performance, fine-tune the entire model (visual encoder, connector, and language model) on the target dataset. This can be computationally intensive.

- Parameter-Efficient Fine-Tuning (PEFT): Use methods like Low-Rank Adaptation (LoRA). This technique fine-tunes the visual encoder, adapter, and language model simultaneously by injecting trainable rank decomposition matrices into the layers of the pre-trained model, dramatically reducing the number of trainable parameters and computational cost while maintaining high performance [22].

- Training & Evaluation: Train the model using standard vision-language objectives (e.g., contrastive loss, captioning loss). Evaluate on a held-out test set, reporting metrics like classification accuracy, F1-score, and qualitative analysis of generated diagnoses.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Resources for Multimodal Plant Data Research

| Resource Name / Type | Function in Research | Example / Source |

|---|---|---|

| Pre-trained Vision Models | Serves as a feature extractor for image modalities, providing a strong starting point and transfer learning. | MobileNetV3, EfficientNetB0, ResNet-50 [1] [20] [23] |

| Vision-Language Models (VLMs) | Base architecture for building systems that jointly understand plant images and textual descriptions. | CLIP, BLIP, LLaVA, Qwen-VL [19] [20] [24] |

| Neural Architecture Search (NAS) | Automates the design of optimal neural network architectures, including multimodal fusion layers. | Multimodal Fusion Architecture Search (MFAS) [1] |

| Parameter-Efficient Fine-Tuning (PEFT) | Enables effective adaptation of large models to new tasks with minimal computational overhead. | Low-Rank Adaptation (LoRA) [22] |

| Explainable AI (XAI) Tools | Provides post-hoc interpretations of model predictions, building trust and providing biological insights. | LIME (for images), SHAP (for tabular/weather data) [23] |

| Contrastive Learning Framework | Used for pre-training to learn high-quality, generalized feature representations, beneficial for few-shot learning. | Siamese Networks, Prototypical Networks [21] |

The How: A Step-by-Step Pipeline for Multimodal Data Curation and Fusion

For researchers building preprocessing pipelines for multimodal plant datasets, the acquisition and sourcing of high-quality, diverse data is a critical first step. This process often involves integrating disparate sources, including citizen science platforms, structured field studies, and public data repositories. Each source presents unique advantages and specific challenges that can impact data quality and usability. This technical support center provides targeted troubleshooting guides and FAQs to help you navigate common issues, mitigate data biases, and implement robust experimental protocols for effective multimodal data integration.

FAQs and Troubleshooting Guides

Citizen Science Data

Q1: How can we address spatial and taxonomic biases in citizen science data? Citizen science platforms, such as iNaturalist, are among the largest sources of plant occurrence data but are prone to spatial biases (e.g., oversampling in easily accessible areas) and taxonomic biases (e.g., under-sampling of cryptic or non-charismatic species) [25]. To mitigate this:

- Leverage Multispecies Deep Learning Models: Employ Deep Neural Networks (DNNs) designed for multispecies distribution modeling. These models are comparably robust to spatial sampling bias because they learn from the relative observation probabilities across many species simultaneously, reducing the effect of uneven sampling intensity [25].

- Implement Strategic Sampling Guidance: Proactively guide citizen scientists to explore identified plant diversity "darkspots"—regions with many undescribed or unrecorded species. Provide them with guidance on when to search and which diagnostic plant characters to photograph [26].

Q2: What are the best practices for discovering new species or rare phenotypes using citizen science? The discovery of novel species is often dependent on expert engagement with citizen science platforms [26].

- For Experts: Routinely review observations in your taxonomic group of expertise. Build collaboration networks with the community by regularly identifying records and interacting with other users. This cultivates a culture where users will actively notify you of potentially significant records [26].

- For All Researchers: Prioritize the collection of data for ephemeral species or those with diagnostic characters that are better captured by photographs than by preserved specimens, such as flower color or corolla shape, which can be lost in herbarium samples [26].

Field Studies and Sensor-Based Data Acquisition

Q3: What is a systematic method for troubleshooting a field-based data acquisition system that is not recording data? A structured approach to troubleshooting is crucial for resuming data collection quickly [27].

- Start with the Basics: Verify the data logger has power and is turned on [27].

- Test Your Assumptions: Systematically ask, "Is my assumption correct that...?" for each component. For every "yes" answer, support it with evidence from a simple test (e.g., "How do I know the data logger has power? Because my voltmeter reads 13.4 V") [27].

- Isolate the Problem: Understand the measurement chain: environmental parameter → sensor → electrical signal → data logger → stored data → computer. Test each link independently. For a faulty temperature reading, independently verify the sensor by placing it in ice water (0°C) to see if the reading changes as expected [27].

Q4: What are the common problems with data loggers, and how can they be solved? Data loggers, while useful, have several limitations that can be mitigated by moving towards real-time data acquisition systems [28].

Table 1: Common Data Logger Problems and Solutions

| Problem | Impact | Solution |

|---|---|---|

| Gaps Between Measurements [28] | Missed events that occur between logging intervals, jeopardizing sample integrity. | Use a real-time data acquisition system that can trigger high-frequency measurement and alarms immediately when a parameter is breached. |

| Missed Alarms During Network Failure [28] | No timely alert for out-of-spec conditions, leading to potential data or sample loss. | Implement a system with 4G failover connectivity and unlimited data buffering to ensure alarm delivery even during network issues. |

| Battery Power Limitations [28] | Requires manual replacement, risking invalid sensor calibration and data loss. | Use a professionally installed system with battery backup only for power outages, not as primary power. |

| Risk of Human Error in Setup [28] | Portable loggers can be moved or misconfigured, invalidating calibration and data. | Opt for a professionally installed system where sensors and recording units are integrated to ensure correct setup. |

Public Repositories and Multimodal Integration

Q5: How can we effectively integrate multimodal data from different sources (e.g., images and environmental data) for plant disease diagnosis? Integrating diverse data types addresses the limitations of single-modality systems [23].

- Adopt a Multimodal Deep Learning Framework: Implement a model that uses separate streams for different data types (e.g., EfficientNetB0 for image classification and a Recurrent Neural Network for environmental time-series data) and fuses the predictions [23].

- Implement Explainable AI (XAI) Techniques: Use tools like LIME (for image-based predictions) and SHAP (for weather-based predictions) to interpret the model's decisions. This improves transparency and trust in the diagnostic outcomes, which is critical for real-world applications [23].

Q6: What are the key challenges in using public image repositories for AI model training, and how can they be overcome? A major barrier in agricultural AI is the lack of large, well-labeled, and curated image sets that account for the high variability in real-world conditions [29].

- Challenge: A stop sign looks the same everywhere, but a pea plant's appearance varies with genetics, weather, and growth stage. Models trained on limited datasets fail to generalize [29].

- Solution: Utilize large-scale, open-source repositories like the Ag Image Repository (AgIR). These provide high-quality images of plants across different growth stages, varieties, and environmental conditions, which are essential for training robust computer vision models [29].

Experimental Protocols for Data Sourcing

Protocol 1: Building a Multimodal Plant Image Dataset

This methodology is derived from the development of the Ag Image Repository and related research [29] [30].

- Image Acquisition: Use automated systems like wheel-mounted "Benchbots" with high-resolution cameras programmed to capture time-series images of plants in pots arranged in rows. This ensures consistent, high-quality data collection over the plant's lifecycle [29].

- Data Curation and Annotation: Develop software tools to streamline the creation of "cut-outs" (plants removed from their background) and to annotate images with detailed metadata (species, growth stage, health status). This step is critical for creating a dataset usable for supervised learning [29].

- Multimodal Structuring: For plant identification tasks, restructure the dataset to include images of multiple plant organs (flowers, leaves, fruits, stems) per species, forming a multimodal dataset like Multimodal-PlantCLEF [30].

- Repository Deployment: Share the final, curated dataset on a publicly accessible platform or high-performance computing cluster (e.g., USDA SCINet) to accelerate AI research in agriculture [29].

Protocol 2: Modeling Plant Distributions from Citizen Science Data

This protocol uses multispecies Deep Neural Networks (DNNs) to handle biases in opportunistic observations [25].

- Data Compilation: Gather and quality-filter citizen science occurrence records (e.g., from platforms like InfoFlora). Compile high-resolution environmental predictors (e.g., climate, topography) and seasonal predictors (sine-cosine transforms of the day of the year) for the study region [25].

- Model Training: Train an ensemble of DNNs using cost functions like Cross-Entropy Loss (CEL) or Normalized Discounted Cumulative Gain (NDCG). The multispecies, joint modeling approach makes the model more robust to spatial sampling bias [25].

- Validation and Analysis:

- Performance Validation: Validate the model against left-out citizen science data and independently collected, systematically distributed plant community inventories [25].

- Ecological Insight Extraction: Use the trained model to map fine-grained species distributions, investigate spatial variations in flowering phenology (by analyzing the timing of peak observation probability), and project future changes under climate scenarios [25].

Workflow Visualization

Data Sourcing and Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Data Acquisition and Analysis

| Item | Function | Application Context |

|---|---|---|

| Digital Multimeter [27] | Provides independent verification of voltages and checks electrical continuity. | Troubleshooting field data acquisition systems and sensors. |

| iNaturalist Platform [26] | A citizen science platform for recording and identifying biodiversity observations. | Sourcing large volumes of plant occurrence data and facilitating species discovery. |

| Ag Image Repository (AgIR) [29] | A public repository of high-quality, curated plant images with metadata. | Training and benchmarking robust computer vision and deep learning models for agriculture. |

| Deep Neural Networks (DNNs) [25] | Machine learning models for joint, multispecies distribution modeling. | Predicting species distributions and community composition from biased citizen science data. |

| Explainable AI (XAI) Tools (LIME & SHAP) [23] | Provides post-hoc explanations for predictions made by complex AI models. | Interpreting and validating diagnoses from multimodal plant disease models. |

| Benchbots / Automated Imaging Rigs [29] | Robotic systems for automated, high-throughput plant imaging. | Generating consistent, time-series image data for phenotyping and dataset creation. |

| Species Distribution Models (SDMs) [7] | Algorithms to characterize habitat suitability and species' environmental niches. | Modeling potential species ranges based on environmental variables. |

| Darwin Core Standards [7] | A standardized framework for publishing biodiversity data. | Ensuring interoperability and integration of biodiversity data from different sources. |

Troubleshooting Guide: Image Standardization for Plant Phenotyping

Q: What are the primary challenges in aligning images from different camera technologies for plant phenotyping, and how can they be addressed?

A: The main challenges are parallax effects and occlusion effects inherent in plant canopy imaging. A effective solution is to integrate 3D information from a depth camera (e.g., a time-of-flight camera) into the registration process. This depth data helps mitigate parallax, facilitating more accurate pixel alignment. Furthermore, implementing an automated mechanism to identify and filter out various types of occlusions can minimize registration errors. This method is robust across different plant types and is not reliant on detecting plant-specific image features [31].

Q: How do I standardize a dataset containing plant images from multiple organs for a multimodal classification model?

A: Standardizing multi-organ images involves creating a cohesive dataset and processing pipeline. You can transform an existing unimodal dataset into a multimodal one by implementing a data preprocessing pipeline that groups images by plant organ (e.g., flowers, leaves, fruits, stems). Each organ, treated as a distinct modality, should be processed through a dedicated feature extractor (e.g., a pre-trained CNN like MobileNetV3). The fusion of these features can then be optimized automatically using algorithms like Multimodal Fusion Architecture Search (MFAS) to determine the most effective integration point, significantly boosting classification performance [1].

Experimental Protocol: 3D Multimodal Plant Image Registration This protocol is adapted from a novel registration algorithm for plant phenotyping [31]:

- Data Acquisition: Set up a multimodal monitoring system with a time-of-flight depth camera and other arbitrary camera technologies (e.g., RGB, multispectral).

- Depth Data Capture: Capture 3D information of the plant canopy using the depth camera.

- Ray Casting for Registration: Leverage the depth data and a ray casting technique to align pixels from the different camera modalities accurately.

- Occlusion Filtering: Run the integrated automated detection algorithm to identify and filter out pixels affected by occlusion.

- Output: Generate the final registered images and 3D point clouds of the plants for downstream analysis.

Troubleshooting Guide: Text Tokenization for Agricultural Literature Mining

Q: What are the essential steps for preprocessing text data, such as research abstracts or field notes, for summarization or classification tasks in an agricultural context?

A: A standard preprocessing pipeline for textual data involves several key steps [32]:

- Data Cleaning: Remove irrelevant characters, correct typos, and handle encoding issues.

- Tokenization: Split the raw text into smaller units (tokens), which can be words, subwords, or characters.

- Normalization: Convert text to a standard form, such as converting all characters to lowercase.

- Truncation and Padding: Ensure all text sequences are of uniform length by truncating long sequences or padding short ones to a fixed length.

- Attention Masks: For models like Transformers, create attention masks to indicate to the model which tokens are actual data and which are padding.

Experimental Protocol: Text Preprocessing for Model Training This protocol outlines the steps for preparing a text dataset (e.g., the CNN/Daily Mail dataset) for training a summarization model [32]:

- Data Loading: Load the dataset, typically divided into training, validation, and test CSV files.

- Cleaning & Preprocessing: Apply the techniques listed above: tokenization, normalization, truncation, and the creation of attention masks.

- Exploratory Data Analysis (EDA): Perform EDA to understand data insights, such as the distribution of article and summary lengths.

- Model Input Preparation: Feed the preprocessed tokens and attention masks into deep learning models (e.g., T5, BART, PEGASUS) for training.

- Evaluation: Use metrics like ROUGE and BLUE scores to assess the model's summarization performance.

Troubleshooting Guide: Environmental Data Normalization

Q: How do I identify and handle outliers in my environmental dataset, such as sensor readings for temperature or soil moisture?

A: Outliers can be identified using several statistical methods [33]:

- Interquartile Range (IQR) method: Any data point below

Q1 – 1.5 * IQRor aboveQ3 + 1.5 * IQRis considered an outlier. - Z-score method: Data points that fall more than 3 standard deviations from the mean are flagged.

- Tukey's fences: A more stringent variant using

Q1 – 3 * IQRandQ3 + 3 * IQR. - Model-based methods: Algorithms like Local Outlier Factor (LOF) use the local density of neighboring data points to identify outliers. It is critical to combine these methods with an understanding of the data collection process and domain knowledge to decide whether to correct or remove outliers [33].

Q: My environmental data (e.g., nutrient concentrations, pollutant levels) is highly skewed. Which normalization method should I use and why?

A: For skewed environmental data, logarithmic transformation is often the most appropriate method. The goal of normalization is to change the values to a common scale without distorting value ranges, and to make the data's distribution more Gaussian (bell-curved) for further statistical analysis. The Shapiro-Wilk test can confirm if data is normally distributed. A p-value < 0.05 indicates a non-normal distribution. Log transformation compresses the scale for large values, effectively reducing positive skewness and making the data more suitable for parametric statistics and regression analysis [34].

Experimental Protocol: Normalizing Skewed Environmental Data This protocol is based on standard practices for handling non-Gaussian environmental data [34]:

- Assess Distribution: Visually inspect the data distribution using histograms or kernel density plots. Perform the Shapiro-Wilk test for normality. A p-value < 0.05 confirms the data is not normally distributed.

- Apply Log Transformation: Transform the data using a logarithmic function (

log(original_value)). - Re-assess Distribution: Run the Shapiro-Wilk test again on the log-transformed data. The p-value should now be > 0.05, confirming a normal distribution. Visually compare box plots and density plots before and after transformation to confirm the decrease in skewness.

- Proceed with Analysis: The normalized data is now ready for further analysis, such as multivariate linear regression.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 1: Key computational tools and data solutions for multimodal plant research.

| Item Name | Function/Brief Explanation | Relevant Context |

|---|---|---|

| Time-of-Flight (ToF) Depth Camera | Captures 3D information to mitigate parallax effects in image registration. [31] | Plant phenotyping, 3D reconstruction. |

| Multimodal Fusion Architecture Search (MFAS) | Automates the discovery of optimal fusion points for combining data from multiple modalities. [1] | Integrating image, environmental, and genomic data. |

| Pre-trained Deep Learning Models (T5, BART, PEGASUS) | Provides a foundation for natural language processing (NLP) tasks like text summarization. [32] | Mining agricultural literature and reports. |

| Explainable AI (XAI) Libraries (LIME, SHAP) | Provides post-hoc explanations for model predictions, enhancing interpretability and trust. [23] | Diagnosing plant disease and validating model decisions. |

| Python Libraries (e.g., Scikit-learn, PyOD) | Offers comprehensive algorithms for data preprocessing, outlier detection, and machine learning. [33] | General-purpose data cleaning and analysis. |

Workflow Visualization: Multimodal Preprocessing Pipeline

The diagram below illustrates a logical workflow for preprocessing the three data modalities discussed, preparing them for a fusion-based model.

Diagram 1: A logical workflow for preprocessing multimodal data.

FAQs on Data Annotation and Labeling

Q1: What are the primary strategies to create labeled datasets when annotated data is scarce? A combination of expert curation and weak supervision is highly effective. Expert curation provides high-quality labels but is resource-intensive. Weak supervision uses lower-cost, noisier sources to generate labels programmatically. For example, multiple noisy labeling functions—such as heuristics, knowledge bases, or predictions from other models—can be aggregated to create a probabilistic training set [35]. In species-level trait imputation, models can be trained on existing data to predict missing traits for related species.

Q2: How can weak supervision be applied to complex, non-categorical data like plant trait rankings? Traditional weak supervision focuses on classification, but it can be universalized. For rankings, the label model can be reoriented to minimize a specific distance metric, such as the Kendall Tau distance, which measures the number of adjacent swaps needed to match two permutations [35]. This framework allows weak supervision to be applied to regression, graphs, and other complex structures where simple categorization isn't sufficient.

Q3: Can large language models (LLMs) be used for weak supervision in specialized domains like plant science? Yes, LLMs can be prompted to generate weak labels (pseudo-labels) for training smaller, more efficient downstream models. To enhance performance in a specialized domain, the LLM can first be fine-tuned on a small set of expert-annotated data. The fine-tuned LLM then generates weak labels for a much larger unlabeled dataset, which are used to train a compact model like BERT. This strategy minimizes the need for domain knowledge to create labeling functions and avoids the computational expense of deploying large LLMs in production [36].

Q4: What is the key challenge in building multimodal plant classification models, and how can it be addressed? A major challenge is modality fusion—determining the optimal strategy to combine information from different data sources (e.g., images of flowers, leaves, fruits, and stems) [1] [37]. Manually designed fusion architectures can be suboptimal. This can be addressed by using a Multimodal Fusion Architecture Search (MFAS), which automates the discovery of the best fusion strategy, leading to more accurate and robust models compared to common practices like late fusion [1] [37].

Q5: How can we ensure our model is robust when some data modalities are missing? Incorporating multimodal dropout during training is a key technique. It randomly drops subsets of modalities, forcing the model to learn robust representations that do not over-rely on any single data type. This results in a model that maintains higher performance even when, for example, only leaf images are available instead of the full set of organ images [1] [37].

Troubleshooting Guides

Problem: Labels generated through weak supervision are noisy, leading to poor model performance.

- Potential Cause: The labeling functions (LFs) are low-quality, conflicting, or have broad coverage errors.

- Solution:

- Analyze LF Conflicts: Use the data programming framework to compute a covariance matrix between your LFs. This helps identify groups of LFs that frequently disagree.

- Refine LFs: Based on the analysis, rewrite heuristics or adjust knowledge-based LFs to reduce conflict.

- Leverage the Label Model: Use the label model (e.g., in Snorkel AI) to de-noise the weak labels by estimating their accuracies and correlations, rather than simply taking a majority vote [35].

Problem: My multimodal model performs worse than a unimodal one.

- Potential Cause: Suboptimal fusion strategy or poor alignment between modalities.

- Solution:

- Audit Unimodal Backbones: First, ensure each unimodal model (e.g., a CNN for images) is competently trained on its specific task.

- Automate Fusion Search: Replace manual fusion (e.g., simple concatenation or averaging) with a Neural Architecture Search (NAS) method tailored for multimodal problems, such as MFAS, to find a better fusion structure [1] [37].

- Check Data Alignment: Verify that samples from different modalities (e.g., a leaf image and a corresponding soil measurement) are correctly paired and aligned in your dataset.

Problem: High computational cost of using LLMs for weak labeling on a large dataset.

- Potential Cause: Performing inference with a full-scale LLM (e.g., Llama2-13B) on thousands of samples is computationally expensive [36].

- Solution:

- Use a Two-Stage Pipeline: Fine-tune an LLM on a small gold-standard dataset, then use it to generate pseudo-labels for the entire unlabeled corpus.

- Distill Knowledge: Use the generated pseudo-labels to train a much smaller, task-specific model (e.g., BERT). This smaller model is then used for production inference, drastically reducing computational costs [36].

Experimental Protocols and Data

Table 1: Performance Comparison of Multimodal Fusion Strategies on Plant Identification

| Fusion Strategy | Description | Accuracy on Multimodal-PlantCLEF | Key Advantage |

|---|---|---|---|

| Late Fusion (Baseline) | Averages predictions from unimodal models [1] [37]. | ~72.28% | Simple to implement |

| Automatic Fusion (MFAS) | Uses architecture search to find optimal fusion points [1] [37]. | 82.61% | Superior performance |

| With Multimodal Dropout | MFAS model trained with randomly dropped modalities [1] [37]. | High robustness | Handles missing data |

Table 2: Weak Supervision Pipeline Performance with Limited Gold-Standard Data

| Method | 3 Gold Standard Notes (F1) | 10 Gold Standard Notes (F1) | Key Insight |

|---|---|---|---|

| BERT (Fine-tuned) | 0.5953 (Events) / 0.2753 (Time) | N/A | Struggles with very low data |

| LLM (Fine-tuned) | 0.7418 (Events) / 0.6045 (Time) | N/A | Better, but computationally heavy |

| LLM-WS-BERT | 0.7765 (Events) / 0.7538 (Time) | 0.8466 (Events) / 0.8448 (Time) | Dominant strategy: Combines weak supervision and efficient final model [36] |

Workflow Visualization

Weak Supervision and Imputation Workflow

Automated Multimodal Fusion with MFAS

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources

| Item | Function in the Pipeline |

|---|---|

| Multimodal-PlantCLEF Dataset | A restructured version of PlantCLEF2015 providing aligned images of flowers, leaves, fruits, and stems for the same plant specimen, enabling multimodal model development [1] [37]. |

| Pre-trained Models (e.g., MobileNetV3) | Provide a strong feature extraction backbone for image-based modalities, enabling effective transfer learning, especially when training data is limited [1] [37]. |

| Multimodal Fusion Architecture Search (MFAS) | An algorithm that automates the discovery of the optimal neural architecture for fusing information from different data modalities, replacing error-prone manual design [1] [37]. |

| Weak Supervision Framework (e.g., Snorkel) | Provides a programming model for defining labeling functions and a label model that aggregates their noisy signals to create a probabilistic training set without manual labeling [35]. |

| Large Language Model (e.g., Llama2) | Can be fine-tuned and used as a source of weak labels for textual or structured data, minimizing the need for hand-crafted rules and domain-specific ontologies [36]. |

Frequently Asked Questions

Q1: What is the core difference between early, intermediate, and late fusion? The core difference lies at which stage in the model pipeline the data from different modalities is combined [38] [39].

- Early Fusion: Integration happens at the input level, combining raw or low-level data before feature extraction.

- Intermediate Fusion: Integration happens at the feature level, combining latent representations extracted from each modality.

- Late Fusion: Integration happens at the decision level, combining the outputs or predictions of modality-specific models.

Q2: How do I choose the right fusion strategy for my plant dataset? The choice depends on your data characteristics and research goal [38] [40] [39].

- Use Early Fusion if your modalities are tightly synchronized and you want to model low-level interactions, but be wary of its sensitivity to noise and misalignment.

- Use Intermediate Fusion to capture rich, non-linear interactions between modalities; this is the most widely used strategy but requires all modalities to be present.

- Use Late Fusion if your data modalities are asynchronous, might be missing at inference, or are best processed by specialized models; it is simpler but may miss cross-modal interactions.

Q3: What are the common data alignment issues in multimodal plant studies? Challenges include temporal misalignment (e.g., RGB images and hyperspectral scans taken at different times) and spatial misalignment (e.g., different resolutions or fields of view). Furthermore, data from various sensors may have different sampling rates, requiring synchronization [41] [39].

Q4: How can I handle missing modalities in my dataset during training? A technique called Modality Dropout can be used. During training, one or more modalities are randomly dropped or obscured in each iteration. This forces the model to adapt and learn robust representations, enabling it to make reasonable predictions even when some data is missing at inference time [38].

Q5: Why does my multimodal model perform well in the lab but poorly in the field? This is a common issue often due to the domain gap between controlled lab conditions and variable field environments. Field data introduces new challenges like complex backgrounds, varying illumination, and occlusions. Techniques such as data augmentation, domain adaptation, and using more robust architectures (e.g., Transformers) can help bridge this gap [9] [42].

Troubleshooting Guides

Issue 1: Model Performance is Poor with Early Fusion

- Potential Cause: High-dimensional and noisy input space due to concatenating raw data [40] [39].

- Solution:

- Apply rigorous preprocessing to each modality to reduce noise (e.g., artifact removal, filtering) [41].

- Implement dimensionality reduction techniques (e.g., PCA) on the raw features before fusion.

- Consider switching to an intermediate fusion strategy, which is often more robust for learning from heterogeneous data [38].

Issue 2: Inconsistent Results When One Sensor Data is Missing

- Potential Cause: The model was trained on a complete dataset and cannot handle missing inputs [38].

- Solution:

Issue 3: Difficulty Combining Image and Numerical Sensor Data

- Potential Cause: The features from different modalities exist in incompatible scales and representations [3].

- Solution:

- Normalize all features into a common numerical range. For image data, this means pixel normalization; for sensor data, use Z-score or Min-Max scaling [43].

- Process each modality into a common embedding space (e.g., convert both images and sensor readings into 128-length vectors) before fusion, which is the core of intermediate fusion [38].

Issue 4: Low Accuracy in Real-World Field Deployment

- Potential Cause: The model has overfitted to clean, lab-condition data and fails to generalize [9] [42].

- Solution:

- Use datasets that contain field-environment variations (e.g., PlantVillage, PlantDoc).

- Employ data augmentation techniques during training to simulate field conditions (e.g., background substitution, lighting changes, occlusions).

- Utilize more robust model architectures like Vision Transformers (ViTs) or hybrid ViT-CNN models, which have shown better performance in field conditions compared to traditional CNNs [9].