Building Resilient Bioregenerative Life Support: Strategies for System Recovery from BLSS Compartment Failure

This article addresses the critical challenge of ensuring system resilience and recovery in Bioregenerative Life Support Systems (BLSS) for long-duration space missions.

Building Resilient Bioregenerative Life Support: Strategies for System Recovery from BLSS Compartment Failure

Abstract

This article addresses the critical challenge of ensuring system resilience and recovery in Bioregenerative Life Support Systems (BLSS) for long-duration space missions. Aimed at researchers, scientists, and systems engineers, it synthesizes foundational principles, methodological approaches, optimization strategies, and validation frameworks for managing compartment failures. By exploring the interconnectedness of biological producers, consumers, and degraders, it provides a comprehensive roadmap for developing robust failure response protocols, enhancing system autonomy, and validating recovery strategies to ensure crew safety and mission success on lunar and Martian outposts.

The Bedrock of BLSS: Understanding Compartment Interdependencies and Failure Risks

Frequently Asked Questions

Q1: What are the core compartments of a Bioregenerative Life Support System (BLSS)? A BLSS is an artificial ecosystem made of several interconnected compartments where the waste products of one compartment become the vital resources for another. The three fundamental compartments are [1]:

- Producers: Organisms like plants, microalgae, and photosynthetic bacteria that produce biomass (food), oxygen, and purify water through photosynthesis [1].

- Consumers: The crew, who consume the oxygen, water, and food produced by the system [1].

- Degraders and Recyclers: Microbes (e.g., fermentative and nitrifying bacteria) that break down and recycle organic waste into inorganic nutrients that can be used again by the producers [1].

Q2: Why might my plant growth experiments show reduced yields in a confined environment? Reduced yields can stem from multiple factors beyond basic nutrient delivery. In a closed system, plants are exposed to unique stressors [1]:

- Confinement Stress: Altered atmospheric composition or the buildup of trace gases like ethylene can affect plant metabolism and growth.

- Limited Root-Zone Volume: The physical constraints of growth chambers can restrict root architecture and function.

- Abnormal Light Cycles: Non-24-hour light/dark cycles used in spaceflight can disrupt plant circadian rhythms and physiology.

- Methodology: Systematically vary one parameter at a time (e.g., light cycle) while holding others constant. Monitor plant growth, gas exchange (O₂ production, CO₂ consumption), and signs of stress. Compare these results with Earth-based control experiments.

Q3: Following a microbial degrader failure, what is the priority for system recovery? The immediate priority is to stabilize the producer compartment and ensure crew safety [1].

- Diagnose Failure Cause: Determine if the failure was due to contamination, suboptimal pH, temperature, or a toxic buildup of waste products.

- Bypass and Isolate: Isolate the failed bioreactor to prevent system-wide contamination. Use physicochemical methods as a backup for critical functions like air and water revitalization.

- Re-inoculate: Introduce a backup, healthy culture of the microbial degrader. Monitor the re-establishment of the microbial community and its waste processing efficiency before fully re-integrating it into the closed loop.

Q4: How can I model a compartment failure to study system resilience? You can simulate a compartment failure to observe its effects and test recovery protocols [1]:

- Producer Failure: Halting plant growth chamber lighting to simulate a power failure, monitoring the drop in oxygen and rise in carbon dioxide.

- Degrader Failure: Stopping the flow of waste to a bioreactor, monitoring the accumulation of ammonia and organic waste in the system.

- Methodology: Use real-time system monitoring (gas composition, water quality, microbial activity) to track the failure's propagation. Implement your recovery protocol and document the time required for the system to return to baseline parameters.

Troubleshooting Guides

Problem: Unexpected Drop in Dissolved Oxygen in Hydroponic Plant Growth Unit

| Symptom | Potential Cause | Diagnostic Steps | Resolution |

|---|---|---|---|

| Plant roots appearing brown and slimy; wilting leaves despite sufficient water. | Root Zone Hypoxia or Microbial Contamination [1]. | 1. Check water circulation pumps for failure.2. Measure dissolved O₂ in nutrient solution.3. Inspect roots for rot and sample for microbial analysis. | 1. Repair or replace circulation pumps.2. Increase aeration.3. Treat with approved biocide or replace nutrient solution. |

Problem: Reduced Efficiency in Nitrifying Bioreactor

| Symptom | Potential Cause | Diagnostic Steps | Resolution |

|---|---|---|---|

| Accumulation of ammonia (NH₃) and drop in nitrate (NO₃⁻) levels in recycled nutrient solution. | Inhibition of Nitrifying Bacteria [1]. | 1. Test pH (optimum is typically 7.5-8.0).2. Check for presence of toxic substances (e.g., heavy metals, antibiotics).3. Monitor temperature for deviations from 25-30°C. | 1. Adjust pH to optimal range.2. Identify and remove source of contamination.3. Consider re-inoculating with a fresh, active bacterial culture. |

Problem: Decline in Crew Well-being and System Performance

| Symptom | Potential Cause | Diagnostic Steps | Resolution |

|---|---|---|---|

| Reports of stress, fatigue; increased errors; minor conflicts among crew. | Psychological Stress from System Failures or Inadequate Diet [1]. | 1. Conduct private crew interviews or surveys.2. Review logs of system stability and recent failure events.3. Analyze nutritional intake, especially fresh food. | 1. Provide psychological support and adjust workloads.2. Increase access to fresh food from the plant compartment, which provides psychological benefits.3. Stabilize the life support systems to restore crew confidence. |

Experimental Protocols & System Modeling

Quantitative Data on BLSS Plant Compartments

The design of the plant compartment must be tuned to the mission scenario [1].

| Mission Scenario | Duration | Recommended Plant Types | Primary Role | Key Resource Contribution |

|---|---|---|---|---|

| Short-Term (LEO) | Days to Months | Leafy greens (lettuce, kale), microgreens, sprouts [1]. | Diet Supplement & Psychology [1]. | High-nutrient fresh food; psychological support. Minimal resource recycling [1]. |

| Long-Term (Planetary Outpost) | Months to Years | Staple crops (potato, wheat, rice, soy), fruits, and vegetables [1]. | Major Food Production & Resource Recycling [1]. | Provides carbohydrates, proteins, fats; substantial contribution to O₂ production, CO₂ removal, and water purification [1]. |

Protocol: Testing System Resilience to a Simulated Producer Failure

Objective: To understand the impact of a sudden plant compartment failure on gas exchange and to test recovery procedures.

Materials:

- Integrated BLSS test facility with plant growth chamber, crew habitat, and microbial recycling unit.

- Real-time gas monitors (O₂, CO₂).

- Backup oxygen supply and CO₂ scrubbers.

Methodology:

- Baseline Phase: Operate the BLSS in a closed-loop mode for 72 hours, recording baseline levels of O₂ and CO₂.

- Failure Induction: Simulate a producer failure by turning off the lights in the plant growth chamber.

- Failure Monitoring: Record the rate of O₂ decline and CO₂ accumulation over the next 24 hours. Monitor crew compartment conditions closely.

- Recovery Initiation: Once O₂ reaches a predefined lower safety limit, activate backup physicochemical systems (O₂ supply, CO₂ scrubbers).

- System Restoration: Restore lighting to the plant growth chamber. Monitor the time taken for the plant compartment to resume net O₂ production and for the system to return to baseline gas levels.

- Data Analysis: Calculate the system's buffer capacity and the recovery time post-failure.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in BLSS Research |

|---|---|

| Nitrifying Bacterial Consortia | Reagents containing Nitrosomonas and Nitrobacter species to convert toxic ammonia into nitrate in the nutrient recycling loop [1]. |

| Hydroponic Nutrient Solution | A precisely formulated solution of macro and micronutrients (N, P, K, Ca, Mg, Fe, etc.) for soilless plant cultivation in BLSS [1]. |

| Luminometric Assay Kits | For rapid, high-frequency measurement of key metabolites like ATP, indicating microbial activity and vitality in degrader compartments. |

| Gas Chromatography System | For detailed analysis of atmospheric composition, including trace gases like ethylene and methane, which can accumulate and affect system balance [1]. |

| DNA/RNA Extraction Kits | For molecular analysis of the microbial community in degrader compartments to monitor its health and stability. |

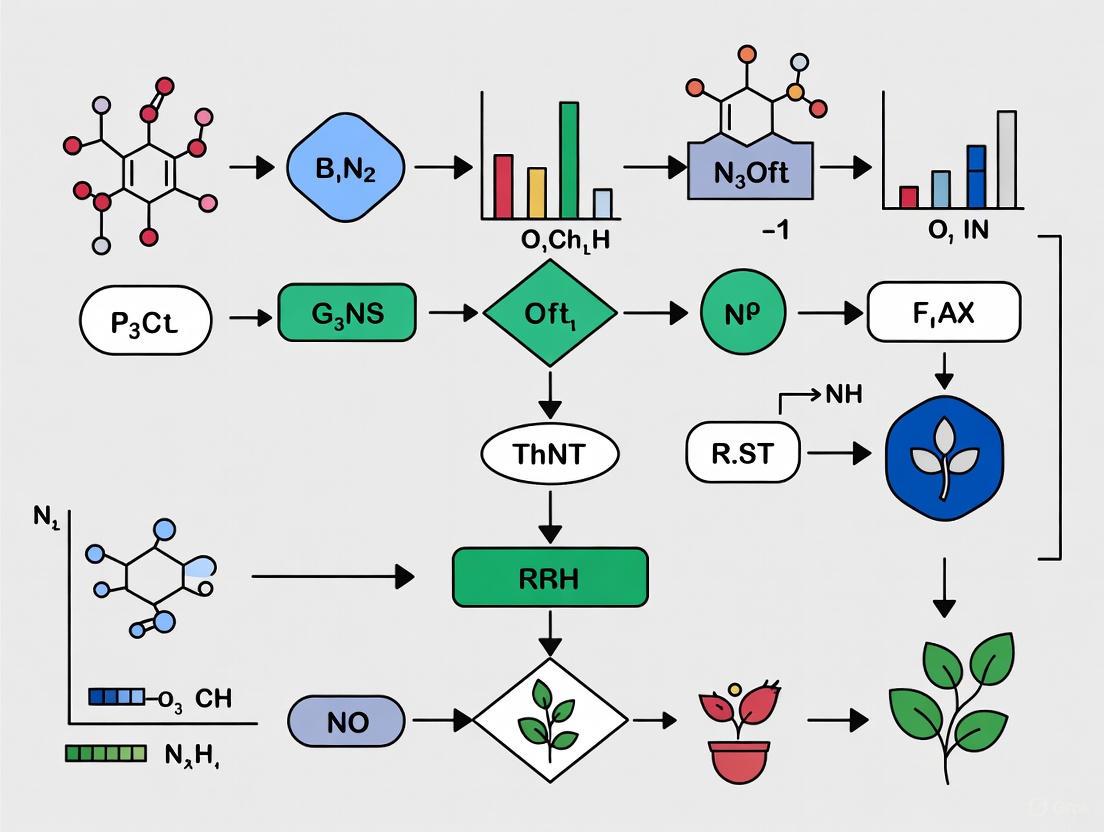

BLSS Compartment Interactions and Resilience

The following diagram illustrates the core material flows between BLSS compartments and the resilience feedback loop that is activated during a failure.

Technical Support Center

Frequently Asked Questions (FAQs)

Q1: Our BLSS photobioreactor is experiencing a sudden drop in oxygen output. What are the primary investigative steps? A sudden decline in oxygen production is a critical failure mode. The immediate investigative protocol should follow a structured path to isolate the cause [2]:

- Contamination Check: Aseptically collect a culture sample for microscopic analysis and streak plating on agar to detect microbial contamination.

- Gas Analysis: Verify the carbon dioxide (CO₂) inflow rate and concentration. The system requires a steady CO₂ supply; a malfunction here directly limits photosynthesis.

- Optical Inspection: Use integrated sensors to measure Photosynthetically Active Radiation (PAR). A failure in the light delivery system will halt photosynthetic activity.

- Culture Vitality: Analyze for a pH shift outside the optimal range (6.5-7.5 for many cyanobacteria) and check cell density via optical density (OD) measurements.

Q2: What is the proven recovery protocol for a spacecraft system that becomes unresponsive to commands? The CAPSTONE mission provides a real-world recovery blueprint for this scenario [3].

- Allow Fault Protection Engagement: Do not continuously send commands. Spacecraft are designed with onboard fault protection systems that need time to automatically diagnose and clear the anomaly. For CAPSTONE, this process took 11 days.

- Monitor for Beacon Signal: Once the fault protection system has resolved the issue, the spacecraft should re-establish communication by sending a beacon signal.

- Implement Procedural Updates: Post-recovery, analyze telemetry data to understand the root cause and update operational procedures to prevent recurrence, such as modifying command sequences or fault detection thresholds.

Q3: How does drug potency degrade in the space environment, and what is the associated risk of medication failure? Quantitative analysis of medications stored on the International Space Station (ISS) reveals a clear trend [4].

- Degradation Rate: Medications exhibit a small but measurable increase in the rate of active pharmaceutical ingredient (API) loss. The overall mean rate of API loss for spaceflight-exposed drugs is approximately 0.004% per day.

- Failure Risk: After 880 days of storage in space, 25 out of 36 medications (69%) fell below United States Pharmacopeia (USP) potency standards, compared to 17 out of 36 (47%) in lot-matched terrestrial controls.

- Primary Cause: Non-protective repackaging of drugs is a major contributing factor, often more detrimental than the space environment itself. Ensuring protective, USP-compliant repackaging is critical for long-duration missions.

Q4: What redundancy architecture is used for mission-critical flight computers? For crewed missions, the tolerance for failure is virtually zero, necessitating sophisticated hardware and software redundancy [5].

- Architecture: The Space Shuttle program employed a quintuple redundancy system. Four primary computers ran identical software and operated on a "voting" principle—if one computer disagreed with the other three, it was reset. A fifth, independent computer with different software was on standby to ensure a safe ascent, abort, or reentry.

- Software Philosophy: The software is designed to be asynchronous and resilient. It can automatically dump low-priority tasks to ensure critical functions (like guidance and navigation) continue uninterrupted, a design that saved the Apollo 11 moon landing.

Troubleshooting Guides

Issue: Complete loss of communication with spacecraft

| Step | Action | Rationale & Reference |

|---|---|---|

| 1 | Verify ground station equipment and network connectivity. | Rule out terrestrial issues before attributing the problem to the spacecraft. |

| 2 | Wait for onboard fault protection system to engage and clear the anomaly. | Spacecraft are designed to autonomously recover. The CAPSTONE mission recovered after 11 days in this state [3]. |

| 3 | Monitor for a beacon or "heartbeat" signal across all communication bands. | Indicates the spacecraft has rebooted and is attempting to re-establish contact [3]. |

| 4 | If beacon is acquired, initiate a minimal command set to assess vehicle health and status. | Avoid overloading the potentially fragile system; gather essential telemetry first [5]. |

Issue: Uncontrolled spin or attitude deviation after a thruster anomaly

| Step | Action | Rationale & Reference |

|---|---|---|

| 1 | Utilize star trackers and sun sensors to precisely determine the spacecraft's spin rate and axis. | Essential for planning a recovery maneuver. The CAPSTONE team maintained excellent navigation knowledge despite anomalies [3]. |

| 2 | Calculate and uplink a controlled thruster burn sequence to counteract the spin. | Burns must be precisely timed to gradually slow rotation without inducing a new spin. |

| 3 | Verify spacecraft attitude stability post-maneuver using onboard sensors. | Confirm the vehicle is back in a stable, controlled orientation. |

| 4 | Re-establish the correct trajectory and orbital path. | The primary mission objective can be resumed once the vehicle is fully under control [3]. |

Issue: Critical sensor failure (e.g., inertial measurement unit) providing erroneous data

| Step | Action | Rationale & Reference |

|---|---|---|

| 1 | Isolate the sensor and switch to a redundant backup unit if available. | Standard redundancy practice to restore immediate functionality [5]. |

| 2 | If no hardware redundancy exists, upload new software to utilize an alternative sensor. | Demonstrated by NASA, where orbiters nearing the end of their sensor life were reconfigured to use a star-tracking camera for positioning [5]. |

| 3 | Cross-reference data from other operational systems to validate the new data source. | Ensures the new navigation solution is accurate and reliable. |

| 4 | Update the vehicle's fault detection parameters to ignore the failed sensor. | Prevents the spacecraft from triggering unnecessary safe modes based on bad data [5]. |

Quantitative Data on Mission Resilience

Spaceflight Drug Stability Profile

Data from 36 drug products stored on the ISS reveals the effect of the space environment on pharmaceutical stability [4].

| Storage Duration | Mean API Content vs. Control (Flight) | Formulations Failing USP (Flight) | Formulations Failing USP (Control) |

|---|---|---|---|

| 13 Days | -1.18% | Not Provided | Not Provided |

| 880 Days | -4.76% | 25 / 36 (69%) | 17 / 36 (47%) |

Human Metabolic Requirements for Life Support Sizing

These values are for an 82 kg reference astronaut and are the foundation for sizing BLSS components [6].

| Consumable | Daily Requirement (per crewmember) | Daily Production (per crewmember) |

|---|---|---|

| Oxygen | 0.89 kg | - |

| Carbon Dioxide | - | 1.08 kg |

| Food (Dry Mass) | 0.80 kg | - |

| Drinking Water | 2.79 kg | - |

| Water (from respiration/perspiration) | - | 3.04 kg |

Experimental Protocols for BLSS Research

Protocol 1: Stress-Testing Cyanobacteria for Bioweathering

This methodology outlines the first stage of a proposed three-stage BLSS/ISRU system for processing lunar or Martian regolith [6].

- Organism Selection: Select siderophilic (iron-loving) species of cyanobacteria, such as Anabaena or Nostoc strains known for their resilience.

- Growth Medium Preparation: Create a liquid growth medium according to standard recipes (e.g., BG-11). Sterilize via autoclaving.

- Regolith Simulation: Use a certified lunar or Martian regolith simulant (e.g., JSC-1A for Mars) as the substrate.

- Inoculation and Cultivation: Inoculate the sterilized simulant with the cyanobacteria culture in a sealed photobioreactor. Maintain temperature at 25°C ± 2°C and provide continuous illumination.

- Gas Exchange: Continuously bubble a mixture of air and CO₂ (approx. 95:5) through the culture to provide a carbon source.

- Analysis:

- Weekly Sampling: Measure pH and OD to monitor culture growth.

- Endpoint Analysis (Day 30): Use Inductively Coupled Plasma (ICP) spectroscopy to analyze the liquid medium for concentrations of leached elements (e.g., Fe, Si, Mg, Ca) to quantify bioweathering efficiency.

Protocol 2: Quantifying Pharmaceutical Degradation in Simulated Space Conditions

This protocol is designed to systematically assess the risk of medication failure on long-duration missions [4].

- Sample Preparation: Select solid oral drug products. Repackage a subset into proposed flight containers (e.g., polypropylene). Keep a control group in the original, manufacturer's packaging.

- Storage Conditions:

- Control Group: Store at standard conditions (e.g., 25°C/60% relative humidity).

- Test Group: Expose to accelerated degradation conditions, such as elevated temperature (40°C) and humidity (75% RH), and/or a controlled radiation source to simulate space stressors.

- Sampling Intervals: Remove samples for analysis at defined time points (e.g., T=0, 1, 3, 6, 9, 12 months).

- Analytical Testing: Use stability-indicating High-Performance Liquid Chromatography (HPLC) to quantify the amount of active pharmaceutical ingredient (API) remaining and to identify any degradation impurities.

System Architecture and Workflow Visualizations

BLSS Three-Stage Reactor Architecture

Spacecraft Anomaly Recovery Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in BLSS & Resilience Research |

|---|---|

| Cyanobacteria Strains (Anabaena, Nostoc) | Siderophilic strains used in Stage 1 reactors for bioweathing regolith to release nutrients [6]. |

| Lunar/Martian Regolith Simulant | Geologically accurate terrestrial soil analogs (e.g., JSC-1A) for testing ISRU and bioweathering processes [6]. |

| Photobioreactor (PBR) | Controlled environment system for cultivating photosynthetic organisms; provides data on O₂ production and CO₂ sequestration [2]. |

| Stability-Indicating HPLC Assay | Analytical method to quantify Active Pharmaceutical Ingredient (API) degradation and impurity formation in medications under space-like conditions [4]. |

| Chip Scale Atomic Clock (CSAC) | High-precision timing device enabling advanced one-way navigation techniques, critical for autonomous spacecraft positioning [3]. |

| Protective Drug Packaging | Containers meeting USP standards for vapor transmission to mitigate the primary cause of drug potency loss in space [4]. |

Modeling Trophic Connections and Resource Flows in Ecological Networks

Frequently Asked Questions (FAQs)

Q1: What are the most common causes of failure when calibrating an ecological network model? Failure in model calibration most often stems from incorrect parameterization of trophic links and imbalances in biomass flow equations. Ensure mass-balance (where consumption equals outflows in terms of production, respiration, and unassimilated food for each functional node) is achieved for each node in the network. Using Markov Chain Monte Carlo (MCMC) methods can help test alternative network structures and parameter sets to find a balanced solution [7].

Q2: How can I diagnose a "frozen" or unresponsive network state in my dynamic model? A frozen network state often indicates that the model has settled into an unrealistic equilibrium due to faulty feedback loops or incorrect interaction strengths. Employ qualitative models and discrete-event models to compute all possible exhaustive dynamics from a given initial state. This helps identify if the observed trajectory is anomalous and can reveal missing or incorrect trophic interactions causing the unresponsive state [8].

Q3: My model shows unrealistic cascading failures; how can I improve its resilience? Cascading failures often result from over-reliance on a few key species or pathways, creating single points of failure. Introduce redundancy and functional diversity into your network structure. Model reorganization by incorporating switches in selective grazing by multiple consumers, which allows the system to maintain function despite perturbations. Furthermore, techniques like degraded mode operations can allow the model to gracefully switch to a well-defined, alternative state rather than failing completely [7] [9].

Q4: What does it mean if my model's transfer efficiency between trophic levels is anomalously low? Low transfer efficiency suggests bottlenecks in energy or biomass movement. Analyze your Lindeman spines (simplified grazing and detritus chains) to pinpoint where production is being dissipated. This often relates to incorrect assimilation efficiencies, overestimated respiration rates, or a lack of pathways for detritus recycling. Re-evaluate the physiological rates and diet compositions of key connector species [7].

Troubleshooting Guides

Issue: Failure to Achieve Biomass Balance in Network Nodes

Problem: The model cannot find a solution where, for each functional node, consumption equals the sum of production, respiration, and unassimilated food.

Solution:

- Step 1: Verify the initial biomass ranges and physiological rates (production, consumption) for all nodes against empirical data. Ensure they are realistic and within observed bounds for the system [7].

- Step 2: Check the weighting of trophic links. Use an MCMC approach to generate numerous alternative network structures and link weights, then select the best model that satisfies all balance constraints [7].

- Step 3: Simplify the network by temporarily removing highly uncertain nodes or links, achieving balance, and then carefully re-incorporating them.

Issue: Model is Overly Sensitive to Minor Parameter Changes

Problem: Small adjustments to input parameters (e.g., a grazing rate) lead to disproportionately large and unrealistic shifts in network stability or output.

Solution:

- Step 1: Identify keystone nodes using mixed trophic impact analysis. These nodes, despite potentially low biomass, have a large overall effect on the rest of the web. Focus on refining the parameters associated with these highly influential nodes [7].

- Step 2: Conduct a sensitivity analysis to formally identify which parameters the model is most sensitive to. Prioritize obtaining high-quality data for these parameters.

- Step 3: Implement circuit breaker patterns in dynamic simulations. This technique can prevent a single component's failure from cascading by blocking its effects after a certain threshold is crossed, allowing the rest of the system to stabilize [9].

Issue: Inability to Replicate Observed "State Shifts" (e.g., Bloom to Non-Bloom)

Problem: The model remains in a single stable state and cannot replicate observed sharp transitions, such as the shift between planktonic "green" (bloom) and "blue" (non-bloom) states.

Solution:

- Step 1: Model the two states (e.g., 'green' and 'blue') as separate network variants with distinct organizations of trophic roles and carbon fluxes [7].

- Step 2: Incorporate mechanisms for switches in selective grazing by both metazoan and protozoan consumers. This re-routes carbon fluxes and is a key internal mechanism for state changes [7].

- Step 3: Use a qualitative discrete-event model to define rules that govern transitions between states. This approach helps map out all possible trajectories, including sharp regime shifts triggered by specific environmental or biological thresholds [8].

Quantitative Data for Ecological Network Analysis

The table below summarizes key metrics used to diagnose the structure and function of ecological networks, particularly in plankton food-webs. These metrics are essential for benchmarking your models.

Table 1: Key Diagnostic Indicators for Ecological Network Models

| Indicator | Description | Interpretation in Plankton Food-Webs |

|---|---|---|

| Weighted Degree | The rank of nodes based on biomass taken from/delivered to others [7]. | Identifies main "hubs"; the top 5 nodes are critical for carbon flow. |

| Trophic Level (TL) | The average number of trophic steps from primary producers (TL=1) to a given node [7]. | Maps the hierarchy of energy transfer; helps locate inefficient chains. |

| Keystoneness | Measures nodes that, despite low biomass, induce large changes in others if removed [7]. | Highlights functionally critical species that are not necessarily abundant. |

| Transfer Efficiency (TE) | The percentage of net production at TL n converted to production at TL n+1 [7]. | A key measure of ecosystem function; in plankton models, a 7-fold decrease in phytoplankton may yield only a 2-fold decrease in potential fish biomass [7]. |

| Relative Ascendency | A scaled measure of the system's organization and its capability to cope with perturbations [7]. | Higher values indicate a more organized and robust network. |

Experimental Protocol: Constructing a Balanced Plankton Food-Web Model

This protocol is adapted from methodologies used to develop highly resolved plankton food-web models integrating most trophic diversity [7].

1. Define Functional Nodes (FNs):

- Create a list of functional nodes representing auto-, mixo-, and heterotrophic organisms in the system. A resolution of ~60 nodes is sufficient to capture most trophic diversity [7].

- Assign each FN a biomass value (e.g., in Carbon units) based on in situ observations or literature.

2. Establish Trophic Links:

- Define a connectivity matrix outlining all possible consumer-resource relationships between FNs.

- Assign initial weights to these links based on expert knowledge, literature, and diet studies.

3. Parameterize Physiological Rates:

- For each FN, define a range of plausible values for:

- Production rate

- Consumption rate

- Respiration rate

- Unassimilated food fraction

4. Implement Mass-Balance Calculation:

- Use an ecological network approach (e.g., Ecopath-style) to ensure mass-balance for each node: Consumption = Production + Respiration + Unassimilated Food [7].

- Utilize a Monte Carlo Markov Chain (MCMC) method to iteratively adjust link weights and physiological rates within their predefined ranges.

- Select the best model that satisfies all balance constraints and produces realistic biomass fluxes.

5. Validate and Diagnose Network Structure:

- Run the balanced model and calculate the diagnostic indicators listed in Table 1.

- Validate the model's behavior by testing if it can replicate observed system states (e.g., bloom vs. non-bloom conditions) by re-parameterizing trophic links to represent switching grazing pressures [7].

Experimental Workflow and Diagnostic Logic

The following diagram illustrates the workflow for building and diagnosing an ecological network model, from node definition to resilience assessment.

The diagram below outlines a diagnostic logic tree for investigating common model failures, linking symptoms to their potential causes and solutions.

The Scientist's Toolkit: Research Reagent Solutions

While ecological network modeling does not use chemical reagents, it relies on critical analytical "tools." The following table lists essential components for constructing and analyzing these models.

Table 2: Essential Tools for Ecological Network Modeling & Analysis

| Tool / Component | Function in Modeling |

|---|---|

| Ecopath with Ecosim (EwE) | A widely used software tool for constructing, balancing, and simulating mass-balanced trophic network models [7]. |

| Monte Carlo Markov Chain (MCMC) | A computational algorithm used to explore the parameter space of a model to find the most probable configurations that meet balance constraints [7]. |

| Qualitative Discrete-Event Models | A formal modeling framework from computer science used to exhaustively characterize all possible state transitions and dynamics in a network, ideal for diagnosing regime shifts [8]. |

| Lindeman Spine Analysis | A method to aggregate complex food-webs into simplified trophic chains (producer → herbivore → carnivore) to calculate overall transfer efficiency between discrete trophic levels [7]. |

| Mixed Trophic Impact (MTI) Matrix | A matrix algebra technique to quantify the net effect (both direct and indirect) that a small change in the biomass of one node has on the biomass of all other nodes in the network [7]. |

Frequently Asked Questions (FAQs)

Q1: What is a Single Point of Failure in a research system? A Single Point of Failure (SPOF) is a critical component within a system that, if it fails, will cause the entire system to stop functioning. In the context of a BLSS or a complex biological experiment, this could be a unique reagent, a specific piece of equipment, or a single biological strain that has no backup or redundant alternative. The presence of a SPOF makes a system substantially more vulnerable to disruption [10].

Q2: How does the concept of 'system resilience' apply to laboratory experiments? System resilience is "the ability to provide required capability when facing adversity" [11]. For an experiment, this means designing your protocols and systems to anticipate, withstand, and recover from potential failures. This involves proactive measures (like having backup reagents) and reactive capabilities (like a clear troubleshooting plan) to maintain the integrity and continuity of your research in the face of unexpected problems [11].

Q3: My microbial co-culture has collapsed. What are the first steps I should take? Follow a structured troubleshooting approach:

- Identify the problem: Define the specific symptom (e.g., "no bacterial growth" or "complete death of one species").

- List possible causes: Consider contamination, expired growth media, incorrect incubation conditions, or an imbalance in the initial inoculum ratios.

- Collect data: Check your lab notebook for procedure modifications, verify the expiration dates of all media components, and review equipment logs (e.g., incubator temperature charts).

- Eliminate explanations: Rule out the simplest causes first.

- Check with experimentation: Design a simple experiment to test your leading hypothesis (e.g., re-test media with a known control strain).

- Identify the cause: Use the experimental results to pinpoint the root cause [12].

Q4: What is the difference between a failure in a 'module' and a 'system-level' failure? A module-level failure is contained within a specific component of your system, such as the failure of a single microbial strain or a malfunctioning pH probe. A system-level failure occurs when an initial module-level failure propagates, causing the entire integrated system to collapse. A core objective of resilience engineering is to prevent module-level failures from becoming system-level failures through strategies like redundancy and isolation [10] [11].

Troubleshooting Guides

Guide 1: Troubleshooting Disruptions in Plant-Microbe Modules

This guide addresses failures in the critical symbiotic relationship between plants and rhizosphere microbiota.

- Problem: Stunted plant growth and unhealthy rhizosphere microbiome.

- Potential Single Points of Failure:

- Low Microbial Diversity: A non-resilient, simple microbial community that cannot withstand environmental fluctuations [13].

- Key Microbial Strain Absence: The loss of a keystone bacterium (e.g., Bacillus or Sphingomonas) that plays an outsized role in nutrient cycling [14].

- Shift in Dominant Environmental Driver: A change in the primary factor controlling the microbiome (e.g., a shift from carbon availability to pH) that the system was not designed to handle [13].

Diagnostic Table for Plant-Microbe Failures

| Observation | Possible SPOF | Diagnostic Experiment | Resilience Improvement |

|---|---|---|---|

| Reduced plant biomass and yellowing leaves | Depletion of soil organic carbon (SOC) [14] | Measure SOC and Total Nitrogen (TN) via elemental analysis [13]. | Introduce organic carbon supplements and establish a monitoring schedule. |

| Shift in rhizosphere pH | Loss of pH-buffering microbial consortia [13] | Perform soil pH and electrical conductivity (EC) tests [13]. | Use pH-buffered media; inoculate with pH-tolerant strains. |

| Collapse of microbial network complexity | Over-dominance of a single plant species, reducing microbial diversity [13] | Use 16S rRNA sequencing to analyze microbial diversity and co-occurrence networks [14] [13]. | Introduce a greater variety of plant species to support a more complex, stable network [13]. |

Experimental Workflow for Analysis

The following diagram outlines a general workflow for analyzing the plant-microbe-physicochemical system to identify points of failure.

Guide 2: Troubleshooting Physicochemical Monitoring Failures

This guide addresses failures in the non-biological parameters that are essential for maintaining module health.

- Problem: Erroneous or drifting readings from sensors monitoring the physicochemical environment.

- Potential Single Points of Failure:

- Single Sensor Unit: Relying on one sensor for a critical parameter like pH, dissolved O₂, or temperature with no backup [10].

- Calibration Solution: Using a single batch of calibration buffer that may be contaminated or expired.

- Data Logging System: A single data cable or connection that, if disconnected, halts all data acquisition.

Diagnostic Table for Physicochemical Sensor Failures

| Observation | Possible SPOF | Diagnostic Check | Resilience Improvement |

|---|---|---|---|

| Sudden "zero" or constant reading | Sensor disconnect or power failure to a single sensor unit [10] | Inspect physical connections and power supply. | Install redundant sensors on independent power circuits [10]. |

| Gradual sensor drift | Exhaustion or contamination of a unique calibration solution | Re-calibrate with a fresh, certified solution from a different batch. | Use multiple, independently sourced calibration standards. |

| Complete loss of data from all sensors | Failure of the central data logger or its single network connection [10] | Check the status of the data logger and network switch. | Implement a distributed logging system or a secondary, independent backup logger. |

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and their functions, highlighting potential SPOFs if they are not managed with redundancy.

| Item | Function | Single Point of Failure Risk if Not Managed |

|---|---|---|

| PCR Master Mix | Provides enzymes, dNTPs, and buffer for DNA amplification. | A single, expiring batch can halt all genetic analysis. Use multiple lots or suppliers [12]. |

| Competent Cells | Essential for molecular cloning transformations. | A single vial or strain with low efficiency can cause experimental failure. Maintain multiple, high-efficiency strains [12]. |

| Selective Antibiotics | Maintains selection pressure for plasmids in microbial cultures. | A single stock solution that degrades or is contaminated can lead to loss of engineered strains. Aliquot and validate stocks. |

| Key Microbial Strain | A unique, engineered, or isolated strain central to an experiment. | The loss of a live culture can be irrecoverable. Always create a large, aliquoted glycerol stock stored in multiple locations [11]. |

| Specialized Growth Media | Supports the growth of fastidious organisms. | A single, custom-prepared media batch with an error is a SPOF. Prepare multiple batches or validate with a control organism [12]. |

Principles of Resilience Engineering for Experimental Design

Building on the troubleshooting guides, the following diagram maps the core principles of engineering resilience into your biological systems to proactively avoid failures.

The strategies in the diagram above can be implemented through specific technical features.

| Resilience Strategy | Technical Implementation in a BLSS/Experiment |

|---|---|

| Redundancy [10] | Having backup components (e.g., redundant sensors, multiple aliquots of critical reagents, backup microbial stock cultures) that can take over if the primary one fails. |

| Modularity & Disaggregation [11] | Physically or logically isolating system modules (e.g., plant growth chamber, microbial bioreactor). This contains failures and prevents them from cascading through the entire system. |

| Failover Systems [15] | Automatically or manually switching to a secondary system. For example, a "warm site" backup incubator that can be activated if the primary one fails [15]. |

| Diversification [11] | Using heterogeneous components to minimize common vulnerabilities. Examples include using microbial consortia instead of a single strain, or multiple suppliers for critical chemicals. |

| Monitoring & Anomaly Detection [11] | Continuously observing system states (e.g., with real-time pH monitors) to project future status and allow for early detection and response to deviations. |

| Graceful Degradation [11] | Designing the system to transition to a partially functional state after a failure, rather than failing completely. This ensures some data can still be collected and the system is easier to recover. |

FAQs and Troubleshooting Guides for BLSS Experimentation

This guide addresses common operational challenges in Bioregenerative Life Support System (BLSS) research, drawing on empirical data from long-duration missions like the 370-day Lunar Palace 1 experiment [16].

Frequently Asked Questions (FAQs)

1. What is the expected operational lifetime of a BLSS, and how reliable is it? Based on a 370-day closed human experiment in the Lunar Palace 1 (LP1) facility, the mean lifetime of a BLSS was estimated to be 19,112.37 days (about 52.4 years) under normal operation and maintenance. The 95% confidence interval for this lifetime is [17,367.11, 20,672.68] days, or approximately [47.58, 56.64] years. This estimation was derived from time-series failure data and Monte Carlo simulations [16].

2. Which BLSS units are most critical to overall system reliability? Sensitivity analysis from the LP1 experiment identified five units whose failure has a greater impact on the overall system's reliability and lifetime [16]:

- Water Treatment Unit (WTU)

- Mineral Element Supply Unit (MESU)

- LED Light Source Unit (LLSU)

- Atmosphere Management Unit (AMU)

- Temperature and Humidity Control Unit (THCU) Proactive monitoring and redundant design for these units are crucial for mission success.

3. How can a BLSS maintain stability during long-term operation and crew shifts? The "Lunar Palace 365" mission demonstrated robust system stability over 370 days with crew rotations. Key strategies included [17]:

- Active Gas Management: Regulating CO₂ and O₂ concentrations by adjusting soybean photoperiods and controlling the activity of solid waste reactors.

- High-Closure Performance: Achieving 100% recycling of O₂ and water for crew use and a 98.2% overall system closure degree for crucial survival materials. The system showed strong resilience, quickly minimizing disturbances through various regulation methods.

4. What are the key verification methods for ensuring system resilience? System resilience, which is the ability to protect critical capabilities from adverse events, can be verified through several methods [18]:

- Inspection: Visual examination and technical reviews of the system and its documentation.

- Analysis: Using modeling and calculations (e.g., Mean Time Between Critical Failure analysis, Fault Tree Analysis) to verify requirements.

- Demonstration: Executing the system to show it meets requirements under specific conditions.

- Testing: Executing the system with known inputs to uncover defects, with a focus on resilience testing under adverse conditions.

Troubleshooting Common BLSS Failures

| Failure Mode | Symptoms | Immediate Actions | Long-term Solutions |

|---|---|---|---|

| Water Treatment Unit (WTU) Failure [16] | Decline in water quality/purity; system alerts. | Isolate unit; switch to backup if available. | Implement more reliable components; add parallel redundant subsystems. |

| Atmosphere Imbalance (O₂/CO₂) [17] | CO₂ concentration outside safe/optimal range. | Adjust photosynthetic organism photoperiods (e.g., soybean); regulate solid waste reactor activity. | Optimize control algorithms for biological O₂/CO₂ exchange; diversify plant species. |

| Temperature & Humidity Fluctuations [16] | Deviations from set environmental parameters. | Check sensor calibration; inspect HVAC systems. | Improve robustness of control unit (THCU) design; install redundant sensors. |

| LED Light Source Unit Failure [16] | Light intensity drop; plant growth inhibition. | Activate backup lighting arrays. | Design with modular, easily replaceable LED units; implement predictive maintenance. |

Quantitative Data from Ground Analog Missions

| BLSS Unit | Relative Impact on System Failure | Key Reliability Findings |

|---|---|---|

| Water Treatment Unit (WTU) | High | High failure probability; significant impact on overall system reliability. |

| Temperature & Humidity Control (THCU) | High | High failure probability; major influence on system lifetime. |

| Mineral Element Supply (MESU) | High | Failure significantly affects system reliability and lifetime. |

| LED Light Source (LLSU) | High | Critical unit; failure greatly impacts overall BLSS performance. |

| Atmosphere Management (AMU) | High | Failure has a greater influence on system longevity. |

| Solid Waste Treatment | Medium | Recorded 4 failures during the 370-day LP1 experiment. |

The Scientist's Toolkit: Key Research Reagents & Materials

| Item | Function in BLSS Research |

|---|---|

| Higher Plant Cultivars | Primary producers for O₂ generation, CO₂ removal, food production, and water purification. 35 plant types were used in Lunar Palace 365 [17]. |

| Yellow Mealworms (Tenebrio molitor) | Convert inedible plant biomass into animal protein for crew consumption, closing the food waste loop [16] [17]. |

| Porcine Cardiac Myosin | Used in rodent models to induce Experimental Autoimmune Myocarditis (EAM) for studying cardiovascular health in confined environments [19]. |

| Melissa Officinalis Extract | Investigated as a potential supplement for mitigating oxidative stress and inflammation, relevant to crew health [19]. |

| Solid Waste Fermentation System | Bioconverts inedible plant biomass, human feces, and food residues into soil-like substrate for plant growth [16]. |

Experimental Protocols & Methodologies

Protocol 1: Reliability and Lifetime Estimation for BLSS

Objective: To quantitatively estimate the reliability and operational lifetime of a BLSS using empirical failure data [16].

Methodology:

- Data Collection: Accurately record the number and time of each unit failure during long-term, closed human experiments (e.g., the 370-day LP1 mission).

- Parameter Estimation: Use maximum likelihood estimation to identify the strength (λ) of the failure stochastic process for each unit.

- Probability Distribution: Formulate a failure number probability distribution function for each unit and for the overall system, based on the system's series and parallel structure.

- Sensitivity Analysis: Determine the influence of each unit's failure on the overall system reliability and lifetime.

- Monte Carlo Simulation: Generate numerous pseudo-random numbers that obey the overall system's failure probability distribution to model long-term performance and estimate mean lifetime and confidence intervals.

Protocol 2: Resilience Testing for Critical Systems

Objective: To verify a system's ability to handle and recover from failures, ensuring continuity of critical services [18].

Methodology:

- Define Scope: Identify critical system components and set clear resilience objectives (e.g., minimize downtime).

- Plan Scenarios: Simulate realistic failure scenarios (e.g., server crashes, network outages, hardware failures) under various load conditions.

- Design Test Cases: Use scripts to automate failure introduction (e.g., Chaos Monkey) or manually trigger failures.

- Execute Tests: Closely monitor and log system behavior, failure responses, and recovery times.

- Analyze Results: Measure downtime, identify root causes of slow recovery or failures, and evaluate fault tolerance.

- Report & Improve: Document weaknesses, provide recommendations, fix issues, and retest to validate improvements.

System Resilience Engineering Framework

Resilience is the degree to which a system rapidly and effectively protects its critical capabilities from harm caused by adverse events and conditions. This can be broken down into key functions and verified through specific tests [18].

Proactive Defense and Active Response: Methodologies for Failure Management

This guide provides a structured framework for researchers, scientists, and drug development professionals to diagnose, troubleshoot, and recover from failures in complex experimental systems, particularly within the context of BLSS (Balanced Lead System Solution) compartment research. System resilience is defined as the capacity to withstand disruptions and quickly recover to pre-disruption performance levels [20]. A resilience-based approach, as opposed to simple reliability metrics, focuses on the full-cycle system performance—resisting failures, maintaining core function during the event, and recovering efficiently afterward [20]. The following sections offer a technical support framework to guide your team from initial failure detection to full system recovery.

Troubleshooting Guides & FAQs

Q1: Our experimental data shows a sudden, sustained drop in system performance. How do we begin diagnosing the root cause?

A: A sustained performance drop indicates a potential compartment failure. Follow this structured diagnostic process:

Phase 1: Understand the Problem

- Ask Targeted Questions: What specific performance metric dropped (e.g., pressure, flow rate, chemical concentration)? What was the system state immediately before the drop? What were the environmental conditions? [21]

- Gather Information: Collect all system logs, sensor data (SCADA outputs), and product usage information from the time of the event. Review these logs to identify anomalous readings or error codes that correlate with the performance loss [21].

- Reproduce the Issue: If safe and feasible, attempt to recreate the failure mode by simulating the same system state and inputs. This helps confirm the failure trigger and illuminates the true issue [21].

Phase 2: Isolate the Issue

- Remove Complexity: Simplify the system to a known functioning state. This may involve temporarily bypassing non-essential modules or subsystems to isolate the faulty compartment [21].

- Change One Thing at a Time: Systematically test individual components. For example, adjust pump settings, modify valve positions, or introduce a control reagent. Changing only one variable at a time allows you to pinpoint the exact factor causing the failure [21].

- Compare to a Working Baseline: Compare the current system state and all collected data to a known good baseline from a previous, stable experiment. This can help spot critical differences that may be causing the problem [21].

Phase 3: Find a Fix or Workaround

- Develop a Solution: Based on the isolated cause, the solution may involve a software setting adjustment, a hardware component repair, or a specific chemical intervention.

- Test the Fix: Before fully implementing the solution, test it on a small-scale reproduction of the failure to confirm it resolves the problem without unintended side-effects [21].

- Implement Permanently: Apply the fix to the main system and document the entire process for future reference.

Q2: After identifying a failed component, how do we prioritize recovery actions to maximize system resilience?

A: Prioritization should be based on a component's functional reliability and its importance weight within the entire system network [20]. The goal is to maximize the recovery of overall system functionality with each action, a concept known as resilience-based optimization.

The table below summarizes key metrics to quantify and compare for prioritization.

Table 1: Quantitative Metrics for Recovery Prioritization

| Metric | Description | Application in BLSS Research |

|---|---|---|

| Functional Reliability | The probability that a component will perform its intended function without failure under given conditions [20]. | Calculate based on pipe material age, previous failure history, and operating pressure data [20]. |

| Importance Weight | A measure of a component's criticality to the overall system's performance, often derived from its network connectivity and function [20]. | Determine by analyzing the system topology; a component with many connections (high degree) or critical supply function has a higher weight [20]. |

| Lack of Resilience (LoR) | The area between the system's time-dependent performance trajectory and its target performance level during recovery. A lower LoR indicates a faster, more resilient recovery [22]. | Use as the key objective to minimize when planning the recovery sequence. It integrates both the depth of performance loss and the duration of recovery [22]. |

Q3: What operational strategies can we use to maintain system performance while a failed compartment is being repaired?

A: Implementing dynamic response strategies is crucial for maintaining baseline functionality. Research on water distribution systems shows that optimizing the operation of core system components, such as pumps and valves, can effectively restore performance during a failure event, even before the physical repair is complete [20].

Experimental Protocol: Pump-Valve Response Strategy for Performance Maintenance

- Objective: To determine the optimal operational settings for pumps and pressure-reducing valves (PRVs) to mitigate the impact of a single compartment failure.

- Methodology:

- System Topology Simplification: Convert the complex system layout into a simplified segment-valve (S-V) model. This model helps rapidly identify which isolation valves need to be closed to contain the failure [20].

- Resilience Assessment: Using the S-V model, calculate the system's robustness by quantifying the change in key performance indicators (e.g., flow rate, pressure, chemical delivery) caused by the failure and the proposed valve closures [20].

- Multi-Objective Optimization: Develop an optimization model that balances two objectives:

- Maximizing Resilience: The primary goal is to improve system robustness by restoring hydraulic (or analogous) performance and quality safety.

- Minimizing Response Cost: The secondary goal is to reduce the operational costs associated with the response, such as energy consumption by pumps or usage of backup reagents [20].

- Implementation: Run the optimization model to output the ideal pump speeds and PRV settings. Apply these settings to the system controls and monitor the performance recovery.

Q4: How can we visually map the system's resilience and recovery pathway after an failure event?

A: The resilience curve is a standard method for visualizing a system's recovery trajectory. The following diagram, generated using the specified color palette, maps system performance against time, highlighting key resilience metrics and decision points.

Q5: What are the essential reagents and materials for establishing a resilience testing protocol?

A: The following toolkit is essential for conducting experiments focused on failure response and system recovery.

Table 2: Research Reagent Solutions for Resilience Testing

| Item | Function / Explanation |

|---|---|

| Pipe Health Assessment Model | A computational model (often combining heuristic, physical, and statistical methods) used to calculate the failure probability of system components based on age, material, and operational stress [20]. |

| Segment-Valve (S-V) Model | A simplified topological representation of the experimental system that allows for rapid identification of critical isolation valves and segments during a failure event [20]. |

| Hydraulic & Quality Sensors | Sensors integrated into a SCADA system to monitor key performance indicators like pressure, flow rate, and chemical concentration in real-time, enabling failure detection and localization [20]. |

| Deep Reinforcement Learning (DRL) Models | Advanced computational models, such as Double Deep Q-Networks (DDQN), that can learn optimal recovery sequences by mapping system states to repair actions, maximizing long-term resilience [22]. |

| Multi-Objective Optimization Framework | A software framework that balances competing objectives, such as maximizing system resilience and minimizing operational costs, to determine the most effective failure response strategy [20]. |

Implementing Real-Time Anomaly Detection with Sensor Data and SCADA Systems

Troubleshooting Guides

Guide 1: Resolving High False Positive Rates in Anomaly Detection

Problem: Your anomaly detection system is triggering an excessive number of false alarms, causing alert fatigue and potentially masking real threats.

Check Feature Selection and Engineering: Overly simplistic features may not capture normal behavioral patterns. Implement behavioral attribute extension by modeling network nodes as graph vertices to create advanced features that improve characterization of normal SCADA traffic. Research shows this can increase the F1 score from 0.6 to 0.9 and MCC from 0.3 to 0.8 [23].

Validate Threshold Configuration: Examine if your detection thresholds are too sensitive. For reconstruction-based models like LSTM Autoencoders, use precision-recall curves on validation data to determine the optimal threshold [24]. Implement dynamic thresholding that adapts to changing operational states.

Confirm Data Preprocessing: Ensure proper handling of missing values and normalization. For continuous physiological parameters with <10% missing data, mean imputation can maintain consistency with real-world clinical monitoring [25]. For SCADA data, verify all sensor readings are properly scaled and timestamp-aligned.

Assess Model-Data Compatibility: A model trained on one type of operational data may not perform well on another. For network-based detection, ensure your training data represents normal IEC 104 protocol communication patterns specific to your system [23].

Guide 2: Addressing Latency in Real-Time Detection Systems

Problem: Anomaly detection system exhibits unacceptable delay between data acquisition and alert generation, compromising real-time response.

Evaluate Processing Location: Cloud-based processing introduces significant latency. Migrate to Edge AI architecture where data processing occurs locally on devices or nearby edge servers. Studies show this can achieve sub-50ms inference latency on platforms like Raspberry Pi [26].

Optimize Model Complexity: Complex models may be too computationally intensive. For resource-constrained environments, Isolation Forest algorithms offer faster inference and lower power consumption compared to LSTM Autoencoders, though with potentially lower accuracy [26].

Implement Model Quantization: Apply optimization strategies such as 8-bit quantization to reduce model size and computational requirements. Research demonstrates this can reduce LSTM-AE inference time by 76% and power consumption by 35% [26].

Verify Data Flow Architecture: Check for bottlenecks in data acquisition pipelines. For sequence-based models, ensure your time window configuration (e.g., 150 packets for network data) balances detection accuracy with latency requirements [24].

Guide 3: Diagnosing Complete System Communication Failures

Problem: SCADA system has lost communication with field devices, resulting in no data flow for anomaly detection.

Perform HMI Verification: Check the human-machine interface for simple configuration issues. Verify settings are correct and examine mundane but critical aspects like power supply, caps lock, and number lock [27].

Inspect Communication Hardware: Locate Ethernet or communication ports and verify signal transmission via blinking indicator lights. If lights are off, no signal is getting through the wire. For radio systems, check antennas for physical damage [27].

Conduct Field Verification: Visit the data point and check the Remote Terminal Unit (RTU) for power and normal operation. For instrumentation, manipulate expected values to known quantities (e.g., zero flow with pump off) and verify SCADA readings match [27].

Apply Circuit Breaker Pattern: Implement a circuit breaker object between service consumer and provider to monitor message success. If consecutive failures exceed a threshold, the breaker trips to prevent cascading failures and allows controlled recovery attempts after timeout [9].

Frequently Asked Questions (FAQs)

Q1: What are the most effective machine learning techniques for real-time SCADA anomaly detection?

The optimal technique depends on your specific requirements for accuracy, latency, and computational resources. For network-based detection in IEC 104 protocols, One-Class SVM has demonstrated stable performance for detecting various attacks [23]. For time-series sensor data, LSTM Autoencoders can achieve up to 93.6% accuracy by learning normal pattern sequences and detecting deviations [26]. When computational resources are constrained, Isolation Forest provides faster inference with lower power consumption [26]. Hybrid approaches that combine multiple techniques often provide the best balance between detection performance and operational efficiency.

Q2: How can we ensure our anomaly detection system supports overall system resilience?

Anomaly detection is one component of a comprehensive resilience strategy. Effective systems implement multiple resilience techniques including: resistance (EM shielding, authentication), detection (health checkers, checksums, denial of service monitoring), reaction (alerts, failover, degraded mode operations), and recovery (checkpointing, immutable server pattern, infrastructure as code) [9]. Specifically, for BLSS compartment failure research, your system should automatically switch to degraded mode operations when anomalies are detected, preserving critical functions while maintaining system safety [9].

Q3: What metrics should we use to evaluate our anomaly detection system's performance?

A comprehensive evaluation should include multiple metrics to provide a complete performance picture. The following table summarizes key quantitative metrics from recent research:

Table 1: Performance Metrics for Anomaly Detection Systems

| Metric | Description | Reported Performance | Context |

|---|---|---|---|

| F₁ Score | Balance of precision and recall | Increased from 0.6 to 0.9 [23] | SCADA network with attribute extension |

| Matthews Correlation Coefficient (MCC) | Overall quality of binary classification | Improved from 0.3 to 0.8 [23] | SCADA network communication |

| Area Under ROC Curve (AUC) | Overall detection capability | 0.825 [25] | Medical sedation detection |

| Accuracy (ACC) | Overall correctness | 0.741 [25] | Non-EEG physiological signals |

| Recall | Ability to find all positives | 0.86 [24] | Modbus/TCP attack detection |

| Latency | Time from data acquisition to alert | <50ms [26] | Edge AI smart home detection |

Q4: How can we handle the integration of sensor data from multiple heterogeneous sources?

Effective sensor data integration requires both technical and business process solutions. Implement standardized data formats and lexicons to create a unified view of data across sources [28]. Use embedding layers to encode categorical features based on relationships between different values, and separate categorical/numerical input data into statics and dynamics [24]. For temporal alignment, implement dynamic time windowing approaches that approximate the calculation principles of your target metrics, enabling models to incorporate short-term physiological variability [25]. Successful integration follows examples from other industries like Bluetooth standards and payment card specifications that enabled widespread interoperability [28].

Experimental Protocols & Methodologies

Protocol 1: Developing Behavioral Attribute Extension for SCADA Networks

This methodology enhances anomaly detection in IEC 60870-5-104 (IEC 104) SCADA protocol communication by extending the attribute set through topological behavior analysis [23].

Node Relationship Modeling: Model SCADA network nodes as graph vertices to construct attributes that enhance network characterization. Represent relationships between interacting SCADA nodes to capture behavioral patterns not apparent in raw data [23].

Attribute Construction: Develop features that represent both individual node behavior and relational characteristics between nodes. Focus on constructing attributes that differentiate normal and anomalous communication patterns in IEC 104 protocol traffic [23].

Anomaly Detection Implementation: Apply One-Class SVM algorithm to the extended attribute set. Utilize its proven stable performance for SCADA protocol data and ability to segregate communication network data effectively [23].

Performance Validation: Evaluate using F₁ score and Matthews Correlation Coefficient (MCC). Compare performance with and without attribute extension to quantify improvement. Benchmark against existing unsupervised detection scores in related literature [23].

Protocol 2: Implementing Sequence-to-Sequence Autoencoder for Network Anomaly Detection

This protocol details implementation of a deep learning approach for detecting data manipulation attacks in Modbus/TCP-based SCADA systems [24].

Model Architecture Design: Implement a sequence-to-sequence Autoencoder using Long Short-Term Memory (LSTM) units. Incorporate an embedding layer to encode categorical features based on relationships between different values. Apply teacher forcing technique using original inputs from prior time steps as Decoder inputs to prevent deviation and enable faster convergence [24].

Input Data Separation: Separate categorical/numerical input data into statics and dynamics. Process static and dynamic features through appropriate pathways to improve model learning and generalization [24].

Attention Mechanism Integration: Incorporate attention mechanisms to make the model more efficient at each time step. This enhances the model's ability to focus on relevant portions of input sequences when detecting anomalies [24].

Threshold Determination: Establish detection thresholds based on precision-recall curves on validation data sets. This data-driven approach optimizes the balance between detection sensitivity and false positive rates [24].

System Architecture & Workflows

System Architecture for Resilient Anomaly Detection

Research Reagents & Essential Materials

Table 2: Essential Research Components for SCADA Anomaly Detection Systems

| Component | Function | Implementation Examples |

|---|---|---|

| Behavioral Attribute Extension | Enhances network characterization by modeling node relationships | Graph-based features for IEC 104 protocol [23] |

| Sequence-to-Sequence Autoencoder | Learns normal network patterns to detect deviations | LSTM with attention mechanism for Modbus/TCP [24] |

| Hybrid Detection Models | Balances accuracy and computational efficiency | Isolation Forest + LSTM Autoencoder on Edge devices [26] |

| Resilience Techniques | Maintains system operation during adverse conditions | Circuit breaker, checkpointing, degraded mode operations [9] |

| Edge AI Optimization | Enables real-time processing on resource-constrained devices | Model quantization, federated learning, power-efficient inference [26] |

| Sensor Data Integration | Combines multiple data sources for comprehensive monitoring | Standardized formats, dynamic time windowing, embedding layers [28] [25] |

Technical Support Center

Troubleshooting Guides

Q: What are the initial steps when a pressure loss is detected in a single BLSS compartment? A systematic approach is required to diagnose and contain the failure. Follow this logical sequence of steps to understand and isolate the problem [21] [29]:

- Confirm and Characterize the Failure: Use sensor data to confirm the pressure reading is not an instrumentation error. Determine the rate of pressure loss (sudden vs. gradual).

- Isolate the Compartment: Immediately initiate the closure of the primary and secondary isolation valves for the affected compartment. This prevents the failure from propagating to other parts of the system [30] [31].

- Activate Bypass Pathways: Engage the appropriate fluid or gas bypass circuits to re-route essential resources around the compromised compartment, maintaining overall system function [32] [31].

- Diagnose Root Cause: While the system is stabilized via the bypass, investigate the root cause. This may involve checking for simulated blockages, valve actuator failures, or leaks in membrane filters.

Q: The system's resource re-routing is inefficient, leading to suboptimal recovery times. How can this be improved? Inefficient re-routing often stems from static protocols that cannot adapt to dynamic failure conditions. Implement a dynamic adaptive re-routing strategy [32] [33].

- Implement Real-Time Data Integration: Ensure the re-routing logic receives live data on resource availability, valve states, and pressure differentials across all compartments [33].

- Utilize Incremental Computation: Employ algorithms that recalculate optimal pathways incrementally as new data arrives (e.g., a new blockage is identified), rather than recomputing from scratch, which reduces latency [33].

- Compare Pathway Options: Evaluate multiple potential re-routing paths (k-shortest paths) based on criteria such as flow resistance, volume capacity, and energy consumption to select the most efficient one [32].

Q: A bypass valve fails to open or close during a simulated compartment failure. What is the diagnostic protocol? This is a critical failure point that requires immediate isolation and diagnosis [21].

- Isolate the Valve: Manually override and close the upstream and downstream isolation valves for the faulty bypass valve to take it out of the circuit [30].

- Check Actuator and Power Supply: Verify the electrical or pneumatic signal to the valve actuator. Use a multimeter to confirm voltage/pressure is reaching the actuator.

- Inspect for Mechanical Obstruction: With the valve isolated and power disconnected, inspect for internal obstructions or mechanical seizure. This may require physical disassembly in a simulated environment.

- Verify Control Logic: Check the system's control unit to ensure the command signal to open/close the valve was sent correctly and was not overridden by a higher-priority safety interlock.

Frequently Asked Questions (FAQs)

Q: How do you validate that a dynamic response strategy will work under unexpected failure conditions? Validation is achieved through a combination of high-fidelity simulation and physical testing. A realistic traffic scenario model, fully developed to imitate actual events, can be used as an analogue for testing re-routing strategies under various failure intensities and locations [32]. The model is able to automatically identify congestion patterns (i.e., blockages) and initiate a proper re-routing strategy in a timely manner [32].

Q: What is the most common point of failure in valve-based isolation systems? Based on post-disaster recovery analysis of critical infrastructures, interdependencies between systems are a key factor [34]. The most common points of failure are often not the valves themselves, but the interdependencies with their support systems, such as the electrical power for automated valve actuators or the control system network. Ensuring the resiliency of these power systems is paramount for the recovery of the entire infrastructure [34].

Q: Why is it critical to change only one variable at a time during troubleshooting? Changing one variable at a time is a fundamental principle of the scientific method and is critical for isolating the root cause of a problem. If you change multiple things at once and the problem is resolved, you cannot know which change fixed the issue. This leads to an unreliable understanding of the system and an unrepeatable solution [21].

The following tables summarize key performance metrics and parameters from the cited methodologies.

Table 1: Dynamic Adaptive Re-routing Algorithm Performance [32]

| Metric | Description | Simulated Result / Value |

|---|---|---|

| Congestion Mitigation | Algorithm's effectiveness in alleviating traffic congestion in a grid network. | Outperformed comparable methods under heavy traffic conditions. |

| k-Shortest Path (kSP) Inspiration | Basis for the re-routing strategy, evaluating multiple potential pathways. | Adapted with a dynamic congestion re-routing strategy. |

| Model Basis | Foundation for the testing scenario. | A custom-designed, medium-scale grid traffic network model. |

Table 2: Valve Functional Specifications [30] [31]

| Component | Key Feature / Parameter | Function in System |

|---|---|---|

| Radiator Isolation & Bypass Valve | Adjustable bypass ratio; built-in shut-off for supply/return lines. | Prevents flow disruption in a 1-pipe system by allowing bypass during isolation [30]. |

| Dual-Action Bypass Sub | Two sets of ports; two internal ball seats; can be run in open or closed position. | Enables jetting/cleaning while running in or pulling out of hole; used as a bypass valve [31]. |

Experimental Protocols

Protocol 1: Evaluating Compartment Isolation and Bypass Activation Time

Objective: To quantitatively measure the time required to fully isolate a compromised BLSS compartment and establish a stable bypass pathway, under different failure scenarios.

Methodology:

- Instrumentation: Ensure all isolation valves, bypass valves, and critical pressure/flow sensors are connected to a data acquisition system with millisecond-time resolution.

- Baseline Establishment: For each test scenario, run the system to a steady state and record all baseline parameters.

- Failure Induction: Initiate a simulated failure in a target compartment. Example failures include a rapid pressure decay (simulating a rupture) or a slow pressure increase (simulating a blockage).

- Data Recording: The data acquisition system should automatically record:

- Time T~0~: The moment the failure is detected by the system's sensors.

- Time T~1~: The moment the primary and secondary isolation valves for the compartment achieve a fully closed state.

- Time T~2~: The moment the designated bypass valve is fully open and stable flow is confirmed via sensors.

- Pressure P~B~ and Flow F~B~ in the bypass circuit once stable.

- Analysis: Calculate key metrics: Isolation Time (T~1~ - T~0~), Bypass Stabilization Time (T~2~ - T~0~), and system efficiency post-bypass.

Protocol 2: Testing the Resiliency of Interdependent Systems

Objective: To validate the discovered interdependencies between the primary flow system (e.g., power systems analogue) and other critical support systems following a compartment failure event [34].

Methodology:

- System Mapping: Identify and document all interdependent systems (e.g., electrical power for valve actuators, control system network, data processing unit).

- Define Metrics: Establish quantitative recovery metrics for each system (e.g., for power: voltage stability; for network: data packet loss).

- Induce Cascade: Initiate a primary compartment failure and record the subsequent failure or performance degradation in the interdependent systems.

- Monitor Recovery: As dynamic response strategies are deployed (isolation, bypass, re-routing), meticulously track the recovery trajectory of each system.

- Validation: Analyze the recovery data to quantify the strength of the interdependencies. A strong interdependency is indicated if the recovery of the support system is a direct prerequisite for the recovery of the primary flow system, and vice-versa [34].

System Workflow and Interdependency Diagrams

Troubleshooting Process Flow

System Interdependency Map

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for BLSS Resilience Experimentation

| Item | Function / Explanation |

|---|---|

| Isolation Valve Actuators | Automated components that physically open or close valves upon an electrical signal. Critical for rapid, remote isolation of failed compartments. |

| Bypass Valves with Adjustable Ratio | Valves that can be configured to allow a specific percentage of flow to bypass a main pathway. Essential for fine-tuning resource re-routing around a failure point [30]. |

| Dual-Action Bypass Sub | A specialized valve tool that can be run in an open position for cleaning/jetting and then closed for normal circulation. Analogous to a multi-mode bypass for managing debris during a failure event [31]. |

| k-Shortest Path (kSP) Algorithm | A computational method used to find several potential pathways between two points, not just the absolute shortest. The foundation for dynamic adaptive re-routing strategies that evaluate multiple options [32]. |

| Real-Time Data Integration Platform | Software that unifies fresh data from disparate sources (sensors, valves, controllers). Provides the foundational, trustworthy data required for correct and timely dynamic responses [33]. |

| Incremental Computation Engine | A system that recalculates outputs (like optimal routes) by only processing new data changes. Dramatically reduces latency, enabling sub-second re-routing decisions in complex systems [33]. |

Multi-Objective Optimization for Balancing Resilience, Cost, and Performance

FAQs and Troubleshooting Guides

Frequently Asked Questions

Q1: What is the core challenge of multi-objective optimization in resilience engineering? The core challenge lies in balancing conflicting objectives, such as minimizing economic loss, reducing repair time or population dislocation, and maintaining system functionality, without a single solution that optimizes all goals simultaneously. The solution involves finding a set of Pareto-optimal solutions that represent the best possible trade-offs [35].

Q2: How can I prevent reward hacking when using data-driven predictive models for optimization? Reward hacking occurs when optimization algorithms exploit inaccuracies in predictive models for data points far outside the training dataset. To prevent this, implement a reliability framework like DyRAMO that uses Applicability Domains (AD) for each predictive model. This ensures that designed solutions or strategies fall within the chemical or parameter space where your property predictions are reliable [36].

Q3: My evolutionary algorithm converges to solutions with low diversity. How can I improve it? To maintain population diversity in evolutionary algorithms, avoid over-reliance on similarity to a single lead structure. Incorporate a Tanimoto similarity-based crowding distance calculation within your multi-objective algorithm (e.g., an improved NSGA-II). This better captures structural differences and prevents premature convergence to local optima [37].

Q4: What is the benefit of a multi-objective approach over single-objective optimization for post-failure recovery? A single-objective approach may maximize one metric, such as system functionality, but at an unacceptable cost or repair time. A multi-objective framework simultaneously optimizes for several key metrics (e.g., hydraulic recovery, repair time, and repair cost), allowing decision-makers to select a balanced strategy that offers the most favorable overall outcome for a specific situation [38].