Building End-to-End Non-Destructive Plant Phenotyping Workflows: From Foundational Concepts to Validated Applications

This article provides a comprehensive guide to developing and implementing end-to-end workflows for non-destructive plant phenotyping.

Building End-to-End Non-Destructive Plant Phenotyping Workflows: From Foundational Concepts to Validated Applications

Abstract

This article provides a comprehensive guide to developing and implementing end-to-end workflows for non-destructive plant phenotyping. It explores the foundational principles of optical sensing and the limitations of traditional methods, then details the integration of advanced imaging technologies like multimodal 3D imaging, hyperspectral sensors, and AI-based analysis. The content covers methodological applications across various plant species and stress scenarios, addresses key challenges in data processing and platform selection, and validates these approaches through comparative analysis with conventional techniques. Aimed at researchers and scientists, this review synthesizes current advancements to empower the development of robust, high-throughput phenotyping systems for precision agriculture and plant research.

The Principles and Evolution of Non-Destructive Plant Phenotyping

Non-destructive phenotyping represents a paradigm shift in plant science, enabling researchers to quantify plant traits without damaging or destroying the sample. This approach involves using advanced sensors and imaging technologies to capture detailed morphological, physiological, and biochemical information from plants throughout their development cycle [1]. The core principle centers on maintaining sample integrity while collecting high-dimensional phenotypic data, allowing for repeated measurements on the same plant over time. This capability is revolutionizing how researchers monitor plant growth, assess stress responses, and accelerate breeding programs by providing dynamic insights into plant development and function.

The technological foundation of non-destructive phenotyping rests on multiple sensing modalities that capture different aspects of plant biology. These include visible light imaging (RGB), hyperspectral and multispectral imaging, thermal imaging, fluorescence sensing, X-ray computed tomography (CT), magnetic resonance imaging (MRI), and 3D reconstruction techniques [1] [2] [3]. Each modality offers unique advantages for assessing specific plant traits, from overall biomass and structure to internal tissue integrity and physiological function. When integrated through sophisticated data analytics, these technologies provide a comprehensive understanding of plant phenotype that was previously unattainable through conventional destructive methods.

Core Concepts and Defining Characteristics

Non-destructive phenotyping is defined by several interconnected core concepts that distinguish it from traditional approaches. Understanding these fundamental principles is essential for effectively implementing these methodologies in plant research.

Fundamental Principles

High-Throughput Data Collection: Advanced phenotyping platforms automate the measurement process, enabling rapid assessment of large plant populations. This scalability is crucial for breeding programs and genetic studies where thousands of genotypes must be evaluated [4]. Throughput is enhanced by automated conveyor systems, robotics, and unmanned aerial vehicles that minimize human intervention while maximizing data acquisition speed.

Non-Destructive Assessments: The preservation of sample integrity allows for repeated measurements on the same plants throughout their life cycle [4]. This longitudinal monitoring captures dynamic developmental processes and transient responses to environmental stimuli, providing temporal data trajectories that are impossible to obtain through destructive sampling.

Real-Time Analysis and Decision-Making: Integrated data processing pipelines transform raw sensor data into actionable insights rapidly, often through cloud-based platforms and automated analysis workflows [4]. This immediacy enables researchers to make timely interventions and adjustments to experimental conditions based on current phenotypic status.

Operational Framework

The operational framework for non-destructive phenotyping typically follows a structured workflow: (1) sample preparation and mounting, (2) automated or semi-automated image acquisition using multiple sensors, (3) data preprocessing and storage, (4) feature extraction and trait quantification, and (5) data visualization and interpretation [5] [2]. This systematic approach ensures consistency and reproducibility across measurements and experimental sessions.

A key conceptual advancement is the end-to-end workflow that connects raw data acquisition directly to phenotypic predictions without intermediate destructive validation. For example, recent research demonstrates complete pipelines where multimodal 3D imaging of grapevine trunks combines with machine learning to automatically classify tissue health status without physical dissection [2]. Similarly, deep learning regression models can directly compute phenotypic traits from image data, bypassing traditional segmentation steps that can introduce errors [5].

Comparative Advantages Over Destructive Methods

Non-destructive phenotyping offers significant advantages across multiple research domains, fundamentally enhancing what is possible in plant science.

Table 1: Comparative Analysis of Phenotyping Approaches

| Parameter | Destructive Methods | Non-Destructive Methods |

|---|---|---|

| Sample Integrity | Samples destroyed during measurement | Samples remain intact for repeated use |

| Temporal Resolution | Single time point per sample | Multiple time points from same sample |

| Data Type | Static snapshot | Dynamic developmental trajectories |

| Throughput | Limited by manual processing | High-throughput with automation |

| Trait Coverage | Often limited to single traits | Multiple traits simultaneously |

| Early Detection | Difficult for subtle changes | Sensitive to pre-symptomatic changes |

| Labor Requirements | High manual effort | Reduced human intervention |

| Longitudinal Studies | Requires large sample sizes | Smaller populations sufficient |

Scientific Advantages

The ability to monitor the same plants throughout development enables researchers to capture growth dynamics and temporal patterns that are completely missed by destructive approaches [4]. This longitudinal dimension is particularly valuable for understanding plant responses to gradually changing environmental conditions or transient stress events. For instance, daily imaging of oak trees in drought tolerance research allowed researchers to track the dynamics of tree development and understand the evolution of each variety's resilience to climate change [4].

Non-destructive methods also enable the detection of subtle, pre-symptomatic responses to stresses before visible symptoms appear. Hyperspectral imaging can reveal biochemical changes in leaves associated with herbicide damage, nutrient deficiencies, or pathogen infections at stages when interventions are most effective [3]. This early-warning capability significantly enhances research on plant stress physiology and resistance mechanisms.

Practical and Economic Advantages

From a practical standpoint, non-destructive phenotyping reduces the sample sizes required for statistical power in experiments. Since each plant serves as its own control across time points, fewer individuals are needed to detect significant treatment effects [4]. This efficiency translates to substantial cost savings in terms of materials, growth space, and labor.

The automation inherent in advanced phenotyping systems also addresses human resource constraints. For example, the IPENS framework enables rapid extraction of grain-level point clouds for multiple targets within three minutes using single-round image interactions, dramatically accelerating what would require extensive manual effort [6]. This efficiency gain allows researchers and breeders to screen larger populations more quickly, accelerating the selection process in breeding programs.

Application Notes and Experimental Protocols

Protocol 1: Multimodal 3D Imaging for Internal Tissue Health Assessment

This protocol outlines the procedure for non-destructive assessment of internal tissue structure in woody plants using combined X-ray CT and MRI, adapted from grapevine trunk disease studies [2].

Research Reagent Solutions: Table 2: Essential Materials for Multimodal 3D Imaging

| Item | Specification | Function |

|---|---|---|

| X-ray CT System | Clinical or micro-CT scanner | Visualizes internal tissue density and structure |

| MRI Scanner | Preferable 3T or higher field strength | Assesses physiological status and water distribution |

| Plant Mounting Apparatus | Customizable, non-metallic | Secures plant during imaging while avoiding artifacts |

| Registration Software | Custom algorithm or commercial solution | Aligns multimodal 3D image datasets |

| Machine Learning Classifier | Random Forest, SVM, or Deep Learning | Automates voxel classification into tissue health categories |

Step-by-Step Procedure:

Sample Preparation: Select intact plants representing the health status range of interest. Secure plants in custom mounting apparatus ensuring stability during imaging. For grapevines, use twelve plants minimum, including both symptomatic and asymptomatic individuals based on foliar symptom history [2].

Multimodal Image Acquisition:

- Acquire X-ray CT images using standardized parameters (e.g., 120 kV tube voltage, 200 μA current, 0.5-1.0 mm slice thickness).

- Perform MRI acquisitions using multiple protocols: T1-weighted, T2-weighted, and PD-weighted sequences to capture different tissue properties.

- Maintain consistent positioning between imaging sessions to facilitate subsequent registration.

Data Registration and Preprocessing:

- Apply automatic 3D registration pipeline to align all multimodal images into a unified coordinate system [2].

- Resample images to consistent voxel dimensions (e.g., 0.5×0.5×0.5 mm³) across all modalities.

- Normalize signal intensities within and between imaging sessions to account for instrument variability.

Expert Annotation and Training:

- Following imaging, carefully section plants and photograph both sides of each cross-section (approximately 120 pictures per plant).

- Have domain experts manually annotate random cross-sections according to visual inspection, defining tissue classes including: healthy-looking tissues, black punctuations, reaction zones, dry tissues, necrosis, and white rot [2].

- Map these 2D annotations to the 3D imaging data using the registration pipeline.

Machine Learning Classification:

- Train a segmentation model using the annotated data to automatically classify voxels into three simplified tissue categories: 'intact' (functional or nonfunctional healthy tissues), 'degraded' (necrotic and altered tissues), and 'white rot' (decayed wood).

- Validate model performance using cross-validation and compute accuracy metrics (global accuracy should exceed 91% [2]).

Quantification and Analysis:

- Calculate the volumetric proportions of each tissue class within the entire plant or specific regions of interest.

- Correlate internal tissue distribution with external symptom expression and historical data.

- Generate 3D visualization maps of tissue health status for comparative analysis.

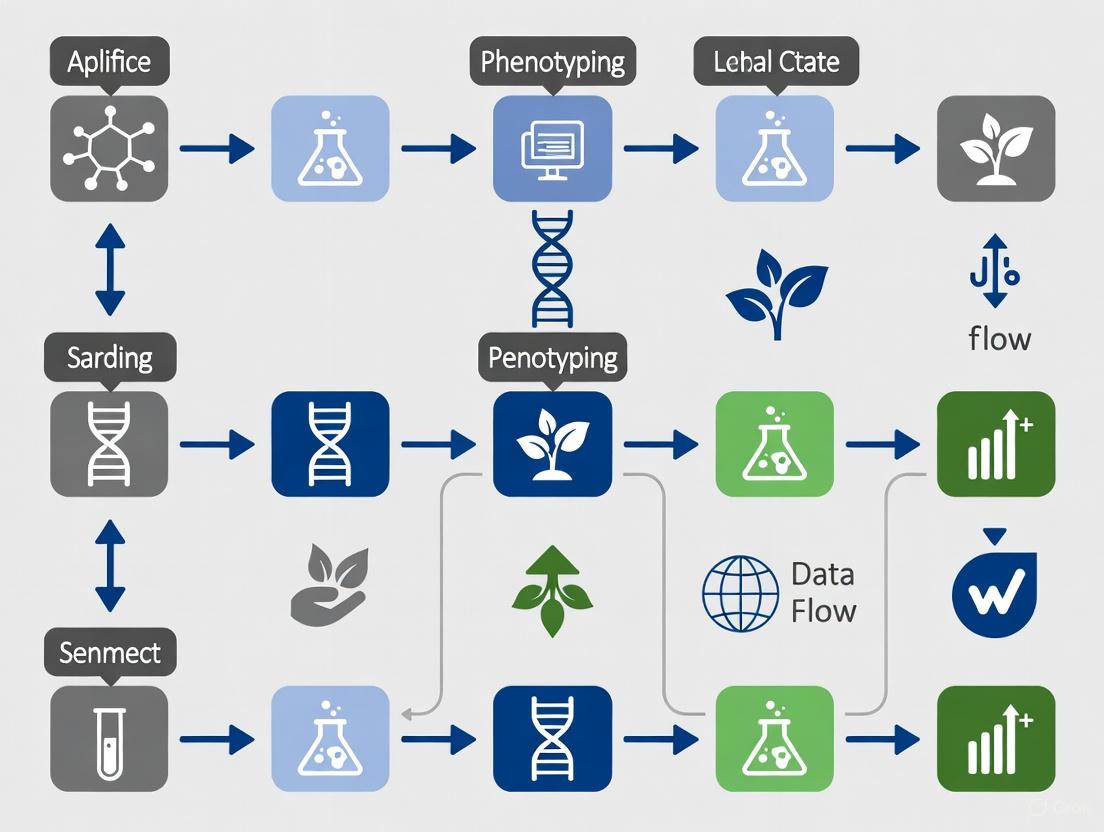

Multimodal tissue analysis workflow.

Protocol 2: End-to-End Deep Learning for Automated Trait Extraction

This protocol describes an end-to-end approach to directly compute phenotypic traits from images using deep learning regression models, bypassing intermediate segmentation steps [5].

Research Reagent Solutions: Table 3: Essential Materials for End-to-End Deep Learning Phenotyping

| Item | Specification | Function |

|---|---|---|

| Imaging System | LemnaTec-Scanalyzer3D or equivalent | Standardized image acquisition under controlled conditions |

| Computing Hardware | GPU-accelerated workstation (NVIDIA recommended) | Model training and inference |

| Deep Learning Framework | MATLAB R2024a, Python/TensorFlow, or PyTorch | Implementation of neural network architectures |

| Data Annotation Tool | kmSeg or similar semi-automated software | Efficient ground truth generation for model training |

| Validation Dataset | 1,476+ images with accurate annotations | Model training and performance assessment |

Step-by-Step Procedure:

Image Data Acquisition and Preparation:

- Acquire visible light images of plants (Arabidopsis, maize, barley, etc.) using standardized phenotyping platforms (e.g., LemnaTec-Scanalyzer3D) [5].

- Maintain consistent imaging protocols throughout experiments, including fixed camera resolutions, background illumination, and photochamber installations.

- For model training, curate a set of images (e.g., 1,476 images) with accurate annotation of foreground and background regions as ground-truth data.

Ground-Truth Trait Calculation:

- Compute target phenotypic traits from ground-truth segmented images for all images in the training set. Key traits include:

- Plant area and convex hull (growth vigor indicators)

- Plant height and width (morphological information)

- Average red, green, and blue colors (health status and nutrient content) [5]

- These calculated values serve as the target variables for end-to-end model training.

- Compute target phenotypic traits from ground-truth segmented images for all images in the training set. Key traits include:

Model Architecture Design:

- Implement a conventional CNN architecture with six hierarchical convolution layers of increasing size (8, 16, 32, 64, 128, and 256 filters) followed by two fully connected layers producing a single trait value [5].

- Configure the final output layer for regression (linear activation) rather than classification.

- Train separate models for each plant trait and imaging modality (e.g., 45 models for nine traits across five plant imaging modalities).

Model Training and Validation:

- Partition data into training, validation, and test sets (typical split: 70%/15%/15%).

- Train models using appropriate loss functions (mean squared error for regression) and optimization algorithms.

- Employ regularization techniques (dropout, weight decay) to prevent overfitting.

- Validate model performance by comparing predictions directly against ground-truth trait values rather than segmentation accuracy metrics.

Performance Evaluation and Interpretation:

- Evaluate models using correlation coefficients, R² values, and root mean square error (RMSE) between predicted and actual trait values.

- Compare end-to-end approach performance against conventional segmentation-based pipelines.

- Use activation layer visualization techniques to maintain model interpretability despite the absence of explicit segmentation.

End-to-end trait prediction workflow.

Protocol 3: Hyperspectral Imaging for Pigment Content Prediction

This protocol details the use of hyperspectral imaging combined with machine learning for non-destructive prediction of photosynthetic pigments in Ginkgo biloba, applicable to large-scale germplasm screening [7].

Research Reagent Solutions: Table 4: Essential Materials for Hyperspectral Pigment Phenotyping

| Item | Specification | Function |

|---|---|---|

| Hyperspectral Imaging System | VNIR (400-1000 nm) range recommended | Captures spectral signatures of plant tissues |

| Reference Pigment Data | acetone/ethanol extraction and spectrophotometry | Provides ground truth for model training |

| Sample Population | 3,460+ seedlings from diverse genetic backgrounds | Ensures model robustness and generalizability |

| Machine Learning Algorithms | AdaBoost, PLSR, Random Forest | Builds prediction models from spectral data |

| Feature Selection Method | Successive Projections Algorithm (SPA) | Identifies most informative wavelengths |

Step-by-Step Procedure:

Experimental Design and Sample Preparation:

- Establish a large and diverse population (e.g., 3,460 seedlings from 590 families) representing the genetic diversity of interest [7].

- Ensure samples cover various color development phases and physiological states to enhance model robustness.

- Maintain standardized growing conditions while allowing natural phenotypic variation.

Hyperspectral Image Acquisition and Pigment Quantification:

- Acquire hyperspectral images of all samples using consistent illumination and camera settings.

- Subsequently, destructively measure pigment contents (Chl a, Chl b, and Car) using traditional methods (acetone/ethanol extraction) for a representative subset.

- This creates the paired dataset of spectral signatures and reference pigment values required for supervised learning.

Data Preprocessing and Optimization:

- Test multiple preprocessing methods: raw reflectance, normalization, first derivative, and second derivative.

- Implement normalization to significantly improve model accuracy, as demonstrated in Ginkgo studies [7].

- Extract spectral data from regions of interest corresponding to tissues used for reference measurements.

Feature Selection and Model Training:

- Apply the Successive Projections Algorithm (SPA) for effective spectral dimensionality reduction while preserving predictive power [7].

- Train and compare multiple machine learning algorithms (AdaBoost, PLSR, Random Forest).

- Select the best-performing algorithm (AdaBoost achieved R² > 0.83 and RPD > 2.4 for Ginkgo pigments [7]).

Model Validation and Deployment:

- Validate model performance using cross-validation and independent test sets.

- Deploy the optimized model for large-scale prediction of pigment contents using only hyperspectral images.

- Establish a framework for efficient, accurate, and scalable pigment phenotyping for germplasm screening and precision breeding.

Integration in End-to-End Research Workflow

Non-destructive phenotyping technologies serve as the foundation for complete end-to-end research workflows in modern plant science. These integrated approaches connect raw data acquisition directly to biological insights without intermediate destructive steps, dramatically accelerating the research cycle.

The workflow begins with automated, non-destructive data collection using multiple sensor modalities, continues through data processing and trait extraction via machine learning algorithms, and concludes with biological interpretation and decision support [2] [8]. This seamless pipeline maintains sample integrity throughout, allowing the same plants to be monitored temporally and subsequently used in further experiments or breeding programs.

For inclusion in a broader thesis on end-to-end workflows, these protocols demonstrate how non-destructive phenotyping creates closed-loop systems where phenotypic assessments directly inform subsequent research directions without the delays and resource expenditures associated with sample destruction and replacement. The longitudinal data obtained through these methods provides unprecedented insights into dynamic biological processes, enabling more accurate gene-to-phenotype associations and more efficient selection in crop improvement programs [1] [9].

End-to-end phenotyping research cycle.

Plant phenotyping, the quantitative assessment of plant traits, is crucial for understanding the interplay between genetic variations and environmental influences [10]. The journey from one-dimensional (1D) spectroscopic measurements to sophisticated three-dimensional (3D) imaging represents a significant evolution in our ability to capture complex plant characteristics non-destructively. This progression has transformed plant breeding and agricultural research by enabling high-throughput, precise measurements of plant morphology, physiology, and architecture [11] [10]. This document outlines the integrated workflows, applications, and experimental protocols across the dimensional spectrum of phenotyping technologies, providing researchers with practical guidance for implementation in non-destructive plant research.

The Phenotyping Spectrum: From 1D to 3D

1D Phenotyping: Spectroscopy

Overview and Workflow 1D phenotyping primarily involves spectroscopic measurements that capture data along a single dimension—the electromagnetic spectrum. These methods generate spectral signatures that serve as proxies for various biochemical and physiological plant traits.

Table 1: Primary Technologies in 1D Phenotyping

| Technology | Measured Parameters | Primary Applications | Output Format |

|---|---|---|---|

| Spectroradiometry | Reflectance across specific wavelengths | Vegetation indices (NDVI, EVI), chlorophyll content | Spectral curves |

| Fluorescence Sensing | Fluorescence emission when excited by specific light | Photosynthetic efficiency, stress responses | Emission spectra |

| Thermal Sensing | Infrared radiation emitted | Canopy temperature, water stress detection | Temperature profiles |

Experimental Protocol: Vegetation Index Measurement Objective: Calculate NDVI (Normalized Difference Vegetation Index) to assess plant health and biomass. Materials: Spectroradiometer (or multispectral sensor), calibration panel, data logging software. Procedure:

- Calibrate the sensor using a standard reference panel before measurement

- Position sensor perpendicular to the plant canopy at specified distance (typically 1-2m)

- Capture reflectance measurements in red (600-700nm) and near-infrared (700-1100nm) bands

- Calculate NDVI using formula: (NIR - Red) / (NIR + Red)

- Repeat measurements across multiple plants and time points for statistical robustness

Applications and Limitations: 1D phenotyping excels at high-throughput screening of physiological traits but provides limited information on structural attributes [10].

2D Phenotyping: Planar Imaging

Overview and Workflow 2D phenotyping utilizes conventional imaging across various spectra to extract morphological and physiological information from planar projections.

Table 2: 2D Imaging Modalities in Plant Phenotyping

| Imaging Modality | Spectral Bands | Extractable Traits | Analysis Approaches |

|---|---|---|---|

| RGB Imaging | Red, Green, Blue | Leaf area, plant size, color analysis | Pixel classification, edge detection |

| Multispectral Imaging | Discrete bands (3-10) | Vegetation indices, nutrient status | Spectral index calculation |

| Hyperspectral Imaging | Continuous narrow bands | Biochemical composition, stress detection | Spectral analysis, machine learning |

| Thermal Imaging | Long-wave infrared | Canopy temperature, stomatal conductance | Temperature thresholding |

Experimental Protocol: RGB-Based Morphological Analysis Objective: Quantify leaf area and plant architecture from RGB images. Materials: Digital RGB camera, controlled lighting environment, calibration scale, image analysis software (e.g., ImageJ, PlantCV). Procedure:

- Set up consistent imaging environment with uniform lighting and neutral background

- Include calibration scale (e.g., color checker, ruler) in each image

- Capture images from consistent angle and distance

- Pre-process images: color correction, background removal, noise reduction

- Segment plant from background using color thresholding or machine learning

- Calculate morphological parameters: projected leaf area, compactness, aspect ratio

- Validate measurements against manual/destructive samples

While 2D methods have advanced high-throughput phenotyping, they face limitations in capturing complex morphological traits and are susceptible to perspective artifacts [10].

3D Phenotyping: Volumetric Reconstruction

Overview and Workflow 3D phenotyping captures the spatial geometry of plants, enabling precise measurement of structural attributes that are insufficiently captured in lower dimensions [10]. This approach has emerged as a powerful tool for analyzing plant architecture by addressing occlusion challenges through depth perception and multiple viewpoints [11] [10].

Table 3: Comparison of 3D Imaging Technologies for Plant Phenotyping

| Technology | Principle | Resolution | Pros | Cons | Best Suited For |

|---|---|---|---|---|---|

| LiDAR | Laser triangulation/Time of Flight | ~1cm-10cm [12] | Fast acquisition; Light independent; Long range [12] | Poor XY resolution; Blurry edges; Requires calibration [12] | Canopy-level measurements; Field applications [11] |

| Laser Line Scanning | Laser line shift detection | Up to 0.2mm [12] | High precision; Robust systems; Light independent [12] | Requires movement; Defined range only [12] | High-precision lab measurements; Architectural traits |

| Structured Light | Pattern deformation analysis | Sub-millimeter to millimeter | Insensitive to movement; Inexpensive systems; Color information [12] | Sensitive to sunlight; Limited outdoor use [12] | Indoor plant phenotyping; Root imaging |

| Multi-view Stereo | Feature matching across images | Variable (depends on camera) | Cost-effective (standard cameras); Color texture; Flexible setup [11] | Computational expensive; Requires feature-rich surfaces [11] | General purpose phenotyping; Growth monitoring |

| Time of Flight (ToF) | Light pulse roundtrip time | Millimeter to centimeter | Real-time capability; Cost-effective (e.g., Kinect) [11] | Lower resolution; Sensitive to ambient light [11] | Real-time monitoring; Robotics applications |

Experimental Protocol: 3D Plant Reconstruction Using Multi-view Stereo Objective: Generate accurate 3D model of a plant for morphological trait extraction. Materials: Digital camera (DSLR or high-quality RGB), rotation stage or multiple camera positions, calibration pattern, computer with 3D reconstruction software (e.g., Meshroom, Agisoft Metashape). Procedure:

- System Setup: Arrange camera positions around plant (minimum 12-24 positions at 15-30° intervals) or use automated rotation stage

- Calibration: Capture calibration images using checkerboard pattern to determine camera intrinsic parameters

- Image Acquisition: Capture images from all positions ensuring 60-80% overlap between consecutive images, maintaining consistent lighting

- Data Pre-processing: Resize images if needed, apply lens distortion correction using calibration parameters

- 3D Reconstruction:

- Import images into reconstruction software

- Run feature detection and matching algorithms

- Generate sparse point cloud through structure-from-motion

- Create dense point cloud using multi-view stereo

- Generate mesh model and apply texture

- Trait Extraction:

- Calculate volume: Voxel-based or convex hull methods

- Determine plant height: Maximum Z-coordinate value

- Estimate leaf area: Surface area of mesh model

- Measure branching angles: Vector analysis between segments

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Non-Destructive Plant Phenotyping

| Category | Item/Technology | Function/Application | Key Considerations |

|---|---|---|---|

| Imaging Hardware | RGB/Multispectral Camera | 2D morphological analysis, color assessment | Resolution, frame rate, spectral bands |

| LiDAR/Laser Scanner | 3D point cloud acquisition for structural traits | Scanning frequency, accuracy, range [12] | |

| Hyperspectral Imaging System | Biochemical composition analysis | Spectral resolution, spatial resolution, acquisition speed | |

| Thermal Camera | Canopy temperature, stress detection | Thermal sensitivity, accuracy, resolution | |

| Software & Analysis | Image Analysis Software (PlantCV, ImageJ) | 2D trait extraction, image processing | Algorithm availability, batch processing capability |

| 3D Reconstruction Software (Meshroom, Agisoft) | 3D model generation from 2D images | Processing speed, automation options, accuracy [11] | |

| Point Cloud Processing (CloudCompare, PCL) | 3D point cloud analysis and measurement | Visualization, filtering, segmentation tools | |

| Accessories & Calibration | Color/Size Reference | Image calibration, scale reference | Color accuracy, dimensional stability |

| Controlled Lighting | Consistent illumination conditions | Spectrum, intensity, uniformity | |

| Positioning System | Precise sensor or plant movement | Accuracy, repeatability, programmability |

Integrated Workflow for Multi-Dimensional Phenotyping

Modern plant phenotyping leverages the complementary strengths of different dimensional approaches through integrated workflows. The fusion of 1D spectroscopic data with 3D structural information enables researchers to correct spectral measurements based on plant organ inclination and distance, leading to more accurate biochemical assessments [12]. This multi-dimensional approach provides a comprehensive understanding of plant function and structure, bridging the gap between laboratory-based precision and field-based relevance.

Implementation Considerations:

- Trait Selection: Match technology to trait complexity—1D for biochemical, 2D for planar morphology, 3D for structural architecture

- Scalability: Balance between resolution and throughput based on research objectives

- Data Management: Implement robust data pipelines for multi-dimensional data storage and processing

- Validation: Establish correlation between non-destructive measurements and traditional destructive assays

The dimensional spectrum of phenotyping technologies offers researchers a powerful toolkit for comprehensive plant assessment. While 1D methods provide efficient biochemical profiling and 2D imaging enables high-throughput morphological screening, 3D technologies unlock unprecedented capability for measuring plant architecture and growth dynamics [11] [10]. The integration of these approaches across dimensional boundaries represents the future of plant phenotyping, enabling deeper insights into gene-phenotype-environment interactions and accelerating crop improvement programs. As these technologies continue to evolve, emphasis should be placed on developing standardized protocols, improving computational efficiency, and enhancing accessibility to ensure broad adoption across the plant research community.

Optical sensing technologies are fundamental to modern, non-destructive plant phenotyping, enabling the high-throughput assessment of complex traits related to plant growth, yield, and adaptation to biotic or abiotic stresses. These technologies function by quantifying the interactions between light and plant tissues, including how photons are reflected, absorbed, or transmitted. The measured signals provide deep insights into the plant's physiological, biochemical, and structural condition without causing harm. In the context of an end-to-end workflow for non-destructive plant phenotyping research, optical sensors serve as the primary data acquisition tool, feeding information into analytical models that bridge the gap between genotype and phenotype [13] [14]. This document details three core optical sensing technologies—reflectance, fluorescence, and thermal imaging—providing application notes and experimental protocols for their implementation in a robust phenotyping pipeline.

The table below summarizes the key characteristics, measured parameters, and applications of the three primary optical sensing technologies.

Table 1: Comparative overview of key optical sensing technologies for plant phenotyping.

| Technology | Principle of Operation | Primary Measured Parameters | Key Applications in Phenotyping | Example Species |

|---|---|---|---|---|

| Reflectance Imaging (Hyperspectral/Multispectral) | Measures light reflected from plant tissues across specific wavelengths [14]. | Reflectance spectra; Vegetation Indices (e.g., NDVI, PRI) [14]. | Quantifying pigment, water, and nutrient content; estimating photosynthetic parameters (Vcmax, Jmax) [14]. | Maize, Wheat, Rice, Soybean [14] [13] |

| Chlorophyll Fluorescence Imaging | Measures light re-emitted by chlorophyll molecules after absorption of light energy [15]. | Quantum yield of PSII (Fv/Fm), Non-photochemical quenching (NPQ) [15]. | Assessing photosynthetic performance and efficiency; early detection of biotic and abiotic stresses [15]. | Arabidopsis, Wheat, Barley, Tomato [15] [13] |

| Thermal Imaging | Captures long-wavelength infrared radiation emitted from the plant surface, which correlates with temperature [15]. | Canopy or leaf surface temperature [15]. | Monitoring stomatal conductance and plant water status; detecting water stress [15]. | Barley, Wheat, Grapevine, Maize [13] [15] |

Detailed Application Notes and Protocols

Hyperspectral Reflectance Imaging

Application Notes: Hyperspectral reflectance data captures the intensity of light reflected from a plant across a continuous range of wavelengths, typically from the visible to the short-wave infrared (400–2500 nm) [14] [15]. The probability of light being reflected, absorbed, or transmitted is wavelength-dependent and governed by the chemical composition and physical structure of the plant tissues. This technology is particularly powerful because a single set of hyperspectral data can be analyzed with various models to predict a wide array of traits. For instance, natural variation in nutrient and metabolite abundance, as well as photosynthetic capacity, can be estimated, enabling genetic studies that were previously limited by low-throughput destructive sampling [14]. In an end-to-end workflow, this allows for the re-analysis of historical spectral datasets as new predictive models are developed, maximizing data utility.

Experimental Protocol:

- Instrument Calibration: Use a handheld spectrometer or hyperspectral camera with an internal light source or calibrated external illumination. Prior to measurement, calibrate the sensor using a standard panel with known reflectance properties (e.g., a white Spectralon panel) to account for ambient light conditions [14].

- Data Acquisition: For leaf-level measurements, ensure the sensor is held at a consistent distance and angle from the leaf surface. For canopy-level measurements from UAVs or ground platforms, note that canopy structure can influence the signal; techniques like vector normalization can help minimize this confounding effect [14]. Acquire spectra from a representative number of plants per genotype.

- Data Processing and Model Application: Process raw spectra to extract relevant features. Two primary analytical approaches are:

- Vegetation Indices: Calculate specific indices (e.g., Normalized Difference Vegetation Index, Photochemical Reflectance Index) from ratios of reflectance at key wavelengths [14].

- Full-Spectrum Modeling: Employ machine learning techniques like Partial Least Squares Regression (PLSR) or deep learning to build predictive models for traits such as nitrogen content, leaf mass per area, and photosynthetic parameters (Vcmax, Jmax) [14] [16]. The performance of such models is quantified in the table below.

Table 2: Performance examples of hyperspectral reflectance models for predicting plant traits (adapted from [14]).

| Trait | Species | Sample Size | Modeling Method | Prediction Performance (R²) |

|---|---|---|---|---|

| Leaf Nitrogen Content | Maize | 203 | PLSR | 0.95 |

| Chlorophyll Content | Maize | 268 | PLSR | 0.85 |

| Vcmax | Maize | 214 | PLSR | 0.65 |

| Vcmax | Various Trees | 78 | PLSR | 0.89 |

| Sucrose Content | Maize | 61 | PLSR | 0.60 |

Chlorophyll Fluorescence Imaging

Application Notes: Chlorophyll fluorescence imaging is a non-invasive technique that measures the efficiency of photosystem II (PSII), which is highly sensitive to a wide range of biotic and abiotic stresses [15]. A major advantage is that changes in chlorophyll fluorescence kinetics often occur before other effects of stress are visible, making it an excellent tool for early stress detection. In a phenotyping workflow, this allows for the dynamic monitoring of plant physiological status. Modern systems use pulse-amplitude modulated (PAM) fluorometers to measure fluorescence kinetics, providing a wealth of information on a plant's photosynthetic capacity and metabolic condition [15]. The heterogeneity of stress responses across a leaf or canopy can be easily visualized and quantified through imaging.

Experimental Protocol:

- System Setup: Use an imaging system equipped with a high-sensitivity CCD camera, a multi-color LED light panel for actinic illumination and saturation pulses, and appropriate filters [15]. The system should be enclosed in a light-isolated imaging box to control ambient light.

- Plant Adaptation: Dark-adapt the plant or leaf for at least 20 minutes to fully open PSII reaction centers and allow accurate measurement of the minimal fluorescence (F₀).

- Image Acquisition Sequence: Execute a programmable measurement protocol. A standard protocol includes:

- Application of a modulated measuring beam to determine F₀.

- A saturating light pulse (up to 6000 µmol m⁻² s⁻¹) to determine maximal fluorescence (Fm) in the dark-adapted state.

- Actinic light illumination to drive photosynthesis, with periodic saturation pulses to determine maximal (Fm') and steady-state (Fs) fluorescence in the light-adapted state.

- Data Analysis: Calculate key parameters from the fluorescence values. The most common parameter is the maximum quantum yield of PSII, Fv/Fm = (Fm - F₀)/Fm, which is a robust indicator of plant health. Values below ~0.83 typically indicate stress.

Thermal Imaging

Application Notes: Thermal imaging cameras capture radiation in the long-wavelength infrared spectrum, which is directly related to the surface temperature of the object [15]. In plants, leaf temperature is governed by the balance between energy absorption, transpirational cooling, and heat loss. When stomata close in response to water deficit, transpirational cooling is reduced, leading to an increase in leaf temperature. Therefore, thermal imaging serves as a proxy for stomatal conductance and plant water status. This technology is critical for phenotyping programs aimed at improving crop water use efficiency and drought tolerance. It allows for the rapid screening of large populations to identify genotypes that better maintain stomatal opening and cooler canopy temperatures under water-limited conditions.

Experimental Protocol:

- Environmental Control: Perform imaging under stable, high-light conditions where transpirational cooling is the dominant factor affecting leaf temperature. Avoid windy conditions, which can disrupt the leaf boundary layer.

- Reference Surfaces: Include well-watered and water-stressed control plants of the same genotype within the imaging frame to provide reference temperatures for relative comparison.

- Image Acquisition: Use a high-performance industrial infrared camera. For a comprehensive view, acquire images from both top and side views, potentially using a rotating table [15]. Ensure the camera is calibrated for emissivity; plant leaves typically have an emissivity of approximately 0.97.

- Data Processing: Analyze the thermal images to extract the mean leaf temperature of each plant or region of interest. Calculate indices like the Crop Water Stress Index (CWSI) or simply use the temperature difference between a genotype and a well-watered reference (ΔT) to rank genotypes for their water stress response.

Integrated Workflow and Data Analysis

A modern phenotyping workflow integrates multiple sensing modalities and leverages advanced data analysis to generate actionable biological insights. The synergy between technologies provides a more complete picture of plant health and function than any single method alone.

Figure 1: An integrated workflow showing how data from multiple optical sensors are fused and analyzed to support decision-making in plant research and breeding.

As illustrated in Figure 1, an end-to-end workflow begins with automated, non-destructive data acquisition using the various imaging sensors. The subsequent critical step is data registration and fusion, where information from RGB, hyperspectral, fluorescence, and thermal cameras is spatially aligned. This creates a multi-dimensional dataset where each plant voxel (3D pixel) is characterized by structural, spectral, and thermal properties [2]. Machine learning algorithms are then trained on these multimodal datasets to automatically segment and classify tissues and quantify traits of interest. For example, a model can be trained to discriminate between intact, degraded, and white rot tissues in grapevine trunks with high accuracy by combining MRI and X-ray CT data [2] [17]. Similarly, deep learning and chemometrics can be combined to detect drought stress in Arabidopsis from spectral images [16]. The output is a predictive model or a digital twin of the plant, which provides key indicators for precise diagnosis and selection.

Figure 2: The data processing pipeline, from raw sensor data to quantitative phenotypic traits, highlighting the role of machine learning and chemometrics.

The Scientist's Toolkit: Essential Research Reagents and Materials

The successful implementation of optical phenotyping protocols relies on a suite of specialized instruments and software.

Table 3: Essential materials and tools for optical plant phenotyping.

| Category | Item | Specification/Function |

|---|---|---|

| Core Sensing Instruments | Hyperspectral Spectrometer/Imager | Covers visible to short-wave infrared (400-2500 nm); for reflectance-based trait analysis [14] [15]. |

| Pulse-Amplitude Modulated (PAM) Fluorometer | Measures chlorophyll fluorescence kinetics; includes saturating light pulse and actinic light sources [15]. | |

| Thermal Infrared Camera | Measures leaf and canopy surface temperature; high thermal sensitivity required [15]. | |

| High-Resolution RGB Camera | For 2D/3D morphological and color analysis [15]. | |

| Calibration & Accessories | Calibration Panels (White & Black Reference) | Provides known reflectance for spectrometer calibration before plant measurement [14]. |

| Controlled Illumination Source | Homogenous LED panels for consistent, repeatable lighting in indoor setups [15]. | |

| Environmental Monitoring Sensors | Logs photosynthetically active radiation (PAR), soil moisture, and temperature [18]. | |

| Data Analysis Software | Image Processing Software | For segmentation, feature extraction, and analysis of 2D/3D image data [13]. |

| Statistical & Machine Learning Platforms (e.g., R, Python) | For implementing PLSR, deep learning, and other classification/regression models [2] [14] [16]. |

Application Notes

High-throughput phenotyping (HTP) has emerged as a transformative solution to a critical bottleneck in plant science: the inability to rapidly and precisely measure complex plant traits at scale. While genomic technologies have advanced rapidly, the slow pace of phenotypic data collection has limited gains in crop breeding and stress resilience research. This document details standardized protocols for non-destructive image-based phenotyping, enabling researchers to integrate these methods into end-to-end workflows for plant research.

The power of HTP lies in its ability to capture dynamic plant responses to environmental challenges through automated, non-invasive monitoring. For instance, one study characterizing 106 Mediterranean maize inbred lines demonstrated how HTP could accurately capture dynamic responses to combined drought and heat stress, followed by recovery under control conditions [19]. This approach provides the rich, temporal phenotypic data necessary for dissecting the genetic basis of complex traits through genome-wide association studies (GWAS).

Table 1: Key Agronomic Traits Quantified Through High-Throughput Phenotyping

| Trait Category | Specific Traits | Measurement Significance | Associated Stress Responses |

|---|---|---|---|

| Morphological | Whole-Plant Area (WPA), Convex Hull, Top View Area, Compactness [20] | Biomass accumulation, canopy structure, early seedling vigor [20] | Drought resilience, nutrient efficiency [19] [20] |

| Physiological | Stomatal Pore Area, Guard Cell Orientation, Opening Ratio [21] | Gas exchange regulation, water use efficiency [21] | Heat stress, drought response [21] |

| Growth Dynamics | Absolute Growth Rate (AGR), Crop Growth Rate (CGR), Relative Growth Rate (RGR) [20] | Plant growth and development over time [20] | Combined stress tolerance and recovery [19] |

| Spectral/Color | Color Profiles, Multispectral Signatures [22] [23] | Plant health, photosynthetic efficiency, pathogen presence [22] | Biotic and abiotic stress detection [22] [24] |

Experimental Protocols

Protocol 1: Non-Destructive Phenotyping for Early Seedling Vigor in Rice

This protocol, adapted from Plant Methods, details an affordable, image-based method to screen for early seedling vigor—a critical trait for crop establishment in direct-seeded rice systems. This method reduces observation time by 80% and labor costs by 50% compared to traditional destructive sampling [20].

Materials and Reagents

- Plant Material: Seeds of genotypes under investigation (e.g., seven diverse rice cultivars).

- Growth Facility: Glasshouse or net house with controlled conditions.

- Imaging Equipment: Digital SLR camera mounted on a stable platform (e.g., tripod) with consistent lighting.

- Pots and Growth Medium: Standard pots filled with clean soil mixture.

- Analysis Software: Image analysis software (e.g., PlantCV, ImageJ) with capacity for batch processing.

Experimental Workflow

Procedure

- Plant Cultivation: Sow pre-germinated seeds in pots filled with a clean soil mixture. Grow plants in a net house under normal conditions without water stagnation [20].

- Image Acquisition: Capture top-view images of each plant at regular intervals, ensuring consistent camera distance, angle, and lighting. Key time points include 14 and 28 Days After Sowing (DAS) [20].

- Image Analysis: Process images using analysis software to extract geometric traits.

- Segmentation: Separate plant pixels from background.

- Trait Extraction: Calculate Whole-Plant Area from images (WPAi), Convex Hull, Top View Area, and Compactness [20].

- Validation with Destructive Sampling: Harvest a subset of plants for traditional measurements.

- Whole-Plant Area from scanner (WPAs): Flatten and scan shoots using a flatbed scanner.

- Dry Weight: Measure shoot and root dry weight.

- Growth Rate Calculations: Calculate Absolute Growth Rate (AGR), Crop Growth Rate (CGR), and Relative Growth Rate (RGR) from the destructive data [20].

- Data Analysis: Perform regression analysis between WPAi (non-destructive) and WPAs (destructive) to validate the image-based method. A strong correlation (R² > 83%) confirms the protocol's legitimacy [20].

Protocol 2: Automated Stomatal Phenotyping Using Deep Learning

This protocol uses the YOLOv8 deep learning model for high-throughput, automated analysis of stomatal morphology and orientation—a key physiological trait linked to plant stress responses [21].

Materials and Reagents

- Plant Material: Leaves of the target species (e.g., Hedyotis corymbosa).

- Microscopy Equipment: Inverted microscope (e.g., CKX41) coupled with a high-resolution camera (e.g., DFC450).

- Sample Preparation: Microscope slides, cyanoacrylate glue.

- Computing Hardware: Computer with powerful GPU for model training and inference.

- Software: Python environment with YOLOv8 implementation and image processing libraries.

Analytical Workflow

Procedure

- Sample Preparation and Imaging:

- Affix the abaxial (lower) surface of the leaf (e.g., the fifth leaf from the top) to a microscope slide using cyanoacrylate glue [21].

- Capture high-resolution images (e.g., 2592 × 1458 pixels) using an inverted microscope and digital camera.

- Image Pre-processing: Apply the Lucy-Richardson deblurring algorithm iteratively to enhance image clarity and stomatal outlines [21].

- Dataset Preparation: Manually annotate pre-processed images, marking bounding boxes and segmentation masks for stomatal pores and guard cells. Split the annotated dataset into training, validation, and test sets [21].

- Model Training and Inference:

- Configure the YOLOv8 architecture (e.g., learning rate, batch size) and train the model on the annotated dataset [21].

- Use the trained model to perform instance segmentation on new images, generating precise masks for each stomatal pore and guard cell pair.

- Trait Extraction and Analysis:

- Standard Traits: Calculate stomatal density, pore area, and guard cell area from the segmentation masks.

- Novel Traits:

- Stomatal Orientation: Fit an ellipse to the segmented guard cell pair and calculate its angle relative to the leaf's longitudinal axis [21].

- Opening Ratio: Calculate a new metric from the areas of the guard cells and the stomatal pore, providing a morphological descriptor for physiological research [21].

Protocol 3: Image Standardization for Large-Scale Phenotyping

Variation in image quality due to factors like fluctuating light intensity can bias phenotypic data. This protocol standardizes an image dataset using a color reference panel to ensure robust and reproducible analyses [23].

Materials and Reagents

- Reference Target: ColorChecker Passport Photo (X-Rite, Inc.) or similar panel with industry-standard color chips.

- Imaging Platform: Any imaging system (from micro-computers to robotic platforms) where the reference can be placed within the field of view.

- Analysis Software: Software capable of performing linear algebra operations (e.g., R, Python with OpenCV, PlantCV).

Procedure

- Image Acquisition with Reference: Include the ColorChecker panel within every image captured throughout the experiment [23].

- Define Source and Target Matrices:

- Let S be the matrix of R, G, and B values for the 24 reference chips in a source image that needs correction.

- Let T be the matrix of R, G, and B values for the same chips in a designated target (reference) image with ideal color profile [23].

- Calculate the Transformation Matrix:

- Apply the Transformation: Use the calculated standardization vectors to transform every pixel in the source image, effectively mapping its color profile to that of the target image. This corrects for batch effects like temperature-dependent light intensity [23].

Table 2: The Scientist's Toolkit: Essential Reagents and Materials for High-Throughput Phenotyping

| Item | Function/Application | Example Use Case |

|---|---|---|

| ColorChecker Passport | Standardizes color profile and corrects batch effects across images [23]. | Ensuring consistent color measurements in time-series experiments under variable light [23]. |

| Calcined Clay Growth Profile | Provides a uniform, controlled root environment for pot-based studies [23]. | Studying nutrient stress responses in sorghum [23]. |

| Cyanoacrylate Glue | Affixes leaf samples to microscope slides for imaging [21]. | Preparing leaf samples for high-resolution stomatal phenotyping [21]. |

| RGB and Multispectral Cameras | Capture morphological and spectral data non-destructively [25]. | Daily monitoring of plant growth and stress symptoms [19] [25]. |

| YOLOv8 Deep Learning Model | Segments and analyzes stomatal guard cells and pores automatically [21]. | High-throughput measurement of stomatal orientation and opening ratio [21]. |

| Lucy-Richardson Algorithm | Deblurs images to enhance clarity of fine structures [21]. | Improving the visibility of stomatal outlines in microscope images [21]. |

Implementing Integrated Workflows: Sensors, Platforms, and AI-Driven Analysis

The integration of multi-modal imaging techniques is revolutionizing non-destructive plant phenotyping by providing comprehensive insights into both structural and functional traits. Multi-modal medical image fusion (MMIF) approaches, though developed for clinical diagnostics, offer valuable frameworks for plant sciences, combining data from complementary imaging sources to create detailed, clinically useful representations [26]. In agricultural research, this integration is particularly valuable for addressing complex challenges such as grapevine trunk diseases (GTDs), where internal degradation occurs long before external symptoms become visible [2] [17]. This protocol details an end-to-end workflow for combining MRI, X-ray CT, and hyperspectral imaging to enable high-throughput, non-destructive phenotyping of internal plant structures and physiological processes.

Application Notes

Rationale for Modality Integration

Each imaging modality provides unique and complementary information about plant structure and function. X-ray Computed Tomography (X-ray CT) excels at visualizing high-resolution three-dimensional internal structures by detecting differences in tissue density and energy absorption, making it ideal for quantifying architectural features [27]. Magnetic Resonance Imaging (MRI), operating at longer wavelengths, provides exceptional contrast for soft tissues and can reveal functional information about water content and physiological status [2] [27]. Hyperspectral Imaging (HSI) captures spatial and spectral information across hundreds of narrow, contiguous bands, enabling detailed biochemical analysis and detection of stress responses through spectral signatures [27].

The synergy between these modalities was demonstrated in grapevine studies, where MRI proved superior for assessing tissue functionality and early degradation, while X-ray CT better discriminated advanced degradation stages like white rot [2]. Hyperspectral imaging extends these capabilities by detecting specific biochemical changes associated with pathogen responses and nutrient deficiencies before morphological symptoms appear [27].

Performance Metrics and Validation

Quantitative validation of the multimodal approach shows significant advantages over single-modality analysis:

Table 1: Performance Metrics of Multimodal Imaging for Tissue Classification

| Imaging Modality | Classification Accuracy | Key Strengths | Limitations |

|---|---|---|---|

| MRI Only | ~83% | Excellent soft tissue contrast, functional assessment | Lower resolution for structural details |

| X-ray CT Only | ~79% | High-resolution structural imaging | Limited functional information |

| Hyperspectral Only | ~81% | Biochemical composition analysis | Limited depth penetration |

| Multimodal Fusion (MRI+X-ray CT+HSI) | >91% | Comprehensive structural & functional profiling | Computational complexity, data alignment challenges |

The integrated pipeline achieved a mean global accuracy exceeding 91% for discriminating between intact, degraded, and white rot tissues in grapevine trunks, significantly outperforming single-modality approaches [2] [17]. This accuracy is maintained across different plant architectures and degradation patterns when proper calibration and validation protocols are followed.

Experimental Protocols

Sample Preparation and Imaging

Plant Material Selection: Select representative plants based on experimental design. For disease studies, include both symptomatic and asymptomatic specimens. Twelve grapevine plants were used in the validation study, providing sufficient statistical power for method development [2].

Pre-imaging Preparation:

- Hydrate plants normally 24 hours before imaging to ensure natural water status

- Remove soil from root systems while minimizing root damage

- Mount plants in imaging-friendly containers using supportive foam

- Attach fiducial markers at strategic locations for multimodal registration

- Include calibration objects of known dimensions and composition in the field of view

Multimodal Image Acquisition Sequence:

- Hyperspectral Imaging: Capture data in the 200-2500 nm range using push-broom or snapshot HSI systems. Maintain consistent illumination and distance-to-canopy.

- X-ray CT Scanning: Acquire volumetric data using micro-CT or clinical CT systems. Typical parameters: 80-140 kV tube voltage, 100-500 µA current, 0.5-1 mm slice thickness.

- MRI Acquisition: Perform using clinical or preclinical MRI systems. Essential sequences include:

- T1-weighted (T1-w) imaging

- T2-weighted (T2-w) imaging

- Proton Density-weighted (PD-w) imaging

- Custom sequences optimized for plant tissue properties

Data Processing and Integration Pipeline

Image Preprocessing:

- Apply modality-specific corrections (geometric distortion, intensity inhomogeneity)

- Remove noise using anisotropic diffusion filters or non-local means algorithms

- Normalize intensity ranges across samples and modalities

Multimodal Registration: Rigid and non-rigid registration transforms align images into a common coordinate system. The process involves:

- Feature detection using SIFT or SURF algorithms

- Initial alignment based on fiducial markers

- Fine registration using mutual information maximization

- Visual validation of alignment accuracy

Data Fusion and Segmentation: Implement a machine learning framework for voxel-wise classification:

- Extract multi-dimensional feature vectors combining information from all modalities

- Train random forest or convolutional neural network classifiers

- Apply trained models to segment tissues into predefined classes

- Post-process to remove segmentation artifacts and smooth boundaries

Table 2: Characteristic Signatures of Plant Tissues Across Imaging Modalities

| Tissue Type | X-ray CT Absorption | T1-w MRI Signal | T2-w MRI Signal | Hyperspectral Features |

|---|---|---|---|---|

| Intact Functional | High (reference) | High | High | Healthy vegetation indices |

| Non-Functional | ~10% lower | ~30-60% lower | ~30-60% lower | Altered water band features |

| Necrotic | ~30% lower | Medium to low | ~60-85% lower | Stress-related spectral shifts |

| White Rot | ~70% lower | ~70-98% lower | ~70-98% lower | Decay-specific signatures |

| Reaction Zones | Medium | Medium | High (hypersignal) | Early stress indicators |

Quantitative Analysis and Phenotype Extraction

Morphological Phenotyping:

- Calculate volume metrics for different tissue classes using voxel counting

- Extract three-dimensional distribution patterns of degraded tissues

- Quantify spatial relationships between different tissue types

Physiological Assessment:

- Derive functional indices from MRI parameters

- Calculate biochemical indices from hyperspectral data (e.g., chlorophyll content, water status)

- Correlate internal tissue status with external symptoms

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents and Materials for Multimodal Plant Imaging

| Item | Specifications | Application & Function |

|---|---|---|

| MRI Contrast Agents | Gadolinium-based compounds (e.g., Gd-DTPA) | Enhance tissue contrast in MRI, highlight vascular transport |

| Fiducial Markers | Vitamin E capsules, agarose beads, ceramic beads | Provide reference points for multimodal image registration |

| Calibration Phantoms | Custom objects with known dimensions and density | Validate geometric accuracy and enable quantitative intensity measurements |

| Plant Support Systems | 3D-printed holders, foam blocks, non-metallic stakes | Immobilize specimens during imaging while minimizing artifacts |

| Data Processing Software | 3D Slicer, FIJI/ImageJ, custom Python/Matlab scripts | Image registration, segmentation, and quantitative analysis |

| AI Segmentation Models | U-Net, Random Forest, Transformer architectures | Automated tissue classification and phenotyping [2] [28] |

| Spectral Calibration Standards | White reference panels, wavelength calibration cards | Ensure hyperspectral data accuracy and reproducibility |

| 3D Reconstruction Tools | Gaussian Splatting, Planar-based Reconstruction [29] | Generate high-fidelity 3D models from multi-view images |

Implementation Considerations

Computational Requirements: The multimodal pipeline demands significant computational resources for data storage, processing, and analysis. A single plant can generate terabytes of multi-modal image data, necessitating high-performance computing infrastructure with adequate GPU acceleration for machine learning components [30].

Validation and Quality Control:

- Perform regular calibration of all imaging systems using standardized phantoms

- Validate segmentation accuracy against manual expert annotations

- Establish standardized protocols for cross-laboratory reproducibility

- Implement version control for analytical pipelines to ensure result consistency

Integration with Complementary Data: For comprehensive phenotyping, correlate imaging data with:

- Genomic information for genome-wide association studies (GWAS) [28]

- Environmental sensor data (temperature, humidity, soil conditions)

- Yield and quality metrics at harvest

- Traditional destructive measurements for validation

This multimodal imaging pipeline represents a powerful framework for non-destructive plant phenotyping, enabling researchers to quantify internal structural and functional traits with unprecedented accuracy and detail. The integration of MRI, X-ray CT, and hyperspectral data provides complementary information that surpasses the capabilities of any single modality, opening new possibilities for understanding plant physiology, pathology, and responses to environmental stresses.

Plant phenomics, the large-scale study of plant growth, performance, and composition, has been transformed by advanced sensing technologies. The integration of multiple imaging modalities—termed sensor fusion—enables a comprehensive, non-destructive analysis of plant morphological, physiological, and biochemical traits that cannot be captured by any single sensor alone [31] [32]. This holistic approach is crucial for elucidating complex genotype-environment interactions and accelerating the development of climate-resilient crops [31] [33]. By combining the strengths of RGB, thermal, depth, and spectral imaging, researchers can now obtain a multidimensional view of plant health and function, from the cellular level to entire canopies, in both controlled and field environments [32] [33]. This document outlines practical application notes and protocols for implementing these integrated sensor systems within an end-to-end workflow for non-destructive plant phenotyping research.

Comparative Analysis of Imaging Modalities

Table 1: Core Imaging Modalities in Plant Phenotyping: Characteristics and Applications

| Imaging Modality | Spectral Range | Primary Measured Parameters | Key Applications in Plant Phenotyping | Strengths | Limitations |

|---|---|---|---|---|---|

| RGB Imaging | 380–780 nm [32] | Color, texture, shape, structure [15] [34] | Morphological analysis (leaf area, plant height, biomass), growth dynamics, color indices [31] [34] | Cost-effective, high spatial resolution, intuitive data interpretation [34] | Limited to visible spectrum, low accuracy for physiological traits, sensitive to lighting conditions [31] [34] |

| Thermal Imaging (TI) | 1000–14000 nm [32] | Canopy/leaf temperature [31] [15] | Stomatal conductance, transpiration rate, drought and heat stress detection [31] [32] | Non-contact measure of plant water status, rapid stress detection [31] [15] | Affected by ambient conditions, requires reference for absolute temperature calibration [32] |

| Depth/3D Imaging (LiDAR, Laser Scanners) | Varies (e.g., time-of-flight) [32] | Distance, point clouds, 3D structure [32] [15] | Plant architecture, biomass estimation, canopy coverage, 3D modeling [32] [15] | Precise volumetric and structural data, less affected by lighting [32] | Lower spatial resolution compared to RGB, can be costly, complex data processing [32] |

| Hyperspectral Imaging (HSI) | 200–2500 nm [32] | Reflectance across hundreds of narrow, contiguous bands [31] [32] | Biochemical profiling (chlorophyll, water content, pigments), early stress detection, nutrient status [31] [32] | Rich spectral data for quantifying biochemical traits, enables early stress detection before visible symptoms [31] | High data volume, computationally intensive, can be expensive [31] |

| Chlorophyll Fluorescence Imaging (ChlF) | Emission: ~600–750 nm [32] | Photosynthetic efficiency (Fv/Fm, etc.) [15] | Photosynthetic performance, metabolic activity, early detection of biotic and abiotic stresses [31] [32] | Highly sensitive indicator of photosynthetic function, reveals stress before other symptoms [15] | Requires controlled lighting during measurement, specialized setup [15] |

Integrated Workflow for Multimodal Plant Phenotyping

The synergy between different sensors creates a powerful pipeline for comprehensive plant analysis. The following workflow diagram generalizes the process from data acquisition to actionable knowledge.

Figure 1: End-to-End Multimodal Phenotyping Workflow. This diagram outlines the integrated process from multi-sensor data acquisition to the generation of actionable insights for plant research.

Experimental Protocols for Multimodal Phenotyping

Protocol: Drought Stress Assessment in Watermelon

This protocol is adapted from a study on high-throughput phenotyping of drought-stressed watermelon plants, integrating RGB, short-wave infrared hyperspectral (SWIR-HSI), multispectral fluorescence (MSFI), and thermal imaging [31].

1. Experimental Setup & Plant Material

- Plant Material: Utilize watermelon (Citrullus lanatus) plants. Genotypes with varying known drought tolerance are recommended for robust model training.

- Growth Conditions: Grow plants in a controlled environment (e.g., greenhouse) with standardized soil, nutrient, and initial watering regimes.

- Stress Induction: Divide plants into two groups: a well-watered control group and a drought-stressed treatment group where irrigation is withheld.

2. Automated Multimodal Image Acquisition

- Platform: Use a fully automated, high-throughput phenotyping platform with dedicated screening chambers and a synchronized multi-sensor array [31].

- Synchronization: Implement a custom software platform for synchronized system control and real-time data acquisition to ensure temporal and spatial alignment of images from all sensors [31].

- Acquisition Schedule: Image plants at the same time daily to minimize diurnal variation effects. The protocol below details the setup for each sensor.

3. Data Processing & Analysis

- Feature Extraction: For each sensor, extract relevant features (e.g., vegetation indices from HSI, temperature from thermal, morphological parameters from RGB).

- Data Fusion & Modeling: Fuse the extracted multi-sensor features into a combined dataset. Employ machine learning (e.g., Random Forests, Support Vector Machines) or deep learning models (e.g., Convolutional Neural Networks) for two primary tasks:

- Validation: Validate model performance using a held-out test set of plants not used in training, reporting metrics like accuracy, mean squared error, etc.

Protocol: Internal Wood Structure Phenotyping in Grapevine

This protocol leverages the fusion of MRI and X-ray CT for non-destructive diagnosis of trunk diseases in perennial plants [2] [17].

1. Plant Material & Preparation

- Plant Material: Collect grapevine (Vitis vinifera L.) plants from the field, selecting both symptomatic and asymptomatic-looking vines based on foliar symptom history [2].

- Sample Handling: Keep the root ball moist and ensure the plant is stable during transport and imaging. No destructive preparation is needed.

2. Multimodal 3D Image Acquisition

- Imaging Facility: Perform imaging in a clinical or specialized facility equipped with both MRI and X-ray CT scanners.

- MRI Acquisition: Acquire 3D images using multiple MRI protocols: T1-weighted (T1-w), T2-weighted (T2-w), and Proton Density-weighted (PD-w). These sequences provide complementary information on the physiological status and water content of the wood [2].

- X-ray CT Acquisition: Perform a high-resolution CT scan of the entire trunk. This modality provides structural information and tissue density [2] [32].

- Spatial Alignment: Ensure the plant is positioned consistently between scans to facilitate subsequent image registration.

3. Data Processing, Registration, and Voxel Classification

- 3D Image Registration: Use an automatic 3D registration pipeline to spatially align the MRI volumes and X-ray CT data into a single, cohesive 4D multimodal image dataset [2].

- Expert Annotation & Ground Truthing: After non-destructive imaging, destructively slice the trunk and photograph the cross-sections. Have experts manually annotate these sections to define tissue classes (e.g., intact, degraded, white rot) [2].

- Machine Learning Model Training: Train a voxel-wise classification algorithm (e.g., a Random Forest classifier) using the registered multimodal imaging data (MRI and CT signals) as input and the expert annotations as the ground truth. This model learns the "multimodal signature" of each tissue type [2].

- In-Vivo Diagnosis: Apply the trained model to new, unseen multimodal scans of living plants to automatically segment and quantify the volume of intact, degraded, and white rot tissues in 3D, enabling a non-destructive diagnosis.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Equipment and Software for Multimodal Phenotyping

| Category | Item | Function & Application Notes |

|---|---|---|

| Core Sensors | High-resolution RGB Camera [31] [15] | Captures morphological and color-based traits. Use industrial-grade cameras with homogenous LED lighting for consistency [31]. |

| Hyperspectral Camera (VNIR/SWIR) [31] [15] | For biochemical profiling and early stress detection. Can be a line scanner; requires specific illumination and calibration [31]. | |

| Thermal Infrared Camera [31] [15] | Measures canopy temperature as a proxy for stomatal conductance and transpiration. Must be calibrated for accurate readings [31]. | |

| 3D Laser Scanner or LiDAR [32] [15] | For precise plant architecture and biomass estimation. Generates 3D point clouds for volumetric analysis [32]. | |

| Chlorophyll Fluorescence Imager [31] [15] | Assesses photosynthetic performance. Requires a pulse-amplitude modulated (PAM) system with actinic and saturating light sources [15]. | |

| Platform & Control | Automated Phenotyping Platform [31] | A conveyor-based or gantry system that moves plants or sensors for high-throughput, consistent data acquisition. |

| Synchronized Control Software [31] | Custom software is critical for orchestrating the simultaneous operation of multiple sensors and managing the resulting large datasets [31]. | |

| Data Analysis | Image Processing & Analysis Software (e.g., FluorCam, PlantScreen) [15] | Vendor-specific software for initial data extraction, such as calculating fluorescence parameters or basic vegetation indices. |

| Machine Learning Frameworks (e.g., Python with TensorFlow/PyTorch, R) [31] [16] | Used for developing custom models for trait prediction, stress classification, and segmenting complex structures (e.g., using DeepLabV3+) [31] [2]. |

The integration of RGB, thermal, depth, and spectral imaging represents a paradigm shift in non-destructive plant phenotyping. By fusing data from these complementary modalities, researchers can move beyond isolated trait analysis to a systems-level understanding of plant growth, health, and response to environmental stresses. The protocols and frameworks outlined herein provide a practical foundation for implementing these powerful technologies. As the field evolves, the continued development of automated platforms, robust data fusion algorithms, and accessible analytical tools will be crucial for unlocking the full potential of sensor fusion in accelerating crop breeding and precision agriculture.

AI and Machine Learning for Automated Segmentation and Voxel Classification

The adoption of artificial intelligence (AI) and machine learning (ML) is revolutionizing the field of non-destructive plant phenotyping. These technologies enable the precise and automated analysis of plant structures in three dimensions, allowing researchers to extract vital phenotypic traits without harming the plants. This document outlines application notes and protocols for automated segmentation and voxel classification, framing them within an end-to-end workflow essential for modern plant phenotyping research. The integration of these advanced computational techniques is accelerating the development of smart agriculture and providing researchers, scientists, and drug development professionals with powerful tools to understand plant health, development, and response to environmental stresses [35] [2].

Core AI Methodologies in Plant Phenotyping

Automated 3D Organ Segmentation

Organ segmentation involves partitioning a 3D representation of a plant into its constituent organs, such as leaves, stems, and roots. Fully supervised learning methods have traditionally dominated this area but require extensive, point-wise annotated datasets, which are time-consuming and costly to produce [35]. To overcome this bottleneck, self-supervised learning approaches are gaining traction.

The Plant-MAE framework is a leading self-supervised method for point cloud segmentation. Its innovations include a kernel-based point convolution embedding module and a multi-angle feature extraction block (MAFEB) based on attention mechanisms. This architecture has demonstrated competitive performance on multiple point cloud datasets, achieving an average precision of 92.08%, recall of 88.50%, F1 score of 89.80%, and Intersection over Union (IoU) of 84.03%. It outperforms advanced deep learning networks like PointNet++ and Point Transformer, with an average improvement of at least 2.38% in IoU. A significant advantage is its data efficiency; on the Pheno4D dataset, it required only half of the training data for fine-tuning to achieve performance comparable to other models [35] [36].

For high-resolution phenotyping, the OmniPlantSeg pipeline addresses the limitation of fixed input sizes in 3D segmentation networks. It employs a novel sub-sampling algorithm called KD-SS that splits point clouds of arbitrary size into sub-samples while retaining the full original resolution. This is crucial for capturing tiny features and small details in high-resolution scans from modalities like photogrammetry, laser triangulation, and LiDAR. This approach is species- and modality-agnostic, making it a versatile tool for plant phenotyping research [37].

Voxel Classification for Internal Tissue Analysis

Beyond external organ segmentation, classifying the internal condition of plant tissues is vital for assessing plant health, particularly for diseases that are not externally visible. An end-to-end workflow combining multimodal 3D imaging and machine learning has been successfully developed for the non-destructive diagnosis of grapevine trunk internal structure [2] [17].

This workflow utilizes X-ray Computed Tomography (CT) and Magnetic Resonance Imaging (MRI) to acquire structural and physiological information from living plants. The 3D data from these modalities are aligned into a 4D-multimodal image. A machine learning model, trained on expert-annotated data, then performs voxel-wise classification to discriminate between different tissue conditions. The model categorizes tissues into three main classes: 'intact' (functional or non-functional but healthy tissues), 'degraded' (necrotic and other altered tissues), and 'white rot' (decayed wood) [2].

This approach has achieved a mean global accuracy of over 91% in distinguishing these tissue types. The study identified quantitative structural and physiological markers characterizing wood degradation steps, demonstrating that white rot and intact tissue contents are key measurements for evaluating vine sanitary status [2] [17].

Table 1: Performance Comparison of Segmentation Models

| Model / Metric | Precision (%) | Recall (%) | F1 Score (%) | IoU (%) | Key Feature |

|---|---|---|---|---|---|

| Plant-MAE [35] [36] | 92.08 | 88.50 | 89.80 | 84.03 | Self-supervised learning |

| Point Transformer (Comparative) | ~91.55 | ~87.14 | ~88.92 | ~81.65 | Fully supervised |

| PointNet++ (Comparative) | ~91.55 | ~87.14 | ~88.92 | ~81.65 | Fully supervised |

| OmniPlantSeg (Cherry Trees) [37] | - | - | - | 94.30 | Modality-agnostic |

Table 2: Voxel Classification Performance for Internal Tissues [2] [17]

| Tissue Class | Description | Key Imaging Signatures | Role in Diagnosis |

|---|---|---|---|

| Intact | Functional or healthy-looking tissues | High X-ray absorbance; High MRI (T1, T2, PD) signals | Indicator of plant's healthy functional capacity |

| Degraded | Necrotic and altered tissues | Medium X-ray absorbance; Low to medium MRI signals | Marks the presence of disease and degradation |

| White Rot | Advanced decayed wood | Very low X-ray absorbance (~-70%); Near-zero MRI signals | Key measurement for evaluating sanitary status |

Experimental Protocols