Bridging Prediction and Proof: A Comprehensive Guide to Validating In Silico Variant Predictions in Biomedical Research

This article provides a comprehensive framework for the experimental validation of in silico variant predictions, a critical step for applications in clinical genetics and drug discovery.

Bridging Prediction and Proof: A Comprehensive Guide to Validating In Silico Variant Predictions in Biomedical Research

Abstract

This article provides a comprehensive framework for the experimental validation of in silico variant predictions, a critical step for applications in clinical genetics and drug discovery. We explore the foundational principles of computational variant effect prediction, contrasting traditional association studies with modern AI-powered sequence-to-function models. The review details state-of-the-art methodological approaches for validating predictions across coding and regulatory regions, addresses common challenges and optimization strategies for improving prediction accuracy, and presents rigorous comparative analyses of tool performance in specific gene contexts. Designed for researchers, scientists, and drug development professionals, this guide synthesizes recent advances and practical validation protocols to enhance the reliability and translational potential of in silico predictions.

The Rise of In Silico Predictions: From Traditional Genetics to AI-Powered Models

Contrasting Traditional Association Studies and Modern Sequence Models

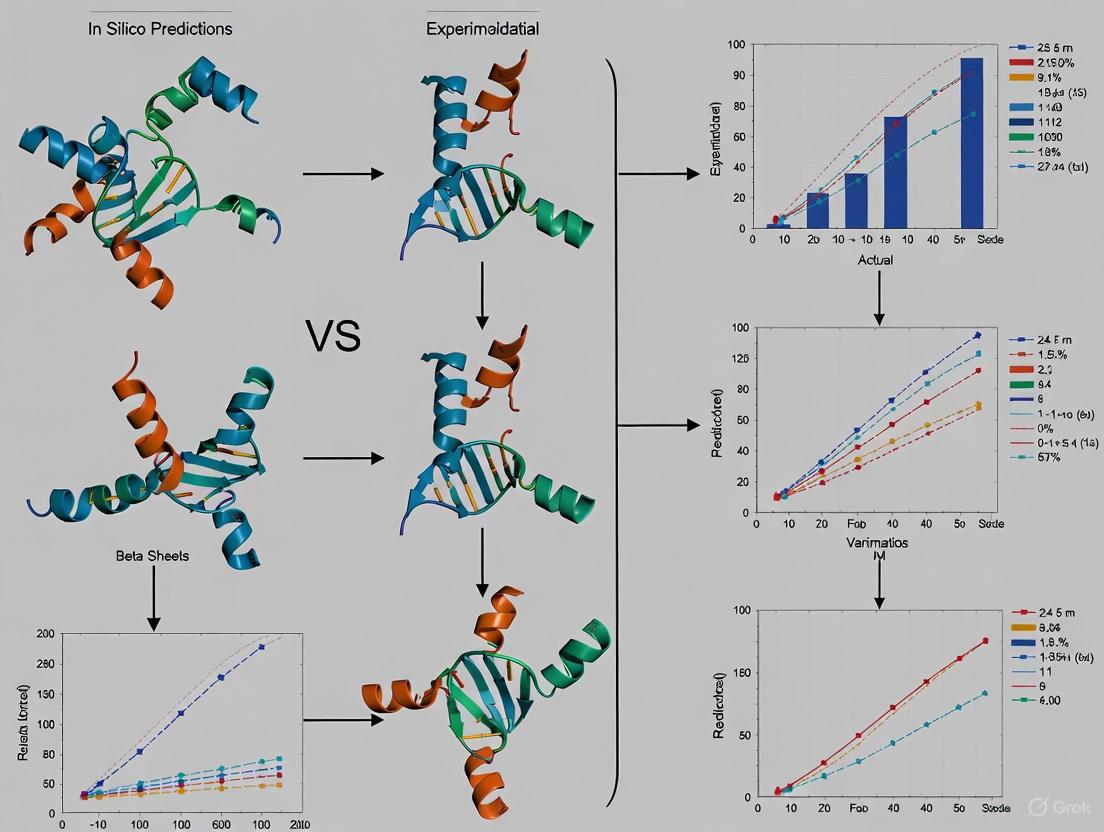

The interpretation of genetic variants represents a central challenge in modern genomics, with profound implications for understanding disease biology and guiding drug development. For decades, traditional association studies have served as the cornerstone for identifying links between genetic variation and phenotypic traits. However, the emergence of modern sequence models powered by deep learning is fundamentally reshaping this landscape. These approaches differ not only in their computational frameworks but also in their underlying assumptions about the genotype-phenotype relationship. This guide provides an objective comparison of these methodologies, focusing on their performance characteristics, experimental validation protocols, and practical implementation considerations for researchers and drug development professionals working on in silico variant prediction.

Methodological Foundations

Core Principles and Statistical Frameworks

Traditional association studies and modern sequence models operate on fundamentally different principles for linking genetic variation to biological function.

Traditional association studies, primarily genome-wide association studies (GWAS) and quantitative trait locus (QTL) mapping, employ mass univariate testing where each genetic variant is tested individually for statistical association with a phenotype [1] [2]. This approach uses linear regression models that estimate genotype-phenotype correlations separately for each locus, with statistical significance determined through hypothesis testing. The method relies on linkage disequilibrium to implicate regions containing causal variants, requiring dense sets of single-nucleotide polymorphisms (SNPs) throughout candidate gene regions [3]. These studies excel at detecting variants with measurable effects on macroscopic traits directly relevant to breeding objectives and human disease [1].

Modern sequence models represent a paradigm shift from this locus-specific approach. Instead of fitting separate functions for each variant, these models estimate a unified function to predict variant effects based on genomic, cellular, and environmental context [1]. Deep learning architectures—including convolutional neural networks (CNNs), Transformers, and hybrid approaches—learn complex sequence-to-function relationships by identifying DNA sequence features that influence regulatory activity [4]. These models extract hierarchical representations where early layers capture low-level features (e.g., k-mer composition) and deeper layers integrate these into higher-order regulatory signals, effectively learning the "regulatory grammar" of the genome [4].

Table 1: Fundamental Methodological Differences Between Approaches

| Feature | Traditional Association Studies | Modern Sequence Models |

|---|---|---|

| Statistical Framework | Mass univariate testing via linear regression | Unified function approximation via deep learning |

| Variant Effect Estimation | Separate coefficient for each locus | Context-aware prediction across all loci |

| Key Assumption | Phenotype-genotype correlations reflect biological causation | Sequence determinants follow learnable patterns |

| Data Requirements | Large sample sizes for statistical power | Diverse training datasets for model generalization |

| Resolution | Limited by linkage disequilibrium (moderate to low) | Base-pair level (theoretically unlimited) |

Experimental Workflows and Validation Paradigms

The experimental workflows for these approaches differ significantly in design, execution, and interpretation.

Traditional association studies follow a standardized workflow beginning with sample collection from hundreds to thousands of individuals, followed by genotype and phenotype measurement [2]. The core analysis involves association testing typically performed using (generalized) linear regression models that account for potential confounders such as population structure or genetic relatedness [1]. Significance is determined through multiple testing correction (e.g., Bonferroni, FDR), with subsequent replication in independent cohorts to confirm findings [2]. The final stage involves functional validation of associated variants through targeted experiments.

Diagram 1: Traditional Association Study Workflow

Modern sequence models employ a substantially different workflow centered on data curation from diverse experimental methodologies (MPRA, raQTL, eQTL) [4]. The core process involves model training where deep learning architectures learn sequence-function relationships from the training data. For Transformer-based models, this often includes pre-training on large-scale genomic sequences followed by task-specific fine-tuning [4]. The trained model then performs in silico variant effect prediction on novel sequences, with results validated through high-throughput experimental benchmarking [4] [5]. Model performance is quantified using standardized metrics on held-out test data, with the most promising predictions selected for experimental confirmation.

Diagram 2: Modern Sequence Model Workflow

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Standardized benchmarking reveals distinct performance profiles for traditional and modern approaches across different variant interpretation tasks.

Table 2: Performance Comparison on Variant Effect Prediction Tasks

| Task | Best-Performing Approach | Performance Metrics | Key Findings |

|---|---|---|---|

| Regulatory Impact Prediction | CNN models (TREDNet, SEI) [4] | Superior for estimating enhancer regulatory effects of SNPs | CNNs most reliable for predicting direction/magnitude of regulatory impact |

| Causal Variant Prioritization | Hybrid CNN-Transformer (Borzoi) [4] [6] | Best for identifying causal SNPs within LD blocks | Effectively integrates long-range dependencies for fine-mapping |

| RNA-seq Coverage Prediction | Borzoi model [6] | Mean Pearson's R=0.74-0.75 on held-out test sequences | Accurately predicts exon-intron coverage patterns for long genes |

| Splicing and Polyadenylation | Borzoi model [6] | Matches or exceeds state-of-the-art specialized tools | Unified modeling of multiple regulatory layers improves performance |

| Experimental Success Rate | Composite metrics (COMPSS) [5] | Improved rate by 50-150% after computational filtering | Computational pre-screening significantly enhances experimental efficiency |

Resolution and Context Specificity

The resolution and context specificity of predictions represent another key differentiator between approaches. Association testing provides population-level insights with resolution limited by linkage disequilibrium (typically 1-100 kb) [1]. Predictions are restricted to variants observed in the study sample, with effects that cannot be extrapolated to unobserved variants. In contrast, sequence models offer base-pair resolution and can generalize to novel variants never observed in nature [1]. For example, Borzoi successfully predicts RNA-seq coverage at 32 bp resolution across 524 kb genomic windows, capturing tissue-specific expression and isoform usage [6].

Practical Implementation

Research Reagent Solutions

Implementing these approaches requires specific computational and experimental resources.

Table 3: Essential Research Reagents and Resources

| Resource | Type | Function | Example Implementations |

|---|---|---|---|

| Deep Learning Models | Software | Variant effect prediction | TREDNet (CNN), SEI (CNN), Borzoi (Hybrid CNN-Transformer), DNABERT-2 (Transformer) [4] |

| Benchmark Datasets | Data | Model training and evaluation | MPRA, raQTL, eQTL datasets profiling 54,859 SNPs across four human cell lines [4] |

| Experimental Validation Platforms | Experimental | Functional confirmation | Massively Parallel Reporter Assays (MPRAs), enzyme activity assays [4] [5] |

| Performance Metrics | Analytical | Model evaluation | COMPSS framework, Pearson's R on held-out test sequences [5] [6] |

| Validation Tools | Software | Sequence assignment validation | checkMySequence for detecting register-shift errors [7] |

Integration Strategies for Optimal Performance

Rather than positioning these approaches as mutually exclusive, strategic integration leverages their complementary strengths. Association studies provide unbiased discovery of variant-trait associations at genome-wide scale, effectively nominating candidate regions and variants for further investigation [2]. Sequence models then enable fine-mapping and mechanistic interpretation within these associated regions, distinguishing causal from linked variants and generating testable hypotheses about molecular mechanisms [4] [6]. This integrated approach is particularly powerful for drug target identification and validation, where understanding causal mechanisms is essential for clinical development.

Traditional association studies and modern sequence models offer complementary approaches to variant interpretation, each with distinct strengths and limitations. Association studies remain powerful for initial discovery of variant-trait associations, particularly for complex diseases and traits, while sequence models excel at fine-mapping and mechanistic interpretation. The choice between approaches should be guided by the specific biological question, available data resources, and validation requirements. As the field advances, integration of these methodologies—leveraging the discovery power of association studies with the resolution of sequence models—will provide the most comprehensive framework for variant interpretation in research and drug development.

The advent of high-throughput technologies has transformed biology into a data-rich science, producing vast amounts of information across functional genomics and comparative genomics [8]. These disciplines, which respectively study how genomic components function and evolve, generate data of such volume and complexity that traditional analytical approaches struggle to extract meaningful biological insights [8] [1]. This data deluge has made machine learning indispensable for modern genomic research. Within artificial intelligence (AI), supervised and unsupervised learning represent two fundamentally distinct approaches for pattern recognition and prediction [9]. The choice between these paradigms carries significant implications for experimental design, resource allocation, and interpretability in genomic studies, particularly in the critical task of variant effect prediction for precision medicine and breeding [1].

This review provides a comprehensive comparison of supervised and unsupervised learning methodologies as applied to functional and comparative genomics. We examine their underlying principles, relative performances across various genomic applications, experimental validation protocols, and provide a practical toolkit for researchers navigating these approaches in silico variant prediction research.

Fundamental Divergences: Conceptual Frameworks and Applications

Core Methodological Differences

The fundamental distinction between supervised and unsupervised learning lies in their use of labeled data. Supervised learning requires a labeled dataset where each input data point is paired with a corresponding output label, training models to learn the mapping function from inputs to outputs [9] [10]. This approach encompasses both classification (predicting categorical outcomes) and regression (predicting continuous values) tasks [9]. In contrast, unsupervised learning identifies inherent patterns, structures, and relationships within unlabeled data without pre-existing labels or correct outputs, primarily through clustering, association, and dimensionality reduction techniques [9] [10].

These methodological differences translate directly to their applications in genomics. Supervised learning excels when predicting predefined outcomes—such as classifying variants as pathogenic or benign, or predicting drought-responsive genes in crops [11] [12]. Unsupervised learning shines in exploratory analyses where the underlying structure is unknown—such as discovering novel cell types from single-cell RNA-sequencing data or identifying patterns in high-dimensional clinical data [13] [14].

Characteristic Workflows in Genomic Research

The application of these learning paradigms follows distinct workflows in genomic research. The diagram below illustrates the characteristic processes for both supervised and unsupervised learning in genomic studies:

Performance Comparison: Experimental Data and Quantitative Metrics

Empirical Performance in Genomic Applications

Multiple studies have systematically evaluated the performance of supervised and unsupervised approaches across various genomic tasks. In cell type identification from single-cell RNA-sequencing data, a comprehensive evaluation of 8 supervised and 10 unsupervised methods revealed that supervised methods generally outperform unsupervised approaches in most scenarios—except for identifying unknown cell types [13]. This performance advantage is most pronounced when supervised methods utilize reference datasets with high informational sufficiency, low complexity, and high similarity to query datasets [13].

In genomic prediction for plant and animal breeding, comparative studies of regularized regression, ensemble, instance-based, and deep learning methods demonstrate that the relative predictive performance and computational expense depend on both the data characteristics and target traits [15]. Notably, increasing model complexity in classical regularized methods often incurs huge computational costs without necessarily improving predictive accuracy [15].

The table below summarizes key performance comparisons across genomic applications:

Table 1: Performance Comparison of Supervised vs. Unsupervised Learning in Genomic Studies

| Application Domain | Supervised Performance | Unsupervised Performance | Key Findings | Reference |

|---|---|---|---|---|

| Cell Type Identification (scRNA-seq) | Superior in most scenarios (except unknown cell types) | Lower overall performance but effective for novel cell type discovery | Supervised methods outperform when reference data has high informational sufficiency and similarity to query data | [13] |

| Genomic Prediction | Competitive predictive performance, computationally efficient with simple parameters | Varies by data type and traits | Classical linear mixed models and regularized regression remain strong contenders; complex models don't always improve accuracy | [15] |

| Variant Pathogenicity Prediction | High accuracy for specific genes (e.g., SIFT: 93% sensitivity for CHD variants) | Shows promise in emerging AI tools (AlphaMissense, ESM-1b) | Performance is gene-specific and dependent on training data; BayesDel most accurate overall | [12] |

| High-Dimensional Clinical Data Analysis | Requires many labeled examples for deep learning applications | REGLE method improves genetic discovery and disease prediction from unlabeled data | Unsupervised representation learning extracts clinically relevant information beyond expert-defined features | [14] |

In Silico Variant Prediction Performance

The performance of in silico prediction tools exhibits significant gene-specific variation, highlighting the importance of contextual validation. A comprehensive assessment of variant effect predictors revealed that while SIFT demonstrated 93% sensitivity for classifying pathogenic variants in CHD nucleosome remodelers, sensitivity dropped considerably for other genes—below 65% for pathogenic TERT variants and ≤81% for benign TP53 variants [16] [12]. This gene-specific performance underscores how tool accuracy depends heavily on the training data used to develop the algorithms [16].

Emergent AI-based tools like AlphaMissense and ESM-1b show significant promise for future pathogenicity prediction, potentially overcoming limitations of current approaches [12]. For genes with insufficient validated variants for training, consideration of missense variant-protein structural impact relationships is recommended over relying solely on gene-agnostic in silico score cutoffs [16].

Experimental Protocols and Validation Frameworks

Methodologies for Performance Benchmarking

Rigorous experimental protocols are essential for validating the performance of supervised and unsupervised learning methods in genomic applications. In comparative studies of cell type identification methods, researchers have employed standardized evaluation workflows using multiple public scRNA-seq datasets encompassing different tissues, sequencing protocols, and species [13]. These protocols typically utilize 5-fold cross-validation for intradataset evaluation and carefully constructed experimental datasets to assess the impact of various factors including cell quantity, cell type number, sequencing depth, batch effects, reference bias, population imbalance, and unknown cell types [13].

For genomic prediction studies, common methodologies involve comparing machine learning methods using both synthetic and empirical breeding datasets, with evaluation metrics focusing on predictive accuracy and computational efficiency [15]. Studies typically implement a standardized preprocessing pipeline including quality control to exclude cells with abnormal detected counts, without filtering atypical cell types or genes to preserve raw dataset integrity [13].

Validation Techniques for In Silico Predictions

Validation of in silico prediction tools requires special consideration, as performance varies significantly across genes and genomic contexts [16] [1]. The following workflow outlines a recommended validation protocol for genomic prediction tools:

Where sufficient numbers of established benign and pathogenic missense variants exist based on clinical and functional evidence, researchers should validate in silico tool scores for individual genes rather than relying solely on gene-agnostic thresholds [16]. For genomic discovery applications, representation learning methods like REGLE (Representation Learning for Genetic Discovery on Low-Dimensional Embeddings) leverage variational autoencoders to compute nonlinear disentangled embeddings of high-dimensional clinical data, which subsequently serve as inputs for genome-wide association studies [14].

Genomic researchers have access to an extensive toolkit of computational methods and resources for implementing supervised and unsupervised learning approaches. The table below catalogs key analytical tools and their applications in genomic research:

Table 2: Research Reagent Solutions for Genomic Machine Learning Applications

| Tool/Method | Category | Primary Application | Key Features | Reference |

|---|---|---|---|---|

| Seurat v3 Mapping | Supervised | Cell type identification | Reference-based annotation using labeled scRNA-seq data | [13] |

| SingleR | Supervised | Cell type identification | Reference-based annotation using reference transcriptomes | [13] |

| XGBoost | Supervised | Gene function prediction | Ensemble method with high accuracy for transcriptomic data (90% accuracy, 0.97 AUC in drought gene discovery) | [11] |

| Random Forest | Supervised | Gene function prediction | Ensemble method effective for high-dimensional gene expression data | [11] |

| Seurat v3 Clustering | Unsupervised | Cell type identification | Unsupervised clustering of scRNA-seq data | [13] |

| SC3 | Unsupervised | Cell type identification | Unsupervised clustering optimized for scRNA-seq data | [13] |

| REGLE | Unsupervised | High-dimensional clinical data analysis | Variational autoencoders for nonlinear embedding of spirograms, PPG data | [14] |

| AlphaMissense | AI-Based | Variant pathogenicity prediction | Emerging deep learning approach for missense variant classification | [12] |

| ESM-1b | AI-Based | Variant pathogenicity prediction | Protein language model for variant effect prediction | [12] |

| BayesDel | Composite Score | Variant pathogenicity prediction | Most accurate overall tool for CHD variant prediction | [12] |

The comparative analysis of supervised and unsupervised learning in functional and comparative genomics reveals context-dependent advantages for each paradigm. Supervised learning generally provides higher accuracy for well-defined prediction tasks with sufficient labeled data, while unsupervised learning offers unique capabilities for exploratory analysis and discovery of novel patterns in unlabeled datasets [13] [9] [10].

The future of genomic research will likely see increased integration of both approaches, with semi-supervised learning and hybrid methods gaining prominence [9] [10]. Emerging AI-based tools, including deep learning models like AlphaMissense and ESM-1b, show particular promise for advancing variant effect prediction [12]. Representation learning methods that combine strengths of both paradigms, such as REGLE, demonstrate how unsupervised feature learning can enhance genetic discovery and disease prediction [14].

For researchers conducting in silico variant prediction, the evidence suggests a strategic approach: validate tool performance for specific genes of interest where possible, consider the structural impact of missense variants when using gene-agnostic thresholds, and leverage the complementary strengths of both supervised and unsupervised approaches to maximize discovery potential while maintaining predictive accuracy [16] [1]. As genomic datasets continue to grow in size and complexity, the thoughtful application of these machine learning paradigms will remain essential for extracting biologically meaningful insights and advancing precision medicine.

Next-generation sequencing releases thousands of genetic variants, creating a significant interpretation challenge that requires substantial expertise and computational power for classification [17]. Researchers have established protocols with several parameters to classify these variants, among which in silico pathogenicity prediction tools have become one of the most widely applicable parameters for evaluating both germline and somatic variants [17]. The delicate process of variant classification requires multiple levels of evidence, from supporting to very strong, and in silico tools serve as critical filters to carefully remove variants unlikely to be associated with the disease in question [17]. These tools have evolved from basic conservation analysis to sophisticated artificial intelligence (AI)-driven frameworks that integrate structural, evolutionary, and functional data to predict variant effects with increasing accuracy. This guide provides an objective comparison of current in silico prediction methodologies, their performance across different variant types and genes, and the experimental protocols essential for validating their predictions in pharmaceutical and clinical research settings.

Performance Comparison of In Silico Prediction Tools

Categorical Classification Tools

Tools that provide categorical classifications (e.g., "deleterious" or "neutral") offer straightforward interpretations for researchers. Based on recent benchmarking studies, the following tools have demonstrated particular utility in specific contexts.

Table 1: Performance Characteristics of Categorical Prediction Tools

| Tool | Primary Methodology | Optimal Threshold | Reported Sensitivity | Reported Specificity | Strengths | Key Applications |

|---|---|---|---|---|---|---|

| SIFT | Sequence conservation | <0.05 (Deleterious) | 93% (CHD genes) [12] | Variable by gene family | High sensitivity for pathogenic variants | Neurodevelopmental disorder genes [12] |

| PolyPhen-2 | Structure/physicochemical parameters | ≥0.957 (Probably damaging) [17] | ~80% (general) | ~85% (general) | Integrates structural parameters | Missense variants with known structures [17] |

| MutationTaster | Supervised machine learning | >0.5 (Disease causing) [17] | High for disease variants | Moderate | Comprehensive variant type analysis | Broad variant screening [17] |

| PROVEAN | Sequence conservation | ≤-2.282 (Deleterious) [17] | Good for indels | Moderate for missense | Handles indels and missense | Cancer variants, indel prediction [17] |

Score-Based and Ensemble Prediction Tools

Score-based tools provide continuous scores that reflect confidence levels, allowing researchers to apply custom thresholds based on their specific requirements. Ensemble methods that combine multiple approaches generally show superior performance.

Table 2: Performance of Score-Based and Ensemble Prediction Tools

| Tool | Methodology Category | Score Threshold | Reported Accuracy | Key Performance Metrics | Limitations |

|---|---|---|---|---|---|

| BayesDel (addAF) | Ensemble method with allele frequency | >0.069 [17] | Highest overall for CHD genes [12] | Most robust overall performance [12] | Performance varies by gene family |

| APF2 | Pharmacogenomic-optimized ensemble | N/A (ensemble score) | 92% (pharmacogenomic test set) [18] | Balanced pharmacogenomic performance | Specialized for pharmacogenes |

| CADD | Supervised machine learning | >20 [17] | Variable across domains | Broad genomic context | Can be overly conservative [18] |

| REVEL | Ensemble method | >0.5 [17] | Good for rare variants | Strong for missense variants | Limited to missense variants |

| AlphaMissense | AI with structural predictions | >0.5 (Pathogenic) [18] | High specificity [18] | Excellent structural context | Newer, less validated [12] |

Specialized Tools for Pharmacogenomic Applications

Pharmacogenomic variants present unique challenges as they often do not follow the same evolutionary constraints as disease-causing variants. Specialized tools have been developed to address this specific niche.

Table 3: Performance Comparison on Pharmacogenomic Variants

| Tool | Sensitivity | Specificity | Accuracy | Balanced Performance | Clinical Actionability Prediction |

|---|---|---|---|---|---|

| APF2 | High | High | 92% (test set) [18] | Most balanced [18] | Excellent for CPIC guideline variants [18] |

| AlphaMissense | Moderate | Highest [18] | Good | Specificity-focused [18] | Good for structural impact |

| APF (previous version) | Good | Good | ~85% | Balanced, but inferior to APF2 [18] | Moderate |

| Traditional Tools (SIFT, PolyPhen-2) | Variable, often poor [18] | Variable | <80% (average) [18] | Generally poor for pharmacogenes [18] | Limited |

Experimental Protocols for Validation

High-Confidence Variant Curation for Benchmarking

Establishing a reliable ground truth dataset is fundamental for validating in silico prediction tools. The following protocol outlines the standard approach for curating high-confidence variant sets.

Variant Curation Workflow

Protocol Steps:

Source Variant Collection: Extract variants from authoritative databases including:

- ClinVar: Focus on variants with expert panel review (3-4 stars) or practice guidelines [18].

- PharmGKB: Prioritize variants with evidence levels 1-2 for drug response or pharmacokinetic impact [18].

- CPIC Guidelines: Include all variants with clinical pharmacogenetic recommendations [18].

- Literature-Curated Sets: Incorporate variants with high-quality experimental characterization from peer-reviewed publications [18] [12].

Functional Annotation:

- Classify variants as deleterious if experimental data shows <50% of wild-type enzyme activity or clear loss-of-function evidence [18].

- Classify variants as neutral if activity is ≥50% of wild-type with no demonstrated functional impact [18].

- For disease contexts, use established pathogenicity criteria from ACMG/AMP guidelines [17].

Dataset Partitioning:

- Training Set: For tool development (e.g., 385 pharmacogenetic variants across 45 genes) [18].

- Validation Set: For parameter optimization (e.g., CPIC variants excluded from training) [18].

- Test Set: Truly independent evaluation (e.g., 146 variants across 61 pharmacogenes not used in training/validation) [18].

In Vitro Functional Characterization of Variant Effects

Experimental validation provides the ground truth for assessing computational predictions. Enzyme activity assays represent a gold standard for pharmacogene validation.

Functional Assay Workflow

Experimental Protocol:

Enzyme Preparation:

- Use recombinant enzyme systems (e.g., Supersomes) expressing individual cytochrome P450 isoforms at consistent concentrations [19].

- Include control enzymes (wild-type and known variants) in each experiment batch.

Inhibition Assay:

- Test each substance at multiple concentrations (0.1, 1, and 10 µM) to capture concentration-dependent effects [19].

- Use luminescence-based P450-Glo assays with isoform-specific substrates (e.g., Luciferin-IPA) according to manufacturer protocols [19].

- Include appropriate positive controls (known inhibitors) and negative controls (solvent-only) in each run.

Activity Calculation and Classification:

- Calculate maximum inhibitory activity across tested concentrations for each substance-enzyme pair.

- Classify as "inhibitor" (positive) if maximum inhibition ≥15%, and "non-inhibitor" (negative) if <15% [19].

- For quantitative assessments, calculate IC50 values and intrinsic clearance relative to wild-type.

Performance Metrics and Statistical Analysis

Standardized evaluation metrics ensure objective comparison between prediction tools.

Calculation Methods:

- Sensitivity: TP/(TP+FN) - Ability to correctly identify deleterious variants [18].

- Specificity: TN/(TN+FP) - Ability to correctly identify neutral variants [18].

- Accuracy: (TP+TN)/(TP+TN+FP+FN) - Overall correctness [18].

- Area Under ROC Curve (AUC): Overall discrimination ability between deleterious and neutral variants [18].

- Youden's J: max(Sensitivity + Specificity - 1) - Balanced performance metric [18].

Validation Approach:

- Perform 5×2 nested cross-validation for robust internal validation [19].

- Conduct external validation on completely independent test sets not used in training [18] [19].

- Establish applicability domains to define chemical space for reliable predictions [19].

Table 4: Key Research Reagent Solutions for Experimental Validation

| Resource Category | Specific Examples | Function/Application | Key Features |

|---|---|---|---|

| Variant Databases | ClinVar [17], PharmGKB [18], CPIC Guidelines [18], gnomAD [17] | Reference datasets for variant interpretation and frequency data | Expert-curated, evidence-ranked, population frequency data |

| Experimental Assay Systems | P450-Glo Assay Systems [19], Supersomes [19] | Functional characterization of variant effects on enzyme activity | Isoform-specific, high-throughput compatible, luminescence-based readout |

| Structural Biology Resources | AlphaFold DB [18], UniProt [17] | Protein structure analysis and variant mapping | Predicted and experimental structures, functional annotation |

| Software & Computing | STELLA [20], GastroPlus [20], ANNOVAR [18] | PK/PD modeling and variant annotation | Compartmental modeling, PBPK simulation, multi-algorithm integration |

| Cell-Based Models | Patient-derived organoids/tumoroids [21], PDX models [21] | Functional validation in biologically relevant systems | Patient-specific genetic background, 3D architecture preservation |

Applications in Drug Development and Precision Medicine

Drug Discovery and Development

In silico prediction tools have become integral throughout the drug development pipeline, from target identification to clinical trial design.

Target Identification and Validation: Deep learning-based classifiers now enable fast and accurate identification of potential druggable proteins, with hybrid models (CNN-RNN + DNN) achieving 90.0% accuracy in identifying druggable proteins [22]. These models help prioritize targets with favorable therapeutic profiles before extensive experimental investment.

Drug Combination Optimization: In complex diseases like cancer, combination therapies often provide superior efficacy. In silico pharmacokinetic models developed using approaches like STELLA or GastroPlus can predict the in vivo performance of drug combinations by integrating in vitro assay results [20]. These models can simulate tissue drug concentration and percentage of cell growth inhibition over time, identifying synergistic interactions while minimizing toxicity [20] [21].

Toxicity and Safety Assessment: Machine learning-based classification models using XGBoost can predict cytochrome P450 inhibition with area under the receiver operating characteristic curve (ROC-AUC) of 0.8 or more in internal validation [19]. This capability is crucial for anticipating drug-drug interactions and specific toxicity endpoints in early development stages.

Clinical Translation and Precision Medicine

The translation of in silico predictions to clinical applications requires careful validation and consideration of population-specific factors.

Clinical Variant Interpretation: For neurodevelopmental disorders linked to CHD chromatin remodelers, BayesDel has emerged as the most robust tool for pathogenicity prediction, outperforming other methods in accurate classification of pathogenic variants [12]. Similarly, for pharmacogenes, APF2 provides quantitative variant effect estimates that correlate well with experimental results (R² = 0.91, p = 0.003) [18].

Population-Specific Dosing Strategies: Application of optimized prediction tools like APF2 to population-scale sequencing data from over 800,000 individuals has revealed drastic ethnogeographic differences in pharmacogene variation [18]. These findings have important implications for population-specific pharmacotherapy and help refine risk assessment for non-response or adverse drug events.

Real-World Safety Monitoring: The FDA's Adverse Event Reporting System (FAERS) provides post-market surveillance that can potentially validate in silico predictions [23]. With the recent shift to daily publication of adverse event data, researchers have enhanced capability to correlate predicted variant effects with real-world drug response and toxicity patterns [24].

The evolution of in silico prediction tools from simple conservation-based algorithms to sophisticated AI-driven frameworks has substantially streamlined variant prioritization in both research and clinical applications. Current evidence demonstrates that no single tool dominates all scenarios—SIFT excels in sensitivity for neurodevelopmental disorder genes [12], BayesDel shows robust overall performance for CHD variants [12], and APF2 provides optimal balanced performance for pharmacogenomic applications [18]. The most effective variant prioritization strategies employ a carefully selected ensemble of tools appropriate for the specific biological context, combined with rigorous experimental validation using the standardized protocols outlined in this guide. As these tools continue to evolve—particularly through the integration of structural predictions from advances like AlphaFold—and validation datasets expand, in silico predictions will play an increasingly central role in bridging genomic discoveries to therapeutic applications, ultimately accelerating the development of personalized medicine.

In the high-stakes realms of clinical research and drug development, validation serves as the critical bridge between theoretical predictions and real-world application. It is the rigorous process that determines whether a promising computational prediction, a novel biomarker, or a new therapeutic candidate can be reliably translated into clinical practice. The immense costs and timelines associated with drug development—requiring approximately 12-16 years and $1-2 billion to bring a new drug to market—make robust validation processes not merely an academic exercise but an economic and ethical necessity [25].

This guide examines the multifaceted role of validation across the research pipeline, with a specific focus on in silico variant predictions and their pathway to clinical implementation. As artificial intelligence and machine learning become increasingly integrated into biomedical research, establishing rigorous validation frameworks has never been more crucial. The transition from computational predictions to clinically actionable tools requires navigating complex technical and regulatory landscapes, which we will explore through comparative performance data, experimental protocols, and visual workflows essential for researchers and drug development professionals.

Validation Landscapes: Preclinical to Clinical Translation

Defining Validation Across the Pipeline

Validation methodologies evolve significantly as research progresses from early discovery to clinical application. The table below outlines the distinct characteristics and requirements across this continuum.

Table 1: Validation Characteristics Across the Research Pipeline

| Aspect | Preclinical Validation | Clinical Validation |

|---|---|---|

| Primary Purpose | Predict drug efficacy and safety in early research; assess variant impact computationally | Confirm efficacy, safety, and therapeutic benefit in human populations |

| Models & Systems | In vitro models (organoids, cell lines), in vivo models (PDX, GEMMs), computational simulations | Human patient samples, clinical trials, real-world evidence, biomarker monitoring |

| Key Methods | High-throughput screening, functional assays, in silico prediction tools, animal studies | Randomized controlled trials, biomarker assays, imaging, outcome studies |

| Validation Standards | Analytical performance, reproducibility in model systems, computational accuracy | Clinical utility, safety, regulatory standards, reproducibility in diverse populations |

| Regulatory Role | Supports Investigational New Drug (IND) applications | Required for FDA/EMA drug approval and clinical implementation [26] |

The Challenge of Translation

A significant challenge in biomedical research is the translational gap between preclinical discoveries and clinical application. Many promising biomarkers and predictions identified in laboratory settings fail to demonstrate the same predictive power in human trials due to biological complexity, species differences, and patient variability [26]. For in silico variant predictors, performance can be highly gene-specific, with recent studies showing inferior sensitivity (<65%) for pathogenic variants in certain genes like TERT, highlighting the limitations of generalizable tools [16].

Validating In Silico Variant Predictions: Methods and Performance

Computational Validation Approaches

For in silico variant effect predictors, validation begins with computational approaches before progressing to experimental confirmation. A systematic review of computational drug repurposing found several established computational validation methods [25]:

- Retrospective clinical analysis: Using EHR data or insurance claims to validate drug repurposing candidates, or searching existing clinical trials databases like clinicaltrials.gov

- Literature support: Manual searches of biomedical literature to find connections between predictions and existing knowledge

- Public database search: Leveraging specialized databases for protein interactions, gene expression, and variant classifications

- Benchmark dataset testing: Evaluating performance against established gold-standard datasets

- Online resource search: Utilizing specialized online validation tools and repositories

These computational methods help researchers prioritize the most promising predictions before committing resources to experimental validation.

Experimental Validation Protocols

Following computational validation, experimental confirmation provides essential evidence for biological relevance. Key experimental approaches include:

Functional Assays for Variant Impact:

- Protocol for Cell-Based Functional Assays: Transfert cells with transgenic constructs containing wild-type versus variant sequences, then measure channel conductance using patch-clamp electrophysiology. For example, in KCNN3 gene studies, cells transfected with constructs showing increasing CAG repeats demonstrated significant reduction in overall conductance and stronger inward rectification [27].

- Binding Affinity Assays: Utilize enzyme-linked immunosorbent assays (ELISA) to screen compound libraries for candidates with desired effects. This approach has been used successfully for repurposing FDA-approved drugs by inhibiting protein-protein interactions [28].

- Cell Viability Assays: Employ colorimetric or fluorescent indicators to monitor cell health in response to incubation with compounds during optimization phases [28].

Structural Prediction Validation:

- Protocol for Structural Impact Analysis: Predict protein structures using AlphaFold2 for reference and ColabFold for variant structures. Define functional domains (e.g., transmembrane helices, pore loops, binding domains) and superimpose them onto reference structures. Assess domain integrity based on completeness of all expected residue indices within each functional region [27].

Performance Comparison of In Silico Prediction Tools

Rigorous benchmarking is essential for selecting appropriate in silico prediction tools. Recent studies have evaluated multiple tools across different gene families and variant types.

Table 2: Performance Comparison of In Silico Pathogenicity Prediction Tools

| Tool | Methodology | Reported Sensitivity | Reported Accuracy | Best Application Context |

|---|---|---|---|---|

| SIFT | Sequence homology-based | 93% (CHD variants) [12] | Variable | First-pass screening for pathogenic variants |

| BayesDel_addAF | Ensemble method with allele frequency | N/A | Most accurate for CHD variants [12] | Clinical diagnostics for neurodevelopmental disorders |

| AlphaMissense | AI-based protein language model | Promising but gene-specific [12] | Emerging evidence | Missense variant prioritization |

| ESM-1b | Evolutionary scale modeling | Comparable to established tools [12] | Gene-specific performance | Structural impact predictions |

| ClinPred | Machine learning integration | High for common variants | Dependent on training data | Combined evidence integration |

These performance characteristics demonstrate that tool selection must be context-dependent, considering the specific gene family and variant type being studied. As noted in recent research, "in silico tool performance can be gene-specific and is dependent on the 'training set' on which the algorithm is built" [16].

Visualization: Validation Workflows

Comprehensive Validation Pipeline

The following diagram illustrates the integrated workflow for validating in silico predictions, from initial computational assessment through clinical implementation:

Assay Development and Validation Workflow

For laboratory assays used in validation, a systematic approach to development and quality control is essential:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Platforms for Validation Studies

| Reagent/Platform | Primary Function | Application Context |

|---|---|---|

| Patient-Derived Organoids | 3D culture systems replicating human tissue biology | Preclinical biomarker discovery, drug response modeling [26] |

| CRISPR-Based Functional Genomics | Systematic gene modification in cell-based models | Identification of genetic biomarkers influencing drug response [26] |

| AlphaFold2/ColabFold | Protein structure prediction from sequence | Structural impact assessment of genetic variants [27] |

| Microfluidic Organ-on-a-Chip | Mimics human physiological conditions | Predictive ADME/Tox screening, biomarker discovery [26] |

| Liquid Biopsy Platforms | Non-invasive cancer detection via ctDNA | Clinical biomarker monitoring, treatment response assessment [26] |

| Automated Liquid Handlers | High-precision liquid handling for assay miniaturization | Increased assay throughput, reduced human error [28] |

| Single-Cell RNA Sequencing | Resolution of cellular heterogeneity within populations | Biomarker signature identification, cellular response characterization [26] |

Regulatory and Clinical Implementation Frameworks

Regulatory Validation Requirements

For any predictive tool or biomarker to achieve clinical adoption, it must navigate rigorous regulatory pathways. Clinical biomarkers must undergo both analytical validation (ensuring the test accurately measures the intended parameter) and clinical validation (demonstrating correlation with clinical outcomes) [26]. Regulatory agencies like the FDA and EMA require extensive clinical trial data to ensure safety, efficacy, and reliability before approval.

The emerging "TechBio" sector must adopt rigorous clinical validation frameworks, prioritizing real-world performance and prospective clinical evidence over algorithmic novelty alone [29]. This is particularly crucial for AI-based tools, where there's a significant gap between technical performance and clinical utility. As noted in recent analysis, "despite the proliferation of peer-reviewed publications describing AI systems in drug development, the number of tools that have undergone prospective evaluation in clinical trials remains vanishingly small" [29].

The Imperative of Prospective Clinical Validation

Retrospective benchmarking in static datasets often proves inadequate for validating tools in real-world clinical environments. Prospective validation is essential because it [29]:

- Assesses how AI systems perform when making forward-looking predictions rather than identifying patterns in historical data

- Evaluates performance in actual clinical workflows, revealing integration challenges not apparent in controlled settings

- Measures impact on clinical decision-making and patient outcomes, providing evidence of real-world utility beyond technical metrics

For the most transformative AI solutions, validation through randomized controlled trials (RCTs) may be necessary, analogous to the drug development process itself. This comprehensive validation framework serves to protect patients, ensure efficient resource allocation, and build essential trust among stakeholders [29].

The validation pathway from computational prediction to clinical application is complex and multifaceted, requiring rigorous assessment at each transition point. For in silico variant predictions, this begins with computational validation using established tools—understanding their performance characteristics, limitations, and appropriate contexts—then proceeds through experimental confirmation in model systems, and ultimately requires clinical validation in human populations.

Successful navigation of this pathway demands careful attention to regulatory requirements, consideration of clinical workflow integration, and demonstration of tangible clinical utility. By understanding the stakes and implementing comprehensive validation strategies, researchers and drug developers can significantly enhance the translation of promising predictions into clinically impactful tools and therapies.

As the field continues to evolve with emerging technologies like AI-powered biomarker discovery and multi-omics integration, validation frameworks must similarly advance to ensure that innovation translates reliably to improved patient care and treatment outcomes.

A Practical Toolkit: Methods for Modeling and Experimentally Testing Variant Effects

The rapid expansion of genomic data has created an urgent need for computational methods to interpret the functional and clinical significance of genetic variants. In silico prediction tools have evolved from early conservation-based methods to sophisticated machine learning and deep learning approaches that can analyze nearly all possible missense variants in the human genome. These tools address a fundamental challenge in clinical genetics: the classification of variants of uncertain significance (VUS), which currently represent approximately 36% of variants in the ClinVar database and pose significant obstacles for genetic diagnosis and clinical decision-making [30].

This guide provides an objective comparison of established and emerging variant effect predictors, focusing on their performance characteristics, underlying methodologies, and appropriate applications within research and clinical contexts. As the field moves toward precision medicine, understanding the strengths and limitations of these tools becomes paramount for researchers, scientists, and drug development professionals working to translate genomic findings into clinical applications.

Evolution and Methodology of Prediction Tools

Historical Development and Technical Approaches

Variant effect prediction has evolved through several generations of computational approaches. Early methods like SIFT (Sorting Intolerant From Tolerant) and PolyPhen-2 relied on evolutionary conservation and protein structure information to predict variant impact [31] [32]. These were followed by meta-predictors such as REVEL and BayesDel, which integrate multiple individual predictors and conservation scores to improve accuracy [31] [32]. The most recent advancement comes from protein language models like ESM1b and structural-aware models like AlphaMissense, which leverage deep learning on protein sequences and structures without explicit evolutionary comparisons [33] [30].

Table: Generational Evolution of Variant Effect Predictors

| Generation | Representative Tools | Core Methodology | Key Innovations |

|---|---|---|---|

| First Generation | SIFT, PolyPhen-2 | Evolutionary conservation, protein structure | Phylogenetic analysis, structural impact |

| Meta-Predictors | REVEL, BayesDel, CADD | Ensemble machine learning | Integration of multiple evidence sources |

| Deep Learning Era | ESM1b, AlphaMissense | Protein language models, structural deep learning | Whole-genome prediction, structural context |

Key Technical Methodologies

Protein language models like ESM1b represent a paradigm shift in variant effect prediction. These models are deep neural networks trained on millions of protein sequences from UniProt, learning the underlying "language" of proteins without explicit evolutionary comparisons [33]. The ESM1b model contains 650 million parameters and processes protein sequences to generate likelihood estimates for amino acid substitutions. The variant effect score is calculated as the log-likelihood ratio between the wild-type and variant residues, providing a quantitative measure of how a mutation affects the protein's natural sequence [33].

Meta-predictors like REVEL employ a different approach, integrating scores from multiple individual predictors (including MutationAssessor, PolyPhen-2, SIFT, and others) along with conservation metrics and protein domain information [31] [32]. REVEL specifically uses a random forest classifier trained on known pathogenic and benign variants to generate its composite prediction scores [32].

AlphaMissense combines structural insights from AlphaFold2 with protein language modeling. Unlike other tools, it was not directly trained on known pathogenic variants but learned from the sequence-structure relationship of proteins, allowing it to predict the impact of missense mutations based on their predicted structural consequences [30].

Performance Comparison of Major Prediction Tools

Clinical Classification Accuracy

Multiple studies have systematically evaluated the performance of variant effect predictors using clinically classified variants from databases such as ClinVar and HGMD. The table below summarizes key performance metrics across major tools:

Table: Performance Comparison on Clinical Variant Classification

| Tool | ROC-AUC (ClinVar) | Sensitivity | Specificity | Key Strengths | Evidence Strength |

|---|---|---|---|---|---|

| ESM1b | 0.905 [33] | 81% [33] | 82% [33] | Genome-wide coverage, no MSA required | Not yet established |

| REVEL | N/A | 92% [30] | 78% [30] | High PPV, well-validated | Supporting to Strong [34] |

| BayesDel | Comparable to REVEL [31] | N/A | N/A | High yield, low false positive rate | Supporting to Strong [34] |

| AlphaMissense | N/A | 92% [30] | 78% [30] | Structural awareness, comprehensive database | Under evaluation |

| CADD | Lower than REVEL/BayesDel [31] | N/A | N/A | Broad variant coverage | Supporting [32] |

In head-to-head comparisons using clinically annotated variants, ESM1b achieved a ROC-AUC of 0.905 for distinguishing 19,925 pathogenic from 16,612 benign variants in ClinVar, outperforming EVE (0.885) and other methods [33]. Similarly, when evaluating 5,845 missense variants across 59 genes associated with neurological and musculoskeletal disorders, AlphaMissense demonstrated sensitivity and specificity of 92% and 78%, respectively [30].

A comprehensive evaluation of meta-predictors using 4,094 ClinVar-curated missense variants found that REVEL and BayesDel outperformed other meta-predictors (CADD, MetaSVM, Eigen) with higher positive predictive value, comparable negative predictive value, and greater overall prediction performance [31].

Experimental Validation Using Deep Mutational Scanning

Beyond clinical annotations, variant effect predictors have been validated against experimental data from deep mutational scanning (DMS) studies. These assays provide quantitative measurements of variant effects on protein function at scale.

When evaluated against 28 deep mutational scanning assays covering 15 human genes and 166,132 experimental measurements, ESM1b outperformed all 45 other variant effect prediction methods included in the comparison [33]. This demonstrates its strong performance not only on clinical classifications but also on experimental functional data.

Diagram 1: Experimental Validation Workflow for variant effect predictors using deep mutational scanning data.

Performance Across Gene-Specific Contexts

An important limitation of genome-wide evaluations is that they can obscure significant variation in tool performance across individual genes. A 2024 study systematically evaluated gene-specific performance of REVEL and BayesDel across 3,668 disease-relevant genes [34]. The researchers found that approximately 70% of evaluable score intervals were "trending discordant," meaning the evidence strength assigned based on genome-wide calibration was inappropriate for the specific gene context [34]. This highlights the critical need for gene-specific calibration when sufficient control variants are available.

This gene-specific performance variation was also observed in cancer predisposition genes, where in silico tools showed particularly inferior sensitivity (<65%) for pathogenic TERT variants and inferior sensitivity (≤81%) for benign TP53 variants [32]. This indicates that tool performance is gene-specific and dependent on the training set used for algorithm development [32].

Experimental Protocols and Validation Frameworks

Standardized Evaluation Methodology

To ensure fair comparisons between prediction tools, researchers have established standardized evaluation protocols. The typical workflow involves:

Variant Curation: Compiling high-confidence pathogenic and benign variants from ClinVar, excluding those with conflicting interpretations or uncertain significance [31] [33]. Variants are typically filtered to include only those with review status of 1+ stars (variants where at least one submitter has provided assertion criteria) [34].

Score Annotation: Annotating each variant with predictor scores using databases such as dbNSFP or tool-specific APIs [31] [32].

Performance Calculation: Computing standard performance metrics including sensitivity, specificity, positive predictive value, negative predictive value, and area under the receiver operating characteristic curve (ROC-AUC) [31] [33].

Statistical Analysis: Using appropriate statistical tests such as Fisher's exact test for differences in sensitivity/specificity and Monte Carlo permutation tests for overall prediction performance differences [31].

Clinical Validation Framework

For clinical applications, the ClinGen Sequence Variant Interpretation (SVI) Working Group has established a framework for calibrating variant effect predictions [34]. This approach involves:

Genome-wide Calibration: Aggregating variants across 1,913 genes from ClinVar and dividing predictor score ranges into sliding windows [34].

Likelihood Ratio Calculation: For each score window, calculating positive likelihood ratios (PLRs) based on the ratio of pathogenic to benign variants [32] [34].

Evidence Strength Assignment: Mapping likelihood ratios to ACMG/AMP evidence strengths (supporting, moderate, strong, very strong) based on predetermined thresholds [32].

Gene-Specific Validation: Where sufficient gene-specific control variants exist, validating or recalibrating thresholds for individual genes [34].

Diagram 2: Clinical Validation Framework showing the process for calibrating variant effect predictors according to ClinGen SVI recommendations.

Research Reagent Solutions: Essential Databases and Tools

Table: Essential Research Resources for Variant Effect Prediction Studies

| Resource Name | Type | Primary Function | Application in Validation |

|---|---|---|---|

| ClinVar | Public Database | Archive of human genetic variants with clinical interpretations | Provides curated pathogenic/benign variants for validation [31] [34] |

| dbNSFP | Database | Comprehensive collection of variant effect predictions | Source of pre-computed scores for multiple tools [31] |

| gnomAD | Population Database | Catalog of human genetic variation from large populations | Provides allele frequency data for benign variant filtering [33] [34] |

| UniProtKB | Protein Database | Manually annotated and automatically annotated protein sequences | Training data for protein language models [33] [35] |

| Mastermind Genomic Database | Evidence Platform | Curated genomic evidence from scientific literature | Gold-standard manual variant interpretations [30] |

The landscape of variant effect prediction tools has evolved significantly, with modern protein language models like ESM1b and AlphaMissense demonstrating superior performance in genome-wide evaluations. However, established meta-predictors like REVEL and BayesDel continue to show robust performance and have the advantage of extensive clinical validation.

Critical considerations for researchers and clinicians include:

- Gene-specific performance variation necessitates caution when applying genome-wide thresholds [34]

- Tool performance is context-dependent - the best tool may vary by gene and variant type [32]

- Combining multiple complementary tools may provide more reliable predictions than relying on a single method [31]

- Experimental validation remains essential for resolving variants of uncertain significance [30]

Future development should focus on improving gene-specific calibration, integrating structural information more comprehensively, and enhancing performance on non-coding variants. As these tools continue to mature, they hold promise for reducing the variant interpretation bottleneck and accelerating precision medicine initiatives.

The interpretation of genetic variation is a cornerstone of modern genomics, yet a significant challenge persists in deciphering the functional impact of variants outside the protein-coding exome. While non-synonymous variants have traditionally been the focus of pathogenicity prediction, two particularly challenging categories have emerged: variants in regulatory sequences and synonymous variants within coding regions. The former governs gene expression through complex mechanisms operating in non-coding DNA, and the latter, once considered "silent," are now known to influence RNA splicing, stability, and protein folding despite not altering the amino acid sequence [36]. This guide provides a comparative analysis of computational strategies developed to predict the effects of these variants, framing the discussion within the broader thesis that robust experimental validation is paramount for establishing the utility of any in silico prediction tool in research and clinical diagnostics.

Understanding the Variant Effect Prediction Landscape

The computational prediction of variant effects has evolved into a sophisticated field leveraging machine learning and deep learning. Methods can be broadly categorized by the type of variants they target and their underlying approach.

For synonymous variants, tools aim to capture subtle signals that disrupt various stages of gene expression. Key mechanisms include: disruption of splicing regulatory elements, alteration of codon optimality affecting translation efficiency and co-translational folding, and changes to mRNA structure and stability [36]. Predictors must therefore integrate features beyond simple conservation, including genomic context, RNA structure, and protein-level constraints.

For regulatory variants, the challenge lies in modeling the non-coding genome's regulatory grammar. The primary mechanisms involve: alteration of transcription factor (TF) binding motifs, changes to chromatin accessibility, and disruption of long-range enhancer-promoter interactions [37]. State-of-the-art models are increasingly sequence-based, trained on functional genomics data to learn this complex code de novo.

A third category of general-purpose predictors also exists, designed to evaluate all variant types, including synonymous and regulatory, often by integrating large-scale functional and conservation annotations.

Comparative Performance of Prediction Strategies

Benchmarking Synonymous Variant Predictors

The performance of synonymous variant predictors is often benchmarked using curated sets of known pathogenic and benign variants. A key finding from recent studies is that DNA-level features, particularly those related to splicing and evolutionary conservation, contribute the most to prediction accuracy, while protein-level features add only marginal utility [38]. This underscores that synonymous mutations primarily exert effects through perturbations in splicing or transcriptional efficiency.

Table 1: Comparison of Selected Synonymous Variant Predictors

| Predictor | Core Methodology | Key Features | Reported Performance |

|---|---|---|---|

| DRP-PSM [38] | Multi-level feature integration (DNA, RNA, protein) | Genomic context, conservation, splicing effects, sequence-derived features | DNA-level features contributed most; splicing and conservation features dominated. |

| synVep [39] | Extreme Gradient Boosting (XGBoost) with Positive-Unlabeled learning | Codon bias, mRNA stability, protein structure, expression profiles | 90% precision/recall on an unseen variant set; correlated with evolutionary distance. |

| SilVA [36] | Random Forest | Conservation scores, splicing, DNA and RNA properties | One of the earlier specific tools; performance varies. |

| CADD [36] | Support Vector Machine (SVM) | Integrative annotation-based scoring, including conservation | A general-purpose tool; often used as a baseline for comparison. |

Benchmarking Regulatory Variant Predictors

Benchmarking regulatory variant predictors requires carefully curated datasets of causal non-coding variants, such as those from TraitGym [40]. Performance varies significantly based on the trait (Mendelian vs. complex) and genomic context (enhancers vs. promoters).

Table 2: Benchmarking Results for Regulatory Variant Prediction (Adapted from TraitGym [40] and Other Studies)

| Model Class | Example Models | Best-Suited Application | Key Findings |

|---|---|---|---|

| Alignment-Based & Integrative | CADD, GPN-MSA | Mendelian traits & complex diseases [40] | Compare favorably for traits where evolutionary constraint is a strong signal. |

| Functional-Genomics-Supervised | Enformer, Borzoi | Complex non-disease traits [40] | Excel at predicting molecular traits (e.g., gene expression) from sequence. |

| CNN-Based | TREDNet, SEI | Predicting regulatory impact in enhancers [37] | Most reliable for estimating SNP effects on enhancer activity. |

| Hybrid CNN-Transformer | Borzoi | Causal SNP prioritization within LD blocks [37] | Superior for identifying the single causal variant among linked SNPs. |

| Hybrid Sequence-Oriented | SVEN [41] | Effects of both small variants and Structural Variants (SVs) | Accurately predicts tissue-specific expression (Mean Spearman R=0.892) and SV impact (Spearman R=0.921). |

A unified benchmark of deep learning models on enhancer variants revealed that Convolutional Neural Network (CNN) models like TREDNet and SEI performed best for predicting the regulatory impact of SNPs in enhancers, likely due to their proficiency in capturing local motif-level features [37]. In contrast, hybrid CNN-Transformer models like Borzoi were superior for the distinct task of causal variant prioritization within linkage disequilibrium blocks [37].

Experimental Protocols for Validation

The true test of any in silico prediction lies in its experimental validation. The following are key protocols used to generate ground-truth data for benchmarking and refining computational models.

Massively Parallel Reporter Assays (MPRAs)

Purpose: To simultaneously test thousands of genetic variants for their regulatory activity in a high-throughput manner. Workflow:

- Library Design: Oligonucleotides containing the reference and alternative alleles of regulatory variants are synthesized.

- Cloning & Delivery: These sequences are cloned into reporter vectors (e.g., with a GFP or barcode sequence) upstream of a minimal promoter and introduced into target cell lines.

- Expression Measurement: After a set period, RNA is sequenced to quantify the abundance of each barcode, serving as a proxy for the regulatory activity of each variant.

- Data Analysis: The effect size of a variant is calculated by comparing the expression output of the alternative allele to the reference allele. Utility in Validation: MPRAs provide direct, functional evidence of a variant's effect on regulatory activity and are a gold standard for benchmarking sequence-based models [37] [41]. A model's ability to classify MPRA-positive variants is a strong indicator of its accuracy.

Saturation Genome Editing (SGE) and Functional Assays

Purpose: To comprehensively test the functional impact of all possible single-nucleotide changes in a genomic region of interest, often applied to coding sequences. Workflow:

- Variant Library Creation: A library of cells is generated, each containing a single defined nucleotide change in the gene of interest, typically via CRISPR/Cas9-mediated homology-directed repair.

- Phenotypic Selection: Cells are subjected to a selection pressure relevant to the gene's function (e.g., drug selection, cell growth assay).

- Deep Sequencing: Pre- and post-selection DNA is sequenced to determine which variants are enriched or depleted.

- Variant Scoring: A functional score is calculated for each variant based on its change in frequency after selection. Utility in Validation: SGE provides high-resolution functional data for thousands of variants at once, offering an unparalleled dataset for training and testing predictors, including those for synonymous variants [36].

Expression Quantitative Trait Loci (eQTL) Fine-Mapping

Purpose: To link genetic variation to changes in gene expression in a natural population context and pinpoint putative causal variants. Workflow:

- Data Collection: Obtain genotype and RNA-seq data from a large cohort of individuals (e.g., from GTEx or UK Biobank).

- Association Testing: Perform statistical tests to identify genetic variants whose alleles correlate with differences in the expression levels of nearby genes (eQTLs).

- Fine-Mapping: Use statistical fine-mapping methods (e.g., based on Bayesian approaches) to narrow down the set of associated variants to a credible set that likely contains the causal variant(s). Utility in Validation: Fine-mapping results from large-scale eQTL studies provide strong, in vivo evidence for causal regulatory variants and are used to create benchmark datasets like TraitGym [40].

Diagram 1: Experimental validation workflows for regulatory variants. Two primary paths, Massively Parallel Reporter Assays (MPRA) and eQTL fine-mapping, provide complementary evidence for a variant's regulatory potential.

The Scientist's Toolkit: Essential Research Reagents and Frameworks

Implementing and applying these prediction strategies requires a suite of computational tools and resources. The following table details key solutions for researchers in this field.

Table 3: Essential Research Reagent Solutions for In Silico Variant Effect Prediction

| Tool/Framework | Type | Primary Function | Key Application |

|---|---|---|---|

| gReLU [42] | Comprehensive Software Framework | Unifies data processing, model training, interpretation, variant effect prediction, and sequence design. | Enables building and interpreting custom models; provides a model zoo with pre-trained networks like Enformer and Borzoi. |

| TraitGym [40] | Curated Benchmark Dataset | Provides standardized sets of putative causal non-coding variants for Mendelian and complex traits. | Benchmarking and comparing the performance of different models on a level playing field. |

| Enformer / Borzoi [40] [37] | Pre-trained Deep Learning Model (Functional-Genomics-Supervised) | Predicts gene expression and chromatin profiles from long DNA sequences (up to ~100-200 kb). | Predicting the effects of variants, especially those involving long-range regulatory interactions. |

| CADD [38] [36] | Integrative Annotation-Based Score | Integrates diverse functional annotations to provide a single score for variant deleteriousness. | A widely used general-purpose tool for initial variant prioritization. |

| DRP-PSM [38] | Specific Prediction Method | Predicts pathogenicity of synonymous mutations by integrating multi-level (DNA, RNA, protein) features. | Prioritizing synonymous variants for further experimental study in disease contexts. |

| SVEN [41] | Hybrid Sequence-Oriented Model | Predicts tissue-specific gene expression and quantifies impacts of both small variants and Structural Variants (SVs). | Interpreting the transcriptomic impact of large-scale SVs and small non-coding variants. |

Integrated Workflow for Variant Interpretation and a Path Forward

To effectively move beyond coding regions, researchers should adopt an integrated workflow that leverages the strengths of multiple computational strategies, followed by rigorous experimental validation.

Diagram 2: An integrated workflow for interpreting non-coding and synonymous variants. The process flows from initial prioritization to specialized prediction, mechanistic interpretation, and finally, experimental validation.

The field continues to evolve rapidly. Future directions include improving the prediction of cell-type-specific effects, better integration of 3D genomic data, and enhancing the interpretation of complex structural variation. Furthermore, as demonstrated by studies like the one on IRF6, even advanced models like AlphaMissense can disagree with experimental findings, highlighting a critical need for gene-specific structural and functional insights to improve accuracy [43]. The synergy between sophisticated in silico models and high-throughput experimental validation will remain the driving force for deciphering the functional genome and accelerating therapeutic development [44].

The rapid advancement of in silico tools for predicting variant effects represents a transformative shift in biomedical research and therapeutic development. Machine learning and deep learning platforms have evolved to better integrate biological factors, leading to unprecedented improvements in predicting functional variants [45]. However, the predictive power of these computational models hinges on their validation through robust, well-designed biological experiments. This guide provides a comparative analysis of validation methodologies, from functional cellular assays to traditional animal models, to help researchers establish rigorous workflows for confirming in silico predictions. As regulatory agencies like the FDA evolve their acceptance of non-animal alternatives for investigational new drug applications, understanding the strengths and limitations of each validation approach becomes increasingly critical for drug development success [46].

The Validation Imperative: Why Experimental Confirmation Matters

In silico tools for variant effect prediction, though increasingly sophisticated, produce computational inferences that require biological validation. Even state-of-the-art sequence-based AI models show great potential for predicting variant effects at high resolution, but their practical value remains contingent on rigorous validation studies [1]. Even the most advanced algorithms can generate false positives or overlook context-dependent effects that only biological systems can reveal.

The validation pipeline typically progresses from simpler, higher-throughput cellular systems to more complex organismal models, with each stage serving distinct purposes in confirming computational predictions. This tiered approach balances practical efficiency with biological relevance, ensuring that resources are allocated effectively while comprehensively assessing variant impact.

Comparative Analysis of Validation Platforms

The following comparison outlines the core methodologies available for validating in silico variant predictions, highlighting their respective applications, advantages, and limitations in the context of modern biomedical research.

Table 1: Comparison of Validation Platforms for In Silico Variant Predictions

| Validation Platform | Best Applications | Key Advantages | Key Limitations | Throughput | Relative Cost |

|---|---|---|---|---|---|

| Stem Cell Organoids | Disease modeling, developmental biology, tissue-specific toxicity [47] | Human-relevant, captures some tissue complexity, amenable to high-content imaging [47] | Limited maturation, variable reproducibility, lacks systemic circulation [47] | Medium | Medium |

| Organ-on-a-Chip | Barrier function studies, drug transport, mechanical stress responses [47] [46] | Controlled microenvironment, incorporates physiological flow, human cells | Technically complex, single-tissue focus typically, specialized equipment required | Low-medium | High |

| Induced Pluripotent Stem Cell (iPSC) Models | Patient-specific modeling, genetic disease mechanisms, personalized toxicology [47] [46] | Patient-specific genetic background, multiple lineage differentiation, renewable cell source | Potential epigenetic memory, differentiation variability, time-consuming | Medium | Medium |

| Traditional Animal Models | Systemic toxicity assessment, complex behavior studies, whole-organism physiology [47] | Intact biological system, established regulatory acceptance, complex physiology | Species-specific differences, high cost, ethical concerns, poor translatability for human-specific effects [47] [46] | Low | High |

Experimental Protocols for Key Validation Assays

Protocol 1: Organoid-Based Functional Validation for Synonymous Variants

Purpose: To validate the impact of synonymous variants on protein expression and function in a human-relevant 3D tissue context.

Materials:

- iPSCs with and without synonymous variant of interest

- Organoid differentiation media (tissue-specific)

- Matrigel or similar extracellular matrix

- Immunostaining reagents for target protein

- Western blot equipment and reagents

- Functional assay reagents (calcium imaging for neuronal variants, albumin ELISA for hepatic variants, etc.)

Procedure:

- Differentiate iPSCs (wild-type and variant-containing) into target tissue organoids using established protocols (14-21 days typically)

- Harvest organoids at maturity stages (day 28-35 typically) for analysis

- Analyze mRNA expression levels using qRT-PCR with primers flanking the variant region