Breaking the Barrier: A Practical Guide to Cross-Laboratory Protocol Standardization for Reproducible Science

This article provides a comprehensive framework for researchers, scientists, and drug development professionals aiming to enhance the reproducibility of their work across multiple laboratories.

Breaking the Barrier: A Practical Guide to Cross-Laboratory Protocol Standardization for Reproducible Science

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals aiming to enhance the reproducibility of their work across multiple laboratories. It explores the foundational importance of standardization, details methodological best practices for protocol implementation, offers solutions for common troubleshooting and optimization challenges, and outlines robust strategies for validation and comparative analysis. Drawing on recent multi-laboratory case studies from fields like microbiome and lipidomics research, the content is tailored to equip scientific teams with the practical tools needed to achieve reliable, replicable, and impactful results in a collaborative research landscape.

Why Standardization is the Bedrock of Reproducible Science

What is the reproducibility crisis in science? The reproducibility crisis refers to a widespread concern across scientific fields where independent researchers cannot recreate the results of previously published studies using the same data and methods. A 2016 survey in Nature revealed that over 70% of researchers have tried and failed to reproduce another scientist's experiments, and over 50% have failed to reproduce their own [1] [2]. This undermines the self-correcting nature of science and wastes immense resources.

What is the scale of the financial cost? In the United States alone, research that cannot be reproduced costs an estimated $28 billion USD in annual research funding [1]. This represents a massive inefficiency in the allocation of scientific resources.

What are the main causes of irreproducible research? Leading causes include [1] [3]:

- Pressure to Publish ("Publish or Perish"): Nearly three-quarters of biomedical researchers cite this as a leading cause.

- Inadequate Statistical Power: Many studies have low statistical power (estimated 8-35%), making true findings hard to distinguish from chance.

- Questionable Research Practices (QRPs): These include p-hacking, HARKing, and cherry-picking results.

- Lack of Transparency: Insufficient detail in methods, reagent information, and data prevents others from replicating the work.

How do "Publish or Perish" culture and QRPs contribute to the problem? The academic system often rewards quantity and novelty of publications over rigor. This creates perverse incentives, leading to [1]:

- P-hacking: Manipulating data collection or analysis until a statistically significant result (p < 0.05) is achieved.

- HARKing (Hypothesizing After the Results are Known): Presenting unexpected findings as if they were the original hypothesis.

- Cherry-picking: Selectively reporting only results that support the desired conclusion.

Are some fields more affected than others? Yes, concerns have been openly raised in fields like oncology, cardiovascular biology, and neuroscience [2] [4]. For instance, a project focused on high-impact cancer biology papers found that fewer than half of the experiments assessed were reproducible [1].

What is the difference between reproducibility and replicability? These terms are sometimes used interchangeably, but they have distinct meanings [5] [6]:

- Reproducibility: Obtaining the same results using the original data and analysis code.

- Replicability: Obtaining consistent results using new data collected by following the same experimental procedures.

Troubleshooting Guides for Common Experimental Issues

Issue 1: Inconsistent Cell Culture Results

Problem: Experimental outcomes vary between labs or even within the same lab over time when using the same cell line.

Potential Causes and Solutions:

| Potential Cause | Troubleshooting Step | Specific Protocol/Methodology |

|---|---|---|

| Misidentified or Cross-contaminated Cell Lines | Perform routine cell line authentication using Short Tandem Repeat (STR) profiling. | - Culture cells until 70-80% confluent. Harvest cells and send sample for STR analysis. Compare profile to reference database (e.g., ATCC). Re-authenticate every 6 months and after every freeze-thaw cycle. |

| Variation in Cell Seeding Density | Establish and adhere to a Standard Operating Procedure (SOP) for cell seeding. | - Create a detailed seeding protocol: "Harvest cells at 80-90% confluency. Count using an automated cell counter. Dilute cell suspension to precisely 50,000 cells/mL. Seed 100 µL per well in a 96-well plate (5,000 cells/well). Gently rock plate side-to-side and front-to-back to ensure even distribution before incubation." |

| Inconsistent Reagent Quality | Use reagents from qualified sources and implement strict quality control. | - Use the same lot of serum for an entire project. Test new lots of critical reagents (e.g., growth factors, antibodies) for performance before full adoption. Record the catalog and lot numbers for all reagents in your lab notebook. |

Issue 2: Irreproducible Findings in Preclinical Animal Studies

Problem: Findings from animal models fail to translate to human clinical trials.

Potential Causes and Solutions:

| Potential Cause | Troubleshooting Step | Specific Protocol/Methodology |

|---|---|---|

| Underpowered Studies | Conduct an a priori sample size calculation before starting the experiment. | - Use a power analysis software (e.g., G*Power). Input the expected effect size (from pilot data or literature), desired power (typically 80%), and alpha (typically 0.05). The output is the minimum number of animals required per group to detect a true effect. |

| Lack of Blinding | Implement blinding during data collection and analysis to prevent unconscious bias. | - Assign a random code to each animal group. The investigator performing the treatment, measurement, or data analysis should be unaware of the group assignments (control vs. treatment). Unblind the data only after the analysis is complete. |

| Poorly Defined Experimental Endpoints | Pre-register the study plan and define primary and secondary endpoints clearly. | - Submit a detailed study protocol to a registry (e.g., OSF Registries). The protocol must explicitly state the primary outcome measure, how it will be measured, the statistical test for analysis, and a pre-determined stopping rule. |

Issue 3: Non-Reproducible Computational Analyses (Machine Learning/Bioinformatics)

Problem: Unable to reproduce the computational results or model performance from a published paper.

Potential Causes and Solutions:

| Potential Cause | Troubleshooting Step | Specific Protocol/Methodology |

|---|---|---|

| Unset Random Seeds | Always set the random seed at the beginning of any script that involves randomness. | - In Python (using NumPy and TensorFlow): import numpy as np; import tensorflow as tf; np.random.seed(123); tf.random.set_seed(123). Document the seed value used in the code comments and manuscript methods section. |

| Silent Default Parameters | Explicitly state all software parameters and versions used in the analysis. | - Use a dependency management tool (e.g., conda env export > environment.yml). In your methods, write: "Analysis was performed using scikit-learn version 1.2.0. The RandomForestClassifier was instantiated with n_estimators=1000, max_depth=10, random_state=123," rather than relying on defaults. |

| Inaccessible Data and Code | Share analysis code and data in a public, version-controlled repository. | - Create a repository on GitHub or GitLab. Include: a) The full analysis script, b) A "README" file with setup instructions, c) A list of all dependencies (e.g., a requirements.txt file). If data cannot be shared publicly, provide a detailed synthetic dataset or instructions for authorized access. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent/Material | Function | Key Considerations for Reproducibility |

|---|---|---|

| Validated Antibodies | Used for detecting specific proteins (e.g., in Western blotting, immunohistochemistry). | - Use validation numbers (e.g., RRID). Check application-specific validation data. Avoid stretching use beyond expiration. Record catalog, lot, and dilution factor [1]. |

| Cell Lines | Fundamental model systems for in vitro research. | - Authenticate via STR profiling upon receipt and regularly thereafter. Test for mycoplasma contamination frequently. Maintain detailed culture SOPs and passage number records [2]. |

| Critical Chemicals & Biomolecules | Includes growth factors, enzymes, and substrates for assays. | - Purchase from qualified suppliers. Use the same lot for an entire project. For powdered reagents, document the buffer, pH, and dissolution protocol precisely. |

| Standard Operating Procedures (SOPs) | Detailed, step-by-step instructions for any experimental protocol. | - SOPs should be living documents that include reagent sources, equipment settings, timing, and safety information. They are essential for cross-laboratory standardization [2] [6]. |

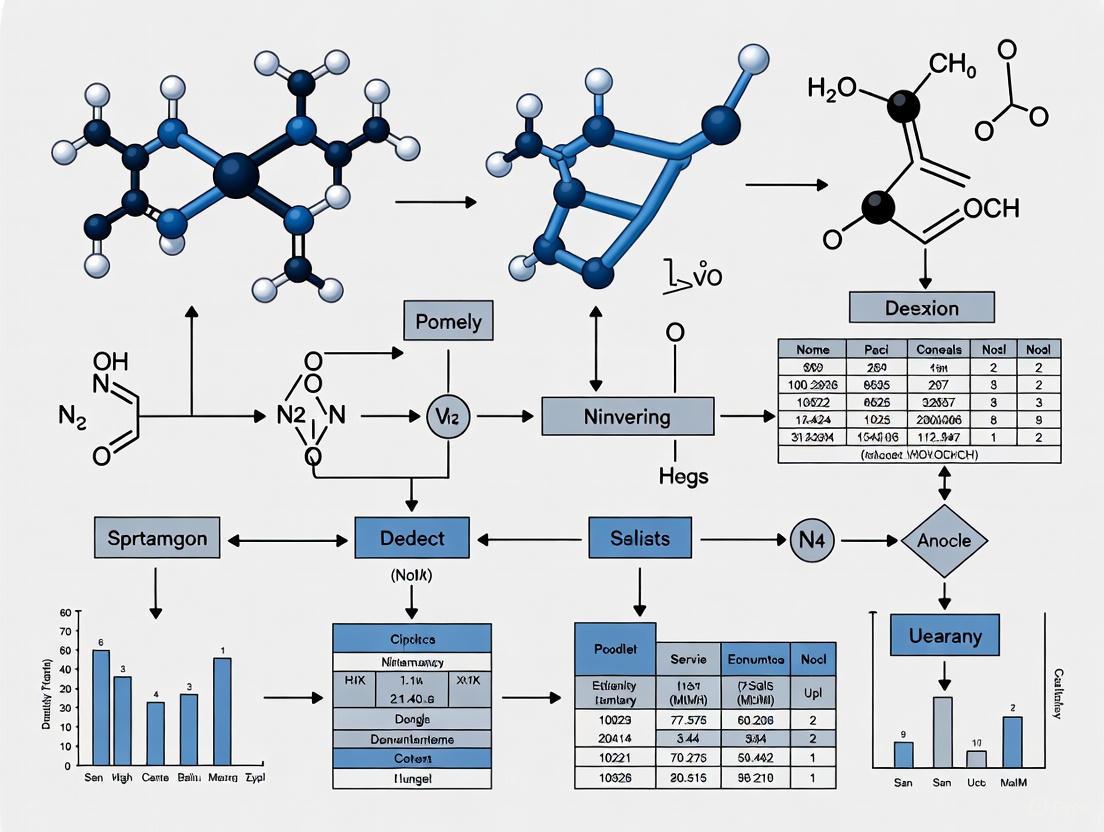

Visualizing Workflows for Robust Science

Standardized In Vitro Assay Workflow

Pathway to Reproducible Research

In modern scientific research, particularly in fields like drug development and biotechnology, the terms reproducibility, replicability, and robustness are fundamental to establishing reliable knowledge. However, their meanings are often confused or used inconsistently across different scientific disciplines, leading to challenges in cross-laboratory collaboration and protocol standardization [7]. A clear, shared understanding of these concepts is the first critical step toward improving the transparency, rigor, and ultimately, the trustworthiness of research outcomes. This guide provides definitive explanations, troubleshooting advice, and practical tools to help researchers integrate these principles into their daily work.

Defining the Core Concepts

The scientific community has not yet reached a universal consensus on the definitions of reproducibility and replicability. The following table outlines the two most common interpretation frameworks, with Framework A being the recommended standard for this guide [7].

Table: Two Common Frameworks for Defining Key Concepts

| Concept | Framework A (Recommended) | Framework B (Alternative) |

|---|---|---|

| Reproducibility | The ability to recompute results using the same original data and the same computational methods [7]. | The ability of an independent team to achieve consistent results using their own data and methods in a new study [7]. |

| Replicability | The ability to confirm a scientific finding by collecting new data and using independent methods or conditions [7]. | The ability to regenerate results using the original author's data and code [7]. |

Beyond these, Robustness refers to the ability of a scientific conclusion to hold true under a variety of conditions. A finding is considered robust if it can be confirmed not only by precise replication (narrow robustness) but also by different experiments testing the same hypothesis under varying circumstances, covariates, and sources of noise (broad robustness) [8]. Broadly robust findings are often seen as having greater explanatory power and real-world applicability [8].

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: Our lab failed to reproduce our own computational analysis. What are the most common causes?

A: This is a frequent issue in data-intensive science. The primary causes and solutions are:

- Cause: Unrecorded changes in the computational environment (e.g., software versions, library dependencies, or operating system).

- Solution: Use software containers (e.g., Docker, BioContainers) to package the entire computational environment, ensuring it runs consistently across different machines [9].

- Cause: Missing or undocumented "hard-coded" parameters in analysis scripts.

- Solution: Implement version control (e.g., Git) for all code and scripts. Use configuration files for all parameters and document them thoroughly in a shared lab repository [9].

Q2: How can we design a replication study that journal reviewers will find compelling?

A: A well-designed replication proposal should clearly articulate:

- Its Contribution: Explain how the replication will build on prior studies and strengthen the evidence base, even if it is a direct (narrow) replication [10].

- Variations and Rationale: Clearly specify any justified variations from the original study's methods and the scientific reason for those changes [10].

- Objectivity: Ensure the investigation is independent, or if the original investigators are involved, establish clear safeguards to maintain objectivity [10].

Q3: A collaborator could not replicate our experimental protocol. How do we troubleshoot this?

A: This often points to incomplete methodological reporting.

- Action: Use a detailed checklist (e.g., the PECANS checklist for cognitive and neuropsychological research) to ensure your methods section includes all necessary information for an independent lab to repeat the experiment [11]. Similar field-specific guidelines are available through the EQUATOR network.

- Action: Proactively share detailed protocols, including information on reagents, equipment settings, and data processing steps, via a platform like GitHub, which is designed for tracking changes and collaboration [9].

Q4: How can we make our data visualizations accessible to colleagues with color vision deficiency (CVD)?

A: This is a common but often overlooked aspect of scientific communication.

- Avoid Red-Green: The standard "stoplight" palette (red/green) is problematic for the most common forms of CVD [12].

- Use Friendly Palettes: Opt for colorblind-friendly palettes built into software like Tableau, or use combinations like blue/orange or blue/red [12].

- Leverage Light vs. Dark: If you must use problematic colors, use a very light version of one and a very dark version of the other so they can be distinguished by value (lightness), not just hue [12].

- Add Redundant Coding: Use textures, patterns, or direct labels in addition to color to encode information [12].

Experimental Protocols for Assessing Robustness

Protocol 1: Testing Experimental Robustness through Parameter Variation

Objective: To determine if a biological assay or experimental outcome is broadly robust to minor, clinically or biologically relevant variations in protocol.

- Identify Critical Parameters: List key experimental parameters (e.g., incubation time, temperature, reagent concentration, pH, cell passage number).

- Define Variation Ranges: For each parameter, define a realistic range of variation based on potential real-world scenarios or inter-lab differences.

- Design Experimental Matrix: Create a set of experimental conditions where each parameter is systematically varied within its defined range while keeping others constant.

- Execute and Analyze: Run the experiment under all conditions. The primary outcome is the consistency of the core finding (e.g., a significant effect, a successful synthesis) across the varied conditions.

Protocol 2: Computational Robustness Analysis

Objective: To ensure computational findings and model predictions are not overly sensitive to specific analytical choices or random noise.

- Sensitivity Analysis: Vary key input parameters or model assumptions within a plausible range and observe the impact on the final results.

- Resampling/Bootstrapping: Use statistical techniques like bootstrapping to assess the stability of model parameters and confidence intervals.

- Algorithm Variation: Repeat the analysis using different but theoretically justified algorithms or statistical methods to see if the conclusion remains consistent.

Standardized Workflow Visualization

The following diagram illustrates a standardized workflow that integrates reproducibility and replicability checks into the research lifecycle, from initial idea to final publication. This workflow helps institutionalize best practices.

Standardized Research Workflow

Research Reagent and Material Solutions

Table: Essential Tools for Reproducible Research

| Tool / Reagent Category | Function | Example / Standard |

|---|---|---|

| Electronic Lab Notebooks (ELNs) | Digital documentation of experiments, protocols, and observations. | Platforms like GitHub can be adapted as a structured, version-controlled lab notebook [9]. |

| Version Control Systems | Tracks changes to code, scripts, and documents over time, enabling full audit trails. | Git [9]. |

| Software Containers | Packages all software, libraries, and dependencies into a portable, reproducible environment. | Docker, BioContainers [9]. |

| Reference Materials & Standards | Provides a benchmark to ensure consistency and accuracy of measurements across experiments and labs. | International standards for specific materials (e.g., graphene community) [13]. |

| Structured Checklists | Ensures all critical information for replicating a study is reported in publications. | PECANS (cognitive science), CONSORT (clinical trials), STROBE (epidemiology) [11]. |

Data Presentation and Visualization Standards

To ensure that visualizations of data, such as sequence alignments, are both informative and accessible, the following standards are recommended. These are based on substitution matrix-driven color schemes, which automatically assign similar colors to biologically similar amino acids, and are adaptable for color vision deficiency [14].

Table: Color Palette for Accessible Scientific Visualizations

| Color Name | Hex Code | Recommended Use |

|---|---|---|

| Blue | #4285F4 | Primary positive result, main data series. |

| Red | #EA4335 | Primary negative result, control data series. |

| Yellow | #FBBC05 | Warning, secondary data series. |

| Green | #34A853 | Confirmation, tertiary data series. |

| White | #FFFFFF | Graph background, node fill. |

| Light Grey | #F1F3F4 | Alternate background, subtle elements. |

| Dark Grey | #202124 | Primary text, arrows, and lines. |

| Medium Grey | #5F6368 | Secondary text, borders. |

Technical Support Center

This support center is designed to assist researchers and scientists in implementing standardized protocols to overcome common challenges in cross-laboratory reproducibility research. The following guides and FAQs address specific issues encountered during experimental workflows.

Troubleshooting Guides

Issue: High Inter-Laboratory Variation in Quantitative Results

Problem: Different laboratories reporting significantly different results when analyzing the same sample.

- Step 1: Verify that all sites are using the same extraction protocol. In lipidomics, methyl-tert-butyl ether (MTBE) extraction has been shown to outperform the classic Bligh and Dyer approach for consistency [15].

- Step 2: Confirm use of standardized reference materials. Utilize pooled plasma reference materials like NIST SRM 1950 or the NIST candidate RM 8231 Suite to benchmark results across sites [15].

- Step 3: Implement a common set of internal standards. Employ a standardized quantitative platform that uses 54 deuterated internal standards for accurate quantitation [15].

- Step 4: Calculate the coefficient of variation (CV) across laboratories to quantify variability and identify outliers [15].

Issue: Irreproducible Microbiome Assembly in Plant Studies

Problem: Inconsistent microbial community structure when repeating synthetic community (SynCom) experiments across different labs.

- Step 1: Standardize biotic and abiotic factors. Use a fabricated ecosystem (EcoFAB) device where all initial conditions are specified and controlled [16].

- Step 2: Source biological materials from a central repository. Obtain a standardized model community of bacterial isolates from a public biobank like the Leibniz-Institute DSMZ to ensure strain consistency [16].

- Step 3: Follow a detailed, shared protocol. Adhere to a centralized, written protocol with annotated videos to minimize procedural variation across laboratories [16].

- Step 4: Centralize sample analysis. Send all collected samples to a single organizing laboratory for sequencing and metabolomic analyses to minimize analytical variation [16].

Issue: Inconsistent Mouse Behavior Across Behavioral Neuroscience Labs

Problem: The same mouse strain exhibits different learning speeds or decision-making behaviors in different laboratories.

- Step 1: Standardize the animal pipeline. Use a consistent mouse strain, provider, age range, and weight range [17].

- Step 2: Control for critical husbandry variables. Standardize water access, diet (food protein and fat), and surgical procedures for headbar implantation [17].

- Step 3: Document non-standardized variables. Regularly measure and record environmental factors like light-dark cycle, temperature, humidity, and sound, even if they are not standardized [17].

- Step 4: Use shared hardware and software. Standardize experimental apparatus, data collection software, and data analysis pipelines across all participating laboratories [17].

Frequently Asked Questions (FAQs)

Q: What is the primary benefit of using standardized clinical practice guidelines (CPGs) in a research setting? A: CPGs distill the large amount of available evidence into explicit care recommendations, reducing unwanted variations in practice and improving healthcare delivery, quality, and efficiency. They provide a basis for measuring institutional performance and subsequent quality improvement initiatives [18].

Q: How can we create an effective troubleshooting guide for our lab's standard operating procedures? A: An effective guide should include a clear description of the equipment or system, a list of potential problems with their symptoms and causes, a flowchart for logical problem-solving, necessary tools and materials, and safety precautions. It should be regularly revised based on user feedback [19].

Q: What is a "ring trial" and how does it improve reproducibility? A: A ring trial is an inter-laboratory comparison study, used in proficiency testing of analytical methods. Multiple laboratories perform the same experiment using the same materials and protocols. This powerful tool identifies sources of variation and helps validate the robustness of methods across different environments [16].

Q: We have standardized our methods, but our results are still not reproducible across sites. What could be wrong? A: This highlights the difference between methods reproducibility and results reproducibility. Ensure you are also controlling for "extraneous factors" such as the sex of the experimenter, animal handling techniques, and subtle environmental cues, which can significantly sway outcomes even with standardized apparatus [17].

Q: What infrastructure can help maintain standardized troubleshooting processes across a large, distributed team? A: Implement a centralized knowledge base where all team members can contribute experiences and expertise. Using a collaborative platform with unified reporting and analytics ensures everyone follows the same step-by-step workflows and can access past solutions [20].

Experimental Protocols & Data

Table 1: Impact of Standardization on Inter-Laboratory Reproducibility in Lipidomics

| Study Focus | Number of Laboratories | Key Standardized Element | Outcome |

|---|---|---|---|

| Quantitative Lipidomics [15] | 9 | Lipidyzer Platform with 54 internal standards | Enabled assignment of consensus concentration values for hundreds of lipid species in human plasma. |

| Plant-Microbiome Research [16] | 5 | EcoFAB 2.0 devices & synthetic communities (SynComs) | All labs observed consistent, inoculum-dependent changes in plant phenotype and final bacterial community structure. |

| Decision-Making in Mice [17] | 7 | Training protocol, hardware, and software | No significant differences in behavior across labs after training completion; database of 5 million mouse choices created. |

Table 2: Clinical Outcomes of Standardization in Pediatric Surgery

| Clinical Context | Type of Standardization | Outcome Improvement | Reference |

|---|---|---|---|

| Perforated Appendicitis [18] | Standardized antibiotic use, operative procedure, discharge criteria | Significant reduction in postoperative abscess and length of hospital stay. | Yousef et al. |

| Pediatric Colorectal Surgery [18] | Eight-element perioperative "colon bundle" | Significantly reduced surgical site infections (SSI) in the high-compliance cohort. | Tobias et al. |

Detailed Methodologies for Key Experiments

Protocol: Cross-Laboratory Lipidomics Analysis using the Lipidyzer Platform [15]

- Sample Preparation: Extract lipids from plasma samples (e.g., NIST SRM 1950) using the MTBE extraction protocol.

- Internal Standards: Spike the sample with a kit of 54 deuterated internal standards covering 13 lipid classes.

- Instrumentation Analysis: Analyze samples on a SCIEX QTRAP 5500 mass spectrometer equipped with a SelexION differential mobility spectrometry (DMS) interface.

- Data Processing: Use automated informatics software to quantify >1000 lipid species based on the internal standards.

- Data Inclusion Criteria: For consensus value calculation, a lipid species must be reported by at least 7 out of 9 participating sites.

Protocol: Reproducible Plant-Microbiome Study in EcoFAB 2.0 [16]

- Material Distribution: The organizing laboratory ships all supplies, including EcoFABs 2.0, seeds, and SynCom inoculum, to all participating laboratories.

- Experimental Setup: In sterile EcoFAB devices, plant the model grass Brachypodium distachyon and inoculate with either a full 17-member SynCom or a 16-member SynCom lacking a key bacterial strain.

- Sample Collection: All labs follow the same protocol to measure plant biomass, collect root and media samples for 16S rRNA amplicon sequencing, and filter media for metabolomics.

- Centralized Analysis: All collected samples are sent to a single organizing laboratory for sequencing and metabolomic analysis via LC-MS/MS to eliminate analytical variation.

Workflow Diagrams

Cross-Laboratory Standardization Workflow

Multi-Lab Reproducibility Verification Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Cross-Laboratory Reproducibility Research

| Item | Function | Example from Research |

|---|---|---|

| Standardized Reference Materials | Provides a benchmark with consensus values to calibrate instruments and validate methods across different sites. | NIST SRM 1950 (Metabolites in Frozen Human Plasma) [15]. |

| Deuterated Internal Standards | Enables accurate quantification of analytes by correcting for losses during sample preparation and instrument variability. | Kit of 54 internal standards for lipidomics on the Lipidyzer platform [15]. |

| Synthetic Microbial Communities (SynComs) | Limits complexity while retaining functional diversity, allowing for replicable studies of community assembly and host-microbe interactions. | 17-member bacterial SynCom for the grass Brachypodium distachyon [16]. |

| Fabricated Ecosystem (EcoFAB) | A sterile, controlled laboratory habitat that minimizes environmental variation for highly reproducible plant-microbiome studies. | EcoFAB 2.0 device [16]. |

| Standardized Software & Pipelines | Ensures consistent data acquisition, processing, and analysis, which is critical for comparing results across laboratories. | Open-access data architecture pipeline and standardized training software for mouse behavior [17]. |

Frequently Asked Questions (FAQs)

Q1: What was the primary challenge in combining data from 69 different cohorts, and how was it addressed? The main challenge was the heterogeneity in participant demographics, enrollment criteria, follow-up periods, data elements, and collection methods across the cohorts [21]. The ECHO Program addressed this by developing the ECHO-wide Cohort Protocol (EWCP), which defined a Common Data Model (CDM) and established a rigorous process for data harmonization to pool both extant (existing) and new data [21] [22].

Q2: How does the ECHO-wide Cohort define "environmental exposures"? In the ECHO-wide Cohort, "environmental exposures" encompass the totality of early life conditions. This includes not only traditional exposures like air pollution and chemical toxicants but also broader factors such as home and neighborhood conditions, socioeconomic status, and behavioral and psychosocial factors [21].

Q3: What are "essential" versus "recommended" data elements in the EWCP? The EWCP classifies data elements as either essential or recommended [21].

- Essential elements are mandatory; they must be collected by all cohorts for new data collection and are the required set to be submitted from existing data [21].

- Recommended elements provide data for deeper investigation into an area. Cohorts are not required to collect them, but if they do, they should use the measure specified in the protocol [21].

Q4: What are "preferred," "acceptable," and "alternative" measures? To balance standardization with practicality, the EWCP allows for flexibility in measurement tools:

- Preferred and Acceptable Measures: These are the standardized instruments listed in the protocol for collecting new data [21].

- Alternative (Legacy) Measures: These are cohort-specific measures that were in use before ECHO. Allowing their continuation facilitates longitudinal analysis within a cohort, though the data requires harmonization before cross-cohort analysis [21].

Q5: What statistical approaches are recommended for determining positive responses in assays like ELISPOT? While the core ECHO protocol focuses on broader data harmonization, experiences from cross-laboratory research highlight the limitations of empirical rules (e.g., fixed thresholds) for assay response determination. Non-parametric statistical tests (e.g., permutation or bootstrap tests) are better suited because they account for inherent variability in the data, especially when sample sizes are small (e.g., triplicate wells), and provide uniform control of false-positive rates [23].

Troubleshooting Guides

Issue: Inconsistent Data Across Cohorts for the Same Construct

Problem: Different cohorts used different measurement tools (legacy measures) to assess the same underlying concept (e.g., stress), making combined analysis impossible.

Solution: Implement a systematic data harmonization process.

- Assemble Expert Team: Form a working group with subject matter experts, statisticians, and data scientists [21].

- Map Variables: Use a tool like the Cohort Measurement Identification Tool (CMIT) to identify all different measures used for the construct across cohorts [21].

- Create Harmonized Variables: Derive new analytical variables (called "derived variables" in ECHO) that represent the common construct. This can be done through:

- Linking: Using established cross-walk tables or statistical equating methods if available.

- Standardization: Converting scores to a common metric (e.g., z-scores) within each cohort before pooling.

- Model-Based Approaches: Using the original measures as inputs in statistical models to estimate the latent trait [21].

- Document and Validate: Transparently document all harmonization decisions and algorithms. Validate the new harmonized variable by ensuring it behaves as expected in relation to other key variables [21].

Problem: Cohorts have data stored in various formats and local systems, making centralized pooling inefficient and error-prone.

Solution: Utilize a centralized data transformation and capture system.

- Use a Common Data Model (CDM): Define a standard structure for all data (e.g., the EWC SQL server database) [21].

- Provide a Data Mapping Tool: Implement a system like ECHO's Data Transform tool. This allows each cohort to provide a detailed "roadmap" for converting their local data into the CDM [21].

- Offer Flexible Data Capture: Allow cohorts to submit data via:

The following workflow diagram illustrates the integrated data pipeline from cohort registration to final analysis, as implemented in the ECHO-wide Cohort Study.

Issue: Ensuring Data Quality Before Cross-Cohort Analysis

Problem: Even after harmonization, data quality may vary between cohorts, potentially biasing results.

Solution: Apply rigorous data quality checks and consider the limit of detection for assays.

- Implement a Variance Filter: For assay data (e.g., ELISPOT), use a quality control metric like the ratio of variance to (median + 1) to identify and exclude samples with extreme outliers that could skew results [23].

- Account for Limit of Detection (LOD): A result can be statistically significant but not scientifically relevant if it is below the assay's LOD. Establish the LOD for key assays within each laboratory using guidelines like the ICH Q2R1 signal-to-noise method. A signal-to-noise ratio of 2:1 or 3:1 is generally acceptable. Do not claim a positive response if the mean of experimental wells is below the LOD, even if statistically significant [23].

- Team Science Review: Before final analysis, have the relevant working group review the quality-controlled and harmonized dataset to ensure it is fit for purpose [21].

The following chart outlines the key steps for planning and executing a successful data harmonization project, from initial assessment to final documentation.

ECHO's Data Harmonization Toolkit

The following table summarizes the key tools and systems developed for the ECHO-wide Cohort to facilitate standardization and harmonization [21].

| Tool / System Name | Primary Function | Role in Standardization & Harmonization |

|---|---|---|

| Common Data Model (CDM) | A standard structure for the central database. | Provides a unified target format for all data, enabling pooling and efficient analysis. |

| ECHO-wide Cohort Protocol (EWCP) | Defines essential/recommended data elements and preferred/acceptable measures. | Standardizes all new data collection across the 69 cohorts. |

| Cohort Measurement Identification Tool (CMIT) | A survey tool to identify measures cohorts used for each data element. | Identified legacy measures for harmonization and informed protocol revisions. |

| Data Transform Tool | Allows cohorts to map local data to the CDM. | Enables the transformation of disparate extant and new local data into the standardized CDM. |

| REDCap Central | A centralized, secure web-based data capture system. | Standardizes the collection of new data for cohorts that use the central system. |

Research Reagent Solutions: Key Materials for Cross-Lab Harmonization

The following table details essential non-laboratory materials and tools that are critical for successful large-scale, collaborative research like the ECHO-wide Cohort Study.

| Item / Tool | Function in Harmonization |

|---|---|

| Common Data Model (CDM) | A standardized data structure that acts as a "reagent" for combining datasets, ensuring all data components are compatible [21]. |

| Standardized Protocol (EWCP) | Defines the "recipe" for new data collection, specifying the required ingredients (essential data elements) and steps (measures) to ensure consistency [21] [22]. |

| Data Mapping Tool (Data Transform) | Functions as a "conversion kit," providing the instructions (the roadmap) to translate cohort-specific data into the standard CDM format [21]. |

| Centralized Data Capture (REDCap Central) | Serves as a "standardized container" for collecting new data, minimizing variation introduced by different local data entry systems [21]. |

Building a Reproducible Workflow: From Theory to Practice

This technical support guide outlines the core components of a standardized protocol to achieve cross-laboratory reproducibility in scientific research. Consistent results across different labs and researchers are fundamental to scientific credibility and progress. Standardizing the elements of Materials, Measurements, and Methods provides a robust framework to minimize experimental variation and enhance the reliability of your findings [24]. The following FAQs and troubleshooting guides address common challenges and provide practical solutions for implementing these standards in your work.

Frequently Asked Questions (FAQs)

1. Why is a standardized protocol critical for multi-laboratory studies? Standardized protocols are essential because they ensure that all participating laboratories are performing experiments in the same way, using the same materials and measurements. This directly controls for procedural variation, making any observed biological differences more likely to be true effects rather than artifacts of the experimental process. A multi-laboratory ring trial demonstrated that when five different labs used identical protocols, materials, and devices, they observed highly consistent results in plant phenotype, root exudate composition, and final bacterial community structure [16].

2. What are the most common factors that ruin experimental reproducibility? Several interrelated factors can compromise reproducibility. Key issues include:

- Inadequate access to methodological details, raw data, and research materials. [24]

- Use of unauthenticated or contaminated biological materials, such as cell lines or microorganisms [24].

- Poorly described methods and experimental design that lack critical parameters like blinding, replication, and randomization [24].

- The inability to manage and share complex datasets effectively [24].

- A research culture that often undervalues the publication of negative results [24].

3. How can I ensure the biological reagents I use are reliable? Using authenticated, low-passage reference materials is crucial for data integrity [24]. You should:

- Source biomaterials from reputable biorepositories whenever possible.

- Authenticate all cell lines and microorganisms upon receipt and at regular intervals during your research. This confirms their genotypic and phenotypic traits and ensures they are free from contaminants like mycoplasma [24].

- Avoid long-term serial passaging, which can lead to genetic and phenotypic drift, altering your experimental results [24].

4. What should a thoroughly described method include? A comprehensively described method goes beyond a simple list of steps. It should provide a detailed protocol that enables other experts to replicate your work exactly. This includes [16] [25] [24]:

- A comprehensive list of all required reagents and equipment, including catalog numbers and lot numbers if critical.

- A step-by-step procedure with precise quantities, timings, and environmental conditions (e.g., temperature, pH).

- Explicit details on sample size, the number of replicates, and how replicates were defined.

- A description of how data was processed and analyzed, including the statistical tests used.

- A troubleshooting section that addresses common problems encountered during the protocol.

Troubleshooting Guides

Problem: Inconsistent Results with a Solid-Phase Extraction (SPE) Protocol

Potential Cause 1: Analytical system malfunction.

- Solution: Verify that your entire analytical system is functioning correctly. Check for sample-to-sample carryover, detector issues, or a malfunctioning autosampler [26].

Potential Cause 2: Variation in sample loading or elution.

- Solution: Ensure that all steps of the SPE procedure—including column conditioning, sample loading, washing, and elution—are performed with precise timing and volumetric control. Using automated liquid handlers can improve consistency.

Problem: Failure to Reproduce a Published Experimental Result

Potential Cause 1: Incomplete methodological details in the original publication.

- Solution: Actively seek out the study's extended protocols, if available. Some journals now publish detailed Research Protocols as supplementary information or standalone articles to combat this issue [25]. Contact the corresponding author to request the full, detailed protocol.

Potential Cause 2: Unavailable or poorly characterized research materials.

- Solution: Check if the original study's key reagents (e.g., antibodies, cell lines, synthetic microbial communities) are available from a public biobank or repository. Using materials with a known provenance, such as bacterial isolates from a public collection, is a cornerstone of reproducible science [16].

Potential Cause 3: Inability to manage complex data or analysis scripts.

- Solution: Advocate for and adopt practices of robust data sharing. Reproducibility is greatly enhanced when raw data and analysis scripts are deposited in publicly available databases, allowing for direct reanalysis and verification of results [16] [27] [24].

The Scientist's Toolkit: Key Research Reagent Solutions

The table below details essential materials for ensuring reproducibility, particularly in environmental microbiome studies, based on a successful multi-laboratory trial.

Table 1: Key Research Reagents for Reproducible Plant-Microbiome Research

| Item | Function in the Protocol |

|---|---|

| Fabricated Ecosystem (EcoFAB 2.0) | A sterile, standardized laboratory habitat that provides a controlled environment for studying plant-microbe interactions, minimizing variability from growth conditions [16]. |

| Synthetic Microbial Community (SynCom) | A defined mixture of bacterial isolates that limits complexity while retaining functional diversity, allowing researchers to dissect specific microbe-microbe and plant-microbe interactions [16]. |

| Reference Plant Lines (e.g., Brachypodium distachyon) | A model organism with consistent genetics and phenotype, providing a uniform host for studying microbiome assembly and function across laboratories [16]. |

| Authenticated Bacterial Isolates | Individual microbial strains that are traceable to a certified repository (e.g., DSMZ), ensuring genotypic and phenotypic consistency for all experiments [16] [24]. |

Standardized Experimental Workflow for Multi-Laboratory Studies

The following diagram illustrates a generalized workflow for implementing a standardized protocol across multiple research sites, based on methodologies proven to enhance reproducibility.

Standardized Multi-Lab Workflow

Data Presentation: Quantifying the Reproducibility Challenge

Understanding the scope of the reproducibility problem is the first step to addressing it. The data below, derived from analyses of published literature, highlights key transparency and reproducibility gaps.

Table 2: Indicators of Reproducibility in Published Empirical Research (Sample of 271 Neurology Publications, 2014-2018) [27]

| Indicator | Availability Rate in Sampled Publications |

|---|---|

| Provided access to study materials | 9.4% |

| Provided access to raw data | 9.2% |

| Linked to the research protocol | 0.7% |

| Provided access to analysis scripts | 0.7% |

| Were pre-registered | 3.7% |

Table 3: Researcher Self-Reported Experiences with Reproducibility (2016 Survey) [24]

| Experience | Percentage of Researchers |

|---|---|

| Were unable to reproduce other scientists' findings | >70% |

| Were unable to reproduce their own findings | ~60% |

This technical support guide is based on a pioneering multi-laboratory study that successfully established a standardized framework for reproducible plant-microbiome research. The research demonstrated that by using fabricated ecosystems (EcoFABs) and defined synthetic microbial communities (SynComs), consistent results in plant phenotype, root exudate composition, and bacterial community assembly can be achieved across different laboratories [16] [28]. The core experiment involved five independent laboratories across three continents using the model grass Brachypodium distachyon and two different bacterial SynComs within sterile EcoFAB 2.0 devices [16] [29]. This case study breaks down the protocols, troubleshooting guides, and FAQs to help your laboratory implement this reproducible system.

Key Research Reagent Solutions

The following table details the essential materials and reagents used in the standardized protocol, which is critical for ensuring cross-laboratory reproducibility.

Table 1: Essential Research Reagents and Materials

| Item Name | Type/Description | Function in the Experiment | Source/Availability |

|---|---|---|---|

| EcoFAB 2.0 Device | Fabricated ecosystem; a sterile, controlled growth chamber | Provides a standardized habitat for highly reproducible plant growth and microbiome studies [16]. | Provided by the organizing lab; protocols available online [16] [28]. |

| Brachypodium distachyon | Model grass species | Standardized plant host for studying plant-microbe interactions [16] [30]. | Seeds were shipped from the organizing lab to ensure uniformity [28]. |

| Synthetic Community (SynCom) | Defined consortium of 17 or 16 bacterial isolates from a grass rhizosphere | Tools to study microbiome assembly and function with limited complexity but retained functional diversity [16] [28]. | Available via public biobank (DSMZ) with cryopreservation protocols [16] [30]. |

| Paraburkholderia sp. OAS925 | A specific bacterial isolate | A dominant root colonizer used to test its specific impact on microbiome composition and plant phenotype [16] [29]. | Component of the SynCom17; its absence defines SynCom16 [16]. |

Detailed Experimental Protocol & Workflow

The successful experiment followed a meticulously detailed protocol. The diagram below outlines the key stages of the experimental workflow.

Critical Protocol Steps

- Device Assembly: Use the standardized EcoFAB 2.0 device. Consistency in labware is crucial, so adhere to the specified part numbers provided in the detailed protocol [28].

- Plant Preparation: Brachypodium distachyon seeds must be dehusked, surface-sterilized, and stratified at 4°C for 3 days, followed by germination on agar plates for 3 days before transfer to the EcoFAB [28].

- SynCom Inoculation: Synthetic communities are prepared as 100x concentrated stocks in glycerol and shipped on dry ice. The inoculum is resuspended and added to 10-day-old seedlings at a final concentration of 1 × 10^5 bacterial cells per plant. Using optical density (OD600) to colony-forming unit (CFU) conversions is essential for equal cell numbers [28].

- Data Collection: At harvest (22 days after inoculation), measure plant biomass (shoot fresh/dry weight), perform root scans, and collect root/media samples for downstream 16S rRNA amplicon sequencing and metabolomic analysis like LC-MS/MS [16] [28].

Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: What is the most critical factor for achieving reproducibility across labs? A: The study identified that standardizing every possible variable is key. This includes using the same source for materials (EcoFABs, seeds, SynCom inoculum), following a detailed, video-annotated protocol, and centralizing key analytical steps like sequencing and metabolomics to minimize analytical variation [16] [28].

Q2: Why use a synthetic community (SynCom) instead of a natural soil sample? A: SynComs bridge the gap between complex natural communities and single-isolate studies. By limiting complexity while retaining key functional diversity, they allow researchers to unravel the mechanistic underpinnings of microbe-microbe and plant-microbe interactions in a controllable and reproducible manner [16] [31].

Q3: We encountered microbial contamination in our EcoFABs. How was this managed in the study? A: The multi-lab study maintained a very high sterility rate (over 99%). They performed sterility tests by incubating spent medium on LB agar plates at two time points. Contamination was minimal and was attributed to specific issues like a cracked plate lid. Ensure all containers are properly sealed and follow the surface sterilization protocol for seeds meticulously [28].

Q4: How significant was the impact of the dominant colonizer, Paraburkholderia? A: The presence of Paraburkholderia sp. OAS925 had a dramatic and reproducible effect. In SynCom17, it dominated the final root microbiome (98% average relative abundance), and its presence correlated with a significant decrease in plant shoot biomass and root development compared to the SynCom16 treatment where it was absent [16] [29] [28].

Troubleshooting Common Experimental Issues

Table 2: Troubleshooting Common Problems in EcoFAB-SynCom Experiments

| Problem | Potential Cause | Solution |

|---|---|---|

| High variability in plant biomass between labs. | Differences in growth chamber conditions (light quality/intensity, temperature) [28]. | Use data loggers to monitor environmental conditions. Where possible, standardize growth chamber specifications or account for these variables in data analysis. |

| Unexpected bacterial community composition in final samples. | Inaccurate inoculum preparation or concentration; cross-contamination. | Use pre-calibrated OD600 to CFU conversions for SynCom preparation. Ensure strict sterile technique during inoculation and handling. |

| Low yield or poor quality of metabolites from root exudates. | Degradation of metabolites during sample collection or storage. | Follow the protocol for immediate filtering of media and flash-freezing samples in liquid nitrogen. Store at -80°C until analysis [16]. |

| SynCom diversity not maintained after cryopreservation. | Improper cryopreservation or resuscitation techniques. | Use the published cryopreservation protocol with glycerol and ensure proper, standardized resuscitation steps are followed by all team members [30]. |

Quantitative Results & Benchmarking Data

The multi-laboratory trial generated consistent, quantifiable results. The following table summarizes the key benchmarking data that your experimental outcomes can be measured against.

Table 3: Key Quantitative Outcomes from the Multi-Laboratory Study

| Parameter Measured | Axenic (Sterile) Control | SynCom16 Inoculated | SynCom17 Inoculated | Notes & Variability |

|---|---|---|---|---|

| Shoot Biomass | Highest | Moderate decrease | Significant decrease | Consistent trend across all 5 labs; some inter-lab variability observed [28]. |

| Root Development (after 14 DAI) | Normal | Moderate decrease | Consistent decrease | Image analysis of root scans showed a clear inoculum-dependent effect [28]. |

| Dominant Root Colonizer | N/A | Rhodococcus sp. OAS809 (68% ± 33%) | Paraburkholderia sp. OAS925 (98% ± 0.03%) | SynCom17 led to highly reproducible dominance. SynCom16 showed higher variability in community structure [28]. |

| Sterility Success Rate | >99% | >99% | >99% | Only 2 out of 210 sterility tests showed contamination [28]. |

Abbreviation: DAI: Days After Inoculation.

This case study demonstrates that high reproducibility in plant-microbiome research is achievable through rigorous standardization. The successful implementation of this framework relies on several best practices: utilizing shared model systems like EcoFABs and SynComs, adhering to detailed, publicly available protocols, and centralizing data analysis where possible. The data, protocols, and benchmarking standards from this study are publicly available, providing a solid foundation for other labs to build upon, replicate, and further advance the field of mechanistic microbiome science [16] [28].

What are LIMS and ELNs?

A Laboratory Information Management System (LIMS) is a software platform designed to manage laboratory operations and structured data [32]. It serves as a central hub for tracking samples, managing workflows, ensuring compliance, and integrating with laboratory instruments [33] [32]. LIMS are particularly strong in managing repetitive, high-throughput analyses and are sample-centric [34].

An Electronic Laboratory Notebook (ELN) is the digital counterpart to a traditional paper lab notebook [32]. It provides a flexible platform for researchers to document experimental procedures, observations, and unstructured data [34] [35]. ELNs excel at capturing the narrative of research, facilitating collaboration, and supporting exploratory R&D work [34].

Core Functions and Differences

The table below summarizes the primary functions of each system, which are often complementary.

| Feature | LIMS (Laboratory Information Management System) | ELN (Electronic Laboratory Notebook) |

|---|---|---|

| Primary Focus | Sample and workflow management [34] [32] | Experimental documentation and collaboration [34] [32] |

| Data Type | Structured, standardized data [32] | Unstructured data, observations, and notes [32] |

| Key Capabilities | Sample registration & tracking, workflow automation, quality control, inventory management, regulatory compliance (e.g., FDA 21 CFR Part 11, ISO 17025) [34] [33] [32] | Customizable templates, version control, result recording, data sharing, audit trails for intellectual property [36] [34] [32] |

| Ideal For | Standardized, repetitive processes in clinical, quality control, or diagnostic labs [32] | Research and Development (R&D), experimental design, and collaborative projects [34] [32] |

Figure 1: LIMS and ELN Data Flow for Reproducibility

Troubleshooting Common System Issues

Data Integration and Workflow Errors

Problem: Data silos and inability to connect instruments or other software.

- Cause: Lack of interoperability between systems, incompatible file formats, or insufficient API (Application Programming Interface) support [37] [35].

- Solution:

- Pre-purchase Check: Before selecting a system, verify its integration capabilities with your specific laboratory instruments (e.g., mass spectrometers, PCR machines) and existing software ecosystem [37] [33].

- Utilize APIs: Choose vendors that offer robust APIs to enable seamless, real-time data flow between instruments, LIMS, and ELNs, minimizing manual transcription [38] [35].

- Adopt Unified Platforms: Consider integrated ELN/LIMS solutions designed from the ground up to work as a single system, eliminating the need for complex integrations later [34] [35].

Problem: Inconsistent or non-reproducible workflows across different laboratory sites.

- Cause: Lack of standardized and enforced protocols in the digital system [38].

- Solution:

- Implement Universal SOPs: Use the LIMS to deploy and enforce global Standard Operating Procedures (SOPs) with version control and mandatory sign-off across all sites [38].

- Configure Workflow Templates: Build and lock down customizable workflow templates within the LIMS to guide users through each step, ensuring consistency in sample processing and data capture [36] [34].

User Adoption and Data Integrity Issues

Problem: User resistance to the new system.

- Cause: The system was selected without end-user input, has a non-intuitive interface, or lacks adequate training [39].

- Solution:

- Involve Users Early: Include laboratory personnel in the selection and testing process via demo presentations and free trial periods [39].

- Prioritize Usability: Choose a system with an intuitive interface and ensure the vendor provides comprehensive training resources and ongoing technical support [39] [33].

- Appoint Laboratory Referents: Designate experienced scientists or technicians as "laboratory data managers" to lead implementation and act as power users and champions for the system [36].

Problem: Errors in data entry and incomplete audit trails.

- Cause: Reliance on manual data entry and a system lacking robust tracking features [32].

- Solution:

- Automate Data Capture: Integrate instruments for direct electronic data acquisition and use barcoding for sample and reagent tracking to eliminate manual entry errors [36] [32] [40].

- Leverage System Features: Ensure your LIMS/ELN has features like detailed audit trails that log all data modifications, role-based access control, and electronic signatures to enforce data integrity and compliance [33] [32] [40].

Frequently Asked Questions (FAQs)

Q1: Our lab does both routine testing and exploratory research. Do we need a LIMS, an ELN, or both? For labs with mixed workflows, an integrated ELN/LIMS platform is often the most effective solution [34] [35]. This unified approach allows you to manage structured sample data (LIMS) and unstructured experimental narratives (ELN) within a single environment, breaking down data silos and providing a complete context for all research activities [35] [32]. If a single platform is not feasible, prioritize a LIMS if high-throughput sample tracking is your primary bottleneck, or an ELN if collaborative, reproducible research documentation is the immediate need [33] [32].

Q2: How can these systems directly support cross-site reproducibility, a key part of our thesis? Digital systems are foundational for cross-site reproducibility. Key strategies include:

- Protocol Standardization: Use the ELN and LIMS to mandate universal SOPs, ensuring identical experimental procedures are followed at every site [38].

- Data Harmonization: Enforce standardized data capture with consistent metadata, units, and naming conventions across all labs, creating a unified data structure [38].

- Centralized Data Access: A cloud-based LIMS/ELN acts as a single source of truth, giving all researchers real-time access to the same protocols, samples, and results, which is crucial for replicating experiments [38] [35].

Q3: What are the common hidden costs we should anticipate when implementing a LIMS or ELN? Beyond the initial license or subscription fee, laboratories should budget for:

- Customization & Configuration: Costs for tailoring workflows and interfaces to your lab's specific needs [39] [33].

- Implementation & Integration: Expenses related to system setup, data migration, and integrating with other software and instruments [33].

- Training & Ongoing Support: Costs for initial user training and ongoing technical support, maintenance, and software updates [39] [33].

Q4: We have a small lab. Are there affordable or open-source options available? Yes, open-source LIMS do exist and can be a good fit for smaller teams with strong internal IT support [33]. However, for labs requiring validated workflows, reliable vendor support, faster implementation, and seamless instrument integrations, a commercial solution may offer a better total cost of ownership despite a higher upfront price [33]. Many commercial vendors also offer scalable, subscription-based cloud solutions that can be more accessible for smaller labs [41] [40].

Essential Research Reagent Solutions

Proper management of research reagents is critical for experimental reproducibility. The following table outlines key materials and how a LIMS can manage them.

| Reagent / Material | Primary Function in Research | LIMS/ELN Management Solution |

|---|---|---|

| Chemical Stocks | Raw materials for synthesis and analysis. | Centralize in a searchable chemical database with structures, properties, and safety information [36]. |

| Plasmids & Antibodies | Key biological tools for genetic engineering and detection. | Maintain detailed biological registries (e.g., plasmid, antibody databases) to track source, sequence, and validation data [36]. |

| Samples & Assays | The core subjects and tests of experimental research. | Track the entire lifecycle from collection to disposal using unique barcodes, managing lineage and storage location [36] [33] [32]. |

| Inventory & Storage | Preservation of reagent integrity and availability. | Manage laboratory storage locations (freezers, cabinets); track stock levels, expiration dates, and aliquot histories to prevent waste [36] [32]. |

Figure 2: Integrated ELN/LIMS Workflow for Protocol Standardization

Frequently Asked Questions (FAQs) and Troubleshooting Guides

General Replication Principles

Q1: What is the difference between "replicability" and "reproducibility" in cross-laboratory research?

In the context of scientific research, these terms have specific meanings [42]:

- Replicability refers to obtaining the same results using the same experimental setup, measurement procedure, and artifacts (e.g., code and data) as the original study. It is sometimes called "repeatability."

- Reproducibility refers to obtaining the same results using a different experimental setup, different measuring systems, or independently developed artifacts.

For cross-laboratory studies, reproducibility is the higher standard, demonstrating that findings are robust across different research environments [42].

Q2: Why should my lab invest time in creating replication packages?

Creating replication packages requires an initial investment but provides significant long-term benefits [43]:

- Strengthened Rigor and Reliability: Enables other researchers to verify your work, strengthening scientific credibility [42].

- Institutional Memory: Preserves knowledge when students graduate or postdocs move on, preventing the need to recreate work from scratch [43].

- Future Efficiency: Well-documented code and data help you retrace your steps, saving time and effort when revisiting projects [43].

- Scientific Contribution: Published replication materials become citable research outputs that advance your field [43].

Data Management

Q3: What is the best way to organize files in a replication package?

A clear, consistent folder structure is crucial. Avoid disorganized directories with confusing file names [44]. A recommended structure separates code, data, and outputs:

Table: Core Components of a Replication Package Folder Structure

| Folder | Purpose | Example Contents |

|---|---|---|

code/ |

All analysis scripts | Master scripts, data cleaning, figure generation |

data/raw/ |

Raw, read-only primary data | Immutable source data |

data/processed/ |

Analysis-ready datasets | Cleaned and merged data |

output/ |

All generated results | Figures, tables, model outputs |

This structure keeps the raw data safe, organizes the workflow logically, and makes it easy to regenerate all results [44].

Q4: How should we handle raw data to ensure it remains unchanged?

Always keep your raw data read-only [44]. After copying raw data into your package (e.g., in a rawdata/ folder), set the file permissions to prevent accidental modification. On Windows, you can set files as read-only through properties; on Linux/Unix systems, use the command chmod 444 rawdata [44].

Code and Analysis

Q5: What are the key practices for writing reproducible code?

- Use a Master Script: Create a master file (e.g.,

main.doormaster.R) that sets paths once at the top and then calls all other subsidiary files in sequence. This allows the entire analysis to be run at once [44]. - Use Relative Paths: Design your code to use relative paths instead of absolute paths (e.g.,

../data/raw/survey.csvinstead ofC:/Users/Name/Project/data/raw/survey.csv). This ensures the code runs on different machines without manual path adjustments [44]. - Cross-OS Compatibility: Write file paths in a way that is compatible across operating systems. For example, in Stata, always use forward slashes (

/) to separate directories, even on Windows [44]. - Automate Output: Ensure your code saves all tables and figures as files in the

output/directory instead of only displaying them on screen [44].

Q6: A collaborator cannot run our code on their machine. What is the most likely cause?

The most common cause is hard-coded file paths specific to your computer [43]. The solution is to use relative paths and a master script that sets the project root directory at the beginning. Other common issues include missing dependencies (libraries/packages) or an undocumented specific software version.

Cross-Laboratory Standardization

Q7: How can we ensure our experimental protocols are replicable in other labs?

The PLOS Biology study on plant-microbiome research provides a successful model for cross-laboratory replication [16]. The key is extreme standardization and detailed documentation.

Table: Essential Materials and Documentation for Cross-Lab Protocols

| Component | Function in Standardization | Example from Plant-Microbiome Study [16] |

|---|---|---|

| Standardized Reagents | Eliminates batch-to-batch variability | Synthetic bacterial communities (SynComs) from a public biobank (DSMZ) |

| Standardized Habitats | Controls the physical environment | Sterile EcoFAB 2.0 devices shipped to all labs |

| Detailed Protocol | Specifies every step of the procedure | Written protocols with annotated videos |

| Centralized Analysis | Reduces analytical variation | All sequencing and metabolomic analyses performed by a single lab |

Q8: What should we do if we need to modify the original protocol during replication?

Any changes from the original study must be explicitly documented. A replication report should clearly discuss all changes to the design, participants, artifacts, or procedures, along with the motivation for each change [45]. This transparency is critical for interpreting the replication's results.

Troubleshooting Common Problems

Q9: We are getting different results when re-running our own code. How can we stabilize the analysis?

- Set Random Seeds: If your analysis involves random number generation (e.g., for simulations or modeling), always set a seed at the beginning of the script to ensure you get the same result every time.

- Version Control: Use version control systems (e.g., Git) to track exact versions of all code and data files [44].

- Document Software Versions: Record the versions of all software and packages used (e.g., by using

sessionInfo()in R). Consider using containerization tools like Docker to capture the entire computational environment.

Q10: Our data is proprietary and cannot be shared publicly. How can we still enable some level of transparency?

Even when data cannot be shared, you can provide [46]:

- All code and scripts used for the analysis.

- Instructional appendices detailing the steps taken.

- References to the proprietary data sources.

- Synthetic or aggregated data that mimics the structure and properties of the original data, allowing others to run the code workflow.

Workflow and Relationship Diagrams

Replication Package Creation Workflow

Cross-Laboratory Replication Protocol

Replication Package Components

Navigating Real-World Challenges in Multi-Lab Studies

Inter-laboratory variation presents a significant challenge in scientific research and clinical diagnostics, affecting the reliability, reproducibility, and comparability of results across different facilities. This variation arises from multiple sources, including differences in equipment, reagents, personnel training, and protocol implementation. In clinical settings, such variation can impact diagnostic accuracy and patient care, while in research, it undermines the validity of findings and hampers collaborative efforts. Standardizing protocols and implementing robust quality assurance systems are therefore critical for enhancing cross-laboratory reproducibility. This technical support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals identify, control, and minimize these variations in their work.

Quantitative Evidence of Inter-Laboratory Variation

The following tables summarize key quantitative findings from recent studies investigating the scope and impact of inter-laboratory variation.

Table 1: Inter-Laboratory Variation in Clinical HbA1c Measurement (2020-2023 Study) [47]

| Metric | Low QC Level | High QC Level | Overall Inter-Laboratory |

|---|---|---|---|

| Performance Goal (CV) | < 1.5% | < 1.5% | < 2.5% |

| Median CV in 2020 | 1.6% | 1.2% | 2.1% - 3.1% |

| Median CV in 2023 | 1.4% | 1.0% | 2.1% - 2.6% |

| % of Labs Meeting Goal (2023) | 58.9% | 79.8% | 96.9% (per EQA criterion) |

Table 2: Inter-Laboratory Variation in Agricultural Soil Testing [48]

| Nutrient | Mean Absolute Percentage Error (MAPE) | Observation |

|---|---|---|

| All Nutrients | 48% | Far exceeds the acceptable 10-15% range |

| Buffer pH | 1% | Within acceptable variation |

| Nitrate Nitrogen | 91% | "Dramatic" variation observed |

| Phosphorus | 73% | "Widely results can vary" |

| Potassium | Not specified | Some results "more than doubled" |

A Systematic Troubleshooting Methodology

A structured approach is essential for diagnosing and resolving the sources of inter-laboratory variation.

Troubleshooting Guide: Resolving Inconsistent Inter-Laboratory Results

Problem: Your laboratory cannot replicate the experimental results or quantitative measurements generated by a collaborator's laboratory.

Initial Assessment & Replication [49]

- Step 1: Repeat the Experiment: Unless cost or time-prohibitive, repeat the experiment to rule out simple human error or one-off mistakes in procedure.

- Step 2: Verify the Result: Re-examine the literature and scientific rationale. Could the discrepancy be a valid, unexpected outcome rather than a technical failure?

Investigation of Core Protocol Elements [49] [50]

- Step 3: Implement Controls: Introduce both positive and negative controls. If the positive control also fails, a fundamental issue with the protocol or reagents is likely. [49]

- Step 4: Audit Equipment and Materials:

- Check calibration records for all instruments.

- Verify storage conditions and expiration dates for all reagents.

- Confirm material authenticity (e.g., cell line authentication via STR profiling). [50]

Systematic Variable Analysis [49] [51]

- Step 5: Change One Variable at a Time: Generate a list of potential variables and test them systematically.

- Common Variables: Antibody concentration, incubation times, buffer pH, sample preparation method, temperature fluctuations, data analysis parameters.

- Efficient Testing: Where possible, test a range of conditions in parallel (e.g., multiple antibody concentrations) with clearly labeled samples.

- Step 6: Document Everything: Maintain a detailed lab notebook logging every change, its justification, and the outcome. This creates an audit trail and is crucial for cross-laboratory communication. [49] [50]

Inter-laboratory comparisons are formal exercises used to compare performance across a group of laboratories.

Methodology:

- Sample Selection & Distribution: A central organizing body prepares and distributes identical, stable test samples (artifacts, control materials) to all participating laboratories. [52]

- Stability Testing: The homogeneity and stability of the samples are confirmed per international standards (e.g., ISO 13528:2022). [47]

- Blinded Analysis: Participating laboratories analyze the samples using their standard in-house protocols and instruments.

- Data Submission: Results are submitted electronically to the organizing body for centralized analysis. [47]

- Statistical Analysis: Data is analyzed using robust statistical methods (e.g., algorithm A from ISO 13528) to determine a consensus value and calculate inter-laboratory variation metrics like CV and bias. [47] [52]

- Performance Reporting: Each laboratory receives a report comparing its results to the group consensus and pre-defined performance goals, enabling self-assessment. [47]

Case Studies and Standardization Solutions

- Challenge: HbA1c is critical for diagnosing diabetes, but variation between labs can lead to misdiagnosis.

- Solution: Implementation of a rigorous External Quality Assessment (EQA) program coupled with analysis of Internal Quality Control (IQC) data.

- Outcome: Over a four-year period, the use of EQA and IQC led to a significant decrease in both intra-laboratory and inter-laboratory variations. The study highlighted that while performance improved, manufacturer-specific bias remains a key source of variation that requires ongoing management. [47]

- Challenge: Replicating complex microbiome assembly experiments across different laboratories.

- Solution: A global collaborative effort using standardized synthetic bacterial communities, the model grass Brachypodium distachyon, and sterile, fabricated ecosystems (EcoFAB 2.0 devices).

- Outcome: All participating labs observed consistent, inoculum-dependent changes in plant phenotype and bacterial community structure. The project succeeded by providing detailed, standardized protocols, benchmarking datasets, and best practices. [16]

The Role of Schema-Driven Tools

Frameworks like ReproSchema address reproducibility by providing a structured, schema-centric approach to defining surveys and experimental protocols. This ensures that every data element is linked to its metadata (collection method, timing, conditions), enforcing consistency across studies and over time, which is vital for longitudinal and multi-site projects. [53]

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Materials and Their Functions in Standardized Experiments

| Item | Function | Quality Control Consideration |

|---|---|---|

| Certified Reference Materials | Provides a material with a known, standardized property value to calibrate equipment and validate methods. [52] | Source from accredited providers; verify certificate of analysis. |

| Liquid Control Samples (Human Whole Blood) | Used in EQA programs to assess a laboratory's ability to accurately measure analytes like HbA1c. [47] | Confirm homogeneity and stability; use within specified timeframe. |

| Cell Lines | Model systems for biological research. | Perform regular authentication (e.g., STR profiling) and mycoplasma testing to prevent misidentification and contamination. [50] |

| Validated Antibodies | Detect specific proteins in assays like Western Blot, IHC, and Flow Cytometry. [54] | Validate specificity in-house for your application; do not rely solely on manufacturer data. [50] |

| Calibrators and Reagents | Essential components for diagnostic and analytical assays. | Document lot numbers; test new lots in parallel with old lots before full implementation to account for batch variability. [47] [50] |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between intra-laboratory and inter-laboratory variation?

- A: Intra-laboratory variation refers to the inconsistency of results when an experiment is repeated within the same lab by different personnel or over time. Inter-laboratory variation refers to the differences in results obtained when different labs analyze the same sample using the same or similar methods. [47]

Q2: Our lab is starting a collaboration with two other sites. What is the first step to ensure data consistency?

- A: Before any data collection begins, all laboratories should align on a single, highly detailed protocol. The most effective first step is often to conduct a small-scale inter-laboratory comparison, where all labs analyze the same set of samples. This will baseline the existing variation and help identify which protocol steps are most susceptible to divergence. [47] [52]

Q3: We followed the protocol exactly, but our results are still inconsistent with the published literature. What should we investigate?

- A: Focus on reagent quality and validation. Antibodies from different lots or suppliers can have varying affinities. Cell lines can become contaminated or misidentified. Ensure you are using positive and negative controls to validate your assay's performance under your specific lab conditions. [49] [50]

Q4: How can computational tools improve cross-laboratory reproducibility?

- A: Using version-controlled scripts (e.g., in R or Python) for data analysis and adhering to FAIR data principles (Findable, Accessible, Interoperable, Reusable) ensure that the data processing pipeline is transparent and repeatable. This eliminates analytical variability as a source of inter-laboratory differences. [50]

Q5: What is the role of EQA and IQC in controlling variation?

- A: Internal Quality Control (IQC) is used daily to monitor a lab's precision and detect sudden errors. External Quality Assessment (EQA) is a periodic, independent check of a lab's accuracy compared to peers. Together, they are a critical quality assurance system for monitoring and improving performance over time. [47]

Workflow for Root Cause Analysis

The following diagram visualizes the logical workflow for a systematic root cause analysis of inter-laboratory variation.

Process for Cross-Lab Protocol Standardization

Standardizing a protocol across multiple laboratories involves a structured process of planning, testing, and refinement, as illustrated below.

Troubleshooting Guides and FAQs

Data Harmonization and Legacy Data