Beyond the Visible: How RGB and Hyperspectral Imaging Are Revolutionizing Plant Analysis

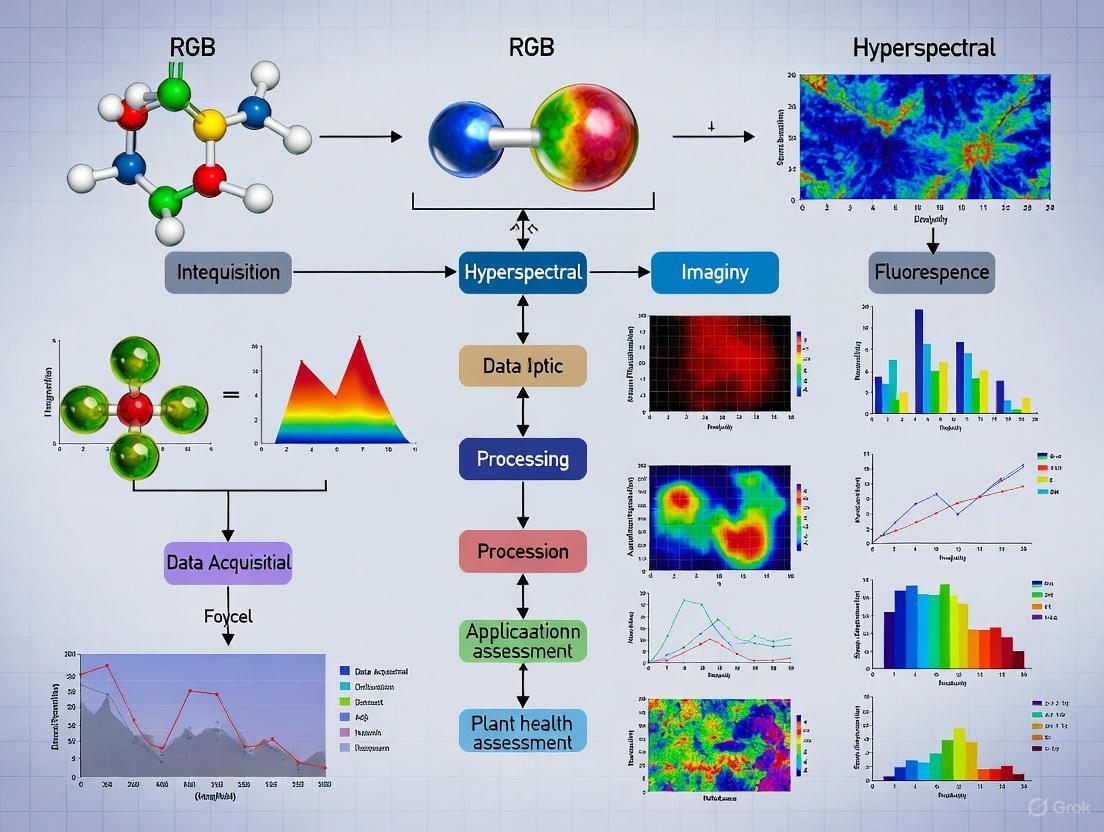

This article explores the synergistic integration of RGB and hyperspectral imaging technologies for advanced plant analysis.

Beyond the Visible: How RGB and Hyperspectral Imaging Are Revolutionizing Plant Analysis

Abstract

This article explores the synergistic integration of RGB and hyperspectral imaging technologies for advanced plant analysis. Aimed at researchers and drug development professionals, it details how this combination bridges the gap between morphological data and deep biochemical insights. The content covers foundational principles, practical methodologies for non-invasive phenotyping and disease detection, strategies to overcome technical hurdles, and validation through comparative performance studies. By synthesizing information across these core intents, the article provides a comprehensive guide for leveraging this powerful multimodal approach to accelerate innovation in plant science and the development of plant-derived therapeutics.

Seeing More Than Meets the Eye: Fundamental Principles of RGB and Hyperspectral Plant Imaging

The Limitation of Human Vision and Standard RGB Imaging

The human visual system and conventional red-green-blue (RGB) imaging form the foundation of traditional plant phenotyping and agricultural assessment. However, these methods are fundamentally constrained by their limited spectral perception, capturing only a fraction of the information contained in light-plant interactions. This technical guide examines the physiological and technological boundaries of these conventional approaches and demonstrates how hyperspectral imaging overcomes these limitations by capturing continuous spectral data, enabling early detection of plant stress, precise nutrient assessment, and advanced growth stage classification. When strategically combined, RGB and hyperspectral imaging create a powerful synergistic toolset for plant researchers, offering both practical visual context and deep biochemical insights.

Human vision operates within a narrow band of the electromagnetic spectrum (approximately 400-700 nanometers), perceiving only three color channels through specialized cone cells. This trichromatic system, while sufficient for everyday tasks, misses critical information contained in near-infrared and ultraviolet ranges that reveal plant physiology and health status. Standard RGB imaging systems mimic this limited human visual perception, capturing only broad wavelength ranges corresponding to red, green, and blue light [1]. In agricultural research and drug development from plant sources, this spectral deficiency presents significant diagnostic limitations, as many biochemical processes exhibit spectral signatures outside this visible range or require finer spectral resolution for accurate quantification.

Fundamental Limitations of Human Vision and RGB Imaging

Physiological and Technological Constraints

The limitations of human vision and RGB imaging in plant research stem from both biological constraints and technological simplifications:

- Limited Spectral Resolution: Human eyes contain three types of cones with overlapping spectral sensitivities, while RGB cameras utilize filters with three broad spectral bands. This provides only generalized color information without the fine spectral resolution needed to identify specific biochemical compounds [1].

- Inability to Detect Pre-Visual Stress: Plant stress from pathogens, nutrient deficiencies, or water deficit induces biochemical changes that precede visible symptoms. These changes often manifest in spectral regions beyond human vision or as subtle spectral shifts indistinguishable by RGB analysis [2] [1].

- Subjectivity and Qualitative Nature: Human visual assessment is subjective and influenced by environmental conditions, observer experience, and physiological factors. Similarly, RGB image analysis often relies on color indices that lack direct correlation with specific biochemical traits [3].

Table 1: Quantitative Comparison of Imaging Modalities for Plant Research

| Parameter | Human Vision | Standard RGB Imaging | Hyperspectral Imaging |

|---|---|---|---|

| Spectral Range | 400-700 nm | 400-700 nm | 400-2500 nm (depending on sensor) |

| Number of Bands | 3 (cones) | 3 (R, G, B) | 100-250+ contiguous bands |

| Spatial Resolution | ~60 pixels per degree (visual acuity) | Limited only by sensor | Limited only by sensor |

| Early Stress Detection | Only when visible symptoms appear | Limited to visible symptoms | Before visible symptoms appear [2] |

| Biochemical Specificity | Low | Low | High (specific pigment/protein detection) |

| Cost & Accessibility | High (labor-intensive) | Low | Moderate to High |

Practical Implications for Plant Research

The technical limitations of human vision and RGB imaging translate directly into practical constraints for research and drug development:

- Growth Stage Misclassification: Visual classification of closely spaced wheat growth stages (Zadoks Z37, Z39, Z41) faces significant inter-observer variability, whereas hyperspectral imaging with machine learning achieved F1 scores of 0.832 for the same task [3].

- Late Intervention Timeline: By the time stress symptoms become visible to human observers or detectable with RGB imaging, plants may have already experienced significant physiological damage, reducing potential intervention efficacy and compromising research results [1].

- Incomplete Phenotypic Profiling: RGB-based phenotyping captures morphological traits but misses critical biochemical information essential for comprehensive phenotypic characterization in pharmaceutical development from plant sources.

Hyperspectral Imaging: Overcoming Spectral Limitations

Technological Advancements

Hyperspectral imaging (HSI) addresses the fundamental limitations of human vision and RGB imaging by capturing a full spectrum for each pixel in an image. Rather than three broad bands, HSI collects hundreds of narrow, contiguous spectral bands, creating a continuous spectral signature that serves as a unique biochemical fingerprint for each plant component [2]. Recent technological advances have transformed HSI from a specialized laboratory tool to a practical field instrument:

- Miniaturization and Portability: Modern hyperspectral sensors have become compact enough for deployment on drones, handheld devices, and field-based platforms, enabling in-situ measurement [2] [1].

- Snapshot HSI Technology: Next-generation snapshot hyperspectral cameras eliminate the need for scanning motions, capturing full hyperspectral cubes at video rates (25+ Hz) for real-time plant assessment [4] [1].

- AI-Enhanced Analytics: Cloud-based artificial intelligence and machine learning algorithms can process the vast datasets generated by HSI, extracting meaningful biochemical and physiological information [2].

Research Applications Revealing Invisible Plant Properties

The rich spectral data provided by HSI enables researchers to detect plant properties that remain invisible to human vision and standard RGB imaging:

- Early Stress Detection: HSI identifies fungal, viral, or bacterial infections through subtle biochemical changes before visible symptoms manifest, enabling intervention 7-14 days earlier than conventional methods [2].

- Nutrient Deficiency Mapping: Each nutrient deficiency produces a unique spectral signature detectable by HSI, allowing precise mapping of nitrogen, phosphorus, and potassium distribution across individual plants or entire fields [2].

- Growth Stage Classification: HSI with Support Vector Machine classification successfully distinguished subtle pre-anthesis wheat growth stages (Z37, Z39, Z41) with F1 scores of 0.832, far exceeding human visual assessment reliability [3].

- Biochemical Quantification: HSI enables non-destructive measurement of chlorophyll, anthocyanins, water content, and other biochemical traits through specific spectral features, many located outside the visible range [5].

Table 2: Key Spectral Regions for Plant Traits Beyond RGB Capability

| Plant Trait | Key Spectral Regions | Detection Method | Research Application |

|---|---|---|---|

| Chlorophyll Content | 450-670 nm, 700-750 nm (red edge) | Spectral index calculation | Photosynthetic efficiency assessment |

| Water Stress | 950-970 nm (water absorption) | Depth of water absorption features | Irrigation optimization, drought studies |

| Nitrogen Status | 550-570 nm, 700-720 nm | Spectral shift detection | Nutrient management, fertilizer optimization |

| Cell Structure | 1200-1300 nm, 1600-1700 nm | SWIR reflectance | Plant vigor assessment, disease impact |

| Anthocyanins | 530-560 nm, 670-680 nm | Specific absorption features | Plant stress response, product quality |

Experimental Protocols: Bridging Conventional and Advanced Imaging

Hyperspectral Imaging for Fine-Scale Growth Stage Classification

Objective: To automatically classify closely spaced wheat growth stages (Z37, Z39, Z41) at the individual plant level using hyperspectral imaging, overcoming limitations of human visual assessment [3].

Experimental Setup:

- Plant Material: Wheat cultivar 'Scepter' grown in greenhouse and semi-natural environments with controlled substrate [3].

- Imaging Systems:

- WIWAM hyperspectral imaging system with Specim FX10 camera (400-1000 nm range, 5.5 nm FWHM resolution)

- Top-down imaging from 1.4m height, 512 pixels/line, 2.6mm spatial resolution

- Halogen lighting in closed cabinet to eliminate external variations

- Reference Data: Visual growth stage assessment by trained experts following Zadoks scale

Methodology:

- Image Acquisition: Capture hyperspectral images between 12:00 PM-2:00 PM to minimize diurnal variation

- Calibration: Collect black-and-white reference images after each plant image for radiometric correction

- Spectral Extraction: Isolate leaf regions by masking background elements

- Data Transformation: Apply spectral transformations (Standard Normal Variate, Hyper-hue, Principal Component Analysis)

- Feature Selection: Identify most informative wavelengths (achieved 0.752 F1 score with only 5 wavelengths)

- Classification: Implement Support Vector Machine model with cross-validation

Key Findings: The combined use of multiple spectral transformations outperformed reliance on any single transformation, with SNV transformation demonstrating robust performance under limited training conditions [3].

Protocol for Hyperspectral Analysis of Leaf Color Patterns

Objective: To analyze complex leaf color patterns using hyperspectral reflectance imaging to reveal previously undetectable biochemical features [6].

Experimental Workflow:

- Hyperspectral Image Acquisition: Capture hyperspectral data cubes of plant leaves under controlled illumination

- Background Masking: Implement processing algorithms to isolate leaf regions from background

- Lighting Correction: Apply corrections for uneven lighting across the sample

- Spectral Component Analysis: Identify key spectral components representing distinct biochemical features

- Spatial Projection: Project hyperspectral cubes onto spectral components to highlight specific traits

This protocol enables researchers to move beyond subjective color descriptions to quantitative spectral pattern analysis, revealing features undetectable by human vision or RGB imaging [6].

Synergistic Integration: Combining RGB and Hyperspectral Imaging

AI-Based Spectral Prediction from RGB Images

Emerging research demonstrates the potential for combining the practical advantages of RGB imaging with the analytical power of hyperspectral data through artificial intelligence:

- Spectral Reconstruction: AI models trained on paired RGB and hyperspectral images can predict absorption spectra from standard RGB images, preserving spectral information while reducing cost and acquisition time [7].

- Cost-Effective Deployment: This approach leverages the widespread availability and lower cost of RGB systems while providing access to hyperspectral-grade data for plant trait analysis [7].

- Trait Quantification: AI-derived RGB models can estimate critical plant traits such as chlorophyll and anthocyanin content with strong agreement to hyperspectral reference values [7].

Operational Frameworks for Multi-Modal Imaging

Strategic combination of RGB and hyperspectral imaging in research pipelines creates complementary advantages:

- RGB for Morphological Assessment: Utilize RGB imaging for high-spatial-resolution morphological analysis, plant counting, and canopy structure assessment

- HSI for Biochemical Profiling: Apply hyperspectral imaging for detailed biochemical characterization, stress detection, and nutrient analysis

- Temporal Monitoring: Employ RGB for continuous monitoring due to its faster acquisition rates, with periodic HSI for deep biochemical profiling

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Key Research Reagent Solutions for Plant Imaging Studies

| Item | Function | Application Example |

|---|---|---|

| Specim FX10 Hyperspectral Camera | Captures VNIR spectral range (400-1000 nm) | High-throughput plant phenotyping [5] |

| WIWAM Hyperspectral Imaging System | Provides controlled environment imaging | Growth stage classification studies [3] |

| Standard Normal Variate (SNV) Transformation | Normalizes spectral data for enhanced analysis | Improves robustness in classification models [3] |

| Living Optics Snapshot HSI Camera | Enables video-rate hyperspectral imaging | Real-time plant stress monitoring [1] |

| Supported Vector Machine (SVM) | Machine learning classification algorithm | Fine-scale growth stage classification [3] |

| TraitDiscover Platform | Integrated high-throughput phenotyping | Multi-dimensional plant data collection [5] |

The limitations of human vision and standard RGB imaging in plant research are significant and multifaceted, spanning spectral range, resolution, and biochemical specificity. Hyperspectral imaging technologies effectively address these limitations by providing continuous, high-resolution spectral data that reveals plant properties invisible to conventional methods. For researchers and drug development professionals, the strategic integration of RGB and hyperspectral imaging offers a powerful approach—combining the practical advantages and morphological capabilities of RGB with the deep biochemical insights of hyperspectral analysis. As these technologies continue to advance and become more accessible, they will play an increasingly critical role in accelerating plant research, pharmaceutical development from plant sources, and addressing global agricultural challenges.

Hyperspectral imaging (HSI) has emerged as a transformative technology for plant research, enabling non-invasive analysis of plant physiology, biochemistry, and health status by capturing unique spectral fingerprints. Unlike conventional RGB (Red, Green, Blue) imaging that records only three broad wavelength bands, HSI measures reflected light across hundreds of narrow, contiguous spectral bands, creating a continuous spectrum for each pixel in an image. This detailed spectral data provides researchers with unprecedented capability to detect plant stress, monitor growth stages, and assess chemical composition before visible symptoms appear. This technical guide explores the core principles of HSI, its synergistic application with RGB imaging, and provides detailed experimental protocols for implementing these technologies in plant research applications, with a specific focus on bridging the gap between laboratory research and field deployment.

The fundamental limitation of conventional RGB imaging lies in its simplification of the continuous light spectrum into just three broad bands corresponding to the red, green, and blue receptors of the human eye. While sufficient for representing color perception, this approach discards vast amounts of spectral information that reveal critical details about plant composition and function. Hyperspectral imaging overcomes this limitation by capturing the complete spectral signature of plant materials across a wide range of wavelengths, typically from the visible (400-700 nm) through the near-infrared (700-2500 nm) regions [8].

Each material and biochemical component within plant tissues interacts uniquely with light, absorbing specific wavelengths while reflecting others. This interaction creates a unique spectral signature that serves as a chemical "fingerprint" [9]. For example, chlorophyll strongly absorbs red and blue light while reflecting green and near-infrared wavelengths, creating characteristic absorption features at around 430-450 nm and 650-700 nm, and high reflectance in the NIR region [10]. These subtle spectral variations, often invisible to RGB cameras, become clearly detectable with HSI's fine spectral resolution, enabling researchers to decipher the biochemical language of plants.

Core Technology: HSI vs. RGB Imaging

Fundamental Differences in Data Acquisition

The technological distinction between RGB and hyperspectral imaging begins at the sensor level. RGB cameras utilize a Bayer filter mosaic with separate filters for red, green, and blue channels, effectively averaging light detection across three broad spectral bands [9]. In contrast, hyperspectral imaging systems employ sophisticated spectrographs that disperse incoming light across hundreds of detector elements, capturing narrow, contiguous wavelength bands throughout the electromagnetic spectrum.

This fundamental difference in data acquisition creates a significant disparity in information content. While RGB produces a three-channel image, HSI generates a three-dimensional data structure known as a hypercube, containing two spatial dimensions and one spectral dimension [11] [8]. This hypercube can be visualized as a stack of images, each representing a specific narrow wavelength band, with each pixel containing a complete, continuous spectrum from the imaged scene.

Quantitative Comparison of Capabilities

Table 1: Technical comparison between RGB, Multispectral, and Hyperspectral imaging systems for plant research.

| Parameter | RGB Imaging | Multispectral Imaging | Hyperspectral Imaging |

|---|---|---|---|

| Spectral Bands | 3 (Red, Green, Blue) | 3-10 broad, discrete bands | 50-250+ narrow, contiguous bands |

| Spectral Range | 400-700 nm (Visible) | Typically 400-900 nm | 400-2500 nm (VNIR-SWIR) |

| Spectral Resolution | 50-100 nm | 10-50 nm | 1-10 nm |

| Primary Data Output | 2D color image | Limited spectral indices | Full spectral signature per pixel |

| Information Depth | Morphology, color | General health, limited stress detection | Biochemical composition, early stress identification |

| Weed/Pest Discrimination | Limited | Moderate | High accuracy based on biochemical differences |

| Early Disease Detection | Not possible | Limited, after symptom appearance | Possible before visual symptoms [10] [12] |

| Cost & Complexity | Low | Medium | High |

The practical implication of these technical differences is profound for plant research. While RGB imaging can identify visible symptoms such as color changes or lesions, HSI can detect pre-symptomatic stress through subtle biochemical alterations. For instance, HSI can identify nutrient deficiencies, water stress, and pathogen infections days before any visible symptoms manifest [1] [12]. This early detection capability provides a critical window for intervention, potentially preventing significant crop losses and reducing unnecessary pesticide applications.

The Scientific Toolkit: Essential Equipment and Analytical Approaches

Implementing hyperspectral imaging for plant research requires specific hardware, software, and analytical tools. The selection of appropriate equipment depends on the research objectives, scale of analysis, and operational environment.

Research Reagent Solutions and Essential Materials

Table 2: Essential components of a hyperspectral imaging system for plant research.

| Component | Specifications | Function & Importance |

|---|---|---|

| Hyperspectral Camera | VNIR (400-1000 nm) and/or SWIR (900-2500 nm); Spectral resolution: 1-10 nm; Spatial resolution: Varies with platform | Captures spectral data cube; Core component defining data quality and application scope |

| Illumination System | Halogen lights (lab) or calibrated LEDs (field/space) [12]; Uniform, stable broadband source | Provides consistent illumination crucial for reproducible spectral measurements |

| Spectral Calibration Tools | White reference panel (Spectralon); Dark current reference | Enables conversion of raw data to reflectance values; Essential for quantitative analysis |

| Platform & Positioning | Laboratory scanners, UAVs, ground vehicles, or handheld systems [13] | Determines spatial scale and operational environment; Affects spatial resolution and coverage |

| Data Processing Software | Python, ENVI, or specialized platforms (Specim, Living Optics) | Handles large datasets, calibration, and analysis including machine learning algorithms |

| Reference Chemicals | Laboratory standards for pigments (chlorophyll, carotenoids), nutrients | Validates spectral signatures and develops quantitative models |

Integrated System Configuration

Advanced HSI systems often combine multiple imaging modalities to enhance analytical capabilities. For example, NASA's plant health monitoring system for space crop production integrates both reflectance and fluorescence imaging within a single automated platform [12]. This system utilizes two LED line lights—one providing VNIR broadband illumination for reflectance measurements and another providing UV-A (365 nm) excitation for fluorescence imaging—enabling comprehensive plant health assessment through complementary data streams.

For field applications, systems like the Specim AFX series offer turn-key solutions for UAV-based hyperspectral imaging, allowing researchers to create detailed material maps across large agricultural areas [13]. These systems are radiometrically calibrated and optimized for the challenging environmental conditions encountered in outdoor research.

Experimental Protocols: Methodologies for Plant Research Applications

Protocol 1: Early Detection of Pathogen-Induced Stress

Objective: To detect and identify fungal pathogens in cabbage (Brassica oleracea) before visible symptoms appear using hyperspectral imaging.

Materials and Setup:

- Hyperspectral imaging system with spectral range covering 400-1000 nm (VNIR)

- Uniform illumination system (halogen lights or calibrated LEDs)

- White reference panel (Spectralon) for calibration

- Plant samples: Healthy and Alternaria brassicae-inoculated cabbage plants

- Controlled environment growth chamber with stable lighting conditions

Procedure:

- System Calibration: Acquire white reference images using the Spectralon panel and dark current images with the lens covered before each imaging session.

- Image Acquisition: Place plants in the imaging area at a constant distance (e.g., 1.4 m) from the camera. Capture hyperspectral images of both healthy and inoculated plants at 24-hour intervals post-inoculation.

- Spectral Feature Identification: Extract mean spectra from regions of interest (ROIs) on leaves. Identify key spectral regions affected by pathogen infection:

- 400-450 nm: Flavonoid content alterations

- 430-450 nm & 680-700 nm: Chlorophyll degradation

- 470-520 nm: Carotenoid changes

- 520-600 nm: Xanthophyll cycle activity

- 700-750 nm & 718-722 nm: Phenolic compounds/mycotoxins [10]

- Classification Model Development: Apply machine learning algorithms (Support Vector Machines or Random Forest) to spectral data. Use 70% of data for training and 30% for validation.

- Validation: Compare classification results with molecular diagnostic tests to verify pathogen presence.

Expected Outcomes: The system should achieve over 90% classification accuracy for early infection stages, enabling detection before visible symptoms manifest [12].

Protocol 2: Classification of Individual Wheat Growth Stages

Objective: To automatically classify pre-anthesis growth stages (Zadoks Z37, Z39, Z41) in individual wheat plants using hyperspectral imaging.

Materials and Setup:

- Pushbroom hyperspectral camera (e.g., Specim FX10) covering 400-1000 nm range

- Automated conveyor system or translational stage for consistent scanning

- Controlled lighting environment (closed imaging cabinet with halogen lighting)

- Pot-grown wheat plants (Triticum aestivum) at target growth stages

Procedure:

- Plant Cultivation: Grow wheat plants under controlled conditions. Precisely record growth stages according to Zadoks scale through daily visual inspection.

- Hyperspectral Data Collection: Image plants from top-down perspective at constant distance (e.g., 1.4 m). Capture images between 12:00 PM-2:00 PM to minimize diurnal variation.

- Spectral Transformation: Apply mathematical transformations to spectral data:

- Standard Normal Variate (SNV): Normalizes spectral data to reduce scattering effects

- Hyper-hue: Enhances color-related spectral features

- Principal Component Analysis (PCA): Reduces dimensionality while preserving variance

- Feature Selection: Identify most discriminative wavelengths (5-10 bands) for growth stage differentiation using feature selection algorithms.

- Model Training and Validation: Implement Support Vector Machine classifier with cross-validation. Compare performance against RGB-based classification.

Expected Outcomes: The hyperspectral approach should achieve F1 scores of approximately 0.832 for growth stage classification, significantly outperforming RGB-based methods [3].

The following diagram illustrates the workflow for this hyperspectral analysis of wheat growth stages:

Data Processing and Analysis: From Spectral Data to Actionable Insights

Spectral Preprocessing and Transformation

Raw hyperspectral data requires substantial preprocessing before analysis to remove instrumental artifacts and environmental noise. Essential preprocessing steps include:

- Radiometric Correction: Converting raw digital numbers to reflectance values using white and dark reference images

- Geometric Correction: Addressing spatial distortions from camera optics or platform movement

- Noise Reduction: Applying smoothing filters (Savitzky-Golay, median filtering) to reduce spectral noise

- Spectral Transformation: Using mathematical transformations to enhance spectral features:

- Standard Normal Variate (SNV): Normalizes each spectrum to zero mean and unit variance, reducing light scattering effects

- Derivative Analysis: First and second derivatives help resolve overlapping spectral features and eliminate baseline offsets

These preprocessing steps are critical for ensuring that subsequent analysis reflects actual biological variation rather than measurement artifacts.

Machine Learning Integration for Spectral Analysis

The high dimensionality of hyperspectral data makes machine learning approaches particularly valuable for extracting meaningful biological information. Both conventional and deep learning methods have demonstrated success in plant research applications:

Conventional Machine Learning:

- Support Vector Machines (SVM): Effective for classification tasks with limited training data, achieving F1 scores up to 0.832 for wheat growth stage classification [3]

- Random Forests: Robust for regression and classification tasks, handling high-dimensional data well

- Partial Least Squares Regression (PLSR): Useful for quantifying biochemical constituents from spectral data

Deep Learning Approaches:

- Convolutional Neural Networks (CNNs): Can automatically learn relevant spectral features from raw data, reducing need for manual feature engineering

- Spectral-Spatial Networks: Incorporate both spectral and spatial information for improved classification accuracy

The integration of machine learning with HSI has enabled the development of automated systems capable of detecting plant stress, predicting yield, and classifying growth stages with minimal human intervention.

Integrated Data Visualization: Combining Spatial and Spectral Information

The power of hyperspectral imaging lies in its ability to simultaneously preserve spatial and spectral information. This integrated data structure enables researchers to visualize both the distribution and chemical identity of materials within a scene.

The following diagram illustrates the fundamental structure of hyperspectral data and the process of extracting meaningful biological information:

Future Perspectives and Challenges

Despite its significant potential, several challenges remain for widespread adoption of hyperspectral imaging in plant research. The high cost of traditional HSI systems, while decreasing, still presents a barrier for many research institutions [11]. Data management poses another significant challenge, as hyperspectral datasets are large and computationally demanding to process and store. Additionally, technical expertise requirements for operating HSI systems and interpreting results remain substantial.

Future developments are likely to focus on:

- Miniaturization and cost reduction of hyperspectral sensors [1] [13]

- Improved data processing algorithms and user-friendly software interfaces

- Enhanced integration with other sensing modalities (thermal, fluorescence, 3D)

- Development of standardized spectral libraries for different plant species and stress conditions

- Advancements in real-time processing capabilities for field applications

As these technological advancements progress, hyperspectral imaging is poised to become an increasingly accessible and indispensable tool for plant researchers, enabling deeper insights into plant biology and more sustainable agricultural practices.

Hyperspectral imaging represents a paradigm shift in plant research methodology, moving beyond the morphological assessments possible with RGB imaging to enable non-invasive biochemical characterization and early stress detection. By capturing the unique spectral fingerprint of plants across hundreds of narrow, contiguous wavelength bands, HSI provides researchers with an powerful tool for deciphering the complex relationships between plant physiology, environmental conditions, and genetic expression.

The integration of HSI with machine learning analytics and complementary imaging modalities creates a powerful framework for advancing plant science. While challenges remain in cost, data handling, and technical complexity, ongoing technological developments are steadily addressing these limitations. As hyperspectral systems continue to become more accessible, portable, and user-friendly, their application in both controlled environments and field settings will undoubtedly expand, contributing significantly to our understanding of plant biology and the development of more sustainable agricultural systems.

This technical guide explores the fundamental principles and applications of light reflectance spectroscopy for quantifying plant biochemical traits. The interaction between light and plant tissues produces unique spectral signatures that can be decoded to measure photosynthetic pigments, structural components, water content, and nutrients non-destructively. Within the broader context of plant research, this whitepaper demonstrates how hyperspectral imaging provides detailed biochemical insights that complement the spatial and cost advantages of RGB imaging, creating a powerful synergistic framework for advanced phenotyping and precision agriculture.

Plant leaves interact with electromagnetic radiation through specific mechanisms across different spectral regions. In the visible range (400-700 nm), light absorption is primarily dominated by photosynthetic pigments, with chlorophyll absorbing strongly in the blue and red regions while reflecting green light [14]. The near infrared plateau (NIR, 800-1300 nm) is characterized by multiple scattering within the leaf's internal air spaces, making this region highly sensitive to leaf structure and cellular organization [14]. The short-wave infrared (SWIR, 1300-2500 nm) contains absorption features primarily associated with water (at 1450 nm and 1940 nm) and dry matter constituents including proteins, lignins, cellulose, and other carbon-based compounds [15] [14].

Each biochemical constituent exhibits specific absorption features due to vibrational bonds including C—O, O—H, C—H, and N—H bonds, plus overtones and combinations of these vibrations [15]. These unique spectral signatures enable researchers to distinguish between biochemical components despite their overlapping absorption features.

Quantitative Relationships: Biochemical-Spectral Correlations

Table 1: Correlation between Hyperspectral Reflectance and Key Wheat Physiological Traits (Partial Least Squares Regression Models) [16]

| Trait | Correlation Coefficient (R²) | Bias (%) | Relative Error of Prediction | Measurement Significance |

|---|---|---|---|---|

| Vcmax25 (Rubisco activity) | 0.62 | <0.7% | Slightly greater | Photosynthetic capacity |

| J (Electron transport rate) | 0.70 | <0.7% | Slightly greater | Light reaction efficiency |

| SPAD (Chlorophyll) | 0.81 | <0.7% | Similar | Chlorophyll content |

| LMA (Leaf mass per area) | 0.89 | <0.7% | Slightly greater | Leaf thickness/structure |

| Narea (Leaf nitrogen) | 0.93 | <0.7% | Slightly greater | Nitrogen status |

Table 2: Key Spectral Regions for Biochemical Constituents in Vegetation [15] [14]

| Biochemical Constituent | Key Spectral Regions (nm) | Specific Absorption Features (nm) | Chemical Bonds Involved |

|---|---|---|---|

| Nitrogen/Proteins | 1510, 1730, 1940, 2060, 2180, 2240, 2300 | 1690, 1940, 2060, 2180, 2240, 2300 | N-H, C-H, O-H bonds |

| Lignin | 1120, 1200, 1420, 1450, 1690, 1940, 2100 | 1120, 1420, 1690, 2100 | C-H, C-O bonds in phenolics |

| Cellulose | 1200, 1490, 1780, 1820, 2000, 2100, 2280, 2340 | 1200, 1490, 1780, 2100, 2280 | C-H, C-O bonds in polysaccharides |

| Leaf Water Content | 970, 1200, 1450, 1940 | 1450, 1940 | O-H bonds |

| Chlorophyll/Pigments | 430-470, 660-680, 700-750 (red edge) | 531, 570 (PRI) | Porphyrin ring structure |

Experimental Protocols and Methodologies

Hyperspectral Data Acquisition Protocol

Equipment Requirements:

- Field spectroradiometer or hyperspectral imaging system (400-2500 nm range)

- Standard reflectance reference panel (e.g., Spectralon)

- Controlled illumination source (for laboratory measurements)

- Stable measurement platform with standardized geometry

- Environmental monitoring sensors (light, temperature, humidity)

Standardized Measurement Procedure: [16] [14]

- Instrument Calibration: Warm up spectrometer for recommended duration (typically 30-60 minutes). Perform wavelength calibration using standard sources.

- Reference Measurement: Measure spectralon reference panel under identical illumination conditions as sample measurements.

- Sample Presentation: For leaf measurements, maintain consistent orientation (typically adaxial surface up). Use clipping holder or dark background to minimize contamination.

- Spectral Collection: Acquire multiple spectra per sample (minimum 3-5 readings) to account for heterogeneity. Maintain constant distance and viewing geometry.

- Data Quality Assessment: Check for saturation, signal-to-noise ratio, and abnormal spectral shapes indicating measurement artifacts.

- Reflectance Calculation: Convert raw radiance to reflectance by dividing sample radiance by reference panel radiance.

Continuum-Removal and Band-Depth Analysis

For quantitative biochemical estimation, continuum-removal enhances detection of specific absorption features: [15]

- Continuum Definition: Identify convex hull points surrounding absorption features of interest (e.g., 1730 nm, 2100 nm, 2300 nm for nitrogen).

- Continuum Removal: Divide original reflectance values by corresponding continuum line values at each wavelength.

- Band Depth Calculation: Compute absorption band depth as BD(λ) = 1 - R(λ)/Rc(λ), where R(λ) is reflectance at wavelength λ and Rc(λ) is continuum reflectance at same wavelength.

- Normalization: Normalize band depths by either the depth at band center or area under band depth curve to reduce non-foliar influences.

- Statistical Modeling: Apply stepwise multiple linear regression between normalized band depths and chemically determined constituent concentrations.

Band Depth Analysis Workflow: From raw spectra to biochemical quantification

Genetic Application Protocol: Spectral Phenotyping of Plant Populations

Advanced applications include detecting genetic variation through spectral phenotyping: [14]

Experimental Design:

- Plant Materials: Utilize diverse germplasm including wild accessions, recombinant inbred lines (RILs), and transgenic lines with targeted gene modifications.

- Environmental Control: Implement replicated designs across controlled (glasshouse) and field environments to partition genetic and environmental variance.

- Standardized Measurement Timing: Measure leaves at consistent developmental stages (e.g., fully expanded source leaves).

Spectral Data Processing for Genetic Analysis:

- Quality Control: Remove spectra with obvious artifacts, noise, or contamination.

- Spectral Normalization: Apply standard normal variate (SNV) or multiplicative scatter correction to reduce light-scattering effects.

- Population-Level Analysis: Conduct principal component analysis (PCA) to visualize spectral variation among genotypes.

- Heritability Estimation: Calculate broad-sense heritability for spectral features using mixed models.

- Genome-Wide Association: Correlate specific spectral features with genetic markers to identify loci controlling biochemical traits.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Tools for Plant Reflectance Spectroscopy

| Item | Function/Purpose | Technical Specifications | Application Context |

|---|---|---|---|

| Field Spectroradiometer | Measures reflected radiance across spectral range | 350-2500 nm range, 3-10 nm resolution, fiber optic input | Field and laboratory measurements |

| Hyperspectral Imaging System | Spatial-spectral data acquisition | VNIR (400-1000 nm) and/or SWIR (1000-2500 nm) cameras | High-throughput phenotyping, spatial mapping |

| Spectralon Reference Panel | Provides baseline reflectance (~99%) for calibration | Labsphere Spectralon or equivalent | Essential for reflectance calculation |

| Controlled Illumination Source | Standardized lighting conditions | Tungsten-halogen, uniform light field | Laboratory measurements |

| Leaf Clips | Fixed geometry for repeated measurements | With or without integrated light source | Standardized leaf-level measurements |

| Spectral Library Data | Reference spectra for known materials | USGS, JPL, ASTER spectral libraries | Material identification, validation |

| Chemical Analysis Kits | Ground truth biochemical data | Nitrogen (Kjeldahl), lignin (ACBr), chlorophyll (extraction) | Model calibration, validation |

| ENVI/SPECPR Software | Spectral data processing and analysis | Continuum removal, derivative analysis, classification | Data processing, model development |

Synergistic Integration: RGB and Hyperspectral Imaging in Plant Research

While hyperspectral imaging provides detailed biochemical information through continuous spectral sampling across hundreds of bands, RGB imaging offers complementary advantages through its accessibility, spatial resolution, and cost-effectiveness. The integration of these technologies creates a powerful framework for plant research.

RGB Imaging Applications and Hidden Potential:

- Spatial Analysis: RGB cameras provide high-resolution structural information for assessing plant architecture, leaf area, and growth patterns [1].

- Color-Based Indices: Despite limited to three broad bands, RGB imagery can extract vegetation indices when calibrated properly [17].

- Hidden Contrast Enhancement: Advanced processing of RGB images can reveal contrast variations beyond human visual perception, extracting analytical information about stress patterns and field variability [17].

- Temporal Monitoring: Cost-effectiveness enables high-frequency monitoring for phenological studies and growth trajectory analysis.

Hyperspectral Advantages for Biochemistry:

- Early Stress Detection: Identification of drought, nutrient deficiency, pests, and disease before visible symptoms appear [1].

- Quantitative Biochemistry: Precise measurement of nitrogen, lignin, cellulose, and photosynthetic parameters through specific spectral features [16] [15].

- Genetic Studies: Detection of spectral traits linked to genetic variation, enabling high-throughput phenotyping for breeding programs [14].

RGB-Hyperspectral Synergy: Complementary technologies for comprehensive plant assessment

Light reflectance spectroscopy provides a powerful, non-destructive approach for quantifying plant biochemical traits across multiple scales from individual leaves to canopies. The fundamental interactions between light and biochemical constituents create detectable spectral signatures that can be decoded through rigorous experimental protocols and analytical methods. The continuous spectrum measured by hyperspectral imaging enables precise quantification of nitrogen, lignin, cellulose, water content, and photosynthetic parameters, while RGB imaging offers complementary spatial and temporal monitoring capabilities. Together, these technologies form an integrated framework that advances plant phenotyping, precision agriculture, and ecological research by bridging the gap between visible traits and underlying biochemical composition. As spectroscopic technologies continue to evolve toward more portable, affordable, and automated systems, the application of light reflectance for decoding plant biochemistry will expand, enabling researchers to address critical challenges in food security, environmental sustainability, and plant-based product development.

In plant research, the transition from traditional RGB (Red, Green, Blue) imaging to hyperspectral analysis represents a fundamental shift in observational capability. Human vision, and by extension conventional RGB cameras, is limited to perceiving reflected light in three broad wavelength bands, providing information primarily about color and morphology. While useful for identifying visible symptoms, this approach cannot detect the subtle biochemical changes that occur in plants during early stress or disease development [2] [1]. Hyperspectral imaging (HSI) shatters this limitation by capturing light across hundreds of narrow, contiguous spectral bands, creating a detailed data structure known as a hypercube [11]. This hypercube contains both spatial information (x, y) and extensive spectral data (λ) for each pixel, enabling researchers to quantify biochemical and physiological changes in plants before visible symptoms appear [2] [18].

The true power of modern plant phenotyping and disease detection lies not in choosing between RGB and hyperspectral modalities, but in strategically combining them. RGB imaging provides high-spatial-resolution morphological data at lower cost and computational requirements, while HSI delivers unparalleled biochemical insight through high spectral resolution [19] [20]. This technical guide explores the theoretical foundations, practical methodologies, and analytical frameworks for integrating these complementary technologies, providing researchers with a comprehensive toolkit for advancing plant science and agricultural innovation.

Technical Foundations: From RGB to the Hyperspectral Data Cube

RGB Imaging: Spatial Resolution and Morphological Analysis

RGB imaging captures reflected light in three broad wavelength bands corresponding to human visual perception (approximately 400-500 nm for blue, 500-600 nm for green, and 600-700 nm for red). The resulting data structure is a two-dimensional array of pixels, with each pixel containing three intensity values representing these color channels [21]. In plant research, RGB imaging excels at quantifying morphological traits such as leaf area, plant architecture, and visible symptom progression [19]. Its advantages include relatively low hardware costs, straightforward data processing, and high spatial resolution, making it suitable for high-throughput phenotyping applications where visible traits are the primary interest [22] [23].

The fundamental limitation of RGB imaging stems from its spectral poverty. With only three data points per pixel spectrum, it cannot resolve the subtle spectral signatures associated with biochemical changes during early stress responses. Additionally, RGB data are sensitive to varying illumination conditions, requiring careful standardization for quantitative comparisons [19].

Hyperspectral Imaging: Spectral Dimension and Biochemical Insight

Hyperspectral imaging fundamentally expands the data dimensionality by capturing reflected light across hundreds of narrow, contiguous spectral bands, typically ranging from the visible to short-wave infrared regions (400-2500 nm) [11] [21]. The resulting data structure is a three-dimensional hypercube with two spatial dimensions (x, y) and one spectral dimension (λ), where each pixel contains a complete spectral signature representing the biochemical composition of that specific location [11].

This rich spectral data enables the detection of subtle changes in plant physiology long before they become visible to the human eye or RGB sensors. Specific molecular bonds and compounds, including chlorophylls, carotenoids, water, and other biochemical constituents, interact with light at characteristic wavelengths, creating unique absorption features in the spectral profile [21]. By analyzing these spectral fingerprints, researchers can detect early responses to biotic and abiotic stresses, often with 60-90% accuracy, and up to 95% in controlled conditions [21] [18].

Table 1: Comparative Analysis of RGB and Hyperspectral Imaging Technologies

| Parameter | RGB Imaging | Multispectral Imaging | Hyperspectral Imaging |

|---|---|---|---|

| Spectral Bands | 3 broad bands (R, G, B) | 3-10 discrete bands | Hundreds of narrow, contiguous bands |

| Spectral Range | 400-700 nm (Visible) | Visible to NIR | UV to SWIR (250-2500 nm) |

| Spatial Resolution | High | Medium to High | Typically lower due to data volume |

| Data Dimensionality | 2D + 3 channels | 2D + limited spectral data | 3D hypercube (x, y, λ) |

| Primary Applications | Morphological assessment, visible symptom detection | Broad stress detection, vegetation indices | Early stress detection, biochemical analysis, pathogen identification |

| Cost Considerations | $500-$2,000 | $2,000-$10,000 | $20,000-$50,000+ |

| Data Processing Complexity | Low | Medium | High |

Table 2: Quantitative Performance Comparison for Disease Detection

| Performance Metric | RGB with Deep Learning | Hyperspectral Imaging |

|---|---|---|

| Laboratory Accuracy | 95-99% | 95-99% |

| Field Deployment Accuracy | 70-85% | 80-90% |

| Early Detection Capability | Limited to visible symptoms | Pre-symptomatic (3-7 days before visual symptoms) |

| Multiple Infection Classification | Low accuracy (approximately 53% with CNNs) | High accuracy (81% with EfficientNet 2D CNN) |

| Cross-Specificity | Limited, confounded by multiple stressors | High, can distinguish between similar diseases |

Integrated Experimental Protocols

Multi-Modal Image Registration Pipeline

The fusion of RGB and hyperspectral data requires precise pixel-level registration to ensure spatial correspondence between modalities. The following protocol, adapted from successful implementations, enables robust multi-modal image registration [20]:

Materials and Equipment:

- RGB camera (e.g., high-resolution scientific-grade with global shutter)

- Hyperspectral imaging system (e.g., Specim FX series pushbroom scanner)

- Chlorophyll fluorescence imager (e.g., PhenoVation Plant Explorer XS)

- Calibration targets (e.g., checkerboard pattern, spectralon reference panel)

- Computational infrastructure for data processing

Procedure:

System Calibration:

- Perform individual camera calibration for each sensor using checkerboard targets

- Calculate lens distortion parameters and apply correction

- Verify subpixel alignment accuracy (target mean reprojection error <0.5 pixels)

Data Acquisition:

- Acquire RGB images under consistent illumination conditions

- Collect hyperspectral data using pushbroom scanner with consistent movement speed

- Capture chlorophyll fluorescence kinetics using standardized excitation protocols

- Include reference standards in each imaging session for radiometric correction

Image Registration:

- Select reference image (typically high-contrast RGB or ChlF)

- Apply affine transformation to align multimodal datasets

- Implement normalized cross-correlation (NCC) for similarity measurement

- Utilize phase-only correlation (POC) for robustness to intensity variations

- Apply feature-based methods (ORB, RANSAC) for coarse alignment

Validation:

- Quantify overlap ratio using convex hull analysis (target >95%)

- Verify registration accuracy across multiple regions of interest

- Assess impact on downstream analytical results

This protocol has demonstrated overlap ratios of 98.0±2.3% for RGB-to-ChlF and 96.6±4.2% for HSI-to-ChlF registration in Arabidopsis thaliana studies, and 98.9±0.5% for RGB-to-ChlF and 98.3±1.3% for HSI-to-ChlF in Rosa × hybrida infection assays [20].

Hyperspectral Analysis of Multiple Co-Infections

The following experimental protocol details the procedure for hyperspectral imaging and classification of multiple concurrent infections in wheat, as demonstrated in recent research [18]:

Plant Material and Growth Conditions:

- Wheat variety 'Vuka' (susceptible to yellow rust, mildew, and Septoria)

- Growth conditions: 17/11°C for 16/8 h light/dark cycle for 10 days post-sowing

- Pathogen isolates: Yellow rust (WYR 19/215), Mildew (NIAB 21-001), Septoria (R13 and R16)

Infection Protocol:

- Single infections: Inoculate at 10 days post-sowing with adjusted temperatures for each pathogen

- Combined infections: Stagger inoculations to synchronize symptom development

- Yellow rust + mildew: YR at day 10, mildew at day 13

- Yellow rust + Septoria: Septoria at day 10, YR at day 17

Hyperspectral Image Acquisition:

- Imaging system: Pushbroom hyperspectral scanner (400-1000 nm range)

- Spatial resolution: Adjust to capture leaf-level details (approximately 0.1 mm/pixel)

- Spectral resolution: 3-5 nm bandwidth across visible and NIR regions

- Standardization: Include white reference and dark current measurement for each session

Data Processing Pipeline:

- Preprocessing: Radiometric correction, noise reduction, and spectral normalization

- Feature extraction: Identify disease-specific spectral bands (e.g., 689 nm and 753 nm for early infection)

- Classification: Train convolutional neural networks (EfficientNet 2D CNN) on labeled data

- Validation: Assess accuracy using k-fold cross-validation and independent test sets

This methodology achieved 81% overall classification accuracy for single and concurrent infections, with 72% accuracy specifically for combined yellow rust and mildew infections [18].

Diagram 1: Multi-modal plant imaging workflow. This workflow integrates RGB, hyperspectral, and chlorophyll fluorescence data through precise image registration and analysis.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Research Equipment for Multi-Modal Plant Imaging

| Equipment Category | Specific Examples | Technical Specifications | Primary Research Function |

|---|---|---|---|

| Hyperspectral Imaging Systems | Specim FX10/FX17, Living Optics camera | VNIR (400-1000 nm) or SWIR (1000-2500 nm) range; Spectral resolution: 3-12 nm | Capture detailed spectral signatures for biochemical analysis |

| RGB Imaging Systems | Scientific-grade CCD/CMOS cameras | High spatial resolution (20+ MP); Global shutter; Controlled illumination | High-resolution morphological assessment and visible symptom documentation |

| Chlorophyll Fluorescence Imagers | PhenoVation Plant Explorer XS | Modulated measuring light; Saturation pulse capability; Multiple fluorescence parameters | Quantify photosynthetic efficiency and early stress responses |

| Laboratory Scanners | Specim LabScanner 40×20 | Automated scanning stages; Integrated illumination; Calibration targets | Standardized hyperspectral data acquisition in controlled environments |

| Field Deployment Systems | Specim AFX series for UAV/drone | Lightweight design; Robust mounting; GPS synchronization | Airborne hyperspectral data collection for large-scale field studies |

| Data Processing Platforms | Python with scikit-learn, TensorFlow | HSI-specific libraries (Hyperspy, ENVI); GPU acceleration | Data preprocessing, analysis, and machine learning model development |

Data Analysis Framework: From Raw Data to Actionable Insights

Hyperspectral Data Preprocessing and Feature Extraction

Raw hyperspectral data requires extensive preprocessing to extract meaningful biological information. The standard workflow includes:

Radiometric Correction:

- Dark current subtraction to remove sensor noise

- White reference normalization using spectralon panels

- Conversion to reflectance values for comparative analysis

Spectral Preprocessing:

- Savitzky-Golay smoothing to reduce high-frequency noise

- Standard Normal Variate (SNV) transformation to minimize scattering effects

- First and second derivative analysis to enhance subtle spectral features

- Continuum removal to emphasize absorption features [21] [23]

Feature Extraction:

- Vegetation indices calculation (NDVI, PRI, etc.) for specific physiological traits

- Spectral Disease Indices (SDIs) development for pathogen-specific detection

- Principal Component Analysis (PCA) for dimensionality reduction

- Identification of key wavelength regions for specific stresses:

- 550-600 nm: Pigment composition changes

- 690 nm: Chlorophyll fluorescence and photosynthetic activity

- 1400-1450 nm: Water content and hydric stress

- 753 nm: Early stress response detection [21]

Machine Learning Integration for Disease Classification

The high dimensionality of hyperspectral data makes it particularly suitable for machine learning approaches. Recent advances demonstrate compelling performance:

Algorithm Selection:

- Random Forest: Effective for feature selection and classification

- Convolutional Neural Networks (CNNs): Superior for spatial-spectral feature extraction

- EfficientNet with 2D convolution: 81% accuracy for multiple infection classification

- Vision Transformers: Emerging approach for capturing long-range dependencies [22] [18]

Model Training Strategies:

- Transfer learning to overcome limited dataset sizes

- Data augmentation through spectral transformations

- Attention mechanisms to focus on diagnostically relevant regions

- Ensemble methods to improve generalization across environmental conditions

Diagram 2: Hyperspectral data analysis pipeline. This pipeline transforms raw hypercubes into actionable insights through preprocessing, feature extraction, and machine learning.

The strategic integration of RGB and hyperspectral imaging technologies represents a paradigm shift in plant research methodology. While RGB provides cost-effective morphological data with high spatial resolution, hyperspectral imaging delivers unparalleled biochemical insight through spectral analysis. The combination enables researchers to correlate visible symptoms with their underlying physiological causes, creating a more comprehensive understanding of plant health and stress responses.

Current research demonstrates the practical viability of this integrated approach, with successful applications in disease detection, nutrient stress monitoring, and plant phenotyping. As both hardware and analytical methods continue to advance—with improvements in sensor miniaturization, computational efficiency, and machine learning algorithms—the fusion of morphological and spectral data will become increasingly accessible to researchers across diverse agricultural and botanical disciplines. This multimodal approach promises to accelerate breeding programs, enhance sustainable agricultural practices, and provide new insights into plant-pathogen interactions, ultimately contributing to global food security in the face of climate change and emerging plant diseases.

In plant research, the transition from traditional Red-Green-Blue (RGB) imaging to advanced hyperspectral imaging represents a fundamental shift from superficial visual assessment to deep physiological probing. RGB imaging, which captures reflectance in three broad visible wavelength bands (approximately 650 nm, 520 nm, and 475 nm), has long been the workhorse for digital phenotyping, providing excellent data on morphological traits such as leaf area, plant architecture, and visible color changes [24] [25]. However, this technology operates with the same fundamental limitation as the human eye: it can only perceive what is already visually apparent. By the time stress symptoms become visible in the RGB spectrum, physiological damage has often already progressed significantly, compromising research interventions and agricultural management [22] [25].

Hyperspectral imaging (HSI) shatters this limitation by capturing reflected light across hundreds of narrow, contiguous spectral bands, typically ranging from the visible spectrum into the near-infrared (NIR) and short-wave infrared (SWIR) regions (approximately 400-2500 nm) [2] [26]. This creates a continuous spectral signature for each pixel in an image, forming a rich three-dimensional data cube that contains both spatial and spectral information. This technological difference is not merely incremental; it is transformative, enabling researchers to detect subtle changes in plant biochemistry that precede visible symptoms by days or even weeks [22]. This whitepaper delineates the critical blind spots of RGB imaging that hyperspectral technology illuminates, framing this analysis within the compelling thesis that the synergistic combination of both modalities provides the most powerful approach for comprehensive plant research.

The Technical Divide: A Comparative Foundation

The core distinction between these imaging modalities lies in their spectral resolution and range. RGB imaging is limited to three broad bands in the visible spectrum, making it highly effective for quantifying what is visible to the human eye but incapable of probing biochemical composition. In contrast, hyperspectral imaging captures a full spectral profile, with modern systems covering from 350-1000 nm (VNIR) and extending to 2500 nm (SWIR) at resolutions as fine as 1-3 nm [26] [27]. This allows for the identification of specific molecular absorption features related to plant pigments, water content, structural compounds, and other biochemical constituents [28].

Table 1: Fundamental Technical Comparison Between RGB and Hyperspectral Imaging

| Parameter | RGB Imaging | Hyperspectral Imaging |

|---|---|---|

| Spectral Bands | 3 broad bands (Red, Green, Blue) [25] | 50-250+ narrow, contiguous bands [2] |

| Spectral Range | ~400-700 nm (Visible light only) [25] | ~350-2500 nm (VIS, NIR, SWIR) [26] [28] |

| Primary Data Output | 2D image with color information | 3D hypercube (x, y, λ) with spectral signatures [26] |

| Key Measurables | Morphology, color, texture | Biochemical composition, water content, pigment ratios [28] [27] |

| Cost & Accessibility | Low cost, highly accessible [22] | High cost ($20,000-$50,000+), requires expertise [22] |

Critical Blind Spots in RGB Imaging and Hyperspectral Solutions

Pre-Symptomatic Disease and Stress Detection

Perhaps the most significant blind spot of RGB imaging is its inability to detect plant stress before visible symptoms manifest. Research indicates that physiological and biochemical changes within plant tissues, such as alterations in cell structure and pigment composition, occur significantly before these changes become visible as discoloration, lesions, or wilting [22]. Hyperspectral imaging fills this void by detecting subtle spectral shifts associated with these early physiological responses.

A systematic review of disease detection methods revealed that while RGB-based deep learning models can achieve 95-99% accuracy in laboratory conditions, their performance drops dramatically to 70-85% in field deployments due to environmental variability and the challenge of identifying early infections [22]. Hyperspectral systems, particularly those leveraging Transformer-based architectures like SWIN, demonstrate superior robustness, maintaining around 88% accuracy in real-world conditions for identifying diseases like powdery mildew before symptom visibility [22]. This early detection capability, sometimes occurring days before visual symptoms, provides a critical window for intervention that can prevent significant crop loss and reduce unnecessary pesticide applications [2].

Quantification of Photosynthetic Pigments and Biochemical Constituents

While RGB imaging can detect gross color changes associated with chlorophyll degradation (e.g., yellowing leaves), it cannot accurately quantify specific pigment concentrations or distinguish between different photosynthetic pigments. Hyperspectral imaging directly targets the specific absorption features of key plant pigments across the electromagnetic spectrum.

A landmark study on Ginkgo biloba involving 3,460 seedlings from 590 families demonstrated the power of hyperspectral imaging combined with machine learning (Adaptive Boosting algorithm) to non-destructively quantify chlorophyll a, chlorophyll b, and carotenoids with remarkable accuracy (R² > 0.83, RPD > 2.4) [27]. The study implemented a sophisticated analytical workflow:

- Hyperspectral Data Acquisition: A portable Image-λ-V10E-HR hyperspectral imager (350-1000 nm range, 176 spectral channels) captured spectral data from all samples in a controlled darkroom environment with halogen lamp illumination to ensure consistency [27].

- Phased Optimization Strategy: The methodology involved: (1) screening preprocessing methods (raw reflectance, normalization, derivatives), finding normalization most effective; (2) comparing machine learning algorithms (PLSR, RF, AdaBoost); and (3) applying feature wavelength selection (SPA, CARS) to optimize the model [27].

- Biochemical Validation: Following spectral capture, leaf discs were destructively sampled for conventional biochemical analysis to create the ground-truth dataset for model training and validation [27].

This non-destructive approach enables large-scale, dynamic monitoring of pigment remodeling during critical physiological transitions, such as autumn senescence—a capability far beyond the reach of RGB imaging or traditional destructive sampling methods [27].

Figure 1: Experimental workflow for non-destructive pigment quantification in Ginkgo biloba using hyperspectral imaging and machine learning, as detailed in [27].

Early Water and Nutrient Stress Identification

Drought and nutrient deficiencies trigger specific biochemical responses in plants that hyperspectral imaging can identify before these stresses manifest as wilting or chlorosis in RGB images. The short-wave infrared (SWIR) region (894-2504 nm) is particularly sensitive to water content and molecular bonds in compounds like nitrogen-based proteins [28].

Advanced research now involves sensor fusion to create a more comprehensive picture of plant health. A study on strawberry plants developed separate hyperspectral imaging systems for the VIS-NIR (397-1003 nm) and SWIR (894-2504 nm) regions, then fused the data to significantly improve the identification of drought stress [28]. The fusion process required sophisticated image registration and alignment techniques to create a composite image combining the enhanced spectral information from both sensors. This fused data more accurately differentiated between control, recoverable, and non-recoverable plants before the emergence of visually apparent indicators [28]. The VIS-NIR region is sensitive to photosynthetic pigments, while the SWIR region provides stronger features related to water and protein content, demonstrating the value of a broad spectral range for stress detection [28].

Fine-Scale Growth Stage Classification

RGB imaging struggles to differentiate between closely related growth stages, particularly in the pre-anthesis phase where morphological changes are subtle. Research on genetically modified wheat classification highlights this limitation, demonstrating the superiority of hyperspectral imaging for distinguishing between fine-scale Zadoks growth stages Z37, Z39, and Z41 (flag leaf just visible to flag leaf sheath extending) [3].

The experimental protocol involved:

- Data Collection: Capturing hyperspectral and RGB images of individual wheat plants in both controlled greenhouse and semi-natural environments using a WIWAM hyperspectral imaging system with a Specim FX10 camera (400-1000 nm range) [3].

- Spectral Transformations: Applying spectral transformations like Standard Normal Variate (SNV), Hyper-hue, and Principal Component Analysis (PCA) to enhance spectral features [3].

- Machine Learning Classification: Using Support Vector Machine (SVM) classification, which achieved an F1 score of 0.832 for growth stage classification, significantly outperforming RGB-based methods [3].

Notably, after feature selection, the model maintained high accuracy (F1 score of 0.752) using only five key wavelengths, demonstrating that specific spectral features are critically responsible for distinguishing these subtle growth stages—features completely absent in RGB data [3].

Table 2: Quantitative Performance Comparison for Key Agricultural Applications

| Application | RGB Performance Limitations | Hyperspectral Performance Advantages |

|---|---|---|

| Early Disease Detection | 70-85% accuracy in field conditions [22] | 88% accuracy with SWIN Transformer; pre-symptomatic detection [22] |

| Pigment Quantification | Limited to color change detection; cannot quantify specific pigments [24] | Quantifies Chl a, Chl b, Carotenoids (R² > 0.83) [27] |

| Water Stress Detection | Relies on visible wilting; late detection [28] | Identifies water content changes in SWIR region before wilting [28] |

| Growth Stage Classification | Poor accuracy for fine-scale stages (Z37-Z41) [3] | SVM classification achieves F1 score of 0.832 [3] |

| Nutrient Deficiency | Detects advanced chlorosis only | Identifies specific nutrient deficiencies via unique spectral signatures [2] |

The Synergistic Approach: Data Fusion for Enhanced Classification

The limitations of both modalities point toward a synergistic solution. While hyperspectral imaging provides superior biochemical insight, its high cost, computational complexity, and sometimes lower spatial resolution present practical challenges [22] [26]. RGB imaging remains more accessible, cost-effective, and superior for certain morphological analyses [24]. The most powerful approach, therefore, combines the strengths of both.

A compelling case study on vegetable soybean freshness classification demonstrates this principle effectively. Researchers developed a novel ResNet-R&H model that incorporates fused data from both RGB and hyperspectral images [29]. The fusion process involved:

- Hyperspectral Data Extraction: Using ENVI software to extract spectral information.

- RGB Image Reconstruction: Applying downsampling technology to standardize RGB images.

- Data Concatenation: Transforming 1D hyperspectral data into 2D space and concatenating it with RGB image data in the channel direction [29].

The results were striking: the fused-data model achieved a testing accuracy of 97.6%, a significant enhancement of 4.0% and 7.2% compared to using only hyperspectral or RGB data, respectively [29]. This demonstrates that the spatial and textural information from RGB images complements the biochemical information from hyperspectral data, creating a more robust and accurate classification system.

Figure 2: Data fusion workflow for vegetable soybean freshness classification, combining RGB and hyperspectral inputs to achieve superior accuracy [29].

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing a hyperspectral or multimodal imaging research program requires specific hardware, software, and analytical tools. The following table details key solutions mentioned across the cited research.

Table 3: Essential Research Reagent Solutions for Hyperspectral Plant Phenotyping

| Solution Category | Specific Tool / Platform | Research Application & Function |

|---|---|---|

| Hyperspectral Imagers | Specim FX10 camera (400-1000 nm) [3] | Wheat growth stage classification in controlled environments [3] |

| Specim IQ camera (397-1000 nm) [30] | Plant phenotyping in fabricated ecosystems (EcoFABs) [30] | |

| Image-λ-V10E-HR (350-1000 nm) [27] | Portable field-based pigment quantification in Ginkgo seedlings [27] | |

| Imaging Systems | WIWAM Hyperspectral Imaging System [3] | Integrated system with LemnaTec 3D Scanalyzer for high-throughput phenotyping [3] |

| Line-scan HSI systems (VIS-NIR & SWIR) [28] | Custom-assembled systems for drought stress detection in strawberries [28] | |

| Analytical Software | ENVI Software [29] | Industry-standard software for hyperspectral data extraction and analysis [29] |

| SpecVIEW (v2.9.3.8) [27] | Control software for hyperspectral image acquisition and calibration [27] | |

| Machine Learning Algorithms | Support Vector Machine (SVM) [3] | Classification of fine-scale wheat growth stages (Z37, Z39, Z41) [3] |

| Adaptive Boosting (AdaBoost) [27] | High-accuracy non-destructive prediction of photosynthetic pigments [27] | |

| ResNet-based Models [29] | Deep learning architecture for fused RGB-HSI data classification [29] | |

| Sparse Mixed-Scale Networks (SMSNets) [30] | Convolutional neural networks for hyperspectral image segmentation with minimal training data [30] |

The "blind spots" of RGB imaging in plant research are not merely minor technical limitations but fundamental gaps in our ability to understand and monitor plant physiology at a biochemical level. Hyperspectral imaging effectively illuminates these blind spots by enabling pre-symptomatic stress detection, precise pigment quantification, early water and nutrient deficiency identification, and fine-scale growth stage classification. The evidence from contemporary research overwhelmingly supports a integrated approach where the morphological strengths of RGB imaging are combined with the biochemical probing capabilities of hyperspectral sensing. This multimodal paradigm, facilitated by advanced machine learning and data fusion techniques, represents the future of precision plant science, offering unprecedented insights into plant health, productivity, and physiology from the laboratory to the field.

From Lab to Field: Methodologies and Real-World Applications in Plant Science

Non-invasive plant phenotyping has emerged as a transformative discipline in agricultural research, enabling the precise quantification of plant growth, health, and development without disturbing the organism or its environment. By leveraging advanced imaging technologies, researchers can capture detailed phenotypic traits throughout the plant lifecycle, facilitating breakthroughs in plant breeding, stress response analysis, and precision agriculture. This technical guide focuses specifically on the synergistic integration of RGB (Red, Green, Blue) and hyperspectral imaging technologies, which together provide complementary data streams that significantly enhance research capabilities across diverse agricultural applications.

The fundamental advantage of combining these imaging modalities lies in their ability to capture different aspects of plant physiology and biochemistry. RGB imaging excels in quantifying morphological and structural traits with high spatial resolution, while hyperspectral imaging detects biochemical and physiological changes through detailed spectral signatures across hundreds of narrow, contiguous wavelength bands [9] [31]. This multi-modal approach enables researchers to correlate visual characteristics with underlying biochemical processes, providing a more comprehensive understanding of plant status than either technology could deliver independently.

Recent technological advancements have made both RGB and hyperspectral imaging more accessible to research institutions. The development of compact, automated phenotyping systems like PhenoGazer, which integrates hyperspectral spectrometers with multiple Raspberry Pi cameras and LED lighting systems, demonstrates the trend toward integrated solutions that capture complementary data types simultaneously [32]. Similarly, NASA's hyperspectral plant health monitoring system for space crop production exemplifies how these technologies can be deployed in controlled environments for precise health assessment [33]. These systems represent a paradigm shift from manual, destructive sampling approaches toward automated, non-invasive monitoring that supports higher-throughput phenotyping with minimal human intervention.

Technological Foundations: RGB and Hyperspectral Imaging

RGB Imaging Technology

RGB imaging represents the fundamental approach to digital plant phenotyping, capturing reflected light in three broad wavelength bands corresponding to red (approximately 650 nm), green (520 nm), and blue (475 nm) [31]. This technology emulates human vision but with greater consistency and quantitative capabilities. Modern RGB sensors deployed in phenotyping applications range from standard digital cameras to specialized scientific imaging systems with calibrated output.

The primary strength of RGB imaging lies in its high spatial resolution and cost-effectiveness for quantifying morphological traits. Applications include measuring plant architecture, leaf area, growth rates, and visible symptoms of stress or disease [24]. However, standard RGB imaging is limited to the visible spectrum (400-700 nm) and cannot detect biochemical changes or pre-visual stress indicators [9]. Despite this limitation, recent advances in image analysis algorithms, particularly those incorporating machine learning, have expanded the utility of RGB imaging for certain physiological assessments when combined with appropriate validation methods.

Hyperspectral Imaging Technology

Hyperspectral imaging represents a significant technological advancement beyond conventional RGB imaging by capturing spectral information across hundreds of narrow, contiguous bands spanning extended wavelength ranges [8]. While typical systems cover the visible to near-infrared (VNIR, 400-1000 nm), advanced systems extend into short-wave infrared (SWIR, 1000-2500 nm) ranges, enabling detection of a wider array of biochemical properties [31].

The core principle of hyperspectral imaging is that each material possesses a unique spectral signature or "fingerprint" based on its chemical composition and how it interacts with electromagnetic radiation [9]. This signature is represented as a spectral reflectance curve, which plots reflectance values against wavelengths. Plants under different stress conditions exhibit characteristic alterations in these spectral profiles, enabling early detection before visible symptoms manifest [34].

Hyperspectral data is structured as a three-dimensional "hypercube" comprising two spatial dimensions and one spectral dimension [8]. This rich dataset allows researchers to identify subtle changes in plant physiology through various analytical approaches, including spectral indices, machine learning classification, and spectral unmixing techniques. The technology's ability to detect pre-symptomatic stress responses, nutrient deficiencies, and pathogen infections makes it particularly valuable for precision agriculture and plant breeding applications [35].

Table 1: Comparative Analysis of RGB and Hyperspectral Imaging Technologies

| Parameter | RGB Imaging | Hyperspectral Imaging |

|---|---|---|

| Spectral Bands | 3 broad bands (R, G, B) [31] | Hundreds of narrow, contiguous bands [9] |

| Spectral Range | 400-700 nm (visible spectrum) [31] | Typically 400-2500 nm (VNIR-SWIR) [8] |

| Spatial Resolution | High | Variable, often lower due to spectral data volume |

| Information Captured | Morphological features, color, texture [24] | Biochemical composition, pigment content, water status [36] |

| Early Stress Detection | Limited to visible symptoms | Pre-visual detection possible [34] |

| Cost & Accessibility | Low to moderate | Moderate to high |

| Data Volume | Moderate | Very high (3D hypercubes) [8] |

Integrated Experimental Design and Methodologies