Beyond the Standard: Advanced Strategies for Predicting Domains in Atypical NBS Protein Architectures

Accurately predicting the structure of non-canonical nucleotide-binding site (NBS) domain architectures is a critical challenge in structural biology with profound implications for understanding immune signaling and drug discovery.

Beyond the Standard: Advanced Strategies for Predicting Domains in Atypical NBS Protein Architectures

Abstract

Accurately predicting the structure of non-canonical nucleotide-binding site (NBS) domain architectures is a critical challenge in structural biology with profound implications for understanding immune signaling and drug discovery. This article provides a comprehensive guide for researchers and drug development professionals, exploring the unique characteristics of atypical NBS proteins and the experimental evidence of their structural variations. We delve into the latest computational methodologies, including hybrid deep learning-physics approaches and innovative multiple sequence alignment techniques, that are pushing the boundaries of prediction accuracy. The content further addresses common pitfalls in predicting multi-domain and chimeric proteins, offering practical optimization strategies and troubleshooting protocols. Finally, we present a rigorous framework for the validation and comparative analysis of predicted models against state-of-the-art tools, synthesizing key takeaways and future directions for biomedical research.

Decoding Atypical NBS Architectures: From Sequence Anomalies to Functional Consequences

FAQ 1: What defines an atypical NBS domain and how is it classified?

An atypical NBS domain is a nucleotide-binding site (NBS) domain that lacks one or more of the canonical domains typically found in a full-length NBS-LRR (NLR) protein. In contrast to typical NLRs, which possess a complete N-terminal domain (either TIR or CC), a central NBS domain, and a C-terminal LRR domain, atypical NBS proteins are characterized by the absence of either the N-terminal domain, the LRR domain, or both [1] [2].

The classification is based on the specific domain architecture, as outlined in the table below [1] [2]:

| Classification | Domain Architecture | Description |

|---|---|---|

| N (NBS only) | NBS | Contains only the Nucleotide-Binding Site domain. |

| TN (TIR-NBS) | TIR - NBS | Contains the TIR and NBS domains, but lacks the LRR domain. |

| CN (CC-NBS) | CC - NBS | Contains the Coiled-Coil and NBS domains, but lacks the LRR domain. |

| NL (NBS-LRR) | NBS - LRR | Contains the NBS and LRR domains, but lacks a defined N-terminal (TIR/CC) domain. |

FAQ 2: What are the conserved sequence motifs in the NBS domain and how can I identify them?

The NBS domain contains several highly conserved amino acid motifs critical for ATP/GTP binding and hydrolysis, which are essential for the protein's role in immune signaling. These motifs can be used to identify NBS domains, including atypical ones, in sequence analyses [2].

Key Conserved Motifs in the NBS Domain [2]:

| Motif Name | Key Function |

|---|---|

| P-loop | ATP/GTP binding and hydrolysis |

| RNBS-A | Role in nucleotide binding |

| Kinase-2 | Catalytic function |

| RNBS-B | Structural and functional integrity |

| RNBS-C | Nucleotide binding and signaling |

| GLPL | Conserved role in resistance signaling |

Experimental Protocol: Identifying NBS Domains and Conserved Motifs

- Step 1: Sequence Retrieval: Obtain the protein or genome sequence of interest from a relevant database.

- Step 2: HMMER Search: Use the Hidden Markov Model (HMM) profiles for the NBS domain (e.g., PF00931 from Pfam) to search against your sequence dataset using tools like HMMER. This will identify candidate sequences containing the NBS domain [1] [2].

- Step 3: Domain Analysis: Utilize domain prediction servers (e.g., Pfam, InterPro) to confirm the presence of the NBS domain and identify other domains (TIR, CC, LRR) to classify the protein as typical or atypical [2].

- Step 4: Multiple Sequence Alignment: Perform a multiple sequence alignment of your candidate NBS domains with well-characterized NBS domains from model plants (e.g., Arabidopsis thaliana). Visually inspect or use motif discovery tools to locate the conserved motifs listed in the table above [2].

FAQ 3: Why are my AI structural predictions for atypical NBS proteins inaccurate, especially in flexible regions?

Deep learning platforms like AlphaFold and RoseTTAFold excel at predicting the 3D structure of well-folded, globular domains. However, they face challenges with multidomain proteins that have flexible linkers or regions that undergo conformational changes, which is common in NLR proteins and their atypical variants [3].

Key Limitations and Solutions for AI Structural Prediction:

| Challenge | Impact on Prediction | Recommended Solution |

|---|---|---|

| Bias Towards Compactness | AI models tend to predict the most compact, often inactive, configuration of a protein, even when the active state is more open [3]. | Use a piecewise modeling approach. Predict domains separately and use experimental data (e.g., Cryo-EM, SAXS) to guide the reconstruction of the global architecture [3]. |

| Modeling Morphing Regions | Coiled-coil (CC) domains and other flexible linkers are often modeled inaccurately. AI may mix segments from different conformational states [3]. | For CC domains, do not rely solely on AI output. Use dedicated coiled-coil prediction servers (e.g., DeepCoil, Marcoil) to inform your model. |

| Ligand-State Insensitivity | The presence of a ligand (e.g., ATP vs. ADP) may not be sufficient to drive the prediction toward the correct conformational state [3]. | If the ligand state is known, use it as a constraint during modeling. Be aware that the protein moiety might still be modeled in the incorrect state. |

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Experiment |

|---|---|

| HMMER Software Suite | For identifying NBS domains in genomic sequences using Hidden Markov Models [1] [2]. |

| Pfam / InterPro Databases | For confirming domain architecture (NBS, TIR, CC, LRR) of identified protein sequences [2]. |

| AlphaFold / RoseTTAFold | For predicting the three-dimensional structure of protein domains [3]. |

| NANEX (Nanobody Exchange Chromatography) | A purification technique that uses immobilized nanobodies to capture and elute target proteins, useful for studying membrane proteins and complexes [4]. |

| Phage Display Library | A screening platform for identifying nanobodies or other binding partners that interact with a specific antigen [5] [4]. |

FAQ 4: What is the evolutionary significance of finding numerous atypical NBS genes in a genome?

The prevalence of atypical NBS genes is a clear indicator of the dynamic and ongoing evolution of the plant immune system. These genes are not merely broken remnants; they are often functional and contribute to the diversity of pathogen recognition [1] [2].

A primary mechanism for generating this diversity is tandem gene duplication, leading to the formation of NBS gene clusters. In pepper, for example, 54% of all NBS-LRR genes are physically clustered in the genome [2]. Atypical NBS genes within these clusters can evolve new functions or serve as genetic reservoirs for creating new resistance specificities through recombination and natural selection. The reduction or loss of entire subfamilies (like the TNL subfamily in Salvia species and monocots) further illustrates how lineage-specific evolutionary pressures shape the NBS-LRR repertoire [1].

Experimental Evidence of Domain Variation in Different Architectural Contexts

Frequently Asked Questions (FAQs)

What are the key challenges in predicting structures for multidomain proteins like NBS-LRR receptors? Deep learning platforms like AlphaFold and RoseTTAFold demonstrate excellent performance in predicting well-established domain folds but face significant challenges with morphing regions like coiled-coil domains and multistate configurations. These tools typically bias toward the most compact, ordered configurations even when biological evidence suggests more sparse, active architectures. [3]

How does cofactor binding (ADP/ATP) affect structural predictions in NBS domains? Experimental studies reveal that AI predictors often maintain proteins in compact ADP-bound configurations even when modeling with ATP present. The ligand information appears correctly positioned in binding sites, but the overall protein architecture frequently remains in the inactive state, indicating limited sensitivity to nucleotide-driven domain rearrangements. [3]

What strategies improve prediction accuracy for atypical NBS protein architectures? Targeted filtering of structural templates and multiple sequence alignments to specific active or inactive states significantly enhances prediction quality. When global templates are unavailable, a piecewise modeling approach with experimental constraints for global architecture reconstruction yields more biologically realistic models. [3]

How can researchers accurately identify and annotate NLR genes in complex genomes? The DaapNLRSeek pipeline enables accurate prediction and annotation of NLR genes from complex polyploid genomes by leveraging diploidy-assisted annotation, allowing researchers to analyze architecture, collinearity, and evolution of resistance genes despite genomic complexity. [6]

Troubleshooting Guides

Problem: Incorrect Coiled-Coil Domain Prediction

Symptoms: RMSD values exceeding 12Å in coiled-coil regions compared to experimental structures; four alpha-helix bundle formations instead of biologically accurate configurations. [3]

Solution:

- Apply filtered template workflows: Use state-specific structural templates (active/inactive) to constrain predictions

- Implement experimental constraints: Integrate biochemical data and cross-linking information to guide folding

- Utilize hybrid approaches: Combine AI prediction with molecular dynamics refinement

- Validate with orthogonal methods: Verify predictions using circular dichroism or cryo-EM validation

Prevention: Always compare predictions across multiple deep learning platforms (AlphaFold2, AlphaFold3, RoseTTAFold All-Atom) and inspect coiled-coil regions for secondary structure inaccuracies.

Problem: Bias Toward Compact Domain Configurations

Symptoms: Models consistently favor inactive ADP-bound states despite ATP presence in simulations; inability to capture domain rotations and sparse configurations. [3]

Solution:

- Employ multi-state modeling: Run separate predictions with different template restrictions

- Utilize the "AF2—Active MSA" workflow: Combine active-state templates with MSA steps

- Implement piecewise reconstruction: Model domains separately and assemble using experimental constraints

- Leverage molecular dynamics: Use MD simulations to test model stability and explore conformational space

Verification: Check interdomain interfaces against experimental data and monitor NBD-Arc rotation states characteristic of activation.

Problem: Limited Performance with Atypical Architectures

Symptoms: Poor prediction quality for proteins with integrated domains, unusual connectors, or non-canonical arrangements; inaccurate interdomain interfaces. [3]

Solution:

- Apply the DaapNLRSeek methodology: Use specialized pipelines for complex architectures

- Increase taxonomic sampling: Include diverse evolutionary representatives in MSAs

- Incorporate experimental data: Integrate cryo-EM, NMR, or SAXS constraints during modeling

- Use hierarchical modeling: Predict individual domains first, then assemble with flexible linkers

Table 1: Domain-Level Prediction Performance Against Experimental Structures (Cα RMSD in Å)

| Domain Region | AF2 Default | AF3 Default | RFAA Default | AF2 Filtered | Experimental Reference |

|---|---|---|---|---|---|

| CC Domain | >12.0 | >12.0 | >12.0 | <3.0 | Cryo-EM (Active/Inactive) |

| NBD Domain | <2.0 | <2.0 | <2.0 | <1.5 | Cryo-EM (Active/Inactive) |

| LRR Domain | <2.5 | <2.5 | <2.5 | <2.0 | Cryo-EM (Active/Inactive) |

| Global Architecture | ~6.0 (Inactive) | ~6.0 (Inactive) | ~6.0 (Inactive) | <3.0 (Targeted) | Cryo-EM Multistate |

Table 2: Platform Performance with Multistate Proteins

| Modeling Condition | Global RMSD vs Active | Global RMSD vs Inactive | Ligand Positioning | Domain Interfaces |

|---|---|---|---|---|

| AF2 Default (Full MSA) | >20Å | ~6Å | Correct in Wrong Architecture | Accurate for Compact State |

| AF3 with ATP | >20Å | ~6Å | Correct in Wrong Architecture | Accurate for Compact State |

| RFAA with ATP | >20Å | ~6Å | Correct in Wrong Architecture | Accurate for Compact State |

| AF2 Active-Filtered | <4Å | >15Å | Correct in Proper Architecture | Accurate for Active State |

Detailed Experimental Protocols

Protocol 1: Multiplatform Validation for Domain Prediction

Purpose: To validate domain predictions across AI platforms and identify consistent inaccuracies in atypical architectures.

Materials:

- Protein sequences of interest (FASTA format)

- AlphaFold2 local installation

- AlphaFold3 access (via server or local)

- RoseTTAFold All-Atom installation

- Molecular dynamics simulation software (GROMACS, AMBER)

- Validation datasets (Experimental structures if available)

Procedure:

- Sequence Preparation: Curate sequences and generate multiple sequence alignments using standard databases

- Parallel Prediction: Run structural predictions using all three platforms with default parameters

- State-Specific Prediction: Implement filtered workflows for active/inactive states using template restrictions

- Domain Isolation: Extract individual domains from full-length models using domain boundary predictions

- Comparative Analysis: Calculate RMSD values for each domain region against experimental data

- Interface Assessment: Analyze interdomain interfaces using PISA or similar tools

- Validation Integration: Incorporate experimental constraints from cryo-EM, SAXS, or biochemical data

Troubleshooting: When CC domain RMSD exceeds 8Å, implement template-free modeling or integrate experimental constraints from cross-linking mass spectrometry.

Protocol 2: DaapNLRSeek Pipeline for Complex Genomes

Purpose: To accurately identify and annotate NLR genes in polyploid genomes with atypical architectures. [6]

Materials:

- Assembled polyploid genome sequences

- Reference NLR domain databases (Pfam, InterPro)

- High-performance computing cluster

- Python environment with Biopython, BLAST+

- Visualization tools (Circos, ggplot2)

Procedure:

- Genome Preprocessing: Annotate genomes using diploid reference-guided approaches

- Domain Scanning: Identify NBS, LRR, TIR, and CC domains using HMMER and InterProScan

- Gene Assembly: Reconstruct full-length NLR genes from domain fragments

- Collinearity Analysis: Identify syntenic regions and gene expansions

- Architecture Classification: Categorize NLRs by domain organization and integrated domains

- Evolutionary Analysis: Determine expansion timing relative to polyploidization events

- Functional Validation: Test candidate NLRs through transient expression in Nicotiana benthamiana

Validation: Confirm immune response activation through cell death assays and reporter gene expression.

Research Reagent Solutions

Table 3: Essential Research Reagents for Domain Variation Studies

| Reagent/Resource | Function | Application Examples | Key Features |

|---|---|---|---|

| InterPro Database | Protein family classification | Domain annotation and functional prediction | Integrates 12 member databases; 85,000 protein families [7] |

| AlphaFold2/3 | Deep learning structure prediction | Multidomain protein modeling | High accuracy for well-folded domains; MSA integration [3] |

| RoseTTAFold All-Atom | Deep learning structure prediction | Multidomain protein modeling | All-atom modeling capability; ligand handling [3] |

| DaapNLRSeek Pipeline | NLR gene annotation | Complex genome analysis | Diploidy-assisted polyploid annotation; NLR architecture classification [6] |

| InterProScan | Domain recognition | User sequence annotation | Processes 40M+ searches annually; weekly UniProtKB updates [7] |

| Molecular Dynamics Software | Structure validation | Model refinement and stability testing | Energy optimization; RMSD monitoring during simulations [3] |

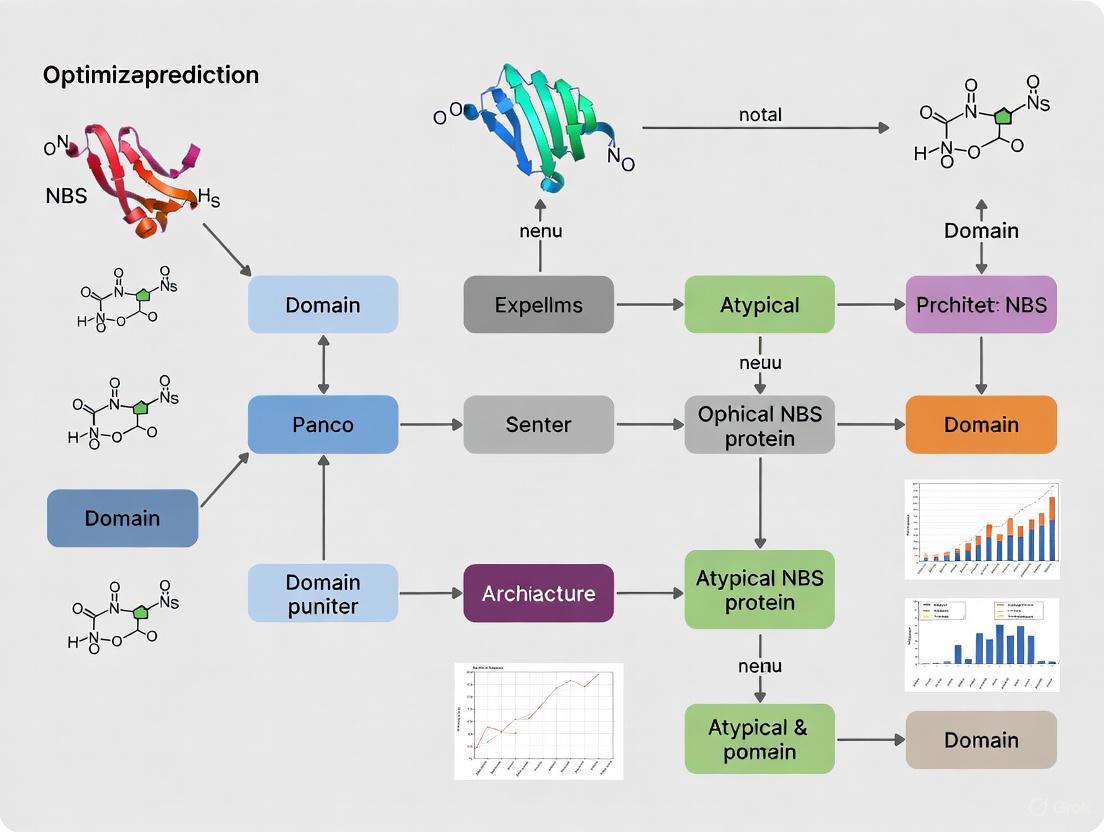

Experimental Workflow Visualization

Multidomain Protein Validation Workflow

DaapNLRSeek Annotation Pipeline

Technical Support Center

Troubleshooting Guide: Common Experimental Challenges

Issue 1: Low Confidence in Predicting Interactions for Atypical Domains

- Problem: Standard domain prediction tools fail to identify divergent motifs in atypical NBS or CheW-like domains.

- Solution: Use profile Hidden Markov Model (pHMM)-based methods like ipHMM that explicitly model interacting residues. For transmembrane prediction in receptor proteins, use DeepTMHMM instead of outdated versions like TMHMM-2.0 [8] [9].

- Preventative Step: Always perform manual domain architecture verification against reference databases like Pfam when working with non-canonical proteins [10].

Issue 2: Difficulty Distinguishing Between Helper and Sensor NLRs

- Problem: In NRC networks, functional specialization is not always clear from sequence data alone.

- Solution: Conduct phylogenetic analysis; helper NLRs (NRCs) typically form conserved subclades (NRC0), while sensor NLRs are more diverse and may show signatures of diversifying selection [11] [12].

- Verification: Perform functional assays (e.g., transient expression in Nicotiana benthamiana) to test for cell death initiation capability, a hallmark of helper NLR function [12].

Issue 3: Differentiating Atypical NBS Domains from Non-Functional Pseudogenes

- Problem: Proteins like the rice Pb1 gene encode an atypical CC-NBS-LRR protein with a degenerated P-loop, raising questions about functionality [13].

- Solution: Analyze expression patterns and genomic context. Functional atypical genes like Pb1 show characteristic expression profiles (e.g., increasing during development) and are generated through specific evolutionary events like local genome duplication [13].

Frequently Asked Questions (FAQs)

Q1: What is the key functional distinction between a generic two-component system and a chemotaxis system? A1: The critical distinction is the physical separation of the sensor and kinase functions into distinct proteins (e.g., MCPs and CheA). This separation allows CheA kinases to integrate signals from multiple chemoreceptors, a process facilitated by CheW scaffold proteins [14].

Q2: How are NRC immune receptor networks genetically organized? A2: NRCs form a complex genetic network where multiple sensor NLRs detect pathogen effectors and signal through a partially redundant set of helper NLRs. This network shows diversified hierarchical architecture across plant lineages, with significant expansion in lamiids [11] [12].

Q3: What are the major classes of CheW-like domains and their likely specializations? A3: Analysis of ~1900 prokaryotic species revealed six classes [14]:

- Class 1: The most abundant (~80%), found in CheW and CheV scaffold proteins.

- Class 2: Rare (~1%), with properties of both CheA and CheW-lineage proteins.

- Class 3 & 4: Primarily in CheA-lineage kinases.

- Class 6: Comprises ~20% of CheW-lineage proteins, potentially interacting with distinct receptor structures.

Q4: Why might my genome assembly lack TNL-type NBS-LRR genes? A4: This is a known phylogenetic distribution. TNL genes are absent in monocot genomes, such as yam (Dioscorea rotundata) and other grasses. Your observation is consistent with evolutionary patterns, not necessarily an assembly error [15].

Data Presentation: Key Classifications and Architectures

Table 1: Classification of CheW-like Domains and Their Properties [14]

| Class | Prevalence | Primary Protein Architecture | Likely Functional Specialization |

|---|---|---|---|

| Class 1 | ~80% | CheW, CheV | Standard scaffold function in MCP•CheW•CheA arrays |

| Class 2 | ~1% | CheW.I | Hybrid properties; often co-occurs with MAC proteins |

| Class 3/4 | Majority of CheA | CheA (Various) | Histidine kinase function; signal integration |

| Class 6 | ~20% of CheW-lineage | CheW | May interact with different chemoreceptor structures |

Table 2: Categories of NLR Immune Receptors and Their Functions [11] [12] [15]

| Category | Mode of Action | Key Domains | Example | Function |

|---|---|---|---|---|

| Singleton | Acts independently | CC-NBS-LRR or TIR-NBS-LRR | ZAR1, Sr35 | Directly or indirectly senses effectors and initiates immunity |

| Pair | Sensor-Helper Pair | Integrated Domain (ID) in Sensor | RRS1/RPS4, Pik-1/Pik-2 | Sensor detects pathogen, helper transduces signal |

| Network | Multiple Sensors to Helpers | CC-NBS-LRR (CCRx-type) | NRC Network | Multiple sensor NLRs signal through redundant helper NRCs |

Experimental Protocols

Protocol 1: Identifying and Classifying NBS-LRR Genes from a Genome

- Sequence Identification: Use HMMER or similar tools with HMM profiles (e.g., from Pfam) for NBS (NB-ARC), LRR, TIR, CC, and RPW8 domains to scan the proteome [15] [10].

- Classification: Assign genes to subclasses (TNL, CNL, RNL) based on their N-terminal domain architecture. Note that TNLs are absent in monocots [15].

- Architecture Analysis: Categorize genes into groups (e.g., full-length CNL, NL, CN, N) based on domain combinations and identify any integrated domains [15].

- Genomic Distribution: Map genes to chromosomes to identify singleton genes versus those in multigene clusters, which often arise from tandem duplication [15].

Protocol 2: Phylogenomic Analysis of NRC Helper NLRs

- Data Collection: Identify NLR genes from genomes of target plant lineages (e.g., asterids, Caryophyllales) using domain-based searches [12].

- Sequence Alignment: Perform multiple sequence alignment of the identified NLR proteins, focusing on conserved domains.

- Tree Construction: Build a phylogenetic tree (e.g., using Maximum Likelihood or Bayesian methods) to reconstruct evolutionary relationships.

- Clade Identification: Identify and define subclades within the NRC superclade, such as the conserved NRC0 and family-specific NRCs in lamiids [12].

- Selection Analysis: Test for signatures of positive (diversifying) selection, particularly in the LRR domains of sensor NLRs and family-specific helper NLRs [11].

Protocol 3: Feature-Based Prediction of Domain-Domain Interaction (DDI)

Model Training:

- Build interaction profile Hidden Markov Models (ipHMMs) for domain families using 3D structural data from databases like 3DID, which annotate interacting residues [8].

- Align protein sequences to the ipHMMs and calculate feature vectors (Fisher scores) for each sequence [8].

- Apply feature selection (e.g., Singular Value Decomposition) to reduce dimensionality [8].

- Train a Support Vector Machine (SVM) classifier using concatenated feature vectors from known interacting and non-interacting domain pairs [8].

Prediction:

- For a novel protein pair, generate feature vectors via alignment to the relevant ipHMMs.

- Input the concatenated, feature-selected vector into the trained SVM to predict interaction potential [8].

Pathway and Workflow Visualizations

Chemotaxis Signaling Core Pathway [14]

Atypical Protein Analysis Workflow [8] [10]

NRC Immune Receptor Network Logic [12]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Resources

| Item | Function/Application | Example/Reference |

|---|---|---|

| ipHMM (interaction profile HMM) | Enhanced domain identification that models interacting residues for improved DDI prediction [8]. | Custom-built from 3DID structural data [8]. |

| Pfam Database | Core repository of protein family HMMs for standard domain identification and classification [14] [10]. | PF01584 (CheW-like), PF00931 (NBS) [14]. |

| DeepTMHMM | Prediction of transmembrane helices in proteins; critical for analyzing membrane-associated receptors like MCPs [9]. | https://services.healthtech.dtu.dk/service.php?DeepTMHMM [9]. |

| 3DID Database | Source of interacting protein pairs with known 3D structures for training predictive models like ipHMM-SVM [8]. | https://3did.irbbarcelona.org/ [8]. |

| Nicotiana benthamiana | Model plant for transient expression assays to test NLR function (e.g., cell death) and network interactions [11] [12]. | Used for validating NRC helper and sensor functions [12]. |

| Support Vector Machine (SVM) | A discriminative classifier that can be trained on features from ipHMMs to predict domain-domain interactions [8]. | Trained on Fisher score vectors from known interactions [8]. |

The Impact of Atypical Architectures on Protein Function and Drug Targeting

Frequently Asked Questions (FAQs)

FAQ 1: Why do standard domain prediction tools often fail with atypical NBS protein architectures, and how can I improve accuracy? Standard tools primarily rely on sequence homology to canonical domains. Atypical architectures may have low sequence similarity, divergent functions, or novel domain combinations that escape detection. To improve accuracy, use an integrated prediction pipeline: combine multiple profile-based tools (e.g., FFAS, SUPERFAMILY) with deep learning-based structure predictors (e.g., AlphaFold2, ESMFold) [16] [17]. Clustering the results from these different methods can generate a consensus prediction that is more robust to the weaknesses of any single algorithm [16].

FAQ 2: What experimental validation is essential after a computational prediction of an atypical domain? Computational predictions are hypotheses that require experimental confirmation. Key validations include:

- Site-Directed Mutagenesis: Introduce targeted mutations to the predicted functional or binding residues. Ablation of activity confirms functional importance [18] [19].

- Biophysical Binding Assays: Use Surface Plasmon Resonance (SPR) or Isothermal Titration Calorimetry (ITC) to quantify interactions between the purified protein and its predicted ligand or target [20].

- Cell-Based Functional Assays: Assess the impact of the mutation or modulation on relevant cellular pathways, such as cancer cell migration in the case of LIM-kinase inhibitors [20].

FAQ 3: How can I assess the 'druggability' of a protein with a non-canonical architecture? Druggability depends on more than just the primary sequence. A holistic assessment should integrate:

- Structure-Based Analysis: Use tools like Fpocket on an AlphaFold2-predicted structure to identify potential binding cavities and characterize their properties (e.g., hydrophobicity, volume) [21].

- Network and Functional Context: Evaluate the protein's position in protein-protein interaction networks and its biological functions to understand potential efficacy and off-target effects [21].

- Machine Learning Predictors: Employ interpretable models like PINNED, which generate druggability sub-scores based on sequence, structure, localization, and network information [21].

FAQ 4: Can protein structure prediction tools like AlphaFold2 reliably model atypical architectures for drug discovery? AlphaFold2 has revolutionized structure prediction, but caution is advised. While it can generate accurate backbone structures, it may have difficulty with inherently disordered regions and cannot model allostery or the effects of specific ligands on conformation [18] [22]. Always check the per-residue confidence score (pLDDT); regions with low confidence (pLDDT < 70) may be unreliable for docking studies [22]. For critical applications, use the predicted structures as a starting point for further refinement with molecular dynamics simulations [19].

Troubleshooting Guides

Problem: Inconsistent Domain Predictions for a Single Atypical Protein Sequence

This issue arises when different algorithms yield conflicting domain annotations.

Table: Troubleshooting Inconsistent Domain Predictions

| Problem Root Cause | Diagnostic Steps | Solution & Recommended Action |

|---|---|---|

| Weak sequence homology to known domain profiles [16]. | Run the sequence through a meta-predictor that clusters results from multiple servers (e.g., meta-BASIC) [16]. Check if any prediction is consistently present across a cluster of models. | Move from sequence-based to structure-based inference. Generate a 3D model with AlphaFold2 and use fold-comparison tools (e.g., Foldseek) to identify structural homologs, which are more conserved than sequence [19] [17]. |

| Novel domain combination not present in training databases. | Manually inspect the multiple sequence alignment used by the predictor. Look for conserved regions that are not fully captured by a single known domain. | Perform functional mapping. Statistically map compound interactions or functional traits to specific protein regions, as in the DRUIDom method, to identify potential functional domains de novo [20]. |

Problem: Low Confidence (pLDDT) in Predicted Protein Structure Regions

AlphaFold2 outputs a per-residue confidence score; low scores indicate unreliable regions.

Table: Troubleshooting Low Confidence in Predicted Structures

| Problem Root Cause | Diagnostic Steps | Solution & Recommended Action |

|---|---|---|

| Intrinsically Disordered Region (IDR) that lacks a fixed structure [22]. | Check the pLDDT scores. IDRs typically have very low scores (pLDDT < 50). Use dedicated disorder predictors (e.g., IUPred2A) for confirmation. | Focus experimental efforts on high-confidence structured domains. For IDRs, investigate function through biochemical assays that do not require a fixed structure. |

| Lack of evolutionary constraints or sparse homologous sequences in databases [22]. | Examine the depth and diversity of the Multiple Sequence Alignment (MSA) used by AlphaFold2. A shallow MSA often leads to poor confidence. | Use homology modeling with a highly confident, structurally similar template (if one can be found) to model the specific domain of interest [19]. |

| Sensitivity to the cellular environment (e.g., allostery, partner binding) not captured in silico. | Compare the predicted structure with any existing experimental data (e.g., mutagenesis, cross-linking). | Employ Molecular Dynamics (MD) simulations to assess the dynamic stability of the predicted model and explore conformational flexibility [19]. |

Problem: Validated Atypical Domain Fails in Initial Drug Screening

The domain is confirmed but appears "undruggable" in virtual or high-throughput screens.

Table: Troubleshooting Atypical Domains in Drug Screening

| Problem Root Cause | Diagnostic Steps | Solution & Recommended Action |

|---|---|---|

| Flat or shallow binding pocket not amenable to small-molecule binding. | Analyze the predicted structure with Fpocket. Visually inspect the top-ranked pockets for depth and enclosure. | Shift screening strategy. Consider medium-sized molecules (e.g., peptides, macrocycles) or explore PROTAC technology that targets the protein for degradation rather than inhibition. |

| Insufficient functional data for optimal ligand-based screening. | Review the biological context. Is the domain's active site or protein-protein interaction interface well-defined? | Use a domain-centric interaction prediction method like DRUIDom. It maps compounds to domains, and this association can be propagated to other proteins with the same domain, expanding the list of candidate inhibitors [20]. |

| Ligand binding is allosterically controlled and the predicted structure represents an inactive state. | Check literature for evidence of allosteric regulation in similar protein families. | Perform blind docking across the entire protein surface to identify potential cryptic or allosteric sites not obvious from the static structure [19]. |

Experimental Protocols for Validation

Protocol: Domain-Centric Compound-Target Prediction (DRUIDom Method)

This protocol outlines a computational method to map compounds to protein domains, enabling the prediction of new drug targets, particularly for proteins with atypical architectures [20].

1. Principle: Statistically map known bioactive compounds to the structural domains of their target proteins. This association allows any other protein containing the same mapped domain to become a candidate target for that compound.

2. Reagents & Data Sources:

- Bioactivity Data: Curated datasets from ChEMBL and PubChem, filtered for active/interacting and inactive/non-interacting compound-target pairs.

- Protein Domain Annotations: From databases like Pfam or InterPro.

- Compound Libraries: Small molecule compounds (e.g., from PubChem).

- Clustering Software: For grouping compounds based on molecular similarity.

3. Procedure:

- Step 1: Data Curation. Meticulously filter public bioactivity data to create high-confidence training sets of active and inactive compound-target pairs.

- Step 2: Domain-Compound Mapping. For each compound-target pair, statistically map the compound to the specific domain(s) of the target protein. This generates a set of high-confidence compound-domain associations.

- Step 3: Similarity-Based Propagation. Cluster a large set of small molecules based on structural similarity. The domain associations from Step 2 are then propagated to other compounds within the same cluster.

- Step 4: New Interaction Prediction. The finalized output is a vast set of predicted new compound-protein interactions, where proteins are targeted based on shared domain architecture.

4. Experimental Validation (Example):

- Synthesis & Bioactivity Analysis: Synthesize compounds predicted to target a protein of interest (e.g., LIM-kinase).

- Cell Migration Assay: Test the compounds in a functional assay, such as a cancer cell migration assay.

- Western Blot Analysis: Confirm the mechanism of action by analyzing the inhibition of target phosphorylation (e.g., LIMK phosphorylation) and its downstream effects (e.g., cofilin activity) [20].

Protocol: Multi-scale Validation of Atypical Domain Function

This protocol provides a framework for validating the function of a predicted atypical domain from computational prediction to cellular phenotype.

1. In Silico Validation of Generated Structures:

- Geometric Plausibility: Analyze generated backbone structures using Ramachandran plots to ensure dihedral angles (φ and ψ) fall within allowed and favored regions [19].

- Conserved Residue Consistency: Align the sequence of the generated structure with experimentally derived sequences. Use tools like ConSurf to identify and confirm that evolutionarily conserved, functionally critical residues are preserved in the model [19].

- Dynamic Stability with MD: Perform Molecular Dynamics (MD) simulations under physiological conditions (e.g., solvated in a water box with ions, 310 K, 1 atm) for at least 10 ns. Analyze the root-mean-square deviation (RMSD) to ensure the structure remains stable over time [19].

2. Functional Ligand Binding Validation:

- Blind Docking: Use docking software (e.g., AutoDock Vina) to scan the entire surface of the generated protein structure without pre-defining a binding site. This helps identify potential binding pockets that may be atypical [19].

- Binding Affinity Measurement: Experimentally quantify the interaction using biophysical techniques like Surface Plasmon Resonance (SPR) or Isothermal Titration Calorimetry (ITC) with the purified protein and its putative ligand.

Diagram 1: Multi-scale domain validation workflow.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Atypical Architecture Research

| Research Reagent / Tool | Function / Application | Example Tools / Sources |

|---|---|---|

| Meta-Prediction Servers | Clusters results from multiple prediction algorithms to generate a more reliable consensus, overcoming individual tool weaknesses. | mGenTHREADER, meta-BASIC [16] |

| AI Structure Predictors | Generates 3D protein structure models from amino acid sequence, crucial for visualizing atypical architectures. | AlphaFold2, ESMFold, RoseTTAFold [18] [17] |

| Structural Comparison Tools | Compares protein structures (experimental or predicted) to identify remote homology and classify folds based on 3D shape. | Foldseek [19] |

| Binding Pocket Detectors | Automatically detects and characterizes potential small-molecule binding cavities in protein structures. | Fpocket [21] |

| Domain-Centric DTI Predictors | Predicts drug-target interactions based on protein domain-compound relationships, ideal for novel domain combinations. | DRUIDom [20] |

| Molecular Dynamics Software | Simulates the physical movements of atoms and molecules over time, assessing the dynamic stability of predicted structures. | GROMACS [19] |

| Docking Software | Predicts the preferred orientation and binding affinity of a small molecule (ligand) to a protein target. | AutoDock Vina [19] |

Diagram 2: DRUIDom domain-centric prediction workflow.

Cutting-Edge Computational Methods for Atypical Domain Prediction

Frequently Asked Questions (FAQs)

General Principles

Q1: What is the core advantage of combining deep learning with physics-based simulations for protein structure prediction?

Hybrid approaches leverage the complementary strengths of both paradigms. Deep learning models, particularly AlphaFold2 and RoseTTAFold, excel at extracting evolutionary constraints and patterns from vast sequence databases to generate highly accurate static structures [23] [24]. Physics-based simulations, such as molecular dynamics, model the physical forces and temporal dynamics that govern protein movement and interactions [25]. Integrating them allows researchers to start with a high-confidence deep learning-predicted structure and then refine it or study its dynamics using physics-based methods, achieving both accuracy and mechanistic insight [25] [26].

Q2: For an atypical NBS-LRR protein architecture, which strategy is recommended for initial structure prediction: deep learning or homology modeling?

For atypical or novel architectures where homologous templates are scarce, deep learning approaches are strongly recommended for the initial structure prediction [24]. Models like AlphaFold2, which rely on multiple sequence alignments (MSAs), can often succeed where traditional homology modeling fails due to a lack of close templates [23] [24]. The deep learning model provides a foundational structure, which can then be validated and refined using physics-based methods.

Technical and Computational Challenges

Q3: My deep learning-predicted NBS model shows a poorly structured loop region. How can I refine this specific domain?

This is a common challenge, particularly in complementarity-determining regions (CDRs) or flexible loops. A recommended protocol is:

- Use the deep learning output as a starting point: Extract the initial coordinates from the AlphaFold2 or RoseTTAFold prediction.

- Set up a targeted molecular dynamics (MD) simulation: Apply restraints to the well-structured parts of the protein to keep them stable.

- Run an accelerated MD simulation focused on the flexible loop region to enhance conformational sampling and allow the loop to explore its native low-energy state, guided by physics-based force fields [25].

- Analyze the resulting trajectories to identify the most stable conformations of the refined loop.

Q4: How can I integrate predicted structural data into a systems biology model of an NBS-LRR mediated signaling pathway?

This involves converting structural information into kinetic parameters. A method demonstrated for the BMP pathway can be adapted [26]:

- Predict the structure of your NBS-LRR protein and its potential interactors (e.g., pathogen effectors, host guardees) using a tool like AlphaFold2 or RoseTTAFold.

- Perform protein-protein docking (e.g., with HADDOCK) to model the complex, using evolutionary conservation data to guide the docking.

- Predict the binding affinity ((K_d)) of the complex from the docked structure using a tool like Prodigy [26].

- Convert the structural binding affinity ((K{struct})) into a mass action kinetics equilibrium constant ((K{eq})) for use in your systems biology model, thereby constraining the model with physically plausible parameters [26].

Q5: What are the common sources of error when applying these hybrid methods to large, multi-domain proteins like NBS-LRRs?

Key challenges include:

- Sampling Limitations: Physics-based simulations may struggle to sample the full conformational landscape of large proteins on biologically relevant timescales.

- Data Scarcity for DL: Atypical architectures may have poor MSAs, reducing deep learning prediction confidence.

- Force Field Inaccuracies: Inaccurate parameterization in physics simulations can lead to drift from the native state.

- Domain-Domain Interactions: Both methods can find it difficult to accurately model the flexible linkers and dynamic interactions between domains like the NBS, LRR, and TIR/CC domains [27].

Troubleshooting Guides

Issue 1: Low Confidence in Deep Learning Prediction for a Specific Domain

Problem: The predicted aligned error (PAE) plot from AlphaFold2 shows low confidence, specifically in the LRR domain of your NBS-LRR protein.

Solution:

- Step 1: Verify the Input. Check the quality and depth of your multiple sequence alignment (MSA). A shallow MSA is a common cause of poor predictions. Try using a larger sequence database or different MSA generation tools.

- Step 2: Leverage Hybrid Sampling. Use the deep learning prediction as a starting structure for molecular dynamics simulations. The physics-based force field can help refine the low-confidence region.

- Step 3: Experimental Validation. If possible, use any existing experimental data (e.g., from mutagenesis studies showing critical residues for pathogen detection [27]) as distance restraints in a subsequent physics-based simulation to guide the structure towards a biologically plausible conformation.

Issue 2: Molecular Dynamics Simulation Diverges from Initial DL Structure

Problem: After a few nanoseconds of MD simulation, the protein backbone RMSD increases dramatically from the deep learning-predicted structure.

Solution:

- Step 1: Check Simulation Stability. Ensure your system was properly solvated and neutralized, and that it was equilibrated correctly before production run.

- Step 2: Apply Restraints. Use soft harmonic restraints on the protein's alpha-carbon atoms during an initial equilibration phase to gently relax the solvent around the protein without allowing the protein to unfold.

- Step 3: Verify the Force Field. Some force fields are known to be over- or under-stabilizing for certain secondary structure elements. Consider trying a different, more modern protein force field.

- Step 4: Cross-Validate. This divergence could indicate a genuine flexibility not captured in the static DL prediction, or an error in either the prediction or simulation setup. Compare the diverged state with other available information, such as known functional motifs [27].

Research Reagent Solutions

The following table details key computational tools and their functions for hybrid deep learning and physics-based research on NBS protein architectures.

| Research Reagent | Type | Primary Function in Workflow |

|---|---|---|

| AlphaFold2 / AlphaFold3 [23] | Deep Learning Model | Predicts 3D protein structures from amino acid sequences with high accuracy; provides initial structural models for refinement. |

| RoseTTAFold [25] [23] | Deep Learning Model | A deep learning-based protein structure prediction tool that integrates sequence, distance, and coordinate information in a three-track architecture. |

| HADDOCK [26] | Docking Software | Performs protein-protein docking, which can be informed by evolutionary data to model complexes (e.g., NBS-LRR with pathogen effectors). |

| Prodigy [26] | Binding Affinity Predictor | Predicts the binding affinity (dissociation constant, Kd) from a pre-docked protein complex structure, useful for parameterizing systems biology models. |

| GROMACS / AMBER | Molecular Dynamics Engine | Performs physics-based molecular dynamics simulations to refine structures, study conformational dynamics, and assess stability. |

| SBASE Domain Collection [28] | Domain Database | A reference database of protein domain sequences; can be used for homology-based checks and functional domain recognition. |

Experimental Workflows and Data Visualization

Workflow for Hybrid Structure Prediction and Refinement

The diagram below outlines a robust protocol for determining a refined, dynamic protein structure.

Pathway for Integrating Structural Data into Systems Biology Models

This diagram illustrates how to connect a predicted protein complex structure to a quantitative systems biology model.

Performance Comparison of Structure Prediction Methods on Nanobodies

Table 1: A comparison of method performance across different nanobody categories, highlighting the complementary strengths of deep learning and physics-based approaches. Adapted from a systematic study on nanobody structure prediction [25].

| Method Category | Specific Method | Concave-type CDR3 (e.g., Nb32) | Loop-type CDR3 (e.g., Nb80) | Convex-type CDR3 (e.g., Nb35) | Key Strength |

|---|---|---|---|---|---|

| Physics-Based | Homology Modeling + MD | Moderate Accuracy | Moderate Accuracy | Lower Accuracy | Models dynamics and flexibility |

| Deep Learning | AlphaFold2 | High Accuracy | High Accuracy | High Accuracy | High accuracy for static structure |

| Deep Learning | RoseTTAFold | High Accuracy | High Accuracy | High Accuracy | Integrated sequence-space reasoning |

Impact of Key Receptors on Chromatin Organization

Table 2: Quantitative assessment from a deep learning analysis (Twins) of Hi-C data, showing the distinct biological impact of cohesin (NIPBL) and CTCF perturbations [29]. This demonstrates how DL can extract meaningful, quantitative features from complex biological data.

| Biological Perturbation | Twins Separation Index | Twins Mean Performance | Biological Interpretation from ChIP-seq Validation |

|---|---|---|---|

| NIPBL Deletion (Cohesin loss) | High (e.g., ~0.70) | High (e.g., ~0.85) | Significant changes in high-density cohesin (RAD21/SMC3) regions (p < 1e-190) |

| CTCF Degradation | High | High | Significant changes in high-density CTCF regions (p < 1e-35), but not in H3K27me3 regions |

The Power of Multiple Sequence Alignments (MSAs) and Advanced Language Models

Troubleshooting Guides

Guide 1: Resolving Poor MSA Accuracy for Distantly Related Proteins

- Problem: My MSA of divergent NBS-domain sequences has many gaps and poor alignment in functionally critical regions.

- Explanation: Progressive alignment methods, common in tools like Clustal Omega, are heuristic and can propagate early errors, especially when sequences are distantly related. This misalignment can obscure conserved residues vital for domain prediction [30].

- Solution:

- Switch Alignment Tool: Use an iterative aligner like MUSCLE or MAFFT, which repeatedly refines the alignment to optimize an objective function and can produce more accurate results for divergent sequences [30] [31].

- Apply Consensus Methods: Run multiple alignments with different algorithms (e.g., Clustal Omega, MAFFT, T-Coffee) and use a consensus tool like MergeAlign to identify reliably aligned regions based on agreement between methods [30].

- Inspect Alignment: Visually inspect the alignment using a viewer that displays conservation scores or sequence logos to verify that known functional motifs are correctly aligned [31].

Guide 2: Addressing Low Confidence in Protein Language Model Predictions

- Problem: The embeddings from a PLM like ESM for my atypical NBS protein yield low-confidence domain predictions.

- Explanation: PLMs are trained on large-scale sequence databases. Atypical architectures with few homologous sequences in the training data may not be well-represented in the model's latent space.

- Solution:

- Try a Specialized Model: Use a PLM specifically designed for your task. For function prediction, models like ProteinBERT or ProtTrans, which are trained with function-aware objectives, may capture more relevant features [32].

- Fine-tune the Model: If you have a sufficient dataset of annotated atypical NBS proteins, fine-tune a general-purpose PLM (e.g., ESM) on this specific data. This adapts the model's representations to your domain of interest [33].

- Combine with MSA Data: Integrate the embeddings from the PLM with traditional evolutionary features derived from an MSA. This hybrid approach leverages both the deep learning model's power and explicit evolutionary information [33] [32].

- Problem: Constructing an MSA for thousands of NBS protein sequences is computationally prohibitive on my local server.

- Explanation: Multiple sequence alignment is a computationally complex problem. The computational time and memory required scale poorly with the number and length of sequences using naive methods [30] [34].

- Solution:

- Use Efficient Algorithms: Employ tools designed for large datasets. MAFFT can align tens of thousands of sequences, and Clustal Omega is capable of aligning over 2,000 sequences efficiently [31].

- Leverage Divide-and-Conquer Strategies: Use software like MAGUS, which divides the sequence set into smaller subsets, aligns them separately, and then merges the results. This strategy significantly reduces the computational burden [34].

- Utilize Cloud Resources: Perform the alignment using cloud computing resources, which can provide the necessary computational power for large-scale analyses [31].

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a traditional MSA and a Protein Language Model?

- Answer: An MSA is a explicit, human-readable comparison of three or more biological sequences to identify regions of similarity and difference, which are used to infer evolutionary relationships and functional domains [30]. A Protein Language Model (PLM) is a deep learning model (often based on the Transformer architecture) that is self-supervised pre-trained on millions of protein sequences. It learns to generate numerical embeddings (dense vector representations) that implicitly capture structural, functional, and evolutionary information without requiring an explicit alignment [33] [32].

FAQ 2: When should I use a progressive versus an iterative MSA method?

- Answer: Use a progressive method (e.g., Clustal Omega) for its speed when working with large numbers of clearly related sequences. However, be aware that it is sensitive to the order of alignment and can propagate early errors [30] [31]. Use an iterative method (e.g., MUSCLE) when aligning more distantly related sequences, as it repeatedly refines the alignment to find a more optimal solution, leading to higher accuracy at the cost of greater computational time [30].

FAQ 3: How can PLMs possibly outperform methods that use explicit evolutionary information from MSAs?

- Answer: PLMs learn statistical patterns from tens of millions of sequences. During pre-training, they develop a deep, contextual understanding of protein sequences that can infer evolutionary constraints, co-evolutionary relationships, and structural principles directly from the primary sequence. This allows them to make predictions for proteins with few or no known homologs, a scenario where traditional MSA-based methods struggle [33] [32].

FAQ 4: For optimizing domain prediction in atypical NBS proteins, should I prioritize MSA or PLM-based approaches?

- Answer: A hybrid approach is often most powerful. Start with a high-quality MSA to identify conserved motifs and structural domains, providing a ground-truthed evolutionary context. Then, use a PLM to generate deep contextual embeddings that can capture subtle, non-local sequence relationships that might be missed in the MSA. Combining these two data types as input to a final machine learning classifier has been shown to significantly improve prediction accuracy for challenging protein families [33].

Experimental Protocols

Protocol 1: Generating a High-Quality MSA for NBS Domain Analysis

- Objective: To create a reliable multiple sequence alignment of NBS-domain proteins for phylogenetic analysis and conserved feature identification.

- Workflow:

- Detailed Methodology:

- Sequence Collection: Gather your protein sequences of interest from databases like UniProt.

- Pre-processing: Remove redundant sequences and truncate all sequences to the specific NBS domain using a tool like PFAM for domain annotation to ensure you are aligning homologous regions.

- Tool Selection: Choose an alignment algorithm based on your data. For a few hundred sequences of moderate divergence, MAFFT is a robust choice due to its progressive-iterative approach [31].

- Execution: Run the alignment with default parameters initially. For sequences with long low-homology terminals, consider using options designed to handle such extensions.

- Visual Inspection: Load the resulting MSA into a viewer (e.g., Geneious). Generate a consensus sequence and a sequence logo to visually identify conserved residues and potential misalignments [31].

- Refinement: Based on inspection, you may need to mask poorly aligned regions or, in rare cases, make minor manual adjustments to correct obvious errors around key functional sites.

Protocol 2: Fine-Tuning a Protein Language Model for Atypical Domain Prediction

- Objective: To adapt a general-purpose PLM to specifically recognize and predict functional domains in atypical NBS protein architectures.

- Workflow:

- Detailed Methodology:

- Model Acquisition: Download a pre-trained PLM such as ESM-1b or ProtTrans [33] [32].

- Dataset Preparation: Curate a high-quality dataset of NBS protein sequences with accurate domain boundary annotations. Split this data into training, validation, and test sets.

- Embedding Extraction: Pass your sequences through the pre-trained model to obtain the initial embeddings.

- Model Architecture: Add a task-specific prediction head (e.g., a multi-layer perceptron) on top of the PLM for classification or regression.

- Fine-tuning: Train the entire model (or just the final layers) on your labeled dataset using an appropriate optimizer. Use the validation set to monitor for overfitting.

- Validation: Evaluate the final model's performance on the held-out test set using metrics like accuracy, F1-score, or mean squared error to ensure it generalizes well.

Data Presentation

Table 1: Comparison of Multiple Sequence Alignment Software

| Program | Algorithm Type | Best Use Case | Key Consideration |

|---|---|---|---|

| Clustal Omega [31] | Progressive | Alignments of >2,000 sequences; sequences with long terminal extensions. | Not suitable for sequences with large internal indels. |

| MUSCLE [31] | Iterative | Alignments of up to ~1,000 sequences. | Improved accuracy over purely progressive methods for divergent sequences [30]. |

| MAFFT [31] | Progressive-Iterative | Large alignments (up to 30,000 sequences); sequences with long gaps. | Offers a good balance of speed and accuracy, with various strategies for different data types [34]. |

| T-Coffee [30] | Progressive | Smaller sets of distantly related sequences. | Generally more accurate but slower than Clustal; uses consensus information from multiple alignments. |

| Model | Base Architecture | Key Feature / Application | Training Data |

|---|---|---|---|

| ESM-1b / ESM-2 [33] [32] | Transformer (Encoder) | State-of-the-art performance on structure and function prediction tasks. | Millions of UniRef sequences. |

| ProtTrans [32] | Transformer (Encoder, e.g., BERT) | Family of models providing protein embeddings for downstream tasks. | UniRef and BFD databases [32]. |

| ProteinBERT [32] | Transformer (Encoder) | Incorporates global attention and is trained with multi-task learning for function prediction. | Custom dataset. |

| UniRep [32] | mLSTM (Recurrent Network) | Early influential model for generating single-vector protein representations. | UniRef50. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| MAFFT Software Suite | A multiple sequence alignment program used to align the collected NBS protein sequences, crucial for identifying conserved regions and evolutionary relationships [31] [34]. |

| ESM-2 Model Weights | The parameters of a pre-trained protein language model, used to generate contextual embeddings from raw NBS protein sequences for subsequent function or structure prediction tasks [33] [32]. |

| UniProt Database | A comprehensive resource for protein sequence and functional information, used to gather initial NBS protein sequences and validate functional annotations [33]. |

| Geneious Prime Software | A bioinformatics platform that provides visualization and analysis tools for MSAs, including the generation of consensus sequences and sequence logos to inspect alignment quality [31]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why do specialized splitting and reassembly protocols exist for multi-domain proteins? While end-to-end deep learning methods excel at single-domain prediction, multi-domain proteins present unique challenges. They often have complex inter-domain interactions, flexible linkers, and can adopt multiple conformational states. For proteins with weak evolutionary signals or large sizes, a "divide and conquer" strategy of predicting individual domains and then assembling them has been shown to achieve higher accuracy than full-chain, end-to-end prediction [35] [36].

FAQ 2: My AlphaFold2/3 model for a multi-domain protein looks compact, but I suspect it has a more open conformation. What could be wrong? This is a recognized behavior. AI predictors have a marked tendency to model multi-domain proteins in their most compact configuration, often corresponding to an inactive state. This occurs even when the protein is known to have a sparse, active state or is modeled in the presence of ligands specific to an open conformation. The system's bias toward order and compactness can overshadow biochemical data [3].

FAQ 3: What is the most common point of failure when reassembling predicted domain structures? The most common failure points are the inter-domain linkers and interfaces. Inaccurate modeling of the flexible regions connecting domains can lead to incorrect relative domain orientations and atomic conflicts during reassembly. Furthermore, if the predicted inter-domain interactions are weak or incorrect, the final assembled model will have a low global accuracy, even if the individual domains are perfectly predicted [3] [36].

FAQ 4: How can I improve the prediction for a protein that has known multiple conformational states? For multi-state proteins, a single model is insufficient. To drive modeling toward a specific, less compact state, you can use a template-based filtering approach. This involves curating the input multiple sequence alignments (MSAs) or templates to include only structural information from the desired state (e.g., only active-state templates). This guides the AI's MSA step away from the default compact configuration [3].

Troubleshooting Guide

Problem 1: Inaccurate Global Architecture in Assembled Model

Symptoms: The full-chain model has a low TM-score or RMSD when compared to an experimental structure, despite high accuracy in the individual domains. The relative orientation of domains is incorrect.

| Potential Cause | Solution | Key Reference |

|---|---|---|

| Default predictor bias toward compactness | Use a multi-objective conformational sampling algorithm (e.g., M-DeepAssembly) that explicitly optimizes for both inter-domain and full-chain distance restraints. | [36] |

| Weak evolutionary signals for domain pairing | Integrate inter-domain interaction features predicted by specialized convolutional neural networks (e.g., DeepAssembly) to guide the assembly process. | [36] |

| Single, incorrect conformational state | Generate a diverse ensemble of models and use a model quality assessment (MQA) algorithm to select the best, or analyze the ensemble for alternative conformations. | [36] |

Problem 2: Poor Performance on Large, Atypical Protein Architectures

Symptoms: The prediction pipeline fails to produce a complete model, crashes due to memory limitations, or produces extremely low-confidence models for large, multi-domain proteins with non-canonical domain arrangements, such as atypical NBS protein architectures.

| Potential Cause | Solution | Key Reference |

|---|---|---|

| High computational demand for full-chain folding | Employ a proven "divide and conquer" strategy. Use a domain parser (e.g., DomBpred) to split the sequence, fold domains independently, and then reassemble. | [35] [36] |

| Morphing regions (e.g., coiled coils) are poorly modeled | For regions like coiled coils in NBS proteins, treat AI predictions with caution. Use piecewise modeling with experimental data (e.g., from cross-linking or cryo-EM) to constrain the global architecture. | [3] |

| Atomic clashes in the final assembled model | Implement a protocol that performs population-based dihedral angle optimization of the linkers, guided by a multi-objective energy function to resolve clashes. | [36] |

Experimental Protocols

Protocol 1: The D-I-TASSER Pipeline for Multi-Domain Protein Structure Prediction

D-I-TASSER is a hybrid approach that integrates deep learning potentials with iterative threading assembly simulations.

- Input: Provide the full-length amino acid sequence of the multi-domain protein.

- Deep Multiple Sequence Alignment (MSA): The pipeline iteratively searches genomic and metagenomic databases to construct deep MSAs. The optimal MSA is selected via a deep-learning-guided process.

- Spatial Restraint Generation: Generate multiple spatial restraints using:

- DeepPotential: Based on deep residual convolutional networks.

- AttentionPotential: Based on self-attention transformer networks.

- AlphaFold2: To leverage end-to-end neural network predictions.

- Domain Partition: A domain-splitting module iteratively identifies domain boundaries.

- Domain-Level Modeling: For each identified domain, domain-level MSAs, threading alignments, and spatial restraints are created.

- Full-Chain Reassembly: The individual domain models are assembled into a full-chain structure using replica-exchange Monte Carlo (REMC) simulations. This step is guided by a hybrid force field that incorporates both the deep learning-derived restraints and classical physics-based knowledge.

- Output: The result is an atomic-level model of the full multi-domain protein. Benchmark tests have demonstrated that this pipeline can outperform methods like AlphaFold2 and AlphaFold3 on multi-domain proteins [35].

Protocol 2: The M-DeepAssembly Protocol for Multi-Domain Conformation Sampling

M-DeepAssembly uses a multi-objective optimization strategy to generate diverse and accurate domain assemblies.

- Domain Parsing: Split the full-length sequence into single-domain sequences using a domain boundary prediction tool like DomBpred.

- Feature Extraction:

- Inter-domain Interactions: Feed MSA, template, and inter-domain features into the DeepAssembly network to predict inter-domain interactions.

- Full-length Distance Features: Use AlphaFold2 to obtain predicted distance maps for the entire sequence.

- Single-Domain Structures: Generate 3D models for each parsed domain using a high-accuracy method like AlphaFold2.

- Multi-Objective Energy Model: Construct an energy model with at least two objective functions:

- finter(x): Measures the deviation from predicted inter-domain distances.

- ffull(x): Measures the deviation from the full-length sequence distance predictions.

- Conformational Sampling: Randomly initialize the dihedral angles of the inter-domain linkers to create a population of initial conformations. Subject this population to a multi-objective optimization algorithm to explore the conformational space and generate a diverse ensemble of full-chain models.

- Model Selection: Apply a model quality assessment (MQA) algorithm to the generated ensemble and select the top-ranking model as the final output. On a test set of 164 multi-domain proteins, this method achieved an average TM-score that was 15.4% higher than AlphaFold2 alone [36].

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in Protocol | |

|---|---|---|

| DomBpred | A sequence-based domain parser used to split a full-length protein sequence into its constituent domain sequences, which is the critical first step in a "divide and conquer" strategy. | [36] |

| DeepAssembly | A convolutional neural network that predicts inter-domain interactions. These interactions serve as crucial spatial restraints to guide the correct assembly of individual domains. | [36] |

| Multi-Objective Conformational Sampling Algorithm | The core computational engine in M-DeepAssembly that explores different domain orientations by optimizing conflicting energy functions (e.g., inter-domain vs. full-chain distances) to produce a diverse ensemble of models. | [36] |

| Replica-Exchange Monte Carlo (REMC) | An advanced sampling simulation method used in D-I-TASSER to assemble full-chain models under the guidance of a hybrid deep learning and physics-based force field, helping to avoid local energy minima. | [35] |

| Model Quality Assessment (MQA) Algorithm | A method to rank and select the most accurate model from a large ensemble of generated protein structures, as the highest-scoring model may not always be the most accurate. | [36] |

Method Performance Data

Table 1: Benchmark Performance of Multi-Domain Protein Prediction Methods

The following table summarizes quantitative performance data from large-scale benchmark studies, providing a comparison of different methodologies.

| Method / Pipeline | Key Feature | Benchmark Performance | |

|---|---|---|---|

| D-I-TASSER | Hybrid approach; integrates deep learning with physics-based simulations and domain splitting. | Outperformed AlphaFold2 and AlphaFold3 on single-domain and multi-domain proteins in CASP15. Folded 73% of full-chain sequences in the human proteome. | [35] |

| M-DeepAssembly | Multi-objective conformation sampling for domain assembly. | Average TM-score was 15.4% higher than AlphaFold2 on a test set of 164 multi-domain proteins. | [36] |

| AlphaFold2/3 | End-to-end deep learning. | High reliability on single domains; performance challenges persist on large multi-domain assemblies with weak evolutionary signals. | [35] [3] [37] |

| Piecewise Modeling with Experimental Constraints | "Divide and conquer" augmented with biophysical data. | Recommended for modeling morphing regions (e.g., coiled coils) and multi-state proteins where global templates are absent. | [3] |

Workflow Visualization

D-I-TASSER Multi-Domain Modeling Workflow

M-DeepAssembly Sampling Workflow

Frequently Asked Questions

Q1: What is the Windowed MSA strategy and why is it needed for chimeric proteins? Standard protein structure prediction tools like AlphaFold often fail to accurately predict the structure of engineered chimeric proteins, where a target peptide is fused to a scaffold protein. This failure occurs because the Multiple Sequence Alignment (MSA), which detects co-evolving residues, loses critical evolutionary signals when the chimeric sequence is aligned as a single unit. The Windowed MSA strategy independently computes MSAs for the target and scaffold regions, then merges them, restoring prediction accuracy [38].

Q2: In what scenarios should a researcher consider using the Windowed MSA approach? You should consider this approach when:

- Your research involves atypical protein architectures, such as N-terminal or C-terminal fusions of peptide tags to scaffold proteins.

- Standard AlphaFold predictions for your fusion construct show high accuracy for individual domains but poor accuracy for the fused target peptide.

- You are working with non-natural proteins or engineered constructs beyond those found in nature [38].

Q3: Which structure prediction tools are compatible with the Windowed MSA method? The method has been empirically validated with AlphaFold-2, AlphaFold-3, and ESMFold. The core of the strategy is the generation of a modified MSA, which can then be provided as input to these deep learning models for structure prediction [38].

Q4: Does the attachment point (N-terminus vs. C-terminus) affect prediction accuracy? Yes, the search results indicate that prediction accuracy for peptide targets is typically worse when attached to the N-terminus compared to the C-terminus of a scaffold protein. However, using the Windowed MSA approach makes prediction accuracy comparable for both attachment points [38].

Q5: How does linker length between protein parts impact the prediction? Testing on a small number of fusions showed that linker length does not significantly affect the prediction accuracy of the peptide tag when using the Windowed MSA method [38].

Troubleshooting Guide

Problem: Poor Prediction Accuracy for Fused Domains

| Symptom | Possible Cause | Solution |

|---|---|---|

| High RMSD in fused peptide region, while scaffold is correct. | Standard MSA fails to find homologs for the chimeric sequence, losing co-evolution signals for the peptide [38]. | Generate a Windowed MSA by independently creating MSAs for the peptide and scaffold, then merge them. |

| Accuracy loss is more severe for N-terminal fusions. | Inherent bias in the MSA construction algorithm for terminal regions [38]. | Apply the Windowed MSA strategy, which has been shown to equalize performance for N and C-terminal fusions. |

| Poor accuracy despite using state-of-the-art predictors. | The model is struggling to generalize beyond natural sequences in its training set [38]. | Use the Windowed MSA to provide the model with the correct evolutionary information for each independent domain. |

Quantitative Performance of Windowed MSA

The following table summarizes the improvement in prediction accuracy achieved by the Windowed MSA strategy on a benchmark set of 408 fusion constructs, as compared to the standard MSA approach [38].

| Performance Metric | Standard MSA | Windowed MSA | Improvement |

|---|---|---|---|

| Cases with strictly lower RMSD | -- | 65% of cases | Significant |

| Cases with marginal RMSD increase | -- | 35% of cases | No visibly worse model |

| Peptide prediction accuracy (N-terminus) | Low | Restored to C-terminus level | High |

| Peptide prediction accuracy (C-terminus) | Medium | Maintained high | Moderate |

Experimental Protocol: Implementing Windowed MSA

This section provides a detailed methodology for generating and using a Windowed MSA for chimeric protein structure prediction, based on the cited research [38].

Detailed Step-by-Step Guide

Step 1: Generate Independent MSAs For each the scaffold region and the peptide tag, generate separate MSAs.

- Tool: Use

MMseqs2via the ColabFold API (api.colabfold.com). - Database: Search against UniRef30.

- Scaffold MSA: Include homologs spanning the scaffold sequence and explicitly incorporate your chosen linker (e.g., "GLY-SER").

- Peptide MSA: Built exclusively from peptide homologs. The research filtered out peptides with less than 2 MSA hits [38].

Step 2: Merge the Sub-alignments

Concatenate the scaffold and peptide MSAs, inserting gap characters (-) to fill non-homologous positions.

- Sequences from the peptide-derived MSA carry gaps across the entire scaffold region.

- Sequences from the scaffold-derived MSA carry gaps across the entire peptide region.

- This prevents spurious residue pairing between the non-homologous scaffold and peptide segments [38].

Step 3: Structure Prediction Use the finalized, merged Windowed MSA as the direct input for structure prediction tools.

- The research validated this using AlphaFold-2 (via ColabFold) and a local installation of AlphaFold-3, providing them with the same Windowed MSAs for a like-for-like comparison [38].

Workflow Visualization

Logical Relationship: Standard MSA vs. Windowed MSA

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Context |

|---|---|

| Scaffold Proteins (e.g., SUMO, GST, GFP, MBP) | Serves as the base protein for fusion, aiding in solubility, purification, or visualization. The folded structure should be minimally perturbed by the fusion [38]. |

| Structured Peptide Targets | The functional domain of interest whose structure is being investigated. Should be stably folded independently and in the fusion context [38]. |

| Flexible Linker (e.g., GLY-SER) | Connects the scaffold and target peptide, alleviating potential steric constraints. A short, flexible linker is often sufficient [38]. |

| MMseqs2 Software | A tool for fast and efficient generation of Multiple Sequence Alignments (MSAs) from protein sequences, used here to create the independent scaffold and peptide MSAs [39]. |

| UniRef30 Database | A clustered version of UniRef100, used as the target database for MSA searches to find homologous sequences while improving computational speed [39]. |

| AlphaFold-2/3 & ESMFold | Deep learning models for protein structure prediction that utilize MSA inputs. They are the final step for generating the 3D structural model from the Windowed MSA [38]. |

Overcoming Prediction Hurdles: A Troubleshooting Guide for Complex Architectures

FAQ: Why is the low-homology challenge particularly problematic for NBS protein research?

NBS (Nucleotide-Binding Site) proteins, such as NLRs (NOD-like receptors), often exhibit multidomain architectures and multiple structural states (e.g., inactive ADP-bound and active ATP-bound states) [3]. Traditional homology modeling relies on finding close evolutionary relatives in the Protein Data Bank (PDB). However, for these atypical architectures, suitable experimental templates are often scarce because their sequences are highly specific and their conformational flexibility makes them difficult to crystallize. This scarcity creates a significant bottleneck for structural and functional studies.

FAQ: What are the primary limitations of current deep learning predictors like AlphaFold in low-homology scenarios?

While revolutionary, deep learning platforms exhibit specific biases that can impact low-homology NBS protein modeling [3]:

- Compactness Bias: A marked tendency to model proteins in their most compact configuration, even when evidence suggests a more open state.

- Secondary Structure Bias: A tendency to over-predict ordered secondary structures like alpha-helices, potentially at the expense of morphing regions like coiled-coils.

- Template Dependency: Performance can degrade for sequences with few homologous sequences for the deep learning model to learn from, though this is partially mitigated by the use of protein language models.

Strategy Guides & Troubleshooting

Guide: Implementing a Hybrid Prediction and Experimental Validation Protocol

When PDB templates are scarce, a single computational method is insufficient. The following integrated workflow combines multiple computational approaches with experimental validation to achieve reliable models. This is especially critical for multistate proteins like NBS proteins, which transition between inactive and active states.

Diagram 1: Hybrid prediction and validation workflow for low-homology proteins.

Detailed Protocol:

- Run Multiple Deep Learning Predictors: Execute AlphaFold2 (or AFM for complexes), AlphaFold3, and RoseTTAFold All-Atom on your target sequence. Do not rely on a single tool [3] [40].

- Comparative Analysis: Use the

fetch_pdbfunction from R packageprotti(or similar bioinformatics tools) to analyze the outputs. Focus on:- Domain-level RMSD: Calculate RMSD for individual domains (e.g., NBD, LRR, CC) against any available experimental data. Domains like NBD and LRR are often predicted with high accuracy (RMSD potentially <2 Å), while coiled-coil regions may show severe deviations (>12 Å) [3].

- Predicted Aligned Error (PAE): Analyze inter-domain PAE to assess confidence in relative domain orientations.

- Identify Reliable Regions: Based on the consensus across predictors and per-residue confidence metrics (pLDDT), identify which domains or regions are reliably modeled.

- Incorporate Experimental Restraints: For regions with low consensus or low confidence, use sparse experimental data to guide modeling.

- Cross-linking Mass Spectrometry (XL-MS): Provides distance restraints between amino acids to guide domain assembly [40].