Beyond the Black Box: A Practical Guide to Refining Feature Importance for Robust Biomedical Discovery

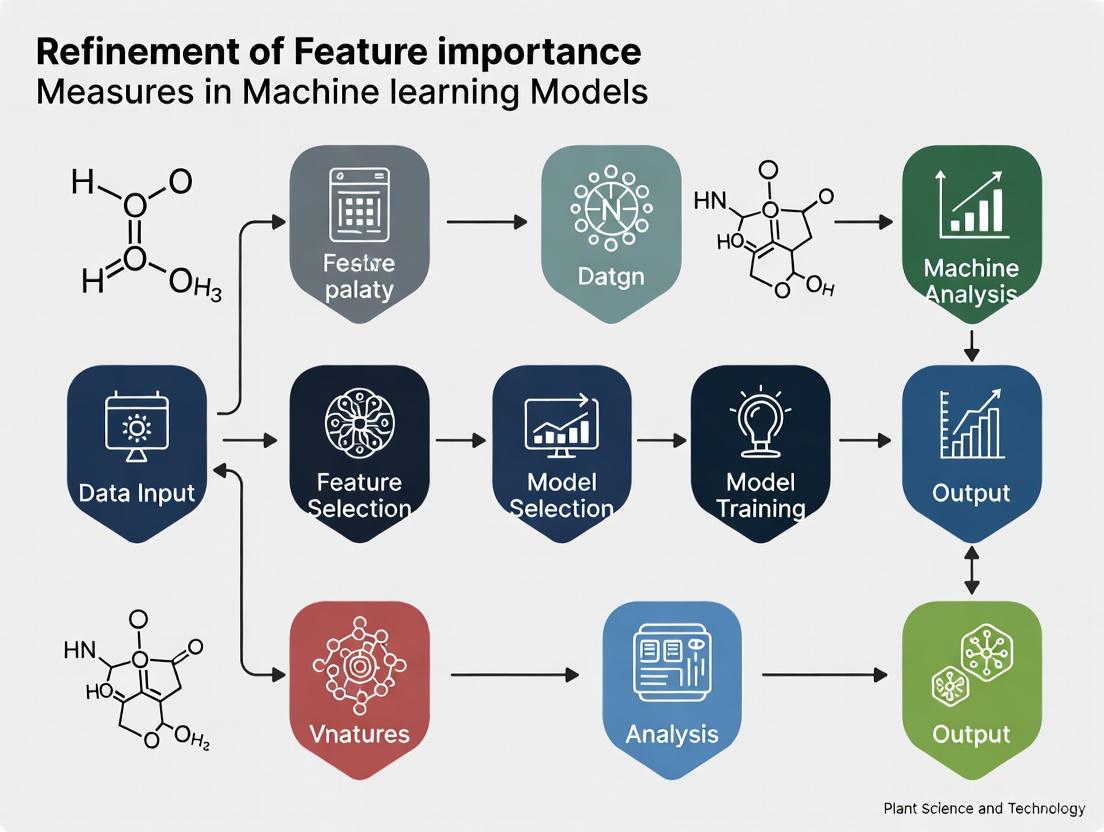

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to refine feature importance measures in machine learning models.

Beyond the Black Box: A Practical Guide to Refining Feature Importance for Robust Biomedical Discovery

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to refine feature importance measures in machine learning models. It bridges the gap between theoretical methodology and practical application, addressing foundational concepts, advanced techniques for high-dimensional data, troubleshooting for conflicting results, and rigorous validation strategies. By synthesizing the latest research, this guide empowers the biomedical community to derive stable, interpretable, and biologically meaningful insights from complex datasets, ultimately accelerating biomarker discovery and clinical model development.

Demystifying Feature Importance: Core Concepts and Scientific Interpretation

Understanding Global vs. Local Feature Importance in Biomedical Contexts

Core Concept FAQs

What is the fundamental difference between global and local feature importance?

Global feature importance provides a bird's-eye view of your model's behavior across the entire dataset, identifying which features the model relies on most for its overall predictions [1] [2]. It's essential for model auditing, feature selection, and understanding general patterns [1].

Local feature importance zooms in on a single prediction to explain why the model made a specific decision for that particular instance [1] [2]. This is crucial for explaining individual outcomes to patients or clinicians and for debugging specific misclassifications [1].

Table: Comparison of Global vs. Local Feature Importance

| Aspect | Global Feature Importance | Local Feature Importance |

|---|---|---|

| Scope | Entire dataset and model behavior [1] | Single prediction or data point [1] |

| Primary Question | "How does the model behave overall?" [1] | "Why did the model make this specific prediction?" [1] |

| Common Techniques | Permutation Feature Importance, Partial Dependence Plots (PDP), Global Surrogate Models [1] | LIME, SHAP, Counterfactual Explanations [1] [3] |

| Biomedical Applications | Model validation for regulatory compliance, identifying systematic bias, understanding disease mechanisms [1] [4] | Explaining individual diagnoses, treatment recommendations, building clinician trust [1] |

| Key Limitations | May conceal subgroup nuances; no individual reasoning [1] | Doesn't describe overall model behavior; potentially unstable [1] |

Why is this distinction particularly critical in biomedical research?

In biomedical contexts, the stakes for model interpretability are exceptionally high. Global explainability helps ensure your model's overall behavior aligns with established medical knowledge and doesn't exhibit systematic bias against certain patient demographics [1] [5]. Meanwhile, local explainability provides the necessary transparency for clinical decision-making, allowing healthcare providers to understand why a model generated a specific diagnosis or treatment recommendation for an individual patient [1].

Biomedical machine learning serves two distinct objectives: performance optimization for diagnostics/prognostics, and causal inference for mechanistic interpretation [6]. The distinction between global and local feature importance bridges these objectives—global patterns may suggest biological mechanisms, while local explanations verify these mechanisms hold for individual cases [1] [4].

Troubleshooting Guides

Problem: My feature importance rankings conflict between different interpretation methods

Issue Description: You obtain different feature importance rankings when using various interpretation techniques (e.g., SHAP vs. permutation importance), creating uncertainty about which features are truly important.

Diagnosis Steps:

- Check for feature correlations: Highly correlated features can cause unstable importance scores across methods [3]. Calculate correlation matrices among your features.

- Validate with statistical methods: Compare ML-based importance with model-agnostic statistical measures like non-parametric correlation and mutual information [4].

- Assess model stability: Evaluate if small changes in training data produce significantly different importance rankings, indicating high variance.

- Examine domain consistency: Check if the identified important features align with established biomedical knowledge [5].

Resolution Protocols:

- For correlated features: Use methods robust to feature correlation like the BoCSoR approach [3], or apply dimensionality reduction techniques before interpretation.

- Employ consensus approaches: Combine multiple interpretation methods and prioritize features consistently ranked as important across different techniques.

- Statistical validation: Supplement ML interpretation with traditional statistical tests to verify relationships between features and outcomes [4].

- Domain expert review: Engage biomedical experts to assess the clinical plausibility of identified important features [5].

Troubleshooting Conflicting Feature Importance Rankings

Problem: My model has high predictive accuracy but uninterpretable feature importance

Issue Description: Your model achieves strong performance metrics (e.g., high AUC, accuracy) but the feature importance explanations lack coherence, contradict medical knowledge, or vary unpredictably.

Diagnosis Steps:

- Verify explanation fidelity: Check if your interpretation method accurately represents the model's reasoning, not just approximating it [5].

- Analyze feature interactions: Complex interactions in black-box models may make single-feature importance scores misleading [1].

- Check for data leakage: Ensure no extraneous features are artificially inflating performance while confounding interpretations [7].

- Evaluate on subsets: Assess whether importance patterns hold consistently across different patient subgroups or data segments [1].

Resolution Protocols:

- Use intrinsically interpretable models: When possible, employ models like decision trees, K-nearest neighbors, or generalized additive models that offer better transparency [8].

- Implement model-based constraints: Incorporate domain knowledge directly into the model architecture to regularize feature importance [5].

- Adopt hybrid approaches: Combine powerful black-box models with interpretable surrogates for specific sub-tasks or populations [1] [8].

- Prioritize local explanations: If global patterns remain unclear, focus on trustworthy local explanations for individual predictions while acknowledging global limitations [1].

Problem: I need to validate that my feature importance reflects true biological mechanisms

Issue Description: You suspect your model's feature importance might capture statistical artifacts rather than genuine biological relationships, potentially leading to spurious conclusions.

Diagnosis Steps:

- Conduct robustness testing: Evaluate how stable your importance scores are under data perturbation and resampling.

- Perform causal analysis: Assess whether identified features have plausible causal relationships with the outcome versus mere correlation [6].

- Check dataset representativeness: Verify your training data adequately represents the biological variability in the target population.

- Compare with null models: Generate importance distributions under null hypotheses to establish significance thresholds.

Resolution Protocols:

- Independent cohort validation: Test your model and feature importance on completely independent datasets from different sources or populations.

- Experimental validation: Design wet-lab experiments to test predictions generated from your feature importance analysis.

- Incorporate mechanistic models: Combine data-driven ML approaches with established mechanistic models of the biological system [5].

- Multimodal data integration: Correlate important features across multiple data modalities (e.g., genomics, imaging, clinical) to establish convergent evidence [9].

Experimental Protocols

Protocol: Statistical Validation of Feature Importance

Purpose: To ensure that feature importance derived from machine learning models reflects statistically significant relationships rather than random variations or artifacts [4].

Table: Research Reagent Solutions for Feature Validation

| Reagent/Resource | Function in Validation | Implementation Considerations |

|---|---|---|

| Permutation Testing Framework | Generates null distribution for importance scores by randomly shuffuring feature-outcome relationships | Number of permutations should be sufficient for multiple comparison correction (typically 1000+) |

| Non-parametric Correlation Measures | Assesses feature-outcome relationships independent of ML model assumptions | Choose appropriate measures (Spearman's rank, Kendall's τ) based on data characteristics |

| Mutual Information Estimators | Quantifies non-linear dependencies between features and outcomes | Requires careful parameter selection for reliable estimation with finite samples |

| Stability Assessment Metrics | Evaluates consistency of importance rankings across data perturbations | Includes measures like Jaccard similarity of top-k features across bootstrap samples |

| Multiple Hypothesis Testing Correction | Controls false discovery rates across multiple features | Benjamini-Hochberg procedure recommended for high-dimensional biomedical data |

Methodology:

- Null Distribution Establishment:

- Generate null importance distributions for each feature through permutation testing (repeatedly shuffling outcome labels).

- Compute empirical p-values for observed importance scores based on their position in the null distribution.

- Apply false discovery rate correction to account for multiple comparisons.

Model-Agnostic Validation:

- Calculate traditional statistical associations between each feature and outcome using non-parametric correlation and mutual information.

- Compare ML-derived importance rankings with these model-agnostic measures.

- Identify discrepancies that may indicate model artifacts versus convergent evidence.

Stability Assessment:

- Perform bootstrap resampling to create multiple dataset variants.

- Compute feature importance on each bootstrap sample.

- Quantify stability using rank correlation or top-feature overlap metrics across bootstrap iterations.

Statistical Validation Workflow for Feature Importance

Protocol: Implementing Local to Global Explanation Integration

Purpose: To create a comprehensive model interpretation framework by aggregating local explanations into robust global insights, particularly valuable when direct global interpretation is challenging [3].

Methodology:

- Local Explanation Generation:

- Select appropriate local explanation methods (SHAP, LIME, counterfactuals) based on model type and data modality.

- Compute local feature importance for a representative sample of instances, ensuring coverage of different data regions and prediction types.

- For each instance, identify the minimal set of features that crucially influence the specific prediction.

Local-to-Global Aggregation:

- Implement aggregation mechanisms such as the Boundary Crossing Solo Ratio (BoCSoR) which quantifies how frequently individual feature changes lead to prediction alterations [3].

- Cluster local explanations to identify common explanation patterns across instance subgroups.

- Analyze how feature importance varies across different data regions and patient subgroups.

Global Pattern Validation:

- Compare aggregated local patterns with direct global importance measures.

- Identify consistencies and discrepancies that may reveal model limitations or data heterogeneity.

- Validate global patterns with domain experts for biological plausibility and clinical relevance.

Advanced Methodologies

Boundary Crossing Solo Ratio (BoCSoR): A Robust Alternative

The BoCSoR method addresses key limitations of traditional feature importance measures by leveraging local counterfactual explanations [3]. This approach is particularly valuable for fMRI data and other biomedical signals where features are often highly correlated.

Implementation Workflow:

- Identify boundary instances: Select data points near the model's decision boundary where small changes would alter predictions.

- Generate counterfactuals: For each boundary instance, create modified versions where individual features are systematically altered.

- Track boundary crossings: Count how often altering each feature in isolation causes a prediction change.

- Compute importance scores: Calculate the ratio of boundary crossings for each feature relative to alteration attempts.

Advantages for Biomedical Applications:

- More robust to feature correlation than SHAP and other traditional methods [3].

- Less computationally expensive for high-dimensional data [3].

- Provides intuitive explanations based on minimal feature changes that alter outcomes.

- Particularly effective for medical decision support systems with correlated features extracted from the same physiological measures [3].

BoCSoR Methodology Workflow

Frequently Asked Questions (FAQs)

1. What is the core theoretical difference between how PFI and LOCO measure feature importance?

Both PFI and LOCO measure importance by removing a feature's information and assessing the performance drop, but they differ fundamentally in how they remove this information. PFI randomly permutes the feature's values, breaking the feature-target relationship while keeping the feature's marginal distribution intact. In contrast, LOCO completely removes the feature by retraining the model without it [10]. This distinction means PFI is theoretically inclined to measure unconditional association (a feature's importance on its own), while LOCO is better suited for assessing conditional association (a feature's importance given the presence of all other features) [10].

2. Why do PFI and LOCO sometimes provide conflicting feature importance rankings?

Conflicting rankings occur because PFI and LOCO measure different types of associations. PFI can mistakenly highlight features that are only correlated with other important features rather than those that directly affect the target. Since it permutes features individually, correlated features can "cover" for each other, leading to underestimated importance for genuinely important but correlated features [11] [10]. LOCO, by retraining the model without the feature, more accurately captures a feature's unique contribution conditional on all others [10].

3. My SHAP computation is extremely slow for a high-dimensional dataset. What are my options?

SHAP's slow computation stems from its need to evaluate all possible feature subsets (coalitions), leading to exponential complexity of O(2^n) for n features [12]. For high-dimensional data, consider these alternatives:

- Use TreeSHAP for tree-based models (e.g., XGBoost, LightGBM), which computes exact SHAP values in polynomial time by leveraging the tree structure [13] [12].

- For non-tree models, KernelSHAP provides model-agnostic approximations, though it is slower than TreeSHAP [13] [14].

- The emerging RAMPART framework uses adaptive sequential halving and ensembling to efficiently rank top-k features without computing all importances, ideal when only the most important features are needed [15].

4. How do correlated features impact SHAP and PFI interpretations?

Correlated features pose significant challenges:

- PFI: Underestimates importance due to "information masking." When a feature is permuted, correlated features can compensate, making it appear less important than it truly is [11] [10]. Recursive Feature Elimination (RFE) that recalculates PFI after each elimination can mitigate this [11].

- SHAP: Many implementations assume feature independence. When features are correlated, this assumption is violated, and SHAP can yield misleading interpretations by allocating credit in non-causal ways [12]. For example, it might assign high importance to a feature that is predictive only because it is correlated with the true causal feature.

5. When should I use SHAP over simpler methods like PFI or LOCO?

SHAP is particularly valuable when you need:

- Local explanations to understand individual predictions, not just global feature importance [13] [12].

- A unified framework with strong theoretical guarantees (Efficiency, Symmetry, Dummy, Additivity) that ensure consistent and fair attribution of contributions among features [12].

- Insights into feature interactions, as the deviation of individual SHAP values from the main effect can hint at interaction patterns [14].

PFI or LOCO may suffice and be more computationally efficient if you only require global feature importance and conditional associations [10].

Troubleshooting Guides

Issue 1: Unstable or Misleading PFI Results with Correlated Features

Problem: PFI scores are low for known important features, or rankings change unpredictably due to feature correlations.

Solution: Implement a correlation-aware PFI workflow.

Experimental Protocol:

- Calculate Baseline Performance: Compute your model's performance score (e.g., accuracy, R²) on the original validation set.

- Perform Recursive Feature Elimination (RFE):

- Train your model on the full feature set.

- Compute PFI for all features by permuting each and measuring the performance drop from the baseline.

- Remove the feature with the lowest PFI.

- Retrain the model on the reduced feature set and repeat steps b-d until a stopping criterion (e.g., desired number of features) is met [11].

- Validate: Compare the out-of-bag error or validation error of the final, reduced model against the model using features from a non-recursive approach. Empirical results, such as on the Landsat Satellite dataset, show RFE achieves significantly lower error rates with fewer variables [11].

Diagram: PFI-RFE Workflow for Correlated Features

Issue 2: Handling SHAP's Computational Complexity

Problem: Calculating SHAP values is computationally infeasible for models with many features or complex models.

Solution: Select the appropriate SHAP estimator and leverage approximations.

Experimental Protocol:

- Identify Model Type:

- For tree-based models (XGBoost, LightGBM, CatBoost, scikit-learn), use

TreeExplainerfor exact and fast computation [14]. - For deep learning models (TensorFlow, PyTorch), use

DeepExplainerorGradientExplainer[14]. - For model-agnostic explanations, use

KernelExplainerwith a subset of background data and limited number of feature coalitions (nsamples) [14].

- For tree-based models (XGBoost, LightGBM, CatBoost, scikit-learn), use

- Approximate for High Dimensions: If you are only interested in the top-k most important features, use a framework like RAMPART that avoids computing importances for all features. It uses minipatch ensembling and recursive trimming to focus resources on promising candidates [15].

Diagram: SHAP Estimator Selection

Issue 3: Interpreting Conflicting Results from Different Methods

Problem: PFI, LOCO, and SHAP yield different feature rankings, leading to confusion.

Solution: Systematically compare methods by understanding and testing for the type of association each one measures.

Experimental Protocol:

- Establish Ground Truth (if possible): On synthetic data with known data-generating processes, verify which method correctly identifies causal features.

- Profile Your Features: Analyze correlation structure among features. High correlation suggests conditional methods (LOCO, conditional PFI) may be more reliable than marginal ones (standard PFI) [11] [10].

- Run a Comparative Analysis:

- Compute global SHAP importance (mean absolute SHAP value).

- Compute PFI and LOCO.

- Tabulate rankings and look for consensus and discrepancies.

- Interpret Discrepancies:

- A feature important in PFI but not LOCO/SHAP may have only unconditional association.

- A feature important in LOCO/SHAP but not PFI is likely conditionally important but masked by correlations in PFI [10].

Method Comparison & Quantitative Data

Table 1: Theoretical and Computational Characteristics

| Method | Theoretical Basis | Association Type Measured | Computational Complexity | Handles Correlated Features? |

|---|---|---|---|---|

| PFI | Performance drop from permutation | Tends towards Unconditional | Low (O(n * p)) | Poor; importance is underestimated due to masking [11] [10] |

| LOCO | Performance drop from model retraining | Conditional | High (O(p) model retrains) | Good; unique contribution is isolated by retraining [10] |

| SHAP | Shapley values from cooperative game theory | Conditional (averaged over subsets) | Very High (exact: O(2^p)), Approx: varies | Varies; standard SHAP can be biased, requires careful handling [12] [15] |

Note: n = number of instances, p = number of features.

Table 2: Empirical Performance on Landsat Dataset (PFI with and without RFE) [11]

| Procedure | PFI Recalculated at Each Step? | Robust to Correlation? | Empirical Error (5 features) |

|---|---|---|---|

| NRFE (Non-Recursive) | No | No | Up to 0.48 |

| RFE (Recursive) | Yes | Yes | ~0.13 (low variance) |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Analytical Tools

| Tool / "Reagent" | Function / Purpose | Key Considerations |

|---|---|---|

shap Python Library [14] |

Comprehensive implementation of SHAP (KernelSHAP, TreeSHAP, DeepSHAP) for model explanations. | Use TreeExplainer for efficiency with tree models. Be mindful of the independence assumption in KernelExplainer. |

fippy Python Library [10] |

Implements a range of feature importance methods (PFI, CFI, RFI, LOCO, SAGE) for systematic comparison. | Useful for benchmarking different importance methods on the same model and dataset. |

| Recursive Feature Elimination (RFE) [11] | Wrapper method to improve PFI's reliability with correlated features by recursively removing weak features and retraining. | Increases computational cost but provides more stable and accurate feature subsets. |

| RAMPART Framework [15] | Algorithm for efficient top-k feature importance ranking using minipatch ensembling and recursive trimming. | Optimized for high-dimensional settings; avoids computing full importance set, saving resources. |

Interpreting Conditional vs. Unconditional Associations for Causal Insight

Frequently Asked Questions (FAQs)

FAQ 1: Why do I get conflicting feature importance results from different methods? Different feature importance methods measure different types of associations. Permutation Feature Importance (PFI) measures unconditional association—whether a feature is predictive on its own. Leave-One-Covariate-Out (LOCO) measures conditional association—whether a feature adds predictive value even when other features are known [10]. If a feature is important unconditionally but not conditionally, it may be correlated with the true drivers but not causally relevant itself [10] [16].

FAQ 2: How can an association be conditionally dependent? Conditional dependence occurs when the relationship between two variables (X and Y) depends on a third variable (Z). For example, the number of ice creams sold (X) and the number of people at the beach (Y) may only be related on hot days (high Z) [17]. In a causal graph, this can occur when conditioning on a collider variable (a common effect), which can create a spurious association between its causes [18].

FAQ 3: What is the difference between a confounder and a collider?

- Confounder: A common cause of both your treatment (D) and outcome (Y). It creates a spurious association that must be controlled for (conditioned on) to isolate the true causal effect [18] [19].

- Collider: A common effect of both your treatment (D) and another variable (A). Conditioning on a collider creates a spurious association between its causes and can introduce bias [18].

The following diagram illustrates the basic structures of confounding and collider bias, which are fundamental to understanding conditional and unconditional dependencies.

FAQ 4: My model has high predictive accuracy. Does this mean I have found causal relationships? No. Machine learning models excel at exploiting all available information—including causes, effects, and spurious correlations—for prediction [16]. A model can accurately predict an outcome using the effects of that outcome (e.g., predicting COVID from a dry cough, which is its effect) [16]. High prediction accuracy is necessary but not sufficient for establishing causality.

FAQ 5: How can I move from association to causation in my analysis?

- Formal Causal Inference: Use frameworks like Potential Outcomes (e.g., with g-computation [20]) or Structural Causal Models (e.g., with Do-calculus [18]) that require explicit causal assumptions.

- Causal Diagrams: Draw a Directed Acyclic Graph (DAG) to map out your assumptions about the causal relationships between all variables, including unmeasured confounders [18] [21]. This helps identify what to control for and what not to.

- Experimental Validation: Whenever possible, use Randomized Controlled Trials (RCTs), which remain the gold standard for establishing causality by breaking links to confounders through random assignment [16] [22].

Troubleshooting Guides

Problem 1: Your feature importance results are misleading your causal interpretation.

Symptoms:

- PFI and LOCO rankings for key features are significantly different [10].

- A feature known to be non-causal from domain knowledge ranks as highly important.

Solution:

- Diagnose the Association Type: Determine if you need to measure conditional or unconditional importance based on your causal question. To infer a direct cause, you typically need to establish conditional importance [10].

- Select the Right Tool: Use a feature importance method that matches your target association. For conditional importance, use methods like LOCO that retrain the model without the feature, thereby testing its contribution given all other features [10].

- Validate with a Causal Graph: Map the suspected feature and outcome into a DAG with other relevant variables. This helps you see if the feature's importance is likely due to a confounder or another structure [18] [21].

Problem 2: You suspect unmeasured variables are confounding your results.

Symptoms:

- An observed association is strong but lacks biological plausibility [19].

- The estimated effect of a treatment changes drastically when including or excluding different sets of covariates.

Solution:

- Sensitivity Analysis: Quantify how strong an unmeasured confounder would need to be to explain away the observed association [23].

- Instrumental Variables (if available): Use a variable that influences the treatment but only affects the outcome through the treatment to estimate a causal effect [20].

- Expert Elicitation: Work with domain experts to formally map the system in a DAG, making assumptions about unmeasured confounders explicit. This can guide which sensitivity analyses are most critical [21].

Problem 3: You need to design an analysis to establish a causal relationship from observational data.

Symptoms:

- You have observational data and need to estimate the causal effect of an intervention.

- A randomized experiment is not feasible due to cost, ethics, or practicality [23].

Solution: Follow a formal causal inference workflow. The following diagram outlines a robust workflow for moving from a causal question to a validated estimate, integrating feature importance as a preliminary step.

- Define a Precise Causal Question: Frame it as "What is the average effect of intervention A on outcome Y in population P?" [21].

- Draw a Causal DAG: Based on literature and domain knowledge, map all known or plausible causes of your outcome and treatment. This is a non-negotiable step for clarifying assumptions [18] [21].

- Use Feature Importance for Exploration: Apply conditional feature importance methods on your observational data to identify promising variables for inclusion in your causal model, but do not interpret these as causal effects [16] [23].

- Select a Causal Estimator: Based on your DAG, choose a method like g-computation [20], propensity score matching, or instrumental variables [20].

- Estimate and Validate: Run the analysis and perform sensitivity analyses to test the robustness of your findings to violations of your assumptions [23].

The following table summarizes key methodological tools and their primary function in causal analysis.

| Research Reagent / Method | Function in Causal Analysis |

|---|---|

| Directed Acyclic Graph (DAG) | A visual tool representing assumptions about causal relationships, confounding, and bias. Essential for planning a valid analysis [18] [21]. |

| Potential Outcomes Framework | A formal mathematical framework for defining causal effects (e.g., the effect of do(D=1) vs do(D=0)) and clarifying the "fundamental problem of causal inference" [18] [22]. |

| G-Computation (G-Formula) | A causal inference technique used to estimate the effect of an exposure or treatment in the presence of confounding in observational studies [20]. |

| Permutation Feature Importance (PFI) | A model-agnostic method that measures a feature's unconditional association with the target, useful for initial feature screening [10]. |

| Leave-One-Covariate-Out (LOCO) | A model-agnostic method that measures a feature's conditional association with the target, getting closer to testing for direct causal relevance [10]. |

| Randomized Controlled Trial (RCT) | The gold-standard experimental design that, via randomization, breaks the link between treatment and confounders, allowing for a direct estimate of the causal effect [16] [22]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why does my model's feature importance ranking change every time I re-run the model, even with the same dataset?

This is a common issue, primarily caused by the stochastic (random) nature of machine learning algorithms. Many models, when initialized, rely on random seeds to set parameters. Changing these seeds alters the model's starting point, optimization path, and ultimately, the resulting feature importance rankings [24]. This is a significant reproducibility challenge, especially in models with stochastic processes. Furthermore, if your dataset has a high number of features relative to samples, or contains noisy and irrelevant features, the model might overfit and latch onto different spurious correlations in each run, leading to inconsistent importance scores [25].

FAQ 2: I used both Permutation Importance and SHAP on the same model, and they produced different top features. Which one should I trust?

This conflict arises because the methods measure different concepts of importance.

- Permutation Importance measures a feature's contribution to the model's overall predictive performance (e.g., accuracy) [26] [27].

- SHAP (Shapley Additive Explanations) explains the output of the model itself by quantifying the marginal contribution of each feature to an individual prediction, based on game theory [28] [26].

Trusting one over the other depends on your research objective. If your goal is to understand which features are most critical for your model's global accuracy, Permutation Importance is a strong choice. If you need to explain how the model makes decisions for individual predictions or require local interpretability, SHAP is more appropriate. The "conflict" is often a reflection of these different perspectives.

FAQ 3: How can the choice of feature set itself impact the perceived importance of a variable?

A feature's importance is not an intrinsic property; it is context-dependent and can vary dramatically based on the other features in the model. Research has shown that when you train multiple models with different combinations of features, the importance and ranking of a given feature can change significantly [29]. This occurs due to interactions and correlations between features. A feature might be a strong predictor on its own, but its importance can diminish if another highly correlated feature is present in the set, as the model can use either one to make the prediction. Therefore, evaluating a feature's importance in isolation can be misleading.

FAQ 4: How can overfitting lead to unreliable feature importance?

Overfitting occurs when a model learns the noise and random fluctuations in the training data instead of the underlying pattern. An overfit model will often assign high importance to irrelevant features that coincidentally align with the noise in the training set [25]. This leads to:

- Inconsistent feature importance rankings across different data samples.

- Inflated importance scores for noisy features, causing you to mistakenly retain them.

- Poor generalization, where the feature importance derived from the training data does not hold up on new, unseen validation or test data [25].

Troubleshooting Guide

Use the following flowchart to diagnose and address common issues with conflicting feature importance results.

Diagram 1: Troubleshooting conflicting feature importance.

Detailed Troubleshooting Steps

Problem: Model Instability and Non-Reproducibility

- Symptoms: Large variations in feature importance rankings when the model is re-trained on the same data.

- Solution Protocol: Implement a repeated trials validation approach [24].

- For a given dataset and model, run the training process multiple times (e.g., 100-400 trials).

- Randomly vary the random seed between each trial to capture the effect of stochastic initialization.

- Aggregate the feature importance rankings (e.g., calculate the mean rank or frequency of appearance in the top-N) across all trials.

- Use the aggregated ranking to identify the most consistently important features, reducing the impact of random noise [24].

Problem: Overfitting to Training Data

- Symptoms: The model performs exceptionally well on training data but poorly on validation/test data. Feature importance is dominated by seemingly irrelevant variables.

- Solution Protocol: Apply regularization and simplify the model [25].

- Use Regularization: Incorporate L1 (Lasso) or L2 (Ridge) regularization into your model. L1 regularization can drive feature weights to zero, acting as an embedded feature selection method.

- Simplify the Model: For tree-based models, reduce

max_depthor increasemin_samples_leaf. For neural networks, use dropout or early stopping. - Validate with Permutation: Use permutation importance on the held-out test set. If a feature has high importance on the training set but low importance on the test set, it is likely a sign of overfitting.

Problem: Incompatible Interpretation Methods

- Symptoms: Different explanation methods (e.g., SHAP vs. Permutation Importance) yield different top features.

- Solution Protocol: Understand and align methods with your goal.

- Define Your Question: Are you asking "Which features are most important for my model's global performance?" (use Permutation Importance) or "How did the model use features to make this specific prediction?" (use SHAP or LIME) [26].

- Don't Rely on a Single Method: Use multiple methods to triangulate your findings. If a feature is consistently important across several methods, you can have higher confidence in its significance.

Experimental Protocols for Robust Feature Importance

Protocol 1: Repeated Trials for Stable Feature Ranking

This methodology is designed to stabilize feature importance in models with inherent stochasticity [24].

- Objective: To generate a stable, reproducible ranking of feature importance at both group and subject-specific levels.

- Materials: See "Research Reagent Solutions" below.

- Workflow:

Diagram 2: Repeated trials workflow for stability.

Protocol 2: Validation via Reduce and Retrain

This protocol validates the identified important features by testing the performance of models retrained on reduced feature sets [30].

- Objective: To verify that a selected subset of features retains the essential predictive power of the model.

- Method:

- Train a baseline model with the full set of features and record its performance on a test set.

- Select a subset of top-K features based on your aggregated importance score.

- Retrain the model from scratch using only the selected subset of top-K features.

- Compare the performance of this reduced model to the baseline. A high degree of performance retention indicates a successful feature selection.

- As a control, retrain a model on a subset of low-importance features. A significant performance drop is expected [30].

Comparative Data & Research Reagent Solutions

Table 1: Comparison of Common Feature Importance Methods

| Method | Scope | Model-Specific? | Key Principle | Best Use Case |

|---|---|---|---|---|

| Permutation Importance [26] [27] | Global | Agnostic | Measures increase in model error after shuffling a feature's values. | Identifying features critical for global model performance. |

| SHAP [28] [26] | Global & Local | Agnostic | Calculates each feature's marginal contribution to prediction based on game theory. | Explaining individual predictions and understanding global feature effects. |

| Gini Importance [27] | Global | Specific (Tree-based) | Measures total reduction in node impurity (e.g., Gini index) weighted by node probability. | Fast, built-in importance for Random Forest and GBDT models. |

| LIME [26] | Local | Agnostic | Approximates a complex model locally with an interpretable one to explain single instances. | Debugging individual model predictions and trust verification. |

| Global Feature Importance [31] | Global | Agnostic | Aggregates feature importance scores from multiple models to create a unified score. | Feature exploration and selection in organizations with many related ML models. |

Table 2: Research Reagent Solutions

This table details key computational "reagents" for refining feature importance analysis.

| Reagent Solution | Function | Example / Notes |

|---|---|---|

| Repeated Trials Framework [24] | Stabilizes feature rankings by aggregating results over many model runs with random seed variation. | Run 400 trials, aggregate rankings. Mitigates stochastic initialization effects. |

| Global Feature Importance Score [31] | Provides a cross-model view of feature importance by normalizing and aggregating scores from multiple models. | Uses percentile normalization. Helps discover features that are robust across related tasks. |

| Reduce and Retrain Methodology [30] | Validates feature selection by measuring performance retention in models trained on selected subsets. | Crucial for confirming that a pruned feature set retains predictive power. |

| SHAP / LIME Explainers [28] [26] | Provides local and global model explanations, helping to debug predictions and understand feature interactions. | Python libraries: shap, lime. |

| Regularization Techniques (L1/L2) [25] | Prevents overfitting by penalizing model complexity, leading to more reliable and generalizable importance scores. | L1 (Lasso) can produce sparse models, acting as a feature selector. |

The Critical Link Between Feature Importance and Model Interpretability

Frequently Asked Questions (FAQs)

Q1: What is feature importance and why does it matter for interpretable machine learning in drug discovery? Feature importance refers to techniques that quantify the contribution of each input variable (feature) to a machine learning model's predictions. In drug discovery, this is crucial because understanding which molecular descriptors, biological activities, or chemical properties drive predictions helps researchers validate models, generate hypotheses, and trust AI recommendations. Unlike black-box models where predictions lack explanation, feature importance methods provide transparency into the model's decision-making process, which is essential for high-stakes applications like pharmaceutical development [32] [33].

Q2: My SHAP results seem inconsistent across different models for the same dataset. Is this expected? Yes, this is a recognized challenge. SHAP (SHapley Additive exPlanations) values are subject to model-specific biases and can vary depending on the underlying machine learning algorithm. A recent critical examination highlighted that although SHAP aids interpretability, different models may emphasize different relationships in the same data. It's recommended to complement SHAP analysis with robust statistical methods like Spearman's correlation with p-values or Kendall's tau to strengthen the integrity of your findings [34] [35].

Q3: How can I validate that my feature importance results are reliable, especially without ground truth? Without ground truth, researchers often employ the "Reduce and Retrain" methodology. This involves:

- Using your feature importance method to rank features.

- Creating subsets of your data containing only the top-k most important features.

- Retraining your model on these reduced datasets.

- Evaluating performance retention. A reliable importance ranking will show minimal performance drop with a small subset of features, indicating the selected features are truly informative. Conversely, performance should significantly degrade when using only low-importance features [30].

Q4: What are the practical differences between local and global feature importance?

- Local explanations (e.g., SHAP) explain individual predictions, answering "Why did the model make this specific prediction for this single compound?" This is valuable for debugging and understanding edge cases.

- Global explanations (e.g., SAGE - Shapley Additive Global Importance) provide an overview of feature importance across the entire dataset, answering "Which features are most important for the model's overall performance?" [36] The choice depends on your research goal: inspecting specific instances or understanding the model's overall behavior.

Q5: Are there lightweight, interpretable models suitable for deployment on resource-constrained systems? Yes. For applications like real-time stress detection using physiological signals, lightweight models such as k-Nearest Neighbors (k-NN) and Decision Trees have demonstrated high accuracy (e.g., >99%) with minimal computational demands. These models can be deployed on edge devices like the NVIDIA Jetson platform, making them ideal for IoT-based health monitoring where both performance and efficiency are critical [37].

Troubleshooting Guides

Issue 1: Handling Unreliable or Noisy Feature Importance Estimates

Problem: Feature importance scores vary significantly between training runs, or seem to highlight features that don't make domain sense.

Solution: Implement a framework that estimates uncertainty in feature importance.

- Step 1: For tree-based models, consider using the Sub-SAGE method, which can be estimated without computationally expensive resampling and provides a stable importance value [36].

- Step 2: Estimate confidence intervals for your feature importance scores using bootstrapping. This involves repeatedly resampling your dataset with replacement, calculating feature importance for each sample, and then determining the variability of these estimates.

- Step 3: When interpreting results, focus on features whose confidence intervals are well-separated from zero (or from the confidence intervals of less important features). This provides a more robust hierarchy of feature relevance [36].

Issue 2: Model-Specific Biases in Interpretation

Problem: Your post-hoc explanations (like SHAP) may be skewed by the specific architecture and training dynamics of your chosen model.

Solution:

- Cross-Model Validation: Run the same analysis using multiple, inherently different model types (e.g., Random Forest, Gradient Boosting, and k-NN). Look for features that are consistently important across all models [34].

- Use Agnostic Methods: Apply model-agnostic interpretation methods like LIME (Local Interpretable Model-agnostic Explanations) to complement your analysis. LIME approximates any black-box model locally with an interpretable one (like a linear model) to explain individual predictions [37].

- Statistical Correlation: Correlate your model-derived importance scores with simple, model-agnostic statistical measures of association (e.g., Spearman's correlation) between features and the target variable. This can help validate that the model is capturing real underlying relationships [35].

Issue 3: Managing High-Dimensional Feature Spaces in Materials Science and Drug Discovery

Problem: With hundreds or thousands of initial descriptors (e.g., for predicting material elasticity or compound efficacy), it's computationally inefficient and noisy to use all features.

Solution: Implement a standardized benchmarking and feature ranking workflow.

- Step 1 - Initial Feature Selection: Use an algorithm like mRMR (Minimum Redundancy Maximum Relevance) to identify a subset of features that are highly relevant to the target property while having low redundancy among themselves [38].

- Step 2 - Model Benchmarking: Train and evaluate multiple ML models (KRR, GPR, GB, RF, etc.) on this reduced feature space.

- Step 3 - Unified Ranking: For the best-performing models, use SHAP analysis to derive a task-specific ranking of features.

- Step 4 - Knowledge Transfer: This unified feature ranking can even be used to improve the performance of complex models like Graph Neural Networks (GNNs) when training data is limited, by focusing the model on the most informative descriptors [38].

Experimental Protocols & Data Presentation

Table 1: Comparison of Key Feature Importance Estimation Methods

| Method | Scope | Model Agnostic? | Key Strength | Key Limitation | Primary Use Case |

|---|---|---|---|---|---|

| SHAP [30] | Local & Global | Yes | Solid theoretical foundation (Shapley values); explains individual predictions. | Computationally expensive; can exhibit model-specific biases [34]. | Explaining individual predictions to domain experts. |

| SAGE / Sub-SAGE [36] | Global | Yes | Decomposes model loss; directly tied to predictive performance. | Computation can be complex; requires approximation for large feature sets. | Understanding which features are most important for overall model accuracy. |

| Gradient/Weight Analysis [30] | Global | No (NN-specific) | Leverages internal model parameters; can be very fast. | Tied to a specific model's parameters; may not generalize. | Rapid, embedded feature selection during neural network training. |

| LIME [37] | Local | Yes | Creates simple, local surrogate models; highly interpretable. | Explanations are local and may not capture global behavior. | Providing intuitive, local explanations for any black-box model. |

| mRMR [38] | Global | Yes | Reduces redundancy in selected feature set. | Does not use a predictive model to evaluate importance directly. | Preprocessing and initial feature filtering in high-dimensional spaces. |

Table 2: Essential Research Reagent Solutions for Interpretable ML Experiments

| Reagent / Resource | Function in Experiment | Example / Notes |

|---|---|---|

| Benchmark Datasets (e.g., MNIST, scikit-feat) [30] | Provides standardized data for method validation and comparison. | Crucial for establishing baselines and ensuring methodological correctness. |

| Specialized Domain Datasets (e.g., Materials Project [38], UK Biobank [36]) | Supplies real-world, high-dimensional data from specific scientific fields. | Enables application-grounded testing and discovery. |

| SHAP Library | Calculates SHapley values for model explanations. | The de facto standard for Shapley-based explanations in ML [34] [38]. |

| Reduce and Retrain Framework [30] | Methodology for validating feature selection by retraining on subsets. | The gold standard for empirically verifying that important features retain predictive power. |

| Bootstrapping Libraries | Used to estimate confidence intervals and uncertainty for any statistic, including feature importance scores. | Essential for robust reporting; allows researchers to assess the stability of their findings [36]. |

Workflow Diagram: A Standardized Protocol for Robust Feature Importance Analysis

The diagram below outlines a generalized workflow for conducting a robust feature importance analysis, integrating best practices from the search results.

Diagram: Troubleshooting Path for Unreliable Feature Importance

This flowchart provides a structured path to diagnose and solve common problems with feature importance stability.

Advanced Methods and Scalable Frameworks for High-Dimensional Data

Leveraging Permutation Importance and SHAP for Model-Agnostic Insights

Frequently Asked Questions (FAQs)

1. What is the fundamental difference in what Permutation Feature Importance (PFI) and SHAP measure?

Permutation Feature Importance measures the increase in a model's prediction error after a feature's values are shuffled, which breaks the feature's relationship with the true outcome. It directly links feature importance to model performance degradation [39]. In contrast, SHAP (SHapley Additive exPlanations) explains individual predictions by fairly attributing the prediction output to each feature based on Shapley values from cooperative game theory. It shows how much each feature contributes to pushing the model's output from a base value (the average prediction) to the final prediction for a specific instance [40] [13] [41].

2. My SHAP summary plot shows a feature as important, but its PFI score is low. Which one should I trust?

This discrepancy often reveals different aspects of your model's behavior. If PFI is low, it means shuffling the feature does not significantly harm the model's predictive performance on your test data. If SHAP shows high importance, it indicates the feature has a substantial effect on the model's output values for many instances.

- Trust PFI for Performance-Centric Insights: If your goal is to understand which features are essential for your model to make accurate predictions, PFI is more reliable. A low PFI suggests the model does not rely on this feature to be correct, which is crucial for feature selection aimed at building robust models [42] [39].

- Trust SHAP for Model Behavior Audit: If your goal is to audit the model's internal mechanics and understand how it uses a feature to make its predictions (regardless of their ultimate correctness), SHAP provides a valid view. This can be particularly useful for detecting when a model has learned to use a feature for overfitting, as SHAP will show its contribution while PFI will not [42].

3. How should I handle highly correlated features when using PFI and SHAP?

- PFI Challenge: The standard (marginal) PFI can be misleading with correlated features. Shuffling one feature in a correlated group creates unrealistic data points, as the broken relationship between the shuffled feature and its correlated partners is not representative of real-world scenarios. This can lead to unreliable importance scores [39].

- Solution - Conditional PFI: Advanced implementations of PFI use conditional permutation, which samples from the conditional distribution of a feature given the others, preserving the correlation structure. This provides a more realistic measure of importance [39].

- SHAP Handling: SHAP values, by their game-theoretic design, account for interactions among features by evaluating all possible coalitions of features. They tend to distribute importance more fairly among correlated features, though the interpretation can become more complex [43].

4. Why are my SHAP value computations so slow, and how can I speed them up?

SHAP value computation is inherently computationally expensive because it requires evaluating the model for many different combinations (coalitions) of features [42] [13]. The computation time depends on the explainer method and the model type.

- For Tree-Based Models (XGBoost, Random Forest): Always use

TreeSHAP. It is an optimized algorithm that computes SHAP values exactly and is vastly faster than model-agnostic methods [13]. - For Other Models (Neural Networks, SVMs): Use the

PermutationExplainerorKernelExplainer.PermutationExplaineris often faster and guarantees local accuracy [44]. You can control the speed/accuracy trade-off by reducing the number of permutations (npermutationsparameter) or by using a smaller, representative background dataset [44] [41].

5. When working with a linear model, is there any benefit to using SHAP over analyzing model coefficients directly?

While the coefficients of a linear model are inherently interpretable, SHAP provides several additional benefits [41]:

- Unified Scale: SHAP values are on the same scale as the model's output (e.g., log-odds, probability), making the contribution of each feature easy to understand (e.g., "Feature A increased the predicted probability by 5%").

- Handling of Non-linearity in Preprocessing: If your model pipeline includes non-linear transformations of input features, the coefficients of the linear model become harder to interpret. SHAP values consistently measure the effect on the final prediction.

- Consistent Framework: Using SHAP allows you to use the same interpretation framework (beeswarm plots, dependence plots) across linear models and complex black-box models, simplifying comparative analysis.

Troubleshooting Guides

Issue 1: Permutation Importance Identifies a Feature as Important, but Ablation (Removing it and Retraining) Shows No Performance Loss

Problem: The results of PFI and feature ablation seem to contradict each other.

Diagnosis: This is a classic sign of feature correlation [39]. Your model relies on the permuted feature during prediction. When you permute it at inference time, performance drops. However, when you completely remove the feature and retrain the model, the model learns to use a different, correlated feature as a surrogate, successfully maintaining performance.

Solution:

- Investigate Feature Correlations: Calculate the correlation matrix of your features.

- Use Conditional Permutation Importance: If available, use a PFI implementation that conditions on correlated features to get a more accurate estimate of the unique importance of the permuted feature [39].

- Interpret in Context: Understand that PFI, in this case, indicates that the information the feature carries is important. For feature selection, you might consider dropping the entire group of correlated features or using dimensionality reduction.

Issue 2: SHAP Values Appear Noisy or Inconsistent for a Feature

Problem: The SHAP values for a feature do not show a clear trend (e.g., in a dependence plot), appearing as a vertical smear of points.

Diagnosis: This is typically caused by interaction effects. The feature's impact on the prediction is not uniform but depends on the value of another feature.

Solution:

- Visualize Interactions: Use a SHAP dependence plot colored by a potential interacting feature. For example,

shap.dependence_plot('Feature_A', shap_values, X, color_by='Feature_B'). - Identify Interaction Partners: Look for features where the coloring reveals distinct patterns (e.g., one color cluster has a positive slope, another has a negative slope). This confirms a strong interaction.

- Report Interactions: Account for this in your interpretation. The global importance of the feature might be high, but its local effect can only be understood in the context of its interacting partner.

Issue 3: Permutation Importance is High for a Feature Deemed Statistically Insignificant in a Linear Model

Problem: A feature with a high p-value in a linear regression (suggesting it is not statistically significant) receives a high importance score from PFI.

Diagnosis: These two methods answer fundamentally different questions. A high p-value suggests that, assuming the linear model is the true data-generating process, the coefficient for that feature is not reliably different from zero. A high PFI score indicates that the trained model (whether the true process is linear or not) uses that feature to reduce prediction error.

Solution:

- Acknowledge the Difference: This discrepancy can reveal that the linear model assumption is incorrect. The feature may have a non-linear relationship with the target that the linear model's coefficient cannot capture effectively, but that the more flexible model (e.g., Random Forest) you used for PFI can exploit.

- Investigate Non-linearity: Plot the partial dependence of the feature to visually check for a non-linear relationship.

Table 1: Comparison of Permutation Feature Importance and SHAP.

| Aspect | Permutation Feature Importance (PFI) | SHAP |

|---|---|---|

| Core Idea | Measures increase in model error when a feature is permuted [39]. | Fairly attributes the prediction output to each feature using Shapley values [40] [13]. |

| Interpretation Scale | Scale of the model's loss function (e.g., MSE, LogLoss) [42] [39]. | Scale of the model's raw output (e.g., log-odds, probability) [42] [41]. |

| Scope | Global (dataset-level) importance [39]. | Both local (instance-level) and global (aggregated) importance [40] [43]. |

| Handling of Correlated Features | Problematic; standard marginal PFI can be biased. Requires conditional variants [39]. | Generally more robust, as it accounts for feature interactions by design [43]. |

| Computational Cost | Low to moderate. Requires model evaluations for each feature permutation [39]. | High to very high. Requires evaluating the model for many coalitions of features [42] [13]. |

| Primary Use Case | Feature selection based on predictive power; understanding what features the model relies on for accuracy [42] [39]. | Explaining individual predictions; auditing model behavior and debugging [42] [40]. |

Table 2: Performance of PermFIT (a PFI-based method) vs. SHAP and others in a simulation study [45]. The study evaluated the ability to correctly identify true causal features among 100 variables, with varying correlation (ρ).

| Method | ρ = 0 | ρ = 0.2 | ρ = 0.5 | ρ = 0.8 |

|---|---|---|---|---|

| PermFIT-DNN | ~1.00 | ~1.00 | ~0.99 | ~0.98 |

| PermFIT-RF | ~0.95 | ~0.95 | ~0.93 | ~0.90 |

| SHAP-DNN | ~0.65 | ~0.63 | ~0.60 | ~0.55 |

| LIME-DNN | ~0.55 | ~0.53 | ~0.50 | ~0.45 |

| Vanilla-RF | ~0.75 | ~0.74 | ~0.72 | ~0.65 |

Experimental Protocols

Protocol 1: Computing and Validating Permutation Feature Importance

Methodology: This protocol is based on the model-agnostic permutation importance algorithm described by Fisher, Rudin, and Dominici (2019) [39].

- Input: Trained model ( \hat{f} ), feature matrix ( \mathbf{X} ), target vector ( \mathbf{y} ), error measure ( L ) (e.g., MAE, MSE).

- Estimate Original Error: Compute the original model error on a test set (not used for training): ( e{orig} = \frac{1}{n{test}} \sum L(y^{(i)}, \hat{f}(\mathbf{x}^{(i)})) ) [39].

- For Each Feature ( j ): a. Permute Feature: Create a new feature matrix ( \mathbf{X}{perm, j} ) by randomly shuffling the values of feature ( j ) in the test set. This breaks the statistical association between feature ( j ) and the target ( y ) [39]. b. Compute New Error: Calculate the model error using the permuted data: ( e{perm, j} = \frac{1}{n{test}} \sum L(y^{(i)}, \hat{f}(\mathbf{x}{perm,j}^{(i)})) ). c. Calculate Importance: The permutation importance for feature ( j ) can be the difference ( FIj = e{perm,j} - e{orig} ) or the ratio ( FIj = e{perm,j} / e{orig} ) [39].

- Sort Features: Sort features by descending ( FI_j ) to get a ranking.

Key Control:

- Always compute PFI on a held-out test set. Computing it on the training data will give overly optimistic results, especially for overfit models, and can falsely identify irrelevant features as important [39].

Protocol 2: Estimating and Interpreting SHAP Values for a Black-Box Model

Methodology:

This protocol uses the shap.PermutationExplainer, which is model-agnostic and guarantees local accuracy [44].

- Input: Trained model ( \hat{f} ), instance(s) to explain ( \mathbf{X} ), background dataset ( \mathbf{X}_{background} ) (e.g., 100 samples from the training data).

- Initialize Explainer:

- Compute SHAP Values:

The

npermutationsparameter can be adjusted for a trade-off between accuracy and speed [44]. - Interpretation and Visualization:

- Local Explanation (Single Instance): Use

shap.plots.waterfall(shap_values[i])to see how each feature pushed the prediction from the base value to the final output for the i-th instance [41]. - Global Explanation (Dataset): Use

shap.plots.beeswarm(shap_values)to see the distribution of feature impacts and their relationship with feature values across the entire dataset [41].

- Local Explanation (Single Instance): Use

Workflow and Relationship Diagrams

Decision Flowchart: Choosing Between PFI and SHAP.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for Feature Importance Analysis.

| Tool / "Reagent" | Function / Purpose | Key Application Notes |

|---|---|---|

| SHAP (Python Library) | A unified library for computing SHAP values across many model types (TreeSHAP, KernelSHAP, PermutationExplainer) [44] [41]. | Use Case: Primary tool for local and global model interpretation. Tip: Use TreeSHAP for tree-based models (XGBoost, LightGBM) for exact, fast explanations [13]. |

| ELI5 (Python Library) | Provides a unified API for model inspection, including calculation of permutation importance [39]. | Use Case: Computing and visualizing PFI in a model-agnostic way. Tip: The eli5.sklearn module integrates seamlessly with scikit-learn pipelines. |

| scikit-learn | The sklearn.inspection module contains the permutation_importance function for direct computation of PFI [39]. |

Use Case: Integrated PFI calculation for scikit-learn compatible estimators. Tip: Always pass a test set to the X and y parameters, not the training set. |

| InterpretML (Python Library) | Provides a glassbox (interpretable) modeling framework, including Explainable Boosting Machines (EBMs), which are highly interpretable and can be used as a benchmark [41]. | Use Case: Training inherently interpretable models to compare against black-box model explanations. |

| Pandas & NumPy | Core data manipulation and numerical computation libraries. | Use Case: Essential for data preprocessing, handling feature matrices, and analyzing results. Tip: Ensure data is properly cleaned and encoded before analysis. |

FAQs and Troubleshooting Guides

L1 Regularization (LASSO)

Q1: Why does my L1-regularized model produce a less accurate but more sparse model than my L2-regularized model?

A: This is expected behavior. L1 regularization (LASSO) adds a penalty equal to the absolute value of the magnitude of coefficients to the loss function [46]. This specific penalty form has a "thresholding" effect during gradient descent, where the gradients of the loss function must be large enough to overcome a constant penalty term that tries to push coefficients to zero [47]. As a result, features with low importance have their coefficients shrunk to exactly zero, creating sparsity and performing implicit feature selection [46] [48]. While this often improves model interpretability and reduces overfitting, it can sometimes remove features that provide minor predictive benefits, potentially leading to a slight decrease in accuracy compared to L2, which only shrinks coefficients but rarely sets them to zero [49].

Q2: How do I interpret the results of L1 regularization for feature selection in a high-dimensional drug discovery dataset?

A: After fitting a model with L1 regularization, you should examine the model's coefficients. Features with non-zero coefficients are those the model has selected as important [48]. In a biological context, this list can be interpreted as the set of molecular descriptors, genomic markers, or other variables most strongly associated with the biological activity or property you are predicting. This provides a data-driven way to prioritize compounds or genes for further experimental validation [50].

Q3: What is the most common pitfall when using L1 regularization for the first time?

A: A common pitfall is forgetting to standardize your input features before applying L1 regularization. Because the L1 penalty is sensitive to the scale of the features, variables on a larger scale can be unfairly penalized. Always scale your data so that each feature has a mean of 0 and a standard deviation of 1 before training.

Tree-Based Feature Importance

Q4: My random forest model returns different feature importance rankings each time I run it. Is this normal?

A: Yes, this is a known characteristic of random forest. The algorithm is non-deterministic; it relies on random sampling of data and features to build each tree [50]. This inherent randomness can lead to variability in feature importance estimates, especially if the number of trees is too low or if many features are highly correlated. To mitigate this, you should increase the number of trees until the importance rankings stabilize and use techniques like the optRF package to find the optimal number of trees for stability [50].

Q5: When using permutation importance, what does a negative importance score indicate?

A: A negative permutation importance score indicates that randomly shuffling the values of that feature improved the model's performance on the test data. This counter-intuitive result typically happens for irrelevant or noisy features. The model's original reliance on that feature was harming its performance, and breaking its relationship with the target variable by shuffling removed that source of error [48].

Q6: In a decision tree model for patient stratification, how can I ensure the feature importance is stable and reliable?

A: For stable and reliable feature importance in decision trees or random forests:

- Ensure an adequate number of trees: Use packages like

optRFto determine the optimal number of trees that maximizes stability without unnecessary computational cost [50]. - Use ensemble methods: Aggregate feature importance from multiple model runs or use a random forest instead of a single tree to average out variability.

- Validate with multiple techniques: Cross-check the results of Gini-based importance (built into the tree algorithm) with model-agnostic methods like permutation importance to confirm your findings [48].

Experimental Protocols and Data Presentation

Protocol 1: Implementing L1 Regularization for Feature Selection

This protocol details how to use L1 regularization (LASSO) to identify the most important features in a high-dimensional dataset, such as genomic data for drug response prediction.

Methodology:

- Data Preprocessing: Standardize all features to have a mean of 0 and a standard deviation of 1. Split the data into training and testing sets.

- Model Training: Train a LASSO regression model on the training data. The loss function minimized is:

Cost = (1/n) * Σ(y_i - ŷ_i)^2 + λ * Σ|w_i|whereλ(alpha) is the key hyperparameter controlling the strength of regularization [46]. - Hyperparameter Tuning: Perform cross-validation on the training set to find the optimal value of

λthat minimizes the cross-validation error. - Feature Extraction: Fit the final model on the entire training set using the optimal

λ. Examine the model's coefficients (model.coef_). Features with non-zero coefficients are the ones selected by the LASSO algorithm [48].

Workflow Diagram:

Table: Key Research Reagents for L1 Regularization Experiments

| Item | Function in Experiment |

|---|---|

| StandardScaler | Standardizes features to mean=0 and variance=1, ensuring the L1 penalty is applied uniformly. |

| LassoCV | Scikit-learn class that implements Lasso with built-in cross-validation to find the optimal regularization parameter (λ). |

| Permutation Importance Function | Used to validate the features selected by L1 by measuring performance drop when a feature is shuffled [48]. |

Protocol 2: Assessing and Improving Stability in Tree-Based Feature Importance

This protocol addresses the challenge of non-deterministic feature importance in random forest models, common in genomic selection studies [50].

Methodology:

- Baseline Model: Train an initial random forest model with a default number of trees (e.g., 500) and record the feature importance (e.g., Gini importance or permutation importance).

- Stability Analysis: Use the

optRFR package (or similar stability assessment methods) to model the relationship between the number of trees and the stability of predictions and variable importance estimates. The package calculates stability metrics like the Intraclass Correlation Coefficient (ICC) for regression or Fleiss' Kappa for classification [50]. - Determine Optimal Trees: Identify the point where increasing the number of trees no longer provides a significant improvement in stability, thus optimizing the trade-off between stability and computation time.

- Final Model: Retrain the random forest model using the optimal number of trees determined in the previous step to obtain a stable and reliable estimate of feature importance.

Workflow Diagram:

Table: Stability Metrics for Random Forest Models

| Metric | Use Case | Interpretation |

|---|---|---|

| Intraclass Correlation Coefficient (ICC) | Regression Problems | Measures the consistency of metric predictions across repeated runs. A value of 1 indicates perfect stability [50]. |

| Fleiss' Kappa (κ) | Classification Problems | Measures the agreement in class predictions across repeated runs. A value of 1 indicates perfect stability [50]. |

| Selection Stability | Genomic Selection | Based on metrics like Cohen's Kappa, it measures the agreement in selection decisions (e.g., top individuals) based on predictions from different model runs [50]. |

The Scientist's Toolkit: Essential Materials and Solutions

Table: Key Research Reagent Solutions for Embedded Feature Importance

| Reagent / Tool | Function / Explanation |

|---|---|

| L1 Regularization (LASSO) | An embedded feature selection method that adds a penalty proportional to the absolute value of coefficients, driving less important feature coefficients to exactly zero [46] [48]. |

| Random Forest Variable Importance | An importance measure embedded in the tree-building process, often based on the total decrease in node impurity (Gini impurity or mean squared error) from splitting on a variable [50]. |

| Permutation Importance | A model-inspection technique that measures the increase in prediction error after randomly shuffling a single feature's values, indicating its importance to the model's performance [48]. |

| optRF R Package | A specialized tool for quantifying the impact of non-determinism in random forests and recommending the optimal number of trees to maximize stability of predictions and variable importance [50]. |

| Recursive Feature Elimination (RFE) | A wrapper method that recursively trains a model (like random forest), removes the least important feature(s), and repeats the process until the desired number of features is reached [48]. |

A fundamental challenge in interpretable machine learning is accurately determining not just which features influence model predictions, but their relative importance ranking. In scientific domains like genomics and drug development, this capability is crucial for prioritizing a small number of top-ranked candidates for costly downstream validation and decision-making processes [15]. The RAMPART (Ranked Attributions with MiniPatches And Recursive Trimming) framework represents a significant advancement in this domain by introducing a novel algorithm specifically engineered for ranking the top-k features, moving beyond traditional feature importance estimation approaches that merely convert importance scores to ranks as a post-processing step [51] [15]. This technical support center provides comprehensive guidance for researchers implementing RAMPART within their feature importance refinement research.

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: What distinguishes RAMPART from previous feature importance methods? RAMPART fundamentally differs from conventional approaches that first estimate feature importance values for all features before sorting and selecting the top-k. Instead, it utilizes a recursive trimming strategy that progressively focuses computational resources on promising features while eliminating suboptimal ones, explicitly optimizing for ranking accuracy rather than treating it as a byproduct of importance scoring [15].

Q2: Why are my top-k rankings unstable with high-dimensional genomic data? High-dimensional data with correlated features presents a known challenge where traditional importance estimates become unstable and unreliable. RAMPART addresses this through its MiniPatches ensembling strategy (RAMP component) that aggregates models trained on random subsamples of both observations and features, effectively breaking harmful correlation patterns while maintaining statistical power [15].

Q3: How does RAMPART compare to other multivariate feature selection methods like k-TSP? While k-TSP (Top Scoring Pairs) employs effective multivariate feature ranking based on relative expression ordering, it utilizes a relatively simple voting scheme in classification. RAMPART separates feature ranking from the final predictive model, allowing integration with various machine learning classifiers and importance measures while providing theoretical guarantees on top-k recovery [15] [52].

Q4: Can RAMPART integrate with knowledge-based feature selection approaches? Yes, RAMPART is model-agnostic and can utilize any existing feature importance measure, including those incorporating biological knowledge. This flexibility enables researchers to combine the framework's efficient ranking capabilities with domain-specific insights, potentially enhancing performance in applications like drug response prediction [15] [53].

Troubleshooting Common Experimental Issues

Problem: Inconsistent Top-k Rankings Across Repeated Experiments

- Symptoms: Variability in identified top features when running RAMPART multiple times on the same dataset.

- Potential Causes:

- Insufficient MiniPatch samples for the dataset complexity

- Overly aggressive recursive trimming thresholds

- High correlation structure not adequately addressed

- Solutions:

- Increase the number of MiniPatches (N_MP) in the RAMP component, especially for high-dimensional data (>10,000 features)

- Adjust the trimming fraction parameters to be less aggressive in early iterations

- Ensure the MiniPatch size (number of features sampled) is appropriately tuned to balance correlation breaking and statistical power

Problem: Excessive Computational Time with Large Feature Sets

- Symptoms: Experiments taking impractically long to complete with high-dimensional data.

- Potential Causes:

- Inefficient base importance measure computation

- Lack of parallelization in the ensembling step

- Suboptimal trimming schedule

- Solutions:

- Utilize faster feature importance measures (e.g., permutation importance) as the base estimator when appropriate

- Implement parallel processing for MiniPatch training and importance calculation

- Consider a more aggressive trimming schedule for very large feature spaces (>50,000 features), leveraging the theoretical guarantees of sequential halving

Problem: Poor Correlation with Downstream Experimental Validation

- Symptoms: Top-ranked features failing to show significance in wet-lab validation experiments.

- Potential Causes:

- Disconnect between the feature importance metric and biological relevance

- Inadequate sample size for the complexity of the biological system

- Improper handling of technical confounding factors

- Solutions:

- Incorporate biological knowledge by using pathway-aware importance measures or pre-filtering features using knowledge-based methods like drug pathway genes or OncoKB genes [53]

- Perform power analysis to ensure adequate sample size and adjust the top-k target accordingly

- Include relevant confounding factors as covariates in the base model

Experimental Protocols & Methodologies

Protocol 1: Benchmarking RAMPART Against Alternative Methods

Objective: Compare the top-k ranking performance of RAMPART against established feature importance methods.