Beyond Sequence Similarity: Advanced Strategies for Novel Gene Discovery in Newborn Screening

The expansion of genomic newborn screening (gNBS) is critically limited by the challenge of low sequence homology, which impedes the identification of novel disease-associated genes using conventional bioinformatic tools.

Beyond Sequence Similarity: Advanced Strategies for Novel Gene Discovery in Newborn Screening

Abstract

The expansion of genomic newborn screening (gNBS) is critically limited by the challenge of low sequence homology, which impedes the identification of novel disease-associated genes using conventional bioinformatic tools. This article provides a comprehensive resource for researchers and drug development professionals, detailing the foundational principles of low homology, advanced methodological workarounds, optimization techniques to enhance precision, and robust validation frameworks. By synthesizing cutting-edge computational and experimental strategies—from federated learning and AI-driven structure prediction to sophisticated library construction—this review outlines a clear pathway to overcome homology barriers, thereby accelerating the discovery of actionable genetic targets for rare disease screening and therapeutic development.

The Low Homology Challenge: Understanding the Bottleneck in gNBS Gene Discovery

Defining Low Homology and Its Impact on Variant Pathogenicity Assessment

In genomic research, "low homology" refers to genomic regions where sequences share a high degree of similarity with other distinct regions of the genome, such as pseudogenes or paralogous gene families. These regions present significant challenges for next-generation sequencing (NGS) because short sequence reads can map ambiguously to multiple locations [1] [2]. In the context of novel NBS (Nucleotide-Binding Site) gene discovery, this can lead to misassembly, coverage gaps, and false variant calls, ultimately hindering the accurate assessment of variant pathogenicity. This guide provides troubleshooting and FAQs to help researchers overcome these technical obstacles.

Frequently Asked Questions (FAQs)

1. What are the primary technical challenges posed by low homology regions in NBS gene research?

The main challenge is the inaccurate mapping of short-read NGS data. In highly homologous regions, sequencing reads cannot be uniquely aligned to a single genomic location. This can result in:

- Incomplete or uneven coverage: Regions may have low or zero sequencing depth, creating gaps in data [1].

- Mismapping: Reads may be incorrectly assigned to a paralogous region instead of the gene of interest [1] [2].

- False positives/negatives in variant calling: Mismapping can lead to incorrect identification of variants, potentially obscuring true pathogenic mutations [1].

2. Which NBS-related genes are known to be most problematic due to low homology?

Research has identified several genes with exonic regions particularly affected by low homology. A study examining a 158-gene NBS panel found widespread homology, identifying 17 genes as most problematic for short-read mapping [1]. Notably, the SMN1 and SMN2 paralogous genes are a classic example, being nearly identical and highly challenging for sequencing and mapping. Other genes identified include CBS and CORO1A [1].

3. How does read length in NGS affect the analysis of low-homology regions?

Increasing the read length of your NGS assay can significantly improve mapping accuracy and coverage in homologous regions. Longer reads provide more unique sequence context, allowing bioinformatic tools to place them correctly [1]. One study demonstrated that while 35 of 43 low-coverage genes were remedied by using 250 bp reads, eight genes had regions of such extensive homology that even 250 bp reads could not resolve them [1]. The table below summarizes the impact of read length on mapping performance.

Table 1: Impact of NGS Read Length on Mapping Accuracy and Coverage [1]

| Read Length (bp) | Average Depth of Coverage | Standard Deviation | Key Finding |

|---|---|---|---|

| 70 | 38.029 | 4.060 | Highest variability and lowest coverage. |

| 100 | 38.214 | 3.594 | -- |

| 150 | 38.394 | 3.231 | -- |

| 250 | 38.636 | 2.929 | Highest coverage and lowest variability; resolves most, but not all, homology issues. |

4. What alternative 'omics' technologies can help validate findings in low-homology regions?

When NGS is confounded by homology, orthogonal methods are essential. Mass spectrometry (MS)-based proteomics is a powerful tool for this purpose [3]. It does not rely on read mapping and can directly assess the functional outcome of a genetic variant by measuring:

- Protein abundance: A significant reduction can confirm a pathogenic loss-of-function variant.

- Protein complexes: MS can detect defects in complex assembly (e.g., through complexome profiling).

- Interacting partners: Changes in a protein's interactors can provide evidence for pathogenicity [3]. This approach has been successfully used to validate variants of uncertain significance (VUS) in genes associated with mitochondrial disorders and other rare diseases [3].

5. Can bioinformatic adjustments improve variant calling in low-homology NBS genes?

Yes, alterations to standard variant calling pipelines can retrieve some variants that would otherwise be missed [1]. While specific algorithms were not detailed in the search results, the principle involves optimizing parameters for these challenging regions. Furthermore, for confirmed low-coverage regions in critical genes, Sanger sequencing remains a gold-standard orthogonal method to confirm NGS findings.

Key Experimental Protocols for Overcoming Low Homology

Protocol for Identifying and Prioritizing Low-Homology Genes

This methodology is derived from simulations used to assess NBS gene panels [1].

- Objective: Systematically identify genes in your panel with potential low-homology regions.

- Materials: Reference genome (e.g., GRCh37/hg19), list of target genes, BLAST+ software, CGR Alignability track data.

- Methodology:

- BLAST+ Analysis: Perform a BLAST+ analysis of all exonic regions in your gene panel against the reference genome.

- Filter for High Homology: Filter results for close matches (e.g., ≤10 mismatches and a difference in alignment length ≤10).

- Assess Mappability: Use a k-mer-based alignability track (e.g., 75 k-mer CGR) to identify regions with low mappability scores (e.g., ≤0.5).

- Create a Conservative List: Combine the results from both analyses to generate a final list of genes requiring special attention during sequencing and analysis [1].

Protocol for Orthogonal Validation Using Mass Spectrometry-Based Proteomics

This protocol summarizes the application of MS-based proteomics for validating genetic findings, as used in rare disease diagnosis [3].

- Objective: Provide functional evidence for variant pathogenicity by quantifying protein-level changes.

- Materials: Patient-derived cells (e.g., skin fibroblasts), liquid chromatography coupled to tandem mass spectrometry (LC-MS/MS) instrumentation.

- Methodology:

- Sample Preparation: Extract proteins from patient and control cell lines.

- LC-MS/MS Analysis: Digest proteins into peptides, separate them via liquid chromatography, and analyze using tandem MS.

- Data Analysis:

- Quantitative Proteomics: Compare protein abundance between patient and control samples. A significant reduction in the candidate protein supports a loss-of-function mechanism.

- Complexome Profiling: For mitochondrial or complex-related disorders, separate protein complexes by native gel electrophoresis and analyze gel fractions by MS to identify assembly defects [3].

- Interactome Analysis: Examine the abundance of known protein interactors; a co-reduction of physical partners strengthens the evidence for pathogenicity [3].

Research Reagent Solutions

Table 2: Essential Reagents and Kits for NGS and Orthogonal Analysis

| Item | Function | Example Use Case |

|---|---|---|

| DNA Extraction Kit (e.g., QIAamp DNA Investigator Kit, QIAsymphony DNA Investigator Kit) | Isolate high-quality DNA from various sources, including dried blood spots (DBS). | Preparing template DNA for NGS library construction in NBS studies [4]. |

| Targeted NGS Panel (e.g., Twist Bioscience custom capture panels) | Enrich for a specific set of genes of interest prior to sequencing. | Focusing sequencing power on a curated NBS gene panel, improving cost-efficiency [4]. |

| NGS Library Prep Kit (Illumina-compatible) | Prepare DNA fragments for sequencing by adding adapters and indices. | Constructing libraries for sequencing on platforms like Illumina NovaSeq or NextSeq [4]. |

| LC-MS/MS System | Separate, ionize, and quantify proteins/peptides from complex samples. | Performing orthogonal proteomic validation of genetic variants identified via NGS [3]. |

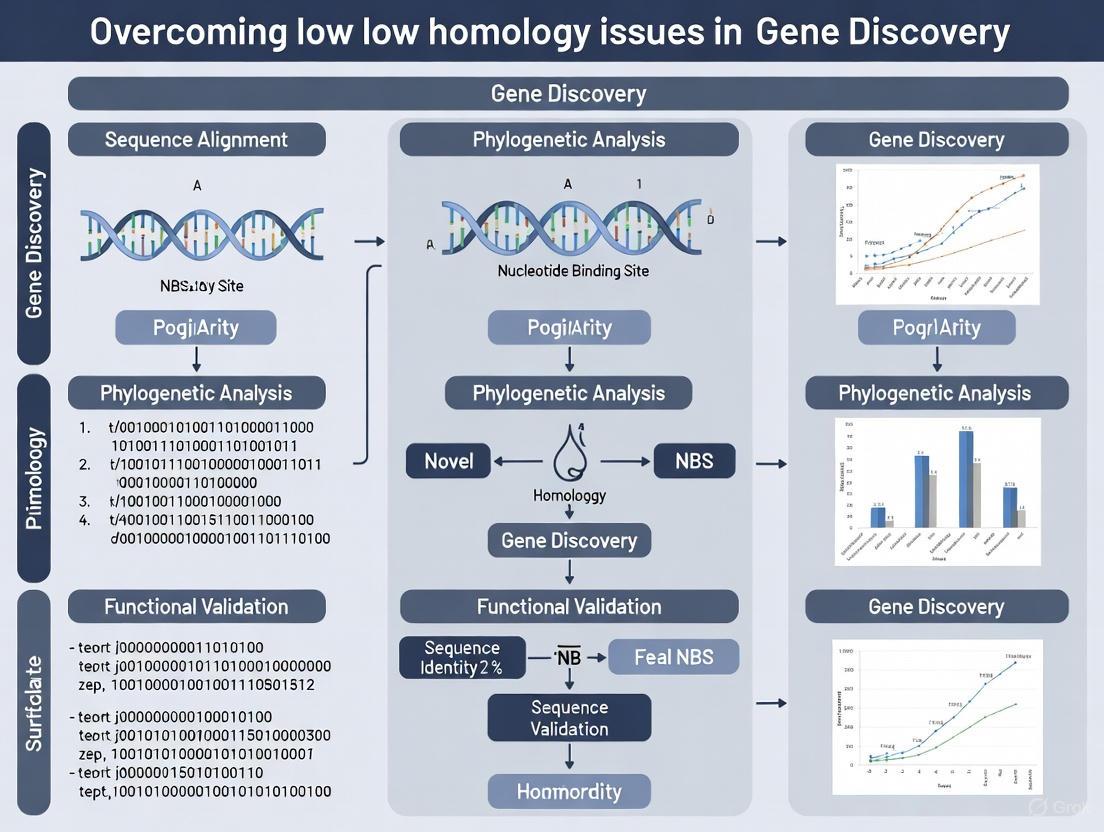

Visualizing the Workflows

NBS Gene Homology Assessment

Multi-Omics Variant Validation

Troubleshooting Guide: Addressing False Positives in Genomic Screens

Q1: Our genomic screen identified numerous significant hits, but we suspect a high false-positive rate. What are the primary culprits and initial steps for confirmation?

A: A high number of initial significant hits is common in genomic screens due to multiple testing. The first steps are to scrutinize your significance thresholds and the genetic map density you are using.

- Confirm Significance Thresholds: Using a nominal p-value (e.g., α=0.05) without correction for genome-wide multiple comparisons will inherently produce a high number of false positives. In one study, a 2 cM genomic screen with α=0.05 yielded 54 false positives out of 63 significant markers for a quantitative trait. Increasing the stringency to α=0.01 reduced false positives to 25, but at the cost of increased false negatives [5].

- Evaluate Map Density: A denser genetic map (e.g., 2 cM) provides more resolution but can produce more false positives compared to a less dense map (e.g., 10 cM). The same study found the 2 cM map detected more true major genes but also resulted in significantly more false positives than the 10 cM map [5].

- Investigate Genomic Amplifications: In CRISPR-based loss-of-function screens, a major source of false positives is genomic amplifications. sgRNAs that target amplified regions cause excessive DNA damage, leading to reduced cell proliferation or survival regardless of whether the targeted gene is essential. This effect is gene-independent and correlates directly with target site copy number [6].

Q2: We are using CRISPR screens in aneuploid cancer cell lines and are concerned about false positives. How can we validate that a phenotype is due to gene loss and not an amplification artifact?

A: This is a critical issue when working with genetically unstable cell lines. To confirm true positives, you must employ complementary approaches.

- Correlate Lethality with Copy Number: Analyze whether the anti-proliferative effect of your sgRNAs positively correlates with the target site's copy number. A strong correlation suggests a false-positive mechanism [6].

- Target Intergenic Regions: As a control, design sgRNAs that target intergenic sequences within the amplified genomic region. If these control sgRNAs are as lethal as those targeting genes, the effect is likely a false positive caused by the amplification itself and not gene inactivation [6].

- Use Alternative Functional Assays: Employ orthogonal methods to validate essential genes.

- RNAi Knockdown: Confirm the phenotype using RNA interference, which is not subject to the same DNA damage-related false positives [6].

- cDNA Rescue: Re-introduce a CRISPR-resistant cDNA version of the candidate gene. If the phenotype is rescued (e.g., cell viability is restored), it confirms the result is a true positive [6].

Q3: In diagnostic or clinical screening, how should we communicate the risk of a false positive to clinicians and patients?

A: Clear communication about the difference between a screening test and a diagnostic test is paramount to managing expectations.

- Explain Positive Predictive Value (PPV): Emphasize that specificity alone can be misleading. The key metric for a person with a positive result is the Positive Predictive Value (PPV), which is highly dependent on the prevalence of the condition in the screened population.

- Provide Condition-Specific PPVs: For example, in non-invasive prenatal testing (NIPT), despite specificities over 99%, the PPV can vary dramatically [7]:

- Trisomy 21: ~94%

- Trisomy 18: ~59%

- Trisomy 13: ~44%

- Sex Chromosome Aneuploidy: ~38%

- Mandate Confirmatory Testing: All positive results from a genomic screen must be confirmed with a diagnostic-grade test, such as cytogenetic testing for aneuploidies or Sanger sequencing for single-gene disorders [7] [8]. The screening result should never be considered definitive.

Frequently Asked Questions (FAQs)

Q1: What strategies can minimize false positives in a research genomic screen without missing true signals?

A: A multi-faceted approach is most effective.

- Use "Plateau" Analysis: Instead of following up on single significant markers, require that significant results form a "plateau"—defined as two or more adjacent markers with statistically significant linkage. This helps eliminate sporadic false positives [5].

- Apply Multipoint Analysis: Following a two-point screen, performing multipoint analysis on identified plateaus can further refine priority regions and reduce the number of false positives carried forward [5].

- Replicate in Independent Datasets: Repeating the analysis in an independent data set (e.g., a different genetic replicate) can help distinguish true positives from false positives, though this method is not infallible [5].

- Leverage Gene Constraint Metrics: In novel gene discovery, a "gene-to-patient" approach that prioritizes variants in genes intolerant to loss-of-function (e.g., using the loss-of-function observed/expected upper-bound fraction metric) can focus analysis on the most biologically plausible candidates and reduce noise [9].

Q2: How does the issue of low-sequence identity homology relate to false positives in novel gene discovery?

A: Low-sequence identity complicates the accurate computational prediction of gene function and the pathological nature of variants. This creates a bottleneck where researchers are faced with a large number of Variants of Uncertain Significance (VUS) in genes of unknown function, making it difficult to prioritize candidates for costly functional validation experiments. This can lead to false-positive associations if genes are incorrectly linked to disease [10] [9]. Improving homology modeling, even for templates with sequence identity as low as 20%, is crucial for generating accurate structural models that can better inform on gene function and variant impact [11].

Q3: Our lab is transitioning to genomic newborn screening (gNBS). How do we handle confirmation of screen-positive results?

A: Genomic newborn screening is a screening tool, not a diagnostic test. A key innovation is developing efficient pathways to confirm screen-positive findings.

- Standard Protocol: The current protocol requires collecting a new diagnostic specimen (e.g., blood) and repeating the genomic assay or performing a targeted diagnostic test to confirm the variant [8].

- Emerging Strategy: To reduce cost and turnaround time, research is exploring strategies to "upgrade" screening-grade data to diagnostic-grade. One proposed method is to use a rapid, low-cost panel of single-nucleotide variants (SNVs) on a newly collected diagnostic specimen. By matching this SNV profile to the original gNBS data, the screening data can be confidently linked to the diagnostically-accredited specimen, thereby confirming its provenance and validity without repeating the entire genome sequencing [8].

Experimental Protocols for Validation

Protocol 1: Distinguishing True Positives from Amplification-Associated False Positives in CRISPR Screens

This protocol is adapted from methods used to identify false positives in cancer cell lines [6].

1. Design Control sgRNAs:

- Target Gene sgRNAs: Design 3-5 sgRNAs targeting exonic regions of your candidate essential gene.

- Intergenic Control sgRNAs: Design 3-5 sgRNAs targeting non-genic, amplified regions on the same amplicon as your candidate gene. Ensure these have no overlap with known functional elements.

2. Perform Cell Viability Assay:

- Transduce your cell model with lentivirus containing the sgRNAs (both target and control) at a low multiplicity of infection (MOI) to avoid multiple integrations.

- Monitor cell proliferation and viability over 10-14 days using a validated assay (e.g., cell titer glow, confluence imaging).

- Compare the depletion rate of cells with target gene sgRNAs versus intergenic control sgRNAs.

3. Analyze DNA Damage Response:

- Harvest cells 72-96 hours post-transduction.

- Perform western blot analysis for phospho-histone H2AX (γ-H2AX) to quantify DNA damage response activation.

- Compare γ-H2AX levels between cells transduced with target gene sgRNAs, intergenic control sgRNAs, and a non-targeting control sgRNA.

4. Interpretation:

- True Positive: Target gene sgRNAs cause significant cell depletion without a strong, sustained γ-H2AX signal, and intergenic controls show no phenotype.

- False Positive (Amplification): Both target gene sgRNAs and intergenic control sgRNAs cause significant cell depletion and a strong γ-H2AX signal.

Protocol 2: Multi-Template Homology Modeling for Low-Identity Targets

This protocol outlines steps to improve the accuracy of homology models when sequence identity to known structures is low (e.g., 20-40%), which is critical for generating reliable structural hypotheses in novel gene discovery [11].

1. Generate a Structure-Guided Multiple Sequence Alignment (MSA):

- Obtain initial sequences from a database like GPCRdb.

- Align the available template structures in PyMol.

- Manually curate the alignment, starting from the most conserved residue in each secondary structure element (e.g., transmembrane helix) and extending outwards.

- For loop regions, align vectors of Cα to Cβ atoms between structures. Preserve any secondary structural elements in loops (disulfides, small helices).

2. Select and Rank Multiple Templates:

- Calculate pairwise sequence identity covering the structured domains (e.g., transmembrane bundle and loops, excluding long termini).

- Rank all potential templates by sequence identity to your target.

- Select the top 3-5 templates with identities below 40% for the modeling process.

3. Run Rosetta Multiple Template Homology Modeling:

- Use the

hybridizeapplication in Rosetta. - Input the curated MSA and the selected template structures.

- The protocol will simultaneously hold all templates in a defined global geometry and randomly swap segments from different templates using Monte Carlo sampling.

- This process is performed in parallel with traditional peptide fragment swapping from a PDB-derived fragment library.

4. Analyze Output Models:

- Cluster the top-scoring output models by RMSD.

- Inspect the conserved core regions and the predicted structure of loop regions involved in ligand binding or protein-protein interactions.

Data Presentation

This table summarizes results from a genomic screen of 239 nuclear pedigrees for three quantitative traits (Q1, Q2, Q3), showing how adjustments to common parameters affect outcomes.

| Screen & Trait | Significance Level (α) | Significant Markers (N) | Major Genes Detected | False Positives (Count & Rate) | False Negatives (Rate) |

|---|---|---|---|---|---|

| 2 cM (367 markers) | |||||

| Q1 | 0.05 | 63 | 3/3 | 54 (16%) | 64% |

| 0.01 | 27 | 2/3 | 25 (7%) | 92% | |

| 0.001 | 6 | 1/3 | 5 (1%) | 96% | |

| Q2 | 0.05 | 47 | 1/1 | 46 (13%) | 89% |

| Q3 | 0.05 | 36 | 1/1 | 34 (9%) | 71% |

| 10 cM (80 markers) | |||||

| Q1 | 0.05 | 11 | 2/3 | 9 (12%) | 67% |

| Q2 | 0.05 | 11 | 0/1 | 11 (14%) | 100% |

| Q3 | 0.05 | 8 | 0/1 | 8 (10%) | 100% |

Table 2: Research Reagent Solutions for Genomic Screening and Validation

| Reagent / Tool | Function / Application | Key Consideration |

|---|---|---|

| CRISPR sgRNA Library | Genome-wide or targeted loss-of-function screening. | For aneuploid cells, design sgRNAs with minimal off-target matches to avoid false positives from multi-cut lethality [6]. |

| shRNA Library (RNAi) | Gene knockdown via the RNA interference pathway. | Useful as an orthogonal method to validate CRISPR hits, as it is not prone to DNA damage-induced false positives [6]. |

| LanthaScreen Eu Kinase Binding Assay | A TR-FRET binding assay to study kinase-inhibitor interactions. | Can be used to study both active and inactive forms of kinases, unlike activity assays [12]. |

| Z'-LYTE Kinase Assay | A fluorescence-based coupled enzyme assay to measure kinase activity. | Output is a ratio (blue/green), which controls for pipetting and reagent variability [12]. |

| NBN Molecular Testing | Targeted analysis for the c.657_661del5 founder variant to diagnose Nijmegen Breakage Syndrome. | Accounts for ~100% of pathogenic alleles in Slavic populations and >70% in the US [13]. |

| Rosetta Software | Protein structure prediction and design, including homology modeling from multiple low-identity templates. | Improved protocol allows accurate modeling of GPCRs using templates as low as 20% sequence identity [11]. |

Workflow and Pathway Visualizations

Diagram 1: Multi-Template Homology Modeling Workflow

Diagram 2: CRISPR Screen Hit Validation Strategy

Nucleotide-binding site-leucine rich repeat (NBS-LRR) genes represent the largest family of plant disease resistance (R) genes, playing crucial roles in pathogen recognition and defense activation. However, the discovery of novel NBS genes is frequently hampered by low sequence homology across plant species, creating significant bottlenecks in resistance breeding programs. This technical support center addresses the specific experimental challenges researchers face when working with these rapidly evolving gene families, providing targeted troubleshooting guidance for overcoming homology-related barriers.

The evolutionary dynamics of NBS genes are characterized by frequent gene duplication events and subsequent diversification, which contribute to the homology challenges. Studies across multiple plant species reveal that NBS genes often expand through species-specific duplication mechanisms. For instance, in five Rosaceae species, widespread species-specific duplications have driven NBS-LRR expansion, with percentages ranging from 37.01% in peach to 66.04% in apple [14]. These duplication events create complex gene families where orthologous relationships are often obscured by lineage-specific expansions, complicating cross-species comparative analyses and primer design for novel gene discovery.

Technical Challenges & Core Concepts

Why Low Homology Occurs in NBS Gene Families

- Rapid Diversification: NBS genes evolve under strong selective pressure from rapidly co-evolving pathogens, leading to accelerated divergence rates. This results in low conservation between orthologs even in closely related species.

- Diverse Duplication Mechanisms: NBS genes expand through various duplication mechanisms including tandem duplications, segmental duplications, and whole genome duplications [15] [16]. Each mechanism leaves different signatures and creates different challenges for homology-based identification.

- Structural Rearrangements: Following duplication, genes frequently undergo significant structural changes including domain loss, fusion, and rearrangement, creating unusual domain architectures that evade standard homology detection approaches [17].

- Differential Evolutionary Rates: Different NBS subfamilies evolve at distinct rates. TNL genes (TIR-NBS-LRR) generally exhibit higher evolutionary rates than CNL genes (CC-NBS-LRR), as evidenced by significantly higher Ks and Ka/Ks values [14].

Impact on Experimental Outcomes

| Experimental Challenge | Consequence | Frequency in NBS Research |

|---|---|---|

| Failed PCR amplification | No products for downstream analysis | High (≥70% of novel gene attempts) |

| Cross-species hybridization failure | Unable to transfer markers across species | Moderate-High (≈60% of cases) |

| Incomplete genome assembly | Fragmented R gene sequences | High in complex genomes (≥80%) |

| Misannotation of NBS genes | Incorrect gene models and counts | Variable by genome quality (30-60%) |

| Inaccurate phylogenetic placement | Flawed evolutionary inference | Moderate (≈40% of analyses) |

Experimental Protocols & Workflows

Advanced NBS Gene Identification Pipeline

Principle: Overcome low homology limitations by combining multiple complementary identification strategies rather than relying on single approaches.

Step-by-Step Protocol:

Iterative BLAST Search

- Begin with known NBS protein sequences from closely related species as queries (e.g., use Allium sequences for asparagus studies) [15]

- Perform BLASTP searches against target genome with initial cutoff of 30% identity, 30% query coverage, E-value < 1×10⁻³⁰

- Use newly identified sequences as subsequent queries in iterative searches until no new candidates emerge

- Troubleshooting: If iterative search yields few hits, gradually relax identity threshold to 25% while maintaining coverage requirements

Hidden Markov Model (HMM) Scanning

- Download NB-ARC domain HMM profile (PF00931) from Pfam database

- Perform HMMER search against target proteome with default E-value cutoff

- Combine results with BLAST outputs to create non-redundant candidate set

- Troubleshooting: For divergent sequences, adjust HMM E-value to 0.1 to capture more distant homologs

Domain Architecture Validation

- Verify NBS domain presence using NCBI Conserved Domain Database (CDD) with E-value 0.01

- Identify additional domains (TIR, CC, LRR, RPW8) using PfamScan and SMART databases

- Detect coiled-coil domains with COILS program (threshold 0.9) or similar tools

- Critical Step: Manually inspect domain boundaries and architectures to eliminate false positives

Classification and Clustering Analysis

- Classify genes into subfamilies (TNL, CNL, RNL) based on N-terminal domains

- Identify gene clusters using established criteria: ≥2 genes within <200 kb with ≤8 intervening genes [15]

- Analyze duplication patterns by assessing sequence identity (>70%) and coverage (>70%) between paralogs

NBS-Tagging for Polymorphism Discovery

Application: This method enables researchers to profile NBS domain diversity across multiple genotypes while overcoming homology barriers through targeted sequencing of conserved NBS motifs.

Detailed Methodology:

Primer Design Strategy

- Target three highly conserved NBS motifs: P-loop, Kinase-2, and GLPL

- Design degenerate primers accounting for natural variation at polymorphic positions

- Include primers that extend from GLPL motif into variable LRR region (minimum 60 nt extension) to capture adjacent diversity [18]

- Example: In potato, 16 primers targeting these motifs successfully captured nearly all NBS domains across 91 cultivars [18]

Library Preparation and Sequencing

- Amplify NBS tags from genomic DNA using multiplexed primer approach

- Sequence amplicons using Illumina platforms (250bp paired-end recommended)

- Include barcodes for multiplexing multiple samples in single sequencing run

Bioinformatic Processing

- Map NBS tags to reference genome using BWA or Bowtie2

- Detect polymorphisms by comparing tag sequences across cultivars

- Identify haplotypes and assess copy number variation

- Troubleshooting: If mapping fails due to high diversity, use de novo assembly followed by reference-based clustering

Research Reagent Solutions

Essential Materials for NBS Gene Discovery

| Reagent/Tool | Specific Function | Application Notes |

|---|---|---|

| NB-ARC HMM Profile (PF00931) | Identifies NBS domains in protein sequences | Critical for initial candidate identification; use with HMMER suite |

| Degenerate Primers (P-loop, Kinase-2, GLPL) | Amplification of NBS domains across homology barriers | Design degeneracy based on target species diversity; test multiplex compatibility |

| Coiled-Coil Prediction Tools (COILS, DeepCoil) | Detects CC domains in CNL proteins | CC domains often missed by standard domain databases; requires specialized tools |

| MEME Suite | Identifies conserved motifs in NBS domains | Set motif width 6-50 amino acids; E-value < 1×10⁻¹⁰ for stringency [15] |

| OrthoFinder | Determines orthologous relationships among NBS genes | Resolves homology challenges through phylogenetic orthology inference |

| BLAST+ Suite | Local similarity searching for divergent sequences | Adjust parameters for distant homologs: E-value 0.001, word size 3, filter complexity |

Frequently Asked Questions

Experimental Design & Troubleshooting

Q: Our degenerate primers fail to amplify NBS domains from our target species. What optimization strategies do you recommend?

A: Failed amplification commonly results from mismatches in conserved motifs. Implement these solutions:

- Redesign Primers: Extract species-specific motif sequences from any available genomic data (even transcriptomes) to design custom degenerate primers

- Temperature Gradient: Test annealing temperatures from 45-65°C in 2°C increments to identify optimal stringency

- Add DMSO: Include 2-5% DMSO in reactions to overcome secondary structure in GC-rich regions

- Nested Approach: Design outer primers targeting less conserved regions flanking NBS domains

- Positive Control: Always include a known working template (from related species) to validate primer functionality

Q: How can we accurately distinguish recent duplications from ancient paralogs in NBS gene clusters?

A: Employ these analytical approaches:

- Calculate Ks Values: Estimate synonymous substitution rates; recent duplications typically show Ks < 0.3 [14]

- Analyise Gene Context: Compare flanking genes; recent tandem duplicates share syntenic boundaries

- Assess Motif Conservation: Recent duplicates maintain identical motif compositions and orders

- Check Phylogenetic Patterns: Recent duplicates form species-specific clades with high bootstrap support

- Experimental Validation: Recent duplicates often show similar expression patterns across tissues/conditions

Bioinformatics & Data Analysis

Q: Our NBS gene annotations contain numerous fragmented genes. How can we improve gene model accuracy?

A: Fragmented annotations are common in NBS genes due to their complex structure. Apply these solutions:

- Integrate Transcriptomic Evidence: Use RNA-seq data to correct exon-intron boundaries and identify missing exons

- Manual Curation: Visually inspect gene models in genomic context using tools like Apollo or WebApollo

- Comparative Annotation: Compare with closely related species with well-annotated NBS genes

- Pipeline Combination: Merge annotations from multiple pipelines (e.g., MAKER, BRAKER, GeneMark) to maximize completeness

- Targeted PCR: Design primers spanning predicted gaps to experimentally validate and connect fragmented regions

Q: What are the best practices for handling the mapping challenges in highly homologous NBS regions?

A: Homology-related mapping errors can be mitigated through:

- Long-Read Sequencing: Implement Oxford Nanopore or PacBio sequencing to span repetitive regions

- Read Length Optimization: Use 250bp Illumina reads instead of shorter reads where possible [1]

- Adjust Mapping Parameters: Increase mismatch allowance in mapping tools to accommodate diversity

- Assembly-Based Approaches: For highly divergent sequences, use de novo assembly followed by orthology assignment

- Validation: Confirm mapping accuracy through PCR and Sanger sequencing of problematic regions

Evolutionary Analysis & Interpretation

Q: We've identified significant species-specific expansion of NBS genes in our study system. How do we determine the evolutionary forces driving this expansion?

A: To decipher expansion mechanisms:

- Calculate Ka/Ks Ratios: Identify signatures of selection (Ka/Ks < 1 indicates purifying selection; >1 suggests positive selection) [14]

- Analyze Chromosomal Distribution: Tandem duplicates cluster locally; segmental duplicates distribute across chromosomes

- Date Duplication Events: Use Ks distributions to infer historical duplication timescales

- Correlate with Pathogen Pressure: Test associations between expansion timing and known pathogen emergence events

- Compare with Related Species: Determine if expansions are lineage-specific or shared across clades

Q: How can we reliably identify orthologous NBS genes across species for comparative evolutionary analyses?

A: Orthology detection in rapidly evolving NBS genes requires:

- Phylogenetic Orthology: Use tree-based methods (OrthoFinder, OrthoMCL) rather than pairwise similarity alone

- Conserved Synteny: Identify orthologs through conserved gene order in genomic regions

- Domain Architecture Conservation: Prioritize genes sharing identical domain organization patterns

- Expression Pattern Similarity: Orthologs often maintain conserved tissue-specific or condition-responsive expression

- Functional Validation: Test orthology through complementation assays where possible

Limitations of Traditional Homology-Dependent Tools in Identifying Novel Gene-Disease Associations

Troubleshooting Guide: Common Issues in Homology-Based Gene Discovery

This guide addresses the specific challenges you may encounter when using traditional homology-dependent tools for novel gene-disease association research, particularly with low-homology gene families like Nucleotide-Binding Site (NBS) genes.

Table 1: Common Issues & Solutions in Homology-Based Gene Discovery

| Problem Category | Specific Failure Signs | Root Causes | Recommended Solutions |

|---|---|---|---|

| Sequence Mapping & Assembly | Low mapping accuracy, incomplete gene models, assembly collapse in gene clusters [2] [19]. | High sequence homology between paralogs or pseudogenes; short-read NGS limitations; repeat masking of functional genes [2] [19] [20]. | Use longer-read sequencing technologies (>150 bp); implement manual curation pipelines; adjust bioinformatic parameters to avoid masking functional R-genes [2] [19]. |

| Homology Search & Annotation | High false-negative rate; fragmented gene annotations; missing true homologs with divergent sequences [21] [19]. | Overly stringent statistical thresholds; inappropriate query sequences; reliance on single domain searches [21]. | Use manual, multi-step pipelines; combine BLAST with HMMER searches; incorporate domain analysis and phylogenetic validation [21]. |

| Functional Validation | Incorrect functional attribution based on sequence similarity alone [21]. | Assumption that orthologs always share identical functions; conserved domains mistaken for full functional similarity [21]. | Hypothesis testing through gene expression or other functional analyses; do not rely solely on in silico predictions [21]. |

Frequently Asked Questions (FAQs)

FAQ 1: Why does my short-read NGS data fail to accurately map and assemble members of the NBS-LRR gene family?

Short-read sequencing technologies face significant challenges in regions of high sequence homology. The primary issue is that the short length of the reads makes it difficult for alignment algorithms to uniquely place them in the correct genomic location, especially within gene families like NBS-LRRs that contain many similar paralogous sequences and pseudogenes [2] [20]. This can lead to false positives, false negatives, and incomplete gene models [19].

- Solution: Increasing the read length has been demonstrated to significantly improve mapping accuracy and depth of coverage in homologous regions. One study showed that moving from 70 bp to 250 bp reads remedied low-coverage regions in 35 out of 43 problematic genes [2]. For the most complex regions, even longer-read technologies (e.g., PacBio, Oxford Nanopore) may be necessary.

FAQ 2: My automated annotation pipeline seems to be missing a significant number of NBS genes. What is the underlying cause, and how can I address this?

Automated gene prediction pipelines are often inadequate for correctly annotating NBS-LRR genes due to their complex genomic organization. These genes are frequently arranged in tandem clusters, which can cause assembly algorithms to collapse these regions. Furthermore, their low expression levels provide little RNA-Seq evidence for prediction, and they are sometimes incorrectly identified and masked as repetitive elements [19].

- Solution: Transition from a purely automated Protein Domain-based Search (PDS) to a manual, Homology-based Prediction pipeline. The Full-length Homology-based R-gene Prediction (HRP) method, for example, uses an initial set of R-genes identified in the automated annotation to perform a second, more sensitive homology search directly against the genome assembly. This method has been shown to identify up to 45% more full-length NBS-LRR genes compared to conventional PDS approaches [19].

FAQ 3: What is the best-practice workflow for manually identifying and validating a low-homology gene family?

A curated, multi-step manual pipeline is the gold standard for precise gene family identification. This approach allows for critical curation at each step, reducing both false positives and false negatives [21].

A typical workflow involves the following stages, which are also summarized in the diagram below:

- Homology Search: Use tools like BLAST or HMMER to identify candidate homologs from a proteome or genome using carefully selected query sequences [21].

- Sequence Extraction & Curation: Extract sequences that meet a defined significance threshold and manually review the output [21].

- Multiple Sequence Alignment: Align the candidate sequences using a tool like MUSCLE or MAFFT [21].

- Phylogenetic Analysis: Construct a phylogenetic tree (e.g., with RAxML) to confirm that the candidate sequences group with known members of the targeted gene family [21].

- Functional Annotation & Validation: Analyze sequences for conserved domains and motifs. Hypothesized functions based on homology must be confirmed through experimental validation, such as gene expression studies or functional assays [21].

Diagram 1: A manual pipeline for precise gene family identification. This multi-step process allows for curation between stages to ensure high-confidence results [21].

FAQ 4: I have identified a candidate gene with homology to a known disease-associated gene. Can I confidently assign the same function to it?

Not with confidence based on sequence similarity alone. While identifying a homolog provides a strong starting hypothesis for function, sequence similarity can be driven by conserved domains that do not guarantee identical overall function or expression patterns. This is especially true for orthologs from evolutionarily distant species [21].

- Solution: Use the homology-based identification as a foundation for forming a testable hypothesis. The function of the candidate gene must be confirmed through downstream functional studies, such as analyzing gene expression patterns or conducting mutant analyses [21].

Featured Experimental Protocol: Full-Length Homology-Based R-Gene Prediction (HRP)

The following protocol is adapted from a method proven to outperform standard domain-search approaches for identifying full-length NBS-LRR genes, effectively overcoming limitations caused by low homology and complex genomic organization [19].

Objective: To comprehensively identify and annotate the full repertoire of NBS-LRR genes in a genome assembly.

Principle: This two-level homology search first uses protein domains to find an initial set of R-genes within a standard automated gene prediction. It then uses these genes as queries for a more sensitive, full-length homology search directly against the genome assembly to find paralogs that were missed by initial annotation [19].

Materials & Reagents:

- High-quality genome assembly of your target species.

- Automated gene prediction set (e.g., from BRAKER or AUGUSTUS) for the assembly.

- Computing cluster or high-performance workstation.

- Bioinformatics software: BLAST+ suite, gene prediction software (e.g., AUGUSTUS), sequence alignment tools.

Procedure:

Initial Domain Search:

- From the automated gene prediction set, extract all protein sequences.

- Perform a domain search (e.g., using PFAM models or InterProScan) to identify sequences containing characteristic NBS and LRR domains. This forms your initial, high-confidence "seed" set of R-genes.

Whole-Genome Tiling:

- Use the nucleotide sequences of the seed R-genes as queries in a tBLASTn search against the entire genome assembly (not just the annotated genes).

- Use a relaxed e-value threshold (e.g., 1e-5) to maximize sensitivity and capture divergent homologs.

Locus Identification and Extraction:

- Collect all genomic regions with significant hits from the tBLASTn search.

- Extract these genomic sequences, along with generous flanking regions (e.g., 5-10 kb) to ensure complete gene models are captured.

De Novo Gene Prediction:

- On each extracted genomic locus, perform ab initio gene prediction.

- Critical Step: Use the protein sequences from your seed R-gene set as extrinsic evidence to guide and train the prediction algorithm. This significantly improves the accuracy of predicting the correct intron-exon structure.

Validation and Curation:

- Analyze the newly predicted gene models for the presence of complete NB-ARC and LRR domains.

- Manually curate the predictions by comparing them to the original seed genes and known R-gene structures from related species. This step is crucial for resolving complex loci.

Troubleshooting Notes:

- If the initial seed set is too small, consider using curated R-gene sequences from a closely related model species.

- A high number of fragmented predictions may indicate that the flanking regions extracted in Step 3 were too small.

Research Reagent Solutions

Table 2: Essential Tools for Overcoming Homology Challenges

| Research Reagent / Tool | Function / Application | Relevance to Low-Homology Research |

|---|---|---|

| Long-Read Sequencing(PacBio, Nanopore) | Generates sequencing reads thousands of base pairs long. | Spans repetitive and highly homologous regions, preventing assembly collapse and enabling complete gene model construction [2]. |

| Hidden Markov Model (HMM) Profiles(e.g., from PFAM) | Statistical models of conserved protein domains. | More sensitive than BLAST for detecting distant homologs based on conserved domain architecture, even with low overall sequence identity [21]. |

| Manual Curation Pipelines(e.g., HRP method [19]) | A multi-step process separating homology search, alignment, and phylogeny. | Allows researcher oversight to reduce false positives/negatives, which is critical for accurately identifying members of complex gene families [21] [19]. |

| BLAST+ Suite | A fundamental tool for performing local sequence alignment searches. | The core engine for both initial domain searches (BLASTp) and sensitive whole-genome homology scans (tBLASTn) in manual pipelines [21] [19]. |

| Phylogenetic Software(e.g., RAxML, MrBayes) | Infers evolutionary relationships among sequences. | Used to validate candidate homologs by confirming they cluster phylogenetically with known members of the target gene family [21]. |

Comparative Analysis of Gene Identification Methods

The diagram below illustrates the logical workflow and key advantages of the HRP method over a conventional Protein Domain-based Search (PDS).

Diagram 2: A comparison of gene identification method workflows. The HRP method uses an iterative homology approach to discover more complete gene models than the single-step PDS method [19].

Moving Beyond Alignment: Computational and Experimental Methods for Low-Homology Targets

Leveraging AI for Protein Structure Prediction (e.g., AlphaFold) When Sequence Homology Fails

Troubleshooting Guides

Guide 1: Handling Low pLDDT Confidence Regions in AlphaFold Predictions

Issue: Your AlphaFold2 (AF2) prediction for a novel NBS-LRR gene shows large regions with very low per-residue confidence (pLDDT < 50), which are typically interpreted as disordered.

Why This Happens: AlphaFold2 relies heavily on co-evolutionary information from Multiple Sequence Alignments (MSAs) to predict structures [22]. For novel genes, such as those found in plant genomes like Vernicia fordii and Vernicia montana, a shallow MSA or lack of documented homologs can result in low confidence predictions, even for segments that are potentially foldable [22]. Low pLDDT regions may be truly disordered, but they could also contain "hidden order" – segments capable of folding that AF2 cannot model due to insufficient evolutionary data [22].

Step-by-Step Troubleshooting:

Assess Foldability with Complementary Tools:

- Use a tool like pyHCA, which is based on Hydrophobic Cluster Analysis (HCA), to identify foldable segments from the amino acid sequence alone, independent of homology [22].

- Procedure: Input your protein sequence into pyHCA. It will automatically delineate segments with a high density of hydrophobic clusters, which are indicative of regions that can form regular secondary structures [22].

- Interpretation: Compare the pyHCA results with your AF2 prediction. If a segment has a low pLDDT but is identified as a soluble, foldable segment by pyHCA (with an HCA score between -1 and 3.5), it may possess "hidden order" and warrant further investigation [22].

Check for Conditional Order:

- Low-confidence regions might be Intrinsically Disordered Regions (IDRs) that undergo a disorder-to-order transition upon binding to a partner molecule [22].

- Procedure: Analyze the sequence of the low-pLDDT region for known short linear motifs (SLiMs) or features characteristic of molecular recognition.

- Interpretation: If such motifs are found, the region may be conditionally folded and its structure should be investigated in the context of its binding partner, for example, using AlphaFold 3 [23].

Investigate "Dark Proteome" Regions:

- The "dark proteome" consists of proteins or regions that lack sequence or structural annotation in databases and may escape confident AF2 prediction [22].

- Procedure: Cross-reference your gene of interest with databases of dark proteomes and check if its sequence characteristics (e.g., amino acid composition) are atypical.

- Interpretation: If your novel NBS gene falls into this category, its low-confidence AF2 model should be treated with caution, and experimental validation becomes paramount.

Guide 2: Overcoming Low Homology inDe NovoProtein Structure Prediction

Issue: You are working with a protein sequence that has very few or no homologs, leading to a poor Multiple Sequence Alignment (MSA) and a failed or low-confidence structure prediction from AlphaFold2.

Why This Happens: AF2's accuracy is directly tied to the depth and breadth of evolutionary information captured in the MSA. A shallow MSA provides insufficient co-evolutionary signals for the model to accurately infer residue-residue contacts [22] [24].

Step-by-Step Troubleshooting:

Utilize the Latest Generation of AI Tools:

- Employ AlphaFold 3 (AF3), which has a substantially updated architecture.

- Procedure: Submit your protein sequence to an AF3 server (when publicly available). Note that AF3 reduces the amount of MSA processing by replacing the complex "evoformer" from AF2 with a simpler "pairformer" module [23].

- Interpretation: While AF3 still uses MSAs, its reduced reliance on them may yield better performance on targets with sparse homology.

Leverage Ab Initio or Threading-Based Approaches:

- If homology-based methods fail, use ab initio modeling, which predicts structure from physical principles rather than homology, or threading.

- Procedure: Input your sequence into a threading server (e.g., i-TASSER). The algorithm will attempt to fit your sequence into a library of known protein folds to find the best match [24].

- Interpretation: These methods can provide plausible structural hypotheses when homology is too weak to detect, but their accuracy is generally lower than AF2 for targets with good MSAs.

Consider the Protein's Biochemical Context:

- If your protein is known or suspected to interact with other molecules (e.g., DNA, ligands, other proteins), predict its structure in complex with them.

- Procedure: Use AlphaFold 3, which is specifically designed for predicting complexes of proteins, nucleic acids, small molecules, and ions [23].

- Interpretation: The presence of a binding partner can stabilize the structure of your protein of interest, potentially leading to a higher-confidence prediction for the entire complex.

Frequently Asked Questions (FAQs)

FAQ 1: My protein has a long region with very low pLDDT scores. Does this definitely mean it is unstructured?

Answer: Not necessarily. While low pLDDT is a strong indicator of disorder, it can also result from a lack of evolutionary information in the MSA. The region might be foldable but belong to the "dark proteome," or it could be an Intrinsically Disordered Domain (IDD) that folds upon binding to a partner [22]. It is recommended to use tools like pyHCA to independently assess the segment's foldability from its sequence [22].

FAQ 2: Besides pLDDT, what other confidence metrics should I examine, and what do they mean?

Answer: You should also review the Predicted Aligned Error (PAE). The PAE plot indicates the expected positional error in angstroms between two residues if the predicted structure were aligned on another part of itself. A low PAE between two regions suggests high confidence in their relative orientation, which is crucial for evaluating domain arrangements and oligomeric interfaces. AlphaFold 3 also introduces a Predicted Distance Error (PDE) matrix, which directly estimates error in the pairwise distance matrix of the predicted structure [23].

FAQ 3: Can I use AlphaFold2/3 to predict the structure of a protein with no homologs in the database?

Answer: This is a significant challenge. AlphaFold's performance drops substantially when no homologous sequences are found. In such cases, the model operates with high uncertainty, often resulting in low pLDDT scores across the entire prediction [22]. For these de novo proteins, you should rely more heavily on ab initio or threading methods and treat the AF2/AF3 output as one of several possible structural hypotheses, not a definitive answer.

FAQ 4: How does AlphaFold 3's approach differ from AlphaFold 2 when dealing with low-homology targets?

Answer: AlphaFold 3's architecture is less dependent on deep MSA processing. It uses a simpler "pairformer" module compared to AF2's complex "evoformer," and it generates structures through a diffusion-based process that directly predicts atom coordinates [23]. This diffusion approach is a generative method, which helps the model learn protein structure at multiple scales, potentially allowing it to handle targets with less evolutionary information more robustly than AF2.

FAQ 5: What are the most common pitfalls when interpreting AlphaFold models for novel protein classes?

Answer:

- Over-interpreting low-confidence regions: Assuming a low-pLDDT loop or terminus is functionally unimportant without investigating its potential for conditional folding.

- Ignoring protein context: Failing to model the protein in its biologically relevant complex (e.g., with DNA, ions, or other proteins), which can alter the stability and confidence of the prediction.

- Misunderstanding the model's limitations: Treating the prediction as an experimental truth rather than a computational hypothesis, especially for low-homology targets. All computational models require experimental validation for definitive conclusions.

Experimental Protocols & Data

Quantitative Performance Data of AlphaFold Versions

Table 1: Key Architectural and Performance Differences Between AlphaFold 2 and 3

| Feature | AlphaFold 2 [22] [24] | AlphaFold 3 [23] |

|---|---|---|

| Core Architecture | Evoformer + Structure Module | Pairformer + Diffusion Module |

| Coordinate Generation | Predicts torsion angles and frames | Directly predicts raw atom coordinates via diffusion |

| Handling of Ligands/Nucleic Acids | Limited (via modifications) | Native support for proteins, nucleic acids, ligands, ions |

| Reported Accuracy (CASP14) | Backbone atom accuracy: ~0.96 Å RMSD | Surpasses specialized tools in protein-ligand, protein-nucleic acid, and antibody-antigen prediction |

| Primary Confidence Metrics | pLDDT, PAE | pLDDT, PAE, PDE (Predicted Distance Error) |

Table 2: Troubleshooting Low-Confidence AlphaFold Predictions

| Observed Issue | Potential Cause | Recommended Action | Alternative Tool/Method |

|---|---|---|---|

| Large regions of low pLDDT (<50) | True disorder OR lack of evolutionary constraints OR "hidden order" | Run foldability analysis (e.g., pyHCA); check for binding motifs | pyHCA [22], IUPred2 [22] |

| Poor model quality overall | Shallow/weak Multiple Sequence Alignment (MSA) | Use AlphaFold 3; try threading/ab initio methods | AlphaFold 3 [23], RoseTTAFold [23], threading [24] |

| Inability to model complexes | Target exists in a complex in vivo | Predict structure as a complex with known partners | AlphaFold 3 [23] |

| Uncertainty in domain arrangement | High inter-domain PAE | Focus on high-confidence individual domains; consider experimental constraints | PAE analysis [23] |

Workflow Visualization

Title: Troubleshooting Low Homology in AI-Based Protein Structure Prediction

The Scientist's Toolkit

Table 3: Essential Research Reagents and Tools for Investigating Novel Protein Structures

| Tool / Reagent | Function / Purpose | Example in NBS Gene Research |

|---|---|---|

| AlphaFold 2 & 3 | AI systems for predicting protein 3D structures from sequence. | Generating initial structural hypotheses for novel NBS-LRR genes from Vernicia montana [22] [23]. |

| pyHCA | Tool to identify foldable segments and estimate order/disorder from sequence using hydrophobic clusters. | Independently verifying if low-confidence regions in an AF2 prediction are potentially foldable, indicating "hidden order" [22]. |

| IUPred2 | Algorithm to predict intrinsically disordered regions from amino acid sequence. | Complementing AF2 analysis to confirm if low-pLDDT regions are likely disordered [22]. |

| Mol*/PyMOL | 3D structure visualization software. | Visualizing and analyzing predicted models, measuring distances, and creating publication-quality images [25] [26]. |

| UniProt | Comprehensive resource for protein sequence and functional information. | Gathering background information on protein domains, active sites, and post-translational modifications [26]. |

| Protein Data Bank (PDB) | Database for experimental 3D structural data of proteins and nucleic acids. | Finding template structures for threading and comparing AI predictions with experimentally solved structures [25] [26]. |

| Virus-Induced Gene Silencing (VIGS) | A technique to knock down gene expression in plants. | Functionally validating the role of a candidate NBS-LRR gene (e.g., Vm019719) in Fusarium wilt resistance [27]. |

Frequently Asked Questions (FAQs)

General Framework & Participation

Q1: How does Federated Learning (FL) enable research on low-homology gene discovery without sharing raw biobank data? FL is a distributed machine learning paradigm that allows multiple biobanks to collaboratively train a model without exchanging or centralizing their raw, privacy-sensitive genomic data [28] [29]. In the context of overcoming low homology, each biobook trains the neural network on its local data. Only the model parameters (e.g., weights and gradients) are shared with a federation controller, which aggregates them into a global model [28]. This process repeats, allowing the model to learn from the collective data of all participating biobanks while the data itself remains private and secure at each original site [29].

Q2: Can a new biobank join an ongoing FL training consortium? Yes, an FL client (e.g., a biobank) can join the training process at any time [30]. As long the total number of participating clients does not exceed the predefined maximum, the new client will receive the current global model and begin contributing to the federation's training efforts [30].

Q3: What are the network and security requirements for biobanks to participate in an FL network? FL clients (biobanks) do not need to open their firewalls for inbound traffic [30]. The server never sends uninvited requests. Instead, clients initiate all communication with the server, which only responds to these requests [30]. For the FL server, the network must open a specific port (e.g., port 8002) for TCP traffic so that outside clients can reach it [30].

Technical Configuration & Troubleshooting

Q4: What happens if a client biobank crashes or loses connection during training? FL clients typically send a heartbeat signal to the server at regular intervals (e.g., once per minute) [30]. If the server does not receive a heartbeat from a client for a configured timeout period (e.g., 10 minutes), the server will remove that client from the active training list [30]. If a server crashes, clients will attempt to reconnect for a period before shutting down gracefully [30].

Q5: How can researchers address the problem of non-IID (Independent and Identically Distributed) data across biobanks, a common challenge in genomics? Non-IID data, where the statistical distribution of data differs between sites, is a recognized challenge in federated learning [28]. Research has shown that FL can achieve performance comparable to centralized analysis even in heterogeneous, non-IID environments [28]. The performance gap can be further minimized when federations enroll more sites than would be possible in a data-sharing consortium, thus increasing the total training data volume [28].

Q6: Is it possible to use different computational resources (like multiple GPUs) across different biobanks? Yes, FL frameworks are designed to handle heterogeneity in client hardware [30]. Different clients can train using different numbers of GPUs. Administrative commands are typically available to start client instances with specific GPU configurations [30].

Experimental Protocols & Methodologies

This section details a proven methodology for implementing FL in a genomic context, as demonstrated in multi-center studies.

Protocol: Implementing a Federated Genome Interpretation Neural Network

The following workflow, used in the FedCrohn project, provides a template for exome-based disease risk prediction across multiple biobanks [29].

1. Data Preparation and Annotation at Each Local Biobank:

- Input: Variant Call Format (VCF) files from Whole Exome Sequencing (WES) at each site.

- Annotation: Annotate all variants using a tool like Annovar [29]. This identifies variant types (e.g., "exonic," "UTR3," "UTR5," "splicing").

- Feature Encoding: For each gene, create a compact feature vector by counting the occurrences of each variant type. This creates a histogram of mutational damage per gene [29].

- Feature Enrichment: Append gene-level contextual information to the feature vector. Key features include:

- Final Input: The process yields an 11-dimensional feature vector for each of a pre-selected set of disease-related genes, ready for model training [29].

2. Federated Training Setup:

- Federation Controller: Deploy a central server responsible for model aggregation. This server does not require a GPU [30].

- Local Clients: Each biobank instantiates a client with the agreed-upon model architecture and its local, pre-processed data.

- Training Loop:

- The server initializes a global model and sends it to all clients.

- Each client trains the model on its local data for a set number of epochs.

- Clients send their updated model parameters back to the server.

- The server aggregates these parameters (e.g., using Federated Averaging) to create an improved global model.

- The new global model is distributed back to the clients, and the process repeats [28] [29].

3. Security and Privacy Enhancements:

- Encryption: Before transmission, encrypt all model parameters. The global model aggregation can be performed under Fully Homomorphic Encryption (FHE), which adds a low runtime overhead (~7%) [28].

- Information Leakage Control: To protect against model inversion or membership inference attacks from a "curious" site, add information-theoretic noise to the gradients during training [28].

The workflow for this protocol is summarized in the diagram below.

Performance Data & Security Benchmarking

The following tables summarize key quantitative findings from real-world implementations of FL in biomedical research, providing benchmarks for expected outcomes.

Table 1: Federated vs. Centralized Model Performance in Neuroimaging Analysis(Based on Alzheimer's Disease Prediction & BrainAGE Estimation from MRI Data [28])

| Training Environment | Data Distribution | Relative Performance (vs. Centralized) | Key Condition |

|---|---|---|---|

| Federated | Uniform & IID | Performs comparably | Same total data volume |

| Federated | Skewed or Non-IID | Small performance gap | Same total data volume |

| Federated | Mixed | Outperforms Centralized | Federation has 5x more total data |

Table 2: Overhead and Security Features of a Secure FL Framework (MetisFL) [28]

| Feature | Method/Technology | Performance Impact / Outcome |

|---|---|---|

| Data Privacy | Data never leaves site | Fundamental privacy guarantee |

| Outsider Attack Protection | Fully Homomorphic Encryption (FHE) | Low runtime overhead (~7%) |

| Insider Attack Protection | Information-theoretic gradient noise | Limits model inversion & membership attacks |

| Controller Optimization | MetisFL architecture | 10-fold reduction in training time |

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Tools for Federated Genomic Analysis

| Item | Function in Federated Learning Context |

|---|---|

| Annovar [29] | An annotation tool for genetic variants from sequencing data; used at each local site to standardize feature extraction from VCF files. |

| RVIS Score [29] | (Residual Variation Intolerance Score) A gene-level metric appended to feature vectors to provide context on a gene's tolerance to mutations. |

| Publication Weight Score [29] | A metric quantifying the association between a gene and a specific disease from literature; enriches the feature vector with prior knowledge. |

| Fully Homomorphic Encryption (FHE) [28] | A cryptographic system that allows computation on encrypted data. Protects model parameters during aggregation from outsider attacks. |

| Federation Controller | The central server that orchestrates the FL process: distributes the model, aggregates updates, and manages client membership [28] [30]. |

| Docker Containers | Technology used to encapsulate and deploy standardized analysis environments (e.g., databases, APIs) across different client sites, ensuring consistency and simplifying installation [31]. |

Computational Pipelines for Functional Gene Discovery from Transcriptomic Data

In the field of plant genomics, the discovery of Nucleotide-Binding Site Leucine-Rich Repeat (NBS-LRR) resistance genes is crucial for developing disease-resistant crops. However, researchers frequently encounter a significant obstacle: low sequence homology across species. Traditional homology-based methods often fail to identify novel NBS genes because these genes evolve rapidly, leading to substantial sequence divergence even among closely related species. This technical limitation hinders the identification of potentially valuable resistance genes in non-model and understudied plant species.

This guide provides a structured approach to overcoming these challenges through advanced computational pipelines that leverage transcriptomic data, moving beyond traditional sequence homology to identify functional genes based on co-expression patterns and functional clustering.

Key Research Reagent Solutions

Table 1: Essential Computational Tools for Functional Gene Discovery

| Tool Category | Specific Tool/Reagent | Primary Function | Application in NBS Gene Discovery |

|---|---|---|---|

| Sequence Alignment | HISAT2 [32] [33] | Aligns RNA-seq reads to reference genome | Initial mapping of transcriptomic data prior to NBS identification |

| Read Quantification | featureCounts [32] [33] | Generates count matrix from aligned reads | Quantifying expression levels of putative NBS genes |

| Batch Effect Correction | ComBat-seq [32] [33] | Adjusts for technical variance between datasets | Harmonizing data from multiple experiments or conditions |

| Differential Expression | DESeq2 [32] [33] | Identifies differentially expressed genes | Finding NBS genes responsive to pathogen challenge |

| Domain Identification | HMMER (PF00931) [34] [35] | Identifies NBS domains using hidden Markov models | Initial screening for NBS-containing genes in genomic data |

| Functional Annotation | clusterProfiler [32] [33] | Performs gene ontology enrichment analysis | Functional characterization of identified NBS gene clusters |

Workflow Diagram: Pipeline for Functional Gene Discovery

Detailed Experimental Protocols

Transcriptome-Wide NBS Gene Identification Protocol

Objective: Comprehensively identify NBS-LRR genes in a plant species with low homology to reference organisms.

Methodology:

Data Acquisition and Quality Control

- Download RNA-seq data from public repositories (e.g., NCBI SRA) or generate new sequencing data [32] [33]

- Perform quality control using FastQC or MultiQC to assess read quality, GC content, and adapter contamination [36]

- Use Trimmomatic or Cutadapt to remove low-quality bases and adapter sequences [36]

Domain Identification Using HMMER

- Download the NB-ARC domain (PF00931) HMM profile from Pfam database [34] [35]

- Perform HMM search against protein sequences using HMMER v3.1b2 with E-value cutoff of 1e-10 [34]

- Verify identified domains using Pfam and SMART databases [34]

- Identify coiled-coil (CC) domains using Paircoil2 with P-score cutoff of 0.025 [34]

- Classify candidates into subfamilies: TNL, CNL, and RNL [35]

Genomic Distribution and Cluster Analysis

- Map physical locations of identified NBS genes on chromosomes using positional information from GFF files [34]

- Define gene clusters as regions where the distance between two neighboring NBS genes is < 200 kb with ≤ 8 non-NBS genes intervening [34]

- Calculate the percentage of NBS genes located in clusters versus singletons [35]

Table 2: NBS Gene Classification Criteria Based on Protein Domains

| NBS Subfamily | N-Terminal Domain | Central Domain | C-Terminal Domain | Example Species Distribution |

|---|---|---|---|---|

| TNL | TIR (PF01582) | NBS (PF00931) | LRR (PF08191) | Abundant in dicots [34] |

| CNL | Coiled-Coil (CC) | NBS (PF00931) | LRR (PF08191) | Found in both monocots and dicots [35] |

| RNL | RPW8 (PF05659) | NBS (PF00931) | LRR (PF08191) | NRG1 and ADR1 lineages [35] |

Expression-Based Functional Gene Discovery Protocol

Objective: Identify novel functional NBS genes through co-expression analysis and functional clustering.

Methodology:

Sequence Processing and Differential Expression

- Align quality-filtered reads to reference genome using HISAT2 [32] [33]

- Generate count matrix using featureCounts [32] [33]

- Correct for batch effects using ComBat-seq when integrating multiple datasets [32] [33]

- Identify differentially expressed genes using DESeq2 with p-value < 0.05 [32] [33]

- Select top 3000 DEGs for further analysis based on significance [32] [33]

Optimal Clustering Using Gap Statistics

- Normalize count data using TPM (transcripts per million) method [32] [33]

- Apply gap statistics method to determine optimal cluster number (K) [32] [33]

- Perform K-means clustering with the determined optimal K value [32] [33]

- Group genes into clusters with similar expression patterns across conditions/time points [32] [33]

Functional Annotation and Literature Mining

- Perform Gene Ontology enrichment analysis for each cluster using clusterProfiler [32] [33]

- Identify clusters enriched for defense response or immune system processes [32]

- Cross-reference cluster genes with known NBS genes from manually curated databases [32]

- Perform PubMed literature searches for genes without established functions in the target biological process [32]

- Select candidate genes with no literature evidence for function in the specific process but expressed in relevant tissues [32]

Troubleshooting Guide: Addressing Common Experimental Challenges

Data Quality and Preprocessing Issues

Table 3: Troubleshooting RNA-seq Data Quality Problems

| Problem | Potential Causes | Solutions | Preventive Measures |

|---|---|---|---|

| Low alignment rate | Poor read quality, adapter contamination, species mismatch | Re-trim reads with stricter parameters, verify reference genome suitability | Perform QC before alignment, use species-specific reference when available |

| High duplication rates | PCR over-amplification, low input RNA, technical artifacts | Use duplication-aware aligners, analyze with dupRadar [36] | Optimize PCR cycles, use unique molecular identifiers (UMIs) |

| Batch effects obscuring biological signals | Different sequencing runs, library preparation dates, personnel | Apply batch correction (ComBat-seq) [32] [33] | Randomize samples across sequencing runs, standardize protocols |

Functional Annotation Challenges

Problem: Incomplete or inaccurate functional annotation for novel NBS genes.

Solutions:

- Use multiple annotation tools (DAVID, QuickGO) through pipelines like Transcriptator to increase coverage [37]

- Incorporate domain-based annotation using Pfam, SMART, and InterPro in addition to sequence similarity [34] [35]

- Leverage protein family-specific databases and orthology-based inference when direct homology is weak

Problem: High false positive rate in NBS gene identification.

Solutions:

- Implement conservative E-value thresholds (1e-10) for HMM searches [34]

- Require presence of multiple conserved motifs (P-loop, GLPL, kinase-2a, kinase-3a) [34]

- Validate predictions with transcript evidence from RNA-seq data

Overcoming Low Homology Limitations

Problem: Traditional BLAST-based methods fail to identify divergent NBS genes.

Solutions:

- Implement profile HMM searches using the NB-ARC domain (PF00931) instead of pairwise sequence comparison [34] [35]

- Utilize co-expression analysis to identify genes that cluster with known NBS genes or defense response genes despite low sequence similarity [32]

- Apply synteny-based approaches to identify orthologous NBS genes in related species, even with low direct sequence similarity

- Use machine learning approaches like PORTRAIT for non-coding RNA identification when standard methods fail [37]

Frequently Asked Questions (FAQs)

Q1: How can I identify NBS genes in species with no reference genome?

A: For non-model organisms without reference genomes, employ the following strategy:

- Perform de novo transcriptome assembly using tools like Trinity or SOAPdenovo-Trans

- Use HMMER with the NB-ARC domain (PF00931) to identify NBS-containing transcripts in the assembled transcriptome [34]

- Annotate assembled transcripts using pipelines like Transcriptator that leverage BLAST against SwissProt and UniProt databases [37]

- Perform functional enrichment analysis to identify defense-related transcripts

Q2: What is the optimal clustering method for grouping co-expressed genes, and how do I determine the right number of clusters?

A: The most effective approach combines:

- Gap statistics to objectively determine the optimal number of clusters (K) rather than subjective dendrogram interpretation [32] [33]

- K-means clustering with the determined K value for grouping genes with similar expression patterns [32] [33]

- Empirical testing shows that K=30 often works well for retinal development data, but this should be determined specifically for your dataset using gap statistics [32]

Q3: How can I distinguish genuine NBS genes from pseudogenes or non-functional copies?

A: Apply multiple filtering criteria:

- Require evidence of expression (RNA-seq read support) to exclude pseudogenes [35]

- Check for intact open reading frames without premature stop codons

- Verify presence of complete conserved motifs within the NBS domain [34]

- Look for evolutionary signatures of purifying selection (Ka/Ks < 1) rather than neutral evolution [34]

Q4: What validation methods are recommended for computationally predicted NBS genes?

A: Employ a multi-tier validation approach:

- Molecular validation: RT-PCR or qPCR to confirm expression and response to pathogens [34]

- Spatial expression analysis: RNA in situ hybridization or spatial transcriptomics to verify expression in defense-related tissues [38]

- Functional validation: Virus-induced gene silencing (VIGS) or CRISPR-based mutagenesis followed by pathogen challenge assays [35]

Q5: How can I handle the problem of fragmented NBS gene predictions in draft genomes?

A: Address assembly fragmentation with:

- Targeted assembly improvement using RNA-seq data to bridge gaps in NBS gene regions

- Use of specialized assemblers that handle repeat-rich regions more effectively

- Validation of gene models with full-length transcriptome sequencing (Iso-Seq) to recover complete coding sequences

NBS Gene Family Evolution and Duplication Analysis

Evolutionary Analysis Protocol

Objective: Understand evolutionary forces shaping NBS gene family expansion and diversification.

Methodology:

Gene Duplication Analysis

Selection Pressure Analysis

Phylogenetic Analysis

- Construct maximum likelihood phylogenetic trees using MEGA 6.0 with 1000 bootstrap replicates [34]

- Compare NBS gene relationships across species to infer orthology and paralogy relationships

- Map gene duplication events onto phylogenetic trees to understand temporal patterns of expansion

Table 4: Interpretation of Evolutionary Parameters in NBS Gene Analysis

| Evolutionary Parameter | Calculation Method | Interpretation | Biological Significance |

|---|---|---|---|

| Ka/Ks Ratio | Non-synonymous vs synonymous substitution rate | >1: Positive selection<1: Purifying selection=1: Neutral evolution | Indicates adaptive evolution for pathogen recognition |

| Ks Value | Synonymous substitution rate | Higher values indicate older duplication events | Dates expansion events in evolutionary history |

| Tandem Duplication Frequency | Proportion of NBS genes in clusters | High frequency suggests rapid adaptation | Mechanism for generating recognition specificity |

| Motif Conservation | Presence of P-loop, GLPL, kinase-2a, kinase-3a | High conservation indicates functional constraint | Essential ATP/GTP binding and signaling function |

Foundational Concepts: Library Types and Characteristics

What are the main types of nanobody libraries and how do they differ in construction and application?

Nanobodies (Nbs), or single-domain antibodies derived from camelid heavy-chain-only antibodies, are typically generated from three primary library types: immune, naïve, and synthetic/semi-synthetic libraries. Each offers distinct advantages and limitations for different research scenarios [39] [40].

Table 1: Comparison of Nanobody Library Types

| Library Type | Source Material | Construction Process | Key Advantages | Common Limitations | Optimal Applications |

|---|---|---|---|---|---|

| Immune Library | Lymphocytes from immunized camels, llamas, alpacas, or dromedaries [39] | Animal immunization, blood collection, lymphocyte isolation, mRNA extraction, cDNA synthesis, VHH gene amplification [39] | High-affinity binders due to in vivo affinity maturation; typically contains 10⁶+ unique transformants [39] | Requires animal immunization; time-consuming; not suitable for toxic antigens [39] | Targets where immunization is feasible and high affinity is paramount |