Beyond Known Genes: Unlocking and Predicting Non-Canonical Antimicrobial Resistance

Antimicrobial resistance (AMR) poses a catastrophic threat to global health, projected to cause 10 million deaths annually by 2050.

Beyond Known Genes: Unlocking and Predicting Non-Canonical Antimicrobial Resistance

Abstract

Antimicrobial resistance (AMR) poses a catastrophic threat to global health, projected to cause 10 million deaths annually by 2050. Traditional, gene-centric AMR prediction models are failing, as a significant portion of resistance emerges from non-canonical mechanisms not captured by standard genomic databases. This article synthesizes the latest research to provide a comprehensive framework for improving prediction accuracy for these elusive determinants. We explore the foundational biology of non-canonical resistance, from global regulatory networks to small proteins from the 'dark proteome.' We then detail cutting-edge methodological approaches, including machine learning on transcriptomic data and non-canonical metatranscriptomics, that are achieving high-accuracy resistance prediction. The content further addresses critical troubleshooting and optimization strategies for model training and data interpretation, and concludes with rigorous validation and comparative techniques to benchmark new prediction tools against existing paradigms. This resource is tailored for researchers, scientists, and drug development professionals aiming to build the next generation of AMR diagnostics and surveillance systems.

The Uncharted Territory of Resistance: Defining Non-Canonical AMR Mechanisms

FAQs: Understanding the Limits of Traditional Resistance Gene Detection

FAQ 1: Why does my analysis, based on the CARD database, fail to identify the genetic basis for a confirmed antibiotic resistance phenotype in my bacterial isolates?

Your experience highlights a key limitation of traditional, gene-centric detection methods. The Comprehensive Antibiotic Resistance Database (CARD), while a valuable and rigorously curated resource, primarily catalogs genes with experimental validation and established links to resistance mechanisms [1]. This reliance on known, peer-reviewed data creates a fundamental gap when facing novel or uncharacterized resistance determinants.

Recent evidence underscores this limitation. A 2025 study on Pseudomonas aeruginosa revealed that machine learning models could predict antibiotic resistance with over 96% accuracy using transcriptomic data, yet only 2-10% of the predictive gene signatures overlapped with known markers in CARD [2]. This indicates that a vast landscape of resistance mechanisms operates outside the boundaries of traditional, sequence-homology-based detection, involving diverse regulatory and metabolic genes not yet annotated as "resistance genes" [2].

FAQ 2: What are the main types of resistance mechanisms that traditional databases like CARD and ResFinder might miss?

The following table summarizes key resistance determinants that often evade detection by traditional database queries.

| Resistance Determinant Type | Description | Why Traditional Methods Miss It |

|---|---|---|

| Non-Canonical Proteins [3] | Proteins derived from genomic regions outside annotated protein-coding genes (e.g., long non-coding RNAs, alternative open reading frames). | These proteins are not part of standard gene annotations and their sequences are not present in reference databases. |

| Transcriptional Regulators [2] | Genes involved in global regulatory networks (e.g., stress responses, metabolism) that indirectly confer resistance when over- or under-expressed. | The resistance is not caused by the presence of the gene itself, but by changes in its expression level, which is not detected by genomic screens. |

| Point Mutations [1] | Single nucleotide changes in chromosomal genes (e.g., in gyrA conferring fluoroquinolone resistance). |

Requires specialized tools (e.g., PointFinder) and is not always comprehensively covered in general ARG databases. |

| Low-Abundance or Novel ARGs [4] | Genes with low sequence similarity to known references or those present in low copy numbers in metagenomic samples. | Homology-based tools (BLAST, Bowtie) with strict cutoffs fail to identify genes with significant but imperfect matches. |

FAQ 3: My genomic analysis shows the presence of a known resistance gene, but the phenotype is susceptible. What could explain this discrepancy?

This common issue, known as the genotype-phenotype discordance, arises because the mere presence of a gene sequence does not guarantee its expression or activity. Several factors can explain this:

- Regulatory Control: The gene may be present but transcriptionally silent under your test conditions due to tight regulatory control [2].

- Gene Incompleteness: The detected sequence might be a pseudogene or a fragment that does not code for a functional protein.

- Context Dependence: The resistance conferred by the gene might be dependent on other genetic backgrounds or synergistic effects with other genes that are absent in your isolate [2].

Troubleshooting Guides

Guide 1: Troubleshooting Failed Resistance Prediction in Genomic Data

Problem: Your whole-genome sequencing data from a resistant bacterial isolate fails to identify a known resistance gene using standard database searches (e.g., with RGI or ResFinder).

Solution: Employ a tiered, multi-modal troubleshooting approach.

| Step | Action | Rationale & Technical Details |

|---|---|---|

| 1 | Verify Data Quality | Ensure sequencing coverage is sufficient (>30x) and the genome assembly is contiguous. Low coverage can miss genes. |

| 2 | Expand Database Search | Run your analysis against multiple databases (CARD, ResFinder, MEGARes, NDARO) as each has unique curation focuses and content [1]. |

| 3 | Lower Search Stringency | If using BLAST-based methods, cautiously adjust parameters (e.g., reduce percent identity cutoff, increase E-value). Warning: This increases false-positive risk [4]. |

| 4 | Use Advanced ML Tools | Employ deep learning tools like DeepARG, HMD-ARG, or MCT-ARG that are designed to detect remote homologs and novel ARGs beyond strict sequence homology [4] [5]. |

| 5 | Shift to Transcriptomics | If the genotype remains elusive, profile the transcriptome (RNA-Seq) under antibiotic stress. This can reveal if unannotated genes or known genes with novel functions are being highly expressed to confer resistance [2]. |

Guide 2: Designing an Experiment to Identify Non-Canonical Resistance Mechanisms

Problem: You suspect your bacterial strain possesses a novel, non-canonical resistance mechanism not found in existing databases.

Objective: To identify and validate the genetic and molecular basis of this unknown resistance.

Experimental Protocol:

Phenotypic Confirmation:

- Perform Antibiotic Susceptibility Testing (AST) using broth microdilution to determine the Minimum Inhibitory Concentration (MIC) according to EUCAST/CLSI guidelines [6]. This provides a robust baseline.

- Confirm stability of the resistance phenotype through serial passage without antibiotic pressure.

Multi-Omics Profiling:

- Genome Sequencing: Perform Whole-Genome Sequencing (WGS) to catalog all potential genetic determinants. Use both Illumina (accuracy) and Oxford Nanopore (completeness) if possible [6] [1].

- Transcriptome Sequencing (RNA-Seq): Grow the isolate with and without a sub-inhibitory concentration of the antibiotic. Sequence the transcriptome to identify genes that are significantly upregulated or downregulated during the antibiotic challenge [2].

Bioinformatic Integration & Discovery:

- Genomic Analysis: Analyze WGS data with a broad-spectrum tool like AMRFinderPlus and deep learning models like MCT-ARG, which integrates protein sequence, structure, and solvent accessibility for better prediction [5].

- Transcriptomic Analysis: Map RNA-Seq reads to the assembled genome. Identify differentially expressed genes (DEGs). Do not restrict analysis to known ARGs; include all genes, especially those with unknown function.

- Prioritize Candidates: Cross-reference DEGs with the list of genes identified by machine learning models from the genome. Genes appearing in both analyses are high-priority candidates.

Functional Validation:

- Gene Knockout/Complementation: Use CRISPR-Cas or allelic exchange to knock out the candidate gene in the resistant strain. The successful knockout should show a decrease in MIC. Re-introducing the gene on a plasmid (complementation) should restore the resistance phenotype.

- Heterologous Expression: Clone and express the candidate gene in a susceptible, model strain (e.g., E. coli). If the recipient strain becomes resistant, this is strong evidence of the gene's function.

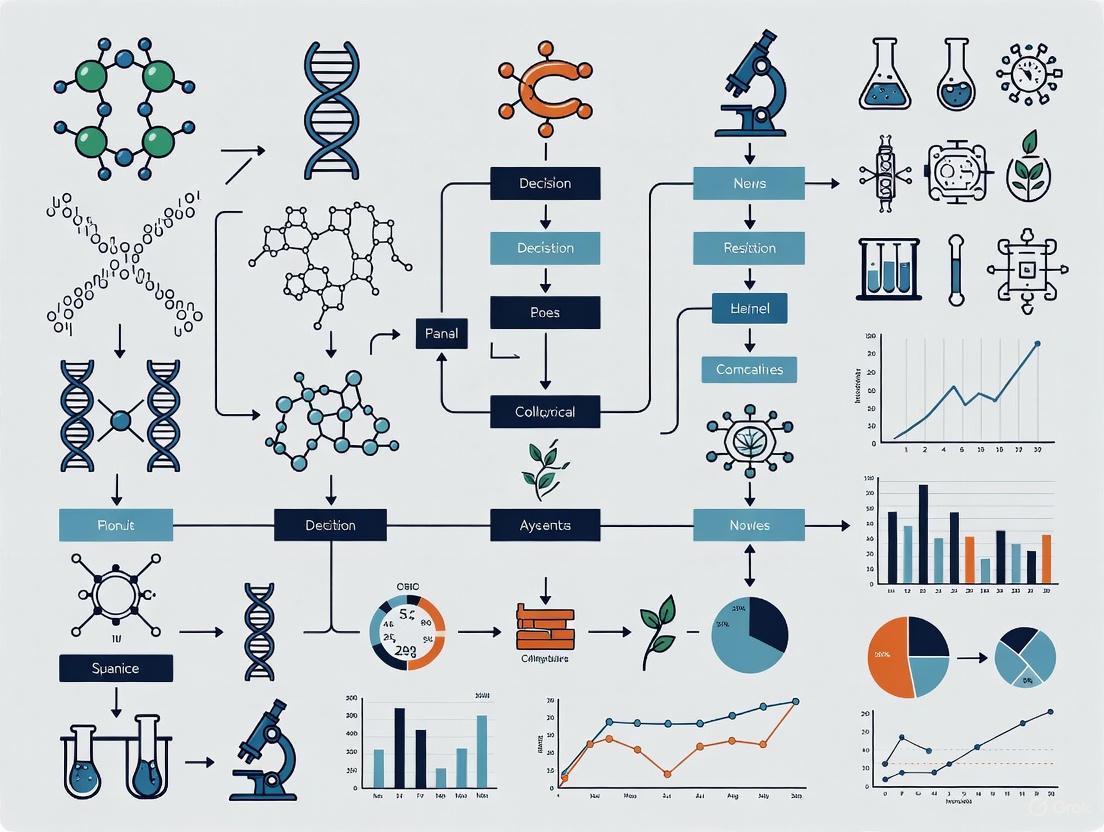

Workflow for Identifying Novel Resistance Mechanisms

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential resources for moving beyond traditional gene-centric analysis.

| Tool / Resource Name | Type | Function & Application |

|---|---|---|

| CARD & RGI [1] | Manually Curated Database & Tool | The gold standard for identifying known ARGs via homology. Serves as a essential baseline for analysis. |

| ResFinder/PointFinder [1] | Specialized Database & Tool | Excellent for detecting acquired resistance genes and chromosomal point mutations in specific bacterial species. |

| MCT-ARG [5] | Deep Learning Model (Multi-channel Transformer) | Integrates protein sequence, structure, and solvent accessibility for highly accurate and interpretable ARG prediction. |

| DeepARG & HMD-ARG [4] | Deep Learning Models (CNN/LSTM) | Uses different neural network architectures to identify ARGs from sequence data, capable of finding remote homologs. |

| ProtBert-BFD / ESM-1b [4] | Protein Language Models (PLMs) | Converts protein sequences into numerical feature vectors that encapsulate structural and evolutionary information for ML input. |

| Ribo-seq | Experimental Technique | Maps the positions of translating ribosomes genome-wide, crucial for identifying non-canonical proteins (microproteins) [3]. |

| Mass Spectrometry (MS) | Experimental Technique | Directly detects and identifies expressed proteins, providing validation for proteins predicted from genomic or transcriptomic data [3]. |

In the evolving landscape of antimicrobial resistance (AMR), bacteria employ sophisticated regulatory networks to survive antibiotic pressure. Beyond canonical resistance genes encoded in the core genome, global regulators and two-component systems (TCSs) enable rapid, adaptive responses to environmental threats. These systems control widespread changes in gene expression that alter cell physiology, leading to transient but clinically significant resistance phenotypes that often evade traditional genetic diagnostics. This technical support center provides troubleshooting guidance for researchers studying these complex systems, with emphasis on improving prediction accuracy for non-canonical resistance mechanisms.

Troubleshooting Guides

MarA, SoxS, and Rob Activation

Problem: Unexpected multidrug resistance emergence in E. coli without acquisition of known resistance genes.

Background: The paralogous transcriptional activators MarA, SoxS, and Rob regulate a common set of promoters controlling multidrug efflux and membrane permeability. They bind to a specific 19 bp DNA sequence called the "marbox" [7]. Activation can occur through different mechanisms: MarA expression increases in response to salicylate, SoxS in response to superoxide stress (e.g., paraquat), and Rob activity increases post-translationally with 2,2′-dipyridyl, bile salts, or decanoate [7].

Troubleshooting Steps:

- Check marbox configuration: Verify the marbox orientation and distance from the -10 promoter hexamer. Functional configurations include:

- Class II: Marbox ~20 bp from -10, overlaps -35 hexamer, "forward" orientation (e.g., fumC)

- Class I: Marbox ~39-50 bp from -10, "backward" orientation (e.g., marRAB, acrAB)

- Class I: Marbox ~30 bp from -10, functional in either orientation (e.g., *zwf) [7]

- Confirm activator-specific induction:

- Test MarA activation with salicylate (e.g., 5 mM)

- Test SoxS activation with paraquat (e.g., 100 µM)

- Test Rob activation with 2,2′-dipyridyl (e.g., 400 µM) [7]

- Investigate promoter usage: For tolC, note that MarA/SoxS/Rob activate the p3 and p4 promoters downstream of previously identified p1 and p2 promoters, with a single marbox activating both p3 and p4 through different spacing (20 bp and 30 bp from -10, respectively) [7]

Experimental Validation:

- Use transcriptional fusions with the relevant promoter region (e.g., tolC region containing the marbox but lacking p1 and p2 promoters)

- Perform primer extension assays to identify transcription start sites under activating conditions

PhoPQ Two-Component System Function

Problem: Pleiotropic effects on antibiotic susceptibility, stress adaptation, and virulence in Gram-negative pathogens.

Background: The PhoPQ TCS consists of sensor kinase PhoQ and response regulator PhoP. PhoQ responds to low Mg²⁺, cationic antimicrobial peptides (CAPs), acidic pH, and osmotic upshift. Activated PhoP regulates genes involved in membrane modification, stress response, and virulence [8] [9].

Troubleshooting Steps:

- Confirm system activation:

- Grow bacteria in low-Mg²⁺ media (e.g., N-minimal medium with <10 µM Mg²⁺)

- Test response to CAPs (e.g., polymyxin B sub-inhibitory concentrations)

- Check growth at acidic pH (e.g., pH 5.5-6.0)

- Address unexpected mutant phenotypes:

- If unable to construct phoQ single mutant, consider polar effects or essential function in some species

- For complementation, use a plasmid expressing the entire phoPQ operon rather than phoP alone, as PhoP protein levels may not be restored with phoP-only complementation [8]

- Check downstream effects:

- Assess lipid A modifications (e.g., addition of 4-amino-4-deoxy-L-arabinose)

- Evaluate membrane permeability changes

- Test for cross-talk with PmrAB system, which is often activated downstream of PhoPQ [10]

Expected Phenotypes in PhoPQ Mutants:

- Increased susceptibility to β-lactams, quinolones, aminoglycosides, macrolides, chloramphenicol, and trimethoprim-sulfamethoxazole [8]

- Reduced swimming motility

- Enhanced sensitivity to oxidative stress (menadione, H₂O₂), envelope stress (SDS), and iron depletion (2,2′-dipyridyl) [8]

- Attenuated virulence in infection models

General Two-Component System Analysis

Problem: Difficulty identifying regulons and connectivity between TCS pathways.

Background: TCSs typically consist of a sensor histidine kinase (HK) that autophosphorylates in response to environmental signals, then transfers the phosphate to a cognate response regulator (RR) that mediates changes in gene expression [9]. However, significant cross-talk and connectivity exist between systems.

Troubleshooting Steps:

- Map regulons systematically:

- Use constitutive activation approaches (phosphatase-deficient HK mutants) to identify regulons independent of environmental signals [11]

- For Streptococcus agalactiae, proven phosphatase-altering mutations include:

- SaeS T133A, BceS V124A, VncS T245A, HK11030 T245A [11]

- Combine RNA-seq with ChIP-seq for comprehensive regulon mapping [12]

- Check for system interconnectivity:

- Test for positive feedback loops through TCS operon transcription

- Look for regulation of non-cognate TCSs in activation mutants

- Consider network-level approaches to identify functional modules [12]

- Account for evolutionary rewiring:

- Be aware that regulons can differ even between closely related species

- Consider laboratory evolution experiments to identify adaptive TCS mutations under specific selection pressures [13]

Interpretation Guidance:

- TCSs can be highly specialized or part of global regulatory networks

- Some TCSs have essential functions or show conditional essentiality

- Compensatory mutations may arise rapidly in TCS mutants with fitness defects

Frequently Asked Questions (FAQs)

Q1: How can I distinguish between MarA, SoxS, and Rob activation when they recognize the same marbox sequence?

A1: Use specific inducing conditions and genetic constructs:

- MarA: Induce with salicylate; use marA-deficient strains

- SoxS: Induce with paraquat; use soxS-deficient strains

- Rob: Activate with 2,2′-dipyridyl, bile salts, or decanoate; note that Rob is constitutively expressed but requires post-translational activation [7]

Q2: Why does my PhoP complementation not restore wild-type phenotypes?

A2: This common problem may occur because:

- PhoP protein levels may not reach sufficient levels with phoP-only complementation

- Use a plasmid expressing the entire phoPQ operon for effective complementation [8]

- Ensure the PhoPQ system is properly activated during testing (use low Mg²⁺ conditions)

Q3: How do global regulators contribute to antibiotic resistance without canonical resistance genes?

A3: Through coordinated regulation of:

- Efflux pump expression (e.g., AcrAB-TolC upregulation by MarA/SoxS/Rob) [7] [10]

- Porin downregulation (e.g., OmpF reduction by MarA) [10]

- Cell envelope modifications (e.g., lipid A modification by PhoPQ) [9] [10]

- Stress response activation that provides collateral resistance [10]

Q4: What experimental approaches can reveal non-canonical resistance mechanisms?

A4:

- Transcriptomics: Machine learning on transcriptomic data can identify predictive gene sets beyond known resistance markers [2]

- Constitutive TCS activation: Phosphatase-deficient HK mutants reveal regulons without specific signals [11]

- Laboratory evolution: Track evolutionary trajectories under antibiotic selection [13]

- Network analysis: Map connectivity between regulatory systems [12]

Q5: How can I improve prediction of resistance phenotypes from genomic or transcriptomic data?

A5:

- Include regulatory genes and their expression states in predictive models

- Consider minimal gene signatures identified through genetic algorithms and machine learning (e.g., 35-40 gene sets achieving 96-99% accuracy) [2]

- Account for condition-specific regulation that may not be apparent in standard growth conditions

- Incorporate epigenetic factors such as DNA methylation that can influence gene expression [14]

Table 1: Antibiotic Susceptibility Changes in Stenotrophomonas maltophilia PhoPQ Mutants [8]

| Antibiotic Class | Specific Antibiotic | MIC Wild-type (μg/ml) | MIC ΔPhoPQ (μg/ml) | Fold Reduction |

|---|---|---|---|---|

| β-lactam | Ceftazidime | 256 | 16 | 16× |

| β-lactam | Ticarcillin-clavulanate | 128 | 8 | 16× |

| Quinolone | Ciprofloxacin | 1 | 0.125 | 8× |

| Quinolone | Levofloxacin | 1 | 0.125 | 8× |

| Aminoglycoside | Kanamycin | 256 | 4 | 64× |

| Aminoglycoside | Tobramycin | 256 | 4 | 64× |

| Macrolide | Erythromycin | 64 | 8 | 8× |

| Chloramphenicol | Chloramphenicol | 8 | 2 | 4× |

| SXT | Trimethoprim-sulfamethoxazole | 2 | 0.25 | 8× |

Table 2: Machine Learning Prediction Performance for P. aeruginosa Antibiotic Resistance Using Minimal Gene Sets [2]

| Antibiotic | Accuracy | F1 Score | Gene Set Size | Key Features |

|---|---|---|---|---|

| Meropenem | ~99% | 0.99 | 35-40 | Limited CARD overlap (3-5%); includes efflux genes mexA, mexB |

| Ciprofloxacin | ~99% | 0.99 | 35-40 | Distinct, non-overlapping gene subsets |

| Tobramycin | ~96% | 0.93 | 35-40 | Performance plateaus with ~35-40 genes |

| Ceftazidime | ~96% | 0.93 | 35-40 | Multiple predictive gene combinations possible |

Table 3: Key Two-Component Systems in Antibiotic Resistance and Their Mechanisms [9]

| TCS | Example Species | Resistance Mechanism | Antibiotics Affected |

|---|---|---|---|

| PhoPQ | Salmonella, E. coli, P. aeruginosa | Lipid A modification, efflux pump regulation | Polymyxins, AMPs, multiple classes |

| PmrAB | K. pneumoniae, Salmonella, Acinetobacter | LPS modification (often downstream of PhoPQ) | Colistin, polymyxins |

| CpxAR | E. coli, P. aeruginosa | Porin downregulation, efflux upregulation | Aminoglycosides, β-lactams |

| BaeSR | E. coli, Salmonella | Multidrug efflux system upregulation | Chloramphenicol, novobiocin |

| CreBC | P. aeruginosa | β-lactamase activation, biofilm formation | β-lactams |

| EvgAS | E. coli | Multidrug efflux pump upregulation | Multiple classes |

Experimental Protocols

Protocol 1: Identifying MarA/SoxS/Rob-Activated Promoters

Based on: Martin et al. Mol Microbiol. 2008 [7]

Procedure:

- Construct transcriptional fusions:

- Clone promoter regions into λRS45 vector or similar transcriptional fusion vector

- Create progressive deletions to identify essential regulatory regions

- For tolC, include region from +91 to -19 relative to marbox for MarA/SoxS/Rob responsiveness

- Generate single-copy lysogens:

- Integrate fusions into bacterial chromosome for single-copy analysis

- Measure reporter expression:

- Grow cultures with and without inducers:

- MarA: 5 mM sodium salicylate

- SoxS: 100 µM paraquat

- Rob: 400 µM 2,2′-dipyridyl

- Assay β-galactosidase activity at mid-exponential phase

- Grow cultures with and without inducers:

- Map transcription start sites:

- Isolate total RNA from induced and uninduced cultures

- Perform primer extension with gene-specific primers

- Identify transcription start sites by comparison with sequencing ladder

Expected Results:

- MarA/SoxS/Rob-activated promoters should show 2-10 fold induction with specific inducers

- Multiple start sites may be present (e.g., tolC p3 and p4 promoters)

Protocol 2: Constitutive Activation of Two-Component Systems

Based on: Burcham et al. Nat Commun. 2024 [11]

Procedure:

- Design phosphatase-deficient HK mutants:

- For HisKA family HKs: Identify E/DxxT/N motif and mutate threonine to alanine

- For HisKA_3 family HKs: Identify DxxxQ/H motif and mutate glutamine/histidine to alanine

- Use allelic exchange for chromosomal mutation

- Verify TCS activation:

- Check for positive feedback through HK/RR operon transcription (RNA-seq)

- Assess RR phosphorylation levels (Phos-tag electrophoresis + Western)

- Compare transcriptomes of mutant vs wild-type under standard conditions

- Characterize regulons:

- Perform RNA-seq on HK+ mutant strains

- Identify differentially expressed genes (DEGs)

- Validate key targets with complementary approaches (e.g., qRT-PCR)

Applications:

- Identify regulons without knowing specific signals

- Reveal TCS connectivity and network interactions

- Uncover biological functions beyond known phenotypes

Signaling Pathway Diagrams

The Scientist's Toolkit

Table 4: Essential Research Reagents and Materials

| Reagent/Material | Function/Application | Example Usage | Key Considerations |

|---|---|---|---|

| Sodium Salicylate | MarA-specific inducer | 5 mM final concentration for MarA activation | Prepare fresh solution in water or culture medium |

| Paraquat | SoxS-specific inducer | 100 µM final concentration for SoxS activation | Handle with caution - toxic compound |

| 2,2′-Dipyridyl | Rob activator | 400 µM final concentration for Rob activation | Iron chelator - may have pleiotropic effects |

| Low Mg²⁺ Media | PhoPQ system activation | N-minimal medium with <10 µM Mg²⁺ | Include controls with supplemented Mg²⁺ |

| λRS45 Vector | Transcriptional fusion construction | Single-copy promoter fusions for regulon analysis | Enables stable chromosomal integration |

| Phosphatase-deficient HK mutants | Constitutive TCS activation | Identify regulons without specific signals | HisKA: T→A in E/DxxT/N; HisKA_3: Q/H→A in DxxxQ/H [11] |

| Machine Learning Classifiers | Resistance prediction from transcriptomics | 35-40 gene sets for P. aeruginosa resistance prediction | Multiple gene combinations can yield similar accuracy [2] |

What are non-canonical proteins and the "dark proteome"?

The dark proteome consists of proteins that are largely unexplored due to their origin from genomic regions that defy conventional gene annotation paradigms. Non-canonical proteins are encoded by previously overlooked genomic regions (part of the "dark genome") and include proteins derived from long non-coding RNAs (lncRNAs), circular RNAs, alternative open reading frames (AltORFs), and other non-canonical genomic regions [3]. These proteins often possess unique functions and regulatory roles compared to their canonical counterparts and significantly expand the known proteome beyond what is encoded in the canonical genetic code [3].

What defines a small open reading frame (sORF) and its encoded peptide?

Small open reading frames (sORFs) are generally defined as open reading frames shorter than 300 codons [15], though many studies focus on those encoding proteins shorter than 100 amino acids [16]. The functional peptides encoded by sORFs within lncRNAs are called sORFs-encoded peptides (SEPs) [15]. These SEPs regulate critical biological processes including gene expression, cell signaling, morphogenic regulation, and serve as partner proteins [15].

Computational Prediction & Analysis

Which computational tools are available for predicting protein-coding sORFs?

Several computational methods have been developed to predict the coding potential of sORFs. The table below summarizes key prediction tools and their performance characteristics based on comprehensive evaluations [16]:

Table 1: Performance Evaluation of sORF Prediction Tools

| Program | Specialization | Reported Accuracy Range | Strengths | Limitations |

|---|---|---|---|---|

| SORFPP | sORFs/SEPs | High (MCC: 12.2%-24.2% improvement) | Ensemble learning, multiple feature encodings | Complex implementation [15] |

| MiPepid | sORFs | Variable across datasets | Specifically designed for peptides | Performance varies by organism [16] |

| CPPred-sORF | sORFs | Variable across datasets | Specialized for sORFs | Limited to eukaryotic data [16] |

| DeepCPP | sORFs | Variable across datasets | Deep learning approach | Trained mainly on human data [16] |

| CPC2 | General ORFs | Moderate | User-friendly online interface | Not sORF-specialized [16] |

| CPAT | General ORFs | Moderate | Fast analysis | Not optimized for short sequences [16] |

| sORFfinder | sORFs | Moderate | Specifically designed for sORFs | Limited evaluation data [16] |

Why do sORF prediction tools sometimes yield inconsistent results?

Prediction tools exhibit variable performance due to several factors:

- Sequence length bias: Traditional coding potential calculators trained on longer ORFs often perform poorly on sORFs because features like codon bias scale with sequence length [16].

- Species-specific differences: Tools trained on eukaryotic data may not transfer well to prokaryotic sORFs and vice versa due to fundamental biological differences [16].

- Inadequate negative datasets: The lack of objectively verified non-coding sORF sequences complicates model training and evaluation [16].

- Feature extraction limitations: Most methods utilize either amino acid or nucleotide features, but not both, and often overlook structural representation information [15].

What is the SORFPP framework and how does it improve prediction accuracy?

SORFPP (sORF Finder and Predictor Platform) addresses current methodological limitations through an integrated ensemble approach [15]:

SORFPP Integrated Workflow

The SORFPP methodology involves four key innovation points [15]:

- Multi-perspective feature extraction: Simultaneously encodes nucleotide sequences of sORFs (using 3-mer, 4-mer, Fickett, and CTD encoding) and amino acid sequences of SEPs (using AAC, APAAC, QSOrder, PAAC, 2-mer, and 4-mer encoding)

- Protein language model integration: Uses ESM-2 to extract protein representation information and analyzes it with a Self-attention model

- Sparsity handling: Employs CatBoost to solve the sparsity problem of traditional encoding

- Ensemble framework: Combines both models with logistic regression for final predictions

This approach has demonstrated performance improvements of 12.2%-24.2% in Matthew's correlation coefficient compared to other state-of-the-art models across three benchmark datasets [15].

Experimental Validation & Troubleshooting

What experimental workflow provides comprehensive sORF validation?

A robust experimental framework for sORF and novel peptide discovery integrates multiple complementary technologies [17]:

Comprehensive sORF Validation Workflow

How can I troubleshoot low peptide detection rates in mass spectrometry?

Low detection rates of novel peptides in mass spectrometry experiments can be addressed by:

- Database optimization: Use a comprehensive reference library like RLNPORF (Reference Library of Novel Peptide ORFs) that includes non-canonical start codons (ATG, CTG, GTG, TTG) and ORFs up to 250 amino acids [17].

- Sample processing modifications: Implement ultrafiltration steps prior to tandem MS to enrich for small peptides [17].

- Library size consideration: Ensure sufficient database coverage; the RLNPORF approach identified 8,945 previously unannotated peptides from gastric cancer samples using a library of 11,668,944 potential sORFs [17].

- Multi-technology correlation: Combine ribosome profiling (Ribo-seq) with MS validation to distinguish translated ORFs from potential non-coding transcripts [17].

What could cause discrepancies between Ribo-seq and proteomics data?

Discrepancies between ribosome profiling and mass spectrometry results may stem from:

- Different temporal resolutions: Ribo-seq captures transient translation events while MS detects stable proteins [17]

- Protein stability variations: Cryptic proteins often exhibit lower stability and rapid turnover compared to canonical proteins [18]

- Sensitivity limitations: MS may fail to detect low-abundance peptides despite robust Ribo-seq signals [17]

- Technical artifacts: Ribo-seq can occasionally generate false positives from non-translating ribosome interactions [17]

Functional Characterization

What are the key functional categories of non-canonical proteins?

Non-canonical proteins participate in diverse cellular processes [3]:

Table 2: Functional Roles of Non-Canonical Proteins

| Functional Category | Specific Examples | Biological Significance |

|---|---|---|

| Cellular Signaling | Myoregulin (MLN) | Regulation of muscle calcium handling [16] |

| Metabolic Regulation | PEP5-nc-TRHDE-AS1 | Impact on mitochondrial complex assembly and energy metabolism [17] |

| Stress Response | Multiple uncharacterized SEPs | Phagocytosis, DNA repair, and metabolic adaptation [3] |

| Development | Tarsal-less gene products | Regulation of actin-based cell morphogenesis [19] |

| Immune Response | Cryptic proteins | Efficient generation of MHC-I peptides (5-fold more efficient per translation event) [18] |

How do I determine if a predicted sORF is functionally relevant?

A systematic framework for functional characterization includes [17]:

- CRISPR-based screening: Identify sORFs essential for cell proliferation or specific functions

- Molecular validation: Confirm protein expression through Flag-knockin or epitope tagging

- Localization studies: Determine subcellular localization using tools like MicroID [17]

- Interaction mapping: Construct peptide-protein interaction networks using AlphaFold2 prediction and co-immunoprecipitation

- Phenotypic assessment: Evaluate in xenograft models and correlate with clinical outcomes

Research Reagent Solutions

What essential reagents and tools are needed for sORF research?

Table 3: Essential Research Reagents for Non-Canonical Protein Studies

| Reagent/Tool | Function/Application | Implementation Example |

|---|---|---|

| Ribotricer | ORF extraction from transcriptome data | Identify potential sORFs from assembled transcripts [17] |

| Ultrafiltration Tandem MS | Small peptide enrichment and detection | Identify novel peptides from complex tissue samples [17] |

| CRISPR Libraries | High-throughput functional screening | Identify sORFs essential for cell proliferation [17] |

| AlphaFold2 | Protein structure prediction | Predict peptide-protein interactions and functional mechanisms [17] |

| Flag-knockin System | Endogenous protein tagging | Validate expression and localization of novel peptides [17] |

| ESM-2 Model | Protein language model | Feature extraction for computational prediction [15] |

| CatBoost Classifier | Machine learning with sparse data | Handle traditional feature encoding in SORFPP pipeline [15] |

Data Integration & Interpretation

How can I integrate multi-omics data for non-canonical protein research?

Effective data integration requires [17] [18]:

- Ribosome profiling: Identify translated regions regardless of annotation

- Transcriptome sequencing: Provide expression context and transcript models

- Mass spectrometry: Confirm protein existence and quantify abundance

- Epigenetic profiling: Understand regulatory context of non-canonical regions

- CRISPR screening: Connect genotypes to phenotypic outcomes

Remarkably, integrated studies have revealed that of 14,498 proteins identified in human B cell lymphomas, 2,503 were non-canonical proteins, with 72% being cryptic proteins encoded by ostensibly non-coding regions (60%) or frameshifted canonical genes (12%) [18].

What analytical pitfalls should I avoid when interpreting non-canonical protein data?

Common analytical pitfalls include:

- Annotation bias: Over-reliance on canonical gene annotations may cause researchers to discard valid non-canonical proteins [3]

- Conservation assumptions: Many functional non-canonical proteins show limited evolutionary conservation [3]

- Size discrimination: Exclusion of small proteins from standard proteomic analyses [16]

- Start codon dogma: Limiting searches to AUG start codons misses proteins with non-canonical initiation [17]

- Functional analogies: Assuming non-canonical proteins function similarly to canonical ones, despite evidence of unique mechanisms [18]

FAQ: Mechanisms and Experimental Design

Q1: What is the core relationship between transcriptional plasticity and non-canonical antimicrobial resistance?

Transcriptional plasticity allows bacteria to dynamically alter gene expression in response to environmental stresses, such as antibiotic exposure, without acquiring permanent genetic mutations. This facilitates several non-canonical resistance mechanisms:

- Efflux Pump Overexpression: Global transcriptional regulators (e.g., MarA, SoxS, Rob) activate genes encoding multidrug efflux pumps like AcrAB-TolC, which expel diverse antibiotics, reducing their intracellular concentration [20] [10] [21].

- Membrane Remodeling: Two-component systems (e.g., PhoPQ, PmrAB) modify membrane composition by altering lipid A, reducing membrane permeability, and decreasing the binding of antimicrobial peptides and other drugs [10] [22].

- Integrated Stress Response: The general stress response, coordinated by sigma factors like RpoS, upregulates a broad regulon that enhances bacterial survival under antibiotic pressure, often linking efflux and membrane changes to other adaptive processes [23] [24].

This plasticity creates a transient, multifactorial resistance phenotype that is often missed by traditional genetic diagnostics but is crucial for accurate resistance prediction [2] [10].

Q2: Why might my gene expression data not correlate with observed resistance phenotypes, and how can I troubleshoot this?

Discrepancies between transcriptomic data and observed resistance are common in studying transcriptional plasticity. The table below summarizes potential causes and solutions.

Table: Troubleshooting Discrepancies Between Gene Expression and Resistance Phenotypes

| Potential Issue | Description | Troubleshooting Approach |

|---|---|---|

| Post-Transcriptional Regulation | Protein activity or stability is modified after transcription (e.g., by small RNAs or proteolysis). | Perform complementary proteomics or western blotting to assess protein levels and activity [10]. |

| Phenotypic Heterogeneity | Resistance is present only in a subpopulation (e.g., persisters). | Use single-cell techniques (e.g., single-cell RNA-seq, flow cytometry) to analyze cell-to-cell variation [10]. |

| Insufficient Model Features | Predictive models rely only on known resistance genes, missing non-canonical players. | Use machine learning on full transcriptomic datasets to identify minimal, predictive gene sets beyond known databases [2]. |

| Condition-Specific Expression | Gene expression is transient and highly dependent on exact experimental conditions (e.g., growth phase, stressor duration). | Standardize culture conditions, harvest cells at multiple time points, and use continuous monitoring techniques like bioreactors [23] [21]. |

Q3: What are the best experimental approaches to validate that an efflux pump is functionally contributing to resistance?

Confirming the functional role of an efflux pump requires a combination of genetic, phenotypic, and pharmacological assays.

Efflux Pump Inhibition (EPI) Assays:

- Protocol: Perform Minimum Inhibitory Concentration (MIC) assays for the antibiotic of interest in the presence and absence of a broad-spectrum EPI (e.g., Phe-Arg-β-naphthylamide (PAβN) or Carbonyl Cyanide m-Chlorophenyl hydrazone (CCCP)). A ≥4-fold reduction in MIC in the presence of the EPI is indicative of efflux pump activity [21].

- Controls: Include a strain with a known deletion of the efflux pump operon as a negative control. Always assess the EPI's potential toxicity and its own effect on bacterial growth.

Genetic Knockout/Complementation:

- Protocol: Create a defined deletion mutant of the efflux pump gene (e.g., ∆

acrB). Compare the MIC and survival rates of the wild-type, mutant, and complemented strain (where the gene is reintroduced on a plasmid) when exposed to antibiotics [25]. - Outcome: A significant increase in susceptibility in the mutant that is restored in the complemented strain confirms the pump's role.

- Protocol: Create a defined deletion mutant of the efflux pump gene (e.g., ∆

Dye Accumulation/Efflux Assays:

- Protocol: Use fluorescent substrates (e.g., ethidium bromide, Hoechst 33342) to measure pump activity. Load bacteria with the dye and measure fluorescence accumulation over time. After equilibrium, add an energy source (e.g., glucose) to initiate active efflux, which will cause a decrease in fluorescence. Compare efflux rates between wild-type and mutant strains [20].

Q4: How can I map the complex regulatory network controlling efflux pumps and membrane remodeling?

A systems biology approach is needed to unravel these interconnected networks.

Network Construction:

- Begin with transcriptomic data (RNA-seq) from bacteria under stress conditions (antibiotic, oxidative, nutrient limitation).

- Identify Differentially Expressed Genes (DEGs) (e.g., |Log2FC| ≥ 1, FDR ≤ 0.05) [24].

- Input the DEGs into a Protein-Protein Interaction (PPI) network tool like STRING to map physical and functional interactions [24].

Identify Key Regulators:

- Perform topological analysis on the PPI network to find hub-bottleneck nodes (proteins with many connections that also act as bridges in the network). These are central mediators of the stress response [24]. Studies have identified 31 such common hub-bottlenecks across multiple pathogens, many within the RpoS regulon [24].

Pathway Enrichment Analysis:

- Use tools like KOBAS to identify enriched metabolic pathways. Common pathways in bacterial stress response include carbon metabolism, amino acid biosynthesis, and purine metabolism [24]. This reveals the metabolic rewiring that supports resistance.

Validation:

The following diagram illustrates the transcriptional network that connects stress signals to resistance phenotypes.

Diagram: Transcriptional Network Linking Stress to Resistance

FAQ: Data Analysis & Modern Techniques

Q5: How can machine learning improve the prediction of resistance based on transcriptional profiles?

Traditional models that rely solely on known resistance genes have limited accuracy because transcriptional plasticity involves many non-canonical genes. Machine learning (ML) models trained on full transcriptomic data can capture these complex patterns.

- Feature Selection: Genetic Algorithms (GA) can identify minimal, highly predictive gene sets (~35-40 genes) from thousands of transcripts. For P. aeruginosa, such models achieved 96-99% accuracy in predicting resistance to meropenem, ciprofloxacin, tobramycin, and ceftazidime [2].

- Beyond Known Markers: Strikingly, only 2-10% of the genes in these high-accuracy ML signatures overlapped with known resistance genes in the Comprehensive Antibiotic Resistance Database (CARD), highlighting the critical role of non-canonical genes [2].

- Model Robustness: Multiple distinct, non-overlapping gene subsets can achieve similar high accuracy, indicating that resistance is a pervasive phenotype achievable through various transcriptional routes [2].

Table: Key Steps for Building a Predictive ML Model for AMR

| Step | Action | Consideration |

|---|---|---|

| 1. Data Collection | Generate RNA-seq data from a large collection of clinical isolates with known antibiotic susceptibility profiles (e.g., 414 isolates) [2]. | Ensure balanced representation of resistant and susceptible strains. |

| 2. Feature Selection | Apply a Genetic Algorithm to iteratively select minimal gene subsets that maximize predictive power [2]. | This reduces overfitting and improves clinical feasibility. |

| 3. Model Training | Use Automated Machine Learning (AutoML) to train classifiers (e.g., SVM, Logistic Regression) on the selected gene subsets [2]. | AutoML automates hyperparameter tuning for optimal performance. |

| 4. Validation | Evaluate the model on a held-out test set of isolates not used in training [2]. | Target performance metrics: Accuracy >0.95, F1 score >0.93. |

Q6: What is a network biology approach to identifying central stress response proteins?

A network biology approach can identify central, cross-pathogen proteins that mediate stress responses and are potential targets for novel antibiotics.

- Dataset Compilation: Gather transcriptomic datasets (e.g., from GEO) for multiple pathogens under diverse stressors (antibiotics, pH, temperature, oxidative stress) [24].

- Network Construction:

- For each stress condition in each pathogen, identify DEGs and build a Protein-Protein Interaction Network (PPIN).

- Merge all stress-specific PPINs for each pathogen to create a unified stress response network [24].

- Identification of Central Nodes:

- Calculate network topology metrics: Degree (number of connections) and Betweenness Centrality (influence over information flow).

- Identify hub-bottleneck nodes—proteins that are both highly connected and critical connectors [24].

- Cross-Validation:

- Validate findings by checking if the same hub-bottlenecks appear in an independent cross-stress response dataset from E. coli [24].

This approach has identified 31 central hub-bottleneck proteins common across multiple major pathogens, which are often part of the RpoS-mediated general stress regulon [24].

The workflow for this systems-level analysis is detailed in the diagram below.

Diagram: Network Biology Workflow for Stress Response

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Reagents for Investigating Transcriptional Plasticity in AMR

| Reagent / Tool | Function / Application | Key Considerations |

|---|---|---|

| Phe-Arg-β-naphthylamide (PAβN) | Broad-spectrum efflux pump inhibitor (EPI). Used in EPI assays to confirm efflux-mediated resistance [21]. | Can be toxic to cells at high concentrations; requires careful dose optimization. |

| Ethidium Bromide | Fluorescent substrate for efflux pumps. Used in dye accumulation/efflux assays to measure pump activity [20]. | Handle as a mutagen; use appropriate safety precautions. |

| CRISPR-Cas9 Systems | For targeted gene knockout (e.g., of efflux pump genes acrB, mexF) or mutagenesis of regulatory genes (e.g., marR, rpoS) [25]. |

Essential for functional validation of identified genes. |

| RNA-seq Kits | For comprehensive transcriptomic profiling of bacterial cultures under antibiotic stress [2] [24]. | Critical for capturing genome-wide expression changes driving plasticity. |

| Anti-RpoS Antibody | To measure protein levels of the key stress sigma factor σS (RpoS) via western blot, complementing transcriptomic data [23]. | Helps confirm post-transcriptional regulation. |

| STRING Database | Public database of known and predicted protein-protein interactions. Used to construct PPINs from transcriptomic data [24]. | A foundational resource for network biology studies. |

| CARD (Database) | The Comprehensive Antibiotic Resistance Database. Used as a reference to compare ML-identified gene signatures against known resistance genes [2]. | Highlights the novelty of non-canonical resistance mechanisms. |

Frequently Asked Questions (FAQs)

Q1: My transcriptomic data for antibiotic resistance prediction is high-dimensional and noisy. How can I identify a minimal, reliable gene signature?

A1. You can employ a Genetic Algorithm (GA) for automated feature selection. This method efficiently sifts through thousands of genes to find a compact set of ~35-40 genes that maintain high predictive accuracy. The process involves [2]:

- Initialization: Start with a randomly generated population of candidate gene subsets (e.g., 40 genes each).

- Evaluation: Assess the performance of each subset by training a classifier (e.g., SVM or Logistic Regression) and evaluating it using metrics like ROC-AUC and F1-score.

- Evolution: Iteratively refine the subsets over hundreds of generations using selection, crossover, and mutation operations, preferentially keeping high-performing gene combinations.

- Consensus: After many independent runs (e.g., 1,000), generate a consensus gene list by ranking genes based on their selection frequency. This list provides a robust, minimal signature for your final model [2].

Q2: What could explain the low overlap between my predictive gene signature and known resistance genes in databases like CARD?

A2. This is a common finding that highlights a key challenge in the field. Limited overlap with the Comprehensive Antibiotic Resistance Database (CARD) suggests that your model is capturing non-canonical resistance mechanisms [2]. Only 2-10% of genes in a high-performing signature may be annotated in CARD. These uncharacterized genes likely represent [2]:

- Novel regulatory or metabolic pathways associated with the resistant phenotype.

- Genes involved in broader cellular stress responses (e.g., oxidative stress, DNA repair, efflux regulation) that indirectly confer survival advantages under antibiotic pressure. This underscores the need to look beyond known resistance markers in your analysis.

Q3: My single-cell experiments show high phenotypic heterogeneity. How can I track whether a phenotype is stochastically fluctuating or stably inherited?

A3. You can use Microcolony-seq to distinguish between these two scenarios. This protocol tracks phenotypic inheritance by sequencing microcolonies derived from single bacterial cells [26].

- Stable Inheritance: If a phenotype (e.g., a specific virulence state) is stably inherited, you will observe distinct and consistent transcriptomic profiles across all cells within a microcolony for over 20 generations.

- Stochastic Fluctuation: If the phenotype is transient and stochastic, the transcriptomic profiles will be heterogeneous and unstructured within and between microcolonies.

- Key Control: Note that growth to stationary phase can erase this epigenetic inheritance, resetting the phenotypic memory [26].

Q4: How can I investigate if cellular aging and asymmetric damage partitioning contribute to bacterial persistence in my samples?

A4. You can use single-cell microscopy combined with microfluidic devices (e.g., the "mother machine") to track lineages and correlate damage inheritance with dormancy. The experimental workflow is as follows [27]:

- Cell Loading and Tracking: Trap individual bacterial cells in a microfluidic device that provides a constant flow of fresh medium. Follow lineages over multiple generations, distinguishing between "old" and "new" daughter cells based on which inherited the old, damage-rich pole of the mother cell.

- Persistence Assay: Expose the entire population to a lethal dose of a bactericidal antibiotic.

- Correlation Analysis: After treatment, identify the persister cells that survived. Track back through the lineage data to determine if these persisters were significantly more likely to be "old" daughters that had inherited more cellular damage [27].

Troubleshooting Guides

Table 1: Troubleshooting Persister Cell Experiments

| Problem | Potential Cause | Solution |

|---|---|---|

| Low persister cell yield | Incorrect antibiotic concentration or exposure time. | Perform a biphasic killing curve to establish the optimal antibiotic concentration and exposure time that kills growing cells but leaves persisters. |

| High variability in persistence levels | Heterogeneous pre-culture conditions. | Standardize the growth phase (stationary phase for Type I; exponential phase for Type II) and ensure consistent culture conditions before the assay [28]. |

| Inability to distinguish between resistance and persistence | Lack of a proper regrowth assay. | After antibiotic treatment, wash cells to remove the drug and plate on fresh medium. Persisters will regrow and remain susceptible to the same antibiotic, while resistant mutants will not [28]. |

Table 2: Troubleshooting Predictive Model Performance

| Problem | Potential Cause | Solution |

|---|---|---|

| Model overfitting on transcriptomic data | High dimensionality (many genes, few samples). | Implement rigorous feature selection (e.g., Genetic Algorithms). Use cross-validation and hold-out test sets to evaluate performance on unseen data [2]. |

| Poor generalizability to new clinical isolates | Model trained on a non-representative dataset. | Ensure your training data includes a diverse set of clinical isolates reflecting real-world genetic and phenotypic diversity. |

| Biologically uninterpretable gene signatures | Focus on pure prediction accuracy without biological context. | Map predictive genes to operons and independently modulated gene sets (iModulons) to uncover coherent functional modules and regulatory programs [2]. |

Experimental Protocols

Protocol 1: Identifying a Minimal Predictive Gene Signature using a Genetic Algorithm and AutoML

This protocol details the workflow for using a GA-AutoML pipeline to predict antibiotic resistance from transcriptomic data [2].

Key Research Reagent Solutions

- Bacterial Isolates: 414 clinical isolates of Pseudomonas aeruginosa.

- RNA Sequencing Kit: For generating transcriptomic profiles (6,026 genes).

- Genetic Algorithm Software: For evolutionary feature selection.

- AutoML Platform: For automated training and validation of classifiers (e.g., SVM, Logistic Regression).

Methodology

- Data Preparation: Collect RNA-seq data from 414 clinical isolates with known antibiotic susceptibility profiles (e.g., for meropenem, ciprofloxacin). Split data into training and hold-out test sets.

- Baseline Model: Train an AutoML classifier using all 6,026 genes to establish a performance baseline.

- Feature Selection with GA:

- Initialize: Generate an initial population of random 40-gene subsets.

- Evaluate: For each subset, train an SVM/Logistic Regression model and evaluate performance using ROC-AUC on the training set.

- Evolve: Run the GA for 300 generations per run, over 1,000 independent runs. In each generation, select top-performing subsets and create new ones via crossover and mutation.

- Consensus Signature: Across all runs and generations, rank all genes by their frequency of selection. The top 35-40 genes form the consensus signature.

- Validation: Train a final classifier using only the consensus gene signature and evaluate its accuracy and F1-score on the held-out test set.

Protocol 2: Microcolony-seq for Profiling Inherited Phenotypic Heterogeneity

This protocol describes how to use Microcolony-seq to uncover stably inherited phenotypic states directly from infected human samples [26].

Key Research Reagent Solutions

- Microfluidic Device or Agar Pads: For growing microcolonies from single cells.

- Lysis Buffer: For inactivating and lysing bacterial microcolonies.

- RNA Stabilization Reagent: (e.g., RNAlater) to preserve transcriptomic profiles.

- Bulk RNA-seq Library Prep Kit: For sequencing the transcriptome of each microcolony.

Methodology

- Sample Preparation and Dispersion: Gently disperse infected human samples (e.g., urine, blood) to separate individual bacteria.

- Microcolony Growth: Seed single bacterial cells into a microfluidic device or onto agar pads containing fresh, rich medium. Allow each cell to divide and form a microcolony of ~16-64 cells.

- Lysis and RNA Extraction: For each microcolony, quickly lyse the cells and extract total RNA. Pool RNA from all cells of the same microcolony.

- RNA Sequencing and Analysis: Perform bulk RNA-seq on each microcolony's transcriptome. Use dimensionality reduction and clustering to identify distinct transcriptomic profiles. Microcolonies derived from a common ancestor will cluster together, indicating an inherited phenotype.

- Phenotypic Correlation: Correlate the identified transcriptomic states with phenotypic assays (e.g., virulence factor expression, antibiotic tolerance) to determine their functional impact.

Data Presentation

Table 3: Performance of Minimal Gene Signatures for Predicting Antibiotic Resistance inP. aeruginosa

Data derived from a GA-AutoML framework applied to 414 clinical isolates. Performance metrics are on a held-out test set [2].

| Antibiotic | Number of Genes in Signature | Prediction Accuracy (%) | F1-Score | Key Overlap with CARD Database |

|---|---|---|---|---|

| Meropenem (MNM) | 35-40 | ~99% | ~0.99 | ~3-5% (e.g., mexA, mexB) |

| Ciprofloxacin (CIP) | 35-40 | ~99% | ~0.99 | 2-10% across all antibiotics |

| Tobramycin (TOB) | 35-40 | ~96% | ~0.93 | 2-10% across all antibiotics |

| Ceftazidime (CAZ) | 35-40 | ~96% | ~0.95 | 2-10% across all antibiotics |

Diagnostic and Experimental Workflow Diagrams

Phenotypic Heterogeneity and Persistence

GA-AutoML Workflow

Next-Generation Tools: Machine Learning and Multi-Omics for Non-Canonical AMR Prediction

Core Concepts: From Bulk Sequencing to Targeted Signatures

Transcriptomic analysis has evolved from broad, discovery-focused approaches to targeted, efficient diagnostic methods. This shift is crucial for research on non-canonical resistance genes, where improved prediction accuracy can accelerate therapeutic development.

Whole Transcriptome Shotgun Sequencing (WTSS) provides a comprehensive, unbiased view of all RNA molecules within a biological sample. As reviewed by Zhao et al., this approach is foundational for deciphering genome structure and function, identifying genetic networks, and establishing molecular biomarkers [29]. It typically involves capturing both coding and non-coding RNA, converting them to cDNA, and using next-generation sequencing (NGS) platforms for analysis [29]. While powerful for discovery, WTSS generates immense datasets that are costly and computationally intensive to analyze, making it less suitable for rapid clinical diagnostics or large-scale screening.

Minimal Gene Signatures represent a focused approach, using a small set of highly informative genes to classify biological states accurately. The core principle is that cellular states are governed by transcriptional programs where genes are co-regulated, meaning a minimal set can act as a proxy for the entire transcriptomic state [30]. The goal is to identify the smallest possible number of genes that reliably predict an outcome—such as antibiotic resistance or viral infection—with performance comparable to a full-transcriptome analysis [2] [31]. This dramatically reduces the cost and complexity of testing, facilitating the development of rapid, point-of-care diagnostic tools.

Experimental Protocols for Signature Discovery

Protocol 1: A Standard RNA-Seq Workflow for Transcriptome Profiling

This protocol is used for initial discovery phases to generate comprehensive transcriptome data.

Step 1: Sample Preparation and RNA Extraction

- Obtain biological samples (e.g., bacterial isolates, patient blood, tissue biopsies) under appropriate ethical approval and preservation conditions [31].

- Extract total RNA using standardized kits, ensuring RNA Integrity Number (RIN) > 8 for high-quality libraries. Treat samples with DNase to remove genomic DNA contamination.

Step 2: Library Preparation

- Poly-A Selection: For eukaryotic mRNA, use oligo(dT) beads to enrich for poly-adenylated RNA. This is standard for host-response studies [29] [31].

- rRNA Depletion: For bacterial RNA or non-polyadenylated transcripts, use ribo-depletion kits to remove ribosomal RNA [29].

- cDNA Synthesis and Adapter Ligation: Fragment RNA and reverse-transcribe it into cDNA. Ligate platform-specific sequencing adapters to the cDNA fragments. This may involve adding unique molecular identifiers (UMIs) to correct for PCR amplification biases [29].

Step 3: Sequencing

Step 4: Bioinformatic Analysis

- Quality Control: Use tools like FastQC to assess read quality. Trim adapters and low-quality bases with Trimmomatic or Cutadapt.

- Alignment: Map reads to a reference genome using STAR (for eukaryotes) or Bowtie2/BWA (for prokaryotes).

- Quantification: Generate a count matrix of reads per gene using featureCounts or HTSeq.

- Differential Expression: Identify genes significantly differentially expressed between conditions (e.g., resistant vs. susceptible strains) using tools like DESeq2 or edgeR.

Protocol 2: Identifying a Minimal Signature with a Genetic Algorithm and AutoML

This protocol details a hybrid machine-learning approach for distilling a full transcriptome down to a minimal, predictive gene set, as demonstrated for antibiotic resistance prediction [2].

Step 1: Input Data Preparation

- Start with a normalized gene expression matrix (e.g., TPM or FPKM values) from RNA-seq for a cohort of samples with confirmed phenotypes (e.g., resistant/susceptible).

- Split data into training (e.g., 80%) and hold-out test (e.g., 20%) sets.

Step 2: Feature Selection via Genetic Algorithm (GA)

- Initialization: Generate an initial population of random gene subsets, each containing a fixed number of genes (e.g., 40) [2].

- Evaluation: For each gene subset in the population, train a simple classifier (e.g., Support Vector Machine or Logistic Regression) and evaluate its performance using metrics like ROC-AUC or F1-score on a validation set.

- Evolution: Apply evolutionary operations over hundreds of generations:

- Selection: Preferentially retain the highest-performing gene subsets.

- Crossover: Recombine genes from high-performing subsets to create "offspring."

- Mutation: Randomly add or remove a small number of genes to/from subsets to introduce novelty.

- Consensus Building: Run the GA for many independent iterations (e.g., 1,000 runs). Rank all genes by their frequency of selection across all runs to create a consensus list of the most important predictors [2].

Step 3: Model Training with Automated Machine Learning (AutoML)

- Take the top-ranked genes (e.g., 35-40) from the GA consensus list.

- Use an AutoML framework to automatically train, validate, and tune multiple machine learning models (e.g., random forests, gradient boosting) using only this minimal gene set.

- The final output is an optimized, compact classifier ready for validation on the held-out test set [2].

Protocol 3: Validation via Reverse Transcription Quantitative PCR (RT-qPCR)

This protocol validates a discovered signature in a clinically applicable format.

Step 1: Primer/Probe Design

- Design specific primers and TaqMan probes for each gene in the minimal signature (e.g., a 3-gene signature: HERC6, IGF1R, NAGK) [31].

- Include reference genes (e.g., GAPDH, ACTB) for normalization.

Step 2: cDNA Synthesis

- Using an independent set of validation samples, reverse-transcribe a fixed amount of total RNA (e.g., 100 ng) into cDNA using a high-capacity reverse transcription kit.

Step 3: qPCR Amplification

- Perform qPCR reactions in triplicate for each target and reference gene.

- Calculate the cycle threshold (Ct) value for each reaction.

Step 4: Data Analysis and Score Calculation

- Normalize target gene Ct values to reference genes (ΔCt).

- Apply a pre-defined model (e.g., a logistic regression formula derived during discovery) to the normalized expression values to generate a diagnostic score for each sample [31].

Diagram 1: Overall workflow for developing a minimal gene signature, from initial sample collection to final validation.

Troubleshooting Guides & FAQs

FAQ 1: Signature Discovery and Robustness

Q: My minimal gene signature performs perfectly on my training data but fails on an independent dataset. What went wrong? A: This is a classic sign of overfitting. Solutions include:

- Increase Cohort Heterogeneity: Ensure your discovery cohort includes the genetic, demographic, and technical diversity expected in real-world applications. A network-based meta-analysis that pools data from multiple studies can build a more robust and generalizable signature [32].

- Apply Regularization: Use machine learning techniques like elastic net regression during model training, which penalizes model complexity and reduces reliance on any single gene [31].

- Validate Extensively: Always validate your signature on a completely held-out test set and multiple external cohorts that were not used in any part of the discovery process [2] [32].

Q: Why do different studies discover completely different gene signatures for the same condition? A: This is common and can be due to several factors:

- Biological Redundancy: Multiple distinct transcriptional pathways can lead to the same phenotype (e.g., antibiotic resistance). The GA-AutoML approach often finds multiple, non-overlapping gene subsets with comparable predictive power, reflecting this biological reality [2].

- Cohort-Specific Biases: Signatures derived from a single, geographically restricted cohort may capture local genetic or environmental factors rather than the core biology [32].

- Technical Variation: Differences in sample collection (PAXgene vs. Tempus tubes), RNA-seq protocols, and bioinformatic pipelines can significantly influence which genes are selected [32].

FAQ 2: Technical and Analytical Challenges

Q: I am working with non-canonical resistance genes or poorly annotated genomes. How does this impact transcriptomic analysis? A: This is a key challenge and opportunity.

- Discovery Bottleneck: Traditional differential expression analysis relies on a well-annotated reference genome. Non-canonical proteins, derived from regions like long non-coding RNAs (lncRNAs) and alternative open reading frames, are often missed [3].

- Alternative Approaches: Use de novo transcriptome assembly tools (e.g., Trinity) to reconstruct transcripts without a reference genome. Subsequently, employ proteogenomic approaches, integrating ribosome profiling (Ribo-seq) and mass spectrometry data, to identify translated open reading frames in these unannotated regions [3].

- Functional Insight: Genes identified by signatures that fall outside known resistance databases (like CARD) may point to these novel, non-canonical resistance mechanisms and are prime candidates for further functional validation [2] [3].

Q: How do I choose the right feature selection method for my dataset? A: The choice depends on your data size and goals.

- For Large Datasets (>50,000 cells): Active learning methods like ActiveSVM are highly efficient. They iteratively identify misclassified cells and select genes that maximize classification improvement, focusing computational resources only on problematic cells [30].

- For High-Dimensional Clinical Cohorts: Evolutionary algorithms like Genetic Algorithms (GA) or Particle Swarm Optimization (PSO) are excellent for searching a vast gene space to find compact, high-performing subsets [2] [33].

- For a Balanced, Smaller Dataset: Regularized regression methods like Elastic Net or Forward Selection-Partial Least Squares (FS-PLS) provide a strong, straightforward approach [31].

Diagram 2: A decision guide for selecting a feature selection method based on dataset characteristics.

Quantitative Data & Performance Comparison

The following tables summarize the performance of minimal gene signatures as reported in recent literature, highlighting their accuracy and potential for clinical translation.

Table 1: Performance of Minimal Gene Signatures in Diagnostic Applications

| Signature / Study | Condition | Number of Genes | Performance (AUROC) | Sensitivity / Specificity | Key Finding |

|---|---|---|---|---|---|

| GA-AutoML [2] | Antibiotic Resistance in P. aeruginosa | 35-40 | 0.96 - 0.99 | N/A | Multiple non-overlapping gene sets achieved similar high accuracy, suggesting diverse transcriptional paths to resistance. |

| Three-Gene Signature [31] | Viral vs. Bacterial Infection | 3 | 0.976 | 97.3% / 100% | Outperformed CRP and leukocyte count in discriminating viral infections, including COVID-19. |

| ActiveSVM [30] | PBMC Cell Type Classification | 15 | ~0.90 (Accuracy) | N/A | Achieved high classification accuracy while analyzing only a small fraction (298) of the total cells. |

| Network Meta-Analysis [32] | Active Tuberculosis | 45 | 0.85 (Prognosis) | 74.2% / 78.3% | Validated across 57 studies, approximating WHO target product profile for TB prediction. |

Table 2: Comparison of Computational Methods for Gene Signature Discovery

| Method | Key Principle | Advantages | Disadvantages / Challenges |

|---|---|---|---|

| Genetic Algorithm (GA) with AutoML [2] | Evolves gene subsets over generations to optimize a classifier's performance. | Discovers multiple, unique, high-performing signatures; balances accuracy and interpretability. | Computationally intensive; can produce biologically distinct signatures that are difficult to interpret. |

| ActiveSVM [30] | Active learning that selects genes based on misclassified cells in a classification task. | Highly scalable to massive datasets (>1M cells); computationally efficient. | Requires pre-defined cell state labels; performance is tied to the quality of these labels. |

| Particle Swarm Optimization (PSO) [33] | Models social behavior to explore the gene space and find optimal feature sets. | Can identify succinct, highly accurate signatures with faster runtimes than some other evolutionary algorithms. | Like GA, may require significant parameter tuning. |

| Network-Based Meta-Analysis [32] | Identifies genes that are differentially expressed and co-vary consistently across multiple independent studies. | Produces highly robust and generalizable signatures by inherently accounting for cohort heterogeneity. | Relies on availability of multiple, high-quality public datasets. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Transcriptomic Signature Workflows

| Item | Function / Application | Example / Note |

|---|---|---|

| RNA Stabilization Tubes | Preserves RNA transcriptome at the moment of collection for accurate expression profiling. | PAXgene Blood RNA Tubes; Tempus Blood RNA Tubes [31] [32]. |

| Total RNA Extraction Kits | Isolates high-quality, intact total RNA from various sample types. | Qiagen RNeasy; Zymo Research Quick-RNA kits. |

| RNAseq Library Prep Kits | Prepares cDNA libraries from RNA for next-generation sequencing. | Illumina TruSeq Stranded mRNA; KAPA mRNA HyperPrep kits. Includes poly-A selection or rRNA depletion [29]. |

| RT-qPCR Master Mix | Enzymes and buffers for reverse transcription and quantitative PCR amplification. | TaqMan Gene Expression Master Mix; SYBR Green-based systems [31]. |

| Custom TaqMan Assays | Gene-specific primers and probes for targeted quantification of signature genes. | Designed for minimal signature genes (e.g., HERC6, IGF1R, NAGK) [31]. |

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using a Genetic Algorithm (GA) for feature selection in transcriptomic studies? Genetic Algorithms are powerful for feature selection because they can efficiently search through a vast number of possible gene subsets to find a small, highly predictive group. In a study on Pseudomonas aeruginosa antimicrobial resistance (AMR), a GA identified minimal gene sets of 35–40 genes that achieved 96–99% accuracy in predicting antibiotic resistance, outperforming models using the entire transcriptome (6,026 genes) [34]. Their stochastic nature helps avoid local optima and is particularly effective for high-dimensional data where the number of features (genes) far exceeds the number of samples [35] [36].

Q2: My GA keeps selecting different gene subsets in each run. Is this an error? Not necessarily. It is a common characteristic of GAs to find multiple, distinct feature subsets that yield similar high performance. In the AMR research, thousands of independent GA runs produced non-overlapping gene sets, yet all maintained high accuracy (F1 scores: 0.93–0.99) [34]. This suggests that the resistance phenotype may be linked to diverse transcriptional programs. You can address this by creating a consensus list from top-performing runs or by analyzing the biological pathways the different subsets represent.

Q3: Why is my GA-based model overfitting? Overfitting in GA feature selection can arise from several factors. To mitigate this, ensure you are using a robust validation method like k-fold cross-validation during the fitness evaluation [37] [38]. Applying regularization techniques (L1/L2) within the classifier used in your fitness function can also help [37]. Furthermore, monitor your GA's performance on a held-out test set that is never used during the feature selection process to prevent data leakage [37].

Q4: How do I evaluate the performance of my model on an imbalanced dataset? For imbalanced datasets, common in medical research, accuracy can be a misleading metric. It is recommended to use a suite of evaluation metrics, including Precision, Recall, F1-score, and AUC-ROC [37] [38]. The AMR study used F1-scores (0.93–0.99) alongside accuracy to reliably measure performance [34]. For highly imbalanced data, Precision-Recall curves can be more informative than ROC curves [37].

Q5: What is the role of AutoML in this pipeline? AutoML (Automated Machine Learning) automates the process of selecting and optimizing the best machine learning model and its hyperparameters. In the referenced framework, a GA handles the "outer loop" of feature selection, while AutoML optimizes the "inner loop" classifier, creating a powerful hybrid pipeline that reduces manual tuning and mitigates bias [34] [39].

Troubleshooting Guides

Issue 1: Poor Model Performance After Feature Selection

Problem: The classifier trained on your GA-selected features shows low accuracy or F1-score on the test set.

| Potential Cause | Solution |

|---|---|

| Data Quality Issues | Perform rigorous data cleaning: handle missing values, remove duplicates, and normalize or standardize expression data [37] [38]. |

| Incorrect Fitness Function | Use a robust, multi-faceted fitness function. Don't rely solely on accuracy, especially for imbalanced data. Incorporate metrics like F1-score or AUC-ROC into your fitness evaluation [34] [40]. |

| Underfitting | The model is too simple. Increase GA parameters like population size or number of generations. Allow for more complex models in your AutoML step [37] [36]. |

| Data Leakage | Ensure that no information from the test set leaks into the training (and feature selection) process. Perform all data preprocessing, including scaling, after splitting the data and use pipelines [37]. |

Issue 2: Genetic Algorithm Fails to Converge

Problem: The GA's performance fluctuates wildly without showing a clear improvement over generations.

| Potential Cause | Solution |

|---|---|

| Improper GA Parameters | Tune key parameters: increase the mutation rate (e.g., to 0.01) to promote diversity; adjust the crossover rate; and use elitism to preserve the best solutions from one generation to the next [35] [36]. |

| Weak Selection Pressure | Use a selection method like rank-based selection or tournament selection to give fitter individuals a higher chance of reproducing, which helps guide the search [36]. |

| Poor Initialization | Instead of purely random initialization, seed the initial population with genes known to have high correlation with the target phenotype to give the algorithm a head start [36]. |

Issue 3: Selected Gene Set is Biologically Uninterpretable

Problem: The GA identifies a high-performing gene set, but it lacks overlap with known biological pathways or databases like CARD.

| Potential Cause | Solution |

|---|---|

| Focus on Non-Canonical Mechanisms | This may not be an error but a discovery. AMR research found that only 2-10% of predictive genes overlapped with known resistance markers in CARD, highlighting non-canonical resistance mechanisms [34]. |

| Lack of Pathway-Level Analysis | Move beyond individual genes. Perform enrichment analysis on the gene set and map them to independently modulated gene sets (iModulons) or operons to uncover higher-order regulatory programs [34]. |

| Ignoring Model Explainability | Use explainable AI (XAI) tools like SHAP or LIME on your final model to understand how individual genes contribute to predictions, providing biological insights [37]. |

Experimental Protocol: A Case Study in AMR Research

This protocol summarizes the methodology from a study that achieved 96-99% accuracy in predicting antibiotic resistance in P. aeruginosa using a GA-AutoML pipeline [34].

Data Preparation and Preprocessing

- Data Source: 414 clinical isolates of P. aeruginosa.

- Transcriptomic Data: RNA-seq data yielding expression values for 6,026 genes.

- Phenotypic Labels: Binary labels (Resistant/Susceptible) for four antibiotics: Meropenem (MEM), Ciprofloxacin (CIP), Tobramycin (TOB), and Ceftazidime (CAZ).

- Preprocessing: Data was likely normalized (e.g., TPM or FPKM) and scaled. The dataset was split into training and held-out test sets.

Genetic Algorithm Setup for Feature Selection

The core of the feature selection process is implemented as follows:

- Encoding Scheme: Binary encoding. Each individual in the population is a binary vector of length 6,026, where '1' indicates the gene is selected and '0' indicates it is excluded [35] [36].

- Population & Initialization: A population of candidate solutions (e.g., 100 individuals) is initialized randomly. Each individual initially represents a random subset of ~40 genes.

- Fitness Function: The fitness of an individual (a gene subset) is evaluated by:

- Training a classifier (e.g., SVM, Logistic Regression) using only the selected genes on the training set.

- Evaluating the classifier's performance using a metric like F1-score or AUC-ROC on a validation set.

- The fitness score is this performance metric. The GA aims to maximize it.

- Genetic Operators:

- Selection: Roulette wheel or rank-based selection is used to choose parents for reproduction, favoring individuals with higher fitness [36].

- Crossover: Single-point or uniform crossover is applied to pairs of parents to create offspring, combining their gene subsets [35].

- Mutation: Each gene in the offspring has a small probability (mutation rate, e.g., 0.01) of being flipped (1 to 0 or vice versa) to maintain population diversity [35] [36].

- Termination Criteria: The algorithm runs for a fixed number of generations (e.g., 300) or until performance plateaus.

Model Training and Evaluation with AutoML

- Consensus Gene Set: After many GA runs (e.g., 1,000), genes are ranked by how frequently they appear in high-performing subsets. A consensus set of the top 35-40 genes is selected for final model building [34].

- AutoML Modeling: An AutoML framework is used to automatically train, validate, and tune a suite of classifiers (e.g., SVM, Random Forest, XGBoost) using the consensus gene set.