Benchmarking Variant Effect Prediction in Plants: From Models to Precision Breeding Applications

Accurately predicting the effects of genetic variants is crucial for advancing plant breeding from traditional phenotypic selection toward precision breeding.

Benchmarking Variant Effect Prediction in Plants: From Models to Precision Breeding Applications

Abstract

Accurately predicting the effects of genetic variants is crucial for advancing plant breeding from traditional phenotypic selection toward precision breeding. This article provides a comprehensive framework for benchmarking variant effect prediction (VEP) models in plants, addressing the unique challenges posed by plant genomes. We explore foundational concepts, contrast traditional statistical methods with emerging machine learning and deep learning approaches, and outline strategies for overcoming obstacles like complex plant genomes and data scarcity. By presenting rigorous validation methodologies and comparative analyses of tools across species—from Arabidopsis to major crops like maize and rice—this review serves as an essential guide for researchers and breeders seeking to leverage computational predictions for crop improvement. The insights provided aim to bridge the gap between model development and practical application in agricultural biotechnology.

The Foundations of Plant Variant Effect Prediction: Why Benchmarking Matters

Defining Variant Effect Prediction in the Context of Plant Genomics and Breeding

Variant Effect Prediction (VEP) encompasses computational methods designed to assess the impact of genetic variants—such as single nucleotide polymorphisms (SNPs) and insertions/deletions (indels)—on gene function and, ultimately, on plant phenotypes. In the realm of plant breeding, these methods are emerging as efficient alternatives or complements to traditional, costly mutagenesis screens, supporting a strategic shift toward precision breeding where causal variants are directly targeted based on their predicted effects [1]. The core challenge VEP addresses is the identification of disease-causing or agronomically valuable variants among the millions present in a plant's genome, a process critical for unlocking genetic diversity within genebanks and accelerating the development of improved crop cultivars [2] [3].

Traditional methods for identifying variant effects have relied heavily on association mapping (e.g., QTL mapping and GWAS) and comparative genomics based on sequence conservation across species [1]. However, these approaches have inherent limitations, including moderate-to-low resolution and dependency on the availability of closely related genomes [1]. Modern VEP tools, particularly those powered by artificial intelligence (AI) and foundation models, aim to overcome these limitations by generalizing across genomic contexts, fitting a unified model across loci rather than requiring a separate model for each locus [1] [4].

Categories of Prediction Models and Methodologies

Variant effect predictors can be broadly categorized based on their underlying methodologies, which align with two primary research fields: functional genomics and comparative genomics [1].

Supervised Learning in Functional Genomics

This approach uses machine learning models trained on experimentally labeled genomic data. These sequence-to-function models predict molecular traits (e.g., gene expression) or complex phenotypes from sequence data by estimating a single, unified function that considers the genomic, cellular, and environmental context [1]. They contrast with traditional association testing, which fits a separate linear function for each locus and is often confounded by linkage disequilibrium [1].

Unsupervised Learning in Comparative Genomics

Leveraging principles from evolutionary genetics, these methods typically use unsupervised or self-supervised learning on unlabeled sequence data from multiple species or populations. They predict the fitness effects of variants by assessing conservation, with modern AI models aiming to predict conservation by considering the sequence context of the focal locus, either with or without explicit alignment information [1].

Table 1: Methodological Categories of Variant Effect Predictors

| Category | Core Methodology | Training Data | Typical Application in Plants |

|---|---|---|---|

| Supervised Models (Functional Genomics) | Supervised machine learning | Experimentally labeled sequences (e.g., phenotypic or molecular trait data) | Predicting variant effects on specific agronomic or molecular traits [1] |

| Unsupervised Models (Comparative Genomics) | Unsupervised/self-supervised learning | Unlabeled sequence variation data across populations/species | Identifying deleterious mutations and inferring fitness-related traits [1] |

| Foundation Models | Self-supervised pre-training on large genomic datasets | Large-scale genomic sequences (e.g., whole genomes) | Zero-shot embeddings for diverse downstream tasks like pathogenicity prediction and gene expression [4] |

Comparative Performance of Model Architectures

Recent benchmarking efforts have systematically evaluated the performance of different DNA foundation models across a range of genomic tasks. These evaluations reveal that model performance is not uniform but varies significantly depending on the specific task, model architecture, and even the method used to generate sequence embeddings [4].

The Impact of Embedding Strategies

A critical finding from comprehensive benchmarks is that the method used to generate sequence-level embeddings from DNA foundation models has a substantial impact on performance in sequence classification tasks. The mean token embedding strategy, which averages the embeddings of all non-padding tokens, has been shown to consistently and significantly outperform other pooling strategies, such as using a sentence-level summary token ([CLS] or [SEP]) or maximum pooling [4].

For instance, in promoter identification tasks for the GM12878 cell line, switching from a summary token to mean token embedding improved the Area Under the Curve (AUC) for DNABERT-2 from 0.964 to 0.986. Even more dramatically, for the B. amyloliquefaciens genome, the same switch increased the AUC for HyenaDNA from 0.689 to 0.864 [4]. This suggests that mean token embedding provides a more comprehensive representation of the entire DNA sequence, which is particularly beneficial when discriminative features are distributed throughout the sequence [4].

Model Performance Across Genomic Tasks

When benchmarked on diverse tasks using optimal mean token embedding, general-purpose DNA foundation models show competitive but variable performance [4].

Table 2: Benchmarking Performance of DNA Foundation Models on Selected Tasks (AUC Scores)

| Genomic Task | DNABERT-2 | Nucleotide Transformer V2 | HyenaDNA | Caduceus-Ph | GROVER |

|---|---|---|---|---|---|

| Pathogenic Variant Identification | Competitive Performance | Competitive Performance | Competitive Performance | Competitive Performance | Competitive Performance |

| Splice Site Prediction (Donor) | 0.906 [4] | Information missing | Information missing | Information missing | Information missing |

| Splice Site Prediction (Acceptor) | 0.897 [4] | Information missing | Information missing | Information missing | Information missing |

| Promoter Identification (GM12878) | 0.986 [4] | Information missing | Information missing | Information missing | Information missing |

| Transcription Factor Binding Site Prediction | Information missing | Information missing | Information missing | Superior Performance [4] | Information missing |

| Gene Expression Prediction (from zero-shot embeddings) | Less Effective | Less Effective | Less Effective | Less Effective | Less Effective |

| Identifying Putative Causal QTLs | Less Effective | Less Effective | Less Effective | Less Effective | Less Effective |

As illustrated in Table 2, while models like DNABERT-2 and Caduceus-Ph excel in specific tasks like splice site and transcription factor binding site prediction, their zero-shot embeddings are less effective for predicting gene expression and identifying quantitative trait loci (QTLs) compared to specialized models designed for these purposes [4]. This highlights that despite their generalizability, foundation models are not a panacea and task-specific tools remain important.

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons of VEP tools, standardized benchmarking protocols are essential. These protocols typically involve using curated datasets, defined evaluation metrics, and consistent experimental workflows.

Protocol for Sequence Classification Benchmarking

This protocol evaluates how well a model's sequence representations can be used to classify genomic regions (e.g., promoters, enhancers) [4].

- Dataset Curation: Assemble a collection of labeled sequences for the classification task (e.g., promoter vs. non-promoter sequences). Resources like EasyGeSe provide curated datasets from multiple plant species in ready-to-use formats [5].

- Generate Zero-Shot Embeddings: Input the sequences into the pre-trained foundation model (with frozen weights) and generate sequence-level embeddings using the mean token pooling strategy [4].

- Train Downstream Classifier: Split the embedded samples into training and testing sets. Train a standard classifier, such as a Random Forest model, on the training embeddings. Random Forest is often selected for its strong performance, minimal hyperparameter tuning, and capacity to handle complex, non-linear relationships [4].

- Evaluate Performance: Use the trained classifier to predict labels for the test set sequences. Report standard performance metrics such as the Area Under the Receiver Operating Characteristic Curve (AUC) [4].

Protocol for Genomic Prediction in Breeding

This protocol assesses the accuracy of predicting complex phenotypic traits from genotypic data, a common application in plant breeding programs [5].

- Population Genotyping: Generate high-density genotype data (e.g., SNP markers) for a training population of plants. Ensure data is filtered for quality, removing markers with high missing data rates or low minor allele frequency [6] [5].

- Phenotypic Evaluation: Measure the traits of interest (e.g., yield, disease resistance, days to flowering) in the training population under controlled or field conditions [5].

- Model Training: Employ a genomic prediction model to learn the relationship between genotypes and phenotypes. This can include:

- Model Validation & Comparison: Use cross-validation to estimate the predictive performance of the model. The primary metric is often the Pearson's correlation coefficient (r) between the predicted and observed phenotypic values in the validation set. Compare the predictive accuracy and computational efficiency (e.g., model fitting time, RAM usage) of different methods [5].

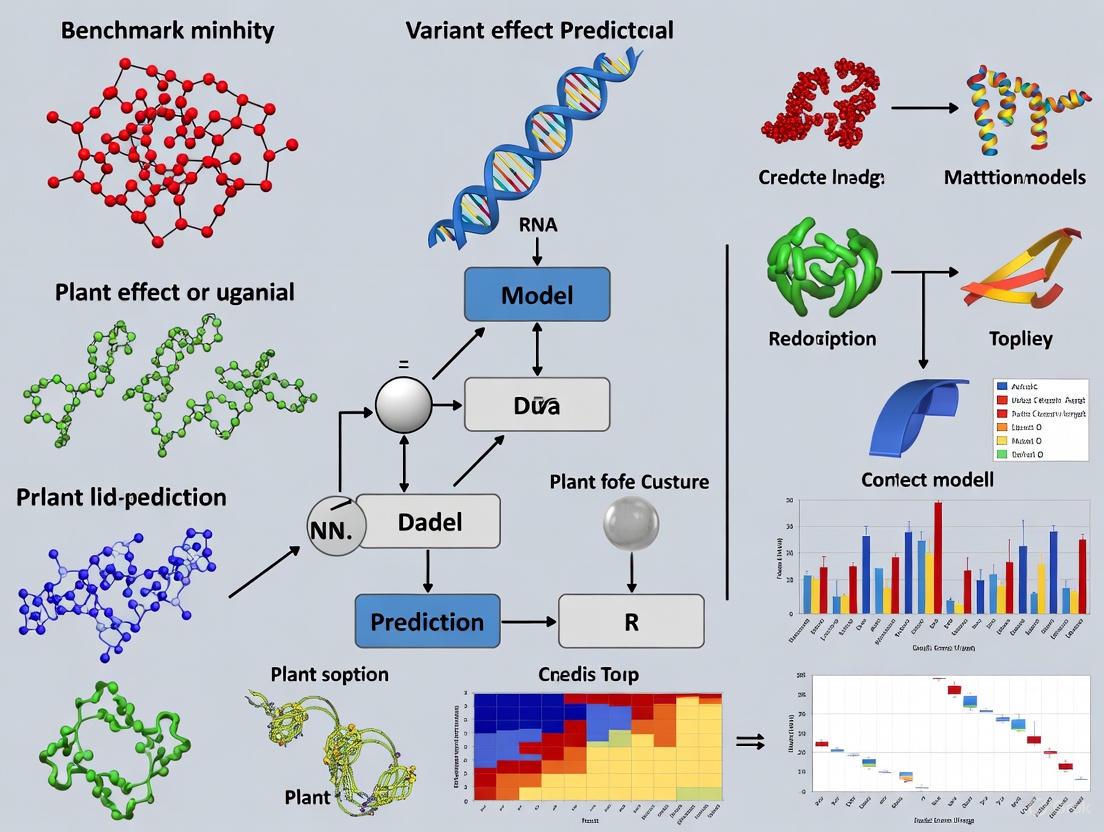

Figure 1: A generalized workflow for the benchmarking of variant effect prediction tools and genomic prediction models.

A suite of databases, software tools, and curated data resources is fundamental for VEP research and its application in plant breeding.

Table 3: Key Research Reagents and Resources for VEP

| Resource Name | Type | Function and Application |

|---|---|---|

| Ensembl VEP [7] | Computational Tool | Annotates variants with their functional consequences (e.g., effect on transcripts, regulatory regions) and overlays known variant data from databases. |

| VIPdb [3] | Database | A curated database of over 400 Variant Impact Predictors (VIPs), facilitating the exploration and selection of appropriate tools for specific variant types and contexts. |

| EasyGeSe [5] | Database / Tool | Provides a curated collection of datasets from multiple species for standardized benchmarking of genomic prediction methods, promoting reproducible research. |

| dbNSFP [7] | Database | Hosts precomputed predictions from multiple functional prediction scores for non-synonymous and splice-site variants, enabling consolidated analysis. |

| OpenCRAVAT [3] | Tool / Platform | Integrates hundreds of variant analysis tools into a single platform, particularly useful for cancer-related variants but also applicable to other contexts. |

| SPET/Probe-Based Genotyping [6] | Laboratory Technique | A targeted sequencing method (Single Primer Enrichment Technology) for cost-effective, high-density SNP genotyping in breeding populations. |

| Chlorophyll a Fluorescence (ChlF) [6] | Phenotyping Assay | A non-invasive endophenotype used in "phenomic prediction" to model and predict growth-related traits, serving as an alternative to genomic predictors. |

Integration with Plant Breeding and Future Outlook

The ultimate validation of VEP lies in its successful integration into plant breeding pipelines to enhance genetic gain. Precision breeding, which directly introduces targeted variants using techniques like CRISPR-Cas9, greatly benefits from accurate in silico predictions to identify optimal editing targets [1] [8]. VEP tools can help pinpoint causal variants for traits such as disease resistance, abiotic stress tolerance, and yield components, thereby informing which edits are most likely to produce desired phenotypes [1] [2].

However, several challenges remain before in silico prediction becomes a routine driver of precision breeding. The accuracy and generalizability of sequence models, especially for complex traits and in regulatory regions, heavily depend on the quality and breadth of training data [1]. Furthermore, plant genomes present specific hurdles, such as large sizes, high repetitiveness, and polyploidy, which are not as prevalent in mammalian systems [1]. Future advancements will likely come from improved model architectures, better integration of multi-omics data, and, crucially, more rigorous validation through direct experimentation in diverse plant species and environments [1] [4] [2]. As these tools mature, they are poised to become an indispensable component of the modern breeder's toolbox, helping to develop resilient crop varieties needed for future food security [1] [8].

The growing field of plant genomics has witnessed rapid advancements in variant effect prediction (VEP) tools, which are increasingly crucial for both precision breeding and the management of deleterious genetic variation. These computational models address a fundamental challenge in plant genetics: distinguishing functional variants with desirable traits from those that are detrimental to plant health and productivity. As plant breeding shifts from traditional phenotype-based selection toward precision breeding strategies, accurate VEP becomes essential for directly targeting causal variants rather than broader genomic segments [1].

Modern VEP tools leverage sophisticated machine learning approaches and protein language models to predict the functional consequences of genetic variants with unprecedented accuracy. These tools have demonstrated remarkable performance in classifying pathogenic versus benign variants and predicting experimental measurements from deep mutational scanning studies [9] [10]. For plant researchers, these capabilities translate into practical applications ranging from the identification of candidate causal variants for precise gene editing to the systematic purging of deleterious mutations that accumulate during domestication and intensive selection [1] [11].

This guide provides a comprehensive comparison of VEP methodologies, their performance benchmarks, and practical protocols for implementation in plant research programs. By synthesizing recent benchmarking studies and experimental validations, we aim to equip researchers with the knowledge to select appropriate VEP tools for specific applications in both model and crop plants.

Comparative Performance of Variant Effect Predictors

Performance Benchmarks Across Multiple Studies

Recent large-scale evaluations have systematically compared the performance of numerous VEP tools using diverse datasets, including clinical variants, functional measurements, and population cohort data. These benchmarks provide critical insights for researchers selecting appropriate tools for plant genomics applications.

Table 1: Comprehensive Benchmarking of Variant Effect Predictors

| Predictor | Clinical Variant Classification (AUC) | DMS Correlation Performance | Plant Research Applicability | Key Strengths |

|---|---|---|---|---|

| AlphaMissense | 0.905 (ClinVar) [9] | Top performer [10] | High | Best overall performance, user-friendly [12] |

| ESM1b | 0.897 (HGMD/gnomAD) [9] | High accuracy [9] | High | Genome-wide coverage, no MSA dependency [9] |

| EVE | 0.885 (ClinVar) [9] | Not evaluated in cohort studies | Moderate | Unsupervised approach, no clinical data training [9] |

| VARITY | Not specified | Comparable to AlphaMissense for some traits [10] | Moderate | Strong performance on quantitative traits [10] |

| Meta-predictors | Varies | Generally strong | High | Consistent performance across variant types [12] |

In a landmark study evaluating 24 computational variant effect predictors using UK Biobank and All of Us cohort data, AlphaMissense emerged as the top-performing tool, outperforming others in 132 of 140 gene-trait combinations [10]. This performance was particularly notable for rare missense variants (MAF < 0.1%), which are especially relevant for breeding applications where novel mutations may be introduced and selected. AlphaMissense demonstrated statistically significant superior performance compared to all other predictors except VARITY, with which it was statistically tied (FDR > 10%) [10].

Another extensive benchmark of 65 different VEP tools confirmed that AlphaMissense consistently ranked among the best options, with the additional advantage of being accessible to non-specialists [12]. The study also revealed that tools leveraging evolutionary information generally performed well for functional variants, while meta-predictors showed strong average performance across diverse variant types [12].

Specialized Performance in Different Genomic Contexts

The performance of VEP tools can vary significantly across different genomic contexts, an important consideration for plant researchers working with diverse genomic elements:

- Coding vs. Non-coding Variants: Protein language models like ESM1b and AlphaMissense excel for coding variants but may have limitations for regulatory regions [1] [9].

- Isoform-specific Effects: ESM1b has demonstrated capability to assess variant effects in the context of different protein isoforms, identifying isoform-sensitive variants in 85% of alternatively spliced genes [9]. This is particularly relevant for plant species with complex alternative splicing patterns.

- Structural Variants: Most VEP tools focus on single nucleotide variants and small indels, with limited capability for predicting effects of larger structural variants that are common in plant genomes [11].

Table 2: Performance Across Variant Types and Genomic Contexts

| Genomic Context | Top Performing Tools | Key Limitations | Considerations for Plant Research |

|---|---|---|---|

| Missense Variants | AlphaMissense, ESM1b, EVE [9] [12] | Limited to coding regions | Critical for identifying deleterious mutations in breeding lines |

| Regulatory Variants | Not clearly established | Generally lower accuracy | Important for complex agronomic traits; area needing improvement [1] |

| In-frame Indels | ESM1b (generalized approach) [9] | Few tools support this variant class | Relevant for gene editing applications in plants |

| Isoform-specific Effects | ESM1b [9] | Most tools don't distinguish isoforms | Important for plants with complex transcriptomes |

| Structural Variants | Specialized population genomics approaches [11] | Not covered by standard VEP tools | Significant in crop domestication studies [11] |

Experimental Protocols for Validation

Benchmarking Against Clinical and Functional Datasets

Robust validation of VEP predictions requires multiple complementary approaches. The following protocol outlines a comprehensive benchmarking strategy adapted from recent large-scale evaluations:

Protocol 1: Clinical and Functional Benchmarking

- Variant Curation: Compile high-confidence pathogenic and benign variants from curated databases (e.g., ClinVar, HGMD for human models; plant-specific databases when available) [9] [12].

- Performance Metrics Calculation:

- Statistical Validation:

- Cross-validation: Implement leave-one-gene-out or similar cross-validation strategies to assess generalizability across different genomic contexts.

This approach was used effectively in benchmarking ESM1b, which achieved a true-positive rate of 81% and true-negative rate of 82% at an optimal log-likelihood ratio threshold of -7.5 for distinguishing pathogenic from benign variants [9].

Population-Based Validation in Cohort Studies

Population-scale cohorts with genotype and phenotype data provide an unbiased approach for VEP validation that avoids circularity concerns:

Protocol 2: Population Cohort Validation

- Cohort Selection: Identify population cohorts with whole-genome or exome sequencing and detailed phenotype data (e.g., UK Biobank, All of Us) [10].

- Gene-Trait Association Curation: Compile established gene-trait associations from rare-variant burden association studies [10].

- Variant Filtering: Extract rare variants (MAF < 0.1%) from trait-associated genes, as rare variants are more likely to have large phenotypic effects and represent a critical test case for VEP tools [10].

- Effect Aggregation: For participants with multiple missense variants in a given gene, sum predicted scores under an additive model [10].

- Trait Correlation: Assess correlation between aggregated variant effect predictions and trait values across the population.

This method demonstrated that AlphaMissense significantly outperformed 23 other predictors in correlating with human traits in the UK Biobank cohort, with consistent replication in the independent All of Us cohort [10].

Figure 1: Experimental workflow for comprehensive validation of variant effect predictors, incorporating clinical, functional, and population-based approaches.

Applications in Precision Breeding

From Traditional Breeding to Precision Approaches

Plant breeding has evolved from traditional phenotype-based selection toward increasingly precise genetic interventions. This transition creates specific requirements for VEP tools:

- Traditional Breeding: Relied on phenotypic selection with limited genomic information, a process described as "costly and time-consuming" [1].

- Marker-Assisted Selection: Used genetic markers to guide transfer of genomic segments containing causal variants [1].

- Genomic Prediction: Jointly uses genome-wide markers and phenotypes to accelerate evaluations [1].

- Precision Breeding: Directly targets causal variants through gene transformation and CRISPR-based genome editing [1].

Precision breeding has been successfully applied in crops including rice, tomato, and wheat to improve traits of interest [1]. However, in most applications, variants introduced by precision breeding techniques were identified through experimental mutagenesis screens, which "remain relatively costly and time-consuming" compared to computational approaches [1].

VEP Workflow for Precision Breeding Applications

Implementing VEP in precision breeding programs involves specific steps tailored to plant systems:

Protocol 3: VEP for Precision Breeding

- Target Gene Identification: Prioritize genes based on prior knowledge (QTL studies, orthology, expression patterns).

- Variant Effect Prediction: Apply multiple VEP tools (e.g., AlphaMissense, ESM1b) to predict functional consequences of natural or engineered variants.

- Variant Prioritization: Rank variants based on predicted effect scores and functional annotations.

- Experimental Validation: Implement CRISPR-based genome editing to introduce prioritized variants.

- Phenotypic Assessment: Evaluate edited lines for desired trait improvements.

In plant systems, VEP faces unique challenges including "large repetitive genomes, rapid functional turnover, and the relative scarcity of experimental data compared to mammals" [1]. Nevertheless, sequence models show strong potential for precision breeding applications due to their ability to generalize across genomic contexts [1].

Managing Deleterious Variation in Breeding Programs

Understanding Deleterious Burden in Domesticated Species

Domestication and intensive breeding often lead to accumulation of deleterious variants through genetic bottlenecks and selection hitchhiking. Studies across diverse species provide insights into these patterns:

- Foxtail Millet: Domestication resulted in reduced structural (25.76%) and deleterious variant (40.40%) burdens in cultivars, reflecting dramatic loss of genetic diversity from wild progenitors [11].

- Raccoon Dogs: White breeds showed increased homozygous missense mutations despite comparable total deleterious mutation numbers, indicating "accumulation of small-effect deleterious mutations may be facilitated during the development of white breeds" [13].

- Lord Howe Island Stick Insect: An extreme population bottleneck (only two mating pairs) demonstrated that "stop-codon mutations were preferentially depleted in captivity compared with other mutations," suggesting purging of highly deleterious mutations [14].

These patterns highlight the dual processes of deleterious variant accumulation through bottlenecks and their potential purging through inbreeding and selection.

Purging Protocols for Breeding Programs

Managing deleterious variation requires specific breeding strategies:

Protocol 4: Deleterious Variant Purging

- Variant Identification: Use VEP tools to identify deleterious variants in breeding populations.

- Homozygosity Mapping: Identify runs of homozygosity (ROH) where deleterious variants may be exposed [14] [13].

- Selection Against Homozygotes: Implement selective breeding to reduce frequency of homozygous deleterious genotypes.

- Outcrossing: Introduce genetic diversity from wild relatives or diverse breeding lines to mask deleterious recessive alleles.

- Monitoring: Track deleterious allele frequencies across generations to assess purging effectiveness.

The effectiveness of purging depends on the severity of deleterious mutations. Theory suggests that "in most cases only highly deleterious mutations can be purged effectively during bottlenecks" [14]. This was demonstrated in the Lord Howe Island stick insect, where "the more deleterious a mutation was predicted to be, the more likely it was found outside of runs of homozygosity, implying that inbreeding facilitates the expression and thus removal of deleterious mutations" [14].

Figure 2: Workflow for managing deleterious variation in breeding programs, showing both purging through bottlenecks and alternative outcrossing strategies.

Successful implementation of VEP in plant research requires access to specific datasets, computational resources, and experimental materials:

Table 3: Essential Research Reagents and Resources for VEP in Plant Research

| Resource Category | Specific Examples | Application in VEP | Availability for Plants |

|---|---|---|---|

| Reference Genomes | Arabidopsis TAIR10, Maize B73, Rice IRGSP | Variant calling and annotation | Variable quality across species |

| Variant Databases | Plant-specific databases (e.g., PlantVar, PlantGVA) | Training and benchmarking | Limited compared to human resources |

| VEP Tools | AlphaMissense, ESM1b, EVE, VARITY | Effect prediction | Most tools species-agnostic |

| Computational Infrastructure | High-memory GPUs, Cloud computing | Running large models (e.g., ESM1b) | Essential for protein language models |

| Genome Editing Tools | CRISPR-Cas systems, Transformation protocols | Experimental validation | Well-established for model crops |

| Phenotyping Platforms | High-throughput phenotyping, Field trials | Functional validation | Critical for bridging prediction to function |

Variant effect prediction has matured into an essential component of plant genomics and breeding programs. Through comprehensive benchmarking, AlphaMissense and ESM1b have emerged as top-performing tools for predicting variant effects, with particular strengths for coding variants [9] [10] [12]. These tools show significant promise for precision breeding applications, though challenges remain for non-coding variants and regulatory regions [1].

The integration of VEP into deleterious variant management enables more strategic breeding approaches that balance trait improvement with genetic health. Studies across diverse species demonstrate that while bottlenecks and artificial selection can increase deleterious burden, targeted approaches can facilitate purging of particularly harmful mutations [11] [14] [13].

As VEP tools continue to evolve, plant researchers should prioritize validation in plant-specific contexts, development of plant-optimized models, and integration of multi-omics data for improved prediction accuracy. The rapid advancement of protein language models and other AI-driven approaches suggests that VEP will play an increasingly central role in bridging genomic variation to phenotypic outcomes in plant research and breeding.

Plant genomics presents a unique set of challenges that distinguish it from research in most model animal systems. Three interconnected features—large and repetitive genomes, prevalent polyploidy, and rapid functional turnover—complicate everything from basic sequencing to the prediction of how genetic variants influence traits. For researchers focused on benchmarking variant effect prediction models, these characteristics demand specialized experimental and computational approaches. This guide compares the performance of various strategies and reagents developed to navigate these complexities, providing a foundation for robust and reproducible plant genomics research.

Core Challenges in Plant Genomics

Large and Repetitive Genomes

The enormous size and repetitive nature of many plant genomes pose significant barriers to sequencing and annotation.

- Genome Size Variation: Plant genomes exhibit extreme size variation, with the average angiosperm genome being about 6.2 gigabases—twice the size of the human genome [15]. Some species, like the Japanese canopy plant (Paris japonica), have genomes as large as 152 gigabases [15].

- High Repetitive Content: Repetitive elements, such as transposable elements (TEs), can constitute the majority of a plant's DNA. In maize, for example, highly repetitive 20-mers constituted 44% of the genome in one study, yet represented only 1% of all possible k-mers, indicating extreme low-complexity [16]. Similar patterns are found in other grasses like sorghum and rice [16].

- Impact on Research: This repetitiveness confounds gene finding, alignment of homologous sequences, and genome assembly [16]. Traditional whole-genome sequencing becomes impractical from both cost and computational perspectives [17].

Pervasive Polyploidy

Polyploidy, or whole genome duplication (WGD), is a ubiquitous feature of plant evolution.

- Prevalence: Most green plant species are recent polyploids or carry signatures of ancient polyploid events [18]. This is arguably the most important force in plant speciation and genome evolution [18].

- Consequences for Genomics: Polyploidy introduces complexity such as:

- Homeoologous Genes: In allopolyploids, the genome contains multiple divergent but related versions of each gene (homeoologues) from its different ancestral species, complicating genotyping and variant calling [18].

- Genome Restructuring: Following polyploidization, genomes undergo rapid changes, including chromosome rearrangements, gene loss, and repetitive DNA amplification or elimination [18].

- Altered Traits: Polyploidy can directly affect phenotypic traits. For instance, tetraploid barley exhibits thicker leaves, larger stomata, more photosynthetic pigments, and an enhanced photosynthetic rate compared to its diploid counterpart [19].

Rapid Functional Turnover

Plant genomes and their functional elements can evolve rapidly, presenting challenges for cross-species comparisons and prediction models.

- Regulatory Evolution: The regulatory regions controlling gene expression can experience rapid functional turnover. This complicates the use of evolutionary conservation-based methods to identify functionally important non-coding sequences [1].

- Ecological Implications: Trait turnover can occur rapidly in plant communities in response to environmental drivers. While more frequently studied in animals, this principle applies to plant functional traits as well [20].

Comparative Analysis of Experimental Solutions

Researchers have developed various strategies to overcome these hurdles. The table below summarizes the performance, advantages, and limitations of key methodological approaches.

Table 1: Comparison of Genomic Approaches for Challenging Plant Genomes

| Methodology | Primary Application | Key Advantages | Key Limitations | Representative Performance |

|---|---|---|---|---|

| Target Capture Sequencing [17] | Variant discovery in large genomes | Enriches specific genomic regions; produces high-quality, codominant genotypes; cost-effective for population studies. | Probe design is challenging; enrichment efficiency can be low; repetitive elements in baits can reduce performance. | Successfully identified 12,390 segregating sites from 4,452 genes in whitebark pine (27 Gb genome). |

| Genome Skimming [15] | Evolutionary studies in large genomes | Avoids full genome assembly; provides wide (if shallow) understanding; cost-effective for comparing context of genes. | Does not provide a deep, complete view of the genome; limited utility for fine-scale variant discovery. | Enabled study of genome evolution in Nicotiana genus, revealing paternal genome degradation. |

| Genotyping-by-Sequencing (GBS) [15] [5] | Genomic prediction & breeding | Reduces complexity; cost-effective for high-throughput genotyping; useful for mapping in polyploids. | Difficulties in polyploid genotyping; can miss rare variants; data complexity due to genome rearrangements. | Used in Brassica napus to detect translocations and introgress beneficial alleles from wild relatives. |

| K-mer Frequency Analysis (Tallymer) [16] | Repeat annotation & genome characterization | De novo method, needs no pre-existing library; flexible k-mer size; memory-efficient for large datasets. | Limited by sequence coverage depth; identifies repetitive profiles but not necessarily full repeat families. | In maize, detected transposon-encoded genes with 92% sensitivity vs. 96% for alignment-based methods. |

Benchmarking Variant Effect Prediction in Plants

The unique features of plant genomes directly impact the accuracy and application of variant effect prediction models, which are crucial for precision breeding.

- Limitations of Traditional GWAS: Association studies like GWAS estimate variant effects separately for each locus and are confounded by linkage disequilibrium, leading to low resolution (from 1 kb to >100 kb) [1]. Their power is also low for rare variants, and they cannot predict the effects of unobserved variants [1].

- Promise of Sequence-Based AI Models: Modern machine learning models offer a unified approach to predict variant effects based on genomic context. They generalize across loci and can predict the effects of even unobserved variants [1].

- Benchmarking Resources: Tools like EasyGeSe provide curated datasets from multiple species (e.g., barley, maize, wheat, loblolly pine) for standardized benchmarking of genomic prediction methods [5]. This allows for fair comparison of parametric (e.g., GBLUP, Bayesian methods), semi-parametric (e.g., RKHS), and non-parametric models (e.g., Random Forest, XGBoost) [5].

Table 2: Benchmarking Data for Genomic Prediction in Plants (from EasyGeSe) [5]

| Species | Ploidy | Sample Size | Number of SNPs | Example Traits |

|---|---|---|---|---|

| Barley (Hordeum vulgare) | Diploid | 1,751 accessions | 176,064 | Disease resistance (BaYMV, BaMMV) |

| Maize (Zea mays) | Diploid | Information missing | Information missing | Information missing |

| Loblolly Pine (Pinus taeda) | Diploid | 926 trees | 4,782 | Stem diameter, tree height, wood density |

| Wheat (Triticum aestivum) | Hexaploid (6x) | Information missing | Information missing | Information missing |

| Common Bean (Phaseolus vulgaris) | Diploid | 444 lines | 16,708 | Yield, days to flowering, seed weight |

Detailed Experimental Protocols

Protocol 1: Targeted Capture Sequencing for Large, Repetitive Genomes

This protocol is adapted from a study on whitebark pine, which has a 27 Gb genome [17].

- Probe Design: Design hybridization-based capture probes to target specific genomic regions (e.g., 7,849 distinct genes). Probes are typically 200 bases targeting contiguous genomic regions. Note: Despite challenges, including repetitive elements in the probe pool can still yield successful results [17].

- Library Preparation and Screening: Prepare genomic DNA libraries from sampled individuals (e.g., 48 trees). Hybridize the libraries with the designed probe pool to enrich the targeted regions.

- Sequencing and Analysis: Sequence the enriched libraries on a high-throughput platform. Process the data to call variants (e.g., single nucleotide polymorphisms).

- Outcome: Despite non-optimal conditions, this protocol successfully provided data on 4,452 genes and identified 12,390 segregating sites, demonstrating its utility for conservation genetics and population studies in species with massive genomes [17].

Protocol 2: Differentiating Diploid and Tetraploid Plants for Polyploidy Research

This protocol is used in studies comparing diploid and tetraploid forms, such as in barley [19].

- Chromosome Counting:

- Collect 1-2 cm long root tips from seedlings.

- Incubate tips in precooled 90% glacial acetic acid for ~10 minutes.

- Preserve tips in 70% ethanol at -20°C.

- Dissociate root tips in 45% acetic acid for 2 hours and observe chromosomes under a microscope.

- Stomatal Guard Cell Measurement:

- Sample the middle section of a leaf.

- Soak the leaf section in Carnoy's fixative (3:1 anhydrous ethanol to glacial acetic acid) until completely discolored.

- Rinse with distilled water and measure the length of stomatal guard cells under a 400x microscope field.

- Photosynthetic Analysis:

- Use a portable photosynthesis system (e.g., LI-6800) to measure parameters like net photosynthetic rate (Pn), stomatal conductance (Gs), and intercellular CO2 concentration (Ci).

- Construct light- and CO2-response curves to calculate advanced parameters like maximum RuBP carboxylation rate (Vc,max).

Visualizing Workflows and Relationships

Diagram: Target Capture Sequencing Workflow

Diagram: Variant Effect Prediction Benchmarking Logic

Table 3: Key Research Reagents and Resources for Plant Genomics

| Resource / Reagent | Function/Application | Example Use Case |

|---|---|---|

| PlantGDB [21] | Database of plant molecular sequences; EST contig assembly and functional annotation. | Accessing assembled and annotated ESTs for gene discovery in species without sequenced genomes. |

| Tallymer Software [16] | K-mer counting and indexing for large sequence sets; repeat annotation. | De novo characterization of the repetitive fraction in a newly sequenced plant genome. |

| EasyGeSe Resource [5] | Curated collection of datasets for benchmarking genomic prediction methods across multiple species. | Testing a new machine learning model for genomic prediction on standardized datasets from barley, maize, pine, etc. |

| LI-6800 Portable Photosystem [19] | Measurement of photosynthetic parameters (Pn, Gs, Ci, Tr). | Phenotyping the physiological effects of ploidy or genetic variants on plant growth and efficiency. |

| High-C0t DNA Sequences [16] | Gene-enriched genomic fraction obtained via biochemical selection. | Reducing genome complexity for sequencing by enriching for low-copy, gene-rich regions. |

Benchmarking datasets are standardized collections of data used to evaluate and compare the performance of computational models and algorithms. In the life sciences, they provide a consistent and reproducible framework for assessing methods ranging from genomic prediction to variant effect prediction, enabling objective comparisons and driving methodological progress [22]. The availability of high-quality, curated benchmarks is particularly crucial in plant research, where the accurate prediction of how genetic variations influence traits of agricultural importance is fundamental to advancing precision breeding [23].

The development and testing of computational methods are dependent on experimental data, and accurate predictors can only be built using reliable, verified cases [24]. Benchmark resources address this need by gathering data from multiple sources, standardizing formats, and providing clear evaluation protocols. This simplifies the benchmarking process, ensures fair comparisons, and broadens access to data, encouraging interdisciplinary researchers to contribute novel modelling strategies [5]. This guide objectively compares several key benchmarking resources, with a focus on their application in plant genomic research, detailing their core features, experimental protocols, and performance.

The table below provides a structured comparison of several curated benchmarking resources, highlighting their primary focus, data composition, and key performance metrics.

Table 1: Comparison of Curated Benchmarking Datasets

| Resource Name | Primary Focus / Domain | Data Composition & Scale | Key Performance Findings |

|---|---|---|---|

| EasyGeSe [5] | Genomic Prediction (Plants & Animals) | Data from 10 species (barley, maize, rice, etc.); Phenotypic and genotypic data (SNPs). | Non-parametric models (XGBoost, LightGBM) showed modest accuracy gains (+0.021 to +0.025) and were 10x faster with 30% lower RAM usage vs. Bayesian alternatives. |

| VariBench [24] | Variation Interpretation (General) | 559 data sets; over 90 million variants; includes insertions/deletions, coding substitutions, regulatory elements, etc. | Widely used for training and testing pathogenicity, protein stability, and disease-specific predictors. Data set quality is variable and requires user evaluation. |

| PMLB (Penn Machine Learning Benchmark) [25] | General Supervised Machine Learning | A large, curated repository for classification and regression; covers a broad range of applications and data types. | Provides standardized data and evaluation procedures to ensure fair comparison of general machine learning algorithms. |

| OpenML Benchmark Suites [26] | General Machine Learning | Curated multi-dataset benchmarks (e.g., OpenML-CC18); datasets have 500-100,000 observations and do not exceed 5000 features. | Facilitates reproducible benchmarking at scale through standardized tasks, train-test splits, and centralized results sharing. |

EasyGeSe: A Resource for Genomic Prediction

EasyGeSe is a tool that provides a curated collection of datasets specifically designed for testing genomic prediction methods. Its resource encompasses data from multiple species, including barley, common bean, lentil, maize, rice, soybean, and wheat, representing broad biological diversity [5]. The data has been filtered and arranged in convenient formats, with functions provided in R and Python for easy loading.

A key study benchmarked various modelling strategies using EasyGeSe. Predictive performance, measured by Pearson’s correlation coefficient (r), varied significantly by species and trait, ranging from -0.08 to 0.96, with a mean of 0.62 [5]. The benchmarking compared parametric (e.g., GBLUP, Bayesian methods), semi-parametric (e.g., RKHS), and non-parametric models (e.g., machine learning). The comparisons revealed modest but statistically significant gains in accuracy for the non-parametric methods random forest (+0.014), LightGBM (+0.021), and XGBoost (+0.025) [5]. These methods also offered major computational advantages, with model fitting times typically an order of magnitude faster and RAM usage approximately 30% lower than Bayesian alternatives, though these measurements did not account for the computational costs of hyperparameter tuning [5].

VariBench: A Database for Variation Interpretation

VariBench is a generic database that serves as a benchmark resource for all types of genetic variations and their effects [24]. It collects data from literature, databases, and predictors, and contains a wide array of variation types, including insertions and deletions, coding region substitutions, structural variants, and effect-specific data sets related to RNA splicing, protein stability, and protein-protein interactions [24].

A core function of VariBench is to support the development and testing of computational methods for predicting the functional consequences of variants, often in relation to disease. The database has been widely used to train and test predictors for pathogenicity, protein stability, solubility, and disease-specific variations, including in plants and animals [24]. The quality of data sets within VariBench is variable, and the resource includes even known low-quality data sets for comparative purposes or for building new data sets. Therefore, it is the duty of the users to evaluate whether the data are suitable for their intended application [24].

General Machine Learning Benchmarks (PMLB and OpenML)

For context and comparison, general machine learning benchmarks like PMLB and OpenML provide critical resources for the broader ML community, which often influences method development in bioinformatics. PMLB is a large, curated repository of benchmark datasets for evaluating supervised machine learning algorithms. It covers binary and multi-class classification and regression problems, with all data stored in a common format and a Python wrapper available for easy access [25].

OpenML Benchmark Suites are curated sets of machine learning tasks designed for comprehensive, standardized evaluations. A prominent example is the OpenML-CC18 suite, which contains datasets that satisfy specific requirements, such as having between 500 and 100,000 observations and a balanced class ratio, to ensure practical and thorough benchmarking [26]. These suites are seamlessly integrated into the OpenML platform, allowing for easy programmatic access, standardized train-test splits, and the sharing of reproducible results [26].

Experimental Protocols for Benchmarking

Standardized Evaluation Using Benchmark Suites

A standardized protocol is essential for obtaining fair and reproducible benchmark results. The general workflow, as implemented by platforms like OpenML, involves accessing a curated set of tasks, running an algorithm on each task using predefined data splits, and uploading the results for comparison [26].

Table 2: Essential Research Reagent Solutions for Computational Benchmarking

| Resource / Reagent | Function in Benchmarking |

|---|---|

| Curated Benchmark Suite (e.g., OpenML-CC18, EasyGeSe) | Provides standardized datasets and evaluation tasks, ensuring consistent and comparable results across different studies. |

| Programming Language APIs (Python, R, Java) | Facilitates programmatic access to benchmark data and integration with data analysis and machine learning libraries. |

| Reference Databases (e.g., GenBank, UniProt) | Provides reference sequences and functional annotations essential for curating and validating biological benchmark data. |

| Computational Frameworks (e.g., scikit-learn, TensorFlow, PyTorch) | Offers implementations of machine learning algorithms and utilities for model training, evaluation, and hyperparameter tuning. |

Case Study: Benchmarking Genomic Prediction Models with EasyGeSe

The following diagram illustrates the experimental workflow for a benchmarking study in genomic prediction, as exemplified by the EasyGeSe resource.

The specific methodology for the EasyGeSe benchmark involved several key steps [5]:

- Data Sourcing and Curation: Data was drawn from ten publicly available studies on different species. The genotypic data, which originated from various formats (e.g., VCF, HDF5), was filtered and imputed. Common filters included removing SNPs with a minor allele frequency (MAF) below 5% or with excessive missing data. The data was then arranged into consistent, easy-to-use formats.

- Model Training and Evaluation: A range of modelling strategies was implemented, including:

- Parametric: Genomic Best Linear Unbiased Prediction (GBLUP), Bayesian methods (BayesA, BayesB, Bayesian Lasso).

- Semi-parametric: Reproducing Kernel Hilbert Spaces (RKHS).

- Non-parametric: Machine learning methods including Random Forest, LightGBM, and XGBoost.

- Performance Assessment: The primary metric for evaluating predictive performance was Pearson's correlation coefficient (r) between the predicted and observed phenotypic values. The statistical significance of differences in performance between model types was tested (e.g., p < 1e-10). Computational performance, including model fitting time and RAM usage, was also recorded.

The development of specialized benchmarks like EasyGeSe is particularly significant for plant research. It provides a resource that accounts for the unique challenges in plant genomics, such as diverse reproduction systems, varying ploidy levels, and large, repetitive genomes [5] [23]. By enabling the benchmarking of genomic prediction methods across a wide range of species, EasyGeSe facilitates the transfer of insights and adoption of novel modelling approaches across different plant breeding programs.

Furthermore, the shift in plant breeding towards precision breeding, which directly targets causal variants, increases the need for accurate in silico prediction of variant effects [23]. While traditional methods like genome-wide association studies (GWAS) estimate effects separately for each locus, modern sequence-based models aim to fit a unified function that generalizes across genomic contexts [23]. The rigorous validation of these emerging models will heavily rely on high-quality benchmark data. Resources like those discussed here provide the foundation for this validation, ultimately helping to build a more robust and predictive toolkit for plant breeders. The complementary strengths of different types of benchmarks—from domain-specific to general—create an ecosystem that supports continuous improvement in computational methods, driving progress in both basic research and applied agricultural science.

The field of genomics is undergoing a profound transformation, driven by the shift from traditional analytical approaches to modern artificial intelligence-based sequence models. This evolution is particularly impactful in plant research, where the accurate prediction of variant effects is crucial for advancing precision breeding and functional genomics [1]. Traditional methods, such as quantitative trait loci (QTL) mapping and sequence alignment-based techniques, have provided foundational insights into genotype-phenotype relationships for decades. However, these approaches face significant limitations in resolution, scalability, and ability to model complex genomic contexts [1].

Modern sequence models, particularly those built on large language model architectures, represent a paradigm shift in biological sequence analysis. These models leverage self-supervised learning on massive-scale genomic data to capture complex patterns and long-range dependencies that elude traditional methods [27]. By framing biological sequences as a "language" with its own grammar and syntax, these models can predict the functional consequences of genetic variants with unprecedented accuracy, enabling researchers to prioritize causal variants for experimental validation [1].

This review provides a comprehensive comparison between traditional and modern approaches for variant effect prediction in plants, with a specific focus on benchmarking methodologies, performance metrics, and practical applications in plant genomics. We examine the experimental evidence supporting both approaches and provide a framework for researchers to select appropriate methods for specific biological questions.

Traditional Approaches: Foundations and Limitations

Core Methodologies

Traditional approaches to variant effect prediction in plants have primarily relied on statistical genetics principles established in the late 20th century. These methods can be broadly categorized into association-based approaches and alignment-based techniques.

Association Mapping: Genome-wide association studies (GWAS) and QTL mapping have been the cornerstone of plant genetics for decades. These methods use linear regression frameworks to identify statistical associations between genetic markers and phenotypes of interest in population samples [1]. The fundamental principle involves testing each variant independently for its correlation with trait variation, while accounting for population structure and relatedness. This approach has successfully identified numerous loci controlling important agronomic traits in major crops, providing valuable markers for breeding programs.

Alignment-Based Techniques: For identifying deleterious mutations, comparative genomics approaches have relied on evolutionary conservation metrics derived from multiple sequence alignments across related species [1]. Methods based on this principle assume that functionally important genomic elements will exhibit evolutionary constraint, with deleterious variants disproportionately occurring at conserved positions. These techniques have been particularly valuable for classifying variants in protein-coding regions and identifying functional non-coding elements.

Table 1: Key Traditional Approaches for Variant Effect Prediction in Plants

| Method Category | Representative Techniques | Underlying Principle | Primary Applications in Plants |

|---|---|---|---|

| Association Mapping | QTL mapping, GWAS | Linear regression between genotype and phenotype | Identifying loci for yield, disease resistance, abiotic stress tolerance |

| Alignment-Based Methods | PhyloP, PhastCons | Evolutionary conservation across species | Identifying deleterious variants, functional non-coding elements |

| Expression-Based Analysis | eQTL mapping | Genotype-expression correlations | Uncovering genetic regulation of gene expression |

Experimental Protocols and Workflows

The standard workflow for traditional variant effect prediction involves carefully designed experiments with specific methodological considerations:

Population Design: For association mapping, researchers typically assemble a diverse panel of individuals representing the genetic variation within a species. For plants, this may include landraces, wild relatives, and cultivated varieties to capture a broad spectrum of genetic diversity. Population sizes typically range from hundreds to thousands of individuals to ensure sufficient statistical power [1].

Phenotyping Protocols: Precise phenotyping is critical for association studies. Measurements may include morphological traits, yield components, stress tolerance indices, and quality parameters. Replicated trials across multiple environments are often necessary to account for genotype-by-environment interactions.

Genotyping and Sequencing: Genetic variation is assessed using genotyping arrays or, increasingly, whole-genome sequencing. For alignment-based methods, homologous sequences are identified across multiple species using algorithms such as BLAST, followed by multiple sequence alignment using tools like CLUSTAL [28].

Statistical Analysis: For GWAS, the standard protocol involves fitting mixed linear models that account for population structure. Each variant is tested independently, with significance thresholds adjusted for multiple testing. For alignment-based methods, evolutionary conservation scores are calculated based on substitution rates, with lower rates indicating higher constraint.

Limitations in Plant Genomics

Despite their widespread adoption, traditional approaches face several limitations in plant genomics applications:

Resolution Challenges: Association mapping typically identifies broad genomic regions containing dozens to hundreds of genes, making it difficult to pinpoint causal variants [1]. The resolution is limited by linkage disequilibrium, which in plants can extend over hundreds of kilobases, particularly in self-pollinating species.

Reference Bias: Alignment-based methods depend heavily on the availability and quality of reference genomes and multi-species alignments. For many plant species with complex, repetitive genomes (e.g., maize with over 80% repetitive sequences), generating accurate alignments is challenging [27].

Context Insensitivity: Traditional methods estimate variant effects independently of genomic context, treating each variant in isolation [1]. This approach fails to capture epistatic interactions and position-specific effects that are increasingly recognized as important determinants of variant impact.

Scalability Issues: As genomic datasets grow exponentially, traditional methods face computational bottlenecks. Alignment-based approaches particularly struggle with the large, repetitive genomes characteristic of many plant species [28].

The Rise of Modern Sequence Models

Conceptual Foundations

Modern sequence models represent a fundamental shift from traditional approaches by leveraging artificial intelligence to learn complex sequence-function relationships directly from genomic data. Inspired by breakthroughs in natural language processing (NLP), these models treat biological sequences as texts written in a "language" of nucleotides or amino acids, applying similar architectural principles to decode their meaning [27].

The core innovation of these models is their ability to learn a unified function that predicts variant effects based on their genomic context, rather than analyzing each variant in isolation [1]. This approach allows them to capture the complex, non-linear relationships between sequence elements and their functional consequences.

Key Architectural Innovations

Transformer Architecture: The transformer architecture, with its self-attention mechanism, has emerged as the foundation for most modern sequence models [27]. Unlike recurrent neural networks that process sequences sequentially, transformers process all sequence elements in parallel, enabling efficient capture of long-range dependencies that are common in genomic regulation.

Self-Supervised Learning: Modern sequence models typically employ self-supervised pre-training on massive unlabeled sequence datasets, learning to predict masked elements based on their context [27]. This pre-training phase allows the models to develop a rich understanding of biological sequence grammar without requiring labeled data.

Transfer Learning: After pre-training, models can be fine-tuned on specific downstream tasks with relatively small labeled datasets. This transfer learning paradigm has proven particularly valuable in plant genomics, where experimental data may be limited [27].

Table 2: Prominent Modern Sequence Models in Plant Genomics

| Model Name | Molecular Focus | Key Innovations | Plant-Specific Applications |

|---|---|---|---|

| AgroNT | DNA | Transformer trained on plant genomes; captures plant-specific regulatory codes | Prediction of functional non-coding variants in crops |

| PDLLMs | DNA, RNA, Protein | Plant-specific foundation models; multi-modal capabilities | Trait prediction, variant effect estimation across species |

| GPN-MSA | DNA | Incorporates multi-species alignment data with deep learning | Enhanced prediction of functional variants in non-coding regions |

| mRNABERT | RNA | Dual tokenization (nucleotides & codons); protein sequence alignment | mRNA optimization, splicing prediction, therapeutic design |

Plant-Specific Adaptations

The development of modern sequence models for plant genomics has required specific adaptations to address unique challenges:

Addressing Genome Complexity: Plant-specific models like AgroNT and PDLLMs incorporate architectural innovations to handle polyploidy, high repetitive content, and extensive structural variation characteristic of plant genomes [27].

Environmental Response Modeling: Unlike animal models, plants must continuously adapt to environmental changes. Modern sequence models for plants are increasingly designed to incorporate environmental context, enabling prediction of genotype-by-environment interactions [27].

Cross-Species Generalization: Several plant-focused models are trained across multiple species to leverage evolutionary information while maintaining performance on specific crops of agricultural importance [27].

Direct Performance Comparison

Benchmarking Frameworks

Rigorous benchmarking is essential for objectively comparing traditional and modern approaches. The AFproject (http://afproject.org) provides a community resource for comprehensive evaluation of sequence comparison methods, establishing standards for performance assessment across different biological applications [28]. This platform characterizes methods based on multiple criteria including accuracy, scalability, and applicability to different data types.

Specialized benchmarks have also been developed for modern sequence models. These typically involve carefully curated datasets with known variant effects, enabling direct comparison of prediction accuracy between approaches [1]. For plant-specific applications, benchmarks often focus on traits of agricultural importance and validated causal variants.

Quantitative Performance Metrics

Multiple studies have systematically compared the performance of traditional and modern approaches across various genomic tasks:

Variant Effect Prediction Accuracy: Modern sequence models consistently outperform traditional methods in predicting variant effects, particularly in non-coding regions. For example, models like GPN-MSA show superior accuracy in identifying functional non-coding variants compared to alignment-based methods, with improvements in area under the precision-recall curve of up to 30% in some genomic contexts [27].

Resolution and Specificity: While traditional GWAS identifies association signals spanning hundreds of kilobases, modern sequence models can pinpoint causal variants at single-base resolution. This enhanced resolution has been demonstrated in several plant species, including tomato and maize, where model predictions have been experimentally validated [1].

Generalization Across Contexts: Modern sequence models show better generalization across tissue types, developmental stages, and environmental conditions compared to traditional methods. This is particularly valuable for plant research, where gene expression is highly context-dependent [27].

Table 3: Performance Comparison of Traditional vs. Modern Approaches

| Performance Metric | Traditional Approaches | Modern Sequence Models | Experimental Evidence |

|---|---|---|---|

| Variant Effect Prediction (Coding) | Moderate (Alignment-based: ~70% accuracy) | High (ESM models: >90% accuracy) | Superior prediction of deleterious missense variants [1] |

| Variant Effect Prediction (Non-coding) | Low (Limited by conservation) | Moderate-High (GPN-MSA: ~25% improvement) | Better identification of regulatory variants [27] |

| Resolution | Low (100 kb - 1 Mb regions) | High (Single-base resolution) | Fine-mapping of causal variants in plant QTL [1] |

| Handling Long-Range Dependencies | Limited | High (Transformers capture dependencies >1 kb) | Improved enhancer-promoter interaction prediction [27] |

| Scalability to Large Genomes | Low (Alignment computationally intensive) | Moderate-High (Efficient architectures like HyenaDNA) | Processing of megabase-scale sequences [27] |

Experimental Validation Studies

Robust validation is essential for establishing the practical utility of variant effect predictions. Several studies have employed complementary approaches to validate predictions from both traditional and modern methods:

Cross-Validation: Standard approach where models are trained on subsets of data and tested on held-out samples. Modern sequence models typically show better performance in cross-validation experiments, with lower overfitting compared to traditional methods [1].

Functional Enrichment Analysis: Successful variant effect predictors should show enrichment for variants with known functional impacts. Modern sequence models consistently show stronger enrichment for experimentally validated functional elements, such as STARR-seq enhancers and ATAC-seq accessible regions in plants [1].

Direct Experimental Evidence: The most compelling validation comes from direct experimental testing of predictions. For example, in several studies, modern sequence models have successfully predicted the effects of CRISPR-induced mutations in plant regulatory elements, with validation rates exceeding 70% in some cases [1].

Practical Implementation Guide

The Scientist's Toolkit

Implementing variant effect prediction requires specific computational resources and software tools:

Table 4: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function | Applicability |

|---|---|---|---|

| PLINK | Software Tool | Genome association analysis | Traditional GWAS in plant populations |

| GATK | Software Tool | Variant discovery and analysis | Processing plant sequencing data |

| AFproject | Web Service | Benchmarking alignment-free methods | Comparing performance of different approaches [28] |

| DNABERT | Pre-trained Model | DNA sequence analysis | Predicting regulatory elements in plant genomes [27] |

| AgroNT | Pre-trained Model | Plant-specific genomic analysis | Variant effect prediction in crop species [27] |

| ESM | Pre-trained Model | Protein sequence analysis | Predicting effects of missense variants in plants [1] |

| High-Performance Computing Cluster | Infrastructure | Model training and inference | Handling large plant genomes and datasets |

Workflow Visualization

The following diagram illustrates the typical workflows for both traditional and modern approaches to variant effect prediction in plants:

Variant Effect Prediction Workflows

Method Selection Framework

Choosing between traditional and modern approaches depends on multiple factors:

Data Availability: Modern sequence models typically require large training datasets to achieve optimal performance. For species with limited genomic resources, traditional methods may be more appropriate.

Biological Question: For initial discovery of genomic regions associated with traits, traditional GWAS remains valuable. For pinpointing causal variants and predicting functional effects, modern models offer superior resolution.

Computational Resources: Modern sequence models, particularly large transformer architectures, require significant computational resources for both training and inference. Traditional methods are generally less computationally intensive.

Validation Capacity: The higher resolution of modern sequence models generates specific, testable hypotheses that require experimental validation through methods like CRISPR genome editing.

Future Perspectives and Challenges

Emerging Trends

The field of variant effect prediction continues to evolve rapidly, with several emerging trends shaping future development:

Multi-Modal Integration: Next-generation models are increasingly integrating multiple data types, including genomic, epigenomic, transcriptomic, and structural information [27]. This multi-modal approach is particularly powerful for plants, where environmental responses involve complex regulatory networks.

Generalizable Architectures: Models like ESM3 demonstrate the potential of general-purpose architectures that can jointly reason about sequence, structure, and function [27]. Similar approaches adapted for plants could transform our ability to predict variant effects across different biological scales.

Interpretability Advances: A key focus of current research is improving model interpretability to extract biological insights from predictive models. Attention mechanisms in transformer models can help identify important sequence motifs and regulatory patterns [27].

Persistent Challenges

Despite rapid progress, significant challenges remain in applying modern sequence models to plant genomics:

Data Scarcity: For many plant species, especially orphan crops, limited high-quality genomic and phenotypic data constrains model performance [1] [27].

Computational Barriers: The scale of modern sequence models creates accessibility challenges for many research groups, particularly in resource-limited settings [27].

Biological Complexity: Plant-specific biological phenomena, such as polyploidy, extensive alternative splicing, and complex gene families, present unique modeling challenges that are not fully addressed by current approaches [27].

Experimental Validation Lag: The rapid pace of computational model development has outstripped capacity for experimental validation, creating a bottleneck in translating predictions to biological insights [1].

The evolution from traditional approaches to modern sequence models represents a fundamental shift in how researchers approach variant effect prediction in plants. Traditional methods like association mapping and alignment-based techniques provide established, interpretable frameworks that continue to offer value for specific applications. However, modern sequence models offer superior resolution, accuracy, and ability to model complex genomic contexts.

Benchmarking studies consistently demonstrate the advantages of modern approaches, particularly for predicting variant effects in non-coding regions and identifying causal variants at single-base resolution. The development of plant-specific models like AgroNT and PDLLMs further enhances the applicability of these methods to agricultural research.

As the field progresses, the integration of multi-modal data, improved interpretability, and expanded experimental validation will be crucial for realizing the full potential of modern sequence models in plant genomics. Researchers should consider a hybrid approach, leveraging the complementary strengths of both traditional and modern methods to advance precision breeding and functional genomics in plants.

Methodological Landscape: From Statistical Models to AI-Driven Approaches

In the field of genomic selection (GS) and association studies, accurately predicting the genetic merit of individuals is fundamental for accelerating genetic gains in plant and animal breeding. Genomic selection has revolutionized breeding programs by enabling the selection of superior individuals based on genomic estimated breeding values (GEBVs) rather than relying solely on phenotypic records or progeny testing. The accuracy of these predictions hinges on the statistical models employed, each with distinct assumptions and computational demands. This guide provides an objective comparison of three cornerstone methodologies: Genomic Best Linear Unbiased Prediction (GBLUP), Bayesian approaches, and association testing frameworks. We focus on their performance in variant effect prediction, framing the discussion within the context of benchmarking models for plant research, supported by experimental data and detailed protocols.

Methodological Foundations

Genomic Best Linear Unbiased Prediction (GBLUP)

GBLUP is a linear mixed model that has become a benchmark method in genomic prediction due to its computational efficiency and reliability. The core model is represented by the equation:

[ \mathbf{y} = \mathbf{1}\mu + \mathbf{Zg} + \mathbf{e} ]

Here, (\mathbf{y}) is the vector of observed phenotypes (or deregressed proofs), (\mu) is the overall mean, (\mathbf{1}) is a vector of ones, (\mathbf{Z}) is an incidence matrix linking observations to the random genetic effects (\mathbf{g}), and (\mathbf{e}) is the vector of residual errors. The random effects are assumed to follow a normal distribution: (\mathbf{g} \sim N(0, \mathbf{G}\sigma^2g)) and (\mathbf{e} \sim N(0, \mathbf{I}\sigma^2e)), where (\mathbf{G}) is the genomic relationship matrix (GRM) derived from marker data [29].

The GRM quantifies the genetic similarity between individuals based on their genotypes. For individuals (i) and (j), the relationship is calculated as:

[ G{ij} = \frac{1}{m} \sum{k=1}^{m} \frac{(M{ik} - 2pk)(M{jk} - 2pk)}{2pk(1-pk)} ]

where (m) is the total number of markers, (M{ik}) and (M{jk}) are the genotypes of individuals (i) and (j) at marker (k) (coded as 0, 1, 2), and (p_k) is the frequency of the coded allele [29]. A key characteristic of GBLUP is its assumption that all single nucleotide polymorphisms (SNPs) contribute equally to the genetic variance, which is suitable for traits governed by many genes with small effects but may limit its accuracy for traits influenced by major-effect genes [30].

Bayesian Approaches

Bayesian methods offer a flexible alternative to GBLUP by relaxing the assumption of equal variance for all markers. These approaches assign different prior distributions to marker effects, allowing for variable selection and shrinkage. The general Bayesian model is:

[ \mathbf{y} = \mathbf{1}\mu + \sum{k=1}^{m} \mathbf{X}k \beta_k + \mathbf{e} ]

where (\mathbf{X}k) is the vector of genotypes for marker (k), and (\betak) is its effect. The distinction between different Bayesian "alphabets" lies in the prior assumed for (\beta_k) [30].

The following table summarizes the key prior assumptions and properties of common Bayesian methods:

Table 1: Key Characteristics of Bayesian Genomic Prediction Models

| Method | Prior on Marker Effects | Variance Assumption | Key Feature |

|---|---|---|---|

| BayesA | t-distribution | Marker-specific variance | All markers have non-zero effects, but with different variances [30]. |

| BayesB | Mixture distribution (spike-slab) | Marker-specific variance; some effects are zero | A proportion of markers (π) have zero effect [29]. |

| BayesCπ | Mixture distribution (spike-slab) | Common variance for non-zero effects | A proportion of markers (π) have zero effect; non-zero effects share a common variance [29]. |

| BayesR | Mixture of normal distributions | Multiple variance classes | Models markers into several effect size categories [29]. |

| Bayesian LASSO | Double-exponential (Laplace) | Marker-specific variance | Induces strong shrinkage of small effects towards zero [30]. |

The posterior distributions of the parameters are typically estimated using Markov Chain Monte Carlo (MCMC) algorithms, such as Gibbs sampling, which can be computationally intensive [30].

Association Testing and Error Control

Association testing, such as in Genome-Wide Association Studies (GWAS) or Epigenome-Wide Association Studies (EWAS), aims to identify specific markers linked to traits. A major challenge is the multiple testing problem, where thousands of hypotheses are tested simultaneously.

- Traditional FDR Control: Methods like the Benjamini-Hochberg (BH) procedure control the False Discovery Rate (FDR) but treat all hypotheses equally, which can be suboptimal [31].

- Covariate-Adaptive FDR Control: Newer methods leverage auxiliary information to improve power. For example, in EWAS, covariates like methylation mean/variance or genomic annotation (e.g., CpG island location) can inform the likelihood of a true association. Methods like Independent Hypothesis Weighting (IHW) and Covariate Adaptive Multiple Testing (CAMT) use these covariates to weight hypotheses, relaxing the rejection threshold for more promising tests while maintaining the overall FDR [31]. These have been shown to improve detection power by 25% to 68% compared to standard procedures in some contexts [31].

- Conditional FDR (cFDR): This approach uses a covariate, such as p-values from a related trait, to calculate a trait-specific FDR. Recent advancements provide better type-1 error control and can substantially increase power in high-dimensional studies like transcriptome-wide association studies [32].

Comparative Performance Analysis

Prediction Accuracy Across Genetic Architectures

The choice between GBLUP and Bayesian methods often depends on the underlying genetic architecture of the trait—that is, the number of genes influencing the trait and the distribution of their effect sizes.

Table 2: Comparative Prediction Accuracy of GBLUP and Bayesian Methods

| Study Context | Trait Type / Genetic Architecture | GBLUP Performance | Bayesian Method Performance | Key Findings |

|---|---|---|---|---|

| Holstein Cattle (16,122 individuals) [29] | Nine production & type traits (e.g., milk yield, conformation) | Baseline accuracy | BayesR achieved the highest average accuracy (0.625). | Bayesian models (e.g., BayesCπ) outperformed GBLUP by 0.8% to 2.2% on average. For some traits like fat percentage, SNP-weighted GBLUP showed a 4.9% gain over standard GBLUP. |

| Various Plant Species [30] | Traits governed by a few major-effect QTLs | Lower accuracy | Higher accuracy | Bayesian methods (e.g., BayesB, BayesLASSO) are more accurate when a limited number of loci have large effects. |