Automated Multimodal Image Registration for High-Throughput Plant Phenotyping: Methods, Applications, and Benchmarks

This article comprehensively reviews automated multimodal image registration techniques essential for high-throughput plant phenotyping.

Automated Multimodal Image Registration for High-Throughput Plant Phenotyping: Methods, Applications, and Benchmarks

Abstract

This article comprehensively reviews automated multimodal image registration techniques essential for high-throughput plant phenotyping. It covers the foundational principles of fusing data from diverse imaging sensors (RGB, hyperspectral, chlorophyll fluorescence, 3D) to enable non-destructive, precise analysis of plant growth and stress responses. The scope extends from core concepts and deep learning methodologies to optimization strategies for challenging field conditions and rigorous validation benchmarks. Tailored for researchers and scientists in plant biology and agriculture, this review synthesizes current technological advancements to address the critical phenotyping bottleneck in breeding programs and precision agriculture.

The Core Principles and Imperative of Multimodal Registration in Plant Phenotyping

Defining Multimodal Image Registration and Its Role in Modern Agriculture

Multimodal image registration is the computational process of aligning two or more images of the same scene that were captured at different times, from diverse viewpoints, and/or by different sensor technologies into a single, unified coordinate system [1] [2]. In the context of modern agriculture, this technique is foundational for fusing complementary data from various imaging sensors, such as RGB (visible light), thermal, hyperspectral, and chlorophyll fluorescence cameras [3]. The effective utilization of cross-modal patterns depends on this pixel-precise alignment to enable a more comprehensive assessment of plant phenotypes [4] [5]. This capability is critical for overcoming the inherent challenges of agricultural imaging, which include parallax effects, occlusion by dense plant canopies, and the vastly different image characteristics produced by each type of sensor [4] [1].

The Critical Role in Modern Agricultural Phenotyping

The fusion of multi-domain sensor systems through precise image registration provides more potentially discriminative features for machine learning models and can provide synergistic information, thereby increasing the specificity and reliability of plant stress detection [3]. In practice, this technology enables several advanced agricultural applications:

- Enhanced Stress Detection: Combining thermal and visual imagery allows for the detection of water stress in crops before it becomes visible to the human eye [1]. Similarly, fusing hyperspectral and chlorophyll fluorescence data facilitates the early identification of biotic stresses, such as fungal infections [3].

- Robotic Harvesting and Precision Spraying: Automated agricultural robots rely on the fusion of data from multiple sensors (e.g., RGB, thermal, laser) to accurately locate fruits or weeds and perform selective operations, reducing chemical usage and improving yield [1] [6].

- High-Throughput Phenotyping: Registration pipelines are essential for platforms that screen thousands of plants, allowing for the non-destructive quantification of growth, morphology, and physiological function over time by aligning images from different modalities and camera views [3] [6].

Key Methodologies and Technical Approaches

Several advanced methodologies have been developed to address the specific challenges of multimodal image registration in unstructured agricultural environments. The table below summarizes the principal technical approaches identified in current research.

Table 1: Key Methodologies for Multimodal Image Registration in Agriculture

| Methodology | Core Principle | Sensor Compatibility | Reported Performance/Advantage |

|---|---|---|---|

| 3D Registration with Depth Sensing [4] [5] | Integrates depth information from a Time-of-Flight (ToF) camera and uses ray casting to mitigate parallax. | RGB, ToF, Multispectral, Thermal | Robust to parallax; Automated occlusion detection; Suitable for arbitrary camera setups and plant species. |

| Distance-Dependent Transformation Matrix (DDTM) [1] [2] | Pre-calibrates a projective transformation matrix where each element is a function of the distance to the target, measured by a range sensor. | RGB, Thermal, Laser Scanner | Compactly represents infinite registration transformations; Accurate for varying sensing ranges in the field. |

| Automated 2D Affine Registration [3] | Uses algorithms like Phase-Only Correlation (POC) or Enhanced Correlation Coefficient (ECC) to compute a global affine transformation (translation, rotation, scaling, shearing). | RGB, Hyperspectral (HSI), Chlorophyll Fluorescence (ChlF) | High overlap ratios (e.g., 96.6% - 98.9%); Computationally efficient; Reversible transformation. |

| Two-Step Registration-Classification [6] | First, co-registers high-contrast fluorescence (FLU) and visible light (VIS) images; then applies a classifier to eliminate residual background pixels. | FLU, VIS | Achieves ~93% segmentation accuracy; Robust to motion artifacts and inhomogeneous backgrounds. |

Detailed Experimental Protocol: 3D Registration with a Depth Camera

This protocol is adapted from the 3D multimodal image registration method that utilizes a Time-of-Flight (ToF) camera [4] [5].

1. Research Reagent Solutions

Table 2: Essential Materials and Equipment

| Item | Function/Description |

|---|---|

| Time-of-Flight (ToF) Camera | Provides per-pixel depth information, which is crucial for constructing 3D scene geometry and mitigating parallax errors. |

| Multimodal Camera Rig | A custom setup housing the ToF camera and other sensors (e.g., RGB, hyperspectral). Must allow for geometric calibration. |

| Artificial Control Points (ACPs) | Physically constructed markers easily identifiable across all sensor modalities. Used for initial coarse calibration of the system. |

| Computational Workstation | A computer with sufficient CPU/GPU resources for running ray casting and registration algorithms. |

| Plant Specimens | A diverse set of plant species with varying leaf geometries (e.g., six species as used in the cited study) to test robustness. |

2. Step-by-Step Procedure

Step 1: System Calibration and Data Acquisition

- Rigidly mount the ToF camera and the other multimodal cameras (e.g., visual, thermal) into a single rig.

- Use specially designed Artificial Control Points (ACPs) [1] to perform an initial geometric calibration between all sensors. This establishes a baseline spatial relationship.

- Capture simultaneous image sets (RGB, depth, other modalities) of the plant specimens from the desired viewpoints.

Step 2: 3D Point Cloud Generation and Ray Casting

- Use the depth information from the ToF camera to generate a 3D point cloud of the plant canopy.

- Employ a ray casting technique [4] from the perspective of each multimodal camera. This simulates how each camera's pixel projects into the 3D space of the point cloud.

Step 3: Projection and Alignment

- Project the 3D point cloud onto the image plane of a chosen reference camera (e.g., the RGB camera). This creates a virtual image that is geometrically aligned with the reference.

- Use this projected image as a bridge to align the other multimodal images. Since the projection is derived from the 3D geometry, it inherently corrects for parallax.

Step 4: Occlusion Handling

- Automatically identify and filter out occluded areas by analyzing the ray casting results. A ray that is interrupted by a closer object indicates an occlusion in the viewpoint of that particular camera [4].

- Mask these occluded regions to prevent them from introducing errors into the final fused image.

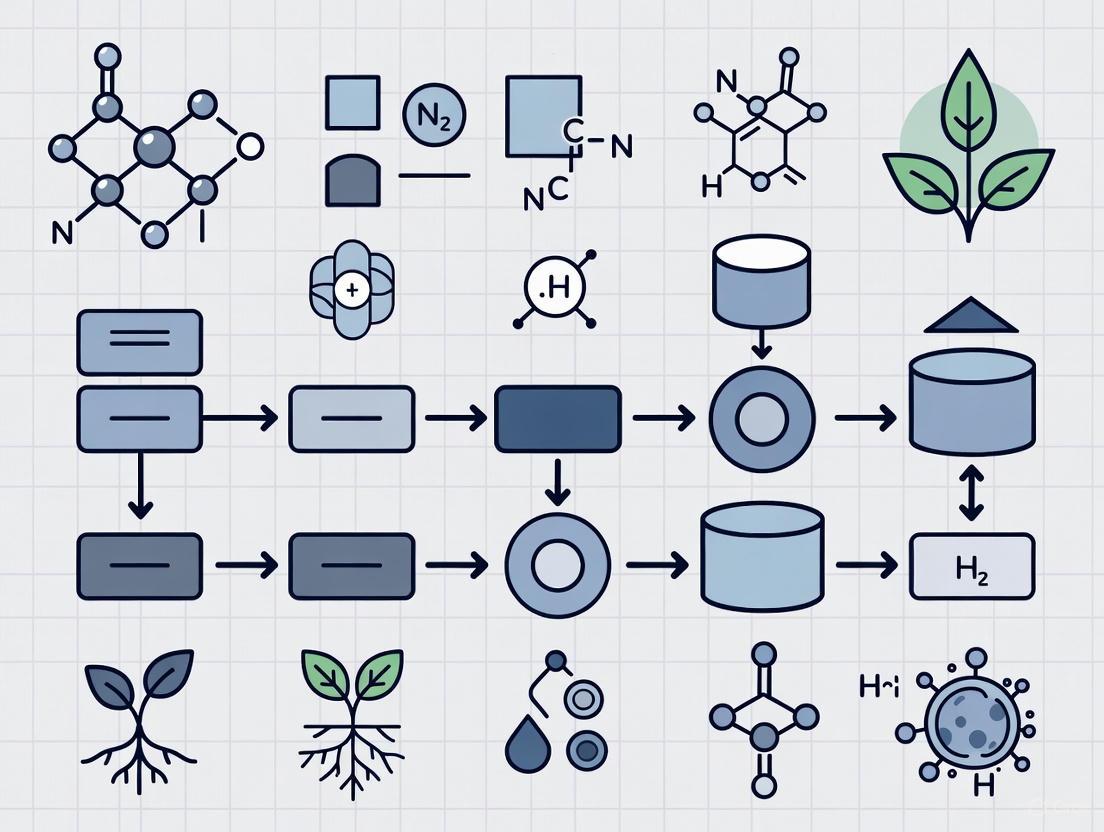

The following workflow diagram illustrates this 3D registration process:

Detailed Experimental Protocol: Automated 2D Affine Registration

This protocol is based on the open-source approach for registering RGB, hyperspectral (HSI), and chlorophyll fluorescence (ChlF) images [3].

1. Research Reagent Solutions

Table 3: Essential Materials and Equipment

| Item | Function/Description |

|---|---|

| Hyperspectral Imaging System | A push-broom or snapshot camera capturing spectral data across many bands (e.g., 500-1000 nm). |

| Chlorophyll Fluorescence Imager | A camera system capable of capturing fluorescence kinetics parameters and reflectance images. |

| RGB Camera | A standard color camera providing high-spatial-resolution reference images. |

| Calibration Target | A standard chessboard or charuco board for camera calibration and distortion correction. |

| Multi-Well Plates or Plant Trays | Standardized containers for holding plants to ensure consistent positioning and high-throughput screening. |

2. Step-by-Step Procedure

Step 1: Pre-processing and Camera Calibration

- Capture images of a calibration target (e.g., chessboard) with all cameras.

- Perform camera calibration to correct for lens distortion, geometric misalignment, and other non-linear effects. The mean reprojection error should be minimized to a subpixel level [3].

Step 2: Reference Image Selection

- Select one image modality as the fixed reference image (e.g., the ChlF image due to its high contrast). The other images (e.g., RGB, HSI) are the moving images to be transformed.

Step 3: Affine Transformation Estimation

- Choose a registration algorithm to compute an affine transformation matrix (accounting for translation, rotation, scaling, and shearing). Suitable methods include:

Step 4: Image Transformation and Validation

- Apply the computed affine transformation matrix to warp the moving image into the coordinate system of the reference image.

- Evaluate registration performance using metrics like the Overlap Ratio (ORConvex), which measures the percentage of overlapping area between the segmented plant regions in the registered images. Target performance can exceed 96% overlap [3].

The workflow for this 2D affine registration is summarized below:

Multimodal image registration has evolved from a manual, error-prone process to an automated, robust, and essential technology in modern plant phenotyping. The methodologies detailed here—ranging from 3D depth-aware registration to 2D affine transformations and hybrid registration-classification pipelines—provide researchers with a powerful toolkit to fuse disparate sensory data. This fusion is pivotal for unlocking deeper insights into plant health, development, and resilience, thereby accelerating breeding programs and enhancing the sustainability of agricultural practices. The continued refinement of these protocols, especially in handling complex canopies and integrating with machine learning models, will further solidify its role as a cornerstone of precision agriculture.

Plant phenotyping has evolved from relying on simple, manual observations to employing advanced, automated sensor technologies that can non-destructively quantify complex plant traits. This evolution is critical for bridging the genotype-to-phenotype gap, a major bottleneck in modern plant breeding and agricultural research [3]. The integration of multiple imaging modalities—including RGB, Hyperspectral, Chlorophyll Fluorescence, and 3D imaging—provides a more comprehensive picture of plant health, structure, and function than any single sensor could achieve alone. When these data streams are fused through a process known as multimodal image registration, researchers can gain synergistic insights into plant responses to various biotic and abiotic stressors, ultimately accelerating the development of more resilient and productive crops [3] [7].

The core challenge this addresses is that monomodal detection of plant stressors is often limited by non-specific or indirect features, leading to low cross-specificity between different types of stress [3]. A multi-sensor approach overcomes this by providing a richer set of discriminative features for machine learning models and enabling the development of new, more robust plant status proxies. The following sections detail the individual sensor technologies, the methods for their integration, and the practical protocols for implementing these systems in plant phenotyping research.

Core Sensor Technologies and Their Applications

Quantitative Comparison of Phenotyping Sensors

Table 1: Technical specifications and primary applications of core plant phenotyping sensor technologies.

| Sensor Technology | Measured Parameters | Spatial Resolution | Spectral Range/Resolution | Primary Applications in Plant Phenotyping |

|---|---|---|---|---|

| RGB Imaging | Color, texture, morphology, architecture | High (Limited by camera optics) | Visible light (Red, Green, Blue channels) | Plant segmentation [3], growth monitoring [7], morphological trait extraction (leaf area, count) [8] |

| Hyperspectral Imaging (HSI) | Spectral reflectance across numerous narrow bands | Medium to High | Visible to Near-Infrared (e.g., 500–1000 nm) [3] | Pigment composition analysis [3], biochemical trait quantification, early stress detection [9] |

| Chlorophyll Fluorescence (ChlF) | Light emission from photosynthetic apparatus | High | Emission spectra typically in red and far-red region | Photosynthetic efficiency [3] [8], functional status of PSII, non-destructive stress response monitoring [9] |

| 3D Imaging (RGB-D) | Depth, point cloud, surface geometry | High (Depth-dependent) | Not applicable (Geometric data) | 3D plant architecture [8], biomass estimation [10], leaf angle and stem morphology [8] |

Technology-Specific Principles and Workflows

RGB Imaging serves as the foundational modality, providing high-contrast and high-resolution structural information that is easily interpretable. In automated phenotyping, its primary role is often for precise plant segmentation and providing a structural reference for aligning data from other sensors [3]. The workflow involves capturing top-view or side-view images under consistent, diffuse lighting to minimize shadows and specular reflections. Subsequent image analysis can extract traits like projected leaf area, compactness, and color indices correlated with health status.

Hyperspectral Imaging (HSI) extends vision beyond the human eye by capturing reflectance across hundreds of contiguous spectral bands. This high-dimensional data forms a "spectral signature" unique to different biochemical components (e.g., chlorophylls, carotenoids, water content) [3]. Push-broom line scanners are a common HSI technology used in phenotyping systems [3]. The critical steps in HSI data processing include radiometric calibration to convert raw digital numbers to reflectance, and spectral calibration to ensure accurate wavelength assignment. The enhanced RotaPrism system, for example, uses a hyperspectral sensor for reflectance measurements to understand canopy structural and physiological dynamics [9].

Chlorophyll Fluorescence (ChlF) Imaging is a functional imaging technique that probes the photosynthetic machinery. It measures the re-emission of light at longer wavelengths by chlorophyll molecules after absorption of light, which is a highly sensitive indicator of photosynthetic performance and plant stress [3]. Specialized pulsed measuring light systems (e.g., the Plant Explorer XS from PhenoVation) are used to capture ChlF kinetics [3]. The standard protocol involves dark-adapting a plant for a set period (e.g., 20-30 minutes) to fully open photosynthetic reaction centers before applying a saturating light pulse to measure key parameters like Fv/Fm (maximum quantum yield of PSII).

3D Imaging technologies, such as RGB-D cameras, capture the three-dimensional geometry of plants. This is crucial for traits that cannot be accurately described in 2D, such as plant biomass, leaf angle distribution, and complex canopy architecture [8]. The workflow involves capturing multiple RGB-D images from different viewpoints around the plant. These multiple depth views are then processed and aligned using algorithms like the Iterative Closest Point (ICP) to construct a merged, comprehensive 3D point cloud model of the plant [8].

Integrated Multimodal Registration Workflows

The true power of multimodal phenotyping is unlocked by precisely aligning the data from all sensors into a unified coordinate system, a process known as image registration.

Workflow Diagram for Multimodal Data Fusion

The following diagram illustrates the integrated workflow for fusing data from RGB, Hyperspectral, Chlorophyll Fluorescence, and 3D sensors.

Key Registration Methods and Performance

Table 2: Comparison of image registration methods and their reported performance in plant phenotyping.

| Registration Method | Core Principle | Applicable Sensor Combinations | Reported Performance (Overlap Ratio - ORConvex) | Key Considerations |

|---|---|---|---|---|

| Affine Transformation | Global linear transformation (translation, rotation, scaling, shearing) | RGB-to-ChlF, HSI-to-ChlF [3] | 98.0 ± 2.3% (RGB-ChlF), 96.6 ± 4.2% (HSI-ChlF) on A. thaliana [3] | Computationally fast, robust, but may not account for local non-linear distortions [3] |

| Feature-Based (e.g., ORB) | Identifies and matches key points (edges, corners) between images | RGB-to-ChlF, 3D-to-ChlF [8] | Used for ChlF-to-RGB-D alignment in 3D systems [8] | Performance depends on distinct feature availability; can fail with low-feature or noisy images [3] |

| Phase-Only Correlation (POC) | Uses phase information in the Fourier domain to estimate transformation | General multi-modal registration [3] | Evaluated as part of automated registration pipeline [3] | Robust to intensity differences and noise [3] |

| Enhanced Correlation Coefficient (ECC) | An extension of Normalized Cross-Correlation (NCC) for intensity-based alignment | General multi-modal registration [3] | NCC-based selection used for robust registration [3] | A similarity metric used for optimization, can handle some intensity variations [3] |

| Iterative Closest Point (ICP) | Aligns 3D point clouds by iteratively minimizing distances between corresponding points | 3D point cloud merging and integration [8] | RMSE for morphological traits: Leaf Area (2.97 cm²), Length (0.78 cm) [8] | Used for 3D reconstruction from multiple RGB-D views [8] |

Detailed Experimental Protocols

Protocol 1: 2D Multimodal Registration (RGB, HSI, ChlF)

This protocol is adapted from high-throughput studies on A. thaliana and Rosa × hybrida [3].

System Setup and Calibration: Position the Multi-well plates or plants under each imaging sensor (RGB, HSI, ChlF). While the position under the ChlF imager can be fixed, plates under the RGB and HSI system may be roughly aligned. Perform camera calibration for each sensor using a checkerboard pattern to correct for lens distortion. Aim for a mean reprojection error of less than 0.5 pixels for RGB and ChlF cameras; a slightly higher error (~2 pixels) may be acceptable for HSI push-broom scanners due to their lower signal-to-noise ratio [3].

Data Acquisition: Capture images sequentially from all sensors. For ChlF, ensure plants are dark-adapted prior to measurement. For HSI, ensure consistent and uniform illumination across the spectral range.

Image Preprocessing: Convert all images to a common coordinate system if possible. Apply distortion correction parameters obtained during calibration. For HSI data, perform radiometric calibration to convert to reflectance.

Reference Image Selection: Select the ChlF image or the high-contrast RGB image as the reference (fixed) image to which the HSI (moving) image will be aligned. The choice of reference can impact performance and should be consistent [3].

Coarse Global Registration: Compute an affine transformation matrix using a chosen algorithm (e.g., Phase-Only Correlation, Feature-Based ORB, or an NCC-based approach) to align the moving image to the reference image globally [3].

Fine Object-Level Registration: To address heterogeneity across the image, segment individual plants or objects (e.g., using the high-contrast RGB or ChlF data). Apply an additional fine registration step to each segmented object to achieve pixel-perfect alignment. This two-step process has been shown to achieve overlap ratios exceeding 96% [3].

Validation: Quantify registration accuracy using metrics like the Overlap Ratio (ORConvex), which measures the intersection over union of the segmented plant regions from the different modalities after alignment [3].

Protocol 2: 3D Multimodal Reconstruction with Chlorophyll Fluorescence

This protocol is based on a gantry robot system for generating 3D ChlF point clouds [8].

Synchronized Data Capture: A gantry robot system with a mounted RGB-D camera and a top-view ChlF camera automatically moves around the plant, capturing multiple RGB-D images from different viewpoints. Simultaneously, the top-view ChlF camera captures a corresponding fluorescence image.

3D Point Cloud Generation: Process the multiple RGB-D images. Use the Iterative Closest Point (ICP) algorithm to align and merge these individual depth views into a single, consolidated 3D point cloud of the plant [8].

2D-3D Registration: Align the top-view ChlF image with the corresponding top-view RGB-D image using a feature-based registration method. This establishes the correspondence between the 2D fluorescence data and the 2D projection of the 3D model [8].

ChlF Data Integration into 3D Model: Using the pinhole camera model and the transformation parameters obtained in the previous step, map the pixel-level ChlF data onto the 3D plant point cloud. This results in a comprehensive 3D model where each point contains both spatial (X, Y, Z) and physiological (ChlF) information [8].

Trait Extraction and Validation: Segment individual leaves from the 3D model using a clustering-based algorithm. Extract morphological traits (leaf length, width, surface area) and correlate ChlF signals with specific leaf regions. Validate the accuracy of extracted morphological traits by comparing them against manual measurements, with reported R² values exceeding 0.92 [8].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key commercial systems, software, and analytical tools used in automated plant phenotyping.

| Item / Solution | Provider Examples | Primary Function in Phenotyping |

|---|---|---|

| Automated Phenotyping Platforms | LemnaTec GmbH [7] [11], WPS (Wageningen Plant Systems) [7] | Provides integrated, high-throughput systems with conveyor belts, robotic gantries, and multiple integrated sensors for controlled environments. |

| Hyperspectral Imaging Systems | Various specialized manufacturers | Push-broom or snapshot cameras capturing high-dimensional spectral data in Visible-NIR range (500-1000 nm) for biochemical analysis [3]. |

| Chlorophyll Fluorescence Imagers | PhenoVation (Plant Explorer XS) [3], Heinz Walz GmbH [7] [11], Photon Systems Instruments [7] [11] | Specialized cameras with pulsed measuring light systems to capture ChlF kinetics and assess photosynthetic performance [3]. |

| 3D/RGB-D Cameras | Often integrated into custom gantry or robotic systems | Sensors that capture both color (RGB) and depth (D) information for reconstructing 3D plant geometry and architecture [8]. |

| Data Management & Integration Software | Custom and commercial solutions (e.g., from LemnaTec, PSI) | Handles the massive data flows from sensors, performs image analysis, manages data, and integrates different data streams [7]. |

| Image Analysis & AI Software | Open-source (Python, R) and commercial packages | Employs AI and machine learning for tasks like plant segmentation, trait identification, and predictive modeling from complex image data [7]. |

Automated multimodal image registration is a cornerstone of high-throughput plant phenotyping, enabling the fusion of complementary data from various camera technologies for a comprehensive assessment of plant traits. However, this process is fundamentally challenged by several natural and technical factors. Parallax effects, caused by the spatial separation of cameras imaging a complex 3D plant canopy, lead to misalignment. Occlusion, where plant structures like leaves and stems hide other parts from view, results in incomplete data. Furthermore, the large intra-class variability inherent in plants—across species, developmental stages, and growing conditions—complicates the development of universal registration algorithms. This application note details these primary challenges and provides structured protocols and resources to address them, facilitating robust and accurate multimodal plant image analysis for research and development.

Key Challenges in Multimodal Plant Image Registration

The effective utilization of cross-modal patterns in plant phenotyping depends on achieving pixel-precise alignment, a task complicated by physical and biological factors [4] [5]. The table below summarizes the core challenges and their impact on the registration process.

Table 1: Core Challenges in Automated Multimodal Plant Image Registration

| Challenge | Description | Impact on Registration |

|---|---|---|

| Parallax | Apparent displacement of foreground objects against the background due to different camera viewpoints. | Causes misalignment and geometric distortions, preventing pixel-precise fusion of data from different sensors. [4] [5] |

| Occlusion | The hiding of plant structures (e.g., bunches, leaves) by other plant parts, a common issue in dense canopies. [12] | Leads to incomplete data, registration errors in hidden areas, and inaccurate trait quantification (e.g., yield estimation). [6] [12] |

| Large Intra-Class Variability | Significant differences in shape, size, color, and architecture among plant species, genotypes, and developmental stages. [13] [14] | Hinders development of universal algorithms; methods tuned for one species may fail on another. [4] [14] |

| Non-Rigid Plant Motion | Dynamic movement of leaves and stems between image captures in different photochambers. [6] [14] | Introduces non-uniform local deformations, making simple rigid registration models (translation, rotation) insufficient. |

Quantitative Data and Method Comparison

Addressing these challenges requires specific methodological approaches. The following table synthesizes techniques from recent research, highlighting their applicability to the core problems.

Table 2: Methodologies for Addressing Plant Image Registration Challenges

| Methodology | Core Principle | Targeted Challenges | Reported Efficacy / Performance |

|---|---|---|---|

| 3D Multimodal Registration with Depth Data [4] [5] | Uses a Time-of-Flight (ToF) camera for 3D information and ray casting to model camera geometry. | Parallax, Occlusion | Robust alignment across 6 plant species with varying leaf geometries; automated occlusion detection. |

| Two-Step Registration-Classification [6] | Co-registers high-contrast fluorescence (FLU) and visible light (VIS) images, then uses classifiers to refine segmentation. | Occlusion, Intra-Class Variability | Achieved ~93% average segmentation accuracy on Arabidopsis, wheat, and maize. |

| Feature-Point, Frequency Domain, and Intensity-Based Registration [14] | Compares and extends three classic techniques (e.g., SIFT, Phase Correlation, Mutual Information) for plant images. | Intra-Class Variability, Non-Rigid Motion | Success rates of 60-100% across species; requires preprocessing for robustness. |

| Canopy Porosity & Bunch Area Modeling [12] | Uses a multiple regression model with canopy porosity and visible bunch area to estimate total occluded bunch area in vineyards. | Occlusion | Model R² of 0.80 for estimating bunch exposure; yield estimation error of 0.2% on validation set. |

Experimental Protocols

Protocol 1: 3D Multimodal Image Registration with Depth Sensing

This protocol leverages 3D depth information to mitigate parallax and automatically identify occlusions [4] [5].

System Setup and Calibration:

- Equipment: Configure a multimodal system with a Time-of-Flight (ToF) depth camera and at least one other sensor (e.g., RGB, hyperspectral). Ensure all cameras are firmly mounted.

- Calibration: Precisely calibrate the extrinsic (position, orientation) and intrinsic (lens distortion, focal length) parameters for all cameras within the system. The relative position between the ToF camera and other sensors must be known.

Image and Data Acquisition:

- Simultaneously capture images from all modalities (e.g., RGB, FLU) along with the corresponding depth map from the ToF camera.

- Ensure plants are within the optimal working distance of all sensors for focus and illumination.

3D Point Cloud Generation:

- Use the depth data and camera calibration parameters to reconstruct a 3D point cloud of the plant scene.

Ray Casting-Based Registration:

- For each pixel in a secondary camera (e.g., the RGB camera), cast a ray from its focal point through the pixel into the 3D scene.

- Determine the intersection point of this ray with the plant's 3D point cloud. This step directly addresses parallax by projecting the 2D pixel onto its correct 3D location.

Projection and Occlusion Handling:

- Project the 3D intersection point onto the image plane of the other cameras (e.g., the FLU camera).

- Automated Occlusion Detection: An occlusion is identified if the projected point does not correspond to a plant pixel in the target image or if multiple 3D points are projected to the same 2D pixel. These occluded regions can be flagged and filtered out to prevent registration errors.

Validation:

- Quantify registration accuracy by comparing automated results with manually annotated ground truth data, using metrics like Dice coefficient or mean squared error of corresponding points.

Protocol 2: Two-Step Registration-Classification for Occlusion-Resilient Segmentation

This protocol uses fluorescence and visible light images to achieve accurate segmentation despite occlusions and background noise [6].

Image Acquisition and Pre-processing:

- Acquire paired Fluorescence (FLU) and Visible Light (VIS) images. Due to plant motion between chambers, these will be misaligned. [6]

- Convert VIS images to grayscale to simplify initial registration.

Distance-Based Pre-Segmentation:

- Compute the Euclidean distance in RGB space between a reference background image and the plant-containing VIS image.

- Cluster the distance image using the k-means algorithm (e.g., N=25 clusters).

- Calculate z-scores between color distributions of background and plant images for all clusters. Select clusters with z-scores above a defined threshold (e.g., >5) to create a "roughly cleaned" VIS image and a well-segmented FLU image. [6]

FLU/VIS Image Co-Registration:

Feature Space Transformation and Data Reduction:

- Transform the VIS image from RGB to a multi-dimensional color space (e.g., HSV, Lab, CMYK) and merge them into a 10D color space.

- Apply Principal Component Analysis (PCA) to obtain a compact "Eigen-color" representation. [6]

- Reduce data complexity by using k-means clustering on the image to describe plant and background regions by their Average Colors of K-Means Regions (AC-KMR).

Supervised Classification for Final Segmentation:

- Train a binary classifier (e.g., Support Vector Machine, Random Forest) using AC-KMR from manually annotated ground truth data to distinguish between plant and background regions.

- Apply the classifier to the AC-KMR of new images to perform the final, refined segmentation, effectively removing any remaining marginal background regions included during registration.

Workflow and Signaling Pathway Diagrams

Workflow for 3D Multimodal Registration

Two-Step Registration-Classification Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Technologies for Multimodal Plant Phenotyping

| Category / Item | Specification / Example | Primary Function in Protocol |

|---|---|---|

| Imaging Sensors | ||

| Time-of-Flight (ToF) Camera | e.g., Microsoft Azure Kinect | Captures depth information to build 3D point clouds, enabling parallax correction and 3D registration. [4] [5] |

| Hyperspectral Imaging (HSI) System | Handheld line scanner (e.g., Blackmobile); VNIR sensor [15] | Captures spatial and spectral data in a hypercube for assessing physiological traits and disease. [15] [16] |

| Visible Light (RGB) Camera | High-resolution CMOS sensor | Captures morphological and color information of plants for traditional image analysis. [6] [16] |

| Fluorescence (FLU) Camera | With specific excitation/emission filters | Provides high-contrast images of photosynthetic material, simplifying initial plant segmentation. [6] [17] |

| Computational Tools | ||

| Registration Algorithms | Feature-based (SIFT, SURF), Phase Correlation, Mutual Information [14] | Aligns images from different modalities by finding geometric transformations. |

| Machine Learning Classifiers | Support Vector Machines (SVM), Random Forests, Convolutional Neural Networks (CNN) [15] [6] | Refines segmentation and classifies plant structures, pixels, or health status. |

| Analysis Software | MATLAB Image Analysis Toolbox, Python (OpenCV, Scikit-image) | Provides built-in functions and environment for implementing and testing registration and analysis pipelines. [6] [14] |

| Supporting Materials | ||

| Calibration Materials & Targets | Charuco boards, spectralon | For spatial and spectral calibration of imaging systems to ensure measurement accuracy. [15] |

| Controlled Illumination | Halogen lamps, integrated LED arrays [15] | Provides consistent, evenly distributed diffuse light to minimize shadows and specular reflections. |

The "phenotyping bottleneck" describes the critical limitation in plant sciences where the ability to generate vast genomic data far surpasses the capacity to measure physical and physiological traits (phenotypes). High-Throughput Phenotyping (HTP) aims to overcome this constraint through automated, non-destructive trait measurement [18]. However, a significant secondary bottleneck emerges in effectively processing and interpreting the massive, complex datasets generated by HTP platforms. Multimodal image registration—the precise alignment of images captured from different sensors, angles, or times—serves as the foundational computational step that enables accurate, biologically meaningful trait extraction. This protocol details how advanced registration techniques transform raw, misaligned sensor data into precisely aligned information streams, thereby unlocking the full potential of HTP for genetic and physiological research.

Technical Solutions: Multimodal Image Registration

The Core Computational Challenge

Multimodal plant phenotyping involves deploying various imaging sensors (e.g., visible light/RGB, infrared, hyperspectral, depth cameras) to capture complementary aspects of plant structure and function [17]. The effective utilization of these cross-modal patterns depends on image registration to achieve pixel-precise alignment, a challenge often complicated by parallax and occlusion effects inherent in complex plant canopy architectures [4]. Without robust registration, trait extraction from multiple sensors becomes unreliable, as corresponding features do not align spatially, leading to erroneous biological interpretations.

A Novel 3D Multimodal Registration Algorithm

A breakthrough registration method addresses these challenges by integrating 3D depth information from a Time-of-Flight (ToF) camera directly into the alignment process [4]. The algorithm's efficacy is demonstrated through the following technical workflow:

- 3D Data Acquisition: A multimodal camera setup simultaneously captures 2D images (e.g., RGB, thermal) alongside 3D point clouds from a depth camera.

- Ray Casting for Projection: The system uses ray casting to project pixels from the 2D image sensors onto the 3D points of the depth map. This step creates a direct spatial correspondence between the 2D image data and the 3D plant structure.

- Mitigation of Parallax: By leveraging the actual 3D geometry of the scene, the algorithm effectively mitigates parallax errors that occur when the same point is viewed from different camera positions.

- Automated Occlusion Handling: An integrated method automatically detects and filters out various types of occlusions (e.g., leaves overlapping), minimizing registration errors caused by hidden surfaces.

- Generation of Registered Outputs: The final output consists of pixel-precise aligned images from all modalities and a consolidated, multimodal 3D point cloud of the plant.

This approach is notably robust as it does not rely on detecting plant-specific image features, making it suitable for a wide range of plant species with varying leaf geometries and canopy architectures, from Arabidopsis to crops like maize and sorghum [4]. Furthermore, the method is scalable to arbitrary numbers of cameras with varying resolutions and wavelengths, making it adaptable to diverse phenotyping platform configurations.

Experimental Protocols

Protocol 1: 3D Multimodal Image Registration for Trait Extraction

This protocol provides a detailed methodology for implementing the 3D multimodal registration algorithm described in Section 2.2.

- Objective: To achieve pixel-precise alignment of images from multiple sensors for accurate, multimodal trait extraction.

- Experimental Setup & Reagents:

- Plant Material: Six distinct plant species with varying leaf geometries (e.g., Arabidopsis, tobacco, maize, sorghum) [4] [17].

- Imaging Platform: A phenotyping system equipped with:

- A Time-of-Flight (ToF) or other depth-sensing camera.

- Multiple 2D image sensors (e.g., visible light/RGB, thermal infrared, hyperspectral).

- A controlled processing environment for data acquisition.

- Step-by-Step Procedure:

- System Calibration: Calibrate all cameras (2D and 3D) intrinsically and extrinsically to determine their precise positions, orientations, and lens distortions relative to a common coordinate system.

- Synchronized Data Acquisition: For each time point, simultaneously trigger all sensors to capture plant images. Ensure consistent lighting conditions.

- Depth Data Pre-processing: Process the raw data from the depth camera to generate a 3D point cloud of the plant.

- Ray Casting Projection: For each 2D sensor, use a ray-casting algorithm to project every pixel from the 2D image plane onto the 3D points of the plant's point cloud.

- Occlusion Detection & Filtering: Automatically identify and flag 3D points that are occluded from the view of a particular 2D sensor. Exclude these points from the final registered image for that sensor.

- Image Generation: Generate the registered 2D image for each modality by sampling the original 2D image data at the pixel locations determined by the successful 3D projections.

- Validation: Manually or semi-automatically check alignment accuracy by visualizing overlays of contours from different modalities (e.g., RGB edges overlaid on thermal images).

Protocol 2: SpaTemHTP Pipeline for Temporal Phenotyping Data Analysis

This protocol outlines the use of a specialized data analysis pipeline for processing temporal HTP data, which relies on high-quality, registered images as a starting point [19].

- Objective: To efficiently process and utilize temporal high-throughput phenotyping data for robust genotype analysis.

- Experimental Setup:

- Plant Material: Diversity panels of crops (e.g., 288 chickpea genotypes, 384 sorghum genotypes) [19].

- Imaging & Design: Data generated from outdoor HTP platforms (e.g., LeasyScan) with replicated experiments laid out in an alpha design.

- Step-by-Step Procedure:

- Data Preprocessing (Outlier Detection): Apply statistical methods to the raw trait measurements (e.g., 3D leaf area, plant height) to detect and remove extreme values generated by system inaccuracies or failures.

- Data Preprocessing (Imputation): Impute missing values in the time-series data using longitudinal methods, which is robust to data contamination rates of 20-30% and up to 50% missing data.

- Spatial Adjustment and Genotype Mean Calculation: Use the SpATS model (a two-dimensional P-spline approach within a mixed model framework) to compute genotype adjusted means. This step accounts for spatial heterogeneity in the field or growth platform.

- Temporal Analysis (Change-Point Analysis): Model the genotype growth curves and apply change-point analysis to the time-series of adjusted means to identify critical growth phases where genotypic differences are maximized.

- Genotype Clustering: Use the estimated genotypic values during the identified optimal growth phase to cluster genotypes into consistent groups for breeding decisions.

Key Findings and Data Synthesis

Performance of Phenotyping Pipelines

Table 1: Robustness of the SpaTemHTP Data Analysis Pipeline [19]

| Pipeline Component | Function | Performance / Robustness |

|---|---|---|

| Outlier Detection & Imputation | Removes extreme values and infers missing data | Can handle up to 50% missing data; robust to 20-30% data contamination |

| Spatial Adjustment (SpATS Model) | Accounts for field heterogeneity to compute accurate genotype means | Improves heritability estimates by reducing error variance |

| Change-Point Analysis | Identifies critical growth phases from time-series data | Determines the optimal timing for observing maximum genotypic variance |

High-Throughput Phenotyping Platforms and Traits

Table 2: Exemplar High-Throughput Phenotyping Platforms and Applications [18]

| Platform Name | Primary Traits Recorded | Crop Species | Stress Context |

|---|---|---|---|

| PHENOPSIS | Plant responses to soil water stress | Arabidopsis thaliana | Drought |

| LemnaTec 3D Scanalyzer | Salinity tolerance traits | Rice (Oryza sativa) | Salinity |

| HyperART | Leaf chlorophyll content, disease severity | Barley, Maize, Tomato, Rapeseed | Biotic & Abiotic |

| PhenoBox | Detection of head smut and corn smut | Maize, Brachypodium | Biotic (Disease) |

| PHENOVISION | Drought stress and recovery traits | Maize (Zea mays) | Drought |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Solutions for Multimodal Phenotyping

| Item / Solution | Function / Application | Example Use-Case |

|---|---|---|

| Time-of-Flight (ToF) Depth Camera | Provides 3D point cloud data of plant structure. | Core sensor for 3D multimodal registration to mitigate parallax [4]. |

| Multimodal Camera Suite (RGB, Thermal, Hyperspectral) | Captures complementary data on morphology, temperature, and physiology. | Simultaneous assessment of plant growth, water status, and photosynthetic pigment content [17]. |

| SpATS Model (Statistical Tool) | Performs spatial adjustment within a mixed-model framework. | Accounting for micro-environmental variation in field-based HTP platforms to compute accurate genotype adjusted means [19]. |

| SpaTemHTP R Pipeline | An automated data analysis pipeline for temporal HTP data. | Processing raw, noisy phenotypic time-series data from outdoor platforms to extract smooth genotype growth curves [19]. |

| Public Benchmark Datasets (e.g., LSC, MSU-PID) | Provide standardized data for algorithm development and validation. | Testing and comparing the performance of leaf segmentation, counting, and tracking algorithms [17]. |

Workflow Visualization

Diagram 1: From Raw Images to Genetic Insights. This workflow illustrates the streamlined data processing pipeline enabled by robust multimodal image registration, which transforms raw, unaligned sensor data into reliable genetic insights.

Diagram 2: 3D Multimodal Registration Engine. This diagram details the core registration process that uses 3D information and ray casting to align 2D sensor data and generate consolidated 3D point clouds, forming the basis for accurate downstream analysis.

Automated multimodal image registration represents a foundational breakthrough in plant phenotyping research, enabling the precise integration of complementary data streams from diverse imaging sensors. This technological advancement is crucial for bridging the gap between laboratory-based discoveries and field applications, particularly in the analysis of plant stress responses and the acceleration of precision breeding programs. By aligning and combining images from various modalities such as RGB, hyperspectral, thermal, and depth sensors, researchers can now generate comprehensive digital representations of plant phenotypes with unprecedented resolution and accuracy. This integration allows for the correlation of anatomical features with physiological processes, revealing previously inaccessible insights into gene-environment interactions and stress adaptation mechanisms. The transition from manual, destructive sampling to automated, high-throughput phenotyping platforms has dramatically increased both the scale and precision of trait measurement, ultimately supporting the development of climate-resilient crop varieties needed for future food security.

Application Notes: Multimodal Imaging in Plant Phenotyping

Sensor Technologies and Data Acquisition

Modern plant phenotyping leverages multiple imaging modalities, each providing unique insights into plant structure and function. RGB imaging serves as the foundational modality, offering high-resolution morphological data for tasks such as plant architecture analysis, organ counting, and visual symptom assessment [20]. Hyperspectral imaging captures spectral data across hundreds of narrow, contiguous bands, typically ranging from visible to short-wave infrared (400-1700 nm), enabling the detection of biochemical changes associated with stress responses before visible symptoms appear [20]. Depth sensors and time-of-flight cameras facilitate 3D reconstruction of plant architecture, allowing accurate measurement of volumetric traits and canopy structure [20] [5]. Thermal imaging provides surface temperature data that serves as a proxy for stomatal conductance and water stress status [21].

The effective integration of these diverse data streams requires sophisticated registration algorithms that align spatial information across modalities. Recent advances in 3D multimodal image registration have addressed the significant challenges posed by parallax effects and occlusion in complex plant canopies [5]. By incorporating depth information directly into the registration pipeline, these methods achieve pixel-accurate alignment essential for correlating structural features with physiological measurements across different sensor outputs.

Quantitative Performance in Stress Response Analysis

Multimodal phenotyping platforms have demonstrated remarkable accuracy in detecting and quantifying plant stress responses. The following table summarizes performance metrics reported for various stress assessment applications:

Table 1: Performance Metrics of Multimodal Phenotyping in Stress Response Analysis

| Application | Crop | Imaging Modalities | Analysis Method | Reported Accuracy | Reference |

|---|---|---|---|---|---|

| Drought severity classification | Rice | Hyperspectral (900-1700 nm) | Random Forest with CARS feature selection | 97.7-99.6% across five drought levels | [20] |

| Wheat ear detection | Wheat | RGB | YOLOv8m deep learning model | Precision: 0.783, Recall: 0.822, mAP: 0.853 | [20] |

| Rice panicle segmentation | Rice | RGB | SegFormer_B0 model | mIoU: 0.949, Accuracy: 0.987 | [20] |

| 3D plant height estimation | Maize | RGB-D depth camera | SIFT and ICP algorithms | R² = 0.99 with manual measurements | [20] |

| Water stress detection | Maize | Thermal + RGB | DarkNet53 deep learning | High classification accuracy across sowing dates | [21] |

These quantitative demonstrations highlight the transformative potential of automated multimodal phenotyping in providing objective, high-throughput assessments of plant stress responses—capabilities that far exceed the throughput and consistency of traditional visual scoring methods.

Field Deployment and Robotic Platforms

The transition from controlled environments to field conditions introduces significant challenges, including variable lighting, wind-induced plant movement, and soil heterogeneity. Robotic platforms such as PhenoRob-F represent a technological solution to these challenges, equipped with integrated RGB, hyperspectral, and depth sensors for autonomous navigation and data capture in field conditions [20]. These systems can complete phenotyping rounds in 2–2.5 hours and process up to 1875 potted plants per hour, demonstrating the scalability of multimodal phenotyping approaches [20].

A critical innovation in field-based multimodal phenotyping is the development of registration methods that leverage depth information to mitigate parallax effects—a persistent challenge when imaging complex plant structures from multiple viewpoints [5]. These algorithms automatically identify and differentiate various types of occlusions, minimizing registration errors that could compromise downstream analysis. The robustness of such approaches has been validated across diverse plant species with varying leaf geometries, confirming their applicability to broad phenotyping research [5].

Experimental Protocols

Protocol 1: Multimodal Registration for 3D Plant Architecture Analysis

Research Objective and Applications

This protocol details a method for capturing and aligning multimodal image data to reconstruct 3D plant architecture and extract quantitative morphological traits. Applications include monitoring growth dynamics, assessing architectural responses to environmental stresses, and evaluating genetic variation in canopy structure.

Materials and Equipment

- PhenoRob-F robotic platform or similar autonomous ground vehicle equipped with multimodal sensors [20]

- RGB-D depth camera (e.g., Intel RealSense D435i) for simultaneous color and depth capture

- Hyperspectral imaging system covering 900-1700 nm range for physiological assessment

- Calibration targets for spatial and spectral alignment across sensors

- Computational workstation with adequate GPU resources for deep learning inference

- Data processing software including Open3D, CloudCompare, or custom Python pipelines

Experimental Workflow

The following diagram illustrates the integrated workflow for multimodal data acquisition, registration, and trait extraction:

Procedure

Pre-deployment Calibration:

- Conduct geometric calibration of all sensors using checkerboard patterns to determine intrinsic and extrinsic parameters.

- Perform spectral calibration of hyperspectral sensors using standardized reflectance panels.

- Establish temporal synchronization across all imaging systems with precision <100ms.

Field Data Acquisition:

- Navigate robotic platform along predetermined transects maintaining constant speed (0.1-0.3 m/s).

- Capture synchronized image bursts from all sensors at 1-2 second intervals.

- Ensure overlap between consecutive capture positions ≥60% for robust 3D reconstruction.

Multimodal Registration:

- Apply scale-invariant feature transform (SIFT) to detect keypoints in RGB images [20].

- Project depth information onto RGB keypoints using known camera transformations.

- Employ iterative closest point (ICP) algorithm to align point clouds from sequential positions [20].

- Transform hyperspectral data into the unified 3D coordinate system using projective geometry.

Trait Extraction:

- Segment plant organs from background using region-growing algorithms on 3D point clouds.

- Quantify architectural traits: plant height, leaf area index, leaf inclination angles.

- Calculate vegetation indices (NDVI, PRI) from hyperspectral data mapped to 3D structure.

Data Analysis and Interpretation

- Export quantitative traits in standardized formats (CSV, JSON) for statistical analysis.

- Conduct time-series analysis to track growth dynamics under different environmental conditions.

- Perform genome-wide association studies (GWAS) using extracted phenotypic traits to identify genetic loci controlling architecture.

Protocol 2: Hyperspectral Imaging for Early Stress Detection

Research Objective and Applications

This protocol describes a method for detecting abiotic stress in plants before visible symptoms manifest using hyperspectral imaging and machine learning classification. Applications include early warning systems for drought, nutrient deficiency, and pathogen infection in breeding programs.

Materials and Equipment

- Hyperspectral imaging system with spectral range 400-1700 nm

- Controlled illumination system with stable light source

- Sainfoin seed samples or other plant material of interest [22]

- Computational resources for feature selection and model training

- Random Forest implementation (e.g., scikit-learn Python package)

Experimental Workflow

The following diagram illustrates the spectral analysis pipeline for early stress detection:

Procedure

Experimental Design:

- Establish stress treatments with appropriate controls (e.g., drought, salinity, pathogen inoculation).

- Arrange plants in randomized complete block design to account for environmental variation.

- Include multiple time points to capture progression of stress responses.

Spectral Data Acquisition:

- Capture hyperspectral imagery under consistent illumination conditions.

- Acquire reference measurements from calibration panels with each imaging session.

- Maintain consistent camera-to-canopy distance (1-2m) and viewing geometry.

Feature Selection:

- Implement Competitive Adaptive Reweighted Sampling (CARS) to identify most informative wavelengths [20].

- Reduce dimensionality from hundreds of spectral bands to 10-20 key features.

- Validate feature stability across biological replicates.

Model Training and Validation:

- Partition data into training (70%), validation (15%), and test (15%) sets.

- Train Random Forest classifier with 500-1000 decision trees.

- Optimize hyperparameters (tree depth, split criteria) via cross-validation.

- Assess model performance using precision, recall, and F1-score metrics.

Data Analysis and Interpretation

- Calculate classification accuracy for different stress severity levels.

- Identify spectral regions most predictive of specific stress types.

- Correlate spectral predictions with conventional physiological measurements (chlorophyll content, photosynthetic rate).

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Essential Research Reagents and Materials for Multimodal Plant Phenotyping

| Category | Specific Product/Technology | Function/Application | Example Use Cases |

|---|---|---|---|

| Imaging Sensors | RGB-D cameras (e.g., Intel RealSense) | Simultaneous color and depth capture; 3D reconstruction | Plant architecture analysis, biomass estimation [20] [5] |

| Hyperspectral imagers (400-1700 nm) | Spectral fingerprinting; biochemical composition analysis | Early stress detection, pigment quantification [20] | |

| Thermal infrared cameras | Surface temperature measurement; stomatal conductance proxy | Drought response monitoring, irrigation scheduling [21] | |

| Computational Tools | Log-Gabor filter banks | Frequency-domain feature extraction; illumination-invariant analysis | Multimodal image registration [23] |

| Phase Congruency algorithms | Illumination and contrast invariant feature detection | Robust feature matching across modalities [23] | |

| Deep learning frameworks (YOLOv8, SegFormer) | High-throughput organ detection and segmentation | Panicle counting, leaf segmentation [20] | |

| Platform Systems | PhenoLab automated phenotyping platform | Controlled environment phenotyping; multispectral imaging | Abiotic and biotic stress response quantification [24] |

| Autonomous robotic platforms (PhenoRob-F) | Field-based high-throughput phenotyping | Large-scale genetic evaluation [20] | |

| Analysis Pipelines | MIRACL (Multimodal Image Registration And Connectivity Analysis) | Integration of heterogeneous image data | Cross-scale correlation of phenotypes [25] |

| Competitive Adaptive Reweighted Sampling (CARS) | Wavelength selection for spectral models | Dimensionality reduction in hyperspectral data [20] |

Implementation Challenges and Future Directions

Despite significant advances, several challenges persist in the widespread implementation of automated multimodal image registration for plant phenotyping. Data scalability remains a concern, as high-resolution multimodal datasets can easily reach terabytes per experiment, creating storage and computational bottlenecks [26]. Model generalization across species, growth stages, and environmental conditions requires further development, particularly for deep learning approaches that typically require large, annotated datasets for training [26]. Standardization of protocols and data formats across research groups would enhance reproducibility and enable meta-analyses across studies.

Future developments will likely focus on edge computing solutions that perform initial data processing directly on phenotyping platforms, reducing data transfer requirements [26]. Digital twin technology, which creates virtual replicas of plants that can be manipulated in silico, represents another promising direction for predicting plant responses to different environmental scenarios [26]. Foundation models pre-trained on large, diverse plant image datasets could enable few-shot learning for new species or traits, dramatically reducing annotation requirements [26]. As these technologies mature, their integration into breeding programs will accelerate the development of climate-resilient crops, ultimately contributing to global food security.

Deep Learning and Algorithmic Strategies for Robust Plant Image Registration

Automated multimodal image registration is a cornerstone of modern plant phenotyping research, enabling the integration of complementary data from diverse imaging modalities. This integration provides a holistic view of plant morphology, physiology, and health, which is critical for advancing agricultural science and crop development. The evolution of registration methodologies has transitioned from classical feature-based techniques to sophisticated deep learning, end-to-end networks. Classical approaches, such as those based on SIFT or ORB, rely on handcrafted features and geometric transformations. In contrast, learning-based methods leverage convolutional neural networks (CNNs) and transformers to learn complex, data-driven representations and spatial correspondences directly from image data. This article details the application notes and experimental protocols for implementing these approaches within the specific context of plant phenotyping, providing researchers with practical guidance for multimodal data integration.

Comparative Analysis of Registration Approaches

The selection between classical and learning-based image registration strategies involves critical trade-offs between data requirements, computational efficiency, registration accuracy, and implementation complexity. The following table summarizes the core characteristics of each approach:

Table 1: Comparison of Classical and Learning-Based Registration Approaches

| Feature | Classical/Feature-Based Approaches | Learning-Based/End-to-End Approaches |

|---|---|---|

| Core Principle | Alignment based on handcrafted features (e.g., SIFT, ORB) and geometric transformation models. [27] [28] | Learning feature representation and spatial transformation directly from data using deep neural networks. [29] [30] |

| Data Dependency | Low; requires only the image pair to be registered. [27] | High; often requires large, annotated datasets for training. [31] |

| Computational Efficiency | High efficiency during registration; potential bottlenecks in feature matching. [27] | High computational cost during training; fast inference after model deployment. [29] |

| Typical Accuracy | Good under ideal conditions; susceptible to failure with poor feature detection. [27] [28] | High; superior performance in complex scenarios with sufficient data. [29] [32] |

| Multimodal Robustness | Moderate; requires tailored feature descriptors for different modality pairs. [27] | High; capable of learning invariant representations across modalities. [27] [30] |

| Implementation Complexity | Low to moderate; relies on established algorithmic pipelines. [28] | High; involves complex architecture design and training protocols. [29] [30] |

Experimental Protocols for Plant Phenotyping

Protocol 1: Classical Feature-Based Registration for Multimodal Plant Images

This protocol outlines a feature-based strategy for aligning images from different sensors, such as RGB and multispectral cameras, inspired by methodologies applied in biomedical imaging and manufacturing. [27] [28]

1. Application Scope: Aligning in-field RGB images with thermal or multispectral images for stress response analysis.

2. Materials and Reagents:

- Image Acquisition System: UAV or ground vehicle equipped with multiple co-mounted cameras (e.g., RGB, multispectral). [31]

- Computing Environment: Workstation with Python and libraries such as OpenCV for computer vision tasks.

- Software Tools: OpenCV for implementing feature detection and matching algorithms.

3. Step-by-Step Procedure: 1. Image Preprocessing: Convert all images to grayscale. Apply histogram equalization to enhance contrast and a Gaussian filter to reduce noise. [32] 2. Feature Detection: Detect keypoints in both the fixed (reference) and moving (to-be-aligned) images using a robust detector like SIFT or ORB. [27] [28] SIFT generally provides higher robustness to illumination and scale changes. 3. Feature Description: Compute a feature descriptor (e.g., SIFT, KAZE) for each detected keypoint, capturing the local image pattern. [28] 4. Feature Matching: Establish correspondences between descriptors from the two images using a brute-force or FLANN-based matcher. Retain the best matches based on Lowe's ratio test to filter outliers. [27] 5. Transformation Estimation: Use the coordinates of matched keypoints to estimate a spatial transformation model (e.g., affine or projective) using a robust estimator like RANSAC to further eliminate incorrect matches. [27] [33] 6. Image Warping: Apply the estimated transformation to warp the moving image into the coordinate system of the fixed image.

4. Visualization of Workflow:

Protocol 2: End-to-End Semantic Segmentation for Structural Phenotyping

This protocol describes using a deep learning-based semantic segmentation model to parse plant images, which can serve as a feature-rich preprocessing step for registration or for direct phenotypic trait extraction. [29] [34] [32]

1. Application Scope: High-throughput segmentation of plant structures (leaves, stems) from complex backgrounds for morphological analysis and disease detection. [29] [35]

2. Materials and Reagents:

- Dataset: Labeled plant image dataset with pixel-wise annotations (e.g., custom maize dataset, CVPPP, PlantVillage). [29] [35] [32]

- Computing Environment: GPU-equipped workstation (e.g., NVIDIA Tesla V100 or RTX 3090).

- Software Frameworks: Python with PyTorch or TensorFlow and model-specific libraries.

3. Step-by-Step Procedure: 1. Data Preparation: Split data into training, validation, and test sets. Apply data augmentation (random flipping, rotation, color jittering) to improve model generalization. [29] 2. Model Selection: Choose a segmentation architecture. DSC-DeepLabv3+ is a lightweight, effective option. It uses MobileNetV2 as a backbone and Depthwise Separable Convolutions to reduce parameters. [29] 3. Model Training: Train the model using an appropriate loss function (e.g., Cross-Entropy Loss, Dice Loss). Use an optimizer like Adam with a learning rate scheduler. 4. Model Evaluation: Validate the model on the held-out test set. Use metrics like mean Intersection over Union (mIoU) and accuracy to assess performance. For example, DSC-DeepLabv3+ achieved an mIoU of 85.57% on a maize weed dataset. [29] 5. Inference & Trait Extraction: Deploy the trained model to segment new images. Extract phenotypic traits (e.g., leaf area, disease coverage) directly from the segmentation masks. [35] [32]

4. Visualization of Workflow:

Protocol 3: 3D Plant Reconstruction via Multi-View Registration

This protocol details a method for creating complete 3D models of plants, which is essential for measuring structural phenotypes like plant height, crown width, and leaf angle. [33]

1. Application Scope: Generating accurate 3D models of tree seedlings or small plants for architectural and growth analysis.

2. Materials and Reagents:

- Imaging System: A multi-view acquisition setup, such as a turntable with a fixed binocular camera (e.g., ZED 2) or a UAV circling a plant. [33]

- Calibration Object: Spherical markers for coarse point cloud alignment. [33]

- Software: COLMAP or AliceVision for SfM/MVS, and the Point Cloud Library (PCL) for point cloud processing.

3. Step-by-Step Procedure: 1. Multi-View Image Acquisition: Capture high-resolution images of the plant from multiple viewpoints (e.g., 6-8 angles around the plant). [33] 2. Sparse Reconstruction (SfM): Use Structure from Motion (SfM) to estimate camera poses and generate a sparse point cloud from the acquired images. [33] 3. Dense Reconstruction (MVS): Apply Multi-View Stereo (MVS) algorithms to the registered images to generate a dense, high-fidelity point cloud for each viewpoint. [33] 4. Point Cloud Coarse Alignment: Perform initial registration of the multiple point clouds using a marker-based Self-Registration (SR) method that aligns the spherical calibration objects. [33] 5. Point Cloud Fine Alignment: Refine the alignment using the Iterative Closest Point (ICP) algorithm, which minimizes the distance between points in overlapping clouds. [33] 6. Phenotypic Trait Extraction: Analyze the unified 3D model to extract traits. Studies have shown strong correlation (R² > 0.92) with manual measurements for plant height and crown width. [33]

4. Visualization of Workflow:

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table catalogues key software and methodological "reagents" essential for conducting experiments in automated multimodal plant image registration and analysis.

Table 2: Key Research Reagent Solutions for Image-Based Plant Phenotyping

| Category | Item | Function/Application |

|---|---|---|

| Algorithms & Features | SIFT / ORB / KAZE [27] [28] | Classical feature detection and description for identifying robust keypoints in multimodal images. |

| RANSAC [27] [33] | Robust algorithm for estimating geometric transformations from noisy feature matches. | |

| Iterative Closest Point (ICP) [33] | Algorithm for fine alignment of 4D point clouds (3D geometry + 1D intensity) during 3D reconstruction. | |

| Deep Learning Models | DSC-DeepLabv3+ [29] | Lightweight semantic segmentation model for efficient plant structure and weed identification. |

| RSL Linked-TransNet [32] | Advanced segmentation model for multi-class plant disease detection and severity assessment. | |

| U-Net [35] | Encoder-decoder CNN architecture widely used for precise biomedical and plant image segmentation. | |

| Software & Libraries | OpenCV | Open-source computer vision library providing implementations of classic registration algorithms. |

| PyTorch / TensorFlow | Deep learning frameworks for developing and training end-to-end registration and segmentation models. | |

| COLMAP | End-to-end pipeline for 3D reconstruction from images using SfM and MVS. | |

| Imaging Modalities | RGB Camera | Captures standard color images for morphological assessment. [31] |

| Multispectral / Hyperspectral Sensor | Captures data beyond the visible spectrum for assessing plant health and physiology. [31] | |

| Binocular Stereo Camera (e.g., ZED) | Captures image pairs for calculating depth and generating 3D point clouds. [33] |

Quantitative evaluation is critical for assessing and comparing the performance of different registration and analysis pipelines. The following table consolidates key metrics reported from the protocols and studies discussed.

Table 3: Quantitative Performance Metrics of Featured Methods

| Method / Model | Primary Application | Key Performance Metrics | Reported Values |

|---|---|---|---|

| Feature-Based Registration [27] | Multimodal Biomedical/Plant Registration | Dice CoefficientComputational Time | 0.95 - 0.97~50% faster than intensity-based |

| DSC-DeepLabv3+ [29] | Maize Weed Segmentation | mean IoU (mIoU)ParametersInference Speed | 85.57%2.89 Million42.89 FPS |

| 3D Reconstruction Workflow [33] | 3D Plant Phenotyping | Correlation (R²) with manual measurements: - Plant Height & Crown Width - Leaf Parameters | > 0.920.72 - 0.89 |

| RSL Linked-TransNet [32] | Citrus Disease Segmentation | Average AccuracyMean IoU | 97.55%75.67% |

The application of deep learning to automated multimodal image registration in plant phenotyping research has traditionally been constrained by a heavy reliance on accurately annotated ground-truth data, the creation of which is both labor-intensive and costly. This application note explores the pivotal role of unsupervised deep learning models in overcoming this fundamental bottleneck. We detail how these techniques leverage inherent data structures and consistency metrics to achieve state-of-the-art performance in aligning images from diverse modalities—such as RGB, hyperspectral, and chlorophyll fluorescence—without paired annotations. Supported by quantitative data and structured protocols, this document provides researchers with a framework for implementing these advanced methods, thereby accelerating high-throughput, high-dimensional plant phenotyping and facilitating a more robust analysis of genotype-environment-phenotype interactions.

Plant phenomics, the comprehensive study of plant phenotypes, is a vital discipline for unraveling the complex relationships between genotypes and the environment [36]. The advent of optical imaging techniques has enabled cost-efficient, non-destructive quantification of plant traits and stress states [3]. A particularly powerful approach involves multimodal imaging, which integrates data from various sensors—like RGB, hyperspectral (HSI), and chlorophyll fluorescence (ChlF)—to provide a more holistic view of plant health and architecture by capturing synergistic information [3] [5].

However, the effective fusion of these cross-modal patterns is critically dependent on precise image registration, the process of aligning two or more images into a single coordinate system. Achieving pixel-accurate alignment is notoriously challenging due to factors like parallax, occlusion, and the fundamental differences in how various sensors depict the same scene [5]. While deep learning has revolutionized many image analysis tasks, its success in registration has often been gated by the need for vast amounts of manually annotated ground-truth data (e.g., corresponding keypoints between image pairs) to supervise model training. The creation of such datasets is a significant hurdle, limiting the pace and scale of phenotyping research.

This application note addresses this challenge by focusing on unsupervised deep learning models. These models learn to perform registration by optimizing metrics of alignment and similarity directly from the data itself, bypassing the need for curated labels. Framed within a broader thesis on automated multimodal image registration for plant phenotyping, this document provides a detailed examination of the principles, protocols, and practical tools for implementing these data-efficient methodologies.

Core Principles and Quantitative Comparison

Unsupervised learning paradigms for image registration shift the objective from replicating human annotations to maximizing intrinsic alignment quality. These models are trained to optimize a similarity metric between the reference and the transformed moving image, such as Normalized Cross-Correlation (NCC) or Mutual Information.

Table 1: Quantitative Performance of Unsupervised and Traditional Registration Methods in Plant Phenotyping

| Registration Method | Modalities Aligned | Key Metric | Reported Performance | Plant Species |

|---|---|---|---|---|

| Affine Transform (NCC-based) [3] | RGB-to-ChlF | Overlap Ratio (ORConvex) | 98.0% ± 2.3% | A. thaliana |

| Affine Transform (NCC-based) [3] | HSI-to-ChlF | Overlap Ratio (ORConvex) | 96.6% ± 4.2% | A. thaliana |

| Affine Transform (NCC-based) [3] | RGB-to-ChlF | Overlap Ratio (ORConvex) | 98.9% ± 0.5% | Rosa × hybrida |

| Affine Transform (NCC-based) [3] | HSI-to-ChlF | Overlap Ratio (ORConvex) | 98.3% ± 1.3% | Rosa × hybrida |

| 3D Multimodal (Depth-integrated) [5] | RGB/HSI/3D-TOF | Pixel Alignment Accuracy | Robust alignment across 6 plant species with varying leaf geometries | Multiple |

The high overlap ratios demonstrate that unsupervised methods, even traditional ones like affine transformation, can achieve highly accurate alignment when paired with an effective similarity metric and pipeline. The integration of 3D depth information represents a significant advancement, directly addressing parallax and improving robustness across diverse plant architectures [5].

Experimental Protocols for Multimodal Registration

This section outlines detailed protocols for implementing unsupervised multimodal image registration, drawing from successful pipelines documented in recent literature.

Protocol 1: Affine Transformation-Based Registration for High-Throughput Systems

This protocol is adapted from studies involving A. thaliana and Rosa × hybrida in multi-well plates [3].

- Image Acquisition: Capture images of the same plant sample using RGB, HSI, and ChlF imaging systems. Ensure the plant sample is roughly positioned within the field of view of all sensors, though precise alignment is not required.

- Camera Calibration and Distortion Rectification: Prior to registration, calibrate each camera to correct for lens-induced distortions. Calculate the mean reprojection error for each sensor (e.g., ~0.3 pixels for RGB, ~0.3 pixels for ChlF, ~2.1 pixels for HSI push-broom scanners) to ensure data quality [3].

- Reference Image Selection: Designate one modality (e.g., a high-contrast ChlF image) as the fixed reference image. The other modalities (e.g., RGB, HSI) will be the moving images to be transformed.

- Global Affine Transformation:

- Objective: Estimate a global transformation matrix (accounting for translation, rotation, scaling, and shearing) to align the moving image to the reference.

- Method: Use an optimization algorithm (e.g., gradient descent) to maximize the Normalized Cross-Correlation (NCC) between the reference and the moving image.

- Implementation: This can be achieved using open-source Python packages. The enhanced correlation coefficient (ECC) is a suitable metric for this optimization [3].

- Fine Registration per Object:

- Rationale: A single global transformation may not account for local misalignments, especially in images containing multiple, distinct objects (e.g., multiple wells in a plate).

- Method: Segment the image to isolate individual objects (plants or leaf discs). Apply a separate, localized affine transformation to each segmented object to refine the alignment further.

- Validation: Calculate the Overlap Ratio (ORConvex) between the segmented plant regions in the registered images to quantify performance. The aforementioned values of >96% indicate successful registration [3].

Protocol 2: 3D Depth-Integrated Multimodal Registration

This protocol leverages 3D information to achieve more robust alignment, overcoming parallax errors common in 2D approaches [5].

- Multimodal 3D Data Acquisition: Utilize a sensor suite that includes a time-of-flight (ToF) or other 3D camera (e.g., RGB-D) alongside traditional RGB and HSI sensors.

- Depth Data Pre-processing: Process the raw depth data to generate a 3D point cloud of the plant canopy.

- Occlusion Identification and Masking:

- Objective: Automatically identify and create masks for occluded regions from each camera's viewpoint.

- Method: Use the 3D point cloud to perform a visibility analysis, differentiating between self-occlusion and object occlusion. This prevents the introduction of errors by excluding non-visible pixels from the alignment metric.

- 3D-Enhanced Transformation Estimation:

- Principle: Use the depth information to mitigate parallax effects, which are a primary source of misalignment in complex 3D structures like plant canopies.

- Method: The registration algorithm integrates the 3D geometric information to compute a more accurate transformation that aligns pixels based on their true 3D position rather than their 2D projection alone. This method is not reliant on detecting plant-specific image features, making it generalizable across species [5].