Automated Multimodal Feature Fusion for Advanced Plant Organ Classification: Methods, Applications, and Future Directions

This article explores the transformative potential of automated multimodal feature fusion for plant organ classification, a critical task in agricultural technology and botanical science.

Automated Multimodal Feature Fusion for Advanced Plant Organ Classification: Methods, Applications, and Future Directions

Abstract

This article explores the transformative potential of automated multimodal feature fusion for plant organ classification, a critical task in agricultural technology and botanical science. It addresses the limitations of traditional unimodal deep learning models by presenting advanced methodologies that intelligently integrate data from multiple plant organs—such as flowers, leaves, fruits, and stems—to achieve more biologically comprehensive and accurate species identification. The content covers foundational principles, cutting-edge fusion techniques like Multimodal Fusion Architecture Search (MFAS), strategies for overcoming computational and data heterogeneity challenges, and rigorous validation frameworks. Designed for researchers, scientists, and technology developers in precision agriculture and plant science, this resource provides both theoretical insights and practical guidance for implementing robust, automated multimodal systems that demonstrate significant performance improvements over conventional approaches.

The Foundation of Multimodal Learning in Plant Science: From Single-Organ Limits to Multi-Organ Integration

The Critical Need for Plant Classification in Agriculture and Ecology

Plant classification is a cornerstone of ecological conservation and agricultural productivity, enabling detailed understanding of plant growth dynamics, preservation of species, and effective crop health management [1]. In agriculture, plant diseases present a severe threat, causing an estimated $220 billion in global crop losses annually and jeopardizing food security [2]. Ecologically, accurate species classification is fundamental for monitoring biodiversity, understanding species distribution, and informing conservation planning in the face of habitat loss and climate change [3]. Traditional classification methods, which often depend on manual feature extraction and expert visual inspection, are increasingly inadequate due to their labor-intensive nature, proneness to human error, and inability to scale [4] [3].

The emergence of deep learning (DL) and multimodal feature fusion represents a paradigm shift, moving beyond the limitations of single-organ, single-data-source approaches. By integrating complementary information from multiple plant organs and data types, these advanced methods provide a more holistic and biologically comprehensive representation of plant species, leading to significant improvements in classification accuracy and robustness [1] [3] [5]. This document provides application notes and detailed experimental protocols for implementing state-of-the-art multimodal fusion techniques in plant organ classification research.

Key Performance Data of Advanced Classification Models

The table below summarizes the performance of recent advanced plant classification models, demonstrating the efficacy of deep learning and multimodal approaches.

Table 1: Performance Metrics of Recent Plant Classification Models

| Model Name | Core Approach | Dataset | Key Metric | Performance |

|---|---|---|---|---|

| LWDSC-SA [4] | Lightweight CNN with Depthwise Separable Convolution & Spatial Attention | PlantVillage (38 classes, 55k images) | Accuracy | 98.70% |

| Average Precision (K=5 CV) | 98.30% | |||

| CNN-SEEIB [2] | CNN with Squeeze-and-Excitation Attention Mechanism | PlantVillage (54,305 images) | Accuracy | 99.79% |

| F1 Score | 0.9971 | |||

| Automatic Fused Multimodal DL [1] [6] | Neural Architecture Search for Multimodal Fusion | Multimodal-PlantCLEF (979 classes) | Accuracy | 82.61% |

| PlantIF [5] | Graph Learning for Image-Text Feature Fusion | Multimodal Disease Dataset (205k images, 410k texts) | Accuracy | 96.95% |

| Plant-MAE [7] | Self-Supervised Learning for 3D Point Cloud Segmentation | Multiple Plant Point Cloud Datasets | Average IoU | 84.03% |

Experimental Protocols for Multimodal Plant Organ Classification

This section outlines detailed methodologies for implementing and validating multimodal plant classification systems.

Protocol: Automated Multimodal Fusion Architecture Search

Objective: To automatically design an optimal neural network for fusing images from multiple plant organs (e.g., flowers, leaves, fruits, stems) for species identification [1].

Materials:

- A multimodal dataset (e.g., Multimodal-PlantCLEF [1]).

- Computational resources (GPU recommended).

- Software: Python, deep learning framework (e.g., PyTorch, TensorFlow).

Procedure:

- Dataset Preparation:

- Input: Restructure a unimodal dataset (e.g., PlantCLEF2015) into a multimodal format where each data sample consists of a set of images, each depicting a different organ of the same plant species.

- Preprocessing: Apply standard augmentation techniques: random horizontal and vertical flips, central cropping, and adjustments in contrast, saturation, and brightness [4].

Unimodal Model Training:

- Train a separate, pre-trained convolutional neural network (e.g., MobileNetV3Small) on each individual organ modality (flowers, leaves, etc.) to create expert feature extractors for each organ [1].

Multimodal Fusion with NAS:

- Employ a Multimodal Fusion Architecture Search (MFAS) algorithm [1]. This algorithm automatically explores different ways to combine the features extracted from the unimodal models.

- The search space includes operations such as concatenation, element-wise addition, and attention-based fusion, determining the optimal fusion points and functions within the network architecture.

Validation and Robustness Testing:

- Evaluate the final fused model on a held-out test set.

- To test robustness to missing data, employ multimodal dropout during evaluation, where one or more organ inputs are randomly masked, simulating real-world scenarios where not all organs are present for identification [1].

Protocol: Graph-Based Semantic Interactive Fusion for Disease Diagnosis

Objective: To integrate image and textual data for robust plant disease diagnosis by modeling the spatial and semantic dependencies between phenotypes and descriptive text [5].

Materials:

- A multimodal dataset of plant disease images paired with textual descriptions [5].

- Pre-trained image and text models (e.g., CNN, BERT).

Procedure:

- Feature Extraction:

- Image Branch: Use a pre-trained CNN to extract visual features from the input plant image.

- Text Branch: Use a pre-trained language model to extract semantic features from the associated textual description.

Semantic Space Encoding:

- Map the extracted visual and textual features into two complementary semantic spaces:

- A shared semantic space to capture common, correlated information across modalities.

- Modality-specific spaces to preserve unique information present only in images or text [5].

- Map the extracted visual and textual features into two complementary semantic spaces:

Multimodal Feature Fusion with Graph Learning:

- The fused features from the previous step are processed by a multimodal feature fusion module.

- Within this module, a Self-Attention Graph Convolutional Network (SA-GCN) is applied. This constructs a graph where nodes represent features, and uses self-attention to model the complex spatial and semantic relationships between visual patterns in the plant phenotype and concepts in the text [5].

Classification and Evaluation:

- The output from the graph convolution is used for the final disease classification.

- Model performance is evaluated using standard metrics such as accuracy, precision, and recall.

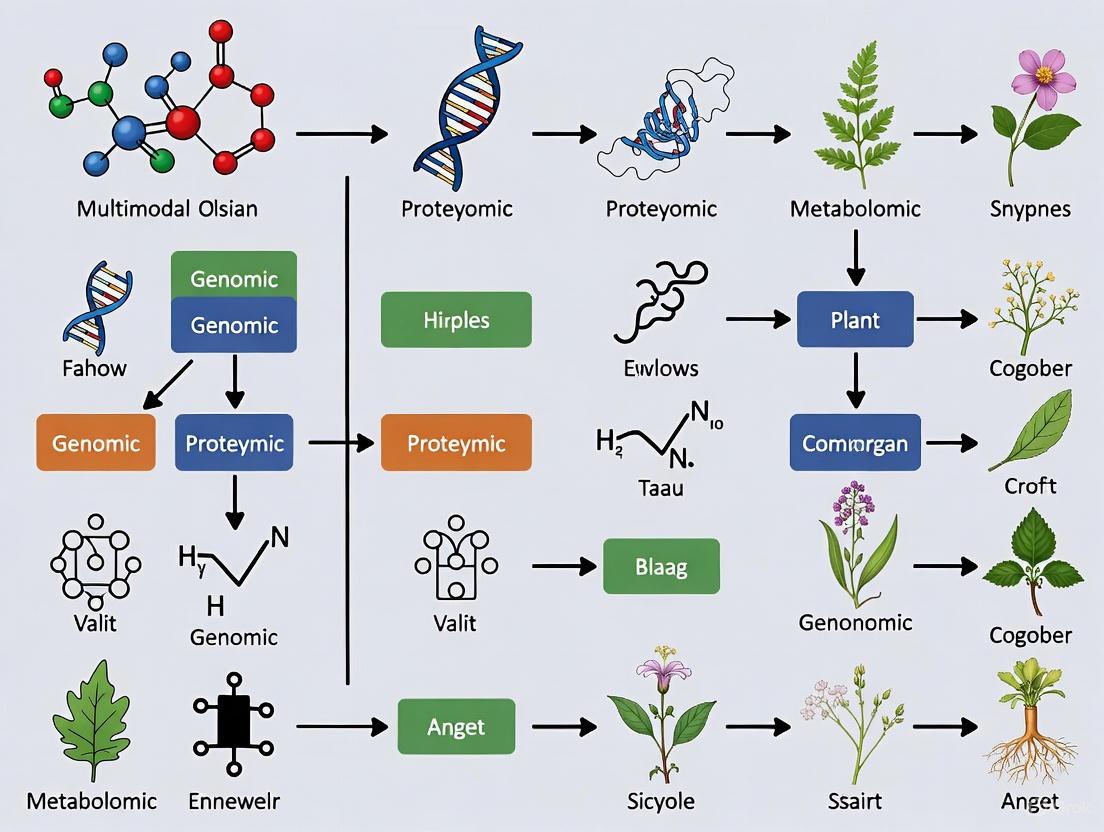

The following diagram illustrates the logical workflow and architecture of the PlantIF model.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials and Computational Tools for Multimodal Plant Classification

| Item Name | Function/Application | Specifications/Examples |

|---|---|---|

| Benchmark Datasets | Training and evaluation of models; enables reproducibility and fair comparison. | PlantVillage [4] [2], Multimodal-PlantCLEF [1], Pl@ntNet, GBIF-derived data [8], iNaturalist [9]. |

| Pre-trained Models | Provides foundational feature extractors; reduces training time and data requirements. | MobileNetV3 [1], other CNNs (e.g., VGGNet, ResNet) [4], Transformer models (for text) [5]. |

| Spatial Transcriptomics Data | Creates foundational atlases of gene expression across plant organs and developmental stages. | Single-cell RNA sequencing and spatial transcriptomics data from model plants like Arabidopsis thaliana [10]. |

| Self-Supervised Learning Frameworks | Reduces dependency on large, manually annotated datasets for tasks like 3D organ segmentation. | Masked Autoencoder (MAE) frameworks (e.g., Plant-MAE) for point cloud data [7]. |

| Neural Architecture Search (NAS) | Automates the design of optimal network architectures and fusion strategies. | Multimodal Fusion Architecture Search (MFAS) algorithms [1]. |

The integration of multimodal feature fusion and deep learning is revolutionizing plant classification, offering unprecedented accuracy and robustness for both agricultural and ecological applications. The protocols and tools outlined herein provide researchers with a roadmap to implement these advanced methodologies. Future research directions include further exploration of self-supervised and few-shot learning to reduce annotation burdens, the integration of 3D phenotypic data with genomic information [10] [3] [7], and the development of more efficient models for real-time, in-field deployment on edge devices [4] [2].

Limitations of Unimodal Deep Learning for Single-Organ Analysis

In the domain of plant phenotyping and classification, deep learning (DL) has emerged as a transformative technology, enabling automated feature extraction and reducing the dependency on manual expertise [1] [11]. However, a significant proportion of established DL approaches operates within a unimodal framework, relying exclusively on imagery of a single plant organ—typically leaves—for classification tasks [1]. This paradigm stands in stark contrast to botanical practice, where expert taxonomists integrate characteristics from multiple organs to achieve accurate species identification. The inherent limitations of single-organ analysis become particularly pronounced when confronting the vast biological diversity of plant species, where intra-species variation and inter-species similarity can confound models based on a limited set of features [1]. This application note details the fundamental constraints of unimodal deep learning for plant organ analysis, provides quantitative comparisons of performance limitations, outlines experimental protocols for benchmarking, and proposes pathways toward more robust multimodal solutions essential for scientific and drug discovery applications.

Core Limitations of Unimodal Approaches

Biological and Technical Constraints

Unimodal deep learning models for plant classification face several intrinsic constraints that limit their real-world applicability and accuracy:

- Biologically Incomplete Representation: From a biological standpoint, a single organ provides insufficient information for reliable classification [1]. Variations in appearance can occur within the same species due to environmental factors, developmental stages, and health status, while different species frequently converge on similar morphological adaptations (e.g., similar leaf shapes among unrelated species) [1]. This fundamental biological reality creates a ceiling for unimodal model performance.

- Feature Representation Failures: Classifiers engineered around specific hand-crafted features, such as leaf teeth or contour, prove ineffective for species that lack these prominent features or share them across species boundaries [1]. While deep learning automates feature extraction, a model trained only on leaves will never learn to recognize the distinctive fruit or flower patterns that are crucial for botanical discrimination.

- Scale and Detail Capture Issues: Capturing the intricate details of diverse plant organs—from minute floral structures to complex bark textures—at a consistent and useful scale within a single image is often impractical [1]. A unimodal approach is inherently constrained by the resolution and field of view of the input image.

Quantitative Performance Gaps

The theoretical constraints of unimodal analysis translate directly into measurable performance deficits. The following table synthesizes key quantitative findings from recent comparative studies, highlighting the performance gap between unimodal and multimodal deep learning models in plant classification.

Table 1: Performance Comparison of Unimodal vs. Multimodal Deep Learning Models in Plant Classification

| Model Type | Data Modalities (Organs) | Dataset | Number of Classes | Reported Accuracy | Key Limitation / Advantage |

|---|---|---|---|---|---|

| Unimodal (Typical) | Leaf (single organ) | Various (as reported in literature) | Varies | Performance ceiling significantly lower than multimodal [1] | Fails to capture comprehensive biological diversity; performance plateaus with species complexity. |

| Late Fusion Multimodal | Flower, Leaf, Fruit, Stem | Multimodal-PlantCLEF | 979 | ~72.28% (Baseline for comparison) [12] | Simple fusion improves over unimodal but is suboptimal. |

| Automated Fusion Multimodal | Flower, Leaf, Fruit, Stem | Multimodal-PlantCLEF | 979 | 82.61% [1] [12] | Outperforms late fusion by 10.33%, demonstrating the benefit of optimized multiorgan fusion. |

The data unequivocally demonstrates that models integrating multiple plant organs consistently surpass the performance of unimodal systems. The automated fusion model not only achieves higher overall accuracy but does so across a challenging number of plant classes, proving its superior ability to capture discriminative features [1] [12].

Experimental Protocols for Validating Limitations

To empirically validate the limitations of unimodal deep learning in a controlled research environment, the following experimental protocol is recommended. This workflow guides the comparison of unimodal and multimodal architectures using a standardized dataset.

Dataset Preparation and Curation

Objective: To create a structured dataset suitable for both unimodal and multimodal model training from a source like PlantCLEF2015 [1] [11].

- Source Data: Acquire the PlantCLEF2015 dataset or a similar comprehensive unimodal plant image dataset.

- Data Restructuring: Implement a preprocessing pipeline to transform the unimodal dataset into a multimodal one. This involves grouping images by species and then by organ type (flower, leaf, fruit, stem) to create the Multimodal-PlantCLEF dataset [1].

- Data Cleaning and Standardization:

- Manually or automatically filter images to ensure each input corresponds exclusively to a specific organ.

- Resize all images to a uniform resolution (e.g., 224x224 pixels).

- Apply standard data augmentation techniques (random flipping, rotation, color jitter) to increase robustness and prevent overfitting.

- Dataset Splitting: Partition the dataset into training, validation, and test sets (e.g., 70/15/15 split) at the species level to ensure no data leakage.

Unimodal Model Training and Benchmarking

Objective: To establish a performance baseline for classification using individual plant organs.

- Model Selection: Choose a standard pre-trained CNN architecture such as MobileNetV3Small or ResNet50 as the backbone for all unimodal models to ensure a fair comparison [1] [13].

- Training Protocol:

- For each organ modality (flower, leaf, fruit, stem), train a separate classification model.

- Replace the final layer of the pre-trained network to match the number of plant species (classes).

- Use a consistent optimizer (e.g., Adam or SGD with momentum) and loss function (Categorical Cross-Entropy) across all models.

- Fine-tune the models on the training set, using the validation set for early stopping.

- Evaluation: Record the top-1 and top-5 accuracy for each unimodal model on the held-out test set. This establishes the performance ceiling for a single-organ analysis.

Multimodal Fusion and Comparative Analysis

Objective: To demonstrate the performance gain achieved by integrating information from multiple organs.

- Baseline Multimodal (Late Fusion): Implement a late fusion baseline by averaging the softmax probabilities from the four trained unimodal models [1]. Evaluate its accuracy on the test set.

- Advanced Multimodal (Automated Fusion):

- Utilize a Multimodal Fusion Architecture Search (MFAS) algorithm to automatically find the optimal fusion points between the unimodal model [1] [12] [13].

- Let the MFAS algorithm search for the best connections between intermediate layers of the unimodal networks.

- Perform end-to-end training of the discovered optimal fusion architecture.

- Robustness Testing: Evaluate the robustness of the fused model to missing modalities (e.g., when only three of the four organs are available) using techniques like multimodal dropout [1] [12]. Compare its graceful performance degradation against the complete failure of a unimodal model missing its sole input.

The Scientist's Toolkit: Research Reagent Solutions

The transition from unimodal to multimodal plant analysis requires a specific set of computational tools and data resources. The following table catalogues essential components for building such a research pipeline.

Table 2: Essential Research Tools for Multimodal Plant Organ Analysis

| Tool / Resource | Type | Primary Function in Research |

|---|---|---|

| PlantCLEF2015 / Multimodal-PlantCLEF | Dataset | Benchmark dataset for training and evaluating plant identification models; provides the foundational data for restructuring into a multimodal format [1]. |

| MobileNetV3, ResNet | Pre-trained Model | Provides a powerful starting point for feature extraction via transfer learning, reducing training time and improving performance on unimodal streams [1] [13]. |

| Multimodal Fusion Architecture Search (MFAS) | Algorithm | Automates the discovery of the optimal neural network architecture for combining features from different organ modalities, overcoming the bias and suboptimality of manual fusion design [1] [12]. |

| Multimodal Dropout | Regularization Technique | Enhances model robustness by simulating scenarios with missing organ data during training, ensuring the model remains functional even when not all modalities are present in the field [1] [12]. |

| scTriangulate | Decision-Level Integration Framework | A conceptual framework from single-cell biology that inspires decision-level integration strategies, demonstrating the value of combining multiple clustering results or predictions for a more stable final output [14]. |

The limitations of unimodal deep learning for single-organ analysis are not merely incremental challenges but fundamental constraints that hinder the development of robust, accurate, and biologically realistic plant classification systems. The quantitative evidence clearly shows that multimodal approaches, which mirror the expert taxonomist's methodology, achieve significantly higher accuracy [1] [12]. For researchers in botany, ecology, and drug discovery—where misidentification can have significant consequences—moving beyond unimodal analysis is imperative. The future of automated plant phenotyping lies in the development of intelligent, flexible, and robust multimodal systems that can seamlessly integrate diverse biological information, paving the way for more reliable scientific insights and applications in agricultural and pharmaceutical development.

In plant phenotyping, the complementarity principle posits that disparate data modalities capture unique and non-redundant biological information across spatial and functional scales. The integration of these complementary perspectives enables the construction of a more holistic and accurate model of plant system dynamics than any single data source can provide. This rationale is foundational to advancing plant organ classification, moving beyond the limitations of unimodal approaches to achieve robust, high-resolution phenotypic characterization. This protocol outlines the application of this principle through multi-omics and multimodal image fusion, providing a detailed framework for researchers.

Theoretical Foundation: The Layers of Biological Information

Biological systems are hierarchically organized, and this hierarchy is reflected in the different types of data that can be collected. The following table summarizes the core complementary data types relevant to plant organ classification.

Table 1: Complementary Data Modalities in Plant Phenotyping

| Data Modality | Biological Layer Captured | Functional Insight Provided | Representative Data Format |

|---|---|---|---|

| Genomics [15] | DNA sequence variation | Genetic potential and underlying alleles for traits | SNP markers (0, 1, 2) |

| Transcriptomics [15] | Gene expression dynamics | Active biological processes and responses to stimuli | RNA-seq read counts |

| Metabolomics [15] | Biochemical phenotype | End products of cellular processes and stress responses | Metabolite abundance levels |

| RGB Imagery [16] [17] | Surface morphology and color | Visual health status, color, texture, and structure | High-resolution pixel arrays |

| Thermal Imagery (TRI) [16] [17] | Canopy temperature | Stomatal conductance and water stress status | Temperature value matrices |

| 3D Point Clouds [18] | Volumetric structural data | Plant and organ architecture, biomass, and size | 3D coordinate sets (x, y, z) |

The power of multimodal integration is demonstrated in specific research contexts. For instance, in disease resistance, genomics identifies potential resistance genes (R-genes), while transcriptomics and metabolomics reveal the active pathways and antimicrobial compounds produced during pathogen attack [15]. Similarly, fusing RGB and thermal imagery allows for the classification of water stress by combining visual symptoms with physiological responses that are not visible to the naked eye [16] [17].

Experimental Protocols for Multimodal Data Acquisition

This section provides detailed methodologies for acquiring key data modalities from featured studies.

Protocol A: Acquisition of RGB and Thermal Imagery for Water Stress Classification

This protocol is adapted from research on sweet potato water stress classification using low-altitude platforms [16] [17].

Application Note: This method is optimized for capturing high-resolution, co-registered RGB and thermal data from individual plants in field conditions, enabling precise correlation of visual and physiological traits.

- Key Materials:

- Low-Altitude Platform: A fixed or mobile rig positioned 1-3 meters above the plant canopy to avoid perspective distortion and ensure high resolution.

- Co-registered RGB-Thermal Camera: A camera system (e.g., FLIR ONE Pro) that simultaneously captures RGB and thermal images, or two separate cameras rigidly mounted and calibrated for spatial alignment.

- Color Calibration Target: A standard reference card (e.g., X-Rite ColorChecker) for consistent color accuracy across imaging sessions.

- Thermal Reference Sources: Objects with known emissivity (e.g., black electrical tape) within the field of view for thermal calibration.

Procedure:

- Experimental Setup:

- Establish plots with controlled soil moisture levels. For example, define five classes: Severe Dry (SD, ≤10% VWC), Dry (D, 20 ± 2% VWC), Optimal (O, 30 ± 3% VWC), Wet (W, 40 ± 3% VWC), and Severe Wet (SW, 50% VWC) [17].

- Ensure imaging is conducted between 11:00 AM and 1:00 PM local solar time to minimize variations in solar angle and illumination.

- Image Acquisition:

- Position the camera system perpendicular to the plant canopy.

- Capture images ensuring both the color calibration target and thermal references are visible in the frame.

- For time-series studies, repeat the process at the same time of day at regular intervals (e.g., daily or weekly).

- Data Pre-processing:

- RGB Processing: Correct lens distortion, and use the ColorChecker to perform white balance and color normalization.

- Thermal Processing: Convert raw sensor data to temperature values using the camera's calibration parameters and the known reference sources.

- Registration: Precisely align the RGB and thermal images to create pixel-wise correlated multimodal data pairs.

Protocol B: Generation of 3D Point Clouds for Organ-Level Segmentation

This protocol is based on the creation of the Cotton3D dataset for semantic segmentation of leaves, bolls, and branches [18].

Application Note: This method uses multi-view photography and 3D reconstruction to generate dense, high-quality point clouds, which are essential for extracting precise phenotypic parameters of individual organs.

- Key Materials:

- Digital SLR or High-Resolution Camera: A camera with manual settings to ensure consistent exposure across all images.

- Controlled Lighting Environment: A setup with multiple photographic lights (e.g., three lights placed 120 degrees apart) to eliminate shadows and ensure uniform illumination [18].

- Turntable or Systematic Shooting Grid: For capturing images from multiple viewpoints around the plant specimen.

- Software for 3D Reconstruction: Structure-from-Motion (SfM) software such as Agisoft Metashape or COLMAP.

Procedure:

- Sample Preparation and Setup:

- Place the potted plant specimen in the center of the shooting arena.

- Ensure the lighting is uniform and avoids specular highlights on the plant surface.

- Multi-View Image Capture:

- If using a turntable, rotate the plant in small, fixed increments (e.g., 10-15 degrees) and capture an image at each position at multiple vertical angles.

- If the plant is too large for a turntable, establish a systematic grid and move the camera around the stationary plant to capture overlapping images from all angles [18].

- Capture several hundred images per plant to ensure sufficient overlap for high-quality reconstruction.

- Point Cloud Generation:

- Import all images into the SfM software.

- Run the standard pipeline: feature detection, feature matching, sparse reconstruction, and dense cloud generation.

- The output is a 3D point cloud where each point has (x, y, z) coordinates and often (R, G, B) color values.

- Post-processing:

- Clean the dense point cloud by removing noise and non-plant points (e.g., the pot, background).

- The final point cloud can be down-sampled to a standardized number of points (e.g., 40,960 points [18]) for input into deep learning models.

Data Integration and Modeling Workflows

The fusion of complementary data requires specialized computational workflows. The following diagram illustrates a generalized pipeline for multimodal feature fusion, integrating concepts from the reviewed studies.

The fusion of different data types, such as images and text, can be further enhanced through graph-based learning. The PlantIF model demonstrates this by mapping image and text features into shared and modality-specific semantic spaces before fusing them [5].

Table 2: Machine Learning Models for Multimodal Integration in Plant Science

| Model Category | Specific Model | Application Example | Reported Performance |

|---|---|---|---|

| Traditional ML | K-Nearest Neighbors (KNN) | Water stress level classification in sweet potato [16] [17] | Outperformed LR, RF, MLP, and SVM |

| Deep Learning (DL) | Convolutional Neural Network (CNN) | Feature extraction from RGB and thermal imagery [16] [17] | Used as a core feature extractor |

| DL & Transformer | Vision Transformer (ViT)-CNN | Water stress classification via image analysis [16] [17] | Simplified 5-level to 3-level classification effectively |

| 3D Point Cloud DL | TPointNetPlus (PointNet++ + Transformer) | Semantic segmentation of cotton leaves, bolls, branches [18] | 98.39% accuracy in leaf segmentation |

| Multimodal DL | PlantIF (Graph Learning) | Plant disease diagnosis fusing image and text data [5] | 96.95% accuracy on multimodal dataset |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for Multimodal Plant Research

| Item / Solution | Function / Application | Example / Specification |

|---|---|---|

| Co-registered RGB-Thermal Camera | Simultaneous acquisition of visual and canopy temperature data for stress phenotyping. | FLIR ONE Pro or similar; critical for calculating CWSI [16] [17]. |

| Low-Altitude Imaging Platform | Enables high-resolution, close-proximity image capture of individual plants. | Fixed/mobile rigs 1-3m above canopy; cost-effective alternative to UAVs [16]. |

| Structure-from-Motion (SfM) Software | Generates high-precision 3D point clouds from multi-view 2D images. | Agisoft Metashape, COLMAP; used for constructing plant point cloud datasets [18]. |

| Graphical User Interface (GUI) System | Allows intuitive interpretation and actionable decision-making from complex models. | Sweet potato water monitor system; integrates Grad-CAM and XAI for usability [17]. |

| Transformer-based Networks | Captures global features and long-range dependencies in complex data (e.g., point clouds, images). | TPointNetPlus for point clouds [18]; ViT-CNN for images [16] [17]. |

| Multi-omics Data | Provides complementary layers of biological information from genome to metabolome. | Genomics, transcriptomics, metabolomics data for predicting disease resistance [15]. |

The biological rationale for multimodal integration is firmly rooted in the complementarity principle, where each data type illuminates a distinct facet of a plant's phenotype and underlying physiology. The protocols and tools detailed herein provide a concrete pathway for researchers to implement this principle. By systematically acquiring and fusing complementary data—from genomic and metabolomic layers to RGB, thermal, and 3D structural information—scientists can achieve a more comprehensive understanding of plant biology, leading to more accurate classification, improved breeding outcomes, and enhanced agricultural management.

Application Notes

Conceptual Foundation of Modalities in Plant Science

In the context of multimodal feature fusion for plant organ classification, a modality refers to a distinct type of biological data source that provides complementary information about a plant species. The integration of multiple modalities enables a more comprehensive representation of plant characteristics, mirroring botanical expertise that considers multiple organs for accurate species identification [1]. From a data perspective, images of different plant organs—specifically flowers, leaves, fruits, and stems—constitute distinct modalities because each encapsulates a unique set of biological features despite all being represented as RGB images [1]. This multimodal approach addresses fundamental limitations of single-organ classification, where variations within the same species and similarities between different species can significantly impair model accuracy [1].

The biological rationale for this framework stems from the fact that different plant organs exhibit diverse morphological characteristics that are taxonomically informative. While leaves may provide information about venation patterns and margin characteristics, flowers offer distinct floral morphometrics, fruits present specific structural features, and stems contribute with bark texture and growth patterns. When combined, these modalities create a robust feature set that significantly enhances classification accuracy compared to unimodal approaches [1]. This approach is particularly valuable for challenging classification tasks involving species with high inter-class similarity or significant intra-class variation.

Experimental Evidence and Performance Metrics

Recent research demonstrates the superior performance of multimodal approaches compared to traditional unimodal methods. The automatic fused multimodal deep learning approach achieves 82.61% accuracy on 979 classes of the Multimodal-PlantCLEF dataset, outperforming late fusion strategies by 10.33% [1] [12]. This performance gain highlights the critical importance of optimal fusion strategies in multimodal plant classification systems.

Table 1: Performance Comparison of Plant Classification Approaches

| Methodology | Data Modalities | Number of Classes | Reported Accuracy | Key Advantages |

|---|---|---|---|---|

| Automatic Fused Multimodal DL [1] | Flowers, Leaves, Fruits, Stems | 979 | 82.61% | Optimal fusion strategy, robust to missing modalities |

| Late Fusion (Baseline) [1] | Flowers, Leaves, Fruits, Stems | 979 | 72.28% | Simple implementation, adaptable to different models |

| Houseplant Leaf Classification (ResNet-50) [19] | Leaves only | 10 | 99.00% | High accuracy for limited classes, effective for single-organ focus |

| Deep Learning (Xception) [19] | Multiple (unspecified) | Not specified | 86.21% | Balance between architecture complexity and performance |

The robustness of multimodal approaches is further enhanced through techniques such as multimodal dropout, which enables the model to maintain strong performance even when some modalities are missing during inference [1] [12]. This capability is particularly valuable for real-world applications where capturing images of all plant organs may not be feasible due to seasonal availability or environmental obstructions.

Protocols

Multimodal Dataset Preparation Protocol

Principle: Transforming existing unimodal plant datasets into multimodal resources requires systematic data curation and organization to ensure proper alignment of different organ modalities across species.

Procedure:

- Dataset Selection: Identify comprehensive unimodal datasets with multiple organ images per species. The PlantCLEF2015 dataset serves as an exemplary foundation for this transformation [1].

- Modality Categorization: Systematically categorize all images into four distinct modality classes: flowers, leaves, fruits, and stems. Each image must be exclusively assigned to one modality category.

- Species-Organ Alignment: Establish a structured database where each plant species entry contains associated images for all available organs, creating a many-to-one relationship between modality images and species labels.

- Quality Filtering: Implement rigorous quality control measures to remove images with excessive occlusion, poor focus, or inconsistent lighting conditions that may impair model training.

- Data Balancing: Address class imbalance through strategic data augmentation techniques, including rotation, scaling, and color jittering, to enhance model generalizability [19].

- Dataset Validation: Employ botanical experts to verify species-organ assignments and ensure taxonomic accuracy throughout the dataset.

The resulting Multimodal-PlantCLEF dataset exemplifies this protocol, providing a standardized benchmark for evaluating multimodal plant classification algorithms [1].

Automated Multimodal Fusion Architecture Search Protocol

Principle: Optimal fusion of multiple modalities requires specialized neural architectures that can effectively integrate complementary information from different plant organs.

Procedure:

- Unimodal Model Pretraining:

- Initialize separate feature extractors for each modality using pre-trained models (e.g., MobileNetV3Small)

- Train each unimodal model independently on its corresponding organ images

- Freeze model layers to reduce parameters and training time [19]

Multimodal Fusion Architecture Search:

- Implement Multimodal Fusion Architecture Search (MFAS) to automatically discover optimal fusion points [1]

- Search for optimal fusion strategies across early, intermediate, late, or hybrid fusion approaches

- Evaluate candidate architectures based on validation accuracy and computational efficiency

Fusion Architecture Evaluation:

- Compare discovered architecture against established baselines (e.g., late fusion with averaging strategy)

- Employ statistical validation methods such as McNemar's test to confirm performance superiority [1]

- Assess robustness to missing modalities through multimodal dropout techniques

Model Deployment Optimization:

- Optimize discovered architecture for resource-constrained devices

- Implement quantization and pruning techniques to reduce model size

- Ensure compatibility with mobile deployment platforms for field applications

Diagram 1: Automated Multimodal Fusion Workflow for Plant Organ Classification

Performance Validation and Statistical Testing Protocol

Principle: Rigorous evaluation of multimodal plant classification systems requires both standard performance metrics and statistical significance testing to demonstrate meaningful improvements over baseline methods.

Procedure:

- Metric Selection:

- Employ comprehensive evaluation metrics including accuracy, precision, recall, and F1-score

- Calculate per-class metrics to identify modality effectiveness across different species

- Report aggregate metrics for overall system performance

Baseline Comparison:

- Compare against established unimodal baselines (leaf-only, flower-only models)

- Evaluate against simple fusion strategies (late fusion with averaging)

- Assess computational efficiency parameters (inference time, model size)

Statistical Validation:

- Implement McNemar's test for paired nominal data to confirm statistical significance

- Conduct cross-validation with multiple random seeds to ensure result stability

- Perform ablation studies to quantify contribution of individual modalities

Robustness Testing:

- Evaluate performance with missing modalities using multimodal dropout

- Test with progressively reduced modality availability

- Assess performance degradation compared to complete modality set

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Materials for Multimodal Plant Classification

| Research Reagent | Specifications | Function in Experimental Protocol |

|---|---|---|

| Multimodal-PlantCLEF Dataset [1] | 979 plant classes; 4 organ modalities (flowers, leaves, fruits, stems) | Primary benchmark dataset for training and evaluating multimodal fusion algorithms |

| SIMPD Version 1 [20] | 20 medicinal plant species; 2,503 high-resolution images | Region-specific dataset for evaluating model transferability and ethnobotanical applications |

| MobileNetV3Small [1] | Pre-trained on ImageNet; optimized for mobile deployment | Base architecture for unimodal feature extraction and efficient model deployment |

| Neural Architecture Search (NAS) Framework [1] | Automated multimodal fusion discovery; supports multiple fusion strategies | Identifies optimal fusion points between modalities without manual design |

| Data Augmentation Pipeline [19] | Rotation, scaling, color jittering; addresses class imbalance | Enhances dataset diversity and improves model generalization to real-world conditions |

| Multimodal Dropout [1] | Random modality exclusion during training | Enhances model robustness to missing modalities in practical applications |

Diagram 2: Complete Experimental Protocol for Multimodal Plant Classification Research

Multimodal feature fusion represents a paradigm shift in plant organ classification, addressing the inherent limitations of unimodal deep learning models that rely on single data sources. By integrating complementary information from multiple plant organs—such as flowers, leaves, fruits, and stems—multimodal fusion strategies create a more comprehensive representation of plant species characteristics, aligning with botanical principles that emphasize the need for multiple organs for accurate classification [1] [21]. The selection of an appropriate fusion strategy is a critical architectural decision that directly impacts model performance, robustness, and computational efficiency. This article provides a detailed examination of early, intermediate, late, and hybrid fusion strategies within the context of plant organ classification, supported by experimental protocols, performance comparisons, and implementation guidelines tailored for research applications.

Fusion Strategy Theoretical Framework

Defining Fusion Types

Multimodal fusion strategies are categorized based on the stage at which information from different modalities is integrated:

- Early Fusion (Feature-Level Fusion): Combines raw data or low-level features from multiple modalities before feature extraction. This approach operates on the assumption that all modalities are aligned and can be directly combined at the input level [22].

- Intermediate Fusion (Model-Level Fusion): Integrates features at intermediate layers within a deep learning architecture, allowing the model to learn complex interactions between modalities through shared representations [22].

- Late Fusion (Decision-Level Fusion): Processes each modality through separate models and combines the outputs at the decision level, typically through averaging or weighted voting [1] [21].

- Hybrid Fusion: Strategically combines elements of early, intermediate, and late fusion to leverage the strengths of each approach while mitigating their individual limitations [1].

Botanical Justification for Multimodal Fusion

From a biological perspective, relying on a single plant organ is insufficient for accurate classification due to several factors: variations in appearance within the same species, similar features across different species, and the practical challenge of capturing all organ details in a single image [1] [21]. Research by Nhan et al. demonstrates that leveraging images from multiple plant organs significantly outperforms single-organ approaches, consistent with botanical expertise that emphasizes the importance of examining multiple organs for reliable identification [1] [21].

Table 1: Comparative Analysis of Fusion Strategies for Plant Organ Classification

| Fusion Strategy | Theoretical Basis | Advantages | Limitations | Ideal Use Cases |

|---|---|---|---|---|

| Early Fusion | Combines raw input data before feature extraction | Preserves correlation between modalities; Simple implementation | Requires modality alignment; Sensitive to missing modalities | Aligned multi-organ images; Controlled environments |

| Intermediate Fusion | Integrates features at intermediate network layers | Learns complex cross-modal interactions; Flexible representation | Higher computational complexity; Complex architecture design | Complex plant species with complementary organ features |

| Late Fusion | Combines predictions from modality-specific models | Robust to missing modalities; Modular training | Cannot model cross-modal correlations; Suboptimal feature learning | Distributed systems; When modality availability varies |

| Hybrid Fusion | Combines multiple fusion strategies strategically | Leverages strengths of different approaches; Highly adaptable | Architecturally complex; Requires careful design | Large-scale plant classification with diverse organ sets |

Experimental Protocols for Fusion Strategies

Protocol 1: Implementing Automatic Hybrid Fusion

Objective: To implement an automated hybrid fusion strategy for plant organ classification using Multimodal Fusion Architecture Search (MFAS).

Materials and Reagents:

- Multimodal-PlantCLEF dataset (restructured from PlantCLEF2015) [1]

- Pre-trained MobileNetV3Small models for each modality [21]

- MFAS algorithm implementation [21]

- Computational resources: GPU with sufficient memory (e.g., RTX 3090 with 18GB) [23]

Procedure:

- Dataset Preparation:

- Apply data preprocessing pipeline to transform unimodal PlantCLEF2015 into multimodal format

- Organize images into four distinct modalities: flowers, leaves, fruits, and stems

- Ensure balanced representation across 979 plant classes

Unimodal Model Training:

- Train separate MobileNetV3Small models for each plant organ modality

- Use transfer learning with ImageNet pre-trained weights

- Freeze initial layers and fine-tune on plant-specific data

Fusion Architecture Search:

- Implement MFAS algorithm to automatically discover optimal fusion points

- Maintain pre-trained unimodal models static during search process

- Progressively merge individual models at different layers

- Train only fusion layers to reduce computational requirements

Model Integration:

- Construct unified model architecture based on MFAS results

- Implement multimodal dropout for robustness to missing modalities

- Apply consistency and complementarity constraints for feature alignment

Validation:

- Evaluate using standard performance metrics (accuracy, AUC)

- Perform McNemar's statistical test for significance validation

- Compare against late fusion baseline with averaging strategy

Expected Outcome: A hybrid fusion model achieving 82.61% accuracy on 979 classes, outperforming late fusion by 10.33% [1] [21].

Protocol 2: Comparative Analysis of Fusion Strategies

Objective: To systematically evaluate and compare early, intermediate, late, and hybrid fusion strategies for plant organ classification.

Materials and Reagents:

- High-quality multimodal plant dataset with annotated organ images

- Vision Transformer (ViT) models for visual analysis [23]

- Contextual metadata (environmental conditions, geographic location, phenological traits) [23]

- M2F-Net framework for multimodal classification [24]

Procedure:

- Data Preparation:

- Curate dataset containing images of multiple plant organs per species

- Collect corresponding contextual metadata (geographic location, environmental conditions)

- Implement data augmentation techniques to address class imbalance

Early Fusion Implementation:

- Combine multiple 2D organ images into a single tensor input

- Process fused input through Vision Transformer architecture

- Extract joint features for classification

Intermediate Fusion Implementation:

- Process each organ modality through separate feature extraction branches

- Integrate features at intermediate transformer layers

- Apply cross-attention mechanisms between modalities

Late Fusion Implementation:

- Train separate classification models for each organ modality

- Combine predictions through averaging or weighted voting

- Optimize weights for each modality based on validation performance

Hybrid Fusion Implementation:

- Implement M2F-Net framework combining agrometeorological and image data [24]

- Assess fusion at multiple stages (input, feature, decision levels)

- Optimize fusion strategy through ablation studies

Evaluation:

- Measure accuracy, Mean Reciprocal Rank (MRR), and computational efficiency

- Test robustness to missing modalities

- Evaluate performance on morphologically similar species

Expected Outcome: Comprehensive performance comparison with metadata fusion expected to achieve up to 97.27% accuracy [23].

Performance Analysis and Quantitative Comparisons

Experimental Results Across Fusion Strategies

Table 2: Quantitative Performance Comparison of Fusion Strategies in Plant Classification

| Fusion Strategy | Reported Accuracy | Dataset | Number of Classes | Key Advantages | Implementation Complexity |

|---|---|---|---|---|---|

| Automatic Hybrid Fusion | 82.61% | Multimodal-PlantCLEF | 979 | Optimal fusion discovery; Robust to missing modalities | High (requires architecture search) |

| Late Fusion Baseline | 72.28% | Multimodal-PlantCLEF | 979 | Simple implementation; Modular training | Low (independent models) |

| Metadata Fusion with ViT | 97.27% | Custom multimodal | Not specified | Handles morphologically similar species | Medium (requires metadata collection) |

| M2F-Net Multimodal | 91% | Amaranthus fertilizer | Binary classification | Integrates image and non-image data | Medium (multiple data pipelines) |

| Computer Vision Only | 69% | Soybean maturity | 4 | Simple data requirements | Low (single modality) |

| PWC-based Model | 79% | Soybean maturity | 4 | Captures physiological relevance | Medium (sensor data required) |

Robustness Analysis with Missing Modalities

The automatic hybrid fusion approach demonstrates remarkable robustness to missing modalities when trained with multimodal dropout techniques. This capability is particularly valuable in real-world plant classification scenarios where certain organs may be seasonal, damaged, or otherwise unavailable [1] [21]. Evaluation on subsets of plant organs confirms maintained performance despite modality absence, a significant advantage over early fusion strategies that typically require all modalities to be present.

Implementation Guidelines and Best Practices

Selection Criteria for Fusion Strategies

Choosing an appropriate fusion strategy depends on multiple factors:

- Data Availability and Quality: Late fusion is preferable when modalities may be missing, while early fusion requires complete aligned datasets [22]

- Computational Resources: Intermediate and hybrid fusion strategies demand greater computational capacity [22]

- Project Scale: Large-scale classification with diverse organ sets benefits from hybrid approaches [1]

- Real-world Constraints: Deployments on resource-limited devices may favor late fusion or simplified architectures [21]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Multimodal Plant Classification

| Reagent/Resource | Function | Example Implementation | Application Context |

|---|---|---|---|

| Multimodal-PlantCLEF Dataset | Benchmark dataset for multimodal plant classification | Restructured PlantCLEF2015 with flower, leaf, fruit, stem images [1] | Algorithm development and comparative evaluation |

| MobileNetV3Small | Lightweight backbone for unimodal feature extraction | Pre-trained on ImageNet, fine-tuned on specific plant organs [21] | Resource-efficient model deployment |

| MFAS Algorithm | Automated search for optimal fusion points | Progressive merging of unimodal models at different layers [21] | Hybrid fusion architecture discovery |

| Vision Transformer (ViT) | Advanced visual analysis of plant organs | Metadata fusion for morphologically similar species [23] | High-accuracy plant species identification |

| Multimodal Dropout | Enhanced robustness to missing modalities | Training with random modality exclusion [1] [21] | Real-world deployment with incomplete data |

| M2F-Net Framework | Multimodal fusion of image and non-image data | Integrating agrometeorological data with plant images [24] | Comprehensive phenotypic analysis |

Visual Guide to Fusion Architectures

Multimodal Fusion Strategy Workflows

Automatic Fusion Architecture Search

The strategic implementation of multimodal fusion approaches represents a significant advancement in plant organ classification research. While late fusion provides a straightforward baseline, automated hybrid fusion strategies demonstrate superior performance by discovering optimal integration points across modalities. The selection of an appropriate fusion strategy must consider dataset characteristics, computational constraints, and real-world deployment requirements. As multimodal plant classification continues to evolve, approaches that automatically adapt fusion strategies to specific contexts and maintain robustness to missing modalities will drive the next generation of plant identification systems, with profound implications for ecological conservation, agricultural productivity, and botanical research.

The Emergence of Automated Fusion to Overcome Manual Architecture Design Biases

The field of plant organ classification is undergoing a significant paradigm shift, moving from reliance on manual, expert-driven model design to automated, data-driven fusion strategies. Traditional deep learning models for plant classification have predominantly relied on single data sources, such as leaf or whole-plant images, which are biologically insufficient for comprehensive species identification [1]. From a botanical perspective, a single organ cannot adequately capture the full biological diversity of plant species, as variations in appearance can occur within the same species, while different species may exhibit similar features [1] [11]. This limitation has prompted researchers to explore multimodal learning techniques that integrate images from multiple plant organs—flowers, leaves, fruits, and stems—to create more robust and accurate classification systems [1].

A critical challenge in multimodal learning involves determining the optimal strategy for fusing these diverse data modalities. Conventional approaches, including early, intermediate, and late fusion strategies, have largely depended on the discretion of model developers, introducing potential biases and leading to suboptimal architectures [1] [11]. The emergence of automated fusion techniques represents a transformative advancement, systematically addressing these manual design biases through algorithmic architecture discovery. By leveraging Neural Architecture Search (NAS) principles specifically tailored for multimodal problems, these automated methods enable the discovery of more optimal and efficient fusion architectures, ultimately enhancing classification performance while reducing human bias in model development [1].

Key Experimental Findings and Quantitative Comparisons

Recent research demonstrates the significant advantages of automated fusion approaches over traditional manual design strategies. The table below summarizes key performance metrics from pioneering studies in automated multimodal fusion for plant classification.

Table 1: Performance Comparison of Fusion Strategies in Plant Classification

| Fusion Strategy | Dataset | Number of Classes | Key Metric | Performance | Reference |

|---|---|---|---|---|---|

| Automatic Fusion (MFAS) | Multimodal-PlantCLEF | 979 | Accuracy | 82.61% | [1] |

| Late Fusion (Averaging) | Multimodal-PlantCLEF | 979 | Accuracy | ~72.28% | [1] |

| Feature Fusion (NCA-CNN) | Medicinal Leaf Dataset | Not Specified | Accuracy | 98.90% | [25] |

| CNN with Optimization | Medicinal Plant Images | Not Specified | Accuracy | Outperforms conventional methods | [26] |

The implementation of a modified Multimodal Fusion Architecture Search (MFAS) algorithm on the Multimodal-PlantCLEF dataset, which contains images of flowers, leaves, fruits, and stems, yielded a remarkable 10.33% absolute improvement in accuracy compared to traditional late fusion with averaging [1]. This performance enhancement highlights the critical limitation of manual fusion strategies: their inherent dependence on researcher intuition and extensive experimentation, which often fails to identify the most effective architectural configurations for integrating multimodal data [1].

Furthermore, automated fusion approaches demonstrate practical advantages beyond raw accuracy. Studies report that these methods lead to more compact models with significantly smaller parameter counts, facilitating deployment on resource-constrained devices such as smartphones [1]. This characteristic is particularly valuable for agricultural and ecological applications, where real-time, in-field plant identification can empower farmers, ecologists, and citizen scientists with immediate, actionable insights.

Experimental Protocols for Automated Fusion

Application Note: This protocol is essential when existing datasets are not structured for multimodal learning, which was a primary challenge in early automated fusion research [1].

Objective: To transform the standard PlantCLEF2015 dataset into Multimodal-PlantCLEF, a dataset suitable for multimodal learning with fixed inputs for specific plant organs.

Materials and Reagents:

- Source Dataset: PlantCLEF2015 or similar unimodal plant image dataset [1].

- Computing Hardware: GPU-enabled workstation for efficient image processing.

- Software: Python with libraries for image processing (e.g., OpenCV, Pillow) and data management (e.g., Pandas).

Procedure:

- Data Audit: Inventory all images in the source dataset, noting the plant organ depicted in each image (e.g., flower, leaf, fruit, stem) based on available metadata or annotations.

- Species-Organ Grouping: For each plant species, group images by their organ type. This creates separate pools of flower, leaf, fruit, and stem images for every species.

- Multimodal Sample Construction: For a given species, create a multimodal data sample by randomly selecting one image from each of its available organ groups. This ensures each input sample during training consists of a set of images representing different organs of the same plant.

- Dataset Splitting: Partition the constructed multimodal samples into training, validation, and test sets, ensuring that all samples from a single plant species belong to only one set to prevent data leakage.

- Handling Missing Modalities: Implement strategies such as multimodal dropout during training to maintain robustness for when images of certain organs are not available during real-world inference [1].

Protocol 2: Implementing Automatic Fusion with MFAS

Application Note: This protocol outlines the core methodology for automating the fusion of unimodal deep learning models, directly addressing the bias in manual architecture design.

Objective: To automatically find the optimal fusion architecture for integrating four unimodal models (processing flower, leaf, fruit, and stem images) into a single, high-performance multimodal classification system.

Materials and Reagents:

- Pretrained Models: Four MobileNetV3Small models, each pretrained on ImageNet [1].

- Software Framework: Deep learning framework (e.g., TensorFlow, PyTorch) with implementations of MFAS or similar NAS algorithms.

- Computational Resources: High-performance computing cluster or multi-GGPU server, as architecture search is computationally intensive.

Procedure:

- Unimodal Model Training: Independently train each of the four MobileNetV3Small models on its corresponding plant organ image type (flowers, leaves, fruits, stems) from the training set of Multimodal-PlantCLEF. This creates specialized feature extractors for each modality.

- Fusion Search Space Definition: Define a search space of possible operations for combining features from the unimodal streams. This space typically includes various types of concatenation, element-wise addition/multiplication, and more complex cross-modal interactions.

- Architecture Search: Run the MFAS algorithm to explore the predefined search space. The algorithm evaluates different fusion architectures and their performance on the validation set.

- Architecture Evaluation: Once the search is complete, select the top-performing fusion architecture identified by the MFAS process.

- Final Model Training: Retrain this discovered architecture from scratch on the full training dataset to obtain the final multimodal plant classification model.

- Performance Validation: Evaluate the final model on the held-out test set, comparing its performance against established baselines like late fusion using statistical tests such as McNemar's test [1].

Visualization of Workflows

The following diagram illustrates the logical workflow of the automatic multimodal fusion process, from data preparation to the final model.

Figure 1: Automated Multimodal Fusion Workflow for Plant Classification

The diagram below details the core MFAS process, showing how the algorithm automatically discovers the optimal fusion strategy.

Figure 2: Multimodal Fusion Architecture Search (MFAS) Core Process

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagents and Computational Tools for Automated Fusion Experiments

| Item Name | Function/Application | Specifications/Notes |

|---|---|---|

| PlantCLEF2015 Dataset | Primary source data for constructing multimodal datasets. | Provides a large volume of plant images with organ annotations. Serves as the base for creating Multimodal-PlantCLEF [1]. |

| Multimodal-PlantCLEF | Benchmark dataset for training and evaluating multimodal fusion models. | A restructured version of PlantCLEF2015 containing aligned images of flowers, leaves, fruits, and stems for 979 plant classes [1]. |

| MobileNetV3Small | Lightweight convolutional neural network used as a unimodal feature extractor. | Pre-trained on ImageNet. Chosen for its efficiency, enabling faster search and deployment on resource-constrained devices [1]. |

| MFAS Algorithm | Core algorithm for automating the discovery of optimal fusion points. | A Neural Architecture Search method specialized for multimodal problems. Reduces human bias and outperforms manually designed fusions [1]. |

| Medicinal Leaf Dataset | Specialized dataset for evaluating performance on medically relevant species. | Used in studies demonstrating high accuracy (e.g., 98.90%) with feature fusion techniques, validating the general approach [25]. |

| Binary Chimp Optimization | Feature selection algorithm used in conjunction with CNNs. | An optimization technique that helps improve accuracy and processing speed by selecting the most relevant features for classification [26]. |

Implementing Automated Fusion Architectures: From Theory to Practice

Neural Architecture Search (NAS) Tailored for Multimodal Problems

The integration of multiple data modalities significantly enhances the robustness and accuracy of computational models. In plant phenotyping, where biological complexity is best captured through images of various organs, multimodal fusion is particularly crucial [1]. The core challenge, however, lies in designing an optimal fusion scheme to effectively combine this complementary information [27].

Neural Architecture Search (NAS) has emerged as a powerful solution, automating the design of high-performing neural architectures. Tailoring NAS for multimodal problems moves beyond simply searching for a unified model; it involves discovering how and where to fuse information from distinct streams—such as images of leaves, flowers, fruits, and stems—to maximize predictive performance for tasks like plant classification [1] [11]. This document details the application notes and experimental protocols for implementing NAS in a multimodal context, specifically for plant organ classification.

Foundational NAS Strategies for Multimodal Fusion

Multimodal NAS frameworks can be broadly categorized by their search strategy. The table below summarizes the core characteristics of three predominant approaches.

Table 1: Comparison of Multimodal Neural Architecture Search Strategies

| Search Strategy | Core Principle | Key Advantages | Reported Limitations |

|---|---|---|---|

| Differentiable ARchiTecture Search (DARTS) [27] | Uses continuous relaxation and gradient-based optimization to jointly learn architecture parameters and model weights. | High search efficiency. | Prone to "Matthew Effect" or performance collapse in multimodal fusion, favoring modalities/features with faster convergence [27]. |

| Single-Path One-Shot (SPOS) [27] | Decouples search and training. A single-path supernet is trained, and the best architecture is found by evaluating SubNets without training. | Robustness against search bias; fairer to different modalities [27]. | Requires a well-designed search space and efficient SubNet evaluation method. |

| Sequential Model-Based Optimization (SMBO) [1] | Iteratively uses a surrogate model to predict promising architectures and evaluates them to update the model. | Can handle complex, non-differentiable search spaces and objectives. | Computationally intensive, as each candidate evaluation typically requires full training [27]. |

Application Notes: NAS for Plant Organ Classification

The following protocols are framed within a research context that aims to build a high-accuracy classifier for 979 plant species by fusing images of four distinct plant organs: flowers, leaves, fruits, and stems [1] [11]. The success of this multimodal approach hinges on finding a superior fusion strategy compared to manual designs like late fusion.

Quantitative Performance Benchmark

Implementing a tailored NAS framework for this task has demonstrated significant performance improvements over established baseline methods, as summarized in the table below.

Table 2: Experimental Performance of NAS vs. Baselines on Multimodal-PlantCLEF

| Model / Framework | Fusion Strategy | Top-1 Accuracy (%) | Key Features & Notes |

|---|---|---|---|

| Late Fusion (Baseline) [1] | Decision-level averaging of unimodal models. | 72.28 | Common baseline; simple but suboptimal [1]. |

| Automatic Fusion (MFAS) [1] [11] | NAS-searched multi-layer fusion. | 82.61 | 10.33% absolute accuracy gain over late fusion; uses modified MFAS algorithm [1]. |

| Multi-scale NAS Framework [27] | NAS-searched multi-scale fusion. | High robustness & efficiency. | Achieves state-of-the-art on other datasets; circumvents DARTS "Matthew Effect" [27]. |

Experimental Protocols

Protocol 1: Dataset Curation for Multimodal Plant Classification

Objective: To create a multimodal dataset, "Multimodal-PlantCLEF," from the unimodal PlantCLEF2015 dataset to support model development with fixed inputs for specific plant organs [1].

Materials:

- Source Data: PlantCLEF2015 dataset [1].

- Computing Resource: Standard workstation with adequate storage.

Procedure:

- Data Identification: Parse the original dataset to identify and extract images containing the target organs: flowers, leaves, fruits, and stems.

- Image Curation & Filtering: Manually or semi-automatically curate the extracted images to ensure each image predominantly features a single, specified organ.

- Sample Alignment: For each plant specimen, create a data sample comprising a set of images, each corresponding to one of the four organ modalities. Handle missing modalities using techniques like multimodal dropout during training [1].

- Dataset Splitting: Partition the aligned dataset into standard training, validation, and test sets, ensuring all samples from a single plant are contained within one split to prevent data leakage.

Validation: The resulting Multimodal-PlantCLEF dataset should enable the training and evaluation of models that take four specific image inputs (one per organ) for classifying 979 plant species [1].

Protocol 2: Implementing a Robust Multimodal NAS with SPOS

Objective: To discover an optimal multimodal fusion architecture for plant organ classification using the SPOS algorithm, avoiding the pitfalls of DARTS [27].

Materials:

- Dataset: Multimodal-PlantCLEF (from Protocol 1).

- Software: Deep learning framework (e.g., PyTorch, TensorFlow).

- Hardware: Several GPUs for supernet training and SubNet evaluation.

Procedure:

- Design the Search Space: a. Unimodal Backbones: Pre-train and freeze efficient networks (e.g., MobileNetV3Small) for each modality [1]. b. Fusion Pathways: Design a search space that defines a set of candidate operations (e.g., 1x1 convolution, 3x3 depthwise convolution, skip-connect, zero) for fusing features from different modalities and at multiple scales (e.g., from early, middle, and late layers of the backbones) [27]. c. Fusion Nodes: Define nodes in the computational graph where features from different modalities can be combined.

Construct and Train the SuperNet: a. Build a one-shot supernet that encompasses all possible pathways and operations within the defined search space. b. Train the supernet once using a single-path uniform sampling strategy, where for each training batch, one random path is activated and updated [27].

Search for the Optimal Architecture: a. After supernet training, freeze its weights. b. Use an evolutionary search or other discrete search method to evaluate the performance of many SubNets (different architecture choices) on the validation set. This evaluation is efficient as it involves only forward passes without training [27]. c. Select the SubNet with the highest validation accuracy as the final architecture.

Retrain and Evaluate: a. (Optional) Retrain the discovered optimal architecture from scratch on the full training set. b. Evaluate the final model's performance on the held-out test set.

Troubleshooting: If search results are poor, verify the design of the search space ensures sufficient diversity and that the supernet training has converged properly. The use of SPOS inherently mitigates the "Matthew Effect" [27].

Diagram: A multi-scale NAS framework for fusing multiple plant organ images. The framework searches for optimal fusion (micro-level) between related features and the best way to combine these fused outputs (macro-level).

Protocol 3: Robustness Evaluation with Multimodal Dropout

Objective: To test the model's resilience to missing plant organ images during inference, simulating real-world scenarios where not all organs are present or visible.

Materials:

- Trained Model: The final model from Protocol 2.

- Test Set: The test split of Multimodal-PlantCLEF.

Procedure:

- Baseline Performance: Evaluate the model on the complete test set where all four modalities are present.

- Ablation with Dropout: Systematically ablate each modality by setting its input to zero (or a masked value) and record the performance.

- Partial Modality Evaluation: Create test subsets with only K out of the four modalities available (e.g., only leaf and flower) and evaluate the model's accuracy on these subsets.

Validation: A robust model will maintain high classification accuracy even with one or more missing modalities. The integration of techniques like multimodal dropout during training is critical for achieving this [1] [11].

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources

| Item Name | Function / Application | Example / Specification |

|---|---|---|

| Multimodal-PlantCLEF | A curated dataset for multimodal plant classification research. | Contains images of flowers, leaves, fruits, and stems for 979 plant species [1]. |

| Pre-trained Unimodal Backbones | Feature extractors for each input modality. | MobileNetV3Small, pre-trained on ImageNet and fine-tuned on specific organ images [1]. |

| Multimodal Dropout | A regularization technique that forces the model to be robust to missing data modalities. | Randomly drops entire feature maps from one modality during training [1] [11]. |

| Multi-scale Search Space | Defines where and how to fuse information from different modalities. | Includes candidate operations (conv, skip-connect) and fusion points across network depths [27]. |

Multimodal Fusion Architecture Search (MFAS) represents a specialized class of Neural Architecture Search (NAS) that automates the discovery of optimal neural network architectures for fusing information from multiple data sources, or modalities [28]. In the context of plant organ classification, this addresses the critical challenge of determining how and when to integrate features from different plant organs—such as flowers, leaves, fruits, and stems—to maximize classification accuracy [1] [11]. Traditional handcrafted fusion strategies, including early, intermediate, and late fusion, rely heavily on researcher intuition and extensive experimentation, often resulting in suboptimal performance [1]. MFAS overcomes these limitations by systematically exploring a defined search space of possible fusion architectures, identifying configurations that outperform manually designed approaches. For plant phenotyping and species identification, where biological characteristics are complex and complementary across organs, MFAS enables the creation of models that more comprehensively capture plant diversity [1] [11].

Table: Comparison of Traditional Fusion Strategies

| Fusion Type | Integration Point | Advantages | Limitations |

|---|---|---|---|

| Early Fusion | Input data level | Simple implementation; enables low-level feature interaction | Requires input alignment; may learn redundant correlations |

| Intermediate Fusion | Feature representation level | Captures complex modal interactions; flexible integration | Requires separate feature extractors; more parameters |

| Late Fusion | Decision/output level | Modular and simple; robust to missing modalities | Cannot capture cross-modal interactions at feature level |

Core Principles of MFAS

The operational framework of MFAS is built upon several foundational principles. First, it operates under the assumption that each modality possesses a pre-trained model, which substantially reduces the search space by keeping these modality-specific networks static during the architecture search process [29]. Second, MFAS employs a sequential model-based exploration approach to efficiently navigate the vast space of possible fusion architectures [30]. This method iteratively proposes and evaluates candidate fusion points between the pre-trained unimodal networks, progressively building a joint architecture. A key advantage is its focus on training only the fusion layers, which yields significant computational savings compared to searching entire network architectures from scratch [29].

The algorithm specifically targets the search for fusion layers and their connectivity patterns between fixed unimodal backbones [30] [28]. This approach recognizes that different layers within deep neural networks capture features at various levels of abstraction, and the optimal fusion point may not necessarily be at the highest layers [29]. By systematically testing fusion at different depths and with different operations, MFAS can discover architectures that leverage both low-level and high-level complementary features across modalities. For plant organ classification, this means the algorithm can learn, for instance, whether to fuse stem and leaf features immediately after initial convolution layers or at deeper, more abstract representation levels.

MFAS Workflow

The implementation of MFAS follows a structured workflow that transforms pre-trained unimodal networks into an optimally fused multimodal architecture. The complete process is visualized below, with detailed explanations of each component following the diagram.

Workflow Component Definitions

- Input Modalities: In plant classification, these typically include images of different plant organs (flowers, leaves, fruits, stems), each treated as a distinct modality despite all being RGB images, as they capture complementary biological features [1] [11].

- Pre-trained Models: Individual models (e.g., MobileNetV3Small) pre-trained on each modality, providing feature extractors that remain fixed during fusion search [29].

- Fusion Operations: Mathematical operations considered for combining features, which may include concatenation, element-wise addition, multiplication, or weighted summation [28].

- Fusion Points: Specific layers within the pre-trained models where cross-modal connections can be established.

- Candidate Architectures: Proposed fusion configurations generated during the search process.

- Optimal Fusion Model: The best-performing architecture identified through the search process, ready for final training and deployment.

MFAS Fusion Cell Operation

The core innovation of MFAS lies in its fusion cells, which determine how information flows between modalities. The following diagram illustrates the internal structure and operation of these fusion cells.

Experimental Protocol for Plant Organ Classification

Dataset Preparation and Preprocessing

For effective MFAS implementation in plant organ classification, proper dataset construction is essential. The Multimodal-PlantCLEF dataset provides a benchmark example, created by restructuring the unimodal PlantCLEF2015 dataset into a multimodal format [1]. The preprocessing pipeline involves several critical steps. First, images must be organized by plant species and organ type, ensuring each sample contains multiple images of different organs from the same species. Second, data cleaning removes mislabeled or poor-quality images. Third, standard image preprocessing includes resizing to a consistent dimension (e.g., 224×224 pixels for MobileNet compatibility), normalization using ImageNet statistics, and data augmentation through random cropping, rotation, and flipping to improve model generalization [1] [29].