Automated Image Segmentation for Plant Organs: Advanced AI Tools and Applications in Biomedical Research

This article provides a comprehensive guide to automated image segmentation for plant organs, tailored for biomedical researchers, scientists, and drug development professionals.

Automated Image Segmentation for Plant Organs: Advanced AI Tools and Applications in Biomedical Research

Abstract

This article provides a comprehensive guide to automated image segmentation for plant organs, tailored for biomedical researchers, scientists, and drug development professionals. We explore the foundational principles of this technology, detail current methodological approaches from semantic to instance segmentation using CNNs and Vision Transformers (ViTs), and discuss practical applications in compound screening and phenomic analysis. The content further addresses common challenges such as data scarcity and model generalization, offering troubleshooting and optimization strategies. Finally, we present a validation and comparative analysis of leading tools and datasets, synthesizing performance metrics to guide tool selection. The conclusion outlines future implications for accelerating botanical drug discovery and plant-based biomedical research.

Plant Organ Segmentation Essentials: Key Concepts and Biomedical Relevance

Defining Image Segmentation in the Context of Plant Biology

Image segmentation, the process of partitioning a digital image into distinct regions or objects, is a foundational step in automated plant phenotyping. Within plant biology, it specifically refers to the computational isolation of plant organs (e.g., leaves, roots, stems) or structures (e.g., cells, vasculature, lesions) from complex backgrounds or from each other for subsequent quantification of morphological, physiological, and pathological traits. This capability is central to a thesis on automating plant organ research, enabling high-throughput, non-destructive analysis of growth, development, and stress responses.

Current Methodologies and Performance Data

Modern segmentation relies predominantly on deep learning models, with convolutional neural networks (CNNs) and vision transformers (ViTs) setting state-of-the-art benchmarks. The following table summarizes key architectures and their reported performance on standard plant datasets.

Table 1: Performance of Selected Segmentation Models on Public Plant Image Datasets

| Model Architecture | Dataset (Focus) | Metric (e.g., mIoU) | Reported Performance | Key Advantage for Plant Biology |

|---|---|---|---|---|

| U-Net | CVPPP Leaf Segmentation (Arabidopsis rosettes) | Symmetric Best Dice | 0.96 | Effective with limited training data, precise boundary delineation. |

| DeepLabv3+ (ResNet-50 backbone) | PlantVillage (Diseased leaves) | Mean Intersection over Union (mIoU) | 0.891 | Robust to multi-scale structures (e.g., lesions at different scales). |

| Mask R-CNN | Root Imaging (Root architecture) | Average Precision (AP@50) | 0.94 | Simultaneous instance segmentation of overlapping root objects. |

| SegFormer (MiT-B1 backbone) | LSC Leaf Segmentation (Multiple species) | Pixel Accuracy | 0.987 | Captures long-range contextual dependencies in dense canopies. |

| nnU-Net | Custom Root & Soil X-ray CT | Dice Similarity Coefficient | 0.93 | Demonstrated adaptability to 3D volumetric data from imaging. |

Detailed Experimental Protocol: Instance Segmentation of Arabidopsis Rosette Leaves

This protocol details the application of a Mask R-CNN model for segmenting individual leaves from top-view Arabidopsis rosette images, a common task in growth analysis.

1. Image Acquisition & Dataset Preparation:

- Imaging Setup: Capture high-resolution RGB images of Arabidopsis plants against a homogeneous, contrasting background (e.g., blue felt) under consistent, diffuse lighting.

- Annotation: Using a tool like LabelMe or VGG Image Annotator, manually label each leaf instance with a polygon mask. Assign all non-plant pixels to a "background" class.

- Dataset Splitting: Divide annotated images into training (70%), validation (15%), and test (15%) sets. Ensure all sets contain plants at various growth stages.

2. Model Training with Transfer Learning:

- Base Model: Initialize a Mask R-CNN model with weights pre-trained on the MS COCO dataset.

- Configuration: Adjust the model head to predict only two classes (Background, Leaf). Set hyperparameters: batch size = 2, initial learning rate = 0.001, optimizer = Adam.

- Data Augmentation: Apply on-the-fly augmentation to the training set to improve generalization: random horizontal/vertical flips, ±30° rotation, and minor brightness/contrast shifts.

- Training: Train the model for 50 epochs, monitoring loss on the validation set. Employ early stopping if validation loss plateaus for 10 epochs.

3. Evaluation & Analysis:

- Primary Metric: Calculate Average Precision at a 50% Intersection-over-Union threshold (AP@50) on the held-out test set.

- Secondary Metrics: Compute per-leaf Dice Coefficient and boundary F1 score to assess segmentation quality and boundary accuracy.

- Phenotype Extraction: For each segmented leaf instance, extract traits: projected leaf area, perimeter, major axis length, and leaf count per rosette.

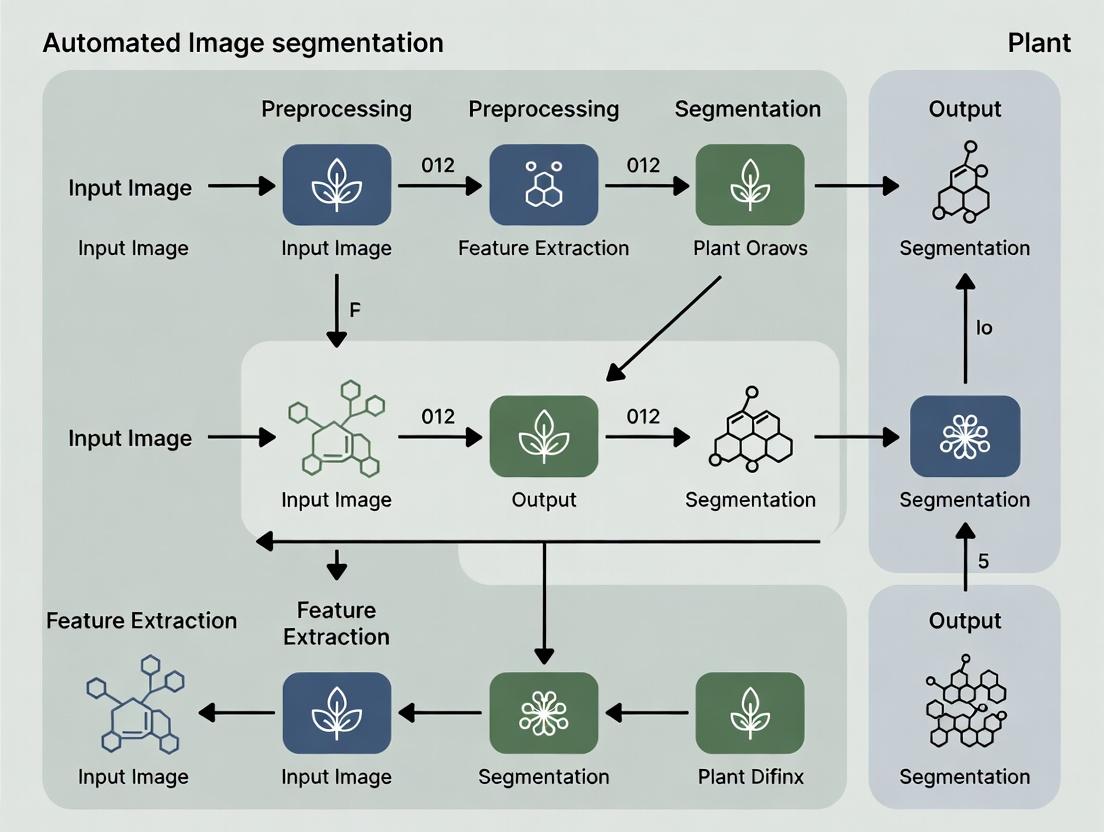

Visualization of Workflow

Title: Workflow for Automated Plant Organ Instance Segmentation

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Research Reagents and Materials for Plant Segmentation Studies

| Item | Function in Experiment |

|---|---|

| High-Contrast Growth Substrates (e.g., Blue Felt, Gel-Based Media) | Provides uniform, non-plant background to simplify initial foreground/background segmentation. |

| Fluorescent Dyes (e.g., Propidium Iodide for roots, Chlorophyll fluorescence) | Enhances contrast of specific organs/cells for confocal or fluorescence microscopy imaging. |

| Rhizotron/Phenotyping Pots | Designed for non-destructive root imaging, compatible with MRI, X-ray CT, or camera systems. |

| Calibration Targets (ColorChecker, Ruler) | Ensures color fidelity and spatial scale consistency across imaging sessions for longitudinal studies. |

| Public Benchmark Datasets (CVPPP, PlantVillage, LSC) | Provides standardized, annotated images for training and benchmarking algorithm performance. |

| Deep Learning Frameworks (PyTorch, TensorFlow) | Open-source libraries for implementing, training, and deploying custom segmentation models. |

| Annotation Software (LabelMe, VIA, ITK-SNAP) | Tools for manually labeling ground truth data, which is essential for supervised model training. |

Signaling Pathway in Segmentation-Guided Analysis

Title: From Segmentation to Biological Insight: Feedback Loop

Within automated image segmentation for plant organs research, the choice between semantic and instance segmentation is foundational. Semantic segmentation classifies every pixel in an image into predefined organ classes (e.g., leaf, root, flower), treating multiple objects of the same class as a single entity. Instance segmentation differentiates between individual objects within the same class, identifying each distinct leaf, root branch, or flower. The selection of method directly dictates the type of quantifiable data extracted, impacting downstream biological interpretation.

Application Notes:

- Semantic Segmentation is optimal for measuring total organ area, coverage, and pixel-wise classification accuracy. It answers questions like "What percentage of the image is root mass?" or "What is the total leaf area under stress?"

- Instance Segmentation is critical for counting, sizing, and analyzing morphology of individual organs. It answers questions like "How many leaves are present at day 14?" or "What is the distribution of root hair lengths?"

Comparative Performance Data

Table 1: Model Performance Comparison on Standard Plant Datasets (e.g., CVPPP, ROOT)

| Organ | Segmentation Type | Common Model (Example) | Key Metric (Typical Range) | Primary Application |

|---|---|---|---|---|

| Leaves | Semantic | U-Net, DeepLabV3+ | mIoU: 0.85 - 0.95 | Rosette area, pixel-wise disease mapping. |

| Instance | Mask R-CNN, YOLOv8 | AP@50: 0.80 - 0.92 | Leaf counting, individual leaf growth tracking. | |

| Roots | Semantic | U-Net, SegNet | mIoU: 0.90 - 0.98 | Total root system area, soil coverage analysis. |

| Instance | Mask R-CNN, StarDist | AP@50: 0.75 - 0.90 | Lateral root count, primary/tap root differentiation. | |

| Flowers | Semantic | FPN, PSPNet | mIoU: 0.80 - 0.90 | Flowering region localization, bloom stage classification. |

| Instance | Mask R-CNN, SOLOv2 | AP@50: 0.85 - 0.95 | Flower bud counting, individual petal/seed pod analysis. |

mIoU: Mean Intersection over Union; AP@50: Average Precision at 50% IoU threshold.

Detailed Experimental Protocols

Protocol 3.1: Semantic Segmentation for Leaf Stress Phenotyping

- Objective: Quantify total chlorotic area in Arabidopsis thaliana rosettes.

- Materials: RGB images of plants, annotated masks (pixel classes: healthy leaf, chlorotic leaf, background).

- Model Training:

- Preprocess images (resize to 512x512, normalize).

- Split dataset (70% train, 15% validation, 15% test).

- Train a U-Net model using a loss function like Dice Loss or Cross-Entropy.

- Validate using mIoU per class.

- Inference & Analysis:

- Apply trained model to unseen images.

- Calculate the proportion of 'chlorotic leaf' pixels to total 'leaf' pixels per plant.

- Correlate chlorotic area with physiological data (e.g., chlorophyll content).

Protocol 3.2: Instance Segmentation for Root Architecture Analysis

- Objective: Count lateral roots and measure primary root length.

- Materials: High-contrast root images (e.g., on agar), annotations with unique IDs for each root instance.

- Model Training:

- Use data augmentation (rotation, flipping) to improve robustness.

- Train a Mask R-CNN model with a ResNet-50 backbone.

- The model learns to propose regions, classify them ('primary root', 'lateral root'), and generate binary masks.

- Post-Processing & Analysis:

- Filter predictions by confidence score (e.g., >0.8).

- Extract mask for the primary root instance and skeletonize to measure length.

- Count all detected 'lateral root' instances.

- Export data for statistical comparison across genotypes or conditions.

Visual Workflows

(Title: Semantic Segmentation Workflow)

(Title: Instance Segmentation Workflow)

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Plant Organ Segmentation Studies

| Item / Reagent | Function / Purpose | Example Application |

|---|---|---|

| Fluorescent Dyes (e.g., Propidium Iodide) | Stains dead plant cell walls, creating high-contrast for root imaging. | Instance segmentation of root system architecture on agar plates. |

| Chlorophyll Fluorescence Imager | Provides non-RGB channels (e.g., Fv/Fm) correlating with plant health. | Semantic segmentation of stressed vs. healthy leaf tissue. |

| Gel-Based Growth Media (Agar) | Provides transparent, uniform background for root growth. | Standardized imaging for both semantic and instance root analysis. |

| Annotation Software (e.g., CVAT, LabelMe) | Enables pixel-accurate labeling of training data for both segmentation types. | Creating ground truth datasets from plant imagery. |

| Pre-trained Model Weights (e.g., on ImageNet) | Accelerates training via transfer learning, especially with limited plant data. | Initializing backbones for custom Mask R-CNN or U-Net models. |

The Role of Plant Phenotyping in Drug Discovery and Compound Screening

Within the broader thesis on automated image segmentation for plant organs research, high-throughput plant phenotyping emerges as a critical bridge between botanical systems and pharmaceutical discovery. Plants serve as biofactories for complex secondary metabolites, many with therapeutic potential. Automated, non-invasive phenotyping platforms enable the rapid, quantitative assessment of plant physiological and morphological responses to chemical libraries. This allows researchers to screen for bioactive compounds that modulate specific biological pathways, identify novel drug leads, or understand compound toxicity, all within a living, multicellular context.

Core Applications in Drug Discovery

Screening for Bioactive Natural Products

Phenotyping tracks changes in plant growth, pigment composition, and leaf morphology induced by external compounds or genetic modifications designed to alter metabolic pathways. This facilitates the identification of conditions that maximize yield of target metabolites.

Toxicity and Mode-of-Action Screening

Plants are sensitive indicators of phytotoxicity. Phenotyping can reveal compound-specific stress signatures (e.g., chlorosis, necrosis, growth inhibition) that inform on environmental impact and suggest cellular targets, serving as a preliminary in planta toxicity assay.

Validating Target Engagement in Complex Organisms

When screening for compounds designed to modulate conserved plant targets (e.g., tubulin, hormone pathways), phenotyping provides direct functional readouts of target engagement in a whole-organism setting, offering insights beyond in vitro assays.

Table 1: Key Phenotypic Parameters and Their Relevance in Compound Screening

| Phenotypic Trait | Measurement Method | Drug Discovery Relevance | Typical Assay Throughput (Plants/Day) |

|---|---|---|---|

| Biomass Accumulation | Projected leaf area, rosette diameter from top-view images. | Indicator of general growth promotion/toxicity. | 500 - 5,000 |

| Chlorophyll Fluorescence | Fv/Fm (PSII efficiency), NPQ (non-photochemical quenching). | Detects disruption of photosynthesis (herbicide mode). | 200 - 2,000 |

| Hyperspectral Reflectance | Indices like NDVI, PRI, Anthocyanin Reflectance Index. | Non-destructive quantification of pigment & biochemical changes. | 100 - 1,000 |

| Leaf Morphology | Segmentation-based analysis of leaf shape, serration, thickness. | Identifies compounds affecting cell division/expansion. | 200 - 1,000 |

| Root Architecture | Length, branching, angle analysis from segmented root images. | Screens for modulators of hormone signaling (e.g., auxin). | 100 - 500 |

Table 2: Example Phenotyping-Driven Drug Discovery Outcomes

| Plant System | Screened Library/Intervention | Key Phenotypic Readout | Identified Lead/Outcome |

|---|---|---|---|

| Arabidopsis thaliana | Library of synthetic small molecules. | Root architecture alteration. | Identification of novel auxin transport inhibitors. |

| Medicago truncatula | Elicitor compounds. | Hyperspectral imaging of leaf reflectance. | Increased production of antimicrobial triterpenes. |

| Marchantia polymorpha | Natural product extracts. | Growth rate & gametophore morphology. | Discovery of a novel compound inhibiting cell plate formation. |

Detailed Experimental Protocols

Protocol 1: High-Throughput Rosette-Based Growth Inhibition Screen

Objective: To screen a chemical library for compounds that inhibit or promote shoot growth in Arabidopsis. Materials: See "The Scientist's Toolkit" below. Procedure:

- Plant Preparation: Sow surface-sterilized Arabidopsis (Col-0) seeds in 96-well agar plates (one seed/well) containing half-strength MS media. Stratify for 48h at 4°C.

- Growth & Treatment: Grow plates vertically in phenotyping cabinet (22°C, 16h light/8h dark) for 5 days. Using a liquid handler, add 10 µL of test compound (from DMSO stock, final conc. 10 µM) or DMSO control to each well.

- Image Acquisition: Place plates in automated imager 72 hours post-treatment. Acquire high-resolution RGB top-view images daily for 5 days under consistent lighting.

- Image Segmentation & Analysis: a. Use pre-trained U-Net model (from core thesis work) to segment rosette from background. b. Extract rosette area (pixels²) for each plant over time. c. Calculate relative growth rate: RGR = (ln(Areafinal) - ln(Areainitial)) / time.

- Data Analysis: Normalize RGR of treated plants to DMSO controls. Z-score > 2 or < -2 indicates significant growth promotion or inhibition, respectively.

Protocol 2: Chlorophyll Fluorescence-Based Herbicide Mode-of-Action Screening

Objective: To classify unknown compounds by their effect on photosystem II (PSII). Materials: See "The Scientist's Toolkit." Procedure:

- Plant Material: Grow Arabidopsis in 96-well pots for 10 days.

- Compound Treatment: Spray plants with test compound (e.g., 100 µM) or known PSII inhibitor (Diuron, 10 µM) as positive control.

- Dark Adaptation & Imaging: Dark-adapt plants for 20 minutes. Load into chlorophyll fluorescence imager.

- Measurement Protocol: a. Apply a saturating light pulse (3000 µmol/m²/s, 0.8s) to measure initial (F₀) and maximal (Fₘ) fluorescence. b. Calculate Fv/Fm = (Fₘ - F₀)/Fₘ for each pixel. c. Generate heatmaps of Fv/Fm values per plant using automated segmentation of individual rosettes.

- Analysis: Compounds causing a uniform reduction in Fv/Fm across the leaf similar to Diuron suggest a PSII-inhibitor mode of action.

Diagrams

Diagram 1 Title: Phenotyping Workflow for Drug Screening

Diagram 2 Title: Plant Stress Signaling from Compound

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function & Relevance in Phenotyping Screens |

|---|---|

| Arabidopsis (Col-0) Seeds | Standardized plant model; uniform genetic background ensures reproducible phenotypic responses. |

| Half-Strength MS Media Agar | Defined growth medium for consistent in vitro cultivation in multi-well plates. |

| 96-/384-Well Plant Growth Plates | Enable high-throughput screening of chemical libraries on individual seedlings. |

| Automated Phenotyping Cabinet | Provides controlled, reproducible light, temperature, and humidity for unbiased growth. |

| RGB & Fluorescence Imaging System | Captures morphological and physiological data (e.g., chlorophyll fluorescence Fv/Fm). |

| Hyperspectral Camera | Measures reflectance spectra to derive biochemical indices (chlorophyll, anthocyanins). |

| Pre-trained Segmentation Model (U-Net) | (Core thesis output) Automatically isolates plant/organs from images for trait extraction. |

| Image Analysis Software (e.g., PlantCV, Fiji) | Processes segmented images to compute quantitative phenotypic descriptors. |

| Chemical Library (in DMSO) | Diverse collection of small molecules or natural product extracts for screening. |

| Liquid Handling Robot | Ensures precise, high-throughput application of compound solutions to assay plates. |

1. Introduction in Thesis Context Automated image segmentation of plant organs is a foundational task in quantitative phenomics, enabling non-destructive measurement of morphological and physiological traits. Within the broader thesis on this topic, benchmarking against standardized, publicly available datasets is critical for evaluating algorithm robustness, generalizability, and performance. This protocol details the access, utilization, and evaluation of two cornerstone resources: the PlantCV ecosystem and the Leaf Segmentation Challenge (LSC) dataset.

2. Key Datasets & Benchmarks: Specifications and Access The following table summarizes the core quantitative attributes of the primary datasets used for benchmarking plant organ segmentation algorithms.

Table 1: Core Benchmark Datasets for Plant Organ Segmentation

| Dataset / Resource | Primary Organ Focus | Sample Size (Images) | Annotation Type | Key Benchmark Metrics | Access Link | ||

|---|---|---|---|---|---|---|---|

| PlantCV Example Datasets | Leaves, Roots, Rosettes | ~50 (variable per set) | Binary masks, Landmarks | Accuracy, Precision, Recall | plantcv.org | ||

| Leaf Segmentation Challenge (LSC) 2016 | Arabidopsis leaves | ~60 (High-Res) | Pixel-wise leaf instance segmentation | Symmetric Best Dice (SBD), Counting Accuracy | Leaf Segmentation Challenge | ||

| CVPPP 2017 LSC | Arabidopsis & Tobacco | 249 (A1-A4) | Pixel-wise instance segmentation | SBD, Difference in Count (DiC), Absolute Difference in Count ( | DiC | ) | CVPPP Dataset |

3. Experimental Protocol: Benchmarking a Segmentation Model on the LSC Dataset

A. Dataset Acquisition and Preprocessing

- Download: Acquire the LSC (CVPPP 2017) dataset from the official source (see Table 1). The dataset includes folders

A1,A2,A3,A4containing RGB images and corresponding ground truth label masks. - Data Partition: Use the official train/test split as defined in the challenge. Sets

A1,A2,A3are typically used for training/validation, whileA4is held out for final testing. - Normalization: Apply channel-wise mean subtraction and standard deviation division using pre-calculated dataset statistics (e.g., mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225] for models pretrained on ImageNet).

- Augmentation (Training Phase): Apply real-time spatial and color augmentations including random rotation (±15°), horizontal/vertical flips, and slight color jitter to improve model generalization.

B. Model Training & Validation

- Model Selection: Choose an instance segmentation architecture (e.g., Mask R-CNN, U-Net with instance post-processing).

- Loss Function: Configure a composite loss: L = L_class + L_box + L_mask, where

L_maskis typically binary cross-entropy or Dice loss for the segmentation head. - Training Loop: Train for a fixed number of epochs (e.g., 100) with early stopping. Use the

A1,A2,A3sets with an 80/20 random split for training and validation. - Validation Metric: Monitor the Symmetric Best Dice (SBD) on the validation split after each epoch.

C. Final Evaluation on Test Set

- Inference: Run the trained model on the held-out test set (

A4). Generate predicted instance masks for each RGB image. - Post-processing: Apply standard post-processing (e.g., thresholding at 0.5, connected components analysis) to predicted masks.

- Metric Calculation: Use the official challenge evaluation code to compute:

- Symmetric Best Dice (SBD): The primary metric for segmentation quality.

- Difference in Count (DiC): Signed difference between predicted and true leaf count.

- Absolute Difference in Count (|DiC|): Absolute value of DiC.

- Reporting: Report the aggregated SBD, |DiC|, and standard deviation of DiC across all test images, allowing direct comparison to published challenge results.

4. Workflow and Pathway Visualizations

Title: LSC Dataset Benchmarking Workflow

Title: Data's Role in Segmentation Thesis

5. The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials and Tools for Plant Organ Segmentation Research

| Item / Reagent | Provider / Example | Function in Experiment |

|---|---|---|

| Reference Dataset (LSC) | CVPPP / Plant-Phenotyping.org | Provides gold-standard annotated images for algorithm training and benchmarking. |

| Segmentation Software Library (PlantCV) | PlantCV Development Team | Open-source toolkit for building reproducible image analysis pipelines for plant phenotyping. |

| Deep Learning Framework | PyTorch, TensorFlow | Provides environment for developing, training, and deploying custom segmentation models (e.g., U-Net). |

| High-Throughput Imaging System | LemnaTec Scanalyzer, Phenospex PlantEye | Acquires standardized, high-resolution RGB/NIR/FLU image data for analysis. |

| Annotation Software | LabelMe, CVAT, ImageJ | Used for generating new ground truth segmentation masks when expanding datasets. |

| Evaluation Code | Official LSC Challenge Scripts | Ensures metrics (SBD, DiC) are calculated correctly for fair comparison to state-of-the-art. |

| Growth Substrate | Peat-based soil, Agar plates | Standardized medium for growing Arabidopsis or other model plants for imaging. |

Why Plant Organ Segmentation Matters for Bioactive Compound Research

Automated image segmentation of plant organs is a pivotal computational tool within plant phenomics, directly accelerating the discovery and analysis of bioactive compounds. By precisely isolating regions of interest (e.g., leaves, roots, flowers, fruits, and specialized structures like trichomes or glands), researchers can quantitatively link morphological and anatomical traits to the production, accumulation, and localization of phytochemicals. This targeted approach replaces labor-intensive manual dissection and subjective visual scoring, enabling high-throughput, non-destructive screening of plant populations under various treatments. Within the broader thesis on automated segmentation, this application note details how these techniques provide the spatial context essential for elucidating the biosynthetic pathways and ecological roles of plant-derived drug candidates.

Application Notes: Linking Segmentation to Compound Localization

Key Insights from Recent Studies (2023-2024)

Current research underscores that organ-specific segmentation is not merely morphological profiling but a gateway to spatially resolved metabolomics.

Table 1: Quantitative Outcomes of Segmentation-Driven Bioactive Compound Research

| Plant Organ & Target Compound | Segmentation Method | Key Quantitative Finding | Impact on Research |

|---|---|---|---|

| Cannabis sativa (Glandular trichomes on flowers) | Deep Lab v3+ (CNN) on macro imagery | Identified a 230% variance in trichome density between cultivars. Density positively correlated (r=0.89) with cannabinoid concentration (HPLC-MS). | Enables non-destructive potency prediction and breeding for high-yield chemotypes. |

| Catharanthus roseus (Leaf idioblasts and laticifers) | U-Net on hyperspectral images | MIA alkaloids localized primarily in leaf margin idioblasts (85% of total leaf content). Segmentation accuracy (mIoU: 0.94) allowed precise micro-dissection. | Revealed previously underestimated compartmentalization, refining metabolic engineering targets. |

| Panax ginseng (Root vasculature vs. cortex) | 3D U-Net on X-ray micro-CT scans | Ginsenoside content (LC-MS) was 3.2x higher in the root cortex versus the vascular cylinder. Segmentation enabled volumetric tissue-specific calculation. | Informs optimal harvest times and post-harvest processing to maximize compound yield. |

| Mentha spp. (Leaf secretory peltate trichomes) | Mask R-CNN on SEM images | A 15% increase in peltate trichome area index (PTAI) under drought stress correlated with a 40% rise in menthol concentration (GC-MS). | Links environmental response mechanisms to secondary metabolism for stress-resilient cultivation. |

The Signaling Pathway Context

Plant organ development and stress responses are governed by complex signaling pathways that directly regulate the biosynthesis of bioactive compounds. Precise organ segmentation allows researchers to quantify pathway activity markers (e.g., fluorescent reporter genes) within specific tissues.

Diagram Title: Signaling to Synthesis: The Segmentation Feedback Loop

Experimental Protocols

Protocol 1: High-Throughput Leaf Segmentation & Trichome Analysis for Metabolite Correlation

Objective: To non-destructively screen a population of Mentha plants for trichome density and area, then correlate these metrics with essential oil composition.

Materials: See "The Scientist's Toolkit" below.

Workflow:

Diagram Title: Workflow for Leaf & Trichome Analysis

Procedure:

- Standardized Imaging: Grow plants under controlled conditions. At defined developmental stage, place potted plant on imaging turntable. Capture top-down RGB images at 5 MP resolution using a dome lighting system to minimize shadows. Include a scale bar.

- Leaf ROI Segmentation: Use a pre-trained U-Net model (e.g., on PlantCV) to segment all individual leaves from background. Export binary masks for each leaf.

- Trichome Segmentation: Crop images using leaf masks. Process cropped images through a Mask R-CNN model fine-tuned to detect peltate and capitate trichomes. Validate with manual annotations (minimum 100 instances).

- Quantitative Morphometrics: For each leaf, calculate:

- Trichome Density = (Number of peltate trichomes) / (Leaf area in cm²).

- Peltate Trichome Area Index (PTAI) = (Total area of peltate trichome masks) / (Leaf area).

- Spatially-Correlated Metabolomics: Immediately after imaging, perform Headspace Solid-Phase Microextraction (HS-SPME) on the same imaged leaf, followed by GC-MS. Quantify key compounds (e.g., menthol, menthone).

- Data Integration: Perform Pearson correlation analysis between PTAI and absolute concentrations of each compound. Build a linear regression model to predict metabolite levels from image-derived features.

Protocol 2: 3D Root System Segmentation for Compound Distribution Mapping

Objective: To map the spatial distribution of ginsenosides within the complex architecture of a Panax ginseng root.

Materials: See "The Scientist's Toolkit" below.

Workflow:

Diagram Title: 3D Root Segmentation & Metabolite Mapping

Procedure:

- Fixation & Staining: Wash fresh root carefully. Fix in 70% ethanol for 48 hours. Immerse in 1% iodine (in KI) solution for 72 hours to enhance X-ray contrast of internal structures.

- Micro-CT Scanning: Mount stained root vertically on specimen stage. Acquire X-ray projections at 0.25-degree rotations (1440 projections). Reconstruct using filtered back-projection to generate a 3D volume stack.

- 3D Semantic Segmentation: Train a 3D U-Net on a manually annotated sub-volume. Input the full CT stack to the trained model to classify each voxel as background, soil, root cortex, stele, or lateral root. Apply morphological post-processing.

- Architectural Analysis: For the segmented root cortex volume, calculate total volume, surface area, and tissue volume ratio (Cortex:Stele).

- Correlative Metabolomics: Using the 3D model as a guide, perform cryo-sectioning of the root at specific positions (e.g., taproot vs. lateral root). Micro-dissect cortical and stelar tissues under a stereomicroscope using laser capture microdissection (LCM). Extract metabolites from each tissue pool and analyze via targeted LC-MS/MS for ginsenosides.

- Model Integration: Map quantitative ginsenoside data back onto the corresponding 3D segmented volumes, creating a heatmap model of compound distribution.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Segmentation-Driven Bioactive Compound Research

| Item Name / Category | Function & Relevance |

|---|---|

| High-Throughput Phenotyping System (e.g., Scanalyzer, PhenoBox) | Provides controlled, uniform imaging environment for RGB, fluorescence, or NIR, generating consistent 2D image datasets for whole-plant or organ-level segmentation. |

| Micro-CT Scanner (e.g., SkyScan 1272) | Generates high-resolution 3D volumetric data of internal plant structures (roots, stems, fruits) non-destructively, essential for 3D segmentation pipelines. |

| Hyperspectral Imaging Camera (400-1000 nm range) | Captures spectral-spatial data cubes that can be segmented based on spectral signatures correlated with chemical composition (e.g., alkaloid-rich regions). |

| Deep Learning Software Framework (e.g., PyTorch, TensorFlow with PlantSeg plugins) | Provides the environment to train, validate, and deploy custom convolutional neural network (CNN) models (U-Net, Mask R-CNN) for specific plant organ segmentation tasks. |

| Laser Capture Microdissection (LCM) System (e.g., Leica LMD7) | Allows for precise, segmentation-guided physical isolation of specific cell types or tissue regions (e.g., trichome, vascular bundle) for downstream targeted metabolomics. |

| Liquid Chromatography Tandem Mass Spectrometry (LC-MS/MS) with ESI source | The gold standard for identifying and quantifying non-volatile bioactive compounds (e.g., ginsenosides, alkaloids) from micro-dissected, segmented tissue samples. |

| Headspace Solid-Phase Microextraction (HS-SPME) Fibers (e.g., DVB/CAR/PDMS) | Enables non-destructive sampling of volatile organic compounds (VOCs) from living plant tissue immediately after imaging, linking morphology to volatile metabolite profiles. |

| Fluorescent Biosensor Reporter Lines (e.g., for Ca2+, ROS, hormones) | Genetically encoded sensors that visualize signaling pathway activity in vivo. Segmentation of fluorescence signals quantifies activity within specific organs/cells under treatment. |

AI in Action: Methodologies and Real-World Biomedical Applications

This document provides an in-depth technical analysis of Convolutional Neural Networks (CNNs), U-Nets, and Vision Transformers (ViTs) within the context of automated image segmentation for plant organ research. These architectures are foundational for quantifying phenotypic traits, such as leaf area, root length, and fruit morphology, which are critical for plant physiology studies and agricultural biotechnology.

Core Architecture Comparison & Quantitative Benchmarks

The following table summarizes the key architectural components and quantitative performance metrics for CNN, U-Net, and ViT models on plant image segmentation tasks, based on recent literature.

Table 1: Architectural Comparison and Performance on Plant Organ Segmentation

| Feature | CNN (e.g., ResNet-50 Backbone) | U-Net | Vision Transformer (ViT) |

|---|---|---|---|

| Core Mechanism | Hierarchical convolution & pooling | Encoder-decoder with skip connections | Multi-head self-attention on image patches |

| Key Strength | Spatial feature extraction, translation equivariance | Precise localization, efficient with few samples | Global contextual understanding, scalability |

| Primary Limitation | Limited receptive field, loses fine details | Can be limited by encoder capacity | High data requirements, computational cost |

| Typical mIoU (Leaf) | 78-82% | 88-92% | 85-89% |

| Inference Speed (FPS) | ~45 | ~32 | ~22 |

| Trainable Parameters | ~25M | ~31M | ~86M (ViT-Base) |

| Data Efficiency | Moderate | High | Low (requires pre-training) |

| Common Use Case | Feature extractor, initial coarse segmentation | Standard for bio-image segmentation | Complex scene segmentation, multi-organ |

Data synthesized from recent studies (2023-2024) on Arabidopsis, tomato, and wheat phenotyping datasets. mIoU = mean Intersection over Union, FPS = Frames per Second on an NVIDIA V100 GPU.

Detailed Experimental Protocols

Protocol 2.1: U-Net Training for Root System Segmentation

Objective: Train a U-Net model to segment root architecture from rhizotron images. Materials: RhizoVision Explorer dataset, Python 3.9+, PyTorch 1.12, CUDA 11.6.

Procedure:

- Image Preprocessing: Resize all RGB images and corresponding ground truth masks to 512x512 pixels. Apply augmentation: random rotation (±15°), horizontal/vertical flips, and contrast adjustment (±10%).

- Data Splitting: Split dataset into 70% training, 15% validation, 15% testing.

- Model Configuration: Implement U-Net with a ResNet-34 encoder pre-trained on ImageNet. Set decoder channels to [256, 128, 64, 32, 16].

- Training: Use Adam optimizer (lr=1e-4), Dice-Cross-Entropy loss. Train for 200 epochs, batch size 16. Reduce learning rate on plateau (factor=0.5, patience=10).

- Validation: Monitor validation Dice Similarity Coefficient (DSC) every epoch. Early stopping if DSC does not improve for 25 epochs.

- Evaluation: Apply trained model on test set. Report DSC, mIoU, and root length estimation error vs. manual annotation.

Protocol 2.2: Fine-tuning a Vision Transformer for Multi-Organ Segmentation

Objective: Adapt a pre-trained ViT for simultaneous segmentation of leaves, stems, and fruits. Materials: Plant phenotyping dataset (e.g., CVPPP A1), TIMM library, pre-trained ViT-Base (ImageNet-21k).

Procedure:

- Patch Embedding: Load ViT-Base. Input images (224x224) are split into fixed 16x16 patches.

- Decoder Attachment: Replace the standard ViT classification head with a lightweight convolutional decoder (e.g., Feature Pyramid Network) to upsample the [CLS] token and patch embeddings to a full-resolution segmentation mask.

- Partial Fine-tuning: Freeze all ViT encoder layers. Only train the attached decoder and the LayerNorm parameters in the encoder for the first 50 epochs.

- Full Fine-tuning: Unfreeze the entire network. Train for an additional 150 epochs with a reduced learning rate (5e-5). Use a weighted cross-entropy loss to handle class imbalance (background, leaf, stem, fruit).

- Inference & Analysis: Perform inference on test images. Use connected component analysis on output masks to count individual organs.

Architectural Diagrams

Diagram 1: U-Net with skip connections for segmentation

Diagram 2: ViT image patching and embedding process

Diagram 3: Benchmarking workflow for three architectures

The Scientist's Toolkit: Key Reagent Solutions

Table 2: Essential Tools for AI-Based Plant Image Segmentation Research

| Item / Solution | Provider / Example | Primary Function in Research |

|---|---|---|

| High-Throughput Imaging System | LemnaTec Scanalyzer, PhenoVation | Automated, standardized image acquisition of plants under controlled lighting. |

| Annotation Software | CVAT, Labelbox, VGG Image Annotator | Creation of pixel-wise ground truth masks for training supervised models. |

| Deep Learning Framework | PyTorch, TensorFlow with Keras | Platform for implementing, training, and evaluating CNN, U-Net, and ViT models. |

| Pre-trained Model Repository | TIMM, Hugging Face, TorchVision | Source of model backbones (ResNet, ViT) for transfer learning, reducing data needs. |

| Bio-image Analysis Suite | ImageJ/Fiji, PlantCV | For pre-processing raw images and post-processing model outputs (e.g., morphological analysis). |

| Augmentation Library | Albumentations, TorchVision Transforms | Expands training dataset diversity by applying rotations, flips, color jitter, etc. |

| Experiment Tracking Tool | Weights & Biases, MLflow | Logs training metrics, hyperparameters, and model versions for reproducibility. |

| High-Performance Compute | NVIDIA GPU (V100/A100) with CUDA | Accelerates model training, which is computationally intensive for ViTs and large CNNs. |

Within the broader thesis on automated image segmentation for plant organs, this protocol details the critical pre-processing workflow from acquiring raw images to physical organ isolation. This pipeline generates the ground truth data essential for training and validating robust segmentation algorithms used in high-throughput phenotyping and phytochemical drug discovery.

Image Acquisition Protocol

The initial step involves capturing high-fidelity images under standardized conditions to ensure consistency for algorithmic processing.

Protocol 1.1: Controlled Environment Plant Imaging

- Plant Material Preparation: Grow Arabidopsis thaliana (Col-0) or target medicinal plant (e.g., Catharanthus roseus) under controlled growth chamber conditions (22°C, 16/8h light/dark, 65% RH) for 21 days.

- Imaging Setup: Position the potted plant against a neutral, high-contrast background (#202124 or #34A853).

- Camera Configuration: Mount a calibrated RGB camera (e.g., Nikon D850) on a fixed tripod. Use a macro lens (60mm f/2.8) for rosettes. Settings: Manual mode, ISO 100, f/8, shutter speed 1/125s, with two diffused LED ring lights at 45° angles.

- Image Capture: Capture images in RAW+JPEG format. Include a calibrated color checker card (X-Rite ColorChecker Classic) in the first frame of each session.

- Data Transfer: Transfer images to a secure server, naming convention:

Species_Genotype_Date_PlantID_View.ext.

Table 1: Quantitative Specifications for Image Acquisition

| Parameter | Specification | Rationale |

|---|---|---|

| Resolution | 45 Megapixels (8256 x 5504) | Enables sub-organ feature detection. |

| Color Depth | 16-bit per channel (RAW) | Maximizes color data for segmentation. |

| Spatial Reference | Scale bar (10 mm) in frame | Allows pixel-to-real-world conversion. |

| Lighting Uniformity | >90% across field of view | Minimizes segmentation artifacts. |

| Image Format | RAW (.NEF/.CR2) & JPEG | RAW for analysis, JPEG for quick review. |

Image Pre-processing & Annotation

Raw images undergo standardization and manual annotation to create training data.

Protocol 2.1: Image Standardization and Labeling

- Color Correction: Use Python (OpenCV,

ccmmodule) to apply color correction matrix derived from the ColorChecker card to all images in a session. - Background Subtraction: Apply a binary threshold on the green-difference index or use a U-Net pre-trained model to remove the neutral background.

- Manual Annotation: Load corrected images into annotation software (LabelBox, CVAT). Manually delineate organ boundaries (leaf, stem, flower, root if visible) as polygons.

- Label Export: Export annotations in COCO JSON or Pascal VOC XML format, linking each polygon to its image and organ class ID.

Table 2: Annotation Quality Control Metrics

| Metric | Target Value | Measurement Tool |

|---|---|---|

| Inter-annotator Agreement (IoU) | >0.85 | Jaccard Index between two annotators. |

| Pixel Accuracy of Ground Truth | >99% | Review by senior plant morphologist. |

| Annotation Time per Image | 5-7 min | For a mature Arabidopsis rosette. |

Automated Segmentation Model Application

The annotated dataset trains a segmentation model, which is then applied to new images.

Protocol 3.1: Training and Inference with DeepLabV3+

- Model Configuration: Initialize a DeepLabV3+ architecture (ResNet-101 backbone) pre-trained on ImageNet. Set output channels to number of organ classes + background.

- Training: Split annotated data 70:15:15 (train:validation:test). Train for 100 epochs using PyTorch, with cross-entropy loss, Adam optimizer (lr=0.001), and a batch size of 8.

- Validation: Monitor mean Intersection-over-Union (mIoU) on the validation set. Proceed to inference when mIoU plateaus above 0.90.

- Batch Inference: Apply the trained model to new acquisition batches. Output includes segmentation masks where each pixel is assigned an organ class.

From Pixel to Physical Organ: Isolation Guidance

Segmentation maps guide precise, automated or manual dissection.

Protocol 4.1: Robotic Organ Isolation Based on Segmentation

- Coordinate Translation: Use the scale bar reference to convert the pixel coordinates of a target organ mask centroid into real-world coordinates relative to a fixed pot corner.

- Robot Programming: Program a 3-degree-of-freedom robotic arm (e.g., UR5) with a soft gripper or micro-scalpel. Input the real-world coordinates and the mask boundary polygon.

- Isolation Execution: The arm navigates to the centroid, and the tool follows the mask boundary to excise the organ. Isolated organs are deposited into pre-labeled, deep-well plates.

- Validation: Weigh isolated organ and capture a post-isolation image to verify fidelity to the intended mask.

Table 3: Organ Isolation Performance Metrics

| Metric | Leaf | Flower | Stem |

|---|---|---|---|

| Isolation Precision (mg) | ± 1.2 mg | ± 0.3 mg | ± 0.8 mg |

| Average Time per Organ | 12 sec | 18 sec | 15 sec |

| Success Rate (Intact) | 98% | 95% | 99% |

The Scientist's Toolkit

Table 4: Key Research Reagent Solutions & Materials

| Item | Function in Workflow |

|---|---|

| Controlled Growth Chamber | Standardizes plant material at the phenotypic level, reducing biological variance in images. |

| Calibrated Color Checker Card | Enables color calibration across imaging sessions, critical for model generalizability. |

| High-Resolution RGB Camera | Captures the raw spectral and spatial data required for pixel-level classification. |

| Annotation Platform (e.g., CVAT) | Provides tools for efficient, collaborative creation of ground truth segmentation labels. |

| Deep Learning Framework (PyTorch/TensorFlow) | Environment for developing, training, and deploying the segmentation neural networks. |

| Robotic Micro-Dissection System | Translates digital segmentation maps into precise physical sampling actions. |

| Deep-Well Plate with LN2 Flash Freeze | Preserves the molecular state (e.g., transcriptome, metabolome) of isolated organs immediately post-dissection. |

Workflow and Pathway Visualizations

Title: Plant Organ Segmentation & Isolation Workflow

Title: Segmentation Model Training Feedback Loop

Framed within a thesis on automated image segmentation for plant organ phenotyping, this application note details how high-throughput screening (HTS) of plant extracts leverages automated image analysis to accelerate bioactive compound discovery. The protocol outlines a workflow for preparing extract libraries from segmented plant organs, conducting phenotypic HTS using cell-based assays, and employing automated image segmentation for quantitative analysis of cellular and subcellular phenotypes.

Experimental Protocol: HTS of Plant Extracts for Cytotoxic Compounds

Objective: To screen a library of leaf and root extracts for cytotoxic activity against a cancer cell line using automated live/dead cell imaging and analysis.

Part A: Plant Extract Library Preparation from Segmented Organs

- Plant Material & Organ Segmentation: Fresh plant tissue is dissected. High-resolution images are acquired and processed using a pre-trained convolutional neural network (CNN) model for automated segmentation of leaves and roots.

- Extraction: Segmented organs are lyophilized, pulverized, and extracted separately with 80% methanol (1:10 w/v) via sonication (30 min). Extracts are centrifuged, and supernatants are dried under vacuum.

- Library Formatting: Dried extracts are reconstituted in DMSO to a stock concentration of 50 mg/mL. Using an automated liquid handler, extracts are diluted in cell culture medium and dispensed into 384-well microplates (final test concentration: 50 µg/mL, 0.1% DMSO). Each plate includes controls: cell-only (negative), DMSO-only (vehicle), and 10 µM staurosporine (positive cytotoxicity control).

Part B: Cell-Based Screening & Automated Image Acquisition

- Cell Seeding: HeLa cells are seeded at 2,000 cells/well in 384-well plates and incubated for 24 hours.

- Treatment: The pre-dosed extract library plates are used to treat cells for 48 hours.

- Staining: Post-incubation, cells are stained with a live/dead viability kit (e.g., Calcein-AM for live cells, Ethidium homodimer-1 for dead cells).

- Imaging: Plates are imaged using a high-content imaging system (e.g., PerkinElmer Opera Phenix, ImageXpress Micro Confocal) with a 20x objective. Four fields per well are acquired per fluorescence channel.

Part C: Automated Image Segmentation & Quantitative Analysis

- Nuclei Segmentation: The Hoechst channel (if used) or the dead-cell channel is used as input. A UNet-based segmentation model identifies individual nuclei/cells.

- Cytoplasm/Region Expansion: A cytoplasm mask is generated by expanding the nuclear mask by a defined pixel radius or using cytoplasmic marker intensity.

- Phenotype Quantification: For each segmented cell, intensity and morphological features are measured:

- Viability Metrics: Mean Calcein intensity (live), Mean EthD-1 intensity (dead).

- Morphological Metrics: Nuclear area, cytoplasmic area, nuclear/cytoplasmic ratio.

- Hit Identification: Wells with a statistically significant increase in dead-cell fluorescence intensity (>3 standard deviations above the vehicle control mean) and a concurrent reduction in cell count (>50%) are flagged as primary hits.

Table 1: Representative HTS Data from a 384-Well Plant Extract Screen

| Well ID | Plant Organ (Source) | Cell Count (Normalized) | Dead Cell Intensity (A.U.) | Nuclear Area (px²) | Hit Status |

|---|---|---|---|---|---|

| A01 | Leaf | 98% | 105 | 245 | Inactive |

| B05 | Root | 45% | 890 | 310 | Active |

| C12 | Leaf | 102% | 98 | 230 | Inactive |

| D08 | Root | 22% | 1250 | 350 | Active |

| Vehicle Ctrl | N/A | 100% | 100 | 250 | Control |

| Staurosporine | N/A | 15% | 1400 | 400 | Control |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in HTS of Plant Extracts |

|---|---|

| Automated Image Segmentation Software (e.g., CellProfiler, Ilastik, custom CNN) | Enables high-throughput, unbiased quantification of cellular phenotypes (count, size, intensity) from thousands of microscopy images, directly linking plant organ extracts to biological activity. |

| High-Content Screening (HCS) Microscope | Automated microscope for rapid, multi-channel fluorescence imaging of cells in microplates. Essential for generating the raw image data for segmentation. |

| 384-Well Cell Culture Microplates | Standard format for HTS, allowing testing of hundreds of samples in parallel with minimal reagent volumes. |

| Live/Dead Viability/Cytotoxicity Kit | Fluorescent dyes that distinguish live from dead cells within a population, providing a direct phenotypic readout for cytotoxicity screens. |

| Automated Liquid Handler | Robots for precise, high-speed dispensing of extracts, cells, and reagents into microplates, ensuring assay reproducibility and throughput. |

| DMSO (Dimethyl Sulfoxide) | Universal solvent for reconstituting organic plant extracts and delivering them to cell-based assays at consistent, low concentrations. |

Visualization: Workflows and Pathways

Diagram 1: HTS workflow from plant to hit

Diagram 2: Automated image analysis pipeline

Diagram 3: Key cytotoxicity signaling pathways

This case study, framed within a broader thesis on automated image segmentation for plant organ research, details the application of high-throughput phenotyping to quantify morphological responses to abiotic stress. The integration of image-based analysis with automated segmentation pipelines enables precise, non-destructive measurement of key traits, providing invaluable data for researchers and drug development professionals investigating plant adaptive mechanisms.

Application Notes

Automated segmentation of plant organs (roots, shoots, leaves) from digital images allows for the continuous, quantitative tracking of phenotypic plasticity under stress conditions. This approach moves beyond traditional, destructive endpoint measurements to capture dynamic growth patterns and stress response kinetics. Key quantified traits include primary root length, lateral root density, leaf area, leaf count, and shoot biomass proxies. Data generated supports genome-wide association studies (GWAS) and the screening of chemical libraries for compounds that modulate stress resilience.

Table 1: Key Morphological Traits Quantified Under Drought Stress (14-Day Treatment)

| Plant Organ | Trait Measured | Control Mean (±SD) | Stress Treatment Mean (±SD) | Percent Change | P-value |

|---|---|---|---|---|---|

| Root System | Primary Root Length (cm) | 24.3 (±2.1) | 18.7 (±3.2) | -23.0% | <0.001 |

| Root System | Lateral Root Count | 45.2 (±5.8) | 62.1 (±7.4) | +37.4% | <0.001 |

| Shoot System | Total Leaf Area (cm²) | 58.6 (±4.9) | 42.3 (±6.1) | -27.8% | <0.001 |

| Shoot System | Shoot Fresh Weight (mg) | 320 (±25) | 245 (±31) | -23.4% | <0.001 |

Table 2: Correlation of Morphological Traits with Physiological Stress Markers

| Morphological Trait | Physiological Marker (e.g., Chlorophyll Content) | Pearson Correlation Coefficient (r) |

|---|---|---|

| Total Leaf Area | Chlorophyll a | 0.89 |

| Primary Root Length | Proline Accumulation | -0.76 |

| Lateral Root Density | Abscisic Acid (ABA) Level | 0.82 |

Experimental Protocols

Protocol 1: High-Throughput Plant Imaging and Segmentation for Stress Response

Objective: To acquire and process top-view and side-view images of plants for automated organ segmentation and trait extraction under controlled stress.

- Plant Growth & Stress Application: Grow Arabidopsis thaliana (Col-0) on vertical agar plates (½ MS media) in controlled environment chambers. Apply abiotic stress (e.g., 200 mM Mannitol for osmotic stress) at day 7 post-germination.

- Image Acquisition: Use a automated phenotyping cabinet with integrated RGB cameras. Capture top-view images daily at a fixed time. For roots, use side-view imaging against the agar plate.

- Automated Segmentation Workflow: a. Pre-processing: Apply flat-field correction to correct illumination artifacts. b. Background Subtraction: Use a绿色/blue excess index (GBEI) for shoot images to separate plant from background. For roots on agar, use contrast thresholding. c. Organ Segmentation: Implement a pre-trained U-Net convolutional neural network (CNN) model specific for Arabidopsis root and shoot architectures. The model is trained on manually annotated images. d. Post-processing: Apply morphological operations (closing, hole-filling) to refine segmentation masks.

- Trait Quantification: Extract numerical data from binary masks and skeletonized images using custom Python scripts (utilizing libraries like OpenCV, scikit-image).

- Leaf Area: Pixel count in shoot mask × calibration factor (mm²/pixel).

- Root Length: Skeleton length of primary root (mm).

Protocol 2: Validation of Segmentation Accuracy Against Ground Truth

Objective: To validate the output of the automated segmentation pipeline against manual annotation.

- Manual Annotation: Randomly select 50 images from the dataset. Using ImageJ software, manually trace the outline of primary roots and individual leaves to create ground truth masks.

- Accuracy Metrics Calculation: Compare the algorithm-generated mask (prediction) with the manual mask (ground truth) for the same image.

- Calculate Intersection over Union (IoU) = (Area of Overlap) / (Area of Union).

- Calculate Dice-Sørensen Coefficient (DSC) = (2 * True Positives) / (2 * True Positives + False Positives + False Negatives).

- Statistical Validation: Require a mean IoU > 0.85 and DSC > 0.90 for the segmentation model to be considered valid for trait extraction.

Visualizations

Plant Phenotyping & Segmentation Workflow

Stress-ABA-Morphology Signaling Pathway

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Materials

| Item | Function in Experiment |

|---|---|

| ½ Strength Murashige & Skoog (MS) Medium | Standardized plant growth medium providing essential macro and micronutrients for consistent in vitro cultivation. |

| Agar, Plant Grade | Solidifying agent for preparing growth plates, ensuring sterile and stable physical support for root imaging. |

| Abscisic Acid (ABA) ELISA Kit | Quantifies endogenous ABA hormone levels, validating the activation of the stress signaling pathway. |

| Mannitol or Polyethylene Glycol (PEG) | Osmoticum used to induce controlled water-deficit stress in the growth media, simulating drought conditions. |

| Chlorophyll Extraction Buffer (e.g., 80% Acetone) | Solvent for extracting chlorophyll from leaf tissue, enabling subsequent spectrophotometric quantification as a health marker. |

| Proline Assay Kit (Ninhydrin-based) | For colorimetric quantification of proline, a common osmoprotectant whose accumulation correlates with stress severity. |

| Custom U-Net CNN Model Weights | Pre-trained neural network parameters enabling accurate, automated segmentation of plant organs from raw images. |

| Image Analysis Software (Python w/ OpenCV, scikit-image) | Open-source libraries for implementing image processing pipelines, trait extraction algorithms, and statistical analysis. |

Overcoming Segmentation Challenges: Optimization Strategies for Robust Results

Automated segmentation of plant organs (e.g., leaves, roots, stems) is foundational for high-throughput phenotyping in agricultural research and phytochemical drug discovery. A core thesis in this field posits that robust segmentation algorithms must explicitly model and compensate for specific imaging artifacts to achieve biological accuracy. This document details application notes and experimental protocols for addressing three pervasive pitfalls: occlusion, variable lighting, and low contrast, which critically impede the extraction of quantifiable morphological and physiological data.

Pitfall Analysis & Mitigation Strategies

Occlusion

Challenge: Overlapping leaves or structures create ambiguous boundaries, leading to under-segmentation (multiple organs labeled as one). Mitigation: Employ deep learning architectures designed for instance segmentation and occlusion reasoning.

- Key Solution: Mask R-CNN variants with attention mechanisms (e.g., Occlusion-Aware R-CNN) that explicitly learn to separate overlapping instances.

- Data Augmentation: Synthetic occlusion generation during training improves model robustness.

Variable Lighting

Challenge: Uneven illumination in growth chambers or field settings causes shadow artifacts and intensity inhomogeneity, falsely interpreted as texture or color changes. Mitigation: Pre-processing and invariant feature learning.

- Key Solution: Application of CLAHE (Contrast Limited Adaptive Histogram Equalization) or Retinex-based algorithms to normalize illumination.

- Model Strategy: Training models on datasets with extreme lighting variations or using histogram-matching for domain adaptation.

Low Contrast

Challenge: Poor differentiation between target organ and background (e.g., green stem against green soil) or within-organ low contrast (disease spots). Mitigation: Enhance feature discriminability.

- Key Solution: Use of multi-spectral imaging (beyond RGB) to capture reflectance in NIR or UV bands where contrast is naturally higher. For RGB, learned color space transformations can optimize contrast.

- Algorithmic Approach: Loss functions like contrastive loss or Dice loss that directly optimize for separation of foreground from background.

Table 1: Performance Impact of Pitfalls on Segmentation Models (mAP@0.5)

| Model Architecture | Ideal Conditions | +Occlusion | +Variable Lighting | +Low Contrast | Combined Challenges |

|---|---|---|---|---|---|

| U-Net (Baseline) | 0.94 | 0.71 | 0.68 | 0.65 | 0.52 |

| DeepLabv3+ | 0.95 | 0.75 | 0.80 | 0.70 | 0.58 |

| Mask R-CNN | 0.96 | 0.85 | 0.82 | 0.73 | 0.67 |

| Occlusion-Aware Mask R-CNN | 0.95 | 0.91 | 0.83 | 0.75 | 0.76 |

| + CLAHE Pre-processing | 0.95 | 0.91 | 0.89 | 0.78 | 0.81 |

Table 2: Efficacy of Pre-processing for Contrast Enhancement (PSNR/dB)

| Enhancement Method | Average PSNR | Resulting Dice Score Improvement |

|---|---|---|

| No Enhancement | 18.5 | Baseline (0.65) |

| Histogram Equalization | 20.1 | +0.04 |

| CLAHE | 22.3 | +0.09 |

| Multi-Spectral Fusion (RGB+NIR) | N/A | +0.15 |

Experimental Protocols

Protocol 4.1: Synthetic Occlusion Dataset Generation

Purpose: To train and evaluate model robustness to leaf occlusion. Materials: Clean, segmented image dataset (e.g., Leaf Segmentation Challenge). Procedure:

- For each training image, randomly select 1-3 foreground object masks.

- Apply affine transformations (rotation, scaling) to these masks.

- Overlay the transformed masks onto other randomly selected training images, using Poisson blending for realism.

- Adjust the ground truth labels accordingly to account for the synthetic overlaps.

- Incorporate 30% synthetically occluded images into each training batch.

Protocol 4.2: Illumination Normalization for Growth Chamber Imaging

Purpose: To standardize image intensity across different lighting conditions. Materials: RGB plant images, computational software (Python/OpenCV, MATLAB). Procedure:

- Convert image from RGB to LAB color space.

- Apply CLAHE to the L (Lightness) channel only.

- Parameters: Clip Limit = 2.0, Tile Grid Size = 8x8.

- Merge the processed L channel with the original A and B channels.

- Convert the image back to RGB color space for downstream model input.

- Validate by comparing the intensity histogram of the foreground region across multiple corrected images.

Protocol 4.3: Multi-Spectral Imaging for Low-Contrast Organ Delineation

Purpose: To segment plant organs under low RGB contrast using near-infrared (NIR). Materials: Multi-spectral camera (RGB + NIR), calibration panels, plant specimens. Procedure:

- Calibration: Capture images of calibration panels under identical lighting to derive reflectance conversion coefficients.

- Acquisition: Simultaneously capture registered RGB and NIR (e.g., 850nm) images of the plant.

- Calculate NDVI: Compute the Normalized Difference Vegetation Index: (NIR - Red) / (NIR + Red).

- Fusion: Use the NDVI map as an additional input channel to a segmentation model, or threshold it to create a preliminary vegetative mask to guide RGB segmentation.

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust Plant Image Segmentation

| Item | Function & Relevance |

|---|---|

| Multi-Spectral Imaging System | Captures data beyond RGB (e.g., NIR, UV) to provide inherent contrast for organs and stress indicators invisible in standard RGB. |

| Controlled LED Growth Chambers | Provides standardized, programmable lighting to minimize variable lighting artifacts at the source. |

| Calibration Panels (Gray & Spectral) | Essential for radiometric calibration to ensure intensity values are comparable across time and different setups. |

| Synthetic Data Generation Software | Tools (e.g., Blender with plant models) or scripts to create controlled, annotated datasets of occlusions for model training. |

| CLAHE Algorithm Library | A standard pre-processing function (in OpenCV, MATLAB) to normalize local contrast and compensate for uneven illumination. |

| Occlusion-Aware Mask R-CNN Model | A specialized neural network architecture that includes modules to reason about object boundaries under overlap. |

| Domain Adaptation Framework | Software tools (e.g., PyTorch's DALIB) to adapt models trained in ideal labs to field images with challenging conditions. |

This document provides application notes and protocols for addressing data scarcity in automated image segmentation of plant organs, a critical task for research in plant phenotyping, stress response analysis, and phytochemical drug discovery. The methodologies outlined herein—data augmentation, synthetic data generation, and transfer learning—enable robust model development when annotated biological image datasets are limited.

Data Augmentation Protocols for Plant Organ Images

Data augmentation artificially expands a training dataset by creating modified versions of existing images. This technique improves model generalization and prevents overfitting.

Standard Spatial & Color Augmentation Protocol

Objective: To generate variant images for training a segmentation model (e.g., U-Net) on Arabidopsis thaliana root system images. Materials: Original annotated dataset of plant organ RGB images. Software: Python with libraries: OpenCV, Albumentations, TensorFlow/PyTorch.

Procedure:

- Load Data: Import image-mask pairs. Masks should be label-encoded (e.g., 0: background, 1: root, 2: leaf).

- Define Augmentation Pipeline: Using the Albumentations library, apply the following transformations sequentially with a probability of 0.5-0.8 per operation:

- Spatial: HorizontalFlip, VerticalFlip, RandomRotate90, ElasticTransform (alpha=120, sigma=120, approx. 0.3 shift), GridDistortion, OpticalDistortion.

- Color/Radiometric: RandomBrightnessContrast (brightnesslimit=0.2, contrastlimit=0.2), RGBShift (rshiftlimit=20, gshiftlimit=20, bshiftlimit=20), Blur (blur_limit=3), RandomGamma.

- Crop/Scale: RandomSizedCrop (minmaxheight=(256, 512), height=512, width=512).

- Application: Apply the identical random transformation to both the input image and its corresponding segmentation mask to preserve alignment.

- Batch Generation: Integrate the pipeline into the data loader for on-the-fly augmentation during model training.

Table 1.1: Impact of Augmentation on Model Performance

| Augmentation Strategy | Dataset Size (Pre-Aug) | Dataset Size (Post-Aug) | Segmentation Accuracy (mIoU) | Notes |

|---|---|---|---|---|

| None (Baseline) | 500 images | 500 images | 0.68 | High overfitting observed |

| Spatial Only | 500 images | 4000 images | 0.78 | Improved shape invariance |

| Color Only | 500 images | 4000 images | 0.74 | Improved lighting invariance |

| Combined (Spatial+Color) | 500 images | 4000 images | 0.83 | Best overall performance |

Advanced: Mixup & CutMix Augmentation

Objective: To regularize the model by blending images and labels, encouraging linear behavior between classes. Protocol (Mixup for Segmentation):

- Sample two image-mask pairs (Xᵢ, Yᵢ) and (Xⱼ, Yⱼ) from a batch.

- Draw a mixing coefficient λ from a Beta(α, α) distribution (α=0.4 is typical).

- Create a new mixed image: X' = λXᵢ + (1-λ)Xⱼ.

- Create a new mixed one-hot encoded mask: Y' = λYᵢ + (1-λ)Yⱼ.

- Train the model on (X', Y'). This protocol requires modifying the loss function to handle soft, continuous labels.

Synthetic Data Generation Protocols

Synthetic data involves creating novel, photorealistic images with perfect annotations from 3D models or simulations.

Protocol: Blender-Based Synthetic Plant Image Generation

Objective: Generate synthetic images of Nicotiana benthamiana leaves with precise segmentation masks for pest/disease segmentation. Software: Blender 3.0+, Botany add-ons (e.g., ANATREE), Python scripting.

Procedure:

- Modeling: Create 3D plant organ models using procedural generation (via Sapling Tree Gen, ANATREE) or by importing scanned mesh data.

- Texturing & Material: Apply high-quality, scanned PBR (Physically Based Rendering) materials to leaf and stem models. Use subsurface scattering for realistic light penetration.

- Scene Composition: Randomize plant pose, camera angle (azimuth: 0-360°, elevation: 15-75°), and lighting (HDRi environment maps for natural skydomes).

- Pathology Simulation: Programmatically apply lesion textures (from isolated real lesions) or 3D deformations to leaf surfaces to simulate disease.

- Rendering & Ground Truth: Render the scene to produce the RGB image. Simultaneously, render object index passes and material passes to automatically generate pixel-perfect masks for organs, lesions, and background.

- Domain Randomization: Randomize every non-essential parameter (pot texture, soil color, camera noise, light temperature) across renders to maximize domain variation.

Table 2.1: Efficacy of Synthetic Data in Plant Disease Segmentation

| Training Data Composition | Model | Dice Score (Leaf) | Dice Score (Lesion) | Real-World Test mIoU |

|---|---|---|---|---|

| 100% Real Data (n=200) | U-Net | 0.91 | 0.45 | 0.48 |

| 100% Synthetic Data (n=5000) | U-Net | 0.89 | 0.87 | 0.52 |

| 10% Real + 90% Synthetic (n=200+4500) | U-Net | 0.93 | 0.89 | 0.81 |

| Real + Style-Transferred Synthetic | DeepLabV3+ | 0.92 | 0.87 | 0.79 |

Transfer Learning & Fine-Tuning Protocols

Transfer learning leverages knowledge from a model pre-trained on a large, general dataset (e.g., ImageNet) and adapts it to a specific, smaller plant imaging task.

Protocol: Fine-Tuning a Pre-Trained Encoder for Root Segmentation

Objective: Adapt a ResNet-50 backbone, pre-trained on ImageNet, for semantic segmentation of wheat root images in soil. Software: PyTorch or TensorFlow, segmentation library (e.g., segmentation-models-pytorch).

Procedure:

- Base Model: Initialize a segmentation model (e.g., U-Net, FPN) with a ResNet-50 encoder. Load weights pre-trained on ImageNet.

- Two-Phase Training:

- Phase 1 - Frozen Encoder: Freeze the weights of the entire encoder. Train only the decoder and segmentation head for 20-50 epochs. This allows the decoder to learn to map the encoder's general features to your specific labels.

- Phase 2 - Full Fine-Tuning: Unfreeze all or the later blocks (e.g., layers of ResNet stage 4 & 5) of the encoder. Use a lower learning rate (10x smaller than Phase 1) to gently adjust the pre-trained features to the new domain. Train for an additional 50-100 epochs.

- Learning Rate Scheduling: Use a cosine annealing or reduce-on-plateau scheduler during Phase 2.

- Differential Learning Rates: Optionally, assign lower learning rates to earlier encoder blocks and higher rates to later blocks and the decoder.

Table 3.1: Comparison of Transfer Learning Strategies for Plant Organ Segmentation

| Strategy | Pre-training Dataset | Trainable Parameters | Training Time (Epochs to Convergence) | Performance (mIoU on Target) |

|---|---|---|---|---|

| Training from Scratch | None | 100% | 300+ | 0.61 |

| Frozen Feature Extractor | ImageNet | ~20% (Decoder only) | 100 | 0.76 |

| Full Fine-Tuning | ImageNet | 100% | 150 | 0.85 |

| Fine-Tuning (Last 2 Blocks) | ImageNet & PlantCV-50k | ~30% | 120 | 0.88 |

Visualization of Methodological Workflows

Title: Three-Pronged Strategy to Overcome Data Scarcity

Title: Sequential Data Augmentation Pipeline for Plant Images

Title: Two-Phase Transfer Learning and Fine-Tuning Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 5.1: Essential Tools & Platforms for Addressing Data Scarcity

| Item Name | Category | Function/Benefit | Example Vendor/Platform |

|---|---|---|---|

| Albumentations | Software Library | Fast, optimized library for image augmentation with perfect mask alignment support. Essential for spatial/color transforms. | Open Source (GitHub) |

| Blender | Software | Open-source 3D creation suite. Core platform for photorealistic synthetic data generation of plant models. | Blender Foundation |

| ANATREE / Sapling | Blender Add-on | Procedural generator for botanically-plausible 3D tree and plant models within Blender. | Open Source / Blender Market |

| NVIDIA Omniverse Replicator | Software SDK | Domain randomization and synthetic data generation SDK built on Pixar USD, useful for scalable synthetic data creation. | NVIDIA |

| CVAT (Computer Vision Annotation Tool) | Software | Open-source tool for annotating images and videos. Integrates with models for AI-assisted labeling, reducing annotation burden. | Open Source (Intel) |

| Weights & Biases (W&B) | MLOps Platform | Tracks experiments, datasets (including synthetic data versions), and model performance, crucial for iterative methodology. | Weights & Biases Inc. |

| BioPreprocessD Dataset | Reference Dataset | A large, public dataset of preprocessed plant images for initial model pre-training or style transfer. | Plant Phenomics Portals |

| StyleGAN2-ADA | Model Architecture | Generative Adversarial Network for high-quality image synthesis and style transfer to bridge synthetic-real domain gaps. | Open Source (NVlabs) |

| segmentation-models-pytorch | Software Library | Provides pre-trained encoders (on ImageNet) and U-Net/FPN/PSPNet decoders for immediate transfer learning. | Open Source (GitHub) |

| Roboflow | Platform | End-to-end platform for managing, augmenting, preprocessing, and versioning computer vision datasets. | Roboflow Inc. |

Application Notes

This document details protocols for optimizing deep learning models within an automated image segmentation pipeline for plant organ phenotyping. Efficient optimization is critical for deploying models in resource-constrained research environments.

Hyperparameter Tuning Strategies

1.1. Core Parameters for Segmentation Models For U-Net and DeepLabV3+ architectures, key hyperparameters impacting performance on plant organ datasets (e.g., Arabidopsis, tomato root systems) include:

| Hyperparameter | Typical Search Range | Impact on Model & Training | Optimal Value (Sample Experiment) |

|---|---|---|---|

| Initial Learning Rate | 1e-4 to 1e-2 | Controls step size in gradient descent. Too high causes divergence; too low leads to slow convergence. | 3e-4 |

| Batch Size | 4, 8, 16, 32 | Affects gradient stability and memory use. Smaller batches can regularize but increase variance. | 8 |

| Optimizer | Adam, SGD, AdamW | Algorithm for updating weights. AdamW often superior for generalization. | AdamW |

| Weight Decay (L2) | 1e-5 to 1e-3 | Regularization to prevent overfitting on limited botanical image data. | 1e-4 |

| Backbone Network | ResNet-18, ResNet-50, MobileNetV3 | Depth vs. speed trade-off. Deeper networks capture more features but are slower. | ResNet-34 |

1.2. Automated Tuning Protocol: Bayesian Optimization

- Objective: Maximize the Mean Intersection-over-Union (mIoU) on a validation set of annotated plant organ images.

- Tools: Optuna or Ray Tune framework.

- Procedure:

- Define Search Space: Specify ranges for each hyperparameter (see table above).

- Initialize Trial: Run an initial model with default parameters.

- Iterate: For

n_trials=50:- The optimizer suggests a new set of hyperparameters.

- Train the segmentation model for a fixed number of epochs (e.g., 30) using the suggested parameters.

- Evaluate on the validation set and report mIoU to the optimizer.

- Select Best: After completion, identify the trial yielding the highest validation mIoU.

- Expected Outcome: A tuned model configuration achieving 2-5% higher mIoU than baseline.

Diagram Title: Bayesian Hyperparameter Tuning Workflow

Lightweight Model Deployment Protocols

2.1. Model Compression Techniques Quantitative comparison of compression techniques applied to a plant leaf segmentation model (ResNet-50 backbone):

| Technique | Method | Model Size (Original) | Model Size (Compressed) | mIoU Drop | Inference Speed (CPU) |

|---|---|---|---|---|---|

| Pruning | Remove 30% of smallest-magnitude weights in convolutional layers. | 98 MB | 68 MB | -0.8% | 120 ms |

| Quantization (FP16) | Convert weights from 32-bit floats to 16-bit floats. | 98 MB | 49 MB | -0.2% | 85 ms |

| Quantization (INT8) | Post-training quantization to 8-bit integers (TensorRT/TFLite). | 98 MB | 25 MB | -1.5% | 45 ms |

| Knowledge Distillation | Train a small "student" (MobileNetV2) from the large "teacher" model. | 98 MB | 9 MB | -2.0% | 30 ms |

2.2. Deployment Protocol: TensorFlow Lite for Edge Devices

- Objective: Deploy a quantized leaf segmentation model on a Raspberry Pi 4 for real-time analysis in a growth chamber.

- Procedure:

- Model Conversion: Convert the trained TensorFlow/Keras model to TensorFlow Lite format using the TensorFlow Lite Converter.

- Apply Full Integer Quantization: Use a representative dataset (200-300 plant images) to calibrate the quantization ranges.

- Benchmark: Use the TFLite Benchmark Tool to verify latency and memory usage on the target hardware.

- Integration: Package the

.tflitemodel and a lightweight inference script into a Python application using thetflite_runtimeinterpreter.

Diagram Title: Model Quantization & Edge Deployment Pipeline

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Model Optimization/Deployment |

|---|---|

| Optuna Framework | Open-source hyperparameter optimization framework for automating the search for optimal model parameters. |

| PyTorch Lightning / Keras Tuner | High-level libraries that abstract training loops and provide built-in tuning capabilities. |

| TensorRT / TensorFlow Lite | SDKs for high-performance deep learning inference on edge devices, enabling model quantization and acceleration. |

| ONNX Runtime | Cross-platform inference engine for exporting and running models from various frameworks (PyTorch, TensorFlow) in a standardized format. |

| Weights & Biases (W&B) | Experiment tracking tool to log hyperparameters, metrics, and model artifacts, facilitating comparison across trials. |

| Labeled Plant Organ Datasets (e.g., PlantCV, PPP) | High-quality, annotated image data for training and validating segmentation models. Critical for domain-specific tuning. |

| Docker | Containerization platform to create reproducible environments for model training and deployment across different systems. |

Automated image segmentation of plant organs (e.g., roots, leaves, flowers) is a cornerstone of high-throughput phenotyping. A persistent challenge in deploying computer vision models for agricultural and pharmaceutical research is generalization—the ability of a single model to perform accurately across diverse plant species and distinct growth stages. This application note provides detailed protocols and strategies to develop robust, generalizable segmentation models, framed within a broader thesis on enabling scalable, cross-species plant organ analysis for trait discovery and drug development from plant-based compounds.

Core Challenges and Strategic Framework

Generalization failure stems from dataset bias and feature distribution shifts. Key challenges include:

- Morphological Variance: Differences in organ shape, size, texture, and venation patterns between species (e.g., Arabidopsis vs. maize leaves).

- Ontogenetic Variance: Changes in organ appearance, structure, and occlusion from seedling to maturity.