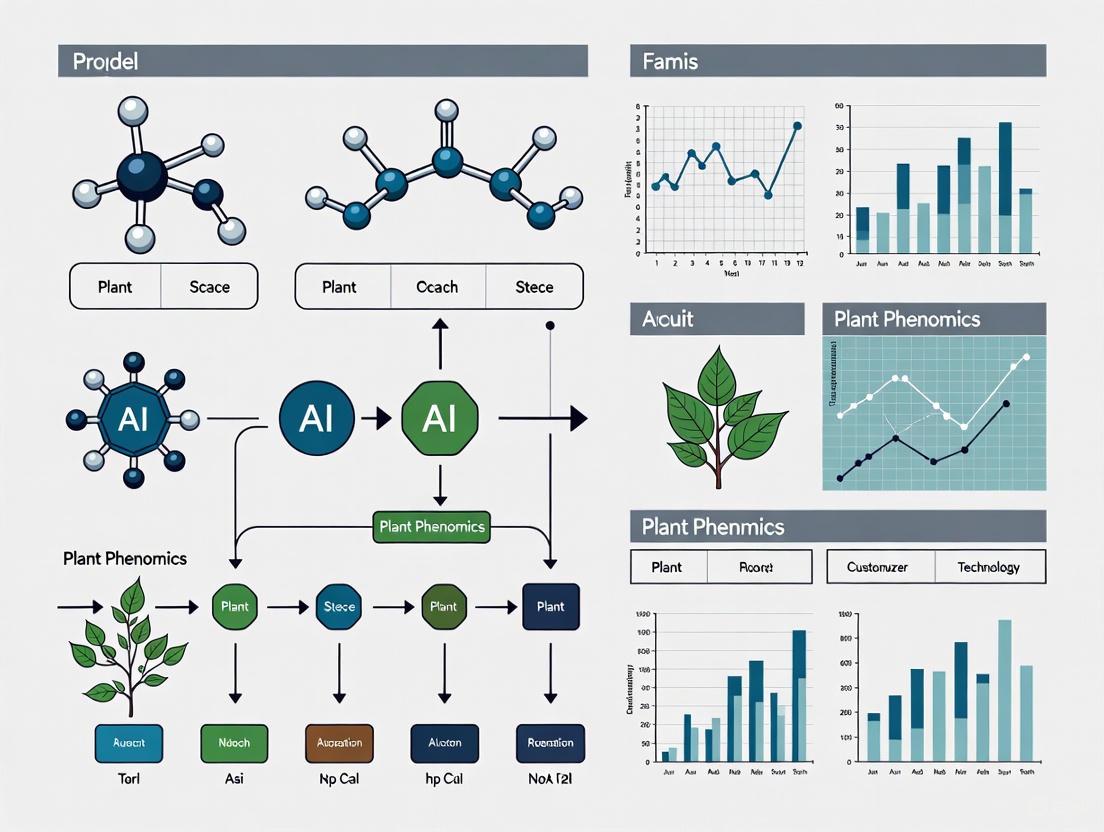

AI in Plant Phenomics: Revolutionizing Data Analysis for Sustainable Agriculture and Drug Discovery

This article explores the transformative role of Artificial Intelligence (AI) in plant phenomics, the high-throughput study of plant traits.

AI in Plant Phenomics: Revolutionizing Data Analysis for Sustainable Agriculture and Drug Discovery

Abstract

This article explores the transformative role of Artificial Intelligence (AI) in plant phenomics, the high-throughput study of plant traits. Aimed at researchers, scientists, and drug development professionals, it details how machine learning and deep learning are overcoming the challenges of analyzing complex, large-scale phenotypic data. The scope spans from foundational concepts and core AI methodologies to practical applications in crop improvement and stress resilience. It further addresses critical challenges like data heterogeneity and model interpretability, evaluates AI's performance against traditional methods, and discusses its emerging cross-disciplinary potential in biomedical research, offering a comprehensive guide to this rapidly evolving field.

From Images to Insights: Defining AI's Role in Modern Plant Phenomics

What is Plant Phenomics? The Data Bottleneck in Traditional Analysis

Plant phenomics is defined as the systematic study of the phenome—the comprehensive set of physical and biochemical traits of an organism—as it changes in response to genetic mutation and environmental influences [1] [2]. It is a high-throughput, path-breaking field dedicated to the accurate, rapid, and multi-faceted collection of phenotypic data [3]. The primary goal is to bridge the critical gap between a plant's genotype and its expressed phenotype, thereby enabling researchers to understand why a particular genotype outperforms others under specific environmental conditions [3] [4].

A phenotype results from the complex interplay between a plant's genetics (G), its environment (E), and even the phenotypic history of its parents, a concept encapsulated as GxExP [5]. In the past, phenotypic assessments were performed manually by researchers. These methods were often extremely time-consuming, labor-intensive, and subjective, with assessments varying between individuals. Furthermore, they often required the destructive harvesting of plants that had taken months to grow [6] [1].

The Phenotyping Bottleneck: Constraining Genomic Advances

The rapid development of high-throughput genetic analysis techniques, such as next-generation sequencing, has drastically reduced the cost and time required for plant genotyping [4]. However, the ability to acquire high-quality phenotypic data has not kept pace. This disparity has created a significant constraint known as the "phenotyping bottleneck" [7] [5].

This bottleneck severely restricts progress in understanding the genetic basis of complex quantitative traits—such as yield, stress tolerance, and resource use efficiency—which are governed by many genes and are highly influenced by the environment [4]. Without precise, high-throughput phenotyping to match the scale and resolution of genomic data, the full potential of genetic advancements in crop improvement cannot be realized [3] [7].

High-Throughput Phenotyping Technologies: Accelerating Data Acquisition

To overcome this bottleneck, plant phenomics employs a suite of non-invasive, high-throughput technologies. These platforms automate data acquisition, enabling the characterization of large numbers of plants at a fraction of the time, cost, and labor of traditional techniques [6].

The following table categorizes the primary platforms and sensing technologies used in modern plant phenomics.

Table 1: High-Throughput Plant Phenotyping Platforms and Technologies

| Platform Scale | Sensing Technology | Measured Traits & Applications | Level of Detail |

|---|---|---|---|

| Microscopic [4] | Micro-computed tomography, High-resolution microscopy [4] | Cellular structure, tissue morphology, seed morphometric features [4] | High-resolution detail of individual plant components (cells, tissues) [4] |

| Ground-Based [4] | RGB (digital) imaging, Chlorophyll fluorescence, Thermal imaging, Hyperspectral, 3D/Lidar [4] [6] | Plant architecture (height, leaf area), physiological status (water stress, photosynthetic efficiency), biomass [4] [6] | Detailed information on individual plants or plots [4] |

| Aerial (Field) [4] | Multispectral & Hyperspectral sensors (on drones, satellites), Thermal imaging [4] | Crop vigor, stress responses (drought, nutrient deficiency), yield prediction over large areas [4] [8] | Large-scale phenotypes at the canopy, plot, or field level [4] |

These technologies generate massive, multi-dimensional datasets. The subsequent challenge shifts from data acquisition to data management and analysis, which represents the next frontier in the phenomics pipeline [4].

The Data Analysis Bottleneck and the Rise of Artificial Intelligence

The robust, high-throughput phenotyping techniques permit continuous imaging of plants at brief intervals, generating vast amounts of data [4]. The analysis and interpretation of these large, complex datasets are a significant challenge, creating a secondary bottleneck that is increasingly being addressed by Artificial Intelligence (AI), specifically machine learning (ML) and deep learning (DL) [4] [6].

- Machine Learning: ML algorithms can search large datasets to discover patterns by simultaneously analyzing a combination of features. They have been successfully applied in tasks such as plant disease identification, stress classification, and organ segmentation [6].

- Deep Learning: This subset of machine learning, particularly Convolutional Neural Networks (CNNs), has created a paradigm shift in image-based plant phenotyping [4] [6]. Unlike traditional ML that requires manual feature extraction, deep learning automatically discovers the complex structures in high-dimensional image data, making it highly efficient for tasks like object detection, semantic segmentation, and image classification [4] [6]. This has proven invaluable for everything from counting leaf numbers to diagnosing fruit quality [4].

Table 2: Applications of Artificial Intelligence in Plant Phenomics

| AI Technology | Key Application in Phenomics | Specific Use Cases |

|---|---|---|

| Machine Learning (ML) [6] | Pattern discovery and classification from large datasets [6] | Identification and classification of plant diseases; Taxonomic classification of leaves; Plant image segmentation [6] |

| Deep Learning (DL) / Computer Vision (CV) [4] [6] [8] | Automated image analysis for trait extraction and plant monitoring [4] [6] | Yield prediction; Detection and quantification of biotic (pests, diseases) and abiotic (drought, nutrient) stresses; Monitoring of morphological and physiological traits [8] |

| Cyberinfrastructure (CI) & Open-Source Tools [6] | Data management, sharing, and collaborative analysis [6] | Facilitating collaboration among researchers; Community-driven development of software (e.g., PlantCV) and data-sharing platforms [6] |

The integration of AI is crucial for translating raw image data into biologically meaningful information, thereby breaking through the data analysis bottleneck.

Experimental Protocols for High-Throughput Phenotyping

Setting up appropriate and well-defined experimental procedures is fundamental for generating reliable and reproducible phenomic data. The following workflow outlines the critical steps for a quantitative high-throughput phenotyping experiment, from initial setup to data analysis.

- Plant Material Standardization: The phenotype is influenced by the parent's phenotype (GxExP). Using simultaneously propagated seed material and accounting for seed size and quality is critical to minimize unplanned variation.

- Environmental Control & Monitoring: Even in controlled environments, microclimatic fluctuations (e.g., between chamber center and sides) exist. Employing wireless sensor networks to monitor conditions at the plant level is recommended. This data can be used to refine experimental designs.

- Randomization and Replication: To counteract environmental inhomogeneities within growth chambers, a sufficiently randomized design with adequate replication is necessary to detect subtle genotypic differences.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key materials and resources essential for conducting modern plant phenomics research.

Table 3: Essential Toolkit for Plant Phenomics Research

| Tool / Resource | Category | Function & Application |

|---|---|---|

| Arabidopsis thaliana [9] [5] | Model Organism | A widely used model plant for developing and optimizing phenotyping protocols in controlled environments due to its short life cycle and small size. |

| Wild Type & Mutant Lines [9] | Genetic Material | Essential for comparative studies to understand gene function and the effect of genetic mutations on the phenotype. |

| High-Throughput Phenotyping Platforms (e.g., LemnaTec Scanalyzer, PlantScreen) [5] | Core Infrastructure | Automated conveyor-based systems in controlled environments that transport plants to imaging stations for non-destructive, multi-sensor data acquisition. |

| Imaging Sensors (RGB, Fluorescence, Hyperspectral, Thermal, 3D/Lidar) [4] [6] | Sensing Technology | Capture different aspects of plant morphology, physiology, and biochemistry for comprehensive trait assessment. |

| Standardized Data Formats & Ontologies (MIAPPE, Crop Ontology, Breeding API) [10] [2] | Data Management | Ensure data is Findable, Accessible, Interoperable, and Reusable (FAIR), enabling data sharing, integration, and meta-analysis. |

| Analysis Software & Cyberinfrastructure (PlantCV, DIRT, IAP, HTPheno) [6] [2] [5] | Data Analysis | Software tools and cyberinfrastructure for processing plant images, extracting features, and managing the large datasets generated. |

Plant phenomics has emerged as a critical discipline to overcome the historical bottleneck in phenotypic data acquisition, which had been limiting the application of genomic advances in crop improvement. By leveraging high-throughput, non-invasive technologies, it enables the precise and large-scale measurement of plant traits. However, the vast data streams generated by these technologies have created a new challenge in data analysis. The integration of Artificial Intelligence is now proving to be the key to unlocking this subsequent bottleneck. Through machine and deep learning, researchers can efficiently extract meaningful biological insights from complex phenomic datasets, ultimately accelerating the development of crops with higher yields and greater resilience to environmental stresses.

Plant phenomics, the high-throughput study of plant traits in relation to their genetic and environmental factors, has emerged as a critical discipline for addressing global food security challenges [11]. With the necessity to increase global food production by 70% by 2050, researchers face immense pressure to accelerate the development of crops with higher yield, better nutrition, and greater resilience to climate change [12]. The rapid advancement of artificial intelligence (AI) technologies, particularly machine learning (ML), deep learning (DL), and computer vision, is transforming plant phenomics from a labor-intensive bottleneck into a powerful, data-driven science. These core AI technologies enable researchers to extract quantitative phenotypic information from complex plant systems at unprecedented scales, speeds, and accuracies, thereby creating a vital bridge between genomic information and observable plant characteristics [11].

The integration of AI into plant phenomics represents a paradigm shift from traditional observational methods to automated, intelligent systems capable of learning from vast amounts of multimodal data. Where plant scientists once relied on manual measurements that were slow, subjective, and destructive, AI-powered systems can now continuously monitor plant growth, architecture, and physiological responses in both controlled and field environments [11]. This technological transformation is making it possible to establish more precise genotype-to-phenotype relationships, which is fundamental to accelerating plant breeding programs and developing more effective crop management strategies in the face of changing environmental conditions [11] [12].

Core AI Technologies: Theoretical Foundations and Methodologies

Machine Learning: The Foundational Framework

Machine learning provides the fundamental framework for enabling computers to learn patterns from data without being explicitly programmed for specific tasks. In the context of plant phenomics, ML algorithms parse complex biological data, learn from it, and make determinations or predictions about plant traits and behaviors [13]. The practice of ML consists predominantly of data processing and cleaning (approximately 80% of effort) with the remaining focus on algorithm application, emphasizing that predictive power depends critically on high-quality, well-curated datasets [13].

ML techniques are broadly categorized into supervised and unsupervised learning approaches. Supervised learning methods train models on known input and output data relationships to predict future outputs for new inputs, making them particularly valuable for classification tasks (e.g., disease identification) and regression analysis (e.g., yield prediction) in plant phenomics [13]. Unsupervised learning techniques identify hidden patterns or intrinsic structures in input data without pre-defined output labels, enabling clustering of plant phenotypes in meaningful ways that might not be immediately apparent to human observers [13]. The selection of appropriate ML models depends on multiple factors including prediction accuracy, training speed, variable handling capacity, and the specific biological question being addressed.

A critical consideration in ML application is managing model generalization - the ability of a model to apply learned concepts to new, unseen data. Overfitting occurs when models learn not only the underlying signal but also noise and unusual features from training data, negatively impacting performance on new data. Conversely, underfitting describes models that fail to capture the underlying patterns in both training and new data [13]. Plant phenomics researchers employ various strategies to mitigate these issues, including resampling methods, validation datasets, regularization techniques (Ridge, LASSO, elastic nets), and dropout methods in neural networks [13].

Deep Learning: Advanced Pattern Recognition in Plant Systems

Deep learning represents a sophisticated evolution of traditional neural networks, characterized by multiple layers of abstraction that enable automatic feature detection from massive datasets [13]. While traditional neural networks typically use one or two hidden layers due to hardware limitations, DL architectures leverage modern GPU and TPU hardware to construct networks with numerous hidden layers, dramatically increasing their capacity to learn complex, hierarchical representations from raw data [13]. This capability is particularly valuable in plant phenomics, where phenotypic traits often emerge from complex interactions across multiple biological scales.

Table 1: Deep Learning Architectures Relevant to Plant Phenomics

| Architecture | Key Characteristics | Plant Phenomics Applications |

|---|---|---|

| Convolutional Neural Networks (CNNs) | Locally connected layers that hierarchically compose simple features into complex models | Image-based trait analysis, disease identification, growth monitoring [13] |

| Recurrent Neural Networks (RNNs) | Chain of repeating modules with connections forming directed graphs along sequences | Analysis of plant development over time, growth trajectory prediction [13] |

| Fully Connected Feedforward Networks | Each input neuron connected to every neuron in subsequent layers | Predictive modeling from high-dimensional data like gene expression [13] |

| Deep Autoencoder Networks | Unsupervised learning for dimensionality reduction while preserving essential variables | Compression of complex phenotypic data, identification of latent representations [13] |

| Generative Adversarial Networks (GANs) | Paired networks where one generates content and the other evaluates it | Synthetic image generation for data augmentation, phenotype simulation [13] |

The application of deep learning in plant phenotyping has demonstrated superior performance over traditional analysis methods across numerous studies, leading to accelerated adoption in the research community [12]. However, the "black box" nature of DL models, where the internal decision-making processes remain opaque, presents significant challenges for biological interpretation and validation [12]. This limitation has stimulated growing interest in Explainable AI (XAI) approaches that aim to make DL models more transparent and interpretable for plant scientists [12].

Computer Vision: Visual Data Interpretation at Scale

Computer vision provides the technological foundation for extracting meaningful information from visual data, making it indispensable for modern high-throughput plant phenotyping systems. Imaging-based phenotyping has become the preferred method for non-destructive, automated measurement of multiple morphological and physiological traits from individual plants across temporal scales [11]. While manual measurement of plant traits may currently offer superior accuracy for specific applications, computer vision enables unprecedented throughput, allowing researchers to characterize thousands of plants simultaneously under controlled or field conditions.

Advanced imaging methodologies deployed in plant phenomics span multiple electromagnetic spectra, including visible light (RGB), hyperspectral, thermal, and fluorescence imaging [11]. Each modality captures distinct aspects of plant physiology and structure, enabling comprehensive phenotypic profiling. RGB imaging provides information about plant architecture, morphology, and color characteristics; hyperspectral imaging captures detailed spectral signatures related to biochemical composition; thermal imaging reveals canopy temperature variations indicative of water stress; and fluorescence imaging offers insights into photosynthetic efficiency and metabolic activity [11].

The integration of computer vision with deep learning has created particularly powerful synergies for plant phenomics. CNNs can automatically learn relevant features from plant images without manual feature engineering, detecting patterns that might escape human observation [13]. This capability is revolutionizing everything from root system architecture analysis to fine-grained disease symptom detection, enabling quantitative assessment of traits that were previously difficult or impossible to measure at scale.

Implementation Framework: Experimental Protocols and Workflows

Data Acquisition and Management Standards

Effective implementation of AI technologies in plant phenomics requires rigorous data management practices throughout the experimental lifecycle. The Minimum Information About a Plant Phenotyping Experiment (MIAPPE) standard provides a foundational framework for ensuring data quality, interoperability, and reusability [14]. This standard encompasses three core components: (1) experiment description including organization, objectives and location; (2) biological material description and identification; and (3) traits description including measurement methodology [14].

Data acquisition in AI-driven phenomics typically involves automated imaging systems that capture high-dimensional data from plants under controlled or field conditions. These systems must balance spatial and temporal resolution with throughput requirements, often employing multiple camera systems synchronized with plant handling automation [11]. The resulting image data requires careful annotation with metadata describing growth conditions, developmental stages, and experimental treatments to facilitate meaningful model training and analysis.

Table 2: Essential Research Reagents and Computational Tools for AI-Driven Plant Phenomics

| Tool/Resource | Type | Function in AI-Powered Phenomics |

|---|---|---|

| MIAPPE Templates | Data Standardization | Standardized metadata collection for plant phenotyping experiments [14] |

| PHIS | Data Management System | Manages heterogeneous phenotyping data from multiple sources and scales [14] |

| PlantCV | Image Analysis | Processing and feature extraction from plant images [14] |

| FAIRDOM-SEEK | Data Sharing Platform | MIAPPE-compliant data sharing and collaboration [14] |

| BrAPI | Web Services | Standard API for plant data interoperability [14] |

| TensorFlow/PyTorch | ML Frameworks | Developing and training custom deep learning models [13] |

| AgroPortal | Ontology Repository | Vocabulary and ontology services for agricultural domains [14] |

Deep Learning Model Development Protocol

The development of deep learning models for plant phenotyping follows a systematic protocol designed to ensure robust performance and biological relevance. A comprehensive workflow begins with data collection and curation, acquiring representative images across expected variations in genotypes, growth stages, environmental conditions, and imaging parameters. This is followed by data preprocessing, including image normalization, augmentation, and annotation, where techniques such as rotation, scaling, and color variation can increase dataset diversity and improve model generalization [13].

The model architecture selection phase involves choosing an appropriate neural network structure based on the specific phenotyping task. CNNs are typically selected for image classification and object detection tasks, while fully connected networks may be preferable for integrating multimodal data from various sources [13]. During model training, optimization algorithms adjust network parameters to minimize the difference between predicted and actual outputs, with validation datasets used to monitor for overfitting. The trained models then undergo comprehensive evaluation using holdout test datasets, with performance metrics tailored to the specific application (e.g., accuracy, F1-score, mean absolute error) [13].

For enhanced biological insight, the protocol should incorporate Explainable AI (XAI) techniques to interpret model decisions and relate detected features to underlying plant physiology [12]. Methods such as saliency maps, class activation mapping, and feature visualization help researchers understand which image regions most strongly influence model predictions, facilitating validation against biological knowledge and identification of potentially novel phenotypic indicators [12].

Performance Evaluation and Validation Metrics

Rigorous evaluation of AI models is essential for establishing trust in phenotypic predictions and ensuring their utility for downstream applications like breeding decisions. Evaluation metrics must be carefully selected based on the specific phenotyping task and the nature of the target traits. For classification tasks (e.g., disease identification, stress detection), common metrics include accuracy, precision, recall, F1-score, and area under the ROC curve (AUC) [13]. These metrics provide complementary perspectives on model performance, with precision emphasizing false positive rates and recall focusing on false negatives.

For regression tasks (e.g., biomass prediction, yield estimation), appropriate metrics include mean absolute error (MAE), root mean square error (RMSE), and coefficient of determination (R²) [13]. These quantify the magnitude of prediction errors and the proportion of variance explained by the model. In addition to numerical metrics, visual validation through XAI techniques provides critical biological context by highlighting image regions influencing model decisions, allowing domain experts to assess whether models are leveraging biologically plausible features [12].

Model validation should extend beyond technical performance to include biological validation, establishing that model predictions correlate meaningfully with ground truth measurements and demonstrate expected responses to genetic or environmental variation. This comprehensive evaluation framework ensures that AI models produce not just statistically accurate predictions, but biologically meaningful insights that can reliably inform breeding and management decisions.

Applications in Plant Phenomics: From Laboratory to Field

High-Throughput Phenotyping in Controlled Environments

AI technologies have revolutionized phenotyping in controlled environments, where imaging systems can automatically monitor plants throughout their development with minimal disturbance. In greenhouse and growth chamber settings, automated conveyor systems transport plants through imaging stations equipped with multiple camera types, capturing structural and physiological data at regular intervals [11]. Deep learning models then process these image sequences to quantify growth dynamics, architectural features, and stress responses with temporal resolution impossible through manual methods.

Specific applications include root system architecture analysis using specialized imaging systems that capture root growth and distribution patterns in soil or gel media; leaf area and biomass estimation through RGB image analysis; photosynthetic performance assessment via chlorophyll fluorescence imaging; and stress response quantification through thermal and hyperspectral imaging [11]. The integration of multiple sensing modalities with deep learning enables comprehensive phenotypic profiling that captures complex trait relationships and developmental trajectories.

These automated systems generate massive datasets that require sophisticated AI approaches for meaningful analysis. For example, time-series imaging of thousands of plants can produce terabytes of data, necessitating efficient feature extraction and pattern recognition algorithms [11]. The resulting high-dimensional phenotypic data provides unprecedented resolution for connecting genetic variation to phenotypic outcomes, accelerating the identification of candidate genes and molecular markers for desirable traits.

Field-Based Phenotyping and Agricultural Applications

Extending AI-powered phenotyping from controlled environments to field conditions presents additional challenges, including variable lighting, complex backgrounds, and environmental heterogeneity. Despite these difficulties, significant progress has been made in deploying computer vision and machine learning for field-based phenotyping using ground vehicles, drones, and satellites [11]. These platforms capture phenotypic data at multiple scales, from individual plants to entire fields, enabling selection of genotypes optimized for real agricultural environments.

UAV (unmanned aerial vehicle) platforms equipped with multispectral and RGB cameras have proven particularly valuable for field phenotyping, providing high-resolution imagery across large breeding trials and production fields [11]. Deep learning models process these images to quantify canopy cover, vegetation indices, lodging resistance, and maturity timing. For more detailed phenotypic characterization, ground-based platforms with sophisticated sensor arrays can capture individual plant architecture and disease symptoms while moving through fields.

A critical application of field-based phenotyping is the identification of genotypes with enhanced resilience to abiotic stresses such as drought, heat, and salinity [11]. By training machine learning models on imagery collected under stress conditions, researchers can identify visual indicators of stress tolerance and select breeding materials with superior performance in challenging environments. Similarly, AI approaches can detect early symptoms of biotic stresses including fungal infections, insect damage, and viral diseases, enabling timely interventions and resistance breeding.

Challenges and Future Directions

Technical and Biological Limitations

Despite rapid progress, the application of AI technologies in plant phenomics faces several significant challenges. Data quality and standardization remain persistent issues, with inconsistent imaging protocols, metadata annotation, and experimental designs complicating model generalization and data integration across studies [11] [14]. The black box nature of many deep learning models creates interpretability challenges, making it difficult to understand the biological basis for predictions and potentially limiting adoption by plant breeders and growers [12].

From a biological perspective, the complexity of genotype-phenotype relationships influenced by environmental interactions presents fundamental challenges for model prediction. Phenotypic traits often exhibit low heritability and high plasticity, with similar genetic variants producing different phenotypes under varying environmental conditions [11]. This biological complexity necessitates sophisticated modeling approaches that can account for these interactions while remaining interpretable and actionable for breeding applications.

Technical limitations include the computational resources required for training complex models on large image datasets, which can present barriers for research groups with limited infrastructure [13]. There are also challenges related to model transferability across species, growth environments, and imaging systems, often requiring extensive retraining or domain adaptation to maintain performance in new contexts. Addressing these limitations requires continued development of more efficient, interpretable, and robust AI approaches specifically tailored to biological applications.

Emerging Trends and Future Prospects

The future of AI in plant phenomics is likely to be shaped by several emerging trends. Explainable AI (XAI) is receiving increasing attention, with growing recognition that model interpretability is essential for biological discovery and translation to breeding applications [12]. XAI techniques that highlight image regions influencing model decisions help researchers validate biological relevance and identify potentially novel phenotypic indicators not previously recognized in manual analysis.

Multimodal data integration represents another important direction, combining imaging data with genomic, environmental, and metabolic information to build more comprehensive models of plant function and performance [11]. Advanced neural network architectures such as graph convolutional networks are being explored to better represent structured biological knowledge and relationships within integrated datasets [13].

The development of foundation models pre-trained on large, diverse plant image datasets holds promise for improving performance on specific phenotyping tasks with limited training data. These models could capture generalizable features of plant morphology and physiology that transfer across species and environments, reducing the need for extensive task-specific training. Similarly, generative AI approaches including generative adversarial networks (GANs) are being investigated for synthetic data generation to augment limited training datasets and simulate plant phenotypes under different conditions [13].

As these technologies mature, the plant phenomics community is increasingly focused on establishing standardized benchmarks, evaluation protocols, and data sharing frameworks to accelerate progress and ensure reproducibility. Initiatives such as the Computer Vision in Plant Phenotyping and Agriculture workshop at major conferences provide venues for presenting advances and identifying key unsolved problems [15]. Through these collaborative efforts, AI technologies are poised to dramatically increase the scale, efficiency, and insight of plant phenotyping, contributing essential tools for addressing global food security challenges.

Plant phenomics, the comprehensive study of plant growth, performance, and composition, has undergone a revolutionary transformation over the past decade through the integration of artificial intelligence. Where traditional phenotyping methods once relied on manual measurements with rulers and visual scoring, AI-powered systems now enable high-throughput, precise, and automated quantification of complex plant traits across vast populations and environments [16] [17]. This evolution has fundamentally accelerated the pace of genetic gain in crop improvement programs by bridging the critical gap between genomic potential and phenotypic expression. The emergence of sophisticated deep learning architectures, combined with advanced imaging technologies and scalable computing infrastructure, has positioned AI-driven phenotyping as an indispensable tool for addressing global food security challenges in the face of climate change and growing population demands.

The significance of this transformation extends beyond mere methodological convenience. AI-powered phenotyping has unveiled previously inaccessible relationships between subtle morphological features and agriculturally important traits, enabling breeders to select for optimal plant architectures with unprecedented precision [16] [18]. From initial applications addressing specific biotic stresses like iron deficiency chlorosis in soybean to contemporary systems capable of characterizing three-dimensional plant structures and predicting yield potential, the field has matured into a multidisciplinary domain leveraging the full spectrum of computer vision advancements [17] [19]. This technical guide examines the evolutionary pathway of AI in phenotyping, details current methodologies and applications, and explores emerging trends that will define the future of plant sciences research.

Historical Progression: From Manual Measurements to AI-Driven Pipelines

The journey of AI in phenotyping began with addressing critical bottlenecks in traditional methods. Initial approaches relied on basic digital imaging and machine learning algorithms to automate what was previously labor-intensive visual scoring. A seminal 2017 framework for phenotyping iron deficiency chlorosis (IDC) in soybean exemplifies this transition period, implementing a complete workflow from image capture to smartphone app deployment [17]. This system investigated ten different classification approaches, with the best classifier achieving a mean per-class accuracy of approximately 96% – significantly surpassing human consistency in visual ratings while enabling rapid assessment of thousands of field plots.

Table: Evolution of AI Approaches in Plant Phenotyping

| Time Period | Primary Technologies | Key Applications | Limitations |

|---|---|---|---|

| Early-Mid 2010s | Basic machine learning classifiers, digital RGB imaging | Abiotic stress scoring (e.g., iron deficiency), basic morphology | Limited generalization, manual feature engineering required |

| Late 2010s | Convolutional Neural Networks, deeper architectures | Multi-stress phenotyping, yield prediction | Computational intensity, data hunger |

| Early 2020s | Instance segmentation (Mask R-CNN), transfer learning | Fine-scale trait extraction, 3D phenotyping | Model complexity, annotation requirements |

| Current (2025) | Transformer architectures, self-supervised learning, foundation models | Genome-to-phenome prediction, real-time breeding decisions | Integration challenges, multimodal data fusion |

The progression of AI in phenotyping has followed the broader trajectory of computer science advancements, with each generation overcoming previous limitations. Early bag-of-words models and support vector machines provided initial automation but struggled with biological complexity and environmental variability [20] [17]. The breakthrough came with the adoption of deep learning, particularly convolutional neural networks, which could automatically learn relevant features from raw images without manual engineering. This transition enabled handling of more complex phenotypes and environmental interactions, setting the stage for the sophisticated pipelines available today [16] [19].

Current AI Methodologies in Plant Phenomics

Advanced Deep Learning Architectures

Contemporary plant phenotyping leverages sophisticated deep learning architectures tailored to specific biological questions. The SpikePheno pipeline for wheat spike characterization exemplifies this trend, combining a ResNet50-UNet semantic segmentation model to isolate wheat spikes and stems from backgrounds with a YOLOv8x-seg instance segmentation model to identify and characterize individual spikelets [16] [18]. This hierarchical approach achieved exceptional accuracy, with spike segmentation reaching mean intersection-over-union values near 0.95 and spikelet detection achieving mAP50 scores as high as 0.986, significantly outperforming previous methods like Mask R-CNN and PointRend [16].

For three-dimensional phenotyping, point cloud processing architectures have enabled the quantification of complex plant structures that cannot be captured through 2D imaging alone [19]. These approaches have evolved from traditional point processing methods to specialized deep learning techniques that can handle the irregular and unstructured nature of 3D point cloud data, facilitating the assessment of canopy architecture, root systems, and other volumetric traits critical for understanding plant-environment interactions.

Integration of Large Language Models and Transformers

While initially developed for natural language processing, transformer architectures and large language models (LLMs) are increasingly being applied to plant phenotyping challenges [20] [8]. In medical phenotyping, a foundational LLM derived from Llama 2 demonstrated superior performance in identifying patients with Alzheimer's disease and related dementias (AUC = 0.9534) compared to conventional methods [20], illustrating the potential of these architectures for complex pattern recognition tasks. Although direct applications in plant sciences are still emerging, the self-supervised learning capabilities and contextual understanding of transformers show promise for genomic sequence analysis, scientific literature mining, and multimodal data integration in plant phenomics.

Detailed Experimental Protocols and Implementation

Case Study: High-Throughput Wheat Spike Phenotyping

The SpikePheno pipeline represents the cutting edge in AI-driven phenotyping implementation, with a meticulously designed experimental protocol [16] [18]:

Imaging Protocol and Data Acquisition:

- Plant Material: 221 diverse wheat cultivars from across China's major agricultural regions

- Imaging Setup: Standardized imaging protocol with consistent lighting, background, and calibration

- Validation Set: 100 accessions grown in the 2024-2025 season for manual comparison

AI Model Development and Training:

- Semantic Segmentation: ResNet50-UNet architecture trained to isolate spikes and stems from background

- Instance Segmentation: YOLOv8x-seg model trained to identify individual spikelets

- Performance Validation: Two test sets - Test 1 (cultivars seen during training, new images/plants) and Test 2 (entirely unseen cultivars)

- Evaluation Metrics: Mean intersection-over-union for segmentation quality, mAP50 for detection accuracy

Trait Extraction and Correlation Analysis:

- Feature Quantification: 45 distinct spike and spikelet traits extracted automatically

- Yield Correlation: Statistical analysis linking morphological features to thousand-grain weight and yield per spike

- Population Structure: Principal component analysis and hierarchical clustering to identify spike architectural classes

This comprehensive approach ensured robust model performance across diverse genetic materials and environmental conditions, with predictions and manual measurements showing nearly identical correlation (r = 0.9865, 0.9753, 0.9635 for spike length, spikelet number per spike, and fertile spikelet number, respectively) [16].

Case Study: Real-Time Soybean Stress Phenotyping

The earlier but influential framework for soybean iron deficiency chlorosis (IDC) assessment established a paradigm for field-based stress phenotyping [17]:

Field Experimental Design:

- Genetic Material: 478 soybean genotypes with wide diversity in leaf and canopy shape

- Field Layout: Randomized complete block design with four replications

- Soil Conditions: Calcareous soil with high pH (7.75-7.95) to induce IDC symptoms

- Visual Ratings: Expert field visual ratings on a 1-5 scale at multiple growth stages

Image Acquisition and Processing Pipeline:

- Imaging Protocol: Standardized imaging protocol with color calibration, consistent camera settings, and controlled lighting

- Feature Engineering: Extraction of biologically meaningful features (amount of yellowing, browning) from digital images

- Classifier Training: Evaluation of 10 different machine learning approaches for severity classification

- Mobile Deployment: Implementation of best-performing classifier as smartphone application for real-time field assessment

This end-to-end workflow demonstrated the potential for AI-powered phenotyping to provide accurate, rapid, and scalable solutions for breeding programs, achieving approximately 96% mean per-class accuracy in severity assessment [17].

Quantitative Performance and Validation Metrics

The advancement of AI in phenotyping is demonstrated through rigorous quantitative validation against traditional methods and biological ground truths. The table below summarizes key performance metrics from recent implementations:

Table: Performance Metrics of Contemporary AI Phenotyping Systems

| Phenotyping System | Application | AI Architecture | Accuracy Metrics | Comparison to Manual |

|---|---|---|---|---|

| SpikePheno [16] | Wheat spike architecture | ResNet50-UNet + YOLOv8x-seg | Spike segmentation mIoU = 0.948, Spikelet detection mAP50 = 0.986 | Correlation: 0.9865 (spike length), 0.9753 (spikelet number) |

| Soybean IDC Classifier [17] | Iron deficiency chlorosis | Hierarchical classifier | Mean per-class accuracy ~96% | Superior to human rater consistency |

| 3D Phenotyping DL [19] | Plant architecture analysis | Point cloud deep learning | Varies by specific task and representation | Enables traits impossible with manual methods |

| LLM Medical Phenotyping [20] | Alzheimer's disease detection | Llama 2-derived foundation model | AUC = 0.9534, F1 score = 0.8571 | Outperformed standard CCW algorithm (AUC = 0.8482) |

Biological validation remains paramount, with the most sophisticated AI systems requiring correlation with agronomically important traits. In the SpikePheno implementation, the pipeline revealed strong correlations between specific morphological features and yield indicators, with spike area and fertile spikelet area showing stronger relationships to thousand-grain weight and yield per spike than traditional measurements like spike length [16]. This demonstrates how AI-driven phenotyping not only automates measurements but uncovers novel biological insights that can inform breeding decisions.

Table: Key Research Reagents and Technologies for AI Phenotyping

| Resource Category | Specific Examples | Function/Application | Implementation Considerations |

|---|---|---|---|

| Imaging Hardware | Canon EOS DSLR cameras, hyperspectral sensors, 3D scanners | Image acquisition across visible and non-visible spectra | Standardized imaging protocols essential for consistency [17] |

| Annotation Tools | Labelbox, CVAT, custom annotation platforms | Generating ground truth data for model training | Major bottleneck; active learning approaches can reduce burden [19] |

| AI Frameworks | PyTorch, TensorFlow, MMDetection | Model development and training | Transfer learning from pretrained models reduces data requirements [16] |

| Specialized Architectures | ResNet50-UNet, YOLOv8x-seg, PointNet++ | Task-specific phenotyping applications | Architecture selection depends on data type and biological question [16] [19] |

| Validation Metrics | mIoU, mAP50, correlation coefficients | Performance assessment and biological validation | Multiple metrics required for comprehensive evaluation [16] |

| Deployment Platforms | Smartphone apps, cloud APIs, edge computing devices | Field deployment and real-time analysis | Resource constraints influence model selection for mobile use [17] |

Emerging Trends and Future Perspectives

Current Research Frontiers

The field of AI-powered phenotyping continues to evolve rapidly, with several key trends shaping its trajectory in 2025. Three-dimensional phenotyping represents a significant frontier, with deep learning methods enabling the quantification of complex plant architectures that cannot be captured through 2D imaging alone [19]. Current research focuses on addressing the challenges of 3D data acquisition, processing, and analysis, with particular emphasis on benchmark dataset construction through synthetic data generation and self-supervised learning approaches.

Multimodal data fusion is another active research area, combining imaging data with genomic, environmental, and sensor-based information to build comprehensive models of plant growth and development [8] [19]. This approach recognizes that plant phenotypes emerge from complex interactions between genetics and environment, requiring integrated analytical frameworks to decode. The emergence of foundation models pretrained on massive biological datasets promises to accelerate this trend, enabling more efficient transfer learning across species and experimental conditions.

Implementation Challenges and Solutions

Despite remarkable progress, significant challenges remain in the widespread adoption of AI-powered phenotyping. Data quality and availability continue to constrain model development, particularly for rare traits or specialized environments. Proposed solutions include generative AI for synthetic data creation, unsupervised and weakly supervised learning to reduce annotation burdens, and benchmark dataset establishment for standardized comparison [19].

Model interpretability and biological relevance present another challenge, as the most accurate deep learning models often function as "black boxes." Research initiatives are increasingly focusing on explainable AI techniques that connect model decisions to biological mechanisms, ensuring that phenotyping insights can effectively guide breeding decisions and biological discovery [16] [19]. Additionally, computational efficiency remains critical for deployment in resource-constrained environments, driving development of lightweight models and edge computing implementations.

The evolution of AI in phenotyping over the past decade represents a paradigm shift in how plant biologists quantify and understand phenotypic expression. From initial applications automating simple stress scoring to contemporary systems capable of characterizing complex three-dimensional architectures and predicting yield potential, AI has fundamentally transformed the scale, precision, and biological insight of phenotyping. The integration of advanced deep learning architectures with high-throughput imaging technologies has enabled discoveries that were previously inaccessible through manual methods, such as the relationship between fine-scale wheat spike morphology and grain yield [16] [18].

As the field progresses, the convergence of AI-powered phenotyping with genomics, environmental sensing, and predictive analytics promises to accelerate the development of climate-resilient crops and sustainable agricultural systems. Current trends toward multimodal data integration, foundation models, and real-time decision support systems reflect the maturation of phenotyping from a descriptive tool to a predictive science capable of guiding breeding decisions and agricultural management [8] [21] [19]. While challenges remain in data quality, model interpretability, and computational efficiency, the rapid pace of innovation suggests that AI-driven phenotyping will continue to be a cornerstone of plant sciences research, enabling breakthroughs in understanding and manipulating the genetic basis of complex traits for improved agricultural productivity and sustainability.

In modern agricultural and biological research, a fundamental challenge persists: accurately predicting how genetic information (genotype) manifests as observable traits (phenotype) in living organisms. This genotype-to-phenotype relationship is complicated by environmental influences, complex genetic interactions, and the multidimensional nature of phenotypic expression. Traditional plant phenotyping methods, which rely heavily on manual observation and measurement, are labor-intensive, time-consuming, and prone to human error, creating a critical bottleneck in breeding programs and functional biology research [22] [23].

Artificial intelligence is rapidly transforming this landscape by enabling high-throughput, precise, and automated phenotypic data acquisition. AI technologies, particularly computer vision and deep learning, are now bridging the functional biology gap by creating direct pipelines from genetic information to quantitative phenotypic assessment. This technological revolution is accelerating crop improvement programs and supporting global food security by providing researchers with unprecedented tools to link molecular biology to observable plant characteristics [24] [23]. The integration of AI into phenomics represents nothing less than a paradigm shift, replacing labor-intensive, human-driven workflows with intelligent systems capable of extracting nuanced biological insights from complex visual and sensor data.

AI Technologies Revolutionizing Phenotypic Data Acquisition

Advanced Imaging and Sensing Platforms

The foundation of AI-powered phenotyping lies in acquiring high-quality, multidimensional data from living plants. Several advanced platforms have emerged to address this need across different scales and environments:

Autonomous Field Robots: Systems like PhenoRob-F represent a significant advancement in field-based phenotyping. This robot is equipped with RGB, hyperspectral, and depth sensors that enable autonomous navigation through crop fields. It captures and analyzes data with exceptional accuracy, demonstrating capabilities in detecting wheat ears, segmenting rice panicles, reconstructing 3D plant structures, and classifying drought severity in rice with over 99% accuracy. The system can complete phenotyping rounds in 2–2.5 hours and process up to 1875 potted plants per hour, dramatically outpacing manual methods [25].

Drone-Based Systems: High-throughput phenotyping platforms such as PhenoScale process drone-captured data into valuable phenotypic information, facilitating frictionless plant analysis at field scale. These systems are particularly valuable for breeding programs requiring assessment of thousands of plots throughout the growing season [26].

Handheld and Ground-Based Devices: Agile and flexible handheld devices like Literal provide ultra-precise plant measurements under field conditions, allowing for detailed assessments of various crops almost in real-time thanks to automated trait processing. These tools make sophisticated phenotyping accessible without massive infrastructure investments [26].

Computer Vision and Deep Learning Frameworks

The raw data captured by sensing platforms becomes biologically meaningful through the application of sophisticated AI frameworks:

3D Plant Reconstruction Systems: The IPENS framework integrates Neural Radiance Fields (NeRF) with Segment Anything Model 2 (SAM2) to reconstruct detailed 3D models of different parts of crops like rice and wheat. This system allows computers to 'see' and understand plants in three dimensions, making plant phenotyping faster and more accurate. In experiments, IPENS automatically extracted and reconstructed detailed 3D models with high accuracy, completing each process in just three minutes [22].

Spatiotemporal Growth Monitoring: The 3D-NOD framework presents a highly sensitive 3D deep learning approach for detecting new plant organs, enabling more accurate and real-time growth monitoring. Tested across multiple crop species, the system achieved an impressive mean F1-score of 88.13% and IoU of 80.68%, offering a powerful tool for real-time, organ-level plant phenotyping. This approach mimics the way experienced human observers track growth over time through novel labeling, registration, and data augmentation strategies [27].

Multimodal AI Pipelines: CIMMYT's AI-powered phenotyping pipeline transforms how plant traits are measured in the field. It begins with geo-referenced images taken using smartphones or tablets, then curates and annotates these images to build high-quality datasets. Advanced AI models are trained to identify key traits—such as stand counts, pod numbers, or disease symptoms—with speed and precision. These models are rigorously validated across different environments, seasons, and genetic backgrounds to ensure accuracy, consistency, and fairness [24].

Table 1: Performance Metrics of Featured AI Phenotyping Frameworks

| Framework Name | Primary Technology | Key Capabilities | Reported Accuracy/Performance | Crop Applications |

|---|---|---|---|---|

| IPENS [22] | NeRF + SAM2 | 3D reconstruction, organ segmentation | Completes process in 3 minutes | Rice, Wheat |

| PhenoRob-F [25] | Multi-sensor robot + YOLOv8m, SegFormer_B0 | Wheat ear detection, rice panicle segmentation, drought classification | Precision: 0.783, Recall: 0.822, mAP: 0.853 (wheat); Drought classification: >99% | Wheat, Rice, Maize, Rapeseed |

| 3D-NOD [27] | 3D deep learning (DGCNN) | New organ detection, growth monitoring | F1-score: 88.13%, IoU: 80.68% | Tobacco, Tomato, Sorghum |

| ImageSafari [24] | Computer vision + mobile technology | Multi-trait analysis, disease assessment | Scalable across environments and seasons | Finger millet, groundnut, pearl millet, pigeon pea, maize, sorghum |

Experimental Protocols and Methodologies

Protocol 1: Automated 3D Plant Reconstruction with IPENS

Purpose: To generate detailed 3D models of plant structures for quantitative trait extraction.

Materials and Equipment:

- RGB cameras (standard digital cameras or smartphones)

- IPENS software framework (integrating NeRF and SAM2)

- Computational resources with GPU acceleration

Procedure:

- Image Acquisition: Capture multiple overlapping images of the target plants (rice, wheat, or other crops) from various angles under consistent lighting conditions.

- Data Preprocessing: Organize images and associated metadata, ensuring proper sequencing and orientation.

- NeRF Processing: Input images into the Neural Radiance Fields component to reconstruct the 3D scene geometry and view-dependent appearance.

- SAM2 Segmentation: Apply the Segment Anything Model to identify and segment individual plant organs within the reconstructed 3D model.

- Trait Extraction: Quantify morphological parameters (leaf area, stem diameter, organ counts) from the segmented 3D model.

- Validation: Compare AI-generated measurements with manual measurements to ensure accuracy.

Typical Results: The system typically generates accurate 3D models of plant structures within approximately three minutes per sample, dramatically improving efficiency over traditional methods. The framework has shown excellent cross-species adaptability, proving effective in analyzing diverse crop organs [22].

Protocol 2: Field-Based High-Throughput Phenotyping with PhenoRob-F

Purpose: To autonomously collect and analyze multimodal phenotypic data under field conditions.

Materials and Equipment:

- PhenoRob-F robotic platform

- RGB, hyperspectral, and RGB-D depth sensors

- YOLOv8m and SegFormer_B0 deep learning models

- Random forest classification algorithms

Procedure:

- System Calibration: Calibrate all sensors and validate robotic navigation systems in the target environment.

- Autonomous Data Collection: Deploy the robot to autonomously navigate crop fields, capturing RGB, hyperspectral, and depth data according to predefined routes.

- Wheat Ear Detection: Process RGB images using YOLOv8m model to detect and count wheat ears with precision of 0.783, recall of 0.822, and mAP of 0.853.

- Rice Panicle Segmentation: Apply SegFormer_B0 model to segment rice panicles, achieving a mean intersection over union (mIoU) of 0.949 and accuracy of 0.987.

- 3D Reconstruction: Use scale-invariant feature transform (SIFT) and iterative closest point (ICP) algorithms with RGB-D data to reconstruct 3D structures of maize and rapeseed plants.

- Drought Stress Classification: Process hyperspectral data (900-1700 nm range) using CARS algorithm for feature reduction, then apply random forest model to classify drought severity.

Typical Results: The system achieves high correlation with manual measurements for plant height (R² = 0.99 for maize and 0.97 for rapeseed) and classifies drought severity with accuracies ranging from 97.7% to 99.6% across five drought levels [25].

Protocol 3: Real-Time Growth Monitoring with 3D-NOD

Purpose: To detect new plant organ emergence and monitor growth dynamics in 3D.

Materials and Equipment:

- 3D imaging sensors (depth cameras, laser scanners)

- 3D-NOD software framework

- DGCNN backbone network

- Semantic Segmentation Editor under Ubuntu

Procedure:

- Data Collection: Capture time-series 3D point clouds of plants (tobacco, tomato, sorghum) across multiple growth stages.

- Data Annotation: Annotate all points using Backward & Forward Labeling (BFL) strategy into "old organ" and "new organ" semantic classes.

- Data Augmentation: Apply Humanoid Data Augmentation (HDA) to generate variants for training.

- Model Training: Train DGCNN backbone on annotated datasets with mixed point clouds.

- Organ Detection: Deploy trained model to detect new organ emergence across growth sequences.

- Performance Validation: Assess detection accuracy using Precision, Recall, F1-score, and Intersection over Union (IoU) metrics.

Typical Results: The framework achieves sensitive detection of tiny buds across all three species with F1 and IoU for new organs reaching 76.65% and 62.14%, respectively, despite many buds being too small for human identification [27].

Visualization of AI Phenotyping Workflows

AI Phenotyping Workflow: From Data to Biological Insights

3D-NOD Organ Detection Framework

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Solutions for AI-Enabled Plant Phenotyping

| Tool/Technology | Type | Primary Function | Example Applications |

|---|---|---|---|

| NeRF (Neural Radiance Fields) [22] | AI Algorithm | 3D scene reconstruction from 2D images | Creating detailed 3D models of plant structures from ordinary photos |

| SAM2 (Segment Anything Model 2) [22] | AI Algorithm | Image segmentation and object identification | Automatically identifying and segmenting plant organs in images |

| PhenoRob-F Robot [25] | Hardware Platform | Autonomous field-based data collection | Capturing multimodal sensor data (RGB, hyperspectral, depth) in crop fields |

| YOLOv8m & SegFormer_B0 [25] | Deep Learning Models | Object detection and semantic segmentation | Detecting wheat ears and segmenting rice panicles for yield estimation |

| 3D-NOD Framework [27] | Software Framework | 3D organ detection and growth monitoring | Identifying new organ emergence in tobacco, tomato, and sorghum |

| ImageSafari Platform [24] | Mobile Data Collection System | Standardized image capture and annotation | Building high-quality datasets for computer vision model training |

| Hyperspectral Imaging [25] | Sensing Technology | Capturing spectral data beyond visible light | Classifying drought stress severity in rice plants |

| DGCNN Backbone [27] | Neural Network Architecture | Processing 3D point cloud data | Analyzing spatiotemporal plant growth patterns |

Data Integration and Biological Insights

The true power of AI in phenotyping emerges when multidimensional phenotypic data is integrated with other biological information streams. AI methods are increasingly being used to combine phenotypic data with genomic, environmental, and management practice datasets to build comprehensive models of plant function and performance [23]. This integrated approach enables researchers to move beyond simple trait measurement to understanding the complex interactions between genes, environment, and management that ultimately determine crop performance.

The application of deep learning-based text generation frameworks further enhances the utility of phenotypic data by automatically generating summaries of plant health metrics, highlighting potential risks, and suggesting interventions in natural language [28]. These systems can process high-dimensional imaging data, effectively capturing complex plant traits while overcoming issues like occlusion and variability, then translating these findings into actionable insights for researchers and breeders.

As these technologies mature, they are creating new opportunities for predictive breeding and phenomic predictions where plant traits can be used as input to predict the characteristics of future hybrids or crosses [26]. This capability could streamline breeding cycles and product development pipelines, making them faster and more efficient than ever before. The continuous evolution of digital phenotyping technologies promises to further revolutionize agriculture by enhancing precision agriculture, plant breeding, and agricultural product development efforts.

AI technologies are fundamentally transforming our ability to connect genotype to phenotype by providing unprecedented tools for quantitative, high-throughput phenotypic assessment. From autonomous robots capturing multimodal data in field conditions to sophisticated deep learning algorithms extracting nuanced biological insights from complex visual data, these approaches are bridging the functional biology gap that has long constrained agricultural research and breeding programs. As these technologies continue to evolve and integrate with other biological data streams, they promise to accelerate the development of improved crop varieties with enhanced yield, resilience, and sustainability characteristics—critical tools for addressing the growing global food security challenges of the 21st century.

The integration of artificial intelligence (AI) into plant phenomics has transformed agricultural research, creating an unprecedented demand for robust, multi-scale data sources. High-throughput imaging, sensor networks, and satellite data collectively provide the foundational inputs that power machine learning algorithms and deep learning models. These technologies enable researchers to move beyond traditional manual phenotyping methods, which have long been a bottleneck in plant science [29]. By capturing comprehensive phenotypic data across molecular, tissue, whole-plant, and canopy levels, these data sources allow AI systems to establish complex relationships between genotype, phenotype, and environment. The resulting data streams provide the training material necessary for AI systems to identify patterns, predict traits, and ultimately accelerate the development of improved crop varieties with enhanced resilience to climate stressors such as drought and heat [30]. This technical guide examines the core data sources powering the AI revolution in plant phenomics, detailing their operational principles, implementation protocols, and integration frameworks.

High-Throughput Imaging Systems

High-throughput imaging systems form the core of modern plant phenomics, enabling non-destructive, automated quantification of plant traits across scales. These systems leverage various imaging modalities to capture both two-dimensional and three-dimensional structural information.

Imaging Modalities and Platforms

Table 1: High-Throughput Imaging Modalities in Plant Phenomics

| Imaging Modality | Captured Parameters | Spatial Resolution | Application Examples |

|---|---|---|---|

| RGB Imaging | Morphological structure, color, texture | Up to 100 megapixels [29] | Canopy coverage estimation, disease assessment [29] |

| Multispectral Imaging | Surface reflectance in specific wavelength bands | Varies with platform (cm-level with UAS) | Vegetation indices (e.g., NDVI), disease detection [31] |

| Hyperspectral Imaging | Continuous spectral signatures across numerous narrow bands | mm to cm level | Detailed stress response analysis, pigment estimation [32] |

| Thermal Imaging | Canopy temperature, stomatal conductance | Varies with platform | Drought stress monitoring, water use efficiency [30] |

| Chlorophyll Fluorescence Imaging | Photosynthetic efficiency, plant stress | Varies with platform | Blue light-induced chlorophyll fluorescence at night [32] |

| 3D Reconstruction (SfM-MVS/LiDAR) | Plant architecture, canopy height, biomass | Sub-cm to cm level | Canopy height estimation, biomass prediction [29] [19] |

Imaging platforms span controlled environments to field conditions, each with distinct advantages. The PhenoGazer system exemplifies an integrated controlled-environment platform, combining a portable hyperspectral spectrometer with eight fiber optics, four Raspberry Pi cameras, and blue LED lights for comprehensive plant health assessment [32]. This system features automated moveable racks for continuous measurements, with the lower rack equipped for nighttime chlorophyll fluorescence capture and the upper rack for daytime hyperspectral reflectance and RGB imaging [32]. For field-based phenotyping, Unmanned Aircraft Systems (UAS) equipped with various sensors have become predominant due to their flexibility and reasonable cost [29]. Ground-based vehicle platforms and stationary systems provide additional options for specific phenotyping applications.

Experimental Protocol: 3D Plant Phenotyping Using UAS

Objective: To quantify canopy architectural traits (height, coverage, biomass) for genetic analysis under field conditions.

Materials and Equipment:

- UAS platform (e.g., quadcopter or fixed-wing) with GPS and inertial measurement unit

- RGB camera (minimum 20 megapixels recommended)

- Multispectral camera (optional, for vegetation indices)

- Ground control points (at least 5, with known coordinates)

- Measurement targets for radiometric calibration (for multispectral/hyperspectral imaging)

- Data processing workstation with adequate computational resources

Procedure:

- Flight Planning: Define the area of interest and establish a flight grid with sufficient forward and side overlap (≥80% recommended). Set flight altitude to achieve target ground sampling distance (typically 1-5 cm/pixel for breeding plots).

- Pre-flight Calibration: Place ground control points evenly throughout the field. For multispectral imaging, capture images of calibration targets before and after flight.

- Image Acquisition: Conduct flights during optimal lighting conditions (mid-day with minimal shadows). Maintain consistent flight parameters across multiple timepoints. Capture images at regular intervals throughout growing season.

- Data Processing:

- Image Alignment: Use structure from motion (SfM) software to align images and generate sparse point cloud.

- 3D Reconstruction: Apply multi-view stereo (MVS) algorithms to generate dense point cloud.

- Georeferencing: Align model to geographic coordinates using ground control points.

- Canopy Height Model Generation: Subtract digital terrain model from digital surface model.

- Trait Extraction: Implement algorithms to quantify canopy height, coverage, and volume from 3D models.

- Data Analysis: Extract plot-level means for genomic studies or combine with temporal data for growth curve analysis.

AI Integration: Convolutional Neural Networks (CNNs) can automate trait extraction from the generated 3D models. Deep learning approaches are particularly valuable for segmenting plant organs, classifying growth stages, and identifying anomalous patterns [19]. For enhanced interpretability, Explainable AI (XAI) methods can be applied to determine which features in the 3D models most strongly influence the AI's predictions [31].

Sensor Networks for Continuous Phenotypic Monitoring

Sensor networks provide continuous, real-time monitoring of plant and environmental parameters, capturing dynamic responses to environmental fluctuations. These systems are particularly valuable for understanding genotype × environment (G×E) interactions.

Architecture and Deployment

Modern sensor networks for plant phenomics integrate multiple sensor types deployed across spatial scales. The PhenoGazer system exemplifies an integrated approach with its automated moveable racks, continuous measurements through a datalogger for photosynthetically active radiation (PAR), soil moisture, and temperature, and expansion capability for additional analog or digital sensors [32]. Such systems are typically managed by microcontrollers (e.g., Raspberry Pi running Python scripts) for precise control and data acquisition with minimal human intervention [32].

Field-based sensor networks often employ IoT environmental sensors such as the Field Server, which can monitor microclimate conditions including air temperature, humidity, solar radiation, and soil parameters [29]. These platforms enable high-resolution temporal tracking of environmental conditions and plant responses, providing essential data for interpreting genetic performance across different environments.

Research Reagent Solutions for Sensor-Based Phenotyping

Table 2: Essential Research Reagents and Materials for Sensor-Based Phenotyping

| Category | Specific Items | Function/Application |

|---|---|---|

| Calibration Standards | Spectral calibration targets, thermal reference sources | Ensure measurement accuracy and cross-platform consistency |

| Fluorescence Imaging Reagents | Blue LED illumination systems, light-emitting diodes | Activate chlorophyll fluorescence for photosynthetic efficiency measurements [32] |

| Environmental Sensors | PAR sensors, soil moisture probes, temperature sensors | Quantify environmental variables for G×E studies [32] |

| Multiplex Immunofluorescence Reagents | CD3, CD4, CD8, CD20, CD56, CD68, CD163, FOXP3, Granzyme B, PD-1, PD-L1, cytokeratin antibodies | Enable cell phenotype classification in AI-powered spatial cell phenomics [33] |

| Data Acquisition Systems | Raspberry Pi microcontrollers, dataloggers, analog/digital sensor interfaces | Automate data collection and system control [32] |

Experimental Protocol: Sensor Network Implementation for Drought Stress Phenotyping

Objective: To monitor dynamic plant responses to drought stress using an integrated sensor network.

Materials and Equipment:

- Microcontroller unit (e.g., Raspberry Pi) with Python scripting capability

- Hyperspectral spectrometer with fiber optics

- RGB cameras for temporal imaging

- Blue LED lights for chlorophyll fluorescence induction

- PAR sensors

- Soil moisture and temperature sensors

- Moveable rack system for comprehensive canopy access

- Data storage and transmission infrastructure

Procedure:

- System Configuration: Integrate sensors with microcontroller unit, ensuring precise synchronization of all measurement devices. Develop Python scripts for automated data acquisition and system control [32].

- Sensor Placement: Position soil moisture sensors at multiple depths within root zones. Install PAR sensors above canopy level. Arrange hyperspectral fiber optics and cameras to capture representative canopy sections.

- Measurement Protocol:

- Daytime Operations: Capture hyperspectral reflectance and RGB images during daylight hours at predetermined intervals (e.g., hourly).

- Nighttime Operations: Activate blue LED lights to induce chlorophyll fluorescence, captured by spectrometer fiber optics on lower rack system [32].

- Environmental Monitoring: Continuously record PAR, soil moisture, and temperature measurements.

- Data Integration: Synchronize all sensor data streams using timestamps. Preprocess data to ensure consistency and quality.

- Stress Application: Implement controlled drought stress treatments while maintaining well-watered controls. Monitor sensor responses throughout stress progression.

- AI-Enabled Analysis: Apply machine learning algorithms to identify patterns in high-dimensional sensor data. Use Explainable AI (XAI) approaches to interpret which sensor features most strongly predict stress responses [31].

Applications: This approach successfully phenotyped soybean plants representing three conditions (healthy well-watered, healthy droughted, and diseased), evaluating growth and stress responses in a walk-in growth chamber [32]. The integration of nighttime blue light-induced chlorophyll fluorescence, hyperspectral reflectance-based vegetation indices, and RGB imagery enables comprehensive assessment of plant phenology, stress responses, and growth dynamics throughout the entire crop growth cycle [32].

Satellite Data for Macro-Scale Phenotyping

Satellite-based phenotyping provides unprecedented capabilities for monitoring crop performance across diverse environments and geographic scales, enabling phenotypic analysis in multi-environment trials (METs) essential for modern breeding programs.

Satellite Platforms and Data Products

Table 3: Satellite Platforms for High-Throughput Plant Phenotyping

| Platform | Spatial Resolution | Spectral Bands | Revisit Time | Key Applications |

|---|---|---|---|---|

| SkySat Constellation | 0.5 m (resampled) [34] | Blue, green, red, infrared | Daily acquisition attempts [34] | NDVI estimation, phenology monitoring, genotypic differentiation |

| Sentinel-2 | 10-60 m | 13 spectral bands | 5 days | Vegetation monitoring, stress detection, yield prediction |

| Landsat 8/9 | 15-30 m | 11 spectral bands | 16 days | Long-term phenological studies, stress monitoring |

| MODIS | 250-1000 m | 36 spectral bands | 1-2 days | Regional-scale phenology, stress assessment |

The advent of a new generation of high-resolution satellites has significantly advanced breeding applications. The SkySat constellation, offering multispectral images at 0.5 m resolution since 2020, represents a particularly promising platform for phenotyping breeding plots [34]. With a fleet of 21 high-resolution satellites guaranteeing daily acquisition attempts, this system can provide cloud-free images every 7 to 10 days for most regions on Earth, enabling comprehensive monitoring throughout growing seasons [34].

Experimental Protocol: Satellite-Based Phenotyping for Breeding Programs

Objective: To estimate normalized difference vegetation index (NDVISAT) from satellite imagery for detecting genotypic differences and seasonal changes in breeding plots.

Materials and Equipment:

- Access to satellite imagery (e.g., SkySat, Sentinel-2)

- Ground validation data (e.g., UAV-based NDVI, ground measurements)

- Geographic Information System (GIS) software

- Cloud computing resources for large-scale image processing

- Field plot maps with precise geographic coordinates

Procedure:

- Experimental Design: Plant breeding trials with appropriate experimental design (e.g., α-lattice design with replication). Ensure plot dimensions are compatible with satellite spatial resolution (≥0.7 m width recommended) [34].

- Image Acquisition: Schedule satellite image acquisitions throughout growing season. Target key phenological stages (emergence, vegetative growth, flowering, maturity).

- Preprocessing:

- Orthorectification: Correct images for topographic distortion and align with geographic coordinates [34].

- Radiometric Calibration: Convert digital numbers to surface reflectance.

- Atmospheric Correction: Compensate for atmospheric effects on spectral signatures.

- Plot Extraction: Precisely delineate individual breeding plots using geographic boundaries. Extract spectral data for each plot.

- Vegetation Index Calculation: Compute NDVI and other relevant indices (e.g., EVI, SAVI) from spectral bands.

- NDVI = (NIR - Red) / (NIR + Red)

- Temporal Analysis: Develop time series of vegetation indices to track phenology. Estimate key phenological metrics (date of emergence, heading, senescence) [34].

- Validation: Compare NDVISAT with NDVIUAV from unmanned aerial vehicles. Assess reliability in detecting genotypic differences and seasonal changes [34].

AI Integration: Machine learning algorithms can enhance the extraction of meaningful phenotypic information from satellite imagery. Deep learning models can automatically identify patterns associated with stress responses or yield potential. The resulting data can be integrated with environmental information from sources such as AgERA5 and ERA5 reanalysis products to better understand environmental influences on gene expression [34].

Integrated AI Frameworks for Multi-Scale Data Fusion

The true power of modern plant phenomics emerges from integrating data across scales through advanced AI frameworks that connect molecular-level responses to field-scale performance.

The "Pixels-to-Proteins" Paradigm