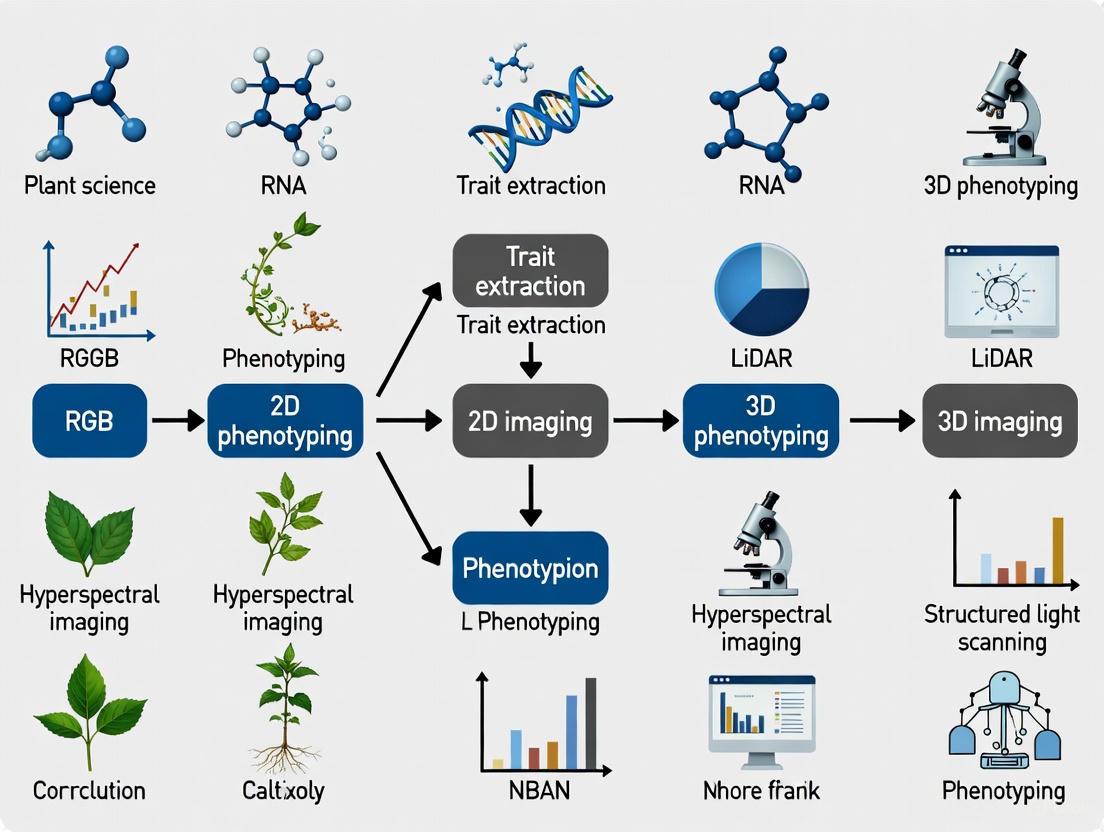

2D vs. 3D Plant Phenotyping: A Comparative Guide for Researchers on Trait Extraction Accuracy and Applications

This article provides a comprehensive comparison of 2D and 3D plant phenotyping methodologies for trait extraction, tailored for researchers and professionals in drug development and biomedical sciences.

2D vs. 3D Plant Phenotyping: A Comparative Guide for Researchers on Trait Extraction Accuracy and Applications

Abstract

This article provides a comprehensive comparison of 2D and 3D plant phenotyping methodologies for trait extraction, tailored for researchers and professionals in drug development and biomedical sciences. It explores the foundational principles of both approaches, detailing key 3D imaging technologies like LiDAR, stereo vision, and structured light. The content delves into methodological applications for extracting critical morphological traits, addresses common troubleshooting and optimization challenges, and presents a rigorous validation of 3D methods against traditional 2D techniques through recent case studies. By synthesizing performance metrics and emerging trends, including AI and deep learning, this guide aims to inform strategic decisions in adopting phenotyping technologies for enhanced research and development outcomes.

From Flat Images to Spatial Data: Understanding the Core Principles of 2D and 3D Phenotyping

Plant phenotyping, the quantitative assessment of plant traits, serves as a critical bridge between genomics and plant performance in agriculture and drug development. For decades, scientific research has relied heavily on two-dimensional (2D) projection methods for trait extraction due to their simplicity and low cost. However, a paradigm shift is underway toward three-dimensional (3D) approaches that capture the complex spatial architecture of biological structures. This comparison guide objectively examines the fundamental limitations of 2D projection against emerging 3D technologies, providing researchers with experimental data and methodological insights to inform their experimental designs. The transition from 2D to 3D phenotyping represents more than a technical upgrade—it constitutes a fundamental reimagining of how we quantify biological form and function across diverse domains from crop improvement to preclinical drug testing.

Theoretical Foundations: The Inherent Constraints of 2D Projection

The Spatial Information Deficit

The primary limitation of 2D projection lies in its fundamental inability to capture spatial depth. When complex 3D structures are projected onto a 2D plane, depth information is permanently lost, leading to measurement inaccuracies and structural ambiguities. In plant phenotyping, this manifests as an inability to accurately characterize root system architecture or canopy structure, where spatial arrangement directly correlates with function [1]. Similarly, in biomedical research, 2D cell cultures fail to recapitulate the three-dimensional tissue architecture that governs cellular behavior and drug response in vivo [2].

The Occlusion Problem

A second fundamental constraint arises from occlusion, where foreground structures obscure elements behind them. In complex biological systems like plant root systems or tissue models, this results in incomplete data capture and systematic measurement errors. Studies demonstrate that 2D root imaging frequently fails to capture the full root system architecture, with one study noting that field-based approaches often extract "only the top portion of the root system" [1]. The occlusion problem is particularly limiting for traits like leaf area index, branching patterns, and vascularization in tissue models.

Structural Simplification

Biological systems possess intricate 3D geometries that are inherently simplified when reduced to 2D representations. This structural simplification distorts critical phenotypic traits including surface area-to-volume ratios, spatial orientation, and mechanical properties. In cancer research, for instance, this simplification has profound implications, as 2D cultured cells "lose the peculiar signals coming from their niches" and are "constantly exposed to high levels of nutrients and oxygen" unlike the gradient conditions found in vivo [2].

Experimental Comparisons: Quantitative Performance Assessment

Accuracy Metrics in Trait Measurement

Table 1: Comparison of 2D vs. 3D Phenotyping Accuracy Across Domains

| Application Domain | Trait Measured | 2D Method Accuracy | 3D Method Accuracy | Citation |

|---|---|---|---|---|

| Soybean Root Phenotyping | Root Tip Counting | 79% correlation with manual counts | 95% correlation with manual counts (with background correction) | [1] |

| Plant Morphology Extraction | Plant Height | R² not reported for 2D | R² > 0.92 with manual measurements | [3] |

| Plant Morphology Extraction | Crown Width | R² not reported for 2D | R² > 0.92 with manual measurements | [3] |

| Leaf Parameter Extraction | Leaf Length/Width | Significant information loss from projection | R² = 0.72-0.89 with manual measurements | [3] |

| Organ Segmentation | Training Efficiency | Required 25 annotated plants for comparable performance | Achieved similar performance with only 5 annotated plants | [4] [5] |

Comprehensive Trait Extraction Capabilities

Table 2: Trait Extraction Capabilities of 2D vs. 3D Phenotyping Platforms

| Phenotypic Trait Category | 2D Projection Capability | 3D Reconstruction Capability | Research Implications |

|---|---|---|---|

| Root System Architecture | Limited to basic morphology (length, tips) | Comprehensive analysis (volume, distribution, spatial arrangement) | Enables identification of genes for deeper root systems [1] |

| Plant Biomass Estimation | Indirect estimation with occlusion errors | Direct volume calculation from 3D models | More accurate yield prediction and growth monitoring [6] |

| Gravotropic Responses | Basic directional assessment | Quantitative analysis of root and shoot angles over time | Enables study of developmental plasticity [7] |

| Drug Response Prediction | Poor clinical translation (5% efficacy) | Better recapitulation of in vivo conditions | Improved drug development success rates [2] [8] |

| Multi-Organ Interactions | Limited to separate analysis | Simultaneous tracking of 6+ plant structures [7] | Holistic understanding of plant development |

Methodological Approaches: Experimental Protocols

2D Phenotyping Workflow

The standard 2D phenotyping protocol for root system architecture analysis involves several established steps. Plants are typically grown in pouch growth systems with a transparent viewing surface, employing a black filter paper background to enhance contrast between roots and background [1]. Image acquisition uses standard RGB cameras under consistent lighting conditions. The critical image processing phase involves binary segmentation to separate roots from background, followed by skeletonization to extract topological attributes. Trait extraction typically includes basic morphological parameters like total root length, number of tips, and projection area. This approach has been widely adopted in high-throughput systems like the original ChronoRoot platform, which provided temporal analysis but was limited to binary segmentation of root structures alone [7].

Advanced 3D Reconstruction Methodologies

Multi-View Stereo Reconstruction

Advanced 3D phenotyping employs sophisticated reconstruction workflows. The integrated two-phase plant 3D reconstruction workflow begins with bypassing integrated depth estimation modules on standard binocular cameras. Instead, researchers apply Structure from Motion (SfM) and Multi-View Stereo (MVS) techniques to high-resolution images from multiple viewpoints, producing high-fidelity, single-view point clouds that effectively avoid distortion and drift [3]. The second phase addresses self-occlusion through precise registration of point clouds from six viewpoints into a complete plant model. This involves rapid coarse alignment using a marker-based Self-Registration method, followed by fine alignment with the Iterative Closest Point algorithm [3]. The resulting 3D models enable extraction of volumetric traits, surface areas, and spatial distribution parameters unavailable through 2D methods.

AI-Enhanced Temporal Phenotyping

Modern platforms like ChronoRoot 2.0 combine 3D imaging with artificial intelligence for comprehensive temporal analysis. The system employs infrared imaging with Raspberry Pi-controlled cameras and LED backlighting to eliminate variations from day/night cycles [7]. At the core of the analysis pipeline is an nnUNet segmentation module that performs simultaneous multi-class segmentation of six distinct plant structures: main root, lateral roots, seed, hypocotyl, leaves, and petiole [7]. This enables comprehensive tracking of plant development from seed to mature seedling, capturing intricate relationships between different organs during growth. The system incorporates Functional Principal Component Analysis for time series comparison across different experimental groups, enabling discovery of new data-driven phenotypic parameters.

2D-to-3D Projection Segmentation

An innovative hybrid approach has emerged that leverages well-established 2D segmentation algorithms for 3D analysis. This method involves reprojecting 2D predictions to 3D point clouds and using a majority vote algorithm to merge multiple predictions [4] [5]. Research demonstrates that this 2D-to-3D method achieves comparable performance to state-of-the-art 3D segmentation algorithms like Swin3D-s and Point Transformer v3, while offering significantly higher training efficiency [4]. With only five annotated plants, the 2D-to-3D approach achieved similar performance to training Swin3D-s on 25 plants, demonstrating remarkable efficiency gains [5].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Key Research Reagent Solutions for 2D and 3D Phenotyping

| Tool/Platform | Category | Function | Research Application |

|---|---|---|---|

| 2D Pouch System [1] | Growth Platform | Provides flat plane for root growth against transparent surface | High-throughput root screening in controlled conditions |

| ChronoRoot 2.0 [7] | Imaging Hardware | Automated infrared imaging system with AI analysis | Temporal phenotyping of plant development with multi-organ tracking |

| ZED 2 & ZED Mini Binocular Cameras [3] | 3D Capture Device | Capture stereo image pairs for 3D reconstruction | Generation of high-resolution point clouds for plant models |

| nnUNet Segmentation [7] | AI Software | Self-configuring neural network for image segmentation | Precise identification of plant organs in complex images |

| ResDGCNN [9] | Specialized Algorithm | Point cloud segmentation integrating residual learning with dynamic graph convolution | Cotton organ segmentation across entire growth cycle |

| Structure from Motion (SfM) [3] [10] | Reconstruction Algorithm | 3D point cloud generation from multiple 2D images | Non-destructive plant modeling from multi-view images |

| 3D Gaussian Splatting (3DGS) [10] | Emerging Technique | Represents geometry through Gaussian primitives | Efficient and scalable reconstruction of plant structures |

The experimental evidence clearly demonstrates that 3D phenotyping approaches overcome fundamental limitations of 2D projection across biological research domains. The spatial information captured by 3D methods enables more accurate trait measurement, better prediction of in vivo behavior, and discovery of previously inaccessible phenotypic relationships. However, 2D methods retain value for high-throughput screening scenarios where cost and simplicity are primary considerations. The emerging 2D-to-3D projection methods represent a promising intermediate approach, leveraging well-established 2D computer vision algorithms while capturing essential 3D structural information. As the field advances, the integration of dimensional approaches will continue to evolve, enabling researchers to select appropriate phenotyping strategies based on their specific accuracy requirements, throughput needs, and resource constraints. This dimensional integration promises to accelerate discoveries across fundamental plant science, agricultural improvement, and pharmaceutical development.

Plant phenotyping, the science of quantitatively measuring plant traits, serves as the critical link between genetics, environment, and observable characteristics. Traditional phenotyping has relied heavily on manual measurements and 2D imaging techniques, which project complex three-dimensional plant structures onto a flat plane. This process inevitably loses depth information and fails to accurately capture crucial architectural traits such as leaf curvature, stem inclination, and volumetric distribution [3]. These limitations have constrained our understanding of plant morphology and its functional implications.

The emergence of 3D phenotyping technologies addresses these fundamental shortcomings. By preserving spatial relationships and volumetric data, 3D methods enable researchers to move beyond simple length and area measurements to extract complex traits related to plant architecture, biomass distribution, and structural complexity [10] [11]. This paradigm shift offers unprecedented opportunities for advancing breeding programs and precision agriculture. This guide objectively compares the performance of 2D and 3D phenotyping approaches, supported by experimental data and methodological insights from current research.

Technical Comparison: 2D vs. 3D Phenotyping Performance

The transition from 2D to 3D phenotyping represents more than just technological advancement—it fundamentally enhances the quality, scope, and accuracy of trait extraction. Quantitative comparisons across multiple studies demonstrate clear advantages for 3D approaches, particularly for architectural traits.

Table 1: Performance Comparison of 2D vs. 3D Phenotyping for Key Plant Traits

| Plant Trait | 2D Method Limitations | 3D Method Advantages | Experimental Validation (R²) |

|---|---|---|---|

| Plant Height | Perspective distortion; reference scaling required | Direct measurement from 3D point clouds | 0.92-0.95 [3] |

| Crown Width | Single-view projection underestimates actual volume | Multi-view reconstruction captures true extent | >0.92 [3] |

| Leaf Area | Projection errors; inability to account for curvature | Surface area calculation from 3D mesh | 0.72-0.89 [3] |

| Internode Length | Destructive dissection often required | Non-destructive extraction from skeletonized models | Enabled via 3D skeletonization [11] |

| Leaf Angle | Manual protractor measurements; single point sampling | Automated calculation from 3D normals/vectors | Included in TomatoWUR dataset [11] |

| Organ Segmentation | Overlap obscures individual organs; loss of spatial context | 3D spatial separation enables instance segmentation | mIoU: 83.05-89.21% across species [12] |

The data reveal that 3D methods particularly excel in capturing traits that require spatial context. For example, while 2D approaches struggle with leaf area estimation due to their inability to account for curvature, 3D reconstructions can accurately measure actual surface area, achieving R² values of 0.72-0.89 compared to manual measurements [3]. Similarly, architectural traits like internode length and leaf angle, which traditionally required destructive sampling or cumbersome manual tools, can now be extracted automatically from 3D skeletonized models [11].

Experimental Approaches in Modern 3D Plant Phenotyping

2D-to-3D Reprojection Segmentation

A innovative approach that leverages well-established 2D computer vision for 3D segmentation has been developed and compared against native 3D methods. The experimental protocol involves:

- Image Segmentation: Applying the Mask2Former model (pre-trained on diverse 2D datasets) to segment individual plant organs from 2D images [4].

- Reprojection to 3D: Projecting the 2D segmentation predictions back onto the corresponding 3D point cloud using known camera parameters [4].

- Majority Vote Fusion: Employing a majority vote algorithm to merge multiple predictions from different viewpoints into a consensus 3D segmentation [4].

This method demonstrated no significant performance difference compared to state-of-the-art 3D segmentation algorithms like Swin3D-s and Point Transformer v3, while achieving higher training efficiency. Remarkably, training on just five annotated plants with the 2D-to-3D method yielded similar performance to training Swin3D-s on 25 plants, highlighting its data efficiency [4].

Table 2: Comparison of 3D Segmentation Method Performance

| Algorithm | Principle | Key Advantage | Training Efficiency |

|---|---|---|---|

| 2D-to-3D Reprojection | Projects 2D segmentation to 3D space | Leverages well-developed 2D models; high data efficiency | 5 plants for comparable performance [4] |

| Swin3D-s | Voxel-based 3D transformer | State-of-the-art on structured data | Required 25 plants for comparable performance [4] |

| Point Transformer v3 | Point-based neural network | Directly processes point clouds | Similar accuracy but lower efficiency than 2D-3D [4] |

| MinkUNet34C | Sparse convolutional networks | Memory efficient for large scenes | Lower performance than other 3D methods [4] |

| PointNeXt | Point-based deep learning | High accuracy across species | mIoU: 83.05-89.21% across crops [12] |

Multi-View 3D Reconstruction with Fine Registration

A comprehensive two-phase workflow for high-fidelity plant reconstruction addresses common challenges in 3D plant phenotyping:

Phase 1: High-Fidelity Single-View Reconstruction

Phase 2: Multi-View Registration

This workflow was validated on Ilex verticillata and Ilex salicina, demonstrating high correlation with manual measurements (R² > 0.92 for plant height and crown width) and successfully extracting fine-scale traits like leaf length and width, which are rarely addressed in multi-view fusion studies [3].

Figure 1: Workflow for multi-view 3D plant reconstruction and trait extraction.

Two-Stem Deep Learning for Organ Segmentation

A two-stage deep learning approach addresses the challenge of organ-level segmentation across multiple crop species:

Stage 1: Semantic Segmentation

Stage 2: Instance Segmentation

This method consistently outperformed four state-of-the-art networks (ASIS, JSNet, DFSP, and PSegNet), achieving average values of 93.32% precision, 85.60% recall, 87.94% F1, and 81.46% mIoU across all tested crops [12].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of 3D plant phenotyping requires specific hardware, software, and datasets. The following table summarizes key resources referenced in the experimental studies.

Table 3: Essential Research Reagents and Materials for 3D Plant Phenotyping

| Category | Specific Product/Method | Function/Application | Research Context |

|---|---|---|---|

| Imaging Hardware | ZED 2 & ZED Mini binocular cameras | Capture stereo image pairs for 3D reconstruction | Multi-view plant reconstruction [3] |

| Annotation Software | TomatoWUR dataset | Comprehensive benchmark for algorithm development | Includes point clouds, skeletons, manual measurements [11] |

| Segmentation Models | Mask2Former | 2D segmentation for reprojection methods | 2D-to-3D segmentation pipeline [4] |

| 3D Deep Learning | PointNeXt framework | Point-based semantic segmentation | Two-stage organ segmentation [12] |

| Reconstruction Algorithms | SfM + MVS pipeline | Generate 3D point clouds from 2D images | High-fidelity plant reconstruction [3] |

| Registration Tools | ICP algorithm + Marker-based SR | Align multi-view point clouds | Complete plant model creation [3] |

| Synthetic Data | AI-generated leaf point clouds | Augment training data; algorithm benchmarking | Trait estimation without manual labeling [13] |

| Evaluation Metrics | mIoU, Precision, Recall | Standardize algorithm performance assessment | Cross-study method comparison [4] [12] |

Emerging Frontiers and Future Directions

The field of 3D plant phenotyping continues to evolve with several promising technological developments:

- 3D Gaussian Splatting (3DGS): An emerging technique that represents plant geometry through Gaussian primitives, offering potential benefits in both efficiency and scalability compared to traditional point clouds [10].

- AI-Generated Synthetic Data: Generative models that produce realistic 3D leaf point clouds with known geometric traits are reducing the bottleneck caused by limited labeled data [13].

- Neural Radiance Fields (NeRF): This recent advancement enables high-quality, photorealistic 3D reconstructions from sparse viewpoints, though computational cost remains a challenge [10].

These technologies collectively address current limitations in 3D plant phenotyping, particularly regarding computational efficiency, annotation requirements, and applicability in field conditions.

Figure 2: Complete workflow from image acquisition to 3D trait extraction.

The comparative evidence presented in this guide demonstrates the clear advantage of 3D phenotyping over traditional 2D approaches for capturing plant architecture and complex traits. While 2D methods remain valuable for specific applications, 3D technologies enable precise, non-destructive measurement of structural traits that are fundamental to understanding plant growth and function.

The integration of advanced computer vision, deep learning, and high-fidelity reconstruction algorithms has transformed plant phenotyping from a primarily manual, destructive process to an automated, quantitative science. As these technologies continue to mature and become more accessible, they promise to accelerate crop improvement programs and enhance our understanding of gene-environment interactions in plants.

For researchers adopting 3D phenotyping, the choice between specific approaches (2D-to-3D reprojection vs. native 3D algorithms, SfM vs. depth cameras) should be guided by the target traits, available resources, and scale of operation. The methodologies and performance benchmarks provided in this guide offer a foundation for making these critical decisions in experimental design.

Three-dimensional (3D) imaging has emerged as a powerful tool in plant phenotyping, moving beyond the limitations of traditional two-dimensional approaches. While 2D imaging captures only intensity and color across a flat plane, 3D imaging technologies capture spatial depth and geometric structure, creating a comprehensive three-dimensional representation of objects and environments by measuring the X, Y, and Z coordinates of each point in the observed space [14]. This capability is revolutionizing trait extraction research by enabling a more accurate understanding of spatial relationships, plant dimensions, and environmental structures.

In the specific context of plant phenotyping, 3D reconstruction models provide significant advantages for morphological classification and growth tracking [15]. These technologies allow researchers to resolve occlusions and crossings of plant structures by reconstructing distance, orientation, and illumination from multiple viewing angles—capabilities that are hard or impossible to achieve with 2D models alone [15]. As the field advances, understanding the fundamental distinction between active and passive 3D imaging approaches becomes essential for selecting appropriate methodologies for specific research applications in trait extraction.

Fundamental Principles: Active vs. Passive 3D Imaging

3D imaging methods can be broadly classified into active and passive approaches, each with distinct operating principles, advantages, and limitations [15]. This fundamental distinction lies in their use of controlled illumination sources to interact with the subject being measured.

Active 3D imaging approaches use active sensors that rely on radiometric interaction with the object by using structured light or laser to directly capture a 3D point cloud representing the coordinates of each part of the subject in 3D space [15]. These systems project their own controlled energy emissions onto the scene and measure how this projected energy interacts with the objects. Because they rely on emitted energy rather than ambient light, active approaches can overcome several problems associated with passive methods, such as correspondence problems (ascertaining which parts of one image correspond to which parts of another image) [15]. Common active techniques include structured light, laser scanners (LiDAR), Time-of-Flight (ToF) cameras, and laser triangulation systems.

Passive 3D imaging approaches, in contrast, rely on ambient light or naturally available illumination to form images without projecting any energy onto the scene [15]. These systems typically use multiple cameras or sensors to capture images from different viewpoints and then apply computational algorithms to reconstruct the 3D structure. Because they don't require specialized illumination equipment, passive techniques tend to be more cost-effective as they typically use commodity or off-the-shelf hardware, but may result in comparatively lower-quality data that often require significant computational processing to be useful [15]. Common passive methods include stereo vision, photogrammetry, and structure from motion.

The following diagram illustrates the fundamental working principles of both active and passive 3D imaging approaches:

Active 3D Imaging Modalities: Technologies and Protocols

Laser Triangulation

Experimental Protocol: Laser triangulation involves shining a laser beam to illuminate the object of interest and using a sensor array to capture the laser reflection [15]. The deformation of the laser line or point on the target surface reveals topological information through triangulation geometry. As either the object or sensor moves, a sequence of profiles is collected and stitched together to form a high-resolution 3D reconstruction [16]. This method excels in precision applications and has been successfully implemented in laboratory experiments for producing 3D point clouds of barley plants [15], wheat canopies [15], and rapeseed [15].

System Requirements: Low-cost setup typically including a laser source (line or point), a camera positioned at a known angle to the laser, and a precision translation stage for moving either the sensor or object. The system requires precise calibration to determine the exact spatial relationship between the laser and camera [16].

3D Laser Scanning (LiDAR)

Experimental Protocol: LiDAR (Light Detection and Ranging) systems use laser pulses to create detailed point clouds representing 3D space with high precision [14]. These high-precision instruments require calibration objects or repeated scanning to accomplish point cloud registration and stitching [15]. Terrestrial laser scanners (TLS) allow for large volumes of plants to be measured with relatively high accuracy and are therefore mostly used for determining parameters of plant canopies and fields of plants [15]. Chebrolu et al. used a laser scanner to record time-series data of tomato and maize plants over a period of two weeks, while Paulus et al. used a 3D laser scanner to create point clouds of grapevine and wheat [15].

System Requirements: Laser scanner (from high-precision research grade to low-cost consumer versions like Microsoft Kinect), calibration objects, and substantial computational resources for processing large data volumes [15] [16].

Time of Flight (ToF)

Experimental Protocol: ToF cameras use light emitted by a laser or LED source and measure the roundtrip time between the emission of a light pulse and the reflection from thousands of points to build up a 3D image [15]. The time delay is translated directly into distance measurements for each pixel in the image [16]. Applications in plant phenotyping include the work of Chaivivatrakul et al. on maize plants, Baharav et al. on sorghum plants, and Kazmi et al. on various plants including cyclamen, hydrangea, and orchidaceae [15].

System Requirements: ToF sensor or camera, controlled lighting conditions, and onboard correction mechanisms for multipath interference, temperature drift, and ambient light noise [16].

Structured Light

Experimental Protocol: Structured light systems project a predefined pattern, such as stripes, dot matrices, or fringe patterns, onto a target scene [16]. The deformation of this pattern, as observed from a separate camera viewpoint, reveals surface topology by analyzing how the structured light distorts over varying depths [15]. This method offers excellent spatial resolution and is particularly useful for detailed scanning tasks. The approach requires very accurate correspondence between images for optimal performance [15].

System Requirements: Pattern projector (often using DLP technology), camera positioned at a known angle, and computational resources for analyzing pattern deformation [16].

Table 1: Technical Specifications of Active 3D Imaging Modalities

| Modality | Depth Sensing Principle | Resolution Potential | Operating Range | Scanning Speed |

|---|---|---|---|---|

| Laser Triangulation | Triangulation geometry | High (sub-millimeter) | Short (cm to few m) | Medium |

| 3D Laser Scanning (LiDAR) | Laser pulse measurement | Very High | Long (m to km) | Slow to Fast (depends on system) |

| Time of Flight (ToF) | Light pulse roundtrip time | Medium to High | Medium (cm to tens of m) | Very Fast (real-time) |

| Structured Light | Pattern deformation analysis | Very High | Short to Medium (cm to m) | Fast |

Passive 3D Imaging Modalities: Technologies and Protocols

Stereo Vision

Experimental Protocol: Stereo vision leverages two or more cameras to mimic human binocular perception [16]. By identifying corresponding points between the captured images and calculating their disparity, a stereo system can triangulate depth and generate dense depth maps [16]. This approach works well in environments with sufficient texture and lighting and is commonly used in robotic navigation and real-time inspection [16]. For plant phenotyping applications, the method requires multiple synchronized cameras positioned at different angles to capture the plant structure from various viewpoints.

System Requirements: Two or more calibrated and synchronized cameras, precise knowledge of camera positions and orientations, and significant computational power for disparity map calculation (often implemented on FPGAs or GPUs) [16].

Photogrammetry

Experimental Protocol: Photogrammetry reconstructs 3D geometry from a series of overlapping 2D images taken from different viewpoints [16]. Using algorithms such as structure-from-motion (SfM) and multi-view stereo (MVS), it identifies shared features across images and estimates their position in 3D space [16]. While photogrammetry requires more post-processing than other methods, it can produce highly detailed models using off-the-shelf cameras [16]. It is widely used in digital archiving of plant specimens and growth monitoring.

System Requirements: Standard digital camera (multiple cameras optional but not required), controlled lighting for consistent image capture, and substantial computational resources for processing overlapping images [16].

Neural Radiance Fields (NeRF)

Experimental Protocol: NeRF is a recent advancement that enables high-quality, photorealistic 3D reconstructions from sparse viewpoints [10]. This method uses a fully-connected deep network to represent a continuous volumetric scene function, optimizing the network to minimize the error between rendered and true images from various viewing directions. The network input is a continuous 5D coordinate (spatial location and viewing direction), and the output is the volume density and view-dependent emitted radiance at that location [10].

System Requirements: Multiple images from different viewpoints, significant GPU resources for training the neural network, and specialized software implementations of the NeRF algorithm [10].

3D Gaussian Splatting (3DGS)

Experimental Protocol: The emerging 3D Gaussian Splatting technique introduces a new paradigm in reconstructing plant structures by representing geometry through Gaussian primitives, offering potential benefits in both efficiency and scalability [10]. This approach represents scenes as a collection of 3D Gaussians that have controllable properties including position, covariance, and color. The optimization process involves adapting these Gaussians to best represent the scene, followed by a tile-based rasterization approach for real-time rendering [10].

System Requirements: Multiple calibrated images or video frames, modern GPU with support for CUDA, and implementation of the 3DGS algorithm which is computationally intensive during training but enables real-time rendering [10].

Table 2: Technical Specifications of Passive 3D Imaging Modalities

| Modality | Depth Sensing Principle | Resolution Potential | Texture Dependency | Computational Demand |

|---|---|---|---|---|

| Stereo Vision | Binocular disparity | Medium to High | High (requires texture) | High (real-time processing) |

| Photogrammetry | Multi-view feature matching | High | High (requires texture) | Very High (post-processing) |

| Neural Radiance Fields (NeRF) | Neural volume rendering | Very High | Medium | Extremely High (training) |

| 3D Gaussian Splatting (3DGS) | Gaussian primitive optimization | Very High | Medium | High (training), Low (rendering) |

Comparative Analysis: Performance Metrics in Plant Phenotyping

When selecting 3D imaging modalities for plant phenotyping applications, researchers must consider multiple performance metrics to align technological capabilities with research objectives. The following comparative analysis highlights key considerations for trait extraction research.

Accuracy and Resolution Requirements: Active methods generally provide higher accuracy and resolution for detailed morphological studies. Structured light systems offer excellent spatial resolution for capturing fine plant structures [16], while laser triangulation provides high (sub-millimeter) precision suitable for laboratory experiments on individual plants [15]. Passive methods like photogrammetry can also achieve high resolution but are more dependent on optimal lighting conditions and surface texture [16].

Data Acquisition and Processing Speed: For dynamic growth studies and high-throughput phenotyping, acquisition speed is critical. Time-of-Flight systems can operate in real time, making them ideal for capturing plant movement or rapid scans [16]. Stereo vision also enables real-time processing when implemented with appropriate hardware [16]. In contrast, methods like photogrammetry and NeRF require significant post-processing time but can produce more detailed models [10] [16].

Cost and Accessibility Considerations: Passive techniques tend to be more cost-effective as they typically use commodity or off-the-shelf hardware [15], making them accessible to research groups with limited budgets. Active methods generally require specialized and often expensive equipment [15], creating higher barriers to entry but potentially offering superior performance for specific applications.

Scalability to Different Plant Architectures: The complexity and diversity of plant structures present unique challenges for 3D reconstruction. Methods like 3D Gaussian Splatting show promise for efficiently representing complex plant geometries [10], while stereo vision and photogrammetry struggle with occlusions in dense canopies. Laser scanning approaches have proven effective for various architectures from barley plants to wheat canopies [15].

The following workflow diagram illustrates a recommended decision-making process for selecting appropriate 3D imaging modalities based on specific research requirements:

The Researcher's Toolkit: Essential Solutions for 3D Plant Phenotyping

Implementing successful 3D imaging protocols for plant phenotyping requires specific hardware and software solutions. The following table details essential research reagent solutions and their functions in trait extraction studies.

Table 3: Essential Research Reagent Solutions for 3D Plant Phenotyping

| Solution Category | Specific Products/Technologies | Primary Function | Application Examples |

|---|---|---|---|

| Active 3D Sensors | Microsoft Kinect, Terrestrial Laser Scanners (TLS), Structured Light Projectors | Direct 3D data capture via projected energy | Root architecture studies, canopy density measurement, growth monitoring |

| Multi-Camera Systems | Synchronized industrial cameras, Stereo vision setups, Smartphone arrays | Multi-view image capture for passive 3D reconstruction | Leaf area index estimation, plant volume calculation, morphological analysis |

| Computational Hardware | GPU clusters (NVIDIA), FPGAs, Edge computing devices | Processing large 3D datasets and reconstruction algorithms | Real-time plant growth tracking, neural network training for NeRF/3DGS |

| Software Platforms | Photogrammetry software (Agisoft Metashape), NeRF implementations, 3DGS codebase | 3D model reconstruction and analysis | Creating digital plant models, quantifying trait variations over time |

| Calibration Tools | Checkerboard patterns, calibration spheres, reference objects | System calibration and accuracy validation | Ensuring measurement precision across imaging sessions |

| Data Management Solutions | Cloud-based PACS, High-capacity storage arrays | Storing and managing large 3D datasets | Long-term growth studies, multi-institutional collaboration |

The selection between active and passive 3D imaging modalities for plant phenotyping involves careful consideration of research objectives, environmental constraints, and available resources. Active methods like structured light and laser scanning provide high accuracy for controlled laboratory environments, while passive approaches like photogrammetry and stereo vision offer flexibility and cost-effectiveness for field applications. Emerging technologies such as Neural Radiance Fields and 3D Gaussian Splatting represent promising directions for capturing complex plant architectures with unprecedented detail.

As the field advances, the integration of artificial intelligence with both active and passive 3D imaging modalities will likely enhance automated trait extraction capabilities, potentially overcoming current limitations in processing speed and accuracy. Researchers should consider establishing multimodal imaging platforms that leverage the complementary strengths of both approaches to address the diverse challenges in plant phenotyping across different species, growth stages, and environmental conditions.

Methodologies in Action: A Practical Guide to 3D Trait Extraction Technologies

The transition from traditional 2D image analysis to advanced 3D sensing represents a paradigm shift in plant phenotyping and agricultural research. While 2D methods project complex 3D plant structures onto a plane, causing loss of depth information and inaccurate morphological capture, 3D sensing technologies provide comprehensive structural data essential for precise trait extraction [3]. This evolution is particularly crucial for pharmaceutical development from plant sources, where accurate morphological and structural phenotyping directly impacts understanding of plant physiology, stress responses, and compound production [10] [9].

Active sensing technologies, particularly LiDAR and laser triangulation, have emerged as powerful tools for generating high-fidelity 3D structural data. Unlike passive imaging systems, these active methods project their own energy sources (typically laser light) and measure returned signals, enabling precise 3D mapping regardless of ambient lighting conditions. This capability is revolutionizing how researchers quantify plant architecture, monitor growth dynamics, and extract phenotypic traits with unprecedented accuracy [3] [10]. The integration of these technologies into research pipelines provides the robust, quantitative structural data necessary for advancing pharmaceutical development from plant resources.

Technology Fundamentals: Operating Principles and Mechanisms

LiDAR (Light Detection and Ranging)

LiDAR operates on the time-of-flight principle, emitting laser pulses and measuring the time taken for reflected light to return to the sensor. By calculating this interval and knowing the speed of light, precise distance measurements are obtained. Through rapid scanning, LiDAR systems generate dense 3D point clouds representing surface geometry [17]. Modern LiDAR systems achieve remarkable precision, with range accuracies between 0.5 to 10mm relative to the sensor, though environmental factors, target surface properties, and measurement distance can affect this precision [17].

LiDAR Technology Variants:

- Terrestrial LiDAR: Tripod-mounted systems providing millimeter-level accuracy for detailed structural documentation [17]

- Mobile LiDAR: Wearable or vehicle-mounted systems utilizing SLAM (Simultaneous Localization and Mapping) algorithms for rapid data capture over larger areas [17]

- Aerial LiDAR: UAV-mounted systems covering extensive territories efficiently, particularly valuable for canopy penetration and topographic mapping [17]

Laser Triangulation

Laser triangulation sensors employ geometric principles to determine distance measurements. A laser diode projects a visible spot or line onto a target surface, while a camera positioned at a known angle captures the reflection. Displacement of the laser point in the camera's field of view corresponds directly to distance changes, enabling high-precision measurements through trigonometric calculations [18]. These sensors are categorized by their measurement ranges, from ultra-precise 0-2μm systems for micro-assembly to 101-500μm range sensors for larger-scale applications [18].

The global laser triangulation sensor market, estimated at $1.5 billion in 2025, reflects the growing adoption of this technology across research and industrial applications, with particularly strong utilization in automotive, aerospace, and electronics manufacturing where precision measurement is critical [18].

Comparative Performance Analysis: Quantitative Data Comparison

Table 1: Performance Specifications of LiDAR and Laser Triangulation Systems

| Parameter | Terrestrial LiDAR | Mobile LiDAR | Aerial LiDAR | Laser Triangulation |

|---|---|---|---|---|

| Accuracy | 1-3mm (at optimal range) [17] | 5-20mm [17] | 10-50mm (depends on altitude) [17] | Varies by range: 0-2μm to 101-500μm [18] |

| Measurement Range | Up to 350m [17] | Several hundred meters [17] | 1600-3900m AGL [19] | Limited to sensor range (typically <1m) [18] |

| Data Capture Speed | Moderate (stationary setup required) [17] | High (walking speed operation) [17] | Very High (aerial coverage) [17] | Very High (thousands of measurements/second) [18] |

| Point Density | Very High (stationary scanning) [17] | Moderate (depends on movement speed) [17] | Variable (depends on altitude) [17] | Extremely High (focused measurement area) [18] |

| Best Applications | Architectural documentation, high-precision plant phenotyping [17] | Corridor mapping, large facility documentation [17] | Topographical mapping, forestry, environmental monitoring [17] [19] | Micro-scale plant organ measurement, leaf surface analysis [18] |

Table 2: Cost Analysis and Implementation Considerations

| Factor | Terrestrial LiDAR | Mobile LiDAR | Aerial LiDAR | Laser Triangulation |

|---|---|---|---|---|

| Equipment Cost | $35,000-$80,000 [17] | $10,500-$60,000 [17] | Varies widely by platform | Varies by precision requirements [18] |

| Operational Workflow | Multiple scan positions requiring registration [17] | Continuous scanning with SLAM processing [17] | Flight planning, GPS/IMU integration [19] | Single-point or line scanning |

| Processing Complexity | High (manual registration often needed) [17] | Moderate (automated SLAM with potential manual correction) [17] | Moderate (specialized photogrammetry software) [17] | Low to Moderate (direct measurement or basic profiling) |

| Environmental Limitations | Atmospheric conditions, temperature variations [17] | Limited features areas cause SLAM drift [17] | Weather, flight regulations, lighting [17] | Sensitive to surface properties, ambient light [18] |

Experimental Protocols for Plant Phenotyping Applications

Multi-View 3D Reconstruction Workflow for Plant Phenotyping

Protocol Objective: Generate complete 3D models of plants by integrating multiple viewpoints to overcome occlusion issues common in plant structures [3].

Materials and Equipment:

- Binocular stereo vision camera (e.g., ZED 2 or ZED mini) [3]

- Controlled rotation platform or U-shaped rotating arm [3]

- Calibration objects (spheres/markers for registration) [3]

- High-resolution computing workstation for processing

Methodology:

- Multi-View Image Acquisition: Position plant specimen on rotation platform. Capture images from six viewpoints around the plant, with additional captures from varying heights at each position. For each viewpoint, acquire 8 RGB images with 2208×1242 resolution [3].

- Structure from Motion (SfM) Processing: Apply SfM algorithms to captured high-resolution images to generate initial 3D point clouds, bypassing integrated depth estimation modules to avoid distortion and drift [3].

- Multi-View Stereo (MVS) Enhancement: Implement MVS techniques to produce high-fidelity, single-view point clouds with improved density and accuracy [3].

- Point Cloud Registration:

- Phenotypic Trait Extraction: From the complete 3D model, extract key phenotypic parameters including plant height, crown width, leaf length, and leaf width using automated measurement algorithms [3].

Validation: Experimental validation on Ilex species demonstrated strong correlation with manual measurements, with coefficients of determination (R²) exceeding 0.92 for plant height and crown width, and ranging from 0.72 to 0.89 for leaf parameters [3].

High-Throughput Cotton Phenotyping Using 3D Point Cloud Segmentation

Protocol Objective: Achieve automated organ-level segmentation and phenotypic extraction across the complete growth cycle of cotton plants [9].

Materials and Equipment:

- Smartphone camera or structured light scanner for data acquisition

- Digital calipers for manual validation measurements

- GPU-enabled computing system for deep learning processing

- Controlled growth environment (greenhouse with maintained temperature 25-27°C) [9]

Methodology:

- Data Collection: Capture video of cotton plants using smartphone, recording approximately 40-second durations yielding 1200 frames. Include full rotations from top-down, horizontal, and bottom-up perspectives. Move camera slowly to prevent motion blur [9].

- 3D Reconstruction: Generate point clouds from video frames using SfM and MVS approaches, creating comprehensive 3D models of cotton plants throughout growth cycle [9].

- Deep Learning Segmentation: Implement ResDGCNN network architecture integrating residual learning with dynamic graph convolution to address significant structural variations in cotton organs across growth stages [9].

- Organ-Level Segmentation Optimization: Apply improved region-growing algorithm incorporating point distance mapping with curvature-based normal vectors to address overlapping regions between different cotton organs [9].

- Phenotypic Parameter Calculation: Extract plant height, stem length, leaf dimensions, and innovatively calculate bell drop rate - a critical phenotypic trait for cotton yield estimation [9].

Validation: The method achieved a segmentation accuracy of 67.55% with 4.86% improvement in mIoU compared to baseline models. In overlapping leaf segmentation, the model achieved R² of 0.962 and RMSE of 2.0. Average relative error in stem length estimation was 0.973 [9].

Essential Research Reagent Solutions for 3D Phenotyping

Table 3: Research Reagent Solutions for Active Sensing in Phenotyping

| Reagent Category | Specific Product/Technology | Research Application & Function |

|---|---|---|

| Terrestrial LiDAR Systems | FARO Focus Premium Max [19] | High-precision outdoor plant scanning with 266-megapixel resolution for detailed structural phenotyping |

| Mobile LiDAR Systems | Wearable SLAM-based systems [17] | Rapid phenotyping of large agricultural fields or greenhouse facilities with centimeter-level accuracy |

| Aerial LiDAR Systems | RIEGL VQ-1560 III-S [19] | Large-scale crop monitoring, canopy structure analysis, and environmental interaction studies |

| Laser Triangulation Sensors | KEYENCE, SICK, Panasonic sensors [18] | Micro-scale measurement of plant organs, leaf surface topography, and detailed morphological analysis |

| Binocular Stereo Cameras | ZED 2 and ZED Mini [3] | Cost-effective 3D reconstruction for plant phenotyping using multi-view stereo approaches |

| Registration Algorithms | Iterative Closest Point (ICP) [3] | Alignment of multi-view point clouds into complete 3D plant models |

| Segmentation Algorithms | ResDGCNN with residual learning [9] | Organ-level segmentation of complex plant structures across full growth cycles |

| Validation Instruments | Digital calipers, manual measurement tools [9] | Ground-truth validation of automated phenotypic extraction algorithms |

The strategic selection between LiDAR and laser triangulation technologies depends fundamentally on the specific requirements of the phenotyping research. LiDAR systems offer superior range and flexibility for macroscopic plant architecture studies, while laser triangulation provides exceptional precision for microscopic organ-level analysis.

For comprehensive phenotyping pipelines, integrated approaches often yield optimal results. Terrestrial LiDAR captures overall plant architecture with millimeter precision, while laser triangulation sensors can be deployed for detailed analysis of specific organs. Mobile LiDAR systems enable high-throughput phenotyping of large populations, essential for breeding programs and pharmaceutical source selection [17].

The integration of these active sensing technologies with advanced computational methods—including deep learning segmentation, multi-view registration, and automated trait extraction—represents the future of high-throughput 3D plant phenotyping. As these technologies continue evolving with improvements in speed, accuracy, and accessibility, they will play an increasingly vital role in advancing pharmaceutical development from plant resources and addressing challenges in sustainable agriculture [10] [9].

Researchers should consider establishing technology stacks that combine the strengths of both LiDAR and laser triangulation, creating complementary phenotyping workflows that capture both macroscopic architectural traits and microscopic morphological features. This integrated approach provides the comprehensive structural data necessary for breakthroughs in plant-based pharmaceutical research and development.

Plant phenotyping, the quantitative assessment of complex plant traits, has traditionally relied on two-dimensional (2D) imaging and manual measurements [9]. However, projecting complex three-dimensional plant structures onto a 2D plane results in significant information loss, particularly depth information, making it difficult to accurately capture morphological features [3]. This limitation is especially pronounced for complex plant architectures with issues such as shading, overlapping leaves, and multiple branches [9]. In drug discovery, traditional 2D cell cultures face a similar challenge, as they cannot accurately illustrate and mimic the complex environment found in living organisms [20] [21].

The emergence of three-dimensional (3D) reconstruction technologies represents a paradigm shift, enabling non-destructive, high-throughput analysis of biological specimens. Among these technologies, image-based methods utilizing Structure-from-Motion (SfM) and Multi-View Stereo (MVS) have gained prominence due to their ability to generate highly detailed 3D models from standard 2D images [22]. These methods are revolutionizing phenotyping by providing access to accurate morphological and structural data that was previously difficult or impossible to obtain with conventional 2D approaches [3]. This guide provides a comprehensive comparison of SfM and MVS methodologies, their performance relative to alternative 3D reconstruction techniques, and their specific applications in trait extraction research.

Understanding the Core Technologies: SfM and MVS

Structure-from-Motion (SfM)

Structure-from-Motion is a photogrammetric technique that estimates three-dimensional structures from two-dimensional image sequences [23] [24]. A key advantage of SfM is its ability to handle unordered image sets, making it suitable for scenarios where image capture is less controlled [24]. The SfM process begins by detecting distinctive feature points across multiple images, matching these features across different views, and then simultaneously calculating camera parameters (positions, orientations, and intrinsic calibration) and a sparse 3D point cloud through a process called bundle adjustment [22] [23]. This results in a geometrically consistent configuration of the image set and an initial sparse reconstruction of the scene [22].

Multi-View Stereo (MVS)

Multi-View Stereo builds upon the output of SfM to create highly detailed 3D models [24]. Once camera parameters and sparse point clouds are established through SfM, MVS algorithms perform dense image matching to compute pixel-wise correspondences between images, generating a dense and detailed surface model [22]. The primary strength of MVS is its ability to generate dense point clouds and detailed textures, making it indispensable for projects requiring high-resolution 3D models with fine surface geometry [24]. Unlike SfM, MVS typically requires more controlled image capture with systematic coverage of the subject from various angles to ensure comprehensive overlapping views [24].

The Integrated SfM-MVS Workflow

In practice, SfM and MVS are complementary techniques often deployed in an integrated pipeline [22] [24]. The SfM stage derives the initial camera parameters and sparse point clouds, setting the stage for MVS to generate a dense and detailed 3D model [24]. This combination leverages the strengths of both techniques, allowing for robust reconstructions even in complex scenarios [24]. The following diagram illustrates this integrated workflow:

SfM-MVS Reconstruction Workflow

Comparative Performance Analysis of 3D Reconstruction Techniques

SfM-MVS vs. Emerging Neural Approaches

Recent studies have compared traditional SfM-MVS photogrammetry with emerging neural rendering approaches like Neural Radiance Fields (NeRF) and Gaussian Splatting (GS). The table below summarizes key quantitative findings from comparative studies:

Table 1: Performance Comparison of 3D Reconstruction Techniques

| Technique | Geometric Accuracy | Processing Time | Completeness | Fine Detail Capture | Primary Strengths |

|---|---|---|---|---|---|

| SfM-MVS | High (RMS error: ~0.1-0.5 mm for small artifacts) [25] | Moderate to High [26] | Minor gaps possible [26] | Excellent for fine geometric details [25] | Highest geometric precision [26] |

| NeRF | Lower than SfM [25] [26] | Fast [26] | High completeness [26] | Lower for fine geometry [25] | Superior rendering, good completeness [26] |

| Gaussian Splatting | Lower than SfM [25] | Fast (real-time capable) [25] | Moderate | Outperformed by NeRF geometrically [25] | Real-time rendering [25] |

| LiDAR | High [3] | Fast acquisition | Limited by occlusion [3] | Good for structural features | Direct distance measurement [3] |

| Binocular Stereo | Moderate (prone to distortion) [3] | Fast | Limited by occlusion [3] | Challenging for low-texture surfaces [3] | Real-time acquisition |

For archaeological artifact reconstruction, SfM demonstrated superior geometric fidelity with root mean square (RMS) error measurements significantly lower than both NeRF and Gaussian Splatting alternatives [25]. Similarly, in architectural heritage documentation, SfM-MVS provided the highest geometric precision despite minor gaps in reconstruction, while NeRF and GS fell short of the accuracy required for precise geometric documentation [26].

Application-Specific Performance in Phenotyping

In plant phenotyping applications, SfM-MVS has demonstrated remarkable accuracy for trait extraction. Studies on Ilex species showed that key phenotypic parameters extracted from SfM-MVS models exhibited a strong correlation with manual measurements, with coefficients of determination (R²) exceeding 0.92 for plant height and crown width, and ranging from 0.72 to 0.89 for leaf parameters [3]. Another study on cotton plants achieved a mean relative error of only 0.973 for stem length estimation using SfM-MVS based reconstruction [9].

Experimental Protocols for High-Fidelity 3D Reconstruction

Image Acquisition Protocol for Plant Phenotyping

A standardized image acquisition protocol is fundamental for high-quality 3D reconstruction. The following methodology has been validated for plant phenotyping applications:

- Equipment Setup: Use a robotic arm to control an industrial RGB camera for maximum flexibility in image acquisition [22]. This mobility ensures comprehensive coverage of plants of different sizes and architectures while minimizing occlusions [22].

- Camera Parameters: Set exposure time to 50 milliseconds, camera-to-object distance of 16 centimeters, and use an optimized parameter tweak value of 0.9 to improve reconstruction of thin and delicate plant parts [22].

- Acquisition Configuration: Employ multiple height levels (3 levels recommended) with systematic coverage at each level [22]. Capture approximately 40 frames per position to balance processing time and model quality [22].

- Lighting Conditions: Use diffuse and uniform lighting to minimize shadows and reflections that can interfere with feature matching [22]. Overcast weather conditions provide ideal natural lighting for outdoor acquisition [27].

- Background Separation: Apply background separation techniques such as chroma keying or use plain, low-texture surfaces to simplify feature detection [22].

SfM-MVS Processing Pipeline with Optimized Parameters

Recent research has identified key optimization parameters that significantly enhance reconstruction quality:

- Minimum Triangulation Angle: Set a minimum triangulation angle of 3° to improve geometric stability [23].

- Bundle Adjustment: Reduce overall re-projection error by simultaneously optimizing all camera poses and 3D points in the bundle adjustment step [23].

- Tiling Configuration: Use a tiling buffer size of 1024 × 1024 pixels for processing high-resolution images [23].

- Feature Matching: Ensure thorough feature detection and matching across images, potentially facilitated by placing distinctive multicolored texture objects in the scene [22].

- Dense Reconstruction: Apply MVS algorithms to the aligned images to generate dense point clouds, followed by surface reconstruction and texturing [22].

The following diagram illustrates a complete experimental setup for plant phenotyping:

3D Plant Phenotyping Workflow

Essential Research Toolkit for SfM-MVS Implementation

Equipment and Software Solutions

Table 2: Essential Research Toolkit for SfM-MVS 3D Reconstruction

| Category | Specific Tools | Specifications/Functions | Application Context |

|---|---|---|---|

| Acquisition Hardware | DSLR/Mirrorless Camera (Canon EOS R) [25] | High-resolution sensor with macro lens | Laboratory-controlled artifact digitization [25] |

| Smartphone (iPhone 13 Pro) [25] [27] | Consumer-grade RGB sensor with video capability | Field documentation and rapid scanning [25] | |

| Binocular Stereo Camera (ZED 2) [3] | Simultaneous multi-view capture | Automated plant phenotyping systems [3] | |

| Software Platforms | Meshroom [23] | Open-source pipeline with customizable features | Academic research, educational use [23] |

| Agisoft Metashape [22] [23] | Commercial-grade with automated processing | Professional documentation, high-precision requirements [22] | |

| Pix4Dmapper [27] [23] | Commercial solution with robust algorithms | Field mapping, architectural documentation [27] | |

| Processing Parameters | Minimum Triangulation Angle [23] | 3° minimum for geometric stability | All reconstruction scenarios |

| Bundle Adjustment [23] | Simultaneous optimization of all parameters | Improving overall model accuracy | |

| Tiling Buffer Size [23] | 1024 × 1024 pixels for memory management | Handling high-resolution datasets | |

| Accessory Equipment | Robotic Positioning Arm [22] | Precise camera positioning | Automated laboratory systems |

| Rotation Stage [3] | Controlled object rotation | Multi-view acquisition for small specimens | |

| Calibration Objects [3] | Known dimensions for scale reference | Metric accuracy validation |

Protocol Selection Guide

The choice of acquisition protocol depends on specific research requirements:

- High-Precision Laboratory Studies: Implement the optimized robotic arm system with controlled lighting and parameter tweak (0.9) for maximum reconstruction fidelity of delicate structures [22].

- Field Documentation and Rapid Scanning: Utilize smartphone-based video acquisition with subsequent frame extraction, suitable for time-sensitive documentation or when professional equipment is unavailable [25] [9].

- High-Throughput Phenotyping: Employ integrated systems with stereo cameras and automated rotation stages, capturing images from six viewpoints with marker-based registration for complete 3D model reconstruction [3].

SfM-MVS photogrammetry remains the most reliable image-based method for generating high-fidelity 3D models when geometric accuracy is the primary requirement [26]. While emerging neural approaches like NeRF and Gaussian Splatting offer advantages in processing speed and visual rendering quality, they currently cannot match the geometric precision of SfM-MVS for scientific applications requiring metric accuracy [25] [26]. In plant phenotyping and drug development research, where quantitative trait extraction is essential, SfM-MVS provides the necessary balance of accuracy, accessibility, and non-destructive analysis, enabling researchers to move beyond the limitations of 2D imaging while maintaining scientific rigor in 3D data acquisition.

The shift from traditional 2D imaging to advanced 3D vision represents a fundamental transformation in phenotyping for trait extraction research. While 2D methods excel at structure, color analysis, and character recognition, they project the complex 3D spatial structure of a subject onto a 2D plane, resulting in an inherent loss of depth information [28]. This limitation makes accurate capture of morphological features, such as volume, shape, and spatial orientation, challenging [28]. Depth cameras, particularly Time-of-Flight (ToF) and Stereo Vision systems, address this gap by providing precise depth perception, thereby enabling high-fidelity 3D reconstruction and quantitative analysis essential for modern phenotyping [29] [30]. This guide provides an objective comparison of these two dominant 3D imaging technologies, framing their performance within the context of plant phenotyping research.

Time-of-Flight (ToF) Cameras

ToF is an active 3D sensing technology that measures distance by calculating the time taken for emitted light to travel to an object and back to the sensor. The core components of a ToF camera include a computing unit, a light source (typically near-infrared), a control unit, and a ToF sensor [31]. The camera emits modulated light pulses from an integrated source; these pulses hit the object and are reflected back. By precisely measuring the phase shift or time delay for the light's round trip, the camera determines the distance for each pixel, generating a depth map or point cloud in real-time [28] [31]. A significant advantage of this method is that it does not rely on ambient light contrast or surface textures for 3D capture [28].

Stereo Vision Cameras

Stereo vision is a passive technology that mimics human binocular vision. It uses two or more 2D cameras separated by a known distance (baseline) to capture synchronous images of a scene from slightly different viewpoints [32]. Depth information is calculated through a process called triangulation. First, the images are rectified. Then, a matching algorithm searches for corresponding pixels in the left and right images. The disparity—the difference in the horizontal location of these corresponding pixels—is inversely proportional to the distance of the object from the camera [32] [28]. Using the camera's calibration parameters (both intrinsic and extrinsic), this disparity map is converted into a dense depth image [28].

The diagram below illustrates the core workflow of a stereo vision system.

Performance Comparison: Quantitative Data and Analysis

A direct comparison of key performance metrics is crucial for selecting the appropriate technology for a specific phenotyping application. The following table summarizes the characteristic differences between Stereo Vision and ToF.

Table 1: Characteristic Performance Comparison of Stereo Vision vs. Time-of-Flight

| Performance Metric | Stereo Vision | Time-of-Flight (ToF) |

|---|---|---|

| Typical Working Distance | ≤ 2 m [32] | 0.4 - 5 m [32] |

| Depth Accuracy | 5 - 10% of distance [32] | ≤ 0.5% of distance [32] |

| Depth Data Resolution | Medium [32] | Low [32] |

| Low-Light Performance | Poor (requires ambient light) [32] [28] | Excellent (active illumination) [32] [28] |

| Performance on Homogeneous Surfaces | Poor (requires texture) [28] | Excellent (texture-independent) [28] |

| Frame Rate | High [32] | Variable [32] |

| Power Consumption | Comparatively High [32] | Medium [32] |

| Susceptibility to Ambient Light | Suitable for bright ambient light [28] | Requires optimization (e.g., 940nm filter) [28] [31] |

The data in Table 1 highlights a fundamental trade-off. ToF cameras generally provide superior absolute accuracy and perform reliably in various lighting conditions and on textureless surfaces. In contrast, stereo vision systems can achieve higher spatial resolution but are dependent on ambient light and surface texture to generate accurate depth maps.

Beyond these general characteristics, performance in real-world phenotyping is best evaluated through application-specific experimental data. The table below synthesizes findings from recent research studies that have employed these technologies for precise trait extraction.

Table 2: Experimental Performance in Plant Phenotyping Applications

| Study Focus | Technology Used | Experimental Results & Correlation with Manual Measurements |

|---|---|---|

| 3D Fine-Grained Plant Reconstruction [29] | Binocular Stereo Vision (ZED 2 / ZED mini) with SfM-MVS processing | Plant Height & Crown Width: R² > 0.92Leaf Parameters (Length/Width): R² = 0.72 - 0.89 |

| Tomato Fruit Phenotypic Recognition [30] | ToF Depth Camera (Azure Kinect 3.0) | Fruit Transverse/Longitudinal Diameter: High correlation (R²), validated by a Hybrid Depth Regression Model (HDRM). |

| General Morphological Phenotyping [29] | ToF Cameras | Effective for measuring plant height and leaf area, but lower resolution can miss fine details like stalks and petioles. |

The experimental data in Table 2 demonstrates that both technologies, when coupled with advanced processing algorithms, can achieve high correlation with manual measurements. The choice between them depends on the specific traits of interest: ToF is well-suited for gross morphological measurements, while stereo vision, with specialized processing, can capture more fine-grained details.

Experimental Protocols for Phenotyping

Protocol 1: High-Fidelity 3D Plant Reconstruction Using Stereo Vision

This protocol, adapted from a 2025 study on Ilex species, bypasses the onboard depth estimation of binocular cameras to achieve higher accuracy through photogrammetric processing [29].

Workflow Diagram: Multi-View 3D Plant Reconstruction

Key Methodology:

- Image Acquisition: A stereo camera (e.g., ZED 2) is mounted on a rotational arm to capture high-resolution RGB images from six viewpoints (0°, 60°, 120°, 180°, 240°, 300°) around the plant [29].

- 3D Reconstruction: Instead of using the camera's built-in depth calculation, the high-resolution 2D images are processed using Structure from Motion (SfM) and Multi-View Stereo (MVS) algorithms. This produces high-fidelity, single-view point clouds, effectively avoiding the distortion and drift common in standard stereo vision [29].

- Point Cloud Registration: To overcome plant self-occlusion, point clouds from all six viewpoints are merged. This involves a rapid coarse alignment using a marker-based Self-Registration (SR) method, followed by a precise fine alignment using the Iterative Closest Point (ICP) algorithm, resulting in a complete 3D plant model [29].

- Trait Extraction: Morphological parameters such as plant height, crown width, leaf length, and leaf width are automatically extracted from the unified 3D model [29].

Protocol 2: Tomato Fruit Phenotyping Using a ToF Depth Camera

This protocol leverages the active sensing of a ToF camera to automate the extraction of complex phenotypic traits from tomato fruits [30].

Key Methodology:

- Image Acquisition: Transverse and longitudinal sections of tomato fruits are placed on a uniform background. A ToF depth camera (e.g., Azure Kinect 3.0) is fixed in a top-down view to capture synchronized RGB and depth (RGB-D) images under consistent illumination [30].

- Segmentation: An improved deep learning model (SegFormer-MLLA) is used to accurately segment complex structures within the fruit, such as locules (the gel-filled cavities) and stem scars [30].

- Depth Optimization and Trait Extraction: A key challenge with ToF sensors is depth error from optical interference. This protocol uses a designed Hybrid Depth Regression Model (HDRM) to optimize depth estimation by modeling parameter errors and applying random forest-based residual correction [30]. This refined depth data, fused with RGB information, enables the high-accuracy measurement of traits like fruit longitudinal/transverse diameter, mesocarp thickness, and stem scar depth and width [30].

The Researcher's Toolkit: Essential Research Reagents and Materials

Selecting the appropriate hardware and software is critical for establishing a reliable phenotyping workflow. The following table details key solutions used in the featured research.

Table 3: Essential Research Toolkit for Depth Camera-Based Phenotyping

| Item Name & Example | Function / Key Characteristics | Representative Use Case |

|---|---|---|

| Stereo Vision Camera(e.g., ZED 2, Basler Stereo) | Captures synchronized image pairs for depth perception via triangulation. Often includes onboard software for robotics. | High-accuracy 3D reconstruction of plant architecture when used with multi-view SfM processing [29] [28]. |

| ToF Depth Camera(e.g., Azure Kinect, Basler ToF) | Active sensor using infrared light to measure distance directly for each pixel. Real-time depth image output. | High-throughput phenotyping of fruit dimensions and volume, effective in varied lighting [30] [28]. |

| FoundationStereo Model [33] | A foundation model for stereo depth estimation designed for strong zero-shot generalization, trained on a large-scale synthetic dataset. | Generating accurate dense disparity maps from rectified stereo images without application-specific fine-tuning [33]. |

| SegFormer-MLLA Model [30] | A deep learning model for image segmentation, enhanced with a linear attention mechanism to reduce computational cost. | Precise segmentation of fine anatomical structures in tomato fruits, such as locules and stem scars [30]. |

| Calibration Spheres/Markers | Passive markers with known dimensions and non-reflective surfaces. | Providing reference points for coarse alignment and registration of multi-view point clouds [29]. |

| Iterative Closest Point (ICP) | An algorithm for the fine alignment of 3D point clouds by minimizing the distance between points in two clouds. | Precisely merging point clouds from different viewpoints into a single, complete 3D model [29]. |

Both Time-of-Flight and Stereo Vision depth cameras are powerful tools that have moved 3D phenotyping from a manual, labor-intensive process to an automated, high-throughput endeavor. The choice between them is not a matter of which is universally better, but which is more appropriate for the specific research context.

ToF technology, with its active illumination, offers robustness in varying light conditions and on textureless surfaces, providing excellent absolute accuracy for gross morphological measurements at a medium working distance. In contrast, Stereo Vision systems, particularly when their high-resolution RGB outputs are processed with advanced algorithms like SfM-MVS and foundation models, can achieve exceptional resolution and accuracy for fine-grained structural analysis, albeit with a greater dependency on ambient light and surface texture.

The ongoing integration of these sensing technologies with sophisticated AI and machine learning algorithms, as demonstrated by the cited protocols, is continuously pushing the boundaries of what is possible in trait extraction research. This synergy ensures that 3D depth sensing will remain a cornerstone technology in the quest for precise, non-invasive, and high-throughput phenotyping.

The accurate measurement of plant phenotypic traits is fundamental to advancing plant breeding, enhancing crop yields, and understanding plant responses to environmental stresses. Traditional phenotyping has relied heavily on manual, two-dimensional (2D) measurements, which are often labor-intensive, destructive, and limited in their ability to capture the full complexity of plant architecture. The transition to three-dimensional (3D) phenotyping, powered by technologies that generate detailed point clouds, represents a paradigm shift. This guide provides a comparative analysis of 2D and 3D phenotyping methodologies for extracting key morphological traits—plant height, crown width, and leaf dimensions—framed within the broader thesis of evaluating the performance and applicability of these contrasting approaches in research settings. We objectively compare the underlying technologies, present supporting experimental data, and detail the protocols that enable researchers to move from raw point cloud data to accurate phenotypic parameters.

The core distinction between 2D and 3D phenotyping lies in the dimensionality of the data and the subsequent completeness of the plant representation. 2D phenotyping projects the complex 3D structure of a plant onto a single plane, inevitably losing depth information and leading to occlusions, which can result in the underestimation of traits like leaf area and total root length [3] [15]. In contrast, 3D phenotyping constructs a spatial model of the plant, typically represented as a point cloud—a set of data points in a 3D coordinate system. This allows for the accurate measurement of plant geometry, the resolution of occlusions, and the extraction of traits that are impossible to measure reliably in 2D, such as leaf angle and 3D root system architecture [34] [15].

Table 1: Fundamental Comparison of 2D and 3D Phenotyping Approaches

| Feature | 2D Phenotyping | 3D Phenotyping |

|---|---|---|

| Data Foundation | 2D images (RGB) | 3D point clouds, meshes |

| Depth Information | Lost due to projection | Preserved |

| Occlusion Handling | Poor; leads to missing data | Good; can be resolved via multi-view fusion [3] |

| Trait Scope | Limited to planar traits (e.g., projected leaf area) | Comprehensive (e.g., 3D leaf area, stem volume, leaf angle) |

| Typical Technologies | Standard photography, flatbed scanners | LiDAR, stereo imaging, Structure from Motion (SfM), depth cameras [15] |

| Root Phenotyping | Grown in rhizoboxes with transparent media; does not mimic soil conditions [34] | X-ray CT scanning in soil; provides in-situ, realistic 3D architecture [34] |

The choice between these systems involves a clear trade-off: 2D methods often offer higher throughput and lower computational cost, while 3D methods provide superior accuracy and a more complete phenotypic profile, which is critical for correlating morphology with function.

Technologies for 3D Point Cloud Acquisition

The first step in 3D phenotyping is acquiring the point cloud. The technologies for this purpose can be broadly categorized into active and passive sensing methods, each with distinct advantages and limitations [15].

Active Sensing Methods

Active sensors emit energy (e.g., laser or patterned light) and measure the returned signal to calculate 3D coordinates directly.